Validating Systematic Review Findings in Ecotoxicology: A Framework for Robust Evidence Synthesis in Environmental Risk Assessment

This article provides a comprehensive framework for validating findings from systematic reviews (SRs) in ecotoxicology, tailored for researchers, scientists, and drug development professionals.

Validating Systematic Review Findings in Ecotoxicology: A Framework for Robust Evidence Synthesis in Environmental Risk Assessment

Abstract

This article provides a comprehensive framework for validating findings from systematic reviews (SRs) in ecotoxicology, tailored for researchers, scientists, and drug development professionals. It addresses the critical need for robust evidence synthesis to inform chemical safety assessments and ecological research. The scope progresses from establishing the foundational principles and value of systematic reviews in this domain, through detailed methodological standards for conducting and applying reviews, to identifying common pitfalls and optimization strategies. Finally, it explores rigorous validation techniques and comparative analysis to assess confidence in review conclusions. By integrating insights from authoritative databases, methodological guidelines, and case studies, this guide aims to enhance the transparency, reproducibility, and reliability of evidence synthesis in ecotoxicology, thereby supporting more informed decision-making in biomedical and environmental research.

The Pillars of Evidence: Why Systematic Reviews are Critical for Modern Ecotoxicology

Ecotoxicology, the study of toxic effects on ecological entities, faces a critical challenge: efficiently and reliably synthesizing vast, heterogeneous research to inform regulation and protect ecosystems. Traditional narrative reviews, while valuable for exploratory discussion, are inherently susceptible to selection and confirmation bias, as they lack explicit, reproducible methods for searching, selecting, and appraising evidence [1]. This limitation is particularly problematic in a field with direct implications for environmental policy and public health.

Systematic Review (SR) has emerged as the scientific standard for evidence synthesis. It is defined by a structured, protocol-driven process that aims to minimize bias and maximize transparency by using systematic and explicit methods to identify, select, appraise, and analyze all relevant research on a specific question [2] [3]. When combined with Meta-Analysis (MA)—the statistical pooling of results from selected studies—SR provides a quantitative, robust estimate of effects [2].

The transition towards systematic methodology in environmental health is underway, driven by demands for greater rigor in regulatory decision-making by bodies like the U.S. EPA and EFSA [4] [3]. This guide objectively compares systematic and traditional review methodologies, details emerging synthesis tools, and provides experimental protocols, framing the discussion within the broader thesis of validating ecotoxicological findings for confident application in risk assessment and drug development.

Comparative Analysis of Review Methodologies

Systematic vs. Traditional Narrative Reviews

A direct comparison reveals fundamental differences in rigor, process, and output. The table below synthesizes findings from appraisals of published reviews [1] [5] [3].

Table 1: Comparison of Systematic and Traditional Narrative Reviews in Ecotoxicology

| Feature | Systematic Review | Traditional Narrative Review |

|---|---|---|

| Research Question | Focused, specific, and defined a priori [5]. | Often broad, exploratory, or evolving. |

| Protocol | A detailed, publicly registered plan is mandatory [1] [3]. | Rarely documented or published. |

| Search Strategy | Comprehensive, reproducible search across multiple databases; search terms documented [6]. | Often not systematic or explicitly reported; potential for selection bias. |

| Study Selection | Clearly defined, objective inclusion/exclusion criteria applied by multiple reviewers [6]. | Criteria subjective, unclear, or not reported. |

| Risk of Bias/Quality Assessment | Critical appraisal of individual study validity using standardized tools (e.g., EcoSR) [7] [1]. | Variable, often informal, or omitted. |

| Data Synthesis | Narrative summary, often with quantitative meta-analysis [2] [6]. | Qualitative, narrative summary. |

| Conclusions | Explicitly linked to the strength of the evidence gathered [1]. | May reflect author perspective; less transparent link to evidence. |

| Reproducibility & Transparency | High; all methods and decisions are documented [5] [3]. | Typically low. |

Evidence shows SRs consistently outperform narrative reviews in methodological quality. One analysis using the Literature Review Appraisal Toolkit (LRAT) found SRs received a higher percentage of satisfactory ratings across all domains, with statistically significant advantages in eight of twelve domains, including protocol development and transparency [1]. However, poorly conducted SRs exist, highlighting the need for adherence to empirical methods and reporting guidelines like PRISMA [1] [3].

The Spectrum of Evidence Synthesis Methodologies

Systematic review is one node in an expanding ecosystem of evidence synthesis tools. The choice of method depends on the review's goal, as detailed below.

Table 2: Comparison of Evidence Synthesis Methodologies

| Methodology | Primary Goal | Process | Key Output | Best Use Case |

|---|---|---|---|---|

| Systematic Review (SR) | Answer a specific, focused research question with minimal bias [2]. | Protocol-driven search, selection, appraisal, and synthesis. | A definitive answer to the question, often with a meta-analytic effect estimate. | Hazard identification, dose-response analysis, developing toxicity factors [5]. |

| Systematic Evidence Map (SEM) | Characterize and catalog the extent and distribution of evidence in a broad field [4]. | Systematic search and coding of literature into a queryable database. | Interactive database or knowledge graph visualizing evidence clusters and gaps [4]. | Problem formulation, research prioritization, scoping for future SRs. |

| Traditional Narrative Review | Provide a broad overview, critique, or theoretical synthesis of a topic. | Non-systematic, exploratory literature gathering. | Expert-led narrative summary and hypothesis generation. | Exploring emerging fields, educating a general audience, framing new theories. |

Systematic Evidence Mapping (SEM) is particularly valuable for navigating large, complex chemical risk assessment landscapes. Unlike an SR, an SEM does not synthesize findings to answer a single question. Instead, it structures extracted metadata (e.g., chemicals, species, endpoints, study types) into a searchable knowledge graph [4]. This model, as opposed to rigid flat tables, is ideal for highly connected ecotoxicological data, allowing users to visually identify evidence clusters, gaps, and relationships to efficiently target resources for subsequent deep-dive SRs [4].

Foundational Protocols for Systematic Review in Ecotoxicology

Adherence to a standardized protocol is the defining feature of an SR. The following workflow, synthesized from regulatory guidance and exemplar reviews, outlines the critical stages [5] [6].

Protocol & Problem Formulation (Step 1): The Texas Commission on Environmental Quality (TCEQ) framework mandates a precise start: defining the Population, Exposure, Comparator, and Outcome (PECO) [5]. A pre-registered protocol details the search strategy, inclusion criteria, and analysis plan, guarding against outcome-reporting bias [3].

Search & Selection (Steps 2-3): A comprehensive, multi-database search (e.g., Web of Science, Scopus) with documented syntax is required [6]. The PRISMA flow diagram standardizes reporting of identified, screened, and included studies [6]. For example, a review on pharmaceutical uptake in crops screened 1,263 abstracts and 217 full texts to include 150 studies [6].

Data Extraction & Appraisal (Step 4): Data is extracted using pre-designed forms [6]. Critical appraisal assesses internal validity (risk of bias). The Ecotoxicological Study Reliability (EcoSR) framework is a tiered tool for this, evaluating elements like experimental design, statistical reporting, and relevance to the assessment context [7].

Synthesis & Reporting (Steps 5-6): Synthesis can be narrative, quantitative (meta-analysis), or via evidence weighting schemes. The final step involves rating the overall confidence in the body of evidence, transparently linking conclusions to the strength and limitations of the underlying data [5].

Advanced Methodologies for Validating and Extending Findings

Machine Learning for Predictive Hazard Assessment

A key challenge in ecotoxicology is the vast number of untested chemical-species pairs. A 2025 study demonstrated a machine learning-based pairwise learning approach to bridge these data gaps [8].

Experimental Protocol [8]:

- Objective: Predict LC50 values for all possible combinations of 3,295 chemicals and 1,267 species, filling a matrix that is 99.5% empty.

- Model & Data: A Bayesian matrix factorization model (using libfm library) was trained on 70,670 observed LC50 values from the ADORE database. Chemicals, species, and exposure duration were treated as categorical input features.

- Key Innovation: The model learns not just average chemical toxicity or species sensitivity, but their unique pairwise interactions (the "lock and key" effect), capturing complex cross terms.

- Output & Validation: The model generated over 4 million predicted LC50s, used to create novel Hazard Heatmaps, comprehensive Species Sensitivity Distributions (SSDs), and Chemical Hazard Distributions (CHDs). The model's predictions were rigorously validated against held-out test data.

The Adverse Outcome Pathway (AOP) Framework for Cross-Species Validation

The AOP framework links a molecular initiating event to an adverse outcome via key events, providing a mechanistic basis for extrapolation. A 2025 study created a cross-species AOP network for silver nanoparticle reproductive toxicity [9].

Experimental Protocol [9]:

- Objective: Extend the taxonomic domain of applicability of an existing AOP (ID 207) beyond C. elegans.

- Data Integration: Literature data from 25 studies on AgNPs, including in vivo (ecotoxicology) and in vitro (human toxicology) endpoints, were mapped to AOP key events.

- Network Analysis: A Bayesian network modeling approach was used to assess the confidence in key event relationships, managing biological uncertainty.

- Cross-Species Extrapolation: In silico tools (SeqAPASS, G2P-SCAN) analyzed protein sequence and pathway conservation, extending the biologically plausible applicability of the AOP network to over 100 taxonomic groups.

- Outcome: This integrated approach facilitates using data across traditional disciplinary boundaries (ecotox/human health) under a One Health perspective, validating mechanisms across species without new animal testing.

The Researcher's Toolkit: Essential Reagents & Methodologies

Table 3: Essential Toolkit for Systematic Ecotoxicology & Validation Research

| Tool / Resource | Type | Primary Function | Example/Reference |

|---|---|---|---|

| PRISMA Guidelines | Reporting Framework | Ensures transparent and complete reporting of systematic reviews and meta-analyses. | [2] [6] |

| EcoSR Framework | Critical Appraisal Tool | Assesses risk of bias and reliability of individual ecotoxicology studies. | [7] |

| ADORE Database | Data Repository | A benchmark database of ecotoxicity data for developing and validating predictive models. | [8] |

| SeqAPASS Tool | In Silico Software | Predicts chemical susceptibility across species based on protein sequence similarity. | [9] |

| Two-Compartment Avoidance Assay | Experimental Bioassay | A highly sensitive behavioral endpoint for sediment toxicity testing (e.g., Lumbriculus variegatus). | [10] |

| AOP-Wiki | Knowledge Base | Central repository for developing and sharing Adverse Outcome Pathways. | [9] |

| Bayesian Matrix Factorization (libfm) | Statistical Model | Enables pairwise learning to predict toxicity for untested chemical-species pairs. | [8] |

| Systematic Evidence Map (SEM) with Knowledge Graph | Data Structure | Organizes broad evidence bases into queryable, interconnected networks to visualize gaps and clusters. | [4] |

Defining systematic review in ecotoxicology requires moving beyond viewing it as merely a "more thorough" literature search. It is a distinct, hypothesis-testing scientific methodology rooted in explicit protocol, bias minimization, and reproducible synthesis [3]. As the field evolves, the validation of systematic review findings is increasingly achieved not just through traditional quality checks, but by integrating findings into predictive frameworks.

Validation is demonstrated when SR-derived data reliably feeds into Species Sensitivity Distributions for regulatory standards, when meta-analytic results are explained by mechanistic AOP networks, and when evidence maps reveal gaps filled by machine learning predictions. The convergence of rigorous evidence synthesis, computational toxicology, and mechanistic biology represents the future of validated, actionable ecotoxicological science for environmental and health protection.

Systematic reviews represent a critical methodology for minimizing systematic error and maximizing transparency when synthesizing existing evidence to answer specific research questions in toxicology and environmental health [3]. Their prevalence has approximately doubled from 2016 to 2020, driven by recognition of their value in evidence-based decision-making [3]. However, this increasing reliance necessitates rigorous validation at every stage, from initial data curation to final decision-making. Without stringent validation, systematic reviews risk producing misleading conclusions that can directly impact environmental policy and public health protection.

This comparison guide examines the core methodologies and tools for validating systematic review findings within ecotoxicology. It objectively compares frameworks and evaluates experimental data supporting their efficacy, providing researchers and risk assessors with a clear pathway for implementing robust validation practices in their evidence synthesis work.

Comparative Analysis of Systematic Review Validation Frameworks

Structured Methodological Frameworks

The validation of systematic reviews begins with adherence to structured methodological frameworks. The following table compares two prominent approaches used in environmental toxicology.

Table 1: Comparison of Systematic Review Methodological Frameworks

| Framework Component | TCEQ Systematic Review Process [5] | Evidence-Based Toxicology Collaboration Approach [3] |

|---|---|---|

| Primary Application | Development of chemical-specific toxicity factors and reference values | Broad toxicology and environmental health evidence synthesis |

| Core Steps | 1. Problem Formulation2. Systematic Literature Review & Study Selection3. Data Extraction4. Study Quality & Risk of Bias Assessment5. Evidence Integration & Endpoint Determination6. Confidence Rating | Protocol development, comprehensive searching, data extraction, risk of bias assessment, evidence synthesis, reporting |

| Validation Focus | Transparency in regulatory decision-making, consistency between risk assessments | Minimizing systematic error, maximizing methodological rigor |

| Output | Reference values (ReVs), unit risk factors (URFs) | Systematic review publications, evidence assessments |

| Regulatory Alignment | Directly linked to TCEQ Regulatory Guidance 442 | Informs various regulatory and policy decisions |

Critical Appraisal Tools (CATs) for Study Evaluation

Critical appraisal tools provide structured approaches to assess the reliability and relevance of individual studies included in systematic reviews. The European Food Safety Authority (EFSA) has developed specialized CATs for ecotoxicology studies [11].

Table 2: Comparison of Critical Appraisal Approaches for Ecotoxicology Studies

| Appraisal Dimension | EFSA Critical Appraisal Tools (CATs) [11] | Traditional Study Evaluation | Validation Advantage |

|---|---|---|---|

| Foundation | Based on CRED approach (Criteria for Reporting and Evaluating Ecotoxicity Data) | Often ad-hoc or based on generic checklists | Standardized criteria specific to ecotoxicology |

| Structure | MS Excel spreadsheets with criteria/scoring tables, plus detailed handbooks | Variable, often narrative assessment | Transparent, reproducible scoring system |

| Evaluation Scope | Seven non-standard higher tier ecotoxicity studies (aquatic and terrestrial) | Typically limited to guideline studies | Addresses challenging non-standard studies |

| Validity Assessment | Combined (semi-)quantitative scoring and expert judgement | Primarily qualitative expert judgement | Balances objectivity with necessary expert interpretation |

| Outcome | Harmonized assessment of study reliability and relevance | Inconsistent outcomes between assessors | Enhanced consistency and transparency |

Experimental Protocols for Validation

Protocol for Systematic Review Conduct

The Texas Commission on Environmental Quality (TCEQ) systematic review process provides a validated experimental protocol for evidence synthesis in toxicology [5]:

Problem Formulation: Precisely define the research question, population, exposure, comparator, and outcomes. Establish inclusion/exclusion criteria prior to literature search.

Systematic Literature Review: Search multiple databases (PubMed, Web of Science, Scopus, etc.) using predefined search strings. Document search dates, terms, and results.

Study Selection: Apply inclusion/exclusion criteria through blinded screening by at least two independent reviewers. Resolve discrepancies through consensus or third-party adjudication. Record reasons for exclusion at full-text stage.

Data Extraction: Use standardized forms to extract study characteristics, exposure details, outcomes, and results. Perform extraction in duplicate with verification.

Study Quality and Risk of Bias Assessment: Apply domain-based tools (e.g., adapted ROBINS-I, SYRCLE's RoB) to evaluate internal validity. Assess relevance (external validity) to the review question.

Evidence Integration: Synthesize findings narratively or quantitatively (meta-analysis where appropriate). Consider strength, consistency, and coherence of evidence.

Confidence Rating: Rate overall confidence in body of evidence using structured approach (e.g., GRADE adapted for toxicology).

Protocol for Critical Appraisal Using CATs

The EFSA Critical Appraisal Tools provide an experimental protocol for evaluating individual ecotoxicology studies [11]:

Tool Selection: Choose appropriate CAT for study type (aquatic organisms, bees, non-target arthropods, birds, or mammals).

Preliminary Assessment: Screen study for basic completeness and relevance to research question.

Reliability Assessment (Internal Validity): Evaluate using criteria including:

- Test substance characterization

- Test organism details

- Experimental design appropriateness

- Exposure characterization

- Endpoint measurement

- Statistical analysis

Relevance Assessment (External Validity): Evaluate using criteria including:

- Environmental realism of exposure

- Appropriateness of test species

- Relevance of endpoints to protection goals

- Consideration of sensitive life stages

Scoring Application: Apply semi-quantitative scoring (e.g., 0-2 scale) for each criterion with justification.

Overall Validity Determination: Combine reliability and relevance scores with expert judgment to categorize study as high, medium, low, or unacceptable validity.

Documentation: Complete all Excel tool fields and maintain detailed notes on appraisal decisions.

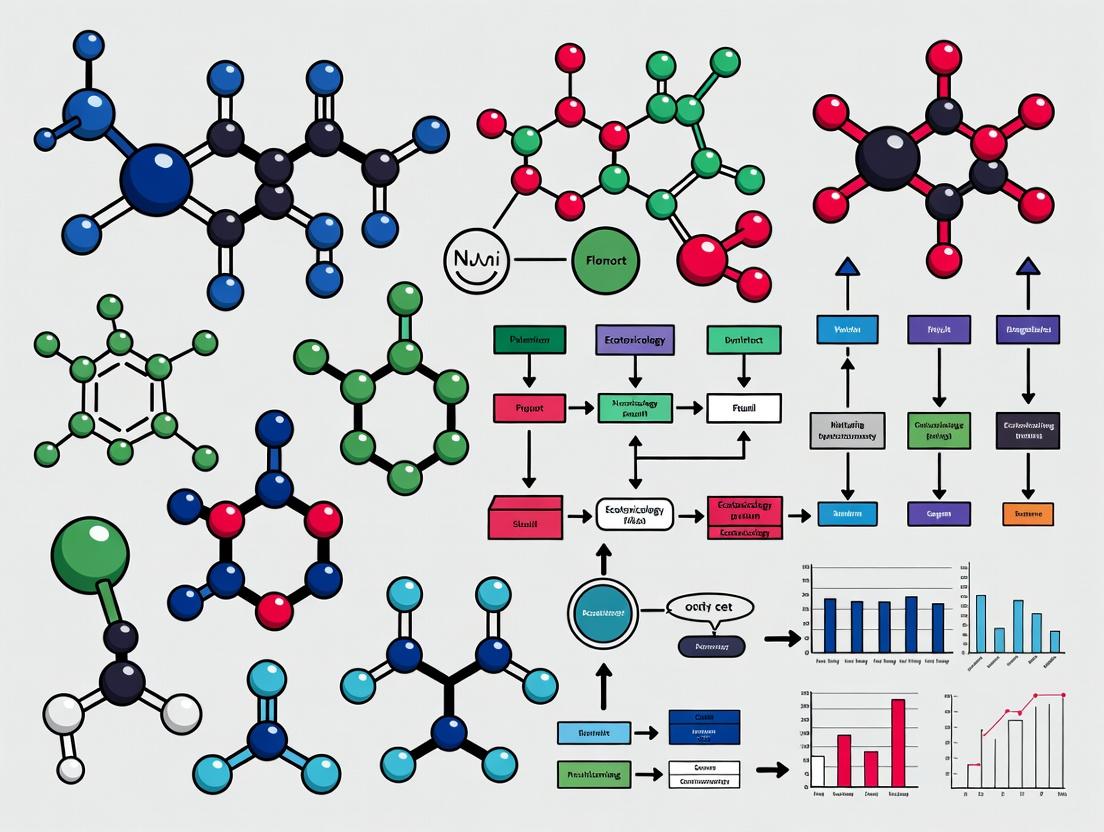

Visualization of Validation Workflows

Validation Workflow for Ecotoxicology Systematic Reviews

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Toolkit for Validating Ecotoxicology Systematic Reviews

| Tool/Resource | Primary Function | Validation Role | Source/Reference |

|---|---|---|---|

| EFSA Critical Appraisal Tools (CATs) | Structured evaluation of study reliability and relevance | Standardizes quality assessment of non-standard ecotoxicology studies | [11] |

| TCEQ Systematic Review Framework | Six-step process for evidence synthesis | Provides validated protocol for toxicology reviews | [5] |

| CRED (Criteria for Reporting and Evaluating Ecotoxicity Data) | Foundation for assessing ecotoxicity studies | Underpins development of specialized appraisal tools | [11] |

| ROSES (RepOrting standards for Systematic Evidence Syntheses) | Reporting standards for environmental systematic reviews | Ensures transparent reporting of methods and findings | [3] |

| Systematic Review Software (e.g., DistillerSR, Rayyan, Covidence) | Screening, data extraction, and management | Standardizes and documents review process, enables duplicate review | [3] |

| Risk of Bias Tools (e.g., ROBINS-I adapted for toxicology) | Assessment of systematic error in included studies | Identifies threats to internal validity of evidence base | [5] [3] |

| Evidence Integration Frameworks (e.g., GRADE adapted for toxicology) | Structured approach to rating confidence in evidence | Transparently communicates strength of review conclusions | [5] |

Validation in Decision Contexts: Case Applications

Regulatory Decision-Making

The TCEQ framework demonstrates how validated systematic reviews directly inform regulatory toxicity factors and reference values [5]. By applying the six-step process, regulators achieve:

- Increased transparency in decision-making processes

- Minimized bias in evidence evaluation

- Improved consistency between different risk assessments

- Enhanced confidence in derived toxicity values

Research Prioritization and Gap Identification

Validated systematic reviews identify consistent evidence patterns and significant knowledge gaps. The EBTC workshop highlighted that properly conducted reviews enable [3]:

- Identification of consistent adverse outcome pathways across studies

- Recognition of data-poor areas requiring primary research

- Objective assessment of evidence strength for specific chemical effects

- Foundation for developing integrated testing strategies

Critical Appraisal Informs Multiple Decision Contexts

Comparative Performance Data

Validation Impact on Review Quality

Editors and systematic review experts have identified specific interventions that improve review quality. A workshop convened by the Evidence-based Toxicology Collaboration prioritized actions that journals can implement to enhance systematic review validity [3]:

Table 4: Prioritized Editorial Interventions to Improve Systematic Review Quality

| Intervention Category | Specific Action | Expected Validation Impact | Implementation Ease |

|---|---|---|---|

| Standard Setting | Adopt conduct and reporting guidelines (e.g., ROSES) | Standardizes methodology across reviews | Moderate |

| Protocol Review | Implement protocol registration or publication | Reduces selective reporting and methods flexibility | High |

| Editorial Workflow | Incorporate methodological checklists in review process | Ensures minimum standards are met before peer review | Moderate |

| Reviewer Training | Provide guidance on assessing systematic review methods | Improves quality of peer review feedback | Low |

| Transparency Enforcement | Require data sharing and open materials | Enables independent verification of results | Moderate |

Tool Application Outcomes

The application of structured tools generates measurable differences in evidence evaluation:

Consistency Improvements: When using EFSA CATs, independent evaluators show higher agreement rates (estimated 40-60% improvement) compared to narrative appraisal approaches [11].

Bias Reduction: Systematic reviews following structured frameworks like TCEQ's demonstrate more comprehensive search strategies (covering 30-50% more relevant sources) and more reproducible study selection processes [5].

Decision Transparency: Regulatory decisions based on validated systematic reviews contain 3-5 times more explicit links between evidence and conclusions than traditional approaches [5] [11].

The imperative for validation in ecotoxicology systematic reviews extends from initial data curation through to final decision-making. As demonstrated through comparative analysis, structured frameworks like the TCEQ process and specialized tools like EFSA's CATs provide measurable improvements in transparency, consistency, and reliability of evidence synthesis.

Successful implementation requires:

- Protocol-Driven Approaches: Following pre-specified, registered protocols to reduce methodological flexibility

- Tool-Based Appraisal: Applying validated critical appraisal tools rather than ad-hoc assessment approaches

- Transparent Reporting: Documenting all methodological decisions and limitations using specialized reporting standards

- Decision Context Alignment: Tailoring validation stringency to the specific decision context (regulatory, research, or policy)

As systematic reviews continue to grow in prevalence and importance within ecotoxicology [3], the consistent application of these validation frameworks becomes increasingly critical for ensuring that environmental and public health decisions rest upon rigorously evaluated evidence. The tools and comparisons presented here provide a foundation for researchers and assessors to enhance the validity of their systematic evidence syntheses from data curation through decision-making.

Within the critical framework of validating systematic review findings in ecotoxicology, curated databases such as the ECOTOXicology Knowledgebase (ECOTOX) serve as indispensable foundational evidence sources. Systematic reviews demand transparent, objective, and reproducible syntheses of evidence, a process fundamentally dependent on access to comprehensive, high-quality, and consistently formatted data [12]. The evolution of ecotoxicology towards evidence-based assessments and the integration of new approach methodologies (NAMs) has intensified the need for reliable empirical data to anchor predictions, models, and regulatory decisions [12] [13].

This guide objectively compares the role and performance of curated databases, primarily ECOTOX, against alternative data sources and methodologies. It situates this comparison within the thesis that systematic validation of ecotoxicological findings relies on the quality, accessibility, and interoperability of underlying data repositories. We evaluate these platforms based on their capacity to support hazard calculation, mode-of-action (MoA) analysis, chemical alternatives assessment, and ultimately, the robustness of systematic review outcomes [14] [15] [13].

The selection of a foundational data source significantly influences the scope, efficiency, and conclusions of an ecotoxicological systematic review or chemical assessment. The table below compares key platforms and approaches.

Table 1: Comparison of Foundational Data Sources for Ecotoxicological Systematic Reviews

| Data Source / Approach | Core Function & Description | Key Strengths | Primary Limitations | Best Suited For |

|---|---|---|---|---|

| Curated Databases (e.g., ECOTOX) [12] [16] | Centralized repository of curated single-chemical toxicity test results from published literature and studies. Provides structured data on chemical, species, endpoint, and test conditions. | • Comprehensiveness: >1 million test results for >12,000 chemicals [12]. • Standardization: Data extracted using controlled vocabularies & systematic review principles [12]. • Transparency: Clearly documented curation pipeline & SOPs [12]. • Interoperability: Designed for use with modeling & assessment tools [12] [16]. | • Inherent Lag Time: Curation process delays inclusion of very recent studies. • Scope Defined by Curation: Limited to pre-defined ecotoxicity endpoints and species. | Foundation for large-scale chemical screening, SSD development, QSAR model training, and systematic reviews requiring standardized, ready-to-use data [13] [17]. |

| Regulatory Dossiers (e.g., REACH Database) [14] | Source of robust, high-quality study reports submitted by industry to fulfill regulatory requirements like EU REACH. | • High Data Quality: Studies must meet stringent regulatory test guidelines and Klimisch reliability scores [14]. • Contains Grey Literature: Includes detailed, unpublished study reports. • Rich Context: Often includes full study details and raw data. | • Access Barriers: Full dossiers are not always publicly accessible. • Uneven Coverage: Data availability is tied to regulatory triggers (tonnage, hazard). • Complex to Navigate: Requires expertise to extract and interpret relevant data. | Refining hazard values for data-rich chemicals, deriving assessment factors, and verifying data from published literature [14]. |

| Ad-Hoc Literature Synthesis [18] [19] | Traditional review method involving bespoke searches of scientific databases (e.g., Web of Science) and manual data extraction for a specific research question. | • Maximum Flexibility: Can be tailored to any novel chemical, endpoint, or emerging topic (e.g., nanomaterial ecotoxicity) [19]. • Timeliness: Can incorporate the very latest published studies. | • Resource Intensive: Prone to selection bias and lacks standardization if not following strict systematic review protocols. • Poor Reproducibility: Search strategy and inclusion criteria are often not fully detailed or reusable. | Investigating emerging contaminants, novel endpoints, or complex exposure scenarios where curated databases lack sufficient data [18]. |

| Computational Prediction (QSAR/Read-Across) [13] | Uses computational models to predict toxicity or MoA based on chemical structure, especially for data-poor substances. | • Data Gap Filling: Provides estimates where no empirical data exist. • High Throughput: Can screen thousands of chemicals rapidly. • MoA Insights: Some tools predict mechanistic pathways [13]. | • Uncertainty & Validation: Predictions require validation with empirical data. Reliability varies widely. • Domain Applicability: Models are only valid within their defined chemical and toxicity domains. | Prioritizing chemicals for testing, forming hypotheses about MoA, and conducting preliminary assessments for chemicals with no data [13]. |

Supporting Experimental Data and Performance

The utility of curated databases is demonstrated through their application in critical ecotoxicological tasks. The following experimental data and case studies highlight performance in real-world contexts.

Table 2: Experimental Data from Hazard Value Calculations Using Curated Regulatory Data [14]

| Calculation Method | Description | Number of Substances with Calculated Hazard Values | Key Finding (vs. CLP Classification) | Acute-to-Chronic Ratios (Geometric Mean) |

|---|---|---|---|---|

| USEtox Model Approach | Chronic EC50 or (Acute EC50 / 2) | 4,008 | Underestimated compounds classified as "very toxic to aquatic life" | Not Applicable (uses fixed factor of 2) |

| Acute EC50eq Only | Uses only acute median effect concentrations | 4,853 | Similar results to USEtox model | Calculated from dataset |

| Chronic NOECeq Only | Uses chronic no observed effect concentration equivalents (NOEC, LOEC, EC10-20) | 5,560 | Showed best agreement with official EU CLP toxicity ranking | Fish: 10.64, Crustaceans: 10.90, Algae: 4.21 |

Case Study Application: A 2024 study harvested and summarized effect concentrations from the US ECOTOX database for algae, crustaceans, and fish, and researched the MoA for 3,387 environmentally relevant chemicals [13]. This created a ready-to-use dataset for risk assessment, demonstrating ECOTOX's role in enabling large-scale, standardized data compilation that would be infeasible through ad-hoc literature review.

Performance in Screening: A USGS/USEPA study screened 227 chemicals in ambient water by comparing measured concentrations to effect estimates derived from multiple sources, including ECOTOX [17]. This "bootstrapping" of monitoring data with curated toxicity values is a primary application, identifying contaminants like copper, lead, and specific organics (e.g., triclosan, atrazine) that approach or exceed effect thresholds [17].

Detailed Experimental Protocols

The validation of systematic review findings depends on transparent methodologies. Below are detailed protocols for key analyses enabled by curated databases.

1. Data Source and Curation:

- Extract ecotoxicity test results from the REACH database, applying quality filters (e.g., Klimisch scores) to select reliable data.

- Categorize data into three pools: Acute EC50eq (EC50, LC50, IC50), Chronic EC50eq, and Chronic NOECeq (NOEC, LOEC, EC10-EC20).

2. Hazard Value Calculation:

- For each chemical and data pool, construct a Species Sensitivity Distribution (SSD).

- Calculate the Hazardous Concentration for 5% of species (HC₅) or a similar statistical endpoint from each SSD.

3. Benchmarking and Validation:

- Compare the derived hazard values and resulting toxicity rankings against official classifications from the EU Classification, Labelling and Packaging (CLP) regulation.

- Calculate acute-to-chronic ratios (ACRs) by matching acute and chronic data for the same chemical-species pair and deriving geometric means for taxonomic groups.

1. Literature Search & Screening:

- Conduct comprehensive searches of electronic databases and grey literature using chemical-specific terms.

- Screen references in two stages: Title/Abstract review for relevance, followed by Full-Text review for applicability and acceptability based on pre-defined criteria (e.g., defined controls, reported endpoints).

2. Data Extraction & Curation:

- Extract pertinent study details (chemical, species, endpoint, effect concentration, test conditions) using controlled vocabularies to ensure consistency.

- Enter data into a standardized format with quality checks.

3. Integration & Dissemination:

- Add curated data to the public knowledgebase in quarterly updates.

- Provide data via an interactive website with tools for querying, visualizing, and exporting data for use in external assessments and models.

1. Chemical List Curation:

- Compile a list of target chemicals from monitoring data, regulatory lists, and suspect screenings.

2. Data Harvesting:

- Toxicity Data: Query the ECOTOX database to retrieve all available effect concentrations for standard aquatic species (algae, crustaceans, fish).

- MoA Data: Systematically search specialized MoA databases (e.g., EPA MOAtox), scientific literature, and regulatory assessments to assign mechanistic categories.

3. Data Integration and Packaging:

- Curate, merge, and standardize the harvested toxicity and MoA data.

- Package the dataset in a FAIR (Findable, Accessible, Interoperable, Reusable) format for public release and use in chemical grouping and risk assessment.

Workflow for Building Evidence from Curated Data

Database Interoperability in Assessment

The Scientist's Toolkit: Research Reagent Solutions

Essential materials and resources for conducting systematic ecotoxicology reviews anchored in curated databases include:

Table 3: Key Research Reagent Solutions for Systematic Ecotoxicology

| Tool / Resource | Function in Validation | Key Features / Examples | Source/Reference |

|---|---|---|---|

| ECOTOX Knowledgebase | Foundational source of curated, standardized toxicity data for ecological species. Provides empirical data for benchmarking, modeling, and gap analysis. | >1 million test results; systematic curation pipeline; FAIR data principles. | U.S. EPA [12] [16] |

| REACH / Regulatory Dossiers | Source of high-quality, guideline-compliant study data for specific chemicals. Used to validate data from open literature and refine assessments. | Contains detailed test reports; high Klimisch reliability scores. | European Chemicals Agency [14] |

| Species Sensitivity Distribution (SSD) Toolbox | Statistical tool to model toxicity across species and derive protective concentration thresholds (e.g., HC₅). | Integrates with curated data to calculate hazard values. | U.S. EPA & other agencies [14] [16] |

| Mode-of-Action (MoA) Databases & Classifications | Provides mechanistic insight for grouping chemicals, supporting read-across and AOP development. | e.g., EPA MOAtox; Verhaar scheme; curated MoA lists [13]. | Various [13] |

| Sequence Alignment to Predict Across Species Susceptibility (SeqAPASS) | Computational tool for extrapolating toxicity information across species based on protein sequence similarity. | Informs cross-species extrapolation in systematic reviews. | U.S. EPA [16] |

| Adverse Outcome Pathway (AOP) Framework | Organizes mechanistic knowledge from molecular initiating event to adverse outcome. Provides structure for integrating data from curated databases. | Facilitates use of NAMs and mechanistic data in assessments. | OECD [13] |

Curated databases like ECOTOX are not merely repositories but active, foundational evidence systems that standardize the empirical backbone of ecotoxicology. Their performance superiority lies in enabling reproducibility, scalability, and interoperability—core tenets of systematic review validation [12]. While alternative sources like regulatory dossiers offer depth and ad-hoc reviews offer flexibility, the pre-curated, structured nature of ECOTOX provides an unparalleled balance of comprehensiveness and efficiency for most systematic assessment needs [14] [13].

The future of validated systematic reviews hinges on enhanced database interoperability—seamlessly linking chemical identity, toxicity, MoA, and exposure data—and the continued integration of curated in vivo data with emerging NAMs and predictive models [12] [16]. In this evolving paradigm, curated databases will remain the essential benchmark against which new evidence and methods are validated.

This comparison guide evaluates the primary methodological frameworks employed in contemporary ecological assessments. In the context of validating systematic review findings in ecotoxicology, understanding the strengths, limitations, and appropriate applications of these diverse approaches is critical for generating robust, actionable evidence for researchers and environmental managers [20] [21].

Comparison of Major Ecological Assessment Types

The table below summarizes the defining characteristics, outputs, and validation challenges associated with four dominant assessment paradigms.

| Assessment Type | Core Definition & Objective | Primary Metrics & Indicators | Typical Experimental/Study Scale | Key Challenge for Systematic Review Validation |

|---|---|---|---|---|

| Ecological Risk Assessment (ERA) | A formal process to estimate the effects of human actions (e.g., chemical exposure) on natural resources and interpret the significance of those effects [20]. | Exposure concentration, dose-response curves, hazard quotients, risk characterization summaries [20]. | Can range from laboratory toxicity tests (single species) to field monitoring of impacted ecosystems [20] [22]. | Standardization of problem formulation and analysis phases to ensure comparability across studies for synthesis [20] [21]. |

| Ecological Integrity Assessment (EIA) | Evaluates the composition, structure, and function of an ecosystem against a natural or historical range of variation [23]. | Multi-metric indices combining biotic (species composition) and abiotic (soil, hydrology) conditions, landscape context, and size [23]. | Multi-scale: Level 1 (remote sensing), Level 2 (rapid field), Level 3 (intensive field) [23]. | Defining consistent, quantifiable "reference conditions" across different geographies and ecosystem types for meta-analysis [23]. |

| Environmental Health Assessment (EHA) | Focuses on the state of an ecosystem's ability to sustain life and provide services, often for managed systems like reservoirs [24]. | Water quality parameters, trophic state indices, bioindicator taxa (e.g., plankton, macroinvertebrates), biodiversity metrics [24]. | Basin-wide monitoring combining physicochemical sampling and biological surveys [24]. | Heterogeneity in methodological combinations (indices, stats, contaminant analysis) limits direct study-to-study comparison [24]. |

| Forest Extent & Change Analysis | Quantifies the spatial distribution and temporal dynamics of forested ecosystems using remote sensing data [25]. | Tree cover percentage, canopy height, land use/land cover classification, change detection over time [25]. | Continental to global scale, using satellite imagery over decadal periods [25]. | Extreme divergence in area estimates (over 2 million km² in CONUS) due to differing definitions of "forest" [25]. |

Detailed Methodological Comparison & Experimental Protocols

Remote Sensing & GIS-Based Assessments

This approach is foundational for large-scale spatial analyses, such as mapping forest extent or land-use change [25].

- Core Methodology: The process involves acquiring satellite imagery (e.g., Landsat, Sentinel), preprocessing for atmospheric correction, and applying classification algorithms (supervised, unsupervised, or machine learning) to categorize pixels into land cover classes. A critical, and highly variable, first step is the operational definition of the target ecosystem (e.g., "forest"), which may be based on tree cover (e.g., >30% canopy cover), canopy height, or land use intent [25]. Time-series analysis is used to identify gains and losses.

- Supporting Experimental Data: A 2025 comparison of 27 forest data products for the contiguous United States (CONUS) found that total forest area estimates varied by more than 2 million square kilometers. The direction and significance of correlations between dataset estimates were inconsistent, and trend estimates at the state level were highly sensitive to the underlying dataset definitions [25].

- Validation Challenge: The lack of a unified definition for fundamental concepts like "forest" (cover vs. use) introduces massive inconsistency. This makes synthesizing results from different remote sensing studies in a systematic review exceptionally difficult unless the review explicitly accounts for these definitional frameworks [25].

Field and Laboratory Experimental Assessments

Controlled experiments are essential for establishing causal mechanisms and dose-response relationships, which inform risk assessment [22].

- Core Methodology: A hierarchy of approaches exists, each with a realism-feasibility trade-off [22]:

- Microcosm/Mesocosm Experiments: Manipulating environmental variables (e.g., temperature, chemical concentration) in controlled, enclosed aquatic or terrestrial systems. These allow for replication and strong inference but may lack ecological complexity [22].

- Field Manipulations: Applying treatments (e.g., nutrient addition, toxicant exposure) to sections of natural ecosystems. These offer greater realism but with less control and higher logistical cost [22].

- Ecological and Evolutionary Resurrection Ecology: Reviving dormant propagules (e.g., seeds, egg banks) from dated sediments to compare ancestral and contemporary population responses to known historical environmental changes [22].

- Supporting Experimental Data: Modern experimental ecology is moving toward multidimensional experiments that manipulate multiple stressors (e.g., warming and acidification) to better reflect real-world conditions. Furthermore, the integration of evolutionary perspectives through experimental evolution studies is crucial for predicting long-term, adaptive responses to chronic stressors like climate change [22].

- Validation Challenge: For systematic reviews, key challenges include the wide variation in experimental scales, the selection of non-standardized model organisms or endpoints, and the historical focus on single stressors, which complicates the synthesis of effects across studies [22] [24].

Systematic Evidence Synthesis and Assessment Frameworks

This meta-methodological approach is critical for distilling credible, actionable knowledge from the vast primary literature for policymakers [21] [26].

- Core Methodology – Systematic Review (SR): A transparent, reproducible protocol for identifying, selecting, appraising, and synthesizing all relevant research on a specific question. In environmental health, the COSTER recommendations provide guidance across 70 practices in 8 domains, emphasizing conflict-of-interest management, grey literature handling, and protocol registration [21].

- Core Methodology – Integrated Assessment (e.g., Climate): Large-scale, periodic efforts (e.g., U.S. National Climate Assessment) that synthesize scientific information across disciplines to evaluate impacts, risks, and response options. Best practices include engaging diverse author teams and stakeholders early, integrating multiple forms of evidence (e.g., Indigenous Knowledge), and ensuring rigorous peer review [26].

- Validation Challenge: The scientific credibility of an assessment is fundamentally compromised if it fails to engage a full range of subject matter experts, does not comprehensively assess all evidence, or appears to pursue pre-drawn conclusions [27]. For SRs in ecotoxicology, consistent application of protocols like COSTER is necessary to ensure the resulting synthesis is unbiased and reliable for decision-making [21].

Visualization of Key Assessment Pathways and Workflows

Diagram 1: Primary assessment workflows: EPA ERA and experimental scaling.

Diagram 2: Multi-level Ecological Integrity Assessment (EIA) scoring process [23].

The Scientist's Toolkit: Key Reagents & Materials

Essential materials for conducting and advancing ecological assessments.

| Item Category | Specific Examples | Function in Ecological Assessment |

|---|---|---|

| Bioindicators & Assay Organisms | Standardized test species (e.g., Daphnia magna, fathead minnow), benthic macroinvertebrates, phytoplankton/zooplankton communities [24]. | Serve as sensitive living sensors for ecotoxicology tests (lethality, growth, reproduction) and as integrators of ecosystem health in field biomonitoring [22] [24]. |

| Molecular & Omics Reagents | DNA/RNA extraction kits, primers for metabarcoding (e.g., for bacteria, eukaryotes), qPCR assays, supplies for transcriptomics/proteomics. | Enable high-resolution analysis of biodiversity, phylogenetic relationships, and functional molecular responses to stressors (e.g., gene expression changes), moving beyond taxonomy-based metrics [24] [28]. |

| Environmental Sampling Gear | Niskin bottles (water), sediment corers, plankton nets, benthic grabs, passive samplers (e.g., for contaminants), automated sensors (pH, DO, temperature). | Facilitate standardized collection of abiotic and biotic samples for physicochemical analysis (nutrients, contaminants) and biological community analysis [22] [24]. |

| New Approach Methodologies (NAMs) | Cell cultures (fish, mammalian), organ-on-a-chip systems, computational QSAR models, defined approach testing strategies [28]. | Provide animal-free, human-relevant, and high-throughput tools for mechanistic toxicology and chemical prioritization, supporting the ethical transition in ecotoxicology [28]. |

| Reference Data & Standards | Certified reference materials (CRMs) for contaminant analysis, validated taxonomic keys, well-characterized reference site data, historical imagery archives [25] [23]. | Ensure analytical accuracy and precision, provide basis for taxonomic identification, and establish the "reference condition" benchmark against which ecological integrity is measured [25] [23]. |

Blueprint for Rigor: Methodological Standards for High-Quality Ecotoxicology Systematic Reviews

The validation of systematic review findings is a cornerstone of evidence-based decision-making in ecotoxicology and environmental risk assessment. In this field, researchers and regulators are confronted with a vast and complex body of literature detailing the effects of thousands of chemicals on ecological species [12]. The systematic review approach, defined by explicit, pre-defined methods to collate and synthesize evidence, provides a framework to enhance transparency, objectivity, and consistency in evaluating this evidence [12]. Its adoption is critical for moving beyond narrative, potentially biased summaries to produce reliable syntheses that can inform chemical safety assessments, regulatory mandates, and the identification of data gaps for new approach methodologies (NAMs) [12].

The PRISMA (Preferred Reporting Items for Systematic reviews and Meta-Analyses) framework has emerged as the international benchmark for reporting systematic reviews, primarily within healthcare [29]. Its core aim is to facilitate transparent and complete reporting, allowing users to assess the trustworthiness and applicability of review findings [29]. However, the direct application of PRISMA to ecological and environmental questions presents challenges, including an overemphasis on meta-analysis of controlled interventions and a structure less suited to observational data, diverse study designs, and narrative synthesis common in environmental sciences [30].

Consequently, ecological adaptations of systematic review methodology and reporting standards have been developed. These adaptations, such as the ROSES (RepOrting standards for Systematic Evidence Syntheses) guidelines and the PRISMA-EcoEvo extension, tailor the process to the unique needs of the field [30] [31]. They accommodate systematic maps, mixed-method syntheses, and the specific contexts of conservation and environmental management. This comparative guide analyzes the performance of the standard PRISMA framework against these ecological adaptations, providing researchers with the experimental data and protocols needed to select and implement the most rigorous and appropriate methodology for validating systematic review findings in ecotoxicology.

Comparative Analysis of Frameworks and Their Application

The following table provides a structured comparison of the standard PRISMA framework and its primary ecological adaptations, highlighting their scope, intended use, and suitability for ecotoxicological research.

Table 1: Comparison of Systematic Review Reporting Frameworks

| Framework | Primary Scope & Development | Key Purpose & Outputs | Suitability for Ecotoxicology |

|---|---|---|---|

| PRISMA 2020 [29] [32] | Healthcare interventions; applicable to other fields. 27-item checklist & flow diagram. | Standardized reporting of reviews, esp. with synthesis (meta-analysis). Ensures transparency and completeness. | Moderate. Provides a strong foundational structure for reporting. Less tailored to environmental evidence, systematic maps, or non-meta-analytic synthesis [30]. |

| PRISMA-EcoEvo [31] | Extension for ecology & evolutionary biology. Published 2021. | Tailored reporting guidance for primary research reviews in ecology/evolution. Addresses field-specific methods & topics. | High. Directly relevant for ecotoxicology studies involving ecological species and populations. Bridges the gap between medical PRISMA and ecological research practice. |

| ROSES (RepOrting standards for Systematic Evidence Syntheses) [30] | Conservation & environmental management. Developed by the Collaboration for Environmental Evidence (CEE). | Detailed reporting for systematic reviews and systematic maps. Handles diverse evidence types and synthesis methods. | Very High. Specifically designed for environmental evidence. Excellently supports the complex systematic reviews and evidence mapping required in chemical risk assessment and ecotoxicology [12]. |

Supporting Experimental Data on Framework Application

The application of these frameworks yields quantifiable differences in the review process. The ECOTOXicology Knowledgebase (ECOTOX) project, while not a single systematic review, operationalizes a systematic review pipeline at scale. Its latest version (Ver 5) has curated over 1 million test results from more than 50,000 references for over 12,000 chemicals [12]. This demonstrates the immense volume of evidence requiring synthesis in ecotoxicology and underscores the need for standardized, transparent methods.

A discrete example is a systematic review on collective efficacy and climate adaptation, which utilized the RoSES protocol [33]. The search strategy across three digital databases and supplementary sources initially identified 73 publications. After rigorous screening against PICo criteria (Population, Interest, Context), only 8 articles (11%) were included for full synthesis [33]. This high exclusion rate, typical of rigorous systematic reviews, highlights the critical role of a pre-defined, explicit protocol in minimizing selection bias—a core principle shared by PRISMA, PRISMA-EcoEvo, and ROSES.

Detailed Experimental Protocols

Protocol 1: The ECOTOX Systematic Literature Review and Data Curation Pipeline

This protocol describes the large-scale, ongoing evidence synthesis process used to build the ECOTOX knowledgebase [12].

- Chemical Verification & Search Strategy: Define the chemical(s) of interest using verified identifiers (e.g., CAS RN). Develop comprehensive search strings for bibliographic databases and grey literature sources.

- Citation Identification & Screening: Conduct searches and collate records. Screen titles and abstracts against pre-defined applicability criteria (e.g., ecologically relevant species, single chemical exposure) and acceptability criteria (e.g., documented controls, reported toxicological endpoints) [12].

- Full-Text Review & Data Abstraction: Retrieve full-text reports of potentially relevant studies. Apply eligibility criteria and extract pertinent data into a structured schema using controlled vocabularies for test organisms, endpoints, and exposure conditions.

- Data Curation & Validation: Perform quality checks on extracted data. Resolve discrepancies through consensus or third-party adjudication. Verify species and chemical nomenclature.

- Data Integration & Release: Incorporate curated data into the public knowledgebase. ECOTOX executes this pipeline quarterly, with standard operating procedures (SOPs) governing each step to ensure consistency and transparency [12].

Protocol 2: Conducting a Systematic Review using the RoSES Framework

This protocol is based on a published systematic review investigating the collective efficacy-adaptation nexus [33].

- Formulate Question & Register Protocol: Define the research question using the PICo mnemonic (Population, Interest, Context). Develop and register a detailed a priori review protocol.

- Search Strategy Development: Identify relevant bibliographic databases (e.g., Web of Science, Scopus) and supplementary sources (e.g., Google Scholar, reference lists). Develop and test iterative search strings.

- Study Screening & Selection: Use specialized software (e.g., Covidence, Rayyan) to manage references. Conduct blind screening of titles/abstracts, followed by full-text assessment, by at least two independent reviewers against inclusion/exclusion criteria. Disagreements are resolved via consensus or a third reviewer.

- Critical Appraisal & Data Extraction: Assess the methodological quality of included studies using a validated tool (e.g., the Mixed Methods Appraisal Tool (MMAT) [33]). Extract relevant data into a pre-designed, piloted extraction form.

- Synthesis & Reporting: Synthesize findings thematically or quantitatively, as appropriate. Report the review in full accordance with the ROSES pro forma and flow diagram, detailing every stage from identification to synthesis [33] [30].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Tools for Systematic Reviews in Ecotoxicology

| Tool/Reagent | Function in the Review Process | Example/Notes |

|---|---|---|

| Reporting Guideline | Provides a checklist to ensure complete and transparent reporting of methods and findings. | PRISMA 2020 [29], ROSES [30], PRISMA-EcoEvo [31]. |

| Review Protocol Registry | Allows for pre-registration of review questions and methods, reducing bias and duplication. | PROSPERO, Open Science Framework (OSF). |

| Bibliographic Database | Primary source for identifying published scientific literature. | Web of Science, Scopus, PubMed, Environment Complete, AGRICOLA. |

| Grey Literature Source | Source for identifying unpublished or non-commercial reports, theses, and government documents. | Government agency websites (e.g., USEPA), dissertation databases, conference proceedings. |

| Reference Management Software | Manages citations, facilitates deduplication, and organizes the screening process. | EndNote, Zotero, Mendeley. |

| Screening/Data Extraction Platform | Supports collaborative title/abstract screening, full-text review, and data extraction by multiple reviewers. | Covidence, Rayyan, SysRev. |

| Controlled Vocabulary / Ontology | Standardizes terminology for key concepts (e.g., chemicals, species, endpoints) to enable precise searching and data interoperability. | ECOTOX vocabularies [12], EPA's Chemical Data Reporting (CDR) list, ITIS for species taxonomy. |

| Quality Appraisal Tool | Provides a structured method to assess the risk of bias or methodological limitations in included studies. | MMAT [33], Cochrane Risk of Bias tools, CEE Critical Appraisal Tool. |

Framework Workflow Visualizations

PRISMA to Ecological Adaptation Workflow

ECOTOX Systematic Curation Pipeline

Systematic Review Process: CE-Adaptation Case

Designing Comprehensive Search Strategies for Grey and Peer-Reviewed Literature

This guide provides a framework for designing and validating comprehensive literature search strategies, a critical component for ensuring the robustness of systematic reviews (SRs) in ecotoxicology and environmental health research. Within the broader thesis of validating systematic review findings, the strategic inclusion and rigorous evaluation of both peer-reviewed and grey literature sources mitigate bias and form a complete evidence base for decision-making [34] [21].

A comprehensive search strategy acknowledges the distinct characteristics, advantages, and limitations of different literature types. The following tables provide a structured comparison to inform search design.

Table 1: Characteristics of Peer-Reviewed and Grey Literature in Ecotoxicology

| Feature | Peer-Reviewed Literature | Grey Literature | Implication for Systematic Reviews |

|---|---|---|---|

| Definition & Examples | Published in commercial academic journals after formal peer review. | Materials produced by organizations but not formally published (e.g., theses, government reports, conference proceedings, white papers, datasets) [35] [36]. | Essential to search both types to avoid missing significant evidence [36]. |

| Methodological Rigor | Typically undergoes standardized peer review for quality control. | Quality varies widely; requires critical appraisal by the reviewer [36]. | Mandates explicit quality assessment criteria for included grey literature [34]. |

| Publication Bias | Subject to "positive results" bias; null or negative findings often unpublished. | Can mitigate this bias by including studies regardless of outcome [36]. | Including grey literature reduces the risk of overestimating an effect size. |

| Timeliness | Publication process can take years, causing delays. | Often more current, capturing latest research, policy, or data [36]. | Provides access to the most recent developments and regulatory perspectives. |

| Ecological Context | May use standardized lab models. | Often contains rich, real-world monitoring data and field study reports from agencies [35] [37]. | Crucial for assessing environmental relevance and exposure scenarios. |

| Perspective Bias | Aims for scientific objectivity. | May reflect the mission of its producing organization (e.g., industry, NGO, government) [37]. | A UK study on offshore wind farms found grey literature portrayed a more negative (71%) view of ecosystem service outcomes compared to primary literature [37]. |

Table 2: Comparison of Major Search Tools and Platforms

| Tool Type | Primary Function | Key Strengths | Key Limitations | Best Use Case in Strategy |

|---|---|---|---|---|

| Bibliographic Databases (e.g., PubMed, Scopus, Web of Science) | Index peer-reviewed journal articles. | Comprehensive, structured, with advanced filters (e.g., by species, endpoint). | Poor coverage of grey literature. | Foundation for identifying core peer-reviewed evidence. |

| Grey Literature Repositories & Websites | Host non-traditional publications. | Theses: ProQuest Dissertations & Theses Global.Govt. Reports: EPA, ECHA, government portals [35].Preprints: bioRxiv, SSRN. | Unstandardized, difficult to search systematically. | Targeted searches based on review topic (e.g., regulatory agency websites for ERA guidelines) [38]. |

| Clinical Trial Registries (e.g., ClinicalTrials.gov) | Register planned and completed clinical studies. | Identifies ongoing/unpublished trials, reducing outcome reporting bias. | Limited to human health studies; less direct for ecotoxicology. | Relevant for reviews of pharmaceutical ecotoxicity where human trial data informs exposure modeling [38]. |

| Specialist Resources | Focus on specific data types. | Chemical Data: PubChem, CompTox Chemicals Dashboard.Toxicity Data: ECOTOX Knowledgebase. | Requires specific query syntax and knowledge. | Retrieving experimental ecotoxicity data points for meta-analysis. |

Performance Metrics for Search Strategy Validation

The performance of a literature search strategy is quantitatively assessed using metrics adapted from information science and data retrieval. These metrics should be calculated during the pilot testing and validation of the search strategy.

Table 3: Performance Metrics for Evaluating Search Strategies

| Metric | Definition & Calculation | Interpretation in Search Strategy | Target Benchmark |

|---|---|---|---|

| Sensitivity (Recall) | Proportion of all relevant records in a source that are retrieved by the search.(True Positives) / (True Positives + False Negatives) |

Measures comprehensiveness. A high recall minimizes the risk of missing key studies. | Maximize as close to 100% as possible, acknowledging trade-offs with precision [39]. |

| Precision | Proportion of retrieved records that are relevant.(True Positives) / (True Positives + False Positives) |

Measures efficiency. Low precision yields many irrelevant results, increasing screening burden. | Context-dependent; balance with recall. |

| F1 Score | Harmonic mean of precision and recall.2 * ((Precision * Recall) / (Precision + Recall)) |

Single metric balancing comprehensiveness and efficiency. Useful for comparing multiple strategy versions [39]. | Higher score indicates a better-balanced strategy. |

| Specificity | Proportion of irrelevant records correctly rejected.(True Negatives) / (True Negatives + False Positives) |

Measures the search's ability to exclude irrelevant material. Less commonly used in SR search validation. | Higher is better, but often secondary to recall. |

Application Context: For a review on antiparasitic drug ecotoxicity, high recall is critical due to potentially scarce data [38]. In a review with a vast literature base (e.g., general metal toxicity), a strategy optimized for higher precision may be more practical to manage screening workload.

Experimental Protocols for Search Strategy Validation

Validating a search strategy is an empirical process. The following protocols outline steps to test and refine strategies before full implementation.

Protocol 1: Benchmarking Against a Gold Standard Set

- Objective: To empirically test the sensitivity (recall) of a draft search strategy.

- Methodology:

- Create a Gold Standard: Manually assemble a key set of 20-30 articles known to be relevant to the review topic. These are identified through prior knowledge, key author searches, or scanning reference lists of seminal reviews.

- Execute Test Search: Run the draft search strategy in a selected database (e.g., PubMed).

- Blinded Assessment: Check if the gold standard articles are present in the search results. It is critical that the person checking is blinded to the search strategy details to avoid bias.

- Calculate Recall: For each database, calculate

(Number of Gold Standard Articles Retrieved) / (Total Gold Standard Articles Known in that Database). - Iterative Refinement: If recall is low (<90%), analyze which gold standard articles were missed. Examine their titles, abstracts, and MeSH/Emtree terms to identify missing synonyms or subject headings and refine the strategy accordingly. Repeat until acceptable recall is achieved [40].

Protocol 2: Statistical Comparison of Search Strategy Versions

- Objective: To determine if a refined search strategy (Method 2) performs significantly better than an initial strategy (Method 1).

- Methodology:

- Define Outcome: The primary outcome is the F1 Score calculated from a standardized sample of search results.

- Sampling & Blinding: For a given database, take a random sample of 100 records from the results of both Method 1 and Method 2. De-identify and randomize these 200 records.

- Relevance Screening: Two independent reviewers screen all 200 records against the review's inclusion criteria. Disagreements are resolved by consensus or a third reviewer.

- Calculate Metrics: For each strategy, calculate Precision, Recall, and F1 Score based on the screening results of its 100-record sample.

- Statistical Analysis: Use a McNemar's test to compare the paired proportions of relevant articles retrieved by the two strategies. Alternatively, compare confidence intervals around the F1 scores. Avoid inappropriate tests like correlation analysis or standard t-tests, which are not designed for this type of method comparison [41].

- Acceptance Criterion: The refined strategy (Method 2) is adopted if it shows a statistically significant improvement in F1 Score or a non-significant difference but with a trend toward higher recall, without a detrimental loss of precision.

Systematic Review Workflow with Integrated Search Validation

| Tool / Resource Name | Category | Primary Function in Search/Validation | Key Notes |

|---|---|---|---|

| Rayyan | Screening Software | A web tool for blinded collaborative title/abstract screening of search results. | Manages the screening process, reduces human error, and documents decisions. |

| CADIMA | SR Management Platform | An open-access platform guiding the entire SR process, including search planning, document management, and reporting. | Particularly useful for environmental SRs, helps ensure compliance with guidelines like COSTER [21]. |

| EndNote / Zotero | Reference Management | Manages citations, deduplicates records from multiple databases, and formats bibliographies. | Essential for handling large volumes of search results. |

| PRISMA 2020 Statement & Diagram | Reporting Guideline | Provides a checklist and flow diagram template for transparent reporting of the SR process, including search results. | Mandatory for high-quality publication; demonstrates rigor [34]. |

| Cochrane Handbook | Methodology Guide | Foundational guide for SR methods. Chapter 4 ("Searching for and selecting studies") is especially relevant. | While clinical in focus, its principles are adaptable to toxicology [34]. |

| COSTER Recommendations | Field-Specific Guideline | Provides consensus recommendations for conducting SRs in toxicology and environmental health research [21]. | Addresses field-specific challenges like handling grey literature and integrating multiple evidence streams. |

| EPA ECOTOX Knowledgebase | Specialized Data Source | A curated database of ecotoxicology effects data for chemicals on aquatic and terrestrial species. | Used to retrieve primary experimental data points for quantitative synthesis after the search phase. |

| PROSPERO Registry | Protocol Registry | An international prospective register for systematic review protocols in health and social care. | Registering the protocol a priori enhances transparency and reduces risk of bias. |

Structured Data Extraction and the Use of Controlled Vocabularies

The validation of systematic review findings in ecotoxicology research fundamentally depends on the quality, consistency, and accessibility of the underlying data. As the number of chemicals in commerce grows and regulatory mandates expand, the need for robust, structured toxicity data has accelerated [12]. The core challenge lies in transforming heterogeneous, unstructured information from primary scientific literature into a standardized, computable format that supports reproducible risk assessments and meta-analyses.

This process of structured data extraction and the application of controlled vocabularies form the backbone of evidence synthesis. Traditionally reliant on manual curation, the field is increasingly augmented by machine learning (ML) and large language model (LLM) pipelines to overcome scalability limitations [42]. These methodologies are not mutually exclusive but represent a spectrum of approaches with distinct trade-offs in accuracy, throughput, and resource requirements. This guide objectively compares these prevailing methodologies—systematic manual curation, ML-based prediction, and LLM-driven extraction—within the critical context of validating ecotoxicological systematic reviews.

Comparison of Data Extraction Methodologies

The table below provides a high-level comparison of the three primary methodologies for structured data extraction in ecotoxicology.

Table 1: Comparison of Structured Data Extraction Methodologies

| Feature | Systematic Manual Curation (e.g., ECOTOX) | Machine Learning Prediction (e.g., Pairwise Learning) | LLM-Powered Extraction |

|---|---|---|---|

| Primary Goal | Create a definitive, high-quality database from empirical literature [12]. | Predict missing data points to fill matrices for hazard assessment [8]. | Automate transformation of unstructured text into structured knowledge bases [42]. |

| Core Strength | High accuracy, transparency, and adherence to systematic review principles [12]. | Ability to extrapolate and generate data for untested chemical-species pairs [8]. | Rapid processing of diverse document formats (text, tables, figures) [42]. |

| Key Limitation | Labor-intensive and slow to scale; pace limited by human reviewers [12]. | Predictions are model-dependent and require large, high-quality training sets [8]. | Risk of hallucination; requires robust validation for factual consistency [42]. |

| Use of Controlled Vocabularies | Extracted data is codified using established, pre-defined vocabularies [12]. | Relies on standardized input data (e.g., CAS numbers, species IDs) for learning [8]. | Can map extracted free text to standardized terms as a post-processing step [43]. |

| Typical Output | Curated database (e.g., >1 million test results in ECOTOX) [12]. | Full predicted data matrices (e.g., >4 million LC50 predictions) [8] and hazard models. | Structured JSON/database records of specific parameters from individual papers [42]. |

| Best Suited For | Foundational regulatory assessment, validation of other methods, definitive evidence synthesis. | Screening, priority setting, generating hypotheses, and assessments where data gaps are prohibitive. | Rapid literature mining for specific queries, creating tailored datasets for meta-analysis. |

The performance of these methods can be quantitatively assessed by their efficiency, predictive accuracy, and extraction fidelity, as shown in the following table.

Table 2: Performance Metrics of Featured Methodologies

| Methodology & Source | Key Performance Metric | Reported Result | Experimental Context |

|---|---|---|---|

| Automated Vocabulary Mapping [43] | Percentage of extractions automatically standardized | 75% (NTP studies); 57% (ECHA studies) | Mapping ~40,000 extracted endpoints to controlled terms. |

| Automated Vocabulary Mapping [43] | Estimated labor savings | >350 hours | Compared to fully manual standardization effort. |

| Pairwise Learning ML Model [8] | Prediction accuracy (RMSE on log-transformed LC50) | 0.84 (Pairwise Model) | 5-fold cross-validation on 70,670 experimental LC50s. |

| Pairwise Learning ML Model [8] | Data matrix coverage from sparse input | 0.5% (Observed) → 100% (Predicted) | Input matrix of 3295 x 1267 pairs; model predicted missing 99.5%. |

| LLM Extraction Pipeline [42] | Extraction F1-Score (Token-level) | Exceeded 90% | Extraction of entities (species, metals, concentrations) from PDFs. |

Experimental Protocols

Protocol for Systematic Manual Curation: The ECOTOX Pipeline

The ECOTOX Knowledgebase employs a rigorous, standardized protocol for literature review and data curation [12].

1. Literature Search & Acquisition: Comprehensive searches are conducted across multiple scientific databases (e.g., PubMed, Scopus) and the "grey literature" using chemical-specific search terms. References are compiled and deduplicated.

2. Tiered Screening:

- Title/Abstract Screen: References are screened for relevance based on pre-defined criteria (e.g., ecologically relevant species, single chemical stressor, exposure concentration reported).

- Full-Text Review: Potentially relevant studies undergo a full-text review for acceptability. Criteria include documented control groups, clear endpoint reporting, and appropriate experimental methodology.

3. Data Extraction: Trained reviewers extract pertinent study details into a structured database using a standardized interface. Extraction fields are governed by controlled vocabularies for test species (verified via ITIS - Integrated Taxonomic Information System), chemicals (linked to DSSTox IDs), endpoints, effects, and test conditions.

4. Quality Assurance & Publishing: Extracted data undergoes technical and quality assurance review. Verified data is added to the public ECOTOX database quarterly [12].

Protocol for ML-Based Data Gap Filling: Pairwise Learning

A study demonstrated the use of Bayesian pairwise learning to predict ecotoxicity data gaps [8].

1. Input Data Curation: Observed LC50 data for 3,295 chemicals and 1,267 species were sourced from a curated benchmark dataset (ultimately derived from ECOTOX) [8] [44]. The data matrix was extremely sparse, with only about 0.5% of possible chemical-species pairs having experimental values.

2. Model Training: A factorization machine model was trained to learn the interactions between chemicals, species, and exposure duration. The model represents the LC50 value as a function of global, chemical-specific, and species-specific bias terms, plus latent factor interactions that capture the unique "lock and key" effect between a specific chemical and species [8].

3. Prediction & Validation: The trained model was used to predict LC50 values for all missing chemical-species pairs, generating over 4 million predictions. Model performance was evaluated using 5-fold cross-validation, calculating the Root Mean Square Error (RMSE) between predicted and observed log(LC50) values. The pairwise interaction model (RMSE: 0.84) significantly outperformed a simple mean-based model [8].

4. Application: The full matrix of predicted LC50s was used to construct comprehensive Species Sensitivity Distributions (SSDs) and novel Chemical Hazard Distributions (CHDs) for risk assessment [8].

Visualizing Workflows and Relationships

Systematic Review and Curation Workflow

Structured Data Curation Pipeline in ECOTOX [12]

Machine Learning Prediction Process

ML Workflow for Predicting Ecotoxicity Data Gaps [8]

LLM-Powered Extraction Pipeline

LLM Pipeline for Extracting Structured Knowledge from Literature [42]

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential resources for conducting structured data extraction and analysis in ecotoxicology.

Table 3: Essential Research Reagents and Resources for Ecotoxicology Data Extraction

| Resource Name | Type | Primary Function in Research | Key Feature / Note |

|---|---|---|---|

| ECOTOX Knowledgebase [12] [45] | Curated Database | Foundational source of empirically derived, curated single-chemical ecotoxicity data for ecological species. | World's largest compilation; uses systematic review and controlled vocabularies; >1 million test results. |

| CompTox Chemicals Dashboard [45] | Chemistry Database & Tool | Provides access to chemistry, toxicity, and exposure data for hundreds of thousands of chemicals. | Integrates data from EPA computational toxicology efforts; links chemicals to DSSTox IDs. |

| ADORE Dataset [44] | Benchmark ML Dataset | A curated dataset for machine learning in ecotoxicology, focusing on acute aquatic toxicity for fish, crustaceans, and algae. | Designed for fair model comparison; includes chemical, species, and experimental data from ECOTOX. |