Validating Landscape Ecological Risk Assessments: Integrating Field Data for Robust Environmental Insights

This article provides a comprehensive overview of landscape ecological risk assessment validation with field data, tailored for researchers, scientists, and professionals in environmental and related fields.

Validating Landscape Ecological Risk Assessments: Integrating Field Data for Robust Environmental Insights

Abstract

This article provides a comprehensive overview of landscape ecological risk assessment validation with field data, tailored for researchers, scientists, and professionals in environmental and related fields. It covers foundational concepts, methodological applications, troubleshooting strategies, and comparative validation techniques, drawing on current case studies and frameworks to enhance assessment accuracy and reliability in diverse ecological contexts.

Understanding Landscape Ecological Risk: Core Concepts and Exploratory Frameworks

Landscape Ecological Risk Assessment (LERA) is a methodological framework that evaluates the potential adverse effects of natural and anthropogenic stressors on ecosystem structure, function, and processes at a regional scale. Unlike traditional ecological risk assessments that focus on single contaminants or specific receptors, LERA adopts a holistic, pattern-based approach. It treats the landscape mosaic—comprising patches, corridors, and a matrix of different land uses—as the primary unit of analysis [1]. This shift in perspective is critical for understanding complex, multi-source risks in the context of rapid global changes such as urbanization, climate change, and land-use transformation. The importance of LERA lies in its capacity to spatially visualize and quantify ecological risk, thereby providing a scientific foundation for land-use planning, ecosystem management, and the formulation of policies aimed at sustainable development and ecological security [2] [3].

Comparative Analysis of Landscape Ecological Risk Assessment Methodologies

Current LERA methodologies predominantly follow two conceptual frameworks: the "Risk Source-Sink" model and the "Landscape Pattern Index" method [1] [4]. The choice between these models depends on the study's objectives, data availability, and the nature of the dominant risks.

The following table provides a comparative overview of different LERA studies conducted in diverse geographical and ecological contexts, highlighting their core methodologies and key findings.

Table 1: Comparison of Landscape Ecological Risk Assessment (LERA) Case Studies

| Study Area (Context) | Core Methodology | Key Landscape Pattern Indices Used | Temporal Trend of Ecological Risk | Primary Driving Factors Identified | Validation Approach |

|---|---|---|---|---|---|

| Core Water Source, South-North Water Diversion (Ecological Function Zone) [5] | Landscape Pattern Index, Spatial Autocorrelation (Moran's I) | Landscape Ecological Risk Index (ERI) based on land use transformation | Increased (2010-2015), then decreased (2015-2020) | Terrain, soil type, climate, national ecological policies | Spatial correlation analysis with geostatistical techniques |

| Engebei, Kubuqi Desert (Ecologically Fragile Zone) [6] | Landscape Pattern Index, Grain Size Effect Analysis | Patch Density (PD), Landscape Shape Index (LSI), Aggregation Index (AI), Shannon's Diversity (SHDI) | Overall slight decrease (0.1944 to 0.1940 from 2005-2021) | Human disturbance (desertification control), landscape fragmentation | Supervised classification of Landsat data, field survey correlation |

| Fuchunjiang River Basin (Suburban Basin of Large City) [1] | Landscape Pattern Index, Geodetector | Risk index based on landscape disturbance and loss degrees | Decreasing over long-term scale (1990-2020) | GDP, human interference, transfer of arable land | Analysis at township-scale administrative units |

| Guiyang City (Multi-Mountainous City) [7] | Landscape Pattern Index, Geodetector, PLUS Model Simulation | Landscape Ecological Risk Index (LERI) | Gradual decrease (0.0341 to 0.0304 from 2000-2020) | Ecological factors (terrain) primary; social factors' influence increasing | Multi-scenario simulation (2030) for predictive validation |

| Ebinur Lake Basin (Arid Region Watershed) [2] | Landscape Pattern Index, Geographical Detector Model (GDM) | Landscape Ecological Risk Index (LERI) | Downward trend (1985-2022) | Climatic factors (temperature, precipitation) most significant | Long-term (37-year) data series analysis |

| Southwest China (Regional Scale) [8] | Production-Living-Ecological Space (PLEs) perspective, Random Forest & Geodetector | Landscape disturbance, vulnerability, and fractal dimension indices | Stable, ranging from 0.20 to 0.21 (2000-2020) | Anthropogenic disturbance, land use intensity, interaction of natural and economic factors | Ecological network construction (corridors, nodes) as spatial validation |

Analysis of Comparative Trends: A significant finding across multiple studies is that despite intense human pressure, overall landscape ecological risk in many Chinese regions has shown a stable or declining trend over recent decades [7] [2] [8]. This counterintuitive result is frequently attributed to the positive impacts of large-scale, national ecological conservation and restoration policies, such as the Grain for Green Program and strict protection of water source areas [5] [6]. However, this macroscale improvement often masks persistent localized high-risk areas, typically associated with urban expansion, water body shrinkage, or intense agricultural activity [1] [9].

Experimental Protocols for Landscape Pattern-Based Risk Assessment

The landscape pattern index method is the most widely applied protocol in regional LERA. Its strength lies in utilizing readily available land use/cover (LULC) data to derive indices that reflect landscape structure, which is closely linked to ecosystem function and resilience.

Standardized Experimental Workflow:

- Data Acquisition and Preprocessing: Acquire multi-temporal LULC data, typically from remotely sensed imagery (e.g., Landsat, Sentinel) with a resolution of 30 meters, classified into categories like forest, grassland, cropland, water, built-up land, and barren land [6] [2]. Ancillary data on natural (e.g., DEM, precipitation) and socio-economic (e.g., GDP, population density) factors are also collected.

- Determination of Assessment Units: The study area is divided into spatial assessment units. A common practice is to create a grid of risk cells, with the cell size often set at 2–5 times the average landscape patch area to ensure statistical meaning [8]. Alternatively, watershed units or township administrative boundaries may be used [1].

- Calculation of Landscape Pattern Indices: Using software like Fragstats, key landscape indices are calculated for each assessment unit and each LULC type. Core indices include:

- Disturbance Index (Ei): Characterizes the external pressure on a landscape type, often combining indices like Fragmentation, Dominance, and Isolation.

- Vulnerability Index (Vi): Represents the intrinsic sensitivity of a landscape type to disturbances. It is usually determined by an expert-based ranking, where ecosystems like water bodies and wetlands are assigned higher vulnerability than cropland or built-up land.

- Loss Index (Ri): Integrated as

Ri = Ei * Vi, representing the potential ecological loss degree of a specific landscape type.

- Construction of the Landscape Ecological Risk Index (LERI): The overall risk for each assessment unit (k) is calculated using a weighted area model:

LERIₖ = ∑ (Aₖᵢ / Aₖ) * RᵢwhereAₖᵢis the area of landscape type i in unit k,Aₖis the total area of unit k, andRᵢis the loss index of landscape type i [8]. A higher LERI value indicates greater ecological risk. - Spatial and Statistical Analysis: The computed LERI values are interpolated to produce a continuous spatial risk surface. Spatial autocorrelation analysis (Global/Local Moran's I) is performed to identify significant risk clusters (High-High, Low-Low) [5] [3]. Tools like the Geodetector are used to quantify the explanatory power (q-statistic) of various natural and human factors on the observed risk pattern [7] [2].

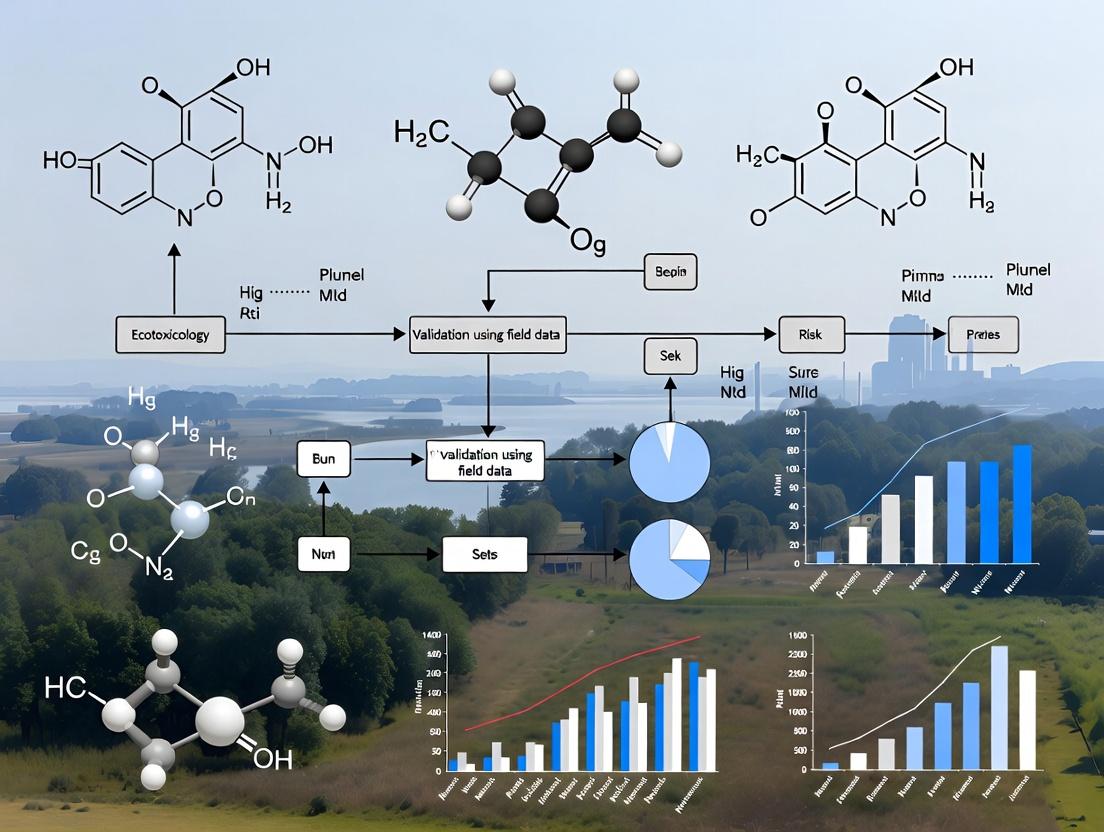

Landscape Ecological Risk Assessment Core Workflow

Visualization of Factor Interactions and Risk Drivers

A key advancement in LERA is moving beyond descriptive mapping to diagnosing the driving mechanisms behind risk patterns. The Geodetector model reveals that single factors rarely act alone; instead, nonlinear enhancement through factor interaction is the rule.

Interaction of Primary Drivers Influencing Landscape Ecological Risk

The Geodetector's interaction detector consistently shows that the combined explanatory power of any two factors is greater than that of any single factor [7] [4]. For instance, in mountainous Guiyang, the interaction between elevation (a natural factor) and GDP (a social factor) was identified as a dominant force driving the spatial differentiation of risk [7]. In arid watersheds like the Ebinur Lake Basin, the interaction between precipitation and temperature often exhibits the strongest explanatory power for ecological risk [2]. This underscores the integrated nature of ecological risk, which arises from the complex interplay between the physical environment and human socio-economic activities.

Conducting robust LERA relies on a suite of specialized software, datasets, and analytical tools.

Table 2: Key Research Reagent Solutions for Landscape Ecological Risk Assessment

| Tool/Reagent Category | Specific Name/Example | Primary Function in LERA | Key Utility for Researchers |

|---|---|---|---|

| Remote Sensing Data Platforms | USGS Earth Explorer, Google Earth Engine (GEE), Geospatial Data Cloud [6] | Source of multi-temporal, multi-spectral satellite imagery for LULC classification. | Provides foundational spatial data; GEE enables cloud-based processing of large datasets. |

| Land Use/Land Cover Datasets | CLCD (China Land Cover Dataset) [4], GlobeLand30 [10] | Pre-classified, standardized LULC data products. | Offers ready-made, often validated LULC maps, reducing preprocessing workload. |

| Landscape Analysis Software | Fragstats | Calculates a wide array of landscape pattern metrics at class and landscape levels. | The industry standard for quantifying landscape composition and configuration indices. |

| Geographic Information Systems | ArcGIS (with ModelBuilder), QGIS | Spatial data management, assessment unit creation, interpolation, map visualization, and workflow automation. | Essential for all spatial operations, from geoprocessing to final cartographic output. |

| Spatial Statistics & Modeling Tools | Geodetector, GeoDa, PLUS Model | Analyzes driving factors, tests spatial autocorrelation, and simulates future land-use/risk scenarios. | Moves analysis from "where" to "why" and enables predictive, scenario-based forecasting [7] [3]. |

| Programming & Analysis Environments | R (with sf, raster packages), Python (with geopandas, scikit-learn libraries) |

Custom script-based analysis, statistical modeling, and integration of machine learning algorithms. | Provides flexibility for advanced, reproducible analyses and handling of big geospatial data. |

Validation with Field Data and Future Risk Simulation

Validation is a critical yet challenging component of LERA. Direct validation often involves correlating LERI patterns with field-measured ecological indicators, such as soil erosion rates, water quality parameters, or biodiversity surveys [6] [10]. For example, high-risk zones predicted by the model should correspond to areas with measured soil degradation or poor habitat quality.

An advanced form of analytical validation is multi-scenario simulation. Using models like the PLUS (Patch-generating Land Use Simulation) model, researchers can project future LULC patterns under different development scenarios (e.g., Natural Development, Ecological Priority, Farmland Protection) and assess the corresponding future ecological risks [7] [3]. Studies in cities like Guiyang and Harbin have demonstrated that an Ecological Priority scenario consistently leads to the smallest expansion of high-risk areas in the future, validating the model's utility for policy planning and providing a target for sustainable management [7] [3]. This approach shifts LERA from a diagnostic tool to a proactive, decision-support system, aligning research directly with the needs of ecosystem management and spatial planning.

This guide provides a comparative analysis of the core methodologies and applications of key terminologies in landscape ecological risk assessment (ERA). Framed within the broader thesis of validating ERA frameworks with empirical field data, it objectively compares conceptual approaches, measurement techniques, and the performance of different models through data from recent peer-reviewed studies and authoritative guidelines [11] [12].

Comparative Analysis of Key Terminology Frameworks

The table below compares three dominant conceptual frameworks used to structure ecological risk assessments, highlighting their core components and primary applications.

Table 1: Comparison of Ecological Risk Assessment Conceptual Frameworks

| Framework Name | Core Components & Sequence | Primary Assessment Focus | Typical Scale of Application | Key Advantage |

|---|---|---|---|---|

| EPA Three-Phase ERA [11] | 1. Problem Formulation2. Analysis (Exposure & Effects)3. Risk Characterization | A comprehensive process evaluating the likelihood of adverse ecological effects from one or more environmental stressors [11]. | Site-specific to Regional | Structured, regulatory-friendly process that integrates planning and stakeholder input. |

| Source-Pathway-Receptor (SPR) [13] | Source → Pathway → Receptor | Systematic evaluation of contaminant impacts by identifying the origin, migration route, and exposed entity [13]. | Often site-specific (e.g., contaminated land) | Intuitive for tracing contamination; clearly identifies intervention points (break the pathway). |

| Landscape Ecological Risk Index (LERI) [5] [7] [12] | Landscape Pattern (Fragmentation, Loss) → Ecological Disturbance → Risk Index | Spatial and temporal ecological risks driven by changes in land use and landscape pattern [12]. | Regional to Landscape | Quantifies spatially explicit risk based on landscape metrics; excellent for land-use planning. |

Quantitative Comparison of Landscape Ecological Risk Assessment Applications

Recent empirical studies demonstrate how these frameworks are applied and validated with field and remote sensing data. The following table summarizes key quantitative findings from landscape-scale studies.

Table 2: Summary of Quantitative Findings from Recent Landscape Ecological Risk Studies

| Study Area & Reference | Time Period | Primary Stressor Analyzed | Key Metric: Landscape Ecological Risk Index (LERI) Trend | Major Driving Factors Identified |

|---|---|---|---|---|

| Core water source, South-to-North Water Diversion, China [5] | 2010-2020 | Land-use transformation | Increased slightly (2010-2015), then decreased (2015-2020) [5]. | Land conversion to industry/agriculture (risk increase); policy protection & forest land (risk decrease) [5]. |

| Guiyang, a multi-mountainous city, China [7] | 2000-2020 | Urban expansion under topographical constraints | Average LERI decreased: 0.0341 (2000) → 0.0320 (2010) → 0.0304 (2020) [7]. | Ecological drivers (primary); social drivers' impact growing over time [7]. |

| Zhangjiachuan County, China [12] | 2000-2020 | Land-use change | "Inverted U-shaped" trend: increased, then decreased [12]. | Aligned with theoretical "ecological risk transition" framework [12]. |

| General Key Finding | Land-use change is a dominant physical stressor [14] [12]. | Policy intervention and ecological protection can reverse risk trends [5] [7] [12]. | Spatial autocorrelation of risk is common but can weaken with development [5] [7]. |

Experimental Protocols for Field-Data Validation

Validating ERA models requires robust methodologies that link field observations to landscape patterns. Below are detailed protocols for common approaches.

3.1 Landscape Pattern Analysis via Remote Sensing

- Objective: To quantify changes in landscape structure that indicate ecological disturbance [5] [12].

- Methodology:

- Data Acquisition: Obtain multi-temporal (e.g., 2000, 2010, 2020) Landsat or Sentinel satellite imagery for the study area [7] [12].

- Land Use/Land Cover (LULC) Classification: Use supervised classification algorithms (e.g., Maximum Likelihood, Support Vector Machine) to categorize each pixel into classes like forest, farmland, urban land, and water. Accuracy is assessed with ground-truthed points [5].

- Calculate Landscape Metrics: Using FRAGSTATS or similar software, compute indices for each LULC type in a moving window or spatial grid:

- Disturbance Index (Ei): Based on metrics like fragmentation (e.g., Patch Density), loss of connectivity (e.g., Splitting Index), and shape complexity [12].

- Vulnerability Index (Si): A weight assigned to each LULC type based on its ecological sensitivity and function, often determined through expert judgment or literature review [5].

- Construct LERI: The Landscape Ecological Risk Index for each spatial unit is calculated as:

LERI = ∑ (Ei * Si) / Area[7] [12]. This produces a spatial risk map. - Spatial Statistical Analysis: Use tools like GeoDa to perform spatial autocorrelation (e.g., Moran's I) analysis on LERI results to identify clustered risk patterns [5] [7].

3.2 Integrating Ecosystem Services as Assessment Endpoints

- Objective: To extend conventional ecological endpoints to include benefits to human welfare, linking ecological risk to societal outcomes [15] [16].

- Methodology:

- Endpoint Selection: In the Problem Formulation phase [11], define assessment endpoints that are explicit expressions of environmental value [17]. Complement conventional endpoints (e.g., species reproduction) with ecosystem service endpoints (ES-GEAEs) like "Water Yield for Municipal Supply" or "Carbon Sequestration Capacity" [15] [16].

- Biophysical Modeling: Use models like InVEST or ARIES to quantify the selected ecosystem services (e.g., water yield, nutrient retention, habitat quality) based on LULC maps and other spatial data (soil, precipitation, topography).

- Risk Characterization: Overlay spatial LERI maps with ecosystem service supply maps. Analyze how areas of high ecological risk correlate with degradation or loss of key ecosystem services, thereby quantifying risk in terms of service loss [16].

3.3 Future Scenario Simulation using the PLUS Model

- Objective: To project future landscape ecological risk under different development pathways [7].

- Methodology:

- Driving Factor Analysis: Use the Geodetector method (GDM) to quantify the explanatory power (q-statistic) of various natural (e.g., slope, elevation) and socio-economic (e.g., GDP, distance to roads) factors on historical land-use change [7].

- Model Calibration: Train the Patch-generating Land Use Simulation (PLUS) model using historical LULC changes and the dominant driving factors identified by GDM.

- Scenario Development & Simulation: Define development scenarios for a target year (e.g., 2030), such as:

- Natural Development Scenario: Extends current trends.

- Ecological Priority Scenario: Imposes strict constraints on converting ecological lands.

- Farmland Protection Scenario: Prioritizes the protection of agricultural land [7].

- Future Risk Assessment: Calculate the LERI for each simulated future LULC map. Compare the area and spatial distribution of risk zones across scenarios to inform planning [7].

Visualizing Assessment Pathways and Relationships

Landscape ERA Process from Stressor to Decision

Source-Pathway-Receptor (SPR) Risk Assessment Framework

The Scientist's Toolkit: Essential Research Reagents & Materials

Conducting landscape ecological risk assessments that integrate field validation requires specific data, software, and analytical tools.

Table 3: Essential Research Reagents & Materials for Landscape ERA

| Tool Category | Specific Item / Software | Primary Function in ERA | Key Consideration for Validation |

|---|---|---|---|

| Spatial Data Inputs | Landsat/Sentinel Satellite Imagery | Provides multi-temporal land use/cover data for calculating landscape pattern changes [7] [12]. | Ground truthing with field surveys is critical for validating classification accuracy. |

| Digital Elevation Model (DEM), Soil Maps, Climate Data | Serves as inputs for ecosystem service models and analysis of risk-driving factors [5]. | Resolution and currency of datasets must match the study's spatial and temporal scale. | |

| Analytical Software | GIS Software (ArcGIS, QGIS) | Platform for spatial analysis, map algebra, and visualizing LERI and ecosystem service results. | Essential for integrating disparate spatial data layers for exposure assessment. |

| FRAGSTATS | Calculates a wide array of landscape pattern metrics (e.g., patch density, edge density) from LULC maps [12]. | Choice of metrics must be ecologically meaningful for the study area and stressors. | |

| R/Python with spatial packages | Used for statistical analysis, spatial autocorrelation (e.g., Moran's I), and running models like Geodetector [7]. | Provides flexibility for custom analysis and linking spatial patterns to statistical drivers. | |

| Assessment Models | InVEST or ARIES Model | Quantifies and maps the supply of ecosystem services (e.g., carbon storage, water purification) [15]. | Outputs provide the link between ecological risk and human well-being endpoints [16]. |

| PLUS or CLUE-S Model | Simulates future land-use change scenarios based on driving factors and spatial policies [7]. | Scenario assumptions must be clearly defined and plausible for informing risk management. | |

| Reference Guides | EPA Guidelines for Ecological Risk Assessment [11] | Provides the authoritative conceptual framework and process for conducting ERA. | Ensures assessment structure is scientifically defensible and meets regulatory standards. |

| Generic Ecological Assessment Endpoints (GEAEs) [15] | Offers a standardized list of potential assessment endpoints, including ecosystem services. | Helps in selecting relevant and measurable endpoints during problem formulation [17]. |

The Role of Spatial Scales and Granularity in Risk Analysis

In landscape ecology and risk analysis, spatial scale—encompassing both extent (the overall area of study) and granularity (the resolution or cell size of data)—is not merely a technical detail but a fundamental determinant of assessment outcomes. The sensitivity of ecological risk patterns to scale means that the choice of spatial parameters can dramatically alter the perceived severity, distribution, and drivers of risk [18]. This comparison guide examines established and emerging methodologies for determining optimal spatial scales in landscape ecological risk assessment (LERA), framing the discussion within the critical need for validation with field data. For researchers and scientists, understanding these methodological nuances is essential for producing reliable, actionable risk models that can inform land-use planning, conservation, and ecological management [3] [19].

Comparative Analysis of Spatial Scale Methodologies in LERA

Different methodological frameworks offer distinct approaches to handling scale. The selection among them depends on research objectives, data availability, and the specific ecological context of the study area.

Table 1: Comparison of Methodologies for Spatial Scale Determination in Landscape Ecological Risk Assessment

| Methodology | Core Approach | Typical Application | Key Strength | Primary Scale Output | Representative Study & Year |

|---|---|---|---|---|---|

| Response Curves & Area Loss Model [18] | Empirical analysis of how landscape indices change with grain size; identifies "inflection point" where information loss accelerates. | Watershed-scale assessment in heterogeneous landscapes. | Directly links scale choice to informational integrity of landscape pattern data. | Optimal Spatial Granularity (e.g., 30m) | Luan River Basin, China (2024) [18] |

| Fixed Grid Unit (2-5x Avg. Patch) [19] | Divides study area into a grid where cell size is a multiple (2-5 times) of the historical average patch area. | Regional assessment in agricultural or fragmented landscapes. | Simple, replicable, and ties assessment unit directly to inherent landscape structure. | Risk Assessment Unit Size (e.g., 3km grid) | Jianghan Plain, China (2025) [19] |

| Semi-Variation Analysis [18] | Analyzes spatial autocorrelation and variance as a function of distance to identify characteristic spatial scales of processes. | Identifying dominant spatial amplitude of ecological processes. | Objectively identifies the spatial scale at which processes are most consistent. | Optimal Spatial Amplitude (e.g., 3200m) | Luan River Basin, China (2024) [18] |

| Multi-Scenario Simulation (PLUS Model) [3] [19] | Projects future land use and associated risk under different development scenarios (e.g., ecological priority, natural development). | Predictive risk assessment and planning support. | Evaluates how scale-dependent risk patterns may evolve under future land-use change. | Future Risk Patterns at Projected Scale | Harbin & Jianghan Plain, China (2025) [3] [19] |

Experimental Protocols and Validation Frameworks

Protocol for Determining Optimal Scale via Response and Loss Models

The integrated protocol used in the Luan River Basin study [18] provides a replicable framework:

- Data Preparation: Acquire multi-temporal land use/cover (LULC) maps for the study period (e.g., 2000, 2008, 2016, 2022).

- Resampling Test: Resample the LULC data to a range of grain sizes (e.g., from 30m to 150m) using different algorithms (Nearest Neighbor, Bilinear, etc.). The study identified Nearest Neighbor as most appropriate for landscape pattern analysis [18].

- Landscape Index Calculation: For each grain size, calculate key landscape pattern indices (e.g., Fragmentation, Dominance, Connectivity) using software like FragStats.

- Generate Response Curves: Plot the values of each landscape index against grain size. The optimal granularity is identified at the inflection point where the index value stabilizes or begins to degrade rapidly.

- Area Accuracy Loss Modeling: Quantify the information loss from aggregation. The optimal scale minimizes this loss.

- Semi-Variation Analysis: Calculate semi-variograms for LULC types to identify the range value where spatial autocorrelation plateaus. This range defines the optimal spatial amplitude for analysis [18].

Protocol for Multi-Scenario Predictive Risk Assessment

Studies in Harbin and the Jianghan Plain [3] [19] employed a forward-looking validation protocol:

- Historical Baseline Establishment: Assess LER spatiotemporally from past to present using an Improved Landscape Ecological Risk Index (ILERI).

- Driver Analysis: Use the GeoDetector model to quantify the explanatory power (q-statistic) of natural (DEM, precipitation) and anthropogenic (population density, GDP) factors on LER patterns.

- Scenario Definition: Define future development scenarios (e.g., Natural Development, Ecological Protection, Cropland Priority).

- Land Use Simulation: Employ the Markov-PLUS or PLUS model to simulate 2030 LULC under each scenario.

- Future Risk Projection & Validation: Calculate projected LER for 2030. The validity rests on the model's ability to replicate past changes and the plausibility of scenario-driven projections.

Diagram 1: A 9-step workflow for optimal scale determination and risk assessment.

Quantitative Findings: The Impact of Scale on Risk Metrics

Empirical studies consistently show that scale choices directly influence quantitative risk indicators, changing the absolute values and spatial distribution of calculated risk.

Table 2: Impact of Spatial Scale on Quantitative Risk Assessment Metrics

| Study Region | Recommended Optimal Scale | Key Quantitative Finding at Optimal Scale | Spatial Autocorrelation (Moran's I) | Dominant Driving Factors Identified |

|---|---|---|---|---|

| Luan River Basin [18] | Granularity: 30mAmplitude: 3200m | Overall ILERI decreased from 0.250 (2016) to 0.234 (2022). Medium-low/medium risk areas comprised 70.55% in 2022. | Not explicitly stated. | Interaction of precipitation, population density, and primary industry. |

| Harbin [3] | Assessment unit derived from land use patches. | Overall LER trended downward (2000-2020). Highest risk concentrated around water bodies. | 0.798, 0.828, 0.852 (increasing from 2000-2020). | DEM had greatest individual explanatory power; interaction of DEM & precipitation was dominant. |

| Jianghan Plain [19] | Assessment Unit: 3km x 3km grid | LER showed "increase then decrease" trend (2000-2020). Risk was high in southeast, low in central/north. | Significant spatial aggregation was detected. | NDVI was the first dominant single factor. |

| Cross-Vendor Climate Risk [20] | Asset-level (high-resolution) vs. Regional Aggregates | Extreme dispersion in hazard (e.g., flood depth) and damage estimates for identical assets across vendors due to methodological and scale differences. | Not applicable. | Data granularity, hazard model downscaling methods, and asset geocoding accuracy. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Tools for Scale-Explicit Risk Analysis

| Tool/Reagent Category | Specific Example/Product | Primary Function in Scale & Risk Analysis |

|---|---|---|

| Geospatial Data Platforms | Google Earth Engine, USGS EarthExplorer | Provides access to multi-temporal, multi-resolution satellite imagery (Landsat, Sentinel) for generating LULC data at different grains. |

| Landscape Pattern Analysis Software | FRAGSTATS | Computes a wide array of landscape metrics (patch, class, landscape level) which are sensitive to grain and extent changes [3] [19]. |

| Spatial Statistics & Modeling Suites | R (sp, raster, GD packages), GeoDa, ArcGIS with Geodetector |

Performs spatial autocorrelation (Moran's I), semi-variogram analysis, and executes the GeoDetector model for factor analysis [18] [3]. |

| Land Use Change Simulation Models | PLUS Model, CLUE-S, CA-Markov | Projects future LULC under different scenarios; the PLUS model is noted for improved simulation of patch-level dynamics [3] [19]. |

| High-Resolution Asset & Hazard Data | Vendor-specific climate hazard layers (e.g., flood, fire), NatureAlpha biodiversity data | Enables asset-level risk analysis in financial contexts; highlights the critical role of location precision and granular hazard data [20] [21]. |

Diagram 2: Key factors driving variation in landscape ecological risk assessment outcomes.

The comparative analysis confirms that there is no universally "correct" scale for ecological risk assessment; the optimal scale is context-dependent [18]. However, best practices emerge:

- Explicit Scale Sensitivity Testing: Studies should not adopt an arbitrary scale. Protocols like response curve analysis should be used to justify grain and extent [18].

- Multi-Scale and Multi-Scenario Analysis: Employing a single scale is insufficient. Robust assessments should test the stability of conclusions across scales and project risks under multiple plausible futures [3] [19].

- Granular, Validated Data is Paramount: The principle that "location matters" is universal [21]. High-resolution, accurately geocoded data significantly reduces uncertainty, whether in ecological or financial risk modeling [20].

- Integration of Field Validation: The final, crucial step is grounding scale-optimized model outputs in field-collected data on ecosystem structure and function to validate the real-world ecological significance of the identified risk patterns. This bridges the gap between spatial pattern analysis and ecological process.

The validation of landscape ecological risk assessment (LERA) models with empirical field data represents a critical frontier in environmental science, bridging theoretical spatial analysis with on-the-ground ecological reality. This guide compares prevailing methodological frameworks applied across diverse fragile landscapes—from arid inland basins and urbanizing river systems to restored forest farms and global tipping point ecosystems. The comparative analysis focuses on their core algorithms, data requirements, validation protocols, and resultant risk insights, providing researchers with an evidence-based toolkit for selecting and applying these models. The synthesis is framed within the overarching thesis that robust risk assessment requires iterative dialogue between model prediction and field validation, where spatial patterns of risk are tested against measurable biotic, abiotic, and socio-ecological endpoints.

Comparative Analysis of Assessment Methodologies

Table 1: Methodological Comparison of Key Case Studies

| Case Study / Location | Core Assessment Model | Primary Data Inputs & Key Tools | Field Validation & Risk Indicators | Key Spatial Outcome / Risk Metric |

|---|---|---|---|---|

| Aksu River Basin (Arid Inland Basin) [22] [23] | Ecosystem Service Value (ESV) + Minimum Cumulative Resistance (MCR) | Land Use/Cover (LULC), biomass, socio-economic data; InVEST model, PLUS simulator [22] [23] | ESV change trends (1990-2018); correlation with landscape indices (AI, SHDI) [23] | Ecological Security Pattern (ESP): High/Medium/Low level source areas (1806.3, 3416.8, 4804.32 km²) [22] |

| Guiyang (Multi-Mountainous City) [7] | Landscape Ecological Risk Index (LERI) + Geodetector + PLUS Simulation | Multi-temporal remote sensing images, landscape pattern indices, socio-ecological drivers [7] | Spatial autocorrelation of LER; scenario simulation (Natural development, Farmland protection, Ecological priority) for 2030 [7] | Decreasing average LERI (0.0341 to 0.0304, 2000-2020); future risk expansion largest in Natural Development scenario [7] |

| Daling River Basin (Water Quality) [24] | Enhanced LSTM with Back Propagation (ELSTM-EBP) Model | Water quality monitoring data (7 stations), spatiotemporal weighted imputation, weighted Pearson feature selection [24] | Prediction accuracy vs. LSTM, GRU, BP models; correlation of TN with water temp (negative) & dissolved oxygen (positive) [24] | “U”-shaped annual TN fluctuation; ELSTM-EBP model outperforms others; 7-step prediction error within ±0.4 mg/L [24] |

| Engebei (Kubuqi Desert) [6] | Landscape Ecological Risk Assessment Model | Landsat imagery (SVM classification), landscape pattern indices (PD, LSI, SHDI, etc.), spatial grain analysis [6] | Moran's I spatial autocorrelation; validation via grain size effect analysis and area information loss evaluation [6] | Overall risk index slight decrease (0.1944 to 0.1940, 2005-2021); positive spatial correlation with Low-Low/High-High aggregation [6] |

| Velika Morava Basin (Ecological Sustainability) [25] | Modified ESE-HIPPO*River Basin Model | Ichthyological field surveys (2001-2021), HIPPO factor scoring, abiotic parameters [25] | Fish community structure (diversity, biomass, age) as indicator; ecological status aligned with Water Framework Directive [25] | 80% of basin deemed ecologically unsustainable; HIPPO impact outweighs Ecosystem Stability (ESE) [25] |

| Fuchunjiang River Basin (Suburban) [1] | LERI Model + Geodetector | Land use data (1990-2020), township-scale administrative data, GDP [1] | Influence of dominant factors (GDP, human interference) tested via factor detection; Environmental Kuznets Curve (EKC) validation [1] | Risk “high in NW, low in SE”; inverted “U” relationship between risk and GDP in 2020 [1] |

| Saihanba Mechanical Forest Farm [26] | Landscape Ecological Risk Index + Geographic Detector | Landsat imagery (SVM classification), NDVI, topographic & climatic factors, forest subcompartment data [26] | Risk drivers quantified via factor (q statistic) and interaction detection; spatial autocorrelation analysis [26] | High-risk area dropped from 72.3% (1987) to clustered points (2020); landscape type is strongest driver (q-value) [26] |

| Global Tipping Points (Amazon, AMOC, Coral Reefs) [27] | Tipping Point Risk Assessment | Climate models, observational data, carbon stock analysis, governance indicators [27] | Evidence of ongoing regime shifts (e.g., coral bleaching >80% reefs, AMOC weakening) [27] | Thresholds breached (e.g., coral reef central TP ~1.2°C); risk of irreversible systemic collapse [27] |

Table 2: Quantitative Performance of Predictive Models from Case Studies

| Model Name | Study Context | Key Performance Metric | Comparative Advantage / Validation Outcome |

|---|---|---|---|

| PLUS (Patch-generating Land Use Simulation) | Aksu River Basin ESV Simulation [23], Guiyang LER Simulation [7] | Simulated future LULC and ESV/LER spatial distribution under multiple scenarios. | Integrates driving factors (natural & human) to project landscape change; validated against historical LULC transitions. |

| ELSTM-EBP (Enhanced LSTM with Back Propagation) | Daling River Basin Water Quality Prediction [24] | Multi-step (7-step) prediction error range: -0.4 to 0.4 mg/L for Total Nitrogen (TN). | Outperformed QLSTM, LSTM, GRU-QIMAS, EQINN, and BP models in accuracy and generalization [24]. |

| ESE-HIPPO*River Basin Model | Velika Morava Basin Sustainability [25] | Sustainability score derived from difference between Ecological Stability (ESE) and cumulative HIPPO impact. | 80% unsustainable basin rating; uses field-validated biotic (fish) indicators and structured HIPPO factor scoring [25]. |

| Geographic Detector | Guiyang [7], Fuchunjiang [1], Saihanba [26] | q-statistic (power of determinant) for driver quantification; interaction detector reveals factor interplay. | Identified dominant drivers: e.g., Human Activity (HAILS) in Aksu (q=0.332) [23], landscape type in Saihanba [26]. |

Detailed Experimental Protocols for Model Validation

3.1 Protocol for Landscape Pattern-Based Risk Assessment & Validation (Aksu, Engebei, Saihanba) This protocol underpins studies in arid basins [22] [23] and restored forests [26].

- Step 1: Data Acquisition & Land Use/Land Cover (LULC) Classification

- Acquire multi-temporal remote sensing imagery (e.g., Landsat series, Sentinel-2). Preprocess for radiometric and atmospheric correction.

- Perform LULC classification using machine learning algorithms (e.g., Support Vector Machine - SVM [6] [26]) based on established classification systems. Validate classification accuracy with field samples or high-resolution imagery (Kappa >0.75 acceptable [26]).

- Step 2: Landscape Pattern Index Calculation & Risk Model Construction

- Using FRAGSTATS or similar software, calculate a suite of landscape pattern indices (e.g., Patch Density - PD, Landscape Shape Index - LSI, Aggregation Index - AI, Shannon's Diversity Index - SHDI) for a systematic sampling grid or ecological risk units.

- Construct a Landscape Ecological Risk Index (LERI). A common formula integrates disturbance and vulnerability indices:

LERI = ∑(Si * Ei * Fi), whereSiis the landscape disturbance index for land use type i,Eiis the landscape fragility index, andFiis the landscape loss index [6] [1].

- Step 3: Spatial Analysis & Statistical Validation

- Perform spatial autocorrelation analysis (Global & Local Moran's I) to identify clustering patterns of high/low risk [6] [26].

- Validate risk patterns using field-data proxies:

- For desert/arid areas (Engebei, Aksu): Correlate LERI with field-measured soil erosion rates, vegetation canopy cover (NDVI), or groundwater salinity surveys.

- For forest farms (Saihanba): Correlate LERI with forest inventory data (e.g., tree species diversity, stand density, soil organic carbon from subcompartment surveys) [26].

- Use Geographic Detector to quantify driving forces (e.g., NDVI, temperature, human activity intensity) and test their interactive effects on LERI [1] [23] [26].

3.2 Protocol for Machine Learning-Based Water Quality Prediction (Daling River Basin) This detailed protocol is derived from the Daling River Basin study [24].

- Step 1: Data Preprocessing & Imputation

- Collect multi-source time-series data from monitoring stations: target variable (e.g., Total Nitrogen - TN) and driving variables (water temperature, dissolved oxygen, pH, turbidity, permanganate index).

- Address missing data using a Spatiotemporal Weighted Imputation Method. This involves constructing a weighted objective function that considers temporal similarity, spatial similarity, and spatiotemporal coupling to estimate missing values, improving upon simple linear interpolation [24].

- Step 2: Feature Selection

- Implement a Weighted Pearson Correlation Coefficient method for feature selection. Introduce a standard deviation-weighted coefficient to optimize correlation analysis between driving factors and TN, enhancing model efficiency and prediction accuracy [24].

- Step 3: Model Construction, Training & Validation

- Develop the ELSTM-EBP model:

- Decompose the preprocessed TN time series into subsequences with different frequencies.

- The Enhanced LSTM (ELSTM) component incorporates a multi-head attention mechanism and adaptive cell state updates to capture complex temporal dependencies.

- The Error Back-Propagation (EBP) network further corrects prediction errors, with an adaptive learning rate optimizing the process [24].

- Divide data into training, validation, and test sets (e.g., 70%-15%-15%).

- Train the model and compare its performance against benchmarks (e.g., standard LSTM, GRU, BP) using metrics like Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and Nash-Sutcliffe Efficiency (NSE).

- Validate by conducting multi-step-ahead forecasts (e.g., up to 7 steps) and comparing predictions with actual, unseen monitoring data [24].

- Develop the ELSTM-EBP model:

3.3 Protocol for Biotic Indicator-Based Sustainability Assessment (Velika Morava Basin) This protocol outlines the ESE-HIPPO model [25].

- Step 1: Field Sampling of Biotic Endpoint (Fish Community)

- Conduct standardized ichthyological surveys across the river network (main stem and tributaries) using methods like electrofishing at representative reaches.

- Record species identification, abundance, biomass, and individual body length/age for key species.

- Step 2: Calculate Ecological Stability of the Ecosystem (ESE) Index

- For each sampling site, score predefined indicators (e.g., trend in species diversity, trend in biomass of autochthonous species, age structure of dominant predators) on a three-point scale (1, 3, 5) representing poor, moderate, and good condition [25].

- Aggregate scores to compute a composite ESE value for the site.

- Step 3: Assess Cumulative Stressor Impact (HIPPO Factors)

- For the same sites, score the intensity of five HIPPO factors (Habitat alteration, Invasive species, Pollution, Population growth, Overexploitation) on a scale (e.g., 0-3: None, Low, Medium, High), based on field observations, water quality data, and socio-economic statistics [25].

- Calculate a cumulative HIPPO impact score.

- Step 4: Model Integration and Validation

- Compute the ecological sustainability score as the difference between the ESE value and the HIPPO impact score.

- Validate the model output by correlating sustainability scores with independent measures of ecological status (e.g., ecological quality ratios from benthic macroinvertebrates or diatom assessments under the EU Water Framework Directive) [25].

- Use a Self-Organizing Map (SOM) to visualize the complex relationships between HIPPO stressors and fish community (ESE) responses [25].

Workflow and Pathway Visualizations

4.1 Generalized Workflow for Landscape Ecological Risk Assessment & Validation

Diagram Title: Generalized LERA Workflow with Field Validation Loop

4.2 Decision Pathway for Selecting an Assessment Methodology

Diagram Title: Decision Pathway for Selecting LERA Methodology

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents, Models, and Tools for LERA

| Tool/Reagent Category | Specific Example | Primary Function in Assessment | Application Context (Example) |

|---|---|---|---|

| Remote Sensing & Classification Tools | Landsat/Sentinel-2 Imagery; SVM Classifier in ENVI/ArcGIS | Provides multi-temporal LULC data, the foundational spatial dataset for pattern analysis. | Used in virtually all spatial studies [22] [6] [1]. |

| Landscape Pattern Analysis Software | FRAGSTATS | Calculates a wide array of landscape pattern indices (PD, LSI, SHDI, etc.) from LULC maps. | Core for LERI construction in Engebei [6], Saihanba [26], Fuchunjiang [1]. |

| Ecosystem Service Modeling Suite | InVEST (Integrated Valuation of Ecosystem Services) | Models and maps multiple ecosystem services (water yield, carbon storage, habitat quality) based on LULC and biophysical data. | Used to inform ESV calculations and identify ecological sources in Aksu Basin [22]. |

| Spatial Statistical Analysis Package | GeoDa; R with spdep/gd packages |

Performs spatial autocorrelation (Moran's I) and geographical detector (q-statistic) analysis to identify risk clusters and drivers. | Key for driver detection in Guiyang [7], Saihanba [26], Fuchunjiang [1]. |

| Machine Learning Framework | Python (TensorFlow/PyTorch) or MATLAB | Enables building and training custom predictive models like ELSTM-EBP for time-series forecasting of ecological parameters. | Core platform for the Daling River Basin water quality prediction model [24]. |

| Land Use Change Simulator | PLUS (Patch-generating Land Use Simulation) Model | Simulates future LULC scenarios by integrating the impacts of multiple drivers, providing input for future risk projections. | Used to simulate 2030 ESV in Aksu [23] and LER in Guiyang under different scenarios [7]. |

| Biotic Field Survey Protocol | Standardized Electrofishing Kit; WFD-compliant sampling protocols | Provides validated, quantitative field data on biotic endpoints (fish community structure) for direct ecological validation of risk. | Foundation of the ESE-HIPPO model in Velika Morava [25]. |

| Global Tipping Point Data | CMIP6 Climate Models; Satellite-derived sea surface temp/forest loss alerts | Provides large-scale, long-term data on climate and Earth system variables to assess proximity to global ecological thresholds. | Underpins risk assessments for Amazon, AMOC, and coral reefs [27]. |

Advanced Methodologies for Landscape Ecological Risk Assessment and Practical Applications

Landscape Pattern Index Methods and Ecological Risk Model Construction

The evolution of landscape ecological risk assessment has progressed from static, pattern-based evaluations to dynamic, multi-process models that integrate ecological functions and future scenarios. The following table summarizes the core characteristics, strengths, and validation contexts of five prominent methodological frameworks identified in current research.

Table 1: Comparison of Landscape Ecological Risk Assessment Methodologies

| Methodology / Core Model | Primary Function & Approach | Key Landscape Indices & Drivers Analyzed | Strengths & Innovations | Application Context & Validation Basis |

|---|---|---|---|---|

| Optimal Scale Deterministic Model [28] [29] | Identifies the most appropriate spatial grain and extent for analysis to minimize scale effect biases. | Fragmentation, Aggregation, Spatial Heterogeneity. Analyzes response curves of indices to grain size. | Enhances precision and comparability of spatial analysis; foundational for reliable pattern quantification. | Used in Bosten Lake [28] and Yellow River Basins [29]. Validated via semi-variance function and coefficient of variation. |

| Landscape Ecological Risk Index (ERI) Model [30] [3] | A classic additive model that integrates landscape pattern indices with vulnerability. | Fragmentation (Aᵢ), Separation (Bᵢ), Dominance (Cᵢ), combined into a structure index (Sᵢ). | Simple, interpretable, widely applicable for spatiotemporal risk trend analysis. | Applied in Harbin [3] and Yellow River Basin [30]. Validated through spatial autocorrelation (Moran’s I) and trend analysis against land use change. |

| Multi-Scale Driving Force Analysis (GeoDetector) [31] [3] [32] | Quantifies the explanatory power of drivers and their interactions on LER spatial heterogeneity. | Natural (elevation, climate) and Anthropogenic (population, GDP, distance to roads) factors. | Reveals scale-dependent driver roles; identifies interaction effects that intensify risk. | Applied in Yunnan plateau lakes [31], Harbin [3], and Changshagongma Wetland [32]. Validation relies on factor detector (q-statistic) and interaction detector results. |

| Multi-Scenario Simulation Model (e.g., PLUS) [3] [33] | Projects future LER under different land use scenarios (e.g., natural development, ecological priority). | Simulated future land use patterns serve as input for ERI calculations. | Supports proactive planning and policy testing; reveals consequences of development pathways. | Demonstrated in Harbin [3] and Jinpu New Area [33]. Validated by model accuracy (FoM, Kappa) and spatial conflict analysis with carbon stock [33]. |

| Ecosystem Service-Optimized LER Model [34] | Integrates ecosystem service valuations to objectively weight landscape vulnerability in the ERI model. | Ecosystem services (e.g., water yield, soil retention, NPP) replace subjective vulnerability scores. | Reduces subjectivity; strengthens ecological connotation of risk; links risk to functional loss. | Implemented in the Luo River watershed [34]. Validated via spatial correlation with ecosystem resilience and statistical fit. |

Experimental Protocols for Key Methodological Frameworks

Protocol 1: Determining the Optimal Spatial Scale for Analysis

This protocol is critical for ensuring the robustness of all subsequent landscape pattern analyses [28] [29].

- Data Preparation: Acquire high-resolution Land Use/Land Cover (LULC) data for the study area (e.g., 30m resolution).

- Grain Size Analysis:

- Re-sample the LULC data to a series of progressively coarser grain sizes (e.g., 30m, 60m, 90m, 120m, 150m).

- Calculate key landscape pattern indices (e.g., Patch Density, Largest Patch Index) for each grain size.

- Plot the index values against grain size to generate response curves. The optimal grain size is identified at the point where indices begin to stabilize or show an inflection point (e.g., 150m in the Bosten Lake Basin) [28].

- Analysis Extent Determination:

- Using the optimal grain size, overlay the study area with grids of different extents (e.g., 2km, 4km, 6km, 8km, 10km squares).

- Calculate the semi-variance for a key index within each grid size.

- The optimal analysis extent is where the semi-variance first reaches a plateau (e.g., 10km in Bosten Lake [28] or 90km in the Yellow River Basin [29]), indicating the scale capturing maximum spatial heterogeneity.

Protocol 2: Constructing and Applying the Landscape Ecological Risk Index (ERI)

This is the core computational procedure for quantifying relative ecological risk [30] [3].

- Landscape Classification & Gridding: Classify LULC data into landscape types (e.g., forest, grassland, cropland, urban, water, bare land). Overlay the study area with a grid of the determined optimal extent.

- Calculate Landscape Pattern Indices per Grid Cell:

- Fragmentation (Aᵢ):

Aᵢ = Nᵢ / Aᵢ, where Nᵢ is the number of patches of landscape type i, and Aᵢ is its total area in the grid. - Separation (Bᵢ):

Bᵢ = Dᵢ / Sᵢ, where Dᵢ is the distance index and Sᵢ is the area index for type i. - Dominance (Cᵢ):

Cᵢ = (Qᵢ + Mᵢ) / 4, where Qᵢ is the patch frequency and Mᵢ is the area proportion.

- Fragmentation (Aᵢ):

- Compute Composite Landscape Structure Index (Sᵢ):

Sᵢ = 0.5 * Aᵢ + 0.3 * Bᵢ + 0.2 * Cᵢ(weights can be adjusted based on expert judgment) [30]. - Assign Landscape Vulnerability Index (Fᵢ): Traditionally based on expert scores (e.g., 1 for urban, 6 for water) [30]. The optimized model uses an inverse normalized composite of key ecosystem services (water yield, soil retention, carbon storage) [34].

- Calculate the Ecological Risk Index (ERI) per Grid Cell:

ERIₖ = ∑ ( (Aₖᵢ / Aₖ) * Fᵢ * Sᵢ )for all landscape types i in grid k, where Aₖᵢ is the area of type i in grid k, and Aₖ is the total area of grid k [30]. - Risk Level Classification: Use natural breaks or quantile methods to classify final ERI values into levels (e.g., Lowest, Lower, Medium, Higher, Highest).

Protocol 3: Multi-Scenario Simulation of Future LER Using the PLUS Model

This protocol projects future risk to inform strategic planning [3] [33].

- Historical Land Use Change Analysis: Analyze transitions between land use types from historical data (e.g., 2000, 2010, 2020) to identify change trajectories.

- Driver Variable Preparation: Prepare raster data for natural (slope, elevation, precipitation) and socioeconomic (GDP, population, distance to roads/water) drivers.

- Land Expansion Analysis Strategy (LEAS): Use the PLUS model's LEAS module to analyze the contributions of each driver to the expansion of each land use type during the historical period via random forest mining.

- Scenario Definition & Simulation:

- Define scenario rules (e.g., Ecological Priority: restrict urban expansion, enhance forest/grassland conversion probability; Urban Development: prioritize economic growth) [3].

- Using a Multi-type Random Seed Cellular Automata (CARS) model, simulate future land use maps under each scenario (e.g., for 2030).

- Future LER Assessment: Apply the ERI model (Protocol 2) to the simulated future land use maps to assess and compare risk outcomes under different scenarios.

Protocol 4: Spatial Conflict Analysis Between LER and Ecosystem Services

This advanced protocol identifies areas of competing ecological objectives [33].

- Parallel Calculation: Independently calculate the LER (via Protocol 2) and a key ecosystem service (e.g., carbon stock using the InVEST model) for the same region and period.

- Data Classification: Reclassify both the LER and ecosystem service rasters into equivalent levels (e.g., Low, Medium, High).

- Spatial Overlay and Conflict Identification: Overlay the two classified rasters. Define "spatial conflict areas" as zones where high ecological risk coincides with high ecosystem service value (e.g., high carbon stock) [33]. These areas represent critical tension zones where development pressure threatens significant ecological function.

- Scenario Evaluation: Repeat the analysis using future simulated land use maps (from Protocol 3) to evaluate how different development pathways alter the spatial conflict pattern.

Workflow and Analytical Pathway Visualizations

Landscape Ecological Risk Assessment General Workflow

Landscape Ecological Risk Index (ERI) Model Components

Research Reagent Solutions: Essential Data and Toolkits

Table 2: Essential Research Reagents and Computational Tools for LER Assessment

| Category | Item / Tool | Primary Function in LER Research | Key Source / Platform Examples |

|---|---|---|---|

| Core Data | Land Use/Land Cover (LULC) Data | The fundamental input for calculating landscape patterns and tracking change. | National Geographic Info Resources [30], GLOBELAND30, ESA WorldCover, USGS Landsat. |

| Socioeconomic & Driving Factor Data | Used to explain risk patterns (GeoDetector) and simulate future scenarios (PLUS). | Resource & Environmental Science Data Center [30], WorldPop (population), OpenStreetMap (roads). | |

| Terrain & Climate Data | Key natural drivers of landscape pattern and ecological vulnerability. | Digital Elevation Models (DEM), WorldClim, national meteorological agencies. | |

| Software & Platforms | Geographic Information System (GIS) | The primary platform for spatial data management, grid analysis, overlay, and cartography. | ArcGIS, QGIS (open source). |

| Remote Sensing & Cloud Platform | For acquiring, preprocessing, and classifying LULC data, especially over large areas. | Google Earth Engine (GEE) [32] [33], ENVI. | |

| Statistical Analysis Software | For performing spatial statistics (Moran's I), running GeoDetector, and general data analysis. | R (with spdep, GD packages), Python (with geodetector lib), SPSS. |

|

| Specialized Models | Landscape Pattern Analysis Tools | Calculate fragmentation, aggregation, and diversity indices from LULC rasters. | FRAGSTATS, landscapemetrics (R package). |

| Land Use Change Simulation Model | Projects future land use under different scenarios for proactive risk assessment. | PLUS Model [3], FLUS, CA-Markov. | |

| Ecosystem Service Assessment Model | Quantifies services (carbon, water, soil) for optimizing LER models and conflict analysis. | InVEST [33], SoLVES. |

Integrating Remote Sensing and GIS Technologies for Data Collection and Analysis

Integrating remote sensing (RS) and Geographic Information Systems (GIS) has fundamentally transformed the scientific approach to landscape ecological risk assessment (LER). This integration provides a robust, scalable framework for analyzing the impact of human activities and natural changes on ecosystem structure, function, and stability [7] [1]. RS technologies enable the consistent, wide-area collection of spatial data on land cover, vegetation health, and environmental conditions [35]. GIS provides the critical platform to manage, analyze, and model this data, translating raw imagery into actionable insights about ecological vulnerability and risk patterns [36] [35]. This comparative guide examines the performance of this integrated technological approach against alternative methods within the critical context of validating landscape ecological risk assessments with field data—a cornerstone for credible research informing conservation policy and sustainable development [1] [37].

Comparative Analysis of Technological Approaches for Risk Assessment

The choice of methodology for spatial data analysis significantly influences the accuracy, scale, and applicability of landscape ecological risk assessments. The table below summarizes the core characteristics of three predominant approaches.

Table: Comparison of Methodological Approaches for Landscape Ecological Risk Assessment

| Methodological Approach | Core Principle | Typical Data Sources | Spatial Scale & Best-Use Context | Key Performance Metrics / Validation |

|---|---|---|---|---|

| Geostatistical Interpolation (e.g., Kriging) | Estimates values at unmeasured locations based on the spatial correlation structure of data from point samples [38]. | Ground monitoring station data (e.g., air/water quality samples). | Best for areas with dense monitoring networks. Accuracy declines with distance from sample points (>100 km) [38]. | Cross-validation R², Mean Squared Prediction Error. Validated against held-out ground stations [38]. |

| Remote Sensing (RS)-Based Estimation | Derives spatial variables through spectral analysis of imagery from satellite, aerial, or drone platforms [39] [35]. | Satellite imagery (e.g., Landsat, Sentinel), aerial photography, drone data, LiDAR. | Global to regional scale. Essential for areas with no or sparse ground networks [38]. Provides wall-to-wall coverage. | Correlation with ground truth data; classification accuracy (e.g., Kappa Coefficient); model R²/RMSE for derived parameters [39] [40]. |

| Integrated RS & GIS Hybrid Analysis | Combines RS-derived data layers with other spatial data (topography, climate, socio-economic) in a GIS for comprehensive modeling and analysis [36] [35]. | RS imagery, Digital Elevation Models (DEMs), climate grids, soil maps, census data. | Flexible, multi-scale. Ideal for complex, multi-factor risk assessments where landscape pattern and context are critical [7] [1]. | Map accuracy assessment, statistical significance of driver analysis (e.g., Geodetector q-statistic), predictive performance of combined models [7] [1]. |

Performance Insights from Comparative Studies:

- Accuracy vs. Coverage Trade-off: A direct comparison for PM2.5 estimation found kriging more accurate near (<100 km) monitoring stations, while satellite-based RS estimates performed better in remote areas farther from stations [38]. This highlights a fundamental trade-off between point-based accuracy and continuous coverage.

- Superiority in Complex Landscape Analysis: For landscape ecological risk, integrated RS/GIS approaches are dominant. Studies consistently use RS to classify land use/cover and calculate landscape pattern indices (e.g., fragmentation, loss), which are then processed within a GIS to model ecological risk indices and analyze their spatial drivers [7] [9] [1]. This method captures the spatial heterogeneity and multi-factor influences (ecological and social) that pure interpolation or standalone RS analysis cannot [1].

- Validation with Field Data: The integration pathway directly supports validation. For example, a study mapping mineral alteration zones used ASTER satellite data processed with spectral algorithms to create mineral maps, which were integrated with field-collected spectral signatures and sample locations in a GIS for validation, achieving approximately 80% accuracy [36].

Diagram 1: Integrated RS/GIS Workflow for Landscape Ecological Risk Assessment [7] [9] [36]

Core Experimental Protocols for Assessment and Validation

The validation of landscape ecological risk assessments with field data follows a structured, replicable protocol. The following outlines a generalized workflow, synthesized from multiple contemporary studies [7] [9] [36].

Phase 1: Data Acquisition and Preprocessing

- Remote Sensing Data Collection: Acquire multi-temporal cloud-free satellite imagery (e.g., Landsat, Sentinel-2) for the study area. Key specifications include spatial resolution (e.g., 10-30m), spectral bands (visible, NIR, SWIR), and acquisition dates matching the assessment period [9] [37].

- Field Data Campaign Design: Strategically plan field surveys to collect ground truth data. This includes:

- Land Cover Validation Points: Using stratified random sampling across different land cover classes to collect GPS points for training and validating the RS classification [1].

- Physicochemical Sampling: For soil or water-related risks, collect samples (e.g., soil cores, water samples) from representative management zones or risk strata. Sample depth and density should follow statistical guidance for the chosen interpolation model [40].

- In-situ Spectral Measurements: In advanced studies, use handheld spectroradiometers (e.g., ASD TerraSpec) to collect the spectral signature of rocks, soils, or vegetation at sample locations for direct comparison with satellite pixel spectra [36].

- Ancillary Data Compilation: Gather GIS layers for topography (Digital Elevation Model), climate (precipitation, temperature), soil types, infrastructure (roads, settlements), and socio-economic data (population density, GDP) [7] [1].

- Preprocessing: Perform radiometric and atmospheric correction on RS imagery. Georeference all field data and ancillary layers to a common coordinate system and pixel resolution (resampling) within the GIS [36].

Phase 2: Landscape Pattern and Risk Index Modeling

- Land Use/Land Cover (LULC) Classification: Use supervised (e.g., Maximum Likelihood, Support Vector Machine) or machine learning (e.g., Random Forest) classifiers on the RS imagery to generate a LULC map [39] [1]. Validate accuracy with field-collected points, typically aiming for >85% overall accuracy.

- Landscape Pattern Analysis: Using the LULC map and software like Fragstats, calculate landscape pattern indices for each land cover type or for a moving window across a grid. Common indices include Patch Density (PD), Edge Density (ED), Landscape Fragmentation Index, and Landscape Loss Index [9] [37].

- Ecological Risk Index (ERI) Construction: Construct a composite Landscape Ecological Risk Index (LERI) within the GIS. A common model is:

LERI = (Landscape Disturbance Index * Landscape Vulnerability Index). The disturbance index is often derived from pattern indices (e.g., fragmentation, loss), while vulnerability is assigned by expert weighting based on LULC type (e.g., forest has low vulnerability, bare land has high) [9] [1] [37]. - Spatial Interpolation of Point Samples (If applicable): For soil or air quality parameters, use geostatistical kriging in the GIS to interpolate values from field sample points to create continuous surface maps. Validate the model using cross-validation techniques [38] [40].

Phase 3: Integration, Validation, and Driver Analysis

- Data Integration and Mapping: Integrate the LERI map with ancillary data layers in the GIS. Perform spatial overlay and zonal statistics to calculate average risk per administrative unit (e.g., township) [1].

- Model Validation: Rigorously validate outputs.

- LULC Map: Use error matrices and Kappa statistics from independent field validation points [1].

- LERI/Surface Map: For soil attributes, compare predicted values from RS/GIS models with lab-analyzed values from a separate set of field samples using R² and RMSE [40]. For ecological risk, perform statistical correlation between the modeled LERI and independent field-based indicators of ecosystem stress where available.

- Spatial and Statistical Analysis:

- Spatial Autocorrelation: Use Moran’s I index in the GIS to determine if ecological risk exhibits clustered, dispersed, or random spatial patterns [9] [37].

- Driver Detection: Apply statistical tools like the Geodetector model to quantify the explanatory power (q-statistic) of various natural and socio-economic factors (e.g., slope, GDP, population density) on the observed risk pattern [7] [1].

Diagram 2: Hybrid Kriging/RS Model for Optimal PM2.5 Estimation [38]

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Research Tools and Materials for RS/GIS-Based Ecological Risk Assessment

| Tool / Material | Category | Primary Function in Research | Example in Context |

|---|---|---|---|

| Landsat / Sentinel-2 Satellite Imagery | Remote Sensing Data | Provides multi-spectral, medium-resolution data for land cover classification, change detection, and vegetation index calculation over large areas and long time series [9] [37]. | Used to map deforestation, urban expansion, and agricultural land changes as foundational inputs for risk assessment [7] [1]. |

| ASD TerraSpec Halo Spectroradiometer | Field Instrument | Collects high-resolution spectral signatures of materials (soil, rock, vegetation) in situ for calibrating satellite data and validating spectral mapping algorithms [36]. | Used to measure the spectral profile of alteration minerals at field sites to validate ASTER satellite-based mineral maps [36]. |

| Digital Elevation Model (DEM) | GIS Data | Provides topographic data (elevation, slope, aspect) essential for modeling hydrological processes, erosion risk, and for integrating terrain effects into ecological models [36] [40]. | Used to extract lineaments and understand topographic controls on the spatial distribution of ecological risks [36]. |

| Random Forest (RF) Algorithm | Analysis Software / AI | A machine learning classifier used for accurate land cover classification from RS imagery and for modeling the relationship between environmental variables and measured soil/ecological properties [39] [40]. | Used to predict soil attributes (clay content, CEC) from a fusion of Sentinel-1 & Sentinel-2 data, or to classify complex urban landscapes [39] [40]. |

| Fragstats Software | Analysis Software | Calculates a wide array of landscape pattern metrics from categorical land cover maps, which are fundamental for constructing landscape disturbance indices [9] [37]. | Used to compute patch density, edge density, and landscape shape index within a moving window to quantify spatial fragmentation [37]. |

| ArcGIS / QGIS Platform | GIS Software | The core platform for integrating multi-source spatial data, performing spatial analysis (overlay, zonal statistics), executing models, and producing final risk maps [36] [35]. | Used to perform weighted overlay analysis of alteration zones and lineaments, and to visualize the final ecological risk zoning maps [9] [36]. |

| Geodetector Model | Statistical Tool | Identifies and quantifies the spatial stratified heterogeneity of a dependent variable (e.g., LERI) and tests the explanatory power of independent driving factors [7] [1]. | Used to determine that GDP and human interference are dominant drivers of landscape ecological risk in a river basin [1]. |

The integration of remote sensing and GIS is the definitive methodology for robust, spatially explicit landscape ecological risk assessment. As evidenced, it surpasses purely ground-based or standalone techniques by providing comprehensive coverage, enabling multi-factor analysis, and directly facilitating validation with field data [38] [1]. The future of this field is intrinsically linked to advances in artificial intelligence (AI) and cloud computing. Machine learning and deep learning models are dramatically improving the automatic extraction of information from RS data, enhancing the precision of LULC maps and predictive models of ecosystem properties [39]. Furthermore, the emergence of cloud platforms like Google Earth Engine is democratizing access to massive RS data archives and processing power, allowing for larger-scale and more frequent risk assessments [39]. The critical research frontier remains strengthening the feedback loop between RS/GIS models and field validation. This includes designing more sophisticated ground sampling schemes informed by preliminary RS analysis and developing novel sensors for drones and field kits that provide direct, quantitative measures of ecosystem stress, thereby closing the validation loop and increasing the operational reliability of landscape ecological risk warnings for scientists and policymakers alike [36] [40].

In landscape ecological risk assessment (LERA), the core challenge lies in validating spatial predictions and causal inferences against empirical field data. This validation is crucial for transforming theoretical risk models into reliable tools for environmental management and policy. Two powerful computational tools have emerged as cornerstones in this process: Geodetector and Random Forest (RF) [19] [3].

Geodetector is a spatial statistical method designed to quantify the driving forces behind geographical phenomena. Its primary strength is factor detection—measuring how much a spatial independent variable (e.g., elevation, precipitation) explains the spatial heterogeneity of a dependent variable (e.g., ecological risk index). It operates on the principle that if an independent variable significantly influences a dependent variable, their spatial distributions will exhibit similarity [41] [42].

Random Forest, in contrast, is a robust machine learning algorithm renowned for its high predictive accuracy and ability to model complex, non-linear relationships. It constructs multiple decision trees during training and outputs the mode of the classes (classification) or mean prediction (regression) of the individual trees. Its key advantages include inherent estimation of feature importance and resilience to overfitting [43] [44].

Increasingly, researchers are not using these tools in isolation but are integrating them into hybrid analytical frameworks. This synergy leverages Geodetector's strength in identifying and quantifying key drivers and their interactions, and RF's power in making accurate spatial predictions. This integrated approach is proving particularly effective for validating LERA models against field observations, as it provides both explanatory insight and predictive validation [43] [45]. The following workflow diagram illustrates a typical integrated methodological framework for landscape ecological risk assessment and validation.

Comparative Performance Analysis: Experimental Data and Results

The integration of Geodetector and Random Forest has been tested across diverse landscapes, from fragile alpine heritage sites to intensively managed agricultural plains. The performance is consistently benchmarked against standalone models and traditional statistical methods. The following table summarizes key experimental outcomes from recent studies, highlighting the validation metrics central to a robust thesis.

Table 1: Comparative Performance of Geodetector, Random Forest, and Hybrid Models in Environmental Applications

| Study Context & Reference | Primary Tool(s) Used | Key Comparative Metric | Performance Result | Validation Against Field Data |

|---|---|---|---|---|

| Landslide Susceptibility Mapping [43] | RF, GeoDetector-RF, RFE-RF | Prediction Accuracy; AUC | Standalone RF: Accuracy=0.860, AUC=0.853GeoDetector-RF Hybrid: Accuracy=0.868, AUC=0.863RFE-RF Hybrid: Accuracy=0.869, AUC=0.860 | Models trained (70%) and tested (30%) on inventory of 406 landslides & 2030 non-landslide points. Hybrid models showed superior reliability. |

| Landscape Ecological Risk (LER) Driving Forces [19] | GeoDetector | q-statistic (Explanatory Power) | Top drivers for LER: NDVI (highest q), followed by GDP density, population density, and distance to roads. Two-factor interactions showed non-linear enhancement. | LER index derived from land use data (2000-2020). Factor contributions quantitatively diagnosed, validating the role of natural and socio-economic drivers. |

| Alpine Land Cover Change [45] | RF & GeoDetector | Factor Importance Ranking & Optimal Range Identification | Both models identified elevation, precipitation, temperature as top drivers. Geodetector further quantified optimal ranges for forest/grassland transition (e.g., precipitation: 275-375 mm). | Supervised classification of Landsat imagery (1994-2023) provided land cover data for driver analysis, confirming climate and terrain as primary drivers. |

| Ecological Vulnerability Classification [44] | RF, LightGBM, MLP, etc.; GeoDetector | Multiclass Classification AUC | Random Forest achieved the best performance: AUC = 0.954, F1-score = 0.78. GeoDetector identified NPP*precipitation interaction as the dominant driver (q=0.50). | Models trained on Ecological Vulnerability Index (EVI) calculated from SRP framework. SHAP analysis validated RF model and aligned with Geodetector results. |

| Landslide Susceptibility (Alternative Method) [46] | Frequency Ratio (FR), AHP, Shannon Entropy | Predictive Capability (AUC) | FR Model: AUC = 0.92 (Success), 0.90 (Prediction)AHP Model: AUC = 0.89, 0.87Shannon Entropy: AUC = 0.81, 0.77 | Validated on 30% of 14,698 landslide inventory data, providing a benchmark for machine learning model performance. |

Beyond predictive accuracy, a critical advantage of the Geodetector-RF synergy is its capability for factor optimization. Redundant or collinear variables can degrade model performance and interpretability. Geodetector addresses this by identifying the unique explanatory power of each factor and revealing synergistic interactions. This refined set of drivers is then fed into the RF model, enhancing its efficiency and robustness [43] [45]. The process is visualized below.

Detailed Experimental Protocols for Validation

For a thesis centered on validation, the reproducibility of methods is paramount. Below are detailed protocols for key experiments that integrate Geodetector and RF, as implemented in recent studies.

This protocol details the steps to create and validate a GeoDetector-optimized Random Forest model, a common application in geohazard risk assessment.

Inventory & Factor Database Creation:

- Landslide Inventory: Compile a historical landslide inventory map using satellite imagery, aerial photographs, and field surveys. Represent landslides as point features. Generate an equivalent number of randomly distributed non-landslide points.

- Initial Factor Selection: Select a comprehensive set of conditioning factors (e.g., 22 factors in the cited study). Common categories include topography (slope, aspect, elevation), hydrology (distance to rivers, TWI), geology (lithology, distance to faults), land cover (NDVI, land use), and climate (rainfall).

- Data Partitioning: Randomly split the entire dataset (landslide and non-landslide points) into a training subset (70%) for model building and a testing subset (30%) for final validation.

Factor Optimization using Geodetector:

- Discretization: Use the Geodetector software to discretize continuous factor data into appropriate strata. The optimal classification method (e.g., natural breaks, quantiles) and number of strata for each factor can be determined experimentally to maximize the q-statistic.

- Factor Detection: Run the factor detector to calculate the q-value for each factor, which represents its explanatory power (0-1).

- Interaction Detection: Run the interaction detector to assess whether the combined influence of any two factors is stronger than their individual effects (e.g., non-linear enhancement).

- Optimized Factor Set: Select factors with high q-values and meaningful interactions, while removing those with very low explanatory power to reduce redundancy.

Random Forest Modeling & Validation:

- Model Training: Train a Random Forest classifier using the training subset and the optimized factor set. Tune hyperparameters (e.g., number of trees, maximum depth) via cross-validation.