Validating In Silico LD50 Models: A Comprehensive Guide for AI-Driven Toxicity Prediction in Drug Discovery

This article provides a systematic framework for researchers and drug development professionals to evaluate and validate in silico models for predicting acute oral toxicity (LD50).

Validating In Silico LD50 Models: A Comprehensive Guide for AI-Driven Toxicity Prediction in Drug Discovery

Abstract

This article provides a systematic framework for researchers and drug development professionals to evaluate and validate in silico models for predicting acute oral toxicity (LD50). It covers the foundational principles of computational toxicology and essential data sources, details the methodological pipeline from data preprocessing to model application, addresses common challenges and optimization strategies, and presents rigorous validation and comparative analysis techniques. By synthesizing current advances in AI, machine learning, and consensus modeling, the article aims to equip scientists with practical knowledge to enhance the reliability, interpretability, and regulatory acceptance of computational LD50 predictions, ultimately accelerating safer drug candidate selection.

The Core of Computational Toxicity: Understanding LD50 and Essential Data Landscapes

The median lethal dose (LD50) is defined as the single dose of a substance required to kill 50% of a test animal population within a specified timeframe [1] [2] [3]. Since its introduction by J.W. Trevan in 1927, it has served as a standardized quantitative benchmark for comparing the acute toxicity of diverse chemicals [1] [3] [4]. The value is typically expressed as the mass of substance per unit body weight of the test animal (e.g., milligrams per kilogram) [1]. A fundamental principle in toxicology is that a lower LD50 value indicates higher toxicity [1] [3].

The primary role of the LD50 test has been to provide a reproducible point of comparison for hazard identification and safety assessment [1] [5]. By using death as a universal endpoint, it allows for the comparison of chemicals with vastly different mechanisms of action [1]. Regulatory frameworks have historically relied on this data point to classify chemicals into toxicity categories, such as those defined by the Hodge and Sterner or Gosselin scales, which help predict risk and guide safe handling procedures [1].

However, within modern drug development, the necessity of determining a precise LD50 value has been questioned [6] [4]. Scientific critiques point to its significant consumption of animals and resources, ethical concerns, and the fact that a highly precise LD50 is rarely needed for safety assessment [6] [4]. Consequently, the field is undergoing a paradigm shift, emphasizing the "3Rs" (Replacement, Reduction, Refinement) and accelerating the validation of alternative methods [7]. This guide explores the traditional LD50 benchmark and objectively compares it with emerging in silico prediction models, framing the discussion within the critical research context of validating these computational approaches.

Traditional Experimental Protocol for LD50 Determination

The classical in vivo LD50 test is a rigorous, multi-stage process designed to pinpoint the dose-mortality curve with statistical confidence.

Detailed Experimental Methodology

Test System Selection: The test is most commonly performed on rats or mice, though other species like rabbits, dogs, or guinea pigs may be used [1]. Animals of a defined strain, age, sex, and weight are acclimatized under standardized housing conditions.

Dose Preparation and Administration: The test substance is administered in its pure form [1]. The route of administration is critical and must be relevant to potential human exposure:

- Oral (Gavage): Most common for initial assessment [1].

- Dermal: Applied to shaved skin for assessing absorption toxicity [1].

- Inhalation (LC50): Animals are exposed to a measured concentration of the chemical in air for a set period (often 4 hours) [1].

- Parenteral (Intravenous, Intraperitoneal): Used for specific drug delivery studies [1].

Study Design and Dosing: A traditional definitive LD50 study uses multiple dose groups (typically 4-6) with 5-10 animals per group [4]. Doses are spaced logarithmically to bracket the expected median lethal dose. A control group receives the vehicle only.

Observation Period: Following administration, animals are clinically observed for up to 14 days [1]. Observations include time of onset of toxic signs (e.g., lethargy, convulsions), morbidity, and mortality. Body weights and food consumption may be monitored.

Necropsy and Histopathology: Animals that die during the study and survivors sacrificed at its conclusion typically undergo gross necropsy. Tissues may be preserved for histopathological examination to identify target organ toxicity.

Data Analysis and LD50 Calculation: Mortality data at the end of the observation period are analyzed using statistical methods (e.g., probit analysis, moving average, or up-and-down methods) to generate a dose-mortality curve and calculate the LD50 value with its confidence intervals [4].

Key Considerations and Limitations

- Species and Route Variability: LD50 values can vary dramatically between species and routes of administration [1]. For example, dichlorvos shows an oral LD50 in rats of 56 mg/kg but an inhalation LC50 of 1.7 ppm, indicating much higher toxicity via the respiratory route [1].

- Ethical and Resource Costs: The procedure is resource-intensive, time-consuming (weeks), and ethically contentious due to the suffering and death of a significant number of animals [7] [4].

- Limited Predictive Scope: The LD50 is a measure of acute lethality only. It does not provide information on sublethal chronic toxicity, mechanisms of action, organ-specific damage, or long-term health effects [1].

Traditional in vivo LD50 determination workflow.

Comparative Analysis: Traditional LD50 vs. Modern In Silico Approaches

The following table provides a direct comparison between the traditional experimental benchmark and the emerging computational prediction paradigms.

Table 1: Comparative Analysis of Traditional LD50 Testing and Modern In Silico Prediction Models

| Aspect | Traditional In Vivo LD50 Test | In Silico LD50 Prediction Models |

|---|---|---|

| Primary Objective | Determine the precise dose causing 50% mortality in a test animal population [1] [3]. | Predict acute toxicity endpoints (LD50, toxicity class) from chemical structure and/or in vitro data [8] [7]. |

| Fundamental Basis | Empirical observation of a biological outcome (death) in a whole, complex organism. | Statistical and machine learning correlations between molecular descriptors/features and known toxicological outcomes [7] [9]. |

| Key Advantages | • Provides a direct, observed biological endpoint.• Long history of use and regulatory acceptance.• Captures complex systemic physiology and metabolism. | • High-throughput: Can screen thousands of compounds in minutes [7].• Cost-effective: Drastically reduces animal and material costs [7].• Ethical alignment: Adheres to the 3R principle (Replacement) [7].• Provides mechanistic insights via interpretable features [9]. |

| Key Limitations | • Low-throughput and time-consuming (weeks) [7].• High cost (animals, facilities, compound) [7].• Ethical concerns regarding animal suffering [7] [4].• Species extrapolation uncertainty to humans [3]. | • Dependent on quality and quantity of training data [7] [9].• Limited predictability for novel chemical scaffolds outside the training domain.• Challenges in model interpretability (especially for deep learning) [7].• Ongoing need for regulatory validation and acceptance. |

| Typical Output | A single, precise LD50 value (e.g., 56 mg/kg) with confidence intervals for a specific species and route [1]. | A predicted LD50 value, a toxicity class (e.g., "highly toxic"), or a probability score for acute lethality [9]. |

| Regulatory Status | Historically required; now often replaced by alternative tests that use fewer animals (e.g., Fixed Dose Procedure) [6] [4]. | Gaining traction for early screening and priority setting; subject to ongoing validation for full regulatory acceptance [8] [7]. |

Validation of In Silico LD50 Prediction Models: Frameworks and Data

The validation of computational models is a multi-layered process essential for establishing scientific and regulatory confidence. Current research focuses on several core frameworks:

- Use of Diverse, High-Quality Benchmark Datasets: Models are trained and validated on large, curated databases. Key sources include:

- Rigorous Performance Metrics: Models are evaluated using standard metrics. For regression tasks (predicting a continuous LD50 value), Mean Squared Error (MSE), Root Mean Squared Error (RMSE), and the coefficient of determination (R²) are used. For classification tasks (predicting a toxicity category), accuracy, precision, recall, F1-score, and the Area Under the Receiver Operating Characteristic Curve (AUROC) are standard [9].

- Advanced Modeling Architectures: The field is transitioning from traditional Quantitative Structure-Activity Relationship (QSAR) models to more sophisticated AI.

- Graph Neural Networks (GNNs): Directly operate on molecular graph structures, automatically learning relevant features associated with toxicity [7] [9].

- Multimodal Models: Integrate multiple data types (e.g., chemical structure images, molecular descriptors, bioassay data) to improve predictive accuracy and robustness [10]. For example, a 2025 study combined a Vision Transformer (ViT) for molecular images with a Multilayer Perceptron (MLP) for numerical property data, achieving a high predictive accuracy (0.872) and Pearson Correlation Coefficient (0.9192) [10].

- Large Language Models (LLMs): Emerging for mining toxicological literature and integrating knowledge [7].

- External Validation and Applicability Domain: A critical step is testing the model on a completely external dataset not used during training. This assesses generalizability. Defining the model's "applicability domain" is crucial to understand for which types of chemicals its predictions are reliable [9].

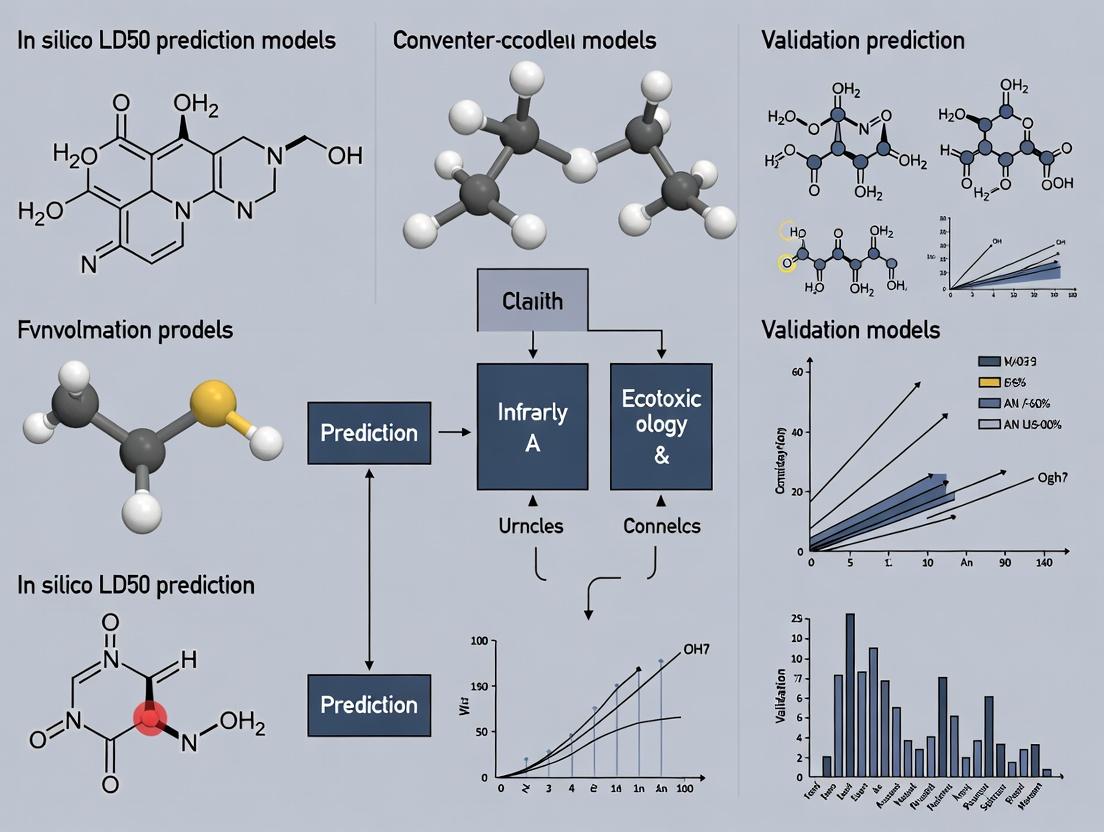

In silico toxicity prediction model validation pipeline.

Experimental Data and Performance Benchmarks

Illustrative Examples of Experimental LD50 Values

Table 2: Examples of Experimental Acute Oral LD50 Values in Rats [1] [3]

| Substance | Approximate LD50 (mg/kg) | Toxicity Classification (Hodge Scale) |

|---|---|---|

| Botulinum toxin | 0.000001 (1 ng/kg) | Extremely Toxic |

| Sodium cyanide | 6.4 | Highly Toxic |

| Paracetamol (Acetaminophen) | 2,000 | Moderately Toxic |

| Ethanol | 7,060 | Slightly Toxic |

| Table Sugar (Sucrose) | 29,700 | Practically Non-toxic |

| Water | >90,000 | Relatively Harmless |

Reported Performance of In Silico Models

Recent literature demonstrates the evolving capability of computational models. A 2025 study on a multimodal deep learning model (ViT + MLP) for multi-label toxicity prediction reported an accuracy of 0.872, an F1-score of 0.86, and a Pearson Correlation Coefficient (PCC) of 0.9192 [10]. Models specifically trained on large datasets like ToxCast for various endpoints show strong performance, though accuracy varies by specific toxicity target (e.g., endocrine disruption vs. hepatotoxicity) [8]. The field acknowledges that while models excel at screening and prioritizing compounds, they are not yet a complete substitute for all in vivo observations, particularly for complex chronic outcomes [7].

The Scientist's Toolkit: Key Reagent Solutions for Toxicity Assessment

Table 3: Essential Research Tools and Reagents for Toxicity Assessment

| Item / Solution | Primary Function in Toxicity Assessment |

|---|---|

| Standardized Laboratory Animals (Rat, Mouse) | The in vivo biological system for traditional acute and chronic toxicity studies, providing a whole-organism physiological context [1]. |

| Cell-Based Assay Kits (e.g., HepG2, primary hepatocytes) | Provide in vitro models for high-throughput screening of cytotoxicity, metabolic disruption, and organ-specific toxicity mechanisms, feeding data for computational models [8] [7]. |

| High-Content Screening (HCS) Imaging Systems | Automates the analysis of cellular morphology and multiple biomarkers in in vitro assays, generating rich, quantitative data for model training [8]. |

| Molecular Descriptor Calculation Software (e.g., RDKit) | Computes thousands of quantitative features (e.g., logP, polar surface area, topological indices) from a chemical's structure, serving as fundamental input for QSAR and machine learning models [7] [9]. |

| Curated Toxicity Databases (e.g., ToxCast, PubChem) | Provide the large-scale, structured experimental data necessary for training, validating, and benchmarking predictive in silico models [8] [7] [9]. |

| Machine Learning/AI Platforms (e.g., Scikit-learn, Deep Graph Libraries) | Offer the algorithmic frameworks (Random Forest, GNNs, Transformers) to build, train, and deploy predictive toxicity models from chemical and biological data [7] [9]. |

| Interpretability Toolkits (e.g., SHAP, LIME) | Help deconstruct "black-box" model predictions to identify which chemical substructures or features drove a toxicological prediction, adding mechanistic insight and trust [9]. |

The LD50 remains a foundational concept in toxicology, providing a historical and quantitative benchmark for acute lethality. However, its practical determination via traditional in vivo testing is increasingly seen as inefficient, costly, and ethically problematic [6] [7] [4]. The field is decisively moving towards a computational paradigm centered on the validation and adoption of in silico prediction models.

These models, powered by AI and diverse data streams, offer a complementary and often preceding approach to physical testing. They enable the early and rapid screening of vast chemical libraries, guiding synthetic efforts towards safer compounds and reducing late-stage attrition [7] [9]. The ongoing research thesis is no longer about whether computational tools will be used, but about how to rigorously validate them to ensure their predictions are reliable, interpretable, and ultimately acceptable for regulatory decision-making. The future of preclinical safety assessment lies in integrated workflows that strategically combine the highest-throughput in silico screens, followed by targeted in vitro assays, with traditional in vivo studies reserved for final confirmation, thereby upholding the principles of the 3Rs while enhancing predictive accuracy.

The validation of in silico LD50 prediction models represents a critical frontier in modern toxicology, driven by converging ethical, scientific, and regulatory forces. The landmark 2025 FDA decision to phase out mandatory animal testing for many drug types has catalyzed a structural transformation in safety science [11]. This guide objectively compares the performance of emerging artificial intelligence (AI)-driven computational models against traditional animal-based and in vitro methods, providing researchers with a framework for evaluating these tools within a rigorous validation paradigm. Data demonstrates that AI models, including Quantitative Structure-Activity Relationship (QSAR) and advanced machine learning systems, can predict acute oral toxicity (LD50) and other endpoints with accuracy rivaling or surpassing traditional methods for many applications, while offering unprecedented gains in speed, cost, and human relevance [12] [9] [13]. This shift is supported by a growing ecosystem of validated toxicity databases, explainable AI algorithms, and regulatory pilot programs, positioning in silico toxicology not merely as an alternative but as the foundation for a new, evidence-based safety assessment paradigm [14] [15].

The Context: Regulatory, Ethical, and Scientific Drivers for Change

The movement toward AI-driven prediction is not merely technological but is embedded within a broader reassessment of drug development's foundational principles. Traditional animal models are limited by species differences, high costs, lengthy timelines, and ethical concerns, often failing to predict human-specific toxicities [12] [11]. These limitations have directly contributed to high failure rates in clinical trials, where safety issues account for approximately 30% of drug candidate attrition [14].

In response, a regulatory evolution is underway. The FDA Modernization Act 2.0 and the European Commission's roadmap to phase out animal testing have created a policy environment conducive to alternative methods [12] [11]. The FDA's 2025 announcement is particularly pivotal, signaling acceptance of New Approach Methodologies (NAMs) and Model-Informed Drug Development (MIDD) as credible evidence for regulatory submissions [11]. This shift is reflected in the growing market for in silico clinical trials, projected to reach USD 6.39 billion by 2033, with drug development applications accounting for over half of the market share [15].

Scientifically, the convergence of high-performance computing, curated toxicogenomics databases, and advanced machine learning algorithms has enabled the development of models that can integrate chemical structure, biological pathway data, and omics signatures to predict toxicity with mechanistic insight [9] [14]. This positions in silico models not as simple replacements, but as superior tools for human-relevant risk assessment.

Table 1: Drivers for the Paradigm Shift from Animal Testing to In Silico Models

| Driver Category | Specific Factor | Impact on Toxicology & Drug Development |

|---|---|---|

| Regulatory | FDA Modernization Act 2.0 & 2025 Animal Testing Phase-out [11] | Enables use of NAMs for regulatory submissions; accelerates adoption. |

| EMA, PMDA, and MHRA promotion of MIDD [11] [15] | Creates global regulatory alignment for computational evidence. | |

| Scientific & Technical | Limitations of animal-to-human translation [12] | Drives demand for more human-relevant predictive models. |

| Advances in AI/ML (e.g., GNNs, Transformers) [9] | Enables analysis of complex chemical-biological interactions. | |

| Expansion of curated toxicity databases (e.g., Tox21, ChEMBL) [9] [14] | Provides high-quality data for training and validating models. | |

| Economic | High cost of animal studies & clinical trial failures [11] [14] | In silico models reduce R&D costs by early identification of toxicants. |

| Market growth of in silico trials (5.5% CAGR) [15] | Signifies industry investment and confidence in the approach. | |

| Ethical | 3Rs principle (Replace, Reduce, Refine) [12] | Aligns research with ethical mandates to minimize animal use. |

Performance Comparison Guide: In Silico AI Models vs. Traditional Methods

This section provides a quantitative and qualitative comparison of predictive performance across key toxicity endpoints, focusing on the context of validating LD50 prediction models.

Predictive Accuracy for Acute Oral Toxicity (LD50)

Direct comparisons between in silico predictions and experimental animal data are essential for validation. A 2025 study leveraging the QSAR Toolbox provided a clear benchmark, predicting LD50 values for several marketed drugs and comparing them to experimental values [13]. The results demonstrate a high degree of accuracy for certain compounds, validating the utility of computational approaches.

Table 2: Comparison of Predicted vs. Experimental LD50 Values for Selected Compounds [13]

| Compound | Predicted LD50 (mg/kg, oral) | Experimental LD50 Range (mg/kg, oral) | Prediction Accuracy | Notes |

|---|---|---|---|---|

| Amoxicillin | 15,000 | Aligns with high experimental values (low toxicity) | High | Close alignment with experimental data. |

| Isotretinoin | 4,000 | Aligns with experimental data | High | Close alignment with experimental data. |

| Risperidone | 361 | Moderate accuracy | Moderate | Model prediction within plausible range. |

| Doxorubicin | 570 | Moderate accuracy | Moderate | Model prediction within plausible range. |

| Guaifenesin | 1,510 | Intermediate consistency | Moderate | Shows utility for screening. |

| Baclofen | 940 (mouse) | ~300-1500 (varies by study/species) | Moderate to High | Demonstrates route/species specific prediction. |

Key Insight: The accuracy of in silico predictions is compound-dependent, with models excelling where chemical domains are well-represented in training data. The ability to generate a reliable estimate for Baclofen for different species and routes (oral mouse, intraperitoneal rat) highlights the models' flexibility [13]. For early-stage screening and prioritization, this level of accuracy is often sufficient to identify compounds with unacceptably high or low toxicity, effectively reducing the number of compounds that require animal testing.

Performance Across Broad Toxicity Endpoints

Beyond acute lethality, AI models are validated against a wide array of regulatory toxicity endpoints. Performance is typically measured by metrics such as Area Under the Receiver Operating Characteristic Curve (AUROC), where a value of 1.0 represents perfect prediction and 0.5 represents chance.

Table 3: Performance Benchmark of AI Models Across Key Toxicity Endpoints

| Toxicity Endpoint | Example Model/Database | Reported Performance (AUROC/Accuracy) | Comparative Advantage Over Traditional Methods |

|---|---|---|---|

| Skin Sensitization | QSAR, Deep Learning Models [12] | High Accuracy | Replaces guinea pig/mouse tests; provides mechanistic insight (key event prediction). |

| Cardiotoxicity (hERG blockade) | Models trained on hERG Central database [9] | AUROC often >0.8 | High-throughput screening alternative to electrophysiology assays; rapid SAR exploration. |

| Drug-Induced Liver Injury (DILI) | Models trained on DILIrank dataset [9] | Variable; top models >0.7 AUROC | Identifies hepatotoxicants missed by animal models due to species-specific metabolism. |

| Carcinogenicity | Integrated QSAR & ML models [12] | Improved accuracy over single tests | More cost-effective and faster than 2-year rodent bioassays; reduces animal use. |

| Endocrine Disruption | ToxCast/Tox21 AI models [12] [9] | Good performance for nuclear receptor targets | Screens thousands of chemicals vs. limited in vivo throughput; identifies mechanisms. |

| Genotoxicity | ICH M7 compliant QSAR models [14] | High sensitivity (>>90%) | Reliable first-tier screening alternative to Ames test, reducing reagent use and time. |

Key Insight: AI models do not uniformly outperform all traditional assays but offer decisive advantages in throughput, cost, and mechanistic clarity. Their strength lies in prioritization and screening, reliably identifying high-risk compounds to guide more resource-intensive testing. Furthermore, hybrid approaches that combine in silico predictions with focused in vitro assays (e.g., for specific metabolic pathways) are emerging as a gold standard for regulatory submissions [12].

Detailed Experimental Protocols for Model Validation

The validation of an in silico LD50 prediction model is a multi-stage process that ensures its scientific rigor and regulatory acceptability.

Protocol for Developing and Validating an AI-Driven LD50 Prediction Model

This protocol outlines a standard workflow for creating a robust model [9] [14].

Data Curation and Preprocessing:

- Source Data: Compile LD50 data from trusted databases such as ACToR (ICE), DSSTox, or curated proprietary sources. Data must include chemical identifier (SMILES, InChIKey), numeric LD50 value (mg/kg), species, route of administration, and source reference [9] [14].

- Standardization: Standardize chemical structures (remove salts, neutralize charges, generate canonical tautomers). Convert LD50 values to a uniform scale (e.g., log10(mmol/kg)).

- Chemical Domain Definition: Calculate chemical descriptors (e.g., Morgan fingerprints, molecular weight, logP) to define the model's Applicability Domain (AD). Compounds within the AD are reliably predictable.

Model Training:

- Algorithm Selection: Choose algorithms based on data size and complexity. Random Forest and Gradient Boosting (XGBoost) are common for structured descriptor data. Graph Neural Networks (GNNs) are preferred for learning directly from molecular graphs [9].

- Feature Engineering: Use molecular fingerprints or learned representations from deep learning.

- Data Splitting: Perform scaffold splitting to ensure training and test sets contain distinct molecular backbones. This rigorously tests the model's ability to generalize to novel chemotypes, preventing over-optimistic performance estimates [9].

Internal and External Validation:

- Internal Validation: Use k-fold cross-validation on the training set to optimize hyperparameters.

- External Validation: Test the final model on a completely held-out dataset not used during training or optimization. This is the primary measure of real-world performance.

- Performance Metrics: For regression (predicting continuous LD50), report Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and coefficient of determination (R²). For classification (e.g., classifying into GHS toxicity categories), report AUROC, accuracy, sensitivity, and specificity [9].

Interpretability and Reporting:

- Apply explainable AI (XAI) techniques like SHAP (SHapley Additive exPlanations) or attention mechanism visualization to identify which chemical substructures contribute most to predicted toxicity [9].

- Document the Applicability Domain, all preprocessing steps, software versions, and hyperparameters to ensure reproducibility.

Protocol for Experimental Validation Using In Vivo Data

To anchor an in silico model in biological reality, prospective or retrospective validation against animal data is required.

- Selection of Test Compounds: Choose a set of 20-30 compounds not included in the model's training set. Include compounds both within and outside the model's defined Applicability Domain to assess its boundaries [13].

- Reference Animal Study Analysis: Use existing, high-quality OECD Guideline-compliant acute oral toxicity studies (e.g., OECD TG 425) from literature or in-house archives. Extract precise LD50 values, confidence intervals, species, strain, and dosing details.

- Blinded Prediction: Input the chemical structures of the test compounds into the in silico model to generate LD50 predictions without access to the experimental results.

- Statistical Comparison: Compare predicted vs. experimental LD50 values using Bland-Altman analysis to assess bias and limits of agreement, and linear regression to evaluate correlation. For categorical classification (e.g., GHS categories), calculate Cohen's kappa to measure agreement beyond chance.

- Discrepancy Analysis: Investigate compounds where predictions and experimental results significantly diverge. Consider factors like species-specific metabolism, impurities in test material, or limitations in the model's training data.

The following diagram illustrates the integrated workflow for developing and validating an AI-driven toxicity prediction model, highlighting the critical feedback loop between computational and experimental validation.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Adopting in silico toxicology requires a blend of computational tools and experimental assets for validation.

Table 4: Essential Research Toolkit for In Silico Toxicology Validation

| Tool/Resource Category | Specific Item | Function & Utility in Validation |

|---|---|---|

| Core Databases | ACToR/ICE, DSSTox, ChEMBL [9] [14] | Provide standardized, curated experimental toxicity data (e.g., LD50) for model training and benchmarking. |

| DrugBank, PubChem [14] | Offer comprehensive chemical, pharmacological, and safety data for known drugs, useful for cross-checking. | |

| Software & Platforms | QSAR Toolbox (OECD) [13] | A regulatory-accepted platform for (Q)SAR, read-across, and LD50 prediction; key for regulatory alignment. |

| ADMETlab, ProTox-3.0, DeepTox [11] [9] | Web servers and suites for predicting various toxicity endpoints; useful for initial screening and comparison. | |

| Commercial Suites (e.g., Certara, Simulations Plus) [15] | Provide enterprise-grade PBPK/PD and QSP modeling platforms integrated with toxicity modules for advanced R&D. | |

| Experimental Validation Assets | Patient-Derived Xenografts (PDXs) & Organoids [16] | Complex in vitro/vivo models used to validate AI-predicted organ-specific toxicities in a human-relevant context. |

| High-Content Screening (HCS) Assays | Generate rich in vitro phenotypic data for compounds, which can be used to train or challenge AI models. | |

| Computational Infrastructure | High-Performance Computing (HPC) / Cloud (AWS, GCP, Azure) | Necessary for training large deep learning models (e.g., GNNs, Transformers) on massive chemical datasets [16]. |

| Explainable AI (XAI) Libraries (SHAP, LIME) | Critical for interpreting model predictions, identifying structural alerts, and building regulatory trust [9]. |

The paradigm shift toward AI-driven prediction is accelerating, with digital twin technology and virtual patient cohorts poised to extend in silico validation beyond single endpoints to simulating entire toxicological pathways in populations [11] [17]. The key challenge remains demonstrating robust external predictability and gaining universal regulatory acceptance for novel chemical entities [12] [18]. Success will depend on the community's commitment to generating high-quality, FAIR (Findable, Accessible, Interoperable, Reusable) data for model training and adopting standardized good in silico practice guidelines.

For researchers, the imperative is clear: competency in computational toxicology is no longer niche but essential. The future of validated safety assessment lies in hybrid workflows that strategically leverage AI for rapid, human-relevant prioritization, guided and confirmed by targeted, ethical experimental science. This integrated approach promises to deliver safer therapeutics to patients faster, fulfilling both ethical mandates and scientific ambitions [12] [11].

The following diagram summarizes this transformative paradigm shift, contrasting the traditional linear pipeline with the new, AI-integrated, and iterative approach to toxicity prediction and drug safety assessment.

Thesis Context: The Critical Role of Databases in ValidatingIn SilicoLD50 Prediction Models

The validation of in silico models for predicting the median lethal dose (LD50) hinges on the quality, scope, and accessibility of the underlying toxicological data. Within the broader thesis of LD50 prediction model research, public databases serve as the essential bedrock for training, testing, and benchmarking algorithms [7]. The transition from traditional animal testing to computational toxicology is driven by the need for efficiency, cost-reduction, and adherence to ethical principles, making robust data repositories more critical than ever [7]. This guide objectively compares four pivotal public resources—TOXRIC, DSSTox, ChEMBL, and PubChem—focusing on their utility in fueling and validating computational models, with particular emphasis on acute toxicity and LD50 endpoints.

Comparative Analysis of Public Toxicity Databases

The landscape of toxicity databases is diverse, with each resource offering unique strengths in content, curation, and intended application. The following analysis synthesizes their core characteristics and specific value for LD50 prediction research.

Table 1: Core Characteristics of Key Public Toxicity Databases

| Feature | TOXRIC | DSSTox | ChEMBL | PubChem |

|---|---|---|---|---|

| Primary Focus | ML-ready toxicology data & benchmarks [19] | High-quality chemical structure curation for risk assessment [20] | Bioactive molecules & drug-like properties [21] | Comprehensive repository of chemical substances & activities [14] |

| Key Provider | Academic Consortium | U.S. Environmental Protection Agency (EPA) [20] | European Molecular Biology Laboratory (EMBL-EBI) [21] | National Institutes of Health (NIH) [14] |

| Total Compounds | ~113,372 [19] | >1,000,000 substances [20] | >2,000,000 compounds [22] | >100 million compounds [14] |

| Toxicity Endpoints | 1,474 across 13 categories (in vivo/in vitro) [19] | Foundational for ToxCast/Tox21 assays; provides toxicity values (ToxVal) [20] [23] | ADMET data, including toxicity endpoints [14] | Massive bioassay results, including toxicity data from multiple sources [14] |

| Unique Strength | Provides pre-computed molecular features, benchmarks, and visualization for model development [19] | High-confidence chemical identifier-structure mapping for accurate data integration [20] | Manually curated bioactivity data (IC50, Ki, etc.) from literature [22] | Unparalleled scale and aggregation of public screening data [14] |

| Data Structure | Endpoint-specific, ML-formatted datasets [19] | Structure-annotated chemical lists [20] | Target-centric bioactivity records [22] | Substance-Compound-Bioassay triple hierarchy [14] |

| Best For | Training & benchmarking ML models for specific toxicity tasks [19] [24] | Building reliable QSAR models and chemical risk assessment [20] | Drug discovery, target profiling, and ADMET prediction [22] | Broad chemical look-up, initial toxicity screening, and data aggregation [14] |

Table 2: Database Utility for Acute Toxicity and LD50 Model Validation

| Aspect | TOXRIC | DSSTox | ChEMBL | PubChem |

|---|---|---|---|---|

| LD50-Specific Data | Extensive curated acute toxicity data, including LD50, LDLo, TDLo for multiple species [19] [24]. | Provides underlying chemical data for ToxCast; toxicity values available via ToxVal [20] [14]. | Contains LD50 data within bioactivity records, though not its primary focus. | Vast amounts of LD50 data aggregated from many sources, requiring significant curation [14]. |

| Data Readiness for ML | High: Datasets are pre-curated, standardized (e.g., to -log(mol/kg)), and split for ML [19] [24]. | Medium: Provides clean chemical inputs; toxicity endpoints often need to be assembled from related projects. | Medium: Bioactivity data is clean, but extracting and formatting specific toxicity endpoints requires work. | Low: Offers raw scale; extracting a clean, unified LD50 dataset requires extensive filtering and deduplication. |

| Support for Multi-Species Prediction | Excellent: Explicitly includes endpoints across >15 species, enabling studies on extrapolation [19] [24]. | Good: Supports models through chemical data for eco-toxicology and human health [20]. | Moderate: Focus is human targets, but contains data from other species. | Variable: Contains data for many species, but not systematically organized for cross-species modeling. |

| Benchmarking Resources | Provides built-in benchmarks and baseline model performance for endpoints [19]. | Not a primary feature; supports benchmarking indirectly via reliable data. | Not a primary feature. | Not a primary feature. |

| Use Case in Validation | Ideal for training and testing new models against standardized benchmarks. | Ideal for ensuring chemical structure quality in training data. | Useful for integrating toxicity with broader pharmacological profiles. | Useful for gathering supplemental data or validating model predictions on novel structures. |

The validation of LD50 prediction models relies on rigorous, reproducible methodologies. The following protocol, exemplified by the ToxACoL study which utilized TOXRIC data, outlines a standard workflow for developing and validating a multi-species acute toxicity model [24].

1. Data Acquisition and Curation:

- Source: Data for 59 acute toxicity endpoints (e.g., mouse oral LD50, human oral TDLo) were extracted from the TOXRIC database and PubChem [24].

- Standardization: All toxicity values (LD50, LDLo, TDLo) were converted to a uniform chemical unit of -log(mol/kg) to enable cross-endpoint comparison and modeling [24].

- Compound Representation: Molecular structures were encoded as Simplified Molecular Input Line Entry System (SMILES) strings, which were then used to generate features (e.g., molecular fingerprints, graph representations).

2. Model Architecture and Training (ToxACoL Paradigm):

- Adjoint Correlation Learning: This paradigm introduces an endpoint graph where nodes represent different toxicity endpoints (e.g., rat intravenous LD50, rabbit dermal LD50). Edges represent learned relationships between these endpoints [24].

- Dual Learning Pathway: The model operates through two interacting branches:

- A compound branch processes molecular features through neural networks.

- An endpoint branch processes endpoint information via graph convolution on the endpoint graph.

- Information Fusion: At each layer, correlation operations fuse information between the two branches, generating endpoint-aware molecular representations. This allows knowledge from data-rich endpoints (e.g., rat LD50) to inform predictions for data-scarce endpoints (e.g., human TDLo) [24].

3. Validation and Performance Metrics:

- Evaluation: Model performance was rigorously evaluated using stratified cross-validation to ensure robustness.

- Key Metrics: Primary metrics included the Coefficient of Determination (R²) and Root Mean Square Error (RMSE) for regression tasks on continuous toxicity values [24].

- Result: The ToxACoL model demonstrated significant performance improvements, particularly for data-scarce human endpoints, with R² improvements of 43%-87% compared to state-of-the-art baselines, while also reducing required training data by 70-80% for some endpoints [24].

Database-Driven Workflow for LD50 Model Validation

Experimental Protocol for Multi-Species Toxicity Model Development

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Computational Toxicology Research

| Item | Function in Research |

|---|---|

| Standardized Toxicity Datasets (e.g., from TOXRIC) | Pre-curated, machine-learning-ready data for training and benchmarking predictive models for specific endpoints like LD50 [19]. |

| High-Quality Chemical Identifiers (e.g., DSSTox SID) | Ensures accurate linkage between chemical structures and associated toxicological data, which is fundamental for building reliable QSAR models [20]. |

| Canonical SMILES Strings | A standardized text representation of molecular structure used as the primary input for most modern graph-based and deep learning models [24]. |

| Molecular Descriptors & Fingerprints (e.g., Morgan Fingerprints) | Numerical representations of chemical structures generated by toolkits like RDKit, used as feature vectors in traditional machine learning models [7]. |

| Graph Neural Network (GNN) Frameworks | Software libraries (e.g., PyTorch Geometric, DGL) for implementing models that directly process molecular graphs, capturing complex structure-activity relationships [7] [24]. |

| Toxicity Value Units (mg/kg, -log(mol/kg)) | Standardized units, particularly the molar-based -log(mol/kg), are crucial for comparing toxicity across compounds and endpoints in regression modeling [19] [24]. |

| Benchmark Performance Metrics (R², RMSE) | Standard statistical metrics used to quantitatively validate and compare the predictive performance of regression models for continuous toxicity values like LD50 [24]. |

The validation of in silico models for predicting median lethal dose (LD50) represents a critical frontier in modern toxicology and drug development. These computational models promise to reduce reliance on animal testing, accelerate safety assessments, and lower research costs [25]. However, their reliability is fundamentally dependent on the quality and integration of the diverse biological data used for their training and validation. This process necessitates a synthesis of in vivo data from whole organisms, in vitro data from controlled cellular systems, and clinical data from human subjects [26] [27].

The core challenge lies in the inherent strengths and limitations of each data type. In vivo studies in animals provide a holistic view of systemic toxicity, pharmacokinetics, and complex organismal responses but are resource-intensive, ethically contentious, and suffer from interspecies translation gaps [28] [29]. In vitro models offer a controlled, high-throughput, and human-cell-based alternative for mechanistic studies but often fail to replicate the intricate physiology of a whole organism [29] [30]. Clinical data is the ultimate gold standard for human relevance but is often limited in availability for early-stage toxicity prediction and is confounded by patient variability [26]. Therefore, robust LD50 prediction models are not built on a single data source but on a strategic, integrated framework that leverages the complementary value of all three. This guide compares the performance characteristics of these data sources and outlines methodologies for their effective integration within the context of validating next-generation in silico toxicology models.

The development and validation of predictive toxicology models require a clear understanding of the attributes of each foundational data stream. The following table provides a structured comparison of in vivo, in vitro, and clinical data sources across key dimensions relevant to LD50 model building.

Table 1: Comparative Analysis of Data Sources for LD50 Prediction Model Development

| Aspect | In Vivo Data (Animal Models) | In Vitro Data (Cellular/Subcellular Models) | Clinical Data (Human Subjects) |

|---|---|---|---|

| Physiological Relevance | High; captures systemic, organ-level interactions and ADME processes. | Low to Moderate; limited to specific cell types or pathways, lacks systemic integration. | Highest; direct human relevance, includes full genetic and physiological complexity. |

| Data Generation Cost & Time | Very High (costly animal care, lengthy protocols) and Time-Consuming [28]. | Low to Moderate (relatively inexpensive materials, scalable assays) and Rapid [28] [29]. | Extremely High (clinical trials are costly and long) and Slow. |

| Throughput & Scalability | Low; limited by ethical and practical constraints on animal numbers. | Very High; amenable to automation in 96/384-well plates for screening thousands of compounds [29]. | Very Low; patient recruitment and trial conduct are inherently limited. |

| Primary Role in Model Building | Provides benchmark toxicity endpoints (e.g., experimental LD50) for model training and validation [25]. | Elucidates mechanistic pathways and generates high-dimensional bioactivity data for feature identification. | Serves as the ultimate validation set to assess translational accuracy and human predictive performance [26]. |

| Key Limitations | Ethical concerns, interspecies translation uncertainty, high variability [28] [31]. | Poor correlation with whole-organism outcomes, oversimplified biology [29] [30]. | Scarce for early toxicity prediction, ethically restricted, highly heterogeneous. |

| Typical Endpoints for LD50 Context | Observed mortality, histopathology, clinical chemistry, organ weights. | Cell viability (IC50), cytotoxicity, apoptosis, specific pathway inhibition (e.g., AChE activity) [32]. | Adverse event reports, pharmacokinetic data from Phase I trials, overdose case studies. |

Methodologies for Data Generation and Integration

Experimental Protocols for Core Data Generation

Valid integration begins with rigorous, standardized protocols for generating each data type.

In Vivo Acute Oral Toxicity Study (OECD Guideline 423/425): This is a standard source for experimental LD50 values. The protocol involves administering a single oral dose of a test compound to groups of laboratory rodents (typically rats). Animals are closely observed for signs of toxicity, morbidity, and mortality over 14 days. The LD50 value, expressed in mg/kg body weight, is calculated using statistical methods (e.g., probit analysis) based on the dose-mortality relationship [33] [25]. Histopathological examination of organs provides supplemental data on target organ toxicity.

In Vitro Cytotoxicity Screening (e.g., for Mechanistic Insight): A common protocol involves treating human cell lines (e.g., HepG2 liver cells) with a range of compound concentrations in 96-well plates. After incubation (24-72 hours), cell viability is measured using assays like MTT or ATP-luciferase. The half-maximal inhibitory concentration (IC50) is calculated. While not directly equivalent to LD50, patterns of cytotoxicity across cell types and assays can inform quantitative structure-activity relationship (QSAR) models about potential mechanisms and relative toxicity [29] [30].

Clinical Data Integration via Silent Pilot Trials: As demonstrated in recent research, clinical predictive models can be validated through a structured "silent pilot" framework before active clinical deployment [26]. The methodology involves:

- Technical Component Analysis: Running the model and its clinical decision support (CDS) software in the background of a live clinical environment (e.g., Emergency Department) to check for coding errors and operational failures.

- Technical Fidelity Analysis: Comparing the model's in vivo (live clinical) screening decisions against its in vitro (original test environment) outputs. This quantifies the agreement (e.g., raw agreement percentage, kappa statistic) to ensure performance is maintained in a real-world, noisy data setting [26].

Framework for Multi-Source Data Integration

A practical workflow for integrating these disparate data types to build and validate an in silico LD50 model is shown in the following diagram.

Performance Benchmarking of In Silico Prediction Tools

A critical step in model validation is benchmarking the performance of different in silico tools, which are trained on integrated data from the sources described above. These tools are essential for applying the principles of Next-Generation Risk Assessment (NGRA), which prioritizes prediction before animal testing [32] [33]. The following table compares widely used software for predicting acute oral toxicity.

Table 2: Comparison of In Silico Tools for Acute Oral Toxicity (LD50) Prediction

| Tool Name | Primary Methodology | Key Advantages | Reported Performance & Application | Major Limitations |

|---|---|---|---|---|

| QSAR Toolbox (OECD) | Read-across, structural analogue categorization [33]. | Endorsed by regulatory bodies (OECD, ECHA); excellent for filling data gaps for structurally similar compounds. | Used to predict LD50 for V-series nerve agents, identifying VX and VM as most toxic [33]. | Performance highly dependent on the availability of close analogues in the database. |

| TEST (US EPA) | Consensus of multiple QSAR methods (Hierarchical, FDA, Nearest Neighbor) [32] [33]. | Open-source; provides a consensus prediction from several models, improving reliability. | Demonstrated utility in predicting toxicity of Novichok agents (e.g., A-232, A-230) [32]. | Consensus can mask high uncertainty if individual model predictions diverge widely. |

| ProTox-II (Browser Application) | Machine learning based on molecular similarity and fragment counts. | Web-based, user-friendly, provides toxicity predictions across multiple endpoints. | Applied in tandem with QSAR Toolbox and TEST for V-agent profiling [33]. | "Black box" nature of models; less transparent than read-across. |

| Integrated AI/ML Models (e.g., from [25]) | Advanced ensemble methods combining SAR, QSAR, and knowledge-based rules. | Can achieve high predictive accuracy (e.g., RMSE <0.50 log units) by leveraging large, curated datasets. | Developed on a database of ~12,000 rat LD50 values, showing balanced accuracy >0.80 for binary toxicity classification [25]. | Requires significant expertise and computational resources to develop and maintain. |

Validation Cycle and Pathway Analysis

The ultimate test of an integrated in silico model is its ability to accurately predict outcomes in a biological system. This involves a continuous validation cycle and an understanding of the toxicological pathways it aims to simulate. For neurotoxic agents like organophosphates (e.g., Novichoks, V-series), a key mechanism is acetylcholinesterase (AChE) inhibition, leading to a cholinergic crisis [32] [33]. The diagram below illustrates this pathway and the corresponding points where different data types inform model validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Building and validating integrated models requires a specific set of tools and reagents. The following table details key solutions for the experimental workflows discussed.

Table 3: Key Research Reagent Solutions for Integrated Toxicity Studies

| Item / Solution | Function in Research | Relevance to Data Integration |

|---|---|---|

| Primary Human Cell Lines & Co-culture Systems (e.g., hepatocytes, neurons) | Provide a human-relevant in vitro system for high-throughput cytotoxicity screening and mechanistic studies [29]. | Generates in vitro bioactivity data (e.g., IC50) that serves as input features for in silico models and helps bridge the gap to in vivo outcomes. |

| Organ-on-a-Chip (OOC) Platforms | Advanced microphysiological systems that emulate organ-level structure and function, including fluid flow and mechanical cues [29]. | Produces in vitro data with higher physiological relevance, improving the translational value of mechanistic data for model training. |

| Tandem Mass Tag (TMT) Proteomics Kits | Enable multiplexed, quantitative analysis of protein expression changes in tissues or cells following toxicant exposure [27]. | Generates rich, multi-parametric in vitro/vivo "omics" data that can be used to discover novel toxicity biomarkers and refine predictive models. |

| Toxicity Estimation Software Tool (TEST) | An open-source software suite that employs multiple QSAR methodologies to predict acute toxicity endpoints from chemical structure [32] [33]. | A key in silico tool for generating initial predictions, which are then validated against experimental in vivo and in vitro data. |

| Curated Toxicity Databases (e.g., EPA DSSTox, NICEATM LD50 inventory) | Centralized repositories of high-quality experimental toxicity data (e.g., rat oral LD50) [25]. | Provide the essential ground-truth in vivo data required for both training machine learning models and benchmarking their predictions. |

| Patient-Derived Xenograft (PDX) or Cell-Derived Xenograft (CDX) Mouse Models | In vivo models where human tumor cells/tissues are grown in immunocompromised mice, used for efficacy and toxicity testing [27]. | Offer a hybrid data source that combines human-derived cellular material with a whole-organism (in vivo) context, aiding translation. |

This comparison guide objectively evaluates the performance and applicability of leading in silico models for predicting rat acute oral toxicity (LD50). Framed within a broader thesis on model validation, the analysis focuses on defining the domain where these computational tools provide reliable predictions and identifying their inherent limitations for researchers and drug development professionals.

Performance Comparison of LD50 Predictive Models

The performance of predictive models is not absolute but is intrinsically tied to their Applicability Domain (AD)—the chemical, mechanistic, and data space where reliable predictions can be expected. The following tables compare two established expert systems, TEST and TIMES, based on a large-scale evaluation using a curated reference dataset of ~16,713 studies for 11,992 substances compiled under the ICCVAM Acute Toxicity Workgroup (ATWG) [34].

Table 1: Core Model Architectures and Training

| Model | Core Approach | Training Set Size | Reported Training Performance (R²) | Key Characteristics |

|---|---|---|---|---|

| TEST (Toxicity Estimation Software) | Consensus of QSAR methods (Hierarchical Clustering, FDA, Nearest Neighbor) [34]. | 7,413 chemicals [34]. | 0.626 (External test set) [34]. | Statistical, consensus-driven; can make predictions for a broad chemical space. |

| TIMES (Tissue Metabolism Simulator) | Hybrid expert system: baseline QSAR + 73 mechanistic categories [34]. | 1,814 chemicals [34]. | 0.85 (Training set) [34]. | Mechanistically grounded; predictions are based on assigned toxicological categories. |

Table 2: Performance on ICCVAM ATWG Reference Dataset

| Performance Metric | TEST Model | TIMES Model | Notes |

|---|---|---|---|

| Coverage (of 10,886 processed chemicals) | Higher | Lower | TEST could generate predictions for more chemicals in the reference set [34]. |

| Overall Predictive Performance | Similar | Similar | Performance was comparable, but models showed different strengths/weaknesses [34]. |

| RMSE (Root Mean Square Error) | ~0.594 [34] | Not explicitly stated | For reference, modern integrated models on similar data can achieve RMSE <0.50 [35]. |

| Chemical Features of Low Accuracy | Distinct patterns | Distinct patterns | Enrichment analysis using ToxPrint fingerprints found different chemical features were associated with inaccurate predictions for each model [34]. |

Table 3: Hazard Classification Performance (Example from Modeling Initiatives)

| Endpoint (Classification) | Model Type | Reported Balanced Accuracy | Regulatory Context |

|---|---|---|---|

| Binary (Very Toxic: LD50 < 50 mg/kg) | Integrated Modeling Strategies | > 0.80 [35] | U.S. EPA, GHS hazard labeling [35]. |

| Binary (Non-Toxic: LD50 > 2000 mg/kg) | Integrated Modeling Strategies | > 0.80 [35] | U.S. EPA, GHS hazard labeling [35]. |

| Multi-class (e.g., GHS 5-category) | Integrated Modeling Strategies | > 0.70 [35] | Globally Harmonized System (GHS) classification [35]. |

Experimental Protocols for Model Evaluation

The reliable evaluation of predictive models depends on rigorous, standardized protocols for data curation and performance assessment, as demonstrated by the ICCVAM ATWG initiative [34].

Protocol 1: Compilation and Curation of the Reference LD50 Dataset

Objective: To create a high-quality, consolidated dataset from diverse sources to serve as a benchmark for evaluating model performance and variability [34].

- Data Aggregation: LD50 values were collated from multiple public databases (e.g., OECD eChemPortal, Acutetoxbase, ChemIDplus) [34].

- Deduplication and Error Correction: Removal of duplicate study records and correction of obvious transcription errors (e.g., "20005000 mg/kg") [34].

- Structure Standardization: Chemical structures were retrieved and standardized to "QSAR-ready" Simplified Molecular-Input Line-Entry System (SMILES) using the EPA's CompTox Chemicals Dashboard to ensure consistency. This step includes desalting and neutralizing structures [34].

- Representative Value Calculation: For chemicals with multiple point estimates (≥3), a robust processed LD50 was derived:

- Values outside the Tukey fence (1.5 * interquartile range) were removed as extremes.

- The median of the lowest quartile of the remaining values was calculated [34].

Protocol 2: Model Prediction and Enrichment Analysis for Domain Identification

Objective: To evaluate model accuracy and systematically identify chemical subclasses where predictions fall outside acceptable limits [34].

- Prediction Generation: Standardized QSAR-ready SMILES for the curated dataset are used as input for the models (TEST and TIMES).

- Performance Benchmarking: Model predictions are compared against the processed experimental LD50 values. The subset of data with high experimental variability is used to contextualize model error [34].

- Chemical Enrichment Analysis: Using ToxPrint chemical fingerprints (a 729-bit binary representation), chemicals are grouped by structural and functional features [34].

- Domain Identification: Statistical analysis identifies specific ToxPrint features that are significantly enriched in the set of chemicals where model predictions lie outside the 95% confidence interval of experimental variability. These features help delineate the model's AD and highlight structural domains prone to over- or under-prediction [34].

Visualizing Workflows and Conceptual Frameworks

LD50 Data Curation and Model Evaluation Workflow

Adverse Outcome Pathway (AOP) Predictive Framework

Categorizing Model Uncertainty for Decision-Making

Table 4: Essential Resources for In Silico LD50 Prediction Research

| Resource / Tool | Primary Function | Relevance to Applicability Domain |

|---|---|---|

| EPA CompTox Chemicals Dashboard | Provides curated chemical structures, properties, and "QSAR-ready" SMILES [34]. | Essential for standardizing chemical inputs, ensuring consistency between training and prediction compounds. |

| TEST (Toxicity Estimation Software) | Free QSAR software that estimates toxicity from molecular structure using a consensus approach [34]. | A widely used tool for generating predictions; understanding its consensus methodology is key to interpreting its AD. |

| TIMES Platform | Commercial hybrid expert system integrating QSARs with mechanistic SARs and metabolic simulators [34]. | Useful for predictions grounded in mechanistic reasoning; its AD is defined by its covered toxicological categories. |

| ToxPrint Fingerprints | A set of 729 chemical structure and feature descriptors (Chemotyper software) [34]. | Critical for enrichment analysis to identify chemical features associated with model error, thereby mapping the AD. |

| ICCVAM ATWG Reference Dataset | A large, publicly curated dataset of rat acute oral LD50 values [34] [35]. | The benchmark for objective model evaluation and a source of training data for new model development. |

| AOP-Wiki (OECD) | Knowledgebase of Adverse Outcome Pathways [36]. | Provides a mechanistic framework for interpreting model alerts and linking molecular predictions to higher-order toxicity. |

Building and Applying Predictive Models: From QSAR to Advanced AI Architectures

The prediction of acute oral toxicity, quantified as the median lethal dose (LD₅₀), is a cornerstone of chemical safety assessment in drug development, forensics, and environmental health. Traditional in vivo testing is resource-intensive, ethically challenging, and cannot keep pace with the vast number of new chemical entities requiring evaluation. This reality has propelled the development and validation of in silico predictive models as indispensable tools within a modern research framework focused on the 3Rs principle (Replacement, Reduction, and Refinement of animal use) [37] [38].

This guide delineates a comprehensive modeling pipeline for LD₅₀ prediction, framed within the critical research thesis of model validation. It moves beyond a simple software tutorial to provide researchers and drug development professionals with a rigorous, evidence-based comparison of methodologies—from established Quantitative Structure-Activity Relationship (QSAR) consensus models to cutting-edge hybrid neural networks. We objectively analyze performance data, detail experimental protocols for validation, and provide the essential toolkit for implementing these approaches, thereby empowering scientists to build confidence in computational predictions and integrate them effectively into safety decision-making [14] [39].

Pipeline Stage 1: Data Collection & Curation

The foundation of any robust predictive model is high-quality, well-curated data. This initial stage is critical, as the applicability domain and predictive accuracy of the final model are directly constrained by the chemical space and data quality of the training set [40] [41].

Primary Data Sources: Key databases for acute oral toxicity (LD₅₀) data include:

- ChemIDplus: A comprehensive database from the U.S. National Library of Medicine containing toxicity data for hundreds of thousands of chemicals [40].

- Toxin and Toxin Target Database (T3DB): Provides detailed information on toxic chemicals, including experimental toxicity values [40].

- DrugBank and ChEMBL: While focused on drugs and bioactive molecules, these curated databases contain valuable toxicity data for pharmaceutical compounds [14].

- EPA Databases: Public resources from the U.S. Environmental Protection Agency include toxicity data used in regulatory risk assessments [40].

Curation Protocol: Raw data must be rigorously processed.

- Standardization: Chemical structures (often from SMILES strings) are standardized (e.g., neutralizing charges, removing salts) and converted to consistent formats (e.g., 2D/3D SDF) [40].

- Deduplication: Remove duplicate entries for the same compound.

- Filtering: Exclude entries with missing critical data (e.g., exact LD₅₀ value, exposure route) or compounds outside the scope (e.g., inorganic metals, mixtures) [40].

- Annotation: Assign categorical labels based on LD₅₀ cut-offs (e.g., for binary classification: toxic/nontoxic at 500 mg/kg) or Globally Harmonized System (GHS) toxicity categories [42] [40].

Pipeline Stage 2: Descriptor Calculation & Feature Selection

Once a clean dataset is obtained, molecular descriptors are calculated to translate chemical structures into numerical features that machine learning algorithms can process.

Descriptor Types:

- Physicochemical: LogP (lipophilicity), molecular weight, polar surface area, number of hydrogen bond donors/acceptors [40].

- Topological & Structural: Molecular fingerprints (e.g., MACCS keys), graph-based indices describing molecular connectivity [40].

- ADMET-Related: Predictions for absorption, distribution, metabolism, excretion, and toxicity properties generated by platforms like ADMETlab [40] [43].

Feature Selection: Not all calculated descriptors are relevant. Techniques like variance thresholding, correlation analysis, and feature importance ranking (e.g., from Random Forest models) are used to reduce dimensionality from hundreds of descriptors to a critical set of 50-100, preventing model overfitting and improving interpretability [40].

Pipeline Stage 3: Model Building & Training

This stage involves selecting an algorithm and training it on the curated data. The choice of model depends on the data size, problem type (regression for exact LD₅₀ or classification for category), and desired interpretability.

Model Architectures:

- Consensus QSAR Models: Tools like TEST, CATMoS, and VEGA employ individual QSAR models (e.g., based on regression, partial least squares, or neural networks). A consensus approach aggregates predictions from multiple models or tools to improve reliability [42] [41].

- Traditional Machine Learning: Algorithms like Random Forest (RF), Support Vector Machine (SVM), and k-Nearest Neighbors (kNN) are widely used for classification tasks [40].

- Advanced Deep Learning: Hybrid Neural Networks (HNN) that combine architectures like Convolutional Neural Networks (CNN) for structural feature extraction with Feed-Forward Neural Networks (FFNN) for regression/classification have shown state-of-the-art performance. For example, the HNN-Tox model was trained on 59,373 chemicals and demonstrated high accuracy in dose-range toxicity prediction [40].

Experimental Protocol for Model Training (e.g., HNN-Tox):

- Dataset Partitioning: The full dataset (e.g., 59,373 chemicals) is randomly split into a training set (e.g., ~90%) and a hold-out test set (e.g., ~10%) [40].

- Model Configuration: A hybrid architecture is defined. For instance, a CNN branch processes molecular fingerprints, while an FFNN branch processes physicochemical descriptors. The outputs are fused in later layers [40].

- Training Loop: The model is trained on the training set using a loss function (e.g., cross-entropy for classification) and an optimizer (e.g., Adam). Performance is monitored on a separate validation set (split from the training set) to avoid overfitting [40].

- Hyperparameter Tuning: Critical parameters (learning rate, network depth, dropout rate) are optimized via grid or random search using the validation set performance [40].

Pipeline Stage 4: Validation & Performance Benchmarking

Rigorous validation is the core of the research thesis for establishing model credibility. It assesses how predictions generalize to new, unseen data.

- Internal Validation: Uses the training data itself, typically via k-fold cross-validation, to provide an initial performance estimate [38].

- External Validation: The gold standard. The trained model is used to predict the hold-out test set that was never used during training or tuning. Performance here best simulates real-world use [40].

- True External Validation: Predicting on a completely independent dataset from a different source (e.g., validating a model built on ChemIDplus data using the NTP dataset) [40].

- Performance Metrics:

- For Classification (GHS Category): Accuracy, Sensitivity, Specificity, Balanced Accuracy, and Matthew’s Correlation Coefficient (MCC).

- For Regression (LD₅₀ Value): Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and Coefficient of Determination (R²).

Comparative Performance Analysis of Modeling Approaches

The table below summarizes key performance data from recent studies, enabling an objective comparison of different modeling strategies.

Table 1: Performance Comparison of In Silico LD₅₀ Prediction Models

| Model / Approach | Dataset & Context | Key Performance Metric | Reported Outcome | Strategic Advantage |

|---|---|---|---|---|

| Conservative Consensus Model (CCM) [42] | 6,229 organic compounds; predicts GHS category from rat oral LD₅₀. | Under-prediction Rate (Health Protective Bias) | 2% (Lowest among compared models) | Maximizes safety; ideal for priority screening where missing a hazard is unacceptable. |

| TEST (Individual Model) [42] | Same dataset as above. | Under-prediction Rate | 20% | General-purpose QSAR tool. |

| CATMoS (Individual Model) [42] | Same dataset as above. | Under-prediction Rate | 10% | Consensus platform integrating multiple models. |

| VEGA (Individual Model) [42] | Same dataset as above. | Under-prediction Rate | 5% | User-friendly platform with good explainability. |

| Hybrid Neural Network (HNN-Tox) [40] | 59,373 chemicals; binary classification (toxic/nontoxic at 500 mg/kg). | Predictive Accuracy (External Test Set) | 84.9% (with 51 descriptors) | Handles large, diverse chemical spaces; capable of dose-range prediction. |

| Integrated In Silico Workflow [43] | Case study on fentanyl analogs; uses 8+ tools (ProTox, ADMETlab, etc.). | Qualitative Hazard Identification | Identified cardiotoxicity (hERG), organ-specific effects for valerylfentanyl. | Provides a weight-of-evidence approach; mitigates limitations of single tools. |

The data reveals a clear trade-off: the Conservative Consensus Model (CCM) is optimized for minimal under-prediction (2%), making it exceptionally health-protective, though it has a higher over-prediction rate (37%) [42]. In contrast, advanced Hybrid Neural Networks like HNN-Tox achieve high overall accuracy (~85%) on large, diverse datasets [40].

Pipeline Stage 5: Prediction & Interpretation

The final stage involves deploying the validated model to predict new compounds and interpreting the results within a defined applicability domain.

- Making a Prediction: The SMILES string of the new compound is input, descriptors are calculated, and the model outputs a prediction (LD₅₀ value or toxicity class) [41].

- Applicability Domain (AD) Assessment: It is crucial to determine if the new compound is structurally similar to the training set. If it falls outside the model's AD, the prediction should be flagged as unreliable [41] [43].

- Interpretation & Reporting:

- For QSAR models, some tools identify toxicophores—substructural features associated with toxicity [43].

- For complex models like HNNs, post-hoc explainable AI (XAI) methods (e.g., SHAP values) can highlight which molecular features drove the prediction.

- Predictions should always be reported with confidence estimates (e.g., probability scores) and AD analysis [41].

Visualizing the Integrated Prediction Workflow

The following diagram synthesizes the complete modeling pipeline, from data sourcing to final decision-making, incorporating both single-model and consensus strategies.

Integrated In Silico LD50 Prediction Pipeline

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Computational Tools & Resources for In Silico Toxicity Prediction

| Tool / Resource Name | Type / Category | Primary Function in the Pipeline | Key Feature / Application |

|---|---|---|---|

| OECD QSAR Toolbox [41] | Integrated Software Suite | Data curation, read-across, (Q)SAR model application. | Profiling chemicals for structural alerts and filling data gaps via read-across; supports the WoE approach. |

| VEGA Platform [42] [41] [43] | QSAR Model Platform | Making predictions for multiple toxicological endpoints. | User-friendly interface; provides predictions with reliability and applicability domain indices for various models (acute toxicity, mutagenicity, etc.). |

| TEST (T.E.S.T.) [42] [41] | QSAR Software | Estimating toxicity values from molecular structure. | Provides multiple estimation methods (e.g., group contribution, neural network) for endpoints like oral LD₅₀ and mutagenicity. |

| ADMETlab [40] [43] | Web-Based Prediction Platform | Calculating ADMET and toxicity descriptors/predictions. | Generates a large profile of ~119 properties, useful as descriptors for machine learning or for independent endpoint checks. |

| ProTox 3.0 [43] | Web-Based Prediction Platform | Predicting various toxicity endpoints, including acute oral toxicity. | Provides predicted LD₅₀ values, toxicity classes, and visualizations of potential toxicophores. |

| Schrodinger Suite (Canvas, QikProp) [40] | Commercial Computational Chemistry Software | Molecular descriptor calculation and featurization. | Used in research to generate thousands of physicochemical and topological descriptors from 2D/3D structures for model building. |

| Python (scikit-learn, TensorFlow/PyTorch) | Programming Libraries | Building, training, and validating custom machine learning/deep learning models. | Offers full flexibility for implementing algorithms like RF, SVM, and custom HNN architectures (e.g., HNN-Tox) [40]. |

Case Study: Application in Forensic Toxicology

The integrated workflow finds critical application in forensic toxicology, particularly for assessing Novel Psychoactive Substances (NPS) like synthetic opioids, where experimental data is scarce. A 2025 study on fentanyl and valerylfentanyl exemplifies this [43].

- Experimental Protocol:

- Input: SMILES structures of fentanyl and valerylfentanyl were obtained.

- Multi-Tool Prediction: Structures were submitted to a battery of 8+ in silico tools (e.g., ProTox 3.0, TEST, VEGA, ADMETlab 3.0) to predict endpoints: acute oral LD₅₀, organ-specific toxicity, hERG inhibition (cardiotoxicity), skin irritation, and genotoxicity.

- Results Synthesis: Predictions were aggregated. Valerylfentanyl showed high acute toxicity (predicted LD₅₀ as low as 18.0 mg/kg by ProTox), a 95.7% probability of hERG inhibition, and high alerts for lung and cardiovascular toxicity.

- Structural Analysis: Tools identified the piperidine-related toxicophore as responsible for the effects.

- Conclusion: The integrated in silico approach provided a rapid, ethical hazard profile, confirming that minor structural modifications (from fentanyl to valerylfentanyl) significantly alter toxicity, supporting risk assessment in forensic and clinical contexts [43].

Visualizing a Multi-Tool Consensus Strategy

The case study above utilized a consensus strategy by employing multiple independent tools. The following diagram illustrates this specific methodological approach.

Multi-Tool Consensus Strategy for NPS Hazard Assessment

The modeling pipeline for LD₅₀ prediction has evolved from a simple QSAR exercise to a sophisticated, multi-stage process integrating big data, advanced machine learning, and rigorous validation. As evidenced by comparative studies, no single model is universally superior; the choice between a health-protective consensus model (CCM), a high-accuracy hybrid neural network (HNN-Tox), or a multi-tool weight-of-evidence approach must be strategically aligned with the research or regulatory objective—be it early hazard screening, lead compound optimization, or forensic case assessment [42] [40] [43].

The future of the field lies in enhancing model interpretability, expanding high-quality training data, and establishing standardized validation protocols to meet evolving regulatory expectations for New Approach Methodologies (NAMs) [41] [38]. By adhering to the comprehensive pipeline detailed herein—meticulous data curation, transparent model building, exhaustive validation, and cautious interpretation within applicability domains—researchers can robustly validate in silico LD₅₀ models and confidently integrate them as indispensable components of modern, ethical toxicological science.

The validation of in silico LD50 prediction models is fundamentally constrained by the choice of molecular representation. This initial step, which translates a chemical structure into a computationally interpretable format, directly determines a model's capacity to learn the complex relationships between structure and biological activity. Within the context of regulatory acceptance and health-protective toxicology, selecting an appropriate representation is not merely a technical decision but a foundational one that influences predictive accuracy, interpretability, and mechanistic plausibility [42] [9].

The field has evolved from traditional quantitative structure-activity relationship (QSAR) models relying on hand-crafted descriptors to modern artificial intelligence (AI) and machine learning (ML) approaches that can learn representations directly from data [7] [9]. This shift is driven by the need to predict complex toxicity endpoints like acute oral toxicity (LD50) more reliably, thereby reducing late-stage drug attrition and reliance on animal testing [44]. The core challenge lies in balancing molecular fidelity with computational efficiency. While quantum mechanical descriptions offer the highest precision, they are often prohibitively expensive for large-scale screening [45]. Consequently, most practical workflows rely on simplified representations: molecular descriptors, fingerprints, and graph-based inputs, each with distinct advantages and limitations for modeling LD50 [45] [46].

This guide provides an objective comparison of these three paradigms, focusing on their application in validating acute oral toxicity prediction models. We present supporting experimental data, detailed protocols from key studies, and a framework to guide researchers and drug development professionals in selecting the optimal representation for their specific validation goals.

Comparative Analysis of Representation Paradigms

The performance of a representation type is contextual, varying with dataset size, endpoint complexity, and model architecture. The following section provides a structured comparison based on quantitative benchmarks.

Definition and Characteristics

- Molecular Descriptors: These are numerical quantities that capture specific physicochemical or topological properties of a molecule (e.g., molecular weight, logP, topological polar surface area, number of rotatable bonds). They are often based on expert knowledge and can be directly linked to biochemical mechanisms, such as permeability or metabolic stability [7] [9].

- Molecular Fingerprints: These are typically bit-string vectors where each bit indicates the presence or absence of a particular substructural pattern or path within the molecule. Common types include MACCS keys (structural keys), Morgan fingerprints (circular fingerprints), and data-driven deep learning fingerprints. They excel at rapid similarity searching and are widely used in QSAR and virtual screening [46] [47].

- Graph-Based Inputs: In this representation, a molecule is treated natively as a graph, with atoms as nodes and bonds as edges. This format is the direct input for graph neural networks (GNNs), which can learn features by propagating information across the molecular graph. This approach automatically captures intricate structural and relational information without manual feature engineering [9] [46].

Quantitative Performance Comparison

The table below summarizes key performance metrics for different representation types as reported in benchmark studies for toxicity and ADMET property prediction.

Table 1: Performance Comparison of Molecular Representation Types

| Representation Type | Example / Variant | Best-Performing Endpoint (Example) | Reported Performance Metric | Key Advantage | Primary Limitation |

|---|---|---|---|---|---|

| Classical Descriptors | 2D/3D Molecular descriptors (e.g., from RDKit) | Acute Oral Toxicity (LD50) [47] | Comparable to fingerprints for many endpoints [47] | High interpretability; Direct link to mechanism | May not capture complex structural patterns; requires expert curation |

| Rule-Based Fingerprints | MACCS, Morgan (ECFP4) | Hepatic & Cardiac Toxicity [47] | BACC: 0.70-0.85; AUC: 0.76-0.89 [47] | Computationally efficient; Excellent for similarity search | Limited to predefined substructures; fixed representation |