Unlocking the Future of Ecotoxicology: A Comprehensive Roadmap for Validating New Approach Methodologies (NAMs)

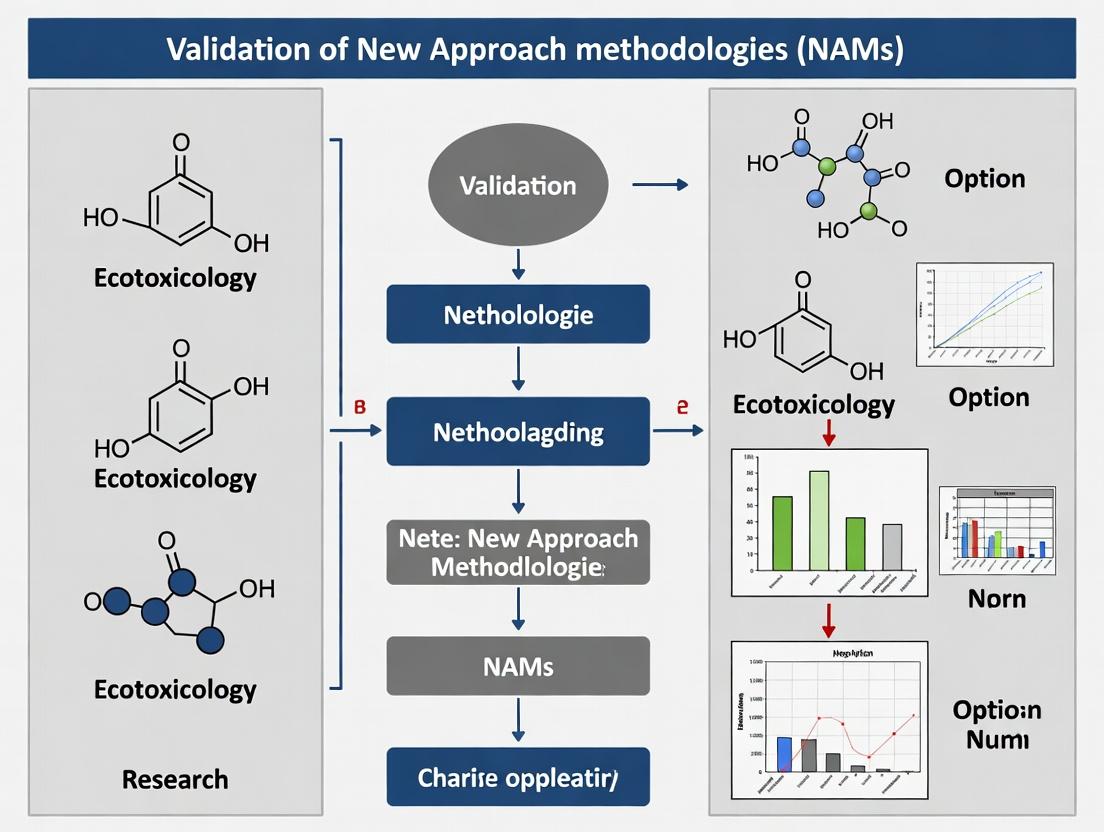

This article provides researchers, scientists, and drug development professionals with a structured analysis of the validation landscape for New Approach Methodologies (NAMs) in ecotoxicology.

Unlocking the Future of Ecotoxicology: A Comprehensive Roadmap for Validating New Approach Methodologies (NAMs)

Abstract

This article provides researchers, scientists, and drug development professionals with a structured analysis of the validation landscape for New Approach Methodologies (NAMs) in ecotoxicology. It explores the foundational shift from traditional animal testing to human- and ecologically-relevant models, driven by scientific, ethical, and regulatory imperatives. The scope encompasses a detailed review of key methodological tools and integrated testing strategies, an examination of common technical and cultural barriers to adoption with practical solutions, and a critical analysis of contemporary validation frameworks and benchmarketing paradigms. By synthesizing current initiatives and case studies, this article aims to equip professionals with the knowledge to develop, apply, and advocate for robust, fit-for-purpose NAMs that accelerate modernized ecological risk assessment.

Redefining the Foundation: Core Principles and Drivers for NAMs in Ecotoxicology

New Approach Methodologies (NAMs) represent a transformative shift in toxicity testing, defined as any technology, methodology, approach, or combination thereof that can provide information on chemical hazard and risk assessment while avoiding the use of animal testing [1]. In ecotoxicology, NAMs are purposefully designed to replace, reduce, or refine (the 3Rs) reliance on traditional animal-based tests and allow for more rapid and effective prioritization of chemicals [2]. This suite of methods includes in silico (computational), in chemico (abiotic chemical reactivity), and in vitro (cell-based) assays, as well as advanced tools like high-throughput screening (HTS) and omics technologies [1] [2]. The critical distinction of a NAM is not necessarily its novelty as a scientific technique, but its "fit-for-purpose" use within a regulatory context to support decision-making that is as protective, or more so, than existing animal-intensive methods [2] [3].

The driving force behind the adoption of NAMs is rooted in the limitations of traditional toxicity testing. Conventional studies, often reliant on rodent and other animal models, can be time-consuming, expensive, and limited in their ability to reveal underlying physiological mechanisms of toxicity [4]. Furthermore, the global scientific and regulatory community is increasingly committed to the 3Rs principle (Replacement, Reduction, and Refinement of animal use) [5]. Landmark regulatory changes, such as the 2023 United States Food and Drug Administration (FDA) legislation that no longer mandates animal testing for all new human drugs, underscore a definitive paradigm shift [5]. Consequently, the validation and qualification of NAMs have become a central focus, ensuring they provide reliable, biologically relevant data for specific regulatory purposes [3].

The Paradigm Shift: From Traditional Testing to a NAM-Centric Framework

The evolution from traditional animal-centric toxicology to a NAM-integrated paradigm represents a fundamental change in philosophy and practice. This shift is characterized by a move from observational, whole-organism endpoints to a mechanistically-driven, hypothesis-testing approach that leverages human and ecologically relevant models.

Table 1: Comparison of Traditional Animal Testing vs. NAMs in Ecotoxicology

| Aspect | Traditional Animal Testing | New Approach Methodologies (NAMs) |

|---|---|---|

| Core Principle | Direct observation of adverse outcomes in intact, protected laboratory animals (e.g., fish, rodents). | Application of non-animal or alternative methods to predict toxicity, anchored to biological pathways. |

| Primary Objective | Generate data for hazard identification and risk assessment as required by regulatory guidelines. | Replace, reduce, or refine animal use while providing equivalent or more informative data for decision-making [5] [2]. |

| Typical Duration & Cost | Long (months to years) and high (animal husbandry, space). | Rapid (days to weeks) and lower cost per chemical, enabling higher throughput [4]. |

| Data Output | Apical endpoints (e.g., mortality, growth, reproduction). | Mechanistic data (e.g., receptor binding, gene expression, pathway perturbation) [4]. |

| Regulatory Status | Well-established, historically mandated. | Undergoing validation and acceptance; increasingly encouraged and accepted (e.g., FDA 2023 legislation) [5] [6]. |

| Species Relevance | Uses standardized test species; extrapolation to other species (including human) is inferential. | Can utilize human cells or ecologically relevant species/models; tools like SeqAPASS allow for cross-species extrapolation [7] [2]. |

| Key Limitation | Limited mechanistic insight, ethical concerns, low throughput. | May not capture complex systemic organismal interactions; validation for regulatory use is ongoing [3]. |

This paradigm is actively supported by major regulatory and research agencies worldwide. Collaborative initiatives, such as the webinar series co-organized by the European Medicines Agency (EMA), the U.S. EPA, and the FDA, focus on advancing the state of the science and regulatory acceptance of NAMs for specific endpoints like bioaccumulation [6]. Furthermore, agencies provide extensive training resources for tools central to the NAM framework, including the CompTox Chemicals Dashboard, ToxCast, and SeqAPASS, ensuring researchers can effectively implement these new strategies [7].

Validation of NAMs: Establishing Scientific Confidence for Ecotoxicology

The integration of NAMs into regulatory ecotoxicology hinges on robust validation—a process to establish scientific confidence by determining a method's fitness for a specific Context of Use (COU) [3]. The COU is a precise statement defining how the NAM will be used (e.g., for chemical prioritization, hazard identification, or quantitative risk assessment) and is the cornerstone against which all validation criteria are measured [3].

Table 2: Key Validation Study Designs for Evaluating NAM Performance

| Study Design | Description & Application in NAM Validation | Key Performance Metrics | Considerations |

|---|---|---|---|

| Pre-Post / Benchmarking | Compares NAM output against a reference dataset (e.g., traditional in vivo results) for a set of chemicals. | Accuracy, Sensitivity, Specificity, Positive Predictive Value. | Requires a high-quality reference dataset. Does not establish causality. |

| Interrupted Time Series (ITS) | Analyzes trends in a performance metric (e.g., predictability) before and after implementing a new NAM protocol or model update [8]. | Trend analysis, slope change post-"intervention". | Useful for monitoring the impact of refinements within a defined testing strategy. |

| Controlled Comparison (e.g., CITS/DID) | Compares outcomes between a group using the NAM and a control group using traditional methods under similar conditions [8]. | Difference-in-differences estimates. | Helps control for external confounding factors when assessing the NAM's effect on decision quality. |

| Synthetic Control Method (SCM) | Constructs a weighted combination of control units (e.g., other testing strategies) to create a counterfactual for the NAM's performance [8]. | Weighted prediction error, bias reduction. | Data-adaptive; useful when no single ideal control group exists. |

| Integrated Assessment (IATA) | Not a single study, but a framework for integrating evidence from multiple NAMs and traditional data within a WOE approach [6]. | Consistency, concordance, and reliability of the overall conclusion. | Framework for decision-making; requires predefined methods for evidence weighting. |

A critical component of validation is demonstrating biological relevance. This involves linking the NAM to the biological effect of interest, ideally through established Adverse Outcome Pathways (AOPs) or a clear understanding of the toxicological mechanism [3]. For example, a NAM measuring binding to the aryl hydrocarbon receptor can be anchored to an AOP leading to developmental toxicity in fish. The relevance also depends on the model system—such as using trout gill cell lines for assessing aquatic toxicity—and should consider genetic and population variability where appropriate [3].

Ultimately, validation seeks to demonstrate that a NAM provides information of equivalent or better quality for regulatory decision-making compared to the traditional animal test it is intended to replace or supplement [3].

Comparative Analysis of Key NAM Tools and Platforms

The NAM ecosystem comprises a diverse array of interoperable tools, databases, and predictive models. These resources are designed to address different questions within the chemical assessment workflow, from initial screening to detailed hazard characterization.

Table 3: Comparison of Select U.S. EPA NAM Tools and Resources (2024-2025)

| Tool/Resource Name | Category | Primary Function in Ecotoxicology | Key Features & Recent Updates |

|---|---|---|---|

| SeqAPASS | In silico / Cross-species Extrapolation | Extrapolates known chemical toxicity information across species by comparing protein sequence similarity [7]. | Online screening tool; Version 8 user guide released in 2024; training materials updated in 2025 [7]. |

| ToxCast & invitroDB | In vitro / High-Throughput Screening | Provides high-throughput bioactivity data from hundreds of cell-free and cell-based assays for thousands of chemicals [7]. | Database and software enhancements featured in 2024 training; integrated into case studies with SeqAPASS and ECOTOX [7]. |

| ECOTOX Knowledgebase | Database | Curated repository of single-chemical toxicity data for aquatic and terrestrial life [7]. | Foundation for model development and validation; featured in 2024-2025 NAMs workshops [7]. |

| CompTox Chemicals Dashboard | Cheminformatics & Data Integration | Central hub for chemistry, toxicity, and exposure data for ~900,000 chemicals; links to multiple NAM tools [7]. | Includes toxicity estimation tools (TEST); virtual training held in 2025 [7]. |

| Web-ICE (v4.0) | In silico / Cross-species Extrapolation | Estimates acute toxicity to aquatic and terrestrial organisms using interspecies correlation models [7]. | Web-based application; user manual updated in 2024 [7]. |

| httk R Package | Toxicokinetics | Predicts in vivo tissue chemical concentrations from in vitro data for human and rodent models [7]. | Used to evaluate NAM effectiveness; training focused on this package in 2025 [7]. |

The power of NAMs is amplified when these tools are used in an integrated manner. A standard workflow might involve: 1) Using the CompTox Dashboard to gather existing data and physicochemical properties for a chemical; 2) Screening for bioactivity using ToxCast data; 3) Applying httk for toxicokinetic modeling to translate bioactive concentrations; and 4) Using SeqAPASS or Web-ICE to extrapolate potential hazards to ecologically relevant species [7]. This integrated approach forms the basis of an IATA (Integrated Approach to Testing and Assessment), which is championed by regulators for making efficient and protective decisions [6].

Experimental Protocols: Methodologies for Key NAM Assays and Validation Studies

Implementing NAMs requires standardized protocols to ensure reproducibility and reliability. Below are generalized methodologies for two cornerstone NAM approaches and a framework for validation studies.

Generalized Protocol for a High-Throughput In Vitro Toxicity Screening (e.g., ToxCast-like assay):

- Cell/Assay Preparation: Seed cells (e.g., human primary cells or engineered cell lines) or prepare enzyme/receptor targets in appropriate microtiter plates.

- Chemical Exposure: Prepare a serial dilution of the test chemical in DMSO or assay medium. Use a liquid handler to add chemical stocks to wells, ensuring final solvent concentration is non-cytotoxic (typically ≤1%). Include vehicle control and reference chemical control wells.

- Incubation: Incubate plates under standardized conditions (e.g., 37°C, 5% CO₂) for a predetermined time (e.g., 24, 48, or 72 hours).

- Endpoint Measurement: At assay end-point, measure viability or a specific mechanistic endpoint (e.g., reporter gene activation, calcium flux, ATP content) using validated kits and a plate reader. Fluorescent or luminescent signals are common.

- Data Processing: Normalize raw data to vehicle and positive controls. Fit dose-response curves (e.g., using a 4-parameter logistic model) to calculate activity thresholds (e.g., AC50, LEC) and efficacy values.

- Data Integration: Upload processed data to a database like invitroDB for cross-assay analysis and integration with chemical descriptors [7].

Generalized Protocol for In Silico Cross-Species Extrapolation Using SeqAPASS:

- Define Assessment Goal: Identify the target protein(s) or pathway known to be involved in the toxicity of interest (the Molecular Initiating Event within an AOP).

- Input Sequence: Obtain the amino acid sequence for the protein of interest from the "source" species (e.g., human or rat with known toxicity data).

- Run Sequence Alignment: In the SeqAPASS tool, select the taxonomic group(s) of interest (e.g., all fish species). The tool performs pairwise alignment of the source sequence against predicted proteomes of the "target" species [7].

- Analyze Results: Review output scores (e.g., percent identity, similarity weighting) across taxonomic space. Higher scores suggest a greater potential for conserved chemical interaction and effect.

- Weight-of-Evidence Determination: Use predefined thresholds (as per SeqAPASS guidance) to categorize confidence levels (High, Moderate, Low) for extrapolating toxicity to each target species [7].

Framework for a Validation Study Using a Quasi-Experimental Design (e.g., CITS): This design is suitable for evaluating the impact of adopting a NAM within an existing testing program [8].

- Define Groups & Timeline: Establish a Treated Group (labs/projects using the new NAM) and a Control Group (labs/projects continuing with the traditional method). Collect performance or outcome data for multiple time periods before and after NAM adoption.

- Specify Model: Apply a Controlled Interrupted Time Series (CITS) model. The model estimates the difference in the outcome trend between the treated and control groups after the NAM implementation, controlling for pre-existing differences and temporal trends [8].

- Collect Outcome Data: Define a relevant quantitative outcome, such as chemical throughput rate, cost per assessment, or concordance with a gold-standard reference decision.

- Analysis: Fit the CITS model to estimate the average treatment effect on the treated (ATT), which quantifies the change in the outcome attributable to the NAM, accounting for what would have happened if the traditional method had continued [8].

Advancing NAMs requires specialized tools and materials. The following table details key solutions for researchers in this field.

Table 4: Essential Research Reagent Solutions and Resources for NAMs in Ecotoxicology

| Tool/Reagent Category | Specific Examples | Function & Role in NAMs Research |

|---|---|---|

| Computational & Data Tools | httk R Package [7], CompTox Chemicals Dashboard [7], Toxicity Estimation Software Tool (TEST) [7] | Enable toxicokinetic modeling, chemical property prediction, and data integration, forming the in silico backbone of NAMs workflows. |

| Bioinformatics & Extrapolation Tools | SeqAPASS [7], Web-ICE v4.0 [7] | Facilitate cross-species extrapolation of toxicity data by analyzing protein sequence similarity or statistical correlations between species. |

| Reference Databases | ECOTOX Knowledgebase [7], DSSTox Database [7], invitroDB (ToxCast) [7] | Provide curated in vivo and in vitro toxicity reference data essential for developing, training, and validating predictive models. |

| Alternative Test Organisms | C. elegans (roundworm) [4], Fish Embryo Models (e.g., Zebrafish) [2] | Serve as non-protected, whole-organism models that can replace protected larval/adult animal tests for specific endpoints. |

| Exposure & Kinetic Modeling Tools | SHEDS-HT (Stochastic Human Exposure & Dose Simulation) [7], Chemical Transformation Simulator (CTS) [7] | Predict human and environmental exposure potential and simulate chemical transformation pathways for risk assessment context. |

| Integrated Workflow Resources | ChemExpo Knowledgebase [7], IATA (Integrated Approach to Testing & Assessment) Framework [6] | Support the integration of chemical use/exposure data and guide the structured combination of multiple lines of evidence from NAMs. |

The validation of New Approach Methodologies (NAMs) in ecotoxicology represents a critical paradigm shift from traditional animal-dependent testing toward a predictive, human-relevant, and systems-based framework. NAMs encompass in vitro, in silico, and ex vivo methods designed to provide faster, more cost-effective, and mechanistically informative safety assessments [4]. The driving engine for this transition is multifaceted, propelled by concurrent and interdependent scientific, ethical, regulatory, and economic imperatives. Scientifically, NAMs offer the potential to overcome the limited predictivity of rodent models for human toxicity, which has been documented at rates as low as 40-65% [9]. Ethically, the global commitment to the 3Rs principles (Replacement, Reduction, and Refinement of animal use) provides a strong moral impetus [4]. Regulatorily, while challenges persist, successful adoptions for endpoints like skin sensitization demonstrate a pathway for regulatory acceptance [9]. Economically, the prospect of accelerated testing and reduced reliance on costly, lengthy in vivo studies presents a compelling business case, particularly within dynamic industrial sectors [10]. This series of comparison guides objectively evaluates the performance of leading NAM platforms against traditional alternatives, providing experimental data and protocols to inform researchers and drug development professionals engaged in the validation and application of these transformative tools.

Scientific Imperative: Performance and Predictive Capacity

The core scientific imperative for NAMs is their capacity to provide equal or superior protective and predictive data for human and ecological health compared to traditional models. Validation hinges on demonstrating reliability, relevance, and robustness within a defined context of use [11].

Comparison Guide 1: Model Systems for Systemic Toxicity Screening

This guide compares traditional animal models with emerging NAM platforms for initial hazard identification.

Table 1: Comparative Performance of Models for Systemic Toxicity Screening

| Model Type | Test System Example | Throughput | Human Relevance | Key Endpoints Measured | Reported Concordance with Human Toxicity | Cost per Compound (Estimated) |

|---|---|---|---|---|---|---|

| Traditional In Vivo | 28-day Rat Oral Toxicity Study (OECD 407) | Very Low | Moderate (Species Differences) | Clinical Pathology, Histopathology, Organ Weights | ~40-65% (Rodent to Human) [9] | $100,000 - $250,000 |

| In Vitro Cell-Based | 2D Hepatic Cytotoxicity (e.g., HepG2) | High | Moderate (Limited Metabolism) | Cell Viability, Apoptosis, Stress Markers | Variable; High false positive rate for liver tox | $1,000 - $5,000 |

| Microphysiological System (MPS) | Liver-on-a-Chip (Primary Human Hepatocytes) | Medium | High (3D Architecture, Flow) | Albumin/Urea Secretion, CYP450 Activity, Barrier Integrity | Emerging; High mechanistic fidelity [9] | $20,000 - $50,000 |

| Non-Mammalian Model | C. elegans Toxicity Screen [4] | Very High | Moderate (Conserved Core Pathways) | Lifespan, Reproduction, Motility, Gene Expression | Promising for neuro & metabolic toxicity [4] | $500 - $2,000 |

Supporting Experimental Data: A 2024 study benchmarking a defined approach for the crop protection products Captan and Folpet utilized 18 in vitro assays, including OECD TG-compliant tests for irritation and sensitization. This NAM package correctly identified the compounds as contact irritants, aligning with risk assessments derived from existing mammalian data, demonstrating the feasibility of integrated NAM strategies for safety decision-making [9].

Detailed Experimental Protocol: C. elegans Acute Toxicity Screening

- Objective: To assess the acute toxic effects of chemical compounds on viability and locomotion.

- Organism: Synchronized L4 larval stage C. elegans (N2 wild-type strain).

- Exposure: Compounds dissolved in M9 buffer or DMSO (≤0.1% final). Worms exposed in 96-well plates (~30-50 worms/well) for 24 hours at 20°C.

- Endpoint Assessment:

- Viability: Using an automated fluorescent image analyzer after staining with a viability dye (e.g., Sytox Green). Live worms are counted based on movement and dye exclusion.

- Locomotion: Record 60-second videos per well. Analyze thrashing rate (body bends/minute) or track path length using software like ImageJ with wrMTrck plugin.

- Data Analysis: Dose-response curves are generated for lethality and locomotion inhibition. LC50 (Lethal Concentration 50) and EC50 (Effective Concentration 50) values are calculated using nonlinear regression (e.g., probit analysis) [4].

Visualization: Integrated NAM Workflow for Systemic Risk Assessment

This diagram illustrates the conceptual workflow of a Next-Generation Risk Assessment (NGRA), integrating multiple NAMs and exposure science.

Diagram 1: NAM Integration in Next-Generation Risk Assessment (NGRA) [9].

Ethical Imperative: The 3Rs and Beyond

The ethical imperative is a primary driver, focusing on the replacement of animal tests, the reduction of animal numbers, and the refinement of procedures to minimize suffering [4]. This extends into a broader framework of responsible innovation, which demands proactive ethical stewardship throughout the technology development lifecycle [12].

Comparison Guide 2: Defined Approaches for Regulatory Toxicity Endpoints

Defined Approaches (DAs)—fixed combinations of NAMs with a data interpretation procedure—are key ethical tools that directly replace animal tests for specific endpoints.

Table 2: Defined Approaches (DAs) Replacing Traditional Animal Tests

| Toxicity Endpoint | Traditional Animal Test (OECD TG) | Validated Non-Animal Defined Approach (OECD TG) | DA Components (Example) | Regulatory Acceptance Status |

|---|---|---|---|---|

| Skin Sensitization | Guinea Pig Maximization Test (TG 406), Murine Local Lymph Node Assay (LLNA, TG 429) | Defined Approaches for Skin Sensitisation (TG 497) | In chemico (DPRA), in vitro (KeratinoSens, h-CLAT), in silico | Adopted by EU, UK, US agencies; used for classification [9] |

| Eye Irritation/Serious Damage | Draize Rabbit Eye Test (TG 405) | Defined Approaches for Serious Eye Damage and Eye Irritation (TG 467) | Reconstructed human cornea-like epithelium (RhCE) assays, in vitro membrane barrier test | Widely used within a tiered testing strategy under GHS [9] |

| Skin Corrosion/Irritation | Draize Rabbit Skin Test (TG 404) | In vitro skin corrosion/irritation tests (TGs 430, 431, 439) | Reconstructed human epidermis (RHE) models, measuring cell viability via MTT assay | Full replacement accepted for corrosion; key part of irritation DAs [9] |

Supporting Experimental Data: For skin sensitization, a combination of human-based in vitro approaches demonstrated similar performance to the murine LLNA. Notably, a specific combination of three in vitro assays outperformed the LLNA in specificity, reducing false positives and providing a more human-relevant prediction [9].

Detailed Experimental Protocol: OECD TG 497 Defined Approach for Skin Sensitization

- Objective: To classify a chemical into one of four skin sensitization potency subcategories (1A, 1B, or no category) without animal testing.

- Test Sequence:

- DPRA (in chemico): Measures peptide reactivity (lysine and cysteine depletion) after 24-hour co-incubation. Predicts the chemical's ability to act as a hapten.

- KeratinoSens (in vitro): Uses a reporter gene assay in human keratinocytes to detect activation of the Keap1-Nrf2 antioxidant response pathway.

- h-CLAT (in vitro): Measures changes in surface expression of CD86 and CD54 on a human monocytic cell line (THP-1) following 24-hour exposure, indicating dendritic cell activation.

- Data Interpretation Procedure (DIP): Results from the three assays are input into a prediction model/decision tree (e.g., the "2 out of 3" rule or a more complex integrated algorithm). The DIP provides a final prediction of the sensitization potency category based on predefined weight-of-evidence criteria [9].

Regulatory Imperative: Validation and Acceptance

Regulatory acceptance is the critical gateway for NAMs. It requires a unified validation framework based on measurable quality standards, standardized protocols, and transparent data sharing to build confidence among regulators, industry, and the public [10].

The Scientist's Toolkit: Essential Research Reagents for NAMs Validation

Table 3: Key Research Reagent Solutions for NAMs Development

| Reagent/Material | Function in NAMs Research | Example Application |

|---|---|---|

| Primary Human Cells (e.g., Hepatocytes, Renal Proximal Tubule Epithelial Cells) | Provide species-relevant, metabolically competent cellular models for organ-specific toxicity. | Liver-on-a-chip models for repeated-dose hepatotoxicity studies [9]. |

| Reconstructed Human Tissues (RhE, RhCE) | 3D, differentiated tissue models for topical toxicity endpoints (irritation, corrosion). | EpiDerm (RhE) used in OECD TG 439 for skin irritation testing [9]. |

| Directional Flow Chip (Organ-on-a-Chip) | Microfluidic device that provides physiological shear stress, tissue-tissue interfaces, and multi-organ interaction. | Lung-on-a-chip for inhalation toxicology; linked liver-kidney chip for systemic ADME-tox [9]. |

| Multi-omics Analysis Kits (Transcriptomics, Metabolomics) | Enable high-content, mechanistic analysis of cellular responses to toxicants, identifying pathways of toxicity. | Toxicogenomics to distinguish genotoxic from non-genotoxic carcinogens or to derive point-of-departure doses [9]. |

| High-Content Screening (HCS) Imaging Systems | Automated microscopy and image analysis for multiplexed endpoint measurement (cell health, morphology, biomarker expression). | Screening for chemical-induced steatosis using lipid droplet staining in hepatic spheroids. |

| Physiologically Based Kinetic (PBK) Modeling Software | In silico tool to extrapolate in vitro effective concentrations to human equivalent doses by modeling absorption, distribution, metabolism, and excretion. | Used in NGRA to calculate a margin of safety between predicted plasma/blood concentrations and bioactivity concentrations from in vitro assays [9]. |

Visualization: Pathway to Regulatory Confidence for a Novel NAM

This diagram outlines the multi-step evaluation framework proposed to build confidence and facilitate regulatory acceptance of new methodologies.

Diagram 2: Framework for Building Confidence in NAMs for Regulatory Use [10] [11].

Economic Imperative: Cost, Speed, and Market Dynamics

The economic case for NAMs is built on efficiency gains, risk reduction in late-stage drug development, and alignment with a growing market demand for sustainable and ethical product stewardship.

Comparison Guide 3: Economic and Operational Comparison

This guide contrasts the resource footprint of traditional versus NAM-based testing strategies.

Table 4: Economic and Operational Comparison of Testing Paradigms

| Aspect | Traditional Animal-Based Paradigm | NAM-Integrated Paradigm | Economic Implication for R&D |

|---|---|---|---|

| Testing Timeline | Months to years for chronic/carcinogenicity studies. | Weeks to months for high-throughput screening and MPS testing. | Accelerated candidate selection reduces time-to-market, extending patent-protected sales period. |

| Direct Cost per Study | High (animal procurement, long-term housing, veterinary care, histopathology). | Lower initial reagent costs; higher capital investment in robotics and instrumentation. | Lower variable cost per compound screened enables broader chemical evaluation, especially in early phases. |

| Attrition Detection | Late-stage failure (preclinical or Phase I) due to unpredicted human toxicity. | Earlier detection of hazard liabilities during lead optimization. | Massive cost avoidance by filtering out problematic candidates before costly in vivo and clinical development. |

| Regulatory Data Flexibility | Often standardized, checklist-based data packages. | Mechanistic, hypothesis-driven data that may support waivers or refined testing strategies. | Potential for reduced animal testing costs and more efficient regulatory submissions via justified alternative approaches. |

| Market Positioning | Increasing scrutiny from ethical investors and consumers. | Aligns with ESG (Environmental, Social, Governance) goals and responsible innovation principles [12]. | Enhances brand value and access to capital from ESG-focused funds; meets regulatory trends (e.g., EU Chemical Strategy for Sustainability). |

Supporting Data: The global toxicology testing market, valued at USD 13.1 billion in 2024, is projected to grow at a CAGR of 8.9%, driven significantly by advancements in in vitro testing. In vitro methods accounted for approximately 35% of the market share in 2024, with significant investment flowing into the sector [13].

The transition to a NAM-dominant paradigm in ecotoxicology and safety sciences is not driven by a single factor but by a powerful, self-reinforcing engine. Scientific advancements demonstrating human relevance build the technical confidence needed for regulatory acceptance. Successful regulatory case studies, in turn, validate the economic model of faster, cheaper testing, while fulfilling the strong ethical mandate to replace animal use. This multifaceted driver engine is propelling the field toward a future where safety assessment is more predictive, preventive, and ethical. For researchers and drug developers, engagement in the standardization of protocols, transparent data sharing, and active participation in consortium-based validation projects is no longer optional but essential to steer this engine and realize the full potential of New Approach Methodologies [10].

The protection of environmental species from chemical contamination represents a critical imperative for ecological stability and biodiversity conservation. In recent decades, wildlife populations have declined by an average of more than two-thirds, with chemical pollution representing a significant contributing factor among multiple anthropogenic stressors [14]. The field of ecotoxicology faces the unique challenge of predicting and preventing adverse effects across diverse species and complex ecosystems, a task complicated by the vast number of chemicals in commerce and the limitations of traditional animal-intensive testing approaches [15].

This challenge is being addressed through the development and validation of New Approach Methodologies (NAMs), defined as any technology, methodology, approach, or combination thereof that can replace, reduce, or refine animal toxicity testing while enabling more rapid and effective chemical prioritization and assessment [2]. The broader thesis framing this discussion posits that the strategic validation and integration of NAMs within regulatory and research ecotoxicology is not merely a technical advancement but an ethical and practical necessity for achieving comprehensive environmental species protection. This transition is driven by the need to assess thousands of chemicals with varying modes of action, the ethical imperative to reduce vertebrate animal testing, and the growing recognition of pharmaceutical pollutants as potent environmental contaminants with specific biological activities [16] [17].

The validation of NAMs for ecotoxicology must account for extraordinary biological diversity, interspecies extrapolation challenges, and the complexities of real-world exposure scenarios. Successful integration requires navigating not only scientific and technical hurdles but also sociological factors within the professional community, including concerns about regulatory "error costs," varying levels of familiarity with new methods, and fundamental beliefs about toxicological principles [18].

Comparative Analysis of Ecotoxicological Testing Approaches

The evolution from traditional whole-organism testing to integrated NAM strategies represents a paradigm shift in ecotoxicology. The following comparison examines the performance characteristics, applications, and validation status of these approaches.

Table 1: Comparison of Traditional and NAM-Based Ecotoxicological Testing Approaches

| Testing Approach | Typical Test Organisms/Systems | Key Endpoints Measured | Timeframe | Throughput | Regulatory Acceptance | Key Advantages | Major Limitations |

|---|---|---|---|---|---|---|---|

| Traditional Whole-Organism Tests | Fathead minnow (Pimephales promelas), Daphnia (D. magna), Earthworm (Eisenia fetida), Algae (Raphidocelis subcapitata) | Mortality, growth inhibition, reproduction impairment, behavioral changes | Days to months (7-90 days) | Low | High (OECD, EPA, ISO guidelines) | Ecological relevance, direct observable effects, established history | Low throughput, high cost, ethical concerns, species-limited |

| In Vitro & Cell-Based Assays | Fish cell lines (e.g., RTgill-W1, PLHC-1), Primary hepatocytes, Receptor-binding assays | Cytotoxicity, specific pathway activation (e.g., endocrine), genotoxicity, oxidative stress | Hours to days | Medium-High | Moderate (e.g., EPA Endocrine Disruptor Screening Program) | Mechanistic insight, human-relevant pathways, reduced animal use | Limited metabolic capacity, may not reflect whole-organism response |

| Computational (In Silico) Methods | N/A (Based on chemical structure and existing data) | Predicted toxicity, physicochemical properties, bioaccumulation potential, cross-species susceptibility | Minutes to hours | Very High | Emerging (Used for prioritization and screening) | Extremely fast and inexpensive, can predict un-tested chemicals | Dependent on quality of training data, limited for novel chemistries |

| Omics Technologies | Any species with sequenced genome/transcriptome | Gene expression changes, protein profiles, metabolic perturbations | Days | Medium | Low (Mostly research applications) | Systems-level understanding, mode-of-action elucidation, sensitive early indicators | Complex data interpretation, high cost per sample, need for specialized bioinformatics |

| Toxicity Forecaster (ToxCast) & High-Throughput Screening | Hundreds of automated biochemical and cell-based assays | Bioactivity across ~1000 molecular and cellular targets | Hours | Very High | Growing (Used in chemical prioritization) | Broad biological coverage, quantitative concentration-response, public database | Extrapolation to ecological endpoints and chronic exposures remains challenging |

Traditional testing, while ecologically relevant, suffers from critical limitations in addressing the scale of the chemical assessment challenge. U.S. federal agencies, including the EPA, FDA, and USDA, rely on data from standardized tests utilizing species such as fish, invertebrates, and algae to fulfill mandates under statutes like the Endangered Species Act and the Toxic Substances Control Act [15]. However, these tests are resource-intensive, time-consuming, and raise ethical concerns, creating a bottleneck for chemical safety evaluations [15].

In contrast, NAMs offer a multifaceted toolkit. In vitro assays provide mechanistic insight into specific toxicity pathways, such as endocrine disruption, which is particularly relevant for pharmaceuticals like anticancer agents and antiparasitics that are designed to interact with conserved biological targets [16] [17]. Computational tools like the EPA's CompTox Chemicals Dashboard and the SeqAPASS (Sequence Alignment to Predict Across-Species Susceptibility) tool enable rapid prediction of chemical properties and hazards, as well as extrapolation of toxicity information across species based on sequence conservation of molecular targets [19] [20]. Omics technologies (transcriptomics, proteomics, metabolomics) offer powerful means to discover biomarkers of effect and understand adverse outcome pathways (AOPs) at a systems level [2].

The integration of these methods into a weight-of-evidence framework represents the most promising path forward. For instance, computational predictions can prioritize chemicals for targeted in vitro testing, the results of which can inform the design of more focused and efficient traditional tests when necessary, thereby reducing overall animal use—a process aligned with the 3Rs principles (Replacement, Reduction, Refinement) [19].

Detailed Experimental Protocols for Key NAMs

Protocol for a High-Throughput Transcriptomics Assay Using Fish Cell Lines

This protocol is designed to assess chemical-induced changes in gene expression as an early indicator of potential chronic toxicity, using the RTgill-W1 fish gill cell line as a model.

Cell Culture and Exposure:

- Maintain RTgill-W1 cells in Leibovitz's L-15 medium supplemented with 10% fetal bovine serum at 19°C without CO₂.

- Seed cells into 96-well tissue culture plates at a density of 2x10⁴ cells per well and allow to attach for 24 hours.

- Prepare a logarithmic dilution series (e.g., 0.1, 1, 10, 100 µM) of the test chemical in exposure medium. Include a vehicle control (e.g., 0.1% DMSO) and a positive control (e.g., 10 µM rotenone for mitochondrial disruption).

- Expose cells in triplicate to each concentration for 48 hours.

RNA Isolation and Quality Control:

- Lyse cells directly in the plate wells using a guanidinium-thiocyanate-based lysis buffer.

- Isolate total RNA using silica-membrane spin columns. Include an on-column DNase I digestion step to remove genomic DNA contamination.

- Assess RNA concentration and purity using a spectrophotometer (260/280 nm ratio ~2.0). Evaluate RNA integrity via microfluidic electrophoresis (RNA Integrity Number, RIN > 8.0).

Library Preparation and Sequencing:

- Convert 500 ng of total RNA per sample to cDNA.

- Prepare sequencing libraries using a stranded mRNA-seq kit, incorporating unique dual-index adapters for sample multiplexing.

- Quantify libraries by qPCR and pool at equimolar ratios.

- Sequence the pooled library on an appropriate platform (e.g., Illumina NextSeq) to a depth of ~25 million paired-end reads per sample.

Bioinformatic Analysis:

- Quality-trim raw sequencing reads and align them to the reference genome of a closely related species (e.g., Oncorhynchus mykiss) using a splice-aware aligner (e.g., STAR).

- Quantify reads mapping to annotated genes.

- Perform differential gene expression analysis using a statistical package (e.g., DESeq2 in R). Genes with an adjusted p-value (FDR) < 0.05 and an absolute log₂ fold change > 1 are considered significantly differentially expressed.

- Conduct pathway enrichment analysis (e.g., using Gene Ontology or KEGG databases) to identify biological processes perturbed by the chemical exposure.

Protocol for an In Chemico Reactive Oxygen Species (ROS) Assay

This abiotic assay measures the potential of a chemical to generate oxidative stress, a common mechanism of toxicity.

Reagent Preparation:

- Prepare a 10 mM stock solution of the probe 2',7'-Dichlorodihydrofluorescein diacetate (H₂DCFDA) in anhydrous DMSO. Protect from light.

- Prepare 10 mM stock solutions of test chemicals in appropriate solvents (DMSO, ethanol, or water).

- Prepare 100 mM phosphate buffer (pH 7.4).

Assay Execution:

- In a black 384-well plate, add 90 µL of phosphate buffer per well.

- Add 5 µL of H₂DCFDA stock solution to each well (final probe concentration = 500 µM). Mix gently.

- Initiate the reaction by adding 5 µL of the test chemical at varying concentrations (final concentrations typically from 1 µM to 100 µM). Include a negative control (solvent only) and a positive control (e.g., 100 µM tert-Butyl hydroperoxide).

- Immediately place the plate in a pre-warmed (e.g., 25°C) fluorescence microplate reader.

Measurement and Data Acquisition:

- Measure fluorescence (Excitation: 485 nm, Emission: 535 nm) kinetically every 5 minutes for 60-90 minutes.

- The non-fluorescent H₂DCFDA is oxidized by ROS to the highly fluorescent DCF.

Data Analysis:

- Calculate the slope of the fluorescence increase over the linear portion of the reaction for each well.

- Normalize the slope for each test chemical well to the average slope of the negative control wells.

- Plot normalized ROS activity versus log chemical concentration to generate a concentration-response curve and determine an effective concentration (e.g., EC₁₀ or EC₅₀).

Visualizing Pathways and Workflows

The following diagrams, generated using Graphviz DOT language, illustrate key conceptual frameworks and experimental workflows in modern ecotoxicology.

Diagram 1: A Generalized Adverse Outcome Pathway (AOP) Framework.

Diagram 2: A Tiered, Integrated Testing Strategy (ITS) Workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

The implementation of NAMs in ecotoxicology relies on a suite of specialized reagents, tools, and databases. The following table details key components of this toolkit.

Table 2: Essential Research Reagent Solutions for Ecotoxicology NAMs

| Tool/Reagent Category | Specific Example(s) | Primary Function in Ecotoxicology | Key Provider(s)/Sources |

|---|---|---|---|

| Reference Chemical Sets | EPA's ToxCast Chemical Library, EURL ECVAM Reference Chemicals | Provide standardized, well-characterized chemicals for assay development, validation, and benchmarking of NAM performance. | U.S. EPA, European Commission Joint Research Centre |

| Cell Lines and Primary Cells | RTgill-W1 (Rainbow trout gill), PLHC-1 (Topminnow liver), primary fish hepatocytes | Serve as in vitro models for assessing cytotoxicity, specific pathway activation (e.g., xenobiotic metabolism, endocrine disruption), and transcriptomic responses. | Commercial vendors (e.g., ECACC, ATCC), academic laboratories |

| Biochemical Assay Kits | Luciferase-based reporter gene kits (e.g., ERα, AR), ATP quantitation kits (cytotoxicity), ROS detection kits (e.g., H₂DCFDA) | Enable high-throughput measurement of specific molecular endpoints relevant to mechanisms of toxicity (e.g., receptor binding, metabolic disruption, oxidative stress). | Various life science suppliers (e.g., Thermo Fisher, Promega, Abcam) |

| 'Omics Reagents & Platforms | RNA/DNA extraction kits, sequencing library prep kits, microarray platforms, mass spectrometry standards | Facilitate genome-wide analysis of gene expression (transcriptomics), protein profiles (proteomics), and metabolic changes (metabolomics) to elucidate modes of action. | Illumina, Thermo Fisher, Agilent, Waters, etc. |

| Computational Databases & Software | CompTox Chemicals Dashboard [20], ECOTOX Knowledgebase [19], SeqAPASS Tool [19], OECD QSAR Toolbox | Provide access to curated chemical property, fate, toxicity, and bioactivity data; enable cross-species extrapolation and predictive modeling. | U.S. EPA, National Institute of Standards and Technology (NIST), OECD |

| Standardized Test Guidelines | OECD Test Guidelines for in vitro assays (e.g., TG 455, ERα binding), EPA Ecological Effects Test Guidelines | Define internationally recognized protocols to ensure the reliability, reproducibility, and regulatory relevance of generated data. | Organisation for Economic Co-operation and Development (OECD), U.S. Environmental Protection Agency |

Case Study Application: Antiparasitic and Anticancer Pharmaceuticals

The principles and tools discussed are critically applied to specific, high-concern pharmaceutical classes. Antiparasitic drugs, such as benzimidazoles and ivermectin, are extensively used in veterinary medicine and enter the environment primarily through animal waste [16]. Their ecological risk is heightened because they target evolutionarily conserved pathways (e.g., β-tubulin in benzimidazoles), making non-target invertebrates particularly susceptible [16]. The European Medicines Agency's tiered Environmental Risk Assessment (ERA) process for veterinary medicinal products begins with exposure estimation (Phase I) and proceeds to effect testing (Phase II) only if thresholds are exceeded [16]. NAMs can streamline this process: in silico tools like SeqAPASS can predict susceptibility across soil and aquatic invertebrates based on target conservation, and targeted in vitro assays can confirm binding affinity to non-target species' tubulin, potentially reducing the need for definitive higher-tier invertebrate toxicity tests.

Similarly, anticancer agents are highly potent ecosystem contaminants designed to disrupt cell proliferation [17]. Their environmental risk assessment benefits from a green chemistry and NAM-driven approach. Mechanism-based in vitro assays using fish cell lines can screen for DNA damage, cell cycle arrest, and apoptosis. Transcriptomic profiling can reveal specific pathway activation even at sub-cytotoxic concentrations, providing sensitive early indicators of hazard. This data can feed into AOP networks to predict potential population-level consequences, such as impacts on fish reproduction and early life-stage survival.

The ecotoxicology imperative demands a transformative shift in how chemical hazards are evaluated for environmental species protection. The validation and adoption of NAMs are central to this transformation, offering a path to more mechanistic, efficient, and ethical risk assessment. As highlighted in the U.S. Strategic Roadmap for Establishing New Approaches, success requires connecting developers with end-users, establishing confidence in new methods, and encouraging their adoption by agencies and industry [15]. Continued investment in foundational resources—such as the CompTox Chemicals Dashboard, expanded ECOTOX Knowledgebase, and standardized in vitro assay protocols—is essential [19] [20].

The ultimate goal is a predictive, integrated testing strategy where computational models, high-throughput in vitro bioassays, and targeted omics are used intelligently to prioritize chemicals and design definitive tests, minimizing animal use while maximizing ecological relevance. This progression will enable regulators and scientists to address the vast universe of chemical contaminants more effectively, fulfilling the imperative to protect vulnerable wildlife populations and the intricate ecosystems they inhabit [2] [21].

The field of ecotoxicology research is undergoing a fundamental paradigm shift, driven by the development and adoption of New Approach Methodologies (NAMs). These methods encompass a suite of in chemico, in vitro, in silico, and omics-based approaches designed to provide more human- and ecologically-relevant hazard data while reducing reliance on traditional animal testing [22] [9]. The transition is motivated by scientific imperatives—recognizing the limited predictivity of rodent models for human toxicity (with true positive rates of 40–65%)—as well as ethical and economic drivers [9]. In the context of ecological hazard assessment, NAMs offer tools to understand chemical effects on diverse species and complex ecosystems, moving beyond mammalian-centric models [23]. The ultimate goal is to enable a Next Generation Risk Assessment (NGRA), an exposure-led, hypothesis-driven framework that integrates various NAMs for a more protective and mechanistic safety evaluation [22] [9]. This guide provides a comparative analysis of the core NAM types, supported by experimental data and protocols, to inform their validated application in ecotoxicological research.

The table below provides a high-level comparison of the four primary NAM categories, summarizing their fundamental principles, key technologies, and primary applications within ecotoxicology.

Table 1: Core Characteristics of Primary NAM Types

| NAM Type | Fundamental Principle | Key Technologies & Models | Primary Applications in Ecotoxicology |

|---|---|---|---|

| In Chemico | Measures direct chemical reactivity with biological molecules. | Peptide/protein binding assays, Reactivity probes. | Screening for electrophilic potential (skin sensitization), protein denaturation (eye irritation), abiotic transformation studies. |

| In Vitro | Studies biological responses using cells, tissues, or organs outside a living organism. | 2D cell cultures, 3D organoids/spheroids, Microphysiological Systems (MPS, organs-on-a-chip). | High-throughput toxicity screening (ToxCast), mechanism-of-action studies, organ-specific toxicity, and as a component of Defined Approaches. |

| In Silico | Uses computational models to predict toxicity from chemical structure or existing data. | QSAR, Machine Learning (ML), PBPK/PBK modeling, Molecular docking. | Priority screening of large chemical inventories, read-across, predicting toxicokinetics, and quantitative in vitro to in vivo extrapolation (QIVIVE). |

| Omics | Provides a holistic analysis of molecular-level changes in response to chemical exposure. | Transcriptomics, Metabolomics, Proteomics, Epigenomics. | Unbiased biomarker discovery, elucidating adverse outcome pathways (AOPs), diagnosing mechanisms of action, and assessing sub-lethal effects. |

Technical Comparison and Experimental Data

The utility of NAMs for decision-making depends on their predictive performance, throughput, and relevance. The following tables compare these methodologies based on quantitative validation metrics, operational parameters, and their application in integrated testing strategies.

Table 2: Performance Metrics and Validation Status of Key NAMs

| Methodology (Example) | Predictive Endpoint | Validation Benchmark | Reported Performance | Regulatory Status |

|---|---|---|---|---|

| Liver-on-a-Chip (MPS) [24] | Human hepatotoxicity | 27 drugs (known human safe/toxic) | 87% sensitivity (correct ID of toxic drugs), 100% specificity (no safe drugs flagged as toxic) | Advanced non-GLP investigative tool; case-by-case regulatory use. |

| ToxCast In Vitro Bioactivity [23] | Ecological Point of Departure (POD) | In vivo PODs from ECOTOX database (649 chemicals) | Weak overall correlation; moderate correlation for specific classes (e.g., antimicrobials). | Used for chemical prioritization and screening within Integrated Approaches to Testing and Assessment (IATA). |

| Defined Approaches (DA) for Skin Sensitization [9] | Skin sensitization hazard & potency | Human data & Local Lymph Node Assay (LLNA) | Combination of in chemico and in vitro NAMs matched LLNA performance and exceeded it in specificity. | OECD Test Guidelines adopted (e.g., TG 497). |

| QSAR Models [23] | Acute toxicity | In vivo PODs from ECOTOX | Significant association found between QSAR-predicted PODs and ECOTOX PODs. | Accepted for read-across and category formation under REACH; specific regulatory use cases. |

Table 3: Operational and Application Comparison

| Parameter | In Chemico | In Vitro (2D/3D) | In Silico (QSAR/ML) | Omics |

|---|---|---|---|---|

| Throughput | Very High | High (2D) to Medium (3D/MPS) | Very High (once built) | Low to Medium |

| Cost per Compound | Low | Low (2D) to High (MPS) | Very Low | High |

| Mechanistic Insight | Low (specific reaction) | Medium to High (cellular pathway) | Varies (model-dependent) | Very High (systems-level) |

| Species Relevance | Not applicable (abiotic) | Can be human or ecologically relevant cell lines | Depends on training data | Can be applied to any species with genomic data |

| Key Limitation | Limited biological context | Lack of systemic interaction (unless MPS) | Dependent on quality/scope of training data | Complex data interpretation; quantitative extrapolation challenges |

| Role in IATA/NGRA | Provides molecular initiating event (MIE) data for AOPs. | Provides key event (KE) data for AOPs; basis for QIVIVE. | Enables prioritization, prediction, and extrapolation. | Informs AOP development and provides mechanistic evidence for WoE. |

Detailed Methodologies and Experimental Protocols

In Chemico Assays for Reactivity Assessment

Protocol: Direct Peptide Reactivity Assay (DPRA) – A Core Method for Skin Sensitization Assessment The DPRA is an OECD-approved (TG 442C) in chemico method that quantifies a chemical's ability to react with nucleophilic amino acids, representing the Molecular Initiating Event (MIE) for skin sensitization [9].

- Reagent Preparation: Prepare separate solutions of the model peptides containing either lysine or cysteine in phosphate buffer. Dissolve the test chemical in a suitable solvent (e.g., acetonitrile, water).

- Reaction: Mix the peptide solution with the test chemical solution and incubate at 25°C for 24 hours. Include control incubations (peptide alone, chemical alone).

- Termination & Analysis: Quench the reaction with an acidifying solution. Analyze the samples using High-Performance Liquid Chromatography (HPLC) with a UV detector.

- Data Calculation: Calculate the percent depletion of each peptide based on the reduction in chromatographic peak area compared to the control. The average depletion of cysteine and lysine peptides is used for classification.

In Vitro Cytotoxicity and Advanced Models

Protocol: MTT Assay for Baseline Cytotoxicity Screening [25] This colorimetric assay measures metabolic activity as a surrogate for cell viability and is a foundational in vitro method.

- Cell Seeding: Seed relevant cells (e.g., fish cell line RTgill-W1 for ecotoxicology) in a 96-well plate at an optimized density (e.g., 10,000 cells/well) and allow to adhere.

- Chemical Exposure: Expose cells to a serial dilution of the test chemical for a defined period (e.g., 24-48 h). Include a solvent control and a positive control (e.g., 1% Triton X-100 for 100% cytotoxicity).

- MTT Incubation: Add MTT reagent (3-(4,5-dimethylthiazol-2-yl)-2,5-diphenyltetrazolium bromide) to each well and incubate for 2-4 hours to allow formazan crystal formation.

- Solubilization & Measurement: Carefully remove the medium, dissolve the formazan crystals in an organic solvent (e.g., DMSO or isopropanol), and measure the absorbance at 570 nm using a plate reader.

- Data Analysis: Calculate cell viability as a percentage of the solvent control. Fit concentration-response curves to determine IC50 or other point-of-departure values.

Workflow for Organ-on-a-Chip (Liver Chip) Experimentation [24]

- Chip Fabrication & Seeding: A microfluidic device with separate channels lined with human liver sinusoidal endothelial cells and primary human hepatocytes is prepared. The channels are fluidically connected to mimic perfusion.

- System Stabilization: Culture medium is perfused through the system for several days to allow tissue maturation and establishment of stable albumin and urea production.

- Dosing Regimen: The test compound is introduced into the perfusion medium at a physiologically relevant concentration. Multiple dosing scenarios (single, repeated) can be simulated.

- Real-time Monitoring: Sensors may monitor parameters like pH and oxygen. Effluent medium is collected periodically for analysis.

- Endpoint Analysis: Assess biomarkers of toxicity (e.g., release of ALT, AST), metabolic competence (e.g., cytochrome P450 activity), and tissue morphology via imaging. Compare to known positive and negative controls.

In Silico QSAR Modeling Workflow

Protocol: Developing a QSAR Model for Acute Aquatic Toxicity [23]

- Data Curation: Compile a high-quality dataset of chemical structures and corresponding experimental endpoint values (e.g., LC50 for fathead minnow). The data should be from a reliable source like the ECOTOX Knowledgebase.

- Chemical Descriptor Calculation: Use cheminformatics software to calculate numerical descriptors (e.g., topological, electronic, geometrical) for each chemical in the dataset.

- Model Training & Validation: Split the data into training and test sets. Use a machine learning algorithm (e.g., random forest, support vector machine) on the training set to build a model that correlates descriptors with toxicity.

- Model Validation: Apply the model to the held-out test set. Evaluate predictive performance using metrics such as the root-mean-square error (RMSE), coefficient of determination (R²), and concordance correlation coefficient with the in vivo benchmark [23].

- Applicability Domain Definition: Statistically define the chemical space for which the model's predictions are considered reliable.

Omics Analysis Workflow

Protocol: Transcriptomics for Elucidating Mechanisms of Action

- Exposure & Sampling: Expose model organisms or in vitro systems to sub-lethal concentrations of the chemical and appropriate controls. Collect tissue or cells at multiple time points.

- RNA Extraction & Sequencing: Extract total RNA, ensure high quality (RIN > 8), and prepare sequencing libraries for RNA-Seq.

- Bioinformatics Analysis: Map sequencing reads to a reference genome. Perform differential gene expression analysis to identify significantly up- or down-regulated genes.

- Functional Enrichment & Pathway Analysis: Use tools like Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) to identify biological processes, molecular functions, and pathways significantly enriched among the differentially expressed genes.

- Integration with AOPs: Map the perturbed pathways and key genes to known Adverse Outcome Pathways (AOPs) to hypothesize or confirm a chemical's mechanism of action.

Visualization of NAM Integration and Workflows

Diagram 1: Conceptual Integration of NAMs for Ecotoxicological Prediction. This diagram shows how data from different NAM types inform Adverse Outcome Pathways (AOPs) and are integrated within frameworks like IATA/NGRA, driven by computational (in silico) tools, to generate risk predictions.

Diagram 2: Tiered NAM-Based Testing Strategy Workflow. This illustrates a pragmatic, tiered workflow for ecological risk assessment, starting with exposure-driven prioritization and moving through increasingly complex testing, with in silico tools framing and integrating the process.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Materials for NAM Implementation

| Category | Item | Function in NAMs | Example Application / Note |

|---|---|---|---|

| In Chemico | Synthetic Peptides (Cysteine, Lysine-containing) | Serve as molecular surrogates for protein nucleophiles to measure covalent binding reactivity. | Core reagent in the Direct Peptide Reactivity Assay (DPRA) for skin sensitization [9]. |

| In Vitro (Foundation) | Cell Lines (e.g., RTgill-W1, HepG2, NHBE) | Provide a biologically relevant platform for toxicity screening and mechanistic study. | Species-relevant lines (e.g., fish, human) are critical for ecotoxicology and translational research. |

| In Vitro (Advanced) | Extracellular Matrix (ECM) Hydrogels (e.g., Matrigel, Collagen) | Provide a 3D scaffold to support complex cell growth, differentiation, and organoid formation. | Essential for creating 3D organoids and spheroids that better mimic tissue architecture [24] [25]. |

| In Vitro (Advanced) | Microfluidic Organ-Chip Devices | Create dynamic, perfusable micro-environments that allow tissue-tissue interaction and mimic physiological shear stress. | Enables Microphysiological Systems (MPS) like lung- or liver-on-a-chip for systemic effect modeling [24]. |

| Viability Assays | MTT, Resazurin, LDH Assay Kits | Provide standardized, quantifiable readouts for cellular metabolic activity, proliferation, and membrane integrity. | Multiplexing these assays is a best practice to overcome limitations of any single endpoint [25]. |

| Omics | Next-Generation Sequencing (NGS) Kits (RNA-Seq, Whole Genome) | Enable genome-wide, unbiased profiling of transcriptional, epigenetic, or mutational changes. | Key for transcriptomics to discover biomarkers and define mechanisms within AOPs. |

| Omics | LC-MS/MS Metabolomics & Proteomics Platforms | Enable high-throughput identification and quantification of small molecules (metabolites) and proteins. | Used for phenotypic anchoring and discovering early, sensitive biomarkers of effect. |

| In Silico | Chemical Descriptor Calculation Software (e.g., PaDEL, DRAGON) | Generates numerical representations of chemical structure for input into QSAR/ML models. | The choice of descriptors heavily influences model performance and interpretability. |

| In Silico | Physiologically-Based Kinetic (PBK) Modeling Software | Simulates the absorption, distribution, metabolism, and excretion (ADME) of chemicals in virtual organisms. | Critical for QIVIVE, bridging in vitro effective concentrations to predicted in vivo doses [25]. |

| Data Management | FAIR-Compliant Database Solutions | Ensure data are Findable, Accessible, Interoperable, and Reusable for model building and validation. | Fundamental for building robust in silico models and sharing NAM data for regulatory acceptance [22]. |

The comparative analysis demonstrates that no single NAM is a universal substitute for traditional ecotoxicology tests. Instead, the power lies in their strategic integration within IATA and NGRA frameworks [22] [9]. Success stories, such as Defined Approaches for skin sensitization, show that validated, reproducible combinations of NAMs can support regulatory decisions [9]. The primary challenge for wider adoption, particularly for complex endpoints like chronic systemic toxicity, remains scientific validation and regulatory acceptance [10] [26]. A critical ongoing debate is whether NAMs should be benchmarked against inherently variable animal data or validated based on their ability to accurately measure human- and ecologically-relevant Key Events within established AOPs [9]. Future progress depends on generating high-quality, interoperable data from NAMs and developing agreed-upon, fit-for-purpose validation frameworks that assess their reliability for specific contexts of use in ecological risk assessment [10] [26]. This will accelerate the transition to a more predictive, mechanistic, and ethical paradigm in ecotoxicology.

From Theory to Toolbox: Methodological Arsenal and Application Strategies for Ecotoxicology NAMs

The validation of New Approach Methodologies (NAMs) in ecotoxicology represents a paradigm shift from traditional, resource-intensive whole-animal testing toward more efficient, predictive, and mechanistic-based strategies [27]. This transition is driven by regulatory mandates, such as the U.S. Toxic Substances Control Act (TSCA), which directs the EPA to reduce vertebrate animal testing and promote the development of alternative methods [28]. Computational, or in silico, tools are foundational to this shift, enabling researchers to extrapolate existing data, predict potential hazards, and prioritize testing across thousands of species and chemicals.

This guide focuses on four pivotal tools that exemplify this computational approach: SeqAPASS, ECOTOX, Web-ICE, and QSAR models. Each tool addresses a critical challenge in ecological risk assessment—from cross-species extrapolation and data curation to quantitative toxicity prediction. Their integrated use forms a powerful framework for generating and validating scientific evidence, thereby building confidence in NAMs for regulatory decision-making [10]. The following sections provide a comparative analysis of these tools, supported by experimental data and detailed protocols, to guide researchers and risk assessors in their practical application.

Comparative Analysis of Key Tools

The table below provides a structured comparison of the four core tools, highlighting their primary function, data inputs, predictive outputs, key strengths, and their specific role in the validation of NAMs.

Table 1: Comparison of Key EPA and OECD Computational Tools for Ecotoxicology

| Tool (Developer) | Primary Function & Purpose | Core Data Input | Primary Output / Prediction | Key Strengths | Role in NAM Validation |

|---|---|---|---|---|---|

| SeqAPASS (U.S. EPA) [27] [29] | Predicts cross-species chemical susceptibility based on protein target conservation. | Protein sequence (e.g., NCBI accession), known sensitive species. | Prediction of relative intrinsic susceptibility across taxa; quality visualizations & summary reports. | Extrapolates from model organisms to thousands of species; integrates three levels of sequence analysis. | Provides mechanistic line of evidence for extrapolating in vitro assay data (e.g., ToxCast) to ecological species. |

| ECOTOX (U.S. EPA) [30] | Curated knowledgebase of peer-reviewed toxicity data for aquatic and terrestrial life. | Chemical, species, and effect keywords. | Compiled experimental toxicity results (e.g., LC50, EC50) from literature. | Largest publicly available single source of curated ecological toxicity data; supports empirical anchoring. | Serves as the critical empirical benchmark for validating predictions from SeqAPASS, QSARs, and Web-ICE. |

| Web-ICE (U.S. EPA) [30] | Estimates toxicity for untested species using Interspecies Correlation Estimation (ICE) models. | A known toxicity value for a surrogate species and a chemical. | Predicted acute toxicity value (e.g., LC50) for a selected species, with confidence intervals. | Generates Species Sensitivity Distributions (SSDs) for risk assessment; models for aquatic, algal, and wildlife taxa. | Extends the utility of existing animal data to predict for untested species, reducing need for new tests. |

| QSAR Models (OECD Principle) [31] [32] | Predicts chemical toxicity or activity based on quantitative structure-activity relationships. | Chemical structure (e.g., SMILES) and/or molecular descriptors. | Continuous (e.g., pIC50) or categorical (active/inactive) prediction for a specific endpoint. | High-throughput, cost-effective; applicable early in chemical assessment where no data exist. | Provides screening-level hazard data for data-poor chemicals, forming a hypothesis for testing. |

Detailed Tool Protocols and Experimental Validation

SeqAPASS Protocol for Cross-Species Extrapolation

The SeqAPASS tool operates on the principle that chemical susceptibility is determined by the conservation of specific protein targets [27]. The following protocol is adapted from the published methodology [27].

Protocol:

- Identify Protein Target: Review literature to identify the specific protein (e.g., estrogen receptor, acetylcholinesterase) through which a chemical elicits toxicity in a known sensitive species (e.g., rat, zebrafish) [27].

- Submit Query: Log in to the SeqAPASS web application and input the protein sequence via NCBI accession number or FASTA format [27].

- Conduct Level 1 Analysis (Primary Sequence): The tool performs a primary amino acid sequence alignment (BLASTp) against all species in its database. Results are visualized as a taxonomic tree with susceptibility predictions [27].

- Refine with Level 2 (Functional Domain) and Level 3 (Critical Residue) Analyses: Use known functional domains or specific amino acid residues critical for chemical binding to refine predictions and increase taxonomic resolution [27].

- Interpret and Validate: Download summary reports and visualizations. Use the integrated widget to cross-reference predictions with empirical toxicity data in the ECOTOX Knowledgebase for validation [27] [29].

Experimental Validation Case: SeqAPASS predictions for honey bee nicotinic acetylcholine receptor susceptibility to neonicotinoid pesticides were consistent with available toxicity data, demonstrating its utility for prioritizing pollinator risk assessment [29].

Web-ICE Protocol for Toxicity Estimation

Web-ICE uses log-linear regression models built from curated empirical data to predict acute toxicity [30].

Protocol:

- Input Surrogate Data: Access Web-ICE and select a surrogate species (e.g., Daphnia magna) for which an experimental toxicity value (e.g., 48-h LC50) for the chemical of interest is known [30].

- Select Prediction Taxa: Choose the untested species or taxonomic group for which a prediction is needed.

- Run Model: The platform applies the relevant ICE model (species-, genus-, or family-level) using the equation: Log10(Predicted Taxa Toxicity) = a + b * Log10(Surrogate Species Toxicity) [30].

- Analyze Output: Retrieve the predicted toxicity value with its confidence interval. The tool can generate multi-species predictions to construct a Species Sensitivity Distribution (SSD) for ecological risk assessment [30].

Validation Metrics: Model reliability is assessed via the coefficient of determination (R²) and the square of the prediction error variance (SPEV). A lower SPEV indicates higher prediction accuracy [30].

Development and Validation of a 3D-QSAR Model

This protocol outlines the development of a predictive QSAR model, following OECD principles, as demonstrated for thyroid peroxidase (TPO) inhibitors [31] [32].

Protocol:

- Data Curation and Preparation: Curate a dataset of chemicals with reliable experimental activity data (e.g., IC50 for TPO inhibition). Standardize chemical structures, remove duplicates, and correct errors [32].

- Descriptor Calculation & Model Building: Calculate molecular descriptors (e.g., 3D spatial, electronic). Split data into training and test sets. Use machine learning algorithms (e.g., Random Forest, k-Nearest Neighbor) on the training set to build a model correlating descriptors with activity [31].

- Internal and External Validation:

- Internal: Use cross-validation on the training set to assess robustness.

- External: Apply the model to the held-out test set to evaluate predictive performance (e.g., R², classification accuracy) [31].

- Define Applicability Domain: Characterize the chemical space of the model to identify for which new compounds its predictions are reliable [32].

- Experimental Confirmation: Synthesize or acquire new chemicals predicted to be active by the model and test them experimentally in an in vitro assay (e.g., rat microsomal TPO assay) to confirm activity. This step is critical for ultimate validation [31].

Integrated Workflow and Validation Framework

The practical power of these tools is amplified when they are used in an integrated workflow. The diagram below illustrates how these tools can be sequenced from early screening to refined risk assessment, creating a weight-of-evidence approach for NAMs.

Diagram 1: Integrated NAM Workflow for Ecological Risk Assessment. This workflow shows how tools are chained to leverage different data types, from initial QSAR screening to final integrated assessment. Key: SSD = Species Sensitivity Distribution; WoE = Weight of Evidence.

The validation of NAMs within this framework relies on anchoring computational predictions to high-quality traditional data. The diagram below details this validation logic, showing how predictions from in silico tools are compared against curated in vivo data to build scientific confidence.

Diagram 2: Validation Logic for NAM Predictions Against Traditional Data. This process underpins the acceptance of NAMs by demonstrating their reliability and relevance through comparison with established data sources like ToxRefDB [20].

Table 2: Key Research Reagents and Resources for NAM-Based Ecotoxicology

| Category | Resource / Reagent | Function in NAM Workflow | Example/Source |

|---|---|---|---|

| Protein & Sequence Data | NCBI Protein Database | Provides the primary amino acid sequences used as input for SeqAPASS cross-species comparisons [27] [29]. | Accession numbers for target proteins (e.g., human estrogen receptor). |

| Chemical Data | Curated Chemical Datasets | Essential for developing and validating QSAR models; requires standardized structures and reliable activity data [32]. | EPA CompTox Chemicals Dashboard; datasets from ToxCast/Tox21 [20]. |

| Toxicity Benchmark Data | ECOTOX Knowledgebase | Serves as the source of empirical toxicity data for validating predictions and as surrogate input for Web-ICE models [30]. | Curated LC50/EC50 values for aquatic and terrestrial species. |

| Validation Benchmark | ToxRefDB | A highly curated database of traditional in vivo guideline studies; used as a gold-standard benchmark for building confidence in NAM predictions [20]. | Chronic toxicity study results for mammals, birds, and fish. |

| Computational Software | Modeling & Docking Software | Used to calculate molecular descriptors, perform homology modeling, and conduct molecular docking as part of advanced QSAR and mechanistic studies [31]. | Open-source tools (e.g., RDKit) or commercial platforms. |

| In Vitro Assay System | Target-based Bioassay | Provides experimental validation for predictions from SeqAPASS or QSAR models (e.g., testing predicted TPO inhibitors) [31]. | Rat thyroid microsomal TPO assay; cell-based receptor activation assays. |

The validation of New Approach Methodologies (NAMs) represents a paradigm shift in ecotoxicology and safety science, moving away from traditional animal studies toward faster, more informative, and human-relevant methods [4]. Integrated Approaches to Testing and Assessment (IATA) and Defined Approaches (DAs) are critical frameworks within this shift. They provide structured, scientifically robust strategies for integrating diverse data sources to answer specific hazard and risk assessment questions [33].

IATA is a broad, flexible framework that guides the gathering and integration of all relevant existing information—including toxicity data, computational predictions, exposure scenarios, and chemical properties—to characterize a hazard [33]. Its strength lies in its ability to incorporate expert judgment and adapt to data gaps, often following an iterative, weight-of-evidence process [33] [34].

In contrast, a Defined Approach (DA) is a specific, rule-based testing strategy that fits within an IATA. It is characterized by two fixed elements: a defined set of information sources (e.g., specific in vitro or in silico tests) and a fixed data interpretation procedure (DIP) that mechanically translates the data into a prediction without further expert judgment [33]. This structure makes DAs objective, transparent, and reproducible, which is essential for regulatory acceptance.

The ongoing validation of NAMs within these frameworks is crucial for their adoption. As regulatory bodies like the U.S. FDA and international organizations like the OECD encourage the replacement of animal tests, proving the reliability and predictive capacity of IATA and DA frameworks is central to modern ecotoxicology research [4] [35].

Core Characteristics and Comparative Framework

IATAs and DAs serve complementary but distinct roles within the NAM ecosystem. The following table summarizes their key characteristics.

Table 1: Comparison of IATA and Defined Approach (DA) Core Characteristics

| Characteristic | Integrated Approach to Testing and Assessment (IATA) | Defined Approach (DA) |

|---|---|---|

| Core Definition | A flexible framework for integrating multiple sources of evidence to address a hazard question [33]. | A fixed, rule-based strategy comprising a defined set of inputs and a data interpretation procedure [33]. |

| Decision Process | Often involves expert judgment and weight-of-evidence within a structured workflow [33]. | Fully automated, mechanical application of rules (algorithm, decision tree, Bayesian model) [33]. |

| Flexibility | High. Can incorporate new data types, adapt testing strategies based on interim results, and address data gaps [34]. | Low. The inputs and interpretation rules are fixed prior to application to ensure consistency [33]. |

| Primary Output | Hazard characterization informed by integrated evidence, often supporting a qualitative or semi-quantitative decision [34]. | A specific, categorical prediction (e.g., hazard yes/no, potency category) without additional interpretation [33]. |

| Regulatory Use | Supports broader chemical assessment, grouping, read-across, and prioritization [33] [34]. | Used for standardized testing for specific endpoints (e.g., skin sensitization, eye irritation) under OECD Test Guidelines [33]. |

| Example Context | Grouping nanomaterials based on dissolution rate, dispersion stability, and transformation in aquatic systems [34]. | Predicting skin sensitization potency using data from three defined in vitro assays processed through a Bayesian network [33]. |

Performance Data and Validation Metrics

The validation and performance of DAs are well-documented for several key toxicological endpoints. The following table presents quantitative performance metrics for established Defined Approaches, primarily drawn from collaborative work led by NICEATM and accepted into OECD Test Guidelines [33].

Table 2: Validation Performance Metrics for Selected Defined Approaches

| Defined Approach (OECD Guideline) | Endpoint | Key Assays/Inputs | Reported Accuracy | Key Validation Study |

|---|---|---|---|---|