Unlocking Predictive Power: How Raw Data Sharing is Revolutionizing Ecotoxicology and Risk Assessment

This article explores the transformative benefits of sharing raw data in ecotoxicology for researchers, scientists, and drug development professionals.

Unlocking Predictive Power: How Raw Data Sharing is Revolutionizing Ecotoxicology and Risk Assessment

Abstract

This article explores the transformative benefits of sharing raw data in ecotoxicology for researchers, scientists, and drug development professionals. It first establishes the foundational shift towards open science, highlighting how data sharing addresses critical challenges in chemical risk assessment and enables meta-analyses. The article then details practical methodologies and frameworks, such as the ATTAC workflow and FAIR principles, for effective data preparation and application. It further addresses common barriers to sharing, including concerns about credit and policy compliance, and offers optimization strategies. Finally, the piece validates the impact of shared data through case studies on toxicokinetic modeling, machine learning benchmarks, and integrative visual analytics. The conclusion synthesizes how a collaborative data ecosystem accelerates discovery, improves regulatory decisions, and fosters a more reproducible and efficient research culture.

The Open Science Paradigm: Why Raw Data Sharing is a Game-Changer for Ecotoxicology

Chemical risk assessment is the cornerstone of environmental protection and sustainable innovation, yet it is fundamentally constrained by systemic data scarcity. This scarcity manifests not merely as a shortage of data points, but as a crisis of fragmented, inaccessible, and non-standardized information that severely limits the predictive power and timeliness of ecological safety evaluations. Current assessment processes are chronically inefficient, with teams spending an average of 24.7 hours per chemical just on Chemical Hazard Assessments (CHAs), often relying on incomplete datasets that live in silos across suppliers, toxicology reports, and regulatory notices [1].

This inefficiency translates into tangible risks: delayed innovation, compliance gaps, regrettable substitutions, and eroded credibility [1]. The core thesis of this whitepaper is that the principled, widespread sharing of raw, well-curated ecotoxicological data is the most direct and powerful mechanism for overcoming this scarcity. By transitioning from isolated data generation to collaborative, open ecosystems, the research community can fuel advanced computational models, enable robust meta-analyses, and accelerate the development of New Approach Methodologies (NAMs), ultimately creating a more predictive and protective framework for chemical safety.

The Current Landscape: Quantifying the Data Gap and Its Consequences

The challenges of chemical assessment are universal, stemming from fragmented data systems and a lack of harmonization [1]. This data scarcity has direct, quantifiable impacts on scientific understanding and regulatory decision-making.

Key Systemic Challenges

The following table summarizes the primary operational and scientific challenges that perpetuate data scarcity.

Table 1: Core Challenges in Chemical Risk Assessment Contributing to Data Scarcity

| Challenge Category | Specific Issues | Impact on Data Availability & Quality |

|---|---|---|

| Operational & Process | Inconsistent data formats and standards [1]. | Hinders data aggregation, comparison, and reuse. |

| Resource-heavy manual processes (avg. 24.7 hrs/CHA) [1]. | Limits capacity for new data generation and curation. | |

| Reactive, compliance-driven approaches [1]. | Prioritizes limited data for known risks over systematic data generation for emerging threats. | |

| Scientific Complexity | Heterogeneity of test organisms, endpoints, and conditions [2]. | Creates "apples-to-oranges" comparisons; complicates data synthesis. |

| Lack of data on emerging materials (e.g., MCNMs, polymers) [3] [4]. | Critical gaps for novel substances entering the environment. | |

| Reliance on supra-environmental concentrations in labs [2]. | Limits ecological relevance and extrapolation to real-world risk. |

Consequences for Emerging Contaminants: The Case of Biodegradable Microplastics

The meta-analysis by Cao et al. (2025) on biodegradable microplastics (BMPs) exemplifies the consequences of data limitations [2]. Despite analyzing 717 endpoints from 28 studies, high heterogeneity and limited studies on specific polymers constrained definitive conclusions. The analysis revealed significant toxic effects, quantified as Hedge's g values:

Table 2: Ecotoxicological Effects of Biodegradable Microplastics (Meta-Analysis Results) [2]

| Biological Endpoint | Hedge's g (Effect Size) | Interpretation & Confidence |

|---|---|---|

| Behavior | -2.358 | Large, significant negative effect (strongest signal). |

| Reproduction | -1.821 | Large, significant negative effect. |

| Oxidative Stress | 0.645 | Moderate, significant increase. |

| Growth | -0.864 | Moderate, significant inhibition. |

| Survival | Not significant | Effect not statistically significant across studies. |

The pronounced behavioral disruption highlights a key ecological risk—impaired locomotion and predator avoidance—that could have population-level consequences but is often underrepresented in standard toxicity testing [2].

Regulatory Drivers and the Push for Modernization

Regulatory agencies worldwide are explicitly identifying data gaps and promoting strategies to overcome them. The European Chemicals Agency's (ECHA) 2025 report outlines critical research needs that directly underscore the urgency of data sharing [4].

ECHA's Key Research Priorities Requiring Enhanced Data [4]:

- For Hazard Assessment: Developing NAMs for neurotoxicity, immunotoxicity, and endocrine disruption. This requires shared data to build and validate adverse outcome pathways (AOPs) and computational models.

- For Environmental Fate: Improving assessment of chemical persistence and bioaccumulation, which depends on access to high-quality environmental monitoring and degradation data.

- For Complex Materials: Understanding the ecotoxicity of polymers, nanomaterials, and multicomponent substances. As noted, SAR models for multicomponent nanomaterials (MCNMs) are sparse due to limited datasets [3].

- Promoting Alternatives: Accelerating the use of non-animal methods (e.g., in vitro fish toxicity tests, read-across) relies on shared data to define chemical categories and validate predictions.

These priorities create a clear mandate: filling these data gaps is impossible through isolated research efforts. A coordinated, data-sharing ecosystem is essential to provide the volume and diversity of data needed to develop, train, and validate the next generation of assessment tools.

Foundational Frameworks for Effective Raw Data Sharing

Moving from a culture of data competition to one of collaboration requires addressing both technical and sociological barriers [5]. Successful frameworks demonstrate that with proper support and incentives, these barriers can be overcome.

The FAIR Principles and Quality-Curated Repositories

The FAIR (Findable, Accessible, Interoperable, Reusable) principles provide the technical foundation. Effective implementation, as seen in systems like Edaphobase for soil biodiversity, involves rigorous, multi-stage quality control [6]:

- Pre-import control: Automated checks during data upload.

- Peri-import review: Manual peer-review after submission.

- Post-import control: Final semi-automated review by the data provider within the system.

This process transforms raw data into a trusted, reusable resource. Similarly, the NIH HEAL Data Ecosystem facilitates sharing of complex data from pain and addiction research by providing a centralized platform for discovery and secure access, supported by dedicated data stewards who assist researchers [5].

Overcoming Sociological and Incentive Barriers

Researchers' hesitancy to share data is well-documented, rooted in fear of being scooped, lack of time/resources for curation, and insufficient institutional credit [5]. Proactive strategies to build a sharing culture include [5]:

- Providing Clear Incentives: Ensuring data producers receive citable digital object identifiers (DOIs), authorship credit where appropriate, and institutional recognition.

- Reducing the Burden: Offering consulting, tools, and hands-on support for data formatting, metadata generation, and repository submission.

- Establishing Clear Policies: Journals and funders play a critical role. A 2025 study of 275 ecology/evolution journals found that while 38.2% mandated data-sharing, compliance monitoring and enforcement remain inconsistent [7]. Strong, clear, and enforced policies are necessary.

Computational & In Silico Advancements Fueled by Shared Data

Shared, high-quality datasets are the essential fuel for computational toxicology, enabling the development of predictive models that can partially replace animal testing and rapidly screen chemicals.

Machine Learning and Benchmark Datasets

The ADORE dataset exemplifies a purpose-built, community resource for machine learning in ecotoxicology [8]. It integrates acute aquatic toxicity data for fish, crustaceans, and algae from the US EPA's ECOTOX database with chemical descriptors and species traits. Its value lies in its standardized, pre-processed format, which allows researchers to benchmark different ML models fairly and accelerate method development [8].

In Silico Model Development: A Protocol for SARs

Structure-Activity Relationship (SAR) models are critical for predicting toxicity based on chemical structure. Gakis et al. (2025) developed a classification SAR model for multicomponent nanomaterials (MCNMs), utilizing the largest curated dataset of its kind (652 measurements on 214 MCNMs) [3]. Their methodological protocol is a template for leveraging shared data.

Experimental Protocol: Developing a Classification SAR Model for MCNM Ecotoxicity [3]

- Data Compilation: Systematically retrieve ecotoxicity measurements (EC50, LC50) from scientific literature for target organisms (e.g., D. rerio, D. magna, E. coli).

- Data Curation & Classification: Standardize toxicity values. Classify each measurement as "toxic" or "non-toxic" based on a defined threshold (e.g., EC50 < 100 mg/L).

- Descriptor Calculation: Compute physicochemical descriptors for each nanomaterial. Key descriptors identified include the hydration enthalpy of the metal ion and the energy difference between the MCNM conduction band and the redox potential in biological media.

- Model Training & Validation: Use machine learning algorithms (e.g., Support Vector Machines, Random Forests) on a training subset to build a classifier that links descriptors to toxicity classification. Validate model performance using a held-out test dataset.

- Mechanistic Interpretation: Analyze the model to identify which descriptors are most influential, providing insight into the mechanisms of toxic action (e.g., ion release, oxidative stress).

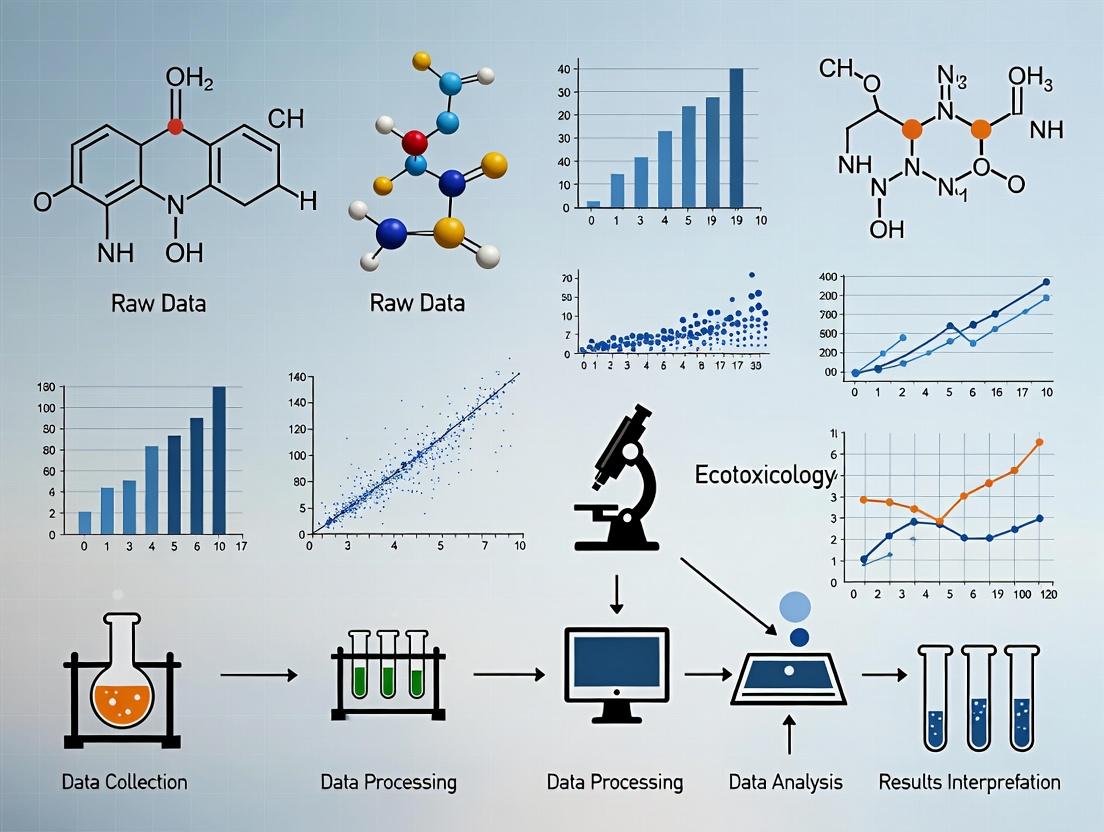

Diagram 1: Workflow for SAR Model Development

The Critical Role of Public Data Infrastructures

Agencies like the U.S. EPA maintain public data infrastructures that are vital for the field. The CompTox Chemicals Dashboard, ECOTOX Knowledgebase, and ToxCast program provide centralized access to chemical properties, toxicity data, and high-throughput screening results [9] [8]. These platforms not only distribute data but also foster communities of practice where scientists collaborate on computational toxicology challenges [9].

Case Study: Meta-Analysis as a Tool for Synthesizing Disparate Data

Meta-analysis is a powerful statistical technique to overcome data scarcity by quantitatively synthesizing findings from multiple independent studies. It is particularly valuable for addressing controversial or emerging topics, such as the ecotoxicity of biodegradable microplastics (BMPs) [2].

Experimental Protocol: Conducting an Ecotoxicological Meta-Analysis [2]

- Define Scope & Protocol: Formulate a clear research question (e.g., "What is the magnitude of BMP effect on aquatic organism behavior?"). Pre-register the review protocol following PRISMA guidelines.

- Systematic Literature Search: Search multiple databases (e.g., Web of Science) using a comprehensive, predefined string of keywords. Define explicit inclusion/exclusion criteria (e.g., peer-reviewed studies, specific endpoints, exposure durations).

- Data Extraction: From each eligible study, extract quantitative endpoint data (e.g., mean, standard deviation, sample size for control and exposed groups). Also extract moderating variables (e.g., polymer type, particle size, organism species, exposure concentration).

- Calculate Effect Sizes: Convert all extracted data into a common, standardized effect size metric, such as Hedge's g (which accounts for sample size bias). This allows comparison across different measured endpoints and experimental designs.

- Statistical Synthesis & Modeling: Use a random-effects model to calculate the overall pooled effect size and its confidence interval. Conduct subgroup analysis and meta-regression to test if moderators (e.g., polymer type: PLA vs. PHB) explain heterogeneity in the results.

- Risk of Bias & Sensitivity Assessment: Evaluate the quality of included studies and test the robustness of findings by conducting sensitivity analyses (e.g., removing one study at a time).

Diagram 2: Meta-Analysis Workflow for Ecotoxicology

Table 3: Research Reagent Solutions for Data-Sharing and Computational Ecotoxicology

| Tool/Resource Name | Type | Primary Function in Overcoming Data Scarcity | Key Reference/Availability |

|---|---|---|---|

| ADORE Dataset | Benchmark Data | Provides a curated, standardized dataset for fish, crustacea, and algae acute toxicity to enable fair benchmarking and development of ML models. | [8] |

| ECOTOX Knowledgebase | Public Database | Aggregates ecotoxicology test results from the literature, providing a primary source for exposure/effect data on thousands of chemicals and species. | U.S. EPA [8] |

| CompTox Chemicals Dashboard | Data Integration Platform | Provides access to chemical structures, properties, hazard data, and bioactivity screening results from multiple EPA programs, enabling read-across and in silico modeling. | U.S. EPA [9] |

| Edaphobase | Thematic Data Warehouse | Demonstrates a functional model for ingesting, quality-reviewing, and sharing complex ecological data (soil biodiversity) with FAIR principles. | [6] |

| HEAL Data Ecosystem Platform | Data Sharing Infrastructure | Provides a cloud-based platform for discovering and securely accessing shared research data, supported by stewardship to lower barriers for contributors. | NIH [5] |

| Structure-Activity Relationship (SAR) Models | Computational Model | Predicts toxicity based on chemical structure descriptors, allowing for prioritization and screening when experimental data is absent. Requires curated training data. | [3] |

Overcoming data scarcity in chemical risk assessment is an urgent, solvable challenge. The path forward requires a concerted shift toward open, collaborative science built on three pillars:

- Cultural Commitment: Institutions, funders, and journals must align incentives to reward data sharing as a valuable scholarly output [5] [7]. This includes mandating and enforcing strong data-sharing policies.

- Technical Infrastructure: Investment must continue in FAIR-aligned data repositories with robust quality control (like Edaphobase) and user-friendly platforms (like the HEAL ecosystem) that make sharing simpler than hoarding [6] [5].

- Strategic Utilization: The research community must actively leverage shared data to power computational toxicology—building benchmark datasets like ADORE, developing predictive models for emerging substances like MCNMs, and conducting definitive meta-analyses [2] [3] [8].

The benefits of raw data sharing for ecotoxicology research are profound: accelerated discovery, reduced redundant testing, enhanced predictive model capability, and ultimately, more robust and timely protection of ecosystem health. By transforming data from a private asset into a public good, the scientific community can decisively meet the urgent need for better chemical safety assessment.

The discipline of ecology, fundamentally concerned with interactions within complex systems, is undergoing a profound transformation in its research culture. A paradigm is shifting from the traditional model of data hoarding—where raw datasets are closely guarded as individual intellectual property—to one of systematic sharing. This shift mirrors a well-documented biological phenomenon where food-hoarding animals, such as scatter-hoarding corvids, evolved sophisticated memory to protect and retrieve their scattered caches [10]. In scientific research, however, the "scatter hoarding" of data across isolated labs creates inefficiencies, impedes reproducibility, and slows collective understanding [7].

This whitepaper frames this transition within the specific context of ecotoxicology, a field where understanding the fate and effects of contaminants is critical for environmental and human health. The benefits of raw data sharing in ecotoxicology are multifaceted: it enhances the reproducibility of dose-response studies, enables powerful meta-analyses across heterogeneous exposure scenarios, accelerates the identification of emerging contaminants, and provides a robust evidence base for chemical risk assessment and drug development. By moving from a model of individual cache protection to one of collaborative resource pooling, the ecological and ecotoxicological research community can significantly accelerate the pace of discovery and application.

The Current State: Data Sharing Policies and Compliance

The adoption of data-sharing practices is increasingly mandated by journals and funding agencies, yet implementation remains inconsistent. A 2025 assessment of 275 journals in ecology and evolution reveals the current landscape of policy strictness [7].

Table 1: Data and Code Sharing Policies in Ecology/Evolution Journals (n=275) [7]

| Policy Type | Data-Sharing (% of Journals) | Code-Sharing (% of Journals) |

|---|---|---|

| Mandated | 38.2% | 26.9% |

| Encouraged | 22.5% | 26.6% |

| Not Mentioned/Optional | 39.3% | 46.5% |

The timing of sharing is equally critical for effective peer review. The same study found that among journals mandating sharing, 59.0% required data submission for peer review, and 77.0% required code for review [7]. When journals merely encouraged sharing, these figures dropped to 40.3% and 24.7%, respectively. This indicates that mandatory policies are far more effective in integrating transparency into the validation process.

Compliance data from leading journals illustrates the impact of policy changes. At Ecology Letters, the implementation of a mandatory data- and code-sharing policy for peer review in 2023 was followed by a dramatic increase in sharing upon submission [7]. Pre-mandate, a small minority of submissions included data or code; post-mandate, the vast majority complied, demonstrating that clear, required policies effect rapid cultural change.

Adopting open science practices requires a new suite of methodological tools and resources. The following toolkit is essential for researchers transitioning to a data-sharing paradigm.

Table 2: Research Reagent Solutions for Open Ecoinformatics

| Tool/Resource Category | Example & Function | Key Benefit for Sharing |

|---|---|---|

| Data Repositories | Zenodo, Dryad, EPA's ECOTOX Knowledgebase: Provide persistent, citable storage for raw datasets. | Ensures long-term accessibility, data integrity, and provides a DOI for citation. |

| Code & Workflow Platforms | GitHub, GitLab, R/Python Notebooks (e.g., Jupyter): Version control and documentation of analytical code. | Enables full reproducibility and transparent methodological reporting. |

| Metadata Standards | Ecological Metadata Language (EML): Structured format for describing dataset content, structure, and origin. | Makes data discoverable, interpretable, and reusable by other researchers. |

| Data Visualization Tools | R ggplot2, Python Matplotlib/Seaborn, GIS software: Create clear, accessible visualizations from complex data [11]. | Facilitates communication of findings to diverse audiences, from scientists to policymakers [12]. |

| Policy Databases | Living Database of Journal Policies in Ecology & Evolution: Tracks journal-specific data-sharing requirements [7]. | Helps researchers comply with mandates and understand disciplinary norms. |

Foundational Protocols for Reproducible Research

The core of the sharing paradigm is a commitment to reproducible workflows. Below are detailed protocols for key activities that ensure data is both sharable and meaningful.

Protocol: Field Data Collection with Embedded Metadata

Objective: To collect ecological or ecotoxicological field data in a manner that ensures its future usability by any researcher. Materials: GPS unit, calibrated environmental sensors (e.g., for pH, conductivity, temperature), digital data loggers, standardized field data sheets (digital or physical), camera. Procedure:

- Pre-Deployment Calibration: Calibrate all sensors according to manufacturer specifications. Record calibration dates, standards used, and any adjustments.

- Spatio-Temporal Tagging: For each observation or sample, record precise GPS coordinates (with error estimate) and timestamp (in UTC). Photograph the sampling site and microhabitat.

- Contextual Data Capture: Record all relevant abiotic and biotic covariates (e.g., weather conditions, habitat type, presence of other species) that may influence the primary measurement.

- Immediate Data Entry & Validation: Enter data into a structured digital format (e.g., .csv) in the field or at day's end. Perform range and logic checks to catch errors early.

- Provenance Logging: Maintain a master log linking raw data files, sensor calibration records, field notes, and personnel.

Protocol: Laboratory Ecotoxicology Bioassay

Objective: To generate dose-response data for a contaminant on a model organism in a fully documented and replicable manner. Materials: Test compound of known purity, model organisms (e.g., Daphnia magna, Danio rerio embryos), certified dilution water, exposure chambers, environmental-controlled incubators, water quality testing kits (for DO, pH, hardness), behavioral or morphological endpoint measurement tools. Procedure:

- Stock Solution Preparation: Prepare a concentrated stock of the test compound using an appropriate solvent (e.g., acetone, DMSO). Record solvent type, concentration, and preparation date. Include a solvent control in experimental design.

- Serial Dilution: Perform a logarithmic serial dilution to create at least five test concentrations plus a negative control. Document dilution factors and final concentrations.

- Exposure Setup: Randomly allocate organisms to exposure chambers. Use at least three replicates per concentration. Record water quality parameters (temperature, pH, dissolved oxygen) at test initiation and termination.

- Endpoint Assessment: At defined intervals (e.g., 24h, 48h, 96h), assess predefined endpoints (e.g., mortality, immobilization, growth inhibition, behavioral change) by a researcher blinded to treatment groups.

- Data Curation: Compile raw endpoint data, water quality measurements, and detailed methodological metadata (including any deviations from protocol) into a single, annotated dataset following the Ecological Metadata Language (EML) standard.

Protocol: Computational Analysis & Dynamic Documentation

Objective: To analyze data using scripts that create a transparent, self-documented record of all transformations and statistical tests. Materials: Statistical software (R, Python), integrated development environment (RStudio, Jupyter Lab), version control system (Git). Procedure:

- Project Structure: Create a well-organized directory with subfolders for

/raw_data,/scripts,/outputs, and/figures. Keep raw data files immutable (read-only). - Scripted Analysis: Write code that reads raw data, performs cleaning (documenting any exclusions), executes analyses, and generates outputs/figures in a single executable workflow. Avoid manual point-and-click operations.

- Dynamic Documentation: Use literate programming tools (e.g., R Markdown, Jupyter Notebook) to weave narrative text, code, and results into a single document.

- Version Control: Initialize a Git repository for the project. Commit code frequently with descriptive messages. Host the repository on a platform like GitHub or GitLab to archive and share the full analytical provenance.

Visualizing the Paradigm Shift and Workflow

Effective visualization is key to understanding complex systems and processes [11]. The following diagrams, created with Graphviz DOT language, map the conceptual and practical shift in ecological research.

The Paradigm Shift in Ecological Research

Open Data Workflow in Ecotoxicology

Future Directions and Implementation Roadmap

The full realization of the sharing paradigm requires concerted action across multiple levels of the research ecosystem. Based on current assessments [7], the following roadmap is proposed:

- Journal Policy Harmonization (Short-Term): Journals should adopt clear, mandatory data- and code-sharing policies that require submission for peer review. Policies must move from vague encouragement to explicit requirements with consistent terminology [7].

- Researcher Training and Incentives (Medium-Term): Graduate programs and professional societies must integrate data management, reproducible coding, and open science practices into core curricula. Tenure and promotion criteria should recognize data publication and software contributions as scholarly outputs.

- Infrastructure for Interoperability (Long-Term): Investment is needed in cyberinfrastructure that allows federated querying across distributed ecotoxicological databases (e.g., linking chemical exposure data from EPA with genomic response data from NCBI). This enables the systems-level analysis required for modern environmental challenges.

The trajectory is clear. By embracing the shift from hoarding to sharing, ecological and ecotoxicological research will enhance its rigor, accelerate the translation of science into policy and application, and build a resilient, cumulative knowledge base capable of addressing the complex environmental threats of the 21st century.

Ecotoxicology faces a critical challenge: the increasing volume and diversity of chemical substances in the environment outpaces our ability to assess their cumulative risks. Scattered, inaccessible data limit robust synthesis, hindering evidence-based decisions. The sharing of raw, primary data is a foundational practice of Open Science that directly addresses this bottleneck. This technical guide details the three core benefits of raw data sharing—enhancing research visibility, enabling powerful meta-analyses, and providing robust support for policy—within the context of advancing ecotoxicological science and chemical safety.

Sharing raw data in public, FAIR-aligned repositories significantly increases the discoverability and impact of research. Data become independent, citable research outputs that extend the reach of the associated publication.

Quantitative Evidence: Multiple studies across disciplines confirm a measurable "citation advantage" for articles that share data.

Table 1: Documented Citation Advantage from Data Sharing

| Study / Source | Field | Reported Citation Increase | Key Finding |

|---|---|---|---|

| Colavizza et al. (2020)[reference:0] | Multi-disciplinary (PLOS/BMC) | Up to 25.36% | Data sharing in a repository was the only method significantly correlated with higher citation impact. |

| PathOS Scoping Review (2025)[reference:1] | General Open Science | ~9% (upper bound) | A causal model estimates a ~9% increase, with about two-thirds mediated by data reuse. |

| Nature Ecology & Evolution (2024)[reference:2] | Ecology & Evolution | Significant increase | Confirms that repository sharing benefits authors through increased citations. |

| ATTAC Principles (2023)[reference:3] | Wildlife Ecotoxicology | Contributes to greater citations | Transparent data description builds trust and increases citation of work. |

Mechanisms: The advantage arises from enhanced reuse potential (data serve as a foundation for further research) and improved reproducibility and transparency, which signals credibility to the community[reference:4]. Journals are now integrating data submission with manuscript review, streamlining the process and ensuring data are available for peer assessment[reference:5].

Enabling Robust Meta-Analyses

Meta-analysis is a cornerstone for synthesizing evidence across studies to derive generalizable conclusions about chemical effects. Its reliability is fundamentally dependent on access to raw or sufficiently detailed data.

The Critical Challenge: Inadequate reporting and lack of raw data access severely hamper meta-analytic efforts. A 2025 attempt to meta-analyze sublethal effects of plant protection products on bees starkly illustrates this problem. The study found that 92% of experiment datapoints (332 of 389) had to be excluded because essential methodological or statistical information was missing or ambiguous[reference:6]. This prevented a formal synthesis, turning the project into a case study on reporting failures.

Detailed Protocol: Data Extraction for Ecotoxicological Meta-Analysis The bee study provides a rigorous protocol for data extraction, highlighting the minimum information required for inclusion:

- Literature Search & Screening: Execute a systematic search using predefined keywords (e.g., chemical classes, species, endpoints). Apply inclusion/exclusion criteria based on population, exposure, comparator, and outcome (PECO).

- Data Extraction Criteria: For each experiment, extract the following for both treatment and control groups:

- Exposure Metrics: Concentration/dose at start and exposure duration.

- Effect Metrics: Central tendency (mean/median) and measure of variation (SD, SE).

- Vitality Metrics: Background mortality rates.

- Extraction Source: Rely solely on information in the main text and supplementary materials. Due to resource constraints, authors are typically not contacted, and data are not extracted from graphs or recalculated[reference:7].

- Exclusion Decision: Experiments missing any of the above information are deemed unreliable and excluded from quantitative synthesis[reference:8].

This protocol underscores that without detailed raw data or summary statistics, even a large body of literature cannot support a quantitative meta-analysis, leading to abandoned synthesis efforts and persistent knowledge gaps.

Supporting Regulatory and Policy Decisions

Raw data sharing transforms isolated research findings into a collective evidence base that can directly inform chemical regulation and environmental management policies.

Workflow for Policy-Relevant Science: The ATTAC (Access, Transparency, Transferability, Add-ons, Conservation sensitivity) workflow is a guiding framework designed to promote the reuse of wildlife ecotoxicology data specifically to support regulations[reference:9]. Its structured steps ensure data are prepared for integration into regulatory risk assessments.

Regulatory Integration: Policymakers require comprehensive, integrated data to evaluate chemical risks. The OECD Best Practice Guide on Chemical Data Sharing Between Companies (2025) provides a critical framework for fair and transparent data sharing to support regulatory compliance, reduce duplicate testing, and accelerate risk assessments[reference:10][reference:11]. Similarly, the ATTAC workflow aims to provide "strong scientific support for regulations and management actions"[reference:12]. By making raw data FAIR (Findable, Accessible, Interoperable, Reusable), the ecotoxicology community directly contributes to more efficient and protective chemical governance.

The Scientist's Toolkit: Essential Reagents for Ecotoxicological Data Generation

High-quality, shareable data begin with standardized experimental materials. The following table lists key reagents and their functions in common ecotoxicological testing.

Table 2: Key Research Reagent Solutions in Standard Ecotoxicology

| Item | Function & Purpose | Example Use Case |

|---|---|---|

| Reference Toxicants | Positive control substances used to validate test organism health and assay performance. | Potassium dichromate (fish toxicity), copper sulfate (daphnia), sodium chloride (algae). |

| Standardized Test Media | Chemically defined water or soil formulations that eliminate confounding variables. | OECD reconstituted freshwater, EPA sediment formulations, ISO algal growth medium. |

| Enzyme Activity Kits | Assay kits for measuring biochemical sublethal effects. | Acetylcholinesterase (AChE) kit for neurotoxicity screening in invertebrates and fish. |

| Metabolite Detection Kits | Kits for measuring oxidative stress or detoxification biomarkers. | Glutathione (GSH) assay kit, lipid peroxidation (MDA) assay kit. |

| Cell Viability Assays | In vitro assays for high-throughput screening of cytotoxic effects. | Neutral Red Uptake (NRU) assay using fish cell lines (e.g., RTgill-W1). |

| DNA/RNA Extraction Kits | Kits for isolating genetic material for transcriptomic or genomic effect studies. | RNA extraction for qPCR analysis of stress gene expression (e.g., cyp1a, hsp70). |

| Data Logging Software | Software for capturing raw instrument readings and experimental metadata. | Systems for logging dissolved oxygen, pH, temperature, and organism behavior in real-time. |

The commitment to raw data sharing is not merely a compliance exercise but a strategic investment in the power and relevance of ecotoxicological research. As demonstrated, it directly enhances the visibility and impact of scientific work, unlocks the potential for rigorous, conclusive meta-analyses, and provides the integrated evidence base required for effective environmental policy and regulation. Adopting frameworks like ATTAC and utilizing standardized toolkits are concrete steps toward a more open, collaborative, and impactful future for the field.

Ecotoxicology, the study of the effects of toxic chemicals on populations, communities, and ecosystems, is fundamental to environmental protection and chemical risk assessment [13]. However, the field is undergoing a paradigm shift towards open science, where the sharing and re-use of primary research data are increasingly seen as essential for scientific advancement [6]. This whitepaper examines the current state of raw data availability within ecotoxicology, identifying critical gaps that hinder meta-analyses, large-scale modeling, and the rapid assessment of emerging contaminants like nanoparticles [14]. It quantifies the systemic barriers to data sharing, from inconsistent journal policies to a lack of researcher incentives, and details the high cost of inaction, which includes slower scientific progress, inefficient use of research funds, and impaired environmental decision-making [7]. Framed within the broader thesis that raw data sharing is a transformative benefit for the field, this guide provides actionable protocols for implementing quality-controlled data publication and a toolkit for researchers to navigate this evolving landscape.

Ecotoxicology research generates complex datasets critical for understanding how pollutants affect organisms from the molecular to the ecosystem level. The traditional model, where data remains siloed within individual research groups or is published only in summarized form, is increasingly recognized as a major bottleneck. Sharing raw, well-annotated data unlocks significant benefits: it enables powerful synthesis efforts like meta-analyses, increases the visibility and citation impact of original research, and allows for the re-analysis of data with new scientific questions or computational tools [6]. This is particularly urgent for addressing modern challenges such as assessing the ecotoxicology of nanoparticles and nanomaterials, where data on terrestrial and marine species is notably lacking [14].

Despite these clear advantages, data sharing is not yet the norm. Researchers often face significant individual and institutional barriers, including a lack of time, funding, or data-science skills needed to properly document and format data for public use [6]. Furthermore, journal policies governing data and code sharing are inconsistent and often poorly enforced. A 2025 assessment of 275 ecology and evolution journals revealed that while 38.2% mandated data sharing, only 26.9% mandated code sharing, and the clarity and timing of these requirements varied widely [7]. This policy ambiguity leads researchers to take the "path of least resistance," depositing data with minimal documentation, which severely hinders its future re-usability and undermines the reproducibility of scientific findings [6] [7]. The cost of this inaction is a fragmented knowledge base, slowing our response to environmental threats and compromising the robustness of ecological risk assessments.

Quantifying the Gaps: Data Availability and Policy Inconsistency

The transition to an open-data paradigm in ecotoxicology is hindered by measurable gaps in policy implementation and researcher compliance. The following tables synthesize current data on these systemic challenges.

Table 1: Journal Policy Landscape for Data and Code Sharing in Ecology & Evolution (2025 Assessment of 275 Journals) [7]

| Policy Strictness | Data Sharing (Percentage of Journals) | Code Sharing (Percentage of Journals) |

|---|---|---|

| Mandated | 38.2% | 26.9% |

| Encouraged | 22.5% | 26.6% |

| Not Mentioned / Other | 39.3% | 46.5% |

Note: "Mandated" indicates a journal requirement; "Encouraged" indicates a journal recommendation without enforcement.

Table 2: Policy Timing and Compliance in Select Journals [7]

| Journal & Policy Period | Submissions Sharing Data | Submissions Sharing Code | Key Finding |

|---|---|---|---|

| Ecology Letters (Pre-mandate: Jun-Aug 2021) | 45.4% | 15.0% | Low voluntary sharing, especially for code. |

| Ecology Letters (Post-mandate: Sep-Nov 2023) | 96.1% | 85.4% | Mandatory policies dramatically increase compliance. |

| Proceedings of the Royal Society B (Mar 2023-Feb 2024) | 90.2% | 79.1% | High compliance under a long-standing mandate. |

Table 3: Critical Knowledge Gaps in Nanomaterial Ecotoxicology [14]

| Research Area | Specific Gaps | Consequence for Risk Assessment |

|---|---|---|

| Test Organisms & Biomes | Limited data on bacteria, terrestrial species, marine species, and higher plants. Heavy reliance on a few standard freshwater species. | Assessments may not protect vulnerable species or entire ecosystems (e.g., soil, oceans). |

| Material Characterization | Inconsistent reporting of nanoparticle properties (size, shape, surface area, charge) and environmental behavior (aggregation, adsorption). | Difficult to compare studies, identify key toxic properties, or predict fate in real environments. |

| Mechanistic & ADME Studies | Few detailed investigations on Absorption, Distribution, Metabolism, and Excretion (ADME) across major phyla. | Limited understanding of internal exposure, target organs, and mechanisms of toxicity. |

| Long-Term & Chronic Effects | Predominance of short-term, acute toxicity data. | Underestimates potential population-level impacts and chronic ecological damage. |

The High Cost of Inaction: Scientific and Conservational Impacts

Failure to address the data availability gap carries substantial costs that extend beyond individual research projects to impede the entire field and its application to environmental protection.

- Impaired Scientific Synthesis and Innovation: Without accessible raw data, the ability to perform robust meta-analyses or train predictive models is severely limited. For example, understanding the ecosystem-level risk of a chemical requires integrating hundreds of toxicity tests across species, endpoints, and environmental conditions—a task impossible without shared, standardized data [6]. This slows the pace of discovery and innovation in environmental safety.

- Reduced Reproducibility and Eroded Trust: The reproducibility crisis in science is exacerbated when data and code are unavailable for scrutiny [7]. In ecotoxicology, where findings directly inform regulatory decisions, the inability to verify or build upon published results undermines scientific credibility and public trust.

- Inefficient Use of Resources and Duplication of Effort: Public funds are wasted when expensive ecotoxicology studies cannot be fully utilized by the broader community. Researchers may unknowingly replicate past experiments, and risk assessors spend excessive time searching for or requesting data instead of analyzing it.

- Delayed and Weakened Environmental Policy: Conservation and policy decisions rely on timely, comprehensive evidence. Gaps in data on key species or ecosystems—such as the noted lack of information on nanomaterials for marine and terrestrial organisms—mean that policies are formulated on an incomplete picture, potentially failing to prevent biodiversity loss or ecosystem degradation [6] [14].

Experimental Protocols for Implementing Quality-Controlled Data Sharing

Overcoming barriers requires more than policy mandates; it requires practical, researcher-friendly systems. The following protocols detail methodologies for establishing effective data sharing practices.

This protocol outlines a structured workflow to ensure shared data is findable, accessible, interoperable, and reusable (FAIR), mitigating common concerns about data misuse and poor quality.

- Objective: To transform a raw, researcher-held ecotoxicology dataset into a quality-reviewed, publicly accessible resource that is ready for synthesis and re-use.

- Pre-Submission Preparation:

- Data Compilation: Gather all raw data files, experimental metadata (e.g., test organism life stage, exposure regime, water chemistry), and analytical code.

- Standardization: Map variables to community-accepted ontologies (e.g., ECOTOX ontology) and use standardized units. Structure data in a tidy format (one observation per row).

- Documentation: Create a detailed

READMEfile describing the study design, methodologies, column definitions, and any data processing steps.

- Pre-Import Control (Automated Check):

- Upload data to a repository (e.g., Edaphobase, Zenodo, Dryad) that features an automated validation tool.

- The tool checks for file format compatibility, basic schema compliance (required columns), and obvious errors (e.g., values outside plausible ranges).

- The researcher addresses any automated feedback before final submission.

- Peri-Import Review (Manual Peer-Review):

- Upon submission, a data curator or peer reviewer with domain expertise examines the dataset and documentation.

- The review assesses ecological relevance, logical consistency, completeness of metadata, and adherence to field-specific standards.

- The reviewer provides confidential feedback to the data provider for corrections or clarifications.

- Post-Import Control (Final Researcher Verification):

- After revisions, the data provider performs a final semi-automated review within the repository system to confirm all changes are correctly integrated.

- The provider sets access terms (e.g., CC-BY license) and can opt for a temporary embargo if needed.

- Upon final approval, the repository issues a persistent, citable Digital Object Identifier (DOI) for the dataset [6].

This methodology describes how journals can empirically evaluate the effectiveness of their data and code sharing mandates.

- Objective: To measure the change in data and code sharing rates before and after the implementation of a mandatory journal policy, and to identify ongoing compliance barriers.

- Design: A retrospective, observational study comparing two time periods: pre-mandate and post-mandate.

- Data Collection:

- Sample: All original research submissions to a selected journal (e.g., Ecology Letters) during two defined windows (e.g., a 3-month period before policy change and a 3-month period after full implementation) [7].

- Variables: For each manuscript, record: (1) Presence/Absence of a data file/archive link, (2) Presence/Absence of a code/script file/link, (3) Accessibility of the shared materials (e.g., link functional, no paywall).

- Source: Data is obtained from the journal's editorial management system or provided directly by the editorial office [7].

- Analysis:

- Calculate the proportion of submissions sharing data and code for each time period.

- Perform a chi-squared test to determine if the difference in sharing rates between the pre- and post-mandate periods is statistically significant.

- Qualitatively analyze the reasons for non-compliance in the post-mandate period (e.g., granted exemptions, author oversight).

Data Quality Review and Publication Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful ecotoxicology research and data sharing depend on both biological and digital "reagents." The following table details key materials and their functions.

Table 4: Research Reagent Solutions for Ecotoxicology

| Item | Category | Function in Research & Data Sharing |

|---|---|---|

| Reference Toxicant | Biological Control | A standardized chemical (e.g., KCl, sodium lauryl sulfate) used to periodically assess the health and sensitivity of cultured test organisms. Ensures the reliability and reproducibility of toxicity test results over time. |

| Standardized Test Organism | Biological Model | A species with established culturing and testing protocols (e.g., Daphnia magna, fathead minnow, Lemna minor). Enables inter-laboratory comparison of data, which is foundational for data sharing and meta-analysis. |

| Algal Culture Media | Growth Substrate | A chemically defined nutrient solution (e.g., OECD TG 201 medium) for cultivating phytoplankton in toxicity tests. Standardization minimizes background variability, making shared toxicity data more comparable. |

| Data Repository with DOI | Digital Tool | A platform (e.g., Zenodo, Dryad, Edaphobase) that stores datasets, assigns a permanent Digital Object Identifier (DOI) for citation, and provides metadata for discovery [6]. Essential for FAIR data sharing. |

| Metadata Schema / Ontology | Digital Standard | A controlled vocabulary or framework (e.g., Ecotox Ontology, Darwin Core) for describing data. Ensures shared data is properly annotated and interoperable, allowing machines and researchers to correctly interpret variables. |

| Statistical Code Script | Digital Record | A documented script (e.g., in R or Python) that performs the data analysis from raw data to final results. Sharing this code is critical for computational reproducibility and is increasingly mandated by journals [7]. |

Visualizing the Impact: From Data Gaps to Systemic Consequences

The interconnected nature of data gaps, research limitations, and real-world impacts can be conceptualized as a cascade of failures. The diagram below maps this logical relationship, illustrating how primary barriers lead to fragmented science and, ultimately, weaker environmental protection.

Multi-Scale Impacts of Ecotoxicology Data Gaps

The landscape of ecotoxicology is at a crossroads. The gaps in data availability and the inconsistent application of sharing policies incur a demonstrably high cost, stalling scientific progress and compromising environmental conservation [6] [7]. However, the path forward is clear. Embracing raw data sharing as a foundational practice, supported by robust systems like the three-step quality review protocol and the use of persistent repositories, can transform these gaps into opportunities [6].

To realize the full benefits, the field must implement concrete changes:

- For Journals: Adopt clear, mandatory data and code sharing policies that require submission at the peer review stage and employ verification checks [7].

- For Institutions and Funders: Create "intrinsic" rewards and recognition for data publication, provide training in data management, and allocate specific resources for data curation activities [6].

- For Researchers: Proactively use standardized tools and ontologies, deposit data in FAIR-aligned repositories, and view data publication as an integral, valued output of their research.

By systematically addressing these challenges, the ecotoxicology community can build a comprehensive, reusable knowledge base. This will accelerate our understanding of complex chemical threats, from legacy pollutants to novel nanomaterials, and provide the robust evidence needed to protect ecosystems and public health effectively [14].

From Theory to Practice: Frameworks and Best Practices for Sharing Ecotoxicology Data

Ecotoxicology faces a critical challenge: the increasing total amount and diversity of chemical substances in the environment generates vast, scattered data that remains largely unintegrated [15]. This inability to quantitatively synthesize information limits our capacity to determine whether existing regulations sufficiently protect wildlife. While systematic reviews and meta-analyses are powerful tools aligned with the Open Science and FAIR (Findable, Accessible, Interoperable, Reusable) movements, the emergence of novel insights from existing data remains rare relative to its hidden potential [15]. The central thesis is that sharing raw, primary data—not just summarized results—is a fundamental prerequisite for transformative ecotoxicological research. It enables more powerful meta-analyses, validation of findings, novel secondary research, and ultimately, stronger scientific support for conservation regulation. The ATTAC workflow (Access, Transparency, Transferability, Add-ons, and Conservation sensitivity) is proposed as a structured, collaborative guide to overcome the barriers to effective data reuse in wildlife ecotoxicology [15].

The ATTAC Workflow: Core Principles and Technical Specifications

The ATTAC framework provides a stepwise guide for both data contributors ("prime movers") and re-users to enhance the utility and reuse of ecotoxicological data [15]. Its five pillars address the entire chain of data collection, homogenization, and integration.

Pillar 1: Access

The foundation of the workflow is ensuring data is proactively accessible. This moves beyond simple availability to structured, discoverable sharing.

- Technical Implementation: Data and metadata should be deposited in recognized, discipline-specific repositories (e.g., Dryad, Zenodo, EPA's ECOTOX Knowledgebase) with persistent identifiers (DOIs). A machine-readable data dictionary must accompany all datasets.

- Protocol for Contributors: Prior to submission, data must be de-identified to remove sensitive location information for threatened species (see Pillar 5). A submission package should include: 1) raw data file (in non-proprietary format, e.g., .csv, .txt), 2) metadata file (using a standard like EML - Ecological Metadata Language), 3) a README file detailing collection methods, units, and abbreviations, and 4) the specific license for reuse (e.g., CC-BY).

Pillar 2: Transparency

Transparency ensures the data's origins and processing steps are fully documented, enabling critical evaluation and accurate reuse.

- Technical Implementation: Use of the Contributor Role Taxonomy (CRediT) to precisely attribute contributions (e.g., data curation, formal analysis) [15]. All data transformations, cleaning steps, and quality control procedures must be documented in a scripted workflow (e.g., using R or Python scripts shared via GitHub).

- Protocol for Re-users: Re-users should document the provenance of the sourced data, including its DOI, and clearly distinguish between the original contributor's work and their own subsequent analyses. Any data cleaning or transformation performed by the re-user must be explicitly detailed and scripted.

Pillar 3: Transferability

Transferability ensures data is structured and annotated for seamless integration with other datasets, which is essential for meta-analysis.

- Technical Implementation: Data should be homogenized into standardized formats and vocabularies. For example, chemical names should use CAS Registry Numbers, species names should follow authoritative taxonomic backbones (e.g., ITIS), and effect endpoints should use controlled terms (e.g., from the OECD glossary).

- Protocol for Homogenization: A recommended methodology involves a multi-stage process: 1) Compilation of raw data from diverse sources; 2) Curation to correct errors and flag uncertainties; 3) Harmonization of variables and units to a common schema; 4) Annotation with standardized identifiers and vocabularies.

Pillar 4: Add-ons

Add-ons refer to the enrichment of shared datasets with additional value-added layers, such as model parameters or cross-references.

- Technical Implementation: Link exposure or response data to relevant model parameters. For instance, toxicological data for a species can be linked to its Dynamic Energy Budget (DEB) parameters in the Add-my-Pet database [15], enabling mechanistic modeling of effects across life stages and endpoints.

- Protocol for Enrichment: Contributors or specialized curators can create a cross-walk table that maps dataset records (species, chemical, endpoint) to entries in external knowledge bases (e.g., NIST Chemistry WebBook, Add-my-Pet, TRY Plant Trait Database). This table should be shared as part of the data package.

Pillar 5: Conservation Sensitivity

This pillar mandates the ethical handling of data concerning species and locations vulnerable to disturbance, balancing openness with protection.

- Technical Implementation: Implement a sensitivity flagging system within the metadata. For data concerning threatened species (IUCN Red List) or sensitive ecosystems, precise geographic coordinates should be generalized (e.g., to a 10km grid or administrative region) before public sharing.

- Protocol for Risk Assessment: Before sharing, data contributors must conduct a sensitivity screen: 1) Check the species conservation status (e.g., via IUCN Red List API); 2) Assess if location data could facilitate disturbance or illegal collection; 3) Apply appropriate spatial obfuscation if risks are identified; 4) Document all modifications made for conservation reasons.

Table 1: The Five Pillars of the ATTAC Workflow and Their Technical Requirements

| ATTAC Pillar | Primary Objective | Key Technical Actions | Output for Re-users |

|---|---|---|---|

| Access | Guarantee data discovery and availability. | Deposit in FAIR repository; Assign DOI; Create README. | A permanently accessible, citable data package. |

| Transparency | Provide complete provenance and processing history. | Use CRediT roles; Share analysis scripts; Document QC. | Full understanding of data lineage and quality. |

| Transferability | Enable data integration and meta-analysis. | Harmonize units/vocabularies; Use standard identifiers (CAS, ITIS). | Data that is interoperable with other studies. |

| Add-ons | Enhance data utility with external knowledge links. | Link to model parameters (e.g., DEB), chemical databases. | Data enriched for advanced modeling and synthesis. |

| Conservation Sensitivity | Protect vulnerable species and habitats. | Flag sensitive data; Generalize sensitive coordinates. | Ethically shared data that minimizes conservation risk. |

ATTAC in Practice: Methodological Protocols for Data Re-use

Protocol for a Systematic Data Integration and Meta-Analysis

This protocol enables researchers to synthesize data collected under the ATTAC principles.

- Query Formulation: Define the precise ecological question (e.g., "What is the dose-response relationship of chemical X on reproduction in freshwater fish?").

- Discovery and Acquisition: Search ATTAC-formatted repositories using standardized keywords and chemical/species identifiers. Download data packages and their associated metadata/README files.

- Homogenization and Curation: Execute curation scripts (if provided by contributor) or apply standardized curation routines to convert all data to common units and formats. Resolve any discrepancies via the documented provenance.

- Integration: Merge datasets using the standardized identifiers (CAS, ITIS). Utilize add-on links (e.g., DEB parameters) to create enriched analysis tables.

- Analysis: Perform meta-analytic models (e.g., mixed-effects models) that account for both the ecological data and the hierarchical structure of the integrated data (e.g., study-level random effects).

- Sensitivity and Conservation Check: Ensure the presentation of results does not inadvertently expose sensitive location information. Generalize findings as necessary.

Experimental Protocol for Validating Model Predictions Using Shared Data

Shared raw data provides the perfect substrate for validating ecological and toxicological models.

- Model Selection: Choose a predictive model (e.g., a DEB-Tox model, a QSAR model).

- Test Data Extraction: From ATTAC-formatted repositories, extract raw experimental data that matches the model's domain (species, chemical, endpoint) but was not used in the model's calibration.

- Data Preparation: Prepare the independent test data according to model input requirements, leveraging "Add-on" information (e.g., species-specific DEB parameters from linked databases).

- Prediction and Comparison: Run the model to generate predictions for the test conditions. Statistically compare model predictions against the observed experimental data (e.g., using root-mean-square error, comparison of confidence intervals).

- Feedback Loop: Document the validation performance. This evaluation can be shared as a new "Add-on" to the original dataset, creating a virtuous cycle of data enrichment.

Table 2: Comparison of Data Sharing Approaches in Ecotoxicology

| Characteristic | Traditional Publication (PDF Summary) | Data Supplement (Static Table) | ATTAC Workflow Implementation |

|---|---|---|---|

| Findability | Low. Buried in text. | Medium. Connected to article. | High. Repository with rich metadata. |

| Accessibility | Medium. Behind paywall possible. | Medium. Often proprietary format. | High. Open, non-proprietary formats. |

| Interoperability | Very Low. Manual extraction needed. | Low. Structure often study-specific. | High. Standardized vocabularies & IDs. |

| Reusability | Low. Lack of provenance & context. | Medium. Basic data provided. | Very High. Full transparency & add-ons. |

| Suitability for Meta-analysis | Poor. | Difficult. | Designed for integration. |

Visualizing the ATTAC Workflow and Data Transformation

Diagram 1: The ATTAC Workflow Process & Data States

Diagram 2: Mapping ATTAC Implementation to FAIR Principles

Diagram 3: Data Homogenization and Enrichment Protocol

Implementing the ATTAC workflow requires both conceptual understanding and practical tools. The following toolkit details essential resources for researchers contributing to or re-using data within this framework.

Table 3: Research Reagent Solutions for ATTAC Implementation

| Tool Category | Specific Tool / Resource | Function in ATTAC Workflow | Key Benefit |

|---|---|---|---|

| Repository & Storage | Zenodo / Dryad | Provides a FAIR-aligned repository for data publication, ensuring Access and citability via DOI assignment. | Long-term preservation, versioning, and integration with GitHub. |

| Metadata Specification | Ecological Metadata Language (EML) | A standardized schema for describing ecological data, critical for Transparency and Transferability. | Ensures machine-readable, comprehensive documentation of data context. |

| Data & Script Management | GitHub / GitLab | Hosts and versions scripts for data cleaning, transformation, and analysis, fulfilling Transparency requirements. | Tracks provenance, enables collaboration, and links code directly to data. |

| Identifier Services | CAS Registry / ITIS | Provides authoritative numeric identifiers for chemicals and taxa, essential for Transferability and integration. | Resolves ambiguity in names, enabling accurate merging of datasets. |

| Model Parameter Database | Add-my-Pet (AmP) Database [15] | A key "Add-on" resource linking species to Dynamic Energy Budget (DEB) model parameters for mechanistic extrapolation. | Transforms simple toxicity data into a basis for trait-based modeling. |

| Conservation Screening | IUCN Red List API | Allows programmatic checking of species conservation status to inform Conservation Sensitivity decisions. | Automates risk assessment for data sharing related to threatened species. |

| Controlled Vocabularies | OECD Glossary of Statistical Terms | Provides standard definitions for ecotoxicological endpoints and metrics, aiding Transferability. | Reduces heterogeneity in how experimental results are described. |

| Data Validation Tool | Morpho Data Editor (w/ EML) | Assists researchers in creating and validating metadata files that comply with EML standards. | User-friendly interface for generating high-quality metadata. |

Implementing FAIR Principles for Findable, Accessible, Interoperable, and Reusable Data

The credibility and pace of ecotoxicology research are fundamentally linked to the availability of high-quality, reusable data. A growing body of evidence positions the sharing of raw data as a critical catalyst for innovation, enabling more robust meta-analyses, accelerating chemical risk assessments, and fostering interdisciplinary collaboration[reference:0]. However, realizing these benefits requires moving beyond simple data deposition to adopting a structured framework that ensures data can be effectively discovered, understood, and utilized by both humans and machines. This technical guide details the implementation of the FAIR (Findable, Accessible, Interoperable, Reusable) principles[reference:1], providing a roadmap for researchers to enhance the value and impact of their ecotoxicological data within the broader scientific community.

The FAIR Principles: A Framework for Data Stewardship

The FAIR principles, established in 2016, provide a comprehensive set of guidelines to transform data into a reliable, machine-actionable asset[reference:2]. Each principle addresses a specific challenge in data reuse:

- Findable: Data and metadata must be assigned persistent, globally unique identifiers (e.g., DOIs) and be richly described with metadata to enable discovery by search engines and catalogues.

- Accessible: Data should be retrievable by their identifier using a standardized, open, and free protocol, with metadata remaining accessible even if the data itself is restricted.

- Interoperable: Data and metadata should use formal, accessible, shared, and broadly applicable languages and vocabularies (ontologies) to enable integration with other datasets.

- Reusable: Data should be described with multiple, relevant attributes (provenance, license, methodological details) to allow accurate interpretation and replication.

The State of Data Sharing: A Quantitative Snapshot

Despite policy pushes, the adoption of structured data-sharing practices in environmental sciences remains inconsistent. Recent analyses quantify the current landscape:

Table 1: Prevalence of Data and Code Sharing Policies in Ecology & Evolution Journals (2025)[reference:3]

| Policy Aspect | Percentage of Journals (n=275) | Key Detail |

|---|---|---|

| Data-Sharing Encouraged | 22.5% | - |

| Data-Sharing Mandated | 38.2% | 59.0% of these require sharing for peer review |

| Code-Sharing Encouraged | 26.6% | - |

| Code-Sharing Mandated | 26.9% | 77.0% of these require sharing for peer review |

Table 2: Availability of Supplementary Materials (SM) in Biomedical Literature[reference:4]

| Metric | Value | Note |

|---|---|---|

| PMC Articles with ≥1 SM file (historical) | 27% | - |

| PMC Articles with ≥1 SM file (2023) | 40% | Indicates a positive trend |

| Primary content of SM (tabular data) | >90% | Highlights need for machine-readable formats |

These figures underscore a dual challenge: while the volume of shared materials is growing, significant gaps remain in mandatory, structured sharing that aligns with FAIR criteria.

Experimental Protocol: The ATTAC Workflow for Wildlife Ecotoxicology Data

To translate FAIR principles into practice, domain-specific protocols are essential. The ATTAC (Access, Transparency, Transferability, Add-ons, Conservation sensitivity) workflow provides a detailed, five-step methodology for curating and sharing wildlife ecotoxicology data[reference:5].

Materials and Pre-Processing

- Data Sources: Gather raw data from laboratory experiments, field monitoring, and legacy literature.

- Homogenization Toolkit: Use spreadsheet software (e.g., Excel, Google Sheets) or script-based tools (R, Python) for initial data cleaning.

- Metadata Schema: Prepare a template based on standards like Ecological Metadata Language (EML) or ISA-Tab.

Step-by-Step Procedure

- Access: Deposit the finalized dataset in a trusted, public repository (e.g., Zenodo, Dryad, EPA's ECOTOX Knowledgebase[reference:6]) to obtain a persistent identifier (DOI).

- Transparency: Document all methodological details, including chemical exposure concentrations, species/strain information, experimental duration, and endpoint measurements. Link this protocol directly to the dataset metadata.

- Transferability: Convert data into non-proprietary, machine-readable formats (e.g., CSV, JSON). Apply controlled vocabularies (e.g., ChEBI for chemicals, ENVO for environments) to key variables to ensure interoperability.

- Add-ons: Provide supplemental code (e.g., R/Python scripts) used for statistical analysis or graph generation, alongside a README file explaining execution steps.

- Conservation Sensitivity: Clearly flag any data subject to ethical or conservation restrictions. If applicable, provide a rationale for data embargo and specify the terms under which restricted data can be accessed.

Quality Control and Validation

- Verify that all dataset variables are clearly defined in the metadata.

- Test the provided code/scripts to ensure they run successfully and reproduce key results.

- Validate the dataset identifier (DOI) resolves to the correct landing page.

Visualization of Workflows and Relationships

Diagram 1: The FAIR Data Lifecycle

This diagram illustrates the iterative cycle of implementing FAIR principles, where each step feeds into the next to enhance data utility.

Diagram 2: The ATTAC Workflow for Data Curation

This flowchart outlines the sequential and decision-based steps in the ATTAC protocol for preparing wildlife ecotoxicology data for sharing and reuse.

Implementing FAIR principles requires a combination of platforms, standards, and software tools. The following table details key solutions for each stage of the data lifecycle.

Table 3: Research Reagent Solutions for FAIR Data Management

| Tool/Resource | Category | Primary Function in FAIR Implementation |

|---|---|---|

| Zenodo / Dryad | Repository | Provides persistent identifiers (DOIs) and long-term storage for data, code, and supplements, fulfilling Findable and Accessible principles. |

| ISA-Tab / EML | Metadata Standard | Frameworks for structuring and reporting metadata in a machine-readable format, essential for Interoperability and Reusability. |

| ECOTOX Knowledgebase | Domain Repository | A curated database for environmental toxicity data that allows download of raw data files, exemplifying FAIR access in ecotoxicology[reference:7]. |

| FAIR-SMART API | Access Tool | A system that standardizes and provides programmatic access to supplementary materials, addressing the Accessible and Interoperable principles for SM[reference:8]. |

| R / Python (tidyverse, pandas) | Analysis Software | Script-based environments that promote reproducible analysis workflows. Sharing code alongside data is critical for Reusability. |

| Ontobee / OLS | Vocabulary Service | Provide access to biomedical and environmental ontologies (e.g., ChEBI, ENVO) for annotating data, a core requirement for Interoperability. |

The transition to a culture of open, reusable data in ecotoxicology is both a technical and a cultural endeavor. As quantified in this guide, current sharing practices are advancing but require systematic implementation of frameworks like the FAIR principles. By adopting structured protocols such as the ATTAC workflow, leveraging the essential tools in the research toolkit, and visualizing the data lifecycle, researchers can transform raw data from a static publication supplement into a dynamic, foundational resource. This shift is paramount for addressing complex environmental health challenges, where the integration and reuse of diverse data streams are key to generating reliable evidence for policy and protection.

Ecotoxicology research is fundamental for understanding the impacts of chemicals on ecosystems and for informing evidence-based environmental regulations [16]. The field faces a critical challenge: a vast and ever-growing amount of data on chemical toxicity is scattered across individual studies, often in heterogeneous formats, making quantitative integration and synthesis difficult [16]. This fragmentation limits our ability to perform robust meta-analyses, identify broad patterns, and ascertain whether existing management actions sufficiently protect wildlife [16] [17].

The paradigm of raw data sharing presents a transformative solution. Moving beyond the sharing of only summarized or published results to sharing primary, unaggregated experimental data unlocks significant scientific and societal benefits [17]. These benefits include: advancing science through reproducible research; allowing verification of results that underpin environmental policies; and enabling the creation of "megadata" resources that permit analyses impossible with smaller, isolated datasets [17]. For instance, large aggregated databases can help answer fundamental questions about the relationship between chemical structure and toxicity or predict adverse outcomes from molecular events [17].

However, the immense potential of shared raw data can only be realized through rigorous data stewardship. Direct pooling of disparate datasets without processing leads to a "Tower of Babel" scenario, where data inconsistency cripples analysis. Therefore, a structured approach to data curation is essential. This guide details the three interdependent pillars of this approach: Standardization (establishing common formats and units), Harmonization (mapping diverse data to a common model), and Quality Review (assessing reliability and relevance) [18] [6]. When implemented within frameworks like the FAIR principles (Findable, Accessible, Interoperable, Reusable), these processes transform scattered data into a powerful, reusable resource for high-impact, collaborative science in ecotoxicology [18] [19].

Data Standardization: Establishing a Common Language

Data standardization is the foundational process of converting data into a consistent format using common units, terminologies, and structural rules. It is the first critical step to ensure that data from different sources can be technically compared and combined.

Core Standardization Procedures

- Unit Conversion and Normalization: Ecotoxicity data are reported in various units (e.g., mg/L, µg/L, ppb, molarity). A primary standardization step involves converting all values to a single, canonical unit system (typically SI or a field-standard like mg/L for aqueous concentrations) [20]. For toxicity endpoints, this also includes normalizing reported values (e.g., EC50, LC50, NOEC) to a standard duration (e.g., 48-h for Daphnia, 96-h for fish) where possible, acknowledging that the effect may vary with exposure time [8].

- Chemical Identifier Harmonization: Chemicals may be identified by common names, trade names, CAS Registry Numbers, or internal database IDs. Standardization requires mapping all entries to authoritative, unique identifiers. Best practice is to use persistent identifiers like the DSSTox Substance ID (DTXSID) or InChIKey, which are less ambiguous than CAS numbers [20] [8]. Tools like the US EPA's CompTox Chemicals Dashboard facilitate this mapping.

- Taxonomic Name Resolution: Organism names are prone to synonyms and changes in classification. Standardization involves resolving all species names to a accepted taxonomic backbone, such as the Integrated Taxonomic Information System (ITIS) or the World Register of Marine Species (WoRMS). This ensures that data for Daphnia magna, for example, is consolidated regardless of reporting variations [20] [8].

- Endpoint Categorization: Similar toxicological effects may be described with different terminology across studies. Standardization involves categorizing free-text effect descriptions (e.g., "immobilization," "intoxication," "lack of movement") into a controlled vocabulary of standardized effect groups, such as "Mortality," "Growth," "Reproduction," or "Behavior" [20] [8].

The following table summarizes the scale and scope of a major standardized ecotoxicity resource, illustrating the outcome of rigorous standardization processes applied to a primary data source.

Table 1: Scale of a Standardized Ecotoxicity Database (Standartox Tool) [20]

| Data Category | Count | Description |

|---|---|---|

| Test Results | ~600,000 | Individual ecotoxicological test results after filtering for common endpoints. |

| Unique Chemicals | ~8,000 | Distinct chemical substances tested. |

| Taxa | ~10,000 | Unique species or other taxonomic groups used in tests. |

| Primary Data Source | US EPA ECOTOX | Quarterly updated source database containing over 1.1 million test results for more than 12,000 chemicals and 14,000 species [8]. |

| Key Standardized Endpoints | XX50 (EC50, LC50), LOEC, NOEC | Filtered and harmonized to ensure comparability. |

Data Harmonization: Integrating Diverse Data Structures

While standardization addresses format, harmonization addresses meaning. It is the process of semantically integrating data collected using different methodologies, experimental designs, or measurement tools into a coherent, unified structure suitable for analysis [21].

The Harmonization Workflow

The harmonization workflow typically follows a multi-stage process, as exemplified by large collaborative cohorts and database projects.

Figure 1: A Generalized Data Harmonization Workflow (100 characters)

- Common Data Model (CDM) Definition: The first step is establishing a target schema—the CDM. This model defines the structure, variable names, data types, and allowed values for the unified database. In the ECHO-wide Cohort, this involved defining "essential" and "recommended" data elements for each life stage [21]. For animal ecology, the Euromammals initiative developed a shared database model with core tables for animals, sensors, deployments, and locations [19].

- Semantic Mapping and Variable Derivation: Each source dataset must be mapped to the CDM. This often requires complex transformations. For example, multiple questionnaires measuring "stress" must be mapped to a derived "stress score" variable in the CDM [21]. In ecotoxicology, this could mean deriving a standardized "acute mortality" flag from various reported effect descriptions and exposure durations [8].

- Execution and Integration: The mapping rules are executed via Extract, Transform, Load (ETL) scripts, populating the harmonized database. Continuous communication with data providers is crucial to resolve ambiguities. The Euromammals model highlights the importance of data curators who perform quality checks and iterate with providers to fix inconsistencies [19].

Protocol for Harmonizing Ecotoxicity Data for Machine Learning

The creation of benchmark datasets for machine learning (ML) requires particularly rigorous harmonization. The following protocol is derived from the ADORE (Aquatic Toxicity Datasets for Open REsearch) benchmark dataset construction [8].

Experimental Protocol 1: Assembling a Machine Learning-Ready Ecotoxicity Dataset

- Objective: To create a clean, standardized, and feature-rich dataset for ML models predicting acute aquatic toxicity.

- Data Source: US EPA ECOTOX database (quarterly release) [8].

- Filtering & Inclusion Criteria:

- Taxonomic Groups: Restrict data to three key groups: Fish, Crustaceans, and Algae.

- Endpoint Harmonization:

- Fish: Include only entries with Effect = "Mortality" and standardized endpoints like LC50.

- Crustaceans: Include entries with Effect = "Mortality" or "Intoxication" (the latter often used as a proxy for immobilization/mortality).

- Algae: Include entries related to population health: Effects = "Mortality," "Growth," "Population," "Physiology."

- Exposure Duration: Include tests with durations ≤ 96 hours to focus on acute toxicity.

- Life Stage: Exclude tests on isolated eggs, embryos, or in vitro cell assays to maintain a focus on whole-organism, in vivo data [8].

- Feature Expansion: