Toxicological Dose Descriptors Decoded: From LD50 to NAMs for Modern Risk Assessment

This article provides a comprehensive guide to toxicological dose descriptors, the fundamental metrics that quantify the relationship between chemical exposure and adverse effects.

Toxicological Dose Descriptors Decoded: From LD50 to NAMs for Modern Risk Assessment

Abstract

This article provides a comprehensive guide to toxicological dose descriptors, the fundamental metrics that quantify the relationship between chemical exposure and adverse effects. Designed for researchers, scientists, and drug development professionals, it covers the foundational definitions and applications of classic descriptors like NOAEL, LD50, and BMD. It then explores modern methodological applications, including their critical role in deriving health-based guidance values such as the Reference Dose (RfD) and in high-throughput screening. The discussion extends to troubleshooting common challenges in dose-setting and data interpretation, highlighting the shift from Maximum Tolerated Dose (MTD) to Kinetic Maximum Dose (KMD) principles. Finally, it examines the validation and comparative use of these descriptors within New Approach Methodologies (NAMs) and large-scale, curated databases like the EPA's ToxValDB. This holistic view equips practitioners to confidently select, apply, and interpret dose descriptors across both traditional and next-generation toxicological paradigms.

Understanding the Core Language of Hazard: Key Toxicological Dose Descriptors Defined

In toxicology and risk assessment, a dose descriptor is a term used to identify the relationship between a specific effect of a chemical substance and the dose at which it takes place [1]. These quantifiable metrics serve as the fundamental bridge between experimental toxicological data and the protective safety limits established for human health and the environment, such as the Derived No-Effect Level (DNEL), Reference Dose (RfD), or Predicted No-Effect Concentration (PNEC) [1]. The core principle underpinning their use is the dose-response relationship, which describes how the likelihood and severity of adverse health effects are related to the amount and condition of exposure to an agent [2].

The process of human health risk assessment is a structured, four-step paradigm: Hazard Identification, Dose-Response Assessment, Exposure Assessment, and Risk Characterization [3]. Dose descriptors are the pivotal output of the Dose-Response Assessment step and are critical inputs for the final Risk Characterization. Their derivation and application are framed within the understanding of two key toxicological concepts: thresholds for systemic toxicity and non-threshold mechanisms for carcinogenicity. For systemic toxicants, it is generally accepted that homeostatic and adaptive mechanisms must be overcome before an adverse effect is manifested, implying the existence of an exposure threshold below which no adverse effect is expected [4]. In contrast, for carcinogens and mutagens, it is often assumed that even a small number of molecular events can initiate a process leading to cancer, a mechanism treated as nonthreshold [4]. This fundamental distinction dictates the choice of dose descriptor (e.g., NOAEL for threshold effects, T25 or BMD for non-threshold carcinogens) and the subsequent mathematical approach for deriving safe exposure levels [3].

This article provides an in-depth examination of the primary dose descriptors utilized in modern toxicology. It details their definitions, the experimental studies from which they are derived, and their central role in the quantitative risk assessment framework that protects public health.

Core Dose Descriptors: Definitions, Acquisition, and Applications

Dose descriptors are determined through standardized toxicological studies and are expressed using specific units. The following section delineates the key descriptors, categorized by their primary application in assessing acute toxicity, systemic (repeated-dose) toxicity, carcinogenicity, and ecotoxicity.

Table 1: Summary of Key Toxicological Dose Descriptors

| Dose Descriptor | Full Name | Definition | Typical Study Source | Common Units | Primary Application |

|---|---|---|---|---|---|

| LD₅₀ / LC₅₀ | Lethal Dose (or Concentration) 50% | A statistically derived single dose (or concentration) at which 50% of the test animals are expected to die [1]. | Acute toxicity studies [1]. | mg/kg body weight (LD₅₀); mg/L (LC₅₀) [1]. | Acute toxicity hazard classification and labeling [1]. |

| NOAEL | No Observed Adverse Effect Level | The highest exposure level at which there are no biologically significant increases in adverse effects between exposed and control groups [1]. | Repeated dose (28-day, 90-day, chronic) and reproductive toxicity studies [1]. | mg/kg bw/day (oral); mg/L/6h/day (inhalation) [1]. | Derivation of safe human exposure levels (e.g., RfD, ADI, OEL) [1]. |

| LOAEL | Lowest Observed Adverse Effect Level | The lowest exposure level at which there are biologically significant increases in adverse effects [1]. | Repeated dose and reproductive toxicity studies (when NOAEL is not identified) [1]. | mg/kg bw/day (oral) [1]. | Used with higher assessment factors to derive safe exposure levels when NOAEL is unavailable [1]. |

| BMD/BMDL₁₀ | Benchmark Dose (Lower Confidence Limit) | A model-derived dose that produces a predetermined change in response (e.g., 10% extra risk). The BMDL is the lower confidence bound [3]. | Dose-response studies (often chronic or carcinogenicity). | mg/kg bw/day [1]. | Modern alternative to NOAEL/LOAEL for deriving reference values; used for cancer and non-cancer endpoints [3]. |

| T₂₅ | Tumorigenic Dose 25 | The chronic dose rate estimated to give 25% of the animals tumors at a specific tissue, after correction for spontaneous incidence [1]. | Carcinogenicity bioassays. | mg/kg bw/day [1]. | Risk assessment for non-threshold carcinogens to calculate a Derived Minimal Effect Level (DMEL) [1]. |

| EC₅₀ | Median Effective Concentration | The concentration of a substance that results in a 50% reduction in a specified sub-lethal effect (e.g., algal growth rate, Daphnia immobilization) [1]. | Acute aquatic toxicity studies. | mg/L [1]. | Acute environmental hazard classification and PNEC calculation [1]. |

| NOEC | No Observed Effect Concentration | The highest tested concentration in an environmental compartment at which no unacceptable effect is observed [1]. | Chronic aquatic and terrestrial toxicity studies. | mg/L [1]. | Chronic environmental hazard classification and PNEC calculation [1]. |

Acute Toxicity Descriptors (LD₅₀/LC₅₀): These values are foundational for hazard classification and labeling (e.g., GHS). A lower LD₅₀/LC₅₀ value indicates higher acute toxicity [1]. While informative for immediate hazards, they do not predict chronic toxicity effects [5].

Systemic Toxicity Descriptors (NOAEL, LOAEL, BMD): These are the most critical descriptors for protecting human health from repeated exposures. The NOAEL is identified from the critical study—the one showing the adverse effect (or its known precursor) at the lowest dose in the most sensitive species [3]. A higher NOAEL indicates lower chronic toxicity [1]. A significant scientific limitation of the NOAEL/LOAEL approach is its dependence on the study's chosen dose spacing and sample size, and it ignores the shape of the dose-response curve [4]. The Benchmark Dose (BMD) modeling approach is a more advanced and statistically rigorous alternative that addresses these shortcomings by using all the dose-response data to estimate a predefined benchmark response [3].

Carcinogenicity Descriptors (T₂₅, BMD): For substances considered non-threshold carcinogens, descriptors like T₂₅ or BMD₁₀ are used to quantify potency. These values serve as points of departure for low-dose extrapolation, often using linear models to estimate cancer risk at environmental exposure levels [3].

Ecotoxicity Descriptors (EC₅₀, NOEC): These are used in parallel to human health descriptors to assess environmental risk. They are derived from studies on species representing different trophic levels (e.g., algae, Daphnia, fish) and are pivotal for calculating the Predicted No-Effect Concentration (PNEC) for an ecosystem [1].

From Descriptor to Decision: Deriving Safe Exposure Limits

The ultimate objective of calculating dose descriptors is to derive health-based guidance values that define presumed safe exposure levels for humans. This process involves applying assessment factors, historically called safety factors, to account for scientific uncertainties [4].

The Reference Dose (RfD) Framework

The RfD is an oral exposure level (RfC for inhalation) estimated to be without appreciable risk of adverse effects over a lifetime [4]. It is derived using the following formula:

RfD = NOAEL (or LOAEL or BMDL) / (UF₁ × UF₂ × ... × UFₙ) = NOAEL / Total UF [4] [3]

The Uncertainty Factors (UFs) are typically 10-fold defaults but can be modified based on chemical-specific data [3]. Common UFs include:

- UFₐ (Interspecies Variability): Accounts for the extrapolation from average animal to average human.

- UFₕ (Intraspecies Variability): Accounts for variability within the human population (e.g., genetic, life stage, health status).

- UFₛ (Subchronic to Chronic): Applied when the NOAEL is from a subchronic study.

- UFₗ (LOAEL to NOAEL): Applied when a LOAEL is used instead of a NOAEL.

- UFₚ (Database Deficiencies): Applied when the overall toxicological database is incomplete [3].

Calculation and Uncertainty

The process is illustrated by a sample calculation from the U.S. EPA: If a chronic rat study identifies a NOAEL of 10 mg/kg-day, and standard UFs of 10 for interspecies and 10 for intraspecies variation are applied, the RfD would be calculated as 10 mg/kg-day / (10 × 10) = 0.1 mg/kg-day [4]. It is crucial to understand that the RfD is not a precise threshold of safety but a "soft" estimate with bounds of uncertainty that may span an order of magnitude [4]. Exceeding the RfD indicates an increased level of concern and triggers closer scrutiny, not a certainty of harm [4].

Table 2: Derivation of Human Health Guidance Values from Dose Descriptors

| Source Descriptor | Target Guidance Value | Core Formula | Key Uncertainty/Assessment Factors | Primary Regulatory Context |

|---|---|---|---|---|

| NOAEL | Reference Dose (RfD) / Acceptable Daily Intake (ADI) [4] [1]. | NOAEL / Total UF [4]. | Interspecies (UFₐ), Intraspecies (UFₕ), Study duration, Database adequacy [3]. | Chemical safety in food, water, and environment [4]. |

| BMDL₁₀ | Reference Dose (RfD) [3]. | BMDL₁₀ / Total UF [3]. | Same as for NOAEL, but with reduced need for UFₗ. | Modern risk assessment where robust dose-response data exist [3]. |

| LOAEL | Reference Dose (RfD) [3]. | LOAEL / (Total UF × UFₗ) [3]. | Includes an additional factor (often 10) for using a LOAEL instead of a NOAEL. | Used when a NOAEL cannot be determined from the critical study. |

| T₂₅ or BMD | Derived Minimal Effect Level (DMEL) [1]. | Varies; often involves linear extrapolation from the point of departure [3]. | Mode-of-action analysis; choice between linear or nonlinear low-dose extrapolation models [3]. | Risk assessment for substances treated as non-threshold carcinogens [1]. |

Experimental Protocols for Determining Key Dose Descriptors

The reliability of dose descriptors hinges on rigorously conducted, standardized toxicological studies. The following protocols outline the general methodologies for key study types.

Protocol for a 90-Day Repeated Dose Oral Toxicity Study (to Identify NOAEL/LOAEL)

Objective: To identify the target organ(s) for toxicity and establish a NOAEL/LOAEL following repeated daily oral administration [6].

- Test System: Young adult rodents (typically rats), assigned randomly to groups.

- Test Groups: At least three dose groups and a concurrent vehicle control group. The high dose should elicit observable toxicity but not excessive mortality; the low dose should aim to produce no adverse effects (a potential NOAEL); and the mid dose(s) should be spaced to define the dose-response curve.

- Administration: The test substance is administered daily, 7 days a week, via oral gavage or incorporation into diet, for 90 days.

- In-life Observations: Daily clinical observations; weekly detailed physical examinations, body weight, and food consumption measurements.

- Terminal Procedures: At study end, hematology, clinical chemistry, and urinalysis are performed. A full necropsy is conducted. All major organs are weighed (absolute and relative to body/brain weight). Tissues are preserved for histopathological examination of a comprehensive list of organs, with special attention to potential target organs.

- Data Analysis & NOAEL/LOAEL Identification: Statistical analysis compares dose groups to the control. The NOAEL is identified as the highest dose showing no statistically or biologically significant adverse effect. The LOAEL is the lowest dose showing a significant adverse effect [1].

Protocol for an Acute Oral Toxicity Study (to Determine LD₅₀)

Objective: To estimate the median lethal dose (LD₅₀) after a single oral administration [6].

- Test System: Healthy young adult rodents (rats or mice), fasted prior to dosing.

- Test Design: Following OECD Test Guideline 423 (Acute Toxic Class Method) or 425 (Up-and-Down Procedure). Doses are selected from predefined series. Animals are dosed sequentially.

- Administration: Single bolus dose via oral gavage.

- Observation: Intensive observation for the first 30 minutes, then periodically for the first 24 hours, and daily for a total of 14 days. Signs of toxicity, time of onset, and mortality are recorded.

- LD₅₀ Calculation: Mortality data are analyzed using specified statistical methods (e.g., probit analysis, maximum likelihood method) to calculate the dose estimated to be lethal to 50% of the test population [1].

Protocol for a Carcinogenicity Bioassay (to Identify T₂₅ or BMD)

Objective: To evaluate the carcinogenic potential of a substance over the majority of the test species' lifespan.

- Test System: Two rodent species (typically rats and mice) with adequate sample size (e.g., 50 animals/sex/group).

- Test Groups: At least three dose groups and a control. The high dose is the maximum tolerated dose (MTD); the low dose is a margin of exposure (e.g., 1/10th to 1/4 of the MTD) above which a carcinogenic effect might be detected.

- Duration: Administration for most of the species' natural lifespan (e.g., 24 months for rats, 18 months for mice).

- Endpoint: Comprehensive histopathological examination of all tissues for neoplastic and pre-neoplastic lesions.

- Data Analysis: Tumor incidence data are analyzed using statistical models to determine dose-related trends. The T₂₅ is calculated as the chronic dose rate estimated to produce a 25% tumor incidence above background [1]. Alternatively, Benchmark Dose (BMD) modeling is applied to estimate the dose corresponding to a 10% extra risk (BMD₁₀) [1] [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

The determination of precise dose descriptors relies on high-quality, standardized reagents and materials. The following table details key components of the experimental toolkit.

Table 3: Essential Research Reagents & Materials for Dose-Response Studies

| Item | Specification / Example | Function in Protocol |

|---|---|---|

| Test Substance | High purity (e.g., >98%), known and stable composition, appropriate vehicle (e.g., corn oil, methyl cellulose, saline). | The agent whose toxicity is being characterized; purity ensures observed effects are due to the substance itself [6]. |

| Vehicle/Control Article | The substance (e.g., 0.5% carboxymethylcellulose) used to dissolve/suspend the test article for administration to the control group. | Provides a baseline for comparison to ensure effects are due to the test article and not the administration method [6]. |

| Animal Models | Defined species, strain, age, and weight (e.g., Sprague-Dawley rat, 6-8 weeks old). Certified pathogen-free status. | Provides a biological system to model potential human effects; genetic uniformity reduces variability [2]. |

| Clinical Chemistry & Hematology Assay Kits | Commercial kits for analyzing serum (e.g., ALT, AST, BUN, creatinine) and blood (e.g., RBC, WBC, platelet count). | Detect systemic toxicity and identify target organs (e.g., liver, kidney) [6]. |

| Histopathology Supplies | Neutral buffered formalin (10%), paraffin embedding media, hematoxylin & eosin (H&E) stain, microscope slides. | Preserve and prepare tissues for microscopic examination to identify morphological changes and lesions [6]. |

| Analytical Standard | Certified reference material of the test substance. | Used to calibrate analytical equipment (e.g., HPLC, MS) for verifying dosing formulation concentrations and conducting toxicokinetic analyses [6]. |

| Data Analysis Software | Statistical packages (e.g., SAS, R) with specific tools for probit analysis (LD₅₀) and Benchmark Dose modeling (e.g., EPA BMDS). | Enables robust statistical evaluation of data and derivation of dose descriptors [3]. |

Dose descriptors such as NOAEL, LOAEL, BMD, and LD₅₀ are the indispensable quantitative outputs of toxicological science. They transform observations from controlled experimental studies into the pivotal metrics that anchor the risk assessment process. By understanding their definitions, the methodologies behind their derivation, and the framework for their application—including the use of uncertainty factors to account for interspecies and interindividual variation—researchers and risk assessors can construct scientifically defensible estimates of safe exposure levels. As toxicology evolves, the field is moving from traditional descriptors like the NOAEL toward more data-driven and statistically robust approaches like Benchmark Dose modeling, which promises to reduce uncertainty and enhance the precision of public health protection [3]. Mastery of these core concepts remains fundamental for any professional engaged in the research and regulation of chemical safety.

Conceptual Foundations and Historical Context

Within the framework of toxicological dose descriptor research, the median lethal dose (LD50) and median lethal concentration (LC50) serve as cornerstone metrics for quantifying the intrinsic acute toxicity of chemical substances. The LD50 is defined as the amount of a material, administered in a single dose, that causes the death of 50% of a group of test animals within a specified observation period [7]. Similarly, the LC50 describes the concentration of a chemical in air (or water) that is lethal to 50% of the test population over a defined exposure duration, typically 4 hours for inhalation studies [7]. These values are fundamental for hazard identification, safety assessment, and the comparative ranking of chemical potencies.

The conceptualization of the LD50 is attributed to J.W. Trevan in 1927, who sought a standardized method to estimate the relative poisoning potency of drugs and medicines [7] [8]. The selection of the 50% mortality endpoint provides a statistically robust benchmark that avoids the extremes of dose-response curves and reduces experimental variability [8]. In toxicology, these are known as "quantal" tests, measuring an effect—death—that either occurs or does not [7]. The derived values are expressed relative to body weight (e.g., mg/kg for LD50) or environmental medium (e.g., mg/m³ or ppm for LC50), enabling direct comparison between substances of differing potencies and across studies using animals of different sizes [7] [8].

Methodological Approaches and Experimental Design

Determining LD50/LC50 values requires a controlled, systematic experimental protocol. While methods have evolved since Trevan's initial work, the core principles involve administering graduated doses of a pure test substance to defined animal populations and observing mortality [7].

Core Experimental Protocol

A standard acute toxicity test incorporates the following key stages [7]:

- Test System Selection: Experiments are most commonly performed on rats and mice. Other species, including rabbits, guinea pigs, dogs, and monkeys, may be used for specific regulatory or translational purposes. The species, strain, sex, age, and weight must be standardized and documented [7].

- Route of Administration: The substance may be administered via the intended route of human exposure.

- Oral (Gavage): The chemical is delivered directly to the stomach. The result is reported as LD50 (oral) [7].

- Dermal: The chemical is applied to the shaved skin under a occlusive covering to assess absorption. The result is reported as LD50 (skin) [7].

- Inhalation: Animals are exposed to a measured concentration of a gas, vapor, or aerosol in an inhalation chamber for a fixed period (usually 4 hours). The result is reported as LC50, specifying the exposure time (e.g., LC50 (4h)) [7].

- Parenteral: Injections may be given intravenously (i.v.), intraperitoneally (i.p.), or intramuscularly (i.m.) for specific research purposes [7].

- Dose Preparation and Administration: The test substance is prepared in a suitable vehicle. Several groups of animals (typically 5-10 per group) are then administered different doses, spaced by a constant multiplicative factor (e.g., 2x), spanning a range expected to cause mortality from 0% to 100% [7].

- Observation Period: Following administration, animals are clinically observed for signs of toxicity (e.g., lethargy, convulsions) and mortality for a period of up to 14 days [7].

- Data Analysis and Calculation: The number of deaths in each dose group is recorded. The LD50 or LC50 value, along with its confidence interval, is calculated using statistical methods such as the probit analysis, logit analysis, or the method of Reed and Muench, which model the sigmoidal relationship between dose and probability of death [8].

Experimental Workflow Visualization

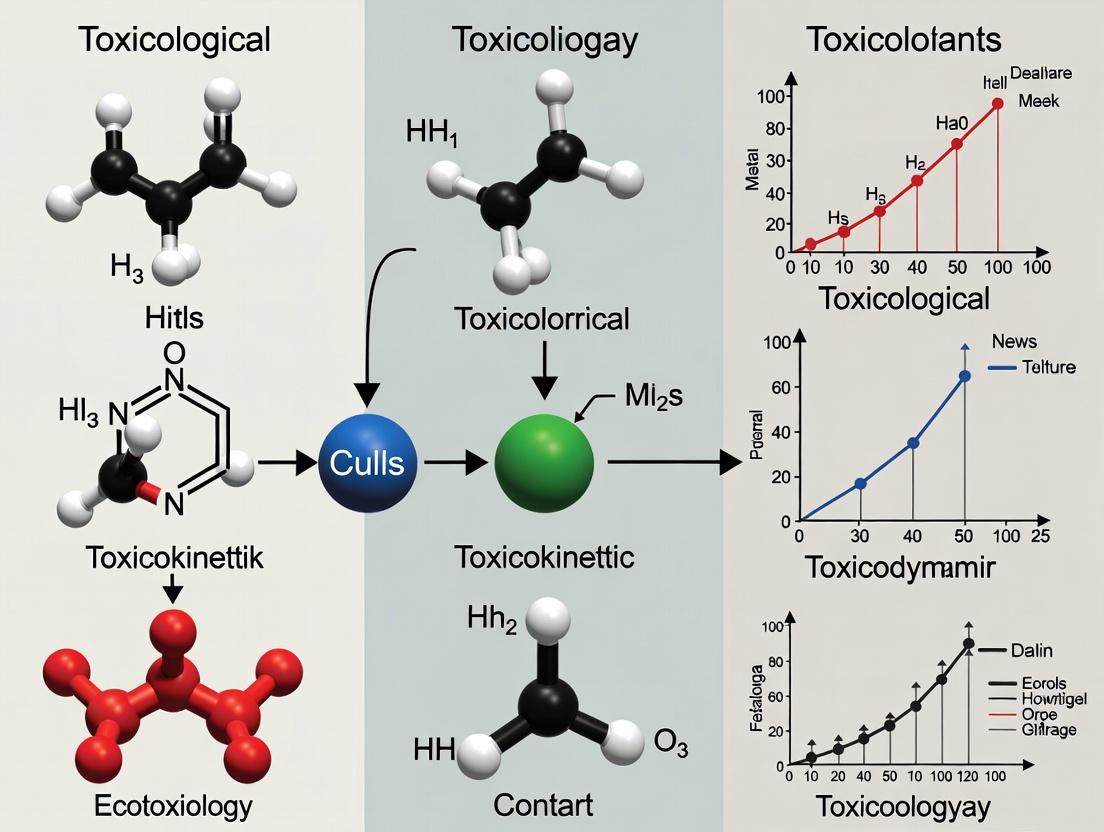

The following diagram outlines the generalized workflow for an acute oral LD50 determination study.

Diagram 1: Workflow for acute oral LD50 determination.

Quantitative Data and Toxicity Classification

LD50 and LC50 values provide a numerical basis for comparing acute toxicity. A fundamental rule is that a lower LD50/LC50 value indicates higher toxicity [7] [9]. For instance, aspirin (LD50 oral, rat = 1,600 mg/kg) is significantly more toxic than table salt (LD50 oral, rat = 3,000 mg/kg) [8] [9]. To facilitate hazard communication, these numerical values are often categorized into toxicity classes using established scales, though the specific class names and boundaries can vary between systems [7].

Table 1: Comparative Acute Toxicity of Common Substances (Oral Route, Rat) [8]

| Substance | Approximate LD50 (mg/kg) | Relative Toxicity Class (Per Table 2) |

|---|---|---|

| Botulinum toxin | 0.000001 (1 ng/kg) | Super Toxic |

| Sodium cyanide | ~5 | Extremely Toxic |

| Strychnine | 5-50 | Extremely Toxic |

| Arsenic (elemental) | 763 | Very Toxic |

| Caffeine | 192 | Very Toxic |

| Aspirin | 1,600 | Moderately Toxic |

| Table Salt (Sodium chloride) | 3,000 | Slightly Toxic |

| Ethanol | 7,060 | Slightly Toxic |

| Vitamin C (Ascorbic acid) | 11,900 | Practically Non-toxic |

| Water | >90,000 | Relatively Harmless |

Table 2: Toxicity Classification Schemes for Human Risk Contextualization [7] [10]

| Toxicity Rating | Hodge & Sterner Scale (Oral LD50, rat) | Gosselin, Smith & Hodge (Probable Human Lethal Dose) | Example [10] |

|---|---|---|---|

| Super Toxic | ≤ 1 mg/kg | A taste (< 7 drops) | Botulinum toxin |

| Extremely Toxic | 1 – 50 mg/kg | < 1 teaspoonful (4 ml) | Arsenic trioxide, Strychnine |

| Very Toxic | 50 – 500 mg/kg | < 1 ounce (30 ml) | Phenol, Caffeine |

| Moderately Toxic | 500 – 5000 mg/kg | < 1 pint (600 ml) | Aspirin, Sodium chloride |

| Slightly Toxic | 5 – 15 g/kg | < 1 quart (1 L) | Ethyl alcohol, Acetone |

| Practically Non-toxic | 15+ g/kg | > 1 quart | — |

It is critical to note that route of exposure dramatically influences toxicity. For example, the insecticide dichlorvos has an oral LD50 (rat) of 56 mg/kg (Highly Toxic) but an inhalation LC50 (4h, rat) of 1.7 ppm (Extremely Toxic) [7]. Therefore, the route must always be specified when reporting or using these values.

The Scientist's Toolkit: Essential Reagents and Materials

Conducting OECD Guideline-compliant acute toxicity studies requires specialized materials and reagents to ensure precision, reproducibility, and animal welfare.

Table 3: Key Research Reagent Solutions and Materials for LD50/LC50 Testing

| Item | Function & Specification |

|---|---|

| Pure Test Substance | The chemical agent of interest, typically of high purity (≥95%). Necessary for generating accurate, interpretable dose-response data without confounding effects from impurities [7]. |

| Appropriate Vehicle | A physiologically compatible solvent or suspending agent (e.g., saline, methylcellulose, corn oil) used to prepare accurate, homogenous dosing solutions/suspensions for administration [7]. |

| Laboratory Rodents | Specifically pathogen-free (SPF) rats or mice of a defined strain, age, and weight. The standard test system for generating foundational toxicity data [7]. |

| Inhalation Exposure Chamber | A whole-body or nose-only exposure system for generating and maintaining a precise, homogenous concentration of a test article (gas, vapor, aerosol) in air for the duration of the exposure period [7]. |

| Gavage Needles | Blunt-tipped, stainless steel or flexible plastic cannulas of appropriate length and gauge for the safe and accurate oral administration of liquid test formulations directly to the animal's stomach [7]. |

| Clinical Observation Scoring System | A standardized checklist or software for recording detailed observations (mortality, morbidity, behavioral changes, clinical signs) at fixed intervals during the post-dosing period [7]. |

| Statistical Analysis Software | Software (e.g., specialized toxicology packages, SAS, R with appropriate libraries) capable of performing probit, logit, or other non-linear regression analyses on mortality data to calculate the LD50/LC50 and its confidence limits [8]. |

Data Analysis and Statistical Pathway

The raw mortality data from the experimental dose groups are transformed into a point estimate (LD50) through statistical modeling. The process assumes a sigmoidal relationship between the logarithm of the dose and the probability of response, which is typically linearized for analysis.

Diagram 2: Statistical pathway for LD50 calculation.

Regulatory Context and Practical Applications

LD50/LC50 data are integral to regulatory safety assessments worldwide. In the United States, the Toxic Substances Control Act (TSCA) mandates the reporting of "substantial risk" information, which can include new, unexpected acute toxicity findings from chemical manufacturers [11]. These data points inform critical safety decisions: they are used to assign hazard classifications and signal words (e.g., "Danger" or "Warning") on product labels and Safety Data Sheets (SDSs) [9], establish exposure limits for occupational settings, and guide the selection of safer chemicals in research and industry [7] [10].

From a drug development perspective, the LD50 is a starting point for establishing the therapeutic index (TI), which is the ratio of the lethal dose (LD50) to the effective dose (ED50). A higher TI indicates a wider safety margin for a pharmaceutical agent [8].

Critical Limitations and Future Directions

While foundational, the classical LD50/LC50 test has significant limitations that must be acknowledged in modern toxicological research:

- High Animal Use and Welfare Concerns: The traditional method can require 40-100 animals per substance to achieve an accurate estimate, raising ethical concerns [8].

- Limited Mechanistic Insight: The test yields a single numerical endpoint (death) without providing information on the mechanism of toxicity, target organs, or the shape of the dose-response curve at lower, more relevant exposure levels [7].

- Interspecies and Intraspecies Variability: Results can vary significantly based on animal species, strain, sex, age, and husbandry, making precise extrapolation to humans uncertain [8]. For example, chocolate is relatively harmless to humans but toxic to dogs.

- Focus on Lethality Alone: It does not address chronic toxicity, carcinogenicity, mutagenicity, or other important health endpoints [7].

Consequently, regulatory and scientific trends are moving toward alternative methods. These include the Fixed Dose Procedure (OECD TG 420), the Acute Toxic Class Method (OECD TG 423), and the Up-and-Down Procedure (OECD TG 425), which use sequential dosing strategies to classify toxicity while significantly reducing animal numbers [7] [8]. Furthermore, in vitro and in silico models are being actively developed and validated to predict acute toxicity, aligning with the global push for the principles of Replacement, Reduction, and Refinement (the 3Rs) in animal testing [8].

Within the systematic study of toxicological dose descriptors, the concepts of the No-Observed-Adverse-Effect Level (NOAEL) and the Lowest-Observed-Adverse-Effect Level (LOAEL) serve as cornerstone practical tools. They are operational definitions applied to experimental data to identify key points on a dose-response curve for systemic toxicants [4]. This guide, framed within broader research on dose descriptors, details their technical definitions, methodological derivation, inherent uncertainties, and critical role in translating nonclinical findings to protect human health in drug development and chemical risk assessment.

A foundational principle is the threshold hypothesis, which states that for most systemic toxic effects, a range of exposures exists that can be tolerated by an organism with no adverse response [4]. This threshold exists because homeostatic, compensating, and adaptive mechanisms must be overcome before toxicity is manifested [4]. The NOAEL and LOAEL are experimental estimates that bracket this theoretical threshold, providing a basis for calculating safety margins such as the Reference Dose (RfD) or Acceptable Daily Intake (ADI) [1] [4].

Foundational Definitions and Biological Context

- NOAEL (No-Observed-Adverse-Effect Level): The highest exposure level at which there are no statistically or biologically significant increases in the frequency or severity of adverse effects between the exposed population and its appropriate control group. Effects may be produced at this level, but they are not considered adverse or precursors to adverse effects [1] [12]. It is typically expressed in units of mg/kg body weight/day [1].

- LOAEL (Lowest-Observed-Adverse-Effect Level): The lowest exposure level at which there are statistically or biologically significant increases in the frequency or severity of adverse effects between the exposed population and its appropriate control group [1].

- Adverse Effect: A biochemical change, functional impairment, or pathologic lesion that affects the performance of the whole organism, reduces an organism's ability to respond to additional environmental challenge, or is irreversible during or after exposure [13]. Distinguishing adverse from non-adverse (e.g., adaptive, pharmacological) effects is a critical scientific judgment in determining the NOAEL [13].

- Relationship to Other Dose Descriptors: NOAEL and LOAEL are distinct from more general terms like NOEL (No-Observed-Effect Level), which includes any effect, and from acute toxicity metrics like LD₅₀ (median lethal dose). They are primarily derived from repeated-dose studies (e.g., 28-day, 90-day, chronic) and reproductive toxicity studies [1]. For non-threshold endpoints like carcinogenicity of genotoxic compounds, metrics such as the Benchmark Dose (BMD) or T₂₅ are often used instead [1] [14].

Table 1: Key Toxicological Dose Descriptors

| Dose Descriptor | Full Name | Definition | Typical Study Source | Primary Use |

|---|---|---|---|---|

| NOAEL | No-Observed-Adverse-Effect Level | Highest dose with no significant adverse effect [1]. | Repeated-dose, reproductive studies [1]. | Point of departure for RfD/ADI [4]. |

| LOAEL | Lowest-Observed-Adverse-Effect Level | Lowest dose with a significant adverse effect [1]. | Repeated-dose, reproductive studies [1]. | Point of departure (with UF) if NOAEL not found [14]. |

| NOEL | No-Observed-Effect Level | Highest dose with no observed effect (adverse or non-adverse) [13]. | Various toxicity studies. | Less commonly used in regulatory safety assessment. |

| BMD | Benchmark Dose | A dose producing a predetermined, low incidence of effect (e.g., 10%) [1]. | Any study with dose-response data. | Alternative to NOAEL; uses full curve [14]. |

| LD₅₀/LC₅₀ | Lethal Dose/Concentration 50% | Dose/concentration estimated to kill 50% of test population [1]. | Acute toxicity studies. | Hazard classification and labeling. |

Methodological Protocols for Determination

Standard Protocol for NOAEL/LOAEL Determination in Repeated-Dose Toxicity Studies

The definitive identification of NOAEL and LOAEL follows a structured in vivo experimental design, most commonly a 90-day repeated-dose toxicity study in rodents or non-rodents, conducted under Good Laboratory Practice (GLP) [13].

1. Study Design:

- Animals: Relevant species (often rat and dog), with sufficient sample size (e.g., 10-20 animals/sex/group) to detect biological signals [15] [13].

- Groups: Minimum of four groups: a vehicle control group and three treated groups receiving the test article at graduated dose levels [13].

- Dose Selection: Doses are selected based on prior range-finding studies to span from a predicted no-effect level to a dose that produces clear toxicity. Doses are often spaced at half-log increments [15].

- Administration: Daily dosing via the intended clinical route (oral gavage, intravenous, etc.) for 90 days [13].

2. Endpoint Monitoring: A comprehensive set of observations is collected:

- Clinical Observations: Mortality, morbidity, signs of toxicity, food consumption, body weight [13].

- Clinical Pathology: Hematology, clinical chemistry, urinalysis at termination and often interim time points [13].

- Ophthalmology and Functional Tests.

- Gross Necropsy and Histopathology: Full tissue examination from all control and high-dose animals, and target organs from all groups [13].

3. Data Analysis and NOAEL/LOAEL Identification:

- Data are compared between each treated group and the concurrent control group.

- Statistical (e.g., ANOVA, Dunnett's test) and biological significance are evaluated.

- The NOAEL is identified as the highest dose level that does not show a significant adverse effect.

- The LOAEL is the next higher dose level, where significant adverse effects are first observed [16].

- A weight-of-evidence approach is used, correlating findings across clinical signs, clinical pathology, and histopathology to determine adversity [13].

Advanced Protocol: A Weight-Based Classification Method

To address common inconsistencies in NOAEL reporting, a systematic three-step, weight-based classification method has been proposed [13].

Step 1: Establish Criteria for Effect Classification.

- Adverse Effect Criteria: Findings showing a clear dose-response in clinical or histopathological parameters not seen in controls, or lesions consistent with statistically significant clinical pathology changes [13].

- Non-Adverse Effect Criteria: Findings showing a weak dose-response for parameters also present in controls, often mild and reversible [13].

Step 2: Classify Individual Findings. Each finding is categorized into one of three classes:

- Important Compound-Related Change: Adverse, part of an adverse constellation, or reflects known target organ toxicity [13].

- Minor Compound-Related Change: Compound-related but of low magnitude, biologically irrelevant, or reflecting desirable pharmacology [13].

- Non-Compound-Related Change: No dose response, consistent with historical control data [13].

Step 3: Derive Dose Descriptors from Classification.

- If any important compound-related change exists, the lowest dose at which it occurs is the LOAEL.

- The highest dose with only minor compound-related changes is designated the NOAEL.

- The highest dose with only non-compound-related changes is the NOEL [13].

Simulation Protocol for Assessing NOAEL Uncertainty

A 2024 simulation study quantified the uncertainty in applying animal NOAEL to humans [15].

1. Pharmacokinetic (PK) Simulation:

- Animal PK (e.g., monkey) was modeled with linear clearance.

- Human clearance was predicted via allometric scaling (exponent 0.75), incorporating a random uncertainty factor (1/3 to 3-fold) to reflect real-world prediction inaccuracy [15].

- Between-subject variability (BSV) in clearance was set at either low (CV%=30%) or high (CV%=70%) [15].

2. Toxicity (PD) Simulation:

- The probability of a dose-limiting adverse event was modeled using a sigmoidal Emax function of AUC (area under the concentration-time curve).

- The animal sensitivity parameter (A₅₀, the AUC for 50% probability) was fixed.

- Human sensitivity was varied to be 5-fold more sensitive, equal, or 5-fold less sensitive than animals [15].

- BSV in sensitivity (A₅₀) was also modeled at low (CV%=30%) or high (CV%=70%) levels [15].

3. Virtual Experiment and Analysis:

- For each scenario (see Table 2), 500 virtual animal toxicology experiments were run, each with 10 animals/dose at half-log increments plus a control group.

- A NOAEL was determined for each virtual study as the highest dose with no AE count increase over controls [15].

- Corresponding human trials were simulated, and the probability of AEs occurring at or below the animal-derived NOAEL exposure was calculated [15].

Table 2: Simulation Results on Cross-Species NOAEL Translation Uncertainty [15]

| Scenario | PK BSV (CV%) | PD BSV (CV%) | Human:Animal Sensitivity Ratio | % of Simulated Human Trials with AEs at ≤ Animal NOAEL Exposure (Mean) |

|---|---|---|---|---|

| 1 | 30 | 30 | 1 (Equal) | 32% |

| 2 | 30 | 30 | 0.2 (Human 5x More Sensitive) | 66% |

| 3 | 30 | 30 | 5 (Human 5x Less Sensitive) | 10% |

| 7 | 70 (High) | 30 | 1 (Equal) | 30% |

| 11 | 70 (High) | 70 (High) | 0.2 (Human 5x More Sensitive) | 63% |

| 12 | 70 (High) | 70 (High) | 5 (Human 5x Less Sensitive) | 8% |

Critical Role and Application in Safety Translation

The Risk Assessment Paradigm: From NOAEL to Safe Exposure

The primary application of the NOAEL is as the point of departure (POD) for calculating a safe human exposure level [4] [14].

The Reference Dose (RfD) or Acceptable Daily Intake (ADI) is derived using the formula: RfD = NOAEL / (UF₁ × UF₂ × ... MF) Where Uncertainty Factors (UFs) account for:

- UFₐ (Interspecies): Default 10-fold for extrapolating from animal to human [17] [4].

- UFₕ (Intraspecies): Default 10-fold to protect sensitive human subpopulations [17] [4].

- UFₛ (LOAEL-to-NOAEL): An additional 10-fold applied if a LOAEL is used instead of a NOAEL [14].

- UFₗ (Subchronic-to-Chronic): Applied if the POD comes from a subchronic study [14].

- MF (Modifying Factor): A professional judgment factor (typically 1-10) for database deficiencies [14].

This process yields a conservative exposure limit (e.g., mg/kg-day) intended to protect lifelong human health [4].

First-in-Human (FIH) Clinical Trial Starting Dose

In drug development, the animal NOAEL is pivotal for determining the Maximum Recommended Starting Dose (MRSD) for FIH trials [15] [13]. Regulatory guidance recommends converting the NOAEL to a Human Equivalent Dose (HED) using body surface area scaling, then applying a safety factor (often 10) to arrive at the MRSD [15]. This is intended to ensure a safe starting exposure for healthy volunteers.

Limitations, Uncertainties, and Modern Perspectives

The simulation study (Table 2) starkly highlights the inherent limitations of the traditional NOAEL approach, even under idealized conditions [15]. When human and animal sensitivity are assumed equal, limiting human exposure to the animal NOAEL still carries a 32% mean risk of causing toxicity due to estimation uncertainty and inter-individual variability [15]. If humans are more sensitive (a 5-fold difference), this risk exceeds 60% [15]. Conversely, it risks under-dosing and undermining a drug's therapeutic potential if humans are less sensitive [15].

Key Limitations Include:

- Experimental Design Dependence: The NOAEL is constrained to one of the tested doses. Its value is influenced by dose spacing, group size, and the statistical power of the study [15] [18].

- Ignores Dose-Response Shape: The NOAEL does not utilize information on the slope of the dose-response curve below the observed effect [4].

- Cross-Species Translational Uncertainty: As simulated, differences in pharmacokinetics and pharmacodynamics between animals and humans create major uncertainty [15].

- Subjectivity in "Adversity": Distinguishing adverse from non-adverse effects requires expert judgment, leading to potential inconsistency [13].

Modern Advancements:

- Benchmark Dose (BMD) Modeling: The BMD approach, which models the full dose-response curve to estimate a POD for a predefined benchmark response (e.g., 10% extra risk), is increasingly favored as a more robust and informative alternative to the NOAEL [14].

- Kinetic Maximum Dose (KMD): A paradigm shift proposed for dose-setting in toxicology studies, where doses are informed by saturation of metabolic pathways rather than inducing overt toxicity, aiming for more human-relevant outcomes [19].

- New Approach Methodologies (NAMs): Increased use of in vitro and in silico models aims to improve human relevance and reduce reliance on animal data [17].

Flowchart: Standard Workflow for NOAEL/LOAEL Determination

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for NOAEL/LOAEL Studies

| Item | Function/Description | Key Consideration |

|---|---|---|

| Test Article/Compound | The substance being evaluated for toxicity. | Requires full characterization (purity, stability, formulation) under GLP [13]. |

| Vehicle/Excipient | Substance used to dissolve or suspend the test article for administration. | Must be non-toxic at administered volumes; appropriate controls are essential [13]. |

| Laboratory Animals | In vivo model (e.g., rodent, non-rodent). | Species relevance, health status, genetic stability, and appropriate housing are critical [15] [13]. |

| Clinical Pathology Assays | Kits and analyzers for hematology, clinical chemistry, urinalysis. | Validated methods; historical control data for the species/strain is vital for interpretation [13]. |

| Histopathology Supplies | Fixatives (e.g., 10% Neutral Buffered Formalin), stains (H&E), embedding media. | Standardized protocols for tissue trimming, processing, and evaluation ensure consistency [13]. |

| Statistical Software | Software for data analysis (e.g., SAS, R). | Used for trend analysis, group comparisons (ANOVA), and determining statistical significance [15] [13]. |

| Toxicokinetic (TK) Assays | Bioanalytical methods (e.g., LC-MS/MS) to measure compound levels in blood/plasma. | Links administered dose to systemic exposure (AUC, Cmax); crucial for cross-species scaling [15] [19]. |

Diagram: Uncertainty Pathway in Cross-Species NOAEL Translation

NOAEL and LOAEL remain fundamental operational dose descriptors in toxicology, providing a pragmatic, though imperfect, bridge from experimental data to human safety decisions. Their determination requires rigorous, standardized protocols and expert judgment on the adversity of effects. However, as contemporary simulation research confirms, the translational uncertainty in applying animal-derived NOAELs to humans is substantial [15]. This underscores the necessity of moving beyond a rigid reliance on the NOAEL as a "red line" [15]. The future of the field lies in integrating these traditional tools with more sophisticated approaches—including BMD modeling, kinetic data, and human-relevant NAMs—to build a more predictive and mechanistic foundation for safety assessment within the evolving science of toxicological dose descriptors.

This technical guide provides a comprehensive examination of two critical dose descriptors for non-threshold toxic effects: the T25 and the Benchmark Dose (BMD). Framed within the broader context of toxicological dose descriptor research, this whitepaper details their fundamental principles, computational methodologies, and applications in quantitative risk assessment (QRA). The T25 is defined as the chronic dose rate expected to produce tumors in 25% of test animals after correction for spontaneous incidence, serving as a transparent, single-point estimate for carcinogen risk characterization [20]. In contrast, the BMD is a model-derived dose corresponding to a specified Benchmark Response (BMR), typically a 10% extra risk (BMD10), utilizing the full dose-response curve for more robust and data-efficient potency estimation [21] [22]. This guide elucidates their integration into modern, tiered assessment frameworks such as New Approach Methodologies (NAMs), which seek to reduce animal testing through integrated in silico, in vitro, and toxicokinetic modeling [23]. Supported by structured data comparisons, experimental protocols, and workflow visualizations, this resource is designed for researchers and drug development professionals navigating the transition from traditional hazard identification to next-generation, probabilistic risk assessment.

Toxicological dose descriptors are quantitative metrics that define the relationship between the administered dose of a chemical and the incidence or magnitude of a specific adverse effect. They are the cornerstone of hazard characterization, forming the critical link between experimental data and the derivation of safety thresholds for human health, such as Reference Doses (RfDs) or Derived No-Effect Levels (DNELs) [22].

Traditionally, descriptors like the No-Observed-Adverse-Effect Level (NOAEL) and the Lowest-Observed-Adverse-Effect Level (LOAEL) have been used for threshold effects—where a dose below a certain level is presumed safe. However, for non-threshold effects, notably genotoxic carcinogenicity, it is assumed that any exposure carries some risk. This paradigm necessitates descriptors that quantify potency to enable low-dose extrapolation and risk estimation [20] [22].

The evolution of dose descriptors is increasingly intertwined with the development of New Approach Methodologies (NAMs). NAMs represent a paradigm shift toward integrating non-animal data—including in silico predictions, high-throughput in vitro bioactivity, and toxicokinetic modeling—into chemical safety assessments [23]. In this context, standardized and transparent dose descriptors like T25 and BMD are essential for benchmarking and calibrating these new approaches against traditional toxicological data, thereby bridging historical and next-generation risk assessment frameworks [24].

Table 1: Common Toxicological Dose Descriptors and Their Applications

| Dose Descriptor | Full Name | Definition | Primary Use | Typical Study Source |

|---|---|---|---|---|

| LD₅₀/LC₅₀ | Lethal Dose/Concentration 50% | A statistically derived single dose/concentration expected to cause death in 50% of treated animals. | Acute toxicity hazard classification [22]. | Acute toxicity studies. |

| NOAEL | No-Observed-Adverse-Effect Level | The highest exposure level with no biologically significant increase in adverse effects compared to the control group. | Deriving safety thresholds (e.g., ADI, RfD) for threshold effects [22]. | Repeated dose toxicity studies (28-day, 90-day, chronic). |

| LOAEL | Lowest-Observed-Adverse-Effect Level | The lowest exposure level that produces a statistically or biologically significant increase in adverse effects. | Used when a NOAEL cannot be determined; requires larger assessment factors for safety threshold derivation [22]. | Repeated dose toxicity studies. |

| T25 | Tumoral dose for 25% incidence | The chronic dose rate predicted to induce a 25% tumor incidence in a specific tissue, corrected for background rates. | Quantitative risk assessment of non-threshold carcinogens [20] [22]. | Chronic carcinogenicity bioassays. |

| BMD/BMDL | Benchmark Dose (Lower Confidence Limit) | The dose (and its lower confidence limit) that produces a specified Benchmark Response (BMR, e.g., 10% extra risk), derived from model-fitting. | A robust point of departure for risk assessment, preferred over NOAEL as it uses all dose-response data [21]. | Any study with graded or quantal dose-response data. |

| EC₅₀ | Effective Concentration 50% | The concentration of a substance that causes a 50% of maximal effect in an ecotoxicity test. | Aquatic environmental hazard classification [22]. | Acute aquatic toxicity tests (e.g., Daphnia immobilization). |

The T25 Dose Descriptor: A Single-Point Risk Estimation Tool

Conceptual Foundation and Calculation

The T25 is a pragmatic dose descriptor designed specifically for the quantitative risk assessment (QRA) of non-threshold carcinogens. It is defined as the chronic daily dose rate (in mg/kg body weight/day) that is expected to induce a 25% tumor incidence in a specific target tissue or organ in an animal population, after correction for spontaneous background incidence, over the standard lifespan of the species [20] [22].

The calculation methodology is intentionally straightforward to ensure transparency and accessibility without requiring complex software [20]:

- Data Selection: Identify the dose group in a chronic rodent carcinogenicity study with an observed tumor incidence significantly above the control group.

- Correction for Background: Adjust the observed tumor incidence (

P_obs) by subtracting the background incidence in the control group (P_control):P_corrected = P_obs - P_control. - Linear Interpolation: If no dose group shows exactly a 25% corrected incidence, the T25 is calculated via linear interpolation between the dose (

D_low) that gives an incidence below 25% (I_low) and the dose (D_high) that gives an incidence above 25% (I_high).T25 = D_low + [(0.25 - I_low) * (D_high - D_low) / (I_high - I_low)]

Protocol for Deriving a Human Risk Estimate from T25

The primary utility of T25 is its conversion into a human cancer risk estimate through a series of standardized steps [20].

- Determine the Experimental T25: Calculate the T25 value from the most sensitive and relevant species-sex group in the animal bioassay, as described above.

- Interspecies Scaling (T25 to HT25): Convert the animal T25 to a human equivalent dose, the HT25. This is typically done by dividing the T25 by a body surface area scaling factor (e.g., 1 for mg/kg/day scaling, or a factor like 6 for rat-to-human scaling based on comparative metabolic rates).

HT25 = T25 / Scaling Factor - Calculate the Potency Factor (PF): The PF represents the risk per unit daily dose. It is derived by assuming linear extrapolation from the HT25 point:

PF = 0.25 / HT25. The value 0.25 represents the 25% tumor risk at the HT25 dose. - Estimate Lifetime Cancer Risk: The excess lifetime cancer risk from a specific human exposure dose (

D_human, in mg/kg/day) is calculated as:Risk = D_human * PF = D_human * (0.25 / HT25).

This method yields risk estimates that have been shown to be in excellent agreement with those from more computationally intensive models like the linearized multistage model [20]. Its simplicity makes it a valuable tool for screening-level assessments and for setting specific concentration limits for carcinogens, as historically practiced within the European Union [20].

The Benchmark Dose (BMD) Framework: A Model-Based Approach

Principles and Advantages over NOAEL/LOAEL

The Benchmark Dose (BMD) framework is a more advanced, model-based methodology for identifying a Point of Departure (POD) for risk assessment. The BMD is defined as the dose that corresponds to a specified, low level of adverse effect, known as the Benchmark Response (BMR), derived by statistically fitting a mathematical model to the dose-response data [21]. The BMDL, the lower statistical confidence limit (typically 95%) on the BMD, is often used as the POD to account for uncertainty.

The BMD approach offers significant advantages over the traditional NOAEL/LOAEL method [21]:

- Utilizes All Data: It incorporates the data from all dose groups and the shape of the entire dose-response curve, not just two groups.

- Independent of Study Design: The BMD is less affected by the arbitrary spacing of dose groups, which directly impacts the NOAEL.

- Quantifies Uncertainty: The BMDL provides a consistent statistical measure of uncertainty.

- Standardized Response Level: The BMR (e.g., 10% extra risk) is consistent across studies, allowing for better comparison of potencies, unlike NOAELs which correspond to variable effect levels.

For non-threshold carcinogens, the BMD10 (the dose associated with a 10% extra risk of tumors) is a commonly used descriptor, analogous to the T25 but derived through formal modeling [22].

Protocol for BMD Analysis

A rigorous BMD analysis follows a structured workflow [21]:

- Data Preparation & BMR Selection: Organize the dose-response dataset. For quantal data (e.g., tumor incidence), select a BMR expressed as extra risk (e.g., 0.10 or 10%).

Extra Risk = [P(dose) - P(0)] / [1 - P(0)], where P is the response probability. - Model Fitting: Fit a suite of plausible mathematical dose-response models (e.g., multistage, Weibull, Log-Logistic, Quantal-Linear) to the data. Software like the US EPA's BMDS is typically used.

- Model Selection & Adequacy Check: Select the best-fitting model based on statistical criteria (e.g., lowest Akaike Information Criterion (AIC)), goodness-of-fit p-value (>0.1), and biological plausibility. Visual inspection of the fit is crucial.

- BMD/BMDL Derivation: From the selected model, calculate the dose corresponding to the chosen BMR. The 95% BMDL is derived from the lower confidence limit of this dose estimate.

- Sensitivity Analysis: Conduct analyses to ensure the derived BMDL is robust to model choice and BMR selection.

Advanced Applications: BMD for Chemical Mixtures

Research has extended the BMD paradigm to the complex challenge of assessing chemical mixtures. For two-agent combinations, the concept of a Benchmark Profile (BMP) has been developed [25]. A BMP is a contour line in a two-dimensional dose space where the combined exposure produces the specified BMR. This defines an infinite set of dose pairs (DoseA, DoseB) that are considered equivalent in risk, providing a powerful tool for the risk characterization of low-level exposures to multiple hazardous agents [25].

Comparative Analysis: T25 vs. BMD in Risk Assessment

Table 2: Comparative Analysis of T25 and Benchmark Dose (BMD) Descriptors

| Feature | T25 | Benchmark Dose (BMD) |

|---|---|---|

| Philosophical Basis | Single-point linear extrapolation. Uses one key data point (25% incidence) to anchor a linear risk model [20]. | Full curve modeling. Statistically models the entire dose-response relationship to derive a POD [21]. |

| Data Utilization | Utilizes data from one or two dose groups near the 25% effect level. Does not use the shape of the entire dose-response curve [20]. | Maximally utilizes data from all dose groups. The shape of the curve informs the model fit and the POD [21]. |

| Statistical Robustness | Simpler, less statistically rigorous. No confidence interval on the T25 point estimate itself, though uncertainty is addressed in later assessment factors [20]. | More statistically robust. Provides a confidence interval (BMDL) directly, quantifying uncertainty in the POD [21]. |

| Computational Requirement | Can be performed manually or with simple calculations; does not require specialized software [20]. | Requires specialized statistical software (e.g., US EPA BMDS, PROAST) for model fitting and BMDL calculation [21]. |

| Primary Regulatory Use | Historically used for carcinogen classification and labeling (e.g., EU specific concentration limits) [20]. Screening-level risk assessments. | Increasingly the preferred method for POD derivation by agencies like the US EPA and EFSA. Used for both threshold and non-threshold effects [21]. |

| Relationship | The T25 can be considered a special case of a BMD where the BMR is fixed at 25% extra risk and the dose-response model is assumed to be linear between the data point and the origin. | A BMD10 (10% extra risk) is a more conservative and commonly used POD than T25. The BMD framework can explicitly model sublinear or supralinear shapes. |

Integration into Modern Toxicological Frameworks: The Role of NAMs

The application of T25 and BMD is evolving within next-generation safety assessments. The European Centre for Ecotoxicology and Toxicology of Chemicals (ECETOC) tiered framework for New Approach Methodologies (NAMs) exemplifies this integration [23].

Tiered Workflow for Systemic Toxicity Assessment [23]:

- Tier 0 (Threshold of Toxicological Concern - TTC): A generic screening threshold for very low exposures.

- Tier 1 (In Silico Assessment): Use of (Q)SAR models and expert systems to predict toxicity alerts and metabolites.

- Tier 2 (In Vitro Bioactivity & Toxicokinetics): Integration of high-throughput in vitro assay data (e.g., from ToxCast) for potency (AC50 values) and severity, coupled with in vitro/in silico toxicokinetic models to predict systemic bioavailability (e.g., plasma Cmax) [23].

- Tier 3 (Targeted In Vivo Studies): Traditional studies are triggered only when needed for resolution.

In this framework, BMD values from traditional in vivo studies, curated in databases like ToxValDB, serve as the critical benchmark for validating and calibrating the in vitro bioactivity potency data (AC50) and the predictions from NAMs [24]. The goal is to establish a quantitative relationship between in vitro potency and in vivo PODs (BMDLs), ultimately allowing for the prediction of human-relevant toxicity values without new animal testing.

Risk Assessment Workflow for Non-Threshold Effects

Diagram 1: Comparative workflow for deriving human cancer risk estimates using the T25 (yellow path) and BMD (green path) approaches. Both begin with the same animal data but diverge in the dose-response analysis method before converging on the calculation of a potency factor for risk characterization.

Essential Databases and Computational Tools

The reliable application of T25 and BMD methodologies depends on access to high-quality, curated toxicological data and sophisticated computational tools.

Table 3: The Researcher's Toolkit: Key Databases and Tools for Dose-Response Analysis

| Resource Name | Type | Key Function in Dose-Descriptor Research | Primary Source/Agency |

|---|---|---|---|

| ToxValDB (v9.6.1) | Centralized Database | Curates & standardizes 242,149 records of in vivo toxicity values (NOAEL, LOAEL, BMD) and derived guidance values for ~42,000 chemicals. Essential for benchmarking NAMs and accessing historical POD data [24] [26]. | U.S. EPA Center for Computational Toxicology and Exposure [24]. |

| ToxRefDB | In Vivo Study Database | Contains detailed, structured data from over 6,000 guideline animal toxicity studies. Provides the raw study data underlying many summary values in ToxValDB [26]. | U.S. EPA [26]. |

| CompTox Chemicals Dashboard | Integrative Web Portal | Provides public access to ToxValDB data, chemical structures, properties, and bioactivity data from ToxCast. Enables linked exploration of chemical identity, hazard, and exposure data [26]. | U.S. EPA [26]. |

| Benchmark Dose Software (BMDS) | Statistical Software | The US EPA's primary software suite for performing BMD modeling. It fits multiple models to dose-response data and calculates BMD/BMDL values [21]. | U.S. EPA [21]. |

| ToxCast Database | High-Throughput Screening (HTS) Data | Provides bioactivity profiles (including AC50 potency values) for thousands of chemicals across hundreds of in vitro assay endpoints. Used to inform potency in NAM-based classification matrices [23] [26]. | U.S. EPA [26]. |

| ECETOC Tiered Framework | Methodological Framework | A conceptual workflow for integrating TTC, in silico, in vitro, and TK tools into a holistic assessment. Guides the placement of T25/BMD data in a modern NAM context [23]. | European Centre for Ecotoxicology and Toxicology of Chemicals [23]. |

NAM Tiered Framework for Systemic Toxicity

Diagram 2: The ECETOC tiered framework for New Approach Methodologies (NAMs) [23]. Traditional in vivo data (right) serves to benchmark and calibrate the in vitro and in silico predictions made within the tiered workflow, illustrating the integrative role of established dose descriptors like BMD.

The T25 and Benchmark Dose represent two pivotal, yet philosophically distinct, methodologies for characterizing the potency of non-threshold toxicants. The T25 stands as a transparent, simplified tool for straightforward risk estimation and regulatory screening. In contrast, the BMD framework embodies a more rigorous, data-driven statistical paradigm that is increasingly becoming the benchmark for modern point-of-departure derivation.

The future of toxicological dose descriptor research lies in their seamless integration into next-generation, integrated testing strategies. As frameworks like the ECETOC tiered approach demonstrate, the role of T25 and BMD is expanding from being endpoints of animal studies to becoming anchors for validating new approach methodologies [23] [24]. The continued development and curation of comprehensive databases like ToxValDB are critical for this endeavor, providing the essential bridge between historical animal data and predictive in vitro or in silico potency estimates [24]. Ultimately, the evolution of these descriptors will be characterized by a convergence of traditional risk assessment principles with computational toxicology, enabling more efficient, human-relevant, and mechanistic-based safety evaluations.

Within the broader framework of toxicological dose descriptors research, the quantification of chemical effects and persistence forms the cornerstone of environmental risk assessment. This guide focuses on three pivotal metrics: the median effective concentration (EC50), the no observed effect concentration (NOEC), and the degradation half-life (DT50). These parameters are indispensable for transitioning from hazard identification to a quantitative understanding of risk, informing regulatory standards such as the Predicted No-Effect Concentration (PNEC) for ecosystems and guiding the sustainable development of agrochemicals and pharmaceuticals [1] [27]. Their accurate determination bridges the gap between empirical toxicology and predictive environmental safety models.

EC50 (Median Effective Concentration) is the concentration of a substance estimated to produce a specific, non-lethal effect (e.g., immobilization, growth inhibition) in 50% of a test population over a defined exposure period. It is a standard measure of acute toxic potency in ecotoxicology [1] [28].

NOEC (No Observed Effect Concentration) is the highest tested concentration at which there is no statistically significant adverse effect observed relative to the control group. It is derived from chronic toxicity studies and identifies a threshold below which unacceptable effects are not expected, playing a critical role in defining safe long-term exposure levels [1] [29].

DT50 (Degradation Half-Life) is the time required for the concentration of a substance to be reduced by 50% in a specific environmental compartment (e.g., soil, water). It is a primary indicator of environmental persistence. For degradation following first-order kinetics, DT50 is calculated as ln(2)/k, where k is the first-order rate constant [1] [30] [31].

Table 1: Core Definitions and Characteristics of Key Ecotoxicological Metrics

| Metric | Full Name | Toxicological Context | Typical Units | Primary Use in Risk Assessment |

|---|---|---|---|---|

| EC50 | Median Effective Concentration | Acute toxicity; sublethal effects | mg/L | Acute hazard classification; calculation of acute PNEC [1]. |

| NOEC | No Observed Effect Concentration | Chronic toxicity; threshold effects | mg/L | Chronic hazard classification; calculation of chronic PNEC [1] [29]. |

| DT50 | Degradation Half-Life | Environmental fate and persistence | Days (d) | Exposure modeling; persistence assessment [1] [30]. |

Detailed Experimental Protocols and Methodologies

Determination of EC50

The EC50 is typically determined using standardized acute toxicity tests with organisms like Daphnia magna (water flea) or Lemna gibba (duckweed) [1] [29]. The foundation is a dose-response experiment.

- Study Design: Test organisms are exposed to a geometrically spaced series of at least five concentrations of the test substance and a control. For Lemna gibba, the OECD Test Guideline 221 is followed, where plants are exposed for seven days, and growth inhibition is measured [29].

- Optimal Design: Statistical optimal design theory suggests that for fitting a 4-parameter log-logistic model, a D-optimal design often requires only a control and three optimally chosen dose levels to estimate the EC50 with maximum precision, improving efficiency over conventional designs [32].

- Data Analysis: The response data (e.g., percent immobilization or growth inhibition) are plotted against the logarithm of concentration. The EC50 and its confidence interval are estimated by fitting a sigmoidal dose-response model (e.g., log-logistic, Weibull) using non-linear regression [32].

Determination of NOEC

The NOEC is derived from chronic or life-cycle tests, such as those with Chironomus riparius (harlequin fly) following OECD TG 218, 219, or 233 [29].

- Protocol: Organisms are exposed to multiple concentrations of the test substance over a prolonged period, often encompassing most or all of their life cycle. Endpoints include survival, growth, reproduction, and emergence [29].

- Statistical Analysis: Data for each endpoint are analyzed using hypothesis-testing methods (e.g., ANOVA followed by Dunnett's test) to compare each treatment group to the control. The NOEC is the highest concentration where no statistically significant (typically p > 0.05) adverse effect is detected [1].

- Advanced Modeling: As an alternative to the statistically limited NOEC, the Benchmark Dose (BMD) approach can be applied. It uses all dose-response data to model a concentration corresponding to a predefined low effect level (e.g., BMD10) [1] [32].

Determination of DT50

DT50 is assessed through environmental degradation studies in simulated or natural systems [30].

- Laboratory Incubation: The chemical is introduced into a defined environmental matrix (soil, water, sediment). Conditions (temperature, moisture, microbial activity) are controlled. Samples are taken over time and analyzed for parent compound concentration [30] [33].

- Kinetic Analysis: Data are fit to degradation models. For first-order kinetics, the DT50 is constant and calculated from the rate constant k (

DT50 = ln(2)/k) [30] [31]. Many pesticides degrade in a biphasic pattern. The EPA's Representative Half-Life (t_rep) method addresses this by calculating a single first-order equivalent value that best represents the entire curve for use in exposure models [30]. - Estimation Methods: For screening, QSAR estimation software like EPIWIN (with modules AOPWIN, BIOWIN) is used. However, validation against experimental data is critical, as estimations can vary significantly from measured values, especially for persistent chemicals [33].

Experimental Workflow for Key Ecotoxicological Metrics

Data Presentation and Comparative Analysis

Quantitative data for these metrics are chemical- and species-specific. The following table for the herbicide 2,4-D provides a concrete example of the values and their implications [34].

Table 2: Example Ecotoxicological Data for the Herbicide 2,4-D (Acid Form)

| Test Organism | Endpoint | Metric | Reported Value | Interpretation & Use |

|---|---|---|---|---|

| Rat (Oral) | Mortality | LD50 | 639 mg/kg [34] | Classified as low acute toxicity; used for human health risk assessment. |

| Aquatic Plants | Growth Inhibition | EC50 | Varies by study [34] | Determines acute hazard to non-target plants; input for aquatic risk models. |

| Soil Microbes | Degradation in Soil | DT50 | 7-10 days (typical range) [34] | Indicates moderate persistence; used in soil exposure and leaching models. |

| Fish/Daphnia | Chronic Toxicity | NOEC | Data derived from lifecycle tests | Sets the threshold for long-term safe concentration in water. |

Integration in Risk Assessment and Decision-Making

These metrics are not used in isolation but are integrated into comprehensive environmental risk assessment (ERA) frameworks. The Risk Quotient (RQ), calculated as the ratio of the Predicted Environmental Concentration (PEC) to the Predicted No-Effect Concentration (PNEC), is a central output [27]. The PNEC is derived by applying an assessment factor to the most sensitive ecotoxicological endpoint (typically the lowest relevant EC50 or NOEC) [1]. Similarly, DT50 is a critical input for fate models that calculate the PEC. Advanced algorithms now combine monitoring data with DT50 and toxicity values (EC50/NOEC) to prioritize site-specific risk management for pesticides [27].

Decision Pathway for Selecting Ecotoxicological Metrics

Advanced Tools: The Scientist's Toolkit

Modern research leverages both standardized testing and computational tools.

Table 3: Research Reagent Solutions and Essential Tools

| Tool/Reagent Category | Specific Example | Function in Experimentation |

|---|---|---|

| Standard Test Organisms | Daphnia magna (Cladocera), Lemna gibba (Duckweed), Chironomus riparius (Midge) | Standardized biological models for acute (Daphnia, Lemna) and chronic (Chironomus) aquatic toxicity testing [1] [29]. |

| Software for Dose-Response Analysis | R packages (drc, bmdb) |

Statistical fitting of non-linear dose-response models to calculate EC50, Benchmark Doses, and confidence intervals [32]. |

| Degradation Kinetics Software | PestDF, R Executable for EPA SOP [30] | Analyzes time-series degradation data to determine rate constants (k), DT50, and calculate representative half-lives for non-first-order decay [30]. |

| QSAR Prediction Platforms | CORAL software, EPIWIN Suite [33] [29] | Estimates ecotoxicological endpoints (EC50, NOEC) and degradation half-lives from molecular structure using quantitative structure-activity/property relationships [33] [29]. |

| Reference Databases | EFSA OpenFoodTox [29] | Curated database of experimental toxicity values used for model training, validation, and regulatory assessment. |

Future Perspectives and Advanced Modeling

The field is moving beyond standalone metric determination towards integrated computational approaches. Quantitative Structure-Activity Relationship (QSAR) models, built using software like CORAL and the Monte Carlo method on databases such as OpenFoodTox, allow for the rapid in silico prediction of EC50 and NOEC for new compounds or untested species [29]. Furthermore, Bayesian optimal experimental design is being applied to optimize the dose selection and sample allocation in toxicity tests, maximizing information gain while minimizing resource use [32]. The integration of monitoring data, DT50, and toxicity endpoints into advanced algorithms supports dynamic, real-world risk management and the development of safer chemical products [27].

The dose-response relationship is a quantitative principle central to pharmacology and toxicology, describing the change in the magnitude of a biological effect as a function of the exposure level to a chemical or drug [35]. The graphical representation of this relationship, the dose-response curve, is an indispensable tool for determining safe, hazardous, and beneficial exposure levels, forming the basis for public policy and drug development [35]. This guide, framed within the broader thesis on toxicological dose descriptors, details the mathematical foundations, key parameters, experimental derivation, and advanced applications of dose-response analysis for research scientists.

At its core, the relationship is often described by sigmoidal curves when response is plotted against the logarithm of the dose [35]. The most prevalent mathematical model for this sigmoidal shape is the Hill Equation (Hill-Langmuir equation) [35]:

E/Emax = [A]^n / (EC50^n + [A]^n)

Where E is the effect, Emax is the maximal effect, [A] is the drug concentration, EC50 is the concentration producing 50% of Emax, and n is the Hill coefficient denoting steepness [35].

A more generalized form is the Emax model, which includes a parameter for the baseline effect (E0) [35]:

E = E0 + ([A]^n × Emax) / ([A]^n + EC50^n)

This model is the single most common non-linear model for describing dose-response relationships in drug development [35]. It is critical to note that while many curves are monotonic, non-monotonic dose-response relationships (e.g., U-shaped curves) are also observed, particularly with endocrine disruptors, challenging traditional threshold models [35].

Table 1: Core Mathematical Models for Dose-Response Analysis

| Model Name | Formula | Key Parameters | Primary Application |

|---|---|---|---|

| Hill Equation | E/Emax = [A]^n/(EC50^n + [A]^n) |

Emax (Efficacy), EC50 (Potency), n (Steepness) | Modelling sigmoidal agonist-receptor relationships [35]. |

| Emax Model | E = E0 + ([A]^n × Emax)/([A]^n + EC50^n) |

E0 (Baseline Effect), Emax, EC50, n | General dose-response modelling, especially in drug development [35]. |

| Multiphasic Model | Combination of independent Hill equations | Multiple EC50 and Emax values | Capturing complex curves with multiple inflection points (e.g., inhibition & stimulation) [36]. |

Diagram 1: PK-PD Pathway Linking Dose to Effect

Key Toxicological Descriptors and Curve Interpretation

Dose-response curves are analyzed by extracting quantitative descriptors that inform on potency, efficacy, and safety. Potency refers to the dose required to produce a given effect and is inversely related to values like EC50 or IC50; a more potent drug requires a lower dose [37]. Efficacy (Emax) is the maximum achievable therapeutic response, which is distinct from and often more critical than potency [37] [36].

Table 2: Key Quantitative Descriptors from Dose-Response Analysis

| Descriptor | Definition | Interpretation in Toxicology/Pharmacology |

|---|---|---|

| EC50 | Concentration producing 50% of the maximal stimulatory effect. | Standard measure of an agonist's potency [36]. |

| IC50 | Concentration producing 50% inhibition of a specified process. | Standard measure of an antagonist's or inhibitor's potency [36]. |

| Emax | Maximum possible effect achievable by the agent. | Measure of intrinsic efficacy [37] [36]. |

| LD50 | Dose lethal to 50% of a test population. | Standard comparator for acute toxicity [38]. |

| NOAEL | Highest dose with no statistically significant adverse effect. | Foundational for risk assessment and setting safety limits [36]. |

| LOAEL | Lowest dose producing a statistically significant adverse effect. | Used with NOAEL to define the point of departure for risk assessment [36] [39]. |

| Therapeutic Index | Ratio of toxic dose (e.g., TD50) to effective dose (ED50). | Measure of drug safety; a larger index indicates a wider safety margin [37]. |

The slope of the curve is also critical, indicating the sensitivity of the response to dose changes. A steeper slope suggests a narrow dose range between minimal and maximal effects [37]. Furthermore, the presence or absence of a threshold—a dose below which no effect is observed—is a major consideration in risk assessment for non-carcinogens [40] [38].

The interaction of drugs with receptors fundamentally shapes the curve. A competitive antagonist shifts the agonist's dose-response curve to the right (increasing EC50) without suppressing Emax, while a non-competitive antagonist decreases Emax (suppressing maximal response) [36].

Experimental Protocols for Curve Generation

Protocol for In Vitro Concentration-Response Assay

This protocol outlines the generation of a concentration-response curve using a cell-based functional assay, a cornerstone of drug discovery.

Primary Materials:

- Test compound serially diluted in appropriate vehicle (e.g., DMSO, buffer).

- Cell line expressing the target receptor or pathway.

- Assay-specific detection reagents (e.g., fluorescent dye, antibody, substrate).

- Microplates (e.g., 96-well or 384-well), plate reader.

Detailed Methodology:

- Cell Preparation: Seed cells at optimized density in microplates and culture until they reach the desired confluence (e.g., 24-48 hours).

- Compound Dilution: Prepare a serial dilution of the test compound (typically 3- or 10-fold) across a range spanning expected no-effect to maximal-effect concentrations (e.g., 10 pM to 100 µM). Include vehicle-only control (0% effect) and a reference agonist/antagonist control (100% effect).