The Science of Extrapolation: Bridging Biological Scales to Accelerate Discovery and Development

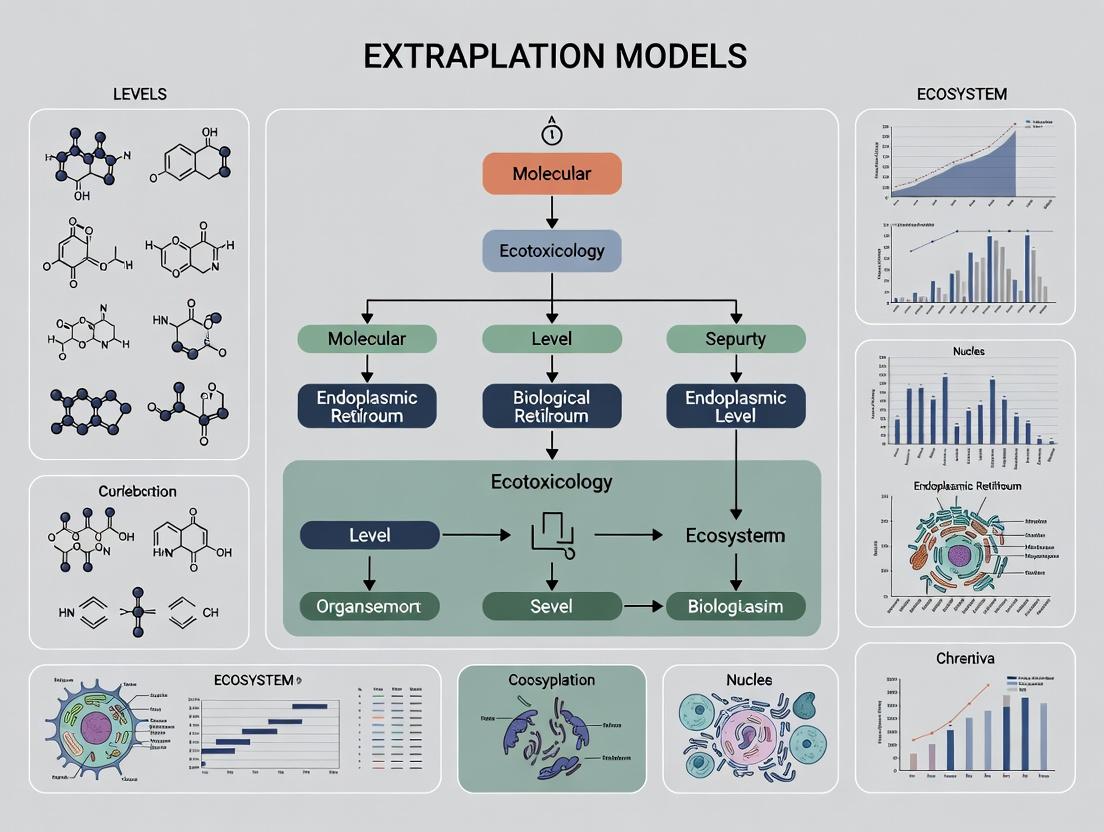

This article provides a comprehensive examination of extrapolation models as essential tools for translating biological knowledge across different levels of organization—from molecules and cells to whole organisms and populations.

The Science of Extrapolation: Bridging Biological Scales to Accelerate Discovery and Development

Abstract

This article provides a comprehensive examination of extrapolation models as essential tools for translating biological knowledge across different levels of organization—from molecules and cells to whole organisms and populations. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles that justify cross-scale inferences, surveys key methodological approaches from pharmacokinetic-pharmacodynamic modeling to machine learning on fitness landscapes, and addresses critical challenges in model validation and uncertainty quantification. Through analysis of current applications in drug development, translational research, and ecological forecasting, the review synthesizes strategies for robust extrapolation, evaluates comparative model performance, and outlines future directions for enhancing predictive accuracy in biomedical and clinical research.

The Principles and Justification of Biological Extrapolation: From Molecules to Populations

Core Concepts and Troubleshooting Fundamentals

In biomedical research, extrapolation is the translation or transfer of relationships observed in one experimental setting to another, such as from animal models to humans [1]. The core challenge, the scale-translation problem, arises from the need to predict outcomes across different levels of biological organization (e.g., molecular, cellular, organismal) or between different species [2]. This process is foundational to risk assessment and drug development, where data from controlled experiments must inform understanding of complex, real-world biological systems [1].

The validity of extrapolation hinges on understanding the conservation of biological pathways. A fundamental principle is that animals are reasonable surrogates for humans; for instance, the genetic makeup of mice and rats is more than 95% identical to humans [1]. However, subtle differences in metabolic pathways, receptor binding affinities, or organ function can lead to failed predictions. Effective troubleshooting in this field therefore requires a systematic approach to identify whether a problem stems from flawed extrapolation assumptions or from technical experimental errors [3].

Common Technical Issues and Validation Failures:

- Weak or No Signal in Detection Assays: This could indicate a technical protocol failure (e.g., antibody degradation) or a genuine biological difference (e.g., low protein expression in the target species) [3].

- Inconsistent Dose-Response Relationships: Discrepancies between model species and humans often point to differences in toxicokinetics (how the body handles a chemical) or toxicodynamics (how the chemical affects the body) [2].

- High Variability in Replicate Experiments: This may expose uncontrolled variables in the experimental system or highlight greater biological variability in the test subject than in the original model [1].

Systematic Troubleshooting Steps:

- Repeat the Experiment: Rule out simple human error or one-off technical failures [3].

- Validate Your Controls: Ensure positive and negative controls are performing as expected. A failed positive control suggests a protocol-wide issue [3].

- Audit Reagents and Equipment: Check storage conditions, expiration dates, and equipment calibration. Molecular biology reagents are particularly sensitive [3].

- Isolate Variables Methodically: Change only one experimental parameter at a time (e.g., antibody concentration, fixation time) to identify the root cause [3].

- Revisit Biological Assumptions: If technical issues are ruled out, critically reassess the conservation of the target pathway or mechanism between your model and target system [2].

Detailed Troubleshooting Guides

Problem: Inconsistent Results in Cross-Species Protein Expression Analysis (e.g., Western Blot, IHC)

Question: My immunohistochemistry (IHC) staining for a conserved protein shows strong signal in mouse liver tissue but is consistently weak or absent in human liver cell lines. My controls are working. Is this a technical failure or a valid biological difference?

Answer: This discrepancy requires a structured investigation to distinguish between assay failure and a true biological result [3].

Step-by-Step Diagnosis:

- Verify Assay Integrity:

- Positive Control: Run a parallel sample known to express the target protein highly (e.g., a different cell line or tissue lysate). If this fails, the protocol is faulty [3].

- Antibody Validation: Confirm the primary antibody's cross-reactivity for the human epitope. Consult the datasheet and search for published validation in human samples [4].

- Sample Quality: Check RNA-seq or qPCR data from your human cell line to confirm the gene is transcribed. Degraded protein samples can also cause failure [3].

Optimize Protocol for New System:

- If the assay is valid but signal is weak, the established mouse protocol may need optimization for human cells.

- Perform a Primary Antibody Titration: Test a range of concentrations (e.g., 1:100 to 1:2000) to find the optimal signal-to-noise ratio for the human sample [3].

- Adjust Epitope Retrieval: For IHC on paraffin-embedded cells, the heat-induced epitope retrieval (HIER) time or pH may need adjustment for human vs. mouse tissue morphology [4].

- Consider Fixation: Over-fixation can mask epitopes. Try reducing the fixation time for human cell pellets [4].

Interpret Biological Meaning:

- If technical optimization fails to yield a strong signal, the result may be valid. The protein may be expressed at lower levels, in a different isoform, or localized to a different subcellular compartment in human cells.

- Next Step: Employ an alternative, more sensitive detection method (e.g., immunofluorescence with signal amplification) to confirm low-level expression [5].

Problem: Divergent Toxicological Response in a Novel Organoid Model

Question: We are developing a human liver organoid model to extrapolate drug-induced toxicity. The organoids show a much higher sensitivity to Drug X compared to primary rat hepatocytes. How do we determine if this is a promising model of human susceptibility or an artifact of the immature organoid system?

Answer: This is a classic scale-translation problem where the in vitro system's predictive value must be rigorously validated [2].

Validation Protocol:

- Establish a Benchmark: Compile known in vivo human and rat toxicity data (e.g., clinical dose, plasma concentration, known adverse outcomes) for Drug X and related compounds [1].

- Correlate Internal Dose: Measure the internal concentration of Drug X and its metabolites in your organoid media and compare it to known human therapeutic or toxic plasma levels. Use techniques like Mass Spectrometry Imaging [2].

- Interrogate the Mechanism:

- Pathway Analysis: Use RNA sequencing or proteomics on treated organoids and compare pathway activation (e.g., apoptosis, oxidative stress) to signatures from human case reports or rat studies [2].

- Functional Conservation Check: Verify that the drug's target (e.g., a specific receptor) is expressed and functional in your organoids at a level comparable to mature human liver [2].

- Refine the Model: If the organoid system is immature, consider prolonging differentiation or using patterning factors to drive maturity. Co-culture with non-parenchymal cells may also provide more realistic metabolic feedback [4].

- Control for Artifacts: Ensure the increased sensitivity is not due to baseline stress (e.g., suboptimal culture conditions, high passage number) by thoroughly characterizing control organoid health (viability, ATP levels, albumin secretion) [4].

Diagram: An Adverse Outcome Pathway (AOP) Framework for Extrapolation Troubleshooting This framework links a molecular initiating event to an adverse outcome through measurable key events. When extrapolation fails, assays (blue ovals) can pinpoint at which conserved key event the prediction breaks down [2].

Problem: Failures in QuantitativeIn VitrotoIn VivoExtrapolation (QIVIVE)

Question: Our *in vitro enzyme activity data suggests a drug should be cleared rapidly, but in vivo pharmacokinetic (PK) studies in rats show prolonged half-life. Which part of the extrapolation model is likely wrong?*

Answer: QIVIVE failures typically originate in the assumptions linking in vitro data to whole-organism physiology [2].

Diagnostic Checklist:

- Toxicokinetic (TK) Parameters: Did your model correctly account for plasma protein binding, blood-to-plasma ratio, and organ-specific blood flow rates in the rat? These factors dramatically affect the free drug concentration available for clearance [2].

- Metabolic Competence: Does your in vitro system (e.g., recombinant enzyme, microsomes) express all relevant Phase I and II metabolizing enzymes at physiological ratios and activities? Co-factor concentrations (e.g., NADPH) must also be optimal [4].

- Transport and Distribution: The in vivo half-life depends on distribution volume and re-absorption processes. Your in vitro clearance assay may not account for transporter-mediated uptake/efflux in the liver or renal reabsorption [2].

- Non-Linear Kinetics: Check if the drug saturates metabolic enzymes or transporters at the in vivo dose, moving away from first-order kinetics assumed in the simple model.

Recommended Action: Develop or apply a Physiologically Based Kinetic (PBK) model. This computational framework incorporates species-specific anatomy, physiology, and biochemistry to mechanistically simulate absorption, distribution, metabolism, and excretion (ADME). Start by populating a rat PBK model with your *in vitrodata and *in vivo PK data to identify which parameters need refinement [2].

Key Experimental Protocols for Validating Extrapolation

Protocol: Cross-Species Target Conservation Analysis

Objective: To quantitatively compare the binding affinity and functional response of a drug target (e.g., a receptor) between a model species and humans, validating a core assumption of pharmacodynamic extrapolation [2].

Materials:

- Recombinant protein (target) from human and model species (e.g., rat) [4].

- Radiolabeled or fluorescent ligand for the target.

- Appropriate cell lines expressing the target from each species.

- Functional assay kit (e.g., cAMP, calcium flux, reporter gene) compatible with both cell systems [4].

Method:

- Express & Purify: Produce recombinant target proteins or generate stable cell lines expressing the target at comparable levels.

- Saturation Binding:

- Incubate serial dilutions of the labeled ligand with a fixed amount of target protein/cell membrane from each species.

- Determine total, non-specific, and specific binding.

- Calculate Kd (dissociation constant) and Bmax (receptor density) for each species.

- Competition Binding:

- Use a fixed concentration of labeled ligand and increasing concentrations of the unlabeled drug candidate.

- Calculate the IC50 (half-maximal inhibitory concentration) for each species.

- Functional Assay:

- Treat cells expressing the target with a range of drug concentrations.

- Measure the functional output (e.g., cAMP generation).

- Calculate EC50/IC50 for the functional response.

- Data Analysis:

- Compare Kd and EC50/IC50 values between species. A difference greater than 10-fold suggests a significant pharmacodynamic divergence that must be factored into the extrapolation model [2].

Protocol: Metabolite Profiling for Toxicokinetic Extrapolation

Objective: To identify and quantify species-specific drug metabolites in in vitro hepatic systems, informing cross-species differences in metabolism that impact toxicity predictions [2].

Materials:

- Cryopreserved hepatocytes or liver microsomes from human and relevant model species (rat, dog) [4].

- Drug candidate (substrate).

- Co-factors (NADPH, UDPGA).

- Liquid Chromatography-Mass Spectrometry (LC-MS/MS) system.

Method:

- Incubation: Incubate the drug with hepatocytes/microsomes and necessary co-factors at physiologically relevant temperature and time [4].

- Sample Collection: Terminate reactions at multiple time points (e.g., 0, 15, 30, 60, 120 min) with an organic solvent (e.g., acetonitrile) to precipitate proteins.

- Sample Preparation: Centrifuge, collect supernatant, and evaporate under nitrogen. Reconstitute in MS-compatible solvent.

- LC-MS/MS Analysis:

- Use a C18 column for separation.

- Employ full-scan and data-dependent MS/MS to detect and identify metabolites.

- Use authentic standards for major suspected metabolites when possible.

- Data Interpretation:

- Compare metabolic stability (half-life) between species.

- Identify qualitative differences (unique metabolites in one species) and quantitative differences (different rates of formation for shared metabolites).

- Integrate these findings into PBK models to improve in vivo predictions [2].

Diagram: Iterative Workflow for Validating an Extrapolation Model This workflow emphasizes that extrapolation is a hypothesis-driven, iterative process. Models must be tested with targeted validation experiments in the system of concern, and refined based on the outcome [1] [2].

Frequently Asked Questions (FAQs)

Q1: What is the simplest first step when an extrapolation prediction fails? A: Re-examine your fundamental conservation assumptions. Before deep-diving into complex model parameters, verify that the primary drug target, key metabolizing enzyme, or critical pathway is functionally equivalent between your model and the target species. A quick in vitro binding or activity assay comparing the two systems can save significant time [2].

Q2: How do I choose the most appropriate model species for extrapolation to humans? A: There is no universal "best" model. Selection requires a weight-of-evidence approach based on your specific endpoint [1]. The table below compares key considerations:

| Biological Factor | Priority for Pharmacokinetics | Priority for Toxicology | Example |

|---|---|---|---|

| Metabolic Pathway Similarity | Critical | Critical | Use guinea pigs for aspirin metabolism studies (similar hydrolysis) [1]. |

| Target Sequence/Function | High | High | Use transgenic mice expressing the human drug target. |

| Organ System Physiology | Moderate | High | Use dogs for cardiovascular toxicity (similar heart conduction) [1]. |

| Life Stage & Development | Low | Moderate | Use juvenile rats for developmental neurotoxicity. |

Q3: What is a "read-across" approach and when should I use it? A: Read-across is a comparative data gap-filling technique. When you lack toxicity data for "Chemical A" in the target species, you predict its properties based on data from a similar, well-studied "Chemical B" in the same or a different species. It is most defensible when the chemicals are structural analogs with a common mode of action, and the biological system's response is conserved [2]. It is commonly used in environmental safety assessment of pharmaceuticals [2].

Q4: Can in silico (computational) models replace animal testing for extrapolation? A: Not yet, but they are powerful complementary tools. In silico models like Quantitative Structure-Activity Relationship (QSAR) and PBK models are excellent for generating hypotheses, prioritizing chemicals for testing, and exploring mechanisms. However, regulatory decisions still generally require in vivo data to capture the complexity of integrated organismal responses. The future lies in defined Integrated Approaches to Testing and Assessment (IATA) that combine computational, in vitro, and limited in vivo data [2].

The Scientist's Toolkit: Essential Reagents & Materials

| Category | Specific Item | Function in Extrapolation Research | Key Consideration |

|---|---|---|---|

| Biological Systems | Cryopreserved Hepatocytes (Human, Rat, Dog) | Study species-specific drug metabolism and intrinsic clearance [4]. | Verify viability and metabolic competence upon thawing. Lot-to-lot variability can be high. |

| Recombinant Proteins (Cytochromes P450, Transporters) | Mechanistically dissect individual contributions to PK differences [4]. | Ensure proper post-translational modifications and membrane incorporation for functional assays. | |

| 3D Organoid Culture Kits (e.g., Liver, Kidney) | Create more physiologically relevant human in vitro models for toxicity testing [4]. | Differentiation maturity and batch consistency of basement membrane extract are critical. | |

| Assay Technologies | Phospho-Specific Antibody Arrays | Profile activation of conserved signaling pathways across species in response to a stressor [4]. | Confirm antibody cross-reactivity with the model species' protein epitope. |

| Multiplex Cytokine/Apoptosis Assays (Luminex, ELISA) | Quantify conserved biomarkers of immune or cellular stress response in different models [4]. | Use identical assay platforms and calibrators for direct cross-species comparison. | |

| LC-MS/MS System | Identify and quantify species-specific metabolites for TK modeling [2]. | Requires method development and optimization for each new chemical class. | |

| Specialized Reagents | Species-Matched Antibody Pairs | Accurately quantify protein biomarkers (e.g., kidney injury molecule-1) in different model species [4]. | Avoid using an antibody against the human protein to measure its rat ortholog unless explicitly validated. |

| Activity-Based Protein Profiling (ABPP) Probes | Directly measure functional enzyme activity (not just expression) in tissue lysates across species. | Probe must be designed for the specific enzyme family of interest. | |

| Reference Data | Annotated Genomes & Proteomes (Ensembl, UniProt) | Align sequences to identify orthologs and check for critical amino acid differences in binding sites. | The quality of functional annotation can vary significantly between non-model species. |

Quantitative Data for Extrapolation Planning

Successful extrapolation relies on quantitative understanding of similarities and differences. The following table summarizes core data that should be compiled before building an extrapolation model [1] [2].

| Parameter for Comparison | Typical Range of Variation (Model vs. Human) | Impact if Ignored | How to Obtain |

|---|---|---|---|

| Plasma Protein Binding (%) | Can vary by >2-fold (e.g., 95% vs 99% bound). | Drastically mispredicts free, active drug concentration. | Equilibrium dialysis or ultrafiltration with species-specific plasma. |

| Hepatic Intrinsic Clearance (mL/min/kg) | Often differs by an order of magnitude. | Leads to incorrect predictions of half-life and dosing. | In vitro metabolic stability assay using hepatocytes. |

| Receptor Binding Affinity (Kd) | Ideally <3-fold difference for valid PD extrapolation. | Misestimates the effective dose for efficacy or toxicity. | Radioligand binding assays with recombinant receptors. |

| Organ Weight/Body Weight (%) | Relatively conserved among mammals (allometry). | Errors in PBK model structure and dose scaling. | From anatomical textbooks or dedicated studies. |

| Key Enzyme Expression Level | Can vary >50-fold (e.g., CYP3A4 in liver). | Fails to predict metabolic routes and drug-drug interactions. | Proteomics or immunoblotting of tissue samples. |

The Philosophical and Mechanistic Basis for Cross-Level Inference

Philosophical Foundation: From Causation to Prediction

Cross-level inference in biological research is fundamentally a problem of causal explanation. The philosophical "new mechanist" approach provides a critical framework, asserting that explaining a phenomenon involves elucidating the multi-level, organized system of entities and activities responsible for it [6]. A mechanistic explanation does not merely establish a statistical association between an input (e.g., a chemical) and an output (e.g., toxicity); it details the step-by-step causal process across biological scales—from molecular interaction to cellular response to tissue damage [6].

This constitutive, part-whole relationship is key to cross-level inference [6]. The validity of extrapolating from a model system (like an in vitro assay or a rodent model) to a target system (like humans) rests on demonstrating a shared underlying mechanism. The greater the mechanistic similarity—conserved molecular targets, homologous signaling pathways, analogous tissue responses—the more justified the inference [7]. This moves prediction from a black-box statistical exercise to a principled, biologically grounded conclusion.

Technical Support Center: Troubleshooting Cross-Level Inference

Common Experimental Challenges & Solutions

Researchers face specific technical and interpretive hurdles when building and validating cross-level extrapolation models. The following table outlines frequent issues and evidence-based corrective actions.

Table 1: Troubleshooting Guide for Cross-Level Inference Experiments

| Problem Symptom | Potential Root Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|---|

| In vitro bioactivity does not predict in vivo outcome. | Poor toxicokinetic mimicry (absorption, distribution, metabolism, excretion) in the test system [8]. | Check for metabolizing enzyme activity (e.g., CYP450) in cell lines. Compare metabolic profiles of the compound in vitro vs. in vivo. | Use primary cells or co-cultures with hepatocytes. Incorporate physiologically based kinetic (PBK) modeling to bridge concentration differences [8]. |

| High toxicity in model organism but no effect in target species (or vice versa). | Divergent toxicodynamics; the molecular target is absent, non-functional, or has a different physiological role in the target species [8]. | Perform a target conservation analysis (sequence alignment, structural modeling). Validate target engagement and downstream signaling in both systems. | Define the Taxonomic Domain of Applicability for the Adverse Outcome Pathway (AOP). Use phylogenetically closer models or humanized assays [8]. |

| Population-level model (e.g., species sensitivity distribution) is overly protective or under-protective. | Model assumes individual-level endpoints (survival, growth) linearly scale to population impacts, ignoring density-dependence and life-history traits [9]. | Analyze population growth rate (e.g., using matrix models). Test if sensitivity differs across life stages (e.g., juvenile vs. adult). | Use individual-based models (IBMs) that integrate life-cycle data and demographic stochasticity for ecological risk assessment [9]. |

| Omics signatures are inconsistent across biological replicates or levels. | Cytotoxic burst or overwhelming stress response at high concentrations masks specific pathway effects [8]. | Conduct a concentration-response series. Check for markers of general stress/necrosis (e.g., LDH release) alongside specific endpoints. | Use benchmark concentration (BMC) modeling to identify the lowest effective concentration for pathway-specific analysis. |

| Uncertainty in extrapolation is unquantified, reducing regulatory confidence. | Reliance on a single point estimate or default safety factors without probabilistic quantification [7]. | Perform sensitivity analysis on key model parameters (e.g., interspecies metabolic scaling factors). | Use probabilistic risk assessment methods (e.g., Bayesian inference, Monte Carlo simulation) to characterize uncertainty [7] [9]. |

Frequently Asked Questions (FAQs)

Q: What is the fundamental scientific basis for extrapolating from animals to humans?

- A: The basis is the evolutionary conservation of biological processes. Genetic makeup of key mammalian models (rats, mice) is >95% identical to humans, leading to similarity in host defense, metabolic systems, and organ function [7]. For example, renal transport and metabolic functions are conserved, justifying the use of animal models for urinary toxicology unless chemical-specific data indicates otherwise [7].

Q: How do New Approach Methodologies (NAMs) change the extrapolation paradigm?

- A: NAMs (in vitro, in silico, omics) shift the basis of prediction from observed apical endpoints in whole animals (e.g., mortality) to mechanistic perturbations at the molecular and cellular level [8]. The extrapolation challenge becomes one of quantitatively linking a mechanistic perturbation described in an AOP to an adverse outcome in a target species, often using bioinformatic tools to assess pathway conservation [8].

Q: When is an extrapolation from individual-level effects to population-level consequences potentially misleading?

- A: It can be misleading when population dynamics are strongly influenced by density-dependent compensation or when the toxicant affects life-history traits with high elasticity (strong influence on population growth rate). A toxicant reducing juvenile survival in a species with high fecundity and low juvenile survival may have minimal population impact, whereas the same effect on a long-lived species with low fecundity could be catastrophic [9].

Q: What is the role of the Adverse Outcome Pathway (AOP) framework in cross-level inference?

- A: The AOP framework provides a structured, modular knowledge map linking a molecular initiating event to an adverse outcome across biological levels of organization. It explicitly defines key events and the relationships between them, allowing researchers to identify where knowledge is sufficient for extrapolation and where critical gaps exist. Its utility hinges on establishing the taxonomic domain of applicability for each key event relationship [8].

Core Experimental Protocols for Validating Inference

Protocol: Establishing the Taxonomic Domain of Applicability for an AOP

Objective: To determine the range of species across which a postulated Key Event Relationship (KER) in an AOP is conserved. Materials: Sequence databases (NCBI, Ensembl), protein structure prediction tools (AlphaFold), phylogenetic analysis software, relevant cell lines or tissues from multiple species. Procedure:

- Identify Molecular Initiating Event (MIE): Precisely define the protein target or DNA binding site of the chemical stressor.

- Perform Conservation Analysis:

- Retrieve amino acid/nucleotide sequences of the target from multiple species.

- Conduct multiple sequence alignment and construct a phylogenetic tree.

- Model the 3D structure of the binding pocket/promoter region in different species.

- Functional Assay: Test target engagement (e.g., receptor binding, enzyme inhibition) in vitro using proteins or cells from species of interest.

- Validate Downstream Key Event: Measure the immediate downstream cellular key event (e.g., phosphorylation, gene expression) in exposed cells/tissues from different species.

- Synthesis: Integrate sequence, structural, and functional data to define the phylogenetic boundary within which the KER is operative [8].

Protocol: Population-Level Extrapolation Using Life-Table Response Experiments (LTRE)

Objective: To translate chemical effects on individual life-cycle traits (survival, growth, reproduction) into impacts on population growth rate (λ). Materials: Synchronized cohort of test organisms (e.g., Daphnia, insects), controlled exposure system, tools for measuring individual traits. Procedure:

- Exposure: Randomly allocate organisms to a control and multiple toxicant concentrations. Maintain exposure over a full life cycle.

- Life-Cycle Trait Measurement: For each treatment, longitudinally track age-specific survival (l_x) and fecundity (m_x).

- Population Model Construction: Construct an age- or stage-structured population projection matrix from the control data.

- Analysis: Calculate the population growth rate (λ) for each treatment group using the Euler-Lotka equation or matrix projection.

- Elasticity Analysis: Perform elasticity analysis on the control matrix to identify which vital rates (e.g., juvenile survival, adult fecundity) contribute most to λ. Compare the sensitivity of λ to the sensitivity of individual traits [9].

- Modeling: Use the results to parameterize an individual-based model (IBM) to explore long-term and stochastic population outcomes under exposure scenarios [9].

Table 2: Key Analytical Outputs from LTRE Protocol

| Output Metric | Description | Interpretation for Cross-Level Inference |

|---|---|---|

| Population Growth Rate (λ) | The per-capita rate of population increase. λ>1 = growth, λ<1 = decline. | The integrated endpoint linking individual toxicity to population sustainability. |

| Critical Effect Concentration (CEC) | The exposure concentration causing a specified decline in λ (e.g., 10%). | A more ecologically relevant benchmark than an individual NOEC for setting safety thresholds [9]. |

| Elasticity of λ to Vital Rates | The proportional sensitivity of λ to changes in a specific vital rate (e.g., juvenile survival). | Identifies which individual-level endpoints are most critical to measure for accurate population-level prediction. |

Visualizing Core Concepts: Pathways and Workflows

Adverse Outcome Pathway Logical Structure

Cross-Level Inference & Validation Workflow

Table 3: Key Research Reagent Solutions for Cross-Level Inference Studies

| Tool/Reagent Category | Specific Example(s) | Function in Cross-Level Inference |

|---|---|---|

| Phylogenetically Broad Cell Panels | Primary cells or induced pluripotent stem cell (iPSC)-derived cells from human, primate, rodent, zebrafish. | Enables direct in vitro comparison of toxicodynamic responses across species, grounding extrapolation in empirical data. |

| Pathway-Reporter Assays | Luciferase-based reporters for conserved pathways (NF-κB, Nrf2, p53, ER stress). | Measures specific Key Event activities in a high-throughput format, allowing quantification of pathway perturbation potency. |

| Bioinformatics Databases & Tools | Comparative Toxicogenomics Database (CTD), AOP-Wiki, BLAST, phylogenetic analysis software (MEGA, Phylo.io). | Supports target conservation analysis, AOP development, and identification of homologous genes/pathways across species [8]. |

| Physiologically Based Kinetic (PBK) Modeling Software | GastroPlus, Simcyp, open-source tools like 'R` packages (httk). |

Simulates absorption, distribution, metabolism, and excretion to bridge between in vitro effective concentrations and in vivo external or tissue doses [8]. |

| Defined In Vitro Systems | Organ-on-a-chip, 3D spheroids, co-culture systems. | Provides more physiologically relevant tissue context and simple cell-cell interactions, improving the biological relevance of the in vitro starting point for extrapolation. |

| Reference Chemicals | Chemicals with well-characterized, species-specific modes of action (e.g., agonists for non-conserved receptors). | Serves as positive and negative controls to test and validate the performance and domain of applicability of new extrapolation models. |

Technical Support Center: Troubleshooting Extrapolation Across Biological Scales

This support center is framed within a thesis on extrapolation models in biological research. It provides resources for researchers, scientists, and drug development professionals facing challenges when translating experimental findings across the hierarchical levels of biological organization—from molecular and cellular systems to tissues, organs, whole organisms, and populations [10] [11] [12].

Frequently Asked Questions (FAQs)

Q1: What is meant by "translation" and "discontinuity" between biological levels? A1: In extrapolation models, a "point of translation" is a conserved biological mechanism (e.g., a specific protein interaction or metabolic pathway) that functions predictably across different levels, such as from in vitro cell assays to in vivo organ systems. A "discontinuity" is a breakdown in this predictability, where emergent properties, unique tissue microenvironments, or systemic feedback loops cause a mechanism observed at one level (e.g., cellular cytotoxicity) to manifest differently or not at all at a higher level (e.g., organ failure) [13] [14].

Q2: What is the primary scientific basis for extrapolating from animal models to humans? A2: The fundamental principle is the high degree of genetic and physiological conservation among mammals. The genetic makeup of mice or rats is >95% identical to humans, and key host defense, metabolic, and organ systems (like the urinary system) are very similar. This conservation provides a reasonable basis for assuming animals are good surrogates, unless chemical-specific data indicate otherwise [7].

Q3: How can the Adverse Outcome Pathway (AOP) framework help in cross-species extrapolation? A3: The AOP framework organizes knowledge into causal pathways linking a Molecular Initiating Event (MIE) to an adverse outcome at the organism or population level. By defining the Taxonomic Domain of Applicability for each key event in the pathway, researchers can assess whether a biological mechanism is structurally and functionally conserved across species. This allows for informed extrapolation and can reduce redundant animal testing [14].

Q4: What are common sources of variability when moving from cellular to tissue/organ-level experiments? A4: Key discontinuities arise from:

- Tissue Microarchitecture: The 3D organization, extracellular matrix, and cell-cell interactions absent in 2D cultures.

- Pharmacokinetics/ Toxicokinetics: Differences in compound absorption, distribution, metabolism, and excretion (ADME).

- Systemic Signaling: Endocrine, immune, and neural communications that modulate local cellular responses.

- Emergent Tissue Functions: Properties like contractility or filtration that only arise from integrated tissue organization [13] [14].

Troubleshooting Guides

Issue 1: In vitro assay result fails to predict in vivo organ toxicity.

- Possible Cause 1: Lack of metabolic competence. Your cellular model may not express the cytochrome P450 enzymes or other metabolizing systems present in the target organ.

- Solution: Use primary cells or co-culture systems that include metabolically active cells (e.g., hepatocytes). Consider validated metabolically competent cell lines or add S9 fractions.

- Possible Cause 2: Absence of tissue-specific microenvironment. The assay misses critical stromal interactions, biomechanical forces, or soluble factors.

- Solution: Implement more complex 3D culture models (spheroids, organoids) or organ-on-a-chip systems that recapitulate tissue-tissue interfaces and fluid flow [14].

Issue 2: Animal model data does not accurately translate to expected human response.

- Possible Cause 1: Species-specific toxicokinetics or toxicodynamics. Differences in ADME or the affinity of a compound for its target protein.

- Solution: Conduct comparative in vitro studies using human and animal hepatocytes or microsomes. Use physiologically based pharmacokinetic (PBPK) modeling to scale dosages.

- Possible Cause 2: The adverse outcome is mediated by a mechanism not conserved in the test species.

Issue 3: Difficulty integrating data from multiple levels of organization (e.g., molecular, cellular, organ) into a coherent prediction.

- Possible Cause: No conceptual framework to logically link events across scales.

- Solution: Develop or use an existing Adverse Outcome Pathway (AOP). Systematically map your molecular and cellular data onto specific Key Events (KEs) and establish Key Event Relationships (KERs) that logically bridge levels of biological organization. This creates a testable, mechanistic hypothesis for extrapolation [14].

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential tools for investigating cross-level translation.

| Tool/Reagent | Primary Function in Cross-Level Research |

|---|---|

| Cross-Species Biomarker Panels (e.g., urinary kidney injury markers) | Quantify conserved functional responses (e.g., tubular damage) across species, bridging organ-level physiology to molecular events [7]. |

| Organoid/3D Tissue Culture Systems | Model tissue- and organ-level complexity (cell diversity, architecture, function) in a controlled in vitro setting, filling the gap between cells and whole organisms [14]. |

| AOP (Adverse Outcome Pathway) Framework | Provides a structured, modular template to formally describe and evaluate the mechanistic sequence of events linking an initial molecular perturbation to an adverse outcome at the organism or population level [14]. |

| PBPK/PD (Physiologically Based Pharmacokinetic/Dynamic) Models | Mathematical models that simulate the absorption, distribution, metabolism, and excretion of compounds across different tissues and species, crucial for quantitative dose and route extrapolation [7]. |

| NAMs (New Approach Methodologies) | An umbrella term for in silico, in chemico, and in vitro assays that provide mechanistic data on toxicokinetics and toxicodynamics, reducing reliance on apical animal testing for extrapolation [14]. |

| Comparative 'Omics Databases (e.g., genomic, proteomic) | Enable analysis of the conservation of genes, proteins, and pathways between model species and humans, informing the domain of applicability for extrapolation [14]. |

Experimental Protocols & Data

Protocol: Developing an Adverse Outcome Pathway (AOP) for Cross-Level Extrapolation This methodology structures existing knowledge to test extrapolation hypotheses [14].

- Define the Adverse Outcome (AO): Start with a precise phenotypic endpoint at the organism or population level relevant to risk assessment (e.g., liver fibrosis, population decline).

- Identify the Molecular Initiating Event (MIE): Characterize the initial, specific interaction between a chemical/stressor and a biomolecular target (e.g., receptor binding, protein oxidation).

- Map Key Events (KEs): List essential, measurable biological steps bridging the MIE to the AO. Assign each KE to a relevant level of organization (cellular, tissue, organ).

- Establish Key Event Relationships (KERs): For each pair of KEs, describe the scientific evidence supporting a causal or correlative link. Evaluate the strength and consistency of the evidence.

- Define Taxonomic Domain of Applicability: For each MIE, KE, and KER, critically assess and document the species for which there is evidence of structural/functional conservation.

- Quantitative AOP Development: Where possible, use computational models to describe the quantitative relationships between KEs (e.g., dose-response, temporal sequence).

Quantitative Data for Extrapolation Context Table: Key data informing cross-species and cross-level extrapolation.

| Data Type | Representative Finding | Implication for Extrapolation |

|---|---|---|

| Genetic Similarity | Mouse/rat genome is >95% identical to human; non-human primate >99% [7]. | Provides a strong foundational basis for using mammalian models as human surrogates. |

| ECOTOX Knowledgebase Trend | Since ~2000, a marked increase in molecular/cellular effects data reported, alongside steady apical (growth/mortality) data [14]. | Supports a paradigm shift towards using mechanistic, lower-level data to predict higher-level outcomes via AOPs. |

| Regulatory Animal Use | U.S. EPA directive to eliminate mammalian studies by 2035; EU REACH mandates animal testing as "last resort" [14]. | Drives urgent development and acceptance of NAMs and computational extrapolation models. |

Visualizing Relationships: Pathways and Workflows

The following diagrams, created using the specified color palette and contrast rules, illustrate core concepts.

Adverse Outcome Pathway Linking Biological Levels

Workflow for Mechanistic Cross-Species Extrapolation

Historical Precedents and Foundational Case Studies in Biomedical Extrapolation

Welcome to the Technical Support Center for Extrapolation Research. This resource provides targeted troubleshooting guides and FAQs for researchers, scientists, and drug development professionals working on extrapolation models across levels of biological organization. The content is framed within the broader thesis that effective extrapolation is fundamental to translating discoveries from molecular systems to individuals and populations, with a focus on historical precedents that inform contemporary methodologies [15] [16].

Fundamental Principles & Troubleshooting

FAQ 1: My clinical trial results are not being adopted by physicians for a key patient demographic. How can I improve the relevance and acceptance of my data?

- Key Issue: The perceived relevance of trial evidence is not solely dependent on average efficacy but is critically influenced by the representativeness of the trial population [15].

- Troubleshooting Guide:

- Diagnose the Gap: Compare the demographic and clinical characteristics of your trial population to the disease burden in the target treatment population. Significant underrepresentation of any group can limit extrapolation confidence [15].

- Assess Impact: Understand that physicians are more willing to prescribe drugs tested on representative samples. A study found that for physicians treating Black patients, a one standard deviation increase in Black trial participation increased prescribing intention by 0.11 standard deviations—an effect about half the size of the drug's efficacy itself [15].

- Implement Solution: Proactively design trials with inclusive enrollment strategies to ensure the study sample is representative. This improves both regulatory robustness and downstream adoption by healthcare providers and patients [15].

- Thesis Context: This addresses extrapolation from the trial cohort level to the broader patient population level. A model of similarity-based extrapolation shows that evidence is weighted more heavily when the sample is more representative of the group being treated [15].

FAQ 2: I am developing a therapy for a rare disease and cannot run a traditional randomized controlled trial (RCT). What alternative evidentiary approaches are accepted?

- Key Issue: For rare diseases, low prevalence, ethical concerns, and practical limitations often make large RCTs impossible [17].

- Troubleshooting Guide:

- Explore Regulatory Pathways: Engage early with regulators (e.g., FDA, EMA). Statutes allow for approval based on one adequate and well-controlled investigation plus confirmatory evidence [17].

- Gather Alternative & Confirmatory Data (ACD): Develop a robust evidence package using alternative sources. The core strategy is to supplement your primary trial data with external evidence [17].

- Select Appropriate ACD Sources:

- Natural History Studies: These observational studies track the disease course without intervention and are a primary source for external control arms [17] [18]. Example: The approval of omaveloxolone for Friedreich's ataxia used natural history data as a control [17].

- Patient Registries: Organized systems collecting uniform data on a specific disease population. They can inform disease progression, standards of care, and serve as a source for historical controls [17] [18].

- Historical Controls (HC): Data from past patients (from prior studies, charts, or registries) used as a comparator for a new treatment in a single-arm trial [18].

- Follow a Roadmap: When using HCs, follow a structured plan from design to analysis to maintain scientific validity. This involves rigorous assessment of data similarity, accounting for biases, and pre-planning sensitivity analyses [18].

- Thesis Context: This is a critical case of extrapolation across the level of experimental design, using external, real-world data hierarchies to bridge the evidence gap when direct concurrent comparison is not feasible [17] [18].

Foundational Case Studies & Methodologies

FAQ 3: How can I optimize a pharmacokinetic (PK) study in a pediatric rare disease where I can only collect very sparse blood samples?

- Key Issue: Ethical and practical constraints in pediatric rare diseases result in extremely sparse and unbalanced PK data, leading to high risk of biased parameter estimates [19].

- Troubleshooting Guide:

- Employ Bayesian Frameworks: Integrate "prior knowledge" into your analysis. This involves using existing, informative PK parameter estimates (e.g., from adult studies) as a Bayesian prior, which is then updated with the sparse new pediatric data [19].

- Quantify the Benefit: A case study on deferasirox in pediatric hemoglobinopathies demonstrated that using highly informative priors increased the probability of successful model convergence from 12% (with no priors) to 75% [19].

- Optimize Study Design: Use experimental design optimization techniques (e.g., ED-optimality) in conjunction with prior information. For example, increasing the number of samples per subject from 1 to 3, guided by prior knowledge, can reduce the probability of significant parameter bias from >60% to <20% [19].

- Ensure Comparability: The validity of this extrapolation depends on demonstrating similarity in drug disposition processes between the source (adult) and target (pediatric) populations, after accounting for known covariates like body size and organ function [19].

- Thesis Context: This is a prime example of extrapolation across levels of biological development (adult to pediatric) and population size, using mathematical modeling (Bayesian statistics) to formally integrate information across these levels [19].

Table 1: Case Study Summary: Bayesian Analysis of Sparse Pediatric PK Data (Deferasirox)

| Analysis Scenario | Probability of Successful Convergence | Key Implication |

|---|---|---|

| No use of prior knowledge | 12% | Sparse data alone are highly unreliable. |

| Use of weakly informative priors | 56% | Even limited prior information drastically improves model stability. |

| Use of highly informative priors | 75% | Strong, relevant prior knowledge is most effective for extrapolation. |

Source: Adapted from pediatric deferasirox PK study [19].

Diagram 1: Bayesian Workflow for Pediatric PK Extrapolation [19].

FAQ 4: How do I systematically find hidden connections in existing literature to generate new hypotheses for drug repurposing or mechanism discovery?

- Key Issue: Vast, fragmented scientific literature contains "undiscovered public knowledge"—latent connections between concepts published in non-interactive literatures [20].

- Troubleshooting Guide:

- Define Literature-Based Discovery (LBD): This is the use of computational tools to mine scientific text to generate novel hypotheses by revealing hidden links [20].

- Leverage Existing Successes: The most prominent application is drug repurposing. For example, early in the COVID-19 pandemic, BenevolentAI used LBD on knowledge graphs to identify baricitinib as a candidate from 378 possibilities within days, leading to rapid clinical testing and authorization [20].

- Understand the Methods: Modern LBD uses natural language processing (NLP), machine learning, and large language models (LLMs) on structured knowledge graphs built from literature databases [20].

- Acknowledge the Challenges: LBD has an evaluation problem. Real-world prospective discoveries are rare due to the difficulty of validating computational hypotheses and the inherent noise in literature data [20].

- Thesis Context: LBD is a form of extrapolation across the level of scientific knowledge organization. It connects isolated "islands of knowledge" from disparate subfields to infer new relationships at a higher systems level [20].

Diagram 2: Literature-Based Discovery Connects Disparate Knowledge [20].

Data, Tools & Reagent Management

FAQ 5: How do I know if my historical control data or natural history dataset is too outdated to use for my current trial analysis?

- Key Issue: Data, especially external data used for extrapolation, can lose relevance over time—a concept analogous to "expiration" [16].

- Troubleshooting Guide:

- Assess Data Currency: Evaluate if the conditions captured in the historical data still reflect the current reality. Key factors include changes in: standard of care, diagnostic criteria (e.g., stage migration), supportive care, and disease awareness [18] [16].

- Review Data Immutability Policy: Regulators emphasize data immutability (data is never deleted or altered). "Expiration" here refers to a change in the utility status of the data for a specific Context of Use (COU), not its deletion [16].

- Conduct a Status Review: Periodically re-evaluate foundational datasets. For example, natural history data collected before a new treatment became available may still be useful for understanding the untreated disease trajectory but cannot represent the current patient management landscape [16].

- Document Everything: Clearly document the provenance, limitations, and your assessment of relevance for any external dataset used in your analysis [16].

- Thesis Context: Managing data expiration is crucial for maintaining the validity of longitudinal extrapolations. It ensures that inferences drawn across time levels (past data to present trials) are based on sound and relevant comparisons [16].

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions for Extrapolation

| Tool/Reagent Category | Specific Example | Primary Function in Extrapolation |

|---|---|---|

| Bayesian Priors | PK parameter estimates from an adult population model [19]. | To formally integrate prior knowledge into the analysis of new, sparse data, stabilizing estimates and reducing required sample size. |

| Historical Control Data | Curated data from a natural history study or patient registry [17] [18]. | To serve as an external comparator arm in single-arm trials, enabling efficacy assessment when randomized concurrent controls are not feasible. |

| Real-World Data (RWD) Platforms | Linked EHR and claims databases (e.g., Flatiron, Optum) [21]. | To understand disease epidemiology, standard of care, treatment patterns, and outcomes in broad, heterogeneous populations beyond clinical trials. |

| Literature-Based Discovery Engines | AI-driven knowledge graphs mining PubMed/MEDLINE [20]. | To generate novel hypotheses by revealing hidden connections between concepts across fragmented scientific literatures. |

| Standardized Disease Registries | IAMRARE (NORD) or RARE-X platforms for rare diseases [17]. | To provide structured, longitudinal patient data essential for characterizing rare diseases and serving as a source for external controls. |

FAQ 6: What advanced statistical methods exist to formally integrate external evidence into my survival extrapolations for Health Technology Assessment (HTA)?

- Key Issue: Survival extrapolations based solely on often immature trial data are uncertain. Incorporating external evidence can reduce uncertainty and improve realism for long-term projections [22].

- Troubleshooting Guide:

- Map the Methodology Landscape: A systematic review identified four major thematic approaches [22]:

- Informative Priors: Using Bayesian methods with priors informed by external data (e.g., from other trials or real-world sources).

- Piecewise Methods: Fitting different survival models to different periods (e.g., trial data for short-term, general population life tables for long-term).

- General Population Adjustment: Adjusting trial data using general population mortality statistics.

- Other Complex Approaches: Including mixture cure models or methods leveraging external data on disease progression.

- Select Based on Evidence & Context: The choice depends on the available external data, the disease (most applications are in cancer), and the specific extrapolation question [22].

- Note the Validation Gap: Be aware that while many methods exist, there is a lack of direct comparative studies evaluating their relative performance and accuracy [22].

- Map the Methodology Landscape: A systematic review identified four major thematic approaches [22]:

- Thesis Context: These methods represent sophisticated quantitative frameworks for extrapolation across the time level (short-term trial data to lifetime horizon) and the population level (trial cohort to general or real-world population) [22].

Diagram 3: Methods for Integrating External Evidence in Survival Extrapolation [22].

Extrapolation in Practice: Key Methodologies and Their Applications in Research & Development

Quantitative Pharmacokinetic-Pharmacodynamic (PK-PD) Modeling and Clinical Trial Simulation

技术支援中心:疑难排解指南与常见问题

本技术支援中心旨在为研究人员、科学家和药物开发专业人士提供支持,解决在定量PK-PD建模与临床试验模拟实践中遇到的具体问题。内容基于更广泛的跨生物组织层次外推模型研究论文框架。

核心概念澄清

1. 什么是模型可识别性(Identifiability),为什么它在同时分析母体药物和代谢物数据时尤为重要?

模型可识别性是指能否根据可观测数据唯一地估计出模型中的所有参数。当同时为母体药物和代谢物建立PK模型时,由于数据更多,似乎更容易建模,但常会忽略可识别性原则。若模型结构过于复杂(例如,试图同时为两者建立多室模型并包含相互转化的速率常数),而数据信息不足,则会导致参数无法唯一确定,造成模型拟合失败或结果不可信。关键在于确保模型复杂度与数据信息量匹配,并可能需要对某些参数进行固定(基于先验知识)以达成可识别 [23]。

2. 如何理解稳态(Steady State)及其在试验设计中的意义?

当药物的给药速率与消除速率相等时,即达到稳态。此时,体内药量(和血药浓度)在一定范围内波动并保持相对稳定。在PK/PD建模中,稳态数据对于准确评估药物的暴露-反应关系至关重要。在设计多次给药的临床试验时,需要通过模拟预测达到稳态的时间,并确保在稳态期间采集PK/PD样本,以获得反映长期治疗效果的参数 [23]。

3. -2LL(负两倍对数似然值)或对数似然比在模型比较中起什么作用?

-2LL是用于比较嵌套统计模型拟合优度的指标。其值越小,表示模型对数据的拟合越好。当在两个具有嵌套关系的模型之间进行选择时(例如,完整模型与简化模型),两个模型-2LL值的差值服从卡方分布。该检验(似然比检验)可用于判断增加的模型参数(如协变量效应)是否提供了统计学上显著的拟合改进 [23]。

实验设计与数据收集

4. 在临床研究中,是否应为PK血样采集设定“时间窗”?

不建议设定宽松的“时间窗”。最佳实践是严格遵循方案设计的采样时间点。虽然PK参数计算可以校正实际采样时间,但引入时间窗会增加操作的复杂性和不必要的数据变异。更重要的是,这可能导致在关键PK特征区域(如峰浓度附近)采样密度不足,从而影响对吸收、分布等过程的准确表征 [23]。

5. 如何在儿科人群中进行PK外推建模?关键考虑因素是什么?

儿科外推的核心是利用成人或较大儿童的数据,结合生理学知识,预测年幼儿童的PK行为。关键步骤包括:

- 异速生长缩放:使用理论指数(清除率CL用0.75,分布容积V用1)或基于数据的估计值,用体重校正大小差异 [24]。

- 成熟度函数:对于婴儿和新生儿,必须使用成熟的Hill或Sigmoid Emax模型来表征肾脏、肝脏等器官功能的发育过程。注意,体重和年龄高度共线性,成熟度函数和异速生长指数不应同时估计 [24]。

- 验证:最终的模型预测必须在目标儿科人群中进行验证,即使数据稀疏 [24]。

6. 采用“组合给药”(Cassette Dosing)进行临床前PK筛选有哪些优缺点?

优点:能显著提高效率,在单一动物实验中同时评估5-10个化合物的PK特性,减少动物使用量和研究时间 [23]。 缺点:存在药物-药物相互作用的潜在风险(例如,竞争代谢酶或转运体),可能扭曲单个化合物的真实PK参数。因此,组合给药通常仅用于早期筛选排序,对优选出的化合物仍需进行传统的单独给药PK研究以确认结果 [23]。

建模、模拟与验证

7. 机器学习(ML)如何改变了传统的PK/PD建模流程?

传统建模是顺序、分步的,容易忽略参数间的交互作用。ML算法(如遗传算法)可以非顺序地同时探索包含多个结构假设(如不同吸收模型、消除模型、协变量关系)的庞大“模型空间”,自动评估数百个候选模型。它通过一个综合了拟合优度、稳健性和简洁性的“适应度评分”来快速识别最优模型,大大提高了效率并可能发现更优的模型结构 [25]。

8. 如何评估一个机制性模型(如PBPK模型)的可信度?

模型评估应遵循验证与确认(V&V)框架。关键活动包括:

- 验证:确保模型被正确实现(即“是否正确地建造了模型”)。检查数学方程、代码、参数单位等。

- 确认:评估模型在多大程度上准确地代表了现实世界(即“是否建造了正确的模型”)。将模型的预测与未用于模型构建的独立实验数据进行比较 [24]。

- 敏感性分析:识别对模型输出影响最大的参数,指导后续研究聚焦于关键不确定性。

- 不确定性量化:表征参数和模型结构的不确定性如何影响预测结果。

9. 当PD效应滞后于PK浓度时(即出现“磁滞环”),应如何建模?

这种现象通常表明药物从血浆到效应部位存在分布延迟。标准建模方法是引入一个效应室。效应室是一个虚拟的房室,通过一级速率常数(ke0)与中央室相连。效应室的浓度并非直接测量,而是用于驱动PD模型(如Emax模型)。ke0表征了效应滞后于血浆浓度的程度,其估计值对于确定药效起效和抵消时间至关重要 [26]。

监管与申报

10. 向监管机构提交建模与模拟结果时,应呈现哪些关键图表和数据?

对于支持儿科剂量选择的PK模拟,EMA建议提供 [24]:

- 连续尺度图:关键暴露指标(如AUC, C_max)随体重和年龄变化的预测图。

- 箱线图:针对拟议的体重/年龄分段剂量,展示各亚组预测暴露范围的箱线图,并叠加成人参考范围。

- 剂量函数对比图:显示拟议的阶梯式给药方案与基于模型的连续剂量函数之间的对比,以证明剂量方案的合理性。

- 数值表格:提供上述图表中预测暴露范围的汇总统计表。

11. 监管机构如何看待建模与模拟在首次人体试验剂量预测中的作用?

FDA和EMA均强烈建议将建模与模拟纳入申报资料。特别是对于大分子生物药,EMA的首次人体试验指南推荐采用最先进的建模(如PK/PD和PBPK)并结合异速生长缩放来预测起始剂量。使用最低预期生物效应水平法确定剂量时,PK/PD模型至关重要 [27]。

数据与方案概要

关键PK参数与PD端点

下表汇总了PK/PD建模中常用于描述药物行为和效应的核心指标。

| 类别 | 参数/端点 | 符号 | 描述与意义 | 典型获取方法 |

|---|---|---|---|---|

| 药代动力学 (PK) | 药时曲线下面积 | AUC | 反映药物在体内的总暴露量,是链接剂量与系统效应的关键指标。 | 非房室分析(梯形法)或模型积分估算 [26]。 |

| 峰浓度 | C_max | 给药后达到的最高血药浓度,与某些疗效或安全性事件相关。 | 直接观测或模型预测。 | |

| 表观清除率 | CL | 单位时间内清除药物的血浆容积,决定维持剂量。 | 房室模型或非房室分析(剂量/AUC)估算 [26]。 | |

| 表观分布容积 | V | 理论上药物均匀分布所需的容积,反映药物在组织中的分布程度。 | 房室模型参数。 | |

| 消除半衰期 | t_1/2 | 血药浓度下降一半所需时间,决定给药间隔。 | 0.693/消除速率常数(λz) [23]。 | |

| 药效动力学 (PD) | 最大效应 | E_max | 药物所能产生的最大效应。 | 通过Sigmoid E_max模型拟合浓度-效应数据得到 [26]。 |

| 产生50%最大效应的浓度 | EC_50 | 衡量药物产生效能的指标,值越小效能越高。 | 通过Sigmoid E_max模型拟合得到 [26]。 | |

| 受体占有率 | RO% | 靶点被药物结合的百分比,是许多靶向药物的关键生物标志物。 | 通过流式细胞术等实验方法测定 [28]。 | |

| 生物标志物变化 | ΔBiomarker | 治疗前后特定生物标志物(如细胞因子、基因表达)的变化量。 | ELISA、MSD、qPCR、RNA测序等平台检测 [28]。 |

建模方法与实验协议

非房室分析与房室建模的衔接协议

- 目的:利用非房室分析获得的标准PK参数,为机制性房室模型提供初始估计值,加速模型拟合进程。

- 步骤:

a. 数据准备:收集个体或平均的血浆药物浓度-时间数据。

b. NCA执行:使用经过验证的软件进行非房室分析,计算AUC(0-t)、AUC(0-∞)、Cmax、tmax、λz、t1/2、CL、Vz等参数 [23]。

c. 参数转换:

* 清除率

CL可直接作为房室模型CL参数的初始值。 * 末端消除速率常数λz可用于估算房室模型的消除速率常数K_e(K_e ≈ λz)。 * 分布容积V_z可作为一室模型V的初始值,或作为二室模型中央室容积V_c的参考。 d. 模型拟合:将上述初始值输入房室建模软件,进行非线性混合效应模型拟合,优化参数并评估模型 [26]。

群体PK/PD模型开发与验证的标准工作流程

- 基础模型开发:基于药物作用机制和先前知识,选择结构模型(如一室/二室)。在不考虑协变量的情况下,建立描述群体典型值和个体间变异性的基础模型 [26]。

- 协变量筛选:系统性地考察人口统计学(体重、年龄、性别)、实验室指标(肾功能、肝功能)、遗传因素等对PK/PD参数的影响。采用逐步法(前向纳入/后向剔除),依据统计学标准(如似然比检验)和临床相关性决定是否纳入 [25]。

- 模型验证:

- 内部验证:使用重采样技术(如自举法、交叉验证)评估模型的稳定性和参数估计的可靠性。

- 外部验证:使用另一独立数据集检验模型的预测性能,这是评估模型外推能力的黄金标准 [24]。

- 预测校正验证图:直观比较模型预测值与观测值,是评估模型预测准确性的常用图形工具。

- 模拟与应用:使用最终验证后的模型,通过蒙特卡洛模拟回答剂量选择、试验设计优化等关键问题 [29]。

用于PK/PD分析的关键研究试剂与平台解决方案 下表列出了支撑PK/PD实验分析的核心技术平台及其应用。

| 类别 | 平台/试剂 | 主要功能与描述 | 典型应用场景 |

|---|---|---|---|

| 浓度定量 | LC-MS/MS | 高灵敏度、高特异性的黄金标准方法,用于小分子药物及部分大分子的定量分析。 | 非临床和临床生物样品中的药物浓度测定 [23]。 |

| ELISA | 基于抗原-抗体反应,成本较低,通量高,经验丰富。 | 大分子药物(单抗、融合蛋白)的PK检测和抗药抗体筛选 [28]。 | |

| 电化学发光(MSD) | 基于电化学发光原理,灵敏度高,动态范围宽,可多重检测,所需样本量少。 | 大分子药物PK检测、生物标志物多重分析 [28]。 | |

| 药效/生物标志物分析 | 流式细胞术 | 多参数单细胞水平分析,可同时检测多个表面标志物和细胞内信号。 | 免疫细胞分型、受体占有率分析、细胞内磷酸化信号检测 [28]。 |

| 多重免疫分析(Luminex/MSD) | 同时定量检测多种细胞因子、趋化因子等可溶性蛋白标志物,通量高。 | 免疫治疗相关的细胞因子风暴评估、药效学生物标志物谱分析 [28]。 | |

| 自动化Western Blot(JESS等) | 自动化、定量化的蛋白质印迹分析,重复性好,通量高于传统Western。 | 靶点蛋白表达水平、信号通路蛋白磷酸化程度的定量分析 [28]。 | |

| qPCR/数字PCR | 高灵敏度、定量检测特定核酸序列(DNA或RNA)。 | ASO/siRNA药物的PK研究(检测载体或药物相关核酸)、基因表达水平变化 [28]。 | |

| 数据整合与建模 | AI/ML驱动建模工具 | 采用遗传算法等ML技术,非顺序性探索庞大模型空间,自动识别最优PK/PD模型结构 [25]。 | 处理复杂PK/PD数据,识别传统方法可能遗漏的关键参数交互作用。 |

| PBPK建模软件 | 整合生理学、生物化学和解剖学知识,机制性预测药物在人体不同组织器官中的处置过程。 | 首次人体剂量预测、药物-药物相互作用评估、特殊人群外推 [27]。 |

关键流程与关系图解

PK/PD建模在药物开发中的整合工作流程

图解:PK/PD建模与模拟整合工作流程

从数据到决策的PK/PD概念关系

图解:从数据到决策的PK/PD核心概念关系

新兴技术(AI/ML)与经典PK/PD建模的融合

图解:AI/ML增强范式与经典PK/PD建模的融合

Technical Support Center: Troubleshooting & FAQs

This technical support center is designed for researchers and drug development professionals working on extrapolating long-term therapeutic effects from clinical trial data. The guidance is framed within a broader thesis examining the challenges and limitations of extrapolating observations across different levels of biological organization—from cellular mechanisms to patient populations and beyond [30].

Frequently Asked Questions (FAQs)

Q1: What is survival analysis, and why is it critical for long-term extrapolation in drug development? Survival analysis, or time-to-event analysis, is a set of statistical methods used to analyze the time until a predefined event occurs, such as patient death, disease relapse, or progression [31] [32]. In drug development, while clinical trials provide data over a limited period, payers and regulatory bodies require estimates of treatment benefits over a patient's lifetime to assess cost-effectiveness and long-term value [33]. Survival modeling is the primary tool for extrapolating observed trial outcomes beyond the follow-up period to estimate these long-term effects [33].

Q2: What does "censoring" mean in my dataset, and how do survival models handle it? Censoring occurs when the exact time-to-event for some individuals is unknown. This is a fundamental feature of survival data and commonly happens because a patient has not experienced the event by the trial's end, is lost to follow-up, or withdraws [34] [31]. Survival analysis methods, like the Kaplan-Meier estimator, incorporate information from censored patients up to their last known follow-up time, allowing for the valid use of all available data without introducing bias from incomplete observations [34] [35].

Q3: My Kaplan-Meier curves for two treatment groups separate early but seem to converge later. Is the Log-Rank test still appropriate? The Log-Rank test is most powerful for detecting differences when the hazard rates (instantaneous risk of the event) between groups are proportional over time—meaning the survival curves maintain a consistent separation [31]. If the curves cross or converge, it suggests non-proportional hazards, where the treatment effect changes over time (e.g., a strong initial effect that wanes). In this case, the standard Log-Rank test may be misleading [36]. You should investigate models that accommodate non-proportional hazards, such as stratified Cox models or models with time-dependent covariates [36].

Q4: I have fitted multiple parametric models (Weibull, Gompertz, Log-Normal) to my trial data. They all fit the observed period well but produce wildly different long-term extrapolations. Which one should I choose? This is a central challenge in survival extrapolation [33]. The choice should not be based on statistical fit alone. You must assess the biological and clinical plausibility of the long-term hazard shapes each model implies [33].

- Consult external data sources (disease registries, longer-term trials) for clues about the expected long-term hazard pattern.

- Consider the mechanism of action: Is a "cure" fraction biologically plausible (suggesting a mixture cure model)? Or is the hazard expected to plateau or eventually align with general population mortality? [33]

- Follow health technology assessment (HTA) guidelines, which recommend presenting a range of plausible models to demonstrate uncertainty, rather than selecting a single "best" fit [33].

Q5: What are mixture cure models, and when should I consider using them? Mixture cure models split the patient population into two groups: those who are theoretically "cured" (and will never experience the event) and those who are "uncured" and remain at risk [33]. They are useful when a treatment modality (e.g., some cell and gene therapies) suggests the potential for long-term remission or functional cure. However, reliably estimating the "cure fraction" from short-term data is difficult and can lead to high uncertainty in predictions [33].

Q6: How does the concept of "emergence" in biological hierarchies relate to the risk of model misspecification in extrapolation? In biology, higher-level entities (like populations) exhibit properties that are not merely the sum of their lower-level components (like organisms or cells). This is called emergence [30]. A key thesis is that processes validated at one level of organization (e.g., tumor shrinkage in an individual) do not always extrapolate cleanly to another (e.g., population-level progression-free survival over decades) [30]. Similarly, a survival model that perfectly fits observed trial-level aggregate data may be misspecified for predicting long-term outcomes because new, "emergent" factors (late toxicities, changing standards of care, competing risks of mortality) can alter the hazard trajectory in ways not captured by the short-term data [33] [30]. This underscores the need for cautious extrapolation grounded in external evidence.

Troubleshooting Guides

Guide 1: Addressing Poor Model Fit to Observed Trial Data

- Symptoms: The modeled survival curve systematically deviates from the Kaplan-Meier empirical estimates. Statistical goodness-of-fit tests (like AIC/BIC comparisons) indicate a poor fit.

- Diagnosis & Solutions:

- Visual Inspection: Always plot the model-predicted survival/hazard function against the non-parametric Kaplan-Meier curve [35].

- Consider a More Flexible Model: Standard parametric models (Exponential, Weibull) assume simple hazard shapes. If the observed hazard is complex (e.g., peaks then declines), switch to a more flexible model like:

- Incorstrate Time-Dependent Effects: If the treatment effect appears to change over time (non-proportional hazards), extend the Cox model or use a parametric model with a time-dependent coefficient [36].

Guide 2: Handling Immature Data with High Censoring Rates

- Symptoms: A high percentage (>60-70%) of patient records are censored. Extrapolations are highly sensitive to the choice of model, leading to unreliable long-term estimates.

- Diagnosis & Solutions:

- Quantify Uncertainty: Use confidence intervals and simulation (e.g., bootstrapping) to illustrate the wide range of possible extrapolated outcomes. This is more honest than presenting a single, precise estimate [33].

- Leverage External Data: Anchor or inform your model using relevant long-term data from disease registries, historical cohorts, or real-world evidence. This can constrain implausible extrapolations [33].

- Use a Range of Plausible Models: Pre-specify and present results from several models that reflect different clinically plausible scenarios (e.g., continued benefit, waning effect, cure). This is a recommended practice by bodies like NICE [33].

- Clearly Report Limitations: All conclusions should be framed with the data immaturity as a key limitation. Propose a plan for data maturation and model re-assessment.

Guide 3: Managing Competing Risks in Non-Mortality Endpoints

- Symptoms: The event of interest (e.g., disease progression) can be precluded by a competing event (e.g., death from an unrelated cause). Using standard survival analysis (which censors competing events) can overestimate the cumulative incidence of the primary event.

- Diagnosis & Solutions:

- Recognize the Problem: Competing risks are common in studies of non-fatal endpoints in elderly populations or aggressive diseases [36].

- Apply Competing Risks Methodology: Instead of the Kaplan-Meier estimator, use the Cumulative Incidence Function (CIF). For regression, use models like the Fine-Gray subdistribution hazard model, which is designed specifically for competing risks analysis [36].

Experimental Protocols for Model Development & Validation

Protocol 1: Systematic Workflow for Developing a Survival Extrapolation

| Step | Action | Key Considerations & Tools |

|---|---|---|

| 1. Define Event | Precisely define the event (e.g., “death from any cause,” “radiographic progression”) and the time origin (e.g., date of randomisation) [32]. | Ensure the definition is unambiguous and consistently adjudicated. Document censoring rules [32]. |

| 2. Prepare Data | Create a dataset with one row per patient, containing: time (to event/censoring) and status (1=event, 0=censored) [32] [35]. |

Use software commands like stset in Stata or Surv() in R to declare survival data [32] [35]. |

| 3. Explore Data | Generate Kaplan-Meier curves and life tables. Calculate median survival times. Test for differences between key groups (Log-Rank test) [34] [35]. | Visual inspection is crucial. The survminer package in R is excellent for publication-ready plots [35]. |

| 4. Select Candidate Models | Fit a set of standard parametric models (Exponential, Weibull, Gompertz, Log-Logistic, Log-Normal, Generalized Gamma) [33]. | Compare statistical fit using AIC/BIC. Plot fitted curves against KM plots [33]. |

| 5. Assess External Validity | Compare the long-term shape of the extrapolated hazard with external data and clinical/biological rationale [33] [30]. | Ask: Is a rising/falling/constant hazard plausible? Is a cure fraction plausible? This step is critical for credibility [33]. |

| 6. Estimate & Present | Calculate long-term outcomes like lifetime mean survival, restricted mean survival time (RMST), or quality-adjusted life years (QALYs). | Present results from a plurality of plausible models to convey decision uncertainty, as required by many HTA agencies [33]. |

Protocol 2: Validating an Extrapolation Using External Registry Data

- Objective: To test the external validity of a long-term survival extrapolation derived from a short-term Phase 3 trial.

- Materials:

- Index trial dataset (with mature follow-up for the validation time horizon, e.g., 5 years).

- Population-based disease registry data (e.g., SEER, national cancer registry) with long-term follow-up for a comparable patient cohort.

- Procedure: a. Using the index trial data only, fit your proposed extrapolation model(s). b. Generate predicted survival probabilities and hazard rates for years 5-10 post-diagnosis. c. From the external registry data, identify a cohort matched as closely as possible to the trial eligibility criteria. d. Calculate the observed (Kaplan-Meier) survival probabilities and hazard rates for the same 5-10 year period in the registry cohort. e. Perform a quantitative comparison: Calculate the mean absolute error (MAE) between the model-predicted and registry-observed survival probabilities at years 6, 7, 8, 9, and 10. f. Perform a qualitative comparison: Visually overlay the extrapolated curve and the registry-based curve. Do the shapes align? Does the model systematically over- or under-predict?

- Interpretation: A model that shows close alignment (low MAE, consistent visual shape) with the external registry data gains credibility for use in further extrapolation. Significant divergence requires re-evaluation of the model's assumptions [33].

Visualization of Key Concepts

Diagram 1: Biological Hierarchy and Extrapolation Challenge

Diagram 2: Survival Extrapolation Model Development Workflow

The Scientist's Toolkit: Essential Materials & Reagents

| Item / Category | Function & Application in Survival Modeling | Example / Specification |

|---|---|---|

| Statistical Software | Platform for performing all survival analyses, from Kaplan-Meier estimation to complex parametric and semi-parametric modeling. Essential for data management, model fitting, and visualization. | R (with survival, survminer, flexsurv packages), Stata, SAS, Python (lifelines, scikit-survival). |

| Clinical Trial Dataset | The primary source of observed time-to-event data. Must include precise event times (or censoring times) and key covariates (treatment arm, age, biomarkers, etc.). | Time variable (days/months), status variable (event=1, censored=0), patient ID, treatment group, other covariates [32]. |

| External Data Source | Provides long-term evidence to inform or validate the shape of the extrapolated hazard function. Critical for assessing model plausibility [33]. | Disease registries (e.g., SEER), long-term follow-up studies, pooled analyses of historical trials, general population life tables. |

| Model Selection Criteria | Quantitative metrics to compare the goodness-of-fit of different statistical models to the observed data. | Akaike Information Criterion (AIC), Bayesian Information Criterion (BIC). Lower values indicate a better fit, penalized for model complexity. |

| Clinical / Biological Rationale | The conceptual framework guiding which long-term hazard shapes are plausible. Informs model choice beyond statistical fit [33] [30]. | Knowledge of disease natural history (chronic, progressive, curable?), mechanism of drug action (continuous effect, time-limited, curative?), and understanding of emergent risks at the population level. |

| Health Technology Assessment (HTA) Guidelines | Documents outlining the expectations of regulatory and reimbursement bodies regarding survival extrapolation methodology, transparency, and presentation of uncertainty. | NICE (UK) DSU Technical Support Document 21, CADTH (Canada) Guidelines, ISPOR Good Practices Reports. |

Exposure-Matching and Extrapolation in Pediatric Drug Development

Data Landscape: Utilization of Extrapolation and Modeling

The table below summarizes key quantitative findings on the application of extrapolation and model-informed strategies in pediatric drug development, based on analyses of regulatory approvals and study designs.

Table 1: Utilization of Extrapolation and Modeling & Simulation (M&S) in Pediatric Drug Development

| Metric | Data | Source / Context |

|---|---|---|

| Drugs approved with pediatric extrapolation (Japan, 2019-2023) [37] | Complete Extrapolation: 43.2%Partial Extrapolation: 30.5%No Extrapolation: 26.3% | Survey of 95 pediatric drug products [37] |

| Use of M&S for dose selection/rationale | 60.0% of approved pediatric drugs [37] | Major rationale for pediatric trial dose or approved regimen [37] |

| Range of exposure ratios (Pediatric/Adult) | Mean Cmax Ratio: 0.63 to 4.19Mean AUC Ratio: 0.36 to 3.60 [38] | Analysis of 31 products (86 trials) with efficacy extrapolation (1998-2012) [38] |

| Trials with pre-defined exposure matching boundaries | 8.1% (7 of 86 trials) [38] | Systematic review of pediatric PK studies [38] |

| Off-label use in intensive care | PICU: Up to 70%NICU: Up to 90% [39] | Historical context underscoring the need for pediatric development [39] |

Core Experimental Protocols and Methodologies

Protocol 1: Establishing a Pediatric Extrapolation Framework per ICH E11A This protocol outlines the foundational regulatory and scientific assessment required before designing pediatric studies [40].

- Develop the Pediatric Extrapolation Concept: Systematically compare the reference (adult) and target (pediatric) populations across three pillars:

- Disease: Assess similarities/differences in pathophysiology, diagnostic criteria, and natural history of disease progression [40].

- Drug Pharmacology: Evaluate known or potential differences in Absorption, Distribution, Metabolism, and Excretion (ADME) and mechanism of action [40].

- Treatment Response: Analyze exposure-response relationships and drug target biology across ages [40].

- Formulate the Extrapolation Plan: Based on the concept, define the data package. This plan specifies [40]:

- The extent of efficacy extrapolation (full, partial, or none).

- The need for additional PK, efficacy, or safety studies in pediatric subgroups.

- The modeling and simulation (M&S) analyses to be employed.

- Integrate Adolescents into Development: When disease and drug response are sufficiently similar, include adolescent subjects in adult trials or study them in parallel to accelerate development [40].

Protocol 2: Exposure-Matching via Population PK/PD Modeling This protocol details the standard methodology for matching pediatric exposures to an established adult therapeutic window [39].

- Develop a PopPK Model: Using sparse data from pediatric trials, build a population pharmacokinetic (PopPK) model with non-linear mixed-effects modeling (e.g., using NONMEM) [39].

- Identify Covariates: Test physiological covariates (e.g., body size via allometric scaling, age, organ function) to explain variability in PK parameters (Clearance, Volume of Distribution) [39].

- Validate the Model: Perform internal (e.g., visual predictive checks, bootstrap) and external validation to ensure robust predictive performance [39].

- Simulate to Match Exposure: Use the validated model to simulate concentration-time profiles in virtual pediatric populations. Iterate on proposed dosing regimens until key exposure metrics (e.g., AUC, Cmax) match the target range derived from adult efficacy/safety data [38].

- Prospective Validation: The final simulated dosing regimen must be tested and challenged in a prospective clinical trial [39].

Protocol 3: Implementing a PBPK Modeling Workflow for Pediatric Extrapolation This protocol describes building a mechanistic Physiologically Based Pharmacokinetic (PBPK) model to extrapolate from adults to children [41].

- Gather System and Drug Parameters:

- Organism Parameters: Use software databases for age-specific organ volumes, blood flows, and enzyme expression/ontogeny profiles [41].

- Drug Parameters: Input measured or estimated physicochemical properties (lipophilicity, pKa, molecular weight) and in vitro data (permeability, fraction unbound, metabolic clearance) [41].

- Build and Verify the Adult Model: Assemble the PBPK model structure (compartments for key organs). Verify and refine the model by ensuring it accurately predicts observed adult PK data [41].

- Scale to Pediatric Populations: Replace the adult physiological system parameters with those for the target pediatric age groups (e.g., neonate, infant, child). Incorporate relevant maturation functions for metabolic enzymes and renal function [41].

- Predict and Evaluate: Simulate pediatric PK profiles. Evaluate the prediction against any available pediatric data. Use the model to optimize dosing or assess drug-drug interaction risks in children [42] [41].

Protocol 4: Accuracy for Dose Selection (ADS) Evaluation for Study Design This novel protocol evaluates a pediatric PK study's power to select correct doses, rather than just precisely estimate parameters [43].

- Define Target and Doses: Establish a target exposure (e.g., adult AUC). Define a set of feasible, discrete dose levels (e.g., available tablet strengths) [43].

- Generate Virtual Population: Simulate a large virtual pediatric population with realistic distributions of demographics (age, weight) and PK parameter variability [43].

- Simulate Trials: For many replicates (e.g., 1000):

- Sample a virtual study cohort per the proposed design.

- Assign doses based on initial strategy.

- Generate simulated PK data using a pre-defined model.

- Re-estimate PK parameters from the simulated data.

- Select the final dose for each weight band that is predicted to get closest to the target exposure.