The Essential Roadmap: A Guide to Systematic Review Protocol Registration in Ecotoxicology for Rigorous Evidence Synthesis

This article provides a comprehensive guide to systematic review protocol registration specifically tailored for ecotoxicology researchers and professionals.

The Essential Roadmap: A Guide to Systematic Review Protocol Registration in Ecotoxicology for Rigorous Evidence Synthesis

Abstract

This article provides a comprehensive guide to systematic review protocol registration specifically tailored for ecotoxicology researchers and professionals. It covers the foundational importance of preregistration for reducing bias and duplication, details the methodological steps for developing and registering a robust protocol on platforms like PROSPERO or with organizations like the Collaboration for Environmental Evidence, and addresses common troubleshooting issues such as managing complex exposure assessments and overcoming barriers like administrative burden. Furthermore, it explores validation through adherence to reporting guidelines and compares ecotoxicology-specific frameworks to clinical standards. The goal is to enhance the methodological rigor, transparency, and policy relevance of evidence synthesis in environmental health and toxicology.

Why Protocol Registration is the Keystone of Rigorous Ecotoxicology Reviews

Defining Systematic Review Protocol Registration and Its Core Principles

Systematic review protocol registration is the formal, public documentation of a systematic review's plan before the review begins. This process entails depositing a detailed protocol in a dedicated registry, making the review's objectives and methodology transparent and accessible to the scientific community [1] [2]. In the context of ecotoxicology research, which assesses the effects of toxic substances on biological organisms and ecosystems, protocol registration is a critical tool for enhancing the rigor, reproducibility, and utility of evidence syntheses in a complex and environmentally vital field.

The practice is anchored in core principles designed to combat methodological challenges inherent in research synthesis. The primary principles are:

- Transparency: Making the review's plan publicly accessible to prevent selective reporting and clarify the rationale for methodological choices [3] [4].

- Bias Reduction: Pre-specifying methods to minimize the influence of the authors' prior knowledge of study results on decisions about study inclusion, data extraction, and synthesis [3] [5].

- Reduction of Duplication: Allowing researchers to identify ongoing reviews to avoid unintentionally replicating effort and wasting resources [6].

- Methodological Rigor: Encouraging the use of explicit, systematic, and reproducible methods from the outset, which is a defining feature of a high-quality systematic review [3].

Table 1: Core Principles of Protocol Registration and Their Rationale

| Core Principle | Primary Rationale | Consequence for Eotoxicology Research |

|---|---|---|

| Transparency | Prevents selective reporting and outcome switching; clarifies methodological decisions. | Builds trust in reviews that inform chemical risk assessments and environmental policy. |

| Bias Reduction | Minimizes the influence of prior knowledge of study results on review conduct. | Ensures objective synthesis of often contentious data on pollutant effects. |

| Reduction of Duplication | Allows identification of ongoing reviews to avoid wasted research effort. | Efficiently directs resources in a field with diverse pollutants and biological endpoints. |

| Methodological Rigor | Promotes the use of explicit, pre-defined, and reproducible methods. | Standardizes approaches for handling heterogeneous data from lab, mesocosm, and field studies. |

The Systematic Review Protocol: Definition and Core Components

A systematic review protocol is a comprehensive, stand-alone document that serves as the detailed work plan and roadmap for the entire review project [1] [2]. It is distinct from a simple registry entry, which contains key information fields; the full protocol provides the complete methodological detail [1].

Creating a protocol is considered a fundamental step in the systematic review process by all major guidelines, including the Cochrane Handbook, the Institute of Medicine Standards, and the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement [1]. For ecotoxicology, a robust protocol is essential for managing the field's complexity, such as diverse study organisms (from bacteria to vertebrates), varied exposure regimes, and multiple endpoints (mortality, reproduction, behavior, genetic effects).

Table 2: Essential Components of a Systematic Review Protocol (Adapted from PRISMA-P)

| Protocol Section | Key Elements | Eotoxicology-Specific Considerations |

|---|---|---|

| Administrative Information | Title, authors, affiliations, contributions, funding source, conflicts of interest [6]. | Disclosure of funding from industry or advocacy groups is critical for credibility. |

| Introduction | Rationale, review question, explicit objectives [2] [4]. | Justification should frame the environmental or health problem posed by the toxicant(s). |

| Methods | ||

| Eligibility Criteria | Population/Experimental Unit, Intervention/Exposure, Comparator, Outcomes (PICO/PECO frameworks); study design filters [5] [4]. | "Population" = test species/life stage; "Exposure" = chemical, concentration, duration; "Outcomes" = measured biomarkers or effects. |

| Information Sources | Databases (e.g., PubMed, Web of Science, Environment Complete), grey literature sources, search strategy syntax [5]. | Must include environmental science and toxicology-specific databases beyond biomedical ones. |

| Study Selection & Data Extraction | Process for screening, forms for data extraction, method for resolving disagreements [2]. | Extraction must capture test conditions (e.g., temperature, pH) critical for interpreting ecotoxicity data. |

| Risk of Bias / Quality Assessment | Tool for assessing study methodological rigor (e.g., SYRCLE's RoB for animal studies) [5]. | Use tools tailored for in vivo or in vitro studies, ecological field studies, or environmental fate research. |

| Data Synthesis | Plan for qualitative synthesis and, if applicable, quantitative meta-analysis (statistical methods, heterogeneity investigation) [3] [5]. | Plan for handling different effect size metrics and high heterogeneity common in ecological data. |

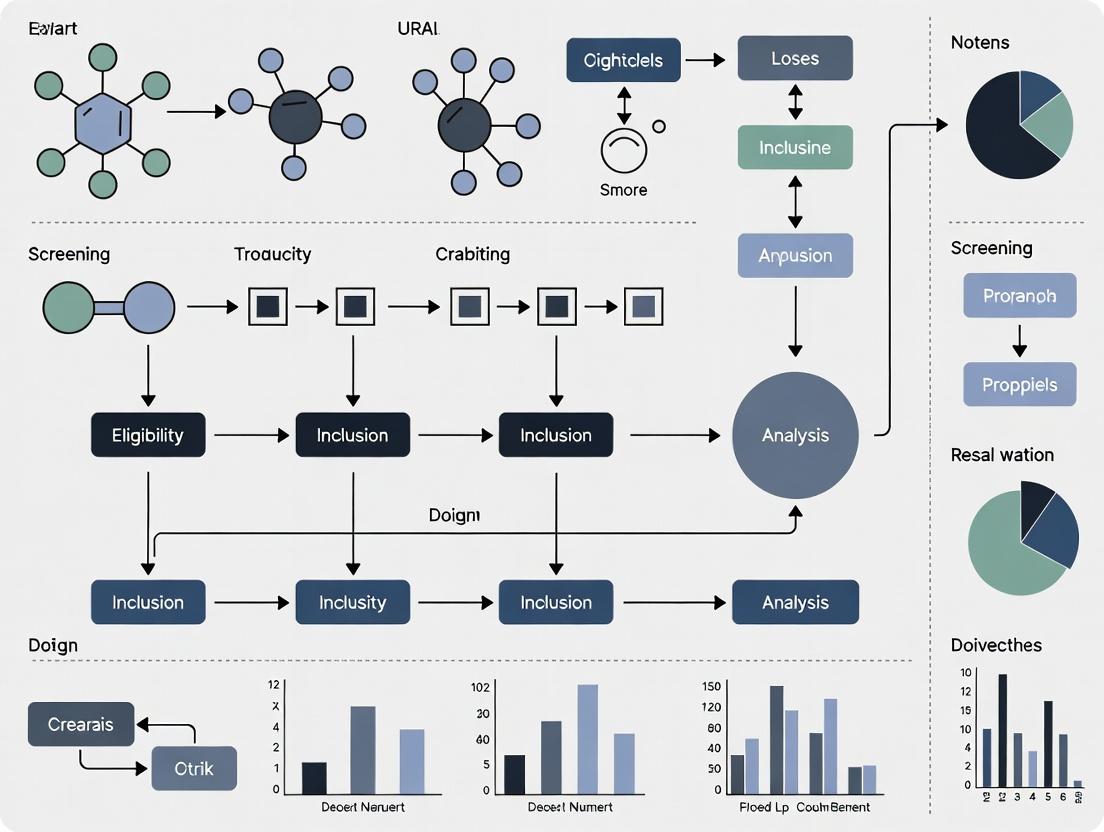

Diagram Title: PECO Framework for an Eotoxicology Systematic Review Question

The Protocol Registration Process

Registration involves submitting key details of the review plan to a publicly accessible, time-stamped registry. Prospective registration (before formal screening begins) is the gold standard, as it locks in the methodology and prevents bias [6]. Registries accept protocols at various stages, but all require that the review has not been completed [6].

The registration workflow follows a structured path from protocol development to public availability. Major international registries include PROSPERO (the largest for health-related reviews), the Open Science Framework (OSF) Registries, and INPLASY [1] [2]. INPLASY, for example, promises publication of protocols within 48 hours, addressing delays sometimes associated with other registries [6].

Table 3: Comparison of Major Protocol Registration Platforms

| Feature | PROSPERO | OSF Registries | INPLASY |

|---|---|---|---|

| Primary Scope | Health & social care, welfare, education, crime, justice [1]. | All scientific disciplines (generalized templates) [1] [2]. | All systematic review types, emphasizes speed [6]. |

| Cost | Free [2]. | Free [2]. | Publication fee required [6]. |

| Editorial Review | Yes, by moderators [1]. | No, immediate registration [1]. | Yes, rapid editorial check [6]. |

| Accepted Review Types | Systematic reviews (intervention, diagnostic, etc.). Excludes scoping reviews [1]. | Systematic reviews, meta-analyses, scoping reviews [2]. | Systematic reviews (incl. animal studies, prognosis), scoping reviews [6]. |

| Key Advantage | Endorsed by major health organizations; high visibility. | Flexibility; integrates with OSF project workspace. | Rapid publication time (≤48 hrs). |

Diagram Title: Workflow for Registering a Systematic Review Protocol

Detailed Application to Eotoxicology Research

Eotoxicology presents unique challenges that a registered protocol helps to address systematically. The field synthesizes evidence from controlled laboratory studies (e.g., OECD guidelines), semi-field mesocosm studies, and field observational studies, each with different strengths and risks of bias. A pre-registered plan is vital for handling this heterogeneity transparently.

Specialized Methodological Guidance: Ecotoxicologists should utilize extensions of generic guidelines tailored to their research. The PRISMA extension for preclinical animal studies is directly relevant for reviews of in vivo toxicity tests [7]. Furthermore, the Collaboration for Environmental Evidence (CEE) provides comprehensive guidelines for systematic reviews in environmental management and conservation, which are directly applicable to ecotoxicology [3].

Critical Experimental and Synthesis Protocols: Two areas demand particular detail in an ecotoxicology protocol:

- Risk of Bias (Quality) Assessment: The protocol must specify the tool for assessing the internal validity of included studies. For animal studies, the SYRCLE's Risk of Bias tool is recommended. For ecological studies, tools like the CEE Critical Appraisal Tool or ECOCHECK should be planned for use [5].

- Data Extraction and Synthesis: The protocol must detail how to handle complex data (e.g., LC50 values, effect sizes, no-observed-effect-concentrations). It should pre-specify rules for extracting data from graphs, dealing with different units, and converting measures for meta-analysis. The plan for investigating heterogeneity (e.g., through subgroup analysis based on test species class, exposure pathway, or study design) must be explicitly stated [5].

The Scientist's Toolkit: Essential Resources for Eotoxicology Systematic Reviews

| Item Name | Type/Category | Primary Function in Protocol Development & Registration |

|---|---|---|

| PRISMA-P Checklist | Reporting Guideline | Provides a minimum set of items to include in a systematic review protocol to ensure completeness and transparency [2]. |

| PECO Framework | Conceptual Tool | Guides the formulation of a focused, structured research question for ecotoxicology (Population, Exposure, Comparator, Outcome) [5]. |

| CEE Guidelines | Methodological Guideline | Offers detailed standards for conducting and reporting systematic reviews in environmental sciences, directly applicable to ecotoxicology [3]. |

| SYRCLE's RoB Tool | Critical Appraisal Tool | Aids in planning the assessment of risk of bias in animal studies, a common study type in toxicology [5]. |

| PROSPERO/OSF/INPLASY | Registration Platform | Provides the structured form and public repository for registering the review protocol to establish precedence and prevent duplication [1] [6] [2]. |

| Covidence/Rayyan | Software Platform | Facilitates the screening and selection of studies; mentioning its planned use adds operational detail to the protocol [2] [5]. |

| EndNote/Zotero | Reference Manager | Essential for managing citations from comprehensive searches; search strategy documentation is a core protocol component [5]. |

Ecotoxicology informs critical decisions regarding chemical safety, environmental policy, and the conservation of ecosystems. Traditionally, narrative reviews have synthesized knowledge in this field, but their subjective nature and vulnerability to bias can compromise the reliability of the conclusions drawn [8]. An evidence-based paradigm, anchored by systematic review and meta-analysis, is now a fundamental necessity. This approach employs explicit, pre-defined methods to minimize bias, systematically collate all relevant evidence, and provide quantitative, reproducible estimates of chemical effects [9] [10].

The transition to this rigorous framework is underscored by documented shortcomings in current synthetic practices. A survey of recent meta-analyses in environmental sciences revealed that fewer than half adequately assessed critical factors like heterogeneity or publication bias, and many failed to properly account for statistical non-independence among data points [9]. Such deficiencies can lead to unreliable conclusions, which in turn risk supporting ineffective or potentially harmful environmental policies [9].

Systematic review protocol registration is the cornerstone of this evidence-based shift. Publicly registering a detailed protocol a priori locks the research question, methodology, and analysis plan, preventing subjective, outcome-dependent decisions and enhancing transparency, reproducibility, and scientific integrity. This article provides the essential application notes and protocols to equip researchers with the tools to implement robust, protocol-driven evidence synthesis in ecotoxicology.

Quantitative Assessment: Current Practices vs. Evidence-Based Standards

A quantitative evaluation of recent meta-analytic practices reveals significant gaps between current common procedures and the standards required for reliable evidence-based synthesis. The following table summarizes key findings from a survey of 73 environmental meta-analyses published between 2019 and 2021 [9].

Table 1: Deficiencies in Current Meta-Analytic Practice in Environmental Sciences (based on a survey of 73 studies) [9]

| Synthesis Component | Current Practice Deficiency | Quantitative Prevalence | Evidence-Based Standard Requirement |

|---|---|---|---|

| Heterogeneity Assessment | Failure to report or investigate variation among effect sizes beyond sampling error. | Only ~40% of meta-analyses reported heterogeneity. | Mandatory quantification using metrics like τ² (absolute) and I² (relative) to interpret overall mean effects [9] [8]. |

| Publication Bias Evaluation | Lack of statistical assessment for the preferential publication of positive or significant results. | Assessed in fewer than half of the meta-analyses. | Required application of sensitivity analyses (e.g., funnel plots, trim-and-fill) to test the robustness of conclusions [9] [8]. |

| Data Non-Independence | Use of models that assume statistical independence when multiple effect sizes originate from the same study. | Non-independence was considered in only approximately 50% of cases. | Mandatory use of multilevel meta-analytic models or robust variance estimation to correctly model dependent data structures [9]. |

| Sensitivity Analysis | Absence of supplementary analyses to check the robustness of main findings. | Commonly not performed or reported. | Integral component to confirm that results are not driven by specific studies or analytic choices [8]. |

These identified gaps directly undermine the reliability of synthesized evidence. For instance, an overall mean effect calculated without considering high heterogeneity is misleading [9]. Similarly, ignoring publication bias can lead to gross overestimations of a chemical's true effect size. The following table defines the core terminology required to understand and implement corrective, evidence-based methodologies.

Table 2: Core Terminology for Evidence-Based Synthesis in Ecotoxicology [9] [8]

| Term | Definition in Evidence Synthesis | Role in Moving Beyond Narrative |

|---|---|---|

| Effect Size | A standardized, quantitative measure of the magnitude of an effect (e.g., log response ratio (lnRR), standardized mean difference (SMD)). Serves as the response variable in meta-analysis [9]. | Replaces qualitative descriptions ("chemical X reduced growth") with comparable, unitless metrics for quantitative aggregation. |

| Overall Mean Effect | The weighted average effect size across all studies in a meta-analysis, where weights are typically based on precision (inverse variance) [8]. | Provides a single, objective summary estimate derived from the entire evidence base, superior to selective narrative quoting. |

| Heterogeneity (τ², I²) | The variation in true effect sizes across studies. τ² is the estimated variance, while I² describes the percentage of total variation due to heterogeneity rather than chance [9] [8]. | Quantifies consistency (or lack thereof) in the evidence, a critical factor narrative reviews often address only subjectively. |

| Meta-Regression | A statistical extension of meta-analysis that models the association between study-level characteristics (moderators) and effect size to explain heterogeneity [9] [8]. | Systematically tests hypotheses about sources of variation (e.g., species class, exposure pathway), moving from anecdote to tested explanation. |

| Publication Bias | The phenomenon where studies with statistically significant or "favorable" results are more likely to be published than null or "unfavorable" studies [8]. | Formal sensitivity analyses detect and correct for this pervasive bias, which narrative reviews cannot account for. |

Application Notes & Detailed Protocols

Protocol for Prospective Registration of a Systematic Review

Prior to any literature search, developing and registering a detailed protocol is mandatory. This commits the research team to a predefined plan, safeguarding against bias.

- Define the PECO/PICO Elements: Formulate the review question with explicit Population (ecosystem and organism), Exposure/Pollutant (chemical or mixture), Comparator (control condition), and Outcome (ecotoxicological endpoint) [8].

- Develop Search Strategy:

- Databases: Plan searches in multiple relevant databases (e.g., Web of Science, Scopus, PubMed, Environmental Complete, ECOTOX [10]).

- Search Strings: Construct strings using Boolean operators (AND, OR) combining chemical names, synonyms, organism terms, and outcome terms. Pilot and refine strings.

- Grey Literature: Specify sources for unpublished data (e.g., government reports, thesis repositories, conference proceedings) [10].

- Language & Time Limits: Justify any restrictions.

- Specify Study Screening & Selection Criteria:

- Develop and pre-test inclusion/exclusion criteria based on PECO elements and study design (e.g., experimental lab/field studies).

- Plan for a multi-stage screening process (title/abstract, full-text) by at least two independent reviewers, with a method for resolving conflicts (e.g., consensus, third reviewer).

- Detail Data Extraction & Management:

- Design a standardized, piloted data extraction form (using tools like Microsoft Excel, Google Sheets, or systematic review software).

- Define variables: citation details, PECO details, experimental design (duration, temperature, endpoint), quantitative results (mean, SD, sample size for control and treatment groups), and key for calculating effect size.

- Plan for Risk of Bias / Critical Appraisal:

- Select a suitable tool for assessing internal study validity (e.g., adapted from Cochrane RoB, SYRCLE's tool for animal studies).

- Define how appraisal results will be used (e.g., sensitivity analysis, descriptive reporting).

- Pre-Specify Data Synthesis Methods:

- Narrative Synthesis: Plan tabulation of study characteristics and results [8].

- Quantitative Synthesis (Meta-analysis): Pre-specify the effect size metric (e.g., lnRR for continuous growth data), the meta-analytic model (e.g., multilevel random-effects), software (e.g.,

metaforin R [9]), and approaches for handling dependent effect sizes. - Heterogeneity & Meta-regression: Plan to calculate I² and τ². List potential moderators (e.g., taxonomic group, exposure concentration) for exploratory analysis.

- Sensitivity & Bias Analysis: Mandate tests for publication bias (e.g., funnel plot, Egger's test) and other sensitivity analyses [9].

Registration: Submit the finalized protocol to a public registry such as the Open Science Framework (OSF) or PROSPERO.

Protocol for Executing a Multilevel Meta-Analysis

This protocol assumes a registered plan and a complete, extracted dataset.

Objective: To quantitatively synthesize effect sizes from multiple ecotoxicology studies, correctly accounting for non-independence (e.g., multiple endpoints from one study) and quantifying heterogeneity.

Materials & Software: Statistical software capable of multilevel meta-analysis (e.g., R with metafor and clubSandwich packages [9]), a cleaned dataset with calculated effect sizes and their variances.

Methodology:

Calculate Effect Sizes:

- For each comparison, calculate the chosen effect size (e.g., lnRR) and its sampling variance (vᵢ). For lnRR:

lnRR = ln(X_t/X_c),v = SD_t²/(n_t*X_t²) + SD_c²/(n_c*X_c²), where X is mean, SD is standard deviation, n is sample size, and subscripts t and c are treatment and control groups [9].

- For each comparison, calculate the chosen effect size (e.g., lnRR) and its sampling variance (vᵢ). For lnRR:

Fit a Multilevel Meta-Analytic Model (MLMA):

- Use a random-effects model with multiple random intercepts to model dependency. A common structure is to nest effect sizes within studies.

- Model Equation (metafor syntax example):

rma.mv(yi = lnRR, V = v, random = ~ 1 | Study_ID / EffectSize_ID, data = dataset) - Interpretation: Extract the overall mean effect (β₀) with its confidence interval and significance test.

Quantify Heterogeneity:

- Extract the variance components (τ²) at the study and effect size levels from the MLMA model.

- Calculate the I² statistic to express the percentage of total variance due to true heterogeneity at each level [9].

Conduct Meta-Regression:

- To explain heterogeneity, add fixed-effect moderators to the MLMA model.

- Model Equation (with one moderator):

rma.mv(lnRR ~ Moderator, V = v, random = ~ 1 | Study_ID / EffectSize_ID, data = dataset) - Interpretation: Assess the significance and direction of the moderator coefficient (β₁). Calculate an R² analog to indicate the proportion of heterogeneity explained.

Perform Sensitivity and Bias Analyses:

- Publication Bias: Create a funnel plot of effect size against precision (standard error). Statistically test for asymmetry using multilevel versions of Egger's regression [9].

- Influence Analysis: Use diagnostics (e.g., Cook's distance) to identify studies exerting undue influence on the results. Refit the model without influential studies to check robustness.

- Subgroup Analysis: If categorical moderators are strong, present summary effects for key subgroups (e.g., fish vs. invertebrates).

Visualization of Systematic Review Workflow

The following diagram, created using DOT language and adhering to the specified color and contrast guidelines, maps the critical path from protocol registration to evidence synthesis, highlighting decision points and mandatory steps for rigor.

Systematic Review & Evidence Synthesis Workflow

Implementing evidence-based synthesis requires a suite of conceptual, data, and software tools. The following table details key resources.

Table 3: Research Reagent Solutions for Evidence-Based Ecotoxicology Synthesis

| Tool / Resource | Type | Primary Function & Relevance | Source / Reference |

|---|---|---|---|

| ECOTOX Knowledgebase | Curated Database | Provides systematically curated, single-chemical ecotoxicity data from over 50,000 references. Serves as a primary data source and model for systematic curation practices [10]. | U.S. EPA (https://www.epa.gov/ecotox) |

| PRISMA-EcoEvo Guidelines | Reporting Framework | An extension of the PRISMA statement providing a checklist and flow diagram template specifically for reporting systematic reviews and meta-analyses in ecology and evolution. Ensures transparent, complete reporting [9]. | http://prisma-ecoevo.org/ |

metafor Package (R) |

Statistical Software | A comprehensive R package for conducting meta-analyses. Fits multilevel, multivariate, and network meta-analysis models, performs meta-regression, and creates essential plots (forest, funnel) [9]. | CRAN R Repository |

| Collaboration for Environmental Evidence (CEE) Guidelines | Methodology Handbook | Provides authoritative standards and detailed guidance for conducting systematic reviews and systematic maps in environmental management and ecotoxicology [8] [11]. | https://environmentalevidence.org/ |

| Open Science Framework (OSF) | Protocol Registry | A free, open-source platform to preregister systematic review protocols, archive search strategies, manage team workflow, and share data/analyses, enhancing transparency and reproducibility. | Center for Open Science |

| ColorBrewer & Paul Tol Schemes | Visualization Aid | Provides color palettes (sequential, diverging, qualitative) optimized for clarity and accessibility to readers with color vision deficiencies, essential for creating inclusive figures [12]. | https://colorbrewer2.org/ & Paul Tol's Notes |

The move from narrative to evidence-based synthesis in ecotoxicology is not merely a technical upgrade but a fundamental cultural shift towards greater rigor, transparency, and utility for decision-makers. Central to this shift is the prospective registration of systematic review protocols. This practice, embedded within the broader thesis of systematic research synthesis, serves as a public contract that mitigates bias, reduces duplication of effort, and aligns the entire research enterprise—from graduate training to high-stakes regulatory assessment—with the principles of open science.

Journals such as Environmental Evidence now formalize this process by publishing peer-reviewed protocols [11]. Funding agencies and regulatory bodies should incentivize registration to ensure that the evidence used to protect ecosystems is built on the most robust possible foundation. By adopting the detailed protocols, visual workflows, and essential tools outlined here, researchers can lead the field toward a future where ecotoxicological knowledge is reliably synthesized, effectively informing the conservation and management of our planet's biological resources.

Within ecotoxicology, the demand for high-quality, synthesized evidence to inform regulatory decisions is paramount [13]. Systematic review protocol registration—the act of publicly publishing a detailed, fixed plan for a review before it begins—serves as a foundational practice to strengthen the scientific integrity of this field. This procedural safeguard directly addresses three critical challenges in evidence synthesis: cognitive and procedural biases, wasteful duplication of effort, and opaque methodologies. As ecotoxicology integrates diverse data sources, from standardized Good Laboratory Practice (GLP) tests to non-GLP ecological studies, the need for transparent, reproducible, and bias-minimized synthesis becomes a cornerstone for credible science and its application in environmental protection [13] [14]. This document details the application notes and experimental protocols that operationalize these key benefits, providing researchers with actionable frameworks for implementation.

Application Note 1: Combating Bias in Evidence Synthesis

Thesis Context: Pre-registering a systematic review protocol mitigates confirmation bias and selection bias by locking in the research question, eligibility criteria, and analysis plan before data collection and screening begin. This prevents the conscious or unconscious tailoring of methods to fit desired or expected outcomes [15].

Quantitative Analysis of Bias Awareness and Prevalence

A survey of 308 ecology scientists reveals significant awareness gaps and the "bias blind spot," where researchers perceive others' work as more susceptible to bias than their own [15].

Table 1: Researcher Awareness and Perceived Impact of Biases in Ecological Sciences (Survey of 308 Scientists) [15]

| Bias Metric | Survey Result | Implication for Protocol Registration |

|---|---|---|

| Awareness of Bias Importance | 98% of respondents aware | High foundational awareness supports procedural adoption. |

| Self vs. Others Bias Impact | Respondents rated impact on own studies as 'High' 3x less frequently & 'Negligible' 7x more frequently than on peers' work. | Highlights the critical need for mandatory, external safeguards like protocol registration to combat the "bias blind spot." |

| Top Known Bias Types | Observer bias (82%), Publication bias (71%), Selection bias (70%). | Protocol registration directly addresses selection and analysis biases. |

| Key Prevention Methods Supported | Reporting all results (89%), Randomization (78%), Blinding (70%). | Registration is a form of "blinding" the analysis plan, aligning with recognized best practices. |

| Career Stage Difference | Early-career scientists were more concerned about biases and more aware of prevention methods (e.g., confirmation bias) than senior scientists. | Underscores the role of formalized training and institutionalized practices like registration. |

Experimental Protocol: Implementing a Bias-Mitigation Workflow via Registration

Objective: To prospectively minimize confirmation, selection, and reporting bias in an ecotoxicology systematic review through public protocol registration.

Materials: Access to a protocol registry (e.g., PROSPERO, INPLASY, Open Science Framework); team meeting platform; protocol drafting template (e.g., PRISMA-P, ROSES).

Procedure:

- Preliminary Search & Scoping: Conduct initial scoping searches to define the feasible scope of the review. Document all search strings and databases used during this exploratory phase [16].

- Protocol Drafting with Locked-In Elements:

- Define & Justify PECO/PICO: Formulate the Population (e.g., specific aquatic species), Exposure (e.g., pharmaceutical contaminant), Comparator (e.g., control/no exposure), and Outcomes (e.g., LC50, reproductive impairment). Include explicit rationale [6] [16].

- Pre-Specify Eligibility Criteria: Define in detail the inclusion/exclusion criteria for studies (e.g., publication date range, language, study design, minimum reporting standards). No modifications allowed post-registration without public amendment and justification [16].

- Pre-Specify Search Strategy: Document all planned databases (e.g., PubMed, Web of Science, ECOTOX), search strings with all Boolean operators, and contact plans for grey literature [16].

- Pre-Specify Data Extraction & Analysis Plan: Define the exact data to be extracted (e.g., mean, SD, sample size, test conditions) and the statistical models or meta-analytic approaches to be used. Specify how heterogeneity and risk of bias will be assessed [6].

- Protocol Registration: Submit the finalized protocol to a public registry. On platforms like INPLASY, this is typically completed within 48 hours, making the plan public and timestamped [6].

- Conduct Review Against Registered Protocol: Execute the review precisely as registered. Any deviation necessitated during the review (e.g., discovery of an unanticipated confounding variable) must be explicitly documented in the final review's "Differences from Protocol" section [6].

Quality Control: Implement dual, independent screening and data extraction by reviewers blinded to each other's assessments at the study selection and risk-of-bias stages. Use pre-piloted forms to ensure consistency [15].

Diagram 1: Systematic Review Workflow with Bias Mitigation Checkpoints (Max Width: 760px)

Table 2: Research Reagent Solutions for Bias Mitigation in Ecotoxicology Reviews

| Tool / Resource | Function | Key Feature for Bias Control |

|---|---|---|

| ROSES Checklist (Reporting standards for Systematic Evidence Syntheses) [16] | A detailed form for reporting systematic review and map methods. | Ensures comprehensive, standardized reporting of methods, preventing selective outcome reporting. |

| PRISMA-P Checklist (Preferred Reporting Items for Systematic Review and Meta-Analysis Protocols) | Guidelines for items to include in a systematic review protocol. | Provides a structured framework for pre-specifying all critical elements of the review design. |

| Blind Review & Data Extraction Forms (e.g., in Covidence, Rayyan) | Software features that blind reviewers to each other's decisions and to study identifiers. | Reduces observer and confirmation bias during study selection and data extraction [15]. |

| Protocol Registries (PROSPERO, INPLASY, OSF) [6] | Public platforms to timestamp and publish review protocols. | Creates an immutable, public record of the intended plan, combating hindsight and analysis bias. |

Application Note 2: Preventing Duplication of Research Effort

Thesis Context: Public protocol registration functions as a global "notice of work in progress," enabling researchers to identify ongoing syntheses before initiating redundant reviews. This prevents waste of scientific resources and reduces "review clutter" [6].

Quantitative Analysis of Duplication Causes and Solutions

Data duplication in research synthesis leads to wasted effort, storage inefficiency, and conflicting conclusions [17] [18]. Proactive prevention is more efficient than post-hoc deduplication [19].

Table 3: Deduplication Techniques and Their Application to Systematic Review Protocol Registration [17] [18] [19]

| Deduplication Technique | Core Principle | Analogous Protocol Registration Practice |

|---|---|---|

| Hash-Based Deduplication | Creates a unique digital fingerprint for data; matches indicate duplicates. | A registered protocol with a unique DOI (Digital Object Identifier) acts as a "fingerprint" for a review project, enabling easy discovery. |

| Content-Aware Deduplication | Analyzes actual content, not just metadata, to identify similar items. | Registries require detailed PECO/PICO descriptions and search strategies, allowing researchers to perform content-aware searches for similar ongoing reviews. |

| In-line (Proactive) Deduplication | Prevents duplicate entry at the point of data ingestion. | Prospective protocol registration prevents the initiation of a duplicate review at the "point of entry" into the research pipeline. |

| Global vs. Local Deduplication | Global searches across the entire system; local searches within subsystems. | Researchers must search multiple global registries (e.g., PROSPERO, INPLASY) before starting, as one platform does not contain all ongoing work [6]. |

Experimental Protocol: Pre-Submission Duplication Check and Registration

Objective: To ensure a proposed systematic review is not duplicative of existing or ongoing work by conducting a comprehensive search of review registries and databases.

Materials: Access to protocol registries (PROSPERO, INPLASY, Cochrane); bibliographic databases; reference management software.

Procedure:

- Develop Preliminary Review Question: Formulate a draft PECO/PICO question.

- Registry Search Strategy:

- Search PROSPERO and INPLASY using key terms from the PECO question, including synonyms and broader/narrower terms [6].

- Search the Cochrane Database of Systematic Reviews and relevant Campbell Collaboration libraries.

- Document the search date, platforms, and search strings used.

- Database Search for Published Reviews:

- Conduct a scoping search in databases like PubMed, Web of Science, and Environmental Evidence for published systematic reviews on the topic [11].

- Analysis of Search Results:

- Screen titles and abstracts of registered protocols and published reviews.

- Compare their objectives, eligibility criteria, and populations/exposures to the proposed review.

- If a similar, recent, or ongoing review is found: Assess whether the proposed review is still justified (e.g., different outcome, updated search, different context). If not justified, abandon or significantly refocus the project.

- Protocol Registration:

- If no duplication is found, finalize and submit the protocol for registration.

- Mandatory Field Completion: In registries like INPLASY, authors must confirm they have identified existing and ongoing reviews to avoid duplication as a requirement for submission [6].

Diagram 2: Workflow for Preventing Systematic Review Duplication (Max Width: 760px)

Application Note 3: Enhancing Methodological and Interpretive Transparency

Thesis Context: Protocol registration enforces explicit, detailed documentation of methods prior to execution. This enhances reproducibility, allows for critical appraisal of the review's methodological rigor, and provides context for interpreting findings [14] [20].

Quantitative Analysis of Transparency Gaps in Environmental Research

A review of 39 conservation social science papers revealed significant gaps in reporting key methodological and contextual details, undermining transparency and quality assessment [20].

Table 4: Identified Transparency Gaps in Field-Based Environmental Research [20]

| Transparency Category | Specific Gap Identified | Percentage of Papers with Gap | How Protocol Registration Addresses This |

|---|---|---|---|

| Researcher Positionality | Who collected the data. | 43% | Mandates listing all review team members and their roles. |

| Methodological Context | Whether data collectors spoke participants' language. | 46% | Encourages detailed description of search strategy, including language restrictions. |

| Sampling & Recruitment | Participant recruitment strategy. | 56% | Requires pre-specification of study eligibility criteria and search methods. |

| Demographic Reporting | Women's representation in samples. | 41% | Prompts detailed definition of population (P in PECO). |

| Temporal Context | Time spent in the field/data collection period. | 28% | Requires specification of the search date range and planned timeline. |

Experimental Protocol: Establishing a Transparent, Reproducible Data Pipeline

Objective: To create a fully documented and reproducible workflow for data acquisition and curation in an ecotoxicology systematic review, leveraging computational tools.

Materials: R statistical software with ECOTOXr package [14]; GitHub or OSF repository; computational notebook (e.g., RMarkdown, Jupyter).

Procedure:

- Pre-Register Data Acquisition Plan: In the protocol, specify the exact source databases (e.g., EPA ECOTOX database) and the planned methods for data extraction (e.g., manual form, API, dedicated package like

ECOTOXr) [14]. - Use Scripted Data Retrieval: Instead of manual downloads, use a scripted approach. For example, employ the

ECOTOXrR package to programmatically query the EPA ECOTOX database. This script formalizes and documents the entire data curation process [14].- Script Components: Include code for setting search parameters (chemical, species, endpoints), executing the query, handling API limits, and performing initial data cleaning (e.g., removing duplicates, standardizing units).

- Version Control and Publishing: Maintain the data retrieval script in a version-controlled repository (e.g., GitHub). Link this repository in the registered protocol and final review.

- Document All Decisions: Use a computational notebook to interweave the code with narrative explanations of each data processing step, any deviations from the planned protocol, and the rationale for decisions (e.g., how borderline studies were handled during screening).

- Publish Code and (Where possible) Data: Submit the final, annotated scripts as supplementary material. Share the cleaned, analysis-ready dataset in a public repository (adhering to FAIR principles), or provide explicit instructions for regenerating it using the published scripts [14].

The Scientist's Toolkit: Resources for Enhancing Transparency

Table 5: Essential Tools for Transparent and Reproducible Ecotoxicology Reviews

| Tool / Resource | Function | Contribution to Transparency |

|---|---|---|

ECOTOXr R Package [14] |

Programmatic access and curation of data from the EPA ECOTOX database. | Formalizes and documents data retrieval, making the process reproducible and auditable. |

| Open Science Framework (OSF) | A free, open-source project management repository. | Provides a centralized, citable hub for protocols, preregistrations, data, code, and materials. |

| GitHub / GitLab | Version control platforms for code and documentation. | Tracks all changes to analysis scripts, ensuring a complete audit trail and facilitating collaboration. |

| RMarkdown / Jupyter Notebooks | Tools for creating dynamic documents that combine code, output, and narrative text. | Produces a complete "research compendium" that links analysis directly to its results, enhancing reproducibility. |

| Positionality Framework [20] | A reflexive tool for researchers to document their background, values, and potential influences on the research. | Adds critical interpretive transparency, allowing readers to understand the context of methodological choices and interpretations. |

Abstract This application note establishes a formal protocol for integrating systematic review methodology with regulatory environmental risk assessment. It provides researchers, scientists, and drug development professionals with actionable frameworks to enhance the transparency, reproducibility, and policy-relevance of ecotoxicology syntheses. The document details procedural workflows for protocol registration, data extraction aligned with regulatory requirements like the U.S. EPA’s Toxic Substances Control Act (TSCA), and the translation of synthesized evidence into actionable risk management options [21] [22].

Systematic Review Protocol Registration Framework

Prospective registration of a systematic review protocol is a critical first step to minimize bias, avoid duplication, and ensure methodological transparency, forming the foundation for credible evidence to inform policy [1] [6].

1.1. Protocol Development & Core Components: A robust protocol must define the rationale, key questions, and detailed methodology before the review commences [1]. For environmental risk assessment, key questions should be structured using modified PECO (Population, Exposure, Comparator, Outcome) elements to align with regulatory needs (e.g., "What is the effect of Chemical X on the fecundity of freshwater fish under chronic, low-dose exposure?"). The protocol must specify inclusion/exclusion criteria, search strategies for published and gray literature, data abstraction and management plans, risk of bias assessment tools for ecological studies, data synthesis methods, and plans for grading the certainty of evidence [1].

1.2. Registration Platforms and Procedures: Protocols should be registered in a publicly accessible repository. Key platforms include:

- PROSPERO: An international register for health-related systematic reviews, often suitable for human health ecotoxicology [1].

- Open Science Framework (OSF): A flexible platform that accepts systematic and scoping review protocols across all disciplines, including environmental science [1].

- INPLASY: An international platform offering rapid publication of protocols for various review types, including preclinical and animal studies, within 48 hours [6]. Registration typically requires submitting a structured form with details on the review team, objectives, methodology, and timeline [6].

1.3. Quantitative Data from Registration Practices: The following table summarizes key metrics and characteristics of major systematic review protocol registries relevant to environmental health research [1] [6].

Table 1: Comparative Overview of Systematic Review Protocol Registries

| Registry Name | Primary Scope | Time to Publication | Fee for Registration | Accepts Scoping Reviews | Editorial Review |

|---|---|---|---|---|---|

| PROSPERO | Health-related reviews | Variable; can exceed months | No | No | Yes, by staff |

| Open Science Framework (OSF) | All disciplines | Immediate upon submission | No | Yes | No |

| INPLASY | Interventions, prognosis, animal studies, etc. | Within 48 hours | Yes | Yes | Yes, for completeness |

| Biomed Central Journals | All research types | Peer-review timeline | Article Processing Charge | Varies by journal | Yes, full peer review |

Application Protocols for Environmental Policy Development

This section outlines a standardized workflow for conducting systematic reviews whose output directly informs regulatory risk evaluation and management, exemplified by the U.S. EPA’s TSCA process [21] [22].

2.1. Protocol: Aligning Systematic Review with TSCA Risk Evaluation Phases

- Objective: To generate a synthesized evidence base that directly feeds into the scoping, hazard assessment, exposure assessment, and risk characterization phases of a TSCA chemical risk evaluation [22].

- Materials: Access to scientific databases (e.g., PubMed, Web of Science, ToxLine), gray literature sources, data extraction software, risk of bias assessment tools for ecological and human health studies.

- Methodology:

- Scoping Phase: The systematic review team engages with risk assessors to define the "Conditions of Use" and Potentially Exposed or Susceptible Subpopulations (PESS) that will guide the review's PECO framework [22].

- Search & Screening: Execute comprehensive, pre-registered searches. Screen studies against eligibility criteria focused on the defined conditions of use and outcomes relevant to unreasonable risk determinations (e.g., carcinogenicity, reproductive toxicity) [22].

- Data Extraction & Synthesis: Extract quantitative and qualitative data on hazard potency (e.g., LD50, NOAEL, LOAEL) and exposure parameters. Synthesize data using meta-analysis where appropriate or narrative synthesis, documenting consistency and knowledge gaps.

- Certainty Assessment: Assess the certainty (or strength) of the synthesized evidence for each key outcome using a structured framework (e.g., GRADE for health outcomes).

- Reporting: Produce a final report structured to mirror the risk evaluation components: Hazard Assessment, Exposure Assessment, and integrated Risk Characterization [22].

2.2. Protocol: Translating Evidence into Risk Management Options

- Objective: To systematically map synthesized evidence on the efficacy and feasibility of risk management actions (e.g., engineering controls, bans, phase-outs) for chemicals determined to present an unreasonable risk [23].

- Methodology:

- Define a taxonomy of risk management actions (e.g., prohibition, workplace chemical protection programs (WCPP), concentration limits, labeling requirements) [23].

- Conduct a systematic review or evidence map to identify studies evaluating the real-world effectiveness, technical feasibility, and economic impacts of these actions for analogous chemicals or sectors.

- Present findings in a decision matrix format for policymakers, linking specific risk conclusions (e.g., unreasonable risk to workers during industrial use) to a ranked set of potential management options supported by evidence of efficacy.

Systematic Review Workflow for TSCA Decisions [21] [22]

The Scientist's Toolkit: Research Reagent Solutions for Policy-Relevant Synthesis

Conducting policy-relevant systematic reviews requires specific "reagent solutions"—standardized tools and datasets. The following table details essential items for generating reliable evidence for environmental decision-making.

Table 2: Essential Research Reagents for Policy-Informing Ecotoxicology Reviews

| Item Name | Function/Description | Application in Policy Context |

|---|---|---|

| Registered Protocol (e.g., on OSF, INPLASY) | Publicly documents the review's plan, locking in objectives and methods to prevent bias [6]. | Serves as an audit trail for regulatory bodies, demonstrating methodological rigor from the start. |

| Structured Data Extraction Form | A standardized spreadsheet or database template for capturing study characteristics, PECO elements, and outcomes. | Ensures consistent data collection across studies, facilitating direct input into risk assessment models and regulatory dockets [22]. |

| Risk of Bias Tool for Ecotoxicology (e.g., ECOTR, SciRAP) | A checklist to appraise internal validity of individual ecological or toxicological studies. | Allows assessors to weight evidence appropriately, a requirement under "best available science" and "weight-of-evidence" mandates like those in TSCA [21]. |

| Evidence Certainty Framework (e.g., GRADE adapted for environment) | A system to rate confidence in synthesized effect estimates (e.g., high, moderate, low, very low). | Communicates the strength of the science to policymakers, clarifying whether evidence is sufficient for decisive action or indicates a critical knowledge gap. |

| Chemical-Specific Dataset (e.g., EPA CompTox Dashboard) | Curated data on chemical properties, uses, and bioactivity from regulatory sources. | Provides essential context for understanding "conditions of use" and populating exposure assessment models during the review scoping phase [22]. |

Data Synthesis and Visualization for Risk Characterization

Effective data presentation is crucial for translating systematic review findings into clear risk assessment conclusions for decision-makers [24] [25].

4.1. Protocol: Preparing Quantitative Evidence Profiles

- Objective: To create standardized, comparative tables that summarize the strength and magnitude of evidence for each health or ecological endpoint.

- Methodology:

- For each outcome (e.g., rodent liver tumor incidence, fish embryo mortality), create a summary table.

- Include columns for: the condition of use studied, test species, exposure duration, effect size metric (e.g., Risk Ratio, Hazard Quotient), confidence interval, number of studies, risk of bias ratings, and the overall certainty of evidence (graded).

- Order outcomes by their regulatory significance (e.g., cancer outcomes precede less severe endpoints).

4.2. Quantitative Data from Regulatory Implementation: The following table illustrates the type of comparative data that emerges from the risk evaluation process and informs subsequent risk management rules, as seen with recent solvent regulations [23].

Table 3: Comparative Risk Metrics and Regulatory Outcomes for Select Chlorinated Solvents

| Chemical | Key Health Endpoint(s) | EPA Inhalation ECEL (ppm) [23] | OSHA PEL (ppm) [23] | Primary Regulatory Action (2025 Rules) [23] |

|---|---|---|---|---|

| Trichloroethylene (TCE) | Cancer, liver toxicity, neurotoxicity | 0.2 | 100 | Ban of all uses with limited exemptions under a WCPP. |

| Perchloroethylene (PCE) | Cancer, neurotoxicity | 0.14 | 100 | Phase-out of most industrial/commercial uses, including dry cleaning. |

| Carbon Tetrachloride (CTC) | Cancer, liver toxicity | 0.03 | 10 | Severe restriction of remaining uses, with WCPP requirements. |

ECEL: Existing Chemical Exposure Limit; PEL: Permissible Exposure Limit; WCPP: Workplace Chemical Protection Program.

Pathway from Evidence Synthesis to Regulation [21] [23]

Within the domain of ecotoxicology, systematic reviews are paramount for synthesizing evidence on the effects of environmental contaminants to inform regulatory decisions and policy [26]. However, a significant portion of ecological research, estimated at 82-89%, currently delivers limited or no value to end-users due to inefficiencies such as poor design, lack of publication, and avoidable duplication [27]. A critical contributor to this research waste is the low rate of prospective protocol registration for systematic reviews. Registration creates a public, time-stamped record of a review's intent and methodology before it begins, safeguarding against reporting bias and redundant effort [6]. This document provides application notes and detailed protocols to address this gap, offering actionable guidance for researchers to enhance the rigor, transparency, and impact of systematic evidence synthesis in environmental sciences [11].

The Current Landscape of Registration

Prospective registration of a systematic review protocol is a cornerstone of rigorous research practice. It involves the public deposition of a detailed plan before the review commences, which is associated with higher final report quality and is increasingly mandated by funders and journals [1] [4]. Despite its importance, adoption in ecotoxicology and broader ecology lags behind fields like medicine [27].

The barriers are multifaceted, including a lack of awareness, perceived administrative burden, and uncertainty about the process. The consequences, however, are tangible: duplication of effort, hidden researcher bias, and an overall reduction in the reliability of synthesized evidence used for critical environmental decision-making [27].

Quantitative Analysis of Research Waste and Registration

Table 1: Estimated Research Waste in Ecological and Medical Sciences

| Field of Research | Estimated Proportion of Research with Limited/No Value | Primary Contributing Factors (Related to Non-Registration) |

|---|---|---|

| Ecological Research [27] | 82% - 89% | Avoidable duplication; Non-publication; Poor design |

| Medical Research [27] | ~85% | Avoidable duplication; Non-publication; Poor design |

Comparative Analysis of Protocol Registration Platforms

Table 2: Key Registries for Systematic Review Protocols

| Registry Name | Primary Scope/Accepted Types | Key Features & Turnaround Time | Fee Model |

|---|---|---|---|

| PROSPERO [1] [6] | Intervention reviews, DTA, Prognosis, etc. | Form-based registration; Editorial review; Long delays reported (>6 months) | Free |

| INPLASY [6] | Interventions, DTA, Prognosis, Animal studies, Scoping reviews | Form-based registration; Rapid publication (<48 hours); Broad scope | Fee required |

| Open Science Framework (OSF) [1] | Any study type (Generalized templates) | Flexible, project-based workspace; Can host full protocols & data | Free |

| Journal Submission [1] | Varies by journal (e.g., BMJ Open, Systematic Reviews) | Full peer-review; Formal citation; Associated with journal's impact factor | Often requires APC |

Detailed Application Notes & Protocols

Protocol for Prospective Registration of an Ecotoxicology Systematic Review

This protocol provides a step-by-step guide to preparing and registering a systematic review protocol, integrating standards from PRISMA-P and major registries [1] [6] [4].

Stage 1: Protocol Development

- Define the Rationale & Question: Clearly articulate the knowledge gap. Formulate the primary question using a structured framework (e.g., PECO/PICO: Population, Exposure/Intervention, Comparator, Outcome).

- Develop Search Strategy: Detail databases (e.g., PubMed, Web of Science, GreenFile), grey literature sources, and a draft search string with keywords and controlled vocabulary.

- Specify Eligibility Criteria: Define explicit inclusion/exclusion criteria based on PECO elements, study design, and context (e.g., laboratory vs. field studies).

- Plan the Review Process: Describe the workflow for study screening (title/abstract, full-text), data extraction (variables, effect metrics), and risk-of-bias assessment (e.g., using tools like SYRCLE's RoB for animal studies).

- Outline Synthesis Methods: Specify plans for data synthesis (narrative, meta-analysis), assessment of heterogeneity, and sensitivity/subgroup analyses.

Stage 2: Platform Selection & Registration

- Choose a Registry: Select a platform based on scope and need. Use INPLASY for speed and broad acceptance [6] or PROSPERO for established recognition in health-related reviews [1]. Scoping reviews may use OSF [1].

- Complete Registration Form: Accurately complete all mandatory fields. For INPLASY, this includes [6]:

- Title: Informative with "protocol for a systematic review".

- Review Stage: Must be "Not started" or "Preliminary searches" for prospective registration.

- Question & Rationale: Precisely state the review question and justification.

- Methods: Summarize search strategy, eligibility, data extraction, and synthesis.

- Declarations: Report funding, conflicts of interest, and organizational affiliation.

- Submit & Obtain ID: After payment (for INPLASY) or editorial review (for PROSPERO), receive a unique registration number (e.g., INPLASY2025XXXXX) to cite in all related publications [6].

Diagram 1: Protocol Registration and Review Workflow

Experimental Protocol: Integrating Non-Forced Exposure Tests into ERA

To demonstrate how detailed protocolization enhances primary research, this section adapts the Heterogeneous Multi-Habitat Assay System (HeMHAS) for inclusion in systematic reviews of behavioral ecotoxicology [28].

Title: Protocol for a Non-Forced Exposure Test Assessing Contaminant-Driven Habitat Selection in Daphnia magna Using a HeMHAS Design.

Objective: To quantify the avoidance or preference behavior of D. magna in a chemically heterogeneous landscape, providing an EC50 (Avoidance) endpoint for Environmental Risk Assessment (ERA).

Materials:

- HeMHAS Apparatus: A linear or circular test arena with 4-6 interconnected compartments [28].

- Test Organism: Daphnia magna, neonates (<24h) or adults.

- Test Chemical: Reference toxicant (e.g., Potassium Dichromate) and pharmaceutical of interest.

- Environmental Control System: For maintaining constant temperature and light conditions.

- Tracking Software: Automated video tracking system (e.g., EthoVision XT).

Procedure:

- Acclimatization: Acclimate organisms in standard culture medium for 48h.

- Gradient Establishment: Create a stable, spatially defined chemical gradient across compartments (e.g., 0%, 25%, 50%, 100% of a known lethal concentration).

- Organism Introduction: Gently introduce a cohort of organisms (n=10-20) into a central compartment or evenly distributed at time zero.

- Behavioral Monitoring: Record the spatial distribution of organisms in each compartment over 24-48 hours using automated tracking.

- Data Collection: Record the number of individuals per compartment at defined intervals (e.g., every 15 minutes). Monitor survival.

Analysis:

- Calculate the Proportion of Avoidance (PA) for each concentration compartment relative to the control.

- Fit a dose-response model to calculate the EC50 (Avoidance) and its confidence intervals.

- Compare the Behavioral Sensitivity Threshold with traditional forced-exposure lethality (LC50) and sub-lethal (EC50) endpoints.

Diagram 2: Non-Forced Exposure Test System (HeMHAS)

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Advanced Ecotoxicology Protocols

| Tool/Reagent Category | Specific Example/Product | Function in Protocol | Relevance to Registration |

|---|---|---|---|

| Behavioral Tracking | EthoVision XT, Noldus; ANY-maze, Stoelting | Automates quantification of movement, location, and activity in non-forced exposure assays [28]. | Specific software and settings must be pre-specified in a registered protocol to ensure reproducibility. |

| Chemical Standards | Certified Reference Materials (CRMs) for pharmaceuticals (e.g., Carbamazepine, Diclofenac) | Provides accurate, traceable dosing for exposure studies, critical for internal validity [26]. | Batch numbers and supplier details should be documented in the registered methods section. |

| Environmental Control | Precision water bath/recirculating chillers; LED climate chambers | Maintains stable temperature and photoperiod, reducing confounding stress in chronic/sub-lethal tests. | Standard operating conditions are a key element of a pre-registered experimental protocol. |

| High-Throughput Screening | Multi-well plate assays; Automated larval zebrafish platforms | Enables rapid testing of multiple concentrations or compounds, aligning with new ERA data demands [26]. | Registration helps declare the exploratory vs. confirmatory nature of such screens upfront. |

| Data Analysis Software | R (with metafor, ecotoxicology packages); GraphPad Prism |

Performs meta-analysis, dose-response modeling, and calculation of summary effect sizes. | The statistical analysis plan, including software choice, is a core component of a review protocol [6]. |

A Step-by-Step Guide to Developing and Registering Your Ecotoxicology Protocol

In environmental health and ecotoxicology, formulating a precise research question is the critical first step in conducting a rigorous systematic review. While the PICO framework (Population, Intervention, Comparator, Outcome) is well-established for clinical intervention studies, it requires adaptation for fields investigating environmental exposures [29]. The PECO framework (Population, Exposure, Comparator, Outcome) has been developed to address this need, shifting the focus from deliberate interventions to unintentional or environmental exposures [29]. This adaptation is formally recognized by major evidence synthesis organizations like the Collaboration for Environmental Evidence [29].

A well-constructed PECO question defines the review's objectives, guides the development of inclusion/exclusion criteria, and shapes the interpretation of findings [29]. Within the context of a thesis on systematic review protocol registration, prospectively defining the PECO question is a foundational protocol element that must be publicly registered to ensure transparency, reduce bias, and prevent unintended duplication of research efforts [6] [1].

The PECO Framework: Components and Scenarios

The PECO framework consists of four pillars. Defining the 'E' (Exposure) and 'C' (Comparator) presents specific challenges in environmental research, differing fundamentally from interventional PICO questions [29].

- Population (P): The subjects of study, which can include humans, specific animal species (e.g., Daphnia magna), plants, or ecosystems. Characteristics like species, sex, age, health status, or habitat are specified.

- Exposure (E): The environmental agent, chemical, or condition under investigation (e.g., a contaminant concentration, noise level, or temperature change). Its timing, duration, and route of exposure must be considered.

- Comparator (C): The scenario against which the exposure is compared. This can be a lower or absent level of exposure, a different chemical, or an alternative environmental condition.

- Outcome (O): The measured endpoint of interest. In ecotoxicology, this includes apical outcomes (e.g., mortality, reproduction, growth) or intermediate biomarkers (e.g., enzyme activity, gene expression).

Research questions can be framed in different ways depending on the state of knowledge and the decision-making context [29]. The framework outlines five paradigmatic scenarios for formulating PECO questions.

Table 1: Scenarios for PECO Question Formulation in Systematic Reviews [29]

| Scenario | Systematic Review Context | Approach | PECO Example (Topic: Hearing Impairment) |

|---|---|---|---|

| 1 | Calculate the health effect from an exposure; describe the dose-response relationship. | Explore the shape of the exposure-outcome relationship. | Among newborns, what is the incremental effect of a 10 dB increase in noise during gestation (E) compared to lower levels (C) on postnatal hearing impairment (O)? |

| 2 | Evaluate the effect of an exposure cut-off on outcomes, informed by the review data. | Use cut-offs (e.g., tertiles, quartiles) defined by the distribution in identified studies. | Among newborns, what is the effect of the highest dB exposure tertile during pregnancy (E) compared to the lowest tertile (C) on hearing impairment (O)? |

| 3 | Evaluate the association using known cut-offs from other populations. | Use mean or standard cut-offs derived from external research or populations. | Among commercial pilots, what is the effect of occupational noise exposure (E) compared to noise exposure in other occupations (C) on hearing impairment (O)? |

| 4 | Identify an exposure cut-off that ameliorates negative health outcomes. | Use existing exposure limits associated with known health outcomes. | Among industrial workers, what is the effect of exposure to < 80 dB (E) compared to ≥ 80 dB (C) on hearing impairment (O)? |

| 5 | Evaluate the effect of a cut-off achievable through an intervention. | Select comparator based on exposure levels achievable via a specific intervention. | Among the general population, what is the effect of an intervention reducing noise by 20 dB (E) compared to no intervention (C) on hearing impairment (O)? |

Protocol Development: From PECO to Detailed Methodology

A systematic review protocol is a detailed plan that minimizes subjectivity and ensures consistency [16]. Following a structured template is essential [30].

Defining Eligibility Criteria

Each PECO element must be translated into explicit, justified eligibility criteria for study inclusion [30].

Table 2: Translating PECO into Eligibility Criteria [30]

| PECO Element | Description of Eligibility Criteria | Example for an Ecotoxicology Review |

|---|---|---|

| Eligible Populations | Species, life stage, sex, health status. | Aquatic invertebrates (e.g., Daphnia spp.), neonatal stage (<24h old). |

| Eligible Exposures | Chemical, concentration range, duration, route. | Exposure to microplastic particles (1-100 µm), via water column, duration ≥48h. |

| Eligible Comparators | Control or alternative exposure scenario. | No microplastic exposure, or exposure to a reference particle (e.g., silica). |

| Eligible Outcomes | Specific apical or intermediate endpoints. | Mortality, immobility (EC50), reproduction rate (number of neonates). |

| Eligible Study Designs | Defined by design features, not labels. | Laboratory-controlled exposure experiments with a concurrent control group. |

Experimental Protocol for Systematic Review Execution

The following protocol, adapted from generic environmental health guidelines [30], provides a stepwise methodology.

Phase 1: Preparation & Protocol Registration

- Team Assembly & Competency Assessment: Form a team with expertise in information science, ecotoxicology, statistics, and systematic review methodology. Document competencies [30].

- Conflict of Interest Declaration: All team members must declare financial and non-financial interests using standardized forms (e.g., ICMJE) [30].

- Problem Formulation & PECO Definition: Justify the review's necessity and articulate the scientific rationale. Define the primary PECO question and secondary questions if needed [30].

- Protocol Registration: Prospectively register the finalized protocol in a public registry (e.g., PROSPERO, INPLASY) before screening begins [6] [1].

Phase 2: Search & Screening

- Search Strategy Design: Develop a sensitive, reproducible search strategy for multiple databases. Use controlled vocabulary and keywords for all PECO elements [30].

- Evidence Screening: Conduct screening at title/abstract and full-text levels. Use dual independent screening by two reviewers, with a process for resolving disputes [30].

- PRISMA Flow Diagram: Document the screening process and results using a PRISMA flow diagram [30].

Phase 3: Data Extraction & Synthesis

- Data Extraction: Extract data on study characteristics, PECO details, and results using a pre-piloted form. Link multiple reports from the same study [30].

- Risk of Bias Assessment: Assess internal validity of individual studies using a tool appropriate for ecotoxicology (e.g., tool based on the COSTER recommendations) [30].

- Data Synthesis: Synthesize findings qualitatively and, if feasible, quantitatively via meta-analysis. Explore sources of heterogeneity (e.g., species, exposure characteristics).

Protocol Registration in Ecotoxicology

Registering a protocol commits to a plan, reduces bias, and informs the scientific community of ongoing work to avoid duplication [6] [1].

Table 3: Comparison of Systematic Review Protocol Registries

| Feature | INPLASY Registry [6] | PROSPERO [1] | Open Science Framework (OSF) [1] |

|---|---|---|---|

| Primary Scope | Broad (interventions, prognosis, diagnostic accuracy, animal studies, etc.). | Initially health-related; all types use intervention form pending development. | Generalized; suitable for all review types, including scoping reviews. |

| Acceptance Time | Within 48 hours of submission and fee payment. | Variable; significant delays reported (can be months) [6]. | Immediate upon submission. |

| Fee | Requires a publication fee. | Free. | Free. |

| Key Requirement | Authors must search for existing/ongoing reviews to avoid duplication. | Must be submitted before data extraction/completion. | Flexible; used for registration and sharing project files. |

| Best For | Researchers needing fast, guaranteed registration. | Health-focused reviews where free registration is required. | All review types, especially scoping reviews and projects desiring an open workspace. |

Best Practice: When registering, the title should be informative, including key PECO elements and the phrase "systematic review protocol" [6]. The review question must be clearly stated, typically using the PECO format [6].

Application in Ecotoxicology: Prioritizing Contaminants of Emerging Concern

The PECO framework underpins the identification and prioritization of research questions. For example, a marine prioritization tool for Contaminants of Emerging Concern (CECs) uses a hazard-based approach to rank chemicals for further study [31]. This directly informs which PECO questions (e.g., "What is the effect of chemical X on marine organism Y?") are most urgent.

Table 4: Application Example: A 3-Step Prioritization Workflow for Marine CECs [31]

| Step | Process | Criteria / Data Used | Output |

|---|---|---|---|

| 1. Filtering | Initial filtering of a large chemical database (~1.13M chemicals). | Persistence & Bioaccumulation (e.g., half-life, BCF), Toxicity (acute/chronic), Persistence & Mobility. | ~8,000 chemicals of potential concern. |

| 2. Scoring | Scoring the filtered chemicals. | Mode of Action (endocrine disruption, genotoxicity), Occurrence data (measured levels), Emission estimates. | A scored list of chemicals. |

| 3. Ranking | Final ranking based on composite scores. | Combined score from Step 2. | A prioritized list (e.g., Top 100). The highest-ranked chemical in one application was 6PPD, a tire antioxidant [31]. |

This prioritization yields specific candidates for systematic review, leading to a definable PECO question: "Among marine crustaceans (P), what is the effect of exposure to 6PPD-quinone (E) compared to unexposed controls (C) on mortality and growth (O)?"

Visualizing the Systematic Review Workflow

The following diagram maps the logical workflow from initial question formulation through to protocol registration and review completion, highlighting key decision points.

Table 5: Research Reagent Solutions for PECO-Based Systematic Reviews

| Tool / Resource Category | Specific Item / Software | Function / Purpose |

|---|---|---|

| Protocol Registration | INPLASY Registry [6], PROSPERO [1], OSF Registries [1] | Publicly register a review protocol to ensure transparency and prevent duplication. |

| Reporting Guidelines | ROSES (Reporting standards for Systematic Evidence Syntheses) [16], PRISMA [16], COSTER Recommendations [30] | Checklists and standards to ensure complete and transparent reporting of the review process and findings. |

| Reference Management | Zotero, EndNote, Mendeley | Manage and de-duplicate bibliographic records from literature searches. |

| Systematic Review Software | Rayyan, Covidence, EPPI-Reviewer | Facilitate collaborative screening of titles/abstracts and full texts, and data extraction. |

| Ecotoxicology Data Sources | ECOTOXicology Knowledgebase (EPA), EnviroTox, PikMe Database [31] | Sources of toxicity data, chemical properties, and environmental fate information to inform PECO elements. |

| Visualization & Diagramming | Graphviz (DOT language), PRISMA Flow Diagram Generator | Create clear workflow diagrams (as above) and standard study flow charts for publication. |

PRISMA-P Framework: Foundations and Core Components

The Preferred Reporting Items for Systematic Review and Meta-Analysis Protocols (PRISMA-P) is a critical checklist designed to ensure the complete and transparent reporting of systematic review protocols [32] [33]. Published in 2015, it provides a structured framework for authors to detail their planned methodology before the review commences, thereby reducing bias, enhancing reproducibility, and preventing duplication of effort [32] [33].

Within the context of ecotoxicology—a field characterized by complex interventions (e.g., chemical mixtures), diverse outcomes (from molecular to ecosystem-level), and varied study designs—adherence to PRISMA-P is particularly valuable. It compels researchers to pre-specify their methods for handling this heterogeneity.

The PRISMA-P 2015 Checklist

The PRISMA-P 2015 statement provides a 17-item checklist categorized into three main sections: Administrative Information, Introduction, and Methods [32]. A well-developed protocol based on this checklist serves as the definitive guide for the review team and a contract with the scientific community [33].

Table 1: Core Sections and Items of the PRISMA-P 2015 Checklist [32] [33]

| Section/Topic | Item # | Item Description (What to Report) | Criticality for Ecotoxicology |

|---|---|---|---|

| ADMINISTRATION | |||

| - | 1 | Identification: Protocol registry name and number. | Essential for transparency and avoiding research waste. |

| - | 2 | Updates: Information on protocol amendments. | Crucial for long-term ecological reviews. |

| INTRODUCTION | |||

| Rationale | 3 | Rationale: Description of the health/evironmental problem. | Frame within planetary health and chemical risk assessment. |

| Objectives | 4 | Objectives: Explicit statement of research question(s). | Must address PECO components with ecological relevance. |

| METHODS | |||

| Eligibility Criteria | 5 | Eligibility Criteria: Specification of study characteristics. | Define population (e.g., species, ecosystem), exposure, comparator, outcome (PECO). |

| Information Sources | 6 | Information Sources: Planned databases, contact with authors. | Must include ecotoxicology-specific databases (e.g., ECOTOX). |

| Search Strategy | 7 | Search Strategy: Draft search strategy for one database. | Requires complex syntax for chemicals, species, and endpoints. |

| Study Records | 8-10 | Data Management, Selection Process, Data Collection Process. | Plan for managing large datasets and diverse data formats. |

| Outcomes & Prioritization | 11 | Outcomes: Definition and prioritization of all outcomes. | Include mechanistic (biomarkers), individual, and population-level outcomes. |

| Risk of Bias | 12 | Risk of Bias Assessment: Planned methods for appraisal. | Adapt tools (e.g., SYRCLE's RoB) for in vivo ecotoxicology studies. |

| Data Synthesis | 13-15 | Data Synthesis, Meta-bias, Confidence in Evidence. | Plan for narrative synthesis, meta-analysis, and addressing publication bias. |

Implementing PRISMA-P in Ecotoxicology: Application Notes

Translating the general PRISMA-P principles into ecotoxicological practice requires careful consideration of the field's unique challenges.

Formulating the Research Question (Item 4)

A clearly focused research question is the foundation of a rigorous review. In ecotoxicology, the PICO framework is commonly adapted to PECO (Population, Exposure, Comparator, Outcome) [5]. This refinement is necessary as "interventions" are typically environmental exposures (e.g., to a pesticide, heavy metal, or nanoparticle), and the "population" may be a non-human species or an ecosystem component [5].

Table 2: Adapting the PICO Framework for Ecotoxicology Systematic Reviews (PECO) [5]

| PECO Element | Definition | Ecotoxicology-Specific Considerations | Example: Neonicotinoid Exposure in Pollinators |

|---|---|---|---|

| Population (P) | The biological system or species of interest. | Define taxa, life stage, habitat, or specific model organisms. | Apis mellifera (honey bee) and Bombus spp. (bumblebee) foragers. |

| Exposure (E) | The chemical, physical, or biological agent and its regime. | Specify compound(s), concentration/dose, route, duration, and mixture context. | Dietary exposure to clothianidin at field-realistic concentrations (e.g., 1-10 ppb). |

| Comparator (C) | The baseline against which exposure is compared. | Often a control (no exposure), a reference toxicant, or an alternative exposure scenario. | Control diet (no neonicotinoid) or exposure to a reference insecticide (e.g., DDT). |

| Outcome (O) | The measured endpoint(s) of toxicological effect. | Can span multiple levels of biological organization. | Primary: Mortality, foraging efficiency. Secondary: Neurological function, colony strength. |

A comprehensive, reproducible search is paramount. Ecotoxicology reviews must search beyond standard biomedical databases (e.g., PubMed/MEDLINE, Embase) to include specialist resources [5]. The search strategy must account for diverse terminology for chemicals, species, and effects.

Table 3: Key Information Sources for Ecotoxicology Systematic Reviews [5]

| Database/Resource | Scope and Coverage | Strategic Importance |

|---|---|---|

| PubMed/MEDLINE | Life sciences and biomedicine [5]. | Foundational; covers mammalian toxicology and some environmental health. |