The CRED Method: A Modern Framework for Evaluating Ecotoxicity Study Reliability in Regulatory Science

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method.

The CRED Method: A Modern Framework for Evaluating Ecotoxicity Study Reliability in Regulatory Science

Abstract

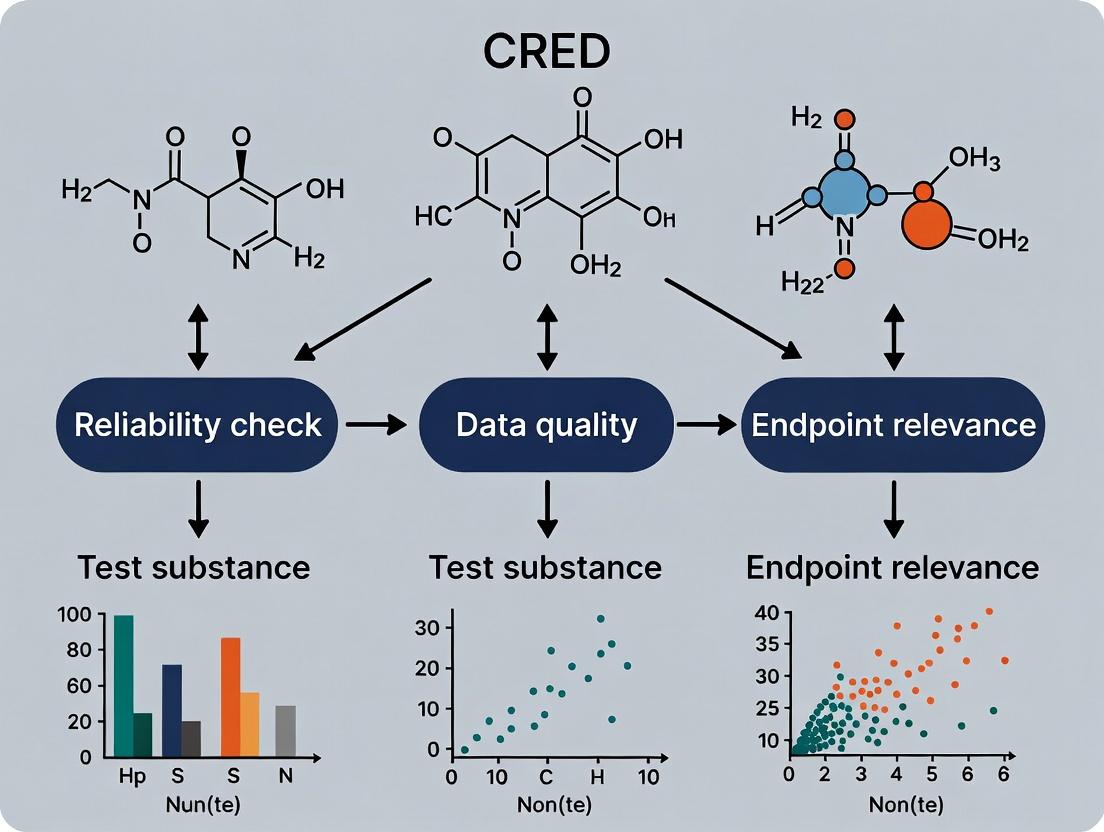

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method. Developed as a transparent and consistent replacement for the outdated Klimisch method, CRED enhances the quality of environmental hazard and risk assessments. The article explores the method's foundations, detailing its 20 reliability and 13 relevance criteria with practical application guidance. It addresses common challenges in study evaluation, examines validation data from international ring tests demonstrating its superior consistency, and discusses its integration into modern workflows, including emerging AI-assisted tools. By synthesizing these aspects, the article underscores CRED's critical role in harmonizing regulatory decisions and incorporating high-quality academic research into chemical safety assessments.

Beyond Klimisch: Why the CRED Method Was Developed for Modern Ecotoxicology

The Critical Need for Reliable Ecotoxicity Data in Chemical Regulation

The derivation of Predicted-No-Effect Concentrations (PNECs) and Environmental Quality Standards (EQSs) forms the cornerstone of chemical regulation, aiming to protect ecosystems from harmful substances. The integrity of this process is entirely dependent on the reliability and relevance of the underlying ecotoxicity studies [1]. Historically, the evaluation of study quality has been subject to expert judgment, leading to potential bias and inconsistency, where different assessors can reach divergent conclusions from the same data [1]. This inconsistency undermines regulatory transparency and confidence.

This article is framed within the context of a broader thesis on the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method, a systematic framework designed to overcome these challenges. The CRED method posits that transparent, criteria-driven evaluation is critical for scientific and regulatory integrity [2]. It provides a standardized tool with explicit criteria to assess reliability (the intrinsic scientific quality) and relevance (the appropriateness for a specific assessment purpose) [1]. As global regulations evolve towards greater stringency and the adoption of New Approach Methodologies (NAMs) [3] [4], the principles championed by CRED—consistency, transparency, and robust data utility—are more critical than ever for ensuring that chemical management decisions are founded on trustworthy science.

Core Application Notes: The CRED Evaluation Framework

The CRED evaluation method was developed to replace the less specific Klimisch method, offering a more detailed, transparent, and consistent system for assessing aquatic ecotoxicity studies [1]. It is structured around two pillars: a set of 20 criteria for reliability and 13 criteria for relevance, each accompanied by extensive guidance to minimize ambiguity [1].

- Reliability focuses on the inherent quality of the study design, performance, and reporting (e.g., test organism health, exposure control, statistical analysis).

- Relevance assesses the applicability of the study's endpoints and conditions to a specific regulatory question (e.g., appropriateness of the species, exposure duration, and measured effect for the hazard being evaluated) [1].

A study is evaluated against these criteria, and the degree to which they are fulfilled determines its final classification. A ring-test evaluation demonstrated that the CRED method leads to more consistent and transparent assessments compared to older methods [1].

Table 1: CRED Evaluation Outcome Categories and Criteria Fulfillment

| Evaluation Category | Description | Mean % of Criteria Fulfilled | Standard Deviation |

|---|---|---|---|

| Reliable without restrictions | High-quality study with no significant shortcomings. | 93% | 12% [5] |

| Reliable with restrictions | Study is usable but has deficiencies that limit its interpretive power. | 72% | 12% [5] |

| Not reliable | Study has critical flaws precluding its use in formal risk assessment. | 60% | 15% [5] |

| Relevant without restrictions | Study design and endpoints are directly applicable to the assessment. | 84% | 8% [5] |

| Relevant with restrictions | Study is applicable but with caveats (e.g., a surrogate species is used). | 73% | 14% [5] |

Furthermore, CRED includes 50 reporting recommendations across six categories (general information, test design, test substance, test organism, exposure conditions, and statistical design) to guide researchers in producing studies that meet regulatory data needs [1].

Experimental & Analytical Protocols

Protocol 1: Systematic Reliability Assessment of an Ecotoxicity Study Using CRED

Objective: To perform a standardized, transparent evaluation of the reliability of an aquatic ecotoxicity study for use in regulatory decision-making.

Materials: The study to be evaluated; CRED evaluation checklist (20 reliability criteria); guidance documents [1].

Procedure:

- Initial Documentation: Record the study identifier, evaluator name, and evaluation date.

- Criterion-by-Criterion Assessment: For each of the 20 reliability criteria, examine the study manuscript for the required information.

- Example Criteria: "Test organism: The test organism is clearly identified (species, life stage, source)." "Exposure concentrations: The measured concentrations are reported and are close to the nominal concentrations, or the reason for a difference is explained."

- Scoring: For each criterion, assign a fulfillment status: "Yes," "No," or "Not Assessable/Not Reported."

- Overall Classification: Based on the pattern of fulfilled criteria, assign the study to one of four reliability categories (see Table 1). A study fulfilling nearly all criteria (mean ~93%) is "Reliable without restrictions," while one with significant gaps (mean ~60%) is "Not reliable" [5].

- Transparent Reporting: Document the rationale for each scoring decision and the final classification in an evaluation report. This ensures the assessment is reproducible and auditable.

Protocol 2: Applying Mass Balance Models for In Vitro Bioavailability Correction

Objective: To predict the freely dissolved (bioavailable) concentration of a test chemical in an in vitro assay from the nominal concentration, improving extrapolation to in vivo conditions for QIVIVE [6].

Materials: Chemical property data (Log KOW, pKa, solubility); assay system parameters (media volume, serum lipid/protein content, cell count, plastic well surface area); computational software (R, Python); mass balance model (e.g., Armitage et al. model) [6].

Procedure:

- Data Compilation: Gather all necessary input parameters for the selected model.

- Model Selection: Choose an appropriate mass balance model. For broad applicability, the Armitage et al. model is recommended as it handles neutral/ionizable chemicals and accounts for partitioning to media, cells, labware, and headspace [6].

- Prediction Execution: Run the model using the compiled inputs to calculate the distribution of the chemical. The key output is the freely dissolved fraction (fu, media) in the exposure medium.

- Concentration Correction: Multiply the reported nominal concentration by the predicted fu, media to estimate the bioavailable concentration driving the biological effect.

- Application to QIVIVE: Use the corrected in vitro bioavailable concentration, rather than the nominal concentration, in subsequent physiologically based kinetic (PBK) modeling for reverse dosimetry to predict an equivalent in vivo dose [6].

Visualization of Workflows and Relationships

CRED Study Evaluation and Regulatory Use Workflow

Model-Based Bioavailability Correction for QIVIVE

Table 2: Key Reagents, Databases, and Tools for Ecotoxicity Research and Data Evaluation

| Tool/Resource | Function in Ecotoxicity Research | Key Features / Notes |

|---|---|---|

| CRED Evaluation Excel Tool [2] | Provides the standardized checklist for evaluating study reliability and relevance. | Contains the 20 reliability and 13 relevance criteria with guidance. Freely available for download. |

| Standard Reference Toxicants | Used to validate the health and sensitivity of test organism cultures and the performance of the test system. | Examples include sodium chloride for daphnia or potassium dichromate for fish. Must be of high purity. |

| Solvent Controls (e.g., Acetone, DMSO) | Used as a vehicle control when testing poorly water-soluble substances. | Must be tested to ensure no toxic effect at the maximum concentration used. Purity should be >99%. |

| Defined Culture Media & Food | For maintaining and testing standard organisms (e.g., algae, daphnia). | Ensures reproducible organism health and eliminates confounding toxicity from media impurities. |

| ToxValDB (Toxicity Values Database) [7] [8] | Curated database of in vivo toxicity results and derived values for data gap filling and model benchmarking. | Contains over 240,000 records for ~42,000 chemicals in a standardized format [7]. |

| ECOTOX Knowledgebase [8] | Comprehensive repository of single-chemical ecotoxicity test results for aquatic and terrestrial species. | Essential for literature review, weight-of-evidence assessment, and data collection for PNEC derivation. |

| Mass Balance Model Software (e.g., R/HTTK Package) [6] [8] | Predicts free concentrations in in vitro assays to improve in vitro to in vivo extrapolation (QIVIVE). | The Armitage et al. model is recommended for broad applicability [6]. |

| CompTox Chemicals Dashboard [8] | Integrates chemical properties, hazard, exposure, and risk data from multiple EPA resources. | Provides access to ToxCast, ToxRefDB, and predictive models, serving as a central chemical data hub. |

The regulatory evaluation of ecotoxicity studies is a cornerstone of environmental hazard and risk assessment for chemicals, informing decisions on marketing authorizations and environmental quality standards (EQS) [9] [2]. For decades, this evaluation has predominantly relied on the method established by Klimisch et al. in 1997, which categorizes study reliability into four levels: "reliable without restrictions," "reliable with restrictions," "not reliable," and "not assignable" [9] [10]. While pioneering, this method suffers from critical shortcomings, including a lack of detailed guidance and insufficient criteria, leading to inconsistent evaluations dependent on expert judgement [9] [1] [11]. These inconsistencies can directly impact risk assessment outcomes, potentially leading to underestimated environmental risks or unnecessary mitigation measures [9].

In response, the Criteria for Reporting and Evaluating ecotoxicity Data (CRED) method was developed to provide a transparent, consistent, and science-based framework [9] [1]. This document details the inherent shortcomings of the Klimisch method and presents the CRED evaluation method as a robust alternative, providing detailed application notes and protocols for researchers and assessors within the broader thesis of advancing ecotoxicity study reliability research.

Core Shortcomings of the Klimisch Method

The Klimisch method's primary flaws stem from its high-level, non-specific approach. It provides only generalized prompts for evaluation rather than a structured set of criteria, making the process highly subjective [11] [10]. This lack of granular guidance is the root cause of inconsistent categorizations of the same study by different risk assessors [9]. Furthermore, the method conflates reporting quality with methodological reliability, often leading to an automatic preference for studies conducted under Good Laboratory Practice (GLP) or according to standardized OECD guidelines, even when such studies may contain methodological flaws [9] [1]. The method also offers no formal criteria or categories for evaluating the relevance of a study to a specific regulatory question, a critical aspect distinct from reliability [9] [1].

Quantitative Comparison: Klimisch vs. CRED Evaluation Outcomes

The CRED method addresses Klimisch's shortcomings with a detailed, criteria-based framework. It employs 20 criteria for reliability and 13 for relevance, each accompanied by extensive guidance to standardize judgement [1] [2]. A major ring-test involving 75 risk assessors from 12 countries compared the two methods, revealing significant differences in consistency and perception [9].

Table 1: Participant Perception of Klimisch vs. CRED Evaluation Methods [9]

| Evaluation Aspect | Klimisch Method | CRED Method |

|---|---|---|

| Dependency on Expert Judgement | High | Low |

| Perceived Accuracy | Lower | Higher |

| Perceived Consistency | Lower | Higher |

| Practicality (Use of Criteria) | Less Practical | More Practical |

| Transparency of Process | Lower | Higher |

The ring test also allowed for a quantitative analysis of how fulfilled criteria correlate with final reliability categories under the CRED system, demonstrating a clear, measurable gradient.

Table 2: CRED Criteria Fulfillment by Final Reliability Category [5]

| CRED Reliability Category | Mean % of Criteria Fulfilled | Standard Deviation | Sample Size (n) |

|---|---|---|---|

| Reliable without restrictions | 93% | 12 | 3 |

| Reliable with restrictions | 72% | 12 | 24 |

| Not reliable | 60% | 15 | 58 |

| Not assignable | 51% | 15 | 19 |

Application Notes & Protocols for the CRED Evaluation Method

Core Protocol: Conducting a CRED Evaluation

The CRED evaluation is a structured, two-phase process assessing reliability and relevance separately. The following protocol is designed for the evaluation of a single aquatic ecotoxicity study for use in deriving an Environmental Quality Standard (EQS).

Phase 1: Reliability Evaluation

- Preparation: Obtain the full text of the study. Use the standardized CRED Excel tool [2].

- Criteria Assessment: Systematically review the study against the 20 reliability criteria. For each criterion, determine if it is "Fulfilled," "Not Fulfilled," or "Not Applicable." Document the rationale for each decision using the guidance notes.

- Overall Judgement: Based on the pattern of fulfilled/unfulfilled criteria, assign an overall reliability category (R1-R4). A study with all key criteria fulfilled is R1. Specific deficiencies (e.g., lack of solvent control, statistical limitations) typically lead to R2. Critical flaws (e.g., unacceptable control mortality, incorrect test species) result in R3. Insufficient reporting precluding evaluation leads to R4.

Phase 2: Relevance Evaluation

- Context Definition: Define the purpose of the assessment (e.g., EQS derivation for a specific water body).

- Criteria Assessment: Evaluate the study against the 13 relevance criteria, considering the defined context. Determine if the test organism, endpoint, exposure duration, and effect concentrations are appropriate ("Fulfilled"/"Not Fulfilled").

- Overall Judgement: Assign a relevance category: C1 (Relevant without restrictions), C2 (Relevant with restrictions), or C3 (Not relevant). A chronic study on a locally relevant species for a chronic EQS would be C1. An acute study used for a chronic assessment might be C2, providing supporting information.

Key Conceptual Relationship: Reliability and relevance are independent. A study on the correct organism (relevant) may be poorly conducted (unreliable). Conversely, a well-conducted study (reliable) on an irrelevant organism or endpoint is not suitable for the specific assessment [1].

CRED Evaluation Workflow

Protocol for a Comparative Method Ring Test

To empirically demonstrate differences in consistency between methods, a standardized ring test can be conducted [9].

- Participant Selection: Recruit multiple risk assessors with varying experience from different organizations.

- Study Selection: Choose 4-8 ecotoxicity studies with varying quality (e.g., a GLP guideline study, a well-reported academic study, a poorly reported study).

- Blinded Evaluation: Randomly assign each participant 2 studies to evaluate using the Klimisch method and 2 different studies using the CRED method. Provide identical background context.

- Data Collection: Collect final reliability/relevance categories and the time taken for each evaluation.

- Analysis: Calculate the percentage agreement among assessors for each study under each method. Compare time requirements and gather feedback on method usability via questionnaire.

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Research Reagent Solutions for Ecotoxicity Testing & Evaluation

| Item / Solution | Function in Ecotoxicity Testing / Evaluation |

|---|---|

| Standardized Test Media (e.g., M4, M7 for Daphnia, FET for fish) | Provides consistent, defined water chemistry for aquatic tests, ensuring reproducibility and comparability of results across studies. Essential for reliability. |

| Reference Toxicants (e.g., K₂Cr₂O₇, NaCl) | Used in periodic laboratory performance checks to confirm healthy test organisms and consistent response. A key reliability criterion. |

| Test Substance Analysis Standards | High-purity chemical standards used to confirm the concentration and stability of the test substance in stock and test solutions via analytical verification. Critical for reliability. |

| Solvent Controls (e.g., acetone, DMSO, methanol) | Required when a solvent is needed to dissolve a hydrophobic test substance. Must be appropriate, non-toxic at used concentration, and included as an additional control group. A key CRED reliability criterion. |

| Formulated Animal Feed | Specific, consistent diets for cultured test organisms (e.g., algae, Daphnia, fish). Ensures organism health and reduces variability in test response, supporting reliability. |

| Data Evaluation Tool (CRED Excel File) [2] | The standardized checklist tool that guides the assessor through the 20 reliability and 13 relevance criteria, ensuring a structured, transparent, and consistent evaluation process. |

| Guideline Documents (OECD, EPA, ISO) | Provide the standardized methodological protocols against which study design and reporting are compared during the reliability evaluation. |

Pathway to Regulatory-Ready Data

The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) initiative was developed to address critical inconsistencies in environmental risk assessment. Its primary objective is to improve the reproducibility, transparency, and consistency of reliability and relevance evaluations for aquatic ecotoxicity studies across different regulatory frameworks, countries, institutes, and individual assessors [1].

The development of CRED was driven by the widespread use of the Klimisch method for study evaluation, which was found to be unspecific, lacking in essential criteria, and subject to considerable interpretational differences, potentially introducing bias into regulatory decisions [1]. In contrast, CRED provides a structured, detailed, and guided framework aimed at minimizing subjective expert judgment. A key complementary aim is to enhance the usability of peer-reviewed ecotoxicity studies for regulatory purposes by providing clear reporting recommendations for researchers, thereby ensuring that published studies contain all information necessary for a high-quality evaluation [1].

Core CRED Evaluation Framework: Criteria and Application

The CRED evaluation method is built on a fundamental distinction between two key concepts:

- Reliability: This assesses the inherent scientific quality of a test report, focusing on the clarity and plausibility of the findings based on the methodology, experimental procedure, and description of results [1].

- Relevance: This evaluates the appropriateness of the data for a specific hazard identification or risk characterization, which depends on the assessment's purpose [1].

A study can be reliable but not relevant for a particular regulatory question, and vice versa. The CRED framework provides separate, detailed criteria for evaluating each aspect.

Quantitative Comparison with the Klimisch Method

The following table summarizes a ring test comparison that highlighted CRED's advantages over the previously dominant Klimisch method [1].

Table 1: Comparison of the Klimisch and CRED Evaluation Methods Based on a Ring Test Analysis [1]

| Evaluation Aspect | Klimisch Method | CRED Method | Implication of CRED's Approach |

|---|---|---|---|

| Core Structure | Four general categories (e.g., "reliable without restriction") | 20 reliability & 13 relevance criteria with extensive guidance | Reduces ambiguity and subjective interpretation. |

| Transparency | Low; limited guidance leads to opaque decisions. | High; explicit criteria and documented reasoning are required. | Improves auditability and understanding of regulatory decisions. |

| Consistency | Low; high variability between different assessors. | High; structured criteria lead to greater agreement. | Promotes harmonization across assessors and jurisdictions. |

| Bias Potential | Criticized for potential bias toward industry & GLP studies [1]. | Designed to be neutral; evaluates intrinsic study quality. | Allows for a fairer evaluation of all relevant scientific literature. |

| User Perception | Considered less accurate and applicable by ring test participants. | Rated as more accurate, applicable, and transparent by participants. | Indicates higher acceptance and usability among professionals. |

Detailed CRED Evaluation Criteria

The CRED method's robustness stems from its comprehensive set of criteria. The tables below categorize the core questions assessors must address.

Table 2: Categories and Examples of CRED Reliability Criteria (20 total criteria) [1]

| Reliability Category | Example Criterion | Purpose of Evaluation |

|---|---|---|

| Test Design | Are the test concentrations appropriate and justified? | Ensures the experimental design can produce meaningful dose-response data. |

| Exposure Control | Was the exposure concentration measured and verified? | Confirms the test organisms were exposed to the reported concentrations. |

| Test Organism | Is the test organism species/life stage clearly identified? | Ensures biological relevance and reproducibility of the test. |

| Control Performance | Were control results within accepted limits? | Validates the health of test organisms and the test system's stability. |

| Statistical Analysis | Are the statistical methods appropriate and clearly described? | Verifies the correctness and robustness of the data analysis. |

Table 3: Categories and Examples of CRED Relevance Criteria (13 total criteria) [1]

| Relevance Category | Example Criterion | Purpose of Evaluation |

|---|---|---|

| Test Substance | Is the tested substance relevant for the assessment (e.g., correct form, purity)? | Ensures the test material corresponds to the substance of regulatory concern. |

| Exposure Regime | Is the exposure duration relevant for the assessment endpoint? | Judges if the test (e.g., acute or chronic) matches the required protection goal. |

| Test Endpoint | Is the observed effect relevant for the protection goal? | Determines if the measured parameter (e.g., mortality, reproduction) is suitable. |

| Test Organism | Is the test species/group relevant for the ecosystem being protected? | Assesses the ecological representativeness of the chosen model organism. |

Application Notes & Experimental Protocols

Protocol for Performing a CRED Evaluation

This protocol provides a stepwise methodology for evaluating an aquatic ecotoxicity study using the CRED framework [1].

Title: Systematic Reliability and Relevance Evaluation of an Aquatic Ecotoxicity Study Using the CRED Method. Purpose: To transparently determine the scientific reliability and regulatory relevance of a given ecotoxicity study for a defined assessment purpose (e.g., deriving a Predicted-No-Effect Concentration for a specific substance). Materials:

- The ecotoxicity study to be evaluated (peer-reviewed paper or report).

- CRED evaluation sheet (Excel tool recommended) [12].

- CRED guidance document detailing the 20 reliability and 13 relevance criteria [1].

Procedure:

- Study Preparation: Clearly define the regulatory assessment context (e.g., substance, protected ecosystem, endpoint of concern). Read the study in full.

- Reliability Assessment: For each of the 20 reliability criteria, answer "Yes," "No," or "Unclear" based solely on the information reported in the study. Provide a brief justification for each answer in the comment field. Do not infer missing information.

- Reliability Integration: Synthesize the individual reliability ratings into an overall reliability classification (e.g., High, Medium, Low). The guidance document assists in weighing major versus minor deficiencies.

- Relevance Assessment: For each of the 13 relevance criteria, answer "Yes," "No," or "Partially" based on the study details and the predefined assessment purpose. Justify each answer.

- Relevance Integration: Synthesize the relevance ratings to conclude whether the study is "Relevant," "Not Relevant," or "Partially Relevant" for the specific assessment.

- Final Documentation: The completed CRED sheet, with all answers and justifications, serves as the transparent audit trail for the evaluation.

Protocol for Applying CRED Reporting Recommendations

To improve the quality of future studies, CRED also provides 50 reporting criteria across six categories [1]. Researchers can use this as a checklist when designing studies and preparing manuscripts.

Title: Conducting and Reporting an Aquatic Ecotoxicity Study Aligned with CRED Principles. Purpose: To ensure an ecotoxicity study is performed and documented to a standard that facilitates a high-reliability evaluation and regulatory use. Procedure (Reporting Phase): Use the CRED reporting checklist to verify all essential information is included in the manuscript or supplementary data [1].

- General Information: Declare funding, conflicts of interest, and compliance with ethical standards.

- Test Substance: Report source, purity, chemical identification (CAS), and characterization (e.g., for nanomaterials or mixtures).

- Test Organism: Specify species, life stage, source, husbandry conditions, and acclimation procedures.

- Test Design: Detail the experimental design, number of replicates, concentrations, controls, and exposure system.

- Exposure Conditions: Document the medium, temperature, pH, light, renewal regime, and measured concentration data (nominal vs. measured).

- Statistical & Biological Response: Describe statistical methods, raw data availability, dose-response analysis, and endpoint observations.

Visualizing the CRED Workflow and Its Regulatory Context

Diagram 1: CRED study evaluation workflow for regulatory use

Diagram 2: The development and evolution of the CRED initiative

Table 4: Key Research Reagent Solutions & Materials for CRED Implementation [1] [12] [2]

| Tool / Resource | Function / Purpose | Source / Availability |

|---|---|---|

| CRED Excel Evaluation Tool | A structured spreadsheet with the 33 criteria, guidance pop-ups, and fields for comments. Facilitates standardized, documented evaluations. | Freely available for download from the SciRAP or ECOTOX Centre websites [12] [2]. |

| CRED Reporting Checklist | A list of 50 specific criteria in six categories to guide researchers in preparing comprehensive study reports. | Published within the primary CRED methodology paper [1]. |

| NanoCRED Framework | An adaptation of CRED with modified criteria for evaluating ecotoxicity studies of engineered nanomaterials, addressing nano-specific issues (e.g., particle characterization). | Detailed in a dedicated publication (Hartmann et al., 2017) [12]. |

| EthoCRED Framework | An extension of CRED to guide the evaluation of behavioural ecotoxicity studies, ensuring reliability and relevance of more complex endpoints. | Detailed in a dedicated publication (Bertram et al., 2024) [12]. |

| CRED for Sediment and Soil | Adapted criteria for evaluating studies on terrestrial and sediment organisms, expanding the framework beyond aquatic toxicity. | Described in Casado-Martinez et al., 2024 [12]. |

Within regulatory environmental risk assessment, the derivation of Predicted-No-Effect Concentrations (PNECs) and Environmental Quality Standards (EQSs) hinges on the quality of underlying ecotoxicity studies [13]. Two cornerstone concepts in evaluating this quality are reliability and relevance. Their precise definition and systematic application are critical for ensuring that regulatory decisions are based on sound, defensible, and pertinent science.

Reliability (also referred to as credibility or internal validity) assesses the methodological soundness of a study. It answers the question: "How trustworthy are the study's data and reported results based on its design, conduct, and reporting?" A reliable study minimizes bias and error, allowing for confidence in its findings.

Relevance (or external validity) assesses the usefulness and applicability of a study's data for a specific regulatory purpose. It answers the question: "Are the test species, endpoints, exposure conditions, and effect concentrations appropriate for the protective goal at hand?" A highly reliable study may have low relevance if, for example, it tests an insensitive species unrelated to the ecosystem being protected.

The CRED (Criteria for Reporting and Evaluating Ecotoxicity Data) method was developed to provide a transparent, consistent, and structured framework for evaluating these two dimensions [13]. It moves beyond older, more subjective evaluation schemes (e.g., the Klimisch method) by offering detailed criteria and explicit guidance, thereby reducing bias and increasing consistency among different assessors [13] [12]. This document provides application notes and protocols for implementing the CRED evaluation within a research or regulatory context.

Quantitative Framework of the CRED Method

The CRED method operationalizes the evaluation of reliability and relevance through a set of explicit criteria. The original framework for aquatic ecotoxicity studies defines 20 criteria for reliability and 13 for relevance [13]. Subsequent developments have extended this framework to nanoecotoxicity (NanoCRED), behavioral studies (EthoCRED), and sediment/soil studies [12].

Table 1: Core CRED Evaluation Criteria for Aquatic Ecotoxicity Studies [13]

| Dimension | Category | Number of Criteria | Example Criteria (Paraphrased) |

|---|---|---|---|

| Reliability | Test Substance Characterization | 4 | Purity, concentration verification, stability, measurement of exposure concentrations. |

| Test Organism & Design | 6 | Species identification, health, age, randomization, blinding, sample size justification. | |

| Exposure System & Conditions | 5 | Control of physico-chemical parameters, system stability, renewal of test media. | |

| Data Reporting & Analysis | 5 | Clear presentation of raw data, statistical methods, dose-response, control performance. | |

| Relevance | Test Species & Endpoint | 5 | Appropriateness of taxonomic group, life-stage, and endpoint for protection goal. |

| Exposure Pattern | 4 | Match of exposure duration, route, and regime to real-world scenarios. | |

| Ecological Context | 4 | Consideration of sensitive species, population/community-level implications. |

The outcome of a CRED evaluation is not a single numeric score but a structured profile. This profile details which specific criteria are fulfilled, partly fulfilled, or not fulfilled, providing a transparent audit trail for the assessment. Comparative analysis has demonstrated the utility of this approach.

Table 2: Comparison of Method Evaluation from a Ring-Test Study [12]

| Evaluation Aspect | Klimisch Method | CRED Method | Outcome / Preference |

|---|---|---|---|

| Transparency | Low - Provides limited guidance and rationale. | High - Offers explicit criteria and detailed guidance for each. | CRED strongly preferred for transparency [12]. |

| Consistency | Moderate to Low - Relies heavily on expert judgment. | High - Structured criteria reduce subjective variance. | CRED found to improve consistency among assessors [12]. |

| Accuracy | Not directly assessed. | Perceived as more accurate due to comprehensiveness. | Assessors perceived CRED as more accurate [12]. |

| Ease of Use | Initially easier due to simplicity. | Requires more initial effort to learn the criteria. | CRED's added complexity is justified by its benefits [12]. |

Application Notes and Experimental Protocols

Protocol for Conducting a CRED Evaluation

The following step-by-step protocol is adapted from the CRED methodology for evaluating a single aquatic ecotoxicity study [13] [12].

Phase 1: Preparation and Familiarization

- Define the Regulatory Context: Clearly articulate the protection goal (e.g., protecting freshwater fish populations) and the intended use of the data (e.g., deriving a PNEC).

- Acquire the Full Study Manuscript: Secure the complete text, including any supplementary materials. An abstract alone is insufficient for evaluation.

- Select the Appropriate CRED Tool: Choose the evaluation sheet matching your study type (e.g., standard aquatic, NanoCRED for nanomaterials, EthoCRED for behavioral studies) [12].

Phase 2: Systematic Assessment

- Reliability Assessment (Criteria R1-R20):

- Work through each reliability criterion sequentially.

- For each criterion, locate the relevant information in the study manuscript.

- Judge the criterion as "Fulfilled" (Y), "Not Fulfilled" (N), "Partially Fulfilled" (P), or "Not Reported/Unclear" (NR). Base judgments strictly on what is reported.

- Document the justification for each judgment by citing page numbers, figure/table references, or quoting text. This creates an essential audit trail.

- Relevance Assessment (Criteria Rel1-Rel13):

- Switch to the relevance criteria. This assessment is inherently more contextual and tied to the protection goal defined in Step 1.

- Judge each relevance criterion as "High", "Medium", "Low", or "Not Applicable."

- Justify each judgment by linking the study's design (species, exposure, endpoint) to the regulatory context. For example, a study on a benthic invertebrate would have "High" relevance for deriving a sediment EQS but potentially "Medium" relevance for a general water column PNEC.

Phase 3: Integration and Conclusion

- Synthesize Reliability Findings: Summarize the reliability profile. A study with many "Not Fulfilled" criteria in critical areas (e.g., exposure concentration verification, control performance) has low reliability, and its results should be weighted lightly or excluded.

- Synthesize Relevance Findings: Summarize the relevance profile. A study may be highly reliable but of low relevance to the specific assessment.

- Form an Integrated Conclusion: Weigh both dimensions to determine the study's overall utility for the assessment. The final conclusion might categorize the study as:

- "Core Study": High reliability and high relevance. Suitable for quantitative analysis (e.g., included in species sensitivity distribution).

- "Supporting Study": High reliability but moderate/low relevance, or medium reliability and high relevance. Used for qualitative support or uncertainty analysis.

- "Excluded Study": Low reliability. Not used for decision-making regardless of perceived relevance.

Protocol for AI-Assisted Extraction of Experimental Procedures

The CRED method emphasizes the need for detailed reporting. Recent advances in artificial intelligence (AI) can aid in the structuring and analysis of experimental data from literature. The following protocol, inspired by computational literature mining approaches, outlines how AI can be used to extract and formalize experimental procedures from ecotoxicity study manuscripts [14].

Objective: To automatically parse a published ecotoxicity study's "Materials and Methods" section into a structured, machine-actionable sequence of experimental actions.

Materials:

- Source Documents: Digital manuscripts of ecotoxicity studies in PDF or HTML format.

- Software & Models: Natural Language Processing (NLP) toolkit (e.g., spaCy, specialized chemical NLP models). A pre-trained sequence-to-sequence model (e.g., based on Transformer or BART architectures) trained on chemical procedures is ideal [14].

- Action Ontology: A defined vocabulary of experimental actions relevant to ecotoxicology (e.g.,

PREPARE_STOCK_SOLUTION,ACCLIMATE_ORGANISMS,MEASURE_PH,RECORD_MORTALITY).

Procedure:

- Text Extraction and Pre-processing:

- Convert the PDF/HTML manuscript to plain text.

- Isolate the "Materials and Methods" (or equivalent) section using section headers.

- Clean the text (remove line breaks, standardize units).

- Named Entity Recognition (NER):

- Apply an NLP model to identify and tag key entities within the text:

- Chemicals: Test substance, solvents, formulants.

- Organisms: Species names, life stages.

- Apparatus: Test chambers, water quality probes.

- Parameters: Concentrations, temperatures, durations, pH levels.

- Apply an NLP model to identify and tag key entities within the text:

- Action Sequence Prediction:

- Input the processed text or a formalized summary (e.g., a list of identified entities) into a pre-trained action prediction model [14].

- The model generates a sequence of step-by-step actions. For example:

ACTION: PREPARE_TEST_SOLUTION; INPUT: $TEST_SUBSTANCE$; PARAMETER: CONCENTRATION=100 mg/L; SOLVENT: $DILUTION_WATER$ACTION: ACCLIMATE_ORGANISMS; PARAMETER: DURATION=7 daysACTION: INITIATE_EXPOSURE; INPUT: $TEST_SOLUTION$; INPUT: $TEST_ORGANISMS$

- Validation and Curation:

- A domain expert (ecotoxicologist) reviews the AI-generated action sequence against the original manuscript for accuracy and completeness.

- Errors are corrected, and the curated sequence is added to a growing database of structured protocols. This feedback loop improves future model performance.

Application to CRED: The resulting structured protocol can be algorithmically checked for completeness against CRED's reporting recommendations (e.g., "Was exposure concentration measured?" corresponds to a MEASURE_CONCENTRATION action). This can provide a preliminary, automated check on study reporting quality.

Visualizing the Evaluation Workflow and Conceptual Framework

CRED Evaluation Protocol Workflow

Reliability and Relevance in Regulatory Decision-Making

Table 3: Key Resources for CRED Evaluation and Ecotoxicity Study Design

| Resource / Tool | Function / Purpose | Key Features & Notes |

|---|---|---|

| CRED Assessment Sheets (Excel) [12] | The primary tool for conducting evaluations. Provides the structured checklist of 20 reliability and 13 relevance criteria with guided fields for scoring and justification. | Available for aquatic studies. Requires enabling macros for full functionality [12]. |

| NanoCRED Tool [12] | Specialized adaptation of CRED for evaluating ecotoxicity studies of engineered nanomaterials (ENMs). Incorporates criteria specific to ENM characterization (e.g., size, coating, agglomeration state in media). | Addresses the unique reliability challenges posed by nanomaterial testing. |

| EthoCRED Framework [12] | A framework to guide the reporting and evaluation of behavioral ecotoxicity studies. Provides criteria to assess the reliability and relevance of behavioral endpoints. | Helps integrate sensitive behavioral data into regulatory assessments systematically. |

| CRED Reporting Recommendations [13] | A checklist of 50 specific reporting items across 6 categories (general, test design, substance, organism, exposure, stats). | Using this as a guide when designing studies or writing manuscripts proactively ensures future evaluations will yield high reliability scores. |

| OECD Test Guidelines | Internationally agreed test protocols (e.g., OECD 201, 210, 211). | Studies conducted in full compliance with a relevant OECD TG typically fulfill many core CRED reliability criteria. |

| AI for Protocol Extraction [14] | Computational models (e.g., transformer-based NLP) that can parse textual methods sections into structured action sequences. | Emerging tool to automate the extraction and formalization of experimental details, aiding in rapid screening and data curation. |

A Step-by-Step Guide to Applying the CRED Evaluation Criteria

The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) framework represents a pivotal advancement in the standardized assessment of ecotoxicological studies for regulatory and research purposes. Developed to address the inconsistencies and subjective biases inherent in earlier evaluation methods like the Klimisch approach, CRED provides a transparent, detailed, and systematic tool for judging the reliability and relevance of aquatic ecotoxicity data [1] [2]. Within the broader thesis on methodological rigor in ecotoxicity, the CRED framework is posited as the foundational pillar that enables reproducible and consistent hazard and risk assessments. Its primary aim is to improve the usability of peer-reviewed literature in regulatory processes, such as deriving Predicted-No-Effect Concentrations (PNECs) and Environmental Quality Standards (EQSs), by ensuring that evaluations are based on the best available and most trustworthy science [1] [12].

Core Components: Reliability and Relevance Criteria

The CRED evaluation method is built on two distinct but complementary pillars: reliability (the inherent scientific quality of a study) and relevance (the appropriateness of the study for a specific assessment purpose) [1]. A study can be highly reliable but irrelevant for a particular regulatory question, and vice-versa. This clear separation is a cornerstone of the framework.

The 20 Reliability Criteria

Reliability assesses the intrinsic soundness of a study's design, conduct, and reporting. The 20 criteria are designed to minimize the risk of bias and ensure the clarity and plausibility of the findings [1].

Table 1: The CRED Framework's 20 Reliability Criteria

| Criterion Group | Specific Criterion | Key Evaluation Question |

|---|---|---|

| Test Substance | 1. Identity and Purity | Is the test substance clearly identified, and is its purity/impurity profile documented? |

| 2. Stability | Was the stability of the test substance in the medium verified? | |

| 3. Exposure Verification | Was the actual exposure concentration measured and reported? | |

| Test Organism | 4. Species Identity | Is the test species clearly identified (preferably to species level)? |

| 5. Life-stage & Source | Are the life-stage, source (e.g., culture), and health status of organisms reported? | |

| 6. Acclimatization | Were organisms properly acclimatized to test conditions? | |

| Test Design | 7. Control Groups | Were appropriate control groups (e.g., negative, solvent) included and performed acceptably? |

| 8. Replicates | Was the number of replicates and organisms per replicate sufficient and reported? | |

| 9. Randomization | Was the allocation of test organisms to treatments randomized? | |

| 10. Blinding | Was the scoring/evaluation of endpoints performed blindly? | |

| 11. Test Concentrations | Were test concentrations justified and appropriately spaced (e.g., geometric series)? | |

| Exposure Conditions | 12. Test Duration | Was the test duration appropriate for the endpoint and species? |

| 13. Test Medium | Is the composition of the test medium (water, sediment) fully described? | |

| 14. Temperature & Light | Were key physical parameters (temperature, photoperiod) controlled and reported? | |

| 15. Feeding & Renewal | Were feeding (if any) and medium renewal regimes specified and appropriate? | |

| Endpoint & Analysis | 16. Endpoint Definition | Is the measured endpoint (e.g., immobility, growth) clearly defined? |

| 17. Statistical Methods | Were appropriate statistical methods used and clearly reported? | |

| 18. Dose-Response | Is a dose-response relationship demonstrated or discussed? | |

| 19. Raw Data | Is access to raw data provided or are summary data sufficiently detailed? | |

| Reporting & Plausibility | 20. Results Consistency | Are the results internally consistent and plausibly linked to the methodology? |

The 13 Relevance Criteria

Relevance determines how fit-for-purpose a study is for a specific regulatory assessment. It depends on the assessment goals (e.g., protecting freshwater vs. marine ecosystems, acute vs. chronic risk) [1].

Table 2: The CRED Framework's 13 Relevance Criteria

| Criterion Category | Specific Criterion | Regulatory Assessment Consideration |

|---|---|---|

| Test Species | 1. Taxonomic Group | Is the species from a relevant trophic level (e.g., algae, invertebrate, fish)? |

| 2. Protection Goals | Does the species represent a regionally or functionally relevant protection goal? | |

| Exposure | 3. Exposure Pathway | Is the tested exposure route (e.g., water, sediment, diet) relevant to the scenario? |

| 4. Temporal Pattern | Does the exposure duration (acute, chronic, pulsed) match the expected environmental exposure? | |

| 5. Media Characteristics | Are the test medium properties (pH, hardness, organic carbon) relevant to the assessment area? | |

| Endpoint | 6. Effect Type | Is the measured endpoint (lethal, sublethal like growth/reproduction, behavioral) relevant to the protection goal? |

| 7. Ecological Significance | Is the endpoint linked to individual fitness or population-level consequences? | |

| Test Substance | 8. Substance Form | Is the tested form (e.g., active ingredient, formulated product, environmental metabolite) relevant? |

| 9. Fate Considerations | Does the test consider relevant environmental transformation processes? | |

| Assessment Context | 10. Regulatory Framework | Does the study meet the specific data requirements of the applicable regulation (e.g., REACH, WFD)? |

| 11. Assessment Factor Derivation | Is the study suitable for deriving assessment factors (e.g., a chronic NOEC for a PNEC)? | |

| 12. Mode of Action | Does the test endpoint align with the known or suspected mode of action of the substance? | |

| 13. Overall Weight of Evidence | How does the study contribute to the overall body of evidence for the hazard assessment? |

Application Protocol: Implementing the CRED Evaluation Method

The following protocol outlines the step-by-step application of the CRED framework for the systematic evaluation of an aquatic ecotoxicity study.

Protocol: CRED Study Evaluation Workflow

Objective: To consistently and transparently determine the reliability and relevance of an aquatic ecotoxicity study for use in chemical hazard and risk assessment.

Materials: Study manuscript/report, CRED evaluation sheet (Excel tool recommended [2] [12]), access to supplemental data if available.

Procedure:

Study Acquisition and Preliminary Review:

- Obtain the full text of the ecotoxicity study to be evaluated.

- Perform an initial read to understand the study's objectives, design, and key findings.

Systematic Reliability Assessment (Apply 20 Criteria):

- For each of the 20 reliability criteria in Table 1, extract the corresponding information from the study's methods and results sections.

- For each criterion, assign a judgment:

- "Yes": The criterion is fully met. Information is clearly reported and methodologically sound.

- "No": The criterion is not met. Information is missing or the methodology is flawed.

- "Partly": The criterion is partially met. Information or methodology is partially addressed but incomplete or unclear.

- "Not Reported (NR)": The information needed to judge the criterion is absent from the report.

- Document the rationale for each judgment, citing specific lines, tables, or figures from the study.

Overall Reliability Classification:

- Based on the pattern of judgments, assign an overall reliability classification to the study. While CRED encourages a nuanced view, classifications often follow:

- Reliable without restriction: All key criteria are met ("Yes"). Minor limitations may be present but do not affect the study's core conclusions.

- Reliable with restrictions: Some criteria are only partly met or specific minor flaws exist, but the study is still scientifically valid for use.

- Not reliable: Critical flaws (e.g., no controls, unacceptable control performance, unverified exposure concentrations, inappropriate statistics) invalidate the study's findings [1].

- Based on the pattern of judgments, assign an overall reliability classification to the study. While CRED encourages a nuanced view, classifications often follow:

Context-Specific Relevance Assessment (Apply 13 Criteria):

- Define the specific purpose of your assessment (e.g., "Derivation of a chronic freshwater PNEC for a pharmaceutical").

- For each of the 13 relevance criteria in Table 2, evaluate the study against your defined assessment goal.

- Judge each criterion as "Relevant", "Not Relevant", or "Potentially Relevant".

- Document the reasoning, linking study details to the protection goals and exposure scenarios of your assessment.

Integration and Final Decision:

- Synthesize the reliability and relevance evaluations.

- Make a final decision on the study's usability:

- A study must be at least "Reliable with restrictions" to be considered for primary use in quantitative risk assessment.

- A "Not reliable" study is typically excluded, though it may be noted in a weight-of-evidence discussion.

- The relevance judgment determines how a reliable study is used (e.g., as a key study, a supporting study, or for a different assessment endpoint).

Documentation and Reporting:

- Complete the CRED evaluation sheet in full, ensuring all judgments and rationales are recorded. This creates a transparent audit trail [1].

Experimental Basis: The CRED Ring Test

The CRED framework was empirically validated through an international ring test [1] [2].

- Design: Risk assessors from industry, academia, and government evaluated multiple ecotoxicity studies, first using the traditional Klimisch method and then using the draft CRED method.

- Outcome: Participants found the CRED method more accurate, applicable, consistent, and transparent than the Klimisch method. The detailed criteria reduced subjectivity and variability between assessors [1].

- Result: The ring test provided the evidence base for fine-tuning the criteria and established CRED as a demonstrably superior tool for harmonized study evaluation.

Specialized Extensions of the CRED Framework

The core CRED principles have been adapted to address specific challenges in emerging areas of ecotoxicology.

EthoCRED for Behavioral Ecotoxicity Studies

Behavioral endpoints are highly sensitive but poorly covered by standard test guidelines. EthoCRED extends the CRED framework with tailored criteria for behavioral studies [15] [16].

- Key Adaptations:

- Reliability: Adds criteria for behavioral assay validation (e.g., equipment calibration, environmental control in arenas), automated tracking verification, and ethical considerations for behavioral testing.

- Relevance: Emphasizes the ecological significance of behavioral endpoints (e.g., linking altered foraging to individual fitness, linking predator avoidance to population dynamics) and the temporal resolution of measurements appropriate for behavioral responses [15].

NanoCRED for Nanomaterial Ecotoxicity

Evaluating studies on engineered nanomaterials (ENMs) requires attention to their unique properties. NanoCRED modifies CRED to address nano-specific challenges [12].

- Key Adaptations:

- Reliability: Stricter criteria for material characterization (size, shape, coating, aggregation state in the test medium) and exposure verification using analytical techniques suitable for ENMs (e.g., ICP-MS, electron microscopy).

- Relevance: Focuses on the environmental realism of the tested nanoform and the appropriateness of the test media for assessing fate and bioavailability of ENMs [12].

Evolution and Integration: The EcoSR Framework

The Ecotoxicological Study Reliability (EcoSR) framework, proposed in 2025, represents an evolution integrating CRED's strengths with a more formal Risk of Bias (RoB) assessment approach common in human health [17].

Table 3: Comparison of the CRED and EcoSR Frameworks

| Feature | CRED Framework | EcoSR Framework |

|---|---|---|

| Primary Focus | Evaluation of reliability and relevance for regulatory data acceptance. | In-depth assessment of internal validity (risk of bias) for toxicity value development. |

| Structure | Two sets of criteria (20 reliability, 13 relevance). | Two-tiered: Tier 1 (screening) and Tier 2 (full RoB assessment across bias domains). |

| Core Methodology | Criteria-based scoring with expert judgment. | Domain-based RoB judgment (e.g., Low/Medium/High/Unclear risk of bias in selection, exposure, outcome measurement). |

| Output | Classification (e.g., reliable without/with restrictions) and relevance judgment. | A detailed bias profile identifying the most critical weaknesses affecting study validity. |

| Relationship | Serves as the foundational criterion set. EcoSR incorporates and builds upon CRED's reliability concepts for deeper validity appraisal [17]. |

Reporting Guidelines: The CRED Reporting Recommendations

To proactively improve study quality, CRED provides 50 reporting recommendations across six categories [1]. Adherence to these by authors minimizes evaluation ambiguity.

Table 4: CRED Reporting Recommendation Categories

| Category | Number of Criteria | Purpose |

|---|---|---|

| General Information | 7 | Ensure traceability and context (e.g., authors, funding, regulatory purpose). |

| Test Design | 9 | Fully document experimental setup (e.g., type of test, controls, replicates, randomization). |

| Test Substance | 7 | Provide complete chemical identification, preparation, and analytical verification details. |

| Test Organism | 7 | Specify organism biology, source, husbandry, and acclimation conditions. |

| Exposure Conditions | 11 | Detail all physical, chemical, and temporal aspects of the exposure regime. |

| Statistical & Biological Response | 9 | Clearly present data, statistical methods, results, and dose-response relationships. |

The Scientist's Toolkit: Essential Reagents and Materials for CRED-Evaluated Studies

The following materials are critical for conducting ecotoxicity studies that can meet high reliability standards under CRED evaluation.

Table 5: Research Reagent Solutions for Standard Aquatic Ecotoxicity Tests

| Item | Function in Ecotoxicity Testing | CRED Evaluation Consideration |

|---|---|---|

| Reference Toxicants (e.g., KCl, Sodium dodecyl sulfate) | Used in periodic control tests to confirm the consistent sensitivity and health of test organism cultures. | Supports Criterion 7 (Control Groups) by demonstrating laboratory proficiency and organism health. |

| Solvent Controls (e.g., Acetone, Methanol, DMSO) | Vehicles for poorly water-soluble test substances. Must be non-toxic at the concentration used. | Critical for Criterion 7 and Criterion 11 (Test Concentrations); their use and effect must be reported. |

| Reconstituted Standardized Test Media (e.g., OECD, EPA reconstituted freshwater) | Provides a consistent, defined water chemistry matrix for tests, improving inter-laboratory reproducibility. | Directly addresses Criterion 13 (Test Medium); composition must be specified. |

| Analytical Grade Test Substance | The chemical of known identity and high purity used to prepare stock and test solutions. | Fundamental to Criterion 1 (Identity and Purity). Impurities must be characterized. |

| Internal & External Analytical Standards (for chemical analysis) | Used in chromatography (e.g., HPLC, GC) and spectroscopy to quantify the test substance concentration in the exposure medium. | Essential for Criterion 3 (Exposure Verification). The method and frequency of analysis must be reported. |

| Live Algal or Invertebrate Food Cultures (e.g., Pseudokirchneriella subcapitata, Artemia nauplii) | Provides nutrition for chronic tests with fish and invertebrates, and is the test organism for algal growth inhibition tests. | Relevant to Criterion 5 (Life-stage & Source) of the food organism and Criterion 15 (Feeding). |

| Certified Water Quality Kits/Probes | For monitoring and reporting key water quality parameters (pH, dissolved oxygen, conductivity, temperature, hardness). | Required for Criterion 14 (Temperature & Light) and part of documenting exposure conditions. |

Visual Synthesis: Frameworks and Workflows

CRED Framework Ecosystem and Evolution

CRED Evaluation Decision Workflow

This document provides application notes and detailed protocols for implementing the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method. The content is framed within a broader thesis focused on advancing the evaluation of ecotoxicity study reliability. The CRED method was developed to address significant shortcomings in the widely used Klimisch method, which has been criticized for its lack of detailed guidance, inconsistency among assessors, and insufficient consideration of study relevance [18]. Within the thesis context, CRED represents a pivotal evolution towards a more transparent, consistent, and scientifically robust framework for evaluating data used in environmental hazard and risk assessments for chemicals, including pharmaceuticals [18].

The core thesis posits that adopting a structured, criteria-based workflow—from initial study screening to detailed mechanistic evaluation—enhances the reliability of ecotoxicological risk assessments. This workflow ensures that all available data, including peer-reviewed literature, are consistently and transparently evaluated, thereby supporting more harmonized regulatory decisions [18] [12].

Core Workflow: A Three-Phase CRED Protocol

The practical application of the CRED methodology follows a sequential, three-phase workflow. This structured approach ensures a systematic evaluation of both the reliability (inherent quality of the study) and relevance (appropriateness for the specific assessment) of ecotoxicity data [18].

Phase 1: Study Screening and Triage Phase 2: Detailed Reliability & Relevance Evaluation Phase 3: Data Integration and Uncertainty Characterization

Phase 1 Protocol: Study Screening and Triage

Objective: To efficiently identify and acquire ecotoxicity studies that meet minimum thresholds of acceptability for further detailed evaluation.

Procedure:

- Source Identification: Compile studies from regulatory dossiers, scientific databases (e.g., ECOTOX), and peer-reviewed literature [19].

- Initial Application of Acceptance Criteria: Screen each study against a predefined set of minimum criteria. Studies that fail are categorized as "rejected" and excluded from the next phase, though the reason for rejection must be documented.

- Categorization: Based on the screen, categorize studies as:

- Accepted: For full evaluation (Phase 2).

- Rejected: Does not meet critical criteria.

- Other: Requires expert judgment (e.g., preliminary data, unclear reporting) [19].

Key Screening Criteria (Adapted from US EPA Guidelines) [19]:

- The study investigates effects of a single chemical.

- Effects are reported for whole, live aquatic or terrestrial organisms.

- A concurrent control group is used.

- An explicit exposure duration and a measured or nominal concentration/dose are reported.

- A quantitative biological endpoint (e.g., LC50, NOEC, EC50) is presented.

- The study is a primary source (not a review or secondary summary) and is publicly available.

Table 1: Minimum Study Screening Criteria for Aquatic Ecotoxicity Data [19]

| Criterion Category | Description | Accept/Reject Decision |

|---|---|---|

| Test Substance | Single, identifiable chemical of concern. | Reject if mixture or unknown substance. |

| Test Organism | Live, whole aquatic or terrestrial species. | Reject if cell line, microbial assay, or deceased organisms. |

| Experimental Design | Concurrent control group; reported exposure duration. | Reject if control missing or exposure time unclear. |

| Data Reporting | Quantitative endpoint; concentration/dose reported. | Reject if only qualitative effects or no exposure data. |

| Document Type | Primary source, full article, publicly available. | Reject if abstract-only, review, or unavailable document. |

Phase 2 Protocol: Detailed Reliability and Relevance Evaluation

Objective: To perform a transparent and criterion-based assessment of the methodological reliability and assessment-specific relevance of each accepted study.

Procedure:

- Reliability Evaluation: Use the CRED evaluation sheet to assess 20 key criteria across four domains [18]:

- Test Substance Characterization: (e.g., purity, formulation, concentration verification).

- Test Organism Information: (e.g., species, life stage, source, health status).

- Study Design and Execution: (e.g., test system, exposure regimen, temperature, controls, compliance with guidelines like OECD).

- Data Reporting and Analysis: (e.g., raw data, statistical methods, dose-response, clarity of results). Each criterion is evaluated (e.g., Yes/No/Partly), with supporting guidance and comments documented.

- Relevance Evaluation: Simultaneously evaluate the study against 13 relevance criteria tailored to the specific risk assessment question [18]:

- Biological Relevance: Appropriateness of the tested species, endpoint (e.g., mortality, growth, reproduction), and exposure pathway.

- Temporal and Spatial Relevance: Match of exposure duration and scenario to the assessment context.

- Overall Weight-of-Evidence Judgment: Synthesize reliability and relevance evaluations to assign a final confidence rating (e.g., Reliable, Reliable with Restrictions, or Not Reliable) for the study's use in the specific assessment.

Table 2: Comparison of the Klimisch and CRED Evaluation Methods [18]

| Characteristic | Klimisch Method | CRED Method |

|---|---|---|

| Primary Focus | Reliability only. | Reliability and Relevance. |

| Number of Criteria | 12-14 vague criteria. | 20 reliability + 13 relevance detailed criteria. |

| Guidance Provided | Minimal, leading to high expert judgment dependency. | Detailed guidance for each criterion, improving consistency. |

| Basis for Judgment | Often prioritizes GLP and guideline status. | Transparent, criteria-based scoring of reported methods. |

| Outcome Transparency | Low; categorical score only. | High; documented evaluation for each criterion. |

Phase 3 Protocol: Data Integration and Uncertainty Characterization

Objective: To integrate evaluated studies into a coherent dataset for risk characterization and to transparently communicate the overall uncertainty.

Procedure:

- Dataset Compilation: Create a matrix of all reliable studies, organized by species, endpoint, and reliability/relevance rating.

- Mechanistic Modeling (Where Applicable): For refined assessments, use reliable data to calibrate and validate Toxicokinetic-Toxicodynamic (TKTD) models, such as the General Unified Threshold model of Survival (GUTS) [20].

- Model Calibration: Fit the model to a subset of experimental data (e.g., time-series survival under constant exposure).

- Model Validation: Test model predictions against an independent dataset (e.g., survival under pulsed exposure).

- Performance Assessment: Evaluate model fits using a combination of visual assessment and quantitative Goodness-of-Fit (GoF) metrics (e.g., Normalized Root-Mean-Square Error - NRMSE) [20].

- Uncertainty Characterization: Use graphical and tabular approaches to communicate overall confidence in the derived toxicity values or risk estimates. This can include "traffic light" tables or uncertainty factor decomposition plots that illustrate the strengths and limitations of the underlying database and models [21].

Table 3: Goodness-of-Fit Metrics for TKTD (GUTS) Model Evaluation [20]

| Metric | Acronym | Description | Interpretation & Suggested Threshold |

|---|---|---|---|

| Normalized Root-Mean-Square Error | NRMSE | Measures the average magnitude of prediction error over time, normalized by the mean observation. Lower values indicate better fit. | NRMSE < 50% generally indicates a satisfactory fit for survival data [20]. |

| Survival Probability Prediction Error | SPPE | Quantifies the accuracy of predicted survival at the end of the experiment across all treatments. | SPPE < 30% is suggested as a conservative acceptance criterion [20]. |

| Posterior Predictive Check | PPC | Assesses whether observations fall within the Bayesian confidence intervals of the model predictions. | A high percentage (e.g., >80%) of data points within the 95% prediction interval is desirable. |

Diagram 1: The Three-Phase CRED Evaluation Workflow (Max Width: 760px)

Advanced Applications and Extended Protocols

The CRED framework is adaptable to specialized areas within ecotoxicology, ensuring its utility for modern research challenges.

3.1 Protocol for Nanomaterial Ecotoxicity (NanoCRED): The basic CRED criteria are extended with specific considerations for nanomaterials [12].

- Test Substance Characterization: Must include particle characterization (size distribution, surface area, charge, aggregation state) in the exposure medium.

- Dosing and Exposure: Documentation of methods to maintain stable and characterized exposures throughout the test (e.g., sonication, use of dispersants, measured concentrations).

- Endpoint Relevance: Inclusion of endpoints specific to nano-effects, such as oxidative stress, particle uptake, or behavioral changes.

3.2 Protocol for Behavioral Endpoints (EthoCRED): EthoCRED provides a tailored framework for evaluating studies on behavioral changes, a sensitive but methodologically complex endpoint [12].

- Apparatus Validation: Evaluation of the testing apparatus for its ability to accurately measure the claimed behavior (e.g., arena size, sensor calibration, video tracking settings).

- Baseline Behavior: Requirement for documentation of normal behavioral variation in control groups.

- Blinding and Bias Mitigation: Assessment of whether experiments were conducted with treatment blinding to observer.

- Statistical Power: Evaluation of whether the sample size was sufficient to detect a biologically meaningful effect size in behavioral metrics.

Diagram 2: Protocol for Evaluating TKTD (GUTS) Model Performance (Max Width: 760px)

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of the CRED workflow requires both conceptual tools and practical resources.

Table 4: Essential Toolkit for CRED-Based Ecotoxicity Evaluation

| Tool/Resource | Function in the Workflow | Source/Example |

|---|---|---|

| CRED Evaluation Sheets | Structured templates for documenting reliability and relevance criteria assessments for each study. | Excel-based tools with macros for visualization [12]. |

| OECD Test Guidelines | Provide the standardized methodological benchmarks against which study reliability is evaluated. | OECD Guidelines 210 (Fish Early-Life), 211 (Daphnia magna), 201 (Algae) [18]. |

| ECOTOX Database | A key source for identifying peer-reviewed ecotoxicity studies for screening (Phase 1). | U.S. EPA ECOTOXicology knowledgebase [19]. |

| QSAR Toolbox | Software for data gap filling via read-across and category formation, useful after data evaluation. | OECD QSAR Toolbox for grouping chemicals and predicting toxicity [22]. |

| TKTD Modeling Software | Tools to calibrate and validate mechanistic models (e.g., GUTS) for refined risk assessment (Phase 3). | morse or GUTS R packages [20]. |

| Uncertainty Characterization Templates | Pre-formatted tables and graphs for transparently communicating data confidence and variability. | Approaches based on IRIS framework (e.g., uncertainty factor plots) [21]. |

The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) framework was developed to standardize the assessment of aquatic ecotoxicity studies, moving beyond subjective expert judgment to promote reproducibility, transparency, and consistency in regulatory decision-making [1]. A core innovation of CRED is its refined classification system for study reliability, which includes the pivotal "Reliable with Restrictions" category. This classification is essential for a nuanced hazard and risk assessment, as it allows for the inclusion of valuable scientific data that may have minor flaws or deviations from standardized guidelines but remain scientifically sound and informative.

Within CRED, reliability and relevance are distinct but interconnected concepts. Reliability refers to the inherent scientific quality of a study—its design, performance, and analysis—independent of its intended use. Relevance, however, is defined by the appropriateness of the data for a specific hazard identification or risk characterization purpose [1]. A study can be highly reliable but irrelevant for a particular assessment (e.g., a robust soil ecotoxicity study is irrelevant for setting a water quality standard). Conversely, a study deemed "Reliable with Restrictions" may be highly relevant and provide critical evidence, especially when data on a particular substance or endpoint are scarce.

The "Reliable with Restrictions" category signifies that a study is fundamentally valid and contributes useful evidence, but contains specific, defined limitations. These limitations are not severe enough to invalidate the core findings, but they introduce a degree of uncertainty or reduce the confidence with which the results can be applied. The proper interpretation of this category is therefore critical: it prevents the unnecessary dismissal of valuable research while ensuring that any constraints on the data's use are clearly acknowledged and documented.

Quantitative Criteria for the 'Reliable with Restrictions' Classification

The assignment of the "Reliable with Restrictions" category is based on a detailed evaluation against explicit criteria. The foundational CRED method outlines 20 reliability criteria covering aspects from test substance characterization to statistical analysis [1]. The more recent EthoCRED extension, designed for behavioral ecotoxicity studies, expands this to 29 reliability criteria to address the unique methodologies in this sub-discipline [23]. A study typically falls into the "Restricted" category when it fulfills most core scientific principles but has deficiencies in one or several specific criteria.

Table 1: Common Deficiencies Leading to a 'Reliable with Restrictions' Classification

| Evaluation Category | Specific Criteria Deficiency | Impact on Study Interpretation |

|---|---|---|

| Test Substance & Solution | Incomplete characterization of test substance purity or concentration verification; inadequate description of solvent/dosing vehicle. | Introduces uncertainty in the actual exposure concentration, affecting dose-response accuracy. |

| Test Organism | Organism source or life stage not fully specified; pre-exposure health/holding conditions inadequately reported. | Raises questions about genetic variability, health status, and the reproducibility of the test. |

| Exposure System | Lack of measurement of key water quality parameters (e.g., pH, oxygen, temperature) during the test; insufficient renewal of test media. | Uncertainty over whether effects are due to the toxicant or to stressful or variable environmental conditions. |

| Experimental Design | Number of replicates or organisms per replicate lower than optimal but sufficient to detect a clear effect; randomisation procedure not described. | Reduces the statistical power of the study and may raise concerns about systematic bias. |

| Data & Reporting | Raw data not available; statistical methods not fully detailed or suboptimal but conclusions still plausible. | Limits independent re-analysis and verification of the reported effect levels (e.g., EC50). |

| Behavioral Endpoints (EthoCRED) | Inadequate calibration of tracking equipment; insufficient acclimation time for organisms prior to behavioral assay [23]. | May introduce noise or stress artifacts into the behavioral data, potentially obscuring or confounding toxicant-induced effects. |

The transition from "Reliable" to "Reliable with Restrictions" is not merely a tally of flaws. It requires expert judgment within the structured CRED framework to determine if the identified deficiencies materially undermine the study's conclusions. For example, a study might use a slightly sub-optimal number of replicates but demonstrate a very strong, dose-dependent, and statistically significant effect. In such a case, the core finding is robust despite the design limitation.

Experimental Protocols for Key Cited Studies

The practical application of the CRED evaluation is best illustrated through protocols. The following outlines a standardized behavioral assay, a type of study frequently evaluated under the EthoCRED extension, and the subsequent evaluation workflow.

Detailed Protocol: Fish Locomotor Activity Assay (Sub-lethal Endpoint)

This protocol assesses changes in swimming behavior, a sensitive indicator of neurotoxicity or general stress.

Materials:

- Test Organisms: Juvenile zebrafish (Danio rerio), 30 days post-fertilization.

- Exposure System: Semi-static system with 2L glass aquaria. Test concentration prepared from a certified stock solution.

- Behavioral Arena: A rectangular glass tank (20cm L x 10cm W x 15cm H) with a white backdrop.

- Recording Equipment: High-definition camera mounted orthogonally above the arena, connected to tracking software (e.g., EthoVision XT).

- Data Analysis Software: Statistical package (e.g., R, PRISM) with appropriate non-parametric tests.

Procedure:

- Acclimation: Individually acclimate fish to the behavioral arena filled with clean, aerated water for 30 minutes prior to recording.

- Recording: Record swimming activity for a 10-minute period under consistent, diffuse lighting. Camera settings (frame rate, resolution) must be documented and held constant.

- Exposure Groups: Repeat for fish exposed to a minimum of five concentrations of the test substance and a solvent control (if applicable). Include a minimum of 8 replicate fish per treatment.

- Tracking Analysis: Use tracking software to extract endpoints: Total distance moved, average velocity, time spent mobile, and thigmotaxis (time near walls).

- Statistics: Perform a one-way ANOVA or Kruskal-Wallis test on each endpoint, followed by post-hoc Dunnett's test to compare each treatment to the control. Calculate No Observed Effect Concentration (NOEC) and Lowest Observed Effect Concentration (LOEC).

CRED Evaluation Points: An evaluator would check this protocol against criteria such as: Was the concentration verified analytically? Was the camera calibrated? Was the acclimation time sufficient to avoid novelty stress? Omission of such details could lead to a "Restricted" classification.

Protocol for CRED Reliability Evaluation Workflow

This is the meta-protocol for applying the CRED method to an ecotoxicity study.

Materials:

- Study manuscript or report.

- CRED evaluation checklist (spreadsheet or form with 20 standard or 29 EthoCRED criteria) [1] [23].

- Access to original statistical guidelines (e.g., OECD Test Guidelines).

Procedure:

- Initial Screening: Read the study fully to understand objectives, design, and conclusions.

- Criterion-by-Criterion Assessment: For each reliability criterion, answer "Yes," "No," or "Not Applicable." "Yes" indicates full compliance.

- Document Deficiencies: For every "No," document the exact nature of the deficiency and its location in the text (e.g., "Page 5: water temperature range not reported").

- Weight Deficiencies: Judge the severity of each deficiency. Does it fundamentally invalidate a core part of the study (e.g., no control group), or is it a minor reporting omission (e.g., supplier name missing for a standard strain)?

- Assign Category:

- Reliable: All key criteria are met ("Yes"). Minor issues are absent or negligible.

- Reliable with Restrictions: One or more deficiencies are present, but the main study findings are considered valid and not critically compromised.

- Not Reliable: One or more critical deficiencies are present that invalidate the study's results (e.g., uncontrolled confounding factor, fatal statistical error).