Taming Uncertainty: A Comprehensive Guide to Sources and Strategies for Handling Variability in Ecotoxicity Testing

This article addresses researchers and scientists in environmental and pharmaceutical development, providing a systematic exploration of variability in ecotoxicity testing.

Taming Uncertainty: A Comprehensive Guide to Sources and Strategies for Handling Variability in Ecotoxicity Testing

Abstract

This article addresses researchers and scientists in environmental and pharmaceutical development, providing a systematic exploration of variability in ecotoxicity testing. It analyzes the fundamental biological, chemical, and methodological sources of variability, introduces standardized protocols and statistical methods for its control, offers practical troubleshooting strategies for common data issues, and reviews frameworks for enhancing reliability through method validation and model comparison. The full scope aims to establish a robust foundation for data quality in both laboratory practice and regulatory submission.

Understanding the Noise: Root Causes and Impacts of Variability in Ecotoxicity Tests

In ecotoxicology, every measurement contains error—the difference between an observed value and the true environmental effect [1]. Correctly classifying this error is the first critical step in diagnosing data issues and ensuring robust risk assessments. Variability is an inherent property of biological and environmental systems, while uncertainty often reflects a lack of knowledge [2]. Errors are traditionally categorized as follows:

- Random Error: A chance difference that causes unpredictable scatter around the true value, affecting the precision (reproducibility) of your measurements [1] [3]. In a dose-response curve, random error appears as increased variation around replicate data points.

- Systematic Error: A consistent, directional difference that skews all measurements away from the true value, affecting the accuracy of your study [1] [4]. This is a form of bias that can lead to false conclusions (Type I or II errors) [1]. An example is a miscalibrated instrument that consistently overestimates chemical concentrations [3].

Table 1: Characteristics of Random and Systematic Error in Ecotoxicology

| Aspect | Random Error | Systematic Error (Bias) |

|---|---|---|

| Definition | Unpredictable fluctuations around the true value [1]. | Consistent, directional deviation from the true value [1]. |

| Impact On | Precision (reproducibility) [1]. | Accuracy (truthfulness) [1]. |

| Direction | Non-directional; equally likely to be higher or lower [1]. | Predictable direction (always higher or lower) [4]. |

| Source Examples | Natural biological variation between organisms [1] [5], minor fluctuations in lab temperature, imprecise instrument resolution [3]. | Miscalibrated equipment [3], non-simultaneous testing of mixtures [6], sampling bias [1], experimenter drift [1]. |

| Statistical Outcome | Increases variance and standard deviation. | Shifts the mean of the dataset. |

| Mitigation Goal | Reduce and quantify. | Identify and eliminate. |

Troubleshooting Guides: Diagnosing and Solving Common Experimental Issues

Scenario 1: Inconsistent Results in Aquatic Toxicity Test Replicates

- Problem: High variability in mortality or growth endpoints among replicate test vessels (e.g., Daphnia reproduction counts vary widely under the same nominal concentration).

- Diagnosis: This primarily indicates high random error. Sources include intrinsic genetic and physiological variability of test organisms [7] [5], slight variations in larval age or health at test initiation, minor differences in food distribution, or imprecise measurement of small-volume stock solutions [3].

- Solution Protocol:

- Standardize Source: Obtain test organisms from a single, reputable supplier with documented culture conditions to reduce inter-batch genetic variability [5].

- Increase Replication: Follow test guidelines (e.g., OECD, EPA) for minimum replicates, but consider increasing replicate number (e.g., from 4 to 8) for highly variable endpoints like reproduction [5].

- Control Environment: Verify and document stability of temperature, photoperiod, and water quality parameters (pH, dissolved oxygen, hardness) daily.

- Use Historical Control Data (HCD): Compare your control group results to in-lab HCD. If controls are within the historical range, the observed treatment variability is more likely random biological noise rather than a procedural flaw [5].

Scenario 2: Suspected False Positive in a Chemical Mixture Study

- Problem: A binary mixture is statistically classified as "synergistic," but the result seems biologically implausible or is irreproducible in follow-up tests.

- Diagnosis: High risk of systematic error introduced by non-simultaneous testing. If the individual chemicals and their mixture are tested in separate batches (even weeks apart), inherent temporal variability in test organism sensitivity can be misinterpreted as a toxicological interaction [6].

- Solution Protocol:

- Mandatory Simultaneous Testing: Design the experiment so that all treatments (Chemical A, Chemical B, Mixture A+B, and controls) are run concurrently from the same batch of organisms, using the same media and reagent stocks [6].

- Full Concentration-Response: For each test unit (single chemicals and mixture), include a full range of concentrations to properly define the dose-response curve, rather than single concentrations [6].

- Statistical Power Analysis: Prior to the experiment, conduct a power analysis based on expected variability to ensure the design can reliably detect the minimum relevant effect size, reducing false negative risk [6].

Scenario 3: Out-of-Range Control Performance in a Chronic Plant Test

- Problem: Control group biomass or germination rate is significantly lower than typical performance for the species, jeopardizing the validity of the entire test.

- Diagnosis: Potential systematic error from an unidentified stressor affecting all test units (e.g., contaminated control water, incorrect lighting spectrum, improperly prepared nutrient solution) [3].

- Solution Protocol:

- Immediate HCD Check: Compare results to laboratory HCD. This contextualizes whether the poor performance is a rare but natural event or a clear outlier indicating a problem [5].

- Triangulation: Use a second, independent method to check a key parameter. For example, if nutrient solution is suspect, verify its pH and electrical conductivity with a freshly calibrated meter and confirm major ion concentrations with a quick test kit [1].

- Calibration Audit: Recalibrate all instruments used to prepare test solutions (balances, pH meters, pipettes) [1] [3]. Check the expiration dates of all chemical reagents and growth media components.

- Blinded Assessment: If possible, have endpoints (e.g., root length measurements) assessed by a technician blinded to the treatment groups to eliminate observer bias [1].

Frequently Asked Questions (FAQs)

Q1: Which type of error is more serious in ecotoxicological risk assessment? A1: Systematic error is generally more problematic. While random error can obscure clear effects and reduce statistical power, systematic error (bias) can lead to consistently inaccurate conclusions [1]. For example, a systematic underestimation of toxicity due to a flawed test method could lead to the approval of a harmful chemical concentration in the environment, causing direct ecological damage [8].

Q2: How can I quantify the different sources of variability and uncertainty in my assessment? A2: Advanced probabilistic methods like 2D Monte Carlo simulation are specifically designed for this. This technique allows you to separately model uncertainty (e.g., in a QSAR model predicting toxicity) and variability (e.g., in chemical composition across consumer products or in regional dilution rates) [2]. The output distinguishes which factor contributes more to the overall range of predicted environmental impact.

Q3: What is the role of Historical Control Data (HCD), and how should I use it? A3: HCD is a compiled record of control group performance from previous, high-quality studies using the same species, method, and laboratory [5]. It is a crucial tool for:

- Contextualizing Results: Determining if your current control or treatment response is within the expected "normal" range of biological variability [5].

- Identifying Systematic Error: A control group consistently outside HCD limits suggests a potential systematic issue with the test execution [5].

- Improving Experimental Design: Understanding the typical variance of an endpoint helps in planning future studies with adequate statistical power [5]. Use HCD as a diagnostic reference, not as a statistical replacement for your concurrent control group.

Q4: Can variability ever be useful information? A4: Yes. While often treated as "noise," variability itself can be an ecologically relevant "signal." [7] Genetic variability in sensitivity within a population is the raw material for adaptation and evolution. Pollution-induced community tolerance (PICT) studies leverage this principle by using changes in community sensitivity as a biomarker of past contaminant exposure [7]. The key is to design studies that specifically aim to measure and interpret variability, rather than unsuccessfully trying to eliminate it all.

Essential Methodologies & Protocols

Protocol A: Implementing Historical Control Data (HCD) Collection [5]

- Define Scope: Select key, variable endpoints for tracking (e.g., Daphnia magna neonate production in OECD 211, Lemna frond count in OECD 221).

- Establish Quality Criteria: Only include data from tests that fully complied with standard guidelines (OECD, EPA, ISO) and internal quality assurance/quality control (QA/QC) criteria.

- Record Systematically: For each study, record the control group mean, standard deviation, sample size (number of replicates), and key test conditions (organism source, test medium, analyst).

- Store and Update: Maintain a live, version-controlled database. Periodically (e.g., annually) analyze the HCD to calculate mean, range, and percentiles (e.g., 5th-95th).

- Apply Judiciously: Use HCD plots as a background for new study results. A result outside the 90% HCD interval should trigger investigation but is not an automatic study rejection.

Protocol B: Testing Mixture Toxicity with Concentration Addition [6]

- Experimental Design: Test individual chemicals (A, B) and their mixture (A+B) simultaneously using the same batch of organisms, media, and equipment.

- Concentration Series: For each of the three test units (A, B, A+B), prepare a geometric series of at least 5 concentrations, plus a negative control.

- Effect Measurement: Measure the standard endpoint (e.g., 50% immobilization, growth inhibition) after the prescribed exposure period.

- Data Analysis:

- Calculate the EC₅₀ for Chemical A and Chemical B.

- For each mixture concentration, calculate the Toxic Unit (TU): TU = (Concentration of A in mixture / EC₅₀ of A) + (Concentration of B in mixture / EC₅₀ of B).

- Plot the observed mixture effect against the sum of TUs. A sum of TUs = 1 at the EC₅₀ indicates additivity. < 1 indicates synergism; > 1 indicates antagonism.

- Use appropriate statistical models (e.g., MIXTOX) to test for significant deviations from additivity.

The Scientist's Toolkit: Key Reagents & Materials

Table 2: Essential Research Reagents and Materials

| Item | Function in Ecotoxicology | Notes on Variability Control |

|---|---|---|

| Standard Reference Toxicants (e.g., KCl, NaCl, CdCl₂) | Used to monitor the health and consistent sensitivity of test organism cultures over time. | Regular testing (e.g., monthly) generates HCD for organism sensitivity, helping to detect systematic drift in culture health [5]. |

| Reconstituted Standard Water (e.g., EPA Moderately Hard Water, ISO Daphnia Medium) | Provides a consistent, defined ionic background for tests, eliminating variability from natural water sources. | Must be prepared from high-purity salts and deionized water using a standardized recipe. Batch documentation is critical. |

| Certified Analytical Standards | Used to calibrate instruments (HPLC, GC-MS, ICP-MS) for accurate quantification of test chemical concentrations. | Using improperly calibrated or low-grade standards is a major source of systematic error in exposure characterization [1] [3]. |

| In-Lab Historical Control Database | A curated record of past control group performance. | The single most powerful tool for distinguishing random biological variability from systematic procedural errors [5]. |

| Blinded Sample Coding System | A method to conceal the identity of test samples (control vs. treatment) from personnel conducting measurements or assessments. | Mitigates observer bias, a common systematic error where expectations unconsciously influence readings [1] [8]. |

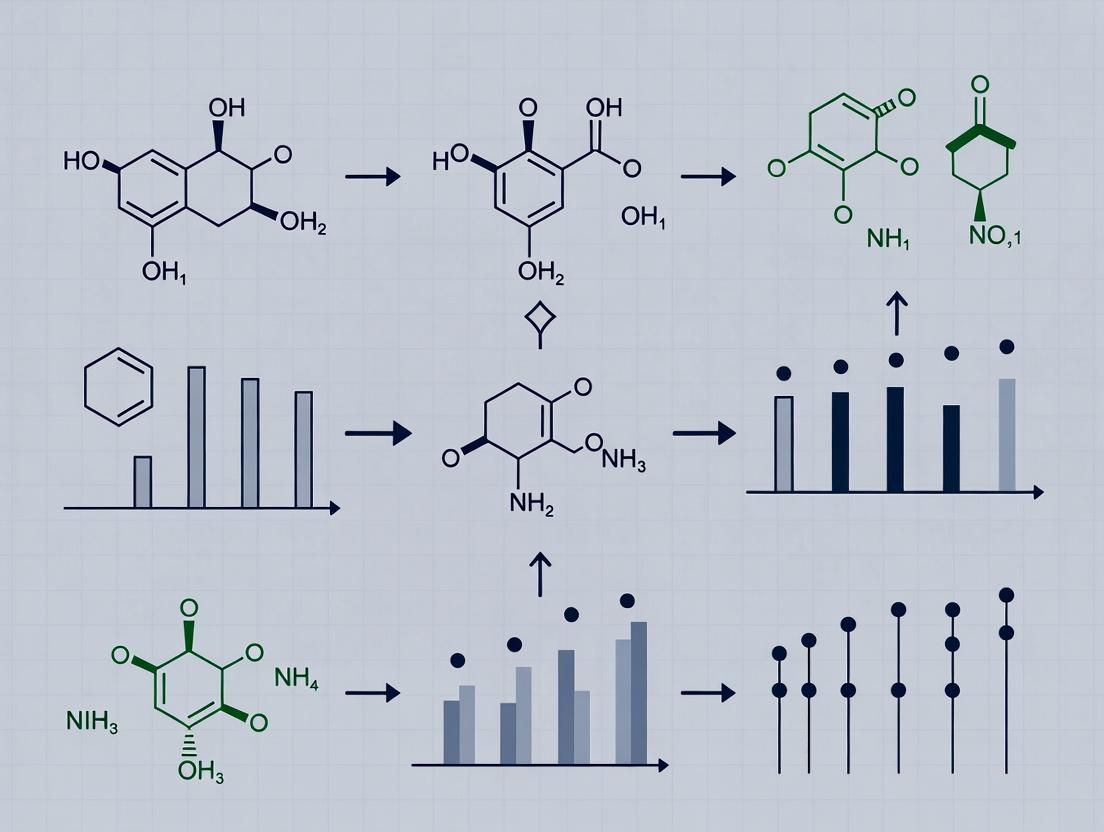

Visualizing Concepts and Workflows

Diagram 1: Conceptual Framework of Error Types and Mitigation

Diagram 2: Decision Workflow for Diagnosing Error Type

Welcome to the technical support center for researchers and scientists focused on managing variability in ecotoxicity testing. This resource is framed within a broader thesis on improving the reproducibility and interpretability of ecotoxicity test results. The core challenge is distinguishing true toxicity signals from "noise" introduced by methodological inconsistencies. This guide provides troubleshooting advice, FAQs, protocols, and visualizations centered on the three primary sources of variability: the Test Organism, the Test Substance, and Procedural Factors.

Troubleshooting Guides & FAQs

The following guides address specific, common issues encountered during ecotoxicity experiments. They are structured by the three main sources of variability.

Test Organism-Related Issues

Troubleshooting Guide: Inconsistent Organism Response

- Problem: High mortality in controls, inconsistent dose-response curves between replicates or test runs.

- Possible Causes & Solutions:

- Cause: Organisms from different suppliers or batches have varying genetic backgrounds, health status, or age[reference:0].

- Solution: Source organisms from a single, reputable supplier. Maintain detailed records of organism lineage, age, and culture conditions. Implement a reference toxicant test with each new batch to verify sensitivity[reference:1].

- Cause: Stress from shipping or acclimation.

- Solution: Extend acclimation periods. Whenever possible, use in-house cultured organisms to avoid transportation stress[reference:2].

- Cause: Poor organism health due to suboptimal culture conditions (food, water quality, density).

- Solution: Strictly standardize and monitor culture conditions. Follow established guidelines for organism husbandry.

FAQs: Test Organism

- Q: How can I tell if my test organisms are the cause of high variability?

- A: Conduct regular reference toxicant tests (e.g., using potassium dichromate for Daphnia). If the LC50/EC50 for the reference toxicant varies significantly (e.g., by more than a factor of 2-3) between batches, organism-related factors are likely a major source of variability[reference:3].

- Q: Are there reporting standards for describing test organisms?

- A: Yes. Frameworks like the CRED (Criteria for Reporting and Evaluating Ecotoxicity Data) recommend reporting the scientific name, life stage, age, weight/length, strain/clone, source, and acclimation procedures[reference:4]. Comprehensive reporting aids in identifying organism-related variability.

Test Substance-Related Issues

Troubleshooting Guide: Unstable or Inconsistent Test Concentrations

- Problem: Measured test concentrations deviate significantly from nominal concentrations, or toxicity appears erratic.

- Possible Causes & Solutions:

- Cause: Poor solubility or instability of the test chemical (e.g., hydrolysis, photodegradation, volatilization)[reference:5].

- Solution: Characterize the substance's stability under test conditions. Use appropriate solvents or dispersants sparingly and include solvent controls. For volatile compounds, consider sealed test chambers or flow-through systems.

- Cause: Adsorption of the substance to test vessel walls or interaction with test media components.

- Solution: Use appropriate vessel materials (e.g., glass, specific plastics). For nanomaterials or hydrophobic substances, characterize aggregation/agglomeration state in the test medium[reference:6].

- Cause: Inaccurate preparation of stock and test solutions.

- Solution: Use validated analytical methods (e.g., chromatography, spectroscopy) to verify concentrations at test initiation and at regular intervals during the exposure.

FAQs: Test Substance

- Q: What is the "factor-of-3" rule in ecotoxicity variability?

- A: Research analyzing large databases of acute aquatic toxicity data found that the standard deviation of intertest variability (differences between tests on the same species-chemical combination) is approximately a factor of 3[reference:7]. This means a three-fold difference in reported effect concentrations can arise from methodological differences alone, even before considering biological variability.

- Q: How should I handle difficult-to-test substances like nanomaterials or poorly soluble compounds?

- A: Follow specific guidance documents (e.g., OECD Guidance Document on Aquatic Toxicity Testing of Difficult Substances). Key considerations include: using appropriate dispersion methods, characterizing the test material in the exposure medium, and including relevant controls (e.g., dispersant controls, ion controls for metallic nanomaterials)[reference:8].

Procedural Factor-Related Issues

Troubleshooting Guide: Abiotic Conditions Causing Aberrant Results

- Problem: Unexplained toxicity or lack of effect, erratic data patterns across replicates.

- Possible Causes & Solutions:

- Cause: Fluctuations in key water quality parameters: dissolved oxygen (DO), pH, temperature, hardness[reference:9].

- Solution: Continuously monitor and log abiotic conditions. Use environmental chambers to control temperature and light. Ensure proper aeration without causing stress to organisms.

- Cause: Inconsistent test execution (e.g., feeding regimes, cleaning schedules, timing of observations) between technicians or test runs.

- Solution: Implement detailed, written Standard Operating Procedures (SOPs). Conduct regular training and proficiency testing for all laboratory personnel[reference:10].

- Cause: Inadequate experimental design (e.g., too few replicates, insufficient concentration levels).

- Solution: Use power analysis to determine appropriate replicate numbers. Include a sufficient range of concentrations to fully define the dose-response relationship.

FAQs: Procedural Factors

- Q: What are the most critical abiotic factors to control in aquatic tests?

- A: Dissolved oxygen and pH are paramount, as they directly affect organism stress and the bioavailability/toxicity of many chemicals (e.g., ammonia toxicity is highly pH-dependent)[reference:11]. Temperature and water hardness are also critical for maintaining organism health and consistent chemical behavior.

- Q: How much variability is considered "acceptable" in a standardized test?

- A: For whole effluent toxicity (WET) tests, variability within a factor of two is often cited as a maximum acceptable range for inter-laboratory comparisons[reference:12]. However, "acceptable" limits depend on the test type and regulatory context. The goal is to minimize variability through rigorous procedural control.

The table below summarizes key quantitative findings on variability in ecotoxicity testing, providing a benchmark for evaluating your own data.

| Variability Source | Typical Magnitude (Factor) | Description & Context | Key Reference |

|---|---|---|---|

| Intertest Variability | ~3x | Standard deviation of effect concentrations (e.g., LC50) for the same chemical-species combination across different studies. Represents combined noise from all sources. | Hickey et al. (2012) - Analysis of a large aquatic toxicity database[reference:13] |

| Intra-laboratory Variability (Target) | ≤2x | Often cited as a benchmark for acceptable reproducibility within a single lab conducting the same test method repeatedly. | Chapman (2000) - Discussion on WET test acceptability[reference:14] |

| Organism Batch Sensitivity | Variable | Can be monitored via reference toxicant tests. A coefficient of variation (CV) of <30% for reference toxicant EC50s is often a quality control goal. | Common laboratory QA/QC practice |

| Abiotic Factor Fluctuation | Situation-dependent | Small changes can have large effects. E.g., a drop in DO below 40% saturation can cause stress; a 1-unit pH shift can change ammonia toxicity tenfold. | Toxicity testing guidance[reference:15] |

Experimental Protocols for Minimizing Variability

The following protocols are essential for robust, reproducible ecotoxicity testing. They are adapted from standard OECD and EPA guidelines.

Reference Toxicant Testing Protocol

- Purpose: To monitor the health and sensitivity of a test organism population over time.

- Materials: Healthy test organisms (e.g., Daphnia magna), reference chemical (e.g., potassium dichromate, K₂Cr₂O₇), reconstituted standard freshwater, test vessels, aeration system.

- Procedure:

- Prepare a geometric series of at least 5 concentrations of the reference toxicant in standard freshwater.

- Dispense solutions into test vessels, with at least 4 replicates per concentration.

- Randomly introduce 5-10 organisms (of similar age) into each vessel.

- Expose for the standard test duration (e.g., 48h for Daphnia acute test) without feeding, under controlled temperature and light.

- Record immobilization (or other endpoint) at 24h and 48h.

- Calculate the EC50 using probit or non-linear regression analysis.

- Quality Control: The test is valid if control mortality is <10%. The historical EC50 range for your lab should be established, and new batch results should fall within an acceptable range (e.g., ±2 standard deviations).

Standard Static Non-Renewal Acute Toxicity Test (Fish/Invertebrate)

- Purpose: To determine the acute toxicity of a chemical or effluent.

- Materials: Test organisms, test substance, solvent (if necessary), dilution water, glass or plastic test chambers, water quality meters (DO, pH, temperature), analytical equipment for chemical verification.

- Procedure:

- Acclimation: Acclimate organisms to test conditions for at least 48h.

- Solution Preparation: Prepare a stock solution of the test substance. From this, prepare a geometric series of test concentrations (e.g., 100%, 50%, 25%, 12.5%, 6.25%) in dilution water. Include a solvent control if applicable.

- Exposure: Randomly allocate organisms to test chambers. Begin exposure by transferring organisms to chambers containing the test solutions.

- Monitoring: Record mortality/immobilization at regular intervals (e.g., 24, 48, 72, 96h). Measure and record DO, pH, and temperature at test initiation and termination.

- Analytical Verification: Take water samples from at least the high, medium, and low concentrations at test start and end for chemical analysis.

- Data Analysis: Calculate LC50/EC50 values using appropriate statistical methods.

Visualizations

This diagram categorizes the primary sources of variability that can influence the outcome of an ecotoxicity test.

Experimental Workflow for a Standard Aquatic Toxicity Test

This flowchart outlines the critical steps in a standard ecotoxicity test, highlighting key decision and quality control points.

The Scientist's Toolkit: Essential Research Reagents & Materials

This table lists critical reagents and materials used in ecotoxicity testing to standardize procedures and control variability.

| Item | Function & Rationale | Example(s) |

|---|---|---|

| Reference Toxicants | To verify the sensitivity and health of test organism batches. A standard response ensures biological variability is minimized. | Potassium dichromate (K₂Cr₂O₇) for Daphnia; Sodium chloride (NaCl) for fish; Copper sulfate (CuSO₄) for algae. |

| Standardized Reconstituted Water | Provides a consistent ionic composition, hardness, and pH for tests, eliminating variability from natural water sources. | ASTM, OECD, or ISO standard reconstituted freshwater (e.g., EPA Moderately Hard Water). |

| High-Purity Solvents | To dissolve poorly soluble test substances without introducing toxicity. Must be used at minimal, non-toxic concentrations. | Dimethyl sulfoxide (DMSO), acetone, ethanol. Always include a solvent control. |

| Culture Media & Food | Standardized nutrition for culturing and maintaining test organisms ensures consistent health and growth across batches. | Algal paste (e.g., Selenastrum capricornutum) for Daphnia; specific fish feeds; yeast-cerophyll-trout chow (YCT) for Ceriodaphnia. |

| Buffers & pH Adjusters | To maintain stable pH in test solutions, which is critical for organism health and chemical bioavailability. | Sodium bicarbonate, hydrochloric acid (HCl), sodium hydroxide (NaOH). |

| Water Quality Test Kits/Probes | For real-time monitoring of critical abiotic parameters: Dissolved Oxygen (DO), pH, temperature, conductivity, hardness. | Calibrated DO meters, pH electrodes, digital thermometers. |

| Analytical Standards | To verify the actual concentration of the test substance in exposure media via chemical analysis (e.g., HPLC, GC-MS, ICP-MS). | Certified reference material (CRM) of the test substance. |

Technical Support Center: Troubleshooting Variability in Ecotoxicity Testing

In ecotoxicity testing, variability—the inherent heterogeneity in biological systems, experimental conditions, and environmental contexts—is not merely noise to be reduced. It is a fundamental characteristic that must be understood, quantified, and integrated into the interpretation of test results and subsequent regulatory decisions [9]. This technical support center is designed within the broader thesis that effectively handling variability is central to robust ecotoxicological research and reliable chemical safety assessments. The following troubleshooting guides and FAQs address specific, high-impact sources of variability, providing researchers and drug development professionals with diagnostic frameworks and methodological solutions to enhance the reliability of their data for regulatory submissions [10].

Troubleshooting Guide 1: Erratic or Inverted Dose-Response Curves

Reported Problem: Test results show non-monotonic dose-response relationships, high replicate scatter, or "inverted" curves where higher survival is observed at higher concentrations.

Root Cause Analysis:

The primary culprits often lie in sample integrity or organism health [11].

- Sample Degradation: Volatile toxicants (e.g., chlorine, certain solvents) can evaporate during test setup or the exposure period. Microbial activity in the sample can consume oxygen or alter pH, changing toxicant bioavailability.

- Unhealthy Test Organisms: Organisms stressed by shipment, poor culturing conditions, or improper acclimation exhibit compromised and inconsistent health, leading to erratic responses and chance deaths not directly related to the toxicant [11].

- Abiotic Test Conditions: Fluctuations in dissolved oxygen (DO), pH, or temperature within or between test chambers can drastically alter organism stress and toxicant action [11].

Diagnostic Steps:

- Review Sample Handling: Verify sample holding time did not exceed 36 hours and that samples were kept at 0-4°C with wet ice, not gel packs [11]. Check for signs of sedimentation or algal growth.

- Audit Organism Source & History: Determine if organisms were cultured in-house or shipped. Request records on culturing parameters (food, density, water quality) and acclimation procedures. Younger organisms are generally more sensitive [11].

- Scrutinize Water Quality Logs: Examine chronological data for DO, pH, temperature, and hardness from all test chambers and replicates. Look for correlations between parameter spikes/drops and observed effects.

Solutions & Protocols:

- Protocol for Sample Preparation with Volatiles: Prepare dilutions in a closed system if possible. Use headspace-minimal vessels and begin exposure of replicates in a randomized, staggered fashion to minimize systematic bias from degradation.

- Protocol for In-House Organism Culturing: Establish and maintain in-house cultures of key species (e.g., Ceriodaphnia dubia, Pimephales promelas). This eliminates shipment stress, allows immediate access, and enables pre-test screening of batch health [11]. Standardize food (e.g., algal species, yeast), density (<40 adults/L for C. dubia), and light cycles.

- Protocol for Real-Time Abiotic Monitoring: Implement continuous logging probes for DO and pH in at least one control and one high-concentration chamber. Set alarms for DO < 60% saturation or pH shifts >0.5 units. Manually confirm readings in all chambers at each check point.

Troubleshooting Guide 2: High Inter-Laboratory Variability in Standardized Tests

Reported Problem: The same reference compound or effluent sample yields significantly different LC50/EC50 values when tested by different laboratories following the same standard guideline (e.g., OECD, EPA).

Root Cause Analysis:

Variability often stems from "flexibilities" within guidelines and differences in technical execution [11].

- Variable Test Organism Phenotype: Age, size, genetic strain, and nutritional state of the test organism can differ between suppliers and internal cultures [10] [11].

- Divergent Technician Technique: Subtle differences in organism handling (pipetting force, net type), feeding, chamber cleaning, and endpoint assessment (e.g., counting live/dead Ceriodaphnia) introduce observer bias [11].

- Sample Manipulation Differences: Pre-test adjustments to sample salinity, pH, or hardness using different reagents or methods can alter toxicant chemistry and bioavailability [11].

Diagnostic Steps:

- Conduct a Ring-Test with Controls: Run a parallel test using a well-characterized reference toxicant (e.g., sodium chloride, potassium dichromate) and a control water. Compare your results to laboratory historical control charts and published inter-laboratory studies.

- Perform a Technical Audit: Have a second analyst within the lab independently count a subset of replicates or review video recordings of test endpoints. Calculate inter-observer error.

- Benchmark Detailed Methods: Compare your detailed standard operating procedures (SOPs) with another lab's for the same guideline. Focus on organism age acceptance window, dilution water source, feeding regimen, and endpoint criteria.

Solutions & Protocols:

- Protocol for Standardized Organism Sourcing & Characterization: If in-house culture is not possible, source from a single, reputable supplier. Characterize each batch by measuring mean body length (for Daphnia) or weight (for fish) and conducting a 24-hour reference toxicant test. Only use batches whose sensitivity falls within pre-established ranges.

- Protocol for Technician Certification: Implement a mandatory certification for new analysts. Require successful completion of a mock test with pre-determined results, demonstrating proficiency in organism handling, dilution preparation, and endpoint determination before running regulatory tests.

- Protocol for Sample Conditioning: For samples requiring pH adjustment, standardize the method: use the same reagent (e.g., ACS grade HCl or NaOH), add dropwise with slow stirring, allow a stabilization period (e.g., 30 minutes), and verify stability over the exposure period.

Advanced Guide: Implementing a 2D Monte Carlo Analysis to Disentangle Uncertainty and Variability

For higher-tier risk assessments (e.g., for a new pharmaceutical ingredient), a quantitative analysis distinguishing uncertainty (lack of knowledge) from variability (true heterogeneity) is critical [9] [2].

- Objective: To quantitatively assess the potential ecotoxicological impact (PEI) of a chemical, separating contributions from data uncertainty (e.g., from QSAR models) and real-world variability (e.g., in formulation, consumer use) [2].

- Principle: A 2D Monte Carlo simulation runs an outer loop sampling from variability distributions (what differs in the real world) and an inner loop sampling from uncertainty distributions (what we are unsure about).

Diagram Title: 2D Monte Carlo Workflow for Uncertainty & Variability Analysis

Step-by-Step Protocol:

- Parameter Identification: List all inputs (e.g., ecotoxicity endpoints, chemical loading, wastewater treatment plant removal efficiency).

- Classification: Tag each as Variability (true spatial/temporal/population difference, like regional use patterns) or Uncertainty (parameter estimate error, like QSAR-predicted toxicity) [2].

- Distribution Definition: Fit appropriate probability distributions (e.g., lognormal for variability, uniform for uncertainty) using literature, empirical data, or expert elicitation.

- Simulation Execution:

- Outer Loop (Variability): Draw one random value from each variability distribution to create a specific "real-world scenario."

- Inner Loop (Uncertainty): For that fixed scenario, run 1,000-10,000 iterations where you draw values from each uncertainty distribution and run your risk model. This generates a distribution of risk outcomes representing knowledge uncertainty for that scenario.

- Repeat the outer loop 1,000+ times to sample the range of real-world variability.

- Output Analysis: Results can be plotted to show, for example, the median risk (from uncertainty) across all variability scenarios, or the 90th percentile risk estimate for the most vulnerable scenario. This explicitly shows whether overall result spread is driven by lack of knowledge or true heterogeneity [2].

Frequently Asked Questions (FAQs)

Q1: How do regulators account for biological variability when setting safe concentration limits (e.g., PNECs)? Regulators apply assessment (uncertainty) factors to account for intra- and inter-species variability. A common default is a factor of 10 to extrapolate from a laboratory species to a generic aquatic community, and another factor of 10 to protect sensitive species within that community [12]. These factors are applied to the most sensitive endpoint from standardized tests (e.g., algal growth, daphnid reproduction) [10]. When more data across species and trophic levels are available, these factors can be reduced using species sensitivity distribution (SSD) models.

Q2: Our product formulation has minor changes (e.g., a new preservative). How does variability in test results affect the regulatory pathway for this change in the EU? The EU's new Variations Guidelines (2025) use a risk-based classification. The key is demonstrating that variability in critical quality attributes and, consequently, in any required ecotoxicity testing, remains within bounds that do not adversely affect the product's benefit-risk balance [13] [14].

- Type IA (Minimal Impact): Changes where any variability is expected to be negligible. Notification post-implementation.

- Type IB (Notification): Changes where variability is controlled and well-understood; you must notify regulators with supporting data.

- Type II (Major): Changes that could introduce new or greater variability in safety or efficacy, requiring prior approval with comprehensive data [14]. Using a Post-Approval Change Management Protocol (PACMP) pre-agreed with regulators can streamline this process by defining acceptable variability limits and studies upfront [13].

Q3: What are the most common sources of variability in a standard acute effluent toxicity test (Ceriodaphnia dubia 48-hour survival), and which should we investigate first? Based on frequency and impact, prioritize investigation in this order [11]:

- Test Organism Health & Sensitivity: The condition of the daphnids is the single largest factor. Check for shipment stress, age, and culture health.

- Dissolved Oxygen (DO) Management: DO crashes in test chambers, especially in higher concentrations or with high organic load, cause acute stress and death. Review aeration methods and monitoring logs.

- Sample Holding & Preparation: Exceeding 36-hour holding time or improper cooling allows sample degradation. Inconsistent temperature during dilution preparation can also be a factor.

- Technician Technique: Inconsistent pipetting during organism transfer or feeding, and subjective determination of "immobile" (dead) endpoints.

Q4: How can New Approach Methodologies (NAMs) help address variability in traditional ecotoxicity testing? NAMs, like defined in vitro assays or computational models, can reduce variability arising from whole-organism tests by using more standardized biological systems (e.g., cell lines, proteins) [10].

- Reducing Biological Variability: An in vitro assay using a specific fish cell line eliminates variability from organism age, nutrition, and genetic drift.

- High-Throughput Screening: Testing multiple concentrations with many replicates becomes feasible, allowing for better characterization of the dose-response relationship itself.

- Mechanistic Insight: Assays targeting specific toxicity pathways (e.g., estrogen receptor binding) can clarify whether variability in whole-organism responses is due to differences in toxicokinetics (uptake/distribution) or toxicodynamics (target interaction). However, NAMs introduce new uncertainties regarding extrapolation to whole organisms and ecosystems, which is an active area of regulatory research [10].

Table 1: Quantified Influence of Different Variability and Uncertainty Sources in an Ecotoxicological Impact Assessment of Shampoo Ingredients [2]

| Source Category | Specific Source | Magnitude of Influence on Results (Order of Magnitude Spread) | Primary Management Strategy |

|---|---|---|---|

| Uncertainty | Limited toxicity data (only 3 species) | ~7 orders of magnitude (90th/10th percentile) | Increase species data via testing or Interspecies Correlation Estimates (ICE) models |

| Variability | Shampoo product formulation differences | ~3 orders of magnitude | Standardize assessment for worst-case or representative formulation |

| Variability | Regional differences in water use & release | ~1-2 orders of magnitude | Use probabilistic regional exposure modeling |

| Uncertainty | QSAR model prediction error | ~1 order of magnitude | Use multiple QSAR models & evaluate applicability domain |

Table 2: Common Test Organisms and Sources of Associated Variability [11]

| Test Organism | Common Test Types | Key Variability Sources Related to Organism |

|---|---|---|

| Ceriodaphnia dubia (Water flea) | Acute (48-hr) & Chronic (7-day) Survival, Reproduction | Age (<24-hr old for chronic), maternal health, food type (algae vs. yeast), culture density. |

| Pimephales promelas (Fathead minnow) | Acute (96-hr) & Chronic (7-day) Larval Survival & Growth | Larval age (post-hatch), egg quality, temperature during incubation, dissolved oxygen. |

| Cyprinella leedsi (Bannerfin shiner) | Acute (96-hr) Survival | Sensitivity varies with wild vs. cultured source; acclimation stress is critical. |

The Scientist's Toolkit: Key Reagent & Material Solutions

Table 3: Essential Materials for Managing Variability in Aquatic Ecotoxicity Tests

| Item | Function in Managing Variability | Specification & Best Practice |

|---|---|---|

| Culture-Grade Algae (e.g., Pseudokirchneriella subcapitata, Selenastrum capricornutum) | Standardized, nutritious food source for daphnids and other invertebrates. Reduces variability in organism growth and health compared to non-standardized food like yeast or lettuce [11]. | Maintain axenic cultures; use consistent growth phase (e.g., late log) for feeding; verify cell density and vitality. |

| Reference Toxicants (e.g., NaCl, KCl, CdCl₂, CuSO₄) | Quality control for test organism sensitivity. Used to create lab-specific control charts to monitor for temporal drift in organism health and responsiveness [11]. | Use high-purity (ACS grade) stock; prepare fresh solutions regularly; run tests periodically (e.g., monthly) with each species. |

| Dissolved Oxygen & pH Probes | Continuous monitoring of critical abiotic parameters. Prevents confounding toxicity with stress from poor water quality and identifies equipment failures [11]. | Calibrate daily; use probes with fast-stabilizing electrodes; implement in-chamber logging where possible. |

| QSAR Software & Databases | Provides estimated ecotoxicity values for data-poor chemicals, helping prioritize testing and fill data gaps. Addresses uncertainty from missing data [2]. | Use multiple models (e.g., ECOSAR, VEGA) to evaluate prediction consensus; always check the applicability domain of the model for your chemical. |

| Post-Approval Change Management Protocol (PACMP) Template | A strategic regulatory tool to proactively manage variability introduced by post-approval changes. Defines studies and acceptable limits for changes upfront, streamlining regulatory review [13] [14]. | Develop in early dialogue with regulators; align with ICH Q12 principles; cover manufacturing, analytical, and if needed, (eco)toxicological variability. |

Regulatory Decision Pathways Incorporating Variability

Diagram Title: Regulatory Decision Pathway with Variability Checkpoint

Technical Support Center: Troubleshooting Variability in Ecotoxicity Testing

This technical support center is designed within the context of a broader thesis on managing variability in ecotoxicological research. It provides targeted guidance for researchers and professionals facing challenges related to interspecies and life-stage sensitivity differences, which are critical for ecological risk assessment (ERA) and regulatory decision-making [15].

Troubleshooting Guide: Addressing Common Experimental Challenges

Issue 1: High variability in sensitivity between closely related species undermines prediction confidence.

- Root Cause: Low phylogenetic signal for toxicant sensitivity. Closely related species can exhibit marked differences in tolerance due to microevolutionary adaptations to different ecological niches, which inadvertently affect toxicokinetic or toxicodynamic pathways [16].

- Recommended Action: Do not assume similar sensitivity based on taxonomy alone. Incorporate functional trait analysis (e.g., maximum body size, habitat salinity, migration type) alongside phylogenetic data. For predictive modeling, ensure your Species Sensitivity Distribution (SSD) is built with data from a sufficient number of species (ideally >5) across diverse taxa and life histories [17] [16].

Issue 2: Uncertainty in extrapolating laboratory test results to protect field populations and ecosystems.

- Root Cause: Standard test species (e.g., Daphnia magna, rainbow trout) are selected for laboratory practicality, not necessarily because they represent the most sensitive or ecologically critical species in all environments. This creates an "extrapolation gap" [18].

- Recommended Action: For site-specific risk assessment, consider incorporating native test species relevant to the ecosystem of concern [15]. Use SSDs, which explicitly account for interspecies variability, rather than relying solely on arbitrary assessment factors applied to single-species data [19] [18]. Explore trait-based approaches to predict sensitivity for untested species [18].

Issue 3: Inconsistent results between different testing methodologies (e.g., water-only vs. sediment tests).

- Root Cause: Exposure pathways and bioavailability differ fundamentally between test systems. For hydrophobic organic chemicals in sediments, equilibrium partitioning (EqP) theory and spiked-sediment tests can yield differing hazard estimates if based on limited data [17].

- Recommended Action: When deriving sediment quality benchmarks, compile toxicity data from an adequate number of species (≥5). Research indicates that with sufficient data, SSDs based on EqP theory and spiked-sediment tests become comparable, with differences in HC5 values reducing significantly [17]. Always document and standardize sediment characteristics (e.g., organic carbon content) that influence chemical bioavailability.

Issue 4: Early-life stage (ELS) fish tests show common toxicity syndromes, but their environmental relevance is unclear.

- Root Cause: The observed ELS syndrome (e.g., pericardial edema, spinal curvature) can result from either specific receptor-mediated toxicity or non-specific baseline toxicity (narcosis) at concentrations near lethality [20].

- Recommended Action: Differentiate between specific and non-specific mechanisms. Compare the effective concentration to baseline toxicity models. Effects occurring at or near baseline-toxic concentrations likely indicate general membrane disruption affecting multiple cellular functions, which is crucial for interpreting the severity and specificity of risk [20].

Frequently Asked Questions (FAQs)

Q1: What is the single most sensitive species I should use for testing to ensure protection? A1: The concept of a "most sensitive species" is a myth [7]. Sensitivity is chemical- and trait-dependent. A species highly sensitive to one toxicant may be tolerant to another. Relying on a single species introduces significant uncertainty. The modern approach uses SSDs to model the range of sensitivities across a community [19] [18].

Q2: How many test species are needed to build a reliable Species Sensitivity Distribution (SSD)? A2: Regulatory applications typically require toxicity data for at least 5 to 10 species to construct an SSD [17]. Statistically, using five or more species can substantially reduce uncertainty. For example, a comparative study found that using ≥5 species reduced the difference in HC5 estimates between two different testing methodologies from a factor of 129 to a factor of 5.1 [17].

Q3: Can I use open literature toxicity data for my regulatory assessment or SSD model? A3: Yes, but data must be carefully curated. The U.S. EPA's ECOTOX database is a primary resource [19] [21]. Acceptable studies must report: a) single-chemical exposure, b) an explicit duration, c) a concurrent control, d) a calculated endpoint (e.g., LC50, NOEC), and e) verified test species information [21]. Data should be screened for reliability and relevance.

Q4: Why should I consider using non-standard, native test species? A4: Standard test species may not represent the ecological or physiological traits of species in your region of interest. Using native species can improve the environmental relevance of the risk assessment, especially for site-specific evaluations [15]. For instance, research in East Asia has proposed native species like the pale chub (Zacco platypus) or the freshwater shrimp Neocaridina denticulata as promising test species for regional ERA [15] [22].

Q5: What are the key functional traits linked to toxicant sensitivity in fish? A5: A large-scale analysis of 269 fish species identified that maximum body length, migration type, habitat salinity, and air-breathing ability were correlated with sensitivity to toxicants [16]. However, these relationships were not strong after accounting for phylogeny, reinforcing that sensitivity is often driven by species-specific microevolutionary adaptations rather than broad phylogenetic patterns [16].

Detailed Experimental Protocols

Objective: To evaluate the consistency of sediment quality benchmarks derived from SSDs based on EqP theory versus direct spiked-sediment toxicity tests for nonionic hydrophobic organic chemicals (log KOW > 3).

Methodology:

- Data Compilation:

- Spiked-Sediment Data: Collect acute (10-14 day) lethality (LC50) test data for benthic invertebrates (e.g., amphipods, midges) from curated databases (e.g., SETAC SEDAG database) and peer-reviewed literature. Record sediment organic carbon content.

- Water-Only Data: Collect acute water-only LC50 data for pelagic and benthic invertebrates from the EPA ECOTOX database. Include only tests with exposure durations ≥ 10 days or apply appropriate exposure-time correction models.

- EqP Conversion: Convert water-only LC50 values to sediment-equivalent concentrations (LC50-sed) using the formula: LC50-sed = LC50-water * KOC, where KOC is the organic carbon-water partition coefficient for the test chemical.

- SSD Construction: For each chemical and each approach (EqP-derived and spiked-sediment), fit the collected LC50 data (log-transformed) to a statistical distribution (e.g., log-logistic). Use a minimum of 5 species data points per SSD.

- Benchmark Calculation: Derive the Hazardous Concentration for 5% of species (HC5) and its 95% confidence interval from each SSD.

- Comparison: Statistically compare the HC5 values and their confidence intervals between the two approaches. Assess the factor of difference.

Key Interpretation: If the 95% confidence intervals of the HC5 estimates overlap or the difference is small (e.g., within a factor of 5-10), the approaches are considered comparable for that chemical, supporting the use of data-rich EqP methods to inform sediment assessments [17].

Objective: To determine whether observed ELS toxicity (edema, spinal curvature) is due to a specific mechanism of action or non-specific baseline narcosis.

Methodology:

- ELS Toxicity Test: Conduct a standard fish ELS test (e.g., OECD Test Guideline 210) with the chemical of concern. Precisely document all sublethal effect concentrations (e.g., EC10, EC50 for malformations).

- Baseline Toxicity Prediction: Calculate the predicted baseline (narcosis) toxicity concentration for the test chemical. This can be done using quantitative structure-activity relationship (QSAR) models based on the chemical's octanol-water partition coefficient (KOW).

- Mechanistic Comparison: Compare the experimentally observed effect concentrations from Step 1 with the predicted baseline toxicity concentration from Step 2.

- If the observed effect concentration is within a factor of 10-30 of the predicted baseline level, the effects are likely due to non-specific narcosis.

- If the observed effect concentration is significantly lower (e.g., > a factor of 100) than the predicted baseline level, it suggests a specific, receptor-mediated toxic mechanism.

- Confirmatory Analysis (if specific toxicity is indicated): Pursue targeted endpoints (e.g., gene expression, specific enzyme inhibition, receptor binding assays) aligned with the hypothesized specific mechanism.

Key Interpretation: Distinguishing between these mechanisms is critical for ERA. Baseline toxicity represents a minimal-effect threshold, while specific toxicity at much lower concentrations indicates a higher potential hazard and may require different risk management strategies [20].

Quantitative Data on Sensitivity Distributions

Table 1: Summary of Large-Scale Species Sensitivity Distribution (SSD) Modeling Data [19]

| Model Scope | Number of Toxicity Records | Taxonomic Groups Spanned | Key Output | Application |

|---|---|---|---|---|

| Global SSD Model | 3,250 entries | 14 groups across 4 trophic levels (Producers, Primary Consumers, Secondary Consumers, Decomposers) | Predicted HC5 for untested chemicals | Prioritizing 188 high-toxicity compounds from 8,449 screened |

| Specialized SSD for Personal Care Products (PCPs) & Agrochemicals | Subset of main database | Tailored taxonomic groups | Class-specific HC5 estimates | Targeted risk mitigation for high-priority regulatory classes |

Table 2: Comparison of SSD Methods for Sediment Assessment [17]

| Testing Approach | Data Requirement | Typical HC5 Comparison (Insufficient Data) | Typical HC5 Comparison (With ≥5 Species) | Major Uncertainty Source |

|---|---|---|---|---|

| Equilibrium Partitioning (EqP) Theory | Acute water-only toxicity data + Chemical KOC | HC5 values differed by up to a factor of 129 from spiked-sediment HC5 | Difference reduced to a factor of 5.1; 95% CIs often overlap | Accuracy of KOC value; assumption of sensitivity parity between pelagic/benthic species |

| Spiked-Sediment Toxicity Test | Direct sediment toxicity tests with benthic organisms | (Reference method) | (Reference method) | Sediment composition (e.g., organic carbon, particle size); limited standardized test species |

Table 3: Proposed Native Test Species for East Asia and Their Traits [15] [22]

| Species | Common Name | Trophic Role | Key Traits for Ecotoxicity Testing |

|---|---|---|---|

| Zacco platypus | Pale Chub | Secondary Consumer (Fish) | Widespread distribution; sensitive to various pollutants; usable in behavioral and biomarker studies. |

| Misgurnus anguillicaudatus | Pond Loach | Secondary Consumer (Fish) | Benthic, air-breathing; useful for testing sediment-bound and hypoxia-inducing chemicals. |

| Hydrilla verticillata | Hydrilla | Primary Producer (Macrophyte) | Important for nutrient cycling; biomarker for herbicide and heavy metal toxicity. |

| Neocaridina denticulata | Freshwater Shrimp | Primary Consumer (Invertebrate) | Short life cycle; easy lab culture; alternative to Daphnia for regional assessment. |

| Scenedesmus obliquus | Green Algae | Primary Producer (Algae) | Rapid growth; high CO2 fixation; model for algal toxicity and nutrient removal studies. |

Visualization of Concepts and Workflows

Diagram 1: A Researcher's Workflow for Diagnosing and Handling Test Variability

Diagram 2: Development and Application of a Species Sensitivity Distribution (SSD) Model

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials and Resources for Ecotoxicity Variability Research

| Item / Resource | Function in Research | Key Application / Note |

|---|---|---|

| EPA ECOTOX Knowledgebase | A curated database providing single-chemical toxicity data for aquatic and terrestrial species. | The primary source for compiling data to construct SSDs and analyze interspecies sensitivity patterns [19] [21] [16]. |

| Standardized Test Organisms | Well-characterized species (e.g., Daphnia magna, Danio rerio, Pseudokirchneriella subcapitata) with established culturing and testing protocols. | Provide reproducible baseline toxicity data for regulatory compliance and comparative studies [23] [15]. |

| Native/Regional Test Species | Species native to the ecosystem under assessment (e.g., Zacco platypus for East Asia). | Increase ecological relevance of risk assessments for site-specific evaluations or regions under-represented by standard species [15] [22]. |

| Reference Toxicants | Standard chemicals (e.g., potassium dichromate for Daphnia, sodium chloride for algae) used to assess the health and sensitivity of test organism populations. | Essential for quality control and assuring consistency in laboratory test conditions over time. |

| Sediment with Characterized Organic Carbon | Standardized or well-defined natural/synthetic sediment for spiked-sediment toxicity tests. | Critical for normalizing results and applying EqP theory; organic carbon content is a key parameter for hydrophobic chemical bioavailability [17]. |

| QSAR/Baseline Toxicity Models | Computational models that predict a chemical's baseline narcosis toxicity based on its structure (e.g., log KOW). | Used to interpret ELS fish tests and distinguish between specific and non-specific mechanisms of action [20]. |

| Trait Databases (e.g., FishBase, etc.) | Databases compiling ecological, morphological, and life-history traits for various species. | Enable trait-based analysis to investigate correlations between functional traits and chemical sensitivity [16] [18]. |

From Theory to Practice: Standardized Protocols and Advanced Methods to Minimize Ecotoxicity Variability

Core Principles of OECD, EPA, and ISO Guidelines for Variability Control

This technical support hub provides guidance for researchers managing variability in ecotoxicity and chemical safety testing. The content is framed within a thesis on improving the reliability and reproducibility of ecotoxicity results through structured control of experimental, environmental, and biological variance.

Core Principles of Variability Control

Controlling variability is fundamental to generating reliable, reproducible ecotoxicity data accepted by regulatory bodies worldwide. The core principles from key organizations are summarized below.

- OECD (Organization for Economic Co-operation and Development): The foundation is the Mutual Acceptance of Data (MAD) system, which mandates that non-clinical safety data generated in one member country in accordance with OECD Test Guidelines and Good Laboratory Practice (GLP) must be accepted by all others [24]. This eliminates duplicative testing and reduces animal use but requires strict adherence to standardized, validated methods to control inter-laboratory variability. The OECD Test Guidelines are continuously updated to reflect scientific advancements and promote best practices [24].

- EPA (U.S. Environmental Protection Agency): The EPA distinguishes between variability (true heterogeneity in a population or system) and uncertainty (a lack of knowledge) [25]. Its core principle is to explicitly characterize variability using statistical metrics (e.g., variance, confidence intervals) and to reduce uncertainty through better data and study design [25]. Risk assessments must transparently address both to inform decision-makers about the reliability of the results [25].

- ISO (International Organization for Standardization): For laboratory environments, ISO standards (e.g., ISO 14644 for cleanrooms) enforce control over physical and environmental variability [26]. The core principle is the classification of spaces based on permissible airborne particulate concentration, maintained through rigorous protocols for air filtration, garment management, cleaning, and personnel behavior [26].

Table 1: Comparative Overview of Core Principles

| Organization | Primary Focus | Core Mechanism for Variability Control | Key Outcome |

|---|---|---|---|

| OECD | Test Method Standardization | Mutual Acceptance of Data (MAD) via Test Guidelines & GLP [24] | Internationally accepted, reproducible data; reduced trade barriers. |

| EPA | Risk Assessment Integrity | Quantitative separation of variability (characterized) and uncertainty (reduced) [25] | Transparent, defensible risk estimates with known confidence levels. |

| ISO | Environmental Control | Cleanroom classification and contamination control protocols [26] | Minimized environmental interference in sensitive processes (e.g., cell culture, analysis). |

Troubleshooting & FAQ: Common Variability Challenges

Q1: Our laboratory’s replicate ecotoxicity tests (e.g., Daphnia magna acute immobilization) show high variance in LC50 values. What are the first steps we should take to identify the source? A: Follow a systematic tiered-investigation protocol.

- Review Test Organism Source & Health: Check for variability in organism age, size, and genetic strain. Use organisms from a certified supplier and maintain standardized acclimation procedures.

- Audit Test Solution Preparation: Verify precision in serial dilutions, stock solution stability, and analytical confirmation of exposure concentrations. Use calibrated equipment and reference standards.

- Check Environmental Parameters: Review dataloggers for temperature, pH, and dissolved oxygen. High variance often links to fluctuations outside ranges specified in guidelines (e.g., OECD Test Guideline 202).

- Evaluate Observer Bias: If endpoints involve subjective scoring (e.g., immobilization), implement blinding procedures and cross-check scoring between technicians.

Q2: According to the EPA, what is the practical difference between addressing "variability" and "uncertainty" in our exposure assessment model? [25] A: This is a critical conceptual distinction.

- Variability (e.g., the range of body weights in a population or daily water intake rates) is an inherent property of the system. You cannot reduce it, but you can characterize it better (e.g., by using a probability distribution instead of a single average value) [25].

- Uncertainty (e.g., not knowing the exact degradation rate of a chemical in a specific water type) stems from a lack of knowledge. You can reduce it by obtaining more or higher-quality data (e.g., conducting a site-specific fate study instead of using a default value) [25].

- Action: For variability, use probabilistic techniques like Monte Carlo analysis. For uncertainty, perform sensitivity analysis to identify which uncertain parameters most affect your model's output and target them for refinement [25].

Q3: We are upgrading our cell culture lab for in vitro toxicology assays. What are the minimum ISO cleanroom standards and practices we should implement to control contamination? [26] A: For cell culture, a ISO Class 7 cleanroom is typically the baseline. Key practices include:

- Garment Management: Use dedicated, low-lint coveralls, masks, gloves, and footwear. Implement a validated laundry or rental service with particle testing [26].

- Unidirectional Material Flow: Establish separate paths for clean and waste materials. Use pass-through autoclaves or UV chambers for transferring items.

- Strict Access & Gowning Procedures: Limit access to trained personnel. Design a sequential gowning room with airlocks.

- Continuous Monitoring: Install continuous particle counters with alert thresholds for ISO Class 7 [26]. Log temperature and humidity.

- Validated Cleaning: Use dedicated, residue-free cleaning agents and protocols for walls, floors, and work surfaces.

Detailed Experimental Protocols for Variability Control

Protocol 1: Implementing a Tiered Approach for Characterizing Exposure Variability (Based on EPA Guidance) [25] Objective: To systematically characterize and incorporate variability in exposure parameters.

- Tier 1 (Deterministic): Use point estimates (e.g., mean, high-percentile) for all input parameters. This provides a single risk estimate but masks variability.

- Tier 2 (Probabilistic - Partial): Identify 2-3 parameters with the highest expected variability (e.g., body weight, ingestion rate). Replace point estimates with probability distributions based on population data (e.g., from EPA's Exposure Factors Handbook). Use a simple Monte Carlo simulation (1,000-5,000 iterations).

- Tier 3 (Probabilistic - Full): Develop a full probabilistic model where all variable inputs are represented by distributions. Run an advanced Monte Carlo analysis (≥10,000 iterations) to generate a distribution of risk outcomes.

- Output Analysis: Present results as a probability distribution of risk (e.g., a cumulative frequency plot). Key outputs include the mean, median, and specific percentiles (e.g., 95th) of the predicted risk [25].

Protocol 2: Validating a New Analytical Method Under OECD GLP Principles Objective: To demonstrate that a new method for measuring test chemical concentration is reliable and reproducible.

- Define Criteria: Set targets for accuracy (mean recovery: 70-120%), precision (relative standard deviation <15%), limit of quantification (LOQ), and linearity (R² >0.98).

- Intra-Assay Validation: On one day, prepare and analyze a calibration curve and quality control (QC) samples at low, mid, and high concentrations (n=6 each). Calculate accuracy and precision.

- Inter-Assay Validation: Repeat the analysis of the QC samples on three separate days with fresh preparations. Assess between-day precision.

- Sample Matrix Test: Spike the test medium (e.g., reconstituted water, cell culture medium) with the analyte to check for matrix interference (recovery).

- Documentation: Compile all data, including chromatograms/raw data, calculations, and any deviations, in a validation report. The method is considered validated only if all pre-set criteria are met.

Visual Workflows for Variability Management

Diagram 1: EPA Framework for Variability vs. Uncertainty

Diagram 2: ISO Cleanroom Contamination Control Workflow

The Scientist's Toolkit: Key Reagents & Materials

Table 2: Essential Materials for Variability Control in Ecotoxicology

| Item | Function in Variability Control | Key Consideration |

|---|---|---|

| Certified Reference Standards | Provides an absolute benchmark for calibrating analytical equipment (e.g., HPLC, GC-MS) to ensure accurate chemical concentration measurement. | Source from accredited suppliers with certified purity and concentration. Use same batch for a study series. |

| In-Vitro Grade Water | Serves as the ultra-pure base for reagent, media, and test solution preparation to minimize unknown ionic/organic interference. | Must meet Type I (18.2 MΩ·cm) specifications. System must be regularly maintained to prevent bacterial endotoxin buildup. |

| Characterized Test Organisms | Provides a biologically standardized "reagent" (e.g., Daphnia, algae, fish embryos) with known genetic background, age, and health status. | Use cultures from recognized culture collections or maintain in-house with strict SOPs for feeding and lifecycle control. |

| GLP-Grade Solvents & Reagents | Ensures consistency in chemical properties (e.g., pH, impurity profile) which can affect test chemical solubility, stability, and bioavailability. | Select suppliers that provide comprehensive certificates of analysis. Avoid switching lots mid-study. |

| Validated Cleanroom Garments [26] | Acts as a primary barrier to personnel-sourced particulate and microbial contamination in sensitive assays and cell culture. | Use low-lint, static-dissipative fabrics. Implement a managed program with regular laundering and integrity testing [26]. |

| Environmental Data Loggers | Continuously monitors and records physical parameters (temperature, pH, DO, light) to confirm conditions remained within guideline limits. | Must be calibrated annually against a traceable standard. Data should be irrefutable and time-stamped for GLP compliance. |

This technical support center provides guidance for implementing the core principles of robust experimental design—replication, randomization, and positive controls—specifically within ecotoxicity testing and related fields. In ecotoxicity research, a primary challenge is distinguishing true treatment effects from inherent biological and procedural variability [7]. Experimental design serves as the structured backbone of research, ensuring data is reliable and conclusions are valid [27]. The goal of robust design is to minimize the influence of unwanted variation on the functional output of an experiment, making results insensitive to noise factors [28]. Proper design is not merely a procedural step but a fundamental requirement for scientific rigor, which the NIH defines as the strict application of the scientific method to ensure unbiased design, methodology, and reporting [29]. The following guides and FAQs address common pitfalls and provide actionable protocols to strengthen your experimental work.

Core Principles for Handling Variability

A robust experimental design proactively manages variability to yield reliable, interpretable results. Three pillars are essential for achieving this in ecotoxicity and biomedical research.

Replication: This involves repeating the experiment or measurements under the same conditions to verify consistency [27]. In ecotoxicity, replication is critical for quantifying the inherent intertest variability. For example, a meta-analysis of acute aquatic toxicity data found that the standard deviation of intertest variability is approximately a factor of 3 for a given chemical-species combination [30]. Replication at multiple levels (within-assay, between-experiment) helps separate this noise from true treatment effects and is fundamental for building scientific consensus [27].

Randomization: This is the practice of allocating experimental units (e.g., test organisms, samples) to treatment or control groups entirely by chance [31]. Its primary role is to eliminate systematic bias and distribute unknown confounding variables evenly across groups [27] [32]. This principle must extend beyond the test arena into the laboratory. For instance, the order of DNA extraction and PCR processing for environmental samples should be randomized to prevent batch effects from confounding ecological interpretations [33]. Randomization provides the foundation for valid statistical inference [31].

Positive Controls: A positive control is a treatment with a known, expected effect. It verifies that the experimental system is responsive and functioning as intended. In ecotoxicity, this could be a reference toxicant that consistently induces a specific effect in a test population. The proper functioning of a positive control helps confirm that a negative result (no effect from a test substance) is truly due to the substance's low toxicity and not a failure of the test system.

Table: Summary of Intertest Variability in Ecotoxicity Data [30]

| Aspect of Variability | Quantitative Finding | Implication for Design |

|---|---|---|

| Intertest Variability (Acute Aquatic Toxicity) | Standard deviation ~ a factor of 3 (fold-difference) | A single test is insufficient; replication is required to estimate true mean effect. |

| Common Data Handling | Multiple records aggregated by geometric mean | Highlights need for models that quantify variability, not just central tendency. |

| Impact on Risk Assessment | Unadjusted variability weakens uncertainty quantification | Designs must account for this noise to defend predicted no-effect concentrations (PNECs). |

Table: Common Sources of Bias and Mitigation through Design [31] [32]

| Source of Bias | Description | Mitigating Principle | Practical Application |

|---|---|---|---|

| Selection Bias | Non-random assignment creating group differences | Randomization | Use random number tables or software to assign organisms to tanks/treatments. |

| Placebo Effect | Response to belief in treatment, not treatment itself | Control Groups | Include unexposed control groups; use blinding where possible. |

| Confounding Variables | Uncontrolled factor correlating with treatment | Control & Randomization | Standardize environmental conditions (temp, light); randomize placement. |

| Batch Effects | Systematic errors from processing in groups | Randomization in Lab | Randomize order of sample processing (extraction, analysis) across all groups [33]. |

| Observer Bias | Researcher's expectations influence measurements | Blinding | Where feasible, keep technician unaware of sample group identity. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: Despite using a standard protocol, my test results are inconsistent between runs. How can I improve reliability?

Issue: High intertest variability obscures the true signal of a treatment effect.

Solution: This is a classic symptom of uncontrolled variability. Implement a tiered strategy:

- Audit Your Replication: Distinguish between technical replicates (multiple measurements from the same sample) and biological replicates (multiple independent organisms or populations). Ensure you have sufficient biological replicates to capture population-level variability. The rule of thumb from ecotoxicology is to plan for variability of a factor of 3 [30].

- Reinforce Randomization: Randomization must be applied to every stage where discretion exists. This includes:

- Assignment: Randomly assign organisms to test chambers and chambers to treatment positions.

- Processing: Randomize the order of sample feeding, water quality measurement, and analytical processing [33].

- Use a tool like a random number generator and document the scheme used.

- Strengthen Controls: Include both a negative control (clean water/solvent) and a positive control (reference toxicant). If your positive control fails to produce the expected effect, the entire test is invalid, and you must investigate system health. Consistent performance of positive controls across runs is a key indicator of overall experimental stability.

FAQ 2: My molecular assay (e.g., qPCR, RNA-seq) results seem to correlate with the day of processing. What went wrong?

Issue: Batch effects or "nondemonic intrusions" are confounding your results [33].

Solution: This indicates a failure of randomization at the laboratory processing stage, a common oversight in molecular ecology and genomics [33].

- Diagnose: Plot your primary results (e.g., gene expression values, DNA concentration) against the extraction batch, PCR plate, or sequencing run date. A clear block pattern indicates a batch effect.

- Corrective Protocol for Future Runs: For your next experiment, design a randomized processing workflow:

- Label all samples with a unique code.

- Create a processing list where sample codes are in a random order, generated by software.

- Process samples strictly in this random order for DNA/RNA extraction, library preparation, and plating for sequencing.

- Interleave positive and negative controls randomly within the run to monitor for spatial drift on plates.

- Statistical Remediation for Existing Data: If randomization was not done, you may attempt to use statistical models (including "batch" as a random effect) in your analysis. However, this is inferior to proper randomized design and may not fully correct the problem [33].

FAQ 3: How do I interpret a successful positive control? Does it guarantee the rest of my experiment is valid?

Issue: Misunderstanding the scope and meaning of positive control results.

Solution: A successful positive control is necessary but not sufficient to guarantee overall experimental validity. It confirms one specific function: that the test system is capable of showing a positive response.

- What it validates: The health and responsiveness of the test organisms, the functionality of key reagents, and the correct execution of critical procedural steps.

- What it does not validate: It does not control for errors in random assignment, contamination of specific treatment groups, inaccurate dosing calculations for the test substance, or errors in data recording. A valid experiment requires the correct application of all three principles—replication, randomization, and control—in concert.

Detailed Experimental Protocols

Protocol: Randomized Block Design for Sediment Ecotoxicity Testing with Molecular Endpoints

This protocol, adapted from a lake sediment DNA study, explicitly incorporates randomization to guard against batch effects [33].

Objective: To assess the impact of a contaminant gradient in sediment cores on benthic community structure via DNA metabarcoding, while controlling for variability in core sampling depth and laboratory processing.

Materials: Sediment corer, sterile sampling tools, DNA extraction kits (e.g., Macherey-Nagel NucleoSpin Soil), PCR reagents, sequencing platform, random number generator.

Methodology:

- Field Sampling & Blocking:

- Collect sediment cores from treatment and reference sites.

- Slice each core into depth horizons (e.g., every 0.5 cm). Each horizon is an experimental unit.

- Define "Blocks": Group horizons from similar depths across different cores into blocks. For example, all 0-0.5 cm samples form one block; all 0.5-1.0 cm samples form another. This controls for variability associated with depth.

Laboratory Randomization:

- Step A - DNA Extraction:

- Assign a unique code to all horizon samples.

- Within each depth block, randomize the order of all samples (from different sites and cores).

- Perform DNA extractions in this randomized order. Include extraction negative controls (blank) randomly within the sequence [33].

- Step B - PCR Amplification:

- After quantification, randomize the DNA templates again.

- Set up PCR reactions in the new random order. Include PCR negative controls (no template) and positive controls (control DNA) randomly within the plate layout [33].

- Step A - DNA Extraction:

Analysis:

- Process sequencing data to obtain community metrics (e.g., species richness).

- Use statistical models (e.g., ANOVA) that account for the blocking factor (depth) and the randomized design to test for significant effects of the contamination site.

Diagram: Workflow for Randomized Laboratory Processing

Protocol: Establishing a Positive Control System for a Chronic Ecotoxicity Test

Objective: To implement and qualify a reference toxicant as a positive control for a 21-day fish early life stage test.

Materials: Healthy, genetically similar cohort of test fish (e.g., zebrafish embryos); reference toxicant (e.g., sodium dodecyl sulfate - SDS); clean dilution water; standard test apparatus.

Methodology:

- Define Acceptance Criteria: Based on historical control data and literature, establish a statistically derived range for the positive control response (e.g., LC50 for SDS should be 5-15 mg/L over 48h for zebrafish embryos).

- Integrate into Test Design:

- Every test run includes a full negative control group (clean water) and a concurrent positive control group exposed to the reference toxicant.

- The positive control concentration is set to reliably produce an effect near the mid-point of its dose-response curve (e.g., the EC50).

- Execution and Qualification: