Strategic Blueprint: Mastering the Planning Phase for Effective Ecological Risk Assessment in Pharmaceutical Development

This article provides a comprehensive guide to the foundational planning phase of ecological risk assessment (ERA), tailored for researchers, scientists, and drug development professionals.

Strategic Blueprint: Mastering the Planning Phase for Effective Ecological Risk Assessment in Pharmaceutical Development

Abstract

This article provides a comprehensive guide to the foundational planning phase of ecological risk assessment (ERA), tailored for researchers, scientists, and drug development professionals. It explores the critical, iterative process of planning and problem formulation where risk assessors and managers define goals, scope, and methodology [citation:1][citation:6]. The scope spans from establishing core principles and stakeholder collaboration, through practical methodological application for defining assessment endpoints and conceptual models. It addresses contemporary challenges in pharmaceutical ERA, including navigating new regulatory demands where an inadequate assessment can now be grounds for marketing authorization refusal [citation:3][citation:8]. Finally, the article covers strategies for validating the planning framework and contrasts different regulatory approaches, synthesizing key takeaways to build robust, defensible, and scientifically sound ERAs that align with both environmental protection and drug development objectives.

Laying the Groundwork: Core Principles and Collaborative Frameworks in ERA Planning

Ecological Risk Assessment (ERA) is a formal, scientific process for evaluating the likelihood that adverse ecological effects may occur or are occurring as a result of exposure to one or more environmental stressors [1]. This process is pivotal for informing environmental decision-making, from pesticide registration and chemical regulation to the remediation of contaminated sites [2]. The ERA framework is structured into three primary phases: Planning, Problem Formulation, and Analysis & Risk Characterization [1].

The Planning Phase is the critical initial stage that establishes the assessment's foundation. It is defined as a collaborative dialogue between risk assessors and risk managers to define the goals, scope, and boundaries of the assessment before technical work begins [2] [3]. Its primary purpose is to ensure the subsequent scientific assessment is aligned with the needs of environmental decision-makers [4]. This phase determines whether a risk assessment is the appropriate tool, identifies key participants, and secures agreement on fundamental questions of scope, complexity, and resources [3] [4]. Contrary to a linear progression, planning exhibits a dynamic, iterative interaction with Problem Formulation, where initial agreements are refined and clarified as the scientific understanding of the problem deepens [3] [4].

This guide details the core objectives of the Planning Phase, deconstructs its iterative relationship with Problem Formulation, and provides technical methodologies for its execution within contemporary research contexts that integrate Ecosystem Services (ES).

Core Objectives of the Planning Phase

The Planning Phase is governed by four interconnected strategic objectives designed to bridge risk management needs with scientific assessment capabilities.

Table 1: Core Objectives and Outputs of the ERA Planning Phase

| Objective | Key Questions Addressed | Primary Outputs & Agreements |

|---|---|---|

| 1. Define Risk Management Goals & Decisions | What decision needs to be made? What environmental values must be protected? What are the policy and legal drivers? [2] [4] | Clear statement of management goals; Definition of the regulatory action or decision context [4]. |

| 2. Establish Assessment Scope & Complexity | What are the spatial and temporal boundaries? What level of uncertainty is acceptable? What resources (time, budget, expertise) are available? [2] [4] | Agreement on geographic scale, time frame, and tiered approach (e.g., screening-level vs. detailed). Decision on the complexity of analysis warranted [4]. |

| 3. Identify and Engage Key Participants | Who are the risk managers, risk assessors, and necessary scientific experts? Who are the stakeholders with an interest in the outcome? [2] [3] | Defined team roles and responsibilities; Plan for stakeholder involvement and communication [2]. |

| 4. Develop a Planning Summary | What are the specific agreements that will guide the assessment? How will the assessment proceed? [4] | Documented consensus on goals, scope, complexity, and roles. A roadmap into Problem Formulation [4]. |

Objective 1: Defining Management Goals and Decisions. This objective translates broad environmental protection mandates into specific goals for the assessment. Risk managers articulate the decision context, which could range from national rulemaking for a chemical to a site-specific remediation decision [2]. Goals are often derived from statutes (e.g., the Clean Water Act's goal to "restore and maintain the chemical, physical, and biological integrity of the Nation's waters") or public values [4]. The planning dialogue clarifies how the ERA will inform the specific decision, ensuring the science is policy-relevant [2].

Objective 2: Establishing Scope and Complexity. Not all ERAs are equal in scale or detail. Planning determines whether the assessment is local or national, prospective (predicting future effects) or retrospective (diagnosing past effects), and simple or complex [1] [4]. A key outcome is often the choice of a tiered approach, starting with conservative screening-level assessments to identify risks of greatest concern, followed by more refined analyses only where needed. This conserves resources by focusing effort on the most significant risks [2] [4].

Objective 3: Identifying Participants. Effective ERA requires a cross-functional team [2] [3].

- Risk Managers: Individuals with the authority to make or inform the environmental decision (e.g., regulatory agency staff).

- Risk Assessors: Scientists (ecotoxicologists, ecologists, statisticians, modelers) who design and execute the technical assessment.

- Stakeholders: Parties with an interest in the outcome (e.g., industry, community groups, tribal governments, other agencies) [2].

Objective 4: Documenting the Plan. The culmination of planning is a documented summary of agreements. This document aligns expectations, serves as a reference throughout the assessment, and provides the definitive starting point for the Problem Formulation team [4].

The Iterative Interaction Between Planning and Problem Formulation

The relationship between Planning and Problem Formulation is not a discrete handoff but a dynamic, iterative cycle [3] [4]. Problem Formulation is the process of translating the planning agreements into a detailed, scientifically rigorous technical plan [2]. Iteration occurs as scientific investigation during Problem Formulation reveals new information that necessitates refinement of the initial planning assumptions.

This iterative process follows a continuous improvement cycle analogous to models used in software development and design [5] [6]. Each cycle involves planning a direction, implementing a step (e.g., data gathering or model drafting), checking the results against goals, and acting to adjust the approach [5]. This "plan-do-check-act" loop reduces project risk by identifying and correcting course early, preventing wasted effort on misaligned objectives [5] [7].

Table 2: The Iterative Cycle Between Planning and Problem Formulation

| Stage in Cycle | Planning Input/Activity | Problem Formulation Activity | Iterative Feedback Loop |

|---|---|---|---|

| Initialization | Establish broad management goals, scope, and team [4]. | Begin integrating available information on stressors, receptors, and effects [3]. | — |

| Hypothesis & Model Development | Provide feedback on the practicality and policy relevance of proposed assessment endpoints and conceptual models. | Develop draft assessment endpoints and conceptual models based on data review [2] [4]. | Risk managers may refine goals based on scientific feasibility. Assessors may request a scope adjustment to address a key pathway. |

| Analysis Planning | Review and agree upon the proposed methods, data requirements, and decision criteria for the analysis phase [4]. | Develop a detailed Analysis Plan specifying measures, models, and data evaluation methods [2] [3]. | Discussions may reveal resource constraints, leading to a simplification of the analysis plan or a decision to pursue a tiered assessment strategy. |

| Formalization | Approve the final Problem Formulation products as the basis for the Analysis Phase. | Finalize the Conceptual Model and Analysis Plan [3]. | The approved documents represent the evolved, consensus-based synthesis of management needs and scientific insight. |

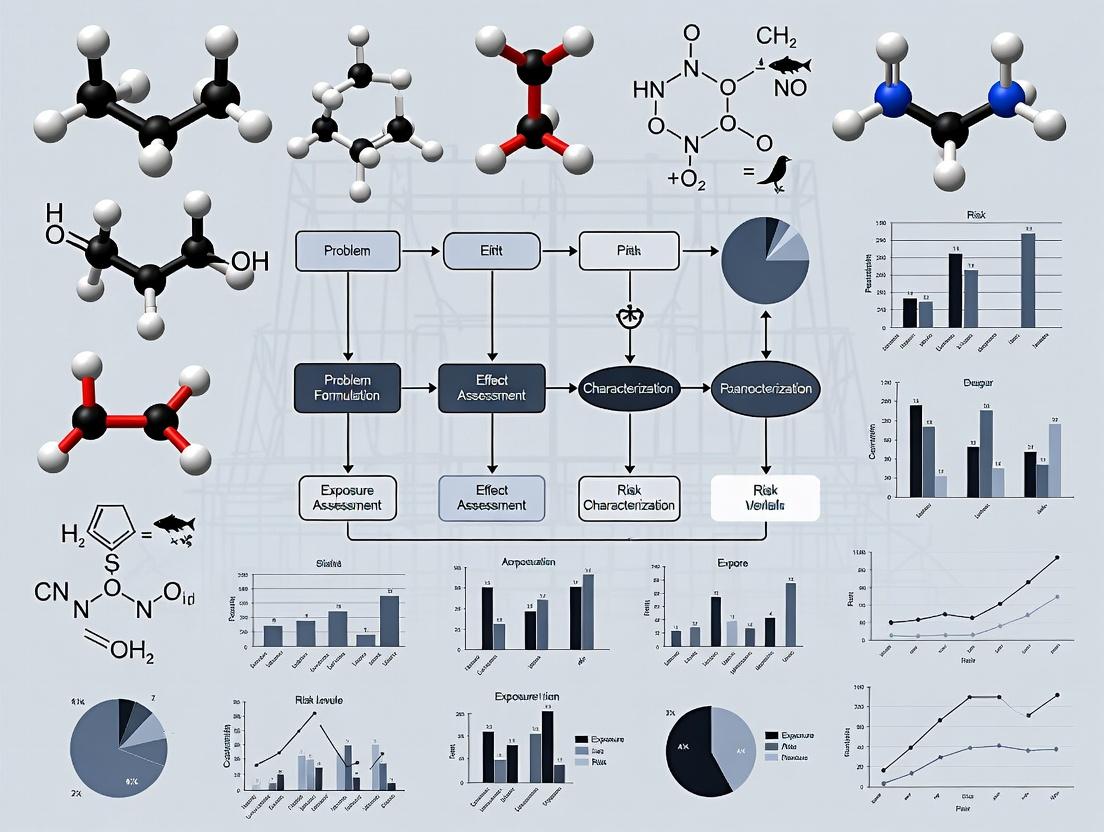

The following diagram illustrates this continuous, feedback-driven relationship.

Diagram 1: Iterative Cycle Linking Planning and Problem Formulation (Max Width: 760px). This workflow shows the non-linear, feedback-driven process where evaluation of Problem Formulation outputs often leads to refinement of initial planning agreements.

Integrating Ecosystem Services into Planning and Problem Formulation

Modern ERA increasingly integrates Ecosystem Services (ES)—the benefits humans derive from nature—as assessment endpoints [8]. This shifts focus from protecting individual species to safeguarding ecological functions that provide services like water purification, flood control, and food provision [2] [8]. This integration profoundly impacts both the Planning and Problem Formulation phases.

Planning Phase Adjustments: When ES are prioritized, management goals are framed in terms of maintaining service supply (e.g., "protect the water filtration capacity of the wetland"). Stakeholder engagement becomes crucial to identify which services are most valued by the public [8]. Defining scope requires considering the spatial scales over which services are provided and used.

Problem Formulation Translation: Assessment endpoints become ES-based (e.g., "maintenance of denitrification rate in sediment for waste remediation service") [8]. Conceptual models must explicitly link stressors to ecological structures/functions and on to the final ES [8]. The analysis plan requires methods to quantify ES supply and identify thresholds for risk and benefit [8].

Table 3: Methodological Comparison: Traditional vs. ES-Integrated ERA Planning

| Aspect | Traditional ERA Approach | ES-Integrated ERA Approach |

|---|---|---|

| Primary Management Goal | Protect ecological entities (species, communities) from adverse effects [2]. | Protect the sustained supply of valued ecosystem services to society [8]. |

| Key Assessment Endpoints | Survival, growth, reproduction of indicator species [2] [4]. | Metrics of ES supply (e.g., ton of carbon sequestered, volume of water purified, yield of fisheries) [8]. |

| Conceptual Model Focus | Stressor → Exposure → Ecological effect on receptor [3]. | Stressor → Effect on ecological structure/function → Change in ES supply → Impact on human well-being [8]. |

| Data & Modeling Needs | Toxicity data, exposure models, population models [4]. | ES quantification models (e.g., InVEST), socio-ecological data, benefit valuation methods [8] [9]. |

| Stakeholder Engagement | Important for context and acceptance [2]. | Critical for identifying and prioritizing which ES to assess [8]. |

Experimental Protocols and Technical Methodologies

Protocol for Developing a Conceptual Model

The conceptual model is a visual hypothesis of how stressors affect assessment endpoints [3]. Its development is a core Problem Formulation activity informed by planning.

- Identify Components: List all potential sources (e.g., pesticide application), stressors (e.g., chemical X), exposure pathways (e.g., spray drift to water, runoff), receptors (e.g., aquatic invertebrates, fish), and assessment endpoints (e.g., survival of mayfly larvae) [3] [4].

- Diagram Relationships: Create a flowchart linking components with arrows indicating influence. Use boxes for entities and arrows for pathways or effects [4].

- Formulate Risk Hypotheses: For each arrow, write a testable hypothesis (e.g., "Runoff from fields will transport Stressor X to the stream, leading to aqueous concentrations that reduce mayfly survival") [3].

- Prioritize and Simplify: Focus the model on major pathways. The final model should guide data collection and analysis planning [4].

Case Study: Quantitative ES-ERA Methodology

A 2025 study demonstrated a novel method to quantify risks and benefits to ES supply, illustrating advanced Problem Formulation [8].

- Objective: Assess the risk of degradation and potential benefit of enhancement to the waste remediation (denitrification) ES from offshore wind farms (OWF) and mussel aquaculture [8].

- Methodology:

- Define ES Metric: The ES was "waste remediation," measured as sediment denitrification rate (μmol N m⁻² h⁻¹) [8].

- Establish Thresholds: A risk threshold (lower bound) and a benefit threshold (upper bound) for denitrification rates were defined based on baseline conditions or management goals [8].

- Quantify Stressor-Response: Statistical models (e.g., multiple linear regression) linked stressor presence (OWF structures altering sediment) to changes in drivers of denitrification (e.g., Total Organic Matter) [8].

- Model Exposure & Effect: Using spatial data and the stressor-response relationship, the distribution of post-stressor denitrification rates was predicted across the site [8].

- Calculate Risk/Benefit Metrics: The probability and magnitude of the denitrification rate falling below the risk threshold (risk) or exceeding the benefit threshold (benefit) were calculated using cumulative distribution functions [8].

- Outcome: The study provided quantitative, probabilistic comparisons of different management scenarios (OWF alone, aquaculture alone, multi-use), directly informing sustainable planning decisions [8].

Protocol for Predictive Landscape ERA Using InVEST and PLUS Models

For regional ERAs driven by land-use change, an integrated modeling approach is used [9].

- Scenario Planning (Planning Phase): Define future development scenarios (e.g., Business-As-Usual, Ecological Protection) [9].

- Land Use Prediction (Problem Formulation -> Analysis): Use the Patch-generating Land Use Simulation (PLUS) model to simulate future land use/cover (LULC) maps under each scenario [9].

- Ecosystem Service Quantification (Analysis): Use the Integrated Valuation of Ecosystem Services and Trade-offs (InVEST) model suite to calculate the supply of multiple ES (e.g., carbon storage, water yield, habitat quality) for both current and future LULC maps [9].

- Risk Calculation: Calculate Ecosystem Service Degradation (ESD) by comparing future ES supply to current levels. ESD is used as a metric of ecological risk caused by LUCC [9].

- Trade-off Analysis: Use statistical methods (e.g., geographically weighted regression) to analyze spatial synergies and trade-offs among the risks to different ES [9].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Research Reagents, Models, and Tools for ERA

| Item/Tool Name | Primary Function in ERA | Application Context |

|---|---|---|

| Standardized Toxicity Test Organisms (e.g., Daphnia magna, fathead minnow, algal species) [4] [8] | Provide consistent, regulatory-accepted data on stressor-effects relationships for chemical risk assessment. | Laboratory testing to generate LC50, NOAEC, etc., for use in screening-level risk quotients [4]. |

| EPA EcoBox | An online compendium providing links to guidance, databases, models, and reference materials for conducting ERA [2]. | Used throughout planning and problem formulation to access authoritative protocols, fate models, and ecological data. |

| InVEST (Integrated Valuation of Ecosystem Services and Trade-offs) Model Suite | A set of spatially explicit models for mapping and valuing the supply of multiple ecosystem services (e.g., carbon, water, habitat) [9]. | Quantifying ES supply for baseline conditions and under future scenarios in landscape-scale ERAs [9]. |

| PLUS (Patch-generating Land Use Simulation) Model | A land-use change model that simulates the evolution of landscape patches under different policy scenarios [9]. | Predicting future land-use patterns as the foundational driver for regional ecological risk projections [9]. |

| Cumulative Distribution Function (CDF) Analysis | A statistical method used to characterize the full probability distribution of an exposure or effect metric [8]. | Quantifying the probability of exceeding a risk or benefit threshold in probabilistic ES-ERA [8]. |

| Conceptual Model Diagramming Software (e.g., graphical tools or simple flowchart software) | To create clear visual representations of hypothesized stressor-exposure-effect pathways [3] [4]. | A critical tool during Problem Formulation to synthesize information and communicate risk hypotheses to the team and stakeholders. |

The Planning Phase is the strategic cornerstone of a successful Ecological Risk Assessment. It is defined by its four core objectives—defining decisions, setting scope, engaging teams, and documenting agreements—which collectively ensure scientific assessment is relevant and actionable for environmental protection. Crucially, planning is not a static starting point but engages in an iterative dialogue with Problem Formulation. This cyclical process of hypothesis, evaluation, and refinement allows the assessment to adapt to new scientific insights, ultimately producing a more robust and targeted analysis plan.

The evolution of ERA to incorporate Ecosystem Services frameworks exemplifies this iterative advancement. It expands the focus of planning discussions to include societal benefits and requires more integrated methodologies during problem formulation. By employing structured protocols, modern quantitative tools, and a collaborative, iterative mindset, researchers and risk managers can define planning phases that effectively guide the scientific evaluation of ecological risks in a complex and changing world.

Ecological Risk Assessment (ERA) is a formal, scientific process for evaluating the likelihood that the environment may be adversely affected by exposure to one or more stressors, such as chemicals, land-use changes, or invasive species [1]. This process is fundamentally initiated to inform environmental decision-making, supporting actions ranging from pesticide regulation and hazardous waste site remediation to watershed management [2].

The planning phase is the critical foundation upon which a successful ERA is built. It is during this initial stage that the scope, goals, and trajectory of the entire assessment are established through structured dialogue [1]. The core thesis of this whitepaper is that the efficacy, legitimacy, and ultimate utility of an ERA are directly determined by the clarity of roles and the depth of integration among three key groups during this planning phase: risk managers, risk assessors, and stakeholders. This phase ensures the assessment is both scientifically rigorous and decision-relevant, setting clear agreements on management goals, assessment scope, complexity, and the specific roles of each team member [1] [4].

Table 1: Core Objectives and Agreements of the ERA Planning Phase [1] [2] [4].

| Planning Component | Key Questions Addressed | Primary Participants |

|---|---|---|

| Management Goals & Decisions | What environmental values need protection? What decision must be informed? | Risk Managers, Stakeholders |

| Scope & Boundaries | What are the spatial and temporal limits? What stressors and ecological entities are of concern? | Risk Managers, Risk Assessors |

| Assessment Complexity & Iteration | What level of analysis is needed? Should a tiered (screening to refined) approach be used? | Risk Managers, Risk Assessors |

| Role Definition & Resources | Who is responsible for each task? What are the timelines, funding, and expertise required? | All Team Members |

| Stakeholder Engagement Plan | Who are the interested parties? How and when will they be consulted? | Risk Managers, Lead Assessor |

Defining Core Roles and Responsibilities

The ERA process hinges on a clear distinction and collaboration between two primary technical roles: Risk Managers and Risk Assessors. This separation is maintained to ensure scientific integrity while aligning the assessment with societal values and legal mandates [10].

The Risk Manager: The Decision-Authority

Risk Managers are individuals or entities with the responsibility and legal authority to act on an identified risk. They are typically staff within regulatory agencies (e.g., EPA, state environmental offices) but can also include corporate environmental leads or resource trustees [2]. Their role is not to conduct the science but to frame the need for it and use its outcomes.

Table 2: Comparative Roles: Risk Manager vs. Risk Assessor [1] [2] [4].

| Aspect | Risk Manager | Risk Assessor |

|---|---|---|

| Primary Objective | Make informed, legally defensible decisions to protect ecological values. | Provide a scientific estimate of the likelihood and magnitude of adverse ecological effects. |

| Key Planning Actions | Define risk management goals and options; set scope, funding, and timeline; articulate policy and legal constraints; determine acceptable uncertainty [2] [4]. | Translate management goals into assessable endpoints; advise on feasible scope and complexity; design the technical approach; identify data needs [4]. |

| Core Responsibilities | - Consult with assessors and stakeholders.- Weigh assessment results with social, economic, and legal factors.- Select and implement risk management actions (e.g., remediation, regulations).- Communicate decisions [1] [2]. | - Gather and analyze data on exposure and ecological effects.- Develop conceptual models and risk hypotheses.- Characterize and quantify risks.- Document uncertainties.- Communicate scientific findings clearly [1] [11] [12]. |

| Ultimate Deliverable | A risk management decision or regulation. | A risk assessment report characterizing ecological risk. |

The Risk Assessor: The Scientific Analyst

The Risk Assessor is the scientific expert responsible for executing the technical evaluation. This is a multidisciplinary role demanding expertise in ecology, toxicology, statistics, and chemistry [11] [2]. A Senior Ecological Risk Assessor, as evidenced in industry postings, typically holds an advanced degree (M.S. or Ph.D.) and has over a decade of experience. Their duties extend beyond analysis to include leading projects, mentoring junior staff, advocating for technical findings with regulators, and designing monitoring programs [12].

The assessor’s work during planning is to translate the risk manager’s broad goals into actionable, scientific terms. This involves helping to define which ecological entities (e.g., an endangered species, a fish community, a wetland ecosystem) and their specific attributes (e.g., reproductive success, population abundance) will be the focus of the assessment—these are known as assessment endpoints [4].

Diagram 1: ERA Framework and Key Participant Roles in Planning

The Imperative of Stakeholder Integration

Stakeholders are individuals or groups with an interest in or affected by the environmental issue and the resulting management decision [2]. A stakeholder approach to risk management is not merely a procedural step; it is a strategic orientation that recognizes stakeholders as essential contributors to risk identification, analysis, and response [13].

Identifying and Classifying Stakeholders

Stakeholders in an ERA are diverse. The planning team must "think outside the box" to identify not only primary entities but also secondary groups who may be overlooked until they oppose a decision [14]. Key categories include:

- Government & Regulatory Bodies: Federal, state, tribal, and municipal agencies [2].

- Affected Communities & Landowners: Local residents, indigenous groups, and property owners.

- Economic Interests: Industry representatives, agricultural users, small-business owners, and commercial fishers [2].

- Civil Society: Environmental NGOs, recreational groups, and academic institutions.

- Technical Experts: Scientists from complementary fields not on the core assessment team.

Risks of Poor Stakeholder Integration

Excluding or inadequately engaging stakeholders creates significant project risks [14]:

- Relationship Risks: Loss of trust, active opposition, and resistance to change.

- Reputational Risks: Negative media coverage and erosion of public confidence.

- Financial & Operational Risks: Project delays, scope creep, costly legal conflicts, and retrofitting.

- Substantive Risks: Overlooking critical exposure pathways, ecological values, or local knowledge, leading to a flawed assessment and poor management outcomes [14].

A Framework for Strategic Integration

Effective integration follows a logical sequence from identification to active involvement in risk response planning [13] [14].

Diagram 2: Stakeholder Integration & Risk Management Cycle

Step 1: Identify Stakeholders and Co-Discover Risks. The process begins with broad brainstorming to list all potential stakeholders [14]. Initial consultations with these groups are then used to uncover risks (e.g., unique exposure pathways, valued ecological resources) that the technical team may have missed.

Step 2: Analyze and Prioritize. Stakeholders and risks are analyzed concurrently using both qualitative and quantitative tools [14]:

- Stakeholder Mapping: Classifying stakeholders based on their level of interest, influence, and potential impact from the risk [14].

- Risk Analysis: Evaluating the probability of a risk occurring and the magnitude of its potential ecological, economic, or social impact.

- Stakeholder-Risk Profiling: Merging these analyses to document which stakeholders are most affected by or can most influence specific risks, guiding targeted engagement [14].

Step 3: Involve Stakeholders in Risk Planning and Response. High-priority stakeholders should be involved in developing risk management strategies. This can include participating in workshops to review conceptual models, providing feedback on remediation options, or co-designing monitoring programs [13] [14]. This involvement improves the quality and legitimacy of decisions.

Step 4: Execute Tailored Risk Communication. Communication is not one-way dissemination but a dynamic, two-way exchange [13]. It must be tailored to the stakeholder's culture, worldview, and level of engagement. For example, individuals with a hierarchical worldview may trust information from authority figures like government scientists, while those with an egalitarian worldview may respond better to messages emphasizing community equity and environmental justice [15]. Communication serves multiple purposes: education, behavior change, disaster warning, and fostering partnership in decision-making [13].

Table 3: Stakeholder Types and Engagement Considerations [2] [14] [15].

| Stakeholder Category | Primary Interests/Concerns | Potential Engagement Risks if Excluded | Recommended Engagement Approach |

|---|---|---|---|

| Regulatory Agencies | Legal compliance, policy adherence, precedent setting. | Legal challenges, permit denials, enforcement actions. | Formal consultation, technical working groups, iterative review. |

| Local Community | Health, property values, quality of life, aesthetic values. | Public opposition, protests, loss of social license to operate. | Public meetings, community advisory boards, transparent reporting. |

| Industry/Applicant | Operational feasibility, cost, regulatory certainty, liability. | Project delays, increased costs, legal disputes over findings. | Technical dialogue, confidential data review, collaborative problem-solving. |

| Environmental NGOs | Species protection, habitat conservation, precautionary principle. | Campaigns against the project, litigation, media criticism. | Early involvement in scoping, access to independent science, formal comment periods. |

| Academic Scientists | Methodological rigor, data validity, contribution to science. | Public criticism of assessment quality, alternative analyses. | Peer review, collaborative research on key uncertainties, workshops. |

Methodological Protocols and the Scientist's Toolkit

The planning phase concludes with the transition to Problem Formulation, where agreements are solidified into a technical blueprint. This involves developing a conceptual model (a diagram of hypothesized stressor-exposure-effect pathways) and a detailed analysis plan [4] [10].

Experimental & Analytical Protocols

The analysis phase tests the risk hypotheses through two parallel lines of inquiry: exposure assessment and ecological effects assessment [1] [10].

Exposure Assessment Protocol: Objective: To characterize the contact between a stressor and ecological receptors. Methodology:

- Source Characterization: Quantify the release rate, form, and location of the stressor (e.g., pesticide application rate and method) [4].

- Fate and Transport Modeling: Use models (e.g., fugacity, runoff models) to predict the distribution and concentration of the stressor in environmental media (water, soil, sediment, air) [10].

- Exposure Pathway Analysis: Identify complete pathways (source → medium → receptor). For chemicals, this includes assessing bioavailability, bioaccumulation (uptake > elimination), and biomagnification (increasing concentration up the food web) [2].

- Exposure Estimation: Quantify the dose or concentration at the receptor interface. This may involve direct measurement (environmental monitoring) or model estimation, considering temporal overlap with sensitive life stages (e.g., fish spawning) [2].

Ecological Effects Assessment (Stressor-Response) Protocol: Objective: To evaluate the relationship between the magnitude of a stressor and the type and severity of ecological effects. Methodology:

- Toxicity Data Compilation: Gather relevant single-species toxicity data from standardized laboratory tests (e.g., LC50, NOEC for survival, growth, reproduction) [4].

- Dose-Response Modeling: Fit statistical models to toxicity data to estimate effect thresholds across a gradient of exposure.

- Species Sensitivity Distribution (SSD): For chemical assessments, compile toxicity endpoints for multiple species to derive a protective concentration (e.g., HC5 – hazardous concentration for 5% of species).

- Higher-Order Effects Evaluation: Review field studies, mesocosm experiments, or population models to understand effects at the population, community, or ecosystem level (e.g., impacts on predator-prey dynamics, nutrient cycling) [2] [10].

The Scientist's Toolkit: Essential Research Reagent Solutions

A robust ERA relies on a suite of standard tools, models, and data sources.

Table 4: Research Reagent Solutions for Ecological Risk Assessment.

| Tool/Reagent Category | Specific Examples | Function & Application in ERA |

|---|---|---|

| Standard Toxicity Test Organisms | Fathead minnow (Pimephales promelas), Daphnia (Daphnia magna), Earthworm (Eisenia fetida), Duckweed (Lemna spp.). | Provide standardized, reproducible toxicity endpoints for chemicals used in screening-level risk assessments and SSDs [4]. |

| Environmental Fate & Exposure Models | PRZM (Pesticide Root Zone Model), EXAMS (Exposure Analysis Modeling System), BASINS (Better Assessment Science Integrating point & Non-point Sources). | Simulate the movement and concentration of stressors in the environment to estimate exposure for ecological receptors [4]. |

| Bioaccumulation Assessment Tools | Field-collected biota (fish, bivalves), Lipid-normalization protocols, BCF/BAF (Bioconcentration/Bioaccumulation Factor) models. | Measure or predict the accumulation of chemicals in organisms, critical for assessing risk to upper-trophic-level wildlife [2]. |

| Ecological Effects Databases | ECOTOX (EPA database), EnviroTox (created by industry collaboration), peer-reviewed literature. | Curated repositories of toxicity data used to populate stressor-response profiles and develop SSDs. |

| Statistical & Risk Calculation Software | R packages (e.g., ssdtools, fitdistrplus), Burrlioz (for SSD modeling), Crystal Ball/@Risk (for probabilistic analysis). |

Perform statistical analyses on exposure and effects data, conduct probabilistic risk assessments, and quantify uncertainty [16]. |

| Sediment & Water Sampling Equipment | Ekman/Ponar grabs (sediment), Van Dorn/Niskin bottles (water), Pore water peepers, Passive sampling devices (SPMDs, POCIS). | Collect representative environmental media samples for chemical analysis to characterize exposure conditions. |

| Conceptual Model & Workflow Software | Diagramming tools (e.g., Lucidchart, yEd), Graphviz (DOT language), GIS software (e.g., ArcGIS). | Visualize stressor pathways and ecosystem relationships; map spatial exposure and receptor distributions [4]. |

The planning phase of an Ecological Risk Assessment is a critical exercise in structured collaboration. Its success is not accidental but results from deliberately defining and integrating the distinct contributions of risk managers, risk assessors, and stakeholders.

The risk manager provides the decision-making mandate and societal context. The risk assessor translates this mandate into a scientifically defensible investigation. The stakeholders infuse the process with essential values, local knowledge, and public legitimacy.

Methodological choices made during planning—from defining risk factors to selecting scoring and aggregation rules for data—can significantly influence the assessment's outcome and subsequent management priorities [16]. Therefore, documenting these choices and the rationale behind them is a fundamental component of a transparent and credible ERA process.

Ultimately, a well-executed planning phase ensures the ERA is relevant (answers the risk manager's questions), reliable (uses sound science), and responsive (addresses the concerns of those affected). This triad of relevance, reliability, and responsiveness is the cornerstone of ecological risk assessments that effectively guide environmental protection and sustainable decision-making.

Foundational Role of Agreements in Ecological Risk Assessment Planning

In ecological risk assessment (ERA), the planning phase establishes the necessary foundation for all subsequent scientific and regulatory activities. This initial phase determines the assessment's scope, boundaries, and the ecological entities that will be its focus, ensuring the final product effectively supports environmental decision-making [2]. Documentation of agreements during planning is not merely administrative; it is a critical scientific and management tool that aligns multidisciplinary teams, defines the problem space, and ensures that the assessment's purpose is explicitly tied to actionable environmental decisions, such as chemical regulation or site remediation [2].

The planning process is inherently collaborative, involving risk assessors, risk managers, scientific experts, and stakeholders [2]. Formal agreements crystallize the outcomes of these discussions, translating abstract goals into concrete parameters. Key outputs of planning include the definition of high-level management goals (e.g., "restore native fish populations"), the identification of explicit management options to be evaluated, and specifications regarding the assessment's scope and complexity, often employing an iterative or tiered approach to efficiently allocate resources [2]. This documented plan directly feeds into the problem formulation phase, where assessment endpoints and conceptual models are developed [2].

Taxonomy and Application of Research Agreements

Research in ecological risk assessment, particularly in contexts like pharmaceutical development, involves multiple parties and sensitive materials. Various formal agreements govern these interactions, each serving a distinct purpose in facilitating research while protecting intellectual property, data, and materials.

Table 1: Common Agreement Types in Ecological and Pharmaceutical Research

| Agreement Type | Primary Purpose | Key Elements / Governing Scope | Typical Use Case in ERA/Drug Development |

|---|---|---|---|

| Confidential Disclosure Agreement (CDA)/ Non-Disclosure Agreement (NDA) [17] [18] [19] | To protect proprietary information exchanged for evaluation or collaboration. | Defines confidential information, obligations of receiving party, exclusions, term. | Sharing unpublished ecotoxicology data or proprietary compound structures prior to a formal collaboration. |

| Material Transfer Agreement (MTA) [17] [18] [20] | To govern the transfer of proprietary physical materials for research. | Describes materials, restricts use to research, addresses ownership of modifications, publication rights. | Transferring transgenic animal models, specific cell lines, or environmental contaminant samples for toxicity testing. |

| Data Use Agreement (DUA) [17] [21] [20] | To outline terms for sharing and using confidential or restricted datasets. | Specifies data set, permitted uses, security requirements, publication review, data destruction. | Providing access to sensitive ecological monitoring data or patient-derived data for an environmental health study. |

| Sponsored Research Agreement (SRA) [17] [20] | To define terms for externally funded research projects. | Includes statement of work, budget, payment terms, intellectual property rights, reporting. | A company funding university research on the environmental degradation pathway of a new active pharmaceutical ingredient. |

| Research Collaboration/Collaborative Research Agreement (RCA/CRA) [18] [20] | To memorialize terms of a joint research project between institutions. | Defines roles/responsibilities of each party, management structure, IP ownership (background/foreground). | A multi-institutional consortium studying the cumulative ecological risks of multiple stressors in a watershed. |

| Memorandum of Understanding (MOU) [17] [20] | To express mutual intent for future collaboration without creating binding legal obligations. | Outlines shared goals and preliminary plans; explicitly non-binding. | Documenting an initial agreement between a research institute and a regulatory agency to explore a joint assessment. |

The selection of the appropriate agreement is a critical first step. The following workflow diagram outlines the decision process based on the primary objective of the interaction.

Integrating Agreements with the ERA Scientific Workflow

Agreements are not standalone documents; they are integrated with the scientific methodology of the ecological risk assessment. The planning and problem formulation phases are where the purpose defined in agreements translates into technical assessment design [2].

From Management Goals to Assessment Endpoints

The high-level management goals documented during planning are refined in problem formulation into precise assessment endpoints. These endpoints combine a valued ecological entity (e.g., a species, community, or ecosystem) with a specific attribute of that entity to be protected (e.g., reproduction, population abundance) [2]. The choice of endpoints is guided by ecological relevance, susceptibility to stressors, and relevance to the management goals [2]. Agreements, particularly SRAs or CRAs, often specify these goals, thereby directly influencing the scientific focus.

Designing the Analysis Plan: Exposure and Effects

The analysis phase of ERA involves two core technical components: exposure assessment and ecological effects assessment [2]. Agreements directly enable this work by governing the sharing of critical resources.

Exposure Assessment Protocols: This evaluates the co-occurrence of stressors and ecological receptors. For chemical stressors, this requires data or models concerning the chemical's source, distribution, fate, and bioavailability [2]. MTAs are essential for obtaining proprietary chemical standards or contaminated field samples. DUAs govern the use of environmental monitoring data detailing chemical concentrations in water, soil, or biota.

- Key Protocol (Chemical Bioavailability): A standard protocol involves collecting environmental media (e.g., water, sediment) and using chemical extraction techniques (e.g., solid-phase extraction for water, sequential extraction for sediments) followed by chemical analysis (e.g., GC-MS, LC-MS) to quantify the bioavailable fraction of the stressor. The use of proprietary chemical standards for calibration would be covered under an MTA.

Stressor-Response Assessment Protocols: This evaluates the relationship between the magnitude of exposure and the magnitude or likelihood of an adverse effect [2]. It relies on data from laboratory toxicity tests, mesocosm studies, or field observations.

- Key Protocol (Standardized Aquatic Toxicity Test): A typical protocol involves exposing a test organism (e.g., Daphnia magna, fathead minnow) to a series of concentrations of the chemical stressor in a controlled laboratory environment over a specified duration (e.g., 48 or 96 hours). Endpoints measured include mortality, growth inhibition, or reproduction. The transfer of a proprietary test organism or cell line for such testing would require an MTA [18]. Data from previous, unpublished toxicity studies shared by a collaborator would be covered under a CDA and DUA.

The following diagram illustrates how different agreement types interface with the core phases of the ecological risk assessment framework, from planning through to risk characterization.

The Scientist's Toolkit: Key Reagents and Materials

Conducting robust exposure and effects assessments requires specific, often proprietary, research materials. The transfer and use of these materials are exclusively managed through MTAs and related agreements [18] [20].

Table 2: Essential Research Materials and Reagents in Ecological Risk Assessment

| Material Category | Specific Examples | Function in ERA | Governance Agreement |

|---|---|---|---|

| Proprietary Chemical Standards | Novel pharmaceutical compound, metabolite, isotopic tracer. | Serves as analytical reference standard for quantifying exposure concentrations in environmental media. | MTA [18] [20] |

| Environmental Test Samples | Contaminated soil, sediment, or water from a field site. | Used in bioavailability studies, toxicity identification evaluations (TIEs), or to validate laboratory tests against field conditions. | MTA [20] |

| Biological Test Organisms | Transgenic or knock-out animal models (e.g., zebrafish, C. elegans), specialized plant cultivars. | Used to study specific molecular toxicity pathways, mode of action, or genetic susceptibility to stressors. | MTA [18] |

| Research Tools & Assays | Proprietary cell lines (e.g., fish gill cells), antibodies for biomarker detection, patented enzymatic assay kits. | Used for high-throughput screening, mechanistic studies, or measuring sub-lethal biological effects (biomarkers). | MTA [18] |

| Human-Derived Materials | Human tissue samples, primary cells, genomic data. | Critical for environmental health assessments linking ecological exposures to potential human health outcomes in pharmaceutical risk assessment. | MTA (with specific attestations) [18] |

Practical Implementation: The Five Safes Framework and Negotiation

Successfully implementing data-sharing agreements, a cornerstone of exposure assessment, can be structured using the Five Safes framework [21]. This risk-proportionate model ensures data security and appropriate use.

- Safe Projects: The DUA must explicitly limit data use to the approved ERA project scope [21].

- Safe People: Researchers accessing data must be qualified and often require institutional affiliation and training [21].

- Safe Settings: The DUA should mandate secure data environments (e.g., encrypted storage, secure servers, limited physical access) [21].

- Safe Data: Data should be de-identified or treated with disclosure limitation methods (e.g., aggregation) before sharing to protect confidentiality [21].

- Safe Outputs: All research outputs (publications, reports) must be reviewed to prevent disclosure of sensitive information [21].

Negotiating these terms, particularly for SRAs and CRAs, requires attention to core scientific and operational priorities [22] [20]. Key negotiation points include preserving the right to publish research results (potentially after a brief sponsor review for confidentiality), clarifying background and foreground intellectual property ownership, ensuring access to original data for validation and collaboration, and defining clear data retention and destruction policies post-project [22] [20]. Establishing these terms in writing is essential, even for informal collaborations, to prevent future disputes regarding authorship, credit, and data use [22].

From Theory to Action: Executing Problem Formulation for Targeted Risk Assessment

Ecological Risk Assessment (ERA) is an iterative, scientific process used to evaluate the likelihood of adverse ecological effects resulting from exposure to one or more stressors [2]. Within this framework, the planning and problem formulation phase serves as the critical foundation, determining the assessment's scope, objectives, and ultimate utility for environmental decision-making [2].

Systematic information gathering during this initial phase ensures the assessment is focused, efficient, and scientifically defensible. It involves the deliberate collection and analysis of data on four interconnected core elements: stressors (physical, chemical, or biological entities that can cause adverse effects), their sources, the exposure pathways that link them to ecological entities, and the receptors (ecological entities that may be adversely affected) [23] [2]. The quality of this initial information dictates the relevance of the conceptual models, the appropriateness of the assessment endpoints, and the effectiveness of the entire risk assessment [24] [25].

This guide provides a technical framework for researchers and scientists to execute this systematic planning, with an emphasis on methodological rigor, data structuring, and the development of actionable conceptual models to support the broader thesis of the planning phase.

The Framework: Ecological Risk Assessment Phases

The U.S. Environmental Protection Agency's guidelines establish a three-phase framework for ERA [2]. The systematic information gathering detailed in this guide is the essential engine of the first phase, informing all subsequent work.

Table 1: Phases of the Ecological Risk Assessment Framework

| Phase | Primary Objective | Key Activities & Outputs |

|---|---|---|

| Planning & Problem Formulation | To define the scope, goals, and methodology for the assessment. | Systematic information gathering on stressors, sources, exposure, and receptors; stakeholder engagement; development of assessment endpoints and conceptual models; creation of an analysis plan [24] [2]. |

| Analysis | To evaluate exposure and ecological effects. | Characterization of exposure (sources, distribution, contact); development of stressor-response profiles; evaluation of effects at relevant biological levels [23] [2]. |

| Risk Characterization | To integrate exposure and effects information to estimate risk. | Description of risk, its severity, and spatial/temporal extent; discussion of uncertainties; interpretation of ecological adversity for decision-makers [2]. |

A tiered approach is a hallmark of effective ERA, moving from conservative, screening-level assessments (SLRA) to more realistic, detailed-level assessments (DLRA) as needed [25]. The systematic information gathered during planning directly informs the choice of tier and the specific tools employed.

Table 2: Characteristics of Screening-Level vs. Detailed-Level Risk Assessments

| Characteristic | Screening-Level Assessment (SLRA) | Detailed-Level Assessment (DLRA) |

|---|---|---|

| Purpose | Identify stressors and pathways of potential concern; screen out negligible risks. | Refine risk estimates for issues flagged in SLRA; reduce uncertainty and conservatism [25]. |

| Information Use | Generic, conservative data (e.g., default exposure parameters, standardized toxicity values). | Site-specific data, detailed modeling, multiple lines of evidence (e.g., field surveys, bioassays, population models) [25]. |

| Exposure & Effects Estimates | Simple point estimates (e.g., maximum concentration, lowest observed effect level). | Probabilistic distributions (e.g., Monte Carlo simulation), spatially explicit modeling [25]. |

| Output | Hazard Quotient (HQ) or similar screening metric. HQ > 1 triggers further evaluation. | Quantitative risk estimate with defined confidence intervals; detailed understanding of cause-effect relationships [25]. |

Diagram 1: Iterative Ecological Risk Assessment Process with Tiering [2] [25].

Core Components of Systematic Information Gathering

Stressors: Characterization and Identification

A stressor is any physical, chemical, or biological entity that can induce an adverse response in an ecological system [23]. Stressors are not inherently harmful; risk is a function of their interaction with a receptor via exposure.

Key Stressor Characteristics to Document [23]:

- Type: Chemical (e.g., pesticide, metal), Physical (e.g., sedimentation, temperature change), Biological (e.g., invasive species, pathogen).

- Intensity: Concentration (chemical), magnitude (physical), or prevalence (biological).

- Duration & Frequency: Acute (short-term, single event) vs. chronic (long-term, continuous or repeated exposure).

- Timing: Relevance to seasonal cycles or critical life stages of receptors (e.g., application of pesticide during avian breeding season).

- Scale: Spatial extent and heterogeneity of the stressor's presence.

A source is the origin or activity from which a stressor is released into the environment [23] [2]. Accurate source characterization is vital for modeling exposure pathways.

- Point Sources: Discrete, localized origins (e.g., industrial effluent pipe, landfill leachate).

- Non-Point Sources: Diffuse origins (e.g., agricultural runoff, atmospheric deposition).

- Historical vs. Ongoing: Distinguishing between legacy contamination and active releases.

Exposure Pathways: The Linkage

Exposure is defined as the co-occurrence or contact between a stressor and a receptor [23]. An exposure pathway is the complete course a stressor takes from the source to the receptor. A pathway must have all of the following:

- A source and mechanism of release.

- A transport or fate medium (e.g., air, water, groundwater, soil).

- An exposure point/location where the receptor contacts the medium.

- An exposure route at the point of contact (e.g., inhalation, ingestion, dermal absorption, direct habitat alteration) [2].

For chemicals, key concepts include bioavailability (the fraction accessible for uptake), bioaccumulation (uptake faster than elimination), and biomagnification (increasing concentration up the food web) [2].

Receptors: Ecological Entities and Assessment Endpoints

Receptors are the ecological entities potentially exposed to and adversely affected by a stressor. Selecting and defining receptors is a critical scientific and policy decision during problem formulation [2].

An assessment endpoint is an explicit expression of the ecological value to be protected, comprised of both a valued receptor and an important attribute of that receptor [2]. For example, "Reproductive success of the piping plover (a receptor) at the watershed scale (an attribute)" is an assessment endpoint. Selecting endpoints involves balancing ecological relevance (the entity's role in ecosystem function), susceptibility to known stressors, and relevance to management goals [2].

Diagram 2: Generalized Conceptual Model of Risk Components [23] [2].

Methodologies and Protocols for Information Gathering

The Iterative Tiered Approach

Systematic gathering follows a tiered strategy. The SLRA uses readily available, generic data to calculate conservative hazard quotients (HQs) [25].

- Protocol (SLRA - Hazard Quotient):

HQ = Estimated Exposure Concentration (EEC) / Toxicity Reference Value (TRV). An HQ > 1 indicates potential risk requiring further investigation via DLRA [25]. - Data Sources: Maximum reported environmental concentration; lowest published toxicity benchmark (e.g., LC50, NOAEL) from databases like ECOTOX [25].

If the SLRA indicates potential risk (HQ > 1), a DLRA is initiated to reduce uncertainty [25].

- Protocol (DLRA - Refined Exposure): Replace generic EEC with site-specific measurements. Use statistical distributions (e.g., 95% upper confidence limit of the mean) rather than single maximum values.

- Protocol (DLRA - Probabilistic Risk): Employ Monte Carlo simulation. Define probability distributions for all input variables (e.g., chemical concentration, food intake rate, toxicity threshold). Run thousands of iterations to produce a distribution of risk values (e.g., probability of exceeding a threshold) [25].

Integrating Multiple Lines of Evidence

A robust DLRA employs multiple, independent lines of evidence to strengthen causal inference [25].

- Laboratory Toxicity Tests: Controlled experiments to establish stressor-response relationships for key receptors (e.g., 48-hr Daphnia immobilization test, 96-hr fish lethality test) [2] [25].

- Field Surveys and Bioassessments: In situ measurement of ecological condition (e.g., benthic macroinvertebrate community index, fish tissue contaminant analysis). Provides direct evidence of exposure and effects at the site [25].

- Sediment Quality Triad: An integrative methodology combining chemical analysis (contaminant levels), laboratory sediment toxicity tests, and infaunal community assessment to diagnose sediment contamination [25].

- Population and Ecosystem Modeling: Using demographic models (e.g., matrix models) to project the long-term impact of stressor-induced mortality or reproductive effects on population viability.

Exposure Assessment Techniques

- Environmental Sampling: Design statistically sound sampling plans to characterize the spatial and temporal distribution of stressors in media (water, soil, sediment, biota) [2].

- Bioaccumulation Studies: Measure contaminant concentrations in resident or caged organisms at different trophic levels to assess exposure via the food web [2].

- Habitat Use Analysis: For wildlife receptors, analyze home range, territory, and foraging patterns via telemetry or observational studies to quantify co-occurrence with contaminated areas [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for ERA Investigations

| Item/Category | Primary Function | Application Example in ERA |

|---|---|---|

| Standard Reference Materials (SRMs) | To calibrate analytical instruments and validate methods for accuracy and precision. | Quantifying trace metals (e.g., NIST SRM 1648a for urban particulate matter) or organic pollutants in environmental samples [26]. |

| Certified Clean Sampling Gear | To prevent sample contamination during collection and handling. | Teflon-lined water samplers, pre-cleaned glass jars for sediment, certified contaminant-free soil corers for organic compound analysis. |

| Laboratory Test Organisms | To provide standardized, sensitive biological units for toxicity testing. | Cultures of cladocerans (Ceriodaphnia dubia), fathead minnows (Pimephales promelas), or algae (Pseudokirchneriella subcapitata) for acute/chronic bioassays [2]. |

| Passive Sampling Devices (e.g., SPMDs, POCIS) | To measure time-weighted average concentrations of bioavailable contaminants in water. | Monitoring hydrophobic organic compounds (via Semi-Permeable Membrane Devices) or polar pesticides (via Polar Organic Chemical Integrative Samplers) over extended periods. |

| Ecological Soil Screening Levels (Eco-SSLs) | To provide risk-based, chemical-specific screening values for soil contaminants. | Initial comparison of site soil concentrations against Eco-SSLs for arsenic, lead, DDT, etc., to identify potential risks to soil-dwelling plants and animals [26]. |

| Stable Isotope Tracers (e.g., ¹⁵N, ¹³C) | To elucidate food web structure, trophic position, and contaminant biomagnification pathways. | Determining the trophic level of a predator species to interpret tissue contaminant concentrations within an ecosystem context. |

| Environmental DNA (eDNA) Extraction & Sequencing Kits | To detect species presence, assess biodiversity, and identify biological stressors from environmental samples. | Screening for the presence of invasive species or pathogens, or conducting community-level assessments without direct observation or capture. |

Ecological Risk Assessment (ERA) is a formal, iterative process for evaluating the likelihood of adverse environmental effects resulting from exposure to one or more stressors, such as chemicals, disease, or invasive species [1]. This process is initiated during the critical Planning Phase, where risk assessors, managers, and stakeholders collaborate to define the assessment's purpose, scope, and objectives [2] [1]. A core output of this planning, further refined in the Problem Formulation phase, is the selection of assessment endpoints [2].

Assessment endpoints are explicit expressions of the actual environmental values to be protected. They consist of both an ecological entity (e.g., a species, community, or ecosystem) and a specific attribute of that entity (e.g., survival, reproduction, community structure) [2]. The choice of these endpoints directly determines the scientific and regulatory trajectory of the entire risk assessment. Therefore, selecting appropriate endpoints requires balancing three principal criteria: ecological relevance, susceptibility to stressors, and relevance to management goals [2]. This guide provides a technical framework for researchers and assessors to navigate this critical, foundational step within the broader ERA planning context.

Core Criteria for Endpoint Selection

The selection of assessment endpoints is a decision informed by scientific judgment and policy needs. The U.S. EPA outlines three principal criteria to guide this choice, ensuring endpoints are both biologically meaningful and decision-relevant [2].

Table 1: Core Criteria for Selecting Assessment Endpoints

| Criterion | Definition | Key Considerations for Evaluators |

|---|---|---|

| Ecological Relevance | The importance of an ecological entity and its attributes to the structure, function, and sustainability of the ecosystem. | Role in energy flow/nutrient cycling; keystone species status; influence on biodiversity; linkage to other valued entities [2]. |

| Susceptibility | The inherent sensitivity of an entity to the identified stressor(s) and its likelihood of exposure. | Toxicological sensitivity; life-stage vulnerability; coincidence of stressor with critical habitat/temporal cycles; potential for bioaccumulation [27] [2]. |

| Relevance to Management Goals | The degree to which the endpoint reflects the societal and regulatory values the assessment aims to protect. | Legal mandates (e.g., Endangered Species Act); economic/recreational value; provision of ecosystem services (flood control, water purification); public or cultural significance [28] [2]. |

The integration of ecosystem services—the benefits humans derive from nature—as assessment endpoints is a contemporary advancement that strengthens the link between ecological risk and management decisions. Assessing risks to services like nutrient cycling, carbon sequestration, or soil formation can highlight valuable endpoints not always considered in conventional assessments focused solely on species survival [28].

Quantitative Frameworks for Risk Estimation

Once assessment endpoints are selected, the analysis phase estimates risk by comparing exposure to effects. For chemical stressors, a widely applied screening-level method is the deterministic risk quotient (RQ) approach [27].

The Risk Quotient (RQ) Methodology

The core formula is: RQ = Exposure Estimate (EEC) / Toxicity Endpoint Value. An RQ > 1 indicates potential risk, triggering further evaluation [27]. The choice of toxicity endpoint is tailored to the assessment endpoint and the organism group.

Table 2: Standard Toxicity Endpoints for Risk Quotient Calculations by Organism Group [27]

| Organism Group | Assessment Type | Typical Toxicity Endpoint |

|---|---|---|

| Terrestrial Animals (Birds/Mammals) | Acute | LD₅₀ (Median Lethal Dose) |

| Chronic | NOAEC (No-Observed-Adverse-Effect Concentration) from reproduction studies | |

| Aquatic Animals (Fish/Invertebrates) | Acute | LC₅₀ or EC₅₀ (Median Lethal/Effect Concentration) |

| Chronic | NOAEC from life-cycle or early life-stage tests | |

| Terrestrial Plants | Acute (Non-listed) | EC₂₅ (Effect Concentration for 25% impact) from seedling emergence/vigor |

| Acute (Endangered) | NOAEC or EC₀₅ | |

| Aquatic Plants (Algae/Vascular) | Acute (Non-listed) | EC₅₀ (growth inhibition) |

| Acute (Endangered) | NOAEC |

Experimental Protocols for Endpoint Derivation

The toxicity values in Table 2 are derived from standardized testing protocols.

- Avian Acute Oral Toxicity Test (OECD 223): This protocol determines the LD₅₀ for birds. A minimum of 10 healthy birds per dose level are administered a single oral dose of the test substance via gavage. Birds are observed for mortality and signs of toxicity for 14 days. The LD₅₀ is calculated using probit or logistic regression analysis [27].

- Aquatic Invertebrate (Daphnia sp.) Acute Immobilization Test (OECD 202): This protocol determines the EC₅₀ for water fleas. Neonates (<24 hours old) are exposed to a range of test substance concentrations for 48 hours. Immobility (lack of movement after gentle agitation) is recorded. The EC₅₀ is calculated based on immobility at 48 hours [27].

- Fish Early Life-Stage Toxicity Test (OECD 210): This chronic test informs the NOAEC for fish. Fertilized eggs are placed in test solutions shortly after fertilization and exposed through embryonic, larval, and early juvenile development (typically 28-60 days post-hatch). Primary endpoints include survival, hatching success, growth, and morphological development. The NOAEC is the highest tested concentration showing no statistically significant adverse effects compared to controls [27].

Advanced Methodologies: Addressing Ecosystem Complexity

Traditional RQ methods can struggle to capture indirect effects, feedback loops, and cumulative risks in complex ecosystems [29]. Qualitative Network Models (QNMs) offer a complementary, systems-level approach.

Protocol for Qualitative Modeling in ERA

A case study on mine site rehabilitation illustrates the methodology [29]:

- Expert Elicitation Workshop: Ecologists, hydrologists, and site managers convene to define the system boundaries and key components (e.g., native trees, invasive weeds, fire regime, herbivores).

- Signed Digraph Development: Participants construct a conceptual model where components (nodes) are linked by directed edges (arrows) representing interactions (e.g., "-" for negative, "+" for positive). For example, "weeds --competition--> native seedlings."

- Community Matrix Formulation: The digraph is translated into a qualitative (sign) matrix, encoding the direct interactions between all components.

- Perturbation Analysis (Loop Analysis): Using algorithms, the model predicts the direction of change (increase, decrease, ambiguous) in all network components in response to a sustained "press" perturbation (e.g., increased weed invasion). This reveals direct and indirect cascading effects.

- Bayesian Network Integration: Predictions can be augmented with probabilistic data to quantify uncertainty, forming a Bayesian Network that estimates the likelihood of ultimate impacts on high-level assessment endpoints (e.g., "ecosystem resilience").

Visualizing the Risk Assessment Workflow

The following diagram integrates the planning phase, endpoint selection, and analysis pathways into a coherent ERA workflow.

ERA Workflow Integrating Endpoint Selection

Visualizing a Qualitative Ecosystem Model

The diagram below illustrates the structure of a qualitative network model used to assess cumulative risks from weeds and fire in a terrestrial ecosystem, as applied in a mine rehabilitation study [29].

Qualitative Model of Weed & Fire Stressors in an Ecosystem

The Scientist's Toolkit: Key Research Reagent Solutions

Selecting and implementing assessment endpoints requires specific methodological tools and reagents. The following toolkit details essential materials for key experimental protocols.

Table 3: Research Reagent Solutions for Key ERA Protocols

| Tool/Reagent | Primary Function in ERA | Example Protocol & Application |

|---|---|---|

| Reference Toxicants (e.g., Potassium dichromate, Sodium chloride) | Validates test organism health and response sensitivity. Used in positive control treatments to ensure experimental integrity. | Acute Daphnia test: A KCl reference confirms neonate sensitivity if immobilization EC₅₀ falls within expected range [27]. |

| Standardized Test Media (e.g., Reconstituted hard/soft water, soil formulations) | Provides a consistent, uncontaminated exposure matrix, isolating the stressor's effect from environmental variability. | Fish early life-stage test: Exposure is conducted in standardized, aerated reconstituted water of defined hardness and pH [27]. |

| Formulated Test Substances (with carriers like acetone or solvents if needed) | Ensures accurate and homogenous delivery of the stressor (especially poorly soluble chemicals) to the test system. | Avian oral toxicity test: The test substance is precisely formulated into a capsule or mixed with a vehicle for gavage dosing [27]. |

| Live Culture Organisms (e.g., Ceriodaphnia dubia, Pimephales promelas, Lolium multiflorum) | Provides sensitive, standardized biological receptors for toxicity testing. Requires culturing under strict conditions (light, temperature, diet). | Chronic invertebrate test: C. dubia neonates from lab cultures are used to assess reproduction NOAECs [27]. |

| Environmental DNA (eDNA) Sampling Kits | Enables sensitive, non-invasive detection of species presence (including rare/endangered) for entity identification and exposure pathway analysis. | Problem Formulation: Used in preliminary field surveys to confirm the presence of a protected aquatic species in a watershed [2]. |

| Expert Elicitation Framework (Structured workshops, Delphi method) | Systematically captures and quantifies expert judgment to parameterize models (like QNMs) when empirical data is scarce [29]. | Qualitative Modelling: Used to establish the direction and strength of interactions between ecosystem components for network analysis [29]. |

In the structured planning phase of ecological risk assessment (ERA), problem formulation serves as the critical bridge between management goals and scientific analysis [2]. A key product of this phase is the conceptual model, a graphical and narrative representation that identifies predicted relationships between ecological entities and the stressors to which they may be exposed [30]. This guide details the technical construction of these models, focusing on diagramming risk hypotheses and exposure pathways to establish a clear, defensible foundation for the assessment.

The primary function of a conceptual model is to organize existing knowledge, identify data gaps, and delineate the scope of the risk assessment. For researchers and drug development professionals, particularly when assessing the potential ecological impact of chemical stressors like pharmaceuticals or agrochemicals, a robust conceptual model ensures that the subsequent analysis phase investigates the most plausible and significant exposure scenarios [2]. It transforms a broad management goal—such as protecting aquatic ecosystems—into testable risk hypotheses about specific cause-effect pathways [30].

Core Principles and Definitions

A conceptual model is built from interconnected components that describe the source, movement, and potential impact of a stressor. The U.S. Environmental Protection Agency (EPA) and the Agency for Toxic Substances and Disease Registry (ATSDR) provide complementary frameworks for defining these components [30] [31].

- Stressor Source: The origin of the chemical, physical, or biological agent that can cause adverse effects (e.g., pesticide application, pharmaceutical manufacturing effluent).

- Stressor: The specific agent or change in the environment (e.g., a specific pharmaceutical compound, increased turbidity).

- Exposure Pathway: The course a stressor takes from the source to a receptor, including the environmental media involved (e.g., runoff to surface water, leaching to groundwater, uptake by crops) [2].

- Receptor (Ecological Entity): The ecological component that may be exposed to and adversely affected by the stressor. This can be a species, a functional group, a community, or an ecosystem [2].

- Assessment Endpoint: An explicit expression of the ecological value to be protected, defined by both a valued receptor and its key attribute (e.g., survival of fathead minnow, reproductive success of honeybee colonies) [2].

- Effect Endpoint (Measurement Endpoint): A measurable response to a stressor that is related to the valued attribute of the assessment endpoint (e.g., LC50, reduced egg production).

Effective model development adheres to several principles: it must be site-specific, considering local ecology and geography; realistic, based on plausible scenarios; and comprehensive, evaluating all significant past, present, and future pathways [31].

Methodology for Diagramming Exposure Pathways

The diagramming process translates the conceptual understanding of the system into a visual map. A standard approach, as outlined by the ATSDR, involves tracing the pathway through five sequential elements [31] [32].

1. Identify Contaminant Source & Stressor: Begin with the primary source. For a pesticide, this is the application to crops [30]. The specific chemical compound(s) of concern, including major degradates, are identified as the stressors.

2. Map Environmental Fate and Transport: This step defines the mechanisms by which the stressor moves through the environment from the source. Key media include air (through volatilization and drift), soil, surface water (through runoff), groundwater, and biota (through uptake) [30] [32]. The model must depict which media are relevant based on the stressor's properties.

3. Locate Exposure Points: This is the physical location where a receptor comes into contact with the contaminated medium (e.g., a contaminated pond, treated foliage, soil within a foraging area).

4. Define Exposure Routes: Specify the biological mechanism of contact at the exposure point. For ecological receptors, primary routes are ingestion (of food, water, soil), inhalation, and dermal contact [30].

5. Characterize the Receptors: Finally, identify the specific ecological entities (e.g., aquatic invertebrates, terrestrial pollinators, piscivorous birds) that are present at the exposure point and susceptible via the defined routes [30].

This logical flow from source to receptor forms the backbone of the diagram and directly informs the development of testable risk hypotheses, such as "Oral ingestion of contaminated aquatic invertebrates will lead to adverse reproductive effects in insectivorous birds."

Quantitative Criteria for Pathway Inclusion

Not all theoretical pathways are equally significant. The following tables summarize quantitative criteria from EPA guidance used to determine if specific exposure pathways should be included in a chemical-specific conceptual model [30].

Table 1: Criteria for Including Sediment Exposure Pathways for Aquatic Organisms

| Exposure Type | Persistence Requirement (Half-life in sediment) | AND Partitioning Requirement (One of the following) | Trigger for Evaluation |

|---|---|---|---|

| Acute Exposure | ≤ 10 days (aerobic soil or aquatic metabolism) | Kd ≥ 50 L/kg OR log Kow ≥ 3 OR Koc ≥ 1,000 L/kg OC | Conditions met |

| Acute & Chronic Exposure | ≥ 10 days (aerobic soil or aquatic metabolism) | Kd ≥ 50 L/kg OR log Kow ≥ 3 OR Koc ≥ 1,000 L/kg OC | EECsediment > 0.1 of acute LC50/EC50 |

Table 2: Criteria for Including Groundwater Exposure Pathways

| Criterion Number | Description |

|---|---|

| 1 | Detections in groundwater from prospective studies or reliable monitoring data. |

| 2 | Movement to sampled depth in terrestrial field dissipation studies. |

| 3 | Environmental fate properties indicating high mobility (Kd < 5) and persistence (hydrolysis half-life > 30 days OR soil metabolism half-life > 2 weeks). |

| 4 | Use in areas vulnerable to groundwater contamination (e.g., karst topography). |

Table 3: Criteria for Evaluating Bioaccumulation for Piscivorous Wildlife

| Criterion | Requirement |

|---|---|

| Chemical Nature | Non-ionic, organic compound |

| Hydrophobicity | Log Kow between 4 and 8 |

| Potential to Reach Habitat | Likely to reach aquatic habitats via runoff, drift, etc. |

Detailed Experimental Protocols for Pathway Analysis

Protocol 1: Assessing Sediment Exposure Pathway Significance. This protocol determines whether sediment is a meaningful exposure route for aquatic organisms [30].

- Data Compilation: Obtain aerobic soil and aquatic metabolism study data to determine the half-life (DT50) of the parent compound and major degradates in sediment.

- Persistence Screening: If any relevant half-life is ≥10 days, proceed to Step 3. If all are ≤10 days, the sediment pathway may only be relevant for acute exposure.

- Partitioning Analysis: Calculate or obtain the soil-water distribution coefficient (Kd), the octanol-water partition coefficient (Kow), and the organic carbon normalized coefficient (Koc).

- Application of Criteria: Apply the logic from Table 1. If the compound is persistent (half-life ≥10 days) and has high partitioning potential (meets one criterion from Table 1), model sediment as a primary exposure medium with a solid line. If not, it may be depicted with a dotted line or excluded.

Protocol 2: Evaluating Groundwater as a Potential Exposure Route. This qualitative assessment determines if groundwater transport should be represented in the conceptual model [30].

- Literature & Data Review: Compile all available groundwater monitoring data, terrestrial field dissipation studies, and hydrolytic stability data.

- Checklist Application: Evaluate the compound against the four criteria in Table 2.

- Pathway Characterization: If any one of the four criteria is met, include a "Groundwater Transport" pathway in the conceptual model. The EPA notes that quantitative risk assessment for this pathway to aquatic receptors is not yet routine, so its inclusion signals the need for a qualitative discussion of potential relevance.

Protocol 3: Screening for Bioaccumulation Risk to Piscivorous Wildlife. This protocol uses the KABAM (Kow-based Aquatic BioAccumulation Model) to assess secondary poisoning risk [30].

- Compound Characterization: Confirm the compound is non-ionic and organic. Obtain a reliable log Kow value.

- Initial Screening: If the log Kow is between 4 and 8, proceed. Values outside this range typically indicate low bioaccumulation potential for this model.

- Exposure Potential: Determine if label uses or environmental fate properties suggest the compound will reach aquatic habitats (e.g., through spray drift, runoff).

- Model Implementation: If all three criteria in Table 3 are met, run the KABAM model (version 1.0 or later) to estimate bioaccumulation factors and potential risks to mammals and birds that consume aquatic prey. Include the "consumption of aquatic prey" pathway in the terrestrial conceptual model.

Diagrammatic Representation with Graphviz

The following DOT scripts generate standardized diagrams for generic exposure pathways, adhering to visual accessibility rules including maximum width (760px) and sufficient color contrast between text and node backgrounds [33] [34].

Generic Aquatic Exposure Conceptual Model

Generic Terrestrial Exposure Conceptual Model

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Models and Tools for Exposure Pathway Analysis

| Tool/Reagent | Primary Function | Application in Conceptual Modeling |

|---|---|---|

| KABAM (Kow-based Aquatic BioAccumulation Model) | Estimates bioaccumulation of hydrophobic organic pesticides in aquatic food webs and risks to piscivorous wildlife [30]. | Determines if the "consumption of aquatic prey by birds and mammals" pathway must be included in the model (see Protocol 3). |

| Screening Tool for Inhalation Risk (STIR) | Assesses potential acute inhalation risk from airborne pesticide droplets and vapor [30]. | Used to evaluate the significance of the "inhalation" pathway for terrestrial vertebrates, informing whether it is a solid or dashed line in the diagram. |