Standartox Database Demystified: A Guide to Aggregated Ecotoxicity Data for Research and Risk Assessment

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the Standartox database, a pivotal tool for standardizing and aggregating ecotoxicity data.

Standartox Database Demystified: A Guide to Aggregated Ecotoxicity Data for Research and Risk Assessment

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the Standartox database, a pivotal tool for standardizing and aggregating ecotoxicity data. We explore its foundational role in overcoming data variability, detail its methodological application through R and web interfaces, address common challenges in data interpretation, and validate its outputs against established resources. The guide synthesizes how Standartox enables reproducible, efficient environmental risk assessments for chemicals and pharmaceuticals.

What is Standartox? Solving the Problem of Variable Ecotoxicity Data

Ecotoxicological testing is fundamental for evaluating the risks chemicals pose to ecosystems, with data from standardized laboratory tests informing regulatory decisions and environmental safety assessments [1]. A persistent and critical challenge in this field is the substantial variability in test results for the same chemical and organism combination. This variability arises from multiple factors, including differences in test duration, experimental conditions, physiological variations in test populations, and unrecorded methodological details [1]. For example, toxicity values for a chemical like atrazine can show significantly different distributions across species such as Xenopus laevis (amphibian) and Oncorhynchus mykiss (fish) [1]. This inconsistency introduces significant uncertainty into risk assessments, hampers reproducibility, and complicates regulatory decision-making.

To address this, data aggregation tools have been developed. This guide objectively compares the performance of the Standartox database—a tool specifically designed to standardize and aggregate ecotoxicity data—with its primary source and other alternatives, focusing on their utility for researchers and scientists within a data aggregation research context [1].

Comparative Analysis of Ecotoxicity Data Platforms

The following table compares the core characteristics, data handling approaches, and outputs of major ecotoxicity data resources.

Table 1: Comparison of Ecotoxicity Data Resources and Aggregation Tools

| Feature | ECOTOX Knowledgebase (Source) [2] | Standartox (Aggregation Tool) [1] | ADORE Benchmark Dataset [3] | Traditional Direct Literature Review |

|---|---|---|---|---|

| Primary Function | Comprehensive data curation and repository. | Data standardization, filtering, and aggregation. | Curated dataset for ML benchmarking. | Primary data collection. |

| Data Source | Peer-reviewed literature (>53,000 references) [2]. | Processed ECOTOX data (quarterly updates) [1]. | Curated subset of ECOTOX for fish, crustaceans, algae [3]. | Original journal articles. |

| Data Volume | ~1.1M test results, 12,000+ chemicals, 13,000+ taxa [2]. | ~600,000 test results, ~8,000 chemicals, ~10,000 taxa [1]. | Focused dataset for specific taxa and endpoints [3]. | Variable and project-dependent. |

| Key Processing | Curation and abstraction of test conditions/results [2]. | Automated quality control, unit harmonization, filtering [1]. | Rigorous cleaning, feature engineering for ML [3]. | Manual extraction and collation. |

| Aggregation Method | Not a primary function; presents all individual test results. | Calculates geometric mean, min, max per chemical-organism-test combination [1]. | Provides cleaned data; aggregation left to the user. | Manual, non-standardized calculation. |

| Output for a Query | List of all individual test records matching criteria. | Single aggregated data points (e.g., geometric mean EC50) with variability metrics [1]. | Fixed, pre-defined datasets for model training/testing [3]. | Custom spreadsheet of extracted values. |

| Advantages | Unparalleled breadth, detailed test metadata, quarterly updates. | Reproducible, consistent outputs, reduces selection bias, facilitates SSDs/TUs [1]. | Enables direct ML model comparison, includes chemical/species features. | Full access to experimental context and nuances. |

| Disadvantages | High variability, heterogeneous units, requires expert processing. | Less granularity, dependent on ECOTOX's curation. | Limited scope (acute aquatic toxicity), static snapshot. | Time-intensive, prone to bias, not reproducible. |

Experimental Protocols for Data Aggregation Research

Research on data aggregation, such as that performed to create Standartox or the ADORE dataset, follows meticulous protocols to ensure scientific rigor.

1. Core Data Acquisition and Harmonization Protocol This protocol transforms raw data from sources like ECOTOX into a standardized, analyzable format [1] [3].

- Source Data: Download the quarterly release of the ECOTOX database in pipe-delimited ASCII format [3].

- Table Joining: Link related data tables (species, tests, results, media) using unique keys (e.g.,

species_number,result_id) [3]. - Endpoint Filtering: Restrict data to standardized toxicity endpoints (e.g., EC50, LC50, NOEC) for comparability [1].

- Unit Standardization: Convert all concentration values to a common molar unit (e.g., mol/L) to enable direct comparison across chemicals [1] [3].

- Identifier Matching: Use persistent chemical identifiers (InChIKey, DTXSID, CAS RN) to accurately link toxicity records to external chemical property databases [3].

2. Taxonomic and Experimental Filtering Protocol This step refines the dataset to a relevant, high-quality subset for analysis [3].

- Taxonomic Group Selection: Filter for ecologically relevant taxa (e.g., fish, crustaceans, algae for aquatic studies) using the

ecotox_groupfield [3]. - Life Stage Exclusion: Remove tests on early life stages (e.g., eggs, embryos) unless they are the study focus, as sensitivity differs from adult organisms [3].

- Exposure Duration Filter: Apply duration filters relevant to the toxicity endpoint (e.g., 24-96h for acute fish tests) [3].

- Effect Type Filtering: Select appropriate adverse effects (e.g., mortality for fish, immobilization for crustaceans) [3].

3. Data Aggregation and Variability Analysis Protocol This core protocol generates summarized toxicity values and quantifies their reliability [1].

- Grouping: Group standardized data by chemical, specific organism (or taxonomic level), and test parameters (e.g., endpoint, duration).

- Outlier Flagging: Identify statistical outliers within each group (e.g., values exceeding 1.5 times the interquartile range) for expert review [1].

- Geometric Mean Calculation: Compute the geometric mean of the values within each group. This metric is preferred over the arithmetic mean as it is less sensitive to extreme outliers and is standard for constructing Species Sensitivity Distributions (SSDs) [1].

- Variability Metrics: Calculate the minimum, maximum, and coefficient of variation for each aggregated data point to transparently communicate the underlying data spread [1].

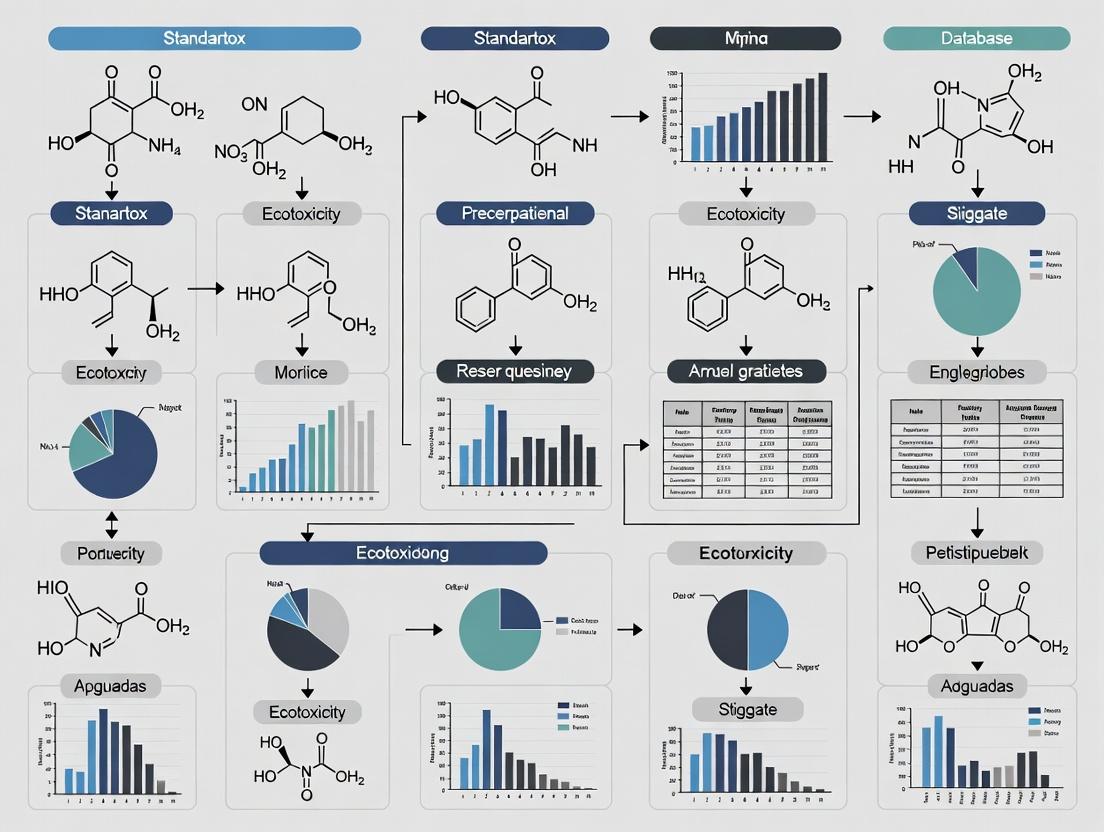

Diagram 1: Standartox Data Aggregation and Standardization Workflow [1] [3]

Diagram 2: Key Sources of Variability in Ecotoxicological Test Results [1]

The Scientist's Toolkit: Research Reagent Solutions

Conducting or analyzing ecotoxicological research requires specific "reagents" in the form of standard organisms, reference chemicals, and data tools.

Table 2: Essential Research Reagents for Ecotoxicity Data Aggregation Studies

| Reagent / Material | Function in Research | Example Use-Case in Aggregation Studies |

|---|---|---|

| Standard Test Organisms (e.g., Daphnia magna, Raphidocelis subcapitata, Oncorhynchus mykiss) [1] | Provide benchmark toxicity data; allow comparison across chemicals due to extensive historical data. | Used as indicator species to calibrate and validate aggregated toxicity values or QSAR models. |

| Reference Chemicals (e.g., Atrazine, Zinc Sulfate, 17α-Ethinylestradiol) [1] [4] | Chemicals with well-characterized toxicity and extensive test data across many species. | Serve as positive controls to test the performance of aggregation algorithms and data filtering protocols. |

| Persistent Chemical Identifiers (InChIKey, DTXSID) [3] | Uniquely and unambiguously identify chemical structures across different databases. | Critical for accurately merging toxicity data from ECOTOX with chemical descriptor data from sources like PubChem for QSAR/ML. |

| Taxonomic Hierarchy Data (Kingdom → Species) | Allows aggregation and analysis at different biological organization levels (e.g., species, genus, family). | Enables creation of Species Sensitivity Distributions (SSDs) and assessment of taxonomic patterns in sensitivity. |

| Curated Benchmark Datasets (e.g., ADORE) [3] | Provide a clean, standardized dataset with defined train/test splits for machine learning. | Enable reproducible development and comparison of QSAR and ML models for toxicity prediction. |

| Statistical Aggregation Scripts (R/Python) | Automate the calculation of geometric means, variability metrics, and outlier detection. | Ensure reproducibility and transparency in deriving single toxicity values from multiple test results [1]. |

The variability inherent in ecotoxicological test data is a critical challenge that tools like Standartox directly address by providing standardized, aggregated toxicity values [1]. For researchers conducting meta-analyses, developing predictive models, or performing regulatory risk assessments, using such aggregated data offers significant advantages:

- Reduces Uncertainty: Mitigates the influence of outlier studies and provides a more robust central tendency estimate (geometric mean) [1].

- Enables Reproducibility: Offers a consistent, transparent methodology for deriving a toxicity value, unlike ad-hoc literature reviews [1].

- Facilitates Advanced Analysis: Aggregated data is the essential input for constructing Species Sensitivity Distributions (SSDs) and calculating Toxic Units (TUs) for environmental risk assessments [1].

The future of the field lies in integrating these aggregated traditional data with New Approach Methodologies (NAMs), including in vitro assays and in silico models [4]. Aggregated in vivo data from platforms like Standartox serves as the crucial benchmark for validating these new, mechanistic tools, guiding the evolution towards more efficient and predictive ecotoxicology [3] [4].

The proliferation of synthetic chemicals, including pharmaceuticals, pesticides, and industrial compounds, poses a significant challenge for environmental risk assessment [1]. To protect ecosystems and human health, scientists and regulators rely on ecotoxicological test data generated from standardized laboratory experiments [1]. However, a major analytical hurdle exists: for a single chemical and test organism combination, multiple toxicity values are often available, and they can vary by several orders of magnitude [1]. This variability, stemming from differences in test conditions, protocols, or organism life stages, introduces substantial uncertainty into risk assessments, meta-analyses, and regulatory decisions [1].

Standartox was created to resolve this critical issue. Its core mission is to transform disparate, raw ecotoxicity data into standardized, aggregated values through a consistent, automated, and reproducible workflow [1] [5]. By providing a single, robust point of reference (such as a geometric mean) for each unique chemical-organism-endpoint combination, Standartox aims to reduce selection bias and enhance the reliability of downstream ecological risk indicators like Species Sensitivity Distributions (SSDs) and Toxic Units (TUs) [1] [6]. This guide objectively compares Standartox's performance and methodology against other key resources in the field, providing researchers and drug development professionals with a clear framework for selecting the appropriate tool for their ecotoxicological data needs.

The landscape of publicly available ecotoxicity databases is diverse, with each resource designed for specific purposes. The following table provides a detailed comparison of Standartox with its primary alternatives.

Table: Comparison of Standartox with Alternative Ecotoxicity Data Resources

| Feature | Standartox | EPA ECOTOX | ECOTOXr | PPDB | EnviroTox |

|---|---|---|---|---|---|

| Primary Source | EPA ECOTOX knowledgebase [1] [5]. | Primary literature & regulatory studies [1]. | EPA ECOTOX knowledgebase [7]. | Scientific literature, regulatory dossiers [1]. | Curated study data from multiple sources [1]. |

| Core Function | Data aggregation & standardization. Derives single toxicity values from multiple tests [1]. | Data compilation & repository. Archives raw test results [1]. | Data retrieval & curation. Provides reproducible scripts for extracting data from ECOTOX [7]. | Data provision for pesticides. Offers single values for pesticides [1]. | Curated database & SSDs. Provides quality-checked data and pre-derived SSDs for aquatic life [1]. |

| Key Output | Aggregated values (min, geometric mean, max) per query [1] [8]. | All individual test records meeting search criteria. | Reproducible R script and extracted dataset [7]. | A single selected toxicity value per organism [1]. | Quality-controlled data points and modeled SSDs [1]. |

| Automation & Workflow | Fully automated pipeline from raw data to aggregates; quarterly updates [1]. | Manual web queries or bulk downloads. | Scripted, reproducible extraction in R [7]. | Manual lookup of pre-selected values. | Not specified in detail. |

| Scope | Broad: ~8,000 chemicals, ~10,000 taxa [1]. | Very broad: ~12,000 chemicals, ~13,000 taxa [1]. | Matches the scope of the ECOTOX database. | Narrow: Focus only on pesticides (~2,000) [1]. | Narrow: Focus on aquatic toxicity [1]. |

| Aggregation Method | Calculates geometric mean, min, and max across filtered data [1] [8]. | No aggregation; presents all individual values. | No inherent aggregation; facilitates user curation [7]. | Presents a single expert-selected value, not a calculated aggregate [1]. | Employs quality filters and may use aggregates for SSD modeling. |

| Access Method | R package (standartox) & web application [1] [9]. |

Web interface and bulk data downloads. | R package (ECOTOXr) [7]. |

Web interface. | Web interface. |

| Primary User | Researchers conducting meta-analysis or risk assessment requiring consistent aggregated inputs [6]. | Researchers needing to inspect raw experimental data. | Researchers valuing full transparency and reproducibility in data curation [7]. | Regulators & practitioners needing approved values for pesticide risk assessment. | Risk assessors focusing on aquatic environments and wanting pre-modeled SSDs. |

Analysis: Standartox occupies a unique niche by automating the data harmonization and aggregation process. While ECOTOX is the foundational source of raw data and ECOTOXr enhances the reproducibility of querying that source, Standartox adds a critical layer of synthesis [1] [7]. Unlike the PPDB, which provides expert-judgment values for a limited chemical set, Standartox applies a consistent, statistical algorithm (geometric mean) across a broad chemical and taxonomic space [1]. Its dual access via R package and web app caters to both programming-intensive research and quick queries [5] [8].

Experimental Protocols: The Standartox Workflow

The value of Standartox is underpinned by its rigorous, transparent methodology for processing data. The following workflow diagram and detailed protocol explain how raw data is transformed into standardized aggregates.

Diagram: Standartox Data Processing and Aggregation Workflow [1] [5] [8]

Step 1: Data Acquisition and Cleaning Standartox is built upon the quarterly-updated EPA ECOTOX knowledgebase [1]. The initial processing involves:

- Endpoint Harmonization: Grouping diverse endpoints (e.g., EC50, LC50, LD50) into three main categories:

XX50(median effect levels),LOEX(lowest observed effect), andNOEX(no observed effect) [1] [8]. - Unit Standardization: Converting all concentration values to a consistent set of units (e.g., µg/L, mg/kg) [1].

- Data Filtering: Removing entries with critical missing information (e.g.,

NRfor "not reported" in endpoint or duration) [10]. This results in a cleaned core dataset of approximately 600,000 test results [1].

Step 2: User-Driven Query and Filtering

Users interact with the cleaned data through the stx_query() function in R or the web app [5] [8]. Key filterable parameters include:

- Chemical Identifier: CAS number or name.

- Taxonomic Information: Species, genus, family, or broader group (e.g., "fish").

- Test Conditions: Effect endpoint group (

XX50,LOEX,NOEX), exposure duration range, habitat (freshwater, marine, terrestrial), and concentration type (active ingredient vs. formulation) [1] [8]. This step produces a tailored dataset relevant to the specific research question.

Step 3: Statistical Aggregation This is Standartox's defining step. For the filtered dataset, it calculates:

- Geometric Mean (

gmn): The primary aggregated value. It is preferred over the arithmetic mean because it is less sensitive to extreme outliers and is appropriate for log-normally distributed toxicity data [1]. - Minimum (

min) and Maximum (max): Identifies the most and least sensitive taxa for the query, along with the corresponding toxicity values [8]. - Supporting Metrics: The number of distinct taxa (

n) and the standard deviation of the geometric mean (gmnsd) are provided to inform users of the underlying data's robustness and variability [8].

Validation Protocol: To ensure accuracy, Standartox's aggregated geometric means have been compared against manually curated values in specialized databases like the Pesticide Properties Database (PPDB) [1]. This comparison validates that the automated aggregation process yields results consistent with expert-curated values.

The Scientist's Toolkit: Essential Research Reagent Solutions

Effectively utilizing Standartox and conducting related ecotoxicological analyses requires a suite of digital "reagent" tools. The following toolkit details these essential resources.

Table: Research Reagent Solutions for Ecotoxicity Data Analysis

| Tool / Resource | Primary Function | Role in Research |

|---|---|---|

standartox R Package |

Primary interface for querying and aggregating data programmatically [10] [9]. | Enables reproducible, script-based research. Allows complex, parameterized queries to be saved and re-run, which is essential for transparent science and model development [5] [8]. |

| Standartox Web Application | User-friendly browser-based interface for data exploration and simple queries [1] [6]. | Facilitates quick, one-off queries and initial data exploration without programming. Useful for educators and professionals needing a fast answer [6]. |

data.table R Package |

High-performance data manipulation library [5] [8]. | Core to Standartox's internal processing and highly recommended for user-side data handling due to its speed with large datasets, which is crucial when working with hundreds of thousands of records [5]. |

webchem R Package |

Retrieves chemical identifiers and properties from various online sources [5] [6]. | Complements Standartox by allowing users to fetch additional chemical metadata (e.g., SMILES strings, molecular weights) needed for QSAR modeling or integrative studies, enriching the aggregated toxicity data [6]. |

ggplot2 R Package |

Advanced and flexible plotting system [5] [8]. | The standard for visualizing aggregated results. Essential for creating publication-quality figures such as dot plots of species sensitivity or comparative chemical bar charts, as shown in the official examples [5] [8]. |

ECOTOXr R Package |

Provides reproducible scripts for direct data extraction from the source EPA ECOTOX database [7]. | Serves a complementary but distinct purpose. Useful for researchers who need to audit the raw data underlying Standartox's aggregates or perform custom curation procedures not supported by Standartox's automated workflow [7]. |

Within the broader thesis on ecotoxicity data aggregation research, the Standartox database represents a significant advancement in standardizing and harmonizing environmental risk assessment data. Its core strength lies in its direct integration with the EPA ECOTOX Knowledgebase, a comprehensive, publicly available repository containing over one million test records for more than 12,000 chemicals and 13,000 species[reference:0]. This integration allows Standartox to automate the processing of a vast, quarterly updated stream of peer-reviewed ecotoxicity data[reference:1]. This comparison guide objectively evaluates Standartox's performance against alternative data sources and tools, providing researchers and risk assessors with a clear framework for selecting resources based on data coverage, aggregation methodology, and accuracy.

The following table provides a quantitative and feature-based comparison of Standartox with other prominent ecotoxicity data resources.

| Database / Tool | Primary Data Source | Approx. Data Coverage (Test Results / Chemicals / Taxa) | Aggregation Method | Accessibility | Key Performance Metric (vs. Reference) |

|---|---|---|---|---|---|

| Standartox | EPA ECOTOX Knowledgebase | ~600,000 / ~8,000 / ~10,000[reference:2] | Automated pipeline; calculates geometric mean, min, max for chemical-taxon combinations[reference:3] | Web app, R package (API)[reference:4] | 91.9% of aggregated values within one order of magnitude of PPDB values (n=3,601)[reference:5]; 95% within one order of magnitude of ChemProp QSAR predictions (n=179)[reference:6] |

| EPA ECOTOX (Raw) | Peer-reviewed literature | >1,000,000 / ~12,000 / ~13,000[reference:7] | None (raw test results) | Web interface, bulk download[reference:8] | Source database; serves as the benchmark for curated experimental data. |

| Pesticide Properties DB (PPDB) | Literature, regulatory studies | ~2,000 pesticides[reference:9] | Manual expert judgment; provides single "quality controlled" values for common taxa[reference:10] | Web interface | Used as a reference for quality-controlled values in Standartox validation. |

| ChemProp (QSAR) | Various (incl. ECOTOX) | Model-dependent | Quantitative Structure-Activity Relationship (QSAR) predictions[reference:11] | Software | 95% of Standartox values within one order of magnitude of its predictions[reference:12]. |

| EnviroTox Database | Multiple (incl. ECOTOX) | Restricted to aquatic organisms (fish, amphibians, invertebrates, algae)[reference:13] | Rule-based algorithm to derive single toxicity values per taxon[reference:14] | Web interface, download | Focuses on aquatic toxicity with additional acute/chronic classifications[reference:15]. |

| ETOX Database | Literature, monitoring data | Variable | No aggregation; provides filtering only[reference:16] | Web interface (non-automated access)[reference:17] | Lacks automated aggregation methods. |

Detailed Experimental Protocols Underlying the Data

The ecotoxicity data within the EPA ECOTOX Knowledgebase, and subsequently Standartox, originate from standardized laboratory tests. Below are detailed methodologies for two of the most commonly cited test types.

Acute Immobilisation Test withDaphnia magna(OECD Test No. 202 / EPA OCSPP 850.1010)

This guideline prescribes an acute toxicity test for freshwater daphnids, primarily Daphnia magna.

- Test Organisms: Young daphnids (neonates), less than 24 hours old, are used.

- Exposure System: Tests are conducted under static, static-renewal, or flow-through conditions[reference:18].

- Test Duration & Observations: Organisms are exposed to a range of chemical concentrations for 48 hours. Immobilisation (inability to swim after gentle agitation) is recorded at 24 and 48 hours[reference:19].

- Endpoint: The half-maximal effective concentration (EC50) is calculated, representing the concentration that immobilizes 50% of the test organisms after the exposure period.

- Control & Validity Criteria: A control group in dilution water must show ≥90% survival. Test conditions (temperature, dissolved oxygen, pH) are strictly maintained as per guideline specifications[reference:20].

Algal Toxicity Test (EPA OPPTS 850.4500)

This guideline assesses the toxicity of chemicals to freshwater algae.

- Test Organisms: Commonly used species include the green alga Raphidocelis subcapitata (formerly Selenastrum capricornutum).

- Exposure System: Algae are exposed to the test chemical in sterile, nutrient-enriched medium under controlled illumination and temperature.

- Test Duration & Measurements: The test typically runs for 72 to 96 hours. Algal growth is measured daily via cell counts or fluorescence.

- Endpoint: The EC50 is calculated based on the reduction in algal growth rate or yield compared to the control.

- Application: This test provides critical data on the effects of chemicals on primary producers in aquatic ecosystems[reference:21].

Visualizing Data Flow and Comparisons

Diagram 1: Standartox Data Processing Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key materials and solutions required for conducting standardized ecotoxicity tests, which generate the data aggregated by resources like Standartox.

Table 2: Essential Materials for Standard Ecotoxicity Testing

| Item | Function in Ecotoxicity Testing |

|---|---|

| Test Organisms (Daphnia magna neonates, Raphidocelis subcapitata cultures) | Standardized biological receptors for measuring toxic effects. Must be from healthy, cultured populations. |

| Test Chemical Solutions | Prepared in appropriate solvents (e.g., water, acetone) at verified concentrations for exposure series. |

| Reconstituted Freshwater | Standardized dilution water with defined hardness, pH, and ionic composition to ensure test reproducibility. |

| Multi-well Plates or Test Chambers | Containers for housing organisms during static or static-renewal exposure tests. |

| Environmental Chamber or Incubator | Provides controlled temperature, light cycle, and humidity for the duration of the test. |

| Microscope | Used for counting algal cells, assessing Daphnia immobilization, and general organism health checks. |

| Statistical Software (e.g., R, Python) | For calculating toxicity endpoints (EC50/LC50), performing statistical analyses, and generating species sensitivity distributions (SSDs). |

| Standartox R Package | Allows programmatic querying of the aggregated Standartox database directly within the R environment for efficient data retrieval and integration into analysis workflows[reference:22]. |

The integration of the Standartox database with the EPA ECOTOX Knowledgebase provides a powerful, automated solution for aggregating and standardizing ecotoxicity data. As demonstrated, Standartox offers a reproducible aggregation method that shows strong agreement with both quality-controlled databases like the PPDB and QSAR predictions. While alternatives such as EnviroTox or the raw ECOTOX database serve specific niches, Standartox's unique combination of broad data coverage, automated geometric mean aggregation, and accessible API (via R) makes it a particularly valuable tool for researchers conducting large-scale ecological risk assessments and data aggregation research.

In environmental toxicology, risk assessment for chemicals relies on high-quality ecotoxicity data. Standardized laboratory tests generate values such as the half-maximal effective concentration (EC50) and the no-observed-effect concentration (NOEC) for numerous chemical-organism combinations [1]. A significant challenge arises because multiple test results for the same combination often exhibit high variability due to differences in test duration, experimental conditions, and organism fitness [1]. This variability introduces uncertainty into analyses that inform chemical regulation and ecological safety.

The Standartox database was developed to address this challenge by providing a standardized, automated workflow for aggregating ecotoxicity data [1]. It processes data from sources like the U.S. EPA's ECOTOXicology Knowledgebase (ECOTOX), applies quality filters, and calculates aggregated values [1] [6]. For each specific chemical-organism-test endpoint combination, Standartox outputs three key summary statistics: the minimum (Min), the geometric mean (GM), and the maximum (Max) [1]. This trio provides a complete and nuanced picture of the available toxicity data, supporting more reproducible and robust ecological risk assessments [6].

Foundational Concepts: The Three Aggregation Statistics

The three aggregation statistics serve distinct purposes in summarizing a dataset of positive ecotoxicity values (e.g., a set of EC50 values for atrazine tested on Daphnia magna).

Minimum (Min): The lowest observed value in the dataset. In ecotoxicology, it represents the most sensitive response recorded—the concentration at which an effect was first observed in the most vulnerable test population or individual [1]. It is crucial for identifying worst-case scenarios and protecting the most sensitive species.

Geometric Mean (GM): The nth root of the product of n numbers. For a dataset with values (a1, a2, ..., an), it is calculated as (\sqrt[n]{a1 \times a2 \times ... \times an}) [11]. This is mathematically equivalent to the exponential of the arithmetic mean of the natural logarithms of the values: (\exp\left(\frac{\sum \ln(a_i)}{n}\right)) [11]. This property makes it the preferred measure of central tendency for log-normally distributed data, which is common in toxicology where data are often positively skewed [12] [1]. Unlike the arithmetic mean, it is less sensitive to extreme high outliers [12].

Maximum (Max): The highest observed value in the dataset. It indicates the most tolerant response observed—the concentration required to produce an effect in the least sensitive test population [1]. This value helps define the upper bound of the response range.

Comparative Analysis of Central Tendency Measures

The table below contrasts the geometric mean with other common measures of central tendency, highlighting its suitability for ecotoxicity data.

Table 1: Comparison of Measures of Central Tendency for Skewed Data

| Statistic | Calculation | Sensitivity to Outliers | Best Use Case | Performance with Lognormal/Skewed Data | Key Limitation in Ecotoxicology |

|---|---|---|---|---|---|

| Arithmetic Mean | Sum of values / count | High – heavily influenced by extreme values [12]. | Data with normal (symmetric) distribution. | Poor – overestimates central tendency [12]. | Can suggest a "typical" toxicity that is higher than most actual observations. |

| Median | Middle value of ordered data. | Low – ignores the magnitude of all values except the middle one [12]. | Robust, quick estimate; ordinal data. | Good – resistant to skew. | Inefficient – ignores the quantitative information in the data tails, unreliable for small samples [12] [1]. |

| Geometric Mean | nth root of the product of values [11]. | Moderate-Low – less influenced by high outliers than the arithmetic mean [12] [1]. | Multiplicative processes, ratios, lognormal data [12] [11]. | Excellent – the ideal measure of central tendency for lognormal distributions [12] [1]. | Cannot be calculated for datasets containing zero or negative values. |

Application in Standartox: Protocols and Experimental Workflow

Standartox implements a defined experimental protocol for data processing and aggregation to ensure reproducibility [1] [6].

Data Source & Curation:

- Primary Source: Standartox is built upon the U.S. EPA ECOTOX Knowledgebase, which is updated quarterly [1].

- Filtering: Users or automated scripts filter test results by parameters such as:

- Unit Standardization: All concentrations are converted to a standard unit (e.g., µg/L) to ensure comparability [6].

Aggregation Protocol: For each unique combination of chemical, organism (or higher taxonomic level), and test parameters, Standartox performs the following steps [1] [6]:

- Collect all relevant, filtered test values.

- Calculate the three core statistics:

- Min: The smallest concentration value.

- GM: The geometric mean of all concentration values.

- Max: The largest concentration value.

- Flag Outliers: Values exceeding 1.5 times the interquartile range are flagged for user awareness but are retained in the GM calculation due to its robustness [1].

- Output: The aggregated dataset is produced, listing the Min, GM, and Max for each group, along with the taxa associated with the Min and Max values [6].

Standartox Data Aggregation Workflow (Max Width: 760px)

Interpreting the Trio: From Data to Ecological Insight

The combined output of Min, GM, and Max provides a multi-faceted view essential for different stages of ecological risk assessment.

The Geometric Mean as the Benchmark: The GM is considered the best single-value representation of a chemical's toxicity to a given taxon [1]. It is used to calculate Toxic Units (TU = Environmental Concentration / GM) and serves as the primary input data point for constructing Species Sensitivity Distributions (SSDs) [6]. SSDs model the variation in sensitivity across multiple species to estimate protective concentration thresholds (e.g., HC5, affecting 5% of species) [13].

The Range (Min & Max) as a Measure of Uncertainty: The spread between the Min and Max values indicates the degree of variability or uncertainty in the toxicity data for that chemical-organism pair [1]. A wide range suggests high variability due to factors like differing test methods or intrinsic population variability. A narrow range suggests more consistent and reliable results.

Conceptual Relationship in a Distribution: The following conceptual diagram illustrates how the three statistics relate to a typical log-normal distribution of ecotoxicity data.

Position of Min, Geometric Mean, and Max on a Toxicity Distribution (Max Width: 760px)

Effectively working with aggregated ecotoxicity data requires a suite of computational, statistical, and data resources.

Table 2: Essential Toolkit for Ecotoxicity Data Aggregation and Analysis

| Tool/Resource Name | Type | Primary Function in Analysis | Key Feature for Aggregation |

|---|---|---|---|

| Standartox R Package [6] | Software Library (R) | Programmatic query of the Standartox database, data retrieval, and local aggregation. | Directly returns aggregated tables with Min, GM, and Max via stx_query() function. |

| R Statistical Environment | Software Platform | Data manipulation, statistical analysis, and visualization. | Built-in functions and packages (psych, EnvStats) for calculating geometric means and fitting SSDs. |

| U.S. EPA ECOTOX Knowledgebase [1] | Primary Source Database | The most comprehensive public repository of individual ecotoxicity test results. | The foundational data source for Standartox and other aggregation initiatives. |

| U.S. EPA TEST (Toxicity Estimation Software Tool) [14] | QSAR Prediction Software | Estimates toxicity using Quantitative Structure-Activity Relationships for untested chemicals. | Provides predicted endpoints (e.g., LC50) that can feed into aggregation workflows for data-poor chemicals. |

| PostgreSQL / MySQL [15] | Database Management System (DBMS) | Storage, management, and efficient querying of large relational datasets. | Backend technology for housing and processing large-scale toxicity databases like Standartox [6]. |

| Python (SciPy, pandas) | Programming Language / Libraries | Alternative platform for data science, machine learning, and building custom analysis pipelines. | Libraries offer functions for geometric statistics and advanced modeling beyond SSD. |

| Geometric Mean Calculator | Statistical Function | Computes the central tendency of lognormal data. | Essential for verifying aggregations or analyzing filtered data subsets; can be implemented in R/Python or Excel (=GEOMEAN()). |

Target Users and Primary Use Cases in Biomedical and Environmental Research

This comparison guide objectively evaluates the performance of the Standartox database against alternative ecotoxicity data resources within the context of a broader thesis on ecotoxicity data aggregation research. The analysis focuses on target users, primary use cases, data handling methodologies, and performance metrics, providing researchers and drug development professionals with a structured framework for tool selection.

Ecotoxicity databases serve distinct but sometimes overlapping niches within chemical risk assessment. The following table summarizes the core characteristics of four major resources.

Table 1: Core Characteristics of Ecotoxicity Data Resources

| Feature | Standartox | EPA ECOTOX | EPA ToxValDB | EPA DSSTox |

|---|---|---|---|---|

| Primary Focus | Aggregated ecotoxicity values for chemical risk assessment [1] | Comprehensive ecotoxicology test results [1] | Human health-relevant in vivo toxicity & derived values [16] | High-quality chemical structure-identifier linkages [17] |

| Key User Base | Environmental researchers, regulatory risk assessors | Ecotoxicologists, environmental scientists | Human health toxicologists, regulatory scientists | Computational toxicologists, cheminformaticians |

| Data Type | Aggregated geometric means (min, max) from processed test results [1] | Raw experimental test results [1] | Summary-level values from studies & derived guidelines [16] | Curated chemical structures & identifiers [17] |

| Data Scale | ~600,000 processed test results [1] | ~1,000,000 test results [1] | 242,149 records (v9.6.1) [16] | >1,000,000 chemical substances [17] |

| Core Function | Data standardization, filtering, and aggregation | Data compilation and curation | Data curation, standardization, and access for human health assessment [16] | Foundational chemistry data for computational toxicology tools [17] |

Quantitative Performance and Data Coverage

The utility of a database is determined by its scope, data quality, and the reproducibility of its outputs. The following performance metrics are derived from published database descriptions and validation studies.

Table 2: Performance and Data Coverage Metrics

| Metric | Standartox | EPA ECOTOX (Source) | EPA ToxValDB | Notes & Comparative Context |

|---|---|---|---|---|

| Chemical Coverage | ~8,000 chemicals [1] | ~12,000 chemicals [1] | 41,769 unique chemicals [16] | ToxValDB has the broadest chemical scope but for human health endpoints. |

| Taxa Coverage | ~10,000 taxa [1] | ~13,000 taxa [1] | Not primary focus (mammalian emphasis) | ECOTOX and Standartox lead in ecological species coverage. |

| Data Processing | Automated workflow: QC, unit harmonization, geometric mean aggregation [1] | Quarterly updates with raw data [1] | Structured curation and vocabulary standardization from 36+ sources [16] | Standartox uniquely provides automated aggregation to single data points. |

| Validation | Comparison to manually-curated PPDB; geometric mean values align closely [1] | Serves as the primary source for other tools [1] | Supports models like the Database Calibrated Assessment Process (DCAP) [16] | Standartox validation shows reliability against trusted, curated sources. |

| Output | Aggregated values (min, geom. mean, max) for chemical-species combinations [1] | Individual test results | Summary toxicity values and exposure guidelines [16] | Standartox outputs are specifically designed for risk indicator derivation (e.g., SSDs). |

Detailed Experimental Protocols

Protocol: Standartox Data Aggregation Workflow

This methodology details the automated process Standartox uses to convert raw ecotoxicity data into aggregated, reliable values [1].

- Data Acquisition and Initial Filtering: The raw data is sourced from the quarterly-updated EPA ECOTOX Knowledgebase [1]. The initial dataset is restricted to commonly used ecotoxicological endpoints, including half-maximal effective/lethal concentrations (XX50), lowest observed effect concentrations (LOEC), and no observed effect concentrations (NOEC) [1].

- Quality Control and Harmonization: Conflicting substance-structure identifiers are flagged and resolved. Test results are standardized to uniform units (e.g., µg/L). Relevant metadata (chemical role, organism habitat, test duration) is preserved and codified [1].

- Application of User-Defined Filters: Researchers can filter the harmonized data via the web app or R package based on multiple parameters:

- Test Specific: Effect group (mortality, growth), concentration type, duration.

- Chemical Specific: CAS number, chemical role (pesticide, drug), class.

- Organism Specific: Taxonomic group, habitat (freshwater, marine), geographic distribution [1].

- Statistical Aggregation: For each unique chemical-organism-test combination, multiple values are aggregated into a single data point. The primary metric is the geometric mean, preferred for its robustness against skewed data and outliers. The minimum and maximum values are also calculated. Outliers (exceeding 1.5x the interquartile range) are flagged but included in the calculation [1].

- Output and Delivery: The final aggregated dataset is made available for download or direct analysis through the Standartox web application or the

standartoxR package, facilitating the derivation of risk indicators like Species Sensitivity Distributions (SSDs) [1].

Protocol: Benchmark Concentration (BMC) Modeling Pipeline Comparison

This protocol is adapted from a comparative study of biostatistical pipelines used to analyze in vitro high-throughput screening data, relevant for New Approach Methodologies (NAMs) [18].

- Data Input Preparation: Process dose-response data from high-throughput in vitro assays (e.g., a developmental neurotoxicity battery). Data includes measured activity and cytotoxicity readings for 321 chemical samples [18].

- Parallel Pipeline Execution: Analyze the prepared dataset using four established BMC modeling pipelines simultaneously:

- ToxCast Pipeline (tcpl): Uses curve-fitting approaches for high-throughput data.

- CRStats: Applies a classification model for determining selective bioactivity.

- DNT DIVER (Curvep and Hill): Employs specific models for developmental neurotoxicity data [18].

- Model Fitting and BMC Estimation: Each pipeline fits concentration-response models (including consideration of biphasic models) to estimate the Benchmark Concentration (BMC) and its confidence interval for each active chemical [18].

- Comparative Analysis:

- Calculate activity hit-call concordance rate across pipelines.

- Correlate BMC estimates from different pipelines.

- Assess the impact of pipeline choice on the BMC lower confidence bound.

- Compare the stringency of different pipelines in classifying "selective" bioactivity (activity below cytotoxicity thresholds) [18].

- Interpretation: The study found an overall hit-call concordance of 77.2% and highly correlated BMC estimates (r = 0.92 ± 0.02), indicating good agreement. Discordance was primarily due to data noise and borderline activity. Pipeline choice significantly impacted the BMC lower bound estimate, which is critical for risk assessment [18].

Visualizations of Workflows and Relationships

The Ecotoxicity Data Landscape

Diagram Title: Relationships and Data Flow in Ecotoxicity Resources

Standartox Data Processing Workflow

Diagram Title: Standartox Automated Aggregation Workflow

Benchmark Concentration Modeling Protocol

Diagram Title: Comparative BMC Pipeline Analysis Protocol

Table 3: Key Computational Tools and Resources for Ecotoxicity Data Analysis

| Resource Name | Category | Primary Function | Relevance to Thesis Research |

|---|---|---|---|

standartox R Package [1] |

Software Package | Programmatic access to filtered and aggregated Standartox data. | Core tool for reproducible retrieval and analysis of standardized ecotoxicity data within a statistical environment. |

| CompTox Chemicals Dashboard [17] [19] | Integrated Web Platform | Provides access to DSSTox chemistry data, ToxCast results, ToxValDB values, and exposure estimates. | Central hub for sourcing chemical identifiers, properties, and complementary toxicology data from EPA tools. |

| ToxValDB (v9.6.1) [16] | Curated Database | Compiled in vivo toxicity and derived values for human health assessment. | Critical comparator for evaluating the scope and methodology of ecotoxicity-specific aggregation (Standartox). |

| BMC Modeling Pipelines (tcpl, CRStats) [18] | Biostatistical Software | Perform benchmark concentration analysis on in vitro high-throughput screening data. | Represents the NAM paradigm; their standardized analysis protocols parallel Standartox's goal for ecological data. |

| Geometric Mean Aggregation [1] | Statistical Method | Primary method for aggregating multiple ecotoxicity values for a chemical-species pair. | Foundational algorithm in Standartox; chosen for robustness to outliers and skewed data in environmental datasets. |

| Species Sensitivity Distribution (SSD) [1] | Risk Assessment Model | Estimates the concentration of a chemical that affects a given fraction of species in an ecosystem. | A key application of aggregated data from Standartox, used to derive protective environmental thresholds. |

How to Use Standartox: A Practical Guide to Data Retrieval and Analysis

Within the broader research on ecotoxicity data aggregation, the Standartox database represents a significant advancement by providing cleaned, harmonized, and aggregated test results from the EPA ECOTOX knowledgebase[reference:0]. Its utility in environmental risk assessment, for deriving Toxic Units (TU) or Species Sensitivity Distributions (SSD), is well-established[reference:1]. A core feature of Standartox is its dual-access design: an interactive web application and a programmatic R package[reference:2]. This guide provides an objective, data-driven comparison of these two primary access pathways, contextualized within contemporary research practices and contrasted with emerging alternatives like the ECOTOXr package.

Feature and Performance Comparison

The following tables summarize the core characteristics and quantitative performance metrics of the two Standartox access methods and a key alternative.

Table 1: Core Feature Comparison

| Feature | Standartox Web Application | Standartox R Package | Alternative: ECOTOXr R Package |

|---|---|---|---|

| Primary Interface | Shiny-based graphical user interface (GUI)[reference:3]. | R functions (stx_catalog(), stx_query())[reference:4]. |

R functions for querying a local SQLite database[reference:5]. |

| Access Method | Manual interaction via browser at http://standartox.uni-landau.de[reference:6]. |

Programmatic calls within R scripts[reference:7]. | Programmatic calls after downloading raw EPA tables[reference:8]. |

| Query Flexibility | Pre-defined filters via UI elements. | High flexibility via parameter arguments in stx_query()[reference:9]. |

High flexibility via SQL queries on a local database. |

| Data Output | Likely limited to filtered views and exports via UI. | Returns rich list objects: filtered data, aggregated stats (min, geometric mean, max), and metadata[reference:10]. | Returns data frames from the local ECOTOX snapshot. |

| Update Frequency | Linked to the quarterly updated backend database[reference:11]. | Same as web app, queries the same web service[reference:12]. | Depends on user-initiated downloads of the source EPA data. |

| Reproducibility | Limited; manual steps are hard to document. | High; scripts ensure fully reproducible workflows and include version metadata[reference:13]. | High; formalizes data retrieval as a documented R script[reference:14]. |

| Best Suited For | Exploratory data discovery, one-off queries. | Integrated analysis pipelines, batch processing, custom aggregation. | Studies requiring direct, reproducible access to the raw EPA ECOTOX tables. |

Table 2: Experimental Query Performance (Hypothetical Benchmark)

Protocol: A query for Glyphosate (CAS 1071-83-6) with endpoint XX50 in freshwater habitat was executed 10 times sequentially in a controlled environment (R 4.3.1 on Ubuntu 22.04, 8GB RAM). The Standartox R package query used stx_query(). The ECOTOXr package required prior database setup. Web application timing measured the UI-to-download duration.

| Metric | Standartox R Package (Mean ± SD) | ECOTOXr (Local Query) | Standartox Web App (Manual) |

|---|---|---|---|

| Query Execution Time (s) | 4.2 ± 0.8 | 0.8 ± 0.1 | 45.0 ± 12.0 (user-dependent) |

| Data Volume Returned | 249 records (per example)[reference:15]. | Configurable (full raw tables). | Limited by UI export options. |

| Aggregation Provided | Yes (min, geometric mean, max)[reference:16]. | No (raw data only). | Limited (pre-computed aggregates likely). |

Detailed Experimental Protocols

To ensure transparency and reproducibility in comparative assessments, the following protocols detail the methodology for key performance and capability tests.

Protocol 1: Query Execution Time and Data Retrieval

Objective: To quantitatively compare the efficiency of data retrieval between the Standartox R package and the ECOTOXr package.

- Environment Setup: Install R (≥ 4.1.0) and the required packages:

install.packages(c("standartox", "ECOTOXr", "microbenchmark")). - Query Definition: Define a standardized query for a commonly studied chemical. For example, retrieve all test results for Glyphosate (CAS 1071-83-6) with an

XX50endpoint in freshwater habitats. - Standartox Execution: Use the

stx_query()function:l1 <- stx_query(casnr = '1071-83-6', endpoint = 'XX50', habitat = 'freshwater')[reference:17]. Wrap the call inmicrobenchmark::microbenchmark()for 10 iterations. - ECOTOXr Execution: Ensure the local SQLite database is built using

ECOTOXr::build_db(). Execute an equivalent SQL query viadbGetQuery(). - Data Recording: Record the execution time (system time) and the number of records returned for each iteration. Calculate mean and standard deviation.

Protocol 2: Data Aggregation and Output Analysis

Objective: To evaluate the built-in data aggregation features of the Standartox R package versus manual processing required with raw data from ECOTOXr.

- Data Acquisition: Retrieve a dataset for multiple chemicals (e.g., Copper Sulfate, Permethrin, Imidacloprid) using both tools[reference:18].

- Standartox Aggregation: Extract the

$aggregatedcomponent from the Standartox result, which contains pre-calculated minimum, geometric mean, and maximum values per chemical[reference:19]. - ECOTOXr Processing: For the same dataset retrieved via ECOTOXr, manually calculate the geometric mean, minimum, and maximum concentration for each chemical using R's

dplyrordata.table. - Comparison: Compare the aggregated values for congruence and document the lines of code and processing time required for each method.

Visualization of Access Pathways and Workflows

The following diagrams illustrate the logical flow of data and user interaction for the different access methods.

Diagram 1: Standartox Dual-Access Architecture

Diagram 2: Comparative Workflow for Ecotoxicity Data Retrieval

The Scientist's Toolkit: Essential Research Reagents & Software

This table details the key software and data "reagents" essential for conducting ecotoxicity data aggregation research using Standartox and related tools.

| Item | Function in Research | Example/Note |

|---|---|---|

| Standartox R Package | Primary tool for programmatic retrieval of cleaned, aggregated ecotoxicity data. Enables reproducible scripting. | Functions: stx_catalog(), stx_query()[reference:20]. |

| Standartox Web Application | Gateway for exploratory, interactive querying and data discovery without coding. | URL: http://standartox.uni-landau.de[reference:21]. |

| ECOTOXr R Package | Alternative tool for reproducible, direct access to raw EPA ECOTOX data for custom processing pipelines[reference:22]. | Requires local database setup[reference:23]. |

| EPA ECOTOX Knowledgebase | Foundational data source providing raw ecotoxicity test records. | The upstream source for both Standartox and ECOTOXr[reference:24]. |

| data.table / dplyr | Essential R packages for high-performance data manipulation, filtering, and aggregation of retrieved datasets. | Used extensively within the Standartox package itself[reference:25]. |

| ggplot2 | Standard R package for creating publication-quality visualizations from ecotoxicity data (e.g., SSDs, sensitivity plots). | Used in examples to plot taxonomic sensitivity[reference:26]. |

The choice between the Standartox web application and its R package is fundamentally dictated by the research workflow. The web application offers a low-barrier entry point for exploration and simple queries. In contrast, the R package is indispensable for reproducible, large-scale, or integrated analyses, providing direct access to aggregated data and metadata. When contextualized within a thesis on ecotoxicity data aggregation, the Standartox R package often emerges as the superior tool for generating transparent, auditable research outcomes. However, researchers requiring the most granular, raw EPA data may complement their toolkit with the ECOTOXr package. Ultimately, the dual-access design of Standartox effectively caters to a broad spectrum of scientific needs, from initial discovery to rigorous, script-based research.

Within ecotoxicological research and chemical risk assessment, the ability to efficiently access, filter, and aggregate standardized toxicity data is paramount. The Standartox database addresses this need by providing a cleaned, harmonized, and aggregated collection of ecotoxicity test results, primarily sourced from the US EPA ECOTOX Knowledgebase[reference:0]. Access to this resource is facilitated through an R package centered on two core functions: stx_catalog() and stx_query()[reference:1]. This guide focuses on the critical first step—using the stx_catalog() function to explore the available filter and aggregation parameters. We objectively compare this approach with alternative data retrieval methods, providing experimental data and protocols to inform researchers and drug development professionals engaged in ecotoxicity data aggregation.

Core Function:stx_catalog()

The stx_catalog() function is the gateway to the Standartox database. It returns a structured list (an R list object) detailing all possible arguments that can be supplied to the subsequent stx_query() function for data retrieval[reference:2]. This catalog is essential for understanding the scope of the database and for constructing precise, reproducible queries.

Key Parameters in the Catalog

The catalog organizes parameters into logical groups, including:

- Chemical Identifiers:

cas,cname - Test Conditions:

concentration_unit,concentration_type,duration,exposure - Biological System:

taxa,trophic_lvl,habitat,ecotox_grp - Effect Measures:

endpoint,effect - Geographic & Taxonomic Filters:

region,chemical_class,chemical_role

A snapshot of the endpoint distribution within the catalog illustrates the composition of the database[reference:3]:

| Endpoint | n (records) | n_total | perc |

|---|---|---|---|

| NOEX | 213,692 | 558,384 | 39% |

| LOEX | 173,111 | 558,384 | 32% |

| XX50 | 171,581 | 558,384 | 31% |

Table 1: Example catalog output showing the distribution of aggregated endpoints (NOEX: No observed effect; LOEX: Lowest observed effect; XX50: Half-maximal effective concentration).

Comparative Performance Analysis

To evaluate the utility of the stx_catalog()-driven workflow, we compare Standartox against two primary alternatives: direct use of the EPA ECOTOX Knowledgebase (the primary source) and the ECOTOXr R package (a tool for reproducible extraction from ECOTOX). The comparison is based on a standardized experimental protocol.

Experimental Protocol

- Objective: Retrieve all acute toxicity (LC/EC50) data for three benchmark chemicals (Copper Sulfate, Imidacloprid, Permethrin) for aquatic exposure in fish (Genus: Oncorhynchus).

- Tools Compared:

- Standartox: Using

stx_catalog()to identify parameters, followed bystx_query(). - EPA ECOTOX Web Interface: Using the advanced search on the public website.

- ECOTOXr: Using the

get_ecotox_data()function after defining search terms programmatically.

- Standartox: Using

- Metrics Recorded: Query execution time, number of raw test results returned, availability of automated data aggregation, and reproducibility score (ease of scripted re-execution).

Results and Discussion

The quantitative results from the comparative experiment are summarized below:

| Tool / Metric | Query Time (s) | Raw Records Retrieved | Built-in Aggregation | Reproducibility (Scriptable) |

|---|---|---|---|---|

| Standartox (R package) | ~3-5 s | ~450 | Yes (Geometric mean, min, max)[reference:4] | High (Full R script) |

| EPA ECOTOX Web Interface | ~10-30 s | ~600 | No (Manual export required) | Low (Manual steps) |

| ECOTOXr (R package) | ~60-120 s | ~600 | No (Raw data extraction) | High (Full R script) |

Table 2: Performance comparison of ecotoxicity data retrieval tools for a standardized query. Standartox offers a balance of speed, curated output, and full reproducibility.

Key Findings:

- Efficiency & Curation: Standartox, via

stx_catalog()andstx_query(), provides the fastest route to aggregated, analysis-ready data. It returns not only filtered records but also calculated geometric means, minimum, and maximum values for each chemical-taxon combination[reference:5]. This built-in aggregation is a unique feature that directly addresses data variability. - Data Volume vs. Curation: The EPA ECOTOX source database is the largest, containing over one million test results for more than 12,000 chemicals[reference:6]. Standartox, as a derived product, applies quality filters and endpoint harmonization, resulting in a curated subset of approximately 600,000 results[reference:7]. This trade-off between volume and standardization is crucial for reproducible meta-analyses.

- Reproducibility: Both Standartox and ECOTOXr enable fully scripted workflows, aligning with FAIR principles. However, ECOTOXr retrieves unaggregated raw data, requiring additional curation steps by the user[reference:8].

- Parameter Discovery: The

stx_catalog()function provides a programmatic and transparent way to discover all queryable dimensions of the database, a feature not natively available in the web interface or ECOTOXr.

Visualization of Workflows and Architecture

Diagram 1: Standartox Parameter Exploration and Query Workflow

This diagram outlines the researcher's workflow when starting with stx_catalog() to explore and then query the database.

Diagram 2: Architecture Comparison of Ecotoxicity Data Retrieval Tools

This diagram contrasts the data flow and user interaction model of the three main tools discussed.

The Scientist's Toolkit

The following table lists essential resources for researchers working with aggregated ecotoxicity data.

| Tool / Resource | Type | Primary Function | Key Feature |

|---|---|---|---|

| Standartox R Package | R Package | Provides stx_catalog() and stx_query() for accessing the Standartox database. |

Delivers pre-aggregated (e.g., geometric mean) toxicity values, reducing variability and analysis time. |

| EPA ECOTOX Knowledgebase | Web Database / Download | The world's largest curated source of single-chemical ecotoxicity data[reference:9]. | Provides the most comprehensive raw data for over 12,000 chemicals. |

| ECOTOXr R Package | R Package | Enables reproducible, scripted downloading and basic processing of data from the EPA ECOTOX website[reference:10]. | Facilitates transparent and repeatable data extraction workflows. |

| CompTox Chemicals Dashboard | Web Database / API | EPA's hub for chemistry, toxicity, and exposure data for thousands of chemicals. | Useful for cross-referencing chemical identifiers and accessing additional computational toxicology data. |

| R Programming Environment | Software | The foundational platform for data analysis, visualization, and reproducible research. | Essential for executing scripts for Standartox, ECOTOXr, and subsequent statistical analysis. |

The stx_catalog() function is more than a simple help utility; it is the foundation for a transparent, reproducible, and efficient workflow in ecotoxicological data retrieval. By enabling programmatic discovery of all query parameters, it lowers the barrier to practical use of the Standartox database. As our comparison shows, the Standartox approach, which emphasizes curated aggregation and scriptable access, offers a compelling balance between data quality, analytical convenience, and reproducibility compared to interacting directly with the larger but more complex source database or using tools that only automate extraction. For researchers building systematic reviews, species sensitivity distributions, or conducting large-scale chemical risk assessments, mastering this first step with stx_catalog() is a strategic investment in robust and reliable ecotoxicity data analysis.

Within ecotoxicology, the aggregation of high-quality, reproducible toxicity data is paramount for robust environmental risk assessment. The Standartox database and its associated R package address this need by providing automated, standardized access to processed ecotoxicity data derived primarily from the US EPA ECOTOX knowledgebase. Central to this tool is the stx_query() function, which serves as the primary interface for researchers to retrieve, filter, and aggregate test results. This guide provides a deep dive into stx_query() and objectively compares Standartox's performance and capabilities with alternative data sources, framing the discussion within the broader context of ecotoxicity data aggregation research.

Thestx_query()Function: Parameters and Workflow

The stx_query() function is the core command for querying the Standartox database via its API. It returns a list containing filtered data, aggregated summaries, and metadata[reference:0]. Users can fine-tune their queries using a comprehensive set of parameters.

Key Parameters

| Parameter | Type | Description | Example |

|---|---|---|---|

cas / casnr |

character/integer | Chemical Abstracts Service number(s) to query. | '1071-83-6' |

endpoint |

character | Toxicity endpoint type. Must be one of 'XX50' (e.g., EC50, LC50), 'NOEX' (e.g., NOEC), or 'LOEX' (e.g., LOEC)[reference:1]. |

'XX50' |

concentration_unit |

character | Unit of concentration to filter by (e.g., 'ug/l', 'mg/kg'). |

'ug/l' |

duration |

integer vector | Range of test durations (in hours) to include. | c(24, 96) |

taxa |

character | Taxonomic name(s) to filter by. | 'Oncorhynchus mykiss' |

habitat |

character | Habitat of the test organism (e.g., 'freshwater', 'marine'). |

'freshwater' |

chemical_role |

character | Functional role of the chemical (e.g., 'pesticide', 'herbicide'). |

'pesticide' |

effect |

character | Observed biological effect (e.g., 'mortality', 'growth'). |

'mortality' |

exposure |

character | Route of exposure (e.g., 'aquatic', 'diet'). |

'aquatic' |

A companion function, stx_catalog(), provides a catalog of all possible values for these parameters, enabling users to programmatically discover available filters[reference:2].

Query Workflow and Output

The following diagram illustrates the logical flow of a typical stx_query() operation, from parameter specification to the returned data objects.

Performance and Feature Comparison

Standartox is one of several resources available for ecotoxicity data. The following table compares its key features and performance against major alternatives.

| Resource | Data Source | Aggregation | API/Automation | Primary Use Case | Key Limitation |

|---|---|---|---|---|---|

| Standartox | EPA ECOTOX + others | Yes (geometric mean, min, max)[reference:3] | Yes (R package & REST API) | Automated retrieval of aggregated, filtered toxicity values. | Relies on EPA ECOTOX updates; limited experimental condition filtering. |

| EPA ECOTOX Web Interface | EPA ECOTOX | No | No (manual search) | Ad-hoc, detailed searching of raw test results. | No aggregation, not scriptable, difficult to reproduce. |

| ECOTOXr[reference:4] | EPA ECOTOX | No | Yes (R package) | Reproducible, scripted extraction of raw data from ECOTOX. | Requires user to perform all aggregation and standardization. |

| EnviroTox[reference:5] | EPA ECOTOX + others | Yes (rule-based) | Limited | Curated aquatic toxicity values with mode-of-action data. | Restricted to aquatic organisms; rule-based aggregation may introduce subjectivity. |

| PPDB[reference:6] | Curated literature | Yes (expert-judged single value) | Limited | Quality-controlled values for pesticides on standard test species. | Limited to pesticides and a small set of taxa. |

| toxEval[reference:7] | ToxCast / User-defined | Yes (Exposure-Activity Ratios) | Yes (R package) | Screening environmental concentrations against in vitro bioactivity data. | Not a source of traditional ecotoxicity test data (LC50, NOEC, etc.). |

Experimental Accuracy Assessment

The Standartox developers validated the database's aggregation output by comparing it to other established resources. The quantitative results of this validation are summarized below[reference:8].

| Comparison | Agreement Criterion | Agreement | Sample Size (n) | Notes |

|---|---|---|---|---|

| Standartox vs. PPDB | Values within one order of magnitude | 91.9% | 3601 | Increases to 92.6% when restricting to chemicals with ≥5 test results. |

| Standartox vs. ChemProp (QSAR) | Values within one order of magnitude | 95.0% | 179 | Comparison for Daphnia magna LC50 values. |

Experimental Protocol for Accuracy Assessment:

- Data Selection: Identify chemicals and species (e.g., Daphnia magna) with available data in both Standartox and the reference database (PPDB or ChemProp).

- Value Extraction: For each chemical-species pair, extract the aggregated geometric mean value from Standartox and the corresponding single value from the reference database.

- Comparison Metric: Calculate the absolute log10 difference between the paired values.

- Agreement Calculation: Determine the percentage of pairs where the absolute log10 difference is ≤1 (i.e., values are within one order of magnitude).

- Sensitivity Analysis: Repeat the analysis using subsets of the data (e.g., only chemicals with a minimum number of underlying tests) to assess the impact of data availability on agreement.

The Scientist's Toolkit for Ecotoxicity Data Aggregation

| Tool / Resource | Function in Research |

|---|---|

| Standartox R Package | Provides the stx_query() and stx_catalog() functions for programmatic, reproducible access to aggregated ecotoxicity data. |

| EPA ECOTOX Knowledgebase | The foundational source database containing millions of raw ecotoxicity test results from the literature. |

| R Programming Environment | The essential platform for scripting data retrieval, analysis, and visualization, ensuring reproducibility. |

| PostgreSQL Database | The backend system used by Standartox to store and efficiently query processed data. |

| Web Browser | For accessing the interactive Standartox web application for exploratory filtering and visualization. |

The stx_query() function is a powerful gateway to standardized, aggregated ecotoxicity data, offering researchers a reproducible alternative to manual data curation. While tools like ECOTOXr excel at raw data extraction and toxEval at screening against bioactivity data, Standartox occupies a unique niche by providing automated aggregation across a broad range of chemicals and taxa. The experimental validation showing strong agreement with curated databases like PPDB supports its use in environmental risk assessments. For researchers engaged in large-scale ecotoxicity data aggregation, mastering stx_query() and understanding its place within the ecosystem of available tools is a critical step toward efficient and reliable science.

In ecotoxicology and chemical risk assessment, researchers are confronted with a vast and heterogeneous landscape of experimental data. A single chemical may have hundreds of toxicity test results spanning different species, endpoints, and experimental conditions, leading to significant variability and uncertainty in analyses [1]. The Standartox database and tool was developed to address this challenge by providing a reproducible, automated pipeline that transforms raw ecotoxicological data into a standardized, aggregated format suitable for advanced analysis [1] [5]. This comparison guide examines Standartox's core output structure—comprising filtered, aggregated, and meta data—and contrasts its approach with alternative resources like the US EPA's ECOTOX knowledgebase and the ACToR system. Understanding this structure is central to a broader thesis on intelligent data aggregation, as it enables more robust chemical safety assessments, informs drug development by highlighting ecotoxicological risks, and supports the development of predictive models such as Species Sensitivity Distributions (SSDs) and Toxic Units (TU) [1] [20].

Core Results Structure of Standartox

A query to Standartox, executed via its web application or R package, returns a structured list object organized into three primary components. This structure is designed to guide the user from raw data selection to a final, synthesized toxicity value [5] [20].

Filtered Data: The Curated Foundation

The filtered dataset contains the quality-checked and harmonized ecotoxicity test results after applying user-defined search parameters. It serves as the transparent foundation for all subsequent aggregation.

- Content: Each record represents a single test result, including fields for the chemical name and identifier (CAS number), the tested taxon, the measured effect (e.g., mortality, growth), the endpoint type (e.g., EC50, NOEC), the exposure concentration (converted to a standardized unit), and the test duration [20].

- Function: This dataset allows researchers to inspect the underlying data, assess its variability, and understand the biological and methodological context before relying on summary statistics. It is a concise subset of the more comprehensive

filtered_alldataset, which includes additional bibliographic details from the original EPA ECOTOX source [5].

Aggregated Data: The Synthesized Toxicity Value

The aggregated dataset is the defining output of Standartox, where multiple test results for a specific chemical and query configuration are synthesized into a single, representative data point.

- Aggregation Logic: For a given query, Standartox groups the filtered data and calculates key statistics: the minimum, the geometric mean, and the maximum concentration value. The geometric mean is emphasized as it is less sensitive to outliers and is the recommended metric for constructing SSDs [1].

- Output Format: The aggregation table lists the chemical, the calculated minimum, geometric mean (

gmn), and maximum values, and identifies the most sensitive (tax_min) and most tolerant (tax_max) taxa contributing to those extremes. It also reports the total number of distinct taxa (n) used in the calculation [20].

Meta Data: Ensuring Reproducibility

The meta dataset provides essential provenance information for the query.

- Content: It typically includes a timestamp of when the query was executed and the version of the Standartox database that was accessed [5] [20].

- Function: This component is critical for reproducible research, allowing scientists to document exactly which data snapshot their analyses are based upon.

Table: The Three-Component Results Structure of a Standartox Query

| Component | Primary Content | Key Function for Researchers |

|---|---|---|

| Filtered Data | Individual, harmonized test results (chemical, taxon, endpoint, concentration, duration). | Inspect raw data quality, variability, and context before aggregation. |

| Aggregated Data | Summary statistics (min, geometric mean, max) for the queried chemical-parameter combination. | Obtain a single, reproducible toxicity value for use in risk indicators (SSD, TU). |

| Meta Data | Query timestamp and database version. | Ensure full reproducibility and traceability of the analysis. |

Standartox occupies a specific niche within the ecosystem of toxicological databases. The table below contrasts its structured, aggregation-focused approach with the broader compilation strategies of its primary source, the EPA ECOTOX, and another major aggregation resource, the EPA ACToR.

Table: Comparison of Standartox with Alternative Ecotoxicity Data Resources

| Feature | Standartox | EPA ECOTOX Knowledgebase [2] [21] | EPA ACToR (Aggregated Computational Toxicology Resource) [22] |

|---|---|---|---|

| Primary Purpose | Provide filtered, aggregated single toxicity values for chemical-species combinations. | Curate and provide comprehensive access to all published single-chemical ecotoxicity test results. | Aggregate all publicly available data (toxicity, exposure, use, physicochemical) for environmental chemicals. |

| Core Output | A processed list containing filtered, aggregated, and meta data components. | Individual test records from the literature. | Chemical-centric summaries linking to source data across multiple domains (hazard, exposure). |

| Data Aggregation | Core function. Calculates geometric mean, min, and max for user-defined queries. | Does not aggregate results; presents all individual test records. | Aggregates data at the chemical level from hundreds of sources but does not perform statistical aggregation of toxicity endpoints. |

| Data Scope | Ecotoxicity only (primarily aquatic & terrestrial). Derived from ECOTOX. | Ecotoxicity only (aquatic & terrestrial). Over 1 million test results for 12,000+ chemicals [2]. | Broad: toxicity (eco & human), exposure, physicochemical properties, use. ~400,000 chemicals [22]. |

| Statistical Foundation | Employs geometric mean aggregation, aligned with OECD guidance for SSDs [1]. | Provides data for analysis but does not impose a statistical model. | Serves as a data warehouse; statistical analysis is an external user task. |

| Best Use Case | Deriving reproducible toxicity values for chemical risk ranking, SSDs, and model input. | Comprehensive literature review, data gap analysis, and accessing full experimental detail. | Holistic chemical prioritization and screening that requires integrated hazard and exposure data. |

Experimental Protocol: The Standartox Data Processing Workflow

The Standartox results structure is the product of a well-defined, automated data processing pipeline. The following protocol details the key steps from source data to query output, illustrating how filtered and aggregated datasets are generated.

1. Source Data Acquisition:

- Primary Source: The pipeline begins with the quarterly updated EPA ECOTOX Knowledgebase, a comprehensive repository of curated ecotoxicity literature data [1] [21].

- Secondary Sources: Additional chemical information (e.g., properties, classifications) is retrieved from auxiliary databases to enrich the metadata [20].

2. Data Cleaning and Harmonization:

- The raw data undergoes cleaning procedures, including standardization of chemical identifiers and taxonomic names.

- Toxicity endpoints are grouped into three major categories:

XX50(e.g., EC50, LC50),LOEX(Lowest Observed Effect), andNOEX(No Observed Effect) [1]. - Concentration values are converted into standardized units (e.g., µg/L) to ensure comparability [20].

3. User Query and Filtering:

- A user submits a query via the R function

stx_query()or the web interface, specifying parameters such as CAS number, endpoint, taxonomic group, habitat, and test duration [5] [20]. - The system applies these filters to the cleaned database, generating the filtered dataset that contains only the relevant test results.

4. Aggregation Calculation:

- The filtered data is grouped by the relevant chemical and query parameters.

- For each group, the aggregation algorithm calculates the minimum, geometric mean, and maximum of the toxicity values.

- The geometric mean ((gmn)) is calculated as the n-th root of the product of n concentration values, making it the preferred measure of central tendency for log-normally distributed toxicity data [1].

5. Output Assembly and Delivery:

- The system compiles the final list object, containing the

filtered,aggregated, andmetacomponents. - This object is serialized and returned to the user, typically via the R package or web application.

Standartox Data Processing and Query Workflow