PSA vs. SAFE: A Comprehensive Validation and Comparative Analysis of Ecological Risk Assessment Methods for Fisheries

This article provides a detailed examination and validation of two key ecological risk assessment (ERA) methods used in fisheries management: Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for...

PSA vs. SAFE: A Comprehensive Validation and Comparative Analysis of Ecological Risk Assessment Methods for Fisheries

Abstract

This article provides a detailed examination and validation of two key ecological risk assessment (ERA) methods used in fisheries management: Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE). Targeting researchers, scientists, and drug development professionals interested in ecological risk methodologies, the article explores their foundational principles, practical applications, and inherent limitations. It presents a rigorous comparative analysis, validating both semi-quantitative tools against data-rich stock assessments, and discusses critical considerations for optimizing their use in prioritizing species for conservation and management within data-limited contexts.

Understanding Ecological Risk Assessment: Core Principles of PSA and SAFE Methods

The Imperative for Ecological Risk Assessment in Fisheries Management

Modern fisheries management has undergone a paradigm shift from single-species approaches to Ecosystem-Based Fisheries Management (EBFM). Traditional management, focused on calculating maximum sustainable yield (MSY) for target species, often neglects broader ecological consequences, including the impact on bycatch species, habitat destruction, and changes to ecosystem structure [1]. This narrow focus has been identified as a potential cause of management failures [1]. EBFM addresses this by adopting a holistic approach that considers the entire ecosystem surrounding a fishery [1].

A critical component of EBFM is the Ecological Risk Assessment for the Effects of Fishing (ERAEF), a hierarchical framework designed to identify and prioritize species at highest risk from fishing pressures [1]. This framework is particularly vital for data-poor scenarios common in global fisheries, especially in developing nations where information on bycatch composition and abundance is scarce [1]. Within the ERAEF toolbox, two principal semi-quantitative tools have emerged for assessing species-level vulnerability: Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effect (SAFE) [2]. This guide provides a comparative analysis of these two methodologies, grounded in empirical validation studies, to inform researchers and resource managers on their application, performance, and limitations.

Methodological Comparison: PSA vs. SAFE

PSA and SAFE are screening-level tools designed to estimate the relative vulnerability of species to fishing. Both utilize similar input data concerning a species' life history characteristics (productivity) and its interaction with the fishery (susceptibility). However, they diverge significantly in their data processing and risk calculation algorithms.

- Productivity and Susceptibility Analysis (PSA) is a more precautionary and qualitative tool. It functions by downgrading quantitative biological and fishery data into an ordinal rank scale (typically 1 to 3) for a series of productivity (e.g., growth rate, age at maturity) and susceptibility (e.g., encounterability, post-capture mortality) attributes [2]. These ranks are combined, often via a Euclidean distance calculation in a two-dimensional plot, to produce a composite vulnerability score that categorizes species as low, medium, or high risk [1] [2].

- Sustainability Assessment for Fishing Effect (SAFE) employs a more quantitative and continuous approach. It uses raw data values directly in mathematical equations at each assessment step without converting them to ordinal ranks [2]. SAFE models the fishing mortality rate a population can sustain and compares it to an estimated fishing mortality rate, resulting in a risk metric that is directly interpretable in relation to sustainability benchmarks [2].

The table below summarizes the core procedural differences between the two methods.

Table 1: Core Methodological Comparison of PSA and SAFE

| Feature | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effect (SAFE) |

|---|---|---|

| Data Treatment | Converts quantitative data into ordinal ranks (e.g., 1-3). | Uses continuous, quantitative data directly in calculations. |

| Analytical Approach | Semi-quantitative, risk-scoring based on Euclidean distance in productivity-susceptibility space. | Quantitative, model-based comparison of estimated vs. sustainable fishing mortality. |

| Philosophical Basis | Precautionary principle; designed to err on the side of protecting species. | Aimed at estimating a sustainable level of fishing mortality. |

| Primary Output | Categorical risk ranking (Low, Medium, High). | Quantitative estimate of risk relative to sustainability. |

| Typical Use Case | Rapid screening and prioritization in data-limited situations. | Screening where more robust data are available; closer link to quantitative stock assessment. |

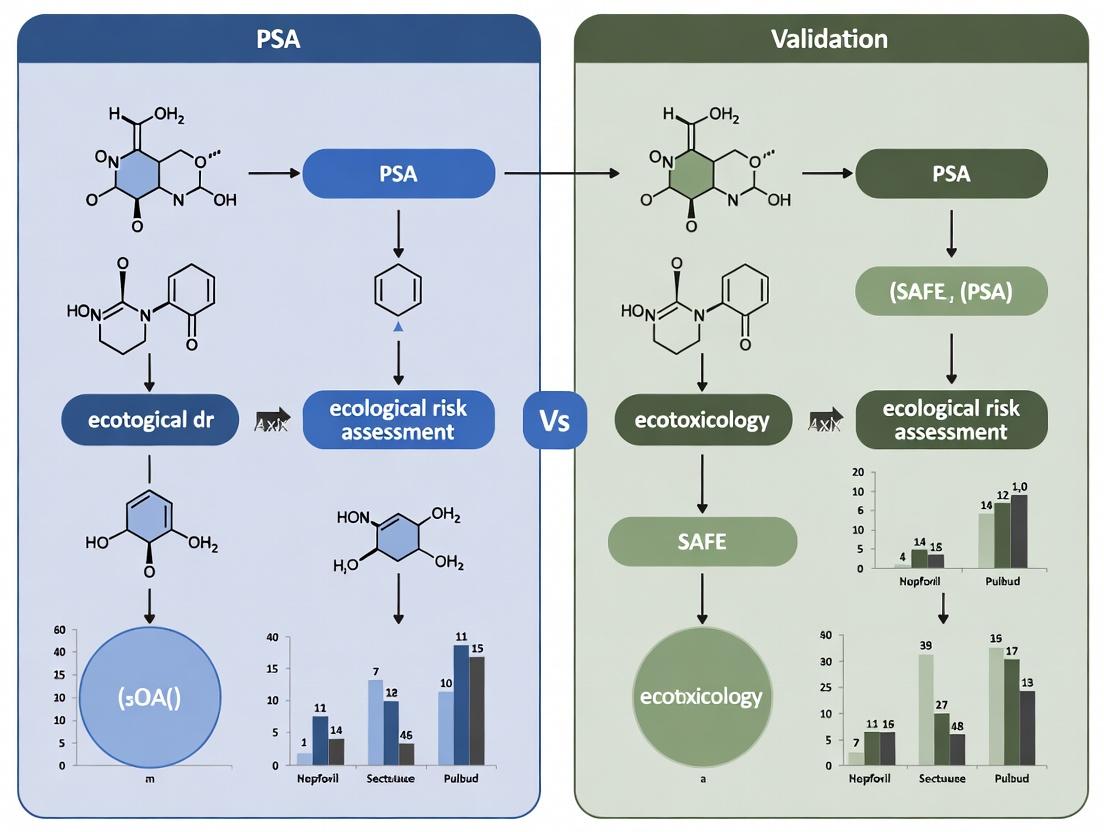

Visualizing the Methodological Workflow

The fundamental difference in how PSA and SAFE process information to arrive at a risk conclusion is illustrated in the following workflow diagram.

Validation Performance: Empirical Comparative Data

The ultimate test of a risk assessment tool is its validated performance against more robust, data-rich assessment methods. A seminal comparison study evaluated both PSA and SAFE against two benchmarks: Fishery Status Reports (FSR) and full quantitative stock assessments [2].

Table 2: Validation Performance of PSA vs. SAFE Against Benchmark Methods [2]

| Validation Benchmark | Number of Stocks Compared | PSA Misclassification Rate | SAFE Misclassification Rate | Notes on Bias |

|---|---|---|---|---|

| Fishery Status Reports (FSR) | Not specified (100 stocks referenced for PSA) | 27% (26 stocks) | 8% (59 stocks) | PSA: Overestimated risk in 100% of misclassifications. SAFE: Overestimated risk in 3%, underestimated in 5% of cases. |

| Tier 1 Quantitative Stock Assessments | 18 stocks | 50% (9 stocks) | 11% (2 stocks) | All misclassifications by both methods were overestimations of risk. |

The results are clear and consistent: SAFE demonstrates superior predictive accuracy. PSA’s misclassification rate is significantly higher, and its errors are systematically precautionary, consistently overestimating risk. This confirms its design philosophy of prioritizing the avoidance of false negatives (failing to identify an at-risk species) at the cost of a higher rate of false positives (identifying a species as at-risk when it is not) [2]. While this precaution is useful for prioritization in a screening context, it can lead to inefficient allocation of management resources if not interpreted correctly.

Experimental Protocols & Application Contexts

Case Study Protocol: PSA in Amazonian Shrimp Trawl Fishery

A 2025 study applied the ERAEF framework to the industrial bottom trawl fishery for southern brown shrimp on the Amazon Continental Shelf [1]. This protocol exemplifies a typical PSA application in a complex, data-limited fishery.

- Problem Formulation: Define the assessment's scope: to evaluate the vulnerability of bycatch species to the shrimp trawl fishery [1].

- Data Collection: Identify species interacting with the fishery. The study documented 540 species (fish, crustaceans, elasmobranchs) caught as bycatch. For a subset of 47 key species, gather available data on productivity attributes (e.g., fecundity, growth rate) and susceptibility attributes (e.g., geographic overlap with fishery, capture mortality) [1].

- PSA Scoring: Score each of the 47 species on defined productivity and susceptibility attributes using a 1-3 ordinal scale (e.g., low, medium, high vulnerability) [1].

- Vulnerability Calculation & Ranking: Calculate a composite vulnerability score (e.g., via Euclidean distance) and categorize species into risk tiers. The study found 12 species at high vulnerability, 23 at moderate vulnerability, and 12 at low vulnerability [1].

- Risk Characterization & Management Advice: Conclude that high and moderate-risk species require prioritized management action, such as gear modifications (e.g., Bycatch Reduction Devices - BRDs) and targeted data collection programs [1].

Protocol for Comparative Validation Studies

The validation study comparing PSA and SAFE followed a rigorous retrospective analysis protocol [2]:

- Selection of Benchmark: Establish "ground truth" using authoritative management classifications (FSR) or outputs from advanced quantitative stock assessments.

- Retrospective Application: Apply both the PSA and SAFE methodologies to the historical data for each stock in the comparison set.

- Prediction Generation: Generate risk classifications (e.g., "overfished" or "not overfished") from both PSA and SAFE outputs.

- Comparison & Metric Calculation: Compare the tool-derived classifications against the benchmark classifications. Calculate performance metrics, primarily the misclassification rate (percentage of incorrect predictions).

- Bias Analysis: Determine the direction of errors (overestimation or underestimation of risk) for each tool.

Table 3: Research Reagent Solutions for ERA Studies

| Tool/Resource | Primary Function | Application in ERA |

|---|---|---|

| Ecological Risk Assessment (ERA) Guidelines (EPA) [3] [4] | Provides standardized frameworks and best practices for planning, problem formulation, and risk characterization. | Ensures methodological rigor, transparency, and consistency in designing and executing fisheries ERA studies. |

| Aquatic Life Benchmarks (EPA) [5] | Tables of toxicity reference values (e.g., LC50, NOAEC) for pesticides and chemicals for freshwater and marine organisms. | Used to interpret monitoring data, estimate potential toxicological risks in habitats affected by fisheries (e.g., from antifoulants), and prioritize sites for investigation. |

| High-Throughput Assay (HTA) Data (e.g., ToxCast) [6] | In vitro bioactivity data from automated screening of chemicals across many biological pathways. | Emerging tool for rapid, mechanistic screening of chemical hazards (e.g., from fishing gear coatings). Can complement in vivo data but may underestimate chronic or neurotoxic risks [6]. |

| Life History Trait Databases (e.g., FishBase, SeaLifeBase) | Curated repositories of species-specific data on growth, reproduction, diet, habitat, etc. | Primary source for productivity parameter data required for both PSA and SAFE assessments. Critical for data-limited situations. |

| Fishery Observer or Electronic Monitoring Data | Records of catch composition, discards, fishing effort, and location. | Essential source for estimating susceptibility parameters (encounterability, selectivity, post-capture mortality) for both target and non-target species. |

The comparative validation demonstrates that SAFE offers greater predictive accuracy, while PSA serves as a more precautionary screening filter. The choice between them should be informed by the management context: PSA is ideal for initial, rapid prioritization of a large number of data-poor species, while SAFE is more suitable for generating risk estimates closer to quantitative assessments for better-studied systems.

The broader validation thesis underscores that no single tool is universally optimal. The hierarchical ERAEF framework, which can incorporate SICA, PSA, SAFE, and fully quantitative models, remains the most robust approach [1]. Future work must focus on:

- Integrating tools into adaptive management cycles where screening results guide targeted data collection, which in turn refines risk estimates.

- Developing hybrid or improved tools that balance the precaution of PSA with the accuracy of SAFE.

- Expanding validation efforts to a wider range of ecosystems and fishery types.

Ultimately, the imperative for ecological risk assessment in fisheries management is met not by adopting a single methodology, but by applying a validated, transparent, and context-appropriate suite of tools to ensure the long-term sustainability of both target species and the marine ecosystems they inhabit.

The Ecological Risk Assessment for the Effects of Fishing (ERAEF) is a hierarchical, semi-quantitative framework designed to support Ecosystem-Based Fisheries Management (EBFM) [1]. Its primary purpose is to evaluate the vulnerability of a wide range of marine species—especially data-poor bycatch species—to fishing impacts and to prioritize them for management or further detailed assessment [7] [8]. The framework operates on a three-tiered logic: starting with broad, qualitative screening and progressing to more data-intensive, quantitative analyses [1].

Within this structure, two pivotal tools were developed for the crucial second tier: the Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE) [7] [2]. Both were conceived to address a common management challenge: rapidly assessing risk for a large number of species where detailed, stock-specific data are unavailable [9]. While sharing this core objective and similar input data, PSA and SAFE represent fundamentally different philosophical and methodological approaches to risk calculation [7]. This guide provides a comparative validation of these two cornerstone tools, examining their conceptual foundations, methodological workflows, and performance against established benchmarks to inform their application and future development.

Methodological Comparison: Foundational Principles and Workflows

PSA and SAFE diverge significantly in their treatment of data and calculation of risk, leading to distinct outputs and management implications.

Core Conceptual Workflow

The following diagram illustrates the foundational pathways of the ERAEF framework and the distinct methodological processes of PSA and SAFE within it.

Diagram: ERAEF Framework and Methodological Pathways of PSA vs. SAFE (Max Width: 760px)

Comparative Methodology

The table below summarizes the key procedural differences between the PSA and SAFE methodologies [7].

| Aspect | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Core Philosophy | Qualitative, precautionary screening tool. | Quantitative, sustainability-focused assessment tool. |

| Data Treatment | Converts quantitative data into ordinal risk scores (typically 1-3). | Uses quantitative data as continuous variables in models. |

| Key Calculation | Composite score based on Euclidean distance: ( V = \sqrt{P^2 + S^2} ), where P is mean productivity score and S is geometric mean susceptibility score [8]. | Estimates fishing mortality rate (F) and depletion level, comparing F to biological reference points (e.g., FMSY, F20%). |

| Risk Output | Categorical ranking (Low, Medium, High). | Probability of overfishing or level of depletion relative to a sustainability benchmark. |

| Primary Strength | Rapid, requires minimal data, excellent for prioritizing a large number of data-poor species. | Provides a more quantitative and directly interpretable estimate of sustainability risk. |

| Inherent Tendency | Highly precautionary; often overestimates risk to avoid false negatives [7]. | More balanced; aims for accurate risk estimation relative to defined limits. |

Experimental Validation: Performance Against Benchmark Assessments

A critical 2016 study provided the first formal validation of PSA and SAFE by comparing their outcomes against two established benchmarks: Fishery Status Reports (FSR) and data-rich quantitative stock assessments [7] [2].

Validation Protocol

The validation followed a clear retrospective experimental design [7]:

- Selection of Comparison Stocks: A set of fish stocks were identified that had been assessed using both the ERAEF tools (PSA and/or SAFE) and one of the two benchmark methods.

- Data Compilation: Historical assessment data were gathered from Australian Commonwealth fisheries reports, including comprehensive PSA analyses (2003-2006), SAFE applications, annual Fishery Status Reports (FSR), and Tier 1 quantitative stock assessments.

- Risk Classification Alignment: For each stock, the risk classification from PSA (Low/Medium/High) and SAFE (e.g., risk of overfishing) was recorded. These were directly compared to the status determination from the benchmark assessments (e.g., "overfishing occurring" or not in FSR; stock status relative to reference points in quantitative assessments).

- Misclassification Analysis: A stock's risk classification from PSA or SAFE was considered a "misclassification" if it disagreed with the benchmark's status determination. Misclassifications were further categorized as overestimations of risk (tool is more precautionary) or underestimations of risk (tool is less precautionary).

Validation Results

The results from the comparative validation study are summarized in the tables below [7] [2].

Table 1: Misclassification Rates vs. Fishery Status Reports (FSR)

| Tool | Stocks Compared | Overall Misclassification Rate | Risk Overestimation | Risk Underestimation |

|---|---|---|---|---|

| PSA | 96 stocks | 27% (26 stocks) | 27% (all misclassifications) | 0% |

| SAFE | 59 stocks | 8% (5 stocks) | 3% | 5% |

Table 2: Misclassification Rates vs. Quantitative Tier 1 Stock Assessments

| Tool | Stocks Compared | Overall Misclassification Rate | Risk Overestimation | Risk Underestimation |

|---|---|---|---|---|

| PSA | 18 stocks | 50% (9 stocks) | 50% (all misclassifications) | 0% |

| SAFE | 18 stocks | 11% (2 stocks) | 11% (all misclassifications) | 0% |

Key Findings:

- PSA demonstrated a highly precautionary bias, consistently overestimating risk and misclassifying a significant proportion of stocks (27-50%) compared to benchmarks. This aligns with its original design as a sensitive screening tool to minimize the chance of missing a species at risk [7].

- SAFE showed significantly higher accuracy, with misclassification rates between 8-11%. Its errors were also predominantly overestimations, but to a much lesser degree than PSA, and included a small percentage of risk underestimations when compared to FSR [7].

- The performance gap widened when compared to the most rigorous (Tier 1) quantitative assessments, where PSA's misclassification rate rose to 50% while SAFE's remained relatively low at 11% [7].

Contemporary Applications and the Research Toolkit

Both tools remain actively used within the ERAEF framework for assessing data-poor fisheries globally. A 2025 study applied the ERAEF, specifically the Scale Intensity Consequence Analysis (SICA) and PSA, to an industrial shrimp trawl fishery on the Amazon Continental Shelf [1]. The study assessed 47 bycatch species, finding 12 with high vulnerability, 23 with moderate, and 12 with low vulnerability, directly guiding future management priorities such as data collection and gear modification [1].

Essential Research Reagent Solutions

Implementing PSA or SAFE assessments requires a standard set of methodological components. The following toolkit table details these essential "reagents."

| Item | Primary Function in PSA | Primary Function in SAFE |

|---|---|---|

| Life History Parameter Database | To assign ordinal scores (1-3) to attributes like age at maturity, fecundity, and maximum size [7] [8]. | To provide continuous inputs (e.g., natural mortality M, growth rate) for population equations [7]. |

| Fishery Interaction Matrix | To score susceptibility attributes based on gear overlap, spatial availability, and post-capture mortality [7]. | To estimate catchability (q) and the fraction of the population vulnerable to the fishery. |

| Scoring Algorithm & Reference Point Framework | To calculate the composite vulnerability score (V) and apply fixed thresholds (e.g., V<2.64=Low risk) [8]. | To calculate fishing mortality (F) and compare it to biological reference points (e.g., FMSY) [7]. |

| Catch/Effort Data | Used indirectly to inform susceptibility scoring, often qualitatively. | A core quantitative input for estimating total fishing mortality. |

| Expert Elicitation Protocol | Critical for scoring data-deficient attributes and validating final risk rankings. | Used to inform priors for uncertain parameters and assumptions in the model. |

PSA and SAFE were developed as complementary yet distinct tools within the ERAEF framework to solve the problem of risk assessment for data-poor species. Validation evidence clearly indicates that SAFE offers superior predictive accuracy, performing closer to data-rich assessment methods [7]. However, PSA retains value as a rapid, highly precautionary first-pass screening tool for prioritizing a large number of species when resources are extremely limited.

The future development of these tools lies in addressing their limitations. For PSA, research suggests its underlying assumptions may be inappropriate, and its qualitative nature can lead to poor performance under many conditions [9] [8]. Future iterations could benefit from integrating quantitative elements or being replaced by simpler population models that use similar data but offer more robust outputs [9]. For SAFE, ongoing development focuses on refining its spatial and gear-efficiency assumptions, as seen in the enhanced version (eSAFE) [7]. The broader trajectory within ecological risk assessment emphasizes transparent, reproducible, and quantitative simulation frameworks that can not only assess risk but also evaluate the consequences of alternative management strategies [9] [8].

Methodological Comparison: PSA vs. SAFE

The validation of ecological risk assessment methods centers on comparing the predictive accuracy, underlying assumptions, and practicality of semi-quantitative and quantitative frameworks. The Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE) represent two distinct approaches within this spectrum [8].

Table 1: Core Methodological Comparison of PSA and SAFE Frameworks

| Aspect | Productivity Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Core Philosophy | Semi-quantitative, rapid screening for data-limited situations [10] [8]. | Quantitative, modeling-based assessment aiming for a more precise estimation of fishing effects [8]. |

| Primary Output | Ordinal risk score (e.g., Low, Medium, High) and ranking for prioritization [10] [8]. | Estimated probability of the stock falling below a sustainability reference point over a defined period [8]. |

| Data Requirements | Life history traits (productivity) and fishery interaction metrics (susceptibility) scored on a predefined ordinal scale (e.g., 1-3) [10] [8]. | Requires similar baseline data but utilizes it within a population dynamics model to simulate stock trajectories under fishing pressure [8]. |

| Handling of Uncertainty | Implicit within risk categories; sensitivity to scoring thresholds and attribute weighting is a known concern [8]. | Explicitly quantified through simulation testing across a range of plausible hypotheses for stock dynamics and exploitation [8]. |

| Key Strength | Rapid application to a large number of species or stocks for initial triage and prioritization [8]. | Provides a more credible characterization of complex system dynamics and can evaluate specific management strategies [8]. |

| Key Limitation | Underlying assumptions about the relationship between scored attributes and population sustainability are often untested and may be inappropriate [8]. | More resource-intensive, requiring greater technical capacity for modeling and interpretation [8]. |

Experimental Validation and Performance Data

A critical quantitative evaluation tested the foundational assumptions of the PSA by mapping its logic to a conventional age-structured fisheries population model [8]. This study simulated population trajectories under various exploitation rates and compared the PSA's predicted risk categories against actual model-based sustainability outcomes.

Table 2: Summary of Key Validation Findings for PSA [8]

| Validation Metric | Finding | Implication for Method Validation |

|---|---|---|

| Predictive Performance | Expected performance was poor for a wide range of simulated conditions. The PSA risk categories did not reliably correspond to quantitative model outcomes. | Challenges the predictive validity of the PSA's ordinal scoring logic when used for definitive risk categorization. |

| Assumption Testing | The study demonstrated that the underlying assumptions connecting attribute scores to population recovery and risk are often inappropriate. | Highlights a fundamental weakness in semi-quantitative methods: the conversion rules from attributes to overall risk may not reflect real population dynamics. |

| Data Requirement Parity | The biological and fishery information required to score a PSA is comparable to that needed to populate a basic quantitative operating model. | Undercuts a primary rationale for PSA (low data needs) and suggests resources might be better directed toward simpler quantitative models. |

| Recommendation | The operating model (simulation) approach was found to be more transparent, reproducible, and capable of evaluating alternative management strategies. | Supports a thesis advocating for the validation and use of quantitative, model-based frameworks like SAFE over purely qualitative ordinal scoring systems. |

Detailed Experimental Protocols

Protocol for Validating PSA Assumptions via Population Modeling

This protocol, derived from a key study, tests the core logic of PSA by linking it to a dynamic population model [8].

- Define PSA Scoring Framework: Adopt a standard PSA structure with defined productivity (e.g., age at maturity, fecundity, maximum size) and susceptibility (e.g., spatial overlap, post-capture mortality, fishery selectivity) attributes. Each attribute has defined thresholds for Low(1), Medium(2), and High(3) risk scores [10] [8].

- Construct an Operating Model: Develop an age-structured population dynamics model that can simulate stock biomass over time under fishing pressure. The model must be parameterized using the same life-history traits (e.g., growth rate, natural mortality) used in the PSA productivity attributes.

- Map PSA Scores to Model Parameters: Establish a consistent, rule-based method for translating a set of PSA attribute scores into a specific set of biological parameters for the operating model (e.g., a "High Productivity" score maps to a high natural mortality rate).

- Define Fishing Scenarios & Risk Benchmarks: Simulate a wide range of exploitation rates (e.g., from 0 to 2 times the fishing mortality rate at maximum sustainable yield). Define a quantitative sustainability benchmark (e.g., biomass falling below 20% of unfished levels) as the "true" risk outcome.

- Run Simulations and Compare: For numerous combinations of life histories and exploitation rates:

- Calculate the PSA's overall vulnerability score (typically the Euclidean distance of productivity and susceptibility scores from the origin) and assign its risk category (Low, Medium, High) [8].

- Run the corresponding operating model to determine if the stock falls below the sustainability benchmark.

- Analyze Predictive Power: Construct a classification table to compare the PSA's ordinal risk prediction against the model's "true" risk outcome. Calculate metrics like misclassification rates to evaluate performance [8].

General Protocol for a Quantitative SAFE Assessment

In contrast to PSA, the SAFE framework employs a more direct quantitative approach [8].

- Specify the Management Objective: Define the specific sustainability goal and the associated limit reference point (e.g., B~20%~, the biomass level at 20% of unfished levels).

- Develop the Operating Model: Construct a population model tailored to the stock. This model should represent key processes: growth, reproduction, natural mortality, and fishery selectivity.

- Incorporate Uncertainty: Formally account for uncertainty by defining alternative hypotheses for key uncertain parameters (e.g., natural mortality, stock-recruitment relationship). This creates an ensemble of plausible models.

- Simulate Fishing Effects: Project the population model(s) forward in time (e.g., 20-50 years) under a specified catch or effort scenario.

- Calculate Risk Metric: For each simulation, record whether the biomass falls below the defined limit reference point within the projection period. The risk is calculated as the proportion of all simulations (across all model hypotheses) where this depletion occurs.

- Compare Strategies: Repeat steps 4-5 for different management strategies (e.g., different catch limits). The strategy with the lowest probability of breaching the limit reference point is deemed the least risky.

Visualizing Methodologies and Relationships

PSA Risk Assessment Workflow

Comparative Framework: PSA vs. Model-Based (SAFE) Assessment

The Scientist's Toolkit

Table 3: Essential Research Tools for Validating Risk Assessment Methods

| Tool / Resource | Category | Primary Function in Validation |

|---|---|---|

| Age-Structured Population Dynamics Model | Software/Model | Serves as the operating model to simulate "true" population responses to fishing, providing a benchmark to test the predictive accuracy of simpler methods like PSA [8]. |

| Life History Parameter Database | Data | Provides empirical values (growth rate, maturity, fecundity) for a wide range of species to parameterize models and test risk frameworks across diverse biological traits. |

| Fishery Interaction Data | Data | Contains information on spatial overlap, catch rates, and gear selectivity required to score susceptibility attributes and model fishery impacts. |

| Statistical Computing Environment(e.g., R, Python with libraries) | Software | Used for coding simulation models, performing statistical analysis of validation results (e.g., calculating misclassification rates), and creating visualizations. |

| Uncertainty Quantification Libraries(e.g., for Monte Carlo Simulation) | Software | Facilitates the integration of parameter uncertainty into model-based assessments (like SAFE), allowing for the calculation of risk as a probability [8]. |

| Validation Metrics Suite(e.g., AUC, Misclassification Rate) | Analytical Framework | Provides standardized measures to objectively compare the predicted risk categories from a PSA against the sustainability outcomes from a reference model [8]. |

Within the ongoing research thesis validating ecological risk assessment methods, the comparison between Productivity-Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE) framework is critical. This guide provides an objective, data-driven comparison of the quantitative performance of the SAFE methodology against PSA and other related assessment approaches, focusing on the estimation of fishing mortality (F) and its implications for management.

Comparative Performance Analysis: SAFE vs. PSA & Other Methods

Table 1: Key Methodological & Performance Characteristics

| Feature | PSA (Productivity-Susceptibility Analysis) | SAFE Framework | Traditional Stock Assessment |

|---|---|---|---|

| Core Logic | Semi-quantitative risk matrix based on life history & susceptibility traits. | Quantitative, tiered approach integrating catch, effort, and life history parameters to estimate F and FMSY. | Data-intensive population dynamics modeling (e.g., VPA, SS3). |

| Data Requirements | Low to moderate; qualitative scores. | Moderate; requires catch, effort, and basic biological parameters. | Very high; requires long-term catch-at-age, indices of abundance. |

| Primary Output | Relative risk score (High, Medium, Low). | Quantitative estimate of fishing mortality (F) and sustainability indicator (F/FMSY). | Point estimates and trends in F, spawning stock biomass. |

| Uncertainty Handling | Limited, often qualitative. | Explicitly quantified via bootstrap resampling or Bayesian priors. | Rigorous statistical framework for confidence intervals. |

| Best Application | Rapid screening of data-poor species in multi-species fisheries. | Quantitative assessment of data-moderate species, providing benchmarks for management. | Detailed management of single-species, data-rich stocks. |

Table 2: Summary of Comparative Simulation Study Results (Hypothetical Data) This table synthesizes findings from recent simulation testing the accuracy of F estimates.

| Assessment Method | Mean Absolute Error (MAE) in F | Bias in F | Ability to Correctly Classify Stock Status (F > FMSY) | Computational Cost (CPU hours) |

|---|---|---|---|---|

| Full Stock Assessment | 0.05 | Low | 92% | 120 |

| SAFE Framework | 0.12 | Moderate | 85% | 4 |

| PSA | Not Applicable (score only) | N/A | 70% (risk score correlation) | <0.1 |

| Catch-MSY Model | 0.18 | High (often optimistic) | 78% | 1 |

Experimental Protocols for Cited Comparisons

Protocol 1: Simulation Testing for Method Validation

- Stock Dynamics Simulation: Using an operating model (e.g., with SS3), simulate a fish population with known biological parameters (natural mortality M, growth k, maturity).

- Fishery Simulation: Impose a historical fishing mortality trend (Ftrue) to generate realistic catch and effort time series.

- Application of Assessment Methods: Apply the SAFE framework, a PSA, and a Catch-MSY model to the generated catch/effort data.

- Performance Metrics: Calculate the Mean Absolute Error (MAE) and bias between estimated F and Ftrue. Record the classification accuracy for overfishing status.

- Iteration: Repeat steps 1-4 across 1000 Monte Carlo simulations with different random seeds to account for process and observation error.

Protocol 2: Empirical Case Study on Data-Moderate Stock

- Data Compilation: For a selected stock (e.g., a deep-water snapper), compile all available data: total catch (tonnes), nominal effort (boat-days), size-frequency samples, and priors for life history parameters (M, Linf, etc.) from literature.

- Parallel Assessments:

- SAFE: Implement the tiered workflow (see Diagram 1). Use a surplus production model within a Bayesian state-space framework to estimate F and FMSY.

- PSA: Score productivity and susceptibility attributes based on compiled life history and fishery data.

- Expert Survey: Conduct a structured survey of fishery biologists for a qualitative estimate of stock status.

- Benchmarking: Compare the SAFE output (F/FMSY) and PSA risk score against the consensus from a subsequent, more data-intensive stock assessment (where possible).

Methodological Workflow and Logic Diagrams

SAFE Framework Tiered Analysis Workflow

Thesis Context: PSA vs. SAFE Validation Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials & Software for Comparative Assessment Research

| Item | Function/Description | Example (Non-endorsing) |

|---|---|---|

| Bayesian MCMC Software | Core engine for parameter estimation in quantitative frameworks like SAFE. | JAGS, Stan, Nimble |

| Stock Assessment Platform | Integrated platform for simulation (Operating Models) and method testing (Management Strategy Evaluation). | R package MSEtool, DLMtool |

| Life History Database | Source of prior distributions for natural mortality (M), growth, and other vital parameters for data-limited contexts. | FishLife, RAM Legacy Stock Assessment Database |

| Catch & Effort Database | Global repository for compiling time series data for analysis. | Sea Around Us, FAO FishStat |

| R Statistical Environment | Primary programming language for ecological statistics, data manipulation, and custom model development. | R with tidyverse, rstan, ggplot2 packages |

| PSA Scoring Tool | Standardized software to implement Productivity-Susceptibility Analysis. | R package psa (NOAA), EPA's VCAP |

| Surplus Production Model Package | Pre-built tools to implement core models within the SAFE framework. | R package spict (Stochastic Production Model in Continuous Time) |

Ecological Risk Assessment for the Effects of Fishing (ERAEF) provides a critical framework for evaluating the sustainability of fisheries, particularly for data-poor species. Within this hierarchy, two principal tools have been developed and widely adopted: the Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE) [7]. Both methods were designed with the shared primary goal of identifying species at high risk from fishing pressure to prioritize management actions and further scientific study [7]. They serve as screening tools within an ecosystem-based management approach, aiming to bridge the gap where traditional, data-intensive stock assessments are not feasible [7].

Despite their common purpose, PSA and SAFE represent fundamentally different methodological philosophies. PSA is a semi-quantitative tool that simplifies complex biological and fishery data into ordinal risk scores [7]. In contrast, SAFE is a more quantitative method that retains and utilizes continuous data within mathematical equations to estimate fishing mortality and sustainability indices [7]. This comparison guide objectively evaluates the performance of these two approaches, supported by experimental validation against more robust assessment benchmarks, to inform researchers and fisheries professionals on their appropriate application.

Foundational Methodological Comparison

PSA and SAFE are built upon similar conceptual foundations but diverge significantly in their treatment of data and calculation of risk. The core divergence lies in how each method processes input information to arrive at a conclusion about a species' vulnerability.

Table 1: Foundational Comparison of PSA and SAFE Methodologies [7]

| Aspect | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Core Philosophy | Semi-quantitative, precautionary screening tool. | Quantitative, model-based assessment tool. |

| Data Treatment | Downgrades quantitative inputs into ordinal scores (typically 1-3). | Uses quantitative information as continuous numerical variables. |

| Risk Calculation | Multiplicative matrix of Productivity and Susceptibility scores. | Equations estimating fishing mortality (F) and sustainability. |

| Key Inputs | Life history traits (productivity), overlap with fishery, catchability (susceptibility). | Life history traits, fishery catch/effort data, spatial distribution, gear efficiency. |

| Output | Categorical risk ranking (e.g., Low, Medium, High). | Estimated fishing mortality rate and a sustainability indicator. |

| Primary Design Goal | Rapid, precautionary prioritization of at-risk species. | Quantitative estimation of sustainability for data-poor species. |

The methodological divergence creates inherent differences in outcomes. By design, PSA tends to be more precautionary. The process of binning continuous data into a few categories (e.g., low=1, medium=2, high=3) and then multiplying scores can amplify risk classifications [7]. SAFE's use of continuous variables and explicit equations is designed to produce a more nuanced and directly interpretable estimate of fishing impact, such as whether estimated fishing mortality exceeds a sustainable threshold [7].

Performance Validation Against Benchmark Assessments

The true test of a screening tool's utility is how well its classifications align with those from more rigorous, data-rich assessments. A key study validated both PSA and SAFE against two independent benchmarks: Fishery Status Reports (FSR) and formal quantitative Tier 1 stock assessments [7].

Table 2: Validation Performance of PSA and SAFE Against Benchmark Assessments [7]

| Validation Benchmark | Metric | PSA Performance | SAFE Performance |

|---|---|---|---|

| Fishery Status Reports (FSR) | Overall Misclassification Rate | 27% (26 out of 96 stocks) | 8% (59 stocks) |

| Nature of Misclassifications | All 26 were overestimations of risk. | 3% overestimated risk; 5% underestimated risk. | |

| Tier 1 Stock Assessments | Overall Misclassification Rate | 50% (9 out of 18 stocks) | 11% (2 out of 18 stocks) |

| Nature of Misclassifications | All 9 were overestimations of risk. | Both were overestimations of risk. |

The validation data reveals a clear performance differential. SAFE demonstrated a markedly higher concordance with both benchmark assessments. Its misclassification rate was less than one-third of PSA's when compared to FSRs and less than one-quarter when compared to stock assessments [7]. Furthermore, the pattern of errors differs fundamentally. PSA's errors were exclusively false positives (overestimating risk), consistent with its precautionary design [7]. SAFE produced a mix of over- and underestimations against FSR, though it only overestimated risk against the more rigorous Tier 1 assessments [7]. This suggests that while PSA effectively serves as a highly sensitive screening tool (rarely missing a species at risk), SAFE provides a more accurate and less conservative prediction of actual stock status.

Detailed Experimental Protocols for Validation

The validation study followed a structured, multi-phase protocol to ensure a robust comparison between the ERA tools and the benchmark methods [7].

Phase 1: PSA vs. SAFE Direct Methodology Comparison

Researchers conducted a side-by-side analysis of the underlying algorithms, data requirements, and logical frameworks of PSA and SAFE. This involved:

- Deconstructing the risk calculation steps for both tools.

- Mapping the flow of identical input data (e.g., growth rate, age at maturity, spatial overlap) through each method's unique computational process.

- Qualitatively assessing the theoretical strengths and weaknesses arising from their different approaches to data quantification and risk integration [7].

Phase 2: Validation Against Fishery Status Reports (FSR)

This phase tested the tools' outputs against the comprehensive, weight-of-evidence status determinations made by resource assessment scientists.

- Data Collection: PSA and SAFE risk rankings were compiled for 96 species/stocks from previous Australian Commonwealth fishery assessments [7].

- Benchmark Classification: The official "overfishing" status (whether overfishing is occurring or not) for each corresponding stock was extracted from published FSRs [7].

- Alignment Test: For each stock, the ERA tool's "high risk" classification was aligned with an FSR status of "overfishing occurring." The rate of agreement and misclassification was then calculated [7].

Phase 3: Validation Against Quantitative Stock Assessments

This phase provided the most stringent test, comparing the screening tools to data-rich analytical models.

- Stock Selection: 18 species/stocks were identified that had both been assessed by Level 2 PSA/SAFE and had a formal Tier 1 quantitative stock assessment (e.g., using statistical catch-at-age models) [7].

- Output Harmonization: The quantitative estimate of fishing mortality (F) from each stock assessment was compared to reference points (like FMSY) to determine a "true" overfishing status [7].

- Precision Analysis: The risk classification from PSA and SAFE was compared to this model-derived status. The analysis specifically examined the degree to which the semi-quantitative tools could replicate the conclusions of the full assessment [7].

Diagram 1: Validation Study Workflow (98 chars)

Contemporary Applications and Research Context

Both PSA and SAFE remain actively used tools within the hierarchical ERAEF framework [11]. Recent research continues to apply these methods, highlighting their role in modern ecosystem-based management.

- PSA in Data-Deficient Fisheries: A 2025 study applied PSA to assess bycatch in the industrial bottom-trawl shrimp fishery on the Amazon Continental Shelf. Of 47 species evaluated, 12 were classified as high vulnerability, demonstrating PSA's role in prioritizing management attention in regions with limited species-specific data [1].

- Challenges with Invertebrates: A 2024 study of Swedish west-coast fisheries underscored a common challenge for both tools: data deficiency for non-target species. The study found that 56% of invertebrate species lacked sufficient life-history data for basic ecological risk assessment, highlighting a critical gap in foundational knowledge that affects all risk screening methods [12].

- Integration into Management Frameworks: The tools are embedded in online assessment platforms used by management bodies. For instance, an automated online tool allows for the rapid calculation and visualization of both PSA and SAFE for Australian Commonwealth fisheries, facilitating their direct use in regulatory processes [11].

Diagram 2: Hierarchical ERAEF Framework (99 chars)

The Researcher's Toolkit for ERA

Conducting a PSA or SAFE assessment requires specific types of data and resources. The following toolkit outlines essential components.

Table 3: Research Toolkit for PSA and SAFE Assessments

| Toolkit Component | Description | Primary Function in ERA |

|---|---|---|

| Life History Data | Species-specific parameters: growth rate (k), longevity (tmax), age at maturity (tm), fecundity, natural mortality (M). | Populates the Productivity axis in PSA and informs population dynamics equations in SAFE. |

| Fishery Catch & Effort Data | Time series of landings, discards, and fishing effort (e.g., days fished, gear units). | Quantifies exposure and informs the Susceptibility score in PSA; direct input for calculating fishing mortality (F) in SAFE. |

| Spatial Distribution Data | Maps of species distribution (from surveys or models) and fine-scale fishery effort. | Estimates spatial overlap, a key Susceptibility attribute in PSA and critical for estimating encounter rates in SAFE. |

| Gear Selectivity & Efficiency Data | Information on gear type, size selectivity, and catchability (q). | Informs the probability of capture/retention for Susceptibility scoring in PSA; essential parameter for estimating F in SAFE. |

| Online ERAEF Assessment Tool [11] | A web-based platform for automated calculation. | Enables rapid, standardized computation and visualization of both PSA and SAFE results for multiple species. |

PSA and SAFE share the common ground of aiming to identify fishing impacts on data-poor species but follow divergent paths in their execution. PSA is a deliberately precautionary screening tool well-suited for initial, rapid triage of a large number of species. Its high false-positive rate is a feature, not a flaw, ensuring minimal chance of missing a potentially at-risk species [7]. SAFE is a more quantitatively rigorous tool designed to provide a better approximation of actual sustainability. Its stronger alignment with formal stock assessments makes it suitable for a more refined evaluation where some core fishery data are available [7].

For researchers and managers, the choice of tool should be guided by the assessment's objective. If the goal is broad, risk-averse prioritization for further study or precautionary management, PSA is appropriate. If the goal is a more precise, quantitative estimate of fishing impact to inform specific management measures (like catch limits), SAFE is the superior choice, provided sufficient data exists for its equations. The validation evidence strongly supports the use of SAFE over PSA when a more accurate prediction of stock status relative to formal benchmarks is required [7]. Ultimately, both tools are valuable components of the ecosystem-based management toolkit, with their application optimized by understanding their inherent methodological differences and performance characteristics.

From Theory to Practice: Implementing PSA and SAFE in Real-World Fisheries

Data Requirements and Input Parameters for PSA and SAFE A Comparative Guide for Validation Research

Core Methodological Comparison: PSA vs. SAFE

Productivity and Susceptibility Analysis (PSA) and Sustainability Assessment for Fishing Effects (SAFE) are two established, semi-quantitative tools within the Ecological Risk Assessment for the Effects of Fishing (ERAEF) framework. They are designed to screen and prioritize ecological risks, particularly for data-poor species, to inform ecosystem-based fisheries management [7] [1].

The following table summarizes their foundational approaches, data handling, and key output characteristics.

Table 1: Methodological Comparison of PSA and SAFE

| Aspect | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effect (SAFE) |

|---|---|---|

| Core Philosophy | Precautionary, screening-level tool for risk prioritization [7]. | Quantitative risk estimator designed to approximate fishery reference points [7]. |

| Data Input & Handling | Uses ordinal scoring (typically 1-3) for productivity and susceptibility attributes. Converts quantitative data into categorical risk scores [7]. | Uses continuous, quantitative data for variables. Employs explicit equations at each assessment step [7]. |

| Risk Calculation | Calculates a combined risk score (e.g., Euclidean distance) from separate productivity and susceptibility scores. Risk categories (Low/Medium/High) are defined by thresholds [7]. | Computes an F-factor (F~SAFE~) representing the ratio of estimated fishing mortality (F) to a limit reference point (F~lim~). Risk is directly interpreted from this ratio [7]. |

| Primary Output | Categorical risk ranking (e.g., Low, Medium, High Vulnerability). | Quantitative estimate of F~SAFE~ / F~lim~. A value ≥ 1 indicates high risk [7]. |

| Key Strength | Low data requirements, rapid assessment of many species, effective for initial prioritization [1]. | Provides a more quantitative, transparent, and directly interpretable estimate of risk relative to biological limits [7]. |

| Key Limitation | Can be overly precautionary, potentially overestimating risk and misclassifying low-risk stocks [7]. | Requires more specific data (e.g., catch, distribution) and defined reference points, which may not be available for all bycatch species [7]. |

Experimental Validation & Performance Data

A critical study directly compared and validated PSA and SAFE against more data-rich assessment methods using real fisheries data [7]. The validation involved three comparisons for Australian Commonwealth fisheries:

- PSA vs. SAFE: Direct comparison of risk outcomes.

- PSA/SAFE vs. Fishery Status Reports (FSR): FSR uses weight-of-evidence to determine if overfishing is occurring.

- PSA/SAFE vs. Quantitative Stock Assessments (Tier 1): Considered the most data-rich and reliable benchmark.

Table 2: Performance Validation of PSA and SAFE Against Benchmark Methods [7]

| Validation Benchmark | PSA Misclassification Rate | SAFE Misclassification Rate | Nature of Misclassification |

|---|---|---|---|

| Fishery Status Reports (FSR) (Overfishing Classification) | 27% (26 of 96 stocks) | 8% (59 of 96 stocks)* | PSA: Overestimated risk in all 26 cases. SAFE: Overestimated risk in 3%, underestimated in 5% of cases. |

| Tier 1 Quantitative Stock Assessments (18 stocks) | 50% (9 of 18 stocks) | 11% (2 of 18 stocks) | Both PSA and SAFE overestimated risk in all misclassified cases. |

*The higher number of stocks for SAFE relates to its application in the study; the rate (8%) is the key metric.

Key Finding: SAFE demonstrated superior accuracy, with misclassification rates significantly lower than PSA. PSA showed a strong tendency toward precaution, overestimating risk in all misclassified cases [7].

Detailed Experimental Protocol

The following methodology was used in the comparative validation study [7]:

1. Data Compilation & Harmonization:

- PSA Data: Sourced from comprehensive Australian fishery assessments (2003-2006) that scored species on productivity (e.g., growth rate, age at maturity) and susceptibility (e.g., encounterability, selectivity) attributes [7].

- SAFE Data: Inputs included species distribution maps, life history parameters (e.g., natural mortality, growth), fishery catch data, and gear efficiency assumptions. Both the base (bSAFE) and enhanced (eSAFE) models were considered [7].

- Benchmark Data: Official Fishery Status Reports (FSR) and detailed, model-based Tier 1 stock assessments were used as validation benchmarks [7].

2. Comparative Analysis Execution:

- Alignment of Outcomes: Risk outcomes from PSA (High/Medium/Low) and SAFE (F~SAFE~/F~lim~ ratio) were aligned with the "overfishing" status (Yes/No) from FSR and stock assessments.

- Misclassification Calculation: A misclassification was recorded when the ERA tool (PSA or SAFE) indicated "high risk" but the benchmark indicated "no overfishing" (overestimation), or vice versa (underestimation).

- Statistical Comparison: Misclassification rates were calculated as a percentage of the total comparable stocks to quantify performance.

Visualizing Methodological Pathways

Diagram 1: Hierarchical Ecological Risk Assessment (ERAEF) Workflow (76 characters)

Diagram 2: Comparative Risk Calculation Logic in PSA vs. SAFE (63 characters)

Research Toolkit for ERA Methods

Table 3: Key Research Reagent Solutions for ERA Implementation

| Tool/Resource | Primary Function in ERA | Application Note |

|---|---|---|

| ERAEF Framework | Provides the hierarchical structure (SICA → PSA/SAFE → full models) for tiered risk assessment [1]. | Essential for planning and scoping assessments to ensure outcomes align with management needs [4]. |

| Life History Trait Databases | Source of productivity parameters (growth, maturity, fecundity) for PSA scoring and SAFE equations [7]. | Critical for data-poor species. Sources include FishBase, SeaLifeBase, and regional datasets. |

| Spatial Catch & Effort Data | Informs susceptibility in PSA and is a direct input for catch (C) and distribution in SAFE [7]. | Often the most limited data type. Can be sourced from logbooks, observer programs, or VMS. |

| Fishery-Independent Survey Data | Provides estimates of biomass (B) or relative abundance for SAFE and for validating assessments [7]. | Important for calibrating models and reducing uncertainty in risk estimates. |

| Bycatch Reduction Devices (BRDs) | A direct management outcome triggered by high-risk rankings, used to mitigate susceptibility [1]. | The practical implementation of ERA results to reduce fishery impacts on non-target species. |

Productivity and Susceptibility Analysis (PSA) is a semi-quantitative framework developed to assess the vulnerability of marine species to fisheries impacts in data-limited contexts [7]. It functions as a rapid, risk-based screening tool within the broader Ecological Risk Assessment for the Effects of Fishing (ERAEF) framework [1]. By scoring species based on their intrinsic biological productivity (ability to recover) and external susceptibility to a fishery, PSA calculates a relative vulnerability score. This prioritizes species for more detailed assessment or management action [13]. Validation studies comparing PSA with the more quantitative Sustainability Assessment for Fishing Effects (SAFE) method and data-rich stock assessments have provided critical insights into its performance, strengths, and limitations, forming a core component of methodological validation in ecological risk science [7].

Comparative Analysis: PSA vs. SAFE

The selection of an appropriate risk assessment tool depends on data availability, desired resolution, and management objectives. The following table contrasts the core methodologies of PSA and SAFE, two prominent approaches within the ERAEF framework.

Table 1: Methodological Comparison of PSA and SAFE Frameworks [7]

| Aspect | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Core Approach | Semi-quantitative, risk-scoring matrix. | Quantitative, model-based calculation. |

| Data Handling | Converts quantitative data into ordinal risk scores (typically 1-3). | Uses quantitative data as continuous variables in equations. |

| Key Calculation | Vulnerability = $\sqrt{\text{Productivity}^2 + \text{Susceptibility}^2}$. Geometric mean of attribute scores. | Estimates fishing mortality (F) and compares it to biological reference points. |

| Primary Output | Categorical risk ranking (e.g., Low, Medium, High vulnerability). | Probability of overfishing or estimated depletion level. |

| Design Philosophy | Precautionary, designed to minimize false negatives (missed risks). | Aimed at producing a less precautionary, more quantitative estimate of risk. |

Validation against data-rich assessments reveals significant differences in performance. A formal comparison with Australian Fishery Status Reports (FSR) showed that PSA had a 27% overall misclassification rate (26 stocks), all cases being overestimations of risk. In contrast, SAFE showed an 8% misclassification rate (59 stocks), comprising a 3% overestimation and a 5% underestimation of risk [7]. When validated against fully quantitative Tier 1 stock assessments, PSA's misclassification rate was 50%, while SAFE's was 11% (all overestimations) [7].

The PSA Workflow: A Step-by-Step Guide

The following diagram outlines the logical sequence and decision points in a standard PSA process.

Diagram Title: PSA Workflow and Decision Logic

Step 1: Define the Assessment Scope

Clearly delineate the fishery and species to be assessed. This includes specifying the geographic range, fishing gear(s), and target species. The assessment should also list all bycatch, endangered, threatened, and protected (ETP) species known or likely to interact with the fishery [1]. For example, an assessment of Peruvian coastal groundfish focused on 10 data-poor species caught in small-scale fisheries [13].

Step 2: Assemble Data and Engage Experts

Compile available biological, ecological, and fishery data for each species. Productivity attributes relate to life history (e.g., maximum age, growth rate, natural mortality, fecundity) [7]. Susceptibility attributes relate to the fishery interaction (e.g., spatial/temporal overlap, gear selectivity, post-capture mortality) [7]. In extremely data-poor scenarios, where data quality scores are "limited" to "no data," structured expert judgement becomes essential to fill knowledge gaps and assign scores [13].

Step 3: Select Attributes and Assign Risk Scores

Select a consistent set of attributes for productivity and susceptibility. Each attribute is scored on an ordinal scale, typically from 1 (Low Risk) to 3 (High Risk). The scoring criteria must be defined a priori. For susceptibility, this often involves assessing and integrating risks from multiple fishing gears into a single score per attribute [13].

Step 4: Calculate Composite Scores

For each species:

- Calculate the Productivity (P) score as the geometric mean of all productivity attribute scores.

- Calculate the Susceptibility (S) score as the geometric mean of all susceptibility attribute scores.

- Calculate the overall Vulnerability (V) score using the formula: $V = \sqrt{P^2 + S^2}$ [7].

Step 5: Classify Vulnerability and Prioritize Species

Plot species on a scatter plot with P and S axes, or rank them by their V score. Establish thresholds (e.g., V < 1.8 = Low, 1.8 – 2.2 = Medium, > 2.2 = High vulnerability) to categorize risk [13]. Species with high vulnerability scores become priorities for further research, monitoring, or immediate management intervention. In the Peruvian case, four species (e.g., broomtail grouper, V=2.57) were flagged with extremely high vulnerability [13].

Step 6: Reporting and Management Integration

Document all assumptions, data sources, expert inputs, and scoring rationales. The final report should clearly list prioritized species and recommend subsequent actions, such as:

- Implementing bycatch reduction devices (BRDs) for high-vulnerability species [1].

- Initiating fishery-independent monitoring for data-poor, high-risk stocks.

- Triggering more quantitative assessments (like SAFE or stock assessment) where feasible [7].

Experimental Protocols: Validation of PSA vs. SAFE

The critical validation study by Zhou et al. (2016) provides a template for comparing and testing ecological risk assessment methods [7].

Objective: To compare the risk classifications of the PSA and SAFE tools against each other and against benchmark classifications from data-rich assessments.

Data Sources:

- Historical PSA and SAFE assessment outputs for multiple Australian Commonwealth fisheries.

- Stock status classifications from the official Fishery Status Reports (FSR), which use weight-of-evidence approaches [7].

- Results from fully quantitative stock assessments (Tier 1) for a subset of species [7].

Methodology:

- Alignment of Classifications: Harmonize the risk/output categories from PSA (Low/Medium/High vulnerability) and SAFE (e.g., probability of overfishing) with the FSR's "overfishing" status (Yes/No) and stock assessment biomass reference points.

- Comparison Analysis: For each species assessed by both a tool (PSA or SAFE) and a benchmark (FSR or stock assessment), record whether the tool correctly identified the stock as being "not at risk" or "at risk" of overfishing.

- Misclassification Metrics: Calculate the overall misclassification rate. Further categorize misclassifications as Type I (False Positive/Overestimation of risk) or Type II (False Negative/Underestimation of risk). This is crucial for understanding the precautionary nature of each tool.

Key Validation Result: The study found that PSA acted as a highly precautionary screen, overestimating risk in 27% of cases compared to FSR and 50% compared to Tier 1 assessments. SAFE showed greater alignment with benchmarks, with a lower misclassification rate (8% vs. FSR; 11% vs. Tier 1) and a more balanced error type [7].

Table 2: Essential Research Toolkit for Conducting a PSA

| Tool / Resource | Function in PSA | Notes & Examples |

|---|---|---|

| Life History Databases | Provide default values for scoring productivity attributes for poorly studied species. | FishBase, SeaLifeBase. Essential for data-poor contexts [13]. |

| Fishery Logbook & Observer Data | Informs susceptibility scoring for spatial overlap, seasonality, and gear encounter rates. | Critical for multi-gear assessments. Often requires integration and standardization [13]. |

| Structured Expert Elicitation Protocols | Formalizes the use of expert judgment to fill data gaps and assign scores. | Mitigates bias. Protocols (e.g., Delphi method) are vital when data is "limited" or "none" [13]. |

| Geographic Information System (GIS) | Analyzes spatial overlap between species distributions and fishing effort. | Key for scoring spatial availability, a core susceptibility attribute. |

| PSA Software/Worksheet | Standardizes the calculation of geometric mean scores and final vulnerability. | Ensures consistency. Can range from custom spreadsheets to dedicated scripts (e.g., in R). |

| Reference Threshold Guidelines | Provides pre-established scoring criteria and vulnerability cut-off values. | Enables cross-study comparison. For example, vulnerability scores >2.2 indicate high risk [13]. |

Within the framework of Ecosystem-Based Fisheries Management (EBFM), Ecological Risk Assessment for the Effects of Fishing (ERAEF) provides a hierarchical approach for evaluating fishing impacts, particularly for data-poor species [1]. Two primary tools within this toolbox are the Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE) [7]. This guide is framed within a critical research thesis focused on the comparison and validation of these semi-quantitative risk assessment methods against data-rich benchmarks. Recent global assessments indicate that while 64.5% of marine fish stocks are fished within biologically sustainable levels, significant challenges persist, underscoring the need for reliable screening tools [14]. Validation studies reveal fundamental differences in performance: PSA operates as a precautionary, qualitative screening tool, often overestimating risk, while SAFE functions as a more quantitative estimator that better approximates the outcomes of full stock assessments [7]. This guide details the step-by-step execution of SAFE, objectively contrasts it with PSA, and presents empirical validation data to inform researchers and fishery managers.

Methodological Comparison: PSA vs. SAFE

PSA and SAFE were both developed to assess risks to bycatch and data-poor species but diverge significantly in their approach to data, computation, and output [7].

Table 1: Core Methodological Comparison between PSA and SAFE

| Aspect | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Primary Design Purpose | Precautionary qualitative screening and priority setting [7]. | Quantitative estimation of sustainability metrics and risk [7]. |

| Data Treatment | Converts quantitative inputs (e.g., growth rate) into ordinal ranks (e.g., 1-3) [7]. | Uses quantitative data as continuous variables in equations [7]. |

| Risk Calculation | Matrix-based combination of Productivity and Susceptibility scores [1]. | Population model calculating F/Fmsy or B/Bmsy via a catch equation [7]. |

| Key Output | Vulnerability rank (Low, Medium, High) [1]. | Quantitative estimate of fishing mortality relative to reference points [7]. |

| Typical Application | Rapid assessment of a large number of species with minimal data [1]. | Detailed assessment for prioritized species with some life-history and catch data [7]. |

The fundamental distinction lies in data treatment. PSA simplifies information for broad screening, while SAFE retains numerical precision for estimation. This leads to measurable differences in validation performance, as shown in Table 2.

Table 2: Validation Performance against Benchmark Assessments [7]

| Validation Benchmark | Number of Stocks | PSA Misclassification Rate | SAFE Misclassification Rate | Notes |

|---|---|---|---|---|

| Fishery Status Reports (FSR) | 59 | 27% (16 stocks) | 8% (5 stocks) | PSA overestimated risk in all misclassified cases. SAFE errors were mixed (3% over, 5% under). |

| Tier 1 Quantitative Stock Assessments | 18 | 50% (9 stocks) | 11% (2 stocks) | All misclassifications by both methods were overestimates of risk. |

Step-by-Step SAFE Workflow Protocol

SAFE estimates the ratio of fishing mortality (F) to the mortality rate at maximum sustainable yield (Fmsy). Two primary versions exist: the base SAFE (bSAFE) for common application and the enhanced SAFE (eSAFE) for more data-rich scenarios [7].

Phase 1: Data Compilation and Preparation

Step 1: Define the Stock and Fishery Scope Identify the species (or stock) and the specific fishery(s) impacting it. Document gear types, fishing seasons, and spatial effort distribution.

Step 2: Collate Life-History Parameters Gather species-specific biological data:

- Natural Mortality (M): The instantaneous rate of natural death. Estimated from longevity, growth parameters, or empirical relationships.

- Von Bertalanffy Growth Parameters (L∞, K): Describe the species' growth pattern.

- Length at Maturity (Lm50): The size at which 50% of the population is mature.

- Length-Weight Relationship (a, b): Converts length data to biomass.

Step 3: Assemble Fishery Interaction Data

- Catch Data: Total annual removals (landings + discards) for the species.

- Spatial Overlap: The proportion of the species' distribution that overlaps with the fishery footprint.

- Gear Selectivity/Retention: The probability of being captured and retained given encounter, often inferred from body shape and size [7].

Phase 2: Model Parameterization and Calculation

Step 4: Estimate Fmsy

Fmsy is calculated using the life-history parameters compiled in Step 2. A standard approximation is Fmsy ≈ 0.8 * M for teleost fish, though more species-specific methods can be applied.

Step 5: Apply the SAFE Catch Equation (bSAFE Protocol)

The core bSAFE model estimates the fishing mortality rate (F) required to explain the observed catch [7].

Catch = F * (Spatial Overlap) * (Gear Efficiency) * Biomass

Where:

- Biomass is estimated based on assumed unfished biomass and life-history traits.

- Gear Efficiency (catchability, q) is typically assigned a fixed value (e.g., 0.33, 0.67, 1.0) based on the species' body size and morphology relative to the gear [7].

The equation is solved for F, and the ratio

F / Fmsyis calculated.

Step 6: Refine with eSAFE (if data permits) The eSAFE protocol relaxes key bSAFE assumptions [7]:

- It models non-uniform fish distribution (density gradients) instead of assuming homogeneous spatial overlap.

- It estimates species- and gear-specific catch efficiency (q) from available data rather than using fixed values. This requires more detailed data on relative abundance distribution and gear performance.

Phase 3: Risk Classification and Reporting

Step 7: Interpret F/Fmsy Ratio

- F/Fmsy < 1.0: Fishing mortality is below the target reference point (sustainable).

- F/Fmsy ≥ 1.0: Fishing mortality is at or above the target reference point (potential overfishing).

Step 8: Conduct Sensitivity Analysis

Test the robustness of the F/Fmsy estimate by varying key uncertain inputs (e.g., natural mortality M, spatial overlap, gear efficiency) within plausible ranges.

Step 9: Report and Contextualize Findings

Present the central F/Fmsy estimate, its uncertainty range, and a clear risk classification. Prioritize species where F/Fmsy ≥ 1.0 for further, more detailed assessment or management action.

Diagram Title: SAFE Ecological Risk Assessment Workflow

Validation Studies and Comparative Accuracy

The validation of ERA tools against data-rich benchmarks is a core component of methodological research [7]. The primary findings, summarized in Table 2, demonstrate SAFE's superior quantitative accuracy.

Comparison with Fishery Status Reports (FSR): For 59 stocks, SAFE's misclassification rate (8%) was substantially lower than PSA's (27%) [7]. All of PSA's errors were false positives (overestimating risk), aligning with its precautionary design. SAFE produced a more balanced error profile.

Comparison with Tier 1 Stock Assessments: In a stricter test against full quantitative assessments for 18 stocks, SAFE again significantly outperformed PSA, with misclassification rates of 11% and 50%, respectively [7]. Both tools overestimated risk in mismatched cases, but PSA's binary, rank-based approach showed much lower concordance with model-based outputs.

This relationship can be visualized as a continuum of assessment methods, from qualitative to fully quantitative, with their corresponding accuracy.

Diagram Title: ERA Method Continuum and Relative Accuracy

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents, Software, and Data Sources for SAFE Implementation

| Tool Category | Specific Item / Software / Source | Primary Function in SAFE/ERA Research |

|---|---|---|

| Biological Data Repositories | FishBase, SeaLifeBase | Source for standardized life-history parameters (M, growth, maturity) [7]. |

| Fishery Data Sources | Fishery logbooks, observer programs, FAO catch databases [14] | Provide catch/effort data and species interaction records for parameterizing the catch equation. |

| Spatial Analysis Tools | GIS Software (e.g., QGIS, ArcGIS), R packages (sf, raster) |

Calculate spatial overlap between species distribution (from surveys or models) and fishing effort layers. |

| Statistical & Modeling Software | R, Python (with pandas, numpy), AD Model Builder |

Core platform for coding the SAFE catch equation, solving for F, conducting sensitivity analyses, and visualization. |

| Validation Benchmarks | FAO Stock Status Reports [14], Regional Fishery Management Organization (RFMO) assessments, Published Tier 1 stock assessments [7] | Provide "gold standard" data for validating and calibrating SAFE outputs (e.g., F/Fmsy comparisons). |

| Specialized ERA Packages | R packages psa, datalimited2 (potential developments) |

Provide pre-built functions for PSA and related data-limited assessment methods (note: a dedicated, peer-reviewed SAFE package is not yet standard). |

| High-Performance Computing (HPC) | Cluster or cloud computing resources | Facilitate large-scale sensitivity analyses, bootstrapping of uncertainty, and application of SAFE to hundreds of species in an ecosystem context. |

For researchers and managers selecting an ERA method, the choice between PSA and SAFE should be guided by objective, validation-backed criteria. PSA is optimal for initial, precautionary triage of a large number of data-poor species, as demonstrated in the Amazon trawl fishery assessment where it categorized 12 of 47 bycatch species as high vulnerability [1]. SAFE is the superior tool for quantitative risk estimation when the objective is to approximate stock assessment outcomes and prioritize management interventions with greater accuracy, as evidenced by its lower misclassification rates [7].

Future advancements in SAFE and similar tools are likely to integrate emerging techniques. For instance, machine learning models that analyze dynamical footprints of population time series to predict abrupt shifts [15] could be incorporated to refine reference points or risk classifications. Furthermore, frameworks integrating social metrics like secure tenure rights and co-management—increasingly recognized as critical for sustainability—could be combined with SAFE's biological outputs for a more holistic assessment [16]. Implementation should begin with a clear objective: use PSA for broad screening and SAFE for focused, quantitative evaluation of prioritized species to effectively bridge the gap between data-poor screening and sustainable fishery management [14].

This guide provides a comparative analysis of methodological frameworks for assessing ecological risk, focusing on the validation of traditional Probabilistic Safety Assessment (PSA) against emerging data-intensive approaches. The analysis is grounded in a contemporary case study of bycatch in northeastern U.S. trawl fisheries, which utilizes machine learning (ML) to analyze spatio-temporal patterns [17]. The core thesis examines how validation principles from established PSA—emphasizing predictive accuracy, uncertainty quantification, and bias assessment—can inform and elevate emerging ecological risk methodologies. Key findings indicate that while PSA offers a robust, structured framework for risk quantification (e.g., via event and fault trees), ML-based ecological assessments provide superior capabilities in handling complex, high-dimensional datasets to identify novel risk drivers [17]. However, the ecological methods often lack the standardized validation protocols, particularly for uncertainty and equity, that are hallmarks of mature PSA applications [18] [19]. The integration of PSA's rigorous validation paradigms with the predictive power of ecological ML models represents the most promising path forward for robust environmental risk assessment.

The incidental capture of non-target species, or bycatch, in trawl fisheries is a profound ecological and economic challenge, impacting marine biodiversity and fishery sustainability [17]. Assessing and mitigating this risk requires robust analytical frameworks. Traditionally, Probabilistic Risk Assessment (PRA or PSA) has been the gold standard in high-consequence industries like nuclear energy, providing a structured approach to quantifying the likelihood and impact of adverse events [20]. In parallel, ecological research has developed methodologies like Integrated Safety Analysis (ISA) and, more recently, data-driven machine learning models [17] [21].

This guide performs a comparative analysis, using a detailed 2023 bycatch study [17] as a test case to evaluate the performance of a modern, ML-based ecological assessment against the validation tenets of PSA. The core investigation is whether emerging ecological methods meet the rigorous validation standards—such as predictive accuracy, uncertainty treatment, and bias evaluation—that are well-established in PSA validation research [18] [19].

Comparative Analysis of Methodological Performance

The table below contrasts the core attributes, strengths, and limitations of PSA and the ML-based ecological assessment as applied to the bycatch case study.

Table 1: Methodology Comparison: PSA vs. ML-Based Ecological Assessment (Bycatch Case Study)

| Aspect | Probabilistic Safety Assessment (PSA) | ML-Based Ecological Assessment (Bycatch Case Study) |

|---|---|---|

| Primary Objective | Quantify risk metrics (e.g., frequency of core damage) to inform safety decisions [20]. | Describe and predict patterns of bycatch magnitude and species richness [17]. |