Protein Sequence Similarity and Susceptibility Prediction: From Foundations to Clinical Applications

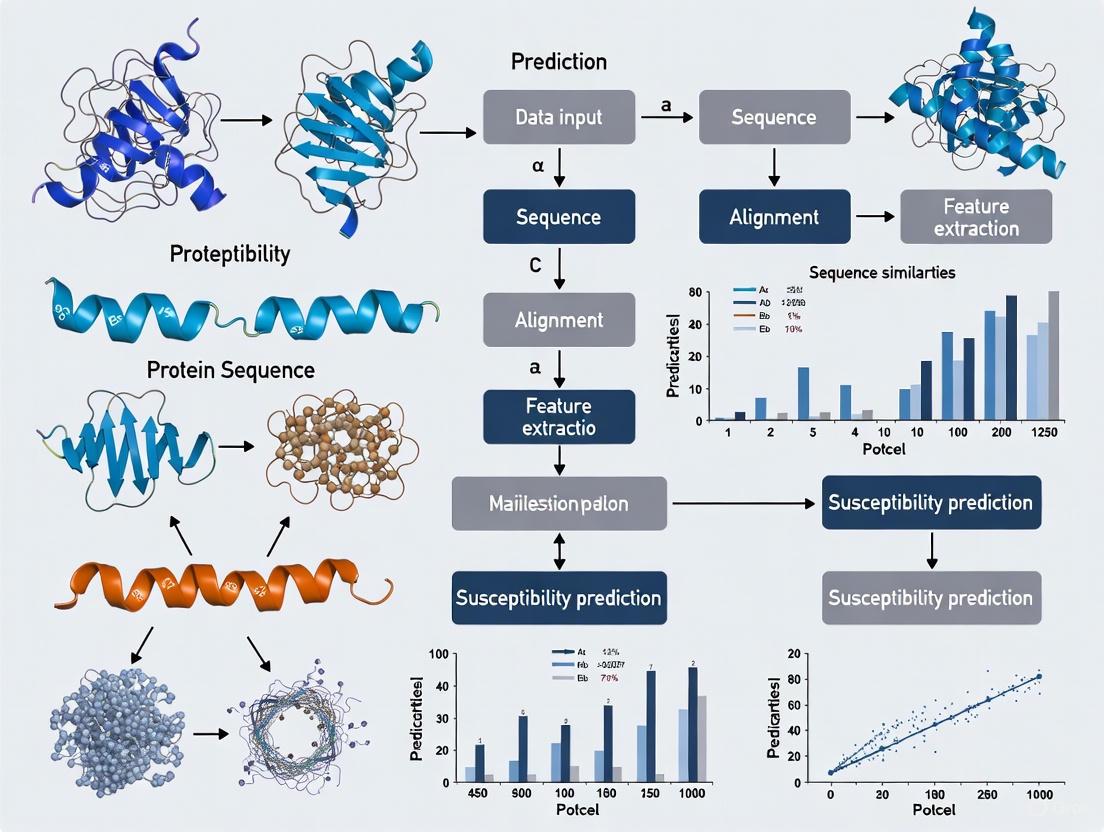

Predicting molecular susceptibility from protein sequences is a cornerstone of modern bioinformatics, crucial for understanding genetic diseases and accelerating drug discovery.

Protein Sequence Similarity and Susceptibility Prediction: From Foundations to Clinical Applications

Abstract

Predicting molecular susceptibility from protein sequences is a cornerstone of modern bioinformatics, crucial for understanding genetic diseases and accelerating drug discovery. This article provides a comprehensive resource for researchers and drug development professionals, exploring the foundational principles that link sequence to function and stability. It details cutting-edge computational methodologies, from traditional alignment-based tools to advanced deep learning and protein language models. The content further addresses critical challenges like data variability and performance bias, offering optimization strategies. Finally, it establishes a framework for the rigorous validation and comparative analysis of prediction tools, highlighting their transformative potential in enabling precision medicine approaches for cancer and neurodegenerative disorders.

The Fundamental Link: How Protein Sequence Dictates Stability and Function

The stability of a folded protein is governed by the Gibbs free energy of folding (ΔGfolding), which represents the energy difference between the unfolded and folded states. A negative ΔGfolding indicates a stable, folded protein. When a mutation is introduced, the resulting change in stability is quantified as ΔΔG (Delta Delta G), defined as the difference in ΔGfolding between the wild-type and mutant protein (ΔΔG = ΔGmutant - ΔGwild-type) [1] [2]. This metric is crucial for predicting whether a point mutation will be favorable for protein stability and has profound implications for understanding genetic diseases, protein engineering, and drug development [1] [3].

The calculation of ΔΔG is biophysically antisymmetric; the ΔΔG value for a direct mutation (A → B) should be the exact opposite of the reverse mutation (B → A) [4]. However, many computational methods fail to preserve this fundamental property [1]. This guide provides a comparative analysis of major ΔΔG prediction methods, their underlying principles, performance metrics, and experimental validation protocols to inform researchers in the field of protein sequence similarity susceptibility prediction.

Comparative Analysis of ΔΔG Prediction Methods

Table 1: Comparison of Key ΔΔG Prediction Methods

| Method | Input Requirements | Underlying Principle | Performance (Correlation) | Key Features |

|---|---|---|---|---|

| DDGun/DDGun3D [1] | Sequence (DDGun) or Sequence+Structure (DDGun3D) | Untrained linear combination of evolutionary features | 0.45-0.49 (Pearson's r) | Naturally antisymmetric; handles single & multiple mutations |

| Rosetta cartesian_ddg [5] | Protein structure | Physical force fields & statistical potentials | ~0.73 (Pearson's r on experimental structures) | Robust on homology models (>40% sequence identity) |

| Rosetta ddg_monomer [2] | Protein structure | Optimization with repulsion term weighting & backbone minimization | Strong correlation to experimental ΔΔG | Uses 50 repeats; averages best 3 structures |

| FoldX [5] | Protein structure | Empirical force field combining physical & statistical terms | Comparable to Rosetta on experimental structures | Performance drops with lower template identity |

Table 2: Performance on Homology Models with Varying Sequence Identity

| Sequence Identity to Template | Expected Model Quality | Recommended Method | Performance Trend |

|---|---|---|---|

| >70% | High (1-2 Å RMSD) | Any structure-based method | Minimal performance loss |

| 40-70% | Medium | Rosetta cartesian_ddg | Robust performance |

| <40% ("Twilight Zone") | Low, different structures/functions | Sequence-based methods (DDGun) | Significant performance degradation |

Methodologies and Experimental Protocols

DDGun: An Untrained Evolutionary Approach

DDGun predicts ΔΔG through a linear combination of sequence-derived evolutionary features without training on experimental ΔΔG datasets, avoiding overfitting [1]. The method incorporates three core evolutionary scores:

- BLOSUM62 difference (sBl): Captures the difference in evolutionary conservation between wild-type and variant residues [1].

- Skolnick statistical potential (sSk): Quantifies the difference in interaction energy within a 2-residue sequence window [1].

- Hydrophobicity difference (sHp): Calculates the difference in hydrophobicity using the Kyte-Doolittle scale [1].

Each score is weighted through the sequence profile derived from multiple sequence alignments. The structure-based version (DDGun3D) adds a fourth score based on the Bastolla-Vendruscolo statistical potential that considers the variation of the structural environment within a 5Å radius [1]. DDGun3D also incorporates a solvent accessibility modulation factor (1.1 - ac) to account for reduced mutation effects at exposed residues [1].

For multiple site variants, DDGun employs a unique combinatorial approach: ΔΔGmultiple = min(ΔΔGsingle) + max(ΔΔGsingle) - mean(ΔΔGsingle), hypothesizing that minimum and maximum values most significantly affect the combined ΔΔG [1].

Rosetta DDG Protocol

The Rosetta ddg_monomer protocol employs a sophisticated conformational sampling approach [2]. The methodology involves:

- Side chain repacking: Initial optimization of side chain conformations around the mutation site.

- Gradient-based minimization: Performed three times with increasing repulsion term weights (10%, 33%, 100%) to sample through unfavorable transition states.

- Backbone flexibility: Allows minor backbone changes during optimization to accommodate the mutation.

- Statistical analysis: Runs 50 repeats from the same original structure and averages the score of the best three structures [2].

This protocol enables thorough sampling of nearby conformations to identify the optimal energy minimum for both wild-type and mutant structures.

Experimental Validation: cDNA Display Proteolysis

Recent advances in high-throughput experimental methods have enabled massive-scale validation of computational ΔΔG predictions. The cDNA display proteolysis method can measure thermodynamic folding stability for up to 900,000 protein domains in a single experiment [6]. The protocol involves:

- DNA library preparation: Synthetic oligonucleotides encoding test protein variants.

- Cell-free translation: Using cDNA display to produce proteins covalently attached to their encoding cDNA.

- Protease incubation: Treatment with different concentrations of trypsin or chymotrypsin.

- Pull-down and sequencing: Isolation of intact (protease-resistant) proteins and deep sequencing to quantify survival rates.

- Energy calculation: Inference of ΔG values from cleavage kinetics using a Bayesian model [6].

This method has demonstrated high consistency with traditional purified protein experiments (Pearson correlations >0.75) while achieving unprecedented scale [6].

Research Reagent Solutions

Table 3: Essential Research Tools for Protein Stability Studies

| Reagent/Resource | Function/Application | Key Features |

|---|---|---|

| DDGun Web Server [1] | ΔΔG prediction from sequence/structure | Untrained method, antisymmetric, handles multiple mutations |

| Rosetta Suite [2] [5] | Structure-based ΔΔG calculations | ddg_monomer and cartesian_ddg protocols |

| FoldX [5] | Empirical force field stability calculations | Fast calculations, user-friendly interface |

| AlphaFold2/3 [7] [8] | Protein structure prediction from sequence | Enables ΔΔG prediction when experimental structures unavailable |

| Modeller [5] | Homology modeling | Generates protein models from templates |

| UniProt/UniRef [1] [7] | Protein sequence databases | Source for multiple sequence alignments |

| cDNA Display Proteolysis [6] | High-throughput experimental ΔG measurement | 900,000 variants per experiment, cost-effective |

The prediction of protein stability changes represents a critical interface between sequence, structure, and function. While structure-based methods like Rosetta generally provide higher accuracy when reliable structures are available, evolutionary-based approaches like DDGun offer robust performance even without structural information and maintain fundamental biophysical properties like antisymmetricity [1] [5]. Recent experimental advances enable validation at unprecedented scales, revealing that protein genetic architectures may be remarkably simple, dominated by additive energetic effects with sparse pairwise couplings [3] [6].

The integration of deep learning approaches with these established methods represents the future of protein stability prediction. As structural coverage expands through tools like AlphaFold2/3 [7] [8], the applicability of structure-based ΔΔG calculations will continue to grow, particularly for human proteome coverage which could quadruple through homology modeling [5]. For the research community, selection of appropriate methods should consider available input data, required accuracy, and the fundamental biophysical properties necessary for their specific application in protein engineering, variant interpretation, and drug development.

Contents

- Mechanisms of Mutation-Induced Destabilization: Explore how single amino acid changes disrupt protein folding and function.

- Computational Prediction Tools: Compare the performance of state-of-the-art stability prediction methods.

- Experimental Validation Workflows: Examine high-throughput protocols for measuring stability changes.

- Clinical Implications & Future Directions: Discuss how stability research informs drug development and personalized medicine.

Protein stability, defined as the thermodynamic favorability of a protein's native folded state over its unfolded state, is a cornerstone of cellular function. The relationship between protein sequence, folded structure, and stability is fundamental to biology, yet this delicate balance can be disrupted by the smallest of changes—a single amino acid substitution. Such missense mutations are a primary cause of human genetic diseases, and a growing body of evidence indicates that protein destabilization is one of their most common molecular mechanisms [9] [10]. When a protein is destabilized, it is more prone to misfolding, degradation by cellular quality control systems, or toxic aggregation, any of which can lead to a loss of normal function and ultimately manifest as disease [10].

Research within the field of protein sequence similarity susceptibility prediction seeks to understand why some proteins are more vulnerable to mutational destabilization than others. Recent large-scale studies have revealed that the most functionally constrained human proteins, often implicated in dominant disorders, have evolved to be less susceptible to large stability changes from missense mutations. This inherent robustness is mechanistically linked to structural features such as greater intrinsic disorder and increased flexibility in ordered regions [9]. This article provides a comparative guide to the molecular mechanisms, computational predictors, and experimental methods that are illuminating how mutations alter protein stability and drive disease pathogenesis.

Molecular Mechanisms: How Mutations Disrupt Protein Stability

Missense mutations can impact protein function through several mechanisms, with disruption of structural stability being a predominant pathway. A massive experimental study of 621 known disease-causing mutations found that approximately 61% caused a detectable decrease in protein stability [10]. The thermodynamic principle underlying this effect is quantified by the change in the Gibbs free energy of folding (ΔΔG). A positive ΔΔG value indicates destabilization, reducing the energy difference between the folded and unfolded states and making the protein more likely to populate non-functional, unfolded, or misfolded conformations [9].

Distinguishing Disease Mechanisms: The molecular mechanism of a mutation has important implications for the inheritance pattern of the associated disease. Analyses show that mutations causing recessive disorders are more likely to be highly destabilizing, essentially knocking out the protein's function. In contrast, mutations in dominant disorders often leave the protein stable but alter its functional interactions, for example, by disrupting DNA-binding interfaces without causing global unfolding [10]. For instance, while most mutations in crystallin proteins cause cataracts by destabilization and aggregation, many disease-causing mutations in the MECP2 protein (linked to Rett Syndrome) do not destabilize the protein but instead impair its ability to bind DNA and regulate genes [10].

Quantitative Stability Thresholds: Research has quantified the stability boundaries beyond which missense variants become subject to purifying selection in human populations. Studies of variation in disease-free individuals have identified a tolerated stability range of approximately -0.5 to 0.5 kcal/mol for ΔΔG. Mutations with stability effects falling outside this range are strongly depleted in the most functionally constrained human proteins, indicating they are often pathogenic [9]. The following diagram illustrates the logical relationship between mutations, stability disruption, and disease outcomes.

Computational Tools for Predicting Stability Changes

Accurately predicting the change in protein stability (ΔΔG) resulting from a mutation is a central goal in computational biology, with applications ranging from variant interpretation to protein engineering. A wide array of tools has been developed, employing methodologies from deep learning and statistical potentials to physics-based simulations.

Table 1: Performance Comparison of Select Protein Stability Prediction Tools

| Tool Name | Methodology | Reported Pearson Correlation (ΔΔG) | Key Features / Applicability | Year / Ref |

|---|---|---|---|---|

| QresFEP-2 | Hybrid-topology Free Energy Perturbation (FEP) | ~0.85 (on T4 Lysozyme benchmark) | Physics-based; applicable to protein-ligand binding; high computational efficiency | 2025 [11] |

| UniMutStab | Shared-weight Graph Convolutional Network | Surpasses existing methods on mega-scale dataset | Pure sequence-based; predicts any mutation type (single, multi-point, indel) | 2025 [12] |

| RaSP | Deep Learning (3D CNN with supervised fine-tuning) | 0.57-0.79 (on experimental test sets) | Rapid predictions (<1s/residue); proteome-scale application | 2023 [13] |

| MAESTRO | Machine Learning & Energy Functions | Not specified in results | Used with AlphaFold2 structures for large-scale analyses | 2025 [9] |

| Assessed Tools (27 total) | Various (ML, Statistical, etc.) | 0.20 - 0.53 (on unseen test data) | Benchmark study highlighted general challenge in predicting stabilizing mutations | 2024 [14] |

A recent independent benchmark study assessed 27 different computational tools on a carefully curated dataset of over 4,000 mutations, ensuring no overlap with their training data. The results revealed several critical points for end-users. The accuracy of predictions, as measured by Pearson correlation with experimental ΔΔG, varied widely from 0.20 to 0.53. A consistent and significant finding across multiple studies is that nearly all methods perform better at predicting destabilizing mutations than stabilizing ones. This performance gap persists even for methods that show good performance on anti-symmetric property analysis, suggesting that simply balancing training datasets may not be sufficient to overcome this challenge [14].

The choice of tool often depends on the specific application. For high-throughput screening of thousands of variants in the human proteome, fast methods like RaSP are invaluable [13]. For a more detailed, physics-based understanding of a critical mutation, especially in a drug discovery context, more computationally intensive FEP protocols like QresFEP-2 may be warranted [11]. Meanwhile, emerging methods like UniMutStab seek to address the limitation of most tools that are restricted to single-point mutations by offering accurate predictions for multi-point and indel mutations from sequence alone [12].

Experimental Protocols for Measuring Stability Changes

Computational predictions require validation and are ultimately grounded in experimental data. Traditional methods for measuring protein stability, such as circular dichroism (CD) spectrometers and differential scanning calorimeters (DSC), provide detailed insights into protein folding and thermal stability but are low-throughput and laborious [15] [6]. To address the need for large-scale stability data, new high-throughput experimental methods have been developed.

cDNA Display Proteolysis Protocol

The cDNA display proteolysis method is a powerful high-throughput stability assay that combines cell-free molecular biology with next-generation sequencing. It can measure thermodynamic folding stability for up to 900,000 protein variants in a single experiment [6].

Table 2: Key Research Reagents for cDNA Display Proteolysis

| Research Reagent | Function / Description | Role in Experimental Workflow |

|---|---|---|

| Synthetic DNA Oligo Pool | Library encoding all protein variants to be tested. | Serves as the starting genetic blueprint for the experiment. |

| Cell-free cDNA Display System | For in vitro transcription and translation. | Produces protein-cDNA fusion molecules, linking phenotype to genotype. |

| Proteases (Trypsin/Chymotrypsin) | Enzymes that selectively cleave unfolded proteins. | Acts as the environmental stressor to probe folding stability. |

| PA Tag & Pull-down Beads | Affinity tag (e.g., PA tag) and corresponding magnetic beads. | Enables purification of intact (protease-resistant) protein-cDNA fusions. |

| Next-Generation Sequencer | For deep sequencing of cDNA from surviving proteins. | Quantifies the relative abundance of each variant after proteolysis. |

Detailed Workflow:

- Library Construction: A DNA library is created, with each oligonucleotide encoding one protein variant.

- In Vitro Expression: The DNA library is transcribed and translated using a cell-free cDNA display system, resulting in each protein being covalently attached to its own encoding cDNA molecule.

- Proteolysis Challenge: The pool of protein-cDNA complexes is incubated with different concentrations of protease (e.g., trypsin or chymotrypsin). Folded proteins are resistant to cleavage, while unfolded proteins are cut.

- Selection and Pull-Down: Intact (cleavage-resistant) protein-cDNA fusions are captured using an affinity tag (e.g., an N-terminal PA tag) and magnetic beads.

- Sequencing and Analysis: The cDNA of the surviving proteins is deep-sequenced. The stability of each variant (expressed as ΔG) is inferred from its relative abundance before and after proteolysis, using a Bayesian kinetic model that accounts for the protease susceptibility of the unfolded state [6].

The following diagram visualizes this high-throughput experimental pipeline.

Yeast-Based Stability Assay (Human Domainome)

Another large-scale approach involved the creation of the "Human Domainome," a library of over half a million mutations across 522 human protein domains. The experimental protocol leveraged yeast cells as a living factory and sensor [10]:

- Transformation: Yeast cells are engineered to produce a single type of mutated human protein domain.

- Growth Selection: The yeast cultures are grown under conditions that link the stability of the expressed human protein domain to the yeast cell's growth and survival.

- Quantification: By comparing the frequency of each mutation before and after growth selection using deep sequencing, researchers can determine which mutations lead to stable proteins and which cause instability. Unstable proteins impair growth, causing their corresponding variants to be depleted from the population [10].

Clinical Implications and Future Directions

Understanding the precise molecular mechanism of a disease-causing mutation—whether it is destabilizing the protein or altering its function—enables the development of more precise therapeutic strategies. As noted by Dr. Antoni Beltran, this "could mean the difference between developing drugs that stabilize a protein versus those that inhibit a harmful activity" [10]. For example, pharmacological chaperones are a class of therapeutics designed to bind to and stabilize specific destabilized proteins, potentially treating diseases caused by loss-of-function mutations.

The field is moving toward an even more comprehensive mapping of the protein stability landscape. Future efforts aim to "map the effects of every possible mutation on every human protein," an ambitious goal that would profoundly transform precision medicine [10]. The integration of high-throughput experimental data from methods like cDNA display proteolysis with increasingly accurate AI-powered computational models promises to reveal the fundamental quantitative rules of how amino acid sequences encode folding stability. This will not only improve our ability to interpret human genetic variation but also accelerate the engineering of stable proteins for therapeutic and industrial applications.

In protein sequence similarity and susceptibility prediction research, the strategic integration of specialized databases is fundamental. Three resources form a critical triad for investigating how sequence relates to structure and stability: ProThermDB for experimental thermodynamic parameters, the Protein Data Bank (PDB) for 3D structural information, and UniProt for comprehensive sequence and functional annotation. ProThermDB provides direct measurements of protein stability, cataloging over 32,000 experimental data points including melting temperatures (Tm) and free energy changes (ΔG) for wild-type and mutant proteins [16] [17]. PDB serves as the global repository for experimentally-determined 3D structures of biological macromolecules, with all structures originating from physical samples studied experimentally [18] [19]. UniProt acts as the central hub for protein sequence and functional information, with its manually reviewed UniProtKB/Swiss-Prot section providing high-quality annotation [20]. Together, these databases enable researchers to traverse from sequence to structure to thermodynamic stability, forming a complete pipeline for understanding how genetic variations influence protein function and stability.

Core Characteristics and Applications

Table 1: Fundamental Characteristics of ProThermDB, PDB, and UniProt

| Feature | ProThermDB | Protein Data Bank (PDB) | UniProt |

|---|---|---|---|

| Primary Focus | Experimental protein stability & mutation effects | 3D atomic structures of macromolecules | Protein sequences & functional annotation |

| Key Data Types | Tm, ΔG, ΔΔG, ΔH, ΔCp; mutation effects | Atomic coordinates, experimental data, biological assemblies | Protein sequences, functional domains, PTMs, subcellular location |

| Size/Scope | >32,000 entries; wild-type, single/multiple mutants [17] | >200,000 structures; proteins, nucleic acids, complexes [19] | >245 million sequences; extensive cross-references [20] |

| Stability Data | Direct thermodynamic measurements | Indirect via structure quality metrics (resolution, R-factor) [18] | Stability predictions via cross-links to specialized databases |

| Mutation Coverage | Comprehensive stability data for mutants | Structures of mutant proteins when determined | Sequence variants from literature and databases |

| Experimental Methods | CD, DSC, fluorescence; high-throughput proteomics [16] | X-ray crystallography, NMR, EM [18] | Manual curation, computational analysis, cross-referencing |

Data Content and Accessibility

Table 2: Data Content, Availability, and Integration Capabilities

| Aspect | ProThermDB | Protein Data Bank (PDB) | UniProt |

|---|---|---|---|

| Sequence Data | Limited to proteins with stability data | Sequences of structurally determined proteins | Comprehensive coverage across species |

| Structure Integration | Visualizes mutations on 3D structures; 95% have structural data [16] | Primary source of 3D structural data | Links to PDB structures and AlphaFold predictions [20] |

| Cross-References | PDB, UniProt, PubMed [16] | UniProt, PubMed, enzyme databases [18] | Extensive links to >100 databases including PDB, ProTherm |

| Access Method | Web search by Uniprot/PDB ID, protein name, mutation [17] | Web search, APIs; structure visualization tools [21] | Web search, downloads, API access |

| Update Frequency | Periodic updates with new data (7,000+ recently added) [17] | Weekly updates with new structures | Every 8 weeks with InterPro [20] |

Experimental Methodologies and Workflows

Determining Structural and Stability Data

The experimental pipelines for generating data in these databases involve sophisticated biophysical techniques. For PDB structures, X-ray crystallography (the most common method) involves protein crystallization, data collection at synchrotron facilities, and computational refinement to generate atomic coordinates [18]. The quality metrics include resolution (detail level) and R-factor (model agreement with experimental data), which inform about structural reliability [18] [19]. NMR spectroscopy provides solution-state structures and dynamic information, while electron microscopy (3DEM) reveals structures of large complexes [18].

For ProThermDB stability data, thermal denaturation experiments using Circular Dichroism (CD) or Differential Scanning Calorimetry (DSC) measure melting temperatures (Tm) and enthalpy changes (ΔH) [16]. Denaturant unfolding experiments using chemicals like GdnHCl or urea provide free energy of unfolding (ΔG) [22]. High-throughput methods like Thermal Proteome Profiling (TPP) now enable stability measurements for thousands of proteins in cellular contexts [16].

Integrated Workflow for Stability-Prediction Research

The following workflow diagram illustrates how these databases interact in a typical research pipeline investigating sequence-stability relationships:

Research Reagent Solutions: Essential Tools for Database Navigation

Table 3: Key Research Tools and Resources for Database Utilization

| Tool/Resource | Function | Application Context |

|---|---|---|

| InterPro | Protein family classification via integrated signatures [20] | Functional annotation of sequences from UniProt |

| InterProScan | Tool for scanning sequences against InterPro signatures | Domain identification and functional prediction |

| RCSB PDB APIs | Programmatic access to PDB data and metadata [21] | Large-scale data retrieval for computational studies |

| JSmol | JavaScript-based molecular viewer | Embedded 3D visualization of mutations in ProThermDB [16] |

| PDB Visualization Tools | Structure analysis and visualization (e.g., RasMol) | Exploring biological assemblies and structural contexts [19] |

| SIFTS | Structure Integration with Function, Taxonomy and Sequence | Mapping residues between UniProt and PDB entries [16] |

Practical Applications in Stability Prediction Research

A powerful application emerges when these databases are combined to predict how mutations affect protein stability and function. For example, in drug-target interaction studies, researchers can:

- Identify target protein in UniProt for comprehensive sequence and functional data

- Retrieve 3D structure from PDB to understand binding sites and molecular interactions

- Extract stability data from ProThermDB for similar mutations or homologous proteins

- Correlate structural position with thermodynamic consequences to establish patterns

This approach has proven valuable in studies like PS3N (Protein Sequence-Structure Similarity Network), which leverages both protein sequence and structure similarity to predict novel drug-drug interactions by capturing how drugs sharing similar protein targets might interact [23]. The model achieved high predictive performance (Precision: 91%-98%, AUC: 88%-99%) by directly integrating structural and sequential information rather than relying solely on chemical properties or interaction networks [23].

Experimental Validation Workflow

The diagram below illustrates an experimental workflow for validating stability predictions using these database resources:

ProThermDB, PDB, and UniProt each offer unique and complementary capabilities for protein stability and sequence-structure relationship research. ProThermDB provides direct experimental thermodynamic measurements, PDB offers the structural context for interpreting these measurements, and UniProt delivers comprehensive sequence and functional annotation. For researchers investigating protein sequence similarity and susceptibility prediction, the strategic integration of these resources enables a more complete understanding of how genetic variations influence protein stability, function, and interaction networks. This database triad continues to evolve, with ProThermDB incorporating high-throughput proteomics data [16], PDB expanding its structural coverage [19], and UniProt integrating AlphaFold predictions and enhancing family annotations [20]. Together, they form an indispensable foundation for modern computational and experimental research in protein science and drug development.

The Central Dogma of Molecular Biology establishes the fundamental flow of genetic information: DNA is transcribed into RNA, which is then translated into protein [24] [25]. This sequence-based information transfer dictates protein structure and, ultimately, cellular function. Understanding the relationship between protein sequence similarity and functional similarity represents a critical challenge in bioinformatics with profound implications for drug discovery, functional annotation, and evolutionary biology [26] [27].

While the genetic code is universal and redundant—with multiple codons specifying the same amino acid—the relationship between a protein's amino acid sequence and its biological function is considerably more complex [25]. This relationship is particularly crucial for predicting protein-protein interactions (PPIs), which underpin virtually all cellular processes and represent compelling drug targets when aberrant [26]. This guide objectively compares the performance of traditional and emerging computational methods for predicting function from sequence, with particular emphasis on their application in protein sequence similarity susceptibility prediction research.

Traditional Methods: Sequence Alignment and Its Limitations

Traditional approaches to predicting protein function from sequence rely primarily on sequence alignment algorithms. The most common method involves pairwise sequence comparison to "transfer" function from proteins of known function to unknown proteins based on a minimum threshold of sequence similarity [27].

Established Sequence Similarity Tools

- BLAST (Basic Local Alignment Search Tool): The most popular pairwise alignment approach for identifying homologous sequences in databases [27] [28].

- MMseqs2: Used by RCSB PDB for sequence similarity searches, achieving better performance than BLAST at comparable sensitivity levels [28].

- SeqAPASS: EPA tool that extrapolates toxicity information across species by evaluating amino acid sequence and structural similarities [29].

The Sequence-Function Relationship Model

Research has quantitatively modeled the relationship between sequence similarity and function similarity using metrics such as:

- Sequence Similarity: Calculated using Reverse Reciprocal Bit Score (RRBS) from BLAST [27].

- Function Similarity: Quantified using Relative Information Content (RIC) based on Gene Ontology term specificity [27].

Table 1: Relationship Between Sequence Similarity and Function Similarity

| Sequence Similarity Range (RRBS) | Mean Function Similarity (RIC) | Standard Deviation | Prediction Reliability |

|---|---|---|---|

| > 0.6 (High) | 0.93 | 0.22 | High |

| 0.2-0.6 (Moderate) | 0.33 | 0.43 | Low/Variable |

| ≤ 0.2 (Low) | 0.03 | 0.18 | Very Low |

The data reveals that function similarity generally increases with sequence similarity but with considerable variability, particularly in the moderate similarity range (0.2-0.6 RRBS) often termed the "twilight zone" of sequence alignment [27] [30]. This variability presents significant challenges for accurate function prediction based solely on sequence alignment, as proteins with moderate sequence similarity can exhibit either very similar or dramatically different functions.

Emerging Methods: Embedding-Based Remote Homology Detection

Recent advances in deep learning have produced powerful protein Language Models that can detect remote homology beyond the capabilities of traditional alignment methods [26] [30].

Protein Language Models

- ProtT5: Transformer-based model trained on UniRef50 using masked language modeling [30].

- ESM-1b: Transformer-based model with 650 million parameters trained on UniRef50 [30].

- ProstT5: Extends ProtT5 by incorporating structural information through Foldseek's 3Di-token encoding [30].

These models generate high-dimensional vector representations (embeddings) for each residue or entire sequences, capturing underlying biological properties without explicit evolutionary information [30].

Advanced Embedding Alignment with Clustering and DDP

State-of-the-art approaches now combine embedding-based similarity with refinement techniques to improve remote homology detection:

Embedding-Based Alignment Refinement Workflow

Performance Comparison: Traditional vs. Embedding-Based Methods

Experimental Protocol for Method Evaluation

Structural Alignment Benchmarking

- Dataset: PISCES dataset (≤30% sequence similarity) [30]

- Evaluation Metric: Spearman correlation between predicted alignment scores and TM-align derived TM-scores [30]

- Purpose: Evaluate structural similarity prediction across remote homologs

Functional Generalization Assessment

- Task: CATH annotation transfer across classification hierarchy (Class, Architecture, Topology, Homology) [30]

- Evaluation: Accuracy of function prediction across different similarity levels

Alignment Quality Benchmarking

- Dataset: HOMSTRAD [30]

- Evaluation: Traditional alignment quality metrics

Comparative Performance Data

Table 2: Method Performance Comparison for Remote Homology Detection

| Method | Type | Twilight Zone Performance | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| BLAST/MMseqs2 | Sequence Alignment | Low | Fast, interpretable | Fails at low sequence similarity |

| Profile HMMs | Sequence Profile | Moderate | More sensitive than pairwise | Difficult with very low similarity |

| Averaged Embeddings | Embedding | Moderate | Captures structural information | Loses residue-level information |

| EBA (Baseline) | Embedding Alignment | High | Residue-level alignment | Noise in similarity matrix |

| EBA + Clustering + DDP | Embedding Alignment | Highest | Best twilight zone performance | Computationally intensive |

The incorporation of K-means clustering and double dynamic programming (DDP) consistently contributes to improved performance in detecting remote homology, outperforming both traditional sequence-based methods and state-of-the-art embedding-based approaches on multiple benchmarks [30].

Table 3: Key Research Reagents and Computational Tools for Sequence-Function Studies

| Resource/Tool | Type | Primary Function | Access |

|---|---|---|---|

| SeqAPASS | Web Tool | Predict cross-species susceptibility | https://www.epa.gov/comptox-tools/sequence-alignment-predict-across-species-susceptibility-seqapass-resource-hub [29] |

| RCSB PDB Sequence Search | Database Tool | Find similar protein sequences in PDB | https://www.rcsb.org [28] |

| ProtT5/ESM-1b | Protein Language Model | Generate residue-level embeddings | GitHub repositories |

| Gene Ontology (GO) | Database | Function similarity quantification | http://geneontology.org [27] |

| PISCES Dataset | Benchmark Dataset | Evaluate remote homology detection | Publicly available |

| CATH Database | Database | Protein structure classification | http://www.cathdb.info [30] |

Implications for Drug Discovery and Protein Engineering

The relationship between sequence similarity and function similarity has direct applications in pharmaceutical research and development. Accurate PPI prediction enables:

- Target Identification: Discovering novel drug targets by identifying biologically relevant protein interactions [26]

- Therapeutic Design: Engineering therapeutic peptides and antibodies with specific binding properties [26]

- Cross-Species Susceptibility Prediction: Extrapolating toxicity information from model organisms to species of concern using tools like SeqAPASS [29]

Sequence-based methods provide a broadly applicable alternative to structure-based approaches, particularly given the limited availability of high-quality protein structures and challenges in modeling intrinsically disordered regions [26].

The relationship between protein sequence similarity and function similarity remains complex and context-dependent. While traditional sequence alignment methods provide reliable function prediction at high sequence similarities (>60%), their performance deteriorates significantly in the twilight zone of 20-35% sequence similarity. Emerging embedding-based approaches, particularly those incorporating clustering and double dynamic programming refinement, demonstrate superior performance for detecting remote homology and predicting function from sequence. These advanced methods show particular promise for drug discovery applications where accurate prediction of protein-protein interactions can streamline target identification and therapeutic design.

Predicting chemical susceptibility and biological function from protein sequences is a cornerstone of modern bioinformatics, with critical applications in toxicology, drug discovery, and ecological risk assessment. This field fundamentally relies on the principle that proteins sharing evolutionary relatedness (homology) often share similar three-dimensional structures and functions [31]. The foundational data for these predictions comes from two primary sources: (1) experimentally determined protein structures and interaction measurements, and (2) the vast repositories of protein sequence data. However, both are plagued by significant limitations. A profound scarcity exists between the number of known protein sequences and those with experimentally validated structures or functions; less than 0.3% of the over 240 million protein sequences in the UniProt database have been experimentally annotated [32]. This discrepancy creates a critical dependency on computational extrapolation. Furthermore, experimental data itself suffers from variability arising from different methodologies (e.g., X-ray crystallography vs. NMR), experimental conditions, and inherent protein dynamics [33]. These dual challenges of data scarcity and experimental variability define the ultimate accuracy limits and practical constraints of predictive tools, framing a critical research area for scientists and drug development professionals.

Foundational Principles and Inherent Data Limitations

The entire enterprise of predicting protein function and chemical susceptibility from sequence is built upon the inference of homology. The logical framework is that statistically significant sequence similarity implies homology, which in turn implies structural and functional similarity [31]. This sequence-structure-function relationship, while powerful, is not absolute. The core limitation lies in the fact that protein structures are not static; they are dynamic objects with flexible regions that can adopt different conformations under different conditions, leading to inherent variability in experimental measurements [33]. This variability directly impacts the "ground truth" data used to train and validate predictive models.

Compounding this is the challenge of distinguishing true homology from analogy or convergent evolution. For instance, trypsin and subtilisin are both serine proteases with the same catalytic triad but possess completely different overall folds, representing a classic case of convergent evolution rather than descent from a common ancestor [31]. Reliable statistical estimates are crucial for distinguishing such similarities, but as sequence and structure databases grow exponentially, the risk of misinterpreting analogy for homology increases, especially with more sensitive comparison methods [31].

- The Statistical Syllogism of Homology: The inference process follows a logical sequence: (1) Sequence alignment scores for unrelated proteins are indistinguishable from random; (2) Therefore, a statistically significant similarity score means sequences are not unrelated; (3) Therefore, sequences sharing significant similarity are homologous and likely share similar structure and function [31]. This logic breaks down when sequences lack significant similarity yet are still homologous, or when significant similarity arises from convergence.

- The Plateaus of Predictive Accuracy: The limitations of foundational data are reflected in the observed accuracy ceilings for prediction tasks. For protein secondary structure prediction (SSP), the three-state accuracy (Q3) has plateaued at 81-86% for years, with the theoretical limit previously estimated at ~88% [33]. Recent analyses suggest this limit may be closer to 92%, but the gap between current performance and the ultimate ceiling highlights the constraints imposed by structural inconsistencies between homologs used for training [33].

Comparative Analysis of Predictive Tools and Methodologies

To address the challenges of data scarcity, a diverse ecosystem of computational tools has been developed. These can be broadly categorized into tools designed for specific extrapolation tasks and general-purpose protein structure and interaction predictors. The following experimental protocols and performance data illustrate how different tools grapple with the underlying data limitations.

Experimental Protocol: SeqAPASS for Cross-Species Susceptibility Extrapolation

Objective: To rapidly predict the intrinsic chemical susceptibility of non-target species by evaluating the conservation of protein targets across taxa, overcoming the scarcity of empirical toxicity data [34] [35] [29].

Methodology:

- Input: A known protein sequence (the "query") from a species with established chemical susceptibility (e.g., a human protein or a pest protein targeted by a pesticide).

- Data Retrieval: The tool retrieves homologous sequences from the National Center for Biotechnology Information (NCBI) protein database, which contains over 153 million proteins from more than 95,000 organisms [29].

- Tiered Evaluation:

- Level 1 (Primary Sequence): Compares primary amino acid sequences to the query, calculating a quantitative metric for sequence similarity and identifying orthologs [34].

- Level 2 (Functional Domains): Evaluates sequence similarity within specific functional domains (e.g., a ligand-binding domain) crucial for the chemical-protein interaction [34].

- Level 3 (Key Residues): Compares individual amino acid residues known to be critical for protein conformation or direct chemical binding [34].

- Output: A prediction of relative susceptibility for hundreds or thousands of species, presented through customizable data visualizations and summary reports [35].

Logical Workflow: The following diagram illustrates the tiered analytical approach of SeqAPASS, which progressively incorporates more specific biological knowledge to refine its predictions.

Experimental Protocol: DeepSCFold for Protein Complex Structure Prediction

Objective: To accurately model the quaternary structures of protein complexes, a task significantly more challenging than predicting single-chain structures due to the scarcity of experimental data on complexes and the difficulty in capturing inter-chain interactions [7].

Methodology:

- Input: The amino acid sequences of the proteins suspected to form a complex.

- Retrieval-Augmented Modeling:

- Monomeric MSA Generation: Creates multiple sequence alignments (MSAs) for each individual subunit from various sequence databases [7].

- Sequence-Based Structure Prediction: Employs deep learning models to predict protein-protein structural similarity (pSS-score) and interaction probability (pIA-score) directly from sequence information [7].

- Paired MSA Construction: Uses the predicted pIA-scores to systematically concatenate monomeric homologs from different subunits, constructing paired MSAs that infer interaction patterns [7].

- Complex Structure Prediction: Feeds the series of paired MSAs into a structure prediction engine (e.g., AlphaFold-Multimer) to generate the final quaternary structure model [7].

- Output: A high-accuracy 3D structural model of the protein complex.

Logical Workflow: DeepSCFold uses a retrieval-augmented paradigm to overcome the limited co-evolutionary signals available for protein complexes, especially in challenging cases like antibody-antigen interactions.

Performance Comparison of Protein Modeling Tools

The following table summarizes the performance and characteristics of key tools, highlighting how they address data scarcity.

Table 1: Comparative Performance of Protein Prediction Tools

| Tool Name | Primary Application | Core Methodology | Reported Performance / Advancement | Key Data Limitation Addressed |

|---|---|---|---|---|

| SeqAPASS [34] [29] | Cross-species chemical susceptibility prediction | Tiered sequence/domain/residue alignment | Successfully predicts susceptibility for pollinators, endocrine disruptors; enables screening for thousands of species. | Scarcity of empirical toxicity data for non-target species. |

| DeepSCFold [7] | Protein complex (multimer) structure prediction | Retrieval-augmented deep learning with sequence-derived structure complementarity. | 11.6% and 10.3% improvement in TM-score over AlphaFold-Multimer and AlphaFold3 on CASP15 targets; 24.7% higher success rate for antibody-antigen interfaces. | Scarcity of complex structures and weak inter-chain co-evolution signals. |

| Protriever [36] | General protein fitness prediction | End-to-end differentiable retrieval from sequence databases. | State-of-the-art Spearman correlation (0.479) on ProteinGym benchmark; ~1000x faster retrieval than JackHMMER. | Task-independent, slow homology search that misses distant relationships. |

| xCAPT5 [37] | Protein-protein interaction (PPI) prediction | Deep multi-kernel CNN with ProtT5 embeddings and Siamese architecture. | Outperforms >10 state-of-the-art methods in cross-validation and generalizes across species. | Reliance on hand-designed feature extractors that cannot capture sequence complexity. |

The Scientist's Toolkit: Essential Research Reagent Solutions

The experimental protocols and tools discussed rely on a foundation of key databases, software, and computational resources. The following table details these essential "research reagents" for scientists working in this field.

Table 2: Key Research Reagents and Resources for Protein Susceptibility Prediction

| Resource Name | Type | Function in Research | Relevance to Data Scarcity |

|---|---|---|---|

| NCBI Protein Database [29] | Database | Primary repository for protein sequence data, used for homology searches. | Provides the foundational sequence data (>153 million proteins) for extrapolating beyond experimentally characterized proteins. |

| UniProt [7] [32] | Database | Curated resource of protein sequence and functional information. | Contains millions of unannotated sequences, highlighting the annotation gap and driving the need for prediction tools. |

| AlphaFold-Multimer [7] | Software Tool | Predicts 3D structures of protein complexes from sequences. | Provides structural models for complexes where experimental structures are scarce, though accuracy for complexes is lower than for monomers. |

| Protein Language Models (e.g., ESM-1b, ProtT5) [37] [32] | Computational Model | Deep learning models pre-trained on millions of sequences to generate informative sequence embeddings. | Mine evolutionary and functional information from unannotated sequence data, reducing reliance on handcrafted features and multiple sequence alignments. |

| MMseqs2/JackHMMER [7] [36] | Software Tool | Tools for rapid homology search and multiple sequence alignment construction. | Generate the evolutionary context (MSAs) for a query sequence, which is critical for structure and function prediction. |

The field of protein susceptibility prediction operates within a fundamental constraint: the vast universe of protein sequences dramatically outstrips the capacity of experimental science to characterize them. Tools like SeqAPASS, DeepSCFold, Protriever, and xCAPT5 represent sophisticated computational strategies to navigate this data-scarce landscape. They leverage evolutionary principles, advanced statistics, and deep learning to extrapolate from the limited available data to the vast unknown. However, their performance is ultimately bounded by the quality, variability, and inherent noise of their foundational data. The theoretical accuracy limits for tasks like secondary structure prediction serve as a reminder that some uncertainty is intrinsic due to protein dynamics and experimental disagreement. For researchers and drug development professionals, the choice of tool must be guided by the specific question—whether it is cross-species extrapolation for ecological risk assessment or determining atomic-level interactions for drug design. The continued growth of sequence databases and the advent of more powerful, adaptive retrieval-based models offer a promising path forward to progressively push these limitations and expand the frontiers of predictive biology.

Computational Tools and Advanced Models for Predicting Susceptibility

Protein sequence similarity search is a fundamental methodology in bioinformatics, enabling researchers to infer protein function, evolutionary relationships, and structural characteristics through homology detection. This capability is particularly crucial in pharmaceutical development, where accurately identifying distant homologs can illuminate potential drug targets and reveal functional domains relevant to therapeutic design [38]. For decades, alignment-based methods have served as the cornerstone of protein sequence comparison, with the Basic Local Alignment Search Tool (BLAST) family representing the traditional standard [39] [40]. As sequence databases have expanded exponentially, next-generation tools like MMseqs2 have emerged to address the computational challenges of searching billions of sequences while maintaining high sensitivity [41] [42]. This comparison guide objectively evaluates these tools' performance characteristics, experimental benchmarks, and methodological approaches within protein sequence similarity prediction research, providing scientists with evidence-based selection criteria for their specific applications.

BLAST and Its Extended Family

The BLAST algorithm employs a heuristic seed-and-extend approach that identifies short matches (seeds) between sequences before performing more computationally intensive extensions to generate full alignments [42]. Its position-specific iterated variant (PSI-BLAST) enhances sensitivity for detecting remote homologs through iterative database searching and position-specific score matrix (PSSM) construction [40]. PSI-BLAST builds these PSSMs from scratch during each search, progressively refining them with each iteration to capture increasingly subtle sequence patterns [38]. Another advanced variant, DELTA-BLAST (Domain Enhanced Lookup Time Accelerated BLAST), further improves remote homology detection by leveraging a database of pre-constructed PSSMs from the Conserved Domain Database (CDD) before searching protein sequence databases [40]. This approach yields significantly better homolog detection compared to standard BLAST and CS-BLAST, with DELTA-BLAST achieving ROC5000 scores 2.2 times higher than CS-BLAST and 3.2 times higher than BLASTP in benchmark tests [40].

MMseqs2 and Its Algorithmic Innovations

MMseqs2 (Many-against-Many sequence searching) implements a cascaded alignment approach that rapidly filters out unrelated sequences through fast k-mer matching before applying more sensitive scoring methods and finally computing optimal gapped alignments [41]. This multi-stage filtering process enables MMseqs2 to achieve remarkable speed while maintaining high sensitivity. The software suite supports both protein and nucleotide sequence clustering and searching, with specialized workflows for common bioinformatics tasks such as taxonomy assignment and profile search [41]. A significant recent advancement is MMseqs2-GPU, which introduces graphics processing unit acceleration through novel gapless filtering and gapped alignment algorithms specifically designed for position-specific scoring matrices [42] [43]. This GPU implementation maps query PSSMs to columns and reference sequences to rows in a matrix, processing each row in parallel while utilizing shared GPU memory to optimize access to PSSMs and packed 16-bit floating-point numbers to maximize throughput [42].

Alignment-Free Comparative Methods

While beyond the scope of traditional alignment-based methods, emerging alignment-free approaches provide valuable context for understanding the methodological landscape. These methods utilize feature extraction from protein sequences, typically based on amino acid composition, physicochemical properties, or k-mer frequencies, to compute similarity without generating base-by-base alignments [44]. Though generally faster and less resource-intensive, they typically trade off some accuracy compared to alignment-based methods and remain most suitable for specific applications like large-scale phylogenetic analyses or initial database screening [44].

Performance Comparison and Experimental Data

Search Speed and Throughput Benchmarks

Comprehensive benchmarking reveals substantial performance differences between tools, particularly as database sizes and query volumes increase. In single-query searches against a ~30-million-sequence database, MMseqs2-GPU on one NVIDIA L40S GPU demonstrated a 6.4× speed advantage over BLAST and a remarkable 177× speedup over JackHMMER [42]. For larger batch searches comprising 6,370 queries, MMseqs2-GPU with eight GPUs performed 2.4× faster than the fastest CPU-based alternative method [42]. The performance advantage of MMseqs2 extends to cost efficiency, with cloud cost estimates showing MMseqs2-GPU on a single L40S instance as the most economical option across all batch sizes [42].

Table 1: Homology Search Speed Benchmarks (Querying against ~30-million-sequence database)

| Tool | Hardware Configuration | Single Query Speed | Batch Query (6,370) Speed | Relative Cost Efficiency |

|---|---|---|---|---|

| MMseqs2-GPU | 1 × L40S GPU | 6.4× faster than BLAST | 2.2× faster than CPU k-mer (8 GPUs) | Most economical |

| MMseqs2-CPU | 2 × 64-core CPU | Reference | 2.2× faster than GPU (1 GPU) | 60.9× more costly for single query |

| BLAST | High-end CPU | Baseline | Not reported | Significantly higher cost |

| JackHMMER | High-end CPU | 177× slower than MMseqs2-GPU | 199× slower for large batches | Least economical |

The GPU acceleration achieves extraordinary computational throughput, with the gapless GPU kernel reaching up to 100 TCUPS (trillions of cell updates per second) across eight L40S GPUs for gapless filtering, outperforming previous acceleration methods by one to two orders of magnitude [43]. This represents a 21.4× speedup on eight L40S GPUs compared to a 2 × 64-core CPU server when processing random amino acid sequences [42].

Sensitivity and Alignment Accuracy

Sensitivity benchmarks evaluating remote homology detection capabilities show that iterative profile searches with MMseqs2-GPU achieve ROC1 scores of 0.612 and 0.669 after two and three iterations respectively, surpassing PSI-BLAST (0.591) and approaching JackHMMER (0.685) [42]. In terms of alignment quality, DELTA-BLAST produces alignments with significantly greater sensitivity than BLASTP and CS-BLAST, particularly at sequence identities between 5% and 20% where its mean sensitivity exceeds other methods by at least 0.1 [40]. MMseqs2 maintains this high sensitivity while offering tremendous speed advantages, achieving sensitivities better than PSI-BLAST while running over 400 times faster in profile searches with three iterations [43].

Table 2: Sensitivity and Alignment Accuracy Comparison

| Tool | ROC1 Score (3 iterations) | Alignment Sensitivity (5-20% identity range) | Alignment Precision | Key Strengths |

|---|---|---|---|---|

| MMseqs2-GPU | 0.669 | Not reported | Not reported | Excellent balance of speed and sensitivity |

| PSI-BLAST | 0.591 | Moderate | Moderate | Established standard for iterative search |

| JackHMMER | 0.685 | High | High | Highest sensitivity, but very slow |

| DELTA-BLAST | Not reported | Highest (0.1 better than alternatives) | Better precision at low identity | Best for remote homology detection |

Resource Requirements and Scalability

Memory consumption varies significantly between tools, with MMseqs2's k-mer-based filtering traditionally requiring substantial RAM (up to 2 TB for large databases) [42]. The GPU version reduces this memory demand from approximately 7 bytes to 1 byte per residue, supports further reduction via clustered searches, and allows distributing databases across multiple GPUs or streaming from host RAM at 63-65% of in-GPU-memory speed [42]. For context, BLAST-based tools typically have more moderate memory requirements but cannot match the scaling capabilities of MMseqs2 for extremely large databases. MMseqs2 is designed to run on multiple cores and servers with excellent scalability, automatically dividing target databases into memory-friendly segments when needed, with optional manual control over memory usage via the --split-memory-limit parameter [41].

Experimental Protocols and Methodologies

Standardized Homology Detection Benchmarks

Standardized evaluation of homology detection tools typically employs Receiver Operating Characteristic (ROC) analysis based on known protein relationships defined by structural classification databases such as SCOP (Structural Classification of Proteins) [40]. The benchmark process involves:

Test Set Curation: Selecting a diverse set of protein domains with known structural and evolutionary relationships. A common approach uses a non-redundant set of domains selected by single linkage clustering based on a BLAST P-value threshold (e.g., 10⁻⁷), with domain boundaries identified using algorithms that correlate with SCOP domain definitions [38].

True Positive Definition: Defining true positives based on structural similarity measures (e.g., VAST algorithm) or curated classification systems (e.g., SCOP family/superfamily/fold) [40].

Search Execution: Running each tool against a comprehensive sequence database (e.g., 10,569 sequences searched using 4,852 queries) with standardized parameters [40].

ROC Calculation: Computing ROCₙ scores by pooling alignments from all queries, ordering by E-value, and considering results up to the nth false positive. ROC₅₀₀₀ and ROC₁₀₀₀₀ scores provide standardized sensitivity measures comparable across tools [40].

Alignment Accuracy Assessment

Alignment quality evaluation involves comparing program-generated alignments to reference structure-based alignments using metrics such as:

- Sensitivity: The fraction of the reference structural alignment correctly recovered by the sequence alignment method.

- Precision: The fraction of the sequence alignment that correctly reproduces the structural alignment.

These measures are typically calculated across different ranges of sequence identity (5-10%, 10-20%, 20-30%, etc.) to evaluate performance at varying evolutionary distances [40]. Benchmark sets like the superfamily subset of the SABmark set, which contains 10,006 pairs of 3D domains with reference alignments, provide standardized resources for these evaluations [40].

Performance Scaling Experiments

To evaluate computational performance across different usage scenarios, tools are typically benchmarked in both single-query and batch-query modes against databases of varying sizes (e.g., ~30-million-sequence databases and larger metagenomic-scale databases) [42]. Hardware configurations are carefully documented, with comparisons including:

- Single GPU vs. Multi-Core CPU: Comparing MMseqs2-GPU on L40S or similar GPUs against MMseqs2-CPU on high-core-count servers (e.g., 2 × 64-core) [42].

- Cloud Cost Analysis: Estimating computational expenses using cloud provider pricing (e.g., AWS EC2) for different hardware configurations [42].

- Energy Consumption Measurements: Quantifying power efficiency under different workload scenarios [42].

Applications in Protein Structure Prediction and Drug Discovery

Enhancing Structure Prediction Pipelines

MMseqs2 plays a critical role in accelerating multiple sequence alignment (MSA) generation for protein structure prediction pipelines. In comparative benchmarks using 20 CASP14 free-modeling targets, ColabFold with MMseqs2-GPU demonstrated a 1.65× speedup over MMseqs2-CPU and a 31.8× acceleration compared to the standard AlphaFold2 pipeline using JackHMMER and HHblits [42]. This performance improvement is primarily driven by accelerated MSA generation, which MMseqs2-GPU accelerates 5.4× compared to MMseqs2-CPU and 176.3× compared to AlphaFold2's CPU-based MSA step [42]. Remarkably, all methods achieved similar prediction accuracy (0.70 ± 0.05 TM-score), demonstrating that the speed advantages do not compromise result quality [42].

The following workflow diagram illustrates how MMseqs2 integrates with modern protein structure prediction pipelines:

Drug Target Identification and Validation

In pharmaceutical development, sequence similarity tools enable researchers to identify potential drug targets by comparing pathogen proteins to human proteomes to find sufficiently divergent regions for selective targeting [43]. These methods also help pinpoint disease-causing mutations by comparing patient protein sequences to healthy references [43]. The dramatically accelerated search times provided by tools like MMseqs2-GPU enable researchers to perform these analyses at unprecedented scales, potentially scanning entire pathogen proteomes against human references in practical timeframes that were previously impossible [42] [43].

The following diagram illustrates a typical drug target identification workflow leveraging modern sequence search tools:

Essential Research Reagent Solutions

Table 3: Key Research Resources for Protein Sequence Analysis

| Resource Category | Specific Examples | Primary Function in Research |

|---|---|---|

| Sequence Databases | UniRef, NR, NT, PFAM, Conserved Domain Database (CDD) | Provide comprehensive reference sequences for homology searches and functional annotation [41] [40] |

| Structure Databases | PDB, SCOP, CATH | Enable template-based modeling and structural validation of sequence-based predictions [40] |

| Taxonomic Databases | NCBI Taxonomy, SILVA | Support taxonomic classification of search results and evolutionary analyses [41] |

| Benchmark Datasets | SABmark, ASTRAL Compendium | Provide standardized datasets for tool evaluation and method comparison [40] |

| Specialized Hardware | NVIDIA L40S/L4/A100/H100 GPUs | Accelerate computationally intensive searches through parallel processing [42] [43] |

The evolving landscape of protein sequence analysis tools demonstrates a clear trajectory toward increasingly efficient and sensitive methods while maintaining the rigorous alignment principles established by early tools like BLAST. MMseqs2 represents a significant advancement in this field, offering researchers dramatically improved computational efficiency without sacrificing sensitivity, particularly through its GPU-accelerated implementation. For most modern applications involving large-scale database searches or integration with structure prediction pipelines, MMseqs2 provides an optimal balance of performance and sensitivity. Traditional BLAST variants remain valuable for specific applications, with DELTA-BLAST particularly effective for detecting remote homologs when searching against curated domain databases. As protein sequence databases continue to expand exponentially, these advanced sequence alignment workhorses will remain indispensable tools for pharmaceutical researchers seeking to unravel protein function and identify novel therapeutic targets.

In the field of bioinformatics, the analysis of protein sequences is fundamental for understanding evolutionary relationships, predicting protein function, and accelerating drug discovery. Traditional methods reliant on sequence alignment, while accurate, face significant challenges with computational efficiency, especially given the explosive growth of sequence databases. Alignment-free methods have emerged as powerful alternatives, offering robust performance for large-scale analyses. This guide focuses on two advanced alignment-free approaches—methods based on Fuzzy Integral and Markov Chains and those utilizing Physicochemical Properties—and objectively compares their performance with other alignment-free and alignment-based techniques.

Methodological Principles and Experimental Protocols

The Fuzzy Integral and Markov Chain Method

This method treats protein sequences as outputs of a Markov process and uses fuzzy integrals to compute similarity.

- Markov Chain Modeling: A protein sequence is viewed as a Markov chain, where each amino acid represents a state. The first step involves estimating the kth-step transition probability matrix for each sequence. This matrix contains the probabilities of transitioning from one amino acid to another after k steps. The initial (1st-step) transition probabilities are estimated directly from the sequence by counting the frequencies of all adjacent amino acid pairs. Higher-step transition matrices are calculated using the Chapman-Kolmogorov equation [45].

- Fuzzy Integral Similarity: The estimated Markov chain parameters are then used to calculate the similarity between two sequences. The method employs a fuzzy measure and the Sugeno fuzzy integral to compute a similarity score within the closed interval [0, 1]. This score integrates the information from the transition matrices into a single, powerful measure of sequence relatedness. The resulting similarity matrix can be used directly for clustering or as input for phylogenetic tree building tools like the neighbor program in the PHYLIP package [45] [46].

The Physicochemical Properties (PCV) Method

This method numerically characterizes a protein sequence by encoding both the physicochemical properties of amino acids and their positional information.

- Feature Extraction: The method begins by accessing the AAindex database, a comprehensive resource of physicochemical properties. Hundreds of properties are clustered into a manageable number of representative categories to reduce data dimensionality while retaining essential information [44].

- Sequence Block Processing: To enable parallel processing and handle long sequences efficiently, each protein sequence is split into fixed-length blocks [44].

- Vector Encoding: For each block, an encoding vector is generated. This vector incorporates statistical and positional characteristics of the amino acids, such as their moments, based on the clustered physicochemical properties. This step ensures that both the composition and the order of amino acids are captured in the final descriptor [44].

- Distance Calculation: The distance between two sequences is finally calculated by comparing their respective encoded vectors using a standard distance metric, completing the comparative analysis [44].

The workflow for implementing and benchmarking these methods is systematized as follows:

Performance Comparison and Benchmarking Data

Independent benchmarking studies and original research provide quantitative data on the performance of various alignment-free methods. The following table summarizes key findings, demonstrating how the featured methods compare to alternatives.

Table 1: Performance Comparison of Alignment-Free Methods for Protein Sequence Analysis

| Method | Core Principle | Reported Accuracy / Performance | Key Advantages |

|---|---|---|---|

| Fuzzy Integral & Markov Chain [45] | Markov transition matrices & fuzzy integral similarity | Better clustering performance vs. alignment-free methods; High correlation with ClustalW [45] | Fully automated; No prior homology knowledge needed; Robust [45] |

| PCV (Physicochemical Vector) [44] | Encoding physicochemical properties & positional information | ~94% average correlation with ClustalW; Significant improvement in classification accuracy vs. other AF methods [44] | High speed; Parallel processing capability; Handles multiple mutations [44] |

| K-merNV & CgrDft [47] | K-mer frequency & Chaos Game Representation | Performance similar to multi-sequence alignment for virus taxonomy [47] | Fast and accurate for viral genome classification [47] |

| D2 Statistic & Variants [48] | Normalized count of k-tuple matches | Power increases with sequence length and k; Useful for large k [48] | Well-studied theoretical foundation; Good for regulatory sequences [48] |

| Alignment-Based (ClustalW) [45] [44] | Progressive sequence alignment | Considered a reference for accuracy [45] [44] | High accuracy on alignable sequences; Established standard [45] [44] |

The benchmarking process itself is critical for a fair evaluation. One major community effort, the AFproject, provides a standardized platform for comparing alignment-free tools across diverse tasks like protein classification and phylogenetics. It uses statistical measures like the Correlation Coefficient (CC) and Robinson-Foulds (RF) distance to quantitatively evaluate how well a method's output matches biological benchmarks or results from established alignment-based methods [49].

Essential Research Reagent Solutions

To implement the described methodologies, researchers can utilize the following key software tools and data resources.

Table 2: Key Research Reagents and Computational Tools

| Tool / Resource Name | Type | Function in Research |

|---|---|---|

| AAindex Database [44] | Database | Repository of physicochemical properties for amino acids, essential for feature extraction in methods like PCV. |

| AFproject [49] | Web Service / Benchmarking Platform | Community resource for standardized benchmarking of alignment-free methods against reference data sets. |

| PHYLIP Package [45] | Software Package | A toolkit containing the 'neighbor' program, used for constructing phylogenetic trees from distance matrices. |

| Custom Python Scripts (e.g., GitHub Repo) [46] | Software / Code | Example implementations of alignment-free methods (k-mer, compression, relative entropy, fuzzy Markov) for practical testing. |

| ClustalW / MUSCLE / MAFFT [45] [47] [44] | Software Package | Standard alignment-based tools used as a reference to validate and assess the accuracy of alignment-free methods. |

Alignment-free methods for protein sequence comparison represent a paradigm shift in bioinformatics, offering the speed and scalability required for modern, data-intensive research. Among them, techniques leveraging fuzzy integrals with Markov chains and physicochemical property encoding have proven to be highly accurate, rivaling the performance of traditional alignment-based methods while being computationally more efficient. As the volume of biological data continues to grow, these and other alignment-free approaches will become increasingly indispensable for researchers in evolutionary biology, drug target identification, and personalized medicine.

The prediction of protein function and behavior from sequence alone represents a cornerstone of modern bioinformatics, with profound implications for drug discovery and protein engineering. Within this field, two distinct deep learning architectures have emerged as particularly powerful: protein Language Models (pLMs) like ESM and AlphaFold, and one-dimensional Convolutional Neural Networks (1D-CNNs). These approaches operate on different principles and are often applied to different types of biological questions. Protein language models, inspired by breakthroughs in natural language processing, learn evolutionary patterns from billions of protein sequences through self-supervised pre-training. In contrast, 1D-CNNs typically operate as supervised models trained end-to-end on specific prediction tasks using smaller, curated datasets. This guide provides a structured comparison of these methodologies, focusing on their performance, optimal applications, and implementation requirements within protein sequence similarity susceptibility prediction research.

Protein Language Models (ESM, AlphaFold)

Protein Language Models have revolutionized computational biology by leveraging transformer architectures pre-trained on massive protein sequence databases. The ESM (Evolutionary Scale Modeling) family, including ESM-2 and ESM-3, applies self-supervised learning to predict masked amino acids in sequences, learning rich representations of evolutionary, structural, and functional constraints. AlphaFold, developed by DeepMind, represents a specialized advancement focusing primarily on protein structure prediction through a novel architecture that integrates multiple sequence alignments (MSAs) and structural templates.

Table 1: Performance Comparison of Prominent Protein Language Models

| Model | Parameter Size | Key Application | Reported Performance | Key Strengths |

|---|---|---|---|---|

| ESM-2 15B | 15 Billion | General-purpose protein representations | Near-state-of-the-art across various downstream tasks [50] | Captures complex sequence relationships |

| ESM-2 650M | 650 Million | Transfer learning on realistic datasets | Competes with larger models when data is limited [50] | Optimal balance of performance and efficiency |

| ESM C 600M | 600 Million | Protein contact prediction | Outperforms much larger ESM-2 15B on contact prediction [50] | Superior training methods and data quality |

| AlphaFold2 | ~93 Million | Protein monomer structure prediction | Median RMSD of 1.0 Å vs. experimental structures [51] | Unprecedented accuracy in tertiary structure |

| AlphaFold3 | Not Specified | Protein complex structure prediction | 10.3% lower TM-score than DeepSCFold on CASP15 multimers [7] | Improved modeling of protein complexes |

| DeepSCFold | Not Specified | Protein complex structure modeling | 11.6% higher TM-score than AlphaFold-Multimer [7] | Leverages sequence-derived structure complementarity |

1D Convolutional Neural Networks (1D-CNNs)

In contrast to pLMs, 1D-CNNs apply convolutional filters across protein sequences to detect local motifs and patterns significant for specific functions. These models are typically trained from scratch on specialized, labeled datasets for tasks like identifying protein-binding DNA sequences or predicting interaction hotspots. A notable example is the Embed-1dCNN model, which combines pre-trained protein sequence embeddings with a 1D-CNN architecture to predict protein hotspot residues, achieving an F1 score of 0.82 and an AUC of 0.89 [52]. Their strength lies in identifying localized, sequence-based features without requiring extensive pre-training or evolutionary information.

Experimental Protocols and Workflows

Standard pLM Transfer Learning Protocol

The application of pLMs like ESM for downstream prediction tasks typically follows a standardized transfer learning protocol via feature extraction. The established methodology, as systematically evaluated in recent studies, involves several key stages [50]:

- Embedding Extraction: For a given protein sequence, the last hidden layer representations (embeddings) are extracted from the pre-trained pLM. Each residue is typically represented as a high-dimensional vector (e.g., 1280 dimensions for ESM-1b).

- Embedding Compression: The per-residue embeddings are compressed into a fixed-length representation for the entire sequence. Mean pooling (averaging embeddings across all sequence positions) has been systematically shown to consistently outperform other compression methods like max pooling, iDCT, or PCA, particularly when input sequences are widely diverged [50].

- Downstream Model Training: The compressed embeddings are used as input features to train a supervised model, such as a regularized regression (e.g., LassoCV) or a shallow neural network, to predict specific targets (e.g., stability, function).

- Performance Evaluation: Model performance is evaluated on held-out test data using task-relevant metrics (e.g., R², accuracy, F1-score).

This workflow is depicted in the following diagram:

1D-CNN Workflow for Hotspot Prediction

The protocol for training a 1D-CNN for specific predictive tasks, such as identifying protein hotspot residues, involves a distinct, end-to-end process [52]: