Problem Formulation in Ecological Risk Assessment: The Foundational Step for Scientific Rigor and Decision-Making

This article provides a comprehensive guide to problem formulation, the critical first phase of ecological risk assessment (ERA) that determines the scientific validity and regulatory utility of the entire process.

Problem Formulation in Ecological Risk Assessment: The Foundational Step for Scientific Rigor and Decision-Making

Abstract

This article provides a comprehensive guide to problem formulation, the critical first phase of ecological risk assessment (ERA) that determines the scientific validity and regulatory utility of the entire process. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of defining management goals and engaging in planning dialogue. It details the methodological framework for selecting assessment endpoints, developing conceptual models, and creating analysis plans. The article further addresses troubleshooting common challenges like data gaps and stakeholder conflicts and discusses validation techniques and comparative analysis with other assessment frameworks. By synthesizing current EPA guidelines and scientific literature, this resource aims to equip professionals with the knowledge to design robust, actionable, and defensible ecological risk assessments[citation:1][citation:2][citation:3].

The Cornerstone of Assessment: Defining Scope and Goals in Problem Formulation

Problem Formulation (PF) is the critical first phase of an Ecological Risk Assessment (ERA), a structured scientific process used to evaluate the likelihood of adverse effects on plants and animals from exposure to stressors such as chemical contaminants [1]. It functions as the strategic planning and scoping stage where risk assessors and managers collaboratively define the assessment's purpose, scope, and methodology [2] [3]. Within a broader thesis on ERA research, PF is the keystone that ensures scientific inquiry remains focused, relevant, and aligned with regulatory and management needs. Its primary role is to transform broad environmental concerns into a testable analytical plan, thereby preventing misallocation of resources and providing clarity for subsequent phases of analysis and risk characterization [4].

The regulatory landscape governing PF is dynamic, as evidenced by ongoing revisions to frameworks like the U.S. Toxic Substances Control Act (TSCA) Risk Evaluation rule. Recent proposals emphasize shifting from a "whole chemical" risk determination to making individual determinations for each condition of use, highlighting how regulatory interpretations directly influence the scope and boundaries established during PF [5]. Furthermore, definitions of key terms, such as "potentially exposed or susceptible subpopulation," are under active review, underscoring the need for precise terminology from the outset of the assessment [5].

Core Components and Process of Problem Formulation

Problem formulation is an iterative, collaborative process involving risk assessors, risk managers, and stakeholders [3]. It integrates available information to produce three essential products: assessment endpoints, a conceptual model, and an analysis plan [2] [3]. The process systematically evaluates stressors, exposure pathways, and ecological receptors to define the problem with scientific and operational rigor.

The following table summarizes the key informational elements integrated during problem formulation:

Table: Key Informational Elements Integrated During Problem Formulation [2] [3]

| Factor | Core Considerations | Example Questions for Assessment |

|---|---|---|

| Stressors | Type, characteristics, mode of action, toxicity, frequency, duration, distribution, intensity. | Is the stressor chemical, physical, or biological? Is it acute, chronic, bioaccumulative, or persistent? |

| Sources | Status (active/inactive), background levels, spatial scale. | What is the geographic extent of the source? What are the baseline environmental conditions? |

| Exposure | Media (air, water, soil), timing, pathways. | When does exposure occur relative to critical life cycles? What are the routes of exposure (ingestion, inhalation, dermal)? |

| Receptors | Types (species, communities), life history characteristics, sensitivity, trophic level. | What keystone, endangered, or commercially valuable species are present? Are there sensitive life stages? |

Defining Assessment Endpoints

Assessment endpoints are explicit expressions of the environmental values to be protected, operationally defined by an ecological entity and its important attributes [2] [3]. They are derived directly from management goals (e.g., "maintain a sustainable aquatic community") and bridge policy with science. For example, a management goal to protect biodiversity may be translated into an assessment endpoint of "reproductive success of a resident fish population," where the fish population is the entity and reproduction is the critical attribute [4].

Developing the Conceptual Model

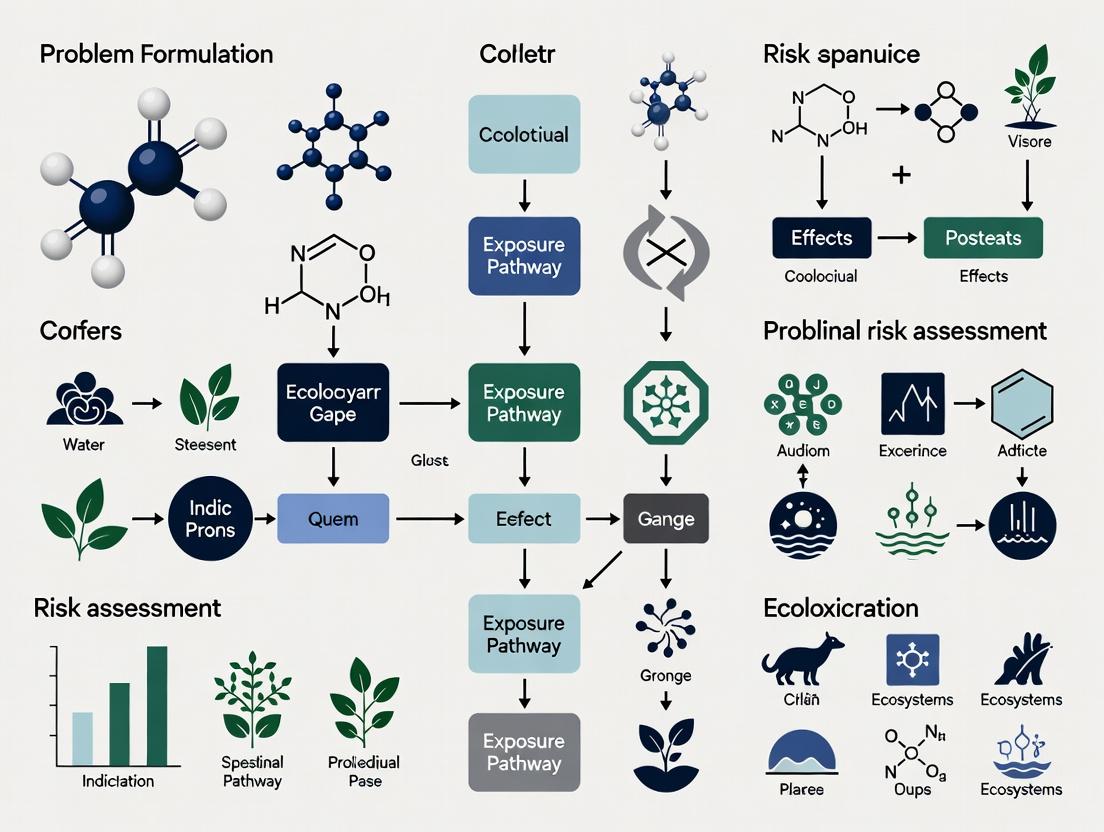

The conceptual model is a written description and visual representation (typically a diagram) of the predicted relationships between stressors, exposure pathways, and assessment endpoints [2]. It illustrates the risk hypotheses—tentative explanations about how an effect might occur—and is vital for identifying data gaps and ranking components by uncertainty [2]. The diagram below outlines the logical flow and primary components of a standard ERA conceptual model.

Diagram 1: Generalized Conceptual Model for Ecological Risk Assessment

Crafting the Analysis Plan

The final stage of PF is developing the analysis plan, which details how the risk hypotheses will be evaluated. It specifies the assessment design, data requirements, analytical methods, and measurement endpoints (e.g., LC50, NOAEC) that will be used [2]. This plan ensures the subsequent analysis phase is structured to effectively inform the risk manager's decision [3].

The ERA Framework: Problem Formulation in Context

Problem formulation is the foundation of the tripartite ERA framework, which proceeds to the Analysis phase and concludes with Risk Characterization [6]. The diagram below depicts this phased structure and the iterative relationship between planning and problem formulation.

Diagram 2: The ERA Framework Phases with Feedback

The analysis phase is divided into two parallel lines of inquiry: exposure characterization and ecological effects characterization [6]. These are synthesized in the risk characterization phase to produce an estimate of risk, which directly informs risk management decisions [1]. A poorly executed PF can compromise the entire ERA, leading to requests for irrelevant data, inappropriate risk mitigation, and delays in decision-making that may themselves cause environmental harm [4].

Practical Application: A Tiered Approach and Decision Logic

A common strategy to manage resource constraints is a tiered evaluation approach, which begins with simple, conservative screening assessments and proceeds to more complex, site-specific analyses only as needed [2]. The logic flow for initiating and scoping an ERA, particularly in a regulatory context like pesticide registration, is illustrated below.

Diagram 3: Decision Logic for Initiating and Tiering an ERA

The Scientist's Toolkit: Essential Reagents and Materials

The experimental work within an ERA, particularly in the analysis phase, relies on standardized tools and models. The following table details key research solutions used in ecological effects and exposure characterization.

Table: Key Research Reagent Solutions and Materials for ERA Experiments [2] [3]

| Category / Item | Primary Function in ERA | Specific Application Example |

|---|---|---|

| Standardized Test Organisms | Serve as surrogate species for broad taxonomic groups to assess toxicity. | Laboratory rat (Rattus norvegicus) as a surrogate for mammals; Fathead minnow (Pimephales promelas) for freshwater fish. |

| Toxicity Testing Benchmarks | Quantitative measurement endpoints derived from controlled laboratory tests. | LC50 (Lethal Concentration for 50% of population): Used in acute risk quotients. NOAEC (No Observed Adverse Effect Concentration): Used in chronic risk assessments. |

| Environmental Fate Models | Predict the distribution and persistence of a stressor in the environment. | Pesticide in Water Calculator (PWC): Estimates pesticide concentrations in surface water bodies based on use patterns and environmental parameters. |

| Site Characterization Tools | Identify ecological receptors and exposure pathways at a specific location. | Geographic Information Systems (GIS): Maps habitats, species distributions, and stressor sources to define exposure scenarios. |

| Analytical Reference Standards | Enable accurate quantification of stressor concentrations in environmental media. | Certified chemical reference standards for target analytes (e.g., specific pesticide active ingredients) used in mass spectrometry for water/soil analysis. |

Current Challenges and Evolving Considerations

Problem formulation continues to evolve in response to scientific and regulatory pressures. A significant contemporary challenge is defining the scope of conditions of use within chemical risk evaluations. Recent regulatory proposals debate whether EPA should have discretion to exclude certain de minimis or non-central uses from the assessment scope to focus resources [5]. Furthermore, incorporating considerations for potentially exposed or susceptible subpopulations and overburdened communities adds necessary complexity to defining receptors and exposure scenarios, though the specific regulatory language remains contentious [5].

The principle of using "best available science" and a "weight of scientific evidence" approach, mandated under statutes like TSCA, must be operationalized during PF. This involves planning to evaluate each piece of information based on its quality, relevance, study design, and reliability before integration [5]. Ultimately, a rigorous problem formulation process is the best defense against an ERA that is inefficient, irrelevant, or uncertain, ensuring that the resulting science is actionable for environmental protection [4].

Within ecological risk assessment (ERA) research, problem formulation is not merely a preliminary step but the critical thesis that determines the scientific and managerial validity of the entire endeavor [7]. Planning represents the active, structured process through which this thesis is developed, creating the indispensable bridge between risk assessment (the scientific analysis of potential adverse effects) and risk management (the decisions and actions taken to mitigate those risks) [8]. For researchers and drug development professionals, this phase establishes the scope, endpoints, and methodologies, ensuring that the resulting data is actionable and decision-relevant [2]. This guide posits that rigorous planning, centered on a well-articulated problem formulation, is the principal determinant of an assessment's efficacy in informing environmental protection and sustainable development.

The Core of Planning: Problem Formulation in Ecological Risk Assessment

The U.S. Environmental Protection Agency (EPA) framework identifies problem formulation as the first technical phase following planning dialogues, where the assessment's foundation is built [7] [2]. This stage translates broad management goals into a concrete, testable scientific plan.

Key Components of Problem Formulation

The process integrates available information to define the nature of the problem and create a roadmap for analysis [2].

- Assessment Endpoints: These are explicit expressions of the ecological values to be protected, combining a valued entity (e.g., a fish species, an aquatic community) with a specific attribute of concern (e.g., survival, reproduction, community structure). They are derived directly from management goals [2].

- Conceptual Model: A diagram and narrative describing hypothesized relationships between a stressor (e.g., a pharmaceutical residue) and the assessment endpoint. It identifies potential exposure pathways and ecological effects [2].

- Analysis Plan: A detailed protocol specifying the data requirements, metrics, and methods to be used for the exposure and effects analyses, and the criteria for risk characterization [2].

Experimental Protocol: Developing a Conceptual Model

Objective: To create a conceptual model that diagrams the plausible causal pathways linking a stressor of concern to ecological assessment endpoints. Procedure:

- Identify Stressor Characteristics: Define the source, magnitude, timing, and spatial distribution of the stressor (e.g., effluent concentration, metabolite toxicity) [2].

- Characterize the Ecosystem: Describe the relevant ecological receptors (species, communities, habitats) and the key environmental processes in the exposure setting.

- Diagram Exposure Pathways: Map the potential routes through which the stressor may reach the receptor (e.g., direct contact, dietary ingestion, biomagnification). Use boxes for entities (stressor, receptor, ecosystem component) and arrows for pathways and effects.

- Articulate Risk Hypotheses: For each pathway, formulate a clear, testable statement predicting the nature and likelihood of an adverse effect (e.g., "Chronic exposure to Compound X via surface water will reduce fecundity in Species Y").

- Peer Review and Iteration: Subject the draft model to review by risk managers and scientific experts to ensure completeness and relevance before finalizing the analysis plan [2].

Diagram: Problem Formulation Conceptual Model for Ecological Risk

Quantitative Methodologies for Risk Estimation

A robust problem formulation guides the selection of analytical methodologies. Moving beyond qualitative judgments, advanced quantitative models enable probabilistic risk estimation, which is crucial for managing uncertainty.

Multi-State Fuzzy Bayesian Networks (MFBN)

A cutting-edge approach for ecological risk assessment involves MFBNs, which address data scarcity and uncertainty by integrating Fuzzy Set Theory (FST) and Bayesian Networks (BN) [9]. Traditional binary-state models (normal/failure) are often insufficient for ecological systems where degradation is gradual. MFBNs allow nodes (e.g., a population health metric) to exist in multiple states (e.g., healthy, stressed, severely degraded), providing a more nuanced risk picture [9].

Core Technical Components:

- Fuzzy Set Theory: Handles the inherent vagueness in expert judgments about ecological conditions by using membership functions to quantify the degree to which a condition belongs to a particular state (e.g., "moderately impaired") [9].

- Bayesian Network: A probabilistic graphical model (Directed Acyclic Graph) representing causal relationships among variables. It uses Conditional Probability Tables (CPT) to quantify these relationships and can update probabilities (perform "inference") when new evidence is obtained [9].

- Improved Similarity Aggregation Method (SAM): A technique to reliably aggregate opinions from multiple experts, a common necessity in ecological assessments where empirical data is limited. Improvements may account for expert reliability and reduce aggregation bias [9].

Table 1: Comparison of Risk Factor States in Binary vs. Multi-State Frameworks

| Risk Factor (Example) | Binary State Model | Multi-State (MFBN) Model |

|---|---|---|

| Population Abundance | Viable / Collapsed | High, Moderate, Low, Critically Low |

| Habitat Quality | Suitable / Unsuitable | Optimal, Suitable, Degraded, Lost |

| Water Quality Index | Passing / Failing | Excellent, Good, Fair, Poor |

| Advantage | Simplicity in analysis and communication. | Captures continuum of degradation; enables more sensitive detection of change and refined management triggers. |

Experimental Protocol: Constructing an MFBN for ERA

Objective: To quantitatively estimate the probability of an adverse ecological endpoint by modeling causal relationships under uncertainty. Procedure [9]:

- Develop a Causal Diagram (DAG): Based on the conceptual model, define the network nodes (variables) and directed arcs (causal links). The top node is the assessment endpoint (e.g., "Aquatic Community Integrity").

- Define Node States: For each node, specify 3-5 discrete, ordered states (e.g., for "Dissolved Oxygen": Hypoxic, Low, Adequate, High).

- Elicit Conditional Probabilities: Use expert judgment, informed by literature and data, to populate Fuzzy Conditional Probability Tables (FCPTs) for each child node. The "fuzzy" component translates linguistic expert estimates (e.g., "low probability") into numerical ranges.

- Aggregate Expert Judgments: Apply an Improved SAM to combine inputs from multiple experts into a single, weighted FCPT for each node, enhancing reliability.

- Parameterize and Validate: Use software (e.g., Netica, GeNIe) to build the network. Validate logic by entering extreme evidence and checking if predictions align with expected outcomes.

- Perform Probabilistic Inference: Enter observed or hypothesized evidence for precursor nodes (e.g., "Increased Nutrient Load = High") to compute the updated probability distribution for the assessment endpoint.

Table 2: Characteristics of Key Methodologies for Quantitative Ecological Risk Estimation

| Methodology | Key Feature | Primary Utility in ERA | Data Requirements | Major Challenge |

|---|---|---|---|---|

| Multi-State Fuzzy Bayesian Network (MFBN) | Integrates expert knowledge with probabilistic reasoning under uncertainty. | Predicting endpoint likelihoods from incomplete or qualitative data; diagnostic analysis. | Moderate (Expert elicitation, some empirical data for validation). | Complexity in constructing and validating conditional probability tables. |

| Fault Tree Analysis (FTA) | Deductive, top-down analysis of pathways to system failure. | Identifying combinations of events leading to a specific ecological disaster (e.g., fish kill). | High (Requires reliable failure probabilities for basic events). | Can become unwieldy for complex systems; often static. |

| Probabilistic Risk Assessment (PRA) | Uses distributions for exposure and effects to produce a risk distribution. | Characterizing variability and uncertainty in risk estimates (e.g., risk curves). | High (Substantial empirical data to define distributions). | Computationally intensive; requires robust statistical expertise. |

From Assessment to Action: Components of the Risk Management Plan

The planning process culminates in a Risk Management Plan (RMP)—the strategic document that translates assessment findings into actionable protocols [10] [11]. An effective RMP is dynamic and contains several key components.

Risk Response Planning: For each identified risk, a planned response must be developed. The four primary strategies are [10] [11]:

- Avoidance: Changing plans to eliminate the risk or protect objectives from its impact.

- Mitigation: Taking steps to reduce the probability and/or impact of the risk.

- Transfer: Shifting the risk to a third party (e.g., through insurance).

- Acceptance: Acknowledging the risk without active pursuit of other strategies, often for low-priority risks.

Risk Monitoring and Control: This ongoing process involves tracking identified risks, monitoring residual risks, identifying new risks, and evaluating the effectiveness of response plans throughout the project lifecycle [10]. The use of key risk indicators (KRIs) and regular review cycles is essential.

Diagram: Integrated ERA & Risk Management Planning Workflow

The Scientist's Toolkit: Essential Reagents for Risk Research

Table 3: Research Reagent Solutions for Ecological Risk Assessment & Management

| Tool/Reagent Category | Specific Example/Product | Primary Function in ERA Research |

|---|---|---|

| Bioassay Test Organisms | Ceriodaphnia dubia (Water flea), Pimephales promelas (Fathead minnow), Lemma minor (Duckweed). | Standardized surrogate species for measuring acute and chronic toxicity endpoints (e.g., survival, growth, reproduction) of chemical stressors [2]. |

| Environmental Sampling & Stabilization | Niskin bottles, Van Dorn samplers, acid-washed vials, preservatives (HNO₃ for metals, amber glass for organics). | Collection and preservation of water, sediment, and tissue samples for contaminant analysis without degradation or contamination. |

| Analytical Reference Standards | Certified reference materials (CRMs) for target analytes (e.g., specific pharmaceuticals, pesticides, metabolites). | Calibration of analytical instrumentation (GC-MS, LC-MS/MS) to ensure accurate quantification of stressor concentrations in environmental samples. |

| Data Analysis & Modeling Software | R packages (ecotoxicology, bayesPOP), Bayesian network software (Netica, AgenaRisk), probabilistic tools (Crystal Ball). |

Statistical analysis of dose-response data, population modeling, and implementation of quantitative risk models (e.g., MFBNs, PRA). |

| Risk Tracking & Management Platform | Enterprise Risk Management (ERM) software (e.g., LogicManager, other GRC platforms). | Documenting the risk register, tracking mitigation actions, assigning ownership, and reporting on risk status to stakeholders [11]. |

Within the structured paradigm of ecological risk assessment (ERA), the planning phase is not merely an administrative prelude but the foundational scientific activity that determines the validity, relevance, and utility of the entire assessment [7] [3]. This initial dialogue, focused on identifying and engaging the correct participants, is the cornerstone of effective problem formulation—a phase described as the process of generating and evaluating preliminary hypotheses about why ecological effects have occurred or may occur from human activities [3]. The quality of this planning dialogue directly dictates whether the subsequent scientific assessment will yield decision-relevant outcomes or become an academically rigorous but practically irrelevant exercise [4].

Framed within a broader thesis on problem formulation research, this guide posits that the systematic identification and integration of risk managers, risk assessors, and stakeholders constitute the first critical test of a sound methodological approach. A poorly conceived or executed planning phase can compromise the entire ERA, leading to requests for irrelevant data, misallocation of resources, miscommunication of findings, and ultimately, delayed environmental decision-making [4]. Conversely, a rigorously planned dialogue ensures the assessment is focused on relevant exposure scenarios and plausible consequences, thereby assuring the relevance of ERA outcomes for environmental protection and resource management [7] [4].

The Planning Dialogue: Core Participants and Their Mandates

The planning dialogue is a collaborative, interdisciplinary exercise that defines the goals, scope, and boundaries of the ecological risk assessment [12]. The participants bring distinct but complementary perspectives, knowledge, and authorities to the table. Their early agreement is essential for aligning scientific inquiry with management needs [2].

Table 1: Core Participants in the Ecological Risk Assessment Planning Dialogue

| Participant Role | Primary Responsibility | Typical Affiliation | Key Contribution to Planning |

|---|---|---|---|

| Risk Manager | Has the authority to make or require action to mitigate an identified risk [12]. | Government agencies (e.g., EPA, state environmental departments), regulatory bodies [12]. | Defines regulatory action and management goals; sets scope, funding, and timeline; determines acceptable level of uncertainty [2] [12]. |

| Risk Assessor | Provides scientific and technical expertise to conduct the risk assessment [12]. | Scientists, ecologists, toxicologists, modelers within agencies, consultancies, or academia [13]. | Translates management goals into assessment endpoints; advises on scientific feasibility, data needs, and methodological approach; identifies uncertainties [2] [3]. |

| Stakeholders (Interested Parties) | Represent societal, economic, or ecological interests affected by the decision [12]. | Industry, environmental NGOs, tribal nations, landowners, the scientific community, and the public [12] [3]. | Provide local knowledge, values, and concerns; help identify valued ecological resources and exposure pathways; ensure the assessment considers all relevant issues [12] [1]. |

The interaction between these groups is governed by a need for clear communication. Risk managers must articulate the regulatory need and the decisions they face, while risk assessors must explain what science can and cannot deliver within constraints [2] [3]. Stakeholders ensure the process remains grounded in real-world ecological and social values [1].

Diagram 1: Interaction of Core Participants in the Planning Dialogue

From Dialogue to Protocol: The Problem Formulation Phase

The planning dialogue flows directly into the formal problem formulation phase, where agreements are translated into a concrete scientific protocol [3]. This phase is highly iterative, often circling back to planning as new information emerges [3]. For researchers, this phase involves several key experimental and analytical protocols.

Protocol for Developing Assessment Endpoints

Assessment endpoints operationalize the management goals into measurable ecological entities and their attributes [2] [3].

- Entity Identification: Review planning agreements and stakeholder input to select the ecological entity (e.g., endangered species, keystone species, commercial fish stock, wetland ecosystem) [12].

- Attribute Selection: Choose a relevant attribute of the entity (e.g., survival, reproductive success, population abundance, community structure) that is both ecologically significant and susceptible to the stressor [12] [3].

- Criteria Application: Filter potential endpoints through three criteria: ecological relevance (role in ecosystem function), susceptibility to the stressor, and relevance to management goals [12].

- Endpoint Specification: Explicitly state the endpoint (e.g., "reproductive success of the fathead minnow (Pimephales promelas) population in Lake X") [2] [4].

Protocol for Conceptual Model Development

The conceptual model is a visual and narrative hypothesis of risk [2].

- Information Integration: Compile data on stressor characteristics (source, intensity, timing), potential exposure pathways (e.g., runoff, bioaccumulation), and the ecosystem at risk (habitat, species present, trophic structure) [3].

- Risk Hypothesis Generation: For each assessment endpoint, draft a statement predicting the relationship between stressor exposure and ecological effect (e.g., "Runoff of pesticide Y to the lake will lead to aqueous concentrations that cause reduced fecundity in fathead minnows") [2].

- Diagram Construction: Create a flow diagram (see Diagram 2) linking stressor sources to receptors via exposure pathways, culminating in the assessment endpoint [3]. This identifies data gaps and critical uncertainties.

Protocol for Analysis Plan Design

The analysis plan is the final product of problem formulation, detailing how the risk hypotheses will be evaluated [2].

- Measures and Metrics Selection: Choose specific measurement endpoints (e.g., LC50, NOAEC, modeled environmental concentration) that are quantitatively linked to each assessment endpoint [2] [4].

- Assessment Design: Decide on the scope and complexity (tiered approach), specifying whether to use existing data, models (e.g., exposure simulation), or new field/lab studies [2].

- Data Quality and Uncertainty Plan: Outline required data quality objectives and a framework for characterizing and reporting uncertainties (e.g., model variability, parameter ignorance) [4] [14].

Diagram 2: Iterative Workflow of the Problem Formulation Phase

Table 2: Key Components and Outputs of Problem Formulation

| Component | Description | Research Protocol Consideration | Output |

|---|---|---|---|

| Assessment Endpoints | Explicit expressions of the environmental values to be protected, defined by an ecological entity and its attributes [3]. | Must be measurable, ecologically relevant, and linked to management goals. Often require surrogate species or proxies in testing [2] [12]. | A prioritized list of endpoints (e.g., survival of aquatic invertebrates; sustainable timber yield). |

| Conceptual Model | A written description and visual representation (diagram) of predicted relationships between stressors, exposures, and assessment endpoints [2]. | Serves as the primary testable hypothesis for the ERA. Development requires interdisciplinary input (ecology, chemistry, hydrology) [3]. | A diagram and narrative detailing risk hypotheses, exposure pathways, and ecosystem interactions. |

| Analysis Plan | A detailed plan for the data analysis and risk characterization phase [2]. | Specifies measurement endpoints, data sources (existing studies, models, new experiments), statistical methods, and uncertainty analysis framework [2] [14]. | A documented protocol guiding the Analysis and Risk Characterization phases of the ERA. |

The Scientist's Toolkit: Essential Research Reagent Solutions

For researchers conducting the problem formulation and subsequent analysis, specific tools and resources are indispensable.

Table 3: Research Reagent Solutions for Ecological Risk Assessment

| Tool/Resource Category | Specific Example or Name | Function in Problem Formulation & ERA |

|---|---|---|

| Guidance & Framework Documents | EPA Guidelines for Ecological Risk Assessment (1998) [12]; International Life Sciences Institute (ILSI) Problem Formulation Framework for GM Plants [4]. | Provide standardized protocols, definitions, and conceptual frameworks to ensure consistency, regulatory compliance, and scientific rigor. |

| Ecological Effects Databases | ECOTOX Knowledgebase (EPA); scientific literature repositories (e.g., PubMed, Web of Science). | Source for toxicity data (e.g., LC50, NOAEC) for surrogate and endpoint species to support effects assessment and endpoint selection [2]. |

| Exposure & Fate Models | Pesticide in Water Calculator (PWC); Exposure Analysis Modeling System (EXAMS); AQUATOX ecosystem model [14]. | Simulate environmental fate, transport, and predicted exposure concentrations (PECs) of stressors to inform conceptual models and analysis plans [2] [14]. |

| Species Sensitivity Distributions (SSD) Tools | Bayesian matbugs calculator; SSD-fitting software (e.g., ETX 2.0) [14]. | Model the distribution of toxicity sensitivity across multiple species to derive protective concentration thresholds and characterize ecological risk [14]. |

| Structured Decision Support Tools | Multicriteria Decision Analysis (MCDA) frameworks [14]. | Help integrate technical risk estimates with socio-economic values and management alternatives during planning and risk management phases [14]. |

Articulating Management Goals and Regulatory Context for the Assessment

This technical guide provides a structured framework for explicitly articulating management goals and regulatory contexts within ecological risk assessment (ERA), specifically tailored for pharmaceutical development. Effective problem formulation—the critical first phase of ERA—requires the integration of compliance obligations, corporate sustainability objectives, and methodological rigor to define the scope and acceptability of risk. We dissect contemporary regulatory paradigms, including recent proposed modifications to the U.S. Toxic Substances Control Act (TSCA) process [15] and international management system standards like ISO 37302 [16] [17]. The guide presents standardized protocols for assessment design, data evaluation, and decision-making, incorporating quantitative and qualitative methodologies [18]. Visual workflows and a curated research toolkit are provided to equip scientists and risk assessors with the practical resources necessary to align scientific analysis with strategic organizational and regulatory imperatives.

In ecological risk assessment for drug development, the problem formulation phase transcends mere technical scoping. It is a strategic exercise that translates disparate inputs—corporate environmental goals, regulatory mandates, stakeholder concerns, and scientific uncertainty—into a coherent assessment plan. A poorly articulated foundation here can lead to regulatory delays, misallocated resources, and incomplete risk characterization. This guide posits that explicit documentation of management goals and regulatory context is not ancillary but central to scientifically defensible and decision-relevant ERA. It frames this articulation within the broader thesis that robust problem formulation is the primary determinant of an assessment's efficiency, credibility, and utility for risk management.

Conceptual Foundations: Interlinking Goals, Regulation, and Assessment

Ecological risk assessment operates at the nexus of science and policy. Management goals (e.g., "minimize aquatic impact," "achieve zero non-compliance") provide the value-based endpoints for what constitutes acceptable risk. The regulatory context provides the legal and procedural boundaries and often defines specific assessment requirements. These two elements inform the assessment goals, which are the specific, technical questions the ERA must answer.

Table 1: Core Components of Problem Formulation in ERA

| Component | Definition | Source/Driver | Example in Pharmaceutical ERA |

|---|---|---|---|

| Management Goals | Strategic objectives related to environmental stewardship, sustainability, and corporate responsibility. | Corporate strategy, ESG commitments, internal policies. | "Prevent API (Active Pharmaceutical Ingredient) discharge into surface water from manufacturing sites." |

| Regulatory Context | Laws, regulations, guidelines, and accepted standards governing chemical safety and environmental protection. | Agencies (e.g., US EPA, EMA), International Standards (ISO). | TSCA requirements for existing chemicals [15], FDA regulations on drug environmental assessments. |

| Assessment Goals | The specific, answerable scientific questions derived from management goals and regulatory context. | Synthesis of the above during problem formulation. | "Determine the chronic risk quotient for fish exposed to effluent containing Compound X under realistic worst-case conditions." |

Analysis of the Regulatory and Management Standards Landscape

The regulatory environment for chemical assessment is dynamic. Recent proposals, such as the U.S. Environmental Protection Agency's (EPA) 2025 changes to the TSCA risk evaluation process, exemplify shifts in regulatory philosophy that directly impact problem formulation [15].

Key Regulatory Developments (TSCA Example):

- Conditions of Use (COU) Focus: The EPA proposes returning to evaluating individual COUs separately, reversing a 2024 rule that required a single determination. This allows for more granular risk evaluations [15].

- Consideration of Occupational Controls: The proposed rule would permit the EPA to consider existing occupational exposure controls (e.g., PPE, engineering controls) in the risk evaluation itself, potentially reducing findings of "unreasonable risk" in controlled settings [15].

- Weight of Scientific Evidence: The proposal incorporates a formal definition emphasizing transparent integration of information based on quality and relevance, guiding data evaluation protocols [15].

Concurrently, international management system standards provide a framework for systematically articulating and achieving goals. The ISO 37302:2025 standard for compliance management system effectiveness offers a directly applicable model [16] [17].

ISO 37302 "Three-Dimension" Evaluation Model: This model evaluates effectiveness not just by written rules, but by holistic performance [17]:

- Policies & Procedures: The design and documentation of the management system.

- Behavior & Culture: The implementation and internalization of rules by people.

- Results & Impact: The tangible outcomes and risk control achieved.

For ERA problem formulation, this model underscores that a goal like "comply with TSCA" must be broken down into: having a procedure for ERA, ensuring staff have the competence and culture to execute it properly, and measuring the result in terms of successful regulatory submissions and risk mitigation.

Table 2: Comparison of Regulatory and Management Frameworks Impacting ERA

| Framework | Primary Focus | Relevance to ERA Problem Formulation | Key Concept for Goal Articulation |

|---|---|---|---|

| TSCA (U.S. EPA) [15] | Chemical substance risk to health/environment. | Defines scope (COUs), required data, risk evaluation methodology. | "Conditions of Use," "Potentially Exposed Subpopulations." |

| ISO 37302:2025 [16] [17] | Effectiveness of compliance management systems. | Provides a structure to ensure the ERA process itself is effective and achieves goals. | "Policies-Procedures-Behavior-Results" linkage. |

| OKR (Objectives & Key Results) [19] | Goal-setting and organizational alignment. | Translates high-level management goals into measurable assessment outcomes. | "Objectives" (qualitative goals) linked to "Key Results" (quantitative metrics). |

Methodological Approaches for Integrated Assessment Design

Articulating goals and context must lead to actionable science. This requires selecting and defining appropriate methodologies.

Integrating Quantitative and Qualitative Lines of Evidence: A robust ERA relies on a weight-of-evidence approach [15], combining:

- Quantitative Data: Numerical, statistical analysis (e.g., LC50 values, predicted exposure concentrations). Ideal for testing hypotheses, measuring effects, and generalizing from samples [18].

- Qualitative Data: Descriptive, contextual information (e.g., field observational notes, stakeholder interview transcripts). Essential for understanding complex phenomena, providing depth, and exploring underlying reasons [18].

Experimental and Assessment Protocols:

- Systematic Review Protocol for Data Collection: A pre-defined, transparent plan to identify, select, appraise, and synthesize all relevant existing scientific evidence on the substance of concern. This directly addresses the "weight of scientific evidence" requirement [15].

- Tiered Toxicity Testing Protocol:

- Tier 1 (Standardized Assays): Conduct standardized acute (e.g., 48h Daphnia immobility) and chronic (e.g., 21d fish early-life stage) tests under Good Laboratory Practice (GLP).

- Tier 2 (Mechanistic & Specialized): If risks are indicated, proceed to endocrine disruption assays, sediment toxicity tests, or multi-generational studies to refine the risk characterization.

- Environmental Exposure Modeling Protocol: Define model scenarios (e.g., EU M3/M4 emission scenarios for pharmaceuticals), input parameters (e.g., log Kow, biodegradation half-life), and conduct probabilistic modeling to estimate predicted environmental concentrations (PECs).

Diagram 1: Integrative Framework for ERA Problem Formulation (92 chars)

Practical Implementation: Tools, Visualization, and the Research Toolkit

The Researcher's Toolkit: Essential Reagent & Material Solutions Table 3: Key Research Reagents and Materials for ERA Protocols

| Item/Category | Function in ERA | Example/Specification |

|---|---|---|

| Standard Test Organisms | Represent trophic levels in aquatic/terrestrial ecotoxicity tests. | Daphnia magna (cladoceran), Danio rerio (zebrafish embryo), Eisenia fetida (earthworm). Must be from certified, culture-stable sources. |

| Reference Toxicants | Validate test organism health and response sensitivity. | Potassium dichromate (for Daphnia), Copper sulfate (for fish). Used in periodic positive control tests. |

| Formulation Vehicle Controls | Ensure test substance effects are not confounded by delivery agent. | HPLC-grade water, acetone, dimethyl sulfoxide (DMSO) at minimal, non-toxic concentrations (e.g., <0.1%). |

| Environmental Matrices | For fate and bioavailability studies. | Standard natural soils/sediments, synthetic surface waters. Characterized for pH, OC%, particle size. |

| Analytical Standards | Quantify test substance concentration and degradation products. | Certified reference material (CRM) of the Active Pharmaceutical Ingredient (API) and major metabolites. |

| Enzymatic/Molecular Assay Kits | Assess sub-organismal, mechanistic endpoints (e.g., oxidative stress, genotoxicity). | Comet assay kit, EROD activity assay, Lipid peroxidation (MDA) assay. |

Visualizing the Assessment Workflow: A clear, staged workflow is critical for project management and regulatory transparency.

Diagram 2: Staged Ecological Risk Assessment Workflow (76 chars)

Diagram Specification for Scientific Communication: All diagrams must adhere to visual accessibility principles. The specified color palette (#4285F4, #EA4335, #FBBC05, #34A853, #FFFFFF, #F1F3F4, #202124, #5F6368) provides sufficient differentiation. Critical contrast rules are enforced: arrow/text colors are explicitly set against node backgrounds (e.g., white text on dark blue, dark text on light yellow) [20] [21]. The WCAG 2.1 contrast standard (minimum 4.5:1 for text) should be verified using tools for scientific figures intended for publication [20] [22].

The future of problem formulation in ERA lies in greater dynamic integration and predictive capability. Emerging trends include:

- Real-Time Data Integration: Leveraging environmental monitoring data from sensors (IoT) to dynamically update exposure scenarios.

- Adoption of New Assessment Criteria (NAC): Incorporating endpoints related to biodiversity, ecosystem services, and climate resilience into management goals.

- Advanced Predictive Tools: Increased use of (Q)SAR, read-across, and mechanistic pathway-based models (e.g., AOPs) to prioritize testing and fill data gaps intelligently.

In conclusion, articulating management goals and regulatory context is a deliberate, structured process that forms the bedrock of a credible and useful ecological risk assessment. By employing the frameworks, protocols, and tools outlined in this guide—from ISO effectiveness models [17] and TSCA compliance strategies [15] to integrated quantitative-qualitative methods [18] and structured visual workflows—researchers and drug development professionals can ensure their scientific assessments are precisely aligned with the strategic and regulatory imperatives that ultimately define success. This alignment is the core of sophisticated problem formulation and the key to defensible environmental risk management.

Within the discipline of ecological risk assessment (ERA), problem formulation is the critical, foundational phase that determines the entire trajectory and feasibility of a study. It is the process of defining the nature, scope, and boundaries of the assessment based on the interplay between management goals and scientific inquiry [7]. For researchers, scientists, and drug development professionals, this phase is not merely an academic exercise; it is a strategic planning activity that directly aligns the assessment's ambitions with the practical constraints of available resources—including time, budget, personnel, and technological access.

The central thesis of this guide is that a rigorously defined problem formulation, executed with resource constraints as a guiding parameter, is the most effective mechanism for ensuring scientific robustness and regulatory relevance without overextending capabilities. This document provides a technical framework for making informed decisions on assessment scope, scale, and complexity, integrating traditional ERA principles with modern New Approach Methodologies (NAMs) to optimize resource efficiency [23]. As regulatory landscapes evolve, such as as the recent EU pharmaceutical legislation that expands requirements to cover the entire product lifecycle and legacy substances, the pressure to conduct thorough yet efficient assessments has never been greater [24].

The EPA Framework: A Scaffold for Scoping Decisions

The U.S. Environmental Protection Agency's (EPA) ecological risk assessment framework provides a well-established, three-phase structure that inherently accommodates resource-based scoping decisions [7]. The process begins with Planning, a collaborative stage involving risk assessors, risk managers, and stakeholders to define the assessment's purpose and constraints [25]. This leads directly into the formal Problem Formulation phase, where the specific questions, endpoints, and analysis plans are defined [7]. The subsequent Analysis (exposure and effects) and Risk Characterization phases are then designed and executed within the boundaries established at the outset.

This framework emphasizes that the interaction between risk assessors and managers at the beginning and end of the process is critical for ensuring the assessment's output is actionable and its scale is appropriate [25]. The following diagram illustrates this iterative framework, highlighting the key decision points where resource availability directly influences the pathway and tools selected.

Diagram: The Iterative Ecological Risk Assessment Framework with Resource Constraints. Resources act as both constraints and enablers at key decision points, shaping the problem formulation and methodological choices.

A Tiered Approach to Defining Scope and Complexity

A tiered, or phased, approach is the most pragmatic strategy for managing resources. It allows an assessment to begin with a conservative, screening-level evaluation using readily available data and models, progressing to more complex and costly studies only if initial results indicate potential risk.

Table 1: Tiered Assessment Approach Aligned with Resource Investment

| Assessment Tier | Typical Scope & Complexity | Key Resource Requirements | Output & Decision Point |

|---|---|---|---|

| Tier 1: Screening | Initial, conservative evaluation. Uses generic exposure models (e.g., EpiSuite), published toxicity data (QSARs), and default safety factors [26] [27]. | Low. Relies on literature, free software, and existing data. Minimal personnel time. | Identification of potential risk. If risk is indicated, proceed to Tier 2. If no risk, assessment may stop. |

| Tier 2: Refined | More realistic, site- or product-specific assessment. Uses measured or modeled environmental concentrations, species-specific toxicity data, and refined safety factors [27]. | Moderate to High. Requires field sampling, chemical analysis, or standardized toxicity testing. Significant personnel and lab resources. | Quantified risk estimate. Determines if risk is confirmed and whether mitigation or further study (Tier 3) is needed. |

| Tier 3: Comprehensive | Detailed, definitive risk characterization. May involve multi-species or mesocosm studies, probabilistic modeling, and investigation of complex endpoints (e.g., endocrine disruption, population-level effects) [24]. | Very High. Demands specialized experimental setups, long-term studies, and advanced analytical or modeling expertise. | Definitive risk characterization. Supports complex regulatory decisions (e.g., market authorization refusal based on environmental risk [24]). |

Integrating New Approach Methodologies (NAMs) for Resource Efficiency

NAMs, which include in vitro assays, computational models, and 'omics technologies, offer a paradigm shift for conducting robust assessments under resource constraints. They can reduce reliance on costly and time-consuming whole-organism vertebrate testing while providing deeper mechanistic understanding [23]. Their integration is a central theme in modern problem formulation.

For instance, in pharmaceutical development, human-relevant cardiac NAMs like human-induced pluripotent stem cell-derived cardiomyocytes (hiPSC-CMs) are being validated to screen for toxicity earlier in the pipeline, preventing costly late-stage attrition [28]. In environmental assessment, a framework integrating in vitro bioassays, Quantitative Structure-Activity Relationship (QSAR) models, and historical in vivo data can identify the most sensitive species based on evolutionary conservation of biological targets, streamlining testing focus [23].

The strategic integration of NAMs into a tiered assessment workflow is illustrated below. This pathway demonstrates how traditional and novel tools can be sequenced to maximize information gain while responsibly allocating resources.

Diagram: Strategic Integration of NAMs into a Tiered Assessment Workflow. Dashed lines show how NAMs inform and refine traditional testing, optimizing resource use across tiers.

Quantitative Decision Points: Data Quality and Uncertainty Factors

A resource-conscious assessment requires clear criteria for deciding when available data are sufficient. This involves evaluating Data Quality and transparently applying Uncertainty (Safety) Factors.

Table 2: Data Quality Objectives (DQOs) for Resource Planning

| Data Quality Tier | Description | Suitable for Assessment Tier | Implications for Resource Planning |

|---|---|---|---|

| Tier 1 (Screening) | Estimated data. QSAR predictions, read-across from analogues, conservative generic models (e.g., 100% release to water). | Tier 1 Screening Assessments. | Minimal resource expenditure. Allows for rapid prioritization of substances or sites. |

| Tier 2 (Refined) | Verified or measured data. Validated laboratory studies, site-specific monitoring data under representative conditions, measured physicochemical properties. | Tier 2 Refined Assessments and higher. | Requires investment in analytical chemistry, standardized testing, or curated database access. |

| Tier 3 (Definitive) | High-resolution, definitive data. GLP-compliant studies, field-validated measurements, probabilistic exposure models, multi-generational or community-level effects data. | Tier 3 Comprehensive Assessments, critical regulatory decisions. | Demands significant resources for complex study execution and expert statistical analysis. |

Uncertainty factors (UFs) are applied to account for gaps in knowledge, such as extrapolating from laboratory to field conditions or from limited species data to an entire ecosystem [27]. Historically, default factors (e.g., 10, 100) are used, but a resource-efficient strategy is to replace default UFs with data. Investing in a key study to reduce a major uncertainty can be more scientifically defensible and, in the long run, more efficient than applying a large, conservative UF that may trigger unnecessary Tier 3 testing [27]. The choice is a direct trade-off between the cost of additional research and the cost (or risk) of potential over- or under-protection.

Experimental Protocols for Key Methodologies

Adopting standardized protocols ensures data quality and interoperability, which is crucial when integrating data from various sources under limited resources. Below are detailed methodologies for two pivotal techniques: one for exposure assessment (Solid Phase Extraction for pharmaceuticals in water) and one for effects assessment (a guideline for implementing NAMs).

Protocol 1: Solid Phase Extraction (SPE) and HPLC-MS Analysis for Pharmaceutical Compounds in Water [26]

- Objective: To extract, concentrate, and quantify trace levels of pharmaceutical compounds from aqueous environmental samples (e.g., wastewater, surface water).

- Materials: Hydrophilic-Lipophilic-Balanced (HLB) SPE cartridges (200 mg, 6 mL), vacuum manifold, HPLC system coupled with Diode Array Detector and Mass Spectrometer (HPLC-DAD-MS), 0.45 μm PTFE filters.

- Procedure:

- Sample Collection & Preservation: Collect water samples in pre-rinsed amber glass bottles. Acidify to pH ~2 (if required for target analytes) and store at 4°C. Process within 24 hours.

- Sample Preparation: Filter 500 mL of water through a 0.45 μm PTFE membrane to remove suspended solids.

- SPE Cartridge Conditioning: Condition the HLB cartridge with 5 mL of methanol, followed by 5 mL of reagent water (pH adjusted to match sample). Do not let the sorbent dry.

- Sample Loading: Pass the filtered sample through the cartridge at a controlled flow rate of 1-10 mL/min.

- Cartridge Washing: Wash with 5-10 mL of a mild aqueous solution (e.g., 5% methanol in water) to remove interfering matrix components.

- Elution: Dry the cartridge under vacuum for 10-20 minutes. Elute analytes into a collection vial with 5-10 mL of an organic solvent (e.g., methanol or acetonitrile).

- Concentration: Gently evaporate the eluate to near dryness under a stream of nitrogen and reconstitute in a smaller volume (e.g., 200 μL) of injection solvent compatible with HPLC.

- Analysis: Inject onto HPLC-DAD-MS. Quantify using external calibration curves prepared from authentic standards.

Protocol 2: Framework for Integrating NAMs into an Ecological Effects Assessment [23]

- Objective: To utilize mechanistic, non-animal data to inform and potentially reduce the need for whole-organism vertebrate ecotoxicity tests.

- Materials: In vitro bioassay kits (e.g., for estrogen receptor binding), computational toxicology software (e.g., for QSAR), access to curated toxicity databases (e.g., ECOTOX), historical in vivo data.

- Procedure:

- Define the Adverse Outcome Pathway (AOP): For the stressor of concern, review literature to establish a plausible AOP, identifying the Molecular Initiating Event (MIE) and Key Events leading to an adverse ecological outcome.

- Select Appropriate NAMs: Choose in vitro assays that measure the MIE or early Key Events (e.g., receptor activation, gene expression). Select in silico tools (QSAR) to predict physicochemical properties and baseline toxicity.

- Generate and Gather Mechanistic Data: Run the selected in vitro assays and in silico models. Concurrently, compile all available historical in vivo toxicity data for the stressor and related chemicals.

- Perform Cross-Species Concordance Analysis: Assess the evolutionary conservation of the molecular target (e.g., receptor, enzyme) across taxa (fish, amphibians, invertebrates, algae). High conservation suggests broader taxonomic sensitivity.

- Integrated Data Analysis (Weight of Evidence): Combine all lines of evidence: AOP concordance, in vitro potency, in silico predictions, and existing in vivo data. Determine if the NAM data robustly identifies the most sensitive taxonomic group and predicts the critical effect.

- Decision Point: If the weight of evidence is strong, the assessment may proceed using the NAM-derived point of departure with a defined uncertainty factor. If significant uncertainty remains, targeted in vivo testing on the predicted most sensitive species is recommended, thereby focusing resources efficiently.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Resource-Conscious ERA

| Item / Solution | Primary Function in ERA | Application Context & Resource Advantage |

|---|---|---|

| HLB (Hydrophilic-Lipophilic Balanced) SPE Cartridges [26] | Broad-spectrum extraction of polar and non-polar organic contaminants from water samples. | Enables efficient monitoring of complex mixtures (e.g., pharmaceuticals in wastewater) with a single, robust extraction method, saving time and sample volume. |

| Certified Reference Standards | Provides accurate quantification and method validation during chemical analysis (e.g., HPLC-MS). | Essential for generating Tier 2/3 quality data. Investing in key standards for parent compounds and major metabolites improves data reliability, reducing uncertainty. |

| Ready-to-Use In Vitro Bioassay Kits (e.g., estrogen receptor transactivation) | Screens for specific mechanistic activity (e.g., endocrine disruption) in a high-throughput format. | A low-resource, rapid alternative to early-tier in vivo fish screening tests. Can prioritize which chemicals require full testing [23]. |

| QSAR Software & Databases (e.g., EPI Suite, OECD QSAR Toolbox) [26] | Predicts physicochemical properties, environmental fate, and baseline toxicity from molecular structure. | Provides critical Tier 1 data at virtually no cost for experimental testing. Fundamental for prioritization and screening assessments. |

| Cultured Test Organisms (e.g., Daphnia magna, algae clones) | Provides standardized, reliable organisms for acute and chronic toxicity testing. | Maintaining in-house cultures reduces cost and increases flexibility for Tier 2 testing compared to purchasing aged specimens for each assay. |

| Environmental DNA (eDNA) Sampling Kits | Allows for sensitive, non-invasive detection of species presence in field communities. | Can reduce the resource burden of traditional ecological surveys for baseline characterization or post-remediation monitoring. |

Structured Methodology: The Step-by-Step Process of Effective Problem Formulation

The initial phase of ecological risk assessment (ERA), problem formulation, is a critical planning and scoping exercise that determines the entire trajectory and relevance of the assessment [7]. Its primary purpose is to translate broad management goals into a specific, actionable analysis plan [2]. At the heart of this phase lies the essential task of integrating available information on three core elements: the characteristics of stressors, the structure and function of potentially exposed ecosystems, and the potential effects of the stressor on ecological entities [2]. This synthesis is not merely a data-collection step but a foundational analytical process that defines the assessment endpoints, informs the conceptual model, and determines the methodology for the subsequent analysis and risk characterization phases [4].

Effective integration ensures the ERA is focused, scientifically defensible, and capable of supporting environmental decision-making. A failure to adequately integrate this information can lead to assessments that are misdirected, overlook significant risks, or become mired in irrelevant detail, ultimately compromising their utility for risk managers [4]. This guide details the technical frameworks, data sources, and methodological approaches for systematically executing this integration within the problem formulation step.

Problem formulation is an interactive process where risk assessors and managers collaboratively define the scope based on available information [7]. The U.S. Environmental Protection Agency (EPA) outlines key information categories that must be integrated [2]:

- Stressor Characteristics: Source, magnitude, timing, frequency, and duration of the stressor (e.g., chemical properties, application rates of a pesticide, physical habitat alteration).

- Ecosystem Characteristics: The biotic and abiotic attributes of the potentially affected environment, including species composition, habitat types, and key ecosystem processes.

- Ecological Effects: Data on the toxicity or adverse impacts of the stressor on ecological entities, derived from laboratory studies, field observations, or models.

Information for integration originates from multiple lines of evidence. Registrant-submitted guideline studies are a primary source for chemical stressors [29]. Crucially, open literature from scientific journals provides a vital supplement, offering data on a wider range of species, field conditions, and novel endpoints [29]. Resources like the EPA's ECOTOX database are systematically searched to gather this literature, with studies screened for relevance and quality based on criteria such as explicit exposure duration, use of appropriate controls, and clear reporting of biological effects [29]. Furthermore, monitoring data and existing models (e.g., for chemical fate or population dynamics) provide critical context on exposure scenarios and ecosystem dynamics.

Frameworks for Structured Integration

Moving beyond data compilation, advanced frameworks structure the integration of stressors, ecosystems, and effects to enhance ecological realism.

The VORS Framework for Ecosystem Health: Recent research advances the "Vigor-Organization-Resilience-Stress" (VORS) model, which explicitly integrates ecosystem stress into health assessments [30]. This framework is operationalized through a composite Ecosystem Health Index (EHI), mathematically combining metrics representing:

- Vigor: Productivity and activity (e.g., Net Primary Productivity).

- Organization: Structure and complexity (e.g., landscape connectivity).

- Resilience: Capacity for recovery.

- Stress: External pressures (e.g., land use intensity, pollution load, fragmentation).

Integrating "Stress" as a core component ensures that the assessment of ecosystem state is directly informed by the magnitude of anthropogenic and natural pressures, providing a more diagnostic evaluation of risk [30].

Dynamic-Probabilistic Synthesis: For complex systems like shelf ecosystems, a synthesis of dynamic simulation models and probabilistic risk models has been proposed [31]. This approach integrates information by:

- Using a dynamic ecosystem model to simulate intra-annual variations in key components (e.g., phytoplankton, zooplankton, nutrient concentrations).

- Feeding these time-variant outputs into a probabilistic risk model, alongside observation data, to compute the likelihood of adverse effects from stressors like oil spills [31].

This method directly couples the natural dynamics of the ecosystem (its seasonal cycles and productivity) with stressor exposure, demonstrating that risk is not static but varies with ecological cycles [31].

Unified Environmental Scenarios: A pivotal concept for prospective ERA is the development of "unified environmental scenarios" that combine exposure and ecological parameters [32]. An exposure scenario predicts chemical fate in space and time using data on use patterns, chemical properties, and landscape configuration. An ecological scenario includes information on ecosystem structure, species traits, ecological interactions, and relevant abiotic factors [32]. Integrating these into a unified scenario ensures that exposure predictions and effects assessments are grounded in a consistent and realistic ecological context.

Quantitative Methods for Data Integration and Analysis

Bayesian Integration of Multiple Lines of Evidence: A powerful quantitative method for integrating disparate data types is Bayesian Markov Chain Monte Carlo (MCMC) [33]. This approach is used to combine multiple lines of evidence—such as risk assessments, biomonitoring data, and epidemiological studies—into a single, updated probability distribution for a risk metric (e.g., Risk Quotient, RQ). The process involves:

- Defining a prior probability distribution based on initial data or expert belief.

- Using MCMC sampling to update this prior with likelihood functions derived from new, disparate studies.

- Generating a posterior distribution that represents the integrated estimate of risk and its uncertainty [33].

This method allows risk assessors to quantitatively answer questions like, "What is the probability that the risk exceeds a level of concern, given all available evidence?" [33]

Table 1: Bayesian MCMC Integration of Multiple Evidence Lines for Insecticide Risk [33]

| Insecticide | Type of Studies Integrated | Mean Posterior Risk Quotient (RQ) | Variance | Probability (RQ > 1.0) |

|---|---|---|---|---|

| Malathion | Risk Assessments, Biomonitoring, Epidemiology | 0.4386 | 0.0163 | < 0.0001 |

| Permethrin | Risk Assessments, Biomonitoring, Epidemiology | 0.3281 | 0.0083 | < 0.0001 |

Dynamic Energy Budget (DEB) Modeling: At the organism-to-population level, DEB theory provides a mechanistic framework for integrating stressor effects with environmental conditions [32]. DEB models mathematically describe an organism's energy acquisition and allocation to maintenance, growth, and reproduction. The core integration step involves modeling how a toxicant alters these energy allocation rules. When coupled with Individual-Based Models (IBMs) to form DEB-IBMs, they can extrapolate individual-level effects—informed by both toxicant exposure and environmental factors like temperature and food availability—to population-level outcomes such as biomass or extinction risk [32]. This represents a deep integration of stressor mechanisms and ecosystem dynamics.

Table 2: Components of a DEB-IBM for Integrating Stressors and Environmental Factors [32]

| Model Component | Description | Role in Integration |

|---|---|---|

| DEB Core | Mathematical rules governing energy uptake from food, and allocation to maintenance, growth, reproduction, and maturation. | Provides the physiological baseline; toxicant effects are modeled as perturbations to these rules. |

| Toxicant Module | Links internal toxicant concentration to sub-lethal effects on DEB parameters (e.g., increased maintenance costs, reduced assimilation). | Integrates the chemical stressor's mechanism of action into the organism's life history. |

| Environmental Driver | Inputs for time-varying conditions like temperature, food density, and habitat quality. | Integrates key abiotic and biotic ecosystem factors that modulate energy intake and expenditure. |

| IBM Population Layer | Simulates a population of individual DEB organisms, each with unique traits and experiences, interacting in a space. | Scales integrated individual-level responses to predict ecological endpoints at the population level. |

Visualization and Communication of Integrated Information

Effective communication of integrated information is crucial. Beyond traditional conceptual model diagrams, advanced graphical tools are used.

Prevalence Plots: This method visualizes the output of integrated, probabilistic assessments [32]. A prevalence plot displays an effect size (e.g., percent reduction in population biomass) on the y-axis against its cumulative prevalence (e.g., proportion of water bodies affected) on the x-axis. The curve is generated by running many model simulations (e.g., a DEB-IBM) across a range of realistic environmental scenarios and exposure levels. This single figure communicates both the severity and the spatial (or temporal) frequency of potential effects, offering a more informative and risk-based perspective than a simple PEC/PNEC ratio [32].

Conceptual Model Diagrams: A cornerstone of problem formulation is the development of a conceptual model diagram [2]. This visual tool integrates knowledge by illustrating hypothesized relationships between stressors, exposure pathways, ecosystem components, and assessment endpoints. It serves to identify key data gaps, prioritize analysis, and ensure a shared understanding among the assessment team.

Integrated Analysis Workflow: The following diagram synthesizes the major steps and iterative feedback involved in integrating information during problem formulation.

Table 3: Key Research Reagents and Resources for Information Integration in Problem Formulation

| Tool / Resource | Primary Function | Application in Integration |

|---|---|---|

| ECOTOX Database | A curated, publicly available database summarizing single-chemical toxicity test results for aquatic and terrestrial species [29]. | The primary source for sourcing and screening open literature effects data to complement guideline studies [29]. |

| Dynamic Energy Budget (DEB) Toolbox | A suite of software tools and libraries for constructing DEB models. | Provides the mechanistic framework to integrate toxicant effects with environmental drivers on organism physiology [32]. |

| Bayesian MCMC Software (e.g., JAGS, Stan) | Software platforms for performing Bayesian analysis using Markov Chain Monte Carlo sampling. | Enables the quantitative integration of disparate lines of evidence into a unified probabilistic risk estimate [33]. |

| Geographic Information System (GIS) | Software for capturing, managing, analyzing, and presenting spatial data. | Integrates spatial data on stressor sources, land use, habitat types, and species distributions to define exposure scenarios and ecosystem boundaries. |

| Unified Environmental Scenario Templates | Standardized, region-specific descriptions of environmental parameters (hydrology, climate, land use, species lists). | Provides a consistent ecological context for both exposure and effects modeling, ensuring they are realistically coupled [32]. |

The selection of assessment endpoints represents the critical bridge between scientific investigation and environmental decision-making within the ecological risk assessment (ERA) process. This step, embedded in the problem formulation phase, translates broad management goals into specific, measurable entities that direct the entire technical assessment [2] [7]. For a thesis focused on advancing problem formulation methodologies, this step is where abstract regulatory concerns are operationalized into testable scientific hypotheses. Effective endpoint selection ensures that the subsequent analysis and risk characterization address questions that are both ecologically significant and policy-relevant, thereby maximizing the utility of the risk assessment for risk managers and stakeholders [34] [2]. This guide details the technical principles, protocols, and decision frameworks for selecting endpoints that are defensible, actionable, and integral to a robust problem formulation strategy.

Foundational Principles: The Role of Assessment Endpoints in Problem Formulation

Assessment endpoints are explicit expressions of the environmental values to be protected, derived from management goals established during the planning dialogue between risk assessors and risk managers [2]. They consist of two mandatory elements: 1) the ecological entity (e.g., a species, functional group, community, or ecosystem process), and 2) the specific attribute of that entity worthy of protection (e.g., survival, reproduction, biodiversity, nutrient cycling) [2].

Within the problem formulation framework, assessment endpoints serve multiple essential functions [2] [7]:

- Directing the Scope of the Assessment: They set the spatial, temporal, and biological boundaries for the investigation.

- Guiding Conceptual Model Development: They are the final receptors in the cause-effect pathways illustrated in conceptual models, which link stressors to potential effects.

- Informing the Analysis Plan: They determine what measures of exposure and effect (known as measurement endpoints) are necessary for the analysis phase.

- Connecting Science to Policy: By aligning with societal values and management goals, they ensure the assessment's conclusions support actionable decisions.

A Systematic Protocol for Endpoint Selection

The following step-by-step protocol operationalizes the endpoint selection process within a problem formulation workflow.

Step 1: Elicit and Analyze Management Goals & Regulatory Context Begin by reviewing the formal planning summary, which documents agreements on management goals, regulatory actions, and the scope of the assessment [2]. Interview risk managers and stakeholders to understand the core ecological values of concern. For example, a goal may be "maintaining a sustainable aquatic community" under the Clean Water Act [2].

Step 2: Identify Potential Ecological Entities List the species, habitats, or ecosystem processes that embody the management goals. Consider entities at multiple levels of biological organization (e.g., endangered species, keystone species, critical habitat types, essential nutrient cycles).

Step 3: Identify Protectable Attributes for Each Entity For each ecological entity, identify the specific attribute whose impairment would constitute an unacceptable adverse effect. Common attributes include survival, growth, reproduction (for species), species richness and composition (for communities), and primary productivity or decomposition rates (for ecosystem functions).

Step 4: Apply Selection Criteria for Scientific Defensibility Evaluate each candidate "Entity-Attribute" pair against the following criteria [2] [7]:

- Susceptibility to the Stressor: Is the entity likely to be exposed and sensitive to the stressor (e.g., a specific pesticide)?

- Relevance to Ecosystem Structure/Function: Does the attribute play a key role in maintaining the ecosystem? Impairment should signal broader ecological consequences.

- Availability of Measurement Endpoints: Can the attribute be quantified or estimated using available or obtainable tools (e.g., toxicity tests, population models, field surveys)?

- Unambiguous Interpretation: Can changes in the measured response be clearly linked to stressor exposure and ecological effect?

Step 5: Apply Selection Criteria for Policy Relevance Evaluate the remaining candidates against policy-driven criteria [34]:

- Linkage to Societal Values & Ecosystem Services: Does the endpoint represent a service with value to society (e.g., crop pollination, water purification, carbon sequestration) [34]?

- Regulatory and Statutory Relevance: Is the entity or attribute explicitly protected by law (e.g., Endangered Species Act, Clean Water Act) [2]?

- Importance to Stakeholders: Is the endpoint recognized as valuable by the public or specific stakeholder groups?

Step 6: Finalize and Document Endpoint Selection Select the final set of assessment endpoints that best satisfy both scientific and policy criteria. Document the rationale for selection and for the exclusion of other potential endpoints. These finalized endpoints now anchor the development of the conceptual model and the analysis plan [2].

Table 1: Evaluation of Candidate Assessment Endpoints for a Pesticide Risk Assessment

| Ecological Entity | Protectable Attribute | Scientific Defensibility (Susceptibility/Measurability) | Policy Relevance (Ecosystem Service/Regulatory Link) | Selection Priority |

|---|---|---|---|---|

| Fathead Minnow (Pimephales promelas) | Reproductive success (fecundity) | High: Standard test species; chronic toxicity data available [2]. | Medium: Supports fishery resources; indicator of aquatic community health. | High (Measurable Surrogate) |

| Colonization Rate of Leaf Litter by Microbes | Decomposition rate | Medium: Can be measured in mesocosms; sensitive to toxicants. | High: Directly linked to nutrient cycling ecosystem service [34]. | Medium (Process-Based) |

| Adult Bald Eagle (Haliaeetus leucocephalus) | Adult survival | Low: Difficult to measure directly; exposure pathway complex. | Very High: Protected under Bald and Golden Eagle Protection Act; high societal value. | High (Requires Modeling) |

| Soil Arthropod Diversity | Species richness & evenness | Medium: Can be measured but taxonomically intensive; response is integrative. | Medium: Supports soil formation service [34]. | Low (Secondary Endpoint) |

Expanding Endpoint Selection to Incorporate Ecosystem Services

A contemporary advancement in problem formulation is the explicit incorporation of ecosystem services as assessment endpoints [34]. This approach directly links ecological risk to human well-being, making assessments more relevant for cost-benefit analyses and stakeholder communication [34].

Table 2: Linking Traditional Ecological Entities to Ecosystem Service Endpoints

| Ecosystem Service Category | Example Service | Related Ecological Entity & Attribute | Potential Measurement Endpoint |

|---|---|---|---|

| Provisioning | Sustainable fisheries | Fish population → reproductive rate | Juvenile fish growth and survival |

| Regulating | Water purification | Riparian wetland plant community → nutrient uptake capacity | Nitrate removal rate in soil cores |

| Supporting | Soil formation & fertility | Soil invertebrate community → biomass & diversity | Litter decomposition rate; earthworm abundance [34] |

| Cultural | Recreational birdwatching | Bird community → species diversity & abundance | Point count surveys of key species |

Experimental & Methodological Guidance for Endpoint Analysis

The selection of assessment endpoints directly informs the experimental and analytical methods required in the subsequent Analysis phase of ERA [7].

5.1. Exposure Assessment Protocols Exposure profiles must be developed for each selected endpoint entity.

- For Chemical Stressors: Use empirical data (e.g., monitoring of pesticide concentrations in water [2]) or model estimates (e.g., EPA's PRZM/EXAMS models) to derive an Estimated Environmental Concentration (EEC) relevant to the entity's habitat [2].

- For Biological Stressors (e.g., invasive species): Quantify pressure metrics such as population density of the invader, rate of spatial spread, or hybridization frequency with native species.

- Key Protocol: Standardized water column and sediment sampling methods (EPA Method 1669) for chemical monitoring; geographic information system (GIS) tracking and population surveys for invasive species.

5.2. Effects Assessment Protocols Effects data quantify the relationship between stressor magnitude and the endpoint attribute's response.

- Toxicity Testing: For standard test species (e.g., laboratory rat for mammals, rainbow trout for fish), analyze data from guideline-accepted acute (e.g., LC50) and chronic (e.g., NOAEC - No Observed Adverse Effect Concentration) studies [2].

- Modeled or Extrapolated Effects: For non-standard species or ecosystem attributes, use tools like Species Sensitivity Distributions (SSD) or process-based models (e.g., ecosystem nutrient cycling models).