Navigating Uncertainty: A Critical Analysis of Uncertainty Factors in Ecological Risk Assessment Quotients for Pharmaceutical Development

This article provides a comprehensive analysis of uncertainty factors (UFs) within the hazard quotient (HQ) method for ecological risk assessment (ERA), tailored for researchers and drug development professionals.

Navigating Uncertainty: A Critical Analysis of Uncertainty Factors in Ecological Risk Assessment Quotients for Pharmaceutical Development

Abstract

This article provides a comprehensive analysis of uncertainty factors (UFs) within the hazard quotient (HQ) method for ecological risk assessment (ERA), tailored for researchers and drug development professionals. It explores the foundational principles and historical application of UFs, revealing inconsistencies in their implementation and a reliance on often-arbitrary default values[citation:1][citation:8]. The review details contemporary methodological approaches, including probabilistic and data-driven techniques to derive chemical-specific adjustment factors, moving beyond traditional defaults[citation:2][citation:6]. It addresses significant challenges in applying and validating UFs, such as issues with data quality, non-commutability in external quality assessment, and model selection[citation:3][citation:5][citation:7]. Finally, the article compares validation strategies and uncertainty quantification methods, emphasizing the critical need for transparency and scientific rigor. This synthesis aims to enhance the reliability and defensibility of environmental safety assessments for pharmaceuticals.

Understanding the Bedrock: Core Concepts and Historical Evolution of Uncertainty Factors in Risk Quotients

Defining the Hazard Quotient (HQ) and the Role of Uncertainty Factors (UFs)

Technical Support & Troubleshooting Hub

Welcome to the Technical Support Center for Hazard Quotient and Uncertainty Factor applications. This resource is designed for researchers, scientists, and drug development professionals engaged in ecological and human health risk assessment quotient research. The following guides and FAQs address common calculation, interpretation, and methodological challenges.

Core Concepts & Calculation Protocols

The Hazard Quotient (HQ) is a primary screening-level tool used to characterize noncancer risk. It is defined as the ratio of a single substance's potential exposure to a level at which no adverse effects are expected [1] [2].

Fundamental HQ Equation:

HQ = Exposure / Reference Value

A HQ less than or equal to 1 indicates that adverse effects are not likely to occur, while a HQ greater than 1 suggests potential risk, though it is not a statistical probability of harm [3] [1].

Key Reference Values:

Reference values, such as the Reference Dose (RfD) or Reference Concentration (RfC), are derived from toxicological points of departure (e.g., NOAEL, LOAEL, BMDL) divided by a composite Uncertainty Factor (UF) [3] [2].

RfD = NOAEL / (UFs) [4]

The product of Uncertainty Factors (UFs) is applied to account for various extrapolations and data gaps, including interspecies differences (animal-to-human) and intraspecies variability (within humans) [4] [5].

Comparison of Common Quotient Methods: The table below summarizes the primary quotient methods used in human health and ecological risk assessments.

| Assessment Aspect | Hazard Quotient (HQ) for Human Health [4] [3] [1] | Risk Quotient (RQ) for Ecological Risk [1] |

|---|---|---|

| Primary Use | Assessing health risks of air toxics, contaminants, and industrial chemicals. | Assessing ecological risks of pesticides and environmental contaminants. |

| Core Equation | HQ = Exposure Concentration / Reference Concentration (RfC) or Reference Dose (RfD). | RQ = Estimated Environmental Concentration (EEC) / Toxicity Endpoint (e.g., LC50, NOEC). |

| Point of Departure | Derived from a human-equivalent reference value (RfD/RfC) which already incorporates UFs. | Uses a raw ecotoxicity endpoint from studies on algae, invertebrates, or fish. |

| Risk Threshold | HQ ≤ 1: Negligible hazard likely. HQ > 1: Potential for adverse effects increases. | Compared to a Level of Concern (LOC). E.g., for chronic risk, RQ must be < LOC of 1.0 [1]. |

| Role of UFs | UFs are embedded within the RfD/RfC used in the denominator. | UFs or Assessment Factors may be applied separately to the toxicity endpoint or are considered within the LOC. |

Detailed Methodological Guides

1. Protocol for Probabilistic Derivation of Chemical-Specific Uncertainty Factors

This data-driven protocol aims to replace default UFs with chemical-specific assessment factors where sufficient data exist [5].

- Objective: To derive empirical distributions for specific UF components (e.g., interspecies, intraspecies, LOAEL-to-NOAEL) using existing toxicity data for a chemical category.

- Materials: A curated database of toxicity values (e.g., NOAELs, LOAELs, LD₅₀s) for the chemical(s) of interest, ideally from standardized studies.

- Procedure: a. Data Compilation: Gather all relevant pairwise toxicity data (e.g., acute LD₅₀ to chronic NOAEL ratios for Acute-to-Chronic Ratios; subchronic to chronic NOAELs for duration extrapolation) [5]. b. Ratio Calculation: For each pair of data points, calculate the required ratio (e.g., LOAEL:NOAEL). c. Distribution Fitting: Use statistical software to fit a distribution (e.g., log-normal) to the calculated ratios. d. Percentile Selection: Identify a protective percentile (e.g., 95th or 99th) of the distribution. The value at this percentile may be proposed as a data-derived UF [5] [6]. e. Uncertainty Analysis: Perform Monte Carlo simulations to characterize uncertainty in the derived UF and calculate confidence intervals [5].

- Troubleshooting: If the dataset is too small for reliable distribution fitting, consider read-across from a similar chemical category or revert to a relevant default UF [5].

2. Protocol for Calculating Aggregate Risk with Hazard Index (HI) for Mixtures

This protocol assesses cumulative risk from exposure to multiple chemicals affecting the same target organ [1] [2].

- Objective: To calculate a Hazard Index (HI) to evaluate the combined risk from a mixture of chemicals.

- Principle: The HI is the sum of the individual HQs for chemicals that share a common mechanism of toxicity or affect the same critical organ system [7] [2].

HI = Σ (HQ₁ + HQ₂ + ... + HQₙ) - Procedure: a. Grouping: Identify all chemicals in the mixture for which exposure data exists. Group them based on a common critical adverse effect or mode of action [7]. b. Individual HQ Calculation: Calculate the HQ for each chemical as described in the Core Concepts. c. Summation: Sum the HQs for all chemicals within the defined group. d. Interpretation: An HI ≤ 1 suggests negligible risk from the mixture. An HI > 1 indicates potential for additive effects and requires further, more refined assessment [2].

- Critical Note: A significant limitation arises when adding HQs based on Reference Doses (RfDs) derived from different critical adverse effects. This can lead to misleading risk estimates [7]. The Adversity Specific Hazard Index (HIA) approach is recommended, where summation is only performed for chemicals whose RfDs are based on the identical critical effect [7].

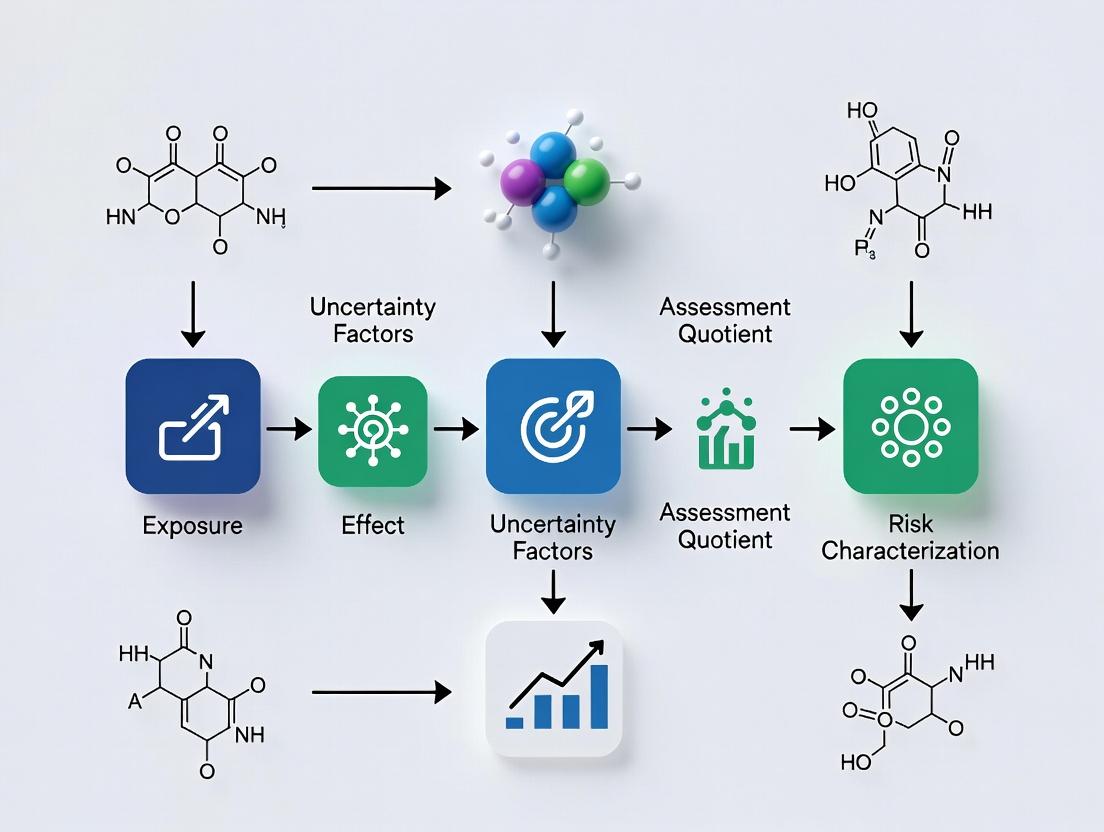

Troubleshooting: Visualization of Workflows and Relationships

Diagram 1: Workflow for HQ Calculation & Risk Characterization

Diagram 2: Role & Composition of Uncertainty Factors (UFs)

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Hazard & Risk Assessment Research |

|---|---|

| Toxicological Points of Departure (NOAEL, LOAEL, BMDL) | Serve as the experimental benchmark from animal or in vitro studies from which safe human limits are extrapolated [5] [3]. |

| Chemical-Specific Toxicity Databases | Curated databases (e.g., for LD₅₀, NOAEL values) are essential raw materials for probabilistic UF derivation and Threshold of Toxicological Concern (TTC) approaches [5]. |

| Probabilistic Distribution Software | Tools for Monte Carlo simulation and fitting log-normal distributions are required to empirically derive UFs from toxicity data ratios and quantify uncertainty [5]. |

| Exposure Assessment Models | Models to generate Estimated Environmental Concentrations (EECs) or Estimated Human Exposures (EHEs) are needed for the numerator in HQ/RQ calculations [1]. |

| Consensus Reference Values (RfD, RfC, ADI) | These are the finalized "reagents" for risk characterization, integrating the toxicological POD with standard or chemical-specific UFs [3] [2]. |

Frequently Asked Questions (FAQs)

Q1: My calculated HQ is 2.5. Does this mean there is a 250% chance of adverse effects? A: No. The HQ is not a probabilistic measure of risk [3] [1]. A HQ > 1 indicates that the exposure level exceeds the reference level (RfD/RfC). It is a signal that potential for risk exists and that further investigation or more refined assessment is warranted. It does not quantify the likelihood or severity of the effect.

Q2: When should I use a chemical-specific UF instead of the default 10x factors? A: Use chemical-specific or data-derived UFs when you have robust, quantitative data to inform a specific extrapolation [5] [6]. For example:

- Toxicokinetic Data: If you have in vivo or in vitro data comparing metabolism or clearance between test species and humans.

- Toxicodynamic Data: If you understand the mode of action and have comparative data on target receptor sensitivity.

- Chemical Category Data: If you have sufficient toxicity data across a group of similar chemicals to perform a probabilistic analysis as described in the protocols above [5]. If such data are lacking, the default factors (e.g., 10 for interspecies, 10 for intraspecies) should be applied as they are considered protective.

Q3: How do I handle HQ calculations for a chemical mixture from a single source (e.g., pesticides in one food item)? A: The standard Hazard Index (HI) approach may underestimate risk if it doesn't account for aggregate exposure from all sources [7]. For single-source assessments, consider the Source-Related Hazard Quotient (HQs) approach [7]:

- Calculate HQs normally (exposure from the source / ADI).

- Determine a Correction Factor (CF) representing the maximum permitted contribution of that source to total dietary exposure.

- Compare the HQs to the CF, not to 1. A risk is indicated if HQs > CF [7]. This method provides a more realistic risk characterization when only single-source data is available.

Q4: What is the most common error in interpreting the Hazard Index (HI) for mixtures? A: The most common error is summing HQs for chemicals whose Reference Doses are based on different critical adverse effects [7]. For example, adding an HQ for a chemical with an RfD based on liver toxicity to an HQ for a chemical with an RfD based on developmental toxicity is not scientifically supportable. Always verify the "critical effect" for each chemical's RfD and sum HQs only for chemicals that affect the same target organ or system, ideally via a similar mode of action. The Adversity Specific Hazard Index (HIA) methodology is designed to correct this error [7].

This technical support center provides resources for researchers conducting Ecological Risk Assessment (ERA) quotient research, a field grounded in deterministic methods but evolving towards structured uncertainty analysis. For decades, risk quotients (RQs)—calculated by dividing a point estimate of exposure by a point estimate of effect—have served as a primary screening-level tool [8] [9]. To account for unknowns, default uncertainty factors (UFs), often 10-fold values, have been applied [5]. This practice originated in the 1950s with food and pesticide safety assessments [10].

However, this "one-size-fits-all" approach contains significant, unquantified uncertainty and may not reflect specific chemical or ecological contexts [9]. Modern research emphasizes moving beyond default factors to structured uncertainty analysis. This involves probabilistic methods, chemical-specific adjustment factors (CSAFs), and frameworks like the Scenario–Model–Parameter (SMP) approach, which systematically accounts for multiple uncertainty sources [5] [11]. This evolution forms the thesis of modern ERA: transitioning from generic safety margins to transparent, quantitative, and hypothesis-driven uncertainty characterization.

Core Methodologies & Technical Reference

Standard Risk Quotient (RQ) Calculations

The deterministic RQ method is the baseline for screening-level assessments. The core formula is RQ = Exposure / Toxicity [8]. The specific parameters vary by assessed organism and exposure scenario.

Table: Standard Risk Quotient Formulas by Organism Type [8]

| Organism Group | Assessment Type | Exposure Estimate (EEC) | Toxicity Endpoint | Formula |

|---|---|---|---|---|

| Terrestrial Animals (Birds/Mammals) | Acute Dietary | Estimated Environmental Concentration (EEC) in mg/kg-diet | Lowest LD50 (oral) | Acute RQ = EEC / LD50 |

| Chronic Dietary | EEC in mg/kg-diet | Lowest NOAEL (mg/kg-diet) | Chronic RQ = EEC / NOAEL | |

| Aquatic Animals | Acute | Peak Water Concentration | Most sensitive LC50 or EC50 | Acute RQ = Peak Concentration / LC50 |

| Chronic (Fish) | 56- or 60-day Avg. Water Concentration | Early Life-Stage NOAEC | Chronic RQ = Avg. Concentration / NOAEC | |

| Terrestrial Plants | Acute (Non-listed) | EEC from Runoff + Spray Drift | EC25 (Seedling Emergence) | RQ = EEC / EC25 |

| Aquatic Plants | Acute (Non-listed) | EEC in water | Lowest EC50 (Algae/Vascular) | RQ = EEC / EC50 |

Uncertainty Factor (UF) Application Protocol

When a Risk Quotient (RQ) indicates potential risk, Uncertainty Factors (UFs) are applied to derive a "safe" concentration or dose. The general equation is: Adjusted Reference Value = Point of Departure (NOAEL, BMDL, etc.) / (UF₁ × UF₂ × ... × UFₙ) [10].

Table: Common Uncertainty Factors and Their Rationale [5] [10]

| Uncertainty Factor (UF) | Area of Uncertainty Addressed | Typical Default Value | Purpose & Notes |

|---|---|---|---|

| UFA | Interspecies (Animal to Human) | 10 | Accounts for differences in toxicokinetics/toxicodynamics between test species and humans. Can be subdivided (e.g., 4.0 for TK, 2.5 for TD) [5]. |

| UFH | Intraspecies (Human Variability) | 10 | Protects sensitive human subpopulations. May be partitioned similarly to UFA [10]. |

| UFS | Subchronic to Chronic Extrapolation | 1-10 | Applied when the Point of Departure is from a less-than-lifetime study. |

| UFL | LOAEL to NOAEL Extrapolation | 1-10 | Applied when the critical study identifies a LOAEL instead of a NOAEL. |

| UFD | Database Incompleteness | 1-10 | Reflects deficiencies in the overall toxicity database (e.g., missing studies, endpoints). |

| MF | Modifying Factor | ≤1 to 10 | A professional judgment factor accounting for additional scientific uncertainties not covered by standard UFs [10]. |

Scenario-Model-Parameter (SMP) Uncertainty Analysis Protocol

The SMP framework is a advanced, multi-step procedure for cumulative risk assessment that moves beyond parameter-only uncertainty [11].

Experimental Protocol: SMP Uncertainty Analysis [11]

- Identify toxic effects and endpoints for all chemicals sharing a common mechanism of toxicity.

- Identify exposure scenarios of concern (e.g., multiple routes, populations, timeframes).

- Develop dose models for each exposure route (inhalation, ingestion, dermal).

- Estimate exposure, dose, and risk using the selected models and scenarios.

- Perform uncertainty analyses:

- Parameter Uncertainty: Use Monte Carlo simulation to vary input parameters (e.g., body weight, ingestion rate).

- Model Uncertainty: Run steps 1-4 using two or more equally plausible mathematical models.

- Scenario Uncertainty: Run steps 1-4 under two or more equally plausible exposure scenarios.

- Characterize risk by comparing the probability distributions of risk estimates generated from parameter-only uncertainty versus the combined SMP uncertainty. This identifies if ignoring model/scenario sources meaningfully underestimates total uncertainty.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for ERA & Uncertainty Research

| Item / Reagent | Primary Function in ERA Research | Application Notes |

|---|---|---|

| Standard Toxicity Test Organisms (e.g., Fathead minnow, Daphnia magna, Rat, Quail) | Generate foundational LC50, EC50, NOAEL, and LOAEL data for RQ calculation [8]. | Required for regulatory submissions. Choice of species is critical for extrapolation relevance. |

| Chemical-Specific Toxicokinetic/Toxicodynamic (TK/TD) Data | Enables replacement of default UFs with Chemical-Specific Adjustment Factors (CSAFs) [5] [10]. | Obtained from in vitro assays, PBPK models, or biomarker studies. Key for modern, data-driven assessments. |

| Probabilistic Exposure Modeling Software (e.g., T-REX, TerrPlant [8]) | Generates exposure concentration distributions (EECs) for probabilistic risk assessment, moving beyond single point estimates. | Models are scenario-driven. Understanding model algorithms and input requirements is essential for uncertainty analysis. |

| Statistical Software for Dose-Response Modeling (e.g., for Benchmark Dose, BMD, analysis) | Determines a Point of Departure (POD) with associated confidence intervals, superior to using a NOAEL from a single test dose [10]. | BMD modeling uses all dose-response data, providing a more robust and quantitative POD for UF application. |

| Monte Carlo Simulation Software | Propagates variability and uncertainty in exposure and toxicity parameters to generate a probability distribution of risk [11]. | Core tool for implementing parameter uncertainty analysis in the SMP framework and probabilistic ecological risk assessment. |

Troubleshooting Guides & FAQs

FAQ 1: When should I use a default Uncertainty Factor (UF) versus developing a Chemical-Specific Adjustment Factor (CSAF)?

- Issue: Concern that a default 10-fold UF may be overly conservative or, conversely, insufficiently protective for a specific chemical.

- Solution: Use default UFs during initial screening-level assessments or when chemical-specific data are utterly lacking [10]. Develop and apply a CSAF when data exist to characterize interspecies differences (e.g., in vitro metabolism studies) or human variability (e.g., genetic polymorphism data) [5]. The trend in regulatory science is to replace defaults with CSAFs whenever possible to increase transparency and scientific rigor [10].

FAQ 2: My Risk Quotient (RQ) is marginally above the Level of Concern (LOC). What are the most defensible refinements?

- Issue: A screening-level RQ > 1.0 triggers regulatory concern, but may be based on overly conservative assumptions.

- Solution: Follow a tiered refinement strategy:

- Refine Exposure: Replace generic estimates with chemical-specific fate data or monitored environmental concentrations. Use probabilistic modeling to represent exposure distributions [9].

- Refine Effects: Consider using a Benchmark Dose (BMD) instead of a NOAEL, or evaluate toxicity for a more relevant, locally present species [10].

- Refine the Assessment Framework: For chronic or population-level risks, consider moving from the RQ to a mechanistic population model (e.g., following Pop-GUIDE) that integrates life-history traits and ecological relevance [9].

FAQ 3: How do I quantitatively incorporate model and scenario uncertainty, not just parameter uncertainty?

- Issue: Traditional Monte Carlo analysis only addresses parameter uncertainty, potentially underestimating total uncertainty.

- Solution: Implement the Scenario-Model-Parameter (SMP) Uncertainty Analysis [11].

- Protocol: See Section 2.3. Systematically run your assessment using alternate, equally plausible models (e.g., different exposure algorithms) and scenarios (e.g., different land-use or behavioral assumptions).

- Outcome: Compare the risk distribution from the parameter-only analysis to the combined SMP analysis. If the SMP distribution is significantly broader, model/scenario uncertainty is critical and must be reported to risk managers.

FAQ 4: What are the major pitfalls in interpreting a probabilistic risk assessment?

- Issue: Misinterpreting the output of a Monte Carlo simulation or probabilistic population model.

- Solution:

- Do not treat the mean or median risk estimate as a deterministic "answer." The entire distribution (e.g., the 5th to 95th percentile range) characterizes uncertainty.

- Clearly communicate that the assessment is based on a set of defined scenarios and models. Different assumptions will yield different results.

- Ensure transparency by fully documenting all input distributions, model choices, and scenario definitions. The value lies in the comparative analysis of assumptions, not a single "true" risk number [11] [9].

FAQ 5: The historical default of a 10-fold safety factor seems arbitrary. What is its scientific basis and is it still valid?

- Issue: The origin and justification for the 10x UF are unclear, leading to questions about its applicability.

- Solution: Recognize its historical and policy context. The 100-fold factor (10 each for inter- and intra-species) was first proposed in 1954 by Lehman and Fitzhugh as a pragmatic "margin of safety" for food additives [10]. Subsequent analyses, like Renwick's work in the 1990s, provided a partial scientific basis by partitioning it into sub-factors for toxicokinetics (4.0) and toxicodynamics (2.5) [5]. Its validity today is conditional: It remains a necessary, health-protective default in the absence of data. However, it is not a substitute for chemical-specific investigation. Modern best practice is to use the default UF as a starting point to be reduced or replaced as data permit [5] [10].

Technical Support & Troubleshooting Hub

FAQ & Troubleshooting Guide: Applying Uncertainty Factors (UFs) in Ecological Risk Assessment Quotients

Q1: I am extrapolating from a mammalian lab species (e.g., rat) to a non-target wildlife species (e.g., bird) in my risk quotient calculation. Which Interspecies UF should I apply, and what are the common pitfalls? A: The standard default Interspecies UF is 10. This is typically bifurcated into subfactors for toxicokinetic (TK, 4.0) and toxicodynamic (TD, 2.5) differences (10 ≈ 4.0 * 2.5). A common error is applying the full UF 10 when chemical-specific adjustment factors (CSAFs) are available. Troubleshooting: If you have in vitro metabolism data (Vmax, Km) or protein-binding data for both species, you can derive a chemical-specific TK factor to replace the default of 4.0. Failure to use available data may overestimate uncertainty. Consult the IPCS framework for CSAFs.

Q2: My chronic toxicity study was only 28 days long, but I need to assess lifetime exposure for a long-lived species. How do I correctly apply the Duration Extrapolation UF? A: The default Duration UF for subchronic-to-chronic extrapolation is 10. The primary issue is misapplication when the available study is actually of chronic duration. Troubleshooting: First, confirm the study duration relative to the species' lifespan. A 28-day study in rodents is subchronic. For fish early life stage tests, a UF may still be needed to account for full life-cycle effects. Ensure your exposure scenario justifies chronic extrapolation. If multiple subchronic studies show consistent effects, consider a reduced UF (e.g., 3-5) with justification.

Q3: I only have a LOAEL from my key study, not a NOAEL. What is the correct methodology for applying the LOAEL-to-NOAEL UF? A: The standard default UF is 10. The core problem is arbitrary application without assessing the "severity" of the LOAEL. Troubleshooting: Analyze the dose-response gradient. If the LOAEL is associated with only minimal, adaptive effects (e.g., slight, transient enzyme induction), a lower factor (e.g., 3) may be scientifically defensible. Alternatively, you can use benchmark dose (BMD) modeling on the raw data to derive a point of departure, which may circumvent the need for this UF entirely. Always document the severity of the effect at the LOAEL.

Q4: My toxicity database for the chemical has gaps (e.g., missing reproductive toxicity data for aquatic invertebrates). How do I quantify and apply the Database Insufficiency UF? A: There is no single default value; it is based on a weight-of-evidence assessment. The error is applying an arbitrary factor (e.g., 10) without structured analysis. Troubleshooting: Use a Modified Scoring System. Evaluate missing taxa (e.g., algae, daphnia, fish) and missing endpoints (acute, chronic, reproduction). Assign scores for each gap (see Table 1). The composite score guides the UF magnitude. A partial UF (e.g., 2-5) is often used for a specific, critical gap rather than a blanket factor.

Table 1: Database Insufficiency UF Scoring Guidance

| Database Gap | Severity Score | Recommended UF Range | Notes |

|---|---|---|---|

| Missing a major trophic level (e.g., no aquatic plant data) | High (3) | 3 - 10 | Critical for herbicides; UF at higher end. |

| Missing chronic data for a key representative species | High (3) | 3 - 10 | Required for long-term risk assessment. |

| Only acute data for all taxa | Moderate (2) | 2 - 5 | Limits capacity for chronic RQ derivation. |

| Missing a specific endpoint (e.g., reproduction) in an otherwise robust chronic study | Low (1) | 1 - 3 | May apply a small additional factor. |

| Complete data for all standard laboratory taxa | None (0) | 1 | No additional UF warranted. |

Experimental Protocols for Key Studies

Protocol 1: Deriving Chemical-Specific Adjustment Factors (CSAFs) for Interspecies Extrapolation Objective: To replace default TK/TD UFs with data-derived values. Methodology:

- Toxicokinetics (TK):

- Conduct in vitro metabolism studies using hepatic S9 or microsomal fractions from the laboratory species (rat) and the target wildlife species (e.g., bird liver).

- Determine kinetic parameters (Km, Vmax) for the chemical's major metabolic pathway.

- Calculate the Relative Metabolic Rate = (Vmax/Km)~human~ / (Vmax/Km)~rat~.

- The inverse of this ratio can inform the TK component of the interspecies UF.

- Toxicodynamics (TD):

- Identify the molecular target (e.g., enzyme, receptor).

- Compare in vitro sensitivity (e.g., IC50, Ki) of the target protein between species using purified proteins or cell lines.

- The ratio of sensitivities informs the TD component. Analysis: The composite CSAF = TK factor × TD factor. This replaces the default 10.

Protocol 2: Benchmark Dose (BMD) Modeling to Replace LOAEL-to-NOAEL Extrapolation Objective: To derive a POD without relying on the LOAEL/NOAEL dichotomy. Methodology:

- Obtain the raw dose-response data from the toxicity study.

- Select a suite of mathematical models (e.g., log-logistic, quantal-linear, Weibull) in software (e.g., EPA BMDS, PROAST).

- Fit all models to the data, specifying a critical benchmark response (BMR), typically a 10% extra risk for quantal data or a 1 standard deviation change for continuous data.

- Calculate the BMD confidence interval (BMDL, BMDU).

- Select the BMDL (lower confidence limit) as the POD. Analysis: The BMDL is used directly in the risk quotient (RQ = PEC / BMDL). This approach is scientifically rigorous and often eliminates the need for the LOAEL-to-NOAEL UF.

Visualizations

Title: Uncertainty Factor Application Decision Workflow

Title: Interspecies UF Covers TK and TD Variability

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in UF-Related Research |

|---|---|

| Hepatic S9 Fractions or Microsomes (from human, rat, bird, fish) | Used in in vitro metabolism studies to derive chemical-specific toxicokinetic data for Interspecies CSAF calculation. |

| Species-Specific Target Proteins (e.g., recombinant enzymes, receptors) | For comparing toxicodynamic sensitivity between species via IC50/Ki assays, informing the TD component of the Interspecies UF. |

Benchmark Dose (BMD) Software (e.g., EPA BMDS, R package drc) |

Enables dose-response modeling to derive a BMDL, eliminating the need for the LOAEL-to-NOAEL UF and providing a more robust POD. |

| Standardized Test Organism Cultures (e.g., Daphnia magna, Pseudokirchneriella subcapitata, fathead minnow embryos) | Essential for filling database gaps. Chronic life-cycle tests with these species can reduce or eliminate the Database Insufficiency UF. |

| High-Throughput Screening (HTS) Assays (e.g., ToxCast/Tox21 assays) | Provides preliminary data on multiple biological pathways across taxa, helping to identify critical data gaps and prioritize testing for Database UF assessment. |

| Physiologically Based Toxicokinetic (PBTK) Modeling Software (e.g., GastroPlus, Simcyp) | Allows extrapolation of internal dose across species and life stages using physiological parameters, refining the Interspecies and Duration UF application. |

Technical Support Center: Troubleshooting Default Values in Ecological Risk Assessment (ERA)

This technical support center assists researchers, scientists, and drug development professionals in navigating the establishment, application, and inherent limitations of default values within Ecological Risk Assessment (ERA) quotient methods. Operating within the broader thesis context of uncertainty factors in ecological risk assessment research, this guide provides targeted troubleshooting for common experimental and interpretive challenges [9].

Frequently Asked Questions (FAQs)

FAQ 1: What is a default value in ERA, and why is it used? A default value is a standardized, conservative parameter used in screening-level ecological risk assessments when chemical- or species-specific data are lacking [9]. The most common default is the Risk Quotient (RQ), calculated as the ratio of an Estimated Environmental Concentration (EEC) to a Toxicity Endpoint (e.g., LC50, NOAEC) [8]. Defaults provide a consistent, initial screening tool to prioritize chemicals and scenarios requiring more refined, resource-intensive assessment [9].

FAQ 2: My Risk Quotient (RQ) exceeds the Level of Concern (LOC). What are my next steps? An RQ > LOC indicates a potential risk at the screening level. Your next steps involve tiered refinement to reduce uncertainty [9]:

- Refine Exposure Estimates: Replace generic model defaults with site-specific data (e.g., local application rates, soil types, water bodies).

- Refine Effects Data: If available, use toxicity data for more relevant, sensitive local species or life stages instead of standard test species.

- Consider Probabilistic Approaches: Move beyond single-point RQs to use full exposure and effects distributions for a probabilistic risk estimate [9].

- Consult Higher-Tier Models: Explore the use of mechanistic population models (e.g., following Pop-GUIDE) to understand ecological relevance [9].

FAQ 3: How are the specific toxicity endpoint defaults (e.g., LC50, NOAEC) selected for different species? Regulatory guidelines prescribe standard test species and endpoints for consistency. The selected default is typically the most sensitive endpoint (lowest value) from a suite of required toxicity tests for a given assessment type [8]. See Table 1 for standard endpoints.

FAQ 4: What are the major sources of uncertainty when using default RQ methods? Key uncertainties are inherent in both components of the RQ [9]:

- Exposure (EEC): Temporal/spatial averaging obscures peak exposures; point estimates ignore variable exposure profiles [9].

- Effects (Toxicity): Laboratory-to-field extrapolation; single-species tests to community/population impacts; acute-to-chronic extrapolation [9].

- The Quotient Itself: A scalar RQ cannot quantify probability, magnitude of effect, or recoverability. It conflates different exposure/effect distribution shapes into a single number [9].

FAQ 5: How can I effectively communicate the limitations of default-value assessments in my research reports? Adhere to the TCCR principles (Transparent, Clear, Consistent, Reasonable) for risk characterization [8]. Explicitly:

- State that the assessment is a screening-level tool.

- List all default values and assumptions used.

- Qualify conclusions by describing the conservative nature of defaults.

- Recommend specific avenues for refinement (e.g., "A probabilistic assessment is recommended to characterize the likelihood of effects given the variable exposure pattern") [9].

Troubleshooting Guide: Common Experimental & Analytical Issues

Problem 1: Inconsistent or Unclear Risk Characterization Conclusions

- Symptoms: Difficulty determining "pass/fail"; contradictory interpretations of the same RQ value; feedback indicates results are not useful for decision-making [8].

- Root Cause: Lack of adherence to a structured risk characterization framework.

- Solution:

- Ensure your risk characterization integrates exposure and effects analyses clearly [8].

- Explicitly describe all uncertainties, assumptions, and the strengths/limitations of the data [8].

- Synthesize a clear conclusion about risk using the TCCR principles [8].

- Use a standardized report structure (Title, Abstract, Introduction, Methods, Results, Discussion, References) to ensure completeness [12] [13].

Problem 2: The Default Toxicity Endpoint Seems Ecologically Irrelevant for My Assessment Scenario

- Symptoms: The standard lab test species is phylogenetically or functionally distant from the species of concern; the endpoint (e.g., mortality) doesn't align with the assessment goal (e.g., population sustainability).

- Root Cause: Defaults are standardized for broad regulatory application, not specific ecological scenarios [9].

- Solution:

- Document the Limitation: Clearly state this uncertainty in your assessment [8].

- Propose a Refined Endpoint: If data exists, justify and use a more relevant endpoint (e.g., a sub-lethal reproduction NOAEC for a population-level assessment) [8].

- Advocate for Modeling: For higher-tier assessment, propose using a mechanistic population model that can translate individual-level effects to population-relevant metrics [9].

Problem 3: My Calculated RQ is Borderline Relative to the LOC, Making Risk Management Decisions Difficult

- Symptoms: The RQ is slightly above or below the LOC; small changes in input assumptions flip the conclusion.

- Root Cause: Deterministic point estimates do not account for natural variability or measurement uncertainty [9].

- Solution:

- Conduct Sensitivity Analysis: Systematically vary key input parameters (e.g., application rate, toxicity value) within plausible ranges to see how the RQ changes.

- Perform Probabilistic Analysis: Use available data to model the EEC and/or toxicity endpoint as distributions (e.g., using @Risk or Crystal Ball software). Calculate the probability that exposure exceeds toxicity.

- Present a Risk Profile: Instead of a single "pass/fail," present a range of plausible outcomes to support more nuanced decision-making [9].

Experimental Protocols & Methodologies

Protocol 1: Standardized Calculation of Acute and Chronic Risk Quotients (RQs) This protocol outlines the deterministic quotient method as per EPA guidelines [8].

- 1. Objective: To derive a screening-level estimate of risk by comparing a point estimate of exposure to a point estimate of toxicity.

- 2. Materials: See "The Scientist's Toolkit" table.

- 3. Procedure:

- Step 1 - Exposure Characterization (EEC): Using a fate & transport model (e.g., T-REX for terrestrial, PRZM/EXAMS for aquatic), calculate the relevant EEC. For acute RQs, this is typically a peak concentration (e.g., peak water concentration). For chronic RQs, this is a time-weighted average (e.g., 21-day average for invertebrates, 60-day for fish) [8].

- Step 2 - Effects Characterization: Obtain the appropriate toxicity endpoint from guideline studies. For acute RQs, this is typically the LC50 or EC50 (for the most sensitive standard test species). For chronic RQs, this is the No Observable Adverse Effect Concentration (NOAEC) [8].

- Step 3 - Risk Quotient Calculation: Apply the formula: RQ = EEC / Toxicity Endpoint.

- Step 4 - Risk Estimation: Compare the calculated RQ to the pre-defined Levels of Concern (LOCs). LOCs vary by regulatory agency and species (e.g., USEPA often uses LOC = 0.5 for acute risk to endangered species, 0.1 for chronic risk).

- 4. Reporting: Report the RQ, the LOC, and a clear statement of potential risk. All input values, models, and assumptions must be documented [8] [12].

Protocol 2: Refinement Using a Probabilistic Risk Assessment (PRA) Approach This advanced protocol addresses limitations of deterministic RQs by incorporating variability [9].

- 1. Objective: To estimate the probability and magnitude of adverse ecological effects by integrating distributions of exposure and toxicity.

- 2. Materials: Statistical software (R, Python), Monte Carlo simulation add-ins (@Risk), datasets of exposure concentrations and/or toxicity values.

- 3. Procedure:

- Step 1 - Develop Distributions: Fit appropriate statistical distributions to exposure data (e.g., modeled or monitored concentrations over time/space) and/or species sensitivity distributions (SSDs) to toxicity data.

- Step 2 - Monte Carlo Simulation: Run a simulation (e.g., 10,000 iterations). For each iteration, randomly select a value from the exposure distribution and a value from the effects distribution. Calculate a "probabilistic RQ" for that iteration.

- Step 3 - Analyze Output: The result is a distribution of RQs. Analyze the proportion of iterations where RQ > 1 (or another threshold). This proportion represents the probability of exceeding the effects threshold.

- Step 4 - Create a Risk Curve: Plot the cumulative probability of effects against the exposure concentration or RQ magnitude.

- 4. Reporting: Report the probability of adverse effects (e.g., "There is a 12% probability that the chronic exposure concentration exceeds the NOAEC for the most sensitive 10% of species"). Include the developed distributions and simulation parameters [9].

Data Presentation: Standard Default Values & Endpoints

Table 1: Standard Toxicity Endpoints Used as Defaults in Screening-Level Risk Quotient Calculations [8]

| Assessment Type | Receptor Group | Standard Toxicity Endpoint (Default) |

|---|---|---|

| Acute Assessment | Terrestrial Birds & Mammals | Lowest available LD₅₀ (oral) or LC₅₀ (dietary) |

| Chronic Assessment | Terrestrial Birds & Mammals | Lowest available NOAEC from reproduction test |

| Acute Assessment | Aquatic Fish & Invertebrates | Lowest available LC₅₀ or EC₅₀ (from acute tests) |

| Chronic Assessment | Aquatic Invertebrates | Lowest available NOAEC from early life-stage test |

| Chronic Assessment | Aquatic Fish | Lowest available NOAEC from full life-cycle test |

| Plant Assessment | Terrestrial & Aquatic Plants | EC₂₅ (for non-listed species) or NOAEC/EC₀₅ (for listed species) |

Table 2: Key Uncertainties and Limitations Associated with Default Risk Quotient Methodology [9]

| Component | Source of Uncertainty | Consequence for Risk Estimation |

|---|---|---|

| Exposure (EEC) | Use of a single high-percentile point estimate (e.g., 90th percentile). | Obscures exposure frequency, duration, and timing relative to species life cycles. May be under- or over-conservative. |

| Exposure (EEC) | Lack of spatial explicitness. | Cannot identify risk in critical habitats if they don't coincide with the highest average exposure. |

| Effects (Toxicity) | Use of limited surrogate species. | May not protect all real-world species. Laboratory conditions do not reflect field stressors. |

| Effects (Toxicity) | Use of individual-level endpoints (mortality, growth). | Difficult to extrapolate to population-level consequences (abundance, extinction risk). |

| Risk Quotient (RQ) | Scalar, deterministic calculation. | Provides no information on the probability, severity, or reversibility of effects. Two scenarios with the same RQ can have vastly different risks. |

Visualizations: Workflows and Relationships

Diagram 1: Tiered Ecological Risk Assessment Workflow with Default Path

Diagram 2: The Science-Policy Interface in Ecological Decision-Making

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Ecological Risk Assessment Research

| Item | Function in ERA Research | Key Considerations |

|---|---|---|

| Standard Test Organisms(e.g., Fathead minnow, Rainbow trout, Water flea (Daphnia), Earthworm, Zebrafish) | Surrogate species for generating regulatory-accepted toxicity endpoints (LC50, NOAEC) [8]. | Maintain cultures under guideline conditions (OECD, EPA). Use consistent age/size classes for test reproducibility. |

| Formulated Chemical Test Substance | The agent of concern for which toxicity is being characterized. | Use highest purity available. Characterize stability and solubility in test media. Prepare fresh stock solutions as needed. |

| Reconstituted Water / Standard Soil | Provides a consistent, defined medium for aquatic or terrestrial toxicity tests. | Follow standard recipes (e.g., EPA reconstituted water). Monitor and document key parameters (pH, hardness, temperature, organic matter). |

| Analytical Grade Solvents & Reagents | For chemical extraction, cleanup, and analysis of test concentrations. | Essential for verifying exposure concentrations (Verification of Exposure). Use appropriate blanks and spikes. |

| Data Analysis Software(e.g., R, Python, SigmaPlot, ToxCalc) | For calculating toxicity endpoints, running statistical analyses, and performing probabilistic simulations [9]. | Use validated scripts or procedures. For probabilistic assessment, ensure sufficient iterations (e.g., >10,000) for stable outputs. |

| Fate & Transport Models(e.g., T-REX, TerrPlant, PRZM/EXAMS) | Predict environmental concentrations (EECs) of chemicals in various compartments for exposure assessment [8]. | Calibrate and validate models with local data when possible. Understand and document all default assumptions within the model. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center addresses common experimental and methodological challenges within ecological and human health risk assessment (ERA/HHRA), framed within a thesis investigating uncertainty factors in risk quotient research. The guides below provide targeted solutions for researchers, scientists, and drug development professionals.

Core Concepts: Variability vs. Uncertainty

Q1: What is the fundamental difference between variability and uncertainty, and why does it matter for my risk assessment? [14]

- A: Variability refers to true heterogeneity in a population or system—differences in body weight, species sensitivity, or seasonal chemical concentrations. It cannot be reduced, only better characterized. Uncertainty stems from a lack of knowledge—measurement errors, model simplifications, or data gaps. It can be reduced with better data. Confusing these leads to poor study design; for instance, misinterpreting natural variation (variability) as a measurement flaw (uncertainty).

Q2: In the context of risk quotient (RQ) methods, where do variability and uncertainty most commonly originate? [14] [8] [15]

- A: In deterministic RQ calculations (RQ = Exposure / Toxicity), key sources are:

- Exposure Estimation: Variability in environmental fate, organism behavior, and spatial/temporal concentration gradients. Uncertainty from using modeled versus measured data, or improper scaling.

- Toxicity Endpoint Selection: Variability among species, life stages, and test populations. Uncertainty from extrapolating laboratory data to field conditions, or from subchronic to chronic effects.

- Assessment Assumptions: Uncertainty from professional judgment in defining exposure scenarios, aggregating disparate data, or selecting inappropriate models.

Troubleshooting Experimental Design & Data Collection

Q3: My environmental sampling results show high unexplained scatter. How can I design a campaign to better characterize variability and limit uncertainty? [14] [16]

- Problem: Unrepresentative sampling masks true spatial/temporal patterns and inflates uncertainty.

- Solution: Implement a tiered, hypothesis-driven design.

- Pilot Study: Conduct preliminary sampling to gauge expected variance.

- Stratified Sampling: Divide the study area into homogeneous strata (e.g., by soil type, proximity to source, land use) based on pilot data or GIS analysis.

- Composite vs. Grab Sampling: For integrated exposure, use composite sampling. For peak or episodic events, use frequent grab samples. Passive samplers can provide time-weighted averages but require careful calibration [16].

- Quality Assurance/Quality Control (QA/QC): Include field blanks, duplicates, and certified reference materials to quantify and control analytical uncertainty.

Table 1: Common Sampling Uncertainties and Mitigation Strategies [16]

| Uncertainty Source | Potential Impact | Recommended Mitigation Strategy |

|---|---|---|

| Spatial Heterogeneity | Unrepresentative point samples, biased mean estimates. | Implement systematic or stratified random sampling design; increase sample density in high-gradient zones. |

| Temporal Variability | Missed peak exposures or seasonal trends. | Increase sampling frequency; align timing with hypothesized driver (e.g., rainfall, application season). |

| Method Detection Limit | Censored data (non-detects), biased low-end distribution. | Use most sensitive analytical method available; apply robust statistical methods for left-censored data. |

| Sample Preservation & Handling | Analytic degradation, contamination. | Strict adherence to chain-of-custody; use appropriate preservatives and cold storage immediately. |

Q4: I am assessing a novel emerging contaminant with scarce toxicity data. What is a robust methodological pathway for developing a preliminary risk quotient? [17] [18]

- Problem: Lack of substance-specific data leads to high uncertainty in hazard identification and dose-response.

- Solution: Follow a tiered workflow integrating New Approach Methodologies (NAMs) and conservative uncertainty factors (UFs).

- Hazard Identification: Use QSAR models and read-across from structurally similar compounds for initial hazard flagging [18].

- Dose-Response: If in vivo data is absent, consider in vitro bioassay data with IVIVE (in vitro to in vivo extrapolation) to estimate a point of departure [18].

- Apply Uncertainty Factors: Use standardized UFs to account for interspecies, intraspecies, and duration extrapolations (see Table 2). Document all assumptions transparently.

- Iterative Refinement: The preliminary RQ should trigger a "data call-in" or targeted testing strategy to replace UFs with data.

Table 2: Common Uncertainty Factors (UFs) in Screening-Level Assessments [15]

| Extrapolation Type | Typical UF | Rationale and Application Notes |

|---|---|---|

| Laboratory to Field | 1 - 100 | Accounts for differences between controlled lab conditions and variable environmental conditions. Highly context-dependent. |

| Interspecies (e.g., rat to human) | 10 | Default factor for extrapolating toxicity data from test species to a different target species. |

| LOAEL to NOAEL | 1 - 10 | Applied when only a Lowest Observed Adverse Effect Level is available, to estimate a No Observed Adverse Effect Level. |

| Subchronic to Chronic | 1 - 10 | Extrapolates from shorter-term study results to predict chronic, long-term effects. |

Troubleshooting Data Analysis & Model Application

Q5: My probabilistic risk assessment model yields highly uncertain outputs. How can I diagnose the source of this uncertainty? [14]

- Problem: Output uncertainty can stem from input variability, model structure, or parameter uncertainty.

- Solution: Conduct a comprehensive uncertainty and sensitivity analysis.

- Parameter Uncertainty: Use Monte Carlo simulation with defined probability distributions for each input parameter (e.g., log-normal for body weight, uniform for degradation rates). This quantifies how input variability propagates to the output.

- Model Sensitivity: Perform sensitivity analysis (e.g., Morris method, Sobol indices) to identify which input parameters drive the majority of output variance. Focus data refinement efforts on these "high-leverage" parameters.

- Model Structure/Scenario Uncertainty: Test alternative, plausible model formulations or exposure scenarios (e.g., different fate models, receptor behaviors). Compare outcomes to gauge structural uncertainty.

Diagram Title: Workflow for Diagnosing Model Output Uncertainty

Q6: How should I handle non-detect or data-below-detection-limit values in my exposure dataset when calculating an EEC (Estimated Environmental Concentration)? [16]

- Problem: Substituting non-detects with zero, the detection limit (DL), or DL/2 can bias the EEC and misrepresent variability.

- Solution: Use robust statistical methods tailored to the proportion of non-detects.

- <20% Non-Detects: Use simple substitution (e.g., DL/√2) or maximum likelihood estimation.

- 20-50% Non-Detects: Use Kaplan-Meier or robust regression on order statistics methods designed for censored data.

- >50% Non-Detects: The dataset may be inadequate for defining a concentration distribution. Consider reporting as "< DL" or using the percentile bootstrap method to estimate confidence limits on the mean.

- Critical Step: Always conduct the final risk calculation under multiple plausible substitution scenarios to bound the uncertainty.

Advanced Topics: Emerging Contaminants & Human Health Integration

Q7: My ecological risk assessment for an emerging contaminant (e.g., microplastics, pharmaceuticals) is criticized for using inappropriate endpoints. What's a valid framework? [19] [16]

- Problem: Conventional endpoints (mortality, growth) may not capture sublethal or novel mechanisms of action.

- Solution: Adopt a multiple-lines-of-evidence and weight-of-evidence approach.

- Expand Endpoints: Incorporate biochemical (biomarkers), behavioral, or reproductive endpoints relevant to the contaminant's suspected mode of action.

- Use Composite Indices: For complex contaminants like microplastics, calculate indices like the Pollution Load Index (PLI), Polymer Hazard Index (PHI), and Ecological Risk Index (ERI) to integrate polymer toxicity and abundance [19] (see Table 3).

- Field Validation: Where possible, correlate laboratory-based RQs with field-based metrics of ecosystem condition (e.g., benthic community diversity).

Table 3: Example Risk Indices for Microplastic Contamination in a Shipbreaking Yard [19]

| Matrix | MP Abundance | Dominant Polymer | Pollution Load Index (PLI) | Polymer Hazard Index (PHI) | Ecological Risk Index (ERI) | Interpretation |

|---|---|---|---|---|---|---|

| Sediment | 73.54 ± 8.61 items/kg | PET (25%), PP (25%) | 1.02 - 1.433 | Up to 254.37 | Up to 312.88 | Moderate to considerable contamination risk. |

| Surface Water | 218.56 ± 19.12 items/m³ | PET (37.5%), PS (25%) | 1.02 - 1.68 | Up to 265.68 | Up to 433.06 | Moderate to considerable contamination risk. |

Q8: How do I coherently integrate variability and uncertainty from both ecological and human health assessments for a single stressor? [14] [20] [17]

- Problem: Ecological and human health assessments run in parallel but are often disconnected, missing shared exposure pathways and compounding uncertainties.

- Solution: Develop an integrated conceptual site model that unifies the assessments.

- Shared Exposure Pathways: Map pathways (e.g., soil → plant → invertebrate → bird; same soil → plant → human). This identifies common nodes (e.g., soil concentration) where uncertainty reduction benefits both assessments.

- Unified Uncertainty Analysis: Perform a coordinated probabilistic assessment where possible, using shared input distributions for common exposure parameters.

- Risk Description: Present results using parallel risk curves or comparative risk quotients, explicitly stating where uncertainty is joint (shared parameters) versus separate (toxicological responses).

Diagram Title: Integrated Exposure Pathways for Ecological and Human Health Risk

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Materials and Models for Risk Assessment Research

| Tool Category | Specific Item / Model | Primary Function in Risk Assessment | Key Reference / Source |

|---|---|---|---|

| Analytical Instrumentation | GC-MS (Gas Chromatography-Mass Spectrometry) | Identification and quantification of organic contaminants (e.g., PAHs, PCBs) in environmental samples. | [21] [16] |

| ICP-MS (Inductively Coupled Plasma Mass Spectrometry) | High-sensitivity quantification of trace metals and elements in water, soil, and tissue samples. | [22] | |

| Exposure & Fate Models | T-REX (Terrestrial Residue Exposure) model | EPA model for estimating pesticide exposure and calculating risk quotients for birds and mammals. | [8] |

| TerrPlant model | EPA model for estimating exposure and risk quotients for non-target terrestrial plants. | [8] | |

| Statistical & Uncertainty Analysis | Monte Carlo Simulation Software (e.g., @RISK, Crystal Ball) | Propagates input variability through models to produce probabilistic risk estimates. | [14] |

| Positive Matrix Factorization (PMF) model | A receptor model used for quantitative source apportionment of contaminants (e.g., metals). | [22] | |

| New Approach Methodologies (NAMs) | QSAR (Quantitative Structure-Activity Relationship) models | Predicts physicochemical and toxicological properties of chemicals based on molecular structure. | [18] |

| In vitro high-throughput screening (HTS) assays | Provides rapid, mechanistic toxicity data for hazard identification and prioritization. | [18] |

From Theory to Practice: Modern Methodologies for Applying and Calculating Uncertainty Factors

This technical support center is designed to assist researchers in implementing the standard calculation for composite Uncertainty Factors (UFs) within Hazard Quotient (HQ) frameworks. The HQ is a fundamental ratio used in ecological and human health risk assessments to compare exposure levels to a toxicity reference value [23] [2]. A critical component of deriving these reference values is the application of UFs, which account for scientific uncertainties when extrapolating from experimental data to protective human or ecological health benchmarks [10]. This resource, framed within broader thesis research on refining UF application, provides targeted troubleshooting and methodologies to ensure robust, transparent, and reproducible risk assessments.

Troubleshooting & FAQ

This section addresses common operational challenges encountered when calculating Hazard Quotients with composite Uncertainty Factors.

Q1: What is the most common source of error when selecting a Point of Departure (PoD) for the HQ calculation?

A: The most frequent error is the misapplication of the PoD to an incompatible exposure scenario. The PoD (e.g., NOAEL, LOAEL, BMD) is duration- and route-specific [10] [23].

- Troubleshooting Steps:

- Identify Exposure Context: Precisely define your assessment's exposure duration (acute, intermediate, chronic) and route (oral, inhalation) [23].

- Match to PoD: Select a toxicity study (and its corresponding PoD) that most closely matches this context. A chronic HQ calculation requires a PoD from a chronic study [23].

- Apply Duration Extrapolation UF (UFS): If a perfect match is unavailable (e.g., using a subchronic study for a chronic assessment), you must apply the appropriate UFS factor (often between 2 and 10) to account for the uncertainty [10].

- Document Justification: Clearly state the rationale for your PoD selection and any applied UFS in your methodology [10].

Q2: My composite UF (UFc) seems disproportionately large. How can I justify its magnitude to reviewers?

A: A large UFc often results from the multiplicative application of multiple default 10-fold factors [10]. The key to justification is transparency and data-driven adjustment.

- Troubleshooting Steps:

- Audit Each Factor: List each individual UF (UFA, UFH, UFL, UFS, UFD) and its default value (often 10) [10].

- Seek Chemical-Specific Data: For each factor, ask if chemical-specific data exists to replace the default.

- Example: Pharmacokinetic data might show the interspecies difference (UFA) is only 4-fold, not 10 [10].

- Replace Defaults: Substitute default values with chemical-specific adjustment factors where possible. This is the established trend to increase scientific rigor [10].

- Present Rationale: In your report, create a table justifying each factor's final value, citing either the default convention or the specific study used for refinement [10].

Q3: When calculating an HQ for a mixture, should I apply UFs before or after summing component HQs?

A: Apply UFs to each component's toxicity value before calculating individual HQs, then sum the HQs. The Hazard Index (HI) is the sum of HQs for substances with similar toxicological effects [2].

- Troubleshooting Protocol:

- For each chemical in the mixture, derive its toxicity reference value (e.g., Reference Dose, RfD): RfD = PoD / UFc [10] [2].

- Calculate the HQ for each chemical: HQ = Exposure Dose / RfD [23] [2].

- Sum the HQs for chemicals affecting the same target organ or system to obtain the Hazard Index (HI): HI = Σ HQ [2].

- Interpretation: An HI < 1 suggests negligible cumulative risk for the mixture [2].

Q4: I have an HQ result slightly above 1. What are the next analytical steps before concluding a significant hazard?

A: An HQ > 1 indicates the exposure estimate exceeds the protective reference value [23]. The next steps involve uncertainty analysis and sensitivity testing.

- Troubleshooting Protocol:

- Deconstruct the UFc: Analyze which individual UFs contribute most to the denominator. Is the uncertainty driven by database insufficiency (UFD) or interspecies extrapolation (UFA)? [10].

- Test Exposure Parameters: Re-examine exposure assumptions (e.g., ingestion rate, exposure frequency) using probabilistic methods instead of conservative point estimates.

- Benchmark Dose (BMD) Modeling: If the PoD was a NOAEL/LOAEL, consider re-analyzing the critical toxicity study using BMD modeling. A BMD-derived PoD is often more robust and may reduce the need for a UFL factor [10].

- Report Probabilistic Output: Instead of a single HQ value, present a distribution of HQ results based on Monte Carlo simulation of input variables. This shows the probability that HQ exceeds 1.

Q5: How do I handle a chemical with a non-threshold mode of action (e.g., a genotoxic carcinogen) in the HQ framework?

A: The standard HQ/UF framework is not typically applied to non-threshold chemicals. These require a different risk characterization approach [10].

- Troubleshooting Guidance:

- Identify Mode of Action (MOA): Review toxicological data to determine if the chemical's critical effect is presumed to have a biological threshold [10].

- Shift Methodology: For non-threshold carcinogens, low-dose extrapolation models (e.g., linear dose-response) are used to estimate cancer risk, resulting in a Cancer Slope Factor (oral) or Inhalation Unit Risk [23].

- Calculate Cancer Risk: The calculation becomes Risk = Exposure Dose × Cancer Slope Factor, with results interpreted as a probability of excess cancer risk [23].

- Do Not Apply UFs: The composite UF process described here is for deriving threshold-based reference values like RfDs and RfCs [10].

Experimental Protocols & Data Presentation

Protocol 1: Deriving a Reference Dose/Concentration with Composite UFs

This protocol details the standard method for deriving a health-based toxicity reference value, which serves as the denominator in the HQ calculation.

Methodology:

- Identify Critical Study & PoD: Select the pivotal toxicological study identifying the most sensitive adverse effect. Determine the PoD: either a No-Observed-Adverse-Effect Level (NOAEL), Lowest-Observed-Adverse-Effect Level (LOAEL), or Benchmark Dose (BMD) [10].

- Select Individual Uncertainty Factors: Choose and justify the value for each relevant UF based on data quality and assessment context [10].

- UFA (Interspecies): Default 10. Can be refined with toxicokinetic/toxicodynamic data [10].

- UFH (Intraspecies): Default 10. Accounts for human variability [10].

- UFL (LOAEL-to-NOAEL): Apply if PoD is a LOAEL (default 10). Not needed for NOAEL or BMD [10].

- UFS (Subchronic-to-Chronic): Apply if extrapolating from shorter to longer duration (default up to 10) [10].

- UFD (Database Deficiencies): Applied for gaps in the overall database (e.g., missing reproductive toxicity study) [10].

- Calculate Composite UF (UFc): Multiply the applicable individual factors: UFc = UFA × UFH × UFL × UFS × UFD [10].

- Derive Reference Value: RfD or RfC = PoD / UFc [10].

Representative Default Uncertainty Factors from Major Organizations: Table: Default values illustrate variability in application; chemical-specific data should replace defaults when available [10].

| Uncertainty Factor | ECHA (EU) | ECETOC | TNO/RIVM | Common Default |

|---|---|---|---|---|

| UFA (Animal to Human) | Allometric Scaling | Allometric Scaling | Allometric Scaling | 10 |

| UFH (Human Variability) | 5 | 3 | 3 | 10 |

| UFL (LOAEL to NOAEL) | 1 (or use BMD) | 3 (or use BMD) | 1-10 (or use BMD) | 10 |

| UFS (Duration) | 2-6 | 2-6 | 10-100 | 10 |

| UFD (Database) | 1 | Not Applied | 1 | 1-10 |

Protocol 2: Standard Hazard Quotient Calculation Workflow

This protocol outlines the step-by-step calculation of the Hazard Quotient, integrating the composite UF-derived reference value.

Methodology:

- Exposure Assessment (Numerator): Calculate the Average Daily Dose (ADD) for oral exposure or the Exposure Concentration (EC) for inhalation. This involves modeling or measuring contaminant concentration, intake rates, and exposure duration [23] [2].

- Formula (Oral): ADD = (C × IR × EF × ED) / (BW × AT), where C=concentration, IR=intake rate, EF=exposure frequency, ED=exposure duration, BW=body weight, AT=averaging time.

- Toxicity Assessment (Denominator): Obtain or derive the appropriate Reference Dose (RfD) or Reference Concentration (RfC) for the same exposure duration and route, using Protocol 1 [23] [2].

- HQ Calculation: HQ = ADD / RfD (for oral) or HQ = EC / RfC (for inhalation) [23] [24] [2].

- Interpretation:

Example HQ Calculation from a Contaminated Water Scenario [23]: Table: HQ calculations for different age groups using a chronic Oral MRL of 0.005 mg/kg/day for 1,2,3-trichloropropane and exposure-specific doses.

| Exposure Group | Exposure Dose (mg/kg/day) | Hazard Quotient (HQ) | Interpretation |

|---|---|---|---|

| Birth to <1 year | 0.50 | 100 | HQ >> 1. Significant exceedance; requires in-depth analysis. |

| Adult | 0.14 | 28 | HQ > 1. Exceedance confirmed across lifespan. |

Visual Workflows

Diagram 1: Hazard Quotient Calculation with Composite UFs

This diagram illustrates the logical workflow for calculating a Hazard Quotient, highlighting the role of individual Uncertainty Factors in deriving the protective toxicity value.

Diagram 2: Relationship of Uncertainty Factors in Risk Assessment

This diagram shows how different categories of uncertainty factors relate to the extrapolations made from experimental data to a protective human exposure limit.

The Scientist's Toolkit

Essential research reagents and resources for conducting studies related to uncertainty factors and hazard quotient calculations.

| Item Name | Function & Application in UF/HQ Research |

|---|---|

| Benchmark Dose (BMD) Modeling Software | Used to derive a more robust PoD from dose-response data, reducing reliance on NOAEL/LOAEL and the associated UFL factor [10]. |

| Toxicokinetic/Toxicodynamic (TK/TD) Data | Chemical-specific data used to replace default interspecies (UFA) and intraspecies (UFH) uncertainty factors with evidence-based values [10]. |

| Published UF Databases & Guidelines | Reference documents from organizations like ECHA, ECETOC, and TNO/RIVM that provide default values and guidance for applying UFs in specific regulatory contexts [10]. |

| Probabilistic Exposure Assessment Tools | Software for modeling exposure distributions (e.g., Monte Carlo simulation) to generate a range of ADD/EC inputs, moving beyond conservative point estimates in the HQ numerator. |

| Hazard Index (HI) Calculation Framework | A standardized methodology for summing HQs of chemicals with additive effects, essential for cumulative risk assessment of mixtures [2]. |

In ecological risk assessment (ERA) and human health evaluation, a risk quotient (RQ) is a fundamental, screening-level tool. It is calculated by dividing a point estimate of exposure by a point estimate of toxicity (e.g., an LC50 or NOAEC) [8]. To account for known and unknown variabilities, traditional uncertainty factors (UFs) are applied. These are default, typically 10-fold factors that address key areas of uncertainty, such as extrapolating from animals to humans or protecting sensitive subpopulations [10].

However, the field is evolving. The reliance on these generic default factors is increasingly viewed as a source of imprecision, potentially leading to over- or under-protective risk estimates. The trend is shifting toward Chemical-Specific Adjustment Factors (CSAFs), which replace default values with data-derived factors tailored to a chemical's unique toxicokinetic and toxicodynamic properties [10]. This transition from a "one-size-fits-all" to a "chemical-specific" paradigm forms the core thesis of modern, refined risk assessment, aiming to reduce uncertainty and increase the scientific robustness and transparency of safety decisions [9].

Technical Support Center: Troubleshooting CSAF Development & Application

This section addresses common technical challenges researchers face when developing or applying CSAFs within ecological and human health risk assessment frameworks.

Troubleshooting Guide: Common Experimental & Analytical Issues

Problem: High Variability in Toxicokinetic (TK) Data Obtained from In Vitro Systems

- Question: Our in vitro metabolism data (e.g., intrinsic clearance) shows high inter-replicate variability, making it difficult to derive a reliable chemical-specific adjustment factor for interspecies differences (CSAF-A). How can we improve experimental consistency?

- Investigation & Solution:

- Audit Cell Health & Passage Number: Confirm the metabolic competency of your hepatocyte or cell line system. Use viability assays (e.g., Trypan Blue, MTT) immediately before the experiment. Limit experiments to cells within a validated passage range.

- Standardize Protein Content: Normalize metabolic rates to total protein content (e.g., via Bradford assay) rather than just cell count, as protein yield can vary.

- Include Positive Controls: Run a parallel experiment with a prototypical substrate (e.g., 7-ethoxycoumarin for CYP450 activity) to verify system functionality in each experimental batch.

- Pre-incubation Stability: Ensure the test chemical is stable in the incubation medium without cells over the experimental timeframe to distinguish degradation from metabolism.

Problem: Translating In Vitro Point-of-Departure (POD) to an In Vivo Equivalent Dose

- Question: We have a robust in vitro benchmark concentration (BMC) for a key event, but we are uncertain how to convert this to a predicted in vivo POD for risk quotient calculation.

- Investigation & Solution: Implement in vitro to in vivo extrapolation (IVIVE).

- Apply a TK Model: Use a simple well-stirred or parallel tube model to reverse-estimate the equivalent human oral dose. The formula often takes the form:

- Predicted Dose = (In Vitro BMC × Hepatic Clearance × Plasma Protein Binding Factor) / Bioavailability.

- Use Published IVIVE Tools: Leverage established software tools (like R packages

httkor EPA'sIVIVE) that incorporate physiological parameters (e.g., liver blood flow, microsomal protein per gram of liver). - Sensitivity Analysis: Conduct a sensitivity analysis on all input parameters (e.g., fraction unbound in plasma, intrinsic clearance) to understand which variables most influence your final CSAF and prioritize their accurate measurement.

- Apply a TK Model: Use a simple well-stirred or parallel tube model to reverse-estimate the equivalent human oral dose. The formula often takes the form:

Problem: Integrating Multiple Lines of Evidence for a Sensitive Subpopulation

- Question: We have conflicting data regarding susceptibility for a life stage (e.g., juvenile animals). Some studies show higher sensitivity, while others do not. How do we decide whether to apply a CSAF for intraspecies variability (CSAF-H)?

- Investigation & Solution:

- Systematic Review: Follow a pre-defined protocol to weight the evidence. Prioritize studies based on reliability (e.g., following OECD GLP guidelines), relevance (model organism, endpoint), and the statistical power to detect differences.

- Mode-of-Action (MOA) Analysis: Determine if the chemical's MOA (e.g., disruption of a specific neurodevelopmental pathway) is plausibly more active or less compensable in the sensitive subpopulation. This mechanistic understanding can support or refute the need for an adjusted factor.

- Default to a Tailored Default: If chemical-specific data are insufficient for a full CSAF-H but evidence suggests potential vulnerability, consider using a data-informed default factor (e.g., a factor of 3 instead of the default 10), clearly documenting the rationale.

Problem: The Derived CSAF Results in an RQ that Conflicts with Population Model Outputs

- Question: Our refined risk assessment using a CSAF suggests a low risk (RQ < 1), but a mechanistic population model for an endangered species indicates potential long-term decline. Which result should we trust?

- Investigation & Solution:

- Scope Reconciliation: Verify that both assessments are evaluating the same spatial-temporal scale and ecological endpoint. The RQ/CSAF approach may assess individual-level risk over a defined period, while the population model assesses viability over generations.

- Identify the Driver: Analyze the population model to identify the critical parameter causing decline (e.g., reduced juvenile survival, impaired fecundity). Check if your toxicity endpoint and CSAF derivation adequately protect this specific critical effect.

- Use the Model to Inform the Factor: This is a key advancement. Use the population model in an iterative way to determine what level of protection (i.e., what magnitude of adjustment factor) would be sufficient to prevent unacceptable population-level consequences. This represents a move beyond the quotient method toward more ecologically relevant risk characterization [9].

Frequently Asked Questions (FAQs)

Q1: When is it mandatory to develop a CSAF, and when can we use default UFs?

- A: Regulatory mandates vary. Under frameworks like the EPA's TSCA risk evaluation, the use of the "best available science" and a "weight-of-scientific-evidence" approach is required [25]. This increasingly pressures assessments to move beyond defaults when credible chemical-specific data exist. Default UFs remain acceptable and necessary for screening-level assessments or when specific data are lacking [10].

Q2: What is the minimum data requirement to justify replacing a default 10-fold interspecies UF (UFA) with a CSAF-A?

- A: A robust CSAF-A typically requires comparative toxicokinetic data. At a minimum, this includes in vitro metabolism data (e.g., intrinsic clearance in human and test animal liver microsomes/S9) to quantify species differences in clearance. More robust justifications include in vivo data on absorption, distribution, metabolism, and excretion (ADME) in both species. The CSAF-A is often calculated as the ratio of the relevant kinetic parameter (e.g., human clearance / animal clearance) [10].

Q3: How do we handle uncertainty within our newly derived CSAF?

- A: A CSAF does not eliminate uncertainty; it aims to characterize it better. You must quantify and report the uncertainty around your CSAF:

- Propagate Measurement Uncertainty: Use statistical methods (e.g., Monte Carlo simulation) to propagate confidence intervals from your primary data (e.g., clearance rates, BMC values) through to the final CSAF.

- Apply a Modifying Factor (MF): Some frameworks recommend applying an additional, small (e.g., <10) MF to account for residual uncertainty in the CSAF derivation process or database limitations [10].

- Transparent Documentation: Clearly state all assumptions, data sources, and computational steps in an audit trail.

- A: A CSAF does not eliminate uncertainty; it aims to characterize it better. You must quantify and report the uncertainty around your CSAF:

Q4: Can CSAFs be applied to the ecological risk assessment of pesticides under EPA guidelines?

- A: Yes, the principles are applicable. While the core EPA ecological risk assessment models (e.g., T-REX for birds/mammals, TerrPlant) currently use deterministic RQs with standard assessment factors [8], there is a strong scientific push for advancement. Higher-tier assessments can and do incorporate more refined, chemical-specific data to replace conservative defaults, especially through the use of population models which inherently account for variability in ways that simple quotients cannot [9].

Data Presentation: Comparing Default and Chemical-Specific Approaches

Table 1: Standard Default Uncertainty Factors (UFs) and Their CSAF Counterparts [10]

| Uncertainty Factor Acronym | Area of Uncertainty Addressed | Typical Default Value | Basis for Chemical-Specific Adjustment (CSAF) |

|---|---|---|---|

| UFA | Interspecies (Animal to Human) | 10 | Ratio of toxicokinetic (e.g., clearance) or toxicodynamic (e.g., receptor affinity) parameters between test species and humans. |

| UFH | Intraspecies (Human Variability) | 10 | Data on differential susceptibility in potentially susceptible subpopulations (e.g., genetic polymorphisms in metabolizing enzymes, life stage-specific sensitivity). |

| UFL | LOAEL to NOAEL Extrapolation | 10 | Use of a benchmark dose (BMD) modeling approach, which uses the full dose-response curve, or chemical-specific data on the slope of the curve. |

| UFS | Subchronic to Chronic Exposure | 10 | Data from toxicity studies of varying durations showing the relationship between exposure time and effect severity for the chemical. |

| UFD | Database Incompleteness | 1-10 | Expert judgment on the quality and completeness of the overall toxicological database, potentially reduced by new testing (e.g., high-throughput screening). |

Table 2: Example Risk Quotient (RQ) Calculations Using Default vs. CSAF Approaches [8]

| Assessment Scenario | Exposure Estimate (EEC) | Toxicity Point (POD) | Applied Adjustment Factor | Risk Quotient (RQ) Calculation | Interpretation |

|---|---|---|---|---|---|

| Avian Acute (Default) | Dietary EEC = 25 mg/kg-diet | LD50 = 500 mg/kg-bw | Default UF = 10 (safety factor) | RQ = EEC / (LD50 / 10) = 25 / 50 = 0.5 | Further evaluation may be triggered. |

| Avian Acute (CSAF) | Dietary EEC = 25 mg/kg-diet | LD50 = 500 mg/kg-bw | CSAF-A (Kinetic) = 3 (based on species-specific metabolism) | RQ = EEC / (LD50 / 3) = 25 / 167 ≈ 0.15 | Risk estimate is lower, reflecting refined kinetic data. |

| Aquatic Chronic (Default) | 21-day Avg EEC = 4.2 µg/L | Invertebrate NOAEC = 10 µg/L | Assessment Factor = 10 (per guideline) | RQ = EEC / (NOAEC / 10) = 4.2 / 1 = 4.2 | Exceeds Level of Concern (LOC=1). |

| Aquatic Chronic (CSAF/Pop Model) | Exposure distribution (see Fig. 1) | Population-level metric (e.g., r, lambda) | Variability integrated in model | Probabilistic Output: 15% probability of population decline >20% over 10 years. | Provides ecologically relevant risk characterization [9]. |

Experimental Protocols for Key CSAF-Related Data Generation

Protocol 1: Deriving a CSAF for Interspecies Differences (CSAF-A) from In Vitro Metabolism Data Objective: To quantify the difference in hepatic intrinsic clearance (CLint) of a chemical between a standard test species (e.g., rat) and humans. Materials: Pooled liver microsomes or S9 fractions from human and rat; test chemical; NADPH regeneration system; appropriate analytical equipment (LC-MS/MS); incubation buffer. Procedure:

- Prepare microsomal incubations containing test chemical at a concentration below Km (to ensure first-order kinetics) and NADPH cofactor.

- Incubate at 37°C. Remove aliquots at multiple time points (e.g., 0, 5, 15, 30, 60 min).

- Terminate the reaction and quantify the parent chemical concentration remaining via LC-MS/MS.

- Plot the natural logarithm of the parent compound concentration versus time. The slope (k) is the elimination rate constant.

- Calculate in vitro CLint = k × incubation volume / microsomal protein amount.