Mastering Systematic Reviews in Toxicology: A Step-by-Step Guide for Researchers and Drug Developers

This comprehensive guide provides researchers, scientists, and drug development professionals with a practical framework for conducting rigorous and reliable systematic reviews in toxicology.

Mastering Systematic Reviews in Toxicology: A Step-by-Step Guide for Researchers and Drug Developers

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a practical framework for conducting rigorous and reliable systematic reviews in toxicology. It moves beyond clinical review models to address the unique challenges of toxicological evidence, such as integrating multiple evidence streams (in vivo, in vitro, in silico) and extrapolating from animal studies to human health. The article covers the foundational principles of evidence-based toxicology, details a methodological workflow from protocol development to data synthesis, offers solutions for common pitfalls in search strategy and bias assessment, and explores advanced validation techniques and future methodological directions. By synthesizing current guidance from authoritative sources like the NTP/OHAT handbook and recent methodological research, this article equips professionals to produce transparent, reproducible reviews that can robustly inform regulatory decisions and safety assessments.

The Why and What: Building the Foundation for Evidence-Based Toxicology Reviews

In toxicology, the traditional approach to synthesizing evidence has historically been the narrative review, where an expert summarizes a field based on a selective, often non-transparent, examination of the literature [1]. While such reviews can provide valuable perspectives, they are intrinsically susceptible to bias, lack reproducibility, and may lead to conflicting conclusions about the same chemical, as seen in historical assessments of substances like Bisphenol A [1]. This undermines consistent, evidence-based decision-making in public health and regulation.

A systematic review is defined as a scholarly synthesis that uses explicit, pre-defined, and reproducible methods to identify, select, appraise, and summarize all available evidence on a clearly formulated question [2] [3]. This methodology, pioneered in clinical medicine, is now recognized as a cornerstone of Evidence-Based Toxicology (EBT), aiming to improve the transparency, objectivity, and reliability of toxicological assessments [1].

The core distinction lies in the methodology. Narrative reviews often employ an implicit process, while systematic reviews are characterized by a rigorous, protocol-driven workflow that minimizes bias and enables independent verification [1] [4]. The following table summarizes the fundamental differences:

Table 1: Comparison of Narrative and Systematic Reviews in Toxicology [1]

| Feature | Narrative Review | Systematic Review |

|---|---|---|

| Research Question | Broad, often informal or implicit. | Specified, precise, and explicit. |

| Literature Search | Sources and strategy usually not specified; potentially selective. | Comprehensive, multi-database search with explicit, documented strategy. |

| Study Selection | Criteria usually not specified; subjective. | Explicit, pre-defined inclusion/exclusion criteria applied consistently. |

| Quality Assessment | Often absent or informal. | Critical appraisal using explicit, standardized tools (e.g., risk of bias). |

| Synthesis | Qualitative summary. | Structured synthesis (qualitative and, where possible, quantitative meta-analysis). |

| Time & Resources | Generally lower (months). | Substantially higher (often >1 year). |

| Expertise Required | Subject matter expertise. | Subject expertise + systematic review methodology, search, and analysis. |

| Output | Expert opinion summary. | Transparent, auditable evidence synthesis suitable for informing decisions. |

Systematic reviews in toxicology face unique complexities not always present in clinical medicine, including multiple evidence streams (e.g., in vitro, animal, human observational), diverse species and strains, complex exposure scenarios, and the frequent need for hazard identification versus therapeutic benefit assessment [1]. Adapting the systematic review framework to address these challenges is the central thesis of modern evidence-based toxicology.

Core Methodology: A Stepwise Protocol for Toxicological Systematic Reviews

Conducting a rigorous systematic review in toxicology follows a structured, multi-stage process. Adherence to this protocol is essential to ensure the review’s validity and reliability.

1. Formulating the Research Question & Protocol Development The process begins with a precisely framed research question. The PICO framework (Population, Intervention/Exposure, Comparison, Outcome) is a standard tool for structuring questions in evidence-based research [5] [3]. In toxicology, this adapts to: Population/Species (e.g., human, rodent, in vitro system), Exposure (chemical, dose, duration, route), Comparator (control or alternative exposure), and Outcome (specific adverse effect or biomarker) [1]. Before beginning the search, a detailed protocol must be written and registered on a platform like PROSPERO. This pre-defines the methods, including eligibility criteria and analysis plans, to reduce bias and prevent arbitrary decision-making during the review [5] [1].

2. Systematic Search & Study Selection A comprehensive, unbiased search is critical. It involves searching multiple electronic databases (e.g., PubMed/MEDLINE, Embase, TOXLINE, Scopus) with a tailored, sensitive search strategy [5] [3]. The strategy should include controlled vocabulary (e.g., MeSH terms) and keywords, and may be supplemented by scanning reference lists and grey literature [3]. Search results are imported into review management software. At least two reviewers then independently screen titles/abstracts and subsequently full-text articles against the pre-defined inclusion/exclusion criteria. Disagreements are resolved through discussion or a third reviewer [5] [4]. This process is documented in a PRISMA flow diagram.

3. Data Extraction & Critical Appraisal (Risk of Bias Assessment) Data from included studies are extracted into standardized forms by two independent reviewers. Extracted information typically includes study design, sample characteristics, exposure details, outcome measures, results, and funding sources [5]. Concurrently, the methodological quality and risk of bias of each study is critically appraised. For animal toxicology studies, tools like the SYRCLE’s risk of bias tool or the NTP/OHAT risk of bias rating are employed [1]. This step evaluates internal validity by assessing elements like randomization, blinding, allocation concealment, and handling of incomplete data [5]. The overall quality of evidence across studies for a specific outcome may be graded using systems like GRADE [5].

4. Evidence Synthesis & Interpretation The final stage involves synthesizing the extracted data. A qualitative synthesis summarizes the findings, often tabulating results and describing patterns across studies. Where studies are sufficiently homogeneous in design, exposure, and outcome, a quantitative synthesis (meta-analysis) can be performed. This uses statistical methods to calculate a pooled effect estimate (e.g., standardized mean difference, relative risk) [5] [3]. Heterogeneity among studies is statistically assessed (e.g., using I²). The synthesis must transparently relate the strength and limitations of the evidence—considering risk of bias, inconsistency, and indirectness—to the final conclusions [1].

Table 2: Key Steps and Methodological Considerations in a Toxicology Systematic Review

| Review Stage | Core Action | Toxicology-Specific Considerations & Tools |

|---|---|---|

| Planning | Define PICO question; Write/register protocol. | Adapt PICO for exposure; Use PROSPERO for registration. |

| Searching | Execute comprehensive, multi-database search. | Include toxicology-specific databases (e.g., TOXLINE); Account for complex chemical nomenclature. |

| Screening | Apply inclusion/exclusion criteria via dual independent review. | Manage large volumes of in vitro and in vivo studies; Use software for efficiency. |

| Appraisal | Assess risk of bias/study quality. | Use specialized tools (e.g., SYRCLE's RoB for animal studies; OHAT tool). |

| Extraction | Systematically extract relevant data. | Design forms for diverse endpoints (histopathology, clinical chemistry, omics data). |

| Synthesis | Qualitatively and/or quantitatively synthesize evidence. | Address high heterogeneity across species, strains, and designs; Consider dose-response. |

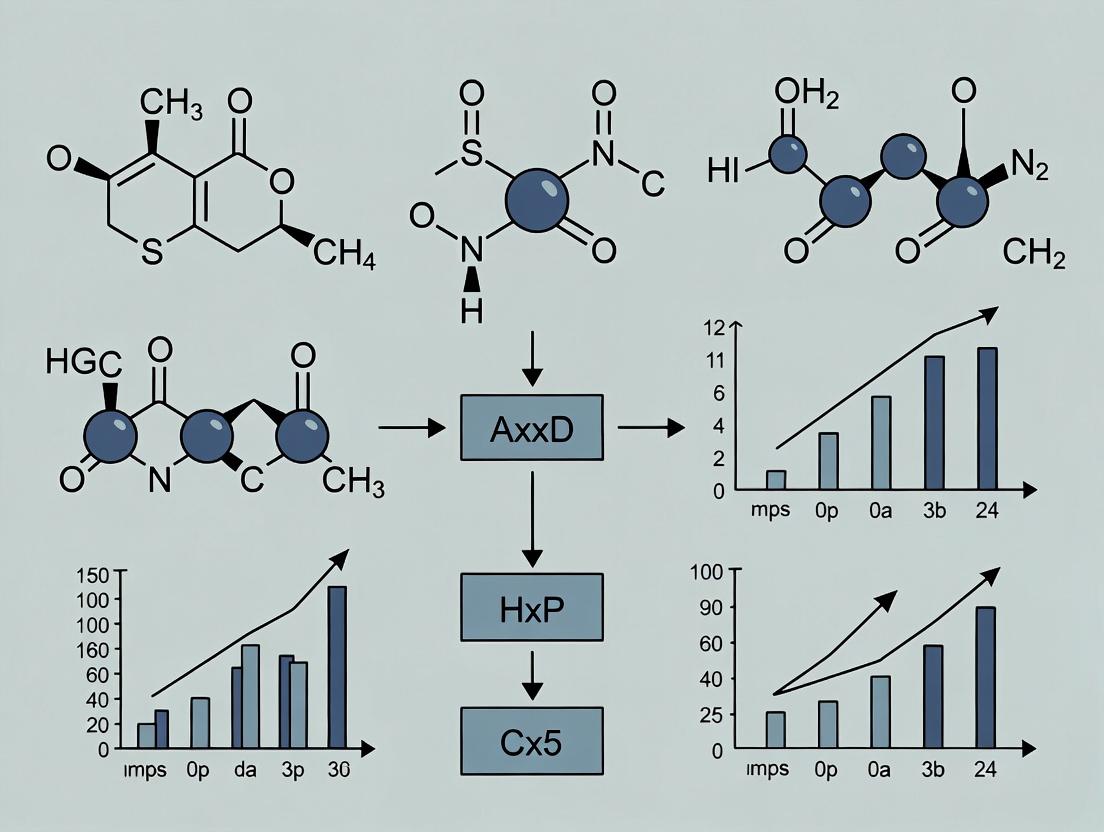

Visualization of Systematic Review Workflows

Systematic Review Workflow in Toxicology

Adapting the PICO Framework for Toxicology Questions

The Scientist's Toolkit: Essential Software for Systematic Reviews

Modern systematic reviews are supported by specialized software that manages the workflow, from reference screening to data synthesis. The choice of tool depends on project scale, budget, and specific needs [6] [7].

Table 3: Key Software Tools for Managing Systematic Reviews [8] [6] [7]

| Tool Name | Primary Function & Key Features | Cost Model | Best For |

|---|---|---|---|

| CADIMA | A free, web-based platform supporting the entire review process: protocol writing, literature screening, data extraction, and reporting. | Free | Academic researchers and projects with limited funding. |

| Covidence | Streamlines title/abstract screening, full-text review, risk-of-bias assessment (Cochrane RoB), and data extraction. Features machine learning to prioritize records. | Subscription (Institutional licenses common) | Medical and health science reviews; teams valuing an intuitive, guided workflow. |

| Rayyan | AI-powered tool focused on efficient and collaborative blind screening of abstracts and titles. Uses machine learning to suggest inclusion/exclusions. | Freemium (Free with paid upgrades) | Rapid screening phases; collaborative teams needing a low-cost entry point. |

| DistillerSR | An enterprise-level platform with high configurability, advanced workflow automation, and robust audit trails. Strong API for integration. | Subscription (Higher cost) | Large-scale projects (e.g., regulatory agencies, large research consortia) requiring compliance and customization. |

| EPPI-Reviewer | A comprehensive tool for complex data synthesis, supporting meta-analysis, textual data coding, and diverse review types (mixed methods, qualitative). | Subscription | Reviews requiring deep qualitative or complex quantitative synthesis beyond basic meta-analysis. |

| SUMARI (JBI) | Supports the entire lifecycle for 10+ review types (effectiveness, qualitative, economic, scoping). Integrated with JBI methodology. | Subscription | Researchers aligned with Joanna Briggs Institute (JBI) methodology for evidence synthesis. |

| RevMan 5 | The standard software for preparing and maintaining Cochrane Reviews. Includes tools for meta-analysis and generation of 'Summary of Findings' tables. | Free for non-commercial use | Teams conducting Cochrane-style reviews or requiring rigorous meta-analysis. |

For toxicology-specific assessments, the Health Assessment Workspace Collaborative (HAWC) is a notable open-source platform designed to support the entire workflow of chemical health assessments, including systematic review, data extraction, dose-response analysis, and evidence visualization [8] [9].

The discipline of toxicology is undergoing a foundational shift from a reliance on traditional, often siloed data assessment toward a rigorous, transparent, and reproducible Evidence-Based Toxicology (EBT) paradigm. This transition is critical for addressing modern challenges, including the evaluation of novel chemical substances, integrating New Approach Methodologies (NAMs), and maintaining public trust in regulatory decisions [10]. At the core of EBT lies the systematic review, a methodological process designed to minimize bias and subjectivity by comprehensively identifying, appraising, and synthesizing all relevant evidence on a specific question [11].

Systematic reviews provide the essential scientific foundation for credible hazard identification, dose-response assessment, and ultimately, risk-informed regulation. Their formal adoption by agencies like the U.S. National Toxicology Program (NTP) underscores their role as a gold standard for evidence integration [12]. This guide details the procedural framework for conducting a systematic review within toxicology, providing researchers and regulatory professionals with the methodological toolkit necessary to generate defensible, high-quality evidence assessments.

Methodological Framework: The Systematic Review Workflow

The conduct of a systematic review is a multi-stage, iterative process. Adherence to a predefined, peer-reviewed protocol is essential to ensure objectivity and reproducibility. The following workflow outlines the critical phases, emphasizing steps specific to toxicological evidence.

Table 1: Key Phases of a Systematic Review in Toxicology

| Phase | Core Activities | Key Outputs & Tools |

|---|---|---|

| 1. Problem Formulation & Protocol | Define the scope using PECO; develop and register the review protocol. | PECO statement; pre-registered protocol [13]. |

| 2. Systematic Search | Execute comprehensive, multi-database searches; manage records. | Search strategy document; de-duplicated library (EndNote, Covidence) [11]. |

| 3. Study Screening & Selection | Apply PECO criteria via title/abstract and full-text screening in duplicate. | Flow diagram of included/excluded studies; inter-reviewer agreement metrics. |

| 4. Data Extraction & Quality Assessment | Extract predefined data using standardized forms; assess risk of bias/study reliability. | "Characteristics of Included Studies" table; risk-of-bias ratings [14]. |

| 5. Evidence Synthesis & Integration | Synthesize data qualitatively or via meta-analysis; grade confidence in the body of evidence. | Narrative synthesis; forest plots; evidence profile tables (e.g., OHAT approach) [12]. |

| 6. Reporting & Application | Draft final report following PRISMA guidelines; articulate conclusions for hazard assessment or regulation. | Published systematic review; summary for regulatory docket (e.g., EPA SNUR analysis) [15]. |

Phase 1: Problem Formulation and Protocol Development The initial and most critical step is crafting a precise and actionable research question, typically structured using the PECO framework (Population, Exposure, Comparator, Outcome) [13]. In toxicology:

- Population: The organism (e.g., human, rodent, zebrafish embryo).

- Exposure: The chemical agent, its dose, route, and duration.

- Comparator: The control group (e.g., vehicle control, low-dose cohort).

- Outcome: The measured health effect (e.g., hepatocellular adenoma, serum ALT elevation).

A narrowly scoped PECO question enhances specificity but may limit generalizability, while a broad question increases resource demands [11]. Recent discussions highlight the value of an iterative approach to problem formulation, where preliminary screening results can inform refinements to PECO criteria to streamline the assessment without compromising its objectives [13]. The finalized question forms the basis of a detailed protocol, which should be registered in a public platform to enhance transparency and reduce bias.

Phase 2: Comprehensive Literature Search and Management A systematic search aims to capture all potentially relevant evidence, mitigating publication bias. This requires searching multiple bibliographic databases (e.g., PubMed/MEDLINE, Embase, TOXLINE, Scopus) with tailored syntax [11]. Searches must be supplemented by reviewing reference lists of included studies and key reviews, and by searching for gray literature (e.g., regulatory reports, thesis repositories). Retrieved records are imported into reference management software, and duplicates are removed using tools like EndNote, Covidence, or Rayyan [11].

Phase 3: Study Screening and Selection Studies are screened in two sequential stages (title/abstract, then full-text) against the pre-defined PECO eligibility criteria. This process should be conducted independently by at least two reviewers, with conflicts resolved through discussion or a third adjudicator [14]. The screening process, including reasons for exclusion at the full-text stage, should be documented in a flow diagram.

Phase 4: Data Extraction and Risk of Bias Assessment Data from included studies are extracted using standardized, pilot-tested forms [14]. Extraction should also be performed in duplicate to ensure accuracy. Key data points include study design, exposure parameters, participant/subject characteristics, outcome data, and funding sources.

Concurrently, the methodological risk of bias (internal validity) or reliability of each study is evaluated using established tools. For animal studies, tools like the OHAT Risk of Bias Rating or SYRCLE's tool are common. For human epidemiological studies, the Newcastle-Ottawa Scale may be used [11]. This assessment is crucial for interpreting findings and weighting studies during synthesis.

Phase 5: Evidence Synthesis and Integration Extracted data are synthesized to answer the PECO question. For quantitative data on a common outcome, a meta-analysis can be performed using statistical software (e.g., R, RevMan) to calculate a pooled effect estimate [11]. Heterogeneity between studies must be assessed (e.g., via I² statistic). Where statistical pooling is inappropriate, a structured narrative synthesis is conducted.

The final step is grading the confidence in the body of evidence. Frameworks like OHAT or GRADE evaluate factors such as risk of bias, consistency, directness, and precision across studies to categorize confidence as high, moderate, low, or very low [12]. This graded confidence directly informs the strength of the hazard conclusion.

Phase 6: Reporting and Regulatory Application The review should be reported following the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) guidelines. The final output provides a transparent, auditable evidence base. This evidence directly supports regulatory actions, such as the U.S. EPA's development of Significant New Use Rules (SNURs), where the systematic review substantiates the identification of potential unreasonable risk and the need for exposure controls [15]. It also informs the integration of NAMs into next-generation risk assessments by establishing a robust baseline of traditional evidence for comparison [10].

Experimental Protocols & The Scientist's Toolkit

A pivotal application of systematic reviews in toxicology is to determine whether existing data are sufficient for safety assessment or if new, targeted research is required. The following protocol exemplifies a hypothesis-driven in vivo study designed to fill a specific evidence gap identified through a systematic review.

Targeted Experimental Protocol: 28-Day Repeated Dose Oral Toxicity Study

- Objective: To characterize the dose-response relationship for hepatic and renal effects of Chemical X, identified as a data gap for subchronic endpoints.

- Test System: Young adult Sprague-Dawley rats (e.g., n=10/sex/group).

- Test Article & Dose Selection: Chemical X, administered via oral gavage in a suitable vehicle. Doses are selected based on a systematic review of acute data (e.g., No Observed Adverse Effect Level [NOAEL] from a 14-day study) and may include that NOAEL, a mid-dose, and a higher effect-level dose.

- Core Measurements:

- Clinical Observations: Twice daily for morbidity/mortality; detailed weekly physical examinations.

- Body Weight & Food Consumption: Measured and recorded at least twice weekly.

- Clinical Pathology: At termination, collect blood for hematology and clinical chemistry (e.g., ALT, AST, BUN, Creatinine). Collect urine for urinalysis.

- Necropsy & Histopathology: Full gross necropsy. Preserve liver, kidneys, and other target organs (as indicated by the literature) for microscopic examination by a board-certified veterinary pathologist.

- Statistical Analysis: Data analyzed using appropriate parametric or non-parametric methods. Dose-response trends evaluated, and a benchmark dose (BMD) may be modeled for critical endpoints.

- Reporting: Results reported per OECD Test Guideline 407 principles, ensuring compatibility for inclusion in future systematic reviews and regulatory dossiers.

Table 2: Research Reagent Solutions for Core Toxicological Assays

| Research Reagent / Material | Primary Function in Toxicology Studies |

|---|---|

| Formalin (10% Neutral Buffered) | Standard fixative for preserving tissue architecture for histopathological evaluation. |

| ALT (Alanine Aminotransferase) & AST (Aspartate Aminotransferase) Assay Kits | Colorimetric or kinetic measurement of these enzymes in serum as sensitive biomarkers of hepatocellular injury. |

| Creatinine Assay Kit & BUN (Blood Urea Nitrogen) Assay Kit | Key diagnostic reagents for assessing renal function by measuring filtration and waste product concentration. |

| Hematology Analyzer Controls & Calibrators | Essential for ensuring accuracy and precision in complete blood count (CBC) analysis, assessing effects on hematopoiesis and immune cells. |

| RNA Stabilization Reagent (e.g., RNAlater) | Preserves RNA integrity in tissues for subsequent transcriptomic analysis, a key component in NAMs and mechanistic toxicology. |

| CYP450 Enzyme Activity Assay Substrates | Fluorescent or luminescent probes used to measure the activity of specific cytochrome P450 isoforms, indicating potential for metabolic induction or inhibition. |

| LC-MS/MS Grade Solvents and Standards | Critical for the accurate quantification of chemical concentrations in dosing formulations, serum, and tissues via liquid chromatography-tandem mass spectrometry. |

Visualizing Workflows and Relationships

Systematic Review Methodology in EBT

PECO Framework for Problem Formulation

The systematic review is not merely a literature summary but a rigorous, transparent scientific investigation in its own right. Its disciplined application is imperative for advancing EBT, resolving "dueling assessments" through methodological clarity, and building a robust, credible foundation for chemical safety decisions [13]. As toxicology evolves with NAMs and complex data streams, the principles of systematic review—structured problem formulation, comprehensive evidence collection, critical appraisal, and transparent synthesis—will remain the indispensable bedrock for trustworthy science that effectively informs public health protection.

Systematic reviews in toxicology and environmental health represent a distinct methodological paradigm from clinical medical reviews, primarily due to their fundamental purpose: hazard identification and risk assessment. While clinical reviews typically evaluate the efficacy and safety of interventions within controlled settings, toxicological systematic reviews assess whether an environmental agent, chemical, or mixture causes an adverse effect under specific exposure conditions [16]. This core objective necessitates the integration of multiple evidence streams—including human epidemiological studies, controlled animal toxicology experiments, and mechanistic in vitro data—to reach a causal conclusion about hazard [17]. The process demands tailored frameworks, such as the OHAT (Office of Health Assessment and Translation) approach or the COSTER (Conduct of Systematic Reviews in Toxicology and Environmental Health Research) recommendations, which extend traditional systematic review methodology to handle this breadth and complexity [12] [18]. This guide delineates the key methodological distinctions, with a focus on evidence integration and hazard conclusion formulation, providing researchers with a technical roadmap for conducting rigorous toxicological systematic reviews.

Foundational Methodological Distinctions

The conduct of a systematic review in toxicology diverges from its clinical counterpart at every stage, from problem formulation to conclusion. These differences stem from the nature of the research questions, the available evidence, and the intended use of the output for public health protection and regulatory decision-making.

Table 1: Core Differences Between Clinical and Toxicology Systematic Reviews

| Aspect | Clinical Systematic Review (e.g., Therapeutic Intervention) | Toxicology Systematic Review (e.g., Hazard Identification) |

|---|---|---|

| Primary Objective | Determine efficacy and safety of an intervention (therapy, prevention). | Determine whether an agent causes an adverse health effect (hazard identification) [16]. |

| Key Question Framework | PICO (Population, Intervention, Comparator, Outcome). | PECOTS (Population, Exposure, Comparator, Outcome, Timing, Setting) [16]. |

| Primary Evidence Streams | Human studies only (RCTs as gold standard, observational studies). | Integrated streams: Human (observational), Animal (experimental), Mechanistic (in vitro, in silico) [16] [17]. |

| Common Study Designs | Randomized Controlled Trials (RCTs), cohort, case-control. | Cohort, case-control (human); controlled laboratory experiments (animal); biochemical, cell-based assays (mechanistic). |

| Exposure Assessment | Controlled, known dose/intervention. | Often estimated, historical, or measured with error; wide range of doses/relevant to environmental levels. |

| Outcome Assessment | Clinical endpoints, patient-reported outcomes. | Broad range of pathological, physiological, and molecular endpoints across species and systems. |

| Risk of Bias Tools | Cochrane RoB (for RCTs), ROBINS-I (for observational). | Domain-based tools specific to evidence stream (e.g., OHAT Risk of Bias Tool for human & animal studies) [16]. |

| Evidence Synthesis Goal | Quantitative meta-analysis of effect measures (e.g., RR, OR). | Qualitative weight-of-evidence integration; quantitative synthesis may be performed within a stream if studies are sufficiently similar [17]. |

| Final Output | Summary of clinical effect, often with a quantitative estimate. | Hazard identification conclusion (e.g., "known to be a hazard," "suspected hazard," "not classifiable") [12]. |

The Seven-Step Framework for Toxicology Systematic Reviews

The OHAT framework provides a standardized, seven-step procedure for conducting systematic reviews that integrate multiple evidence streams to reach hazard identification conclusions [16].

Step 1: Problem Formulation & Protocol Development This critical first stage involves defining the PECOTS criteria, which explicitly frames the review around Exposure rather than a clinical intervention [16]. A detailed, publicly registered protocol is developed a priori, specifying the methods for all subsequent steps, including how different evidence streams will be identified and integrated.

Step 2: Search & Study Selection A comprehensive search is executed across multidisciplinary databases (e.g., PubMed/MEDLINE, TOXNET, Embase, Scopus) to capture literature from medical, toxicological, and environmental sciences [16]. The study selection process, documented via a PRISMA flow diagram, applies eligibility criteria independently by two reviewers to minimize bias [19] [20].

Step 3: Data Extraction Structured forms are used to extract detailed data on study design, population/exposure characteristics, outcomes, and results. Data extraction is typically performed by one reviewer and verified by a second to ensure accuracy [21]. Data from different streams (human, animal, mechanistic) are often extracted into separate, tailored forms.

Step 4: Risk of Bias Assessment of Individual Studies The credibility of each study is evaluated using evidence-stream-specific tools. For human studies, tools assess domains like confounding and exposure characterization. For animal studies, domains include randomization, blinding, and attrition. Mechanistic studies are evaluated for reliability and relevance [16]. This step is distinct from clinical reviews, which may not assess laboratory-based evidence.

Step 5: Rate Confidence in the Body of Evidence The overall reliability of the evidence for a specific outcome within each stream (e.g., human evidence for liver toxicity, animal evidence for liver toxicity) is rated. Systems like GRADE (Grading of Recommendations Assessment, Development and Evaluation) or its adaptations are used, considering risk of bias, consistency, directness, precision, and other factors [16].

Step 6: Translate Confidence Ratings into Levels of Evidence The confidence ratings are converted into discrete levels of evidence for each stream (e.g., "high," "moderate," "low," or "evidence of no effect") [16]. This creates a standardized input for the final integration step.

Step 7: Integrate Evidence Streams to Develop Hazard Identification Conclusions This is the most distinctive step. Using a predefined method (e.g., the OHAT approach or a visual integration tool), the levels of evidence from all streams are weighed together [17]. The process is deliberative and consensus-based, considering the strengths and limitations of each stream: human data provide direct relevance but often have exposure uncertainty, animal data provide controlled exposure but require cross-species extrapolation, and mechanistic data support biological plausibility but may not predict apical outcomes. The final output is a hazard conclusion (e.g., "known/suspected/likely to be a hazard" or "not identified as a hazard") [12] [16].

OHAT Framework: Evidence Integration Workflow

Detailed Methodological Protocols for Key Experiments

Implementing the systematic review framework requires precise protocols for handling different evidence streams. The following table outlines the core methodological considerations for each.

Table 2: Methodological Protocols for Evidence Streams in Toxicology Reviews

| Evidence Stream | Core Study Designs | Key Data Extraction Elements | Risk of Bias Assessment Domains | Special Considerations for Synthesis |

|---|---|---|---|---|

| Human (Epidemiological) | Cohort, Case-Control, Cross-Sectional | - Exposure assessment method & metric.- Outcome definition & ascertainment.- Confounder adjustment & statistical model.- Effect estimate (RR, OR, HR) with CI. | 1. Participant selection.2. Exposure characterization.3. Outcome assessment.4. Confounding control.5. Incomplete data.6. Selective reporting [16]. | - Meta-analysis often limited by heterogeneity in exposure/outcome measurement.- Emphasis on consistency, dose-response, and temporal relationship. |

| Animal (Toxicology) | Controlled Laboratory Experiments (in vivo) | - Species, strain, sex, age.- Exposure route, duration, frequency, dose levels.- Detailed outcome data (incidence, severity, time-to-onset).- Historical control data. | 1. Sequence generation (randomization).2. Allocation concealment.3. Blinding.4. Incomplete outcome data.5. Selective outcome reporting [16]. | - Quantitative synthesis possible for similar studies (e.g., benchmark dose modeling).- Critical evaluation of study relevance to human exposure scenarios (e.g., dose, route). |

| Mechanistic (Other Relevant Data) | in vitro assays, ex vivo studies, in silico models, read-across. | - Test system (cell line, primary cells, tissue).- Biological endpoint (cytotoxicity, genotoxicity, receptor binding).- Concentration/dose-response relationship.- Relevance to hypothesized Adverse Outcome Pathway (AOP). | 1. Reliability (e.g., protocol adherence, replication).2. Relevance (biological/chemical similarity to human case).3. Consistency (within and across test systems) [16]. | - Not used in isolation for hazard identification.- Serves to support biological plausibility, explain concordance/discordance between human and animal data, or fill data gaps. |

Conducting a high-quality toxicology systematic review requires leveraging specialized tools and databases beyond those used in clinical medicine.

Table 3: Research Reagent Solutions for Toxicology Systematic Reviews

| Tool/Resource Category | Specific Examples | Function & Utility |

|---|---|---|

| Specialized Literature Databases | TOXNET (via PubMed), Scopus, Embase, Web of Science, ISTA (Index to Scientific & Technical Abstracts). | Broad coverage of toxicological, pharmacological, and environmental science literature not fully indexed in MEDLINE [16]. |

| Systematic Review Management Software | Covidence, Rayyan, DistillerSR. | Platforms for collaborative title/abstract screening, full-text review, data extraction, and generation of PRISMA flow diagrams [21] [20]. |

| Risk of Bias / Study Quality Tools | OHAT Risk of Bias Tool, SYRCLE's RoB tool for animal studies, Klimisch Score for in vitro studies. | Standardized, evidence-stream-specific tools to evaluate internal validity of individual studies [16]. |

| Data Extraction & Management | Custom forms in Excel or Google Sheets, systematic review software modules, electronic lab notebooks. | Structured templates to consistently capture critical data from heterogeneous study designs across multiple streams [21]. |

| Evidence Integration & Visualization | The UK COC/COT Visualisation Tool [17], OHAT evidence profile tables, AOP (Adverse Outcome Pathway) knowledgebase. | Frameworks and graphical tools to transparently document the weight-of-evidence judgment and communicate how different streams contributed to the final hazard conclusion [17]. |

| Chemical & Toxicological Data Repositories | EPA CompTox Chemicals Dashboard, NTP CEBS (Chemical Effects in Biological Systems), OECD eChemPortal. | Sources for chemical identifiers, properties, and curated toxicological data to inform problem formulation and data extraction. |

Visualizing Evidence Integration: A Weight-of-Evidence Approach

A major challenge is transparently communicating the integration process. Frameworks like the one proposed by the UK Committees on Toxicity and Carcinogenicity advocate for visual synthesis tools [17]. The following diagram conceptualizes this deliberative, qualitative process, where the strength and consistency of evidence within each stream, along with considerations of biological plausibility and concordance across streams, inform a final expert judgment on the probability of causation.

Weight of Evidence Integration Process

Executive Summary Within toxicology research—a field that directly informs chemical safety, regulatory decisions, and public health—the robustness of evidence is paramount. Systematic reviews and meta-analyses represent the pinnacle of the evidence hierarchy, providing synthesized conclusions from all available studies [11]. The validity of these conclusions and their utility for risk assessment depend entirely on the rigorous application of three core principles: transparency, reproducibility, and minimizing bias. This guide provides a technical roadmap for embedding these principles into every phase of a systematic review in toxicology, from question formulation to data synthesis. Adherence to this framework ensures that reviews produce reliable, actionable evidence capable of withstanding scientific and regulatory scrutiny.

Foundational Framework: The Systematic Review Protocol

A pre-registered, detailed protocol is the bedrock of a transparent, reproducible, and unbiased systematic review. It commits the research team to a predetermined plan, safeguarding against selective reporting and data-driven analysis.

1.1 Formulating a Structured Research Question The process begins with a precisely defined research question, commonly structured using the PICO(TTS) framework, adapted for toxicology [11].

- Population (P): The biological system (e.g., "in vivo mammalian models," "primary human hepatocytes").

- Intervention/Exposure (I/E): The toxicant or chemical of interest, including dose, route, and duration.

- Comparator (C): The control group (e.g., vehicle control, low-dose exposure, an alternative chemical).

- Outcome (O): The measured toxicological endpoint (e.g., mortality, tumor incidence, serum ALT level, gene expression change).

- Time, Type of Study, Setting (TTS): Specifies the relevant exposure windows, preferred study designs (e.g., randomized controlled trials, cohort studies), and experimental settings [11].

Table 1: Application of PICO(TTS) to a Toxicology Research Question

| PICO(TTS) Element | Generic Definition | Example: Hepatotoxicity of Compound X |

|---|---|---|

| Population (P) | The biological system under investigation. | Adult Sprague-Dawley rats |

| Intervention/Exposure (I/E) | The toxicant, its dose, route, and duration. | Oral gavage of Compound X, ≥ 28 days |

| Comparator (C) | The control condition for comparison. | Vehicle control (e.g., corn oil) |

| Outcome (O) | The measured toxicological endpoint(s). | Serum alanine aminotransferase (ALT) activity, histopathological liver score |

| Type of Study (T) | The preferred experimental design. | Randomized controlled trials, controlled cohort studies |

| Time & Setting (TS) | Relevant exposure time and lab environment. | Not specified for this question |

1.2 Protocol Registration & Reporting The finalized protocol should be registered on a public platform such as PROSPERO or the Open Science Framework. Reporting must follow established guidelines like PRISMA-P (Preferred Reporting Items for Systematic Review and Meta-Analysis Protocols), ensuring all methodological choices are documented before literature screening begins.

The Systematic Review Workflow: A Phase-by-Phase Application of Core Principles

The following workflow diagram outlines the major stages of a systematic review, highlighting the critical actions required to uphold transparency, reproducibility, and bias minimization at each step.

Diagram 1: Core Principles in the Systematic Review Workflow (Max Width: 760px)

2.1 Phase 1: Comprehensive Literature Search (Transparency, Reproducibility) The goal is to identify all relevant evidence, minimizing selection bias. A reproducible search strategy is mandatory [11].

- Databases: Search multiple relevant databases (e.g., PubMed/MEDLINE, Embase, Scopus, TOXLINE) [11].

- Search Strategy: Develop a structured syntax using controlled vocabulary (e.g., MeSH terms) and free-text keywords, tailored for each database. The full strategy must be included in the review's supplement.

- Gray Literature: Include unpublished studies, theses, and conference abstracts from sources like clinical trial registries (e.g., ClinicalTrials.gov) to combat publication bias [11].

2.2 Phase 2: Study Screening & Selection (Minimizing Bias) This phase filters search results to identify studies meeting the PICO(TTS) criteria.

- Blinded Screening: Use dedicated software (e.g., Rayyan, Covidence) to have at least two independent reviewers screen titles/abstracts and full texts based on pre-defined eligibility criteria [11]. Discrepancies are resolved by consensus or a third reviewer.

- Documentation: A PRISMA flow diagram must document the number of records at each stage, with explicit reasons for exclusions at the full-text level.

2.3 Phase 3: Data Extraction & Critical Appraisal (Minimizing Bias, Reproducibility)

- Standardized Extraction: Data is extracted by independent reviewers using piloted, electronic forms. Extracted information includes study design, population characteristics, exposure details, outcome data, and funding sources.

- Risk of Bias (RoB) Assessment: The methodological rigor of each included study is evaluated using tools appropriate to its design. For animal studies, the SYRCLE's RoB tool is standard. For human observational studies, the Newcastle-Ottawa Scale is often used [11]. This assessment directly informs the synthesis and grading of evidence.

Table 2: Common Risk of Bias Assessment Tools for Toxicology Research

| Tool Name | Primary Study Type | Key Domains Assessed | Role in Minimizing Bias |

|---|---|---|---|

| SYRCLE's RoB Tool | Animal Intervention Studies | Selection, performance, detection, attrition, reporting bias. | Identifies methodological flaws in preclinical data that may lead to overestimated effects. |

| Newcastle-Ottawa Scale (NOS) | Observational (Cohort, Case-Control) | Selection of groups, comparability, outcome/exposure assessment. | Evaluates susceptibility to confounding and measurement error in human studies. |

| Cochrane RoB 2.0 | Randomized Controlled Trials (Human) | Randomization, deviations, missing data, outcome measurement, selective reporting. | Assesses the internal validity of human clinical trials included in the review. |

2.4 Phase 4: Data Synthesis & Analysis (All Principles) Synthesis integrates findings from the included studies and can be qualitative, quantitative (meta-analysis), or both [22].

- Qualitative Synthesis: A structured narrative summary that explores patterns, relationships, and heterogeneity across studies. It should link findings to the RoB assessment [22].

- Quantitative Synthesis (Meta-Analysis): A statistical method for combining numerical results from multiple studies to produce an overall effect estimate [11].

- Feasibility: Requires sufficient studies with clinically and methodologically similar designs and outcomes [22].

- Statistical Methods: Software like R (with

metaforpackage) or RevMan is used to calculate pooled effect sizes, confidence intervals, and assess statistical heterogeneity (e.g., I² statistic) [11]. - Investigating Heterogeneity & Bias: Sources of variation are explored via subgroup analysis (e.g., by species, dose). Publication bias is assessed visually (funnel plots) and statistically (e.g., Egger's regression) [11].

The following diagram illustrates the decision pathway and methods for data synthesis and bias analysis.

Diagram 2: Data Synthesis and Bias Analysis Pathway (Max Width: 760px)

Adhering to the core principles requires leveraging specific tools and reagents throughout the review process.

Table 3: Research Toolkit for Systematic Reviews in Toxicology

| Tool Category | Specific Tool/Resource | Primary Function in Upholding Principles |

|---|---|---|

| Protocol & Registration | PROSPERO, Open Science Framework | Transparency, Reproducibility: Creates a public, time-stamped record of the review plan before commencement. |

| Literature Management | EndNote, Zotero, Mendeley | Reproducibility: Manages citations, removes duplicates, and maintains a searchable library of all identified records [11]. |

| Screening & Selection | Rayyan, Covidence | Minimizing Bias, Reproducibility: Enables blinded, independent screening by multiple reviewers with conflict resolution features [11]. |

| Risk of Bias Assessment | SYRCLE's RoB Tool, Newcastle-Ottawa Scale | Minimizing Bias: Provides a structured, standardized framework to critically appraise study validity, informing analysis and conclusions. |

| Data Extraction & Management | Custom electronic forms (e.g., Google Forms, REDCap), Covidence | Reproducibility, Minimizing Bias: Ensures consistent and accurate capture of data from studies by independent extractors. |

| Statistical Synthesis | R (metafor, meta packages), RevMan, Stata |

Transparency, Reproducibility: Performs meta-analyses with code/scripts that can be shared, allowing full independent verification of results [11]. |

| Reporting Guidelines | PRISMA, ARRIVE (for animal studies) | Transparency: Provides a checklist to ensure all critical methodological and result information is reported completely. |

In toxicology, where research outcomes guide decisions with significant societal and health implications, systematic reviews must be bastions of scientific integrity. The principles of transparency, reproducibility, and bias minimization are not abstract ideals but practical necessities. By rigorously implementing the protocol-driven framework, methodological safeguards, and specialized tools outlined in this guide, toxicologists can produce synthesized evidence that is reliable, auditable, and fit for purpose. This elevates the standard of evidence in the field, ultimately strengthening the foundation for chemical risk assessment and public health protection.

The field of toxicology is increasingly adopting evidence-based approaches to improve the transparency, objectivity, and reproducibility of hazard and risk assessments [1]. This shift addresses the limitations of traditional narrative reviews, which often suffer from implicit selection processes, potential for bias, and lack of reproducibility [1]. Evidence synthesis methodologies provide structured frameworks to comprehensively and systematically identify, evaluate, and summarize scientific evidence. Within this ecosystem, Systematic Reviews (SRs), Scoping Reviews, and Evidence Maps serve distinct but complementary purposes. The choice of methodology is pivotal and must be driven by the specific research question, whether it demands a definitive answer on toxicity (suited for an SR), seeks to map the breadth of literature on a broad topic (suited for a Scoping Review), or aims to catalog and characterize existing evidence to identify gaps (suited for an Evidence Map). This guide details the technical specifications, protocols, and applications of each review type within the context of toxicology and environmental health research.

Comparative Analysis of Review Types

The following table summarizes the core characteristics, purposes, and methodological distinctions between Systematic Reviews, Scoping Reviews, and Evidence Maps, drawing from established guidance in clinical epidemiology and toxicology [23] [1] [24].

Table 1: Core Characteristics of Evidence Synthesis Methodologies

| Feature | Systematic Review (SR) | Scoping Review | Evidence Map (Mapping Review) |

|---|---|---|---|

| Primary Goal | To answer a focused research question by synthesizing evidence, often to inform a specific decision or conclusion. | To map the extent, range, and nature of research activity on a broad topic; to clarify key concepts [24]. | To systematically catalog and characterize existing evidence on a broad field to identify gaps and inform future research priorities [23] [24]. |

| Typical Research Question Framework | PICO (Population, Intervention/Exposure, Comparator, Outcome) or adaptations for toxicology (e.g., Population, Exposure, Comparator, Outcome) [1]. | PCC (Population, Concept, Context) [23] [24]. | Often uses PICO or similar frameworks focused on effectiveness or presence of evidence [23] [24]. |

| Scope of Question | Narrow and specific. | Broad and exploratory. | Broad and cataloging. |

| Study Selection & Inclusion Criteria | Strict, pre-defined criteria focused on relevance to the specific question. | Broad and inclusive to cover the conceptual scope; may include diverse study designs. | Broad but focused on coding for specific characteristics (e.g., intervention type, population, study design). |

| Critical Appraisal (Risk of Bias) | Mandatory. Formal quality assessment of included studies is a defining feature. | Optional. Not required, as the aim is mapping, not weighted synthesis. | Typically not conducted. Focus is on characterizing the evidence base, not appraising it. |

| Data Extraction | Comprehensive and detailed to enable synthesis and analysis. | Charts key information relevant to mapping the field. | Limited to coding of predefined study characteristics and interventions [23]. |

| Synthesis | Qualitative and/or quantitative (meta-analysis). Aims to generate a summary of findings with an assessed strength of evidence. | Descriptive summary or thematic analysis. No synthesis in the SR sense; results in a narrative and tabular presentation. | Descriptive and visual. Results are presented in searchable databases, tables, and graphical maps (e.g., bubble plots). |

| Key Output | Answer to a specific question; often used for risk assessment or guideline development. | Map of the literature, identification of research gaps, clarification of concepts/definitions. | Visual map and inventory of evidence; clear identification of clusters and gaps to guide research funding or commissioning [23]. |

| Time & Resource Intensity | High (often >1 year) [1]. | Moderate to High. | Moderate. |

Table 2: Application in Toxicology & Environmental Health Research

| Review Type | Best Use Cases in Toxicology | Example Toxicology Research Question |

|---|---|---|

| Systematic Review | Hazard identification, dose-response assessment, evaluating efficacy of an antidote or therapeutic intervention, supporting regulatory decision-making. | "In adult mammalian animal models, does chronic oral exposure to chemical X compared to control increase the incidence of hepatocellular carcinoma?" |

| Scoping Review | Exploring how a toxicological concept (e.g., "endocrine disruption," "non-monotonic dose response") is defined and measured across disciplines; identifying all reported health outcomes associated with a broad class of chemicals. | "What is the scope and nature of research on the neurodevelopmental effects of per- and polyfluoroalkyl substances (PFAS) in epidemiological studies?" |

| Evidence Map | Identifying what primary and secondary research exists on a large family of chemicals (e.g., pesticides, flame retardants) to prioritize substances for future SRs or targeted testing. | "What is the volume and distribution of evidence from in vivo and in vitro studies on the genotoxicity of substituted phenols?" |

Methodological Protocols

Systematic Review Protocol (Based on COSTER Recommendations)

The Conduct of Systematic Reviews in Toxicology and Environmental Health Research (COSTER) guidelines provide a consensus standard for SRs in this field [18]. The protocol must be registered (e.g., in PROSPERO) prior to commencement.

- Planning & Team Assembly: Form a multidisciplinary team including subject matter experts, information specialists, and review methodologies. Declare and manage conflicts of interest [18].

- Framing the Question: Define the review question using a structured framework (e.g., PECO: Population, Exposure, Comparator, Outcome). Precisely specify the toxicological agent, population(s) (species, strain, cell type), outcomes, and study designs of interest.

- Developing the Search Strategy: Work with an information specialist. Search multiple bibliographic databases (e.g., PubMed, Embase, TOXLINE, Web of Science), trial registers, and grey literature sources. Use a comprehensive list of search terms and chemical synonyms/CAS numbers. Document the full strategy.

- Study Selection: Screen titles/abstracts and full texts independently by two reviewers against pre-defined eligibility criteria, using software (e.g., Rayyan, Covidence). Resolve conflicts via consensus or third-party adjudication.

- Data Extraction: Use a piloted, standardized form. Extract study design, population characteristics, exposure details, outcome data, and key results. Perform extraction in duplicate.

- Risk of Bias / Quality Assessment: Assess internal validity of each study using a domain-based tool appropriate to the design (e.g., SYRCLE's RoB tool for animal studies, OHAT/NTP tool for human and animal studies) [1].

- Evidence Synthesis: Tabulate study characteristics and results. Conduct a qualitative synthesis. If studies are sufficiently homogeneous, perform a meta-analysis to calculate summary effect estimates. Address heterogeneity through subgroup or sensitivity analyses.

- Rating the Confidence in Evidence: Use a framework (e.g., GRADE for human studies, adapted GRADE for animal studies) to rate the overall body of evidence for each key outcome as high, moderate, low, or very low confidence.

- Reporting: Adhere to the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement. For environmental health SRs, the COSTER recommendations provide additional reporting guidance [18].

- Interpretation & Knowledge Translation: Discuss the strength and limitations of the evidence, implications for research and policy, and relevance to risk assessment.

Scoping Review Protocol (Based on Arksey & O'Malley Framework)

Scoping reviews follow an iterative, flexible framework [24].

- Identifying the Research Question: Establish a broad question based on the PCC (Population, Concept, Context) framework.

- Identifying Relevant Studies: Conduct comprehensive searches across multiple databases and grey literature. The search strategy may be refined iteratively as reviewers become more familiar with the literature.

- Study Selection: Apply inclusion/exclusion criteria to map the literature. Selection is typically performed in two stages (title/abstract, full-text), often with a second reviewer verifying a subset.

- Charting the Data: Develop a data-charting form to extract relevant information about the study design, methods, concepts, and key findings relevant to the mapping objective. The form may be updated during the process.

- Collating, Summarizing, and Reporting the Results: Analyze the extracted data quantitatively (e.g., counts of study designs, years, geographic locations) and qualitatively (thematic analysis). Present results in tables, charts, and a narrative summary.

- Consultation (Optional): Engage with stakeholders (e.g., researchers, policymakers) to inform the review process or validate findings.

Evidence Map Protocol

The protocol for an Evidence Map shares steps with Scoping and SR protocols but has a distinct analytical focus [23] [24].

- Question Formulation: Often uses a PICO-style question focused on the existence and characteristics of evidence (e.g., "What evidence exists on the health effects of chemical class Y?").

- Search & Selection: Conducts a systematic search with broad inclusion criteria to capture all relevant evidence on the topic. Study selection is documented via a PRISMA flow diagram.

- Data Extraction & Coding: Extracts a standardized set of descriptive data about each study (e.g., chemical, population, study type, outcome domain, funding source). This creates a coded database.

- Critical Appraisal: Usually omitted, as the goal is descriptive mapping.

- Synthesis & Visualization: Analyzes the coded database to quantify the volume and distribution of research. Results are presented as:

- A searchable database or evidence inventory.

- Structured tables summarizing evidence volume by key dimensions.

- Visual maps (e.g., bubble plots) where axes represent two key dimensions (e.g., chemical vs. outcome), bubble size represents number of studies, and color may represent study type.

Figure 1: Decision Pathway for Selecting a Review Methodology [23] [24].

Table 3: Research Reagent Solutions for Evidence Synthesis in Toxicology

| Item / Resource | Function / Purpose | Key Examples & Notes |

|---|---|---|

| Protocol Registries | To pre-register the review plan, reduce duplication of effort, and minimize reporting bias. | PROSPERO (International prospective register of systematic reviews). |

| Reporting Guidelines | To ensure transparent and complete reporting of the review process and findings. | PRISMA (Systematic Reviews & Meta-Analyses) [1], PRISMA-ScR (Scoping Reviews) [24], COSTER (Environmental Health SRs) [18]. |

| Toxicology-Specific Guidance | To address methodological challenges unique to toxicology (e.g., multiple evidence streams, species extrapolation). | COSTER Recommendations [18], OHAT/NTP Handbook [1], EFSA Guidance [1]. |

| Information Sources | To ensure a comprehensive search for toxicological evidence. | Bibliographic Databases: PubMed/MEDLINE, Embase, TOXLINE, Web of Science. Chemical Databases: PubChem, ChemIDplus. Grey Literature: gov't reports (EPA, EFSA), dissertations, conference abstracts [18]. |

| Study Selection & Data Extraction Tools | To manage the screening process and extract data in a standardized, reproducible manner. | Rayyan, Covidence, DistillerSR, EPPI-Reviewer. |

| Risk of Bias Tools | To critically appraise the internal validity of included studies. | Animal Studies: SYRCLE's RoB tool, OHAT/NTP tool. Human Observational Studies: ROBINS-I, Newcastle-Ottawa Scale. In Vitro Studies: (Emerging tools, often adapted from other designs). |

| Evidence Grading Frameworks | To rate the confidence in the body of evidence for a given outcome. | GRADE (Grading of Recommendations, Assessment, Development, and Evaluations) and its adaptations for pre-clinical research. |

| Data Synthesis & Visualization Software | To perform meta-analysis and create informative graphs. | Statistical: R (metafor, meta packages), Stata, RevMan. Visualization: R (ggplot2), Python (matplotlib, seaborn), standard graphing software. |

Figure 2: Core Systematic Review Workflow in Toxicology [1] [18].

Selecting the appropriate review methodology is a critical first step in any evidence synthesis project in toxicology. Systematic Reviews are the gold standard for answering focused questions to support hazard characterization and decision-making but are resource-intensive. Scoping Reviews provide the necessary breadth to explore under-researched or complex topics and clarify definitions. Evidence Maps offer a strategic overview of a research landscape, efficiently pinpointing where sufficient evidence exists for a full SR and where critical knowledge gaps remain.

For toxicologists and environmental health scientists, the emergence of field-specific guidance like the COSTER recommendations provides a crucial toolkit for navigating the unique challenges of integrating heterogeneous evidence streams [18]. Ultimately, the choice hinges on a clear articulation of the review's purpose: to answer, to explore, or to map. By applying these methodologies rigorously, the toxicology community can produce more transparent, reliable, and actionable syntheses of evidence to inform both science and policy.

The How-To: A Stepwise Protocol for Executing Your Toxicology Systematic Review

The formulation of a precise and answerable research question is the foundational step of any systematic review in toxicology [1] [25]. This initial step defines the scope, determines the methodology for the subsequent search and synthesis, and directly impacts the review's validity and utility for decision-making [26]. Unlike traditional narrative reviews, which often address broad topics, a systematic review requires a tightly focused question that can be addressed through a transparent and reproducible process of evidence identification, evaluation, and synthesis [1].

The PECO framework (Population, Exposure, Comparator, Outcome) is the toxicological adaptation of the PICO (Population, Intervention, Comparator, Outcome) model used in clinical medicine [26]. Its primary function is to structure a review question with unambiguous components, which then translate directly into the review's inclusion/exclusion criteria and literature search strategy [27]. A well-constructed PECO question minimizes bias, enhances reproducibility, and ensures the review efficiently targets the most relevant evidence [28].

Table 1: Key Distinctions Between Narrative and Systematic Reviews in Toxicology

| Feature | Narrative Review | Systematic Review |

|---|---|---|

| Research Question | Broad and often not explicitly specified [1] | Specific and structured using frameworks like PECO [1] |

| Literature Search | Not typically specified or systematic [1] | Comprehensive, from multiple databases, with explicit search strategy [1] |

| Study Selection | Implicit, based on expert knowledge [1] | Explicit, based on pre-defined inclusion/exclusion criteria [1] |

| Quality Assessment | Usually informal or absent [1] | Critical appraisal using explicit risk-of-bias tools [27] |

| Evidence Synthesis | Qualitative summary [1] | Structured qualitative and/or quantitative (meta-analysis) summary [1] |

| Time & Resources | Generally lower (months) [1] | Substantially higher (often >1 year) [1] |

| Output | Expert opinion, state-of-the-science overview [1] | Transparent, reproducible evidence base for decision-making [25] |

Deconstructing the PECO/PICOS Framework for Toxicology

The PECO framework provides the necessary structure for toxicological questions, which differ from clinical questions by focusing on hazardous exposures rather than therapeutic interventions.

Population (P): This defines the subject of study, which in toxicology can include humans (specific populations, e.g., workers, children), experimental animal models (species, strain, sex, life stage), in vitro systems (cell lines, primary cultures), or environmental species [1]. Clarity here is crucial for defining the biological context and applicability of the evidence.

Exposure (E): This is the toxicological agent or condition of interest. It must be precisely defined, including the specific chemical or stressor, its form, route of exposure (oral, inhalation, dermal), duration (acute, chronic), and timing (e.g., developmental window) [28]. For complex mixtures, the definition becomes more challenging and must be carefully considered.

Comparator (C): This defines the reference against which exposure is evaluated. In animal or in vitro studies, this is typically a control group (e.g., vehicle-treated, sham-exposed). In human epidemiology, it may be a population with lower exposure levels or background exposure [29]. The choice of comparator influences the interpretation of the effect.

Outcome (O): This specifies the adverse health effect or endpoint under investigation. Outcomes in toxicology span multiple levels of biological organization, from molecular initiating events (e.g., receptor binding) and key cellular events (e.g., oxidative stress, proliferation) to organ-level effects (e.g., steatosis, fibrosis) and apical disease outcomes (e.g., cancer, reproductive dysfunction) [26] [28]. Defining relevant outcomes is key to linking mechanistic data to adverse effects.

Study Design (S - optional): Sometimes included as "S" in PICOS, this component can restrict evidence to specific methodological approaches (e.g., randomized controlled trials, cohort studies, controlled laboratory studies). In toxicology, specifying evidence streams (epidemiological, in vivo, in vitro) at the question stage can help manage the complexity of integrating diverse data types [28].

Constructing a High-Quality PECO Question: A Stepwise Guide

Constructing an effective PECO question is an iterative process that requires balancing specificity with feasibility.

Step 1: Define the Core Problem Begin with a broad problem statement (e.g., "Concerns about the potential hepatotoxicity of Chemical X"). Engage stakeholders, including subject matter experts, to understand the decision-making context and key uncertainties [27].

Step 2: Specify Each PECO Element with Precision

- Population: Avoid overly broad definitions. Instead of "mammals," specify "adult female Sprague-Dawley rats" or "human occupational cohorts."

- Exposure: Provide chemical identifiers (CAS RN), define relevant real-world exposure scenarios (e.g., "oral exposure, ≥ 90 days"), and consider metabolites if relevant.

- Comparator: State the exact reference (e.g., "vehicle control (corn oil)," "population with exposure below the 10th percentile").

- Outcome: Use standardized terminology where possible. Define the outcome operationally (e.g., "hepatocellular hypertrophy diagnosed by histopathology," "serum alanine aminotransferase activity increased ≥ 2-fold over control").

Step 3: Evaluate and Refine the Question Test the question for feasibility (is there likely to be sufficient evidence?), clarity (would different reviewers interpret it the same way?), and relevance (does it address the core problem?) [25]. A question that is too narrow may yield no evidence; one that is too broad becomes unmanageable.

Step 4: Align with the Adverse Outcome Pathway (AOP) Framework (Where Applicable) For mechanism-focused reviews, the PECO question can be structured around elements of an Adverse Outcome Pathway. This is particularly powerful for integrating New Approach Methodologies (NAMs). The Molecular Initiating Event (MIE) or a Key Event (KE) can serve as the Outcome in a PECO question aimed at collecting evidence for a specific segment of the AOP [26].

From Question to Protocol: Operationalizing PECO

The finalized PECO question is the cornerstone of the systematic review protocol, a publicly registered document that pre-specifies the review's methods to minimize bias [29].

Protocol Development: The protocol explicitly translates each PECO element into operational criteria.

- Population becomes the eligibility criteria for test systems.

- Exposure defines the search terms and chemical identifiers.

- Comparator sets the threshold for study inclusion.

- Outcome lists the exact endpoints and measurement methods that will be extracted from studies [27].

Search Strategy: A biomedical librarian or information specialist should be involved. The search strategy uses controlled vocabulary (e.g., MeSH terms) and free-text words derived from the PECO elements, combined with Boolean operators. It should be designed for sensitivity (to capture all relevant evidence) across multiple databases (e.g., PubMed, Web of Science, Embase, ToxLine) [29].

Screening and Data Extraction: The PECO framework is used to create standardized forms for title/abstract screening and full-text review. At least two independent reviewers screen studies, with conflicts resolved by consensus or a third reviewer [29]. Data extraction templates are structured to capture detailed information pertinent to each PECO element from every included study.

Table 2: Key Components of a Systematic Review Protocol Derived from PECO

| Protocol Section | Description | Direct Link to PECO |

|---|---|---|

| Review Question | Statement of the primary question. | The fully articulated PECO question. |

| Eligibility Criteria | Detailed rules for including/excluding studies. | Operational definitions of P, E, C, and O. |

| Information Sources | List of databases and other resources to be searched. | Strategy to capture all evidence for the defined PECO. |

| Search Strategy | Complete, reproducible search query. | Translates PECO concepts into search syntax. |

| Study Selection | Process for screening references. | Application of eligibility criteria based on PECO. |

| Data Extraction | Items to be collected from each study. | Detailed characterization of P, E, C, O, and study design. |

Case Study: PECO in Action

The SYRINA framework for Endocrine Disrupting Chemicals (EDCs) provides a clear case study [28]. To evaluate whether a chemical is an EDC per the WHO/ICPS definition, three evidence needs must be met. A series of linked systematic reviews, each with its own PECO question, can be conducted:

- Question for Adverse Effect: "In female rats (P), does in utero exposure to Chemical Z (E), compared to vehicle control (C), increase the incidence of reproductive tract malformations (O)?"

- Question for Endocrine Activity: "In estrogen receptor alpha transactivation assays (P), does Chemical Z (E), compared to solvent control (C), demonstrate agonist activity (O)?"

- Question for Plausible Link: This involves an integrated assessment of evidence from the first two reviews, examining biological plausibility and coherence [28].

Table 3: Research Reagent Solutions for Systematic Review Implementation

| Tool / Resource | Category | Function in PECO-Based Review |

|---|---|---|

| PROSPERO Registry | Protocol Repository | Public registration of review protocol to enhance transparency and reduce bias. |

| Cochrane Risk-of-Bias (RoB) Tools | Quality Assessment | Structured tools to evaluate internal validity of randomized trials (RoB 2.0) and observational studies (ROBINS-I). |

| OHAT / Navigation Guide RoB Tool | Quality Assessment | Tool adapted for environmental health studies, assessing selection, performance, detection, attrition, and reporting bias [27]. |

| EndNote, Covidence, Rayyan | Reference Management & Screening | Software platforms to manage search results, enable blinded screening by multiple reviewers, and track decisions. |

| GRADE (Grading of Recommendations, Assessment, Development, and Evaluations) | Evidence Grading | Framework for rating the overall certainty (high, moderate, low, very low) of a body of evidence across studies. |

| AOP-Wiki (aopwiki.org) | Knowledge Organization | Repository of Adverse Outcome Pathways; useful for defining mechanistic outcomes and contextualizing evidence [26]. |

Formulating a focused question using the PECO framework is a non-negotiable first step in conducting a rigorous, reproducible, and unbiased systematic review in toxicology. It transforms a general concern into a structured, investigable query that guides every subsequent methodological choice [1]. As evidence-based toxicology matures, mastery of PECO question formulation remains a fundamental skill for researchers and professionals aiming to produce syntheses that reliably inform scientific understanding, risk assessment, and public health policy [25].

The Critical Role of a Protocol in Toxicology Systematic Reviews

In evidence-based toxicology, the systematic review is the cornerstone for synthesizing data to inform risk assessments and regulatory decisions [1]. A meticulously developed protocol is the essential foundation of any rigorous systematic review. It serves as a pre-defined roadmap, minimizing arbitrariness in decision-making and safeguarding against selective reporting bias, which is crucial when evaluating potentially hazardous substances [30]. Unlike traditional narrative reviews, which may lack transparency, a protocol ensures the review process is explicit, reproducible, and methodologically sound [1].

The development and registration of a protocol are particularly vital in toxicology due to the field's unique complexities. Reviews must often integrate evidence from multiple streams, including human observational studies, in vivo animal models, in vitro assays, and in silico models [1]. Furthermore, challenges such as assessing multiple species, strains, and diverse adverse outcome endpoints necessitate a priori planning to ensure consistency and objectivity [1]. A publicly registered protocol also prevents unnecessary duplication of effort and allows the scientific community to scrutinize the planned methods, thereby enhancing the credibility of the eventual review [31] [30].

PRISMA-P: The Reporting Guideline for Protocols

The Preferred Reporting Items for Systematic reviews and Meta-Analyses Protocols (PRISMA-P) is an evidence-based guideline developed to ensure the complete and transparent reporting of systematic review protocols [32] [30]. Published in 2015, its primary objective is to improve the quality of systematic review protocols by providing a minimum set of items that should be addressed in the protocol document [30].

It is critical to distinguish PRISMA-P from protocol registries. PRISMA-P is a reporting guideline—it dictates what information should be included in a protocol document to make it complete [30]. In contrast, a registry like PROSPERO is a public database where key information about the planned review is recorded for the world to see [30]. The two tools are complementary: authors should use the PRISMA-P checklist to develop a robust, detailed protocol and then register the key details from that protocol in a registry [31] [30].

Table 1: The PRISMA-P 2015 Checklist (17-Item Summary)

| Section | Item # | Item Description |

|---|---|---|

| Administrative | 1 | Identification: Protocol title, registration, authors, contributions, contact, amendments. |

| 2 | Contributions: Names, affiliations, contributions of protocol contributors. | |

| 3 | Amendments: Procedure for documenting and reporting protocol changes. | |

| Introduction | 4 | Rationale: Description of the health problem and rationale for the review. |

| 5 | Objectives: Explicit statement of the primary and secondary review questions. | |

| Methods | 6 | Eligibility Criteria: PICO/PECO elements (Population, Intervention/Exposure, Comparator, Outcome). |

| 7 | Information Sources: Planned databases, trial registers, websites, journals, contact with experts. | |

| 8 | Search Strategy: Draft search strategy for at least one primary database (e.g., MEDLINE). | |

| 9 | Study Records: Data management, selection process, data collection process. | |

| 10 | Data Items: List and define all variables for extraction (outcomes, exposures, effect modifiers). | |

| 11 | Outcomes & Prioritization: Define and prioritize all primary and secondary outcomes. | |

| 12 | Risk of Bias Assessment: Tools and process for assessing methodological quality of individual studies. | |

| 13 | Data Synthesis: Criteria for quantitative synthesis (meta-analysis); statistical methods; heterogeneity investigation. | |

| 14 | Meta-bias(es): Plans for assessing publication/reporting bias across studies (e.g., funnel plots). | |

| 15 | Confidence in Evidence: Planned approach for assessing the overall strength/certainty of the body of evidence (e.g., GRADE). |

Developing a Toxicology Systematic Review Protocol: A Stepwise Methodology

Defining the Review Question and Eligibility Criteria (PECO)

The foundation of a toxicology systematic review is a precisely framed research question, commonly structured using the PECO framework (Population, Exposure, Comparator, Outcome) [1]. This framework is adapted from clinical medicine's PICO, replacing "Intervention" with "Exposure" to reflect toxicological inquiry.

- Population: Define the biological system (e.g., human, specific animal species/strain, cell line). For human studies, specify relevant demographics [1].

- Exposure: Specify the chemical agent(s), including details on form, dose, duration, and route of administration (e.g., oral gavage, inhalation) [1].

- Comparator: Define the appropriate control (e.g., vehicle control, placebo, low-dose or background exposure group).

- Outcome: Clearly state the adverse health outcomes or toxicological endpoints of interest (e.g., mortality, tumor incidence, reproductive toxicity, biomarker change). Predefine primary and secondary outcomes [30].

Designing a Comprehensive Search Strategy

A systematic search must be designed to maximize sensitivity (finding all relevant studies) while maintaining manageable precision [30]. The strategy should be peer-reviewed, often by a research librarian [31].

Database Selection: Search multiple bibliographic databases beyond PubMed/MEDLINE. Toxicology-specific databases are essential. Table 2: Key Information Sources for Toxicology Systematic Reviews

Database/Resource Scope and Relevance PubMed/MEDLINE Core biomedical literature. Embase Strong coverage of pharmacology and toxicology, including conference abstracts. TOXLINE Specialized in toxicology, environmental health, and chemical safety. Scifinderⁿ / CAS Covers chemical literature, including patents and obscure journals. Web of Science Core Collection Multidisciplinary science citation index. EPA WebFIRE / IRIS Source for regulatory reports and risk assessments. Government & Agency Websites (EFSA, NTP, IARC) Grey literature, technical reports, and monographs. Search String Development: Use controlled vocabulary (e.g., MeSH terms for PubMed) combined with free-text keywords for the PECO elements. Include synonyms, related terms, and chemical registry numbers (e.g., CAS RN) [33].

Planning Study Selection, Data Extraction, and Risk of Bias Assessment

The protocol must detail a reproducible, unbiased process for handling studies [30].

- Study Selection Process: Describe a two-stage screening (title/abstract, then full-text) conducted independently by at least two reviewers. Define and pilot the eligibility criteria using the PECO framework. Use tools like Rayyan or Covidence for management [33].

- Data Extraction: Specify the variables to be extracted into a standardized form. These include study identifiers, PECO details, experimental design, funding source, and quantitative results (e.g., mean, SD, N for each group) [30].

- Risk of Bias (RoB) Assessment: Selecting an appropriate tool is critical. The protocol must state the chosen tool and justify its use. For animal studies, tools like SYRCLE's RoB tool or the NTP/OHAT Risk of Bias Rating Tool are relevant [1] [34]. For in vitro studies, adapted tools or criteria-based checklists are used [34]. The process should also be performed in duplicate.

Planning Data Synthesis and Evidence Integration

The protocol must pre-specify the approach for synthesizing findings from the included studies [30].

- Qualitative Synthesis: Plan a structured summary of study characteristics and findings, often presented in tables.

- Quantitative Synthesis (Meta-analysis): State the preconditions for performing a meta-analysis (e.g., sufficient homogeneity in PECO, available statistical data). Specify the statistical models (e.g., random-effects vs. fixed-effect), effect measures (e.g., odds ratio, mean difference), and methods for assessing heterogeneity (I² statistic) [30].

- Assessing Confidence in the Evidence: Describe the planned method for grading the overall strength or certainty of the evidence for each key outcome. The GRADE (Grading of Recommendations Assessment, Development and Evaluation) framework, adapted for toxicology, is widely recommended for this purpose [1] [33]. This involves rating confidence (High, Moderate, Low, Very Low) based on RoB, consistency, directness, precision, and other factors.