LD50 vs LC50 Decoded: A Toxicology Guide for Drug Development and Research

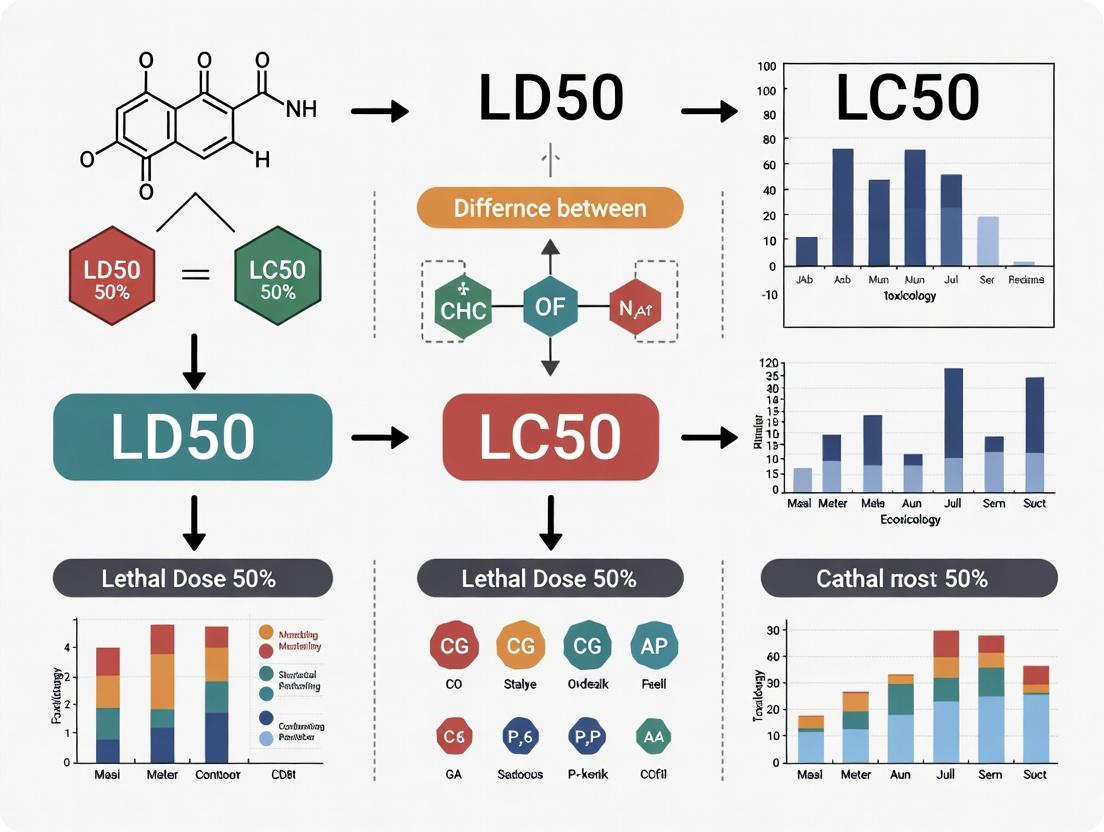

This article provides a comprehensive comparison of LD50 (Lethal Dose 50%) and LC50 (Lethal Concentration 50%), two foundational metrics in toxicology.

LD50 vs LC50 Decoded: A Toxicology Guide for Drug Development and Research

Abstract

This article provides a comprehensive comparison of LD50 (Lethal Dose 50%) and LC50 (Lethal Concentration 50%), two foundational metrics in toxicology. Aimed at researchers, scientists, and drug development professionals, it explores their core definitions, historical origins, and distinct applications in measuring acute toxicity. The guide details standardized testing methodologies, critical limitations including species variability, and strategies for data optimization. Furthermore, it contextualizes these acute measures within a modern toxicological framework by comparing them with chronic and environmental dose descriptors like NOAEL and EC50. The synthesis offers essential insights for interpreting toxicity data, designing safer studies, and informing robust risk assessment in biomedical research.

LD50 and LC50 Defined: Unpacking the Core Concepts and History of Acute Toxicity Metrics

In toxicology, the quantitative assessment of a substance's potential to cause harm is paramount for risk assessment in chemical safety, pharmaceutical development, and environmental protection. Two of the most critical metrics for evaluating acute toxicity—the adverse effects occurring shortly after a single or brief exposure—are the Lethal Dose 50 (LD50) and the Lethal Concentration 50 (LC50) [1]. These values represent a fundamental dose-response relationship, providing a standardized point of comparison for the intrinsic toxicity of diverse chemical entities.

The core distinction lies in their units of measurement. LD50 is a measure of dose, expressed as the mass of a substance administered per unit body weight of the test animal (e.g., milligrams per kilogram, mg/kg). It is applicable to routes such as oral, dermal, or injection [1] [2]. In contrast, LC50 is a measure of concentration, expressed as the amount of a substance present in a given volume of air (e.g., milligrams per cubic meter, mg/m³, or parts per million, ppm) or water, to which test subjects are exposed for a defined period, typically via inhalation [1] [3].

This technical guide delineates the definition, experimental derivation, and application of these endpoints, framing them within the broader context of toxicological research and the critical differences that govern their use.

Core Definitions and Conceptual Differentiation

LD50 (Lethal Dose 50): The statistically or experimentally derived single dose of a substance required to kill 50% of a test animal population within a specified observation period (typically up to 14 days) [1] [4]. As a dose metric, it is normalized to the body weight of the test subject, allowing for comparison across individuals and species. Its value is fundamentally dependent on the total amount of the active agent that enters the organism [2].

LC50 (Lethal Concentration 50): The calculated concentration of a substance in an environmental medium (air or water) that is expected to kill 50% of a test population during a controlled exposure period (e.g., 1 or 4 hours for inhalation) [1] [3]. Unlike a dose, a concentration describes the density of the toxicant in the exposure environment. The total internal dose received by the organism depends on this external concentration, the duration of exposure, and the subject's respiratory or uptake rate [2].

Table 1: Core Conceptual Differences Between LD50 and LC50

| Aspect | LD50 | LC50 |

|---|---|---|

| Core Definition | Lethal Dose for 50% of a population | Lethal Concentration for 50% of a population |

| What it Measures | Amount of substance administered per unit body weight. | Amount of substance per unit volume of exposure medium (air/water). |

| Typical Units | mg/kg body weight (or g/kg, µg/kg) | mg/m³, µg/L (air); mg/L, ppm (water) |

| Primary Route | Oral, Dermal, Injection (intravenous, intraperitoneal) | Inhalation, Aquatic Immersion |

| Key Variable | Administered dose (mass/body weight). | Environmental concentration and exposure time. |

| Expression | LD50 (oral, rat) = 5 mg/kg [1] | LC50 (rat, 4h) = 1000 ppm [1] |

Historical Context and Theoretical Basis

The concept of the median lethal dose was pioneered by J.W. Trevan in 1927 in an effort to standardize the evaluation of the relative potency of drugs and poisons [1] [2]. By using death as a unambiguous, quantal (all-or-nothing) endpoint, it became possible to compare chemicals that cause toxicity through vastly different biological mechanisms [1].

The determination of LD50/LC50 relies on the dose-response relationship, a cornerstone principle in toxicology. This relationship assumes a monotonic increase in the measured effect (mortality) with increasing dose or concentration [5]. When mortality data from groups exposed to different doses are plotted, they typically form a sigmoidal curve. The LD50/LC50 is derived from the midpoint of this curve, which represents the point of greatest sensitivity to changes in dose [5].

Table 2: Advantages and Limitations of LD50/LC50 Endpoints

| Advantages | Limitations |

|---|---|

| Provides a standardized, quantitative value for acute toxicity comparison [1]. | Measures only acute lethality, not chronic, sublethal, or specific organ toxicity [1]. |

| Enables hazard classification and labeling (e.g., GHS, OSHA categories) [6]. | High inter-species variability; results in rodents may not accurately predict human toxicity [2]. |

| Informs initial safety guidelines for human exposure limits and protective equipment [7]. | Ethical concerns due to animal use and potential suffering; pushes field towards alternative methods [8]. |

| Serves as a starting point for determining other toxicological thresholds (e.g., NOAEL) [6]. | Experimental variability based on strain, sex, age, and laboratory conditions [2]. |

Detailed Experimental Protocols

OECD Guideline-Based Testing for Acute Oral Toxicity (LD50)

The Organisation for Economic Co-operation and Development (OECD) provides standardized test guidelines to ensure reliability and reproducibility. The OECD Test Guideline 425: Up-and-Down Procedure (UDP) is a widely accepted method that uses sequential dosing to reduce animal use.

1. Test System:

- Animals: Young adult rats (or other rodents), healthy and acclimatized.

- Housing: Standard laboratory conditions with controlled temperature, humidity, and light cycles.

- Fasting: Animals are fasted prior to dosing (e.g., food withdrawn 3-4 hours, water available).

2. Test Substance Administration:

- The substance is administered in a single dose by oral gavage.

- A starting dose is chosen based on prior information. If the animal survives, the next animal receives a higher dose; if it dies, the next receives a lower dose.

- The test proceeds sequentially until a pre-defined stopping criterion is met (typically after testing 4-6 animals).

3. Observation Period:

- Animals are observed intensely for the first 4 hours, then at least daily for a total of 14 days for signs of toxicity, morbidity, and mortality [1].

- All clinical observations, time of death, and body weight changes are recorded.

4. Data Analysis and LD50 Calculation:

- The LD50 value and its confidence intervals are calculated using a maximum likelihood statistical program (e.g., the AOT425StatPgm software provided by the OECD).

- The result is expressed as LD50 (oral, rat) = X mg/kg body weight [1].

Protocol for Acute Inhalation Toxicity (LC50)

OECD Test Guideline 403 outlines the standard method for determining acute inhalation toxicity.

1. Generation of Exposure Atmosphere:

- The test substance (gas, vapor, aerosol, or dust) is mixed with air in an exposure chamber to achieve a stable, homogenous concentration [1].

- Concentration is continuously monitored using analytical methods (e.g., gravimetric, spectroscopic).

2. Test System and Exposure:

- Animals: Young adult rats or mice are commonly used.

- Groups of animals (typically 5 per sex per concentration) are placed in the chamber and exposed to a fixed concentration of the test substance for a defined period, usually 4 hours [1].

- Multiple groups are exposed to a series of at least three concentrations to generate a dose-response.

3. Post-Exposure Observation:

- Following exposure, animals are monitored for 14 days.

- Observations are identical to the oral study, with particular attention to respiratory distress.

4. Data Analysis and LC50 Calculation:

- Mortality data at each concentration is used to generate a concentration-mortality curve.

- The LC50 is calculated using probit or logit analysis and expressed as LC50 (rat, 4h) = Y mg/m³ or Z ppm [1].

Diagram 1: Generalized Workflow for Acute Toxicity Testing (LD50/LC50) (88 characters)

Data Interpretation and Toxicity Classification

A fundamental principle is that a lower LD50 or LC50 value indicates a more toxic substance [7] [2]. These numerical values are used to classify chemicals into toxicity categories for labeling and risk communication purposes, such as the Globally Harmonized System of Classification and Labelling of Chemicals (GHS).

Table 3: Toxicity Classification Based on LD50 (Oral, Rat) and LC50 (Inhalation, Rat) [1] [7]

| Toxicity Category | Common Term | Oral LD50 (mg/kg) | Inhalation LC50 (gases, ppm/4h) | Probable Lethal Dose for 70 kg Human |

|---|---|---|---|---|

| Category 1 | Extremely Toxic | ≤ 5 | ≤ 100 | A taste (< 7 drops) |

| Category 2 | Highly Toxic | >5 – ≤ 50 | >100 – ≤ 500 | 1 teaspoon (5 ml) |

| Category 3 | Moderately Toxic | >50 – ≤ 300 | >500 – ≤ 2500 | 1 ounce (30 ml) |

| Category 4 | Slightly Toxic | >300 – ≤ 2000 | >2500 – ≤ 20000 | 1 pint (500 ml) |

| Not Classified | Low/Acute Toxicity | > 2000 | > 20000 | > 1 pint |

Critical Considerations in Interpretation:

- Route Dependency: A substance can have vastly different toxicities by different routes (e.g., oral vs. inhalation) [1].

- Species Differences: LD50 values can vary significantly between species (e.g., rat vs. dog) [1] [2]. Extrapolation to humans requires caution and the use of assessment factors.

- Mechanistic Insight: The LD50/LC50 provides no information on the mechanism of toxicity, time course of effects, or the shape of the dose-response curve at lower, non-lethal doses.

Advanced Context: Integration with Other Toxicological Endpoints

LD50 and LC50 are starting points in a comprehensive toxicological assessment. They feed into the determination of other critical safety thresholds used in risk assessment [6].

- NOAEL (No Observed Adverse Effect Level): The highest tested dose at which no adverse effects are observed. It is derived from longer-term, repeated-dose studies and is central to establishing safe exposure limits for humans [6].

- LOAEL (Lowest Observed Adverse Effect Level): The lowest tested dose at which an adverse effect is observed [6].

- Therapeutic Index (TI): In pharmacology, the ratio of LD50 to the Effective Dose 50 (ED50) provides a measure of a drug's safety margin (TI = LD50/ED50) [2].

Diagram 2: Relationship of LD50/LC50 to Other Toxicological Thresholds (80 characters)

Modern Innovations and Computational Alternatives

Traditional LD50/LC50 testing requires significant numbers of animals. Driven by ethical principles (the "3Rs": Replacement, Reduction, Refinement) and regulatory pushes (e.g., from the European Union), the field is rapidly adopting New Approach Methodologies (NAMs) [8].

Computational Toxicology (In Silico Models):

- Quantitative Structure-Activity Relationship (QSAR): Models that predict toxicity based on the chemical structure's physicochemical properties [8].

- Machine Learning (ML) and Artificial Intelligence: Advanced algorithms trained on large historical datasets of in vivo LD50 values can predict acute toxicity for novel chemicals with high accuracy. Initiatives like the Collaborative Acute Toxicity Modeling Suite (CATMoS) provide consensus models that are becoming accepted for screening and priority-setting [8].

- These computational tools must comply with OECD validation principles, ensuring they have a defined endpoint, a clear algorithm, and a documented domain of applicability [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Materials for Acute Toxicity Testing

| Item | Function in LD50/LC50 Testing |

|---|---|

| Pure Test Substance | Nearly all tests are performed using a pure form of the chemical to ensure accurate dosing and attribution of effects [1]. |

| Vehicle (e.g., Corn Oil, Methyl Cellulose, Water) | An inert substance used to dissolve or suspend the test chemical for consistent administration via gavage or injection. |

| Exposure Chamber (Inhalation) | A sealed, dynamic airflow chamber designed to maintain a precise and homogenous concentration of gas, vapor, or aerosol for inhalation studies [1]. |

| Analytical Concentration Monitor | A device (e.g., real-time gas monitor, filter sampler with gravimetric/chemical analysis) to verify and maintain the target concentration in an inhalation chamber [1]. |

| Gavage Needle (Oral Dosing) | A blunt-tipped, graduated syringe needle used for the safe and accurate oral administration of liquid test substances to rodents. |

| Clinical Chemistry & Hematology Kits | Used during the observation period to assess sublethal toxic effects on organ function (e.g., liver, kidney) and blood components. |

| Histopathology Reagents | Fixatives (e.g., formalin), stains, and supplies for post-mortem tissue examination to identify target organs and morphological damage. |

| Statistical Analysis Software | Specialized software (e.g., for probit analysis, OECD UDP calculation) required to transform mortality data into the final LD50/LC50 estimate with confidence intervals [5]. |

The median lethal dose (LD₅₀) and median lethal concentration (LC₅₀) are foundational toxicological metrics for quantifying acute toxicity. Introduced by J.W. Trevan in 1927, the LD₅₀ was conceived to solve a critical problem in pharmacology and toxicology: the need for a standardized, reproducible method to compare the relative poisoning potency of diverse substances, irrespective of their specific mechanisms of action [1]. By defining the dose that causes death in 50% of a test population, Trevan established a common biological endpoint that enabled quantitative hazard ranking [2]. This whitepaper delineates the technical core of Trevan's innovation, places it within the comparative context of LD₅₀ versus LC₅₀, details the evolution of its experimental protocols, and surveys the modern in silico and in vitro methodologies that are refining and replacing this classical paradigm. The thesis underpinning this analysis is that while LD₅₀ and LC₅₀ share a common statistical foundation for comparing acute lethal potency, their fundamental distinction lies in the dimension of measurement—mass of substance per unit body weight (dose) versus concentration in an environmental medium (air or water)—which dictates their specific applications in safety science.

The Core Innovation: Standardizing the “Lethal Dose”

Prior to 1927, comparing the toxicity of different drugs and chemicals was fraught with inconsistency. Toxic effects vary widely (e.g., neurotoxicity, hepatotoxicity), making direct comparison of non-identical endpoints scientifically dubious [1]. J.W. Trevan’s seminal contribution was the proposition of a single, unambiguous biological endpoint—death—and the statistical precision of the median (50%) response [2].

The selection of the 50% lethality point was a pragmatic masterstroke. It avoids the high variability and extreme dose requirements associated with measuring responses at the tails of the population distribution (e.g., LD₁ or LD₉₉) and provides a stable, reproducible point on the sigmoidal dose-response curve [2]. This innovation transformed toxicology from a qualitative descriptive science into a quantitative comparative one. The resulting LD₅₀ value, expressed as mass of substance per unit body mass of the test animal (e.g., mg/kg), became a universal currency for acute toxicity [1].

LD₅₀ vs. LC₅₀: A Dimensional Distinction within a Unified Framework

Trevan’s concept of median lethality provides the unifying framework for both LD₅₀ and LC₅₀. The critical difference is not conceptual but dimensional, relating to the route of exposure and how the amount of toxicant is quantified.

- LD₅₀ (Lethal Dose 50%): Measures a dose—a specific amount of substance administered directly to an organism per unit body weight. Common routes include oral, dermal, or intravenous [1]. It answers: "How much of this substance, given directly, kills half the test population?"

- LC₅₀ (Lethal Concentration 50%): Measures a concentration—the amount of substance present in a unit volume of environmental medium (air or water) to which an organism is exposed for a defined period [1]. It answers: "At what concentration in the air/water does this substance kill half the test population over a set exposure time?"

This distinction is paramount for application. LD₅₀ is pivotal for assessing hazards from ingestion, dermal contact, or injection (e.g., pharmaceuticals, consumer products) [1]. LC₅₀ is essential for evaluating inhalation risks in occupational settings or aquatic toxicity in environmental assessments [1]. The exposure duration is a critical, stated parameter for LC₅₀ (e.g., 4-hour exposure) but is typically implicit for a single administered LD₅₀ dose [2].

Table 1: Core Distinctions Between LD₅₀ and LC₅₀

| Parameter | LD₅₀ (Lethal Dose 50%) | LC₅₀ (Lethal Concentration 50%) |

|---|---|---|

| Definition | Dose causing death in 50% of test population | Concentration causing death in 50% of test population |

| Primary Dimension | Mass of substance per unit body weight (mg/kg) | Concentration in medium: mg/m³ (air) or mg/L (water) |

| Typical Route | Oral, Dermal, Injection | Inhalation, Aquatic Immersion |

| Key Variable | Body weight of recipient | Duration of exposure (must be specified) |

| Primary Use Case | Drug toxicity, accidental ingestion, hazard labeling | Occupational inhalation safety, environmental toxicology |

Experimental Protocol: The Traditional LD₅₀ Determination

The classical LD₅₀ test, now largely refined or replaced, followed a rigorous protocol to determine the precise dose-mortality relationship [9].

Detailed Methodology:

- Test System Selection: Healthy, young adult animals (typically rats or mice) of a defined strain, sex, and weight range are acclimatized [1].

- Dose Group Formation: Animals are randomly allocated into several groups (e.g., 5-6). Each group is assigned a specific dose level of the test substance [9].

- Dose Administration: The substance, in a pure form, is administered in a single bolus via the chosen route (oral gavage is common). Doses are spaced logarithmically (e.g., half-log intervals) to adequately bracket the expected median lethal dose [9].

- Observation Period: Animals are monitored closely for 24-48 hours for signs of acute toxicity (e.g., lethargy, convulsions) and then daily for a standard period of 7 to 14 days [1].

- Endpoint Recording: The primary endpoint is death within the observation period. Time-to-death and morbid symptoms are also recorded [9].

- Data Analysis: The mortality data (proportion dead in each dose group) is plotted against the logarithm of the dose. The LD₅₀ is calculated statistically, often using probit analysis or the method of Reed and Muench, to find the dose corresponding to 50% mortality [9].

Diagram 1: Classical LD50 Test Protocol Flow

Application & Interpretation: From Animal Data to Hazard Classification

The primary output of an LD₅₀/LC₅₀ study is a quantitative value used for hazard ranking and classification. A fundamental rule is that a lower LD₅₀/LC₅₀ value indicates higher acute toxicity [1] [10].

These values are categorized into toxicity classes to guide safety communication and regulatory decision-making. It is critical to note that different classification scales exist, such as the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale, which can assign different descriptive terms to the same numerical value [1].

Table 2: Acute Toxicity Classification Based on LD₅₀ (Oral, Rat)

| Toxicity Class | Oral LD₅₀ (Rat) (mg/kg) | Probable Lethal Dose for Humans (70 kg) | Example Substances [2] [10] |

|---|---|---|---|

| Super/Extremely Toxic | < 5 | A taste (< 7 drops) | Botulinum toxin, Arsenic trioxide |

| Highly Toxic | 5 – 50 | < 1 teaspoon (5 mL) | Strychnine, Sodium cyanide |

| Moderately Toxic | 50 – 500 | < 1 ounce (30 mL) | Phenol, Caffeine |

| Slightly Toxic | 500 – 5000 | < 1 pint (500 mL) | Aspirin, Sodium chloride (salt) |

| Practically Non-Toxic | 5000 – 15000 | < 1 quart (1 L) | Ethanol, Acetone |

| Relatively Harmless | > 15000 | > 1 quart | Water, Sucrose (table sugar) |

Crucial Limitations for Interpretation:

- Interspecies Variation: LD₅₀ can vary dramatically between species (e.g., rat vs. dog), making direct human extrapolation uncertain [2].

- Route Dependency: A substance’s toxicity can differ vastly by route (e.g., oral vs. inhalation), necessitating route-specific testing for relevant hazard assessment [1].

- Single-Endpoint Focus: LD₅₀ reveals nothing about non-lethal toxicity, mechanisms of action, long-term chronic effects, or carcinogenic potential [1].

Evolution and Modern Alternatives: The 3Rs and New Approach Methodologies (NAMs)

The classical LD₅₀ test used large numbers of animals (e.g., 40-100) [9]. Since the late 20th century, driven by ethical (3Rs: Replacement, Reduction, Refinement) and scientific imperatives, regulatory bodies have endorsed alternative methods.

Refined & Reduced In Vivo Tests:

- Fixed Dose Procedure (OECD TG 420): Uses fewer animals (e.g., 5-10/step) and seeks to identify a dose causing clear signs of toxicity without necessarily causing lethal toxicity [9].

- Acute Toxic Class Method (OECD TG 423): Uses small, sequential groups of animals (3/step) to assign a substance to a broad toxicity class [9].

- Up-and-Down Procedure (OECD TG 425): Doses animals sequentially one at a time, adjusting the dose up or down based on the previous outcome, dramatically reducing animal use [9].

Replacement In Vitro and In Silico Methods:

- Cytotoxicity Assays: e.g., 3T3 Neutral Red Uptake (NRU) assay. Predicts starting points for acute systemic toxicity by measuring damage to cultured mammalian cells [11] [9].

- Microphysiological Systems (MPS): "Organs-on-chips" that mimic human organ tissue complexity and interactions for more relevant toxicity screening [11].

- Computational (In Silico) Models:

- Quantitative Structure-Activity Relationship (QSAR): Predicts toxicity based on a chemical's structural similarity to compounds with known data [8] [12].

- Machine Learning (ML) Models: Trained on large curated datasets (e.g., from EPA, ChEMBL) to classify toxicity or predict LD₅₀ values. The Collaborative Acute Toxicity Modeling Suite (CATMoS) is a leading example of a consensus model achieving high predictive accuracy for rat oral LD₅₀ [8].

- Read-Across: Uses data from a well-studied "source" chemical to predict the toxicity of a similar "target" chemical [12].

These New Approach Methodologies (NAMs) are increasingly integrated into Integrated Approaches to Testing and Assessment (IATA), forming a weight-of-evidence for safety decisions without sole reliance on animal data [12].

The Scientist’s Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagents & Solutions for Traditional Acute Toxicity Testing

| Item | Function & Specification |

|---|---|

| Test Substance | High-purity (>95%) chemical or formulated product of interest. The core agent whose toxicity is being quantified [1]. |

| Vehicle/Solvent | A physiologically compatible agent (e.g., saline, carboxymethylcellulose, corn oil) to dissolve or suspend the test substance for accurate dosing [1]. |

| Anesthetics/Analgesics | Used during refinement protocols for invasive procedures (e.g., implantation) to minimize animal pain and distress, adhering to 3R principles [9]. |

| Fixatives (e.g., 10% Neutral Buffered Formalin) | For post-mortem preservation of tissues from deceased animals for potential histopathological examination to investigate cause of death [11]. |

| Cell Culture Media & Reagents (for in vitro NAMs) | Supports growth of cell lines (e.g., BALB/c 3T3, human keratinocytes) used in cytotoxicity assays like the NRU assay [11] [9]. |

| Neutral Red Dye | A vital dye used in the 3T3 NRU assay. Taken up by viable lysosomes; its uptake inhibition is a measure of cytotoxicity [11]. |

| S9 Metabolic Fraction | Liver homogenate containing Phase I metabolizing enzymes. Added to in vitro assays to approximate mammalian metabolic activation of pro-toxins [11]. |

| Chemical Descriptors & Modeling Software | Digital tools and molecular fingerprint databases (e.g., ECFP6) required for building and running QSAR and machine learning prediction models [8]. |

Abstract This technical guide provides an in-depth analysis of the fundamental units of measure in acute toxicity assessment: milligrams per kilogram (mg/kg) for the median lethal dose (LD50) and parts per million (ppm) or milligrams per cubic meter (mg/m³) for the median lethal concentration (LC50). Framed within a broader thesis on the critical distinctions between LD50 and LC50 in toxicology research, this whitepaper elucidates the conceptual basis, experimental derivation, and practical application of these metrics. The content details standardized testing protocols, presents comparative data, and explores the implications of unit selection for hazard classification, risk assessment, and regulatory decision-making, serving as a comprehensive resource for researchers and drug development professionals.

In toxicology, quantifying the acute lethal potency of a substance is paramount for safety evaluation. The median lethal dose (LD50) and the median lethal concentration (LC50) are the cornerstone metrics for this purpose, representing statistically derived points on a dose-response or concentration-response curve [1] [2]. The LD50 is defined as the dose of a substance required to kill 50% of a test animal population within a specified period, typically administered via oral, dermal, or injection routes [1]. Its value is universally expressed as the mass of substance per unit mass of the test subject, most commonly as milligrams per kilogram (mg/kg) of body weight [2]. This normalization allows for the comparison of toxicity across substances and accounts for variations in animal size [2].

Conversely, the LC50 applies specifically to exposure via inhalation or, in ecotoxicology, aquatic environments. It is defined as the atmospheric or aqueous concentration of a substance that kills 50% of the test population during a controlled exposure period [1] [3]. The units for LC50 are context-dependent: parts per million (ppm) is used for gases and vapors as a volume-to-volume ratio, while milligrams per cubic meter (mg/m³) is used for dusts, mists, and vapors as a mass-to-volume measure [3] [13]. A critical distinction is that ppm and mg/m³ are not universally equivalent; their conversion depends on the molecular weight of the substance and environmental conditions [14].

The genesis of these standardized measures is attributed to J.W. Trevan in 1927, who introduced the LD50 concept to enable consistent comparison of the relative poisoning potency of diverse chemical agents by using death as a common, unambiguous endpoint [1] [2]. Both LD50 and LC50 are primary indicators of acute toxicity—the ability of a chemical to cause adverse effects soon after a single administration or a short-term exposure (minutes to ~14 days) [1]. A fundamental, inverse relationship governs their interpretation: a lower numerical value indicates higher toxicity [2] [15].

Quantitative Data and Toxicity Classification

The numerical values of LD50 and LC50 span orders of magnitude, from the highly toxic to the practically non-toxic. To standardize communication of hazard, these values are categorized within formal toxicity classification systems.

Table 1: Hodge and Sterner Toxicity Classification Scale [1]

| Toxicity Rating | Commonly Used Term | Oral LD50 (mg/kg in rats) | Inhalation LC50 (ppm/4hr in rats) | Dermal LD50 (mg/kg in rabbits) |

|---|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | ≤ 10 | ≤ 5 |

| 2 | Highly Toxic | 1 - 50 | 10 - 100 | 5 - 43 |

| 3 | Moderately Toxic | 50 - 500 | 100 - 1,000 | 44 - 340 |

| 4 | Slightly Toxic | 500 - 5,000 | 1,000 - 10,000 | 350 - 2,810 |

| 5 | Practically Non-toxic | 5,000 - 15,000 | 10,000 - 100,000 | 2,820 - 22,590 |

| 6 | Relatively Harmless | > 15,000 | > 100,000 | > 22,600 |

Table 2: Comparative Acute Toxicity of Selected Substances

| Substance | Test Model & Route | LD50 / LC50 Value | Toxicity Class (Per Table 1) | Reference |

|---|---|---|---|---|

| Botulinum Toxin | Oral, various | ~1 ng/kg | Extremely Toxic (Rating 1) | [2] |

| Dichlorvos (Insecticide) | Rat, Inhalation (4hr) | 1.7 ppm | Extremely Toxic (Rating 1) | [1] |

| Sodium Cyanide | Rat, Oral | 6.4 mg/kg | Highly Toxic (Rating 2) | - |

| Aspirin | Rat, Oral | 200 mg/kg | Moderately Toxic (Rating 3) | [15] |

| Table Salt (Sodium Chloride) | Rat, Oral | 3,000 mg/kg | Slightly Toxic (Rating 4) | [2] [15] |

| Ethanol | Rat, Oral | 7,060 mg/kg | Practically Non-toxic (Rating 5) | [2] |

| Sucrose | Rat, Oral | 29,700 mg/kg | Relatively Harmless (Rating 6) | [2] |

The data illustrate the vast range of chemical potency. It also highlights that toxicity can vary dramatically with the route of exposure, as seen with dichlorvos, which is extremely toxic via inhalation but only moderately toxic via the oral route in rats [1]. Furthermore, differences between species are common; a substance deemed moderately toxic in rats may have a very different potency in humans or other animals [1] [2].

Unit Conversion and Technical Relationships

Understanding the mathematical relationship between common units for LC50 is essential for accurate data interpretation and regulatory compliance.

Table 3: Key Unit Conversions for LC50

| Conversion | Formula | Notes & Application |

|---|---|---|

| ppm to mg/m³ (for gases/vapors) | mg/m³ = (ppm × Molecular Weight) / 24.5 | Applies at standard temperature (25°C) and pressure (1 atm). 24.5 is the molar volume (L/mol) under these conditions [14]. |

| mg/L to ppm (for gases/vapors in air) | ppm = [mg/L × 1000 × 24.5] / Molecular Weight | Used for converting regulatory toxic endpoints [14]. |

| mg/L (in air) to mg/m³ | 1 mg/L = 1000 mg/m³ | A direct volumetric conversion, where 1 cubic meter = 1000 liters. |

| Aquatic LC50 (mg/L) | Typically reported as mg/L | For substances dissolved in water (e.g., pesticides, industrial chemicals) [16]. |

A critical point is that mg/L (mass/volume) is not inherently equivalent to ppm (a ratio) [14]. In aquatic contexts, due to water's density of 1 kg/L, 1 mg/L is approximately equal to 1 ppm by weight. For gases in air, however, this simple equivalence does not hold, and the formulas in Table 3 must be used.

Detailed Experimental Protocols

The determination of LD50 and LC50 values follows standardized guidelines, such as those from the Organisation for Economic Co-operation and Development (OECD), to ensure reproducibility and scientific validity [1].

Protocol for Oral or Dermal LD50 Determination

- Test Substance: High-purity chemical [1].

- Animal Models: Typically, groups of healthy young adult rats or mice of a defined strain. Other species (rabbits, guinea pigs) may be used for specific endpoints (e.g., dermal) [1].

- Experimental Design:

- Animals are randomly divided into several dose groups (e.g., 5-6), plus a vehicle control group.

- A range of geometrically increasing doses (e.g., 5, 10, 20, 40, 80 mg/kg) is selected based on preliminary range-finding tests.

- The substance, often suspended or dissolved in a vehicle, is administered in a single bolus. For oral tests, this is via gavage; for dermal, it is applied to shaved skin under a semi-occlusive covering [1].

- Observation Period: Animals are clinically observed intensively for the first 24 hours and at least daily for a total of 14 days [1]. Observations include mortality, changes in skin, eyes, respiration, nervous system function, and behavior.

- Data Analysis: The dose causing 50% mortality within the observation period is calculated using statistical probit or logit analysis [15]. The final result is reported as, for example, LD50 (oral, rat) = 56 mg/kg [1].

Protocol for Inhalation LC50 Determination

- Exposure System: A dynamic inhalation chamber of known volume where the test atmosphere (gas, vapor, or aerosol) is generated and maintained at a constant concentration [1].

- Animal Models: Groups of rodents (rats, mice) are placed in restraint tubes or whole-body exposure chambers.

- Exposure Regime:

- Animals are exposed to a fixed concentration of the test substance for a set duration, most commonly 4 hours [1]. Other durations (e.g., 1 hour) may be specified.

- Multiple concentration groups are tested to bracket the expected LC50.

- Post-Exposure Observation: Following exposure, animals are monitored for mortality and clinical signs for up to 14 days [1].

- Data Analysis: The concentration resulting in 50% mortality is calculated statistically. The result must specify species, exposure time, and units: e.g., LC50 (rat, 4h) = 1.7 ppm [1].

Protocol for Aquatic LC50 Determination (e.g., Fish)

- Test System: Static or flow-through aquaria containing standardized, aerated water [16].

- Test Organisms: Juvenile or adult fish of a sensitive species (e.g., Rainbow Trout, Zebrafish) [16].

- Experimental Design:

- Fish are acclimated and then exposed to a logarithmic series of concentrations of the chemical dissolved in water.

- A minimum of five concentrations and a control are used, with multiple fish per tank.

- Exposure Duration: Typically 96 hours (4 days), with observations for mortality [16].

- Data Analysis: The 96-hour LC50 is calculated using statistical methods. The result is reported as, for example, 96-h LC50 (Rainbow Trout) = 0.246 mg/L [16].

Visualizing Testing Workflows and Toxicological Relationships

The following diagrams illustrate the generalized experimental workflow and the conceptual relationship between key toxicological descriptors on a dose-response curve.

Flowchart: General Workflow for Acute Lethality Testing

Conceptual Diagram: Dose-Response Curve with Key Descriptors [6]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Materials for Acute Toxicity Testing

| Item Category | Specific Items | Function & Explanation |

|---|---|---|

| Test Substance | High-purity chemical, radiolabeled compound, formulated product. | The agent whose toxicity is being evaluated. Purity is critical to avoid confounding effects [1]. Radiolabels allow for pharmacokinetic tracking. |

| Animal Models | Specific Pathogen-Free (SPF) rodents (rats, mice), rabbits, zebrafish (Danio rerio), Rainbow Trout. | Provide a biological system to model toxic effects. Species and strain selection is based on regulatory guidelines, sensitivity, and relevance [1] [16]. |

| Dosing/Exposure Apparatus | Oral gavage needles, dermal application chambers, dynamic inhalation exposure chambers, static/flow-through aquatic tanks. | Enable precise and controlled administration of the test substance via the intended route (oral, dermal, inhalation, aquatic) [1] [16]. |

| Vehicle/Control Agents | Carboxymethylcellulose (CMC), corn oil, saline, dimethyl sulfoxide (DMSO). | Used to dissolve or suspend the test substance for administration. Vehicle control groups are essential to distinguish the substance's effects from those of the carrier. |

| Analytical Equipment | Gas Chromatography (GC), High-Performance Liquid Chromatography (HPLC), aerosol particle sizers. | Used to verify and monitor the actual concentration of the test substance in inhalation chambers or aqueous solutions, which is more reliable than relying on nominal (calculated) concentrations [16]. |

| Clinical Pathology Kits | Hematology analyzers, clinical chemistry analyzers, histopathology supplies. | For quantifying biomarkers of organ damage (e.g., liver enzymes, kidney markers) during the observation period, providing mechanistic insights beyond lethality. |

Discussion: Applications, Limitations, and Modern Context

LD50 and LC50 data are indispensable for hazard classification and labeling under systems like the Globally Harmonized System (GHS), directly influencing the use of signal words (e.g., "Danger" or "Warning") on safety data sheets (SDS) and product labels [15]. They are the starting point for quantitative risk assessment, used to establish safety thresholds such as Reference Doses (RfD) or Derived No-Effect Levels (DNEL) by applying assessment factors (uncertainty factors) to the NOAEL or LOAEL, which are often identified in the same studies that yield LD50 data [6].

However, significant limitations must be acknowledged. The tests traditionally require a substantial number of animals and involve significant suffering, raising ethical concerns. Consequently, the 3Rs principle (Replacement, Reduction, Refinement) is actively driving change. In vitro assays (e.g., cell-based cytotoxicity), computational (in silico) toxicology models (e.g., QSAR), and the use of lower phylogenetic organisms are increasingly accepted alternatives or supplements [2]. Furthermore, LD50/LC50 values are specific to the tested species, strain, sex, and age, and extrapolation to humans is not always linear or predictable [2]. They measure only acute lethality and provide no information on chronic toxicity, carcinogenicity, mutagenicity, or organ-specific toxic mechanisms.

In modern toxicology, while the LD50 and LC50 remain regulatory staples, the focus has shifted toward acquiring more mechanistic data from animal studies and developing Integrated Approaches to Testing and Assessment (IATA). The objective is to understand the biological pathway of toxicity rather than relying solely on a single mortality endpoint, enabling more predictive and human-relevant safety evaluations.

The units of measure—mg/kg for LD50 and ppm or mg/m³ for LC50—are far more than technical conventions; they are the foundational language for communicating acute toxicological potency. Their proper use and understanding are critical for comparing chemical hazards, classifying risks, and establishing protective exposure limits. While evolving ethical standards and scientific practices are refining the role of traditional lethality testing, the conceptual and quantitative framework provided by LD50 and LC50 continues to underpin global chemical safety paradigms. For the research and drug development professional, mastery of these concepts, including their derivation, limitations, and correct application, remains an essential component of toxicological science.

In toxicology and pharmacology, the median lethal dose (LD₅₀) and median lethal concentration (LC₅₀) are foundational metrics for quantifying and comparing the acute toxicity of substances [1]. These values represent the dose or concentration estimated to kill 50% of a tested population within a defined period [1]. Their development and enduring use are rooted in a specific statistical rationale that addresses the core challenge of variability in biological response.

The choice of the 50% lethality point as a benchmark is not arbitrary. Testing at the extremes of a dose-response curve (e.g., LD₁ or LD₉₉) requires substantially more animals to achieve statistical precision, as responses in these regions are highly variable [2]. The 50% point, located at the steepest, most linear portion of the sigmoidal dose-response curve, provides the greatest precision and reproducibility with the smallest number of test subjects [17] [2]. This efficiency was the driving force behind J.W. Trevan's introduction of the LD₅₀ concept in 1927, moving the field away from less reliable measures like the "minimal lethal dose" [1] [18].

This whitepaper explores the statistical and practical foundations of the 50% benchmark, delineates the critical methodological distinctions between LD₅₀ (dose-based) and LC₅₀ (concentration-based) determinations, and provides a technical guide for their application in modern research and drug development.

Theoretical Foundations: Dose-Response Relationships and the 50% Point

The quantal dose-response relationship is a cornerstone concept for understanding LD₅₀ and LC₅₀. A quantal response is an all-or-nothing event, such as death or the occurrence of a specific lesion, which either happens or does not for each individual in a population [1] [19]. This contrasts with a graded response, which measures a continuous change in effect within a single organism (e.g., enzyme inhibition or blood pressure change) [17] [19].

When the proportion of a population exhibiting a quantal response is plotted against the logarithm of the dose or concentration, a sigmoidal (S-shaped) curve is typically produced [20] [17]. This curve can be described by the Hill equation or a probit model [20]. The 50% response point lies at the inflection point of this curve, where the slope is steepest.

Figure 1: The 50% point (LD₅₀/LC₅₀) is situated at the steepest part of the sigmoidal dose-response curve. This region provides the most precise and reproducible measurement, avoiding the high variability associated with extreme doses (e.g., LD₁ or LD₉₉) and optimizing experimental efficiency [17] [2].

Key Statistical and Practical Advantages of the 50% Benchmark

- Precision and Reproducibility: The central point of the curve is less susceptible to random biological variation than the tails, yielding more consistent results across experiments [2].

- Experimental Efficiency: Identifying a median requires fewer test subjects than precisely defining a threshold or a low-incidence response point, aligning with ethical principles of reduction in animal testing [21] [2].

- Comparative Utility: A single, standardized point allows for the direct ranking of relative toxic potency across diverse chemical classes with different mechanisms of action [1].

LD₅₀ vs. LC₅₀: Fundamental Distinctions in Methodology and Application

While both LD₅₀ and LC₅₀ describe median lethal values, their technical definitions and experimental determinations differ fundamentally, reflecting distinct routes of exposure.

LD₅₀ (Lethal Dose 50%) is the amount of a substance administered in a single dose that is expected to cause death in 50% of the treated animals [1]. It is expressed as the mass of substance per unit mass of test subject (e.g., milligrams per kilogram of body weight) [1]. The route of administration (oral, dermal, intravenous) must be specified, as toxicity can vary dramatically [1]. For example, dichlorvos has an oral LD₅₀ in rats of 56 mg/kg but an intraperitoneal LD₅₀ of 15 mg/kg [1].

LC₅₀ (Lethal Concentration 50%) is the concentration of a substance in an exposure medium (typically air for inhalation studies, or water for aquatic toxicology) that is expected to cause death in 50% of a test population over a specified exposure period [1] [6]. It is expressed as concentration (e.g., parts per million in air, milligrams per liter in water) and must be qualified by the exposure duration (e.g., 4-hour LC₅₀) [1].

Figure 2: LD₅₀ and LC₅₀ share the core statistical rationale of the median response but differ fundamentally in their units, routes of exposure, and consequent experimental methodologies. LD₅₀ focuses on administered dose, while LC₅₀ focuses on environmental concentration over time [1] [6].

Quantitative Data: Toxicity Classification and Substance Comparison

The numerical value of an LD₅₀ or LC₅₀ is inversely related to acute toxicity: a lower value indicates a more toxic substance [1] [7]. To standardize communication of hazard, chemicals are often categorized into toxicity classes based on these values.

Table 1: Toxicity Classification Based on Oral LD₅₀ (Rat) [1] [7]

| Toxicity Class | Oral LD₅₀ (mg/kg body weight, rat) | Probable Lethal Dose for a 70 kg Human | Examples |

|---|---|---|---|

| Super Toxic | < 5 | A taste (< 7 drops) | Botulinum toxin [7] |

| Extremely Toxic | 5 – 50 | < 1 teaspoon (≈ 5 mL) | Arsenic trioxide, Strychnine [7] |

| Very Toxic | 50 – 500 | < 1 ounce (≈ 30 mL) | Phenol, Caffeine [7] |

| Moderately Toxic | 500 – 5,000 | < 1 pint (≈ 470 mL) | Aspirin, Sodium chloride [7] |

| Slightly Toxic | 5,000 – 15,000 | < 1 quart (≈ 950 mL) | Ethanol, Acetone [7] |

Table 2: Comparative Acute Toxicity of Common Substances (Oral LD₅₀, Rat) [1] [15] [2]

| Substance | Approximate Oral LD₅₀ (mg/kg) | Relative Toxicity (vs. NaCl) | Notes / Context |

|---|---|---|---|

| Botulinum toxin | 0.000001 | ~3 billion times | Most potent known neurotoxin [2]. |

| Sodium cyanide | 6.4 | ~470 times | Classic rapid-acting poison [2]. |

| Strychnine | ~5 | ~600 times | Extremely toxic alkaloid [7]. |

| Dichlorvos | 56 | ~54 times | Insecticide; toxicity varies by route [1]. |

| Aspirin | 200 | ~15 times | Common drug; demonstrates therapeutic index [15] [2]. |

| Caffeine | 192 | ~16 times | Common stimulant [2]. |

| Table Salt (NaCl) | 3,000 | Baseline | Reference for "low" oral toxicity [15]. |

| Ethanol | 7,060 | ~0.4 times | Less acutely toxic than salt by this measure [2]. |

| Water (H₂O) | >90,000 | <0.03 times | Practically non-toxic via oral route [2]. |

Detailed Experimental Protocols

This protocol follows standard acute oral toxicity testing guidelines.

1. Test System Preparation:

- Animals: Typically rodents (rats or mice). A defined strain, age, sex, and weight range is selected. Healthy, acclimatized animals are used.

- Test Substance: Administered in a pure, stable form. For insoluble substances, an appropriate vehicle (e.g., corn oil, aqueous suspension) is used.

- Group Assignment: Animals are randomly assigned to several dose groups (typically 4-6) and a vehicle control group (n=5-10 per group).

2. Dosing and Observation:

- Dose Selection: Doses are selected based on a range-finding study to bracket the expected median. Doses are usually spaced logarithmically (e.g., factor of 1.5 to 2.0).

- Administration: The substance is administered in a single dose via oral gavage using a calibrated syringe and feeding needle.

- Clinical Observation: Animals are observed intensively for the first 4-8 hours, then at least daily for a total of 14 days [1]. Observations include mortality, clinical signs (lethargy, tremors, etc.), and body weight changes.

3. Data Analysis and Calculation:

- Endpoint: The primary endpoint is death within the 14-day observation period.

- Statistical Analysis: Mortality data is analyzed using an appropriate statistical method to calculate the LD₅₀ and its confidence intervals. Common methods include:

4. Reporting: The final report must specify the species, strain, sex, body weight range, vehicle, dosing volume, LD₅₀ value with confidence limits, and any observed toxic signs [1]. The result is reported as, for example, "LD₅₀ (oral, rat) = 250 mg/kg (95% C.I.: 195 – 320 mg/kg)."

This protocol assesses acute inhalation toxicity in a controlled atmosphere.

1. Test System and Atmosphere Generation:

- Animals: Rodents (rats or mice) housed in individual restraining tubes within an inhalation chamber.

- Atmosphere Generation: The test substance (gas, vapor, or aerosol) is mixed with conditioned air in a dynamic airflow chamber. Concentration is continuously monitored and verified analytically (e.g., via UV spectroscopy, gas chromatography).

2. Exposure and Observation:

- Exposure Period: Animals are exposed to a fixed concentration for a defined period, most commonly 4 hours [1].

- Post-Exposure Observation: Animals are removed, returned to their cages, and observed for mortality and clinical signs for up to 14 days post-exposure [1].

3. Data Analysis and Calculation:

- Multiple chambers running simultaneously with different concentrations, or sequential runs at different concentrations, are used to generate mortality data across a range.

- The LC₅₀ and its confidence limits are calculated using probit or similar analyses on concentration vs. mortality data.

4. Reporting: The result specifies species, strain, exposure duration, and analytical method for confirming concentration. It is reported as, for example, "LC₅₀ (rat, 4-hr) = 2.5 mg/L (95% C.I.: 1.9 – 3.3 mg/L)."

Figure 3: A generalized experimental workflow for determining median lethal values. The process is methodologically rigorous, proceeding from study design through controlled treatment and observation to final statistical analysis and reporting [1] [21].

The Scientist's Toolkit: Essential Research Reagents and Materials

Conducting rigorous LD₅₀/LC₅₀ studies requires specialized materials and equipment to ensure accuracy, reproducibility, and humane treatment of test subjects.

Table 3: Essential Research Reagents and Materials for LD₅₀/LC₅₀ Studies

| Item | Function & Specification | Critical Notes |

|---|---|---|

| Defined Animal Models | Inbred rodent strains (e.g., Sprague-Dawley rats, CD-1 mice). Provide genetic uniformity to reduce response variability [1]. | Species, strain, sex, age, and weight must be standardized and reported. Health status monitoring (SPF) is essential. |

| Test Substance & Vehicle | High-purity chemical of interest. Appropriate vehicle (e.g., sterile saline, corn oil, 0.5% methylcellulose) for solubilization/suspension [1]. | Purity must be verified. Vehicle must be non-toxic and not interact with test substance. Dose formulations require stability assessment. |

| Dosing Apparatus (LD₅₀) | Calibrated syringes, oral gavage needles (ball-tipped for safety), topical application chambers, or infusion pumps for IV/IP routes [1]. | Precision in delivered volume/mass is critical. Needle size must be appropriate for animal size to prevent injury. |

| Inhalation Chamber (LC₅₀) | Dynamic flow whole-body or nose-only exposure chamber. Allows precise control of aerosol/vapor concentration and duration [1]. | Must provide uniform test atmosphere. Chamber material must not adsorb the test substance. Real-time concentration monitoring is required. |

| Analytical Equipment (LC₅₀) | Gas chromatograph (GC), UV spectrophotometer, or real-time aerosol monitor. Used to verify and maintain target chamber concentration [1]. | Regular calibration against primary standards is mandatory. Analytical method must be validated for the test substance. |

| Clinical Observation Tools | Standardized scoring sheets, video recording equipment, scales for body weight, thermometers. | Observations must be blinded to avoid bias. Clear, objective criteria for clinical signs must be established a priori. |

Advanced Applications and Critical Limitations in Drug Development

The Therapeutic Index (TI)

In drug development, the LD₅₀ is rarely viewed in isolation. Its primary utility is in calculating the Therapeutic Index (TI), a measure of a drug's safety margin [18].

TI = LD₅₀ / ED₅₀

where ED₅₀ is the median effective dose for the desired therapeutic effect. A higher TI indicates a wider margin of safety [18]. However, the TI is a population-based ratio and does not account for differences in the slopes of the dose-response curves for efficacy and toxicity [18].

Key Limitations and Modern Context

While foundational, the classical LD₅₀/LC₅₀ test has significant limitations that guide its modern application:

- Animal to Human Extrapolation: Interspecies differences in metabolism, physiology, and pharmacokinetics make direct extrapolation to humans uncertain [1] [2]. LD₅₀ values are best used for comparative hazard ranking, not absolute human risk prediction.

- Ethical Constraints: The classical test using death as an endpoint raises significant ethical concerns. International guidelines (OECD, EPA) now mandate the use of alternative methods where possible, such as the Fixed Dose Procedure or Acute Toxic Class method, which use fewer animals and less severe endpoints [2].

- Narrow Biological Insight: The test yields a single number but provides no information on mechanism of toxicity, target organs (beyond lethality), or effects of repeated low-dose exposure (chronic toxicity) [1]. It is strictly a measure of acute toxicity.

- Regulatory Use: LD₅₀/LC₅₀ data remain a key component for hazard classification and labeling under systems like the Globally Harmonized System (GHS) [6]. However, they are increasingly supplemented by data from in vitro assays and quantitative structure-activity relationship (QSAR) models.

The LD₅₀ and LC₅₀ endure as central concepts in toxicology because the 50% benchmark is statistically robust, experimentally efficient, and provides a standardized point for comparing acute lethal potency. The critical distinction between dose (LD₅₀) and concentration (LC₅₀) dictates fundamentally different experimental approaches for oral/dermal versus inhalation hazards.

For today's researcher, these median values are not endpoints in themselves but tools for calculating safety margins like the Therapeutic Index and for performing initial hazard characterization within a broader, more mechanistically driven safety assessment paradigm. Their determination must be carried out with rigorous methodology, a clear understanding of their limitations, and a firm commitment to the principles of replacement, reduction, and refinement (the 3Rs) in animal testing. As toxicology evolves, the statistical rationale underpinning the 50% point remains valid, even as the methods for deriving it continue to advance.

In toxicology, the median lethal dose (LD50) and median lethal concentration (LC50) are foundational, quantal metrics for evaluating the intrinsic acute toxicity of chemical substances [1]. The LD50 is defined as the amount of a material, administered in a single dose, that causes the death of 50% of a group of test animals within a specified observation period [1]. Similarly, the LC50 represents the concentration of a chemical in air (or water) that is lethal to 50% of the test population during a set exposure duration, typically 4 hours for inhalation studies [1]. Developed by J.W. Trevan in 1927, these metrics were designed to standardize the comparison of poisoning potency between chemicals that cause harm through disparate biological mechanisms by using death as a common, unambiguous endpoint [1] [22].

Fundamentally, LD50 and LC50 are measures of acute toxicity, which is the ability of a chemical to cause adverse effects relatively soon after a single administration or short-term exposure [1] [6]. This timeframe is generally defined as minutes, hours, or days, with observation periods rarely exceeding 14 days [1] [23]. This stands in direct contrast to chronic toxicity, which arises from repeated or continuous exposure over months to years and is associated with delayed, often irreversible health outcomes such as cancer, organ damage, or neurological disorders [23]. The primary value of LD50/LC50 lies in hazard identification, classification, and labeling—providing a critical first tier of data for setting safety limits for single or short-term exposures, informing emergency response protocols, and establishing a basis for more comprehensive, chronic toxicity testing [6] [22].

Core Concepts: Acute vs. Chronic Exposure and Toxicity

Understanding the distinction between acute and chronic exposure is essential for contextualizing the role and limitations of LD50/LC50 data within a complete toxicological risk assessment.

Acute Exposure is characterized by a single event or series of closely repeated events involving contact with a chemical over a period less than 14 days [23]. It is typically high in intensity but short in duration, often resulting from accidents, spills, or brief operational tasks [23]. The effects are usually immediate or rapidly onset (within hours) and can include irritation, chemical burns, systemic poisoning, respiratory distress, and neurological symptoms [23]. Many acute effects are reversible with prompt medical intervention [23].

Chronic Exposure involves repeated or continuous contact with a chemical at lower concentrations over a much longer period, defined as more than one year [23]. This pattern is common in occupational or environmental settings. The associated health effects are significantly delayed, potentially manifesting years or decades after initial exposure, and are frequently irreversible [23]. Examples include carcinogenesis, pulmonary fibrosis, neurodegenerative diseases, and reproductive toxicity [23].

Table 1: Core Characteristics of Acute vs. Chronic Exposure and Toxicity

| Characteristic | Acute Exposure/Toxicity | Chronic Exposure/Toxicity |

|---|---|---|

| Timeframe | Short-term; single dose or exposure ≤ 14 days [1] [23]. | Long-term; repeated exposure > 1 year [23]. |

| Typical Intensity | High concentration/dose. | Low to moderate concentration/dose. |

| Onset of Effects | Immediate or rapid (minutes to days). | Delayed (months to years). |

| Nature of Effects | Often reversible (unless lethal). | Often irreversible and progressive. |

| Primary Toxicological Metrics | LD50, LC50 (for lethality) [1]. | NOAEL, LOAEL, BMD (for non-lethal adverse effects) [6]. |

| Regulatory Focus | Hazard classification, emergency planning, short-term exposure limits (STELs) [23]. | Permissible Exposure Limits (PELs), cancer risk assessment, lifetime health advisories [23]. |

Quantitative Data: Interpreting LD50/LC50 Values

The numerical value of an LD50 or LC50 provides a direct indicator of acute toxicity potency. A fundamental rule is that a lower LD50/LC50 value indicates a higher degree of acute toxicity [1] [24]. These values are used to classify chemicals into toxicity categories for labeling and risk communication under systems like the Globally Harmonized System (GHS) [23].

For example, an oral LD50 of ≤ 5 mg/kg in rats would classify a substance as "Category 1: Highly Toxic," whereas an LD50 > 2000 mg/kg might be classified as "Category 5" [23]. It is critical to note that LD50 values can vary substantially based on the route of administration (oral, dermal, inhalation), the animal species, strain, sex, and age used in the test [1]. Therefore, the specific test conditions must always be reported alongside the value (e.g., LD50 (oral, rat) = 5 mg/kg) [1].

Table 2: Example LD50 Values and Associated Toxicity Classification

| Substance | Approximate Oral LD50 (Rat) | Toxicity Class (Illustrative) | Probable Lethal Dose for 70 kg Human [24] |

|---|---|---|---|

| Botulinum Toxin | < 0.00001 mg/kg* | Super Toxic | A taste (less than 7 drops) |

| Sodium Cyanide | ~5 mg/kg [24] | Extremely Toxic | < 1 teaspoonful |

| Nicotine | ~50 mg/kg [22] | Very Toxic | < 1 ounce |

| Caffeine | ~190 mg/kg [22] | Moderately Toxic | < 1 pint |

| Aspirin | ~200 mg/kg [22] | Moderately Toxic | < 1 pint |

| Table Salt (NaCl) | ~3000 mg/kg [22] | Slightly Toxic | < 1 quart |

| Ethanol | ~7000 mg/kg [22] | Practically Non-Toxic | > 1 quart |

*Note: Value for botulinum toxin is illustrative from common literature; specific rodent LD50 may vary.

Experimental Protocols for Determining LD50 and LC50

Standardized protocols, such as those established by the Organisation for Economic Co-operation and Development (OECD), govern the conduct of these tests to ensure reliability and comparability of data [1].

1. Test Substance and Animal Model:

- The test is performed using a pure form of the chemical [1].

- Young, healthy adult animals of a defined strain (most commonly rats or mice) are acclimatized prior to testing [1].

- Animals are randomly assigned to treatment and control groups, typically with 5-10 animals per sex per dose level.

2. Route of Administration and Dosing:

- Oral (Gavage): The substance is directly administered into the stomach via a feeding needle [1].

- Dermal: The substance is applied uniformly to a shaved area of skin (~10% of body surface) and covered with a porous dressing for a fixed period (usually 24 hours) [1].

- Inhalation: Animals are placed in an inhalation chamber where they are exposed to a known, controlled concentration of the test article (gas, vapor, or aerosol) for a set period, traditionally 4 hours [1].

3. Dose Selection and Study Design:

- A preliminary range-finding study is conducted to estimate the approximate lethal dose range.

- For the main study, at least three dose levels are chosen, spaced by a consistent geometric factor (e.g., 2), intended to produce mortality between 0% and 100%.

- A vehicle control group is included.

4. Observation and Data Analysis:

- Animals are observed intensively for the first 4-6 hours after dosing and at least daily thereafter for a total of 14 days [1] [22].

- All signs of toxicity, time of onset, duration, and mortality are recorded.

- At the end of the observation period, the LD50/LC50 value and its confidence intervals are calculated using standardized statistical methods (e.g., probit analysis, logistic regression) [22].

Diagram 1: Standard Workflow for an LD50/LC50 Study (76 characters)

Beyond LD50: Chronic Toxicity and the Broader Risk Assessment Framework

While LD50/LC50 are critical for acute hazard assessment, they do not predict the outcomes of chronic, low-level exposure [1] [22]. Chronic risk assessment relies on different studies and metrics derived from repeat-dose toxicity studies (e.g., 28-day, 90-day, or lifelong studies) [6].

Key metrics for chronic toxicity include:

- NOAEL (No Observed Adverse Effect Level): The highest tested dose at which no adverse effects are observed [6].

- LOAEL (Lowest Observed Adverse Effect Level): The lowest tested dose at which a statistically or biologically significant adverse effect is observed [6].

- BMD (Benchmark Dose): A statistical lower confidence limit on the dose that produces a predetermined level of change in response (e.g., a 10% increase in tumor incidence, or BMD10) [6].

These values are used to derive human safety thresholds, such as Reference Doses (RfDs) or Occupational Exposure Limits (OELs), by applying assessment (uncertainty) factors to account for interspecies differences and human variability [6].

Diagram 2: Integration of Acute & Chronic Data in Risk Assessment (76 characters)

The Scientist's Toolkit: Methods and Emerging Approaches

Table 3: Key Reagents, Models, and Methods in Toxicity Testing

| Tool/Category | Specific Item/Model | Function and Application |

|---|---|---|

| In Vivo Animal Models | Rat (Rattus norvegicus), Mouse (Mus musculus), Rabbit [1]. | The standard biological system for determining classical LD50/LC50, providing a whole-organism response. |

| Exposure/Dosing Systems | Oral gavage needles, dermal occlusion chambers, whole-body inhalation chambers [1]. | Enable precise, controlled administration of the test substance via the intended route of exposure. |

| Computational (In Silico) Models | QSAR Models: CATMoS, VEGA, TEST [25]. | Predict acute toxicity and LD50 based on chemical structure, reducing animal testing. Used for screening and prioritization. |

| Advanced TKTD Models | GUTS Framework: GUTS-RED, BufferGUTS [26]. | Toxicokinetic-Toxicodynamic models that simulate uptake, internal damage, and survival over time under variable exposure scenarios, moving beyond single-point estimates. |

| Consensus Strategies | Conservative Consensus Model (CCM) [25]. | Combines predictions from multiple QSAR models to generate a health-protective toxicity estimate, minimizing under-prediction risk. |

The field is actively evolving toward New Approach Methodologies (NAMs) that aim to reduce, refine, or replace (the 3Rs) animal testing [26]. Computational toxicology, exemplified by Quantitative Structure-Activity Relationship (QSAR) models, is now a mature alternative. A 2025 study demonstrated that a Conservative Consensus Model (CCM) integrating predictions from CATMoS, VEGA, and TEST achieved an under-prediction rate of only 2% for acute oral toxicity classification, making it a robust, health-protective tool for regulatory screening [25].

Furthermore, toxicokinetic-toxicodynamic (TKTD) models like the Generalized Unified Threshold Model of Survival (GUTS) represent a paradigm shift [26]. Unlike the static LD50, GUTS models dynamically simulate how an organism absorbs, distributes, and is damaged by a chemical over time, allowing for survival prediction under complex, time-variable exposure patterns relevant to real-world environmental risk assessment [26]. The recent development of BufferGUTS, which incorporates an intermediate buffer compartment (e.g., representing residues on an insect exoskeleton), extends this powerful framework to event-based terrestrial exposures [26].

In toxicology research, the quantitative assessment of a substance's acute lethal potential is foundational for hazard identification, risk assessment, and safety classification. The median lethal dose (LD50) and median lethal concentration (LC50) serve as the principal benchmarks for this purpose, providing a standardized point of comparison for the acute toxicity of diverse chemicals [1]. The LD50 is defined as the dose required to kill 50% of a test population, while the LC50 refers to the ambient concentration (typically in air or water) that achieves the same effect over a specified exposure period [1] [2].

However, the full dose-response relationship extends beyond this median point. Terms such as LD01, LD100, and LDLO describe critical boundaries of this relationship, offering insights into threshold effects and maximum responses [1]. Understanding the distinctions between these metrics, and their relationship to the central LD50/LC50 values, is crucial for a nuanced interpretation of toxicity data, particularly in drug development where the therapeutic index (the ratio between lethal and effective doses) is paramount [2]. This guide details these key terminologies, their experimental derivation, and their integrated application in safety science.

Comparative Analysis of Key Lethality Metrics

The following table defines and contrasts the core lethality metrics, illustrating their specific roles within a toxicity assessment.

Table 1: Definitions and Applications of Core Lethality Metrics

| Term | Full Name | Definition | Primary Role in Risk Assessment |

|---|---|---|---|

| LD01 | Lethal Dose 1% | The dose estimated to be lethal to 1% of the test population [1]. | Identifies a low-incidence response threshold; can inform the derivation of safety factors for sensitive sub-populations. |

| LD50 | Median Lethal Dose | The dose estimated to be lethal to 50% of the test population. It is the standard measure of acute toxicity potency [1] [2]. | Serves as the primary benchmark for classifying and comparing the acute toxicity of substances. |

| LD100 | Lethal Dose 100% | The lowest dose estimated to be lethal to 100% of the test population [1]. | Represents the dose above which lethality is assured under test conditions; indicates the upper boundary of the dose-response curve. |

| LDLO | Lowest Lethal Dose | The lowest dose administered in experimental or case studies that has been reported to cause death [1]. | Provides an empirical observation of a lethal effect, crucial for identifying absolute minimum hazard levels from case reports. |

| LC50 | Median Lethal Concentration | The concentration of a substance in air (or water) that is lethal to 50% of the test population over a specified exposure period (e.g., 4 hours) [1] [27]. | The standard measure for assessing inhalation or aquatic toxicity, essential for setting occupational exposure limits and environmental standards. |

Experimental Protocols for Determining Lethality Metrics

The determination of LD50, and by extension LD01 and LD100 values, follows standardized in vivo protocols. The following workflow details a standard acute oral toxicity test, which is the most common format.

Standard Protocol for Acute Oral LD50 Determination (OECD Guideline 401/423)

1. Test System Preparation:

- Animals: Healthy young adult rodents (typically rats or mice) of a defined strain and sex are acclimatized to laboratory conditions. A typical test uses 5-10 animals per dose group [1].

- Test Substance: Administered in a single dose via oral gavage. The substance is often dissolved or suspended in a suitable vehicle (e.g., water, corn oil) [1].

2. Experimental Design:

- Dose Selection: A pilot range-finding study is conducted to inform the selection of 3-5 fixed doses for the main test that are expected to span mortality from 0% to 100%.

- Administration: Animals are fasted prior to dosing. The test substance is administered at a constant volume per unit body weight (e.g., 10 mL/kg).

- Control Group: A concurrent control group receives the vehicle only.

3. Observation and Data Collection:

- Observation Period: Animals are observed intensively for the first 4-8 hours post-dosing and at least daily for a total of 14 days [1].

- Clinical Observations: All signs of toxicity (e.g., lethargy, tremors, dyspnea), time of onset, duration, and mortality are recorded.

- Necropsy: All animals, including those found dead and survivors sacrificed at termination, undergo gross necropsy to identify target organs.

4. Data Analysis and Calculation:

- Mortality Data: The number of animals dying in each dose group at the end of the observation period is recorded.

- Statistical Analysis: The LD50, along with confidence intervals, LD01, and LD100, is calculated using an appropriate statistical model. The Bliss probit method or the Litchfield-Wilcoxon method are historically common [27]. Modern practice often employs computer programs to fit data to logistic or probit regression models, which generate the complete dose-response curve and enable precise estimation of all lethal dose values (LD01, LD50, LD100) [27].

- Reporting: The final LD50 is expressed as milligrams of substance per kilogram of animal body weight (mg/kg), with specifications of species, sex, route, and vehicle (e.g., "Oral LD50 (rat, male): 250 mg/kg") [1].

Diagram 1: Dose-Response Curve & Key Lethality Metrics

Protocol for Inhalation LC50 Determination

For LC50, the protocol differs primarily in the exposure system [1] [27].

- Exposure Chamber: Animals are placed in an inhalation chamber where the test substance (gas, vapor, or aerosol) is generated and maintained at a constant, analytically verified concentration.

- Exposure Duration: A standard test involves a 4-hour exposure period, followed by a 14-day observation period [1].

- Concentration Gradients: Multiple groups of animals are exposed to a geometric series of concentrations.

- Analysis: The LC50 (e.g., in ppm or mg/m³) and associated values (LC01, LC100) are calculated similarly to the LD50, with the exposure duration always reported (e.g., "4-hour LC50 (rat): 1000 ppm") [1].

Diagram 2: Acute Systemic Toxicity Testing Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Essential Materials for Acute Lethality Testing

| Item | Function in Research | Example/Specification |

|---|---|---|

| Defined Animal Models | Provide the in vivo biological system for assessing systemic toxicity. Strain, sex, age, and health status must be standardized to minimize variability [1]. | Specific-pathogen-free (SPF) Sprague-Dawley rats or CD-1 mice, 8-12 weeks old. |

| Test Substance & Vehicle | The chemical agent under investigation. Purity must be known and documented. A vehicle is required for solubilizing or suspending the agent for accurate dosing [1]. | Pure chemical (e.g., >98% purity). Common vehicles: sterile water, saline, 0.5% carboxymethylcellulose, corn oil. |

| Dosing Apparatus | Ensures precise and accurate delivery of the correct dose volume to each animal, which is critical for data reliability. | Oral gavage needles (ball-tipped for safety), calibrated syringes, precision micropipettes for small volumes. |

| Inhalation Exposure System | For LC50 studies, it generates and maintains a constant, homogenous atmosphere of the test agent at target concentrations [27]. | Whole-body or nose-only exposure chambers, aerosol generators, real-time air concentration monitors (e.g., PID, GC). |

| Statistical Analysis Software | Fits mortality data to dose-response models (probit, logit) to calculate LD/LC values and their confidence intervals with statistical rigor [27]. | Commercial software (e.g., SAS, GraphPad Prism) or validated OECD-funded tools. |

| Reference Toxins | Serve as positive controls to validate the sensitivity and performance of the test system. | Standardized chemicals with well-characterized LD50 values (e.g., potassium cyanide, dioxin). |

Contextual Application: From LD50 to Regulatory Classification

The LD50 value is the primary datum used for the hazard classification and labeling of chemicals. The following scale demonstrates how numerical LD50 ranges translate into regulatory hazard categories and estimated human risk.

Table 3: Toxicity Classification Based on LD50 Values [1] [7]

| Toxicity Class | Oral LD50 in Rats (mg/kg) | Probable Oral Lethal Dose for a 70 kg Human | Example Substances |

|---|---|---|---|

| Super Toxic | < 5 | A taste (< 7 drops) | Botulinum toxin, tetanospasmin [7]. |

| Extremely Toxic | 5 – 50 | < 1 teaspoonful (< 5 mL) | Arsenic trioxide, strychnine, sodium cyanide [7]. |

| Very Toxic | 50 – 500 | < 1 ounce (< 30 mL) | Phenol, caffeine, warfarin [7]. |

| Moderately Toxic | 500 – 5000 | < 1 pint (< 500 mL) | Aspirin, sodium chloride (table salt) [7]. |

| Slightly Toxic | 5,000 – 15,000 | < 1 quart (< 1 L) | Ethanol (grain alcohol), acetone [7]. |

| Practically Non-Toxic | > 15,000 | > 1 quart | Water, sucrose (table sugar) [2]. |

Critical Interpretation Note: A substance's LD100 is always higher than its LD50, and the LDLO may fall at or below the LD01. A narrow spread between LD01 and LD100 suggests a steep dose-response curve, where small increases in dose lead to large increases in mortality. This has significant implications for risk management, indicating high inherent hazard. Conversely, a wide spread indicates a shallower curve. The LDLO, as an observed value from case reports, is particularly valuable for forensic and human health risk assessment as it represents a real-world lethal exposure, not a statistical estimate [1].

From Lab to Label: Standard Test Methods, Data Interpretation, and Practical Applications

The quantitative assessment of acute toxicity, fundamental to chemical safety evaluation and regulatory classification, hinges on two pivotal metrics: the median lethal dose (LD₅₀) and the median lethal concentration (LC₅₀). The LD₅₀ represents the amount of a substance, administered in a single dose, that causes the death of 50% of a test animal population [1]. It is expressed as the weight of chemical per unit body weight of the animal (e.g., mg/kg) [1]. In contrast, the LC₅₀ denotes the concentration of a substance in an environmental medium—typically air for inhalation studies or water for aquatic toxicity—that is lethal to 50% of the test population over a specified exposure period, often 4 hours [1]. This fundamental distinction between a delivered dose (LD₅₀) and an environmental concentration (LC₅₀) is critical, as it dictates the experimental design, exposure methodology, and interpretation of hazard for different routes of entry.

The OECD Guidelines for the Testing of Chemicals provide the internationally recognized, standardized protocols for deriving these values [28]. As a collection of approximately 150 agreed-upon methods, they are instrumental for regulatory safety testing, chemical registration, and hazard identification [29]. Recent comprehensive updates in 2025 underscore the OECD's commitment to incorporating state-of-the-art science, promoting animal welfare through the 3Rs (Replacement, Reduction, Refinement), and facilitating the Mutual Acceptance of Data across member countries [28] [30]. These guidelines ensure that toxicity data generated for oral, dermal, and inhalation routes are robust, reproducible, and applicable for global chemical safety assessments.

Foundational Concepts: Interpreting LD₅₀ and LC₅₀ Values

The core principle in interpreting LD₅₀ and LC₅₀ data is that a lower value indicates higher acute toxicity [1] [15]. For instance, a chemical with an oral LD₅₀ of 5 mg/kg is significantly more toxic than one with an LD₅₀ of 500 mg/kg. These values allow for the comparison of toxic potency across different substances but are specific to the tested species, sex, age, and route of exposure [1].

Table 1: Toxicity Classification Based on LD₅₀ and LC₅₀ Values (Hodge and Sterner Scale) [1]

| Toxicity Rating | Commonly Used Term | Oral LD₅₀ (Rat, mg/kg) | Inhalation LC₅₀ (Rat, 4-hr, ppm) | Dermal LD₅₀ (Rabbit, mg/kg) |