Inside the ECOTOX Knowledgebase: A Guide to the Systematic Data Curation Process for Chemical Risk Assessment

This article provides a comprehensive guide to the data curation process of the ECOTOX Knowledgebase, the world's largest compilation of curated ecotoxicity data.

Inside the ECOTOX Knowledgebase: A Guide to the Systematic Data Curation Process for Chemical Risk Assessment

Abstract

This article provides a comprehensive guide to the data curation process of the ECOTOX Knowledgebase, the world's largest compilation of curated ecotoxicity data. We detail the systematic, multi-stage pipeline—from literature search to final entry—that transforms raw scientific studies into a reliable, FAIR-compliant resource. Aimed at researchers, scientists, and drug development professionals, this guide explores ECOTOX's foundational role in regulatory science, offers practical methodologies for data extraction and application, addresses common challenges in data evaluation, and validates its use through real-world examples in chemical safety assessment and New Approach Methodologies (NAMs).

Understanding ECOTOX: The Foundation of Curated Ecotoxicity Data for Regulatory Science

What is the ECOTOX Knowledgebase? Defining the World's Largest Curated Ecotoxicity Resource

The exponential growth of chemicals in commerce necessitates efficient, reliable methods for ecological hazard assessment. In response, the U.S. Environmental Protection Agency (USEPA) developed the ECOTOXicology Knowledgebase (ECOTOX). Initiated in the 1980s and continuously refined, ECOTOX has evolved into the world's largest, publicly available compilation of curated single-chemical ecotoxicity data. It serves as a critical resource for regulators, researchers, and risk assessors, supporting chemical safety evaluations, ecological research, and the development of New Approach Methodologies (NAMs). This whitepaper provides a technical deep-dive into ECOTOX, framing its significance within a broader thesis on systematic data curation processes in environmental toxicology.

ECOTOX is a living database, updated quarterly with new data curated from the scientific literature. Its scale is a direct result of decades of systematic review and data abstraction. The following table summarizes the current quantitative scope of the knowledgebase as of its latest release.

Table 1: Quantitative Scope of the ECOTOX Knowledgebase (Version 5)

| Metric | Value | Source |

|---|---|---|

| Compiled References | Over 53,000 peer-reviewed and grey literature sources | [reference:0] |

| Total Test Records | Over 1 million curated toxicity test results | [reference:1] |

| Unique Chemicals | Approximately 12,000 single chemical stressors | [reference:2] |

| Ecological Species | More than 13,000 aquatic and terrestrial species | [reference:3] |

| Data Fields per Record | Over 100 structured fields for search and export | [reference:4] |

The Data Curation Process: A Thesis Perspective

The integrity and utility of ECOTOX are rooted in its transparent, standardized curation pipeline. This process aligns with contemporary systematic review practices and FAIR (Findable, Accessible, Interoperable, Reusable) data principles[reference:5]. The workflow is governed by detailed Standard Operating Procedures (SOPs) covering literature search, data abstraction, and maintenance[reference:6].

The core of the curation logic is a PECO (Population, Exposure, Comparator, Outcome) framework, which defines strict inclusion criteria for studies[reference:7].

- Population (P): Ecologically relevant, whole organisms (aquatic and terrestrial). Bacteria, viruses, and humans are excluded.

- Exposure (E): Single-chemical exposure with a verifiable CAS number, reported concentration, and duration. Air pollution studies (CO₂, ozone) are excluded.

- Comparator (C): A documented control treatment (e.g., vehicle-only) is required.

- Outcome (O): Measured biological effects concurrent with exposure.

Experimental Protocols: Standard Operating Procedures for Data Curation

The ECOTOX team's methodology for transforming primary literature into structured data can be considered a meta-experimental protocol. The key phases are:

1. Literature Search & Citation Identification: Comprehensive searches are conducted across open and grey literature databases using chemical-specific terms. Retrieved citations are initially screened by title and abstract[reference:8].

2. Applicability & Acceptability Screening: Full-text articles are reviewed against the PECO criteria. Studies must report essential details like chemical purity, species verification, test method (e.g., OECD guidelines), and appropriate controls to be deemed acceptable for data extraction[reference:9][reference:10].

3. Data Abstraction: For each accepted study, trained reviewers extract detailed information into over 100 structured fields. This includes chemical properties, species taxonomy, test conditions (media, duration, temperature), and quantitative results (e.g., LC50, NOEC, effect measurements)[reference:11].

4. Quality Control & Data Maintenance: Extracted data undergo rigorous quality checks. The underlying controlled vocabularies and SOPs are reviewed and updated quarterly to incorporate new efficiencies and maintain consistency[reference:12].

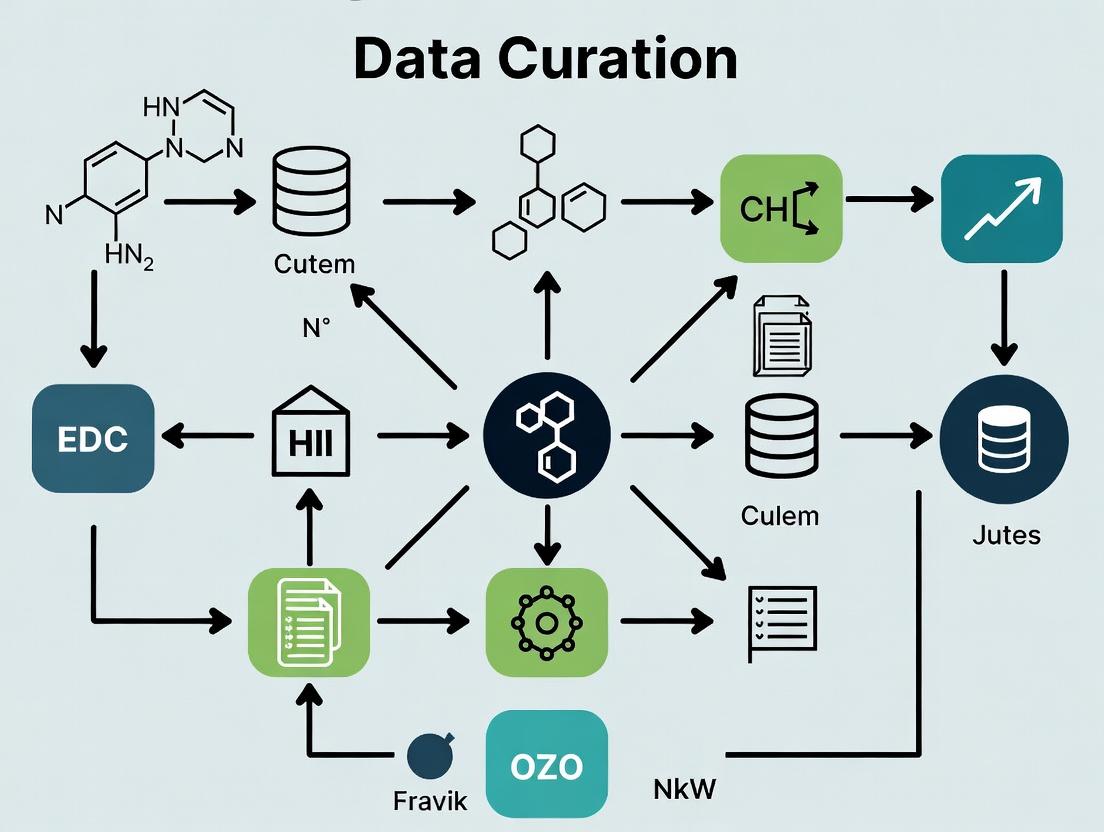

Visualizing the Curation Pipeline and Data Model

The ECOTOX data curation workflow and the logical relationships within its structured database can be visualized as follows.

Diagram 1: ECOTOX Data Curation Pipeline

A systematic, multi-stage process for identifying, reviewing, and ingesting ecotoxicity data.

Diagram 2: ECOTOX Core Data Model

The fundamental entity-relationship structure organizing chemical, species, test, and effect data.

The Scientist's Toolkit: Essential Reagents and Tools for Ecotoxicity Research

While ECOTOX itself is a data resource, the experimental studies it curates rely on a standardized set of materials and tools. The following table details key reagents and solutions fundamental to generating the ecotoxicity data that populates the knowledgebase.

Table 2: Essential Research Reagents & Tools for Ecotoxicity Testing

| Item/Category | Example | Primary Function in Ecotoxicity Studies |

|---|---|---|

| Reference Toxicants | Potassium dichromate, Copper sulfate, Sodium chloride | Used as positive controls to validate test organism health and assay sensitivity. |

| Standardized Test Media | ASTM reconstituted hard water, OECD algal test medium | Provides consistent, defined water chemistry for aquatic tests, ensuring reproducibility. |

| Endpoint Assay Kits | MTT assay (cell viability), ELISA kits (biomarker detection), Chlorophyll a extraction kits | Quantifies specific biological effects, from cytotoxicity in vitro to growth inhibition in algae. |

| Chemical Analysis Standards | Certified reference materials (CRMs) for metals, PAHs, pesticides | Verifies measured exposure concentrations in test solutions, critical for dose-response analysis. |

| Statistical Software | R (with packages like drc for dose-response modeling), USEPA's ToxRStat |

Analyzes toxicity data, calculates EC/LC values, and generates species sensitivity distributions (SSDs). |

The ECOTOX Knowledgebase represents a monumental achievement in environmental data curation. Its value extends far beyond being a simple repository; it is the product of a rigorous, systematic, and transparent process that transforms dispersed scientific literature into a structured, interoperable, and reusable resource. As the demand for rapid chemical safety assessments grows, the role of curated databases like ECOTOX becomes increasingly central. It provides the essential empirical foundation for risk assessment, model development, and the validation of alternative testing strategies, ultimately supporting the protection of ecological health in the face of global chemical challenges.

The continuous introduction of new chemicals into commerce, coupled with expanding regulatory mandates for environmental safety, has created an unprecedented demand for assembled and accessible toxicity data [1]. This need catalyzed the development of the ECOTOXicology Knowledgebase (ECOTOX) by the U.S. Environmental Protection Agency (USEPA) in the early 1980s [1]. Originally conceived as a collection of ecosystem-specific databases for regulatory offices, ECOTOX has evolved into the world’s largest curated compilation of single-chemical ecotoxicity data [1]. Its transformation from a simple archival database to a modern, interactive systematic review platform reflects broader paradigm shifts in toxicology—including the move toward high-throughput in vitro assays, computational modeling, and the adoption of systematic review methods for transparent evidence synthesis [1] [2]. This evolution is central to a thesis on ECOTOX's data curation process, which demonstrates how rigorous, standardized methodologies are critical for generating reliable, reusable data that supports chemical risk assessments, regulatory decisions, and the development of New Approach Methodologies (NAMs) [1] [3].

Historical Development and Architectural Evolution

The development of ECOTOX was driven by practical regulatory needs under statutes like the Clean Water Act and the Toxic Substances Control Act, requiring rapid access to ecological effects data for risk characterization [1]. Its initial architecture in the 1980s consisted of decentralized, taxa-specific databases. The pivotal shift began with the formalization of its data curation pipeline and the adoption of controlled vocabularies, which standardized the extraction of methodological details and results from the literature [1]. The release of ECOTOX Version 5 marks the most significant architectural and philosophical modernization. It introduced a completely redesigned user interface, enhanced query capabilities, and embedded data visualization tools [1] [4]. This version explicitly aligns the database with the FAIR principles (Findable, Accessible, Interoperable, and Reusable), ensuring data can be effectively integrated with other computational toxicology resources and tools [1] [5].

Table 1: The Growth of the ECOTOX Knowledgebase: Key Metrics

| Metric | Historical Scope (1980s-2000s) | Current Scope (ECOTOX Ver 5, 2022-2025) | Data Source |

|---|---|---|---|

| Number of Chemicals | Not specified (focus on pesticides & priority pollutants) | >12,000 chemicals [1] | Peer-reviewed & grey literature |

| Number of Species | Limited, ecosystem-specific | >13,000 aquatic & terrestrial species [4] | Peer-reviewed & grey literature |

| Test Results (Records) | Not specified | >1,000,000 curated test results [1] | >50,000 references [1] |

| Primary Use Case | Internal USEPA regulatory support | Public resource for global research, risk assessment, & model development [1] [4] | N/A |

| Guiding Principles | Data aggregation | FAIR principles & systematic review framework [1] [5] | N/A |

The Systematic Review Framework: Core Methodology

ECOTOX's data curation process is a rigorous, multi-stage pipeline designed to mirror contemporary systematic review practices, ensuring transparency, objectivity, and consistency [1]. The process is governed by detailed Standard Operating Procedures (SOPs) for literature search, citation identification, data abstraction, and data maintenance [1].

The initial phase involves comprehensive searches of both open and "grey" literature (e.g., government reports) for ecologically relevant toxicity studies [1]. Identified references undergo a two-stage screening process: first by title and abstract, followed by a full-text review [1]. For a study to be accepted, it must meet strict applicability and acceptability criteria, which are summarized in Table 2.

Following screening, trained reviewers extract pertinent data from accepted studies using well-established controlled vocabularies. Over 100 data fields are captured, encompassing chemical and species verification, detailed test conditions (exposure duration, concentration, temperature), methodological endpoints, and results [1] [6]. This structured extraction is critical for enabling complex queries and reproducible analyses. The entire workflow, from initial search to data entry, follows a PRISMA-like flow (see Diagram 1), enhancing transparency and minimizing selection bias [1].

Table 2: ECOTOX Study Acceptance Criteria for Data Curation [1] [6]

| Criterion Category | Specific Requirement | Purpose of Criterion |

|---|---|---|

| Test Substance | Single chemical exposure | Ensures clarity of cause-effect relationship |

| Test Organism | Live, whole aquatic or terrestrial plant/animal species | Focus on ecologically relevant endpoints |

| Experimental Design | Reported concurrent environmental concentration/dose & explicit exposure duration | Allows for quantitative dose-response analysis |

| Documented, acceptable control group | Ensures observed effects are treatment-related | |

| Data Reporting | Calculated toxicity endpoint (e.g., LC50, NOEC) is reported or can be derived | Enables data standardization and comparison |

| Study is primary source (not a review) and is publicly available | Ensures data verifiability and traceability | |

| Reporting Standards | Species identified and verified; Test location (lab/field) reported | Assesses relevance and reliability of test conditions |

Diagram 1: ECOTOX Systematic Literature Review and Data Curation Pipeline [1]. The process follows a PRISMA-like flow, with critical screening stages applying the standardized acceptance criteria outlined in Table 2.

Experimental Protocols for Data Generation and Validation

The utility of ECOTOX relies on the quality of the underlying studies from which data is extracted. While ECOTOX itself is a repository, its content generation depends on standardized experimental protocols from primary ecotoxicity research. Key traditional and emerging protocols are highlighted below.

Traditional Whole-Organism Bioassays: The majority of data in ECOTOX comes from standardized in vivo tests, such as the 48-96 hour aquatic acute toxicity test with Daphnia magna (OECD Test Guideline 202) or the fish early-life stage test (OECD TG 210) [2]. These protocols involve exposing organisms to a range of chemical concentrations under controlled conditions to determine lethal or sub-lethal (e.g., growth, reproduction) effects. Key methodological requirements for data inclusion in ECOTOX include specification of exposure medium, temperature, pH, dissolved oxygen, use of appropriate controls, and statistical derivation of endpoints like LC50 (median lethal concentration) [6].

High-Throughput and New Approach Methodologies (NAMs): To address the backlog of untested chemicals, high-throughput screening (HTS) paradigms are emerging [2]. One seminal example is the automated duckweed (Lemna sp.) growth inhibition test. This assay leverages automated image recording and processing to rapidly quantify frond number and area, providing a high-throughput phytotoxicity endpoint [2]. Another advancing area is the use of microfluidic Lab-on-a-Chip (LOC) technologies for small model organisms (e.g., Daphnia, nematodes). These platforms automate animal loading, exposure, and behavioral phenotyping, increasing test throughput while reducing manual labor and animal use [2]. Data from such standardized NAMs, when publicly available, are increasingly curated into repositories like ECOTOX to support model development and validation.

Data Retrieval and Reproducibility Protocols: A critical modern "experimental" protocol is the reproducible retrieval of data from ECOTOX itself. The ECOTOXr R package formalizes this process [5]. The protocol involves: 1) installing the ECOTOXr package in R, 2) using its functions to build targeted API queries (e.g., by chemical CASRN, species name, or effect endpoint), 3) retrieving datasets directly into the R environment, and 4) documenting the entire script for full reproducibility [5]. This tool transforms ad hoc data gathering into a transparent, programmable workflow that aligns with the FAIR principles.

Table 3: The Scientist's Toolkit: Key Reagents and Platforms for Ecotoxicity Research

| Tool/Reagent Category | Specific Example | Primary Function in Ecotoxicity Research | Relevance to ECOTOX & Systematic Review |

|---|---|---|---|

| High-Throughput Bioassay Platforms | Automated imaging systems for Lemna (duckweed) tests [2] | Enables rapid, quantitative assessment of phytotoxicity via frond count and area. | Generates consistent, digital endpoint data suitable for curation and modeling. |

| Microfluidic & Automation Systems | Lab-on-a-Chip (LOC) for Daphnia or nematode bioassays [2] | Automates organism handling, exposure, and real-time behavioral phenotyping. | Increases throughput of in vivo data; provides high-content endpoints for AOP development. |

| Computational Data Access Tools | ECOTOXr R package [5] | Provides programmable, reproducible access to ECOTOX data via API queries. | Embodies FAIR principles; enables transparent and reproducible meta-analysis. |

| Study Evaluation Frameworks | Critical Appraisal Tools (CATs) based on CRED criteria [3] | Provides a structured checklist to assess the reliability and relevance of individual studies. | Supports the systematic review phase of data curation and quality assurance. |

| Reference Chemical Sets | Curated lists of compounds with well-characterized toxicity profiles | Serves as positive controls and benchmarks for calibrating new assay systems. | Provides anchor points for validating NAMs against traditional data within ECOTOX. |

Data Visualization, Interoperability, and the FAIR Framework

ECOTOX Version 5 significantly advanced data accessibility through integrated visualization tools and explicit interoperability features. Users can generate interactive data plots (e.g., scatter plots of effect concentrations) directly within the web interface, allowing for exploratory analysis and identification of trends or outliers [4].

The platform's commitment to the FAIR principles is demonstrated by its interoperability with other major databases. A key integration is with the USEPA's CompTox Chemicals Dashboard, providing seamless linking from a chemical in ECOTOX to rich supplemental data on physicochemical properties, bioactivity, and ongoing toxicological assessments [4]. Furthermore, the development of the ECOTOXr package exemplifies the "Reusable" principle by providing a standardized, script-based method for data retrieval that ensures computational reproducibility [5]. This ecosystem of connected tools (Diagram 2) transforms ECOTOX from a siloed database into a central hub within a broader computational toxicology network, directly supporting the development of Quantitative Structure-Activity Relationship (QSAR) models, species sensitivity distributions (SSDs), and Adverse Outcome Pathways (AOPs) [1] [7].

Diagram 2: ECOTOX Interoperability within the Modern Computational Toxicology Ecosystem. ECOTOX functions as a core data provider, interoperating with chemical (CompTox), mechanistic (AOP), and modeling (QSAR) resources via APIs and linked identifiers. It directly feeds applications in risk assessment and method validation [1] [4] [5].

Future Trajectories: Integrating High-Throughput Data and Advanced Review Methods

The future evolution of ECOTOX will be shaped by two dominant trends in toxicology. First, the expansion of high-throughput and high-content ecotoxicity testing will necessitate the curation of new data types [2]. This includes results from genomic, transcriptomic, and other -omic assays, as well as high-content phenotypic data from automated in vivo platforms [2] [7]. Incorporating such data will require extending controlled vocabularies and developing new modules to capture mechanistic key events, aligning ECOTOX more closely with the Adverse Outcome Pathway (AOP) framework [7].

Second, the systematic review foundation of ECOTOX will be deepened through greater integration of automated screening tools and artificial intelligence. While current screening is manual, future iterations may employ machine learning for title/abstract prioritization and natural language processing to assist in data extraction [2]. Furthermore, the adoption of structured Critical Appraisal Tools (CATs), like those developed by EFSA based on the CRED (Criteria for Reporting and Evaluating Ecotoxicity Data) approach, could be more formally embedded into the curation pipeline to standardize and transparently document reliability and relevance assessments for each study [3].

ECOTOX has completed a transformative evolution from a 1980s internal database to a modern, public systematic review platform. This journey reflects a broader scientific shift towards transparency, reproducibility, and data-driven assessment. Its core strength lies in a rigorous, documented curation process—a systematic review pipeline that applies consistent criteria to identify, evaluate, and extract high-quality ecotoxicity data. By embracing FAIR principles, developing interoperable tools like ECOTOXr, and preparing for next-generation data streams, ECOTOX has established itself as an indispensable infrastructure. It supports not only traditional regulatory risk assessment but also the innovative development and validation of predictive toxicological models and New Approach Methodologies, thereby directly addressing the 21st-century challenge of efficiently evaluating environmental chemical safety.

The systematic assessment of chemical hazards is a cornerstone of environmental protection. For over two decades, the Ecotoxicology (ECOTOX) Knowledgebase has served as a critical infrastructure, transforming dispersed scientific evidence into curated, accessible data to inform regulatory decisions [4]. Its core purpose is to provide a comprehensive, publicly available source of single-chemical toxicity data for ecologically relevant species, thereby supporting the scientific foundation of U.S. environmental statutes [1].

This function is framed within a broader thesis on data curation process research, where ECOTOX exemplifies the application of systematic review principles to ecological toxicology. By implementing a rigorous, transparent pipeline for literature search, study evaluation, and data extraction, ECOTOX ensures that regulatory mandates under laws like the Toxic Substances Control Act (TSCA) and the Clean Water Act (CWA) are met with high-quality, reproducible evidence [1]. For researchers and drug development professionals, understanding this curated data source is vital for designing safer chemicals, evaluating environmental risks of new entities, and developing non-animal New Approach Methodologies (NAMs) that rely on robust historical data for validation [4].

The Regulatory Landscape: TSCA, CWA, and the Need for Curated Data

The regulatory mandates driving chemical assessment are complex and data-intensive. TSCA, as amended by the Lautenberg Act, requires the U.S. Environmental Protection Agency (EPA) to evaluate and manage risks from existing and new chemicals in commerce [8]. Concurrently, the CWA mandates the development of Ambient Water Quality Criteria to protect aquatic life, a process fundamentally reliant on species toxicity data [4]. These laws create a continuous demand for curated, reliable ecotoxicity data.

The ECOTOX Knowledgebase is engineered to meet this demand. It is directly used to inform ecological risk assessments for chemical registration and re-registration, aid in the prioritization and assessment of chemicals under TSCA, and develop numeric criteria for water and sediment quality under the CWA [4]. Recent regulatory proposals, such as the 2025 TSCA Risk Evaluation Framework rule, emphasize efficiency and the use of the best available science, further underscoring the value of centralized, high-quality data repositories like ECOTOX [8].

The ECOTOX Data Curation Pipeline: A Model for Systematic Review

The integrity of ECOTOX is anchored in its meticulous data curation process, which aligns with contemporary systematic review (SR) and evidence-based toxicology practices [1]. This pipeline ensures that the database is not merely a collection of studies but a refined resource of relevant and acceptable toxicity information.

3.1 Experimental Protocol: Literature Search and Study Selection The curation pipeline begins with comprehensive searches of the peer-reviewed and grey literature. Identified references undergo a multi-tiered screening process based on pre-defined applicability and acceptability criteria [1].

Table 1: Key Applicability and Acceptability Criteria for ECOTOX Study Inclusion

| Criterion Category | Description | Example |

|---|---|---|

| Applicability | Relevance to ecological risk assessment. | Test organism is an ecologically relevant aquatic or terrestrial species. |

| Applicability | Study design suitability. | Exposure is to a single, verified chemical stressor. |

| Applicability | Data reporting completeness. | Exposure concentration and duration are explicitly reported. |

| Acceptability | Study reliability and internal validity. | Documented control group is present. |

| Acceptability | Endpoint relevance. | Effect endpoint is clearly defined and measurable (e.g., LC50, NOEC). |

3.2 Data Abstraction and Quality Control Studies passing screening have key details methodically extracted using controlled vocabularies. This includes data on the chemical, test species, exposure conditions, measured effects, and test methodology. Species and chemical identities are verified against authoritative taxonomy and chemistry databases to ensure consistency and interoperability [1]. This rigorous abstraction process transforms narrative journal articles into structured, computable data fields.

3.3 Workflow Visualization The following diagram illustrates the sequential stages of the ECOTOX curation pipeline, from initial search to public data release.

ECOTOX Data Curation and Literature Review Pipeline [1]

Quantitative Scope and Interoperability of the ECOTOX Resource

The scale of curated data within ECOTOX directly reflects its capacity to support broad regulatory and research needs. The knowledgebase is a living resource, updated quarterly with new data [4].

Table 2: Quantitative Summary of the ECOTOX Knowledgebase Scope

| Data Category | Volume | Regulatory and Research Utility |

|---|---|---|

| Scientific References | Over 53,000 compiled references [4]. | Provides an auditable evidence trail for regulatory decisions. |

| Unique Test Records | Over 1,000,000 curated test results [4] [1]. | Enables robust dose-response analysis and meta-analysis. |

| Ecological Species | More than 13,000 aquatic and terrestrial species [4]. | Supports species sensitivity distributions (SSDs) for CWA criteria and ecological risk assessment. |

| Chemical Stressors | Data for over 12,000 chemicals [1]. | Informs assessments across a wide chemical space under TSCA and other statutes. |

ECOTOX enhances its utility through interoperability. It is linked to the EPA CompTox Chemicals Dashboard, which provides additional physicochemical, hazard, and exposure data [4]. This connectivity allows researchers to move seamlessly from a toxicity endpoint in ECOTOX to a chemical's structure, predicted properties, and associated bioassay data, facilitating integrated approaches to safety assessment.

Application in Risk Assessment and New Approach Methodologies (NAMs)

For risk assessors and researchers, ECOTOX data are applied in several critical frameworks. It is fundamental for developing Species Sensitivity Distributions (SSDs), which are used to derive Protective Concentration thresholds for aquatic life [1]. The database also supplies the empirical toxicity data needed to validate and calibrate computational toxicology models, such as Quantitative Structure-Activity Relationship (QSAR) models and ecological thresholds predicted via in vitro to in vivo extrapolation [4].

This role is increasingly important in the context of NAMs. As regulatory science shifts toward reducing vertebrate animal testing, historical in vivo data from ECOTOX becomes essential for anchoring and interpreting high-throughput screening and pathway-based assay results [1]. The database helps identify data gaps, prioritize chemicals for testing, and provide the biological context needed to make mechanistic data ecologically relevant.

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental studies curated within ECOTOX rely on standardized tools and materials to ensure reproducibility and relevance. The following table details key items central to generating reliable ecotoxicity data.

Table 3: Research Reagent Solutions for Ecotoxicity Testing

| Item | Function in Ecotoxicology | Role in ECOTOX Curation |

|---|---|---|

| Standard Reference Toxicants | (e.g., Sodium chloride, KCl). Used to validate the health and sensitivity of test organisms in a laboratory bioassay. | Studies using reference toxicants for quality control are flagged for higher reliability. |

| Renewal Test Chambers | Flow-through or static-renewal exposure systems for aquatic tests. Control exposure concentration and water quality. | Test system type (static, renewal, flow-through) is a critical extracted field for interpreting exposure dynamics. |

| Formulated Synthetic Water | (e.g., EPA Reconstituted Hard Water). Provides a consistent, defined medium for aquatic toxicity tests, eliminating variability from natural sources. | Water chemistry parameters (hardness, pH, temperature) are extracted as key test condition modifiers. |

| Control Sediments | Defined, uncontaminated sediments for benthic organism testing. Serve as a baseline for assessing toxicity in spiked or field-collected sediments. | The use of appropriate control sediments is a key acceptability criterion for sediment toxicity studies. |

| Standardized Nutrient Media | For algal and aquatic plant toxicity tests (e.g., AAP, OECD media). Ensures consistent growth not limited by nutrients. | Growth medium composition is captured to assess test validity and cross-study comparability. |

Visualizing the Regulatory and Scientific Integration Pathway

The ultimate value of ECOTOX is realized when curated data directly informs regulatory decisions and scientific advancements. The following diagram maps this integration pathway, showing how raw data from controlled studies flow through the knowledgebase to support core regulatory mandates and research initiatives.

Integration of ECOTOX Data into Regulatory and Research Workflows [4] [1] [8]

The ECOTOXicology Knowledgebase (ECOTOX) stands as the world's most comprehensive repository of curated, single-chemical ecotoxicity data [1]. Managed by the U.S. Environmental Protection Agency, this resource is foundational for ecological risk assessment, regulatory decision-making, and environmental research. Its evolution from separate databases in the 1980s to a unified, systematic knowledgebase reflects a commitment to FAIR principles (Findable, Accessible, Interoperable, and Reusable) in toxicological data science [1]. This whitepaper details the technical framework, data curation pipeline, and research applications of ECOTOX, contextualizing its immense scale—over one million test results from more than 12,000 chemicals and 13,000 species—within the rigorous methodology that ensures its reliability and utility for the scientific community [4].

Core Database Metrics and Composition

The ECOTOX Knowledgebase is an ever-expanding resource, updated quarterly with new data extracted from the peer-reviewed and gray literature [4] [9]. Its scale and diversity are summarized in the following tables.

Table 1: Core Quantitative Metrics of the ECOTOX Knowledgebase

| Metric | Count | Description and Source |

|---|---|---|

| Test Records (Results) | >1,000,000 | Individual toxicity test results from acceptable studies [4] [1]. |

| Unique Chemicals | >12,000 | Single, verified chemical stressors, including pesticides, PFAS, and industrial compounds [4] [9]. |

| Ecological Species | >13,000 | Taxonomically verifiable aquatic and terrestrial species [4]. |

| Scientific References | >50,000 | Source publications, including journal articles and technical reports [1]. |

Table 2: Taxonomic Distribution of Test Records (Representative Groups)

| Species Group | Approximate % of Total Records | Key Examples |

|---|---|---|

| Fish | 25.6% | Rainbow trout, Zebrafish, Fathead minnow |

| Flowering Plants/Trees | 18.7% | Duckweed, Soybean, Ryegrass |

| Insects & Spiders | 14.2% | Honey bee, Daphnia magna, Midges |

| Crustaceans | 9.3% | Water flea (Daphnia), Amphipods |

| Mammals | 7.5% | Rat, Mouse, Voles |

| Algae | 5.9% | Green algae, Diatoms |

| Birds | 3.8% | Mallard duck, Bobwhite quail |

| Amphibians | 2.5% | Frog, Toad, Salamander |

Table 3: Diversity of Measured Effects in ECOTOX

| Effect Group | % of Records | Example Endpoints |

|---|---|---|

| Mortality | 26.9% | LC50 (Lethal Concentration to 50%), LD50 |

| Growth | 14.6% | Biomass change, Root elongation inhibition |

| Population | 16.9% | Abundance, Population growth rate |

| Biochemical | 13.8% | Enzyme activity (e.g., AChE inhibition), Hormone levels |

| Physiology | 6.7% | Respiration rate, Photosynthesis efficiency |

| Reproduction | 4.9% | Fecundity, Hatchability, Number of offspring |

| Genetics | 5.2% | Chromosomal aberration, Micronucleus formation |

| Behavior | 3.5% | Avoidance, Feeding rate, Locomotor activity |

| Accumulation | 4.6% | Bioconcentration Factor (BCF), Tissue concentration |

The Data Curation Pipeline: A Systematic Review Protocol

The integrity of ECOTOX is maintained through a formal, multi-stage literature review and data curation pipeline. This process aligns with systematic review methodologies and is governed by detailed Standard Operating Procedures (SOPs) [1].

Experimental Protocol: Literature Search and Screening

The curation workflow is a defined sequence of planning, screening, and extraction.

1. Chemical Identification and Search Strategy: The process begins with the verification of the chemical of interest using its CAS Registry Number (CASRN). Curators compile a comprehensive list of synonyms, trade names, and related chemical forms from sources like the CompTox Chemicals Dashboard and STN [9]. A tailored Boolean search string is constructed and executed across multiple academic databases (e.g., Web of Science, PubMed, Agricola, ProQuest) [10] [1].

2. Screening with PECO Criteria: Identified citations undergo a two-stage screening process against formal PECO (Population, Exposure, Comparator, Outcome) criteria [9].

- Population: Test organisms must be taxonomically verifiable, ecologically relevant species (e.g., fish, plants, invertebrates). Studies on bacteria, viruses, yeast, or human-focused research are excluded [9].

- Exposure: The study must involve exposure to a single, verifiable chemical at quantified concentrations or doses. The route of exposure (e.g., water, diet, injection) and a clear exposure duration must be reported [9].

- Comparator: The study must include an appropriate control treatment for comparison [9].

- Outcome: A measurable biological effect (e.g., mortality, growth reduction) must be reported in relation to the exposure. The source must be a primary research article (not a review) published in English [9].

Studies excluded at the title/abstract or full-text stage are tagged with a specific reason (e.g., "Mixture," "No Concentration," "Review") to ensure transparency and aid process refinement [9].

For studies that pass screening, detailed data are extracted into structured fields using controlled vocabularies to ensure consistency [1].

Key Abstraction Fields:

- Chemical & Species Identifiers: CASRN, DTXSID (EPA's substance identifier), and taxonomic IDs (NCBI Taxonomy, ITIS) [9].

- Test Conditions: Exposure medium, duration, temperature, pH, and test type (acute/chronic).

- Toxicity Results: Endpoint values (e.g., LC50, NOEC, LOEC), effect concentrations, statistical measures, and the author-reported observed duration [10] [9].

- Study Metadata: Reference details, including DOI and ECOTOX-specific reference number (ECOREF).

A single primary study may yield multiple ECOTOX records if it reports results for different species, life stages, or endpoints [9]. All extracted data undergo quality assurance checks before being integrated into the master database and published to the public website in quarterly updates [1] [9].

Integration in Research and Regulatory Applications

Researchers leverage ECOTOX data through a suite of software tools and interoperable resources.

Table 4: Key Research Tools and Resources for ECOTOX Data Analysis

| Tool/Resource Name | Type | Primary Function | Interoperability with ECOTOX |

|---|---|---|---|

| ECOTOXr | R Software Package | Programmatic, reproducible retrieval and curation of ECOTOX data [5]. | Directly queries and processes ECOTOX data exports within the R environment, formalizing the data cleaning pipeline. |

| CompTox Chemicals Dashboard | Interactive Web Application | Provides physicochemical properties, hazard, exposure, and bioactivity data for ~1 million chemicals [11]. | ECOTOX toxicity data is integrated into chemical profiles; linked via DTXSID and CASRN for seamless cross-referencing [11]. |

| USEtox | Scientific Consensus Model | Global model for characterizing human and ecotoxicological impacts in Life Cycle Assessment (LCA) [12]. | ECOTOX data is a critical input for calculating freshwater ecotoxicity characterization factors, particularly for deriving species sensitivity distributions (SSDs) [12]. |

| EPA SSD Toolbox / Web-ICE | Statistical Software Tools | Generate Species Sensitivity Distributions (SSDs) to estimate hazardous concentrations affecting a portion of species [9]. | ECOTOX is a primary data source for constructing SSDs to derive environmental benchmarks like Predicted No-Effect Concentrations (PNECs). |

| R Project & RStudio | Programming Environment | Open-source platform for statistical computing and graphics [10]. | ECOTOX's "Data to R Plot" export function provides customized R scripts and data to regenerate and tailor visualizations from the Explore module [10]. |

Application Workflow: From Data Retrieval to Risk Assessment

A typical research workflow using ECOTOX involves data retrieval, filtration, and synthesis for modeling.

Primary Use Cases:

- Ecological Risk Assessment (ERA): Regulatory bodies use ECOTOX to develop Aquatic Life Criteria, Soil Screening Levels, and Toxicity Reference Values (TRVs) to protect ecosystems [4] [9].

- Chemical Prioritization & Screening: Under laws like the Toxic Substances Control Act (TSCA), data density and potency information from ECOTOX help identify chemicals requiring greater scrutiny [4] [1].

- Model Development and Validation: The curated in vivo data is essential for developing and validating Quantitative Structure-Activity Relationship (QSAR) models, New Approach Methodologies (NAMs), and adverse outcome pathways (AOPs) [4] [1].

- Life Cycle Impact Assessment (LCIA): Models like USEtox rely on ECOTOX data to calculate ecotoxicity characterization factors, translating chemical emissions into potential ecosystem impact scores [12].

Technical Access and Data Export

ECOTOX provides multiple pathways for data access tailored to different user needs [10] [4].

1. Interactive Web Interface:

- Search: For targeted queries with known parameters (specific chemical, species, or endpoint). Users can filter by 19+ parameters, including newly added observed duration filters [10] [4].

- Explore: For open-ended data discovery. Users can browse chemicals, species, or effects and utilize interactive Data Visualization tools with plot exports [10] [4].

- Plot View & R Export: A key feature allows export of plot data paired with an R script to regenerate and customize high-quality graphs externally, facilitating reproducible research [10].

2. Bulk Data Download: The entire database is available for download as pipe-delimited ASCII files, enabling advanced, large-scale analyses [10]. This complete dataset is essential for systematic evidence mapping, large-scale meta-analyses, and integration into other computational platforms.

3. Programmatic Access: The development of the ECOTOXr R package represents a significant advancement toward reproducible and transparent data retrieval, allowing researchers to formally script and document every step of their data curation process [5].

The ECOTOX Knowledgebase is a critical infrastructure component for modern ecotoxicology and environmental chemistry. Its authoritative value stems not merely from its scale—over one million test records for 12,000+ chemicals—but from its rigorous, systematic curation pipeline that adheres to systematic review principles. By implementing FAIR data practices, providing advanced user interfaces, and fostering interoperability with tools like the CompTox Dashboard and USEtox, ECOTOX transforms dispersed literature into actionable, computational-ready knowledge. For researchers and risk assessors, it remains an indispensable resource for deriving protective environmental benchmarks, validating predictive models, and informing the sustainable management of chemicals worldwide. Future developments will continue to enhance its interoperability, computational accessibility, and alignment with evolving paradigms in toxicological assessment.

The ECOTOX Pipeline: A Step-by-Step Guide to Systematic Literature Review and Data Curation

Within the broader research on the ECOTOXicology Knowledgebase (ECOTOX) data curation process, Stage 1: Systematic Literature Searching and Acquisition represents the foundational and critical first phase. ECOTOX is the world’s largest curated database of single-chemical ecotoxicity data, supporting chemical safety assessments and ecological research [1]. Its authority and reliability are directly contingent upon a comprehensive, transparent, and systematic approach to identifying all available evidence. This process is designed to mitigate publication bias—the well-documented tendency for studies showing significant or positive effects to be published more readily than those showing null or negative results [13]. For a definitive resource like ECOTOX, which informs regulatory decisions under statutes like the Clean Water Act and the Toxic Substances Control Act [4], failing to capture this "grey literature" would result in a skewed, non-representative dataset. This guide details the technical methodology of this initial stage, framing it as an essential component of a robust, evidence-based data curation pipeline that ensures the ECOTOX knowledgebase remains a FAIR (Findable, Accessible, Interoperable, and Reusable) resource for the global scientific and regulatory community [1].

Defining the Search Universe: Open and Grey Literature

A systematic search for ECOTOX data curation explicitly targets two broad domains: traditional open literature and grey literature.

Open Literature: This refers to commercially published, peer-reviewed scientific material typically indexed in major bibliographic databases (e.g., PubMed, Scopus, Web of Science). It includes journal articles, published reviews, and academic monographs.

Grey Literature: Defined as literature produced by entities outside of traditional commercial or academic publishing channels [14]. For ecotoxicology, this encompasses:

- Government and Agency Reports: Technical reports from environmental protection agencies (e.g., U.S. EPA, Environment Canada), health departments, and international bodies like the World Health Organization (WHO) [14].

- Academic Work: Doctoral dissertations and master's theses, which often contain extensive original data [14].

- Conference Proceedings: Abstracts, posters, and full papers presented at scientific conferences [13].

- Regulatory and Trial Data: Unpublished or ongoing study reports from chemical manufacturers, and records from clinical and ecological trial registries [14] [13].

- Preprints: Preliminary versions of research articles shared on servers like bioRxiv and arXiv prior to peer review [14].

The inclusion of grey literature is not optional; it is a scientific imperative. Studies suggest that papers with "interesting" results are three times more likely to be published [13]. Relying solely on open literature risks creating a "file-drawer" problem, where an incomplete and positively biased evidence base leads to inaccurate hazard assessments [13]. A classic example is the antidepressant Agomelatine, where a review of both published and unpublished trials revealed a more modest efficacy profile and underreported safety concerns than the published literature alone suggested [13].

Quantitative Scope of ECOTOX Data Curation

The scale of the ECOTOX knowledgebase underscores the importance of a rigorous Stage 1 search protocol. The following table summarizes the current quantitative scope of the curated data, which is the direct product of systematic literature searching and acquisition [4] [1].

Table 1: Quantitative Scope of the ECOTOX Knowledgebase (as of 2025)

| Data Category | Metric | Description |

|---|---|---|

| Total References | Over 53,000 | The number of individual source documents (from both open and grey literature) from which data has been curated [4] [1]. |

| Curated Test Records | Over 1,000,000 | Individual toxicity test results extracted and entered into the knowledgebase [4]. |

| Chemical Coverage | Over 12,000 | Unique single chemical stressors with associated toxicity data [4] [1]. |

| Species Coverage | Over 13,000 | Ecologically relevant aquatic and terrestrial species represented in the database [4]. |

Experimental Protocol: The ECOTOX Literature Review Pipeline

The ECOTOX team employs a documented, multi-stage pipeline for literature review and data curation that aligns with systematic review principles [1]. The workflow for Stage 1 and initial screening is visualized below.

Diagram 1: ECOTOX Literature Search and Screening Workflow (Max Width: 760px)

Detailed Methodological Steps

Strategy Development (Protocol): For each chemical or project, a structured search protocol is defined. This includes:

- Population/Test System: Ecologically relevant species (aquatic and terrestrial).

- Stressor: Single, verified chemical substances.

- Outcome: Measured toxicity endpoints (e.g., mortality, growth, reproduction).

- Search Strings: Boolean logic-based queries incorporating chemical names, synonyms, CAS numbers, and broad toxicity terms, tailored for each database [1].

Search Execution: Searches are performed across multiple sources concurrently [1].

- Open Literature Databases: PubMed/MEDLINE, Scopus, Web of Science, Environmental Sciences and Pollution Management.

- Grey Literature Sources: As detailed in Section 5 (The Scientist's Toolkit). This includes targeted searches in government repositories, thesis databases, and trial registries.

Title/Abstract Screening: Retrieved references are independently screened by two reviewers against pre-defined applicability criteria. These criteria determine if a study is within scope (e.g., original ecotoxicity data, relevant species and chemical, controlled experiment) [1]. Conflicts are resolved by consensus or a third reviewer.

Full-Text Review and Acceptability Screening: The full text of potentially applicable studies is obtained and assessed against more detailed acceptability criteria. This quality assessment evaluates study reliability, focusing on factors like documented methodology, appropriate controls, and clear reporting of results and raw data [1]. Studies failing to meet minimum quality thresholds are excluded.

Data Extraction Ready Set: The final output of Stage 1 is a vetted set of high-quality, relevant studies that proceed to the next stage: structured data abstraction into the ECOTOX knowledgebase.

Success in grey literature search requires knowing where to look. The following table catalogs essential resources and their function within the ecotoxicology data curation context [14] [13].

Table 2: Research Reagent Solutions for Grey Literature Acquisition

| Resource Category | Resource Name | Function in ECOTOX Data Curation |

|---|---|---|

| Theses & Dissertations | ProQuest Dissertations & Theses Global [14] | Locates foundational academic research containing extensive raw data not always published elsewhere. |

| EThOS (British Library) [14] | Provides access to UK doctoral theses. (Note: Temporarily offline as of 2023) [14]. | |

| Open Access Theses and Dissertations (OATD) [13] | Searches globally for freely available graduate theses. | |

| Government & Agency Repositories | WHO IRIS (Institutional Repository) [14] | Sources international technical reports and policy documents on chemical safety and health. |

| U.S. EPA Web Portal [4] | Primary source for EPA technical reports, risk assessments, and data relevant to U.S. regulations. | |

| World Bank Open Knowledge Repository [14] | Provides reports on environmental projects and chemical impacts in developing regions. | |

| Clinical & Ecological Trial Registries | ClinicalTrials.gov [14] | Identifies unpublished, ongoing, or completed studies on chemical effects, including non-human subjects. |

| WHO ICTRP (Intl. Clinical Trials Registry) [14] | A global portal searching across national trial registries. | |

| EU Clinical Trials Register [14] | Source for trial information within the European Union. | |

| Preprint Servers | bioRxiv [14] | Discovers cutting-edge, non-peer-reviewed research in biology and toxicology. |

| arXiv [14] | Covers quantitative biology, physics, and related computational fields relevant to model development. | |

| Specialized Grey Lit Databases | Grey Matters (CADTH) [14] | A practical checklist and tool for identifying health-related grey literature sources. |

| Global Index Medicus (WHO) [14] | Focuses on biomedical literature from low- and middle-income countries. |

Data Flow and Interoperability Post-Acquisition

The conclusion of Stage 1 initiates the critical data curation and integration phases. The relationships between acquired data, the ECOTOX knowledgebase, and downstream applications are complex and bidirectional, supporting both regulatory assessment and predictive modeling.

Diagram 2: Data Curation Flow and Interoperability from Search to Application (Max Width: 760px)

As shown, the acquired and curated data serves multiple high-value purposes:

- Direct Query and Visualization: Through the ECOTOX interface, users can search, filter, and visualize data via interactive plots [4].

- Support for New Approach Methodologies (NAMs): Curated in vivo data is essential for developing and validating computational toxicology models, such as Quantitative Structure-Activity Relationships (QSARs) and Species Sensitivity Distributions (SSDs) [4] [1].

- Informing Regulatory Standards: Data directly feeds into the derivation of chemical benchmarks, water quality criteria, and ecological risk assessments [4].

- Closes the Research Loop: The use of ECOTOX in modeling and assessment continuously identifies data gaps for specific chemicals or species, which in turn informs and prioritizes future systematic search efforts (Stage 1), creating an iterative, evidence-driven cycle of knowledge refinement [1].

Stage 1: Systematic Literature Searching and Acquisition is a meticulously engineered process that underpins the scientific integrity of the ECOTOX knowledgebase. By employing a protocol-driven, dual-path approach that rigorously targets both open and grey literature, the ECOTOX curation pipeline actively combats publication bias and strives for comprehensiveness. This results in a foundational dataset that is not only massive in scale—exceeding one million test records—but also balanced and reliable [4] [1]. As outlined, this stage is the critical first link in a chain that transforms disparate research findings into a structured, interoperable, and FAIR resource. This resource, in turn, accelerates ecological risk assessment, fuels the development of predictive toxicological models, and ultimately supports informed decision-making to protect environmental and public health.

Within the broader thesis on the ECOTOXicology Knowledgebase (ECOTOX) data curation process research, Stage 2 represents the critical juncture where identified scientific literature is systematically evaluated for inclusion. This stage transforms a collection of potential references into a curated body of evidence suitable for ecological risk assessment and regulatory decision-making. ECOTOX, as the world's largest compilation of curated ecotoxicity data, relies on a transparent and repeatable screening protocol to ensure data quality, consistency, and relevance for its over one million test results from more than 50,000 references [1]. This guide details the technical execution of Stage 2, providing researchers, scientists, and drug development professionals with an in-depth analysis of the defined applicability and acceptability criteria, experimental protocols, and quality control measures that underpin this authoritative resource.

Defining Applicability and Acceptability Criteria

The screening process bifurcates into two sequential assessments: applicability (relevance) and acceptability (quality). These criteria are derived from standardized evaluation guidelines and are fundamental to systematic review practices [1] [6].

Core Applicability Criteria

Applicability determines if a study investigates a question relevant to the knowledgebase's scope. A study must meet all of the following minimum criteria to be considered applicable [1] [6]:

- Single Chemical Exposure: Effects must be attributable to exposure to a single, identifiable chemical.

- Ecologically Relevant Species: Test subjects must be whole, live aquatic or terrestrial plants or animals.

- Measured Biological Effect: The study must report a quantifiable biological effect on the organism.

- Reported Exposure Concentration/Dose: A concurrent environmental chemical concentration, dose, or application rate must be specified.

- Explicit Exposure Duration: The duration of chemical exposure must be clearly stated.

Core Acceptability Criteria

Acceptability assesses the methodological soundness and reporting quality of an applicable study. These criteria ensure data verifiability and robustness [6].

Table 1: Quantitative Summary of ECOTOX Knowledgebase (as of 2022 Publication)

| Metric | Count | Description |

|---|---|---|

| Curated Chemicals | >12,000 | Unique chemical substances with ecotoxicity data. |

| Ecological Species | >N/A (implied thousands) | Aquatic and terrestrial species represented. |

| Test Results | >1,000,000 | Individual toxicity endpoint records. |

| Source References | >50,000 | Scientific papers, reports, and studies curated. |

The Stage 2 Screening Workflow: A Systematic Protocol

The screening process follows a defined pipeline with sequential gates, ensuring efficiency and consistency. The workflow is visually summarized in Figure 1.

Diagram 1: ECOTOX Literature Screening and Data Curation Workflow

Experimental Protocol for Screening

The protocol is executed by trained reviewers following detailed Standard Operating Procedures (SOPs) [1].

Title and Abstract Screen:

- Objective: To rapidly exclude clearly irrelevant literature.

- Method: Reviewers assess the title and abstract against the five core applicability criteria. Studies not involving ecologically relevant species, single-chemical toxicity, or reporting of an effect and exposure are rejected.

Full-Text Acquisition and Initial Review:

- Objective: To obtain the complete study for definitive evaluation.

- Method: The full text of all potentially relevant studies is retrieved. An initial review confirms basic relevance and checks for major exclusion factors (e.g., non-English language, not a full primary article) [6].

Formal Applicability Assessment:

- Objective: To definitively judge if the study falls within ECOTOX's scope.

- Method: Using a standardized checklist, reviewers verify the presence of all five core applicability elements within the full text. Studies failing any criterion are documented and excluded.

Formal Acceptability Assessment:

- Objective: To evaluate the methodological quality and reporting completeness of applicable studies.

- Method: Reviewers apply the acceptability criteria, focusing on:

- Control Groups: Verification of concurrent, untreated or vehicle control groups for comparison [6].

- Endpoint Calculation: Confirmation that a quantitative toxicity endpoint (e.g., LC50, NOEC) is reported or can be calculated from provided data [6].

- Reporting Standards: Assessment of whether key test conditions (species identification, temperature, pH, test location) are unambiguously reported.

Documentation and Resolution:

- All decisions are recorded in a tracking system. Uncertain cases are escalated for consensus review by senior curators to maintain consistency.

The Scientist's Toolkit: Research Reagent Solutions

The following tools and resources are essential for executing or understanding the Stage 2 screening process.

Table 2: Essential Toolkit for ECOTOX Data Screening and Curation

| Item | Function in Screening Process | Relevance for Researchers |

|---|---|---|

| Controlled Vocabularies & Taxonomies | Standardize terminology for species, chemicals, and endpoints during data extraction, ensuring interoperability and searchability [1]. | Critical for aligning in-house data with ECOTOX structure for comparison or submission. |

| Chemical Verification Tools (e.g., CAS RN, InChIKey) | Unambiguously identify the tested chemical, separating it from metabolites or mixtures, a key applicability criterion [1]. | Prevents misidentification in literature reviews; essential for QSAR and computational modeling. |

| Species Verification Databases | Confirm the taxonomic identity and ecological relevance of test organisms [1]. | Ensures accurate extrapolation of toxicity data across related species in risk assessment. |

| Systematic Review Software (e.g., for PRISMA) | Manage the flow of references, track screening decisions, and generate audit trails, as reflected in ECOTOX's internal SOPs [1]. | Provides transparency and reproducibility for independent systematic reviews in ecotoxicology. |

| EPA ECOTOX Knowledgebase (Public Interface) | Serves as the public portal for accessing the final curated data output of the screening process [1]. | Primary source for retrieving quality-controlled ecotoxicity data for chemical assessments and research. |

Integration with Broader Assessment Frameworks

The output of Stage 2 screening directly feeds into higher-order ecological risk assessments. A primary application is in developing Species Sensitivity Distributions (SSDs), which are used to derive protective benchmarks like Ecological Soil Screening Levels (Eco-SSLs) [1] [15].

Diagram 2: Pathway from Literature Screening to Protective Benchmarks

The rigorous screening in Stage 2 ensures that only relevant and reliable data populate the SSD, leading to scientifically defensible environmental safety values. This underscores the critical role of structured curation in supporting regulatory science and the assessment of chemical safety under mandates like the Endangered Species Act [6].

Within the context of a broader thesis on the ECOTOX knowledgebase data curation process research, Stage 3 represents the critical implementation phase where systematic review principles are operationalized. The Ecotoxicology (ECOTOX) Knowledgebase, maintained by the United States Environmental Protection Agency (USEPA), is the world's largest curated repository of single-chemical toxicity data for ecological species [1]. Its utility in regulatory risk assessments, chemical prioritization under statutes like the Toxic Substances Control Act (TSCA), and ecological research hinges on the consistency, reliability, and findability of its over one million test records [4].

This stage transforms the screened and accepted scientific evidence, identified through exhaustive literature searches, into structured, computable data. Data abstraction is the meticulous process of extracting pertinent information from source studies, while controlled vocabulary is the standardized language that ensures uniform entry and retrieval. Together, they form the backbone of a FAIR (Findable, Accessible, Interoperable, and Reusable) data resource, enabling sophisticated queries, interoperability with tools like the CompTox Chemicals Dashboard, and support for quantitative modeling such as species sensitivity distributions (SSDs) and quantitative structure-activity relationship (QSAR) models [4] [1]. This guide details the technical protocols and systems that underpin this transformation, providing a framework for high-fidelity data curation in ecotoxicology.

Data abstraction is the targeted extraction of specific data points and metadata from a primary research article into a structured field-based format. In ECOTOX, this moves beyond simple digitization to capture the nuanced context of each toxicity test. The process is governed by detailed Standard Operating Procedures (SOPs) designed to minimize curator subjectivity and maximize consistency [1]. Key abstracted elements include:

- Chemical Identity: Verified substance name, CAS Registry Number, and molecular details.

- Species Taxonomy: Verified organism identity, including Latin binomial, life stage, and source.

- Experimental Design: Exposure pathway, duration, concentration/dose levels, media, and temperature.

- Control Conditions: Description and performance of control groups.

- Endpoint Results: Quantitative toxicity measures (e.g., LC50, EC10, NOEC) with reported values, statistical significance, and units.

Controlled Vocabulary: The Architecture of Consistency

A controlled vocabulary is a prescriptive, organized set of terms and phrases used for indexing and retrieval, where one preferred term is designated for each concept [16]. Its primary function is vocabulary control, which suppresses the "anarchy of natural language" by managing synonyms, distinguishing homographs, and identifying semantic relationships between terms (e.g., broader, narrower, related) [16] [17].

Types of Controlled Vocabularies Relevant to ECOTOX:

- Simple Term Lists (Pick Lists): Used for fields with a limited set of unambiguous options (e.g., exposure media: "Freshwater," "Saltwater," "Sediment") [16].

- Thesauri: Provide semantic relationships and are ideal for complex concepts like toxicological effects (e.g., linking "Mortality" to related terms like "Lethality") [17].

- Taxonomies & Ontologies: Hierarchical systems that may be used for structuring species kingdoms or chemical classes, supporting more advanced computational reasoning.

The implementation of a controlled vocabulary ensures that all curators describe the same experimental condition using the same term (e.g., "Salmo salar" instead of "Atlantic salmon," "young adult," or "smolt"), enabling precise data collocation and retrieval [16].

The ECOTOX Data Curation Pipeline: A Stage 3 Protocol

The following workflow details the sequential steps for abstracting data and applying controlled vocabulary, from the receipt of an accepted study to its entry into the knowledgebase.

Diagram: Sequential Workflow for Data Abstraction and Vocabulary Curation. This protocol ensures systematic processing from an accepted study to a validated database record.

Step 1: Full-Text Review & Critical Appraisal The curator performs a detailed read of the complete study to understand the experimental narrative fully. This step verifies that the study meets all Phase II acceptability criteria, including the use of an appropriate control, clear reporting of results, and a defensible endpoint calculation [6]. Studies are classified for their potential use in risk assessment (e.g., definitive screening, limit test).

Step 2: Chemical Verification & Standardization The chemical stressor is identified and linked to a verified, unique identifier. This process typically involves:

- Matching the reported chemical name to a master chemical list (e.g., via the EPA CompTox Chemicals Dashboard).

- Resolving ambiguities (e.g., "BPA" to "Bisphenol A").

- Recording the definitive CASRN (Chemical Abstracts Service Registry Number) and structure information. This creates interoperability with other chemical databases [1].

Step 3: Species Verification & Taxonomic Alignment The test organism is verified using authoritative taxonomic databases (e.g., Integrated Taxonomic Information System - ITIS). The Latin binomial (genus, species) is standardized, and relevant life stage, age, or sex data is captured. This ensures data for "Oncorhynchus mykiss" is distinct from "Danio rerio," regardless of the common names used in the source paper.

Step 4: Experimental Data Abstraction Quantitative and qualitative data are extracted into predefined fields. This includes [1]:

- Test Conditions: Exposure duration, concentrations/doses, media chemistry (pH, hardness), temperature.

- Endpoint & Statistical Results: The specific toxicity metric (e.g., LC50 value, its confidence intervals, the statistical test used).

- Effect Measurement: The observed biological effect (e.g., mortality, growth inhibition, reproduction impairment) linked to the endpoint.

Step 5: Application of Controlled Vocabulary The curator translates the author's narrative into the knowledgebase's standardized language using pick lists, thesauri, and authority files [16]. For example:

- An author's description of "eggs didn't hatch" is coded as the effect "Hatching Success."

- A test described as a "96-hour flow-through acute test" is tagged with the terms "Acute," "Flow-Through," and the duration "96 h."

- The species life stage "juvenile" is mapped to a precise term like "Juvenile (specified)."

Step 6: Quality Assurance & Validation Check The abstracted record undergoes automated and/or peer validation. Automated checks may flag outliers or missing required fields. A second curator may review a subset of records to ensure adherence to SOPs and consistency in vocabulary application. Failed records are flagged and returned to Step 1 for correction [1].

Core Controlled Vocabulary Structures in ECOTOX

The knowledgebase employs a multi-layered vocabulary system to describe the core entities and concepts.

Chemical Hierarchy & Identity

Chemicals are organized by identity, not function. The primary vocabulary includes preferred chemical names, synonyms, and unique identifiers (CASRN, DTXSID from CompTox). A chemical ontology may classify them by structure (e.g., "Polycyclic Aromatic Hydrocarbons") to support QSAR modeling [4].

Diagram: Hierarchical Vocabulary Structure for Chemical Identity Standardization.

Taxonomic & Biological Effect Vocabularies

- Species Vocabulary: A taxonomy-driven hierarchy (Kingdom > Phylum > Class > Order > Family > Genus > Species) ensures precise organism identification. Common names are included as non-preferred terms pointing to the Latin binomial [16].

- Effect/Endpoint Vocabulary: A thesaurus organizes biological responses. Broader terms (e.g., "Reproduction") have narrower terms (e.g., "Fecundity," "Hatching Success"). Related terms (e.g., "Growth" and "Development") are cross-linked to aid discovery [17].

Quantitative Framework: Acceptance Criteria and Data Scale

The curation process is governed by explicit, binary criteria that determine a study's acceptability for abstraction.

Table 1: Phase I Minimum Acceptance Criteria for Data Abstraction [6]

| Criterion Number | ECOTOX Acceptance Requirement | Rationale for Curation |

|---|---|---|

| 1 | Single chemical exposure | Maintains focus on causative agent for use in chemical-specific assessments. |

| 2 | Effect on aquatic/terrestrial plant or animal | Ensures ecological relevance. |

| 3 | Biological effect on live, whole organism | Excludes in vitro cellular studies (though relevant for NAMs context). |

| 4 | Concurrent chemical concentration/dose reported | Essential for dose-response modeling and benchmark derivation. |

| 5 | Explicit exposure duration reported | Critical for distinguishing acute from chronic effects. |

| 6 | Chemical of concern to OPP (for regulatory assessments) | Ensures regulatory utility. |

| 7 | Article published in English | Practical limitation for curation. |

| 8 | Study presented as a full article | Ensures sufficient methodological detail is available. |

| 9 | Publicly available document | Promotes transparency and verifiability. |

| 10 | Paper is the primary source of data | Avoids duplication and potential transcription errors. |

| 11 | A calculated endpoint is reported (e.g., LC50, NOEC) | Provides a standardized, quantitative metric for comparison. |

| 12 | Treatment(s) compared to an acceptable control | Establishes a baseline for determining treatment-related effects. |

| 13 | Study location (lab/field) reported | Provides context for interpreting environmental relevance. |

| 14 | Tested species is reported and verified | Fundamental for species-specific analysis and SSD development. |

Table 2: Scale of Curated Data in ECOTOX Knowledgebase (as of 2025) [4] [1]

| Data Category | Quantitative Scale | Significance for Research |

|---|---|---|

| Total References | > 53,000 | Represents the comprehensive scope of the systematically searched literature. |

| Total Test Results | > 1,000,000 | Indicates the granularity of data available for meta-analysis and modeling. |

| Unique Chemicals | ~12,000 - 13,000 | Demonstrates broad chemical coverage for comparative hazard assessment. |

| Ecological Species | > 13,000 (aquatic & terrestrial) | Enables the development of robust Species Sensitivity Distributions (SSDs). |

| Data Update Frequency | Quarterly | Ensures the knowledgebase remains current (an "evergreen" resource). |

Table 3: Key Research Reagent Solutions and Curation Tools

| Category | Tool / Resource | Primary Function in Curation/Research |

|---|---|---|

| Chemical Identity | EPA CompTox Chemicals Dashboard | Authoritative source for chemical verification, CASRN/DTXSID mapping, and obtaining related physicochemical properties [4] [1]. |

| Taxonomic Verification | Integrated Taxonomic Information System (ITIS) | Standard reference for validating species nomenclature and taxonomic hierarchy [1]. |

| Bibliographic Management | Reference Databases (e.g., PubMed, Web of Science) | Sources for conducting systematic literature searches using controlled vocabulary (e.g., MeSH terms) and Boolean operators [17]. |

| Controlled Vocabulary | Custom ECOTOX Thesauri & Pick Lists | Internal standardized lists for effects, endpoints, test conditions, and other critical fields to ensure curator consistency [1]. |

| Data Quality & Modeling | Quantitative Structure-Activity Relationship (QSAR) Software | Uses curated toxicity data from ECOTOX to develop and validate predictive models for chemical prioritization [4]. |

| Statistical Analysis | Species Sensitivity Distribution (SSD) Generators (e.g., ETX 2.0, SSD Master) | Analyzes curated toxicity data across multiple species to derive protective environmental thresholds (e.g., HC5) [1]. |

| Accessibility & Compliance | Color Contrast Checkers (e.g., based on WCAG 2.2 guidelines) | Ensures that any visualizations or interfaces developed for data presentation meet enhanced contrast requirements (≥7:1 for standard text) for accessibility [18] [19]. |

Stage 3 of the ECOTOX curation pipeline—data abstraction paired with rigorous controlled vocabulary application—transforms peer-reviewed literature into a robust, computable knowledge asset. This process, framed within systematic review practices, directly supports the evolving paradigm in toxicology. The resulting high-quality, standardized data is indispensable for validating New Approach Methodologies (NAMs), training machine learning models, and conducting transparent chemical safety assessments. By adhering to the technical protocols and principles outlined in this guide, the knowledgebase not only preserves the value of legacy animal testing but also provides the essential empirical foundation required to advance predictive ecotoxicology in the 21st century.

The data curation process for the ECOTOX Knowledgebase is a systematic, multi-stage operation designed to transform raw ecotoxicological literature into a FAIR (Findable, Accessible, Interoperable, and Reusable) scientific resource. This process is the core subject of a broader research thesis on scalable environmental data management [20]. Stage 4, encompassing Data Maintenance, Quarterly Updates, and Public Release, represents the final, continuous cycle of this pipeline. It is where curated data achieves operational utility and public accessibility. In ECOTOX Version 5, this stage has been significantly enhanced to support the needs of modern chemical risk assessment and research, which demand timely, transparent, and interoperable data [20].

The primary functions of Stage 4 are threefold: to maintain the integrity and accuracy of over one million existing test records; to integrate newly curated data from the ongoing literature review process on a quarterly schedule; and to publicly release this data through a redesigned web interface and API, ensuring it is actionable for regulatory decision-makers, researchers, and model developers [4] [20].

Core Components of Data Maintenance

Data maintenance is the foundational activity that ensures the long-term reliability and consistency of the knowledgebase. It involves systematic processes to preserve data quality and adapt to evolving scientific standards.

Back-End Data Management and Version Control: The core ECOTOX data is maintained in a relational database structure, where tables for chemicals, species, tests, and results are linked via unique identifiers [21]. A rigorous version control system tracks all changes to the underlying data. This is critical for reproducibility, allowing users to reference specific data releases (e.g., the September 2022 release used to build the ADORE machine learning benchmark dataset) [21]. Archived versions of related databases, such as ToxValDB, are also maintained to provide a historical record [22].

Vocabulary and Standardization Maintenance: Consistency is enforced through the use of controlled vocabularies for key fields such as chemical names, species taxonomy, test media, and measured effects [20] [6]. Maintenance involves curating these vocabularies, adding new terms as needed by emerging science, and mapping legacy terms to current standards. This standardization is what enables precise searching and large-scale data aggregation.

Linkage and Interoperability Updates: A key maintenance task is updating and validating links to external resources. Each chemical is associated with identifiers like the DSSTox Substance ID (DTXSID), which links directly to the EPA's CompTox Chemicals Dashboard for rich chemical property data [4] [21]. Maintaining these linkages ensures ECOTOX remains an interoperable node within a larger network of computational toxicology resources [22] [23].

Protocol for Quarterly Update Cycles

The quarterly update is a scheduled, structured process for expanding the knowledgebase with newly curated information. The protocol ensures that each update is consistent, traceable, and seamlessly integrated.

1. Literature Acquisition and Curation Window: Prior to each quarterly release, a defined period (e.g., the previous six months) is established for processing newly published literature. The ECOTOX team performs comprehensive searches of scientific databases, identifies relevant studies on single-chemical toxicity to ecological species, and applies established systematic review procedures for study evaluation and data extraction [20].

2. Data Validation and Integration Batch Processing: Extracted data from newly accepted studies undergoes a multi-tier validation check. This includes automated checks for format and required fields, as well as expert manual review for scientific accuracy and proper application of controlled vocabularies. Validated data is then formatted into standard batches for integration into the main database tables [6] [21].

3. Pre-Release Quality Assurance (QA): Before public deployment, the updated database undergoes a comprehensive QA process. This involves running automated test queries to verify data integrity, checking a sample of new entries for accuracy, and ensuring that all search, filtering, and visualization functions perform correctly with the new data. The system's interoperability with linked tools like the CompTox Chemicals Dashboard is also verified [4].

4. Version Documentation and Release Notes: Each quarterly update is assigned a discrete version identifier. Detailed release notes are generated, documenting the number of new references, tests, and chemicals added, as well as any changes to the user interface, underlying vocabularies, or API functionality [20]. This mirrors the transparent update practices of other EPA data tools [24].

The following diagram illustrates this cyclical workflow: