Improving LD50 Reproducibility: Strategies for Robust and Reliable Acute Toxicity Testing

This article provides a comprehensive framework for researchers and drug development professionals aiming to enhance the reproducibility of LD50 determinations.

Improving LD50 Reproducibility: Strategies for Robust and Reliable Acute Toxicity Testing

Abstract

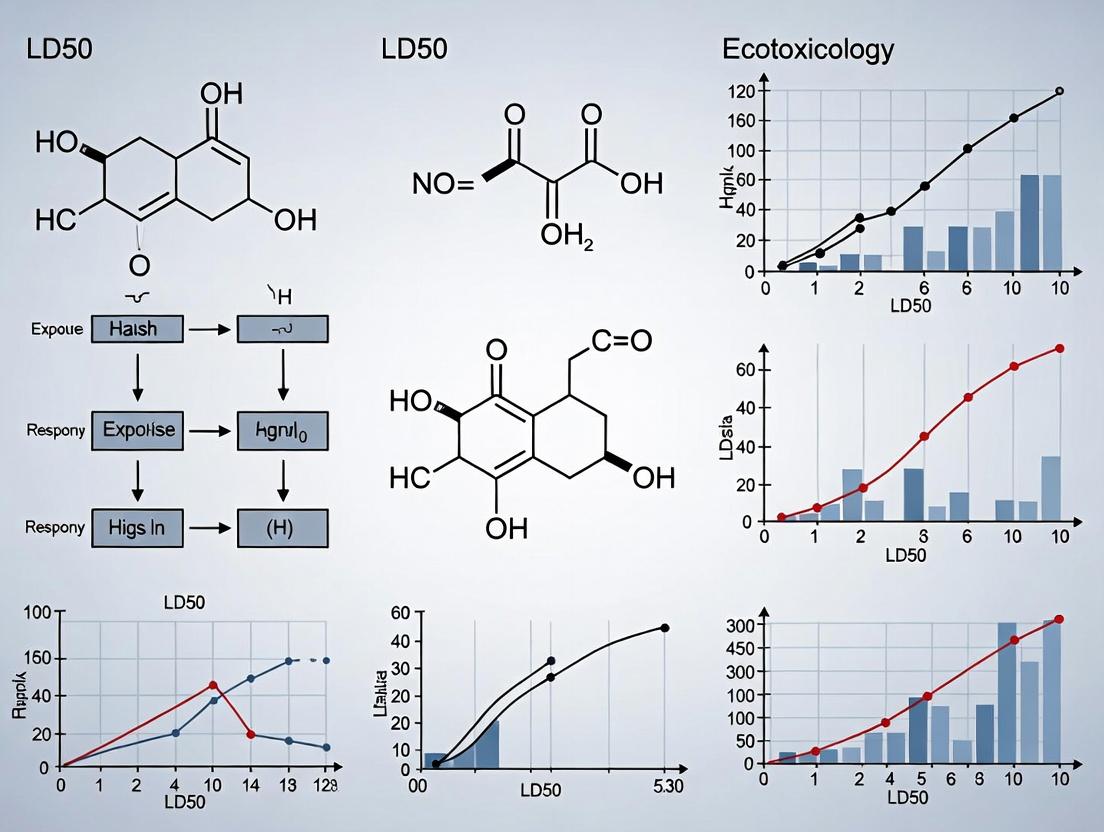

This article provides a comprehensive framework for researchers and drug development professionals aiming to enhance the reproducibility of LD50 determinations. We begin by exploring the foundational concepts and inherent challenges of variability in acute toxicity studies [citation:2][citation:8]. The discussion then progresses to methodological advancements, including refined in vivo protocols like the Improved Up-and-Down Procedure (iUDP) and the principles of New Approach Methodologies (NAMs) [citation:1][citation:4][citation:8]. A dedicated troubleshooting section identifies major sources of experimental variability and offers optimization strategies for study design, animal models, and data reporting. Finally, we establish a framework for method validation, detailing comparative analyses with traditional methods, techniques for establishing confidence intervals, and the creation of robust reference datasets. This end-to-end guide synthesizes current best practices to support more reliable, efficient, and ethically conscious toxicity assessments.

Understanding LD50: Foundational Concepts and the Critical Challenge of Reproducibility

Defining LD50, LC50, and Acute Toxicity in Regulatory Science

This technical support center provides resources to standardize acute toxicity testing methodologies, directly supporting a broader thesis on improving the reproducibility of LD50 research. Consistent and reliable determination of median lethal doses (LD50) and concentrations (LC50) is foundational to chemical safety assessment, product labeling, and regulatory decision-making across pharmaceuticals, agrochemicals, and industrial compounds [1] [2].

LD50 (Lethal Dose 50) is defined as the amount of a substance administered in a single dose that causes the death of 50% of a test animal population within a specified observation period [1]. It is a standardized measure of acute toxicity, which refers to adverse effects occurring within a short time (minutes to about 14 days) after exposure [1] [2]. The value is typically expressed in milligrams of substance per kilogram of animal body weight (mg/kg) [1].

LC50 (Lethal Concentration 50) is the analogous measure for airborne substances, defined as the concentration of a chemical in air (or water in environmental studies) that kills 50% of test animals during a set exposure period (commonly 4 hours) [1] [3]. It is expressed in parts per million (ppm) or milligrams per cubic meter (mg/m³) [1] [3].

These metrics were developed to enable the comparison of toxic potency between different chemicals by using death as a common, unambiguous endpoint [1]. In regulatory science, they are crucial for classifying chemicals into hazard categories, which dictate required safety warnings on labels and safety data sheets (SDS) [2].

Table 1: Standard Toxicity Classification Systems

| Toxicity Rating | Common Term (Hodge & Sterner Scale) | Oral LD50 in Rats (mg/kg) | Probable Lethal Dose for a 70 kg Human |

|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | A taste, a drop (~1 grain) |

| 2 | Highly Toxic | 1 – 50 | 1 teaspoon (~4 ml) |

| 3 | Moderately Toxic | 50 – 500 | 1 ounce (~30 ml) |

| 4 | Slightly Toxic | 500 – 5000 | 1 pint (~600 ml) |

| 5 | Practically Non-toxic | 5000 – 15000 | > 1 quart (> 1 liter) |

| 6 | Relatively Harmless | ≥ 15000 | > 1 quart (> 1 liter) |

Note: A separate scale by Gosselin, Smith, and Hodge is also used, which can lead to different numerical ratings for the same LD50 value [1]. Always reference the scale applied.

Technical Support Center: Troubleshooting LD50/LC50 Reproducibility

A core challenge in acute toxicity testing is the variability of results. The following guides address common sources of irreproducibility and provide evidence-based best practices to enhance data reliability.

FAQ 1: Why do LD50 values for the same compound vary between studies, and how can this be minimized?

Problem: Reported LD50 values for a single chemical can vary significantly due to differences in experimental parameters. For example, the insecticide dichlorvos shows variable oral LD50 values: 56 mg/kg in rats, 10 mg/kg in rabbits, and 157 mg/kg in pigs [1]. This inter-species and inter-study variability complicates hazard classification and risk assessment.

Root Causes & Solutions:

- Species, Strain, and Sex: Toxicological responses are inherently species-specific. Always compare values generated in the same species, strain, and sex. For regulatory submissions, the rat is the most commonly used species [1].

- Route of Administration: Toxicity can change dramatically with the exposure route (e.g., oral, dermal, inhalation). Values must be reported with the route specified (e.g., LD50 (oral, rat)) [1].

- Environmental & Husbandry Factors: Non-standardized housing, diet, and animal health status introduce noise. Implement strict, documented Standard Operating Procedures (SOPs) for animal care and handling to ensure consistency [4].

- Dosing Formulation & Vehicle: The compound's purity, particle size, and the vehicle used (e.g., saline, methylcellulose) affect bioavailability. Use pharmaceutical-grade materials and characterize formulations thoroughly.

- Protocol Adherence: Deviations from OECD or EPA test guidelines (e.g., exposure duration, observation period) alter outcomes. Strictly follow the prescribed regulatory test guideline (e.g., OECD 425 for the Up-and-Down Procedure) [3].

FAQ 2: What are the best practices for designing a study to determine a reliable LD50 or LC50?

Problem: Poorly designed studies yield unreliable data that cannot be replicated or used for confident regulatory classification.

Solution: Adopt a rigorous, pre-defined study protocol.

Table 2: Key Parameters for Acute Oral Toxicity Study Design

| Parameter | Standard Requirement | Rationale for Reproducibility |

|---|---|---|

| Animal Model | Healthy, young adult rodents (e.g., Sprague-Dawley rats). Consistent strain, age, and weight range. | Minimizes biological variability in metabolic and physiological response. |

| Group Size & Dosing | According to guideline (e.g., 5-10 animals per sex per dose for fixed-dose method). | Provides sufficient statistical power to estimate the median lethal dose. |

| Fasting | Typically overnight fasting before oral gavage. | Standardizes gastrointestinal content and ensures consistent absorption. |

| Observation Period | At least 14 days post-administration [1]. | Captures delayed toxic effects and ensures mortality counts are complete. |

| Clinical Observations | Systematic, timed checks for signs of toxicity (e.g., piloerection, ataxia, labored breathing). | Provides crucial supportive data on the compound's effects beyond mortality. |

| Necropsy & Histopathology | Full gross necropsy on all animals; histopathology on target organs. | Identifies target organs and provides mechanistic context for lethality. |

| Data Analysis | Use appropriate statistical method (e.g., probit analysis, up-and-down method) as per guideline. | Ensures accurate and mathematically sound calculation of the LD50 value. |

Experimental Protocol: Fixed-Dose Procedure (OECD Guideline 420) This method aims to identify the dose causing clear signs of toxicity rather than death, reducing animal use.

- Select a Starting Dose: Based on existing data or a sighting study, choose from predefined doses (5, 50, 300, 2000 mg/kg).

- Dose Administration: Administer the test substance via oral gavage to a single group of animals (one sex, typically 5 animals).

- Observation: Observe animals meticulously for 14 days for clinical signs of toxicity and mortality.

- Decision Tree:

- If no toxicity or mortality is observed, test a higher dose in a new group.

- If clear toxicity but no mortality is observed, this dose may be used for classification.

- If mortality occurs, the test may be repeated at a lower dose or the result is used with appropriate classification.

- Classification: The study result places the substance into one of the Globally Harmonized System (GHS) toxicity categories based on the discriminatory dose [2].

FAQ 3: How can we improve reproducibility when dealing with highly toxic or volatile compounds (LC50 testing)?

Problem: Testing airborne compounds (LC50) introduces variability from chamber design, aerosol generation, and analytical chemistry.

Solutions:

- Exposure Chamber Calibration: Regularly verify and document chamber parameters: uniform concentration distribution, temperature, humidity, and flow rates. Use calibrated analytical equipment (e.g., real-time gas monitors) to confirm chamber concentration [3].

- Particle Size Characterization: For aerosols, the respirable particle size fraction determines toxicity. Use equipment (e.g., cascade impactors) to measure and report mass median aerodynamic diameter (MMAD).

- Use of Negative Controls: Include a control group exposed only to clean air or the vehicle aerosol under identical chamber conditions.

- Personal Protective Equipment (PPE) & SOPs: For researcher safety and to prevent cross-contamination, enforce strict PPE (gloves, lab coats, eye protection) and conduct all work in a certified chemical fume hood for volatile liquids [4].

Workflow for Reliable LC50 Inhalation Study

FAQ 4: What computational and modern methods can supplement or reduce the need for traditional LD50 tests?

Problem: Traditional in vivo LD50 tests are resource-intensive, subject to ethical concerns, and can show variability.

Solutions:

- Quantitative Structure-Activity Relationship (QSAR) Models: Use validated in silico models to predict acute toxicity based on chemical structure. Consensus models that combine predictions from multiple platforms (e.g., CATMoS, VEGA, TEST) offer more reliable and health-protective estimates, especially for data-poor chemicals [5]. A 2025 study showed a conservative consensus model had an under-prediction rate of only 2%, minimizing the risk of missing a truly toxic chemical [5].

- New Approach Methodologies (NAMs): Integrate in vitro assays and omics technologies. For example, transcriptomic analysis in short-term (5-28 day) in vivo studies can identify molecular points of departure (PODs) that precede overt toxicity, offering a more sensitive and mechanistically informative endpoint [6]. The U.S. EPA's Transcriptomic Assessment Product (ETAP) program uses this approach [6].

- Tiered Testing Strategies: Implement a weight-of-evidence approach. Start with in silico predictions and in vitro assays to flag high-hazard compounds. This refines and reduces the need for definitive in vivo testing, aligning with the principles of the 3Rs (Replacement, Reduction, Refinement) [7].

Modern Tiered Strategy for Acute Toxicity Assessment

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Acute Toxicity Studies

| Item | Function & Importance for Reproducibility |

|---|---|

| Certified Reference Standards | High-purity test substance is critical. Impurities can significantly alter toxicity. Use certificates of analysis (CoA) to document purity, identity, and stability. |

| Standardized Vehicle/Formulation | Consistent vehicles (e.g., 0.5% methylcellulose, corn oil) ensure uniform suspension/emulsion and reproducible bioavailability between studies and dosing days. |

| Analytical Grade Solvents & Reagents | For formulation, cleaning, and analytical verification. Reduces confounding toxicity from contaminants. |

| Calibrated Dosing Equipment | Syringe pumps, calibrated pipettes, and intubation needles ensure accurate and precise delivery of the intended dose volume. Regular calibration is mandatory. |

| Clinical Pathology Kits | Validated commercial kits for hematology and clinical chemistry provide standardized, comparable data on systemic toxicity (e.g., liver, kidney injury). |

| Histology Fixatives & Stains | Standardized fixatives (e.g., 10% neutral buffered formalin) and staining protocols (H&E) ensure consistent tissue preservation and pathological evaluation. |

| Personal Protective Equipment (PPE) | Nitrile gloves (4-mil minimum), safety goggles, and 100% cotton or flame-resistant lab coats [4]. Protects personnel, prevents contamination, and is a core element of laboratory SOPs [4]. |

| Validated Software | For statistical analysis (e.g., probit) and data management. Reduces calculation errors and maintains data integrity for audits. |

Future Directions: Enhancing Reproducibility Through Innovation

The field is moving toward methodologies that provide more reproducible and human-relevant data:

- Omics-Defined Molecular Points of Departure (PODs): Using transcriptomic or metabolomic data from short-term studies to derive a transcriptomic POD (tPOD). This molecular benchmark is often more sensitive and less variable than traditional mortality-based LD50s [6].

- Standardized Bioinformatics Pipelines: Initiatives like the Regulatory Omics Data Analysis Framework (R-ODAF) aim to harmonize data processing, reducing variability in omics-based studies [6].

- Regulatory Adoption of NAMs: Frameworks like Next Generation Risk Assessment (NGRA) are being developed to formally integrate data from in silico, in vitro, and targeted in vivo studies, creating a more robust and reproducible basis for safety decisions [7].

The LD50 (Lethal Dose, 50%) test, introduced by J.W. Trevan in 1927, was a landmark innovation for standardizing the comparison of acute toxicity for potent drugs like digitalis and insulin [1] [8]. Its original purpose was to provide a statistically derived, reproducible point for biological assay standardization [9]. However, its subsequent codification into regulatory guidelines for a vast array of chemicals has exposed significant challenges in achieving consistent and reproducible results across different labs, species, and experimental conditions [8]. This technical support center is designed within the thesis that improving the reproducibility of traditional in vivo LD50 data is a critical step for robust historical comparison and validation as the field transitions toward more human-relevant, mechanistic New Approach Methodologies (NAMs) and computational toxicology [10] [11].

Troubleshooting Guide: Common Pitfalls in LD50 Determination

This guide addresses frequent issues that compromise the reproducibility and reliability of acute toxicity studies.

F1. Fundamental Reproducibility Challenges

- Q: Our calculated LD50 value for a reference compound differs significantly from literature values. What are the most common sources of this variability?

- A: Inter-laboratory variability is well-documented [8]. Key factors include:

- Species & Strain Differences: Sensitivity can vary drastically between species (e.g., rat vs. mouse) and between genetic strains of the same species [1] [8].

- Animal Husbandry: Diet, fasting state, bedding material, cage type, and environmental conditions (temperature, humidity, light cycles) can influence results [8].

- Compound Administration: The route of administration (oral, dermal, intravenous) yields different LD50 values [1]. Variations in dosing technique, vehicle used, and concentration of the test substance introduce error.

- Biological Variables: The age, sex, and microbiological status of the animals are critical. A compound may be highly toxic to one sex but not the other [8] [9].

- A: Inter-laboratory variability is well-documented [8]. Key factors include:

F2. Experimental Design & Protocol

- Q: How can we design an LD50 study that minimizes animal use while still generating reliable data for regulatory submission?

- A: The traditional use of large numbers of animals (e.g., 10 per dose group) for precise LD50 calculation is increasingly seen as unnecessary [9]. Streamlined protocols are recommended:

- Up-and-Down Procedure (UDP): This sequential method uses a single animal at a time, adjusting the dose for the next animal based on the previous outcome. It can estimate an LD50 with 6-10 animals total [9].

- Fixed Dose Method (FDM): Focuses on identifying signs of evident toxicity rather than death, using fewer animals and causing less suffering.

- Acute Toxic Class Method: Uses a step-wise procedure with 3 animals per step to classify a substance into a toxicity class, not a precise LD50.

- A: Always consult the latest OECD or ICH guidelines. Regulatory acceptance of alternative methods has grown, and these guidelines specify the minimum animal numbers and protocols required [10].

- A: The traditional use of large numbers of animals (e.g., 10 per dose group) for precise LD50 calculation is increasingly seen as unnecessary [9]. Streamlined protocols are recommended:

F3. Data Analysis & Interpretation

- Q: Is it necessary to calculate a precise LD50 with confidence intervals, or is a toxicity range sufficient for safety assessment?

- A: For most industrial and pharmaceutical safety decisions, knowing the approximate lethal dose range and the slope of the dose-response curve is more informative than a precise LD50 [9]. A steep slope indicates small changes in dose cause large changes in mortality, which is a critical safety concern. Regulatory focus is shifting from the single-point LD50 value toward a more comprehensive understanding of acute toxicity, including clinical observations, time to onset, and pathology [8].

Frequently Asked Questions (FAQs)

Q1: What does an LD50 value not tell us about a chemical? A: The LD50 is a measure of acute lethality only. It does not predict [1] [8]:

- Long-term (chronic) toxicity from repeated low-dose exposure.

- Specific organ toxicity (e.g., hepatotoxicity, neurotoxicity).

- Carcinogenic, mutagenic, or teratogenic potential.

- The mechanism of toxicity.

- Pain and distress experienced by animals at sublethal doses.

Q2: Why is there a push to replace the classical LD50 test? A: The drive for replacement is based on the 3Rs (Replacement, Reduction, Refinement) and scientific limitations [10] [8]:

- Ethical Concerns: The test causes severe suffering and death [8].

- Poor Human Relevance: Species differences make extrapolation to humans uncertain [8].

- High Variability: As noted in the troubleshooting guide, results are highly variable.

- Resource-Intensive: It is time-consuming, expensive, and uses many animals.

- Mechanistically Blind: It provides no data on how a substance causes toxicity.

Q3: What are the modern alternatives to the in vivo LD50 test? A: The field is transitioning to a combination of in silico and in vitro methods [10] [11] [12]:

- Computational (Q)SAR Models: Predict toxicity based on chemical structure.

- AI-Based Toxicity Prediction: Machine learning models trained on large databases can predict multiple toxicity endpoints, including acute toxicity [11] [12].

- In Vitro Cytotoxicity Assays: Use cell lines (e.g., human cell lines) to measure general cell death, providing a screening tool to estimate starting doses for in vivo studies or to rank compound toxicity [8].

- Mechanistic Toxicity Pathways: Assessing specific pathways (e.g., mitochondrial dysfunction, specific receptor activation) in human-derived cells provides more human-relevant data than lethality in rodents.

Detailed Experimental Protocols

The following table compares a traditional protocol with a modern, refined approach aimed at improving reproducibility and reducing animal use.

Table 1: Comparison of Traditional and Refined Acute Oral Toxicity Test Protocols

| Protocol Aspect | Traditional LD50 (OECD 401, Deleted) | Refined Fixed Dose Procedure (OECD 420) | Rationale for Improvement |

|---|---|---|---|

| Objective | Determine precise median lethal dose (LD50) and confidence intervals. | Identify the dose that causes clear signs of toxicity (evident toxicity) without lethal effects, and classify the substance. | Shifts focus from mortality to observable toxicity, reducing suffering. |

| Animals per Group | Typically 5-10 of each sex. | 5 animals of a single sex (usually females), sequentially. If clear toxicity is seen, a second group of 5 of the other sex may be used. | Significantly reduces total animal numbers (up to 70%). |

| Dose Levels | At least 3 doses, ideally spanning the expected LD50. | A starting dose is selected (5, 50, 300, 2000 mg/kg). Subsequent steps depend on the presence or absence of "evident toxicity." | Uses a step-wise approach to find a toxicity range, not a precise lethal point. |

| Endpoint | Death within 14 days. | Detailed clinical observations (signs of toxicity, morbidity) for 14 days. | Generates more informative data on the nature and progression of acute toxicity. |

| Statistical Analysis | Complex probit or logit analysis to calculate LD50 and slope. | Simple classification into predefined toxicity classes based on the dose causing evident toxicity. | Simplifies analysis and aligns with the Globally Harmonized System (GHS) of classification. |

Modern Computational & Mechanistic Approaches

The evolution from a single lethal endpoint to pathway-based understanding is key to modern toxicology. The diagram below illustrates this paradigm shift.

Case Study: Beyond Lethality – ZBP1 and Interferon Therapy Research on SARS-CoV-2 provides a powerful example of why mechanistic understanding surpasses simple lethality metrics. Studies found that delayed treatment with interferon (IFN-β), intended as an antiviral therapy, actually increased lethality in infected mice [13]. The mechanism was traced to IFN-β inducing ZBP1-dependent inflammatory cell death (PANoptosis) in macrophages. This illustrates that a therapy altering a biological pathway (IFN signaling) can have opposite effects on survival depending on timing and context—a complexity completely invisible to an LD50 test, which would only measure the final lethal outcome of the combined virus+drug exposure [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Modern Acute Toxicity Assessment

| Item Category | Specific Examples | Function & Rationale |

|---|---|---|

| In Vivo Test Substances | Certified Reference Compounds (e.g., KBrO₃, Dichlorvos) [1] | Positive controls to validate experimental protocol and compare inter-laboratory performance. |

| Alternative Test Systems | Human Cell Lines (e.g., HepG2, HEK293), 3D Tissue Models, Zebrafish Embryos | Provide human-relevant toxicity data for screening; reduce and replace animal use (3Rs) [10]. |

| Computational Tools | ADMET Prediction Platforms (e.g., ADMETlab), QSAR Software, Open-Tox APIs [11] | Enable in silico prediction of acute toxicity and other endpoints from chemical structure, prioritizing compounds for testing. |

| Public Toxicity Databases | Tox21/TOXCAST: High-throughput screening data. ChEMBL: Bioactivity data. PubChem: Assay results [11] [12]. | Source of large-scale data for training and validating computational AI/ML models. Critical for modern predictive toxicology. |

| Biomarker Assay Kits | Kits for ALT/AST (liver), Creatinine (kidney), LDH (cytotoxicity), Caspase-3 (apoptosis) | Move beyond death as an endpoint. Quantify specific organ damage or mechanistic pathways in in vitro or in vivo studies. |

Technical Support Center: FAQs & Troubleshooting

This technical support center provides targeted guidance for researchers facing the critical challenge of variability in LD50 determinations. Consistent and reproducible results are foundational to chemical safety assessment, drug development, and regulatory decision-making. The following FAQs and protocols are designed to help you identify, document, and mitigate sources of variability within your experimental framework [10].

Frequently Asked Questions (FAQs)

Q1: Our lab has generated an oral LD50 for a compound in rats that differs significantly from a literature value. What are the most common sources of such inter-laboratory variability?

A1: Discrepancies often stem from poorly controlled experimental parameters. Key factors to audit include:

- Animal Model Specifications: Strain, sex, age, and weight of the test animals significantly influence results [14]. For example, a chemical may have different toxicity in Sprague-Dawley versus Wistar rats. Always document these parameters precisely.

- Test Substance Formulation: The LD50 of the pure active ingredient can differ dramatically from its formulated product (e.g., a pesticide mixed with adjuvants) [15]. Variability in vehicle (e.g., water, oil, methylcellulose) and concentration can alter bioavailability.

- Experimental Protocol: The specific route of administration (oral, dermal, intravenous), fasting state of animals, volume of dose administered, and duration of observation can all affect the outcome [1].

- Environmental & Husbandry Conditions: Diet, water quality, bedding, light cycles, and stress levels are often overlooked sources of physiological variation that can impact toxicity.

Q2: How should we design an LD50 study to properly quantify and document variability, rather than just report a single median value?

A2: Move beyond the point estimate by implementing these practices:

- Use Adequate Group Sizes and Dose Groups: Smaller group sizes lead to wider confidence intervals. Use statistical power calculations to determine group size. Include more than the minimum number of dose levels to better define the slope of the dose-response curve.

- Calculate Confidence Intervals: Always report the LD50 value with its 95% confidence interval (e.g., LD50 = 250 mg/kg, CI: 215-295 mg/kg). This range quantitatively communicates the precision of your estimate.

- Report Complete Data: Publish or archive the full dataset: the number of animals per dose, the exact mortality at each dose, and the statistical method used for calculation (e.g., probit analysis, Spearman-Karber).

- Standardize and Document Everything: Use a detailed, written SOP for the entire process. Document anomalies, such as an unexpected death not clearly related to toxicity.

Q3: We are under pressure to reduce animal testing. Are there validated alternative methods that provide reproducible acute toxicity data without the variability associated with whole-animal studies?

A3: Yes. New Approach Methodologies (NAMs) are being actively developed and validated to address both ethical concerns and reproducibility issues [10]. While traditional LD50 tests measure a complex organismal outcome (death), NAMs focus on specific, mechanistically defined toxicity pathways. These can be more reproducible as they control for systemic animal variation.*

- In Vitro Cytotoxicity Assays: Tests like the basal cytotoxicity assay (e.g., using 3T3 mouse fibroblasts) can categorize chemicals into broad systemic toxicity bands (e.g., very toxic, toxic) with good reliability.

- Pathway-Based Assays: These target specific mechanisms of acute toxicity, such as mitochondrial dysfunction or activation of apoptotic pathways, using human-derived cells.

- Computational (In Silico) Models: Quantitative Structure-Activity Relationship (QSAR) models predict toxicity based on a compound's chemical structure. A unified, cross-industry framework for validating and accepting these methods is a current priority in regulatory science [10].

Q4: How do we interpret an LD50 value for human risk assessment when the test data shows high variability across species or laboratories?

A4: Highly variable data is a major red flag and necessitates extreme caution. Follow this risk-assessment strategy:

- Identify the Most Sensitive Relevant Species: Do not default to the rat data if rabbit or dog data shows greater sensitivity. Consider the relevance of the route of exposure (e.g., dermal for occupational settings) [1].

- Apply Larger Uncertainty Factors: Regulatory toxicology uses uncertainty factors (often 10-fold) to extrapolate from animal to human and to account for human variability. With high variability, the scientific justification for using an additional "database uncertainty factor" increases.

- Emphasize Mode of Action: Investigate why the variability exists. Is it due to metabolic differences? Understanding the mechanism helps determine which animal data, if any, is most relevant to humans.

- Clearly Communicate Limitations: Any risk assessment derived from highly variable data must explicitly state the uncertainty in its conclusions. It may indicate a need for further, more standardized testing.

Experimental Protocols for Quantifying Variability

Protocol 1: Establishing a Robust Traditional LD50 Test with Variability Metrics

This protocol extends the standard OECD-style test to explicitly capture variability [1] [14].

Pre-Test Documentation:

- Test Substance: Record source, purity, lot number, and detailed formulation method. For a formulation, document all "inert" ingredients if available [15].

- Animals: Specify species, strain, supplier, age range (e.g., 8-9 weeks), weight range (e.g., 200-220g), and sex. Acclimatize for at least 5 days. Randomize animals into weight-matched groups.

- Dose Selection: Based on a pilot range-finding test, select at least 5 dose levels with a logarithmic spacing (e.g., 10, 25, 50, 100, 200 mg/kg) expected to yield 0-100% mortality.

Experimental Procedure:

- Administer the test substance in a single, precise volume (e.g., 10 mL/kg) via the chosen route (oral gavage recommended for accuracy) to fasted animals.

- House animals individually post-dosing for observation.

- Observe clinically at 0.5, 1, 2, 4, 6, and 24 hours post-dosing, then daily for a total of 14 days [1].

- Record precise time of death, all clinical signs (lethargy, ataxia, tremors), and body weight changes.

Data Analysis & Variability Quantification:

- Tabulate final mortality at each dose level.

- Use probit analysis or an equivalent statistical software to calculate the LD50 and its 95% confidence limits.

- Key Variability Outputs: The LD50 value (mg/kg), the 95% Confidence Interval, and the slope of the probit line. A steeper slope indicates less variability in individual animal sensitivity.

Protocol 2: In Vitro Cytotoxicity Screening as a Precursor to Animal Testing

This NAM helps predict acute systemic toxicity range and can reduce animal use by informing better dose selection for any subsequent in vivo test [10].

- Cell Culture: Maintain a standardized cell line (e.g., 3T3 mouse fibroblasts or human-derived HepG2 cells) under controlled conditions.

- Compound Exposure: Prepare a logarithmic series of at least 8 concentrations of the test substance in culture medium. Include solvent controls.

- Viability Assessment: After 24-48 hours of exposure, measure cell viability using a robust assay like Neutral Red Uptake (NRU) or MTT.

- Data Analysis:

- Calculate the concentration that reduces viability by 50% (IC50).

- Correlate the in vitro IC50 with known in vivo LD50 values from a database to place the new substance into a Global Harmonized System (GHS) acute toxicity category (e.g., Category 1: ≤ 5 mg/kg).

Data Presentation: Understanding the Landscape of Variability

Table 1: Examples of Oral LD50 Values Illustrating Intrinsic Toxicity and Potential for Variability [1] [16]

| Chemical | Approximate Oral LD50 (rat, mg/kg) | GHS Toxicity Category (Estimated) | Notes on Potential Variability |

|---|---|---|---|

| Nicotine | 50 | Category 3 (Toxic) | Highly dependent on formulation and pH (affects absorption). |

| Glyphosate (acid) | 5,600 | Category 5 (May be harmful) | Formulation is critical: Commercial herbicides can be 10-125x more toxic [15]. |

| Sodium Chloride (Table Salt) | 3,000 | Category 5 (May be harmful) | Low variability expected due to simple mechanism and ubiquitous exposure. |

| Ethanol | 7,000 | Not Classified | Variability influenced by metabolic rate, diet, and genetic factors. |

| Dichlorvos (Insecticide) | 56 (rat) | Category 3 (Toxic) | Major route-dependent variability: Inhalation LC50 is significantly lower (1.7 ppm) [1]. |

Table 2: Documented Inter-Species Variability for Selected Substances [1]

| Chemical | Species | Oral LD50 (mg/kg) | Implication for Research |

|---|---|---|---|

| Dichlorvos | Rat | 56 | Default test species. |

| Rabbit | 10 | ~5.6x more sensitive than rat. Highlights risk of single-species testing. | |

| Dog | 100 | ~1.8x less sensitive than rat. | |

| Pigeon | 23.7 | ~2.4x more sensitive than rat. Critical for environmental risk assessment. |

Visualizing Workflows and Concepts

Traditional In Vivo LD50 Test Workflow

NAM Framework for Predicting Acute Toxicity

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials for LD50 Studies and Variability Mitigation

| Item | Function & Specification | Rationale for Reducing Variability |

|---|---|---|

| Defined Animal Strain | Specific Pathogen-Free (SPF) rats or mice from a reliable supplier (e.g., Crl:CD(SD), C57BL/6). | Minimizes inter-individual and inter-batch differences in genetics, microbiota, and health status. |

| Analytical Grade Test Substance | High-purity (>98%) active ingredient with certificate of analysis. Lot number must be documented. | Ensures the toxic agent is consistent, free from impurities that may alter toxicity [15]. |

| Standardized Vehicle | Pharmacopeia-grade materials (e.g., 0.5% Methylcellulose, Corn Oil). Prepare fresh with documented SOP. | Controls for variability in solubility, absorption, and potential vehicle toxicity. |

| Precision Dosing Equipment | Calibrated positive-displacement pipettes or syringes for oral gavage. | Eliminates dose volume as a source of error, critical for accurate mg/kg calculation. |

| Clinical Observation Checklist | Standardized digital form for recording time-stamped signs (e.g., piloerection, labored breathing). | Reduces observer bias and ensures consistent, quantifiable data capture across technicians. |

| Statistical Software | Software capable of probit/logit analysis (e.g., EPA BMDS, SAS PROC PROBIT, R packages). | Enables consistent calculation of the LD50, confidence intervals, and slope—the key metrics of variability. |

Implications of Poor Reproducibility for Hazard Classification and Risk Assessment

A technical support resource for researchers navigating reproducibility challenges in toxicological testing, specifically concerning the determination of median lethal dose (LD₅₀) and its critical role in hazard classification and risk assessment. Poor reproducibility in LD₅₀ results directly undermines the reliability of the Globally Harmonized System (GHS) of Classification and Labelling of Chemicals, leading to potential misclassification of substances and flawed safety decisions [17]. This center provides actionable guidance, framed within the broader thesis of improving the reproducibility of LD₅₀ research, to help scientists and drug development professionals enhance the rigor, transparency, and trustworthiness of their acute toxicity studies [18] [19].

Troubleshooting Guide: Common LD₅₀ Reproducibility Issues

This section addresses specific, frequently encountered problems that compromise the reproducibility of acute toxicity studies and their subsequent use in hazard classification.

Q1: Why does the same substance get assigned different GHS hazard categories in different databases or safety sheets?

- Problem: Inconsistent GHS classification for a single compound.

- Primary Cause: The foundational LD₅₀ value is highly variable. Reproducibility is poor due to factors like animal strain, sex, age, fasting state, and procedural differences between labs [17]. An LD₅₀ can vary by 10-fold or more between species and strains, and significant interlaboratory differences are common [17].

- Solution: Do not rely on a single literature LD₅₀ value for definitive classification. Consult multiple authoritative sources (e.g., PubChem, manufacturer SDS). For critical assessments, consider conducting a fixed-dose procedure or up-and-down procedure, which are recommended by OECD for animal welfare and can provide more consistent results for classification purposes [17]. Always report the exact value, confidence intervals, and experimental conditions alongside any classification.

Q2: Why is our in-house LD₅₀ result statistically different from a published study, even using the same species?

- Problem: Failure to replicate a previously reported LD₅₀.

- Primary Cause: Insufficient methodological detail in the original publication. Missing information on vehicle, dosing volume, animal supplier, housing conditions, and exact statistical method prevents true replication [18].

- Solution: Implement and request detailed, structured reporting. Use checklists like the ARRIVE guidelines for animal research to ensure all critical parameters are documented [18]. For your own work, pre-register protocols and share full methodological details, raw data, and analysis code in public repositories [20].

Q3: How can a small change in statistical analysis alter the LD₅₀ enough to shift GHS categories?

- Problem: Sensitivity of hazard classification to analytical choices.

- Primary Cause: The use of different statistical methods (probit, logit, Spearman-Karber, moving average) can yield different LD₅₀ point estimates and confidence intervals [17]. Classical methods requiring many animals are discouraged, but newer alternatives may not estimate slope or confidence intervals well [17].

- Solution: Justify and transparently report the statistical method. Follow current OECD test guidelines (e.g., Test No. 425: Up-and-Down Procedure). Clearly state if confidence intervals were calculated and how. Understand that p-values and statistical significance do not measure the size or importance of an effect; the precise LD₅₀ estimate and its variability are more critical for risk assessment than crossing an arbitrary threshold [18].

Q4: Why does our lab get different LD₅₀ results for a reference standard over time?

- Problem: Intra-laboratory variability for a controlled substance.

- Primary Cause: Uncontrolled environmental or procedural drift. Subtle changes in animal microbiota, feed composition, staff technique, or reagent quality can affect outcomes.

- Solution: Implement a rigorous quality assurance system. Maintain detailed standard operating procedures (SOPs), use certified reference materials, ensure staff training, and conduct regular positive control experiments with a reference compound. Document all deviations from SOPs.

Standard Operating Protocols for Enhanced Reproducibility

Adherence to detailed, transparent protocols is fundamental to generating reliable and reproducible LD₅₀ data.

Protocol 1: Conducting a Reproducible Fixed-Dose Procedure (Based on OECD Guideline 420)

Objective: To identify a "discriminating dose" that causes clear signs of toxicity but low mortality, suitable for hazard classification while using fewer animals [17].

Detailed Methodology:

- Selection of Starting Dose: Choose from four predefined dose levels (5, 50, 300, 2000 mg/kg). Use existing information to select the dose most likely to produce toxic signs but not severe mortality.

- Animal Assignment: Use a single sex (typically females) of a healthy, young adult rodent strain. House under standardized conditions. Fast animals overnight prior to dosing.

- Dosing: Administer the test substance in a constant volume by oral gavage to a single group of 5 animals.

- Observation: Observe animals meticulously for 14 days, recording clinical signs, time of onset, severity, and mortality.

- Decision Tree:

- If mortality is 0% or ≥ 50%, the test is concluded, and the LD₅₀ is estimated to be above or below that dose level, respectively.

- If mortality is <50%, a second dose level is tested using 5 new animals (higher if no toxicity seen, lower if toxicity seen).

- Analysis & Reporting: The study provides an estimate of the LD₅₀ within a broad range (e.g., between 50 and 300 mg/kg) for classification. Must report: strain, supplier, age, weight, fasting details, vehicle, dosing volume, all clinical observations by animal and day, and individual animal outcomes.

Protocol 2: Implementing a Computational Reproducibility Pipeline for Dose-Response Analysis

Objective: To ensure statistical analysis of dose-response data is fully transparent, executable, and reproducible by independent researchers.

Detailed Methodology:

- Environment Capture: Use containerization software (e.g., Docker) to create a snapshot of the exact computational environment, including operating system, R or Python version, and all package dependencies [21].

- Code Organization: Write analysis scripts (e.g., for probit analysis) in a documented, modular fashion. Use version control (e.g., GitHub) to track all changes [21] [20].

- Data-Code Linkage: Keep raw data immutable. Scripts should read raw data, perform cleaning/transformation (in documented steps), and generate outputs (tables, figures, LD₅₀ estimate).

- Automated Documentation: Use tools like R Markdown or Jupyter Notebooks to interweave narrative text, code, and results into a single, compilable document.

- Archiving: Deposit the final dataset, code, and computational environment file in a public, FAIR-compliant repository (e.g., Zenodo, Figshare) and link it to the published manuscript [20].

Key Data for Hazard Classification

The following table summarizes the GHS hazard categories for acute oral toxicity based on LD₅₀ values, which are directly impacted by the reproducibility of the underlying experiments [17].

Table 1: GHS Hazard Categories for Acute Oral Toxicity

| GHS Hazard Category | Criteria: Oral LD₅₀ (mg/kg body weight) | Hazard Statement Example |

|---|---|---|

| Category 1 | ≤ 5 | Fatal if swallowed |

| Category 2 | >5 and ≤ 50 | Fatal if swallowed |

| Category 3 | >50 and ≤ 300 | Toxic if swallowed |

| Category 4 | >300 and ≤ 2000 | Harmful if swallowed |

| Category 5 | >2000 and ≤ 5000 | May be harmful if swallowed |

Table 2: Impact of LD₅₀ Variability on Drug Classification

| Drug | Reported LD₅₀ in Rats (mg/kg) | GHS Category (Based on value) | Clinical Acute Toxicity Concern | Illustration of Classification Issue |

|---|---|---|---|---|

| Ibuprofen | 636 | Category 4 | Gastrointestinal lesions | A 2-fold variability could shift it to Category 3. |

| Paracetamol (Acetaminophen) | 1944 | Category 4 | Hepatotoxicity | A 1.3-fold variability could shift it to Category 5. |

| Tramadol | 228-300 | Category 3 (or conflicting 1/2) | Central nervous system depression | High variability leads to conflicting hazard codes (H300 vs. H301) in public databases [17]. |

Visualizing Workflows and Relationships

Diagram 1: LD50 Determination & GHS Classification Workflow

Diagram 2: Framework for Improving Reproducibility

This table lists key resources, guidelines, and tools to support reproducible research in toxicology and hazard assessment.

Table 3: Research Reagent Solutions for Reproducible Toxicology

| Item / Resource | Function / Purpose | Key Feature for Reproducibility |

|---|---|---|

| ARRIVE Guidelines [18] | A 20-point checklist for reporting animal research. | Ensures all critical methodological details (sample size, allocation, animal strain) are included in publications, enabling replication [18]. |

| OECD Test Guidelines (e.g., 420, 423, 425) | Internationally agreed test methods for chemical safety assessment. | Provide standardized protocols for acute toxicity testing, reducing inter-laboratory variability. Promote Fixed-Dose Procedure to use fewer animals [17]. |

| FAIR Data Repositories (e.g., Zenodo, Figshare) | Platforms for public data archiving. | Ensure experimental data is Findable, Accessible, Interoperable, and Reusable (FAIR), a core tenet of open science and reproducibility [20]. |

| Containerization Software (e.g., Docker) [21] | Tool to package code and its environment into a container. | Captures the exact computational environment (OS, libraries, versions), guaranteeing others can re-run the exact same analysis [21]. |

| Version Control Systems (e.g., Git, GitHub) [21] [20] | Systems for tracking changes in code and documents. | Documents the evolution of analysis scripts, allows collaboration, and links specific code versions to specific results. |

| The MDAR Checklist [20] | A framework for reporting materials, design, analysis, and results. | Helps systematically detail critical research resources (antibodies, cell lines, chemicals) and analytical procedures in life sciences [20]. |

| Statistical Training Resources [18] | Education on proper statistical inference. | Addresses the misuse of p-values and statistical significance, a major source of non-reproducibility. Emphasizes estimation and confidence intervals [18]. |

The Ethical and Economic Imperative for Improved Methods (The 3Rs Principles)

Technical Support Center: Troubleshooting LD50 Research & 3Rs Implementation

This technical support center addresses common challenges in acute toxicity testing, focusing on improving the reproducibility of LD50 results while implementing the 3Rs principles (Replacement, Reduction, and Refinement). The guidance is structured within a broader thesis that enhancing methodological rigor and adopting New Approach Methodologies (NAMs) are ethical and economic necessities for sustainable, reliable research [22] [23].

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: Why are our LD50 values inconsistent between studies, and how can we improve reproducibility?

- Problem: High variability in LD50 results undermines study reliability and violates the Reduction principle by potentially wasting animals and resources [17].

- Primary Causes & Solutions:

- Cause: Biological Variability. Differences in animal strain, sex, age, and microbiome can significantly alter toxicological responses [17].

- Solution: Strictly standardize and document all animal husbandry conditions. Use isogenic strains where possible and consider this variability a key factor in sample size calculation.

- Cause: Methodological Discrepancies. Variations in dosing procedure, vehicle used, fasting state, and observation period lead to inconsistent data [17].

- Solution: Adopt a Standard Operating Procedure (SOP) aligned with OECD guidelines (e.g., TG 425: Up-and-Down Procedure) and ensure consistent training for all technicians.

- Cause: Statistical Method Limitations. The classical LD50 test, requiring large group sizes, has been criticized for poor reproducibility and ethical concerns [17].

- Solution: Transition to alternative OECD-approved methods like the Fixed Dose Procedure (FDP) or the Up-and-Down Procedure (UDP). These methods are designed to Reduce animal use (typically by 50-70%) and focus on observing clear signs of toxicity rather than just mortality, which also aligns with Refinement [17].

- Cause: Biological Variability. Differences in animal strain, sex, age, and microbiome can significantly alter toxicological responses [17].

FAQ 2: Which alternative method for acute toxicity assessment should we use to replace the classical LD50 test?

- Problem: Regulatory guidelines now discourage the classical LD50 test, but the choice of alternative can be unclear [17].

Decision Support: The choice depends on your specific goal (screening vs. regulatory submission) and the available compound quantity.

Table 1: Comparison of Alternative Acute Oral Toxicity Test Methods [17]

Method OECD TG Key Principle Typical Animal Use Primary Outcome Advantage for 3Rs Fixed Dose Procedure (FDP) 420 Identifies a dose that produces clear signs of toxicity (not mortality). ~15-20 animals Hazard classification, evident toxicity dose. Reduction & Refinement: Uses fewer animals, avoids death as an endpoint. Acute Toxic Class Method 423 Uses stepwise dosing with 3 animals per step to assign a toxicity class. ~6-18 animals Hazard classification range. Reduction: Minimizes numbers via a sequential design. Up-and-Down Procedure (UDP) 425 Adjusts dose up or down for each subsequent animal based on the previous outcome. ~6-12 animals LD50 estimate with confidence intervals. Significant Reduction: Dramatically lowers animal use for point estimate.

FAQ 3: How can we integrate New Approach Methodologies (NAMs) into our non-clinical pipeline to reduce animal use?

- Problem: Researchers are unsure how to initiate the Replacement of animal models with emerging human-relevant methods [22] [24].

- Troubleshooting Steps:

- Engage Regulators Early: Utilize consultation forums like the FDA's Innovative Science and Technology Approaches for New Drugs (ISTAND) pilot program or the EMA's Innovation Task Force (ITF). These provide pathways to discuss the acceptability of specific NAMs for a given context of use [22] [24].

- Start with Supplemental Data: Initially, use NAMs (e.g., in vitro cytotoxicity assays, organ-on-chip models) to inform and refine your animal study design (dose selection, endpoint monitoring). This is a form of Reduction and Refinement [25].

- Target Specific Endpoints: Consult agency-specific tables (e.g., from FDA CDER) that identify where NAMs are accepted. For example, a battery of in silico and in chemico tests is now accepted for skin sensitization assessment, replacing the traditional guinea pig or mouse tests [25] [24].

- Adopt a Weight-of-Evidence Approach: For endpoints like carcinogenicity or developmental toxicity, build a case using existing data, in vitro assays, and mechanistic understanding. This may Reduce or eliminate the need for certain long-term animal studies [25].

Detailed Experimental Protocols

Protocol 1: OECD Guideline 420 - Fixed Dose Procedure (FDP) The FDP aims to identify the dose that causes clear signs of evident toxicity, moving away from mortality as the primary endpoint [17].

- Selection of Starting Dose: Choose from one of four fixed dose levels (5, 50, 300, or 2000 mg/kg body weight) based on preliminary information.

- Dosing and Observation: Administer the test substance orally to a single group of animals (typically 5 animals of one sex). Observe meticulously for 14 days, recording clinical signs of toxicity, their time of onset, severity, and duration.

- Decision Criteria:

- If evident toxicity is observed, the test is concluded at that dose level.

- If mortality occurs, the procedure is stopped, and a lower dose may be tested.

- If no evident toxicity or mortality is seen, a higher fixed dose is tested in a new group of animals.

- Outcome: The study identifies the dose level causing evident toxicity, which is used for hazard classification (e.g., under the Globally Harmonized System - GHS) without calculating a precise LD50.

Protocol 2: Integrated Testing Strategy for Skin Sensitization (A Replacement Model) This strategy exemplifies Replacement by using a defined in vitro and in silico approach accepted by regulators [24].

- In Silico Assessment: Use a validated (Q)SAR model to predict the protein reactivity and skin sensitization potential of the chemical.

- In Chemico Assay: Perform the Direct Peptide Reactivity Assay (DPRA) to measure the chemical's reactivity with model peptides.

- In Vitro Assays: Conduct cell-based assays like the ARE-Nrf2 Luciferase Test (KeratinoSens or LuSens) to measure the activation of the Keap1-Nrf2 pathway, a key event in skin sensitization.

- Data Integration: Combine the results from these three non-animal sources using a predefined Weight-of-Evidence or Integrated Testing Strategy (ITS) approach to reach a prediction of skin sensitization hazard and potency (Category 1A/1B or no category).

Key Data and Regulatory Classifications

Table 2: GHS Hazard Categories for Acute Oral Toxicity Based on LD50 Values [17]

| Hazard Category | Oral LD50 (mg/kg body weight) | Hazard Statement | Example Pharmaceutical (Approx. Rat Oral LD50) |

|---|---|---|---|

| Category 1 | ≤ 5 | Fatal if swallowed | Highly potent compounds (e.g., some cytotoxics) |

| Category 2 | >5 and ≤ 50 | Fatal if swallowed | |

| Category 3 | >50 and ≤ 300 | Toxic if swallowed | Tramadol (~228 mg/kg) [17] |

| Category 4 | >300 and ≤ 2000 | Harmful if swallowed | Ibuprofen (~636 mg/kg), Paracetamol (~1944 mg/kg) [17] |

| Category 5 | >2000 and ≤ 5000 | May be harmful if swallowed | Substances with low acute toxicity |

Note: The table highlights a key limitation of using LD50 alone for classification. Ibuprofen and paracetamol have different LD50 values and toxicological profiles but fall into the same GHS category, demonstrating why mechanistic data from NAMs is crucial for a complete safety assessment [17].

Pathways and Workflows

Ethical Decision Pathway for Animal Research

Workflow: From Traditional LD50 to Modern 3Rs Approach

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Implementing 3Rs in Toxicity Testing

| Tool Category | Specific Item/Technique | Function & Role in 3Rs |

|---|---|---|

| In Vitro Systems | Primary hepatocytes, 3D organoids (e.g., liver spheroids), Microphysiological Systems (Organs-on-a-Chip) | Replacement/Reduction: Model human tissue responses for mechanistic toxicity screening, reducing animal use in early phases. |

| In Silico Tools | (Quantitative) Structure-Activity Relationship [(Q)SAR] software, Physiologically Based Kinetic (PBK) models, AI-based toxicity predictors. | Replacement: Predict toxicity based on chemical structure. Prioritize compounds for testing, eliminating unsafe candidates early. |

| Specialized Assay Kits | Mitochondrial toxicity assay, high-content screening apoptosis/cytotoxicity kits, cytokine release assay panels. | Reduction/Refinement: Provide standardized, sensitive endpoints for in vitro studies, reducing the need for in vivo confirmatory tests. |

| Reference Standards & Vehicles | Certified reference compounds for assay validation, standardized dosing vehicles (e.g., 0.5% methylcellulose). | Refinement/Reduction: Ensure consistency between studies, reducing experimental noise and the need for repeat experiments. |

| Statistical Software Modules | Software packages with modules for Bayesian sequential design, up-and-down analysis, and low-n statistical power calculation. | Reduction: Enable robust study design and analysis with minimized animal numbers, directly implementing Reduction principles. |

Advanced Methodologies for Reliable LD50 Determination: From iUDP to NAMs

This technical support center is established within the context of a broader thesis dedicated to improving the reproducibility of LD₅₀ results. Reproducibility in acute toxicity testing is challenged by methodological variability, resource constraints, and ethical considerations [26]. This guide provides researchers, scientists, and drug development professionals with targeted troubleshooting and detailed protocols for two principal methods: the Modified Karber Method (mKM) and the Up-and-Down Procedure (UDP), including its improved variant (iUDP) [27]. By structuring support around common experimental hurdles, we aim to standardize practices, reduce operational errors, and enhance the reliability of median lethal dose determinations.

The choice between traditional and sequential testing paradigms involves balancing precision, resources, and ethical guidelines. The table below summarizes the core characteristics of each method [27] [28] [26].

| Feature | Modified Karber Method (mKM) | Traditional Up-and-Down (UDP) | Improved UDP (iUDP) |

|---|---|---|---|

| Core Principle | Fixed-dose, parallel group design. Multiple groups of animals dosed simultaneously at different levels. | Sequential, adaptive dosing. The dose for the next animal depends on the outcome (death/survival) of the previous one. | Sequential dosing with a shortened observation interval between animals (e.g., 24 hours) [28]. |

| Typical Animals Used | ~50-80 animals per substance [28]. | 4-15 animals [28]. | Approximately 6-8 animals [27]. |

| Experimental Duration | ~14 days (including final observation) [27]. | 20-42 days (due to 48-hour intervals between doses) [28]. | ~7-10 days [27]. |

| Compound Required | Higher amount (e.g., ~1.24g for sinomenine HCl) [27]. | Lower amount. | Very low amount (e.g., ~0.114g for sinomenine HCl), ideal for scarce/valuable compounds [27]. |

| Primary Advantage | Well-established, simple calculation, provides a precise LD₅₀ under ideal conditions. | Significant reduction in animal use (ethical 3Rs principle). | Retains animal reduction benefits while dramatically shortening time and minimizing compound use [27]. |

| Key Challenge | High animal and compound use; lower ethical alignment. | Very long experimental timeline. | Requires careful management of shortened observation windows. |

Troubleshooting Guide: A Three-Phase Approach

Effective troubleshooting follows a structured process: understanding the problem, isolating the cause, and implementing a fix [29]. The following guide adapts this framework to specific issues in acute toxicity testing.

Phase 1: Understanding the Problem

Begin by gathering complete information. Ask specific questions and request raw data [29] [30].

- If the reported LD₅₀ has high variability, ask: What was the exact dosing preparation protocol? Can you share the mortality data for each group/animal?

- If an experiment fails to reach a stopping point, ask: What are the defined stopping rules? What is the complete dosing sequence and outcome log?

Phase 2: Isolating the Issue

Simplify and test variables one at a time [29].

- Problem: Inconsistent results between technicians.

- Action: Standardize the animal fasting procedure, dosing technique, and criteria for recording "death" vs. "moribund." Implement a single, validated scoring sheet for clinical observations.

- Problem: mKM confidence interval is excessively wide.

- Action: Check if the dose progression (e.g., ratio of 0.7-0.8) is appropriate for the compound's suspected toxicity slope. Verify that mortality spans the required 0% to 100% range. Recalculate using the correct formula:

LD₅₀ = Dm - Σ(a * b)where Dm is the lowest dose causing 100% mortality, a is the dose interval, and b is the mean mortality between groups [26].

- Action: Check if the dose progression (e.g., ratio of 0.7-0.8) is appropriate for the compound's suspected toxicity slope. Verify that mortality spans the required 0% to 100% range. Recalculate using the correct formula:

- Problem: UDP/iUDP oscillates without converging.

- Action: (1) Confirm the dose progression factor (typically 1.3-3.2 [28]) is not too small. (2) Verify that the observation period (e.g., 24h for iUDP) is sufficient for the compound's toxicokinetics. (3) Ensure the starting dose is reasonably close to the true LD₅₀; consult preliminary range-finding data.

Phase 3: Implementing a Fix and Verifying

Test the solution and document the outcome for future use [29].

- Fix for Wide mKM CI: Redesign the study with a revised, narrower dose range based on literature or a new pilot study. Verify by running a small confirmatory group at the predicted LD₅₀.

- Fix for Non-converging UDP: Restart the sequence using a revised starting dose and a larger progression factor. Use software (e.g., AOT425StatPgm) to confirm parameters [28]. Verify by completing the new sequence and confirming it meets standard stopping rules (e.g., 5 reversals in 6 consecutive animals).

Experimental Protocols for Key Methods

- Animals: ICR female mice (7-8 weeks old, 26-30 g). House under standard conditions (12h light/dark, 20-22°C).

- Pre-test: Fast animals for 4 hours (water ad libitum) before dosing.

- Dosing (Oral): Administer volume of 0.2 mL per 10g body weight for nicotine/sinomenine; 0.4 mL/10g for berberine HCl. Fast for 1 hour post-dose.

- Dosing Sequence:

- Estimate starting dose (e.g., 175 mg/kg for sinomenine HCl).

- Define dose progression using software (sigma=0.2, slope=5, progression factor=1.6).

- Dose first animal. Observe for 24 hours for poisoning symptoms and mortality.

- Based on outcome, dose next animal at the next higher (if survived) or lower (if died) dose in the sequence.

- Stopping Rules: Experiment concludes when: (a) 3 consecutive animals survive at the highest dose, (b) 5 reversals occur in any 6 consecutive animals, or (c) statistical likelihood-ratios are met.

- Endpoint: Humane euthanasia of survivors after a 14-day observation. Necropsy and organ inspection.

- Animals & Pre-test: As per Protocol 1.

- Experimental Design:

- Select a minimum of 5 dose levels, with a constant ratio between successive doses (e.g., 0.7).

- Randomly allocate animals (typically 10 per group) to each dose level and one vehicle control group.

- Administer all doses simultaneously.

- Observation: Monitor animals closely for 24 hours, then daily for 14 days. Record time of death and all clinical signs.

- Calculation:

- Determine the lowest dose causing 100% mortality (Dm).

- Calculate LD₅₀ using the formula: LD₅₀ = Dm - Σ(a * b).

- Calculate standard error: S.E. = a * √(Σ(b - b²)/(n-1)), where n is animals per group.

Visual Guide: iUDP Experimental Workflow and Decision Logic

iUDP Experimental Workflow

UDP Stopping Rules Decision Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function & Specification | Critical Note for Reproducibility |

|---|---|---|

| Test Compounds (Alkaloids) | Nicotine (high toxicity), Sinomenine HCl (medium), Berberine HCl (low). Serve as model compounds for method validation [27]. | Use high-purity (>99%) from certified suppliers (e.g., Sigma). Document CAS number (e.g., 54-11-5 for Nicotine) and lot number [28]. |

| Vehicle Solvents | Normal saline, distilled water, carboxymethyl cellulose (CMC) suspension. Used to dissolve/suspend test compounds for administration. | The choice and concentration of vehicle must be consistent across all studies and reported in detail, as it can affect bioavailability. |

| AOT425StatPgm Software | Statistical program to generate the dose progression sequence for UDP/iUDP based on initial parameters [28]. | Use the same software version across the lab. Document input parameters (estimated LD₅₀, sigma, slope, progression factor) for exact replication. |

| Clinical Observation Checklist | A standardized sheet for recording symptoms (e.g., piloerection, ataxia, convulsions) and times. | Essential for consistent endpoint assessment between technicians. Links clinical signs to dose levels for a richer dataset than mortality alone. |

| Precision Analytical Balance | For accurate weighing of small quantities of valuable test substances (critical for iUDP). | Must be regularly calibrated. Document weighing protocol to minimize loss. |

Frequently Asked Questions (FAQs)

Method Selection & Design

Q1: I have a very limited amount of a novel compound. Which method should I use? A: The Improved UDP (iUDP) is explicitly designed for this scenario [27]. It can provide a reliable LD₅₀ estimate using approximately 6-8 animals and consumes less than 10% of the compound required for an mKM test, as demonstrated with alkaloids [27].

Q2: How do I choose a starting dose and progression factor for a UDP with no prior data? A: Conduct a small range-finding test using 2-3 animals at logarithmically spaced doses (e.g., 10, 100, 1000 mg/kg) [26]. Observe for 24-48 hours to identify a dose that causes minimal toxicity and one that causes severe toxicity. Start the main UDP at a dose between these bounds. A default progression factor of 3.2 is often a safe starting point [28].

Experimental Execution

Q3: During a UDP, an animal dies very quickly (<30 minutes). Should I immediately proceed with the next animal? A: No. Adhere to the defined observation period (e.g., 24h for iUDP) before dosing the next animal. A very rapid death is critical data that informs the toxicodynamics of the compound but does not alter the procedural interval. Proceeding too quickly may miss delayed effects in the next animal.

Q4: In an mKM test, one animal in the mid-dose group died late (Day 5). Should it count as "dead" for the LD₅₀ calculation? A: This depends on your pre-defined observation period protocol. Standard mKM protocol uses a fixed observation period (typically 14 days) [28]. Any death occurring within that period should be counted. The protocol must specify this timeframe, and any deviation must be scientifically justified and reported.

Data Analysis & Interpretation

Q5: My UDP yielded an LD₅₀, but the confidence interval is wider than from a similar mKM test. Is this acceptable? A: Yes, this is expected and reflects a fundamental trade-off. UDP methods use fewer animals, which typically results in wider confidence intervals compared to mKM [28]. The key is whether the precision is sufficient for your classification or decision-making purpose. For many regulatory classifications (e.g., GHS hazard categories), the UDP's precision is adequate [27].

Q6: How do I calculate the LD₅₀ and its confidence interval from a completed UDP test? A: Do not use the mKM formula. The LD₅₀ from a UDP is calculated using maximum likelihood estimation (MLE), which accounts for the sequential dependency of the data. Use specialized software like the EPA's AOT425StatPgm or the OECD's dedicated statistical tool to ensure correct calculation.

Technical Support Center: Troubleshooting iUDP Experiments

This technical support center provides solutions for common challenges encountered when implementing the Improved Up-and-Down Procedure (iUDP) for acute toxicity testing. The guidance is framed within the critical goal of improving the reproducibility of LD50 results, a cornerstone of reliable drug safety assessment [31].

Troubleshooting Guide: Common iUDP Experimental Issues

Problem 1: Experiment Duration is Still Too Long

- Symptoms: The main test phase exceeds an average of 22 days [32] [28].

- Potential Cause & Solution:

- Cause: The observation window between administering doses to sequential animals is longer than the optimized 24-hour period [32].

- Solution: Strictly adhere to the 24-hour observation window. The core refinement of the iUDP is reducing the inter-dosing observation time from 48 hours (in traditional UDP) to 24 hours, based on evidence that outcomes are typically clear within this period for the tested alkaloids [32] [28]. Ensure animal monitoring is consistent and frequent within this first 24 hours post-administration.

Problem 2: High Compound/Test Article Consumption

- Symptoms: You are using more of a valuable or limited compound than expected.

- Potential Cause & Solution:

- Cause: Using a traditional fixed-dose group method (like mKM) instead of the sequential iUDP. The iUDP is explicitly designed to minimize compound use [32] [28].

- Solution: Transition to the iUDP protocol. Comparative data shows dramatic reductions in compound use. For example, testing nicotine required 0.0082g with iUDP versus 0.0673g with mKM, an 88% reduction [32] [28]. For berberine hydrochloride, use dropped from 12.7g to 1.9g [32].

Problem 3: Inconsistent or Wide Confidence Intervals in LD50 Estimate

- Symptoms: The calculated 95% confidence interval for your LD50 is very wide, suggesting low precision.

- Potential Cause & Solution:

- Cause 1: The experiment was stopped prematurely, before meeting the formal stopping rules [32] [28].

- Solution: Continue the sequential testing until one of these stopping criteria is met: (a) 3 consecutive animals survive at the highest dosage; (b) 5 reversals occur in any 6 subsequent animals; or (c) at least 4 animals follow the first reversal and statistical likelihood-ratios exceed the critical value [32] [28].

- Cause 2: Incorrect estimation of the initial dose or dose progression series (Sigma, Slope factors).

- Solution: Use the AOT425StatPgm software to calculate the dose progression series before starting the experiment. Use appropriate Sigma (e.g., 0.2 for high/medium toxicity, 0.5 for low toxicity) and Slope factors (e.g., 5 or 2) based on prior knowledge of the compound's toxicity class [32] [28].

Problem 4: Excessive Animal Use

- Symptoms: The number of animals used is approaching that of traditional methods (e.g., ~50-80 for mKM) [32] [28].

- Potential Cause & Solution:

- Cause: Not applying the iUDP's sequential, outcome-dependent dosing strategy. The iUDP typically requires only 6-10 animals to reach a stopping point, compared to the large, fixed-number groups used in traditional methods [32] [28].

- Solution: Implement the iUDP correctly. The method inherently aligns with the "Reduction" principle of the 3Rs. In the validation study, the iUDP used 23 mice total to test three compounds, while the mKM used 240 mice [32] [28].

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the iUDP compared to the traditional UDP? A1: The primary refinement is the reduction of the observation period between dosing sequential animals from 48 hours to 24 hours. This change cuts the average total experimental time from 20-42 days (UDP) down to approximately 22 days (iUDP), without compromising the reliability of the LD50 estimate [32] [28].

Q2: Is the iUDP less accurate than traditional methods like the Modified Karber Method (mKM)? A2: No. Validation studies show that the iUDP produces LD50 values with high reliability and comparability to the mKM. For example, the LD50 for sinomenine hydrochloride was 453.54 ± 104.59 mg/kg (iUDP) vs. 456.56 ± 53.38 mg/kg (mKM) [32] [28]. The iUDP achieves this with far fewer animals and less compound.

Q3: For what type of compounds is the iUDP particularly advantageous? A3: The iUDP is especially suitable for testing valuable, rare, or difficult-to-synthesize compounds because it reduces the amount of test substance required by up to 88-90% compared to traditional methods [32] [28].

Q4: How do I determine the starting dose and the series of doses for a new compound? A4: You must use established software, specifically the AOT425StatPgm program. You will need to input an estimated LD50 (based on literature or similar compounds) and select appropriate Sigma and Slope factors to generate a predefined geometric series of doses (e.g., 2000, 1260, 800, 500... mg/kg). The first animal receives a dose from the middle of this series [32] [28].

Q5: How does using the iUDP improve the reproducibility of LD50 research? A5: The iUDP enhances reproducibility by: 1) Reducing procedural variability through a standardized, software-driven dosing series, 2) Minimizing inter-animal variability by focusing testing on the critical dose-response region near the LD50, and 3) Providing clear, statistically defined stopping rules to terminate experiments consistently, preventing under- or over-testing [32] [28] [31].

Experimental Protocols & Data

The following protocols are derived from the seminal study validating the iUDP using three model alkaloids [32] [28].

- Animals: ICR female mice (7-8 weeks old, 26-30 g). Fasted for 4 hours before dosing, water available ad libitum.

- Dosing & Observation: Oral administration (gavage). Volumes: 0.2 ml per 10g body weight for nicotine/sinomenine; 0.4 ml per 10g for berberine. Observe animal for 24 hours to determine survival/death before dosing the next animal.

- Stopping Rules: The test ends when one of these criteria is met [32] [28]:

- Three consecutive animals survive at the highest tested dose.

- Five reversals (survival-death or death-survival sequences) occur in any six consecutive animals.

- At least four animals have been tested after the first reversal, and specified statistical likelihood-ratios are exceeded.

Protocol for a Highly Toxic Compound (e.g., Nicotine):

- Estimated LD50: 20 mg/kg.

- Parameters for AOT425StatPgm: Sigma=0.2, Slope=5, T=1.6.

- Generated Dose Series (mg/kg): 2000, 1260, 800, 500, 320, 200, 126, 80, 50, 32, 20, 12.6, 8, 5, 3.2, 2.

- First Animal Dose: 12.6 mg/kg.

- Procedure: Administer dose. If animal survives after 24h, administer next higher dose (20 mg/kg) to next animal. If it dies, administer next lower dose (8 mg/kg). Continue until stopping rule is triggered.

Quantitative Performance Data: iUDP vs. Traditional Method

Table 1: Comparative Efficiency of iUDP vs. Modified Karber Method (mKM) [32] [28]

| Metric | Improved UDP (iUDP) | Modified Karber Method (mKM) | Advantage for iUDP |

|---|---|---|---|

| Total Animals Used (for 3 compounds) | 23 | 240 | ~90% Reduction |

| Average Time to Complete Test | ~22 days | ~14 days | Protocol is longer but uses far fewer animals. |

| Compound Used: Nicotine | 0.0082 g | 0.0673 g | 87.8% Reduction |

| Compound Used: Sinomenine HCl | 0.114 g | 1.24 g | 90.8% Reduction |

| Compound Used: Berberine HCl | 1.9 g | 12.7 g | 85.0% Reduction |

Table 2: Comparison of LD50 Results (mg/kg) from iUDP and mKM [32] [28]

| Test Compound | iUDP LD50 ± SD (95% CI) | mKM LD50 ± SD (95% CI) | Reliability Assessment |

|---|---|---|---|

| Nicotine | 32.71 ± 7.46 mg/kg | 22.99 ± 3.01 mg/kg | Values are of same order; iUDP CI is wider but uses 90% fewer animals. |

| Sinomenine Hydrochloride | 453.54 ± 104.59 mg/kg | 456.56 ± 53.38 mg/kg | Excellent agreement. Core LD50 values are nearly identical. |

| Berberine Hydrochloride | 2954.93 ± 794.88 mg/kg | 2825.53 ± 1212.92 mg/kg | Strong agreement. The mKM shows a much wider confidence interval. |

Workflow and Process Diagrams

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for iUDP Acute Toxicity Testing

| Item | Function / Role in iUDP | Critical Specification / Note |

|---|---|---|

| AOT425StatPgm Software | Generates the standardized, logarithmic series of test doses based on an initial LD50 estimate. This ensures consistency and correct progression between animals. | Mandatory. Using a pre-calculated series is a foundational step for a valid iUDP [32] [28]. |

| Test Compound (High Purity) | The substance whose acute oral toxicity (LD50) is being determined. | Purity >99% is recommended to ensure results are attributable to the compound itself [32] [28]. |

| Vehicle for Dosing | Used to dissolve or suspend the test compound for accurate oral gavage administration. | Common examples: Saline, carboxymethylcellulose (CMC), vegetable oil. Must be non-toxic at administered volumes. |

| Laboratory Animals (e.g., ICR Mice) | The in vivo model for assessing systemic acute toxicity. | Strain, sex, age, and weight should be standardized (e.g., 7-8 week old female ICR mice, 26-30g) [32] [28]. Ethical approval is required. |

| Precision Dosing Equipment | For accurate oral gavage administration of the test compound solution. | Includes appropriate syringes and gavage needles. Calibrated for volumes like 0.2 ml per 10g body weight [32] [28]. |

| Statistical Analysis Tool | To calculate the final LD50 value and its 95% confidence interval from the sequence of doses and outcomes. | The AOT425StatPgm or equivalent specialized software can perform this calculation. |

Technical Support Center: Method Selection and Troubleshooting

This technical support center provides guidance for researchers selecting and implementing the Improved Up-and-Down Procedure (iUDP) and Modified Karber Method (mKM) for acute oral toxicity testing. The content is framed within the critical goal of improving the reproducibility of LD₅₀ results, emphasizing robust protocols, ethical compliance, and transparent reporting [33].

Comparative Analysis: iUDP vs. mKM The following table summarizes the core quantitative differences between the iUDP and mKM based on a direct comparative study using three model alkaloids [28] [32].

| Comparison Metric | Improved Up-and-Down Procedure (iUDP) | Modified Karber Method (mKM) | Implications for Reproducibility |

|---|---|---|---|

| Animals Used (per compound) | ~6-8 mice (total of 23 for 3 compounds) [28] | ~80 mice (total of 240 for 3 compounds) [28] | iUDP offers a ~90% reduction. Fewer subjects reduce inter-animal variability and biological noise, a key principle of the 3Rs (Reduction) [34] [35]. |

| Compound Consumption | Significantly lower (e.g., 0.0082 g vs. 0.0673 g for nicotine) [28] | 5-10 times higher than iUDP [28] | iUDP is superior for scarce/valuable compounds. Lower consumption reduces batch variability and cost, improving accessibility and consistency. |

| Experimental Duration | ~22 days (average) [28] | ~14 days (average) [28] | mKM is faster. The longer iUDP timeline requires stringent environmental and husbandry control over time to ensure stable baselines [36]. |

| Reported LD₅₀ (mg/kg) ± SD | Nicotine: 32.71 ± 7.46 [28] Sinomenine HCl: 453.54 ± 104.59 [28] Berberine HCl: 2954.93 ± 794.88 [28] | Nicotine: 22.99 ± 3.01 [28] Sinomenine HCl: 456.56 ± 53.38 [28] Berberine HCl: 2825.53 ± 1212.92 [28] | Accuracy is comparable. Point estimates are similar; variance differs. mKM showed lower SD for nicotine/sinomenine, but much higher SD for berberine, indicating iUDP may offer more consistent precision for certain compounds. |