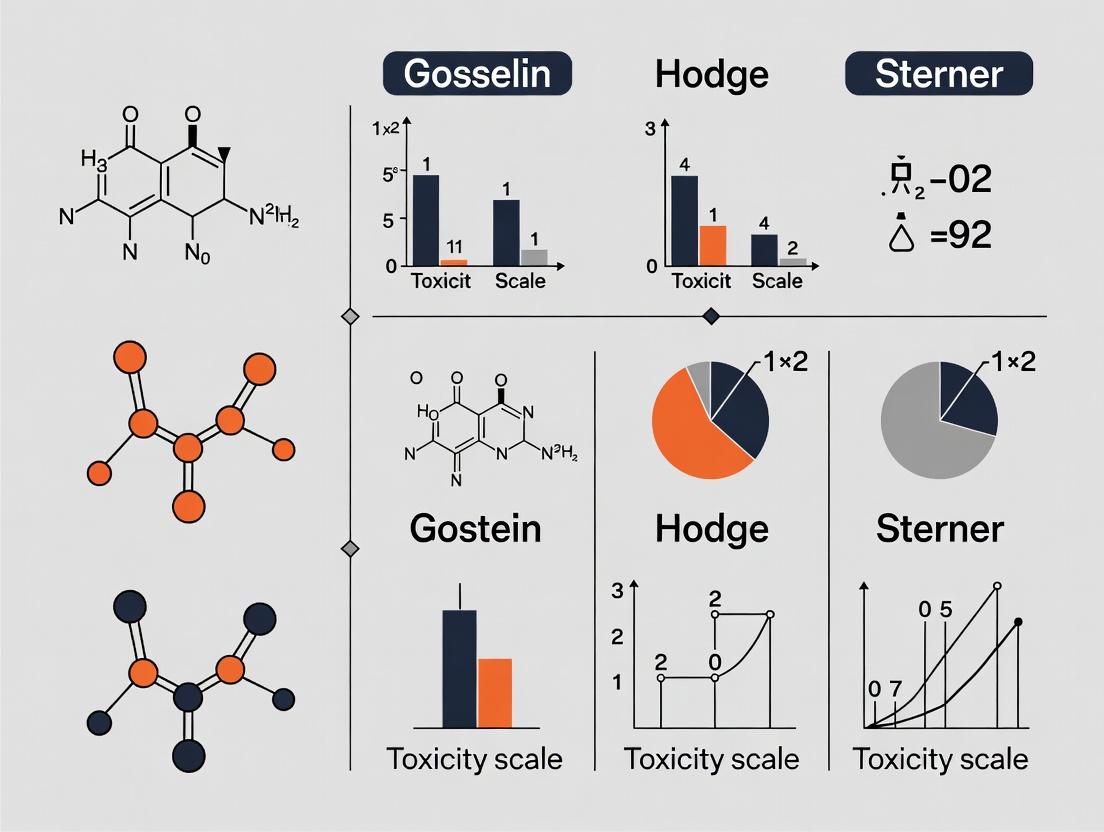

Gosselin vs. Hodge & Sterner: A Comparative Guide to Toxicity Scales for Drug Development and Risk Assessment

This article provides a detailed, practical comparison of the Gosselin (Gosselin, Smith and Hodge) and Hodge and Sterner toxicity classification scales, two foundational systems used to categorize acute chemical hazards...

Gosselin vs. Hodge & Sterner: A Comparative Guide to Toxicity Scales for Drug Development and Risk Assessment

Abstract

This article provides a detailed, practical comparison of the Gosselin (Gosselin, Smith and Hodge) and Hodge and Sterner toxicity classification scales, two foundational systems used to categorize acute chemical hazards based on LD50/LC50 values. Tailored for researchers and drug development professionals, the analysis covers their historical origins, core methodological differences in numerical rating and terminology, and implications for labeling, safety data sheets (SDS), and regulatory communication. It further addresses common points of confusion in application, explores modern computational and animal-alternative methods that complement these classical scales, and provides a framework for validation and selection based on specific project needs in biomedical and clinical research.

Defining the Scales: Historical Origins and Core Principles of Gosselin and Hodge & Sterner

The Origin of LD50 and the Need for Standardized Classification

The concept of the median lethal dose (LD₅₀), defined as the dose of a substance required to kill 50% of a test population under specified conditions, was introduced in 1927 by J.W. Trevan [1] [2]. His objective was to establish a standardized, reproducible method for comparing the relative poisoning potency of drugs and chemicals, which, until then, lacked a consistent benchmark [2]. The selection of the 50% mortality point was strategic; it avoided the statistical extremes and variability associated with measuring doses that kill either very few or nearly all test subjects, thereby reducing the amount of testing required while providing a stable central measure [1].

This innovation provided toxicology with its first widely adopted quantal measure, where the effect (death) either occurs or does not [2]. The LD₅₀ value, typically expressed as mass of substance per unit mass of test subject (e.g., mg/kg), allows for the comparison of different substances and normalizes results across animals of varying sizes [1]. However, the inherent variability of biological systems means that a single LD₅₀ value can be influenced by species, strain, age, sex, route of administration, and environmental conditions [1] [3]. Consequently, while the LD₅₀ provides a crucial snapshot of acute toxicity, its interpretation and application demand careful contextualization. This necessity directly led to the development of formal toxicity classification scales, which translate numerical LD₅₀ values into standardized hazard categories for labeling, safety protocols, and regulatory decision-making [2].

Comparative Analysis of Major Toxicity Classification Scales

To standardize the communication of hazards, several classification systems have been developed. The two most commonly referenced scales are the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale [2] [3]. While both serve the same fundamental purpose, they differ significantly in their structure, terminology, and the probable lethal dose estimates they provide for humans, leading to potential confusion if the applied scale is not explicitly referenced [2].

The following tables detail the specific criteria for each scale, highlighting their contrasting approaches.

Table 1: The Hodge and Sterner Toxicity Scale [2] This scale uses a numerical rating from 1 (most toxic) to 6 (least toxic) and provides criteria for oral, inhalation, and dermal routes of exposure.

| Toxicity Rating | Commonly Used Term | Oral LD₅₀ (Single Dose to Rats) (mg/kg) | Inhalation LC₅₀ (4-Hour Exposure in Rats) (ppm) | Dermal LD₅₀ (Single Application to Rabbits) (mg/kg) | Probable Lethal Dose for an Average Human (70 kg) |

|---|---|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | ≤ 10 | ≤ 5 | A taste (< 7 drops) |

| 2 | Highly Toxic | 1 – 50 | 10 – 100 | 5 – 43 | 1 teaspoon (4 ml) |

| 3 | Moderately Toxic | 50 – 500 | 100 – 1,000 | 44 – 340 | 1 ounce (30 ml) |

| 4 | Slightly Toxic | 500 – 5,000 | 1,000 – 10,000 | 350 – 2,810 | 1 pint (600 ml) |

| 5 | Practically Non-toxic | 5,000 – 15,000 | 10,000 – 100,000 | 2,820 – 22,590 | 1 quart (1 liter) |

| 6 | Relatively Harmless | ≥ 15,000 | ≥ 100,000 | ≥ 22,600 | > 1 quart |

Table 2: The Gosselin, Smith and Hodge Toxicity Scale [2] This scale uses a reverse numerical class system (6 is most toxic) and focuses primarily on the probable oral lethal dose for humans.

| Toxicity Class | Probable Oral Lethal Dose (Human) | For a 70-kg Person (150 lbs) |

|---|---|---|

| 6: Super Toxic | < 5 mg/kg | A taste (< 7 drops) |

| 5: Extremely Toxic | 5 – 50 mg/kg | 1 tsp – 2 tsp (4 – 15 ml) |

| 4: Very Toxic | 50 – 500 mg/kg | 0.5 – 2 oz (15 – 60 ml) |

| 3: Moderately Toxic | 0.5 – 5 g/kg | 2 oz – 1 pint (60 – 600 ml) |

| 2: Slightly Toxic | 5 – 15 g/kg | 1 pint – 1 quart (600 ml – 1.4 L) |

| 1: Practically Non-Toxic | > 15 g/kg | > 1 quart |

Key Comparative Insights: A direct comparison reveals that the same substance can receive different hazard descriptors under each system. For example, a chemical with an oral LD₅₀ of 2 mg/kg in rats is classified as "1: Extremely Toxic" on the Hodge and Sterner Scale but as "6: Super Toxic" on the Gosselin scale [2] [3]. This discrepancy underscores the critical importance of always citing which scale is being used. The Hodge and Sterner Scale offers a more comprehensive, multi-route framework, while the Gosselin scale provides a simplified, human-focused estimate derived from animal data. The choice between them often depends on the specific regulatory or safety communication context.

Experimental Protocols for Determining Acute Toxicity

The determination of LD₅₀ values has evolved significantly since Trevan's original protocols. Modern guidelines, such as those from the Organisation for Economic Co-operation and Development (OECD), emphasize reducing animal use, minimizing suffering, and improving statistical reliability [4]. The following are key methodological approaches.

Conventional OECD Acute Oral Toxicity Test (Test Guideline 401, now deleted)

This traditional method involved administering a fixed series of doses (e.g., 50, 500, 5000 mg/kg) to groups of animals (typically 5-10 rats or mice per sex per dose) [5]. The animals were observed meticulously for 14 days for signs of toxicity and mortality [2]. The LD₅₀ was calculated by statistical interpolation from the dose-response curve. While robust, this method required a relatively large number of animals (40-80) and has been largely superseded by more efficient alternatives [4].

The Up-and-Down Procedure (UDP - OECD Guideline 425)

This sequential method uses significantly fewer animals, typically 6-10 animals of one sex [4]. Testing begins with a single animal administered a dose just below the best estimate of the LD₅₀. Depending on the outcome (survival or death), the dose for the next animal is increased or decreased by a predetermined factor (e.g., 3.2 times). This "up-and-down" progression continues until a pre-defined stopping criterion is met. The LD₅₀ and its confidence intervals are then calculated using maximum likelihood estimation. Studies show that the UDP provides consistent hazard classification with the conventional method while drastically reducing animal use [4].

The Fixed Dose Procedure (FDP - OECD Guideline 420)

The FDP abandons the objective of determining a precise LD₅₀ in favor of identifying a dose that produces clear signs of non-lethal toxicity. It tests pre-defined fixed doses (5, 50, 300, 2000 mg/kg). A starting dose is selected, and a small group of animals (typically 5 of one sex) is treated. If no clear signs of toxicity are observed, the next higher dose is tested with a new group. If clear toxicity is observed, the test may stop, classifying the substance based on that dose. The goal is to identify the dose that causes evident toxicity but not mortality, thereby classifying the substance without requiring lethal endpoints [4] [5].

Diagram 1: Alternative Testing Methodologies Flowchart (width=760px)

Data Interpretation and Application in Hazard Classification

The application of LD₅₀ data within a regulatory framework follows a structured logic to ensure consistency and safety. Regulatory bodies, such as those adopting the Globally Harmonized System of Classification and Labelling of Chemicals (GHS), use data from validated test methods (like those described in Section 3) to place substances into hazard categories [6]. The process is test-method neutral, prioritizing scientifically validated data regardless of its source [6].

The classification is performed using a weight-of-evidence approach, considering all available data, including animal studies, in vitro tests, and human experience [6]. For acute oral toxicity, the GHS establishes five categories based on experimentally derived LD₅₀ values (or their estimated equivalents from other tests), with Category 1 being the most toxic (LD₅₀ ≤ 5 mg/kg) and Category 5 representing lower acute hazard (LD₅₀ between 2000 and 5000 mg/kg) [6]. The GHS categories thus serve a similar function to the older Hodge and Sterner or Gosselin scales but are designed for global standardization in labeling and safety data sheets.

Diagram 2: Classifying LD50 with Different Scales (width=760px)

The Scientist's Toolkit: Essential Research Reagents and Materials

Conducting robust acute toxicity studies requires specific materials and reagents. This toolkit details essential items for a standard test, referencing both classical rodent models and common educational alternatives.

Table 3: Essential Research Reagents and Materials for Acute Toxicity Testing

| Item | Function | Example/Note |

|---|---|---|

| Test Substance | The chemical agent whose toxicity is being evaluated. Must be of known and high purity for reproducible results [2]. | Pure compound; mixtures are rarely studied in foundational LD₅₀ tests [2]. |

| Vehicle/Solvent | A non-toxic medium to dissolve or suspend the test substance for accurate dosing. | Examples include distilled water, saline, corn oil, or carboxymethyl cellulose (CMC) [5]. |

| Laboratory Animals | The biological model for the assay. Species and strain selection significantly impact results [1] [2]. | Typically rats or mice; other species include rabbits, guinea pigs, or dogs. Brine shrimp (Artemia) are used in educational bioassays [7]. |

| Dosing Apparatus | Tools for precise administration of the test substance via the chosen route. | Oral gavage needles (for rodents), syringes, micropipettes, inhalation chambers [2], or calibrated droppers for aquatic tests [7]. |

| Housing & Caging | Standardized environment to house test subjects before, during, and after dosing. | Individually ventilated cages with controlled temperature, humidity, and light cycles. Culture dishes for aquatic organisms [7] [5]. |

| Diet & Water | Standardized nutrition provided ad libitum (except prior to dosing) to eliminate variability. | Certified commercial rodent diet. For brine shrimp, specific hatching salts are required [7]. |

| Analytical Balance | For accurately weighing the test substance and the test animals to calculate precise dose (mg/kg). | High-precision balance (e.g., 0.1 mg sensitivity). |

| Data Collection Sheets/Software | For systematic recording of clinical observations, mortality, body weights, and other parameters over the observation period [5]. | Standardized templates or electronic data capture systems. |

| Statistical Software | To calculate the LD₅₀/LC₅₀ value, confidence intervals, and other statistical parameters from the experimental data. | Tools like the AAT Bioquest LD₅₀ calculator or commercial software (e.g., SAS, GraphPad Prism) [8]. |

The LD₅₀, since its inception by Trevan, has served as an indispensable, if imperfect, cornerstone of quantitative toxicology. Its true utility is unlocked not by the raw numerical value alone, but through its integration into standardized classification systems like those developed by Hodge and Sterner and by Gosselin, Smith and Hodge. These frameworks translate experimental data into actionable hazard communication, despite their differing terminologies and scales. Modern toxicology continues to refine the underlying experimental protocols, prioritizing methods that reduce animal use and refine endpoints while maintaining scientific integrity. The ongoing evolution from simple lethality testing toward more nuanced, mechanism-based safety assessments does not diminish the historical and practical importance of the LD₅₀ and its associated classification scales. They remain fundamental tools for researchers, regulators, and safety professionals in the ongoing effort to understand and mitigate chemical risks.

This comparison guide objectively analyzes the structure and application of the Hodge and Sterner Scale for acute toxicity classification, with direct comparison to the Gosselin, Smith and Hodge Scale. The content is framed within a broader research thesis examining the comparative utility, numerical logic, and contextual application of these two predominant classification systems in toxicology and drug development [2] [3].

Comparative Scale Structures and Classification Criteria

The Hodge and Sterner Scale and the Gosselin, Smith and Hodge (GSH) Scale are the two most common systems for classifying acute toxicity based on lethal dose (LD₅₀) or lethal concentration (LC₅₀) values [2] [9]. They share the same foundational data but differ significantly in their class numbering, terminology, and the implied risk to humans.

Table 1: Comparative Structure of Hodge & Sterner vs. Gosselin, Smith & Hodge Scales

| Hodge and Sterner Scale [2] | Gosselin, Smith and Hodge Scale [2] | ||||

|---|---|---|---|---|---|

| Rating | Commonly Used Term | Oral LD₅₀ (rat, mg/kg) | Probable Lethal Dose for Man | Toxicity Class | Probable Oral Lethal Dose (Human) |

| 1 | Extremely Toxic | ≤ 1 | 1 grain (a taste, a drop) | 6 (Super Toxic) | < 5 mg/kg (A taste, < 7 drops) |

| 2 | Highly Toxic | 1 – 50 | 4 ml (1 tsp) | 5 (Extremely Toxic) | 5 – 50 mg/kg (7 drops – 1 tsp) |

| 3 | Moderately Toxic | 50 – 500 | 30 ml (1 fl. oz.) | 4 (Very Toxic) | 50 – 500 mg/kg (1 tsp – 1 oz.) |

| 4 | Slightly Toxic | 500 – 5000 | 600 ml (1 pint) | 3 (Moderately Toxic) | 0.5 – 5 g/kg (1 oz. – 1 pint) |

| 5 | Practically Non-toxic | 5000 – 15000 | 1 litre (or 1 quart) | 2 (Slightly Toxic) | 5 – 15 g/kg (1 pint – 1 quart) |

| 6 | Relatively Harmless | ≥ 15000 | >1 litre | 1 (Practically Non-Toxic) | > 15 g/kg (> 1 quart) |

Core Differences and Research Implications:

- Inverse Numerical Logic: The most critical distinction is the inverse numbering system. Hodge and Sterner assign the most toxic substances a Class 1, while GSH assigns them Class 6 [2]. This is a fundamental point of potential confusion in interdisciplinary research.

- Human Lethal Dose Correlation: Both scales provide an estimated probable lethal dose for a 70 kg human, bridging animal data to human risk [2]. The descriptive terms (e.g., "Extremely Toxic" vs. "Super Toxic") differ, which can impact the perceived severity in regulatory or safety communications.

- Comprehensiveness: The Hodge and Sterner Scale provides specific criteria for three routes of administration (oral, inhalation LC₅₀, dermal), making it more comprehensive for occupational and environmental hazard assessment [2]. The GSH scale data shown focuses primarily on the oral route.

Experimental Protocols for Acute Toxicity Testing and Classification

The classification under either scale depends on high-quality experimental determination of the LD₅₀ (Lethal Dose, 50%) or LC₅₀ (Lethal Concentration, 50%).

Standard Protocol for Determining LD₅₀ [2]:

- Test Substance: Typically a pure chemical. Mixtures are rarely studied.

- Animal Models: Most tests use rats or mice. Other species (rabbits, guinea pigs, dogs) may be used. Species, strain, age, and sex must be documented.

- Routes of Administration:

- Oral (Gavage): Most common and cost-effective.

- Dermal: Applied to shaved skin for assessing absorption toxicity.

- Inhalation: Animals exposed to a chemical concentration in air for a set period (usually 4 hours).

- Parenteral (e.g., intravenous, intraperitoneal): For specific pharmacokinetic studies.

- Dosing: Animals are grouped and administered a range of single doses. The doses are selected based on preliminary range-finding studies to bracket the expected LD₅₀.

- Observation Period: Animals are clinically observed for signs of toxicity for up to 14 days after administration.

- Data Analysis: The LD₅₀ value is calculated using statistical methods (e.g., probit analysis, Karber method [10]) as the dose that causes lethality in 50% of the test population. It is expressed as mass of chemical per unit body weight (e.g., mg/kg).

- Classification: The calculated LD₅₀ value is compared to the numerical ranges in the chosen toxicity scale (e.g., Table 1) to assign a toxicity class and descriptive rating.

Protocol for Determining LC₅₀ (Inhalation) [2]:

- Exposure Chamber: Test animals are placed in a chamber where the air concentration of a chemical (gas, vapor, aerosol) is precisely controlled and monitored.

- Concentration & Duration: Groups of animals are exposed to a series of concentrations for a fixed period, most commonly 4 hours, as per OECD guidelines.

- Observation: Similar to LD₅₀, animals are observed post-exposure for up to 14 days.

- Calculation: The LC₅₀ is the concentration in air (ppm or mg/m³) that causes death in 50% of animals during the observation period. The exposure duration must always be reported with the value (e.g., LC₅₀ (rat) = 1000 ppm/4hr).

Example Application in Research: A study on copper nanoparticles determined an oral LD₅₀ of 413 mg/kg in mice. Using the Hodge and Sterner Scale, this value (falling between 50-500 mg/kg) classified the material as Class 3, Moderately Toxic [11].

Pathway Diagrams for Testing and Classification Workflows

Acute Toxicity Testing and Classification Workflow

Decision Pathway: Classifying Toxicity Using Different Scales

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Acute Toxicity Studies

| Item | Function in Research | Example/Note |

|---|---|---|

| Pure Test Chemical | The substance whose acute toxicity is being characterized. Testing is nearly always done with pure compounds, not mixtures [2]. | Essential for reproducible dose calculation (mg/kg). |

| Laboratory Animals (in vivo) | Biological models for quantifying systemic toxic response. Rats and mice are most common [2]. | Species, strain, age, and sex must be standardized and reported. |

| Vehicle/Solvent | To dissolve or suspend the test chemical for accurate administration via gavage, injection, or dermal application. | e.g., Carboxymethylcellulose, saline, corn oil. Must be non-toxic at administered volumes. |

| Gavage Needles (Oral) | For precise oral administration of the test substance directly to the stomach [2]. | Various sizes calibrated for animal weight. |

| Inhalation Exposure Chamber | For LC₅₀ studies, it maintains a precise and stable concentration of test chemical (gas, aerosol) in air [2]. | Must have calibrated analytical monitoring. |

| Clinical Observation Checklist | Standardized sheet for recording signs of toxicity (lethargy, convulsions, respiratory distress, etc.) over the observation period [2]. | Critical for consistent data collection. |

| Statistical Analysis Software | To calculate the LD₅₀/LC₅₀ value from mortality data using probit, logit, or Karber methods [10]. | Required for deriving the final numerical value used in scaling. |

| Reference Toxicity Scale | The classification framework (e.g., Hodge and Sterner table) used to interpret the calculated LD₅₀/LC₅₀ value [2]. | Must be explicitly cited to avoid confusion from inverse class numbering. |

Application in Contemporary Research and Regulatory Context

The Hodge and Sterner Scale remains actively used in modern research to communicate the severity of acute toxicity findings. For example, a study on an herbal preparation (Somina) calculated an oral LD₅₀ >10,000 mg/kg in rats, classifying it as "Practically non-toxic" (Class 5) according to the Hodge and Sterner Scale [10].

However, the role of simple acute toxicity classification is evolving within a broader toxicological and regulatory framework:

- Beyond Acute Effects: LD₅₀ measures acute toxicity but does not inform about chronic, carcinogenic, or organ-specific long-term effects [2]. Modern assessments, like the FDA's Post-market Assessment Prioritization Tool (2025), evaluate multiple toxicity data types (carcinogenicity, neurotoxicity, etc.) for a comprehensive risk score [12].

- New Approach Methodologies (NAMs): Regulatory science is increasingly using in vitro high-throughput screening and computational toxicology to prioritize chemicals for testing and group them into categories based on structure and predicted activity [13] [14].

- Therapeutic Index (TI) in Drug Development: In pharmacology, the LD₅₀ is contextualized with the effective dose (ED₅₀) to calculate the Therapeutic Index (TI = LD₅₀/ED₅₀), a crucial metric for determining a drug's safety window and weight-based dosing regimens [15].

Within the thesis comparing the Gosselin and Hodge and Sterner scales, key distinctions emerge:

- The Hodge and Sterner Scale offers a multi-route perspective (oral, dermal, inhalation), making it particularly valuable for occupational and environmental health research where exposure pathways are diverse [2]. Its intuitive system, where Class 1 denotes the highest hazard, aligns with common risk ranking paradigms.

- The Gosselin, Smith and Hodge Scale, with its inverse numbering, provides a focused oral toxicity classification that correlates directly with estimated human lethal dose [2].

The choice between scales is not a matter of accuracy but of context and convention. Consistency in application and explicit citation of the chosen scale are paramount to prevent misinterpretation, especially in interdisciplinary teams. While these acute toxicity scales provide a vital foundational hazard classification, they represent the initial step in a much more comprehensive modern risk assessment strategy that integrates chronic data, mechanistic insights, and human exposure information [12] [13].

Core Concepts of Acute Toxicity and the Role of Classification Scales

The systematic evaluation of acute toxicity is foundational to chemical safety, pharmaceutical development, and environmental risk assessment. The median lethal dose (LD₅₀) and median lethal concentration (LC₅₀) are cornerstone metrics for this purpose. An LD₅₀ represents the amount of a material, given all at once, which causes the death of 50% of a group of test animals, while an LC₅₀ refers to the concentration in air or water that achieves the same effect [2]. Developed by J.W. Trevan in 1927, these values provide a standardized method to compare the toxic potency of diverse chemicals whose specific toxic effects may differ [2] [9].

The fundamental principle is that a smaller LD₅₀/LC₅₀ value indicates a more toxic substance [2] [9]. However, raw numerical data requires interpretation for practical use, such as labeling, safety protocol design, and regulatory decision-making. This is where classification scales are essential. By grouping ranges of LD₅₀/LC₅₀ values into descriptive categories (e.g., "highly toxic," "practically non-toxic"), these scales translate experimental data into actionable hazard information. The two most prevalent systems are the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale [2] [3]. While both serve the same ultimate purpose, their structural differences in class numbering, terminology, and human dose estimation lead to distinct classifications for the same chemical, underscoring the critical importance of specifying which scale is being referenced [2].

Structural Comparison: Hodge and Sterner vs. Gosselin, Smith and Hodge

The primary distinction between the two scales lies in their organizational logic and intended application. The Hodge and Sterner Scale is a multi-route, species-specific tool that provides a unified toxicity rating based on separate thresholds for oral, dermal, and inhalation exposures, primarily for rats and rabbits [2]. In contrast, the Gosselin, Smith and Hodge Scale is a human-centric, oral-focused system that directly estimates a probable oral lethal dose for humans based on animal data [2].

Table 1: Structural Comparison of Toxicity Classification Scales

| Feature | Hodge and Sterner Scale | Gosselin, Smith and Hodge Scale |

|---|---|---|

| Rating System | Numerical classes 1 (most toxic) to 6 (least toxic) [2]. | Numerical classes 6 (most toxic: "Super Toxic") to 1 (least toxic) [2]. |

| Scope | Evaluates oral (rat), inhalation (rat), and dermal (rabbit) LD₅₀/LC₅₀ in a single integrated table [2]. | Focuses primarily on translating animal oral LD₅₀ to a probable oral lethal dose for a 70 kg human [2]. |

| Common Terms | Extremely Toxic, Highly Toxic, Moderately Toxic, etc. [2]. | Super Toxic, Extremely Toxic, Very Toxic, etc. [2]. |

| Key Output | A single toxicity rating (1-6) applicable to defined experimental routes and species [2]. | An estimated human lethal dose range (e.g., "1 grain – less than 7 drops") alongside the toxicity class [2]. |

| Primary Utility | Standardizing hazard classification for chemical labeling and safety data sheets based on standardized animal tests [2]. | Risk communication and emergency response planning by providing a tangible estimate of human lethality [2]. |

Table 2: Comparative Classification of a Hypothetical Chemical (Oral LD₅₀ = 2 mg/kg, Rat)

| Scale | Assigned Class | Descriptive Term | Basis for Classification | Implied Human Lethal Dose (Estimate) |

|---|---|---|---|---|

| Hodge and Sterner | 1 | Extremely Toxic | Oral LD₅₀ (rat) of 1-50 mg/kg falls into Class 1 [2]. | 1 grain (a taste, a drop) [2]. |

| Gosselin, Smith & Hodge | 6 | Super Toxic | Oral LD₅₀ (rat) of less than 5 mg/kg falls into Class 6 [2]. | A taste (less than 7 drops) [2]. |

Applied Case Study: Classifying Hydrogen Sulfide (H₂S)

The practical implications of these structural differences are illustrated by classifying a real compound like hydrogen sulfide (H₂S). H₂S is a highly toxic gas with variable reported lethal concentrations. Historical data suggests concentrations of 500–1,000 ppm can be fatal within minutes [16]. Using a reported 4-hour LC₅₀ for rats of 444 ppm [16], we can apply both scales.

Table 3: Toxicity Classification of Hydrogen Sulfide (H₂S) Using Different Scales

| Scale & Route | Experimental Value | Class & Term | Rationale |

|---|---|---|---|

| Hodge & Sterner (Inhalation) | LC₅₀ ≈ 444 ppm (4h, rat) [16] | Class 3: "Moderately Toxic" | Falls within the 100-1000 ppm range for Class 3 [2]. |

| Gosselin, Smith & Hodge (Oral Estimate) | Requires extrapolation from inhalation data. | Likely Class 5 or 6 ("Very" to "Super Toxic") | The extreme inhalation toxicity suggests a correspondingly high oral toxicity class. |

This case reveals a critical insight: the Hodge and Sterner Scale classifies H₂S as "Moderately Toxic" based purely on the numerical inhalation range. This may seem counterintuitive given its notoriety as a potent asphyxiant, highlighting how a rigid classification system can sometimes obscure a chemical's true hazard potential without expert interpretation. The Gosselin scale, by focusing on the implication for human lethality, might convey the acute danger more effectively, though it requires an extrapolation step not directly designed for inhalation data.

Experimental Methodologies in Toxicity Assessment

Classical In Vivo LD₅₀ Protocol The traditional determination of LD₅₀ follows established guidelines (e.g., OECD). A standard protocol involves [2]:

- Test Substance: A pure form of the chemical is used [2].

- Animal Models: Groups of healthy, young adult animals (typically rats or mice) of a defined strain and sex are acclimatized [2].

- Dose Administration: Animals are divided into several groups. Each group receives a single dose of the test substance via the route of interest (oral gavage, dermal application, intraperitoneal injection). Dose levels are spaced logarithmically (e.g., 10, 50, 200, 1000 mg/kg) [2].

- Observation Period: Animals are closely monitored for signs of toxicity (morbidity) and mortality for a period of 14 days following administration [2].

- Data Analysis: The number of deaths in each dose group is recorded at the end of the observation period. The LD₅₀ value and its confidence interval are calculated using a statistical probit analysis or other suitable method (e.g., Spearman-Karber) [2].

Modern In Silico QSAR Prediction Protocol Quantitative Structure-Activity Relationship (QSAR) models offer a computational alternative to estimate toxicity. A standard workflow, as applied to predict the oral LD₅₀ of sulfur mustard breakdown products, includes [17]:

- Dataset Curation: A training set of chemicals with reliable experimental LD₅₀ values is assembled [17].

- Descriptor Calculation: Numerical representations (descriptors) capturing the molecular structure and properties (e.g., molecular weight, logP, topological indices) are computed for each chemical [17].

- Model Development & Validation: A mathematical model (e.g., using multiple linear regression, random forest) is built to correlate descriptors with LD₅₀. The model is validated using internal (cross-validation) and external test sets [17].

- Prediction & Applicability Domain: For a new chemical, its descriptors are calculated and fed into the validated model to predict an LD₅₀. The prediction is only considered reliable if the new chemical falls within the model's "applicability domain" (structural and parametric space of the training set) [17].

Diagram 1: Experimental workflow from LD₅₀ determination to toxicity classification.

Diagram 2: In silico QSAR methodology for LD₅₀ prediction and classification.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Reagents and Materials for Toxicity Assessment Research

| Item | Function in Research | Typical Use Case |

|---|---|---|

| Purified Test Compound | The substance whose toxicity is being evaluated. Must be of known purity and stability to ensure reliable results [2]. | Foundation for all in vivo dosing solutions and in silico descriptor calculation. |

| Standardized Animal Models (e.g., Sprague-Dawley rats, CD-1 mice) | Provide a consistent biological system for in vivo toxicity testing. Strain, age, and sex are controlled variables [2]. | Oral, dermal, and inhalation LD₅₀/LC₅₀ studies [2]. |

| Vehicle (e.g., Carboxymethylcellulose, Corn Oil, Saline) | A solvent or suspension agent used to prepare accurate and administrable dosing formulations of the test compound. | Ensuring uniform delivery of the test substance via gavage, dermal application, or injection [2]. |

| Molecular Descriptor Software (e.g., RDKit, PaDEL) | Computes quantitative numerical representations of molecular structures from their chemical notation (e.g., SMILES) [18] [17]. | Generating input features for QSAR model development and prediction [18] [17]. |

| Curated Toxicity Databases (e.g., T3DB, RTECS) | Repositories of experimental toxicological data used to train, validate, and benchmark predictive models [18] [17]. | Sourcing reliable LD₅₀ data for QSAR training sets and validating model predictions. |

The Hodge and Sterner and Gosselin, Smith and Hodge scales are not mutually exclusive but are complementary tools born from different perspectives. The Hodge and Sterner Scale excels as a standardized hazard communication tool, providing a clear, consistent rubric for classifying chemicals based on standardized animal tests. Its strength is its reproducibility and direct link to common experimental protocols. The Gosselin, Smith and Hodge Scale serves as a translational risk assessment tool, bridging the gap between animal data and human risk perception by providing tangible, if estimated, human lethal doses [2].

The modern research paradigm, framed within a thesis comparing these approaches, increasingly integrates both. For chemicals with existing data, applying both scales offers a more comprehensive view. For new chemicals, especially in early drug development, modern in silico QSAR methods can provide predicted LD₅₀ values to feed into these classification systems, flagging potential hazards before resource-intensive animal testing [18] [17]. Therefore, a sophisticated understanding of both scales' structures, limitations, and appropriate contexts is essential for researchers and safety professionals to make informed decisions in chemical risk assessment and therapeutic development.

In toxicology and drug development, a fundamental task is classifying and communicating the hazard level of chemical substances. The Lethal Dose 50 (LD₅₀) and Lethal Concentration 50 (LC₅₀), which represent the dose or concentration required to kill 50% of a test population, serve as the primary quantitative benchmarks for acute toxicity [2]. However, translating these numerical values into a standardized hazard class presents a significant challenge due to the coexistence of two major classification systems: the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale [2]. These systems are in direct conflict, using inverted numerical ratings and differing descriptive terminology for the same chemical potency. This creates substantial risk for misinterpretation in scientific literature, safety data sheets, and regulatory communications. This guide provides an objective, data-driven comparison of these scales, details the experimental protocols for generating the underlying LD₅₀/LC₅₀ data, and frames the discussion within ongoing research efforts to refine toxicity assessment.

Quantitative Comparison of Toxicity Classification Scales

The core discrepancy between the two major toxicity scales lies in their opposing approaches to numbering severity classes. The Hodge and Sterner Scale assigns the lowest number (1) to the most toxic category, while the Gosselin, Smith and Hodge Scale assigns the highest number (6) to its most toxic category [2]. This inversion, coupled with differing descriptive terms, can lead to dangerous confusion if the scale used is not explicitly referenced.

Table 1: Comparison of Acute Oral Toxicity Classification Systems (Rat) [2]

| Hodge and Sterner Scale | Gosselin, Smith and Hodge Scale | Oral LD₅₀ (mg/kg) | Probable Lethal Dose for a 70kg Human |

|---|---|---|---|

| 1 (Extremely Toxic) | 6 (Super Toxic) | ≤ 1 | A taste, less than 7 drops (< 1 grain) |

| 2 (Highly Toxic) | 5 (Extremely Toxic) | 1 – 50 | 4 ml (1 teaspoon) |

| 3 (Moderately Toxic) | 4 (Very Toxic) | 50 – 500 | 30 ml (1 fl. oz.) |

| 4 (Slightly Toxic) | 3 (Moderately Toxic) | 500 – 5000 | 600 ml (1 pint) |

| 5 (Practically Non-toxic) | 2 (Slightly Toxic) | 5000 – 15000 | 1 litre (1 quart) |

| 6 (Relatively Harmless) | 1 (Practically Non-Toxic) | ≥ 15000 | > 1 litre |

The practical impact of this discrepancy is significant. For example, the insecticide dichlorvos has an oral LD₅₀ (rat) of 56 mg/kg [2]. According to Table 1, this value falls in the "1-50 mg/kg" range. Under the Hodge and Sterner Scale, it is classified as a "2 - Highly Toxic." Under the Gosselin, Smith and Hodge Scale, the same number corresponds to "5 - Extremely Toxic." [2] This difference of three classification levels underscores the absolute necessity of declaring which scale is being used in any assessment.

Table 2: Multi-Route Toxicity Profile of Dichlorvos (Example Chemical) [2]

| Route of Exposure | Test Species | LD₅₀ / LC₅₀ Value | Hodge & Sterner Classification | Gosselin et al. Classification |

|---|---|---|---|---|

| Oral | Rat | 56 mg/kg | 2 (Highly Toxic) | 5 (Extremely Toxic) |

| Dermal | Rat | 75 mg/kg | 2 (Highly Toxic) | 5 (Extremely Toxic) |

| Inhalation (4-hr) | Rat | 1.7 ppm | 1 (Extremely Toxic) | 6 (Super Toxic) |

| Intraperitoneal | Rat | 15 mg/kg | 1 (Extremely Toxic) | 6 (Super Toxic) |

Experimental Protocols for Acute Toxicity Testing

The reliability of any toxicity classification rests on the robustness of the underlying experimental data. The following outlines the standard methodology for determining LD₅₀ and LC₅₀ values, primarily based on OECD guidelines [2].

Protocol for Oral and Dermal LD₅₀ Testing

- Test Substance: A pure form of the chemical is used [2].

- Test Animals: Young, healthy adult rodents (rats or mice are most common). A typical test uses 40-50 animals, divided into 4-5 dose groups and a control group [2].

- Dose Administration:

- Oral (Gavage): The substance is directly introduced into the stomach via a tube.

- Dermal: The substance is applied to a shaved area of skin under a porous dressing for a fixed period (usually 24 hours) to assess absorption toxicity.

- Dose Selection: Doses are selected based on prior range-finding studies to yield mortality between 0% and 100%.

- Observation Period: Animals are clinically observed for signs of toxicity (e.g., lethargy, convulsions) and mortality for a minimum of 14 days [2].

- Pathology: Deceased animals, and survivors at termination, undergo necropsy to identify target organ damage.

- Data Analysis: The LD₅₀ value and its confidence interval are calculated using statistical probit analysis or logistic regression on the dose-mortality data.

Protocol for Inhalation LC₅₀ Testing

- Exposure Chamber: Animals are placed in a sealed, temperature-controlled chamber where the atmospheric concentration of the test chemical (gas, vapor, or aerosol) is carefully monitored and maintained [2].

- Exposure Regimen: A standard exposure period is 4 hours, though other durations may be used [2]. Animals are not provided food or water during exposure.

- Concentration Determination: Multiple concentration groups are tested (e.g., 3-5). The concentration is measured in parts per million (ppm) or milligrams per cubic meter (mg/m³) [2].

- Observation & Analysis: A 14-day post-exposure observation period follows. The LC₅₀ is calculated similarly to the LD₅₀, based on the concentration-mortality relationship [2].

Diagram 1: Acute Toxicity Testing Workflow

Diagram 2: Classification Conflict from a Single LD₅₀ Value

The Scientist's Toolkit: Essential Research Reagents & Materials

Conducting standardized acute toxicity studies requires specific, high-quality materials to ensure reproducible and regulatory-acceptable results.

Table 3: Key Research Reagent Solutions for Acute Toxicity Testing

| Item | Function & Specification | Rationale |

|---|---|---|

| Defined Test Substance | High-purity (>95%) chemical of interest. Must be characterized for stability under dosing conditions [2]. | Using a pure substance isolates the toxic effect from impurities. Mixtures are rarely studied for definitive LD₅₀ [2]. |

| Vehicle/Formulation Agent | Sterile water, saline, corn oil, methylcellulose, or other non-toxic solvent appropriate for the test substance. | Ensures accurate dosing and delivery of the test substance via the chosen route (oral gavage, dermal application). |

| Clinical Observation Tools | Standardized scoring sheets for clinical signs (e.g., piloerection, ataxia, labored breathing). | Enables objective, consistent monitoring of animal health and identification of onset and progression of toxicity. |

| Analytical Grade Dosing Equipment | Calibrated syringes, gavage needles, precision micropipettes, occlusive dressing for dermal tests. | Essential for the accurate and precise administration of the exact dose volumes required for statistical analysis. |

| Histopathology Reagents | Neutral buffered formalin (10%), hematoxylin and eosin (H&E) stain, paraffin embedding materials. | Used for tissue fixation, processing, and staining during necropsy to identify and document target organ pathology. |

| Reference Control Articles | Known toxicants (e.g., sodium cyanide) and vehicle-only controls. | Serves as a positive control to validate test system sensitivity and a negative control to confirm vehicle safety. |

The conflict between the Hodge and Sterner and Gosselin scales highlights a historical fragmentation in hazard communication. This comparison guide underscores that no toxicity classification is meaningful without explicit reference to the scale employed. For researchers and drug developers, this necessitates rigorous documentation practices. The field is evolving beyond this binary conflict. Modern research, such as the development of novel toxicity scoring systems that treat toxicity as a quasi-continuous variable by integrating multiple graded adverse events, seeks to utilize more information than a single lethal endpoint [19]. Furthermore, standardized grading systems like the Common Terminology Criteria for Adverse Events (CTCAE) provide a structured lexicon for severity in clinical trials [20]. The future of toxicity assessment lies in integrating robust, standardized acute data (like LD₅₀) with more nuanced, multi-parameter scoring systems to achieve a comprehensive and unambiguous safety profile for chemical entities.

The median lethal dose (LD50) is a foundational concept in toxicology, representing the dose of a substance required to kill 50% of a test population within a specified time [2] [1]. First developed by J.W. Trevan in 1927, this metric was established to provide a standardized, quantal measure for comparing the acute poisoning potency of diverse chemicals whose mechanisms of toxic effect differ widely [2] [9]. By using death as a common endpoint, researchers can rank substances based on their inherent hazard.

The critical translational step—extrapolating an animal LD50 value to a probable lethal dose for humans—is not straightforward. It requires systematic frameworks to interpret the numerical data. This is where established toxicity classification scales, primarily the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale, provide essential context [2] [3]. These scales categorize chemicals based on animal LD50 ranges and pair these categories with estimated human lethal doses. However, they differ significantly in their class terminology and numerical ratings, leading to potential confusion if the applied scale is not explicitly referenced [2]. Understanding the comparative structure, application, and limitations of these scales is vital for toxicologists, regulatory scientists, and drug development professionals who rely on historical and contemporary animal data to assess human health risks.

Comparative Analysis of Toxicity Classification Scales

The Hodge and Sterner and Gosselin scales serve the same primary function but are structured differently. Their direct comparison reveals how the same raw data can be categorized under divergent systems.

Table 1: Comparison of Hodge and Sterner vs. Gosselin, Smith and Hodge Toxicity Scales

| Toxicity Rating | Hodge and Sterner Scale | Gosselin, Smith and Hodge Scale | Probable Oral Lethal Dose for a 70-kg Human |

|---|---|---|---|

| Class 1 / Super Toxic | Extremely Toxic (≤1 mg/kg) | Class 6: Super Toxic (<5 mg/kg) [2] | A taste, less than 7 drops [2] |

| Class 2 / Extremely Toxic | Highly Toxic (1-50 mg/kg) | Not a Direct Equivalent | < 1 teaspoonful [21] |

| Class 3 / Very Toxic | Moderately Toxic (50-500 mg/kg) | Class 5: Very Toxic (5-50 mg/kg) [2] | < 1 ounce (30 mL) [2] [21] |

| Class 4 / Moderately Toxic | Slightly Toxic (500-5000 mg/kg) | Class 4: Moderately Toxic (0.5-5 g/kg) [2] | < 1 pint (~600 mL) [2] [21] |

| Class 5 / Slightly Toxic | Practically Non-toxic (5000-15,000 mg/kg) | Class 3: Slightly Toxic (5-15 g/kg) [21] | < 1 quart (~1 L) [2] |

| Class 6 / Practically Non-Toxic | Relatively Harmless (≥15,000 mg/kg) | Class 2 & 1: Practically Non-Toxic & Relatively Harmless [2] | > 1 quart [2] |

Key Difference: The most notable discrepancy is the inverse numbering system. A chemical with an oral LD50 of 2 mg/kg is rated as "1" (Extremely Toxic) on the Hodge and Sterner scale but as "6" (Super Toxic) on the Gosselin scale [2] [3]. This underscores the critical importance of always citing the scale used when classifying a compound.

Core Experimental Protocol: Determining the LD50

The determination of an LD50 value follows a standardized, though resource-intensive, experimental protocol designed to generate a dose-response curve.

Standard OECD-Inspired Protocol

The traditional method involves the following key steps [2] [9]:

- Test Substance Preparation: The chemical is typically tested in a pure form, not as a mixture.

- Animal Model Selection: Healthy, young adult animals of a defined strain (most commonly rats or mice) are acclimatized. Species and strain must be documented.

- Dose Administration: Animals are divided into several groups (usually 4-6). Each group receives a specific single dose of the test substance via the chosen route (oral gavage, dermal application, intravenous injection, etc.). A control group receives the vehicle only.

- Observation Period: Following administration, animals are clinically observed for signs of toxicity for a period of up to 14 days, with mortality as the primary endpoint [2].

- Data Analysis: The mortality data (percentage of animals dead in each dose group) is plotted against the logarithm of the dose. The LD50 is estimated statistically from this sigmoidal curve as the dose corresponding to 50% mortality.

Modern Statistical Estimation Methods

Due to animal welfare concerns (the "3Rs" – Replacement, Reduction, Refinement) and statistical critique, the classic large-group design is often replaced or supplemented by refined methods [22]:

- Fixed Dose Procedure (FDP): Focuses on identifying doses causing evident toxicity rather than death, using fewer animals.

- Acute Toxic Class (ATC) Method: Uses stepwise testing in predefined toxicity classes with small group sizes.

- Up-and-Down Procedure (UDP): Doses one animal at a time; the next dose is increased or decreased based on the previous outcome, efficiently targeting the LD50 zone.

- Statistical Techniques: Maximum likelihood estimation of a parametric dose-response model (e.g., probit or logit analysis) is now considered best practice, as it makes efficient use of all data and provides confidence intervals for the LD50 estimate [22].

Comparative Data: Species and Route Dependence

A core challenge in extrapolation is that a single chemical's toxicity varies dramatically based on the species tested and the route of exposure. This variability directly impacts how scales are applied and underscores the need for cautious human translation.

Table 2: Species & Route Variability: Example of Dichlorvos (Insecticide) [2]

| Test Subject | Route of Exposure | LD50 Value | Toxicity Classification (Gosselin Scale) |

|---|---|---|---|

| Rat | Oral | 56 mg/kg | Very Toxic (Class 5) |

| Rat | Dermal | 75 mg/kg | Very Toxic (Class 5) |

| Rat | Intraperitoneal | 15 mg/kg | Super Toxic (Class 6) |

| Rat | Inhalation (4-hr LC50) | 1.7 ppm | Super Toxic (Class 6) |

| Rabbit | Oral | 10 mg/kg | Super Toxic (Class 6) |

| Dog | Oral | 100 mg/kg | Very Toxic (Class 5) |

| Pig | Oral | 157 mg/kg | Moderately Toxic (Class 4) |

This table illustrates that for dichlorvos: 1) Inhalation is the most hazardous route; 2) Intraperitoneal injection is more toxic than oral ingestion; and 3) Sensitivity varies ~15-fold among mammalian species, with rabbits being most sensitive and pigs least [2].

Table 3: Comparison of Acute Oral Toxicity Across Diverse Substances

| Substance | Approx. Oral LD50 (Rat) | Gosselin Class | Hodge & Sterner Class | Probable Human Lethal Dose (70 kg) |

|---|---|---|---|---|

| Botulinum Toxin | ~0.000001 mg/kg* | 6: Super Toxic | 1: Extremely Toxic | A taste [21] |

| Sodium Cyanide | ~5-10 mg/kg* | 6: Super Toxic | 1/2: Extremely/Highly Toxic | <1 tsp [21] |

| Arsenic (inorganic) | 763 mg/kg [1] | 5: Very Toxic | 3: Moderately Toxic | <1 oz [21] |

| Aspirin | 1,600 mg/kg [1] | 4: Moderately Toxic | 4: Slightly Toxic | <1 pint [21] |

| Table Salt (Sodium Chloride) | 3,000 mg/kg [1] | 4: Moderately Toxic | 4: Slightly Toxic | <1 pint [21] |

| Ethanol | ~7,000 mg/kg [1] | 3: Slightly Toxic | 5: Practically Non-toxic | <1 quart [21] |

| Water | >90,000 mg/kg [1] | 1: Relatively Harmless | 6: Relatively Harmless | >1 quart |

*Approximate values for well-known toxins placed in context; exact published values may vary.

Translating Animal LD50 to Human Lethal Dose: Principles and Modern Research

The fundamental principle for using animal LD50 data is that if a chemical shows consistent high toxicity across several animal species, it should be considered highly toxic to humans [9]. The scales in Table 1 provide the initial, generalized translation. However, modern research aims to refine this process with quantitative models.

A pivotal 2021 study by Dearden et al. quantitatively examined the correlation between rodent LD50 and human lethal doses for 36 chemicals from the Multicentre Evaluation of In Vitro Cytotoxicity (MEIC) study [23]. The key findings were:

- Strong correlations exist, particularly for intraperitoneal (i.p.) administration data.

- The best predictive model used mouse i.p. LD50 values, achieving a high correlation (r² = 0.838) with human lethal dose.

- This demonstrates that historical rodent LD50 data, even "uncurated," can be leveraged in quantitative activity-activity relationship (QAAR) models to predict human toxicity with good accuracy, offering a valuable application for existing data [23].

This relationship and the role of modern analysis can be visualized as a translational workflow.

Limitations and Complementary Approaches

While foundational, the LD50 and its associated scales have significant limitations that researchers must acknowledge:

- Mechanistic Insight: LD50 reveals nothing about the mechanism of toxicity or sublethal effects (toxicodynamics) [22].

- Inter-Species Extrapolation: Differences in metabolism, physiology, and pharmacokinetics between rodents and humans can lead to inaccurate predictions [1].

- Variability: LD50 values can vary substantially between labs due to animal strain, age, sex, and environmental conditions [1] [22].

- Acute Focus: It is a measure of acute toxicity only and does not address chronic exposure, carcinogenicity, or reproductive toxicity [2].

Consequently, the field is moving toward Integrated Testing Strategies that combine:

- Tiered in vivo testing (using refined methods like FDP).

- In vitro assays (cell-based toxicity screens).

- "Omics" technologies (toxicogenomics, metabolomics) to identify mechanistic biomarkers.

- Computational toxicology (QSAR, read-across, and physiological based pharmacokinetic (PBPK) modeling) to reduce animal use and improve human relevance [24] [23].

Table 4: Key Research Reagent Solutions and Resources

| Tool / Resource | Function & Relevance in LD50 & Human Dose extrapolation |

|---|---|

| Standardized Animal Models (e.g., Sprague-Dawley Rat, CD-1 Mouse) | Provide consistent, reproducible biological systems for generating baseline acute toxicity data. Strain must be documented. |

| Reference Toxicants (e.g., Sodium Chloride, Potassium Cyanide) | Used as positive controls in assay validation to ensure test system responsiveness and inter-laboratory comparability. |

| OECD Test Guidelines (e.g., TG 401, 420, 423, 425) | Provide internationally accepted protocols for conducting acute oral toxicity studies, ensuring regulatory acceptance of data. |

| Statistical Analysis Software (e.g., for Probit/Logit analysis) | Essential for calculating the LD50, its confidence intervals, and for performing modern regression analyses as recommended by Finney [22]. |

| Toxicity Databases (e.g., EPA ACToR, NIH PubChem) | Repositories of historical animal toxicity data (LD50, LC50) crucial for read-across, model building, and initial hazard assessment [23]. |

| Computational Toxicology Platforms (e.g., OECD QSAR Toolbox) | Allow for the application of QAAR models, read-across, and chemical category formation to predict human toxicity from existing data, reducing animal testing [23]. |

The Hodge and Sterner and Gosselin toxicity scales provide the essential, albeit imperfect, shared foundation for converting quantitative animal LD50 data into qualitative and semi-quantitative estimates of probable human lethal dose. Their comparative analysis highlights that consistent scale application is critical for clear communication. While these traditional frameworks remain embedded in safety data sheets and regulatory classifications, modern toxicology is augmenting them with quantitative statistical models and integrated testing strategies. For the researcher, the optimal approach involves using the scales for initial hazard ranking and communication, while actively leveraging historical data through contemporary computational models and targeted, mechanistic studies to achieve a more precise, humane, and predictive assessment of human health risk.

From Data to Decision: Applying Toxicity Scales in Research and Regulatory Contexts

A foundational task in toxicology and drug development is the standardized assessment and communication of a substance's acute lethal potency. The median lethal dose (LD₅₀), defined as the amount of a material that causes death in 50% of a group of test animals, serves as the primary quantitative metric for this purpose [2]. However, the raw LD₅₀ value (e.g., 5 mg/kg) requires interpretation within a classification framework to convey its practical hazard level. This is where established toxicity scales, primarily the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale, become essential [3].

These scales provide a critical bridge between experimental data and hazard communication. They translate numerical LD₅₀ results into descriptive toxicity classes (e.g., "Highly Toxic," "Super Toxic"), which are used for safety labeling, transport regulations, and occupational exposure guidelines [2]. A persistent challenge for researchers is that these two common scales use different numerical rating systems and descriptive terminologies for similar LD₅₀ ranges. A compound classified as "Class 1" on one scale may be "Class 6" on the other, leading to potential confusion if the scale used is not explicitly referenced [2].

This guide provides a step-by-step methodology for classifying a novel compound using both scales. It is framed within the broader research context of comparing their applications, advantages, and limitations, thereby equipping scientists with the knowledge to apply and report toxicity data accurately and consistently.

Comparative Analysis of the Gosselin and Hodge-Sterner Scales

The Hodge and Sterner (H&S) and Gosselin, Smith and Hodge (GSH) scales are the two most prevalent systems for classifying acute oral toxicity [2]. Their core difference lies in their structure and intended nuance. The H&S scale is a six-class, ascending numerical system (1=most toxic), while the GSH scale is a six-class, descending numerical system (6=most toxic) [2] [9].

Table 1: Comparison of the Hodge & Sterner and Gosselin, Smith & Hodge Toxicity Scales for Oral LD₅₀ (Rat)

| Toxicity Class | Hodge & Sterner Scale | Gosselin, Smith & Hodge Scale | Probable Lethal Dose for 70kg Human |

|---|---|---|---|

| Most Toxic | 1: Extremely Toxic (<1 mg/kg) | 6: Super Toxic (<5 mg/kg) | A taste, less than 7 drops (~1 grain) [2] |

| 2: Highly Toxic (1-50 mg/kg) | 5: Extremely Toxic (5-50 mg/kg) | 4 ml (1 teaspoon) [2] | |

| 3: Moderately Toxic (50-500 mg/kg) | 4: Very Toxic (50-500 mg/kg) | 30 ml (1 fluid ounce) [2] | |

| 4: Slightly Toxic (500-5000 mg/kg) | 3: Moderately Toxic (0.5-5 g/kg) | 600 ml (1 pint) [2] | |

| 5: Practically Non-toxic (5-15 g/kg) | 2: Slightly Toxic (5-15 g/kg) | 1 litre (1 quart) [2] | |

| Least Toxic | 6: Relatively Harmless (>15 g/kg) | 1: Practically Non-toxic (>15 g/kg) | >1 litre [2] |

Key Distinctions and Research Implications:

- Inverted Classification Logic: The most critical difference is the inverted class numbering. H&S Class 1 represents the highest toxicity, whereas GSH Class 6 represents the highest toxicity [2]. This is a primary source of error in reporting.

- Descriptive Terminology: The descriptive terms for similar dose ranges differ. For example, an LD₅₀ of 100 mg/kg is "Moderately Toxic" (Class 3) on the H&S scale but "Very Toxic" (Class 4) on the GSH scale [2].

- Human Toxicity Correlation: The GSH scale explicitly includes a column for "Probable Oral Lethal Dose in Humans," providing a direct, albeit estimated, translation of animal data to human risk [2]. The H&S scale includes this for its higher classes.

- Scope of Application: The H&S scale provides specific thresholds for inhalation (LC₅₀) and dermal LD₅₀ routes alongside oral data, making it slightly more comprehensive for multi-route hazard assessment [2].

Step-by-Step Classification Protocol

This protocol outlines the process from experimental determination of an oral LD₅₀ in rats to final classification on both scales. The case study of the polyherbal formulation KWAPF01 (LD₅₀ = 2225 mg/kg) [25] will be used as a running example.

Phase 1: Experimental Determination of LD₅₀

The following acute oral toxicity study design is adapted from OECD guidelines and contemporary research [25].

Table 2: Key Experimental Parameters for an Acute Oral LD₅₀ Study

| Parameter | Specification | Rationale & Reference |

|---|---|---|

| Test System | Healthy young adult rats (e.g., Wistar or Sprague-Dawley). | Standardized species with well-characterized responses [2] [25]. |

| Group Size | Minimum of 5 animals per dose group, with 3-5 dose groups minimum. | Provides robust data for statistical analysis of mortality dose-response [26]. |

| Dose Selection | Based on a pilot "range-finding" study. Doses are logarithmically spaced (e.g., 1000, 1500, 2000, 2500, 3000 mg/kg) [25]. | Ensures the main test includes doses that cause 0% to 100% mortality. |

| Administration | Single oral gavage (feeding tube). Volume adjusted by individual animal body weight. | Ensures precise delivery of the test substance [25]. |

| Observation Period | At least 14 days, with intensive monitoring for the first 4-6 hours and daily thereafter [2]. | Captures delayed onset of toxicity and mortality. |

| Endpoint Data | Mortality, time to death, and detailed clinical observations (e.g., piloerection, tremors, motility) [25]. | Informs on the nature and progression of toxicity. |

| LD₅₀ Calculation | Use of statistical methods such as the Probit Analysis (Miller-Tainter) or Karber's method [26]. | Provides a precise LD₅₀ value with confidence intervals. |

Workflow for Acute Oral Toxicity Testing The following diagram illustrates the sequential workflow for conducting an LD₅₀ study.

Phase 2: Classification Using Both Scales

Once the LD₅₀ value (e.g., 2225 mg/kg for KWAPF01) and its confidence interval are determined, follow this decision logic to classify it on both scales.

Decision Logic for Dual-Scale Classification This diagram outlines the logical process of matching an experimental LD₅₀ value to the correct class on each scale.

Applying the Protocol to KWAPF01:

- Obtain LD₅₀: The experimental result is 2225 mg/kg [25].

- Consult Hodge & Sterner Scale: 2225 mg/kg falls within the range of 500-5000 mg/kg. This corresponds to Class 4: Slightly Toxic.

- Consult Gosselin, Smith & Hodge Scale: 2225 mg/kg (or 2.225 g/kg) falls within the range of 0.5-5 g/kg. This corresponds to Class 3: Moderately Toxic.

- Report Dual Classification: For KWAPF01, researchers must report: H&S Class 4 (Slightly Toxic) and GSH Class 3 (Moderately Toxic). The LD₅₀ value must always be included (2225 mg/kg).

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Essential Reagents and Materials for Acute Toxicity Studies

| Item | Typical Specification/Example | Primary Function in LD₅₀ Protocol |

|---|---|---|

| Test Animals | Specific-pathogen-free (SPF) rats (e.g., Wistar, Sprague-Dawley), 8-12 weeks old. | Standardized biological system for assessing systemic toxicity [25]. |

| Test Substance | Pure compound or formulated product, accurately weighed. | The agent whose acute toxicity is being characterized [2]. |

| Vehicle | Distilled water, saline, methylcellulose, or corn oil. | Medium for dissolving or suspending the test substance for administration [25]. |

| Oral Gavage Needle | Stainless steel, ball-tipped, of appropriate length and gauge for the animal size. | Ensures safe and accurate intragastric delivery of the test substance [26]. |

| Clinical Observation Tools | Standardized scoring sheets, stopwatch, thermometer, weighing scale. | For systematic recording of behavioral, neurological, and autonomic responses [25]. |

| Analytical Balance | Precision to 0.1 mg. | Accurate weighing of test substance and dose preparation [25]. |

| Statistical Software | Packages capable of Probit analysis (e.g., SPSS, GraphPad Prism). | For calculating the LD₅₀ value and its confidence intervals from mortality data [26]. |

Implications for Research and Development

The dual-classification exercise highlights critical considerations for scientific communication and drug development.

1. Unambiguous Reporting is Non-Negotiable: A toxicity classification is meaningless without stating which scale was used. The preferred practice is to report the raw LD₅₀ value followed by the class in parentheses, specifying the scale: e.g., "LD₅₀ = 2225 mg/kg (H&S Class 4: Slightly Toxic; GSH Class 3: Moderately Toxic)."

2. Informing the Therapeutic Index (TI): The LD₅₀ is a key component in preclinical safety assessment. It is used with the median effective dose (ED₅₀) to calculate the Therapeutic Index (TI = LD₅₀/ED₅₀) [15]. A higher TI indicates a wider safety margin. The toxicity class helps contextualize this margin; a drug with a low ED₅₀ but classified as "Slightly Toxic" (high LD₅₀) may have an excellent TI.

3. Guiding Safety Protocols: The classification directly influences hazard communication. A material classified as "Highly Toxic" or "Super Toxic" on either scale mandates stringent handling procedures, specific packaging for transport, and clear warning labels on Safety Data Sheets (SDS) [2].

4. Scale Selection in a Research Context: The choice of scale may depend on the field and regional regulations.

- The Hodge and Sterner Scale is often cited in occupational health and environmental toxicology due to its inclusion of inhalation and dermal routes [2].

- The Gosselin, Smith and Hodge Scale, with its direct human lethal dose estimates, is frequently encountered in forensic toxicology and pharmaceutical safety evaluation.

In conclusion, a rigorous, stepwise approach to determining and classifying acute toxicity is fundamental to product safety evaluation. By systematically applying both major classification scales, researchers ensure their findings are robust, transparent, and interpretable within the global scientific and regulatory community, directly contributing to the comparative analysis central to advancing toxicological science.

The assessment of chemical toxicity is a cornerstone of product safety evaluation in pharmaceutical development, chemical manufacturing, and environmental health. A fundamental principle in this field is that the hazard posed by a substance is intrinsically linked to the route of exposure. A compound deemed safe for dermal application may prove highly toxic if inhaled or ingested, owing to differences in absorption, distribution, metabolism, and excretion (ADME) across these pathways [27]. The primary quantitative measures for acute toxicity are the Lethal Dose 50 (LD₅₀) for oral and dermal routes and the Lethal Concentration 50 (LC₅₀) for inhalation [2]. These values represent the dose or concentration estimated to cause death in 50% of a tested animal population and serve as critical benchmarks for classifying chemical hazards.

Historically, J.W. Trevan introduced the LD₅₀ concept in 1927 to standardize the comparison of poisoning potency across diverse substances [2] [3]. To interpret these numerical values, scientists developed classification scales. Among these, the Hodge and Sterner Scale and the Gosselin, Smith and Hodge Scale are the two most commonly referenced frameworks [2] [3]. However, they differ significantly in their class boundaries and descriptive terminology, leading to potential confusion. For instance, an oral rat LD₅₀ of 2 mg/kg is classified as "1 - Extremely Toxic" on the Hodge and Sterner Scale but as "6 - Super Toxic" on the Gosselin et al. scale [2]. This comparison guide objectively analyzes these pivotal classification systems within the broader context of route-specific toxicity data, providing researchers with a clear framework for navigating and interpreting experimental results.

Comparative Analysis of Major Toxicity Classification Scales

The Hodge and Sterner Scale

The Hodge and Sterner Scale is a multi-route toxicity classification system. It provides a unified framework for oral, inhalation, and dermal exposure data, assigning a "Toxicity Rating" from 1 to 6 [2]. A key feature is its inclusion of a probable lethal dose for humans, offering a translational perspective from animal data [2].

The Gosselin, Smith and Hodge Scale

In contrast, the Gosselin, Smith and Hodge (GSH) scale focuses primarily on the probable oral lethal dose for a human. It uses a reversed class numbering system (6 to 1) and descriptive terms like "Super Toxic" for the most hazardous category [2].

Quantitative Comparison of Scale Classifications

The following table juxtaposes the two scales, highlighting their differing thresholds and terminologies.

Table 1: Comparative Classification of Toxicity Scales for Oral Exposure

| Hodge & Sterner Rating | Hodge & Sterner Common Term | Oral LD₅₀ (Rat) mg/kg | Gosselin, Smith & Hodge Rating | Gosselin, Smith & Hodge Common Term | Probable Oral Lethal Dose for 70 kg Human |

|---|---|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | 6 | Super Toxic | A taste, less than 7 drops (< 5 mg/kg) |

| 2 | Highly Toxic | 1 – 50 | 5 | Extremely Toxic | 4 ml (1 tsp) |

| 3 | Moderately Toxic | 50 – 500 | 4 | Very Toxic | 30 ml (1 fl. oz.) |

| 4 | Slightly Toxic | 500 – 5000 | 3 | Moderately Toxic | 600 ml (1 pint) |

| 5 | Practically Non-toxic | 5000 – 15000 | 2 | Slightly Toxic | 1 litre (or 1 quart) |

| 6 | Relatively Harmless | ≥ 15000 | 1 | Practically Non-Toxic | > 1 litre |

Source: Adapted from CCOHS [2]

The critical divergence between the scales is evident. A chemical with an LD₅₀ of 3 mg/kg is "Highly Toxic (Rating 2)" per Hodge and Sterner but "Extremely Toxic (Rating 5)" per Gosselin et al. [2] This underscores the absolute necessity of citing the scale used when classifying a compound.

Route-Specific Toxicity: Data, Discrepancies, and Implications

A substance's toxicity can vary dramatically based on the exposure route due to differences in bioavailability, first-pass metabolism, and direct tissue damage [27]. The following table illustrates this using real experimental data.

Table 2: Route-Specific Acute Toxicity Data for Dichlorvos (Insecticide)

| Exposure Route | Test Species | LD₅₀ / LC₅₀ Value | Hodge & Sterner Classification | Gosselin et al. Classification (Oral) |

|---|---|---|---|---|

| Oral | Rat | 56 mg/kg | Moderately Toxic (3) | Very Toxic (4) |

| Dermal | Rat | 75 mg/kg | Moderately Toxic (3) | N/A |

| Inhalation (4-hr) | Rat | 1.7 ppm | Extremely Toxic (1) | N/A |

| Intraperitoneal | Rat | 15 mg/kg | Highly Toxic (2) | N/A |

Source: Adapted from CCOHS [2]

The data reveals that dichlorvos is most hazardous via inhalation, classified as "Extremely Toxic" [2]. This has profound implications for occupational safety, where inhalation is a primary risk [2]. A comparative analysis of 335 substances found low concordance between oral and dermal hazard classifications; using oral data to predict dermal hazard would misclassify the majority of substances, often over-classifying the risk [28].

The complexity of multi-route exposure is central to environmental risk assessment. A study on metals in soil incorporated oral, inhalation, and dermal bioaccessibility and found risk contributions varied significantly by pathway. For non-carcinogenic risk, the oral and dermal pathways dominated, while inhalation contribution was low [27].

Diagram: Route-Specific Toxicity Assessment Pathways

Experimental Protocols for Generating Route-Specific Data

Standard In Vivo Acute Toxicity Testing

Traditional protocols for determining LD₅₀/LC₅₀ involve administering the pure chemical to groups of laboratory animals (typically rats or mice) via the route of interest [2].

- Oral LD₅₀ Test: The chemical is administered via gavage or in feed. Animals are observed for 14 days for mortality and clinical signs. The LD₅₀ is calculated statistically [2].

- Dermal LD₅₀ Test: The chemical is applied to the shaved skin of animals (often rabbits) under a occlusive dressing for 24 hours to ensure absorption, then observed for 14 days [2].

- Inhalation LC₅₀ Test: Animals are placed in an inhalation chamber and exposed to a known concentration of a chemical gas, vapor, or aerosol for a set period (traditionally 4 hours). Mortality is observed for up to 14 days [2].

The result is expressed with the route and species (e.g., LD₅₀ (oral, rat) = 5 mg/kg) [2].

In Silico Toxicity Estimation Protocol

Computational methods like the EPA's Toxicity Estimation Software Tool (TEST) use QSAR models to predict endpoints like oral rat LD₅₀ [29].

Protocol Workflow:

- Input: Define the chemical structure via SMILES string, CAS number, or a drawing tool [29].

- Model Selection: Choose a prediction methodology (e.g., Hierarchical, Single Model, Group Contribution, Consensus) [29].

- Calculation: The software estimates the LD₅₀ value based on structural similarity and fragment contributions [29].

- Classification: The predicted LD₅₀ is mapped to a toxicity scale (e.g., Hodge and Sterner) for interpretation [29].

This protocol was applied to phytoconstituents of Euphorbia hirta, predicting LD₅₀ values from 153.2 mg/kg ("Highly Toxic") to >23,000 mg/kg ("Practically Non-toxic") [29].

Diagram: Experimental Workflow for Acute Toxicity Data Generation

Modern Innovations: AI and Integrated Approaches to Toxicity Prediction

A significant challenge is the poor translatability of preclinical toxicity findings to humans [30]. Modern approaches address this by incorporating biological complexity and multi-route data.

- Genotype-Phenotype Difference (GPD) Models: Advanced machine learning frameworks now integrate biological differences between test models and humans. These models analyze disparities in gene essentiality, tissue expression, and network connectivity of drug targets. A GPD-based Random Forest model significantly outperformed chemical-only models (AUROC 0.75 vs. 0.50) in predicting human-specific drug failures, especially for neurotoxicity and cardiotoxicity [30].

- Adverse Outcome Pathways (AOPs): The AOP framework provides a mechanistic bridge between molecular initiating events (e.g., a chemical binding to a receptor) and adverse organism-level outcomes. This supports the integration of data from various sources and exposure routes [31].

- Multi-Pathway Exposure Assessment: For environmental risk, studies now integrate bioaccessibility (the fraction of a contaminant that is soluble and available for absorption) for oral, dermal, and inhalation routes. This yields a more accurate, route-specific risk characterization than using total contaminant concentration alone [27].

Diagram: AI-Driven Framework for Predictive Toxicology

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Route-Specific Toxicity Research

| Item | Function in Toxicity Assessment | Primary Application Route |

|---|---|---|

| Standard Test Animal Models (e.g., Sprague-Dawley Rats, Swiss-Webster Mice, New Zealand White Rabbits) | Provide in vivo biological systems for determining lethal doses (LD₅₀) and observing clinical signs of toxicity. Strain, sex, and age are controlled variables [2]. | Oral, Dermal, Inhalation |

| Gavage Needles & Syringes | Enable precise oral administration of liquid test substances directly into the stomach of rodents for oral LD₅₀ studies [2]. | Oral |

| Occlusive Dressing Materials (e.g., semi-occlusive bandages) | Used in dermal toxicity tests to hold the test substance in contact with shaved skin and prevent ingestion, ensuring accurate assessment of dermal absorption [2]. | Dermal |

| Whole-Body Inhalation Exposure Chambers | Controlled environments for exposing animals to precise concentrations of gaseous, vapor, or aerosolized test substances for inhalation LC₅₀ studies [2]. | Inhalation |

| In Vitro Bioaccessibility Fluids (e.g., Simulated Gastric, Lung, or Sweat Fluids) | Chemically simulate human physiological conditions to measure the fraction of a contaminant (e.g., from soil) that is soluble and available for absorption by the body [27]. | Oral, Inhalation, Dermal |

| Toxicity Estimation Software Tool (TEST) | EPA software that uses Quantitative Structure-Activity Relationship (QSAR) methodologies to predict toxicity endpoints (e.g., oral LD₅₀) from chemical structure, reducing animal testing [29]. | In silico Screening |

| Common Terminology Criteria for Adverse Events (CTCAE) | A standardized lexicon and grading scale (Grades 1-5) for reporting the severity of adverse drug reactions in humans, crucial for translating preclinical findings to clinical risk [32]. | Clinical Translation |

Conceptual Foundations and Historical Context

The median lethal dose (LD₅₀), defined as the amount of a substance required to kill 50% of a test population under standardized conditions, serves as a cornerstone for evaluating acute toxicity [2] [3]. First developed by J.W. Trevan in 1927, this metric provides a consistent basis for comparing the toxic potency of diverse chemicals by using death as a universal endpoint [2] [9]. Lethal Concentration 50 (LC₅₀) is the analogous measure for airborne or aqueous substances, typically based on a 4-hour exposure period [2]. A fundamental principle is that a smaller LD₅₀ value indicates higher toxicity, while a larger value indicates lower toxicity [2] [3] [33].

Raw LD₅₀/LC₅₀ data alone, however, are not directly actionable for hazard communication or regulation. To translate these quantitative values into practical safety information, toxicity classification scales were developed. The most widely used systems are the Hodge and Sterner Scale (1949) and the Gosselin, Smith and Hodge Scale [2] [3] [34]. These scales differ fundamentally in their structure and application. The Hodge and Sterner Scale assigns chemicals to one of six classes (1=Extremely Toxic to 6=Relatively Harmless) based on defined thresholds for oral, dermal, and inhalation exposure routes [2]. Conversely, the Gosselin Scale focuses primarily on probable oral lethal dose in humans, using a reversed numbering system where Class 6 denotes "Super Toxic" substances [2]. The selection of scale directly impacts the hazard signal communicated to users on labels and Safety Data Sheets (SDSs).

Comparative Analysis of Toxicity Classification Scales

The following table provides a direct comparison of the two primary classification systems, highlighting their differing structures and the resultant classifications for the same chemical.

Table 1: Comparison of Hodge & Sterner and Gosselin Toxicity Classification Scales

| Scale Feature | Hodge & Sterner Scale [2] | Gosselin, Smith & Hodge Scale [2] |

|---|---|---|

| Primary Focus | Classification based on experimental animal data (rat, rabbit) for three exposure routes. | Estimation of probable oral lethal dose for a 70 kg human. |

| Toxicity Classes | 1 to 6 (1 = Extremely Toxic). | 1 to 6 (6 = Super Toxic). |

| Classification Basis | Rigid LD₅₀/LC₅₀ ranges for oral (rat), dermal (rabbit), and inhalation (rat) routes. | Broad estimated dose ranges for humans (e.g., <5 mg/kg for Class 6). |

| Example: Oral LD₅₀ of 2 mg/kg (Rat) | Class 1: "Extremely Toxic". | Class 6: "Super Toxic" (Probable lethal dose < 1 grain). |

| Example: Oral LD₅₀ of 500 mg/kg (Rat) | Class 3: "Moderately Toxic". | Class 4: "Moderately Toxic". |

| Key Output for Labeling | Standardized hazard class (e.g., "Highly Toxic") based on animal test. | Direct translation to a plausible human lethal dose quantity. |

| Regulatory Context | Often used in occupational and industrial chemical hazard communication systems. | Frequently cited in clinical, pharmaceutical, and forensic toxicology contexts. |