Geometric Mean vs. Median in Ecotoxicity: The Scientific Rationale for Robust Data Aggregation in Research and Risk Assessment

This article provides a comprehensive guide for researchers and toxicology professionals on selecting appropriate statistical methods for aggregating ecotoxicity data, a critical step in chemical hazard assessment and life cycle...

Geometric Mean vs. Median in Ecotoxicity: The Scientific Rationale for Robust Data Aggregation in Research and Risk Assessment

Abstract

This article provides a comprehensive guide for researchers and toxicology professionals on selecting appropriate statistical methods for aggregating ecotoxicity data, a critical step in chemical hazard assessment and life cycle impact analysis. We explore the foundational principles, methodological applications, troubleshooting strategies, and comparative validation of the geometric mean and the median. The analysis reveals a strong scientific consensus favoring the geometric mean for its robustness against outliers and skewed data distributions, which are common in ecotoxicity datasets. In contrast, the median's limitations, particularly its disregard for distribution tails, make it less reliable for building sensitive models like Species Sensitivity Distributions (SSDs). The article synthesizes current best practices from major databases and regulatory frameworks, offering clear guidance to enhance the reproducibility, accuracy, and regulatory acceptance of ecotoxicity characterizations in biomedical and environmental research.

Why Aggregation Matters: Core Concepts of Geometric Mean and Median in Ecotoxicology

Ecotoxicology faces a fundamental challenge: a single chemical can yield a wide range of toxicity values (e.g., LC50, NOEC) for the same species, with intertest variability approximating a factor of 3 [1]. This variability stems from differences in experimental conditions, analytical methods, and organism life stages [2] [3]. For regulatory decisions, risk assessments, and life cycle impact analyses, researchers must aggregate multiple data points into a single, robust value [4] [5]. The choice of aggregation method—geometric mean or median—is not merely statistical but profoundly influences derived environmental quality standards, predicted no-effect concentrations (PNECs), and characterization factors in models like USEtox [4] [6].

This comparison guide evaluates the performance of the geometric mean and median in managing ecotoxicity data variability. It provides experimental evidence and methodological protocols to inform researchers, scientists, and drug development professionals engaged in ecological hazard assessment and chemical safety evaluation.

Understanding variability requires examining its key sources. Experimental conditions significantly influence measured outcomes, while the inherent dispersion in aggregated datasets defines the challenge for statistical summarization.

Key Experimental Factors Influencing Variability

Meta-analyses reveal that specific test conditions can systematically affect reported concentrations. For persistent chemicals like Perfluorooctanoic Acid (PFOA) and Perfluorooctane Sulfonate (PFOS), the agreement between nominal (intended) and measured concentrations is generally high but can diverge under certain conditions [7].

Table 1: Impact of Experimental Conditions on PFOA/PFOS Concentration Agreement [7]

| Experimental Condition | Impact on Nominal vs. Measured Agreement | Key Evidence |

|---|---|---|

| Test Vessel Material | Minimal systematic influence observed. | Glass and plastic showed similar high correlations (>0.98 for PFOA freshwater). |

| Presence of Substrate | Can increase discrepancy. | PFOS freshwater tests with substrate showed greater deviation from the 1:1 line. |

| Water Type (Salt vs. Fresh) | Higher discrepancy in saltwater tests. | Saltwater tests showed lower correlation coefficients (e.g., ~0.84 for PFOS). |

| Feeding Regime & Solvent Use | Little to no consistent influence found. | No strong association with concentration discrepancies in the meta-analysis. |

Furthermore, basic test design choices like sample size directly impact the precision and reliability of point estimates like the LC50. Research demonstrates that the common default of n=7 organisms per concentration group may be insufficient, particularly for tests with shallow dose-response slopes or LC50 values near the concentration range edges [8]. Larger sample sizes (e.g., n=10-23/group) reduce error and yield more robust estimates for critical regulatory studies [8].

Quantifying Intertest Variability

A foundational analysis of acute aquatic ecotoxicity data concluded that the standard deviation of intertest variability is approximately a factor of 3 [1]. This means that for the same chemical-species combination, one test result could reasonably be three times higher or lower than another due to unexplained experimental noise. This high degree of variability creates significant uncertainty when building Species Sensitivity Distributions (SSDs) or deriving PNECs, underscoring the critical need for a justified and transparent aggregation method [1].

Comparison of Aggregation Methods: Geometric Mean vs. Median

The European Union's REACH guidance recommends aggregating multiple toxicity records for a single chemical-species combination using the geometric mean [1]. However, the median is also a common robust measure of central tendency. Their performance differs in key aspects relevant to ecotoxicology.

Table 2: Performance Comparison of Geometric Mean vs. Median for Ecotoxicity Data Aggregation

| Characteristic | Geometric Mean | Median | Implication for Ecotoxicology |

|---|---|---|---|

| Mathematical Definition | The nth root of the product of n numbers. | The middle value in an ordered list. | Geometric mean accounts for multiplicative relationships common in biological systems. |

| Sensitivity to Outliers | Less sensitive than arithmetic mean, but influenced by extreme values. | Highly robust; insensitive to extreme high or low values. | Median may ignore valid but extreme high-toxicity or low-toxicity values, potentially under-representing risk [5]. |

| Use in Small Datasets | Can be calculated for any dataset with positive values. | Reliable even for very small datasets. | For very limited data (n<5), the median's stability is an advantage, but it may not represent the central tendency of the sample well [5]. |

| Theoretical Justification | Justifiable for log-normally distributed data (common in toxicity values). | Makes no distributional assumptions. | Species sensitivity and within-species toxicity data often follow log-normal distributions, favoring the geometric mean [5]. |

| Regulatory Adoption | Recommended by EU REACH guidance [1]; used in Standartox database [5]. | Less commonly prescribed in formal guidance. | The geometric mean facilitates consistency and reproducibility across regulatory assessments. |

| Data Utilization | Uses the magnitude of all data points. | Ignores the magnitude of all data except the central one(s). | The geometric mean maximizes the use of available experimental information, which is critical given testing costs and ethical considerations [5]. |

The geometric mean is generally preferred in contemporary frameworks. Tools like the Standartox database explicitly use the geometric mean for aggregation, arguing it is less influenced by outliers than the arithmetic mean and preferable to the median because the median "completely ignores the tails of the data distribution, making it unreliable for small data sets" [5]. This approach maximizes the value of existing data within a reproducible workflow.

Protocols for Data Generation, Curation, and Aggregation

Experimental Protocol for Acute Fish Toxicity Testing (OECD TG 203)

A core source of variability is the test protocol itself. Adherence to standardized methods is crucial.

- Test Organisms: Use healthy, juvenile fish of a standard species (e.g., Oncorhynchus mykiss, Danio rerio). Acclimate to test conditions for at least 7 days [8].

- Exposure System: Semi-static or flow-through systems in glass or plastic vessels. Confirm chemical-specific adsorption properties (e.g., PFAS may adsorb to plastics [7]).

- Concentration Verification: Measure test chemical concentrations in treatment vessels at start, during, and at end of test. Maintain measured concentrations within ±20% of nominal or time-weighted mean [7].

- Sample Size Determination: Move beyond the default of n=7/group. For critical studies, use n=10 to 23 fish per concentration to reduce confidence interval width and improve LC50 estimation accuracy, especially for challenging scenarios (shallow slope, LC50 near range boundary) [8].

- Endpoint Measurement: Record mortality at 24h, 48h, 72h, and 96h. Calculate LC50 using appropriate probit or non-linear regression methods.

Protocol for Data Curation and Reliability Scoring

Before aggregation, data must be quality-checked. A Multi-Criteria Decision Analysis (MCDA) framework provides a quantitative, transparent method [3].

- Step 1: Assess Reliability (Data Integrity): Score criteria like test guideline adherence, concentration verification, control survival, and statistical reporting. Use a scoring system (e.g., 0-3) per criterion. Data failing a critical "red criterion" (e.g., unacceptable control performance) are excluded [3].

- Step 2: Assess Relevance (Fit-for-Purpose): Score criteria related to the specific assessment, including organism ecological relevance, endpoint (acute vs. chronic), and exposure duration matching the scenario.

- Step 3: Calculate Weighted Score: Aggregate reliability and relevance scores using predefined weights. Studies can be ranked or thresholded for inclusion. This weighted score can later inform a Species Sensitivity Weighted Distribution (SSWD) [3].

Protocol for Aggregating Data Points

After curating a final dataset for a specific chemical-species-endpoint combination:

- Transform Data: Log-transform all toxicity values (e.g., LC50, EC50).

- Calculate Central Tendency: Calculate the mean of the log-transformed values.

- Back-Transform: Exponentiate the result to obtain the geometric mean on the original scale.

- Report Variability: Always report the associated measure of dispersion (e.g., geometric standard deviation, factor range, or individual data points).

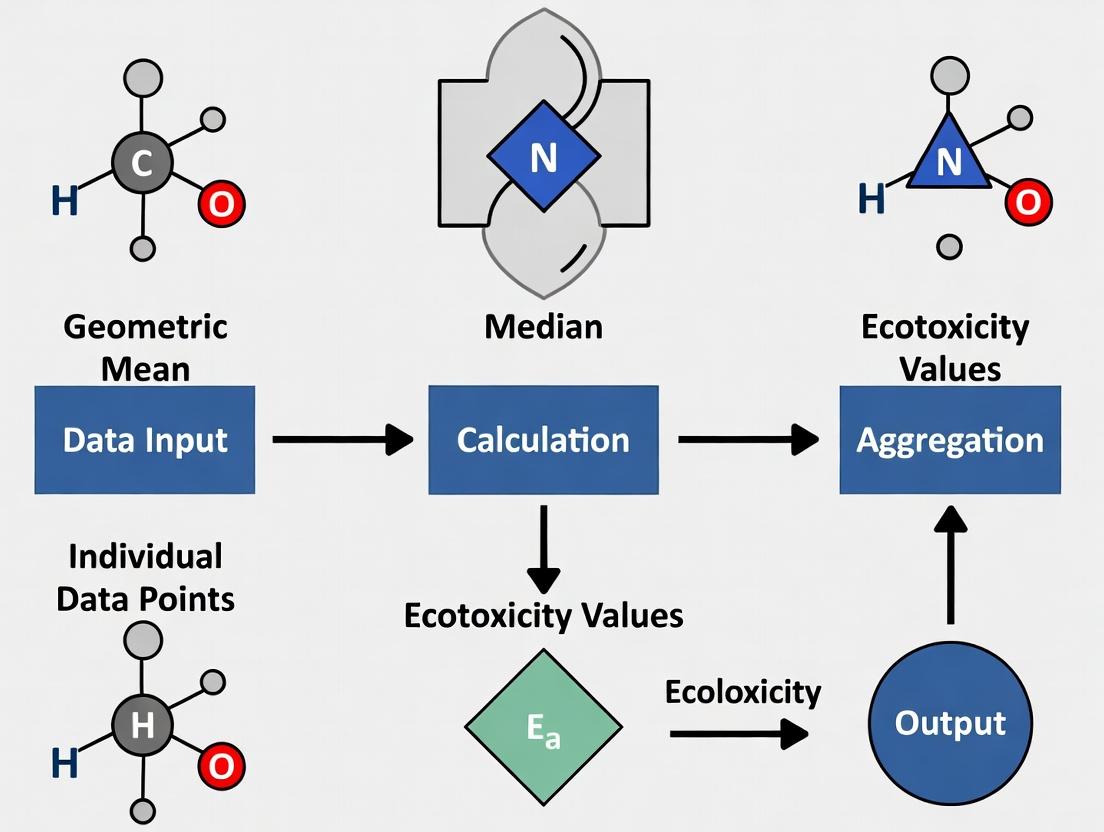

Diagram 1: From Data Variability to Informed Decision-Making

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents, Organisms, and Tools for Ecotoxicity Research

| Item | Function & Description | Typical Use Case |

|---|---|---|

| Standard Test Organisms | Freshwater Algae (Raphidocelis subcapitata): Primary producer. Crustacean (Daphnia magna): Primary consumer. Fish (Danio rerio, fathead minnow): Vertebrate predator. | Constituting the base set for aquatic hazard assessment according to OECD guidelines [2]. |

| Reference Toxicants | Potassium dichromate, sodium chloride, copper sulfate. | Routine checking of organism health and sensitivity, ensuring laboratory consistency over time. |

| Solvent Carriers | Acetone, dimethyl sulfoxide (DMSO), ethanol. | Dissolving poorly water-soluble test chemicals, with concentration kept low (e.g., ≤0.1%) to avoid solvent toxicity. |

| Analytical Standards | Certified reference materials (CRMs) for target chemicals (e.g., PFOA, PFOS). | Verifying analytical method accuracy and quantifying measured vs. nominal concentration discrepancies [7]. |

| Data Aggregation Tools | Standartox R Package/Web App: Automates retrieval, filtering, and geometric mean aggregation of ecotoxicity data from ECOTOX [5]. | Deriving single, reproducible toxicity values for risk assessment and model input. |

| QSAR Prediction Tools | ECOSAR: Predicts toxicity based on chemical class. US EPA TEST: Estimates toxicity using multiple computational methodologies [6]. | Filling data gaps for chemicals lacking experimental values; used with caution due to varying predictive performance [9] [6]. |

| Curated Databases | ECOTOX Knowledgebase: Comprehensive repository of primary study results. REACH Database: High-quality study summaries submitted under EU regulation. CompTox/ToxValDB: Aggregates data from multiple public sources [5] [6]. | Sourcing experimental data for chemical assessments and meta-analyses. |

Advanced Models and Data Gap Filling

When experimental data are scarce, models provide essential estimates, though with limitations.

Machine Learning and QSARs

Machine learning models, such as random forest algorithms trained on chemical properties and mode of action, can estimate hazardous concentrations (HC50) for chemicals missing data in life cycle assessment (LCA) models like USEtox [9]. Such models can explain a significant portion of variability (R² ~0.63) and outperform traditional QSARs like ECOSAR in some cases [9]. However, a 2024 comparison showed that effect factors (EFs) calculated from estimated QSAR data (ECOSAR, TEST) correlated poorly with those derived from experimental data, underscoring the need for caution and transparency when using predicted values [6].

From Data to Policy: USEtox and Hazard Values

The USEtox model employs SSDs to calculate characterization factors for LCA. Research comparing hazard value derivation methods using REACH data found that:

- Chronic NOEC-equivalent data produced hazard values that aligned best with the EU's Classification, Labelling and Packaging (CLP) regulation [4].

- The commonly used acute-to-chronic extrapolation factor of 2 was found to be simplistic. Calculated geometric mean ratios were higher: 10.64 for fish, 10.90 for crustaceans, and 4.21 for algae [4].

- Reliance solely on acute EC50 data or the standard USEtox method may underestimate the number of chemicals classified as "very toxic to aquatic life" [4].

Diagram 2: Deriving an Effect Factor via Species Sensitivity Distribution

Table 4: Performance of Different Data Sources for Effect Factor Calculation [6]

| Data Source | Number of Substances with Calculated EFs | Correlation with USEtox Benchmark EFs | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Experimental (REACH/CompTox) | 8,869 (added to 2,426 existing) | High | High confidence; based on measured biological effects. | Data unavailable for many thousands of chemicals. |

| QSAR (ECOSAR) | 6,029 | Low | Fills data gaps for organic chemicals; readily available. | High uncertainty; poor correlation with experimental benchmarks. |

| QSAR (US EPA TEST) | 6,762 | Low | Fills data gaps; uses consensus modeling. | High uncertainty; predictive performance varies by chemical class. |

Managing ecotoxicity data variability is a multi-step process requiring sound experimental design, rigorous data curation, and a justified statistical approach to aggregation. Based on the comparative analysis:

- Recommend the geometric mean over the median for aggregating multiple toxicity values for the same test combination. It makes better use of all data, is theoretically justifiable for log-normal distributions, and supports reproducible, consistent decision-making [1] [5].

- Implement robust experimental protocols, including adequate sample sizes (n>7/group where possible) [8] and measured concentration verification [7], to reduce variability at its source.

- Apply transparent data quality scoring (e.g., MCDA frameworks) before aggregation to weight or exclude unreliable data [3].

- Use estimated QSAR and machine learning data with clear caveats. They are essential for filling gaps but are not substitutes for high-quality experimental data [9] [6].

- Select hazard derivation methods aligned with assessment goals. For policy alignment, chronic NOEC-based values may be most appropriate, while the standard USEtox method provides consistency for comparative LCA [4].

The geometric mean, embedded within a workflow that prioritizes data quality and transparency, remains the most robust tool for navigating the inherent variability in ecotoxicity data and translating complex experimental results into actionable scientific insights and protective policies.

Mathematical Foundations and Core Properties

The geometric mean is a measure of central tendency, distinct from the arithmetic mean, that calculates the central value of a set of numbers by using the product of their values rather than their sum [10]. For a dataset of n positive values (𝑥₁, 𝑥₂, ..., 𝑥ₙ), the geometric mean is defined as the nth root of the product of all values [11]:

GM = (𝑥₁ · 𝑥₂ · ... · 𝑥ₙ)^(1/𝑛)

A key mathematical property is its relationship with logarithms. The geometric mean can be equivalently calculated by taking the exponential of the arithmetic mean of the natural logarithms of the values [10]:

GM = exp[(ln(𝑥₁) + ln(𝑥₂) + ... + ln(𝑥ₙ)) / 𝑛]

This logarithmic transformation is particularly useful for handling datasets with wide ranges or multiplicative relationships and helps avoid computational issues like arithmetic overflow [10].

- Inequality Relationship: For any dataset of positive numbers with at least one pair of unequal values, the harmonic mean is always the least of the three Pythagorean means, the arithmetic mean is always the greatest, and the geometric mean always lies between them [10]. Formally,

HM ≤ GM ≤ AM. - Minimization Property: The geometric mean is the minimizer of the sum of squared logarithmic deviations [10]. For a dataset, the value a that minimizes

∑ (log 𝑥ᵢ − log 𝑎)²is the geometric mean.

Diagram: Relationship Between Pythagorean Means

Comparative Analysis of Central Tendency Measures

The geometric mean exhibits distinct advantages and limitations compared to the arithmetic mean and median, especially in contexts relevant to toxicology, such as analyzing species sensitivity or chemical concentration data.

Table: Comparative Properties of Central Tendency Measures

| Property | Geometric Mean | Arithmetic Mean | Median |

|---|---|---|---|

| Mathematical Definition | nth root of the product of n values [10]. | Sum of values divided by n. | Middle value in an ordered list. |

| Data Relationship | Multiplicative [11]. | Additive. | Ordinal. |

| Sensitivity to Extreme Values | Less sensitive to high outliers (right-skew), but can be sensitive to values near zero. | Highly sensitive to extreme values (outliers). | Robust, insensitive to extreme values. |

| Typical Use Case in Ecotoxicology | Averaging log-normally distributed data (e.g., species sensitivity values, concentration ratios) [12]. Deriving HC50 in models like USEtox [13]. | Averaging normally distributed data. | Reporting typical value for highly skewed data or data with non-detects. |

| Data Requirement | All values must be positive ( >0 ) [14]. | Can handle positive and negative values. | No restriction on value sign. |

| Handling Zero Values | Cannot be calculated directly; requires adjustment (e.g., adding a constant). | Can be calculated. | Can be calculated. |

In ecological risk assessment, the geometric mean is often applied to aggregate toxicity data (e.g., multiple EC50 values for the same species-chemical pair) because species sensitivity distributions (SSDs) and many environmental concentration data are approximately lognormal [12]. This makes the geometric mean a more representative "average" than the arithmetic mean for such data. The median is favored when the dataset is small, contains non-detect values, or is highly skewed, as it provides a more robust central value unaffected by extreme outliers [12].

Role in Species Sensitivity Distribution (SSD) Modeling: Experimental Protocols

A primary application of the geometric mean in ecotoxicity research is within Species Sensitivity Distribution (SSD) modeling, a core method for deriving environmental safety thresholds like the Hazardous Concentration for 5% of species (HC5) [12].

Core SSD Workflow Protocol: This protocol is adapted from methodologies used to compare model-averaging and single-distribution approaches for HC5 estimation [12].

Data Curation and Geometric Mean Aggregation:

- Source: Collect acute (e.g., EC50, LC50) or chronic toxicity data from curated databases like EnviroTox [12] or U.S. EPA ECOTOX [15].

- Selection: Filter data based on reliability, relevance of endpoints, and water solubility limits (e.g., excluding concentrations >5x solubility) [12].

- Aggregation: For a given chemical, if multiple toxicity values exist for a single species, aggregate them using the geometric mean to obtain one representative value per species [12].

Reference HC5 Calculation (For Validation):

- For chemicals with large datasets (>50 species from diverse taxonomic groups), a reference HC5 can be calculated non-parametrically as the 5th percentile of the aggregated geometric mean values [12].

Subsampling Simulation:

- To simulate typical data-poor conditions, create subsampled datasets by randomly selecting toxicity data for 5-15 species from the full dataset [12].

SSD Fitting and HC5 Estimation:

- Fit parametric statistical distributions (e.g., log-normal, log-logistic, Burr Type III) to the log-transformed toxicity data from the subsample.

- Alternative Approach (Model Averaging): Fit multiple distributions and use a weighted average (based on goodness-of-fit criteria like AIC) of their HC5 estimates [12].

Performance Evaluation:

- Compare the HC5 estimates from the subsampled SSDs (from both single-distribution and model-averaging approaches) against the reference HC5. Assess performance by calculating the deviation or error [12].

Diagram: SSD-Based HC5 Estimation Workflow

Table: Key Findings from SSD Method Comparison Studies

| Method | Typical Input Data | Key Finding | Reference/Context |

|---|---|---|---|

| Single Distribution (Log-Normal) | Log-transformed EC50/LC50 values (geometric means per species). | HC5 estimates showed comparable precision to more complex model-averaging approaches in simulation tests [12]. | Iwasaki & Yanagihara (2025) comparison study [12]. |

| Model-Averaging (Multiple Distributions) | Log-transformed EC50/LC50 values (geometric means per species). | Did not guarantee reduced prediction error over single best-fit distribution; HC5 estimates could be insensitive to new data [12]. | Iwasaki & Yanagihara (2025) [12]. |

| Non-Parametric (Reference) | Full dataset of geometric mean toxicity values (>50 species). | Serves as a benchmark ("reference HC5") for validating parametric models under data-limited scenarios [12]. | Used for validation in methodological studies [12]. |

Computational and Predictive Modeling Applications

Beyond direct calculation, the geometric mean is foundational in computational tools for predicting ecotoxicity.

USEtox Effect Factors: In the life cycle assessment model USEtox, the geometric mean is central to calculating the ecotoxicity effect factor (EF). For a chemical, the hazardous concentration for 50% of species (HC50) is calculated as the arithmetic mean of the logarithmized geometric means of species-specific chronic toxicity values [13]. This log-transformed averaging is mathematically equivalent to the geometric mean of the geometric means, ensuring consistency with the expected log-normal distribution of species sensitivity.

Machine Learning for Data Gap Filling: Machine learning models are trained to predict HC50 or HC5 values using chemical descriptors. A 2023 study used a random forest model to estimate HC50 values for USEtox, achieving an average coefficient of determination (R²) of 0.630 on test sets [9]. The geometric mean of repeated experimental measurements for the same endpoint often forms the high-quality training data for such models [15] [6].

Table: Performance of Predictive Models in Ecotoxicity

| Model Type | Prediction Target | Key Performance Metric | Context & Implication |

|---|---|---|---|

| Random Forest (ML) | HC50 for characterization factors [9]. | Avg. Test Set R² = 0.630 [9]. | Outperformed traditional QSAR models (e.g., ECOSAR) in explaining variability [9]. Useful for filling data gaps. |

| Quantitative Structure-Activity Relationship (QSAR) | e.g., EC50 estimates from ECOSAR [6]. | Lower correlation with experimental USEtox factors vs. experimental data [6]. | Highlights caution needed when using estimated data; different QSAR tools can yield varied results [6]. |

| Global SSD Models | pHC5 for untested chemicals [15]. | Built on 3250 toxicity records across 14 taxonomic groups [15]. | Allows prioritization of high-toxicity chemicals from large inventories (e.g., 188 out of 8449) [15]. |

Table: Key Research Reagent Solutions and Databases for Ecotoxicity Aggregation

| Resource Name | Type | Primary Function in Ecotoxicity Research | Relevance to Geometric Mean |

|---|---|---|---|

| EnviroTox Database [12] | Curated Database | Provides quality-controlled acute and chronic ecotoxicity data for numerous chemicals and species. | Serves as a primary source for obtaining species-specific toxicity values, which are aggregated using the geometric mean for SSD modeling. |

| U.S. EPA ECOTOX Knowledgebase [15] | Comprehensive Database | A publicly available repository of single-chemical toxicity data for aquatic and terrestrial life. | Used to gather experimental toxicity endpoints for building and validating SSDs and machine learning models. |

| USEtox Model [13] | Consensus Model | The scientific consensus model for calculating characterization factors for human and ecotoxicity in life cycle assessment. | Its underlying methodology uses the geometric mean (via log-averaging) to derive the central effect factor (HC50) for chemicals. |

| OpenTox SSDM Platform [15] | Computational Tool | An open-access platform providing SSD modeling tools and data. | Facilitates the application of SSD modeling, where the geometric mean is a standard pre-processing step for per-species data. |

| REACH & CompTox Databases [6] | Regulatory & Research Databases | Large collections of experimental and predicted chemical property and toxicity data. | Sources for extracting ecotoxicity data to calculate effect factors, where intra-species data aggregation via geometric mean is often required. |

In ecotoxicity data aggregation, selecting the appropriate summary statistic is a foundational decision that directly impacts the robustness of risk assessments and regulatory conclusions. This analysis compares the mathematical properties of the median against its primary alternative, the geometric mean, within the context of contemporary research on species sensitivity distributions (SSDs) and life cycle impact assessment (LCIA). While the median is a familiar measure of central tendency, its performance relative to the geometric mean in handling the skewed, log-normal distributions typical of ecotoxicity data is a critical point of debate[reference:0]. This guide objectively compares these contenders, presenting experimental data and methodologies to inform researchers, scientists, and drug development professionals.

Mathematical Definitions and Core Properties

- Median: The middle value of an ordered dataset. It is the 50th percentile, effectively splitting the data into two equal halves.

- Geometric Mean: The n-th root of the product of n numbers ( ( \sqrt[n]{x1 \times x2 \times ... \times x_n} ) ). It is equivalent to the exponential of the arithmetic mean of the log-transformed data, making it the natural measure of central tendency for log-normally distributed data.

The fundamental difference lies in their treatment of data distribution: the median is based solely on data rank, while the geometric mean incorporates the magnitude of all values, giving it a direct algebraic relationship with multiplicative processes.

Comparative Properties Table

The following table summarizes the key mathematical and practical properties of common aggregation statistics in ecotoxicity.

| Property | Median | Geometric Mean | Arithmetic Mean | Minimum | Maximum |

|---|---|---|---|---|---|

| Definition | 50th percentile | n-th root of the product of n values | Sum of values divided by n | Smallest value | Largest value |

| Sensitivity to Outliers | Robust (ignores extreme values) | Moderately Robust (less sensitive than arithmetic mean) | Highly Sensitive | Not Applicable | Not Applicable |

| Suitability for Skewed Data | Good | Excellent (inherently for log-normal data)[reference:1] | Poor | Poor | Poor |

| Use in Small Datasets | Can be unreliable (ignores distribution tails)[reference:2] | Preferred over median for small n[reference:3] | Unreliable | Conservative (protective) | Worst-case |

| Data Requirements | Ordinal scale | Ratio scale (positive values only) | Interval scale | Any scale | Any scale |

| Primary Use in Ecotoxicity | Descriptive statistic | Standard for SSD aggregation[reference:4] | Rarely recommended | Deriving conservative thresholds | Identifying extreme values |

Experimental Evidence and Performance Data

Evidence from Standartox: Geometric Mean as the Standard

The Standartox database, which standardizes toxicity data from the US EPA ECOTOXicology Knowledgebase, explicitly advocates for the geometric mean. Its automated workflow aggregates multiple test results for a chemical-species combination by calculating the minimum, geometric mean, and maximum, but not the median[reference:5]. The rationale is that the geometric mean is less influenced by outliers than the arithmetic mean and, critically, is preferable to the median because "the median completely ignores the tails of the data distribution, making it unreliable for small data sets"[reference:6]. Validation showed that 91.9% of Standartox's geometric mean values lie within one order of magnitude of manually curated values from the Pesticide Properties DataBase (PPDB)[reference:7].

Quantitative Comparison in Acute-to-Chronic Extrapolation

A comparative study on deriving chemical ecotoxicity hazard values provides direct numerical comparison between the median and geometric mean. The research calculated acute-to-chronic ratios (ACRs) using REACH data, presenting both statistics side-by-side[reference:8]. The data, summarized below, show that the geometric mean is consistently lower than the median for these ratios, reflecting its reduced sensitivity to high outliers in the typically right-skewed data.

Table: Median vs. Geometric Mean of Acute-to-Chronic Ratios (ACRs) from REACH Data[reference:9]

| Taxon & Endpoint | n | Median | Geometric Mean |

|---|---|---|---|

| Fish (EC50eq to chronic EC50eq) | 96 | 2.64 | 3.74 |

| Crustacean (EC50eq to chronic EC50eq) | 389 | 4.58 | 5.45 |

| Fish (EC50eq to chronic NOECeq) | 322 | 8.93 | 10.64 |

| Crustacean (EC50eq to chronic NOECeq) | 876 | 8.77 | 10.90 |

| Algae (EC50eq to chronic NOECeq) | 2342 | 3.40 | 4.22 |

Contemporary Adoption in Life Cycle Assessment

Recent methodological advancements continue to solidify the geometric mean's role. A 2025 framework for calculating ecotoxicity effects in Life Cycle Assessment (LCA) utilized a geometric mean-based aggregation process, generating over 79,000 aggregated effect concentration datapoints at the species level[reference:10]. This approach is central to deriving extrapolation factors for models like USEtox, underscoring its acceptance as the standard for handling heterogeneous ecotoxicity data in regulatory and comparative impact contexts[reference:11].

Detailed Experimental Protocols

Protocol 1: Standartox Data Aggregation and Validation

- Data Source: Quarterly downloads of the US EPA ECOTOX database[reference:12].

- Harmonization: Filtering to common endpoints (e.g., EC50, NOEC) and unit standardization.

- Aggregation: For each chemical-organism combination, calculate the minimum, geometric mean, and maximum of all available test results. Outliers beyond 1.5×IQR are flagged but included[reference:13].

- Validation: Aggregated geometric means are compared to authoritative values from the PPDB and QSAR-based estimates from ChemProp. Agreement is measured as the percentage of values within one order of magnitude[reference:14].

Protocol 2: Deriving Acute-to-Chronic Ratios (ACRs) for Hazard Values

- Data Source: Ecotoxicity data from EU REACH registration dossiers[reference:15].

- Data Preparation: Extract acute EC50 and chronic NOEC (or EC50) values. Calculate ratio (acute/chronic) for each species-chemical data pair.

- Statistical Aggregation: For each taxon (fish, crustacean, algae), calculate descriptive statistics (n, min, max, median, geometric mean, percentiles) of the ratios, typically excluding ratios less than 1[reference:16].

- Hazard Value Calculation: Use the geometric mean of the ratios (or the median for comparison) as an extrapolation factor to convert acute data to chronic equivalents for use in Species Sensitivity Distributions (SSDs)[reference:17].

Visualizing Workflows and Key Concepts

Diagram 1: Standartox Data Aggregation Workflow

Diagram 2: Median vs. Geometric Mean Sensitivity

| Item | Function/Description | Relevance to Aggregation |

|---|---|---|

| US EPA ECOTOX KB | Comprehensive database of ecotoxicological test results. | Primary source of raw, multi-study data requiring aggregation. |

| REACH Dossiers | EU regulatory database with extensive submitted toxicity data. | Key source for deriving hazard values and extrapolation factors. |

| Standartox R Package | Tool for automated data download, harmonization, and geometric mean aggregation. | Implements the standardized workflow comparing min/geom/max. |

R with ssd packages |

Statistical environment (e.g., fitdistrplus, ssdtools). |

Used to fit Species Sensitivity Distributions (SSDs) based on aggregated geometric means. |

| USEtox Model | UNEP/SETAC consensus model for toxicity characterization in LCA. | End-user of aggregated ecotoxicity data (e.g., HC50 values) for impact assessment. |

| Geometric Mean Formula | ( \exp(\frac{1}{n}\sum{i=1}^{n} \ln(xi)) ) | The essential calculation for aggregating log-normal ecotoxicity data. |

The geometric mean emerges as the mathematically and practically superior contender for aggregating ecotoxicity data, particularly within the frameworks of SSDs and LCIA. Its property of dampening, rather than ignoring, the influence of extreme values makes it more reliable than the median for the typical small, skewed datasets in the field[reference:18]. Experimental evidence from standardization efforts like Standartox and contemporary research confirms its adoption as the benchmark method. While the median remains a useful descriptive statistic, its inability to incorporate information from the tails of the distribution limits its utility for deriving robust, reproducible aggregate values in ecotoxicological risk assessment and comparative guidance.

Within the context of geometric mean versus median ecotoxicity data aggregation research, a critical question persists: which measure offers greater robustness and sensitivity for deriving hazard values? This guide provides an objective, data-driven comparison of these two central tendency estimators, framing the discussion within the broader thesis on optimal data aggregation for species sensitivity distributions (SSDs) and life cycle impact assessment (LCIA).

Data Presentation: Quantitative Comparison of Aggregation Methods

The following table synthesizes key findings from recent studies comparing the geometric mean and the median in ecotoxicity data processing.

Table 1: Comparison of Geometric Mean and Median in Ecotoxicity Data Aggregation

| Study (Year) | Data Context | Key Finding Regarding Geometric Mean vs. Median | Quantitative Outcome (Where Available) | Source |

|---|---|---|---|---|

| GM-troph (2007) | HC50EC50 estimation for LCIA effect indicators. | The geometric mean is the most robust average estimator, especially for limited data (≤3 data points). The median was less favored. | Qualitative assessment based on theoretical and real-data tests. | [reference:0] |

| Standartox (2020) | Standardized aggregation of multiple ecotoxicity values for chemical-organism pairs. | The geometric mean is preferable over the median because the median "completely ignores the tails of the data distribution, making it unreliable for small data sets." | 91.9% of Standartox geometric mean values were within one order of magnitude of manually curated PPDB values (n=3601). | [reference:1][reference:2] |

| Saouter et al. (2019) | Acute-to-chronic extrapolation (ACE) ratios from EU REACH data. | Provides direct numerical comparison of median and geometric mean for ACE ratios across taxa. | Fish ACE ratios (n=96): Median = 2.64, Geometric Mean = 3.74. Crustacean ACE ratios (n=389): Median = 4.58, Geometric Mean = 5.45. | [reference:3] |

| Extrapolation Factors (2025) | Harmonization of ecotoxicity data for LCA. | The geometric mean-based aggregation process was used to produce tens of thousands of aggregated datapoints, facilitating derived extrapolation factors. | Process yielded 79,001 aggregated effect concentration datapoints at the species level. | [reference:4] |

Experimental Protocols

GM-troph Robustness Testing Protocol

The foundational GM-troph study established a methodology for comparing aggregation robustness[reference:5].

- Objective: To determine the most robust average estimator (arithmetic mean, geometric mean, or median) for the hazardous concentration for 50% of species (HC50EC50) under low-data conditions.

- Data: Real ecotoxicity effect data for eleven substances representing seven different toxic modes of action.

- Procedure:

- Data Subsampling: For each substance, multiple HC50EC50 values were simulated or derived from available species data.

- Aggregation Calculation: The arithmetic mean, geometric mean, and median were calculated for each subsample.

- Stability Assessment: The variability of each estimator was examined when the number of data points was limited (e.g., as few as three points, mirroring common LCIA data scarcity).

- Performance Evaluation: Estimators were ranked based on their statistical stability and resistance to bias from skewed data or outlier values.

- Outcome: The geometric mean demonstrated superior robustness, leading to its recommendation as the preferred estimator for HC50EC50 in LCIA[reference:6].

Standartox Data Aggregation Workflow

The Standartox tool implements a standardized pipeline for aggregating ecotoxicity data[reference:7].

- Objective: To automatically harmonize multiple ecotoxicity test results for a given chemical-organism combination into a single, reproducible aggregated value.

- Data Source: Quarterly releases of the US EPA ECOTOX knowledgebase.

- Procedure:

- Data Retrieval & Filtering: The pipeline downloads the ECOTOX database, and users can filter results based on taxonomic, chemical, and endpoint parameters.

- Outlier Flagging: Values exceeding 1.5 times the interquartile range are flagged (though not automatically excluded).

- Aggregation: For each unique chemical-organism-endpoint combination, the geometric mean of all available values is calculated. The minimum and maximum are also computed for context.

- Validation: Aggregated geometric means are compared to values from curated databases (e.g., PPDB) to assess accuracy, defined as falling within one order of magnitude.

- Rationale for Geometric Mean: This method was selected over the median due to its relative robustness against outliers and its incorporation of all data points, which is deemed critical for reliable aggregation, particularly with small datasets[reference:8].

Mandatory Visualization

Diagram 1: Ecotoxicity Data Aggregation & Comparison Workflow

This diagram outlines the logical workflow for processing ecotoxicity data and comparing the geometric mean and median estimators.

Diagram Title: Workflow for Comparing Geometric Mean and Median in Ecotoxicity

This table details key materials and digital resources essential for conducting research in ecotoxicity data aggregation.

Table 2: Key Research Reagent Solutions for Ecotoxicity Aggregation Studies

| Item / Resource | Function in Research | Example / Source |

|---|---|---|

| ECOTOX Knowledgebase | The primary public repository of curated aquatic and terrestrial ecotoxicity test results, serving as the fundamental data source for aggregation studies. | US EPA ECOTOX [reference:9] |

| Standartox Tool & R Package | Provides an automated, reproducible pipeline for downloading, filtering, and aggregating ECOTOX data using the geometric mean, enabling standardized analysis. | standartox R package [reference:10] |

| Model Test Organisms | Standard species used in toxicity testing, whose data forms the basis for aggregating chemical-specific sensitivity. | Daphnia magna (crustacean), Raphidocelis subcapitata (algae), Danio rerio (fish) [reference:11] |

| Statistical Software (R/Python) | Essential for implementing aggregation algorithms, calculating SSDs, and performing robustness simulations (e.g., bootstrap, Monte Carlo). | R with packages like fitdistrplus, ssd; Python with SciPy, pandas. |

| Reference Datasets (PPDB, EnviroTox) | Manually curated databases used as benchmarks to validate the accuracy and reliability of automated aggregation methods. | Pesticide Properties DataBase (PPDB), EnviroTox database [reference:12][reference:13] |

| SSD Fitting Tools | Software routines used to fit statistical distributions to aggregated toxicity data and derive protective concentrations (e.g., HC5). | R package ssd; web-based tools like the US EPA's SSD Generator. |

From Theory to Practice: Implementing Data Aggregation in Ecotoxicity Workflows

The foundation of robust ecotoxicity research lies in the quality and comparability of underlying data. The first critical step is sourcing and harmonizing raw toxicity information from large-scale repositories such as the US EPA's ECOTOX knowledgebase and the EU's REACH database. This process directly impacts downstream analyses, including the pivotal debate on whether to aggregate species-level data using the geometric mean or the median. This guide objectively compares leading tools and methodologies for this task, providing researchers with a clear framework for selecting the optimal approach for their work.

Comparison of Data Sourcing and Harmonization Tools

The landscape of ecotoxicity data resources varies widely in scope, automation, and aggregation philosophy. The following table summarizes key alternatives, with Standartox presented as a benchmark for automated, reproducible harmonization.

Table 1: Comparison of Ecotoxicity Data Resources and Harmonization Tools

| Tool / Database | Primary Data Source | Coverage (Approx.) | Aggregation Method | Key Validation / Performance Metric | Access & Usability |

|---|---|---|---|---|---|

| Standartox | ECOTOX (quarterly updates)[reference:0] | ~600,000 test results, ~8,000 chemicals, ~10,000 taxa[reference:1] | Geometric mean (preferred), min, max[reference:2] | 91.9% of aggregated values within one order of magnitude of PPDB reference values (n=3,601)[reference:3] | R package & web application; fully automated pipeline[reference:4] |

| ECOTOX Knowledgebase (Raw) | Primary literature & regulatory studies | ~1.1 million entries, >12,000 chemicals, ~14,000 species[reference:5] | None (raw data) | N/A | Web interface; bulk download available; requires manual curation |

| REACH Database (Raw) | Industry submissions under EU regulation | Initial: 305,068 records; Usable after QC: 54,353 records[reference:6] | None (raw data) | ~82% of initial data excluded due to quality/reporting issues[reference:7] | ECHA portal; complex structure; requires significant cleaning[reference:8] |

| EnviroTox Database | ECOTOX & other sources | Limited to aquatic organisms (fish, amphibians, invertebrates, algae)[reference:9] | Rule-based algorithm for single toxicity value per taxon[reference:10] | N/A (focused on quality-controlled values for aquatic SSDs) | Curated dataset; less taxonomic breadth than Standartox[reference:11] |

| PPDB (Pesticides Properties DB) | Literature & regulatory data | ~2,000 pesticides[reference:12] | Manual expert judgment for single values per species[reference:13] | Used as a quality benchmark for other tools[reference:14] | Focused resource for pesticides only; not automated |

Experimental Protocols for Aggregation Validation

The superiority of a harmonization tool is demonstrated through rigorous validation against independent benchmarks. The following protocols detail key experiments that quantitatively assess performance.

- Objective: To assess the accuracy of Standartox's automated geometric mean aggregation against manually curated reference values.

- Data Source: Standartox aggregated values and corresponding ecotoxicity data for the same chemical-species combinations from the Pesticide Properties Database (PPDB)[reference:15].

- Methodology:

- Overlapping chemical-species records between Standartox and PPDB were identified (n=3,601).

- For each record, the Standartox geometric mean value was compared to the PPDB reference value.

- The comparison metric was the percentage of Standartox values lying within one order of magnitude (i.e., a factor of 10) of the PPDB value[reference:16].

- Outcome: 91.9% of Standartox aggregated values met the acceptance criterion, validating the automated pipeline's reliability[reference:17].

Protocol 2: Comparison with QSAR Model Predictions (ChemProp)

- Objective: To evaluate the consistency of harmonized experimental data with in silico predictions.

- Data Source: Standartox geometric mean LC50 values for Daphnia magna and predicted LC50 values from the QSAR software ChemProp[reference:18].

- Methodology:

- Daphnia magna LC50 data were extracted from Standartox.

- Corresponding predictions were obtained from ChemProp for the same chemicals (n=179).

- Agreement was measured as the percentage of Standartox values within one order of magnitude of the ChemProp prediction[reference:19].

- Outcome: 95% of Standartox values were within one order of magnitude of ChemProp predictions, demonstrating alignment between harmonized experimental data and computational models[reference:20].

Protocol 3: Geometric Mean vs. Median Aggregation

- Objective: To empirically justify the choice of geometric mean over median for species-level aggregation, a core thesis in ecotoxicity data analysis.

- Data Source: Multi-test data for chemical-species combinations within the ECOTOX database.

- Methodology:

- For a given chemical and species, all available toxicity values (e.g., EC50) are collected.

- Both the geometric mean and the median are calculated for the dataset.

- The robustness and representativeness of each statistic are evaluated, particularly for small sample sizes where the median's ignorance of distribution tails is a critical flaw[reference:21].

- Theoretical Basis: The geometric mean is less influenced by outliers than the arithmetic mean and, crucially, incorporates information from the entire data distribution (including tails), unlike the median. This makes it more reliable for deriving representative toxicity values, especially with limited data[reference:22].

Visualizing Workflows and Methodologies

Diagram 1: Ecotoxicity Data Harmonization Pipeline

This diagram outlines the generalized workflow for sourcing raw data from major repositories and processing it into a harmonized, analysis-ready format.

Diagram 2: Geometric Mean vs. Median Aggregation Logic

This diagram contrasts the underlying logic of the two primary aggregation methods within the context of species sensitivity data.

Successfully executing data sourcing and harmonization requires a combination of software tools, data resources, and methodological knowledge.

Table 2: Essential Toolkit for Ecotoxicity Data Harmonization Research

| Category | Item | Function / Purpose | Example / Note |

|---|---|---|---|

| Core Software | R Programming Environment | Provides the statistical foundation and scripting capability for reproducible data cleaning, analysis, and automation[reference:24]. | Essential for running packages like standartox. |

| Standartox R Package / API | Enables programmatic access to the pre-harmonized Standartox database and its aggregation functions[reference:25]. | Facilitates integration into custom analysis workflows. | |

| Primary Data Sources | EPA ECOTOX Knowledgebase | The largest public repository of curated ecotoxicity test results, serving as the primary input for many harmonization tools[reference:26]. | Downloaded quarterly for updates. |

| ECHA REACH Database | A vast source of regulatory ecotoxicity data for chemicals in the EU market, requiring extensive processing to be usable[reference:27]. | Useful for regulatory alignment studies. | |

| Reference & Validation | PPDB (Pesticide Properties DB) | A manually curated database providing high-quality reference values for pesticide toxicity, used as a validation benchmark[reference:28]. | Serves as a "gold standard" for validation protocols. |

| QSAR Software (e.g., ChemProp) | Provides in silico toxicity predictions used to compare against and complement harmonized experimental data[reference:29]. | Helps assess data plausibility and fill gaps. | |

| Methodological Guidance | Species Sensitivity Distribution (SSD) Theory | The conceptual framework for aggregating species-level data to estimate hazardous concentrations (HCx) for ecosystems. | Underpins the use of geometric mean aggregation. |

| Geometric Mean Aggregation Protocol | The standardized statistical method for deriving a single representative toxicity value from multiple tests, preferred over median[reference:30]. | A critical step in the harmonization pipeline. |

The derivation of robust environmental safety thresholds, such as Predicted-No-Effect Concentrations (PNECs) or Environmental Quality Standards (EQS), fundamentally relies on the aggregation of ecotoxicity data [16]. Within the research context of comparing geometric mean versus median aggregation methods for species sensitivity distributions (SSDs), the initial step of applying stringent quality filters is not merely preparatory—it is determinative. The choice between geometric mean and median for summarizing multiple toxicity values for a single species-chemical combination, or for estimating hazardous concentrations (e.g., HC5) from an SSD, is secondary to the foundational quality of the input data [12].

Inconsistent or biased reliability evaluations can directly alter the dataset used for aggregation, thereby influencing the final hazard assessment and potentially leading to underestimated environmental risks or unnecessary mitigation costs [17]. This guide objectively compares the predominant quality evaluation frameworks—the established Klimisch method and the modern CRED (Criteria for Reporting and Evaluating Ecotoxicity Data) criteria. It details their application, supported by experimental ring-test data, to provide researchers and risk assessors with a clear basis for selecting a method that ensures transparency, consistency, and scientific rigor in the data foundation upon which all subsequent aggregation decisions are made [16] [17].

Comparative Analysis of Klimisch and CRED Evaluation Frameworks

The Klimisch method, developed in 1997, has been a regulatory cornerstone for evaluating study reliability but has faced criticism for its lack of detail and guidance [17]. The CRED method was developed to address these shortcomings, providing a more structured and transparent framework [16].

Table 1: Core Structural Comparison of the Klimisch and CRED Evaluation Methods

| Feature | Klimisch Method (1997) | CRED Method (2016) |

|---|---|---|

| Primary Scope | Reliability evaluation only. | Combined evaluation of Reliability (20 criteria) and Relevance (13 criteria) [16] [17]. |

| Reliability Categories | 4-point scale: Reliable without restrictions (R1), Reliable with restrictions (R2), Not reliable (R3), Not assignable (R4) [17]. | Detailed criteria-based evaluation leading to the same 4-category conclusion, but with explicit, guided justification [17]. |

| Relevance Evaluation | No formal criteria or categories provided [17]. | Formal criteria and 3 categories: Relevant without restrictions (C1), Relevant with restrictions (C2), Not relevant (C3) [16] [17]. |

| Guidance & Specificity | Limited, high-level criteria. Lacks detailed guidance, leaving significant room for expert judgment [16]. | Extensive guidance for each criterion, reducing ambiguity. Includes specific reporting recommendations for authors [16]. |

| Bias Consideration | Criticized for potential bias towards industry-sponsored Guideline/GLP studies, potentially overlooking valid non-standard research [16] [17]. | Designed for neutral application to all studies, whether guideline or peer-reviewed literature, based solely on scientific merit [17]. |

| Tool Format | Descriptive text. | Supported by structured Excel tools for systematic evaluation and documentation [18] [19]. |

Experimental Data and Performance Comparison

A pivotal international ring test involving 75 risk assessors from 12 countries was conducted to directly compare the two methods [17]. Participants evaluated aquatic ecotoxicity studies using both frameworks. The results quantitatively demonstrate CRED's advantages in consistency and transparency.

Table 2: Quantitative Ring-Test Results Comparing Evaluation Consistency [17]

| Evaluation Aspect | Klimisch Method Performance | CRED Method Performance | Implication |

|---|---|---|---|

| Inter-assessor Consistency (Reliability) | Low. Assessments for the same study frequently spanned multiple categories (e.g., R1 to R3). | High. Majority consensus on the final reliability category was significantly more frequent. | CRED reduces arbitrariness, leading to more reproducible hazard identification. |

| Handling of Relevance | Not systematically addressed, leading to inconsistent consideration of study fitness-for-purpose. | Enabled structured, purpose-driven evaluation, improving alignment between data and assessment goals. | Ensures aggregated data (e.g., for SSDs) is appropriate for the specific regulatory context. |

| Perceived Dependence on Expert Judgment | Rated as high by participants. | Rated as substantially lower. | Promotes objectivity and reduces the potential for evaluator bias in the data screening phase. |

| Perceived Transparency | Rated as low; evaluation rationale often opaque. | Rated as high due to requirement for criterion-specific documentation. | Creates an audit trail, crucial for defending data choices in geometric mean vs. median aggregation research. |

| Time Requirement | Perceived as faster due to simplicity. | Perceived as slightly more time-consuming but worthwhile due to increased rigor and reduced need for re-evaluation. | Initial investment in quality filtering saves time during later data analysis and dispute resolution. |

Detailed Experimental Protocol: The CRED vs. Klimisch Ring Test

The methodology of the comparative ring test provides a model for validating quality assessment frameworks [17].

- Phase 1 (Klimisch Evaluation): Each participant evaluated the reliability and (where possible) relevance of two out of eight preselected aquatic ecotoxicity studies using the Klimisch method. Relevance was summarized ad-hoc using three categories (C1-C3).

- Phase 2 (CRED Evaluation): Each participant evaluated two different studies from the same set using a draft version of the CRED Excel tool, which included specific criteria for both reliability and relevance.

- Study Design: To ensure independence, the studies evaluated in Phases 1 and 2 were different, and participants from the same institute did not evaluate the same study.

- Contextualization: Participants were instructed to evaluate studies for the purpose of deriving Environmental Quality Criteria under the EU Water Framework Directive, standardizing the relevance perspective [17].

- Data Collection: After each phase, participants completed a questionnaire assessing the method's practicality, their confidence in the evaluation, and any missing criteria.

- Analysis: Consistency was measured by the degree of consensus on the final reliability/relevance category for each study under each method. Questionnaire responses were analyzed thematically.

Integration with Data Aggregation Research: From Filtered Data to SSDs

Applying rigorous quality filters via CRED directly impacts downstream aggregation research. A dataset curated with CRED will consist of studies where experimental conditions, statistical reporting, and biological relevance are clearly documented and validated [16]. This high-quality input is essential for robust SSD modeling, where the choice between parametric (e.g., log-normal, log-logistic) and non-parametric approaches, or between using geometric means versus medians for intra-species data, becomes a purely statistical decision rather than one confounded by data quality issues [12].

Recent research on SSD modeling confirms that with a sufficient number of high-quality, reliable data points, the choice of statistical distribution (e.g., for model averaging vs. a single-distribution approach) has a more nuanced impact on the HC5 estimate than the underlying data quality itself [12]. Furthermore, computational toxicology frameworks that integrate heterogeneous biological data (e.g., knowledge graphs linking chemicals to genes and pathways) for toxicity prediction depend on reliable experimental data for training and validation, underscoring the foundational role of quality evaluation across traditional and New Approach Methodologies (NAMs) [20].

Title: Workflow for Quality Filtering in Ecotoxicity Data Aggregation Research

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Tools and Resources for Quality Evaluation and Data Aggregation

| Tool/Resource | Function in Research | Relevance to Aggregation Studies |

|---|---|---|

| CRED Excel Evaluation Tool [18] [19] | Provides a standardized worksheet to systematically score the 20 reliability and 13 relevance criteria for an ecotoxicity study. | Ensures transparent, documented quality filtering, creating a defensible curated dataset for geometric mean/median comparisons. |

| EnviroTox Database [12] | A curated database of ecotoxicity studies with pre-applied quality filters (e.g., excluding data above water solubility). | A primary source for high-quality, pre-screened toxicity data used in SSD modeling and aggregation method research. |

| OpenTox SSDM Platform [15] | An open-access platform for building and analyzing Species Sensitivity Distribution models. | Enables testing of how different data aggregation methods (e.g., geometric mean input) affect HC5 estimates across statistical models. |

| Toxicological Knowledge Graph (ToxKG) [20] | A structured database integrating chemicals, genes, pathways, and assay data to inform mechanistic toxicity. | Provides biological context which can help assess the relevance of studies for specific modes of action, influencing data inclusion for aggregation. |

| CREED for Exposure Data [21] | A sister framework to CRED for evaluating the reliability and relevance of environmental monitoring (exposure) datasets. | Critical for the complementary exposure side of risk assessment, ensuring high-quality concentration data for risk quotient calculations. |

Title: Logical Framework: Quality Filtering's Role in Data Aggregation Research

The comparative analysis demonstrates that the CRED evaluation method offers a superior framework for applying quality filters in ecotoxicity research compared to the traditional Klimisch method. Its structured criteria, explicit guidance, and proven higher consistency make it the recommended choice for constructing datasets intended for advanced aggregation research, such as comparing geometric mean and median approaches.

For researchers focused on data aggregation methodologies, the following application pathway is recommended:

- Primary Filtering: Employ the CRED criteria as the primary quality filter to build a foundation dataset. The Excel tool facilitates transparent documentation [19].

- Contextual Relevance: Clearly define the assessment purpose (e.g., derivation of chronic PNEC for a specific mode of action) when applying relevance criteria to ensure aggregated data is fit-for-purpose [16].

- Aggregation on Clean Data: Conduct geometric mean vs. median comparisons using the data curated through CRED. This isolates the statistical question from data quality confounders.

- Sensitivity Analysis: Perform sensitivity analyses to determine how the inclusion of studies rated "reliable/relevant with restrictions" under CRED affects the outcomes of different aggregation methods.

By adopting the CRED framework, the research community can ensure that the ongoing scientific discourse on optimal data aggregation techniques is built upon a consistent, transparent, and high-quality data foundation, ultimately leading to more reliable environmental safety standards.

Within the broader research on geometric mean vs. median ecotoxicity data aggregation, executing the geometric mean is a critical, non-negotiable step for deriving robust hazard values. Aggregation reduces multiple toxicity data points for a single chemical and species to a singular, representative value, which forms the foundation for higher-order calculations like Species Sensitivity Distributions (SSDs) and Hazardous Concentrations (HCp) [12]. The choice of aggregation method directly influences the outcome of environmental risk assessments and life cycle impact evaluations [4] [5].

While the median is a measure of central tendency less sensitive to outliers, the geometric mean is the established standard in ecotoxicology [5]. It is preferred because toxicity data are typically log-normally distributed, and the geometric mean provides a more accurate central value for multiplicative processes. Critically, for small datasets, the median can be unreliable as it ignores the distribution's tails, whereas the geometric mean incorporates all data points while dampening the influence of extreme values [5]. This guide provides a detailed, procedural framework for correctly executing geometric mean aggregation within contemporary research and regulatory workflows.

Foundational Principles and Comparative Framework

Rationale for Geometric Mean over Arithmetic Mean and Median

The geometric mean is defined as the nth root of the product of n numbers. Its application in ecotoxicology is justified by several key principles [22] [5]:

- Log-Normal Data: Species sensitivity to chemicals and the distribution of repeated toxicity tests for the same endpoint often follow a log-normal distribution. The geometric mean accurately reflects the central tendency of such data.

- Robustness to Skew: It is less influenced by exceptionally high or low outlier values compared to the arithmetic mean, providing a more conservative and stable estimate.

- Multiplicative Processes: Toxicological effects often relate to concentrations in a multiplicative manner (e.g., dose-response), making the geometric mean a mathematically appropriate descriptor.

A comparison of central tendency measures is summarized in the table below.

Table 1: Comparison of Central Tendency Measures for Ecotoxicity Data Aggregation

| Measure | Calculation | Best Use Case | Sensitivity to Outliers | Suitability for SSDs |

|---|---|---|---|---|

| Geometric Mean | (Π xᵢ)^(1/n) | Log-normally distributed data (standard for toxicity values) | Low | High (Recommended) [5] |

| Arithmetic Mean | (Σ xᵢ)/n | Normally distributed data | High | Low (Can overestimate safe levels) |

| Median | Middle value of ordered dataset | Data with severe, non-physical outliers | Very Low | Low for small datasets (ignores distribution tails) [5] |

Quantitative Basis from Major Databases

Large-scale analyses of regulatory data provide the empirical foundation for using geometric means. Key studies have calculated critical toxicity ratios using geometric mean aggregation [4] [23]:

- Analysis of the EU REACH database derived acute-to-chronic ratios for major taxonomic groups: 10.64 for fish, 10.90 for crustaceans, and 4.21 for algae [4].

- A 2025 study harmonizing data from REACH and CompTox databases used geometric mean aggregation to produce 79,001 species-level effect concentration datapoints for 10,668 chemicals, which were subsequently used to derive extrapolation factors [23].

- The Standartox database and tool automates the aggregation of ecotoxicity data from the US EPA ECOTOX knowledgebase, using the geometric mean as its primary aggregation method to produce standardized, reproducible toxicity values for chemical risk assessment [5].

Step-by-Step Protocol for Geometric Mean Aggregation

The following workflow details the standardized procedure for calculating a geometric mean value from a set of ecotoxicity data. This protocol aligns with methodologies employed by major databases and research initiatives [23] [6] [5].

Protocol Title: Geometric Mean Aggregation for Ecotoxicity Data

Objective: To aggregate multiple ecotoxicity test results (e.g., EC50, NOEC) for a specific chemical, species, and endpoint into a single, robust representative value using the geometric mean.

Materials:

- Dataset of curated ecotoxicity test results.

- Statistical software (e.g., R, Python) or calculation tool.

Procedure:

- Data Grouping: Assemble all valid test results for the identical combination of chemical, species, and toxicological endpoint (e.g., Daphnia magna 48-hour EC50 for Chemical X) [5].

- Log-Transformation: Convert each concentration value ((xi)) in the group to its base-10 logarithm ((yi = \log{10}(xi))). This stabilizes variance and normalizes skewed data.

- Calculate Mean of Logs: Compute the arithmetic mean ((\bar{y})) of the log-transformed values: (\bar{y} = \frac{\sum{i=1}^{n} yi}{n}), where (n) is the number of data points.

- Back-Transformation: Calculate the geometric mean (GM) by back-transforming the result: (GM = 10^{\bar{y}}).

- Optional - Robustness Check: Identify potential outliers using the Interquartile Range (IQR) method on the log-transformed data. Values exceeding (Q1 - 1.5 \times IQR) or (Q3 + 1.5 \times IQR) can be flagged for review [5]. (Note: Expert judgment is required for exclusion; the geometric mean is often calculated including flagged points due to its inherent robustness [5]).

Example Calculation: For three Daphnia magna EC50 values: 1.0 mg/L, 2.2 mg/L, and 5.1 mg/L.

- Log-transformed: 0.000, 0.342, 0.708.

- Arithmetic mean of logs: (0.000 + 0.342 + 0.708) / 3 = 0.350.

- Geometric Mean: (10^{0.350} = 2.24) mg/L.

Decision Protocol for Data Inclusion/Exclusion

A critical pre-aggregation step is determining which data points to include. There is no universal regulatory guideline for this [22]. The following decision logic, synthesized from current practice, should be applied during the data curation phase (Step 1 of the workflow).

Experimental Validation & Comparative Performance

Validation in Species Sensitivity Distribution (SSD) Estimation

The geometric mean's performance is validated in advanced SSD modeling. A 2025 study comparing SSD estimation methods used the geometric mean to aggregate multiple toxicity values for a single species before fitting distributions [12]. The study, analyzing 35 chemicals with extensive data (>50 species), found that SSD-derived hazardous concentrations (HC5) were reliable when based on geometric mean-aggregated inputs. This supports its use as a precursor step to community-level risk estimation [12].

Comparison with Model-Averaging and QSAR Data

Geometric mean aggregation also serves as a benchmark for evaluating predictive data.

- Model-Averaging SSDs: Research indicates that HC5 estimates from SSDs based on geometric mean-aggregated data show comparable precision to those from more complex model-averaging approaches that combine multiple statistical distributions [12].

- QSAR Prediction Aggregation: When using Quantitative Structure-Activity Relationship (QSAR) models like ECOSAR, which generate multiple predictions per chemical, the geometric mean is a standard method to derive a single point estimate. One large-scale study calculated the geometric mean of all applicable QSAR model outputs for a chemical to produce a consolidated prediction for use in hazard assessment [24].

Table 2: Application of Geometric Mean in Key Ecotoxicological Contexts

| Context | Data Input | Aggregation Action | Purpose & Outcome | Supporting Study |

|---|---|---|---|---|

| SSD Development | Multiple EC50/LC50 values for one species & chemical. | Compute species mean acute value (SMAV) as the geometric mean. | Creates the data points for fitting the SSD curve to estimate HC5. | [12] |

| Database Curation (e.g., Standartox) | All test results for a chemical-species-endpoint from ECOTOX. | Outputs the geometric mean as the standard aggregated value. | Provides reproducible, single toxicity values for risk indicators. | [5] |

| Extrapolation Factor Derivation | Paired acute-chronic data for many chemicals. | Calculate the geometric mean of acute:chronic ratios. | Derives generic assessment factors (e.g., acute-to-chronic ratio = 10). | [4] [23] |

| QSAR Prediction Reconciliation | Multiple model predictions from different QSAR classes. | Calculate geometric mean of all valid predictions. | Provides a consensus, single-point estimate from in silico tools. | [6] [24] |

The Scientist's Toolkit: Research Reagent Solutions

Implementing geometric mean aggregation requires access to curated data and specialized tools. The following toolkit lists essential resources for researchers.

Table 3: Research Reagent Solutions for Ecotoxicity Data Aggregation

| Item / Resource | Type | Primary Function in Aggregation | Key Reference / Source |

|---|---|---|---|

| Standartox Database & R Package | Software Tool / Database | Automates the curation, filtering, and geometric mean aggregation of ecotoxicity data from the EPA ECOTOX database. | [5] |

| REACH Ecotoxicity Database | Regulatory Database | Source of high-volume, curated experimental data for deriving aggregated hazard values and extrapolation factors. | [4] [23] |

| US EPA ECOTOX Knowledgebase | Comprehensive Database | Primary source of empirical ecotoxicity test results for tools like Standartox. Provides raw data for aggregation. | [5] [24] |

| CompTox Chemicals Dashboard | Integrated Database | Source of experimental toxicity data (via ToxValDB) used alongside REACH data for large-scale harmonization and factor derivation. | [23] [6] |

| R or Python Statistical Environment | Programming Language | Platform for executing custom data curation, log-transformation, and geometric mean calculation scripts. Essential for reproducible research. | Common Practice |

| USEtox Model & Database | Consensus Model | Uses aggregated chronic EC50 values (often derived via geometric mean) to calculate characterization factors for life cycle assessment. | [9] [6] |

| ECOSAR, VEGA, TEST | QSAR Software | Generate predicted toxicity values. Outputs from multiple models are often aggregated via geometric mean to fill data gaps. | [6] [24] |

Executing the geometric mean is a foundational, technically defined step in ecotoxicity data aggregation. Its superiority over the median and arithmetic mean for log-normal toxicity data is well-supported by theory and large-scale empirical practice [4] [5]. The protocol outlined here—encompassing data curation, log-transformation, and back-calculation—provides a standardized workflow that aligns with methods used by major regulatory databases and research consortia [23] [12] [5].

The resulting aggregated values are not an endpoint but a critical input for higher-order decision-making models, including SSDs for environmental quality standard setting and USEtox for comparative life cycle assessment. Mastery of this step ensures that subsequent assessments of chemical hazard and environmental risk are built upon a robust and representative foundation.

Within the broader research on geometric mean versus median aggregation for ecotoxicity data, the derivation of Species Sensitivity Distributions (SSDs) and Hazard Concentrations (HCs) represents the critical translational step. This phase transforms aggregated, chemical-specific toxicity values (e.g., geometric mean EC50 for a species) into robust, ecosystem-level estimates of risk [5]. SSDs model the variation in sensitivity among species, allowing regulators and scientists to determine concentrations predicted to affect a specified percentage (e.g., 5% or 20%) of species—the HC5 or HC20 [12] [23]. The choice of data aggregation method (geometric mean vs. median) directly influences the input values for SSD construction, thereby propagating uncertainty or robustness into these final protective benchmarks. This guide compares the performance of contemporary approaches for building SSDs and deriving HCs, framing them within the ongoing methodological evolution from simple distribution fitting to model-averaging and machine-learning-assisted techniques.

Performance Comparison of SSD and Hazard Concentration Derivation Methods

The selection of methodology for constructing SSDs significantly impacts the resulting hazard concentration estimates. The table below compares the core performance metrics, data requirements, and optimal use cases for the primary contemporary approaches.

Table: Comparison of Methods for Deriving Species Sensitivity Distributions (SSDs) and Hazard Concentrations (HCs)

| Method | Core Description | Key Performance Metrics | Data Requirements | Best Suited For |

|---|---|---|---|---|

| Single Parametric Distribution (e.g., Log-Normal) [12] | Fits a single statistical distribution (e.g., log-normal, log-logistic) to aggregated species sensitivity data to estimate the HC5. | Accuracy: Can produce large deviations from reference HC5 with limited data (<15 species) [12]. Precision: Comparable to model-averaging when using log-normal/log-logistic distributions [12]. Simplicity: Straightforward to implement and interpret. | Minimum of ~8-10 species from multiple taxonomic groups recommended; performance improves with >15 species [12]. | Initial screening, assessments with well-established data where a suitable distribution is known. |

| Model-Averaging Approach [12] | Fits multiple statistical distributions, weights them by goodness-of-fit (e.g., AIC), and calculates a weighted-average HC estimate. | Accuracy: Does not guarantee reduced error compared to single-distribution approach; deviations comparable to log-normal/log-logistic [12]. Robustness: Incorporates model selection uncertainty, making HC estimates less sensitive to adding new data points [12]. | Requires sufficient data to fit multiple models reliably; benefits from >10 species [12]. | Regulatory applications seeking conservative, stable estimates that account for model uncertainty. |

| Non-Parametric / Direct Percentile [12] | Directly calculates the HC5 as the 5th percentile of the empirical distribution of aggregated toxicity data. | Accuracy: Provides a direct "reference" HC5 when extensive data are available [12]. Bias: Unreliable with small datasets (<15-20 species) [12]. | Requires large datasets (>50 species) for a reliable estimate [12]. | Validation of parametric methods or assessments for chemicals with exceptionally rich toxicity datasets. |

| Machine Learning (ML)-Predicted HC50 [9] | Uses ML models (e.g., Random Forest) trained on chemical properties to directly predict the Hazardous Concentration for 50% of species (HC50). | Predictive Power: Random Forest models can explain ~63% (R²=0.630) of variability in USEtox HC50 [9]. Coverage: Can generate estimates for thousands of data-poor chemicals [9] [6]. Speed: Enables rapid screening. | Requires a training set of chemicals with known HC50 and associated physicochemical property data [9]. | Life Cycle Assessment (LCA) and high-throughput screening where effect factors for many chemicals are needed [9] [6]. |

| QSAR-Estimated Inputs for SSD [6] | Uses Quantitative Structure-Activity Relationship models to predict base toxicity endpoints (e.g., fish LC50), which are then aggregated and used in SSD construction. | Confidence: Low correlation with experimental data-based effect factors; high uncertainty [6]. Coverage: Provides data for otherwise data-less chemicals (e.g., ECOSAR covered 6029 chemicals) [6]. | Dependent on the applicability domain and quality of the QSAR model. | Filling data gaps for preliminary or prioritization assessments, with clear acknowledgment of uncertainty. |

Detailed Experimental Protocols

Protocol for Comparing Model-Averaging and Single-Distribution SSD Approaches

This protocol, based on a 2025 study, provides a framework for empirically evaluating HC estimation methods [12].

- Reference Dataset Curation: Select chemicals with acute toxicity data (EC50/LC50) for >50 species from at least three taxonomic groups (e.g., algae, invertebrates, fish). Use a quality-controlled database like EnviroTox [12].

- Reference HC5 Calculation: For each chemical, compute a reference HC5 as the 5th percentile of the complete, aggregated dataset (geometric mean per species) [12].