From Raw Reads to Regulatory Readiness: A Complete Guide to Ecotoxicology Data Archivation Protocols

This article provides a comprehensive framework for the systematic archiving of raw data in ecotoxicology, a field increasingly defined by high-throughput methodologies like transcriptomics.

From Raw Reads to Regulatory Readiness: A Complete Guide to Ecotoxicology Data Archivation Protocols

Abstract

This article provides a comprehensive framework for the systematic archiving of raw data in ecotoxicology, a field increasingly defined by high-throughput methodologies like transcriptomics. It is designed for researchers, scientists, and drug development professionals navigating the dual demands of scientific rigor and regulatory compliance. We begin by establishing the critical importance of raw data as the foundational asset for scientific reproducibility and regulatory submissions. The guide then details actionable, step-by-step protocols for archiving diverse data types, from sequencing reads to traditional assay results. To address real-world challenges, we offer solutions for common issues such as managing large datasets and ensuring metadata completeness. Finally, the article presents robust methods for validating archived data's quality, integrity, and reusability, enabling cross-study comparisons and fulfilling the stringent requirements of agencies like the FDA and EPA. This end-to-end resource empowers scientists to transform raw data into a durable, accessible, and compliant scientific asset.

The Cornerstone of Credibility: Why Raw Data Archiving is Non-Negotiable in Modern Ecotoxicology

The term 'raw data' is foundational to scientific integrity and regulatory compliance in ecotoxicology, yet its definition is often nuanced and context-dependent. Within regulated environments, two primary interpretations exist. From a Good Laboratory Practice (GLP) perspective, raw data is traditionally viewed as the "original observations" of a study [1] [2]. Conversely, under Good Manufacturing Practice (GMP), the emphasis shifts to records that "are used to create other records" [1] [2]. This divergence can lead to inconsistency and regulatory risk if not properly reconciled.

The U.S. FDA's GLP regulations (21 CFR 58.3(k)) provide a pivotal definition, describing raw data as "any laboratory worksheets, records, memoranda, notes, or exact copies thereof, that are the result of original observations and activities of a nonclinical laboratory study and are necessary for the reconstruction and evaluation of the report of that study" [1] [2]. A critical, often overlooked clause is the necessity for reconstruction and evaluation, which expands the scope beyond simple instrument output. Modern proposed updates to this definition further clarify its application to computerized systems and explicitly include final reports, such as the signed pathology report, within the raw data umbrella [1] [2].

In the European Union's GMP Chapter 4, the term is used but not explicitly defined, creating ambiguity. The guidance states that "records include the raw data which is used to generate other records" and crucially advises that "at least, all data on which quality decisions are based should be defined as raw data" [1] [2]. For ecotoxicology, this underscores that raw data is not a single file but a complete data trail—encompassing everything from the initial sample and its metadata, instrument acquisition files and contextual parameters, through to processed results, calculations, and the final interpreted report [1]. This holistic view ensures transparency, traceability, and the ability to audit from a final conclusion back to the original observation, or from a sample forward to a result.

Categories of Raw Data in Modern Ecotoxicology

Modern ecotoxicology employs diverse methodologies, each generating distinct but equally critical forms of raw data. These can be broadly categorized, with their defining characteristics summarized in the table below.

Table 1: Categories and Characteristics of Raw Data in Ecotoxicology

| Data Category | Core "Original Observation" | Essential Contextual Metadata & Activities | Primary Archiving Format(s) |

|---|---|---|---|

| Sequencing & Transcriptomics | Binary base call (BCL) or FASTQ files from sequencer [3] [4]. | Sample RNA Integrity Number (RIN), library prep protocol, sequencer model/run ID, reference genome/transcriptome used for alignment. | FASTQ, BAM/SAM alignment files, processed count matrices with associated sample metadata files. |

| Analytical Chemistry & Environmental Sampling | Instrument-specific spectral/chromatographic data files (e.g., .D, .RAW, .MS). | Sampling GPS coordinates/depth, sample handling logs, instrument calibration records, acquisition method file, processing parameters (integration, calibration curves). | Vendor-specific raw files, documented processing scripts, final concentration tables with quality flags. |

| Dose-Response & Apical Endpoint Bioassays | Original, time-stamped observations of mortality, growth, reproduction, or behavior. | Test organism source/life stage, exposure regime (concentration, duration, media), solvent/control details, water quality parameters, raw measurements for derived endpoints (e.g., body weights, counts). | Laboratory notebook scans, electronic data capture system exports, original images or videos, raw calculation spreadsheets. |

Sequencing Reads and Transcriptomic Data

The rawest form of data in next-generation sequencing is the binary base call (BCL) files generated directly by the sequencer's image analysis [4]. These are routinely converted into FASTQ files, which contain the sequence reads and their associated per-base quality scores. As demonstrated in the EcoToxChip project, where samples yielded between 13 and 58 million raw reads each [4], these files constitute the fundamental, immutable original observation. The raw data, however, extends to include all metadata necessary for interpretation: the RNA extraction protocol, RNA Integrity Number (RIN), details of library preparation, sequencer configuration, and the specific reference genome or transcriptome used for subsequent alignment [4]. Public repositories like the Sequence Read Archive (SRA) mandate the submission of this raw data and alignment information to ensure reproducibility [3].

Analytical Chemistry and Environmental Monitoring Data

For chemical analysis, raw data originates from analytical instruments such as mass spectrometers, chromatographs, and spectrometers. This includes the vendor-specific proprietary data files that capture the instrument's output signal over time [1] [2]. Crucially, the "original observation" is inseparable from the contextual metadata required for its reconstruction: the analytical method file, instrument calibration logs, sequence file defining the run order, and the sample preparation records [1]. In environmental fate studies, the raw data chain begins even earlier, with field sampling records, GPS coordinates, chain-of-custody documentation, and sample preservation logs. As highlighted in geochemical studies, both raw concentration data and compositionally transformed data (e.g., centered log-ratio transformed) are necessary for a complete spatial interpretation of contamination [5].

Dose-Response and Apical Endpoint Data

The foundational raw data for traditional toxicity testing are the direct, time-stamped observations of organismal responses. This includes manual or automated records of mortality, sub-lethal effects (e.g., paralysis, discoloration), reproductive output (egg counts), and growth measurements (individual length/weight) [6]. For data to be considered acceptable by authoritative databases like the ECOTOX knowledgebase, these observations must be linked to a concurrent exposure concentration or dose and an explicit duration [7] [6]. The raw data encompasses the original worksheet or electronic record where these observations were first recorded, along with all supporting information on test organism husbandry, exposure solution verification (e.g., analytical chemistry results), and environmental conditions (pH, temperature, dissolved oxygen) [6].

Protocols for Raw Data Generation and Archiving

Robust protocols are essential to ensure raw data is generated and archived in a manner that satisfies both scientific and regulatory definitions, supporting the FAIR (Findable, Accessible, Interoperable, Reusable) principles [7] [8].

Protocol for Transcriptomic Raw Data Generation and Curation (Based on EcoToxChip Project)

This protocol outlines steps for generating and documenting RNA-sequencing raw data suitable for public deposition and reuse [4].

- Sample Preservation & RNA Extraction:

- Preserve tissue immediately in RNAlater or similar. Document tissue mass, preservation method, and storage time/temperature.

- Extract total RNA using a validated kit (e.g., Qiagen RNeasy). Perform on-column DNase I digestion.

- Raw Data Output: Laboratory notebook entry detailing extraction batch, kit lot numbers, and deviations.

- RNA Quality Control (QC):

- Quantify RNA concentration using a fluorometer (e.g., QIAxpert).

- Assess integrity with a Bioanalyzer or TapeStation to obtain an RNA Integrity Number (RIN).

- Acceptance Criterion: Proceed only with samples meeting a pre-defined threshold (e.g., RIN ≥ 7.5) [4].

- Raw Data Output: Digital QC reports (e.g., Bioanalyzer electropherogram files) and a QC summary table.

- Library Preparation & Sequencing:

- Use a standardized library prep kit. Record all protocol versions and modifications.

- Perform final library QC (size distribution, concentration).

- Sequence on an Illumina platform (e.g., NovaSeq) to a minimum depth (e.g., 12 million paired-end reads per sample).

- Raw Data Output: Binary Base Call (BCL) files from the sequencer [4].

- Primary Data Conversion & Metadata Assembly:

- Convert BCL files to FASTQ files using

bcl2fastqor equivalent. Do not apply quality filtering at this stage. - Compile a sample metadata table including: Species, life stage, tissue, chemical exposure (name, concentration, duration), control/solvent information, RNA extraction ID, RIN, library prep batch, and sequencer lane.

- Raw Data Output: FASTQ files and a comprehensive metadata file in a structured format (e.g., .csv).

- Convert BCL files to FASTQ files using

Protocol for Curating Legacy Dose-Response Data (ECOTOX Model)

This protocol, based on the systematic review procedures of the ECOTOX database, details how to extract and structure raw data from published literature for archiving and reuse [7] [6].

- Systematic Literature Search & Screening:

- Define search strings using chemical names and CAS numbers combined with ecotoxicity keywords.

- Search multiple databases (e.g., PubMed, Web of Science, Scopus). Document the exact search string, date, and number of hits.

- Apply acceptability criteria during screening: a) Single chemical exposure, b) Effect on live whole organism, c) Concentration/dose and duration reported, d) Comparison to a control [6].

- Raw Data Output: A PRISMA-style flow diagram documenting the screening process [7].

- Data Extraction & Harmonization:

- Extract data using a controlled vocabulary to ensure consistency. Key fields include:

- Test Organism: Species name (verified), life stage, source.

- Exposure: Chemical form, concentration/dose (with units), duration, route, media.

- Endpoint: Type (e.g., LC50, EC50, NOEC), value, units, statistical significance.

- Study Conditions: Temperature, pH, light cycle, feeding regime.

- Control Data: Mean response and variability in control group.

- Raw Data Output: A structured data table (e.g., .csv) populated with extracted values, linked directly to the source PDF and page number.

- Extract data using a controlled vocabulary to ensure consistency. Key fields include:

- Quality Assurance & Documentation:

- Perform a second-person review of all extracted entries against the source material.

- Document any uncertainties or assumptions made during extraction.

- Raw Data Output: A curation log file noting corrections, reviewer initials, and dates.

Protocol for Mixture Risk Assessment Data Compilation

This protocol supports the compilation of raw data needed for component-based mixture risk assessment (CBMs), such as the summation of Toxic Units (TU) or msPAF calculation [9].

- Compile Single-Chemical Toxicity Data:

- For each chemical in the mixture, gather relevant dose-response raw data (preferably EC50/LC50 values) for the target species or taxonomic group. Source from curated databases (e.g., ECOTOX) [7] or primary literature.

- Raw Data Input: The original data table showing concentration-response relationships (individual organism or replicate responses) used to derive the point estimate.

- Gather or Predict Environmental Concentrations:

- Obtain measured environmental concentration (MEC) data from monitoring studies. Retain the full data set, including non-detects (with detection limits) and sampling location/time metadata.

- Alternatively, use predicted environmental concentration (PEC) data from fate models. Archive the model input parameters and version.

- Calculate Toxic Units (TUs) and Apply Mixture Model:

- For each chemical i, calculate TUi = MECi / EC50i.

- Raw Data Output: A spreadsheet showing the calculation of each TU, explicitly linking the MEC and EC50 values to their primary sources.

- Apply the chosen mixture model (e.g., Concentration Addition: Mixture TU = ΣTUi). The raw data for the assessment is the entire linked spreadsheet, not just the final sum.

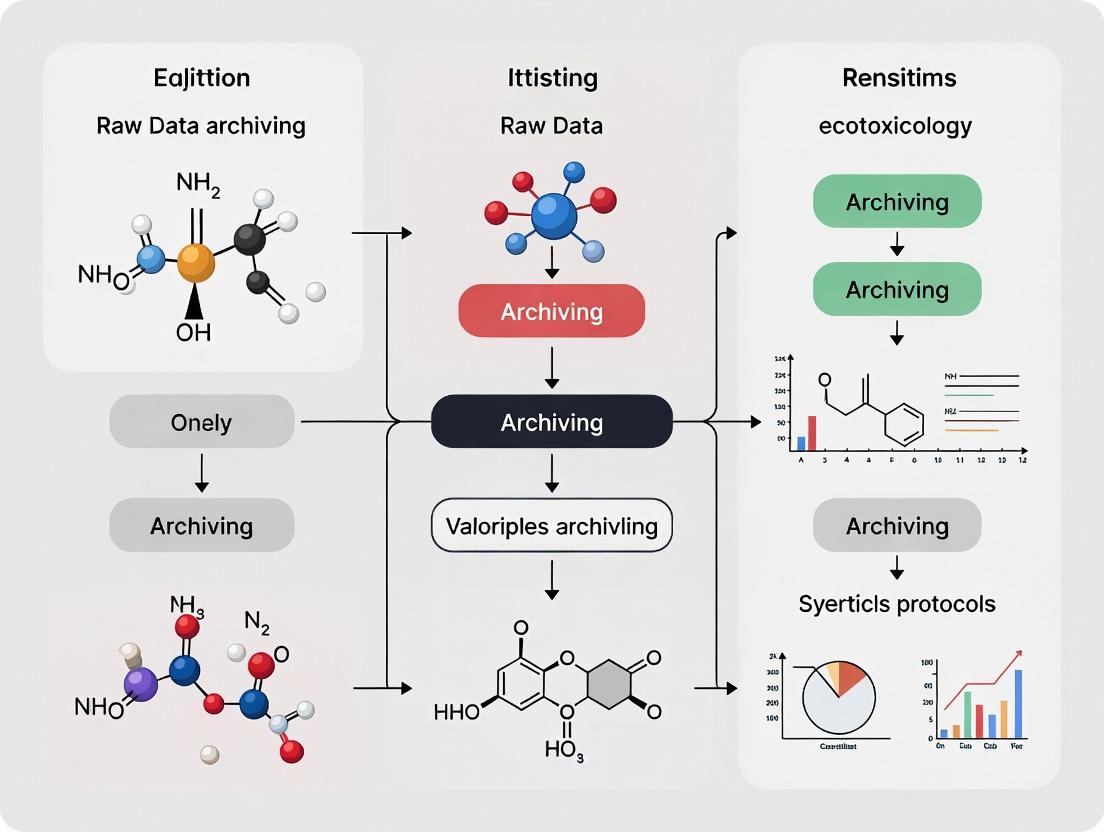

Visualizing the Raw Data Lifecycle and Workflows

Raw Data Lifecycle from Sample to FAIR Archive

Transcriptomics Raw Data Workflow from Tissue to Counts

Dose-Response Curve Derivation from Raw Observations

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Essential Research Reagent Solutions for Ecotoxicology Raw Data Generation

| Item | Function in Raw Data Generation | Critical Raw Data Linkage |

|---|---|---|

| RNA Stabilization Reagent (e.g., RNAlater) | Preserves RNA integrity in tissue samples immediately post-collection to prevent degradation. | Directly determines the RNA Integrity Number (RIN), a key metadata attribute for sequencing raw data validity [4]. |

| Validated RNA Extraction Kit with DNase I | Isolates high-quality, genomic DNA-free total RNA for transcriptomic analysis. | Kit lot number and protocol version are essential contextual metadata. On-column DNase treatment prevents confounding signals in sequencing reads [4]. |

| Internal Standards & Reference Toxicants | Certified chemical standards used for instrument calibration (analytical chemistry) and as positive controls in bioassays. | Their use and resulting calibration curves are raw data activities required to reconstruct reported environmental concentrations or to validate test organism sensitivity [1] [6]. |

| Standardized Test Media (e.g., OECD Reconstituted Water) | Provides a consistent, defined exposure matrix for aquatic toxicity tests. | Media preparation records, including source water chemistry and recipe, are raw data necessary to interpret exposure conditions and reproduce the study [6]. |

| High-Fidelity Data Capture Tools | Electronic Laboratory Notebooks (ELNs), barcode scanners, and automated instrument data systems. | These tools generate time-stamped, attributable primary records, forming the core of the "original observation" with embedded contextual metadata, enhancing data integrity [1] [2]. |

Implementing a Raw Data Archiving Strategy: The ATTAC Workflow

Effective archiving requires moving beyond simple storage to ensure data reusability. The ATTAC workflow (Access, Transparency, Transferability, Add-ons, Conservation sensitivity) provides a structured framework aligned with FAIR principles [8].

- Access: Define how data will be made findable and accessible. This involves depositing raw data in public, persistent repositories like the NCBI SRA for sequences [3] [4] or institutional data portals. The ECOTOX database exemplifies this by providing open access to curated toxicity data [7].

- Transparency: Document every step from sampling to analysis. Use standard operating procedures (SOPs) and provide clear readme files with archived data. This meets the regulatory requirement for "reconstruction and evaluation" [1] [2].

- Transferability: Ensure data is in non-proprietary, machine-readable formats (e.g., .csv, .txt, .fastq) accompanied by detailed metadata schemas. This enables interoperability and reuse in meta-analyses or modeling, as seen in mixture risk assessment [9].

- Add-ons: Provide auxiliary data that increases utility. For example, alongside a dose-response LC50, archive the raw survival counts per replicate and the statistical script used for calculation. This aligns with the GLP view of activities as raw data [1].

- Conservation Sensitivity: For studies involving sensitive or endangered species, implement controlled access protocols to balance ethical data sharing with conservation needs, while still adhering to archiving principles [8].

By implementing such a workflow, ecotoxicology laboratories can ensure their raw data—from the first sequencing read to the final dose-response model—is preserved as a complete, authoritative, and reusable digital object, fulfilling both scientific and regulatory imperatives.

Within ecotoxicology, the reliable identification of chemical threats to ecosystems depends on the integrity, transparency, and longevity of primary research data [10]. Raw data archiving is not an administrative endpoint but a foundational scientific and regulatory activity. It directly underpills the three pillars of modern environmental science: 1) Scientific Reproducibility, enabling the validation of reported effects and the re-analysis underlying ecological risk assessments; 2) Regulatory Compliance, meeting stringent mandates for data quality, traceability, and auditability as per Good Laboratory Practice (GLP) and OECD test guidelines [11]; and 3) Long-Term Knowledge Preservation, ensuring that valuable and often irreplaceable data on chemical effects remain accessible and interpretable for future generations, policy shifts, and emerging analytical techniques.

The move towards standardized testing under frameworks like the OECD and GLP sets a high bar for data management [11]. These guidelines mandate detailed planning, performance, monitoring, recording, archiving, and reporting [11]. Consequently, a raw data archiving protocol must be engineered to satisfy these procedural requirements while also serving the broader scientific need for data discoverability and reuse. This document provides detailed application notes and actionable protocols to institutionalize robust data archiving within ecotoxicology research programs.

Detailed Experimental and Archival Protocols

The following protocols delineate a seamless pipeline from experimental execution to final data deposition, ensuring fidelity to both scientific and regulatory standards.

Protocol 2.1: Standardized Ecotoxicity Test Execution and Primary Data Capture

- Objective: To generate reliable, guideline-compliant raw data for environmental effects assessment [11].

- Materials: Test substance, validated test organisms (e.g., Daphnia magna, algae, fish embryos), calibrated environmental chambers, water quality probes (DO, pH, conductivity), analytical equipment for test substance concentration verification, data logging software.

- Procedure:

- Study Plan Activation: Initiate the study per the approved, version-controlled study plan and corresponding Standard Operating Procedures (SOPs). Log the initiation.

- Test System Preparation: Prepare dilution water, reconstitute test organisms if required, and acclimate all biological materials. Document all preparation steps and initial measurements (e.g., water quality, organism health).

- Exposure Regime: Apply the test substance to treatment replicates according to the predefined design. Include solvent and negative controls. Record exact timings, volumes, and nominal concentrations.

- In-Experiment Monitoring & Data Capture: At prescribed intervals, record mortality, sublethal endpoints (e.g., growth, reproduction, mobility), and environmental parameters (temperature, pH, DO). Capture concentration verification samples. All raw observations must be recorded directly into bound notebooks or electronic systems with audit trails. No data should be transcribed from temporary notes.

- Sample Archiving: Preserve physical samples (e.g., water, tissue) as specified in the study plan, labeling them with unique, persistent identifiers linked to the electronic metadata.

- Data Integrity Check: Perform immediate, daily reviews of captured data for obvious errors or omissions. Annotate any deviations from the protocol contemporaneously.

Protocol 2.2: Raw Data Curation, Metadata Assignment, and Packaging for Archive

- Objective: To transform primary data files into a self-describing, preserved package.

- Pre-requisite: Protocol 2.1 data.

- Procedure:

- Data Consolidation: Gather all digital raw data (instrument outputs, spreadsheet readings, digital images) and physical data (notebook scans, sample logs) into a designated staging directory.

- File Renaming and Organization: Apply a consistent naming convention (e.g.,

[StudyID]_[Endpoint]_[Date]_[Run].csv). Organize files in a logical hierarchy (e.g.,/raw_data/[assay_type]/). - Metadata Generation: Create a machine- and human-readable metadata file (preferably in XML or JSON format). Mandatory fields include:

- Study Identifier and Title

- Principal Investigator and Personnel

- Test Guideline (e.g., OECD 202, 211) [11]

- Test Organism (species, life stage, source)

- Test Substance (identifier, CAS, purity)

- Detailed Experimental Design (replicates, concentrations, exposure regime)

- Dates of Experiment Start and End

- Instrumentation and Software (with versions)

- Definitions of all recorded variables and units

- Fixity and Description: Generate a checksum (e.g., SHA-256) for every digital file to ensure future integrity. Write a brief

readme.txtfile describing the package structure. - Package Creation: Compress the data files, metadata file, and

readme.txtinto a preservation-friendly format (e.g.,.zipor.tar.gz).

Protocol 2.3: Submission to a Trusted Digital Repository and Linkage to Publication

- Objective: To deposit the data package into a preserved, publicly accessible repository.

- Pre-requisite: Protocol 2.2 data package; completed data embargo decisions.

- Procedure:

- Repository Selection: Identify a suitable trusted repository (e.g., Zenodo, Dryad, ERA, or a discipline-specific archive). Criteria should include: persistence policy, unique identifier assignment (DOI), open access, and recognition by target journals [10].

- Upload and Description: Upload the data package. Complete the repository's submission form, re-using and expanding upon the embedded metadata. Specify licensing (e.g., CCO, CC-BY).

- Post-Deposition: Upon acceptance, the repository will issue a permanent DOI. This DOI must be cited in the associated manuscript's Data Availability Statement [10].

- Internal Record Update: Log the DOI, repository link, and deposit date in the laboratory's internal data management ledger.

Visualization of Workflows and Pathways

Ecotoxicology Data Lifecycle from Experiment to Archive

Systematic Reliability Assessment for Ecotoxicology Data [11]

Quantitative Data Presentation Standards

Adherence to clear tabular presentation is critical for accurate communication and regulatory review [12] [13]. Tables should be self-explanatory [13].

Table 1: Summary of Key Data Quality and Reporting Requirements

| Requirement Category | Specific Parameter | Target Standard | Reporting Format in Archive |

|---|---|---|---|

| Test Organism | Species & Strain | Certified, taxonomically confirmed | Text (Genus, species, strain code) |

| Life Stage | Guideline-specified [11] | Text (e.g., < 24h neonate) |

|

| Source & Cultivation | Laboratory culture details | Text / Protocol DOI | |

| Test Substance | Identification | CAS RN, IUPAC name, purity | Text, Analytical Certificate |

| Concentration Verification | Measured vs. Nominal | Table with timestamps, values, % recovery | |

| Experimental Conditions | Temperature | Guideline range ± tolerance [11] | Time-series data log (mean ± SD) |

| Photoperiod | Guideline-specified | Text (e.g., "16h:8h L:D") | |

| Water Quality (pH, DO, etc.) | Guideline range [11] | Time-series data log (mean ± SD) | |

| Control Performance | Solvent Control Response | Acceptability limits (e.g., mortality <10%) | Table with endpoint values |

| Negative Control Response | Baseline variability | Table with endpoint values | |

| Endpoint Data (Raw) | Mortality / Survival | Counts per replicate | Table: [Replicate_ID, Day, Count_Alive] |

| Growth / Biomass | Individual measurements | Table: [Replicate_ID, Organism_ID, Weight/Length] |

|

| Reproduction (e.g., Daphnia) | Offspring counts per female | Table: [Replicate_ID, Female_ID, Day, Offspring] |

|

| Statistical Results | Effect Concentration (ECx) | Point estimate with 95% CI | Value, Confidence Interval, Model used |

| No Observed Effect Concentration (NOEC) | Statistical test result | Value, Statistical test (e.g., Dunnett's) |

Table 2: CRED Reliability Assessment Scoring for a Sample Study [11]

| CRED Evaluation Criteria [11] | Fulfilled? | Supporting Evidence Location in Archive | Reliability Impact |

|---|---|---|---|

| 1. Test substance properly identified | Yes | metadata.json, /certs/ folder |

Fundamental |

| 2. Concentration verification reported | Yes | chem_analysis_summary.csv |

Fundamental |

| 3. Control performance within limits | Yes | bio_control_data.csv |

Fundamental |

| 4. Test organism details specified | Yes | metadata.json |

Fundamental |

| 5. Exposure duration adhered to guideline | Yes | study_timeline.log |

Fundamental |

| ... | ... | ... | ... |

| 18. Raw data available for all endpoints | Yes | raw_growth_measurements.csv |

Critical for re-analysis |

| 19. Statistical methods clearly described | Partial | analysis_protocol.pdf (method stated, code not provided) |

May restrict score |

| 20. Study funded without vested interest | Yes | funding_declaration.txt |

Contextual |

| PRELIMINARY ASSESSMENT | 17/20 Criteria Met | Reliability Score: 2 (Reliable with Restrictions) |

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item Category | Specific Item / Solution | Primary Function in Archiving Context |

|---|---|---|

| Data Capture & Integrity | Electronic Lab Notebook (ELN) with audit trail | Ensures immutable, timestamped primary data recording, replacing paper notebooks for enhanced traceability [11]. |

Checksum generation tool (e.g., sha256sum) |

Creates unique digital fingerprints for files to detect corruption and verify integrity post-transfer or over time. | |

| Metadata & Packaging | Standardized metadata template (JSON-LD, XML) | Provides structured, machine-actionable description of the experiment, enabling discovery and interoperability. |

| Data curation software (e.g., OpenRefine) | Cleans, transforms, and annotates raw data tables, ensuring consistency and readiness for archiving. | |

| Preservation & Access | Trusted Digital Repository (e.g., Zenodo, Dryad) | Provides long-term storage, unique DOI minting, public access control, and preservation stewardship [10]. |

| Version control system (e.g., Git) | Manages versions of analysis scripts and documentation, linking specific code to specific data packages. | |

| Quality Assurance | CRED Evaluation Checklist [11] | Systematic tool to assess the reliability of one's own or legacy studies based on 20 transparent criteria. |

| Internal QA/QC audit protocol | Regular, scheduled reviews of data management practices against GLP and internal SOPs to ensure continuous compliance [11]. |

The Data, Information, Knowledge, Wisdom (DIKW) framework provides a critical lens for understanding the value chain in ecotoxicology research, where the archival of raw data is the foundational step for generating actionable insights. In this field, raw data—from high-throughput screening assays, in vivo toxicity studies, and environmental monitoring—must be systematically preserved to be transformed into information (structured data with context), knowledge (understanding of patterns and mechanisms), and ultimately wisdom (principles for decision-making and risk assessment) [14]. This document details application notes and standardized protocols for navigating this pipeline, emphasizing the role of public archives like the U.S. EPA's ECOTOX and ToxCast databases as essential repositories that enable this progression [15] [16].

Data: The Foundation of Archival and Curation

In the DIKW hierarchy, Data are the discrete, objective facts and numbers without context. In ecotoxicology, this encompasses the primary outputs from experiments and environmental sensors.

1.1 Key Quantitative Data from Major Ecotoxicology Archives The scale of available raw data is illustrated by major public archives.

Table 1: Scale of Raw Data in Public Ecotoxicology Archives [15] [16] [14]

| Data Archive | Primary Data Type | Approximate Scale | Key Metric for Archiving |

|---|---|---|---|

| ECOTOX Knowledgebase | Single-chemical toxicity test results | >1,000,000 results; >12,000 chemicals; >13,000 species [15] | Results linked to chemical, species, endpoint, and reference |

| ToxCast/Tox21 | High-throughput screening (HTS) assay data | ~12,000 chemicals tested in up to ~1,200 assays [16] [17] | Assay hit-calls and dose-response data |

| Typical RNA-Seq Experiment | Transcriptomic sequencing reads | 100s of GB to TB per study; ~$100/sample [14] | Raw FASTQ files, sample metadata |

| CompTox Chemicals Dashboard | Physicochemical properties & descriptors | ~1.2 million chemicals [16] | Curated structure (SMILES, InChIKey), property values |

1.2 Protocol: Archival of Raw Experimental Data Objective: To ensure raw data are preserved in a reusable, FAIR (Findable, Accessible, Interoperable, Reusable) state. Workflow:

- Pre-Submission Documentation: Assign a unique, persistent identifier (e.g., DTXSID from CompTox for chemicals) [16]. Document all experimental conditions (species, dose, duration, endpoint) using controlled vocabularies, such as those defined in the ECOTOX Terms Appendix [15].

- Data Packaging: For bioassays, package raw instrument outputs, normalized values, and a complete metadata sheet. For transcriptomics, preserve raw FASTQ files alongside sample information and sequencing platform details [14].

- Submission to Public Repository: Submit to the appropriate curated database.

- For ecological toxicity data: The ECOTOX Knowledgebase, which accepts data from published literature and conducts targeted literature searches [15].

- For chemical screening data: ToxCast or related resources within the EPA's Computational Toxicology Data resources [16].

- For 'omics data: Repositories like the Gene Expression Omnibus (GEO) [18].

- Quality Assurance: The repository curates data, standardizes units (e.g., to ppm), and links entries to authoritative chemical and species lists [15].

Information: Organizing Data into Structured Context

Information is data that has been processed, organized, and structured to provide context and meaning. This step involves analysis and visualization.

2.1 Protocol: From Raw Sequencing Reads to Biological Information Objective: Transform raw RNA-Seq reads into a list of differentially expressed genes (DEGs), a key informational output [14]. Workflow:

- Quality Control & Trimming: Assess raw FASTQ files using tools (e.g., FastQC) and trim low-quality bases/adapters.

- Read Mapping & Quantification:

- For model species: Map reads to a reference genome; count reads per gene.

- For non-model species: Use a de novo transcriptome assembly or a species-agnostic tool like Seq2Fun, which maps reads to functional ortholog groups across species [14].

- Differential Expression Analysis: Using statistical packages (e.g., edgeR, Limma), compare gene counts between treatment and control groups. Apply corrections for multiple testing. Note: Different statistical approaches can yield varying DEG lists, highlighting the interpretive nature of this step [14].

- Visualization (Information Presentation): Generate plots to convey information:

- Principal Component Analysis (PCA) Plot: Shows overall sample similarity and outliers.

- Volcano Plot: Highlights statistically significant and highly dysregulated genes.

- Heatmap: Clusters genes and samples by expression patterns [14].

Diagram 1: Workflow from raw sequencing data to informational DEG list.

Knowledge: Synthesizing Information into Understanding

Knowledge arises when information is synthesized, interpreted, and integrated with prior understanding to reveal patterns, relationships, and mechanisms.

3.1 Protocol: Pathway and Network Analysis for Mechanistic Insight Objective: Interpret a DEG list to uncover perturbed biological pathways and hypothesize modes of action. Workflow:

- Functional Enrichment Analysis: Input DEGs into tools (e.g., Enrichr, DAVID) to identify over-represented Gene Ontology (GO) terms, KEGG, or Reactome pathways. This transforms a gene list into a biological narrative.

- Dose-Response Analysis: For transcriptomics data across multiple concentrations, apply Transcriptomic Dose-Response Analysis (TDRA) to derive point-of-departure (PoD) estimates, allowing comparison with apical endpoint PoDs [14].

- Integration with Adverse Outcome Pathways (AOPs): Map dysregulated genes and pathways to known AOP frameworks. This links molecular initiating events to potential adverse organismal or population outcomes.

- Knowledge Visualization: Create pathway diagrams or network graphs illustrating the interaction between key dysregulated genes and their role in broader biological processes.

Table 2: Tools for Generating Knowledge from Ecotoxicology Information

| Tool/Resource | Function | Input | Knowledge Output |

|---|---|---|---|

| Seq2Fun / ExpressAnalyst [14] | Functional profiling for non-model species | Raw reads or gene counts | Table of dysregulated functional ortholog groups |

| Enrichment Analysis Tools | Identifies over-represented biological themes | List of DEGs | Enriched pathways, GO terms, and p-values |

| AOP-Wiki | Framework for mechanistic knowledge | Molecular initiating event data | Hypothesis linking chemical exposure to adverse outcome |

| Interpretable ML (IML) Models [18] [17] | Identifies chemical features driving toxicity predictions | Chemical structure or ToxCast data | Mechanistic insights (e.g., toxicophores, important assays) |

Wisdom: Applying Knowledge for Decision-Making

Wisdom is the judicious application of knowledge to inform decisions, policies, and actions. In ecotoxicology, this is the domain of risk assessment and resource prioritization.

4.1 Protocol: Informing Risk Assessment with Integrated Evidence Objective: Synthesize data, information, and knowledge to support regulatory and scientific decisions. Workflow:

- Evidence Integration: Weigh and integrate evidence across the DIKW hierarchy. For a chemical, this includes its data (e.g., ToxCast assay hits, ECOTOX LC50 values), information (e.g., dose-response curves, DEG lists), and knowledge (e.g., activation of an AOP linked to reproductive toxicity) [14] [18].

- Model Application for Prioritization: Use Interpretable Machine Learning (IML) models built on resources like ToxCast. These models predict toxicity and, crucially, explain which chemical substructures or in vitro assay results drive the prediction, providing wisdom for prioritizing chemicals for further testing [18] [17].

- Uncertainty Characterization: Explicitly document uncertainties at each stage—from biological variability in raw data to statistical confidence in DEGs and model predictions. Wisdom involves understanding these limits [14].

- Decision Support: Formulate clear, evidence-based conclusions. Examples include proposing a chemical for regulatory restriction based on integrated evidence, or recommending a specific molecular endpoint for a standardized test guideline.

Diagram 2: The DIKW pyramid applied to ecotoxicological decision-making.

Table 3: Key Resources for DIKW-Driven Ecotoxicology Research

| Item | Function in DIKW Pipeline | Example/Source |

|---|---|---|

| RNA Extraction Kits & Sequencers | Generates raw transcriptomic data (FASTQ files). | Various commercial suppliers; Illumina, Nanopore platforms. |

| ECOTOX Knowledgebase [15] | Archives curated data and provides tools to create information (plots, filtered results). | U.S. EPA database. |

| Seq2Fun Algorithm [14] | Transforms raw reads from non-model species into information (functional gene count table). | Available via ExpressAnalyst. |

| R/Bioconductor Packages | Tools (edgeR, Limma) for creating information (DEG lists) from count data. | Open-source software. |

| Functional Enrichment Tools | Synthesizes information (DEG lists) into knowledge (perturbed pathways). | Enrichr, g:Profiler, DAVID. |

| AOP Wiki | Framework for organizing mechanistic knowledge. | OECD. |

| Interpretable ML (IML) Models [18] [17] | Applies knowledge from chemical data to generate wisdom for prioritization and risk assessment. | Models built on ToxCast/Tox21 data. |

| CompTox Chemicals Dashboard [16] | Provides authoritative chemical identifiers and properties, linking data across all stages. | U.S. EPA resource. |

The integrity, reliability, and archival of raw data in ecotoxicology research are governed by a complex framework of regulatory standards and scientific guidelines. These frameworks ensure that data submitted to regulatory agencies for product safety and environmental risk assessments are of sufficient quality to support critical decision-making. The foundational Good Laboratory Practices (GLPs) enforced by the U.S. Food and Drug Administration (FDA) establish the core principles for study conduct, data recording, and archiving [19]. Concurrently, the U.S. Environmental Protection Agency (EPA) provides specific guidelines for evaluating ecological toxicity data, particularly from open literature, to inform pesticide registration and ecological risk assessments [6]. A pivotal modern development is the emergence of structured data standards like SENDIG-GeneTox v1.0, which mandates the standardized electronic submission of genetic toxicology study data to the FDA, enhancing review efficiency and long-term data usability [20] [21].

This article provides detailed application notes and protocols within the context of a broader thesis on raw data archiving. It examines the synergistic and sometimes divergent requirements of these regulatory drivers, offering a practical guide for researchers and drug development professionals to navigate compliance while ensuring the scientific robustness and archival fidelity of ecotoxicological data.

Comparative Analysis of Regulatory and Guideline Frameworks

The landscape of regulatory compliance is defined by several key frameworks, each with distinct origins, scopes, and data requirements. The following table provides a structured comparison of the FDA GLPs, EPA Ecological Toxicity Guidelines, and the SENDIG-GeneTox standard.

Table 1: Comparative Overview of Key Regulatory and Standardization Frameworks

| Framework | Primary Authority | Core Scope & Objective | Key Data & Archiving Requirements | Typical Application Context |

|---|---|---|---|---|

| FDA Good Laboratory Practices (GLPs) [19] [22] | U.S. Food and Drug Administration (FDA) | Ensure the quality and integrity of nonclinical laboratory studies supporting FDA-regulated product safety. Focus on study conduct, reporting, and archiving. | - Raw data defined as all original laboratory records. - Mandated Study Director responsibility. - Independent Quality Assurance Unit audits. - Archival of all raw data, specimens, and final reports for specified periods. | Safety studies for drugs, biologics, medical devices, and certain food additives. |

| EPA Guidelines for Ecological Toxicity Data [6] | U.S. Environmental Protection Agency (EPA), Office of Pesticide Programs | Evaluate and incorporate open literature and guideline studies for ecological risk assessments of pesticides. Focus on data relevance and reliability. | - Use of the ECOTOX database as primary search tool [6]. - Screening criteria for study acceptance (e.g., single chemical exposure, reported concentration/dose, exposure duration) [6]. - Completion of Open Literature Review Summaries (OLRS) for tracking [6]. | Pesticide registration, Registration Review, and endangered species risk assessments. |

| SENDIG-GeneTox v1.0 [20] [21] | CDISC (Standard); Required by FDA | Standardize the electronic submission structure for in vivo genetic toxicology studies (micronucleus and comet assays). Focus on data format and interoperability. | - Submission of structured datasets per SDTM v1.5/SENDIG v3.1.1 [21]. - Use of controlled terminology. - Creation of a new GV domain for genetic toxicology results [20]. - Requires preparation of define.xml and reviewer's guide. | Regulatory submissions to the FDA for new drugs involving in vivo genetic toxicology studies. |

Application Notes & Protocols

Protocol: EPA Evaluation of Open Literature Toxicity Data for Raw Data Archiving

This protocol outlines the steps for screening, evaluating, and archiving supporting data from open literature studies according to EPA guidelines [6]. This process is critical for justifying the use of non-guideline data in ecological risk assessments.

1.0 Objective: To systematically identify, evaluate, categorize, and archive ecotoxicity data from the published open literature for use in EPA ecological risk assessments, ensuring the traceability and reliability of the incorporated data.

2.0 Materials:

- Access to the EPA ECOTOXicology database (ECOTOX) [6].

- Institutional access to scientific journals and publication databases.

- Open Literature Review Summary (OLRS) template (per EPA format) [6].

- Secure, dedicated digital storage for archived PDFs and data extracts.

3.0 Procedure:

3.1 Literature Search & Initial Screening:

- Conduct a search for the target chemical in the ECOTOX database, which serves as EPA's primary search engine for this purpose [6].

- Apply Phase I acceptability criteria to retrieved citations. A study must meet all of the following minimum criteria to be accepted for further review [6]:

- Effects are due to single chemical exposure.

- Test subject is an aquatic or terrestrial plant or animal.

- A biological effect on live, whole organisms is reported.

- A concurrent environmental concentration/dose is reported.

- An explicit exposure duration is reported.

- Categorize papers as: (1) Accepted by ECOTOX/OPP, (2) Accepted by ECOTOX but not OPP, (3) Rejected, or (4) "Other" [6].

3.2 Full-Text Review & Data Extraction:

- Obtain full texts of accepted and "Other" category papers.

- Evaluate studies against Phase II, higher-tier criteria [6]:

- Chemical relevance to OPP concern.

- Study design robustness (e.g., acceptable control groups, measured exposure concentrations).

- Clarity and statistical reporting of endpoints.

- For accepted studies, extract key quantitative data (e.g., LC50, NOEC, LOEC, exact p-values) and critical study metadata (species, test conditions, exposure regime) into a standardized data table.

3.3 Completion of Open Literature Review Summary (OLRS):

- For each evaluated paper, complete an OLRS documenting [6]:

- Citation and retrieval source.

- Application of acceptance/rejection criteria.

- Summary of methods and key results.

- Assessment of relevance and reliability for the specific risk assessment.

- Final classification (e.g., "Core," "Supplementary," "Rejected").

- Submit the finalized OLRS to the designated tracking system (e.g., EPA's Storage Area Network drive) for archival [6].

3.4 Raw Data Archiving Protocol:

- Archive the primary source: Save a digital copy of the full-text publication (PDF) in the project's permanent raw data repository.

- Archive the processed data: Save the finalized data extraction tables and the completed OLRS in the same repository, with clear file-naming conventions linking them to the source PDF.

- Document the provenance: Maintain a master log that links the risk assessment conclusion back to the archived OLRS, extracted data, and original publication, creating an immutable audit trail.

Diagram 1: EPA Open Literature Evaluation & Archiving Workflow (100 chars)

Protocol: Conducting an SENDIG-GeneTox Compliant In Vivo Micronucleus Assay

This protocol details the experimental and data management procedures for conducting an in vivo micronucleus assay that complies with both OECD/EPA test guidelines and the SENDIG-GeneTox v1.0 electronic submission requirements [20].

1.0 Objective: To assess the potential of a test article to induce chromosomal damage in rodent hematopoietic cells by measuring the frequency of micronucleated polychromatic erythrocytes (MN-PCE), and to generate all raw and standardized data required for a regulatory submission.

2.0 Materials:

- Animals: Healthy, young adult rodents (typically mice or rats).

- Test Article: Well-characterized chemical substance.

- Positive Control: A known clastogen (e.g., Cyclophosphamide).

- Vehicle Control: Appropriate solvent.

- Reagents: May-Grünwald stain, Giemsa stain, or acridine orange for slide preparation.

- Equipment: Microscope, automated slide scanner, balance.

- Software: A SEND-compliant data management system capable of generating the GV domain and related files [20].

3.0 Procedure:

3.1 Study Design & Animal Administration:

- Design the study with appropriate dose groups (vehicle control, multiple test article doses, positive control), including concurrent negative and positive control groups [22].

- Randomize and assign animals to groups. Record all raw data on animal body weights, dose formulations, and administration details in real-time into a validated electronic data capture system.

3.2 Sample Collection & Slide Preparation:

- At predetermined sampling times (e.g., 24-48 hours post-treatment), collect bone marrow (typically from femurs).

- Prepare bone marrow smears on slides. Stain slides appropriately (e.g., with May-Grünwald/Giemsa to distinguish polychromatic erythrocytes (PCE) from normochromatic erythrocytes (NCE)).

3.3 Microscopic Analysis & Raw Data Capture:

- For each animal, score a minimum number of PCEs (e.g., 2000 PCEs per animal) for the presence of micronuclei.

- Simultaneously score the percentage of PCE among total erythrocytes (PCE/NCE ratio) as a measure of bone marrow toxicity.

- Critical Archiving Step: The primary raw data are the analyst's signed paper scoring sheets or the direct electronic capture from a validated automated scanner. These must be preserved in their original format.

3.4 Data Processing & SEND Dataset Creation:

- Calculate the mean frequency of MN-PCE per group and statistically compare treatment groups to the concurrent vehicle control.

- Translate the raw study data into SEND-compliant datasets:

- Demographics (DM) and Comments (CO) domains for animal and study info.

- Findings About (FA) domain for body weights and clinical signs.

- Pharmacokinetics (PC) domain for dose administration data.

- New Genetic Toxicology (GV) Domain: Populate with micronucleus test-specific variables. This includes the count of PCEs scored, the count of MN-PCEs observed, the PCE/NCE ratio, and the derived result (e.g., "MN-PCE FREQUENCY") for each animal [20].

- Generate the accompanying define.xml and Study Data Reviewer's Guide (SDRG) to describe the datasets.

3.5 Archiving for SEND Compliance:

- Archive the native SEND datasets (e.g., .xpt files), define.xml, and SDRG as the definitive electronic submission records.

- Maintain a clear, documented link between the submitted SEND datasets and the original analytical raw data (scoring sheets/scanner images), ensuring the entire data lineage is preserved.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful and compliant ecotoxicology and toxicology research requires specific materials and tools. The following table details key reagents and their functions in the context of the discussed protocols and regulatory frameworks.

Table 2: Essential Research Reagents and Materials for Regulatory Toxicology Studies

| Item Category | Specific Item / Solution | Function in Regulatory Science | Associated Quality/Archiving Consideration |

|---|---|---|---|

| Reference & Control Substances | Certified Positive Control (e.g., Cyclophosphamide) | Validates the sensitivity and proper conduct of the test system (e.g., micronucleus assay) [22]. | Must have a Certificate of Analysis (CoA) documenting identity, purity, and stability. CoA is part of the raw data archive. |

| Reference & Control Substances | EPA Aquatic Life Benchmark Reference Toxicants [23] | Used to calibrate or validate test organisms' sensitivity in ecological assays. | Source (e.g., EPA benchmark table) and preparation records must be archived [23]. |

| Data Management | SEND-Compatible Data System [20] | Software platform to structure, manage, and export nonclinical study data in SENDIG v3.1.1 and SENDIG-GeneTox formats. | System must be validated for regulatory use. Audit trails and electronic raw data exports are archived. |

| Sample Processing | Micronucleus Staining Kit (e.g., Acridine Orange) | Facilitates the differentiation and scoring of polychromatic erythrocytes for the micronucleus assay. | Lot numbers and preparation dates of staining solutions must be recorded as part of method raw data. |

| Sample Processing | EPA ECOTOX Database Access [6] | The primary tool for identifying open literature ecotoxicity studies for EPA assessments. | Search strategy, dates, and results (citation lists) should be documented and saved in the project archive. |

Integration of Frameworks for Comprehensive Raw Data Archiving

The ultimate goal for a research organization is to create a seamless raw data archiving protocol that satisfies the core requirements of all applicable frameworks. The diagram below illustrates how data from different study types flows through evaluation and processing pipelines into a unified archival system that supports both scientific review and regulatory submission.

Diagram 2: Integrated Raw Data Archiving for Regulatory Compliance (99 chars)

Synthesis and Forward Look: Effective raw data archiving in ecotoxicology is not merely a retrospective filing exercise but a proactive data governance strategy. It requires understanding that FDA GLPs provide the foundational principles for data traceability and audit trails [19] [22], EPA guidelines define the criteria for evaluating and incorporating external data sources [6], and SENDIG-GeneTox and similar standards dictate the future-state format for electronic data interoperability and reuse [20] [21]. As evidenced by ongoing debates about data quality evaluation [22] and the continuous update of tools like the EPA Aquatic Life Benchmarks [23], these frameworks are dynamic. Therefore, a robust archival protocol must be built on flexibility, clear provenance tracking, and a commitment to preserving data in both its original raw form and its standardized, submission-ready state. This integrated approach ensures that data remains accessible, verifiable, and meaningful for the lifetime of a product and beyond, fulfilling both regulatory obligations and the scientific ethic of transparency.

Quantified Risks of Inadequate Archiving in Ecotoxicology

The integrity of ecotoxicology research, a field critical to environmental regulation and chemical safety, is fundamentally dependent on the quality of its underlying data and the reproducibility of its studies [24]. Failures in raw data archiving directly undermine scientific credibility and carry significant, measurable costs.

Table 1: Documented Prevalence of Integrity Issues Related to Data and Documentation [24]

| Issue Category | Reported Prevalence | Primary Consequence |

|---|---|---|

| Admitting to falsification of data | 0.3% of surveyed scientists | Fraud, retraction, invalidated policy |

| Failure to present conflicting evidence | 6% of surveyed scientists | Bias, misleading conclusions |

| Changing design/method/results due to funder pressure | 16% of surveyed scientists | Compromised objectivity, irreproducibility |

| Personal knowledge of colleagues' detrimental practices | >70% of surveyed scientists | Erosion of trust within the scientific community |

Table 2: Impact of Poor Data Management on Regulatory Submissions

| Challenge | Consequence | Source / Context |

|---|---|---|

| Lack of data collection standards | Only ~20% of studies meet deadlines; significant delays and costs [25]. | Pharmaceutical clinical trials |

| Unstructured evidence, gaps in traceability | Delays, additional regulator questions, or rejection of submissions [26]. | FDA regulatory submissions |

| Siloed teams, inconsistent processes | Difficulty maintaining consistency, leading to conflicting interpretations [26]. | Evidence synthesis for regulatory dossiers |

These quantified risks highlight that poor archiving is not merely an administrative concern. It is a primary contributor to scientific irreproducibility, which fuels public distrust and complicates the translation of science into policy [24]. Furthermore, it creates substantial regulatory inefficiency, delaying the approval of vital environmental technologies or therapeutics [25] [26].

Application Notes & Protocols for Raw Data Archiving

A robust archiving protocol transforms raw data from a vulnerable project artifact into a trustworthy, reusable scientific asset. The following framework is designed for ecotoxicology research, encompassing field studies, laboratory ecotoxicology, and computational modeling.

Protocol: Implementing a FAIR Archiving Workflow

This protocol ensures data is Findable, Accessible, Interoperable, and Reusable (FAIR).

1. Pre-Archive Data Curation & Validation:

- Action: Before archiving, perform a final quality control check. This includes verifying data against original laboratory or field notebooks, confirming the consistency of units, and ensuring no data points are missing without documented justification (e.g., instrument failure, sample loss).

- Documentation: Create a

README.txtfile and a data dictionary. TheREADMEmust describe the experiment, personnel, dates, and any processing steps applied. The data dictionary must define every column/variable, including units, detection limits, and codes for missing data.

2. Selection of Archival Storage Medium:

- Criteria: Choose based on data volume, required retrieval speed, budget, and longevity needs [27].

- For active, high-value datasets: Use multi-cloud or hybrid cloud storage for redundancy and to avoid vendor lock-in [27].

- For long-term, infrequent access (e.g., raw instrument files): Consider cloud archiving services (cold storage) or magnetic tape systems for cost-effective, high-capacity preservation [27].

- Critical Note: Never rely on a single point of failure. The 3-2-1 rule is a minimum standard: 3 total copies, on 2 different media, with 1 copy off-site [27].

3. File Format Standardization:

- Principle: Use open, non-proprietary, and widely supported formats to mitigate future software obsolescence [27].

- Ecotoxicology-Specific Recommendations:

- Tabular Data: CSV (comma-separated values) or TSV (tab-separated values) with a clear header row.

- Instrument Data: Convert proprietary formats to open standards where possible (e.g., mzML for mass spectrometry, NetCDF for chromatography).

- Metadata & Protocols: PDF/A for documents, XML or JSON for structured metadata.

4. Integrity Assurance & Provenance Logging:

- Action: Generate a cryptographic hash (e.g., SHA-256) for each archived file. Record this checksum in the metadata.

- Action: Document the complete provenance: from sample collection through all analytical derivations to the final archived dataset. This includes software name, version, and parameters used for any analysis.

5. Scheduled Integrity Audits and Media Refreshment:

- Action: Establish an annual review. Verify file integrity by re-computing and comparing checksums [27].

- Action: Plan for data migration every 3-5 years to new storage media or systems to combat hardware obsolescence [27]. Refreshing (copying to new media of the same type) is insufficient long-term; migration to contemporary systems is essential [27].

Protocol: Ensuring Reproducibility for Computational Ecotoxicology

Computational studies (e.g., QSAR, population modeling) require archiving of the digital environment.

1. Archive the Code & Explicit Dependencies:

- Action: Use version control (e.g., Git) and archive the final repository. Include a

requirements.txt(Python),DESCRIPTION(R), or equivalent file that explicitly lists all package names and version numbers.

2. Containerize the Analysis Environment:

- Action: Use container technology (e.g., Docker, Singularity) to capture the complete operating system, software, and library state. Push the container image to a permanent repository and record its unique identifier.

3. Document Seed Values for Random Number Generators:

- Action: Any stochastic simulation must have the pseudo-random number generator seed value clearly documented and archived to allow exact replication of results.

Visualizing the Archiving Workflow and Consequences

The following diagrams map the logical relationship between poor archiving practices and their consequences, as well as a standardized archival workflow.

Consequences of Poor Archiving in Ecotoxicology

FAIR Raw Data Archiving Workflow for Ecotoxicology

The Scientist's Toolkit: Essential Reagents & Solutions for Data Archiving

Table 3: Research Reagent Solutions for Data Archiving

| Tool / Solution | Function in Archiving Protocol | Key Considerations for Ecotoxicology |

|---|---|---|

| Cryptographic Hash Generator (e.g., sha256sum) | Creates a unique digital fingerprint for a file to verify its integrity has not changed over time or during transfer. | Essential for validating raw instrument files and large genomic or imagery datasets before and after archival. |

| Open File Format Converters | Transforms proprietary data formats (e.g., .D from mass spectrometers) into open, documented standards to ensure long-term accessibility [27]. | Critical for analytical chemistry data. Must be validated to ensure no loss of critical metadata during conversion. |

| Containerization Software (e.g., Docker, Singularity) | Packages code, software dependencies, and environment settings into a single, reproducible unit for computational analyses [27]. | Vital for QSAR, toxicokinetic modeling, and bioinformatics pipelines to guarantee exact reproducibility. |

| Systematic Literature Review Platforms | Provides structured workflows for managing, screening, and documenting evidence from scientific literature, creating an audit trail [26]. | Supports regulatory dossiers for chemical approval by ensuring transparent, reproducible evidence synthesis. |

| WORM (Write Once, Read Many) Storage | Prevents modification or deletion of archived data for a defined retention period, crucial for regulatory compliance [27]. | Applicable for final study data submitted to support chemical registration under regulations like REACH or FIFRA. |

| Persistent Identifier (PID) System (e.g., DOI) | Assigns a permanent, unique identifier to a dataset, making it citable and permanently findable. | Enables proper citation of ecotoxicological datasets, linking publications directly to their evidence base. |

| Rich Metadata Schema (e.g., EML - Ecological Metadata Language) | Provides a structured framework to describe dataset content, context, and structure using standardized terms. | Improves discovery and interoperability of ecotoxicology data across studies on contaminants, species, and endpoints. |

Building Your Archive: Step-by-Step Protocols for Ecotoxicology Data Curation and Storage

Foundational Documentation for Ecotoxicology Data

Effective raw data archiving in ecotoxicology is a prerequisite for scientific integrity, reproducibility, and the reusability of valuable datasets for secondary analyses, meta-analyses, and computational modeling [28] [7]. The cornerstone of this process is comprehensive documentation, which encompasses both detailed metadata and the complete experimental context.

Core Metadata Requirements

Metadata provides the essential "who, what, when, where, and how" of a dataset, enabling its discovery, understanding, and proper use long after the original research team has moved on [28]. Adherence to the FAIR principles (Findable, Accessible, Interoperable, and Reusable) is the modern standard for data stewardship [29].

The following table outlines the essential metadata categories for an ecotoxicology dataset, structured to ensure compliance with repository requirements and alignment with systems like the ECOTOXicology Knowledgebase (ECOTOX) [7].

Table 1: Essential Metadata Checklist for Ecotoxicology Data Archiving

| Metadata Category | Specific Elements Required | Format & Standards | Purpose/Importance |

|---|---|---|---|

| 1. Dataset Identification | - Persistent Unique Identifier (e.g., DOI)- Descriptive Title- Principal Investigator & Affiliations- Funding Source(s)- Keywords | - Dublin Core terms- Repository-assigned DOI- CRediT contributor roles | Ensures findability, attribution, and links to publications and grants. |

| 2. Temporal & Spatial Context | - Date(s) of Data Collection- Date of Dataset Publication/Version- Geographic Coordinates of Study Site (if field-based)- Lab Location | - ISO 8601 date format (YYYY-MM-DD)- Decimal degrees (WGS84) | Critical for ecological relevance, understanding environmental context, and temporal trend analyses. |

| 3. Taxonomic Descriptors | - Accepted Species Name (Genus, species)- Authority- Higher Taxonomy (Family, Order, Class)- Species ID Verification Method- Life Stage & Sex of Test Organisms | - Integrated Taxonomic Information System (ITIS)- World Register of Marine Species (WoRMS)- Darwin Core Standard [29] | Enables data integration across studies, phylogenetic analyses, and modeling of species sensitivity [30]. |

| 4. Chemical Descriptors | - Chemical Name- CAS Registry Number (RN)- DSSTox Substance ID (DTXSID)- SMILES String / InChIKey- Purity & Source of Test Substance | - EPA CompTox Chemicals Dashboard IDs [7]- IUPAC nomenclature | Essential for linking to chemical properties, use in QSAR models, and interoperability with toxicology databases [7] [30]. |

| 5. Data Provenance | - Description of Raw vs. Processed Data Files- Software & Version Used for Analysis- Algorithm or Script Name/ID- Detailed Data Transformation Steps | - Use of version control (e.g., Git) IDs for scripts- Narrative description of cleaning/normalization rules | Guarantees transparency, enables reproducibility of results from raw data, and prevents misinterpretation [28]. |

| 6. Access & Licensing | - License Type (e.g., CC0, CC-BY)- Embargo Date (if applicable)- Contact for Data Access Requests | - Creative Commons designations- Clearly stated in README file | Defines terms of reuse, fulfilling funder mandates and enabling collaborative research [31]. |

Archiving Workflow and Decision Logic

Before archiving, data must be prepared and validated. The following diagram outlines the critical pre-archiving workflow and the decision logic for ensuring data quality and completeness.

Comprehensive Experimental Context Documentation

The experimental context transforms a standalone dataset into a reusable scientific resource. For ecotoxicology, this requires meticulous detail on the test system, exposure conditions, and measured outcomes [6].

Experimental Design and Conditions

Ecotoxicology data is highly sensitive to methodological parameters. Archiving must capture the full experimental design to allow for correct interpretation and use in regulatory risk assessments or species sensitivity distributions (SSDs) [6] [30].

Table 2: Essential Experimental Context Documentation

| Aspect | Minimum Required Information | Example / Standard | Rationale for Inclusion |

|---|---|---|---|

| Test System | - Test Type (Acute/Chronic, Lab/Field)- Test Guideline Followed (e.g., OECD 203)- Test Duration & Frequency of Observation- Test Chamber/Vessel Type & Volume | OECD Test Guidelines 203 (Fish), 202 (Daphnia), 201 (Algae) [30] | Defines the fundamental nature of the study and its regulatory relevance. |

| Exposure Regime | - Exposure Concentration Units (e.g., mg/L, ppm)- Number & Values of Test Concentrations- Control Type(s) (Negative, Solvent, Positive)- Renewal Frequency (Static, Static-renewal, Flow-through) | Must report explicit duration and concurrent concentration [6]. | Critical for dose-response modeling and determining LC50/EC50 values. |

| Environmental Medium | - Full Medium Composition (e.g., reconstituted water recipe)- Temperature, pH, Dissolved Oxygen, Hardness- Photoperiod & Light Intensity- Feeding Regime (if applicable) | EPA standard test media; measurements should be reported. | Water quality profoundly affects toxicity (e.g., metal speciation) and organism health. |

| Organism Source & Health | - Source of Test Organisms (Supplier, Wild Collection)- Acclimation Period & Conditions- Age/Life Stage, Weight/Length (Mean, SD)- Pre-test Mortality Threshold (e.g., <10%) | Organism health and life stage must be reported and verified [6] [30]. | Ensures test organism suitability and helps explain variability in response. |

| Endpoint Quantification | - Primary Endpoint (e.g., Mortality, Growth, Immobilization)- Method of Measurement (e.g., visual count, instrument)- Raw Data for Each Replicate- Method for Calculated Value (e.g., LC50, probit analysis) | ECOTOX encodes effects (MOR, GRO, ITX) and endpoints (LC50, EC50) [7] [30]. | Raw replicate data allows for alternative analyses; the calculated endpoint enables comparison. |

Systematic Review and Data Curation Protocol

The U.S. EPA's ECOTOX database exemplifies a high-standard protocol for curating ecotoxicology data from the literature [7]. The following protocol, modeled on this process, provides a reproducible methodology for researchers to prepare their own data for curation or direct archiving.

Protocol 1: Data Curation for Archiving Based on Systematic Review Principles

Objective: To systematically extract, validate, and structure experimental data and metadata from an ecotoxicology study into a standardized format suitable for public archiving or submission to a curated database like ECOTOX.

Materials: Primary research records (electronic raw data, lab notebooks), manuscript draft/final report, metadata schema (Table 1), experimental context checklist (Table 2), spreadsheet or database software.

Procedure:

- Study Identification & Screening:

- Assemble all digital and physical records related to the study.

- Screen materials against a pre-defined applicability checklist. A study must meet all of the following criteria to proceed [6] [7]:

- Effects are due to a single, verified chemical exposure.

- Test subject is an aquatic or terrestrial plant or animal.

- A concurrent concentration/dose and an explicit exposure duration are reported.

- A biological effect on live organisms is measured.

- Treatment groups are compared to an acceptable control group.

- The test species is identified and verifiable.

Full-Text Review & Data Extraction:

- Using a pre-populated data extraction form (based on Tables 1 & 2), systematically enter all relevant metadata and experimental context.

- Extract raw data at the level of individual replicate responses (e.g., survival status of each organism per tank, individual growth measurements). Do not extract only summary statistics [28].

- Record all data as presented, including any notes on outlier treatment or missing data.

Verification and Harmonization:

- Chemical Verification: Cross-check chemical identifiers (CAS RN, name) against the EPA CompTox Chemicals Dashboard. Use the DSSTox ID (DTXSID) as the authoritative link [7] [30].

- Taxonomic Verification: Verify the reported species name against a authoritative database (e.g., ITIS, WoRMS). Record the verified canonical name and higher taxonomy [29].

- Unit Standardization: Convert all measurements to standard SI units or a project-defined consistent unit system (e.g., all concentrations in mg/L, all times in hours).

- Vocabulary Control: Use a controlled vocabulary for key fields (e.g., for "effect," use terms like "MOR," "ITX," "GRO" as defined in ECOTOX) [7].

Generation of Structured Data Files:

- Create a "raw_data.csv" file containing the verified, harmonized replicate-level data.

- Create a "metadata_context.csv" file containing all information from Tables 1 and 2. Each row should correspond to a single test or treatment series, linkable to the raw data via a unique

test_id. - Create a "README.txt" file describing the relationship between files, any abbreviations, the extraction protocol, and contact information.

Quality Control: Perform independent double-entry of a random 10% of data points to ensure extraction accuracy. The final dataset should pass the logic check in the workflow diagram (Section 1.2).

The data curation process used by authoritative databases involves a rigorous, multi-stage pipeline to ensure quality and consistency. The following diagram visualizes this systematic protocol from initial search to final archived record.

The Scientist's Toolkit for Archiving

Preparing data for archiving requires specific tools and resources to ensure the process is efficient and the output is robust. The following toolkit lists essential solutions for ecotoxicology researchers.

Table 3: Research Reagent Solutions for Data Archiving

| Tool / Resource | Category | Primary Function | Relevance to Ecotoxicology Archiving |

|---|---|---|---|

| ECOTOX Knowledgebase [7] | Curated Database | Repository of single-chemical ecotoxicity data. | Serves as the gold-standard model for data structure, controlled vocabularies, and metadata fields. Use as a template for your own data formatting. |

| EPA CompTox Chemicals Dashboard | Chemical Registry | Authoritative source for chemical identifiers, properties, and linkages. | Critical for verifying and standardizing chemical information (DTXSID, CAS RN, SMILES) in metadata [7] [30]. |

| Darwin Core Standard (DwC) [29] | Data Standard | A vocabulary for biodiversity data, including taxonomy and measurements. | Provides standardized terms (e.g., scientificName, taxonRank) for describing test species, ensuring global interoperability. |

| Integrated Taxonomic Information System (ITIS) | Taxonomic Authority | Verified database of species names and hierarchical classification. | Used to validate reported species names and populate higher taxonomy fields (family, order) in metadata. |

| Git / GitHub / GitLab | Version Control System | Tracks changes to code and small data files, enables collaboration. | Essential for managing scripts used for data cleaning/analysis, documenting provenance, and maintaining version history of processed data [31]. |

| Zenodo / Figshare | General Repository | FAIR-aligned, public data repositories that issue Digital Object Identifiers (DOIs). | Suitable for archiving final datasets, supplementary materials, and code, linking them to publications for long-term preservation and citation. |

| R / Python (pandas, tidyverse) | Programming Language / Library | Environments for reproducible data cleaning, transformation, and analysis. | Creating documented scripts for data processing is a best practice that transforms a manual analysis into a reproducible, archivable workflow [29]. |

Implementation and Validation Protocol

The final step involves packaging the documented data and validating its readiness for archiving. This protocol ensures the dataset is self-contained and reusable.

Protocol 2: Final Dataset Assembly and Validation for Repository Submission

Objective: To assemble all components of a research project into a coherent, well-documented, and validated data package suitable for deposit into a public archive.

Materials: Outputs from Protocol 1 (raw_data.csv, metadata_context.csv, README.txt), final manuscript/study report, any analysis scripts, and a chosen repository's submission guidelines.

Procedure:

- Create a Project Directory: Establish a master folder with the naming convention

ProjectTitle_PI_Year. - Populate Directory Structure:

- Write a Comprehensive README: This is the most critical documentation file. It must include [31]:

- Project title, abstract, and funding.

- A file manifest describing every file in the directory.

- Definitions for all column headers in data files and all codes/abbreviations used.

- A description of any relationships or linkages between files (e.g., "

test_idinprocessed/experiment_results.csvcorresponds to thetest_idinmetadata/test_conditions.csv"). - A step-by-step narrative of how to go from the raw data to the key results (potentially referencing scripts).

- The data license (e.g., "CC0 1.0 Universal").

- Perform a Final FAIR Check:

- Findable: Are all files logically named? Does the metadata contain rich, searchable keywords?

- Accessible: Is the data to be stored in a trusted repository with persistent identifiers? Are access conditions clear?

- Interoperable: Are common, non-proprietary file formats used (CSV, TXT, PDF)? Are terms from controlled vocabularies (DwC, ECOTOX) used where possible?

- Reusable: Is the experimental context (Table 2) thoroughly documented? Are the provenance and processing steps clear?

- Repository Submission: Upload the entire directory structure to your chosen repository. Complete the repository's metadata submission form, which often draws information directly from your

README.txtandmetadata_context.csvfiles.

Modern ecotoxicology increasingly relies on high‑throughput sequencing (HTS) to unravel molecular responses of organisms to environmental contaminants. A single RNA‑Seq experiment can generate hundreds of gigabytes of raw sequencing reads[reference:0]. These primary data (typically stored as FASTQ files) represent the full evidentiary record of the experiment; their loss or incomplete archiving severely limits reproducibility, future re‑analysis, and the long‑term value of the study[reference:1]. This protocol provides a detailed, actionable guide for archiving HTS data within the framework of a broader thesis on raw‑data preservation for ecotoxicology research. It integrates best‑practice recommendations from ancient genomics[reference:2], cost‑effective storage strategies[reference:3], and the specific data‑generation realities of transcriptomics in ecotoxicology[reference:4].

Step‑by‑Step Archiving Protocol

Pre‑archiving Quality Control

Before archiving, assess the technical quality of the raw reads to ensure they meet minimal standards for reuse.

- Tool: FastQC (https://www.bioinformatics.babraham.ac.uk/projects/fastqc/).

- Procedure: Run FastQC on each FASTQ file (or

fastq.gzcompressed file). The software calculates a set of quality metrics (Table 1). Generate a summary report for all samples using MultiQC[reference:5]. - Acceptance Criteria: Per‑base sequence quality score ≥ Q30 for the majority of cycles; low adapter content (<5%); low N‑content (<1%). Failed samples should be flagged in the metadata.

File Compression

Compression is essential to reduce long‑term storage costs. Lossless compression of paired‑end fastq.gz files can achieve ratios up to 1:6[reference:6].

- Recommended Tools: DRAGEN ORA or Genozip for highest compression ratios;

gzipfor universal compatibility. Procedure: For each sample, compress the paired‑end FASTQ files. Example using

gzip:The resulting

sample_R1.fastq.gzandsample_R2.fastq.gzare the files to be archived.

Metadata Preparation

Comprehensive metadata is critical for FAIR (Findable, Accessible, Interoperable, Reusable) data. For submission to the International Nucleotide Sequence Database Collaboration (INSDC) archives (SRA, ENA, DDBJ), prepare the following:

- BioProject: A single overarching project description.

- BioSample: One record per unique biological sample, detailing organism, tissue, treatment, time point, etc.[reference:7].

- SRA Experiment Metadata: Links samples to library preparation details (library strategy, layout, instrument model) and the compressed data files[reference:8].