From Raw Data to Reliable Models: A Step-by-Step Guide to Curating Benchmark Datasets for Ecotoxicity Studies

This article provides a comprehensive guide to building robust raw data curation workflows essential for modern ecotoxicology, particularly for machine learning applications.

From Raw Data to Reliable Models: A Step-by-Step Guide to Curating Benchmark Datasets for Ecotoxicity Studies

Abstract

This article provides a comprehensive guide to building robust raw data curation workflows essential for modern ecotoxicology, particularly for machine learning applications. Aimed at researchers, scientists, and drug development professionals, it addresses the critical need for high-quality, reproducible data to overcome the ethical and logistical limitations of traditional animal testing [citation:1][citation:2]. The guide covers the full scope from foundational concepts—defining data curation and identifying key sources like the US EPA ECOTOX database—to methodological best practices for extraction, cleaning, and structuring [citation:1]. It further details troubleshooting common pitfalls such as data leakage and offers strategies for validation through benchmark datasets and comparative model analysis [citation:1][citation:2]. The synthesis provides a actionable framework for generating regulatory-ready, computational toxicology insights.

Building the Bedrock: Understanding the Why and Where of Ecotoxicology Data Curation

Effective data curation transforms disparate, raw ecotoxicological measurements into reliable, interoperable datasets ready for risk assessment and research. This process is a cornerstone of modern computational toxicology, enabling the development of New Approach Methodologies (NAMs) and supporting regulatory decisions[reference:0]. This technical support center, framed within a thesis on raw data curation workflows, provides troubleshooting guidance and essential resources for researchers, scientists, and drug development professionals navigating this critical field.

Technical Support Center: Troubleshooting Guides & FAQs

Data Acquisition & Formatting

Q: My raw data files (e.g., from plate readers, LC-MS) are in various proprietary formats. How can I standardize them for curation?

- A: Begin by exporting raw instrument data to open, non-proprietary formats (e.g.,

.csv,.txt). Develop a standardized template for metadata, capturing essential details: chemical identifier (preferably with CAS RN), species, exposure duration, endpoint measured (e.g., LC50, EC50), units, and test conditions. Automated scripts (Python/R) can be written to parse and reformat recurring data exports into this template.

- A: Begin by exporting raw instrument data to open, non-proprietary formats (e.g.,

Q: How do I handle inconsistent or missing units (e.g., mM vs. µg/mL) across different studies I am compiling?

- A: Implement a unit harmonization step. First, map all synonymous unit terms to a controlled vocabulary (e.g., "ug/ml", "µg/mL", "microgram per ml" → "µg/mL"). Then, apply conversion factors to standardize all values to a single unit per measurement type (e.g., all concentrations in µM). Document all conversions performed[reference:1].

Data Integration & Quality Control

Q: When integrating data from multiple literature sources, I encounter conflicting toxicity values for the same chemical-species pair. Which one should I use?

- A: Do not arbitrarily choose. Flag all records for manual expert review. Assess study quality based on reporting completeness (e.g., adherence to OECD test guidelines), test organism life stage, solvent controls, and statistical methods. Retain the higher-quality data and document the rationale for exclusion in your curation log[reference:2].

Q: How can I ensure the chemical identifiers in my dataset are accurate and consistent?

- A: Use authoritative chemical databases for verification and mapping. Cross-reference chemical names and CAS numbers with the EPA CompTox Chemicals Dashboard or PubChem. Resolve discrepancies (e.g., synonyms, spelling variants) by aligning all records to a preferred identifier (e.g., DTXSID from CompTox) to enable reliable linking with other resources[reference:3].

Analysis & Reporting

Q: My curated dataset is ready. What are the best practices for sharing it to ensure usability?

- A: Adhere to the FAIR principles (Findable, Accessible, Interoperable, Reusable). Publish your dataset in a trusted repository (e.g., Zenodo, Figshare) with a persistent DOI. Include a detailed data descriptor file explaining the curation pipeline, all fields, controlled vocabularies, and any quality flags used[reference:4].

Q: I need to perform a meta-analysis on curated ecotoxicity data. What are the key statistical considerations?

- A: Account for data hierarchy (multiple records per chemical-species). Use appropriate models (e.g., mixed-effects models) that can handle within-study and between-study variance. Sensitivity analysis is crucial; test how results change if certain study types or quality tiers are excluded.

Table 1: Scale of Major Ecotoxicology Data Resources

| Resource | Primary Content | Record Count | Species Covered | Chemicals Covered | Key Use |

|---|---|---|---|---|---|

| ECOTOX Knowledgebase | Curated literature toxicity data | >1 million test records[reference:5] | >13,000 aquatic & terrestrial[reference:6] | ~12,000[reference:7] | Regulatory benchmarks, risk assessment[reference:8] |

| ICE (Integrated Chemical Environment) | Curated in vivo, in vitro, in silico data | Not specified | Primarily mammalian | Thousands | NAM development & validation[reference:9] |

| Curated Aquatic MoA Dataset (Kramer et al., 2024) | Effect concentrations & Mode of Action (MoA) | 3,387 compounds[reference:10] | Algae, crustaceans, fish[reference:11] | 3,387 environmentally relevant chemicals[reference:12] | Chemical grouping, AOP-informed assessment[reference:13] |

Table 2: Composition of a Curated Aquatic Ecotoxicity Dataset (Example)

| Data Category | Count | Percentage of Total | Notes |

|---|---|---|---|

| Total Compounds | 3,387 | 100% | Environmentally relevant list[reference:14] |

| Parent Substances | 2,890 | ~85.3% | [reference:15] |

| Transformation Products (TP) | 374 | ~11.0% | [reference:16] |

| Both Parent & TP | 96 | ~2.8% | [reference:17] |

| Unassigned | 27 | ~0.8% | Mainly industrial chemicals[reference:18] |

Experimental Protocol: Data Curation Workflow for Ecotoxicity Studies

This protocol outlines a generalized workflow for curating ecotoxicity data from raw sources into an analysis-ready format, synthesizing approaches from major resources[reference:19][reference:20].

1. Planning & Scope Definition

- Define Objectives: Determine the intended use of the curated data (e.g., QSAR modeling, chemical grouping, specific risk assessment).

- Identify Sources: Select primary data sources (e.g., ECOTOX, literature search, in-house experiments). Define inclusion/exclusion criteria for studies (e.g., test guideline compliance, species relevance).

2. Data Acquisition & Extraction

- Automated Harvesting: Where possible, use APIs (e.g., ECOTOX API) or scripts to download data programmatically.

- Manual Extraction: For literature, systematically extract data into a standardized template. Capture numeric results, metadata (species, chemical, endpoint, duration), and study design details.

3. Harmonization & Standardization

- Vocabulary Control: Map all free-text terms (chemical names, endpoints, species) to controlled vocabularies or ontologies (e.g., ChEBI, OBA).

- Unit Conversion: Convert all measurements to standardized units (e.g., molarity for concentrations, hours for time).

- Identifier Resolution: Validate and align chemical identifiers using authoritative databases like CompTox.

4. Quality Control & Expert Review

- Automated Checks: Run scripts to flag outliers, missing values, and logical inconsistencies (e.g., effect concentration > solubility).

- Manual Curation: Expert review of flagged records, conflicting data, and complex cases to adjudicate based on study quality and reporting clarity[reference:21].

5. Integration & Formatting

- Merge Datasets: Combine data from all sources into a unified structure.

- Format for Output: Structure the final dataset into tidy formats (e.g., one record per observation) suitable for analysis. Create comprehensive data descriptor documentation.

6. Publication & Sharing

- Apply Metadata: Describe the dataset with rich metadata following community standards.

- Deposit in Repository: Publish in a FAIR-aligned repository with a DOI and usage license.

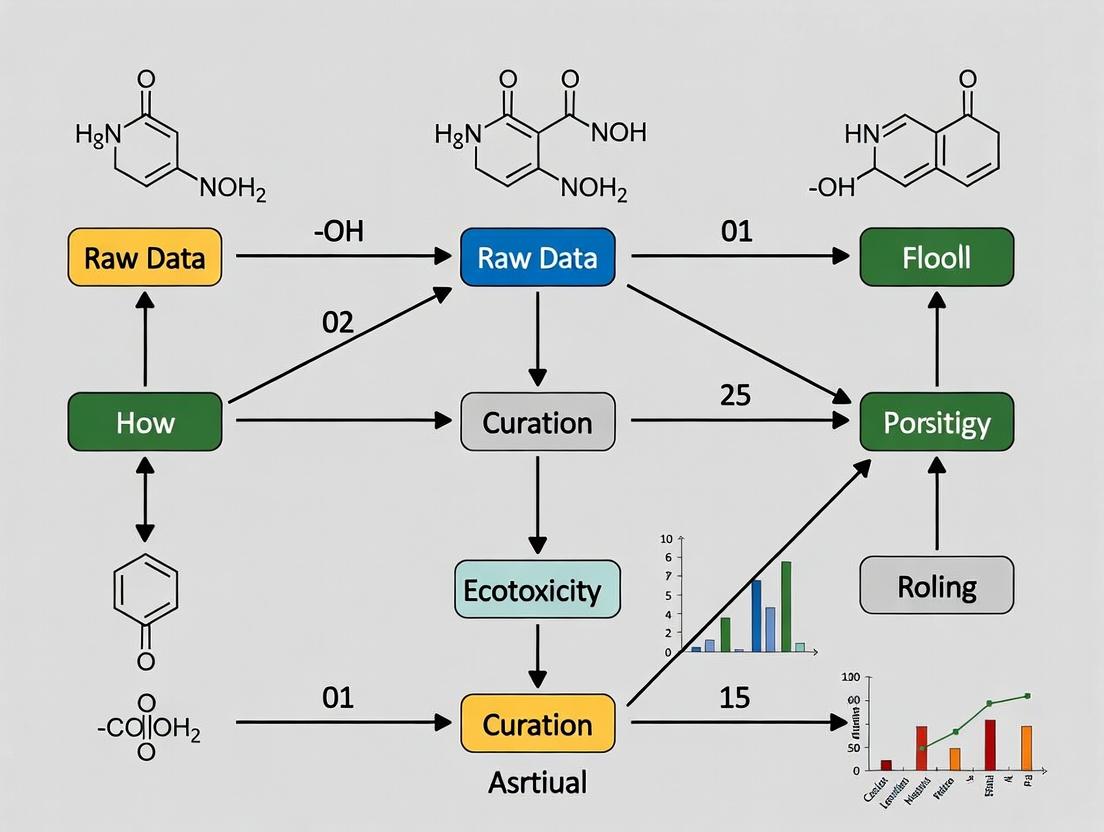

Workflow Visualization

Diagram 1: Ecotoxicology Data Curation Workflow

Table 3: Key Research Reagent Solutions for Ecotoxicology Data Curation

| Tool/Resource | Category | Function in Curation | Example/Note |

|---|---|---|---|

| ECOTOX Knowledgebase | Primary Data Source | Provides curated, literature-derived single-chemical toxicity data for aquatic and terrestrial species. The starting point for many compilations[reference:22]. | Use EPA website or API for data harvesting[reference:23]. |

| CompTox Chemicals Dashboard | Chemical Registry | Authoritative source for chemical identifiers, properties, and links to toxicity data. Critical for verifying and standardizing chemical names[reference:24]. | Resolve CAS RN to DTXSID for consistent linking. |

| R / Python (pandas, tidyverse) | Data Processing | Scripting languages for automating data cleaning, transformation, harmonization, and quality control checks. Essential for handling large datasets. | Develop reproducible scripts for each curation step. |

| OECD Test Guidelines | Reporting Standard | Define standardized methods for toxicity testing. Used as a criterion for assessing study quality and data reliability during curation. | References like OECD 201 (Algae), 202 (Daphnia). |

| FAIR Principles | Data Management Framework | Guiding principles (Findable, Accessible, Interoperable, Reusable) to ensure curated data is maximally useful for the community[reference:25]. | Implement via rich metadata and repository deposit. |

| Zenodo / Figshare | Data Repository | Trusted platforms for publishing curated datasets with DOIs, ensuring long-term preservation and access. | Include a data descriptor file with submission. |

| Adverse Outcome Pathway (AOP) Wiki | Conceptual Framework | Organizes mechanistic knowledge. Curated MoA data can be linked to AOPs to support pathway-based assessment[reference:26]. | Useful for interpreting and grouping chemicals. |

Technical Support Center: Data Curation for Ecotoxicity Studies

Troubleshooting Common Data Curation Issues

This section addresses specific, technical problems researchers encounter when curating raw ecotoxicity data for integration into reusable databases or models.

Issue 1: Inconsistent Endpoint Terminology Across Studies

- Problem: The same biological effect (e.g., mortality, immobilization) is reported using different terms (e.g., "LC50," "LE50," "EC50 (mortality)") or units (mg/L vs. µM), preventing automated aggregation [1] [2].

- Solution:

- Establish a Controlled Vocabulary: Adopt standardized terms from existing resources. For aquatic toxicity, align with the ECOTOX Knowledgebase's effect codes (e.g., "MOR" for mortality, "ITX" for intoxication/immobilization) [2] [3].

- Expert Harmonization: A subject matter expert must map synonymous terms from primary literature to the controlled vocabulary. Document this mapping rationale for provenance [1].

- Unit Standardization: Implement automated scripts to convert all concentration values to a standard unit (e.g., molarity for biological relevance, mg/L for environmental relevance), flagging any entries where molecular weight is missing for conversion [2].

Issue 2: Missing Critical Metadata in Aggregated Datasets

- Problem: Data obtained from large repositories often lack essential metadata (e.g., test species sex, life stage, or specific test guideline), making quality assessment and proper interpretation difficult [1].

- Solution:

- Expert Inference and Annotation: Use regulatory test guidelines to infer missing details. For example, if a Hershberger assay result lacks sex metadata, an expert can confidently annotate "male" based on the standardized protocol [1].

- Apply Quality Flags: Implement a data quality flagging system. Curated data in the Integrated Chemical Environment (ICE) receives flags to inform users about the completeness and reliability of metadata [1].

- Proactive Sourcing: Prioritize data extraction from primary study reports over pre-aggregated sources when high-quality metadata is essential for the research question [1].

Issue 3: Data Quality Variability in Open Literature

- Problem: Studies from the open literature vary in reliability. Using all data without assessment can introduce noise and bias into computational models [4] [3].

- Solution - Implement a Screening Protocol:

Follow a structured workflow based on EPA evaluation guidelines [4] [3]:

- Acceptance Screening: Filter studies based on minimum criteria: single-chemical exposure, whole organism effect, reported concentration/dose and exposure duration, use of a control group, and a calculable endpoint (e.g., LC50).

- Quality Review: Classify accepted studies based on adherence to guideline standards (e.g., OECD Test Guidelines 201, 202, 203), reporting clarity, and statistical rigor [2] [4].

- Curation Decision: For building benchmark datasets, include only studies passing the highest quality tier. For broader hazard assessment, include lower-tier studies with appropriate uncertainty annotations [1] [2].

Issue 4: Preparing Data for Machine Learning (ML) Benchmarking

- Problem: Model performance is incomparable across studies due to different data cleaning, splitting, and feature selection strategies [2].

- Solution - Create a Standardized ML-Ready Dataset:

The methodology for creating the ADORE benchmark dataset provides a protocol [2]:

- Core Data Extraction: Extract experimental results from a trusted curated source (e.g., ECOTOX), focusing on specific taxonomic groups (fish, crustaceans, algae) and acute lethal endpoints.

- Feature Expansion: Augment core data with chemical descriptors (e.g., SMILES, molecular weight), physicochemical properties, and species-specific phylogenetic data.

- Define Splits: Pre-define training and test splits based on chemical scaffolds to evaluate model performance on novel chemistries and prevent data leakage. Provide these splits publicly alongside the dataset.

Frequently Asked Questions (FAQ) on Curation Workflows

Q1: What are the first steps in designing a data curation workflow for ecotoxicity data? A1: Begin by identifying stakeholder needs and defining explicit use cases (e.g., risk assessment, QSAR model training, chemical prioritization) [1]. This determines which data and metadata to extract, the required quality threshold, and the formatting of the final output. The core requirement is to structure data to be both human-readable and machine-actionable [1].

Q2: How do I ensure my curated data is FAIR (Findable, Accessible, Interoperable, Reusable)? A2:

- Findable: Use persistent, unique chemical identifiers (DTXSID, InChIKey) and species taxonomies, not just names or CAS numbers [2] [5].

- Accessible: Use standard, non-proprietary data formats (e.g., CSV, JSON) for storage and sharing.

- Interoperable: Adopt controlled vocabularies and ontologies (e.g., from OBO Foundry) to describe assays, effects, and experimental conditions [6].

- Reusable: Provide rich, structured metadata and detailed documentation on all curation and provenance steps [1] [3].

Q3: What is the difference between data aggregation and expert-driven curation? A3: Aggregation is the automated collection of data from various sources with minimal processing. Expert-driven curation involves subject matter experts who assess data relevance and quality, harmonize terminology, infer missing metadata from context, and apply quality flags based on regulatory or scientific criteria. Curation transforms aggregated data into a reliable, high-confidence resource [1] [3].

Q4: Where can I access high-quality curated toxicology data to start my analysis? A4: Several publicly available, expertly curated resources exist:

- Integrated Chemical Environment (ICE): Curated in vivo, in vitro, and in silico data organized by regulatory endpoints, with tools for analysis [1] [6].

- ECOTOX Knowledgebase: The world's largest curated collection of single-chemical ecotoxicity data for aquatic and terrestrial species [5] [3].

- CompTox Chemicals Dashboard: Provides access to multiple EPA data streams, including ToxCast assay data, physicochemical properties, and exposure information [5] [6].

Table 1: Scale of Major Publicly Available Curated Toxicology Data Resources

| Resource Name | Primary Focus | Number of Chemicals | Number of Data Points/Records | Key Feature |

|---|---|---|---|---|

| ECOTOX Knowledgebase [5] [3] | Ecological toxicity | >12,000 | >1,000,000 test results | Curated aquatic & terrestrial ecotoxicity data from >50,000 references. |

| ICE (Integrated Chemical Environment) [1] [6] | Data for NAMs development | Varies by endpoint | Not specified (aggregated) | Harmonized data curated by toxicity endpoint with integrated analysis tools. |

| ADORE Benchmark Dataset [2] | Acute aquatic toxicity (ML) | 3,376 | 47,210 experiments | Curated for machine learning, includes chemical & phylogenetic features. |

Detailed Methodologies for Key Curation Protocols

Protocol 1: ICE Data Curation Workflow [1] Objective: To integrate diverse toxicity data into a harmonized, quality-controlled resource for chemical safety assessment. Steps:

- Data Collection: Gather data from primary literature, large aggregations (e.g., PubChem), and computational models.

- Expert Assessment: Subject matter experts evaluate data for relevance and quality. For example, for uterotrophic assay data, only results meeting all six criteria based on OECD/EPA guidelines are selected.

- Data Cleanup: Standardize spelling, capitalization, and units. Remove special characters that hinder computational analysis.

- Harmonization: Map all endpoint and methodology terms to a consistent controlled vocabulary.

- Formatting & Loading: Structure data into predefined, machine-readable schemas and load into the ICE database with version control.

Protocol 2: Building a Benchmark Ecotoxicity ML Dataset [2] Objective: To create a standardized, reusable dataset for training and comparing machine learning models. Steps:

- Source Core Data: Download the ECOTOX database flat files. Filter for three taxonomic groups (fish, crustacea, algae) and acute effects (mortality, immobilization, growth inhibition) within standard test durations (≤96h).

- Filter and Clean: Remove entries with missing critical metadata (species, chemical identifier). Standardize chemical identifiers using DTXSID or InChIKey.

- Expand Features: Enrich each record by joining with chemical descriptor data (e.g., SMILES from PubChem) and species phylogenetic data.

- Define Splits: Partition the data into training and test sets using chemical structure-based splitting (e.g., by molecular scaffold) to rigorously assess predictive performance on unseen chemistries.

- Package and Document: Release the dataset with clear documentation of all filtering steps, feature definitions, and the rationale for data splits.

Visualizations of Curation Workflows and Relationships

ICE Data Curation and Integration Workflow

The Data Integration Challenge in Toxicology

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Resources for Ecotoxicity Data Curation and Analysis

| Resource / Solution | Function in Curation Workflow | Key Utility |

|---|---|---|

| ECOTOX Knowledgebase [5] [3] | Primary source for curated ecological toxicity test data. | Provides pre-extracted, quality-screened data from the open literature, saving initial collection effort. Uses standardized vocabularies. |

| CompTox Chemicals Dashboard [5] [6] | Authoritative source for chemical identifiers, structures, and properties. | Resolves chemical ambiguity via DTXSID. Provides SMILES, molecular weight, and links to associated assay data (ToxCast). Essential for joining chemical and bioactivity data. |

| Integrated Chemical Environment (ICE) [1] [6] | Platform for accessing curated data and integrated analysis tools. | Offers not just data, but tools for IVIVE, PBPK, and chemical characterization. Data is curated by regulatory endpoint. |

| EPA ToxValDB [5] | Aggregated database of summary-level in vivo toxicity values. | Provides a curated collection of derived toxicity values (e.g., Benchmark Doses) from multiple sources, formatted for comparison. |

| OECD Test Guidelines [2] [4] | International standard for test methodologies. | The gold-standard reference for evaluating the reliability and relevance of experimental methods reported in primary studies. |

| Controlled Vocabularies & Ontologies (e.g., from OBO Foundry) [6] | Terminology systems for standardizing metadata. | Enable interoperability by providing machine-readable definitions for biological effects, anatomical terms, and assay components. |

Within a thesis focusing on raw data curation workflows for ecotoxicity studies, understanding the role and characteristics of primary data repositories is fundamental. ECOTOX, EnviroTox, and ACToR serve as critical pillars for data acquisition, each with distinct architectures and curation philosophies. Their effective use is a prerequisite for robust secondary data analysis and modeling.

Table 1: Key Characteristics of Ecotoxicity Data Repositories

| Repository | Primary Maintainer | Primary Scope | Data Source | Key Data Types | Access Method |

|---|---|---|---|---|---|

| ECOTOX | U.S. EPA | Ecotoxicology effects of chemicals on aquatic and terrestrial life. | Peer-reviewed literature, government reports. | LC50, EC50, NOEC, LOEC, mortality, growth, reproduction. | Public web interface, bulk download. |

| EnviroTox | Health & Environmental Sciences Institute (HESI) | Curated in vivo ecotoxicity data for regulatory applications. | High-quality published studies (selected). | Chronic toxicity endpoints for fish, invertebrates, algae. | Web platform, downloadable datasets. |

| ACToR | U.S. EPA (Computational Toxicology) | Aggregated data from ~1,000 public sources on chemical toxicity and exposure. | Multiple databases (including ECOTOX), literature. | Toxicity, exposure, hazard, physicochemical properties. | Web interface, API. |

Table 2: Quantitative Data Scope (Approximate Figures as of 2023-2024)

| Repository | Number of Chemicals | Number of Species | Number of Records | Temporal Coverage |

|---|---|---|---|---|

| ECOTOX | ~12,000 | ~13,000 | ~1,000,000 | 1900s - Present |

| EnviroTox | ~1,200 | ~300 | ~45,000 (curated) | 1970s - Present |

| ACToR | ~900,000 | N/A (Chemical-centric) | ~500 million data points | Varies by source |

Technical Support Center: Troubleshooting & FAQs

Common Access & Data Retrieval Issues

- Verify Systematic Name: Use the chemical's CAS RN (Chemical Abstracts Service Registry Number) if available. This is the most reliable identifier.

- Check the Synonym List: ECOTOX has a built-in synonym finder. Use the "Chemical Search" tab and try entering different common names.

- Broaden Filters: Ensure your "Test Location" and "Effect" filters are not overly restrictive. Start broad, then narrow down.

- Database Update Window: Be aware of scheduled maintenance periods (typically announced on the EPA website).

Q2: When comparing data from EnviroTox and ECOTOX for the same chemical, I see discrepancies. Which one is correct? A: Discrepancies arise from different curation protocols. This is a core consideration for your raw data curation workflow.

- EnviroTox employs a highly stringent curation process with explicit quality criteria (e.g., test duration, controls, solvent use). It may exclude studies that do not meet these benchmarks.

- ECOTOX aims for comprehensiveness, including a wider array of studies with varying quality.

- Troubleshooting Action: In your workflow, document the source and its curation policy. For regulatory analysis, EnviroTox's curated set may be preferred. For a comprehensive ecological risk assessment, you may need to merge and quality-check data from both, applying your own consistency filters.

Q3: The ACToR database is vast. How can I efficiently extract relevant ecotoxicity data without being overwhelmed? A: Use ACToR as a chemical index and gateway, not a primary ecotoxicity data source.

- Start with the "Chemical Dashboard": Search by CAS RN or name.

- Identify Relevant Data Sources: On the chemical summary page, review the "Related Databases" section. Look for direct links to ECOTOX and ToxRefDB (for in vivo toxicity).

- Use the "Assays" Tab: Filter assay results by endpoint type (e.g., "mortality," "growth").

- Key Tip: For deep ecotoxicity analysis, use the links to jump directly to the record in the specialized database (ECOTOX), which offers more sophisticated ecologically relevant filters.

Data Quality & Curation Challenges

Q4: How do I handle missing critical metadata (e.g., pH, water hardness) for an aquatic toxicity record in ECOTOX? A: This is a frequent curation challenge.

- Source Tracking: Note the ECOTOX "Source ID." Use this to locate the original publication through platforms like PubMed or DOI link.

- Extract from Primary Literature: Manually retrieve the missing parameters from the original study.

- Document Assumptions: If the original study also lacks the data, document this gap. For your thesis workflow, you may need to apply data gap-filling rules (e.g., using study-average values for that laboratory, or stated reference conditions). Clearly state any assumptions made.

- Flag the Record: In your curated dataset, create a quality flag (e.g., "CriticalMetadataMissing") to inform later analysis sensitivity.

Q5: I have downloaded a dataset from EnviroTox. What do the "Quality Scores" and "Flags" mean, and how should I use them? A: EnviroTox's quality assessment is central to its value.

- Quality Score (typically 1-4): A numeric score where a higher number indicates better adherence to predefined quality criteria (e.g., test guideline compliance, control performance).

- Quality Flags: Textual descriptors (e.g., "Acceptable," "Deficient") summarizing the score.

- Protocol: In your curation workflow, define a quality threshold for inclusion. For example, "Include only records with a Quality Score ≥ 3" or "Include 'Acceptable' and 'Guideline' studies." Consistency in applying this filter across all chemicals is crucial.

Experimental Protocols for Cited Studies

Protocol 1: Data Extraction and Curation for a Systematic Review (Referencing ) Objective: To systematically collate and curate raw ecotoxicity data from multiple repository sources for a meta-analysis. Materials: Access to ECOTOX, EnviroTox, and ACToR; reference management software (e.g., Zotero, EndNote); structured spreadsheet or database (e.g., SQLite, Microsoft Excel with predefined columns). Methodology:

- Define Inclusion/Exclusion Criteria: Establish criteria for species, chemical, endpoint (e.g., LC50 for fish), exposure duration, and study quality a priori.

- Parallel Repository Search: Execute identical search queries across ECOTOX and EnviroTox using CAS RN. Use ACToR to identify additional potential sources.

- Data Harvesting: Download full result sets or manually extract records meeting criteria. Record the Repository Source ID for each entry.

- Metadata Harmonization: Map all extracted fields to a common data schema (e.g., transform all concentrations to µg/L, standardize species names to ITIS taxonomy).

- De-duplication: Compare records based on Title, Source ID, and Author to remove duplicates present in multiple repositories.

- Quality Filtering: Apply the predefined quality criteria (e.g., applying EnviroTox scores, or manual check for ECOTOX studies).

- Gap Documentation: Log any missing critical data fields for each record.

- Curation Output: Generate a single, harmonized, and quality-flagged dataset for statistical analysis.

Protocol 2: Building a Curated Dataset for QSAR Modeling (Referencing ) Objective: To create a high-quality, consistent dataset suitable for developing Quantitative Structure-Activity Relationship (QSAR) models for ecotoxicity prediction. Materials: EnviroTox database (primary source); chemical structure drawing software; cheminformatics toolkit (e.g., RDKit, OpenBabel); curation scripting environment (e.g., Python, R). Methodology:

- Source Selection: Prioritize the EnviroTox database due to its high curation standard, ensuring biological consistency.

- Endpoint Selection: Extract data for a single, well-defined endpoint (e.g., 48h Daphnia magna immobilization EC50).

- Structural Standardization: For each chemical in the dataset:

- Retrieve SMILES notation from EnviroTox or linked sources (e.g., EPA CompTox Chemistry Dashboard).

- Standardize structures: remove salts, neutralize charges, generate canonical tautomers.

- Calculate and verify key molecular descriptors (e.g., log P, molecular weight).

- Data Point Curation:

- Outlier Detection: Use statistical methods (e.g., IQR, Dixon's Q) to identify and review outliers within congeneric series.

- Resolve Replicates: For multiple tests on the same chemical, apply a pre-defined rule (e.g., use geometric mean, or select the study with the highest quality score).

- Mechanistic Consistency Check: Ensure all chemicals in the final set are likely to act via a similar mode of action (MoA) for the model's applicability domain.

- Final Dataset Assembly: Produce a two-column table: Column A = Standardized SMILES, Column B = Curated toxicity value (log unit), with associated metadata in subsequent columns.

Visualizations

Title: Raw Data Curation Workflow from Repositories

Title: Repository Selection Based on Research Goal

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Ecotoxicity Data Curation Workflow

| Item | Function in the Curation Workflow |

|---|---|

| Chemical Identifier Resolver (e.g., EPA CompTox Dashboard, PubChem) | Converts between chemical names, CAS RN, SMILES, and InChIKeys, ensuring unambiguous substance identification across databases. |

| Taxonomic Name Resolver (e.g., ITIS, WoRMS) | Standardizes species names to accepted scientific nomenclature, resolving synonyms and common name variations from different data sources. |

| Structured Data Schema (e.g., custom SQL database, ISA-Tab format) | Provides a pre-defined template for data entry, ensuring consistency, completeness, and machine-readability of the curated dataset. |

| Cheminformatics Toolkit (e.g., RDKit, CDK) | Standardizes chemical structures, calculates molecular descriptors, and helps assess chemical similarity for defining model applicability domains. |

| Scripting Environment (e.g., Python with Pandas, R with tidyverse) | Automates repetitive curation tasks: data cleaning, unit conversion, merging tables, and applying logical quality filters at scale. |

| Quality Flagging System (e.g., predefined codes in a data column) | A consistent method to tag records with issues (e.g., "missing control data," "concentration units unclear") for transparent decision-making. |

This technical support center provides troubleshooting guidance for researchers navigating the data curation and analysis workflow in ecotoxicology. The content is framed within the DIKW (Data, Information, Knowledge, Wisdom) hierarchy, a conceptual model for understanding how raw observations are transformed into actionable understanding [7]. The following guides and FAQs address common issues at each stage of this journey, supporting a robust raw data curation workflow for ecotoxicity studies.

Data Layer: Acquisition & Curation Troubleshooting

This layer involves the collection and initial organization of raw, unprocessed facts and figures from experiments and monitoring.

FAQs & Troubleshooting Guides

Q1: My chemical toxicity data is scattered across literature and in-house studies. How can I systematically compile it for analysis?

- A: Utilize curated public repositories. The ECOTOX Knowledgebase is a comprehensive source, containing over one million test records for more than 12,000 chemicals and 13,000 species [8] [3]. For a more specialized dataset, a 2024 resource provides curated mode-of-action (MoA) data and effect concentrations for 3,387 environmentally relevant chemicals [9].

- Troubleshooting Tip: If you cannot find data for a specific chemical-species pair in ECOTOX, check the chemical's use group (e.g., pesticide, pharmaceutical, industrial chemical) in curated lists [9]. This can guide read-across strategies or help identify analogous chemicals with existing data.

Q2: I've downloaded a large dataset, but the formats and terminology are inconsistent. How do I standardize it?

- A: Implement a data curation pipeline following established principles. The ECOTOX team uses a systematic review and data curation pipeline with standard operating procedures (SOPs) to ensure consistency [3]. Adopt the ATTAC workflow principles (Access, Transparency, Transferability, Add-ons, Conservation sensitivity) to guide the homogenization and integration of scattered data [10].

Q3: How do I verify the quality and relevance of toxicity data from literature sources?

- A: Apply systematic review criteria. During curation, ECOTOX evaluates studies based on predefined applicability (e.g., relevant species, reported exposure) and acceptability (e.g., documented controls) criteria [3]. Develop and document similar internal criteria for your workflow to ensure data quality.

Quantitative Data Summary The table below summarizes key statistics from major curated data sources to inform your data acquisition strategy.

| Data Source | Number of Chemicals | Number of Species | Test Records | Key Focus | Citation |

|---|---|---|---|---|---|

| ECOTOX Knowledgebase | >12,000 | >13,000 (aquatic & terrestrial) | >1,000,000 | Comprehensive single-chemical toxicity | [8] [3] |

| Curated MoA Dataset (2024) | 3,387 | Algae, Crustaceans, Fish (key groups) | Not specified | Mode of action & effect concentrations | [9] |

Experimental Protocol: Systematic Literature Curation This methodology is adapted from the ECOTOX systematic review pipeline [3].

- Define Scope: Identify the chemical(s) and taxonomic groups of interest.

- Search Strategy: Conduct comprehensive searches in scientific databases (e.g., Web of Science, PubMed) using defined chemical names and toxicity-related terms.

- Screen References: Perform title/abstract screening followed by full-text review against pre-defined eligibility criteria (e.g., original data, controlled study, relevant endpoint).

- Data Abstraction: Extract pertinent details (chemical, species, exposure conditions, test results, endpoints) using a standardized template or controlled vocabulary.

- Data Verification: Implement a quality check step (e.g., double entry) to ensure accuracy and consistency of the abstracted data.

Information Layer: Processing & Contextualization

Here, curated data is organized, structured, and given context to make it meaningful and useful.

FAQs & Troubleshooting Guides

Q4: How can I effectively explore and filter a large toxicity database to find relevant information?

- A: Use advanced database features. The ECOTOX Knowledgebase offers a SEARCH feature that allows filtering by 19 parameters (chemical, species, effect, endpoint) and an EXPLORE feature for less defined queries [8]. Its DATA VISUALIZATION tools enable interactive plotting to identify trends and outliers [8].

Q5: I have chemical concentration data, but how do I contextualize it biologically?

- A: Classify chemicals by their Mode of Action (MoA). A curated dataset categorizes chemicals into MoAs (e.g., narcosis, acetylcholinesterase inhibition) [9]. Linking your chemical to an MoA provides immediate biological context and enables grouping with chemicals that act similarly.

Visualization: The DIKW Workflow in Ecotoxicology The diagram below maps the foundational journey from raw data to wisdom, outlining key questions and tasks at each stage.

Knowledge Layer: Analysis & Synthesis

At this stage, information from multiple sources is analyzed, synthesized, and modeled to identify patterns, relationships, and principles.

FAQs & Troubleshooting Guides

Q6: How can I use existing toxicity data to predict effects for untested chemicals?

- A: Employ Quantitative Structure-Activity Relationship (QSAR) models. The ECOTOX database is explicitly used to build and validate QSAR models that predict toxicity based on a chemical's physical structure [8] [3]. Curated MoA data is also crucial for developing structure-based classification schemes [9].

Q7: How do I move from single-chemical toxicity to assessing mixture risks?

- A: Use Mechanistic Grouping. Knowledge of a chemical's MoA allows for grouping into "assessment groups" for cumulative risk assessment [9]. This is vital because diverse pesticides, pharmaceuticals, and industrial chemicals can share the same MoA (e.g., acting on the nervous system), posing a combined risk to non-target organisms [9].

Q8: My analysis requires integrating different data types (in vivo, in vitro, in silico). What framework can help?

- A: Structure your analysis using the Adverse Outcome Pathway (AOP) framework. AOPs organize mechanistic knowledge from molecular interaction to adverse ecological outcome, facilitating cross-species extrapolation and the integration of data from New Approach Methodologies (NAMs) [9].

Visualization: Systematic Data Curation Pipeline The following diagram details the experimental protocol for transforming raw literature into a curated, reusable knowledge base, as practiced by the ECOTOX team [3].

Wisdom Layer: Application & Decision-Making

Wisdom involves using knowledge to make informed judgments, decisions, and predictions within a broader ethical and practical context.

FAQs & Troubleshooting Guides

Q9: How can my curated data and analysis best support environmental regulation and chemical safety?

- A: Ensure data is FAIR and actionable. Regulators use databases like ECOTOX to develop water quality criteria, inform ecological risk assessments, and prioritize chemicals under review [8]. Applying the ATTAC principles ensures data supports conservation-sensitive decision-making [10].

- Troubleshooting Tip: If your research conclusions are not being adopted, audit your workflow against the ATTAC principles—specifically Transparency in methods and Transferability of data formats—to bridge the gap between research and regulation [10].

Q10: How do we responsibly share sensitive or unpublished ecotoxicology data to advance the field?

- A: Adopt a collaborative workflow framework. The ATTAC guiding principles provide a structure for sharing data that balances openness with conservation sensitivity (e.g., protecting location data for endangered species) [10]. This promotes reuse and maximizes the value of existing data for integrative meta-analyses.

Visualization: ATTAC Principles for Data Sharing This diagram outlines the five guiding principles for openly and collaboratively sharing wildlife ecotoxicology data to transform knowledge into wise conservation action [10].

The Scientist's Toolkit: Research Reagent Solutions

This table details key resources and their functions in the ecotoxicological data workflow.

| Item / Resource | Primary Function | Relevance to DIKW Workflow | Citation |

|---|---|---|---|

| ECOTOX Knowledgebase | Comprehensive, curated repository of single-chemical toxicity test results. | Data/Information Source: Foundational resource for acquiring and contextualizing toxicity data. | [8] [3] |

| Curated MoA Dataset | Provides assigned modes of action and curated effect concentrations for 3,387 chemicals. | Information/Knowledge: Critical for contextualizing data biologically and enabling chemical grouping. | [9] |

| ATTAC Workflow Principles | Guidelines (Access, Transparency, etc.) for sharing and reusing wildlife ecotoxicology data. | Wisdom: Framework for ethical, effective application of knowledge to support regulation. | [10] |

| Adverse Outcome Pathway (AOP) Framework | Organizes mechanistic knowledge linking molecular initiation to adverse ecological outcomes. | Knowledge Synthesis: Provides structure for integrating data across biological levels and test systems. | [9] |

| Systematic Review Protocol | Standardized method for literature search, screening, and data extraction. | Data Curation: Essential methodology for transforming raw literature into reliable, structured data. | [3] |

| QSAR Modeling Tools | Use chemical structure descriptors to predict toxicity properties and MoA. | Knowledge Generation: Leverages curated data to build predictive models for data-poor chemicals. | [9] [8] |

Technical Support Center: Troubleshooting Guides & FAQs

This support center addresses common data curation challenges within the ecotoxicity raw data workflow, focusing on the accurate handling of chemical identifiers and experimental results.

FAQ: Chemical Identifier Curation

Q1: My dataset has CAS Registry Numbers (CAS RN) with hyphens in the wrong place or missing check digits. How can I validate and correct them?

A: CAS RNs follow a specific format: [##...##]-[##]-[#]. The final digit is a check digit calculated using a specific algorithm. To troubleshoot:

- Validate Format: Use a regular expression (e.g.,

^\d{1,7}-\d{2}-\d$) to check the basic pattern. - Verify Check Digit: Implement the checksum algorithm: Multiply each digit (including the two preceding the hyphen) from right to left by 1, 2, 3,..., respectively. Sum the products. The check digit is the sum modulo 10. Tools like the NIH/CACTUS CAS Checker can automate this.

- Cross-Reference: For invalid entries, use the chemical name or structure to search authoritative databases (PubChem, ChemSpider) to retrieve the correct CAS RN.

Q2: I have a mixture or a substance with multiple stereoisomers. Which SMILES or InChIKey should I use? A: This is a critical curation decision impacting reproducibility.

- For a racemic mixture or undefined stereochemistry, use the SMILES or InChIKey without stereochemical specifications.

- For a specific stereoisomer, use the isomeric SMILES or the full InChIKey (which includes stereochemistry in the second block). The standard InChIKey's first block (hash) is the same for all stereoisomers.

- Protocol: Always document the choice in the experimental metadata. Use the

isomericSmilestag in data files and note if the compound was a defined isomer or a mixture.

Q3: An InChIKey collision is theoretically possible. How should I address this in my curated database? A: While extremely rare for the first block (14 characters), it is a known limitation.

- Do not rely solely on InChIKey. Always store the full InChI string as the authoritative identifier.

- Use a composite key. In your database, create a unique identifier that pairs the InChIKey with another property (e.g., molecular formula) to guarantee uniqueness for collision handling.

- Verification Step: As part of curation, for any new compound, generate and store the full InChI string alongside the InChIKey.

FAQ: Toxicity Endpoint & Metadata Curation

Q4: How do I normalize toxicity endpoints (like LC50) from studies that use different exposure times (24h, 48h, 96h)? A: Direct numerical normalization across time points is scientifically invalid.

- Curation Protocol: Treat LC50/EC50 values as distinct data points linked to their specific exposure duration. Store them in a structured table.

- Metadata is Key: The exposure time must be an immutable, searchable metadata field (

exposure_durationwith unithours). - Data Presentation: When summarizing, present values in a table grouped by organism, endpoint, and exposure time.

Table 1: Example Curation of Fish Acute Toxicity Data

| Chemical Name | CAS RN | SMILES | Organism | Endpoint | Value (mg/L) | Exposure (h) | Confidence Score |

|---|---|---|---|---|---|---|---|

| Sodium dodecyl sulfate | 151-21-3 | CCCCCCCCCCCCOS(=O)(=O)[O-] | Danio rerio | LC50 | 12.5 | 96 | High |

| Sodium dodecyl sulfate | 151-21-3 | CCCCCCCCCCCCOS(=O)(=O)[O-] | Daphnia magna | EC50 (immobilization) | 8.2 | 48 | High |

Q5: How should I handle non-numeric or qualitative results (e.g., ">100 mg/L" or "No observed effect at 10 mg/L") in a quantitative database? A: Preserve the original information while making it computationally usable.

- Structured Fields: Create three linked data fields:

effect_value: The numeric part (e.g., 100, 10).effect_operator: The qualitative modifier (e.g.,>,<,~,NOEC).effect_comment: The original text string.

- Query Logic: This allows for advanced filtering (e.g., "find all LC50 values

< 1 mg/L").

Q6: What is the minimum experimental metadata required for FAIR (Findable, Accessible, Interoperable, Reusable) data curation in ecotoxicity? A: A core set of metadata should accompany every data point.

- Chemical Identity: Preferred IUPAC Name, CAS RN, isomeric SMILES, InChI/InChIKey.

- Biological System: Organism (with taxonomic ID), life stage, sex (if applicable).

- Experimental Design: Endpoint (e.g., LC50, NOEC), exposure duration, temperature, pH, endpoint measurement method.

- Result: Numeric value, unit, statistical measure (e.g., mean, 95% CI), sample size (n).

- Source: Citation (Digital Object Identifier - DOI).

Experimental Protocol: Curation Workflow for Ecotoxicity Data

Title: Standard Operating Procedure for Manual Curation of Ecotoxicity Data Points from Literature.

Objective: To extract, validate, and structure chemical, toxicity, and metadata from published ecotoxicity studies into a standardized format.

Materials: Access to scientific literature (PDFs), chemical identifier resolver tools (e.g., PubChem, OPSIN, ChemAxon), a spreadsheet or database with controlled vocabularies.

Methodology:

- Source Identification & Extraction:

- From the selected paper, identify the specific data table or result paragraph containing the toxicity endpoint.

- Extract the chemical name as reported, the test organism, the endpoint abbreviation (LC50, EC50, etc.), the numeric value, unit, exposure time, and any relevant experimental conditions (pH, temp).

Chemical Identifier Curation:

- Input the reported chemical name into a structure-based database (PubChem, ChemSpider).

- Verify the structure matches the intended compound in the study.

- Retrieve and record the validated CAS RN, isomeric SMILES, and InChIKey. Cross-check between two sources if uncertain.

- If the substance is a mixture, note this and curate identifiers for the major active component(s) with appropriate annotations.

Toxicity Endpoint Normalization:

- Record the endpoint using a controlled vocabulary (e.g., "LC50" for lethal concentration, "EC50_immobilization" for effect concentration).

- Convert all values to a standard unit (e.g., mg/L, μM). Document the original unit and conversion factor.

- Apply the structured field approach for qualitative results (see FAQ A5).

Metadata Annotation:

- Populate fields for organism (using e.g., NCBI Taxonomy ID), exposure duration, test medium, temperature, and any other parameters reported as critical.

- Add the full citation and DOI of the source publication.

Quality Control & Entry:

- A second curator reviews a subset of entries for accuracy in identifier mapping and data transcription.

- Enter the fully curated record into the target database or structured file (e.g., CSV, JSON-LD).

Workflow & Relationship Diagrams

Title: Ecotoxicity Data Curation Workflow

Title: Relationship Between Chemical Identifier Types

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Chemical Data Curation

| Tool / Resource | Type | Primary Function in Curation |

|---|---|---|

| PubChem | Database | Authoritative source for chemical structures, properties, and validated identifiers (CID, CAS, SMILES, InChIKey). |

| ChemSpider | Database | Community-resourced chemical structure database with extensive links to other resources and spectral data. |

| OPSIN (Open Parser for Systematic IUPAC Nomenclature) | Software | Converts IUPAC chemical names into chemical structures (SMILES, InChI), automating a key curation step. |

| RDKit | Cheminformatics Library | Open-source toolkit for working with chemical structures (SMILES/InChI conversion, fingerprinting, standardization). |

| NIH/CACTUS CAS Check Digit Calculator | Web Tool | Validates the format and check digit of CAS Registry Numbers. |

| ChEMBL / ECOTOX | Database | Curated databases of bioactivity and ecotoxicity data, providing models for data structure and metadata. |

| JSON-LD | Data Format | A lightweight Linked Data format ideal for embedding structured metadata (chemical IDs, experimental conditions) alongside toxicity data. |

| OpenBabel | Software Tool | Converts between numerous chemical file formats, useful for standardizing structural data from various sources. |

The Curator's Toolkit: A Practical Pipeline for Processing Ecotoxicity Data

Technical Framework: Principles and Workflow

Strategic data harmonization is the foundational process of unifying data from diverse origins into a coherent, standardized dataset ready for analysis. In ecotoxicity studies, this is critical for integrating data from varied sources like scientific literature, laboratory information management systems (LIMS), and public databases such as the US EPA's ECOTOX Knowledgebase [8] [3].

The core challenge lies in reconciling inconsistencies in formats, structures, and semantics (e.g., differing units of measurement, species nomenclature, or effect endpoint terminology) to create a reliable, single source of truth for chemical hazard assessment [11] [9].

A structured, multi-phase workflow is essential for success. The following diagram outlines the key stages from data assessment to maintenance, adapted for ecotoxicity data curation.

Diagram: Six-Stage Workflow for Ecotoxicity Data Harmonization

Table 1: Core Phases of Strategic Data Harmonization for Ecotoxicity Studies [11] [3]

| Phase | Primary Objective | Key Activities for Ecotoxicity Data | Typical Timeline |

|---|---|---|---|

| 1. Assessment & Preparation | Understand data landscape and project scope. | Inventory sources (e.g., ECOTOX, in-house studies); Assess quality of species IDs, concentration units; Define required endpoints (LC50, NOEC, etc.). | Weeks 1-2 |

| 2. Framework Design | Establish the rules for standardization. | Adopt controlled vocabularies (e.g., from EPA/ECOTOX); Define rules for unit conversion (ppm to μM); Set criteria for data acceptability. | Weeks 3-4 |

| 3. Data Mapping | Align source data elements to the target model. | Map source spreadsheet columns to a unified schema; Link synonymous chemical names (CAS RN as anchor); Align varied test duration descriptions. | Weeks 5-8 |

| 4. Data Transformation | Convert and integrate data into the harmonized set. | Clean species names; Convert all concentrations to molar units; Merge data from different sources into a master table. | Weeks 9-12 |

| 5. Quality Assurance & Validation | Ensure integrity and accuracy of harmonized data. | Verify random records against original sources; Run statistical checks for outliers; Validate model with a known chemical dataset. | Weeks 13-14 |

| 6. Maintenance & Monitoring | Ensure data remains accurate and relevant. | Schedule quarterly updates from ECOTOX; Monitor for new data types; Refine transformation rules based on user feedback. | Ongoing |

Experimental Protocol: Harvesting and Curating Data from the ECOTOX Knowledgebase

This protocol details the methodology for systematically extracting, harmonizing, and curating raw ecotoxicity data from the US EPA's ECOTOX Knowledgebase, a primary source for constructing a research-ready dataset [8] [3].

Materials and Pre-Experiment Planning

- Primary Data Source: US EPA ECOTOX Knowledgebase (Version 5+) [8] [3].

- Chemical List: A predefined list of target chemicals, ideally with validated Chemical Abstracts Service Registry Numbers (CAS RN).

- Software Tools: A data processing environment (e.g., R with

tidyverse, Python withpandas), a tool for managing semantic vocabulary (e.g., simple thesaurus or ontology manager), and a database or spreadsheet application for the final curated dataset. - Reference Standards: Access to the relevant literature or standard operating procedures (SOPs) for endpoint definitions and test guideline criteria (e.g., OECD, ASTM guidelines referenced in ECOTOX).

Step-by-Step Procedure

Step 1: Targeted Data Extraction from ECOTOX

- Use the ECOTOX Search or Explore feature to query your target chemicals by CAS RN or name [8].

- Apply relevant filters (e.g., species group:

Fish,Crustaceans,Algae; effect:Mortality,Growth) to focus the dataset. - Use the customizable output options to select all necessary data fields (e.g., Chemical Name, CAS Number, Species Name, Effect, Endpoint, Concentration Value/Unit, Exposure Duration, Reference). Export the data in a structured format (CSV, XLSX).

Step 2: Initial Assessment and Cleaning

- Inventory: Load the extracted data. Document the number of records, unique chemicals, and species.

- Quality Screening: Flag records missing critical information (e.g., no CAS RN, no numeric concentration value, missing test duration).

- De-duplication: Identify and merge duplicate entries that may arise from overlapping searches.

Step 3: Harmonization Framework Application

- Chemical Standardization: Convert all chemical identifiers to a single, authoritative system (e.g., standardize names using CAS RN as the primary key).

- Unit Conversion: Identify all concentration units (e.g., ppb, mg/L, μM). Apply conversion factors to transform all values into a single, consistent unit (e.g., μM for organic chemicals) using chemical-specific molecular weights.

- Vocabulary Alignment: Map all reported effect and endpoint terms to a controlled internal vocabulary. For example, map

LC50,Lethal concentration 50,50% lethal concto a single termLC50. - Species Harmonization: Validate scientific names against a taxonomic authority (e.g., ITIS) and standardize to accepted binomial nomenclature.

Step 4: Integration and Validation

- Merge Data: Integrate the harmonized ECOTOX data with other curated sources (e.g., in-house experimental data, other public databases) into a unified schema.

- Logical Validation: Perform sanity checks. For example, ensure chronic NOEC values are generally lower than acute LC50 values for the same chemical-species pair.

- Source Verification: For a statistically significant sample (e.g., 5%) of records, trace the harmonized data back to the original source abstract in ECOTOX or the cited publication to verify accuracy [3].

Step 5: Final Curation and Documentation

- Format the final dataset according to FAIR principles (Findable, Accessible, Interoperable, Reusable) [3].

- Create comprehensive metadata documenting all steps: source extraction date, harmonization rules applied, conversion factors, assumptions made, and version information.

Table 2: Common Data Discrepancies and Harmonization Actions in Ecotoxicity Data [11] [9] [3]

| Data Element | Common Discrepancy | Harmonization Action |

|---|---|---|

| Chemical Identifier | Multiple common names, trade names, or spelling variants for one chemical. | Use CAS RN as the primary, immutable key. Map all names to a single preferred name. |

| Concentration | Values reported in mass/volume (mg/L), molarity (μM), or parts-per (ppm, ppb). | Convert all values to a standard molar unit (μM) using the molecular weight for organic chemicals. Document conversion factor. |

| Test Duration | "48-h", "2 day", "48 hr", "Acute (48h)". | Standardize to a numeric value in hours (e.g., 48) and a separate category (e.g., Acute). |

| Effect Endpoint | "LC50", "EC50 (mortality)", "50% Lethal Concentration". | Map to controlled vocabulary: LC50. Differentiate from EC50 (for sublethal effects). |

| Species Name | Common name vs. scientific name; outdated or misspelled scientific name. | Standardize to current accepted binomial nomenclature (e.g., Oncorhynchus mykiss) using a taxonomic database. |

Table 3: Key Research Reagent Solutions & Tools for Ecotoxicity Data Curation

| Item / Resource | Primary Function | Relevance to Harmonization Workflow |

|---|---|---|

| US EPA ECOTOX Knowledgebase [8] [3] | Comprehensive, curated source of single-chemical toxicity data for aquatic and terrestrial species. | The primary external data source for extraction. Provides over 1 million test records with structured fields, serving as a model for schema design. |

| Controlled Vocabulary/Thesaurus | A predefined list of standardized terms for effects, endpoints, and test conditions. | Critical for Phase 2 (Framework Design) and Phase 3 (Mapping). Ensures semantic consistency across disparate sources [3]. |

| Chemical Registry (e.g., CAS RN, CompTox Dashboard) | Authoritative source for unique chemical identifiers and properties. | The anchor for chemical standardization (Phase 3). Used to resolve chemical name conflicts and obtain molecular weights for unit conversion. |

| Taxonomic Database (e.g., ITIS, WORMS) | Authoritative source for validated species names and taxonomy. | Essential for standardizing organism identities in the dataset, ensuring accurate cross-study comparison. |

| Scripting Environment (R/Python) | Programming environment for data manipulation, analysis, and automation. | Used to automate the transformation, cleaning, and integration steps (Phase 4), making the process reproducible and scalable. |

| Data Validation & Profiling Tools | Software or scripts to statistically profile data and identify outliers or inconsistencies. | Supports Phase 5 (Validation). Used to run automated quality checks on the harmonized dataset. |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: What is the single most important step to ensure successful data harmonization? A1: The most critical step is the initial Framework Design (Phase 2), specifically establishing a clear, documented set of controlled vocabularies and transformation rules before processing any data [11]. Investing time here prevents inconsistent decisions later and ensures all team members process data identically.

Q2: How do I handle a chemical that has multiple CAS Registry Numbers or where the CAS RN is missing from the source data? A2: This is a common issue. First, use the EPA CompTox Chemicals Dashboard (linked from ECOTOX) to verify the correct identifier [8]. For records with missing IDs, a manual literature search based on the provided chemical name and study details is necessary. Document all such cases and decisions in your project metadata. Never guess or assume a CAS RN.

Q3: Can I automate the entire harmonization process? A3: While core transformation tasks (unit conversion, format changes) can and should be automated using scripts, complete automation is not advisable. Human oversight is essential for semantic mapping (e.g., deciding if "reduced spawning" maps to "Reproduction" endpoint) and for validating complex cases flagged by automated quality checks [12].

Q4: How often should I update my harmonized dataset with new data from sources like ECOTOX?

A4: ECOTOX is updated quarterly [8]. For a living review or ongoing monitoring project, a quarterly or biannual update cycle is recommended. Implement a versioning system for your curated dataset (e.g., v2.1_2025-Q2) to track changes over time [11].

Troubleshooting Guide

Table 4: Common Issues and Solutions in Ecotoxicity Data Harmonization

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| Extracted data has inconsistent date formats or unclear study years. | Source data entries may use different formats (DD/MM/YYYY, YYYY, "Unpublished"). | During mapping (Phase 3), create a rule to extract only the publication year. Mark "Unpublished" or incomplete dates as NA and flag for later review. |

| After merging two sources, I find conflicting toxicity values for the same chemical-species pair. | This may be due to genuine experimental variation, differences in test conditions (e.g., water hardness, temperature), or one value being an error. | Do not automatically average or delete. Preserve both values but add new columns for Test_Conditions and Notes. This allows for later sensitivity analysis or the application of data quality weighting schemes. |

| My unit conversion for concentrations is producing extreme outliers. | The wrong molecular weight was used, or the original unit was misidentified (e.g., ppb assumed to be μg/L for water, but it could be μg/kg for sediment). |

Audit the conversion logic. Verify the molecular weight for each chemical. Check the original context in ECOTOX—the "Media" field indicates if it's a water or sediment study, which clarifies the mass basis. |

| The harmonized dataset is much smaller than the sum of my extracted records. | Aggressive filtering during quality screening may have removed too many records. Transformation rules (e.g., requiring a CAS RN) may be too strict. | Review the records removed at each stage. Adjust your acceptability criteria if they are unnecessarily stringent. It is often better to retain a record with a minor issue (with a flag) than to lose the data entirely. |

Troubleshooting Guides & FAQs

Q1: During the curation of ecotoxicity endpoints (e.g., LC50), I frequently encounter missing values in key fields like exposure duration or chemical concentration. What is the most statistically sound method to handle this?

A1: For ecotoxicity data, simple deletion or mean imputation is discouraged as it can introduce bias. The recommended protocol is Multiple Imputation by Chained Equations (MICE). First, assess if data is Missing Completely at Random (MCAR) using Little's test. If not MCAR, use MICE to create 5-10 imputed datasets. The protocol involves: 1) Loading your dataset (df) in R using the mice package. 2) Specifying the imputation model (e.g., predictive mean matching for continuous variables). 3) Running the imputation: imp <- mice(df, m=5, maxit=50, method='pmm', seed=500). 4) Pooling results from analyses on each dataset using pool(). For specific chemical parameters, use Quantitative Structure-Activity Relationship (QSAR) models as predictors within the MICE framework to improve imputation accuracy.

Q2: My dataset from public repositories has duplicate entries for the same test organism and chemical, but with slight variations in reported effect values. How do I resolve this?

A2: This is common in aggregated ecotoxicity databases. Follow this deduplication protocol: 1) Fuzzy Matching: Identify duplicates not just on exact matches, but on core identifiers (Chemical CAS, Species, Endpoint) using string distance functions (e.g., agrep in R). 2) Priority Hierarchy: Establish a pre-defined hierarchy for source reliability (e.g., GLP studies > peer-reviewed articles > grey literature). 3) Variance-Based Selection: For entries from equal-priority sources, calculate the coefficient of variation (CV). If CV < 50%, retain the geometric mean. If CV ≥ 50%, flag the entry for expert review. 4) Documentation: Create an audit trail log recording all merged records and the rule applied.

Q3: How do I standardize units across decades of ecotoxicity studies that report concentrations in ppm, ppb, µg/L, mg/L, and mol/L? A3: Implement a two-stage automated unit standardization workflow. Stage 1: Conversion to Molarity. Convert all mass-based units (ppm=mg/L, ppb=µg/L) to a common molarity (mol/L) using chemical-specific molecular weight. Always retrieve molecular weight from a trusted source like PubChem via its API to ensure accuracy. Stage 2: Logical Validation. Post-conversion, run logic checks: Is the converted value within the plausible solubility limit for that chemical? For example, a reported 1000 mg/L concentration for a poorly soluble compound should be flagged. Use a lookup table of solubility data (from sources like EPA's CompTox) for automated flagging.

Q4: After cleaning, how can I visually and quantitatively confirm the integrity of my curated dataset before proceeding to meta-analysis?

A4: Implement a validation protocol consisting of: 1) Summary Statistics Table: Generate pre- and post-cleaning summaries for key numeric fields (see Table 1). 2) Range Plots: Create boxplots for key endpoints (e.g., LC50) by taxonomic group before and after cleaning to identify outlier removal impact. 3) Missingness Map: Use the naniar package in R to create a visualization of the missing data pattern post-imputation to ensure no systematic bias remains. 4) Unit Consistency Check: Script a check to confirm that 100% of concentration values in the final dataset are in the standardized unit (e.g., µM).

Table 1: Example Data Summary Pre- and Post-Cleaning for an Ecotoxicity Dataset

| Metric | Pre-Cleaning Raw Data | Post-Cleaning Curated Data |

|---|---|---|

| Total Records | 12,450 | 10,112 |

| Records with Missing Critical Fields | 1,844 (14.8%) | 0 (0%)* |

| Duplicate Entries (by unique study ID) | 325 potential groups | 0 (consolidated to 112 records) |

| Concentration Units Standardized | 5 different units | 1 unit (µM) |

| Mean LC50 (µM) for Cadmium, Fish | 45.2 ± 120.1 (SD) | 38.7 ± 22.4 (SD) |

*After applying MICE imputation for partially missing and removing entries where critical fields were entirely unreportable.

Experimental Protocol: Multiple Imputation for Missing Ecotoxicity Data

Objective: To generate statistically valid imputations for missing numeric ecotoxicity endpoints (e.g., NOEC) and categorical covariates (e.g., test temperature category).

Materials: R software (v4.0+), mice package, tidyverse package, dataset in CSV format.

Procedure:

- Load and Prepare Data:

library(mice); df <- read.csv("ecotox_data.csv"). Identify variables with >5% missingness. - Configure Imputation Methods: Specify

methodfor each column. For numeric LC50/NOEC, usemethod='pmm'(predictive mean matching). For categorical variables (e.g., 'Water_type'), usemethod='polyreg'. - Run Imputation: Execute

imp <- mice(df, m=5, maxit=20, seed=123). Them=5creates 5 imputed datasets. - Diagnostics: Check convergence by plotting

plot(imp). The lines for mean and SD of imputed variables should be intermingled without trends. - Analyze and Pool: Perform your analysis (e.g., linear regression) on each dataset:

fit <- with(imp, lm(logLC50 ~ logP + Taxon)). Pool results:pooled_fit <- pool(fit); summary(pooled_fit). - Export the Completed Dataset: Select one of the imputed datasets as the final, cleaned dataset for downstream workflow steps:

final_df <- complete(imp, 1).

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Data Cleaning |

|---|---|

R mice Package |

Primary tool for performing Multiple Imputation by Chained Equations (MICE) to handle missing data with statistical rigor. |

| PubChemPy (Python) / webchem (R) | Libraries to programmatically fetch authoritative chemical identifiers (CAS, InChIKey) and molecular weights for unit standardization. |

| OpenRefine Software | A powerful, open-source tool for exploring datasets, applying cluster algorithms to find "fuzzy" duplicates, and transforming data formats. |

| EPA CompTox Chemicals Dashboard API | Source for validating chemical names, obtaining solubility data, and other physicochemical properties for logic checks during unit conversion. |

| UNITSNAKE Python Library | A specialized library for parsing and converting complex scientific units, invaluable for standardizing legacy data. |

Visualization: Ecotoxicity Data Cleaning Workflow

Data Cleaning and Curation Workflow for Ecotoxicity

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My phylogenetic tree construction fails due to sequence alignment errors. What are the common causes and fixes? A: This is often due to non-homologous sequences or poor-quality input data.

- Cause 1: Input FASTA files contain sequences of vastly different lengths or non-overlapping gene regions.

- Fix: Use a sequence trimming tool (e.g., TrimAl) to ensure consistent regions. Manually verify the gene target (e.g., cytochrome P450, 16S rRNA) is consistent across all specimens.

- Cause 2: The alignment algorithm parameters are unsuitable for your gene family.

- Fix: For conserved genes, use MUSCLE or ClustalW. For divergent sequences, use MAFFT with the L-INS-i algorithm. Always visualize the raw alignment (e.g., in AliView) to check for obvious errors.

- Protocol (MAFFT Alignment):

- Prepare a FASTA file (

sequences.fasta) with all target protein or nucleotide sequences. - Run MAFFT:

mafft --localpair --maxiterate 1000 sequences.fasta > aligned_sequences.aln - Assess alignment quality with GUIDANCE2 or by calculating the average percentage of gaps.

- Prepare a FASTA file (

Q2: How do I handle missing chemical descriptor data for proprietary compounds in my ecotoxicity dataset? A: Use a tiered approach to descriptor generation.

- Step 1 (2D Descriptors): If the SMILES string is available, use open-source tools like RDKit or CDK to compute 2D molecular descriptors (e.g., molecular weight, logP, topological polar surface area). This covers ~85% of common descriptors.

- Step 2 (3D Descriptors): For missing 3D descriptors (e.g., quantum chemical properties), use a geometry optimization and calculation workflow.

- Protocol (RDKit & DFT Minimization):

- Generate initial 3D conformation from SMILES using RDKit's

EmbedMoleculefunction. - Optimize geometry using a semi-empirical method (e.g., PM6) via MOPAC or a Density Functional Theory (DFT) method (e.g., B3LYP/6-31G*) using Gaussian or ORCA.

- Extract descriptors (e.g., HOMO/LUMO energies, dipole moment) from the output log file.

- Generate initial 3D conformation from SMILES using RDKit's

- Protocol (RDKit & DFT Minimization):

- Step 3 (Read-Across): For completely proprietary structures with no SMILES, a read-across hypothesis using a similar, publicly described compound from the same class may be necessary, clearly documented as an estimated value.

Q3: The integrated phylogeny-chemical model shows overfitting. How can I reduce model complexity? A: Overfitting occurs when descriptors outnumber data points.

- Cause: Including hundreds of chemical descriptors without feature selection.

- Fix: Apply rigorous feature selection before model building.

- Protocol (Random Forest Feature Selection):

- Compute all available chemical descriptors (e.g., using PaDEL-Descriptor).

- Train a preliminary Random Forest model (

sklearn.ensemble.RandomForestRegressor). - Rank features by their

feature_importances_attribute. - Select the top N features, where N is less than 10% of your total number of ecotoxicity data points.

- Retrain your final model (e.g., Phylogenetic Generalized Least Squares - PGLS) using only the selected descriptors. Cross-validate the model performance.

- Protocol (Random Forest Feature Selection):

- Recommended Workflow:

- Construct your tree (see Q1) and save in Newick format (

tree.nwk). - Create a CSV file (

data.csv) with columns:species_name,chemical_id,lc50_value. - Use the

ggtreepackage in R.

- Protocol (R ggtree):

- Construct your tree (see Q1) and save in Newick format (

Table 1: Common Phylogenetic Distance Metrics & Software

| Metric | Description | Use Case | Typical Software |

|---|---|---|---|

| Patristic Distance | Sum of branch lengths connecting two taxa. | Quantitative trait evolution, PGLS. | RAxML, BEAST, ape (R) |

| Node Count | Number of nodes between two taxa. | Simple topological comparison. | Any tree viewer |

| Robinson-Foulds | Topological dissimilarity between two trees. | Comparing tree outputs from different methods. | phangorn (R), PAUP* |

Table 2: Essential Chemical Descriptor Categories for Ecotoxicity QSAR

| Descriptor Category | Examples | Relevance to Ecotoxicity | Source Tool |

|---|---|---|---|

| Hydrophobicity | LogP (Octanol-water partition coeff.) | Membrane permeability, baseline toxicity. | RDKit, ChemAxon |

| Topological | Molecular connectivity indices, Bond counts. | Molecular size & branching. | PaDEL, Dragon |

| Electronic | HOMO/LUMO energies, Polar Surface Area. | Reactivity, interaction with biological targets. | Gaussian (DFT), RDKit |

| Constitutional | Molecular weight, Heavy atom count. | Dose-response scaling. | All cheminformatics suites |

Experimental Protocol: Integrated Phylogenetic and Chemical Enrichment

Title: Protocol for Constructing a Phylogenetically-Informed Chemical Dataset for Ecotoxicity Analysis.

Objective: To curate a dataset where each ecotoxicity endpoint (e.g., LC50 for fish) is linked to both the species' phylogenetic position and the chemical's molecular descriptors.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Species & Toxicity Data Compilation:

- Extract species names and corresponding toxicity values (NOEC, LC50, etc.) from literature into a structured table (CSV format).

- Standardize species names to their binomial Latin format using the ITIS or NCBI Taxonomy database.

Phylogenetic Tree Construction:

- Retrieve protein-coding gene sequences (e.g., cytochrome P450 1A) for all species from GenBank using the

rentrezR package or Biopython. - Perform multiple sequence alignment (MSA) using MAFFT v7 (Protocol in Q1).

- Build a maximum-likelihood tree using IQ-TREE 2 with model finder:

iqtree2 -s alignment.phy -m MFP -B 1000 -alrt 1000. - Root the tree using an appropriate outgroup species.

- Retrieve protein-coding gene sequences (e.g., cytochrome P450 1A) for all species from GenBank using the

Chemical Descriptor Calculation:

- For each chemical in the dataset, obtain the canonical SMILES string from PubChem.

- Use the RDKit Python library to compute a standard set of 2D/3D descriptors. A sample script calculates 200+ descriptors.

Data Fusion:

- Merge the three data sources: (1) Toxicity Table, (2) Phylogenetic Tree (Newick), (3) Chemical Descriptor Table (CSV).

- The final enriched dataset is ready for Phylogenetic Generalized Least Squares (PGLS) or similar comparative analyses in R using

caperornlme.

Visualizations

Diagram Title: Workflow for Phylogenetic and Chemical Data Enrichment (76 chars)

Diagram Title: Chemical Interaction Leading to Ecotoxicity Pathway (65 chars)

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Enrichment Workflow |

|---|---|

| IQ-TREE 2 | Software for maximum likelihood phylogenetic tree inference with robust branch support metrics. |

| RDKit | Open-source cheminformatics library for calculating chemical descriptors from SMILES strings. |

| MAFFT | Multiple sequence alignment program for accurate nucleotide/protein alignments. |

| CURATED | A public database for curating environmental toxicity data, aiding initial data collection. |

| PaDEL-Descriptor | Software to calculate 2D/3D molecular descriptors and fingerprints for QSAR. |

ggtree (R pkg) |

Visualization package for annotating phylogenetic trees with associated data (e.g., toxicity). |

caper (R pkg) |

Implements Phylogenetic Generalized Least Squares (PGLS) for comparative analysis. |

| Gaussian/ORCA | Quantum chemistry software for computing high-accuracy 3D molecular descriptors. |

Core Concepts and Importance

Feature engineering is the process of transforming curated raw data into informative inputs (features) that machine learning (ML) algorithms can effectively use to build predictive models. In ecotoxicology, this step is critical for bridging the gap between standardized, high-quality data—prepared through prior curation steps—and the successful application of New Approach Methodologies (NAMs) for chemical hazard assessment [1].

The goal is to create features that capture the underlying biological and chemical mechanisms of toxicity, such as mode of action (MoA), thereby improving model accuracy, interpretability, and regulatory acceptance [9] [13]. Effective feature engineering directly supports the development of robust models that can predict outcomes like acute aquatic toxicity (e.g., LC50 values) for diverse chemicals and species [2].