From Prediction to Proof: A Strategic Framework for Validating Computational Toxicity Models with Experimental Data

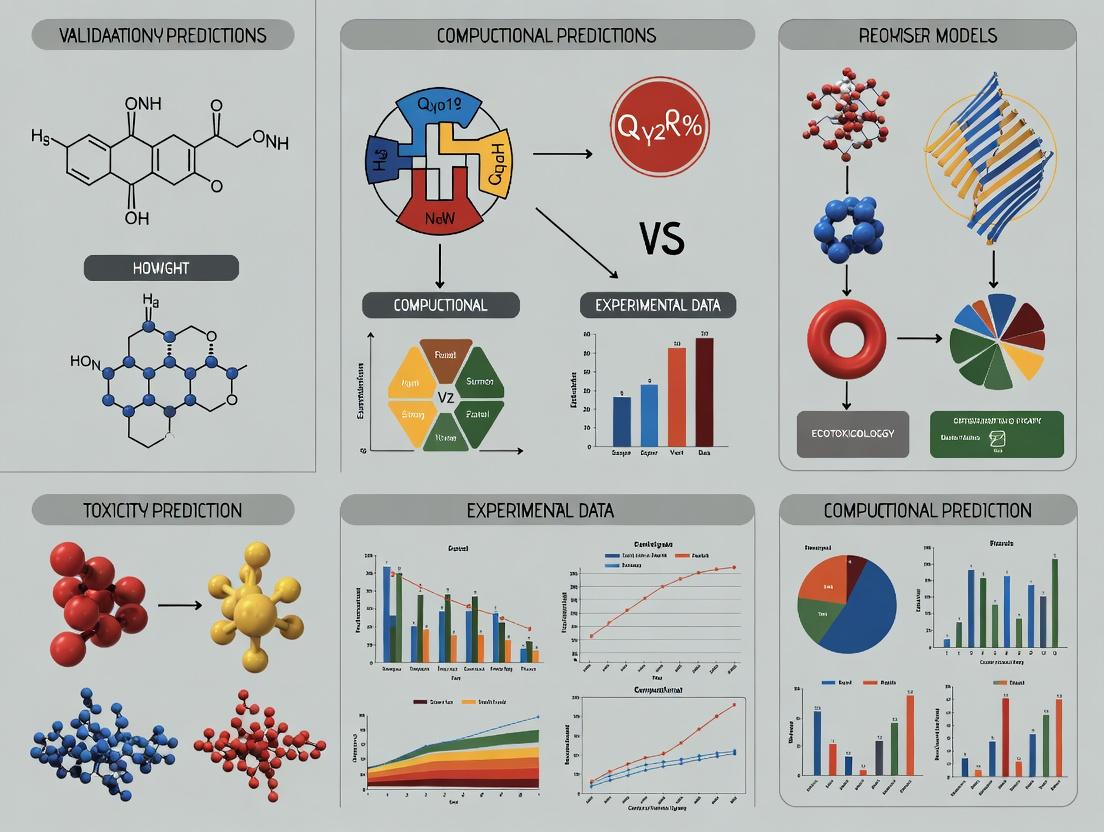

This article provides researchers, scientists, and drug development professionals with a comprehensive roadmap for establishing the scientific credibility of computational toxicity models through rigorous experimental validation.

From Prediction to Proof: A Strategic Framework for Validating Computational Toxicity Models with Experimental Data

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive roadmap for establishing the scientific credibility of computational toxicity models through rigorous experimental validation. As toxicity-related failures remain a primary cause of drug candidate attrition, the integration of predictive in silico models with robust experimental data is critical for modern drug discovery [citation:1]. We explore the foundational principles of model validation, detailing methodological frameworks for integrated testing and assessment (IATA) [citation:2]. The article addresses common challenges in data quality and model interpretability, offering troubleshooting and optimization strategies [citation:1][citation:4]. Finally, we present a comparative analysis of validation protocols and metrics, using case studies from organ-specific Quantitative Systems Toxicology (QST) to illustrate best practices for bridging the in silico-in vivo gap and enhancing regulatory confidence [citation:2][citation:4].

The Imperative for Validation: Core Concepts in Computational Toxicology

The High Stakes of Toxicity in Drug Development and the Rise of In Silico Models

The Critical Need for Predictive Toxicology in Drug Development

Drug toxicity remains a primary cause of failure in pharmaceutical research and development (R&D), leading to costly late-stage clinical trial attrition and market withdrawals. For example, drug-induced liver injury (DILI) alone accounts for a significant portion of market withdrawals (up to 32% of drug recalls) [1]. Cardiac side effects are another major concern. This high-stakes environment necessitates a paradigm shift from reactive to predictive safety assessment [2].

Traditionally, toxicity evaluation has relied heavily on animal models and standardized in vitro assays. While providing essential data, these methods are often low-throughput, expensive, ethically challenging, and can suffer from poor translatability to human outcomes. To address these limitations, in silico (computational) toxicity models have risen as indispensable tools for early, rapid, and cost-effective risk assessment [1] [3]. These models leverage artificial intelligence (AI), machine learning (ML), and systems biology to predict adverse effects from chemical structure and biological data.

The core thesis of modern computational toxicology is that model credibility is contingent on rigorous validation with high-quality experimental data. This guide compares leading in silico approaches, focusing on their predictive performance, underlying methodologies, and the experimental frameworks essential for their development and validation.

Comparison of In Silico Toxicity Prediction Models

The landscape of in silico models is diverse, ranging from broad, data-driven AI models to mechanism-focused quantitative systems. The table below provides a structured comparison of three primary categories based on recent literature and tools.

Table 1: Comparison of In Silico Toxicity Prediction Model Categories

| Model Category | Core Methodology | Primary Prediction Endpoints | Reported Performance Metrics | Key Validation/Data Source | Major Advantage |

|---|---|---|---|---|---|

| AI/ML Data-Driven Models [1] | Machine Learning (e.g., Random Forest, SVM), Deep Learning (e.g., Graph Neural Networks) | hERG blockage, DILI, Ames mutagenicity, Carcinogenicity, Acute toxicity (LD50), Skin sensitization. | Variable; many models report accuracy/AUC >0.8 for specific endpoints. Performance for DILI and some complex toxicities can be lower [1]. | Public datasets (e.g., Tox21, PubChem), published literature compilations. | High throughput and scalability; excellent for early-stage screening and prioritization of large compound libraries. |

| Quantitative Systems Toxicology (QST) Models [2] | Mechanistic, multi-scale mathematical modeling integrating physiology, PK/PD, and molecular pathways. | Organ-specific injury (e.g., liver, heart, kidney, GI), functional disturbances, biomarker dynamics. | Quantitative prediction of dose-response and time-course effects. Evaluated by fit to in vitro and in vivo data. | Data from in vitro assays, in vivo preclinical studies, and clinical biomarkers. | Provides mechanistic insight and human-relevant, quantitative risk assessment; supports dose selection and trial design. |

| Commercial Integrated Platforms (e.g., Leadscope Model Applier) [3] | Curated databases with hybrid (statistical + expert rule) models, often OECD QSAR principle compliant. | Regulatory-focused: ICH M7 mutagenicity, skin sensitization, acute oral toxicity, pharmaceutical impurities. | Promotes high predictivity and reliability for specific regulatory endpoints; offers transparency into predictions. | Proprietary database of >200,000 chemicals and >600,000 toxicity studies, often developed with regulatory agencies [3]. | "Regulatory-ready" reporting, model transparency, and integration of vast high-quality data for robust assessments. |

Detailed Experimental Protocols for Model Development and Validation

The performance of the models compared above is intrinsically linked to the quality and design of the experimental data used to build and validate them. Below are detailed protocols for two critical experimental approaches.

3.1 High-Content Screening (HCS) for Mechanistic DILI Prediction [4] This protocol generates multiparametric cellular data ideal for training and validating both AI and QST models for hepatotoxicity.

- Objective: To quantitatively assess multiple mechanistic endpoints of drug-induced liver injury in a single assay using live-cell imaging.

- Cell Model: HepG2 cells or primary human hepatocytes. For bile duct toxicity, sandwich-cultured hepatocytes forming 3D biliary structures are used [4].

- Compound Treatment: A test set of 100+ compounds with known clinical DILI severity (e.g., no, moderate, severe) is recommended. Compounds are tested across a range of concentrations (e.g., up to 100x the human maximum plasma concentration) [4].

- Multiplexed Fluorescent Probing: After compound exposure, cells are stained with a panel of fluorescent dyes:

- Hoechst 33342: Labels cell nuclei for viability and morphological analysis.

- TMRM or JC-1: Measures mitochondrial membrane potential (MMP).

- Fluo-4 AM: Detects changes in intracellular calcium concentration.

- BODIPY dyes: Labels lipids for steatosis assessment.

- CLF (Cholyl-Lysyl-Fluorescein): A fluorescent bile acid analog to assess biliary export function in 3D cultures [4].

- Image Acquisition & Analysis: Automated fluorescence microscopy (HCS system) captures high-resolution images. Image analysis software segments individual cells and quantifies >10 parameters (e.g., nuclear size, cell count, MMP intensity, cytoplasmic calcium flux, lipid droplet count, biliary export rate).

- Data Integration & Model Training: The multi-parameter output for each compound creates a phenotypic "fingerprint." These fingerprints are used as input features to train ML classifiers to predict DILI severity or to calibrate QST models simulating mitochondrial dysfunction or bile acid accumulation.

3.2 Gene Expression Profiling for Target Toxicity Validation [5] This protocol, derived from a patented method, uses transcriptomics to identify and validate toxicity mechanisms linked to specific drug targets.

- Objective: To determine if inhibition of a novel drug target gene leads to a gene expression signature associated with organ toxicity.

- Genetic Perturbation: Use CRISPR-Cas9 gene editing or RNA interference (RNAi) to knock down or knock out the target gene in relevant human cell lines (e.g., hepatocytes, cardiomyocytes).

- Transcriptomic Data Collection: Perform RNA sequencing (RNA-seq) on both the genetically perturbed cells and control cells.

- Toxicity Signature Database: Construct or access a reference database of gene expression profiles from cells or tissues treated with known toxicants (e.g., liver toxicants, cardiotoxicants).

- Bioinformatics Analysis:

- Identify differentially expressed genes (DEGs) in the target knockout cells.

- Perform enrichment analysis (GO, KEGG) on the DEGs to find perturbed biological pathways.

- Compare the DEG signature against the reference toxicity database using statistical methods (e.g., gene set enrichment analysis - GSEA). A significant overlap indicates the target's mechanism is associated with a known toxic outcome.

- Prioritize key toxicity-related genes from the overlap for further validation.

- Experimental Cross-Validation: The predicted toxicity risk from the in silico analysis is then validated using targeted in vitro assays (e.g., measuring caspase-3 activation for apoptosis, or reactive oxygen species for oxidative stress).

Key Workflows and Conceptual Frameworks in Computational Toxicology

The development and application of advanced models like QST follow a rigorous, iterative process.

Diagram 1: QST Model Development & Application Workflow [2] (Max Width: 760px)

Diagram 2: Model Application Across Drug Development Stages (Max Width: 760px)

The Scientist's Toolkit: Essential Research Reagent Solutions

Building and validating computational toxicity models requires integrated use of software, data, and physical reagents.

Table 2: Key Research Reagent Solutions for Computational Toxicology

| Category | Item/Resource | Function in Model R&D | Example/Source |

|---|---|---|---|

| Software & Platforms | Leadscope Model Applier | Provides ready-to-use, validated QSAR models for regulatory endpoints like mutagenicity and skin sensitization, with access to a massive toxicity database [3]. | Instem [3] |

| QST Modeling Software (e.g., DILIsym) | Platform for building mechanism-based, quantitative models of organ-specific toxicity to simulate human outcomes [2]. | DILI-sim Initiative [2] | |

| AI/ML Libraries (e.g., Scikit-learn, PyTorch) | Open-source libraries for developing custom deep learning and machine learning prediction models from toxicity datasets. | Publicly available | |

| Databases | Toxicity Reference Databases | Provide curated experimental data for model training, testing, and validation. | Tox21 [1], Leadscope DB (>600k studies) [3] |

| Bioinformatics Databases | Used for target identification, pathway analysis, and gene signature comparison. | GO, KEGG, PubMed | |

| Experimental Reagents | Multiplexed HCS Assay Kits | Pre-configured fluorescent probe sets for live-cell imaging of cytotoxicity, MMP, oxidative stress, etc. | Commercial vendors (e.g., Thermo Fisher) |

| 3D Cell Culture Systems | Provide more physiologically relevant in vitro models (e.g., for liver bile transport) for generating high-quality training data [4]. | Various commercial matrices and plates | |

| CRISPR-Cas9 Gene Editing Kits | Enable target validation studies by creating specific gene knockouts to link target modulation to toxicity signatures [5]. | Commercial vendors |

The integration of in silico models into drug safety assessment is no longer optional but a strategic imperative to de-risk development. As this guide illustrates, a synergistic approach is most effective: high-throughput AI models enable early triaging, mechanism-rich QST models support quantitative human-relevant decision-making, and transparent commercial platforms facilitate regulatory compliance.

The future of the field hinges on closing the loop between prediction and experiment. This involves generating more predictive in vitro data (e.g., from complex organoids and time-series omics) specifically designed to feed and challenge computational models [2]. Furthermore, advancing explainable AI (XAI) and fostering interdisciplinary collaboration among toxicologists, data scientists, and clinicians will be crucial to enhance model transparency, build trust, and fully realize the potential of in silico methods to deliver safer medicines faster [1] [2].

This comparison guide objectively evaluates the performance of contemporary computational toxicity models against experimental data. Framed within the critical thesis of model validation, it compares emerging artificial intelligence (AI)-driven approaches with established quantitative structure-activity relationship (QSAR) methodologies. The analysis focuses on quantitative performance, underlying experimental protocols, and the integration of biological mechanistic data as a cornerstone for establishing scientific credibility, regulatory relevance, and predictive reliability [6] [7].

Performance Comparison of Computational Toxicity Models

The predictive performance of toxicity models varies significantly based on their architecture, the data they incorporate, and the specific toxicological endpoint. The following tables summarize key quantitative findings from recent benchmarking studies and novel model developments.

Table 1: Performance of Graph Neural Network (GNN) Models on the Tox21 Dataset with Knowledge Graph Integration [6]

| Model Type | Model Name | Key Description | Average AUC (Range across tasks) | Best Performance (Task: AUC) |

|---|---|---|---|---|

| Heterogeneous GNN | GPS | Graph Positioning System with ToxKG | 0.927 | NR-AR: 0.956 |

| Heterogeneous GNN | HGT | Heterogeneous Graph Transformer with ToxKG | 0.915 | SR-ARE: 0.942 |

| Heterogeneous GNN | HRAN | Heterogeneous Representation Aggregation Network with ToxKG | 0.909 | NR-AR: 0.939 |

| Homogeneous GNN | GAT | Graph Attention Network (Fingerprints only) | 0.881 | SR-ARE: 0.914 |

| Homogeneous GNN | GCN | Graph Convolutional Network (Fingerprints only) | 0.869 | NR-Aromatase: 0.905 |

Table 2: Benchmarking of QSAR Tools for Physicochemical (PC) and Toxicokinetic (TK) Property Prediction [8]

| Property Category | Example Endpoints | Average Performance (Top Tools) | Key Finding |

|---|---|---|---|

| Physicochemical (PC) | LogP, Water Solubility, pKa | R² = 0.717 (Regression) | PC property models generally show higher predictivity than TK models. |

| Toxicokinetic (TK) | CYP450 Inhibition, Plasma Protein Binding | Balanced Accuracy = 0.780 (Classification) | Performance is endpoint-dependent; models for human hepatic clearance showed lower accuracy. |

| Overall | 17 PC & TK endpoints across 12 tools | VARIED | No single tool was optimal for all properties; selection must be endpoint-specific. |

Table 3: Performance of Multi-Modal and Fusion Models on Diverse Toxicity Tasks

| Study & Model | Data Modality / Strategy | Toxicity Endpoint | Key Metric & Result |

|---|---|---|---|

| Multi-Modal Deep Learning [9] | Vision Transformer (images) + MLP (tabular data) | Multi-label toxicity | Accuracy: 0.872; F1-score: 0.86 |

| Fusion QSAR Model [10] | Ensemble of in vitro & in vivo data (Weight-of-Evidence) | Genotoxicity (Mutagenicity) | Accuracy: 83.4% (RF Fusion Model); AUC: 0.897 (SVM Fusion Model) |

| AI Review Highlights [7] | GNNs on molecular graphs | Various (hERG, DILI, etc.) | GNNs consistently match or outperform fingerprint-based models. |

Detailed Experimental Protocols for Model Validation

A rigorous validation framework is essential for assessing model credibility. The following protocols are representative of contemporary practices.

This protocol outlines the evaluation of knowledge graph-enhanced GNN models.

- Dataset Curation: Use the publicly available Tox21 dataset, containing assay results for 7,831 compounds across 12 nuclear receptor and stress response targets. Filter compounds with missing or uncertain labels.

- Knowledge Graph (KG) Construction: Build a Toxicological Knowledge Graph (ToxKG) by integrating data from ComptoxAI, PubChem (for chemical structures), Reactome (for pathways), and ChEMBL (for compound-gene interactions). The final graph contains ~19k chemical, ~17.5k gene, and ~4.5k pathway entities [6].

- Feature Integration: For each compound, combine traditional molecular fingerprints (e.g., ECFP4, Morgan) with heterogeneous features extracted from ToxKG (e.g., connected genes and pathways).

- Model Training & Evaluation:

- Implement six GNN models: GCN, GAT, R-GCN, HRAN, HGT, and GPS.

- Address class imbalance using a reweighting strategy that assigns higher loss weights to the minority (toxic) class.

- Split data into training/validation/test sets. Evaluate performance using Area Under the ROC Curve (AUC), F1-score, Accuracy (ACC), and Balanced Accuracy (BAC).

- The primary finding is that heterogeneous GNNs (e.g., GPS) leveraging KG data significantly outperform homogeneous models using only structural fingerprints [6].

This protocol is based on a weight-of-evidence approach aligning with ICH guidelines.

- Data Compilation and Grouping: Collect mutagenicity experimental results for the same compounds from multiple databases (GENE-TOX, CPDB, CCRIS). Group data according to ICH-recommended testing combinations:

- Y1: Ames test (in vitro bacterial) result.

- Y2: In vitro mammalian cell assay (e.g., micronucleus) result.

- Y3: In vivo assay (e.g., rodent micronucleus) result.

- Fusion Rule Definition: Apply the decision rule: a compound is classified as mutagenic if any of the three experimental groups (Y1, Y2, or Y3) returns a positive result.

- Model Development:

- Build separate sub-models for predicting Y1, Y2, and Y3 outcomes using algorithms like Random Forest (RF) and Support Vector Machine (SVM).

- Create a fusion model where the predictions from the three sub-models serve as inputs. The final classification follows the defined fusion rule.

- Validation: Assess performance via 5-fold cross-validation and external test sets. The fusion model demonstrates superior accuracy and robustness compared to single-endpoint models [10].

Visualizing Validation Workflows and Model Architectures

Diagram 1: Validation workflow for toxicity models.

Diagram 2: Integration of toxicological knowledge graphs.

Table 4: Key Research Reagent Solutions for Computational Toxicity Validation

| Category | Resource Name | Key Function in Validation | Source / Example |

|---|---|---|---|

| Benchmark Datasets | Tox21 | Provides standardized, high-quality experimental data for 12 toxicity endpoints to train and benchmark models [6] [7]. | NIH/EPA [6] |

| Benchmark Datasets | ToxCast | Offers high-throughput screening data for thousands of chemicals across hundreds of biological pathways for mechanistic model development [11] [12]. | U.S. EPA [11] |

| Reference Data | ToxValDB v9.6 | A large compilation of in vivo toxicology data and derived toxicity values, used as a gold standard for external validation [11]. | U.S. EPA [11] |

| Knowledge Sources | ComptoxAI / Reactome / ChEMBL | Provide structured biological knowledge (chemicals, genes, pathways, bioactivities) to build mechanistic graphs and improve model interpretability [6]. | Multiple consortia [6] |

| Software & Tools | OECD QSAR Toolbox | A widely accepted regulatory tool for grouping chemicals, read-across, and (Q)SAR model application, central to defining applicability domains [13]. | OECD |

| Software & Tools | OPERA | An open-source battery of QSAR models with built-in applicability domain assessment, used for benchmarking physicochemical and toxicokinetic properties [8]. | NIEHS [8] |

| Software & Tools | RDKit | Open-source cheminformatics library essential for standardizing chemical structures, calculating descriptors, and handling molecular data during curation [8]. | Open Source |

| Validation Frameworks | ICH M7 Guidelines | Provide a regulatory framework for assessing mutagenic impurities, including criteria for the use of (Q)SAR models and weight-of-evidence approaches [10]. | International Council for Harmonisation |

Understanding Multi-Scale Toxicity Mechanisms as a Basis for Model Building

The high attrition rate of drug candidates due to unforeseen toxicity remains a critical bottleneck in pharmaceutical development, with approximately 30% of preclinical candidates failing for safety reasons [14]. This reality underscores a fundamental challenge: accurately predicting complex biological adverse outcomes from chemical structure alone. Traditional in vivo toxicity assessment, while historically informative, is costly, time-consuming, and faces increasing ethical scrutiny, driving the urgent need for reliable in silico alternatives [14].

The core thesis of modern computational toxicology is that predictive accuracy is contingent upon a mechanistic, multi-scale understanding of toxicological pathways. Toxicity is not a single event but an emergent property arising from interactions across scales—from molecular initiating events (e.g., protein binding, metabolic activation) to cellular stress responses (e.g., oxidative stress, mitochondrial dysfunction), and ultimately to tissue and organ damage [14]. Therefore, building robust models requires frameworks that integrate these scales and, crucially, are rigorously validated against high-quality experimental data [15]. This guide compares current computational modeling paradigms by evaluating their ability to capture multi-scale mechanisms and their corresponding validation through experimental benchmarks, providing a roadmap for researchers to select and develop models with greater translational confidence.

Comparison of Computational Modeling Paradigms

The landscape of computational toxicity prediction is diverse, ranging from traditional statistical models to advanced deep learning architectures. The choice of model significantly impacts interpretability, data requirements, and ability to capture mechanistic complexity. The following table compares the core methodologies.

Table 1: Comparison of Computational Toxicity Modeling Approaches

| Modeling Paradigm | Typical Algorithms | Mechanistic Interpretability | Data Requirements & Scalability | Key Strength | Primary Limitation |

|---|---|---|---|---|---|

| Quantitative Structure-Activity Relationship (QSAR) | Linear Regression, PLS, Support Vector Machines (SVM) | Moderate to Low. Relies on descriptive molecular features; causal links are often obscure. | Lower; works well with hundreds to thousands of compounds. | Simple, fast, and well-established for congeneric series. | Struggles with complex, non-linear relationships and novel chemical spaces [9]. |

| Machine Learning (ML) with Molecular Descriptors | Random Forest, Gradient Boosting, Multi-Layer Perceptron (MLP) | Low to Moderate. Feature importance can be derived, but biological mechanism is not explicit. | Moderate; requires curated feature sets for thousands of compounds. | High predictive accuracy for specific endpoints; handles non-linear data well. | Risk of overfitting; predictions are often a "black box" lacking biological insight [14]. |

| Graph-Based & Deep Learning Models | Graph Neural Networks (GNN), Graph Convolutional Networks | Inherently Low. Learns complex structural patterns but offers limited direct biological explanation. | High; requires large datasets (>10k compounds) for robust training. | Superior at capturing intricate structural relationships without manual feature engineering. | Extremely data-hungry; outputs are difficult to validate mechanistically [14] [9]. |

| Network Toxicology & Systems Biology Models | Pathway enrichment analysis, protein-protein interaction network analysis | High. Explicitly maps chemicals to targets, pathways, and phenotypic outcomes. | Moderate; depends on quality of underlying ontological and interaction databases. | Provides holistic, mechanism-rich hypotheses about multi-target, multi-pathway effects. | Predictive output is often qualitative or probabilistic; requires downstream experimental confirmation [16]. |

| Multimodal Deep Learning | Vision Transformers (ViT) fused with MLPs, hybrid architectures | Low. Although it integrates diverse data types, the fusion logic is complex and opaque. | Very High; needs large, aligned multimodal datasets (images, descriptors, bioassays). | Leverages complementary data sources (e.g., structure images + properties) for potentially greater accuracy. | High computational cost; integration and interpretation of multimodal features is challenging [9]. |

The evolution from QSAR to deep learning has primarily increased predictive power for data-rich endpoints, often at the expense of interpretability. A critical trend is the move towards multi-endpoint joint modeling and the integration of multimodal features, including biological assay data from high-throughput screening (HTS) programs like the U.S. EPA's ToxCast [14] [12]. The most promising frameworks for mechanistic understanding are those that combine the pattern recognition strength of AI with the causal, knowledge-based structure of systems biology [15].

Experimental Validation: FromIn SilicoPrediction toIn Vitro/In VivoConfirmation

A computational model's true value is determined by its performance in guiding and being validated by empirical experiments. The following experimental paradigms are essential for this validation loop.

Table 2: Key Experimental Protocols for Model Validation

| Validation Tier | Experimental Protocol | Measured Endpoints | Role in Model Validation | Typical Data Output for Model Refinement |

|---|---|---|---|---|

| Tier 1: In Vitro High-Throughput Screening (HTS) | ToxCast/Tox21 assay batteries: Cell-free and cell-based assays (e.g., nuclear receptor activation, stress response pathways). | Fluorescence, luminescence, cell viability (IC50). | Provides high-volume biological activity data to train and benchmark predictive models for specific pathways [12]. | Concentration-response data across hundreds of targets, used as biological feature input for models. |

| Tier 2: In Vitro Mechanism-Focused Assays | - Cytotoxicity Assays (MTT, LDH release).- High-Content Screening (HCS) for imaging-based cytopathology.- Transcriptomics (RNA-Seq, qPCR arrays). | Cell viability, organelle integrity, morphological changes, gene expression signatures. | Confirms predicted organ-specific toxicity (e.g., hepatotoxicity) and elucidates subcellular mechanisms (e.g., oxidative stress, apoptosis). | Dose-dependent phenotypic and gene expression profiles that anchor predictions to specific mechanistic pathways. |

| Tier 3: In Vivo & Ex Vivo Validation | - Repeated-dose toxicity studies in rodent models.- Histopathology of target organs (liver, kidney, heart).- Clinical chemistry (e.g., ALT, AST, BUN, creatinine). | Organ weight changes, tissue necrosis/inflammation, serum biomarkers of injury. | The gold standard for confirming model predictions of systemic, organ-level toxicity and for determining no-observed-adverse-effect levels (NOAEL). | Histological scores and clinical chemistry values that provide the ultimate benchmark for model accuracy. |

| Tier 4: Specialized Mechanistic Models | - Molecular Docking & Dynamics Simulations.- Stem cell-derived organoids or microphysiological systems (e.g., liver-on-a-chip).- Ex vivo tissue explants. | Binding affinity, conformational changes, tissue-specific functionality, metabolite formation. | Provides deep mechanistic insight into molecular initiating events (e.g., protein binding) and human-relevant tissue-level responses, bridging Tiers 2 and 3. | Atomic-level interaction data and human-relevant tissue response data, reducing reliance on animal extrapolation. |

A representative integrated workflow for developing and validating a toxicity model, particularly for a complex endpoint like neurodevelopmental toxicity, is shown below. This workflow synthesizes computational and experimental tiers into a cohesive validation pipeline.

Diagram 1: Integrated Computational-Experimental Validation Workflow (94 characters)

Case Study Comparison: Neurodevelopmental Toxicity of PBDE-47

To illustrate the practical application of these principles, we compare the methodologies and findings of two studies investigating the neurodevelopmental toxicant 2,2′,4,4′-Tetrabromodiphenyl ether (PBDE-47).

Table 3: Case Study Comparison: Computational & Experimental Analysis of PBDE-47 Neurotoxicity

| Aspect | Network Toxicology & Bioinformatics Study [16] | AI-Based Multimodal Deep Learning Study (General Analogue) [9] |

|---|---|---|

| Primary Objective | Elucidate multi-target, multi-pathway mechanisms of neurodevelopmental toxicity. | Achieve high predictive accuracy for classifying chemicals as toxic/non-toxic. |

| Computational Methodology | 1. Target prediction from chemical structure.2. Protein-protein interaction (PPI) network construction & topology analysis (core target identification: TP53, AKT1, MAPK1).3. Pathway enrichment analysis (HIF-1, Thyroid hormone signaling).4. Molecular docking validation of key targets. | 1. Multimodal data integration: Molecular structure images (processed by Vision Transformer) + numerical chemical descriptors (processed by MLP).2. Joint fusion mechanism of image and numerical features.3. Multi-label classification model training. |

| Experimental Validation Protocol | Sequential & mechanistic:1. Expression analysis (qPCR/Western blot) of core targets.2. Single-cell RNA-seq to localize target gene expression in neural cell types.3. Immunohistochemistry on brain tissue to visualize protein expression in neurons/glia. | Primarily performance-based:1. Model performance evaluated on held-out test sets using metrics (Accuracy: 0.872, F1-score: 0.86).2. Validation relies on the quality and diversity of the pre-existing curated dataset. |

| Key Output | A mechanistic hypothesis: PBDE-47 disrupts HIF-1/Thyroid hormone signaling crosstalk via TP53/AKT1/MAPK1, leading to neuronal and glial dysfunction. | A high-accuracy classifier capable of predicting toxicity for new chemicals based on structure and properties. |

| Strength for Model Building | Provides causal, interpretable insights into multi-scale mechanisms (molecular target → pathway → cellular phenotype), directly informing the biology behind model predictions. | Demonstrates technical prowess in pattern recognition; can screen vast chemical libraries rapidly once trained. |

| Limitation | The hypothesized mechanism, while rich, requires extensive further experimental causal testing (e.g., knock-out/rescue studies) for full validation. | Offers little direct mechanistic insight; acts as a sophisticated "black box," making it difficult to understand why a prediction was made. |

The network toxicology approach exemplifies the deductive, hypothesis-driven strategy central to understanding multi-scale mechanisms. It starts with a chemical, predicts its bio-interactions, builds a network model of affected biology, and then designs targeted experiments to confirm each layer of the model [16]. The molecular initiating event and subsequent pathway perturbations can be visualized as a simplified signaling cascade.

Diagram 2: Multi-Scale Toxicity Pathway for PBDE-47 Neurotoxicity (79 characters)

The Scientist's Toolkit: Essential Research Reagent Solutions

Building and validating mechanistic toxicity models requires a suite of experimental tools. The following table details key reagents and platforms critical for this research.

Table 4: Key Research Reagent Solutions for Mechanistic Toxicity Studies

| Tool/Reagent Category | Specific Example(s) | Primary Function in Model Validation | Relevant Experimental Protocol Tier |

|---|---|---|---|

| High-Throughput Screening (HTS) Assay Platforms | ToxCast/Tox21 assay library (Attagene, CellSensor, etc.); Biochemical enzyme inhibition kits. | Generates large-scale, multi-target bioactivity data to train and test computational models for biological space coverage [12]. | Tier 1 (In Vitro HTS) |

| Cell-Based Viability & Toxicity Assays | MTT, CellTiter-Glo (ATP quantitation), LDH-Glo (cytotoxicity), Caspase-Glo (apoptosis). | Quantifies general or specific modes of cell death and metabolic dysfunction, confirming predicted cytotoxicity. | Tier 2 (In Vitro Mechanism) |

| High-Content Screening (HCS) Reagents | Multiplex fluorescent dyes (e.g., for mitochondria, ROS, lysosomes, nuclei); Automated imaging systems (e.g., ImageXpress). | Provides multiplexed, subcellular phenotypic data (cytological profiles) to identify mechanistic signatures of toxicity. | Tier 2 (In Vitro Mechanism) |

| Transcriptomics & Pathway Analysis Suites | RNA-Seq kits; qPCR arrays for stress pathways; Enrichment analysis software (DAVID, Metascape). | Measures genome-wide expression changes to derive mechanistic signatures and validate predicted pathway perturbations. | Tier 2 & 4 (In Vitro Mechanism, Ex Vivo) |

| Molecular Docking & Simulation Software | AutoDock Vina, Schrödinger Suite, GROMACS (for dynamics). | Predicts and visualizes the molecular initiating event—the physical binding interaction between a toxicant and a protein target [16]. | Tier 4 (Specialized Mechanistic) |

| Organoid & Microphysiological System (MPS) Kits | Stem cell-derived hepatocyte/organoid kits; Commercial "organ-on-a-chip" systems (e.g., Emulate, Mimetas). | Provides human-relevant, tissue-structured models for functional toxicity assessment (e.g., albumin production, barrier integrity), bridging in vitro and in vivo gaps. | Tier 4 (Specialized Mechanistic) |

| In Vivo Biomarker Assay Kits | ELISA kits for serum ALT, AST, BUN, Creatinine; Tissue homogenization & histology reagents. | Measures clinically relevant biomarkers of organ damage in animal models, providing the ultimate systemic validation of model predictions. | Tier 3 (In Vivo & Ex Vivo) |

The comparative analysis reveals a fundamental trade-off: predictive power versus mechanistic insight. Advanced AI models excel at identifying complex patterns and achieving high statistical accuracy, making them powerful tools for high-throughput prioritization [9]. Conversely, network and systems biology approaches provide the causal, multi-scale understanding that is essential for building scientifically credible models, interpreting adverse outcome pathways, and informing risk assessment [16].

The future of reliable model building lies in hybrid integrative frameworks. The most robust strategy is to use high-accuracy, data-driven models (like multimodal deep learning) as sensitive filters to flag potential toxicants, and then employ mechanistic, network-based models to generate testable hypotheses about how toxicity occurs [15]. This hypothesis is then rigorously interrogated using the tiered experimental validation protocols outlined here. This continuous loop of in silico prediction, targeted experimental validation, and model refinement, grounded in multi-scale biology, is the cornerstone of advancing computational toxicology from a correlative tool to a causal, predictive science that can confidently accelerate the development of safer chemicals and therapeutics.

The validation of computational toxicity models with experimental data represents a fundamental paradigm shift in drug development. With approximately 30% of preclinical candidate compounds failing due to toxicity issues—the leading cause of drug withdrawal from the market—the imperative for accurate early prediction has never been greater [14]. Traditional animal-based testing is constrained by ethical concerns, high costs (often exceeding millions per compound), and protracted timelines (6–24 months), creating a pressing need for reliable in silico alternatives [14]. This guide frames the critical evaluation of key toxicological databases within this broader thesis, examining how these resources underpin the training and validation of models that seek to bridge computational predictions with experimental reality.

The evolution of computational toxicology is intrinsically linked to the availability and quality of data. Modern artificial intelligence (AI) and machine learning (ML) models do not operate in a vacuum; their predictive power is a direct function of the data from which they learn [14] [17]. Consequently, databases serve as the foundational bedrock for developing models capable of predicting endpoints such as acute toxicity, hepatotoxicity, cardiotoxicity, and carcinogenicity [14] [12]. This guide provides a comparative analysis of the primary database types, their specific applications in model workflows, and their inherent limitations, offering researchers and drug development professionals a structured framework for selecting and utilizing these essential resources.

Comparative Analysis of Key Database Types

Toxicological databases can be categorized by their core content and primary application in the modeling pipeline. The following tables provide a comparative overview of the major types, highlighting their scope, common uses, and key limitations.

Table 1: Chemical Structure and Generic Toxicity Databases

| Database Name | Primary Content & Scale | Primary Use in Modeling | Key Limitations |

|---|---|---|---|

| DSSTox (EPA) [11] | Curated chemical structures, identifiers, and properties for ~1.2 million substances. | Provides high-quality, curated chemical identifiers and structures for featurization (e.g., generating molecular descriptors, fingerprints). | Limited direct toxicity data; primarily a chemistry foundation for other resources. |

| PubChem [17] | Massive repository of chemical structures, bioactivities, and toxicity data from literature and high-throughput screens. | Source for chemical structures, bioactivity data, and literature-extracted toxicity information for model training. | Data heterogeneity and variable quality require extensive curation; not specifically tailored for toxicology. |

| ChEMBL [17] | Manually curated bioactive molecules with drug-like properties, including ADMET data. | Training models for bioactivity and early-stage ADMET property prediction in drug discovery. | Focus on drug-like molecules; may lack data on environmental or industrial chemicals. |

| OCHEM [17] | Platform with ~4 million records for building QSAR models. | Hosts existing models and data for training custom QSAR models for various endpoints. | Requires user expertise to build and validate models; data sourced from varying origins. |

Table 2: Experimental Toxicity Databases (In Vivo & In Vitro)

| Database Name | Primary Content & Scale | Primary Use in Modeling | Key Limitations |

|---|---|---|---|

| ToxValDB (v9.6.1) (EPA) [11] [18] | Standardized summary-level in vivo toxicity data (e.g., LOAEL, NOAEL) and derived values for ~41,769 chemicals from 36 sources. | Gold-standard data for validating computational model predictions against traditional animal studies; training models for specific toxicological endpoints. | Data is summary-level, not detailed study data; legacy study design may not reflect modern protocols. |

| ToxRefDB (EPA) [11] | Detailed in vivo animal toxicity study data from guideline studies for ~1,000 chemicals. | Training and benchmarking models with rich, well-characterized animal study outcomes. | Limited chemical space (mostly pesticides and herbicides); data access can be complex. |

| ToxCast/Tox21 (invitroDB) (EPA) [11] [19] [20] | High-throughput in vitro screening data for ~10,000 chemicals across ~1,500 assay endpoints. | Training models to link chemical structure to biological pathway perturbation; developing New Approach Method (NAM) signatures. | In vitro to in vivo extrapolation (IVIVE) is challenging; assays may not capture systemic toxicity. |

| ECOTOX (EPA) [11] | Ecotoxicology data for aquatic and terrestrial species. | Training models for environmental risk assessment and ecological toxicity. | Limited relevance for direct human health toxicity prediction. |

Table 3: Specialized & Multi-Omics/Biological Databases

| Database Name | Primary Content & Scale | Primary Use in Modeling | Key Limitations |

|---|---|---|---|

| DrugBank [17] | Comprehensive drug data with detailed ADMET information, target pathways, and clinical data. | Enhancing model interpretability by linking predictions to known biological targets and pathways. | Focus only on approved or investigational drugs, not broader chemical space. |

| ICE (Integrated Chemical Environment) [17] | Integrates chemical properties, toxicity data (e.g., LD50, IC50), and environmental fate from multiple sources. | One-stop resource for curated data to train models on diverse endpoints. | Integrated nature can obscure original data source quality and context. |

| TOXRIC [17] | Focused toxicity database for intelligent computation, covering multiple toxicity types and species. | Provides pre-filtered toxicity data specifically intended for computational model development. | Scope and update frequency not as clearly defined as major government resources. |

| CPDat (Consumer Product Database) [11] | Maps chemicals to their use in consumer products (e.g., shampoo, soap). | Informing exposure assessment for risk-based prioritization and modeling. | Contains use/function data, not toxicity data. |

Experimental Protocols for Model Training and Validation

The utility of databases is realized through structured experimental protocols for building and validating models. Here, we detail two key methodologies central to computational toxicology research.

Protocol for Building a Multi-Modal Deep Learning Model

A 2025 study demonstrated a protocol for a multi-modal model achieving an accuracy of 0.872 and an F1-score of 0.86 by integrating chemical structure images with property data [9]. This approach addresses the limitation of single-data-type models.

Data Curation and Integration:

- Image Data: Molecular structure images for compounds are programmatically collected from databases like PubChem using CAS numbers. Images are standardized to a resolution of 224x224 pixels [9].

- Tabular Data: Numerical chemical property descriptors (e.g., molecular weight, logP) are compiled from sources like the CompTox Chemicals Dashboard [11].

- Toxicity Labels: Binary or multi-label toxicity endpoints are assigned from curated sources like ToxValDB or ToxCast, ensuring alignment between the chemical identifier, image, and property data [18] [19].

Model Architecture and Training:

- Image Processing Branch: A pre-trained Vision Transformer (ViT) model, fine-tuned on molecular images, extracts a 128-dimensional feature vector from the structure image [9].

- Descriptor Processing Branch: A Multi-Layer Perceptron (MLP) processes the tabular property data into a separate 128-dimensional vector [9].

- Fusion and Prediction: The two feature vectors are concatenated. This fused representation is passed through a final classification layer to predict toxicity endpoints. The model is trained using a binary cross-entropy loss function, with separate validation and test sets to monitor for overfitting [9].

Protocol for Validating NAMs Using ToxCast and ToxValDB

This protocol is essential for establishing the credibility of New Approach Methodologies (NAMs) by benchmarking them against traditional in vivo data, a core requirement for regulatory acceptance.

Define the Toxicity Endpoint: Select a specific endpoint for validation (e.g., hepatotoxicity, endocrine disruption).

Construct a Benchmark Dataset:

- Identify chemicals with high-quality in vivo data in ToxValDB (e.g., a clear point of departure like a BMDL10) [18].

- For the same chemicals, extract relevant in vitro bioactivity profiles from the ToxCast database (invitroDB) [19] [20].

- This creates a paired dataset:

[Chemical Structure -> *In Vitro* Bioactivity Signature -> *In Vivo* Toxicity Outcome].

Develop and Validate the Predictive Model:

- Train a machine learning model (e.g., Random Forest, Gradient Boosting) to predict the in vivo endpoint using the in vitro bioactivity signature as input features.

- Use stringent k-fold cross-validation on the paired dataset to evaluate the model's performance metrics (e.g., accuracy, sensitivity, specificity).

- The performance quantitatively indicates how well the NAM (in vitro data) can predict the traditional in vivo outcome, thereby validating its utility [12] [20].

Visualizing Workflows and Relationships

Database Curation and Integration Workflow

Diagram Title: Data Curation Pipeline for Toxicology Databases

Model Validation Process with Experimental Data

Diagram Title: Model Validation Loop with Experimental Benchmarks

Multi-Modal AI Prediction Framework

Diagram Title: Multi-Modal AI Framework for Toxicity Prediction

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key computational reagents—databases and software tools—that are essential for conducting research in computational toxicology and model validation.

Table 4: Essential Computational Reagents for Model Development & Validation

| Tool/Resource | Type & Provider | Primary Function in Research | Typical Application in Experiment |

|---|---|---|---|

| CompTox Chemicals Dashboard | Integrated Web Application (U.S. EPA) [11] [21] | Central hub for accessing chemical identifiers, properties, and linked toxicity data (ToxValDB, ToxCast). | First stop for chemical look-up to gather all available EPA-curated data for a compound set. |

| ToxCast Pipeline (tcpl/tcplfit2) | R Software Package (U.S. EPA) [19] | Processes, models, and visualizes high-throughput screening dose-response data from invitroDB. | Used to re-analyze ToxCast data, apply custom hit-calling algorithms, and generate potency estimates for modeling. |

| CTX Application Programming Interfaces (APIs) | Programming Interface (U.S. EPA) [19] [21] | Enables programmatic access to CompTox data, allowing integration into automated workflows and custom applications. | Used to batch query thousands of chemicals for properties and bioactivity data directly within a modeling script. |

| RDKit | Open-Source Cheminformatics Library | Calculates molecular descriptors, generates fingerprints, and handles chemical I/O operations. | Standard for converting SMILES strings to numerical features for QSAR and ML model training. |

| invitroDB | MySQL Database (U.S. EPA) [19] [20] | The backend relational database storing all ToxCast assay and response data. | Source for extracting high-throughput in vitro bioactivity matrices to use as predictive features or for benchmark validation. |

| ToxValDB R Package | R Software Package (U.S. EPA) [18] | Facilitates direct access and analysis of the curated in vivo toxicity values database. | Used to retrieve standardized LOAEL/NOAEL values for a list of chemicals to create a gold-standard validation set. |

Blueprints for Trust: Methodological Frameworks and Validation Workflows

The validation of computational toxicity models stands as a cornerstone for modern chemical safety assessment and drug development. With international regulatory pressure to reduce animal testing and the exponential growth of chemicals requiring evaluation, Quantitative Structure-Activity Relationship (QSAR) models and other New Approach Methodologies (NAMs) have become indispensable [22]. Their regulatory acceptance, however, is critically contingent upon demonstrating scientific rigor and reliability through robust validation frameworks. This guide provides a comparative analysis of the foundational and emerging validation paradigms, centered on the OECD Principles and the newer OECD QSAR Assessment Framework (QAF), and evaluates the performance of leading computational tools against experimental data. The discussion is framed within the essential thesis that experimental validation is the non-negotiable benchmark for establishing confidence in in silico predictions, bridging the gap between computational promise and regulatory application [23].

Core Validation Frameworks: Principles and Evolution

The validation landscape is governed by established principles that ensure models are scientifically credible and fit for regulatory purpose.

The Foundational OECD QSAR Validation Principles

The OECD principles provide a five-point checklist for regulatory consideration of QSAR models [23]:

- A defined endpoint.

- An unambiguous algorithm.

- A defined domain of applicability.

- Appropriate measures of goodness-of-fit, robustness, and predictivity.

- A mechanistic interpretation, if possible.

These principles emphasize transparency and reproducibility, ensuring that a model's predictions can be understood and verified. Principle 3 (Applicability Domain - AD) and Principle 4 (Performance Metrics) are particularly crucial for evaluating a model's reliability for a specific chemical of interest [24].

The OECD QSAR Assessment Framework (QAF): A Modern Expansion

Building upon the original principles, the OECD QSAR Assessment Framework (QAF) provides detailed guidance for regulators to evaluate models and their predictions consistently [22]. It translates the principles into actionable assessment elements, explicitly addressing the confidence and uncertainty in predictions. The QAF is particularly significant for facilitating the use of multiple predictions and consensus modeling, acknowledging that a single model is rarely sufficient for complex regulatory decisions. Its development signals an evolution from principle-based guidance to a more prescriptive framework aimed at increasing regulatory uptake [22].

Table 1: Comparison of Foundational and Modern Validation Frameworks

| Framework Aspect | OECD QSAR Principles (Foundational) | OECD QSAR Assessment Framework (QAF) (Modern) |

|---|---|---|

| Primary Purpose | Provide criteria for regulatory consideration of a QSAR model. | Guide regulatory assessment of both QSAR models and individual predictions. |

| Scope | Model-centric evaluation. | Holistic evaluation of the model, its predictions, and the use of multiple predictions. |

| Key Emphasis | Transparency, reproducibility, defined boundaries (AD). | Confidence, uncertainty, consistency, and transparency in the assessment process. |

| Regulatory Utility | Determines if a model is potentially acceptable. | Enables a consistent and transparent decision on the validity of a prediction for a specific case. |

| Evolution | Foundational checklist. | Operational guide with assessment elements for implementers. |

Comparative Analysis of Tools and Performance

The practical value of validation frameworks is demonstrated through the performance of software tools that implement QSAR models.

Performance Benchmarking of QSAR/QSPR Software

A 2024 benchmarking study of twelve software tools for predicting physicochemical (PC) and toxicokinetic (TK) properties provides a broad performance overview. The study, which rigorously curated 41 external validation datasets, found that models for PC properties generally outperformed those for TK properties [8].

Table 2: Benchmarking Summary of Computational Tool Performance [8]

| Property Category | Average Performance (R²) | Notable Finding | Key Challenge |

|---|---|---|---|

| Physicochemical (PC) | 0.717 | Models show adequate to good predictive performance for standard organic chemicals. | Performance drops for "difficult" chemical classes (e.g., PFAS, multifunctional compounds). |

| Toxicokinetic (TK) | 0.639 (Regression) | Balanced accuracy for classification models averaged 0.780. | Complex biological endpoints introduce higher variability and modeling difficulty. |

| Overall Trend | - | Freely available tools (e.g., OPERA) often perform comparably to commercial tools. | Defining and respecting the Applicability Domain (AD) is critical for reliable application. |

A focused 2025 study compared three QSPR packages (IFSQSAR, OPERA, and EPI Suite) for predicting partition ratios (log KOW, KOA, KAW). It highlighted the importance of quantifying prediction uncertainty. The study found IFSQSAR's 95% prediction interval (PI95) captured 90% of external experimental data. To achieve similar coverage, the uncertainty bounds for OPERA and EPI Suite required broadening by a factor of at least 4 and 2, respectively [24]. This underscores that accuracy metrics alone are insufficient; an understanding of uncertainty is vital for informed decision-making.

Validation of Profilers for Hazard Assessment: The OECD QSAR Toolbox

The OECD QSAR Toolbox is widely used for grouping chemicals and filling data gaps via read-across. Its performance depends on the "profilers" (structural alerts and rules) used to form categories. Validation studies reveal variable performance:

- Genotoxicity Profilers: A 2025 validation against a pesticide database showed accuracies ranging from 41-78% for in vivo micronucleus (MNT) profilers and 62-88% for Ames mutagenicity profilers. Incorporating metabolism simulations boosted accuracy by 4–16% [25].

- Carcinogenicity & Sensitization Profilers: A 2024 assessment revealed that while many structural alerts are fit-for-purpose, others have low positive predictive value (PPV < 0.5), leading to over-prediction. The study concluded that refining or excluding such alerts is imperative for reliable use [26].

Table 3: Performance Metrics of Selected OECD QSAR Toolbox Profilers [25] [26]

| Endpoint | Profiler / Alert Type | Reported Accuracy | Key Insight for Reliable Use |

|---|---|---|---|

| Mutagenicity (Ames) | DNA binding alerts | 62% - 88% | Incorporate metabolism simulation to improve accuracy. |

| Genotoxicity (MNT) | In vivo MNT alerts | 41% - 78% | Negative predictions (no alert) are highly reliable for screening. |

| Carcinogenicity | Oncologic Primary Class. | Varies by alert | Some structural alerts have low precision (PPV < 0.5) and require expert review. |

| Skin Sensitization | Protein binding alerts | Good sensitivity | Requires mechanistic compatibility for read-across. |

Experimental Validation Protocols and Data Curation

The credibility of any model comparison rests on the quality of the experimental data used for validation.

Protocol for Curating High-Quality Validation Datasets

A rigorous data curation protocol, as detailed in recent literature, is essential to avoid the "garbage in, garbage out" paradigm [23]. The following workflow is recommended:

Key Protocol Steps:

- Multi-Source Data Aggregation: Compile data from curated public databases (e.g., eChemPortal, AqSolDB) and literature [23].

- Structural Standardization: Use toolkits (e.g., RDKit) to neutralize salts, remove duplicates, and filter out organometallics or mixtures [8].

- Identifier Harmonization: Ensure consistent mapping between chemical names, CAS numbers, and structural identifiers (SMILES, InChIKey) [23].

- Experimental Data Cleaning: Apply statistical filters (e.g., removing data points with a Z-score > 3) and reconcile conflicting values for the same compound across different sources [8].

- Applicability Domain Consideration: Document the chemical space (e.g., functional groups, property ranges) covered by the final dataset to contextualize validation results [8].

Protocol for External Validation and Uncertainty Quantification

Once a curated dataset is prepared, the following protocol should be used to benchmark models:

- Strict Train-Test Separation: Ensure chemicals in the validation set are external to all models' training data [24].

- Generate Predictions: Run the validation set chemicals through the software, recording the prediction, any applicability domain flag, and any provided uncertainty metric (e.g., prediction interval) [24].

- Calculate Performance Metrics: For regression, calculate R², root mean squared error (RMSE). For classification, calculate sensitivity, specificity, accuracy, and Matthews Correlation Coefficient (MCC) [26].

- Quantify Uncertainty Reliability: Assess if the model's reported prediction intervals (e.g., PI95) actually contain the stated percentage of experimental data points (e.g., 95%) [24].

- Analyze by Chemical Domain: Stratify performance analysis for specific chemical classes (e.g., PFAS, ionizable compounds) to identify model weaknesses [24].

Implementing these validation protocols requires a set of key resources.

Table 4: Essential Research Reagent Solutions for Model Validation

| Tool/Resource | Function in Validation | Example/Source |

|---|---|---|

| Curated Experimental Databases | Provide high-quality reference data for training and, crucially, external validation. | AqSolDB (water solubility) [23]; MultiCASE Genotoxicity DB [25]; OECD eChemPortal [23]. |

| Chemical Standardization Tools | Ensure consistent structural representation, which is foundational for reproducible modeling. | RDKit (Open-source); Pipeline Pilot (Commercial). |

| Software with AD & Uncertainty Metrics | Enable reliable application by signaling when predictions are extrapolative and quantifying their confidence. | OPERA (leverage & vicinity) [8]; IFSQSAR (prediction intervals) [24]. |

| The OECD QSAR Toolbox | A multifunctional platform for applying profilers, forming categories, and performing read-across predictions. | Freely available software integrating databases and models [26]. |

| Benchmarking & Validation Scripts | Automated scripts for calculating performance metrics and generating comparative visualizations. | Custom Python/R scripts implementing Cooper statistics [26] and uncertainty validation [24]. |

Integrated Validation Workflow: From Principles to Decision

For a researcher or regulator, validating a computational prediction involves integrating all discussed elements into a logical workflow. The following diagram synthesizes the OECD Principles, the QAF assessment elements, and experimental benchmarking into a coherent process for building confidence in a prediction.

Workflow Explanation: The process begins by ensuring the prediction request aligns with a model's defined endpoint and transparent algorithm (OECD Principles 1 & 2). The chemical must then be checked against the model's Applicability Domain (Principle 3), and the model's historical performance metrics (Principle 4) must be reviewed. These predictions must be benchmarked against curated experimental data—the gold standard. Concurrently, the mechanistic interpretation (Principle 5) is considered. Following QAF guidance, the uncertainty of the prediction is quantified, and, where possible, a consensus from multiple models is sought. This integrated analysis of principles, experimental evidence, and framework elements culminates in a transparent confidence assessment to inform the final decision [22] [24] [23].

The validation of computational toxicity models is a dynamic field anchored by the OECD Principles and increasingly operationalized by the QAF. As demonstrated, no single tool is universally superior; performance is endpoint- and chemical-dependent. The consistent theme across studies is that transparent, experimental validation is non-negotiable for establishing trust. Future progress hinges on:

- Improved Uncertainty Characterization: Moving beyond simple accuracy to provide reliable, quantitative uncertainty estimates for every prediction [24].

- Targeted Model Development: Building models and refining profilers for "difficult" chemical classes like PFAS and ionizable organic compounds [24].

- Integration of AI and NAMs: Leveraging artificial intelligence to integrate QSAR predictions with other new approach methodologies (e.g., in vitro bioassays) within defined validation frameworks [22] [8].

For researchers and regulators, the path forward involves a judicious, case-by-case application of validation frameworks, leveraging consensus predictions from rigorously benchmarked tools, and grounding all conclusions in high-quality experimental evidence.

Integrated Approaches to Testing and Assessment (IATA) are defined frameworks that combine multiple sources of information to conclude on the toxicity of chemicals [27]. They are developed to address specific regulatory or decision-making contexts, moving beyond reliance on any single test method [27]. The core principle of IATA is the iterative integration of existing data—from scientific literature, (Q)SAR predictions, or chemical databases—with targeted new information generated from in vitro, in chemico, or in silico methods [27]. This strategy is designed to be flexible and fit-for-purpose, aiming to provide robust hazard and risk assessments while minimizing, and often eliminating, the need for traditional animal testing [28] [29].

IATA is closely related to, but distinct from, several other key concepts in modern toxicology. Defined Approaches (DAs) are structured, reproducible components within an IATA that use a fixed data interpretation procedure on a defined set of information sources to produce an objective, rule-based prediction [28] [29]. Adverse Outcome Pathways (AOPs) provide a mechanistic framework for organizing toxicological data across different biological levels (molecular, cellular, organ, organism) and are highly useful for designing and interpreting IATAs, though they are not a mandatory component [27]. The overarching category of New Approach Methodologies (NAMs) encompasses the modern tools—including high-throughput screening, omics, microphysiological systems, and artificial intelligence—that are frequently employed within IATA frameworks [30].

The rationale for adopting IATA is multifaceted. It directly addresses the critical limitations of traditional animal-centric testing, which is characterized by high costs, low throughput, ethical concerns, and challenges in extrapolating results to humans [31]. Furthermore, IATA provides a systematic solution for evaluating the vast number of "data-poor" chemicals for which little or no toxicity information exists [27]. By leveraging advances in biotechnology and computational science, IATA enables faster, more cost-effective, and more human-relevant safety assessments [27] [31].

The following diagram illustrates the logical workflow and decision-making process within a typical IATA.

Performance Comparison: IATA vs. Traditional and Standalone Methods

The validation of IATA hinges on its performance relative to established approaches. The following tables compare IATA-based strategies with traditional animal tests and standalone non-animal methods across critical endpoints where IATA has been formally adopted or extensively validated.

Table 1: Comparison of Skin Sensitization Assessment Approaches

| Approach Type | Specific Method/Strategy | Key Components | Accuracy (vs. LLNA/Max Human) | Throughput & Cost | Animal Use | Regulatory Status |

|---|---|---|---|---|---|---|

| Traditional In Vivo | Murine Local Lymph Node Assay (LLNA) | Animal test measuring lymphocyte proliferation | Gold Standard (Reference) | Low throughput, High cost, Weeks | ~30 mice/chemical | OECD TG 429 |

| Standalone NAM | In chemico DPRA (Direct Peptide Reactivity Assay) | Single assay measuring peptide reactivity | ~75-80% concordance [28] | High throughput, Low cost, Days | None | OECD TG 442C |

| Defined Approach (within IATA) | OECD TG 497 DA for Skin Sensitization | Fixed combination of DPRA, KeratinoSens, h-CLAT + DIP | 89-93% concordance for hazard; Provides potency estimation [28] | Medium-High throughput, Medium cost, Days | None | Adopted OECD TG (2021, updated 2025) [28] |

| IATA (Expert-led) | Weight-of-Evidence using AOP & multiple NAMs | Integrates (Q)SAR, in chemico, in vitro KE assays, exposure | High (context-dependent), Enables potency and risk assessment [27] [29] | Flexible, Variable | Minimal to None | Case-by-case acceptance under various regulations [27] |

Table 2: Comparison of Eye Irritation/Serious Eye Damage Assessment Approaches

| Approach Type | Specific Method/Strategy | Key Components | Ability to Discern UN GHS Categories | Throughput & Cost | Animal Use | Regulatory Status |

|---|---|---|---|---|---|---|

| Traditional In Vivo | Rabbit Draize Eye Test | Animal test applying substance to rabbit eye | Reference standard (Categories 1, 2, No Cat.) | Low throughput, High cost, Days-Weeks | 1-3 rabbits/chemical | OECD TG 405 |

| Standalone NAM | Bovine Corneal Opacity & Permeability (BCOP) | Isolated bovine cornea | Does not fully discriminate Cat. 1 vs. Cat. 2 [32] | Medium throughput, Medium cost, Days | Ex vivo tissue | OECD TG 437 |

| Defined Approach (within IATA) | OECD TG 467 DA for Eye Hazard | Fixed battery of in vitro tests (e.g., RhCE, BCOP) + DIP | High accuracy for Cat. 1 & No Cat.; accepted for specified drivers of classification [28] [32] | Medium-High throughput, Medium cost, Days | None | Adopted OECD TG (2022, updated 2025) [28] |

| IATA (Sequential Testing) | OECD GD 263 for Eye IATA | Tiered strategy using RhCE, BCOP, other tests with decision points | High, allows for definitive classification for many substances [32] | Flexible, Optimized to reduce testing | Minimal to None | OECD Guidance Document [32] |

Table 3: Comparison of Endocrine Disruption Screening for Estrogen/Androgen Pathway Activity

| Approach Type | Specific Method/Strategy | Key Components | Performance (Sensitivity/Specificity) | Throughput & Cost | Animal Use | Mechanistic Insight |

|---|---|---|---|---|---|---|

| Traditional In Vivo | EPA EDSP Tier 1 Battery (e.g., uterotrophic, Hershberger assays) | Suite of in vivo assays | High but variable, Reference standard | Very low throughput, Very high cost, Months | Hundreds of animals/chemical | Low (organism-level endpoint) |

| Standalone NAM | Single in vitro ER/AR Binding or Transcriptional Activation Assay | e.g., ERα CALUX, AR CALUX | Good for single molecular event, misses other KEs | High throughput, Low cost, Days | None | High but narrow |

| Defined Approach (within IATA) | EPA/NICEATM ER/AR Pathway Model | Computational model integrating 11-18 HTS assay outputs | ~95% concordance with relevant in vivo outcomes for model chemicals [28] | Very high throughput, Low cost (after model built) | None | High (covers multiple KEs in pathway) |

| IATA (Optimized DA) | Streamlined ER/AR DA | Optimized subset of 4-5 key HTS assays + model | Similar performance to full model with reduced resource use [28] | Very high throughput, Low cost | None | High |

Practical Applications and Worked Examples

IATA frameworks are applied to diverse and complex toxicological challenges. Two prominent examples demonstrate their utility in modern risk assessment.

1. Grouping and Read-Across of Nanomaterials (NMs): Assessing every unique nanoform is impractical. An IATA for NMs in aquatic systems uses a tiered strategy with decision nodes focused on dissolution, dispersion stability, and transformation processes [33]. By testing these functional fate properties, different NMs that share similar behavior can be grouped. Hazard data from a "data-rich" NM within the group can then be read across to "data-poor" members. A worked example for metal oxide NMs showed that by applying dissolution rate thresholds, materials could be successfully grouped, significantly reducing the need for extensive ecotoxicity testing for each variant [33].

2. Integrated Bioaccumulation Assessment: A systematic IATA for bioaccumulation moves beyond reliance on a single in vivo fish bioconcentration factor (BCF) test. It integrates multiple lines of evidence (LoE) [34]:

- LoE 1: Existing experimental BCF data.

- LoE 2: In vitro metabolism data (to account for biotransformation).

- LoE 3: Read-across from structurally analogous chemicals.

- LoE 4: Physiologically-Based Kinetic (PBK) modelling to simulate absorption, distribution, metabolism, and excretion [27].

- LoE 5: Empirical descriptors like log Kow (octanol-water partition coefficient).

The IATA provides a transparent weight-of-evidence methodology to evaluate and integrate these LoEs, allowing for a robust conclusion even for data-poor chemicals [34]. The process is visualized in the following diagram, which shows how the AOP framework supports the integration of data from different biological levels within an IATA.

Methodological Protocols for Core IATA Experiments

The reliability of an IATA depends on the standardized execution of its constituent methods. Below are detailed protocols for key experimental components commonly integrated into IATAs.

Protocol 1: Defined Approach for Skin Sensitization Potency (OECD TG 497)

- Objective: To classify a chemical's skin sensitization potency (Weak vs. Strong/Extreme) without animal testing.

- Principle: A fixed data interpretation procedure (DIP) is applied to results from three validated in chemico and in vitro assays representing key events (KEs) in the skin sensitization AOP [28].

- Procedure:

- KE1: Protein Binding. Perform the Direct Peptide Reactivity Assay (DPRA) (OECD TG 442C). Incubate test chemical with cysteine- and lysine-containing peptides. Measure depletion via HPLC.

- KE2: Keratinocyte Response. Perform the KeratinoSens assay (OECD TG 442D). Expose recombinant KeratinoSens cells to the chemical. Measure activation of the antioxidant response element (ARE) via luciferase reporter gene expression.

- KE3: Dendritic Cell Activation. Perform the human Cell Line Activation Test (h-CLAT) (OECD TG 442E). Expose THP-1 cells to the chemical. Measure surface expression of CD86 and CD54 via flow cytometry.

- Data Integration: Input the quantitative results (e.g., % peptide depletion, EC values, fluorescence indices) into the official TG 497 prediction model. This Bayesian network DIP calculates a probability for the chemical belonging to each potency class [28].

- Output: A definitive prediction of "Weak" or "Strong/Extreme" sensitizer, which can be used directly in specific regulatory classifications [28].

Protocol 2: Quantitative High-Throughput Screening (qHTS) for Pathway Activity

- Objective: To generate robust concentration-response data for thousands of chemicals across a battery of toxicity pathway assays.

- Principle: Compounds are tested across a range of concentrations (e.g., 4-15 points over 4 log units) in cell-based or biochemical assays using robotic platforms, generating high-quality data suitable for computational modeling [31].

- Procedure:

- Assay Selection: Choose cell lines (e.g., HepG2, primary hepatocytes) or enzyme systems relevant to the molecular target (e.g., nuclear receptor, kinase).

- Plate Format & Dispensing: Use 1536-well plates. A robotic liquid handler dispenses assay reagents and serially dilutes test chemicals directly into the assay plates [31].

- Multiplexed Readout: Incubate as required. Measure endpoint(s) using fluorescence, luminescence, or absorbance. High-content imaging can be incorporated for multiplexed readouts (e.g., cytotoxicity + specific reporter activation).

- Data Processing: Raw data is normalized to plate controls. Concentration-response curves are fitted for each chemical-assay pair. Key parameters (e.g., AC50, efficacy, curve shape) are extracted [31].

- Output: A large-scale, high-quality dataset linking chemical structure to quantitative biological activity, forming the core training data for predictive in silico models used in DAs and IATAs [31].

The following diagram conceptualizes the iterative cycle of computational model development, validation, and refinement within the IATA paradigm, which is central to the thesis of validating in silico tools with experimental data.

The Scientist's Toolkit: Essential Research Reagent Solutions

The implementation of IATA relies on a suite of specialized tools and platforms. The following table details key solutions and their functions in modern toxicity testing and assessment.

Table 4: Essential Research Reagent and Platform Solutions for IATA

| Tool Category | Specific Solution/Platform | Primary Function in IATA | Key Characteristics |

|---|---|---|---|

| Bioassay Platforms | Quantitative High-Throughput Screening (qHTS) Robotic Systems [31] | Generates concentration-response data for thousands of chemicals across multiple toxicity pathways. | Enables testing at multiple concentrations; high reproducibility (r² > 0.87) [31]; forms backbone of Tox21 program data generation. |

| Tissue Models | Reconstructed Human Epidermis (RhE) Models (e.g., EpiDerm, SkinEthic) [29] | Used in DAs for skin corrosion/irritation and eye irritation; provides a human-relevant, organotypic tissue response. | 3D culture of human keratinocytes; reproducible and validated; can be adapted for phototoxicity testing [29]. |

| Tissue Models | Microphysiological Systems (MPS) / Organs-on-a-Chip [30] [29] | Models complex organ-level physiology and interactions for repeated-dose or systemic toxicity assessment within IATA. | Incorporates fluid flow, mechanical cues, and multiple cell types; emerging tool for addressing chronic toxicity endpoints. |

| In Chemico Assays | Direct Peptide Reactivity Assay (DPRA) Reagents [28] | Measures the molecular initiating event (covalent protein binding) for skin sensitization. | Standardized HPLC-based assay; provides quantitative input for the OECD TG 497 DA. |

| Cell-Based Assays | Reporter Gene Cell Lines (e.g., KeratinoSens, ER/AR CALUX) [28] | Measures specific cellular key events, such as keratinocyte activation or nuclear receptor pathway perturbation. | Genetically engineered for sensitive, specific, and high-throughput readout of pathway activity. |

| Computational Tools | (Q)SAR and Expert System Software (e.g., OECD QSAR Toolbox) [27] | Provides in silico predictions for various endpoints and supports grouping/read-across hypothesis formation. | Essential for compiling existing information and filling data gaps without testing. |

| Computational Tools | Bayesian Network / Machine Learning Models [28] [29] | Serves as the fixed Data Interpretation Procedure (DIP) in Defined Approaches to integrate multiple assay results. | Produces objective, probabilistic predictions from complex input data (e.g., skin sensitization potency). |

| Data Reporting | OECD Harmonized Templates (QMRF, QPRF, Omics Template) [27] | Ensures standardized, transparent reporting of information sources (QSAR models, predictions, omics data) within an IATA. | Critical for regulatory acceptance and reproducibility of the assessment. |

The failure of approximately 30% of preclinical drug candidates due to toxicity issues underscores a critical challenge in pharmaceutical development [14]. Computational toxicology has emerged as a transformative field, leveraging machine learning (ML) and artificial intelligence (AI) to predict adverse effects, thereby offering a faster, more cost-effective, and ethically favorable alternative to traditional animal testing [14] [35]. However, the transition from a promising in silico model to a reliable tool for decision-making hinges on a robust, systematic validation workflow. This guide provides a comparative framework for this essential process, from initial conceptualization to final performance reporting, ensuring models are not only predictive but also transparent, interpretable, and trustworthy for researchers and regulatory evaluators alike [36].

Model Conceptualization and Development

The foundation of a reliable computational toxicology model is a clearly defined purpose and a rigorously curated dataset.

Define the Predictive Task: The endpoint must be specific, measurable, and biologically relevant. Common tasks include binary classification (toxic/non-toxic), multi-class toxicity grading (e.g., using GHS classes) [37], regression for potency values (e.g., LD₅₀ or TD₅₀) [36], or predicting specific organ toxicities like hepatotoxicity or cardiotoxicity [14].

Curate a High-Quality Dataset: Model performance is intrinsically linked to data quality. Key steps involve:

- Source Diverse Data: Integrate data from public databases (e.g., Tox21 [38]), proprietary sources, and literature. A study on ToxinPredictor utilized a manually curated set of 14,064 unique molecules (7550 toxic, 6514 non-toxic) [38].

- Ensure Chemical and Biological Applicability Domain: The dataset should represent the chemical space and biological mechanisms relevant to the model's intended use [36].

- Address Data Imbalances: Employ techniques like stratified sampling or synthetic data generation to handle uneven class distributions, which is common in toxicity data [35].

Select Molecular Descriptors and Algorithms: The choice of features and model architecture is critical.

- Descriptor Extraction: Tools like RDKit and PaDel are used to compute molecular fingerprints, physicochemical properties (e.g., log P, molecular weight), and topological descriptors [38] [35].

- Algorithm Selection: Options range from traditional ML to deep learning. Studies show Support Vector Machines (SVM) and Random Forests (RF) often achieve state-of-the-art performance for classification tasks, while Deep Neural Networks (DNNs) excel with complex, high-dimensional data [38] [35]. The table below compares common approaches.

Table 1: Comparison of Common Algorithmic Approaches in Computational Toxicology

| Algorithm Type | Example Models | Typical Use Case | Strengths | Key Considerations |

|---|---|---|---|---|

| Traditional ML | SVM, Random Forest, Gradient Boosting [38] [35] | Binary/Multi-class Toxicity Classification | High interpretability, performs well on structured descriptor data, less computationally demanding | Feature engineering is crucial; may plateau with very complex data |