From Molecules to Ecosystems: A Comprehensive Guide to Ecological Risk Assessment Across Levels of Biological Organization

This article provides a critical synthesis for researchers, scientists, and drug development professionals on the methodologies, challenges, and integration of ecological risk assessment (ERA) across hierarchical biological scales.

From Molecules to Ecosystems: A Comprehensive Guide to Ecological Risk Assessment Across Levels of Biological Organization

Abstract

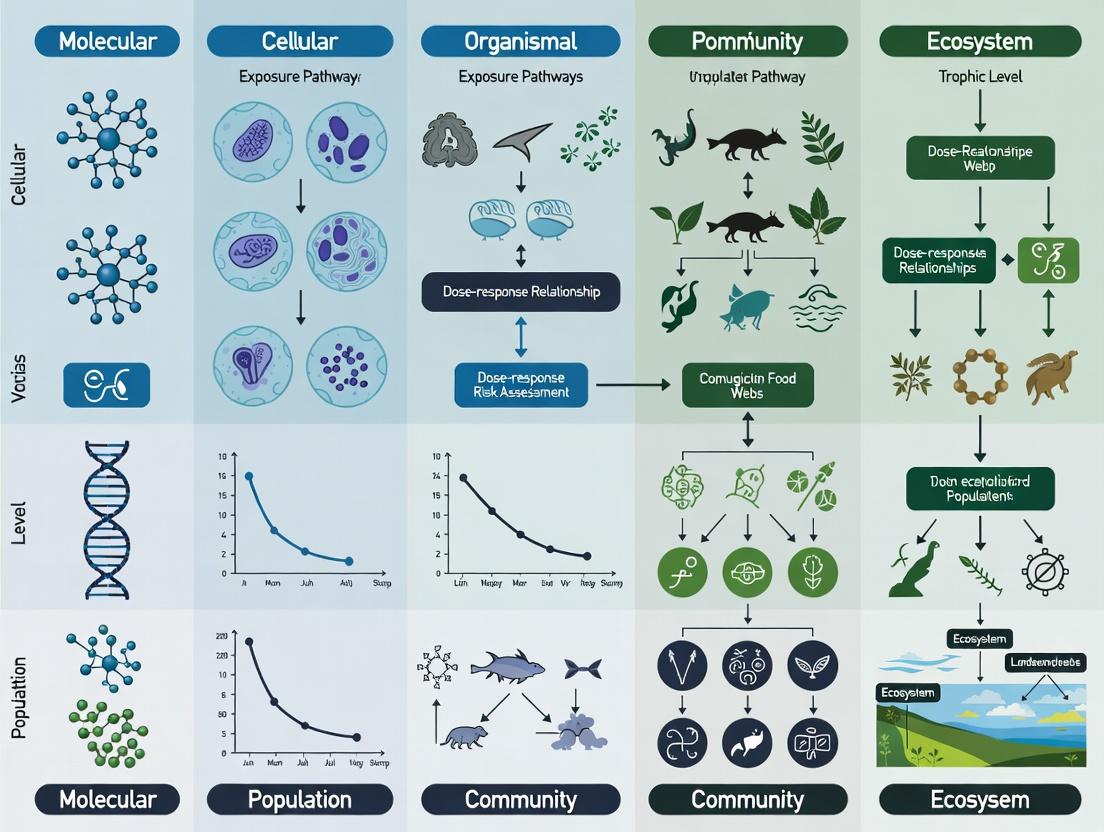

This article provides a critical synthesis for researchers, scientists, and drug development professionals on the methodologies, challenges, and integration of ecological risk assessment (ERA) across hierarchical biological scales. It explores the foundational principles differentiating sub-organismal, individual, population, community, and ecosystem-level assessments, highlighting the persistent gap between molecular measurement endpoints and regulatory protection goals [citation:1][citation:5]. The review details advanced methodological frameworks, including Adverse Outcome Pathways (AOPs), population modeling, and probabilistic scenario-based approaches, that aim to bridge these levels [citation:3][citation:4][citation:9]. It further examines key troubleshooting issues such as accounting for genetic diversity, multi-stressor interactions, and ecological complexity within problem formulation [citation:7][citation:8]. Finally, the article presents a comparative validation of assessment approaches, evaluating their predictive power, uncertainty, and utility for decision-making in biomedical and environmental contexts. The goal is to equip professionals with the knowledge to design more ecologically relevant and predictive risk assessments.

Foundations of Multi-Scale Assessment: Defining Endpoints and Navigating the Biological Hierarchy

Ecological Risk Assessment (ERA) is the formal process of evaluating the likelihood and significance of adverse environmental impacts resulting from exposure to stressors such as chemicals, disease, or invasive species [1]. The overarching goal is to protect valued ecological entities, ultimately expressed as the sustained delivery of ecosystem services such as clean water, soil productivity, pollination, and sustainable fisheries [2] [1]. However, a persistent and core challenge limits the efficacy of ERA: the disconnect between what is commonly measured and what society aims to protect [2] [3].

Modern toxicology has made significant advances in high-throughput in vitro systems and molecular biomarkers that can rapidly identify Molecular Initiation Events (MIEs)—the initial interactions between a stressor and a biological target [2] [3]. While these tools allow for efficient screening of many chemicals with reduced vertebrate testing, their results are confined to low levels of biological organization [4]. Regulatory protection goals, in contrast, concern higher-order ecological structures—populations, communities, and entire ecosystems—and their associated functions and services [1] [4]. The scientific and predictive linkage between an early molecular perturbation and a consequential shift in an ecosystem service remains complex and poorly quantified [2].

This creates a critical gap in risk assessment. Decisions are often based on data from standardized single-species laboratory tests (e.g., LC50 for Daphnia magna), which are then extrapolated, with substantial uncertainty, to predict effects on diverse field communities and ecosystem endpoints [4]. This mismatch between measurement endpoints and assessment endpoints can lead to both under-protection of the environment and inefficient allocation of management resources [4]. This guide provides a comparative analysis of ERA methodologies across biological scales, framed within the thesis that integrative, multi-scale modeling is essential for bridging this gap and achieving predictive next-generation ecological risk assessment [3] [5].

Comparative Analysis of ERA Across Biological Organization Levels

The choice of biological organization level for an ERA involves significant trade-offs. Each level offers distinct advantages and limitations in terms of methodological ease, ecological relevance, and extrapolative power [4]. The following table synthesizes these key characteristics, providing a framework for selecting appropriate assessment strategies based on specific risk assessment goals.

Table 1: Comparison of Ecological Risk Assessment Methodologies Across Levels of Biological Organization

| Level of Organization | Key Measurement Endpoints | Strengths | Weaknesses | Primary Use Case |

|---|---|---|---|---|

| Molecular/Cellular | Gene expression, protein binding, enzyme inhibition, in vitro cytotoxicity [2] [3]. | High-throughput, cost-effective, mechanistic insight, reduces animal testing, excellent for screening many chemicals [4] [3]. | Greatest distance from ecological protection goals; difficult to extrapolate to organismal and higher-level effects; misses systemic feedback [4] [3]. | Early hazard identification & screening; MIE characterization for Adverse Outcome Pathways (AOPs). |

| Individual Organism | Survival (LC50/EC50), growth, reproduction, development, behavior [4] [6]. | Standardized, reproducible, direct measure of toxicity, cornerstone of regulatory testing (Tier I) [4] [6]. | Limited ecological realism; ignores population dynamics (e.g., recovery, compensation) and species interactions [4]. | Core regulatory testing; derivation of protective thresholds (e.g., PNEC) for single species. |

| Population | Population growth rate, age/stage structure, extinction risk, spatial distribution models [4] [3]. | More ecologically relevant than individual-level data; can incorporate life-history traits and density dependence; closer to some protection goals (e.g., threatened species) [4] [3]. | More resource-intensive; requires complex modeling; species-specific, making multi-species assessments challenging [4]. | Assessing risks to specific valued populations (e.g., endangered species); refining risk for chemicals failing Tier I screens. |

| Community & Ecosystem | Species richness, abundance, diversity indices, functional group metrics, ecosystem process rates (e.g., decomposition, primary production) [4]. | High ecological relevance; captures species interactions (competition, predation) and emergent properties; can directly link to some ecosystem services [4]. | Highly complex, variable, and costly to study (e.g., mesocosms, field studies); results are context-dependent and difficult to generalize [4]. | Higher-tier (Tier III/IV) assessment for chemicals of high concern; site-specific risk evaluation; validation of lower-tier predictions [4]. |

| Landscape/Ecosystem Service | Habitat connectivity, service delivery metrics (e.g., crop yield, water filtration), integrated socio-ecological models [2] [1]. | Directly addresses societal protection goals (ecosystem services); integrates multiple stressors and ecological compartments [2] [1]. | Maximum complexity; requires extensive transdisciplinary data; models are highly uncertain and difficult to validate [2]. | Strategic environmental management; cost-benefit analysis of regulatory actions; watershed or regional planning [1]. |

The trends are clear: as one moves up the biological hierarchy, methodological ease and throughput decrease, while ecological relevance, system complexity, and context-dependence increase [4]. Conversely, lower-level assays are efficient for screening but suffer from a large extrapolation distance to meaningful ecological outcomes [4]. No single level is sufficient for a comprehensive ERA. Therefore, the modern paradigm emphasizes a weight-of-evidence approach that integrates data from multiple levels, connected through conceptual frameworks (like AOPs) and quantitative models [2] [3].

Detailed Experimental Protocols for Key ERA Methodologies

Protocol for High-ThroughputIn VitroScreening (Molecular/Sub-Organismal Level)

This protocol is designed to identify Molecular Initiation Events and early cellular responses for rapid chemical prioritization.

- Test System Preparation: Select appropriate in vitro systems (e.g., fish gill cell line, zebrafish embryo, yeast-based estrogen screen). Culture cells or embryos according to standardized guidelines (e.g., OECD TG 249 for fish embryo toxicity). Ensure consistency in passage number, growth medium, and incubation conditions [3].

- Chemical Exposure: Prepare a logarithmic dilution series of the test chemical in an appropriate solvent, with solvent controls. For water-soluble chemicals, use culture medium as the diluent. For hydrophobic chemicals, use a carrier solvent (e.g., DMSO) at a concentration not exceeding 0.1% v/v. Expose test systems in multi-well plates for a defined period (e.g., 24, 48, 96 hours) [3].

- Endpoint Measurement: Quantify relevant sublethal endpoints.

- Cytotoxicity: Measure using standard assays (e.g., Alamar Blue, MTT, neutral red uptake) to determine a benchmark concentration for cell viability.

- Specific Mechanistic Endpoints: Use reporter gene assays for receptor activation (e.g., estrogen receptor), measure enzyme activity (e.g., acetylcholinesterase inhibition), or quantify oxidative stress markers (e.g., glutathione levels) [3].

- Transcriptomics/Proteomics: For mode-of-action discovery, use high-throughput RNA sequencing or protein arrays on exposed versus control samples [7].

- Data Analysis: Calculate effect concentrations (e.g., EC50 for cytotoxicity or receptor activation). For omics data, perform pathway enrichment analysis to identify perturbed biological processes. Data is used to rank chemical potency and inform the development of Adverse Outcome Pathways [3].

Protocol for Population-Level ERA Using Individual-Based Models (IBMs)

This protocol uses modeling to extrapolate individual-level toxicity data to population-level consequences.

- Model Parameterization:

- Toxicokinetic-Toxicodynamic (TKTD) Model: Fit a model (e.g., General Unified Threshold model of Survival - GUTS) to standard organismal toxicity data (e.g., survival over time at different concentrations) to characterize internal dose and damage dynamics [3].

- Individual Life History: Collate data on the species' life cycle: growth rates, age/size at maturation, fecundity schedules, and background mortality. These data can come from the literature or control treatments of chronic tests [4] [3].

- Environmental Context: Define key environmental variables for the assessment scenario (e.g., temperature, resource availability, habitat structure) [3].

- Model Integration: Construct an IBM where simulated individuals each follow the defined life-history rules and are subject to stressor-induced mortality and/or impaired reproduction as predicted by the TKTD model. Incorporate stochasticity in individual processes and, if relevant, spatial explicitity (e.g., landscape grids) [3].

- Simulation & Analysis: Run the model for multiple generations under a range of exposure scenarios (constant, pulsed, spatially variable). Record population-level endpoints: intrinsic growth rate (r), time to recovery after a pulse, quasi-extinction risk, and changes in spatial distribution.

- Risk Characterization: Compare population metrics under exposure scenarios to those in a reference (unexposed) simulation. Determine the exposure concentration or pattern that leads to an unacceptable population-level effect, as defined by protection goals (e.g., population decline >20%) [4] [3].

Protocol for Community-Level Assessment Using Aquatic Mesocosms

This protocol provides high-tier, ecologically realistic data on chemical effects on complex multi-species systems.

- Mesocosm Design and Establishment: Use outdoor pond systems (e.g., 1000-10,000 L) or large indoor flow-through tanks. Standardize sediment, water source, and nutrient levels. Introduce a diverse, representative community: phytoplankton, periphyton, zooplankton (cladocerans, copepods), macroinvertebrates (insects, snails), and often plants and fish. Allow the system to stabilize and develop trophic interactions for 2-3 months prior to dosing [4].

- Experimental Design and Dosing: Employ a randomized block design. Establish a gradient of treatments: controls (no chemical), solvent controls, and multiple concentrations of the test chemical (typically 3-5), often replicating each treatment 3-4 times. Apply the chemical to simulate a realistic exposure scenario (e.g., a single pesticide spray event, chronic low-level input) [4].

- Monitoring and Sampling: Conduct intensive monitoring before and after dosing (e.g., weekly for 8-12 weeks).

- Abiotic: Measure temperature, pH, dissolved oxygen, nutrient levels, and chemical concentration (to characterize fate and exposure).

- Biotic: Sample phytoplankton (chlorophyll a, cell counts), zooplankton (abundance, species ID), macroinvertebrates (emergence traps, benthic samples). Measure functional endpoints like leaf litter decomposition rates or primary productivity [4].

- Statistical and Ecological Effect Analysis: Analyze data using multivariate statistics (e.g., Principal Response Curves) to visualize community trajectory differences between treatments and controls over time. Calculate No Observed Effect Concentrations (NOECs) and/or Effect Concentrations (ECx) for key structural (species richness) and functional endpoints. The goal is to identify a community-level threshold where significant and potentially irreversible shifts occur [4].

Visualizing Pathways and Workflows

Diagram 1: The Core Ecological Risk Assessment Process

Diagram 2: A Framework for Predictive Cross-Scale Modeling in ERA

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Multi-Scale Ecological Risk Assessment Research

| Category | Item/Solution | Function in ERA Research |

|---|---|---|

| Molecular/In Vitro Tools | Stable Reporter Gene Cell Lines (e.g., ER-CALUX, AR-EcoScreen) | High-throughput screening for specific receptor-mediated toxicity pathways (endocrine disruption). |

| Fluorescent Viability/Cytotoxicity Assay Kits (e.g., Alamar Blue, CFDA-AM) | Rapid, plate-based quantification of general cellular health and membrane integrity in in vitro systems. | |

| qPCR/PCR Assays & Microarrays/RNA-Seq Kits | Profiling gene expression changes to identify molecular biomarkers of exposure and effect and elucidate modes of action [7]. | |

| Organismal Testing | Standardized Test Organisms (e.g., Daphnia magna, Ceriodaphnia dubia, Fathead minnow embryos, Chironomus riparius) | Providing consistent, reproducible biological material for regulatory toxicity testing across trophic levels (algae, invertebrate, fish). |

| Reconstituted Water & Certified Reference Sediments | Providing consistent, contaminant-free aqueous and substrate media for aquatic and sediment toxicity tests, ensuring reproducibility. | |

| Precise Chemical Dosing Solutions (e.g., from neat compound) | Accurate preparation of exposure concentrations for laboratory bioassays, critical for dose-response modeling. | |

| Population & Community Studies | Environmental DNA (eDNA) Sampling & Extraction Kits | Non-invasive biodiversity monitoring for mesocosm and field studies; tracks species presence/absence and community composition [7]. |

| Standardized Artificial Substrates (e.g., Hester-Dendy samplers, leaf packs) | Uniform sampling of colonizing macroinvertebrate communities in field and mesocosm studies for consistent metric calculation. | |

| Fluorescent Tracking Dyes or Stable Isotope Enrichment | Tracing nutrient or contaminant flow through food webs in experimental ecosystems to understand trophic transfer. | |

| Data Integration & Modeling | Bioinformatics Pipelines & Databases (e.g., ECOTOX, BOLD, GBIF, ELIXIR resources) [6] [7] | Curating, standardizing, and analyzing molecular sequence data, species occurrence data, and ecotoxicity literature data for model parameterization and validation. |

| Mechanistic Modeling Software/Platforms (e.g., R packages for TKTD/GUTS, NetLogo for IBMs, AQUATOX) | Providing the computational environment to develop and run integrative models that link processes across biological scales [3]. |

Defining Assessment Endpoints vs. Measurement Endpoints Across Scales

Fundamental Concepts and Distinctions

In ecological risk assessment (ERA), the clear distinction between assessment endpoints and measurement endpoints is foundational to scientifically defensible and socially relevant environmental protection. An assessment endpoint is an explicit expression of the actual environmental value to be protected, defined by societal goals and management objectives [4]. Examples include "the sustainability of a commercial fish population" or "the biodiversity of a wetland community." In contrast, a measurement endpoint is a measurable response to a stressor that is quantitatively linked to the assessment endpoint [4]. Common measurement endpoints include the 96-hour LC50 (median lethal concentration) for a standard test species or a biochemical biomarker of exposure.

The relationship between these endpoints is hierarchical. Assessment endpoints, often defined at higher levels of biological organization like populations or communities, are the ultimate targets of protection. Measurement endpoints, which are practical to quantify in experiments (often at the individual or suborganismal level), serve as quantitative proxies for predicting effects on those assessment endpoints [8]. A central challenge in ERA is the frequent mismatch between what is easily measured in controlled laboratory tests and the complex, valued ecological entities we aim to protect [4]. This guide compares how endpoint selection and utility shift across scales of biological organization, from molecules to landscapes.

Endpoint Selection Across Levels of Biological Organization

The choice and feasibility of endpoints are intrinsically linked to the level of biological organization at which an assessment is focused. Each level offers distinct advantages and trade-offs between ecological relevance, methodological practicality, and certainty in cause-effect relationships [4] [9].

Comparison of Endpoint Characteristics by Organizational Level

Table 1: Comparative analysis of endpoint utility across levels of biological organization.

| Level of Biological Organization | Typical Assessment Endpoint Examples | Common Measurement Endpoint Examples | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Suborganismal (Biomarker) | Population viability, Ecosystem health | Gene expression, Enzyme inhibition, Protein biomarkers | High-throughput screening; Clear mechanistic link to stressor; Low cost per assay [4] [9]. | Large extrapolation distance to protected entities; Ecological relevance uncertain [4]. |

| Individual (Organismal) | Survival of key species, Individual health | LC50/EC50, Growth rate, Reproduction (e.g., Daphnia 21-day test) [4] | Standardized, reproducible tests; Strong cause-effect certainty; Extensive historical database [4]. | Misses population-level dynamics (e.g., compensation); Ignores species interactions [4]. |

| Population | Abundance, Production, Persistence of a species | Population growth rate, Age/size structure, Extinction risk models [10] | Directly relevant to conservation goals; Can integrate individual-level effects over time [11] [10]. | Data-intensive; Requires complex modeling; Less amenable to high-throughput testing [4]. |

| Community & Ecosystem | Biodiversity, Trophic structure, Ecosystem function (e.g., decomposition) | Species richness, Biomass spectra, Nutrient cycling rates [12] | Captures emergent properties and species interactions; High ecological relevance [4] [12]. | Highly complex and variable; Low repeatability; High cost and resource needs [4]. |

| Landscape/Region | Habitat connectivity, Meta-population persistence, Regional water quality | Land cover change, Patch size distribution, Material export [8] | Addresses large-scale management issues; Incorporates spatial dynamics [8]. | Extremely complex modeling; Validation is difficult; Often lacks established protocols [8]. |

Trends and Trade-offs

A synthesis of the research reveals two primary opposing trends across the biological hierarchy [4] [9]:

- Negatively Correlated with Level: Ease of establishing cause-effect relationships, ease of high-throughput screening, and methodological certainty generally decrease at higher organizational levels.

- Positively Correlated with Level: Ecological relevance, inclusion of system feedbacks and context dependencies, and the ability to capture recovery processes generally increase at higher organizational levels.

Some factors, such as ethical considerations regarding vertebrate testing and the ability to screen many species, show no consistent trend across levels [4]. Furthermore, key metrics like the repeatability of assays and comprehensive cost analyses (e.g., cost per species assessed) lack sufficient comparative data to draw definitive conclusions [4].

Tiered Assessment Frameworks and Endpoint Evolution

ERA is typically conducted in a tiered framework, where the sophistication of endpoints, exposure scenarios, and effects analysis escalates with each tier [4]. This structure efficiently allocates resources by using simple, conservative endpoints for initial screening. Table 2: Evolution of endpoints within a tiered ecological risk assessment framework.

| Tier | Assessment Philosophy | Typical Assessment Endpoint | Dominant Measurement Endpoints | Risk Metric |

|---|---|---|---|---|

| I (Screening) | Conservative "screen out" of negligible risks. | Generic protection of aquatic life, wildlife. | Standard single-species toxicity values (LC50, NOAEC) [4]. | Deterministic Hazard Quotient (HQ) [4]. |

| II (Refined) | Incorporates variability and uncertainty. | Protection of specific, valued populations. | Probabilistic species sensitivity distributions (SSDs); refined exposure models. | Probability of exceeding effects threshold [4]. |

| III (Advanced) | Site-specific, biologically and spatially explicit. | Sustainability of local community structure/function. | Multi-species micro/mesocosm responses; population model outputs [4]. | Risk estimates for complex endpoints [4]. |

| IV (Field Verification) | Direct measurement under real-world conditions. | Status of ecosystem at a particular site. | Field monitoring data (e.g., invertebrate community indices) [4]. | Multiple lines of evidence [4]. |

As the assessment tier escalates, measurement endpoints evolve from simple, standardized laboratory responses to complex, system-level attributes that more closely approximate the desired assessment endpoint [4].

Experimental and Modeling Protocols for Endpoint Bridging

Mesocosm Experiments for Community-Level Endpoints

Protocol Overview: Multi-species mesocosm studies (Tier III) are a critical methodology for generating measurement endpoints closer to community and ecosystem assessment endpoints [4].

- Objective: To evaluate the effects of a stressor (e.g., pesticide concentration) on a semi-natural, contained ecosystem, measuring structural (species abundance) and functional (process rates) endpoints.

- Design: A typical aquatic mesocosm consists of outdoor ponds or large tanks (1,000-10,000 L) seeded with a natural assemblage of plankton, invertebrates, macrophytes, and sometimes fish. Treatments (e.g., different chemical concentrations) are replicated and randomly assigned.

- Key Measurement Endpoints: Phytoplankton and zooplankton species richness and abundance (structural); leaf litter decomposition rate (functional); chlorophyll-a concentration; and dissolved oxygen diurnal flux.

- Duration: Typically lasts for months to over a year to capture seasonal dynamics and recovery potential [4].

- Analysis: Data are analyzed using multivariate statistics (e.g., Principal Response Curves) to visualize community-level treatment effects over time and determine no-observed-effect concentrations (NOECs) for the system.

Population Modeling from Individual-Level Data

Protocol Overview: Mechanistic population models bridge individual-level measurement endpoints (e.g., survival, reproduction) to population-level assessment endpoints (e.g., abundance, extinction risk) [10].

- Objective: To translate toxicant effects on individuals into projections for population trajectory.

- Model Types: Common models include Individual-Based Models (IBMs), which simulate the fate of each organism, and Matrix Projection Models, which use stage- or age-classified vital rates [10].

- Data Requirements: Models require control and treatment data on age/size-specific survival, fecundity, and growth. These are derived from life-cycle toxicity tests. Models also require information on density-dependence and life-history traits.

- Process: The model is first parameterized and validated with control (no stressor) data. Toxicant effects are then incorporated by altering the relevant vital rates (e.g., reducing juvenile survival by 30% based on EC50 data) in the treatment simulations.

- Output: The primary model outputs are population growth rate (λ), probability of quasi-extinction, and time to recovery. A reduction in λ below 1.0 indicates a declining population, directly addressing a population-level assessment endpoint [10].

AOP to Population Assessment Framework: Illustrates how suborganismal and individual measurement endpoints (Key Events, Adverse Outcomes) feed into models to predict effects on population-level assessment endpoints. [10]

Integrated Frameworks and Future Perspectives

No single level of biological organization provides a perfect suite of endpoints. The future of ERA lies in integrated, weight-of-evidence approaches that combine data from multiple levels [9] [12]. The Adverse Outcome Pathway (AOP) framework is a pivotal organizing tool that facilitates this integration [10]. An AOP is a conceptual model that maps a direct, causal pathway from a Molecular Initiating Event (a measurement endpoint) through intermediate Key Events to an Adverse Outcome relevant to risk assessment (an assessment endpoint, often at the individual or population level) [10]. This framework explicitly links mechanistic data from high-throughput in vitro assays to outcomes of regulatory concern, guiding targeted testing and reducing uncertainty in extrapolation.

The most robust ERAs will employ a dual "top-down" and "bottom-up" strategy [9]. A top-down approach starts with monitoring data from field systems (high-level assessment endpoints) to identify potential impairments, which then guides targeted, lower-level investigation to diagnose causes. The bottom-up approach uses traditional toxicity testing and AOPs to predict potential higher-order effects. System-scale modeling, incorporating food web interactions and ecosystem processes, is essential for synthesizing these lines of evidence [12].

The Scientist's Toolkit: Essential Reagent Solutions

Table 3: Key research reagents and materials for endpoint measurement across scales.

| Tool Category | Specific Item / Solution | Primary Function in Endpoint Measurement |

|---|---|---|

| Model Organisms | Daphnia magna (Cladoceran), Danio rerio (Zebrafish), Lemna spp. (Duckweed) | Standardized test species for deriving individual-level measurement endpoints (mortality, growth, reproduction) in regulatory assays [4]. |

| Biomarker Assays | Acetylcholinesterase (AChE) Activity Kit, Vitellogenin ELISA Kit, CYP450 Reporter Gene Assay | Quantifies suborganismal key events in an AOP (e.g., enzyme inhibition, endocrine disruption), serving as early-warning measurement endpoints [10]. |

| Mesocosm Components | Sediment & Water from Reference Site, Standardized Invertebrate Inoculum, Macrophyte Transplants | Creates replicated semi-natural systems for measuring community and ecosystem-level endpoints (e.g., biodiversity, functional rates) [4]. |

| Environmental DNA (eDNA) | eDNA Extraction Kits, Universal Primer Sets for Metabarconding | Enables non-invasive, high-throughput measurement of community composition and biodiversity (a community-level measurement endpoint). |

| Population Modeling Software | RAMAS Ecology, META-X, NetLogo with IBMs | Platform for integrating individual-level toxicity data with life-history information to project population-level assessment endpoints like extinction risk [10]. |

Endpoint Selection Framework: Outlines the logical flow from broad societal goals to the selection of specific measurement endpoints at different biological scales. [4] [8]

This guide provides a comparative analysis of methodological approaches for ecological risk assessment (ERA) across the biological hierarchy, from molecular biomarkers to landscape-scale processes. It is structured within the broader thesis that a multi-level assessment is critical for comprehensive environmental protection, integrating acute toxicity data with chronic, systemic ecological impacts [1].

Comparative Analysis of Assessment Approaches Across Biological Levels

Ecological risk assessment (ERA) is a formal process for evaluating the likelihood of adverse environmental impacts from exposure to stressors like chemicals or land-use change [1]. The choice of assessment method is dictated by the level of biological organization of concern, each offering distinct advantages and limitations in sensitivity, spatial relevance, and managerial utility.

Table 1: Comparison of ERA Methodologies Across Biological Organization Levels

| Organization Level | Primary Assessment Method/Indicator | Typical Endpoints Measured | Spatial Scale | Temporal Sensitivity | Key Advantages | Major Limitations |

|---|---|---|---|---|---|---|

| Sub-Organismal | Biochemical Biomarkers (e.g., enzyme inhibition, DNA damage) | Molecular/cellular function | Point source to local | Immediate to short-term | High sensitivity, early warning, mechanistic insight | Difficult to extrapolate to higher-level effects |

| Organismal | Standardized Toxicity Tests (e.g., LC50, EC50) | Survival, growth, reproduction | Local | Short to medium-term | Standardized, reproducible, strong regulatory foundation [13] | May not reflect complex field conditions or interspecies interactions |

| Population | Species Sensitivity Distributions (SSD) [14] | Population viability, HC5 (Hazard Concentration for 5% of species) | Local to regional | Medium to long-term | Community-relevant, probabilistic risk estimation [14] | Requires extensive toxicity data for multiple species |

| Community & Ecosystem | Biotic Indices (e.g., Nematode Community Indices [15]) | Diversity, structure, functional metrics (e.g., maturity index) | Local to landscape | Medium to long-term | Integrates cumulative stress, reflects ecosystem function | Complex to interpret, requires taxonomic expertise |

| Landscape & Regional | Ecosystem Service Supply-Demand Analysis [16] [17] | Service flow (e.g., water yield, carbon sequestration), risk bundles | Regional to continental | Long-term | Directly links ecology to human well-being, informs land-use policy [16] | Data-intensive, complex modeling required |

Experimental Protocols for Key Assessment Tiers

Protocol for Organismal-Level Assessment: Deriving Aquatic Life Benchmarks

This protocol outlines the standard process for generating the toxicity data used to establish regulatory benchmarks, such as the EPA's Aquatic Life Benchmarks [13].

- Test Organism Selection: Select representative species of freshwater vertebrates (e.g., fish), invertebrates (e.g., Daphnia), and plants (algae) as mandated by guidelines (40 CFR 158) [13].

- Exposure Regime: Conduct acute (short-term, e.g., 48-96 hour) and chronic (long-term, e.g., full life-cycle) laboratory tests under controlled conditions.

- Endpoint Measurement: For acute tests, determine the concentration lethal to 50% of organisms (LC50). For chronic tests, determine the no-observed-adverse-effect concentration (NOAEC) or EC50 for endpoints like growth or reproduction [13].

- Data Evaluation: Assess the quality and utility of studies using the EPA's Evaluation Guidelines for Ecological Toxicity Data.

- Benchmark Derivation: The most sensitive, scientifically acceptable endpoint for each taxonomic group is established as the benchmark (e.g., Acute = LC50/EC50, Chronic = NOAEC) [13].

Protocol for Community-Level Assessment: Nematode-Based Indices for Soil Health

This protocol details a method for assessing soil contamination effects using nematode communities as bioindicators, as employed in studies of coal mining areas [15].

- Field Sampling: Collect soil cores from target sites (e.g., near pollution sources) and reference sites. Sampling should account for spatial heterogeneity and seasonal variation [15].

- Nematode Extraction: Extract nematodes from soil samples using a combination of sieving and centrifugal-flotation techniques.

- Taxonomic Identification: Identify nematodes to genus or family level under a microscope and assign them to trophic groups (bacterial feeders, fungal feeders, omnivores, predators) and colonizer-persister (c-p) values.

- Index Calculation:

- Maturity Index (MI): Weighted mean of c-p values, indicating ecosystem disturbance (lower MI = greater disturbance).

- Nematode Channel Ratio (NCR): Ratio of bacterial-feeding to fungal-feeding nematodes, indicating the dominant decomposition pathway.

- Structure Index (SI): Reflects the complexity of the soil food web [15].

- Dose-Response & Modeling: Analyze relationships between Potentially Toxic Element (PTE) concentrations and nematode indices using models like Bayesian Kernel Machine Regression (BKMR). Use indices to predict ecological risk via machine learning models (e.g., Random Forest) [15].

Protocol for Landscape-Level Assessment: Ecosystem Service Supply-Demand Risk Bundling

This protocol is used to identify regional ecological risks based on mismatches between ecosystem service supply and human demand [16].

- Service Quantification: Use models like the InVEST (Integrated Valuation of Ecosystem Services and Trade-offs) model to map and quantify the supply of key services (e.g., Water Yield, Carbon Sequestration, Soil Retention) over time [16] [17].

- Demand Quantification: Map demand for the same services based on socio-economic data (e.g., population density, agricultural land, carbon emissions).

- Supply-Demand Ratio Calculation: For each service and grid cell, calculate a supply-demand ratio (SDR) or difference to identify surplus and deficit areas [16].

- Trend Analysis: Calculate supply and demand trend indices (STI, DTI) over a multi-year period to understand dynamics.

- Risk Classification & Bundling: Classify areas into risk levels based on SDR and trends. Use unsupervised clustering algorithms, like Self-Organizing Feature Maps (SOFM), to identify recurring combinations (bundles) of service risks across the landscape (e.g., "Water-Soil-Carbon High-Risk Bundle") [16].

Diagram 1: Landscape-Level ERA Workflow [16] [17]

The Scientist's Toolkit: Essential Reagents & Materials for Multi-Scale ERA

Table 2: Research Reagent Solutions and Essential Materials

| Item/Category | Function in ERA | Example Use Case / Relevant Level |

|---|---|---|

| Standardized Test Organisms (Daphnia magna, fathead minnow, algae cultures) | Provide reproducible biological units for toxicity testing. | Determining acute LC50/EC50 values for pesticide registration [13]. (Organismal) |

| Chemical Standards & Analytical Reagents (HPLC-grade solvents, certified reference materials for PTEs/OPEs) | Enable precise quantification of stressor concentrations in environmental matrices (water, soil, tissue). | Measuring Potentially Toxic Element (PTE) concentrations in soil [18] [15]. (All levels) |

| DNA/RNA Extraction Kits & PCR Reagents | Isolate and amplify genetic material for biomarker analysis (e.g., gene expression, metagenomics). | Assessing sub-organismal stress responses or microbial community changes. (Sub-organismal, Community) |

| Taxonomic Identification Guides & Databases | Allow accurate classification of biota (e.g., nematodes, benthic macroinvertebrates). | Calculating Nematode Community Indices (MI, SI) for soil health assessment [15]. (Community) |

| Geographic Information System (GIS) Software | Enables spatial data management, analysis, and visualization of landscape-scale patterns. | Mapping ecosystem service supply, demand, and risk bundles [16] [17]. (Landscape) |

| Remote Sensing Data & Indices (Landsat, Sentinel imagery, NDVI) | Provide synoptic, repeated measurements of land cover and vegetation health. | Input for LULC classification and ecosystem service modeling with InVEST [18] [16]. (Landscape) |

| Statistical & Modeling Software (R, Python with scikit-learn, BKMR packages) | Perform dose-response analysis, fit SSDs, run machine learning algorithms (RF, Ridge regression). | Developing multimodal SSDs for community risk [14] or predicting risk indices from biotic data [15]. (Population, Community) |

Integration of Hierarchical Data for Comprehensive Risk Characterization

The final phase of ERA integrates data from multiple levels to characterize risk [1]. For instance, a risk assessment for a pesticide might integrate organismal-level benchmark exceedances [13] with landscape-level models predicting exposure to non-target habitats. A study on mining contamination successfully combined sub-organismal (PTE concentration), community (nematode indices), and landscape (remote sensing) data to create a holistic risk picture [18] [15].

Diagram 2: Integration of Hierarchical Data in ERA

Ecological Risk Assessment (ERA) is the formal process for evaluating the safety of manufactured chemicals, pesticides, and other anthropogenic stressors to the environment [4]. A central, enduring challenge in ERA is the fundamental trade-off between methodological attributes that varies across levels of biological organization. Assessments conducted at lower biological levels (e.g., suborganismal, individual) typically offer high sensitivity, methodological control, and capacity for high-throughput screening. However, they suffer from a large inferential gap between the measured endpoint and the ecological values society aims to protect, leading to high predictive uncertainty when extrapolating to real-world systems [4]. Conversely, assessments at higher biological levels (e.g., community, ecosystem) provide greater ecological relevance by capturing emergent properties, feedback loops, and recovery processes, but are often less sensitive, more variable, and resource-intensive [4].

This guide objectively compares contemporary ERA approaches through the lens of this trade-off. It is framed within the broader thesis that no single level of biological organization is ideal; rather, a robust assessment strategy employs a tiered framework that integrates information from multiple levels, using mechanistic models to extrapolate across scales, thereby balancing sensitivity, relevance, and managed uncertainty [4] [19].

Comparative Performance of ERA Approaches Across Biological Levels

The performance of ERA is intrinsically linked to the level of biological organization at which it is conducted. The following table synthesizes the comparative advantages and limitations of key approaches, drawing from empirical reviews and case studies [4] [20] [19].

Table 1: Performance Comparison of Ecological Risk Assessment Approaches Across Levels of Biological Organization

| Assessment Level & Example Method | Relative Sensitivity | Ecological Relevance & Context | Key Sources of Predictive Uncertainty | Primary Use Case & Throughput |

|---|---|---|---|---|

| Suborganismal/ Biomarker (e.g., genomic, proteomic assays) | Very High. Detects molecular initiating events long before overt toxicity. | Very Low. Far removed from protection goals; lacks biological integration and recovery mechanisms. | High extrapolation uncertainty to higher-level effects; unknown relationship to population fitness. | Screening/ prioritization of chemicals; Very High throughput. |

| Individual Organism (e.g., standard lab toxicity tests, LC50/NOEC) | High. Measures overt toxicity on standard test organisms under controlled conditions. | Low. Based on single species; ignores species interactions, demographic structure, and environmental mediation. | Interspecies extrapolation; laboratory-to-field extrapolation; ignores population recovery. | Regulatory cornerstone for deriving toxicity thresholds; High throughput. |

| Population (e.g., demographic or matrix models, in-situ population studies) | Moderate. Integrates individual-level effects on survival, growth, reproduction into population metrics (e.g., growth rate λ). | Moderate. Captures demographic processes critical to species persistence but often lacks multi-species interactions. | Parameter uncertainty for vital rates; density-dependent feedbacks; spatial structure often omitted. | Refined risk assessment for listed or keystone species; Medium throughput with models. |

| Community & Ecosystem (e.g., mesocosm studies, field monitoring, trait-based models) | Variable/Low. May miss subtle effects but can detect emergent, indirect effects. | High. Captures species interactions, functional diversity, and ecosystem processes directly relevant to protection goals. | High natural variability; structural uncertainty of model choice; costly, limiting replication. | Higher-tier, site-specific validation; Low throughput. |

| Landscape/Scenario (e.g., agent-based models, integrated exposure scenarios) [21] [20] | Context-Dependent. Sensitivity is a function of model complexity and parameterization. | Very High. Explicitly incorporates spatial dynamics, habitat heterogeneity, and meta-population processes. | Complex uncertainty propagation (initial conditions, drivers, process error) [22]; computational intensity. | Prospective risk forecasting and management strategy evaluation; Low throughput. |

Experimental Protocols for Multi-Level Method Comparison and Validation

Validating and comparing ERA methods across biological levels requires robust experimental design. The following protocols are synthesized from established guidelines for method comparison and ecological modeling [23] [24].

Protocol for Comparative Analysis of Toxicity Test vs. Population Model Endpoints

Objective: To quantify the systematic error (bias) and predictive uncertainty introduced when using standard individual-level toxicity endpoints (e.g., NOAEC) to infer population-level risk, compared to estimates from a validated population model [19] [24].

Design:

- Test System: Select a well-studied model species (e.g., Daphnia magna) with existing high-quality individual toxicity data and a published demographic model.

- Sample (Scenario) Selection: A minimum of 40 different exposure scenarios should be modeled [24]. These should cover a wide range of realistic exposure profiles, including constant exposure, pulsed events, and chronic low-level exposure, with concentrations spanning from no-effect to severe effect levels.

- Methods Comparison:

- Test Method (Individual-level): For each scenario, calculate the standard Risk Quotient (RQ = PEC/NOAEC) or apply a species sensitivity distribution (SSD).

- Comparative Method (Population-level): For the same scenario, run the demographic model to simulate population trajectory over a defined period (e.g., 2-5 years). The primary endpoint is the simulated intrinsic population growth rate (λ) or probability of decline below a quasi-extinction threshold.

- Both methods should be run in "duplicate" using stochastic model runs or bootstrapped parameter estimates to account for variability [24].

- Data Analysis:

- Graphical Analysis: Create a comparison plot with the individual-level risk metric (RQ) on the x-axis and the population-level risk metric (e.g., change in λ) on the y-axis [24].

- Statistical Analysis: Perform regression analysis (if the range of RQ is wide) to derive a slope and intercept, quantifying the proportional and constant bias. Calculate the systematic error at critical management decision points (e.g., RQ = 1) [24].

- Uncertainty Partitioning: Use variance decomposition techniques to partition the total uncertainty in the population forecast into components attributable to toxicity parameter uncertainty, demographic parameter uncertainty, and model structure error [22].

Protocol for Validating a Prospective Scenario-Based ERA Method

Objective: To evaluate the performance of a prospective, scenario-based assessment tool (e.g., the ERA-EES for mining areas) [20] against traditional, measurement-intensive retrospective indices.

- Study Sites: Select a diverse set of >40 field sites (e.g., 67 metal mining areas in China [20]) representing a gradient of predicted risk.

- Method Application:

- Prospective Test Method: Apply the scenario-based method (e.g., ERA-EES using Analytic Hierarchy Process and Fuzzy Comprehensive Evaluation) [20]. Inputs are exclusively pre-existing geospatial and operational data (e.g., mine type, ecosystem sensitivity, climate). Output is a categorical risk level (Low/Medium/High).

- Retrospective Comparative Method: Conduct traditional field sampling and chemical analysis at each site. Calculate a quantitative risk index (e.g., Potential Ecological Risk Index - PERI) [20] [25] and classify sites into the same risk categories.

- Performance Evaluation:

- Construct a confusion matrix comparing the classifications from both methods.

- Calculate accuracy (proportion of correctly classified sites) and the kappa coefficient (measure of agreement beyond chance).

- Assess conservatism by examining the rate at which the prospective method classifies risk equal to or higher than the retrospective method [20].

Visualizing Methodological Relationships and Decision Frameworks

The Tiered ERA Decision Framework and Uncertainty Cascade

Diagram Title: Tiered ERA framework showing uncertainty flow [4] [19].

Trade-offs Across Levels of Biological Organization

Diagram Title: Trade-offs between sensitivity, ecological relevance, and uncertainty across biological levels [4].

The Scientist's Toolkit: Key Research Reagent Solutions

Selecting appropriate tools and models is critical for designing robust multi-level ERA studies. This toolkit details essential resources for addressing the core trade-offs [21] [20] [22].

Table 2: Essential Research Toolkit for Multi-Level Ecological Risk Assessment

| Tool/Reagent Category | Specific Example or Model Type | Primary Function in Addressing Trade-offs | Key Reference/Application |

|---|---|---|---|

| Mechanistic Effect Models | Pop-GUIDE-aligned Population Models [19], Agent-Based Models (ABMs) [21] | Bridge individual effects to population/community outcomes. Reduce extrapolation uncertainty by incorporating life history, density-dependence, and spatial structure. | Used to refine risk beyond screening quotients; e.g., predicting fish population resilience to pesticide exposure [19]. |

| Uncertainty Quantification Software | R ecoforecast packages, Bayesian calibration tools (e.g., Stan, JAGS) |

Propagate and partition uncertainty from multiple sources (initial conditions, parameters, process error). Informs where new data most reduces predictive uncertainty [22]. | Essential for probabilistic risk characterization and Value of Information (VoI) analysis [21] [22]. |

| Multi-Criteria Decision Analysis (MCDA) Frameworks | Analytic Hierarchy Process (AHP), Fuzzy Comprehensive Evaluation (FCE) [20] | Integrate diverse, often qualitative, data from exposure and ecological scenarios into a structured risk ranking. Manages linguistic and epistemic uncertainty in complex systems. | Applied in prospective ERA for mining sites (ERA-EES) to classify risk prior to costly sampling [20]. |

| Standardized Toxicity Test Organisms & Protocols | EPA Ecological Effects Test Guidelines (e.g., OCSPP 850 series), ISO/DIN standards. | Provide controlled, reproducible sensitivity data at individual/organism level. The foundational "reagent" for all higher-tier extrapolations. | Used globally to generate regulatory endpoints (LC50, NOAEC). |

| Mesocosm/Field Study Components | Outdoor stream channels, experimental ponds, standardized field sampling kits. | Deliver high ecological relevance by testing effects under realistic environmental conditions with complex communities. | Higher-tier validation for pesticides in Europe (e.g., EU ERA under EFSA) [4]. |

| Geospatial & Scenario Data | Land use/cover maps, soil/climate grids, chemical fate model outputs. | Feed exposure and landscape context into spatial models (ABMs, meta-population models). Critical for driver uncertainty assessment [22]. | Inputs for landscape-level risk forecasts and invasive species spread models [21] [22]. |

Historical and Regulatory Context for Tiered Risk Assessment Paradigms

Tiered risk assessment represents a structured, hierarchical approach to evaluating potential hazards, where simpler, cost-effective screening methods are employed first, progressing to more complex and resource-intensive analyses only as needed. This paradigm is foundational across regulatory science, designed to efficiently allocate resources by quickly identifying low-risk scenarios while focusing detailed scrutiny on substances or situations of greater concern [4]. The historical development of these frameworks is deeply intertwined with growing regulatory needs to manage chemical exposures in the environment, food supply, and pharmaceutical products in a scientifically defensible yet pragmatic manner.

The theoretical underpinning of a tiered approach lies in its sequential decision-making logic. An initial assessment (Tier I) uses conservative assumptions and readily available data to screen for clear cases of "no risk." If potential risk is indicated, the assessment proceeds to higher tiers (II, III, IV), which incorporate more refined data, probabilistic methods, and site- or population-specific considerations to reduce uncertainty and generate a more precise risk estimate [4]. This approach is evident in frameworks ranging from ecological risk assessment (ERA) for pesticides [4] to next-generation risk assessment (NGRA) for combined chemical exposures [26] and pharmacovigilance system evaluations [27].

Within the context of a broader thesis comparing ecological risk assessment across levels of biological organization, tiered paradigms offer a critical lens. The choice of biological level—from suborganismal biomarkers to individual organisms, populations, communities, and entire ecosystems—profoundly influences the feasibility, uncertainty, and ecological relevance of the assessment [4]. Lower-tier assessments often rely on data from standardized tests on individual organisms, which are high-throughput and reproducible but may poorly predict effects at the population or ecosystem level, which are typically the ultimate assessment endpoints. Higher-tier assessments may incorporate population modeling or field studies (mesocosms) that better capture ecological complexity and recovery processes but are more costly and variable [4]. Thus, the tiered framework serves as the operational bridge connecting measurable endpoints at one level of biological organization to protective goals defined at another.

Regulatory Evolution and Current Frameworks

The formalization of tiered risk assessment is a product of evolving regulatory mandates aimed at protecting human health and the environment. A cornerstone in the United States is the Toxic Substances Control Act (TSCA), as administered by the Environmental Protection Agency (EPA). The EPA is developing a tiered data reporting rule to inform the three-stage TSCA process of prioritization, risk evaluation, and risk management [28]. This regulatory tiering begins with using the Chemical Data Reporting (CDR) database for basic screening to identify candidate chemicals. As substances move to high-priority evaluation, the rule triggers requirements for more detailed reporting on health and safety studies, exposure monitoring, and supply chain information under TSCA authorities [28]. This exemplifies a regulatory-driven tiered approach where data requirements escalate in parallel with the level of regulatory scrutiny.

Globally, similar tiered logic structures diverse regulatory domains. In the European Union, the AI Act establishes a four-tier risk framework categorizing applications as having unacceptable, high, limited, or minimal risk, with regulatory obligations escalating accordingly [29]. In food safety, quantitative tiered methods are employed to prioritize hazards, such as using exposure-based screening followed by Margin of Exposure (MOE)-based probabilistic risk ranking for mycotoxins in infant food [30].

The table below summarizes key tiered frameworks across regulatory domains, highlighting their shared hierarchical logic and domain-specific applications.

Table: Comparison of Tiered Frameworks Across Regulatory Domains

| Regulatory Domain | Framework Name/Example | Core Tiers & Logic | Primary Regulatory Goal |

|---|---|---|---|

| Industrial Chemicals (U.S.) | TSCA Existing Chemicals Process [28] | 1. Identification/Prioritization → 2. Risk Evaluation → 3. Risk Management. Data requirements tier to each stage. | Identify and mitigate risks from chemicals in commerce. |

| Artificial Intelligence (EU) | EU AI Act [29] | Unacceptable → High → Limited → Minimal Risk. Compliance demands increase with risk level. | Ensure safe and ethical deployment of AI systems. |

| Food Safety | Hazard-Prioritization & Risk-Ranking [30] | 1. Exposure-based screening → 2. Probabilistic MOE-based risk ranking. Filters out low-risk agents for focused assessment. | Prioritize resources for managing chemical contaminants in food. |

| Pharmacovigilance | Assessment Tools (IPAT, WHO GBT) [27] | Use of core vs. supplementary indicators; maturity levels (1-4). Tools assess system functionality with increasing granularity. | Evaluate and strengthen national drug safety monitoring systems. |

In pharmacovigilance, the tiered concept is embedded within assessment tools rather than a prescribed regulatory process. The Indicator-Based Pharmacovigilance Assessment Tool (IPAT), the WHO Pharmacovigilance Indicators, and the WHO Global Benchmarking Tool (GBT) Vigilance Module all employ structured indicators to evaluate the maturity and functionality of national systems [27]. These tools implicitly tier assessments by distinguishing between core and complementary indicators or by assigning maturity levels, guiding authorities from basic functionality toward advanced practice [27].

Comparative Analysis of Tiered Ecological Risk Assessment Across Biological Levels

Ecological Risk Assessment (ERA) provides a clear case study for examining the trade-offs inherent in a tiered approach across different levels of biological organization. The fundamental challenge in ERA is the frequent mismatch between measurement endpoints (what is easily measured, e.g., individual survival in a lab test) and assessment endpoints (what society values and aims to protect, e.g., population viability or ecosystem function) [4]. Tiers in ERA navigate this gap by starting with simple, standardized tests and progressing toward more ecologically complex and relevant studies.

The relationship between the level of biological organization and key assessment characteristics is not linear but presents distinct advantages and disadvantages at each level. Suborganismal and individual-level endpoints are advantageous for high-throughput screening and establishing clear cause-effect relationships but suffer from high uncertainty when extrapolating to protect populations or ecosystems. In contrast, community- and ecosystem-level studies (e.g., mesocosms) are more ecologically relevant and can capture recovery dynamics and indirect effects but are highly complex, costly, and variable [4].

Table: Advantages and Disadvantages of ERA at Different Levels of Biological Organization [4]

| Level of Biological Organization | Key Advantages | Key Disadvantages |

|---|---|---|

| Suborganismal (e.g., biomarkers) | High-throughput screening; strong mechanistic insight; low cost per study. | Largest gap to assessment endpoints; high extrapolation uncertainty; ecological relevance unclear. |

| Individual | Standardized, reproducible tests; clear dose-response; regulatory acceptance. | Misses population-level processes (e.g., compensation, recruitment); may over- or under-estimate population risk. |

| Population | Direct link to assessment endpoints for many species; can model demographic recovery. | Data-intensive; models require simplification; interspecies variability. |

| Community & Ecosystem | Captures indirect effects & species interactions; measures functional endpoints; evaluates recovery. | Very high cost and complexity; high natural variability; difficult to establish causality. |

The tiered framework formally addresses these trade-offs. Tier I typically uses conservative, quotient-based methods comparing individual-level toxicity values (e.g., LC50) to exposure estimates [4]. If a risk is indicated, higher tiers (II-IV) may employ probabilistic risk models, population models, or ultimately field studies to refine the assessment using data from more complex biological levels [4]. Adverse Outcome Pathways (AOPs) provide a conceptual framework to link mechanistic data at lower levels of organization (molecular, cellular) to outcomes at the individual and population level, thereby informing and strengthening quantitative models used in higher-tier assessments [10].

Experimental Protocols & Data in Next-Generation Risk Assessment

Next-Generation Risk Assessment (NGRA) exemplifies the modern evolution of tiered paradigms, integrating New Approach Methodologies (NAMs)—including in vitro assays and computational toxicokinetic (TK) modeling—to assess safety, particularly for combined chemical exposures. A 2025 case study on pyrethroid insecticides provides a detailed experimental protocol for a tiered NGRA framework [26].

Table: Tiered NGRA Framework Protocol for Pyrethroid Assessment [26]

| Tier | Objective | Key Methodology & Data Sources | Outcome/Decision Point |

|---|---|---|---|

| Tier 1 | Hazard identification & bioactivity profiling. | Gather bioactivity data (AC50 values) from ToxCast in vitro assays. Categorize by gene pathway and tissue type. | Establish bioactivity indicators; generate hypotheses on mode of action. |

| Tier 2 | Explore combined risk assessment. | Calculate relative potencies from AC50s; compare with relative potencies from traditional points of departure (NOAELs, ADIs). | Test hypothesis of similar mode of action; identify inconsistencies between in vitro and traditional data. |

| Tier 3 | Risk screening using internal dose. | Apply TK modeling to convert external exposures to internal concentrations. Calculate Margin of Exposure (MoE) based on internal dose. | Screen risks based on target tissue concentrations; identify critical pathways. |

| Tier 4 | Refine bioactivity assessment. | Use TK models to estimate interstitial concentrations in vitro; compare bioactivity concentrations between in vitro and in vivo systems. | Improve quantitative in vitro to in vivo extrapolation (QIVIVE); refine bioactivity-based effect assessment. |

| Tier 5 | Integrated risk characterization. | Calculate final bioactivity MoEs for combined dietary exposure; compare to safety thresholds and in vivo MoEs. | Conclude on risk level for combined exposure; identify any data gaps for non-dietary pathways. |

This NGRA protocol demonstrates a tiered shift from relying solely on apical endpoints from animal studies toward using mechanistic bioactivity data and TK modeling to estimate internal target-site concentrations. This allows for a more nuanced assessment of combined exposures from chemicals with similar molecular targets. The study concluded that while dietary exposure to the pyrethroid mixture was below levels of concern for adults, the combined Margin of Exposure was insufficient to cover additional non-dietary exposures, a nuance potentially missed by conventional, single-chemical risk assessment [26].

The experimental workflow integrates diverse data streams: high-throughput in vitro bioactivity, existing regulatory toxicology data (NOAELs, ADIs), human biomonitoring or food monitoring exposure data, and physiological TK models. The tiered approach ensures that resource-intensive TK modeling and refinement steps are reserved for substances that pass initial screening tiers.

Tiered NGRA Workflow Integrating NAMs and TK Modeling

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing tiered risk assessment, particularly next-generation frameworks, relies on a suite of specialized research reagents, tools, and data sources.

Table: Essential Research Toolkit for Tiered Risk Assessment Studies

| Tool/Reagent Category | Specific Examples | Function in Tiered Assessment | Typical Application Tier |

|---|---|---|---|

| High-Throughput In Vitro Assay Platforms | ToxCast/Tox21 assay batteries; reporter gene assays; high-content screening. | Provides mechanistic bioactivity data (AC50, efficacy) for hazard identification & potency ranking. | Tier 1 (Screening), Tier 2 (Potency Comparison). |

| Reference Toxicological Data | Regulatory study NOAELs/LOAELs; published in vivo toxicity databases; EFSA/ECHA assessment reports. | Serves as anchor points for validating NAMs and calculating traditional risk metrics (ADI, MoE). | Tier 2 (Comparison), Tier 5 (Benchmarking). |

| Physiological Toxicokinetic (TK) Models | Generic PBPK models (e.g., in GastroPlus, Simcyp); chemical-specific PBPK models; high-throughput TK (HTTK) models. | Translates external exposure to internal target-site concentration for in vitro-in vivo extrapolation and risk refinement. | Tier 3 (Internal Dose), Tier 4 (Refinement). |

| Bioanalytical Standards & Kits | Certified reference materials for target chemicals; ELISA kits for biomarkers; qPCR kits for gene expression. | Enables precise quantification of chemicals in exposure media or biomarkers in biological samples. | All Tiers (Exposure & Effect Measurement). |

| Computational Data Integration & Modeling Software | R/Bioconductor packages (e.g., httk); Bayesian modeling tools (e.g., Stan); probabilistic risk software. |

Performs statistical dose-response modeling, uncertainty analysis, and integrated risk calculation. | Tier 2-5 (Data Analysis & Risk Characterization). |

Synthesis and Future Directions in Tiered Assessment

The historical trajectory of tiered risk assessment demonstrates a consistent drive toward greater efficiency, mechanistic understanding, and ecological relevance. Future directions are shaped by several converging trends. First, the integration of NAMs and AOPs into regulatory-tiered frameworks will continue to accelerate, moving beyond case studies like the pyrethroid NGRA toward broader acceptance [26]. This requires standardized protocols for qualifying NAMs for specific regulatory purposes and developing associated uncertainty frameworks.

Second, data integration and computational power are enabling more sophisticated higher-tier assessments. The use of population models informed by AOPs, as proposed for ecological risk assessment [10], and the probabilistic risk ranking used in food safety [30] exemplify this trend. In pharmacovigilance, the emphasis on Real-World Data (RWD) and advanced analytics from sources like clinical registries promises to create more dynamic, evidence-based post-market surveillance tiers [31].

Finally, the scope of tiered assessment is expanding into new domains like Artificial Intelligence and Environmental, Social, and Governance (ESG) criteria. The EU AI Act's risk tiers mandate conformity assessments for high-risk applications [29], while ESG frameworks require companies to tier their reporting and due diligence based on materiality and risk exposure [32]. This expansion underscores the versatility of the tiered paradigm as a logic model for managing complexity and uncertainty across diverse fields.

The enduring relevance of the tiered paradigm lies in its fundamental alignment with the scientific method: it is a hypothesis-driven, iterative process that allocates investigational effort proportionally to the level of indicated concern. Whether bridging the gap from molecular perturbations to population-level ecological effects or from in vitro bioactivity to public health guidance for chemical mixtures, the tiered structure provides a robust scaffold for transparent, defensible, and progressively refined decision-making.

Bridging AOPs and Population Models to Inform Tiered ERA

Bridging the Scales: Methodological Frameworks for Cross-Level Prediction and Integration

The Adverse Outcome Pathway (AOP) framework is a conceptual model that organizes scientific knowledge into a sequential chain of causally linked events, starting from a Molecular Initiating Event (MIE) at the molecular level and leading to an Adverse Outcome (AO) relevant to regulatory decision-making, which can occur at the individual, population, or community level [33] [34]. In the context of ecological risk assessment (ERA), this framework provides a powerful tool for bridging data gaps across different levels of biological organization—from subcellular biomarkers to population consequences [4] [10].

Traditional ERA often struggles with a fundamental mismatch: measurement endpoints (e.g., cell death or enzyme inhibition from laboratory tests) are frequently distant from the assessment endpoints society aims to protect (e.g., population viability or ecosystem function) [4]. The AOP framework addresses this by creating a structured, mechanistic bridge. It logically connects measurable key events (KEs) at lower biological levels (e.g., binding to a receptor, cellular inflammation) to predictions about outcomes at higher levels (e.g., impaired reproduction, population decline) [33] [10]. This facilitates the use of data from efficient, high-throughput in vitro assays (New Approach Methodologies, or NAMs) to inform on risks to whole organisms and populations, thereby supporting regulatory decisions while aiming to reduce reliance on traditional animal testing [34] [35].

This guide compares the AOP framework with other established ERA approaches, evaluating their respective performances, data requirements, and utility for extrapolating effects across biological scales.

Comparative Analysis of ERA Approaches Across Biological Scales

Ecological risk assessment can be conducted at various levels of biological organization, each with distinct advantages, limitations, and appropriate applications. The table below provides a structured comparison.

Table 1: Comparison of Ecological Risk Assessment Approaches Across Levels of Biological Organization [34] [4]

| Level of Biological Organization | Primary Measurement Endpoints | Key Advantages | Key Limitations | Best Suited For |

|---|---|---|---|---|

| Sub-Organismal (Biomarker/Cellular) | Molecular initiating events, key events (e.g., receptor binding, gene expression, protein damage) [33] [36]. | High mechanistic clarity; strong cause-effect relationships; amenable to high-throughput screening; reduces animal use [4] [35]. | Largest extrapolation distance to population/ecosystem effects; may miss compensatory biological feedback [4]. | Screening & prioritization of chemicals; mode-of-action identification; building blocks for AOPs. |

| Individual Organism | Survival, growth, reproduction (e.g., LC50, NOEC) in standardized test species [4]. | Regulatory familiarity and acceptance; directly measures integrated organism health; relatively reproducible [4]. | Limited ecological realism; high cost and time per test; uses vertebrate animals; ignores species interactions [4]. | Tiered hazard assessment; derivation of protective thresholds (e.g., PNEC) for single species. |

| Population | Population growth rate, extinction risk, age/size structure [37] [10]. | Directly relevant to protection goals (population sustainability); integrates individual-level effects over time [10]. | Complex, data-intensive models required; difficult to validate empirically for many species [10]. | Risk refinement for chemicals with known individual-level effects; assessment of endangered species. |

| Community/Ecosystem | Species diversity, functional endpoints (e.g., primary production, decomposition), mesocosm studies [4]. | High ecological realism; captures indirect effects and species interactions [4]. | Extremely high cost and complexity; highly variable results; difficult to establish causality for specific stressors [4]. | Higher-tier, site-specific risk assessment for chemicals with wide-scale use. |

| AOP Framework (Cross-Level) | Modular sequence of KEs from MIE to AO [33] [34]. | Provides mechanistic bridge across biological scales; supports use of NAMs; chemical-agnostic; identifies knowledge gaps [33] [34]. | Is not a risk assessment itself (does not address exposure); requires substantial mechanistic knowledge to develop [34]. | Integrating data across testing methods; hypothesis-driven testing; supporting extrapolation (e.g., cross-species, to populations) [34] [10]. |

The AOP framework does not replace assessments at any single level but serves as a translational and integrative scaffold. It enhances the utility of data from lower levels (sub-organismal, individual) by explicitly defining their causal relationship to outcomes at higher levels (population), which are of greater regulatory and ecological relevance [10].

Performance Evaluation: AOPs vs. Traditional ERA

When evaluated against the core objectives of modern ecological risk assessment, the AOP framework demonstrates distinct strengths and complementarities with traditional methods.

Table 2: Performance Comparison of AOP Framework vs. Traditional Single-Level ERA Methods [34] [4] [10]

| Evaluation Criterion | Traditional ERA (Organism/Population Level) | AOP Framework | Supporting Evidence & Notes |

|---|---|---|---|

| Mechanistic Understanding | Low to Moderate. Often relies on correlative, descriptive toxicity endpoints (e.g., mortality) [4]. | High. Explicitly maps the chain of mechanistic events from molecular perturbation to adverse outcome [33] [34]. | AOPs organize knowledge on how toxicity occurs, moving beyond whether it occurs. |

| Extrapolation Across Biological Levels | Weak. Requires separate models (e.g., individual to population models) with significant uncertainty [10]. | Strong (Core Function). The framework's structure is designed for cross-level extrapolation by linking KEs [34] [10]. | AOPs provide the qualitative causal roadmap required for quantitative extrapolation models. |

| Use of NAMs / Animal Replacement | Limited. Heavily reliant on standard whole-organism toxicity tests [4]. | High (Core Function). Designed to incorporate data from in vitro and in chemico assays aligned with KEs [33] [35]. | Projects like Methods2AOP explicitly map high-throughput assays to AOP KEs [33]. |

| Regulatory Acceptance | High. Well-established through decades of use and guidelines (e.g., OECD test guidelines) [4]. | Growing. Actively supported by OECD and US EPA; used for chemical prioritization and hypothesis-testing [33] [34]. | Formal OECD endorsement of AOPs is increasing; used to support Integrated Approaches to Testing and Assessment (IATA) [36]. |

| Handling of Chemical Mixtures | Difficult. Typically uses additive models (e.g., concentration addition) based on similar toxicological endpoints [4]. | Promising. AOP networks can identify shared KEs; chemicals converging on the same KE may be predicted to have additive effects [34]. | This provides a mechanistic basis for grouping mixture components, moving beyond simple endpoint similarity. |

| Cross-Species Extrapolation | Uncertain. Often uses arbitrary assessment factors or limited phylogenetic comparisons [4]. | Mechanistically Informed. Tools like SeqAPASS can assess conservation of MIEs and KEs (e.g., protein targets) across species [34]. | If the MIE (e.g., binding to a conserved estrogen receptor) is conserved, the AOP may be extrapolated with greater confidence [34]. |

| Speed & Cost for Screening | Slow & Expensive. Whole-organism tests are resource-intensive [4]. | Fast & Cost-Effective (Potential). Enables screening based on high-throughput KE assays, prioritizing chemicals for higher-tier testing [34]. | This addresses the critical problem of assessing thousands of "data-poor" chemicals in the environment [38]. |

| Quantitative Prediction | Direct. Provides measured toxicity values (e.g., LC50) for the test organism [4]. | Independent. An AOP itself is a qualitative knowledge framework; however, it facilitates the development of quantitative AOP (qAOP) models [34]. | The strength of an AOP lies in defining what to quantify. Quantitative understanding is a key evidence type for Key Event Relationships (KERs) [34]. |

Case Study & Experimental Data: The Oxidative DNA Damage AOP Network

AOP #296, "Oxidative DNA Damage Leading to Mutations and Chromosomal Aberrations," provides a well-characterized example of linking a molecular stressor to adverse genetic outcomes [36]. This case study illustrates the experimental data that underpins a robust AOP.

Table 3: Experimental Data and Measurement Methods for Key Events in AOP #296 (Oxidative DNA Damage) [36]

| Event Type | Event Title | Description | Key Measurement Methods (Experimental Protocols) |

|---|---|---|---|

| Molecular Initiating Event (MIE) | Increases in Oxidative DNA Damage | Initial lesions caused by reactive oxygen/nitrogen species, including oxidized bases (e.g., 8-oxo-dG) and direct strand breaks [36]. | 1. Modified Comet Assay: Cells are embedded in agarose on a slide, lysed, and treated with a lesion-specific enzyme (e.g., Fpg or hOGG1 for 8-oxo-dG). The enzyme creates breaks at damage sites, which are visualized via electrophoresis and fluorescence staining. DNA migration ("tail moment") is quantified as damage level [36]. 2. LC-MS/MS: DNA is isolated, enzymatically hydrolyzed to nucleosides, and analyzed via liquid chromatography coupled with tandem mass spectrometry. This provides absolute quantification of specific oxidative lesions like 8-oxo-dG [36]. |

| Key Event (KE1) | Inadequate DNA Repair | Failure of cellular repair mechanisms (e.g., Base Excision Repair) to correctly, completely, or timely repair oxidative lesions [36]. | 1. Indirect Measurement: Time-course analysis of oxidative lesions (using comet assay or LC-MS/MS) post-exposure. Persistence of lesions indicates inadequate repair [36]. 2. Direct Reporter Assays: Transfection of cells with a fluorescent reporter plasmid containing a specific oxidative lesion (e.g., 8-oxo-dG). Measurement of fluorescence after a set period assesses the cell's ability to repair the lesion and restore gene function [36]. |

| Key Event (KE2) | Increases in DNA Strand Breaks | Accumulation of single-strand breaks (SSBs) and double-strand breaks (DSBs), which can be direct lesions or intermediates of faulty repair [36]. | 1. Alkaline/Neutral Comet Assay: Standard comet assay (without lesion-specific enzymes) detects SSBs (alkaline) and DSBs (neutral) [36]. 2. γ-H2AX Immunofluorescence: DSBs trigger phosphorylation of histone H2AX (γ-H2AX). Cells are fixed, stained with fluorescent anti-γ-H2AX antibody, and foci are counted per cell via microscopy or flow cytometry [36]. |

| Adverse Outcome (AO1) | Increases in Mutations | Permanent changes in DNA sequence (e.g., base substitutions, frameshifts) [36]. | 1. In Vitro Gene Mutation Assays: Use of cell lines with reporter genes (e.g., HPRT, TK, PIG-A). Exposure to a stressor can inactivate the gene, and mutants are selected using a toxic agent (e.g., 6-thioguanine for HPRT). Mutation frequency is calculated from surviving clones [36]. |

| Adverse Outcome (AO2) | Increases in Chromosomal Aberrations | Microscopically visible damage to chromosomes (e.g., gaps, breaks, exchanges) [36]. | 1. In Vitro Micronucleus Assay: Cells are exposed, then treated with cytochalasin-B to block cytokinesis. Binucleated cells are scored for the presence of micronuclei (small, extranuclear bodies containing chromosome fragments or whole chromosomes), indicating chromosomal damage or loss [36]. |

Experimental Protocol for a Key AOP-Based Investigation

Objective: To test the key event relationship between the MIE (Oxidative DNA Damage) and KE2 (DNA Strand Breaks) using an in vitro human cell model.

- Cell Culture & Exposure: Human hepatocellular carcinoma (HepG2) cells are maintained and seeded into multi-well plates. At ~70% confluence, cells are exposed to a range of concentrations of a test chemical (e.g., potassium bromate, a known oxidant) and a negative control (vehicle only) for 2-24 hours [36].

- MIE Measurement (Oxidative DNA Damage):

- A subset of cells is harvested.

- DNA is extracted and analyzed for 8-oxo-dG using a validated LC-MS/MS protocol. Data is expressed as lesions per 10⁶ normal deoxyguanosine bases [36].

- KE2 Measurement (DNA Strand Breaks):

- A parallel set of harvested cells is analyzed using the alkaline comet assay.

- Cells are embedded in agarose, lysed, subjected to alkaline electrophoresis, neutralized, and stained with a DNA-binding fluorescent dye.

- 50-100 randomly selected cells per treatment are scored using image analysis software to determine the % tail DNA [36].