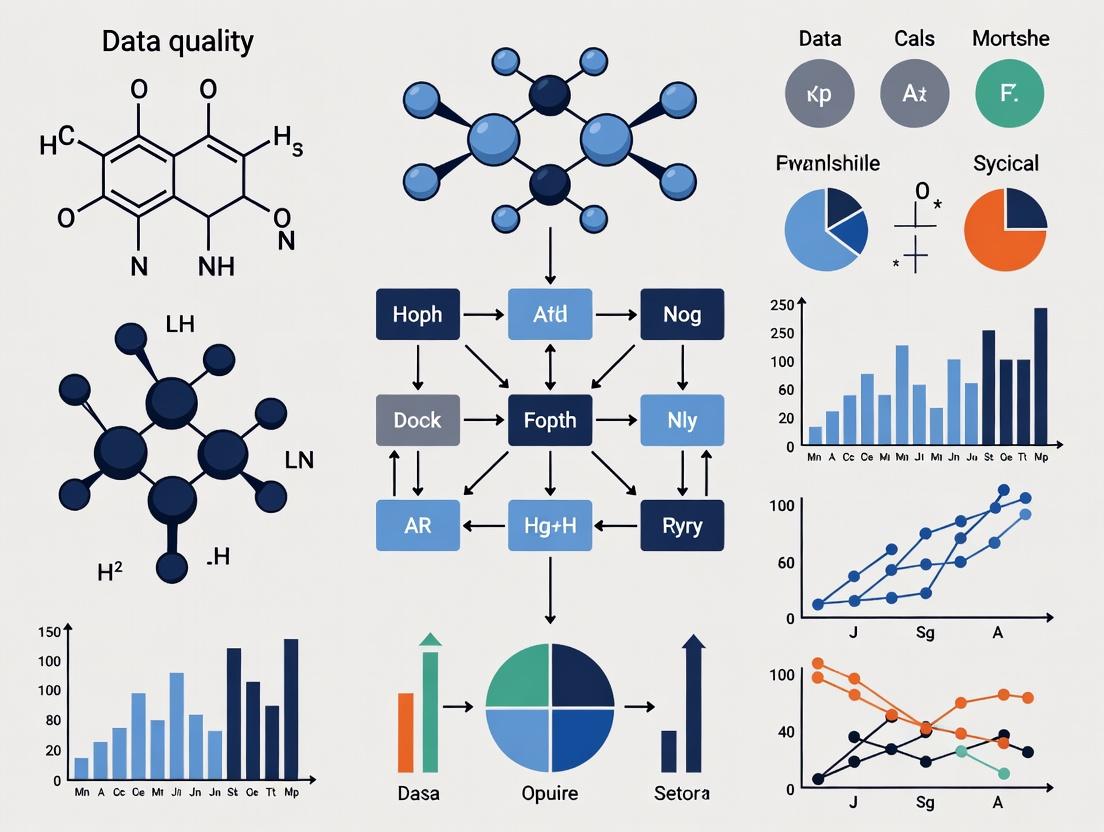

From Data Gaps to Robust Predictions: Confronting the Core Data Quality Challenges in Ecotoxicology Machine Learning

Machine learning (ML) promises to revolutionize chemical safety assessment, yet its effective application in ecotoxicology is fundamentally constrained by data quality.

From Data Gaps to Robust Predictions: Confronting the Core Data Quality Challenges in Ecotoxicology Machine Learning

Abstract

Machine learning (ML) promises to revolutionize chemical safety assessment, yet its effective application in ecotoxicology is fundamentally constrained by data quality. This article provides a comprehensive analysis for researchers, scientists, and drug development professionals. We first explore the foundational data challenges, including the scarcity of high-quality experimental data for most marketed chemicals and the prevalence of small, heterogeneous datasets[citation:1][citation:2]. We then examine methodological approaches for constructing predictive models from imperfect data, highlighting the role of benchmark datasets like ADORE and the integration of multi-dimensional features[citation:4][citation:9]. The third section focuses on troubleshooting strategies to address specific data flaws such as noise, imbalance, and data leakage. Finally, we discuss critical frameworks for model validation and comparative analysis to ensure reliability, reproducibility, and regulatory acceptance, concluding with a pathway toward more robust and interpretable predictive toxicology[citation:3][citation:6].

The Data Desert: Mapping Foundational Quality Gaps in Ecotoxicology ML

The development of reliable machine learning (ML) models in ecotoxicology is fundamentally constrained by the severe scarcity of high-quality, curated experimental data. While over 350,000 chemicals are in commerce [1], only a tiny fraction have sufficient empirical toxicity data for robust model training and validation. This disparity creates a foundational data quality challenge, where models are asked to predict outcomes for a vast chemical space represented by only a sparse set of data points [2]. This technical support center is designed to help researchers, scientists, and drug development professionals navigate these specific data scarcity and quality issues, providing troubleshooting guides and FAQs framed within the critical thesis that data quality is the paramount bottleneck in ecotoxicological ML research.

Troubleshooting Common Data Scarcity & Quality Problems

Problem 1: Identifying and Accessing Existing Ecotoxicity Data

A primary challenge is locating and aggregating reliable experimental data from scattered sources.

Step-by-Step Solution:

- Start with Curated Repositories: Begin your search with comprehensive, publicly available knowledgebases like the U.S. EPA's ECOTOX Knowledgebase. It contains over one million test records for more than 12,000 chemicals and 13,000 species, compiled from peer-reviewed literature [3].

- Utilize Recently Curated Datasets: Leverage newer, purpose-built datasets that have done the curation work for you. For example, the dataset published by (2024) provides curated mode-of-action and effect concentration data for over 3,300 environmentally relevant chemicals [1].

- Perform Strategic Literature Mining: For chemicals not covered in major databases, conduct targeted searches in scientific databases (e.g., Web of Science, PubMed). Use compound names in combination with specific terms like "

toxicity," "mode of action," or "adverse outcome pathway" [1]. - Check Regulatory Data Sources: Investigate data from regulatory programs like the EPA's Chemical Data Reporting (CDR) rule under TSCA, which can provide information on manufactured chemical volumes and uses, helpful for exposure estimates [4].

Problem 2: Prioritizing Chemicals for Testing When Data is Poor

With thousands of data-poor chemicals, you need a systematic method to identify which ones pose the greatest potential risk and merit scarce experimental resources.

Step-by-Step Solution (Prioritization Workflow):

- Compile a Candidate List: Gather a list of chemicals of concern, such as active pharmaceutical ingredients (APIs) or high-production volume chemicals. A study prioritizing 1,402 pharmaceuticals is a relevant example [5].

- Gather or Predict Exposure Data: For each chemical, obtain a Measured Environmental Concentration (MEC) or calculate a Predicted Environmental Concentration (PEC) [5].

- Gather or Predict Effect Data: Obtain a Predicted No-Effect Concentration (PNEC). Use available experimental data (e.g., from ECOTOX) or derive a conservative estimate using in silico tools like Quantitative Structure-Activity Relationship (QSAR) models [1] [5].

- Calculate a Risk Quotient (RQ): For each chemical, compute RQ = PEC / PNEC. Chemicals with RQ > 1 indicate potential risk [5].

- Screen and Finalize Priority List: Filter the top-ranking chemicals by checking data repository availability to avoid redundancies. The final output is a shortlist of high-priority, data-poor chemicals for targeted testing [5].

Problem 3: Managing Data Quality for Machine Learning

Poor data quality—such as sparsity, noise, and inconsistency—directly leads to reduced model accuracy, biased predictions, and poor generalizability [2] [6].

Step-by-Step Solution (Data Quality Pipeline):

- Cleanse and Impute: Address incomplete (sparse) data using techniques like mean/median imputation or K-Nearest Neighbors (KNN) imputation. Remove or correct duplicate entries and irrelevant noise [2] [6].

- Validate and Standardize: Perform consistency checks to unify data formats and units. Validate data against predefined schemas and business rules (e.g., effect concentrations must be positive numbers) [7] [6].

- Conduct Exploratory Data Analysis (EDA): Use visualizations (histograms, scatter plots) and statistical summaries to identify outliers, understand distributions, and detect potential biases before model training [2] [6].

- Implement Continuous Monitoring: Deploy automated checks to monitor for data drift, sudden statistical changes, or new anomalies in incoming data. This is critical for maintaining model performance over time [2] [6].

- Update and Retrain Models: Regularly retrain models with new, high-quality data to adapt to changing data patterns and prevent performance decay [2] [6].

Key Data Comparisons: The Scale of Scarcity

Table 1: The Gap Between Marketed Chemicals and Available Ecotoxicological Data [1] [5]

| Data Category | Estimated Number | Key Detail | Implication for ML |

|---|---|---|---|

| Chemicals in Commerce | > 350,000 | Includes industrial chemicals, pesticides, pharmaceuticals, etc. | Vast prediction space with extreme sparsity. |

| Environmentally Relevant Chemicals (curated list) | 3,387 | Focus on substances likely found in freshwater. | A targeted but still large subset for modeling. |

| With Curated Mode-of-Action (MoA) Data | 3,387 | MoA categorized for all chemicals in the list. | Enables models based on mechanistic understanding. |

| With Curated Effect Concentration Data | Subset of above | Compiled from ECOTOX for algae, crustaceans, fish. | Provides essential quantitative labels for supervised learning. |

| Active Pharmaceutical Ingredients (APIs) | > 3,500 | On global market for human/veterinary use. | A major, structurally diverse class of contaminants. |

| APIs with Prioritization Data | 1,402 | Studied for environmental risk using PEC/PNEC. | Example of using in silico tools to triage testing. |

Table 2: Common Data Quality Issues in Ecotoxicology ML & Solutions [2] [7] [6]

| Issue | Description | Potential Impact on ML Model | Recommended Mitigation Strategy |

|---|---|---|---|

| Sparse/Incomplete Data | Missing toxicity endpoints or chemical descriptors for many compounds. | Reduced accuracy, failure to generalize to under-represented chemical classes. | Imputation techniques (mean, KNN), active learning to target testing [2]. |

| Noisy Data | Irrelevant, duplicate, or erroneous entries in databases. | Obscures true signal, leads to inaccurate or biased predictions. | Deduplication, outlier treatment, robust statistical validation [6]. |

| Inconsistent Data | Variability in test protocols, units, or reporting standards across studies. | Model confusion, poor integration of data from multiple sources. | Standardization, curation pipelines, schema validation [7]. |

| Biased Data | Over-representation of certain chemical classes (e.g., pesticides) or taxa. | Models that perform poorly on under-represented groups (e.g., pharmaceuticals, invertebrates). | Exploratory Data Analysis (EDA), bias correction algorithms, strategic data acquisition [6]. |

Frequently Asked Questions (FAQs)

Q1: Where can I find high-quality, ready-to-use ecotoxicity data for machine learning projects? Start with the U.S. EPA ECOTOX Knowledgebase, which is a comprehensive, curated source [3]. For data that includes mechanistic information, seek out recently published curated datasets like the one by (2024), which provides mode-of-action and effect data for thousands of chemicals [1]. Always check the methodology to ensure the curation aligns with your project's needs.

Q2: How do I approach a machine learning project for a chemical with little to no experimental data? Embrace a prioritization and read-across strategy. First, use available tools (like QSAR models from the EPA's CompTox Dashboard) to estimate properties and toxicity for the data-poor chemical [3]. Then, use these predictions to identify similar chemicals (analogues) that have experimental data. You can use the data from these analogues to make informed estimates, a process central to regulatory "read-across" [1]. Your model can be trained to automate this similarity finding and prediction.

Q3: What are the most critical data quality checks to perform before training an ecotoxicity ML model? The non-negotiable checks are: 1) Completeness: Identify missing values for key features and labels. 2) Consistency: Standardize units (e.g., all concentrations in µM) and taxonomic nomenclature. 3) Outlier Detection: Use statistical methods (IQR, Z-score) or visualization to flag anomalous effect concentrations that could be errors. 4) Bias Assessment: Analyze the distribution of your data across chemical use classes (e.g., pesticides vs. pharmaceuticals) to understand model limitations [2] [6].

Q4: For legacy pharmaceuticals approved before modern ERA requirements, how can I assess risk with limited data? Follow a tiered prioritization framework as demonstrated in recent research [5]. Combine the simplest available exposure estimate (e.g., a default PEC) with an effect estimate from a QSAR model or the most sensitive species data from a close analogue. Calculate a risk quotient to flag high-priority candidates. This conservative, screening-level approach efficiently narrows the list for subsequent, more costly testing.

Q5: How can I make my ecotoxicity ML model more robust and interpretable? Incorporate mechanistic information. Using curated Mode-of-Action (MoA) or Adverse Outcome Pathway (AOP) data as features can guide the model towards biologically plausible relationships, improving extrapolation and interpretability [1]. Furthermore, applying model-agnostic interpretation tools (like SHAP values) to highlight which structural or mechanistic features drove a prediction can build trust in the model's outputs.

Table 3: Key Research Reagents & Tools for Ecotoxicology ML [1] [3] [5]

| Tool/Resource | Category | Primary Function | Use Case in Ecotox ML |

|---|---|---|---|

| EPA ECOTOX Knowledgebase | Database | Repository of curated single-chemical toxicity test results. | Source of experimental effect concentrations (labels) for model training and validation [3]. |

| Curated MoA Dataset (e.g., 2024) | Dataset | Provides assigned mode-of-action categories for thousands of environmental chemicals. | Enables development of classification models and use of MoA as a predictive feature [1]. |

| QSAR Toolkits (e.g., from EPA CompTox) | Software | Predicts chemical properties and toxicity based on molecular structure. | Generates features (molecular descriptors) and fills data gaps for initial prioritization [1] [5]. |

| Active Learning Algorithms | ML Technique | Selects the most informative data points for which to acquire labels (e.g., test data). | Optimizes limited testing budget by identifying chemicals whose experimental data would most improve the model [2]. |

| Data Profiling & Validation Libraries (e.g., pandas-profiling, Great Expectations) | Software | Automates data quality assessment (completeness, consistency, anomalies). | Critical first step in the ML pipeline to diagnose and remediate issues in raw ecotoxicity data [6]. |

Core Challenges in Ecotoxicological Data for Machine Learning

The advancement of machine learning (ML) in ecotoxicology is critically hampered by inherent data heterogeneity. This heterogeneity arises from the integration of diverse biological endpoints, multiple species with varying physiological responses, and inconsistent experimental conditions across studies [8]. In the context of a broader thesis on data quality challenges, these inconsistencies create significant barriers to developing robust, generalizable predictive models. A primary issue is the lack of standardized benchmark datasets, which makes direct comparison of model performances across different studies nearly impossible [8]. Furthermore, regulatory databases, which are key data sources, often contain known inconsistencies and migration errors that can compromise data integrity if not carefully addressed [9]. Researchers must navigate these challenges by implementing rigorous data curation, harmonization, and splitting strategies to prevent data leakage and build trustworthy ML applications for chemical risk assessment [8] [10].

Table: Key Quantitative Data on Ecotoxicological Data Heterogeneity

| Data Aspect | Scale/Example | Source/Note |

|---|---|---|

| Registered Chemicals | >350,000 chemicals and mixtures worldwide [8] | Creates vast prediction space for models. |

| Taxonomic Groups in ADORE | Fish, Crustaceans, Algae [8] | Together cover 41% of entries in the ECOTOX database. |

| Standard Test Durations | Fish: 96h; Crustaceans: 48h; Algae: 72h [8] | OECD guidelines; heterogeneity in timing affects endpoint comparison. |

| Primary Acute Endpoints | LC50 (Fish), EC50 (Immobilization for Crustaceans), Growth Inhibition (Algae) [8] | Different measures of "toxicity" across species. |

| Top Data Integrity Challenge | Cited by 64% of organizations [10] | Context from broader data science; underscores universal difficulty. |

Troubleshooting Guides

Problem Category: Data Collection & Curation

- Problem: Inconsistent or missing metadata (e.g., life stage, exposure time) from large public databases like ECOTOX.

- Solution: Establish a pre-processing pipeline that filters data based on standardized criteria. For instance, filter entries to specific taxonomic groups (fish, crustacea, algae) and exclude non-standard life stages (e.g., eggs, embryos) and exposure durations beyond guideline limits (e.g., >96 hours) [8]. Always retain multiple chemical identifiers (CAS, DTXSID, InChIKey) to facilitate merging with other data sources [8].

- Preventative Step: Before analysis, document all filtering decisions and assumptions in a data provenance log. Use resources like the TAME 2.0 toolkit for training on robust data management practices [11].

Problem Category: Experimental Design for ML Readiness

- Problem: Historical data is not directly usable for ML due to variability in experimental conditions.

- Solution: Harmonize endpoints to a common basis. Convert all concentration values to molar units (mol/L) to enhance biological relevance for model learning [8]. For algae, group related effects (mortality, growth, population, physiology) into a collective "growth inhibition" endpoint to increase data volume and consistency [8].

- Preventative Step: When designing new experiments, adhere to OECD Test Guidelines (e.g., 203 for fish, 202 for Daphnia, 201 for algae) and report all critical parameters (temperature, pH, solvent controls) in a structured, machine-readable format [8].

Problem Category: Model Training & Evaluation

- Problem: Overoptimistic model performance due to data leakage, often from inappropriate splitting of training and test sets.

- Solution: Implement splitting strategies based on chemical similarity (e.g., molecular scaffolds) rather than random splits. This tests a model's ability to extrapolate to new chemical classes, providing a more realistic performance estimate [8]. Use benchmark datasets like ADORE that provide predefined splits for fair comparison [8].

- Preventative Step: Never use data from the same chemical or highly similar chemicals in both training and testing sets. Validate model performance on external, temporally separated, or structurally distinct datasets.

Problem Category: Interpretation & Extrapolation

- Problem: Model predictions are difficult to interpret biologically or extrapolate to untested species or ecosystems.

- Solution: Integrate mechanistic biological features (e.g., from ToxCast assays for endocrine disruption or hepatotoxicity) alongside chemical descriptors [12]. This can improve interpretability. For species extrapolation, incorporate phylogenetic data or species-specific traits as model features to help bridge knowledge gaps [8].

- Preventative Step: Frame the ML problem within an ecological risk assessment context. Collaborate with ecologists and regulatory scientists from the project's start to ensure research questions and model outputs address real-world protection goals [13].

Frequently Asked Questions (FAQs)

Q1: How do I standardize toxicity endpoints (e.g., LC50, EC50, NOEC) from different species and tests for a unified machine learning analysis? A1: The first step is categorical harmonization. Group biologically similar endpoints: treat crustacean "immobilization" as analogous to fish "mortality" [8]. Next, convert all numeric concentration values to a common unit, preferably molarity (mol/L), to reflect the molecular basis of toxic action and enable direct comparison [8]. For no-observed-effect concentrations (NOEC), be aware they are statistically less robust than EC/LC values and may introduce noise.

Q2: What is the most critical step in preprocessing ecotoxicological data for ML to avoid biased models? A2: The most critical step is the strategic splitting of data into training and test sets. A random split is inadequate as it often leads to data leakage and inflated performance. You must split based on chemical scaffolds to ensure the model is tested on structurally distinct compounds, or split by taxonomic group to evaluate extrapolation capability [8]. This tests the model's generalizability, which is the ultimate goal for predicting new chemicals.

Q3: I'm using data from the EPA's ECOTOX database. What are common data quality issues I should check for? A3: Common issues include: 1) Inconsistent reporting of test conditions (e.g., pH, temperature) [9]; 2) Missing or uninformative life stage data (many entries are blank, and stages are not comparable across fish, algae, and crustaceans) [8]; 3) Historical data migration errors, as noted in EPA's known data problems for programs like the Clean Water Act [9]. Always cross-check critical toxicity values and chemical identifiers against other sources like the CompTox Chemicals Dashboard where possible.

Q4: How can I make my ecotoxicology ML model more interpretable and useful for risk assessors? A4: Move beyond black-box models by: 1) Using model-agnostic interpretation tools (e.g., SHAP values) to identify which chemical structural features or ToxCast assay outcomes drive predictions [12]. 2) Incorporating established Adverse Outcome Pathways (AOPs) into your feature set or as a framework to interpret results. 3) Validating model outputs against mechanistic toxicology data (e.g., specific receptor binding assays) to provide biological plausibility [13] [12].

Q5: Where can I find high-quality, curated datasets to benchmark my ecotoxicology ML model? A5: The ADORE dataset is a benchmark dataset specifically designed for this purpose. It provides curated acute toxicity data for fish, crustaceans, and algae, expanded with chemical and phylogenetic features, and includes proposed train-test splits [8]. For in vitro bioactivity data, the ToxCast/Tox21 database is the primary resource for developing models that link chemical structure to biological pathways [12].

Q6: Our lab studies chemical mixtures, but most public data is for single substances. How can we build ML models for mixture toxicity? A6: This is a frontier challenge. Current strategies include: 1) Using single-substance data as a base and applying mixture models (e.g., Concentration Addition, Independent Action) to predict combined effects as features [14]. 2) Generating targeted mixture data for high-priority combinations (e.g., common co-pollutants at Superfund sites) to build specific datasets [14]. 3) Applying advanced deep learning architectures (e.g., graph neural networks) that can theoretically represent multi-chemical interactions, though they require substantial mixture data for training.

Table: Summary of Experimental Protocols from Key Guidelines

| Taxonomic Group | OECD Test Guideline | Primary Endpoint | Standard Duration | Key Experimental Conditions to Record |

|---|---|---|---|---|

| Fish | TG 203 [8] | Mortality (LC50) | 96 hours | Temperature, water hardness, pH, dissolved oxygen, species/strain, age/weight. |

| Crustaceans (Daphnia) | TG 202 [8] | Immobilization (EC50) | 48 hours | Temperature, light cycle, number of neonates per test vessel, test medium composition. |

| Algae | TG 201 [8] | Growth Inhibition (EC50) | 72 hours | Light intensity & quality, media composition, shaking speed, initial cell density. |

| General Principle | - | - | - | Always report solvent/vehicle type and concentration, test concentration verification method (nominal vs. measured), and control group performance. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Resources for Integrated Ecotoxicology ML Research

| Item / Resource | Function / Purpose | Notes for Data Integration |

|---|---|---|

| ADORE Dataset [8] | Benchmark dataset for acute aquatic toxicity ML. Provides curated data, chemical features, and predefined splits. | Use as a standard to compare your model's performance against community benchmarks. |

| ECOTOX Database [8] | Primary source of in vivo ecotoxicological effects data from peer-reviewed literature. | Requires extensive curation. Filter for standard guidelines, endpoints, and exposure times. |

| CompTox Chemicals Dashboard | Provides authoritative chemical identifiers, structures, and properties. | Use DTXSID or InChIKey for reliable merging of chemical data from different sources [8]. |

| ToxCast/Tox21 Database [12] | High-throughput screening data on thousands of chemicals across hundreds of biological pathways. | Use as source of "biological descriptor" features to augment chemical descriptors for ML. |

| TAME 2.0 Toolkit [11] | Online data science training resource with modules specific to environmental health and toxicology. | Reference for training on data management, machine learning applications, and database mining. |

| Alternative Model Organisms (e.g., C. elegans) [14] | Provide cost-effective, high-throughput mechanistic toxicity data. | Data can inform on specific pathways (e.g., mitochondrial toxicity) but requires careful extrapolation to ecological endpoints. |

This technical support center addresses the pervasive data quality challenges in ecotoxicology machine learning (ML), where models must make reliable predictions from limited experimental data within a vast chemical space. The central hurdle is the "curse of dimensionality": as the number of chemical descriptors (features) grows, the data becomes sparse, and the statistical power of a small sample plummets [15]. This guide provides troubleshooting, standard protocols, and resources to help researchers navigate these challenges, enhance model reliability, and contribute to the development of robust, non-animal testing methods for chemical safety assessment [16] [17].

Troubleshooting Guides & FAQs

This section addresses common experimental and analytical problems encountered when building predictive ecotoxicology models with small, high-dimensional datasets.

Data Curation & Feature Engineering

Q1: My dataset has fewer than 100 compounds, but I’ve calculated over 1,000 molecular descriptors. My model fits the training data perfectly but fails on new compounds. What's wrong? A: You are experiencing severe overfitting, a direct consequence of the small-sample, high-dimensionality problem [15]. With more features than observations, models can memorize noise instead of learning generalizable patterns.

- Diagnostic Check: Compare training and validation performance. A high R² (>0.9) on training with near-zero or negative R² on hold-out test data confirms overfitting.

- Solution Path:

- Apply Feature Selection: Use domain knowledge to filter irrelevant descriptors. Employ statistical filter methods (e.g., correlation analysis) or embedded methods like Lasso (L1) regularization, which drives coefficients of unimportant features to zero [15].

- Use Dimensionality Reduction: Apply Principal Component Analysis (PCA) or non-linear methods like UMAP to transform your high-dimensional descriptors into a lower-dimensional latent space that retains essential information [18].

- Implement Rigorous Validation: Use external test sets from a different chemical library or apply a Leave-One-Library-Out (LOLO) cross-validation scheme to ensure your model generalizes beyond its training data [18].

Q2: I am using a public ecotoxicology database like ECOTOX, but my data is messy, with multiple entries for the same chemical-species pair. How should I preprocess it to avoid data leakage? A: Duplicate or highly similar data points randomly split between training and test sets cause data leakage, artificially inflating model performance [19].

- Diagnostic Check: Perform a chemical similarity check (e.g., using Tanimoto similarity on fingerprints) between your training and test set compounds. High similarity indicates risk of leakage.

- Solution Path:

- Apply a Data Splitting Strategy Based on Chemical Structure: Use the "scaffold split" method. Group chemicals by their molecular backbone (Bemis-Murcko scaffold) and split these groups into sets, ensuring structurally novel compounds are in the test set [16].

- Use a Benchmark Dataset with Pre-defined Splits: Leverage resources like the ADORE dataset, which provides curated aquatic toxicity data with explicit, non-leaky train-test splits designed for meaningful benchmarking [16] [19].

- Aggregate Data: For true duplicates, average the toxicity endpoints. For varying experimental results, consider the reliability of the source study or treat them as separate data points only if they can be clearly distinguished by documented experimental conditions.

Model Training & Validation

Q3: I want to visualize my chemical space to check for clusters or outliers, but a simple 2D plot loses too much information. What is the best method for visualizing high-dimensional chemical data? A: Linear methods like PCA are common but may not preserve local chemical similarities. Your goal is neighborhood preservation for a trustworthy visual inspection [18].

- Diagnostic Check: Calculate neighborhood preservation metrics (e.g.,

PNNk, trustworthiness) for your 2D projection to quantify information loss [18]. - Solution Path:

- For Exploring Local Clusters: Use t-SNE. It excels at revealing local structures and clusters within your data, though global distances are not interpretable [18] [15].

- For a Balance of Local and Global Structure: Use UMAP. It is generally faster than t-SNE and often does a better job of preserving some of the broader layout of the chemical space while maintaining local neighborhoods [18].

- For a Quantifiable, Linear Projection: Use PCA. It provides the most statistically rigorous linear projection, and the principal components are interpretable as directions of maximum variance in your descriptor space [18].

Q4: How can I predict toxicity for a completely new chemical that is structurally different from anything in my training set? A: This is an extrapolation problem, the most difficult challenge in small-sample settings. Models can only reliably interpolate within the chemical space defined by the training data.

- Diagnostic Check: Calculate the distance to model (e.g., leverage, Hotelling's T²) for the new chemical. If it falls outside the applicability domain of your training data, any prediction is highly uncertain.

- Solution Path:

- Define and Adhere to an Applicability Domain (AD): Explicitly define the chemical space your model is valid for (e.g., ranges of descriptors, similarity thresholds). Report when predictions are made outside this AD with appropriate uncertainty warnings.

- Use a More Diverse Training Set: Incorporate data from related toxicity endpoints or species using transfer learning or multi-task learning approaches to broaden the learned chemical representation [17].

- Leverage External Knowledge: Integrate features from adverse outcome pathways (AOPs) or use pre-trained chemical language models that have learned general chemical representations from vast molecular databases, potentially allowing for better generalization [17].

Dimensionality Reduction Method Comparison

Selecting the right technique is critical for analyzing and visualizing high-dimensional chemical data. The table below summarizes key performance metrics from a benchmark study on ChEMBL chemical subsets [18].

Table 1: Performance Comparison of Dimensionality Reduction (DR) Techniques for Chemical Space Analysis.

| Method | Type | Key Strength | Neighborhood Preservation (PNN₅₀ Score Range) | Best For | Computational Cost |

|---|---|---|---|---|---|

| PCA [18] | Linear | Maximizes variance, interpretable components, deterministic. | Lower (Varies by dataset) | Initial exploration, noise reduction, linear data. | Low |

| t-SNE [18] | Non-linear | Excellent preservation of local neighborhoods/clusters. | High (0.74 - 0.91) | Visualizing distinct chemical clusters in detail. | High |

| UMAP [18] | Non-linear | Balances local and global structure, faster than t-SNE. | High (0.71 - 0.92) | General-purpose chemical space visualization. | Medium |

| GTM [18] | Non-linear | Generative model; provides a probabilistic projection. | Moderate to High (Benchmarked) | Creating interpretable, probability-based landscape maps. | High |

Key Metric Explained: The PNNₖ (Percentage of Nearest Neighbors preserved) score measures how well the k closest neighbors of a compound in the original high-dimensional space remain neighbors in the 2D/3D projection. A score of 1.0 represents perfect preservation [18].

Experimental Protocols

Protocol 1: Evaluating Dimensionality Reduction for Chemical Visualization

This protocol outlines the steps to objectively compare DR methods, as performed in benchmark studies [18].

Objective: To generate and evaluate a 2D map of a chemical library that faithfully represents the high-dimensional relationships between compounds.

Materials:

- Dataset: A set of chemical structures (e.g., from ChEMBL, your in-house library).

- Software: Python with RDKit (

rdkit.org), scikit-learn, openTSNE or umap-learn. - Descriptors: Morgan fingerprints (radius=2, nBits=1024) or other molecular representations.

Procedure:

- Descriptor Calculation: For each compound in your set, compute a high-dimensional feature vector (e.g., Morgan fingerprint) [18].

- Data Standardization: Remove zero-variance features and standardize the remaining features (mean=0, variance=1).

- Hyperparameter Optimization (Grid Search):

- Define a grid of key parameters for each DR method (e.g., perplexity for t-SNE, nneighbors and mindist for UMAP).

- For each parameter combination, perform DR and calculate the PNN₂₀ score (preservation of the top 20 nearest neighbors).

- Select the parameter set that yields the highest average PNN₂₀ score.

- Model Training & Projection: Train the optimized DR model on your full dataset and project the data into 2D coordinates.

- Evaluation: Calculate a suite of neighborhood preservation metrics on the final projection using the

co-ranking matrixframework [18]. Key metrics include:- PNNₖ for various k.

- Trustworthiness: Measures if neighbors in the 2D projection were also neighbors in the original space.

- Continuity: Measures if original neighbors remain neighbors in the projection.

- Visualization & Interpretation: Create a scatter plot of the 2D projection, colored by a property of interest (e.g., toxicity class, source library). Use the quantitative metrics, not just visual appeal, to judge the map's quality.

Protocol 2: Constructing a Benchmark-Quality Dataset for Ecotoxicology ML

This protocol is based on the methodology used to create the ADORE dataset [16].

Objective: To curate a reproducible, well-split dataset for training and fairly comparing ML models predicting acute aquatic toxicity.

Materials:

- Primary Data Source: US EPA ECOTOX database (

cfpub.epa.gov/ecotox/). - Taxonomic Focus: Fish, crustaceans, and algae.

- Endpoint Focus: Acute mortality (LC50/EC50 values for exposures ≤96 hours).

- Chemical Identifiers: CAS RN, DTXSID, InChIKey for cross-referencing.

Procedure:

- Data Extraction & Filtering:

- Extract all records for target taxa and acceptable endpoints (e.g., Mortality for fish, Mortality/Immobilization for crustaceans) [16].

- Filter for standardized test durations (e.g., 48h for Daphnia, 96h for fish).

- Exclude data from non-standard life stages (e.g., embryos) or in vitro tests.

- Data Aggregation & Deduplication:

- Aggregate multiple entries for the same chemical-species-experimental condition, taking the geometric mean of the toxicity value.

- Retain critical metadata (species, chemical, endpoint value, exposure time).

- Feature Expansion:

- Chemical Features: Calculate molecular descriptors (e.g., Mordred), multiple fingerprints (Morgan, MACCS, ToxPrints), and retrieve physicochemical properties [19].

- Biological Features: Add species-specific traits (e.g., phylogeny, life history, ecological traits) to inform cross-species predictions [16].

- Data Splitting (Critical Step): Avoid random splitting. Implement:

- Scaffold Split: Group chemicals by molecular scaffold. Place entire scaffold groups into training, validation, or test sets to assess generalization to novel chemotypes [16].

- Temporal/Hold-out Library Split: Reserve data from a specific source or later time period as the final test set.

- Documentation & Sharing: Clearly document all filtering steps, feature calculations, and split definitions. Publish the dataset with unique identifiers (e.g., DOI) to serve as a community benchmark.

The Scientist's Toolkit

Table 2: Essential Research Reagents & Resources for High-Dimensional Ecotoxicology ML.

| Item / Resource | Function & Utility | Key Consideration |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for calculating molecular descriptors (e.g., Morgan fingerprints), handling chemical I/O, and basic operations [18]. | The standard for programmable chemical informatics. Essential for feature generation. |

| ADORE Dataset | A curated benchmark dataset for acute aquatic toxicity prediction, featuring curated data, multiple molecular representations, species traits, and pre-defined splits to prevent data leakage [16] [19]. | Use as a gold standard for method development and benchmarking against published work. |

| UMAP / t-SNE Algorithms | Non-linear dimensionality reduction libraries for visualizing high-dimensional chemical data in 2D/3D, helping to identify clusters and outliers [18] [15]. | UMAP is generally preferred for speed and balance; t-SNE for detailed cluster inspection. Hyperparameter tuning is essential. |

| L1 (Lasso) Regularization | A modeling technique that performs automatic feature selection by penalizing the absolute size of coefficients, driving irrelevant feature weights to zero [15]. | Highly effective for combating overfitting in small-sample, high-dimensional scenarios. |

| Applicability Domain (AD) Methods | A set of techniques (e.g., leverage, distance-based, range-based) to define the chemical space where a model's predictions are considered reliable [17]. | Critical for responsible prediction. Always report when a query compound falls outside the model's AD. |

| Adverse Outcome Pathway (AOP) Knowledge | Conceptual frameworks linking a molecular initiating event to an adverse ecological outcome. Provides mechanistic insight for feature engineering and model interpretation [17]. | Helps move beyond black-box models by informing which biological or chemical features may be most relevant. |

Workflow & Conceptual Diagrams

Diagram 1: Workflow for Navigating the Small-Sample Hurdle in Ecotoxicology ML. This diagram outlines the journey from raw data to a reliable model, highlighting the core problem (curse of dimensionality) and the interconnected solution pathways.

Diagram 2: Data Splitting Strategies: Random vs. Scaffold-Based. This diagram contrasts two data splitting methods, illustrating how random splitting can lead to data leakage and overoptimistic performance, while scaffold-based splitting provides a more rigorous test of a model's ability to generalize to new chemical structures [16] [19].

The integration of machine learning (ML) into ecotoxicology marks a profound shift from traditional, hypothesis-driven research to a data-driven paradigm. This transition promises to accelerate hazard assessment and reduce animal testing, but its success hinges on overcoming significant data quality challenges[reference:0]. Insufficient data reporting, improper experimental splitting leading to data leakage, and a lack of standardized benchmarks severely hinder model reproducibility and comparability[reference:1]. This article provides a technical support framework to help researchers navigate these informational demands, ensuring robust and reliable ML applications in ecotoxicology.

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: My ML model shows excellent performance on the test set but fails completely on new, external data. What went wrong?

Issue: This is a classic symptom of data leakage, where information from the test set inadvertently influences the model training process, leading to inflated and non-generalizable performance estimates[reference:2]. Solution:

- Audit Your Data Splits: Ensure no chemical (or chemical-species pair) appears in both the training and test sets. For datasets with repeated experiments, a simple random split is insufficient[reference:3].

- Implement Rigorous Splitting Strategies: Use domain-informed splits, such as splitting by unique chemical compound or by chemical occurrence, to rigorously test a model's ability to generalize to truly unseen examples[reference:4].

- Validate with External Sets: Always reserve a completely independent external validation set that is never used during any model development or hyperparameter tuning phase.

Issue: Heterogeneous data sources introduce variability in experimental conditions, units, taxonomic nomenclature, and reporting standards, creating noise that confounds ML models. Solution:

- Standardize Features and Endpoints: Convert all toxicity values (e.g., LC50, EC50) to consistent units (e.g., log10(mol/L)). Use controlled vocabularies for species names (e.g., integrating with ITIS or NCBI taxonomy).

- Curate Molecular Representations: Use standardized cheminformatics tools (e.g., Mordred, RDKit) to generate consistent molecular descriptors or fingerprints for all chemicals, rather than relying on manually reported properties[reference:5].

- Document a Transparent Curation Pipeline: Publish a detailed, step-by-step protocol of all filtering, transformation, and merging steps applied to the raw data to ensure full reproducibility.

FAQ 3: My dataset is relatively small and imbalanced (e.g., many more entries for common species likeD. magna). Will ML still work?

Issue: Data scarcity and class/target imbalance are major barriers in ecotoxicology ML, often leading to models that are biased toward well-represented chemicals or species[reference:6]. Solution:

- Consider Small-Data ML (SDML) Methods: Explore techniques specifically designed for limited data, such as Bayesian learning, Gaussian processes, or few-shot learning, which can be more valuable than conventional ML in these scenarios[reference:7].

- Employ Strategic Data Augmentation: For molecular data, use validated in-silico methods to generate analogous, non-identical training examples. For ecological data, leverage phylogenetic information to inform similarity-based augmentations[reference:8].

- Utilize Benchmark Challenges: Frame your research within the context of established benchmark dataset challenges (e.g., the ADORE dataset's tiered challenges), which are designed to objectively assess model performance under data-limiting conditions[reference:9].

FAQ 4: How can I make my ecotoxicology ML study reproducible and comparable to others?

Issue: A lack of common benchmarks and inconsistent reporting makes it nearly impossible to compare models across different studies[reference:10]. Solution:

- Use a Public Benchmark Dataset: Train and evaluate your models on a curated, publicly available dataset like ADORE. This provides a common ground for performance comparison[reference:11].

- Adhere to Reporting Checklists: Follow established guidelines for reporting ML studies in life sciences, such as the QSAR-specific checklist or extensions for ML-based QSARs[reference:12].

- Share Code, Data, and Splits: Publish not just the model code, but the exact data splits (training/validation/test) used. Repositories like GitLab or Zenodo are ideal for this.

| Dataset | Primary Focus | Scale (Data Points) | Chemicals | Species/Groups | Key Feature |

|---|---|---|---|---|---|

| ADORE (Schür et al., 2023)[reference:13] | Acute aquatic toxicity (LC50/EC50) | ~26,000[reference:14] | ~2,000[reference:15] | Fish, Crustaceans, Algae | Integrated chemical, species-specific, and phylogenetic features; defined train-test splits to avoid leakage. |

| ECOTOX (US EPA) | Broad ecotoxicological effects | >1.1 million entries[reference:16] | >12,000[reference:17] | >14,000 species | Comprehensive but raw database; requires extensive curation for ML. |

| EnviroTox | Hazard assessment for ecological risk | Not specified | Not specified | Multiple | Curated for regulatory use; less focused on ML-ready feature engineering. |

Detailed Experimental Protocol: Curating a ML-Ready Dataset from ECOTOX

This protocol outlines the steps to create a reproducible, ML-ready dataset from the public ECOTOX database, following the principles used in creating the ADORE benchmark.

1. Data Acquisition & Initial Filtering:

- Source: Download the latest release of the ECOTOX database from the US EPA website[reference:18].

- Endpoint Selection: Filter entries to retain only those reporting quantitative acute toxicity endpoints (LC50 or EC50) for fish, crustaceans, and algae.

- Quality Filter: Remove entries with missing critical information (e.g., chemical CAS number, species name, exposure duration, concentration value/unit).

2. Data Standardization & Curation:

- Unit Conversion: Convert all concentration values to a standardized unit (e.g., log10(mol/L)) using molecular weights.

- Species Harmonization: Map all species names to a standard taxonomy (e.g., NCBI Taxonomy IDs) to resolve synonyms and spelling variants.

- Chemical Identifier Consolidation: Standardize chemical identifiers using PubChem CID or InChIKey.

3. Feature Engineering:

- Molecular Descriptors: For each unique chemical, calculate a set of molecular descriptors (e.g., using the Mordred calculator) or generate molecular fingerprints (e.g., Morgan fingerprints)[reference:19].

- Species Features: Annotate each species with available traits (e.g., trophic level, habitat, maximum body size) and phylogenetic distance matrices[reference:20].

- Experimental Context: Encode experimental conditions (e.g., exposure duration, water temperature) as categorical or continuous features where available.

4. Data Splitting for ML Evaluation:

- Avoiding Leakage: Do not perform a simple random split of all data points. Instead, split the data by unique chemical compound ID to ensure no chemical in the test set is seen during training[reference:21].

- Creating Challenges: Define specific prediction challenges (e.g., "fish-only," "cross-species extrapolation") and create corresponding train/test splits for each.

5. Documentation & Sharing:

- Record all filtering criteria, transformation formulas, and software versions used.

- Publish the final curated dataset, the exact splitting indices, and the curation code in a persistent repository.

Visualizing the Workflow and Key Challenges

Diagram 1: The Ecotoxicology ML Data Workflow & Leakage Risks

This diagram illustrates the steps from raw data to model evaluation, highlighting critical points where improper practices can introduce data leakage.

Diagram 2: Paradigm Shift in Ecotoxicology Research

This diagram contrasts the traditional hypothesis-driven approach with the emerging data-driven ML paradigm.

| Item | Category | Function / Purpose | Example / Source |

|---|---|---|---|

| ADORE Dataset | Benchmark Data | Provides a curated, ML-ready benchmark for acute aquatic toxicity with defined splits to enable fair model comparison and avoid data leakage[reference:22]. | Scientific Data publication; open access repository. |

| ECOTOX Database | Primary Data Source | The US EPA's comprehensive database of ecotoxicological test results; the foundational raw material for curating new datasets[reference:23]. | cfpub.epa.gov/ecotox/ |

| Mordred Descriptor Calculator | Cheminformatics Tool | Calculates a comprehensive set (>>1000) of molecular descriptors directly from chemical structure, essential for featurizing chemicals for ML[reference:24]. | Open-source Python package. |

| Mol2vec | Cheminformatics Tool | Provides molecular embeddings (vector representations) learned from large chemical corpora, capturing latent structural similarities. | Open-source Python package. |

| Phylogenetic Distance Data | Biological Context | Informs models about the evolutionary relatedness between species, based on the premise that closely related species may have similar chemical sensitivities[reference:25]. | Integrated from sources like TimeTree. |

| Toxicity Prediction Models (e.g., Random Forest, XGBoost) | ML Algorithm | Tree-based ensemble methods have shown strong performance in predicting continuous toxicity values (e.g., logLC50) from chemical and biological features[reference:26]. | Scikit-learn, XGBoost libraries. |

| Active Learning Frameworks | ML Strategy | A technique to iteratively and strategically select the most informative data points for experimental testing, optimizing resource use in data-scarce settings[reference:27]. | Custom implementation or libraries like modAL. |

Building on Imperfect Foundations: Methodological Strategies for Quality-Impacted Data

This technical support center is designed for researchers and scientists developing machine learning (ML) models in ecotoxicology. A core thesis in this field posits that data quality and availability are the primary constraints on model reliability and regulatory adoption [20]. While ML offers a powerful tool to fill data gaps for chemical toxicity characterization, its systematic application is limited by inconsistent data, non-standardized benchmarks, and a lack of clear frameworks for prioritizing which data gaps to address first [20] [19]. This resource provides troubleshooting guidance and methodologies to navigate these challenges, framed within the context of building robust, reproducible ML models for predicting ecotoxicological outcomes.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: My model performs well on the training set but fails on new chemicals. How can I diagnose and fix this?

Answer: This is a classic sign of overfitting or data leakage, where the model memorizes training data rather than learning generalizable patterns. It is a critical issue in ecotoxicology where chemical space is vast and diverse [19].

Troubleshooting Steps:

- Audit Your Train-Test Split: The most common cause is an improper data split. For ecotoxicology data, a simple random split is often inadequate due to multiple entries for the same chemical or species. You must use a scaffold split (grouping by molecular backbone) or chemical split to ensure chemicals in the test set are structurally distinct from those in training [16] [8]. Always use predefined splits from benchmark datasets like ADORE where available [8].

- Check for Feature Leakage: Ensure no feature in your training data contains direct or indirect information about the target value (e.g., using a calculated property that is a direct function of toxicity).

- Simplify the Model: Reduce model complexity. A simpler model (e.g., Random Forest vs. a deep neural network) may generalize better with limited data.

- Validate with External Data: Test your final model on a completely external, hold-out dataset from a different source to assess real-world generalizability.

Related Experimental Protocol: Implementing a Scaffold Split The goal is to split data so that no molecular scaffold in the test set appears in the training set.

- Input: A dataset containing chemical structures (e.g., as SMILES strings) [16].

- Preprocessing: For each chemical, generate its molecular scaffold (the core framework after removing side chains) using a cheminformatics library like RDKit.

- Grouping: Group all data entries (including different toxicity values for the same chemical across species) by their calculated scaffold.

- Splitting: Perform a stratified split on the scaffold groups, not the individual data points. This ensures all entries sharing a scaffold are contained within either the training or test set, preventing data leakage [8].

- Output: Training and test sets with distinct chemical spaces.

Answer: Use a prioritization framework to objectively rank data gaps. Adapt product management frameworks like RICE or the Impact-Effort Matrix to a research context [21] [22].

- Troubleshooting Steps:

- Define Your "Features": List the potential data acquisition projects (e.g., "Run toxicity assays for chemical class X on species Y," "Curate legacy data from source Z").

- Apply a Framework:

- RICE Scoring: Score each project on:

- Reach (R): How many other chemicals or species could this data inform? (e.g., a model species vs. a niche one) [21] [22].

- Impact (I): How much would this data reduce model uncertainty or regulatory risk? Use a scale (e.g., 3=Massive, 0.25=Minimal) [21].

- Confidence (C): Your confidence (as a percentage) in the Reach and Impact estimates [21].

- Effort (E): Person-months or resource cost required [21].

- Calculate:

RICE Score = (Reach * Impact * Confidence) / Effort. Prioritize higher scores.

- Impact-Effort Matrix: Plot projects on a 2x2 grid [21] [23].

- High Impact, Low Effort (Quick Wins): Example: Digitizing and cleaning a small, high-quality legacy dataset that is readily available.

- High Impact, High Effort (Major Projects): Example: Launching a new experimental campaign for a critical data gap.

- Low Impact, Low Effort (Fill-Ins): Only do these after higher-priority items.

- Low Impact, High Effort (Money Pits): Avoid these [21].

- RICE Scoring: Score each project on:

- Review and Re-prioritize: Reassess priorities as projects are completed or new information emerges [21].

Table: Prioritization Framework Comparison for Research Data Gaps

| Framework | Core Principle | Best Use Case in Ecotoxicology ML | Key Consideration |

|---|---|---|---|

| RICE Scoring [21] [22] | Quantitative score based on Reach, Impact, Confidence, and Effort. | Prioritizing a backlog of diverse data curation or experimental tasks with mixed resource needs. | Requires good estimates for effort and confidence; can be time-consuming to set up. |

| Impact-Effort Matrix [21] [23] | Visual 2x2 plot of value vs. cost. | Initial, high-level sorting of potential projects during team discussions. | Can be subjective; doesn't distinguish between two "High Impact" projects. |

| MoSCoW Method [21] [22] | Categorization into Must-haves, Should-haves, Could-haves, Won't-haves. | Defining the minimum data requirements for a model to be viable (the "Must-haves") for a specific regulatory question. | Teams often overload the "Must-have" category, making it ineffective. |

FAQ 3: How do I handle inconsistent or missing toxicity values for the same chemical-species pair?

Answer: This is a fundamental data quality issue in aggregated databases like ECOTOX [16]. A systematic, documented approach is required.

Troubleshooting Steps:

- Don't Average Immediately: First, investigate the source of inconsistency. Group duplicate entries and examine experimental variables.

- Filter by Reliability Flags: If the database includes data quality or reliability flags (e.g., from Klimisch scores), prioritize data with higher flags.

- Examine Experimental Conditions: Significant variation can be due to differences in water temperature, pH, life stage of organism, or exposure time [16] [8]. Determine if you can normalize values based on these factors (e.g., standardizing to 96h for fish).

- Apply Domain-Knowledge Rules: Establish criteria for resolving conflicts. For example:

- Document Decisions: Create a transparent audit trail of all decisions made during data cleaning for full reproducibility.

Related Experimental Protocol: Data Curation Pipeline for Ecotoxicology Data This protocol outlines steps to create a clean, machine-learning-ready dataset from raw ecotoxicology database exports (e.g., from ECOTOX) [16] [8].

- Filter by Taxonomic Group: Select entries for your taxa of interest (e.g., Fish, Crustaceans, Algae).

- Filter by Endpoint and Effect: Select relevant measurements (e.g., LC50, EC50) and consistent observed effects (e.g., Mortality for fish, Immobilization for crustaceans) [16].

- Standardize Units: Convert all concentrations to a common unit (e.g., mg/L) and log-transform.

- Handle Duplicates: Implement the conflict-resolution logic described in the troubleshooting steps above to produce a single value per unique chemical-species pair.

- Merge with Feature Data: Join the curated toxicity data with chemical descriptors (e.g., molecular fingerprints, physicochemical properties) and species traits (e.g., phylogenetic data, ecological traits) [16] [19].

- Apply Splits: Apply a scaffold-based or other appropriate split to prevent data leakage before model training.

FAQ 4: My model deployment fails with a "Container Can't Be Scheduled" or "CrashLoopBackOff" error. What should I do?

Answer: These are common errors when deploying models to cloud or containerized environments, often related to resource constraints or code errors in the scoring script [24].

- Troubleshooting Steps:

- Check Resource Requests (Kubernetes/AKS): The error

"0/3 nodes are available: 3 Insufficient nvidia.com/gpu"means your deployment is requesting GPUs, but the cluster nodes don't have them available [24].- Fix: Modify your deployment configuration to either remove GPU requests, add GPU-enabled nodes to your cluster, or change the node pool SKU.

- Debug the Scoring Script Locally: A

CrashLoopBackOffoften indicates an uncaught exception in the model's initialization (init()function) or scoring (run()function) code [24].- Fix: Deploy the model as a local web service first. Use the Azure ML Inference HTTP Server or similar tools to test your

score.pyscript locally, which makes debugging much easier [24]. - Code Check: Ensure paths to model files are correct using

Model.get_model_path()and wrap yourrun(input_data)logic in a try-except block to return descriptive error messages during debugging [24].

- Fix: Deploy the model as a local web service first. Use the Azure ML Inference HTTP Server or similar tools to test your

- Inspect Logs: Always retrieve the detailed deployment and container logs. The command

az ml service get-logs(or its equivalent in other platforms) is the first step to diagnose any deployment failure [24].

- Check Resource Requests (Kubernetes/AKS): The error

FAQ 5: How can I assess if my data is sufficient to build a reliable ML model for a new chemical class?

Answer: Perform a chemical space analysis to evaluate the applicability domain of your model and identify extrapolation risks [20].

- Troubleshooting Steps:

- Define Descriptors: Choose meaningful molecular representations for your analysis, such as Morgan fingerprints or Mordred descriptors [19].

- Map the Chemical Space: Use dimensionality reduction techniques (e.g., PCA, t-SNE) to project both your training data chemicals and the new chemicals into the same 2D/3D space.

- Calculate Distance: For each new chemical, calculate its distance (e.g., Euclidean, Tanimoto) to its nearest neighbor in the training set or to the centroid of the training set cluster.

- Set a Threshold: Establish a distance threshold based on the distribution of distances within your training data. New chemicals falling outside this threshold are in a region of chemical space where the model's predictions are highly uncertain (extrapolation).

- Report Coverage: A study found that for many toxicity parameters, ML models could potentially predict for 8–46% of marketed chemicals based on data available for 1–10% of chemicals [20]. Quantify your model's expected coverage in these terms.

Visualizations

Diagram: Workflow for Prioritizing & Addressing Data Gaps in Ecotox ML

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Ecotoxicology ML Research

| Item/Resource | Function & Relevance | Example/Source |

|---|---|---|

| Benchmark Datasets (ADORE) | Provides a curated, standardized dataset for fair model comparison. Includes toxicity data, chemical descriptors, and species traits for fish, crustaceans, and algae [16] [8]. | ADORE (Acute Aquatic Toxicity Dataset) on Figshare/Scientific Data [8]. |

| Chemical Identification Tools | Critical for merging data from different sources. Uses unique identifiers to link chemical structures to toxicity data [16]. | CompTox Chemicals Dashboard (DTXSID), PubChem (CID, SMILES), InChI/InChIKey [16]. |

| Molecular Representation Libraries | Generates numerical features from chemical structures for ML model input [19]. | RDKit (for fingerprints like Morgan), Mordred (for 2D/3D descriptors), mol2vec (for embeddings). |

| Prioritization Framework Templates | Provides structured approaches to rank research tasks and data acquisition projects objectively [21] [22]. | RICE scoring spreadsheet, Impact-Effort Matrix whiteboard template. |

| Model Deployment & Debugging Tools | Allows testing of model scoring scripts locally to catch errors before cloud deployment [24]. | Azure ML Inference HTTP Server (azmlinfsrv), Docker for local containerization. |

| Chemical Space Visualization Tools | Assesses model applicability domain and identifies regions of extrapolation risk [20]. | PCA/t-SNE implementations (scikit-learn), cheminformatics libraries for similarity calculation. |

Ecotoxicology is undergoing a paradigm shift toward machine learning (ML) to reduce animal testing, accelerate chemical safety assessments, and manage vast numbers of untested substances[reference:0]. However, progress is hampered by persistent data quality challenges: inconsistent experimental reporting, heterogeneous data sources, and a lack of standardized benchmarks that allow for direct model comparison[reference:1]. This environment creates a critical need for community-endorsed, high-quality datasets. The ADORE (A benchmark Dataset for machine learning in Ecotoxicology) dataset emerges as a direct response to this need, establishing a common ground for researchers to train, benchmark, and compare models in a reproducible manner[reference:2].

The following tables summarize the core composition and scope of the ADORE dataset, providing a clear snapshot of its scale and structure.

Table 1: ADORE Core Dataset Statistics

| Component | Description | Source/Note |

|---|---|---|

| Primary Source | ECOTOX database (US EPA), September 2022 release. | Contains over 1.1 million entries for >12,000 chemicals and close to 14,000 species[reference:3]. |

| Taxonomic Focus | Fish, Crustaceans, Algae. | These groups represent ~41% of all ECOTOX entries and are of key regulatory importance[reference:4]. |

| Core Endpoint | Acute mortality (LC50/EC50). | Lethal/Effective Concentration for 50% of population, standardized to mg/L and mol/L[reference:5]. |

| Experimental Duration | 24, 48, 72, 96 hours. | Aligned with OECD test guidelines (e.g., 96h for fish, 48h for crustaceans, 72h for algae)[reference:6]. |

| Additional Data Layers | Chemical properties, molecular representations, species ecology, life-history, phylogenetic distances. | Curated to provide informative features for ML modeling beyond simple toxicity values[reference:7]. |

Table 2: Common Data Quality Challenges & ADORE's Curational Response

| Challenge | Manifestation in Raw Data | ADORE Curation Strategy |

|---|---|---|

| Inconsistent Reporting | Variable units, missing metadata, non-standardized effect descriptions. | Unified units (mg/L, mol/L, hours), filtered to retain only common exposure types (Static, Flow-through, Renewal) and media (fresh/salt water)[reference:8]. |

| Data Scarcity vs. Noise | Trade-off between large, diverse but noisy data versus small, clean but limited data. | Prioritized a cleaner, well-curated dataset with expanded feature space (chemical, phylogenetic) over raw volume[reference:9]. |

| Repeated Experiments | Multiple entries for same chemical-species pair, causing data leakage if split randomly. | Implemented structured train-test splits based on chemical occurrence and molecular scaffolds to prevent leakage[reference:10]. |

| Sparse Biological Features | Lack of standardized species descriptors for ML input. | Integrated ecological data (climate zone, migration), life-history traits (lifespan, body length), and phylogenetic distances from TimeTree[reference:11]. |

| Chemical Representation | SMILES strings are not directly usable by most ML algorithms. | Provided multiple molecular representations: MACCS, PubChem, Morgan, and ToxPrint fingerprints; Mordred descriptors; and mol2vec embeddings[reference:12]. |

Technical Support Center: FAQs & Troubleshooting

FAQs on Dataset Access and Structure

Q1: Where can I access the ADORE dataset and its documentation? A: The dataset is freely available via repositories like Renku and is described in detail in the original Scientific Data article[reference:13]. The publication includes a full glossary of features (Supplementary Table 1) and describes all provided data files[reference:14].

Q2: What is the difference between the "core" dataset and the "challenge" splits? A: The core dataset contains all curated acute mortality experiments. The challenge splits are predefined subsets (e.g., single species, single taxonomic group, or all three groups) with specific train-test partitions designed to test model generalization across chemicals or taxa, preventing data leakage[reference:15].

Q3: Which molecular representation should I use for my model? A: ADORE provides six representations to explore this research question. For baseline studies, Morgan fingerprints (radius 2, 2048 bits) are a robust starting point. For toxicity-specific features, consider ToxPrint fingerprints. The mol2vec embedding offers a learned, continuous representation[reference:16].

Troubleshooting Common Experimental & Modeling Issues

Issue 1: My model performs exceptionally well on the test set, but fails on external validation.

- Likely Cause: Data leakage due to random splitting that placed data from repeated experiments in both training and test sets.

- Solution: Use the provided scaffold-based or occurrence-based splits. These ensure chemicals in the test set are structurally distinct or less frequent in the training set, giving a true measure of generalization[reference:17].

Issue 2: Model performance is poor for algae predictions compared to fish.

- Likely Cause: Inherent biological differences and potentially sparser feature coverage for algae (e.g., lack of DEB theory-based pseudo-data)[reference:18].

- Solution:

- Verify feature availability for your algal species.

- Consider using a challenge split restricted to a single taxonomic group to first build a performant model before attempting cross-taxa extrapolation.

- Incorporate chemical properties (e.g., logP, pKa) that may differentially affect autotrophs.

Issue 3: Handling missing values in ecological or life-history features.

- Recommendation: The dataset intentionally includes incomplete tables for maximal flexibility[reference:19]. For modeling, you can:

- Use only the "long core dataset" which maximizes data points but has fewer features.

- Employ imputation techniques suitable for your model, noting that life-history traits like lifespan have full coverage, while others may not[reference:20].

Issue 4: Are the functional use categories (e.g., "biocide") safe to use as model features?

- Critical Warning: No. These categories are provided only for interpreting results. Using them as input features constitutes data leakage, as a label like "biocide" directly correlates with toxicity without the model learning from chemical structure[reference:21].

Experimental Protocols: Key Methodologies

1. Core Data Curation from ECOTOX:

The raw ECOTOX tables (species, tests, results, media) were harmonized and joined using unique keys (result_id, species_number). Entries were filtered to the three taxonomic groups, standardized exposure types, and freshwater/saltwater media only. Effect concentrations were unified to mg/L and converted to mol/L. Only tests with explicit mean LC50/EC50 values within 24-96 hour durations were retained[reference:22].

2. Chemical Feature Engineering: For each chemical, properties (MW, logP, pKa, etc.) were fetched from DSSTox and PubChem. Six molecular representations were computed: (1) MACCS (166-bit), (2) PubChem (881-bit), (3) Morgan (2048-bit, radius 2), (4) ToxPrint (729-bit), (5) Mordred descriptors (719), and (6) mol2vec (300-dim embedding)[reference:23].

3. Species Feature Integration: Ecological data (ecozone, climate, migration, food type) and life-history traits (lifespan, body lengths, reproductive rate) were extracted from the AmP collection. Phylogenetic distances were calculated from a TimeTree-derived tree and converted to a distance matrix[reference:24].

4. Train-Test Splitting Strategy: To prevent leakage from repeated experiments, splits are based on chemical occurrence (placing rare chemicals in the test set) and molecular scaffolds (ensuring test chemicals are structurally distinct from training chemicals). This mimics a realistic extrapolation scenario[reference:25].

Visualizing Workflows & Relationships

Diagram 1: ADORE Dataset Construction Workflow

Diagram 2: Preventing Data Leakage via Structured Splitting

Table 3: Key Research Reagent Solutions for ADORE-Based Studies

| Item / Resource | Function / Purpose | Notes |

|---|---|---|

| ECOTOX Database | Primary source of in vivo ecotoxicology data. | U.S. EPA quarterly-updated database. ADORE uses the September 2022 release[reference:26]. |

| RDKit | Open-source cheminformatics toolkit. | Used to compute molecular fingerprints (MACCS, Morgan) and descriptors for chemicals in the dataset[reference:27]. |

| PubChemPy | Python interface to PubChem. | Facilitates retrieval of canonical SMILES and PubChem fingerprints for chemical curation[reference:28]. |

| TimeTree | Resource for phylogenetic timescales. | Used to generate phylogenetic distance matrices as a feature for species relatedness[reference:29]. |

| AmP (Add-my-Pet) Collection | Database of species-level ecological and life-history parameters. | Source for species-specific traits (e.g., lifespan, body size) integrated into ADORE[reference:30]. |

| MordredDescriptor | Molecular descriptor calculation software. | Provides a comprehensive set of 2D/3D molecular descriptors for chemical representation[reference:31]. |

| mol2vec | Word2vec-style molecular embedding. | Offers a learned, continuous vector representation of chemicals based on substructure patterns[reference:32]. |

| OECD QSAR Toolbox | Software for predicting chemical properties. | Used to estimate pKa values for chemicals in the dataset via SMILES input[reference:33]. |

Troubleshooting Guide & FAQ

Context: This support content addresses common pitfalls in feature engineering for ecotoxicological machine learning, framed within the thesis on data quality challenges in this field. Issues arise when integrating heterogeneous data sources (chemical, biological, ecological) which have different scales, formats, and sparsity patterns.

FAQ: Common Data & Model Issues

Q1: My model performs well on training data but fails to generalize to new chemical classes or species. What could be the cause? A: This is a classic sign of data leakage or non-representative training data. Ensure your data splitting strategy accounts for chemical structural similarity and phylogenetic relationships. Use Tanimoto similarity and taxonomic distance to create stratified splits, not random ones.

Q2: How do I handle missing ecological trait data (e.g., species lifespan, trophic level) for many species in my dataset? A: Avoid simple deletion. Implement a tiered imputation strategy:

- Taxonomic Imputation: Fill missing values with the mean/median from the same genus or family.

- K-Nearest Neighbors Imputation: Use measured traits from phylogenetically similar species.

- Flag as Missing: Add a binary indicator column for each imputed trait to signal uncertainty to the model.

Q3: My chemical descriptor vectors and bioassay results have vastly different scales. Which normalization method is most appropriate? A: The choice depends on data distribution and sparsity. See the protocol below.

Protocol 1: Data Normalization and Scaling for Integrated Ecotox Features

- Objective: Standardize heterogeneous feature sets to a common scale without distorting differences in ranges or creating spurious correlations.

- Materials: Raw feature matrix (chemical descriptors, species traits, ecological indices).

- Procedure:

- Split: Partition your data into training and test sets using a chemical/scaffold-split method.

- Handle Zeros: For sparse data (e.g., molecular fingerprints), apply MaxAbs Scaling (

x / max(|x|)). It scales data to [-1, 1] without centering, preserving sparsity. - For Continuous, Dense Data: If features are approximately normally distributed, apply Standard Scaling (Z-score:

(x - mean)/std). If not (e.g., toxicity endpoints), apply Robust Scaling (using median and IQR) to mitigate outlier influence. - Fit & Transform: Fit the chosen scaler only on the training set, then transform both training and test sets.

- Validation: Check that no feature in the test set has a variance of zero and that the scaled training data has a mean ~0 and std ~1 (for Standard Scaling).

Q4: The integration of high-dimensional chemical descriptors (e.g., from QSAR) with lower-dimensional ecological data causes my model to ignore the ecological features. How can I balance their influence? A: This is a feature dominance problem. Before concatenation, apply dimensionality reduction (e.g., PCA) to the chemical descriptor block, or use dedicated feature networks in a multimodal architecture. Alternatively, apply feature selection (like mutual information) across all integrated features to select the most informative ones from each domain.

Q5: What is the best way to encode categorical ecological data (e.g., habitat type: freshwater, marine, terrestrial) for machine learning? A: Simple One-Hot Encoding can lead to high dimensionality. For ordinal categories (e.g., trophic level: producer, primary consumer, secondary consumer), use Ordinal Encoding. For non-ordinal categories, consider Target Encoding (smoothing the category label with the target variable mean, calculated on the training set with careful cross-validation to prevent leakage) or Entity Embeddings for deep learning models.

Key Data Quality Metrics & Benchmarks

The following table summarizes quantitative benchmarks for assessing data quality in integrated ecotoxicity datasets, derived from recent literature reviews.

Table 1: Data Quality Benchmarks for Ecotoxicological ML

| Metric | Recommended Threshold | Purpose |

|---|---|---|

| Chemical Space Coverage | ≥0.3 Tanimoto similarity to nearest neighbor in training set for any test compound | Ensures model interpolation, not extreme extrapolation. |

| Taxonomic Breadth | Data from ≥3 distinct orders per phylum represented | Reduces phylogenetic bias in species sensitivity predictions. |

| Endpoint Consistency | Coefficient of Variation (CV) < 35% for replicated toxicity measurements (e.g., LC50) | Identifies highly variable, less reliable experimental endpoints. |

| Feature Sparsity | < 30% missing values per feature column; < 15% per instance (species-chemical pair) | Guides decisions on imputation vs. feature/instance removal. |

| Data Balance (Class) | Minority class represents ≥ 10% of total samples for classification tasks | Prevents model bias toward the majority class (e.g., "non-toxic"). |

Experimental Protocols

Protocol 2: Building an Integrated Chemical-Species Feature Matrix

- Objective: Create a unified feature matrix for an ML model by combining descriptors from chemical structures, species biology, and exposure ecology.

- Materials:

- Chemical SMILES strings

- Species taxonomic IDs

- Ecological trait database (e.g., Ecotox, TRIAD)

- Procedure:

- Chemical Descriptor Generation: For each compound, compute 200+ 2D molecular descriptors (e.g., using RDKit) and Morgan fingerprints (radius=2, nbits=2048).

- Species Trait Aggregation: For each species, map taxonomic ID to traits: body mass, trophic level, habitat, generation time. Resolve missing data via Tiered Imputation (see FAQ A2).

- Pairwise Combination: For each chemical-species pair in your toxicity dataset, concatenate the chemical descriptor vector, the species trait vector, and computational cross-features (e.g., octanol-water partition coefficient

log Pmultiplied by species average body mass lipid fraction). - Quality Filter: Remove instances where >15% of concatenated features are missing.

- Normalization: Apply Protocol 1 to the final combined matrix.

- Validation: Perform Principal Component Analysis (PCA) on the final matrix. A 2D PCA plot should show overlap, not complete separation, of points from different chemical classes, indicating integration.

Visualizations

Integrated Feature Engineering Workflow

Common Model Failure Diagnosis Path

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Integrated Ecotox Feature Engineering

| Resource Name | Type / Category | Primary Function & Application |

|---|---|---|

| RDKit | Software Library | Open-source cheminformatics for calculating molecular descriptors and fingerprints from chemical structures (SMILES). |

| ECOTOXicology Knowledgebase (EPA) | Database | Curated source of single chemical toxicity data for aquatic and terrestrial species, used for labeling and trait association. |

| PubChem | Database | Provides chemical identifiers, structures, and biological activity data for feature generation and validation. |

| CATMoS (CERAPP) | Consensus Model / Platform | Platform for comparing and benchmarking QSAR models; informs chemical descriptor selection and performance targets. |

| ECOlogical TRAit database (ECOTRAIT) | Database | Aggregates species ecological traits (e.g., body size, feeding type) for non-taxonomic feature engineering. |

| scikit-learn | Software Library | Python library for data preprocessing (scaling, imputation), feature selection, and implementing basic ML models. |

| mol2vec | Algorithm / Resource | Unsupervised machine learning approach to generate molecular embeddings, useful as an alternative to fingerprints. |

| Kronecker Regularized Least Squares (KRLS) | Modeling Algorithm | Specifically designed for two-input (chemical × species) problems, directly integrating chemical and biological data. |