Extracting Insights from Ecosystems: A Comprehensive Guide to Data Extraction Methods for Ecotoxicology Systematic Reviews

Systematic reviews in ecotoxicology are crucial for evidence-based decision-making in environmental protection and chemical risk assessment, yet their execution is hindered by complex, labor-intensive data extraction processes.

Extracting Insights from Ecosystems: A Comprehensive Guide to Data Extraction Methods for Ecotoxicology Systematic Reviews

Abstract

Systematic reviews in ecotoxicology are crucial for evidence-based decision-making in environmental protection and chemical risk assessment, yet their execution is hindered by complex, labor-intensive data extraction processes. This article provides a comprehensive guide for researchers, scientists, and drug development professionals, analyzing the evolving landscape of data extraction methods. It covers the foundational principles and specialized frameworks unique to ecotoxicology, examines the progression from manual extraction to semi-automated techniques, including the emerging role of Large Language Models (LLMs). The article addresses common challenges and optimization strategies to ensure data integrity and reviews validation criteria for comparing different methodological approaches. By integrating key findings and future directions, this guide aims to equip professionals with the knowledge to enhance the efficiency, reproducibility, and scientific rigor of their systematic reviews.

Building the Blueprint: Foundational Frameworks and Core Concepts for Ecotoxicology Data Extraction

The Imperative for Systematic Reviews in Ecotoxicology and Environmental Health

The fields of ecotoxicology and environmental health (EH) are defined by complex questions concerning the effects of chemical, physical, and biological agents on ecosystems and human health. The evidence base is vast, heterogeneous, and rapidly expanding, driven by scientific advancement and regulatory demands [1]. In this context, systematic reviews (SRs) and related evidence synthesis methodologies have transitioned from a novel approach to an imperative scientific practice. They provide a structured, transparent, and bias-minimizing framework to navigate this complexity, forming the cornerstone of evidence-based decision-making for chemical risk assessment, policy formulation, and public health protection [2] [3]. This article details the application notes and protocols essential for conducting robust systematic reviews and evidence maps within this domain, framed within a broader thesis on advancing data extraction methodologies.

Scientific and Policy Imperative

The conduct of SRs in toxicology and EH has increased dramatically, with publications in toxicology approximately doubling from 2016 to 2020 [3]. This growth is propelled by a paradigm shift toward evidence-based approaches within major regulatory and public health bodies worldwide, including the U.S. Environmental Protection Agency (EPA) and the European Food Safety Authority (EFSA) [1]. These frameworks mandate rigorous, unbiased synthesis of all available evidence to inform risk assessments and policies.

However, the unique nature of EH evidence poses distinct challenges. Data is often highly connected (e.g., linking a chemical, its metabolic pathway, a molecular endpoint, and an ecological outcome), heterogeneous (spanning in vitro, animal, epidemiological, and field studies), and complex [1]. Traditional, narrative reviews are susceptible to selection and confirmation bias, making them unsuitable for definitive conclusions. Systematic methodologies are therefore not merely beneficial but essential to produce credible, high-value syntheses that can withstand scrutiny and guide sound decisions [2] [3].

Methodological Foundations: Reviews, Maps, and Protocols

Systematic reviews and systematic evidence maps (SEMs) serve complementary purposes. A Systematic Review aims to answer a specific, narrowly focused research question (e.g., "Does exposure to chemical X cause effect Y in organism Z?") through critical appraisal and synthesis, potentially including meta-analysis [4]. In contrast, a Systematic Evidence Map aims to catalogue and characterize a broader evidence base (e.g., "What is known about the ecotoxicological effects of chemical class A?") to identify trends, gaps, and clusters for further research or review [1] [4]. SEMs are particularly valuable for problem formulation and prioritization in chemicals policy [1].

The foundation of both is a publicly accessible protocol. The protocol is a detailed, prospective work plan that locks in the rationale, objectives, and methods, guarding against bias arising from post-hoc changes in approach [5] [6]. Key registries include PROSPERO (health-focused), the Collaboration for Environmental Evidence (CEE) library, and INPLASY, which offers rapid publication [5] [6] [7].

Table 1: Core Elements of a Systematic Review Protocol for Ecotoxicology/EH

| Protocol Section | Key Components & Frameworks | Purpose & Notes |

|---|---|---|

| Introduction | Rationale, Background, Objectives [6] | Justifies the review, states its aims, and identifies knowledge gaps. |

| Research Question | PECO/PICO Framework [8]:• Population/Organism• Exposure/Intervention• Comparator• Outcome | Defines the review's scope with precision. PECO (Population, Exposure, Comparator, Outcome) is often more applicable than PICO in EH [8]. |

| Methods: Eligibility | Inclusion & Exclusion Criteria [5] [6] | Explicitly states which studies will be selected based on PECO elements, study design, language, date, etc. |

| Methods: Search | Information Sources, Search Strategy, Grey Literature [6] | Ensures reproducibility and comprehensiveness. Must detail databases, search strings, and efforts to find unpublished data. |

| Methods: Study Selection | Screening Process, Conflict Resolution [5] | Describes the title/abstract and full-text screening phases, often using tools like Covidence or Rayyan [9]. |

| Methods: Data Extraction | Data Collection Process, Forms, Management [5] | Specifies what data will be extracted (e.g., study design, exposure details, outcomes, effect sizes) and how. |

| Methods: Risk of Bias | Quality Assessment Tools [5] | Details tools for evaluating study reliability (e.g., OHAT, SYRCLE for animal studies). |

| Methods: Synthesis | Data Synthesis Plan [6] | Outlines plans for narrative, qualitative, or quantitative (meta-analysis) synthesis. |

Detailed Protocol for Systematic Evidence Mapping

Systematic Evidence Mapping is a critical first step for navigating broad EH topics. The following protocol, derived from CEE guidance and adapted for EH complexity, focuses on creating a queryable database of evidence [1].

Objective: To systematically catalogue and characterize the available scientific literature on [Broad Chemical Class/Stressors] and their [Broad Category of Ecological or Health Outcomes] to visualize the distribution and types of evidence, identify knowledge clusters and gaps, and inform future research prioritization and specific systematic review questions.

Experimental Protocol:

Define Scope & Develop Codebook: Engage stakeholders to finalize the map's breadth. Develop a hierarchical codebook for data extraction. This includes controlled vocabularies (ontologies) for key entities:

- Stressors: Chemical names, CAS numbers, classes.

- Organisms/Populations: Species, taxonomic groups, human populations.

- Outcomes: Biochemical, physiological, pathological, population-level, ecosystem-level endpoints.

- Study Design: In silico, in vitro, in vivo (acute/chronic), observational, field study.

Search & Screen: Execute a comprehensive search across multiple databases (e.g., PubMed, Web of Science, Scopus, GreenFile, TOXLINE). Search strings will combine terms for the stressor and broad outcome domains. Grey literature will be sought from regulatory agency websites and thesis repositories. Screening will follow the PRISMA flow diagram, performed independently by two reviewers.

Data Extraction & Coding: For each included study, extract metadata (authors, year) and code data according to the codebook. The recommended advanced method is to structure this data as a knowledge graph, not a flat table. In a graph, entities (e.g., "Atrazine," "Xenopus laevis," "Vitellogenin") are stored as "nodes," and their relationships (e.g., "causes increase in," "is a metabolite of") are stored as "edges." This schemaless, on-read approach is uniquely suited to EH's interconnected data [1].

Database Development & Validation: Implement the knowledge graph using graph database technology (e.g., Neo4j). Develop a user-friendly front-end interface that allows users to query the map visually (e.g., "Show all studies on amphibians and endocrine disruption"). Validate the coding consistency through dual independent extraction on a subset of studies.

Analysis & Visualization: Analyze and report the map descriptively. Use interactive visualizations to show evidence volume by year, species, outcome, or study type. Critical gaps are identified as well-studied stressors with no data on key species or outcomes.

Systematic Evidence Mapping Workflow

Specialized Considerations for Ecotoxicology & EH

Conducting SRs in this field requires adaptation of general biomedical standards. The COSTER recommendations provide a consensus-based, cross-sector guide covering 70 practices across eight domains specific to toxicology and EH [2]. Key considerations include:

- Problem Formulation: Framing a reviewable question from a broad policy or research need.

- Handling Grey Literature: Regulatory reports and industry studies are crucial for unbiased assessment but require careful appraisal [2].

- Risk of Bias/Quality Assessment: Using tools validated for different study types (e.g., in vivo toxicology, epidemiology, ecological field studies) is critical. The OHAT (Office of Health Assessment and Translation) and SYRCLE (SYstematic Review Centre for Laboratory animal Experimentation) tools are widely used.

- Dealing with Complexity: Evidence often involves multiple stressors, species, and endpoints with non-linear relationships. Narrative synthesis and evidence mapping are frequently as important as meta-analysis.

Journals like Environment International now enforce stringent, specialized submission criteria for evidence syntheses, requiring adherence to PRISMA or ROSES reporting standards and triage using tools like CREST_Triage [4]. This underscores the field's commitment to methodological rigor.

Innovation in Data Extraction: Automation & Knowledge Graphs

Data extraction is the most time-consuming and labor-intensive stage of a review [10]. Advancements in automation and data structuring are therefore central to the thesis of improving SR efficiency and scalability in EH.

(Semi-)Automated Data Extraction: A living systematic review of methods up to 2024 shows a growing field, with 117 identified publications [10]. While most early efforts focused on extracting PICO elements from clinical trial texts using classical NLP, recent trends are decisive:

- Shift to Relation Extraction: Moving from extracting isolated entities (e.g., a chemical name, an outcome) to extracting the precise relationship between them (e.g., "chemical X increases biomarker Y") [10].

- Rise of Large Language Models (LLMs): LLMs are emerging as powerful tools for data extraction. However, current applications show a trend of decreasing reporting quality for quantitative performance metrics (like recall) and lower reproducibility compared to earlier, model-specific approaches [10].

- Focus on Interoperability: There is a push for shared datasets and code (available in ~45% of recent publications) to facilitate comparison and tool development [10].

Protocol for (Semi-)Automated Data Extraction Pilot:

- Task Definition: Define a specific extraction task (e.g., extract chemical name, species, dose, and reported effect size from results sections).

- Training Data Creation: Manually annotate a corpus of 50-100 full-text PDFs relevant to the review topic.

- Tool Selection & Prompt Engineering: Choose an LLM API (e.g., GPT-4, Claude) or a specialized tool (e.g., EPPI-Reviewer's ML features). For LLMs, iteratively develop and refine prompts (instructions) using the training set.

- Execution & Validation: Run the automated tool on a new set of studies. Have two human reviewers independently extract data from the same set. Compare all three outputs (Human1, Human2, AI).

- Performance Metrics & Integration: Calculate inter-rater agreement (Fleiss' Kappa) between humans and between humans and the AI. Use AI output as a "first pass" to accelerate human review, not replace it.

Knowledge Graphs as an Extraction Goal: The ultimate output of extraction should be a structured, computable format. A knowledge graph is ideal for EH data, as it preserves the complex relationships inherent in toxicological pathways [1]. It transforms the review from a static document into a dynamic, queryable knowledge base.

Knowledge Graph Data Structure for EH Evidence

Table 2: Performance Metrics of Data Extraction Methods (Representative Data from Literature) [10]

| Extraction Method | Typical Precision | Typical Recall | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Manual Extraction (Dual Review) | Very High (~98-100%) | Very High (~98-100%) | Gold standard for accuracy; handles complexity. | Extremely time/resource intensive. |

| Classical NLP (e.g., SVM, Rules) | Moderate-High (75-90%) | Moderate (70-85%) | Reproducible; good for defined entities. | Requires technical expertise; poor generalizability. |

| Deep Learning (e.g., BERT) | High (80-95%) | High (80-95%) | Better context understanding; state-of-the-art for specific tasks. | Requires large training datasets; computationally intensive. |

| Large Language Models (LLMs) | Variable (60-95%)* | Variable (65-90%)* | No task-specific training needed; flexible. | Output can be non-deterministic; metrics often under-reported; risk of hallucination. |

Note: Performance is highly dependent on prompt engineering, task complexity, and the specific LLM used. Recent trends indicate declining completeness in reporting these metrics [10].

The Researcher's Toolkit

Table 3: Essential Research Reagent Solutions for Ecotoxicology/EH Systematic Reviews

| Tool / Resource | Category | Primary Function | Relevance to Data Extraction Thesis |

|---|---|---|---|

| Covidence, Rayyan | Review Management | Streamlines screening, full-text review, and manual data extraction via collaborative web platforms. | Primary interface for human-driven extraction; some are beginning to integrate basic AI functions for prioritization [5] [9]. |

| EPPI-Reviewer | Review Management & Automation | Advanced tool supporting machine learning for screening and custom data extraction forms. | Features built-in classifiers and NLP tools to (semi-)automate the extraction of study characteristics and outcomes [10]. |

| RevMan, R (metafor, meta) | Statistical Synthesis | Software for performing meta-analysis and generating forest/funnel plots. | The endpoint for extracted quantitative data. Requires clean, structured numerical data from the extraction phase. |

| Neo4j, GraphXR | Graph Database & Visualization | Platforms to create, query, and visualize knowledge graphs. | Core innovation. Enables implementation of a graph-based data model for SEMs and complex reviews, turning extracted data into an interactive knowledge base [1]. |

| Python (spaCy, Transformers) | Programming / NLP | Libraries for building custom natural language processing and machine learning pipelines. | Enables the development of tailored, automated data extraction systems for specific EH concepts (e.g., chemical, species, endpoint) from text [10]. |

| PRISMA, ROSES | Reporting Guidelines | Checklists and flow diagrams for transparent reporting of reviews and maps. | Ensures the methods and results of the data extraction process are fully documented and reproducible [4]. |

| COSTER Guidelines | Conduct Guidelines | Domain-specific recommendations for planning and conducting EH SRs [2]. | Informs the design of the extraction protocol, especially for handling grey literature and assessing risk of bias in diverse study types. |

| PROSPERO, INPLASY | Protocol Registries | Public repositories for registering review protocols prospectively. | Guards against bias in the extraction and synthesis plan; a mandatory step for rigorous reviews [7]. |

The imperative for systematic reviews in ecotoxicology and environmental health is unequivocal, driven by the demands of evidence-based policy and the intrinsic complexity of the field. Mastering the detailed protocols for systematic reviews and evidence maps is fundamental. The future of scalable, efficient, and insightful evidence synthesis lies in the innovative convergence of two paths: the structured, relationship-rich data model provided by knowledge graphs and the advancing power of (semi-)automated extraction technologies, particularly LLMs. Researchers who integrate these advanced methodologies with foundational rigor will be best positioned to synthesize the evidence needed to protect environmental and human health.

Within the rigorous process of evidence synthesis, data extraction serves as the critical translational step where information from primary studies is systematically captured into a structured format for analysis and synthesis [11]. In the specific domain of ecotoxicology systematic reviews, this involves distilling complex experimental data on chemical effects, environmental concentrations, and biological endpoints from diverse study reports. The fidelity of this process directly determines the validity of subsequent meta-analyses and the strength of environmental risk assessments. Current methodologies are evolving from manual, error-prone spreadsheet approaches toward more structured, hierarchical, and semi-automated systems to handle the inherent complexity of ecological data, which often includes multiple species, life stages, endpoints, and exposure scenarios [12].

Current Practices and Quantitative Insights

A survey of systematic reviewers reveals heterogeneous approaches to data extraction. A 2022 survey (n=162) found that spreadsheet software remains the dominant tool, while independent duplicate extraction is considered the gold standard for minimizing error [13].

Table 1: Survey Results on Current Data Extraction Practices (2022, n=162) [13]

| Practice or Opinion | Percentage of Respondents | Key Insight |

|---|---|---|

| Use of spreadsheet software | 83% | Indicates widespread reliance on flexible but error-prone tools. |

| Use of adapted or newly developed forms | 65% (adapted), 62% (new) | Most teams customize extraction tools for each review. |

| Piloting of extraction forms | 74% | A majority validate their forms before full extraction. |

| Independent duplicate extraction as most appropriate | 64% | Considered best practice to reduce errors. |

| Perceived top research gap: Reducing errors | 60% | Highlights concern over data accuracy. |

| Perceived top research gap: Support tools/(semi-)automation | 46% | Strong interest in technological assistance. |

Concurrently, a living systematic review on automation methods (2025, n=117 publications) shows a rapidly advancing field, though practical application lags [10].

Table 2: Status of (Semi)Automated Data Extraction Research (Living Review, 2025) [10]

| Aspect | Finding | Implication for Ecotoxicology |

|---|---|---|

| Primary study type targeted | 96% focus on Randomized Controlled Trials (RCTs) | A significant gap exists for automating extraction from diverse ecotoxicology study designs (e.g., chronic toxicity tests, field studies). |

| Most extracted entities | PICO elements (Population, Intervention, Comparator, Outcome) | Suggests frameworks like PECO (Population, Exposure, Comparator, Outcome) could be targeted for automation in environmental health. |

| Data availability | 45% of publications share data | Promotes reproducibility and model training. |

| Code availability | 42% of publications share code | Essential for validating and adapting tools. |

| Publicly available tools | Only 8% of publications result in an accessible tool | Highlights a major translation barrier between research and usable software for reviewers. |

| Emerging trend | Use of Large Language Models (LLMs) | LLMs show promise but current trends indicate challenges with reproducibility and accuracy of quantitative data extraction. |

Experimental Protocols for Ecotoxicology Systematic Reviews

Protocol 1: Hierarchical Data Extraction (HDE) for Complex Ecotoxicological Data

Hierarchical Data Extraction is designed to manage nested, repeating data sets common in ecotoxicology (e.g., multiple endpoints measured across several species and exposure concentrations) [12].

Methodology:

- Form Configuration: Create discrete electronic forms for each data type level.

- Root Form: Captures study-level metadata (e.g., author, year, test substance, test type [acute/chronic]).

- Child Form 1 - Test Organism: Created for each species/life stage, linked to the root. Captures species name, age, source.

- Child Form 2 - Exposure Scenario: Created for each concentration/duration tested, linked to a Test Organism form. Captures concentration, duration, medium.

- Child Form 3 - Endpoint Result: Created for each measured endpoint (e.g., LC50, growth inhibition, reproduction), linked to an Exposure Scenario form. Captures endpoint type, mean value, standard deviation, sample size.

- Piloting and Key Definition: Pilot the form structure on 5-10% of included studies. Designate a "key" question on each form (e.g., "Species name" for Test Organism form) whose answer will appear in the navigation tree for easy identification [12].

- Data Extraction: Reviewers navigate a dynamic tree interface. They create new child form instances on-the-fly as they encounter new data layers in a paper, with software automatically managing parent-child links.

- Output Generation: Data exports in an analysis-ready vertical format, preserving the hierarchy (e.g., each row represents a unique endpoint, with columns for its associated concentration, species, and study metadata) [12].

Protocol 2: Validation and Piloting of Data Extraction Forms

Piloting is essential to ensure consistency, clarity, and completeness of the extraction process [11].

Methodology:

- Develop Draft Forms: Based on the review question (e.g., "What is the ecotoxicity of Chemical X to aquatic invertebrates?"), draft forms using the HDE principle or a standard template.

- Select Pilot Sample: Randomly select a representative sample (typically 10-15%) of the included studies, ensuring a mix of study designs and report formats [11].

- Independent Dual Extraction: Two reviewers independently extract data from the pilot sample using the draft forms.

- Calculate Inter-Rater Reliability (IRR): For categorical items (e.g., risk of bias judgments), calculate Cohen's Kappa. For continuous data (e.g., LC50 values), calculate intraclass correlation coefficients or simple percent agreement.

- Consensus Meeting & Form Revision: Reviewers discuss and resolve all discrepancies. The extraction form is revised to clarify ambiguous items, add missing fields, or remove redundant ones. This process is iterated until high IRR (>0.8) is achieved.

- Procedure Documentation: All decisions, form versions, and piloting results are documented in the review's methodology section [13].

Protocol 3: Implementing Semi-Automated Extraction with LLMs

This protocol outlines a human-in-the-loop approach using LLMs to augment manual extraction.

Methodology:

- Task Definition & Prompt Engineering: Define a discrete, structured extraction task (e.g., "Extract all reported NOEC (No Observed Effect Concentration) values and their associated test species from this paragraph"). Develop and iteratively refine precise prompts with clear instructions on output format (e.g., JSON) [10].

- Document Chunking: For full-text processing, split PDF documents into manageable sections (e.g., by heading, or fixed token-size chunks) respecting logical boundaries like "Materials and Methods" or "Results."

- Model Querying & Output Generation: Use an API to submit prompts with text chunks to a capable LLM. Critical: Always use a consistent model version and temperature setting (e.g., temperature=0) for reproducibility [10].

- Human Verification & Curation: Treat all LLM outputs as preliminary data. A trained reviewer must verify every extracted data point against the source text. This step is non-negotiable due to model tendencies for "hallucination" or misrepresentation of quantitative data [10].

- Integration into Workflow: The verified data is then integrated into the master extraction database (e.g., the HDE system). The process should be documented, including prompt versions, model used, and verification rate.

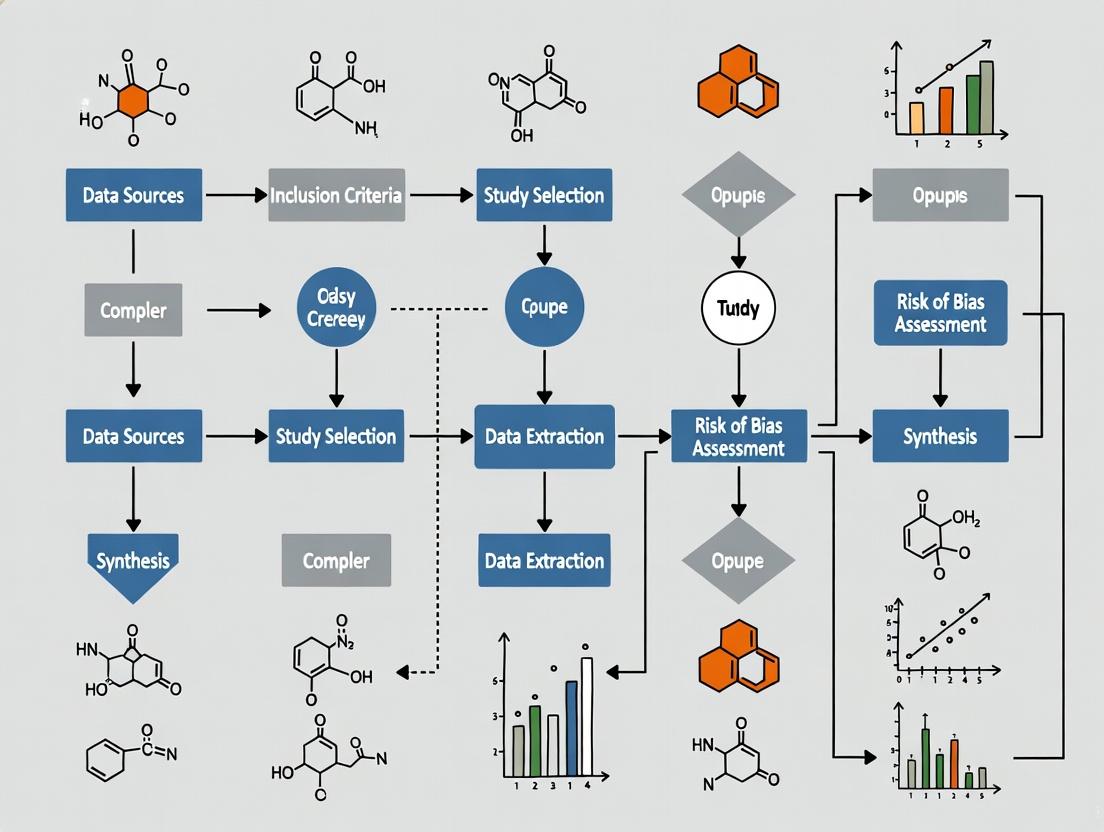

Visualizing the Data Extraction Workflow

The following diagram illustrates the integrated workflow for manual and semi-automated data extraction within an ecotoxicology systematic review.

Systematic Review Data Extraction Workflow

The structure of Hierarchical Data Extraction (HDE) is key to managing complex ecotoxicology data, as shown in the following conceptual diagram.

Hierarchical Data Extraction Form Structure

The Scientist's Toolkit: Essential Materials for Data Extraction

Table 3: Essential Tools and Resources for Systematic Data Extraction

| Tool/Resource Category | Specific Item or Software | Function in Data Extraction | Considerations for Ecotoxicology |

|---|---|---|---|

| Structured Extraction Software | DistillerSR, Covidence, Rayyan [11] | Provides platform for creating, piloting, and executing dual-reviewer extraction with built-in conflict resolution. Some support HDE [12]. | Evaluate support for non-PICO frameworks (e.g., PECO) and complex, nested data outputs. |

| Flexible & Ubiquitous Tools | Microsoft Excel, Google Sheets [13] [11] | Highly accessible for custom form creation. Useful for initial prototyping and simple reviews. | Prone to error, lacks audit trails, and becomes unmanageable with complex hierarchical data [12]. |

| Specialized Systematic Review Tools | RevMan (Cochrane) [11] | Integrated tool for full review production, including extraction and meta-analysis. | Best suited for clinical/intervention data; may be less flexible for ecological endpoints. |

| Reference & Text Management | EndNote, Zotero, Mendeley | Manage and annotate included study PDFs. Essential for organizing the corpus of literature. | Integration with extraction software (e.g., direct PDF import) streamlines the workflow. |

| (Semi)Automation & NLP Tools | Large Language Model APIs (e.g., GPT-4, Claude), Custom NLP scripts [10] | Assist in locating and drafting extractions for specific data points from text, speeding up the reviewer's work. | Require extensive human verification [10]. Effectiveness depends on prompt engineering and model suitability for scientific text. |

| Validation Instruments | Pre-designed piloting protocol, IRR calculation scripts (e.g., in R or Python) [11] | Ensure consistency and reliability between extractors before full-scale extraction begins. | Critical for training reviewer teams and ensuring the extraction form captures ecotoxicological data accurately. |

| Reporting Guidelines | PRISMA checklist, INCREASE checklist (under development) [13] | Guide the transparent reporting of the data extraction methods, enhancing reproducibility. | Using such checklists is a best practice for methodological rigor. |

The systematic review has become a cornerstone of evidence-based decision-making, initially formalized in clinical medicine through frameworks like PICO (Population, Intervention, Comparator, Outcome) [10]. This structured approach is fundamental for developing focused research questions and for the subsequent data extraction phase, where key study characteristics are captured in a standardized form [10]. However, the direct application of PICO to ecological and ecotoxicological research presents significant conceptual challenges. In environmental health, the "intervention" is often an unintentional exposure, and the "population" may encompass non-human species or entire ecosystems [14]. Consequently, the PECO framework (Population, Exposure, Comparator, Outcome) has emerged as a critical adaptation, reframing the question to better suit the assessment of environmental exposures and their effects [14].

This evolution from PICO to what can be termed the ECO framework (expanding to encompass Ecosystem, Contaminant/stressor, and Outcome) is not merely a change in acronym but a fundamental shift in perspective. It is situated within a broader thesis on advancing data extraction methods for ecotoxicology systematic reviews. The goal is to develop rigorous, transparent, and repeatable protocols that can handle the complexity of ecological data, which is often heterogeneous and contextual [15] [16]. The Collaboration for Environmental Evidence (CEE) explicitly recommends using PICO or PECO structures to guide the design of data coding and extraction forms, underscoring the framework's operational importance [15]. This article details the application notes and protocols for implementing and adapting these extraction frameworks to answer pressing ecological questions reliably and efficiently.

Deconstructing the Framework: From PICO to ECO Components

Adapting the data extraction framework requires a clear understanding of how each component transforms from a clinical to an ecological context. This translation ensures that systematic reviews in ecotoxicology capture the necessary information for a robust synthesis.

- Population (P) to Ecosystem/Community/Population: In clinical PICO, the Population is a defined patient group. In ECO, this expands to the biological unit of interest. This could be a specific species (e.g., Pimephales promelas, the fathead minnow), a functional group, an ecological community, or an entire ecosystem. Defining this requires specifying taxonomic details, life stage, health status, and relevant habitat descriptors [17] [18].

- Intervention (I) to Exposure/Contaminant/Stressor (E): The active "Intervention" becomes the "Exposure." This is a passive environmental stressor to which the population is subjected [14]. It must be defined with precision, including:

- Identity: The specific chemical (e.g., benzo[a]pyrene), physical agent, or biological stressor.

- Magnitude: Concentration or intensity (e.g., 50 μg/L).

- Duration & Timing: Acute (e.g., 96-hour) vs. chronic exposure, and life-stage specificity [17].

- Route: Waterborne, dietary, sediment, etc.

- Comparator (C): This element remains crucial but its nature changes. In therapy, it's often a placebo or standard care. In ECO, the comparator is typically defined by the exposure gradient. Key approaches include [14]:

- Incremental Comparison: Evaluating the effect of a unit increase in exposure (e.g., per 10 dB increase in noise) [14].

- High vs. Low: Comparing effects at the highest versus lowest observed or measured exposure levels.

- Exposed vs. Reference/Unexposed: Comparing a contaminated site to a clean reference site.

- Before-After/Control-Impact (BACI): A robust design comparing changes over time between impacted and control populations.

- Outcome (O): Outcomes in ecotoxicology are diverse measures of effect. These can range from molecular and biochemical responses (e.g., CYP1A1 enzyme induction, vitellogenin mRNA expression) to physiological, histological, individual fitness (growth, reproduction, mortality), and population- or community-level endpoints [17] [16]. A single study may report multiple outcomes.

Table: Comparative Analysis of Framework Components

| Framework Component | Clinical PICO Context | Ecological ECO Context | Key Extraction Variables for ECO |

|---|---|---|---|

| Population (P) | Human patients (age, sex, disease status) | Species, community, or ecosystem; Life stage; Habitat type; Health status | Taxonomic identification; Life stage; Sex; Habitat descriptors; Sample size |

| Exposure/Intervention (E/I) | Therapeutic drug, procedure | Chemical contaminant, physical stressor, non-native species | Stressor identity; Measured concentration/intensity; Exposure duration & frequency; Exposure matrix (water, soil, etc.) |

| Comparator (C) | Placebo, standard therapy, alternative drug | Reference site, lower exposure level, pre-exposure state, experimental control | Type of comparator (e.g., spatial reference, dose-control); Comparator exposure level; Characteristics of control/reference system |

| Outcome (O) | Clinical endpoint (mortality, symptom score) | Biological effect at sub-organismal, individual, or population level | Endpoint type (mortality, growth, reproduction, biomarker); Measurement method; Units; Time of measurement |

| Additional Context | Study design (RCT) | Field monitoring, mesocosm, lab experiment; Ecological relevance | Study design type; Test system scale (lab/field); Temperature, pH, other abiotic factors; Geographic location |

Data Extraction Protocols for ECO-Based Systematic Reviews

Implementing the ECO framework requires a meticulous, multi-stage process to ensure data integrity, transparency, and reproducibility. The following protocol, synthesized from systematic review guidance, provides a detailed roadmap [15].

Stage 1: Protocol Development & Form Pilot-Testing

- Action: Based on the a priori ECO question, design a detailed data coding and extraction form. This is typically a spreadsheet or database with predefined fields for all ECO elements, study metadata (author, year, location), and critical appraisal criteria [15].

- Protocol: Pilot-test the form on a representative subset (e.g., 5-10%) of the included full-text articles. This must involve at least two independent reviewers. The goal is to identify ambiguities in coding instructions, missing data fields, and inconsistencies in interpretation [15].

- Output: A finalized, piloted extraction form and a detailed codebook documenting rules for handling all variables. This form should be included in the systematic review protocol.

Stage 2: Data Coding and Extraction Execution

- Action: Reviewers systematically apply the finalized form to all included studies. Data coding records study characteristics (meta-data), while data extraction records quantitative results and findings [15].

- Protocol:

- Extract and record all relevant ECO variables as defined in the codebook.

- For quantitative outcomes, extract raw data or summary statistics (e.g., mean, standard deviation, sample size for control and exposed groups) necessary for effect size calculation [15].

- Document the precise location (page, figure, table) of the extracted data within the source article.

- Note any assumptions or transformations applied during extraction (e.g., converting standard error to standard deviation).

- Output: A complete, populated database of coded study characteristics and extracted results.

Stage 3: Quality Assurance and Agreement Assessment

- Action: Ensure the reliability and repeatability of the extraction process.

- Protocol: A minimum of a random subset (e.g., 10-20%) of studies should be extracted independently by two reviewers. Inter-reviewer agreement is assessed (e.g., using Cohen's kappa for categorical variables, correlation for continuous variables). Discrepancies are resolved through discussion or adjudication by a third reviewer. This process validates the extraction rules and minimizes human error [15].

- Output: A measure of inter-reviewer reliability and a final, consensus dataset for analysis.

Stage 4: Data Transformation and Synthesis Preparation

- Action: Prepare extracted data for statistical synthesis or qualitative analysis.

- Protocol: Transform extracted summary statistics into a common effect size metric appropriate for the data (e.g., log response ratio, Hedges' g, odds ratio). Impute missing statistics only using justified, pre-specified methods (e.g., algebraic conversion, contact with authors), and conduct sensitivity analyses to assess the impact of imputation [15].

- Output: A standardized dataset ready for meta-analysis or other evidence synthesis methods.

The following diagram illustrates this integrated workflow.

The Scientist's Toolkit: Essential Reagents and Methods

Implementing ecotoxicological systematic reviews and the associated frameworks relies on a suite of established and emerging methodologies. The following table details key research tools and their functions.

Table: Key Research Reagent Solutions and Methodologies

| Item/Method | Primary Function in Ecotoxicology | Relevance to ECO Framework & Data Extraction |

|---|---|---|

| In situ Caged Bioassays (e.g., caged fathead minnows) [17] | Provides a controlled measure of biological effects in real-world environments, linking exposure to outcome at the organism level. | Directly generates data for Population (species) and Outcome (individual-level effects) under field-based Exposure. Critical for weight-of-evidence assessments. |

| High-Throughput In Vitro Assays (e.g., T47D-kBluc estrogen receptor assay, Attagene Factorial assays) [17] | Screens for chemical activity against specific biological pathways (endocrine disruption, xenobiotic metabolism, cytotoxicity). | Provides mechanistic Outcome data. Used in prioritization frameworks to flag chemicals with high ecotoxicological potential even when traditional toxicity data is limited [17]. |

| Molecular Biomarkers (e.g., CYP1a1, Vtg mRNA quantification via RT-qPCR) [17] | Measures sub-organismal, early biological responses to exposure, indicating specific mechanisms of action. | Sensitive Outcome measures that provide evidence of biological activity before higher-level effects manifest. Useful for diagnosing exposure and effect. |

| Chemical Analysis (LC/MS/MS, GC/MS) | Identifies and quantifies specific contaminants in environmental matrices (water, sediment, tissue). | Defines the Exposure component with precision (identity and magnitude). Essential for establishing dose-response relationships and exposure gradients (Comparator). |

| Adverse Outcome Pathways (AOPs) | Organizes knowledge linking a molecular initiating event to an adverse outcome at the organism/population level. | A conceptual framework that helps structure Outcome data, supports extrapolation, and strengthens weight-of-evidence assessments for causality [16] [19]. |

| Weight-of-Evidence (WoE) Integration Software/Procedures | Systematically combines lines of evidence from different sources (chemistry, in vitro, in vivo, field) to reach a conclusion. | The overarching method for synthesizing extracted ECO data. Provides transparent, structured decision-making for hazard identification and prioritization [16] [17] [19]. |

Application in Weight-of-Evidence and Chemical Prioritization

The ECO framework provides the structured data necessary for advanced synthesis methods like Weight-of-Evidence (WoE) analysis. A practical application is the prioritization of contaminants detected in environmental monitoring. As demonstrated in a study of the Milwaukee Estuary, multiple lines of evidence—each aligned with ECO components—can be integrated to rank chemicals for further action [17].

Protocol for a WoE-Based Chemical Prioritization Framework [17]:

- Assemble Evidence Lines: For each detected chemical, gather data on:

- Exposure: Detection frequency, concentration, and environmental distribution.

- Ecotoxicological Potential (Outcome): Data from in vivo toxicity tests, in vitro bioactivity assays, and QSAR predictions.

- Environmental Fate: Persistence and bioaccumulation potential.

- Score and Weight Evidence: Assign quantitative or semi-quantitative scores to each evidence line based on reliability and relevance (e.g., Klimisch scores) [16]. Weights can reflect the perceived strength of each line.

- Integrate and Prioritize: Combine scores, often using predefined algorithms or decision rules, to generate an overall prioritization score. Chemicals are then binned into categories (e.g., High, Medium, Low Priority), with clear delineation between data-sufficient and data-limited substances [17].

- Recommend Actions: High-priority, data-sufficient chemicals are flagged for definitive risk assessment. High-priority, data-limited chemicals are flagged for targeted ecotoxicological testing.

Table: Example Prioritization Output from Milwaukee Estuary Study [17]

| Priority Bin | Data Sufficiency | Number of Chemicals (Example) | Recommended Management Action |

|---|---|---|---|

| High Priority | Sufficient | 4 (e.g., fluoranthene, benzo[a]pyrene) | Candidate for definitive risk assessment and potential regulatory action. |

| High/Medium Priority | Limited | 21 | Candidate for targeted ecotoxicological research to fill data gaps. |

| Medium/Low Priority | Varies | 34 | Lower immediate concern; consider for future monitoring. |

| Low Priority | Sufficient | 1 (2-methylnaphthalene) | Likely minimal risk; requires no further action. |

This WoE process, fueled by systematically extracted ECO data, transforms a list of detected chemicals into a risk-informed management strategy. The following diagram visualizes this integration process.

The transition from PICO to ECO is a necessary evolution for generating reliable evidence in ecotoxicology. Successful implementation hinges on several key practices. First, clearly define the adapted ECO elements at the protocol stage, using specific scenarios (e.g., incremental exposure vs. high-low comparison) to guide what data will be extracted [14]. Second, invest in rigorous pilot-testing and dual review of the extraction process to ensure consistency, as the heterogeneity of ecological studies makes this step more critical than in clinical reviews [15]. Third, plan for data transformation and synthesis from the outset, acknowledging that extracting raw or summary statistics is essential for meaningful meta-analysis [15]. Finally, employ the extracted ECO data within structured WoE frameworks to move beyond simple narrative synthesis to transparent, defensible conclusions that can effectively inform environmental risk assessment and management decisions [16] [17] [19]. By adhering to these detailed application notes and protocols, researchers can leverage the power of systematic review to address complex ecological questions with greater rigor and impact.

This application note details the methodological challenges of data extraction for systematic reviews (SRs) in ecotoxicology, framed within the broader thesis of advancing (semi)automated data extraction methods. Ecotoxicology presents unique obstacles compared to clinical research, including profound study heterogeneity (multiple species, endpoints, and experimental designs) and pervasive data scarcity (incomplete reporting, limited datasets) [20]. These challenges complicate the implementation of standardized, automated extraction tools that are increasingly used in evidence synthesis [10]. We provide detailed protocols for managing these issues, from designing piloted extraction forms [21] to implementing semi-automated tools like Dextr [22]. Supported by comparative data tables and workflow visualizations, this note equips researchers with practical strategies to enhance the rigor, efficiency, and reproducibility of data extraction in ecotoxicological evidence synthesis.

Within evidence-based toxicology, the systematic review is a core tool for transparently and rigorously synthesizing research [20]. Data extraction is the critical bridge between identified primary studies and synthesized evidence, involving the systematic capture of study characteristics, interventions/exposures, and outcomes into structured forms [23]. In ecotoxicology, this stage is particularly burdensome due to the field's inherent complexity. The broader thesis of this work posits that adapting and developing data extraction methodologies—both manual and (semi)automated—is essential to overcome the field's specific barriers [10] [22].

Traditional narrative reviews in toxicology, while useful for expert commentary, often lack transparency and methodological rigor, increasing the risk of bias and irreproducibility [20]. SR methodology, adapted from clinical research, addresses these shortcomings but requires significant adaptation. The central challenges are twofold: 1) Study Heterogeneity: Ecotoxicological evidence derives from diverse streams (in vivo, in vitro, in silico), involves myriad species and strains, and assesses a wide array of toxicological endpoints and outcomes [20]. 2) Data Scarcity: Primary studies frequently suffer from incomplete reporting of essential methodological details and quantitative results, and there is a lack of large, standardized, publicly available datasets to train machine learning (ML) models [10] [22]. These challenges necessitate tailored protocols and tools, moving beyond frameworks designed for clinical trials.

Core Challenge 1: Profound Study Heterogeneity

Ecotoxicological reviews must integrate evidence from fundamentally different study types, each with variable data reporting standards. This heterogeneity complicates the creation of universal data extraction templates and automated tools.

Table 1: Manifestations and Data Extraction Implications of Study Heterogeneity in Ecotoxicology

| Aspect of Heterogeneity | Manifestion in Literature | Implication for Data Extraction |

|---|---|---|

| Evidence Streams | In vivo (animal), in vitro (cell), in chemico, in silico (QSAR) models, and field observational studies [20]. | Requires extraction forms with distinct modules for each study type; complicates direct comparison and synthesis. |

| Experimental Subjects | Multiple species (e.g., Daphnia magna, Danio rerio, Rattus norvegicus), strains, sexes, and life stages [20]. | Necessitates detailed extraction of organism taxonomy, genetic strain, and husbandry conditions as potential effect modifiers. |

| Exposure Regimens | Variable routes (dietary, waterborne, injection), durations (acute, chronic), doses/concentrations, and use of complex mixtures. | Extracting dose-response data requires capturing exact values, units, and temporal patterns, often from text, tables, or figures. |

| Measured Endpoints | Lethality (LC50), sub-lethal effects (growth, reproduction, behavior), biochemical markers (gene expression, enzyme activity) [20]. | Extraction must accommodate diverse outcome types (continuous, dichotomous, ordinal) and their associated statistical measures (mean, SD, N). |

Protocol 2.1: Designing Hierarchical Extraction Forms for Heterogeneous Studies Objective: To create a flexible, piloted data extraction form that captures complex, nested data relationships common in ecotoxicology. Materials: Systematic review protocol, access to representative primary studies, form-building software (e.g., REDCap, Microsoft Excel, specialized SR tool) [24] [23]. Procedure:

- Define Core & Modular Fields: Based on the review PECO/PICO question, define core fields applicable to all studies (e.g., author, year, test substance). Create modular sections for specific evidence streams (e.g., an "in vivo mammalian toxicokinetics" module) [21] [23].

- Incorporate Entity Linking: Design the form to explicitly connect related entities. For example, a single study may report multiple experiments; each experiment may have multiple dose groups; each dose group links to specific outcome measures. Tools like Dextr are explicitly built to support this hierarchical data structure [22].

- Pilot and Revise: Two independent reviewers extract data from a sample (e.g., 5-10) of the most heterogeneous included studies [21] [23]. The pilot tests:

- Clarity: Are instructions unambiguous?

- Completeness: Does the form capture all relevant data?

- Consistency: Do reviewers record data identically?

- Resolve Discrepancies & Refine: Reviewers compare extractions, resolve disagreements through discussion or third-party arbitration, and use insights to refine the form and its guidance [21] [23].

- Proceed with Dual Extraction: Implement the final form, with all studies extracted in duplicate by two independent reviewers to minimize error [23].

Core Challenge 2: Pervasive Data Scarcity

Data scarcity in ecotoxicology manifests as both incomplete reporting within primary studies and a lack of large, annotated public datasets. This limits the performance and applicability of automated extraction tools.

Table 2: Comparison of Manual vs. Semi-Automated Data Extraction Workflows Performance data adapted from the evaluation of the Dextr tool on environmental health animal studies [22].

| Performance Metric | Manual Extraction Workflow (n=51 studies) | Semi-Automated Workflow (Dextr Tool, n=51 studies) | Statistical Significance & Implication |

|---|---|---|---|

| Median Extraction Time per Study | 933 seconds | 436 seconds | p < 0.01. Semi-automation substantially reduces time burden, a key advantage given data scarcity. |

| Precision Rate | 95.4% | 96.0% | p = 0.38. No significant difference. Automation does not increase error rates for extracted items. |

| Recall Rate | 97.0% | 91.8% | p < 0.01. Small but significant reduction. Highlights the tool may miss some relevant data points, underscoring the need for user verification [22]. |

A 2024 living systematic review on automated data extraction found that while tools are advancing, only 8% of publications described publicly available tools, and 45% provided data [10]. This "scarcity of data about data" hinders tool development and validation for ecotoxicology.

Protocol 3.1: Implementing and Validating a Semi-Automated Extraction Tool (Dextr) Objective: To integrate a semi-automated tool into the review workflow to improve efficiency while maintaining accuracy, specifically for complex, hierarchically structured data. Materials: The Dextr web application (or similar tool), a set of PDFs for included studies, a predefined data extraction schema [22]. Procedure:

- Tool Training & Schema Upload: Configure the tool by uploading the review's data extraction schema. Dextr uses this to make prediction targets.

- User-Verified Prediction Cycle:

- Machine Prediction: The tool processes a study PDF, highlighting text spans it predicts correspond to schema items (e.g., a dose value, a species name).

- Human Verification: The reviewer accepts, rejects, or corrects each prediction. This critical step ensures accuracy and creates new annotated data for the tool's continuous learning [22].

- Entity Connection: The reviewer explicitly links verified entities (e.g., linking a specific dose to a specific outcome measure) within the tool's interface.

- Export and Quality Control: Export the machine-readable, annotated data. A second reviewer should perform quality control on a subset (e.g., 20%) of studies processed by the first reviewer to ensure consistency [22] [23].

- Handling Missing Data: Document all instances where critical data (e.g., standard deviation, exposure duration) is unreported. Develop and document a priori rules for handling such missingness (e.g., imputation, exclusion) in the SR protocol [23].

(Semi-Automated Data Extraction with Human Verification)

Integrated Data Extraction Protocol for Ecotoxicology SRs

This protocol synthesizes best practices to address heterogeneity and scarcity simultaneously.

Phase 1: Planning & Form Design (Pre-Extraction)

- Develop Detailed, Piloted Forms: Follow Protocol 2.1. Include fields for: PECO elements, funding source, organism details, exposure parameters, all outcome data with measures of variance, and risk-of-bias indicators [21] [23].

- Plan for Data Types: Pre-specify how different effect measures (e.g., odds ratios, mean differences, LC50 values) will be handled, converted, or harmonized for synthesis [23].

- Define Automation Strategy: Decide if and where (screening, extraction of specific fields) (semi)automation will be used. Document the choice of tool and its validation process [10] [23].

Phase 2: Execution & Quality Assurance

- Dual Independent Extraction: Two reviewers extract data independently for all studies to minimize error [23].

- Implement Tool Integration: If using a tool like Dextr, follow Protocol 3.1. The "human-in-the-loop" verification is non-optional for ensuring reliability [22].

- Resolve Discrepancies: Reviewers compare extractions. All disagreements are resolved by consensus or third-party adjudication. The process and final decisions are documented [21] [23].

- Contact Authors: Systematically contact corresponding authors to request missing or unclear critical data (e.g., exact p-values, baseline data). Document all contact attempts and responses [23].

Phase 3: Data Processing & Reporting

- Convert and Calculate: Transform extracted data into the desired format for synthesis (e.g., calculate effect sizes, standardize units). Use reliable tools (e.g., Campbell Collaboration's effect size calculator) [23].

- Populate Evidence Tables: Create clear summary tables (e.g., Characteristics of Included Studies, Summary of Findings) to present extracted data [23].

- Archive and Share: Publish the final extraction forms and, where possible, the extracted datasets in publicly accessible repositories to combat data scarcity for future reviews and tool training [10] [23].

(Integrated Data Extraction Workflow for Ecotoxicology)

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Tools and Resources for Ecotoxicology Data Extraction

| Tool/Resource Name | Type/Category | Primary Function in Data Extraction | Key Consideration for Ecotoxicology |

|---|---|---|---|

| Dextr [22] | Semi-Automated Extraction Software | Provides ML-powered predictions for data points with mandatory user verification; supports hierarchical entity linking. | Specifically evaluated on environmental health animal studies; excels at capturing complex study designs. |

| Cochrane Handbook [20] [23] | Methodological Guidance | The gold-standard reference for SR conduct, including data extraction processes, form design, and bias reduction. | Requires adaptation for non-clinical study types (e.g., toxicological assays, ecological field studies). |

| PRISMA & PRISMA-P [23] | Reporting Guidelines | Checklists for reporting protocols (PRISMA-P) and final reviews (PRISMA), including mandatory items on data extraction processes. | Ensures transparent reporting of how heterogeneity and data scarcity were handled. |

| Effect Size Converter (e.g., Campbell Collaboration) [23] | Statistical Utility | Calculates or converts between different effect size metrics (e.g., Cohen's d, odds ratios). | Critical for synthesizing outcomes reported in diverse metrics across studies. |

| Systematic Review Management Software (e.g., Rayyan, Covidence, EPPI-Reviewer) | Workflow Management | Platforms that often include structured data extraction form builders, dual-reviewer workflows, and discrepancy resolution modules. | Check for flexibility to create custom forms that capture ecotoxicology-specific data. |

| REDCap [24] | Electronic Data Capture Platform | Secure, web-based application for building and managing customized data collection forms and databases. | Highly customizable for complex, hierarchical extraction forms; good for team-based projects. |

The Critical Role of Data Extraction in Ecotoxicology Systematic Reviews

Within the framework of a broader thesis on advancing data extraction methods for ecotoxicology systematic reviews, the development and execution of a robust extraction plan is a foundational imperative. Systematic reviews are complex, methodology-driven projects designed to minimize bias and maximize transparency when synthesizing existing evidence to answer specific research questions [3]. In ecotoxicology, this evidence base is vast and heterogeneous, encompassing studies on diverse stressors—from classic chemical toxicants and endocrine disruptors like 17α-ethinylestradiol (EE2) [25] to engineered nanomaterials (ENMs) [26]—across multiple species and levels of biological organization.

The prevalence of systematic reviews in toxicology has approximately doubled from 2016 to 2020 [3]. However, their scientific quality is often variable, with common shortcomings in conduct and reporting undermining their reliability [3]. At the heart of these quality issues lies data extraction: the critical process of systematically capturing and organizing quantitative results, study characteristics, and methodological details from included primary studies. A poorly designed or executed extraction phase introduces systematic error, compromises synthesis, and can lead to misleading conclusions that misinform policy and future research. Therefore, a robust, pre-defined extraction plan is not merely a procedural step but a non-negotiable pillar of scientific integrity and utility in evidence-based ecotoxicology.

Table 1: The Imperative for Robust Extraction: Growth and Challenges in Ecotoxicology Systematic Reviews

| Aspect | Quantitative Data & Trends | Implications for Data Extraction |

|---|---|---|

| Growth in Volume | Number of toxicology systematic reviews in Web of Science ~doubled from 2016 to 2020 [3]. | Increases the demand for and reliance on synthesized evidence, making extraction accuracy paramount. |

| Identified Quality Shortcomings | Reviews of systematic reviews in environmental health consistently find important methodological shortcomings [3]. | Highlights a systemic need for standardized, rigorous protocols to improve reliability. |

| Data Heterogeneity | Ecotoxicity data for ENMs is characterized by inconsistent reporting, varying test preparations, and diverse biological endpoints [26]. | Extraction plans must be meticulously detailed to capture complex, non-standardized data (e.g., physicochemical properties, exposure conditions). |

| Regulatory Reliance | New Approach Methodologies (NAMs) and Integrated Approaches to Testing and Assessment (IATA) depend on reliable data for read-across and grouping strategies [27] [26]. | Flawed extraction creates "garbage in, garbage out" scenarios, compromising safety assessments and the 3Rs (Replacement, Reduction, Refinement) agenda [27]. |

Application Notes: Core Principles and Protocol Development

A robust extraction plan is a detailed, prospectively developed protocol that serves as the operational blueprint for the review team. Its development is guided by core principles aimed at ensuring accuracy, consistency, completeness, and clarity.

- Pre-Definition is Paramount: Every element to be extracted must be defined before the process begins. This includes the specific outcome metrics (e.g., LC50, NOEC, biomarker expression level), study design descriptors, and risk-of-bias criteria.

- Pilot Testing is Essential: The extraction form and guidelines must be piloted on a sample of 5-10 included studies by all reviewers. This uncovers ambiguities, ensures common understanding, and refines the protocol [3].

- Independent Duplication Mitigates Error: Data should be extracted independently by at least two reviewers, with a pre-defined process for resolving discrepancies through discussion or third-party adjudication [28]. This is a key guard against human error and subjective interpretation.

- Transparency Ensances Reproducibility: The final extraction protocol, including the blank form and coding guidelines, should be made publicly available, such as in a supplemental file, aligning with FAIR (Findable, Accessible, Interoperable, and Reusable) data principles [26].

Protocol Development Workflow: The following workflow, derived from best practices and case studies [25] [3] [28], outlines the key stages in creating a robust extraction plan:

Detailed Extraction Protocols from Ecotoxicology Case Studies

The theoretical framework above is best understood through practical examples. The following table compares extraction approaches from published systematic reviews on different ecotoxicology topics, illustrating both commonalities and context-specific adaptations.

Table 2: Comparison of Data Extraction Protocols from Ecotoxicology Systematic Reviews

| Review Focus & Citation | Key Extracted Data Elements | Specialized Protocol Notes | Handling of Complex Data |

|---|---|---|---|

| Reproductive Effects of Micro-pollutants [28] | Study design, population/model, pollutant type & exposure metric, reproductive outcome (e.g., sperm conc., hormone level), effect size (OR, SMD), confidence intervals, covariates. | Followed PRISMA guidelines. Extracted data to perform meta-analysis, requiring precise numeric effects and measures of variance. | Managed heterogeneity in exposure metrics (e.g., urine vs. air concentration) via subgroup analysis and sensitivity analysis. |

| Persistence & Toxicity of EE2 [25] | Sample type (water, soil, biota), concentration (influent/effluent), detection method, removal efficiency, reported toxicity endpoints. | Used PRISMA framework. Extraction focused on environmental fate and occurrence data alongside toxicological results. | Compiled concentration ranges across matrices (water, soil, crop) for comparative environmental risk assessment. |

| Grouping Strategies for Nanomaterials [26] | Pristine material properties: Core element, size, surface area, purity. System-dependent properties: Hydrodynamic size (with/without BSA). Ecotoxicological endpoints: Algal growth inhibition, D. magna mortality, cell viability. | Emphasized extraction of both inherent and measured physicochemical properties. Highlighted the critical need for standardized reporting of ENM characteristics. | Addressed the challenge of "autocorrelated" properties (e.g., size and surface area) by extracting a full suite of parameters for multivariate analysis. |

Detailed Protocol: Extracting Data for Engineered Nanomaterial (ENM) Ecotoxicity Reviews Based on the NanoReg2 project [26], a specialized extraction protocol for ENM studies is essential:

- Extract Inherent Physicochemical Properties: Record the core composition (e.g., TiO2, ZnO), primary particle size, specific surface area, shape, and purity as reported by the material supplier.

- Extract Dispersion Protocol Details: Document the dispersion medium (e.g., water, BSA solution), sonication energy and time, and the final concentration of stabilizers (e.g., BSA %). Note if a standardized protocol like NanoGENOTOX SOP was used.

- Extract System-Dependent Characterization: Capture the measured properties in the exposure medium: hydrodynamic diameter (e.g., via DLS), polydispersity index, and zeta potential.

- Extract Ecotoxicological Data: Record the test organism, exposure duration, measured endpoint (e.g., % inhibition, LC50), and its value with variance. Note the exposure medium composition.

- Cross-Reference and Flag: Create a link between the specific material batch, its dispersion protocol, its characterized state in the test system, and the resulting biological endpoint. Flag studies missing any of these critical data clusters.

The decision-making pathway during extraction, especially for complex or poorly reported data, can be visualized as follows:

Table 3: Research Reagent Solutions & Essential Tools for Data Extraction

| Item / Tool | Function in the Extraction Process | Example from Search Results |

|---|---|---|

| Standardized Reporting Guideline (PRISMA) | Provides a minimum set of items to report, ensuring the extraction plan and its results are fully transparent [25] [28]. | Used as the foundational framework in reviews on EE2 [25] and micro-pollutants [28]. |

| Pilot-Tested Extraction Form (Digital) | The structured instrument (e.g., Google Form, MS Form, REDCap) used to record extracted data consistently. Must be piloted. | Implicitly required for independent duplicate extraction and resolving discrepancies [3] [28]. |

| Reference Management Software | Manages the flow of studies from search to screening to extraction, tracking decisions and reviewer assignments. | Essential for handling the hundreds to thousands of records identified [25] [28]. |

| Bovine Serum Albumin (BSA) | A critical biochemical reagent used to create stable, reproducible dispersions of nanomaterials in ecotoxicity testing [26]. | Its use or absence must be extracted as a key methodological variable, as it significantly influences ENM behavior and toxicity [26]. |

| FAIR Data Principles Framework | A guiding concept (Findable, Accessible, Interoperable, Reusable) for planning extraction to ensure the resulting dataset supports future reuse and integration [26]. | Cited as necessary to overcome barriers to grouping and read-across for ENMs by creating interoperable datasets [26]. |

Implementing the Plan: From Protocol to Actionable Dataset

Implementation begins with training all reviewers on the finalized protocol and using the piloted extraction form. The process of independent duplicate extraction followed by discrepancy resolution is non-negotiable for quality assurance [28]. A third reviewer should adjudicate unresolved disagreements.

The final output is a curated, high-quality dataset ready for synthesis. This dataset is the direct product of the extraction plan and determines all subsequent conclusions. In ecotoxicology, this may enable dose-response meta-analysis [28], grouping of materials based on extracted properties [26], or a weight-of-evidence assessment for environmental risk [27] [25]. A robust extraction plan thus sets the stage for a systematic review that is not only methodologically sound but also capable of informing regulatory science, advancing the 3Rs, and contributing to a more sustainable and ethical toxicological paradigm [27].

From Manual to Machine: A Practical Guide to Data Extraction Methods and Tools

This document establishes a definitive protocol for manual data extraction and double-review processes within ecotoxicology systematic reviews (SRs). Adherence to these practices is critical for ensuring the credibility, reproducibility, and regulatory acceptance of synthesized evidence used in environmental risk assessment and chemical safety evaluation. The guidelines synthesize established SR methodology [11] with field-specific standards such as the COSTER recommendations for toxicology and environmental health research [2]. The core mandate is the implementation of independent dual extraction followed by formalized consensus to minimize random error and cognitive bias, thereby protecting the integrity of the review's conclusions [11] [29].

In ecotoxicology, systematic reviews are foundational for hazard identification, dose-response assessment, and ultimately, the derivation of safe exposure limits. The data extraction phase is where the empirical evidence from primary studies is translated into a structured format for synthesis; it is consequently one of the most labour-intensive and error-prone stages of the SR process [29]. Errors introduced during extraction—whether from oversight, misinterpretation, or subjective judgment—propagate directly into the meta-analysis and final conclusions, potentially jeopardizing chemical safety decisions.

The "gold standard" of dual independent review with consensus is not merely a recommendation but a methodological imperative. It functions as a quality control system, dramatically reducing the rate of data entry mistakes and improving the consistency of subjective judgments (e.g., risk-of-bias assessments). This protocol provides a detailed, actionable framework for implementing this standard, emphasizing planning, piloting, and documentation tailored to the complex data hierarchies common in ecotoxicology (e.g., multiple species, endpoints, exposure durations).

Comprehensive Protocols for Dual-Review Data Extraction

The following protocol is structured into three sequential phases, incorporating a 10-step guideline adapted for ecotoxicology [29].

Phase 1: Planning and Tool Development

Objective: To design a pilot-tested, detailed data extraction form and a structured relational database that accurately reflects the review question and the hierarchical nature of ecotoxicological data.

Step 1 – Determine Data Items: Assemble a development team including content experts, methodologies, and a data manager. Define the full set of data items required to answer the review question(s), assess risk of bias, and perform potential meta-analyses. For ecotoxicology, this typically includes:

- Study Identifiers & Context: Author, year, source, funding, chemical identity (CAS RN), exposure medium.

- Test System: Species (Latin name), life stage, sex, source, housing.

- Exposure Regime: Duration, route, concentration(s) (with units), frequency, vehicle/control.

- Endpoint & Outcome: Effect category (mortality, growth, reproduction), specific measurement, time of assessment, data type (continuous, dichotomous), summary statistics (mean, SD, SE, N, response rate).

- Risk of Bias: Items aligned with tools like SciRAP or other ecological risk-of-bias instruments [2].

Step 2 – Define Data Structure (Entity Grouping): Organize data items into logical entities to prevent data redundancy. A hierarchical structure is essential.

- Root Entity (STUDY): Data reported once per study (e.g., author, year, test substance).

- Branch Entity (TEST GROUP): Data repeated for each exposure concentration or experimental group (e.g., dose level, mean response).

- Branch Entity (OUTCOME): Data repeated for each measured endpoint within a group (e.g., specific biomarker, survival count). This structure efficiently manages studies reporting multiple concentrations and effects [29].

Step 3 – Build and Pilot the Extraction Form: Develop the form in the chosen software (see Toolkit). It must include clear instructions for every field. A critical pilot phase is then conducted:

- At least two reviewers independently extract data from an identical subset (e.g., 5-10%) of included studies.

- The team meets to compare extractions item-by-item, calculating inter-rater reliability (e.g., percent agreement, Cohen's kappa for categorical items).

- The form and instructions are refined iteratively to resolve ambiguities until acceptable agreement (>90% or kappa >0.8) is achieved [11].

Phase 2: Independent Dual Data Extraction

Objective: To execute a blinded, independent extraction of data from all included studies by two separate reviewers.

- Step 4 – Reviewer Training: All reviewers complete training using the finalized form and pilot studies. They must be calibrated on interpreting subjective criteria.

- Step 5 – Independent Extraction: Reviewers extract data without consultation. Software should facilitate blinding to each other's entries where possible [11]. Documentation of uncertainties and decisions is mandatory.

Phase 3: Consensus, Validation, and Data Locking

Objective: To identify and resolve discrepancies systematically, resulting in a single, accurate dataset.

- Step 6 – Discrepancy Identification: The extraction software or a manual comparison flags disagreements for each data point.

- Step 7 – Consensus Process: The two original reviewers discuss each discrepancy, referring back to the source document. They document the rationale for the final decision.

- Step 8 – Adjudication: If consensus cannot be reached, a third reviewer (an arbiter or methodologist) makes the final decision.

- Step 9 – Data Validation & Locking: The finalized dataset undergoes a final quality check (e.g., range checks for outliers, unit consistency). The database is then locked, and an audit trail of all changes is preserved.

Table 1: Quantitative Performance Benchmarks for the Double-Review Process

| Performance Metric | Target Benchmark | Measurement Method | Rationale |

|---|---|---|---|

| Pilot Phase Agreement | >90% item agreement or κ >0.8 | Percent agreement; Cohen's Kappa for categorical items | Ensures the extraction form is unambiguous and reviewers are calibrated before full extraction [11]. |

| Full Extraction Discrepancy Rate | <15% of all data items | (Number of discrepant items / Total items extracted) x 100 | A manageable rate indicates good initial reviewer alignment; a very low rate may suggest lack of independence. |

| Time for Consensus | Documented per study | Record time spent resolving discrepancies for each study | Provides metrics for project planning and identifies complex studies. |

| Final Data Error Rate | <1% (Post-consensus) | Random audit of a subset of finalized entries against source documents | Validates the overall accuracy of the locked dataset. |

Visual Workflow for Data Extraction and Consensus

The following diagram illustrates the formal workflow and decision points for the dual-review extraction and consensus process.

Dual-Review Data Extraction and Consensus Workflow

The Scientist's Toolkit: Essential Materials and Software

Table 2: Research Reagent Solutions for Data Extraction

| Tool / Resource | Primary Function | Application Notes |

|---|---|---|

| Systematic Review Software (e.g., Covidence, DistillerSR) | Provides integrated platform for screening, extraction (with side-by-side PDF view), automatic discrepancy highlighting, and consensus management [11]. | Ideal for teams; enforces process rigor and maintains an audit trail. Subscription cost is a consideration. |

| Relational Database (e.g., Microsoft Access, Epi Info) | Enforces structured data entry and manages complex, hierarchical data (e.g., multiple outcomes nested within doses, nested within studies) more effectively than flat tables [29]. | Essential for large, complex reviews. Requires upfront design time but minimizes downstream data cleaning. |

| Flat-file Database (e.g., Microsoft Excel, Google Sheets) | Accessible and flexible for simple reviews or as a preliminary tool. Can incorporate data validation (drop-downs, range checks) [11]. | Prone to errors in complex data structures. Manual discrepancy checking is time-consuming. Best for small-scale projects. |

| Reference Management Software (e.g., EndNote, Zotero) | Organizes PDFs, facilitates sharing and annotation among the review team. | Integrates with some SR software. Critical for managing large libraries of included studies. |

| Project Management Tool (e.g., MS Teams, Trello) | Coordinates team tasks, timelines, and communication. Documents meeting minutes and key decisions. | Vital for maintaining project schedule and transparency, especially for distributed teams. |

Validation and Reporting Protocols

Inter-Rater Reliability (IRR) Assessment: Quantify agreement before (pilot) and after (full extraction) the consensus process. Report percent agreement for all items and Cohen’s Kappa for categorical judgments (e.g., risk-of-bias ratings) [11].

Audit Trail Documentation: Maintain a living log of all decisions, including:

- Modifications to the extraction form post-pilot.

- Rationale for resolving each discrepancy during consensus.

- Justifications for arbiter decisions.

- This log is a critical appendix to the final review.

Reporting in the Manuscript: The method section must detail:

- The number of extractors and their expertise.

- The use of independent extraction and consensus.

- The piloting process and IRR results.

- The method for handling disagreements.

- A statement confirming data were locked post-consensus.

Systematic reviews (SRs) represent the most trusted form of evidence, positioned at the top of the evidence pyramid [30]. In fields like ecotoxicology, they are crucial for synthesizing evidence on the effects of chemicals, pollutants, and stressors on ecosystems and organisms. However, the traditional SR process is notoriously resource-intensive, often taking a team approximately a year to complete [30]. The exponential growth of scientific literature further intensifies this burden, creating a significant bottleneck for timely, evidence-based decision-making in environmental protection and chemical risk assessment.

This urgency has catalyzed a paradigm shift toward semi-automation and full automation of SR workflows. The integration of digital tools, artificial intelligence (AI), and machine learning (ML) promises to enhance the efficiency, reproducibility, and scalability of evidence synthesis [30] [31]. For ecotoxicology, this transition is particularly pertinent. Research questions often involve complex, multi-faceted data from diverse study types (in vivo, in vitro, field studies) reported across heterogeneous sources, making manual extraction and synthesis exceptionally challenging.

This article, framed within a broader thesis on data extraction methods for ecotoxicology SRs, provides detailed application notes and protocols. It focuses on the software, workflows, and experimental methodologies enabling the transition to (semi)automated evidence synthesis. The content is designed for researchers, scientists, and drug development professionals seeking to implement these advanced techniques in their review processes.

Levels of Automation and Key Software Tools

Automation in systematic reviews is not a binary state but a spectrum. Tools offer varying levels of assistance, from purely manual workflow management to fully autonomous extraction and synthesis. The choice of tool depends on the review's scope, complexity, and the team's technical capacity.

The "Big Four" Comprehensive Platforms