Evidence-Based Toxicology: Integrating Modern Approaches for Safer Chemical & Drug Assessments

This article provides a comprehensive overview of evidence-based toxicology (EBT), a discipline that applies structured, transparent, and objective methods to evaluate scientific evidence for toxicological decision-making.

Evidence-Based Toxicology: Integrating Modern Approaches for Safer Chemical & Drug Assessments

Abstract

This article provides a comprehensive overview of evidence-based toxicology (EBT), a discipline that applies structured, transparent, and objective methods to evaluate scientific evidence for toxicological decision-making. We trace EBT's evolution from its foundations in evidence-based medicine to its current role in modernizing risk assessment. The scope encompasses foundational principles and systematic review methodologies; the application of advanced predictive tools, including New Approach Methods (NAMs), high-throughput screening, and multi-omics integration; strategies for troubleshooting common challenges in data integration and validation; and a comparative analysis of traditional versus emerging frameworks. Aimed at researchers, scientists, and drug development professionals, this synthesis highlights how EBT principles are critical for enhancing the reliability, efficiency, and human relevance of toxicological evaluations in biomedical and regulatory contexts[citation:2][citation:5][citation:8].

Foundations of Evidence-Based Toxicology: From Medicine to Modern Risk Assessment

Evidence-based toxicology (EBT) is a disciplined process for transparently, consistently, and objectively assessing available scientific evidence to answer questions in toxicology [1]. Its primary goal is to address long-standing concerns within the toxicological community regarding the limitations of traditional approaches to synthesizing science, which often lack transparency, are prone to bias, and yield irreproducible conclusions [1] [2]. By providing a structured framework for evaluating evidence, EBT strives to strengthen the scientific foundation of decision-making in chemical safety, risk assessment, and public health protection [2].

The core impetus for EBT's development was the recognized need to improve the performance assessment of new toxicological test methods [1]. This need aligns with the vision set forth by the U.S. National Research Council's landmark 2007 report, "Toxicity Testing in the 21st Century," which advocated for a shift from traditional animal-based observations to human biology-based, mechanistic understanding [1]. EBT provides the essential tools to evaluate and integrate evidence from these new approach methodologies (NAMs), ensuring they are validated and accepted based on rigorous, objective standards [3].

At its heart, EBT is characterized by three foundational principles [1] [2]:

- Transparency: Making all decision-making processes, criteria, and data interpretations open and accessible.

- Objectivity: Minimizing bias through predefined, systematic protocols.

- Consistency: Applying standardized methods to ensure results are reproducible and reliable over time.

Historical Context and Evolution

The evolution of evidence-based toxicology is directly rooted in the longer history of evidence-based medicine (EBM). The EBM movement, catalyzed by the work of Scottish epidemiologist Archie Cochrane in the 1970s, was a response to widespread inconsistencies in clinical practice, where medical decisions were frequently based on anecdote or tradition rather than a rigorous synthesis of available research [1]. The establishment of the Cochrane Collaboration in 1993 institutionalized the use of systematic reviews to inform healthcare decisions [1].

The formal translation of these evidence-based principles to toxicology began in the mid-2000s. Seminal papers published in 2005 and 2006 proposed that the tools of EBM could serve as a prototype for evidence-based decision-making in toxicology [1]. This concept gained significant momentum at the First International Forum Toward Evidence-Based Toxicology in Cernobbio, Italy, in 2007, which convened over 170 scientists from more than 25 countries to explore its implementation [1] [2].

Subsequent workshops, including a key 2010 meeting titled "21st Century Validation for 21st Century Tools," led to the formation of the Evidence-based Toxicology Collaboration (EBTC) in 2011 [1] [2]. The EBTC, a non-profit comprising scientists from government, industry, and academia, has been instrumental in driving the methodology forward, conducting pilot studies, and promoting the use of systematic reviews in toxicology [2] [4].

Table 1: Key Milestones in the Development of Evidence-Based Toxicology

| Year | Milestone Event | Significance |

|---|---|---|

| 2005-2006 | Publication of foundational papers [1] | Proposed adapting evidence-based medicine principles to toxicology. |

| 2007 | First International Forum Toward EBT (Cernobbio, Italy) [1] [2] | Brought global scientific community together to launch formal EBT initiative. |

| 2010 | "21st Century Validation for 21st Century Tools" Workshop [1] | Inspired the creation of a collaborative organization to advance EBT. |

| 2011 | Launch of Evidence-based Toxicology Collaboration (EBTC) [1] [2] | Established a sustained, organized effort to develop and promote EBT methodologies. |

| 2012 | EBTC Workshop: "Evidence-based Toxicology for the 21st Century" [4] | Clarified approaches and set priority activities, including pilot studies and education. |

| 2014 | EBTC Workshop on Systematic Reviews [1] | Addressed challenges and called for collaboration to enable widespread adoption. |

| 2016+ | Adoption by regulatory bodies (e.g., NTP Office of Health Assessment and Translation) [1] | Systematic review methodology applied to formal chemical risk assessments. |

Core Methodologies and Frameworks

The methodological engine of evidence-based toxicology is the systematic review, a highly structured approach to identifying, selecting, appraising, and synthesizing all relevant studies on a specific question [1] [5]. This stands in contrast to traditional narrative reviews, which are often subjective and non-transparent [1]. The systematic review process is designed explicitly to minimize bias and enhance reproducibility [5].

A critical early step is framing the research question using structured formats like PECO (Population, Exposure, Comparator, Outcome) or PICO (Population, Intervention, Comparator, Outcome), which define the scope with precision [6] [5]. For example, in toxicology, "Population" could be a specific animal model or human cohort, "Exposure" a defined chemical, and "Outcome" a measurable adverse event [6].

A pivotal component of study appraisal is the Risk-of-Bias (RoB) assessment. This evaluates the internal validity of individual studies—the degree to which their design and conduct are likely to have prevented systematic error [5]. Common bias domains assessed in toxicology include selection bias, performance bias, detection bias, attrition bias, and reporting bias [5].

Toxicology presents a unique challenge compared to medicine: it must integrate evidence from multiple, distinct evidence streams [1]. These streams include:

- Human Evidence: Observational studies (e.g., cohort, case-control).

- Animal Evidence: In vivo toxicology studies.

- Mechanistic Evidence: In vitro assays, 'omics data, and in silico models [1].

EBT provides frameworks for synthesizing evidence within and across these streams to form a cohesive weight-of-evidence conclusion [5]. This often involves applying established causation criteria, such as the Hill criteria (e.g., strength, consistency, specificity, biological gradient), to assess whether an exposure is causally linked to an adverse outcome [5].

Table 2: Characteristics of Major Evidence Streams in Toxicology

| Evidence Stream | Typical Study Designs | Key Strengths | Inherent Limitations |

|---|---|---|---|

| Human Epidemiological | Cohort, case-control, cross-sectional studies. | Direct human relevance; can identify real-world associations. | Confounding difficult to control; exposure assessment often imprecise; ethical constraints. |

| Traditional In Vivo | Controlled animal studies (e.g., OECD guideline tests). | Controlled exposure; full organismal response; established historical data. | Interspecies extrapolation uncertainty; high cost and time; ethical concerns [7]. |

| New Approach Methodologies (NAMs) | In vitro assays, organ-on-a-chip, high-throughput screening, in silico models [7] [8]. | Human-relevant biology; high-throughput; mechanistic insight; addresses 3Rs [7] [3]. | May not capture complex organ interactions; ongoing validation for regulatory use [3]. |

Applications in Modern Toxicology

Assessment of New Approach Methodologies (NAMs)

The rise of NAMs—including advanced in vitro models, organs-on-chips, and computational toxicology—creates a pressing need for robust evaluation frameworks [7] [3]. EBT, through systematic review, is uniquely positioned to assess the validity, reliability, and human relevance of these novel tests [3] [4]. By objectively evaluating performance metrics (e.g., sensitivity, specificity, predictive capacity) against defined toxicological outcomes, EBT can accelerate the regulatory acceptance and deployment of NAMs, facilitating the shift away from traditional animal testing [7] [3].

Development of Adverse Outcome Pathways (AOPs)

The Adverse Outcome Pathway (AOP) framework is a conceptual model that describes a sequence of causally linked key events from a molecular initiating event to an adverse organism- or population-level outcome [6]. EBT methodologies are critical for the robust development and assessment of AOPs. Specifically, systematic review can be applied to evaluate the evidence supporting each Key Event Relationship (KER) within an AOP [6]. This ensures that the causal connections depicted in the AOP are based on a transparent and comprehensive analysis of the available literature, strengthening their utility for regulatory decision-making and chemical prioritization [6].

Informing Regulatory and Human Health Risk Assessment

Regulatory agencies worldwide are increasingly adopting evidence-based methods. For instance, the U.S. National Toxicology Program's Office of Health Assessment and Translation (OHAT) employs systematic review methodology to evaluate the effects of environmental exposures [1]. This approach is particularly valuable for substances with large, complex, or conflicting bodies of literature, as it provides a clear, auditable path to a conclusion. It moves beyond the traditional practice of selecting a single "lead" study, enabling a more holistic and defensible integration of all relevant evidence [1] [5].

Table 3: Criteria for Assessing a Key Event Relationship (KER) within an AOP

| Assessment Dimension | Key Questions for Systematic Evaluation |

|---|---|

| Biological Plausibility | Is there a well-understood mechanistic basis for the inferred causal relationship? |

| Essentiality | If the upstream key event is prevented, is the downstream key event also prevented? |

| Empirical Evidence | What is the weight, consistency, and concordance of experimental data supporting the relationship? |

| Quantitative Understanding | Is the relationship dose- and time-responsive? Are there known modulating factors? |

| Uncertainties and Inconsistencies | What data gaps, contradictory findings, or alternative explanations exist? |

Experimental Protocols in Evidence-Based Toxicology

Protocol for Conducting a Systematic Review

A systematic review in toxicology follows a strict, pre-defined protocol to ensure objectivity [5].

- Protocol Development & Registration: Develop a detailed protocol specifying the PECO question, search strategy, inclusion/exclusion criteria, and analysis plan. Register the protocol on a public platform.

- Systematic Search: Execute comprehensive searches across multiple bibliographic databases (e.g., PubMed, Web of Science, Embase, Toxline) using a structured search strategy developed with an information specialist [5].

- Study Screening & Selection: Screen retrieved records (titles/abstracts, then full text) against the inclusion/exclusion criteria, typically performed by two independent reviewers with conflicts resolved by consensus [5].

- Data Extraction & Risk-of-Bias Assessment: Extract predefined data from included studies into standardized forms. Perform a Risk-of-Bias assessment using tools tailored to toxicology (e.g., adapted from Cochrane or OHAT tools) to evaluate study reliability [5].

- Evidence Synthesis & Integration: Synthesize findings descriptively and, where appropriate, statistically via meta-analysis. Integrate evidence across studies and evidence streams using a weight-of-evidence approach, considering strength, consistency, and biological plausibility [5].

- Reporting: Document and report the entire process and findings transparently, following guidelines like PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses).

Risk-of-Bias Assessment forIn VivoToxicology Studies

Assessing the internal validity of animal studies is crucial. Key domains include [5]:

- Selection Bias: Was allocation to control and treatment groups random and adequately concealed?

- Performance Bias: Were experimental personnel blinded to the treatment groups during the study?

- Detection Bias: Were outcome assessors blinded during data analysis?

- Attrition Bias: Were all animals accounted for, and were data reported for all predefined outcomes?

- Reporting Bias: Is there evidence of selective reporting of outcomes based on results? Each domain is judged as having "Low," "High," or "Unclear" risk of bias, providing a profile for each study.

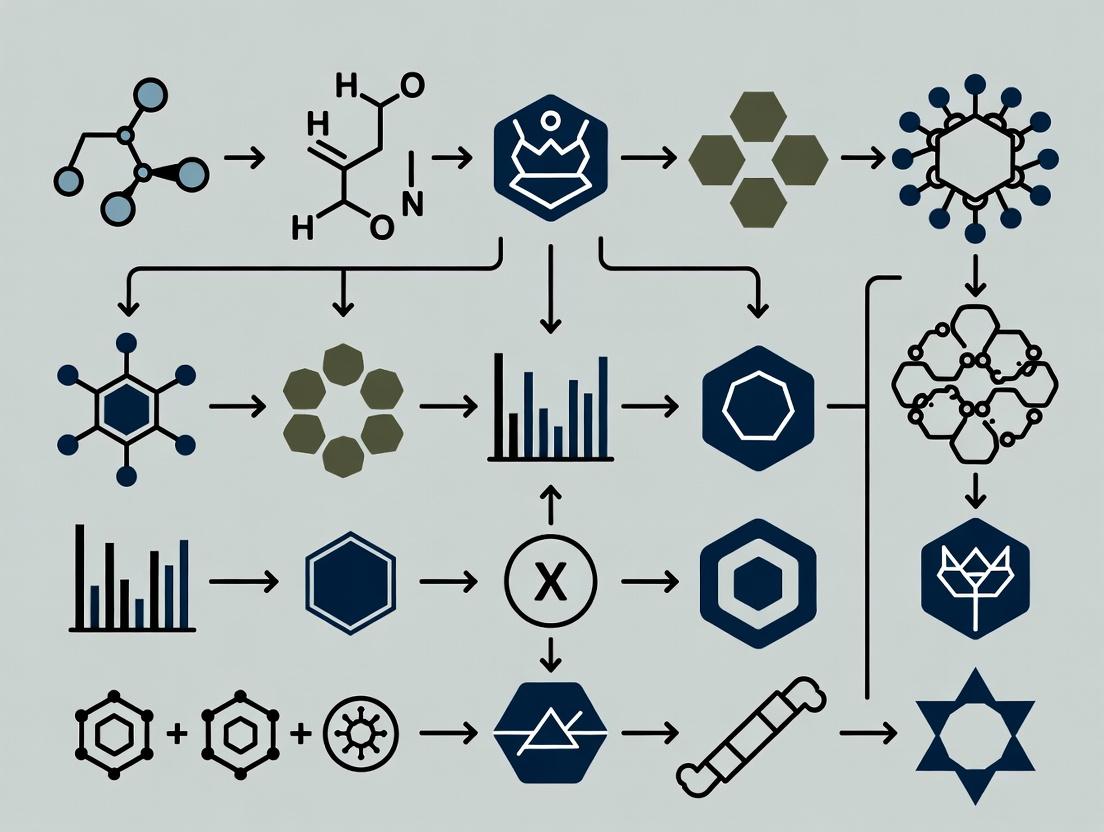

Systematic Review & Evidence Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Tools and Reagents for Evidence-Based Toxicology

| Tool/Reagent Category | Specific Examples | Primary Function in EBT |

|---|---|---|

| Reference Chemicals | Certified pure analytical standards (e.g., Bisphenol A, Benzo[a]pyrene). | Serve as positive controls and benchmark substances for validating test methods and ensuring reproducibility across studies [7]. |

| In Vitro Model Systems | Primary human hepatocytes; induced pluripotent stem cell (iPSC)-derived neurons; 3D bioprinted tissue constructs [7]. | Provide human-relevant biological substrates for mechanistic toxicity testing, generating data for the mechanistic evidence stream [7] [3]. |

| High-Content Screening Assays | Multiplexed fluorescence assays for cell health parameters (viability, apoptosis, oxidative stress). | Enable high-throughput, quantitative data generation on key events, supporting dose-response analysis and AOP development [7]. |

| Computational Toxicology Software | QSAR toolkits; molecular docking software; machine learning platforms (e.g., for ADMET prediction) [8]. | Generate in silico predictions of toxicity and pharmacokinetics for prioritization and hypothesis generation, enriching the mechanistic evidence stream [8]. |

| Systematic Review Software | DistillerSR, Rayyan, Covidence. | Facilitate the management of the systematic review process, including reference screening, data extraction, and RoB assessment, ensuring protocol adherence [5]. |

Systematic Review Process Steps

Evidence-based toxicology represents a fundamental shift toward greater scientific rigor, transparency, and accountability in evaluating chemical safety. By adopting and adapting the structured methodologies of systematic review, it provides a powerful framework for integrating complex, multi-stream evidence—from human epidemiology to cutting-edge in silico models [1] [5].

The future trajectory of EBT is inextricably linked to the advancement of 21st-century toxicology. As defined by the NRC vision, this future relies on human-relevant, mechanistic data from NAMs [7] [3]. EBT is the essential "quality control" system that will validate these new tools and build confidence in their application for regulatory decision-making [3] [4]. Key challenges remain, including the resource-intensive nature of full systematic reviews and the need for further development of risk-of-bias tools tailored to diverse study types in toxicology [1]. However, ongoing efforts to develop semi-automated tools and machine learning approaches for evidence retrieval and synthesis promise to increase efficiency [6]. The continued collaboration fostered by organizations like the EBTC will be crucial in refining EBT methodologies and ensuring their widespread adoption, ultimately leading to more robust protection of public health and the environment [2] [4].

The field of toxicology is undergoing a fundamental transformation, shifting from a reliance on narrative expert judgment toward structured, transparent, and reproducible evidence-based approaches. Central to this evolution is the systematic review methodology, a rigorous process for identifying, selecting, appraising, and synthesizing all available research relevant to a precisely framed question [9]. Within the broader thesis of evidence-based toxicology (EBT), systematic reviews serve as the primary engine for objective evidence synthesis, designed to minimize bias, maximize transparency, and provide reliable foundations for regulatory decision-making and risk assessment [9] [5].

The adoption of systematic reviews in toxicology addresses critical limitations inherent in traditional narrative reviews. Narrative reviews often employ implicit, non-transparent processes for literature identification and selection, raising risks of selective citation and the perpetuation of bias [9]. This lack of rigor can lead to conflicting conclusions from the same evidence base, undermining stakeholder trust and potentially jeopardizing public health [9]. In contrast, systematic reviews explicitly define their methods a priori in a published protocol, ensuring the process is fully documented and reproducible [9] [10].

The push for systematic reviews is driven by regulatory agencies worldwide, including the U.S. National Toxicology Program (NTP), the Environmental Protection Agency (EPA), the European Food Safety Authority (EFSA), and the European Chemicals Agency (ECHA) [9] [6]. These organizations recognize that as the volume and complexity of toxicological data grow—encompassing human observational studies, animal testing, in vitro assays, and in silico models—a standardized method for evidence integration is not just beneficial but essential [9]. The number of systematic reviews in toxicology has risen sharply, approximately doubling from 2016 to 2020, reflecting this paradigm shift [11].

Table: Core Differences Between Narrative and Systematic Reviews in Toxicology

| Feature | Narrative (Traditional) Review | Systematic Review |

|---|---|---|

| Research Question | Broad, often not explicitly specified [9]. | Focused and specific, framed using PECO/PICO [9] [12]. |

| Literature Search | Sources and strategy usually not specified; risk of selective citation [9]. | Comprehensive, multi-database search with explicit, documented strategy [9] [10]. |

| Study Selection | Implicit, based on reviewer expertise [9]. | Explicit, pre-defined inclusion/exclusion criteria applied by multiple reviewers [9] [5]. |

| Quality/Risk of Bias Assessment | Often absent or informal [9]. | Critical appraisal using explicit tools (e.g., OHAT, Cochrane) [9] [5]. |

| Evidence Synthesis | Typically qualitative summary [9]. | Structured qualitative synthesis; may include quantitative meta-analysis [9] [10]. |

| Time & Resource Commitment | Generally lower (months) [9]. | Substantially higher (often >1 year) [9]. |

| Output | Expert opinion summary. | Transparent, reproducible evidence synthesis for decision-making. |

The Systematic Review Methodology: A Step-by-Step Technical Protocol

Conducting a systematic review is a complex, multi-stage project requiring a distinct methodological skill set. The following protocol, synthesized from established guidance, details the essential steps [9] [13] [10].

Problem Formulation & Protocol Development

The process begins with a meticulously crafted problem formulation. In toxicology, this is typically expressed as a PECO statement: Population (e.g., a specific organism, cell type), Exposure (the chemical or stressor), Comparator, and Outcome (the measured adverse effect) [6] [12]. A precise PECO is critical for guiding all subsequent steps and preventing "dueling reviews" where different teams reach opposite conclusions from the same literature due to differing initial questions [12].

Developing and publicly registering a detailed protocol is a mandatory, non-negotiable step. The protocol pre-specifies the research question, search strategy, inclusion/exclusion criteria, data extraction methods, risk-of-bias assessment tools, and synthesis plans. This practice locks in the methodology, preventing biased post-hoc decisions and allowing for peer review of the plan before work begins [9] [11]. Journals and organizations like PROSPERO are platforms for protocol registration [10].

Comprehensive Search & Study Selection

A systematic search aims to identify all potentially relevant studies across multiple published and unpublished sources. Information specialists design search strings using a mix of controlled vocabulary (e.g., MeSH terms) and keywords, tailored for databases like PubMed/MEDLINE, Embase, Web of Science, and ToxLine [10]. Grey literature—including government reports, theses, and conference proceedings—is also searched to mitigate publication bias [14].

The search results are imported into specialized reference management software (e.g., Covidence, Rayyan, DistillerSR). Using the pre-defined criteria, at least two independent reviewers screen titles/abstracts and then full texts. Disagreements are resolved through discussion or a third reviewer. This dual screening process minimizes error and bias in study selection [5].

Data Extraction & Risk-of-Bias Assessment

Relevant data from included studies are extracted into standardized forms. Key items include study design, population/exposure details, outcome measures, results, and funding sources. Independent dual extraction is recommended for accuracy [5].

Concurrently, each study's internal validity is evaluated using a risk-of-bias (RoB) tool tailored to the study type. For animal studies, tools like the OHAT Risk of Bias Rating are used to assess domains such as randomization, blinding, selective reporting, and other sources of bias [13] [5]. For in vitro studies, specific tools like INVITES-IN are being developed and validated [14]. This assessment determines the confidence placed in each study's results and informs the overall strength of the evidence.

Evidence Synthesis & Integration

Synthesis involves collating and summarizing findings. A qualitative synthesis categorizes and describes results thematically. When studies are sufficiently homogeneous in their PECO, a quantitative synthesis (meta-analysis) can be performed to statistically combine effect estimates, providing a more precise overall measure of association [10].

The final, critical step is weight-of-evidence integration. This assesses the overall body of evidence, considering the quantity, quality, and consistency of studies, the strength of measured associations, and biological plausibility. Frameworks like GRADE (Grading of Recommendations Assessment, Development and Evaluation) are being adapted for toxicology to rate confidence in the evidence and translate it into clear conclusions [9] [14].

Systematic Review Workflow in Evidence-Based Toxicology

Executing a high-quality systematic review requires leveraging a suite of specialized tools and resources. The following table details key components of the modern systematic reviewer's toolkit.

Table: Essential Toolkit for Conducting Systematic Reviews in Toxicology

| Tool/Resource Category | Specific Examples & Functions | Application in Toxicology |

|---|---|---|

| Protocol & Reporting Guidelines | PRISMA-P (Protocols), PRISMA (Reporting), GRADE [10]. | Ensures complete, transparent reporting of methods and findings. GRADE is adapted for toxicology to rate evidence confidence [14]. |

| Search & Screening Software | Covidence, Rayyan, DistillerSR, EPPI-Reviewer. | Manages de-duplication, dual screening, and data extraction workflows; essential for team collaboration [5]. |

| Risk-of-Bias (RoB) Tools | OHAT RoB Tool, Cochrane RoB Tool, INVITES-IN (in vitro) [14] [5]. | Assesses internal validity of included studies. Tool selection depends on study design (animal, human, in vitro). |

| Evidence Integration Frameworks | GRADE for Toxicology, Hill's Criteria, AOP Framework [6] [14] [5]. | Provides structured process to move from individual study results to a body-of-evidence conclusion regarding hazard or risk. |

| Data Sources & Repositories | PubMed, Embase, Web of Science, ToxLine, AOP-Wiki [6] [10]. | AOP-Wiki is crucial for linking mechanistic data to adverse outcomes within the review context [6]. |

Advanced Applications and Integrations: AOPs, AI, and Quality Assurance

Systematic Reviews in Adverse Outcome Pathway (AOP) Development

The AOP framework, a central pillar in modern mechanistic toxicology, provides a structured representation of causal pathways from a molecular initiating event (MIE) to an adverse outcome [6]. Systematic review methodology is increasingly recognized as vital for robust AOP development. Individual Key Event Relationships (KERs)—the causal links between two key events in an AOP—can be treated as mini-systematic review questions. Applying PECO-like frameworks to KERs ensures the transparent and comprehensive gathering of mechanistic evidence supporting each causal link [6].

This integration is particularly valuable for endocrine disruptor assessment, where regulators require evidence of an adverse effect, an endocrine-mediated mode of action, and a plausible causal link [6]. Systematic reviews can strengthen the evidence base for these components, moving AOPs from qualitative descriptions to quantitatively supported pathways suitable for regulatory use.

Systematic Review Evidence Informs Key Event Relationships in an AOP

The Evolving Role of Artificial Intelligence

AI and machine learning tools are being explored to increase the efficiency and scalability of systematic reviews. Potential applications include automating citation screening, data extraction, and even risk-of-bias assessments [12]. However, significant challenges remain. Current sentiment among experts is cautiously skeptical, with concerns about AI "hallucinations," difficulty identifying negative results, and a lack of transparency in automated decisions [12].

The emerging consensus favors a "human-in-the-loop" model. In this hybrid approach, AI handles initial, high-volume tasks (e.g., ranking search results by relevance), while human reviewers make final judgments on inclusion, extraction, and appraisal [12]. This balances efficiency with the necessary accuracy and expert judgment, ensuring the review remains truly systematic.

Ensuring Quality: The Critical Role of Editorial Standards

The rapid increase in published systematic reviews has raised concerns about methodological quality and reporting completeness. Reviews have identified frequent shortcomings in conduct and documentation [11]. Journal editors play a critical gatekeeper role in upholding standards.

Initiatives led by the Evidence-Based Toxicology Collaboration (EBTC) advocate for concrete editorial actions [11]. Key recommendations include:

- Mandating protocol registration prior to review commencement.

- Requiring adherence to PRISMA reporting guidelines.

- Employing reviewers with specific methodological expertise in systematic reviews, not just subject matter knowledge.

- Promoting registered reports, where the protocol undergoes peer review and in-principle acceptance before the review is conducted, safeguarding against publication bias [11].

These measures aim to ensure that the label "systematic review" signifies a truly rigorous and trustworthy evidence synthesis.

Systematic review methodology has become an indispensable component of evidence-based toxicology, providing a structured and transparent alternative to narrative reviews. Its role in informing regulatory decisions, supporting AOP development, and integrating diverse evidence streams will only grow in importance.

Key challenges for the future include:

- Methodological Harmonization: Continued development of toxicology-specific guidelines for evidence assessment and integration, building on frameworks like GRADE and OHAT [13] [14].

- Balancing Rigor with Efficiency: Developing "right-sized" or tiered approaches, such as systematic evidence maps, that match the method's depth to the assessment's needs without compromising core principles of transparency [12].

- Effective Technology Integration: Establishing best practices for incorporating AI tools to augment—not replace—human expertise, ensuring gains in speed do not come at the cost of validity [12].

- Education and Training: Building systematic review competency into toxicology graduate curricula and professional training to build capacity within the field [12].

Ultimately, the systematic review is more than a literature summarization tool; it is a fundamental research methodology for testing hypotheses using existing evidence. By committing to its rigorous and transparent application, the toxicology community can strengthen the scientific foundation of public health and environmental protection decisions worldwide.

Traditional toxicological risk assessment, reliant on animal testing and simplistic in vitro models, faces critical limitations including prolonged timelines, high costs, interspecies translational uncertainty, and ethical concerns [15]. This whitepaper delineates the key evidence-based drivers revolutionizing the field: computational artificial intelligence (AI), New Approach Methodologies (NAMs), and integrated data ecosystems. These paradigms shift toxicology from observational, apical endpoint-driven science to a predictive, mechanistic, and human-relevant discipline. We provide a technical guide to the core methodologies, experimental protocols, and essential tools underpinning this transformation, framing them within the overarching thesis that future chemical safety assessment will be driven by the convergence of in silico prediction, in vitro mechanistics, and curated in vivo evidence.

The Foundational Shift: From Traditional to Evidence-Based Toxicology

Traditional toxicology has operated on a paradigm of high-dose, long-term animal studies (e.g., 90-day or 2-year rodent bioassays) to identify adverse effects like organ pathology or tumor formation [16]. The statistical analysis of such data relies on established methods for comparing dose groups, with choice between parametric (e.g., Williams, Dunnett tests) and non-parametric (e.g., Shirley-Williams, Steel tests) approaches depending on data distribution and study design [17]. However, this framework is increasingly misaligned with modern needs for human-relevance, speed, and mechanistic depth [15].

The core limitations driving change are:

- Translational Uncertainty: Interspecies differences compromise human risk prediction.

- Time and Cost: Assessing a single compound can require years and millions of dollars [15].

- Throughput Inadequacy: The chemical universe contains tens of thousands of data-poor substances that cannot be evaluated with traditional methods [18].

- Mechanistic Opacity: Apical endpoints provide limited insight into molecular initiating events and toxicity pathways.

The emergent thesis of evidence-based toxicology integrates three pillars to overcome these hurdles: (1) AI-driven computational models for prioritization and prediction, (2) human-relevant in vitro and short-term in vivo NAMs for mechanistic insight, and (3) curated, accessible data repositories to fuel and validate the first two pillars.

Computational Toxicology: AI and Knowledge Graphs

Computational models offer a high-throughput, cost-effective alternative for hazard prioritization and risk prediction [15]. Moving beyond traditional Quantitative Structure-Activity Relationship (QSAR) models, Graph Neural Networks (GNNs) and knowledge graphs represent the cutting edge.

Methodological Advance: Knowledge Graph-Enhanced Graph Neural Networks

Recent breakthroughs involve integrating heterogeneous biological knowledge graphs with GNNs. A 2025 study constructed a Toxicological Knowledge Graph (ToxKG) from ComptoxAI, PubChem, Reactome, and ChEMBL, encompassing entities like chemicals, genes, pathways, and assays [19]. This graph, rich with relationships such as CHEMICAL-BINDS-GENE and GENE-IN-PATHWAY, provides mechanistic context that pure molecular structure lacks.

Experimental Protocol: Knowledge Graph-Enhanced Toxicity Prediction [19]

- Data Curation: Obtain the Tox21 dataset (7,831 compounds across 12 toxicity receptor targets). Filter compounds to those with definitive labels and corresponding PubChem IDs.

- Knowledge Graph (ToxKG) Construction:

- Import and extend the ComptoxAI knowledge graph into a Neo4j database.

- Standardize chemical identifiers to PubChem CIDs.

- Enrich pathway data from Reactome and compound-gene interactions from ChEMBL.

- Prune redundant relationships to optimize graph structure.

- Feature Integration: For each compound, extract its subgraph from ToxKG (including connected gene and pathway nodes). Combine this relational information with traditional molecular fingerprints (e.g., ECFP4, Morgan).

- Model Training & Evaluation:

- Implement and train heterogeneous GNN models (e.g., R-GCN, HGT, GPS) as well as homogeneous GNN baselines (e.g., GCN, GAT).

- Address class imbalance via a reweighting strategy, assigning higher loss weights to the minority (toxic) class.

- Evaluate using stratified k-fold cross-validation, reporting AUC-ROC, F1-score, Accuracy (ACC), and Balanced Accuracy (BAC).

This approach has demonstrated superior performance. For instance, the GPS model achieved an AUC of 0.956 for the NR-AR receptor task, significantly outperforming models using only structural features [19]. This underscores the critical role of biological mechanism information.

Quantitative Performance of AI Models

Table 1: Performance Comparison of Toxicological Prediction Models

| Model Type | Key Features | Reported Performance (AUC-ROC) | Primary Advantage |

|---|---|---|---|

| Traditional QSAR/RF [15] | Molecular descriptors, fingerprints | Variable (often 0.7-0.85) | Established, interpretable |

| Graph Neural Network (GCN) [20] | Molecular graph structure | Baseline ~0.73 | Captures structural topology |

| GNN with Few-Shot Learning [20] | Structural data + adversarial augmentation | 0.816 (11.4% improvement) | Effective with limited data |

| Heterogeneous GNN (GPS) with ToxKG [19] | Integrated chemical-gene-pathway knowledge | 0.956 (NR-AR task) | Mechanistic interpretability, high accuracy |

Diagram 1: Knowledge Graph-Enhanced GNN for Predictive Toxicology

New Approach Methodologies (NAMs):In Vitroand Short-TermIn VivoSystems

NAMs encompass non-animal and human-relevant testing strategies, including microphysiological systems and omics technologies [15].

Organ-on-a-Chip (OoC) Platforms

OoC devices emulate human organ-level physiology, cellular microenvironment, and multi-organ crosstalk, offering a more predictive alternative to static 2D cell cultures [15]. These platforms can model the dynamic exposure and metabolic responses seen in humans.

Omics-Enhanced Short-Term Studies

Integrating transcriptomics, proteomics, and metabolomics into short-term (5-28 day) in vivo studies enables the detection of molecular perturbations long before the onset of traditional apical pathology [16]. This allows for the derivation of Molecular Points of Departure (mPODs), which are often within a 2-3 factor difference of traditional PODs, demonstrating strong concordance [16].

Experimental Protocol: Deriving a Transcriptomic Point of Departure (tPOD) [16]

- Study Design: Conduct a 5-day repeated oral dose study in rats (per EPA's Transcriptomic Assessment Product (ETAP) program framework). Include a vehicle control and multiple dose groups.

- Tissue Sampling & Analysis: Harvest relevant target organs (e.g., liver, kidney). Extract total RNA for RNA-sequencing (RNA-seq).

- Bioinformatics Processing:

- Map sequence reads to a reference genome and quantify gene expression.

- Identify differentially expressed genes (DEGs) for each dose group vs. control using a standardized pipeline (e.g., Regulatory Omics Data Analysis Framework - R-ODAF).

- Perform pathway enrichment analysis (e.g., GO, KEGG) on DEGs.

- Benchmark Dose (BMD) Modeling:

- For significant pathways or key genes, fit dose-response models using software like BMDExpress.

- Calculate the Benchmark Dose (BMD) for a predefined Benchmark Response (BMR), typically a 1 standard deviation change.

- The tPOD is defined as the lowest median BMD (lower 95% confidence limit) across the set of sensitive, toxicologically relevant pathways.

- Validation: Compare the derived tPOD to PODs from traditional endpoints (e.g., histopathology, clinical chemistry) from parallel or historical studies to assess concordance.

Diagram 2: Multi-Omics Workflow for Mechanistic Point of Departure

Quantitative Data on NAMs Performance

Table 2: Characteristics of Advanced Toxicological Testing Modalities

| Methodology | Typical Duration | Key Endpoint | Human Relevance | Primary Application |

|---|---|---|---|---|

| Traditional 90-day Rodent Study [16] | 3+ months | Apical pathology (organ weight, histology) | Low (interspecies extrapolation) | Regulatory requirement, chronic hazard ID |

| Organ-on-a-Chip (OoC) [15] | Days to weeks | Cellular function, barrier integrity, cytokine release | High (human cells, tissue mechanics) | Mechanistic screening, ADME/tox |

| Omics-Enhanced Short-Term In Vivo [16] | 5-28 days | Genome-wide expression changes, pathway perturbation | Moderate-High (bridging animal to human pathways) | Deriving mPODs, mode-of-action analysis |

| In vitro Assay Battery [18] | Hours to days | Specific target activity (e.g., receptor binding) | Variable (depends on assay) | High-throughput screening, prioritization |

Integrated Data Ecosystems: The Fuel for Innovation

The advancement of computational and experimental NAMs is contingent upon access to high-quality, curated toxicological data. Fragmented and non-standardized data remains a major bottleneck [18].

Curated Databases: ToxValDB

The Toxicity Values Database (ToxValDB) v9.6.1 exemplifies the necessary data infrastructure. It is a curated compilation of in vivo toxicity study results (e.g., LOAEL, NOAEL), derived toxicity values, and exposure guidelines [18].

- Scale: Contains 242,149 records covering 41,769 unique chemicals from 36 sources [18].

- Structure: A two-phase process ensures quality: a "Curation" phase preserving original data and a "Standardization" phase mapping all entries to a common vocabulary and format [18].

- Utility: Serves as a critical resource for chemical screening, QSAR model training and validation, and benchmarking NAMs against traditional data [18].

Table 3: Contents and Applications of the ToxValDB Database (v9.6.1) [18]

| Data Category | Record Count (Example) | Key Fields | Primary Research Applications |

|---|---|---|---|

| In Vivo Toxicity Results | Summary values from animal studies | Chemical ID, Dose, Effect, Target Organ, LOAEL/NOAEL | Benchmarking NAMs, Read-across, Chemical prioritization |

| Derived Toxicity Values | Human-equivalent reference doses/values | Value, Uncertainty Factors, Critical Effect | Risk assessment, Screening-level safety evaluation |

| Media Exposure Guidelines | Regulatory limits (e.g., MCLs in water) | Medium, Guideline Value, Authority | Exposure context, Comparative risk analysis |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Resources for Modern Toxicology Research

| Item | Category | Function in Research | Example/Source |

|---|---|---|---|

| Tox21 Dataset | Curated Biological Data | Provides standardized in vitro bioactivity data across 12 targets for model training and validation [19]. | NIH/EPA Tox21 Program |

| Toxicological Knowledge Graph (ToxKG) | Data/Software Resource | Supplies structured mechanistic prior knowledge (chemical-gene-pathway) to enhance AI model accuracy and interpretability [19]. | Extended from ComptoxAI [19] |

| ToxValDB | Curated Database | Offers standardized, searchable in vivo and derived toxicity data for benchmarking, modeling, and assessment [18]. | U.S. EPA Center for Computational Toxicology |

| BMDExpress Software | Bioinformatics Tool | Performs benchmark dose modeling on transcriptomic or other high-throughput data to derive quantitative points of departure [16]. | U.S. National Toxicology Program |

| Organ-on-a-Chip Kits | In Vitro System | Emulates human organ/tissue physiology for mechanistic toxicity and efficacy testing in a controlled microenvironment [15]. | Commercial providers (e.g., Emulate, Mimetas) |

| Ultra-high-throughput RNA-seq Kits | Omics Reagent | Enables scalable, cost-effective transcriptomic profiling from low-input samples (e.g., from short-term studies or microtissues) [16]. | e.g., DRUG-seq, BRB-seq protocols |

The limitations of traditional toxicological assessment are being decisively addressed by a synergistic triad of key drivers: (1) AI and Knowledge Graphs for predictive and interpretative computational modeling, (2) NAMs (OoC, omics) for human-relevant, mechanistic biological insight, and (3) Integrated Data Ecosystems (ToxValDB) that provide the foundational evidence for training and validation. The future lies in precision toxicology, where these elements converge within tiered testing and probabilistic risk assessment frameworks [15]. This will enable safety decisions based on a mechanistic understanding of human biology, drastically reducing time, cost, and animal use while improving the accuracy of human health protection. Success requires continued interdisciplinary collaboration, harmonization of bioinformatics pipelines, and proactive engagement with regulatory bodies to translate scientific innovation into accepted practice [15] [16].

The field of toxicology is undergoing a paradigm shift, moving from observational hazard identification towards a predictive, mechanism-based science. This evolution is fundamentally supported by the integration of two powerful methodological frameworks: evidence-based toxicology (EBT) and mechanistic validation constructs like the Adverse Outcome Pathway (AOP) framework [21]. For researchers and drug development professionals, this convergence provides a robust scaffold for assessing diagnostic tests and validating toxicological mechanisms with greater transparency, reproducibility, and regulatory acceptance.

Evidence-based methods, adapted from clinical medicine, introduce systematic review, evidence mapping, and structured certainty assessment (e.g., GRADE) to toxicology [21]. Concurrently, the AOP framework organizes knowledge into a sequence of measurable Key Events (KEs), from a Molecular Initiating Event (MIE) to an Adverse Outcome (AO), facilitating the integration of in vitro, in silico, and traditional in vivo data [21]. This whitepaper details the core methodologies for diagnostic test assessment within this integrated paradigm, providing technical protocols and frameworks essential for advancing new approach methodologies (NAMs) in regulatory decision-making [22].

Conceptual Framework: Integrating Systematic Review with Mechanistic Pathways

The synergistic integration of systematic review methodology and the AOP framework creates a rigorous foundation for mechanistic validation. The process is not linear but iterative, where systematic evidence synthesis informs and refines the biological pathway, and the pathway, in turn, guides targeted evidence gathering.

Table: Core Components of the Integrated Evidence-Mechanism Framework

| Component | Definition | Role in Assessment |

|---|---|---|

| Systematic Review (SR) | A structured, protocol-driven method to identify, select, appraise, and synthesize all available evidence on a specific question. | Ensures comprehensiveness, minimizes bias, and provides a transparent audit trail for the evidence base supporting an AOP or test method [21]. |

| Adverse Outcome Pathway (AOP) | A conceptual framework that describes a sequential chain of causally linked key events at different biological levels leading to an adverse outcome. | Serves as the organizing template for mechanistic data, defining measurable KEs that become targets for diagnostic test development [21]. |

| Certainty Assessment (e.g., GRADE) | A system to rate the confidence in the body of evidence (e.g., high, moderate, low, very low) based on risk of bias, consistency, directness, and precision. | Applied to the evidence supporting each Key Event Relationship (KER) within an AOP, quantifying the confidence in the proposed mechanistic linkage [21]. |

| Context of Use (CoU) | A precise description of the manner and purpose of use for a test method or AOP within a regulatory decision process. | Defines the specific boundaries and applicability of a validated method or pathway, which is critical for regulatory qualification [22]. |

The integrated workflow begins with defining a precise problem formulation (e.g., "Does chemical X induce liver fibrosis via activation of the PPARα receptor?"). A systematic evidence map is then created to identify all relevant studies. Key findings are mapped onto a proposed AOP, linking the molecular interaction (MIE) to cellular, organ, and organism-level events. The strength and weight of evidence for each KER are formally evaluated. This structured, evidence-anchored AOP directly informs the development and validation of diagnostic tests—such as in vitro assays or biomarker measurements—that are designed to measure specific, critical KEs within the pathway [21].

Diagram 1: Integrated Framework for Evidence-Based Mechanistic Validation [21]

Methodologies for Diagnostic Test Assessment and Statistical Evaluation

Foundational Statistical Principles for Toxicity Data Analysis

The assessment of data generated by diagnostic tests in toxicology requires careful statistical planning from the experimental design stage. A core principle is the selection between parametric and nonparametric methods, which hinges on the distribution of the data [17].

Table: Guide to Statistical Method Selection for Quantitative Data in Toxicology

| Study Design & Purpose | Parametric Method (Assumes Normal Distribution) | Nonparametric Method (No Distribution Assumption) | Key Considerations |

|---|---|---|---|

| Compare one control vs. multiple dose groups, expecting a monotonic dose-response. | Williams' Test (step-down test for monotonic trends) | Shirley-Williams Test | Powerful for detecting dose-related trends. Preferred over pairwise comparisons if a monotonic trend is biologically plausible. |

| Compare one control vs. multiple dose groups, with no expectation of a dose-response direction. | Dunnett's Test (compares each treatment to a common control) | Steel's Test | Controls the experiment-wise Type I error rate when the only comparisons of interest are to the control. |

| All pairwise comparisons among all groups. | Tukey's Honestly Significant Difference (HSD) Test | Steel-Dwass Test | Appropriate when interest lies in comparing every group to every other group. More conservative for control comparisons than Dunnett's. |

| A small number of pre-specified, planned comparisons. | Bonferroni-adjusted t-test | Bonferroni-adjusted Wilcoxon Test | Simple method. Can be overly conservative (low power) if the number of comparisons is large. |

Parametric methods (e.g., Student's t-test, ANOVA) assume data follow a normal (bell-shaped) distribution and are generally more powerful when this assumption holds. Nonparametric methods (e.g., Wilcoxon rank-sum, Kruskal-Wallis) convert data to ranks and make no distributional assumptions. They are robust to outliers and skewed data (common in toxicology for endpoints like serum enzyme levels) and can be applied to ordinal categorical data (e.g., histopathology severity scores: -, +, ++, +++). A critical disadvantage of nonparametric methods is a severe loss of statistical power with very small sample sizes (n < 7 per group), making them less suitable for studies using large animals like dogs or non-human primates [17].

Managing Multiplicity and Experimental Workflow

A critical methodological error is the repeated application of simple tests (like multiple t-tests) without adjustment, which inflates the family-wise Type I error rate (false positive rate). For example, three unadjusted comparisons at α=0.05 each have an approximate 14% chance of at least one false significant result [17]. Multiple comparison procedures, as listed in the table above, control this experiment-wise error rate.

The experimental workflow for validating a diagnostic test, such as a novel in vitro assay for a KE, must be pre-defined and locked in a protocol. The following diagram outlines a robust, phase-based workflow that aligns with best practices for test development and qualification [23] [22].

Diagram 2: Phased Experimental Workflow for Diagnostic Test Validation

Protocols for Mechanistic Validation within an AOP Context

Validating the mechanistic role of a diagnostic test's target requires demonstrating its place within a causal biological sequence. The following provides a detailed protocol for experimental validation of a Key Event Relationship (KER).

Protocol: Establishing a Causal Key Event Relationship

- Objective: To provide empirical evidence that modulation of Key Event A (KEA) directly and predictably alters Key Event B (KEB), supporting a hypothesized KER within an AOP.

- Experimental Design: Utilize a minimum of two complementary intervention strategies (e.g., chemical inhibitor, genetic knockdown/knockout, activating agent) to modulate KEA in a relevant in vitro or in vivo test system. Include appropriate negative and positive controls.

- Measurement: Employ the diagnostic test(s) to quantitatively measure KEA. Use a separate, orthogonal method to quantitatively measure KEB.

- Analysis:

- Dose-Response/Temporal Concordance: Demonstrate that the magnitude or timing of change in KEB is concordant with the change in KEA across doses or time points.

- Statistical Correlation: Calculate correlation coefficients (e.g., Pearson's or Spearman's based on data distribution) between KEA and KEB measurements.

- Essentiality Assessment: If KEA is blocked, KEB should be prevented or significantly attenuated. If KEA is activated, KEB should be induced.

- Acceptance Criteria: The relationship meets the Bradford Hill considerations for causality (e.g., strength, consistency, specificity, temporality, biological gradient). Statistical significance (p < 0.05, using appropriate multiple comparison adjustment) should be achieved for the essentiality tests.

This empirical validation is embedded within the broader AOP, which can be modeled as a network. For example, a simplified AOP for receptor-mediated liver fibrosis illustrates how validated diagnostic tests for each KE form the basis of an integrated testing strategy [21].

Diagram 3: Example AOP for Liver Fibrosis with Associated Diagnostic Tests

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing these methodologies requires a suite of reliable research tools. The following table details essential reagent solutions for experimental work in mechanistic toxicology and diagnostic test validation.

Table: Key Research Reagent Solutions for Mechanistic Validation Studies

| Reagent / Solution Category | Specific Examples | Primary Function in Validation |

|---|---|---|

| Validated Reference Chemicals | Prototypical agonists/antagonists for target receptors (e.g., WY-14643 for PPARα), cytotoxicants, genotoxicants. | Serve as positive and negative controls in assays to establish expected response, demonstrate assay sensitivity and specificity, and anchor results to known biology [22]. |

| Stable Engineered Cell Lines | Reporter gene cells (e.g., luciferase under control of a stress response element), isogenic knockout lines (CRISPR/Cas9), cells overexpressing a human metabolizing enzyme. | Provide consistent, reproducible test systems to isolate and study specific MIEs or KEs (e.g., receptor activation, essentiality of a gene) [21] [22]. |

| High-Quality Antibodies & Probes | Phospho-specific antibodies, monoclonal antibodies for biomarkers (e.g., α-SMA for stellate cells), fluorescent activity-based probes. | Enable precise, quantitative measurement of specific molecular KEs (e.g., protein phosphorylation, biomarker expression, enzyme activity) in immunoassays or imaging. |

| Standardized In Vitro Systems | 3D reconstructed human epidermis (OECD TG 439), liver spheroids, microphysiological systems (organ-on-a-chip). | Provide more physiologically relevant models for assessing higher-level KEs (e.g., tissue barrier disruption, organ-level toxicity) as alternatives to animal models [22]. |

| Quantitative PCR & NGS Assays | TaqMan assays for stress response genes, RNA-Seq panels for pathway analysis, digital PCR for low-abundance targets. | Measure transcriptional KEs, validate pathway modulation, and provide mechanistic anchoring for phenotypic observations. |

| Software for Data Analysis & Modeling | Statistical packages (e.g., R, SAS JMP), pathway mapping tools (e.g., AOP-Wiki), computational toxicology suites (e.g., for QSAR, read-across). | Perform rigorous statistical analysis (multiple comparisons, dose-response modeling), manage AOP knowledge, and support in silico validation and extrapolation [21] [17] [22]. |

Regulatory Implementation and Qualification Pathways

The ultimate goal of these methodologies is to generate credible evidence for use in regulatory decision-making. Agencies like the U.S. FDA have established formal qualification programs for new alternative methods. Qualification is a voluntary, collaborative process where a test developer works with the agency to demonstrate and agree that a method is scientifically valid for a specific Context of Use (CoU) [22].

Key FDA programs include the ISTAND (Innovative Science and Technology Approaches for New Drugs) Pilot Program for novel drug development tools and the Medical Device Development Tool (MDDT) program [22]. A successful qualification submission is built upon the methodologies described in this document: a clearly defined CoU anchored in a mechanistic framework (AOP), comprehensive validation data following phased experimental protocols, and a robust statistical analysis plan. This rigorous, evidence-based approach is essential for gaining regulatory acceptance and transitioning new diagnostic tests and mechanistic assays from research tools to trusted components of product safety and risk assessment [23] [22].

Applied Methodologies: Predictive Tools, NAMs, and Integrative Omics in Practice

Modern toxicology is undergoing a paradigm shift from descriptive, observation-based animal studies toward predictive, mechanistically anchored frameworks. This evolution is driven by the ethical imperative of the 3Rs (Replacement, Reduction, and Refinement), the need for human-relevant data, and the demand for faster, more cost-effective safety assessments [24] [25]. New Approach Methodologies (NAMs) represent this new paradigm, encompassing a broad suite of non-animal methods including advanced in vitro assays, complex tissue models, and in silico computational tools [25].

The core thesis of evidence-based toxicology posits that hazard and risk assessment should be built upon a robust, transparent, and mechanistically sound understanding of biological pathways. NAMs are the practical implementation of this thesis. They move beyond merely documenting adverse outcomes to elucidating molecular initiating events within adverse outcome pathways (AOPs). This whitepaper provides a technical guide for implementing integrated NAM strategies, detailing foundational in vitro assays, advanced complex models, predictive in silico tools, and the frameworks for their synthesis into a cohesive Integrated Approach to Testing and Assessment (IATA) [24] [26].

Foundational In Vitro Assays: Endpoints and Best Practices

Classical in vitro cytotoxicity assays form the methodological bedrock of cellular toxicology. They provide quantitative, reproducible data on fundamental cellular health parameters and continue to serve as regulatory benchmarks [24]. Their proper execution and interpretation are critical for any NAM-based testing strategy.

Table 1: Core Classical Cytotoxicity Assays and Protocols

| Assay Name | Primary Endpoint | Key Protocol Steps | Common Pitfalls & Mitigation |

|---|---|---|---|

| MTT/Tetrazolium Reduction | Mitochondrial dehydrogenase activity (metabolic capacity) [24]. | 1. Seed cells (5x10³–2x10⁴/well).2. Apply test agent for treatment period.3. Add MTT reagent (0.5 mg/mL), incubate 2-4 hours.4. Solubilize formazan crystals (DMSO, isopropanol).5. Measure absorbance at 570 nm [24]. | Non-specific reduction by test compounds; use "no-cell" blanks. Formazan insolubility; optimize solubilization protocol [24]. |

| LDH Release | Plasma membrane integrity (cytotoxicity) [24]. | 1. Treat cells in serum-free or low-serum medium.2. Collect supernatant post-treatment.3. Mix supernatant with NADH and pyruvate.4. Monitor conversion of pyruvate to lactate (absorbance decrease at 340 nm) [24]. | High LDH background in serum; use serum-free media or heat-inactivated serum controls. Spontaneous leakage from stressed cells [24]. |

| Neutral Red Uptake (NRU) | Lysosomal function and cell viability [24]. | 1. Treat cells.2. Incubate with Neutral Red dye (40 µg/mL) for 3 hours.3. Rapidly wash cells.4. Extract dye with destain solution (50% ethanol, 1% acetic acid).5. Measure absorbance at 540 nm [24]. | pH sensitivity; maintain medium pH. False positives if test agent targets lysosomes [24]. |

| Resazurin Reduction (AlamarBlue) | Cellular metabolic activity (non-destructive) [24]. | 1. Treat cells.2. Add resazurin reagent (10% v/v).3. Incubate 1-4 hours, protected from light.4. Measure fluorescence (Ex560/Em590) or absorbance (600 nm) [24]. | Signal saturation from high metabolic activity; shorten incubation time. Compound fluorescence interference [24]. |

Best Practice Guidelines: A key evidence-based principle is the use of multiparametric assessment. No single assay is universally reliable; combining at least two independent endpoints (e.g., MTT for metabolism and LDH for membrane integrity) mitigates assay-specific artifacts and provides a more robust viability profile [24]. Essential reporting standards include cell seeding density, passage number, medium composition, precise incubation times, and detailed data normalization methods (e.g., to untreated control and maximal lysis controls) [24]. For novel materials like nanomaterials, checking for assay interference—such as adsorption of chromogenic dyes—is mandatory [24].

Advanced In Vitro Models: Physiological Relevance through Complexity

To bridge the gap between simple monolayer cultures and human physiology, NAMs employ Complex In Vitro Models (CIVMs). These systems introduce critical elements like three-dimensional (3D) architecture, multiple cell types, and dynamic microenvironments, enabling more accurate modeling of tissue-specific functions and toxicities [27].

Organoids and 3D Culture Systems

Organoids are self-organizing 3D structures derived from pluripotent stem cells (PSCs) or adult stem cells (ASCs) that recapitulate key aspects of in vivo organ microanatomy and function [27]. Their generation hinges on three fundamental elements: cells, matrix, and media composition [27].

- Cell Sources: Pluripotent Stem Cells (PSCs, including iPSCs) are used to model embryonic development and can generate organoids from all germ layers. Adult Stem Cells (ASCs), like intestinal Lgr5+ cells, generate organoids that model tissue homeostasis and regeneration [27].

- Extracellular Matrix (ECM): Matrigel or other hydrogel scaffolds provide the necessary biophysical and biochemical cues for 3D growth and polarization [27].

- Directed Differentiation: Media is supplemented with precise combinations of growth factors and pathway modulators (e.g., Wnt agonists, BMP4, FGFs) to guide stem cell fate toward the target tissue [27].

Protocol: Hepatic Organoid Generation from iPSCs

- Maintenance: Culture human iPSCs in feeder-free conditions using mTeSR1 medium.

- Definitive Endoderm Induction: Dissociate iPSCs and aggregate into embryoid bodies. Culture for 3 days in RPMI with 100 ng/mL Activin A and 50 ng/mL Wnt3a.

- Hepatic Specification: Transfer aggregates to Matrigel-coated plates. Culture for 5 days in KnockOut DMEM with 20 ng/mL BMP4 and 10 ng/mL FGF2.

- Hepatoblast Expansion: Switch to HCM medium with 20 ng/mL HGF and 10 ng/mL FGF for 5 days.

- 3D Maturation: Embed cell clusters in Matrigel domes and culture in hepatocyte maturation medium (William's E medium with OSM, dexamethasone) for 10+ days to form functional, bile canaliculi-containing organoids [27].

Organ-on-a-Chip (OOC) Microphysiological Systems

OOC technology uses microfluidics to culture living cells in continuously perfused, micrometer-sized chambers, simulating the physiological mechanics and tissue-tissue interfaces of human organs [24]. A liver-on-a-chip, for example, may co-culture hepatocytes and endothelial cells in separate but connected channels, subjecting them to fluid shear stress and allowing for the analysis of metabolite exchange [24] [27].

Table 2: Advanced In Vitro Models for Target Organ Toxicity

| Model Type | Key Technical Features | Primary Toxicological Applications | Considerations |

|---|---|---|---|

| Patient-Derived Organoids (PDOs) | 3D culture from patient biopsies; retains genetic and phenotypic traits of the tumor/tissue [27]. | Personalized drug efficacy and toxicity screening; modeling inter-individual variability. | Throughput can be limited; variable success rates for establishment. |

| Liver-on-a-Chip | Microfluidic perfusion; often includes Kupffer and stellate cell co-culture; fluid shear stress [24]. | Hepatotoxicity, chronic toxicity (steatosis, fibrosis), metabolite-mediated toxicity. | Higher technical complexity and cost than static cultures. |

| Kidney Proximal Tubule-on-a-Chip | Porous membrane separating luminal and interstitial channels; active fluid flow and shear [24]. | Nephrotoxicity, drug transporter interactions, biomarker release (e.g., KIM-1). | Requires specialized equipment for pumping and flow control. |

In Silico Prediction Models: Computational Toxicology Tools

In silico NAMs use computational tools to predict toxicity from chemical structure, existing data, or in vitro results. They are essential for high-throughput prioritization, mechanism elucidation, and quantitative extrapolation [28] [8].

Core Methodologies and Protocols

Quantitative Structure-Activity Relationship (QSAR): QSAR models correlate a numerical descriptor of molecular structure (e.g., logP, molecular weight, topological indices) with a quantitative biological activity [28]. The OECD QSAR Validation Principles provide a standard development framework [29].

- Protocol for QSAR Model Development & Validation: a. Curate Data: Assemble a high-quality dataset of chemicals with associated toxicity endpoint data. b. Calculate Descriptors: Use software (e.g., PaDEL, Dragon) to compute molecular descriptors. c. Split Data: Divide into training set (≥70%) and external test set (≈30%). d. Model Building: Apply algorithms (e.g., Partial Least Squares, Random Forest) on the training set. e. Internal Validation: Use cross-validation on the training set to avoid overfitting. f. External Validation: Apply the finalized model to the held-out test set to assess predictive power [28] [26].

Physiologically Based Pharmacokinetic (PBPK) Modeling & QIVIVE: PBPK models are mathematical representations of the absorption, distribution, metabolism, and excretion (ADME) of a chemical in the body. When coupled with in vitro bioactivity data, they enable Quantitative In Vitro to In Vivo Extrapolation (QIVIVE) [24] [29].

- Protocol for High-Throughput Toxicokinetics (HTTK) with QIVIVE:

a. Obtain In Vitro Bioactivity: Determine AC50 or LOAEL in relevant human cell assay.

b. Estimate TK Parameters: Use in vitro assays or QSAR models to get parameters like intrinsic hepatic clearance (Clint) and plasma protein binding (fup) [29].

c. Configure PBPK Model: Use a generic human HTTK model (e.g., in R package

httk). d. Run Reverse Dosimetry: Input the in vitro bioactive concentration. The model iteratively calculates the external human equivalent dose (HED) required to produce that tissue concentration [29].

- Protocol for High-Throughput Toxicokinetics (HTTK) with QIVIVE:

a. Obtain In Vitro Bioactivity: Determine AC50 or LOAEL in relevant human cell assay.

b. Estimate TK Parameters: Use in vitro assays or QSAR models to get parameters like intrinsic hepatic clearance (Clint) and plasma protein binding (fup) [29].

c. Configure PBPK Model: Use a generic human HTTK model (e.g., in R package

Consensus Modeling to Improve Predictivity

A major challenge is discordance between different in silico tools. Consensus or ensemble modeling combines predictions from multiple individual models to generate a single, often more accurate and robust prediction [26].

Methodology: For a given chemical and endpoint (e.g., estrogen receptor binding), predictions are gathered from several models (e.g., VEGA, ADMETLab, Danish QSAR). The final call is determined by a weighted average or majority vote, where weights can be based on each model's performance metrics (e.g., sensitivity, applicability domain coverage) [30] [26]. This approach smooths individual model errors and expands the covered chemical space [26].

Table 3: Selected In Silico Tools for Toxicity Prediction

| Tool/Model | Methodology | Typical Endpoints | Reported Performance (Example) |

|---|---|---|---|

| VEGA | QSAR and SAR | Mutagenicity, carcinogenicity, endocrine disruption [30]. | For ER/AR activity, efficiency of 43-100%; correct calls 50-100% [30]. |

| ProTox-II | Machine Learning (ML) | Acute toxicity, hepatotoxicity, endocrine disruption [30]. | Performed well in comparative study for ER/AR and aromatase inhibition [30]. |

| Opera | QSAR & ML, integrated into EPA CompTox Dashboard [30]. | Physicochemical properties, environmental fate, toxicity [30]. | Demonstrated strong overall performance for ER and AR effects [30]. |

| AdmetLab | Machine Learning-based QSAR | Comprehensive ADMET properties [30]. | Reliable for predicting ER, AR effects and aromatase inhibition [30]. |

| HTTK (R Package) | PBPK/IVIVE | High-throughput toxicokinetics, plasma concentration prediction [29]. | Predicts AUC with RMSLE ~0.9 using in vitro inputs, ~0.6-0.8 using QSPR inputs [29]. |

Integrated Strategies: IATA and NGRA Frameworks

The true power of NAMs is realized through their integration within a tiered, hypothesis-testing framework. An Integrated Approach to Testing and Assessment (IATA) logically combines multiple information sources (e.g., in silico, in vitro, existing data) to inform a regulatory decision on a specific hazard [24] [26].

Workflow of an IATA for Skin Sensitization:

- Tier 1 - In Silico & Chemistry: Apply QSAR models (e.g., OECD Toolbox) for structural alerts for electrophilicity (key molecular initiating event). Perform read-across from similar, data-rich chemicals.

- Tier 2 - In Vitro Mechanistic Assays: Test negative/equivocal chemicals from Tier 1 in the Direct Peptide Reactivity Assay (DPRA) to measure covalent binding to peptides. Follow with the KeratinoSens assay to assess the Keap1/Nrf2 pathway activation.

- Tier 3 - In Vitro Integrated Testing: Use a human reconstructed epidermis model (e.g., EpiSensA) to combine penetration, reactivity, and inflammatory response.

- Integrated Decision: A weight-of-evidence analysis across all tiers classifies the substance without animal testing [24].

Next-Generation Risk Assessment (NGRA) is a consumer exposure-led, hypothesis-driven framework that integrates NAM-derived hazard data with targeted exposure assessments to establish a margin of safety (MoS) [30]. It is particularly vital for ingredients like cosmetics, where animal testing is banned [30].

Diagram 1: Next-Gen Risk Assessment (NGRA) workflow integrating in silico, in vitro, and exposure data within a PBPK model for safety decision-making.

Case Study: Integrated Assessment of Endocrine Disruption Potential

A 2023 study provides a template for implementing an integrated NAM strategy to assess endocrine disruption, a complex endpoint involving multiple mechanisms [30].

Objective: Evaluate the estrogenic (ER), androgenic (AR), and steroidogenic (aromatase inhibition) potential of 10 chemicals using a suite of in vitro assays and in silico models, comparing results to the EPA's ToxCast database [30].

Integrated Methodology:

- Tier 1 - Initial Screening: Perform Yeast Estrogen/Androgen Screen (YES/YAS) assays for high sensitivity detection of receptor activation. Run multiple in silico models (Danish QSAR, Opera, ADMETLab, ProToxII) [30].

- Tier 2 - Mechanistic Confirmation: Test positives/negatives in human cell-based CALUX transactivation assays (ER/AR) and recombinant aromatase enzyme inhibition assays. Include metabolic activation using liver S9 fractions for relevant chemicals (e.g., benzyl butyl phthalate) [30].

- Data Integration & QIVIVE: Compare all in vitro and in silico results to ToxCast classifications. For positive findings, use in vitro potency (AC50) and human exposure estimates in a PBPK model to calculate a bioactivity-exposure ratio and assess risk [30].

Key Findings: The YES/YAS assays showed high sensitivity for ER effects. In silico final calls were mostly concordant with in vitro results, with Danish QSAR, Opera, ADMETLab, and ProToxII showing the best overall performance for ER/AR effects. This study validated a strategy where Tier 1 in silico and YES/YAS screening can reliably inform the need for and design of more complex Tier 2 mechanistic assays within an NGRA framework [30].

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for NAM Implementation

| Reagent/Material | Function/Description | Key Application in NAMs |

|---|---|---|

| Basement Membrane Matrix (e.g., Matrigel, Cultrex) | A gelatinous protein mixture mimicking the mammalian extracellular matrix (ECM). | Provides scaffold for 3D organoid growth and differentiation; essential for establishing complex morphology [27]. |

| Defined Media Kits for Stem Cell/Organoid Culture | Serum-free media formulations containing precise growth factors, cytokines, and inhibitors (e.g., Wnt3a, Noggin, R-spondin). | Directs the differentiation and maintenance of PSC-derived and adult stem cell-derived organoids [27]. |

| Microfluidic Organ-on-a-Chip Devices | Pre-fabricated polymer chips containing micro-channels and chambers, often with integrated porous membranes. | Creates dynamic, perfused culture environments with physiological shear stress and tissue-tissue interfaces [24] [27]. |

| Metabolic Activation System (e.g., Rat/Liver S9 Fractions + Cofactors) | A subcellular liver fraction containing Phase I and II metabolic enzymes, supplemented with NADPH, UDPGA, etc. | Incorporates xenobiotic metabolism into in vitro assays (e.g., CALUX), identifying pro-toxins or detoxified compounds [30]. |

| High-Content Imaging (HCI) Dye Sets | Multiplexed fluorescent dyes for labeling nuclei, mitochondria, lysosomes, ROS, calcium flux, etc. | Enables multiparametric, mechanistic cytotoxicity screening in complex in vitro models, moving beyond single endpoints [24]. |

| QSAR-Ready Chemical Structures (Standardized SMILES) | Canonical, curated molecular representations that remove salts and standardize tautomers. | Essential input for reliable in silico predictions; ensures consistency across different computational tools [26] [29]. |

Diagram 2: The iterative, data-informed workflow of an Integrated Approach to Testing and Assessment (IATA).

Leveraging High-Throughput Screening and Computational Toxicology Databases

The field of toxicology is undergoing a foundational transformation from observational, animal-centric studies to a predictive, evidence-based discipline. This paradigm shift is powered by the strategic integration of high-throughput screening (HTS) and computational toxicology databases. HTS employs automated, miniaturized assays to rapidly evaluate thousands of chemicals for biological activity, generating vast volumes of in vitro hazard data [31]. Concurrently, computational toxicology organizes and interprets this data through public databases and predictive models, creating a structured knowledge base for safety assessment [32]. Together, these approaches form the core of next-generation risk assessment (NGRA), which aims to provide more human-relevant, mechanistic, and efficient evaluations of chemical safety while reducing reliance on traditional animal testing [31] [33]. This technical guide details the methodologies, tools, and integrated workflows that define this modern, evidence-based approach to toxicological research and drug development.

High-Throughput Screening: Technologies and Market Landscape

HTS is a cornerstone technology for generating the empirical data required for computational modeling. It leverages automation, sensitive detection systems, and informatics to test large chemical libraries against biological targets.

2.1 Core Technologies and Assay Formats The technology segment is diverse, with cell-based assays leading due to their physiological relevance. As of 2024, this segment held a dominant 45.14% market share [34]. These assays utilize advanced models like 3-D organoids and organs-on-chips to replicate human tissue physiology, addressing the high clinical failure rates linked to inadequate preclinical models [34]. Key technological segments include:

- Cell-Based Assays: Dominant for functional and phenotypic screening.

- Lab-on-a-Chip & Microfluidics: Projected for rapid growth (10.69% CAGR), enabling ultra-miniaturization and complex tissue modeling [34].

- Label-Free Technologies: Gaining traction for safety-toxicology workflows by minimizing assay interference [34].

2.2 Market Drivers and Economic Context The global HTS market is experiencing robust growth, valued at an estimated USD 32.0 billion in 2025 and projected to reach USD 82.9 billion by 2035 at a compound annual growth rate (CAGR) of 10.0% [35]. This growth is driven by multiple interrelated factors:

Table 1: Key Drivers and Restraints in the High-Throughput Screening Market [34]

| Factor | Impact on CAGR Forecast | Primary Geographic Relevance | Key Rationale |

|---|---|---|---|

| Advances in Robotic & Imaging Systems | +2.1% | Global (North America & EU lead) | Increases throughput and reproducibility; reduces experimental variability. |

| Rising Pharma/Biotech R&D Spending | +1.8% | Global (major pharma hubs) | Fuels investment in screening for precision medicine and pipeline growth. |

| Adoption of 3-D & Cell-Based Assays | +1.5% | North America, EU, expanding to APAC | Improves predictive accuracy for human physiology, reducing late-stage attrition. |

| Integration of AI/ML for Triage | +1.3% | Global (Silicon Valley, Boston clusters) | Shrinks physical screening libraries by up to 80%, improving cost efficiency. |

| High Capital Expenditure | -1.4% | Global (impacts small firms most) | High upfront costs (up to ~USD 5M per workcell) create adoption barriers. |

| Shortage of Skilled Specialists | -0.8% | North America, EU, emerging in APAC | Interdisciplinary expertise in biology, robotics, and data science is scarce. |

The application of HTS is also evolving. While primary screening remains the largest application segment (42.70% share in 2024), the fastest growth is in toxicology and ADME (Absorption, Distribution, Metabolism, Excretion) applications, forecast at a 13.82% CAGR [34]. This reflects a strategic industry shift towards "fail early, fail cheaply" by identifying safety liabilities during early candidate selection.

Computational Toxicology Databases: Curated Knowledge Repositories

Computational toxicology databases provide the essential infrastructure to store, organize, and disseminate the data generated by HTS and traditional studies. These resources transform raw data into accessible, structured knowledge.

3.1 Key Public Databases and Resources Several public databases, notably those maintained by the U.S. Environmental Protection Agency (EPA), are critical to the field. All EPA computational toxicology data is publicly available as "open data," free for commercial and non-commercial use [31].

Table 2: Essential Public Computational Toxicology Databases and Resources [31]

| Database/Resource | Primary Content | Key Utility |

|---|---|---|