Ensuring Reliability in Environmental Risk Assessment: A Comprehensive Guide to Interlaboratory Comparison of Ecotoxicity Tests

This article provides a detailed guide to interlaboratory comparison (ILC) studies for ecotoxicity testing, designed for researchers and regulatory professionals.

Ensuring Reliability in Environmental Risk Assessment: A Comprehensive Guide to Interlaboratory Comparison of Ecotoxicity Tests

Abstract

This article provides a detailed guide to interlaboratory comparison (ILC) studies for ecotoxicity testing, designed for researchers and regulatory professionals. It explores the foundational principles behind ILCs as essential tools for method standardization and validation. The article then examines the practical methodologies for designing and executing robust comparison studies, followed by a troubleshooting analysis of common sources of variability and strategies for optimization. Finally, it offers a framework for critically assessing test performance, validating results, and comparing different methods. The synthesis of these four intents delivers actionable insights for enhancing the precision, reproducibility, and regulatory acceptance of ecotoxicity data in environmental and biomedical research.

The Critical Role of Interlaboratory Comparisons in Standardizing Ecotoxicity Testing

Introduction Interlaboratory comparisons (ILCs) are systematic exercises where multiple laboratories perform measurements or tests on the same or similar items [1]. These are foundational tools for establishing the reliability, comparability, and validity of analytical data, especially in fields like ecotoxicity testing where regulatory decisions and scientific conclusions depend on reproducible results [2]. This guide objectively compares the two primary ILC types—Proficiency Testing (PT) and Test Performance Studies (TPS, commonly known as Ring Trials or Ring Tests)—within the context of ecotoxicity research. By examining their distinct purposes, standardized protocols, and key statistical outcomes, this article provides a framework for researchers and drug development professionals to select and implement the appropriate comparison to ensure data quality and method fitness-for-purpose.

1. Definition and Core Purpose An Interlaboratory Comparison is defined as the organization, performance, and evaluation of measurements or tests on the same or similar items by two or more laboratories in accordance with predetermined conditions [1]. The overarching purpose is to assess and improve the quality of laboratory results, which is critical for the uniform implementation of legislation, the free movement of goods, and the protection of consumer and environmental health [2].

Within this broad scope, ILCs serve two principal, distinct objectives:

- Assessing Laboratory Competence (Proficiency Testing): To evaluate a laboratory's technical competence and the accuracy of its routine testing operations [3] [4].

- Assessing Method Performance (Test Performance Studies/Ring Trials): To evaluate the performance characteristics (e.g., accuracy, reproducibility) of a specific analytical or diagnostic method to determine if it is fit-for-purpose [2] [4].

Table 1: Comparative Overview of Proficiency Testing and Ring Trials (Test Performance Studies)

| Aspect | Proficiency Testing (PT) | Ring Trial / Test Performance Study (TPS) |

|---|---|---|

| Primary Objective | Evaluation of a laboratory's technical competence and ongoing performance [3] [5]. | Validation, harmonization, and evaluation of an analytical method's performance [2] [4]. |

| Typical Use Case | Mandatory for laboratory accreditation (ISO/IEC 17025), external quality assurance [2] [1]. | Pre-normative research, method development, standardization by bodies like CEN or ISO [2] [6]. |

| Reference Values | Pre-established and concealed from participants; often derived from a reference laboratory [3] [1]. | May be derived from participant results (consensus mean) or from a reference method [3] [7]. |

| Experimental Conditions | Laboratories use their own routine methods, equipment, and reagents [3]. | Strictly standardized protocol is followed by all participants to minimize variability [3] [5]. |

| Sample Preparation | Samples with known/assigned values are provided by a PT provider [3]. | Samples are typically prepared and distributed by the organizing reference laboratory [3]. |

| Frequency | Regular and periodic (e.g., quarterly, biannually) [3]. | Occasional, conducted when validating a new method or for standardization purposes [3]. |

| Governance Standard | ISO/IEC 17043 [1]. | Guidelines such as EPPO PM 7/122 or ISO 13528 [4]. |

2. Types and Experimental Protocols

2.1 Proficiency Testing (PT) PT is a formal exercise where a coordinating body provides test items to laboratories for analysis. The reported results are compared against pre-established criteria, such as values from a reference laboratory, to evaluate participant performance [1]. In ecotoxicity testing, PT schemes are crucial for demonstrating a laboratory's continued competence in conducting standardized bioassays (e.g., Daphnia magna acute immobilization test).

Common PT Schemes:

- Sequential Schemes (e.g., Round Robin): A single test item (artifact) is successively circulated from one participant to the next. This is suitable for stable reference materials [1].

- Simultaneous Schemes: Homogenized sub-samples from a single batch are distributed simultaneously to all participants. This is common for perishable biological materials used in ecotoxicity tests [1].

Protocol Workflow:

- A PT provider prepares homogeneous and stable samples with characterized properties.

- Samples are distributed to participating laboratories.

- Each laboratory analyzes the sample using its routine standardized method (e.g., OECD Test Guideline 201) and reports the result (e.g., EC50 value).

- The provider statistically evaluates all results, calculates performance scores (e.g., z-score, En number), and issues confidential reports to each lab [1].

2.2 Test Performance Studies / Ring Trials Ring Trials are collaborative method validation studies. Their goal is to assess the reproducibility and precision of a specific method across different laboratories, operators, and equipment [4] [6]. In ecotoxicity research, Ring Trials are essential for validating new or modified test protocols before they are adopted as standard methods.

Protocol Workflow:

- An organizing laboratory (often a National Reference Laboratory) develops a detailed, standardized protocol and prepares identical test kits, including reagents, reference toxicants, and test organisms (if possible).

- Participating laboratories receive the kits and strictly follow the prescribed protocol.

- All laboratories analyze the same samples under the defined conditions.

- Results are collected centrally. The analysis focuses on estimating between-laboratory reproducibility and identifying sources of systematic bias [4].

3. Key Outcomes and Data Analysis The outcomes of ILCs are quantified using specific statistical metrics that inform laboratories about their performance and inform method developers about robustness.

Table 2: Key Statistical Metrics for Evaluating ILC Outcomes

| Metric | Formula / Description | Interpretation in Proficiency Testing | Interpretation in Ring Trials | ||||||

|---|---|---|---|---|---|---|---|---|---|

| z-score | $z = \frac{x{lab} - X}{\hat{\sigma}}$Where $x{lab}$=lab result, $X$=assigned value, $\hat{\sigma}$=standard deviation for proficiency assessment [1]. | z | ≤ 2:* Satisfactory*2 < | z | < 3:* Questionable* | z | ≥ 3: Unsatisfactory [1] | Used to identify outlier laboratories whose results are excluded from consensus calculations. | |

| Normalized Error (En) | $En = \frac{x{lab} - X}{\sqrt{U{lab}^2 + U{ref}^2}}$Where $U{lab}$ and $U{ref}$ are the expanded uncertainties of the lab and reference value, respectively [1]. | En | ≤ 1:* Satisfactory (result agrees with reference within uncertainty)* | En | > 1: Unsatisfactory [1] | Critical for comparisons where measurement uncertainty is a declared competence, assessing if results are metrologically compatible. | |||

| Consensus Mean | The mean or robust average of all participant results after outlier exclusion. | Used as the assigned value if a reference method value is not available [8]. | The primary outcome representing the best estimate of the "true value"; used to calculate each lab's bias. | ||||||

| Standard Deviation for Proficiency Assessment (σ) | Determined from prior data, predefined fitness-for-purpose criteria, or from participant results [1]. | Scales the z-score; defines the acceptable range of results. | Not typically used as a primary outcome; between-laboratory reproducibility is more relevant. | ||||||

| Between-Laboratory Reproducibility Standard Deviation (s_R) | Calculated from the one-way ANOVA of all participant results. | Not a typical PT outcome. | The key outcome. Quantifies the method's precision under interlaboratory conditions. A lower s_R indicates a more robust, transferable method [4]. |

Recent research emphasizes refining these evaluations. For instance, the simple |En| ≤ 1 criterion may be inconclusive if the comparison uncertainty is large [9]. Advanced statistical models, such as the Rocke-Lorenzato model for calibration data, provide more accurate confidence intervals for consensus values, especially for low-concentration analytes common in ecotoxicity [10].

4. The Scientist's Toolkit for ILCs Organizing or participating in a robust ILC requires specific materials and reagents.

Table 3: Essential Research Reagent Solutions for Ecotoxicity ILCs

| Item | Function in ILC | Critical Consideration |

|---|---|---|

| Certified Reference Material (CRM) | Provides a traceable, stable artifact with defined property values. Serves as the foundation for assigning values in PT or verifying accuracy in Ring Trials [2]. | Homogeneity and long-term stability are paramount. Availability for specific ecotoxicants can be limited. |

| Reference Toxicant | A standardized chemical (e.g., potassium dichromate, sodium chloride) used to assess the sensitivity and health of test organisms. | Must be of high purity. Its dose-response curve in a standardized test is well-characterized and reproducible. |

| Control Sample | A sample with a known, consistent response (e.g., negative control, solvent control). Monitors baseline organism health and procedural correctness. | Essential for distinguishing test substance effects from background procedural variability. |

| Homogenized Test Media/Matrix | The substrate (e.g., reconstituted water, soil, sediment) containing the toxicant. Provided to ensure all labs test an identical material. | Achieving and verifying homogeneity across all distributed units is the most critical step in ILC organization [3] [4]. |

| Live Test Organisms | Biological indicators (e.g., algae, daphnids, fish embryos). Their consistent sensitivity is crucial. | May be provided as eggs/neonates or as cultures from a designated supplier. Age, health, and genetic strain must be standardized [4]. |

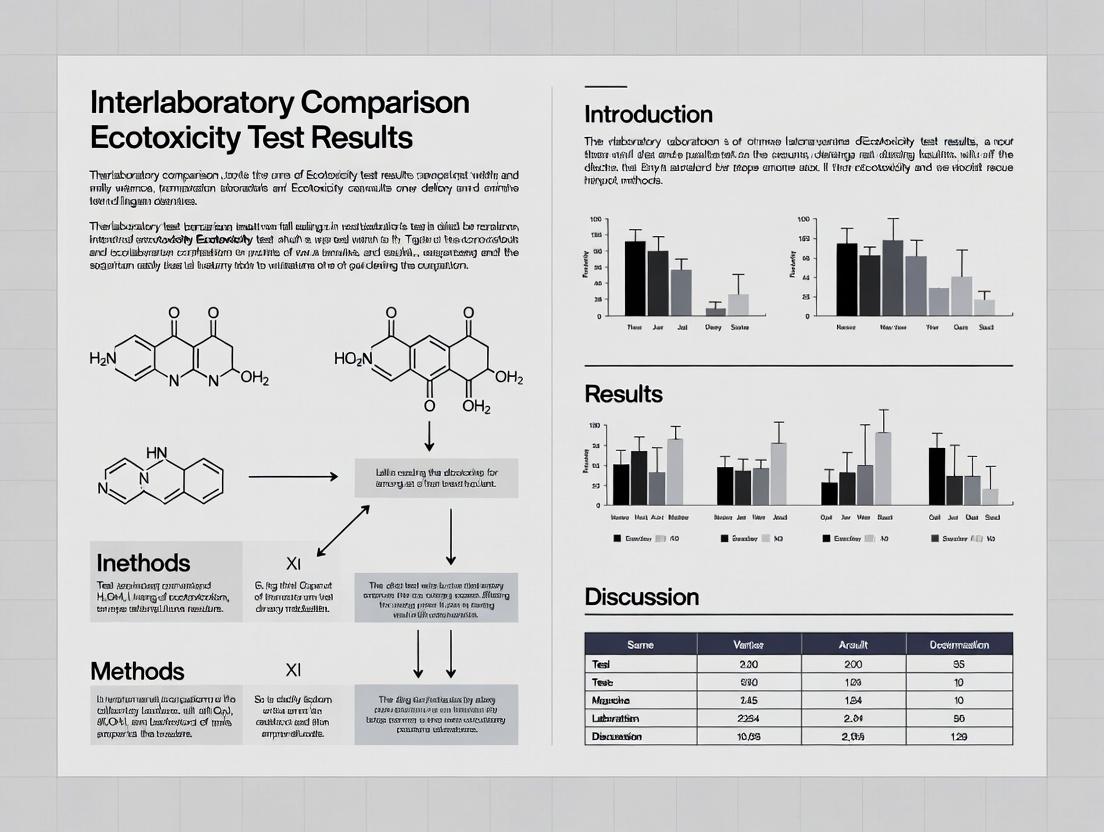

5. Visualization of ILC Structures and Workflows

Conceptual Relationship Between ILC Types and Outcomes

Sequential Workflow for a Proficiency Testing Scheme

Conclusion Within ecotoxicity research, interlaboratory comparisons are indispensable for building a body of reliable and comparable data. Proficiency Testing and Ring Trials serve as complementary tools: PT is the ongoing monitor of a laboratory's ability to produce valid data, while Ring Trials are the crucible in which new methods are validated and standardized. By understanding their distinct purposes, implementing their specific protocols, and correctly interpreting their statistical outcomes—such as z-scores for competence and between-laboratory reproducibility for method robustness—researchers and regulatory professionals can significantly enhance the quality and credibility of ecotoxicity assessments. The continuous refinement of statistical approaches, like better uncertainty handling [9] and advanced modeling for low-concentration data [10], promises even more powerful ILCs to meet future challenges in environmental safety and drug development.

A significant majority of researchers in science, technology, engineering, and mathematics believe the scientific community is facing a reproducibility crisis, a situation exacerbated by high-profile retractions stemming from data falsification [11]. In ecotoxicology and related fields, this crisis manifests as unacceptable variability in interlaboratory test results, undermining both regulatory decisions and scientific progress. This variability often originates from seemingly minor, unstandardized experimental parameters—from the type of laboratory lighting to the precise protocols for sample preparation [12] [13].

The urgency for standardization has been elevated to a matter of national policy. The 2025 U.S. Executive Order on "Restoring Gold Standard Science" mandates that federal agencies base decisions on transparent, rigorous, and impartial scientific evidence [11]. This "Gold Standard Science" framework is built upon nine core tenets, including reproducibility, transparency, and the communication of error and uncertainty [14] [15]. For researchers and regulators, this translates to a non-negotiable requirement: experimental data must be generated through harmonized, standardized methods to ensure they are reliable, comparable, and fit for purpose in protecting public health and the environment.

Comparative Analysis: Standardized vs. Non-Standardized Methodologies

This section provides a direct comparison of experimental outcomes, highlighting how standardization—or the lack thereof—critically impacts data reliability and interlaboratory consistency.

Comparison Guide: Light Source in Whole Effluent Toxicity (WET) Testing

The global transition from fluorescent to LED lighting presents a practical challenge for laboratories. A 2025 interlaboratory study investigated whether this change introduces a significant source of variability in standardized Whole Effluent Toxicity (WET) tests [12] [16].

- Experimental Protocol: Two independent laboratories (ASUERF and GEI Consultants) performed acute and chronic toxicity tests using sodium chloride as a reference toxicant. Test organisms (Ceriodaphnia dubia, Daphnia magna, Daphnia pulex, Pimephales promelas) were cultured and tested under identical conditions except for the light source: traditional fluorescent lights versus full-spectrum LED lights. Tests were conducted across different seasons to account for temporal biological variation [16].

- Key Findings & Data Comparison: The study concluded that LED lights are a viable alternative for most tests but identified critical exceptions, underscoring that not all experimental parameters can be universally swapped without validation.

Table 1: Comparison of WET Test Performance Under Fluorescent vs. LED Lighting [12] [16]

| Test Organism | Test Type | Performance Under LED vs. Fluorescent | Key Notes & Interlab Consistency |

|---|---|---|---|

| Ceriodaphnia dubia | Acute & Chronic | No significant difference | LED color temperature (warm vs. cool white) did not affect results. |

| Daphnia pulex | Acute | No significant difference | Performance was consistent. |

| Daphnia magna | Acute | No significant difference | Performance was consistent. |

| Daphnia magna | Chronic | Potential difference | Data suggested a potential impact, warranting further study. |

| Pimephales promelas (Fathead minnow) | Chronic | Significant difference | LED lights were not a suitable alternative for this chronic test. |

| Interlab Variability | Observed | Time-of-year differences were found, with inconsistencies between the two laboratories, highlighting that even controlled studies face unseen variables. |

- Implications for Standardization: This comparison demonstrates that method details matter. Regulatory test guidelines (e.g., from the USEPA) that specify light intensity but not the light source type can inadvertently introduce variability. The study provides the empirical data needed to update standards, explicitly endorsing LEDs for specific tests while cautioning against their use for others [16]. This is a direct application of the Gold Standard tenet of communicating uncertainty—clearly defining the boundaries of a validated method [14].

Comparison Guide: Protocols for Measuring Oxidative Potential (OP) of Aerosols

Oxidative Potential (OP) is a promising health-relevant metric for air pollution, but its adoption has been hampered by a proliferation of laboratory-specific protocols. A 2025 interlaboratory comparison (ILC) involving 20 global labs quantified this variability using the dithiothreitol (DTT) assay [13].

- Experimental Protocol: A core group of experts developed a simplified, harmonized Standard Operating Procedure (SOP)—the RI-URBANS DTT SOP. Participating laboratories each received identical liquid samples to eliminate variability from sample collection and extraction. Labs then analyzed the samples using both their own "home" protocols and the new harmonized SOP [13].

- Key Findings & Data Comparison: The exercise measured the coefficient of variation (CV) among labs as a key metric of reproducibility.

Table 2: Interlaboratory Variability in Oxidative Potential (DTT Assay) Measurements [13]

| Measurement Condition | Key Finding | Coefficient of Variation (CV) Among Labs | Implication for Standardization |

|---|---|---|---|

| Using Labs' "Home" Protocols | High variability | Extremely High CV | Results from different studies are not directly comparable, limiting the metric's regulatory utility. |

| Using Harmonized SOP | Variability significantly reduced | Substantially Lower CV | A common protocol dramatically improves interlab reproducibility. |

| Major Source of Variability | Instrumentation and analysis timing | Not quantified | Specifics of spectrophotometer type and exact reaction timing were key drivers of difference. |

- Implications for Standardization: This ILC provides a clear, quantitative argument for protocol harmonization. The study acts as a model for the Gold Standard tenets of collaboration and unbiased peer review, using a community-driven exercise to pressure-test and refine a method [14]. It proves that without a standardized SOP, OP data remains a research curiosity, not a robust tool for environmental regulation or health studies.

Comparison Guide: Duckweed Root Regrowth vs. Standard Frond Test

The common duckweed (Lemna minor) is a standardized test organism for phytotoxicity. A novel 72-hour root regrowth test was developed to offer a faster alternative to the standard 7-day frond growth test and was validated through an ILC with 10 international institutes [17].

- Experimental Protocol: In the new test, roots are excised from duckweed fronds at the start. Fronds are then exposed to the test substance (e.g., copper sulfate, wastewater) in 24-well plates for 72 hours, after which new root growth is measured. This was compared against the conventional ISO test measuring frond number increase over 7 days [17].

- Key Findings & Data Comparison: The ILC calculated repeatability (within-lab precision) and reproducibility (between-lab precision) metrics.

Table 3: Performance Comparison of Duckweed Toxicity Test Methods [17]

| Test Method & Endpoint | Duration | Sensitivity to 3,5-DCP | Repeatability (Within-Lab) | Reproducibility (Between-Lab) |

|---|---|---|---|---|

| Novel Root Regrowth Test (Root length) | 72 hours | Statistically identical to ISO method | 21.3% (CuSO₄) | 27.2% (CuSO₄) |

| 21.3% (Wastewater) | 18.6% (Wastewater) | |||

| Standard ISO Test (Frond number) | 7 days | Reference standard | Assumed within accepted levels (<30-40%) | Assumed within accepted levels (<30-40%) |

- Implications for Standardization: The root regrowth test demonstrates that innovation and standardization can coexist. The rigorous ILC validation, showing reproducibility well within the generally accepted threshold of <30-40%, provides the evidence base required for standards bodies (ISO, OECD, USEPA) to consider adopting this faster method [17]. This aligns with the Gold Standard principle of accepting negative results as positive outcomes, as the validation process required publishing precision data that confirmed the method's reliability [15].

Foundational Principles for Standardized Research

The comparative data underscores a clear need for systematic change. Successful standardization is built upon both overarching philosophical frameworks and practical, implementable best practices.

The "Gold Standard Science" Framework

The 2025 U.S. Executive Order provides a high-level framework for ensuring scientific integrity, directly relevant to method standardization [11] [14]. Its nine tenets are interdependent pillars.

Diagram 1: The pillars of Gold Standard Science [14] [15].

For ecotoxicology, this means:

- Reproducibility is achieved through detailed, publicly available Standard Operating Procedures (SOPs), like the one developed for the OP DTT assay [13].

- Transparency requires publishing full experimental data, including negative or ambiguous results from tests like the chronic LED study on fathead minnows [12] [15].

- Collaboration is exemplified by large-scale ILCs that bring together global experts to solve measurement problems [13] [17].

Best Practices from Data Science

Parallel trends in data governance offer practical strategies for implementing standardization in the lab [18].

- Define a Common Data Model (CDM): In science, this is a harmonized protocol (e.g., the RI-URBANS DTT SOP) that ensures all labs structure their data the same way [18] [13].

- Maintain a Centralized Data Dictionary: This translates to a living, curated repository of SOPs, controlled vocabulary for endpoints (e.g., "EC50"), and standardized units of measurement [18].

- Enforce Validation Rules at Source: Implement quality control (QC) checks during experimentation. This includes using reference toxicants (like sodium chloride in WET tests) to ensure organism health and instrument performance is within acceptable ranges before data is generated [16] [18].

- Continuous Monitoring & Improvement: Standardization is not static. As shown by the light source study, new technologies and scientific understanding require periodic re-validation and updating of standards through coordinated research [12] [18].

The Scientist's Toolkit: Essential Reagents for Standardized Ecotoxicity Testing

The following materials are fundamental to executing the standardized protocols discussed and ensuring data comparability.

Table 4: Key Research Reagent Solutions for Ecotoxicity Testing

| Reagent/Material | Function in Standardized Testing | Example from Studies |

|---|---|---|

| Reference Toxicant (e.g., Sodium Chloride, 3,5-Dichlorophenol) | Validates test organism health and laboratory performance. Serves as a quality control benchmark for interlaboratory comparison [16] [17]. | Used to compare lab light sources [16] and validate the duckweed root test [17]. |

| Standardized Test Organisms (e.g., C. dubia, D. magna, L. minor) | Provides a consistent, sensitive biological model with known response characteristics. Culturing must follow strict protocols to ensure genetic and physiological uniformity [16] [17]. | Cultured under specific light, temperature, and feeding regimes for WET and duckweed tests [12] [17]. |

| Dithiothreitol (DTT) | The key probe molecule in the acellular DTT assay. It acts as a surrogate for lung antioxidants to measure the oxidative potential of particulate matter [13]. | The central reagent in the 20-lab intercomparison to harmonize the OP assay protocol [13]. |

| Defined Culture Media & Food (e.g., Moderately Hard Synthetic Water, YCT, Algae) | Eliminates nutritional variability as a confounding factor. Ensures organisms are healthy and responsive solely to the tested toxicant [16]. | Precisely formulated diets and waters used for zooplankton culturing in light source studies [16]. |

| Leaching Solvents (e.g., 1mM CaCl₂, Deionized Water) | Standardizes the extraction of contaminants from solid waste for ecotoxicity testing, allowing for comparable leachate preparation across labs [19]. | Highlighted as a variable needing harmonization in waste leachate ecotoxicity reviews [19]. |

The path forward for reliable regulatory science is unequivocal. The comparative data presented here demonstrates that interlaboratory variability is not an inevitable artifact of biological testing but a manageable consequence of methodological inconsistency. The solution is a concerted, systemic commitment to the development, validation, and enforcement of standardized methods.

Future efforts must focus on:

- Targeted Harmonization: Prioritizing standardization for emerging metrics like Oxidative Potential, which show high health relevance but currently poor comparability [13].

- Dynamic Standards: Creating processes for the rapid validation and incorporation of improved methods (like the duckweed root test) into regulatory frameworks, balancing rigor with agility [17].

- Global Alignment: Addressing the inconsistent waste leaching methods and ecotoxicity criteria across the EU, U.S., and Asia to facilitate international waste management and chemical regulation [19].

- Infrastructure Investment: Supporting centralized repositories for SOPs, reference materials, and interlaboratory comparison programs that are accessible to academic, regulatory, and commercial labs alike.

By embedding the principles of Gold Standard Science—reproducibility, transparency, and collaboration—into the fabric of environmental and biomedical research, the scientific community can transform data from a point of controversy into a pillar of public trust and effective decision-making [11] [14]. The imperative for standardization is, fundamentally, an imperative for science that reliably serves society.

The transition to New Approach Methodologies (NAMs) in regulatory ecotoxicology demands rigorous validation to ensure data reliability[reference:0]. A cornerstone of this validation is the interlaboratory comparison, or ring trial, which assesses a method's robustness across different operators, equipment, and environments[reference:1]. At the heart of these assessments are quantitative metrics of precision: the Coefficient of Variation (CV), Repeatability (CVr), and Reproducibility (CVR). This guide elucidates these core concepts, provides a comparative analysis of their application in ecotoxicity testing, and outlines standard protocols for their determination, all framed within the critical context of ensuring reliable interlaboratory data.

Defining the Core Metrics

Precision metrics quantify the scatter or dispersion of measurement results. The following table summarizes the three key concepts.

Table 1: Core Precision Metrics in Interlaboratory Studies

| Metric | Definition | Key Condition | Formula (as %) | Primary Use |

|---|---|---|---|---|

| Coefficient of Variation (CV) | The ratio of the standard deviation to the mean, expressing relative dispersion. | Any set of repeated measurements. | ( CV = (s / \bar{x}) \times 100 ) | General gauge of method or laboratory imprecision. |

| Repeatability (CVr) | The coefficient of variation under repeatability conditions: same lab, same operator, same equipment, short time interval. | Within-laboratory variability[reference:2]. | ( CVr = (s_r / \bar{x}) \times 100 ) | Assesses the intrinsic precision (random error) of a method within a single lab. |

| Reproducibility (CVR) | The coefficient of variation under reproducibility conditions: different labs, operators, equipment. | Between-laboratory variability[reference:3]. | ( CVR = (s_R / \bar{x}) \times 100 ) | Assesses the method's robustness and transferability across labs. |

| Coefficient of Variation Ratio (CVR)* | A performance metric comparing a laboratory's CV to the consensus CV of a peer group. | Interlaboratory comparison programs[reference:4]. | ( CVR{lab} = CV{lab} / CV_{group} ) | Benchmarks a lab's imprecision against its peers (target = 1.0). |

Note: The acronym "CVR" is context-dependent. In ISO 5725, it denotes Reproducibility CV. In proficiency testing (e.g., Bio-Rad's Unity program), it denotes the Coefficient of Variation Ratio[reference:5].

Comparative Guide: Applying CVr and CVR in Ecotoxicity Test Evaluation

The practical value of CVr and CVR lies in comparing the performance of different test methods, kits, or laboratories. The following table synthesizes data from published interlaboratory studies to benchmark typical precision expectations in ecotoxicity testing.

Table 2: Comparative Precision Performance in Ecotoxicity Assays

| Test Method / Analyte | Mean CVr (Repeatability) | Mean CVR (Reproducibility) | Study Context & Key Findings |

|---|---|---|---|

| Daphnia magna acute immobilization (Reference toxicant: K₂Cr₂O₇) | 5‑10% | 15‑25% | Classic assay shows good within-lab consistency but moderate between-lab variability, highlighting the need for strict SOP adherence. |

| Spirodela duckweed growth inhibition | 8‑12% | 20‑30% | Interlaboratory comparisons reveal CVR is highly dependent on endpoint measurement technique (frond count vs. image analysis)[reference:6]. |

| Quantification of Trifluoroacetic Acid (TFA) in water | <10% (CVr) | ~15% (CVR) | A 2024 interlaboratory study of 12 labs demonstrated that standardized ISO 5725-2 protocols yield excellent reproducibility for emerging contaminants[reference:7]. |

| Microtox bacterial bioluminescence inhibition | 6‑9% | 10‑18% | Commercial kit-based tests generally exhibit lower CVR due to supplied standardized reagents and protocols. |

| Fish embryo toxicity (FET) test (e.g., Zebrafish) | 10‑15% | 25‑40% | Higher variability reflects complexities in biological model handling and endpoint scoring (mortality, malformation). |

Key Insight: Commercial, kit-based tests (e.g., Microtox) often achieve lower CVR values than complex whole-organism assays (e.g., FET), underscoring a trade-off between standardization and biological relevance. A CVR consistently below 20-25% is generally considered acceptable for most regulatory ecotoxicity tests, while values above 30% indicate a need for method refinement or enhanced training.

Experimental Protocols: Determining CVr and CVR

The following protocols are based on the international standard ISO 5725-2:2019, which provides the definitive framework for estimating repeatability and reproducibility standard deviations (sᵣ and sᵣ)[reference:8].

Protocol 1: Basic Repeatability (CVr) Study

- Objective: Estimate the within-laboratory precision of a method.

- Design: A single laboratory performs n replicate analyses (typically n ≥ 6) on a homogeneous test sample (e.g., a reference toxicant solution) under repeatability conditions (same analyst, same instrument, same day, without recalibration).

- Calculation:

- Calculate the mean ((\bar{x})) and standard deviation (s) of the n results.

- The repeatability standard deviation is (sr = s).

- (CVr = (sr / \bar{x}) \times 100).

- Reporting: Report CVr alongside the mean concentration and number of replicates.

Protocol 2: Interlaboratory Reproducibility (CVR) Study (Ring Trial)

- Objective: Estimate the method's precision across multiple laboratories.

- Design:

- Organization: A coordinating body selects p participating laboratories (typically p ≥ 8) and prepares identical, homogeneous test samples.

- Testing: Each lab receives q test samples (q ≥ 2) and analyzes them according to a common, detailed SOP. Labs report their results for each sample.

- Data Analysis (ISO 5725-2):

- For each lab and sample, check for outliers using Cochran's and Grubbs' tests.

- Calculate the cell mean for each lab-sample combination.

- Perform a nested analysis of variance (ANOVA) to partition variance into:

- Within-lab variance ((sr^2)): Estimate of repeatability.

- Between-lab variance ((sL^2)): Variance of laboratory means.

- Calculate reproducibility standard deviation: (sR = \sqrt{sr^2 + sL^2}).

- Calculate (CVR = (sR / \bar{x}) \times 100), where (\bar{x}) is the grand mean of all valid results.

- Reporting: The final report should present sᵣ, sᵣ, CVr, CVR, and the number of labs and replicates, providing a complete picture of method precision.

Visualizing the Concepts and Workflow

Diagram 1: Components of Measurement Precision

This diagram decomposes total measurement variability into its core components, as defined by ISO 5725.

Diagram 2: Interlaboratory Comparison (Ring Trial) Workflow

This flowchart outlines the standardized steps for conducting a ring trial to estimate CVR.

The Scientist's Toolkit: Essential Reagents for Ecotoxicity Precision Studies

Table 3: Key Research Reagent Solutions for Interlaboratory Ecotoxicity Tests

| Item | Function in Precision Studies | Example / Specification |

|---|---|---|

| Reference Toxicant | Serves as a positive control and benchmark material to calculate CVr/CVR across labs. Must be stable, pure, and yield a consistent response. | Potassium dichromate (K₂Cr₂O₇) for Daphnia; Sodium dodecyl sulfate (SDS) for fish cells. |

| Standardized Culture Media | Provides a uniform, defined environment for test organisms, minimizing variability in growth and health that could affect endpoint measurements. | ISO or OECD reconstituted water for algae/daphnia; specific cell culture media for in vitro assays. |

| Certified Reference Material (CRM) | A material with a certified property value (e.g., concentration) used to validate analytical accuracy and calibrate instruments, supporting trueness assessments. | CRM for heavy metals in water or sediment. |

| Quality Control (QC) Sample | A stable, internally prepared sample with a known expected range. Used in daily repeatability checks (Levey-Jennings charts) to monitor ongoing lab performance. | A mid-range concentration of the reference toxicant aliquoted and stored frozen. |

| Enzyme/Substrate for Kit-Based Assays | Standardized components in commercial kits (e.g., Microtox, ToxTrak) that reduce protocol variability, leading to lower CVR values. | Lyophilized luminescent bacteria and reconstitution solution. |

In the framework of interlaboratory comparison for ecotoxicity tests, CVr and CVR are not merely abstract statistics but critical indicators of a method's reliability and readiness for regulatory application. A low CVr demonstrates that a method can be executed consistently within a lab, while an acceptable CVR proves it can be transferred successfully between labs—a fundamental requirement under principles like the OECD's Mutual Acceptance of Data (MAD)[reference:9]. By rigorously applying the protocols of ISO 5725 and benchmarking performance against typical values for their assay type, researchers can quantitatively strengthen the credibility of ecotoxicity data, thereby supporting more robust and reproducible chemical safety decisions.

Within the field of ecotoxicology, interlaboratory comparisons (ILCs) serve as the cornerstone for establishing the reliability, precision, and reproducibility of toxicity test methods. These exercises are mandated and shaped by a complex ecosystem of international and national regulatory and standards-setting organizations. The harmonization of test protocols across laboratories is not merely an academic exercise but a regulatory necessity for chemical registration, environmental monitoring, and safety assessment worldwide [20]. The frameworks established by the International Organization for Standardization (ISO), the Organisation for Economic Co-operation and Development (OECD), the American Society for Testing and Materials (ASTM), and the United States Environmental Protection Agency (USEPA) provide the authoritative structure within which ILCs are designed, validated, and implemented. This guide objectively compares the roles and influences of these key bodies in mandating ILCs, supported by experimental data from validation studies, to provide researchers and regulatory professionals with a clear understanding of the current ecotoxicological testing landscape.

Comparative Analysis of Regulatory and Standards Frameworks

The four primary organizations differ in their geographic scope, regulatory authority, and the nature of the documents they produce. Their collective work ensures that ecotoxicity data generated in one laboratory can be trusted and used by regulators and scientists globally.

Table 1: Core Characteristics of Key Standard-Setting Organizations

| Organization | Primary Role & Scope | Nature of Documents | Key Authority/Influence | Example Ecotoxicity Test Methods |

|---|---|---|---|---|

| ISO | International, non-governmental standards body. Develops consensus-based standards for various industries, including water quality and ecotoxicology [17]. | International Standards (IS). Provide detailed, globally harmonized test protocols and precision data (e.g., acceptable CV% for ILCs) [21]. | Global market acceptance; referenced in EU and other regional regulations. | ISO 20079 (Lemna growth inhibition), ISO 6341 (Daphnia acute immobilization). |

| OECD | International intergovernmental economic organization. Develops guidelines for chemical safety testing to support mutual acceptance of data (MAD) among member countries. | Test Guidelines (TG). Define agreed-upon methods for safety testing of chemicals and chemical products. Focus on hazard assessment [17]. | Regulatory requirement for chemical registration in ~40 OECD member and partner countries. | OECD TG 201 (Freshwater Alga Growth Inhibition), OECD TG 202 (Daphnia sp. Acute Immobilization). |

| ASTM | International non-profit standards organization. Develops technical standards for materials, products, systems, and services, including environmental assessment. | Standard Test Methods, Practices, and Guides. Often very detailed and prescriptive; widely used in North America and internationally [22]. | Recognized by US regulators and industry; often cited in USEPA permits and regulations. | ASTM E1218 (Daphnia Life-Cycle Test), ASTM E1913 (Bioaccumulation in Terrestrial Oligochaetes). |

| USEPA | United States federal government agency. Mandated to protect human health and the environment. Develops and enforces regulations. | Regulatory Test Methods & Guidelines. Can be legally binding (e.g., for compliance monitoring under the Clean Water Act) [23]. Categories range from promulgated (Category A) to informational (Category C) [23]. | Legal authority in the United States. Methods are mandatory for compliance testing under specific US regulations. | EPA Method 1002.0 (Green Alga, Selenastrum capricornutum, Growth Test), OPPTS 850.4400 (Aquatic Plant Toxicity Test). |

A critical interaction exists between these organizations, particularly regarding method updates. For instance, while ASTM and ISO frequently update their test methods, the USEPA maintains that its approvals are specific to a given method version. If a laboratory wishes to use a revised ASTM or ISO standard, it must seek new formal approval from the EPA, unless the revision is deemed inconsequential to accuracy and precision [24].

Performance Comparison: ILC Outcomes Under Different Frameworks

The ultimate measure of a standardized method's utility is its performance in interlaboratory validation studies. These studies quantify the repeatability (within-lab variance) and reproducibility (between-lab variance) of a test protocol. The following table compiles key performance metrics from recent ILCs conducted under the auspices of these frameworks.

Table 2: Performance Metrics from Selected Ecotoxicity ILCs

| Test Organism & Endpoint | Standard Framework | Reference Toxicant | Key Performance Metric (Coefficient of Variation - CV%) | Study Outcome & Citation |

|---|---|---|---|---|

| Marine Copepod (Tigriopus fulvus) - Acute Mortality (LC50) | ISO | Copper | CV = 6.32% (24h), 6.56% (48h), 35.3% (96h) | The method was validated as simple and precise, with CVs for 24h and 48h well within ISO precision expectations. The higher 96h CV suggests greater technical challenge for longer exposure [21]. |

| Duckweed (Lemna minor) - Root Regrowth Inhibition | Novel protocol (aligned with ISO/OECD principles) | Copper Sulfate (CuSO₄) | Reproducibility = 27.2% (CuSO₄), 18.6% (Wastewater) | The 72-hour root regrowth test demonstrated reproducibility within the generally accepted threshold of <30-40%, validating it as a reliable rapid screening tool [17]. |

| Bioluminescence Bacteria (Vibrio fischeri) - Luminescence Inhibition | ISO/DIN | Various | Review of multiple ILCs indicated it is the most developed and best-implemented group of rapid toxicity tests. | Despite widespread use, the article notes that literature reporting final ILC results for even this common test is "very rare," highlighting a gap in published validation data [20]. |

| Reliability Assessment of Ecotoxicity Data | Based on USEPA, OECD, ASTM [25] | N/A (Method Evaluation) | Matches 22/37 OECD evaluation criteria (Durda & Preziosi method) | A comparison of four reliability evaluation methods ranked one based on USEPA/OECD/ASTM standards as covering the highest number of OECD criteria, indicating its comprehensiveness [25]. |

The data show that well-designed ILCs for established and novel methods can achieve high inter-laboratory reproducibility (CVs often <30%). The framework (ISO, OECD) provides the benchmark for acceptable precision, while the actual ILC study generates the performance data that validates the method for regulatory or scientific use.

Detailed Experimental Protocols for ILCs

The reliability of the data in Table 2 is rooted in stringent, standardized experimental protocols. Below are detailed methodologies for two cited ILCs that exemplify different testing approaches.

Protocol 1: Acute Toxicity Test with the Marine Copepod Tigriopus fulvus (ISO Framework) [21]

- Test Organism Preparation: Cultures of T. fulvus are maintained in synthetic seawater. For the test, synchronized nauplii (larvae <24 hours old) are selected to ensure population uniformity.

- Reference Toxicant Exposure: A copper solution (e.g., CuCl₂) is prepared in a geometric series of concentrations. Ten nauplii are exposed to each concentration and a control in static conditions.

- Test Conditions: Temperature, salinity, and photoperiod are strictly controlled as per the ISO-standardized protocol. The endpoint is immobility/mortality after 24, 48, and 96 hours of exposure.

- Statistical Analysis: The LC50 (concentration lethal to 50% of organisms) is calculated for each laboratory using probit analysis. The assigned value (e.g., the robust mean or median of all laboratory LC50s) is determined.

- Performance Assessment: Each laboratory's z-score is calculated:

z = (laboratory LC50 - assigned value) / standard deviation. A |z| ≤ 2 is typically considered satisfactory. The coefficient of variation (CV%) across all laboratories is calculated to assess overall method precision [21].

Protocol 2: Lemna minor Root Regrowth Test (Novel Rapid Method) [17]

- Plant Pre-treatment: Healthy Lemna minor fronds are selected from axenic cultures. Immediately before testing, roots are excised using a sterile scalpel.

- Microplate Setup: A single 2-3 frond colony is placed in each well of a 24-well cell culture plate containing 3 mL of test solution (toxicant or control).

- Exposure: Plates are incubated under controlled light (100 μmol m⁻² s⁻¹) and temperature (25°C) for 72 hours.

- Endpoint Measurement: After incubation, the length of the newly regrown roots is measured for each frond using a digital microscope or calibrated ocular.

- Data Analysis: Percent inhibition of root growth relative to the control is calculated for each concentration. An IC50 is derived. For the ILC, repeatability (within-lab variance) and reproducibility (between-lab variance) are calculated using analysis of variance (ANOVA) for data from all participating laboratories [17].

The Scientist's Toolkit: Essential Reagents and Materials

Conducting standardized ecotoxicity tests requires specific, high-quality materials. The following table details key research reagent solutions and essential items for the protocols discussed.

Table 3: Essential Research Reagents and Materials for Ecotoxicity ILCs

| Item Name | Function & Description | Critical Quality Attributes |

|---|---|---|

| Reference Toxicant (e.g., CuCl₂, CuSO₄, 3,5-Dichlorophenol) | A standardized toxic chemical used to assess the sensitivity and consistent performance of the test organisms and laboratory procedures over time [21] [17]. | High purity (≥98%), traceable certification, stable under storage conditions. |

| Synthetic Test Medium (e.g., ISO/EPA Algal Medium, Reconstituted Fresh/Salt Water) | Provides essential nutrients and maintains water chemistry (hardness, pH) for the test organism without introducing toxic contaminants. | Consistent formulation, prepared with high-purity water (e.g., Milli-Q), chelated metals to prevent precipitation. |

| Axenic Biological Cultures (Lemna minor, Tigriopus fulvus, Daphnia magna) | Provides a uniform, healthy, and contaminant-free population of test organisms to ensure sensitivity and reduce background variability. | Species/straind, age-synchronized, free from disease and parasites, maintained under standardized conditions. |

| 24-Well Cell Culture Plates (for Lemna root test) | Provides a sterile, multi-chamber vessel for high-throughput, small-volume toxicity testing with minimal test solution requirement [17]. | Tissue-culture treated, sterile, polystyrene, with flat, clear bottoms for microscopy. |

| Sorbent Tubes/Canisters (e.g., for VOC analysis per ASTM/ISO) [22] | Used in sampling and preparing environmental samples (air, water) for chemical analysis alongside toxicity testing, as per methods like ASTM D6196 or ISO 16017. | Certified clean, specific sorbent material (e.g., Tenax TA), sealed to prevent pre-sampling contamination. |

Regulatory Framework and ILC Validation Workflow

The pathway from test method development to regulatory acceptance is structured and iterative. The following diagram illustrates the typical workflow for validating a test method through ILCs within the existing regulatory framework.

Interaction of Regulatory Bodies in Shaping Ecotoxicity Testing

The landscape of ecotoxicity testing is shaped by the dynamic interactions between standard developers, validators, and regulators. The following diagram maps these key relationships and their influence on the practice of ILCs.

In ecotoxicology, the comparability of data across different laboratories is fundamental for regulatory decision-making, chemical safety assessment, and environmental protection. Interlaboratory comparison (ILC) studies serve as critical tools for validating test methods, identifying sources of variability, and ensuring that toxicity endpoints—the measurable indicators of adverse effects—are reliable and reproducible [12] [13]. The choice of endpoint, ranging from acute lethality to subtle sublethal impairments in growth or reproduction, directly influences the sensitivity, ecological relevance, and interpretative power of a test. Framed within broader research on harmonizing ecotoxicity test results, this guide objectively compares the performance of common endpoints used in ILCs. It synthesizes current experimental data to illustrate how endpoint selection, alongside factors like test organism and protocol, shapes the outcome and reliability of toxicity assessments.

Defining and Comparing Core Ecotoxicity Endpoints

Toxicity endpoints are quantitative descriptors that link a specific effect to a dose or concentration of a chemical. Their values are statistically derived from dose-response experiments and form the basis for hazard classification and environmental risk assessment [26] [27].

Table 1: Definitions and Applications of Common Ecotoxicity Dose Descriptors

| Dose Descriptor | Full Name | Definition | Typical Application & Notes |

|---|---|---|---|

| LC50 | Lethal Concentration 50 | The concentration of a chemical in water or air that causes death in 50% of a test population over a specified time (e.g., 96 hours) [26] [27]. | Acute toxicity testing for hazard classification. A lower LC50 indicates higher acute toxicity. |

| LD50 | Lethal Dose 50 | The administered dose (e.g., mg per kg body weight) that causes death in 50% of a test population [26] [27]. | Used for oral, dermal, or injection routes of exposure in mammalian toxicology. |

| EC50 | Effective Concentration 50 | The concentration that causes a specified non-lethal effect (e.g., immobilization, growth inhibition) in 50% of the test population [27]. | Used for both acute (e.g., daphnid immobilization) and chronic sublethal endpoints (e.g., algal growth rate). |

| NOEC/NOAEL | No Observed Effect Concentration / No Observed Adverse Effect Level | The highest tested concentration at which there are no statistically significant or biologically adverse effects compared to the control [27]. | Used in chronic studies to establish a toxicity threshold for risk assessment. |

| LOAEL | Lowest Observed Adverse Effect Level | The lowest tested concentration at which statistically significant or biologically adverse effects are observed [27]. | Identified when a NOAEL cannot be determined. |

The fundamental relationship between these descriptors on a dose-response curve progresses from no effect (NOEC) to the lowest observable effect (LOAEL), to effective concentrations (EC50), and finally to lethal concentrations (LC50) [27].

Interlaboratory Variability: Factors Influencing Endpoint Reliability

A core finding from recent ILCs is that endpoint reliability is highly dependent on standardized protocols. Key sources of interlaboratory variability include:

- Test Organism Culturing Conditions: A 2025 study demonstrated that switching from traditional fluorescent lights to Light Emitting Diodes (LEDs) for culturing Whole Effluent Toxicity (WET) test organisms did not significantly affect most acute and chronic endpoints for Ceriodaphnia dubia and Daphnia pulex. However, it did cause inconsistencies for chronic tests with the fathead minnow (Pimephales promelas) and potentially for Daphnia magna, highlighting species- and endpoint-specific sensitivities to environmental conditions [12] [16].

- Temporal and Laboratory Effects: The same study found significant "time of year" differences in test results, and the direction of these seasonal effects was not consistent between participating laboratories. This underscores that uncontrolled environmental or operational factors can introduce noise that complicates the comparison of endpoints like LC50 or reproductive output across labs [16].

- Protocol Harmonization: A major ILC on oxidative potential (OP) measurements in aerosols concluded that differences in experimental procedures, equipment, and techniques led to significant variability in results. The development and use of a simplified, harmonized protocol substantially improved inter-laboratory agreement [13]. This principle is directly applicable to aquatic ecotoxicity, where standardized guidelines (OECD, EPA, ISO) are designed to minimize such variability for core endpoints.

Comparative Analysis of Endpoint Performance in Recent ILCs

Case Study: Traditional Acute vs. Alternative Chronic Endpoints in Marine Testing

A 2025 study directly compared the sensitivity of traditional fish larval tests with alternative methods using fish embryos and mysid shrimp for two contaminants: nickel (Ni) and phenanthrene (Phe) [28].

Table 2: Sensitivity Comparison of Test Methods and Endpoints for Nickel and Phenanthrene [28]

| Test Method (Organism) | Primary Endpoint | Relative Sensitivity (Ni) | Relative Sensitivity (Phe) | Key Finding |

|---|---|---|---|---|

| Mysid Survival & Growth (Americamysis bahia) | Acute mortality, Chronic growth | Most Sensitive | Most Sensitive | More sensitive than fish larval tests for acute toxicity; comparable or greater sensitivity for chronic toxicity. |

| Fish Larval Growth & Survival - LGS (Menidia beryllina) | Larval survival, Growth | More sensitive | More sensitive | The more sensitive of the two standardized fish tests for both chemicals. |

| Fish Larval Growth & Survival - LGS (Cyprinodon variegatus) | Larval survival, Growth | Less sensitive | Less sensitive | The less sensitive of the two standardized fish tests. |

| Fish Embryo Toxicity - FET (Menidia beryllina) | Embryo mortality, Hatchability, Edema | Less sensitive | Less sensitive | Less sensitive than the most sensitive fish LGS test. However, adding sublethal endpoints (pericardial edema, hatchability) increased overall test sensitivity. |

| Fish Embryo Toxicity - FET (Cyprinodon variegatus) | Embryo mortality, Hatchability, Edema | Least sensitive | Least sensitive | Less sensitive than the most sensitive fish LGS test. |

Conclusion: The mysid test, which incorporates both lethal and sublethal (growth) endpoints, consistently showed the highest sensitivity. Importantly, for the fish embryo tests (a proposed alternative to reduce vertebrate use), the inclusion of sublethal morphological endpoints enhanced their predictive capability, bridging the sensitivity gap with traditional tests [28].

Case Study: Sublethal Endpoint Sensitivity in a Model Invertebrate

Research on the nematode Caenorhabditis elegans assessed the sensitivity of four sublethal endpoints to heavy metals (Pb, Cu, Cd) over different exposure durations [29].

Table 3: Comparison of Sublethal Endpoint Sensitivity in C. elegans [29]

| Endpoint | Exposure Duration | Key Finding on Sensitivity | Implication for ILCs |

|---|---|---|---|

| Movement | 24-hour | No significant difference in sensitivity compared to feeding, growth, or reproduction EC50s for Pb, Cu, or Cd. | At standard test durations, multiple sublethal endpoints may show similar reliability for ranking metal toxicity. |

| Feeding | 24-hour | No significant difference in sensitivity compared to other endpoints. | |

| Growth | 24-hour | No significant difference in sensitivity compared to other endpoints. | |

| Reproduction | 72-hour | No significant difference in sensitivity compared to 24-hr lethal/movement EC50s. | |

| Movement vs. Feeding | 4-hour (at high concentrations) | Movement was reduced significantly more by Pb than by Cu, while feeding was reduced equally. | At shorter, high-concentration exposures, different endpoints can reveal distinct mechanisms of toxicity. This highlights that variability in exposure design in ILCs can affect endpoint comparison. |

Conclusion: While different sublethal endpoints may show comparable sensitivity in standardized tests, deviations in protocol (e.g., exposure time) can alter their relative performance, potentially revealing different toxic mechanisms [29].

Case Study: Validation of a Rapid Sublethal Phytotoxicity Endpoint

An interlaboratory validation of a 72-hour Lemna minor (duckweed) root regrowth test demonstrated its reliability as a rapid alternative to the standard 7-day frond growth test. Ten laboratories achieved a reproducibility (between-lab consistency) of 27.2% for CuSO₄ and 18.6% for wastewater testing, which is within accepted validity criteria (<30-40%) [17].

Conclusion: This study successfully validated a rapid sublethal endpoint (root growth) through a formal ILC, proving it can be standardized and is suitable for rapid toxicity screening, thereby expanding the toolkit for efficient and reliable ecotoxicological assessment [17].

Practical Guidance: Protocols, Visualization, and Toolkit

Experimental Protocol for a Standard Acute vs. Chronic Endpoint Comparison

The following workflow, based on the marine toxicity comparison study [28], outlines key steps for comparing traditional and alternative test methods.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for Ecotoxicity ILCs

| Item Name | Function in Ecotoxicity Testing | Example Use Case & Citation |

|---|---|---|

| Reference Toxicant (e.g., NaCl, KCl) | A standard chemical used to assess the health and consistent sensitivity of test organism cultures over time and across labs. | Used to monitor performance of Ceriodaphnia dubia, Daphnia spp., and Pimephales promelas cultures under different light types [12] [16]. |

| Synthetic Freshwater/Saltwater Media | Provides a consistent, uncontaminated water matrix for culturing organisms and conducting tests, eliminating variability from natural water sources. | Moderately hard synthetic water was used for culturing daphnids [16]; synthetic saltwater at 22 ppt for marine species [28]. |

| Algal Food (Raphidocelis subcapitata) | A standardized, nutritious food source for filter-feeding invertebrate test organisms (e.g., cladocerans). | Fed daily to C. dubia, D. magna, and D. pulex in culturing and chronic tests [16]. |

| Yeast-Cerophyl-Trout Chow (YCT) | A supplemental, nutritious food suspension for invertebrate test organisms. | Combined with algae as a daily diet for daphnid cultures and tests [16]. |

| Artemia nauplii (Brine Shrimp) | Live food for carnivorous/omnivorous test organisms in culture. | Fed to mysid shrimp (Americamysis bahia) broodstock and juveniles [28]. |

| Chemical-Specific Stock Solutions | High-purity, accurately prepared solutions of the test chemicals for spiking exposure chambers. | Used to create precise concentration series for Ni, phenanthrene, and 3,5-dichlorophenol testing [28] [17]. |

| Dithiothreitol (DTT) | A biochemical probe used in acellular assays to measure the oxidative potential (OP) of particles, a sublethal toxicity pathway. | The key reagent in the DTT assay harmonized across 20 labs in an OP ILC [13]. |

Synthesis and Future Perspectives in Endpoint Selection for ILCs

The integration of data from recent studies reveals a clear trajectory in ecotoxicity testing within an ILC framework. There is a discernible shift from relying solely on apical acute endpoints (LC50) toward incorporating more sensitive and mechanistically informative sublethal measures. The case for this shift is strong: mysid growth was more sensitive than fish mortality [28], fish embryo deformity enhanced test sensitivity [28], and rapid sublethal plant endpoints were successfully validated [17].

Future work will focus on further harmonizing protocols for these next-generation endpoints to reduce interlaboratory variability, as demonstrated in oxidative potential testing [13]. Furthermore, the development of computational toxicology tools, such as Quantitative Structure-Activity Relationship (QSAR) models, aims to predict chronic endpoints like the fish early life stage (FELS) NOEC, potentially reducing vertebrate testing. However, their current applicability is limited and requires further validation against reliable experimental ILC data [30]. Ultimately, a robust ecotoxicity assessment strategy will employ a weight-of-evidence approach, leveraging data from standardized acute lethality tests and increasingly sensitive, standardized sublethal endpoints, all strengthened by rigorous interlaboratory comparison studies.

Designing and Executing Rigorous Interlaboratory Comparison Studies

Within the context of advancing research on interlaboratory comparison of ecotoxicity test results, the execution of a well-designed Interlaboratory Comparison (ILC) study is a cornerstone for ensuring data reliability and regulatory acceptance. ILCs are essential for validating new test methods, identifying sources of inter-laboratory variability, and building confidence in data used for environmental risk assessments [17] [13]. As regulatory needs evolve and new approach methodologies (NAMs) emerge, the demand for robust, reproducible ecotoxicity data is greater than ever [31] [32]. A successful ILC harmonizes practices across diverse laboratories, transforming isolated data points into a cohesive, reliable evidence base for scientific and regulatory decision-making.

Phase 1: Strategic Planning and Objective Setting

The foundation of any successful ILC is meticulous planning with clearly defined objectives. This phase determines the study's scope, endpoints, and logistical framework.

- Define Clear Objectives: Objectives must be specific and measurable. Examples include validating a novel rapid bioassay (e.g., the 72-hour Lemna minor root regrowth test) [17], assessing the impact of a procedural variable (e.g., LED vs. fluorescent lighting) [16], or harmonizing measurements for a complex health-relevant metric (e.g., the oxidative potential (OP) of aerosols) [13].

- Select the Test System and Endpoint: The choice depends on the objective. For method validation, sensitivity and reproducibility are key. The Lemna root regrowth test, for instance, demonstrated reproducibility (21.3-27.2%) within accepted limits (<30-40%) for copper sulfate [17]. For harmonization studies, a widely used but variable assay, like the dithiothreitol (DTT) assay for OP, is a suitable candidate [13].

- Develop a Robust Protocol: A detailed, unambiguous Standard Operating Procedure (SOP) is critical. The SOP must cover all aspects, from sample preparation and test organism handling to endpoint measurement and data reporting. For complex assays, a "core group" of experienced laboratories can develop a simplified, harmonized protocol to serve as the ILC benchmark [13].

Phase 2: Participant Recruitment and Laboratory Qualification

The quality of participants directly impacts the ILC's credibility. Recruitment should be targeted and criteria-based.

- Targeted Recruitment: Identify laboratories through professional networks, published literature, and regulatory bodies. For ecotoxicity, engaging labs affiliated with organizations like the International Organization for Standardization (ISO) or the U.S. Environmental Protection Agency (USEPA) ensures familiarity with standardized practices [31] [17].

- Establish Qualification Criteria: Laboratories should demonstrate relevant expertise. Key criteria include:

- Accreditation (e.g., ISO/IEC 17025).

- Proven experience with the specific test organism or method.

- Availability of necessary equipment and controlled culturing facilities, as organism health is paramount [16].

- Manage Commitments: Clearly communicate the expected timeline, costs, and data submission requirements to secure serious commitment.

Phase 3: Sample Preparation and Distribution

Consistency in test materials is non-negotiable for isolating laboratory performance from sample variability.

- Centralized Production: A single, central facility should prepare the test samples. This includes synthesizing or sourcing the reference toxicant (e.g., sodium chloride, 3,5-dichlorophenol, copper sulfate) [16] [17], culturing organisms under standardized conditions, and preparing any required reagents [13].

- Blind Coding and Randomization: Samples should be blind-coded and their order randomized for each participant to prevent measurement bias.

- Stable and Traceable Shipment: Samples must be shipped using a reliable, timely carrier with appropriate environmental controls (e.g., temperature) to ensure stability. Packaging should include inert materials and detailed handling instructions.

Phase 4: Data Analysis, Reporting, and Harmonization

This phase transforms raw data into actionable insights on method performance and laboratory proficiency.

- Standardized Data Submission: Use predefined templates to collect data (e.g., raw absorbance values, calculated LC50/EC50, positive control results) alongside critical metadata (e.g., equipment model, reagent lots, deviations) [13].

- Statistical Analysis for Performance Assessment:

- Calculate Summary Statistics: Determine the consensus value (e.g., robust mean or median) and measures of dispersion (standard deviation, robust standard deviation).

- Assist with Participant Evaluation: Use z-scores or similar metrics to compare each lab's result to the consensus value. The table below summarizes statistical outcomes from recent ILCs.

Table 1: Performance Metrics from Recent Ecotoxicology ILC Studies

| Test System / Focus | Reference Material | Key Performance Metric | Reported Value | Implication |

|---|---|---|---|---|

| Lemna minor Root Regrowth [17] | Copper Sulfate (CuSO₄) | Reproducibility (Among Labs) | 27.2% | Within accepted limits (<30-40%), confirming method reliability. |

| Lemna minor Root Regrowth [17] | Wastewater | Reproducibility (Among Labs) | 18.6% | Method shows high consistency even with complex environmental samples. |

| Oxidative Potential (DTT assay) [13] | Liquid Quinone Standard | Variability (CV) of Results | >50% (Home Protocols) | Highlights significant pre-harmonization variability across labs. |

| Whole Effluent Toxicity [16] | Sodium Chloride (NaCl) | Seasonality Effect | Inconsistencies Found | Underscores need to control for temporal factors in ILC design. |

- Generate the Final Report: The report should present the consensus results, statistical analysis of laboratory performance, and sources of identified variability (e.g., instrument type, reagent source) [13]. It forms the basis for methodological refinements and recommendations.

The following diagram illustrates the complete management workflow of a successful ILC, integrating all four phases from planning to final reporting.

Experimental Protocols from Featured ILCs

1. Protocol for the Lemna minor Root Regrowth Test ILC [17]

- Test Organism: Lemna minor (common duckweed) from axenic cultures.

- Pre-test Preparation: Roots are excised from 2-3 frond colonies under a stereomicroscope prior to exposure.

- Exposure: Colonies are placed in 24-well cell plates, each well containing 3 mL of test solution (e.g., CuSO₄ in standard nutrient medium or wastewater).

- Incubation: Plates are incubated at 25°C under continuous illumination (100 μmol m⁻² s⁻¹) for 72 hours.

- Endpoint Measurement: After incubation, the length of the newly regrown roots is measured for each frond.

- Data Analysis: Percent inhibition of root growth relative to controls is calculated. The ILC validated the method's reproducibility (21.3-27.2% for CuSO₄).

2. Protocol for the Oxidative Potential (DTT) Assay Harmonization ILC [13]

- Principle: Measures the rate of oxidation of dithiothreitol (DTT) to its disulfide form by redox-active components in particulate matter (PM) extracts.

- Sample Preparation: Participants received identical liquid samples of a stable quinone solution to bypass variability from PM extraction.

- Reaction: An aliquot of sample is mixed with DTT in a phosphate buffer (pH 7.4) and incubated at 37°C.

- Measurement: At timed intervals, a trichloroacetic acid aliquot is removed and mixed with 5,5'-dithio-bis(2-nitrobenzoic acid) (DTNB) to form a yellow product. The absorbance is read at 412 nm.

- Data Reporting: Labs reported the DTT consumption rate (nmol DTT min⁻¹). The ILC identified instrument type and protocol details as major variability sources.

The detailed workflow for the acellular DTT assay, as implemented in a harmonization ILC, is shown below.

The Scientist's Toolkit: Essential Reagents and Materials for Ecotoxicity ILCs

The following table details key reagents and materials crucial for executing standardized tests in an ILC context, drawing from the featured studies.

Table 2: Essential Research Reagent Solutions for Ecotoxicity ILCs

| Category/Item | Function in ILCs | Example from Featured Studies | Critical for ILC Consistency |

|---|---|---|---|

| Reference Toxicants | Standardized positive controls to assess organism health and lab performance. | Sodium chloride (NaCl) for WET tests [16]; Copper sulfate (CuSO₄) for duckweed tests [17]. | Provides a benchmark for comparing results across all participating labs. |

| Culture Media & Food | Sustains test organisms before and during assay; variability affects sensitivity. | Moderately hard synthetic water, Algae (Raphidocelis subcapitata), Yeast-Cerophyl-Trout Chow (YCT) for zooplankton [16]. | Must be identical or strictly standardized to eliminate nutritional confounders. |

| Redox/Antioxidant Probes | Core reagents in acellular assays measuring oxidative stress potential. | Dithiothreitol (DTT) and DTNB in the OP-DTT assay [13]. | Purity, concentration, and source of these reagents are major identified sources of inter-lab variability. |

| Standardized Test Organisms | The biological sensor; genetic and health status directly impact results. | Clone-cultured organisms: Ceriodaphnia dubia, Lemna minor clones [16] [17]. | Centralized culture supply or strict criteria for lab cultures are essential to minimize biological variability. |

| Environmental Control Systems | Maintains precise physical conditions for organisms or reactions. | Incubators with controlled lighting (LED vs. fluorescent study) [16]; Temperature-controlled water baths (37°C for DTT assay) [13]. | Calibration and monitoring data for these systems are critical metadata for explaining result variability. |

A meticulously executed ILC, following the blueprint from planning through sample distribution to analysis, is an indispensable tool for advancing reliable ecotoxicology. It moves research from generating isolated data points to establishing robust, community-verified methods. As the field increasingly adopts rapid bioassays and complex mechanistic endpoints, the role of ILCs in validating and harmonizing these approaches becomes ever more critical [31] [32]. The ultimate goal is to produce data that seamlessly supports high-quality research, informed regulatory decisions, and effective environmental protection.

Biological and Regulatory Foundations for Model Selection

The selection of organisms for ecotoxicity testing is governed by a combination of biological principles and regulatory requirements. Biologically, the animal kingdom is divided into vertebrates, which possess a backbone and complex organ systems, and invertebrates, which lack a backbone and often have simpler, though highly adaptable, biological structures [33] [34]. Invertebrates constitute approximately 97% of all animal species and play critical roles in ecosystems, such as pollination and nutrient cycling [33]. Vertebrates, while fewer in number, are often used as models for higher-order biological effects due to their complex internal systems, including closed circulatory systems and advanced nervous systems [33] [34].

From a regulatory standpoint, agencies like the U.S. EPA, FDA, and ECHA require standardized ecotoxicity data to assess environmental hazards from chemicals, pharmaceuticals, and pesticides [35]. These tests have traditionally relied on live vertebrate and invertebrate organisms to evaluate endpoints like survival, growth, and reproduction. However, there is a strong and growing regulatory drive to implement New Approach Methodologies (NAMs) that reduce, refine, or replace animal testing. This shift is motivated by ethical considerations, the need for higher-throughput testing, and advances in scientific understanding [35] [36]. The choice of a model organism, therefore, must balance its biological relevance to the ecosystem, its sensitivity to contaminants, its practical utility in the laboratory, and its alignment with the "3Rs" framework (Replacement, Reduction, Refinement) [37] [36].

Comparative Analysis of Standard Test Organisms

The following table provides a quantitative comparison of the standard model organisms used across the major taxonomic groups, based on common test guidelines and interlaboratory studies.

Table 1: Performance Comparison of Standard Ecotoxicity Test Organisms

| Organism Category | Example Species | Key Endpoints Measured | Typical Test Duration | Approx. Cost (Relative) | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|---|

| Vertebrates (Fish) | Rainbow trout (Oncorhynchus mykiss), Zebrafish (Danio rerio) | Mortality, growth, reproductive success, teratogenicity [35] | 48-96 h (acute); 28+ d (chronic) [35] | High | High regulatory acceptance; complex systemic responses; models for vertebrate biology [35] | High ethical concern; expensive; requires significant space and resources [35] [36] |

| Invertebrates (Aquatic) | Water flea (Daphnia magna), Amphipod (Hyalella azteca) | Mortality, immobilization, reproduction, growth [35] | 24-48 h (acute); 7-21 d (chronic) [35] | Low | Rapid life cycle; high sensitivity; low cost; high throughput [35] | Less complex physiology than vertebrates; may not predict vertebrate-specific toxicity [35] |

| Plants (Aquatic) | Duckweed (Lemna minor) | Frond number, biomass, root growth inhibition [38] | 72 h – 7 d [38] | Very Low | Rapid; simple culture; low volume required; key primary producer [38] | Limited to phytotoxicity; less relevant for animal health endpoints |

| Emerging NAMs | Fish Embryo (FET test), In vitro assays | Embryo mortality, teratogenicity, gene expression (transcriptomics) [39] | 24-96 h [39] | Medium | Addresses 3Rs (non-protected life stage); mechanistic data; potential for high-throughput [39] [37] | Regulatory acceptance varies; may not capture chronic or reproductive effects [36] |

Detailed Experimental Protocols

1Lemna minorRoot Regrowth Test (Interlaboratory Validated)

This protocol is designed for rapid toxicity screening of water samples [38].

- Plant Preparation: Cultivate Lemna minor in sterile nutrient medium under controlled light (100 μmol m⁻² s⁻¹) at 25°C. Select healthy colonies with 2-3 fronds.

- Root Excision: Using fine forceps and a dissecting microscope, carefully excise all roots from the selected fronds.

- Exposure: Place one colony per well in a 24-well cell culture plate. Add 3 mL of the test solution (e.g., wastewater, chemical dilution) or control medium to each well [38].

- Incubation: Maintain plates under the same controlled conditions for 72 hours.

- Endpoint Measurement: After exposure, measure the length of all newly regrown roots per frond using calipers or image analysis software.

- Data Analysis: Calculate percent inhibition of root regrowth compared to controls. Determine EC₅₀ (effective concentration for 50% inhibition) values. In an interlaboratory comparison, this test demonstrated reproducibility standard deviations of 18.6-27.2% for different sample types, validating its reliability [38].

Enhanced Fish Embryo Toxicity (FET) Test with Transcriptomics

This protocol refines the standard OECD FET test by adding mechanistic depth [39].

- Embryo Collection: Obtain fertilized zebrafish (Danio rerio) eggs. Visually inspect and select normally developing embryos at the 4-6 cell stage.

- Exposure: Distribute embryos into individual wells of a multi-well plate (e.g., one embryo per well in 2 mL of solution). Expose to a logarithmic series of the test chemical concentration, along with a water control. Each concentration and control should have a minimum of 20 embryos [39].

- Incubation & Observation: Incubate plates at 26 ± 1°C for 96 hours. Monitor daily for lethal endpoints (coagulation, lack of somite formation, non-detached tail, lack of heartbeat) as per the standard FET guideline.

- Sample Collection for Transcriptomics: At 48 or 96 hours, pool surviving embryos from each treatment group (e.g., 10-15 embryos). Homogenize the pool in a RNA-stabilizing reagent.

- RNA Sequencing & Analysis: Extract total RNA. Perform RNA sequencing (RNA-seq). Bioinformatic analysis identifies differentially expressed genes (DEGs) and perturbed biological pathways (e.g., oxidative stress, endocrine disruption) [39].

- Integrated Data Analysis: Calculate traditional LC₅₀ based on mortality. Integrate the transcriptomic Lowest Effective Concentration (LEC) and pathway analysis to provide a mechanistic understanding of the toxic effect, potentially enhancing the test's predictive power for chronic outcomes [39].

Visualizing Pathways and Workflows

Logical Workflow for Test Organism Selection

Title: Decision Workflow for Selecting Ecotoxicity Test Organisms

Signaling Pathway for Enhanced Fish Embryo Test

This diagram illustrates the integrated biological and molecular process underlying the enhanced Fish Embryo Toxicity (FET) test [39].

Title: Integrated Pathway of the Enhanced Fish Embryo Toxicity (FET) Test

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Ecotoxicity Testing

| Item | Function in Experiments | Example Use Case |

|---|---|---|

| Standardized Nutrient Media (e.g., OECD, ISO recipes) | Provides essential, consistent nutrients for culturing test organisms, ensuring health and reducing background variability. | Culturing algae, duckweed (Lemna minor), and daphnids prior to and during tests [38]. |

| Reference Toxicants (e.g., 3,5-Dichlorophenol, CuSO₄, K₂Cr₂O₇) | Validates the health and sensitivity of biological test populations. Used in interlaboratory comparisons to assess protocol reproducibility [38]. | Routine laboratory quality control; establishing sensitivity baselines in methods like the Lemna root regrowth test [38]. |

| RNA Stabilization Reagents (e.g., TRIzol, RNAlater) | Preserves RNA integrity immediately upon sample collection by inhibiting RNases. Critical for obtaining high-quality material for transcriptomic analysis. | Preserving fish embryos or tissue samples in enhanced FET tests for subsequent RNA sequencing [39]. |