Ecotoxicology Data Management: Best Practices for Robust Research, Regulatory Compliance, and Future-Proof Science

This article provides a comprehensive guide to modern ecotoxicology data management, tailored for researchers, scientists, and drug development professionals.

Ecotoxicology Data Management: Best Practices for Robust Research, Regulatory Compliance, and Future-Proof Science

Abstract

This article provides a comprehensive guide to modern ecotoxicology data management, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of data quality and standardized curation, as exemplified by authoritative resources like the EPA's ECOTOX Knowledgebase. The guide details methodological approaches for integrating multi-omics data, utilizing environmental data management systems (EDMS), and leveraging cloud-based solutions. It further addresses common challenges in statistical analysis, data interoperability, and regulatory alignment, offering optimization strategies. Finally, it explores validation frameworks for new approach methodologies (NAMs) and compares leading data platforms to support informed tool selection. The goal is to equip professionals with actionable strategies to enhance data integrity, streamline workflows for assessments like REACH, and foster innovation in ecological safety science.

Laying the Groundwork: Core Principles and Authoritative Sources for Ecotoxicology Data Integrity

High-quality ecotoxicity data are the foundation of reliable environmental risk assessments. In an era of growing data volume, establishing rigorous and consistent criteria for data acceptability is a cornerstone of effective ecotoxicology data management. This framework ensures that only scientifically sound studies inform regulatory decisions for chemicals, pharmaceuticals, and pesticides. This guide details the essential criteria for evaluating ecotoxicity studies, providing researchers and drug development professionals with a structured approach to data quality assurance.

Core Data Quality Evaluation Frameworks

Several established frameworks are used to assess the reliability and relevance of ecotoxicity studies. The choice of framework can significantly impact a study's regulatory acceptability.

U.S. EPA Acceptance Criteria for Open Literature Data

The U.S. Environmental Protection Agency (EPA) provides clear minimum criteria for a study to be accepted into its Ecotoxicity Database (ECOTOX) and considered for risk assessment[reference:0]. These criteria ensure data verifiability and relevance to regulatory needs.

Table 1: U.S. EPA Minimum Acceptance Criteria for ECOTOX

| Criterion Category | Specific Requirement |

|---|---|

| Exposure & Effect | Toxic effects must result from single-chemical exposure. |

| Test System | Effects must be on live, whole aquatic or terrestrial plants/animals. |

| Reporting | A concurrent environmental concentration/dose and explicit exposure duration must be reported. |

| Data Quality | Treatment(s) must be compared to an acceptable control. |

| Transparency | The study location (lab/field) and tested species must be reported and verified. |

| Accessibility | The study must be a publicly available, full article in English, serving as the primary data source. |

The Klimisch Score: A Traditional Reliability Check

The Klimisch scoring system is a widely used method for categorizing study reliability, particularly within EU regulatory schemes like REACH[reference:1]. It assigns a score based on adherence to guidelines and documentation quality.

Table 2: Klimisch Reliability Score Categories

| Score | Category | Description |

|---|---|---|

| 1 | Reliable without restriction | Conducted according to internationally accepted guidelines (preferably GLP). |

| 2 | Reliable with restriction | Not fully GLP-compliant but sufficiently documented and scientifically acceptable. |

| 3 | Not reliable | Insufficient documentation or major methodological flaws. |

| 4 | Not assignable | Lacks sufficient experimental details (e.g., abstracts only). |

Generally, only scores of 1 or 2 are considered reliable for primary regulatory use, while scores 3 and 4 may serve as supporting information[reference:2].

The CRED Framework: A Modern, Comprehensive System

The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method was developed to address limitations of earlier systems. It provides a more detailed, transparent, and consistent evaluation of both reliability and relevance for aquatic ecotoxicity studies[reference:3].

Table 3: Comparison of Klimisch and CRED Evaluation Methods

| Characteristic | Klimisch Method | CRED Method |

|---|---|---|

| Data Type | General toxicity & ecotoxicity | Aquatic ecotoxicity |

| Reliability Criteria | 12–14 | 20 (with 50 reporting criteria) |

| Relevance Criteria | 0 | 13 |

| OECD Reporting Criteria | 14 of 37 included | 37 of 37 included |

| Guidance Material | No | Yes |

| Evaluation Summary | Qualitative (reliability only) | Qualitative (reliability & relevance) |

The CRED method's structured criteria and guidance aim to reduce subjectivity and promote harmonization across different regulatory frameworks[reference:4].

Experimental Protocol: The Fish Acute Toxicity Test (OECD TG 203)

The fish acute toxicity test is a standard guideline study often used as a benchmark for data quality. The following protocol outlines its key methodological steps.

Test Principle: Juvenile fish are exposed to a range of concentrations of the test substance, usually for 96 hours. The primary endpoint is the median lethal concentration (LC50).

Detailed Methodology:

- Test System: Healthy juvenile fish of a defined species (e.g., Danio rerio, Oncorhynchus mykiss) are acclimated to laboratory conditions.

- Exposure Design: At least five concentrations of the test substance and a control (with or without solvent) are prepared. A minimum of seven fish per concentration is recommended, with random assignment.

- Test Conditions: Tests are conducted under static, semi-static, or flow-through conditions. Water quality parameters (temperature, pH, dissolved oxygen) are monitored and maintained within specified limits.

- Observations: Mortalities are recorded at 24, 48, 72, and 96 hours. Any abnormal behavior or morphological signs of toxicity are noted.

- Validity Criteria: The test is considered valid only if control mortality does not exceed 10% (or one fish if fewer than ten are used)[reference:5].

- Data Analysis: The LC50 and its confidence intervals are calculated using appropriate statistical methods (e.g., probit analysis, trimmed Spearman-Karber).

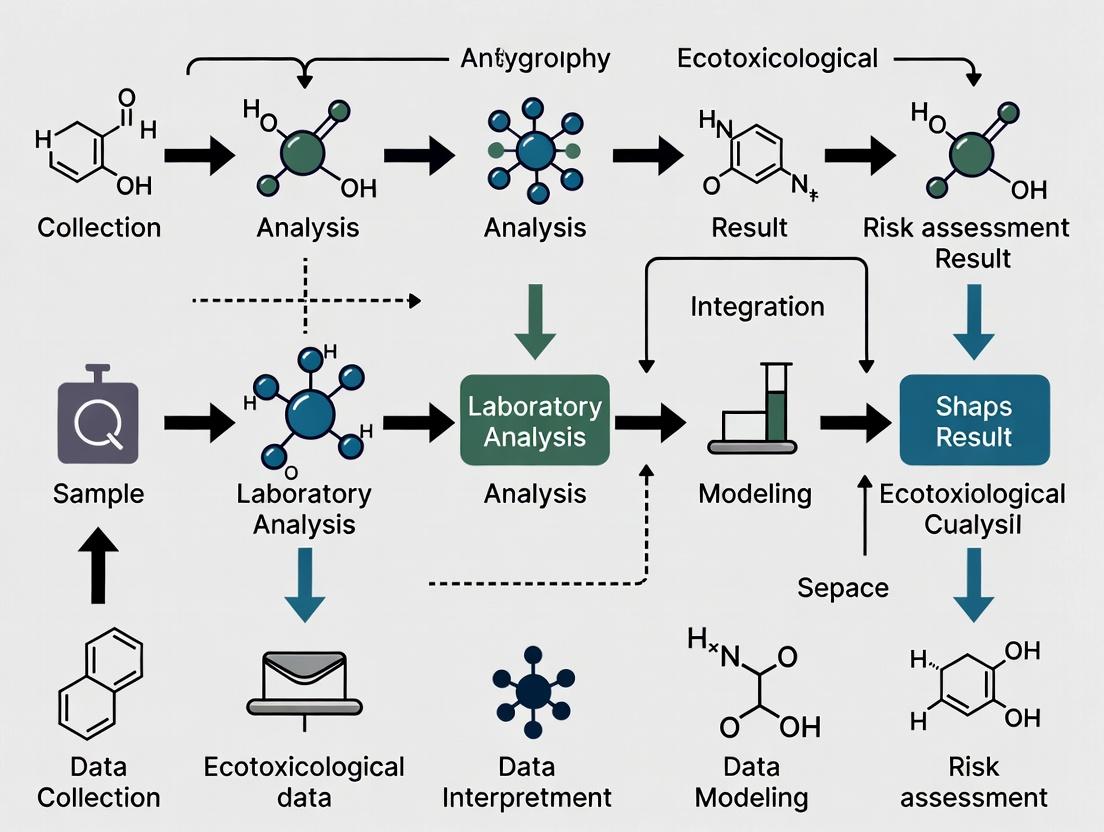

Visualizing Data Quality Assessment

A standardized evaluation workflow is critical for consistent data management. The following diagram maps the logical decision process for assessing an ecotoxicity study's acceptability.

Diagram 1: Logical workflow for ecotoxicity data quality assessment.

The Scientist's Toolkit: Essential Reagents & Materials

Conducting a high-quality ecotoxicity study requires standardized materials. The following table lists key reagent solutions and their functions in a typical aquatic test.

Table 4: Essential Research Reagent Solutions for Aquatic Ecotoxicity Testing

| Item | Function | Example / Specification |

|---|---|---|

| Reconstituted Freshwater | Provides a standardized, contaminant-free aqueous medium for tests. | Prepared according to ISO or OECD standards (e.g., ISO 6341). |

| Culture Media for Algae | Supports the growth and maintenance of algal test species. | OECD TG 201 medium, containing essential nutrients. |

| Eluent/Solvent Control | Verifies that any solvent used to dissolve the test substance is not toxic. | Acetone, dimethyl sulfoxide (DMSO), or ethanol, typically at ≤0.1% v/v. |

| Reference Toxicant | Assesses the sensitivity and health of the test organisms over time. | Potassium dichromate (for Daphnia), sodium chloride, or copper sulfate. |

| Buffering Solution | Maintains stable pH in the test medium, critical for chemical stability and organism health. | Sodium bicarbonate or HEPES buffer. |

| Anaesthetic Solution | Humanely immobilizes fish for handling or terminal procedures. | Tricaine methanesulfonate (MS-222), buffered to test water pH. |

| Fixative/Preservative | Preserves tissue or organism samples for subsequent histological or chemical analysis. | Formalin, RNAlater, or glutaraldehyde. |

| Enzyme/Specific Biomarker Assay Kits | Quantifies sublethal effects (e.g., oxidative stress, neurotoxicity). | Acetylcholinesterase (AChE) assay kit, glutathione (GSH) assay kit. |

Defining and applying essential data quality criteria is not a bureaucratic hurdle but a fundamental scientific practice. Frameworks like the EPA criteria, Klimisch score, and the more comprehensive CRED method provide the necessary structure to distinguish reliable, relevant studies from those that are not fit for purpose. Integrating these evaluations into a systematic data management workflow, as visualized, ensures transparency and consistency. For researchers and drug developers, adherence to these criteria from the study design phase is the most effective strategy for generating ecotoxicity data that will withstand regulatory scrutiny and contribute meaningfully to environmental protection.

The Role of Systematic Review and Curation in Building Reliable Knowledgebases

The discipline of ecotoxicology is tasked with a critical mandate: to understand and predict the impacts of chemical stressors on ecosystems to inform protective regulations and sustainable practices. This mandate relies on a vast, heterogeneous, and ever-growing body of primary research. The fundamental challenge lies not in a scarcity of data, but in effectively synthesizing disparate studies into a coherent, reliable evidence base for decision-making. Unsystematic, narrative literature reviews are vulnerable to selection bias and may yield inconsistent or misleading conclusions [1]. In contrast, systematic review and rigorous data curation provide a structured, transparent, and reproducible framework to overcome these limitations.

Within the context of ecotoxicology data management best practices, systematic methodologies transform raw data from individual studies into actionable knowledge. They establish a clear chain of evidence—from formulating a precise research question to grading the certainty of the synthesized findings. This process is paramount for supporting chemical risk assessments, validating New Approach Methodologies (NAMs), and identifying critical data gaps [2]. Furthermore, the curated output of systematic reviews forms the core of authoritative knowledgebases, such as the U.S. EPA's ECOTOX database, which serves as an indispensable resource for researchers and regulators globally [3] [2]. This guide details the technical execution of systematic review and curation, framing them as essential, interdependent pillars for building reliable ecological knowledgebases.

Foundational Methodologies: Frameworks and Protocols

A high-quality systematic review is built upon explicit, pre-defined frameworks that ensure rigor and mitigate bias from the outset.

Formulating the Research Question and Analytic Framework

The first and most critical step is developing a focused, structured research question. In biological and health sciences, the PICO framework (Population, Intervention/Exposure, Comparator, Outcome) is most common [1]. For ecotoxicology, this is effectively adapted to:

- Population: The ecological receptor (e.g., Daphnia magna, fathead minnow, a soil invertebrate community).

- Intervention/Exposure: The chemical stressor, its concentration, duration, and route of exposure.

- Comparator: The control group (e.g., no chemical exposure, vehicle control).

- Outcome: The measured apical or sub-organismal endpoint (e.g., LC50, reproduction, growth, gene expression).

For broader questions involving qualitative evidence or mixed-methods research, alternative frameworks like SPIDER (Sample, Phenomenon of Interest, Design, Evaluation, Research type) may be more appropriate [1]. Developing an analytic framework visually maps the linkages between these components, clarifying the logic of evidence required to connect an exposure to an ecological outcome and guiding subsequent review steps [4].

Developing and Registering the Review Protocol

A detailed protocol is the review's operational blueprint, essential for transparency and reproducibility. Key elements include [1] [5]:

- Rationale and explicit research questions.

- Pre-specified inclusion/exclusion criteria for studies.

- Comprehensive search strategy (databases, search strings, grey literature sources).

- Plans for study selection, data extraction, and risk-of-bias assessment.

- Data synthesis methods.

- Strategy for assessing the certainty of evidence (e.g., GRADE).

Protocol registration on platforms like PROSPERO is considered a hallmark of best practice, reducing duplication of effort and mitigating reporting bias [5].

Critical Appraisal and Risk of Bias Assessment

Not all studies contribute equally valid evidence. Critical appraisal evaluates the methodological quality of each included study, assessing the degree to which its design, conduct, and analysis have minimized the risk of systematic error (bias) [4]. In ecotoxicology, this involves evaluating factors such as:

- Reporting clarity on test substance, organism, and conditions.

- Appropriateness of controls.

- Statistical methods and reporting of variability.

- Adherence to relevant test guidelines (e.g., OECD, EPA) [6].

Checklists and domain-specific tools (e.g., for in vivo or in vitro studies) are used rather than generic quality scores [4]. The outcome informs both the synthesis of results and the grading of the overall evidence. Common biases and mitigation strategies are summarized in Table 1.

Table 1: Common Biases in Primary Ecotoxicology Studies and Mitigation Strategies in Systematic Review

| Bias Type | Description | Mitigation Strategy in Review |

|---|---|---|

| Selection Bias | Systematic differences in baseline characteristics between compared groups. | Assess random allocation and allocation concealment methods [5]. |

| Performance Bias | Systematic differences in care provided apart from the intervention. | Evaluate blinding of researchers/care-takers during the experiment [4]. |

| Detection Bias | Systematic differences in outcome assessment. | Evaluate blinding of outcome assessors [5]. |

| Attrition Bias | Systematic differences in withdrawal from the study. | Analyze completeness of outcome data and use of intention-to-treat analysis [5]. |

| Reporting Bias | Selective reporting of some outcomes but not others. | Compare outcomes in protocol vs. published report; seek unpublished data [5]. |

Execution: From Search to Synthesis

Comprehensive Literature Search and Screening

A systematic search aims to identify all relevant evidence. This requires searching multiple bibliographic databases (e.g., PubMed, Scopus, Web of Science, Environment Complete) using a sensitive search strategy crafted from the PICO elements [1]. The strategy employs Boolean operators, controlled vocabularies (e.g., MeSH terms), and careful text-word searching. Grey literature (theses, government reports, conference proceedings) should also be sought to counteract publication bias [5].

The screening process, typically conducted in two phases (title/abstract, then full-text), employs the pre-defined inclusion/exclusion criteria. Dual, independent screening with consensus resolution is the gold standard to minimize error [5]. The flow of studies through this process is best reported according to the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement, using a flow diagram [1].

Data Extraction and Management

Data is extracted from included studies using standardized, piloted forms. Essential extraction fields for ecotoxicology include:

- Study identifiers and source.

- Chemical and test substance details (identity, purity, formulation).

- Test organism (species, life stage, source).

- Experimental design (exposure system, concentrations/doses, duration, controls, replication).

- Results (endpoint values, measures of variability, statistical significance).

- Key study evaluation factors (guideline compliance, reporting quality).

Dual independent extraction is recommended for critical fields. Data is ideally managed in structured formats (e.g., spreadsheets, specialized software like Covidence or SysRev) to facilitate analysis and sharing [5].

Evidence Synthesis and Certainty Assessment

Synthesis integrates findings across studies. Narrative synthesis involves a structured summary, often tabulating studies and exploring relationships between study characteristics and findings. Quantitative synthesis (meta-analysis) statistically combines effect size estimates from comparable studies, providing a more precise summary estimate and quantifying heterogeneity [4].

The final step is grading the overall certainty (or confidence) in the body of evidence for each key outcome. The GRADE (Grading of Recommendations, Assessment, Development, and Evaluations) framework is increasingly adopted. It starts with a baseline certainty (e.g., high for randomized trials, low for observational studies) and is then downgraded for limitations in: risk of bias, inconsistency, indirectness, imprecision, and publication bias [1] [7]. This provides end-users with a transparent understanding of the strength of the conclusions.

Systematic Review & Evidence Synthesis Workflow

Curation in Practice: Building and Maintaining the ECOTOX Knowledgebase

Systematic review methodologies are operationalized at scale in curated toxicology knowledgebases. The U.S. EPA's ECOTOXicology Knowledgebase (ECOTOX) exemplifies this application, serving as the world's largest curated repository of single-chemical ecological toxicity data [2].

The ECOTOX Curation Pipeline

The ECOTOX workflow is a standardized, systematic curation pipeline aligned with systematic review principles [2]:

- Search: Systematic literature searches are conducted for target chemicals using major scientific databases.

- Screening & Acceptance: Studies are screened against defined scientific and reporting criteria. Minimum criteria for inclusion require that effects are from single-chemical exposure on live organisms, with reported concentrations/doses, exposure durations, and a concurrent control [3].

- Data Extraction & Curation: Accepted studies undergo detailed data extraction. Information is captured using controlled vocabularies for test organisms, endpoints, and effects, ensuring consistency and interoperability.

- Quality Assurance: Extracted data undergoes rigorous multi-level quality control checks.

- Integration & Release: Curated data is added to the public database quarterly, with over one million test results for more than 12,000 chemicals [2].

Data Evaluation Guidelines

To ensure reliability, ECOTOX and regulatory assessors apply specific evaluation criteria to open literature studies, which extend beyond basic acceptance to assess usability in risk assessment [3]. Key criteria include:

- The study is a primary source (not a review).

- A calculable toxicity endpoint (e.g., LC50, NOEC) is reported.

- The test substance and species are clearly identified.

- The study design (lab/field) is documented.

This rigorous evaluation allows risk assessors to differentiate between data that is available and data that is usable for deriving robust toxicity values.

Knowledgebase Curation & Integration Process

Specialized Considerations and Tools for Ecotoxicology

Statistical Analysis of Ecotoxicity Data

The quantitative synthesis of ecotoxicity data presents unique challenges, often involving dose-response modeling and analysis of censored data (e.g., no observed effect concentrations). Authoritative guidance, such as that from the OECD, outlines appropriate statistical methods for deriving summary endpoints (e.g., EC50, NOEC, LOEC) from standard test data, which is a prerequisite for meta-analysis [8].

The Scientist's Toolkit: Essential Reagents and Materials for Standardized Testing

Reliable, reproducible ecotoxicity data—the foundational input for systematic reviews—depends on standardized methodologies and high-quality materials. Key research reagent solutions include:

Table 2: Key Research Reagent Solutions in Standardized Ecotoxicity Testing

| Reagent/Material | Primary Function | Role in Standardization |

|---|---|---|

| Reconstituted Hard Water | Provides consistent ionic composition and hardness for freshwater aquatic tests (e.g., OECD 202, Daphnia sp.). | Eliminates variability in natural water sources, ensuring reproducibility across labs. |

| Elendt M4 or M7 Culture Media | Defined medium for continuous culturing of algae (Pseudokirchneriella subcapitata, OECD 201) and other organisms. | Supports healthy, consistent organism health and baseline sensitivity. |

| Dimethyl Sulfoxide (DMSO) | Common solvent carrier for poorly water-soluble test chemicals. | Standardizes bioavailability; requires solvent control groups to isolate chemical effects. |

| Artificial Sediment | Standardized mixture of quartz sand, kaolin clay, peat, and calcium carbonate for benthic organism tests (e.g., OECD 218/219). | Provides a consistent substrate, controlling variables like organic carbon content and particle size. |

| Reference Toxicants (e.g., Potassium dichromate, Sodium chloride, Copper sulfate) | Positive control substances with well-characterized toxicity. | Verifies the sensitivity and health of test organisms in each assay batch. |

Overcoming Domain-Specific Challenges

Ecotoxicology systematic reviews face distinct hurdles:

- Heterogeneity: Extreme variability in test species, life stages, endpoints, and exposure scenarios. A clear analytic framework and narrative synthesis are often more feasible than meta-analysis [9].

- Non-Standard Reporting: Academic studies may not follow guideline formats, complicating data extraction and quality appraisal. Tools like the ECOTOX acceptability criteria provide a critical screening layer [3].

- Integrating Regulatory and Academic Evidence: Bridging the divide between guideline studies (designed for regulation) and mechanistic academic research requires careful consideration of relevance and reliability within the review question [10].

Systematic review and expert curation are not merely academic exercises; they are essential engineering processes for constructing reliable knowledgebases in ecotoxicology. By adhering to structured protocols—from precise question formulation through transparent evidence grading—these methods convert fragmented data into trustworthy, synthesized evidence.

This evidence directly feeds into FAIR (Findable, Accessible, Interoperable, Reusable) knowledgebases like ECOTOX, which in turn power regulatory risk assessments, computational toxicology models, and the identification of critical data needs. As chemical testing paradigms evolve toward greater use of high-throughput and in silico methods (NAMs), the role of systematically curated in vivo data becomes even more vital for validation and anchoring [2]. Therefore, advancing and institutionalizing systematic review and curation practices is a cornerstone of robust ecotoxicology data management, ensuring that scientific knowledge is not only accumulated but also effectively integrated and translated into protective decisions for environmental and public health.

Within the framework of advancing ecotoxicology data management best practices, the EPA ECOTOX Knowledgebase stands as a cornerstone resource. It addresses a fundamental challenge in the field: the efficient aggregation, standardization, and accessibility of high-quality toxicity data across a vast spectrum of chemicals and species [11]. As ecotoxicology evolves to assess emerging contaminants like PFAS, nanoplastics, and pharmaceuticals, the need for robust, curated data repositories has never been greater [11]. The ECOTOX Knowledgebase meets this need by providing a comprehensive, publicly available application that compiles information on the adverse effects of single chemical stressors to ecologically relevant aquatic and terrestrial species, directly supporting the development of chemical safety benchmarks and ecological risk assessments [12].

Core Database Metrics and Scope

The ECOTOX Knowledgebase is distinguished by its extensive scale and rigorous curation process. Data are systematically abstracted from the peer-reviewed scientific literature using an exhaustive search and review protocol [12]. The following table quantifies the current scope of the database.

Table: Quantitative Scope of the EPA ECOTOX Knowledgebase

| Data Category | Metric | Description and Significance |

|---|---|---|

| Scientific References | Over 54,000 references [13] | Compiled from open literature; forms the evidence base for all records. |

| Total Test Records | Over 1.1 million records [13] | Individual data points from toxicity tests, including effects, concentrations, and experimental conditions. |

| Unique Species | Nearly 14,000 species [13] | Covers ecologically relevant aquatic and terrestrial organisms, supporting broad ecological extrapolation. |

| Unique Chemicals | Approximately 13,000 chemicals [13] | Includes traditional and emerging contaminants, with recent additions for PFAS and 6-PPD quinone [13]. |

| User Engagement | ~16,000 avg. monthly users [13] | Indicates high utility within the global research and regulatory community. |

Technical Architecture and Data Relationships

Understanding the relational structure of the database is critical for effective data mining and integration into research workflows. The ECOTOX database is built on a structured schema where key data tables are linked through unique identifiers [14].

ECOTOX Knowledgebase Core Relational Schema

The central tables are tests (describing experimental setup) and results (containing the measured outcomes), linked by a unique test_id [14]. This relational design allows for complex queries linking chemical properties, experimental conditions, and observed biological effects, which is essential for meta-analysis and model development.

The value of ECOTOX lies in its rigorous data curation, which transforms disparate literature findings into a standardized, computable format.

Primary Data Source: The sole source is the peer-reviewed, open scientific literature [12]. No unpublished or proprietary data are included.

Curation Workflow:

- Literature Identification: Comprehensive searches are conducted using standardized vocabularies and taxonomies.

- Data Abstraction: Trained curators extract all pertinent information from each study into a controlled, structured format. This includes:

- Chemical Data: Identifier (CAS RN), name, purity, and formulation details. Chemical structures are accurately mapped via the DSSTox database to resolve identifier conflicts [15].

- Species Data: Scientific name, habitat, life stage, age, and source.

- Experimental Design: Test type (e.g., acute, chronic), location (lab/field), exposure methodology, duration, and control type.

- Environmental Media Parameters: For relevant tests, details like water hardness, pH, sediment texture, and organic matter content are recorded [14].

- Results: The observed effect, its quantitative measurement (with mean, standard deviation, etc.), statistical significance, and the dose/concentration at which it was observed.

Quality Assurance: The use of controlled vocabularies and linkage to the high-quality DSSTox chemical database ensures consistency and minimizes errors in chemical mapping [12] [15]. The database is updated quarterly with new data and revisions [12].

Research Applications and Regulatory Utility

The ECOTOX Knowledgebase is engineered to support specific, high-impact applications within environmental science and regulation.

Table: Primary Applications of ECOTOX Data

| Application Domain | Specific Use Case | Role of ECOTOX Data |

|---|---|---|

| Ecological Risk Assessment & Regulation | Development of Aquatic Life Criteria [12] | Provides the species sensitivity distributions required to derive protective water quality standards. |

| Chemical Registration/Reregistration (e.g., EPA, TSCA) [12] | Informs hazard assessments by aggregating existing toxicity data for the chemical of concern across species. | |

| Predictive Modeling | Quantitative Structure-Activity Relationship (QSAR) Models [12] | Serves as a source of high-quality experimental toxicity data for model training and validation. |

| Cross-Species Extrapolation & New Approach Methods (NAMs) [12] | Enables the development and validation of models that extrapolate from in vitro to in vivo or across taxa. | |

| Advanced Research & Analysis | Data Gap and Meta-Analysis [12] | Allows researchers to identify taxa or chemicals lacking sufficient toxicity data and to synthesize trends across studies. |

| Assessment of Emerging Contaminants [11] [13] | Curated data on PFAS, cyanotoxins, and other contaminants of concern accelerates research and regulatory response. |

Effective utilization of the knowledgebase requires leveraging a suite of interconnected tools and resources provided by the EPA.

Table: Essential Research Toolkit for ECOTOX Navigation

| Tool/Resource Name | Type | Primary Function & Utility |

|---|---|---|

| CompTox Chemicals Dashboard | Interactive Database | Provides detailed chemical information (properties, identifiers, related data) and is directly linked from ECOTOX chemical searches [12] [16]. |

| DSSTox Database | Chemical Curation Backbone | Ensures accurate chemical identification and structure mapping, which is fundamental for reliable data querying and modeling [15]. |

| ECOTOX Quick Guide | User Documentation | Provides updated, step-by-step guidance for conducting queries and using the interface effectively [17]. |

| EPA Tools Webinar Series | Training Resource | Offers recorded and live training sessions (e.g., Dec 2024 session on ECOTOX) for in-depth learning [18]. |

| Abstract Sifter | Literature Mining Tool | An Excel-based tool to enhance PubMed searches, useful for understanding the literature landscape prior to or after querying ECOTOX [16]. |

| ToxValDB & ToxRefDB | Supplemental Toxicity Data | Provide additional in vivo toxicology data (ToxValDB) and detailed guideline study data (ToxRefDB) for broader context [16]. |

Access and Analytical Workflow

Access to the ECOTOX Knowledgebase is public and free via the EPA website [12]. The interface offers three primary pathways for data retrieval, each suited to different researcher needs:

- Search: For targeted queries using known chemical, species, or effect parameters. Results can be filtered by 19 different parameters [12].

- Explore: A more flexible interface for discovery when search parameters are not precisely defined [12].

- Data Visualization: Interactive plotting tools allow users to visualize dose-response trends and patterns directly within the interface [12].

For advanced analysis, users can leverage the ECOTOXr R package to execute custom SQL queries against a local copy of the database schema, enabling complex joins and analyses that go beyond the web interface's capabilities [14]. This is particularly valuable for constructing large datasets for meta-analysis or model development.

Current Challenges and Future Directions

Despite its robustness, the use of ECOTOX and similar databases must evolve with the science. A key challenge is the need to modernize statistical practices in ecotoxicology. Current regulatory guidelines often rely on outdated statistical methods, and there is a pressing call for closer collaboration between ecotoxicologists and statisticians to implement state-of-the-art analysis techniques [19]. Furthermore, the field must address knowledge gaps identified through resources like ECOTOX, including the need for more long-term, multigenerational, and multi-stressor studies to fully understand the impacts of complex contaminant mixtures in the environment [11]. The ongoing quarterly updates and expansion of the knowledgebase to include critical emerging contaminants like PFAS demonstrate its commitment to addressing these future challenges [13].

The exponential growth in the volume, complexity, and generation speed of ecotoxicological data necessitates a foundational shift in data management practices [20]. This whitepaper provides a technical guide for implementing the FAIR Guiding Principles—Findable, Accessible, Interoperable, and Reusable—within ecotoxicology and environmental risk assessment [20]. Framed within a broader thesis on data management best practices, this document articulates how FAIR principles address critical challenges in data discovery, integration, and reuse. Using the U.S. EPA ECOTOXicology Knowledgebase (ECOTOX) as a primary case study, we demonstrate the practical application of these principles through a detailed examination of its systematic curation pipeline, enhanced user interface, and interoperability features [21] [12]. We further present a generalized FAIRification workflow, a toolkit of essential research software, and a contemporary case study on chemical mode-of-action data curation [22]. Adopting FAIR principles is imperative for enhancing the reproducibility, credibility, and collaborative potential of research that underpins chemical safety assessments and the protection of ecological health.

Ecotoxicology is a data-intensive field central to global chemical risk assessment and environmental protection. Researchers and regulators are tasked with evaluating the safety of thousands of chemicals, a process that relies on synthesizing vast amounts of existing toxicity data [21]. However, this data is often fragmented across systems and formats, described with inconsistent or missing metadata, and stored in ways that are not machine-actionable, creating significant barriers to efficient reuse and integration [23].

The FAIR data principles, formally defined in 2016, provide a robust framework to overcome these barriers by ensuring data and metadata are optimally prepared for both human and computational use [20] [23]. It is critical to distinguish FAIR data from open data: FAIR is concerned with the technical and descriptive infrastructure that enables data to be easily processed by machines, regardless of whether access is open or restricted [23]. In regulated and competitive fields like drug development and chemical safety, data can be highly FAIR while remaining securely accessible only to authorized personnel.

The transition towards New Approach Methodologies (NAMs), including high-throughput in vitro assays and computational toxicology models, further amplifies the need for FAIR data [21]. These approaches depend on high-quality, well-curated, and interoperable existing data for development, validation, and regulatory acceptance. Implementing FAIR principles is therefore not merely an academic exercise but a practical necessity to accelerate scientific discovery, ensure reproducibility, and maximize return on investment in data generation [23].

The Core FAIR Principles: A Technical Breakdown for Ecotoxicology

The FAIR principles provide specific guidance for data producers, curators, and repository managers. The following breakdown interprets each principle within the context of ecotoxicological data management.

Table 1: Core FAIR Principles and Ecotoxicology-Specific Requirements

| FAIR Principle | Core Technical Requirement | Ecotoxicology Implementation Example |

|---|---|---|

| Findable | Data and metadata are assigned a globally unique and persistent identifier (PID) (e.g., DOI, UUID). Metadata are rich, machine-readable, and indexed in a searchable resource [20] [23]. | A toxicity dataset receives a DOI upon publication in a repository. Its metadata includes standardized terms for chemical (via InChIKey), species (via ITIS TSN), and measured endpoints. |

| Accessible | Data are retrievable by their identifier using a standardized, open, and free communication protocol. Access control and authentication/authorization are clearly defined where necessary [20] [23]. | Data can be accessed via HTTPS protocol. Restricted data for pre-publication research have clear access instructions and authentication via institutional login. |

| Interoperable | Data and metadata use formal, accessible, shared, and broadly applicable languages and vocabularies for knowledge representation (ontologies, controlled vocabularies) [20] [23]. | Toxicity data is annotated with terms from the ECOTOX controlled vocabulary and chemicals are linked to the EPA CompTox Chemicals Dashboard for consistent identification [21]. |

| Reusable | Data and metadata are richly described with multiple relevant attributes, clear provenance, and usage licenses to enable replication and reuse in new studies [20] [23]. | A dataset includes detailed experimental conditions (temperature, pH, exposure duration), a full description of the data curation process, and a Creative Commons license. |

The ECOTOX Knowledgebase: A FAIR-Compliant Model in Ecotoxicology

The U.S. Environmental Protection Agency's ECOTOXicology Knowledgebase (ECOTOX) stands as a leading exemplar of FAIR-aligned data management in environmental science. As the world's largest curated compilation of single-chemical ecotoxicity data, it supports chemical safety assessments and ecological research through transparent, systematic review procedures [21] [12].

Table 2: ECOTOX Knowledgebase Statistics and FAIR Alignment

| Metric | Volume | FAIR-Relevant Feature |

|---|---|---|

| Test Results | >1 million records [21] [12] | Supports large-scale data mining and meta-analysis. |

| Chemical Substances | >12,000 [21] [12] | Linked to authoritative chemical information via the CompTox Dashboard for interoperability. |

| Species | >13,000 aquatic & terrestrial [12] | Species names verified and standardized using integrated taxonomic tools. |

| References | >53,000 [12] | Each record is traceable to a source, ensuring provenance (Reusable). |

Systematic Curation Pipeline: Ensuring Quality and Reusability

The reliability of ECOTOX data stems from a well-documented, protocol-driven curation pipeline that mirrors systematic review methodologies [21]. This process ensures data is Reusable by capturing comprehensive context and provenance.

Experimental Protocol: ECOTOX Data Curation Workflow

- Literature Search & Acquisition: Comprehensive searches are conducted across multiple scientific databases using structured queries for chemicals and ecologically relevant taxa. Both open and "grey" literature (e.g., government reports) are included [21].

- Citation Screening & Review: Titles and abstracts are screened for applicability (e.g., single chemical test on relevant species). Eligible studies undergo full-text review against predefined criteria for acceptability (e.g., documented controls, reported effect concentrations) [21].

- Data Abstraction: Trained reviewers extract pertinent study data using standardized electronic forms. A controlled vocabulary governs the entry of all key fields—including chemical identity, species, test method, endpoint, and effect concentration—ensuring Interoperability [21].

- Quality Assurance & Entry: Extracted data undergoes rigorous quality checks before being entered into the master database. The system performs automatic validation (e.g., unit conversions, value range checks) [21].

- Quarterly Updates & Maintenance: The database is updated quarterly with new records. Standard Operating Procedures (SOPs) for all steps are maintained and available, ensuring the process remains transparent and consistent [21].

Enhanced User Interface and Interoperability Features

The release of ECOTOX Version 5 introduced significant advancements in Findability and Accessibility [21].

- Search and Explore: Users can perform targeted searches or explore data through intuitive filters for chemical, species, or effect. Results can be customized and exported for external analysis [12].

- Data Visualization: Integrated interactive plotting tools allow users to visualize effect data distributions, aiding in rapid data exploration and interpretation [12].

- Interoperability Links: Direct links to the EPA CompTox Chemicals Dashboard provide immediate access to authoritative chemical identifiers, properties, and related data, a key feature for machine-actionable Interoperability [21] [12].

Implementing a FAIR Data Workflow: From Generation to Repository

The following diagram and accompanying description outline a generalized, community-centric workflow for making ecotoxicological data FAIR, drawing on successful implementations in environmental science [24].

Workflow Description:

- Plan and Use Reporting Formats: Prior to data generation, researchers should adopt or develop community reporting formats—templates that standardize (meta)data structure for specific data types (e.g., toxicity test results, chemical measurements) [24]. This upfront planning ensures consistency and interoperability.

- Apply Controlled Vocabularies: Data should be annotated using shared, machine-readable vocabularies and ontologies (e.g., for chemical identifiers, taxonomic names, anatomical terms). This is the core of achieving Interoperability [24] [25].

- Process with Reproducible Scripts: Data processing and quality control should be performed using documented, version-controlled scripts (e.g., in R or Python) to ensure transparency and reproducibility, key aspects of Reusability [26].

- Describe with Rich Metadata and a PID: A persistent identifier (PID) must be assigned. Comprehensive metadata, following a standard schema (e.g., DataCite), must describe the who, what, when, where, and how of the dataset, making it Findable and Reusable [24] [23].

- Deposit in a FAIR-Aligned Repository: Data should be deposited in a trusted repository that provides persistent storage, assigns PIDs, and ensures Accessibility. Domain-specific repositories (e.g., for environmental science) often provide the best alignment with community standards [24].

Implementing FAIR principles is supported by a growing ecosystem of software tools, databases, and standards.

Table 3: Research Reagent Solutions for FAIR Ecotoxicology Data Management

| Tool/Resource Name | Type | Primary Function in FAIR Context |

|---|---|---|

| ECOTOXr [26] | R Software Package | Enables reproducible, programmatic retrieval and curation of data from the ECOTOX Knowledgebase, directly supporting Reusability and traceability in meta-analyses. |

| EPA CompTox Chemicals Dashboard | Database / Tool | Provides authoritative chemical identifiers, properties, and links to toxicity data. Serves as a central hub for chemical interoperability, crucial for integrating data from different sources [21] [12]. |

| ECOTOX Controlled Vocabulary | Vocabulary | The standardized set of terms used within the ECOTOX database for species, endpoints, and test conditions. Using this vocabulary promotes Interoperability with this key resource [21]. |

| ESS-DIVE Reporting Formats [24] | Community Standards | A set of guidelines and templates for formatting diverse environmental (meta)data types (e.g., water chemistry, sample metadata). Adopting these facilitates structured, interoperable data submission. |

| EPA Data Standards [25] | Policy & Standards | Documents EPA's agreed-upon representations, formats, and definitions for data. Following these standards promotes efficient sharing, transparency, and reuse of environmental information. |

Case Study: Curating a FAIR Dataset for Chemical Mode-of-Action and Toxicity

A 2024 study by Neale et al. provides a contemporary, real-world example of applying FAIR principles to create a high-value resource for chemical risk assessment [22].

Objective: To develop a curated, FAIR dataset containing mode-of-action (MoA) information and effect concentrations for thousands of environmentally relevant chemicals to support hazard assessment, chemical grouping, and the development of New Approach Methodologies (NAMs) [22].

Experimental Protocol:

- Chemical List Curation: A list of 3,387 compounds was compiled from regulatory directives and environmental monitoring suspect lists. Each was classified as a parent substance or transformation product [22].

- Systematic Data Harvesting:

- MoA Data: For each chemical, a systematic search was performed across multiple scientific databases (e.g., PubChem, AOP-Wiki) and literature to identify documented mechanisms of toxic action. Information was categorized into standardized MoA classes [22].

- Toxicity Data: Effect concentrations for algae, crustaceans, and fish were harvested from the ECOTOX Knowledgebase using a standardized query and filtering pipeline to ensure data quality and relevance [22].

- Data Integration and Curation: Collected MoA and toxicity data were merged into a single, structured dataset. Inconsistent terminology was harmonized, and data gaps were explicitly noted [22].

- FAIR Publication: The final dataset was published on the Zenodo repository, where it was assigned a persistent DOI, rich machine-readable metadata, and a clear usage license, making it Findable, Accessible, and Reusable. The data is formatted for immediate use in computational modeling and regulatory assessment [22].

Outcome: This study produced the first comprehensive collection of MoA for environmental chemicals paired with curated toxicity data. By building upon the FAIR-aligned ECOTOX database and publishing its output with FAIR principles, the dataset directly enables more efficient, evidence-based ecological risk assessment and exemplifies the virtuous cycle of FAIR data reuse [22].

Establishing a FAIR data foundation is a critical strategic imperative for advancing ecotoxicology and environmental risk assessment. As demonstrated by the ECOTOX Knowledgebase and supporting case studies, implementing these principles transforms data from a static output into a dynamic, interoperable resource that accelerates scientific discovery, enhances reproducibility, and maximizes research investment [21] [23].

The path forward requires concerted action across multiple fronts:

- Community Adoption: Widespread adoption of community-developed reporting formats and vocabularies is essential to overcome data fragmentation [24].

- Tool Development: Continued support for open-source software tools (like ECOTOXr) that automate and standardize data retrieval, curation, and analysis will lower the technical barrier to FAIR practices [26].

- Training and Incentives: Integrating FAIR data management into graduate training and establishing institutional incentives for data sharing are crucial for cultural change.

- Integration with New Methods: FAIR data pipelines must be designed to integrate seamlessly with emerging NAMs and computational modeling approaches, providing the high-quality data needed for their validation and acceptance [21].

By embedding FAIR principles into the core of ecotoxicology research workflows, the scientific community can build a more collaborative, transparent, and efficient foundation for protecting human health and the environment in the face of global chemical challenges.

From Data to Insight: Implementing Effective Management Systems and Analytical Workflows

Modern ecotoxicology and drug development research generate complex, multi-dimensional data from diverse sources, including field samples, high-throughput laboratory assays, and computational models. Effective management of this data is not merely an operational concern but a scientific and regulatory imperative. The forthcoming revision of the EU's REACH regulation (“REACH 2.0”), as highlighted at the recent Ecotox REACH 2025 Conference, underscores this shift. Key changes, such as the introduction of a Mixture Assessment Factor (MAF) for high-tonnage substances and the mandatory notification of polymers, will demand more sophisticated, transparent, and accessible data streams [27]. Furthermore, the push towards digital Safety Data Sheets (SDS) and alignment with the European Digital Product Passport (DPP) signals a broader regulatory trend demanding fully digital, traceable data workflows [27].

Within this context, a well-structured data pipeline serves as the critical infrastructure for transforming raw, dispersed observations into credible, analysis-ready knowledge. It ensures data integrity, facilitates reproducible research, and enables the complex, integrative analyses required to understand chemical effects across biological scales—from molecular initiating events to population-level outcomes. This guide details a framework for building such pipelines, tailored to the specific challenges and standards of ecotoxicological research.

Architectural Framework for Ecotoxicology Data Pipelines

A data pipeline is a methodical process for ingesting data from various sources, transforming it, and loading it into a repository for analysis [28]. In ecotoxicology, this architecture must handle heterogeneous data types—from genetic sequences and spectral data to ecological field observations—while enforcing rigorous quality and metadata standards.

Core Pipeline Components and Ecotoxicology Applications

The architecture comprises sequential, automated stages [29] [28]:

- Data Ingestion: The process of collecting raw data from source systems. For ecotoxicology, this includes automated feeds from High-Content Screening (HCS) systems, manual uploads of field sampling logs, instrument outputs (e.g., mass spectrometers), and public database APIs (e.g., CompTox Chemistry Dashboard).

- Data Transformation: The stage where data is cleansed, validated, and reformatted. This is crucial for standardizing units (e.g., converting nM to µg/L), applying quality flags to outlier measurements, annotating compounds with persistent identifiers (e.g., InChIKeys), and structuring data according to defined schemas (e.g., ISA-Tab format for omics data).

- Data Storage: The loading of processed data into a centralized repository (e.g., a data warehouse or lake) optimized for querying and analysis [30].

- Data Analysis & Visualization: The consumption layer, where researchers access data for statistical analysis, modeling, and generating visual reports.

Pipeline Typology: Selecting the Right Model

The choice of pipeline type depends on data velocity and use case [29] [28].

Table 1: Data Pipeline Types and Their Applications in Ecotoxicology Research

| Pipeline Type | Processing Mode | Ideal Ecotoxicology Use Case | Example Tools/Platforms |

|---|---|---|---|

| Batch Processing | Data is collected and processed in discrete chunks at scheduled intervals [28]. | Processing end-of-day results from automated toxicity assays; monthly aggregation of environmental monitoring data. | Apache Airflow, Cron jobs, ETL tools (e.g., Talend). |

| Streaming | Data is processed in real-time as it is generated [29] [28]. | Continuous monitoring of effluent toxicity via online biosensors; real-time telemetry from tagged organisms in mesocosm studies. | Apache Kafka, Apache Flink, AWS Kinesis. |

| Cloud-Native | Pipeline runs on scalable cloud infrastructure (AWS, GCP, Azure) [29]. | Collaborative, multi-institutional projects requiring elastic compute for large-scale omics data analysis or complex PBPK modeling. | AWS Glue, Google Cloud Dataflow, Azure Data Factory. |

Diagram 1: Generalized Data Pipeline Architecture for Ecotoxicology

Foundational Data Generation: Experimental Protocols

The quality of the pipeline is contingent on the quality of the data it ingests. Standardized experimental protocols are therefore the critical first step.

Protocol: High-Throughput In Vitro Toxicity Screening

This protocol generates concentration-response data for rapid hazard assessment [27].

1. Objective: To determine the concentration of a test chemical that induces a 50% effect (EC₅₀) on a defined cellular endpoint (e.g., viability, receptor activation).

2. Materials: See "The Scientist's Toolkit" below.

3. Procedure:

a. Plate Preparation: Dispense cells into a 384-well microplate. Allow to adhere overnight.

b. Compound Serial Dilution: Prepare a 1:3 serial dilution of the test chemical in assay medium across 10 concentrations, plus vehicle controls.

c. Exposure: Remove cell culture medium and add compound dilutions. Incubate for 24 hours.

d. Endpoint Measurement: Add a luminescent viability reagent, incubate for 10 minutes, and read luminescence on a plate reader.

e. Data Capture: The plate reader software outputs a raw data file (e.g., .csv or .xlsx) containing luminescence values for each well.

4. Data Output: A matrix linking well identifiers to test chemical ID, concentration, raw luminescence signal, and calculated viability percentage.

Protocol: Environmental Sample Collection for Metabolomics

This protocol captures field samples for subsequent analysis of exposure biomarkers.

1. Objective: To collect and preserve aquatic organism samples for untargeted metabolomic profiling to identify exposure-related biochemical perturbations. 2. Procedure: a. Site Selection & Collection: At the sampling site, collect target organisms (e.g., 5 individuals of a specific fish species) using standardized methods. b. Immediate Preservation: Euthanize each organism immediately. Dissect and flash-freeze the tissue of interest (e.g., liver) in liquid nitrogen within 2 minutes to halt metabolic activity. c. Metadata Recording: Record critical metadata using a structured digital form: Sample ID, GPS coordinates, date/time, water chemistry parameters (pH, temperature, dissolved oxygen), and photographic documentation. d. Storage & Transport: Maintain samples at -80°C during transport to the laboratory. 5. Data Output: A set of paired data: (1) the physical frozen samples, and (2) a structured metadata table documenting the sampling context.

Diagram 2: Workflow for High-Throughput Screening Data Generation

Data Transformation & Standardization

Raw data is rarely analysis-ready. The transformation stage ensures consistency, quality, and interoperability.

Key Transformation Steps for Ecotoxicology Data:

- Metadata Annotation: Linking datasets with detailed experimental metadata (species, exposure regime, endpoint) using controlled vocabularies (e.g., ECOTOXicology Knowledgebase terms).

- Unit Standardization: Converting all concentrations to a standard unit (e.g., molarity).

- Quality Control Filtering: Applying statistical rules (e.g., Z-score > 3) or control-based thresholds (e.g., Z'-factor < 0.5) to flag or remove unreliable data points.

- Chemical Identifier Harmonization: Resolving compound names to standard identifiers (CAS RN, InChIKey, DSSTox Substance ID) to enable linking across disparate datasets.

- Data Structuring: Mapping data into predefined schemas suitable for the target repository, such as a relational schema for a data warehouse or a nested JSON document for a data lake.

Centralized Repository Selection and Management

The choice of repository dictates how data is stored, accessed, and analyzed [30].

Table 2: Comparison of Centralized Data Repository Types

| Repository Type | Data Structure | Primary Strength | Primary Weakness | Ideal Ecotoxicology Use Case |

|---|---|---|---|---|

| Relational Database (Data Warehouse) | Structured, schema-on-write. Tabular format with enforced relationships [30]. | Excellent for complex queries, joins, and ensuring ACID compliance for transactional integrity [30]. | Inflexible; poor handling of semi/unstructured data. Requires upfront schema design. | Storing and querying finalized, curated data from standardized assays for regulatory reporting [27]. |

| Data Lake | Raw data in native format (structured, semi-structured, unstructured) [30]. | High flexibility and scalability. Cost-effective for storing vast, diverse raw data (e.g., genomic sequences, microscopy images). | Risk of becoming a "data swamp" without strict governance. Not optimized for fast queries [30]. | Archiving all raw data from multi-omics projects (genomics, transcriptomics, metabolomics) for future re-analysis. |

| Data Lakehouse | Hybrid: Raw data storage of a lake with management/optimization features of a warehouse [30]. | Supports both flexible storage and performant SQL analytics. Enables BI and ML on the same platform. | Emerging technology; tooling and best practices are still evolving. | A modern research platform supporting both exploratory analysis of raw HCS images and production of standardized summary reports. |

Data Visualization for Analysis and Communication

Effective visualization translates complex results into actionable insights [31] [32]. The choice of technique must match the analytical goal and audience.

Table 3: Key Data Visualization Techniques for Ecotoxicology

| Visualization Goal | Recommended Technique | Ecotoxicology Application Example | Design Consideration |

|---|---|---|---|

| Compare Categories | Bar/Column Chart [31] [33]. | Comparing the toxicity (EC₅₀) of several chemicals for a single endpoint. | Ensure the y-axis starts at zero to accurately represent proportional differences [33]. |

| Show Trend Over Time | Line Chart [31] [32]. | Plotting the change in a biomarker level in organisms over a 28-day exposure period. | Use clear markers for data points and avoid cluttering with too many lines. |

| Display Distribution | Box & Whisker Plot [31]. | Showing the distribution of species sensitivity values for a particular chemical. | Effective for highlighting median, quartiles, and potential outliers across groups. |

| Reveal Relationships | Scatter Plot [31] [32]. | Exploring the correlation between the log P (lipophilicity) of chemicals and their measured bioaccumulation factor. | Add a trend line (linear regression) and R² value to quantify the relationship. |

| Map Spatial Data | Choropleth Map [31]. | Visualizing the geographic distribution of pesticide concentrations in surface water across a region. | Use a logical, sequential color scale and provide a clear legend. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing a robust data pipeline requires both digital and physical tools. Below is a table of essential materials and solutions for generating high-quality ecotoxicology data at the bench.

Table 4: Key Research Reagent Solutions for Ecotoxicology Assays

| Item | Function | Example in Practice |

|---|---|---|

| Cell-Based Viability Assay Kits | Quantify live cells after chemical exposure by measuring ATP content, enzyme activity, or membrane integrity. | A luminescent ATP assay (e.g., CellTiter-Glo) is used in high-throughput screening to generate concentration-response data for cytotoxicity [27]. |

| Biomarker ELISA Kits | Detect and quantify specific proteins (biomarkers) indicative of exposure or effect, such as vitellogenin or stress response proteins. | Used in environmental monitoring to measure endocrine disruption in fish plasma samples collected from the field. |

| Metabolite Extraction & Derivatization Kits | Standardize the extraction and preparation of small molecules from biological samples for mass spectrometry analysis. | Critical for ensuring reproducibility in untargeted metabolomics studies aimed at discovering novel exposure biomarkers. |

| Standard Reference Materials (SRMs) | Certified materials with known analyte concentrations used for instrument calibration and quality control. | Essential for ensuring the accuracy of environmental chemistry measurements, such as PFAS concentrations in water samples [27]. |

| Robotic Liquid Handling Systems | Automate precise dispensing of cells, compounds, and reagents into microplates, increasing throughput and reproducibility. | Enables the setup of large-scale chemical screening campaigns with minimal human error and inter-plate variability. |

| Data Integration & ETL Software | Software platforms designed to automate the extract, transform, load (ETL) process from instruments to databases. | Tools like Knime or Pipeline Pilot can be configured to automatically process plate reader files, apply QC rules, and push curated results to a lab database. |

Leveraging Environmental Data Management Systems (EDMS) for Compliance and Analysis

Within the context of advancing ecotoxicology data management best practices, the systematic handling of complex environmental data has emerged as a critical determinant of research quality and regulatory compliance. Environmental Data Management Systems (EDMS) are specialized software platforms designed to automate the collection, processing, analysis, and reporting of environmental metrics, ensuring data integrity and streamlining workflows [34]. For researchers, scientists, and drug development professionals, these systems are indispensable for managing the multifaceted data generated from studies on the effects of toxic chemicals on populations, communities, and ecosystems [35].

The evolution of ecotoxicology toward more sophisticated, mechanistic understanding and the integration of advanced statistical methodologies necessitates a robust data management framework [36]. An EDMS provides the necessary infrastructure to support this progression, moving beyond simple data storage to become an active component in environmental risk assessment and hypothesis testing [37]. By offering a centralized repository for diverse data types—from chemical fate measurements and laboratory ecotoxicity results to field monitoring and omics-based biomarkers—an EDMS enables the synthesis of information required for credible scientific analysis and defensible regulatory submissions [38] [39].

Core Components and Architecture of an EDMS for Ecotoxicology

Implementing an EDMS requires careful planning aligned with specific programmatic needs. Key decisions involve defining the necessary data for target analyses and reporting, determining the appropriate data model, and establishing how users will access and utilize the information [38]. A well-architected EDMS for ecotoxicology must accommodate the inherent complexity of environmental data, which is characterized by nested relationships and multiple levels of sampling and analysis.

Foundational Data Models

At its core, an environmental data model must accurately represent real-world entities and their relationships. A basic model revolves around three primary entities: locations, samples, and measurements. However, ecotoxicological studies often require expanded models to capture intricate details [38]. For instance, a single sampling location (e.g., a lake) may involve multiple gear deployments (e.g., trawls). The collected material may be organized into a collection (e.g., a bucket of fish), from which interpretive samples (e.g., pooled groups of small fish or individual large fish fillets) are derived for specific analyses. These interpretive samples are then subdivided into analytical samples sent to laboratories [38]. This hierarchical structure is crucial for maintaining the chain of custody, understanding replication levels, and ensuring that statistical analysis is performed on the correct data units.

The choice of data model has direct implications for data quality and usability. A model that cannot faithfully represent all relevant entities and relationships risks data loss, the need for complex workarounds, or a loss of data integrity [38]. Furthermore, data models must be extensible to cover diverse ecotoxicology endpoints, such as species abundance, toxicity test results, bioaccumulation factors, and histopathology observations, each potentially requiring tailored data structures [38].

System Capabilities and Functionalities

A modern EDMS extends beyond a passive database to offer active project management and analytical support. Key functionalities include:

- Data Gathering and Storage: Centralized import and storage of data from any source or format, including direct capture from remote field sensors and laboratory information management systems (LIMS), providing 24/7 access [40].

- Analysis and Planning: Tools for querying data, running "what-if" scenarios for remediation strategies, performing unit conversions, and supporting statistical analysis workflows [40].

- Compliance and Reporting: Automated tracking of data against regulatory limits, generation of compliance reports, environmental impact assessments, and scheduling tools for report submissions [39] [40].

- Visualization and Interfacing: Seamless export to visualization tools (e.g., GIS, 3D contouring software) and integration with other business or scientific platforms for enhanced communication and decision-making [40].

- Real-Time Monitoring: Integration with continuous monitoring equipment to provide live data feeds on parameters like water quality, with automated alerting for threshold exceedances [39].

Table 1: Comparative Analysis of EDMS Functionalities for Ecotoxicology

| Functionality Category | Core Features | Benefit for Ecotoxicology Research |

|---|---|---|

| Data Management | Centralized repository, automated ingestion, audit trails, version control. | Ensures data integrity, traceability, and reproducibility for long-term studies and regulatory audits [38] [34]. |

| Compliance Tracking | Regulatory library, automated limit checks, pre-formatted report templates. | Streamlines preparation of dossiers for agencies like EPA or ECHA, reducing administrative burden [39]. |

| Statistical Integration | Direct connection to statistical software (e.g., R, Python), data export for dose-response modeling. | Facilitates advanced analyses like benchmark dose (BMD) modeling and species sensitivity distributions (SSDs) [41]. |

| Collaboration Tools | Role-based access controls, shared workspaces, annotation features. | Supports teamwork among field scientists, laboratory analysts, and statisticians [39]. |

Experimental Protocols and Data Management Integration

Robust ecotoxicology is built on standardized yet adaptable experimental protocols. Integrating these protocols directly into the EDMS framework ensures data consistency and enhances analytical power.

Protocol for a Hazard Assessment Hackathon

A contemporary pedagogical and research approach involves collaborative "hackathons" focused on real-world chemical risk problems [37]. The following protocol outlines how an EDMS supports each phase:

- Problem Definition & Hypothesis Formulation: A real-world case (e.g., a pesticide authorization question) is loaded into the EDMS. Relevant historical data on similar compounds, environmental fate parameters, and preliminary toxicity data are centralized for team access.

- Experimental Design: Teams design their study within the EDMS, using its tools to define test organisms, exposure concentrations, replication schemes, and endpoints. The system enforces data model rules, ensuring the design captures necessary metadata (e.g., ensuring replicate samples are correctly linked to the same interpretive sample) [38].

- Sampling & Data Collection: Field or lab sampling data is entered directly via mobile interfaces or uploaded from instruments. The EDMS logs GPS coordinates, timestamps, and personnel, creating an immutable record. For blind laboratory submissions, the system can manage sample coding to hide treatment groups from analysts [38].

- Analysis & Curation: Laboratory results are imported electronically via standardized formats (e.g., Electronic Data Deliverables). The EDMS automatically performs initial quality checks, flags outliers against control limits, and links results back to the correct experimental units.

- Statistical Analysis & Dissemination: Researchers export clean, well-structured datasets to statistical software. The EDMS documents the final datasets and analysis scripts, enabling full reproducibility. Results and reports are disseminated through the system's collaboration portals [37].

Advanced Statistical Analysis Workflow

Modern ecotoxicology is moving beyond outdated statistical methods like the No-Observed-Effect Concentration (NOEC) toward more powerful regression-based models [41]. An EDMS is critical in preparing data for these advanced analyses. The workflow begins with data extraction and preparation from the EDMS, where users select relevant endpoints and associated covariates. The EDMS ensures the correct hierarchical level of data (e.g., interpretive sample level) is used. Data is then formatted for analysis in platforms like R, which offers packages for advanced dose-response modeling [41]. Analysts fit a range of models, such as generalized linear models (GLMs) or non-linear models (e.g., 4-parameter log-logistic), to estimate critical values like ECx (Effect Concentration for x% effect) or the Benchmark Dose (BMD). Model selection is guided by information criteria (e.g., AIC). Finally, the fitted model parameters, plots, and derived values are uploaded back to the EDMS, linking the statistical output directly to the raw data and experimental metadata for a complete, auditable record.

Diagram 1: Statistical Analysis Workflow with EDMS Integration

The Scientist's Toolkit: Essential Research Reagent Solutions

Effective ecotoxicology research relies on a suite of standardized reagents, materials, and tools. When managed within an EDMS, inventory, usage, and quality control data for these items become traceable assets.

Table 2: Key Research Reagent Solutions and Materials in Ecotoxicology

| Item Category | Specific Examples | Function & Importance in EDMS |

|---|---|---|

| Reference Toxicants | Potassium dichromate, Copper sulfate, Sodium chloride. | Used for periodic validation of test organism health and laboratory performance. EDMS tracks batch numbers, expiration dates, and associated control response data for quality assurance [37]. |

| Standardized Test Media | Reconstituted hard water, ASTM/ISO standard dilution water, sediment formulations. | Ensures consistency and reproducibility across tests. EDMS can link specific media batches to test runs and record preparation logs [38]. |

| Biomarker Assay Kits | ELISA kits for vitellogenin, Oxidative stress assay kits (e.g., CAT, SOD), EROD assay reagents. | Used for mechanistic studies at the sub-organism level. EDMS manages kit lot numbers, standard curve data, and calculated results for integrative analysis with apical endpoints [36]. |

| Chemical Analysis Standards | Certified reference materials (CRMs), Internal standards, Surrogate recovery standards. | Critical for calibrating analytical instruments and confirming accuracy of chemical concentration data (e.g., for test solutions or tissue residues). EDMS links CRM certificates and recovery rates directly to sample results [38]. |

| Live Test Organisms | Daphnia magna, Danio rerio (zebrafish), Lemna minor, Aliivibrio fischeri. | The foundation of bioassays. EDMS can track organism source, age, acclimation conditions, and culturing parameters to account for variability in test sensitivity [37]. |

Modernizing Analysis: Statistical Innovations Supported by EDMS

The field of ecotoxicology is undergoing a significant transformation in its statistical practices, moving away from fragmented and outdated methods toward a more unified, model-based framework [41]. An EDMS is pivotal in supplying the high-quality, well-structured data required for these modern techniques.

The historical dichotomy between "hypothesis testing" (using ANOVA on categorized concentrations) and "dose-response modeling" (using regression) is now seen as artificial. Both are forms of linear models [41]. Contemporary analysis favors treating concentration as a continuous predictor using generalized linear models (GLMs), non-linear mixed-effects models, and generalized additive models (GAMs). These provide more robust estimates of effect concentrations (ECx) and better account for data variability and nested experimental structures [41]. Emerging metrics like the Benchmark Dose (BMD) and the No-Significant-Effect Concentration (NSEC) offer advantages over traditional NOECs and are more amenable to probabilistic risk assessment [41]. An EDMS facilitates this evolution by ensuring data is organized to easily fit these models—for example, by correctly structuring replication and linking covariates—and by providing a repository for the resulting model objects and scripts, ensuring full transparency and reusability.

Table 3: Evolution of Key Statistical Metrics in Ecotoxicology

| Metric | Traditional Approach | Modern & Emerging Approaches | Role of EDMS |

|---|---|---|---|

| Threshold Estimation | NOEC/LOEC (Hypothesis testing on categorical concentrations). | ECx, Benchmark Dose (BMD), No-Significant-Effect Concentration (NSEC) (Regression-based, model-averaged). | Provides the continuous concentration-response data required for regression. Archives model outputs and confidence intervals for audit [41]. |

| Data Analysis Framework | ANOVA, data transformation to meet assumptions. | Generalized Linear Models (GLMs), Nonlinear models, Mixed-effects models. | Manages complex data hierarchies (e.g., nested replicates) essential for mixed-effects modeling [38] [41]. |

| Uncertainty Quantification | Standard error of the mean, post-hoc test p-values. | Confidence/credible intervals around ECx/BMD, model selection uncertainty. | Stores raw replicate data necessary for bootstrap or Bayesian methods to calculate intervals [41]. |

Diagram 2: Evolution of Statistical Analysis in Ecotoxicology

The integration of a robust Environmental Data Management System is no longer a mere administrative convenience but a cornerstone of rigorous, reproducible, and compliant ecotoxicology research. By providing a structured framework for data from inception through to analysis and reporting, EDMS directly addresses core challenges in the field: managing complex data relationships, ensuring quality and integrity, and enabling the adoption of modern statistical methodologies. For researchers and professionals engaged in drug development and chemical safety assessment, leveraging an EDMS is a strategic imperative. It transforms data from a passive record into an active, accessible asset that fuels advanced analysis, supports transparent regulatory decision-making, and ultimately contributes to a more robust understanding of chemical impacts on environmental and human health. The ongoing evolution of statistical best practices, as outlined in forthcoming revisions to key guidance documents, will further underscore the necessity of sophisticated data management systems as the foundational platform for 21st-century ecotoxicology [41].

The management of ecotoxicology data is undergoing a fundamental transformation, driven by the proliferation of high-throughput screening (HTS) and toxicogenomics. These advanced data types represent a shift from traditional apical endpoint observations to a predictive, mechanism-based science focused on early biological perturbations [42] [43]. This evolution is central to fulfilling the vision for toxicity testing in the 21st century, which advocates for a greater reliance on in vitro data and in silico methodologies to increase efficiency and reduce animal testing [42].

Eco-toxicogenomics integrates functional genomics—including transcriptomics, proteomics, and metabolomics—to study systemic molecular responses in organisms exposed to environmental chemicals [42]. When combined with HTS, which allows for the parallel testing of thousands of compounds against biological targets, these approaches generate vast, complex datasets. The core challenge and opportunity for modern ecotoxicology lie in developing robust data management frameworks that can unify these diverse data streams. Effective integration enables the identification of mechanisms of action, supports hazard identification for data-poor chemicals, and informs dose-response assessments, thereby strengthening ecological and human health risk assessments [42]. Success in this area requires harmonizing experimental protocols, adopting advanced statistical and computational workflows, and adhering to data visualization and accessibility best practices.

The effective management of advanced ecotoxicology data begins with a clear understanding of the primary data sources, their scale, structure, and inherent challenges. The two pillars are large-scale public HTS programs and targeted toxicogenomic screening studies.

High-Throughput Screening (HTS) Programs: ToxCast/Tox21

The U.S. EPA's ToxCast program and the collaborative Tox21 consortium are foundational resources. They employ a wide array of in vitro cell-free (biochemical) and cell-based assays to test chemicals across a broad biological space [42].

Table 1: Key Statistics for ToxCast/Tox21 HTS Data (as of 2019) [42]

| Data Category | Metric | Description |

|---|---|---|

| Chemical Coverage | 9,076 compounds | Selected based on toxicity data availability, exposure significance, and regulatory interest. |

| Assay Composition | 1,192 assay endpoints | Derived from 763 assay components and 360 distinct in vitro assays. |

| Biological Targets | Diverse | Includes enzyme activities, nuclear receptor binding, cell proliferation, death, and genotoxicity. |