Ecotoxicity Study Relevance Evaluation: A Comprehensive Framework for Robust Environmental Risk Assessment

This article provides a systematic guide for researchers and drug development professionals to evaluate the reliability and relevance of ecotoxicity studies for regulatory decision-making.

Ecotoxicity Study Relevance Evaluation: A Comprehensive Framework for Robust Environmental Risk Assessment

Abstract

This article provides a systematic guide for researchers and drug development professionals to evaluate the reliability and relevance of ecotoxicity studies for regulatory decision-making. We begin with foundational principles, differentiating between reliability (intrinsic scientific quality) and relevance (appropriateness for a specific assessment)[citation:1]. The methodological section explores standardized frameworks like the CRED criteria, modern OECD test guidelines, and computational tools such as the EPA's ECOTOX Knowledgebase[citation:1][citation:2][citation:6]. We then address common challenges in data appraisal, study design, and alignment with evolving regulations like REACH 2.0 and PFAS restrictions[citation:3][citation:5]. Finally, the article compares validation frameworks, including the EcoSR and human relevance workflows, to establish robust, transparent evidence for risk assessment[citation:4][citation:5][citation:9]. The goal is to enhance the consistency, transparency, and scientific defensibility of using ecotoxicity data in biomedical and environmental safety contexts.

Understanding the Core Principles: Defining Reliability and Relevance in Ecotoxicity Data

In regulatory ecotoxicology, deriving safe chemical thresholds, such as Predicted-No-Effect Concentrations (PNECs) or Environmental Quality Standards (EQSs), depends on the critical evaluation of individual scientific studies [1]. Historically, this evaluation has relied heavily on expert judgment, leading to potential bias and inconsistency, where different assessors can reach divergent conclusions about the same data [1]. The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) framework was developed to address this problem by promoting reproducibility, transparency, and consistency [1]. At the heart of this framework—and of sound scientific assessment—lies the fundamental and separate consideration of two pillars: reliability and relevance. Confusing these concepts undermines the integrity of risk assessment. Reliability concerns the intrinsic scientific quality of a study's methodology and reporting, while relevance pertains to its applicability and appropriateness for a specific regulatory question [1]. A study can be meticulously performed and reported (reliable) but irrelevant for a given assessment (e.g., a soil toxicity test for an aquatic standard), and vice-versa. This guide delineates this critical distinction, providing researchers and assessors with a clear, comparative framework for evaluation.

Defining the Pillars: Reliability vs. Relevance

Reliability and relevance are separate attributes that answer different questions about an ecotoxicity study. Their independent assessment is crucial for transparent and defensible regulatory decision-making.

Reliability is defined as "the inherent quality of a test report or publication relating to preferably standardized methodology and the way the experimental procedure and results are described to give evidence of the clarity and plausibility of the findings" [1]. It is an intrinsic property of the study itself. Evaluation focuses on whether the experiment was well-designed, properly conducted, correctly analyzed, and clearly reported. A reliability deficit means the results are not scientifically trustworthy.

Relevance is defined as "the extent to which data and tests are appropriate for a particular hazard identification or risk characterization" [1]. It is an extrinsic, purpose-dependent property. Evaluation focuses on the alignment between the study's parameters (e.g., test species, endpoint, exposure duration) and the specific needs of the risk assessment. A relevance deficit means the study, however well-performed, does not suitably address the regulatory question.

The Critical Distinction: A reliable study is not automatically relevant, and a relevant study is not automatically reliable. They must be evaluated on their own merits. For instance, a chronic fish reproduction study (potentially relevant for a long-term water quality standard) may be deemed unreliable due to poorly controlled water chemistry, while a flawless acute Daphnia mortality test (highly reliable) may be irrelevant for assessing a chemical with a chronic mode of action [1].

Table: Core Differences Between Reliability and Relevance Evaluation

| Aspect | Reliability (Scientific Trustworthiness) | Relevance (Regulatory Applicability) |

|---|---|---|

| Core Question | Was the study well-conducted and reported? | Is the study appropriate for the specific assessment? |

| Nature | Intrinsic, immutable property of the study. | Extrinsic, depends on the assessment context and goals. |

| Primary Focus | Experimental design, protocol adherence, statistical analysis, data reporting clarity. | Test organism, endpoint measured, exposure scenario, ecological realism. |

| Evaluation Outcome | Determines if the data are scientifically credible. | Determines if the credible data are fit for the intended purpose. |

| Dependency | Independent of the regulatory question. | Entirely dependent on the regulatory question. |

The CRED Evaluation Framework: A Comparative Analysis

The CRED method provides a structured, criteria-based system that supersedes older, less transparent methods like the Klimisch score. It was rigorously tested in an international ring-test, where evaluators found it more accurate, applicable, consistent, and transparent [1]. The framework consists of two distinct checklists: one for reliability (20 criteria) and one for relevance (13 criteria), each with detailed guidance to minimize subjective judgment [1].

Experimental Protocol for Study Evaluation Using CRED: The following step-by-step protocol is derived from the CRED methodology [1]:

- Study Selection & Initial Screening: Identify potentially useful studies from the literature based on title and abstract.

- Independent Dual Evaluation: Two trained assessors independently evaluate the same study using the full CRED criteria checklists.

- Reliability Assessment: Each assessor works through the 20 reliability criteria, judging each as "yes," "no," or "not applicable." Criteria cover six categories: Test Substance Characterization, Test Organism, Exposure Design, Experimental Design, Statistical & Analytical Methods, and Reporting & Data Presentation.

- Relevance Assessment: Independently, each assessor works through the 13 relevance criteria, judging them for the specific assessment context. Criteria cover Test Organism Relevance, Endpoint Relevance, Exposure Relevance (duration, pathway), and Environmental Relevance.

- Resolution & Consensus: Assessors compare their independent evaluations. Any discrepancies are discussed with reference to the CRED guidance text until a consensus score for each criterion is reached.

- Overall Classification: The study's results are classified based on the consensus:

- Reliable: All or most critical reliability criteria are fulfilled. Minor limitations are documented.

- Not Reliable: One or more critical reliability criteria are not fulfilled, casting substantial doubt on the results.

- Relevant: The study's parameters align well with the regulatory protection goal and assessment scenario.

- Not Relevant: There is a fundamental mismatch between the study and the assessment needs.

- Documentation: The final evaluation, including rationale for all judgments, is documented transparently for audit and review purposes.

Table: Comparison of Evaluation Methods for Ecotoxicity Data

| Feature | Traditional Klimisch Method | CRED Evaluation Framework |

|---|---|---|

| Basis | Broad, four-category score (1-4) with minimal guidance [1]. | Detailed checklists with 20 reliability and 13 relevance criteria [1]. |

| Transparency | Low; heavily reliant on unexplained expert judgment [1]. | High; requires explicit justification for each criterion [1]. |

| Distinction of R&R | Often blurred; reliability frequently dominates the score [1]. | Explicitly separates and requires independent evaluation of reliability and relevance [1]. |

| Consistency | Poor; high variability between different evaluators [1]. | Good; ring-testing showed improved consistency among assessors [1]. |

| Primary Criticism | Non-specific, biased toward industry guideline studies, allows for interpretation [1]. | More objective, balanced, and provides a clear audit trail [1]. |

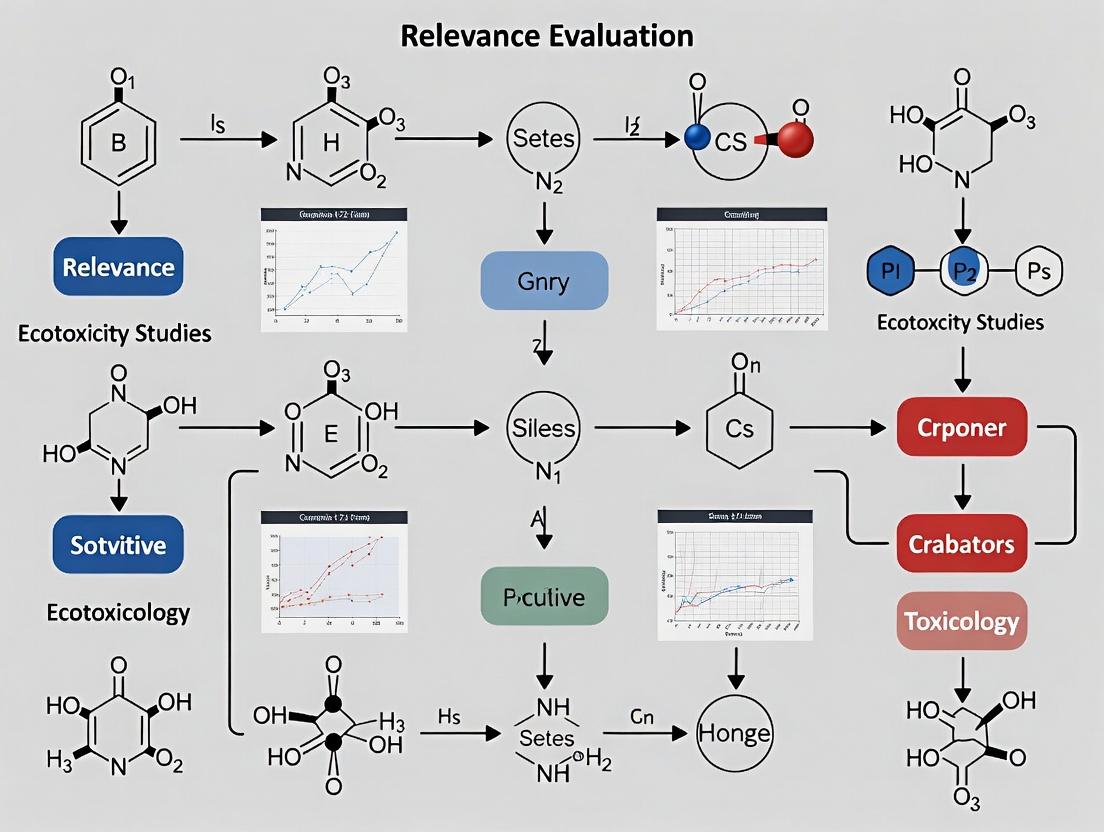

Visualizing the Evaluation Workflow

The following diagram illustrates the sequential yet independent decision pathways for evaluating reliability and relevance within a regulatory assessment context, as advocated by the CRED framework.

Evaluating Ecotoxicity Studies: Reliability and Relevance Pathways

The Scientist's Toolkit: Essential Research Reagents & Materials

High-quality, reliable ecotoxicity research requires standardized materials and informed selection of test systems. The following toolkit outlines key components for conducting studies that meet rigorous evaluation criteria.

Table: Essential Research Reagent Solutions for Aquatic Ecotoxicity Testing

| Item | Function & Importance | Considerations for Reliability/Relevance |

|---|---|---|

| Reference Toxicants (e.g., Potassium dichromate, Sodium chloride) | Used to confirm the consistent sensitivity and health of the test organism batch. A core reliability check [1]. | Must produce a consistent, known effect within an acceptable range. Failure invalidates the test's reliability. |

| Standardized Test Organisms (e.g., Daphnia magna, Pseudokirchneriella subcapitata, Danio rerio embryos) | Provides a reproducible biological model with known genetics, life history, and baseline responses. | Choice of organism directly determines relevance for protecting specific trophic levels (e.g., algae, invertebrates, fish) [1]. |

| Well-Characterized Test Substance | The chemical or material being evaluated. Accurate characterization is fundamental. | Purity, stability, solubility, and verified concentration (analytical chemistry) are critical reliability criteria. Physical form (e.g., nanoparticle) affects relevance [1]. |

| Reconstituted Standardized Test Water (e.g., ISO or OECD medium) | Provides a consistent, defined chemical environment, minimizing confounding variables from water quality. | Essential for reliability (reproducibility). May be adjusted for relevance (e.g., different water hardness) to simulate specific environments. |

| Positive & Negative (Solvent) Controls | Validates the experimental setup. Negative control establishes baseline effect; positive control confirms system responsiveness. | Mandatory for reliability assessment. Unacceptable control performance renders study results unreliable [1]. |

| High-Fidelity Exposure System (e.g., flow-through, semi-static chambers) | Maintains stable, measured concentrations of the test substance throughout the exposure duration. | Central to reliability. The choice of static vs. flow-through can affect relevance for simulating real-world exposure scenarios [1]. |

The evaluation of ecotoxicity study reliability and relevance is a cornerstone of environmental hazard and risk assessment, underpinning the derivation of Predicted-No-Effect Concentrations (PNECs) and Environmental Quality Standards (EQSs) [1]. For decades, the method established by Klimisch and colleagues in 1997 served as the regulatory backbone for this critical task [2]. While it introduced a systematic approach, its limitations—including a lack of detailed guidance, inconsistency among assessors, and a perceived bias toward industry-sponsored guideline studies—became increasingly apparent [3] [4]. This created a pressing need for a more robust, transparent, and consistent framework.

The Criteria for Reporting and Evaluating ecotoxicity Data (CRED) project emerged to address these shortcomings [5]. Developed through international collaboration, CRED provides a detailed, criterion-based method for evaluating both the reliability (internal scientific quality) and relevance (fitness for a specific assessment purpose) of aquatic ecotoxicity studies [1]. This guide provides a comparative analysis of the Klimisch and CRED frameworks, supported by experimental data from a major ring test, and situates this evolution within the broader thesis of improving the relevance evaluation of ecotoxicity studies for regulatory science [3] [2].

Framework Comparison: Klimisch vs. CRED

The following table summarizes the fundamental architectural differences between the Klimisch and CRED evaluation methods.

Table: Core Architectural Comparison of the Klimisch and CRED Frameworks

| Feature | Klimisch Method (1997) | CRED Method (2016) |

|---|---|---|

| Primary Scope | Reliability evaluation only. | Integrated evaluation of both reliability and relevance [1]. |

| Evaluation Categories | Four reliability categories: Reliable without restrictions (R1), Reliable with restrictions (R2), Not reliable (R3), Not assignable (R4) [2]. | Three explicit relevance categories (Relevant with/without restrictions, Not relevant) alongside refined reliability categories [2]. |

| Number of Criteria | Limited, non-explicit criteria for reliability; no formal criteria for relevance [1]. | 20 detailed reliability criteria and 13 detailed relevance criteria, each with extensive guidance [1] [5]. |

| Guidance & Transparency | Minimal guidance; heavily reliant on expert judgement, leading to low transparency [3]. | Comprehensive guidance for each criterion; designed to structure and document expert judgement for greater transparency [1]. |

| Handling of Test Standards | Heavily favors Good Laboratory Practice (GLP) and OECD guideline studies, potentially overlooking flaws [2]. | Criteria-based; evaluates the actual conduct and reporting of a study regardless of its guideline status [1]. |

| Output | A single reliability score (R1-R4). | A dual score for reliability and relevance, plus documented rationale for all criteria assessments [2]. |

Experimental Comparison: The CRED Ring Test

Experimental Protocol

A two-phased international ring test was conducted to empirically compare the performance of the Klimisch and CRED methods [3] [2].

- Phase 1 (Klimisch): 75 risk assessors from 12 countries evaluated the reliability and relevance of two out of eight selected ecotoxicity studies using the Klimisch method [2].

- Phase 2 (CRED): The same pool of assessors evaluated two different studies from the same set using a draft version of the CRED evaluation method [2].

- Participant Profile: Assessors represented industry, academia, consultancy, and government, with most having over five years of experience [1].

- Task: For each method, assessors categorized study reliability and relevance and completed a questionnaire on their perception of the method's accuracy, consistency, and practicality [2].

Results and Performance Data

The ring test generated quantitative and perceptual data demonstrating CRED's advantages.

Table: Key Quantitative Results from the CRED Ring Test [3] [2]

| Evaluation Metric | Klimisch Method Outcome | CRED Method Outcome | Interpretation |

|---|---|---|---|

| Consistency Among Assessors | Low | Higher | CRED's detailed criteria reduced variability in study categorization between different experts. |

| Perceived Accuracy | Lower | 85% of participants rated CRED as "more accurate" | Assessors trusted CRED evaluations to better reflect the true scientific quality of a study. |

| Perceived Consistency | Lower | 90% of participants rated CRED as "more consistent" | The structured criteria led to more reproducible evaluations across different users. |

| Dependence on Expert Judgement | High | Participants perceived CRED as "less dependent" on subjective judgement | The explicit guidance reduced the scope for arbitrary or biased decisions. |

| Transparency of Process | Low | High | The requirement to document evaluations against specific criteria made the process auditable and clear. |

Diagram 1: Workflow of the Klimisch-CRED Comparative Ring Test

The CRED Ecosystem: Expansion and Specialization

The core CRED principles have spawned a family of specialized tools, illustrating the framework's adaptability and ongoing evolution.

Table: The Expanding Family of CRED-Based Evaluation Tools

| Tool Name | Focus Area | Key Innovation | Status/Application |

|---|---|---|---|

| CRED (Core) | Aquatic ecotoxicity studies [1]. | Integrated reliability & relevance criteria. | Piloted in EU EQS revision; used in literature evaluation tools [5] [6]. |

| EthoCRED | Behavioural ecotoxicity studies [7]. | Adapts criteria for unique challenges of behavioural endpoints (e.g., arenas, tracking software). | Proposed extension; addresses gap in standard guidelines [7]. |

| NanoCRED | Ecotoxicity of nanomaterials [6]. | Incorporates criteria specific to nano-materials (e.g., characterization, dosing). | Framework published to assess regulatory adequacy of nanoecotoxicity data [6]. |

| EFSA CATs | Non-standard higher-tier studies (e.g., bees, birds) [8]. | Critical Appraisal Tools (CATs) for regulatory use, based on CRED approach. | Developed for EFSA to harmonize evaluation of studies in pesticide peer-review [8]. |

| CREED | Environmental exposure datasets [9]. | Applies the CRED paradigm to chemical monitoring data (e.g., water, soil). | New framework (2024) to evaluate reliability/relevance of exposure data for risk assessments [9]. |

Diagram 2: Evolution and Expansion of Study Appraisal Frameworks

The Scientist's Toolkit: Essential Research Reagents and Materials

Beyond evaluation criteria, robust study appraisal depends on access to well-reported information. The CRED project also developed reporting recommendations to improve the utility of primary studies [1]. The following are key "research reagents" – the essential data and metadata that should be clearly reported in any ecotoxicity study to facilitate its evaluation.

Table: Essential Research Reagents for Ecotoxicity Study Reporting and Evaluation

| Item Category | Specific Item/Reagent | Critical Function in Appraisal |

|---|---|---|

| Test Substance | Certified Reference Material with documented purity and stability. | Enables assessment of relevance (correct substance) and reliability (exposure consistency) [1]. |

| Test Organism | Species and Strain designation; Source and Life Stage details. | Critical for evaluating relevance to assessment endpoint and reliability of biological response [1]. |

| Exposure System | Dosing Solution preparation protocol; Analytical Verification data of actual concentrations. | Fundamental for reliability; confirms the test organism was exposed to the intended concentration [1]. |

| Control Reagents | Solvent/Carrier Controls (type and concentration); Positive Control Data (if applicable). | Allows assessment of reliability by checking for solvent effects and system responsiveness [1]. |

| Endpoint Measurement | Validated Assay Kits or standardized protocols for measuring mortality, growth, reproduction, etc. | Ensures reliability of the biological effect data used for hazard quantification [1]. |

| Statistical Package | Software and Methods for calculating EC/LC values, variance, and significance. | Essential for reliability evaluation of data analysis and reported conclusions [1]. |

The evolution from Klimisch to CRED represents a paradigm shift from a reliance on authority and tradition (GLP, guidelines) to a principled, criteria-based appraisal of scientific merit [4]. Experimental data confirms that this structured approach enhances consistency, transparency, and accuracy in determining which studies are fit for regulatory purpose [3] [2].

This evolution directly addresses the core thesis of improving relevance evaluation. CRED explicitly separates reliability from relevance, providing tools to systematically determine if a scientifically sound study is also appropriate for a specific regulatory question (e.g., deriving a chronic water quality standard vs. an acute hazard classification) [1]. The framework's expansion into specialized areas like behavioral toxicology (EthoCRED) and exposure science (CREED) demonstrates its utility in bringing emerging, relevant science into the regulatory fold [7] [9].

The trajectory points toward even more integrated assessment frameworks, such as the recent EcoSR framework, which seeks to combine the strengths of CRED with risk-of-bias approaches from human health assessment [10]. The ultimate goal remains constant: to ensure environmental decisions are based on the best possible, most critically appraised science, with a clear and transparent line of evidence from the laboratory to the regulatory standard.

The establishment of Predicted No-Effect Concentrations (PNECs) and Environmental Quality Standards (EQSs) represents the cornerstone of modern chemical regulation, designed to protect aquatic and terrestrial ecosystems from harmful substances. These regulatory thresholds are not arbitrary; they are quantitative expressions of environmental safety, derived from empirical toxicity data and ecological theory. However, their scientific validity and protective capacity are intrinsically tied to the quality, relevance, and reliability of the underlying ecotoxicity data. Within the broader thesis on the relevance evaluation of ecotoxicity studies, this analysis argues that data quality is the non-negotiable prerequisite for robust PNECs and EQSs. As regulatory science evolves to incorporate New Approach Methodologies (NAMs)—including in vitro assays, (Q)SAR models, and omics technologies—the frameworks for assessing the relevance of these novel data streams become increasingly critical [11] [12]. This guide provides a comparative analysis of the methodologies and tools that generate and evaluate the data underpinning these essential regulatory values.

Comparative Analysis of Data Generation and Modeling Platforms

The derivation of PNECs and EQSs relies on data from diverse sources, ranging from standardized animal tests to computational models. The choice of platform significantly influences the resulting data quality and, consequently, the reliability of the derived safety threshold.

Comparison of (Q)SAR Model Performance for Environmental Fate Prediction

(Q)SAR models are pivotal for filling data gaps, especially under legislative bans on animal testing (e.g., for cosmetics). Their performance varies by the predicted property and the chemical domain [13].

Table 1: Performance Comparison of Freely Available (Q)SAR Platforms for Key Environmental Fate Parameters [13]

| Environmental Fate Parameter | Recommended Model/Platform | Key Strength | Reported Reliability Consideration |

|---|---|---|---|

| Persistence (Ready Biodegradability) | Ready Biodegradability IRFMN (VEGA) | High performance for qualitative classification | Qualitative predictions are more reliable than quantitative ones against REACH/CLP criteria. |

| Leadscope Model (Danish QSAR) | Suitable for cosmetic ingredients dataset | ||

| BIOWIN (EPISUITE) | Relevant prediction results | ||

| Bioaccumulation (Log Kow) | ALogP (VEGA) | High performance for prediction | Applicability Domain (AD) is critical for evaluating reliability. |

| ADMETLab 3.0 | Appropriate for cosmetic ingredients | ||

| KOWWIN (EPISUITE) | Relevant for Log Kow estimation | ||

| Bioaccumulation (BCF) | Arnot-Gobas (VEGA) | Best for BCF prediction | |

| KNN-Read Across (VEGA) | Best for BCF prediction | ||

| Mobility (Log Koc) | OPERA v.1.0.1 (VEGA) | Relevant model for prediction | |

| KOCWIN-Log Kow (VEGA) | Relevant model for prediction |

Comparison of PNEC Derivation Approaches and Tools

PNECs can be derived using generic assessment factors or more sophisticated, bioavailability-adjusted tools. The method chosen impacts the site-specificity and accuracy of the standard.

Table 2: Comparison of Approaches for Deriving Predicted No-Effect Concentrations (PNECs)

| Approach | Description | Typical Use Case | Key Advantage | Key Limitation | Example/Platform |

|---|---|---|---|---|---|

| Empirical Assessment Factors | Application of safety factors (e.g., 10–1000) to the lowest reliable toxicity endpoint. | Initial screening, priority setting for diverse substances. | Simple, requires minimal data. | Conservative; may not account for species sensitivity or bioavailability. | NORMAN "Lowest PNEC" list for prioritization [14]. |

| Species Sensitivity Distribution (SSD) | Statistical distribution of toxicity data from multiple species to estimate a protective concentration. | Higher-tier assessment with robust chronic toxicity dataset. | Ecologically representative, defines a specific protection level (e.g., HC5). | Requires high-quality data for many species. | Standard method in EU EQS derivation [15]. |

| Bioavailability Modeling (BLM) | Uses site-specific water chemistry (e.g., pH, DOC) to model metal bioavailability and toxicity. | Site-specific risk assessment for metals (Cu, Ni, Zn, Pb). | Reduces over-protection, enables compliance checking for specific water bodies. | Complex, requires detailed input data. | PNEC-pro tool (endorsed by Dutch authorities and EU CIS) [16]. |

| QSAR-Estimated PNEC (P-PNEC) | Uses QSAR predictions of toxicity when empirical data are insufficient or absent. | Preliminary screening for data-poor substances. | Enables a first-tier assessment for virtually any chemical. | High uncertainty; requires clear marking as provisional. | Method described in NORMAN workflow [14]. |

The Emerging Role of Behavioral Endpoints in Regulation

Behavioral studies offer sensitive, ecologically relevant endpoints but face challenges in regulatory acceptance. Their use depends heavily on demonstrating relevance to population-level effects [17].

Table 3: Regulatory Consideration of Behavioral Endpoints in EU Frameworks [17]

| EU Regulatory Framework | Status of Behavioral Endpoints | Reported Use Cases | Key Requirement for Acceptance |

|---|---|---|---|

| REACH | Not prohibited; can be used as supportive evidence. Not a standard endpoint. | Sediment avoidance, burrowing activity mentioned in guidance. | Must be backed by studies on traditional endpoints (mortality, growth, reproduction). |

| Water Framework Directive (EQS) | Can be used if relevant at population level. | Limited known cases in regulatory dossiers. | Study must be robust, well-designed, and transparently link behavior to population fitness. |

| Plant Protection Products (PPP) & Biocidal Products (BPR) | Not standard but can contribute to weight-of-evidence. | — | Relevance for decision-making must be clearly argued by risk assessors. |

Experimental Protocols: Methodologies Underpinning Key Data

The reliability of data feeding into PNECs and EQSs is determined by rigorous, standardized experimental protocols. Recent updates to OECD Test Guidelines (TGs) reflect the integration of modern, mechanistic endpoints into traditional frameworks [18].

Protocol for QSAR Modeling and Validation (Based on OECD Principles)

The development of a QSAR model for predicting ecotoxicity endpoints, such as Acute Exposure Guideline Levels (AEGL) or stability constants, follows a standardized workflow to ensure scientific validity [19] [20].

1. Objective Definition: Define the specific regulatory endpoint to be predicted (e.g., chronic fish toxicity LC50, biodegradability half-life). 2. Data Curation and Preparation: * Collect a high-quality dataset of chemical structures and associated experimental endpoint values from reliable sources (e.g., EPA databases, OECD-NEA). * Calculate molecular descriptors (e.g., physicochemical properties, topological indices) for each compound. * Divide the dataset into a training set (~80%) for model building and a hold-out test set (~20%) for external validation. 3. Model Development and Training: * Select machine learning algorithms (e.g., Gradient Boosting (GBDT/XGBoost), Support Vector Regressor (SVR), CatBoost). * Use the training set to build models, optimizing hyperparameters via techniques like genetic algorithms or Bayesian optimization. * Perform internal validation using bootstrapping or cross-validation. 4. Model Validation and Applicability Domain (AD) Definition: * External Validation: Predict the endpoint for the unseen test set. Calculate performance metrics (R², RMSE, MAE). A robust model for AEGL prediction achieved R² > 0.95 on the test set [19]. * Applicability Domain Analysis: Define the chemical space where the model makes reliable predictions. Use methods like leverage (Williams plots) and descriptor ranges to identify outliers [19] [20]. * Y-Randomization: Confirm the model is not based on chance correlation by shuffling endpoint values and re-training [20]. 5. Reporting and Use: Document the model according to OECD QSAR validation principles for regulatory use, clearly stating its intended purpose and limitations.

Protocol for Integrating 'Omics Endpoints into Standard Fish Toxicity Tests (OECD TG Updates)

The September 2025 updates to OECD TGs 203 (Fish Acute), 210 (Fish Early-Life Stage), and 236 (Fish Embryo) permit the optional collection of samples for mechanistic analysis [18].

1. Standard Toxicity Test Execution: Conduct the fish toxicity test according to the base OECD TG protocol (e.g., exposure concentrations, duration, endpoints like mortality or growth). 2. Sample Collection for 'Omics: * At test termination (or at interim time points), humanely euthanize specified organisms. * Excise target tissues (e.g., liver, gill, brain) known to be toxicological targets. * Immediately preserve tissues in RNAlater or flash-freeze in liquid nitrogen. Store at -80°C. 3. Transcriptomic Analysis (Example Workflow): * Extract total RNA from preserved tissue samples. * Perform RNA sequencing (RNA-Seq) or quantitative PCR (qPCR) for targeted genes. * Analyze gene expression changes relative to control groups. * Map differentially expressed genes to known Adverse Outcome Pathways (AOPs) to infer mode of action. 4. Data Integration and Point of Departure (POD) Derivation: * Determine the Transcriptomic Point of Departure (tPOD), the lowest exposure concentration that induces a statistically significant, biologically relevant change in gene expression. * Compare the tPOD with the traditional POD (e.g., based on mortality). The tPOD often serves as a more sensitive, mechanistic benchmark for risk assessment [12]. Purpose: This protocol modernizes standard tests by embedding mechanistic insight, supporting the development of AOPs and next-generation risk assessments.

Visualizing Workflows and Relationships

The processes of data generation, relevance assessment, and standard derivation involve complex, interconnected steps. The following diagrams clarify these workflows.

Workflow for PNEC Derivation and Data Source Integration

This diagram outlines the decision-making process for deriving a PNEC, highlighting the hierarchy and integration of different data sources.

Workflow for Human Relevance Assessment of AOPs and NAMs

This diagram illustrates the refined workflow for systematically evaluating whether an Adverse Outcome Pathway (AOP) and its associated New Approach Methodologies (NAMs) are relevant to humans [11].

Generic QSAR Modeling Workflow for Ecotoxicity Prediction

This diagram depicts the standardized steps in building and validating a QSAR model for regulatory ecotoxicology, as applied in recent studies [19] [20].

Generating and evaluating high-quality data for regulation requires a suite of specialized tools and resources.

Table 4: Key Research Reagent Solutions and Tools for Ecotoxicity Data Generation and Evaluation

| Tool/Resource Category | Specific Example(s) | Primary Function in PNEC/EQS Context | Relevance to Data Quality |

|---|---|---|---|

| Bioavailability Modeling Software | PNEC-pro [16] | Calculates site-specific, bioavailability-corrected PNECs for metals (Cu, Ni, Zn, Pb) using Biotic Ligand Models (BLMs). | Enhances relevance by accounting for local water chemistry, moving from overly conservative generic standards to protective, site-specific values. |

| QSAR Model Platforms | VEGA, EPI Suite, Danish QSAR Models [13] | Predicts missing ecotoxicity endpoints and environmental fate parameters (persistence, bioaccumulation, mobility). | Addresses data gaps for prioritization; reliability is contingent on the model's Applicability Domain (AD) and proper validation. |

| Transcriptomics & Omics Tools | EPA's ETAP, tPOD derivation workflows [12] | Analyzes gene expression changes to derive mechanistic Points of Departure (tPODs) and inform AOPs. | Provides sensitive, human-relevant mechanistic data that can support or refine hazard assessment, improving biological relevance. |

| Standardized Test Guidelines | Updated OECD TGs (203, 210, 236, 254) [18] | Provides internationally recognized protocols for generating reliable ecotoxicity data. | Ensures reliability and reproducibility of experimental data, fostering Mutual Acceptance of Data (MAD). |

| Adverse Outcome Pathway (AOP) Resources | AOP-Wiki, AOP-KB (Knowledge Base) | Frameworks for organizing mechanistic toxicology knowledge from a Molecular Initiating Event (MIE) to an Adverse Outcome (AO). | Structures biological relevance assessment; essential for evaluating the mechanistic basis of both traditional and NAM-derived data [11]. |

| Regulatory Database & Lists | NORMAN Ecotoxicology Database (Lowest PNECs) [14] | Provides curated, screening-level PNEC values for a wide range of substances, often based on empirical data or QSAR. | Serves as a prioritization tool; flags when measured concentrations exceed a provisional safety threshold, triggering more robust assessment. |

| Weight-of-Evidence & Relevance Assessment Frameworks | Refined HR Assessment Workflow [11] | Provides structured guidance and templates for assessing the human (or ecological) relevance of toxicological pathways (AOPs) and associated NAM data. | Critical for systematically evaluating the relevance of novel data streams before they can be confidently used in regulation. |

Within the rigorous domain of ecotoxicity studies, expert judgment is an indispensable yet double-edged tool. Researchers routinely rely on it to design experiments, interpret complex mixture effects, and evaluate environmental risk when definitive data is scarce [21]. However, this very reliance can systematically introduce bias and inconsistency, potentially distorting scientific conclusions and regulatory decisions. A critical review of bee ecotoxicology studies reveals a telling pattern: of 60 studies on binary chemical mixtures examined, only two utilized multiple total concentrations and ratios to explore a broad spectrum of possible interactions. In contrast, 26 studies tested only a single concentration of each chemical, leading to incomplete and potentially biased interpretations of interactive effects [22]. This mirrors findings from broader decision science, where experts evaluating the same evidence—such as the feasibility of manufacturing jet engine parts—demonstrate striking variability; no two experts make identical judgments, and the majority exhibit internal inconsistency in their evaluations [23]. This article frames these pitfalls within the context of relevance evaluation in ecotoxicology, comparing the "product" of individual expert judgment against more systematic, aggregated alternatives. We present experimental data and methodologies that quantify these issues, providing researchers with evidence-based strategies to enhance the objectivity and reliability of their assessments.

Comparative Analysis of Expert Judgment Performance

The following tables synthesize quantitative findings from empirical studies, comparing the performance of individual expert judgment against aggregated approaches and highlighting specific sources of bias.

Table 1: Comparison of Individual vs. Aggregate Expert Judgment Performance

| Performance Metric | Individual Expert Judgment | Aggregate Expert Judgment (Pooled) | Experimental Context & Source |

|---|---|---|---|

| Inter-expert Agreement | Low (No two experts identical) [23] | High (Forms consistent decision rules) [23] | Feasibility of producing parts with Metal Additive Manufacturing (MAM) [23] |

| Internal Consistency (Intransitivity) | Frequently inconsistent (Majority exhibit some intransitivity) [23] | Greater internal consistency [23] | Feasibility rankings for jet engine parts via MAM [23] |

| Relation to Ground Truth | Variable; high inconsistency on ambiguous cases [24] | More robust; wisdom of crowds effect [23] | Diagnosis of mammograms and spinal images [24] |

| Confidence-Accuracy Calibration | Confidence drops as consensus decreases, even unconsciously [24] | Aggregate confidence more stable [23] | Repeated two-alternative diagnostic tasks [24] |

| Key Implication | Relying on 1-2 experts risks considerable divergence from reliable knowledge [23] | Capturing and scaling aggregate knowledge accelerates reliable decision-making [23] | Technical frontier assessment [23] |

Table 2: Sources and Manifestations of Bias & Inconsistency in Ecotoxicology and Expert Judgment

| Source of Bias/Inconsistency | Manifestation in Expert Judgment | Manifestation in Ecotoxicology Study Design | Impact on Relevance Evaluation |

|---|---|---|---|

| Limited Sampling of Conditions | Judging based on limited experience or a narrow set of mental models [21]. | Testing chemical mixtures at only a single total concentration or ratio (58/60 studies) [22]. | Leads to overgeneralization; interactions (synergistic/antagonistic) may be mischaracterized across untested environmental conditions. |

| Case Ambiguity & Cue Conflict | Inconsistency increases, and confidence drops, for cases where cues are ambiguous or conflict [24]. | Interactive effects vary significantly with concentration, ratio, and effect magnitude (e.g., LC10 vs. LC50), often unaddressed [22]. | Undermines extrapolation of lab results to field relevance; the "true" interaction for a given environmental exposure remains unknown. |

| Overreliance on Tacit Knowledge | Dependence on unarticulated, subjective experience leading to information asymmetry [23]. | Preference for familiar model organisms or endpoints without justifying ecological relevance. | Obscures rationale for study design, making it difficult for the community to assess the applicability of findings. |

| Lack of Structured Elicitation | Unstructured judgments are more prone to cognitive biases (e.g., anchoring, availability) [21]. | Ad hoc selection of test concentrations based on precedent rather than systematic spacing or probabilistic design. | Introduces arbitrary elements into the foundational data, affecting all downstream risk assessment conclusions. |

Detailed Experimental Protocols

To understand the evidence behind the comparisons above, the methodologies of two key experiments are detailed below.

Protocol 1: Eliciting and Analyzing Inconsistency in Technical Expert Judgment [23]

- Objective: To codify expert decision-making at the technological frontier and measure the prevalence and impact of inconsistency.

- Expert Panel: 65 experts from industry, academia, and government (27 completed the full survey) in metal additive manufacturing (MAM) for aerospace.

- Elicitation Method:

- Experts were presented with detailed profiles of jet engine parts, varying in complexity, material, and criticality.

- For each part, they performed a series of paired-comparison tasks, judging which of two parts was more feasible to produce with MAM.

- They also provided direct feasibility ratings on a quantitative scale.

- Analysis of Inconsistency:

- Intransitivity Detection: An expert's pairwise comparisons were checked for logical transitivity (if A > B and B > C, then A > C). Violations indicated internal inconsistency.

- Inter-Expert Agreement: Judgments across all experts were compared to calculate the level of consensus for each part.

- Aggregation Simulation: Judgments from random subsets of experts (of varying sizes) were aggregated to create a "group" feasibility score. The divergence of individual experts from this aggregate score was measured.

- Key Outcome: The majority of experts showed some intransitivity. The divergence of individual judgments from the aggregate knowledge decreased sharply as the sample size of experts increased, demonstrating the value of pooling judgments [23].

Protocol 2: Measuring the Confidence-Consistency Link in Diagnostic Expertise [24]

- Objective: To empirically test the theoretical relationship between expert confidence, internal consistency, and case difficulty.

- Theoretical Model (Self-Consistency Model - SCM):

- Assumes an expert samples "cues" from a case (e.g., features in a mammogram) to reach a decision.

- The true probability (p) that a sampled cue points to the correct answer defines case difficulty (p = 0.5 is maximally ambiguous).

- The model predicts that for a single expert, inconsistency across two viewings of the same case is highest for ambiguous cases and lower for clear cases. Confidence should track the clarity of the evidence.

- Empirical Test:

- Datasets: (a) Radiologists interpreting the same mammograms twice, months apart. (b) Doctors interpreting lumbar spine MRI images twice.

- Ground Truth: Subsequent clinical follow-up (biopsy, treatment outcome).

- Measures: For each case, researchers calculated (i) expert consensus (inter-rater agreement), (ii) individual expert confidence (when available), and (iii) individual expert inconsistency (intra-rater agreement).

- Key Outcome: Empirical data confirmed the model's predictions. As consensus among experts decreased (indicating a more ambiguous case), individual experts were less confident and more likely to be inconsistent in their repeated judgments, regardless of whether the consensus was correct [24].

Visualizing Key Concepts and Workflows

The following diagrams, created using DOT language, illustrate the core theoretical model and the experimental workflow for analyzing expert judgment.

- Diagram 1 Title: How Case Difficulty Drives Expert Inconsistency and Confidence

Diagram 1 Summary: This flowchart visualizes the Self-Consistency Model (SCM) [24], a theoretical framework for understanding expert judgment. The central pathway (black/blue arrows) shows the process: an expert samples cues from a case, makes a decision based on the majority, and derives confidence. The probabilistic nature of cue sampling (the dotted yellow loop) means a second viewing can lead to a different outcome. The key insight is that the property of the case itself—its inherent difficulty (p)—governs this process. Ambiguous cases (p≈0.5) lead to high inconsistency and low confidence, while clear cases (p→1 or 0) lead to consistent, confident judgments.

- Diagram 2 Title: A Systematic Workflow to Address Judgment Pitfalls

Diagram 2 Summary: This diagram outlines a practical, evidence-based workflow for researchers to manage the pitfalls of expert judgment. Moving beyond ad-hoc opinion, the process begins with structured elicitation from multiple experts. Three key metrics are then measured: disagreement between experts, inconsistency within individual experts, and their confidence levels [23] [24]. Analyzing these patterns reveals the source of unreliability, guiding the choice of mitigation strategy: aggregating judgments to overcome individual bias [23], flagging ambiguous cases for further scrutiny [24], or redesigning the experiment itself—for example, by testing a wider range of chemical concentrations to reduce ambiguity in interaction assessments [22].

This table details key methodological tools and principles researchers can employ to minimize bias and enhance consistency in evaluations, particularly within ecotoxicology.

Table 3: Research Reagent Solutions for Mitigating Judgment Pitfalls

| Tool/Resource Category | Specific Item or Principle | Function & Rationale | Application Context |

|---|---|---|---|

| Structured Elicitation Frameworks | Delphi Method [21] | Anonymously aggregates expert opinions over iterative rounds, reducing dominance bias and converging on a reasoned group judgment. | Prioritizing research questions, setting testing guidelines, or defining criteria for study relevance. |

| Experimental Design Reagents | Full Factorial or Concentration-Ratio Matrix Design [22] | Forces systematic testing across a defined chemical mixture space (multiple concentrations and ratios), replacing ad-hoc selection with empirical coverage. | Designing ecotoxicology studies for binary or ternary chemical mixtures to characterize interactions without bias. |

| Bias Detection Metrics | Intransitivity Check [23] | A logical test (e.g., on paired comparisons) to identify internal inconsistency within a single expert's judgments, flagging unreliable evaluations. | Quality control during peer review or data validation when expert scores are used. |

| Data Visualization & Communication | Principles of Effective Data Display [25] [26] | Rules (e.g., simplify, use correct chart type, provide context) to present data in a way that minimizes cognitive burden and misinterpretation by experts. | Preparing reports, dashboards, or figures for risk assessment panels or stakeholder meetings to ensure clear, unbiased interpretation. |

| Formal Decision Support Models | Self-Consistency Model (SCM) Framework [24] | A theoretical model that predicts and explains the link between case ambiguity, expert confidence, and judgment inconsistency. | Diagnosing why expert evaluations for certain environmental scenarios (e.g., novel pollutant mixtures) show high disagreement. |

| Visualization Accessibility Tools | Color Contrast Analyzer (e.g., WebAIM) [27] [28] | Software to verify that color choices in graphs and interfaces meet WCAG contrast ratios, ensuring information is accessible to all and not misread. | Creating inclusive and unambiguous charts for publications and presentations, avoiding reliance on color alone [29] [28]. |

Modern Tools and Frameworks: Applying CRED Criteria and OECD Guidelines

In environmental risk assessment, the derivation of predicted-no-effect concentrations (PNECs) and environmental quality standards (EQSs) relies on the critical evaluation of available ecotoxicity studies[reference:0]. Historically, this evaluation has often depended on expert judgment, leading to potential bias and inconsistency among assessors[reference:1]. The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) project was developed to address this problem by providing a transparent, consistent, and science-based method for evaluating both the reliability and relevance of aquatic ecotoxicity studies[reference:2]. This guide details the implementation of CRED's 20 reliability and 13 relevance criteria, frames its performance within a comparative analysis against the established Klimisch method, and highlights its evolving role in modern ecotoxicology.

The CRED evaluation method is built on two pillars: a set of 20 criteria for reliability and 13 criteria for relevance. This structure provides a systematic checklist that moves beyond the Klimisch method's focus solely on reliability[reference:3].

- Reliability (20 criteria): Assesses the inherent quality of a test report, examining experimental methodology, documentation clarity, and result plausibility. These criteria are derived from OECD reporting recommendations and cover aspects from test design to statistical analysis[reference:4].

- Relevance (13 criteria): Evaluates the appropriateness of data for a specific hazard identification or risk characterization. This includes the biological relevance of the test organism and endpoint, and the exposure relevance to the scenario being assessed[reference:5].

The method is supported by extensive guidance material, which was a key factor in its preference by risk assessors during validation[reference:6].

Comparative Performance: CRED vs. the Klimisch Method

A pivotal two-phased ring test, involving 75 risk assessors from 12 countries, directly compared the CRED and Klimisch methods[reference:7]. The quantitative results demonstrate CRED's advantages in consistency, transparency, and comprehensiveness.

Table 1: Methodological Comparison of Klimisch and CRED

| Characteristic | Klimisch Method | CRED Method |

|---|---|---|

| Primary Data Type | Toxicity & ecotoxicity | Aquatic ecotoxicity |

| Number of Reliability Criteria | 12–14 (ecotoxicity) | 20 (evaluating) / 50 (reporting) |

| Number of Relevance Criteria | 0 | 13 |

| OECD Reporting Criteria Included | 14 of 37 | 37 of 37 |

| Additional Guidance Provided | No | Yes |

| Evaluation Summary | Qualitative (reliability only) | Qualitative (reliability & relevance) |

Source: Kase et al. (2016)[reference:8]

Table 2: Ring Test Results - Reliability Categorization Outcomes

| Reliability Category | Klimisch Method (% of evaluations) | CRED Method (% of evaluations) |

|---|---|---|

| Reliable without restrictions (R1) | 8% | 2% |

| Reliable with restrictions (R2) | 45% | 24% |

| Not reliable (R3) | 42% | 54% |

| Not assignable (R4) | 6% | 20% |

Source: Ring test data analysis[reference:9].

Key Findings from the Comparison:

- Increased Stringency & Consistency: CRED resulted in a higher proportion of studies categorized as "not reliable" or "not assignable," suggesting a more critical and systematic appraisal that reduces the acceptance of flawed studies[reference:10]. For specific studies, CRED led to significantly different (typically more conservative) reliability categorizations, uncovering issues like exposure concentrations exceeding substance solubility that were missed by Klimisch assessors[reference:11].

- Superior User Perception: Participants perceived CRED as less dependent on expert judgment, more accurate, consistent, and practical regarding the use of criteria[reference:12].

- Comprehensive Relevance Assessment: Unlike Klimisch, CRED provides structured criteria for evaluating relevance, addressing a critical gap in ecological risk assessment[reference:13].

Experimental Protocol: The CRED Ring Test Methodology

The comparative data presented above were generated through a robust, two-phased ring test designed to minimize bias.

1. Study Selection: Eight ecotoxicity studies were selected, covering various taxonomic groups (algae, higher plants, crustaceans, fish) and chemical classes (insecticides, antibiotics, pharmaceuticals, industrial chemicals)[reference:14]. 2. Participant Recruitment: 75 risk assessors from regulatory agencies, consultancies, industry, and academia across 12 countries participated[reference:15]. 3. Phased Evaluation: * Phase I: Participants evaluated two studies using the Klimisch method[reference:16]. * Phase II: Participants evaluated two different studies from the same set using the draft CRED method[reference:17]. 4. Data Collection: For each evaluation, participants recorded reliability/relevance categories, time taken, and completed questionnaires on their perception of the method's accuracy, consistency, and usability[reference:18]. 5. Analysis: Consistency between assessors, differences in categorization outcomes, and participant feedback were statistically analyzed to compare the two methods[reference:19].

The Evolving CRED Toolkit: Extensions for Modern Challenges

The core CRED framework has been adapted to address specific sub-disciplines within ecotoxicology, ensuring its continued relevance.

- NanoCRED: A framework tailored for assessing the reliability and relevance of ecotoxicity data for nanomaterials, accounting for their unique properties[reference:20].

- EthoCRED: A recently developed extension for evaluating behavioural ecotoxicity studies. It provides criteria to accommodate the wide array of experimental designs in this sensitive and growing field[reference:21].

- CRED for Sediment and Soil: The original aquatic CRED criteria have been adapted to include sediment- and soil-specific aspects, such as bioavailability and porewater chemistry, for terrestrial and benthic risk assessments[reference:22].

The relationship between these tools is illustrated in the following diagram:

Diagram: The CRED framework and its specialized extensions for nanomaterials, behavioural studies, and sediment/soil ecotoxicology.

Implementation Workflow: Applying the CRED Criteria

A structured approach is key to implementing CRED effectively. The following workflow diagrams the evaluation process for a single study.

Diagram: A stepwise workflow for implementing the CRED criteria to evaluate an ecotoxicity study.

Successfully applying the CRED criteria often requires access to specific reagents, organisms, and tools. The following table details key resources for generating and evaluating data within this framework.

Table 3: Essential Research Reagent Solutions for Ecotoxicity Testing & Evaluation

| Item Category | Specific Example(s) | Function in CRED Context |

|---|---|---|

| Standard Test Organisms | Daphnia magna (Cladocera), Danio rerio (Zebrafish), Desmodesmus subspicatus (Algae) | Provides biologically relevant endpoints. CRED criteria assess the appropriateness (species, life-stage) of the test organism for the regulatory question. |

| Reference Toxicants | Potassium dichromate, Sodium chloride, Copper sulfate | Used in routine laboratory proficiency testing to demonstrate organism health and test system validity—a key reliability criterion. |

| Culture Media & Reagents | OECD Reconstituted Freshwater, ISO Algal Growth Medium, Elendt M4/M7 for Daphnia | Standardized media ensure test reproducibility. CRED evaluates whether exposure conditions (including medium) are adequately reported and appropriate. |

| Analytical Grade Test Substances | High-purity pesticides, pharmaceuticals, industrial chemicals | Necessary for defining accurate exposure concentrations. CRED reliability criteria heavily weigh the reporting and verification of exposure metrics (e.g., measured vs. nominal concentrations). |

| Analytical Equipment | HPLC-MS, GC-MS, ICP-OES, Photometric analyzers | Enables the measurement of actual exposure concentrations in test media, which is critical for fulfilling key CRED reliability criteria. |

| Data Evaluation Software | CRED Excel Assessment Sheet, Statistical packages (R, PRISM) | The official CRED Excel tool guides the evaluator through the criteria. Statistical software is needed to re-analyze original data if required for relevance assessment. |

| Guidance Documents | OECD Test Guidelines, ISO Standards, CRED Guidance PDFs | Provide the standardized methodology against which study reliability is judged using the CRED checklist. |

The CRED evaluation method, with its structured set of 20 reliability and 13 relevance criteria, represents a significant advancement over the traditional Klimisch approach. Empirical data from a large ring test confirm that CRED promotes greater consistency, transparency, and thoroughness in ecotoxicity study evaluation[reference:23]. Its ongoing expansion into nanomaterials, behavioural ecotoxicology, and sediment/soil systems demonstrates its adaptability and enduring value for researchers and regulatory professionals[reference:24]. By implementing the practical workflow and utilizing the essential tools outlined in this guide, the scientific community can contribute to more robust, reproducible, and relevant environmental risk assessments.

The Organisation for Economic Co-operation and Development (OECD) Test Guidelines are the globally recognized standard for generating reliable, regulatory-grade data on chemical safety. A significant update in June 2025 saw the publication of 56 new, updated, or corrected guidelines, reflecting a concerted effort to align testing strategies with modern scientific principles [30]. These revisions are not merely procedural; they represent a strategic shift towards more predictive, mechanistic, and ethically conscious ecotoxicity research. This evolution is critically examined through the lens of relevance evaluation, a core component of modern ecotoxicology that assesses how well test data predict real-world ecological outcomes.

A key driver of the 2025 updates is the strengthened commitment to the 3Rs principles (Replacement, Reduction, and Refinement of animal testing), promoting the use of alternative methods and maximizing information from necessary studies [18] [31]. Furthermore, the updates facilitate the generation of data that supports Next-Generation Risk Assessment (NGRA), which relies on mechanistic understanding and early biomarkers of effect. This forward-looking approach is contextualized by emerging scientific frameworks like EthoCRED, a new tool for evaluating the relevance and reliability of behavioural ecotoxicity data—an endpoint still largely outside formal test guidelines but recognized for its high ecological relevance [32] [33]. The following analysis compares the updated and legacy guidelines for fish toxicity and environmental fate testing, detailing the experimental shifts and their implications for the relevance of ecotoxicity studies.

Comparative Analysis of Updated vs. Legacy Guidelines

The 2025 revisions introduce targeted, science-driven enhancements to specific test guidelines. The changes can be categorized into two main groups: methodological clarifications for environmental fate studies and substantive modernizations for ecotoxicity tests, particularly for fish.

Table 1: Overview of Key Updated OECD Test Guidelines (June 2025)

| Test Guideline Number | Test Guideline Title | Core Update in 2025 | Primary Impact |

|---|---|---|---|

| TG 111 | Hydrolysis as a Function of pH | Correction of radioactive labelling guidance [18]. | Improves accuracy and consistency of tracking degradation. |

| TG 307 | Aerobic/Anaerobic Transformation in Soil | Correction of radioactive labelling guidance [18] [34]. | Ensures reliable formation of degradation products. |

| TG 308 | Aerobic/Anaerobic Transformation in Aquatic Sediment | Correction of radioactive labelling guidance [18]. | Enhances reliability of persistence data for sediments. |

| TG 316 | Phototransformation in Water | Correction of radioactive labelling guidance [18]. | Standardizes assessment of light-driven degradation. |

| TG 203 | Fish, Acute Toxicity Test | 1. Allowed tissue sampling for 'omics' analysis.2. Major update to 1992 guideline: guidance on testing UVCBs, flow-through systems [18] [34]. | Enables mechanistic insight; modernizes testing of difficult substances. |

| TG 210 | Fish, Early-life Stage Toxicity Test | Allowed tissue sampling for 'omics' analysis [18] [31] [34]. | Links sub-lethal effects to molecular initiating events. |

| TG 236 | Fish Embryo Acute Toxicity (FET) Test | Allowed tissue sampling for 'omics' analysis [18] [31]. | Enhances mechanistic data from a 3R-aligned alternative. |

| TG 254 | Mason Bees, Acute Contact Toxicity Test | New Guideline: Introduces a test for solitary bee species [18] [34]. | Expands pollinator risk assessment beyond honeybees. |

Environmental Fate & Persistence Testing: Enhanced Precision

The updates to the environmental fate guidelines (TG 111, 307, 308, 316) focus on improving methodological rigor rather than altering the fundamental test design. The primary change is the clarification of requirements for radioactive labelling of test substances [18] [34]. Accurate labelling is essential in simulation tests (e.g., TG 307, 308) to reliably track the parent compound's transformation into degradation products and non-extractable residues, enabling a definitive mass balance and calculation of degradation half-lives (DT~50~) [35]. These half-lives are directly compared to regulatory persistence criteria (P/vP) under frameworks like REACH [35].

Table 2: Key Changes in Environmental Fate Test Guidelines

| Aspect | Legacy Guideline Approach | 2025 Updated Guideline Approach | Impact on Data Relevance |

|---|---|---|---|

| Radioactive Labelling | Guidance on label position was less explicit [18]. | Corrected and clarified guidance on label position and protocol [18] [34]. | Increases accuracy and consistency of degradation tracking across labs, leading to more reliable P/vP classification. |

| Test Scope | Standard protocols for well-soluble substances. | Implicitly supports tailored strategies for challenging substances (UVCBs, volatile, adsorbing) as per industry practice [36]. | Promotes scientifically justified adaptations to generate relevant data for all substance types. |

| Integration with Assessment | Data used for single-parameter half-life estimation. | Data feeds tiered testing strategies (Ready > Inherent > Simulation) and complex exposure models (e.g., FOCUS) [35] [36]. | Enables more environmentally realistic risk assessments through higher-tier testing and modelling. |

Fish Toxicity Testing: A Step Toward Mechanistic Toxicology

The updates to fish toxicity guidelines represent a more transformative shift. The most significant change across TG 203, 210, and 236 is the formal allowance for the collection and cryopreservation of tissue samples for subsequent 'omics' analysis (e.g., transcriptomics, metabolomics) [18] [31] [34]. This change bridges traditional apical endpoint observation (mortality, growth) with molecular biomarker discovery and mode-of-action investigation.

Furthermore, TG 203 (Fish Acute Toxicity Test) has undergone its first major update since 1992. It now includes specific guidance for testing poorly soluble substances, UVCBs (Unknown or Variable composition, Complex reaction products or Biological materials), and the use of flow-through systems [18]. This addresses long-standing practical challenges and improves the test's applicability to a wider range of industrial chemicals.

Table 3: Key Changes in Fish Toxicity Test Guidelines

| Aspect | Legacy Guideline Approach | 2025 Updated Guideline Approach | Impact on Data Relevance |

|---|---|---|---|

| Endpoint Measurement | Apical endpoints only: Mortality, growth, development [37] [38]. | Apical + Mechanistic: Optional 'omics' sampling from same organisms [18] [34]. | Enables linking adverse outcomes to molecular pathways, greatly enhancing mechanistic relevance and predictive power. |

| Test Substance Scope | Limited guidance for difficult-to-test substances. | Explicit guidance for UVCBs, poorly soluble substances, and flow-through testing (TG 203) [18]. | Increases methodological robustness and relevance for modern chemical portfolios. |

| 3Rs Alignment | FET test (TG 236) as a stand-alone alternative. | FET test enhanced with omics potential, strengthening its role in a weight-of-evidence approach to reduce juvenile fish testing [18] [31]. | Refines and potentially reduces higher-tier testing by extracting more data from 3R-aligned methods. |

Methodological Innovations and Experimental Protocols

Protocol for Integrating 'Omics' into Fish Toxicity Tests

The 2025 update does not prescribe a specific 'omics protocol but provides a framework for sample collection and preservation that is harmonized with the standard test execution.

1. Experimental Workflow:

- Test Conduct: The fish toxicity test (e.g., TG 210, Early-Life Stage) is performed as standard, with exposure to a geometric series of test concentrations and controls [37].

- Tissue Sampling: At test termination (or at a predefined interim time point), target tissues (e.g., liver, brain, gonad, or whole embryo/larvae) are dissected from a subset of surviving organisms. Sampling should be performed rapidly to minimize stress-induced gene expression changes.

- Cryopreservation: Tissues are immediately snap-frozen in liquid nitrogen and stored at -80°C or below to preserve RNA/DNA and metabolite integrity for future analysis.

- Data Integration: Apical results (NOEC, LOEC, EC~x~) are reported alongside the availability of archived tissues for omics. Subsequent transcriptomic, etc., analysis can reveal biomarker profiles or perturbed pathways associated with specific effect concentrations.

2. Relevance to NGRA: This integrated design allows researchers to connect a Molecular Initiating Event (e.g., receptor binding) detected via omics with Key Events (e.g., altered histology) and the Adverse Outcome (e.g., reduced growth) within a single study, directly supporting Adverse Outcome Pathway (AOP) development and application.

Diagram: Workflow for Integrating Omics Analysis into Updated Fish Toxicity Tests

Tiered Strategy for Persistence Assessment

The updated environmental fate guidelines operate within a well-established tiered testing strategy for biodegradation and persistence. The simulation tests (TG 307, 308) are high-tier studies triggered when lower-tier screens suggest a substance may be persistent [35].

1. Tiered Testing Logic:

- Tier 1 (Screening): Ready Biodegradability tests (OECD 301 series). A positive result (e.g., >60% mineralization in 28 days) generally indicates non-persistence in the environment.

- Tier 2 (Inherent Potential): If Tier 1 fails, Inherent Biodegradability tests (OECD 302 series) determine if the substance has any degradative potential under optimized conditions.

- Tier 3 (Simulation): If inherent potential is shown, Simulation Tests (updated TG 307 for soil, TG 308 for sediment) are conducted. These use radiolabelled material under environmentally realistic conditions to determine a definitive degradation half-life (DT~50~) for comparison with regulatory P/vP criteria [35].

2. Role of Updated TG 307/308: These simulation tests are critical for definitive persistence classification. The 2025 clarifications on radiolabelling ensure the mass balance is accurate, which is essential for distinguishing between true degradation and mere sorption or volatilization losses.

Diagram: Tiered Testing Strategy for Environmental Persistence Assessment

The Scientist's Toolkit: Key Research Reagent Solutions

The implementation of the updated OECD guidelines relies on specific, high-quality materials and reagents. The following toolkit is essential for generating reliable, guideline-compliant data.

Table 4: Essential Research Toolkit for Implementing Updated OECD Guidelines

| Tool/Reagent | Primary Use Case | Function & Importance | Associated Updated TG |

|---|---|---|---|

| Radiolabelled Test Substance (e.g., ¹⁴C, ³H) | Environmental fate simulation studies. | Enables precise mass balance tracking of parent compound transformation into CO₂, metabolites, and non-extractable residues. Critical for calculating valid DT~50~ [35]. | 307, 308, 316 |

| RNA Stabilization Reagent (e.g., RNAlater) | Fish tissue sampling for transcriptomics. | Immediately stabilizes cellular RNA at the moment of sampling, preserving the gene expression profile and preventing degradation during cryopreservation. | 203, 210, 236 |

| Cryogenic Storage Vials & LN₂ | Archiving biotic samples. | Provides long-term, stable storage of frozen tissues at -80°C or in liquid nitrogen vapor, preserving biomolecule integrity for future 'omics analysis. | 203, 210, 236 |

| Defined Aquatic Invertebrate Test Species (e.g., Osmia cornuta) | Pollinator ecotoxicology. | Provides a standardized, relevant test organism for solitary bee acute contact toxicity testing, expanding risk assessment beyond social bees [18] [34]. | 254 (New) |

| Reference Toxicants (e.g., KCl, 3,4-DCA) | Fish toxicity test validation. | Serves as a positive control to confirm the health and sensitivity of the test organisms, ensuring the reliability and reproducibility of the test system. | 203, 210, 236 |

| Sorbent Materials (e.g., XAD resins) | Fate studies with volatile compounds. | Traps volatile organic compounds in test systems to account for losses and complete the mass balance, especially important for challenging substances [36]. | 307, 308 |

The June 2025 OECD updates signify a pivotal evolution from observation-based testing to mechanism-informed hazard assessment. By permitting 'omics integration into fish tests, the guidelines directly address a key dimension of relevance: the ability to connect molecular perturbations to adverse outcomes, thereby improving the scientific and predictive basis for risk assessment. Similarly, the refinements to environmental fate testing bolster the reliability of persistence data, a critical factor in long-term environmental protection.

These changes align with broader scientific movements, such as the EthoCRED framework, which seeks to standardize the evaluation of sensitive behavioural endpoints currently outside formal guidelines [32] [33]. While behavioural ecotoxicity is not yet incorporated into OECD TGs, the direction is clear: the future of ecotoxicity research lies in embracing more informative, human-relevant, and ecologically meaningful endpoints.

For researchers and regulators, these updates necessitate an adaptive approach. Successful navigation will require familiarity with advanced analytical techniques (omics, radiotracer analysis) and a deeper engagement with AOP frameworks to fully exploit the mechanistic data these updated guidelines are designed to generate. The ultimate result will be chemical safety decisions that are not only robust and internationally harmonized but also more predictive of real-world ecological impacts.

The evaluation of chemical safety across diverse species presents a fundamental challenge in ecotoxicology and environmental risk assessment. Traditional whole-animal toxicity testing, while informative, is resource-intensive, time-consuming, and ethically charged, creating a critical gap between the vast number of chemicals in commerce and the limited availability of empirical toxicity data [39]. This gap is especially pronounced for non-target species, including pollinators and endangered organisms, for which direct testing is often impractical or impossible [40].

Framed within a broader thesis on the relevance evaluation of ecotoxicity studies, this guide examines two pivotal digital resources developed by the U.S. Environmental Protection Agency (EPA): the ECOTOX Knowledgebase and the SeqAPASS tool. These tools represent complementary pillars of a modern, data-driven approach. ECOTOX serves as a comprehensive repository of curated empirical toxicity data from the published literature, encompassing over one million test records for more than 13,000 species and 12,000 chemicals [41]. In contrast, SeqAPASS is a predictive screening tool that uses protein sequence and structural similarity to extrapolate known toxicological susceptibilities from data-rich model species to thousands of data-poor species [40] [42].

The integration of these tools addresses core challenges in relevance evaluation: maximizing the utility of existing data, providing mechanistic insights for extrapolation, and prioritizing future testing efforts. Their use is driven by the need for robust, efficient, and humane New Approach Methodologies (NAMs) to support chemical safety evaluations in an era of limited testing resources and increasing regulatory demand [40] [39].

Tool Comparison: ECOTOX Knowledgebase vs. SeqAPASS

The ECOTOX Knowledgebase and SeqAPASS are designed for distinct but interconnected purposes within the ecotoxicology workflow. The following table summarizes their core characteristics, highlighting their complementary roles.

Table 1: Core Comparison of the ECOTOX Knowledgebase and SeqAPASS Tool

| Feature | ECOTOX Knowledgebase | SeqAPASS Tool |

|---|---|---|

| Primary Purpose | Curated archive of empirical toxicity test results [41]. | Predictive screening for cross-species chemical susceptibility based on protein target conservation [40]. |

| Core Function | Data retrieval, synthesis, and visualization of measured effects [41]. | Computational extrapolation via sequence/structure alignment and susceptibility prediction [42]. |

| Type of Data | Experimental results from published literature (e.g., LC50, NOEC, EC50) [41]. | Protein sequences, structural models, and bioinformatic similarity metrics [40] [42]. |

| Key Inputs | Chemical, species, or effect of interest [41]. | Protein sequence/accession of a known molecular target from a sensitive species [39]. |

| Methodological Basis | Literature curation and data abstraction [41]. | Comparative bioinformatics (BLASTp, COBALT, I-TASSER) [39]. |

| Typical Output | Tabulated toxicity values, concentration-response data, interactive plots [41]. | Prediction of susceptible species, alignment scores, 3D protein models, and summary reports [40] [39]. |

| Temporal Scope | Retrospective (existing studies). | Prospective (predictions for untested species). |

| Domain of Applicability | Chemicals with existing in vivo or in vitro toxicity data [41]. | Chemicals with a known protein target or Molecular Initiating Event (MIE) [40]. |

| Regulatory Application | Deriving water quality criteria, ecological risk assessment, supporting chemical assessments [41]. | Prioritizing testing for endangered species, extrapolating high-throughput assay data, screening-level risk assessment [40] [43]. |

Experimental Workflows and Visualization

The SeqAPASS Tiered Analysis Workflow

SeqAPASS operates through a tiered, hypothesis-driven workflow that progresses from broad sequence comparisons to specific structural evaluations. This multi-level approach allows users to refine predictions based on available knowledge about the chemical-protein interaction [39].

SeqAPASS Tiered Bioinformatics Workflow

Level 1: Primary Amino Acid Sequence Comparison. The analysis begins by comparing the full-length query protein sequence against all sequences in the National Center for Biotechnology Information (NCBI) protein database using BLASTp. This provides a broad list of potential orthologs across species and an initial, conservative susceptibility prediction based on overall sequence identity [39].

Level 2: Functional Domain Conservation Analysis. This level focuses alignment and comparison on specific functional domains of the protein (e.g., ligand-binding domain) obtained from the NCBI Conserved Domain Database. It refines the prediction by considering conservation in regions critical for the protein's function [39].

Level 3: Critical Amino Acid Residue Comparison. The most granular level requires prior knowledge of specific amino acid residues essential for chemical binding or protein function. SeqAPASS evaluates the conservation of these exact residues across species, offering the highest taxonomic resolution for susceptibility predictions [39].

Level 4: Protein Structure Conservation (Version 8). The latest version incorporates protein structural modeling using I-TASSER, allowing users to generate and compare 3D protein models. This adds a crucial line of evidence for understanding functional conservation when sequence similarity is moderate [42].

The ECOTOX Knowledgebase Data Retrieval Pathway

The ECOTOX workflow is centered on retrieving and synthesizing existing experimental data from its curated repository.

ECOTOX Knowledgebase Data Retrieval Pathway

Users can initiate queries via two primary pathways. The SEARCH feature is used when specific parameters (chemical name, species, effect endpoint) are known, allowing for precise data retrieval. The EXPLORE feature is designed for more open-ended discovery when search parameters are less defined [41]. Results can be filtered by over 19 parameters (e.g., exposure duration, test medium, effect measurement) and visualized through interactive plots before export for further analysis.

Practical Applications and Case Studies

Case Study 1: Predicting Pollinator Risk from Insecticide Targets

Challenge: To assess the potential risk of neonicotinoid insecticides to non-target pollinators, specifically honey bees (Apis mellifera) and other bee species, based on the known molecular target. SeqAPASS Application: Scientists used the nicotinic acetylcholine receptor (nAChR) subunit from a sensitive insect model as the query protein. The tiered analysis evaluated the conservation of this target across bee species and other insects [40]. Integrated ECOTOX Validation: Predictions of high susceptibility from SeqAPASS for honey bees were consistent with empirical toxicity data curated in ECOTOX (e.g., acute contact LC50 values), confirming the tool's predictive utility. Furthermore, SeqAPASS identified other bee species with conserved targets but lacking toxicity data, highlighting priority candidates for future testing or monitoring [40].

Case Study 2: Evaluating NSAID Toxicity to Aquatic Crustaceans