Ecotoxicity Data Systematic Reviews: Updated Methods, Applications, and Integration for Biomedical and Regulatory Science

This article provides a comprehensive guide to modern systematic review (SR) methods for ecotoxicity data, tailored for researchers and professionals in drug development and environmental health.

Ecotoxicity Data Systematic Reviews: Updated Methods, Applications, and Integration for Biomedical and Regulatory Science

Abstract

This article provides a comprehensive guide to modern systematic review (SR) methods for ecotoxicity data, tailored for researchers and professionals in drug development and environmental health. It addresses the urgent need to update statistical practices in ecotoxicology, highlighted by the ongoing revision of key guidelines like OECD No. 54 [citation:1][citation:2]. The scope covers foundational concepts, advanced methodologies like PRISMA-guided reviews and dose-response modeling using Generalized Linear Models (GLMs) [citation:1][citation:5], strategies for troubleshooting data heterogeneity and quality assessment, and frameworks for validating and integrating evidence into regulatory decision-making [citation:3]. The article synthesizes current initiatives and offers a practical roadmap for implementing robust, transparent, and scientifically defensible SRs to support chemical risk assessment and biomedical research.

The Evolving Landscape of Ecotoxicology: Why Systematic Reviews and Modern Statistics Are Now Essential

The field of ecotoxicology stands at a critical juncture. Regulatory hazard assessment, which relies heavily on extensive animal testing, faces escalating ethical, financial, and practical pressures [1]. With over 350,000 chemicals and mixtures registered globally, the traditional paradigm of toxicity testing is unsustainable [1]. Concurrently, the statistical and methodological foundations of the discipline have been identified as fragmented, inconsistent, and outdated [2]. For decades, regulatory risk assessments have been based on statistical principles that are no longer considered state-of-the-art, creating a significant gap between available scientific innovation and applied regulatory practice [2].

This whitepaper frames this challenge within the context of systematic review methods for ecotoxicity data research. Systematic review provides a framework for identifying, evaluating, and synthesizing evidence with transparency, objectivity, and consistency [3]. The evolution of curated databases like the ECOTOXicology Knowledgebase (ECOTOX) demonstrates a long history of applying systematic principles to data curation [3]. However, the full potential of systematic review is hindered by persistent use of outdated analytical practices and barriers to integrating contemporary academic research into regulatory decision-making [4] [2].

The push for change is driven by a confluence of factors: the urgent need for alternatives to animal testing, the advent of powerful computational and machine learning tools, and a growing consensus within the scientific community that methodological overhaul is essential for robust chemical safety assessments [2] [1]. This document provides an in-depth technical guide to the core outdated practices, the contemporary methods poised to replace them, and the integrated systems—encompassing data curation, statistical analysis, and computational modeling—required to realize a modernized ecotoxicology.

The Legacy Framework: Outdated Practices and Their Limitations

The historical framework of ecotoxicology is characterized by methodological conventions that have become outmoded. A central and protracted debate exemplifies this stagnation: the use of the No-Observed Effect Concentration (NOEC), which has been contested for over 30 years [2]. The NOEC approach, which relies on statistical hypothesis testing to identify the highest test concentration showing no significant difference from controls, is fundamentally flawed. It is critically dependent on study design (e.g., the chosen test concentrations and sample size), lacks statistical power, and does not provide information on the concentration-response relationship or the magnitude of effects [2].

The regulatory guidance anchoring many of these practices is the Organisation for Economic Co-operation and Development (OECD) document No. 54, "Current approaches in the statistical analysis of ecotoxicity data: A guidance to application," published in 2006 [2]. This document is now widely recognized as no longer reflective of contemporary statistical methods or the computational platforms available to scientists [2]. Its proposed dichotomy between "hypothesis testing" (e.g., ANOVA-type models) and "dose-response modeling" (regression models) neglects the underlying unity of these approaches as variants of linear models [2]. This artificial separation has hindered the adoption of more sophisticated and informative analysis techniques.

Beyond statistics, the evaluation of data quality for use in assessments relies on various reliability evaluation methods. These methods are inconsistently applied and vary in their criteria, as summarized in Table 1 [5].

Table 1: Comparison of Reliability Evaluation Methods for Ecotoxicity Data [5]

| Method (Source) | Klimisch et al. | Durda & Preziosi | Hobbs et al. | Schneider et al. (ToxRTool) |

|---|---|---|---|---|

| Primary Data Types | Toxicity & ecotoxicity | Ecotoxicity | Ecotoxicity (acute & chronic) | Toxicity (in vivo & in vitro) |

| Evaluation Categories | Reliable without/with restrictions, Not reliable, Not assignable | High, Moderate, Low quality, Not reliable, Not assignable | High, Acceptable, Unacceptable quality | Reliable without/with restrictions, Not reliable, Not assignable |

| Number of Criteria | 12 (acute) / 14 (chronic) | 40 | 20 | 21 |

| Guidance & Summarization | Limited guidance; summarization not stated | Detailed guidance; summarization stated | Limited guidance; summarization stated | Detailed guidance; automated calculation |

| Regulatory Association | Recommended in REACH guidance | Based on US EPA, OECD, ASTM standards | For Australasian database | - |

Furthermore, a systems-based analysis of European chemical assessment reveals deep-seated barriers to the uptake of modern academic research. Stakeholders are divided on the extent to which available evidence is used, citing issues of reliability, transparency, and a fundamental misalignment between the goals of academic knowledge production and the demands of regulatory decision-making [4]. These technical and social factors are interconnected, requiring a systems-level approach to overcome [4].

The Contemporary Statistical Toolbox: Moving Beyond OECD No. 54

The revision of OECD No. 54, planned for 2026, represents a pivotal opportunity to align regulatory guidance with modern statistical science [2]. Contemporary practice moves beyond the simple ANOVA vs. regression dichotomy to a unified framework based on Generalized Linear Models (GLMs) and their extensions [2].

GLMs handle non-normal data (e.g., binomial mortality counts, Poisson counts) through link functions, eliminating the need for problematic data transformations. Mixed-effect GLMs (hierarchical models) can account for nested data structures and variability, such as individuals within test chambers or multiple studies on the same chemical [2]. For more complex, nonlinear response patterns, Generalized Additive Models (GAMs) allow the relationship between concentration and response to be described by smooth, data-driven curves [2].

The benchmark for analysis is now continuous dose-response modeling to derive point estimates like the ECx (the concentration causing an x% effect). Modern software, particularly the open-source R environment and packages like drc, has made fitting multi-parameter models (e.g., 2 to 5 parameter logistic models) routine [2]. Furthermore, advanced metrics are gaining traction:

- Benchmark Dose (BMD) Modeling: Promoted by the European Food Safety Authority, this method fits a dose-response model to identify a lower confidence limit for a predetermined benchmark response (e.g., a 10% effect), offering advantages in statistical stability and biological relevance [2].

- No-Significant Effect Concentration (NSEC): A recently proposed metric designed to offer a more statistically robust alternative to the NOEC [2].

Table 2: Evolution of Key Statistical Practices in Ecotoxicology

| Aspect | Legacy/Outdated Practice | Contemporary/Recommended Practice | Key Advantage of Contemporary Practice |

|---|---|---|---|

| Core Analysis | Dichotomy: Hypothesis Testing (ANOVA) vs. Dose-Response | Unified framework of Generalized Linear Models (GLMs) | Unified theory, handles non-normal data correctly via link functions. |

| Primary Metric | No-Observed Effect Concentration (NOEC) | Effective Concentration (ECx) or Benchmark Dose (BMD) | Quantifies effect magnitude, accounts for full dose-response curve, more statistically robust. |

| Model Flexibility | Fixed-parameter, pre-defined curves (e.g., standard logistic) | Flexible models (GAMs), multi-parameter models | Can describe complex, non-standard response patterns observed in real data. |

| Data Structure | Ignores hierarchical data structure | Mixed-effects models (Hierarchical GLMs) | Accounts for variability at multiple levels (e.g., within study, between labs), more accurate uncertainty estimates. |

| Inferential Framework | Almost exclusively Frequentist | Inclusion of Bayesian methods | Allows for incorporation of prior knowledge, direct probability statements about parameters. |

| Software & Accessibility | Proprietary software, limited methods | Open-source platforms (e.g., R with extensive packages) | Democratizes access to cutting-edge methods, ensures reproducibility. |

A critical "wish list" for the OECD No. 54 revision includes clarifying the connection between hypothesis testing and dose-response, making continuous regression the default, incorporating GLMs and Bayesian methods, and advocating for better training in statistical design for ecotoxicologists [2].

Foundational Data Curation: Systematic Review and the ECOTOX Model

Robust contemporary analysis is impossible without high-quality, consistently curated data. The ECOTOXicology Knowledgebase (ECOTOX) is the world's largest curated ecotoxicity database and serves as a premier model for systematic data curation in the field [3]. Its methodology aligns closely with systematic review and PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) guidelines [3].

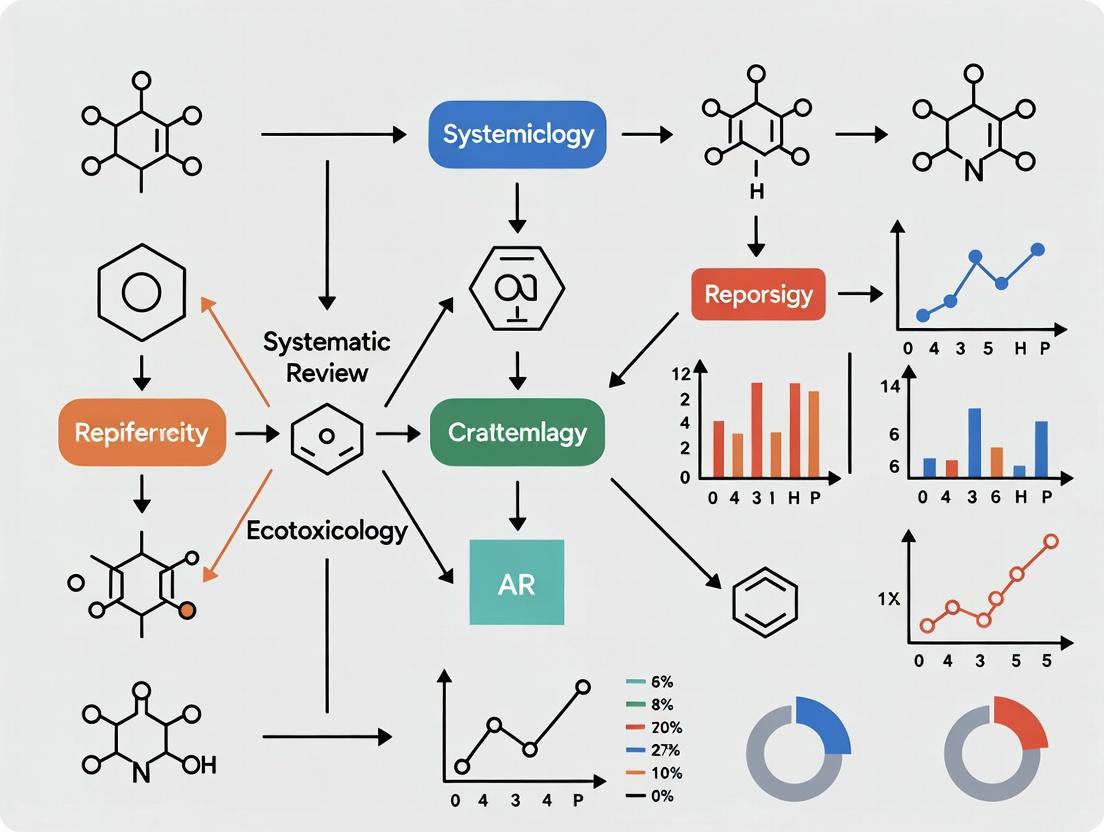

The ECOTOX workflow is a rigorous, multi-stage pipeline, as illustrated in the following diagram.

The process begins with comprehensive literature searches for a chemical of interest, spanning both open and "grey" literature (e.g., government reports) [3]. References are screened in two phases: first for applicability (ecologically relevant species, correct chemical, reported exposure details), then for acceptability (documented controls, reported endpoints) [3]. Accepted studies undergo detailed data extraction using well-established controlled vocabularies to ensure consistency. Key extracted information includes chemical identity (CAS, DTXSID, structure), species taxonomy, detailed experimental design (exposure duration, media, endpoints like LC50/EC50), and test conditions [3]. Following quality assurance, the data is added to the publicly accessible database, which is updated quarterly [3].

This systematic, transparent process transforms disparate primary literature into FAIR data (Findable, Accessible, Interoperable, and Reusable), creating the essential feedstock for modern computational toxicology, model development, and integrated risk assessment [3].

Driving Innovation: Machine Learning and Benchmark Datasets

The curated data from systematic repositories like ECOTOX enables the next frontier: predictive computational toxicology. Machine Learning (ML) offers a powerful paradigm for predicting ecotoxicological outcomes, potentially reducing reliance on animal testing [1]. However, its adoption has been hampered by the lack of standardized, high-quality benchmark datasets, which are essential for fairly comparing model performance and advancing the field [1].

The ADORE (Aquatic Toxicity) dataset has been introduced to meet this need [1]. It is a curated benchmark dataset for acute aquatic toxicity focusing on fish, crustaceans, and algae. The dataset is constructed from ECOTOX data and enriched with chemical descriptors (e.g., molecular fingerprints), phylogenetic data, and species-specific traits [1].

Table 3: The ADORE Benchmark Dataset for Machine Learning [1]

| Aspect | Description | Purpose & Significance |

|---|---|---|

| Core Source | Curated data from the US EPA ECOTOX Knowledgebase. | Provides a high-quality, toxicity-focused foundation. |

| Taxonomic Groups | Fish, Crustaceans, Algae. | Covers key aquatic trophic levels with substantial available data. |

| Key Endpoints | Acute mortality & comparable effects (LC50, EC50). | Aligns with standard regulatory endpoints (OECD Test Guidelines 203, 202, 201). |

| Data Enrichment | Chemical features (e.g., molecular representations, properties), phylogenetic features, species-specific traits. | Provides the multidimensional "feature space" necessary for ML algorithms to learn complex relationships. |

| Defined Splits | Provides predefined training/test splits based on chemical occurrence and molecular scaffolds. | Prevents data leakage; enables direct, fair comparison of different ML models on identical data partitions. |

| Primary Challenge | To predict toxicity values (regression) or classes (classification) for new chemicals or across taxonomic groups. | Drives community progress toward robust, generalizable predictive models for chemical hazard. |

The ADORE dataset embodies the transition to contemporary methods by addressing a major barrier to ML adoption. It provides the community with a common benchmark to develop models for Quantitative Structure-Activity Relationships (QSARs) and beyond, facilitating exploration of complex questions like extrapolation across taxonomic groups [1].

Transitioning to contemporary methods requires familiarity with a new set of core resources and tools. The following table details key solutions spanning data, software, and methodological guidance.

Table 4: Research Reagent Solutions for Contemporary Ecotoxicology

| Item / Resource | Type | Function / Purpose | Key Features / Relevance |

|---|---|---|---|

| ECOTOX Knowledgebase | Curated Database | The world's largest source of curated single-chemical ecotoxicity data [3]. | Provides systematically reviewed data for ~12,000 chemicals and ~14,000 species; essential for assessment, modeling, and systematic review [3]. |

| ADORE Dataset | Benchmark ML Dataset | A curated, feature-enriched dataset for developing and benchmarking ML models in aquatic toxicology [1]. | Includes defined train/test splits; enables fair model comparison and focuses research on prediction challenges [1]. |

| R Statistical Environment | Software Platform | An open-source language and environment for statistical computing and graphics [2]. | The de facto standard for implementing contemporary statistical methods (GLMs, mixed-models, dose-response analysis, Bayesian inference) in ecotoxicology [2]. |

| ToxRTool | Evaluation Tool | Toxicological data Reliability assessment Tool for evaluating study reliability [5]. | Provides a structured, transparent method with 21 criteria to score and categorize study reliability for use in assessments [5]. |

| OECD No. 54 (Future Revision) | Guidance Document | The forthcoming updated guidance on the statistical analysis of ecotoxicity data (revision planned for 2026) [2]. | Will be the central regulatory guidance promoting modern statistical methods (GLMs, BMD, Bayesian) as standard practice [2]. |

| BMD Methodology | Statistical Approach | Benchmark Dose modeling for identifying a lower confidence limit on a predefined effect size [2]. | Supported by EFSA; offers a more stable and biologically informative alternative to NOEC/LOEC and point estimates like EC10 [2]. |

Synthesis and Future Directions: Implementing a Systems-Level Change

The historical context of ecotoxicology reveals a field constrained by outdated statistical paradigms, inconsistent data evaluation, and a socio-technical system that limits the flow of academic innovation into regulatory practice [4] [2]. The push for change is now unequivocal, driven by the confluence of ethical imperatives, regulatory needs, and scientific advancement.

The path forward requires a systems-level approach that simultaneously addresses technical, social, and training dimensions [4]. The following diagram synthesizes the key barriers and intervention points within the socio-technical system of regulatory toxicology [4].

Technical modernization is underway through the statistical toolbox update, the systematic curation of FAIR data, and the development of ML-ready benchmarks. However, as the diagram illustrates, these technical factors are interdependent with social factors [4]. Lasting change requires:

- Finalizing and adopting modernized guidance, specifically the OECD No. 54 revision, to align regulatory standards with scientific best practices [2].

- Embracing open science and benchmarking through resources like the ADORE dataset to accelerate and standardize computational model development [1].

- Investing in targeted training to elevate statistical and data science literacy within the ecotoxicology community, moving beyond sporadic courses to embedded expertise [2].

- Fostering sustained collaboration between academic statisticians, ecotoxicologists, and regulatory scientists to align goals, clarify assessment objectives, and co-develop solutions [4] [2].

By integrating robust systematic review for data curation, contemporary statistical and computational methods for analysis, and a collaborative systems mindset for implementation, the field of ecotoxicology can fully transition from its outdated practices to a more predictive, efficient, and evidence-based future.

Within the context of developing rigorous systematic review methods for ecotoxicity data research, three core challenges persistently hinder progress: fragmented and outdated statistical guidelines, the long‑standing debate over the use of the no‑observed‑effect concentration (NOEC), and the limited integration of academic research into regulatory decision‑making. This whitepaper examines each challenge in detail, presents quantitative data from recent studies, outlines key experimental methodologies, and provides visual workflows to guide researchers and risk assessors.

Fragmented Statistical Guidelines

The statistical methods used in regulatory ecotoxicology have evolved in a fragmented, inconsistent, and often outdated manner, creating a landscape that is difficult to navigate[reference:0]. A prominent illustration of this fragmentation is the decades‑long debate over whether NOECs should be banned from or used in regulatory contexts[reference:1]. The key reference document, OECD No. 54 (“Current approaches in the statistical analysis of ecotoxicity data”), published in 2006, is no longer considered reflective of contemporary statistical methods or computational platforms[reference:2]. A revision is planned for 2026, coordinated by the German Environment Agency (UBA)[reference:3].

This fragmentation is not limited to hypothesis‑testing versus dose‑response modeling. The evaluation of study reliability itself relies on inconsistent criteria. The widely used Klimisch method (1997) has been criticized for lacking detail and guidance, leading to evaluations that depend heavily on expert judgment and yield inconsistent results among assessors[reference:4][reference:5]. In response, the Criteria for Reporting and Evaluating ecotoxicity Data (CRED) method was developed to provide a more transparent, consistent, and science‑based framework for evaluating both reliability and relevance[reference:6].

The NOEC Debate

The NOEC, derived from hypothesis‑testing (e.g., ANOVA), identifies the highest tested concentration at which no statistically significant effect is observed. Its critics argue that the NOEC is inherently dependent on study design (e.g., chosen concentration spacing, sample size) and does not estimate a true threshold of effect. Alternatives such as the effect concentration for x% of the population (ECx), the benchmark dose (BMD), and the no‑significant‑effect concentration (NSEC) have been proposed as more robust, regression‑based metrics[reference:7].

A recent meta‑analysis of chronic freshwater toxicity tests quantified the actual effect levels associated with traditional hypothesis‑testing endpoints[reference:8]. The findings are summarized in Table 1.

Table 1. Median percent effect and adjustment factors for hypothesis‑based toxicity endpoints (Justice et al., 2025)

| Endpoint | Median percent effect occurring at the endpoint | Median adjustment factor to approximate EC5 |

|---|---|---|

| NOEC | 8.5% | 1.2 |

| LOEC | 46.5% | 2.5 |

| MATC | 23.5% | 1.8 |

| EC20 | – | 1.7 |

| EC10 | – | 1.3 |

Source: [reference:9]

The data show that a “no‑observed” effect can correspond to a median effect of 8.5%, highlighting the disconnect between the statistical significance and the biological effect size. The derived adjustment factors allow hypothesis‑based results to be converted to a common scale (e.g., EC5), facilitating their use in screening‑level risk assessments[reference:10].

Integrating Academic Research

Academic peer‑reviewed studies are a vital source of evidence for chemical assessment, yet they face significant barriers to use in regulatory decision‑making[reference:11]. A systems‑based analysis of the European regulatory toxicology system identified deep divisions among stakeholders regarding the extent to which available academic evidence is used[reference:12]. Barriers are not merely technical (e.g., perceptions of variable reliability and transparency) but are often interdependent with social factors, such as misaligned goals between academic and regulatory knowledge production[reference:13]. Overcoming these barriers requires a coordinated, systems‑level approach that considers both technical and social dimensions[reference:14].

Experimental Protocols

Protocol 1: Meta‑Analysis of Hypothesis‑Based vs. Point‑Estimate Toxicity Values

Based on Justice et al. (2025)[reference:15]

- Literature search: Systematic search of chronic freshwater toxicity tests reporting NOEC, LOEC, MATC, EC10, and EC20 values.

- Inclusion criteria: Studies must report both hypothesis‑based endpoints (NOEC/LOEC/MATC) and point‑estimate endpoints (EC10/EC20) for the same test.

- Data extraction: For each test, record the reported endpoint values, test organism, chemical, and experimental design.

- Statistical analysis:

- Calculate the percent effect corresponding to each NOEC, LOEC, and MATC by comparing the response at that concentration to the control response.

- Derive adjustment factors for each endpoint by determining the multiplier needed to equate the endpoint to an EC5 value (e.g., NOEC/EC5).

- Analyze the influence of chemical class and taxon (invertebrate vs. vertebrate) on the percent effect and adjustment factors using robust statistical tests (e.g., Kruskal‑Wallis).

Protocol 2: Ring Test for Evaluating Ecotoxicity Study Reliability (CRED Method)

Based on Kase et al. (2016)[reference:16]

- Participant recruitment: Assemble a diverse group of risk assessors (e.g., 75 assessors from 12 countries) representing regulatory agencies, industry, and academia.

- Study selection: Provide a set of aquatic ecotoxicity study reports covering a range of qualities and test types.

- Evaluation procedure:

- Phase 1: Each assessor evaluates the same set of studies using the traditional Klimisch method.

- Phase 2: Assessors evaluate the same studies using the new CRED method, which includes detailed criteria for reliability (e.g., test validity, reporting completeness) and relevance (e.g., ecological realism, endpoint appropriateness).

- Consistency assessment: Compare the evaluation outcomes (reliable/not reliable, relevance score) within and between the two methods. Measure agreement using statistical metrics (e.g., Cohen’s kappa).

- Perception survey: Collect feedback from participants on the practicality, transparency, and perceived accuracy of each method.

Visualization of Workflows and Relationships

Diagram 1: Systematic Review Workflow for Ecotoxicity Data

Diagram 2: Decision Tree for Selecting Ecotoxicity Statistical Endpoints

Diagram 3: Interrelationship of Core Challenges

The Scientist’s Toolkit

Table 2. Essential materials and tools for ecotoxicity research and systematic review

| Item | Function/Description |

|---|---|

| Test organisms (e.g., Daphnia magna, Danio rerio) | Standardized model species for acute/chronic toxicity testing; provide reproducible biological responses. |

| Exposure systems (static, renewal, flow‑through) | Control and maintain precise chemical concentrations during tests; flow‑through systems best mimic environmental conditions. |

| Chemical analysis tools (LC‑MS, GC‑MS, spectrophotometry) | Verify exposure concentrations, measure chemical degradation, and assess metabolite formation. |

Statistical software (R) with packages (drc, bmd, ggplot2) |

Fit dose‑response models (ECx, BMD), perform meta‑analyses, and create publication‑quality graphics. |

| Reliability assessment framework (CRED checklist) | Standardized tool to evaluate the reliability and relevance of individual ecotoxicity studies for inclusion in reviews. |

| Curated ecotoxicity database (e.g., US EPA ECOTOX Knowledgebase) | Centralized repository of published toxicity data; essential for systematic literature searches and data extraction. |

In ecotoxicity research, the volume and complexity of data—from standardized laboratory assays to complex field studies—present a significant challenge for risk assessment and regulatory decision-making. The critical need to distinguish signal from noise, to reconcile conflicting studies on a chemical's environmental impact, and to build a reliable evidence base for policy underscores the importance of rigorous evidence synthesis methodologies [6].

This whitepaper defines and contrasts the core methodologies of evidence synthesis: the narrative review, the systematic review, and the quantitative meta-analysis, with a specific focus on their application within ecotoxicity data research. Systematic reviews, which minimize bias through explicit, pre-defined methods, have become the standard for providing confident evidence, increasingly supplanting traditional narrative reviews [6] [7]. Furthermore, we explore the principles of evidence integration, which governs how synthesized findings are incorporated into broader scientific narratives and decision-making frameworks. For researchers and drug development professionals, mastering these concepts is not academic; it is essential for validating ecotoxicological models, supporting chemical safety assessments, and ensuring that environmental health protections are built on a foundation of transparent, reproducible, and robust science.

Defining the Core Methodologies

A narrative review (or traditional literature review) provides a broad, qualitative summary of the literature on a topic by an expert in the field. It is characterized by its flexibility in scope and methodology, which is not pre-specified or systematic. The author selectively cites literature to provide an experiential perspective, track the development of a concept, or explore existing debates and knowledge gaps [6] [7]. While valuable for offering a foundational overview or generating novel hypotheses, its major limitation is the absence of objective, systematic selection criteria. This can introduce selection bias, where the review reflects the author's predispositions, leading to different narrative reviews on the same topic reaching conflicting conclusions [6]. As such, while they may be evidence-informed, narrative reviews are not considered rigorous scientific evidence on their own.

Systematic Review: The Structured, Bias-Minimizing Synthesis

A systematic review is a high-level research project that answers a specific, focused research question by systematically identifying, selecting, appraising, and synthesizing all relevant studies [7]. Its paramount objective is to minimize bias and error at every stage, ensuring transparency and reproducibility. This is achieved through a pre-published protocol that details the research question (often formulated using frameworks like PICO—Population, Intervention, Comparator, Outcome), explicit inclusion/exclusion criteria, a comprehensive search strategy across multiple databases, a critical appraisal of study quality, and a structured data synthesis [7]. The synthesis can be qualitative (descriptive) or quantitative (meta-analysis). Systematic reviews are the cornerstone of evidence-based practice, designed to provide the most valid evidence to guide decision-making in fields like clinical medicine and, increasingly, environmental policy [6] [7].

Meta-Analysis: The Quantitative Statistical Synthesis

Meta-analysis is a statistical methodology for quantitatively combining the results of multiple independent studies that address a common research question [8]. It is often, but not always, a core component of a systematic review. The process involves extracting a common effect size (e.g., standardized mean difference, risk ratio) and its variance from each study, then calculating a pooled (combined) effect estimate across studies [8] [9].

- Primary Purpose: To improve the precision of an effect estimate, resolve uncertainties from conflicting studies, and investigate sources of variation (heterogeneity) across studies [9].

- Key Statistical Models:

- Fixed-Effect Model: Assumes all studies estimate a single, common underlying effect. The inverse of each study's variance is used as its weight [9].

- Random-Effects Model: Acknowledges and models that the true effect may vary across studies (heterogeneity). It incorporates this between-study variation into the weighting, which redistributes weight more equally among studies, especially when heterogeneity is high [8] [9].

The results are typically displayed in a forest plot, which visually presents the effect size and confidence interval for each study alongside the pooled estimate [9].

Table 1: Comparative Analysis of Narrative and Systematic Review Methodologies [6] [7].

| Feature | Narrative (Traditional) Review | Systematic Review |

|---|---|---|

| Research Question | Broad, exploratory, often not explicitly stated. | Narrow, specific, and pre-defined (e.g., using PICO). |

| Search Strategy | Not systematic or comprehensive; selection may be subjective. | Comprehensive, explicit, and reproducible across multiple databases. |

| Study Selection | No pre-defined criteria; prone to selection bias. | Based on explicit, pre-specified inclusion/exclusion criteria. |

| Quality Appraisal | Variable, often not formalized. | Rigorous critical appraisal of study validity (risk of bias). |

| Data Synthesis | Qualitative, narrative summary. | Structured synthesis, which can be qualitative or quantitative (meta-analysis). |

| Conclusions | Based on author's interpretation, potentially subjective. | Evidence-based, derived directly from the analyzed data. |

| Reproducibility | Low, due to lack of methodological transparency. | High, due to documented protocol and methods. |

| Primary Utility | Background, hypothesis generation, expert perspective. | Answering focused questions, supporting evidence-based decisions and policy. |

The Systematic Review Workflow: A Step-by-Step Protocol for Ecotoxicity Data

The following protocol, adapted from guidelines like Cochrane and PRISMA, provides a detailed roadmap for conducting a systematic review in ecotoxicity.

Pre-Protocol Phase: Scoping & Team Assembly

- Define the Rationale: Clearly articulate the environmental or regulatory problem (e.g., "Uncertainty in the chronic aquatic toxicity of Chemical X").

- Assemble a Team: Include subject matter experts (ecotoxicologists), a librarian/information specialist for search design, and a statistician if a meta-analysis is anticipated.

Phase 1: Protocol Development & Registration

- Formulate the Research Question: Use a structured framework. For ecotoxicity: Population (e.g., Daphnia magna), Exposure (Chemical X concentration/duration), Comparator (Control/solvent control), Outcome (e.g., 48-hr LC50, reproduction NOEC) [7].

- Develop & Register the Protocol: Document the methods before starting. Key elements include:

- Eligibility Criteria: Define included species, exposure regimes, study designs (e.g., OECD guideline tests, microcosm studies), language, and publication status (including "gray literature" like regulatory reports) [8].

- Search Strategy: Design with an information specialist. Use controlled vocabulary (e.g., MeSH, Emtree) and keywords. Pre-specify databases (e.g., PubMed, TOXLINE, Web of Science, Scopus) and other sources [8].

- Study Selection Process: Outline how titles/abstracts and full texts will be screened, typically by two reviewers independently, with conflicts resolved by consensus or a third reviewer.

- Data Extraction Plan: Design a standardized form to capture study characteristics (test organism, exposure conditions, endpoint, effect size data, study funding) and risk of bias items.

- Risk of Bias Assessment Plan: Specify the tool (e.g., adapted from Cochrane RoB, SYRCLE's RoB tool for animal studies) to evaluate study reliability.

- Synthesis Strategy: State plans for qualitative synthesis and the conditions under which a meta-analysis will be performed (e.g., sufficient data, comparable outcomes).

Phase 2: Study Identification & Selection

- Execute the Search: Run the search across all planned sources. Record the number of records identified from each source.

- Manage Records & Screen: Use reference management software (e.g., DistillerSR, Rayyan). Perform deduplication. Screen titles/abstracts, then full texts, against eligibility criteria. Document reasons for exclusion at the full-text stage.

- Diagram the Flow: Create a PRISMA flow diagram to transparently report the study selection process.

Diagram 1: Systematic Review & Meta-Analysis Workflow

Phase 3: Data Collection & Critical Appraisal

- Data Extraction: Use the pre-designed form. Extract numeric data needed for effect size calculation (means, SDs, sample sizes) and descriptive data. Contact study authors for missing data if necessary.

- Assess Risk of Bias (RoB): Have at least two reviewers independently apply the chosen RoB tool to each study. This evaluates internal validity (e.g., random allocation, blinding in effect assessment, completeness of outcome data).

Phase 4: Synthesis & Reporting

- Qualitative Synthesis: Systematically describe and summarize the findings from the included studies, often grouped by key characteristics (e.g., species, exposure pathway).

- Meta-Analysis (if appropriate):

- Calculate Effect Sizes: Transform extracted data into a common, comparable effect size (e.g., log-transformed ratio of treated to control mean for continuous outcomes).

- Choose a Statistical Model: Assess statistical heterogeneity (e.g., using I² statistic). A random-effects model is often appropriate for ecotoxicity data due to expected methodological and biological variation [8] [9].

- Conduct Analysis & Assess Heterogeneity: Perform the meta-analysis to generate a pooled effect estimate with a confidence interval. Investigate sources of high heterogeneity via subgroup analysis (e.g., by test type, species class) or meta-regression.

- Report Findings: Follow reporting guidelines (PRISMA). Present results with tables, forest plots, and a clear discussion of the strength of evidence, limitations, and implications for research and regulation.

Meta-Analysis: Statistical Foundations and Application

Meta-analysis translates the qualitative question "What does the body of evidence show?" into a quantitative answer. Its validity hinges on the quality of the preceding systematic review steps [9].

Core Statistical Models and Selection

Table 2: Key Statistical Models in Meta-Analysis [8] [9].

| Model | Underlying Assumption | Weighting of Studies | Interpretation of Result | When to Use in Ecotoxicity |

|---|---|---|---|---|

| Fixed-Effect | All studies estimate one single, true effect size. Differences are due to sampling error alone. | Weight is inverse of study's within-study variance (1/SE²). Larger studies get more weight. | The pooled estimate is the best estimate of that single common effect. | Rarely appropriate. Only if studies are near-identical (e.g., same species, same lab protocol). |

| Random-Effects | The true effect size varies across studies (heterogeneity). Studies estimate different, related effects. | Weight is inverse of (within-study variance + between-study variance (τ²)). Weight is distributed more equally. | The pooled estimate is the mean of the distribution of true effects. | Almost always appropriate. Accounts for real variation due to species sensitivity, test conditions, etc. |

Diagram 2: Meta-Analysis Statistical Model Selection Process

Investigating Heterogeneity and Sensitivity Analysis

In ecotoxicity, heterogeneity is the rule, not the exception. Key sources include:

- Biological: Differences in species, life stage, genetic strain.

- Methodological: Differences in test duration, exposure medium, endpoint measurement.

- Statistical: Differences in study quality and design.

Subgroup analysis and meta-regression are used to explore if these factors explain heterogeneity. For example, a meta-regression might test if log-transformed toxicity values are predicted by the octanol-water partition coefficient (log Kow) of the test chemicals. Sensitivity analyses test the robustness of results, for instance, by repeating the meta-analysis excluding studies with a high risk of bias or those published only as abstracts.

Evidence Integration: From Synthesis to Application

Evidence integration is the process of critically evaluating and incorporating synthesized evidence into a broader context, such as a research paper, a risk assessment report, or a regulatory guideline [10]. It moves beyond simply reporting a pooled effect size to interpreting its meaning, credibility, and relevance.

Principles of Effective Evidence Integration

- Credibility Assessment: Evaluate the reliability of the synthesized evidence itself. Consider the rigor of the systematic review (Was the protocol followed? Was the search comprehensive?), the consistency of findings across studies, and the risk of bias in the included studies [10].

- Contextualization: Relate the findings to the specific problem at hand. Does the pooled EC50 for a chemical from laboratory species predict effects in a specific local ecosystem? Discuss biological plausibility and relevance.

- Transparency in Uncertainty: Clearly communicate the limitations of the evidence. This includes statistical uncertainty (confidence intervals), methodological limitations (risk of bias, publication bias), and applicability concerns (e.g., laboratory-to-field extrapolation).

- Balanced Interpretation: Avoid "cherry-picking" only evidence that supports a pre-determined conclusion. Acknowledge and interpret conflicting evidence or indeterminate results [10].

Types of Evidence for Integration

Table 3: Hierarchy and Integration of Evidence Types in Ecotoxicological Risk Assessment [10].

| Evidence Type | Description | Role in Integration | Example in Ecotoxicity |

|---|---|---|---|

| Primary Research Data | Original data from individual experiments or studies (e.g., a toxicity test). | The raw material for systematic review and meta-analysis. | A published paper reporting the LC50 of Chemical Y to fathead minnow. |

| Synthesized Quantitative Evidence | The output of meta-analysis (pooled effect estimates, confidence intervals). | Provides the most precise and statistically powerful summary of the available primary data. | A pooled no-observed-effect concentration (NOEC) for invertebrates exposed to Chemical Z. |

| Synthesized Qualitative Evidence | Systematic descriptive summaries of study findings, often where meta-analysis is not feasible. | Identifies patterns, knowledge gaps, and contextual factors not captured by numbers. | A systematic review categorizing the types of sub-lethal behavioral effects observed across fish studies. |

| Expert Judgment/Testimony | Informed opinions from recognized authorities in the field. | Provides interpretation, identifies plausible mechanisms, and helps bridge gaps where direct evidence is lacking. Must be used transparently. | A panel interpreting mechanistic toxicology data to assess the relevance of a tumor finding in rodents for aquatic species. |

Table 4: Essential Toolkit for Conducting Systematic Reviews & Meta-Analyses in Ecotoxicity.

| Tool / Resource Category | Specific Examples | Primary Function |

|---|---|---|

| Protocol & Reporting Guidelines | PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses); ROSES (Reporting standards for Systematic Evidence Syntheses in environmental research). | Ensure completeness, transparency, and quality of reporting. |

| Review Management Software | DistillerSR, Rayyan, Covidence. | Streamline and manage the collaborative process of screening references, data extraction, and quality appraisal. |

| Specialized Databases | PubMed, TOXLINE, Web of Science, Scopus, ECOTOX (US EPA). | Enable comprehensive, structured literature searches for primary ecotoxicity studies. |

| Statistical Software for Meta-Analysis | R (packages: metafor, meta), RevMan (Cochrane), Comprehensive Meta-Analysis. |

Perform complex meta-analyses, create forest and funnel plots, conduct subgroup and meta-regression analyses. |

| Risk of Bias / Quality Assessment Tools | SYRCLE's RoB tool (for animal studies), NIH Quality Assessment Tool, custom tools based on OECD test guideline criteria. | Systematically evaluate the internal validity and reliability of included primary studies. |

| Gray Literature Sources | Government and regulatory agency reports (e.g., US EPA, ECHA), university theses, conference proceedings. | Reduce publication bias by including studies not published in commercial journals. |

In the data-rich and high-stakes field of ecotoxicity research, the disciplined application of systematic review and meta-analysis methodologies is paramount. Moving from traditional, selective narrative summaries to transparent, protocol-driven syntheses represents a fundamental shift toward greater scientific rigor and reliability. As shown, a systematic review provides the essential structural framework to minimize bias, while meta-analysis offers powerful statistical tools to quantify overall effects and explore variability. Finally, the principled integration of this synthesized evidence into the broader scientific and regulatory context ensures that conclusions are not only statistically sound but also relevant and actionable.

For researchers and drug development professionals, mastery of these concepts enables the creation of defensible, high-quality environmental safety assessments. It empowers them to build a compelling evidence base that can effectively inform substance regulation, guide the development of safer chemicals, and ultimately contribute to more robust protection of environmental health.

The robust assessment of chemical hazards to ecosystems hinges on the transparent, reproducible, and statistically sound analysis of ecotoxicity data. In recent years, the scientific community has increasingly adopted systematic review (SR) methodologies to minimize bias, ensure comprehensive evidence synthesis, and support regulatory decision-making[reference:0]. This whitepaper frames the ongoing revision of OECD Document No. 54 and concurrent global initiatives within this broader thesis: the modernization of statistical and methodological frameworks is a regulatory imperative for advancing ecotoxicity data research. These coordinated efforts aim to replace outdated practices with contemporary, evidence-based approaches, ultimately enhancing the reliability and global harmonization of environmental risk assessments.

The Pivotal Revision: OECD Document No. 54

OECD Document No. 54, “Current approaches in the statistical analysis of ecotoxicity data: a guidance to application” (2006), has been the cornerstone for statistical analysis in regulatory ecotoxicology. However, it is no longer considered reflective of modern statistical methods or computational platforms[reference:1].

Key Drivers and Timeline

A consensus has emerged that the document requires a substantial overhaul. The revision is formally planned for 2026, with the German Environment Agency (UBA) coordinating scientific contributions[reference:2]. The process was launched with the “1st UBA expert workshop on the OECD No. 54 revision” in September 2024, which focused on updating chapters concerning hypothesis testing, dose-response models, and biological effect models[reference:3].

Core Statistical Upgrades

The revision addresses critical limitations in the current guidance:

- Moving Beyond the Hypothesis Testing vs. Dose-Response Dichotomy: The existing distinction between ANOVA-type "hypothesis testing" and regression-based "dose-response modeling" is artificial, as both are variants of linear models[reference:4]. The revision advocates for continuous regression-based models as the default.

- Incorporating Modern Statistical Tools: The update will integrate contemporary methods such as Generalized Linear Models (GLMs), mixed-effect models, Generalized Additive Models (GAMs), and Bayesian frameworks into the ecotoxicologist's toolbox[reference:5][reference:6].

- Embracing Open-Source Software: The guidance will promote the use of open-source platforms like R, which have made advanced statistical methodologies widely accessible[reference:7].

- Evaluating Modern Metrics: The revision will consider alternative effect metrics like the Benchmark Dose (BMD) and the No-Significant Effect Concentration (NSEC), alongside traditional ECx values, evaluating their properties for use in higher-tier assessments[reference:8].

Concurrent Global Regulatory Initiatives

The push for modernization extends beyond the OECD, manifesting in parallel initiatives across major regulatory bodies.

European Food Safety Authority (EFSA)

In June 2024, EFSA received a mandate to review its Guidance Document on Terrestrial Ecotoxicology and to develop guidance for assessing indirect effects on biodiversity[reference:9]. This revision aims to strengthen the methodological foundation for pesticide and chemical risk assessment in the EU.

U.S. Environmental Protection Agency (EPA)

The EPA is advancing the systematic review of ecotoxicity data through two key pillars:

- The ECOTOX Knowledgebase: This is the world's largest curated database of single-chemical toxicity data for aquatic and terrestrial species, providing foundational data for SR processes[reference:10]. As of 2023, it contained data from over 54,000 references, comprising 1.1 million test records on nearly 14,000 species and 13,000 chemicals[reference:11].

- Standard Operating Procedures (SOPs): The EPA has developed formal SOPs for the Systematic Review of Ecological Toxicity Data to support the development of Ambient Water Quality Criteria, ensuring consistency and transparency in data evaluation[reference:12].

World Health Organization (WHO)

In February 2024, WHO launched a Repository of Systematic Reviews on interventions in environment, climate change, and health[reference:13]. While broader in scope, this repository underscores the global institutional shift towards evidence-based assessment and directly intersects with ecotoxicity through its coverage of chemicals and environmental health.

International Standardization (ISO/OECD)

A vast battery of standardized microbial toxicity tests (e.g., ISO 8192, OECD 209) are globally accepted for assessing chemical impacts on microbial communities, particularly in wastewater treatment and biodegradation testing[reference:14]. These standardized methods provide the reliable, reproducible experimental data that feed into higher-level systematic reviews and risk assessments.

| Initiative | Leading Organization | Primary Focus | Current Status/Timeline |

|---|---|---|---|

| Statistical Analysis Guidance | OECD | Modernizing statistical methods for ecotoxicity data analysis. | Revision ongoing; planned publication in 2026[reference:15]. |

| Terrestrial Ecotoxicology Guidance | EFSA | Updating risk assessment guidance for terrestrial organisms and indirect effects. | Revision mandated in June 2024; outline published[reference:16]. |

| Systematic Review SOPs | U.S. EPA | Standardizing procedures for reviewing ecological toxicity data. | SOPs published in September 2024[reference:17]. |

| Evidence Repository | WHO | Cataloging systematic reviews on environmental health interventions. | Repository launched in February 2024[reference:18]. |

| Microbial Toxicity Tests | ISO/OECD | Standardizing tests for bacterial and microbial toxicity. | Multiple guidelines actively maintained and applied[reference:19]. |

Table 2: Scale of the U.S. EPA ECOTOX Knowledgebase (2023)

| Data Category | Volume | Description |

|---|---|---|

| Scientific References | >54,000 | Curated sources from the peer-reviewed literature. |

| Test Records | >1.1 million | Individual toxicity test results. |

| Species Covered | ~14,000 | Aquatic and terrestrial test organisms. |

| Chemicals Covered | ~13,000 | Unique chemical substances. |

Detailed Experimental Protocols

Protocol for a Standard Aquatic Ecotoxicity Test (Algal Growth Inhibition)

This protocol is based on the principles of OECD Test Guideline 201.

1. Test Organism and Pre-culture:

- Organism: Use a validated freshwater algal species (e.g., Pseudokirchneriella subcapitata).

- Culture Conditions: Maintain stock cultures in sterile OECD TG 201 standard growth medium (e.g., AAP medium) under continuous cool-white fluorescent light (60-120 µE/m²/s) at 21±2°C with shaking.

2. Test Chemical Preparation:

- Prepare a stock solution of the test chemical in sterile medium, using a solvent if necessary (e.g., acetone, DMSO ≤ 0.01% v/v). Prepare a series of at least 5 geometrically spaced concentrations (e.g., dilution factor of 1.8-2.2).

3. Test Initiation and Design:

- Inoculate fresh medium with algae from an exponentially growing pre-culture to an initial cell density of ~10⁴ cells/mL.

- Dispense 50-100 mL of inoculated medium into sterile Erlenmeyer flasks. Add the appropriate volume of test solution or solvent control.

- Include at least three replicates per concentration and controls (solvent and negative).

4. Incubation and Monitoring:

- Incubate flasks under the same conditions as the pre-culture for 72 hours.

- Measure algal growth (cell density or fluorescence) at 0, 24, 48, and 72 hours.

5. Data Analysis and Endpoint Calculation:

- Calculate the average specific growth rate for each replicate.

- Fit a dose-response model (e.g., a 4-parameter log-logistic model) to the mean growth rate inhibition data.

- Calculate the EC₅₀ (concentration causing 50% growth inhibition) or EC₁₀/EC₂₀ with confidence intervals.

Protocol for Systematic Review of Ecotoxicity Data

This protocol aligns with the U.S. EPA's SOPs for systematic review[reference:20].

1. Problem Formulation & Protocol Registration:

- Define a clear, structured review question (PECO: Population, Exposure, Comparator, Outcome).

- Develop and register a detailed review protocol outlining search strategy, inclusion/exclusion criteria, and analysis plan.

2. Comprehensive Literature Search:

- Search multiple electronic databases (e.g., Web of Science, PubMed, Scopus).

- Use chemical names, CAS numbers, and relevant ecotoxicity keywords.

- Supplement with searches of regulatory dossiers, grey literature, and backward/forward citation chasing.

3. Study Screening & Selection:

- Screen titles/abstracts and then full texts against pre-defined eligibility criteria.

- Perform screening in duplicate, with conflicts resolved by a third reviewer.

- Document the flow of studies (PRISMA diagram).

4. Data Extraction & Quality Assessment:

- Extract relevant data (test organism, exposure conditions, endpoints, results) into a standardized form.

- Assess the reliability/risk of bias of each study using a validated tool (e.g., adapted from Klimisch scores or the EPA's Data Evaluation Record system).

5. Evidence Synthesis & Integration:

- For quantitative synthesis (meta-analysis), calculate summary effect estimates (e.g., pooled EC₅₀) where appropriate.

- For narrative synthesis, organize findings by taxon, endpoint, or exposure scenario.

- Assess the overall strength and confidence in the body of evidence.

Visualizing Workflows and Relationships

Diagram 1: Systematic Review Workflow for Ecotoxicity Data

Diagram 2: Interplay of Global Regulatory Initiatives

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Standard Ecotoxicity Testing

| Item | Function & Explanation | Example/Standard |

|---|---|---|

| Standard Growth Medium | Provides essential nutrients for test organisms in a reproducible, defined matrix. Critical for controlling test conditions. | OECD TG 201 AAP medium for algae; OECD TG 202 Daphnia medium. |

| Reference Toxicant | A chemical with known, stable toxicity used to validate the health and sensitivity of test organisms across different test runs. | Potassium dichromate (for Daphnia), Copper sulfate (for algae). |

| Solvent Control | A vehicle (e.g., acetone, DMSO) used to dissolve poorly water-soluble test chemicals. Ensures any observed effects are due to the chemical, not the solvent. | Concentration typically ≤ 0.01% v/v to avoid solvent toxicity. |

| Culture Organisms | Standardized, genetically defined strains of test species that ensure reproducibility and inter-laboratory comparison. | Pseudokirchneriella subcapitata (algae), Daphnia magna (cladocera). |

| Biomass Indicator | A dye or assay used to quantify organism growth or viability as the test endpoint. | Fluorescence probes (e.g., chlorophyll a for algae), cell counting. |

| Quality Control Buffers & Standards | Used to calibrate and verify the performance of analytical equipment (pH meters, dissolved oxygen probes, spectrophotometers). | pH buffer solutions (4.0, 7.0, 10.0), DO calibration standards. |

| Data Analysis Software | Open-source statistical platforms essential for applying modern analysis methods recommended in revised guidelines. | R with packages (e.g., drc for dose-response, metafor for SR). |

The concurrent revision of OECD No. 54 and the advancement of complementary initiatives by the EFSA, U.S. EPA, WHO, and ISO represent a cohesive global regulatory imperative. This movement is fundamentally rooted in the principles of systematic review, aiming to replace fragmented and outdated practices with transparent, statistically robust, and evidence-based methodologies. For researchers and drug development professionals, engagement with these evolving frameworks is not merely compliance but an opportunity to contribute to and leverage higher-quality, globally harmonized ecotoxicity assessments that better protect environmental and public health.

Conducting Rigorous Systematic Reviews: A Step-by-Step Guide from Protocol to Synthesis

In the evolving landscape of ecotoxicology, the shift towards evidence-based environmental decision-making necessitates robust, transparent, and reproducible systematic review methodologies [3]. The foundation of any high-quality systematic review is a precisely formulated research question, which dictates the entire process—from study identification and selection to evidence synthesis and risk characterization [11]. Within the context of systematic review methods for ecotoxicity data research, the Population, Exposure, Comparator, Outcome (PECO) and Population, Stressor, Outcome (PSO) frameworks provide this critical scaffolding [11].

These frameworks are not merely academic exercises; they address a tangible challenge in environmental science. The proliferation of contaminants, including contaminants of emerging concern (CECs), has created a data-rich but often fragmented landscape [12]. Without a clearly defined question, systematic reviews risk being inefficient, non-transparent, or misaligned with regulatory and research needs [11]. The PECO framework, an adaptation of the clinical PICO (Population, Intervention, Comparator, Outcome) model, is increasingly accepted for structuring questions about the association between environmental exposures and ecological outcomes [11]. It is specifically designed to address the complexities of unintentional exposure scenarios, which are fundamental to environmental and occupational health [11]. Concurrently, frameworks like PSO serve in contexts where defining a precise comparator is less critical than characterizing the stressor-effect relationship itself. This technical guide details the operationalization of these frameworks, their integration into systematic review workflows, and their application in advanced ecotoxicological research, positioning them as the cornerstone of a rigorous, defensible, and decision-relevant evidence assessment process.

Core Frameworks: PECO and PSO in Detail

The PECO Framework: Operationalizing Exposure-Based Questions

The PECO framework deconstructs a research question into four interdependent pillars. A well-constructed PECO question defines the review's objectives and directly informs study design, inclusion/exclusion criteria, and the interpretation of findings [11].

- Population (P): The ecological entity of interest. This must be specified with taxonomic, life-stage, and contextual precision (e.g., "adult fathead minnows (Pimephales promelas) in freshwater lotic systems," "the earthworm Eisenia fetida in agricultural soils") [12] [13]. The population defines the biological domain of the review.

- Exposure (E): The chemical, physical, or biological agent under investigation, with detailed characterization of its form, magnitude, duration, and route. This is a critical differentiator from clinical PICO, as environmental exposures are often passive and complex [11]. Examples include "waterborne exposure to ≥ 80 dB anthropogenic noise" or "dietary exposure to a mixture of acetamiprid and chlorpyrifos at a 1:1 concentration ratio" [11] [13].

- Comparator (C): The reference against which the exposure is evaluated. Defining an appropriate comparator is a noted challenge in environmental health [11]. It may be a different exposure level (e.g., "exposure to < 80 dB"), a background condition, or a different substance. The comparator is essential for estimating effect size and understanding dose-response relationships.

- Outcome (O): The measurable ecological or toxicological endpoint. Outcomes should be biologically relevant, measurable, and tiered where possible (e.g., from molecular initiating events like "CYP1A1 gene expression" to apical endpoints like "96-hour mortality" or "reproductive success") [12].

Research and regulatory contexts dictate how these elements are combined. A seminal framework outlines five paradigmatic PECO scenarios, moving from exploratory association to intervention-based questions [11].

Table 1: PECO Framework Scenarios for Systematic Review [11]

| Scenario & Context | Approach | Example PECO Question |

|---|---|---|

| 1. Explore dose-effect relationship | Explore the shape of the exposure-outcome relationship. | Among freshwater amphipods, what is the effect of a 10 μg/L incremental increase in waterborne triclosan on 48-hour mortality? |

| 2. Evaluate data-driven exposure cut-offs | Use cut-offs (e.g., tertiles) identified from the distribution within retrieved studies. | Among soil nematodes, what is the effect of exposure to the highest versus lowest quartile of cadmium concentration in soil on reproductive rate? |

| 3. Evaluate externally defined cut-offs | Use cut-offs (e.g., regulatory limits) known from other populations or standards. | Among coastal marine fish, what is the effect of sediment PAH concentrations exceeding EPA sediment quality guidelines compared to those below guidelines on liver somatic index? |

| 4. Identify a protective exposure cut-off | Use an existing health-based exposure limit as the comparator. | Among honeybee colonies, what is the effect of exposure to neonicotinoid levels below the LD₅₀ compared to levels at or above the LD₅₀ on colony collapse? |

| 5. Evaluate an intervention | Select a comparator based on an achievable exposure reduction. | In an urban watershed, what is the effect of implementing a constructed wetland (reducing effluent pyrene by 50%) compared to no intervention on the genetic diversity of benthic macroinvertebrate populations? |

The PSO and Related Frameworks

The Population, Stressor, Outcome (PSO) framework is a streamlined variant used when a formal comparator is either implicit or not the focus of the review. It is particularly useful for hazard identification and screening-level assessments. For instance, a question may be: "In juvenile zebrafish (Danio rerio), what is the effect of microplastic exposure (1-10 μm) on larval deformity rate?" The comparator here is implicit (no exposure). PSO is often applied in prioritization frameworks that screen chemicals based on their intrinsic Persistence (P), Bioaccumulation (B), and Toxicity (T) potential [14].

Complementary frameworks like qAOP (Quantitative Adverse Outcome Pathway) provide a mechanistic structure for organizing evidence [15]. While not a direct substitute for PECO/PSO, a qAOP framework quantifies the relationships along a pathway from a Molecular Initiating Event (MIE) to an Adverse Outcome (AO), directly informing the 'O' in PECO and enabling prediction of outcomes for novel exposure scenarios [15].

Table 2: Comparison of Question Framing Frameworks in Ecotoxicology

| Framework | Primary Purpose | Key Components | Typical Application Context |

|---|---|---|---|

| PECO | To structure questions about the association or effect of an exposure relative to a comparator. | Population, Exposure, Comparator, Outcome | Systematic reviews for risk assessment, dose-response analysis, intervention evaluation [11]. |

| PSO | To structure questions for hazard identification and stressor-effect characterization. | Population, Stressor, Outcome | Screening-level reviews, hazard ranking, PBT assessments [14]. |

| qAOP | To organize mechanistic, quantitative evidence across biological scales for predictive toxicology. | Molecular Initiating Event, Key Events, Adverse Outcome, Quantitative Relationships | Developing predictive models, integrating NAMs (e.g., in vitro, in silico), supporting read-across [15]. |

Integrating PECO/PSO into Systematic Review Workflows

Framing the question is the inaugural and defining step in a systematic review. Its integration into the subsequent workflow is critical for maintaining focus and rigor. The following diagram illustrates this integration, highlighting decision points informed by the PECO/PSO structure.

Diagram 1: Systematic Review Workflow with Integrated PECO/PSO Framing

The PECO question directly shapes the review protocol. Inclusion and exclusion criteria are explicit translations of the PECO elements. For example, a PECO specifying "chronic toxicity in freshwater fish" excludes acute toxicity studies and studies on terrestrial species. The search strategy is built using controlled vocabularies (e.g., MeSH, Emtree) and keywords derived from each PECO component [3].

During study screening and data extraction, PECO acts as a consistent benchmark. Tools like the ECOTOXicology Knowledgebase (ECOTOX), which houses over one million curated test results, exemplify the application of systematic, PECO-informed review principles for data curation [3] [16]. Furthermore, the rise of New Approach Methodologies (NAMs)—including high-throughput in vitro assays, toxicogenomics, and in silico models—generates data that must be integrated within this structured framework [12] [3]. A PECO-defined question ensures that NAM data relevant to specific key events or outcomes are appropriately incorporated into a Weight-of-Evidence (WoE) assessment [12].

Advanced Applications and Quantitative Methodologies

Quantitative Analysis Informing PECO Comparators

Quantitative toxicological methods provide the data needed to define meaningful 'E' and 'C' elements, especially in Scenarios 2-5 of the PECO framework [11]. Two key methodologies are:

- Benchmark Dose (BMD) Modeling: This approach is superior to traditional NOAEC/LOEC methods as it models the full dose-response curve to estimate a predetermined level of change (e.g., BMD₁₀, the dose associated with a 10% effect) [13] [2]. BMD is invaluable for quantifying the toxicity of chemical mixtures. A study on pesticide mixtures (acetamiprid, carbendazim, chlorpyrifos, cyhalothrin) found that mixture toxicity to earthworms could be up to 40 times higher than that of individual components, a finding critical for defining relevant exposure levels in a PECO question about mixture effects [13].

- Species Sensitivity Distributions (SSDs): SSDs model the variation in sensitivity of multiple species to a single stressor. They are used to derive Predicted No-Effect Concentrations (PNECs), which can serve as scientifically robust comparators (the 'C') in PECO questions focused on protective thresholds for ecosystems [3].

Statistical Best Practices for Ecotoxicity Data Analysis

The statistical analysis of data underlying PECO-based reviews is undergoing significant modernization. There is a strong movement away from hypothesis-testing methods based on NOEC/LOEC towards continuous regression-based modeling [2]. Contemporary best practices recommend:

- Using generalized linear models (GLMs) and non-linear dose-response models (e.g., 2-5 parameter models) to estimate ECₓ or BMD values [2].

- Employing generalized additive models (GAMs) to explore non-linear response patterns without pre-specified shapes [2].

- Considering Bayesian methods for incorporating prior knowledge and quantifying uncertainty [2].

These advanced statistical practices yield more reliable and informative toxicity estimates, which are the fundamental data points for answering PECO questions and conducting robust evidence synthesis [2].

The qAOP Modeling Workflow: From Mechanistic Data to Quantitative Predictions

The development of a Quantitative Adverse Outcome Pathway (qAOP) is a paradigm for integrating diverse data streams to support predictive toxicology. It directly operationalizes mechanistic evidence within a quantifiable framework [15]. The workflow, depicted below, shows how data curated under a PECO/PSO structure can feed into predictive models.

Diagram 2: Quantitative AOP (qAOP) Development Workflow

This process requires identifying and extracting reliable quantitative data for key events, which is facilitated by a clear initial question framing the relationship of interest [15]. Resources like the ECOTOX Knowledgebase are essential for sourcing curated in vivo data for model calibration and validation [3].

Detailed Experimental Protocols

Protocol 1: Aquatic Ecotoxicity Screening for Polymers (Adapted from OECD TG) [17] This protocol assesses the intrinsic toxicity of polymer filtrates and suspensions.

- Test Organisms: Algae (Raphidocelis subcapitata), daphnids (Daphnia magna), and zebrafish embryos (Danio rerio).

- Test Substance Preparation: Polymers (e.g., alginate, chitosan) are prepared as both filtrates (0.45 μm filtered to assess intrinsic toxicity) and suspensions (to assess physical effects) in reconstituted standard test waters.

- Exposure Design: A concentration range of 1–100 mg/L is tested. For algae, a 72-hour growth inhibition test (OECD TG 201) is conducted. For daphnids, a 48-hour immobility test (OECD TG 202, miniaturized) is used. For zebrafish embryos, a 96-hour exposure (adapted from OECD TG 236) is performed, followed by transcriptomic analysis.

- Endpoint Measurement: Algal growth (cell density/biomass), daphnid immobility, zebrafish embryo mortality/morphology, and differential gene expression via RNA sequencing.

- Data Analysis: Determine LOEC/NOEC. For transcriptomics, identify significantly differentially expressed genes (DEGs) and perform pathway enrichment analysis to infer mode of action [17].

Protocol 2: Benchmark Dose Analysis for Pesticide Mixtures in Earthworms [13] This protocol quantifies mixture toxicity using the BMD approach.

- Test Organism: Adult earthworms (Eisenia fetida) with developed clitellum, cultured in artificial soil.

- Test Chemicals: Individual pesticides (acetamiprid, carbendazim, chlorpyrifos, cyhalothrin) and their mixtures at environmentally relevant ratios.

- Exposure Design: Two tests: (i) Acute toxicity test: Exposure to a range of concentrations in artificial soil for 14 days; endpoint is mortality. (ii) Avoidance response test: Dual-chamber test with contaminated vs. control soil for 48 hours; endpoint is the effective avoidance rate.

- Data Analysis: Fit dose-response models to mortality and avoidance data for each chemical and mixture. Calculate the Benchmark Dose (BMD) for a specified Benchmark Response (BMR), e.g., 10% extra risk (BMD₁₀). Compute the mixture toxicity index by comparing the BMD of the mixture to the BMDs of individual components [13].

Research Reagent Solutions & Essential Materials

Table 3: Key Research Reagents and Materials for Featured Ecotoxicity Studies

| Item | Function/Description | Example Study/Use Case |

|---|---|---|

| Reconstituted Standard Test Water | Provides a consistent, defined ionic composition and hardness for aquatic toxicity tests, minimizing confounding water quality variables. | Used in OECD TG tests for algae, daphnids, and fish [17]. |

| Artificial Soil | A standardized matrix of peat, kaolin clay, and sand for terrestrial toxicity tests, ensuring reproducibility. | Used in earthworm acute and avoidance tests per OECD guidelines [13]. |

| Polymer Test Substances (e.g., Chitosan, Alginate) | Biopolymers of interest as "green" substitutes; tested as both filtrates (intrinsic toxicity) and suspensions (physical effects). | Central test materials in aquatic ecotoxicity screening of biopolymers [17]. |

| Pesticide Active Ingredients (e.g., Chlorpyrifos, Carbendazim) | High-purity analytical standards used to spike soils or waters at precise concentrations for dose-response testing. | Used to generate dose-response data for BMD modeling of single and mixed pesticides [13]. |

| RNA Sequencing Kits | For library preparation and next-generation sequencing of transcriptomes from exposed organisms (e.g., zebrafish embryos). | Enables transcriptomic analysis to identify differentially expressed genes and infer toxic modes-of-action [17]. |

| T47D-kBluc or Attagene Factorial Assay Kits | In vitro cell-based bioreporter assays used in effects-based monitoring. They detect activation of specific biological pathways (e.g., estrogen receptor, stress response). | Used in WoE prioritization frameworks to screen environmental water extracts for biological activity [12]. |

In the field of ecotoxicology, researchers and regulators are confronted with a complex and ever-expanding body of data on the effects of chemicals, pharmaceuticals, and novel materials like nanoplastics on ecosystems[reference:0]. Systematic reviews are essential for distilling this evidence into reliable conclusions that can inform risk assessment and policy. However, the credibility of any systematic review hinges on the transparency and a priori planning of its methods, which mitigates bias and ensures reproducibility. This is where the development and registration of a detailed protocol becomes paramount. Framed within a broader thesis on advancing systematic review methods for ecotoxicity data, this technical guide elucidates the process of creating and registering a robust protocol using the PRISMA 2020 framework as its cornerstone.

The PRISMA 2020 Framework and Protocol Registration

The Preferred Reporting Items for Systematic reviews and Meta-Analyses (PRISMA) 2020 statement is the contemporary guideline for reporting systematic reviews, designed to facilitate complete and transparent reporting[reference:1]. It comprises a 27-item checklist. While PRISMA 2020 guides the reporting of a completed review, its Item 24 explicitly addresses the protocol: "Provide the register name and registration number" or "Indicate where the review protocol can be accessed"[reference:2]. This requirement underscores that a publicly available protocol is not an optional extra but an "essential element" of a trustworthy systematic review[reference:3].

A protocol acts as the project's roadmap, detailing the rationale, question (often structured as PICO), inclusion/exclusion criteria, search strategy, data management, risk-of-bias assessment, and synthesis plans before the review begins[reference:4]. Registering this protocol on a public platform locks in these plans, reducing opportunities for selective reporting and outcome switching, thereby enhancing the review's validity.

Developing a Detailed Protocol: A PRISMA-P Guided Methodology

The dedicated guideline for protocol development is the PRISMA extension for Protocols (PRISMA-P) 2015 statement[reference:5]. The following steps outline a robust methodology for ecotoxicity reviews:

Problem Formulation & Rationale: Clearly define the ecotoxicological problem, the populations (e.g., specific species, ecosystems), interventions/exposures (e.g., chemical contaminants), comparators, and outcomes (e.g., mortality, sublethal biomarkers like oxidative stress)[reference:6]. Justify the review in the context of existing knowledge and regulatory needs.

Eligibility Criteria: Pre-specify detailed inclusion and exclusion criteria. For ecotoxicity, this includes study designs (in vivo, in vitro, field studies), organism models (e.g., Danio rerio, earthworms), exposure durations, and measured endpoints.

Information Sources & Search Strategy: Identify all databases to be searched (e.g., Web of Science, Scopus, PubMed, specialized databases like ECOTOX). Draft a full, reproducible search strategy for each, including keywords, controlled vocabulary (e.g., MeSH terms), and filters[reference:7]. Specify dates of search and plans for identifying grey literature.

Study Selection Process: Describe the process for deduplication and a two-stage screening (title/abstract, then full-text) using tools like Rayyan or Covidence. Specify that screening will be performed independently by at least two reviewers, with conflicts resolved by consensus or a third reviewer[reference:8].

Data Extraction & Management: Design a standardized data extraction form to capture study characteristics, exposure details, outcome data, and key conclusions. Define the process for independent extraction and management of data.

Risk of Bias Assessment: Select and justify appropriate tools for assessing the methodological quality or risk of bias of individual ecotoxicity studies (e.g., SYRCLE's risk of bias tool for animal studies, adapted tools for in vitro research).

Data Synthesis Plan: Outline the approach for synthesis. If a meta-analysis is plausible, describe the effect measures, heterogeneity assessment (I² statistic), and model (fixed/random effects). For narrative synthesis, describe the planned grouping and comparison of studies.

Protocol Amendments: Establish a process for documenting and reporting any deviations from the registered protocol, with justifications.

Registering the Protocol: Platforms and Procedures

Registration makes the protocol publicly accessible and discoverable. The two primary platforms are:

- PROSPERO: An international, free, open-access register for systematic review protocols, maintained by the University of York. It requires completion of a minimum dataset of key methodological details[reference:9]. Registration is prospective (before data extraction begins) and provides a unique registration number (e.g., CRD420XXXXXX).

- Open Science Framework (OSF) Registries: A flexible, open-source platform that allows for the registration and archiving of systematic review protocols, including the upload of full protocol documents and associated materials[reference:10].

Table 1: Comparison of Protocol Registration Platforms

| Feature | PROSPERO | OSF Registries |

|---|---|---|

| Primary Purpose | Dedicated registry for systematic review protocols | General registry for any research study, including protocols |

| Cost | Free | Free |

| Required Fields | Pre-defined minimum dataset for systematic reviews | Flexible; user-defined structure |

| Peer Review | Administrative check only, no methodological review[reference:11] | No editorial review before acceptance[reference:12] |