Ecological Risk Assessment Principles: A Strategic Framework for Ecosystem Protection in Biomedical and Regulatory Science

This article provides a comprehensive framework for ecological risk assessment (ERA) tailored to researchers, scientists, and drug development professionals.

Ecological Risk Assessment Principles: A Strategic Framework for Ecosystem Protection in Biomedical and Regulatory Science

Abstract

This article provides a comprehensive framework for ecological risk assessment (ERA) tailored to researchers, scientists, and drug development professionals. It begins by establishing the foundational principles and regulatory context of ERA, including the core three-phase EPA framework. It then details methodological applications, from problem formulation and exposure assessment to integrating novel endpoints like ecosystem services. The guide also addresses critical troubleshooting aspects such as managing uncertainty, incorporating climate change stressors, and navigating regulatory challenges. Finally, it explores validation and comparative strategies through advanced modeling, case study analysis, and adaptive management. The synthesis offers a forward-looking perspective on applying ERA principles to protect ecosystems while advancing biomedical innovation.

Foundations of Ecological Risk Assessment: Core Principles and Regulatory Frameworks for Ecosystem Protection

Ecological Risk Assessment (ERA) is a formal, scientific process for evaluating the likelihood that adverse ecological effects are occurring or may occur as a result of exposure to one or more environmental stressors [1] [2]. These stressors include chemicals, land-use changes, disease, invasive species, and physical alterations to habitats. Framed within the broader thesis on principles of ecosystem protection research, ERA's primary objective is to generate scientifically robust information that directly informs environmental decision-making and risk management. Its scope systematically spans from molecular-level impacts on individual organisms to population dynamics, community structure, and the integrity of entire ecosystems and the services they provide [3] [4].

The Core Phases and Objectives of Ecological Risk Assessment

The United States Environmental Protection Agency (EPA) framework outlines a structured, phased process for ERA, initiated by a critical planning stage [1] [3]. The core objective of this process is to bridge the gap between ecological science and environmental management, providing a transparent basis for regulatory actions, remediation, and conservation planning.

Planning and Problem Formulation

The Planning phase establishes the assessment's foundation through dialogue between risk managers, assessors, and stakeholders. The team identifies risk management goals, the natural resources of concern, and agrees on the assessment's scope, complexity, and roles [1]. This phase answers strategic questions: What decision needs to be made? What is the spatial scope (e.g., local watershed, national)? What level of uncertainty is acceptable? [3]

Problem Formulation refines these plans into a actionable scientific strategy. Its central objective is to define the specific problem by identifying assessment endpoints and developing a conceptual model [3].

- Assessment Endpoints: These are explicit expressions of the actual environmental values to be protected, defined by an ecological entity and a key attribute of that entity. Selection criteria include ecological relevance, susceptibility to stressors, and relevance to management goals [3].

- Conceptual Model: A diagram and narrative that illustrates the hypothesized relationships between stressors, exposure pathways, and the assessment endpoints. It forms the basis for generating risk hypotheses about how effects might occur [3].

The output is an Analysis Plan, specifying the data, methods, and models to be used in the next phase [1].

Analysis Phase

The objective of the Analysis phase is to evaluate two key components: exposure and ecological effects. It characterizes the stressor, its distribution in the environment, and the concentration/dose-response relationship [1] [3].

- Exposure Assessment: Describes the contact or co-occurrence of the stressor with the ecological receptors. It evaluates sources, release mechanisms, environmental transport and fate, and the extent, frequency, and duration of contact. Key considerations for chemicals include bioavailability, bioaccumulation (uptake faster than elimination), and biomagnification (increasing concentrations up the food web) [3].

- Effects Assessment (Stressor-Response): Evaluates the relationship between the magnitude of exposure and the type and severity of ecological effects. Data is gathered from laboratory toxicity tests, field observations, and previously published studies. This assessment links measurable effects endpoints (e.g., mortality, growth reduction, reproduction impairment) to the assessment endpoints identified in problem formulation [3].

Risk Characterization

Risk Characterization synthesizes the exposure and effects analyses to estimate and describe risk. Its objectives are to interpret the ecological significance of the results, articulate the associated uncertainties, and provide conclusions to the risk manager [1].

- Risk Estimation: Compares the measured or estimated exposure concentrations with the effects data (e.g., toxicity benchmarks) to generate a risk quotient or probabilistic estimate [1].

- Risk Description: Integrates the evidence to evaluate whether adverse effects on the assessment endpoints are likely. It describes the nature, severity, and spatial/temporal scale of potential effects, and discusses recovery potential and lines of evidence supporting the estimates. A critical component is the explicit discussion of uncertainties arising from data gaps, variability, and model assumptions [3].

This phase concludes the scientific assessment, providing the input needed for the risk manager to weigh alternatives, communicate with stakeholders, and select a course of action [1].

Table 1: Core Phases and Objectives of Ecological Risk Assessment

| Phase | Primary Objectives | Key Outputs |

|---|---|---|

| Planning [1] [3] | Establish risk management goals, scope, and team roles; ensure assessment will support decision-making. | Defined management goals, assessment scope, and team agreements. |

| Problem Formulation [1] [3] | Translate goals into actionable science; define what is at risk and how. | Assessment endpoints, conceptual model, analysis plan. |

| Analysis [3] | Evaluate the magnitude of exposure and the relationship between stressor and effect. | Exposure profile (sources, pathways, levels) and stressor-response profile. |

| Risk Characterization [1] [3] | Integrate analysis to estimate risk, describe ecological significance, and summarize uncertainties. | Risk estimate (quantitative or qualitative), description of adversity, uncertainty analysis. |

Quantitative Assessment Endpoints and Measurement Protocols

Selecting appropriate assessment endpoints and associated measurement protocols is critical for a scientifically defensible ERA. Endpoints must be both ecologically relevant and practically measurable [3].

Endpoints Across Levels of Biological Organization

Effects can be measured at different hierarchical levels, from sub-organismal to ecosystem. Higher-level endpoints (population, community) are more ecologically relevant but often more complex and variable to measure. Lower-level endpoints (biochemical, individual) are more precise and commonly used in standardized tests but require careful extrapolation [4].

Table 2: Ecological Assessment Endpoints and Measurement Protocols

| Level of Organization | Example Assessment Endpoints | Common Measurement Protocols & Endpoints | Typical Experiments/Studies |

|---|---|---|---|

| Sub-organismal/ Biochemical | Enzyme function, genetic integrity, physiological stress. | Cholinesterase inhibition assay [5], ethoxyresorufin-O-deethylase (EROD) activity, DNA strand break assays (Comet assay), stress protein (e.g., Hsp) induction. | Controlled laboratory exposures of individual organisms; cell culture assays. |

| Individual | Survival, growth, reproduction, development, behavior. | Acute lethality (LC50/LD50) [5], chronic no-observed-effect concentration (NOEC), reproductive output (fecundity), growth rate, behavioral avoidance tests. | Standardized EPA/ASTM/OECD guideline toxicity tests (e.g., 96-hr fish LC50, Daphnia reproduction test). |

| Population | Population abundance, density, growth rate, age/size structure, extinction risk. | Mark-recapture studies, population modeling (e.g., matrix models), field census/survey data, estimation of critical effect levels for population growth rate. | Long-term field monitoring, demographic studies, population-level modeling based on individual effects data. |

| Community | Species diversity, richness, evenness, trophic structure, community composition. | Multivariate analysis (e.g., ordination), index of biotic integrity (IBI), species sensitivity distributions (SSDs) [6]. | Field mesocosm or microcosm studies, comparative field surveys at contaminated vs. reference sites. |

| Ecosystem | Primary productivity, nutrient cycling, decomposition rates, ecosystem metabolism. | Dissolved oxygen dynamics (for eutrophication), litter bag decomposition studies, nutrient flux measurements, gross primary production/respiration. | Whole-ecosystem or large-scale enclosure experiments, long-term ecological research (LTER). |

Detailed Experimental Protocol: A Retrospective Case Study

The case of Tributyltin (TBT)-induced imposex in marine gastropods provides a classic example of a field-observed effect leading to regulatory action [5]. The following protocol details the key methodologies used to establish causality.

1. Protocol Title: Retrospective Assessment of Tributyltin (TBT) Exposure and Imposex in Marine Gastropods.

2. Problem Formulation:

- Stressor: Tributyltin (TBT) leached from antifouling paints on vessel hulls.

- Assessment Endpoint: Sustained population health of native marine gastropod species (ecological entity) as measured by normal reproductive development and function (attribute).

- Observed Effect: Increased incidence of imposex (development of male sexual characteristics in females) in snails near marinas, leading to population declines [5].

3. Analysis Phase Methodologies:

- Field Survey & Correlation:

- Method: Systematic collection of gastropods (e.g., Nucella lapillus, Ilyanassa obsoleta) from reference sites and gradients away from marinas/harbors.

- Measurement: For each female, the degree of imposex is quantified using the Relative Penis Size Index (RPSI) or Vas Deferens Sequence Index (VDSI). Concurrently, sediment and water samples are collected for TBT analysis.

- Data Analysis: Statistical correlation (e.g., regression) is performed between tissue TBT concentration (or environmental concentration) and the imposex index severity [5].

- Laboratory Toxicity & Dose-Response:

- Method: Controlled laboratory exposure of sensitive gastropod species (e.g., Crassostrea gigas oysters) to a range of waterborne TBT concentrations.

- Exposure System: Flow-through or semi-static systems with verified TBT concentrations. Exposure spans critical developmental and reproductive life stages.

- Measurement: Endpoints include shell thickening (in oysters), induction of imposex, mortality, and growth. The effective concentration causing a 50% response (EC50) or no-observed-effect concentration (NOEC) is derived [5].

- Chemical Fate and Exposure Analysis:

- Method: Analysis of TBT concentrations in environmental matrices (water, sediment, biota) using gas chromatography coupled with mass spectrometry (GC-MS).

- Measurement: Determination of TBT's spatial distribution, its partitioning into sediments (a major sink), and its bioaccumulation factor in mollusk tissues. This builds the exposure profile linking marina sources to biotic receptors [5].

4. Risk Characterization:

- Risk Estimation: Comparison of measured environmental concentrations (MECs) in marinas (found to be in the ng/L to µg/L range) with laboratory-derived chronic effect levels (as low as 2 ng/L for some species). High risk quotients (MEC/NOEC >> 1) were consistently calculated [5].

- Risk Description: The weight of evidence—from strong field correlations, consistent laboratory dose-response, and a plausible mechanistic pathway (TBT acting as an endocrine disruptor)—confirmed a causal relationship. The risk was characterized as widespread in harbors, severe (causing population declines), and irreversible for affected individuals, leading to risk management actions restricting TBT use [5].

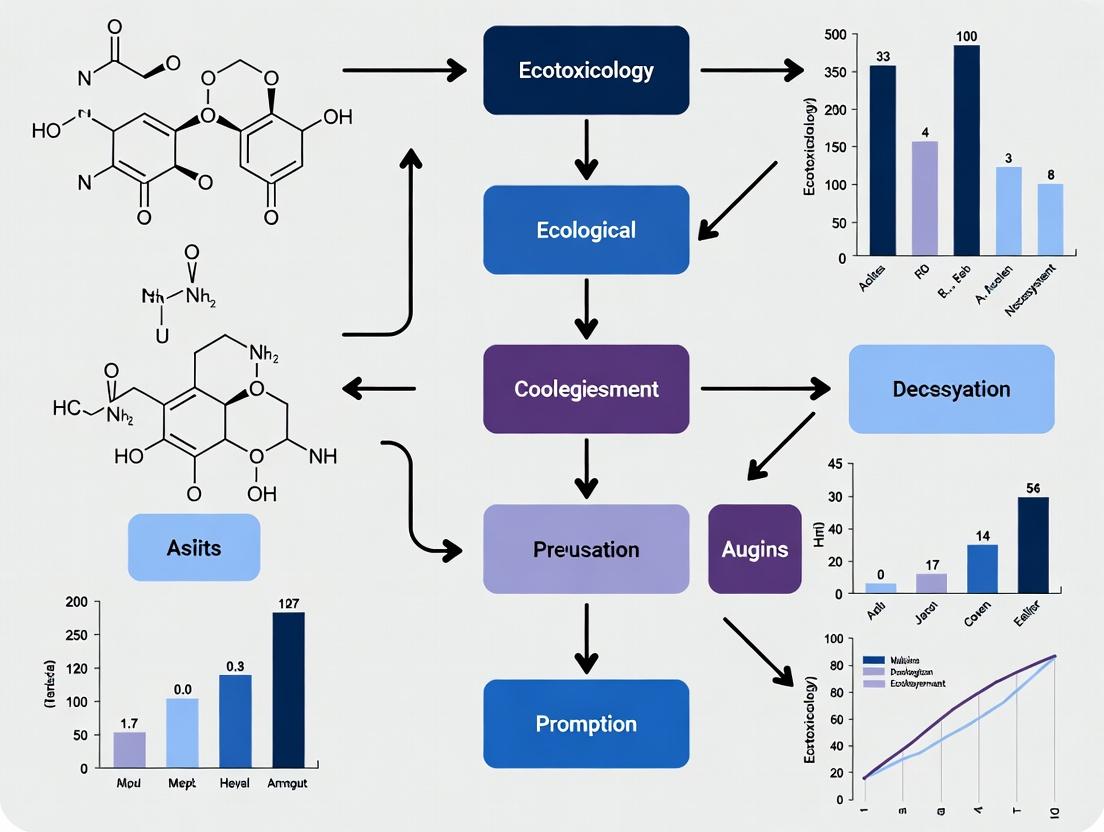

Ecological Risk Assessment Core Workflow

Conducting a modern ERA requires access to a suite of standardized tools, databases, and models. The U.S. EPA's EcoBox serves as a primary compendium of these resources [6] [2].

Table 3: Key Research Reagent Solutions and Tools for Ecological Risk Assessment

| Tool/Resource Name | Type | Primary Function & Application | Key Features/Outputs |

|---|---|---|---|

| ECOTOX Knowledgebase [6] [7] | Database | A curated database summarizing peer-reviewed toxicity data for aquatic and terrestrial species. Used to gather effects data for chemicals during problem formulation and analysis. | Contains single-chemical toxicity results for thousands of species. Essential for developing species sensitivity distributions (SSDs). |

| KABAM (Kow-based Aquatic BioAccumulation Model) [6] | Simulation Model | Predicts bioaccumulation of non-ionic organic chemicals in freshwater aquatic food webs. Used in exposure assessment for pesticides and persistent organics. | Estimates chemical concentrations in water, sediment, and multiple trophic levels (plankton, invertebrates, fish). |

| T-REX (Terrestrial Residue Exposure Model) [6] | Simulation Model | Estimates exposure of terrestrial wildlife (birds and mammals) to pesticides through dietary and incidental soil ingestion. | Calculates expected environmental concentrations (EECs) in food items and risk quotients for screening-level assessments. |

| Wildlife Exposure Factors Handbook [6] [8] | Guidance/Handbook | Provides best-practice data on physiological and behavioral parameters (e.g., ingestion rates, home range, body weight) for wildlife species. | Used to parameterize exposure models and translate environmental concentrations into species-specific daily doses. |

| CADDIS (Causal Analysis/Diagnosis Decision Information System) [6] | Decision Support System | A structured framework for identifying causes of biological impairment in aquatic systems (stressors other than chemicals). | Guides users through source, exposure, and effects evidence to determine probable causes of community degradation. |

| Species Sensitivity Distributions (SSDs) [6] | Statistical Method | Models the variation in sensitivity of multiple species to a single stressor (usually a chemical). Used to derive protective benchmarks. | Outputs a concentration protective of a specified percentage of species (e.g., HC5: Hazardous Concentration for 5% of species). |

| Aquatic Life Benchmarks (Pesticides) [6] | Regulatory Benchmark | Provides EPA Office of Pesticide Programs' approved toxicity thresholds (acute and chronic) for freshwater species. | Used as effects concentrations in risk quotients for regulatory decision-making on pesticide registration. |

| Ecological Soil Screening Levels (Eco-SSLs) [8] | Regulatory Benchmark | Provides risk-based soil concentration guidelines for protection of terrestrial plants, soil invertebrates, and wildlife. | Used for screening-level evaluation and initial focus at contaminated sites (e.g., Superfund). |

The protection of ecosystems from chemical stressors is a multidisciplinary endeavor grounded in the principles of ecological risk assessment (ERA). This scientific process evaluates the likelihood that exposure to one or more environmental stressors, such as chemical substances, will cause adverse effects on plants, animals, and entire ecosystems [1]. In the United States, this scientific framework is operationalized through key regulatory statutes administered by the Environmental Protection Agency (EPA) and the Food and Drug Administration (FDA). The Toxic Substances Control Act (TSCA), the Federal Food, Drug, and Cosmetic Act (FD&C Act), and EPA's Guidelines for Ecological Risk Assessment form a complementary network designed to assess and manage risks across a chemical's lifecycle—from industrial production and use to incorporation into food and food contact materials [9] [10] [11]. For researchers and drug development professionals, understanding these intertwined frameworks is critical for designing environmentally sound products, anticipating regulatory requirements, and contributing to the protection of susceptible ecological receptors and subpopulations.

The U.S. Environmental Protection Agency (EPA): Foundational Risk Assessment Frameworks

The EPA's approach to ecological protection is codified in its Guidelines for Ecological Risk Assessment, which provide a flexible, three-phase structure for evaluating potential impacts [9] [1]. This framework is the scientific bedrock upon which many regulatory actions, including those under TSCA, are built.

Core Principles and Process: The ERA process is characterized by an iterative dialogue between risk assessors, risk managers, and interested parties, particularly during planning and risk characterization [9]. The formal phases are:

- Problem Formulation: This planning phase establishes the scope, goals, and methodology of the assessment. It identifies the environmental stressors of concern, the specific ecological entities (e.g., endangered species, keystone populations, critical habitats) to be protected, and the assessment endpoints (e.g., survival, reproduction, community structure) [1].

- Analysis: This phase consists of two parallel components: an exposure assessment (estimating the extent of contact ecological receptors have with the stressor) and an effects assessment (evaluating the relationship between stressor levels and adverse ecological outcomes) [1].

- Risk Characterization: This final phase integrates the exposure and effects analyses to estimate the likelihood and severity of adverse ecological effects. It explicitly describes risks, discusses uncertainties, and summarizes conclusions for risk managers [9] [1].

Application and Evolution: EPA uses ERA to support a wide range of actions, from regulating pesticides and hazardous waste sites to managing watersheds [1]. The science continues to evolve, with recent reports in July 2025 providing new information for performing assessments at complex urban, industrial, and waterway sites [12]. Furthermore, EPA is advancing methodologies for Cumulative Risk Assessment (CRA), which evaluates the combined risks from multiple stressors, pathways, and populations, as demonstrated in its framework for assessing phthalates [12] [11].

The diagram below illustrates the iterative, three-phase workflow of the EPA's Ecological Risk Assessment framework.

The Toxic Substances Control Act (TSCA): Chemical Risk Evaluation and Management

The 2016 amendments to TSCA established a mandatory, science-based process for the EPA to evaluate and manage risks from existing chemicals in commerce [11]. The TSCA risk evaluation process is a primary regulatory application of ecological risk assessment principles.

The Risk Evaluation Process: The purpose of a TSCA risk evaluation is to determine whether a chemical presents an unreasonable risk to health or the environment under its conditions of use, without consideration of cost [13] [11]. The rigorous process includes scope publication, hazard and exposure assessment, risk characterization, and a final risk determination, and must be completed within a 3 to 3.5-year timeframe [11]. As of September 2025, EPA has proposed significant amendments to its procedural framework rule to rescind or revise certain 2024 changes, aiming to ensure timely completion of evaluations and effective protection of health and the environment [13] [14].

Current Implementation and Case Studies:

- Phthalates and Cumulative Risk: EPA is currently conducting risk evaluations for five phthalates (DBP, DEHP, BBP, DIBP, DCHP). A key scientific advancement is the development of a draft cumulative risk assessment for these chemicals, which was peer-reviewed by the Science Advisory Committee on Chemicals (SACC) in August 2025 [13]. This reflects a move towards assessing combined effects from multiple, structurally related substances.

- Octamethylcyclotetrasiloxane (D4): In September 2025, EPA released its draft risk evaluation for D4, preliminarily finding it poses an unreasonable risk to human health and the environment, primarily from certain conditions of use [13]. This draft is undergoing public comment and peer review.

- Risk Management Rules: When unreasonable risk is found, EPA must propose risk management rules. The final rule for trichloroethylene (TCE), prohibiting most uses, is currently under litigation, with an administrative stay on exemption requirements until November 2025 [15]. Similarly, the risk management rule for carbon tetrachloride is open for comment until November 10, 2025 [13].

The TSCA risk evaluation process is a systematic sequence from initiation to final determination, as shown in the workflow below.

Table 1: Key Quantitative Metrics and Timelines in EPA/TSCA Processes

| Process/Requirement | Quantitative Metric | Description & Regulatory Context |

|---|---|---|

| TSCA Risk Evaluation Timeline [11] | 3 - 3.5 years | Statutory deadline to complete a final risk evaluation after a chemical is designated as high-priority. |

| Public Comment Period (Draft Scope) [11] | ≥ 45 days | Minimum docket open period for public comment on the draft scope of a risk evaluation. |

| Public Comment Period (Draft Risk Evaluation) [11] | ≥ 60 days | Minimum docket open period for public comment on the draft risk evaluation. |

| Toxics Release Inventory (TRI) Reporting Penalties [13] | $22,900 - $63,800 | Range of penalties from recent (Sep 2025) EPA settlements with four companies for TRI reporting failures. |

| PFAS Subject to TRI Reporting [13] | 206 substances | Total number of per- and polyfluoroalkyl substances subject to TRI reporting as of October 2025, following the addition of sodium perfluorohexanesulfonate (PFHxS-Na). |

The Food and Drug Administration (FDA): Safety of Chemicals in Food

The FDA ensures the safety of chemicals in the U.S. food supply, encompassing both intentionally added substances and environmental contaminants, under the FD&C Act [10]. Its approach combines pre-market authorization and post-market surveillance, with a focus on population-level exposure and safety.

Regulatory Authorities and Pathways:

- Premarket Approval: Food additives and color additives require pre-market review and approval via a petition process demonstrating safety [10]. Food contact substances are often authorized through a Food Contact Notification (FCN) process [10]. Substances Generally Recognized as Safe (GRAS) may be used without formal FDA approval, but the agency maintains a voluntary notification program [10].

- Post-Market Surveillance and Monitoring: The FDA monitors contaminant levels through programs like the Total Diet Study, establishes action levels (e.g., for lead in foods for babies), and enforces pesticide tolerances set by the EPA [10]. Its Closer to Zero initiative focuses on reducing exposure to environmental contaminants from foods for young children [10] [16].

Integration of Modern Science and Current Priorities: The newly formed Human Foods Program (HFP), established in October 2024, has made food chemical safety a core risk management area [16]. FY 2025 priorities include:

- Systematic Post-Market Assessment: Developing an updated framework for re-evaluating the safety of chemicals based on new science [16].

- Advanced Analytical Methods: Implementing tools like the Expanded Decision Tree (EDT) for screening chemical toxicity and using AI-driven tools like the Warp Intelligent Learning Engine (WILEE) for post-market signal detection [16].

- Targeted Contaminant Research: Expanding methods to better understand exposure to PFAS from food and issuing guidance on action levels for environmental contaminants [16].

Table 2: Comparison of Core Regulatory Frameworks for Chemical Assessment

| Aspect | EPA Ecological Risk Assessment [9] [1] | TSCA Risk Evaluation [13] [11] | FDA Food Chemical Safety [10] [16] |

|---|---|---|---|

| Primary Legislative Authority | Multiple statutes (e.g., FIFRA, CERCLA) guiding agency practice. | Toxic Substances Control Act (TSCA). | Federal Food, Drug, and Cosmetic Act (FD&C Act). |

| Core Objective | Assess likelihood of adverse effects on plants, animals, and ecosystems. | Determine "unreasonable risk" to health or the environment from existing chemicals. | Ensure safety of chemical exposure from food and food contact articles. |

| Key Scientific Focus | Ecosystem-level endpoints, population sustainability, habitat quality. | Hazard, exposure, and risk characterization under "conditions of use". | Human health exposure assessment, toxicology, dietary intake. |

| Assessment Trigger | Site-specific contamination, pesticide registration, chemical review. | Prioritization as a High-Priority Substance or manufacturer request. | New additive petition, FCN, GRAS notice, or contaminant concern. |

| Risk Management Outcome Examples | Cleanup levels, use restrictions, remediation goals. | Use prohibitions, workplace controls, recordkeeping rules. | Use authorizations, action levels, enforcement actions, recalls. |

Experimental Protocols for Regulatory Science

Adhering to standardized methodologies is essential for generating data acceptable to regulatory agencies. Below are detailed protocols for key testing paradigms relevant to ecological and human health risk assessment under these frameworks.

Protocol for a Tiered Aquatic Toxicity Test (EPA/OCSPP 850.1000 Series):

- Purpose: To determine the acute and chronic toxicity of a chemical to freshwater and marine organisms, supporting hazard classification and risk evaluation under TSCA and other statutes.

- Test Organisms: Select based on trophic level and sensitivity (e.g., fathead minnow (Pimephales promelas) for fish, Daphnia magna for invertebrate, green alga (Raphidocelis subcapitata) for plant).

- Procedure:

- Exposure System Preparation: Prepare a geometric series of at least five test concentrations and appropriate controls (e.g., dilution water, solvent) in replicate test chambers.

- Acute Toxicity (e.g., 96-hr Fish Test): Randomly allocate healthy organisms to each chamber. Observe mortality at 24, 48, 72, and 96 hours. Do not feed during test. Measure dissolved oxygen, pH, temperature, and chemical concentration.

- Chronic Toxicity (e.g., Daphnia Reproduction Test): Expose young female daphnids (<24 hr old) for 21 days. Renew test solutions three times weekly. Record adult survival and the number of live offspring produced per adult.

- Data Analysis: Calculate LC50/EC50 values (acute) or NOEC/LOEC values (chronic) using appropriate statistical methods (e.g., Probit, Spearman-Karber, or hypothesis testing).

- Regulatory Application: Data directly informs the effects assessment phase of an ERA and the hazard assessment for a TSCA risk evaluation [1] [11].

Protocol for a GLP-Compliant 28-Day Repeated Dose Oral Toxicity Study (OECD 407):

- Purpose: To identify adverse health effects from repeated chemical exposure, providing critical data for establishing points of departure (PODs) for human health risk assessment under TSCA and FDA reviews.

- Test System: Young adult rodents (typically rats), assigned to control and at least three dose groups (n=10/sex/group).

- Procedure:

- Dose Administration: Administer the test article daily via oral gavage at constant volumes for 28 days. The high dose should induce toxicity but not severe mortality; the low dose should elicit no adverse effects.

- In-life Observations: Record daily clinical signs, weekly body weights, and food consumption. Conduct detailed clinical examinations (e.g., sensory reactivity, grip strength) prior to dosing and near study end.

- Terminal Procedures: At study end, collect blood for hematology and clinical chemistry. Perform a full necropsy, record organ weights (e.g., liver, kidneys, adrenals), and preserve tissues in fixative for histopathological examination of all high-dose and control animals, and target organs in all groups.

- Statistical Analysis: Analyze continuous data (body weight, organ weight, clinical pathology) using ANOVA followed by Dunnett's test. Analyze incidence data (pathology) using Fisher's Exact Test.

- Regulatory Application: Serves as a core study for identifying target organ toxicity, establishing a No Observed Adverse Effect Level (NOAEL), and is a standard requirement for FDA food additive petitions and TSCA hazard assessments [10] [11].

Protocol for Residue Migration Testing for Food Contact Notifications (FDA):

- Purpose: To quantify the migration of substances from food contact materials into food simulants, ensuring exposures remain within safe limits.

- Test Articles: Food contact material (e.g., polymer pellets, finished film) in its intended use form.

- Procedure:

- Selection of Food Simulants: Assign simulants per FDA guidelines: 10% ethanol (aqueous foods), 50% ethanol (dairy), vegetable oil (fatty foods). Use time-temperature conditions that mimic the intended use (e.g., 121°C for 2 hours for hot-fill sterilization).

- Migration Cell Setup: Place the test article in contact with the simulant in a migration cell, ensuring total immersion. Use appropriate controls.

- Extraction & Analysis: After exposure, analyze the simulant using validated analytical methods (e.g., GC-MS, HPLC-MS) with sufficient sensitivity (often in the ppb range). Calculate the concentration of the migrant.

- Dietary Exposure Estimation: Use migration concentration, food consumption factors (e.g., FDA's Consumption Factor), and food-type distribution data to calculate an Estimated Daily Intake (EDI).

- Regulatory Application: The calculated EDI is compared to an Acceptable Daily Intake (ADI) to support the safety determination in an FCN or food additive petition [10].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Regulatory Testing

| Item/Category | Function in Regulatory Science | Example Application & Notes |

|---|---|---|

| Certified Reference Standards | Provide the basis for accurate chemical quantification and method validation. | Used to calibrate instruments and verify accuracy in residue migration testing (FDA), environmental fate studies (EPA), and impurity profiling for TSCA submissions. Must be of known high purity and traceable to a primary standard. |

| OECD-Compliant Test Organisms | Standardized, sensitive biological models for reproducible toxicity testing. | Includes specific strains of Daphnia magna (e.g., Clone 5), fathead minnows from certified breeders, and algal cultures. Their use is mandated in EPA and OECD test guidelines to ensure data reliability and inter-lab comparability. |

| Food Simulants | Chemical substitutes for food that mimic the extractive properties of different food types. | 10% ethanol, 50% ethanol, and vegetable oil are standard simulants for aqueous, alcoholic, and fatty foods, respectively, in FDA migration testing. Their use is defined in 21 CFR Part 177. |

| Metabolically Activated S9 Fraction | Provides the exogenous metabolic activation system (microsomal enzymes) for in vitro genotoxicity assays. | A critical component of the Ames test (OECD 471) and in vitro micronucleus assay (OECD 487) to detect promutagens. S9 is typically derived from rodent livers induced with Aroclor 1254 or phenobarbital/β-naphthoflavone. |

| Stable Isotope-Labeled Analogs (Internal Standards) | Correct for matrix effects and analyte loss during sample preparation in advanced analytical chemistry. | Essential for accurate quantification of PFAS, phthalates, or pesticide residues in complex matrices (food, biological tissue, soil) via LC-MS/MS or GC-MS/MS, as required for environmental monitoring and exposure assessment. |

Ecological Risk Assessment (ERA) is a formal, scientific process used to evaluate the likelihood that adverse ecological effects may occur or are occurring as a result of exposure to one or more environmental stressors [1]. These stressors can be chemical (e.g., pesticides, industrial contaminants), physical (e.g., habitat alteration), or biological (e.g., invasive species) [1]. For researchers and drug development professionals, understanding this framework is critical for anticipating and mitigating the unintended environmental consequences of chemical products, from novel active pharmaceutical ingredients to agrochemicals.

The process is inherently iterative and is built upon a collaborative foundation between risk assessors (scientists who evaluate the data) and risk managers (decision-makers who use the assessment to inform actions) [17]. The core sequence of an ERA, as defined by the U.S. Environmental Protection Agency (EPA), consists of three primary phases: Problem Formulation, Analysis, and Risk Characterization, preceded by a critical Planning stage [3] [1]. This guide details the technical principles and methodologies underpinning this sequence, providing a roadmap for designing robust, defensible ecological safety evaluations within ecosystem protection research.

Foundational Phase: Planning and Scoping

Before technical assessment begins, a planning dialogue establishes the assessment's purpose, scope, and boundaries [17] [3]. This stage ensures the scientific evaluation will directly support subsequent environmental decision-making [3].

Key participants include risk managers (e.g., regulatory agency staff), risk assessors (ecologists, toxicologists, statisticians), and other stakeholders (e.g., industry representatives, community groups, resource trustees) [3] [18]. Together, they reach agreement on several foundational elements [17]:

- Regulatory Action & Management Goals: The specific decision context (e.g., approval of a new pesticide) and the high-level environmental values to protect (e.g., "maintaining a sustainable aquatic community") [17].

- Management Options: The potential risk management actions (e.g., use restrictions) that the assessment will inform [17].

- Scope and Complexity: Decisions on spatial/temporal scales, ecological entities to consider, resources required, and the level of uncertainty that can be tolerated [17]. A tiered approach, starting with conservative screening-level assessments, is often employed to focus resources on risks of greatest concern [17] [3].

The product of planning is a clear summary that guides the transition into the first technical phase: Problem Formulation [17].

Phase 1: Problem Formulation – Defining the Assessment

Problem formulation is the pivotal phase that translates the agreements from planning into a concrete, scientifically defensible plan for analysis. It "provides the foundation for the risk assessment" by defining the problem, selecting assessment endpoints, and developing a conceptual model [17].

Integrating Available Information

The process begins with a systematic compilation and evaluation of available data across four key domains [17] [18]:

Table 1: Key Information Domains Integrated During Problem Formulation

| Domain | Considerations | Example Data Sources |

|---|---|---|

| Stressor Characteristics | Identity, chemical/physical properties, toxicity, mode of action, persistence, frequency, and intensity of release [18]. | Product chemistry data, fate and transport studies, published literature. |

| Source Characteristics | Status (active/inactive), spatial scale, and background environmental levels [18]. | Site maps, release inventories, monitoring data from reference areas. |

| Exposure Context | Environmental media affected, exposure pathways (ingestion, inhalation, dermal), and timing relative to sensitive life cycles [18]. | Environmental fate models, habitat use studies, life-history data. |

| Ecosystem & Receptor Characteristics | Identification of species, communities, or habitats present; their life history traits, susceptibility, and ecological/social value [3] [18]. | Ecological surveys, endangered species lists, database queries. |

Selecting Assessment Endpoints

Assessment endpoints are explicit expressions of the environmental values to be protected. They combine a specific ecological entity (the what to protect) with a relevant attribute of that entity (the characteristic to protect) [17] [18].

- Ecological Entities can range from a single species (e.g., an endangered bird) to a functional group, community, or entire ecosystem [3].

- Attributes are measurable characteristics critical to the entity's sustainability, such as survival, growth, reproduction, or community structure [17].

Endpoints are selected based on ecological relevance, susceptibility to the stressor, and relevance to management goals [3]. For screening-level pesticide assessments, typical endpoints include reduced survival (acute effects) and impaired reproduction or growth (chronic effects) in surrogate species [17].

Developing a Conceptual Model

A conceptual model is a visual and narrative tool that illustrates the hypothesized relationships between stressors, exposure pathways, receptors, and assessment endpoints [17] [18]. It consists of:

- Risk Hypotheses: Statements predicting how a stressor leads to an ecological effect.

- A Diagram: A flow chart linking sources, stressors, exposure routes, and receptors [17].

The model identifies known relationships, highlights data gaps, and ranks components by uncertainty [17]. It serves as the direct blueprint for the analysis phase.

The Analysis Plan

The final product of problem formulation is a detailed analysis plan. This plan specifies the methods for evaluating the risk hypotheses, including the measures of exposure and effect, the assessment design, data quality objectives, and approaches for addressing uncertainty [17] [3]. It ensures the analysis will yield results applicable to the risk management decisions [17].

Diagram: Conceptual Model Development Workflow

Diagram 1: Sequential flow of Problem Formulation, from planning input to the creation of a technical analysis plan.

Phase 2: Analysis – Evaluating Exposure and Effects

The analysis phase is divided into two parallel, complementary components: exposure assessment and ecological effects assessment [3] [1].

Exposure Assessment

The exposure assessment describes the contact or co-occurrence of the stressor with ecological receptors. It develops an exposure profile detailing:

- Source & Release: How the stressor enters the environment.

- Fate & Transport: How it moves and distributes through media (air, water, soil, sediment).

- Contact & Bioavailability: The intensity, frequency, duration, and route of contact, considering whether the stressor is in a form organisms can absorb [3].

For chemicals, key considerations include bioaccumulation (uptake faster than elimination) and biomagnification (increasing concentration up the food web) [3]. Exposure can be estimated using models (e.g., predicting environmental concentrations) or measured via field monitoring [17].

Ecological Effects Assessment

The ecological effects assessment, or stressor-response profile, evaluates the relationship between the magnitude of exposure and the likelihood or severity of adverse effects [3]. It involves:

- Evaluating Toxicity Data: Using data from standardized laboratory tests (e.g., LC50, NOAEC/NOAEL), field studies, or mesocosm experiments. Surrogate species (e.g., laboratory rat for mammals) often represent broader taxonomic groups [17].

- Linking Effects to Endpoints: Determining how a measured effect (e.g., reduced body weight in a fish) translates to an impact on the assessment endpoint (e.g., population sustainability) [3].

Table 2: Standard Toxicity Endpoints Used in Screening-Level Risk Quotient Calculations [19]

| Receptor Group | Assessment Type | Typical Toxicity Endpoint (Point Estimate) |

|---|---|---|

| Terrestrial Animals | Acute Avian/Mammalian | Lowest LD₅₀ (median lethal dose, single oral) |

| Chronic Avian | Lowest NOAEC from 21-week avian reproduction test | |

| Chronic Mammalian | Lowest NOAEC from two-generation rat reproduction test | |

| Aquatic Animals | Acute Fish/Invertebrate | Lowest LC₅₀ or EC₅₀ (median lethal/effect concentration) |

| Chronic Fish | Lowest NOAEC from early life-stage or full life-cycle test | |

| Chronic Invertebrate | Lowest NOAEC from life-cycle or partial life-cycle test | |

| Terrestrial Plants | Acute (Non-listed) | EC₂₅ (effective concentration for 25% effect) from seedling emergence or vegetative vigor tests |

| Aquatic Plants | Acute (Non-listed) | EC₅₀ for vascular plants and algae |

Phase 3: Risk Characterization – Synthesizing and Interpreting

Risk characterization integrates the exposure and effects analyses to estimate and describe risk. It has two major components: risk estimation and risk description [19] [1].

Risk Estimation (The Risk Quotient Method)

For deterministic, screening-level assessments, risk is commonly estimated by calculating a Risk Quotient (RQ) [19].

RQ = Exposure Estimate (EEC) / Toxicity Endpoint

An RQ > 1.0 indicates a potential risk, triggering further evaluation or refinement. The specific formulas vary by receptor and exposure scenario [19].

Table 3: Example Risk Quotient Formulas for Pesticide Applications [19]

| Application Scenario | Receptor | Risk Quotient Formula |

|---|---|---|

| Spray (Dietary) | Birds/Mammals | Acute RQ = EEC (mg/kg-diet) / LD₅₀ (mg/kg-diet) |

| Spray (Dose-Based) | Birds/Mammals | Acute RQ = (Ingestion Rate-Adjusted EEC) / (Weight Class-Scaled LD₅₀) |

| Granular | Birds/Mammals | Acute RQ = (mg a.i./ft²) / LD₅₀ (mg/kg-bw) |

| Aquatic | Fish/Invertebrates | Acute RQ = Peak Water Concentration (μg/L) / LC₅₀ (μg/L) |

Risk Description and Principles (TCCR)

Risk description interprets the quantitative estimates. It evaluates the lines of evidence, discusses the adequacy and quality of data, explains uncertainties, and interprets the adversity of effects in ecological context [19] [3]. The overall characterization must adhere to the TCCR principles: being Transparent, Clear, Consistent, and Reasonable to be useful for risk managers [19].

Diagram: Risk Characterization Integration Process

Diagram 2: Integration of exposure and effects analyses during Risk Characterization to produce a final, interpreted assessment.

The Scientist's Toolkit: Essential Reagents and Materials

Implementing a technically sound ERA requires specialized materials and methodological knowledge. The following toolkit outlines key resources.

Table 4: Research Reagent Solutions for Ecological Risk Assessment

| Item Category | Specific Item/Reagent | Function in ERA | Technical Notes |

|---|---|---|---|

| Standard Test Organisms | Fathead minnow (Pimephales promelas), Daphnids (Ceriodaphnia dubia), Earthworms (Eisenia fetida), Northern bobwhite (Colinus virginianus), laboratory rat (Rattus norvegicus). | Serve as surrogate species for toxicity testing to represent broader groups of aquatic animals, soil invertebrates, birds, and mammals [17]. | Must be from certified culture laboratories to ensure genetic consistency and health status. Testing follows standardized OPPTS, OECD, or ASTM guidelines. |

| Reference Toxicants | Sodium chloride (NaCl), potassium chloride (KCl), sodium dodecyl sulfate (SDS), copper sulfate (CuSO₄). | Used in range-finding tests and as positive controls in definitive toxicity tests to confirm organism sensitivity and test validity. | Preparation of stock solutions requires high-purity (>99%) analytical grade chemicals and precise gravimetric/volumetric techniques. |

| Environmental Matrix Simulants | Synthetic fresh/saltwater (e.g., reconstituted water per ASTM D1141), artificial soil (e.g., OECD soil), defined sediment. | Provide a standardized, reproducible medium for laboratory toxicity tests, controlling for variables like pH, hardness, and organic matter. | Recipes require reagent-grade salts (CaCl₂, MgSO₄, NaHCO₃, KCl). Must be verified for ion concentration and pH before use. |

| Analytical Standards & Spiking Solutions | High-purity (>98%) analytical standard of the stressor compound, internal standards (deuterated or ¹³C-labeled analogs for mass spectrometry). | Used to calibrate analytical instrumentation (GC-MS, LC-MS/MS, ICP-MS) for quantifying stressor concentrations in environmental media and tissue (bioaccumulation) samples. | Critical for exposure assessment. Requires preparation in appropriate solvents (e.g., methanol, acetonitrile) with serial dilution to create calibration curves. |

| Sample Preservation Reagents | Nitric acid (HNO₃, trace metal grade), hydrochloric acid (HCl), sulfuric acid (H₂SO₄), sodium hydroxide (NaOH), chemical preservatives (e.g., ascorbic acid for chlorine). | Used to preserve water, soil, sediment, and tissue samples for chemical analysis post-collection, preventing degradation or transformation of the analyte. | Handling requires appropriate PPE and safety protocols. Choice of preservative is analyte-specific (e.g., HNO₃ for metals). |

| Model Input Data | Local crop and land use data, soil property databases (texture, organic carbon), climate data (rainfall, temperature), pesticide application rates and timings. | Serve as inputs for exposure simulation models (e.g., T-REX, TerrPlant, PRZM-EXAMS) to predict environmental concentrations (EECs) [19]. | Sourced from public databases (USDA, NOAA), product labels, or site-specific measurements. Data quality directly impacts model uncertainty. |

Within the principles of ecological risk assessment for ecosystem protection, effective environmental stewardship is not achieved by any single entity. It is the product of a tripartite collaborative framework integrating the distinct yet interdependent roles of risk assessors, risk managers, and regulators [1]. This guide articulates the operational protocols, decision-making structures, and collaborative mechanisms that define this partnership. The ultimate goal is to translate scientific evidence into protective actions, balancing ecological integrity with societal needs [4].

The foundation of this collaboration is a shared process model. The U.S. Environmental Protection Agency (EPA) formalizes ecological risk assessment into three sequential, iterative phases: Problem Formulation, Analysis, and Risk Characterization, preceded by a critical collaborative planning stage [1]. This structure provides the common workflow within which distinct stakeholder roles interact, ensuring scientific rigor is aligned with regulatory mandates and management feasibility.

Defining Core Stakeholder Roles and Responsibilities

The efficacy of the ecological risk assessment process hinges on the clear definition and understanding of each stakeholder's function. Confusion or overlap in roles can lead to procedural delays, compromised scientific integrity, or ineffective management outcomes.

Table 1: Core Stakeholder Roles in Ecological Risk Assessment

| Stakeholder | Primary Function | Key Responsibilities | Governance Analogy [20] [21] |

|---|---|---|---|

| Risk Assessor | Provides scientific and technical analysis. | Conducts exposure and effects assessments; characterizes risk magnitude and uncertainty; presents objective findings [1] [22]. | The analyst and modeler; provides evidence for decision-making. |

| Risk Manager | Makes decisions on actions to mitigate risk. | Defines the problem and assessment goals with assessors; weighs assessment results with social, legal, and economic factors; selects and implements regulatory or remedial actions [1]. | The decision-maker and strategist; balances risk with organizational or program objectives. |

| Regulator | Establishes and enforces the legal and policy framework. | Sets protection goals (e.g., water quality standards, species protection); mandates assessment procedures; reviews and approves management plans; ensures compliance [23]. | The rule-setter and auditor; ensures processes and outcomes align with statutory mandates. |

The transition of the risk assessor from a purely technical role to a strategic partner is critical [22]. This elevation involves moving beyond checklist compliance to developing business- or ecosystem-focused risk management strategies. It requires assessors to communicate complex data in terms of operational impact and resilience, thereby directly informing management choices [22].

The Role of Risk Appetite and Tolerance

A key collaborative task is defining risk capacity, appetite, and tolerance [20]. In an ecological context:

- Risk Capacity: The maximum ecological stress an ecosystem can absorb without irreversible change (e.g., loss of a keystone species).

- Risk Appetite: The level of potential ecological impact a society or regulator is willing to accept to secure benefits (e.g., agricultural use of pesticides).

- Risk Tolerance: Specific, quantifiable thresholds (e.g., a pollutant concentration in water that must not be exceeded) [20] [22].

Managers and regulators must articulate these parameters during the Planning and Problem Formulation phases to guide the scope and depth of the scientific assessment [1].

Diagram 1: The 3-Phase Ecological Risk Assessment Workflow & Stakeholder Interaction [1]. This diagram illustrates the iterative EPA process and the primary points of engagement for each stakeholder. The regulator sets the overarching framework, the manager defines the problem and makes the final decision, and the assessor leads the technical analysis.

Operational Workflows and Collaborative Mechanisms

Collaboration must be structured and intentional to be effective. Best practices involve established protocols for communication, data sharing, and joint problem-solving at defined milestones.

The Collaborative Planning and Problem Formulation Phase

This initial stage is the most critical for alignment. The team must co-create the assessment's foundation [1]:

- Define Management Goals & Options: Managers, with regulatory input, articulate the desired ecological outcome (e.g., "protect spawning fish populations") and potential actions.

- Identify Assessment Endpoints: Assessors translate management goals into measurable entities (e.g., "survival and reproductive success of brown trout").

- Develop Conceptual Model: The team collaboratively diagrams the hypothesized relationships between stressors, exposure pathways, and ecological receptors.

- Agree on Analysis Plan: The scope, complexity, methods, models, and data requirements are finalized, ensuring they are fit for purpose [1].

Communication During Analysis and Risk Characterization

During the technical phases, interaction shifts but remains essential:

- Analysis Phase: Assessors conduct exposure and effects studies. Managers and regulators should be updated on methodological challenges or preliminary findings that might alter the plan [22].

- Risk Characterization Phase: Assessors synthesize data to estimate risk. The output must clearly separate scientific conclusions (the risk estimate) from science policy choices (e.g., which uncertainty factors to apply). This transparency is crucial for manager and regulator review [1] [24].

Decision-Making and Feedback Loops

Risk characterization informs, but does not dictate, the management decision. Managers integrate the risk assessment with other factors (cost, technical feasibility, social equity) [1]. Post-decision, a feedback loop is established through monitoring. This data validates the assessment and decision, closing the iterative cycle and allowing for adaptive management [25].

Table 2: Mechanisms for Effective Stakeholder Engagement [26] [22]

| Mechanism | Description | Best Practice Implementation |

|---|---|---|

| Structured Joint Meetings | Formal meetings at process milestones (e.g., after Problem Formulation). | Use co-chaired working groups; prepare shared agendas; document action items and decisions [26]. |

| Technical Working Groups | Small groups focusing on specific scientific or methodological issues. | Include assessors, relevant managers, and external experts; task with resolving technical uncertainties. |

| Stakeholder Reviews | Formal review of draft assessment documents or management plans. | Provide clear charge questions; allow sufficient time; respond to all comments substantively [26]. |

| Shared Digital Platforms | Centralized systems for document sharing, data access, and comment tracking. | Use platforms that maintain version control, audit trails, and role-based access to improve visibility [23] [25]. |

Advanced Methodological Protocols for Next-Generation Assessment

Modern ecological risk assessment is evolving towards predictive, systems-based approaches. These protocols require deep collaboration from the outset, as they demand integrated problem formulation and interdisciplinary expertise [24].

Protocol: Developing an Adverse Outcome Pathway (AOP)-Informed Assessment

An AOP links a molecular initiating event to an adverse ecological outcome through a series of measurable key events [24].

1. Objective: To create a mechanistic framework that organizes existing knowledge, identifies data gaps, and supports the use of alternative (non-animal) toxicity data in risk assessment. 2. Materials & Team: * Team: AOP knowledge (toxicologists, molecular biologists), exposure science, ecological modeling, risk management, regulatory policy. * Data: Systematic literature review tools (e.g., HAWC), AOP-Wiki access, computational modeling software. 3. Methodology: a. Problem Formulation: Define the regulatory problem and the relevant apical ecological endpoint (e.g., fish population decline). b. AOP Development/Utilization: i. Search existing AOP networks (e.g., in the AOP-Wiki) for pathways linking candidate stressors to the endpoint. ii. If no AOP exists, assemble a team to draft one, identifying the Molecular Initiating Event (MIE), Key Events (KEs), and Key Event Relationships (KERs). iii. Assess the weight of evidence for the AOP using OECD guidelines. c. Assessment Strategy: i. Design tests to measure KEs (e.g., in vitro assays for MIE, omics for early KEs). ii. Develop quantitative key event relationship models to predict progression between KEs. iii. Integrate AOP model with exposure models to predict likelihood of the adverse outcome. 4. Output: A mechanistically supported risk hypothesis that can guide targeted testing and inform a more predictive risk characterization [24].

Protocol: Population Modeling for Endangered Species Assessment

This protocol uses dynamic models to extrapolate from organism-level toxicity data to population-level consequences.

1. Objective: To predict the risk of a pesticide or chemical to the long-term viability of a listed fish species in a specific watershed. 2. Materials & Team: * Team: Population ecologists, ecotoxicologists, habitat specialists, risk assessors, species managers. * Data: Species life-history data (survival, fecundity, age structure), toxicity data (LC50, effects on growth/reproduction), habitat quality and carrying capacity data, chemical exposure profiles. 3. Methodology: a. Model Selection: Choose an appropriate model structure (e.g., individual-based model, matrix model) based on data availability and management questions. b. Parameterization: i. Calibrate the baseline model with field data to ensure it accurately reflects undisturbed population dynamics. ii. Integrate dose-response functions from toxicity tests to translate exposure concentrations into effects on vital rates (e.g., reduced juvenile survival). c. Scenario Simulation: i. Run simulations under various exposure scenarios (e.g., pulsed exposures from runoff). ii. Compare endpoints like population growth rate (λ), extinction probability, or time to recovery against management benchmarks. d. Uncertainty Analysis: Perform sensitivity and Monte Carlo analyses to identify critical data gaps and quantify uncertainty in predictions. 4. Output: Quantitative estimates of population-level risk and recovery potential, informing species-specific management options like buffer zones or use restrictions [24].

Diagram 2: AOP-Based Risk Assessment & Predictive Modeling Framework [24]. This diagram shows how an Adverse Outcome Pathway (bottom chain) provides a mechanistic link from molecular stress to ecological harm. Exposure and population models (blue ovals) are integrated to translate laboratory data into predictive, population-relevant risk estimates, guided by stakeholder-defined protection goals.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Modern Ecological Risk Assessment

| Tool/Reagent Category | Specific Example(s) | Primary Function in Assessment | Relevance to Stakeholder Collaboration |

|---|---|---|---|

| High-Throughput In Vitro Assays | Transcriptomic arrays, receptor-binding assays, high-content screening [24]. | Identify Molecular Initiating Events (MIEs) and early Key Events for AOP development; screen many chemicals rapidly. | Provides mechanistic data for assessors; helps regulators prioritize chemicals for further testing; informs managers on emerging threats. |

| Omics Technologies | Metabolomics, proteomics, transcriptomics (e.g., EcoToxChips) [24]. | Reveal sub-lethal stress responses and mode of action; discover biomarkers of exposure and effect. | Assessors use for diagnostic evidence in retrospective assessments. Critical for developing predictive AOPs. |

| Stable Isotope Tracers | ¹⁵N, ¹³C, deuterated compound spikes. | Quantify trophic transfer of contaminants; measure bioaccumulation and biomagnification in food webs. | Provides key exposure parameters for assessment models. Essential for assessing risks to higher trophic levels (e.g., birds, mammals). |

| Passive Sampling Devices | SPMDs, POCIS, Chemcatchers. | Measure time-weighted average concentrations of bioavailable contaminants in water, sediment, or air. | Generates realistic exposure data for assessors, superior to grab sampling. Data directly feeds into exposure models. |

| Toxicity Identification Evaluation (TIE) Materials | Solid phase extraction columns, toxicity-directed fractionation equipment, organism-specific culturing. | Identify the specific chemical(s) causing observed toxicity in a complex environmental mixture. | Crucial for assessors in retrospective (cause identification) assessments. Directly guides managers on which contaminants to target for remediation. |

| Environmental DNA (eDNA) Kits | Species-specific qPCR assays, metabarcoding primer sets. | Sensitive detection of species presence (including invasive or endangered); assess community composition. | Provides assessors with efficient biodiversity data. Aids regulators in monitoring protected species and managers in evaluating restoration success. |

| Process-Based Simulation Software | AQUATOX, BEEHAVE, individual-based model platforms [24]. | Simulate fate, transport, and effects of stressors on populations, communities, or ecosystem functions. | Allows assessors to extrapolate laboratory data to field conditions and predict recovery. Enables managers to simulate scenarios of different intervention strategies. |

Fostering a Culture of Effective Collaboration

Sustained collaboration requires more than protocols; it demands a supportive culture and structured engagement strategies. Research indicates that stakeholder relationships are strongest when engagement is two-way, trusted, and timely, allowing for genuine co-creation [26].

Key principles for fostering this culture include:

- From "Tick-Box" to Co-Creation: Move beyond perfunctory consultation. Involve managers and regulators in shaping the assessment questions from the start, and involve assessors in discussing the practical implications of their findings [26] [22].

- Managing Power Imbalances: Regulators and large agencies must actively mitigate perceived power imbalances. This can involve co-chairing meetings, transparently sharing internal constraints, and demonstrating how stakeholder input influenced outcomes [26].

- Building Institutional Memory: Staff rotation can disrupt relationships and lose critical knowledge. Organizations should implement structured onboarding and offboarding for key roles and maintain centralized repositories of decision rationales and project histories [26] [25].

- Integrating Feedback Loops: Establish clear channels for stakeholders to provide feedback on the assessment process itself, and commit to being a "learning organization" that acts on that insight [26].

The future of ecosystem protection lies in strengthening this collaborative triad. By embracing structured workflows, advanced predictive methodologies, and a culture of trusted engagement, risk assessors, managers, and regulators can ensure that ecological risk assessment fulfills its vital role as the scientific backbone of informed environmental stewardship [4] [24].

Within the framework of principles for ecological risk assessment (ERA) aimed at ecosystem protection, the methodologies of prospective and retrospective assessment serve as two fundamental, complementary pillars [27]. This whitepaper establishes that an integrated application of these approaches is critical for advancing sustainable chemical and pharmaceutical development. Prospective risk assessment is defined as a predictive exercise, estimating the likelihood of future adverse ecological effects before a chemical is approved for use or release [27] [1]. Conversely, retrospective risk assessment is a diagnostic tool used to evaluate whether observed ecological impacts are or were caused by past or ongoing exposure to environmental stressors [1] [28].

The overarching thesis is that effective ecosystem protection research requires a dynamic, iterative loop between these two paradigms. Prospective assessments, while essential for prevention, are inherently burdened with assumptions and uncertainties regarding real-world exposure and ecological complexity [27]. Retrospective assessments provide the critical "ecological reality check" [29], grounding predictions in observable effects and enabling the refinement of models and regulatory guidelines. This integrated approach is paramount for transitioning from simplistic, deterministic hazard quotients to robust, ecologically relevant risk characterizations that account for species life histories, population-level consequences, and the complexities of chemical mixtures [27] [30].

Core Principles and Quantitative Comparison

The distinction between prospective and retrospective ERA is rooted in their temporal direction, primary objectives, and the nature of the data they employ. The following table synthesizes their core characteristics.

Table 1: Core Characteristics of Prospective and Retrospective Ecological Risk Assessments

| Characteristic | Prospective ERA | Retrospective ERA |

|---|---|---|

| Temporal Direction | Future-oriented (predictive) | Past- and present-oriented (diagnostic) |

| Primary Objective | To predict likelihood and magnitude of adverse effects before they occur; to inform pre-market authorization and risk management [27] [1]. | To determine causality between observed ecological impacts and past/current exposures; to inform remediation and validate predictions [1] [28]. |

| Typical Triggers | New chemical/product application, regulatory review, planned land-use change [27]. | Observed population decline, community shift, toxic incident, monitoring data indicating impairment [28]. |

| Exposure Data | Predicted Environmental Concentration (PEC) based on models of use, fate, and transport [29] [31]. | Measured Environmental Concentration (MEC) from field monitoring of water, soil, sediment, or biota [29]. |

| Effects Data | Derived from standardized laboratory toxicity tests on surrogate species (e.g., LC50, NOEC) [27] [31]. | Derived from field observations, biomarkers in resident species, and community-level bioassessments (e.g., species diversity indices) [28]. |

| Risk Characterization | Often uses deterministic Risk Quotients (RQ = PEC/PNEC) compared to Levels of Concern (LOC) [27] [30]. | Involves weight-of-evidence approaches, cause-effect analysis (e.g., Toxicant Identification Evaluations), and spatial/temporal co-occurrence analysis [28]. |

| Key Uncertainty | Extrapolation from lab to field, individual to population, and across species [27]. | Confounding stressors, establishing definitive causal links, and historical baseline data [28]. |

A pivotal concept in both frameworks, especially for chemical mixtures like wastewater effluent, is the Cumulative Risk Characterization Ratio (cumRCR). This metric sums the risk quotients of individual chemicals to evaluate the potential risk of the whole mixture [32] [29]. A case study on domestic wastewater treatment demonstrated that while no single chemical exceeded a hazard quotient of 1.0 for certain treatment types, the cumulative risk (cumRCR) indicated a potential need for further assessment [29]. This highlights a critical limitation of single-substance prospective assessment and underscores the value of retrospective monitoring to validate mixture risk predictions.

Methodological Protocols and Experimental Workflows

Integrated Prospective-Retrospective Framework for Wastewater Assessment

A definitive example of linking both methodologies is a tiered framework for assessing risks from "down-the-drain" chemicals in treated wastewater [32] [29]. The protocol is designed to prioritize resources and provide an ecological reality check.

Table 2: Tiered Framework for Assessing Chemical Mixtures in Wastewater [29]

| Tier | Assessment Type | Key Actions | Decision Criteria |

|---|---|---|---|

| Tier 1 | Prospective Screening | Compare Predicted Effluent Concentrations (PEC) of multiple chemicals to lowest available Predicted No-Effect Concentration (PNEC). Calculate cumulative RCR (cumRCR). | If cumRCR < 1.0, risk deemed unlikely. If cumRCR > 1.0, proceed to Tier 2. |

| Tier 2 | Refined Prospective | Refine PNECs based on trophic level (algae, invertebrate, fish). Recalculate cumRCR with more specific effect data. | If refined cumRCR < 1.0, risk unlikely. If > 1.0, proceed to Retrospective assessment. |

| Retrospective | Field Validation | Conduct site-specific monitoring: 1) Chemical analysis to verify PECs, 2) Ecological surveys (e.g., invertebrate communities), 3) In-situ bioassays. | Test hypotheses from Tiers 1/2. Determine if predicted risk drivers are linked to observed effects. Inform risk management. |

Detailed Tier 1 Experimental Protocol:

- Scenario Definition: Define population served (e.g., 10,000 people), wastewater daily flow (e.g., 2,000 m³/day), and receiving water dilution factor (e.g., 10x) [29].

- Chemical Selection: Compile a list of representative "down-the-drain" chemicals (pharmaceuticals, personal care products) with known per capita consumption and removal rates in different treatment types (Trickling Filter, Activated Sludge, Advanced Oxidation) [29].

- PEC Calculation: For each chemical

i, calculate:PEC_effluent(i) = (Daily Load per Capita(i) * Population) / Daily Flow VolumePEC_surface_water(i) = PEC_effluent(i) / Dilution Factor - PNEC Derivation: Obtain lowest chronic toxicity endpoint (e.g., NOEC, EC10) from literature for three trophic levels. Apply appropriate assessment factors (e.g., 10-1000) to derive a PNEC [29].

- Risk Quotient & CumRCR Calculation:

RQ(i) = PEC_surface_water(i) / PNEC(i)cumRCR = Σ RQ(i) for all chemicals - Interpretation: A cumRCR > 1.0 suggests potential risk, triggering Tier 2.

Prospective ERA in Veterinary Drug Development

For veterinary medicinal products (VMPs), the European Medicines Agency mandates a tiered prospective ERA [31]. This protocol is crucial for preventing incidents like the diclofenac-induced vulture population collapse [31].

Phase II, Tier B Experimental Protocol (Triggered if PEC/PNEC > 1 in Tier A):

- Refined Exposure Assessment (PEC refinement):

- Conduct environmental fate studies to determine degradation rates (hydrolysis, photolysis, biodegradation) in water and soil.

- Perform adsorption/desorption studies to derive a Kd or Koc, modeling leaching potential.

- Use simulation models (e.g., FOCUS) to generate more realistic PECs accounting for climate, soil type, and timing of application [31].

- Refined Effects Assessment (PNEC refinement):

- Execute extended-duration chronic toxicity tests (e.g., 28-day reproduction test in crustaceans, early life-stage test in fish).

- Investigate effects on additional, possibly more sensitive, species relevant to the exposure compartment.

- Consider testing of key ecosystem processes (e.g., organic matter decomposition in soil) [31].

- Updated Risk Characterization: Recalculate PEC/PNEC ratios with refined data. If ratio remains >1, proceed to Tier C (e.g., microcosm/mesocosm studies or proposal of risk mitigation measures).

Visualizing Assessment Workflows and Signaling Pathways

Integrated Prospective and Retrospective ERA Workflow

Pathway Description: This diagram illustrates the synergistic relationship between prospective and retrospective ERA. The prospective pathway (yellow/green) is a predictive, forward-looking process culminating in a risk quotient (RQ) and a regulatory decision. If the decision is "No" (risk unacceptable), it triggers a retrospective assessment (blue), which begins with an observed ecological effect. The retrospective pathway diagnoses cause and effect, leading to risk management. Crucially, outcomes from both pathways feed into a validation and refinement step, creating a feedback loop that improves the initial problem formulation and predictive models, embodying the iterative, learning-by-doing principle of advanced ERA [27] [29] [28].

Application in Pharmaceutical Development: A One Health Perspective

The integration of prospective ERA into drug development is ethically and regulatory essential, particularly under the One Health framework linking human, animal, and environmental health [31]. The environmental release of active pharmaceutical ingredients (APIs) is concerning as they are designed to be biologically active and may act on evolutionarily conserved targets in non-target organisms [31].

Current Regulatory Context: In the European Union, an ERA is mandatory for new veterinary medicinal products and for human pharmaceuticals where the predicted aquatic concentration exceeds 0.01 μg/L or the API has specific hazardous properties [31]. However, a significant gap exists for "legacy" drugs approved before these requirements, resulting in a lack of chronic ecotoxicity data for the majority of pharmaceuticals on the market [31].

Critical Need for Advanced Models: Traditional prospective ERA for pharmaceuticals often relies on standard algal, daphnid, and fish toxicity tests. For APIs with specific modes of action (e.g., endocrine disruption, neurotoxicity), these tests may lack sensitivity or ecological relevance [27]. This is where mechanistic effect models like population models are critical. For instance, a model incorporating the life history of a fish species, its vulnerability to endocrine disruption during specific life stages, and population recovery dynamics provides a far more robust risk characterization than a simple RQ based on a 21-day fish survival test [27] [30].

Table 3: Key Challenges and Advanced Approaches for ERA in Drug Development

| Challenge | Traditional Approach Limitation | Advanced/Integrated Approach |

|---|---|---|

| Legacy Data Gaps | Lack of chronic ecotoxicity data for most APIs [31]. | Use of New Approach Methodologies (NAMs) like QSAR, in vitro assays, and omics to fill data gaps and prioritize testing [31]. |

| Sub-lethal & Chronic Effects | Standard tests may miss population-relevant endpoints like reproduction, behavior, or multi-generational effects. | Application of mechanistic population models (e.g., following Pop-GUIDE) to translate sub-organism effects to population-level consequences [27] [30]. |

| Mixture Exposure | Drugs are present in the environment as complex mixtures; single-substance assessment is insufficient. | Retrospective monitoring to identify mixture risk drivers and validate prospective mixture assessment models [32] [29]. |

| Real-World Exposure Scenarios | PECs often based on simplistic, worst-case scenarios. | Use of environmental fate modeling and refined exposure scenarios informed by post-market environmental monitoring (retrospective data) [31]. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents and Materials for ERA Experiments

| Item | Function in ERA | Example Application Context |

|---|---|---|

| Standard Test Organisms (e.g., Daphnia magna, Pseudokirchneriella subcapitata, Danio rerio embryos) | Surrogate species for deriving acute and chronic toxicity endpoints (LC50, NOEC, EC10) used to calculate PNECs [31]. | Base set of assays in prospective Tier A/B assessments for pharmaceuticals and chemicals. |

| In-situ Bioassay Chambers (e.g., riverine deployment cages for mussels or amphipods) | Allow for controlled exposure of test organisms in the actual environment, integrating all site-specific conditions (water quality, mixture exposure) [29]. | Retrospective validation in wastewater effluent studies to confirm laboratory-based PNECs. |

| Environmental Fate Simulation Systems (e.g., soil lysimeters, water-sediment microcosms) | Experimental systems to measure degradation rates (DT50), leaching potential, and bioavailability of chemicals under controlled but environmentally realistic conditions [31]. | Refined exposure assessment (PEC refinement) in Phase II Tier B of veterinary drug ERA. |

| Molecular Biomarker Kits (e.g., for vitellogenin, CYP450 enzyme activity, DNA damage) | Tools for Biological Effect Monitoring (BEM), detecting early sub-lethal responses in organisms exposed to contaminants, indicating specific modes of action [28] [31]. | Used in both prospective (to identify sensitive endpoints) and retrospective (as evidence of exposure/effect) assessments. |

| Activated Sludge & Advanced Oxidation Process (AOP) Reactors | Bench-scale models of wastewater treatment to empirically determine chemical-specific removal rates, which are critical for accurate PEC calculation for "down-the-drain" chemicals [29]. | Prospective exposure modeling for pharmaceuticals and personal care products. |

| Population Model Software Platforms (e.g., individual-based model frameworks, matrix population model code) | Computational tools to implement mechanistic effect models that translate individual-level toxicity data to population growth rate or extinction risk [27] [30]. | Higher-tier prospective assessment for chemicals with specific life-stage effects or for endangered species assessments. |

The protection of ecosystems from the unintended consequences of chemical and drug development demands a robust, scientifically advanced ERA paradigm. Reliance solely on prospective assessment with deterministic risk quotients is inadequate, as it fails to capture ecological complexity, mixture effects, and population-level dynamics [27] [30]. Retrospective assessment is not merely a tool for addressing past mistakes but an indispensable feedback mechanism for validating and refining predictive models.

The future of ERA lies in the explicit integration of these approaches: using prospective models to generate testable hypotheses about risk, and employing targeted retrospective monitoring to provide the ecological validation necessary for continuous learning and model improvement [29]. This iterative cycle, supported by advanced tools like mechanistic population models and New Approach Methodologies, is essential for realizing the principles of One Health and achieving sustainable ecosystem protection in the face of ongoing chemical innovation.

Methodological Applications in ERA: From Exposure Analysis to Ecosystem Service Endpoints

Within the structured framework of ecological risk assessment (ERA) for ecosystem protection, Problem Formulation is the critical first phase that determines the entire direction, scope, and utility of the assessment [17]. It serves as the essential bridge between broad management goals and the subsequent technical analysis. This phase is a collaborative, iterative planning dialogue between risk assessors and risk managers to ensure the assessment yields information relevant for informed environmental decision-making [17].

The process establishes the "rules of engagement" for the ERA, defining what needs to be protected and how potential risks will be evaluated. Successful problem formulation integrates available information about stressors and ecosystems, defines clear assessment endpoints aligned with management goals, develops predictive conceptual models, and creates a pragmatic analysis plan [17]. This guide details the core technical components of this phase, framed within the overarching principles of ecological risk assessment research.

Table 1: Core Components and Outcomes of Problem Formulation