Dose-Response Curve Decoded: From Fundamental Principles to AI-Driven Optimization in Modern Drug Development

This article provides a comprehensive guide to dose-response curves for researchers and drug development professionals, bridging classic pharmacological theory with contemporary application challenges.

Dose-Response Curve Decoded: From Fundamental Principles to AI-Driven Optimization in Modern Drug Development

Abstract

This article provides a comprehensive guide to dose-response curves for researchers and drug development professionals, bridging classic pharmacological theory with contemporary application challenges. It begins by establishing the core principles of potency, efficacy, and curve interpretation, including metrics like EC50 and the therapeutic index [citation:1][citation:9]. The discussion then advances to modern methodological applications, highlighting the paradigm shift from maximum tolerated dose (MTD) to optimal biological dose (OBD) for targeted therapies, as championed by initiatives like the FDA's Project Optimus [citation:2][citation:7]. The article tackles common troubleshooting issues, such as non-sigmoidal curves and clinical translation failures, and explores innovative solutions, including randomized dose optimization designs and backfill cohorts [citation:4][citation:2]. Finally, it examines advanced validation and comparative techniques, featuring cutting-edge machine learning models like Multi-Output Gaussian Processes (MOGP) for predictive biomarker discovery and dose-response prediction [citation:3]. This end-to-end analysis synthesizes foundational knowledge with the latest regulatory and computational strategies to inform safer, more effective therapeutic development.

What is a Dose-Response Curve? Core Principles, Curve Anatomy, and Pharmacological Interpretation

Defining the Dose-Response Relationship and Its Central Role in Pharmacology and Toxicology

The dose-response relationship is the most fundamental concept in pharmacology and toxicology, describing the quantitative change in effect on a biological system resulting from differing levels of exposure to a chemical, physical, or biological agent [1]. This relationship forms the bedrock of therapeutic drug development, toxicological risk assessment, and regulatory decision-making [1]. At its core, the concept rests on three critical assumptions: that the agent interacts with a specific molecular target or receptor, that the magnitude of the response is correlated with the concentration of the agent at that site, and that this local concentration is, in turn, related to the administered dose [1].

Quantifying this relationship involves graphing the dose (or a function of the dose, such as log10 dose) on the x-axis against the measured effect on the y-axis [2]. The resulting dose-response curve is characterized by several key parameters: potency (the location of the curve along the dose axis), maximal efficacy or ceiling effect (the greatest attainable response), and slope (the change in response per unit dose) [2]. Furthermore, dose-response analysis determines the therapeutic index—the ratio between the toxic and effective doses—which is a crucial indicator of a drug's safety window [2].

A foundational distinction is made between two primary types of responses. A graded (or gradual) dose-response describes a continuous, measurable effect in an individual system, such as the contraction of muscle tissue or the inhibition of an enzyme [1]. In contrast, a quantal (or all-or-none) dose-response tracks the distribution of a specific, discrete outcome (like death or the achievement of a therapeutic cure) across a population [1]. The profile of these curves also varies based on the agent's mechanism; most chemicals exhibit a threshold dose below which no observable effect occurs, while for carcinogens and mutagens, a linear, non-threshold relationship is often assumed [1].

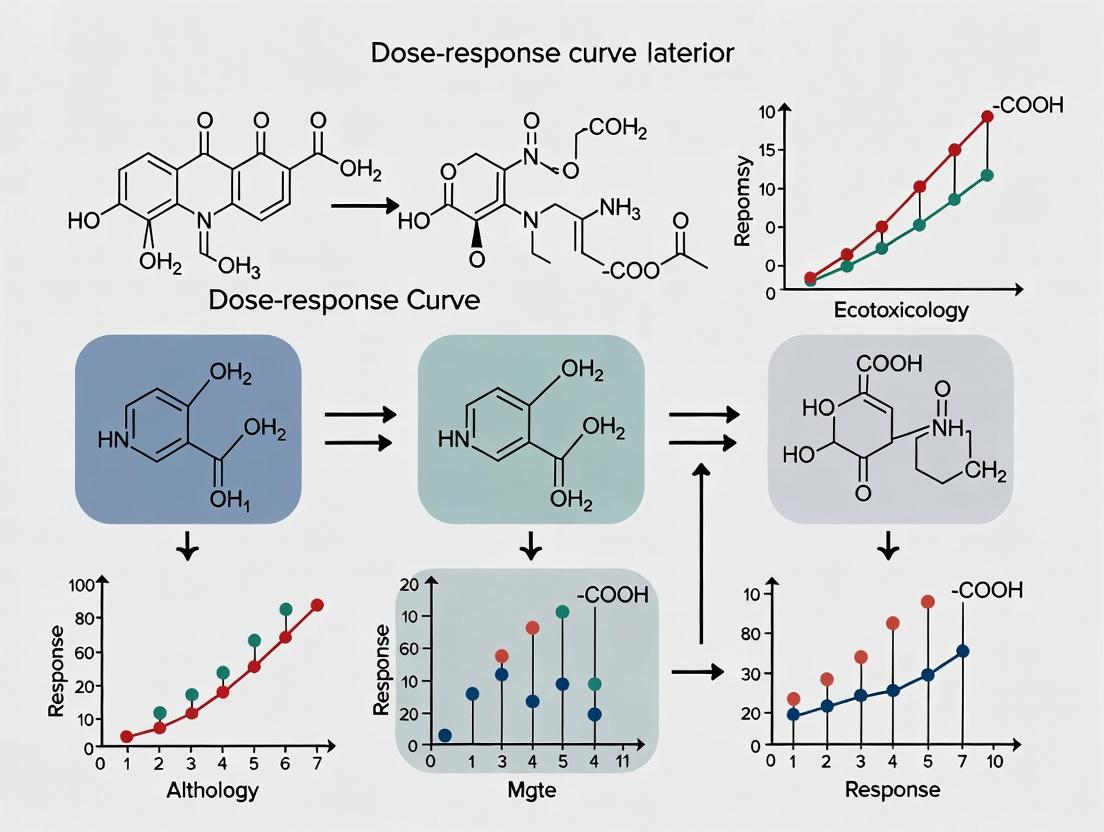

The following diagram illustrates the core concepts, components, and analytical outputs derived from a standard dose-response relationship.

Diagram: Core Logic of Dose-Response Analysis (100 chars)

Quantitative Parameters and Comparative Analysis

The quantitative characterization of dose-response curves allows for the precise comparison of different drugs or toxicants. As illustrated in the hypothetical comparison of Drugs X, Y, and Z, Drug X is the most potent (its curve is left-shifted, indicating it achieves its effect at a lower dose), while Drugs X and Z share the same maximal efficacy, both reaching a higher plateau than Drug Y [2]. Potency is often expressed as the ED₅₀ or EC₅₀ (the dose or concentration producing 50% of the maximal effect), a key parameter derived from curve fitting [3].

Understanding the difference between graded and quantal responses is essential for experimental design and data interpretation. The table below summarizes their key characteristics and applications.

Table 1: Comparative Analysis of Graded vs. Quantal Dose-Response Relationships

| Characteristic | Graded (Gradual) Response | Quantal (All-or-None) Response |

|---|---|---|

| Nature of Measured Effect | Continuous, measurable effect in a single biological unit (e.g., organ, tissue, cell). | Discrete event occurring or not occurring in individual members of a population. |

| Typical Experimental Output | Magnitude of response (e.g., mmHg of blood pressure change, percentage of enzyme inhibition). | Proportion or percentage of subjects exhibiting the defined effect (e.g., death, seizure, cure). |

| Primary Analysis Goal | Determine relationship between dose and intensity of effect; calculate EC₅₀. | Determine relationship between dose and probability of effect; calculate ED₅₀ (median effective dose) or LD₅₀ (median lethal dose). |

| Common Use Cases | In vitro assays, receptor pharmacology studies, mechanistic toxicology. | Preclinical toxicity studies (LD₅₀), clinical trials measuring binary outcomes (response/no response). |

| Example | Contraction of isolated ileum by carbachol; inhibition of cholinesterase by an organophosphate [1]. | Lethality in an acute toxicity study; percentage of patients achieving pain relief in a clinical trial. |

Advanced Methodological Approaches and Statistical Modeling

Modern dose-response analysis employs sophisticated statistical models to derive robust parameters and make causal inferences. For binary outcomes (e.g., response/no response), logistic regression within a generalized linear model (GLM) framework is a standard approach for analyzing both subject-level and grouped data [3]. For more complex relationships, non-linear models like the Emax model are used, defined by the equation: Effect = E₀ + (Emax × Dose)/(ED₅₀ + Dose), where E₀ is the baseline effect, Emax is the maximum effect, and ED₅₀ is the dose producing 50% of Emax [3].

A 2025 systematic review of dose-response methodologies for complex interventions categorized advanced approaches into three groups [4]:

- Multilevel and Longitudinal Modelling: Useful for capturing heterogeneity and repeated measures but limited in establishing causality due to potential confounding [4].

- Non-parametric Regression (e.g., Smoothing Splines, Kernel Regression): Flexible for estimating curve shape without assuming a specific functional form but requires careful assumption checking [4].

- Causal Inference Methods (e.g., Instrumental Variables, G-estimation): Promising for addressing confounding in randomized controlled trials (RCTs) to estimate causal dose-response curves, though they often require a priori assumptions about the functional form [4].

The experimental workflow for establishing a dose-response relationship, from design to regulatory application, involves a structured sequence of stages.

Diagram: Experimental Workflow for Dose-Response Studies (91 chars)

Table 2: Summary of Statistical Modeling Approaches for Dose-Response Analysis

| Methodological Category | Example Techniques | Key Strengths | Primary Limitations | Ideal Application Context |

|---|---|---|---|---|

| Generalized Linear/Non-Linear Models | Logistic Regression, Emax Model [3]. | Standard, well-understood, provides direct parameter estimates (ED₅₀, Emax). Works with grouped/ungrouped binary data [3]. | Assumes a specific functional form (S-shape for logistic, hyperbolic for Emax). May not capture complex curve shapes. | Primary analysis of dose-ranging RCTs for binary or continuous endpoints. |

| Multilevel & Longitudinal Modelling | Mixed-effects models, Growth curve models [4]. | Accounts for within-subject correlation and heterogeneity across individuals or sites. | Cannot inherently establish causal relationships from observational data due to unmeasured confounding [4]. | Analyzing sessional data from psychotherapy trials or repeated-measures preclinical data [4]. |

| Non-parametric Regression | Smoothing splines, Kernel regression [4]. | Highly flexible; does not assume a predefined mathematical model for the dose-response shape. | Requires careful selection of smoothing parameters; inferences can be less straightforward than parametric models. | Exploratory analysis to identify the shape of a dose-response relationship without strong prior assumptions. |

| Causal Inference Methods | Instrumental Variables, G-estimation, Propensity Score Matching [4]. | Can control for unmeasured confounding under certain conditions, providing stronger evidence for causal effects. | Often requires strong, untestable assumptions (e.g., valid instrumental variable). May need a priori specification of dose-response function [4]. | Re-analysis of RCT data with non-adherence to estimate causal dose-response, or analysis of high-quality observational data. |

Experimental Protocols and the Scientist's Toolkit

Establishing a robust dose-response relationship requires rigorous experimental protocols. A standard protocol for a preclinical in vivo efficacy or toxicity study involves several key phases [1]. First, a range-finding study is conducted with a small number of animals across broad dose levels (e.g., log intervals) to identify the approximate dose causing the target effect (e.g., LD₅₀ range). This is followed by a definitive study, where a larger cohort of animals (e.g., 10 per sex per group) is randomly assigned to a vehicle control group and 4-5 geometrically spaced dose groups based on the range-finding results. Animals are dosed via the relevant route (oral gavage, intravenous, etc.), closely monitored for clinical signs, and euthanized at a predefined endpoint for necropsy and histopathology. Data analysis involves plotting mortality or response incidence against dose (often log-transformed), fitting a probit or logistic model, and calculating the median effective/lethal dose (ED₅₀/LD₅₀) with 95% confidence intervals.

For clinical dose-response characterization, a Phase II dose-ranging RCT is the gold standard [3]. A typical design randomizes several hundred participants to a placebo arm and 3-5 active dose arms. The primary efficacy endpoint and safety are monitored over the treatment period. Statistical analysis often employs an Emax model or linear contrast tests to establish a dose-response relationship and identify the minimum effective dose and optimal therapeutic dose for Phase III trials [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and materials essential for conducting dose-response experiments.

Table 3: Research Reagent Solutions for Dose-Response Studies

| Item / Reagent | Function in Dose-Response Studies | Key Considerations |

|---|---|---|

| Reference Standard Agonist/Antagonist | Serves as a positive control to validate the experimental system and benchmark the potency (EC₅₀/IC₅₀) and efficacy (Emax) of novel test compounds. | Purity and stability are critical. Should be pharmacologically well-characterized for the target of interest. |

| Vehicle Controls | Ensures that any observed biological effect is due to the active agent and not the solvent used for dissolution (e.g., DMSO, saline, cyclodextrin). | Must be compatible with the biological system and not elicit effects of its own at the concentrations used. |

| Cell Lines with Engineered Receptors/Reporters | Provide a consistent, high-throughput in vitro system for quantifying graded responses (e.g., calcium flux, cAMP production) to agonists/antagonists. | Requires validation of target expression and signal transduction functionality. Clonal selection ensures uniformity. |

| Radioligands or Fluorescent Probes | Enable the direct measurement of receptor occupancy in binding assays, allowing for the determination of binding affinity (Ki), a component of potency. | Specific activity and selectivity for the target receptor are paramount. Requires facilities for handling radioactivity or appropriate fluorescence detectors. |

| Specific Enzyme Substrates/Inhibitors | Used in enzymatic assays to measure the inhibitory dose-response (IC₅₀) of test compounds, a key parameter in drug discovery for kinases, proteases, etc. | Substrates should be selective and generate a detectable signal (e.g., fluorescent, colorimetric) upon conversion. |

| Formulated Drug Product for In Vivo Studies | The test article administered to animals, formulated to ensure accurate dosing, bioavailability, and stability. Different from the raw active pharmaceutical ingredient (API). | Formulation (suspension, solution in specific vehicle) can significantly impact pharmacokinetics and thus the observed dose-response relationship [2]. |

Data Analysis, Interpretation, and Visualization Pipeline

The analysis of dose-response data follows a logical pipeline from raw data processing to final visualization and interpretation. For binary data from a clinical trial, a common workflow in R begins with data preparation, followed by fitting both a linear logistic model and an Emax model using packages like gnm or nlme [3]. Model selection may be based on goodness-of-fit criteria (e.g., AIC). The ED₅₀ is then extracted from the chosen model, and its confidence interval is computed to assess estimation precision [3]. The final step involves creating publication-quality visualizations, such as a scatter plot of the observed response proportions with the fitted model curve overlaid.

A critical challenge in interpreting dose-response curves is distinguishing between association and causation, particularly with observational data. As highlighted in recent methodological reviews, traditional analyses of non-randomized data (e.g., patients receiving different doses of psychotherapy) cannot confirm if the dose caused the outcome due to potential confounding (e.g., severity of illness) [4]. Advanced methods like instrumental variable analysis are being explored in RCT contexts to estimate causal dose-response functions, even when participants deviate from their assigned dose [4].

This analysis pipeline and the interplay between statistical modeling and causal inference are summarized in the following diagram.

Diagram: Dose-Response Data Analysis Pipeline (78 chars)

Applications and Contemporary Challenges in Research

The dose-response relationship is pivotal across the lifecycle of a compound. In drug discovery, it is used for high-throughput screening to rank compound potency and efficacy [3]. In preclinical development, it establishes proof of concept, defines the therapeutic index in animal models, and identifies a safe starting dose for clinical trials [1]. In clinical development, Phase II dose-ranging trials are explicitly designed to characterize this relationship to inform optimal dosing for Phase III [3]. Finally, in regulatory toxicology and environmental health, it is used to determine points of departure (e.g., NOAEL - No Observed Adverse Effect Level, BMD - Benchmark Dose) for risk assessment and setting exposure limits [1].

Contemporary research faces several challenges. In the context of complex interventions like psychotherapy, standard pharmaceutical models are difficult to apply, and methodological reviews note a scarcity of robust statistical methods for causal dose-response evaluation in RCTs [4]. Furthermore, there is a recognized gap in translating advanced statistical methods (e.g., causal inference, non-parametric regression) from methodological literature into routine pharmacometric and clinical trial practice [4] [3]. Future directions emphasize the need for novel methodologies that can handle high dropout rates, individualized dosing, and make valid causal inferences with fewer assumptions, ultimately aiming to personalize dosing strategies for optimal therapeutic outcomes.

The sigmoidal dose-response curve is a fundamental graphical model in pharmacology and drug discovery that depicts the relationship between the dose or concentration of a drug and the magnitude of its biological effect [5] [6]. Characterized by its distinctive S-shape, this curve is not merely a descriptive tool but a critical analytical framework for understanding drug behavior. It visualizes core pharmacological principles, including potency, efficacy, and therapeutic window, which are indispensable for translating preclinical findings into safe and effective clinical therapies [5].

Within the broader thesis of dose-response research, deconstructing the sigmoidal curve into its constitutive phases—lag, linear, and plateau—provides a powerful lens for mechanistic investigation. Each phase corresponds to distinct underlying biological and molecular events, from initial receptor binding and signal transduction to system saturation [6]. This phased analysis moves beyond simple parameter estimation (e.g., EC₅₀) to offer insights into a drug's mechanism of action, the presence of spare receptors, cooperative binding effects, and the potential for toxicological thresholds [5] [7]. Consequently, a rigorous understanding of the sigmoidal curve is foundational for researchers and drug development professionals aiming to optimize lead compounds, predict clinical outcomes, and navigate regulatory evaluations of efficacy and safety [5].

Mathematical Foundation: The Hill Equation and Four-Parameter Logistic (4PL) Model

The canonical sigmoidal curve is most commonly described by the Hill-Langmuir equation and its generalized form, the Four-Parameter Logistic (4PL) model. These mathematical frameworks transform empirical dose-response data into quantifiable parameters that define the curve's shape and position [5] [8].

The standard Hill Equation is expressed as:

E = (E_max * [D]^n) / (EC₅₀^n + [D]^n)

Where:

Eis the observed effect.E_maxis the maximum possible effect (efficacy).[D]is the drug dose or concentration.EC₅₀is the concentration producing 50% ofE_max(potency).nis the Hill slope coefficient, which describes the steepness of the curve.

The more versatile 4PL model expands on this [8] [9]:

Y = Bottom + (Top - Bottom) / (1 + 10^((LogEC₅₀ - X) * HillSlope))

Where:

Yis the response.Xis the logarithm of the concentration.Bottomis the response of the system in the absence of drug (lower asymptote).Topis the maximum response of the system (upper asymptote).LogEC₅₀is the log-concentration at the inflection point where 50% of the effect is observed.HillSlopedefines the sigmoidicity and steepness.

Non-linear regression analysis is used to fit experimental data to this model, iteratively determining the best-fit values for these parameters [8]. The logarithmic transformation of the dose axis is critical, as it compresses a wide range of concentrations (e.g., nanomolar to millimolar) into a manageable scale, linearizing the central portion of the sigmoid and allowing for accurate visualization and analysis [5] [8].

Deconstructing the Three Phases

The sigmoidal curve can be systematically deconstructed into three sequential phases, each with distinct biological and mathematical interpretations.

Lag Phase (Low-Dose Region):

- Description: The initial, shallow portion of the curve where increases in dose produce minimal to no measurable biological response [6] [10].

- Biological Interpretation: This phase represents the period where drug concentration is insufficient to occupy a critical threshold of target receptors or enzymes required to initiate a detectable signal above background noise. It may also reflect early pharmacokinetic processes like distribution to the site of action [5].

- Mathematical Interpretation: The system operates near the lower asymptote (Bottom parameter). The Hill slope is very low, indicating high system insensitivity to dose changes in this range.

Linear Phase (Mid-Dose, Log-Linear Region):

- Description: The central, steepest portion of the sigmoid where the response increases approximately linearly with the logarithm of the dose [6] [10]. This phase contains the inflection point.

- Biological Interpretation: This is the therapeutically critical range. Small changes in dose yield significant changes in effect. The system is exquisitely sensitive as spare receptors are engaged and signal amplification cascades are fully activated. The EC₅₀ value, a key measure of potency, is located here [5] [8].

- Mathematical Interpretation: The inflection point occurs at

LogEC₅₀. The Hill slope is maximal here, directly describing the sensitivity of the response to dose changes. A steeper slope suggests cooperative binding or a highly efficient signaling system [8].

Plateau Phase (High-Dose Region):

- Description: The final, shallow portion where increasing the dose yields no further increase in response; the curve approaches a horizontal upper limit [6] [10].

- Biological Interpretation: The system has reached saturation. All target receptors are occupied, or a downstream physiological limit has been reached (e.g., maximal heart rate contraction, complete enzyme inhibition). This defines the drug's intrinsic efficacy (Emax or Top parameter) [5]. Further dose increases only increase the risk of off-target effects and toxicity without therapeutic benefit.

- Mathematical Interpretation: The system is at the upper asymptote (Top). The Hill slope approaches zero, indicating a loss of dose-dependency.

Table 1: Quantitative Parameters of the Sigmoidal Dose-Response Curve

| Parameter | Symbol (4PL) | Phase Association | Pharmacological Interpretation | Typical Experimental Determination |

|---|---|---|---|---|

| Potency | EC₅₀ or IC₅₀ | Linear (Inflection Point) | Concentration for 50% of maximal effect. Lower value = higher potency. | X-value at midpoint between Top and Bottom plateaus from curve fit [5] [8]. |

| Efficacy | Top (Emax) | Plateau | Maximum possible effect of the drug system. Defines therapeutic ceiling. | Upper asymptote from curve fit [5] [8]. |

| Hill Slope | HillSlope | Linear (Steepness) | Coefficient describing cooperativity & sensitivity. >1 = steeper, <1 = shallower. | Slope at inflection point from curve fit [8]. |

| Baseline | Bottom | Lag | System response in absence of drug. | Lower asymptote from curve fit or control measurements [8]. |

| Therapeutic Index | N/A | Entire Curve | Ratio between toxic (TD₅₀) and effective (EC₅₀) doses. Measure of safety window. | Calculated from separate dose-response curves for efficacy and toxicity [5]. |

Experimental Protocols and Data Analysis

Generating a robust sigmoidal dose-response curve requires careful experimental design, execution, and analytical validation.

Core Experimental Protocol (Cell-Based Assay Example):

- Compound Dilution Series: Prepare a serial dilution (e.g., 1:3 or 1:10) of the test compound across a range typically spanning 3-5 orders of magnitude (e.g., 1 nM to 100 µM). Use 8-12 non-zero concentrations for reliable curve fitting [8].

- Cell Seeding and Treatment: Seed target cells (e.g., tumor cell line) into multi-well plates. After adherence, treat cells with the compound dilution series. Each concentration should be tested in replicates (n=3-6) [8].

- Incubation and Endpoint Measurement: Incubate for a defined period (e.g., 48-72 hours). Measure the chosen endpoint (e.g., cell viability via ATP content, fluorescence, or absorbance).

- Control Wells: Include untreated control wells (100% viability) and wells with a maximum inhibitor (e.g., 0% viability control) to define the Top and Bottom plateaus biologically [8].

- Data Normalization: Normalize raw data from each well as a percentage of the control response:

% Response = 100 * (Raw - Mean_Max_Inhibition) / (Mean_Untreated - Mean_Max_Inhibition)[8].

Data Analysis and Curve Fitting Workflow:

- Log Transformation: Convert compound concentrations to their logarithmic values [8].

- Non-Linear Regression: Fit the normalized data to the 4PL model using specialized software (e.g., GraphPad Prism, R). The software iteratively adjusts Bottom, Top, LogEC₅₀, and HillSlope to minimize the sum of squares between the data and the model [8].

- Model Validation: Assess the goodness-of-fit.

- Visual Inspection: Ensure the curve aligns with the data points, particularly through the linear phase.

- Residual Analysis: Check that residuals are randomly scattered.

- Parameter Confidence Intervals: Verify that the 95% confidence intervals for key parameters (EC₅₀, Hill Slope) are not excessively wide [8].

- Outlier and Ambiguity Handling: Investigate, but do not automatically exclude, outlier points. For incomplete curves (missing plateaus), constraints may be applied to Bottom or Top parameters based on control values [8].

Table 2: The Scientist's Toolkit: Essential Reagents & Materials

| Item/Category | Function in Dose-Response Experiment | Key Considerations |

|---|---|---|

| Test Compound | The molecule whose biological activity is being quantified. | Purity (>95%), stability in buffer/DMSO, accurate solubilization [8]. |

| Vehicle (e.g., DMSO) | Solvent for compound dissolution. Must be biologically inert at final concentration. | Final concentration in assay typically ≤0.1-1.0% to avoid vehicle toxicity [8]. |

| Biological System | Cells, tissue, or enzyme expressing the target of interest. | Use a relevant, well-characterized model (e.g., stable cell line). Passage number and viability are critical [5]. |

| Assay Reagent Kit | Provides optimized components for measuring endpoint (viability, apoptosis, cAMP, etc.). | Choose based on sensitivity, dynamic range, and compatibility with detection instrument. |

| Multi-Well Plates | Platform for hosting the biological system during treatment and measurement. | Tissue culture-treated, clear-bottom for absorbance/fluorescence assays. |

| Liquid Handler | For accurate and reproducible serial dilutions and compound transfers. | Reduces manual error, essential for high-throughput screening [5]. |

| Microplate Reader | Instrument to detect assay signal (absorbance, fluorescence, luminescence). | Must be calibrated and have the appropriate optical filters/excitation for the assay. |

| Curve-Fitting Software | Performs non-linear regression to fit data to the 4PL/Hill model. | Essential for calculating EC₅₀, Hill slope, and statistical confidence intervals [8] [9]. |

Advanced Interpretations and Complexities

While the monophasic sigmoid is common, biological systems often exhibit more complex behaviors that necessitate advanced modeling.

Multiphasic and Non-Monotonic Curves: A significant proportion (approximately 28% in one large screen) of dose-response relationships cannot be accurately captured by a simple sigmoid [5]. Multiphasic curves may show two or more inflection points, indicating multiple independent effects (e.g., stimulation at low dose followed by inhibition at high dose, or two distinct inhibitory phases). These are modeled by summing multiple independent Hill equations [5]. U-shaped or inverted U-shaped curves indicate hormesis or dual mechanisms, requiring specialized piecewise or polynomial fitting methods [7].

Confounding Factors in Interpretation:

- Confounding by Indication: In observational clinical studies, sicker patients may receive higher doses, creating a spurious association between high dose and poor outcome that reflects disease severity, not drug effect [7].

- Measurement Error & Model Mis-specification: Random error in dose measurement attenuates observed relationships. Forcing a linear or simple sigmoidal model onto a complex relationship yields misleading parameters [7].

- System-Dependent Nonlinearities: Factors like receptor desensitization, negative feedback loops, metabolic saturation, or drug-induced toxicity at high doses can distort the classic sigmoidal shape, leading to bell-shaped or truncated curves [5] [7].

The deconstruction of the sigmoidal curve into its lag, linear, and plateau phases provides an indispensable framework for rational drug development. This analysis directly informs critical decisions: the lag phase helps define the threshold for biological activity and potential sub-therapeutic doses; the linear phase dictates the therapeutically relevant dosing range and establishes a compound's potency (EC₅₀); and the plateau phase defines its intrinsic efficacy (Emax) and the point of diminishing returns [5] [6].

For the drug development professional, this phased understanding is operationalized in determining the therapeutic index, predicting and managing toxicity, and designing optimal dosing regimens for clinical trials [5]. Furthermore, recognizing deviations from the classic sigmoid—through multiphasic or complex curve analysis—can reveal novel mechanisms of action, off-target effects, or unique biological properties of a compound, guiding medicinal chemistry efforts and patient stratification strategies [5] [7]. Therefore, mastering the interpretation of the sigmoidal dose-response curve remains a cornerstone of translational research, enabling the precise navigation from molecular discovery to clinical therapy.

The dose-response relationship is the cornerstone of quantitative pharmacology and drug development, defining the correlation between the amount of a drug administered and the magnitude of its biological effect [2]. Within this framework, two distinct yet equally critical parameters emerge: potency and efficacy. Potency, quantified by metrics such as EC50 (half-maximal effective concentration) or IC50 (half-maximal inhibitory concentration), describes the concentration of a drug required to produce a defined effect [11] [12]. In contrast, maximal efficacy (Emax) defines the ceiling of a drug's action—the greatest possible effect it can produce, regardless of dose [13] [11].

This whitepaper provides an in-depth technical guide for researchers and drug development professionals on distinguishing between these parameters. Understanding their independent roles is not an academic exercise; it is essential for rational drug design, predicting therapeutic utility, and optimizing clinical doses. A highly potent drug (low EC50) may still be clinically useless if its maximal effect (Emax) is insufficient to treat the disease [14] [5]. This document situates these concepts within the broader thesis of dose-response curve analysis, moving from theoretical foundations to experimental protocols and practical applications in modern drug discovery.

Theoretical Foundations: Defining Core Parameters and Models

Key Parameter Definitions

The following table summarizes the core parameters that define the properties of a drug in a dose-response system.

| Parameter | Symbol | Definition | Key Interpretation |

|---|---|---|---|

| Maximal Efficacy | Emax | The maximum possible effect a drug can produce in a given system [13] [11]. | Defines the therapeutic ceiling. A higher Emax indicates greater intrinsic ability to activate a response pathway [15]. |

| Half-Maximal Effective Concentration | EC50 | The concentration of an agonist or stimulator that produces 50% of its own maximal effect (Emax) [16] [12]. | A standard measure of agonist potency. A lower EC50 value indicates higher potency [14] [17]. |

| Half-Maximal Inhibitory Concentration | IC50 | The concentration of an antagonist or inhibitor that reduces a defined biological response by 50% [16] [5]. | A standard measure of inhibitor potency. A lower IC50 indicates a more potent inhibitor. |

| Equilibrium Dissociation Constant | Kd | The concentration of a drug at which 50% of its target receptors are occupied [14] [17]. | A pure measure of binding affinity, independent of functional response. A lower Kd indicates higher affinity. |

| Hill Coefficient | n | A dimensionless parameter that describes the steepness of the dose-response curve [13] [12]. | Indicates cooperativity in binding or signaling. n > 1 suggests positive cooperativity. |

The Emax and Sigmoid Emax Models

The fundamental mathematical model describing the relationship between drug concentration ([A]) and effect (E) is the Emax model [13]:

E = (Emax × [A]) / (EC50 + [A])

This hyperbolic equation, derived from the law of mass action, assumes one drug molecule binding to one receptor. When drug concentration is plotted on a logarithmic scale, this relationship transforms into the more familiar sigmoidal curve, which linearizes the central portion for easier analysis [13].

Many biological systems exhibit steeper or shallower curves than predicted by the simple Emax model. This is captured by the Sigmoid Emax Model (or Hill Equation) [13] [12]:

E = (Emax × [A]^n) / (EC50^n + [A]^n)

The Hill coefficient (n) modifies the curve's slope. When n=1, the model simplifies to the classic Emax model. An n > 1 indicates a steeper curve, often seen with positive cooperativity in multi-subunit receptors (e.g., hemoglobin) [13].

Visualizing the Dose-Response Relationship

Experimental Protocols: Generating and Analyzing Dose-Response Data

Core Assay Workflow for Dose-Response Analysis

The accurate determination of EC50/IC50 and Emax requires a standardized experimental workflow. The following table outlines the key steps, from plate design to data analysis [16] [14].

| Step | Protocol Detail | Purpose & Rationale |

|---|---|---|

| 1. Assay Design | Use a multi-well plate. Prepare a serial dilution (typically 1:3 or 1:10) of the test compound to span a range from no effect to maximal effect (e.g., 8-12 concentrations). Include control wells: vehicle-only (0%) and a maximal stimulator/inhibitor (100%) [16]. | To generate a full concentration range that will define the top (Emax) and bottom plateaus of the curve. |

| 2. Treatment & Incubation | Apply the compound dilutions to the biological system (cells, enzymes, tissues). Incubate for a defined, physiologically relevant time under controlled conditions (temperature, CO₂). | The effect is time-dependent [12]. Standardized incubation allows the system to reach equilibrium for reliable measurement. |

| 3. Response Measurement | Quantify the biological response using an appropriate readout (e.g., fluorescence, luminescence, absorbance, imaging, contraction force). Perform technical replicates (n≥3). | To obtain a continuous, graded measurement of effect for a graded dose-response curve [17]. |

| 4. Data Normalization | Normalize raw data from each well relative to the control-defined plateaus: % Effect = (Raw - Mean 0%) / (Mean 100% - Mean 0%) * 100 [16]. | Places all data on a common 0-100% scale, enabling direct comparison between experiments and compounds. |

| 5. Curve Fitting | Fit normalized data to the sigmoid Emax (Hill) model using non-linear regression software (e.g., GraphPad Prism). The model solves for Emax, EC50/IC50, and the Hill slope (n). | To derive the key pharmacodynamic parameters mathematically. The fit must be constrained by the defined 0% and 100% controls for accuracy [16]. |

| 6. Quality Control | Assess the R² value and confidence intervals of the fitted parameters. Visually inspect the curve fit. The data should clearly define the lower asymptote, the linear phase, and approach the upper asymptote. | To ensure the estimated parameters are reliable. Data that do not define plateaus lead to unreliable EC50/IC50 estimates [16]. |

Experimental Workflow Visualization

Critical Distinction: Relative vs. "Absolute" IC50

A crucial nuance in inhibitor studies is the definition of IC50 [16]:

- Relative IC50: The standard definition. It is the concentration that reduces the response to a point halfway between the top and bottom plateaus of the tested compound's own curve. This is the pharmacologically relevant measure of a drug's potency.

- "Absolute" IC50: Sometimes used (e.g., GI50 in oncology). It is the concentration that reduces the response to 50% of the control (vehicle) response, ignoring the compound's own bottom plateau. This value does not cleanly represent potency if the compound cannot achieve 100% inhibition.

Experiments must clearly report which definition is used. The "relative IC50," derived from a complete curve fit, is preferred for pharmacological analysis [16].

The Scientist's Toolkit: Essential Research Reagents and Materials

Accurate dose-response analysis relies on high-quality, specific reagents. The following table details essential materials and their functions [14].

| Category | Item | Function in Dose-Response Experiments |

|---|---|---|

| Test Compounds | Agonists/Antagonists (high-purity small molecules, biologics) | The experimental variables. Their serial dilutions create the concentration gradient to probe the biological system's sensitivity and capacity. |

| Biological System | Cell lines, Primary cells, Enzymes, Tissue preparations (e.g., isolated heart) [14] | The responsive unit containing the drug target (receptor, enzyme). System choice heavily influences observed Emax and EC50 [11]. |

| Assay Reagents | Fluorescent/Luminescent probes, Enzyme substrates, Radioligands, Antibodies | Enable quantification of the biological response (e.g., calcium flux, cell viability, cAMP levels, receptor occupancy). |

| Controls | Vehicle (e.g., DMSO, buffer), Reference Agonist (full agonist), Reference Antagonist (full inhibitor) | Define the 0% and 100% effect boundaries for data normalization, which is critical for accurate EC50/IC50 and Emax calculation [16]. |

| Instrumentation | Plate reader (fluorescent/luminescent), FLIPR system [5], Imaging system, Tissue bath apparatus | Hardware to deliver stimuli and precisely measure the kinetic or endpoint biological response. |

| Analysis Software | Non-linear regression software (e.g., GraphPad Prism, Dr-Fit [5]) | Essential for fitting the sigmoidal model to normalized data to calculate key parameters with statistical confidence intervals. |

Data Interpretation and Clinical & Pharmacological Implications

Separating Affinity, Potency, and Efficacy

It is critical to understand that EC50 is not equivalent to Kd (affinity), and potency is not efficacy.

- Affinity (Kd) is the strength of drug-receptor binding [14] [17].

- Potency (EC50) depends on both affinity and efficacy. A drug with high affinity but low efficacy (a partial agonist) can have a similar EC50 to a drug with lower affinity but very high efficacy [12] [18].

- Efficacy (Emax) is the intrinsic ability of a drug, once bound, to activate the receptor and downstream signaling to produce a response [11] [15].

The concept of "spare receptors" (receptor reserve) exemplifies this: a system has spare receptors if a maximal biological response (Emax) can be achieved when only a fraction of receptors are occupied. In such systems, the EC50 will be significantly lower than the Kd [17].

Visualizing Drug-Receptor Interactions and Cellular Signaling

Implications for Drug Selection and Development

The distinction guides critical decisions:

- Therapeutic Utility: Efficacy (Emax) often trumps potency. A drug must achieve sufficient Emax to produce a clinically meaningful effect [2] [5]. A highly potent drug with low Emax (e.g., a partial agonist) may be chosen for its safety profile in certain contexts [18].

- Dosing Regimen: Potency (EC50) informs the starting dose, while Emax and the curve's slope inform the therapeutic window and risk of toxicity from over-dosing [13] [14].

- Mechanism of Action: The shape of the curve (Hill slope) and changes in Emax/EC50 in the presence of other drugs reveal if an antagonist is competitive (rightward shift of curve, no change in Emax) or non-competitive (depression of Emax) [5].

Advanced Concepts and Applications in Modern Research

Multiphasic and Non-Monotonic Dose-Response Curves

Not all dose-response relationships follow a simple sigmoid model. Advanced pharmacological systems can exhibit multiphasic curves, which may combine stimulatory and inhibitory phases [5]. These can arise from:

- Drugs acting on multiple receptor subtypes with different affinities and effects.

- Negative feedback mechanisms becoming engaged at higher concentrations.

- Off-target effects manifesting at high doses.

Analyzing such curves requires specialized fitting algorithms (e.g., Dr-Fit software) that use multiple independent Hill equations to model distinct phases [5]. A significant study of over 11,000 dose-response curves in cancer cell lines found that 28% were best fit by multiphasic models [5], highlighting the importance of moving beyond standard sigmoid analysis in complex systems.

Regulatory and Toxicological Applications

Dose-response principles are fundamental to risk assessment [5].

- Therapeutic Index (TI): The ratio between the toxic dose (TD50) and effective dose (ED50). It is a direct application of dose-response analysis for safety assessment [2].

- No Observed Adverse Effect Level (NOAEL): The highest dose in a study causing no harmful effect. It is determined from dose-response data on toxicity and is used by regulatory agencies to establish safe exposure limits [5].

The precise distinction between potency (EC50/IC50) and maximal efficacy (Emax) is non-negotiable for rigorous pharmacological research and effective drug development. Potency indicates the concentration required for an effect, while efficacy defines the ultimate limit of that effect. As explored in this guide, these independent parameters are derived from robust experimental protocols, interpreted within the context of biological system and receptor theory, and applied to everything from lead compound optimization to clinical dosing and regulatory safety assessment.

Future directions in dose-response analysis will increasingly integrate multiphasic modeling, high-throughput kinetic data from systems like FLIPR [5], and in silico predictions to handle complex biological realities. A deep understanding of the foundational principles outlined here will remain essential for navigating this complexity and translating quantitative biological data into successful therapeutic outcomes.

The dose-response relationship is a fundamental principle in pharmacology that describes the quantitative correlation between the dose or concentration of a drug and the magnitude of its biological effect [19]. Within this framework, the classification of drugs as agonists or antagonists based on their dominant mechanism of action provides critical insight into their therapeutic potential and safety profile [20]. An agonist is defined as a ligand that binds to a receptor and alters its state to produce a biological response, while an antagonist inhibits or blocks the response produced by an agonist [21]. The interplay between these drug classes directly shapes the dose-response curve, influencing key parameters such as potency (ED₅₀/EC₅₀) and efficacy (Emax) [19]. This guide, framed within ongoing research to elucidate dose-response paradigms, provides a technical examination of these concepts for researchers and drug development professionals.

Agonists are further subclassified based on their intrinsic efficacy. A full agonist produces the maximal response that a biological system is capable of, even if it does not occupy all available receptors (a concept known as spare receptors) [21]. A partial agonist, in contrast, cannot elicit the system's maximal response even at full receptor occupancy [21]. An inverse agonist reduces the fraction of receptors in an active conformation, producing an effect opposite to that of a conventional agonist in systems with constitutive receptor activity [21].

Antagonists are classified by their mechanism of inhibition. A competitive antagonist binds reversibly to the same site as the agonist, shifting the agonist's dose-response curve to the right (decreased potency) without altering its maximal response [19]. A non-competitive antagonist inhibits the response through a mechanism not involving direct competition for the agonist binding site, such as by affecting the secondary messenger system. This results in a decrease in the maximal response (Emax) and can also cause a rightward shift [19]. An irreversible antagonist forms a permanent or long-lasting bond with the receptor, effectively reducing the pool of available receptors and acting as a special case of non-competitive antagonism [19].

Table: Fundamental Characteristics of Drug Classes

| Drug Class | Receptor Binding | Intrinsic Efficacy | Effect on Agonist Curve (Potency/Emax) | Clinical Example |

|---|---|---|---|---|

| Full Agonist | Orthosteric Site | High (Maximum System Response) | N/A (Defines baseline curve) | Morphine (μ-opioid receptor) |

| Partial Agonist | Orthosteric Site | Intermediate (Sub-maximal Response) | Acts as antagonist; reduces potency of full agonist | Buprenorphine (μ-opioid receptor) |

| Inverse Agonist | Orthosteric/Allosteric | Negative (Reduces Basal Activity) | Suppresses agonist curve | Rimonabant (CB1 cannabinoid receptor) [22] |

| Competitive Antagonist | Orthosteric Site | Zero (No Activation) | Rightward shift (Decreased Potency); Emax unchanged | Naloxone (Opioid receptors) |

| Non-Competitive Antagonist | Allosteric Site or downstream | Zero (Blocks Function) | Decreased Emax; possible rightward shift | Ketamine (NMDA receptor channel blocker) |

Quantitative Analysis of Dose-Response Curves

The sigmoidal log dose-response curve is the standard graphical representation for analyzing drug effects. Plotting response against the logarithm of dose (or concentration) linearizes the central, most informative portion of the relationship [19]. Two primary parameters are derived from this curve: potency and efficacy.

Potency is quantified by the ED₅₀ or EC₅₀, the dose or concentration required to produce 50% of the maximal effect. It is an index of a drug's affinity for its receptor and the efficiency of the coupling of receptor occupancy to response. A lower ED₅₀ indicates greater potency [19].

Efficacy (Intrinsic Activity) is quantified by Emax, the maximal response a drug can elicit. Efficacy is a property of the drug-receptor complex and reflects the ability of the drug, once bound, to activate the receptor and subsequent signaling cascade. A full agonist has high efficacy, while a partial agonist has lower efficacy [21].

The therapeutic index, calculated as the ratio of the toxic dose (e.g., TD₅₀) to the effective dose (ED₅₀), is a critical safety parameter derived from quantal dose-response curves (which plot the frequency of a specified effect in a population against dose).

Table: Quantitative Parameters from Dose-Response Curves

| Parameter | Symbol | Definition | Interpretation | Determined By |

|---|---|---|---|---|

| Potency | ED₅₀ / EC₅₀ | Dose/Concentration for 50% of Emax | Strength of drug effect; depends on affinity & efficacy | Position of curve on X-axis |

| Efficacy | Emax | Maximal possible response | Drug's ability to activate receptor; "power" of drug | Height of curve plateau (Y-axis) |

| Slope | Hill Coefficient | Steepness of linear phase | Cooperativity; sensitivity to dose changes | Steepness of central linear region |

| Therapeutic Index | TI | TD₅₀ / ED₅₀ (for quantal curves) | Margin of safety; higher is safer | Comparison of separate dose-effect curves |

The effect of antagonists on these curves is diagnostic. As shown in Figure 1, increasing concentrations of a competitive antagonist cause parallel rightward shifts of the agonist curve, demonstrating a surmountable blockade where sufficient agonist can overcome inhibition. In contrast, a non-competitive antagonist suppresses the Emax, as the blockade is insurmountable by increasing agonist concentration [19]. This graphical distinction is foundational for in vitro characterization of lead compounds.

Figure 1: Antagonist-Induced Shifts in Agonist Dose-Response Curves

Experimental Methodologies for Characterization

In Vitro Functional Assays: The [³⁵S]GTPγS Binding Assay

This assay measures the functional efficacy of agonists and antagonists at G protein-coupled receptors (GPCRs) by quantifying the activation of Gα subunits. Upon receptor activation, the agonist-bound receptor catalyzes the exchange of GDP for GTP on the Gα subunit. Using the radioactively labeled, non-hydrolyzable GTP analog [³⁵S]GTPγS allows for the quantification of this event as a direct marker of receptor activation [22].

Detailed Protocol (Adapted from [22]):

- Membrane Preparation: Harvest CHO cells stably transfected with the target receptor (e.g., mouse μ-opioid receptor, mMOR-CHO). Homogenize cells in membrane buffer (50 mM Tris, 3 mM MgCl₂, 1 mM EGTA, pH 7.4) and centrifuge at high speed (e.g., 50,000×g). Store aliquots at -80°C.

- Assay Setup: Thaw membranes and homogenize in assay buffer. In each reaction well, combine:

- Membrane preparation (e.g., 5-20 µg protein).

- [³⁵S]GTPγS (e.g., 0.1 nM).

- A range of concentrations of the test agonist (e.g., fentanyl), antagonist (e.g., naltrexone), or fixed-proportion mixtures.

- GDP (e.g., 10 µM) to suppress basal G-protein activity.

- Any required salts or protease inhibitors.

- Incubation: Incubate the reaction for 60-90 minutes at 30°C to reach equilibrium.

- Termination and Quantification: Terminate reactions by rapid vacuum filtration through GF/B filter plates. Wash filters to remove unbound radioactivity. Measure bound radioactivity via liquid scintillation counting.

- Data Analysis: Non-specific binding is determined in the presence of excess unlabeled GTPγS. Specific binding is total minus non-specific. Data are fit to a sigmoidal concentration-response model to determine EC₅₀ and Emax values for agonists. For antagonist studies, Schild regression analysis is used to determine the pA₂ value (negative log of the antagonist concentration that necessitates a 2-fold increase in agonist concentration to achieve the same effect), which quantifies antagonist potency.

In Vivo Behavioral Assays: Thermal Nociception

In vivo efficacy is contextual and integrates pharmacokinetics and systems-level physiology. The warm water tail-withdrawal assay in rodents is a classic model for evaluating analgesic (antinociceptive) efficacy of agents like opioid agonists and antagonists [22].

Detailed Protocol (Adapted from [22]):

- Animal Preparation: House mice or rats under standard conditions with ad libitum food/water. Acclimate animals to handling and the testing environment.

- Baseline Measurement: Gently restrain the animal and immerse the distal portion of its tail into a water bath maintained at a noxious but non-damaging temperature (e.g., 50°C or 55°C). Record the latency (in seconds) for the animal to vigorously flick or withdraw its tail. Establish a baseline latency (typically 2-4 sec) and a cut-off latency (e.g., 10-15 sec) to prevent tissue damage.

- Drug Administration: Administer the test compound(s) (e.g., subcutaneous or intraperitoneal injection of fentanyl/naltrexone mixtures). Include vehicle control groups.

- Post-Treatment Testing: At predetermined time points post-injection (e.g., 15, 30, 60 minutes), repeat the tail immersion test. The test is usually performed multiple times with different sections of the tail.

- Data Analysis: Calculate the Percent Maximum Possible Effect (%MPE) for each animal/time point: %MPE = [(Post-drug latency – Baseline latency) / (Cut-off latency – Baseline latency)] × 100. Plot group mean %MPE against drug dose or time to generate dose-response or time-course curves. Fit data to determine ED₅₀ values for analgesic effect. Comparisons between agonist-alone and agonist/antagonist mixture curves allow for quantification of in vivo antagonist potency and efficacy requirements [22].

Table: Core Experimental Assays for Pharmacodynamic Profiling

| Assay Type | Measured Endpoint | Key Output Parameters | Application in Agonist/Antagonist Research | Key Advantage |

|---|---|---|---|---|

| [³⁵S]GTPγS Binding [22] | G-protein activation | Agonist: EC₅₀, EmaxAntagonist: pA₂, KB | Quantifies intrinsic efficacy & potency at receptor level; tests fixed-ratio mixtures. | Direct, biochemical measure of receptor coupling; high throughput. |

| cAMP Accumulation | 2nd messenger level | EC₅₀, Emax (Gs); IC₅₀ (Gi) | For receptors modulating adenylyl cyclase. | Functional readout for key signaling pathway. |

| Beta-Arrestin Recruitment | Receptor trafficking | EC₅₀, Emax | Assesses "biased agonism" (G-protein vs. arrestin signaling). | Critical for understanding differential drug effects. |

| Calcium Mobilization (FLIPR) | Intracellular Ca²⁺ flux | EC₅₀, Emax | For receptors coupled to Gq or Ca²⁺ release. | Very high throughput; sensitive. |

| Radioligand Binding | Receptor affinity | Ki (inhibition constant) | Measures binding affinity, not function. | Distinguishes affinity from efficacy. |

| In Vivo Nociception [22] | Pain response latency | In vivo ED₅₀, %MPE, duration of action | Translational efficacy and therapeutic window. | Integrates PK/PD; clinically relevant endpoint. |

Figure 2: Integrated Workflow for Characterizing Agonists & Antagonists

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Key Reagents for Agonist/Antagonist Pharmacology Studies

| Reagent / Material | Example(s) from Literature | Function in Research | Critical Notes |

|---|---|---|---|

| Stable Cell Lines | mMOR-CHO, mCB1R-CHO cells [22] | Express the recombinant target receptor at consistent, measurable levels for in vitro assays. | Essential for reproducible functional and binding studies. |

| Radioactive Ligands | [³⁵S]GTPγS, [³H]Naloxone, [³H]Rimonabant [22] | Enable highly sensitive quantification of specific molecular events: G-protein activation ([³⁵S]GTPγS) or receptor occupancy (tritiated antagonists). | Require specialized safety protocols and licensing for use and disposal. |

| Reference Agonists & Antagonists | DAMGO (MOR agonist), Fentanyl, CP55,940 (CB1R agonist), Naltrexone, Rimonabant [22] | Serve as standard, well-characterized controls to benchmark the activity of novel test compounds in assays. | Purity and proper storage are critical for reliable reference data. |

| Assay Buffers & Cofactors | Tris/MgCl₂/EGTA buffer, GDP, Guanosine-5'-(γ-thio)triphosphate (GTPγS) [22] | Provide the optimal ionic and biochemical environment for receptor-G-protein coupling and function. | Mg²⁺ concentration is crucial for GPCR coupling; GDP reduces basal noise. |

| Filtration Equipment | Whatman GF/B glass fiber filters, vacuum filtration manifold [22] | Separate receptor-bound radioactive ligand from free ligand in binding and GTPγS assays. | Pre-soaking filters in buffer with PEI or BSA can reduce non-specific binding. |

| Liquid Scintillation Counter | N/A (Standard Equipment) | Measures the radioactivity (disintegrations per minute) from bound radioactive isotopes on filter plates or in vials. | Calibration and efficiency correction are necessary for accurate quantification. |

Advanced Concepts and Research Frontiers

Controlling Net Receptor Efficacy with Fixed-Proportion Mixtures

A sophisticated application of receptor theory is the use of fixed-proportion agonist/antagonist mixtures to precisely control the net efficacy of a treatment. As demonstrated with μ-opioid receptor ligands, mixtures of a high-efficacy agonist (fentanyl) and a competitive antagonist (naltrexone) produce graded maximal effects in vitro and in vivo depending on the agonist proportion [22]. The agonist proportion required to produce a half-maximal effect (A₅₀) provides a quantitative metric for comparing efficacy requirements across different biological endpoints (e.g., analgesia vs. respiratory depression). This approach offers a strategic pathway to design therapeutics with a ceiling effect for safety, exemplified clinically by the partial agonist buprenorphine [22].

Computational Cheminformatics and QSAR

Quantitative Structure-Activity Relationship (QSAR) modeling, powered by deep learning (DL), is transforming the prediction of agonist/antagonist activity. Studies on datasets like the Tox21 10K library employ methods such as matched molecular pair (MMP) analysis and scaffold decomposition to identify structural features discriminating agonists from antagonists for targets like the androgen receptor [23]. Modern DL-QSAR systems, like improved DeepSnap-DL, generate high-performance prediction models by using 3D molecular structures as input, automatically extracting features to predict activity for molecular initiating events in toxicity pathways [24]. This in silico frontier aids in the rational design of ligands with desired efficacy profiles and the identification of "MOA-cliffs"—where minimal structural changes flip a compound from agonist to antagonist [23].

Biased Agonism and Allosteric Modulation

Biased agonists (or functionally selective agonists) stabilize unique receptor conformations that preferentially activate specific downstream signaling pathways (e.g., G-protein vs. β-arrestin recruitment). This can lead to drugs with dissociated therapeutic and adverse effects. The study of allosteric modulators, which bind at sites distinct from the orthosteric (endogenous ligand) site, represents another frontier. Positive allosteric modulators (PAMs) can enhance the effect of an endogenous agonist, offering greater spatial and temporal specificity, while negative allosteric modulators (NAMs) act as non-competitive antagonists [21]. These mechanisms add layers of complexity and opportunity for fine-tuning dose-response relationships beyond classical models.

The transition of a therapeutic agent from laboratory discovery to clinical application is fundamentally governed by one principle: the relationship between the dose administered and the biological effect elicited. This dose-response relationship forms the bedrock of pharmacology, providing the quantitative framework necessary to understand a drug's efficacy and safety [2] [25]. Within this framework, two interrelated concepts are paramount for clinical decision-making: the therapeutic index (TI) and the therapeutic window. The therapeutic index is a quantitative measure comparing the dose (or exposure) that causes toxicity to the dose that produces the desired therapeutic effect [26] [27]. The therapeutic window describes the range of doses between the minimum effective concentration and the maximum tolerated concentration, optimizing benefit while minimizing risk [26].

This guide details the derivation, interpretation, and application of these critical safety parameters. Framed within the broader thesis of dose-response research, we will explore how classical pharmacological analysis is being transformed by Quantitative and Systems Pharmacology (QSP) and Model-Informed Drug Development (MIDD). These advanced approaches integrate mechanistic data across biological scales—from molecular interactions to whole-organism physiology—to predict clinical outcomes and optimize dosing strategies with greater precision than traditional methods allow [28] [29]. For researchers and drug development professionals, a nuanced understanding of these concepts is essential for designing safer, more effective therapies and navigating the complex journey from preclinical models to patient care.

Foundational Concepts: Dose-Response, Efficacy, and Toxicity

The Dose-Response Curve: Parameters and Interpretation

A dose-response curve graphically represents the relationship between the magnitude of a drug's effect and its dose or concentration [25]. When plotted with the dose (often on a logarithmic scale) on the x-axis and the measured effect on the y-axis, the curve typically assumes a sigmoidal shape. This shape reveals several key pharmacological parameters [2]:

- Potency: Reflected by the horizontal position of the curve. It is often quantified by the ED₅₀ (Effective Dose for 50% of the population), the dose required to produce half of the maximum therapeutic response. A drug with a lower ED₅₀ is more potent.

- Maximal Efficacy (Ceiling Effect): The greatest attainable response, represented by the upper plateau of the curve. It indicates the intrinsic activity of the drug.

- Slope: The steepness of the linear portion of the curve, indicating how rapidly the response changes with dose. A steeper slope suggests a narrower dose range between ineffective and maximal effects.

Table: Key Parameters Derived from Dose-Response Curves [2] [25]

| Parameter | Abbreviation | Definition | Pharmacological Significance |

|---|---|---|---|

| Median Effective Dose | ED₅₀ | Dose required to produce a specified therapeutic effect in 50% of the population. | Primary measure of a drug's potency. |

| Median Toxic Dose | TD₅₀ | Dose required to produce a particular toxic effect in 50% of the population. | Measure of a drug's toxicity profile. |

| Median Lethal Dose | LD₅₀ | Dose required to cause death in 50% of a population (primarily used in preclinical animal studies). | Historic measure of acute safety risk. |

| Maximal Efficacy | E_max | The greatest attainable response, regardless of dose. | Measure of a drug's intrinsic activity. |

Defining the Therapeutic Index and Therapeutic Window

The therapeutic index (TI) provides a numerical estimate of a drug's relative safety. Classically, it is calculated as the ratio of a toxic dose to an effective dose. Two primary formulations are used [26]:

- Safety-based TI (Preclinical Focus): TI = LD₅₀ / ED₅₀. A higher value indicates a wider margin of safety.

- Efficacy-based TI (Clinical Focus): TI = TD₅₀ / ED₅₀. This is more clinically relevant, as toxic effects often occur at doses far below lethal levels. For this ratio, a higher value also indicates a safer drug.

The protective index (PI), calculated as TD₅₀/ED₅₀, is mathematically the reciprocal of the efficacy-based TI and is often used synonymously to express the same safety margin [26].

The therapeutic window (or safety window) is the dose range between the minimum effective concentration (MEC) and the minimum toxic concentration (MTC). Doses within this window are most likely to produce the desired therapeutic effect without causing unacceptable adverse events [26]. A drug with a narrow therapeutic window (e.g., digoxin, warfarin, lithium) requires careful dose titration and, often, therapeutic drug monitoring (TDM) to ensure plasma concentrations remain within this narrow safe range [26].

Diagram: Dose-Response Relationship and Therapeutic Window. This diagram illustrates the fundamental sigmoidal curves for therapeutic efficacy (green) and toxicity (red). Key parameters like ED₅₀ and TD₅₀ are shown, with the Therapeutic Window (blue dashed line) representing the safe dose range between the Minimum Effective Concentration (MEC) and the Minimum Toxic Concentration (MTC) [2] [26] [25].

Quantitative Analysis: Calculating and Interpreting the Therapeutic Index

Comparative Therapeutic Indices of Common Drugs

The therapeutic index varies dramatically between different substances. A high TI indicates a wide margin of safety, whereas a low TI signifies a narrow therapeutic window and a greater risk of toxicity with small dosing errors [26].

Table: Representative Therapeutic Indices for Selected Drugs [26]

| Drug | Class/Therapeutic Use | Approximate Therapeutic Index (TD₅₀/ED₅₀) | Clinical Implication |

|---|---|---|---|

| Remifentanil | Opioid Analgesic | 33,000 : 1 | Exceptionally wide safety margin for intended effect. |

| Diazepam | Benzodiazepine (Sedative) | 100 : 1 | Relatively wide margin, but overdose risk exists. |

| Morphine | Opioid Analgesic | 70 : 1 | Requires careful dosing to manage pain vs. respiratory depression. |

| Warfarin | Oral Anticoagulant | 2 : 1 | Very narrow window; requires frequent INR monitoring. |

| Digoxin | Cardiac Glycoside | 2 : 1 | Narrow window; toxicity (arrhythmia) can be fatal. TDM essential. |

| Lithium | Mood Stabilizer | 2 - 3 : 1 | Narrow window; TDM required to maintain serum levels of 0.6-1.2 mEq/L. |

Note on Calculation: It is critical to recognize that TI is a population-derived statistic. It does not account for individual variability due to genetics (pharmacogenomics), age, organ function, or drug-drug interactions [27]. For drugs with a narrow TI, this inter-individual variability is a major clinical challenge, making personalized dosing strategies and monitoring imperative.

Beyond the Classical Ratio: Exposure-Based TI and its Determinants

In modern drug development, TI is increasingly calculated using drug exposure (e.g., plasma concentration-time profile, AUC, C_max) rather than administered dose. This is because the pharmacological and toxicological effects are driven by the concentration of the drug at the target site over time [26]. An exposure-based TI accounts for pharmacokinetic (PK) variability between individuals. Key factors influencing exposure and, consequently, the effective TI in a patient include [2] [26]:

- Metabolic Polymorphisms: Genetic variations in cytochrome P450 enzymes (e.g., CYP2C9, CYP2D6) can cause individuals to be poor or ultrarapid metabolizers, drastically altering drug exposure.

- Drug-Drug Interactions (DDIs): Concomitant medications can inhibit or induce metabolic enzymes or drug transporters, increasing or decreasing exposure.

- Organ Function: Impaired renal or hepatic function reduces clearance, leading to drug accumulation and a higher risk of toxicity at standard doses.

- Body Weight/Composition: Affects the volume of distribution and clearance.

Modern Methodologies: QSP and MIDD for Predictive Safety

The Rise of Quantitative and Systems Pharmacology (QSP)

Traditional, empirical dose-finding is being augmented by Quantitative and Systems Pharmacology (QSP), an integrative approach that combines computational modeling with multiscale biological data. QSP models the dynamic interactions between a drug and a biological system as a whole—from molecular targets and signaling pathways to tissue-level physiology and population-level outcomes [28].

The power of QSP lies in its dual integration [28]:

- Horizontal Integration: Simultaneously considers multiple receptors, cell types, metabolic pathways, and signaling networks, acknowledging that drug effects do not occur in isolation but within complex, homeostatic biological networks.

- Vertical Integration: Spans multiple temporal and spatial scales—from rapid receptor-ligand binding (seconds) to long-term disease progression (months/years) and from subcellular compartments to whole-organism physiology.

QSP operates on a "learn and confirm" paradigm, where experimental data are continuously integrated into mechanistic mathematical models (often systems of ordinary differential equations). These models generate testable hypotheses, which then guide the design of new, more precise experiments [28].

Diagram: The QSP 'Learn and Confirm' Workflow in Drug Development. This flowchart outlines the iterative, hypothesis-driven cycle of Quantitative and Systems Pharmacology. It begins with defining objectives, integrates multiscale data into mechanistic models, runs predictive simulations to optimize development decisions, and refines the models based on new experimental results [28].

Application in Defining the Therapeutic Window

QSP and related Model-Informed Drug Development (MIDD) approaches are particularly valuable for drugs with a narrow therapeutic index. They enable [28] [29]:

- Preclinical-to-Clinical Translation: Predicting human pharmacokinetics and pharmacodynamics (PK/PD) and estimating a first-in-human safe starting dose and therapeutic range.

- Optimizing Clinical Trial Design: Simulating virtual patient populations to predict dose-response, identify responsive subpopulations, and optimize dose selection for Phase II/III trials.

- Evaluating Combination Therapies: Modeling drug-drug interactions and synergistic effects to design safe and effective combination regimens, especially in oncology.

- Personalized Dosing Strategies: Incorporating individual patient factors (genetics, biomarkers, organ function) into models to tailor dosing within the therapeutic window.

Experimental Protocols: From Classical Designs to QSP Implementation

Optimal Experimental Design for Dose-Response Studies

Robust estimation of ED₅₀, TD₅₀, and the dose-response curve slope requires careful experimental design. Statistical optimal design theory provides a framework to select dose levels and allocate experimental units (animals, cell culture wells) to minimize the number of subjects needed while maximizing the precision of parameter estimates [30].

For standard dose-response models (e.g., log-logistic, Weibull), D-optimal designs often require only a control group and three carefully chosen dose levels. These dose levels are typically placed near the estimated ED₁₀, ED₅₀, and ED₉₀, which provides the most information for defining the sigmoidal curve [30]. This is a more efficient design than traditional protocols using evenly spaced doses with equal animal allocation per group.

Detailed Protocol: In Vivo Determination of ED₅₀ and TD₅₀ for TI Calculation

- Objective: To establish the dose-response relationship for both efficacy and a key toxicity endpoint in a relevant animal model.

- Model Selection: Choose a pharmacologically relevant disease model (e.g., rodent model of inflammation for an NSAID).

- Dose Selection (Optimal Design): Based on pilot studies, select a vehicle control and three to four dose levels expected to span the range from no effect to maximum effect. A D-optimal design suggests doses near the anticipated ED₁₀, ED₅₀, and ED₉₀ [30].

- Randomization & Group Allocation: Randomly assign animals to dose groups (n=8-10 per group is common). Use power analysis to determine sample size based on expected effect size and variability.

- Dosing & Measurement:

- Administer compound via the intended clinical route.

- For Efficacy (ED₅₀): Measure the primary therapeutic endpoint (e.g., reduction in paw swelling) at a predefined peak-effect time.

- For Toxicity (TD₅₀): In parallel or in a separate study, measure a relevant toxicity endpoint (e.g., gastric lesion score, serum marker of organ injury).

- Data Analysis:

- Fit individual animal response data to a nonlinear regression model (e.g., four-parameter log-logistic model) [30].

- Use software (e.g., GraphPad Prism, R) to calculate the best-fit values for ED₅₀, TD₅₀, and their confidence intervals.

- Calculate TI as TD₅₀ / ED₅₀ and report the 95% confidence limits for the ratio.

Building and Validating a QSP Model

Detailed Protocol: Developing a Minimal QSP Model for Glucose Homeostasis (Example) [28]

- Define Scope and States: Objective: Predict plasma glucose response to a drug affecting insulin secretion. Key system "states" to track: Plasma Glucose (G), Plasma Insulin (I), and Remote Compartment Insulin (X).

- Diagram Mechanisms: Create a schematic of inputs and outputs for each state.

- Inputs to G: Exogenous glucose injection, endogenous hepatic glucose production.

- Outputs from G: Insulin-independent clearance, insulin-dependent clearance (a function of X).

- Input to I: Pancreatic secretion (a function of G).

- Transfer between I and X: Define rates of insulin movement between compartments.

- Translate to Mathematics: Express the diagram as a system of Ordinary Differential Equations (ODEs). For example:

- dG/dt = Rate of appearance - (Insulin-Independent Clearance) - (Insulin-Dependent Clearance * X)

- dI/dt = Secretion(G) - (k1 * I) + (k2 * X)

- dX/dt = (k1 * I) - (k2 * X)

- Parameterize: Assign values to rate constants (k1, k2) and functions (Secretion(G)) from literature or fit to experimental data (e.g., from an Intravenous Glucose Tolerance Test).

- Simulate and Validate:

- Use computational software (MATLAB, Julia, Berkeley Madonna) to simulate the system's behavior under control conditions and match it to validation data.

- Introduce the "drug effect" as a modulator of a specific parameter (e.g., increasing insulin secretion rate).

- Run virtual dose-response simulations to predict the drug's effect on glucose time profiles and estimate its therapeutic window for causing hypoglycemia.

Diagram: Core Workflow for Developing a Minimal QSP Model. This diagram illustrates the five key steps in constructing a mechanistic QSP model, using a simplified glucose-insulin system as a canonical example [28].

Table: Key Research Reagent Solutions for Therapeutic Index Studies

| Category | Specific Item/Resource | Function in TI/Window Research | Key Considerations |

|---|---|---|---|

| In Vivo Models | Pharmacologically relevant disease models (e.g., transgenic, xenograft, induced pathology). | Provide an integrated system to evaluate dose-dependent efficacy and toxicity endpoints simultaneously. | Species relevance, endpoint translatability to humans, and ethical compliance (3Rs). |

| In Vitro Assays | High-content screening (HCS) assays; target-specific reporter assays; cytotoxicity assays (e.g., MTT, CellTiter-Glo). | Enable high-throughput screening of efficacy (IC₅₀) and toxicity (CC₅₀) in cell lines, informing early TI estimates. | Cell line relevance, assay robustness (Z'), and mechanistic alignment with in vivo effects. |

| Biomarker Kits | Validated ELISA, MSD, or Luminex kits for PD biomarkers and toxicity markers (e.g., troponin, ALT/AST, cytokines). | Quantify molecular efficacy signals and organ injury in biofluids, linking exposure to effect and toxicity. | Assay sensitivity, specificity, and correlation with histological or clinical outcomes. |

| PK/PD Analysis Software | Phoenix WinNonlin, NONMEM, Monolix, R (with nlme/mrgsolve packages). |

Perform nonlinear fitting of dose-response data, calculate ED₅₀/TD₅₀, and build PK/PD models for exposure-response analysis. | Modeling flexibility, statistical rigor, and regulatory acceptance. |

| QSP/Modeling Platforms | MATLAB/SimBiology, Julia/SciML, DBSolve, commercial platforms (e.g., Certara's QSP Platform). | Build, simulate, and validate mechanistic multiscale models to predict therapeutic windows and optimize dosing. | Ease of ODE implementation, parameter estimation tools, and visualization capabilities. |

| Reference Materials | Certified chemical reference standards; control biomatrices (plasma, tissue homogenates). | Ensure accuracy and reproducibility of analytical methods (LC-MS/MS) used to measure drug concentrations for exposure-based TI. | Purity, stability, and traceability to primary standards. |

The therapeutic index and therapeutic window remain indispensable concepts for translating pharmacological effect into safe clinical practice. While rooted in classic dose-response curve analysis, their determination and application are undergoing a significant evolution. The future lies in moving beyond static, population-average ratios toward dynamic, individualized exposure-response models.

The integration of QSP and MIDD into the drug development pipeline represents a paradigm shift. These approaches allow for the prospective prediction of therapeutic windows, the identification of sensitive subpopulations, and the design of smarter, more efficient clinical trials [28] [29]. For drugs with a narrow therapeutic index, these tools are becoming critical for risk management and personalized therapy.

Future advancements will likely focus on the deeper integration of real-world data (RWD) and digital health technologies (e.g., continuous biosensors) into pharmacological models, creating a closed-loop "learn and confirm" system that extends from the lab through all phases of clinical use. Furthermore, regulatory science is adapting to embrace these quantitative approaches, as evidenced by FDA workshops and guidances on MIDD [29]. For researchers and developers, mastering both the foundational principles of dose-response and the modern computational tools to analyze it is essential for bridging the gap between laboratory discovery and patient safety.

From Theory to Trial: Applying Dose-Response Principles in Modern Drug Development and Regulatory Strategy

The dose-response relationship is a cornerstone concept in pharmacology, toxicology, and drug development, describing the magnitude of a biological effect as a function of exposure to a stimulus [31]. Historically, the dominant model for dose-finding in oncology, particularly for cytotoxic chemotherapies, has been the identification of the Maximum Tolerated Dose (MTD). This paradigm operates on a "more is better" principle, rooted in the steep, monotonic dose-response curves typical of cytotoxic agents, where efficacy and toxicity both increase with dose [32] [33]. The primary objective of a traditional Phase I trial was to establish the MTD, defined by a target incidence of Dose-Limiting Toxicities (DLTs), which then becomes the Recommended Phase II Dose (RP2D) [34] [35].