Deterministic vs. Probabilistic Risk Assessment: A Strategic Guide for Biomedical Research and Drug Development

This article provides a comprehensive comparison of deterministic and probabilistic risk assessment methodologies for researchers, scientists, and drug development professionals.

Deterministic vs. Probabilistic Risk Assessment: A Strategic Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive comparison of deterministic and probabilistic risk assessment methodologies for researchers, scientists, and drug development professionals. It explores the foundational definitions, core philosophical differences, and historical contexts of both approaches. The article details practical methodological tools, computational techniques (including Monte Carlo simulations and fault/event tree analyses), and specific applications in drug development, such as Quality by Design (QbD) and bioequivalence studies. It addresses common challenges in implementation, data requirements, and strategies for optimizing risk models. Furthermore, the article covers validation frameworks, comparative analyses of outputs and regulatory acceptance, and the emerging paradigm of risk-informed decision-making. The conclusion synthesizes key insights on selecting and integrating both methods to enhance the robustness, efficiency, and predictive power of risk assessments in biomedical and clinical research.

Core Concepts and Historical Evolution: Defining Deterministic and Probabilistic Risk Paradigms

In the scientific assessment of risk, two foundational philosophies guide analysis and decision-making. Deterministic risk assessment evaluates the impact of a single, specified scenario, producing a precise point estimate of risk [1]. In contrast, probabilistic risk assessment models all plausible scenarios, their likelihoods, and consequences to generate a spectrum of possible outcomes [1]. This guide objectively compares these methodologies within the context of drug development and environmental health, providing researchers and professionals with a clear analysis of their performance, supported by experimental data and standardized protocols.

Philosophical and Methodological Foundations

The divide between these approaches is philosophical and practical. The deterministic model treats the probability of an event as finite, relying on known inputs to yield an observed outcome [1]. It is often used to test specific plans, such as an evacuation strategy or a worst-case failure mode [1]. However, it does not quantify the likelihood of outcomes and may underestimate risk by ignoring the full range of possibilities [1].

Probabilistic risk assessment was developed to resolve the limitations of historical data by simulating the physics of phenomena to generate a catalogue of synthetic future events [1]. It incorporates inherent uncertainties from natural randomness and incomplete knowledge [1]. Its core output is a probability distribution, providing a more complete picture of the full spectrum of future risks [1].

Table: Foundational Characteristics of Risk Assessment Approaches

| Aspect | Deterministic Risk Assessment | Probabilistic Risk Assessment |

|---|---|---|

| Core Definition | Considers the impact of a single, specified risk scenario [1]. | Considers all possible scenarios, their likelihood, and associated impacts [1]. |

| Primary Output | A single point estimate (e.g., a specific risk value or loss amount) [2]. | A distribution of possible estimates (e.g., a range of risk values with probabilities) [2]. |

| Treatment of Uncertainty | Does not quantitatively characterize uncertainty; may discuss it qualitatively [2]. | Explicitly characterizes variability and uncertainty through statistical distributions [2]. |

| Typical Application Stage | Used in initial screening-level assessments and for analyzing specific scenarios [2]. | Generally reserved for higher-tier, refined assessments [2]. |

| Model Behavior | The same inputs always produce the same output [1]. | Incorporates randomness; the same inputs can lead to a group of different outputs [1]. |

Core Comparison: Inputs, Tools, and Results

The practical implementation of these philosophies differs significantly in data requirements, analytical tools, and the interpretation of results.

Table: Comparative Analysis of Assessment Implementation

| Factor | Deterministic Assessment | Probabilistic Assessment |

|---|---|---|

| Inputs | Single point estimates (e.g., high-end or central tendency values) [2]. | Probability distributions for key parameters (e.g., lognormal, uniform) [2]. |

| Key Tools | Simple models, standardized equations, sensitivity analysis with point estimates [2]. | Complex models, Monte Carlo simulation, sensitivity analysis with distributions [2]. |

| Results & Communication | A single, easy-to-communicate number useful for prioritization and screening [2]. | A distribution (e.g., CDF) that is more robust but complex to communicate [2]. |

| Characterization of Extremes | Can calculate a "worst-case" or "reasonable maximum" using conservative point inputs [2]. | Can identify the exact percentile (e.g., 95th, 99th) of the exposure or risk distribution [2]. |

| Resource Intensity | Relatively economical and straightforward, requiring less expertise [2]. | Resource-intensive, requiring more data, expertise, and computational power [2]. |

| Best For | Compliance, auditability, and situations requiring binary certainty [3]. | Pattern recognition, handling fragmented data, and modeling evolving systems [3]. |

Experimental Data and Case Comparisons

4.1 Case Study: Contaminated Site Risk Assessment A comparative study of sites contaminated with Polycyclic Aromatic Hydrocarbons (PAHs) provides quantitative data on the outputs of both methods [4].

- Method: The probabilistic approach used Monte Carlo simulation to propagate uncertainty in exposure factors (e.g., ingestion rate, exposure frequency) and toxicity via Toxic Equivalency Factors (TEFs) [4].

- Finding: Variability in exposure factors contributed more to uncertainty in risk estimates than sample heterogeneity or toxicity estimates [4].

- Result: The deterministic method produced a single risk estimate. The probabilistic method generated a range, showing that the deterministic point estimate corresponded to a specific percentile (e.g., 50th, 75th, 95th) of the full probabilistic distribution, revealing its degree of conservatism [4].

Table: Representative Risk Estimate Comparison from PAH Study [4]

| Population | Deterministic Risk Estimate | Probabilistic Risk Range (5th-95th Percentile) | Percentile of Deterministic Point in Probabilistic Distribution |

|---|---|---|---|

| Adult | e.g., 2.1 x 10⁻⁶ | e.g., 3.0 x 10⁻⁷ to 8.7 x 10⁻⁶ | ~80th Percentile |

| Child | e.g., 1.7 x 10⁻⁵ | e.g., 2.5 x 10⁻⁶ to 5.4 x 10⁻⁵ | ~75th Percentile |

4.2 Case Study: Generic Drug Development An analysis of Abbreviated New Drug Application (ANDA) deficiencies reveals areas where deterministic quality standards meet probabilistic real-world outcomes [5].

- Finding: The majority of major deficiencies are in Manufacturing (31%) and Drug Product (27%), areas governed by deterministic Current Good Manufacturing Practice (cGMP) rules [5].

- Implication: This indicates that a purely deterministic, compliance-focused check may not prevent all failure modes. A probabilistic, quality-by-design approach that understands process variability could mitigate these prevalent risks [5].

Table: Common Deficiencies in ANDA Submissions (First Cycle) [5]

| Source of Major Deficiency | Percentage of Total |

|---|---|

| Manufacturing (Primarily Facility-Related) | 31% |

| Drug Product-Related | 27% |

| Bioequivalence | 18% |

| Drug Substance-Related | 9% |

| Pharmacology/Toxicology | 6% |

| Other | 5% |

Experimental Protocols

5.1 Protocol for Deterministic (Point Estimate) Risk Assessment This protocol is typical for a screening-level assessment of chemical exposure [2].

- Define Scenario: Identify a single, specific exposure scenario (e.g., "reasonable maximum exposure" for a resident adjacent to a site) [2].

- Select Point Values: For each exposure parameter (concentration, ingestion rate, exposure frequency, body weight), select a single health-protective value. These are often 95th percentile values for intake and central tendency for body weight [2].

- Calculate: Use a standard risk equation:

Risk = (Concentration × Intake Rate × Exposure Frequency × Duration) / (Body Weight × Averaging Time) × Toxicity Value. - Interpret: Compare the single resulting risk number to a regulatory benchmark (e.g., 1 x 10⁻⁶).

5.2 Protocol for Probabilistic Risk Assessment via Monte Carlo Simulation This protocol is used for a refined assessment to characterize variability and uncertainty [2] [6].

- Model Development: Develop an exposure or risk equation (e.g., the same as used in the deterministic model).

- Define Input Distributions: Replace key point estimates with probability distributions. For example, model body weight as a normal distribution and ingestion rate as a lognormal distribution based on population data [2].

- Run Monte Carlo Simulation:

- Analyze Output: Analyze the resulting risk distribution. Key outputs include the mean, median, and percentiles (e.g., 50th, 95th, 99th). Sensitivity analysis identifies which input parameters contribute most to the output variance [2].

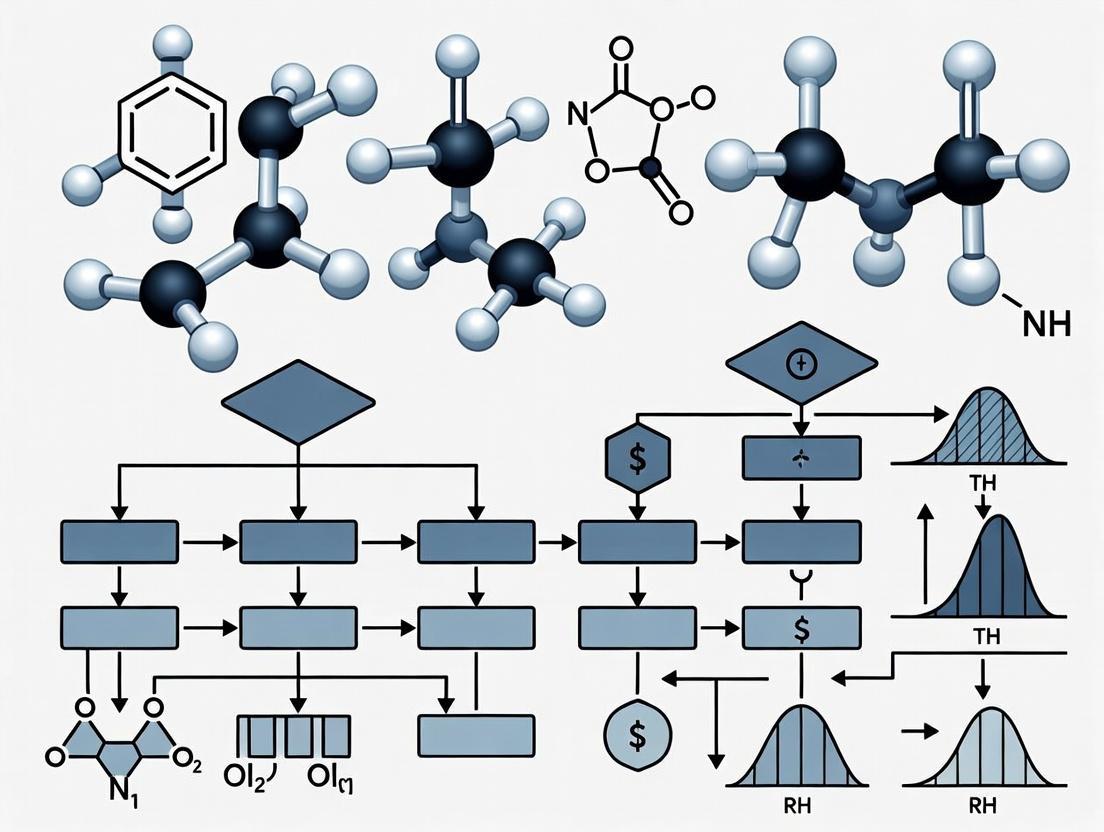

Visualizing the Relationship and Workflow

Risk Assessment Philosophy and Model Outputs

Probabilistic Risk Assessment Monte Carlo Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Materials and Tools for Comparative Risk Studies

| Item | Function in Experiment | Typical Source/Example |

|---|---|---|

| Probabilistic Software | Runs Monte Carlo simulations and handles statistical distributions. | @Risk, Crystal Ball, R with mc2d package [2]. |

| Exposure Factor Database | Provides population-based statistical distributions for parameters (e.g., body weight, intake rates). | EPA Exposure Factors Handbook, NHANES data [2]. |

| Toxic Equivalency Factors (TEFs) | Allows summation of risks from congener mixtures by converting concentrations to a common toxic potency. | Used for PAHs, dioxins, and PCBs in probabilistic studies [4]. |

| Sensitivity Analysis Tools | Quantifies the contribution of each input variable to output variance. | Built-in functions in probabilistic software (e.g., Spearman correlation) [2]. |

| High-Performance Point Estimates | Serves as inputs for deterministic comparison or as anchors (e.g., 95th percentile) for probabilistic distributions. | Derived from the same databases used to build distributions (e.g., 95th percentile intake rate) [2]. |

Application in Regulatory and Drug Development Contexts

The choice between approaches is context-dependent. Deterministic models are valued for compliance and auditability, where transparent, reproducible decisions are required [3]. Probabilistic models are essential for strategic planning and resource allocation, where understanding the likelihood of outcomes is critical [1].

In drug development, deterministic rules (cGMP) ensure baseline quality, but probabilistic thinking (Quality by Design) is used to control process variability and predict potential failure modes [5]. Regulatory bodies like the European Medicines Agency's PRAC integrate both in pharmacovigilance, assessing specific safety signals (deterministic) within a framework of ongoing risk management (probabilistic) [7].

In spectrum policy, the U.S. Federal Communications Commission has moved from deterministic worst-case interference analysis to probabilistic assessments, allowing for more efficient spectrum sharing by quantifying the low likelihood of worst-case scenarios [8]. This shift underscores the trend toward probabilistic thinking in complex, modern systems.

Conceptual Foundations and Methodological Comparison

In deterministic risk assessment, a single, fixed value (a point estimate) is used for each input parameter to produce a single output, such as a clinical success probability or a net present value. This approach treats uncertainty as knowable and fixed [1]. In contrast, probabilistic risk assessment uses probability distribution functions (PDFs) to represent input parameters, accounting for their variability and uncertainty. This generates a range of possible outcomes, quantifying the likelihood of each [1] [4].

The core difference lies in how they handle uncertainty. Deterministic models provide a snapshot based on a specific scenario (e.g., best-case, worst-case, or most likely), but do not quantify the likelihood of that scenario occurring [1]. Probabilistic models explicitly incorporate randomness and uncertainty, allowing for a more comprehensive view of the full spectrum of potential risks and their associated probabilities [1] [9]. In drug development, this translates to moving from a single, often optimistic, efficacy estimate to a distribution that reflects the true uncertainty based on available data.

Table 1: Comparison of Deterministic and Probabilistic Risk Assessment Methodologies

| Aspect | Deterministic (Point Estimate) Approach | Probabilistic (Distribution) Approach |

|---|---|---|

| Core Input | Single, fixed value for each parameter [1]. | Probability distribution for each parameter (e.g., PDF) [4]. |

| Treatment of Uncertainty | Implicit, often ignored or subsumed into a "conservative" estimate [1]. | Explicitly quantified and propagated through the model [1]. |

| Typical Output | A single-point outcome (e.g., one success probability, one financial value) [10]. | A range of possible outcomes with associated probabilities (e.g., a distribution of success probabilities) [4]. |

| Interpretation of Output | The result is often interpreted as a definitive prediction [11]. | Results express likelihood (e.g., "80% chance that final efficacy is >X") [12]. |

| Decision-Making Insight | Provides a scenario-specific answer. May underestimate risk by not considering the full outcome range [1]. | Informs on the chance of success/failure and magnitude of risk, supporting more nuanced decisions [12] [10]. |

| Common Drug Development Applications | Traditional sample size calculation using a fixed effect size; simple rNPV models [12] [10]. | Probability of Success (PoS), Probability of Pharmacological Success (PoPS), and value estimation using clinical trial simulations [12] [13]. |

Application in Drug Development: Quantitative Insights

The transition from deterministic to probabilistic thinking is pronounced in assessing a drug candidate's chance of success. Historical aggregate success rates, often used as deterministic benchmarks, show that overall likelihood of approval from Phase I is approximately 6.2% [14]. However, this point estimate masks significant variability across therapeutic areas and development strategies.

Table 2: Clinical Trial Success Rates (Probabilistic Benchmarks vs. Deterministic Adjustments)

| Development Phase / Scenario | Historic Point Estimate (Deterministic Benchmark) | Probabilistic Consideration & Impact |

|---|---|---|

| Overall Likelihood of Approval (Phase I to Approval) | 6.2% [14] | The distribution around this mean is wide; for example, oncology success rates have fluctuated from 1.7% to 8.3% in recent years [14]. |

| Phase II to Phase III Transition | Often planned with a fixed, optimistic effect size from Phase II [12]. | Using a Probability of Success (PoS) with a "design prior" distribution for the effect size prevents overestimation. PoS can be calculated from Phase II data or by incorporating external data [12]. |

| Trials Using Biomarkers | Binary classification: study uses a biomarker or not. | Trials that use biomarkers for patient selection have a higher overall success probability than those without [14]. A probabilistic model can quantify the improvement in success distribution. |

| Asset Valuation (rNPV) | Single-value output conflates decision quality with outcome [10]. | Tripartite models separate the observed data, true drug potential, and decision tool quality, generating a distribution of asset value that reflects predictive validity [10]. |

Experimental Protocols for Key Probabilistic Assessments

Protocol for Calculating Probability of Success (PoS) for a Phase III Trial

This protocol outlines the calculation of PoS when Phase II data on the clinical endpoint is available, as defined in recent methodological reviews [12].

Define the Design Prior: Specify a probability distribution (the "design prior") for the true treatment effect size (δ) in the target Phase III population. This prior can be:

- Directly from Phase II Data: Use the Phase II estimate of effect size and its standard error to form a prior distribution (e.g., a normal or t-distribution).

- Incorporating External Data: Use a meta-analytic-predictive or similar approach to combine the Phase II data with relevant real-world data (RWD) or historical trial data to form a more robust prior [12].

Specify the Success Criteria: Define the statistical success criterion for the planned Phase III trial. This is typically rejecting the null hypothesis (H₀: δ ≤ 0) at a one-sided alpha level (e.g., 0.025).

Perform the Predictive Calculation: Calculate the predictive probability that the future Phase III trial will meet the success criterion, given the design prior. This is often computed as: PoS = ∫ P(Reject H₀ | δ) · p(δ) dδ where

P(Reject H₀ | δ)is the power function of the Phase III trial for a given δ, andp(δ)is the density of the design prior. This integral represents the average power over the distribution of plausible effect sizes [12].Incorporate Alternative Endpoints: If Phase II used a biomarker or surrogate endpoint, an additional step is required to quantify the relationship between that endpoint and the clinical endpoint. External data (e.g., from published studies or RWD) is used to model this association and translate the Phase II findings into a prior for the clinical effect [12].

Protocol for Calculating Probability of Pharmacological Success (PoPS)

PoPS is applied earlier in development to inform candidate selection and first-in-human dosing decisions [13]. The following Monte Carlo simulation protocol is based on the established framework [13].

Define Success Criteria: Specify thresholds for pharmacological efficacy (

K_eff) and safety (K_safe), and the required percentage (n%) of a virtual population achieving them. Acknowledge uncertainty in these criteria by assigning them probability distributions (e.g.,K_eff ~ d(λ)) [13].Develop Pharmacometric Models: Build pharmacokinetic/pharmacodynamic (PK/PD) models for the key efficacy and safety endpoints using in vitro, in vivo, or early clinical data. Define the statistical model for parameters (θ, Ω), capturing inter-individual variability and parameter estimation uncertainty [13].

Translate to Human Population: Translate the models from preclinical species or in vitro systems to predict outcomes in a human virtual population. This step incorporates "translation uncertainty."

Execute Monte Carlo Simulation: a. Outer Loop (Uncertainty): Simulate

M(e.g., 1000) sets of model parameters and success criteria from their respective distributions [13]. b. Inner Loop (Variability): For each parameter seti, simulate a virtual population ofN(e.g., 1000) subjects and calculate their PK/PD endpoints [13]. c. Check Success: For each virtual populationi, determine if the proportion of subjects meeting the efficacy and safety criteria satisfies the success rule.Compute PoPS: Calculate PoPS as the proportion of the

Msimulated virtual populations that meet the combined success criteria: PoPS = M' / M, whereM'is the count of successful populations [13].

Diagram 1: Decision Framework for Drug Development Milestones

Visualizing Probabilistic Workflows and Integrations

Diagram 2: Probability of Success (PoS) Calculation Workflow

Diagram 3: Integrating Biomarker Data into Clinical Effect Estimation

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing probabilistic risk assessments requires specific conceptual and computational tools. The following toolkit details essential components for designing and executing these analyses.

Table 3: Essential Toolkit for Probabilistic Risk Assessment in Drug Development

| Tool / Reagent | Category | Function in Probabilistic Assessment |

|---|---|---|

| Design Prior [12] | Statistical Construct | A probability distribution representing uncertainty in a key parameter (e.g., true treatment effect). It is the foundational input that differentiates probabilistic from deterministic calculation. |

| External Data (RWD/Historical) [12] | Data Source | Real-world data or historical clinical trial data used to inform and strengthen the design prior, reducing reliance on single, potentially biased, internal datasets. |

| Pharmacometric (PK/PD) Model [13] | Quantitative Model | A mathematical model describing the relationship between drug exposure, biological activity, and effect. It is used to simulate virtual patient populations for PoPS calculations. |

| Monte Carlo Simulation Engine | Computational Method | Software that performs repeated random sampling from input distributions to numerically approximate the distribution of outcomes. Core to computing PoPS and other metrics. |

| Biomarker Validation Data [12] [14] | Translational Bridge | Data establishing the quantitative relationship between a biomarker (used in early phases) and the clinical endpoint. Critical for translating Phase II signals to Phase III predictions. |

| Uncertainty Distribution Library | Parameter Specification | A curated collection of potential distributions (Normal, Log-Normal, Beta, etc.) and their parameters to represent uncertainty in inputs, success criteria, and translation factors. |

The evolution of risk assessment reflects a fundamental transformation from static, rule-based systems to dynamic, data-driven predictive frameworks. Traditional prescriptive approaches established fixed safety margins based on historical data and conservative assumptions, providing clear compliance boundaries but limited adaptability to novel or complex scenarios [15]. In contrast, modern predictive risk modeling utilizes statistical algorithms and probabilistic reasoning to forecast potential risks, quantify uncertainties, and optimize mitigation strategies proactively [15] [9]. This paradigm shift is particularly evident in fields ranging from environmental science and drug development to construction and finance, where the limitations of deterministic "one-size-fits-all" safety factors have become increasingly apparent in managing complex, interdependent risks [16] [9].

This comparative guide objectively analyzes the performance of deterministic (prescriptive) and probabilistic (predictive) risk assessment methodologies within the broader thesis of risk research evolution. The transition is characterized by the integration of advanced data analytics, artificial intelligence, and formal uncertainty quantification, moving from asking "Are we compliant with the rules?" to "What is likely to happen, and what is the best action to take?" [15] [17].

Foundational Methodologies: A Comparative Framework

Deterministic (Prescriptive) Risk Assessment

Deterministic risk assessment relies on fixed equations, categorical ratings, and predefined thresholds to yield a single, often conservative, risk estimate [16] [18]. Its core philosophy is the application of standardized safety margins to ensure protection under worst-case or typical scenarios.

- Key Characteristics: It uses predefined formulas where input variables are fixed values (e.g., maximum observed concentration, shortest distance). The output is commonly a categorical risk level (e.g., High, Medium, Low) or a pass/fail determination against a regulatory threshold [16] [18].

- Typical Applications: This method is foundational in regulatory compliance, initial screening-level assessments, and engineering design where rules must be simple, transparent, and universally applicable [9] [18].

- Inherent Limitations: A primary critique is its inability to propagate uncertainty. It often overlooks the variability and interdependence of input factors, which can lead to significant over- or under-estimation of risk. For instance, a study on combined sewer overflows found that deterministic models frequently overestimated risk for outfalls near water intakes by overemphasizing proximity while neglecting other factors like overflow frequency and duration [16].

Probabilistic (Predictive) Risk Assessment

Probabilistic risk assessment explicitly quantifies uncertainty by representing inputs and outputs as probability distributions. It employs statistical and machine learning models to simulate a range of possible outcomes and their likelihoods [19] [9].

- Key Characteristics: Input parameters are defined by distributions (e.g., normal, log-normal) rather than single values. Models like Bayesian Networks (BNs), Monte Carlo simulations, and predictive algorithms are used to compute a probability distribution of risk [16] [9].

- Core Advantages: This approach facilitates more informed decision-making under uncertainty. It allows for sensitivity analysis to identify dominant risk factors, accommodates sparse or missing data through probabilistic inference, and supports the evaluation of multiple complex scenarios simultaneously [16] [19].

- Evolution to Prescriptive Analytics: Predictive models answer "what is likely to happen?" The advanced stage, prescriptive analytics, builds upon this by using optimization and simulation to recommend actions that optimize outcomes, answering "what should we do about it?" [15] [17].

Table 1: Foundational Comparison of Deterministic vs. Probabilistic Risk Assessment [16] [19] [9]

| Aspect | Deterministic (Prescriptive) Approach | Probabilistic (Predictive) Approach |

|---|---|---|

| Philosophical Basis | Rule-based compliance; safety margins. | Forecasting and uncertainty management. |

| Uncertainty Handling | Implicit, buried in safety factors; not quantified. | Explicitly quantified and propagated through the model. |

| Output Format | Single-point estimate or categorical risk level. | Probability distribution of risk (e.g., likelihood of exceeding a threshold). |

| Data Requirements | Fixed input values; often less data-intensive. | Data to characterize variability and define distributions. |

| Model Complexity | Generally lower; uses formulas and lookup tables. | Higher; employs statistical, simulation, or AI models. |

| Decision Support | Provides a clear compliance check. | Supports risk-informed decisions by evaluating trade-offs and probabilities. |

| Primary Limitation | Can be overly conservative or non-conservative; inflexible. | Computationally intensive; requires more sophisticated expertise. |

Performance Comparison: Experimental Data and Case Studies

Case Study: Microbial Risk from Combined Sewer Overflows

A direct comparative study evaluated 89 combined sewer overflows (CSOs) upstream of drinking water intakes using both a deterministic equation and a Bayesian network [16].

- Deterministic Method: A single equation combined factors like distance to intake and pipe diameter to assign one of five static risk levels.

- Probabilistic Method: A Bayesian network modeled probabilistic relationships between population, overflow frequency/duration, intake vulnerability, and meteorological data.

Results and Performance Insights: The deterministic method failed to assess 42 of the 89 overflow structures due to data gaps. Where comparisons were possible, the deterministic model overestimated risk for CSOs close to the intake, as distance dominated the calculation, and underestimated risk for infrequent but long-duration overflows [16]. The Bayesian network (probabilistic) successfully handled data gaps through inference and provided a nuanced prioritization of structures. Sensitivity analysis revealed that population, frequency, and duration were more influential than distance—insights obscured by the deterministic formula [16].

Table 2: Performance Outcomes from CSO Risk Assessment Comparative Study [16]

| Performance Metric | Deterministic Equation | Bayesian Network (Probabilistic) |

|---|---|---|

| Structure Assessability | Failed to assess 47% (42/89) of structures due to missing data. | Assessed 100% of structures, using inference for missing data. |

| Risk Prioritization | Coarse; assigned many structures to the same risk level. | Fine-grained; enabled effective prioritization for intervention. |

| Key Driving Factors | Over-emphasized distance to intake. | Correctly identified overflow frequency, duration, and population. |

| Handling Uncertainty | No uncertainty output. | Provided full probability distribution across risk levels. |

Validation in Clinical and Industrial Settings

The principles of method comparison are rigorously applied in clinical laboratories, providing a template for validating predictive against established methods [20]. Key experimental protocols include:

- Specimen Selection: Using 40-100 patient specimens covering the entire analytical range of interest [20].

- Data Analysis: Employing difference plots, correlation analyses, and regression statistics to quantify systematic error (bias) and random error between new (predictive) and comparative (deterministic or reference) methods [20].

In industrial risk management, studies comparing classical risk matrices (deterministic) with graphical uncertainty-aware methods (probabilistic) show that the latter provides a more honest representation of expert judgment and reduces the false precision of forcing experts to choose a single likelihood/impact value [19]. This leads to better-informed resource allocation for risk mitigation.

Experimental Protocols for Method Comparison and Validation

This protocol provides a standardized framework for objectively comparing a new predictive model or method against an established one.

- Define Objective and Scope: Clearly state the medical or risk decision concentration (Xc) at which performance is critical.

- Select Comparative Method: Choose the best available reference or current standard method against which to compare.

- Specimen Collection & Preparation:

- Collect a minimum of 40 unique samples spanning the full reportable range.

- Ensure sample stability and analyze by both methods within a tight time window (e.g., 2 hours) to avoid degradation.

- Experimental Execution:

- Analyze all samples by both the Test (predictive) and Comparative (deterministic/reference) methods.

- Perform measurements over multiple days (≥5) to capture inter-day variability.

- Ideally, perform duplicate measurements to identify outliers and ensure repeatability.

- Data Analysis & Interpretation:

- Graphical Analysis: Create a difference plot (Test result – Comparative result vs. Comparative result) or a comparison scatter plot.

- Statistical Calculation:

- For wide range data: Perform linear regression (Y = a + bX). Calculate systematic error (SE) at critical decision points: SE = (a + b*Xc) – Xc.

- For narrow range data: Calculate mean difference (bias) and standard deviation of differences.

- Interpretation: Quantify bias (inaccuracy) and assess whether it is constant or proportional. Determine if the predictive method's performance is clinically or operationally acceptable relative to the standard.

This protocol details the development of a probabilistic model for complex risk systems.

- Problem Formulation & Variable Selection: Define the risk event (e.g., "Microbial contamination at intake"). Identify all contributing factors (nodes): hazards (pathogen load), exposures (flow, dilution), vulnerabilities (treatment barrier), and consequences.

- Network Structure Development: Establish cause-and-effect relationships between variables to create a directed acyclic graph (DAG). This can be based on literature, expert elicitation, or data.

- Parameterization: Populate the Conditional Probability Tables (CPTs) for each node. Use historical data, expert judgment, or derived statistical distributions.

- Model Validation:

- Training/Testing Split: If data is sufficient, split the dataset to train and test the model.

- Sensitivity Analysis: Identify which input parameters have the greatest influence on the output risk probability (e.g., using variance reduction or entropy reduction metrics).

- Predictive Performance: Test the model's output probabilities against observed outcome frequencies in historical data (calibration).

- Scenario Analysis & Decision Support: Use the validated BN to run "what-if" scenarios (e.g., increased rainfall frequency, population growth) and calculate the resulting shift in risk probability to inform management decisions.

Diagram 1: Predictive Risk Modeling and Validation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents, Tools, and Resources for Risk Assessment Research

| Tool/Resource Category | Specific Item / Platform | Primary Function in Risk Research | Key Reference/Context |

|---|---|---|---|

| Statistical Computing | R (with packages like 'bnlearn', 'rjags'), Python (with PyMC3, scikit-learn, pandas) | Platform for building probabilistic models (BNs, regression), performing statistical analysis, and data visualization. | Essential for Bayesian network development and analysis [16]. |

| Uncertainty Quantification Tools | Monte Carlo Simulation software (@RISK, Crystal Ball), specialized libraries (Chaospy, UQpy). | Propagates input uncertainties through complex models to generate probability distributions of output risk. | Core to probabilistic risk assessment [19] [9]. |

| Systematic Review Platforms | DistillerSR, Rayyan, Covidence. | Facilitates evidence-based risk assessment by managing systematic literature reviews and evidence mapping for hazard identification and data synthesis. | Critical for transparent, objective data gathering per evidence-based frameworks [21]. |

| Reference Databases & Repositories | SEER (cancer), NHANES (health), regulatory agency databases (EPA, ECHA), project historical databases. | Provides population-level data, historical incident rates, and chemical/toxicity data essential for model parameterization and validation. | Used for model calibration and validation (e.g., breast cancer risk models) [22]. |

| Validation & Comparison Software | Method validation modules in LIMS, EP Evaluator, CBstat. | Performs standardized statistical comparisons between methods (e.g., Bland-Altman, regression) to quantify bias and imprecision. | Embodies protocols for comparison of methods experiments [20]. |

| Expert Elicitation Platforms | MATCH Uncertainty Elicitation Tool, online Delphi survey platforms. | Structures the process of gathering and aggregating probabilistic judgments from domain experts to inform model priors and parameters. | Addresses data gaps by formalizing expert knowledge input [19]. |

Diagram 2: Methodological Shift from Prescriptive to Predictive

The comparative analysis demonstrates that deterministic and probabilistic risk assessments are not mutually exclusive but are complementary parts of a robust risk management strategy [15] [18]. Deterministic methods provide essential, tractable benchmarks and compliance foundations, while probabilistic methods offer the granularity and flexibility needed for optimized decision-making in complex, uncertain environments [16] [9].

The future of risk research lies in hybrid or integrated frameworks. A promising approach involves using probabilistic models for comprehensive system assessment and sensitivity analysis, then deriving context-specific, simplified deterministic rules or safety factors for routine application [19] [18]. Furthermore, the full potential is realized when predictive analytics (forecasting what will happen) is coupled with prescriptive analytics (recommending what should be done), closing the loop from risk identification to optimized mitigation [15] [17]. For researchers and drug development professionals, this evolution necessitates building competency in data science, statistical modeling, and uncertainty quantification alongside deep domain expertise, to develop more resilient and effective risk assessment strategies.

Comparative Analysis of Deterministic and Probabilistic Risk Assessment

Risk assessment methodologies are foundational to scientific and industrial decision-making. Deterministic approaches provide single-point estimates of risk, while probabilistic methods generate a distribution of possible outcomes, explicitly accounting for uncertainty and variability [23] [2]. This comparison guide evaluates their performance within the broader research thesis of quantifying and managing risk in complex systems.

Deterministic Risk Assessment (DRA) relies on fixed, point-value inputs (e.g., a specific exposure concentration, a defined body weight) to produce a single risk estimate [2]. It is often employed for screening-level analyses due to its simplicity, straightforward implementation, and ease of communication. A common deterministic output is a point estimate of exposure or risk, which can be designed to represent a central tendency (e.g., average) or a health-protective high-end value (e.g., reasonable maximum exposure) [2].

Probabilistic Risk Assessment (PRA), in contrast, replaces key point estimates with probability distributions for inputs (e.g., a range of possible exposure frequencies or ingestion rates) [23] [2]. Through techniques like Monte Carlo simulation, the model runs thousands of iterations, each sampling from the input distributions, to produce a distribution of possible risk outcomes [2]. PRA provides a more robust characterization of the range and likelihood of risks, enabling a quantitative understanding of variability within a population and uncertainty in the estimate itself [23].

The core comparative differences are summarized in the table below.

Table: Core Characteristics of Deterministic vs. Probabilistic Risk Assessments

| Aspect | Deterministic Assessment | Probabilistic Assessment |

|---|---|---|

| Philosophy & Inputs | Uses single, point estimates for all input variables [2]. | Uses probability distributions for key input variables to reflect variability/uncertainty [23] [2]. |

| Primary Tools | Simple models and equations; standardized methods [2]. | Complex models; Monte Carlo simulation; sensitivity analysis based on distributions [2]. |

| Key Results & Output | A single point estimate (e.g., of exposure or risk) [2]. | A distribution of possible estimates (e.g., a risk curve); measures of central tendency and tails [23] [2]. |

| Characterization of Uncertainty & Variability | Limited; typically addressed qualitatively or via multiple scenario runs [2]. | Explicit and quantitative; can separate variability (true differences in population) from uncertainty (lack of knowledge) [23]. |

| Best Use Cases | Screening, prioritization, situations with limited data or resources, communicating simple messages [2]. | Refined assessments, informing decisions where the magnitude of uncertainty is critical, cost-benefit analysis [2] [4]. |

| Resource & Expertise Demand | Lower; easier to implement and explain [2]. | Higher; requires more data, computational power, and statistical expertise [2]. |

Experimental Performance Data and Protocols

Direct comparisons in scientific literature highlight the quantitative differences in outputs and insights generated by these two paradigms.

Experimental Protocol: Comparative Risk Assessment for PAH-Contaminated Sites

A seminal study compared DRA and PRA for two sites contaminated with Polycyclic Aromatic Hydrocarbons (PAHs) [4].

1. Objective: To compare risk estimates from deterministic and probabilistic methods and to evaluate the impact of using Toxic Equivalency Factors (TEFs) for PAH mixtures [4].

2. Methodology:

- Site Characterization: Soil samples were collected from two contaminated sites. PAH concentrations were analyzed for multiple species [4].

- Exposure Scenario: Standard EPA models for soil ingestion, dermal contact, and inhalation were used for both child and adult receptors [4].

- Deterministic Arm: Point estimates for exposure factors (e.g., ingestion rate, exposure frequency) were selected, typically using health-protective upper-percentile values (e.g., 95th percentile). A single Hazard Index (HI) was calculated for the PAH mixture [4].

- Probabilistic Arm: Probability distributions (e.g., lognormal, uniform) were assigned to exposure factors based on published data. Monte Carlo simulation (e.g., 10,000 iterations) was performed to generate a distribution of HI values [4].

- TEF Integration: For both arms, TEFs were applied to convert concentrations of various PAHs into an equivalent concentration of a reference compound (e.g., Benzo[a]pyrene), allowing for a combined risk assessment of the mixture [4].

- Uncertainty Analysis: The contribution of different parameters (exposure factors, sample heterogeneity, toxicity) to overall uncertainty in the probabilistic output was analyzed [4].

3. Key Performance Findings:

- Higher Risk Estimates: The use of TEFs produced higher risk estimates for both adults and children at both sites under both methodologies, as it accounted for the toxicity of more PAH species [4].

- Primary Uncertainty Driver: Exposure factor variability was identified as a greater contributor to uncertainty in the final risk estimate than either sample heterogeneity or toxicity estimates [4].

- Probabilistic Enhancement: The PRA provided a range of risk values and an explicit measure of the degree of conservatism inherent in the deterministic point estimate [4]. For instance, it could show that a deterministic "reasonable maximum exposure" estimate corresponded to the 95th or 99th percentile of the probabilistic distribution.

Experimental Protocol: Modern PRA with Machine Learning for Fire Risk

Contemporary research illustrates the evolution of PRA through the integration of advanced computational techniques.

1. Objective: To identify key fire input parameters, quantify their contribution to uncertainty, and use machine learning (ML) to guide efficient data collection for fire PRA in nuclear power plants [24].

2. Methodology:

- Parameter Selection: Identify variable fire model inputs, from general (heat release rate) to detailed (material pyrolysis kinetics) [24].

- Uncertainty Quantification: Use Monte Carlo simulation to propagate uncertainty from input parameter distributions through the fire model to the output distribution of conditions (e.g., temperature, pressure) [24].

- Sensitivity Analysis: Employ global sensitivity analysis (e.g., Sobol indices) to rank the influence of each input parameter on output uncertainty [24].

- Machine Learning Integration: Train artificial neural network (ANN) surrogate models on the Monte Carlo simulation data. Use the ANN for rapid prediction and further analysis [24].

- Framework Development: Develop a decision framework that uses sensitivity results to recommend which new fire experiments would most effectively reduce overall model uncertainty and improve risk estimates [24].

3. Key Performance Findings:

- This advanced PRA protocol moves beyond simple risk quantification to optimize resource allocation for risk reduction [24].

- ML-based surrogate models dramatically increase computational efficiency, enabling more complex simulations and deeper uncertainty exploration [24].

- The output directly informs experimental design, prioritizing tests on parameters identified as the largest contributors to predictive uncertainty [24].

Visualizing Methodologies and Relationships

Diagram 1: Comparative Workflow for PAH Risk Assessment Study [4]

Diagram 2: Modern Probabilistic Risk Assessment with Machine Learning [24]

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Tools and Materials for Advanced Risk Assessment Research

| Tool/Reagent | Primary Function in Research | Context & Application |

|---|---|---|

| Monte Carlo Simulation Engine | The core computational technique for PRA. It performs repeated random sampling from input distributions to numerically generate an output probability distribution [2] [24]. | Used to propagate uncertainty and variability through complex models in environmental risk [4], catastrophe modeling [25], and engineering safety [24]. |

| Sensitivity Analysis Software | Quantifies how the variation in the model's output can be apportioned to different sources of variation in its inputs. Identifies key drivers of uncertainty [2] [24]. | Critical following Monte Carlo simulation to determine which parameters most affect risk outcomes, guiding focused research or data collection [24]. |

| Probabilistic Catastrophe Model | Integrated software that combines event, hazard, vulnerability, and financial modules to estimate loss distributions from rare, high-impact events [25]. | Used by insurers and researchers to calculate metrics like AAL and return period losses for natural perils, directly employing the key terminology of risk [26] [25]. |

| Machine Learning Libraries (e.g., for ANNs) | Used to develop surrogate models that approximate complex physical simulations. Dramatically accelerates analysis and enables new pattern discovery in high-dimensional data [24]. | Applied to create efficient emulators for fire progression [24] or other phenomena, facilitating more extensive uncertainty quantification and scenario testing. |

| Toxic Equivalency Factors (TEFs) | A set of multiplicative factors used to convert concentrations of various compounds in a mixture into an equivalent concentration of a single reference compound [4]. | Essential reagent in chemical mixture risk assessment (e.g., for PAHs, dioxins) to calculate a combined risk index for both deterministic and probabilistic assessments [4]. |

| High-Performance Computing (HPC) Cloud Platform | Provides the scalable computational resources required for large-scale Monte Carlo simulations, complex model runs, and training machine learning models [24] [25]. | Enables modern, high-definition catastrophe modeling and advanced PRA that would be infeasible on local computing resources [25]. |

The Central Role of Uncertainty in All Risk Assessments

The systematic evaluation of risk is foundational to scientific fields ranging from environmental protection to drug development and aerospace engineering. At the core of this practice lies a fundamental methodological divide: deterministic versus probabilistic risk assessment. Deterministic approaches use single, fixed values (point estimates) as inputs to produce a single output, offering simplicity and clarity at the cost of obscuring variability and uncertainty [2]. In contrast, probabilistic approaches explicitly incorporate distributions of data, using tools like Monte Carlo simulations or Bayesian networks to generate a range of possible outcomes, thereby quantifying uncertainty and variability [2] [23].

This guide provides a comparative analysis of these two paradigms, focusing on their treatment of uncertainty—the central, inescapable element in all risk assessments. We objectively compare their performance through experimental data, detailed protocols, and visualizations, framed within the broader thesis that effectively characterizing uncertainty is not merely an optional refinement but a critical determinant of assessment accuracy, utility, and decision-support capability.

Methodological Comparison: Deterministic vs. Probabilistic Assessment

The choice between deterministic and probabilistic methodologies dictates how uncertainty is represented, propagated, and communicated. The table below summarizes their core technical distinctions.

Table 1: Core Technical Comparison of Deterministic and Probabilistic Risk Assessments

| Aspect | Deterministic Assessment | Probabilistic Assessment |

|---|---|---|

| Fundamental Inputs | Single point estimates (e.g., high-end, typical, or bounding values) [2]. | Probability distributions for key parameters (e.g., normal, lognormal) [2]. |

| Core Analytical Tools | Simple equations and models; standardized methods; sensitivity analysis via changing point estimates [2]. | Complex models and simulations (e.g., Monte Carlo, Bayesian networks); sensitivity analysis based on probability distributions [16] [2]. |

| Nature of Outputs | A single point estimate of risk or exposure (e.g., a "high-end" value) [2]. | A distribution of possible risk estimates (e.g., a probability curve across a risk spectrum) [2] [23]. |

| Treatment of Uncertainty & Variability | Limited quantitative characterization; approximated by running multiple deterministic scenarios [2]. | Explicitly quantifies variability in populations and uncertainty in knowledge through output distributions [2] [23]. |

| Primary Use Cases | Screening-level assessments, prioritization, situations with limited data or resources [2]. | Refined assessments, decision-making under uncertainty, cost-benefit analysis, and prioritization of complex systems [16] [2]. |

| Communication & Interpretation | Straightforward and easy to communicate (a single number or category) [2]. | Requires more effort to explain; provides richer context (e.g., likelihood of exceeding a threshold) [2]. |

The following diagram illustrates the fundamental logical and procedural differences between the two assessment workflows.

Deterministic vs Probabilistic Risk Assessment Workflow

Experimental Data & Comparative Performance

A comparative study on microbial risk from combined sewer overflows (CSOs) provides clear experimental data on the performance differences between the two approaches [16].

Table 2: Comparative Experimental Results from Microbial Risk Assessment Study [16]

| Assessment Aspect | Deterministic Equation Approach | Probabilistic Bayesian Network Approach |

|---|---|---|

| Study Object | 89 combined sewer overflows (CSOs) upstream of four drinking water intakes. | Same 89 CSOs. |

| Primary Output | Single risk rating per CSO (Very Low, Low, Medium, High, Very High). | Probability distribution across the five risk levels for each CSO. |

| Scenario Analysis Capability | Evaluates one scenario at a time. Limited ability to integrate multiple factors simultaneously. | Can simultaneously consider and integrate multiple, complex scenarios (e.g., varying rainfall, population). |

| Data Gap Handling | Left 42 out of 89 CSOs unassessed due to missing input data. | Assessed all 89 CSOs by inferring missing data through probabilistic relationships. |

| Risk Prioritization | Assigned the same risk level to many CSOs, limiting prioritization for action plans. | Provided differentiated risk probabilities, enabling effective ranking and cost-effective prioritization. |

| Identified Key Drivers | Over-emphasized distance to water intake, neglecting other factors like overflow duration. | Sensitivity analysis revealed population in drainage basin, overflow frequency, and duration as top risk drivers. |

| Tendency for Bias | Often overestimated risk for CSOs close to the intake and underestimated risk for infrequent overflows. | Provided a more balanced assessment by weighing all contributing factors probabilistically. |

The probabilistic approach in this study used a Bayesian Network, a graphical model that represents cause-and-effect relationships between variables (nodes) and uses conditional probability tables to quantify their dependencies. The structure of such a model for the CSO study is conceptualized below.

Bayesian Network for Probabilistic Microbial Risk Assessment

Detailed Experimental Protocols

To replicate or build upon the comparative findings, researchers require detailed methodologies. Below are protocols derived from the cited studies.

Protocol A: Deterministic Risk Scoring via Equation

This protocol is based on the deterministic method used for CSO risk [16].

- Parameter Identification & Point Estimate Selection: For each risk source (e.g., a CSO), identify required parameters (e.g., distance to receptor, pipe diameter, population served). For each parameter, select a single, health-protective point estimate (e.g., a high-end or reasonable maximum exposure value) [2].

- Equation Application: Input the selected point estimates into a predefined deterministic risk equation. This equation is typically a weighted sum or product of the normalized parameter values.

- Categorization: Map the calculated numerical score to a predefined risk category (e.g., on a scale from "Very Low" to "Very High").

- Sensitivity Analysis (Deterministic): Conduct a one-at-a-time sensitivity check. Vary each input parameter by a fixed percentage (e.g., ±10%) while holding others constant, and observe the change in the final risk score to identify influential parameters [2].

Protocol B: Probabilistic Assessment via Bayesian Network Construction

This protocol outlines the construction of a Bayesian Network (BN) model, as implemented in the comparative study [16].

- Node Definition & Structural Development: Define key variables as network nodes (e.g., "Overflow Frequency," "Population," "Final Risk"). Engage domain experts to structure the nodes in a Directed Acyclic Graph (DAG) that represents causal relationships (e.g., "Population" influences "Hazard Level").

- Parameterization with Probability Distributions:

- For input nodes (root causes), define probability distributions based on historical data or literature (e.g., lognormal distribution for population served).

- For child nodes, create Conditional Probability Tables (CPTs) that quantify the likelihood of each state given the states of its parent nodes. These can be informed by data, expert elicitation, or mechanistic models.

- Model Validation & Calibration: Use a portion of available data to validate the model. Compare BN predictions with observed outcomes. Calibrate CPTs to ensure the model accurately reflects the system's behavior.

- Probabilistic Inference & Analysis:

- Risk Calculation: Enter evidence for a specific case into the BN. Use inference algorithms (e.g., Markov Chain Monte Carlo) to compute the posterior probability distribution of the "Final Risk" node.

- Sensitivity Analysis (Probabilistic): Use variance reduction or other probabilistic measures to rank the contribution of each input node to the uncertainty in the final risk output. This quantitatively identifies the most influential factors [16].

The Scientist's Toolkit: Research Reagent Solutions

Conducting robust comparative risk assessments requires specialized tools and conceptual frameworks.

Table 3: Essential Research Toolkit for Comparative Risk Assessment

| Tool / Solution | Type | Primary Function in Risk Assessment | Key Application |

|---|---|---|---|

| Bayesian Network Software (e.g., Netica, AgenaRisk) | Software Platform | Provides an environment to build, parameterize, run inferences, and perform sensitivity analysis on graphical probabilistic models. | Implementing probabilistic risk models that handle data gaps and complex variable interactions [16]. |

| Monte Carlo Simulation Add-ins (e.g., @RISK, Crystal Ball) | Software / Library | Adds iterative random sampling from defined probability distributions to spreadsheet models (like Excel), generating output distributions. | Conducting probabilistic exposure and risk assessments where models are equation-based [2]. |

| R Studio with Bayesian Packages (e.g., 'bnlearn', 'rstan') | Programming Environment | Offers open-source, reproducible frameworks for statistical computing, including advanced Bayesian analysis and custom probabilistic model building. | Flexible development and analysis of risk assessment models; used in recent comparative studies [16]. |

| Read-Across / Category Approach | Methodological Framework | Uses data from structurally or functionally similar substances (analogues) to predict properties of a target substance with missing data [27]. | Filling data gaps in chemical risk assessment for drug development, reducing uncertainty when direct data is absent [27]. |

| Medical Extensible Dynamic PRA Tool (MEDPRAT) | Specialized Model | A dynamic probabilistic risk assessment tool that forecasts medical event timelines and treatment efficacy for crew health in space missions [28]. | Informing medical kit design and research investment by quantifying health risk under resource constraints—analogous to clinical trial risk planning [28]. |

Discussion: Implications for Drug Development and Research

The comparative data underscores that probabilistic frameworks are superior for decision-making in complex, data-limited environments. In drug development, this is highly relevant.

- Optimizing Development Strategy: Similar to NASA's use of PRA for medical kit optimization [28], pharmaceutical companies can use these methods to quantify the risk of clinical trial failure due to safety or efficacy uncertainties, informing smarter portfolio investment.

- Filling Preclinical Data Gaps: The read-across approach [27] is a specific probabilistic technique used in chemical safety assessment to infer a compound's toxicity from analogues, directly addressing uncertainty when novel compounds lack data.

- Prioritizing Risk Mitigation: Just as the Bayesian network prioritized which sewer overflows to address first [16], probabilistic methods can help prioritize which drug candidate safety risks require additional testing or which manufacturing process variables are most critical to control.

The central role of uncertainty mandates a shift from seeking a single, definitive risk answer to characterizing a risk profile. While deterministic methods offer speed for initial screening, probabilistic assessment provides the necessary depth for the high-stakes, uncertainty-rich landscape of modern scientific research and development.

Tools, Techniques, and Real-World Applications in Drug Development and Research

In the rigorous landscape of modern drug development, risk assessment is the cornerstone of ensuring patient safety and therapeutic efficacy. This process systematically evaluates potential hazards, requiring methodologies that are both scientifically robust and decision-ready. The central thesis of this guide is that a pragmatic, “fit-for-purpose” strategy—one that strategically employs deterministic tools for clarity and speed, while reserving probabilistic methods for characterizing irreducible uncertainty—is essential for efficient and defensible risk-based decision-making [29]. This discussion is framed within the broader, ongoing scholarly comparison of deterministic and probabilistic risk assessment paradigms, a debate highlighted by studies in environmental health and complex engineering systems [30] [4].

Deterministic assessment provides a fixed, reproducible output for a given set of inputs, often yielding a single point estimate such as a “worst-case” risk value. Its strength lies in simplicity, transparency, and regulatory familiarity, making it indispensable for establishing clear safety boundaries, screening-level analyses, and communicating base conclusions [31] [32]. In contrast, probabilistic risk assessment (PRA) embraces variability and uncertainty explicitly. By using probability distribution functions (PDFs) for inputs, it generates a distribution of possible outcomes, quantifying the likelihood of different risk levels [31] [4]. This approach is critical for understanding the confidence in estimates, optimizing resource allocation, and making informed decisions when variability is significant.

The U.S. Food and Drug Administration (FDA) recognizes the growing integration of advanced modeling, including AI and quantitative frameworks like Model-Informed Drug Development (MIDD), across the drug lifecycle [33] [29]. The choice between deterministic and probabilistic tools is not a matter of superiority but of contextual appropriateness. This guide provides a comparative analysis, supported by experimental data and protocols, to equip researchers and drug development professionals with the knowledge to deploy a deterministic toolkit effectively and understand when to advance to a probabilistic analysis.

Conceptual Comparison of Deterministic and Probabilistic Paradigms

At their core, deterministic and probabilistic models represent fundamentally different approaches to handling knowledge and uncertainty in complex systems.

Deterministic models operate on fixed rules and inputs to produce a single, repeatable output. In computing, a deterministic function like getWeather(“NYC”) fetches data in the exact same way every time [34]. In risk assessment, this translates to using discrete, often conservative values (e.g., 95th percentile exposure, maximum measured concentration) to calculate a singular risk quotient or hazard index. The logic is transparent and auditable, as every step from input to conclusion can be traced [32]. This makes deterministic models highly reliable within their defined scope and ideal for establishing compliance with bright-line safety standards, conducting initial hazard rankings, or in scenarios where input variability is negligible [31] [35].

Probabilistic (or stochastic) models, however, incorporate randomness and variability. They use inputs defined by distributions (e.g., body weight in a population, chemical concentration across a site) and run numerous simulations—often thousands—to produce an ensemble of possible outcomes [31]. A prime example is the Monte Carlo simulation, used in everything from financial forecasting to the risk analysis of NASA's Cassini mission [30]. The result is not a single number but a risk curve, showing the probability of exceeding a given risk level. This provides a more realistic picture of real-world scenarios, where multiple variable factors interact [4]. It is particularly valuable for identifying and prioritizing key sources of uncertainty, quantifying confidence, and supporting optimized, risk-informed decisions when the deterministic "worst-case" may be overly conservative and economically burdensome.

The following table summarizes the key philosophical and practical distinctions between these two paradigms.

Table: Foundational Comparison of Deterministic and Probabilistic Assessment Paradigms

| Aspect | Deterministic Assessment | Probabilistic Assessment |

|---|---|---|

| Core Philosophy | Fixed rules, predictable outcomes, "worst-case" focus. | Incorporates randomness and variability to model a range of possible realities. |

| Uncertainty Handling | Typically addressed implicitly through conservative input assumptions. | Explicitly quantified and characterized using probability distributions. |

| Output | Single point estimate (e.g., Risk Quotient = 0.5). | Probability distribution of outcomes (e.g., 90% of simulations show Risk < 0.7). |

| Key Strength | Simplicity, transparency, speed, regulatory familiarity, clear audit trail. | Realism, quantifies likelihoods, identifies key uncertainty drivers, supports robust decision-making. |

| Primary Limitation | Can be overly simplistic, may misrepresent true variability, "worst-case" can be misleading. | Computational complexity, requires more data and expertise, results can be harder to communicate. |

| Ideal Use Context | Screening-level assessments, compliance checks against fixed standards, stable systems with low variability, communicating clear safety boundaries. | Refined analysis for critical decisions, optimizing designs or interventions, systems with high input variability, cost-benefit analysis. |

Comparative Analysis in Pharmaceutical and Environmental Risk Assessment

The theoretical distinctions between deterministic and probabilistic approaches manifest concretely in scientific practice. Comparative studies highlight how methodological choice directly impacts risk estimates and their interpretation.

A seminal 2007 study directly compared both methods at sites contaminated with polycyclic aromatic hydrocarbons (PAHs) [4]. The deterministic approach used single, conservative values for exposure factors (e.g., soil ingestion rate, body weight) and chemical concentration to calculate a hazard index (HI). The probabilistic approach used probability distributions for these same inputs in a Monte Carlo simulation, running 10,000 iterations to generate a distribution of possible HI values.

The findings were revealing. The deterministic “worst-case” estimate was consistently higher than the central tendency (e.g., the median) of the probabilistic distribution. However, the probabilistic output showed a range of risk, with a measurable probability that the true risk could exceed the deterministic estimate. Furthermore, the study demonstrated that incorporating Toxic Equivalency Factors (TEFs) to account for the combined effect of multiple PAHs raised risk estimates under both methods, but provided a more complete and defensible toxicological basis [4].

Table: Comparative Results from a PAH-Contaminated Site Risk Assessment [4]

| Metric | Deterministic Assessment | Probabilistic Assessment (10,000 Iterations) | Implication |

|---|---|---|---|

| Output Form | Single Hazard Index (HI) = 2.1 | Distribution of HI values (Mean: 0.8, 95th Percentile: 2.3) | Deterministic result aligned with a high percentile of the probabilistic output, confirming its "bounding" nature. |

| Key Finding | HI > 1, indicating a potential concern. | 22% of simulated HI values were > 1. | Probabilistic analysis quantifies the likelihood of exceedance, aiding in priority setting. |

| Uncertainty Insight | Uncertainty is hidden within conservative point values. | Sensitivity analysis identified exposure factor variability (e.g., ingestion rate) as the largest contributor to output variance. | Guides targeted data collection to reduce overall uncertainty most effectively. |

In drug development, this translates to the use of Model-Informed Drug Development (MIDD) tools. A Physiologically Based Pharmacokinetic (PBPK) model can be run deterministically to predict a maximum plasma concentration (C~max~) for a "virtual average" patient, which is useful for dose selection [29]. When run probabilistically—by varying physiological parameters (organ weights, blood flows, enzyme levels) across a virtual population—it generates a predicted distribution of C~max~ across thousands of virtual patients. This can be used to assess the probability of exceeding a toxic threshold in subpopulations (e.g., patients with renal impairment), a nuanced insight impossible to gain from a single point estimate.

Experimental Protocols and Methodological Workflows

The validity of any risk assessment hinges on a rigorous, documented methodology. Below are generalized protocols for implementing deterministic and probabilistic analyses, drawing from established practices in environmental and engineering risk assessment [30] [4].

Protocol for a Deterministic Point Estimate Risk Assessment

Objective: To derive a single, health-protective risk estimate for initial screening or compliance purposes.

- Problem Formulation & Conceptual Model: Define the source, receptors (e.g., human populations, ecosystems), and exposure pathways (e.g., ingestion, inhalation). Create a diagrammatic conceptual site model.

- Data Collection & Parameter Selection: Gather contaminant concentration data. For each exposure parameter (e.g., exposure frequency, body weight, ingestion rate), select a single, health-protective value. This is typically a reasonable maximum exposure (RME) value, such as the 95th percentile from a population survey or the maximum detected concentration.

- Risk Calculation: Apply a standard risk equation (e.g., Hazard Quotient = Daily Dose / Reference Dose). Use the selected point values for all parameters.

- Uncertainty Discussion: Qualitatively discuss the conservatisms embedded in the selected input values and acknowledge that the true risk is likely lower than the calculated value, but its magnitude is unknown.

Deterministic Risk Assessment Workflow

Protocol for a Probabilistic Monte Carlo Risk Assessment

Objective: To characterize the distribution of possible risk outcomes and quantify the influence of input uncertainty.

- Problem Formulation & Conceptual Model: (Identical to Step 1 above).

- Data Analysis & Distribution Fitting: For variable parameters (e.g., concentration, body weight), fit appropriate probability distribution functions (PDFs). Common choices include lognormal, normal, or triangular distributions. This requires robust datasets.

- Model Implementation & Simulation: Program the risk equation in a software capable of Monte Carlo simulation (e.g., R, @RISK, Crystal Ball). For each iteration (typically 10,000+), the software randomly samples a value from each input PDF and calculates a corresponding risk value.

- Output Analysis & Interpretation: Analyze the resulting distribution of risk outcomes. Report key statistics: mean, median, and percentiles (e.g., 5th, 50th, 95th). Perform a sensitivity analysis (e.g., rank-order correlation) to identify which input variables contribute most to output variance.

- Probabilistic Decision-Making: Use the output distribution to inform decisions. For example, "The probability of risk exceeding the target is 5%," or "Reducing uncertainty in Parameter X will most effectively refine our risk estimate."

Probabilistic Risk Assessment Workflow

Validation: As demonstrated in high-stakes fields, a key practice is validating one methodology against another. In the Cassini Mission PRA, a complex Monte Carlo and event-tree analysis was validated using an independent Discrete Dynamic Event Tree (DDET) methodology, confirming that different dynamic probabilistic methods yield consistent results given the same assumptions [30].

The Scientist's Toolkit: Research Reagent Solutions for Risk Assessment

Selecting the right "reagents"—the models, software, and data sources—is critical for conducting a sound assessment. The following table details essential components of a modern risk assessment toolkit, aligned with the "fit-for-purpose" principle [29].

Table: Research Reagent Solutions for Risk Assessment

| Tool Category | Specific Tool / Technique | Function & Purpose | Deterministic or Probabilistic Utility |

|---|---|---|---|

| Conceptual Model | Pathway Diagram, Influence Diagram | Visually maps sources, exposure routes, receptors, and key relationships to ensure a complete assessment framework. | Foundational for both. |

| Exposure Assessment | EPA Exposure Factors Handbook, Bioavailability Studies | Provides validated point estimates (e.g., average soil ingestion) or data to develop distributions for exposure parameters. | D: Source for RME values. P: Source for distribution parameters. |

| Toxicology | EPA Integrated Risk Information System (IRIS), WHO Toxic Equivalency Factors (TEFs) | Provides critical health-based benchmarks: Reference Doses (RfD), Cancer Slope Factors (CSF), and relative potency factors for mixtures. | Core input for risk equations in both. |

| Fate & Transport | Jury Model, PRZM, MODFLOW | Predicts the movement and concentration of chemicals in the environment (air, water, soil). | D: Used with conservative scenarios. P: Input parameters can be distributed. |

| Pharmacokinetics | Physiologically Based Pharmacokinetic (PBPK) Modeling [29] | Mechanistically simulates the absorption, distribution, metabolism, and excretion of a drug or chemical in the body. | D: Predicts exposure for a "typical" individual. P: Virtual population simulations. |

| Statistical & Simulation Software | R (with mc2d or fitdistrplus packages), @RISK, Crystal Ball, Python (NumPy, SciPy) |

Performs distribution fitting, executes Monte Carlo simulations, and conducts sensitivity/uncertainty analysis. | Primarily for probabilistic analysis. |

| Quantitative Decision Support | Two-Dimensional Monte Carlo, Bayesian Belief Networks | Separates variability (between-individual differences) from uncertainty (lack of knowledge) or combines prior evidence with new data. | Advanced probabilistic methods for high-stakes decisions. |

The deterministic toolkit, centered on simple models and bounding estimates, remains an indispensable component of the risk assessor's arsenal. Its power lies in providing clear, auditable, and health-protective answers to well-defined questions, making it ideal for screening, compliance, and initial hazard characterization [31] [32]. As evidenced in drug development, deterministic applications of PBPK or dose-response models are crucial for establishing initial safe doses and designing early-stage trials [29].

However, the scientific and regulatory landscape is evolving. The FDA and other agencies are increasingly receptive to probabilistic and quantitative approaches like MIDD that provide a richer, more informative evidence base for decision-making [33] [29]. The key is strategic deployment.

Strategic Guidance for Drug Development Professionals:

- Start Deterministic: Use deterministic models for "bounding" analyses, rapid prototype testing of risk hypotheses, and in contexts where regulations demand a fixed standard. They are excellent tools for internal go/no-go decisions at early stages [34] [35].

- Advance to Probabilistic to Inform: When facing significant variability (e.g., in patient physiology, disease progression, or market conditions), high-stakes decisions, or the need to optimize a strategy (e.g., clinical trial design, dose selection), employ probabilistic methods. They quantify confidence and likelihood, turning uncertainty from a liability into a measurable variable [30] [4].

- Adopt a "Fit-for-Purpose" Mindset: No single tool is universally best. The choice must be driven by the Question of Interest (QOI) and the Context of Use (COU) [29]. Ask: "What decision does this analysis support, and what level of certainty is required?"

- Seek Hybrid Approaches: The most powerful modern systems often combine both. A deterministic rule-based "guardrail" can ensure safety and compliance, while a probabilistic engine explores optimized strategies within those safe boundaries [32] [35]. Similarly, a probabilistic overall assessment may use deterministic sub-models for specific components.

In conclusion, mastery of the deterministic toolkit is fundamental. Its judicious application, coupled with a clear understanding of when to transition to more sophisticated probabilistic analyses, defines the modern, effective risk assessor and drug development scientist. By framing deterministic tools not as the endpoint but as a vital step in a tiered, fit-for-purpose strategy, researchers can build more efficient, defensible, and impactful development programs.

Within the broader thesis context comparing deterministic and probabilistic risk assessment, probabilistic methods provide a structured approach to quantify uncertainty, model complex interactions, and estimate the likelihood of adverse outcomes. Unlike deterministic approaches that often rely on conservative, single-point estimates, probabilistic tools such as Monte Carlo Simulation (MCS), Event Tree Analysis (ETA), Fault Tree Analysis (FTA), and Human Reliability Analysis (HRA) incorporate variability and randomness, offering a more realistic and informative risk profile [36] [37]. This guide objectively compares the performance, application, and experimental validation of these four core techniques, focusing on their utility for researchers and professionals in fields like drug development, where understanding low-probability, high-consequence events is critical.

Methodological Comparison and Performance Data

The four primary probabilistic tools differ in their foundational logic, direction of analysis, and output. The following table summarizes their core characteristics [38] [37] [39].

Table 1: Core Characteristics of Probabilistic Risk Assessment Methods

| Feature | Monte Carlo Simulation (MCS) | Fault Tree Analysis (FTA) | Event Tree Analysis (ETA) | Human Reliability Analysis (HRA) |

|---|---|---|---|---|

| Methodological Approach | Simulation-based; relies on repeated random sampling [40]. | Deductive, logic-based (Boolean & dynamic gates) [39]. | Inductive, sequence-based [38]. | Performance-shaping factor-based; often uses expert judgment [41]. |

| Analytical Direction | Not applicable (samples a defined model). | Top-down (from system failure to root causes) [37]. | Bottom-up (from initiating event to consequences) [37]. | Task-focused (analysis of human actions within a system context). |

| Primary Output | Probability distributions, sensitivity analyses, performance metrics (bias, MSE) [42] [43]. | Probability of top event, critical failure paths (minimal cut sets) [36]. | Probabilities and severities of various consequence scenarios [38]. | Human Error Probability (HEP) for a specific action or diagnosis [41]. |

| Quantification Basis | Input probability distributions and system model [40]. | Failure probabilities of basic components/events [36]. | Probabilities of success/failure of safety functions [38]. | Performance Influencing Factors (PIFs), generic error rates, expert judgment [41] [44]. |