Data Validation and Qualification in Ecotoxicology: A Modern Framework for Reliable NAMs and Regulatory Acceptance

This article provides a comprehensive guide to data validation and method qualification within modern ecotoxicology, tailored for researchers and drug development professionals.

Data Validation and Qualification in Ecotoxicology: A Modern Framework for Reliable NAMs and Regulatory Acceptance

Abstract

This article provides a comprehensive guide to data validation and method qualification within modern ecotoxicology, tailored for researchers and drug development professionals. It covers the foundational principles distinguishing validation from usability assessments and explores the evolving regulatory landscape driven by New Approach Methodologies (NAMs). The scope includes practical methodologies for applying validation qualifiers, troubleshooting common data integrity challenges, and optimizing workflows. Furthermore, it examines comparative validation strategies for traditional versus NAMs, including AI-powered tools, and outlines pathways for regulatory qualification. By synthesizing current guidelines, trends, and best practices, this resource aims to equip scientists with the knowledge to generate defensible, high-quality ecotoxicological data that meets stringent regulatory standards and advances the 3Rs principles.

Understanding Data Validation and Qualification: Core Principles and the Evolving Ecotoxicology Landscape

Technical Support Center: Troubleshooting Data Quality in Ecotoxicology Research

This technical support center is designed for researchers and scientists navigating data quality assessment in ecotoxicology. It provides clear guidelines to troubleshoot common issues encountered during Data Validation (DV) and Data Usability Assessments (DUA), ensuring your data is both technically sound and fit for its intended purpose in regulatory decision-making or scientific publication.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between Data Validation (DV) and a Data Usability Assessment (DUA)? Data Validation (DV) is a formal, technical review of analytical data against specific methodological and contractual requirements. It determines the analytical quality and assigns standardized qualifiers (e.g., "J" for estimated) to individual data points based on quality control (QC) performance [1]. In contrast, a Data Usability Assessment (DUA) is a scientific and strategic evaluation that occurs after validation. It asks, "Can we use this data for our specific project objectives?" A DUA interprets validation flags in the context of your study's goals, such as whether a bias is significant relative to a regulatory threshold [1] [2].

Q2: When is a full DV required versus a limited DV or a DUA? The choice depends on your project's Data Quality Objectives (DQOs) and regulatory context [1].

- Full DV is often required for definitive, decision-making data in regulated programs (e.g., EPA Superfund sites). It involves intensive checks of all raw data and QC [1].

- Limited DV or a DUA may be sufficient for screening studies, research, or when data is used in a weight-of-evidence approach. These are less resource-intensive and focus on key parameters affecting project objectives [1].

Q3: How do I interpret common validation qualifiers in my dataset? Validation qualifiers are standardized codes appended to data values. Key examples include [1]:

- UJ: The analyte was not detected above the reported level. The value is an upper-bound estimate.

- J: The analyte was detected, but QC criteria were not fully met. The value is an estimated concentration.

- R: The data is rejected due to serious QC failure and should not be used. Always consult the specific validation report for the exact definitions applied to your data.

Q4: A key QC sample in my batch failed. Does this invalidate all my experimental data? Not necessarily. This is a primary function of DV. The validator will assess the nature and extent of the failure and apply qualifiers (like "J") only to the specific samples and analytes affected by that batch. Other data in the batch that met all criteria may be considered usable [1]. The DUA will then evaluate the impact of those qualified data points on your study's conclusions.

Q5: Our DUA concluded some data has "high bias." How should we proceed in our ecotoxicological risk assessment? A DUA finding of "high bias" means the reported concentrations are likely higher than the true value [1]. In a risk assessment context, this can be conservatively protective. You should:

- Document the finding and its potential magnitude.

- Determine if the bias affects contaminants driving the risk.

- Consider running a sensitivity analysis with and without the biased data, or using the data as an upper-bound estimate in your models.

Troubleshooting Guide: Common Data Quality Issues

| Problem | Likely Cause | Recommended Action | Related Concept |

|---|---|---|---|

| Inconsistent qualifiers across similar datasets. | Different reviewers, labs, or validation guidelines were used. | Standardize protocols upfront. Request a re-review under a unified guideline. Perform a DUA to harmonize interpretation for your project. | DV Standardization |

| Data meets technical DV criteria but seems biologically implausible. | Matrix interference, sample contamination, or incorrect DQOs. | Review field and lab metadata. Consult a subject matter expert (e.g., toxicologist). The DUA should flag this as a usability concern. | DUA: Fitness-for-Purpose |

| Unclear how to proceed with "J"-qualified (estimated) data. | The implications of the estimation on the study goal are not defined. | Refer to the DUA. It should explicitly state whether "J" data can be used quantitatively, as a screening tool, or not at all for your specific objective. | DUA Interpretation |

| Critical project decision point is reached, but DV is incomplete. | Poor project planning or delayed lab deliverables. | Prevention is key: Involve data reviewers early. If late, a rapid DUA on available QC can inform interim decisions, pending full DV. | Project Planning |

Case Study: DUA in Action – Improving Pharmacotherapy Safety

A 2021 study demonstrated the practical power of usability assessment. Researchers linked patient laboratory data (e.g., kidney function) directly to outpatient drug prescriptions. Community pharmacists, performing a form of DUA, used this linked data to check prescription appropriateness [3].

- Result: Prescription inquiries related to lab data surged from 4 per year to over 640 annually, with 132-224 inquiries per year leading to critical prescription changes [3].

- Impact: Over four years, this process avoided 153 contraindicated prescriptions and 84 exacerbations of adverse drug reactions [3].

Ecotoxicology Parallel: This mirrors how a DUA uses validated environmental data (e.g., chemical concentration "J") alongside site-specific data (e.g., sensitive species presence) to "flag" and prevent inappropriate risk management decisions.

Experimental Protocols for Key Assessments

Protocol 1: Conducting a Tiered Analytical Data Validation

- Objective: To assign definitive quality qualifiers to analytical chemistry data.

- Method:

- Verification: Check data package completeness, correct transcription, and compliance with chain-of-custody [2].

- Performance Evaluation: Review QC samples (blanks, spikes, duplicates) against method-specified criteria for precision and accuracy [1].

- Raw Data Review (Full DV): Recalculate a subset of results from raw instrument data to verify final reported values [1].

- Qualifier Assignment: Apply standardized qualifiers (UJ, J, R) to each datum based on the QC evaluation [1].

- Deliverable: A validation report listing qualified data and an explanation of all assigned qualifiers.

Protocol 2: Performing a Data Usability Assessment

- Objective: To determine the fitness of a validated dataset for a specific project decision.

- Method:

- Review Project DQOs: Re-state the original decision-making goals and action levels [2].

- Integrate Validation Findings: Summarize the types, magnitudes, and frequencies of validation qualifiers [1].

- Contextual Evaluation: For each major qualifier, assess its impact relative to the DQOs. Example: Is an estimated ("J") concentration for Compound X at 5 µg/L usable if the regulatory screening level is 100 µg/L? [1].

- Make a Usability Determination: Categorize data as: (a) Usable as-is, (b) Usable with stated limitations (e.g., for screening only), or (c) Not usable for the intended purpose.

- Deliverable: A DUA report that translates technical qualifiers into clear, actionable guidance for the project team.

The Scientist's Toolkit: Essential Reagents & Materials

| Item | Function in DV/DUA Context | Key Consideration for Ecotoxicology |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide known, traceable analyte quantities to establish accuracy and calibrate instruments during analysis, forming the basis for DV. | Ensure CRMs match your sample matrix (e.g., sediment, tissue) to account for extraction efficiency. |

| Laboratory Control Samples (LCS) / Matrix Spikes | Measured alongside actual samples to quantify accuracy and precision within the specific sample batch, a core element of DV. | Spike with relevant analyte suites (e.g., specific PFAS compounds, pesticide mixtures) expected at the site. |

| Method Blanks & Field Blanks | Detect contamination from laboratory procedures or field activities. Findings directly lead to "R" or "J" qualifiers in DV. | Critical for ultra-trace analysis of compounds like dioxins or metals where background contamination is common. |

| Electronic Data Deliverable (EDD) Template | Standardized format for labs to report data and QC. Enables efficient electronic verification and reduces transcription errors [2]. | Must be aligned with your data management system and include all required metadata (e.g., detection limits, dilution factors). |

| Data Quality Assessment Software | Automates screening of large datasets against PARCCS (Precision, Accuracy, etc.) criteria, streamlining the verification step [2]. | Software should be configurable with project-specific DQOs and regulatory thresholds to support the DUA. |

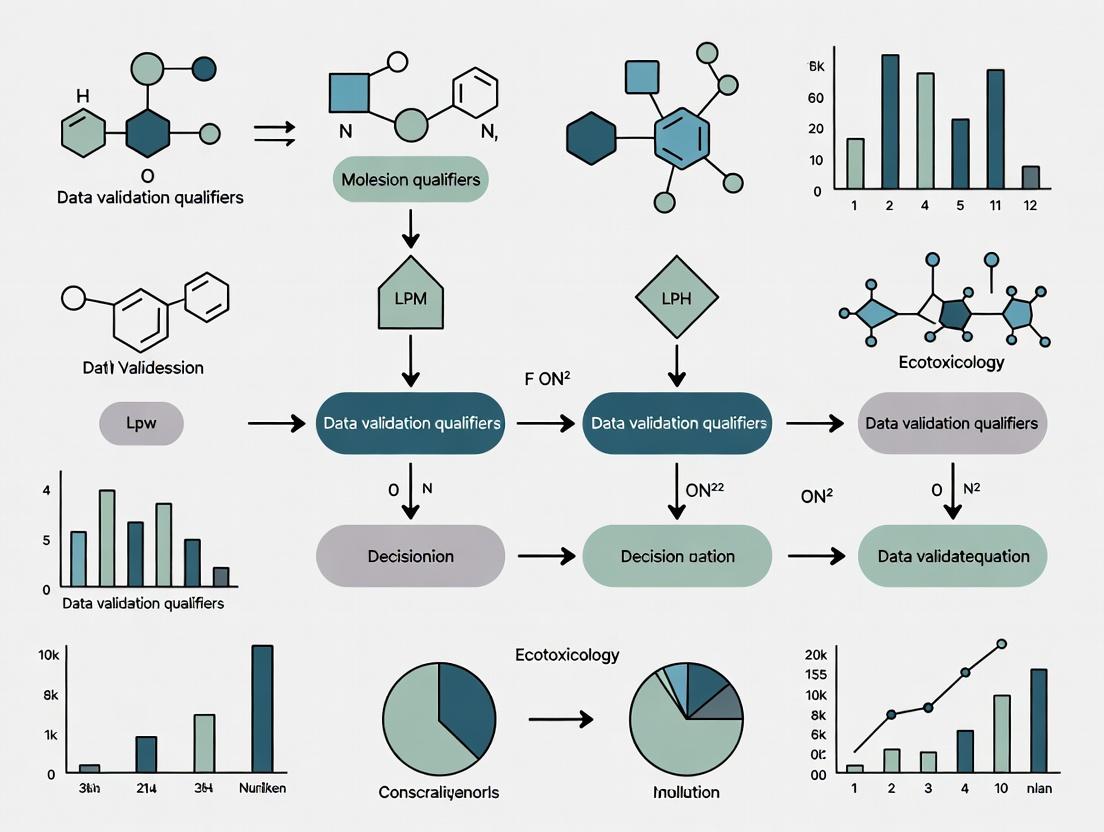

Visualizing Workflows and Relationships

Diagram 1: Data Assessment Workflow in Ecotoxicology

Diagram 2: Factors Informing a Data Usability Assessment (DUA)

Technical Support Center: Data Validation & Qualifiers

Welcome to the technical support center for data validation in ecotoxicology and regulatory toxicology research. This resource is designed to help researchers, scientists, and drug development professionals interpret data quality flags, troubleshoot common analytical issues, and implement robust validation protocols to ensure the defensibility of data used for critical decisions.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between data verification and data validation in the context of analytical chemistry data? Data verification is the initial process of evaluating a data set's completeness, correctness, and conformance against method, procedural, or contractual requirements [2]. This includes reviewing chains of custody and comparing data deliverables. Validation is a subsequent, more formal process that determines the analytical quality of the data and documents how any failures to meet requirements impact the data's usability [2]. Verification checks if criteria are met; validation qualifies how misses affect the data.

Q2: What do the common EPA data qualifiers 'J', 'U', and 'B' specifically indicate? These qualifiers flag specific data quality conditions [4]:

- J (Estimated Value): The analyte is identified but the concentration is between the Estimated Detection Limit (EDL) and the Reporting Detection Limit (RDL) [4].

- U (Not Detected): The compound was analyzed for but not found. The reported value is the EDL [4].

- B (Blank Contamination): The analyte was found in both the sample and the associated blank, indicating potential contamination [4].

Q3: Why is third-party data validation recommended even when not explicitly required by a regulator? Independent validation identifies reporting errors common even in accredited laboratories [5]. Since project data directly drive remediation decisions and associated costs, ensuring data are qualitatively and quantitatively correct is fundamental. Early identification of problems saves significant time, money, and frustration during investigation and remediation [5].

Q4: What are the unique analytical challenges for ecotoxicology studies, such as fish toxicity tests? Key challenges include achieving extremely low Limits of Quantification (LOQ) to measure trace concentrations (ppb/ppt) and quantifying unstable test items in aqueous media over exposure periods (e.g., 24-96 hours) [6]. Test item solubility issues and the risk of contamination causing ghost peaks further complicate analysis [6].

Q5: How is the final usability of a validated data set determined? Data usability is a fitness-for-purpose assessment made by the project team after verification and validation are complete [2]. It considers whether the known quality of the data is sufficient for its intended use, factoring in project objectives, the conceptual site model, and regulatory decision rules [2].

Troubleshooting Guides

Problem 1: Potential False Positive or False Negative Results

- Symptoms: A compound of concern is reported present (or absent) in samples, but the result is inconsistent with other lines of evidence or seems anomalous.

- Investigation & Resolution:

- Review Chromatograms/MS Spectra: Re-examine the raw data for correct qualitative identification. False negatives can occur if a detected compound is not reported by the analyst [5]. False positives can arise from non-target compounds with interfering masses [5].

- Check for Blank Contamination (B Flag): If the analyte is also present in blanks, the sample result may be due to systemic contamination from labware, reagents, or the sample preparation environment [5] [4].

- Assess QC Failures (Q Flag): Investigate any failed quality control criteria (e.g., surrogate spike recovery). A 'Q' flag indicates potential inaccuracy for that analyte across all associated samples [4].

- Action: For interference, request re-quantitation using alternate mass ions or reanalysis with an alternate technique [5]. For blank contamination, the lab should perform root cause analysis. Sample results may be negated, or the set may need re-extraction [5] [4].

Problem 2: Sample Results Rejected Due to Holding Time or Preservation Issues

- Symptoms: Sample data is invalidated due to exceeded analytical holding times or inappropriate field preservation methods [5].

- Investigation & Resolution:

- Audit Chain of Custody & Field Logs: Verify sample collection dates/times, preservation methods used, and shipment conditions against the required protocol.

- Determine Responsibility: Identify if the cause was a field error (e.g., wrong preservative) or a laboratory delay.

- Action: Resampling is typically required. The responsible party (sampling consultant or laboratory) should bear the cost for re-analysis [5].

Problem 3: Inconsistent or Unreliable Results at Very Low Concentrations (Common in Ecotox)

- Symptoms: High variability, inability to quantify degradation products, or frequent non-detects near the LOQ in aquatic toxicity studies.

- Investigation & Resolution:

- Evaluate Method Scope: Confirm the validated method's LOQ is sufficiently low—often several folds below the lowest test concentration—to track analyte degradation over the exposure period [6].

- Check for Matrix Effects: In complex media (e.g., reconstituted water with carriers), matrix components can suppress or enhance signal. Method validation must account for this [6].

- Review Instrument Sensitivity: Analysis at ppt levels may require high-end LC-MS/MS or GC-MS/MS systems and specialized, contamination-free sample preparation labs [6].

- Action: Re-develop or optimize the method to improve sensitivity and specificity, potentially using advanced sample concentration techniques like SPE [6].

Problem 4: Handling 'J' and 'U' Flags During Data Interpretation

- Symptoms: Uncertainty in how to treat estimated ('J') or non-detect ('U') values in statistical analysis or risk assessment.

- Investigation & Resolution:

- Understand the Limits: Clarify the laboratory's EDL (or MDL) and RDL for the analyte/method. A 'J' flag falls between these limits; a 'U' is at or below the EDL [4].

- Apply Project-Specific Rules: Follow pre-established Data Quality Assessment (DQA) plans. Common practices include treating 'U' as zero, half the detection limit, or the detection limit itself. 'J' values are often used as-is but with noted uncertainty.

- Action: Document all assumptions used in interpreting qualified data for full transparency in the decision-making record.

Data Validation Qualifiers: Reference Table

The table below summarizes key EPA data qualifiers, their triggers, and implications for data use [4].

| Qualifier Flag | Meaning | Typical Trigger Condition | Implication for Data Use |

|---|---|---|---|

| U | Not Detected | Analyte not found. Reported value is the Estimated Detection Limit (EDL). | Indicates analyte concentration is at or below the detection threshold. Requires careful statistical handling. |

| J | Estimated Value | Analyte is identified, but concentration is between the EDL and the Reporting Detection Limit (RDL). | The numeric value is an estimate. Uncertainty is higher than for unqualified results above the RDL. |

| B | Blank Contamination | Analyte is detected in both the sample and its associated method blank. | Suggests possible lab or procedural contamination. The sample result may be unreliable or require negation. |

| Q | QC Failure | One or more quality control criteria (e.g., surrogate recovery, calibration) fell outside acceptable limits. | Questions the accuracy of the reported analyte concentration for all samples in the affected batch. |

| E | Exceeds Calibration | The analyte concentration is above the highest point in the instrument's calibration curve (>CS5). | The reported concentration is a minimum estimate. Sample may require dilution and re-analysis for accurate quantitation. |

| R | Rejected Data | Sample is compromised (e.g., holding time exceeded, improper preservation, fatal sampling error). | Data should not be used for decision-making. Resampling is typically required [5]. |

Experimental Protocols for Key Analyses

Protocol 1: Validating an Analytical Method for Unstable Test Items in Aquatic Ecotoxicology Studies This protocol ensures an analytical method can accurately track the declining concentration of a test item in water over a study's exposure period [6]. Objective: To develop and validate a sensitive, stability-indicating method for quantifying test item concentration in aqueous test media (e.g., for a 96-hour static or 24-hour renewal fish toxicity test). Materials: Test item reference standard, appropriate vehicle/carrier, reconstituted water, high-sensitivity LC-MS/MS or GC-MS/MS system, dedicated glassware. Procedure:

- Method Development: Prepare fortified solutions in test media at concentrations spanning from the planned high dose to a level ~30% below the low dose. Spike with analyte and serially dilute to establish a calibration curve. Optimize extraction (e.g., liquid-liquid extraction, SPE) and instrument parameters to achieve a peak signal-to-noise ratio >10 for the lowest required concentration [6].

- Forced Degradation: Stress the fortified medium (e.g., via pH change, heat, light) to generate degradation products and confirm the method separates and quantifies the parent compound without interference.

- Validation Parameters:

- LOQ Determination: The LOQ must be sufficiently low (often several folds below the low dose) to quantify the test item at the end of the stability period (e.g., 96 hours) [6].

- Stability Testing: Fortify test media at low, mid, and high concentrations. Analyze replicates immediately (T=0) and after 24, 48, and 96 hours under test conditions. Calculate mean recovery and percent deviation.

- Homogeneity Testing (for suspensions): Prepare a bulk dose formulation, sample from top, middle, and bottom, and analyze. The relative standard deviation (RSD) between samples should be ≤10%.

- Acceptance Criteria: Mean recovery within 85-115%; RSD ≤15% for precision; demonstrated stability over the required exposure period.

Protocol 2: Conducting a Data Usability Assessment (DUA) This formal assessment determines if validated data is fit for its intended purpose [2]. Objective: To systematically evaluate the verified and validated data against project Data Quality Objectives (DQOs) to support a defendable decision. Materials: Final validated data set with qualifiers, project QAPP or DQOs, laboratory data packages, conceptual site model. Procedure [2]:

- Review Project Objectives & Sampling Design: Re-affirm the decision the data must support (e.g., "Determine if concentration exceeds regulatory threshold X").

- Evaluate Conformance to Criteria: Review validation reports and qualifier summaries. For each DQO parameter (Precision, Accuracy, etc.), note if it was met and, if not, the severity and extent of the deficiency as indicated by qualifiers.

- Assess Impact on Decision: Using the conceptual model, determine if qualified data (e.g., 'J'-estimated or 'B'-contaminated) affect the ability to make the decision. Can the decision be made conservatively despite the qualification?

- Document Conclusion: Formally state whether the data set is usable, partially usable, or not usable for its intended purpose, citing specific evidence from the validation process.

Workflow Visualizations

Title: Data Quality Review and Usability Assessment Workflow

Title: Troubleshooting Logic for Common Data Qualifiers

The Scientist's Toolkit: Essential Research Reagents & Materials

The following items are critical for ensuring data quality in sensitive ecotoxicology and analytical validation work [6].

| Item | Function & Importance |

|---|---|

| High-Sensitivity LC-MS/MS or GC-MS/MS System | Essential for achieving the low Limits of Quantification (LOQ) required for trace-level analysis (ppb/ppt) in ecotoxicology studies. High-end systems provide the necessary specificity and signal-to-noise ratio [6]. |

| Certified Reference Standards & Materials | Provides the known quantity of analyte essential for accurate instrument calibration, method development, and establishing traceability for quantitative results. |

| Dedicated, Contamination-Free Glassware & Lab Space | Prevents false positives ('B' flags) from systemic contamination. Separate labs and glassware for ultra-trace analysis are a best practice [6]. |

| Stable Isotope-Labeled Surrogate Standards | Added to every sample prior to extraction. Their recovery is monitored ('Q' flag trigger) to correct for and track analyte-specific losses during sample preparation. |

| Commutable Quality Control (QC) Materials | Materials that behave like real study samples are critical for reliable External Quality Assessment (EQA) to detect analytical errors unrelated to the sample matrix [7]. |

| Appropriate Sample Preservation Supplies | Correct preservatives (e.g., acids, buffers) and refrigerated shipping containers are vital to prevent analyte degradation and avoid sample rejection for holding time violations [5]. |

Foundational Concepts: Validation, Qualification, and Verification

In the context of ecotoxicology research and regulatory submission, understanding the precise definitions and applications of validation, qualification, and verification is critical for data integrity and agency acceptance. These terms are often used interchangeably but describe distinct phases of the method lifecycle [8].

- Validation is a formal, documented process that provides a high degree of assurance that a method will consistently produce results meeting predetermined acceptance criteria for its intended use [8]. It is required for methods used in final product release, stability studies, or batch quality assessments [8]. Regulatory agencies like the FDA require full validation to support the identity, strength, quality, purity, and potency of drug substances and products [9].

- Qualification is an early-stage evaluation conducted during preclinical or early clinical development. It assesses key performance parameters to determine if a method is likely to be reliable before committing to the resource-intensive full validation process [8]. In ecotoxicology, this may involve initial testing of a New Approach Methodology (NAM) with a small set of reference chemicals.

- Verification confirms that a previously validated method performs as expected in a new laboratory or under modified conditions (e.g., a different sample matrix) [8]. It is not a re-validation but a demonstration of suitability in a new operational context. This is common when transferring a standardized ecotoxicity assay to a new research facility.

The following table summarizes the core distinctions:

Table: Core Distinctions Between Validation, Qualification, and Verification

| Aspect | Validation | Qualification | Verification |

|---|---|---|---|

| Primary Goal | Demonstrate suitability for definitive intended use [8]. | Early assessment of method performance during development [8]. | Confirm a validated method works in a new setting [8]. |

| Stage of Use | Late-stage development, regulatory submission, QC release [8]. | Early research, preclinical, Phase I trials [8]. | Post-validation, method transfer between labs [10]. |

| Regulatory Expectation | Mandatory for regulatory decision-making [9] [8]. | Informational; guides future validation protocol [8]. | Required for adoption of compendial or transferred methods [10]. |

| Documentation Rigor | Comprehensive, definitive report for agency review [8]. | Informal or preliminary documentation [8]. | Limited report against established criteria [8]. |

The Regulatory Framework and Pathways

Agency Roles and Requirements

The Interagency Coordinating Committee on the Validation of Alternative Methods (ICCVAM) and the U.S. Food and Drug Administration (FDA) play complementary, driving roles in method qualification.

ICCVAM is a permanent committee established by statute to increase the efficiency of federal agency test method review, eliminate duplication, and promote the development of alternatives to animal testing [11]. Its mandate is to ensure new methods are validated to meet the needs of federal agencies [11]. For ecotoxicology, ICCVAM's dedicated Ecotoxicology Workgroup provides expertise in identifying and evaluating in vitro and in silico methods for ecological hazard assessment [12].

The FDA requires that analytical methods supporting applications demonstrate identity, strength, quality, purity, and potency [13] [9]. Its guidance aligns with the International Council for Harmonisation (ICH) Q2(R2) guidelines, which define the validation characteristics for analytical procedures [14].

The Validation and Submission Pathway to ICCVAM

ICCVAM has a defined pathway for the submission and evaluation of new test methods. The process emphasizes early engagement and alignment with agency needs.

Key Steps for Researchers:

- Early Consultation: Engage with the National Toxicology Program Interagency Center for the Evaluation of Alternative Toxicological Methods (NICEATM) early in development to discuss the method's regulatory alignment [15].

- Agency Alignment: Ensure the method addresses the needs of at least one ICCVAM member agency willing to sponsor its evaluation [15].

- Validation Studies: Design and conduct studies that adequately characterize the method's usefulness and limitations for a specific regulatory application [15].

- Submission: Prepare a complete package per ICCVAM guidelines (NIH Publication No. 03-4508) and submit for review [15].

Technical Support Center: Troubleshooting Guides & FAQs

Foundational Q&A

Q1: What is the core difference between "validating a method" and "qualifying a method" for FDA submission? A: Validation is a comprehensive, end-stage requirement for methods used to make decisions about product quality for commercial release. It must fully address all ICH Q2(R2) parameters [14]. Qualification is a precursor activity used during drug development to provide confidence that a method is "fit-for-purpose" for generating reliable data to support early-stage decisions (e.g., formulation screening, stability indicators). The data from a qualified method may not be sufficient for a final marketing application without full validation [8].

Q2: Our lab focuses on ecotoxicology for environmental safety. How does ICCVAM's work apply to our field? A: ICCVAM's scope explicitly includes ecotoxicology. Its Ecotoxicology Workgroup is tasked with identifying and evaluating non-animal approaches for ecological hazard assessment [12]. A key current activity is evaluating alternatives to the in vivo acute fish toxicity test [12]. If you are developing a NAM (e.g., a fish cell line assay or computational model) to predict ecotoxicity, engaging with this workgroup is the primary pathway for regulatory consideration in the U.S. [15] [12].

Q3: What are the most common reasons for regulatory agencies to reject a method validation package? A: Based on common audit findings, rejections or major deficiencies often stem from [13] [9]:

- Using non-validated methods for critical decisions (e.g., releasing a clinical trial batch).

- Inadequate validation that fails to fully assess required parameters like specificity or robustness.

- Poor control of the validation process, including incomplete documentation or only reporting favorable data while omitting out-of-specification results.

Troubleshooting Common Experimental & Submission Issues

Issue 1: Failing Specificity/Separation in an Impurity Method

- Problem: In a chromatographic assay (e.g., for analyzing drug substance or environmental metabolite), the peak of interest co-elutes with an impurity or matrix component, failing specificity requirements [9].

- Solution:

- Revisit Mobile Phase: Systematically adjust the pH, organic solvent ratio, or buffer concentration. A robustness study during development should have identified sensitive parameters [13].

- Consider Alternative Columns: Switch to a column with different particle chemistry (e.g., from C18 to phenyl-hexyl).

- Sample Preparation Optimization: Introduce or modify a solid-phase extraction (SPE) or derivatization step to separate or modify the interfering component [9].

- Preventive Action: During early method development, use diode array or mass spectrometric detectors to confirm peak purity. Stress samples (acid/base/heat/light) should be used to generate potential degradants for specificity testing [13].

Issue 2: High Variability (Poor Precision) in a Cell-Based Bioassay

- Problem: An in vitro ecotoxicity assay (e.g., algal growth inhibition) shows unacceptably high inter-assay or inter-operator variability.

- Solution:

- Standardize Critical Reagents: Use aliquoted, characterized batches of key components like fetal bovine serum (FBS), growth factors, or the frozen cell stock [9].

- Tighten Procedural Controls: Strictly control passage number, cell seeding density, incubation times, and use automated plate washers to minimize manual handling differences.

- Implement System Suitability: Define and use control compounds with expected response ranges in each run. The assay is only valid if these controls pass.

- Preventive Action: Perform a formal robustness test during qualification, deliberately varying likely influential factors (e.g., incubation temperature ±1°C, reagent incubation time ±5%) to establish acceptable operating ranges [10].

Issue 3: Method Transfer Failure Between Labs

- Problem: A method that worked perfectly in the development lab fails to meet acceptance criteria when transferred to a QC or partner lab [10].

- Solution:

- Conduct a Gap Analysis: Compare all equipment models, software versions, reagent sources, and analyst techniques between the two sites. Even minor differences in HPLC tubing diameter can affect results.

- Execute a Joint Training & Protocol Review: Have analysts from both labs run the method together to identify unwritten "tribal knowledge" steps.

- Consider Covalidation: If frequent transfers are anticipated, design the original validation to include intermediate precision testing across both laboratories from the start [10].

- Preventive Action: Create an exceptionally detailed procedure that includes troubleshooting notes and photos/videos of critical steps. Specify exact makes and models of key instruments.

Issue 4: Inability to Generate a Suitable "Spiked" Sample for Recovery Studies

- Problem: For validation of an impurity or analyte method, you need to spike the target into a sample matrix, but the pure impurity is unavailable or unstable.

- Solution (Case Study - SEC Aggregates): If measuring protein aggregates or fragments, generate the spike material in-house [10]:

- Generate Aggregates: Subject the parent material to controlled stress (e.g., gentle agitation, elevated temperature).

- Generate Fragments/Low Molecular Weight Species: Use a controlled enzymatic or chemical reduction reaction [10].

- Isolate: Use preparatory-scale chromatography to isolate the generated species for use as spike material.

- Characterize: Briefly characterize the isolated spike material to confirm its identity.

Experimental Protocols for Key Validation Activities

Protocol for a Fit-for-Purpose Spiking Study (Accuracy/Recovery)

This protocol is adapted from a size-exclusion chromatography (SEC) case study but is applicable to various quantitative assays [10].

Objective: To demonstrate the accuracy of an analytical procedure by measuring the recovery of a known amount of analyte spiked into a sample matrix.

Materials:

- Test sample (e.g., drug substance, formulated product, environmental sample).

- Analytically pure reference standard of the target analyte. If unavailable, see "Spike Generation" below.

- Appropriate solvents and reagents for sample preparation.

Spike Generation (if reference standard is unavailable):

- For aggregates: Create via controlled stress (e.g., vortexing a protein solution at medium speed for 60 minutes at room temperature). Isolate aggregates using preparatory SEC.

- For degradants/fragments: Create via controlled chemical reaction (e.g., incubating a monoclonal antibody with a dilute hydrogen peroxide solution for 2 hours at 25°C for oxidation products, or with dithiothreitol for fragments). Quench the reaction and isolate the species.

Procedure:

- Prepare a stock solution of the spike material at a known, high concentration.

- Prepare the test sample matrix at the nominal concentration.

- Spike the sample matrix at three levels across the validated range (e.g., 50%, 100%, 150% of the specification limit or expected concentration). Perform each level in triplicate.

- Prepare an unspiked sample matrix and a spike standard in solvent (representing 100% recovery).

- Analyze all samples using the candidate method.

- Calculation: % Recovery = [(Found concentration in spiked sample – Found concentration in unspiked sample) / Theoretical spike concentration] x 100.

Acceptance Criteria: Establish based on method capability and stage. For a late-stage validation, typical recovery limits are 98-102% for drug substance, 95-105% for drug product or impurities [10].

Protocol for a Basic Method Robustness Test

Objective: To identify critical experimental parameters whose small, deliberate variation may affect method results.

Materials: A system suitability sample or a mid-level validation sample.

Design (One-Factor-at-a-Time):

- List 5-7 potentially influential factors (e.g., pH of mobile phase, column temperature, extraction time, sonication power).

- For each factor, select a nominal value (from your procedure) and a high and low value (representing a small, realistic deviation, e.g., pH ±0.2 units).

- Run the method holding all factors at nominal, except for one factor at its high or low value. Use the system suitability sample.

Analysis:

- Record the result (e.g., assay value, retention time, peak area) for each run.

- Compare the results from the high/low runs to the nominal run.

- If a change in a factor causes the result to fall outside pre-defined acceptance criteria (e.g., ±2% for assay), that factor is deemed critical.

- The operating range for that factor should then be narrowed, or the procedure must specify tighter control limits.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Research Reagents and Materials for Method Development and Validation

| Item | Primary Function | Key Considerations for Validation |

|---|---|---|

| Reference Standards | Provides the definitive benchmark for identity, purity, and concentration of the analyte. | Use the highest certified purity available. Document source, lot number, and certificate of analysis. Stability under storage conditions must be verified [13]. |

| Cell Lines for NAMs | Provides the biological system for in vitro toxicity assays (e.g., fish gill cells, human hepatocytes). | Authenticate and test for mycoplasma regularly. Control passage number tightly. Establish a master cell bank with characterized performance [9]. |

| Chromatography Columns | Separates analytes in complex mixtures (HPLC, UPLC, GC). | Column lot-to-lot variability can impact separation. Document manufacturer, dimension, particle size, and lot number. Specify a "guard column" if needed [9]. |

| Mass Spectrometry-Grade Solvents & Reagents | Used for sample prep and mobile phases in sensitive detection systems. | Low UV cutoff, minimal particulate and ionic impurities are critical to reduce background noise and prevent ion suppression in MS [9]. |

| Quality Control Samples | Used to monitor method performance over time (e.g., system suitability). | Should be stable, homogeneous, and representative of the test material. Create a large, well-characterized batch to last the validation and beyond [10]. |

| Software for Data Acquisition & Analysis | Collects, processes, and reports analytical data. | Must be validated for its intended use (21 CFR Part 11 compliance for regulated work). Ensure audit trail functionality is enabled [13]. |

The Method Validation Lifecycle

Successful method qualification is not a single event but a managed lifecycle that aligns with product and project development stages, from initial concept through regulatory submission and post-approval monitoring [10].

Stage 1: Define the Analytical Target Profile (ATP): This is a prospective summary of the method's required performance (what it needs to measure, and how well). It drives all subsequent development [10].

Stage 2: Method Development & Optimization: Based on the ATP and molecule properties (solubility, pKa, stability), scientists select and optimize the technique. A quality by design (QbD) approach, studying variables via design of experiments (DoE), is recommended [10].

Stage 3: Method Qualification: For early-phase ecotoxicology research, this involves limited testing to show the method is "fit-for-purpose" for generating reliable research data, informing decisions on whether to proceed to full validation [8].

Stage 4: Formal Method Validation: This is the definitive, documented exercise to meet all regulatory requirements (ICH Q2(R2)) for the final intended use [14]. The data is included in submissions to ICCVAM or the FDA [15] [16].

Stage 5: Routine Use & Ongoing Performance Verification: The method is monitored via system suitability tests and quality control charts. If performance trends out of control, the lifecycle loops back to development or the ATP for improvement [10].

Technical Support Center: Troubleshooting Guides & FAQs

This section addresses common technical and scientific barriers encountered when implementing NAMs in ecotoxicology research, framed within the context of ensuring data quality and validation.

| FAQ Category | Question | Potential Issue & Troubleshooting Guidance | Relevance to Data Validation Qualifiers |

|---|---|---|---|

| Assay Performance & Reproducibility | Q: My in vitro assay shows high inter‑experimental variability. How can I improve reproducibility? | A: Variability often stems from inconsistent cell culture conditions, reagent lots, or protocol drift. Standardize by: 1) Using accredited cell lines and recording passage numbers; 2) Implementing routine positive/negative controls; 3) Following OECD Test Guidelines (e.g., TG 497 for skin sensitization) where available[reference:0]. | Inconsistent data may receive qualifiers (e.g., “J” for estimated value). Document all procedural details to justify data flags. |

| Data Interpretation & Relevance | Q: How do I demonstrate the biological relevance of my NAM data for regulatory submission? | A: Anchor your assay to an Adverse Outcome Pathway (AOP). Describe the molecular initiating event and key events measured, and benchmark against existing in vivo data where possible[reference:1]. For complex endpoints, a combination of NAMs may be needed to cover the biological space[reference:2]. | Data without clear biological relevance may be qualified as “U” (of uncertain reliability). A well‑documented AOP linkage supports “valid” status. |

| Validation & Regulatory Acceptance | Q: What are the minimum validation requirements for a NAM to be used in a regulatory context? | A: Validation is a flexible process that establishes fitness for a specific Context of Use (COU). Key steps include: 1) Defining the COU statement; 2) Demonstrating technical reliability and biological relevance; 3) Providing transparent data for independent review[reference:3]. Engage with agencies early (e.g., ICCVAM) to align with agency needs[reference:4]. | The validation process itself determines whether data can be used without qualifiers. Incomplete validation may lead to “UJ” (unacceptable) flags. |

| Integration of Multi‑Source Data | Q: How can I combine data from different NAMs (e.g., in chemico, in silico) into a single assessment? | A: Use a Defined Approach (DA)—a fixed combination of data sources with a prescribed data‑interpretation procedure. DAs for eye irritation or skin sensitization are codified in OECD TGs 467 and 497[reference:5]. | DAs provide a standardized framework that reduces subjective qualifier assignment. Each input data set should still carry its own validation flags. |

| Technical Barriers in Ecotoxicology | Q: Why are NAMs for systemic ecotoxicity endpoints (e.g., chronic fish toxicity) particularly challenging? | A: Systemic effects involve complex organism‑level interactions that are difficult to capture with a single in vitro assay. Success has been greater for local endpoints (e.g., skin irritation) driven by chemical reactivity[reference:6]. For systemic effects, consider integrating PBK modeling and AOP networks[reference:7]. | Data for complex endpoints may require a “U” qualifier if the assay’s relevance is not fully established. |

Quantitative Data Comparison: Traditional vs. NAMs Testing

The following table summarizes key performance metrics for traditional animal‑based ecotoxicity testing versus NAMs, highlighting the 3Rs (Replacement, Reduction, Refinement) and innovation benefits.

| Metric | Traditional Animal Testing (e.g., Fish Acute Toxicity Test) | NAMs (e.g., Fish Cell‑Line In Vitro Assay) | Data Source / Rationale |

|---|---|---|---|

| Time to result | 7–10 days (including acclimation, exposure, observation) | 24–48 hours (direct exposure of cells) | Based on OECD TG 203 (fish acute) vs. typical cell‑assay protocols. |

| Cost per chemical | ~$5,000–$15,000 (including animal husbandry, facilities) | ~$500–$2,000 (reagents, cell culture) | Estimates from validation‑study reports[reference:8]. |

| Animal use | 7–10 fish per concentration, multiple concentrations | 0 vertebrate animals | EPA’s strategic plan aims to reduce vertebrate‑animal use[reference:9]. |

| Throughput | Low (few chemicals per month) | High (dozens to hundreds of chemicals per week) | Enabled by high‑throughput screening platforms[reference:10]. |

| Predictive performance | Variable cross‑species extrapolation (rodent predictivity ~40–65% for human toxicity)[reference:11] | Can be tailored to species‑relevant cells (e.g., fish gill cell lines) and anchored to AOPs for mechanistic relevance[reference:12]. | |

| Regulatory acceptance | Fully accepted under existing guidelines (e.g., OECD TG 203) | Growing acceptance for specific endpoints via OECD TGs (e.g., TG 497 for skin sensitization)[reference:13]. |

Experimental Protocol: Fish Cell‑LineIn VitroAcute Toxicity Assay

This protocol outlines a typical NAM for estimating acute aquatic toxicity using a fish gill cell line (e.g., RTgill‑W1), aligning with the 3Rs by replacing whole‑organism tests.

Materials

- Cell line: RTgill‑W1 (rainbow trout gill epithelium).

- Culture medium: Leibovitz’s L‑15 medium supplemented with 10% fetal bovine serum, 2 mM L‑glutamine, 100 U/mL penicillin‑streptomycin.

- Exposure plates: 96‑well tissue‑culture‑treated plates.

- Test chemicals: Prepared as 1000× stock solutions in DMSO or culture‑compatible solvent (final solvent concentration ≤0.1%).

- Viability reagent: AlamarBlue (resazurin) or MTT.

- Equipment: CO₂ incubator (for cell culture), plate reader (fluorescence/absorbance), laminar‑flow hood.

Procedure

- Cell seeding: Harvest log‑phase RTgill‑W1 cells and seed at 1×10⁴ cells/well in 100 µL complete medium. Incubate at 20°C (non‑CO₂) for 24 h to allow attachment.

- Chemical exposure: Prepare a dilution series of the test chemical in culture medium (typically 6–8 concentrations, plus solvent and positive‑control wells). Replace the medium in each well with 100 µL of exposure solution. Incubate for 24 h.

- Viability assessment: Add 10 µL of AlamarBlue reagent to each well. Incubate for 3–4 h, then measure fluorescence (excitation 560 nm, emission 590 nm) using a plate reader.

- Data analysis: Calculate cell viability as percentage of solvent‑control fluorescence. Fit a dose‑response curve (e.g., 4‑parameter logistic model) to derive IC₅₀ (concentration causing 50% inhibition).

- Quality controls: Include a positive control (e.g., 1% SDS) to confirm assay responsiveness. Run each plate in triplicate. Accept the assay if solvent‑control viability is >90% and positive‑control viability is <30%.

Validation & Data Qualifiers

- Context of Use: Screening/prioritization of chemicals for acute aquatic toxicity.

- Performance standards: Benchmark IC₅₀ values against existing in vivo fish acute toxicity data (e.g., from ECOTOX database[reference:14]) to establish correlation.

- Data qualifiers: Assign standard environmental‑data validation qualifiers (e.g., “U” if the chemical interferes with the fluorescence signal; “J” if the dose‑response curve fit is uncertain) based on the observed data quality[reference:15].

Visualization: NAMs Workflow & Data‑Validation Process

Visualization: Adverse Outcome Pathway (AOP) Framework for NAMs Anchoring

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in NAMs Ecotoxicology | Example Product / Source |

|---|---|---|

| Fish cell lines | Provide species‑relevant in vitro models for aquatic toxicity testing. | RTgill‑W1 (rainbow trout gill), RT‑Liver (rainbow trout liver). |

| High‑throughput screening assays | Enable rapid concentration‑response profiling of chemicals. | ToxCast assay panels (EPA)[reference:16]. |

| Defined Approach (DA) kits | Standardized combinations of in chemico/in silico/in vitro tests for specific endpoints. | Skin sensitization DA (OECD TG 497)[reference:17]. |

| Adverse Outcome Pathway (AOP) databases | Anchor NAMs to mechanistic frameworks for biological relevance. | AOP‑Wiki (https://aopwiki.org). |

| Toxicokinetic modeling software | Extrapolate in vitro concentrations to in vivo doses. | httk R‑package (EPA)[reference:18]. |

| Data‑validation qualifier guides | Standardize flags for data quality (e.g., “U”, “J”, “UJ”). | EPA Data Validation Guidance[reference:19]. |

| Reference chemical sets | Benchmark NAM performance against traditional in vivo data. | EPA’s ToxRefDB[reference:20]. |

| Omics profiling platforms | Uncover mechanistic insights via transcriptomics, metabolomics. | RNA‑seq kits, mass‑spectrometry‑based metabolomics. |

The field of ecotoxicology is undergoing a significant transformation, driven by the convergence of New Approach Methodologies (NAMs), advanced data science, and heightened demands for predictive, human-relevant safety assessments [17]. This shift away from traditional animal testing towards integrated, mechanistic models places unprecedented importance on data quality and validation. In this context, data validation qualifiers are not mere administrative flags; they are critical tools for interpreting complex datasets, assessing chemical risk, and ensuring the defensibility of scientific conclusions used in multi-million dollar decisions [18] [19].

Concurrent market analysis reveals that the broader life sciences industry is being reshaped by artificial intelligence (AI), a surge in novel therapeutic modalities like protein degraders and radiopharmaceuticals, and an increase in strategic mergers and acquisitions [20] [21]. These trends directly impact ecotoxicology research, creating a demand for faster, more reliable data validation frameworks that can keep pace with AI-accelerated discovery and the safety assessment of complex new chemical entities [22].

This technical support center is designed to address the specific, practical challenges researchers face in this evolving landscape. The following troubleshooting guides, FAQs, and protocols provide actionable solutions for ensuring data integrity, from the bench to the final risk assessment report.

Troubleshooting Guides & FAQs

This section addresses common technical and interpretative challenges encountered during ecotoxicology studies and data validation processes.

Q1: Our laboratory reported data with a "J" qualifier (estimated value). How should I interpret and report this result in my environmental risk assessment?

- Issue: The "J" flag indicates the analyte was detected, but the concentration is below the method's practical quantitation limit yet above the detection limit. This is a common qualifier requiring careful interpretation [18].

- Solution: Do not automatically dismiss "J"-flagged data. Apply critical thinking by comparing the value to relevant toxicological thresholds (e.g., PNEC - Predicted No-Effect Concentration). If the estimated value is orders of magnitude below the threshold, it may have negligible risk implications. However, for risk-averse assessments or bioaccumulative compounds, a conservative approach using the estimated value may be warranted. Document your decision logic transparently in the data usability assessment [19] [2].

Q2: Method blank contamination was detected for a key analyte. Does this invalidate all my sample results for that compound?

- Issue: Contamination in a method blank suggests potential laboratory-based introduction of the analyte, posing a risk to data integrity for associated samples [18].

- Solution: This requires a comparative analysis. Calculate the blank contamination level. For each field sample, assess if the reported concentration is significantly greater (e.g., 5-10 times higher) than the blank level. Results that are comparable to or lower than the blank may be considered unusable ("non-detect" with a note) or highly qualified. Results substantially higher are likely valid but should still carry a qualifier noting the blank issue. The project's Quality Assurance Project Plan (QAPP) should define the specific multiplier for this decision [18] [23].

Q3: Our AI/QSAR model predicted a toxicity concern, but initial in vitro assay results are negative. How do I resolve this conflict?

- Issue: Discrepancies between in silico predictions and in vitro experimental data are common, especially with novel chemical structures or poorly defined applicability domains for the computational model [17].

- Solution: Initiate a tiered investigation:

- Audit the Input: Verify the chemical structure (SMILES) used in the model. Check the model's documented applicability domain to ensure your compound fits.

- Interrogate the Assay: Review the in vitro assay's metabolic competence. Many cell lines lack sufficient cytochrome P450 activity. Consider repeating the assay with an S9 metabolic activation system or using a more physiologically relevant model like a 3D liver spheroid [17].

- Seek Mechanistic Insight: Employ a transcriptomic or proteomic screening (a "omics" approach) on the exposed in vitro system. The AI prediction may be correct in identifying a pathway perturbation that is not captured by your single-endpoint assay [17].

Q4: A key quality control parameter (e.g., surrogate recovery) in my batch failed the QAPP acceptance criteria. What are my options for using the associated field sample data?

- Issue: A QC failure questions the accuracy and precision of the entire analytical batch, potentially rendering all data from that batch unusable [2].

- Solution: Follow a structured data usability assessment process [2]:

- Evaluate the Failure Magnitude: Was it a marginal fail (e.g., 65% recovery against a 70-130% criterion) or a gross fail (e.g., 20% recovery)?

- Assess Impact: Did the failure affect all analytes or just a specific, problematic one? Review raw data like chromatograms for signs of interference.

- Apply Professional Judgment: For a marginal, isolated failure, you may justify qualifying the data for specific analytes as estimated ("J") while rejecting others. For a gross failure, re-analysis is likely required. Document every step of this assessment to defend the final use or rejection of the data [19] [23].

Q5: We are transitioning to a New Approach Methodology (NAM) that isn't yet in standardized guidelines. How do we establish and validate our data review criteria?

- Issue: The lack of prescriptive, method-specific QC limits for novel NAMs (e.g., a high-content imaging assay) creates uncertainty in data review [15] [17].

- Solution: Develop an internal validation plan based on core principles:

- Define the Context of Use: Clearly state what the assay is predicting (e.g., mitochondrial toxicity).

- Establish Performance Standards: Run a set of reference compounds (known positives/negatives) repeatedly to characterize the assay's precision, accuracy, and reproducibility. Statistically derived control limits (e.g., ±3 standard deviations) from this data become your initial QC criteria [15].

- Document in a SOP: Formalize the assay protocol, data analysis pipeline, and review criteria in a Standard Operating Procedure.

- Seek External Benchmarking: Participate in consortium studies or publish your method to gather inter-laboratory feedback, which is a key step towards formal validation and regulatory acceptance [15].

Table: Common Data Review Levels and Their Applications in Ecotoxicology Studies

| Review Level | Typical Activities | Best For | Resource Intensity |

|---|---|---|---|

| Level 1: Verification | Check COC, confirm EDD format, review summary QC against pass/fail flags [2]. | Screening studies, low-risk decisions, initial data triage. | Low |

| Level 2: Validation | Full review of raw data (chromatograms, calibrations); apply project-specific qualifiers; assess all QC samples [18] [23]. | Regulatory submissions, definitive risk assessments, litigation support. | High |

| Level 3: Peer/Usability Review | Evaluation of validated data within the project context (CSM, risk thresholds); final go/no-go decision [2]. | Final project decision-making, integrating multiple lines of evidence. | Medium |

Experimental Protocols for Key Methods

To ensure robust and reproducible data, follow these detailed protocols for critical techniques in modern ecotoxicology.

Protocol: Cellular Thermal Shift Assay (CETSA) for Target Engagement Validation

CETSA confirms direct drug-target interaction in a physiologically relevant cellular context, bridging in silico prediction and functional outcome [22].

Methodology:

- Cell Preparation: Culture relevant cell line (e.g., HepG2 for liver toxicity). Harvest and aliquot ~1 million cells per condition into PCR tubes.

- Compound Dosing: Treat cell aliquots with a range of test compound concentrations (e.g., 1 nM – 100 µM) and a vehicle control for 30-60 minutes.

- Heat Challenge: Individually heat each aliquot to a predetermined temperature (e.g., 52°C, 54°C, 56°C) for 3 minutes in a thermal cycler, then return to room temperature.

- Cell Lysis & Clarification: Lyse cells chemically, followed by centrifugation to separate soluble (native) protein from aggregated/precipitated protein.

- Target Quantification: Analyze the soluble fraction by western blot or quantitative mass spectrometry to measure the remaining amount of the target protein. A compound that stabilizes the target will result in more soluble protein post-heat challenge.

- Data Analysis: Plot soluble protein amount versus temperature to generate melt curves. A rightward shift in the melt curve for dosed samples indicates target stabilization and engagement [22].

Protocol: Data Validation for Analytical Chemistry Results

This formal process assesses compliance with the QAPP and method specifications [18] [23].

Methodology:

- Receive & Verify Package: Ensure the complete data package (EDD, PDF reports, raw data) is received. Verify Chain of Custody and that all required samples/analytes are reported [18].

- Review Initial QC Flags: Examine laboratory-applied qualifiers and summary QC pass/fail status from the EDD.

- Validate Raw Data: Systematically review:

- Calibration: Linearity, residuals, and correctness of the calibration model.

- Continuing Calibration Verification (CCV): Confirms instrument stability during the run.

- Blanks: Method, trip, and equipment blanks for contamination.

- QC Samples: Surrogate recoveries, matrix spike/recoveries, duplicate precision.

- Sample-Specific Data: Chromatographic integration, peak identification, interference examination.

- Apply Project Qualifiers: Based on raw data review, assign standardized data validation qualifiers (e.g., "V" for valid, "J" for estimated, "R" for rejected) to each analyte result, following project-specific guidance [18].

- Generate Validation Report: Document findings, including any qualifications, corrections, and a summary of QC performance. This report is the definitive record of data quality [23].

Table: Common Data Validation Qualifiers and Their Interpretation [18] [2]

| Qualifier | Full Name | Meaning | Typical Cause |

|---|---|---|---|

| V | Valid | Data meets all QC criteria. | N/A |

| J | Estimated | Analyte detected, but concentration is below the quantitation limit. | Low concentration near method limits. |

| U | Not Detected | Analyte was not detected above the method detection limit. | Analyte absent or below MDL. |

| R | Rejected | Data is not usable for its intended purpose. | Major QC failure, contamination, holding time exceedance. |

| NJ | Estimated, Not Detected | Result is an estimated value below the detection limit. | Applied by validators to "J" flags where blank contamination is high. |

Visualizing Workflows and Pathways

Diagram 1: Ecotoxicology Data Quality Review Workflow

This workflow outlines the staged process for ensuring data quality, from planning to final decision-making [18] [2].

Diagram 2: Adverse Outcome Pathway (AOP) Conceptual Framework

The AOP framework provides a mechanistic structure for linking data from various NAMs to an adverse outcome, forming the basis for Integrated Approaches to Testing and Assessment (IATA) [17].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagent Solutions for Ecotoxicology and Data Validation Research

| Item | Function/Description | Key Application in Ecotoxicology |

|---|---|---|

| Electronic Data Deliverable (EDD) Templates | Standardized spreadsheet formats for data reporting [18]. | Ensures consistent data structure from laboratories, enabling automated QC checks and efficient data management. |

| Reference Toxicants & QC Standards | Certified chemical standards with known toxicity and recovery profiles (e.g., surrogate compounds) [18]. | Used to spike samples (matrix spikes) to assess method accuracy and precision under specific study conditions. |

| Metabolic Activation Systems (S9 Fraction) | Liver microsome fractions containing metabolic enzymes [17]. | Added to in vitro assays (e.g., Ames test) to simulate mammalian metabolic conversion of pro-toxicants. |

| Cryopreserved Primary Hepatocytes | Isolated human or rodent liver cells that maintain metabolic function better than cell lines [17]. | A gold-standard in vitro model for studying hepatotoxicity, biotransformation, and bioaccumulation potential. |

| 3D Culture Matrices (e.g., Basement Membrane Extract) | Hydrogels that support the formation of three-dimensional cell structures like spheroids or organoids [17]. | Enables development of more physiologically relevant tissue models for chronic toxicity and repeated-dose studies. |

| "Omics" Profiling Kits (Transcriptomics/Proteomics) | Kits for genome-wide RNA expression or protein abundance analysis (e.g., RNA-Seq, LC-MS kits) [17]. | Used for biomarker discovery, mechanism of action elucidation, and defining signatures of adversity within AOPs. |

| Data Validation Software/Checklists | Tools or formalized checklists based on EPA or other guidelines [18] [2]. | Guides reviewers through systematic evaluation of raw data against method and QAPP criteria, ensuring consistency. |

| Adverse Outcome Pathway (AOP) Wiki Resources | The curated AOP knowledgebase (aopwiki.org). | Provides a structured, peer-reviewed framework for hypothesizing and testing linkages between molecular events and adverse outcomes. |

Executing Validation: A Step-by-Step Guide from Project Planning to Qualifier Assignment

Within the framework of a thesis on data validation qualifiers in ecotoxicology research, the integrity of the Data Validation (DV) and Data Usability Assessment (DUA) processes is paramount. These qualifiers are not standalone checkpoints but must be embedded within a continuous workflow from initial project design to final reporting. This technical support center provides targeted troubleshooting guidance and FAQs to help researchers, scientists, and drug development professionals navigate common pitfalls, ensure robust experimental design, and strengthen the defensibility of their data for regulatory and research purposes [24] [25].

A Systematic Troubleshooting Methodology for Validation Workflows

Effective troubleshooting is a critical skill for maintaining the integrity of a validation workflow. A structured, six-step approach can be systematically applied to diagnose and resolve issues [26]:

- Identify the Problem: Objectively describe the symptom (e.g., "high variability in replicate LC50 values") without jumping to conclusions about the cause.

- List All Possible Explanations: Brainstorm potential root causes across all workflow stages—from sample integrity and reagent quality to instrumentation calibration and data analysis protocols.

- Collect the Data: Review experimental records, control results, instrument logs, and reagent certifications. Consult relevant standard operating procedures (SOPs).

- Eliminate Explanations: Use the collected data to rule out possibilities. For instance, if positive controls performed as expected, the core assay protocol is likely not the issue.

- Check with Experimentation: Design and execute targeted diagnostic experiments to test the remaining plausible hypotheses.

- Identify the Cause: Synthesize findings to pinpoint the root cause, implement a corrective action, and document the entire process for future reference [26].

Frequently Asked Questions (FAQs) by Workflow Stage

Stage 1: Project Planning & Experimental Design

Q1: How do I design an experiment that will generate data suitable for a formal DUA? A: Robust design is the foundation of valid data. Clearly define your independent (e.g., chemical concentration) and dependent variables (e.g., mortality, growth inhibition) [27] [28]. Implement proper controls (negative, positive, vehicle), determine appropriate replication (biological and technical) to achieve statistical power, and use randomization to avoid bias [27]. For ecotoxicology, adhere to relevant OECD test guidelines (e.g., OECD 203 for fish, OECD 202 for daphnia) which specify critical design elements [29].

Q2: My chemical of interest is "data-poor." How can I plan a validation workflow? A: For data-poor chemicals, integrate New Approach Methodologies (NAMs) and structured workflows like RapidTox at the planning stage [24]. Begin with in silico assessments (QSAR, read-across from structural analogs) to inform hazard hypotheses [24] [30]. Design experiments that can test these predictions, using in vitro assays for specific key events (e.g., receptor binding, cytotoxicity) before proceeding to more complex in vivo models if necessary.

Stage 2: Assay Execution & Data Generation

Q3: My positive control is failing, or I have no signal in my assay. What should I check? A: Follow the troubleshooting methodology [26]. First, verify the viability and preparation of your test organisms or cell lines [29]. Second, confirm the preparation and stability of all stock and working solutions, including the positive control substance. Third, check equipment calibration (e.g., pH meters, temperature controllers). Fourth, review the procedural steps against the SOP to ensure no deviations occurred.

Q4: I am observing excessive variability in organism responses within treatment groups. What could be the cause? A: High intra-group variability undermines data quality. Investigate these areas:

- Organism Source & Health: Ensure organisms are from a genetically consistent, healthy population and are properly acclimatized to lab conditions.

- Environmental Consistency: Check for fluctuations in water quality parameters (temperature, pH, dissolved oxygen, hardness), lighting cycles, or unintended stressors [29].

- Exposure Preparation: Verify the accuracy of serial dilutions and the stability of the test substance in the exposure medium (e.g., via volatilization, degradation, adsorption to tank walls).

Stage 3: Data Analysis & Interpretation

Q5: How do I determine if my toxicity data (e.g., LC50) is valid and usable for reporting? A: Apply systematic DUA qualifiers. Assess if test acceptability criteria (e.g., control survival ≥ 90%, positive control response within range) were met [29]. Evaluate the goodness-of-fit for dose-response models. Scrutinize data for outliers with statistical and biological reasoning; never exclude data without justification. Finally, ensure the calculated values are within the tested concentration range and not extrapolated [29].

Q6: How do I assess the human or environmental relevance of a mechanistic pathway observed in my tests? A: Use a structured human relevance assessment workflow [25]. For an Adverse Outcome Pathway (AOP), evaluate if the Molecular Initiating Event (MIE) and Key Events (KEs) are biologically plausible in the target species (e.g., humans, specific wildlife). Assess the conservation of the target molecule or pathway across species. The relevance of associated NAMs must also be evaluated based on the biological context they represent [25].

Stage 4: Reporting & Integration

Q7: What are the common reasons for drug development failure related to toxicity, and how can robust validation workflows mitigate them? A: Approximately 30% of clinical drug development failures are due to unmanageable toxicity [31]. Common failures include off-target effects, on-target toxicity in vital organs, and poor pharmacokinetic/pharmacodynamic (PK/PD) relationships. Integrating DV/DUA workflows early, including in silico toxicity profiling, thorough in vitro screening against panels of targets (e.g., kinases, hERG), and translational PK/PD modeling, can identify these risks earlier in the pipeline [31] [30].

Q8: How should I document troubleshooting investigations and protocol deviations for regulatory compliance? A: All investigations and deviations must be documented in study records or a dedicated notebook. The record should include the initial observation, hypotheses tested, data collected from diagnostic experiments, the conclusive root cause, the corrective action taken, and the impact assessment on the original data. This transparency is critical for the audit trail and final data usability assessment.

Detailed Experimental Protocols for Key Validation Studies

This protocol follows OECD Guideline 203 and is central to generating data for validation qualifiers.

- Objective: To determine the acute lethal toxicity (LC50) of a chemical substance to a freshwater fish species over 96 hours.

- Test Organisms: Use juvenile fish of a standard species (e.g., Danio rerio, Pimephales promelas). Organisms must be healthy, from a documented source, and acclimatized to test conditions for at least 7 days.

- Exposure System:

- Prepare a geometric series of at least 5 test concentrations and a negative (solvent) control.

- Use a static-renewal or flow-through system as appropriate for the test substance.

- Randomly assign organisms to test chambers (e.g., 10 organisms per concentration).

- Maintain water quality: Temperature 20-25°C (±1°C), pH 6.5-8.5, dissolved oxygen >60% saturation.

- Observations & Measurements:

- Record mortality at 24, 48, 72, and 96 hours. Define mortality as lack of opercular movement or no response to gentle prodding.

- Monitor and record water chemistry parameters (pH, temperature, DO) daily.

- Measure actual test concentrations at the start and end of renewal periods for unstable chemicals.

- Data Analysis:

- Calculate the LC50 value at each time point using a suitable statistical model (e.g., Probit, Trimmed Spearman-Karber).

- Report the 95% confidence interval and the goodness-of-fit.

- Acceptance Criteria:

- Control mortality must not exceed 10%.

- The concentration-response relationship should be monotonic.

- Water quality must remain within specified ranges.

This qualitative protocol assesses the relevance of an AOP for human risk assessment.

- Objective: To evaluate the biological plausibility of an established AOP (e.g., from animal studies) in humans.

- Input: A defined AOP with Molecular Initiating Event (MIE), Key Events (KEs), and Adverse Outcome (AO).

- Procedure:

- Deconstruct the AOP: List each element (MIE, KE1, KE2...AO).

- Gather Biological Evidence: For each element, search scientific literature and databases (e.g., UniProt, GenBank, HGNC) to identify if the homologous molecular target, pathway, and cellular/tissue response exist in humans.

- Gather Empirical Evidence: Review available human data (e.g., in vitro human cell studies, epidemiological associations, clinical observations) that support or contradict the activation of the pathway in humans.

- Assess NAM Relevance: For any in vitro or in silico NAMs associated with the AOP, evaluate if the test system (e.g., cell line, protein construct) adequately represents the human biological context of that KE.

- Output & Conclusion:

- A weight-of-evidence statement on the qualitative likelihood of the AOP operating in humans.

- An evaluation of which NAMs provide human-relevant data for specific KEs.

- Documentation of key data gaps and uncertainties.

Table 1: Benchmark Dataset for Ecotoxicology Model Validation (ADORE Dataset Summary) [29]

| Taxonomic Group | Primary Endpoint | Standard Test Duration | Number of Unique Chemicals | Number of Data Points (approx.) | Key Utility for Validation |

|---|---|---|---|---|---|

| Fish | Mortality (LC50) | 96 hours | 2,900+ | 47,000 | Benchmarking traditional in vivo tests; training QSAR/ML models. |

| Crustaceans | Immobilization (EC50) | 48 hours | 3,600+ | 39,000 | Representing invertebrate toxicity; assessing species sensitivity. |

| Algae | Growth Inhibition (EC50) | 72-96 hours | 1,800+ | 29,000 | Representing primary producer toxicity; validating microbial assays. |

Table 2: Analysis of Clinical Drug Development Failures (2010-2017) [31]

| Primary Reason for Failure | Percentage of Failures | Potential Mitigation through Enhanced DV/DUA Workflows |

|---|---|---|

| Lack of Clinical Efficacy | 40% - 50% | Improved preclinical target validation and PK/PD modeling using human-relevant NAMs [25] [30]. |

| Unmanageable Toxicity | ~30% | Earlier integration of in silico safety profiling, phenotypic toxicity screening in human cell models, and thorough off-target panels [31] [30]. |

| Poor Drug-Like Properties | 10% - 15% | Rigorous ADME (Absorption, Distribution, Metabolism, Excretion) profiling and optimization during lead candidate selection. |

| Commercial/Strategic Reasons | ~10% | Not typically addressed by technical validation workflows. |

Workflow Visualization Diagrams

Diagram 1: Modular Decision-Support Validation Workflow (e.g., RapidTox) [24]

Diagram 2: Workflow for Human Relevance Assessment of AOPs & NAMs [25]

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Ecotoxicology Validation Studies

| Item | Function in Validation Workflow | Key Considerations for DV/DUA |

|---|---|---|

| Standardized Test Organisms (e.g., D. magna, D. rerio, Raphidocelis subcapitata) | Provide consistent, reproducible biological response systems for toxicity benchmarking. | Source from certified suppliers. Document generation, health status, and acclimatization conditions. Control mortality is a critical acceptance qualifier [29]. |

| Reference Toxicants (e.g., K₂Cr₂O₇, CuSO₄, 3,4-Dichloroaniline) | Serve as positive controls to verify organism sensitivity and assay performance over time. | Include in every test batch. Response must fall within an established historical range for data to be considered valid [29]. |

| Chemical Identifiers & Standards (CAS RN, DTXSID, analytical-grade test substances) | Ensure precise identification of test substance and purity for reproducibility and regulatory submission. | Use the highest purity available. Document source, lot number, and Certificate of Analysis. Store according to stability data [24] [29]. |

| Endpoint-Specific Assay Kits (e.g., for cytotoxicity, oxidative stress, ATP content) | Enable quantitative measurement of Key Events (KEs) in in vitro or in chemico NAMs. | Validate kit performance for your specific cell line/application. Include kit positive and negative controls [25]. |

| High-Quality Data Sources (e.g., ECOTOX, CompTox Dashboard, PubChem) | Provide curated existing data for read-across, QSAR modeling, and weight-of-evidence assessments. | Use as primary sources for in silico modules. Document query dates and parameters for transparency [24] [29]. |

| AOP Knowledgebase (AOP-Wiki from OECD) | Provides structured mechanistic frameworks to guide hypothesis-driven testing and NAM integration. | Essential for planning studies to populate or evaluate specific KERs and for human relevance assessment [25]. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center is designed to assist researchers in navigating data validation within ecotoxicology. Framed within a thesis on data validation qualifiers, it provides practical guidance for selecting the appropriate review tier and addressing common data quality issues.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between limited and full data validation in ecotoxicology? A1: The core difference lies in the depth and scope of review. Limited validation is a targeted, risk-based review often applied in early research phases or for well-understood methods. It may focus on critical quality control (QC) parameters like calibration verification and a subset of samples[reference:0]. Full validation is a comprehensive assessment required for definitive studies, evaluating all mandated performance characteristics (e.g., accuracy, precision, specificity, detection limit) to ensure data is fully defensible for regulatory decision-making[reference:1].

Q2: What key factors should guide my choice between a limited and a full validation approach? A2: Selection should be based on a risk assessment considering the following factors:

- Stage of Research: Early method development or screening may warrant limited validation, while late-stage or regulatory-submission studies require full validation[reference:2].

- Data Criticality: How will the data be used? Data supporting pivotal conclusions or regulatory actions necessitates full validation.