Data Quality Assessment Tools for Ecotoxicology: A Comparative Guide for Researchers and Drug Development Professionals

High-quality data is the cornerstone of reliable ecological risk assessment and regulatory decision-making in ecotoxicology.

Data Quality Assessment Tools for Ecotoxicology: A Comparative Guide for Researchers and Drug Development Professionals

Abstract

High-quality data is the cornerstone of reliable ecological risk assessment and regulatory decision-making in ecotoxicology. This article provides a comprehensive comparison of data quality assessment tools and methodologies specifically tailored for researchers, scientists, and drug development professionals. The scope encompasses foundational principles of data quality, explores established and emerging assessment tools—from the EPA's ECOTOX Knowledgebase to AI-assisted screening—and details methodological applications for real-world data. It further addresses common troubleshooting and data optimization challenges, concluding with a framework for the validation and comparative analysis of different tools. By synthesizing current standards, software, and best practices, this guide aims to equip professionals with the knowledge to select and implement robust data quality strategies, ultimately enhancing the reliability and efficiency of ecotoxicological research and chemical safety evaluations[citation:1][citation:2][citation:7].

The Bedrock of Reliability: Core Principles and Data Challenges in Ecotoxicology

The regulatory evaluation of chemicals hinges on the quality of the underlying ecotoxicity data [1]. Data Quality Assessment (DQA) frameworks provide structured methods to evaluate this information, primarily based on two core dimensions: reliability and relevance [2] [1]. Reliability refers to the inherent quality of a test report relating to its methodology and the clarity of its findings, while relevance concerns the appropriateness of the data for a specific hazard identification or risk assessment [1]. The choice of DQA framework directly impacts which studies are included in risk assessments and can influence regulatory outcomes [3] [1].

Historically, the method established by Klimisch et al. in 1997 has been widely adopted [1]. However, its limitations in providing detailed guidance and ensuring consistency have led to the development of newer, more robust frameworks [2] [1]. This guide compares established and emerging DQA tools, examining their criteria, application, and performance to inform their use in modern ecotoxicological research and regulatory decision-making.

Comparative Analysis of Data Quality Assessment Tools

A critical review of frameworks reveals significant variation in their design, scope, and applicability. The following table compares four established methods for evaluating the reliability of ecotoxicity data.

Table 1: Comparison of Four Reliability Evaluation Methods for Ecotoxicity Data [3]

| Feature | Klimisch et al. Method | Durda & Preziosi Method | Hobbs et al. Method | Schneider et al. (ToxRTool) |

|---|---|---|---|---|

| Primary Data Types | Toxicity (in vivo/vitro) & ecotoxicity (acute/chronic) | Ecotoxicity data | Ecotoxicity (acute/chronic) data | Toxicity data (in vivo/in vitro) |

| Evaluation Categories | Reliable without/with restrictions, Not reliable, Not assignable | High, Moderate, Low quality, Not reliable, Not assignable | High, Acceptable, Unacceptable quality | Reliable without/with restrictions, Not reliable, Not assignable |

| Number of Criteria | 12 (acute) or 14 (chronic ecotoxicity) | 40 | 20 | 21 |

| Criteria Structure | Several aspects per criterion | 1 aspect per question | 1 aspect per question | Several aspects per criterion |

| Guidance for Evaluator | No | Yes | No | Yes |

| Summary of Evaluation | Not stated | Stated | Stated | Stated and calculated automatically |

| Key Basis/Note | Recommended in REACH guidance | Based on US EPA, OECD, ASTM standards | Based on a method for Australasian ecotoxicity database | Integrates reliability and some relevance aspects |

The Klimisch method, while foundational, offers limited guidance and lacks transparency in its summarization process [3] [1]. In contrast, tools like the ToxRTool and the Durda & Preziosi method provide more structured questions and guidance for the evaluator [3]. A major advancement in the field is the development of the CRED (Criteria for Reporting and Evaluating ecotoxicity Data) evaluation method, designed explicitly as a more detailed and transparent successor to the Klimisch method for aquatic ecotoxicity [1].

Table 2: Comparison of the Klimisch and CRED Evaluation Methods [1]

| Characteristic | Klimisch Method | CRED Method |

|---|---|---|

| Scope of Data | Toxicity and ecotoxicity data | Aquatic ecotoxicity studies |

| Number of Reliability Criteria | 12-14 for ecotoxicity | Evaluates against 20 criteria (based on 50 reporting criteria) |

| Relevance Evaluation | Not included | Includes 13 specific relevance criteria |

| Alignment with OECD Standards | Includes 14 out of 37 OECD reporting criteria | Incorporates all 37 OECD reporting criteria |

| Guidance Provided | No additional guidance | Detailed guidance material provided |

| Evaluation Output | Qualitative reliability score | Qualitative scores for both reliability and relevance |

The CRED method's inclusion of explicit relevance criteria and its alignment with all OECD reporting standards address significant gaps in the Klimisch approach [1]. Ring tests have shown that the CRED method is perceived as less dependent on expert judgment, more accurate and consistent, and practical in use [1].

Experimental Protocols for Data Generation and Curation

The reliability of any DQA process depends on the underlying data. Standardized experimental protocols and transparent data curation are therefore fundamental. A prominent example is the creation of the ADORE benchmark dataset for machine learning in ecotoxicology [4].

Core Data Source and Processing: The ADORE dataset is built around acute aquatic toxicity data extracted from the US EPA ECOTOX database [4]. The curation process involves several key steps:

- Taxonomic Filtering: Data is filtered for three key taxonomic groups: fish, crustaceans, and algae.

- Endpoint Harmonization: Diverse effect endpoints (e.g., mortality, immobilization, growth inhibition) are mapped to comparable toxicity values, primarily LC50 or EC50.

- Experimental Validity Checks: Entries are checked against standard test durations (e.g., 96h for fish, 48h for crustaceans) and relevant life stages.

- Chemical Identifier Standardization: Chemicals are matched and annotated using stable identifiers like CAS numbers, DTXSIDs, and InChIKeys to enable integration with external chemical property databases [4].

Statistical Analysis Protocols: Ecotoxicity data often consists of count or proportion data (e.g., number of dead organisms) that are not normally distributed. A comparative study of statistical approaches recommends specific methods for robust analysis [5]:

- For count data (e.g., number of immobilized daphnids), quasi-Poisson Generalized Linear Models (GLMs) are recommended as they provide high statistical power while handling overdispersion.

- For proportion data derived from counts (e.g., mortality rate), binomial GLMs generally outperform traditional linear models applied to transformed data.

- The use of these GLM-based methods reduces the need for data transformation and provides more reliable determination of effect concentrations (e.g., LOEC) compared to non-parametric methods or transformed linear models [5].

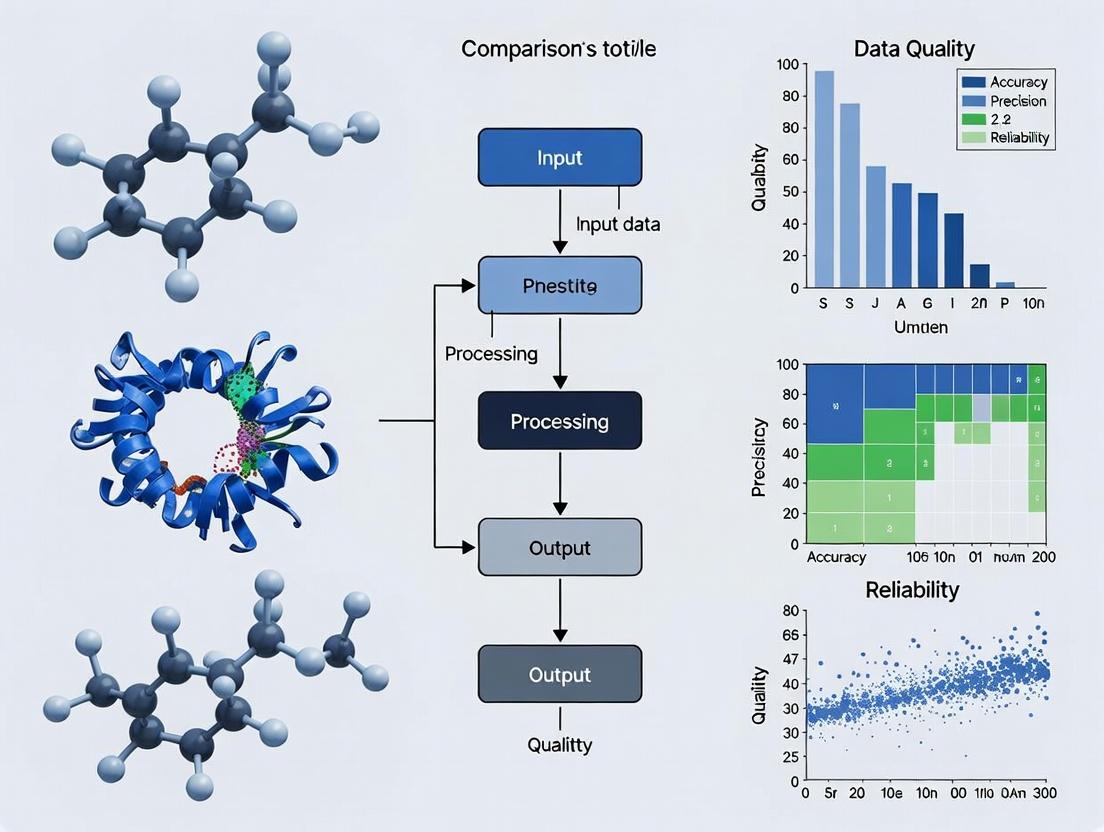

Workflow and Conceptual Diagrams

The following diagrams illustrate the logical workflow for data quality assessment and the recommended statistical pathway for analyzing experimental data.

Data Quality Assessment Logical Workflow [2] [1]

Statistical Analysis Pathway for Ecotox Data [5]

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key resources, databases, and tools essential for generating and evaluating high-quality ecotoxicological data.

Table 3: Essential Research Toolkit for Ecotoxicology Data Quality

| Tool/Resource Name | Type | Primary Function in DQA | Key Features / Notes |

|---|---|---|---|

| OECD Test Guidelines (e.g., TG 201, 202, 203) | Standardized Protocol | Defines reliability criteria for test design and reporting. | The gold standard for regulatory tests; compliance is a major reliability criterion in all DQA frameworks [4] [1]. |

| US EPA ECOTOX Knowledgebase | Database | Primary source of curated ecotoxicity data for retrospective analysis and modeling. | Contains over 1.1 million entries; provides experimental metadata crucial for reliability and relevance evaluation [4]. |

| CRED Evaluation Method | Assessment Framework | Provides structured criteria and guidance for evaluating reliability and relevance of aquatic ecotoxicity studies. | Includes 20 reliability and 13 relevance criteria; designed to improve transparency and consistency over the Klimisch method [1]. |

| ToxRTool (Toxicological data Reliability assessment Tool) | Assessment Tool | Evaluates the reliability of toxicological and ecotoxicological studies. | Automates scoring and summary; integrates some relevance aspects [3]. |

| Generalized Linear Model (GLM) Software (e.g., in R/Python) | Statistical Tool | Correctly analyzes non-normal ecotoxicity data (counts, proportions). | Quasi-Poisson and Binomial GLMs are recommended for valid effect concentration estimation [5]. |

| Chemical Identifier Resolvers (CAS, DTXSID, InChIKey) | Standardization Tool | Ensures unambiguous chemical identification, a foundational data quality element. | Critical for merging data from different sources and linking to chemical property databases [4]. |

| Benchmark Datasets (e.g., ADORE) | Curated Data | Provides a standardized basis for comparing model performance (e.g., QSAR, ML). | Includes defined data splits to prevent leakage and enable fair comparison of predictive tools [4]. |

Ecotoxicology occupies a unique and challenging position within the environmental sciences, tasked with predicting the effects of thousands of chemical stressors on diverse ecological communities. This discipline's effectiveness hinges on the quality, accessibility, and intelligent application of vast amounts of experimental data. Researchers and regulatory professionals face a dual challenge: integrating data from highly heterogeneous sources—from standardized laboratory tests to field mesocosm studies—and navigating a landscape of evolving data quality assessment frameworks to ensure robust risk assessments [2]. The core thesis of modern ecotoxicological research is that advancements in chemical safety and ecosystem protection are directly contingent on improving how we curate, evaluate, and synthesize this complex data. This guide provides a comparative analysis of the primary data sources and evaluation tools, framed within the broader objective of identifying best practices for data quality assessment in support of reliable ecological risk assessment.

The foundation of ecotoxicology is built upon curated databases that aggregate toxicity data from the global scientific literature. Among these, the U.S. Environmental Protection Agency's ECOTOXicology Knowledgebase (ECOTOX) stands as the world's largest and most widely used repository [6] [7].

Primary Source: The ECOTOX Knowledgebase ECOTOX is a comprehensive, publicly accessible database containing single-chemical toxicity data for ecologically relevant aquatic and terrestrial species [6]. Its scale is formidable, compiled from over 53,000 references and containing more than one million test records covering over 13,000 species and 12,000 chemicals [6] [7]. The database is curated through a systematic review process designed to identify, extract, and standardize data from peer-reviewed literature, with updates released quarterly [7]. ECOTOX supports a wide range of applications, from developing water quality criteria and ecological risk assessments to informing chemical prioritization under regulatory frameworks like the Toxic Substances Control Act (TSCA) [6].

Experimental Data and Benchmark Datasets While ECOTOX serves as a primary aggregator, the field is increasingly supported by specialized, research-ready datasets. A significant development is the creation of benchmark datasets tailored for computational modeling. For instance, the ADORE (Aquatic Toxicity) dataset is a curated subset of ECOTOX data designed specifically for machine learning applications [4]. It focuses on acute toxicity for three key taxonomic groups (fish, crustaceans, and algae) and is enriched with chemical descriptors and phylogenetic information. This dataset addresses a critical need for standardized, reproducible data splits to fairly compare the performance of different predictive models, a common challenge in computational ecotoxicology [4].

The table below summarizes the scope and utility of these key data sources.

Table 1: Key Data Sources in Ecotoxicology

| Data Source | Primary Content & Scope | Key Applications | Update Frequency |

|---|---|---|---|

| ECOTOX Knowledgebase [6] [7] | >1 million test results; >13,000 species; >12,000 chemicals; aquatic & terrestrial. | Regulatory risk assessment, water quality criteria, chemical prioritization, model validation. | Quarterly |

| ADORE Benchmark Dataset [4] | Curated acute toxicity data for fish, crustaceans, algae; includes chemical features and phylogenetic data. | Training and benchmarking machine learning and QSAR models; methodological research. | Static release (based on ECOTOX snapshot) |

| EnviroTox Database [4] | Curated ecotoxicity data similar to ADORE, but with different feature sets and curation focus. | Hazard assessment, species sensitivity distributions (SSDs). | Irregular |

Data Curation and Accessibility Workflow The process of transforming raw literature into usable, curated data is complex and critical for ensuring reliability. The ECOTOX workflow exemplifies a systematic approach [7].

ECOTOX Data Curation and Application Workflow

Comparative Analysis of Data Quality Assessment Tools

A cornerstone of credible ecotoxicology is the transparent evaluation of data quality before its use in risk assessment. Several frameworks have been developed to assess the reliability (inherent methodological soundness) and relevance (appropriateness for a specific assessment) of individual studies [2] [1]. The choice of framework can significantly influence which data are deemed acceptable, thereby impacting the outcome of hazard assessments [1].

The Klimisch Method: The Established Standard For decades, the method proposed by Klimisch et al. (1997) has been the default in many regulatory contexts [3] [1]. It is a relatively simple, criteria-based system that categorizes studies into four reliability tiers: "reliable without restrictions," "reliable with restrictions," "not reliable," and "not assignable" [3]. While it provided an important step towards standardization, it has been criticized for its limited detail, lack of guidance for relevance evaluation, and dependence on expert judgment, which can lead to inconsistencies between assessors [2] [1]. Furthermore, it has been argued that its structure can favor Good Laboratory Practice (GLP) and standardized guideline studies, potentially sidelining relevant data from the peer-reviewed literature [1].

The CRED Framework: A Modern Evolution The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method was developed to address the shortcomings of the Klimisch approach [1]. CRED offers a more granular and transparent system, with approximately 20 explicit criteria for evaluating reliability and 13 for relevance [1]. It includes detailed guidance for assessors and aligns fully with OECD reporting requirements [3] [1]. A major ring-test involving 75 risk assessors from 12 countries demonstrated that CRED provides more consistent, less subjective evaluations than the Klimisch method [1].

Comparative Overview of Frameworks The table below provides a detailed comparison of four prominent reliability evaluation methods, highlighting their structural differences and scope.

Table 2: Comparison of Ecotoxicity Data Reliability Evaluation Methods [3]

| Feature | Klimisch et al. | Durda & Preziosi | Hobbs et al. | Schneider et al. (ToxRTool) |

|---|---|---|---|---|

| Primary Data Type | Toxicity & ecotoxicity | Ecotoxicity | Ecotoxicity | Toxicity & ecotoxicity |

| Evaluation Categories | 4 categories (e.g., Reliable with restrictions) | 4 categories (e.g., High, Moderate, Low) | 3 categories (High, Acceptable, Unacceptable) | 3 categories (Reliable with/without restrictions, Not reliable) |

| Number of Criteria | 12-14 | 40 | 20 | 21 |

| Relevance Evaluation | No | No | No | Yes (limited aspects) |

| Guidance for Summarizing | Not stated | Stated | Stated | Stated & automated |

| Matched OECD Criteria | 14 of 37 | 22 of 37 | 15 of 37 | 14 of 37 |

Experimental Protocol: Ring-Testing Evaluation Methods The comparative advantage of the CRED method was established through a structured ring-test, a key experimental approach for validating assessment tools [1].

Protocol: Ring-Test for Comparing Klimisch and CRED Methods [1]

- Participant Selection: 75 risk assessors from 12 countries with expertise in ecotoxicology.

- Study Selection: A set of eight diverse aquatic ecotoxicity studies from peer-reviewed literature, covering different species (e.g., Daphnia magna, fish, algae), chemicals (e.g., pharmaceuticals, pesticides), and endpoints (e.g., LC50, NOEC).

- Phase I - Klimisch Evaluation: Participants evaluated two assigned studies using only the Klimisch method criteria.

- Phase II - CRED Evaluation: Participants evaluated two different studies using a draft version of the CRED evaluation method (which was very similar to the final version).

- Analysis: Consistency of reliability scores among evaluators for the same study was compared between the two methods. Participant feedback on practicality, transparency, and perceived accuracy was also collected.

- Outcome: The CRED method produced significantly more consistent evaluations between different assessors and was perceived as more transparent, accurate, and practical.

The data quality assessment process, from study evaluation to weight-of-evidence analysis, is integral to risk assessment.

Data Quality Assessment and Integration Process

Complexity and Statistical Challenges in Ecotoxicity Data

Beyond data collection and quality scoring, ecotoxicology grapples with profound intrinsic complexities. A primary challenge is the lack of ecological realism in standard laboratory tests, which use a few model species under controlled conditions, making it difficult to extrapolate results to predict effects on complex, dynamic ecosystems [8]. Furthermore, regulatory assessments often rely on outdated statistical methods. The use of hypothesis-testing derived metrics like the No Observed Effect Concentration (NOEC) has been debated for over 30 years, as it is statistically flawed and less informative than model-based estimates like the ECx (Effect Concentration for x% effect) or the Benchmark Dose (BMD) [9].

Statistical Modernization Contemporary statistical practice advocates for a shift towards regression-based dose-response modeling as the default analytical approach [9]. Modern tools like generalized linear models (GLMs), generalized additive models (GAMs), and Bayesian methods offer more powerful and flexible ways to analyze ecotoxicity data, better capture variability, and provide more robust toxicity estimates [9]. This statistical evolution is critical for improving risk assessment accuracy and for reducing animal testing by maximizing information gained from each experiment [9].

The Challenge of Integrated Assessment A significant gap identified in the literature is the separation between human health and environmental risk assessment frameworks [2]. Most data quality assessment tools are siloed, designed for either ecotoxicity or human toxicity data, with little cross-talk. This hinders the development of Integrated Risk Assessment (IRA), which aims to holistically evaluate chemical risks [2]. None of the existing frameworks fully satisfy the need for a common system to evaluate both eco- and human toxicity data, highlighting a key area for future methodological development [2].

Table 3: Key Challenges and Evolving Solutions in Ecotoxicology Data

| Challenge Area | Traditional Approach/Limitation | Evolving Solution/Methodology |

|---|---|---|

| Ecological Realism [8] | Single-species lab tests; poor extrapolation to ecosystems. | Higher-tier testing (micro/mesocosms); Species Sensitivity Distributions (SSDs); Ecological modeling. |

| Statistical Analysis [9] | Reliance on NOEC/LOEC; ANOVA-based hypothesis testing. | Dose-response modeling (ECx, BMD); Generalized Linear/Additive Models (GLMs/GAMs); Bayesian methods. |

| Data Integration [2] | Separate frameworks for human health and ecotoxicity data. | Development of integrated Data Quality Assessment (DQA) systems for Integrated Risk Assessment (IRA). |

| Mechanistic Prediction [10] | Limited data for most chemicals; reliance on apical endpoints. | Adverse Outcome Pathways (AOPs); Bioinformatics & cross-species extrapolation (e.g., SeqAPASS). |

Future Directions and the Research Toolkit

The future of ecotoxicology is moving towards precision and prediction, leveraging advances in bioinformatics, evolutionary toxicology, and computational power [10]. The concept of the Adverse Outcome Pathway (AOP) provides a framework for organizing mechanistic knowledge, from a Molecular Initiating Event (MIE) to an adverse ecological outcome [10]. Understanding the taxonomic domain of applicability of an AOP—which species are susceptible based on conserved biological pathways—is a growing research focus enabled by bioinformatic tools [10].

Essential Research Tools and Reagents Modern ecotoxicology relies on a blend of traditional experimental materials and advanced in silico resources.

Table 4: Research Toolkit for Modern Ecotoxicology

| Tool/Reagent Category | Specific Examples | Primary Function/Purpose |

|---|---|---|

| Data & Database Identifiers | CAS Number, DTXSID (CompTox), InChIKey, SMILES [4] | Unique chemical identification and database interoperability. |

| Standard Test Organisms | Danio rerio (zebrafish), Daphnia magna, Raphidocelis subcapitata (algae) [4] | Standardized toxicity testing for regulatory endpoints. |

| Key Toxicity Metrics | LC50, EC50, NOEC, Benchmark Dose (BMD) [4] [9] | Quantitative measures of chemical potency and effect. |

| Bioinformatic Tools | SeqAPASS, EcoDrug, AOP-Wiki [10] | Predicting cross-species susceptibility and mapping mechanistic pathways. |

| Computational Tools | EcoToxChips (transcriptomics), Molecular docking models [10] | High-throughput screening and understanding chemical-protein interactions. |

| Statistical Software | R (with packages for dose-response, e.g., drc) [9] |

Advanced statistical analysis of toxicity data (GLMs, dose-response modeling). |

The data landscape of ecotoxicology is both vast and uniquely complex, characterized by large-scale curated repositories like ECOTOX, a critical evolution in data quality assessment tools from Klimisch to CRED, and enduring challenges in ecological extrapolation and statistical practice. The field is at an inflection point, where traditional in vivo data remains essential for validation, but its value is amplified when combined with modern computational, bioinformatic, and statistical methodologies. For researchers and assessors, the path forward involves the judicious application of transparent, consistent data evaluation frameworks, the adoption of modern statistical best practices, and the integration of mechanistic insights to build a more predictive and precise science of ecotoxicology. This integrated approach is fundamental to addressing the global challenge of chemical pollution and biodiversity protection.

In ecotoxicology and environmental health research, data quality is not merely an academic concern but a foundational regulatory requirement that directly determines the validity of chemical risk assessments. Regulatory frameworks worldwide, such as the US Toxic Substances Control Act (TSCA) and the EU's REACH regulation, mandate that safety decisions be based on reliable, high-quality data [11] [7]. The consequences of poor data quality are severe, ranging from mischaracterized chemical hazards and inadequate environmental protection to substantial financial penalties for non-compliance [11] [12].

This guide situates the comparison of data quality assessment tools within the specific domain of computational ecotoxicology. Here, the volume and complexity of data—from high-throughput screening (HTS) assays to legacy animal studies—necessitate robust, standardized tools to ensure information is Findable, Accessible, Interoperable, and Reusable (FAIR) [13] [7]. For researchers and risk assessors, selecting the right tool is a strategic decision that impacts not only research efficiency but also regulatory acceptance. The following sections provide a comparative framework, experimental validations, and a practical toolkit for evaluating these critical software and data resources.

Foundational Data Quality Standards and Regulatory Drivers

Effective data quality management in regulated research is guided by formalized standards and principles. Key among these are the FAIR principles, which provide a benchmark for modern scientific data management by emphasizing machine-actionability and reuse potential [13]. Complementing this, the ISO/IEC 25000 (SQuaRE) series offers an international standard for evaluating data quality across defined dimensions such as accuracy, completeness, and credibility [13].

These frameworks operationalize abstract quality concepts into measurable metrics. Regulatory compliance acts as a primary driver for their adoption. For instance, the EU Data Governance Act promotes secure data sharing for public good, implicitly requiring high-quality, well-documented data [11]. In the ecotoxicology context, agencies like the U.S. Environmental Protection Agency (EPA) have internal mandates requiring systematic, transparent data curation to support decisions under statutes like the Clean Water Act and Comprehensive Environmental Response, Compensation, and Liability Act (CERCLA) [7].

The diagram below illustrates how these regulatory drivers establish data quality standards, which in turn govern assessment methodologies and ultimately determine the reliability of risk assessment outputs.

Diagram: Regulatory drivers establish data quality standards, which govern assessment methodologies and determine risk assessment outcomes.

In ecotoxicology, "data quality assessment tools" encompass both software platforms for evaluating datasets and the curated data resources themselves, which have inherent quality controls. The comparison below focuses on four major, publicly accessible resources maintained by the U.S. EPA, which are foundational for regulatory science. The evaluation is based on defined data quality dimensions [13] [14] [12] and their relevance to research and risk assessment workflows.

Table 1: Comparative Analysis of Key Ecotoxicology Data Resources

| Resource (Provider) | Primary Data Type & Volume | Key Data Quality Dimensions Addressed [13] [12] | Integrated Quality Assurance Protocols | Primary Use Case in Risk Assessment |

|---|---|---|---|---|

| ECOTOX Knowledgebase [15] [7] | Curated in vivo ecotoxicity tests; >1 million test results for >12,000 chemicals. | Completeness, Accuracy, Consistency, Credibility. | Systematic review & curation pipeline; controlled vocabularies; Klimisch-style study evaluation. | Derivation of point estimates (e.g., LC50) for ecological hazard characterization. |

| ToxCast/Tox21 Database [15] | High-throughput screening (HTS) in vitro assay data; ~10,000 chemicals. | Accessibility, Interoperability, Timeliness. | Standardized assay protocols; benchmark chemical controls; computational quality control flags. | Mechanistic screening for priority setting & predictive model development. |

| Toxicity Reference Database (ToxRefDB) [15] | Historic in vivo mammalian toxicity studies; ~6,000 guideline studies. | Consistency, Completeness, Traceability. | Use of controlled vocabulary; structured data fields from guideline studies. | Chronic hazard identification (e.g., carcinogenicity) for human health assessment. |

| CompTox Chemicals Dashboard [15] | Aggregated physicochemical, hazard, exposure data; >1 million chemicals. | Interoperability, Accuracy, Currentness. | Cross-source data harmonization; curation flags; linked chemical identifiers (DTXSID). | One-stop resource for chemical identification, property estimation, and data sourcing. |

Experimental Protocols: Validating Data Quality and Tool Performance

Evaluating the tools and resources in Table 1 requires experimental protocols that test their performance against the stated data quality dimensions. The following methodologies are standard in the field for validating both the integrity of curated data and the functionality of analytical tools.

Protocol 1: Assessing Completeness and Accuracy in a Curated Knowledgebase (e.g., ECOTOX)

- Objective: To quantify the completeness of critical data fields and verify the accuracy of extracted toxicity values against original source material.

- Methodology:

- Stratified Sampling: Randomly select a defined subset of test records (e.g., 200 records) from the knowledgebase, stratified by species group (fish, invertebrate, plant) and toxicological endpoint (mortality, growth, reproduction).

- Completeness Audit: For each record, audit the presence of mandatory fields defined by the standard evaluation procedure (e.g., chemical identifier, species name, exposure duration, endpoint value, effect concentration, dose unit) [7].

- Accuracy Verification: Locate the original source publication for each sampled record. Independently extract the key toxicity value (e.g., LC50) and critical test conditions. Compare the manually extracted value with the value stored in the knowledgebase.

- Data Quality Scoring: Calculate completeness as the percentage of mandatory fields populated per record. Calculate accuracy as the percentage of records where the key toxicity value matches the source within a defined acceptable margin of error (e.g., ±5% for numeric values, exact match for descriptors).

- Supporting Data: A 2022 study described ECOTOX's curation pipeline, which employs dual independent review and arbitration for data extraction, a process designed to maximize accuracy [7]. An experimental audit would generate quantitative metrics (e.g., 98.5% field completeness, 99.2% value accuracy) to benchmark performance.

Protocol 2: Benchmarking Interoperability and Predictive Performance of HTS Data (e.g., ToxCast)

- Objective: To evaluate the interoperability of HTS data by testing its integration into a predictive modeling workflow and to assess the predictive performance of models built from this data.

- Methodology:

- Model Construction: Select a benchmark set of chemicals with high-quality in vivo toxicity data from ToxRefDB (e.g., chronic lowest effect levels). Obtain corresponding in vitro bioactivity profiles from ToxCast.

- Data Integration: Use the chemical identifier mapping (e.g., from DTXSID) provided by the CompTox Dashboard to seamlessly merge the in vitro activity matrix with the in vivo toxicity endpoint [15].

- Predictive Modeling: Train a quantitative structure-activity relationship (QSAR) or machine learning model using the ToxCast activity signatures as descriptors to predict the in vivo toxicity values.

- Performance Validation: Validate model performance using held-out test chemicals. Key metrics include the coefficient of determination (R²) for model fit and the mean absolute error (MAE) for prediction accuracy.

- Supporting Data: This protocol mirrors the EPA's internal validation for new approach methodologies (NAMs). The success of the model, measured by R² and MAE, directly demonstrates the interoperability and fitness-for-purpose of the HTS data for predictive toxicology [15] [7].

The flow of data and validation in such an experiment is illustrated below.

Diagram: Experimental workflow for validating High-Throughput Screening (HTS) data quality through predictive modeling.

The Scientist's Toolkit: Essential Reagent Solutions for Data Quality Assessment

Beyond software, ensuring data quality in computational ecotoxicology relies on a suite of curated data "reagents" and foundational resources. The following table details essential components of this toolkit.

Table 2: Research Reagent Solutions for Data Quality Management

| Tool/Resource | Function in Data Quality Assessment | Key Features for Quality Control | Typical Application in Workflow |

|---|---|---|---|

| Controlled Vocabularies & Ontologies | Ensures consistency and uniqueness in data annotation by providing standardized terms for chemicals, species, and endpoints [7]. | Prevents synonym errors; enables reliable searching and computational reasoning. | Used during data extraction/curation and when querying databases like ECOTOX. |

| Chemical Identifier Mapping Service (via CompTox Dashboard) | Maintains accuracy and interoperability by providing authoritative, cross-referenced chemical identifiers (CASRN, DTXSID, InChIKey) [15]. | Resolves ambiguity from synonyms or deprecated IDs; links data across disparate sources. | Essential first step before integrating or comparing data from multiple studies or databases. |

| Systematic Review Protocol Templates | Ensures completeness, credibility, and transparency of literature-based data curation [7]. | Provides a pre-defined checklist for study evaluation, data extraction, and reporting. | Guides the manual or semi-automated curation of new data for internal databases or published reviews. |

| ToxValDB (Toxicity Value Database) [15] | Provides a quality-filtered aggregate of toxicity values from multiple sources, addressing consistency and currency. | Applies harmonized data evaluation criteria across sources; values are updated with new science. | Serves as a benchmark for checking derived values or as a primary source for screening-level assessments. |

| Abstract Sifter (Literature Mining Tool) [15] | Enhances the efficiency and thoroughness of the data collection phase, supporting completeness. | Uses relevance ranking and keyword highlighting to triage large volumes of PubMed search results. | Accelerates the initial phase of a systematic review or literature search for chemical safety data. |

The comparison of data quality assessment tools and resources reveals that no single solution addresses all dimensions of data quality. Regulatory imperatives demand a strategic, hybrid approach. For researchers and assessors, the following evidence-based recommendations emerge:

- For Definitive Hazard Characterization: Rely on authoritative curated knowledgebases like ECOTOX and ToxRefDB. Their rigorous, systematic review processes maximize accuracy, completeness, and credibility—the dimensions most critical for regulatory point-of-departure analysis [15] [7]. The experimental Protocol 1 provides a template for periodically validating the quality of such resources.

- For Predictive Modeling and Priority Setting: Leverage high-throughput screening data from ToxCast/Tox21. Its strength lies in interoperability, timeliness, and volume, making it ideal for developing models and screening large chemical inventories [15]. Its fitness-for-purpose should be validated using experimental frameworks like Protocol 2.

- For Data Integration and Chemical Identification: Utilize the CompTox Chemicals Dashboard as a central hub. It is indispensable for ensuring consistency and accuracy in chemical identification, which is the first, critical step in any integrated data analysis [15].

Ultimately, the choice of tool must be guided by a clear fit-for-purpose principle, aligned with the specific data quality requirements of the research question or regulatory decision at hand. Building competency in using this interconnected toolkit—and understanding the experimental validation behind it—is essential for producing risk assessments that are both scientifically robust and regulatorily defensible.

In contemporary ecotoxicology research and drug development, the exponential growth of data volume and complexity has necessitated robust frameworks for data management, quality assessment, and governance. Researchers and professionals are increasingly evaluated not only on their scientific discoveries but also on the integrity, reusability, and ethical stewardship of the digital assets they produce. This guide provides a comparative analysis of three pivotal frameworks that shape modern scientific data practice: the FAIR Principles, ISO/IEC standards (specifically the 11179 metadata registry), and OECD guidelines for AI and quality infrastructure.

The broader thesis underpinning this comparison is that effective data quality assessment in ecotoxicology is not a function of a single tool, but rather the strategic application of complementary governance frameworks. Each standard addresses different aspects of the data lifecycle—from the granular description of data elements to the ethical principles governing intelligent systems used for analysis. Understanding their scope, requirements, and practical implementation is essential for constructing a trustworthy, efficient, and collaborative research ecosystem.

Comparative Analysis of Frameworks

The following tables provide a structured comparison of the FAIR Principles, relevant ISO/IEC standards, and OECD guidelines across key dimensions relevant to scientific research.

Table 1: Foundational Characteristics and Scope

| Characteristic | FAIR Guiding Principles | ISO/IEC Standards (e.g., 11179) | OECD Guidelines & Principles |

|---|---|---|---|

| Primary Focus | Enhancing the Findability, Accessibility, Interoperability, and Reuse of digital research objects [16] [17]. | Standardizing the definition, registration, and exchange of metadata and data elements within a registry [18] [19]. | Promoting trustworthy AI, robust quality infrastructure, and good statistical practice for policy and innovation [20] [21] [22]. |

| Nature & Status | A set of voluntary, community-developed guiding principles. Not a formal standard [17] [23]. | Formal, consensus-based International Standards with defined compliance criteria [18] [19]. | International policy guidelines and recommendations, often adopted by member countries [20] [21]. |

| Core Objective | To make data machine-actionable and optimally reusable for both humans and computational agents [17] [24]. | To make data understandable and shareable across systems and organizations through semantic precision [19]. | To foster innovative growth, fairness, and safety in digital and data-driven ecosystems [20] [22]. |

| Target Audience | Data producers, stewards, repository managers, and researchers across all disciplines [17] [24]. | Data architects, system designers, and organizations implementing metadata registries [19]. | Policymakers, regulators, statisticians, and organizations deploying AI systems [20] [21] [22]. |

Table 2: Functional Requirements and Application

| Aspect | FAIR Principles | ISO/IEC 11179 Metadata Registry | OECD AI Principles & Quality Framework |

|---|---|---|---|

| Key Requirements | Assign persistent identifiers (F1), use standardized protocols (A1), employ shared vocabularies (I1), provide rich provenance (R1) [24] [23]. | Register data elements with unique identification, standardized naming and definitions, and link to classification schemes [19]. | Ensure AI systems are transparent, robust, secure, accountable, and respectful of human-centered values [22]. |

| Governance Approach | Principle-based guidance focused on the attributes of data and metadata objects. | Model-based specification defining the structural relationships between concepts, data elements, and value domains [19]. | Risk- and value-based framework promoting inclusive growth, well-being, and agile governance [20] [22]. |

| Implementation Output | FAIR digital objects (datasets, metadata) hosted in compliant repositories. | A functioning Metadata Registry (MDR) containing semantically precise data element definitions [19]. | Policies, risk assessments, and governance structures for statistical systems and AI lifecycle management [21] [22]. |

| Typical Use Case | Preparing an omics dataset for deposition in a public repository to ensure future discovery and integration. | Creating an enterprise-wide data dictionary to harmonize the meaning of "chemical concentration" across lab systems. | Developing an internal policy for the ethical and safe use of an AI-based model for predicting chemical toxicity. |

Experimental Protocols for Framework Implementation

Implementing these frameworks in ecotoxicology research requires concrete, actionable protocols. The following methodologies detail how to apply each framework's core tenets to a typical research data lifecycle.

Protocol for Assessing and Enhancing FAIRness of Ecotoxicology Datasets

This protocol provides a stepwise method to evaluate and improve the compliance of a dataset with the FAIR Principles, a prerequisite for submission to many journals and repositories [24].

1. Objective: To systematically evaluate a dataset against the 15 FAIR sub-principles and implement enhancements to increase its machine-actionability and reusability [17] [23].

2. Materials: The raw dataset, associated metadata, a suitable persistent identifier service (e.g., DOI), a FAIR checklist [24], and access to a domain-specific or general-purpose data repository (e.g., FigShare, Zenodo, or an institutional repository).

3. Methodology:

- Pre-assessment & Planning:

- Create an inventory of all digital objects (data files, code, workflows).

- Identify a target repository that assigns persistent identifiers and supports rich metadata [24].

- Determine the relevant community standards for metadata (e.g., ECOTOXicology Knowledgebase fields, Darwin Core for species data).

- Findability Enhancement:

- F1/F3: Obtain a persistent identifier (e.g., DOI) for the dataset from the repository. Ensure all metadata explicitly references this identifier.

- F2/F4: Generate comprehensive metadata. For an ecotoxicity dataset, this must include: experimental organism (with taxonomy ID), tested chemical (with CAS/ChEBI ID), exposure regimen, measured endpoints (e.g., LC50, growth inhibition), and environmental parameters. Register the dataset in the repository to make it searchable.

- Accessibility & Interoperability Enhancement:

- A1/A1.1: Ensure data is downloadable via an open, standardized protocol like HTTPS or a repository API.

- I1/I2: Use controlled vocabularies and ontologies (e.g., ChEBI for chemicals, OBO Foundry ontologies for toxicology terms). Structure data in non-proprietary, machine-readable formats (CSV, JSON-LD, RDF).

- I3: Include qualified references (using their persistent identifiers) to related datasets, the source chemical, or the published study.

- Reusability Enhancement:

- R1.1: Attach a clear, machine-readable usage license (e.g., CC-BY 4.0) to the dataset and metadata.

- R1.2: Document detailed provenance: experimental protocols, instrument parameters, data processing scripts (with version numbers), and contributor roles.

- R1.3: Ensure metadata and data structure adhere to community-accepted standards for ecotoxicology data reporting.

- Validation: Use automated FAIR assessment tools (e.g., FAIR Evaluator, F-UJI) to generate a maturity score and identify remaining gaps.

Protocol for Registering Data Elements using ISO/IEC 11179 Concepts

This protocol outlines how to formally define a core data element from ecotoxicology—such as "median lethal concentration (LC50)"—within an ISO/IEC 11179-compliant metadata registry to ensure consistent interpretation across studies and databases [19].

1. Objective: To create a standardized, semantically precise registration for a key ecotoxicological data element in a metadata registry to eliminate ambiguity and enable precise data integration.

2. Materials: Access to an ISO/IEC 11179-conformant metadata registry tool or template. Domain expertise in ecotoxicology and data modeling.

3. Methodology:

- Conceptual Modeling:

- Identify the Object Class: Define the entity being described. In this case, the object class is "Toxicity Test."

- Identify the Characteristic: Define the property being measured. The characteristic is "Median Lethal Concentration."

- Form the Data Element Concept (DEC): Combine the object class and characteristic: "Median Lethal Concentration of a chemical substance for a test organism in a Toxicity Test." The DEC is representation-independent [19].

- Registration of the Data Element (DE):

- Administrative Attributes: Assign a unique identifier to the DE.

- Naming (Per 11179-5): Apply naming conventions (e.g., "LC50 Value").

- Definition (Per 11179-4): Write a precise, unambiguous definition: "The concentration of a chemical substance in water, estimated to be lethal to 50% of a defined population of a test organism under specified exposure conditions and duration."

- Representation: Define the Value Domain. This could be a "Decimal Value" with a unit of measure (e.g., milligrams per liter). If codes are used (e.g., for test organism life stage), a separate code list must be defined and linked, with each code value semantically defined [19].

- Classification & Relationship Mapping: Classify the DE within a scheme (e.g., under "Ecotoxicological Endpoints"). Establish relationships to other DECs, such as "No Observed Effect Concentration (NOEC)" or "Test Organism."

Protocol for OECD AI Principle-Based Risk Assessment of an Ecotoxicity Prediction Model

This protocol adapts the OECD AI Principles and the Framework for the Classification of AI Systems to assess a machine learning model used to predict chemical toxicity [22].

1. Objective: To conduct a structured risk assessment of an AI-driven quantitative structure-activity relationship (QSAR) model for ecotoxicity prediction, ensuring alignment with OECD principles of transparency, robustness, and fairness.

2. Materials: The trained QSAR model, documentation of its development (training data, algorithms, performance metrics), and the OECD AI Principles checklist [22].

3. Methodology:

- Context Establishment (Map): Define the model's purpose: "To predict acute aquatic toxicity (LC50) for regulatory screening." Identify stakeholders: regulators, chemical manufacturers, environmental scientists.

- Multi-Dimensional Assessment (Measure): Evaluate the model across dimensions outlined in the OECD framework [22]:

- People & Planet: Assess potential environmental and social impact of erroneous predictions (e.g., underestimating toxicity).

- Data & Input: Scrutinize the training dataset for representativeness (chemical space coverage), bias (over-representation of certain chemical classes), and quality (experimental data provenance).

- AI Model: Evaluate technical robustness (performance on external validation sets, uncertainty quantification), explainability (ability to interpret which structural features drive toxicity prediction), and security (resilience against adversarial attacks).

- Task & Output: Assess if the model's output is suitable for its intended use (screening vs. definitive risk assessment) and if human oversight is integrated.

- Gap Analysis against OECD Principles:

- Transparency & Explainability: Can the model's logic be communicated to a toxicologist?

- Robustness, Security & Safety: Are the model's limitations and uncertainty well-characterized?

- Fairness/Bias: Does the model perform equally well across different chemical families? Could its use lead to discriminatory outcomes?

- Accountability: Is there clear ownership and a process for addressing model errors or stakeholder inquiries?

- Risk Treatment & Governance (Manage): Document identified risks (e.g., "high uncertainty for perfluorinated chemicals") and mitigation plans (e.g., "apply a higher assessment factor for predictions in this chemical class"). Establish ongoing monitoring procedures for model performance and relevance [22].

Framework Interaction and Research Workflow Integration

The following diagram illustrates how the three frameworks logically interact and integrate into a cohesive data governance strategy within the ecotoxicology research lifecycle.

Diagram: Integration of Frameworks in a Research Workflow. This diagram shows how FAIR, ISO, and OECD frameworks provide complementary governance at different stages of the research data lifecycle, converging to enable trustworthy data reuse and integration.

Successfully implementing these frameworks requires a combination of tools, standards, and services. The following table details key resources relevant to ecotoxicology researchers.

Table 3: Research Reagent Solutions for Framework Implementation

| Tool/Resource Category | Specific Examples & Standards | Primary Function in Implementation |

|---|---|---|

| Persistent Identifier Services | Digital Object Identifier (DOI), Handle System, RRIDs (Research Resource Identifiers). | Fulfills FAIR F1: Provides globally unique, persistent identifiers for datasets, chemicals, organisms, and instruments [24] [23]. |

| Metadata Standards & Ontologies | Domain-Specific: ECOTOX Knowledgebase format, Environmental Conditions for Chemical Testing (ECETOC). Cross-Domain: Dublin Core, Schema.org. Ontologies: Chemical Entities of Biological Interest (ChEBI), NCBI Taxonomy, OBO Foundry ontologies (e.g., EXO). | Fulfills FAIR I1/I2/R1.3 & ISO Semantics: Provides shared, formal languages and vocabularies for describing data with semantic precision, enabling interoperability [17] [19]. |

| Data Repositories | General-purpose: FigShare, Zenodo, Dataverse, institutional repositories. Domain-specific: EPA's CompTox Chemicals Dashboard, Dryad. | Fulfills FAIR F4/A1: Provides searchable infrastructure, standardized access protocols, and often assigns persistent identifiers. Critical for findability and accessibility [17] [24]. |

| Metadata Registry (MDR) Tools | COTS (Commercial Off-The-Shelf) MDR software, open-source implementations based on ISO/IEC 11179 metamodel. | Core ISO/IEC 11179 Infrastructure: Enables the systematic registration, management, and querying of standardized data element definitions within an organization or consortium [19]. |

| AI Risk & Governance Platforms | Commercial AI governance solutions (e.g., OneTrust) that incorporate OECD and NIST framework checklists. | Supports OECD Implementation: Provides structured workflows to inventory AI models, assess them against principles (transparency, fairness), and manage risks throughout the lifecycle [22]. |

| FAIR Assessment Tools | Automated: F-UJI, FAIR Evaluator, FAIR-Checker. Checklist-based: RDA FAIR Data Maturity Model, generic FAIR checklists [24]. | Evaluation & Benchmarking: Provides metrics and maturity indicators to measure the FAIRness of digital objects and guide improvement efforts [23]. |

From Theory to Lab: A Toolkit for Ecotoxicological Data Quality Assessment

In modern ecotoxicology and chemical risk assessment, the quality and accessibility of data fundamentally determine the robustness of scientific conclusions and regulatory decisions. The challenge is no longer a scarcity of information but effectively managing, evaluating, and synthesizing vast amounts of heterogeneous toxicity data from diverse sources [2]. Curated knowledgebases address this challenge by applying systematic review and standardized vocabularies to transform raw literature into structured, reliable, and reusable data assets. These resources are indispensable for supporting chemical safety assessments, ecological research, and the development of predictive models like Quantitative Structure-Activity Relationships (QSARs) and New Approach Methodologies (NAMs) [25].

The U.S. Environmental Protection Agency's Ecotoxicology (ECOTOX) Knowledgebase has emerged as a preeminent example of such a curated resource. As the world's largest compilation of curated single-chemical ecotoxicity data, it provides a critical foundation for researchers and assessors [25]. This guide objectively compares ECOTOX with other data quality assessment tools and databases, situating it within a broader thesis on tools for ecotoxicology research. We present quantitative comparisons, detail the experimental and computational protocols they support, and provide a visual and practical toolkit for the scientific community.

The ECOTOX Knowledgebase: A Foundational Resource

The ECOTOX Knowledgebase is a comprehensive, publicly available repository containing information on the adverse effects of single chemical stressors to ecologically relevant aquatic and terrestrial species [6]. Its development, beginning in the early 1980s, was driven by the need for rapid access to toxicity data for regulatory programs under statutes like the Clean Water Act and the Toxic Substances Control Act [25].

Scope and Content

As of late 2025, ECOTOX is a monumental aggregation of ecotoxicity evidence, containing:

- Over one million test records abstracted from more than 53,000 references (including peer-reviewed and grey literature).

- Data covering more than 13,000 species and 12,000 chemicals [6] [25].

- Test results encompassing a wide range of effects (e.g., mortality, growth, reproduction) and endpoints (e.g., LC50, EC50, NOEC) across acute and chronic exposures [25].

Systematic Curation Methodology

The authority of ECOTOX derives from its transparent, systematic pipeline for literature search, review, and data curation, which aligns with contemporary systematic review practices and FAIR data principles (Findable, Accessible, Interoperable, Reusable) [25]. The process involves:

- Comprehensive Literature Search: Systematic searching of bibliographic databases and grey literature using standardized chemical, species, and toxicity terms.

- Structured Screening & Appraisal: Titles, abstracts, and full texts are screened against predefined criteria for applicability (ecologically relevant species, single chemical exposure) and acceptability (documented controls, reported endpoints).

- Standardized Data Abstraction: Pertinent methodological details (species, chemical, test conditions, results) are extracted using controlled vocabularies into a structured database schema.

- Quality Assurance & Release: Curated data undergo quality checks before being added to the public knowledgebase, which is updated quarterly [25].

Table 1: Core Features of the ECOTOX Knowledgebase (as of 2025)

| Feature | Description | Source |

|---|---|---|

| Total Test Records | >1,000,000 records | [6] [25] |

| Data Sources | >53,000 references (peer-reviewed & grey literature) | [6] [25] |

| Chemical Coverage | >12,000 unique chemicals | [6] [25] |

| Species Coverage | >13,000 aquatic and terrestrial species | [6] [25] |

| Primary Use Cases | Development of water quality criteria, ecological risk assessment, chemical prioritization, research, model development/validation. | [6] [25] |

| Systematic Process | Literature search, screening, and data extraction follow documented SOPs aligned with systematic review principles. | [25] |

| Interoperability | Linked to EPA's CompTox Chemicals Dashboard; data exportable for use in external applications. | [25] [15] |

| Update Frequency | Quarterly updates with new data and features. | [6] |

Comparative Analysis of Data Quality Assessment Tools

Evaluating the reliability (inherent trustworthiness of a study) and relevance (pertinence for a specific assessment) of individual toxicity tests is a critical step in risk assessment [2]. Several frameworks have been developed for this purpose. The table below compares four established methodologies, highlighting ECOTOX's role as a primary data source that can feed into such evaluation schemes.

Table 2: Comparison of Frameworks for Evaluating (Eco)Toxicity Data Reliability

| Framework (Developer) | Primary Scope | Evaluation Categories | Number of Criteria | Key Characteristics & Relation to ECOTOX | |

|---|---|---|---|---|---|

| Klimisch et al. (1997) | Toxicity & Ecotoxicity (acute/chronic) | Reliable without/with restrictions, Not reliable, Not assignable. | 12 (acute ecotoxicity), 14 (chronic) | Foundational method; recommended in REACH guidance. Used to evaluate studies that may be sourced from databases like ECOTOX. | [3] |

| Durda & Preziosi (2000) | Ecotoxicity data | High, Moderate, Low quality, Not reliable, Not assignable. | 40 | Based on US EPA, OECD, ASTM standards. Provides additional guidance to evaluators. | [3] |

| Hobbs et al. (2005) | Ecotoxicity (acute/chronic) | High, Acceptable, Unacceptable quality. | 20 | Developed for the Australasian ecotoxicity database. | [3] |

| ToxRTool (Schneider et al., 2009) | Toxicity (in vivo/in vitro) | Reliable without/with restrictions, Not reliable, Not assignable. | 21 | Includes aspects of relevance; provides guidance and automatic scoring. | [3] |

| ECOTOX Curation Pipeline | Ecotoxicity literature | Acceptable / Not Acceptable for inclusion. | Implicit in SOPs (e.g., controls, reported endpoint) | Not a scoring tool for end-users. It is a pre-curation process that applies consistent acceptability criteria during data entry, providing a baseline level of quality-assured data. | [25] |

A 2016 critical review of such frameworks noted a frequent shortcoming: the lack of clear separation between reliability and relevance criteria [2]. Furthermore, the review concluded that none of the existing frameworks fully satisfied the needs of an integrated eco-human decision-making system, highlighting a gap for more unified, transparent, and quantitative approaches [2]. ECOTOX addresses part of this gap by providing a large volume of pre-curated, reliability-screened data that can serve as a consistent input for downstream quality weighting and integration in Weight-of-Evidence analyses.

Experimental and Computational Protocols Enabled by Curated Data

The value of a curated knowledgebase is realized through its application in scientific and regulatory workflows. The following sections detail key experimental and computational protocols that utilize ECOTOX as a foundational data source.

Protocol 1: The ECOTOX Systematic Curation and Data Extraction Pipeline

This protocol describes the backend process used by ECOTOX curators to populate the knowledgebase, reflecting a systematic review methodology [25].

- Chemical & Search Strategy Definition: A chemical of interest is verified using authoritative identifiers (e.g., CAS RN, DTXSID). A comprehensive search strategy is developed using standardized terms for the chemical, organisms, and toxicological effects.

- Literature Retrieval & Screening: Scientific literature is retrieved from multiple databases. Titles/abstracts and subsequently full-text articles are screened against pre-defined eligibility criteria (e.g., single chemical test, relevant species, controlled study).

- Data Abstraction: For each accepted study, detailed information is extracted into structured fields:

- Test Substance: Chemical identity, form, purity.

- Test Organism: Species, life stage, source.

- Study Design: Exposure type (aqueous, dietary), duration, concentrations, control group.

- Results & Endpoints: Quantitative results (e.g., LC50 value, confidence intervals), observed effects, statistical significance.

- Quality Control & Harmonization: Abstracted data are reviewed for consistency, standardized using controlled vocabularies, and linked to other resources (e.g., CompTox Chemicals Dashboard).

- Data Publication & Release: Curated records are integrated into the live ECOTOX database, made accessible via search, exploration, and visualization tools, and are available for bulk download [6] [25].

Protocol 2: Constructing a Benchmark Dataset for Machine Learning in Ecotoxicology

This protocol, exemplified by the creation of the ADORE dataset, outlines how to extract and prepare ECOTOX data for developing predictive ML models [4].

- Source Data Acquisition: Download the publicly available, pipe-delimited ASCII data files from the ECOTOX website.

- Taxonomic & Endpoint Filtering:

- Filter data for specific taxonomic groups of interest (e.g., fish, crustaceans, algae).

- Filter for relevant effects (e.g., mortality, immobilization, growth inhibition) and standardized endpoints (e.g., 48-h LC50 for Daphnia, 96-h LC50 for fish).

- Exclude data from non-standard test systems (e.g., in vitro assays, embryo tests) if the goal is to model traditional in vivo toxicity [4].

- Data Harmonization & Cleaning:

- Resolve chemical identifiers using DSSTox IDs or InChIKeys to ensure consistency.

- Handle data redundancy (multiple records for same chemical-species pair) by applying rules (e.g., selecting geometric mean or most sensitive value).

- Address missing or outlier values in critical fields.

- Feature Engineering:

- Merge ecotoxicity data with additional features:

- Chemical Descriptors: Molecular fingerprints, physicochemical properties (log Kow, molecular weight), often sourced from the CompTox Chemicals Dashboard [4].

- Biological Features: Phylogenetic traits of the test species (e.g., taxonomy, habitat).

- Merge ecotoxicity data with additional features:

- Dataset Splitting & Benchmarking: Partition the final dataset into training and test sets using strategies (e.g., by chemical scaffold) that prevent data leakage and allow for meaningful evaluation of model generalizability [4].

Performance Comparison: An ML Case Study Using ECOTOX Data

A 2025 study on predicting pharmaceutical phytotoxicity demonstrated the practical application of ECOTOX data in machine learning [26]. Researchers compiled a dataset of Effective Concentration (EC50) values for plants from ECOTOX and the literature, then built predictive models. Table 3: Performance of Machine Learning Models in Predicting Pharmaceutical Phytotoxicity (EC50) Based on ECOTOX-Derived Data

| Machine Learning Model | 10-Fold Cross-Validation R² | 10-Fold Cross-Validation RMSE | External Validation R² | External Validation RMSE |

|---|---|---|---|---|

| XGBoost (Extreme Gradient Boosting) | 0.78 | 0.48 | 0.61 | 0.90 |

| Random Forest | 0.74 | 0.51 | 0.57 | 0.94 |

| Support Vector Machine | 0.70 | 0.55 | 0.53 | 0.99 |

| k-Nearest Neighbors | 0.65 | 0.60 | 0.48 | 1.05 |

Key Findings: The XGBoost model performed best, indicating the value of advanced ensemble methods. However, the drop in performance (R² from 0.78 to 0.61) between cross-validation and external validation underscores the challenge of model generalization to new chemicals, a known issue in computational toxicology [26]. The study used SHAP analysis to interpret the model, identifying experimental factors (e.g., plant species, exposure media) and molecular descriptors (e.g., energy gap) as key drivers of predictions [26].

Diagram 1: The ECOTOX Systematic Curation Pipeline Workflow

Diagram 2: Workflow for Building ML Toxicity Models with ECOTOX Data

The effective use of curated knowledgebases and data quality tools is supported by a suite of ancillary resources. The following table details key solutions for researchers in this field.

Table 4: Essential Research Reagent Solutions & Resources in Computational Ecotoxicology

| Tool/Resource Name | Type | Primary Function | Key Link to ECOTOX/Use Case |

|---|---|---|---|

| CompTox Chemicals Dashboard | Database / Web Application | Provides access to chemistry, toxicity, exposure, and bioactivity data for hundreds of thousands of chemicals. | The primary hub for EPA chemical data. ECOTOX data is accessible through the Dashboard, which provides chemical identifiers (DTXSID) crucial for merging toxicity data with chemical descriptors for modeling [15] [27]. |

| ToxValDB | Database | A large compilation of human health-relevant in vivo toxicology data and derived toxicity values. | Serves as a human health counterpart to ECOTOX. Facilitates integrated eco-human assessments. The Dashboard directs users to ECOTOX for ecological data [15] [27]. |

| SeqAPASS | Computational Tool | An online protein sequence alignment tool used to extrapolate chemical susceptibility across species. | Can be used in conjunction with ECOTOX data to predict toxicity for species with no empirical data, based on conserved molecular targets [28]. |

| Web-ICE | Computational Tool | A web application that uses interspecies correlation estimation to predict acute toxicity to aquatic and terrestrial organisms. | Uses curated species-sensitivity data, often sourced from databases like ECOTOX, to build predictive models for data-poor species [28]. |

| Abstract Sifter | Literature Mining Tool | An Excel-based tool to enhance relevance ranking and triage of PubMed search results. | Supports the literature search phase of systematic reviews, which is the first step in the ECOTOX curation pipeline and in independent evidence gathering [15]. |

| NAMs Training Catalog | Training Resource | Houses videos, worksheets, and slide decks for EPA's New Approach Methodologies tools. | Includes specific training modules for ECOTOX, the CompTox Dashboard, SeqAPASS, and related tools, enabling researchers to use these resources effectively [28]. |

This comparison guide provides an objective analysis of artificial intelligence (AI) and machine learning (ML) tools applied to data screening and quality evaluation within ecotoxicology research. The content is framed within a thesis comparing data quality assessment tools, focusing on performance metrics, experimental protocols, and practical applications for researchers and drug development professionals [29] [2].

Quantitative Performance Comparison of AI/ML Tools

The effectiveness of AI/ML tools in ecotoxicology varies based on task type, model architecture, and data handling strategies. The following tables summarize key experimental findings.

Table 1: Performance of Large Language Models (LLMs) in QA/QC Screening Data from a study evaluating 73 microplastics research studies using prompt-based LLM assessment [29].

| AI Tool | Primary Task | Key Performance Outcome | Reported Advantage |

|---|---|---|---|

| ChatGPT (OpenAI) | Reliability assessment of studies | High consistency in replicating human QA/QC evaluations | Effective at extracting relevant information and interpreting study reliability |

| Gemini (Google) | Reliability assessment of studies | High consistency in replicating human QA/QC evaluations | Standardizes and accelerates reliability assessments for large datasets |

| General LLM Approach | Ranking studies for risk assessment | Demonstrated promise in improving speed and consistency | Harmonizes assessments in data-intensive regulatory domains [29] |

Table 2: Comparative Performance of ML Models for Toxicity Prediction Data from a study evaluating classifiers for predicting liver toxicity using chemical structure and/or transcriptomic data [30].

| Model / Approach | Data Type | Toxicity Endpoint | Mean CV F1 Score (Standard Deviation) |

|---|---|---|---|

| Range of Classifiers (ANN, RF, NB, etc.) | Unbalanced Data | Chronic Liver Effects | 0.735 (0.040) |

| Same Classifiers (excluding k-NN) | Over-sampled Data | Chronic Liver Effects | 0.697 (0.072) |

| Same Classifiers | Under-sampled Data | Chronic Liver Effects | 0.523 (0.083) |

| Same Classifiers | Unbalanced Data | Developmental Liver Effects | 0.089 (0.111) |

| Same Classifiers | Over-sampled Data | Developmental Liver Effects | 0.234 (0.107) |

| Generalised Read-Across (GenRA) | Varies (Similarity-based) | Liver Effects | Performance context-dependent; used as a baseline local approach [30] |

Table 3: Comparison of Traditional Reliability Evaluation Methods Summary of four established frameworks for evaluating ecotoxicity data reliability [3].

| Evaluation Method | Data Coverage | Evaluation Categories | No. of Criteria | Matches OECD Criteria |

|---|---|---|---|---|

| Klimisch et al. | Toxicity & Ecotoxicity (acute/chronic) | Reliable without/with restrictions, Not reliable, Not assignable | 12-14 | 14/37 |

| Durda & Preziosi | Ecotoxicity Data | High, Moderate, Low quality, Not reliable, Not assignable | 40 | 22/37 |

| Hobbs et al. | Ecotoxicity (acute/chronic) | High, Acceptable, Unacceptable quality | 20 | 15/37 |

| Schneider et al. (ToxRTool) | Toxicity (in vivo/in vitro) | Reliable without/with restrictions, Not reliable, Not assignable | 21 | 14/37 |

Experimental Protocols for Key Studies

2.1 Protocol: LLM-Assisted QA/QC for Microplastics Studies [29] Objective: To assess the potential of LLMs in streamlining quality assurance/quality control (QA/QC) screening for microplastics human health risk assessments.

- Criteria Development: Specific prompts were engineered based on previously published QA/QC criteria for analyzing microplastics in drinking water.

- Dataset Curation: A set of 73 scientific studies published between 2011 and 2024 was compiled.

- AI Evaluation: The curated prompts were used to instruct AI tools (ChatGPT and Gemini) to evaluate each study's reliability.

- Validation: The AI-generated reliability assessments were compared to human evaluations to determine consistency and accuracy.

- Output: Studies were ranked based on their suitability for exposure and risk assessments.

2.2 Protocol: Benchmarking ML Models for Hepatotoxicity Prediction [30] Objective: To investigate the impact of class imbalance and modeling approaches on predicting hepatotoxicity from chemical and biological data.

- Data Sourcing: In vivo toxicity outcomes (chronic, developmental, etc.) were retrieved from the Toxicity Reference Database (ToxRefDB). Chemical descriptors and targeted high-throughput transcriptomic (HTTr) data were used as features.

- Data Preparation: Eighteen study-toxicity outcome combinations with sufficient positive/negative cases were selected. Three data balancing approaches were tested: no balancing (unbalanced), over-sampling (e.g., SMOTE), and under-sampling.

- Model Training & Comparison: Seven machine learning models were trained, including Artificial Neural Networks (ANN), Random Forests (RF), Support Vector Classification (SVC), and Generalised Read-Across (GenRA).

- Performance Validation: Models were evaluated using 5-fold cross-validation, with the F1 score as a primary performance metric to account for class imbalance.

- Analysis: Performance was analyzed as dependent on dataset, model type, and balancing approach.

2.3 Protocol: Ring-Testing the CRED vs. Klimisch Evaluation Method [1] Objective: To compare the consistency, transparency, and practicality of the newer CRED method against the traditional Klimisch method for evaluating ecotoxicity studies.

- Participant Recruitment: 75 risk assessors from 12 countries were enlisted.

- Study Selection: Eight aquatic ecotoxicity studies from peer-reviewed literature, covering different organisms and chemicals, were selected.

- Blinded Evaluation (Two-Phase):

- Phase I: Participants evaluated two studies using the Klimisch method.

- Phase II: Participants evaluated two different studies using the draft CRED method. Evaluators and studies were rotated to ensure independence.

- Data Collection: For each evaluation, the final reliability/relevance categorization, time taken, and the assessor's subjective perception of the method were recorded.

- Statistical Comparison: Consistency among assessors was measured for each method. Results showed CRED provided more detailed, transparent, and consistent evaluations with less reliance on expert judgment.

Visualized Workflows and Relationships

AI/ML Tool Evaluation Workflow in Ecotoxicology

Relationship Between Traditional Frameworks and AI Tools

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for AI/ML-Based Ecotoxicology Research

| Resource Name | Type | Primary Function in Research | Key Reference/Source |

|---|---|---|---|

| ADORE Dataset | Benchmark Data | Provides a standardized, well-curated dataset for acute aquatic toxicity (fish, crustaceans, algae) to enable fair comparison of ML model performances [4]. | Moir et al., Scientific Data (2023) [4] |

| ECOTOX Database | Reference Database | A core public source of curated ecotoxicity data used to build training and validation sets for predictive models [4]. | U.S. Environmental Protection Agency (EPA) [4] |

| ToxRefDB (v2.0) | Reference Database | Provides in vivo animal toxicity data for various endpoints, crucial for training and validating ML models for human health toxicity prediction [30]. | U.S. Environmental Protection Agency (EPA) [30] |

| CRED Evaluation Method | Assessment Framework | Offers detailed, transparent criteria for evaluating the reliability and relevance of aquatic ecotoxicity studies, serving as a ground truth for training AI screening tools [1]. | Moermond et al., Environmental Sciences Europe (2016) [1] |

| ToxRTool | Assessment Framework | A structured tool for evaluating the reliability of toxicological data, providing a replicable framework that can be automated or assisted by AI [3]. | Schneider et al. [3] |

| OECD Test Guidelines | Methodological Standards | Define standardized experimental protocols (e.g., OECD TG 203 for fish). Conformance to these is a key reliability criterion assessed by both traditional and AI-assisted methods [4] [1]. | Organisation for Economic Co-operation and Development |

The statistical analysis of ecotoxicity data has reached a pivotal juncture. For decades, regulatory assessments have relied on methods that many statisticians now consider fragmented and outdated [9]. The debate over the use of no-observed-effect concentrations (NOECs), which has persisted for over 30 years, exemplifies the field's need for modernization [9]. Contemporary ecotoxicology demands a shift from simple hypothesis testing toward sophisticated dose-response modeling and benchmark dose (BMD) analysis. This evolution is driven by the necessity for more precise, reproducible, and mechanistically informative risk assessments that can effectively protect ecosystems while potentially reducing reliance on animal testing [9] [31].

This transition is supported by significant regulatory and scientific initiatives. The Society for Environmental Toxicology and Chemistry (SETAC) has seen high interest in forming a statistics interest group, and a major revision of the key OECD guidance document (No. 54) on statistical analysis is planned for 2026 [9]. Concurrently, the advent of powerful, accessible software and comprehensive public databases is equipping researchers with an unprecedented toolkit. These tools allow for the application of generalized linear models (GLMs), nonlinear regression, and Bayesian methods to derive more robust toxicity estimates like the BMD and the emerging metric of no-significant-effect concentration (NSEC) [9].

Comparative Analysis of Leading Dose-Response Modeling Software

The landscape of software for dose-response and BMD analysis features a mix of established regulatory platforms, innovative commercial packages, and versatile open-source programming environments. The choice of tool depends heavily on the specific research context, regulatory requirements, and technical expertise of the team.

Table 1: Comparison of Major Dose-Response and BMD Analysis Software

| Software | Primary Developer | License/Availability | Key Features & Models | Best Suited For |

|---|---|---|---|---|

| BMDS Suite | U.S. Environmental Protection Agency (EPA) | Free, Public [32] | Multistage Cancer, Nested Dichotomous (NCTR, Nested Logistic), Poly-k trend test, Rao-Scott transformation [32]. | Regulatory submissions, risk assessors, standardized BMD derivation. |

| ToxGenie | Independent Developer (Ecotoxicologist) | Commercial (Free Trial) [33] | Spearman-Karber, Trimmed Spearman-Karber, Moving Average-Angle; NOEC/LOEC determination; automated regulatory reporting [33]. | Academic & industrial toxicologists seeking specialized, guided analysis without coding. |

| ToxTracker with BMD | Toxys | Commercial Service [34] | BMD analysis integrated into in vitro genotoxicity assay flow; uses PROAST software for modeling [34]. | Quantitative genotoxicity risk assessment, potency ranking, qIVIVE. |

| R/Python Ecosystem | Open-Source Community | Free, Open-Source | GLMs, GAMs, dose-response packages (e.g., drc), custom Bayesian models, high-throughput scripting (e.g., pybmds) [32] [9]. |

Method development, complex/non-standard data, machine learning integration, batch analysis. |