CRED vs Klimisch: Advancing Reliability and Relevance in Ecotoxicity Data Evaluation

This article provides a comprehensive comparison of the Klimisch and CRED methods for evaluating the reliability and relevance of ecotoxicity studies, targeting researchers, scientists, and drug development professionals.

CRED vs Klimisch: Advancing Reliability and Relevance in Ecotoxicity Data Evaluation

Abstract

This article provides a comprehensive comparison of the Klimisch and CRED methods for evaluating the reliability and relevance of ecotoxicity studies, targeting researchers, scientists, and drug development professionals. It explores the foundational evolution from the established Klimisch method to the more detailed CRED framework, delves into their methodological application and criteria, addresses common troubleshooting and optimization strategies, and validates their performance through comparative ring test analysis. The synthesis highlights CRED's advantages in transparency, consistency, and practical utility for harmonizing chemical hazard and risk assessments across regulatory frameworks.

Foundations of Ecotoxicity Evaluation: Evolution from Klimisch to CRED

The Critical Role of Data Reliability and Relevance in Chemical Risk Assessment

The regulatory assessment of chemicals hinges on the quality of the underlying ecotoxicity data. For decades, the Klimisch method has been the cornerstone for evaluating study reliability, yet its dependence on expert judgment and lack of explicit relevance criteria have raised concerns about consistency and transparency. This has spurred the development of the Criteria for Reporting and Evaluating ecotoxicity Data (CRED) method. Framed within a broader thesis comparing these two paradigms, this article details the application and protocols that underscore the critical role of data reliability and relevance, providing researchers and drug development professionals with the tools to implement robust, science-based evaluations.

Application Notes and Protocols

The following tables synthesize key quantitative findings from a comprehensive ring test comparing the Klimisch and CRED evaluation methods[reference:0].

Table 1: Structural Characteristics of the Evaluation Methods[reference:1]

| Characteristic | Klimisch Method | CRED Method |

|---|---|---|

| Data Type | Toxicity and ecotoxicity | Aquatic ecotoxicity |

| Number of Reliability Criteria | 12–14 (ecotoxicity) | 20 (evaluating), 50 (reporting) |

| Number of Relevance Criteria | 0 | 13 |

| OECD Reporting Criteria Included | 14 of 37 | 37 of 37 |

| Additional Guidance | No | Yes |

| Evaluation Summary | Qualitative (reliability only) | Qualitative (reliability & relevance) |

Table 2: Reliability Categorization Outcomes from the Ring Test[reference:2]

| Reliability Category | Klimisch Method (% of evaluations) | CRED Method (% of evaluations) |

|---|---|---|

| Reliable without restrictions (R1) | 8% | 2% |

| Reliable with restrictions (R2) | 45% | 24% |

| Not reliable (R3) | 42% | 54% |

| Not assignable (R4) | 6% | 20% |

Table 3: Relevance Categorization Outcomes from the Ring Test[reference:3]

| Relevance Category | Klimisch Method (% of evaluations) | CRED Method (% of evaluations) |

|---|---|---|

| Relevant without restrictions (C1) | 32% | 57% |

| Relevant with restrictions (C2) | 61% | 35% |

| Not relevant (C3) | 7% | 8% |

Table 4: Risk Assessor Confidence in Evaluation Results[reference:4]

| Confidence Level | Klimisch Method | CRED Method |

|---|---|---|

| "Very confident" or "Confident" | 37% | 72% |

Experimental Protocol: The CRED-Klimisch Ring Test

This protocol details the two-phase ring test designed to compare the Klimisch and CRED evaluation methods[reference:5].

Study Design and Participants

- Objective: To compare the consistency, transparency, and user perception of the Klimisch and CRED methods for evaluating the reliability and relevance of aquatic ecotoxicity studies.

- Design: A two-phase, crossover design where each participant evaluated different studies using each method.

- Participants: 75 risk assessors from 12 countries, representing 35 organizations including regulatory agencies, consultancies, and industry[reference:6].

- Materials: Eight peer-reviewed ecotoxicity studies covering diverse taxonomic groups (algae, higher plants, crustaceans, fish), test designs (acute, chronic), and chemical classes[reference:7].

Phase I: Klimisch Method Evaluation (Nov-Dec 2012)

- Assignment: Each participant was assigned two of the eight studies based on their expertise.

- Evaluation: Participants evaluated the reliability of their assigned studies using the Klimisch method, categorizing each as:

- R1: Reliable without restrictions.

- R2: Reliable with restrictions.

- R3: Not reliable.

- R4: Not assignable.

- Relevance Evaluation: As the Klimisch method lacks formal relevance criteria, participants used ad-hoc judgment to categorize relevance as C1, C2, or C3 (mirroring the reliability categories)[reference:8].

- Data Submission: Completed evaluations and a feedback questionnaire were submitted.

Phase II: CRED Method Evaluation (Mar-Apr 2013)

- Assignment: Each participant evaluated two different studies from the same set of eight.

- Evaluation: Participants used a draft version of the CRED method, applying its 19 reliability and 11 relevance criteria (later refined to 20 and 13)[reference:9].

- Categorization: Studies were categorized using the same R1-R4 and C1-C3 scales for direct comparison.

- Questionnaire: Participants completed a detailed questionnaire on their perception of the method's accuracy, consistency, transparency, and the confidence they had in their evaluations[reference:10].

Data Analysis

- Consistency Analysis: The proportion of agreement among assessors for each criterion and overall category was calculated.

- Statistical Comparison: Non-parametric tests (Wilcoxon rank-sum) were used to compare categorization outcomes between methods[reference:11].

- Perception Analysis: Responses to perception statements and confidence levels were analyzed using Chi-square tests.

Visualization of Workflows and Processes

This table lists key tools and resources required for implementing rigorous reliability and relevance evaluations in chemical risk assessment.

| Tool/Resource | Function & Purpose | Key Features / Examples |

|---|---|---|

| CRED Evaluation Checklist | Provides the structured criteria for evaluating study reliability (20 items) and relevance (13 items). Ensures transparent and consistent assessments. | Available as Excel-based assessment sheets from the SciRAP platform[reference:12]. |

| OECD Test Guidelines (TGs) | Define standardized test protocols for generating ecotoxicity data. Serve as the benchmark for evaluating methodological reliability. | e.g., TG 201 (Algal growth inhibition), TG 210 (Fish early-life stage), TG 211 (Daphnia reproduction). |

| Statistical Analysis Software | Enables the statistical comparison of evaluation outcomes and analysis of ring-test or validation study data. | R or Python packages for non-parametric tests (e.g., Wilcoxon rank-sum), consistency analysis, and data visualization. |

| Good Laboratory Practice (GLP) Standards | Define a quality system for the organizational process and conditions under which non-clinical safety studies are planned, performed, and reported. | While not a guarantee of reliability, GLP compliance is a weighted factor in many evaluation frameworks. |

| Reference Databases & Reporting Standards | Provide access to published ecotoxicity studies and define minimum reporting requirements to ensure all necessary information is available for evaluation. | Databases like ECOTOX (US EPA); Reporting standards like the ARRIVE guidelines for in vivo studies. |

| Specialized CRED Extensions | Tailor the evaluation framework for novel data types or specific environmental compartments. | NanoCRED (for nanomaterials), EthoCRED (for behavioral studies), CRED for sediment and soil studies[reference:13]. |

In the mid-1990s, the regulatory landscape for chemical safety, particularly under the European Union's Existing Substances Regulation, faced a significant challenge: the need for a harmonized, systematic approach to evaluate the vast and inconsistent body of toxicological and ecotoxicological data submitted by industry [1]. Prior to 1997, assessments relied heavily on unstructured expert judgment, leading to potential inconsistencies and a lack of transparency in how data influenced regulatory decisions [2]. It was within this context that scientists H.J. Klimisch, M. Andreae, and U. Tillmann from BASF AG introduced a seminal framework. Their 1997 paper, "A systematic approach for evaluating the quality of experimental toxicological and ecotoxicological data," proposed a standardized scoring system designed to categorize the reliability of individual studies [3] [4]. The Klimisch method was developed explicitly to fulfill regulatory obligations, providing a structured process for assessing data for entry into the IUCLID database (International Uniform Chemical Information Database), which was becoming the central repository for regulatory information on chemicals [1]. Its primary objective was to bring clarity and consistency to the hazard assessment process, enabling regulators and industry scientists to distinguish between high-quality guideline studies and those with methodological limitations [3].

Core Methodology: The Four-Tiered Scoring System

The Klimisch method's innovation lies in its simplified, four-category classification system for judging study reliability. The assignment is based on a study's adherence to international testing standards (like OECD guidelines), Good Laboratory Practice (GLP), and the completeness of its documentation [5] [6].

Table 1: The Klimisch Scoring Categories and Criteria

| Klimisch Score | Category Title | Core Assignment Criteria |

|---|---|---|

| 1 | Reliable without restriction | Studies performed according to internationally accepted testing guidelines (e.g., OECD, EPA) preferably under GLP, or where all parameters are closely comparable to a guideline method [5] [6]. |

| 2 | Reliable with restriction | Studies where test parameters do not fully comply with a specific guideline but are sufficiently documented and scientifically acceptable. This also includes well-documented non-guideline studies and accepted calculation methods [5] [6]. |

| 3 | Not reliable | Studies with significant methodological deficiencies, use of irrelevant test systems, or insufficient documentation that precludes a credible assessment [5]. |

| 4 | Not assignable | Studies where experimental details are absent, such as those found only in short abstracts or secondary literature sources [5]. |

In regulatory practice, only studies scoring 1 or 2 are typically considered sufficient on their own to fulfill a data requirement for a specific hazard endpoint [5] [6]. Studies scored as 3 (Not reliable) or 4 (Not assignable) may still be used in a supporting role or as part of a "weight of evidence" approach but cannot be the primary basis for a decision [5].

The method also introduced important definitions for reliability, relevance, and adequacy. Reliability was defined as "the inherent quality of a test report... relating to preferably standardized methodology and the way the experimental procedure and results are described to give evidence of the clarity and plausibility of the findings" [7]. This definition intrinsically links reliability to standardized methods and reporting completeness.

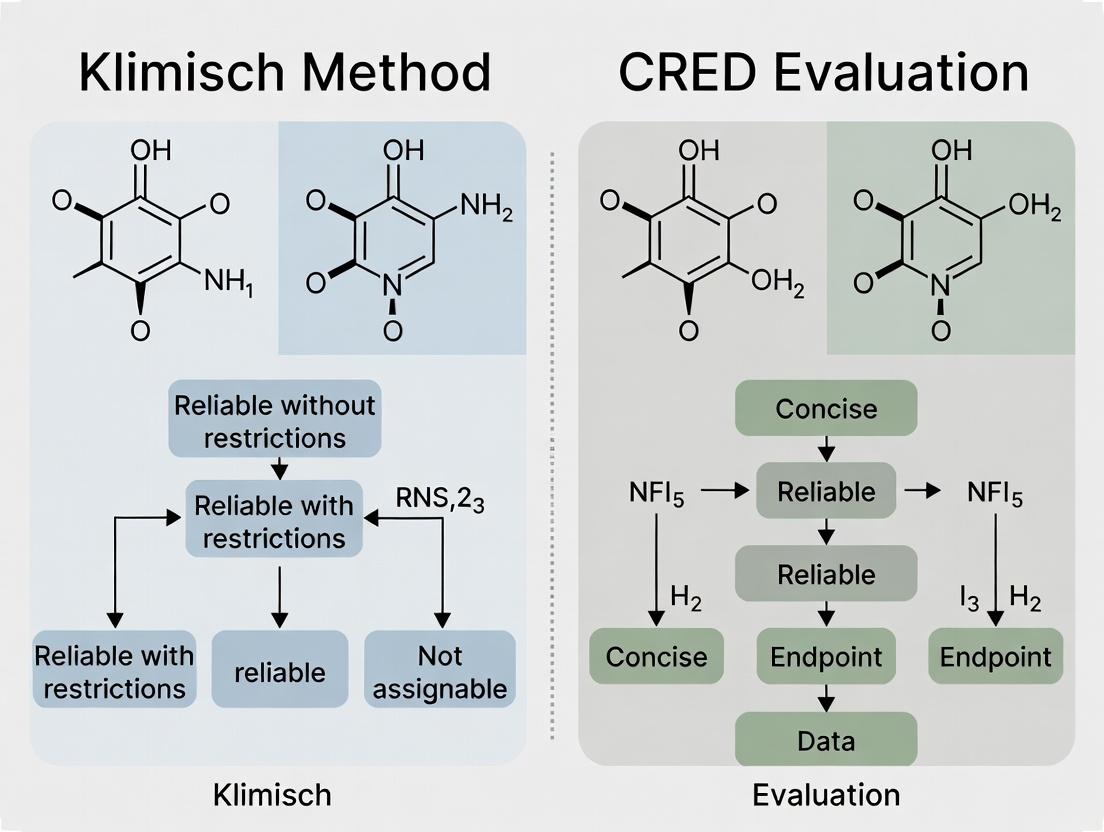

Diagram 1: Klimisch Method Evaluation Workflow (Max 760px)

Initial Adoption and Evolution into a Regulatory Backbone

Following its publication, the Klimisch method was rapidly adopted as the de facto standard within emerging EU regulatory frameworks. Its integration was driven by a clear need for efficiency and harmonization. The method became formally recommended in the Technical Guidance for the REACH regulation (Registration, Evaluation, Authorisation and Restriction of Chemicals), which required registrants to evaluate and report the quality of all submitted study data [1]. Its structured approach allowed for the consistent organization of thousands of studies within the IUCLID database, making hazard assessments "scientifically valid, repeatable and... consistent across substances" [1].

To address criticisms about the lack of detailed guidance for applying the Klimisch categories, supporting tools were developed. The most notable is the ToxRTool (Toxicological data Reliability Assessment Tool), an Excel-based checklist released by the European Centre for the Validation of Alternative Methods (ECVAM) [6] [7]. ToxRTool breaks down the evaluation into specific criteria across areas like test substance identification, study design, and results documentation, providing a more transparent and consistent path to assigning a Klimisch score [7].

The method's influence also expanded beyond its original scope. Researchers proposed adaptations to systematically evaluate the quality of human epidemiological data, creating a parallel framework that mirrored the Klimisch categories to allow for the combined assessment of human and animal studies within weight-of-evidence evaluations [1].

The Klimisch Method in Modern Context: Comparison with CRED

The Klimisch method's historical role as the backbone of data evaluation is now critically examined in comparison to newer, more detailed frameworks like the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) developed in 2016 [8].

CRED was developed explicitly to address perceived shortcomings in the Klimisch method, which were highlighted through ring-tests and scholarly critique [9] [2]. Key criticisms of the Klimisch method include its over-reliance on GLP and guideline adherence, which can cause assessors to overlook methodological flaws in guideline studies or undervalue sound non-guideline research [2]. It was also criticized for providing insufficient guidance, leading to inconsistencies between evaluators, and for lacking explicit criteria to assess a study's relevance (its appropriateness for a specific hazard assessment) [8] [2].

Table 2: Comparison of the Klimisch Method and the CRED Framework

| Feature | Klimisch Method (1997) | CRED Framework (2016) |

|---|---|---|

| Primary Scope | Toxicological & Ecotoxicological data [3]. | Aquatic ecotoxicity data (with extensions for nano, behavior, sediment) [8] [10]. |

| Evaluation Dimensions | Reliability (4 categories) [5]. | Reliability (20 criteria) & Relevance (13 criteria) [8] [2]. |

| Guidance Detail | Limited, high-level criteria [2]. | Extensive guidance for each criterion [8]. |

| Bias Toward GLP/Guidelines | High; GLP/guideline studies are favored for top scores [2]. | Reduced; evaluates methodological soundness independent of formal compliance [9]. |

| Outcome Transparency | Single category score [5]. | Detailed qualitative summary of strengths/weaknesses in reliability and relevance [2]. |

| Regulatory Status | Embedded in REACH guidance & IUCLID [1] [5]. | Piloted in EU EQS revisions and scientific databases (e.g., NORMAN) [9]. |

A major ring test involving 75 risk assessors from 12 countries found that participants perceived the CRED method as more accurate, consistent, transparent, and less dependent on subjective expert judgment than the Klimisch method [8] [2]. CRED's structured criteria aim to make the evaluation process more reproducible and its conclusions more transparent.

Diagram 2: Conceptual Evolution from Klimisch to CRED (Max 760px)

Application Notes & Protocols

Protocol: Performing a Klimisch Evaluation for Regulatory Submission

Purpose: To systematically assign a reliability score to a toxicological/ecotoxicological study for inclusion in a regulatory dossier (e.g., REACH, biocides).

Materials & Tools:

- Full study report or publication.

- Relevant OECD, EPA, or other applicable test guideline.

- ToxRTool (recommended for in vivo/in vitro studies) or institutional score sheet [6] [7].

- IUCLID 6 database fields for recording rationale [6].

Procedure:

- Document Review: Obtain the complete study document. Abstracts or secondary summaries alone are insufficient and typically lead to a Score 4.

- Guideline Compliance Check:

- Determine if the study was conducted according to a specific OECD, EPA, or equivalent international testing guideline.

- Verify if it was performed under Good Laboratory Practice (GLP).

- If fully compliant with a guideline (GLP preferred), assign Score 1.

- Scientific Assessment (if not fully guideline-compliant):

- Evaluate if the methodology is well-documented and scientifically sound (e.g., appropriate controls, dose selection, statistical analysis).

- Assess if any deviations from guidelines are justified and do not invalidate the core findings.

- If the study is deemed scientifically acceptable despite restrictions, assign Score 2.

- Deficiency Identification:

- Identify any fatal methodological flaws (e.g., contaminated controls, unsuitable test system, exposure route irrelevant to human/environmental scenario).

- If such flaws are present and undermine the study's conclusions, assign Score 3.

- Documentation and Rationale:

- In the corresponding IUCLID field or assessment report, clearly document the rationale for the assigned score, referencing the specific criteria met or violated.

The Scientist's Toolkit: Essential Resources for Study Evaluation

| Tool/Resource | Function in Evaluation | Key Features & Notes |

|---|---|---|

| OECD Guidelines for the Testing of Chemicals | The international benchmark for test methodology. Used as the primary reference to assess study design compliance [5]. | Provides detailed protocols for specific endpoints (e.g., acute toxicity, repeated dose). |

| ToxRTool (ECVAM) | An Excel-based tool to standardize the Klimisch scoring process for in vivo and in vitro studies [6] [7]. | Uses weighted criteria checklists to generate a score. Improves consistency between evaluators. |

| IUCLID Database | The regulatory data management system for chemicals under REACH and other frameworks. The Klimisch score is a mandatory field for each study record [1] [5]. | Ensures evaluation rationale is systematically archived and reviewed by authorities. |

| CRED Evaluation Method (Excel Tool) | A modern, criteria-based tool for evaluating aquatic ecotoxicity studies. Useful as a more detailed reference even when a Klimisch score is required [9] [10]. | Contains 20 reliability and 13 relevance criteria with extensive guidance. |

| GLP Principles (OECD Series) | Defines standards for organizational process and study conditions. Not a measure of scientific validity, but a key indicator of process quality for regulators [5]. | Focuses on data traceability, quality assurance, and reporting integrity. |

The Klimisch method emerged in 1997 as a pragmatic, harmonizing solution to an urgent regulatory need. By introducing a simple, four-tiered scoring system focused on reliability, it brought unprecedented structure to chemical hazard assessment and became deeply embedded in EU regulations like REACH [1] [5]. Its historical role as the backbone of data evaluation is undeniable. However, the evolution of best practices in scientific assessment has revealed its limitations, particularly regarding transparency, detail, and the separation of reliability from relevance. The development of the CRED framework represents a direct and evidence-based response to these limitations, offering a more granular, transparent, and consistent approach for the modern era [8] [2]. For today's researcher, understanding the Klimisch method is essential for navigating historical data and existing regulatory systems, while familiarity with CRED principles points toward the future of more robust and reproducible ecotoxicological risk assessment.

The evaluation of toxicological and ecotoxicological data is a cornerstone of regulatory hazard and risk assessment for chemicals, pharmaceuticals, and other substances [11]. For over two decades, the method proposed by Klimisch and colleagues in 1997 has served as the de facto standard for assessing study reliability within many regulatory frameworks, notably the European Union's REACH regulation [5] [12]. The Klimisch method assigns studies to one of four categories: "Reliable without restriction" (1), "Reliable with restriction" (2), "Not reliable" (3), and "Not assignable" (4) [5] [6]. Generally, only studies scoring 1 or 2 are used to cover an endpoint independently, while scores of 3 or 4 may serve as supporting evidence [5].

Despite its widespread adoption, the Klimisch method has faced sustained and growing criticism from the scientific community. Critiques center on its inconsistency among assessors, lack of detailed operational guidance, and an inherent bias favoring studies conducted under Good Laboratory Practice (GLP) [5] [11] [12]. These shortcomings can introduce subjectivity, limit the use of valuable peer-reviewed science, and ultimately compromise the transparency and robustness of regulatory decisions [11] [7].

In response, alternative, more structured evaluation tools have been developed. The most prominent is the Criteria for Reporting and Evaluating ecotoxicity Data (CRED) method [11] [12]. Developed through a collaborative, international effort, CRED aims to provide a transparent, consistent, and detailed framework for evaluating both the reliability and relevance of aquatic ecotoxicity studies [12]. This article details the emerging criticisms of the Klimisch method, contrasts its framework with the CRED approach, and provides application protocols to guide researchers and risk assessors in implementing robust study evaluation practices.

Core Criticisms of the Klimisch Method

The criticisms of the Klimisch method can be organized into three principal, interconnected deficiencies that undermine its utility for modern, evidence-based risk assessment.

Inconsistency and Subjectivity in Evaluation

The Klimisch method provides only broad category descriptions, lacking a concrete checklist of criteria to assess [5] [11]. This absence of standardized operational guidance places a heavy burden on expert judgment, leading to low inter-assessor consistency. A study may be categorized as "reliable with restrictions" by one assessor and "not reliable" by another, creating significant discrepancies in the data used for hazard characterization [11]. This inconsistency directly threatens the reproducibility and fairness of regulatory outcomes, as the same evidence base can yield different conclusions depending on the assessor [12].

Lack of Detailed Guidance and Criteria

The method's original formulation is brief, offering minimal guidance on how to interpret its categories or evaluate specific study elements [7]. It does not explicitly assess fundamental study design criteria such as randomization, blinding, sample size calculation, or statistical power [5]. Furthermore, it entirely omits a structured process for evaluating a study's relevance—the extent to which its design and findings are appropriate for a specific regulatory question (e.g., assessing chronic effects of an endocrine disruptor using an acute mortality test) [11] [12]. This conflation or neglect of relevance forces assessors to rely on unspecified personal judgment.

Bias Towards Good Laboratory Practice (GLP)

The Klimisch method explicitly prioritizes studies performed "according to generally valid and/or internationally accepted testing guidelines (preferably performed according to GLP)" [5]. This has been criticized for institutionalizing a bias that equates procedural compliance with scientific validity [11] [12]. A GLP-compliant guideline study can receive a high reliability score even if it contains fundamental scientific flaws (e.g., excessive control mortality) [5] [11]. Conversely, a well-designed and thoroughly reported peer-reviewed study may be downgraded solely for not following GLP [12]. This bias can marginalize independent academic research and restrict the evidence base primarily to industry-submitted studies [11].

Comparative Analysis: Klimisch Method vs. CRED Evaluation

The CRED method was developed explicitly to address the flaws in the Klimisch approach. A comparative analysis highlights fundamental differences in structure, application, and outcome.

Table 1: Foundational Comparison of the Klimisch and CRED Evaluation Methods

| Aspect | Klimisch Method | CRED Evaluation Method |

|---|---|---|

| Primary Purpose | Assign a reliability category for regulatory acceptance [5]. | Evaluate reliability and relevance separately to support transparent risk assessment [12]. |

| Core Components | Four reliability categories (1-4) with brief descriptive definitions [5] [6]. | 20 reliability criteria and 13 relevance criteria, each with detailed guidance [12]. |

| Evaluation of Relevance | Not formally addressed [11]. | Structured evaluation across 13 criteria (e.g., test organism, endpoint, exposure scenario) [12]. |

| Basis for Decision | High-level expert judgment based on adherence to guidelines/GLP [5]. | Systematic assessment against predefined, detailed criteria [11]. |

| Tool Format | Descriptive text; often applied via tools like ToxRTool [6]. | Criteria checklist with extensive guidance; supported by worksheets [12]. |

| Handling of GLP | GLP/guideline compliance is a primary determinant of high reliability [5]. | GLP is one factor among many; scientific rigor and reporting quality are paramount [11]. |

Empirical evidence from a major international ring test underscores the practical impact of these differences. The test involved 75 risk assessors from 12 countries evaluating ecotoxicity studies using both methods [11].

Table 2: Key Quantitative Findings from the CRED-Klimisch Ring Test [11]

| Evaluation Metric | Finding | Implication |

|---|---|---|

| Inter-assessor Consistency | Lower consistency with Klimisch. Higher agreement among assessors when using the structured CRED criteria. | CRED reduces subjectivity and promotes harmonized assessments. |

| Perceived Accuracy & Transparency | A majority of ring test participants perceived CRED as more accurate and transparent. | Structured criteria build confidence in the evaluation process and its conclusions. |

| Dependence on Expert Judgement | CRED was perceived as less dependent on subjective expert judgement. | Reduces variability and potential for unconscious bias. |

| Practicality | CRED was rated as practical regarding time and use of criteria. | A more detailed method can be efficient and user-friendly. |

| Study Categorization Outcome | Evaluations could lead to different final classifications for the same study. | The choice of method can directly alter the data entering a risk assessment. |

Detailed Application Notes and Experimental Protocols

Protocol for Applying the Klimisch Method (with ToxRTool)

Given the Klimisch method's lack of native guidance, the ToxRTool (Toxicological data Reliability Assessment Tool) is frequently used as an operational intermediary [6] [7]. The following protocol is recommended for a standardized assessment.

Objective: To assign a Klimisch reliability score (1-4) to a toxicological or ecotoxicological study report or publication. Materials: Study to be evaluated; ToxRTool (Excel-based tool for in vivo or in vitro studies). Procedure:

- Tool Selection: Download and open the appropriate ToxRTool module (in vivo or in vitro) from the EU Reference Laboratory for alternatives to animal testing (EURL ECVAM) [5].

- Initial Screening: Read the study thoroughly. Confirm it falls within the tool's scope (empirical toxicological data).

- Criteria Assessment: Navigate through the ToxRTool's tabs (e.g., Test Substance, Test System, Study Design). For each of the ~21 criteria, answer the binary (Yes/No) or multiple-choice questions based solely on the information reported in the study. The tool contains "red" (critical) and "white" (standard) criteria [7].

- Score Calculation: The tool automatically calculates a weighted score based on your responses, with red criteria carrying more weight.

- Category Assignment: Based on the final score, ToxRTool suggests a Klimisch category:

- ≥ 80% of total points: Reliable without restrictions (1).

- 50-79%: Reliable with restrictions (2).

- <50%: Not reliable (3).

- The tool may also indicate "Not assignable" (4) if information is profoundly lacking.

- Documentation: Record the final Klimisch score and the key reasons for any deductions (e.g., "control mortality not reported," "test substance characterization insufficient") as required by databases like IUCLID [6].

Workflow for Klimisch Scoring Using the ToxRTool Intermediary

Protocol for Applying the CRED Evaluation Method

The CRED method involves separate, parallel evaluations of reliability and relevance.

Objective: To transparently evaluate and document the reliability and relevance of an aquatic ecotoxicity study for a defined regulatory purpose. Materials: Study to be evaluated; CRED evaluation worksheet (Excel); CRED guidance document [12]. Procedure:

- Define Assessment Context: Clearly state the regulatory purpose (e.g., "Derivation of a PNEC for freshwater under REACH"). This frames the relevance evaluation.

- Reliability Evaluation (20 Criteria):

- Using the worksheet, assess each of the 20 reliability criteria (e.g., "Test concentrations are reported and appropriate," "Control performance is acceptable").

- For each criterion, choose: Fully addressed (Y), Partially addressed (P), Not addressed (N), or Not applicable (NA).

- Provide a brief text justification for each score, referencing specific lines/sections in the study.

- Do not combine criteria into an overall numerical score. The strength/weakness profile is more informative than a composite number [7].

- Relevance Evaluation (13 Criteria):

- Evaluate the 13 relevance criteria (e.g., "Appropriateness of test organism," "Exposure duration relative to the assessment endpoint") against the defined assessment context from Step 1.

- Use the same scoring scheme (Y/P/N/NA) and provide justifications.

- This determines if the study is fit for purpose, independent of its reliability.

- Integration and Conclusion:

- Summarize the major strengths and weaknesses from both evaluations.

- Conclude on the study's usability for the defined purpose. A study can be:

- Usable: High reliability and high relevance.

- Usable with limitations: Minor issues in reliability/relevance.

- Supporting only: Major limitations in one or both domains.

- Not usable: Fatally flawed or irrelevant.

Dual-Path Evaluation Workflow of the CRED Method

The Scientist's Toolkit: Essential Research Reagents and Solutions

Conducting and evaluating toxicological studies requires both physical reagents and methodological tools. Below is a table of key solutions for professionals in this field.

Table 3: Key Research Reagent Solutions for Toxicology Study Execution and Evaluation

| Tool/Reagent Category | Specific Example/Name | Primary Function in Research/Evaluation |

|---|---|---|

| Evaluation Framework | CRED Evaluation Worksheets [12] | Provides the structured checklist and guidance for performing transparent reliability and relevance assessments. |

| Evaluation Framework | ToxRTool [5] [6] [7] | An Excel-based tool that operationalizes Klimisch scoring with defined criteria, aiding consistency. |

| Evaluation Framework | SciRAP Tool [7] | An online resource for evaluating and reporting in vivo (eco)toxicity studies, promoting structured assessment. |

| Reporting Guideline | CRED Reporting Recommendations [12] | A checklist of 50 criteria across 6 categories to guide researchers in publishing regulatory-ready studies. |

| In Silico Predictive Tool | QSAR Models (e.g., VEGA, EPI Suite) [13] | Provides predicted data for persistence, bioaccumulation, and toxicity to fill gaps, especially for data-poor substances. |

| Reference Management | IUCLID Database [5] | The standard software for compiling, evaluating, and submitting chemical data under REACH; includes Klimisch score fields. |

| Regulatory Guideline | OECD Test Guidelines [5] [11] | Internationally agreed test methods defining standard protocols for generating reliable safety data. |

| Quality System | Good Laboratory Practice (GLP) [14] | A quality management system ensuring studies are planned, performed, monitored, and reported to high standards of traceability. |

The Klimisch method played a historic role in introducing systematic thinking to toxicological data evaluation. However, its structural deficiencies—inconsistency, lack of guidance, and GLP bias—render it inadequate for contemporary demands for transparency, reproducibility, and comprehensive evidence integration in regulatory science [11] [12]. Empirical evidence demonstrates that the CRED evaluation method effectively mitigates these shortcomings by providing a detailed, criteria-based framework that separately assesses reliability and relevance [11].

For researchers, adopting the CRED reporting recommendations during study design and publication increases the likelihood that their work will meet regulatory standards [12]. For risk assessors and drug development professionals, transitioning from the Klimisch method to structured tools like CRED or SciRAP is a critical step toward more robust, objective, and defensible hazard and risk assessments. This evolution supports a broader and more rigorous evidence base, ultimately leading to better-informed decisions that protect human health and the environment.

The regulatory assessment of chemicals hinges on the robust evaluation of ecotoxicity studies. For decades, the Klimisch method, established in 1997, served as the cornerstone for determining study reliability within frameworks like REACH and the Water Framework Directive [2]. It categorizes studies as "reliable without restrictions," "reliable with restrictions," "not reliable," or "not assignable" [2]. However, mounting evidence has revealed critical shortcomings in this approach. Its limited criteria and lack of guidance for relevance evaluation lead to inconsistent assessments that depend heavily on individual expert judgment [2]. This inconsistency can directly impact risk assessments, potentially resulting in underestimated environmental hazards or unnecessary mitigation costs [2].

The Criteria for Reporting and Evaluating ecotoxicity Data (CRED) project emerged from a 2012 initiative to address these deficiencies [2]. Its genesis was driven by the need for a transparent, science-based, and harmonized method that could systematically evaluate both the reliability and relevance of aquatic ecotoxicity studies. Developed through international collaboration and expert consultation, CRED aims to strengthen the consistency and robustness of environmental hazard assessments, thereby supporting more reliable regulatory decisions [9]. This document provides detailed application notes and experimental protocols for implementing the CRED method, framed within a broader research thesis comparing it to the traditional Klimisch approach.

Comparative Methodologies: Klimisch vs. CRED

The fundamental difference between the Klimisch and CRED methods lies in their structure, granularity, and scope. The following table summarizes their core characteristics.

Table 1: Core Characteristics of the Klimisch and CRED Evaluation Methods

| Characteristic | Klimisch Method | CRED Method |

|---|---|---|

| Primary Focus | Reliability of toxicity/ecotoxicity studies [2]. | Reliability and relevance of aquatic ecotoxicity studies [2]. |

| Number of Reliability Criteria | 12-14 for ecotoxicity studies [2]. | 20 detailed evaluation criteria [2] [9]. |

| Number of Relevance Criteria | 0 (not formally addressed) [2]. | 13 specific criteria [2] [9]. |

| Guidance Provided | Limited, qualitative summary [2]. | Comprehensive guidance for each criterion [2]. |

| Basis for Evaluation | General expert judgement based on broad categories. | Specific, documented criteria aligned with OECD test guideline reporting requirements [2]. |

| Outcome | A single reliability category (e.g., "reliable with restrictions"). | Separate, qualitative summaries for reliability and relevance, with documented justification [2]. |

The CRED method's enhanced detail is visualized in the following workflow, which outlines the sequential steps for a comprehensive study evaluation.

CRED Evaluation Workflow

Experimental Protocol: The CRED Ring Test Methodology

The comparative efficacy of the CRED and Klimisch methods was empirically validated through a formal, two-phase international ring test [2]. The following protocol details the experimental design and procedure.

Protocol: International Ring Test for Method Comparison

Objective: To characterize differences in study categorization, consistency, and user perception between the Klimisch and CRED evaluation methods.

Materials & Resources:

- Eight peer-reviewed ecotoxicity studies: Covering different taxonomic groups (algae, crustaceans, fish, higher plants), chemical classes (industrial, biocide, pharmaceutical), and endpoints (EC50, NOEC) [2].

- Evaluation Materials: Klimisch method guidelines and the draft CRED evaluation matrix [2].

- Participant Cohort: 75 risk assessors from 12 countries with expertise in regulatory ecotoxicology [2].

Procedure:

Phase I – Klimisch Evaluation (November-December 2012):

- Randomly assign each participant two distinct studies from the pool of eight, ensuring no institutional overlap for a given study.

- Instruct participants to evaluate the reliability of their assigned studies using the standard Klimisch method. Relevance is evaluated ad-hoc if required by the participant's usual practice.

- Collect completed evaluations, including the final Klimisch score (1-4) and any supporting notes.

Phase II – CRED Evaluation (March-April 2013):

- Assign each participant two new studies from the same pool, different from their Phase I assignments, again preventing institutional overlap.

- Provide participants with the draft CRED evaluation tool, consisting of the 20 reliability and 13 relevance criteria with guidance [2].

- Instruct participants to evaluate both the reliability and relevance of their assigned studies using the CRED criteria.

- Collect completed CRED evaluation matrices.

Data Analysis:

- Consistency Calculation: For each study and method, calculate the percentage agreement among all evaluators on the final categorization (e.g., reliable vs. not reliable for Klimisch; high/medium/low for CRED dimensions).

- Performance Metrics: Compare the time required to complete evaluations and the perceived practicality of each method via participant questionnaire.

- Categorization Comparison: Map CRED outcomes to Klimisch categories to analyze shifts in study acceptability for regulatory use.

Results and Comparative Analysis

The ring test yielded quantitative and qualitative data demonstrating the advantages of the CRED framework. Key results are consolidated below.

Table 2: Key Quantitative Outcomes from the CRED-Klimisch Ring Test

| Metric | Klimisch Method Results | CRED Method Results | Implication |

|---|---|---|---|

| Participant Consensus | Lower inter-evaluator agreement on study reliability [2]. | Higher consistency among evaluators for both reliability and relevance judgments [2]. | CRED reduces subjective interpretation, promoting harmonization. |

| Evaluation Scope | Focused solely on reliability; relevance not systematically assessed [2]. | Comprehensive assessment of reliability (20 criteria) and relevance (13 criteria) [2]. | Enables more scientifically robust and fit-for-purpose study selection. |

| Bias Toward GLP | Strong preference for GLP (Good Laboratory Practice) studies, potentially overlooking flaws [2]. | Criteria-driven assessment that evaluates methodological soundness regardless of GLP status [2]. | Facilitates the inclusion of high-quality peer-reviewed literature, expanding the data pool. |

| Perceived Utility | Viewed as dependent on expert judgement [2]. | Perceived as more accurate, transparent, and practical by a majority of ring test participants [2]. | Higher user acceptance and confidence in evaluation outcomes. |

The following diagram illustrates the conceptual shift from the Klimisch method's linear, judgment-based process to CRED's structured, criteria-based analysis.

Methodological Comparison: Expert vs. Criteria-Driven

Application Notes: Implementing CRED in Regulatory and Research Contexts

Protocol: Conducting a CRED Evaluation for a Single Study

Objective: To perform a standardized, transparent evaluation of the reliability and relevance of an aquatic ecotoxicity study.

Materials:

- CRED Evaluation Tool: The official Excel-based checklist containing the 20 reliability and 13 relevance criteria [9].

- Study Manuscript: The complete peer-reviewed publication or study report to be evaluated.

- Relevant Test Guideline: The appropriate OECD (e.g., OECD 210 for fish early-life stage) or EPA test guideline corresponding to the study design.

Procedure:

- Documentation: Record study identifier, test substance, organism, and endpoint.

- Reliability Assessment (Criteria 1-20): For each criterion (e.g., "Test concentrations are reported and confirmable"), select "Yes," "No," or "Not Applicable." Provide a brief justification for each response based on the study text. This covers experimental design, chemical analysis, statistical methods, and reporting clarity.

- Reliability Summary: Based on the pattern of responses, assign an overall reliability rating: High (most criteria met, no critical flaws), Medium (some deficiencies not affecting core conclusions), or Low (critical flaws present).

- Relevance Assessment (Criteria A-M): Evaluate the study's relevance for a specific assessment context (e.g., deriving a Water Framework Directive EQS). Criteria cover ecological representativeness, exposure pathway, endpoint sensitivity, and environmental realism.

- Relevance Summary: Assign a relevance rating: High (ideal for the assessment purpose), Medium (useful with caveats), or Low (marginal or inappropriate relevance).

- Final Documentation: Combine the reliability and relevance summaries to produce a final statement on the study's utility for the regulatory or research question at hand. The completed CRED tool serves as the audit trail.

Integration in Systematic Review and Weight-of-Evidence

CRED is designed for integration into systematic review processes. In a Weight-of-Evidence assessment, multiple studies are evaluated individually using CRED. The transparent outputs—specific ratings and justifications—allow for the explicit, defensible weighing of studies. A study with "High" reliability and "High" relevance would typically carry more weight than one with "Medium" reliability and "Low" relevance for a particular context. This moves beyond the Klimisch-based binary acceptance/rejection, enabling nuanced, tiered use of available science.

Table 3: Key Research Reagent Solutions for CRED Implementation

| Tool/Resource | Function in CRED Evaluation | Source/Availability |

|---|---|---|

| CRED Excel Evaluation Tool | The primary instrument containing all criteria, guidance, and fields for documenting the evaluation [9]. | Freely available for download from the CRED project resources [9]. |

| OECD Test Guidelines | The international standard for test methodology. Used as the benchmark for evaluating study design and reporting adequacy against the CRED reliability criteria [2]. | OECD Publishing. |

| GLP Principles | A quality system for non-clinical studies. Understanding GLP helps evaluate study conduct aspects but, per CRED, does not override specific scientific quality criteria [2]. | OECD Series on Principles of GLP. |

| Systematic Review Software | Platforms (e.g., HAWC, DistillerSR) can be configured to incorporate CRED criteria for blinding, managing, and documenting evaluations across a large evidence base. | Various commercial and open-source platforms. |

| Chemical-Specific Guidance | Documents like the EU's Technical Guidance for deriving Environmental Quality Standards (TGD-EQS) provide the context for determining study relevance [9]. | European Commission and other regulatory bodies. |

The genesis of CRED represents a paradigm shift from a reliance on expert judgment to a structured, criteria-based transparency model. As demonstrated by the ring test, it provides a more consistent, detailed, and balanced framework for evaluating ecotoxicity data than the Klimisch method [2]. Its ongoing adoption in regulatory pilots, such as the revision of the EU's Technical Guidance Document and its use by the Joint Research Centre, underscores its utility in promoting international harmonization [9].

For researchers and assessors, adopting CRED mitigates the subjectivity inherent in the Klimisch approach, leading to more defensible and reproducible hazard assessments. The provision of separate reliability and relevance summaries offers nuanced insight that directly informs Weight-of-Evidence decisions. Future research directions include expanding the CRED principles to terrestrial ecotoxicity endpoints and further automating the evaluation process within systematic review platforms.

In regulatory ecotoxicology, the quality of data used for hazard and risk assessment is paramount. Two core concepts underpin this evaluation: reliability (the inherent quality of a study based on its methodology and reporting) and relevance (the appropriateness of the data for a specific regulatory purpose)[reference:0]. Regulatory frameworks like REACH, the US EPA, and the Water Framework Directive mandate that studies undergo a formal evaluation of these attributes before use.

For decades, the Klimisch method (1997) has been the dominant evaluation system. It categorizes study reliability but offers limited criteria and no formal guidance for assessing relevance, leading to reliance on expert judgment and potential inconsistency[reference:1]. In response, the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method was developed to provide a more transparent, detailed, and consistent framework for evaluating both reliability and relevance[reference:2].

This document, framed within a thesis comparing the Klimisch and CRED methodologies, provides detailed application notes and experimental protocols derived from the seminal ring-test comparison study.

Core Conceptual Definitions

- Reliability: “The inherent quality of a test report or publication relating to preferably standardized methodology and the way the experimental procedure and results are described to give evidence of the clarity and plausibility of the findings.”[reference:3]

- Relevance: “The extent to which data and tests are appropriate for a particular hazard identification or risk characterisation.”[reference:4]

- Regulatory Context: The specific legal framework (e.g., REACH, pesticide registration, derivation of Environmental Quality Standards) that defines the purpose for which a study is being evaluated, thereby influencing relevance judgments.

Quantitative Comparison: Klimisch vs. CRED

The following tables summarize key data from the ring test involving 75 risk assessors from 12 countries, which directly compared the two methods[reference:5].

Table 1: Method Characteristics

| Characteristic | Klimisch Method | CRED Evaluation Method |

|---|---|---|

| Data Type | General toxicity & ecotoxicity | Aquatic ecotoxicity |

| Number of Reliability Criteria | 12–14 (ecotoxicity) | 20 (evaluating) / 50 (reporting) |

| Number of Relevance Criteria | 0 | 13 |

| OECD Reporting Criteria Included | 14 of 37 | 37 of 37 |

| Additional Guidance | No | Yes, extensive |

| Evaluation Summary | Qualitative (reliability only) | Qualitative (reliability & relevance)[reference:6] |

Table 2: Reliability Evaluation Outcomes (Ring Test)

| Reliability Category | Klimisch Method (% of evaluations) | CRED Method (% of evaluations) |

|---|---|---|

| Reliable without restrictions (R1) | 8% | 2% |

| Reliable with restrictions (R2) | 45% | 24% |

| Not reliable (R3) | 42% | 54% |

| Not assignable (R4) | 6% | 20%[reference:7] |

Table 3: Relevance Evaluation Outcomes (Ring Test)

| Relevance Category | Klimisch Method (% of evaluations) | CRED Method (% of evaluations) |

|---|---|---|

| Relevant without restrictions (C1) | 32% | 57% |

| Relevant with restrictions (C2) | 61% | 35% |

| Not relevant (C3) | 7% | 8%[reference:8] |

Table 4: Risk Assessor Confidence in Evaluation

| Confidence Level | Klimisch Method (% of respondents) | CRED Method (% of respondents) |

|---|---|---|

| Very confident / Confident | 37% | 72%[reference:9] |

Table 5: Participant Perception of Method Attributes

Statement: "The method is accurate, applicable, consistent, transparent, and less dependent on expert judgement."

- CRED was rated significantly higher than Klimisch across all attributes by participants[reference:10].

Experimental Protocol: The CRED-Klimisch Ring Test

This protocol details the two-phase ring test designed to compare the Klimisch and CRED evaluation methods.

Objective

To characterize differences in outcomes and user perception between the Klimisch and CRED methods for evaluating the reliability and relevance of aquatic ecotoxicity studies.

Study Design & Timeline

- Type: Prospective, cross-over ring test.

- Phases: Two sequential evaluation phases.

- Duration: Phase I (Nov-Dec 2012), Phase II (Mar-Apr 2013)[reference:11].

Participant Selection & Demographics

- Recruitment: Via professional networks (SETAC), regulatory meetings, and email.

- Cohort: 75 risk assessors from 35 organizations across 12 countries.

- Background: Represented regulatory agencies (9), consultancies (17), industry (9), and academia. 58-62% had >5 years of evaluation experience[reference:12].

Test Article Selection

- Number: 8 peer-reviewed aquatic ecotoxicity studies.

- Selection Criteria: Covered diverse taxonomic groups (cyanobacteria, algae, crustaceans, fish), test designs (acute/chronic), and chemical classes (industrial, pharmaceutical, biocide)[reference:13].

- Assignment: Each participant evaluated 2 unique studies per phase, with no overlap within institutes to ensure independence[reference:14].

Evaluation Procedure

- Phase I (Klimisch): Participants evaluated the reliability and relevance of two assigned studies using the standard Klimisch method. Relevance was categorized ad-hoc (C1-C3) as the Klimisch method lacks formal criteria[reference:15].

- Phase II (CRED): Participants evaluated two different studies using a draft version of the CRED method, which included 19 reliability and 11 relevance criteria with guidance[reference:16].

- Questionnaire: After each phase, participants completed a survey on their experience, perception of the method's accuracy/consistency, and confidence in their evaluations[reference:17].

Data Collection & Analysis

- Primary Outcome: Assigned reliability (R1-R4) and relevance (C1-C3) categories for each study.

- Analysis: Consistency of categorization across assessors was calculated. Differences between methods were analyzed using the non-parametric Wilcoxon paired rank-sum test. Participant perception data were analyzed using Chi-square tests[reference:18].

- Output: Arithmetic means of conclusive categories (R1-R3, C1-C3) were calculated for comparative analysis[reference:19].

Visualizing Workflows and Relationships

Diagram 1: Ring Test Design for Method Comparison

Diagram 2: Evaluation Criteria Comparison

Diagram 3: Reliability, Relevance & Regulatory Decision Pathway

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table lists key materials essential for conducting standardized aquatic ecotoxicity tests, which form the basis of the studies evaluated by the Klimisch and CRED methods.

Table 6: Key Reagents & Materials for Aquatic Ecotoxicity Testing

| Item | Function & Relevance to Evaluation |

|---|---|

| Standard Test Organisms (e.g., Daphnia magna, Pseudokirchneriella subcapitata, Danio rerio) | Required for OECD/EPA guideline tests. Study reliability is assessed based on proper organism identification, husbandry, and health[reference:20]. |

| Reference Toxicants (e.g., Potassium dichromate, Sodium lauryl sulfate) | Used in periodic quality control tests to demonstrate organism sensitivity and test system validity—a key reliability criterion. |

| Culture Media & Reconstituted Water (e.g., ISO, OECD standard media) | Standardized exposure media ensure reproducibility. Deviations from recommended compositions can affect reliability evaluations[reference:21]. |

| Analytical Grade Test Substances | Purity and stability of the test substance must be characterized. Lack of analytical confirmation of exposure concentration is a common reason for downgrading reliability[reference:22]. |

| Solvent Controls (e.g., Acetone, DMSO) | Necessary for testing poorly soluble substances. Exceeding OECD-recommended solvent concentrations can render a study "not reliable"[reference:23]. |

| Positive & Negative Control Articles | Essential for validating test performance and distinguishing treatment effects from background variability. |

| Data Reporting Templates (e.g., CRED checklist) | Facilitate comprehensive reporting of all methodological details (50 CRED criteria), directly improving the clarity and evaluability of a study[reference:24]. |

Concluding Notes for Researchers

The transition from the Klimisch method to the CRED framework represents a significant evolution in regulatory ecotoxicology. CRED's structured, criteria-based approach enhances transparency, reduces undesirable reliance on expert judgment, and improves consistency among assessors. For researchers, adhering to detailed reporting recommendations (like those provided by CRED) increases the likelihood that their work will be deemed reliable and relevant for regulatory use. For risk assessors, using CRED provides greater confidence in evaluation outcomes, contributing to more robust and defensible hazard and risk assessments across global regulatory frameworks.

Methodology in Practice: Deconstructing Klimisch and CRED Evaluation Criteria

The evaluation of study reliability is a cornerstone of chemical hazard and risk assessment. In 1997, Klimisch and colleagues introduced a standardized four-category scoring system to assess the reliability of toxicological and ecotoxicological data[reference:0]. This method, widely adopted by regulatory authorities, categorizes studies as "reliable without restrictions," "reliable with restrictions," "not reliable," or "not assignable." Despite its widespread use, the Klimisch method has been criticized for lacking detailed criteria and guidance, leading to inconsistencies among assessors. This prompted the development of the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) method, which offers a more structured and transparent framework[reference:1]. This article provides detailed application notes and protocols for the Klimisch method, framed within the broader thesis of comparing its performance and utility against the CRED evaluation system.

Klimisch Method Application Notes

The Klimisch method assigns a reliability score based on the study's adherence to standardized methodologies and the completeness of its documentation[reference:2].

The Four-Category Scoring System

The core of the method is the four-tier classification, detailed in Table 1.

Table 1: Klimisch Scoring System Categories and Assignment Criteria[reference:3]

| Score | Description | Assignment Criteria (Excerpt from IUCLID) |

|---|---|---|

| 1 | Reliable without restriction | Guideline study (preferably GLP); comparable to guideline study; test procedure in accordance with national standard methods or generally accepted scientific standards and described in detail. |

| 2 | Reliable with restriction | Guideline study without detailed documentation; guideline study with acceptable restrictions; test procedure in accordance with national standard methods with acceptable restrictions; well-documented study meeting generally accepted scientific principles. |

| 3 | Not reliable | Study has significant methodological deficiencies or uses an unsuitable test system. |

| 4 | Not assignable | Information is insufficient for assessment (e.g., abstract, secondary literature). |

Protocol for Applying Klimisch Scoring

Protocol 1: Step-by-Step Klimisch Evaluation

- Study Acquisition: Obtain the full study report or publication.

- Guideline Check: Determine if the study follows a recognized test guideline (e.g., OECD, ISO) or is comparable.

- Documentation Review: Assess the completeness of the methodological description, including test substance characterization, organism details, exposure conditions, and statistical analysis.

- Quality Assessment: Identify any methodological flaws, such as inadequate controls, poor compliance with validity criteria, or missing critical data.

- Category Assignment: Based on the review, assign the appropriate Klimisch score (1–4) using the criteria in Table 1. Expert judgment is required, particularly for borderline cases[reference:4].

- Document Rationale: Record the justification for the assigned score, citing specific strengths or deficiencies.

CRED Evaluation Method Application Notes

The CRED method was developed to provide a more detailed, consistent, and transparent tool for evaluating aquatic ecotoxicity studies. It expands upon the Klimisch framework by introducing explicit criteria for both reliability and relevance[reference:5].

CRED Reliability Categories

CRED uses four reliability categories analogous to Klimisch scores: Reliable without restrictions (R1), Reliable with restrictions (R2), Not reliable (R3), and Not assignable (R4)[reference:6]. Detailed descriptions are provided in Table 2.

Table 2: CRED Reliability Categories[reference:7]

| Score | Description |

|---|---|

| R1 | Reliable without restrictions: All critical reliability criteria for this study are fulfilled. The study is well designed and performed without flaws affecting reliability. |

| R2 | Reliable with restrictions: The study is generally well designed and performed, but some minor flaws in documentation or setup may be present. |

| R3 | Not reliable: Not all critical reliability criteria are fulfilled. The study has clear flaws in design and/or performance. |

| R4 | Not assignable: Information needed to assess the study is missing (e.g., insufficient experimental details in an abstract or secondary literature). |

CRED Reliability and Relevance Criteria

The CRED method is defined by a set of 20 reliability criteria and 13 relevance criteria, providing a structured checklist for evaluators[reference:8]. The reliability criteria span six categories: General information, Test setup, Test compound, Test organism, Exposure conditions, and Statistical design & biological response[reference:9].

Table 3: CRED Relevance Categories

- C1: Relevant without restrictions.

- C2: Relevant with restrictions.

- C3: Not relevant.

- C4: Not assignable (added in the final method)[reference:10].

Protocol for Conducting CRED Evaluation

Protocol 2: Step-by-Step CRED Evaluation

- Preparation: Download the CRED Excel evaluation tool.

- Study Screening: Perform an initial relevance check based on the title and abstract (e.g., aquatic vs. terrestrial test).

- Criteria Assessment: For the full study, systematically evaluate and score each of the 20 reliability criteria and 13 relevance criteria against the provided guidance.

- Category Assignment: Based on the criteria scores, assign final reliability (R1-R4) and relevance (C1-C4) categories.

- Documentation: The Excel tool automatically summarizes the evaluation, promoting transparency and consistency.

Comparative Analysis: Klimisch vs. CRED

A ring test involving 75 risk assessors from 12 countries directly compared the two methods[reference:11]. Key comparative data are summarized below.

Table 4: General Characteristics of Klimisch and CRED Evaluation Methods[reference:12]

| Characteristic | Klimisch | CRED |

|---|---|---|

| Data type | Toxicity and ecotoxicity | Aquatic ecotoxicity |

| Number of reliability criteria | 12–14 (ecotoxicity) | Evaluating: 20 (Reporting: 50) |

| Number of relevance criteria | 0 | 13 |

| Number of OECD reporting criteria included | 14 (of 37) | 37 (of 37) |

| Additional guidance | No | Yes |

| Evaluation summary | Qualitative for reliability | Qualitative for reliability and relevance |

Table 5: Ring Test Results - Reliability Evaluation Outcomes[reference:13]

| Reliability Category | Klimisch Method (% of evaluations) | CRED Method (% of evaluations) |

|---|---|---|

| Reliable without restrictions | 8% | 2% |

| Reliable with restrictions | 45% | 24% |

| Not reliable | 42% | 54% |

| Not assignable | 6% | 20% |

Table 6: Ring Test Results - Practicality and Perception

- Time Requirement: A higher percentage of evaluations were completed in <60 minutes using the Klimisch method (considered efficient), but the CRED method was still perceived as practical for routine use[reference:14][reference:15].

- Consistency: The CRED method produced more consistent categorization among assessors, reducing the high discrepancies seen with the Klimisch method where studies were often split between "reliable" and "not reliable" categories[reference:16].

- Perception: Ring test participants rated the CRED method as more accurate, consistent, applicable, and less dependent on expert judgment than the Klimisch method[reference:17][reference:18].

- Confidence: 72% of assessors felt "very confident" or "confident" using CRED, compared to 37% using the Klimisch method[reference:19].

Experimental Protocols for Method Comparison

Protocol 3: Ring Test Design for Comparing Evaluation Methods This protocol is based on the CRED ring test methodology[reference:20].

- Participant Recruitment: Recruit risk assessors from diverse sectors (regulatory, industry, academia, consultancy) with experience in study evaluation.

- Study Selection: Select a set of 8-10 ecotoxicity studies representing different organisms, substances, and study types (guideline, non-guideline).

- Phased Evaluation:

- Phase I (Klimisch): Each participant evaluates 2 studies using the Klimisch method only.

- Phase II (CRED): Each participant evaluates 2 different studies using the CRED method.

- Data Collection: Collect the assigned reliability/relevance categories, time taken for evaluation, and perceived confidence via a standardized questionnaire.

- Analysis: Calculate consistency metrics (agreement among assessors), compare category distributions, and analyze perception data.

Visualization of Evaluation Workflows

Diagram 1: Klimisch Scoring Decision Workflow

Diagram 2: CRED Evaluation Systematic Process

Diagram 3: Comparative Decision Pathways: Expert vs. Criteria-Driven

The Scientist's Toolkit

Table 7: Essential Materials for Ecotoxicity Study Evaluation

| Item Category | Specific Item | Function/Purpose |

|---|---|---|

| Reference Documents | OECD/ISO Test Guidelines (e.g., 201, 210, 211) | Provide standardized methodology against which studies are evaluated. |

| Good Laboratory Practice (GLP) Principles | Benchmark for assessing study conduct and documentation quality. | |

| REACH Guidance Documents | Define regulatory context and requirements for reliability/relevance. | |

| Evaluation Tools | Klimisch Score Criteria Table (Table 1) | Quick reference for assigning Klimisch categories. |

| CRED Evaluation Excel Tool | Structured checklist for systematic, transparent assessment. | |

| ToxRTool (ECVAM) | Excel-based tool that provides detailed criteria leading to a Klimisch category[reference:21]. | |

| Test Materials | Certified Reference Substances | Ensure test substance purity and identity are verifiable. |

| Control Substances (Solvent, Positive/Negative) | Essential for validating test system performance. | |

| Cultured Test Organisms (e.g., Daphnia magna, algae) | Standardized biological material for toxicity testing. | |

| Laboratory Equipment | Analytical Chemistry Equipment (HPLC, GC-MS) | For verifying test substance concentrations during exposure. |

| Environmental Chambers | To maintain stable temperature, light, and pH conditions. | |

| Software | Statistical Analysis Software (e.g., R, GraphPad Prism) | To evaluate dose-response data and calculate endpoints (EC50, NOEC). |

| Reference Management Software | To organize and cite evaluated studies. |

The Klimisch four-category scoring system provided a foundational step toward standardizing reliability assessments in regulatory toxicology. However, its reliance on expert judgment and lack of detailed criteria can lead to inconsistency. The CRED evaluation method addresses these shortcomings by offering a structured, criteria-based framework that encompasses both reliability and relevance, resulting in greater transparency and consistency among assessors. For robust regulatory decision-making, especially where data inclusivity is valued, the CRED method presents a scientifically rigorous alternative or successor to the Klimisch approach. The choice of method ultimately depends on the need for speed versus the demand for detailed, defensible, and harmonized study evaluations.

The regulatory evaluation of ecotoxicity studies is fundamental for deriving Predicted-No-Effect Concentrations (PNECs) and Environmental Quality Standards (EQSs) [12]. For decades, the predominant method for this task has been the system proposed by Klimisch and colleagues in 1997 [2]. While representing an initial step toward systematic evaluation, the Klimisch method has faced sustained criticism for its lack of detail, insufficient guidance, and failure to ensure consistency among different risk assessors [12] [2]. Its broad categorization of studies as "reliable without restrictions," "reliable with restrictions," "not reliable," or "not assignable" leaves considerable room for subjective expert judgment [2]. Furthermore, it has been criticized for favoring Good Laboratory Practice (GLP) and standardized guideline studies, potentially excluding pertinent and high-quality data from the peer-reviewed scientific literature [12] [15].

The Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) framework was developed to address these shortcomings. Its primary aim is to improve the reproducibility, transparency, and consistency of reliability and relevance evaluations across different regulatory frameworks, countries, and individual assessors [12] [9]. The core innovation of CRED is its detailed, criteria-based approach, comprising 20 reliability criteria and 13 relevance criteria, supported by extensive guidance [12]. This structured methodology provides a more granular and transparent alternative to the Klimisch method, reducing ambiguity and promoting harmonized data assessment in environmental hazard and risk evaluations [2].

Core Framework: The CRED Evaluation Criteria

The CRED framework is built on clear, distinct definitions. Reliability refers to the inherent scientific quality of a study, assessing the clarity, plausibility, and reproducibility of its experimental procedure and findings. Relevance, in contrast, is purpose-dependent and evaluates how appropriate the data and test are for a specific hazard identification or risk characterization [12] [2]. A study can be highly reliable but irrelevant for a particular assessment, and vice-versa [12].

The 20 reliability criteria are organized into key categories that scrutinize every aspect of a study's design, conduct, and reporting. The 13 relevance criteria ensure the study's purpose, test organism, substance, endpoint, and exposure conditions align with the specific regulatory question at hand [12]. The comprehensive nature of these criteria is summarized below.

Table 1: Overview of CRED Reliability and Relevance Criteria Categories

| Evaluation Dimension | Number of Criteria | Primary Focus Areas |

|---|---|---|

| Reliability | 20 | Test substance characterization, test organism details, exposure system & conditions, study design & methodology, data reporting & statistical analysis [12]. |

| Relevance | 13 | Assessment purpose, test organism appropriateness, exposure pathway, measured endpoints, and ecological realism of the tested concentration and duration [12]. |

Application Notes and Experimental Protocols

Protocol 1: Performing a CRED Evaluation

A systematic workflow is essential for consistent application. The following protocol, derived from the CRED method and supporting literature, details the steps for evaluators [12] [2].

1. Definition of Assessment Purpose: Clearly articulate the regulatory context (e.g., derivation of an EQS, pesticide authorization) and the specific ecological compartment (e.g., freshwater, marine) and protection goals involved. This defines the lens for all relevance evaluations [16].

2. Initial Screening & Gateway Check: Perform a preliminary scan of the study's title, abstract, and materials section. Verify the presence of minimum information: correct test organism, relevant substance, reported ecotoxicological endpoint, and basic methodological description. Studies failing this gateway are not evaluated further unless missing information can be obtained [16].

3. Independent Reliability and Relevance Assessment:

- Reliability Assessment: Systematically review the full study against each of the 20 reliability criteria. For each criterion, determine if it is "Fully Met," "Partially Met," "Not Met," or "Not Reported." Document the rationale for any score other than "Fully Met," citing specific sections of the study [12] [16].

- Relevance Assessment: Using the defined assessment purpose from Step 1, evaluate the study against the 13 relevance criteria. This determines the "fitness for purpose." A chronic reproduction study on fish, for example, is highly relevant for assessing an endocrine disruptor but may be less relevant for a baseline acute hazard classification [12].

4. Overall Categorization and Integration: Synthesize the individual ratings into overall reliability and relevance categories (e.g., Reliable/Relevant Without Restrictions, With Restrictions, Not Reliable/Relevant, Not Assignable). Finally, integrate these two judgments to determine the study's overall usability for the defined purpose [16]. A study must be both reliable and relevant to be usable without restrictions.

5. Documentation: Transparently document all scores, justifications, and final conclusions. This creates an audit trail, which is critical for regulatory transparency and for reconciling differences between evaluators [12].

Diagram: CRED Study Evaluation Workflow. The process begins by defining the purpose, proceeds through parallel reliability and relevance assessments informed by the purpose, and concludes with integrated categorization and documentation [12] [16].

Protocol 2: The CRED Ring Test Methodology for Comparative Validation

The comparative superiority of CRED over the Klimisch method was demonstrated through a structured ring test [2]. The protocol for this validation is as follows:

1. Participant Selection: Recruit a diverse cohort of risk assessors from industry, academia, consultancy, and government institutions across multiple countries to ensure representativeness [12] [2].

2. Study Selection & Assignment: Curate a set of 8 ecotoxicity studies from the peer-reviewed literature covering various organisms (algae, Daphnia, fish, macrophytes), substance classes (pharmaceuticals, pesticides, industrial chemicals), and endpoints (acute, chronic) [2]. Assign each participant two unique studies to evaluate in each phase to prevent learning bias.

3. Phased Evaluation:

- Phase I - Klimisch: Participants evaluate their assigned studies using the traditional Klimisch method, providing a reliability category and any relevance notes based on its limited guidance [2].

- Phase II - CRED: Participants evaluate two different studies using the draft CRED evaluation method, which included the detailed reliability and relevance criteria [2].

4. Data Collection & Analysis: Collect completed evaluations, including final categories and written comments. Analyze for: * Inter-evaluator consistency for the same study within each method. * Perceived utility of each method via participant questionnaires (clarity, ease of use, time required). * Critical analysis of discrepancies to refine the CRED criteria [12] [2].

5. Method Refinement: Use quantitative results and qualitative feedback from Phase II to fine-tune the wording and guidance of the CRED criteria, finalizing the published version [12].

Table 2: Ring Test Comparison of Klimisch and CRED Methods [2]

| Comparison Aspect | Klimisch Method | CRED Method | Implication from Ring Test |

|---|---|---|---|

| Scope of Evaluation | Primarily reliability; vague relevance consideration. | Explicit, separate evaluation of reliability (20 crit.) and relevance (13 crit.). | CRED provides a more comprehensive and structured assessment. |

| Guidance Specificity | Low; minimal descriptive guidance for criteria. | High; extensive guidance text for applying each criterion. | Reduced ambiguity and lower dependency on expert judgment with CRED. |

| Basis for Categorization | Holistic expert judgment based on 12-14 broad criteria. | Summative judgment based on scoring many specific criteria. | CRED evaluations were perceived as more accurate, consistent, and transparent. |

| Inherent Bias | Criticized for bias toward GLP/guideline studies. | Criteria are applied equally to guideline and non-guideline studies. | CRED facilitates the inclusion of high-quality peer-reviewed literature. |

| Participant Perception | Less consistent, more subjective. | More consistent, practical, and useful for regulatory application. | CRED was endorsed as a suitable replacement for Klimisch. |

The Scientist's Toolkit: Essential Reagents and Materials for Aquatic Ecotoxicity Testing

Implementing and evaluating studies under the CRED framework requires an understanding of core experimental materials. The following table lists key reagent solutions and their functions in standard aquatic tests, the quality of which is scrutinized under CRED's reliability criteria.

Table 3: Key Research Reagent Solutions in Aquatic Ecotoxicity Testing

| Reagent/Material | Function in Ecotoxicity Testing | CRED Reliability Consideration |

|---|---|---|

| Reconstituted Standardized Test Water (e.g., ISO, OECD recipes) | Provides a consistent, defined medium for exposing test organisms, controlling water hardness, pH, and ion composition. | Critical for reporting exposure conditions (Criterion R-10). Deviations must be justified and documented [12]. |

| Test Substance Stock Solution (in solvent if needed) | The concentrated source of the chemical for serial dilution to create exposure concentrations. | Purity, concentration verification, and solvent identity/volume must be reported (Criteria R-2, R-3). Solvent controls are mandatory [12]. |

| Algal Growth Medium (e.g., OECD TG 201 medium) | Supplies essential macro and micronutrients for unicellular algal growth in tests assessing inhibition of growth rate. | Composition must be specified. Nutrient levels can affect substance bioavailability and toxicity [12]. |

| Daphnia Food Suspension (e.g., algae, yeast, trout chow) | Sustains test organisms during chronic (>48h) reproduction and survival tests. | Type, quantity, and feeding regimen must be reported. Inadequate feeding is a common reason for study de-rating [12]. |

| Aeration Systems (air pumps, stones) | Maintains dissolved oxygen levels above critical thresholds for fish and invertebrates. | Aeration status must be reported. Lack of aeration can cause hypoxia, confounding toxicity results [12]. |

| Positive Control Substance (e.g., K₂Cr₂O₇ for Daphnia, 3,5-DCP for algae) | Used periodically to verify the sensitivity of the test organism population is within historical ranges. | Use of positive controls, while not always mandatory, strengthens the reliability of the biological response [12]. |

Visualizing the Conceptual Relationship Between Evaluation Frameworks

The evolution from Klimisch to CRED, and its extension to exposure assessment with CREED, represents a paradigm shift toward greater structure and transparency in environmental data evaluation [16].

Diagram: Evolution of Data Evaluation Frameworks. The diagram shows the progression from the initial Klimisch method, through critique and development, to the detailed CRED framework for ecotoxicity data, and its recent extension to exposure data via the CREED framework [12] [2] [16].