Comparative Sensitivity in Ecological Risk Assessment: A Critical Analysis of Methods for Biomedical and Environmental Research

This article provides a comprehensive analysis of the comparative sensitivity of various ecological risk assessment (ERA) methodologies, tailored for researchers and drug development professionals.

Comparative Sensitivity in Ecological Risk Assessment: A Critical Analysis of Methods for Biomedical and Environmental Research

Abstract

This article provides a comprehensive analysis of the comparative sensitivity of various ecological risk assessment (ERA) methodologies, tailored for researchers and drug development professionals. We explore foundational principles, including comparative risk assessment (CRA) frameworks and the reductionist versus holistic model debate [citation:3]. The analysis delves into advanced methodological applications, highlighting the integration of machine learning models like Ridge regression and Random Forest for predicting risks from pollutants [citation:1] and the use of ecosystem services in landscape-level assessments [citation:5]. We address key troubleshooting considerations, such as managing uncertainty with safety factors [citation:9] and ensuring methodological compliance with international standards [citation:8]. Finally, the article examines validation paradigms, using insights from clinical model comparisons [citation:7] to discuss metrics for evaluating ERA model performance. The synthesis aims to guide the selection and optimization of sensitive, reliable ERA methods in complex biomedical and environmental contexts.

Defining the Landscape: Core Principles and Frameworks of Comparative Ecological Risk Assessment

Comparative Risk Assessment (CRA) is a foundational methodological framework for quantifying the contributions of various risk factors to population health burdens or ecological impacts within a unified, consistent structure [1] [2]. Its core paradigm involves the systematic comparison of current exposure distributions against a theoretical minimum risk counterfactual to calculate attributable burden, most commonly quantified using metrics like Disability-Adjusted Life Years (DALYs) [1]. This guide provides a comparative analysis of the CRA paradigm against alternative risk assessment methodologies, situating it within ongoing research on the sensitivity and specificity of ecological and public health risk evaluation tools. We present synthesized experimental data, detailed procedural protocols, and essential research tools to inform its application by scientists and drug development professionals.

Comparative Analysis of Risk Assessment Methodologies

The selection of a risk assessment methodology is dictated by the nature of the risk, data availability, and the decision-making context. The CRA paradigm occupies a specific niche within a broader ecosystem of approaches, each with distinct strengths and limitations. The following table provides a structured comparison of CRA against other prevalent methodologies.

Table 1: Comparison of Risk Assessment Methodologies

| Methodology | Core Approach | Primary Output | Key Strengths | Major Limitations | Best-Suited Applications |

|---|---|---|---|---|---|

| Comparative Risk Assessment (CRA) | Quantifies the disease/impact burden attributable to specific risk factors by comparing current exposure to a theoretical minimum [1] [2]. | Population Attributable Fraction (PAF), attributable DALYs or other burden metrics [1]. | Enables systematic ranking and comparison of diverse risk factors; provides a unified framework for priority-setting in public health and environmental policy [2]. | Highly data-demanding; requires strong causal evidence; counterfactual (TMREL) can be difficult to define [1]. | Global Burden of Disease studies, ranking health risks, informing population-level intervention strategies [1] [2]. |

| Cumulative Risk Assessment (CumRA) | Evaluates combined risks from aggregate exposures to multiple chemical and non-chemical stressors acting through multiple pathways and routes [3]. | Cumulative risk index or hazard index; characterization of combined effects [3] [4]. | Holistic by considering combined effects of multiple real-world exposures; can integrate psychosocial and environmental stressors [3] [4]. | Extremely complex due to interactions; models for combined toxicity are resource-intensive and uncertain [3]. | Community-level environmental justice studies, regulatory assessment of pesticide mixtures, ecosystem impact assessments [5] [3] [4]. |

| Qualitative Risk Assessment | Uses descriptive scales and expert judgment to categorize risks based on likelihood and impact without numerical quantification [6] [7]. | Risk rankings (e.g., High/Medium/Low), risk matrices, heat maps [6] [7]. | Fast, flexible, and resource-efficient; useful when data are scarce; incorporates expert insight on intangible factors [6] [7]. | Subjective and difficult to compare precisely; can be influenced by bias; provides no quantitative basis for cost-benefit analysis [6]. | Early-stage project screening, initial hazard identification, assessing reputational or operational risks [7]. |

| Quantitative Risk Assessment | Employs numerical data and probabilistic models to estimate the likelihood and magnitude of risk [6] [7]. | Probabilistic risk estimates (e.g., mortality probability, financial loss) [6] [8]. | Provides objective, numerical outputs for direct comparison and decision-making; supports statistical confidence intervals [6] [8]. | Relies on availability of high-quality, quantitative data; complex models can be opaque and require specialized expertise [6]. | Engineering safety (Fault Tree Analysis), financial risk modeling (VaR), clinical outcome prediction (e.g., TRISS, NSQIP-SRC) [7] [8]. |

A critical research frontier is the integration and sensitivity analysis across these paradigms. For instance, qualitative ecosystem-based principles are being integrated into strategic environmental assessments to guide more quantitative Cumulative Effects Assessments (CEA) in marine planning [5]. Similarly, validation studies comparing quantitative tools—such as the Trauma and Injury Severity Score (TRISS) and the National Surgical Quality Improvement Program Surgical Risk Calculator (NSQIP-SRC)—highlight how predictive performance varies by outcome (mortality vs. complications), a lesson directly applicable to evaluating ecological risk models [8].

Experimental Data and Performance Benchmarks

The empirical validation of CRA and related methodologies relies on large-scale data synthesis. The following table summarizes key quantitative findings from major studies, illustrating the output and scope of the CRA approach.

Table 2: Experimental Data from Burden of Disease and Risk Assessment Studies

| Study / Framework | Key Metric | Quantitative Finding | Implication for Risk Priority |

|---|---|---|---|

| Global Burden of Disease (GBD) 2019 [1] | Attributable DALYs (Millions) | • High systolic blood pressure: ~235 million• Smoking: ~200 million• High fasting plasma glucose: ~172 million | Metabolic and behavioral risk factors dominate the global disease burden. |

| GBD 2019 Risk Factor Groups [1] | Attributable DALYs by Group (Millions) | • Behavioral risks: 831 million• Metabolic risks: 463 million• Environmental/Occupational: 397 million | Provides a high-level categorization for targeted policy interventions. |

| Community CRA Case Study (Philadelphia) [4] | Correlation & Increased Mortality Risk | Cumulative risk scores correlated with a 2-6% increase in total mortality and an 8-23% increase in respiratory mortality per incremental score increase. | Demonstrates CRA's utility in identifying local environmental health inequities independent of socioeconomic confounders. |

| Trauma Tool Comparison Study [8] | Predictive Performance (Brier Score / AUC) | Mortality Prediction:• TRISS Brier Score: 0.02• NSQIP-SRC/ASA-PS Brier Score: 0.03(Lower is better) | Highlights that even robust quantitative tools have differential sensitivity based on the specific outcome measured. |

Detailed Experimental Protocols

Protocol 1: Core CRA Workflow for Population Health Burden Estimation This protocol, derived from the standardized CRA methodology used in Global Burden of Disease studies, details the five consecutive steps for estimating the burden attributable to a specific risk factor [1].

- Problem Formulation & Exposure Definition: Define the risk factor of interest (e.g., ambient particulate matter, smoking) and specify the exposure metric (e.g., annual mean PM2.5 concentration, pack-years) [1]. This stage aligns with the planning phase in broader cumulative risk frameworks [9] [3].

- Exposure Assessment: Model or measure the distribution of exposure in the target population. This often uses national surveys, environmental monitoring data, or satellite-derived models to estimate population-level exposure [1].

- Identification of Risk-Outcome Pairs: Establish causal relationships between the risk factor and health outcomes (e.g., PM2.5 and lung cancer). Inclusion is typically based on systematic review and criteria evaluating the strength of evidence (e.g., Bradford-Hill criteria) [1].

- Exposure-Response Quantification: Meta-analyze epidemiological studies to derive a continuous mathematical function (e.g., a relative risk curve) that defines the relationship between exposure level and the probability of each health outcome [1].

- Burden Calculation: Compute the Population Attributable Fraction (PAF) using the exposure distribution and exposure-response functions. The theoretical minimum risk exposure level (TMREL) serves as the counterfactual. The PAF is then applied to the total burden of the health outcome to yield the attributable burden, expressed in DALYs [1].

Protocol 2: Validation Study for Comparative Predictive Accuracy of Risk Tools This protocol, modeled on clinical tool comparison studies, is adaptable for evaluating different ecological risk assessment models [8].

- Study Design & Cohort Definition: Conduct a prospective observational study. Define clear inclusion/exclusion criteria for the subjects (e.g., specific trauma patients, a defined ecosystem unit) [8].

- Data Collection & Tool Application: Collect baseline data on all variables required for the risk tools being compared (e.g., physiological parameters, injury codes, ecosystem variables). Apply each tool's algorithm to generate parallel risk predictions for each subject [8].

- Outcome Measurement: Record actual, observed outcomes (e.g., mortality, length of stay, complication incidence, or ecosystem state change) during a specified follow-up period [8].

- Statistical Comparison: Use metrics like the Brier Score (for calibration) and Area Under the ROC Curve (AUC; for discrimination) to compare predictive accuracy for each outcome. Use regression models and likelihood ratio tests to assess which tool best explains variations in continuous outcomes like length of stay [8].

The Scientist's Toolkit: Essential Reagents and Models

Table 3: Key Research Reagent Solutions for CRA and Related Assessments

| Item / Metric | Primary Function | Application Context |

|---|---|---|

| Disability-Adjusted Life Year (DALY) | A summary measure of population health that combines years of life lost due to premature mortality and years lived with disability [1] [2]. | The standard outcome metric for quantifying and comparing health burdens across diseases and risks in CRA studies. |

| Population Attributable Fraction (PAF) | The proportion of disease burden in a population that would be eliminated if exposure were reduced to the TMREL [1]. | The core output of a CRA used to calculate attributable burden (e.g., Attributable DALYs = Total DALYs * PAF). |

| Theoretical Minimum Risk Exposure Level (TMREL) | The counterfactual exposure distribution that minimizes population risk, against which current exposure is compared [1]. | A critical and often challenging parameter to define in CRA; foundational for PAF calculation. |

| Hazard Quotient (HQ) / Hazard Index (HI) | HQ is the ratio of a single substance's exposure level to its safe reference dose. HI is the sum of HQs for substances with similar toxic effects [3]. | A core metric in chemical cumulative risk assessment for evaluating potential additive effects. |

| Cumulative Exposure Models (e.g., CARES, SHEDS) | Probabilistic models that estimate exposure to multiple chemicals via multiple pathways (dietary, residential, environmental) [3]. | Used in higher-tier regulatory cumulative risk assessments, such as for pesticides under the FQPA. |

| Geographic Information Systems (GIS) | A framework for gathering, managing, and analyzing spatial and geographic data [5]. | Critical for ecosystem-based CEA and spatial CRA to map exposure gradients, vulnerable populations, and cumulative stressors. |

Visualizing Workflows and Relationships

Comparative Risk Assessment (CRA) Core Computational Workflow

Relationship Between Risk Assessment Method Families

Implications for Research and Development

For researchers and drug development professionals, the CRA paradigm offers a powerful, standardized framework for contextualizing the population health impact of a specific pathogen, toxin, or behavioral risk factor relative to other competing priorities. Its sensitivity is highly dependent on the quality of input data—particularly the exposure-response functions and exposure assessments [1]. Integration with cumulative risk approaches is a key direction, as it moves from single-risk silos toward a more holistic reality where individuals face multiple, simultaneous exposures [3] [4]. Furthermore, the validation techniques used in clinical tool comparisons [8] should be adopted to rigorously test the predictive performance of different ecological and public health risk models, ensuring that the most sensitive and specific tools guide resource allocation and intervention strategies.

The assessment of genetically modified organisms (GMOs) and novel environmental stressors operates at the intersection of two dominant scientific philosophies: reductionism and holism. Reductionism, a cornerstone of molecular biology, seeks to explain complex systems by studying their isolated, simpler components [10]. In contrast, holistic approaches, exemplified by systems biology, contend that "the whole is more than the sum of its parts," focusing on emergent properties arising from interconnectedness [10] [11]. Within ecological risk assessment (ERA), this dichotomy translates into fundamental differences in methodology, sensitivity, and applicability. Reductionist strategies are characterized by controlled, single-variable experiments and comparative safety assessments against unmodified counterparts [12]. Holistic strategies employ systems-level analyses, network models, and ecologically realistic simulations to capture complex interactions [13] [14]. As GMOs evolve with new genomic techniques—enabling complex, multiplexed genetic modifications and systemic metabolic changes—and as novel chemical stressors present multifaceted ecological threats, the limitations of purely reductionist frameworks become apparent [12] [13]. This guide objectively compares the performance of these divergent philosophical approaches within the context of research on the comparative sensitivity of ecological risk assessment methods.

Comparative Framework: Principles and Applications

The choice between reductionist and holistic models dictates every stage of risk assessment, from experimental design to data interpretation and final risk characterization. The table below summarizes their core principles and primary applications.

Table: Foundational Comparison of Reductionist and Holistic Assessment Models

| Aspect | Reductionist Model | Holistic Model |

|---|---|---|

| Core Philosophy | Understand the whole by isolating and studying its constituent parts [10]. | The whole system exhibits emergent properties not predictable from parts alone [10] [11]. |

| Primary Goal | Establish clear, causal mechanisms for specific traits or toxic effects. | Understand system-level behavior, interactions, and long-term dynamics under complexity. |

| Typical Approach | Comparative assessment (e.g., GMO vs. non-GMO isoline); controlled single-stressor tests [12]. | Systems biology; eco-evolutionary modeling; mesocosm/field studies [13] [14]. |

| Level of Analysis | Molecular, biochemical, single-organism. | Population, community, ecosystem, landscape. |

| Handling Complexity | Reduces complexity by controlling variables. May miss synergistic or indirect effects. | Embraces and seeks to quantify complexity, interactions, and feedback loops. |

| Key Strength | High internal validity, precise mechanistic insight, regulatory familiarity [10]. | Higher ecological realism, can predict emergent outcomes and landscape-scale effects [14]. |

| Major Limitation | May lack ecological relevance; poor predictability for complex traits or novel stressors [12]. | High resource demand; can be data-hungry; results may be less precise and more uncertain. |

Assessment of Methodological Sensitivity and Performance

The sensitivity of an assessment method refers to its ability to detect and accurately quantify adverse effects, particularly under realistic conditions of complexity. The performance of reductionist and holistic models diverges significantly based on the nature of the stressor.

Assessing Genetically Modified Organisms (GMOs)

For GMOs, the established regulatory paradigm has heavily relied on reductionist, comparative assessment. However, its sensitivity is challenged by next-generation modifications.

Reductionist Performance (Comparative Safety Assessment): This method involves detailed comparison of the GMO and a near-isogenic non-GM comparator for agronomic, phenotypic, and compositional parameters [12]. Its sensitivity is high for detecting unintended changes in single metabolites or well-defined phenotypic traits.

- Experimental Protocol: A standard protocol involves growing GM and non-GM lines under identical controlled conditions in replicated field trials. Measurements include yield, plant height, disease resistance, and the concentration of key nutrients, toxins, and allergens. Statistical equivalence testing is used to conclude safety [12].

- Performance Data & Limitations: This approach works effectively for first-generation GMOs with simple, single-trait modifications (e.g., herbicide tolerance). However, for GMOs with complex modifications—such as multiplexed gene editing, reprogrammed metabolic pathways, or altered transcription factors—the search for a valid comparator breaks down, and the method's sensitivity plummets [12]. A GM plant engineered for systemic drought tolerance may be fundamentally different from its parent, making a line-by-line comparison misleading for ecological risk [12].

Holistic Performance (Systems-Level Assessment): Holistic models address GMO risk by focusing on the organism's interaction with its environment, independent of a direct comparator.

- Experimental Protocol: Hypothesis-driven, whole-plant assessments in environments mimicking release conditions. This includes evaluating impacts on non-target organisms, soil microbial communities, and gene flow potential. For gene drives, eco-evolutionary dynamic models are used, incorporating genetics, demography, spatial ecology, and environmental variables to predict population-level outcomes [14].

- Performance Data & Advantages: A 2023 review of gene drive models found that outcomes were most strongly influenced (>50% of models) by demographic factors (e.g., density-dependence) and spatial ecology (e.g., dispersal rates), features entirely outside the scope of reductionist lab studies [14]. Holistic models are uniquely sensitive to these higher-order, network-based, and long-term evolutionary risks.

Table: Performance Comparison for GMO Assessment

| Assessment Scenario | Reductionist Model Output | Holistic Model Output | Comparative Sensitivity Insight |

|---|---|---|---|

| Simple, Single-Trait GM Crop | Confirms compositional equivalence; identifies no significant difference from comparator [12]. | May identify minor, ecologically irrelevant shifts in rhizosphere microbes. | Reductionist model is sufficiently sensitive and more efficient. Holistic model may detect "noise" without clear risk implications. |

| Complex GM Crop (e.g., Metabolic Pathway Engineering) | May show numerous "non-equivalence" results that are difficult to interpret for risk [12]. | Assesses fitness, invasiveness, and community-level impacts in simulated ecosystems; provides functional risk metrics. | Reductionist model loses sensitivity (generates uninterpretable data). Holistic model provides functionally sensitive, actionable risk conclusions. |

| Gene Drive Organism | Can characterize molecular function and inheritance pattern in the lab. | Predicts invasion dynamics, resistance evolution, and non-target population impacts in a spatial context [14]. | Reductionist model is insensitive to population-scale fate. Holistic dynamic modeling is essential for sensitive prediction of ecological outcomes. |

Assessing Novel Chemical and Environmental Stressors

The assessment of novel chemical stressors, such as oxidation by-products (OBPs) from advanced oxidation processes, further illustrates the sensitivity divide.

Reductionist Performance (Dose-Response & Standardized Toxicity Tests): This relies on standardized laboratory toxicity tests (e.g., on algae, daphnia, fish) to generate dose-response curves and endpoints like LC50 (lethal concentration for 50% of a population).

- Experimental Protocol: Organisms are exposed to a range of concentrations of a single, purified OBP in a controlled lab setting over a defined period (e.g., 48 or 96 hours). Mortality, growth inhibition, or reproduction are measured. The Species Sensitivity Distribution (SSD) method uses data from multiple single-species tests to estimate a "hazard concentration" protecting most species [13].

- Performance Data & Limitations: This provides precise, reproducible toxicity thresholds. However, its sensitivity is limited to the tested species and the isolated chemical. It is largely insensitive to mixture toxicity, indirect ecological interactions, and trophic transfer effects common with novel stressors in the environment [13].

Holistic Performance (Community and Ecosystem-Level Assessment): These methods assess stressors within complex biotic and abiotic networks.

- Experimental Protocol: Microcosm/Mesocosm Studies: Enclosed, simplified ecosystems (e.g., sediment-water systems with multiple species) are exposed to the stressor. Community structure, nutrient cycling, and ecosystem function (e.g., primary productivity, decomposition) are monitored over time [13]. Probabilistic Ecological Risk Assessment (PERA): Integrates distributions of exposure data and toxicity data (from SSDs) using simulation models (e.g., Monte Carlo) to quantify the probability and magnitude of adverse effects, explicitly accounting for uncertainty [13].

- Performance Data & Advantages: Mesocosm studies on complex effluents have shown the ability to detect indirect effects (e.g., predator decline due to prey loss) and functional disruptions that single-species tests miss [13]. PERA provides a more sensitive and realistic risk estimate by moving beyond a single "risk quotient" to a probability distribution of outcomes.

Table: Performance Comparison for Novel Stressor Assessment

| Assessment Method | Typical Output | Key Strength (Sensitivity) | Key Limitation |

|---|---|---|---|

| Single-Species Toxicity Test (Reductionist) | LC50, EC50, NOEC values. | High precision for acute toxicity in a standard organism. | Insensitive to ecological complexity, chronic low-dose effects, and species interactions. |

| Species Sensitivity Distribution - SSD (Transitional) | HC5 (Hazard Concentration for 5% of species). | More sensitive to variation in sensitivity across a taxonomic community. | Still relies on isolated single-species data; insensitive to ecological dynamics. |

| Microcosm/Mesocosm (Holistic) | Changes in community diversity, dominance, and ecosystem process rates. | Sensitive to indirect effects, biotic interactions, and recovery dynamics. | Highly resource-intensive; results can be system-specific and difficult to generalize. |

| Probabilistic ERA - PERA (Holistic) | Probability distribution of impacted fraction of species (e.g., 30% chance >10% of species are affected). | Sensitively incorporates real-world variability and uncertainty; produces quantifiable risk probabilities [13]. | Requires extensive data; complexity can hinder communication to decision-makers. |

Experimental Protocols for Key Methodologies

- Selection of Comparators: Identify the near-isogenic non-transformed parental line as the primary comparator. Select a set of commercial reference varieties representing the range of natural variation.

- Experimental Design: Conduct a minimum of eight field trial sites over two growing seasons, using a randomized complete block design with sufficient replication.

- Phenotypic & Agronomic Analysis: Measure key characteristics (plant height, flowering time, yield, disease susceptibility) at appropriate growth stages.

- Compositional Analysis: At harvest, analyze grains/leaves for proximates (protein, fat, carbohydrates), key nutrients, anti-nutrients, and known toxins. Use established analytical methods (e.g., HPLC, GC-MS).

- Statistical Analysis: Perform analysis of variance (ANOVA). Use equivalence testing (e.g., two-one-sided t-tests) to determine if differences between the GMO and comparator fall within a pre-defined equivalence interval based on natural variation observed in reference varieties.

- Problem Formulation & Feature Identification: Define the risk question (e.g., probability of regional population suppression). Identify key biological features across five categories: Genetic (drive efficiency, resistance alleles), Demographic (population growth rate, carrying capacity), Spatial (dispersal kernel, landscape structure), Environmental (temporal variability), and Implementation (release size, timing).

- Model Selection and Parameterization: Select an appropriate dynamic process-based model (e.g., individual-based model, reaction-diffusion model). Parameterize the model using a combination of laboratory data, literature values, and expert elicitation for uncertain parameters.

- Iterative Modeling and Validation: Run simulations to predict outcomes (e.g., spread rate, persistence). Conduct sensitivity and uncertainty analysis to identify which parameters most influence outcomes. Refine parameter estimates through targeted lab or confined field experiments ("ground-truthing").

- Risk Characterization: Output probabilistic predictions (e.g., distribution of potential invasion distances). Integrate model results with qualitative risk assessment frameworks to inform confinement decisions and risk management plans.

Philosophical and Methodological Pathways

The following diagram illustrates the logical relationship between the core philosophies, their methodological implementations, and their ultimate outputs in risk assessment, highlighting that they are complementary rather than mutually exclusive.

Integrated Workflow for Assessing Complex Stressors

A modern, sensitive risk assessment for complex GMOs or novel stressors requires an integrated workflow that strategically combines both philosophical approaches. The following diagram outlines this iterative process.

The Scientist's Toolkit: Essential Reagent Solutions

Table: Key Research Reagents and Materials for GMO and Novel Stressor Assessment

| Tool / Reagent | Function in Assessment | Primary Model Association |

|---|---|---|

| CRISPR/Cas9 Genome Editing Systems | Enables precise creation of gene knockouts, knock-ins, and multiplexed modifications to study gene function and create complex GM models for testing [11]. | Reductionist (mechanistic) & Holistic (creating complex systems). |

| dCas9 Fusion Proteins (e.g., dCas9-KRAB, dCas9-p300) | Allows for targeted epigenetic silencing or activation without altering DNA sequence, used to study gene regulatory networks and epigenetic landscapes [11]. | Holistic (network analysis). |

| Species Sensitivity Distribution (SSD) Databases | Curated collections of toxicity endpoints (e.g., LC50) for multiple species and chemicals, used to derive protective concentration thresholds [13]. | Transitional (bridges single-species data to community protection). |

| Environmental DNA (eDNA) Metabarcoding Kits | For comprehensive, non-invasive monitoring of biodiversity and community composition changes in mesocosm or field studies post-stressor exposure. | Holistic (community-level assessment). |

| Stable Isotope Tracers (e.g., ¹⁵N, ¹³C) | Used to trace nutrient flow, trophic transfer of stressors, and measure ecosystem process rates (e.g., decomposition, primary production) in holistic studies. | Holistic (ecosystem function). |

| Individual-Based Model (IBM) Software Platforms (e.g., NetLogo) | Provides flexible frameworks for building and simulating eco-evolutionary dynamic models incorporating individual variation, spatiality, and stochasticity [14]. | Holistic (predictive modeling). |

| Probabilistic Risk Software (e.g., @Risk, mcsim) | Facilitates Monte Carlo simulation and other probabilistic analyses to integrate exposure and effects distributions for PERA [13]. | Holistic (risk quantification). |

The comparison reveals that neither reductionist nor holistic models hold universal superiority. Their sensitivity is context-dependent. Reductionist models offer unmatched precision and are perfectly sensitive for defined, simple hazard identification. Holistic models provide the necessary breadth and ecological sensitivity for understanding complex, interacting systems. The most robust and sensitive risk assessment framework for novel GMOs and stressors is not a choice between philosophies, but their strategic integration. An iterative approach—where holistic models generate hypotheses and identify critical risk pathways, reductionist experiments provide precise mechanistic data and parameter validation, and these inputs feed back into refined holistic models for probabilistic prediction—capitalizes on the strengths of both worlds [11] [14]. This synthesis represents the future of sensitive ecological risk assessment in an era of increasing biological and environmental complexity.

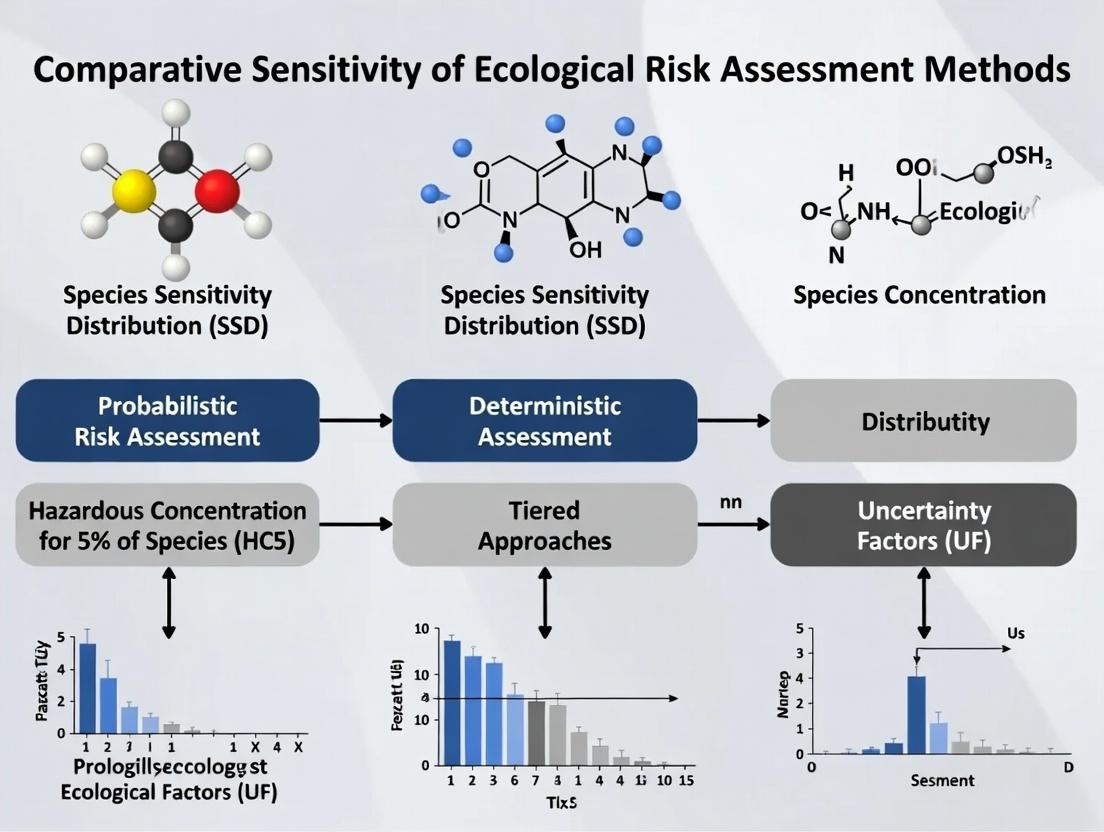

Ecological Risk Assessment (ERA) is fundamental to environmental protection, requiring robust methods to balance scientific accuracy with regulatory feasibility. A central challenge lies in the comparative sensitivity of different assessment methodologies—how varying approaches yield different estimations of risk from the same chemical threat. This guide objectively compares the performance of conventional, probabilistic, and next-generation tiered assessment frameworks. Tiered approaches begin with conservative, screening-level evaluations and progress iteratively to more data-intensive refinements, optimizing resource allocation while striving for scientific precision [15]. The evolution from simple Assessment Factor (AF) methods to Species Sensitivity Distributions (SSD) and, more recently, to Next-Generation Risk Assessment (NGRA) integrating New Approach Methodologies (NAMs), represents a paradigm shift towards mechanistic, internal dose-based evaluations [15] [16]. Framed within broader thesis research on comparative sensitivity, this guide examines experimental data to determine how methodological choice influences the detection and quantification of ecological risk.

Comparative Analysis of Methodological Performance

The performance of ERA methods is not absolute but is contingent on data quality and the ecological context. The following analysis compares the defining principles, outputs, and performance drivers of three core methodologies.

Table 1: Comparative Performance of Core Ecological Risk Assessment Methods

| Methodology | Core Principle | Primary Output | Key Performance Drivers | Reported Performance Insights |

|---|---|---|---|---|

| Conventional Assessment Factor (AF) | Applies a fixed, conservative divisor (e.g., 10, 100, 1000) to the lowest available toxicity endpoint (e.g., NOEC, LC50). | Predicted No-Effect Concentration (PNEC) as a single point estimate. | Magnitude of the chosen default factor; quality of the single most sensitive test endpoint. | Performance declines as interspecies variation increases. It may misrepresent risk when sensitivity among species is highly variable [17]. |

| Species Sensitivity Distribution (SSD) | Fits a statistical distribution (e.g., log-normal) to multiple species toxicity data to estimate a hazardous concentration (HCp). | HCp (e.g., HC5), often divided by a small AF (e.g., 1-5) to derive PNEC. | Sample size (number of species) and statistical variation in the dataset. | Performance improves with larger sample size. More accurate than AF when sensitivity variation is high [17]. Considered more data-driven and less arbitrary. |

| Tiered NGRA/NAM Framework | Integrates bioactivity data, toxicokinetics (TK), and toxicodynamics (TD) in a stepwise, hypothesis-driven tiered process. | Bioactivity-based Margin of Exposure (MoE), internal dose estimates, and pathway-specific risk characterization [15]. | Availability of high-throughput in vitro bioactivity data (e.g., ToxCast); validity of TK models for extrapolation. | Provides nuanced, mechanism-based assessment for combined exposures. Can identify tissue-specific risk drivers and refine safety margins using internal concentrations [15]. |

A critical quantitative finding is that the relative precision of the AF and SSD methods is not fixed but depends on data properties [17]. Research shows that with small sample sizes (e.g., <5 species) and low variation in species sensitivity, the conventional AF method can perform adequately. However, its performance deteriorates significantly as the variation in sensitivity across species increases. Conversely, the SSD method's reliability is strongly enhanced by larger sample sizes, becoming more robust and accurate as more toxicity data points are included [17]. This underscores a fundamental trade-off: simpler methods are less data-intensive but more vulnerable to misrepresentation, while more complex methods require greater investment but offer increased precision and transparency.

Experimental Data from Tiered Assessment Applications

Case Study: Tiered NGRA for Pyrethroid Insecticides

A 2025 study applied a five-tiered NGRA framework to six pyrethroids (bifenthrin, cyfluthrin, cypermethrin, deltamethrin, lambda-cyhalothrin, permethrin), generating comparative data versus conventional risk assessment [15].

Table 2: Outcomes from a Tiered NGRA Case Study on Pyrethroids [15]

| Assessment Tier | Activity & Data Input | Key Comparative Finding vs. Conventional RA |

|---|---|---|

| Tier 1: Bioactivity Screening | Analysis of ToxCast in vitro assay data (AC50 values) across tissue and gene pathways. | Identified bioactivity patterns inconsistent with a single, common mode of action, challenging a core assumption of conventional cumulative risk assessment for this class. |

| Tier 2: Combined Risk Exploration | Calculation of relative potencies from ToxCast data and comparison to regulatory NOAEL/ADI-derived potencies. | Found poor correlation between in vitro bioactivity potency and in vivo NOAEL/ADI values, highlighting limitations of using apical endpoints alone for mixture assessment. |

| Tier 3: Exposure & TK Screening | Application of Margin of Exposure (MoE) analysis using TK modeling to estimate internal doses. | Shifted assessment basis from external dose to internal concentration, identifying tissue-specific pathways as critical risk drivers—a refinement not possible with standard ADI methods. |

| Tier 4: Bioactivity Refinement | In vitro to in vivo extrapolation using TK models to compare bioactivity concentrations with interstitial fluid levels. | Achieved coherent results based on interstitial concentrations, though intracellular estimates remained uncertain, demonstrating the potential and current limits of NAM-based extrapolation. |

| Tier 5: Integrated Risk Characterization | Comparison of bioactivity MoEs with dietary and aggregate (dietary + non-dietary) exposure estimates. | Concluded dietary exposure alone yielded MoEs within standard safety factors, but aggregate exposure brought MoEs close to thresholds of concern, revealing a risk gap missed by conventional dietary-only assessment. |

Experimental Protocol: NGRA Tiered Workflow

The protocol for the pyrethroid case study exemplifies a modern, integrated approach [15]:

- Data Gathering (Tier 1): Bioactivity data (AC50 values) for the six pyrethroids were retrieved from the EPA's ToxCast database via the CompTox Chemicals Dashboard. Assays were categorized by relevance to specific tissues (e.g., liver, brain, kidney) and gene pathways (e.g., neuroreceptor activity, cytochrome P450).

- Potency Calculation & Hypothesis Testing (Tier 2): For each category, the average AC50 was calculated. Relative potencies were determined by normalizing all values to the most potent pyrethroid in that category (assigned a value of 1). These bioactivity-derived potencies were plotted against relative potencies calculated from regulatory NOAEL and ADI values to test for correlation.

- TK Modeling & MoE Calculation (Tiers 3 & 4): Physiologically Based Toxicokinetic (PBTK) models were used to translate oral doses from rodent studies into predicted internal concentrations in blood and tissues. These modeled internal concentrations were compared to in vitro bioactivity concentrations to calculate bioactivity MoEs. Human exposure estimates were derived from food monitoring and human biomonitoring data.

- Integrated Assessment (Tier 5): Bioactivity MoEs for realistic human exposure scenarios were calculated. The final risk characterization compared these MoEs to predefined thresholds of concern and evaluated the contribution of non-dietary exposure pathways.

Visualizing Assessment Workflows and Logic

Tiered Ecological Risk Assessment Decision Logic [15] [17]

Species Sensitivity Distribution (SSD) Methodology Workflow [17]

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Advanced Tiered ERA

| Tool/Reagent | Primary Function in ERA | Application Context |

|---|---|---|

| ToxCast/Tox21 Bioassay Libraries | Provide high-throughput in vitro bioactivity screening data across hundreds of cellular and molecular pathways. | Tier 1 Screening & Tier 2 Hazard ID: Used to generate initial bioactivity indicators, identify potential modes of action, and calculate relative potencies for chemicals or mixtures [15]. |

| Physiologically Based Kinetic (PBK) Models | Mathematical models simulating the absorption, distribution, metabolism, and excretion (ADME) of chemicals in organisms. | Tier 3-4 Refinement: Critical for in vitro to in vivo extrapolation (IVIVE), translating external doses or in vitro concentrations to relevant internal target-site doses for MoE calculation [15]. |

| OECD Test Guideline (TG) Alternative Methods | Standardized in vitro and in chemico assays (e.g., fish cell line acute toxicity, vertebrate embryo tests). | All Tiers (3Rs Principle): Provide mechanistic data while reducing vertebrate testing. Examples include TG 249 (Fish Cell Line Acute Toxicity) for replacing some fish acute tests [16]. |

| Adverse Outcome Pathway (AOP) Frameworks | Organize mechanistic knowledge linking a molecular initiating event to an adverse ecological outcome across biological levels. | Hypothesis Formulation: Guides the selection of relevant in vitro assays and endpoints for NAM-based assessments, ensuring biological relevance [16]. |

| SSD Statistical Software Packages | Specialized software (e.g., ETX, SSD Master) or R packages (e.g., fitdistrplus, ssdtools) to fit and analyze species sensitivity distributions. |

Tier 2 Analysis: Used to fit statistical distributions to toxicity data, estimate HCp values, and calculate confidence intervals [17]. |

This guide provides a comparative analysis of the three core analytical components of Ecological Risk Assessment (ERA). Framed within broader research on the comparative sensitivity of ERA methods, it contrasts the objectives, methodologies, and outputs of Hazard Identification, Exposure Assessment, and Ecological Response Characterization, supported by experimental data and case studies [18] [19] [20].

The analysis phase of an ERA systematically evaluates the interactions between stressors and ecological receptors [19]. The following table compares the three fundamental components.

Table 1: Comparative Summary of Key ERA Components

| Component | Primary Objective | Key Outputs | Core Methodological Approaches | Major Sources of Uncertainty |

|---|---|---|---|---|

| Hazard Identification | Determine the inherent potential of a stressor to cause adverse ecological effects. | List of potential hazards; Qualitative/quantitative toxicity profiles; Mode of action. | Literature review; Database mining (e.g., ECOTOX); In vitro and single-species bioassays; QSAR modeling. | Extrapolation from lab to field; Limited data for novel stressors; Interaction effects. |

| Exposure Assessment | Estimate the co-occurrence, intensity, and duration of contact between the stressor and ecological receptors. | Exposure profile (magnitude, frequency, duration, spatial scale); Predicted Environmental Concentrations (PECs). | Environmental monitoring & fate modeling; GIS-based spatial analysis; Bioaccumulation models. | Environmental variability; Model parameterization; Measurement limits. |

| Ecological Response Characterization | Evaluate the relationship between stressor magnitude and the likelihood/severity of ecological effects, culminating in risk estimation. | Stressor-response profile; Risk quotients or probabilistic risk estimates; Characterization of adversity and recovery potential. | Species Sensitivity Distributions (SSD); Population/community modeling; Field surveys; Mesocosm studies. | Ecological complexity; Selection of assessment endpoints; Temporal scaling of effects. |

Experimental Protocols and Data Integration

This section details specific methodologies that generate data for the comparative evaluation of ERA components, drawing from contemporary case studies.

Protocol: Deriving Water Quality Benchmarks via Species Sensitivity Distribution (SSD)

This protocol, applied to ferric iron (Fe³⁺) in Chinese surface waters, exemplifies the integration of hazard and ecological response data [21] [22].

- Toxicity Data Compilation: Collect acute (e.g., LC/EC/IC50) and chronic (e.g., NOEC, LOEC) toxicity endpoints from standardized laboratory tests for a defined set of species. For Fe³⁺, 47 data points across 22 species were sourced from ECOTOX, CNKI, and Web of Science [22].

- Data Screening & Aggregation: Apply quality criteria (e.g., test guideline compliance, reported exposure conditions). For multiple data points per species, calculate the geometric mean. Ensure representation across taxonomic groups (e.g., fish, invertebrates, algae) [22].

- SSD Model Fitting: Fit a statistical distribution (e.g., logistic, log-normal) to the sorted, log-transformed toxicity data for a selected endpoint (e.g., acute toxicity). This models the variation in sensitivity among species [21].

- Benchmark Derivation: Calculate the HC5 (Hazardous Concentration for 5% of species) from the fitted SSD curve. For Fe³⁺, the acute HC5 was 689 μg/L. A Short-Term Water Quality Criterion (SWQC) of 345 μg/L (HC5/2) and a Long-Term WQC (LWQC) of 28 μg/L were derived [21].

- Risk Quotient Calculation: Compare environmental exposure concentrations (EECs) to the benchmarks. Risk Quotient (RQ) = EEC / Benchmark. An RQ > 1 indicates potential risk. The Fe³⁺ study found 30% of sites had acute RQ > 1 and 83% had chronic RQ > 1 [21].

Protocol: Quantitative Risk-Benefit Assessment for Ecosystem Services

This novel protocol integrates ecosystem services (ES) as assessment endpoints, moving beyond traditional hazard assessment [18].

- Define ES Endpoint: Select a quantifiable ecosystem service (e.g., waste remediation via sediment denitrification). Define metrics (e.g., denitrification rate in μmol N m⁻² h⁻¹) [18].

- Establish Baselines and Thresholds: Use field data to define baseline service levels and identify critical benefit (upper) and risk (lower) thresholds for service supply [18].

- Model Stressor-ES Relationships: Develop empirical or mechanistic models linking stressor gradients (e.g., changes in sediment organic matter from offshore wind farms) to ES metrics. In the case study, a multiple linear regression linked sediment traits to denitrification rates [18].

- Construct Cumulative Distribution Functions (CDFs): For a given scenario, model the probability distribution of the potential ES supply level. Overlay risk and benefit thresholds on the CDF [18].

- Calculate Risk/Benefit Metrics: Quantify the probability and magnitude of the ES supply exceeding (benefit) or falling below (risk) the defined thresholds. This allows direct comparison of management scenarios [18].

Comparative Methodological Sensitivity Analysis

The choice of methodology within each component significantly influences the sensitivity and outcome of the ERA.

Table 2: Sensitivity of Methodological Choices Within ERA Components

| ERA Component | Methodological Choice | Impact on Assessment Sensitivity | Supporting Data / Case Example |

|---|---|---|---|

| Hazard Identification | Endpoint Selection: Acute lethality vs. chronic reproduction. | Chronic endpoints are typically more sensitive, leading to lower effect thresholds and higher perceived hazard. | Chronic LWQC for Fe³⁺ (28 μg/L) was orders of magnitude lower than acute benchmarks [21]. |

| Exposure Assessment | Spatial Scale: Local point-scale vs. landscape-scale modeling. | Landscape-scale assessment captures diffuse sources and cumulative exposure, often revealing higher risks than local models. | Landscape-based pesticide ERA considers combined runoff from multiple fields, increasing predicted exposure [23]. |

| Ecological Response Characterization | Assessment Entity: Single species vs. ecosystem service. | ES endpoints integrate structural and functional impacts, potentially showing risk (or benefit) where single-species endpoints do not. | Offshore wind farms showed a 95% probability of providing a benefit to waste remediation services, a nuance missed by single-species tests [18]. |

| Cross-Component | Data Type: Deterministic (fixed value) vs. probabilistic (distribution). | Probabilistic methods (e.g., SSD, exposure distributions) quantify uncertainty, allowing for more nuanced risk estimates (e.g., probability of exceeding a threshold). | SSD provides an HC5 (protecting 95% of species) rather than a single most sensitive value, informing management confidence [21] [22]. |

Visualizing ERA Workflows and Method Relationships

ERA Phases and Analytical Integration

SSD and Ecosystem Services Method Integration

Table 3: Key Research Reagents and Tools for ERA Component Analysis

| Tool/Resource | Primary Function in ERA | Relevant Component | Example/Source |

|---|---|---|---|

| ECOTOX Database | Repository of curated chemical toxicity data for aquatic and terrestrial species. | Hazard Identification, Ecological Response | Source for Fe³⁺ toxicity data for 22 species [22]. |

| Standard Test Organisms (e.g., Daphnia magna, fathead minnow, algae) | Provide standardized, reproducible toxicity endpoints for hazard ranking. | Hazard Identification | Recommended by EPA guidelines for effects assessment [19]. |

| Species Sensitivity Distribution (SSD) Models | Statistical models to derive protective concentrations for communities based on single-species data. | Ecological Response Characterization | Used to derive HC5 and WQC for Fe³⁺ [21]. |

| Geographic Information Systems (GIS) | Analyze and visualize spatial patterns of stressor release, fate, and receptor distribution. | Exposure Assessment | Core for landscape-based pesticide exposure assessment [23]. |

| Ecosystem Service Models (e.g., InVEST, ARIES) | Quantify and map the supply and value of ecosystem services under different scenarios. | Ecological Response Characterization | Enables ES integration as an assessment endpoint [18]. |

| Fate and Transport Models | Predict environmental distribution and concentration of stressors (e.g., chemicals). | Exposure Assessment | Used to estimate Predicted Environmental Concentrations (PECs). |

From Theory to Practice: Advanced and Emerging Methodologies in Ecological Risk Analysis

Ecological risk assessment for soil contamination has traditionally relied on chemical analysis to quantify pollutant concentrations. While precise, these methods fail to capture the biological impact and ecological consequences of contaminants on soil life and function [24]. Within the broader thesis comparing the sensitivity of ecological risk assessment methods, biological indicators—particularly soil nematode communities—provide a critical integrative measure of soil health. Nematodes, as the most abundant and diverse metazoans in soil, occupy multiple trophic levels in the soil food web and participate in essential processes such as organic matter decomposition, nutrient mineralization, and energy cycling [25] [26]. Their community structure responds predictably to various stressors, including heavy metals [24] [27], polycyclic aromatic hydrocarbons (PAHs) [25], salinity [26], and physical disturbance [28].

This guide objectively compares the performance of nematode-based bioindication with traditional chemical assessment and alternative biological methods. It provides supporting experimental data demonstrating that nematode community indices offer a more sensitive, ecologically relevant, and cost-effective measure of soil contamination and ecosystem degradation.

Comparative Performance: Nematode Indices vs. Traditional Chemical Analysis

The table below summarizes key findings from comparative studies, highlighting how nematode community indices detect ecological impact where chemical data alone is insufficient.

Table 1: Comparative Sensitivity of Nematode Indices vs. Chemical Analysis in Contamination Studies

| Contaminant & Study Focus | Chemical Analysis Findings | Nematode Community Indices Findings | Key Superiority of Nematode Indices |

|---|---|---|---|

| Heavy Metals (Pb, Zn, As) [24] | Measured gradient of pseudo-total metals (e.g., As: 120–490 mg/kg). | Maturity Index (MI) and MI2-5 showed strong negative correlation with metal content. Structural Index (SI) indicated degraded food web at polluted sites. | Indices quantified functional impairment (simplified food web) not predicted by total metal concentration alone. |

| Lead (Pb) Contamination [29] | Soil Pb concentration ranged 74–290 µg/g. Plant biomass showed only a 3.6% decrease at highest level. | Structural Index (SI) decreased consistently with increasing Pb, showing high sensitivity. Trophic structure was the most affected parameter. | Detected significant biological impact even when plant growth (a common endpoint) showed minor change. |

| Long-term Heavy Metal Pollution [27] | Quantified total and mobile fractions of As, Cd, Cr, Cu, Pb, Zn along a transect. | MI2-5, SI, and Shannon diversity (H') negatively correlated with metals. Community near source was depauperate, dominated by tolerant taxa. | Reflected the integrated, long-term biological stress and recovery gradient better than metal fractions. |

| Saline-Alkaline Land Reclamation [26] | Reclamation lowered Electrical Conductivity (EC) and increased pH, Organic Carbon (SOC), Total Nitrogen (TN). | Increased total abundance, Shannon index, and metabolic footprints of fungivores, herbivores, and omnivores-predators post-reclamation. | Provided a direct measure of biological recovery and food web functionality following physicochemical improvement. |

Nematode Community Response to Specific Contaminant Classes

Heavy Metal Contamination

Heavy metals exert chronic, non-degradable stress on soil ecosystems. Studies consistently show that nematode communities provide a sensitive and integrative response [24] [27]. Research along a pollution gradient from a smelter in the Czech Republic found that the total nematode abundance, number of genera, and biomass were significantly lower at the most contaminated sites [24]. Indices based on life strategy were particularly sensitive: the Maturity Index (MI) and the MI2-5 (excluding disturbance-tolerant c-p 1 guilds) were the most sensitive indicators of disturbance, showing strong negative correlations with arsenic, lead, and zinc content [24]. Furthermore, the Structure Index (SI), which reflects the complexity of the soil food web, and the Enrichment Index (EI), indicative of nutrient availability, were both suppressed, indicating a shift towards a degraded, basal, and less structured ecosystem [24].

Organic Pollutants (Polycyclic Aromatic Hydrocarbons - PAHs)

PAHs represent a major class of persistent organic pollutants with complex toxicological effects. Nematodes respond to PAHs at multiple levels: molecular (activation of xenobiotic metabolic pathways), individual (slowed physiological processes), and community (shifts in sensitive taxa) [25]. The toxicity of PAHs to nematodes depends on their bioavailability, which is influenced by soil organic matter content. Community-level indices, similar to those used for heavy metals, can indicate PAH stress through a reduction in diversity and a shift towards colonizer (r-strategist) species. However, research dedicated specifically to nematode-based indication of PAHs is less extensive than for heavy metals, highlighting a need for further standardized study [25].

Combined and Abiotic Stresses

Nematode communities also integrate responses to non-chemical stresses and habitat changes, which is crucial for holistic risk assessment. A study on invasive tree (Pinus contorta) removal demonstrated that nematode taxon richness in invaded plots was half that of uninvaded plots, and community composition was significantly altered [28]. Furthermore, management timing mattered: removing saplings allowed the nematode community to recover to a state resembling uninvaded conditions, whereas removing mature trees did not, demonstrating the method's sensitivity to ecological legacies [28]. Similarly, in drought-stressed habitats, nematode community composition and stability were highly sensitive to pH shifts and water level changes, with lakes showing more pronounced effects than shorelines or prairies [30].

Experimental Protocols for Nematode-Based Bioindication

A standardized methodological workflow is essential for generating comparable and reliable data for ecological risk assessment. The following protocol synthesizes best practices from the reviewed studies [24] [29] [26].

1. Site Selection & Soil Sampling:

- Design sampling along a defined gradient of contamination (distance from point source [24] or across defined contamination levels [29]).

- Collect composite soil samples (e.g., 5 sub-samples per replicate) from the surface horizon (0-20 cm).

- Collect a minimum of 4-5 field replicates per site or treatment.

- Process samples for nematode extraction immediately or store at 4°C for very short periods.

2. Nematode Extraction:

- Common Method: Use a combination of Cobb's sieving and decanting followed by modified Baermann funnel technique for 48-72 hours [24].

- Alternative for Fine Soils: Centrifugal-flotation using a sucrose solution (specific gravity 1.15-1.18) is effective [29].

- Fix extracted nematodes with hot (e.g., 60°C) 4% formaldehyde or a formaldehyde-glycerol solution to prevent decomposition [24].

3. Identification and Counting:

- Identify at least 100-150 nematode individuals per sample to the genus level under a compound microscope (400-1000x magnification) using taxonomic keys.

- Assign each genus to a trophic group (bacterivore, fungivore, herbivore, predator, omnivore) [24] and a colonizer-persister (c-p) value (1-5, from r-strategists to K-strategists).

4. Community Index Calculation:

- Calculate ecological indices based on trophic and c-p classifications:

- Maturity Index (MI): Weighted mean c-p value of all nematodes [31].

- Structure Index (SI): Reflects food web complexity, weighted by c-p values of omnivores and predators [31].

- Enrichment Index (EI): Indicates resource availability and bacterial-dominated pathways [31].

- Channel Index (CI): Reflects the dominant decomposition channel (fungal vs. bacterial) [31].

- Shannon Diversity Index (H'): Measures generic diversity [27].

- Metabolic Footprint Analysis: An advanced approach to estimate the carbon used by nematodes for production and respiration, providing a functional measure of their contribution to ecosystem processes [26].

5. Soil Physicochemical Analysis:

- Conduct parallel analysis of soil properties: pH, organic carbon, total nitrogen, texture, and target contaminants (e.g., heavy metals via ICP-MS, PAHs via GC-MS) [24].

- This enables direct statistical correlation between contamination levels and biological response.

6. Data Analysis:

- Use multivariate statistics (e.g., Principal Component Analysis, Redundancy Analysis) to visualize community shifts and link them to environmental variables [29].

- Apply correlation or regression analysis to test relationships between specific indices and contaminant concentrations [24] [27].

Graphviz workflow: Nematode-Based Ecological Risk Assessment Protocol

Interpretation Frameworks and Index Selection

Selecting the correct nematode-based indices (NBIs) is hypothesis-driven and must be focus-oriented [31]. The following table provides a guide for interpreting common indices in a contamination context.

Table 2: Guide to Key Nematode Indices for Contamination Assessment

| Index | Ecological Interpretation | Typical Response to Contamination/Disturbance | Best Used For |

|---|---|---|---|

| Maturity Index (MI) | Weighted mean life strategy (c-p). High MI = stable, mature environment; Low MI = disturbed, enriched environment. | Decreases due to loss of sensitive K-strategists (high c-p) and increase of tolerant r-strategists (low c-p). | General disturbance detection, including chronic pollution [24] [27]. |

| Structure Index (SI) | Reflects complexity and connectivity of the soil food web, based on weighted abundance of omnivores and predators. | Strongly decreases as higher trophic levels (predators, omnivores) are lost first, simplifying the food web. | Assessing ecosystem degradation and stability loss from persistent stressors [24] [29]. |

| Enrichment Index (EI) | Indicates opportunistic, bacterial-driven responses to nutrient enrichment. | Initially may increase with organic enrichment; under toxic stress, it can decrease due to suppression of all trophic groups. | Differentiating between enriching (e.g., manure) vs. toxic (e.g., metals) disturbances [31]. |

| Channel Index (CI) | Indicates the dominant decomposition pathway: fungal (>50%) vs. bacterial (<50%). | Can increase or decrease. Often increases if bacterial pathways are suppressed more than fungal ones. | Understanding shifts in fundamental ecosystem processes due to stress [31]. |

| Nematode Metabolic Footprint | Estimates the carbon metabolism and contribution of nematodes to ecosystem functions. | Total footprint often decreases with contamination, reflecting reduced energy flow. Shifts in trophic group footprints indicate functional changes. | Quantitative assessment of ecosystem function and service provision [26]. |

Graphviz workflow: Nematode Trophic Groups in the Soil Food Web and Stress Response

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Nematode Bioindication Research

| Item | Function/Application | Key Notes |

|---|---|---|

| Formaldehyde-Glycerol Fixative (e.g., 4% formaldehyde, 1% glycerol) | Heat-killing and long-term preservation of extracted nematodes for taxonomy. Prevents distortion and decomposition [24]. | Glycerol prevents desiccation. Prepare fresh from formalin stock. |

| Sucrose Flotation Solution (Specific gravity 1.15-1.18) | Extraction of nematodes from soil/sediment via centrifugal-flotation [29]. | Concentration (e.g., 484g/L water) must be precise for optimal recovery. |

| Baermann Funnel Setup (Funnel, mesh, rubber tubing, clamp) | Passive extraction of active nematodes from soil suspension over 48-72 hours [24]. | Standard method; relies on nematode movement through mesh into water. |

| Taxonomic Identification Keys (e.g., "Nematodes of the World") | Essential reference for identifying nematodes to genus level based on morphological characteristics. | Accurate identification is the foundation for all subsequent index calculations. |

| pH & Electrolyte Solution (e.g., 1M KCl for soil pH) | Standardization of soil pH measurement, a key covariate influencing nematode communities [24]. | Required for parallel physicochemical analysis to interpret biological data. |

| Microwave-Assisted Wet Digestion System (e.g., Ethos 1) | Preparation of soil samples for pseudo-total heavy metal analysis via ICP-MS or AAS [24]. | Provides high-quality contaminant concentration data for correlation studies. |

Within the framework of comparative ecological risk assessment methods, nematode community analysis proves to be a highly sensitive and diagnostically powerful bioindicator system. It surpasses traditional chemical analysis by translating contaminant presence into measurable ecological impact, reflecting the health of the entire soil food web. Its key advantages include:

- Integrative Sensitivity: Responds to a wide array of chemical, physical, and biological stressors.

- Functional Relevance: Measures direct effects on biodiversity, trophic structure, and ecosystem processes (decomposition, nutrient cycling).

- Diagnostic Capability: Specific indices (e.g., SI, MI) help differentiate between types of disturbance (enrichment vs. toxicity).

- Cost-Effectiveness: Once expertise is established, it provides more ecological information per unit cost than exhaustive chemical screening.

For researchers and assessors, the adoption of nematode-based indices offers a path towards more ecologically grounded risk assessments. Future development should prioritize the calibration of molecular-based NBIs (e.g., from metabarcoding data) to enhance throughput and taxonomic resolution, further solidifying the role of nematodes as indispensable sentinels of soil health [31].

Within ecological risk assessment (ERA), the shift towards data-driven modeling necessitates a rigorous comparison of analytical tools. This guide objectively evaluates two prominent machine learning approaches—Ridge Regression (a linear, penalized model) and Random Forest (a non-linear, ensemble model)—within the specific context of predicting ecological risk indices [32]. The core thesis investigates the comparative sensitivity of these models: their responsiveness to underlying data patterns, parameterization, and data perturbations, which directly impacts the reliability and interpretability of ERA predictions. Ridge Regression introduces sensitivity through its regularization parameter (alpha or k), which controls the trade-off between coefficient stability and model bias [33]. In contrast, Random Forest's sensitivity is governed by its structural parameters (e.g., mtry, ntree), which influence the diversity of the tree ensemble and its propensity to capture complex, non-linear interactions [34]. Understanding these divergent sensitivity profiles is crucial for researchers, scientists, and drug development professionals who rely on predictive models to prioritize chemical hazards, assess ecosystem impacts, and support regulatory decisions [35].

Deciphering Model Sensitivity: Mechanisms and Meaning

The sensitivity of a predictive model in ERA refers to how its outputs—risk indices, hazard concentrations, or classification outcomes—change in response to variations in input data or model parameters. This characteristic is not inherently negative; a model appropriately sensitive to true ecological signals is desirable. However, excessive sensitivity to noise, outliers, or specific parameter settings undermines robustness and generalizability.

Ridge Regression: Sensitivity Through Constrained Linearity Ridge Regression modifies ordinary least squares by adding a penalty proportional to the square of the magnitude of the coefficients [33]. This penalty is controlled by the regularization parameter (α or k). The sensitivity of Ridge is thus dual-faceted:

- Coefficient Stability vs. Bias: As α increases, coefficient estimates shrink toward zero, reducing their variance and sensitivity to multicollinearity in the data. However, this introduces bias [33]. The optimal α balances this trade-off, minimizing overall prediction error.

- Data Point Influence: The sensitivity of the model's slope (coefficients) to individual data points can be mathematically quantified. Points with extreme predictor (x) values or those lying far from the regression line can exert greater influence on the fitted model [36]. Ridge regularization mitigates but does not eliminate this influence.

Random Forest: Sensitivity Through Ensemble Structure Random Forest is an ensemble of many decision trees, each built on a bootstrap sample of the data and a random subset of features at each split [37]. Its sensitivity is intrinsically linked to its parameters:

- Parameter Dependence: Contrary to some assumptions, RF performance is not universally robust to parameter choices. The number of variables considered at each split (mtry) is often highly influential. Studies show mtry can be strongly negatively correlated with prediction accuracy (AUC), while the number of trees (ntree) may have a less dramatic effect [34].

- Interaction and Non-linearity: RF inherently models complex interactions and non-linear relationships without explicit specification. Its sensitivity is therefore directed towards detecting these patterns, which can be a major advantage over linear models for ecological data where such interactions are common but poorly defined a priori.

Experimental Performance Data and Comparative Analysis

A direct application in ERA research provides a clear basis for comparison. A 2025 study assessed pollution from Potentially Toxic Elements (PTEs) near coal mines using soil nematode communities as bioindicators [32]. The study developed models to predict three comprehensive ecological risk indices—Nemerow Synthetic Pollution Index (NSPI), Potential Ecological Risk Index (RI), and Pollution Load Index (PLI)—from a suite of nematode community indices.

Table 1: Model Performance on Ecological Risk Indices [32]

| Ecological Risk Index | Best-Performing Model | Key Performance Note |

|---|---|---|

| Nemerow Synthetic Pollution Index (NSPI) | Ridge Regression | Led performance among linear models tested. |

| Potential Ecological Risk Index (RI) | Ridge Regression | Led performance among linear models tested. |

| Pollution Load Index (PLI) | Random Forest | Topped performance among non-linear models tested. |

The study also performed a feature importance analysis for the Random Forest models, revealing which nematode indices were most sensitive in predicting the risk indices.

Table 2: Feature Importance in Random Forest Models for Risk Prediction [32]

| Predictor (Nematode Index) | Importance for NSPI | Importance for RI |

|---|---|---|

| Nematode Channel Ratio (NCR) | 21.08% | 20.90% |

| Maturity Index (MI) | 20.78% | 20.90% |

| Shannon-Weaver Diversity (H) | 18.48% | 19.50% |

A separate comparative study in psychiatry, which methodologically parallels many ERA prediction tasks, found that Ridge Regression and Random Forest could achieve statistically equivalent performance (AUC ~0.79), though the most important predictors differed between the models [38].

Detailed Experimental Protocols

Protocol 1: ERA Modeling with Nematode Indices [32]

- Site Selection & Sampling: Soil samples were collected from areas near active coal mines across seven cities. Sampling accounted for spatial and seasonal variation.

- Data Collection: Two parallel data streams were generated: (a) Chemical: Concentrations of PTEs (Pb, Hg, Mn, Zn, etc.) were measured. (b) Biological: Nematodes were extracted, identified, and counted to calculate general community indices (e.g., Shannon-Weaver diversity) and nematode-specific indices (e.g., Maturity Index, Structure Index).

- Index Calculation: Comprehensive ecological risk indices (NSPI, RI, PLI) were calculated from the PTE concentration data to serve as the model target variables.

- Model Development & Comparison: Multiple linear (including Ridge) and non-linear (including RF) models were trained to predict the comprehensive risk indices from the biological nematode indices. Models were validated, and performance was compared to identify the best predictor for each risk index.

- Sensitivity Analysis: For RF models, a feature importance analysis was conducted to determine the relative contribution of each nematode index to the prediction.

Protocol 2: Assessing Random Forest Parameter Sensitivity [34]

- Dataset Preparation: Two biological datasets with distinct features-to-samples (p/n) ratios were selected: one with low p/n (15 features, 720 samples) and one with high p/n (12,135 features, 255 samples).

- Parameter Grid Definition: A wide grid of RF parameters was defined, focusing on ntree (e.g., 10 to 500,000), mtry, and sampsize.

- Exhaustive Model Fitting: An RF model was fitted for each unique parameter combination in the grid. Each model was trained on a training cohort and evaluated on a held-out validation set.

- Performance Metric: Prediction accuracy was quantified using the Area Under the Receiver Operating Characteristic Curve (AUC).

- Analysis: The relationship between parameter values and AUC was analyzed using rank correlation. The performance of default parameters was compared to the optimal tuned parameters found through the grid search.

Visualizing Workflows and Sensitivity Relationships

Comparative ERA Model Sensitivity Workflow

Random Forest Parameter Sensitivity Relationships

Table 3: Key Reagents and Resources for Machine Learning in ERA

| Item / Resource | Function in ERA Research | Example / Note |

|---|---|---|

| Soil Nematode Communities | Bioindicators that provide an integrated, biological response to soil contamination, used as model predictors [32]. | Taxa are identified to calculate indices like Maturity Index (MI) and Structure Index (SI). |

| Curated Toxicity Databases | Provide the foundational chemical and biological effect data required to train and validate predictive models [35]. | U.S. EPA ECOTOX database [35]. |

| Ecological Risk Indices | Quantitative targets for machine learning models, integrating multiple contaminant measurements into a unified risk metric [32]. | Nemerow Synthetic Pollution Index (NSPI), Potential Ecological Risk Index (RI). |

| Machine Learning Software Packages | Provide implementations of algorithms, sensitivity analysis tools, and validation functions. | scikit-learn (Python), caret or ranger (R), JuMP/DiffOpt for advanced sensitivity [36]. |

| Species Sensitivity Distribution (SSD) Models | A computational framework for extrapolating from single-species data to ecosystem-level protection thresholds, often enhanced by ML [35]. | Used to predict Hazardous Concentrations (e.g., HC-5) for untested chemicals. |

The choice between Ridge Regression and Random Forest for ecological risk assessment is not a matter of identifying a universally superior algorithm but of matching model sensitivity to the problem context. For predicting certain integrated risk indices (like NSPI and RI) where linear relationships with bioindicators may dominate, Ridge Regression offers stable, interpretable, and highly performant results [32]. Its sensitivity is usefully constrained to coefficient regularization, guarding against overfitting from multicollinearity. Conversely, for predicting indices that may encapsulate more complex interactions (like PLI) or when working with high-dimensional data with unknown non-linearities, Random Forest's sensitivity to complex patterns is a decisive advantage [32] [39]. However, this comes with the cost of higher computational demand and a critical need for parameter tuning, as its performance is sensitive to choices like mtry [34]. Therefore, the broader thesis on comparative sensitivity concludes that a strategic, context-aware application—potentially even an ensemble of both approaches—will yield the most robust and insightful ERA predictions.

The projection and assessment of landscape ecological risk (LER) under changing land use patterns represent a critical frontier in sustainable development research. Effectively evaluating LER is foundational for sustainable land use planning and regional development [40]. Within this domain, comparative analysis of methodological sensitivity—the degree to which different models respond to variations in input parameters and scenarios—is essential for robust scientific and policy outcomes. This guide provides a comparative evaluation of two prominent modeling frameworks: the Patch-generating Land Use Simulation (PLUS) model and the Integrated Valuation of Ecosystem Services and Tradeoffs (InVEST) model. The PLUS model specializes in simulating future land use patterns by analyzing the drivers of change and employing a patch-generation strategy [40] [41]. In contrast, InVEST is a suite of spatially explicit, open-source models designed to map and quantify the economic and biophysical values of ecosystem services, such as carbon storage, water conservation, and habitat quality [42] [43]. The integration of these tools—using PLUS to project land use change and InVEST to evaluate the resulting impacts on ecosystem services—has emerged as a powerful, synergistic approach for prospective ecological risk assessment [41] [44]. This comparison is framed within a broader thesis on the comparative sensitivity of ecological risk assessment methods, examining how different tools capture, quantify, and communicate risks arising from anthropogenic and natural stressors [20].

Model Comparison: Core Characteristics and Methodological Foundations

PLUS and InVEST serve distinct yet complementary functions within the ecological risk assessment workflow. Their integration forms a comprehensive pipeline from scenario projection to service valuation.

Table 1: Core Characteristics of the PLUS and InVEST Models

| Feature | PLUS Model | InVEST Model |

|---|---|---|

| Primary Purpose | Simulates future land use/cover change under multiple scenarios. | Quantifies and values ecosystem services provided by land/water. |

| Core Methodology | Integrates a land expansion analysis strategy and a cellular automata (CA) model based on multi-type random patch seeds. | Uses production functions that define how ecosystem structure affects service flows; spatially explicit mapping. |

| Key Inputs | Historical land use maps, driving factors (e.g., slope, distance to roads), neighborhood weights, transition costs, demand constraints. | Land use/cover maps, biophysical tables (e.g., carbon stocks per land class), climate data, socio-economic data. |

| Typical Outputs | Projected future land use maps, transition matrices, gain/loss statistics. | Maps and total values of ecosystem services (e.g., tons of carbon, volume of water yield, habitat quality index). |

| Spatial Explicitness | High; generates spatially explicit projections of land use patterns. | High; produces maps showing the spatial distribution of service supply and value. |

| Scenario Capability | Strong; designed for multi-scenario simulation (e.g., SSPs, ND, EP). | Dependent on input scenarios; typically assesses outcomes of provided land use scenarios. |

| Major Strength | High simulation accuracy and ability to model patch-level changes. | Modular, standardized ecosystem service valuation; accessible to non-programmers. |

The PLUS model's sensitivity is heavily influenced by its algorithm configuration. Research in the Fujian Delta region showed that coupling multiple linear regression with a Markov chain significantly improved prediction accuracy (Figure of Merit, FoM = 0.244) compared to using a Markov chain alone (FoM = 0.146) [40]. This highlights the sensitivity of outcomes to the chosen analytical method within the model framework. The InVEST model's sensitivity, conversely, is most closely tied to the accuracy and resolution of its biophysical input parameters. For instance, carbon stock calculations depend critically on the carbon pool values (above-ground, below-ground, soil, and dead organic matter) assigned to each land cover class [43] [44].

Table 2: Documented Performance Metrics from Integrated PLUS-InVEST Applications

| Study Region | Key Performance Metric (PLUS) | Key Ecosystem Service (InVEST) | Key Finding | Source |

|---|---|---|---|---|

| Fujian Delta, China | FoM = 0.244 (with MLR & Markov) | Landscape Ecological Risk Index | Localized SSP1 scenario yielded minimal risk; SSP4 highest risk. | [40] |

| Chengdu-Chongqing, China | Kappa coefficient for calibration | Water Conservation | EP scenario projected higher water conservation than ND scenario by 2050. | [41] |