Bridging the Translation Gap: A Strategic Guide to Integrating Epidemiological and Animal Evidence in Systematic Reviews

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the strategic integration of epidemiological (human) and animal evidence within systematic reviews.

Bridging the Translation Gap: A Strategic Guide to Integrating Epidemiological and Animal Evidence in Systematic Reviews

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the strategic integration of epidemiological (human) and animal evidence within systematic reviews. The synthesis addresses four core objectives: establishing the foundational rationale and current landscape for evidence integration; detailing methodological frameworks and practical application steps; identifying common challenges and optimization strategies to enhance review rigor; and presenting frameworks for validating integrated evidence and comparing outcomes across species. By synthesizing findings from both human population data and preclinical animal models, this approach enhances the translational validity of systematic reviews, reduces research waste, and provides a more robust evidence base to inform clinical trials and public health decisions.

The Why and What: Defining the Imperative for Integrating Human and Animal Evidence in Research Synthesis

The high attrition rate in drug development underscores a critical translational gap between preclinical discovery and clinical success [1]. While epidemiological studies identify associations and risk factors in human populations, and preclinical animal models elucidate biological mechanisms and therapeutic potential, each approach has intrinsic limitations. Epidemiological data can signal correlation but not causation, whereas preclinical findings often suffer from poor external validity, failing to predict human responses [2] [1]. Systematic reviews (SRs) that integrate these two evidence streams offer a powerful methodological "bridge" to overcome these limitations. A preclinical SR provides a formal, unbiased synthesis of animal data, assessing the robustness, reproducibility, and translational readiness of a proposed intervention [3] [4]. When its findings are directly contextualized with epidemiological evidence on disease burden and human pathophysiology, it creates a stronger, more holistic rationale for clinical translation or for the refinement of preclinical research. This integrated approach mitigates research waste, supports ethical animal use, and provides empirical evidence to inform clinical trial design and funding decisions [3] [4].

Quantitative Landscape of Preclinical and Epidemiological Systematic Reviews

The field of evidence synthesis is expanding rapidly in both preclinical and clinical domains. The tables below summarize key quantitative data illustrating this growth, the characteristics of preclinical SRs, and persistent methodological challenges.

Table 1: Growth and Volume of Systematic Review Evidence

| Evidence Stream | Key Metric | Data | Source & Context |

|---|---|---|---|

| Preclinical SRs | Approximate total published (1992-2023) | ~3000 SRs | One-third included a meta-analysis [4]. |

| Preclinical SRs | Annual Growth Trend | Increasing exponentially | 54% focused on pharmacological interventions (2015-2018 data) [4] [5]. |

| Clinical SRs (for comparison) | Publications in Pediatric Medicine (2022) | >130,000 publications | Highlights the vast scale of clinical literature vs. preclinical [4]. |

| Clinical Prediction Model SRs | Total Published (2001-2023) | 1004 SRs | 66.6% published after 2020, indicating rapid recent growth [6]. |

Table 2: Epidemiological Characteristics of Preclinical Systematic Reviews (2015-2018 Sample)

| Characteristic | Category | Prevalence / Finding |

|---|---|---|

| Geographic Distribution | Published across 43 countries | Global activity with concentration in North America and Europe [5]. |

| Disease Domain Coverage | Spanning 23 different domains | Demonstrates wide application across biomedical research [5]. |

| Animal Species Reviewed | Use of 26 different species | Rodents (mice, rats) are most common; includes dogs, primates, etc. [5]. |

| Methodological Reporting | Risk of Bias Assessment Reported | <50% of reviews [5]. |

| Methodological Reporting | Construct Validity Assessment Reported | 0% of reviews [5]. |

Table 3: Methodological Gaps in Systematic Review Reporting

| Review Type | Reporting Gap | Percentage/Evidence | Implication |

|---|---|---|---|

| Clinical Prediction Model SRs [6] | Lacked a standardized review question (e.g., PICO) | 88.3% | Compromises reproducibility and focus. |

| Clinical Prediction Model SRs [6] | Did not follow a standardized checklist for data extraction | 79.8% | Increases risk of error and bias in data collection. |

| Clinical Prediction Model SRs [6] | Did not assess certainty of evidence (e.g., GRADE) | 94.8% | Limits interpretation of findings' reliability. |

| All SRs [4] | Had a preregistered/published protocol (2020-2021) | 38% | Improves transparency; reduces duplication and bias. |

Foundational Protocols for Integrated Evidence Synthesis

Protocol 1: Conducting a Preclinical Systematic Review and Meta-Analysis

This protocol outlines the core steps for synthesizing preclinical animal evidence, forming one pillar of the translational bridge [3] [4].

- I. Protocol Development & Registration (Mandatory): Define a focused research question (e.g., "What is the efficacy of compound X in animal models of disease Y?"). Develop a detailed statistical analysis plan. Register the protocol on platforms like PROSPERO, SYRF, or Open Science Framework to prevent bias and duplication [3] [4].

- II. Systematic Search Strategy: Collaborate with a biomedical librarian. Search multiple electronic databases (e.g., PubMed, Embase, Web of Science) using animal search filters [3]. Do not rely solely on Google Scholar. Search strategies must be reproducible.

- III. Study Selection & Screening: Two independent reviewers screen titles/abstracts and then full-text articles against pre-defined inclusion/exclusion criteria. A PRISMA flow diagram records the process [3].

- IV. Data Extraction & Annotation: Two reviewers independently extract data using standardized forms. Extract details on study design, animal model, intervention, outcomes, and risk of bias. Use tools like WebPlotDigitizer to extract data from graphs [3].

- V. Risk of Bias & Quality Assessment: Use a domain-based tool like the SYRCLE Risk of Bias tool for animal studies [3] [1]. Assess internal validity (randomization, blinding, etc.) and report on external validity factors (model relevance, sex of animals) [1] [5].

- VI. Data Synthesis & Meta-Analysis: Perform a narrative synthesis of study characteristics. If sufficient homogeneous data exist, conduct a meta-analysis to calculate a summary effect size. Use random-effects models. Explore heterogeneity via subgroup analysis or meta-regression (e.g., by species, model type, risk of bias) [3].

- VII. Assessment of Publication Bias: Use funnel plots and statistical tests (e.g., Egger's) to assess small-study effects, which may indicate publication bias [3].

- VIII. Reporting & Dissemination: Report according to the PRISMA-Preclinical guidelines. Submit data and code to a repository (e.g., Figshare). Publish in an open-access format to maximize reach [3] [4].

Protocol 2: Integrating Epidemiological Data with Preclinical SR Findings

This protocol describes how to contextualize preclinical SR results within the human disease context.

- I. Define the Epidemiological Scope: Based on the preclinical research question, define key epidemiological parameters to search for: disease incidence/prevalence, key demographic risk factors (age, sex, ethnicity), major environmental or genetic determinants, and natural history/progression data [1].

- II. Targeted Epidemiological Evidence Search: Conduct a focused, narrative review of high-quality epidemiological sources. Search for large cohort studies, disease registries, and authoritative reports from organizations like the WHO or CDC. The goal is not an exhaustive SR but to gather relevant contextual data [4].

- III. Align Preclinical Models with Human Disease: Systematically compare the models used in the preclinical SR to human disease. Utilize a framework like the Framework to Identify Models of Disease (FIMD), which scores models across eight domains: Epidemiology, Symptomatology/Natural History, Genetics, Biochemistry, Aetiology, Histology, Pharmacology, and Endpoints [1]. This identifies which human disease features are recapitulated.

- IV. Comparative Analysis & Gap Identification: Create a side-by-side analysis:

- Consistency: Do the mechanistic pathways and intervention effects in animals align with known human pathophysiology and risk factor data?

- Population Relevance: Do the animal models reflect key at-risk human demographics (e.g., age, sex, co-morbidities)?

- Outcome Translation: Are the preclinical outcome measures clinically relevant biomarkers or surrogates for patient-centered outcomes?

- V. Generate Integrated Conclusions & Recommendations: Synthesize findings into a translational assessment:

- Strong Case for Translation: Preclinical evidence is robust, low bias, and effects are consistent across models that faithfully mirror key human epidemiological and pathophysiological features.

- Case for Refined Preclinical Research: Preclinical evidence is promising but models lack critical human disease features (e.g., a model lacks genetic heterogeneity present in the human population). Recommend next-step studies using more representative models.

- Case Against Current Translation: Preclinical evidence is weak, inconsistent, or derived from models with poor face/construct validity for the human condition [1].

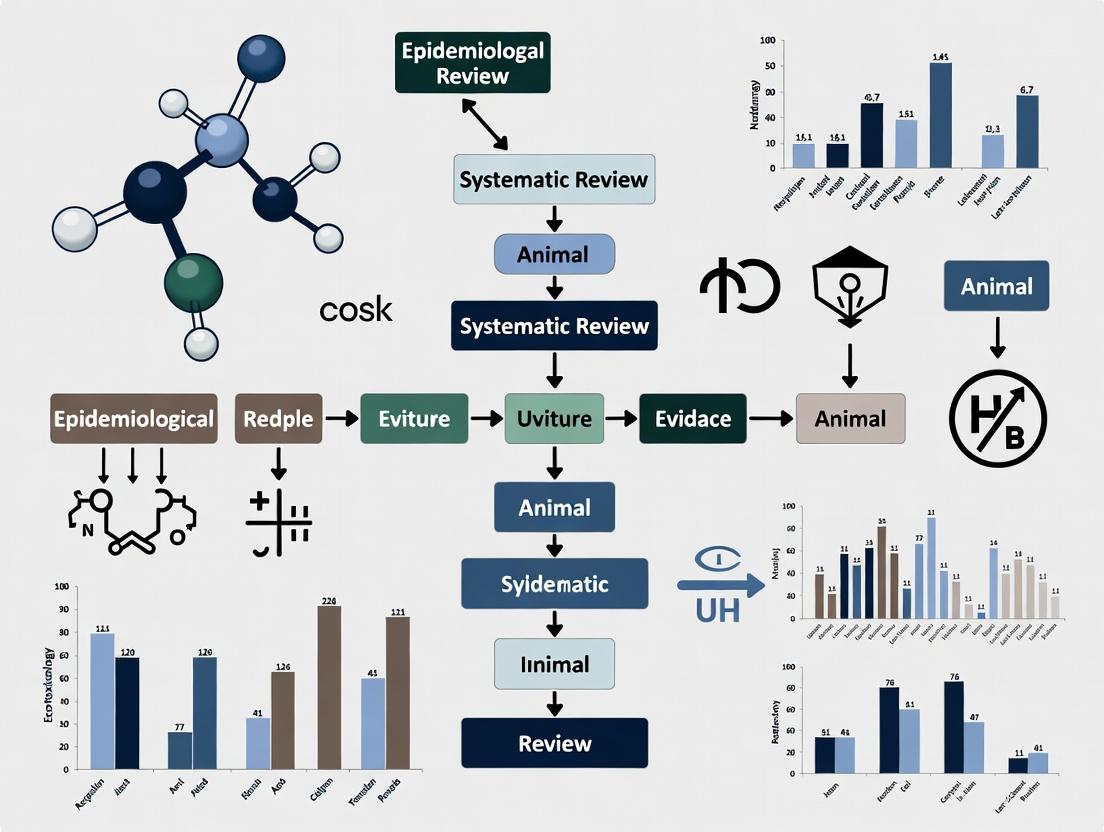

Visualizing the Translational Workflow and Methodology

Figure 1: The Translational Bridge Workflow. This diagram illustrates the bidirectional integration of epidemiological and preclinical evidence streams through a formal systematic review process to produce translational decisions.

Figure 2: Preclinical Systematic Review Workflow. A linear representation of the seven mandatory stages for conducting a rigorous preclinical SR, with an optional feedback loop [3] [4].

Figure 3: Framework to Identify Models of Disease (FIMD). This radial diagram shows the eight domains used to systematically score and compare how well an animal model recapitulates key features of a human disease, critical for assessing external validity [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Integrated Translational Reviews

| Tool / Resource Name | Type | Primary Function in Integration | Key Reference/Source |

|---|---|---|---|

| SYRCLE Risk of Bias Tool | Methodological Tool | Assesses internal validity (e.g., selection, performance bias) of individual animal studies within an SR. | [3] [1] |

| Framework to Identify Models of Disease (FIMD) | Analytical Framework | Systematically scores and compares animal models across 8 domains (Epidemiology, Aetiology, etc.) for translational relevance. | [1] |

| ARRIVE & PREPARE Guidelines | Reporting Guidelines | Ensures complete and transparent reporting of animal experiments, improving the quality of primary data for SRs. | [1] |

| Systematic Review Facility (SyRF) | Online Platform | Provides a free, integrated platform for managing the preclinical SR process (screening, data extraction, analysis). | [3] |

| Compound 48/80-Induced Ocular Allergy Model | Preclinical Disease Model | A non-immunogenic mast cell degranulation model used to study allergic conjunctivitis and test antihistamines (e.g., alcaftadine). | [7] |

| Antigen-Challenge Ocular Allergy Model | Preclinical Disease Model | Uses sensitization and topical challenge (e.g., with ovalbumin) to induce a T-cell mediated response, modeling chronic ocular allergy. | [7] |

| Pharmacokinetic/Pharmacodynamic (PK/PD) Modeling | Analytical Method | Integrates data on a drug's absorption, distribution, metabolism, excretion (ADME) with its biological effects to predict human dosing. | [8] |

| PROSPERO Registry | Protocol Registry | International prospective register for SR protocols in health-related fields; mandatory for Cochrane reviews and best practice for all SRs. | [4] [6] |

Application Notes: From Theory to Practice

- Note 1: Informing Clinical Trial Design with Preclinical SRs. A robust preclinical SR and meta-analysis can provide quantitative estimates of treatment effect size, optimal therapeutic time window, and effective dose ranges across species. These data directly inform the design of Phase I/II clinical trials, including first-in-human dosing, escalation schemes, and candidate biomarker selection [3] [8]. Conversely, an SR revealing inconsistent effects or high risk of bias in animal studies can justify halting translation, preventing unnecessary human trials [3].

- Note 2: Selecting the Optimal Animal Model. The integration of epidemiological data is crucial for model selection. For a pediatric disease, an SR should critically appraise whether the age of animals used corresponds to the relevant human developmental stage [4]. The FIMD framework can guide this choice: for a disease with strong genetic determinants, prioritize models with high scores in the "Genetics" domain; for a disease driven by environmental factors, "Aetiology" and "Epidemiology" become more critical [1].

- Note 3: Addressing the "Valley of Death" in Drug Development. The high failure rate between preclinical success and clinical efficacy is often attributed to poor predictive validity of animal models [1]. Integrated SRs address this by forcing an explicit, structured comparison between animal and human data before costly clinical trials begin. This process de-risks development by identifying fundamental disconnects early—such as a drug working only in acute injury models when the human disease is chronic—and directing resources toward more promising candidates or better models [1] [8].

Application Notes: Thesis Context and Research Imperative

This document synthesizes the current state of systematic reviews (SRs) of animal studies, framing the evidence within the broader thesis objective of integrating epidemiological and animal evidence to strengthen translational research. Animal SRs are critical for distilling preclinical evidence, identifying robust findings from often heterogeneous animal studies, and directly informing the design and justification of human clinical trials and public health interventions [9]. Their role is not merely to summarize animal data but to act as a bridge in the translational pathway, highlighting which animal findings have sufficient promise, mechanistic insight, and safety profiles to warrant human investigation [10] [9]. However, the utility of this bridge depends entirely on the rigor, coverage, and accessibility of the animal SRs themselves. These application notes detail the empirical landscape, identify persistent gaps, and provide standardized protocols to enhance the quality and integration potential of future animal SRs, thereby directly serving the thesis goal of creating a more cohesive and predictive evidence ecosystem.

Section 1: The Evolving Landscape of Animal Systematic Reviews

Quantitative Growth and Publication Metrics

The field of animal SRs has experienced substantial growth, particularly over the past decade. Empirical analyses reveal key metrics regarding their production and publication lifecycle.

Table 1: Growth and Publication Metrics for Animal Systematic Reviews

| Metric | Findings | Data Source & Context |

|---|---|---|

| Cumulative Volume | Over 3,113 SRs indexed in a dedicated database (as of June 2019) [11]; 1,358 SRs in neuroscience alone (1997-2023) [10]. | Demonstrates significant scholarly activity and a foundation for evidence synthesis [11]. |

| Annual Growth Trajectory | In neuroscience, yearly publications grew from 5 (2007) to 305 (2022), indicating rapid adoption [10]. | Reflects increasing recognition of the value of evidence synthesis in preclinical research [10] [9]. |

| Protocol-to-Publication Rate | 51% (694/1,365) of protocols registered in PROSPERO result in a published SR [12]. | Suggests substantial publication bias or attrition due to resource constraints, potentially distorting the evidence base [12]. |

| Median Time to Completion | 11.5 months (range: 0.13–44.9 months) from start to submission [12]. | Provides realistic timelines for researchers and funders; actual time often exceeds authors' anticipation [12]. |

| Median Time to Publication | 16.2 months (range: 1.0–49.7 months) from start to final publication [12]. | Highlights the full timeline from inception to disseminated knowledge. |

Geographic Distribution and Collaborative Trends

The production of animal SRs is a global endeavor, but with notable concentrations of activity.

Table 2: Geographic Distribution of Animal Systematic Review Production

| Rank | Country | Primary Research Context | Implications for Integration |

|---|---|---|---|

| 1 | United States | Most prolific producer of neuroscience SRs [10]. | Leads in volume; sets methodological trends. |

| 2 | China | Among the top producers [10]. | Major and growing contributor to the evidence base. |

| 3 | United Kingdom | Top producer; leads in adoption of non-animal methods (NAMs) in several disease areas [10] [13]. | Strong focus on methodology, quality (e.g., CAMARADES, SYRCLE), and the 3Rs principle [13]. |

| 4 | Brazil | Among the top producers [10]. | Indicates active evidence-synthesis communities in multiple regions. |

| 5 | Iran | Among the top producers [10]. | Highlights global distribution of research expertise. |

| Collaboration Impact | International collaboration (≥2 countries) is common but does not significantly alter publication likelihood or timeline [12]. | Supports globalized science but suggests complex logistics may offset efficiency gains. |

Topical Coverage and Translational Gaps

While SRs cover many disease areas, significant mismatches exist between research focus and global health burden.

Table 3: Topical Coverage and Identified Gaps in Neuroscience SRs

| Disease Area | Level of SR Coverage | Notes and Translational Implications |

|---|---|---|

| Neurodegenerative (e.g., Alzheimer's, Stroke) | High | Well-covered, aligning with high disease burden and extensive animal modeling [10]. |

| Psychiatric Disorders (e.g., Depression) | Moderate | Covered, but often with less mechanistic depth from animal models [10]. |

| Schizophrenia | Low | A major gap despite significant clinical burden [10]. |

| Brain Tumours | Low | A major gap despite significant clinical burden [10]. |

| Other Psychiatric Disorders | Low | Generally underrepresented [10]. |

| General Observation | The ratio of SRs to disease prevalence is uneven [10]. | Research investment does not fully align with epidemiological need, creating an integrative gap where clinical demand outpaces synthesized preclinical evidence. |

Section 2: Experimental Protocols for Rigorous Evidence Synthesis

Protocol: Registration, Search, and Automated Quality Assessment

This integrated protocol combines best practices for conducting an animal SR, from inception to quality evaluation [12] [10].

Phase 1: Protocol Development and Registration

- Define Question: Formulate a clear, focused PICO (Population, Intervention, Comparator, Outcome) question relevant to translational research.

- Draft Protocol: Detail all planned methods: search strategy, inclusion/exclusion criteria, data extraction items, risk of bias (RoB) assessment tool, and synthesis plan.

- Mandatory Registration: Register the finalized protocol on a public registry before starting the review. PROSPERO is the international preferred registry (PROSPERO4animals section) [12]. Registration reduces bias, increases transparency, and allows for the tracking of protocol-to-publication attrition [12].

Phase 2: Systematic Literature Search and Screening

- Database Search: Execute the registered search string in at least two major biomedical databases (e.g., PubMed/MEDLINE, Embase) [10]. Use validated animal study filters like the SYRCLE animal filter to improve precision [10].

- Supplementary Search: Search specialized resources (e.g., Web of Science, Google Scholar for grey literature) and scan reference lists [12].

- Deduplication & Screening: Use reference management and screening software (e.g., Rayyan). Conduct title/abstract and full-text screening independently by two reviewers, with conflicts resolved by consensus or a third reviewer [10].

Phase 3: Automated Quality Assessment (AQA) of Included SRs For umbrella reviews or methodological studies assessing the quality of many SRs, manual assessment is impractical. This protocol details an automated, high-reliability method [10].

- Tool Setup: Develop a script in R (or similar) using regular expressions (regex). Create regex libraries for 11 key quality items (e.g., presence of a protocol, dual screening, RoB assessment, search string provided) [10].

- Text Processing: The script imports full-text PDFs of SRs, segments text by section (e.g., Methods, Results), and removes references.

- Pattern Matching: The script searches each text segment for matches to the predefined regex patterns for each quality item.

- Validation & Scoring: Manually check a random subset (e.g., 10%) to calculate inter-rater agreement (F1-score). The tool achieved >80% F1-score for most items [10]. Assign a point for each fulfilled item to generate a normalized quality score (0-1).

Phase 4: Data Synthesis and Reporting

- Data Extraction: Use a standardized, pilot-tested form. Extract study characteristics, results, and RoB assessments. Perform in duplicate.

- Synthesis: Conduct narrative synthesis. If studies are sufficiently homogeneous, perform a meta-analysis to calculate summary effect estimates.

- Reporting: Adhere to PRISMA guidelines. Clearly report the fate of the protocol (registration ID, amendments) and discuss findings in the context of translational potential and human evidence [10].

Protocol: Assessing Protocol Publication Status and Timelines

This protocol is designed for methodological research tracking the publication output and efficiency of registered SR protocols [12].

Objective: To determine the proportion of registered animal SR protocols that result in publication and to calculate real-world completion timelines.

Methods:

- Data Source: Manually download all records from the "Reviews of animal studies for human health" section of the PROSPERO registry [12].

- Eligibility Filter: Exclude protocols with a start date within the median time-to-publication period (e.g., 466 days) prior to data extraction to avoid misclassifying ongoing reviews as unpublished [12].

- Data Extraction (Manual & Automated):

- Publication Status Verification:

- Primary Check: Search PROSPERO's "Details of final report/publication" field [12].

- Secondary Search: If primary check is negative, search Google Scholar, PubMed, and Embase using the protocol title and author names [12].

- Categorization: Classify each protocol as "Published" (linked SR found) or "Unpublished" (no SR found after exhaustive search).

- Timeline Calculation:

- Analysis: Calculate descriptive statistics (median, IQR) for proportions and timelines. Compare actual vs. anticipated times using non-parametric tests (e.g., Wilcoxon signed-rank test) [12].

Section 3: Visualizing Workflows and Relationships

Animal SR Workflow and Evidence Gaps

Integration of Animal SR and Human Evidence

Section 4: The Scientist's Toolkit for Animal Systematic Reviews

Table 4: Essential Research Reagent Solutions and Resources

| Tool / Resource | Primary Function | Relevance to Integration Thesis |

|---|---|---|

| PROSPERO (PROSPERO4animals) | International prospective register for SR protocols [12]. | Mandatory for transparency. Mitigates publication bias, allows tracking of the animal evidence pipeline, a prerequisite for integration. |

| SYRCLE Animal Filter | Search filter to efficiently identify animal studies in PubMed [10]. | Increases efficiency and recall of primary animal studies, improving the foundation of the SR. |

| CAMARADES / SYRCLE Guidelines | Methodological guidance & checklists for conducting animal SRs and meta-analyses. | Critical for quality. Directly improves SR rigor, enhancing the reliability of evidence to be integrated with human data. |

| Database of Animal SRs | Curated database of >3,100 published animal SRs [11]. | Prevents duplication, enables mapping of existing evidence, and facilitates meta-epidemiological studies for integration research. |

| Automated Quality Assessment (AQA) Script | R tool using regex to extract quality indicators from SR full texts [10]. | Enables large-scale evaluation of the animal evidence base's reliability, identifying strengths/weaknesses for integration. |

| PRISMA Reporting Checklist | Standard for transparent reporting of systematic reviews [10]. | Ensures animal SRs are reported with sufficient detail for critical appraisal and comparison with human evidence. |

| One Health Integration Frameworks | Models for combining human, animal, and environmental surveillance data [14]. | Provides a direct conceptual and methodological model for integrating animal and human epidemiological evidence at the systems level. |

Definitions and Comparative Roles in Drug Development

Real-World Data (RWD) refers to data relating to patient health status and/or the delivery of health care routinely collected from a variety of sources outside of traditional clinical trials [15]. Real-World Evidence (RWE) is the clinical evidence regarding the usage and potential benefits or risks of a medical product derived from the analysis of RWD [15] [16]. Preclinical Evidence encompasses all research on a drug or treatment conducted before human testing, including basic research, drug discovery, lead optimization, and safety studies in animal and cellular models [17].

These three evidence streams serve distinct but complementary purposes throughout the therapeutic development lifecycle and its subsequent evaluation within systematic reviews. The following table summarizes their core characteristics and roles.

Table 1: Comparative Analysis of Preclinical, Clinical Trial, and Real-World Evidence

| Aspect | Preclinical Evidence | Randomized Controlled Trial (RCT) Evidence | Real-World Evidence (RWE) |

|---|---|---|---|

| Primary Purpose | Establish biological plausibility, mechanism of action, initial safety, and dosing [17]. | Establish efficacy and safety under controlled, ideal conditions (internal validity) [18] [16]. | Demonstrate effectiveness, safety, and utilization in routine clinical practice (external validity/generalizability) [18] [16]. |

| Typical Setting | Laboratory (in vitro, in vivo animal models) [17]. | Experimental, protocol-driven clinical setting [18]. | Observational, routine healthcare delivery setting [18] [19]. |

| Subject Population | Cellular systems, selected animal species (e.g., mice, rats, non-rodents) [20]. | Highly selective patient population based on strict inclusion/exclusion criteria [18] [16]. | Heterogeneous patient population with comorbidities, reflecting actual clinical practice [16] [21]. |

| Key Strength | Reveals disease mechanisms; essential for first-in-human dose estimation and initial go/no-go decisions [17]. | Gold standard for establishing causal efficacy with high internal validity due to randomization and blinding [18]. | Assesses long-term outcomes, rare adverse events, and effectiveness in diverse, representative populations [18] [16]. |

| Primary Limitation | Limited direct translatability to human physiology and disease [17]. | Results may not generalize to broader, more complex real-world populations [18] [16]. | Susceptible to confounding and bias due to lack of randomization; data quality and standardization challenges [16] [19]. |

| Regulatory Use | Supports Investigational New Drug (IND) application to initiate human trials [17]. | Supports New Drug Application (NDA) for initial market approval [15]. | Supports post-approval safety monitoring, label expansions, and updates to treatment guidelines [15] [16]. |

Integrated Evidence Synthesis Workflow for Systematic Reviews

Systematic reviews aiming to provide a comprehensive therapeutic assessment must integrate preclinical, RCT, and RWE. The following workflow outlines a protocol for their synthesis.

Figure 1: Integrated workflow for synthesizing preclinical, RCT, and real-world evidence in systematic reviews.

Detailed Methodological Protocols

Protocol for Generating and Analyzing Real-World Evidence

A. RWD Source Selection & Acquisition:

- Primary Sources: Identify and access fit-for-purpose databases. Common sources include [15] [18] [16]:

- Electronic Health Records (EHRs): Contain clinical notes, lab results, and treatment histories.

- Claims & Billing Databases: Provide data on diagnoses, procedures, and prescriptions for insured populations.

- Disease/Product Registries: Prospective, structured data collection on specific patient populations.

- Patient-Generated Data: From wearables, mobile apps, or patient-reported outcome surveys.

- Data Linkage: Use privacy-preserving methods to link data across sources (e.g., EHR to registry) to create a more comprehensive patient journey [22].

B. Study Design & Analytical Methodology:

- Design Choice: Select based on the research question.

- Retrospective Cohort Study: Efficient for assessing long-term outcomes; identifies exposed/non-exposed groups from past data [18].

- Prospective Cohort Study: Collects data forward in time; reduces recall bias but is time-intensive [18].

- Case-Control Study: Efficient for studying rare outcomes; compares cases (with outcome) to controls (without) [16].

- Pragmatic Clinical Trial: A randomized trial integrated into routine care, blending RCT and RWE features [16] [19].

- Bias Mitigation: Employ advanced statistical techniques to address confounding and selection bias inherent in observational data [16]. Standard methods include multivariable regression, propensity score matching, and instrumental variable analysis.

C. Data Standardization Protocol (Critical for Integration):

- Transform RWD to a Common Data Model (CDM): Map heterogeneous source data (e.g., different EHR codes) to a standardized structure like the OMOP CDM to enable pooling and analysis across datasets [22].

- Map to Research Standards: For regulatory submissions, further map CDM data to clinical trial standards like CDISC SDTM to facilitate combined analysis with RCT data [22].

Protocol for Generating Preclinical Evidence

A. Experimental Progression:

- Basic Research & Target Identification: Investigate disease pathophysiology to identify molecular targets [17].

- Drug Discovery (in vitro): Screen compound libraries in cellular disease models to identify "hits" that modulate the target [17].

- Lead Optimization (in vivo): Test promising "lead" compounds in animal disease models (e.g., transgenic mice). Assess efficacy, pharmacokinetics (PK), and preliminary toxicity. Chemically modify leads to improve properties [17].

- IND-Enabling Studies: Conduct Good Laboratory Practice (GLP) compliant toxicology, PK, and safety pharmacology studies in two species (one rodent, one non-rodent) to support regulatory filing for human trials [17] [20].

B. Key In Vivo Experiment Protocol: Efficacy in Animal Model

- Objective: To evaluate the therapeutic effect of a lead compound in a validated animal model of Disease X.

- Materials: Animal model (e.g., specific transgenic mouse), test compound, vehicle control, positive control drug (if available), dosing apparatus, equipment for outcome measurement (e.g., behavioral assay, imaging, biomarker analysis).

- Methods:

- Randomization & Blinding: Randomly assign age/weight-matched animals to Vehicle, Test Compound (multiple doses), and Positive Control groups. Ensure blinding of personnel during dosing and outcome assessment.

- Dosing Regimen: Administer compound via clinically relevant route (e.g., oral gavage, injection) daily for defined study duration.

- Outcome Assessment: Measure primary (e.g., tumor volume, cognitive score) and secondary (e.g., biomarker levels, histological changes) endpoints at predefined time points.

- Toxicology Monitoring: Record body weight, clinical signs, and conduct terminal blood/ tissue analysis for signs of toxicity.

- Statistical Analysis: Use ANOVA with post-hoc tests to compare group means. Report effect size and statistical power.

The Scientist's Toolkit: Essential Reagent Solutions for Integrated Evidence Generation

Table 2: Key Research Reagents and Materials for Integrated Evidence Studies

| Item Category | Specific Examples | Primary Function in Integrated Evidence Synthesis |

|---|---|---|

| Preclinical Biological Models | Genetically engineered mouse models (GEMMs), patient-derived xenografts (PDXs), induced pluripotent stem cell (iPSC)-derived cells. | Provide mechanistic insight and proof-of-concept for therapeutic targets. Findings help explain molecular subgroups observed in human RWE or heterogeneous RCT responses [17] [20]. |

| In Vivo Imaging Agents | Bioluminescent reporters (e.g., luciferin), fluorescent dyes, contrast agents for MRI/CT. | Enable non-invasive, longitudinal tracking of disease progression and treatment response in animal models, paralleling imaging biomarkers used in human RCTs and RWD [17]. |

| Biomarker Assay Kits | ELISA kits, multiplex immunoassays (e.g., Luminex), PCR panels for gene expression. | Quantify molecular biomarkers in animal tissues and human biospecimens (from trials or biobanks linked to RWD). Essential for translational bridging between preclinical mechanism and clinical outcome [17]. |

| Data Standardization Tools | OMOP Common Data Model (CDM) vocabularies, CDISC SDTM/ADaM mapping guides, FHIR to CDISC implementation guides [22]. | Convert disparate RWD and structured trial data into standardized formats, enabling pooled analysis and direct comparison across evidence streams. |

| Advanced Analytics Software | Propensity score matching packages (R, Python), machine learning libraries (scikit-learn), pharmacovigilance signal detection tools. | Mitigate confounding in RWE analyses, identify novel subgroups or predictors from integrated datasets, and detect safety signals across preclinical and post-market data [16]. |

| Bioinformatics Databases | Public genomics repositories (e.g., GEO, TCGA), drug-target databases (e.g., DrugBank), protein interaction networks (e.g., STRING). | Contextualize preclinical findings within human disease biology and drug mechanisms, informing the design of RWE studies that investigate genetic or molecular treatment effect modifiers. |

The 'One Health' Paradigm as a Framework for Integration

The One Health paradigm is an integrative approach that recognizes the fundamental interconnectedness of human, animal, and environmental health [23]. This framework is predicated on the understanding that health challenges such as zoonotic diseases, antimicrobial resistance (AMR), and food safety cannot be effectively addressed within isolated disciplinary silos [23]. For researchers conducting systematic reviews, particularly those aimed at informing drug development and public health policy, One Health provides an essential structure for synthesizing evidence across species and ecosystems. The approach advocates for collaborative, transdisciplinary, and multisectoral interventions to tackle complex health issues whose root causes often span traditional boundaries [23]. Applying this paradigm to systematic review methodology necessitates the deliberate and structured integration of epidemiological, veterinary, and ecological data, moving beyond anthropocentric evidence synthesis to a more holistic model of health evidence [24].

Quantitative Data on One Health Priority Areas

The rationale for a One Health approach in systematic reviews is underscored by quantitative data highlighting shared burdens across human and animal domains. The following tables summarize key areas where integrated evidence synthesis is critical.

Table 1: Global Burden of Select Zoonotic Diseases and One Health Implications

| Disease | Estimated Annual Human Cases/Deaths | Primary Animal Reservoir/Vector | Key Environmental Driver | Systematic Review Integration Need |

|---|---|---|---|---|

| Influenza (Zoonotic) | Variable; pandemics cause millions of deaths [23] | Wild birds, poultry, swine [23] | Agricultural intensification, land use change [23] | Joint analysis of human surveillance, poultry farm outbreaks, and wild bird migration data. |

| Rabies | ~59,000 human deaths annually [23] | Domestic dogs ( >99% of human cases) [23] | Urbanization, low dog vaccination coverage [23] | Synthesis of human post-exposure prophylaxis efficacy, canine vaccination campaign success, and cost-effectiveness studies. |

| Lyme Disease | ~30,000 reported cases in USA annually | Wild rodents, transmitted by ticks [23] | Climate change, habitat fragmentation [23] | Integrated review of human incidence, wildlife host seroprevalence, and climatic/tick distribution models. |

Table 2: Antimicrobial Resistance (AMR) Data Under a One Health Lens

| Parameter | Human Health Sector Data | Animal Health & Agriculture Sector Data | Environmental Sector Data | Integrated Review Focus |

|---|---|---|---|---|

| Resistance Prevalence | Percentage of clinical E. coli isolates resistant to 3rd-gen cephalosporins [23]. | Percentage of E. coli from livestock resistant to same antibiotics [23]. | Concentration of antibiotic resistance genes (ARGs) in river systems near farms [23]. | Correlating resistance trends across sectors to identify transmission hotspots and drivers. |

| Driver: Antibiotic Use | Defined daily doses (DDD) per 1,000 hospital patient-days [23]. | Milligrams of antibiotics per population correction unit (mg/PCU) in livestock [23]. | Not directly applicable. | Comparing the impact of stewardship interventions (e.g., reduced use in animals) on human resistance patterns. |

| Economic Impact | Projected global GDP loss due to AMR by 2050 [23]. | Cost of increased animal morbidity and reduced productivity [23]. | Cost of water treatment to remove ARGs and pathogens [23]. | Holistic economic models for intervention planning that account for cross-sector costs and benefits. |

Application Notes & Protocols for Systematic Review Integration

Protocol: Developing a One Health Systematic Review Question

A clearly formulated review question is the cornerstone of an integrated One Health systematic review. The PECO(S) framework (Population, Exposure, Comparator, Outcome, Study Design/Sector) is recommended over the standard PICO to explicitly incorporate multiple sectors.

Detailed Methodology:

- Define Multi-Sector Populations:

- Human: Specify demographic and clinical characteristics (e.g., "children under 5", "hospitalized patients").

- Animal: Specify species, health status, and context (e.g., "domestic poultry in intensive farms", "wild Rattus species in urban settings").

- Environment: Specify relevant compartments (e.g., "freshwater sources", "soil in agricultural land").

Define Exposure/Intervention Across Sectors: The exposure (e.g., a pathogen, an antibiotic) or intervention (e.g., a vaccination campaign, an agricultural practice) must be defined in terms relevant to each sector. For example, an exposure might be "presence of Campylobacter jejuni," which is measured differently in human stool samples, poultry cecal swabs, and surface water samples.

Define Comparable Outcomes: Identify health outcomes that are analogous or linked across sectors. For a review on influenza transmission, relevant outcomes could be "seroconversion" in humans, "viral shedding" in animals, and "viral detection in air samples" in the environment.

Specify Study Designs and Sector of Origin: Explicitly plan to include study designs from various fields (e.g., human cohort studies, veterinary field trials, environmental surveillance reports). The search strategy must be tailored to retrieve literature from all relevant databases (e.g., PubMed, CAB Abstracts, GreenFILE).

Protocol: Integrated Search Strategy & Study Screening

This protocol ensures comprehensive evidence gathering from all relevant disciplines while maintaining methodological rigor.

Detailed Methodology:

- Database Selection: Search a combination of biomedical, agricultural, veterinary, and environmental science databases. Core databases should include PubMed/MEDLINE, Embase, CAB Abstracts, Web of Science Core Collection, and Scopus. Consider specialized databases like AGRICOLA or GreenFILE for environmental aspects [24].

Development of Search Strings:

- Create a core set of concepts related to the disease or health issue.

- For each concept, compile a comprehensive list of synonyms and controlled vocabulary terms (MeSH, Emtree, CAB Thesaurus) relevant to human, veterinary, and environmental literature. For example, for "influenza," include terms like "human influenza," "avian influenza," "swine flu," and "orthomyxoviridae infections" in animals.

- Combine concepts with Boolean operators (AND, OR). Avoid overly restrictive filters by study type in the initial search.

Screening with a One Health PRISMA Flow Diagram: Adapt the standard PRISMA flow diagram to track the identification and screening of records from different disciplinary sources [25] [26]. The diagram below visualizes this integrated screening workflow.

One Health Systematic Review Screening Workflow

- Dual Screening with Cross-Disciplinary Teams: Implement dual, independent screening of titles/abstracts and full texts. The screening team should include reviewers with expertise in human health, veterinary science, and/or environmental science to correctly interpret discipline-specific literature [24]. Disagreements should be resolved by consensus or a third arbitrator.

Protocol: Integrated Data Extraction and Quality Appraisal

This protocol guides the extraction of data from studies across sectors into a unified framework and the assessment of their quality.

Detailed Methodology:

- Design a Unified Data Extraction Form:

- Create a form with core modules applicable to all studies: Citation, Study Design, Objectives, Funding Source.

- Include sector-specific modules:

- Human Module: Population details, case definitions, diagnostic methods, confounders.

- Animal Module: Species, husbandry/wild, sampling method, diagnostic assay.

- Environment Module: Sample type (water, soil, air), location, collection method, lab processing.

- Include an "Integration" module to capture data on cross-species transmission routes, shared risk factors, or direct comparisons of outcomes between sectors within a single study.

Extraction Process: Reviewers with matched expertise should extract data from studies in their field. A lead reviewer should oversee the integration module to ensure consistency in capturing links.

Quality Appraisal Using Hybrid Tools: No single tool fits all study types. Use a hybrid approach:

- Human Clinical/ Epidemiological Studies: Use ROBINS-I for observational studies or Cochrane Risk of Bias 2.0 for trials.

- Animal (in vivo) Studies: Use the SYRCLE's risk of bias tool.

- Environmental Sampling Studies: Adapt tools based on design, focusing on sampling representativeness, confounding control, and assay validity.

- Note and document the different appraisal criteria across sectors during synthesis.

Protocol: Synthesis of Integrated Evidence

The synthesis phase must explicitly model the interactions between evidence from different sectors, as conceptualized in the following diagram.

One Health Evidence Integration Synthesis Framework

Detailed Methodology for Narrative Synthesis:

- Develop a Preliminary Synthesis: Summarize the findings from each sector (Human, Animal, Environment) separately using text, tables, and maps (e.g., geographic distribution of findings).

- Explore Relationships Within and Between Sectors:

- Use juxtaposition matrices to display data from different sectors side-by-side (e.g., a table showing human case incidence, animal reservoir prevalence, and a key environmental variable like rainfall over the same time period and region).

- Generate causal loop diagrams or conceptual models based on extracted data to hypothesize how drivers and outcomes in one sector influence another. For example, model how antibiotic use in aquaculture (animal/environment) leads to resistant bacteria in waterways (environment), impacting human health through recreational exposure.

- Assess the Robustness of the Integrated Synthesis: Critically evaluate the strength and coherence of the links drawn between sectors. Consider the quantity, quality, and consistency of evidence supporting each proposed interaction. Clearly state where evidence for integration is strong versus speculative.

Detailed Methodology for Quantitative Synthesis (if feasible):

- Assess Statistical Heterogeneity Across Sectors: Recognize that direct meta-analysis of, for example, human clinical trial outcomes and animal infection prevalence rates is not statistically valid due to fundamentally different outcome measures.

- Employ Complementary Meta-Analyses: Conduct separate, parallel meta-analyses for each sector where outcomes are comparable (e.g., efficacy of the same vaccine antigen in human trials and animal challenge studies). Present these results together and discuss the concordance or discordance.

- Use Integrated Modeling: If sufficient quantitative data are extracted, a more advanced approach is to use the systematic review findings to parameterize an integrated mathematical model (e.g., a transmission model that explicitly includes human, animal, and environmental compartments). The review provides the evidence base for model structure and inputs.

The Scientist's Toolkit: Research Reagent Solutions

Implementing a One Health systematic review requires specific tools and resources to handle multi-sector evidence. The following table details key components of this toolkit.

Table 3: Essential Research Toolkit for One Health Systematic Reviews

| Tool/Resource Category | Specific Item or Platform | Function in One Health Review | Key Consideration |

|---|---|---|---|

| Reference Management & Screening | Covidence, Rayyan, EndNote | Manages citations from diverse databases, facilitates dual screening, and tracks reasons for exclusion across disciplines [26]. | Ensure the platform can handle large, heterogeneous imports and allows custom screening forms. |

| Data Extraction & Management | REDCap, Systematic Review Data Repository (SRDR+), custom spreadsheets (Excel, Google Sheets). | Hosts structured, multi-module extraction forms; enables secure data storage and collaboration among geographically dispersed, cross-disciplinary teams. | Form must be rigorously piloted to ensure sector-specific fields are clear to all reviewers. |

| Quality Appraisal Hybrid Toolkit | ROBINS-I, Cochrane RoB 2.0, SYRCLE's RoB tool, bespoke tools for environmental studies [24]. | Enables standardized, sector-appropriate critical appraisal of included studies, highlighting different biases relevant to human trials vs. field ecology studies. | Review team must be trained on multiple tools. Document which tool was used for each study type. |

| Data Synthesis & Visualization | NVivo, Atlas.ti (for thematic synthesis); R, Python with metafor/ statsmodels libraries; GIS software (QGIS, ArcGIS). | Supports coding of qualitative themes across sectors; performs statistical meta-analysis where possible; creates maps to visualize spatial relationships between human, animal, and environmental data points. | Thematic analysis software helps find connections across disparate qualitative findings. GIS is crucial for spatial One Health analysis. |

| Regulatory & Guidance Reference | FDA IND Guidance Documents [27], WOAH Terrestrial Animal Health Code, WHO International Health Regulations (2005). | Informs the translation of review findings for regulatory submissions (e.g., for a zoonotic drug) [27] and ensures recommendations align with international health standards across sectors. | Critical for reviews intended to directly inform drug development or international policy [24]. |

The How-To: Methodological Frameworks and Practical Steps for Evidence Integration

The systematic review of scientific evidence represents a cornerstone of evidence-based medicine and public health decision-making. Within this domain, a critical challenge and opportunity lie in the integration of disparate evidence streams, particularly epidemiological (human) studies and preclinical (animal) research. This integration is not merely a technical exercise but a fundamental methodological advancement for understanding disease etiology, assessing chemical risks, and translating basic research into clinical applications [9]. Historically, these evidence streams have existed in parallel, with epidemiological studies providing direct human relevance but often limited by observational design constraints, and animal studies offering controlled experimental settings and mechanistic insights but facing questions regarding translational validity [9] [28].

The drive toward integration is fueled by several factors. Firstly, frameworks such as One Health explicitly recognize the interconnectedness of human, animal, and environmental health, necessitating surveillance and research that transcend traditional disciplinary boundaries [14]. Secondly, regulatory and risk assessment bodies increasingly seek to leverage all available evidence to reduce uncertainty; for instance, using human epidemiological data can eliminate uncertainties associated with interspecies extrapolation when deriving toxicological reference values [28]. Thirdly, integrating evidence can enhance the biological plausibility of observed associations in human studies and ground animal findings in real-world human exposure scenarios, thereby strengthening causal inference [29] [28].

This article details the mechanisms, protocols, and applications for integrating epidemiological and animal evidence within systematic reviews. It is structured within the context of a broader thesis arguing that such convergent integration is essential for robust, translational, and ethically efficient scientific synthesis.

Defining the Integration Mechanism Spectrum

Integration in the context of health evidence synthesis is a multi-faceted concept. A seminal systematic review categorized approaches to integrating human and animal health surveillance systems into four primary mechanisms, which provide a valuable framework for evidence synthesis in systematic reviews [14]. These mechanisms exist on a continuum from simple data exchange to full methodological and conceptual fusion.

Table 1: Spectrum of Integration Mechanisms for Evidence Synthesis [14]

| Mechanism | Core Principle | Key Activities in Systematic Reviews | Level of Integration |

|---|---|---|---|

| Interconnectivity | Basic exchange of information or data between independent systems. | - Manual cross-referencing of reference lists between human and animal reviews.- Separate searches in PubMed for human and animal studies with post-hoc comparison. | Low |

| Interoperability | Systems or components work together using shared standards, enabling communication and data exchange. | - Using common, controlled vocabularies (e.g., MeSH terms) across searches.- Applying harmonized data extraction fields (PECO/PICO) to both study types.- Depositing shared datasets in interoperable repositories (e.g., GenBank for genetic data). | Medium |

| Semantic Consistency | Implementation of common data models, definitions, and formats to ensure consistent interpretation. | - Defining and applying standardized outcome measures across species (e.g., behavioral assays for aggression) [29].- Using common risk-of-bias frameworks adapted for both observational and experimental studies.- Implementing FAIR (Findable, Accessible, Interoperable, Reusable) principles for all data [30]. | High |

| Convergent Integration | Merging of technology, processes, and knowledge to create a unified system with emergent properties. | - A priori protocol defining a single, integrated review question addressing both human and animal evidence [29].- Unified synthesis methodology (e.g., narrative synthesis across streams, integrated quantitative models).- Joint assessment of strength of evidence and causality using frameworks like GRADE or OHAT that explicitly consider both streams [4] [28]. | Very High |

The progression from interconnectivity to convergent integration represents a shift from post-hoc linkage to unified design. While a 2020 review found interoperability and semantic consistency to be the most commonly attempted mechanisms in health surveillance [14], the most robust systematic reviews strive for convergent integration to answer complex questions such as the causal association between lead exposure and antisocial behavior [29].

Performance and Reporting Landscape of Integrated Reviews

The implementation of integrated systematic reviews is growing but faces significant challenges in reporting quality and data availability. Understanding this landscape is crucial for developing effective protocols.

Table 2: Performance Metrics and Reporting Characteristics of Integrated Evidence Synthesis

| Aspect | Findings from Current Evidence | Implication for Integration |

|---|---|---|

| System Performance | Integrated health surveillance systems showed: sensitivity 63.9-100% (median 79.6%), data quality improvement 73-95.4% (median 87%), and timeliness improvement 10-91% (median 67.3%) [14]. | Demonstrates the tangible benefits of integration for key system attributes like sensitivity and timeliness, which are analogous to the completeness and efficiency of evidence synthesis. |

| Reporting Quality | A cross-sectional study of preclinical systematic reviews (2015-2018) found inconsistent reporting. Key methods like risk of bias assessment were reported in less than half of reviews, and construct validity (model relevance) was rarely assessed [31]. | Poor reporting hampers reproducibility and effective integration. Highlights the need for strict adherence to reporting guidelines like PRISMA. |

| Data Availability (FAIRness) | A review of veterinary epidemiological studies found most non-molecular datasets were not publicly available. Where data was shared, interoperability was the weakest FAIR principle [30]. | Lack of accessible, interoperable data is a major barrier to semantic consistency and convergent integration. Mandates data-sharing policies and use of standardized formats. |

| Review Volume & Scope | Over 3,000 systematic reviews of animal studies have been published, covering preclinical research, toxicology, and veterinary medicine [11]. A 2021 sample identified 442 preclinical reviews across 43 countries and 23 disease domains [31]. | Provides a substantial evidence base for integration but also indicates a risk of duplication and waste without coordinated, integrated approaches. |

Foundational Protocols for Integrated Systematic Reviews

The following protocols provide a methodological blueprint for conducting systematic reviews that aim for convergent integration of human epidemiological and animal evidence.

Protocol 1: Developing the A Priori Integrated Review Protocol

A pre-registered, detailed protocol is the bedrock of a high-quality integrated review [4].

- Define the Integrated Review Question: Formulate a question that explicitly requires both evidence streams. Use a structured framework like PECO (Population, Exposure, Comparator, Outcome), ensuring definitions are applicable across species.

- Example from lead review: P (Human populations & laboratory animals), E (Lead exposure), C (Lower/no lead exposure), O (Antisocial behavior, aggression, social norm violation) [29].

- Establish Harmonized Search Strategies:

- Develop comprehensive search strings for bibliographic databases (e.g., PubMed, Web of Science, Embase).

- Use both subject headings (MeSH, Emtree) and free-text terms tailored for human and animal literature. Avoid species-specific filters in the primary search to maximize sensitivity [29].

- Search for both primary studies and existing systematic reviews in each domain to map the evidence landscape [11].

- Set Inclusion/Exclusion Criteria: Define criteria for study design, language, and date limits. Critically, establish principles for evidence bridgeability, detailing how outcomes in animal models (e.g., specific social behavior tests) map to human outcomes (e.g., psychiatric diagnoses or recorded offenses) [29] [4].

- Register the Protocol: Submit the protocol to a public registry such as PROSPERO or an open science platform (e.g., Open Science Framework) before commencing the review [4].

Protocol 2: Unified Screening, Data Extraction, and Risk of Bias Assessment

This phase operationalizes semantic consistency.

- Screening: Use systematic review software (e.g., Rayyan, Covidence). Conduct title/abstract and full-text screening in duplicate, with reviewers screening records from both evidence streams to ensure consistent application of criteria.

- Data Extraction: Develop a single, piloted extraction form with dedicated sections for human-specific (e.g., confounders adjusted for) and animal-specific (e.g., species, strain, model induction method) data, plus common fields (e.g., exposure/metric, outcome definition, effect size) [31].

- Integrated Risk of Bias (RoB) Assessment: Apply RoB tools appropriate to each study design but within a unified framework.

- For animal studies, use SYRCLE's RoB tool or the CAMARADES checklist [31].

- For observational human studies, use tools like ROBINS-E or the NTP/OHAT framework [28].

- The integrated assessment should explicitly evaluate confounding (key in human studies) and fidelity of the animal model (construct validity) as sources of bias affecting the overall evidence body [28] [31].

Protocol 3: Synthesis, Integration, and Grading of Evidence

This is the stage of convergent integration, where evidence streams are fused to draw a unified conclusion.

- Stratified Synthesis: Initially, synthesize human and animal evidence separately. For human studies, if heterogeneity in outcome assessment is too high, a narrative synthesis may be necessary [29]. For animal studies, a meta-analysis may be feasible to calculate a summary effect size [9].

- Cross-Stream Evidence Integration: Use a structured framework to integrate the synthesized bodies of evidence. Adapted approaches from the U.S. EPA or NTP OHAT are recommended [29] [28].

- Assess coherence/consistency: Do effect directions align across species and study designs?

- Assess biological plausibility and mechanistic evidence: Do animal studies provide a supporting mechanism for observed human associations?

- Assess exposure-response: Is there evidence of a gradient in both streams?

- Triangulation: Deliberately seek consistencies across evidence streams with different, non-overlapping sources of bias to strengthen causal inference [28].

- Grading the Integrated Body of Evidence: Apply an evidence grading system like GRADE or the OHAT approach to rate the overall confidence in the conclusion (e.g., "high," "moderate," "low"), considering the contributions and limitations of both evidence streams [4] [28].

Integrated Systematic Review Workflow for Convergent Evidence Synthesis

The Scientist's Toolkit: Essential Reagents for Integration

Successfully implementing the protocols above requires a suite of methodological tools and resources.

Table 3: Research Reagent Solutions for Integrated Evidence Synthesis

| Tool/Resource Category | Specific Item & Source | Primary Function in Integration |

|---|---|---|

| Protocol & Reporting Guidelines | PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) [4] [31] | Ensures transparent and complete reporting of the integrated review process. |

| Protocol & Reporting Guidelines | PROSPERO International Register of Systematic Reviews [4] | Platform for a priori protocol registration to prevent bias and duplication. |

| Risk of Bias Assessment Tools | SYRCLE's Risk of Bias Tool for Animal Studies [31] | Standardized assessment of internal validity in preclinical studies. |

| Risk of Bias Assessment Tools | ROBINS-E (Risk Of Bias In Non-randomized Studies - of Exposures) [28] | Assesses risk of bias in human observational studies, enabling parallel appraisal. |

| Evidence Grading Frameworks | GRADE (Grading of Recommendations, Assessment, Development, and Evaluations) / OHAT (Office of Health Assessment and Translation) [4] [28] | Provides a structured system to rate the overall confidence in synthesized evidence from multiple streams. |

| Data Management & Sharing | FAIR Guiding Principles [30] | Framework (Findable, Accessible, Interoperable, Reusable) for managing data to enable semantic consistency and reuse. |

| Data Management & Sharing | Disciplinary Repositories (e.g., GenBank, ENA) & General Repositories (e.g., Figshare, Dryad) [30] | Platforms for sharing interoperable data underlying the review. |

| Evidence Databases | Database of Systematic Reviews of Animal Studies [11] | Resource to identify existing preclinical reviews, preventing redundancy and facilitating integration. |

Advanced Applications: From Integration to Causality and Translation

Convergent integration enables powerful applications that extend beyond simple synthesis.

Quantitative Bias Assessment and Triangulation: Moving beyond qualitative RoB assessment, integrated reviews can employ quantitative bias analysis (e.g., to adjust for unmeasured confounding) and triangulation. Triangulation strengthens causal inference by seeking consistent findings from human studies (with different confounding structures) and animal experiments (free of human-style confounding but with different construct validity issues) [28].

Informing Chemical Risk Assessment: Integrated reviews are pivotal for modern risk assessment. A workshop highlighted that epidemiologic data can be used not just for hazard identification but for quantitative dose-response assessment, especially when supported by coherent animal evidence providing biological plausibility and mechanistic data [28]. This reduces reliance on default uncertainty factors for interspecies extrapolation.

Guiding Translational Research: Preclinical systematic reviews can catalyze translational efficiency. By synthesizing animal evidence, they identify the most promising interventions and robust models, informing the design of clinical trials. Conversely, they can reveal irreproducible animal findings, preventing futile or unethical human trials. Institutions like Radboud University have reported a 35% reduction in animal use following the implementation of systematic review methodology, underscoring the ethical and efficiency gains of rigorous, integrated evidence assessment [4].

Advanced Analysis and Applications of Integrated Evidence

The synthesis of preclinical animal evidence and clinical epidemiological data is a cornerstone of translational research, aiming to inform drug development and therapeutic strategies. This process hinges on methodological rigor to ensure transparency, minimize bias, and yield reproducible conclusions. This article details the application of four core standards—protocol registration, PRISMA, SYRCLE, and CAMARADES—that together provide a structured framework for conducting systematic reviews (SRs) that integrate evidence across the translational spectrum. Adherence to these standards addresses critical issues in evidence synthesis, such as selective reporting, poor methodological quality in animal studies, and the challenges of managing complex preclinical data, thereby strengthening the bridge from bench to bedside [32] [33] [34].

Core Methodological Standards: Purpose, Components, and Application

Protocol Registration

Purpose and Rationale: Protocol registration is the a priori publication of a review's design, committing researchers to a predetermined plan. This practice is fundamental for transparency, as it reduces bias from post-hoc changes in methods based on knowledge of the results, deters duplication of effort, and allows peer feedback on proposed methods. Registration is increasingly a requirement for publication in peer-reviewed journals [35].

Key Registries and Data: For SRs of animal studies, PROSPERO's dedicated section (PROSPERO4animals) is a primary registry [33]. Empirical data from 2025 indicates that while registration is growing, only 51% of registered animal study SR protocols culminate in publication, highlighting a significant publication bias or attrition. The median time from protocol registration to published review is 16.2 months, which is 69% longer than authors typically anticipate (6.8 months) [33].

Table 1: Protocol Registration Metrics for Animal Study Systematic Reviews (2025 Data)

| Metric | Value | Implication |

|---|---|---|

| Eligible Protocols Analyzed | 1,365 protocols | Large, growing evidence base [33] |

| Publication Rate | 51% (694/1,365) | Half of initiated reviews remain unpublished, indicating potential bias/waste [33] |

| Median Actual Time to Publish | 16.2 months | Sets realistic expectations for project planning [33] |

| Median Anticipated Time to Publish | 6.8 months | Highlights a widespread underestimation of required effort [33] |

Essential Protocol Components: A robust protocol must include the review title, research question (e.g., PICO: Population, Intervention, Comparator, Outcome), a detailed search strategy with databases and draft queries, explicit inclusion/exclusion criteria, plans for data extraction and risk of bias assessment, and the intended approach to data synthesis [35].

PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses)

Purpose and Evolution: PRISMA is an evidence-based reporting guideline, not a direct quality assessment tool. Its purpose is to ensure the complete, transparent, and replicable reporting of SRs and meta-analyses. The 2020 update refines the original standard to address newer forms of evidence synthesis [36] [37].

Core Components and Checklist: The guideline consists of a 27-item checklist and a flow diagram for reporting study selection. Key items cover the rationale, objectives, eligibility criteria, information sources, search strategy, study selection process, data collection process, risk of bias assessment, synthesis methods, and discussion of limitations and conclusions. The flow diagram visually documents the inflow of studies from identification through screening to inclusion [37] [38].

Application in Integrated Reviews: For reviews integrating animal and human evidence, PRISMA provides the overarching reporting structure. Reviewers should clearly delineate how evidence streams are handled separately and together. The PRISMA checklist ensures that the methods for both the preclinical and clinical arms of the review are reported with equal rigor [36].

SYRCLE (SYstematic Review Centre for Laboratory animal Experimentation)

Purpose and Tools: SYRCLE develops methodology tailored to SRs of animal intervention studies. Its flagship tool is the SYRCLE Risk of Bias (RoB) tool, a critical adaptation of the Cochrane RoB tool for preclinical specifics [32] [39].

Risk of Bias Tool (10 Domains): The tool assesses six types of bias through 10 signaling questions [32]:

- Sequence generation (selection bias).

- Baseline characteristics (selection bias): Assesses if groups were similar at baseline.

- Allocation concealment (selection bias).

- Random housing (performance bias): Specific to animal studies, as housing conditions can affect outcomes.

- Blinding of personnel/caregivers (performance bias).

- Random outcome assessment (detection bias): Accounts for circadian rhythms and other time-sensitive measures.

- Blinding of outcome assessor (detection bias).

- Incomplete outcome data (attrition bias).

- Selective outcome reporting (reporting bias).

- Other sources of bias.

Additional Resources: SYRCLE provides a step-by-step guide for comprehensive search strategies, including validated search filters for PubMed and Embase to efficiently identify animal studies. It also promotes the Gold Standard Publication Checklist (GSPC) to improve primary study reporting [39].

CAMARADES (Collaborative Approach to Meta-Analysis and Review of Animal Data from Experimental Studies) & SyRF

Purpose and Evolution: CAMARADES provides support, mentoring, and infrastructure for preclinical meta-research. It has evolved from a collaborative group to offering a practical online platform: the Systematic Review Facility (SyRF) [40].

The SyRF Platform: SyRF is a free, online, end-to-end platform designed to manage the entire SR workflow for preclinical studies. It supports protocol development, reference importing and deduplication, collaborative screening and data extraction (with user blinding), custom annotation, and data export for analysis. It is engineered to facilitate large, crowdsourced projects and the integration of automation tools [40].

CAMARADES Checklist: An earlier contribution was a quality checklist for animal studies, often used alongside SYRCLE's RoB tool. It includes items like peer-reviewed publication, statement of control of temperature, and use of animals with relevant comorbidities [34].

Emerging and Unifying Tools: CRIME-Q

Development and Purpose: The CRIME-Q tool (Critical Appraisal of Methodological Quality, Quality of Reporting and Risk of Bias in Animal Research) is a 2024 development that unifies assessment across three domains: Quality of Reporting (QoR), Methodological Quality (MQ), and Risk of Bias (RoB). It integrates items from SYRCLE's RoB, ARRIVE 2.0, and CAMARADES while adding unique items, particularly to assess technical ("bench-top") laboratory quality. It is designed to be universally applicable across interventional and non-interventional animal studies [34].

Validation: An internal validation study reported high inter-rater agreement. Cohen’s kappa indices were 0.86 for QoR items, 0.83 for MQ items, and 0.68 for RoB items, indicating substantial to almost perfect agreement [34].

Table 2: Comparison of Core Methodological Standards and Tools

| Standard/Tool | Primary Purpose | Key Components/Items | Specific Application Context |

|---|---|---|---|

| Protocol Registration | Pre-commitment to plan; prevent bias | Research question, search strategy, inclusion criteria | Mandatory first step for all SRs, including animal & integrated reviews [33] [35] |

| PRISMA 2020 | Reporting guideline | 27-item checklist; flow diagram | Final reporting of any SR, ensuring transparency [36] [37] |

| SYRCLE RoB Tool | Risk of bias assessment | 10 domains adapted for animal studies | Critical appraisal of internal validity of animal intervention studies [32] |

| CAMARADES/SyRF | Conduct support & infrastructure | Online platform (SyRF); quality checklist | Managing workflow for preclinical SRs; historical quality assessment [40] |

| CRIME-Q Tool | Unified critical appraisal | 3 domains: QoR, MQ, RoB | Holistic quality assessment of any animal study (interventional/non-interventional) [34] |

Integrated Application: Experimental Protocols for a Translational Systematic Review

Phase I: Protocol Development and Registration

- Define the Integrated Research Question: Formulate a translational question (e.g., "What is the efficacy and safety profile of drug X in animal models of disease Y and in human phase II/III trials?").

- Draft the Full Protocol: Using PRISMA-P as a guide, detail separate but parallel strategies for animal and human evidence streams. Specify databases (e.g., PubMed/MEDLINE, Embase, clinical trial registries), develop separate search strings using SYRCLE's animal filters where appropriate, and define inclusion criteria for both study types [39] [35].

- Register the Protocol: Submit the finalized protocol to PROSPERO (PROSPERO4animals) or another suitable registry like the Open Science Framework (OSF) before commencing the formal search. Record the unique registration number [33] [35].

Phase II: Search, Screening, and Data Management

- Execute Searches and Manage References: Run the registered searches across all databases. Import all references into a management platform. SyRF is strongly recommended for this phase, especially for the animal evidence stream, as it handles deduplication and allows for collaborative screening seamlessly [40].

- Screen Studies: Perform title/abstract and full-text screening in duplicate, independently, using the pre-defined inclusion/exclusion criteria. SyRF facilitates this by blinding reviewers to each other's decisions and tracking conflicts for resolution [40].

- Extract Data: Design and pilot data extraction forms. Extract study characteristics (e.g., species, model, intervention, human population, trial design) and quantitative outcome data in duplicate. SyRF supports customized annotation forms and extraction of data from figures with built-in tools [40].

Phase III: Critical Appraisal and Risk of Bias Assessment

- Assess Animal Studies: Use the SYRCLE RoB tool to judge the internal validity of each animal study across 10 domains. Alternatively, for a more comprehensive assessment covering reporting and technical quality, employ the CRIME-Q tool. Perform assessments in duplicate [32] [34].

- Assess Human Studies: Use an appropriate tool for clinical trials, such as the Cochrane RoB 2.0 tool for randomized trials.

- Document and Synthesize Appraisals: Create summary tables and graphs (e.g., traffic light plots) to present RoB judgments across all studies within each evidence stream.

Phase IV: Synthesis, Analysis, and Reporting

- Synthesize Evidence: Conduct narrative synthesis for each evidence stream, exploring relationships between study characteristics and findings. If appropriate, perform meta-analysis separately for animal and human data, acknowledging the fundamental differences in pooling.

- Integrate Findings: Create a structured summary table comparing the consistency, magnitude, and direction of effects between animal models and human trials. Discuss possible reasons for discordance (e.g., model validity, RoB, dosing, outcome timing).

- Write the Final Report: Adhere strictly to the PRISMA 2020 checklist and flow diagram. Report the process and results for animal and human evidence transparently. Discuss the implications of the integrated findings for translational science and future research [37].

Visualizing the Integrated Systematic Review Workflow

The following diagram outlines the integrated workflow, highlighting the points of application for each core standard.

Integrated Systematic Review Workflow for Translational Research

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for Conducting Integrated Systematic Reviews

| Item / Solution | Function / Purpose | Key Features / Notes |

|---|---|---|

| PROSPERO Registry | International prospective register for SR protocols. | Dedicated section for animal study SRs (PROSPERO4animals). Registration is free and provides a time-stamped, unique ID [33]. |

| SyRF (Systematic Review Facility) | Online end-to-end platform for managing preclinical SRs. | Supports collaborative screening, data extraction, custom annotation, and data management. Facilitates blinding and conflict resolution. Free to use [40]. |

| SYRCLE's Risk of Bias Tool | Critical appraisal tool for animal intervention studies. | 10-domain tool adapted from Cochrane for animal-specific biases (e.g., random housing, blinding of caregivers) [32]. |

| CRIME-Q Tool | Unifying critical appraisal tool for animal research. | Assesses Quality of Reporting, Methodological Quality, and Risk of Bias in one tool. Applicable to interventional and non-interventional studies [34]. |

| PRISMA 2020 Checklist & Flow Diagram | Reporting guideline for systematic reviews. | 27-item checklist and standardized flow diagram template to ensure complete and transparent reporting [37] [38]. |

| SYRCLE Search Filters | Validated search strings for PubMed/Embase. | Filters designed to efficiently and sensitively retrieve animal studies, reducing irrelevant clinical trial results [39]. |

| Reference Management Software | Software for storing, organizing, and deduplicating citations. | Tools like EndNote, Zotero, or Mendeley are essential. SyRF has built-in management for projects on its platform [40]. |