Bridging the Predictive Gap: Advances in Correlating In Vitro and In Vivo Toxicity for Modern Drug Development

This article provides a comprehensive examination of the correlation between in vitro and in vivo toxicity data, a critical nexus in pharmaceutical and chemical safety assessment.

Bridging the Predictive Gap: Advances in Correlating In Vitro and In Vivo Toxicity for Modern Drug Development

Abstract

This article provides a comprehensive examination of the correlation between in vitro and in vivo toxicity data, a critical nexus in pharmaceutical and chemical safety assessment. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts of in vitro-in vivo correlations (IVIVC) and extrapolation (IVIVE), reviews advanced predictive methodologies including computational models and microphysiological systems, addresses common challenges and optimization strategies for complex formulations, and analyzes validation frameworks and regulatory acceptance. By synthesizing insights from current regulatory science, computational toxicology, and advanced in vitro models, this review aims to equip the target audience with a holistic understanding of how to build more reliable, human-relevant pathways for toxicity prediction.

The Core Concepts: Defining the Relationship Between In Vitro Data and In Vivo Outcomes

The process of bringing a new drug to market remains prohibitively expensive and inefficient, with total development costs often exceeding a billion dollars and timelines stretching beyond a decade [1]. A central contributor to this high attrition rate is the persistent failure of traditional toxicity models to accurately predict human adverse effects. Historically, this has led to two critical failures: safe drugs being incorrectly categorized as unsafe, and, more dangerously, unsafe drugs reaching patients [1]. The reliance on animal (in vivo) models, while providing whole-organism data, is fundamentally limited by significant biological differences between species, from metabolic pathways to organ system physiology [1]. Concurrently, conventional in vitro (cell-based) models, though more human-relevant, have often been oversimplified, failing to capture the complexity of organ systems, physiological rhythms, and homeostatic responses [1].

This guide argues that the imperative for modern drug development lies in building next-generation predictive models. These models must bridge the gap between simplified in vitro assays and complex in vivo outcomes by integrating high-quality, curated data, advanced in vitro systems, and computational analytics. The thesis is that enhancing the quantitative correlation between in vitro bioactivity and in vivo toxicity through measured exposure data, human-relevant systems, and ensemble computational modeling is key to reducing late-stage failures and accelerating the delivery of safe therapeutics [2] [3].

Comparison Guide: Current Toxicity Testing Models and Predictive Approaches

The following tables compare the core methodologies, their applications, and the performance of emerging predictive frameworks.

Table 1: Comparison of Core Preclinical Toxicity Testing Models

| Model Type | Key Description | Primary Advantages | Major Limitations & Correlation Challenges | Typical Application in Pipeline |

|---|---|---|---|---|

| In Vivo (Animal Models) | Studies using whole living organisms (e.g., rodents, non-human primates). | Provides data on systemic toxicity, pharmacokinetics, and complex organ interactions [1]. Mandated for certain endpoints by regulators [4]. | Limited human predictivity due to interspecies differences [1]. Ethical concerns, high cost, and low throughput [5]. | Late preclinical stages; required for regulatory submissions on immunotoxicity, carcinogenicity [4]. |

| In Vitro (Cell-Based Models) | Studies using human or animal cells/tissues in a controlled environment. | Human-relevant, high-throughput, cost-effective for early screening [1]. Enables mechanistic studies. | Traditional 2D models lack tissue complexity and systemic feedback [1]. Uncertain chemical exposure due to binding, degradation [2]. | Early screening, mechanistic toxicity, prioritizing compounds for in vivo studies. |

| Advanced In Vitro NAMs (New Approach Methodologies) | Enhanced systems like 3D cultures, organoids, and organ-on-chip. | Better mimics human tissue structure, function, and cellular diversity [6]. Can model multi-organ interactions. | Technical complexity, standardization challenges, and high cost relative to simple assays. Still evolving for regulatory acceptance. | Investigating organ-specific toxicity (e.g., DILI, nephrotoxicity), disease modeling [4]. |

| In Silico (Computational Models) | Predictive models using QSAR, machine learning, and bioinformatics. | Extremely high-throughput, low cost. Can predict toxicity for data-poor chemicals [7]. | Highly dependent on quality and quantity of input data. Can be a "black box"; validation against robust datasets is critical [3]. | Early virtual screening, prioritizing chemical libraries, filling data gaps for risk assessment [7]. |

Table 2: Predictive Modeling Platforms and Validation Metrics

| Model/Platform Approach | Core Predictive Function | Key Differentiators & Data Strategy | Reported Applications & Advantages |

|---|---|---|---|

| CAS BioFinder Discovery Platform | Predicts ligand-target activity, metabolite profiles, and toxicity [3]. | Uses an ensemble of 5+ distinct models (structure-based, etc.) for consensus prediction [3]. Employs deep human curation to disambiguate entities and harmonize data from literature/patents [3]. | Increases confidence by combining multiple predictive methodologies. Proven performance jump when using curated vs. public data [3]. |

| Toxicity Values Database (ToxValDB) v9.6.1 | A curated resource of in vivo toxicity results and derived values for model benchmarking [7]. | Standardizes 242,149 records from 36 sources into a consistent vocabulary [7]. Serves as a gold-standard benchmark for developing and validating New Approach Methodologies (NAMs) [7]. | Enables chemical screening, QSAR model training, and read-across. Used in EPA's Database Calibrated Assessment Process (DCAP) for data-poor chemicals [7]. |

| Measured Exposure In Vitro Protocol [2] | Quantifies bioavailable (freely dissolved) concentration of test chemicals in assay media. | Uses solid-phase microextraction (SPME) in 96-well plates to measure concentration at dosing and after 24h [2]. Directly addresses the uncertainty of exposure in traditional assays. | Identifies chemicals with low bioavailability or instability. Provides critical data for quantitative in vitro-to-in vivo extrapolation (IVIVE) [2]. |

| SOPHiA DDM for Multimodal AI | Integrates clinical, genomic, and imaging data to predict patient outcomes and adverse events [8]. | Multimodal data integration for patient-stratified predictions. Focus on clinical trial optimization and post-market safety [8]. | Shown to predict post-operative outcomes in renal cell carcinoma, outperforming standard risk scores [8]. Aims to improve trial efficiency and safety prediction. |

Detailed Experimental Protocols for Key Predictive Approaches

Objective: To generate robust, quantitative in vitro toxicity data by directly measuring the freely dissolved concentration (C~free~) of test chemicals in cell assay media, thereby accounting for losses due to sorption, metabolism, and degradation. Materials:

- Cell-based bioassay: e.g., human cell line reporter gene assay for cytotoxicity or oxidative stress response in 96-well format.

- Test chemicals: A diverse set covering a range of hydrophobicity (log K~ow~).

- Solid-phase microextraction (SPME) fibers: Compatible with 96-well plate format.

- Analytical instrument: GC-MS or LC-MS for chemical quantification.

- Dosing system: For precise application of test chemical to assay medium.

Methodology:

- Assay Preparation: Seed cells in 96-well assay plates and incubate until appropriate confluence is reached.

- Chemical Dosing & T0 Measurement: Dose the test chemical across a range of concentrations into the assay medium (with or without cells). Immediately, use SPME fibers to sample the medium from designated wells to measure the initial freely dissolved concentration (C~free, T0~).

- Exposure Incubation: Incubate the assay plates under standard conditions (e.g., 24 hours at 37°C, 5% CO₂).

- Endpoint Measurement & T24 Sampling: At the end of the incubation period:

- Measure the biological endpoint (e.g., cell viability, reporter gene activity).

- Use SPME fibers again to sample medium from the same wells to measure the final freely dissolved concentration (C~free, T24~).

- Chemical Analysis: Analyze the SPME fibers using GC-MS/LC-MS to quantify the amount of chemical absorbed, back-calculating to C~free~ in the medium.

- Data Analysis: Calculate the freely dissolved fraction (f~free~) and the percentage loss of C~free~ over 24 hours. Dose-response curves should be plotted using the measured C~free~ values, not the nominal dosed concentrations. This data is directly usable for IVIVE modeling. Significance: This protocol moves beyond nominal dosing, identifying chemicals with unstable exposure and providing a quantitative foundation for extrapolating in vitro bioactivity to in vivo doses, directly addressing a major source of predictive failure [2].

Objective: To create a high-confidence predictive model for chemical toxicity or bioactivity by leveraging multiple, distinct computational methodologies. Materials:

- Curated training dataset: A high-quality dataset of chemical structures linked to toxicological endpoints (e.g., from ToxValDB [7]).

- Computational infrastructure: Sufficient processing power for model training and validation.

- Modeling algorithms: Diverse set (e.g., random forest, deep neural networks, support vector machines, Bayesian methods).

Methodology:

- Data Curation & Harmonization: Apply rigorous curation to the raw data. This involves:

- Disambiguation: Clustering all synonyms for a given protein, gene, or chemical structure under a single, consistent identifier.

- Normalization: Converting all experimental results (e.g., IC₅₀, LOEL) to standard units and formats by expert review.

- Model Training: Train several (e.g., five) independent predictive models on the curated dataset. Each model should employ a different core algorithm or data representation (e.g., one model focused purely on chemical structure fingerprints, another on molecular docking simulations, a third on known metabolic pathways).

- Validation & Benchmarking: Rigorously validate each model using hold-out test sets and benchmark against known external datasets (like those in ToxValDB).

- Ensemble Consensus Prediction: For a new query chemical, run predictions using all individual models. The final prediction is a weighted consensus or aggregation of all individual model outputs. Confidence metrics are derived from the level of agreement among models. Significance: This ensemble approach mitigates the limitations of any single algorithm. The emphasis on deep data curation ensures the "triples" of chemical, target, and measurement are reliable, which is foundational for model accuracy [3].

- Data Curation & Harmonization: Apply rigorous curation to the raw data. This involves:

Predictive Model Development and Validation Workflow

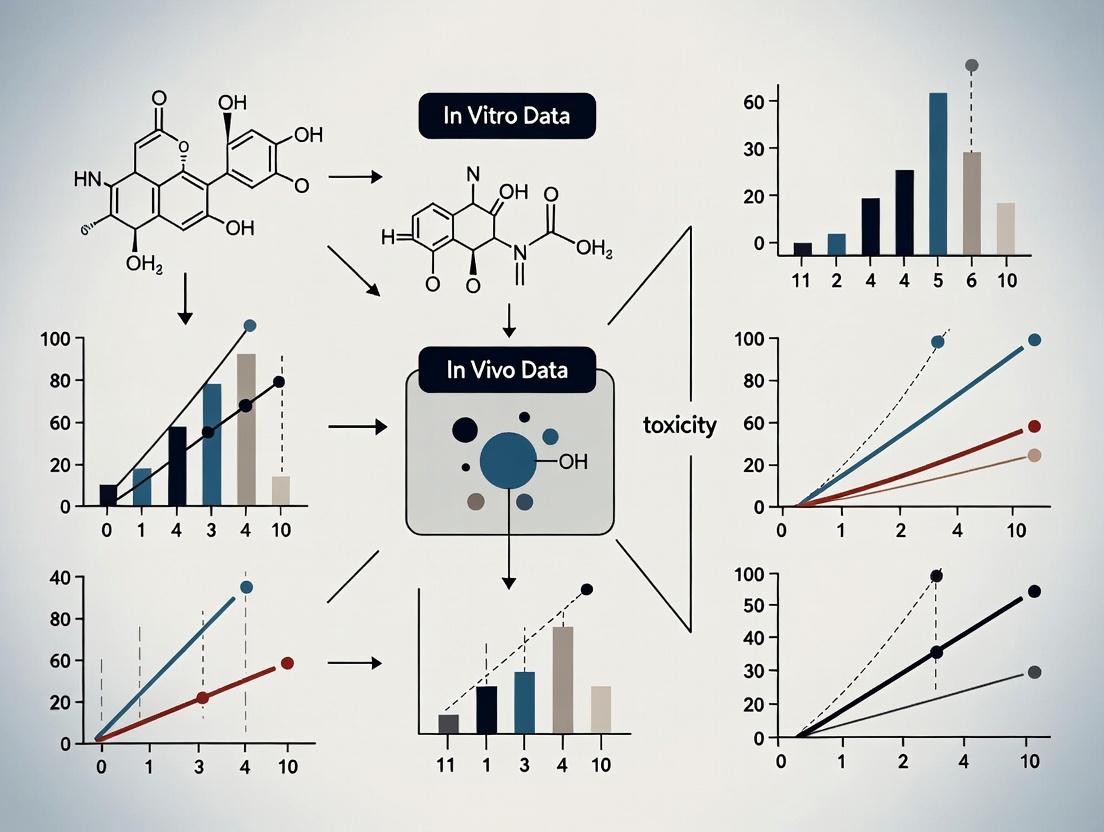

Diagram 1: Integrated workflow for developing high-confidence predictive toxicity models.

Ensemble Predictive Modeling Architecture

Diagram 2: Ensemble modeling architecture combining diverse algorithms for consensus prediction.

The Scientist's Toolkit: Essential Reagents and Technologies

Table 3: Key Research Reagent Solutions for Advanced Predictive Toxicology

| Item/Tool | Function in Predictive Toxicology | Key Rationale for Use |

|---|---|---|

| Human Primary Cells & iPSC-Derived Cells | Provide genetically diverse, physiologically relevant cell sources for in vitro assays. | Overcome limitations of immortalized cell lines; enable patient-specific toxicity screening and personalized medicine applications [6]. |

| Organ-on-Chip Platforms (e.g., Emulate Chip-R1 [4], CN Bio PhysioMimix [4]) | Microfluidic devices that emulate human organ physiology, tissue-tissue interfaces, and vascular flow. | Model complex organ responses and systemic toxicity in a human-relevant context; reduce compound loss via specialized materials [4]. |

| Solid-Phase Microextraction (SPME) Probes | Measure freely dissolved chemical concentrations directly in in vitro assay media [2]. | Critical for defining real exposure concentrations, calculating bioavailability, and generating data usable for quantitative IVIVE [2]. |

| Curated Toxicology Databases (e.g., ToxValDB [7], CAS Content Collection [3]) | Provide standardized, high-quality data for model training, validation, and benchmarking. | Foundational for developing reliable ML models. ToxValDB’s curated in vivo data is essential for validating NAMs [7]. |

| Multimodal Data Integration Platforms (e.g., SOPHiA DDM [8]) | Integrate genomic, clinical, and imaging data to predict patient-specific outcomes and adverse events. | Bridges preclinical findings to clinical reality; aims to optimize trial design and predict safety in heterogeneous human populations [8]. |

| AI-Driven Discovery Platforms (e.g., Merck AIDDISON [5]) | Use generative AI and ML to design novel compounds with optimized toxicity and efficacy profiles. | Accelerates early discovery by virtually screening ultra-large libraries and predicting key drug-like properties before synthesis [5]. |

Core Concepts and Comparative Framework

In the pursuit of more predictive and efficient drug development, establishing robust links between laboratory tests and clinical outcomes is paramount. In Vitro-In Vivo Correlation (IVIVC) and In Vitro-In Vivo Extrapolation (IVIVE) are two fundamental, complementary methodologies that serve this purpose. While both aim to bridge in vitro and in vivo data, their primary objectives, applications, and methodological frameworks differ significantly.

IVIVC is defined as "a predictive mathematical model describing the relationship between an in vitro property of a dosage form and a relevant in vivo response," most commonly between drug dissolution/release and pharmacokinetic (PK) parameters like plasma concentration [9]. Its principal goal is to use in vitro dissolution testing as a surrogate for in vivo bioavailability or bioequivalence studies, thereby supporting formulation development, quality control, and regulatory submissions for specific drug products [10].

IVIVE refers to the qualitative or quantitative transposition of in vitro experimental results to predict in vivo PK and pharmacological outcomes. It often relies on Physiologically Based Pharmacokinetic (PBPK) or Physiologically Based Biopharmaceutics Modeling (PBBM). The goal of IVIVE is broader: to forecast the human PK behavior of a drug substance early in development by integrating intrinsic drug properties (e.g., metabolism, permeability) with physiological system data [11]. It is a key tool in Model-Informed Drug Development (MIDD).

The following table provides a structured comparison of these two cornerstone approaches.

Table 1: Fundamental Comparison of IVIVC and IVIVE

| Aspect | In Vitro-In Vivo Correlation (IVIVC) | In Vitro-In Vivo Extrapolation (IVIVE) |

|---|---|---|

| Primary Objective | To establish a predictive relationship between in vitro drug release from a specific formulation and its in vivo absorption profile [9] [10]. | To predict in vivo pharmacokinetics and dynamics by translating data from intrinsic drug substance properties using physiological models [11]. |

| Typical Application | Formulation development and optimization for modified-release dosage forms (oral, injectable); setting clinically relevant dissolution specifications; supporting biowaivers [9] [10]. | Early drug discovery and candidate selection; predicting human clearance, dose, drug-drug interactions, and tissue exposure; risk assessment [11]. |

| Key Input Data | In vitro dissolution/release profiles of multiple formulations; in vivo pharmacokinetic profiles (e.g., from human or animal studies) [12]. | In vitro intrinsic data (e.g., metabolic stability in hepatocytes, permeability, plasma protein binding) [13]. |

| Core Methodology | Convolution/deconvolution techniques to relate dissolution and absorption time courses; statistical moment analysis [14]. | Scaling factors and mechanistic modeling (e.g., PBPK/PBBM) that incorporate physiological parameters (organ volumes, blood flows) [11] [15]. |

| Regulatory Context | Formally defined in FDA/EMA guidances for oral extended-release products; used to justify biowaivers for formulation and manufacturing changes [10]. | Increasingly used to inform trial design and regulatory decisions within a Model-Informed Drug Development (MIDD) paradigm; supports Investigational New Drug (IND) and New Drug Application (NDA) submissions [11]. |

| Correlation Focus | Product-specific. Correlates the performance of a particular drug product's design. | Drug substance/system-specific. Correlates inherent drug properties within a biological system. |

The IVIVC Correlation Spectrum: Levels A, B, and C

The predictive strength and regulatory utility of an IVIVC are classified into distinct levels. These levels are hierarchically arranged based on the complexity of the relationship established between in vitro and in vivo data [10] [14].

Table 2: Hierarchy and Characteristics of IVIVC Levels

| Aspect | Level A | Level B | Level C |

|---|---|---|---|

| Definition | A point-to-point correlation between the in vitro dissolution curve and the in vivo absorption (or dissolution) curve [10]. | A correlation based on statistical moment analysis, comparing the mean in vitro dissolution time to the mean in vivo residence or absorption time [10] [14]. | A single-point correlation relating one dissolution time point (e.g., % dissolved at 4h) to one PK parameter (e.g., AUC or Cmax) [10]. |

| Predictive Value | High. Can predict the complete plasma concentration-time profile. Considered the most robust and informative [10] [14]. | Moderate/Low. Reflects general trends but does not predict the shape of the absorption profile. Useful for rank-order comparisons [10]. | Low. Provides only a limited snapshot of the relationship. Does not predict the full PK profile [10]. |

| Regulatory Acceptance | Most Preferred. Can support biowaivers for major formulation and process changes, and set dissolution specifications, if validation criteria are met [10]. | Limited. Generally not acceptable as a standalone justification for biowaivers due to lack of profile prediction [10]. | Limited. May support early development insights but is insufficient for biowaivers. A Multiple Level C (correlating several time points to PK parameters) is more useful [10] [16]. |

| Primary Use Case | Regulatory submissions for modified-release products; optimizing and controlling formulations with high confidence [10] [12]. | Early formulation screening and understanding overall release characteristics [10]. | Early development to gain initial insights, or as a supportive element alongside more robust analyses [10]. |

Experimental Protocols for Establishing Correlation

Protocol 1: Developing a Level A IVIVC Using Biphasic Dissolution

This protocol, based on a 2025 study for bicalutamide (BCS Class II) immediate-release tablets, outlines a biorelevant approach to establish a predictive Level A correlation [12].

1. Formulation & Study Design:

- Utilize at least two formulations (e.g., reference and test) with differing release rates. For novel drugs, develop slow, medium, and fast-releasing variants [12] [16].

- Conduct a single-dose, crossover pharmacokinetic study in human volunteers to obtain plasma concentration-time profiles for each formulation [12].

2. In Vitro Biphasic Dissolution Testing:

- Apparatus: USP Apparatus II (paddle), modified with a second paddle in the organic layer.

- Media: A biorelevant two-phase system.

- Aqueous Phase: 300 mL of phosphate buffer (pH 6.8), simulating intestinal fluid.

- Organic Phase: 200 mL of 1-octanol, pre-saturated with buffer, simulating the absorptive compartment.

- Procedure:

- Saturate both phases by stirring for 45 min at 37°C ± 0.5°C.

- Introduce the dosage form into the aqueous phase using a sinker.

- Operate both paddles at 50 rpm.

- Withdraw simultaneous samples from both phases at predefined time points (e.g., 15, 30, 60, 120, 240 min).

- Filter samples and analyze drug concentration using a validated UV-spectrophotometric or HPLC method.

- Calculate cumulative drug amount partitioned into the organic phase, representing the combined dissolution-absorption process [12].

3. In Vivo Data Deconvolution:

- Apply a model-dependent (e.g., Wagner-Nelson for one-compartment, Loo-Riegelman for two-compartment) or model-independent deconvolution method to the PK profiles.

- This step calculates the in vivo fraction of drug absorbed over time for each formulation [12] [16].

4. Model Development and Validation:

- Correlate the in vitro fraction partitioned into octanol (from Step 2) with the in vivo fraction absorbed (from Step 3) using a linear or non-linear regression model.

- Internal Validation: Evaluate the predictability of the model by calculating percent prediction error (%PE) for key PK parameters (AUC, Cmax). For regulatory acceptance, mean %PE should be ≤10%, and individual %PE ≤15% [16].

- The validated model can then predict the in vivo performance of new batches or formulations based solely on their biphasic dissolution profile [12].

Protocol 2: FoundationalIn VitroAssays for IVIVE

IVIVE relies on data from a suite of in vitro DMPK assays. The following are standardized protocols for key assays [13].

1. Metabolic Stability Assay:

- System: Human liver microsomes (HLM) or cryopreserved human hepatocytes.

- Procedure: Incubate the test compound (1 µM) with HLM (0.5 mg/mL) or hepatocytes (1 million cells/mL) in appropriate buffer at 37°C. Terminate reactions at multiple time points (e.g., 0, 5, 15, 30, 60 min) by adding an organic solvent.

- Analysis: Quantify the remaining parent compound using LC-MS/MS. Calculate intrinsic clearance (CLint) by determining the first-order disappearance rate constant (k). This value is scaled to predict hepatic clearance in vivo.

2. Permeability Assay (Caco-2):

- System: Differentiated monolayer of Caco-2 cells cultured on a transwell membrane.

- Procedure: Add the test compound to the donor compartment (apical for A→B transport). Sample from the receiver compartment (basolateral) at set intervals (e.g., 30, 60, 90, 120 min).

- Analysis: Quantify compound concentration. Calculate apparent permeability (Papp). High Papp indicates good potential for passive intestinal absorption.

3. Cytochrome P450 Inhibition Assay (Reversible):

- System: Human liver microsomes with a probe substrate (e.g., phenacetin for CYP1A2, bupropion for CYP2B6).

- Procedure: Co-incubate the test compound at multiple concentrations with the probe substrate and NADPH cofactor. Measure the formation rate of the probe's specific metabolite.

- Analysis: Calculate the concentration of test compound that inhibits 50% of enzyme activity (IC50). This data predicts the potential for clinical drug-drug interactions.

Visualizing the Correlation Workflow

The following diagram illustrates the integrated workflow for developing an IVIVC, highlighting the parallel streams of in vitro and in vivo data that converge into a predictive model.

Diagram 1: IVIVC Development and Application Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for IVIVC and IVIVE Research

| Category | Item / Reagent | Primary Function in Research |

|---|---|---|

| Dissolution & IVIVC | USP Apparatus I (Basket), II (Paddle), or IV (Flow-Through Cell) | Standardized equipment for performing in vitro drug release/dissolution testing under controlled conditions [12]. |

| Biorelevant Dissolution Media (e.g., FaSSIF, FeSSIF, Biphasic systems) | Simulate the pH, surface tension, and composition of gastrointestinal or injection site fluids to provide more physiologically relevant in vitro release data [12] [16]. | |

| Organic Solvent for Partitioning (e.g., 1-Octanol) | In biphasic dissolution systems, acts as an absorptive compartment to mimic drug partitioning across biological membranes, crucial for IVIVC of poorly soluble drugs [12]. | |

| DMPK & IVIVE | Human Liver Microsomes (HLM) / Cryopreserved Hepatocytes | Provide the enzymatic machinery (CYPs, UGTs) to assess metabolic stability and generate intrinsic clearance data for IVIVE to human hepatic clearance [13]. |

| Caco-2 Cell Line | A validated in vitro model of the human intestinal epithelium used to assess passive and active drug permeability, a key parameter for predicting absorption [13]. | |

| Specific CYP450 Probe Substrates and Inhibitors (e.g., Midazolam for CYP3A4) | Tools to identify which enzymes metabolize a drug and to quantify the potential for drug-drug interactions via enzyme inhibition or induction [13]. | |

| Analytical & General | High-Performance Liquid Chromatography (HPLC) / LC-MS/MS Systems | Essential for separating, identifying, and quantifying drugs and their metabolites in complex biological (plasma) and in vitro matrices with high sensitivity and specificity. |

| Physiologically Based Pharmacokinetic (PBPK) Software (e.g., GastroPlus, Simcyp) | Platform for building mechanistic models that integrate in vitro DMPK data with population physiology to perform IVIVE and simulate clinical outcomes [11]. |

The translational gap in drug development represents the critical failure of preclinical data to accurately predict clinical outcomes in humans. This disconnect is most starkly observed in toxicity assessment, where unanticipated severe adverse events (SAEs) remain a leading cause of clinical trial failures and post-market withdrawals [17]. Despite remarkable advancements in basic research, the journey from "bench to bedside" remains fraught with challenges, primarily due to disparities between how compounds behave in controlled laboratory settings versus the complex systems of living organisms [18].

The fundamental thesis of modern translational research posits that the correlation between in vitro and in vivo toxicity data is compromised by both biological divergences (species-specific physiology, disease heterogeneity, genetic variations) and methodological limitations (oversimplified models, non-physiological assays, validation deficiencies) [19] [20]. This article provides a comparative analysis of these sources through the lens of experimental data, established protocols, and emerging technologies that aim to bridge this persistent gap for researchers and drug development professionals.

Biological sources of the translational gap arise from inherent physiological and genetic differences between preclinical models and humans. These differences directly affect drug metabolism, target engagement, and toxicity manifestation.

Table 1: Comparative Analysis of Biological Sources of the Translational Gap

| Biological Source | Impact on Toxicity Prediction | Supporting Experimental Data | Representative Example |

|---|---|---|---|

| Species-Specific Physiology [19] [21] | Differing drug metabolism, immune responses, and organ system functions lead to missed human toxicities. | Ipilimumab showed minimal safety concerns in NHPs but has high incidence of immune-related adverse events (irAEs) in humans [21]. | TGN1412 cytokine release storm in humans was not predicted by NHP models [21]. |

| Disease Heterogeneity [19] | Controlled preclinical models fail to capture the genetic diversity and evolving tumor microenvironments in human patient populations. | Less than 1% of published cancer biomarkers enter clinical practice, partly due to population heterogeneity [19]. | Biomarkers robust in controlled conditions often show poor performance in diverse patient cohorts [19]. |

| Genotype-Phenotype Differences (GPD) [17] | Variations in gene essentiality, tissue expression, and network connectivity between models and humans alter toxicological outcomes. | A GPD-based ML model significantly outperformed chemical-based models (AUROC: 0.75 vs. 0.50) in predicting human toxicity [17]. | The drug sibutramine was safe in preclinical models but withdrawn due to human cardiovascular risks [17]. |

| Target Homology & Expression [21] | Drugs designed for human-specific targets or pathways with poor species homology have unreliable preclinical toxicity profiles. | Bispecific T cell engagers have advanced to trials supported mainly by in vitro human assays due to lack of relevant animal models [21]. | Checkpoint inhibitors showed inconsistent safety signals between NHPs and humans [21]. |

Methodological sources stem from the technical and strategic limitations of the tools and protocols used in preclinical research, which fail to capture human in vivo complexity.

Table 2: Comparative Analysis of Methodological Sources and Technological Solutions

| Methodological Source | Limitation / Failure Rate | Emerging Solution / Model | Improved Predictive Performance |

|---|---|---|---|

| Oversimplified 2D Cell Cultures [20] [22] | Lack tissue structure, mechanical forces, and multicellular interactions, leading to poor physiological relevance. | 3D Organoids & Spheroids: Retain tissue architecture and patient-specific biomarker expression [19]. | 3D liver spheroids were more representative of in vivo liver response to toxicants than 2D HepG2 cells [20]. |

| Traditional Animal Models [19] [21] | Poor human correlation due to biological differences; ethical and cost concerns. | Patient-Derived Xenografts (PDX): Better recapitulate human tumor progression and drug response [19]. | KRAS mutant PDX models correctly predicted resistance to cetuximab, a finding later validated in humans [19]. |

| Static In Vitro Assays [22] | Fail to simulate dynamic bodily processes (e.g., fluid flow, digestion, perfusion). | Dynamic Microphysiological Systems (MPS/Organ-Chips): Integrate fluid flow and mechanical forces [22]. | A human Liver-Chip correctly identified 87% of drugs causing drug-induced liver injury (DILI) in humans [22]. |

| Poor In Vitro-In Vivo Correlation (IVIVC) [23] [10] | Complex formulations like Lipid-Based Formulations (LBFs) have dynamic processes not captured by standard dissolution tests. | Biorelevant Dissolution & PBPK Integration: Combines physiologically-relevant in vitro tests with computational modeling [23] [10]. | Only 50% of drugs studied with a pH-stat lipolysis device correlated well with in vivo data, highlighting the need for better methods [23]. |

| Lack of Functional & Longitudinal Validation [19] | Single time-point, correlative biomarker data lacks biological relevance and dynamic context. | Longitudinal Sampling & Functional Assays: Measures biomarker dynamics and confirms biological activity [19]. | Cross-species transcriptomic analysis has been used to successfully prioritize novel therapeutic targets [19]. |

Experimental Protocols for Key Assessments

Protocol 1: Establishing a Level A In Vitro-In Vivo Correlation (IVIVC)

- Objective: To develop a point-to-point predictive mathematical model between in vitro dissolution and in vivo absorption for oral dosage forms [10].

- Methodology:

- Formulation Preparation: Develop at least two formulations (e.g., slow, medium, fast release) of the drug product with differing release rates [10].

- In Vitro Dissolution Testing: Perform dissolution testing on each formulation using a biorelevant medium (e.g., FaSSIF/FeSSIF for LBFs) and a USP apparatus. Collect samples at multiple time points to establish a full release profile [23].

- In Vivo Pharmacokinetic Study: Administer each formulation in a crossover study using an appropriate animal model or human subjects. Collect serial blood samples to determine plasma drug concentration over time [10].

- Data Analysis: Use deconvolution techniques (e.g., Wagner-Nelson, Loo-Riegelman) to estimate the in vivo absorption/time profile. Correlate the fraction of drug dissolved in vitro with the fraction absorbed in vivo for each time point to establish the Level A correlation [23] [10].

- Validation: Predict the in vivo profile of a new formulation (e.g., with a minor change) using its in vitro dissolution data and the IVIVC model. Compare the prediction to the actual observed in vivo profile. The prediction error should generally be less than 10% for regulatory acceptance [10].

Protocol 2: Genotype-Phenotype Difference (GPD) Feature Extraction for ML Toxicity Prediction

- Objective: To compute biologically grounded features that quantify differences between preclinical models and humans for machine learning-based toxicity prediction [17].

- Methodology:

- Data Curation: For a given drug target gene, collect species-specific datasets:

- Gene Essentiality: CRISPR knockout screens from human (e.g., DepMap) and model organism (e.g., mouse) cell lines [17].

- Tissue Expression: Transcriptomic data (RNA-seq) from human (e.g., GTEx) and mouse (e.g., ENCODE) tissues [17].

- Network Connectivity: Protein-protein interaction networks for human and mouse from dedicated databases [17].

- Feature Calculation:

- Compute the absolute difference or correlation distance between the human and model organism profiles for each data type.

- For gene essentiality, calculate the difference in essentiality scores across a panel of cell lines.

- For tissue expression, compute a dissimilarity metric (e.g., 1 - Spearman correlation) between the tissue expression profiles.

- Model Integration: Use the calculated GPD features (e.g., essentiality difference, expression profile dissimilarity) as input variables alongside traditional chemical descriptors in a machine learning classifier (e.g., Random Forest) to predict human toxicity risk [17].

- Data Curation: For a given drug target gene, collect species-specific datasets:

Visualization of Pathways and Workflows

Diagram 1: Sources and solutions for the translational gap in toxicity.

Diagram 2: ML workflow using genotype-phenotype differences for toxicity prediction.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools and Reagents for Translational Toxicity Research

| Tool / Reagent | Category | Primary Function in Translation | Key Advantage / Note |

|---|---|---|---|

| Patient-Derived Organoids [19] | Advanced In Vitro Model | Retains patient-specific tumor biology and microenvironment for efficacy/toxicity testing. | More predictive of therapeutic response than 2D cultures; used for personalized treatment selection [19]. |

| Organ-Chips (e.g., Liver-Chip) [22] | Microphysiological System (MPS) | Replicates human organ-level physiology with dynamic flow and mechanical forces for safety assessment. | Correctly identified 87% of human DILI-causing drugs; accepted into FDA's ISTAND pilot program [22]. |

| CETSA (Cellular Thermal Shift Assay) [24] | Target Engagement Assay | Measures drug-target binding and engagement in intact cells and native tissue environments. | Provides quantitative, system-level validation, closing the gap between biochemical potency and cellular efficacy [24]. |

| Biorelevant Dissolution Media (FaSSIF/FeSSIF) [23] | In Vitro Test Reagent | Simulates human gastrointestinal fluid composition to predict formulation performance and solubility. | Critical for establishing IVIVC for poorly soluble drugs and lipid-based formulations (LBFs) [23]. |

| pH-Stat Lipolysis Assay [23] | Functional In Vitro Test | Models the dynamic digestion of lipid-based formulations in the GI tract, a key process for drug release. | Essential for LBF development, though correlations with in vivo data can be inconsistent, requiring careful interpretation [23]. |

| Multi-Omics Profiling Suites [19] | Analytical Toolset | Integrates genomics, transcriptomics, and proteomics to identify clinically actionable, context-specific biomarkers. | Moves beyond single targets to capture complex biology; helps identify biomarkers for early detection and prognosis [19]. |

| Validated GPD Feature Datasets [17] | Computational Resource | Provides pre-curated data on cross-species differences in gene essentiality, expression, and network connectivity. | Enables the implementation of biologically-grounded machine learning models for human toxicity prediction [17]. |

The U.S. Food and Drug Administration (FDA) has launched a concerted, agency-wide effort to spur the development and regulatory use of New Alternative Methods (NAMs). This initiative is driven by the goals of replacing, reducing, and refining animal testing (the 3Rs), improving the predictivity of nonclinical safety assessments, and accelerating the development of FDA-regulated products [25]. A cornerstone of this effort is the New Alternative Methods Program, which received $5 million in new funding through the Fiscal Year 2023 budget [25].

The FDA's strategy is built on a "qualification" process, where an alternative method is evaluated for a specific context of use—defining the precise manner and purpose for which the method is deemed acceptable [25]. This process is managed through various qualification programs, including the Drug Development Tool (DDT) programs and the Innovative Science and Technology Approaches for New Drugs (ISTAND) program, which is designed to expand the types of tools accepted, such as microphysiological systems [25].

This regulatory shift is now accelerating. In April 2025, the FDA announced a groundbreaking plan to phase out animal testing requirements for monoclonal antibody therapies and other drugs, leveraging AI-based computational models, organoids, and organ-on-a-chip systems [26] [27]. The agency has published a roadmap outlining a strategic, stepwise approach, starting with monoclonal antibodies and intending to expand to other biological molecules and new chemical entities [27] [28]. This initiative is empowered by the FDA Modernization Act 2.0, passed in late 2022, which authorized the use of non-animal alternatives in investigational new drug applications [28]. The ultimate goal is to make animal studies the exception rather than the norm within three to five years [29] [28].

Framed within the critical research on the correlation between in vitro and in vivo toxicity data, this guide objectively compares the performance of emerging NAMs—spanning in silico, advanced in vitro, and data curation platforms—against traditional methods and provides the supporting experimental data essential for researchers and drug development professionals.

Performance Comparison of Key NAMs Categories

The following tables provide a quantitative and qualitative comparison of major NAMs categories, highlighting their performance, advantages, and regulatory standing relative to traditional methods.

In Silico& Computational Toxicology Models

Table 1: Comparison of In Silico Predictive Toxicology Models with Traditional Methods

| Method | Primary Function | Key Performance Metrics (vs. Traditional) | Regulatory Status & Context of Use | Major Advantages | Key Limitations |

|---|---|---|---|---|---|

| MT-Tox Model (Knowledge Transfer ML) [30] | Predicts in vivo toxicity (carcinogenicity, DILI, genotoxicity) from chemical structure & in vitro data. | Outperformed baseline models; Utilizes sequential transfer from chemical→in vitro→in vivo data to overcome scarcity. | Emerging; cited as example of AI/ML for regulatory use [26] [20]. | Integrates multiple data levels; improves prediction in low-data regimes; provides mechanistic insight. | Performance dependent on quality/quantity of underlying in vitro and in vivo training data. |

| QSAR & Read-Across Models [20] [7] | Predict toxicity based on chemical structure similarity and quantitative structure-activity relationships. | Used for priority screening of data-poor chemicals; benchmarked against in vivo databases like ToxValDB. | Accepted for assessing mutagenic impurities (e.g., per ICH M7) [25]; part of EPA's assessment process [7]. | Fast, low-cost screening for large chemical libraries. | Limited by chemical domain of training set; may struggle with novel structures. |

| Virtual Population (ViP) Models [25] | High-resolution anatomical models for in silico biophysical modeling (e.g., medical device safety). | Cited and used in over 600 CDRH premarket applications; considered a gold standard for specific applications. | Qualified for specific contexts of use within medical device submissions [25]. | Enables patient-specific simulations; reduces need for physical testing. | Highly specialized; development requires significant expertise and data. |

| Traditional Animal Toxicity Studies | Empirical observation of adverse effects in live animal models. | Establishes benchmark data (e.g., NOAEL, LOAEL); often poor predictors of human efficacy/toxicity [27]. | Longstanding regulatory requirement; current benchmark for many endpoints. | Whole-system, integrated biology. | High cost, time, ethical concerns; species translation uncertainties. |

AdvancedIn Vitroand Microphysiological Systems (MPS)

Table 2: Comparison of Advanced In Vitro Models with Traditional 2D Assays and Animal Studies

| Method | Physiological Relevance | Typical Assay Readouts | Predictive Performance for Human Toxicity | Throughput & Cost Relative to Animal Studies | Regulatory Adoption Examples |

|---|---|---|---|---|---|

| 3D Organoids & Spheroids [20] [29] | Moderate-High; 3D architecture allows for better cell-cell communication. | Cytotoxicity, gene expression (omics), specific pathway activation. | More representative of in vivo organ response than 2D models (e.g., liver spheroids) [20]. | Medium throughput; cost-effective compared to animals. | Used in research; being validated for specific contexts (e.g., ISTAND pilot programs). |

| Organ-on-a-Chip (Microphysiological Systems) [25] [29] | High; microfluidic systems can mimic tissue-tissue interfaces, fluid flow, and mechanical cues. | Functional metrics (e.g., barrier integrity, contractility), metabolic activity, secreted biomarkers. | Shown to be as or more predictive of human effects than animal models for some endpoints [29]. | Low-Medium throughput; higher cost per chip than simple assays but lower than long-term animal studies. | Focus of FDA-funded research (e.g., radiation countermeasures) [25]; part of qualification programs. |

| Human iPSC-Derived Cell Models (e.g., Cardiomyocytes, Neurons) [29] | High; human-derived cells with relevant functional phenotypes. | Functional electrical activity (MEA), contractility, impedance, calcium handling. | Human in vitro cardiotoxicity assays (CiPA) show improved predictive value for clinical cardiac risk. | Medium-High throughput for screening. | Maestro MEA for cardiotoxicity used by 9 of top 10 pharma companies; cross-site validation studies [29]. |

| Standard 2D In Vitro Assays | Low; immortalized cell lines in monolayer lack tissue complexity. | Cell viability, reporter gene activity, specific enzyme/target inhibition. | Can have good mechanistic correlation but poor quantitative extrapolation to in vivo due to over-simplification. | Very High throughput; low cost. | Accepted for specific endpoints (e.g., phototoxicity S10, mutagenicity M7) [25]. |

| Reconstructed Human Tissue Models (e.g., Epidermis, Cornea) | Moderate; 3D human-derived tissue with stratified layers. | Cytotoxicity, inflammation markers. | Validated for skin/eye irritation; OECD Test Guidelines 439 & 437 have replaced rabbit tests for some applications [25]. | Medium-High throughput. | OECD accepted for pharmaceuticals; referenced in FDA guidance [25]. |

Toxicity Data Repositories and Benchmarking Tools

Table 3: Comparison of Key Toxicity Data Resources for NAMs Development and Validation

| Database/Resource | Primary Content & Scope | Key Utility for NAMs & IVIVE Correlation | Unique Features & Data Metrics | Access & Integration |

|---|---|---|---|---|

| ToxValDB (v9.6.1) [7] | Curated in vivo toxicity study results, derived values, and exposure guidelines. 242,149 records for 41,769 unique chemicals. | Primary resource for benchmarking NAMs predictions against traditional in vivo outcomes. Enables meta-analysis for chemical prioritization. | Contains harmonized data from 36 sources; includes NOAELs, LOAELs, BMDs; mapped to regulatory chemical lists. | Open-source; accessible via U.S. EPA's CompTox Chemicals Dashboard [7]. |

| Tox21 Dataset [30] | In vitro bioactivity data from 12 quantitative high-throughput screening assays targeting stress response and nuclear receptor pathways. | Provides a standardized in vitro toxicity "context" for training computational models (e.g., MT-Tox) to improve in vivo prediction. | ~8,000 compounds with activity calls for assays like NR-ER, SR-ARE, etc. | Publicly available from NCATS. |

| ChEMBL [30] | Large-scale database of bioactive drug-like molecules, with curated bioactivity data. | Used for general chemical knowledge pre-training of ML models, teaching fundamental structure-activity relationships. | Contains over 1.5 million compounds; focuses on drug discovery space. | Publicly available. |

| FDA NAMs Program & Qualification Reports [25] | Details on qualified alternative methods, guidance documents, and ongoing pilot programs (e.g., ISTAND). | Defines the regulatory context of use for accepted NAMs, providing a clear pathway for sponsor adoption. | Examples: Qualified CHRIS calculator for color additives; First ISTAND submission for off-target protein binding [25]. | Information and guidance published on FDA website. |

Experimental Protocols for Key NAMs Studies

The advancement and validation of NAMs rely on rigorous, standardized experimental methodologies. Below are detailed protocols for two critical approaches: a computational model for in vivo toxicity prediction and an in vitro assay protocol incorporating exposure measurement for improved IVIVE.

Protocol 1: Sequential Knowledge Transfer Training for MT-ToxIn VivoToxicity Prediction Model

This protocol, based on the MT-Tox study [30], outlines a three-stage training strategy to predict in vivo toxicity endpoints by transferring knowledge from large-scale chemical and in vitro datasets.

1. General Chemical Knowledge Pre-training

- Objective: To initialize a graph neural network (GNN) encoder with a fundamental understanding of molecular structure and functional groups.

- Materials: Processed ChEMBL dataset (∼1.58 million compounds) [30], RDKit software for SMILES standardization [30].

- Procedure:

- Standardize all compound structures: Convert SMILES strings using RDKit's

StandardizeSmilesfunction. Filter out inorganic compounds and molecules with molecular weight >1,000 Da [30]. - Model Architecture: Employ a Directed Message Passing Neural Network (D-MPNN) as the GNN backbone to learn molecular graph representations [30].

- Training Task: Train the model in a self-supervised manner on the ChEMBL dataset to learn general chemical representations without specific toxicity labels.

- Standardize all compound structures: Convert SMILES strings using RDKit's

2. In Vitro Toxicological Auxiliary Training

- Objective: To adapt the pre-trained model to the domain of toxicological bioactivity.

- Materials: Tox21 dataset (12 assay endpoints, ∼8,000 compounds) [30].

- Procedure:

- Data Preparation: Assign binary labels ('Active'/'Inactive') based on Tox21 assay outcomes. Remove 'Inconclusive' results [30].

- Multi-Task Learning: Fine-tune the pre-trained GNN using the Tox21 data. The model is trained simultaneously on all 12 assay endpoints, allowing it to learn shared and specific features of in vitro toxicity [30].

- Output: A model capable of generating "in vitro toxicity context" embeddings for input molecules.

3. In Vivo Toxicity Fine-Tuning

- Objective: To leverage the chemical and in vitro knowledge for accurate prediction of specific in vivo toxicity endpoints.

- Materials: Curated in vivo datasets for Carcinogenicity, Drug-Induced Liver Injury (DILI), and Genotoxicity (∼2,600 compounds) [30].

- Procedure:

- Data Curation: Apply rigorous standardization to SMILES strings, remove duplicates, and resolve conflicting activity labels [30].

- Knowledge Integration via Cross-Attention: For each in vivo endpoint, implement a cross-attention mechanism. This allows the model to selectively query and transfer the most relevant information from the learned in vitro toxicity context for the final prediction task [30].

- Multi-Task Fine-Tuning: Perform final model training jointly on the three in vivo endpoints, utilizing the refined representations from the previous stages.

Protocol 2:In VitroBioassay with Parallel Measurement of Freely Dissolved Concentration (C~free~)

This protocol, derived from recent research [2], enhances standard in vitro toxicity testing by quantifying the bioavailable fraction of a test chemical, a critical parameter for robust IVIVE.

1. Assay Setup and Dosing

- Objective: To expose cells to the test chemical in a standardized 96-well plate format while preparing for concurrent chemical analysis.

- Materials: Cell line of choice (e.g., human reporter gene line), 96-well cell culture plates, test chemical stock solutions, solid-phase microextraction (SPME) fibers or other suitable sampling tools [2].

- Procedure:

- Plate cells according to standard protocols for the desired endpoint (e.g., cytotoxicity, oxidative stress response).

- Prepare a dilution series of the test chemical in cell culture medium.

- At the time of dosing (T=0), simultaneously add the chemical dilutions to the cells and to separate, cell-free wells containing only medium and SPME fibers.

2. Measurement of Exposure Concentration

- Objective: To determine the freely dissolved concentration (C~free~), representing the bioavailable fraction, at the start and end of the exposure period.

- Materials: Solid-phase microextraction (SPME) apparatus, analytical instrumentation (e.g., GC-MS, LC-MS) [2].

- Procedure:

- T=0 Measurement: Immediately after dosing, sample the medium from the cell-free wells using SPME to measure the initial C~free~ [2].

- T=24h Measurement: After a 24-hour incubation period, sample medium from both cell-free and cell-containing wells using SPME to measure the final C~free~ [2].

- Analysis: Calculate the freely dissolved fraction (f~free~) and quantify any loss of chemical over time due to sorption, degradation, or cellular metabolism.

3. Toxicity Endpoint Assessment & IVIVE

- Objective: To correlate the measured bioavailable dose with the observed biological effect.

- Procedure:

- Measure the relevant toxicity endpoint (e.g., cell viability, reporter gene activity) at 24 hours using standard methods.

- Data Integration: Plot dose-response curves using the measured C~free~ instead of the nominal administered concentration. This corrects for bioavailability limitations in the in vitro system [2].

- Application to IVIVE: The C~free~-based effective concentrations (e.g., EC~50~) provide a more accurate and physiologically relevant input for quantitative in vitro to in vivo extrapolation models, leading to improved human toxicity predictions [2].

Visualizing NAMs Development and Application Workflows

MT-Tox Sequential Knowledge Transfer Training Pipeline

FDA NAMs Qualification and Regulatory Integration Pathway

IVIVE Workflow Incorporating Measured In Vitro Exposure

The Scientist's Toolkit: Essential Reagents & Platforms for NAMs

Table 4: Key Research Reagent Solutions for Implementing New Alternative Methods

| Item/Category | Primary Function in NAMs Research | Key Features & Examples | Relevance to IVIVE & Correlation |

|---|---|---|---|

| Multielectrode Array (MEA) Systems | Measures real-time, functional electrical activity of neurons and cardiomyocytes for neuro- and cardiotoxicity screening. | Maestro MEA: Industry-standard for cardiotoxicity (CiPA) and seizurogenic assays; used in 9 of top 10 pharma companies [29]. | Provides functional human-relevant data that correlates better with clinical cardiac/neurological risk than animal models [29]. |

| Impedance-Based Analyzers | Tracks cell viability, proliferation, and barrier integrity in a label-free, non-invasive manner. | Maestro Z: Used for cytotoxicity, immune response, and Transendothelial Electrical Resistance (TEER) in barrier models (gut, BBB) [29]. | Enables kinetic assessment of cell health, critical for accurate in vitro potency determination for IVIVE. |

| Live-Cell Imaging Systems | Automatically visualizes and quantifies dynamic biological processes in 2D and 3D cultures. | Omni & Lux Imagers: Monitor complex models in well plates and microfluidic devices [29]. | Facilitates high-content analysis in complex models like organoids, capturing phenotypic changes relevant to in vivo outcomes. |

| Microphysiological Systems (Organ-on-a-Chip) | Mimics human organ physiology and interactions in microfluidic devices for disease modeling and drug testing. | Various commercial and custom devices (lung-, liver-, heart-on-a-chip). FDA is evaluating liver-chip for food chemical safety [25] [29]. | Aims to replicate human tissue-tissue interfaces and pharmacokinetics, directly improving in vitro to in vivo correlation. |

| Human iPSC-Derived Cells | Provides a renewable source of human cells (cardiomyocytes, neurons, hepatocytes) with relevant genotype and phenotype. | Commercially available differentiated cells. Essential for functional assays on MEA and other platforms [29]. | Source of human biology for in vitro systems, reducing species translation uncertainty inherent in animal data. |

| Chemical Analysis for Exposure | Quantifies the freely dissolved/bioavailable concentration of test chemicals in in vitro assays. | Solid-Phase Microextraction (SPME) fibers coupled with GC-/LC-MS [2]. | Critical for moving from nominal to bioeffective dose in in vitro assays, a fundamental requirement for accurate QIVIVE [2]. |

| Curated Toxicity Databases | Provides standardized in vivo and in vitro data for model training, validation, and benchmarking. | ToxValDB [7], Tox21 [30], ChEMBL [30]. | The foundational data layer for developing and validating any computational or correlation-based NAM. |

Modern Toolbox: Methodologies for Building and Applying Predictive Correlations

A central challenge in drug development is the accurate prediction of human toxicity from preclinical data. Historically, this has relied on animal models, which are costly, time-consuming, and most critically, often poorly predictive of human outcomes due to species differences [31]. This translational gap has driven the innovation of in vitro New Approach Methodologies (NAMs) designed to be more human-relevant, ethical, and efficient [2] [31].

This guide objectively compares the evolution of these systems, from conventional 2D cultures to advanced 3D Microphysiological Systems (MPS), within the critical context of improving the correlation between in vitro bioactivity and in vivo toxicity. The maturation of these technologies coincides with a significant regulatory shift. Recent guidance from the U.S. Food and Drug Administration (FDA) now permits sponsors to forgo comparative clinical efficacy studies for biosimilars when "advanced analytical technologies can structurally characterize... and model in vivo functional effects with a high degree of specificity and sensitivity using in vitro biological and biochemical assays" [32] [33] [34]. This policy underscores the growing confidence in sophisticated in vitro models and the data they generate for critical decision-making.

Comparative Analysis of In Vitro Model Systems

The following table summarizes the key characteristics, experimental outputs, and correlation potential of major in vitro model classes.

Table 1: Comparison of In Vitro Model Systems for Toxicity Assessment

| Model Type | Key Characteristics & Components | Primary Experimental Readouts | Strengths | Limitations for IVIVE |

|---|---|---|---|---|

| 2D Monoculture | Single cell type on flat, rigid plastic surface (e.g., multi-well plates) [31]. | Cell viability (MTT, CCK-8), membrane integrity, apoptosis, reporter gene activity [2] [35]. | Simple, high-throughput, low-cost, reproducible. Excellent for mechanistic single-endpoint studies [31]. | Lacks tissue structure, cell-cell/matrix interactions. Altered cell phenotype/function. Poor pharmacokinetic (PK) modeling [31]. |

| 3D Spheroids/Organoids | Self-assembled aggregates or stem cell-derived structures with 3D architecture [36]. | Viability, growth kinetics, spatial differentiation markers, zone-specific toxicity (e.g., necrotic core) [31]. | Better mimiccy of cell morphology, gradients (O₂, nutrients), and some tissue functions. Useful for cancer and developmental toxicity studies [31]. | Often lack perfusion, leading to necrotic cores. Limited control over microenvironment (e.g., mechanical forces). Medium-to-high throughput possible [31]. |

| Single-Organ-Chip (OoC) | Microfluidic device with cultured cells in a controlled, perfused microenvironment. May include tissue-tissue interfaces, extracellular matrix (ECM), and mechanical cues (e.g., cyclic stretch) [37] [31] [38]. | Real-time barrier integrity (TEER), metabolic activity, albumin/urea production (liver), contraction analysis (heart), cytokine release, sensitive biomarker discovery [31]. | Recapitulates dynamic, tissue-specific physiology and PK (absorption, metabolism). Provides human-relevant mechanistic data. Medium throughput. | Higher cost and complexity than static models. Requires specialized expertise. Standardization and reproducibility across labs is a key challenge [37] [31]. |

| Multi-Organ Microphysiological System (MPS) | Two or more organ chips (e.g., liver, kidney, gut, heart) linked via microfluidic circulation to mimic systemic ADME (Absorption, Distribution, Metabolism, Excretion) [31]. | System-level PK parameters (metabolic clearance, inter-organ metabolite transfer), organ-specific toxicity from circulating metabolites, identification of off-target effects [31]. | Enables study of complex, systemic toxicity and metabolite-mediated effects. Most holistic in vitro model for predicting human PK/PD and in vivo outcomes [31]. | Highest cost and technical complexity. Low current throughput. Challenges in scaling organ sizes and media composition to match physiological ratios [31]. |

Detailed Experimental Protocols for Key Methodologies

Protocol: Measuring Bioavailable Concentration in 96-Well Cytotoxicity Assays

A major confounder in in vitro-in vivo extrapolation (IVIVE) is the undefined and unstable exposure concentration of test chemicals in cell media [2]. This protocol details how to measure freely dissolved concentration (C~free~), a critical parameter for accurate bioactivity assessment.

- Objective: To complement standard cytotoxicity (e.g., CCK-8) and specific endpoint (e.g., oxidative stress reporter gene) bioassays with measured exposure concentrations to account for bioavailability and chemical stability [2].

- Materials:

- Test chemicals (spanning a range of hydrophobicity).

- Appropriate cell line and assay media.

- 96-well cell culture plates.

- Solid-phase microextraction (SPME) fibers or alternative partitioning-based sampling tools.

- Analytical instrument (e.g., GC-MS, LC-MS) for chemical quantification [2].

- Method:

- Cell Seeding & Exposure: Seed cells in a 96-well plate and allow to adhere. Prepare serial dilutions of test chemicals in assay medium.

- Initial (T~0~) Sampling: Immediately after dosing a parallel set of blank (cell-free) wells, use SPME to sample the medium to determine the initial C~free~ for each concentration.

- Incubation: Transfer the assay plate to incubator for the exposure period (e.g., 24h).

- Terminal (T~24~) Sampling: After 24h, use SPME to sample medium from both cell-free and cell-containing wells to determine the remaining C~free~ [2].

- Data Analysis:

- Calculate the freely dissolved fraction (f~free~ = C~free~ / nominal concentration).

- Plot f~free~ against log K~ow~ (octanol-water partition coefficient) to visualize the relationship with hydrophobicity. Hydrophilic chemicals (e.g., atenolol) may have f~free~ >90%, while hydrophobic ones (e.g., chrysene) can be <2% [2].

- Identify chemicals with significant loss (>20% decrease in C~free~ over 24h), indicating instability (sorption, degradation, metabolism) [2].

- IVIVE Relevance: The dose-response relationship derived from measured C~free~, rather than nominal concentration, provides a quantitative bioactivity metric that can be directly used for physiologically based pharmacokinetic (PBPK) modeling and IVIVE, drastically improving correlation potential [2].

Protocol: Establishing a Liver-Kidney Multi-Organ MPS for Nephrotoxic Metabolite Detection

This protocol outlines the creation of a linked MPS to model metabolite-mediated organ toxicity, a common failure mode in drug development.

- Objective: To co-culture liver and kidney tissues in a fluidically coupled system to detect the generation of hepatically derived metabolites and their subsequent renal clearance or toxicity [31].

- Materials:

- Two-chamber or modular MPS platform supporting separate tissue culture and shared perfusion.

- Primary human hepatocytes or stem cell-derived hepatocyte-like cells.

- Primary human proximal tubule kidney cells or suitable cell line.

- Tissue-specific or common circulation medium [31] [38].

- Pumps and tubing for recirculating flow.

- Functional assay kits (e.g., for albumin, urea, CYP450 activity for liver; KIM-1, NGAL for kidney injury).

- Method:

- Chip Preparation & Cell Seeding: Coat liver and kidney chambers with appropriate ECM (e.g., collagen I for liver, Matrigel for kidney). Seed each cell type in its respective chamber and allow for tissue formation under static conditions for 1-3 days [31] [38].

- System Connection & Perfusion: Connect the outflow of the liver chamber to the inflow of the kidney chamber via microfluidic channels, establishing a unidirectional or recirculating flow. Begin perfusion with medium at a physiologically low shear stress.

- Dosing & Experiment: Introduce the parent drug compound into the circulating medium reservoir. Maintain the system under flow for up to 7-14 days, with periodic medium sampling [31].

- Endpoint Analysis:

- System PK: Measure parent drug and metabolite concentrations in medium over time using LC-MS.

- Liver Function: Assess albumin/secretion, urea synthesis, and CYP450 activity.

- Kidney Injury: Measure release of injury biomarkers (KIM-1, NGAL), barrier integrity (TEER if applicable), and cell viability [31].

- IVIVE Relevance: This system can reveal nephrotoxicity from a stable parent compound that is metabolized in the liver to a toxic species—a scenario often missed in single-organ tests. The PK data generated (in vitro clearance rates) can be scaled to predict human in vivo clearance, improving the correlation of systemic toxicity outcomes [31].

Visualizing Workflows and System Integration

Evolution and Integration of Advanced In Vitro Models

Multi-Organ MPS Workflow for Systemic ADME-Tox

AI-Enhanced Predictive Toxicology Data Integration

The Scientist's Toolkit: Essential Research Reagents & Materials

The successful implementation of advanced in vitro models depends on specialized materials and reagents.

Table 2: Essential Research Reagents and Materials for Advanced In Vitro Systems

| Category | Item | Function in Experiment | Key Considerations |

|---|---|---|---|

| Platform Fabrication | Polydimethylsiloxane (PDMS) | The most common polymer for soft lithography of microfluidic chips. Its transparency, gas permeability, and flexibility are ideal for OoC [37] [38]. | Can absorb small hydrophobic molecules, potentially skewing drug exposure data. Surface modification often required [38]. |

| Platform Fabrication | Extracellular Matrix (ECM) Hydrogels (e.g., Collagen I, Matrigel, Fibrin) | Provides a 3D, biomechanical scaffold that mimics the native tissue microenvironment, supporting cell polarization, differentiation, and function [31] [38]. | Choice of ECM is organ-specific. Batch-to-batch variability (especially in Matrigel) can affect reproducibility. |

| Cellular Biology | Primary Human Cells (e.g., hepatocytes, proximal tubule cells) | Gold standard for MPS due to retention of mature phenotype and metabolic/transport functions critical for accurate ADME modeling [31]. | Limited availability, donor variability, and rapid de-differentiation in culture. |

| Cellular Biology | Induced Pluripotent Stem Cell (iPSC)-Derived Cells | Enables creation of patient- or disease-specific tissue models. Essential for studying genetic diseases and personalized toxicology [36]. | Differentiation protocols must yield mature, functional cell types. Functional maturity can be variable. |

| Assay & Analytics | Solid-Phase Microextraction (SPME) Fibers | To measure freely dissolved concentration (C~free~) of test chemicals in cell culture media, critical for accurate dose-response and IVIVE [2]. | Requires calibration for each chemical. Integration into standard 96-well workflows is key. |

| Assay & Analytics | Transepithelial/Transendothelial Electrical Resistance (TEER) Electrodes | Non-invasive, real-time quantification of barrier integrity in models of gut, lung, kidney, or blood-brain barrier [31] [38]. | Requires specialized electrodes that fit the OoC device. Measurements can be sensitive to temperature and medium composition. |

| Assay & Analytics | Organ-Specific Functional Assay Kits | Quantify tissue-specific output (e.g., liver albumin/urea, cardiac beat analysis, renal KIM-1/NGAL). More predictive of toxicity than simple viability [31]. | Assay compatibility with microfluidic culture medium and small volumes must be validated. |

The evolution from 2D cultures to perfused, multi-tissue MPS represents a paradigm shift towards human-relevant, mechanistic toxicology. As evidenced by the regulatory pivot towards advanced in vitro analytics for biosimilars, confidence in these NAMs is growing [32] [33]. The critical advancement is the move from qualitative hazard identification to quantitative bioactivity assessment—enabled by measuring real exposure in assays [2] and generating human PK-relevant clearance data from MPS [31].

The future of in vitro-in vivo correlation lies in the systematic integration of the four layers visualized in Figure 1: Biology (iPSCs, organ-specific cells), Technology (sensor-integrated MPS), Data Science (high-content omics), and Predictive Modeling (AI and PBPK). Promising AI models, like the Communicative Message Passing Neural Network (CMPNN) for reproductive toxicity (AUC ~0.95) [39], demonstrate the power of computational integration. The ultimate goal is a closed-loop framework where AI predicts toxicity, MPS tests and refines the predictions, and new MPS data continuously improves the AI models, dramatically accelerating the development of safer therapeutics.

This guide provides a comparative analysis of modern in silico methodologies—Quantitative Structure-Activity Relationship (QSAR), Physiologically Based Pharmacokinetic (PBPK) modeling, and Machine Learning (ML) models—within the critical context of correlating in vitro and in vivo toxicity data. The integration of these computational tools is revolutionizing predictive toxicology and drug development by enhancing the accuracy of extrapolations from biochemical assays to whole-organism outcomes, thereby reducing ethical, temporal, and financial costs associated with traditional animal studies. We objectively compare the performance of standalone and hybrid approaches, supported by recent experimental data, and detail the protocols that underpin these comparisons. The analysis concludes that while hybrid ML-PBPK models and consensus AI platforms show superior predictive performance, the choice of tool must be aligned with the specific research question, data availability, and required interpretability.

A central thesis in modern toxicology and drug development is establishing a robust, predictive correlation between in vitro assays and in vivo outcomes. Traditional drug discovery relies heavily on in vitro experiments and animal studies to assess pharmacokinetics (PK) and toxicity, a process that is time-consuming, expensive, and faces increasing ethical scrutiny [40]. The challenge of in vitro to in vivo extrapolation (IVIVE) lies in accurately translating the behavior of a compound in a controlled cellular environment to its complex absorption, distribution, metabolism, excretion, and toxicological (ADMET) profile in a living organism [40].

Computational and in silico approaches have emerged as indispensable tools for bridging this gap. This guide compares three pivotal methodologies: Quantitative Structure-Activity Relationship (QSAR) models, which predict biological activity from molecular structure; Physiologically Based Pharmacokinetic (PBPK) models, which mechanistically simulate a compound's journey through the body; and Machine Learning (ML) models, which identify complex patterns from large datasets. The most significant contemporary advance is the strategic integration of these approaches, particularly the use of ML to generate accurate input parameters for PBPK models, creating a powerful hybrid paradigm for predictive toxicology [40] [41].

Core Methodologies and Comparative Workflows

Quantitative Structure-Activity Relationship (QSAR) Models

QSAR models are foundational computational tools that establish a mathematical relationship between a compound's physicochemical descriptors (e.g., molecular weight, lipophilicity, electronic properties) and its biological activity or property.

- Traditional Workflow: The classic QSAR pipeline involves: (1) curating a dataset of compounds with known activity, (2) calculating molecular descriptors, (3) selecting relevant features, (4) training a statistical model (e.g., linear regression, partial least squares), and (5) validating the model's predictive capability.

- Modern ML-Enhanced QSAR: Machine learning has broadened QSAR's arsenal. Algorithms like Random Forest (RF), Support Vector Machines (SVM), and Graph Neural Networks (GNNs) can handle high-dimensional, non-linear relationships, significantly improving predictions for complex endpoints like toxicity [42] [43]. These models leverage extensive public databases (e.g., ToxCast, ChEMBL) to predict critical ADME and toxicity parameters directly from chemical structure [40].

Physiologically Based Pharmacokinetic (PBPK) Modeling

PBPK models are mechanistic, compartmental models that simulate the time-course concentration of a compound in plasma and various tissues based on species-specific physiology and compound-specific ADME parameters [40].

- "Bottom-Up" Approach: This approach builds models primarily from in vitro assay data (e.g., hepatic clearance from microsomes, cell-based permeability) and is crucial for IVIVE [41]. However, its accuracy can be limited by errors in in vitro assays and the challenge of capturing all clearance pathways.

- The Data Input Challenge: Developing a robust PBPK model is resource-intensive, as it requires numerous validated compound-specific parameters that are often unavailable for new chemical entities [40]. This bottleneck has driven the integration of ML for parameter prediction.

Machine Learning Models in Predictive Toxicology

ML, a subset of artificial intelligence (AI), employs algorithms that learn patterns from data without being explicitly programmed. In toxicology, supervised learning is predominant, where models are trained on known chemical structures and their associated toxicological outcomes [40].

- Model Types: Commonly used algorithms include RF, SVM, k-Nearest Neighbors (k-NN), and advanced deep learning architectures like Message-Passing Neural Networks (MPNNs) [41] [43].

- Application: ML models excel at predicting discrete toxicity endpoints (e.g., hepatotoxicity, mutagenicity) and continuous PK parameters (e.g., clearance, volume of distribution) [42] [43]. Their performance is heavily dependent on the quality, quantity, and diversity of the training data.

The Integrated Paradigm: ML-PBPK Hybrid Models

The most promising development is the hybrid ML-PBPK paradigm, which synergizes the data-driven power of ML with the mechanistic rigor of PBPK models [40] [41]. This workflow, detailed in the diagram below, involves three key steps: data aggregation, ML prediction of ADME parameters, and PBPK simulation for final PK/toxicity prediction [40].

Diagram 1: Integrated ML-PBPK Workflow for IVIVE. This three-step paradigm illustrates how machine learning is used to predict critical input parameters from chemical structure, which are then used to parameterize mechanistic PBPK models for final prediction of in vivo outcomes [40] [41].

Comparative Analysis of Tool Performance

Predictive Accuracy: ML-PBPK vs. Traditional PBPK

A direct comparison between a hybrid ML-PBPK platform and a traditional in vitro-informed PBPK model reveals a significant advantage for the integrated approach. A 2024 study evaluated both methods on a set of 40 compounds for predicting human Area Under the Curve (AUC) [41].

Table 1: Performance Comparison of ML-PBPK vs. Traditional PBPK Modeling [41]

| Model Type | Key Input Source | Accuracy (AUC within 2-fold) | Primary Advantage | Key Limitation |

|---|---|---|---|---|

| Traditional PBPK | In vitro assays (e.g., microsomal CL, Caco-2 permeability) | 47.5% | Based on measurable biochemical data; mechanistically transparent. | Accuracy limited by assay error and incomplete pathway coverage. |

| Hybrid ML-PBPK | In silico predictions from chemical structure | 65.0% | Higher accuracy; eliminates need for initial in vitro experiments, speeding discovery. | "Black box" nature of some ML models can reduce interpretability. |

The superior performance of the ML-PBPK model is attributed to the ML models' ability to predict total plasma clearance (CLt) more holistically than in vitro assays, which often focus only on hepatic metabolic clearance and miss renal or biliary elimination [41]. This directly addresses a major IVIVE challenge.

Comparison ofIn SilicoMetabolism Prediction Tools

Metabolism prediction is crucial for toxicity assessment. A study comparing four open-access tools for predicting the metabolism of New Psychoactive Substances (NPS) highlights that performance varies, and a consensus approach is beneficial [44].

Table 2: Performance of In Silico Metabolism Prediction Tools for NPS [44]

| Tool | Predicted Metabolites (for 7 NPS) | Strength | Weakness |

|---|---|---|---|

| SyGMa | 437 (most) | Excellent at predicting Phase II (conjugation) metabolites. | May overpredict the number of metabolites. |

| GLORYx | 191 | Can identify unique glutathione conjugates. | Predicts fewer Phase II metabolites than SyGMa. |

| BioTransformer 3.0 | 91 | Effective for Phase I (functionalization) reactions. | Limited Phase II predictions (only for 3/7 NPS). |

| MetaTrans | 80 (fewest) | Not specified in source. | Did not predict any Phase II metabolites. |

| Consensus (All Tools) | Greatest Coverage | Maximizes coverage of potential metabolites; increases confidence in identifying key biomarkers. | Requires integration of multiple outputs. |

No single tool provided complete coverage of experimentally observed metabolites, but their combined use significantly improved the identification of key metabolic biomarkers [44]. This underscores the value of using multiple computational approaches to mitigate individual model limitations.

Performance of AI Toxicity Prediction Platforms

For discrete toxicity endpoints, comprehensive platforms that use consensus modeling from multiple algorithms show state-of-the-art performance. VenomPred2.0, an in silico platform, exemplifies this approach [43].

Table 3: Selected Performance Metrics of VenomPred2.0 vs. Other Methods [43]

| Toxicity Endpoint | VenomPred2.0 (MCC) | Comparison Method A (MCC) | Comparison Method B (MCC) |

|---|---|---|---|

| Mutagenicity | 0.72 | 0.69 | n.d. |

| Carcinogenicity | 0.77 | 0.75 | 0.68 |

| Skin Sensitization | 0.73 | 0.63 | 0.60 |

| Acute Oral Toxicity | 0.80 | 0.75 | 0.72 |

Note: MCC (Matthews Correlation Coefficient) is a robust metric for binary classification, with 1 indicating perfect prediction, 0 random guess, and -1 inverse prediction. VenomPred2.0's strength lies in its use of a consensus strategy, averaging predictions from multiple underlying ML models (RF, SVM, k-NN, MLP) trained on different chemical fingerprints. This ensemble method consistently outperformed single-model approaches [43]. Furthermore, it incorporates SHAP (SHapley Additive exPlanations) analysis, providing crucial interpretability by identifying toxicophores (structural alerts) responsible for the prediction [43].

Diagram 2: Consensus Modeling Strategy for Toxicity Prediction. Platforms like VenomPred2.0 improve reliability by aggregating predictions from multiple independent ML models. The final consensus score, compared against a threshold (e.g., 0.5), yields the classification, which is then explained via SHAP analysis [43].