Beyond Rodent Models: A Systematic Guide to Cross-Species LD50 Comparison for Predictive Toxicology and Risk Assessment

This article provides a comprehensive analysis of methodologies and challenges in comparing Lethal Dose 50 (LD50) values across different species—a critical task for researchers, toxicologists, and drug development professionals.

Beyond Rodent Models: A Systematic Guide to Cross-Species LD50 Comparison for Predictive Toxicology and Risk Assessment

Abstract

This article provides a comprehensive analysis of methodologies and challenges in comparing Lethal Dose 50 (LD50) values across different species—a critical task for researchers, toxicologists, and drug development professionals. It explores the foundational principles of species sensitivity and variability, reviews advanced methodological approaches including in silico QSAR models and database-calibrated assessments, addresses common troubleshooting issues in data interpretation and extrapolation, and establishes frameworks for the validation and comparative analysis of cross-species toxicity data. By synthesizing current research and regulatory perspectives, this guide aims to enhance the accuracy of human health and ecological risk predictions derived from animal studies.

Decoding Species Sensitivity: The Scientific and Ecological Basis of Variable LD50 Values

The median lethal dose (LD50) is a foundational metric in toxicology, defined as the dose of a substance required to kill 50% of a test population within a specified time frame [1] [2]. First introduced by J.W. Trevan in 1927, this standardized measure was developed to compare the relative acute toxicity of various substances, such as drugs and chemicals, by using death as a common, unambiguous endpoint [1] [3]. The value is typically expressed as the mass of substance administered per unit mass of the test subject (e.g., milligrams per kilogram of body weight) [1]. A core principle is that a lower LD50 value indicates higher toxicity [2].

The utility of LD50 extends beyond a simple toxicity ranking. It is critical for risk assessment, informing safety standards in pharmacology, industrial chemical handling, and environmental protection [4]. By providing a quantitative benchmark, LD50 allows researchers and regulators to establish exposure limits, design protective measures, and prioritize chemicals for further study [5]. Its determination involves constructing a dose-response curve, which models the relationship between increasing doses and the percentage mortality in a population [6].

Standardized Experimental Protocol for LD50 Determination

Determining an LD50 value follows a standardized experimental protocol designed to ensure reproducibility and comparability. The following workflow outlines the key stages from animal model selection to final statistical calculation.

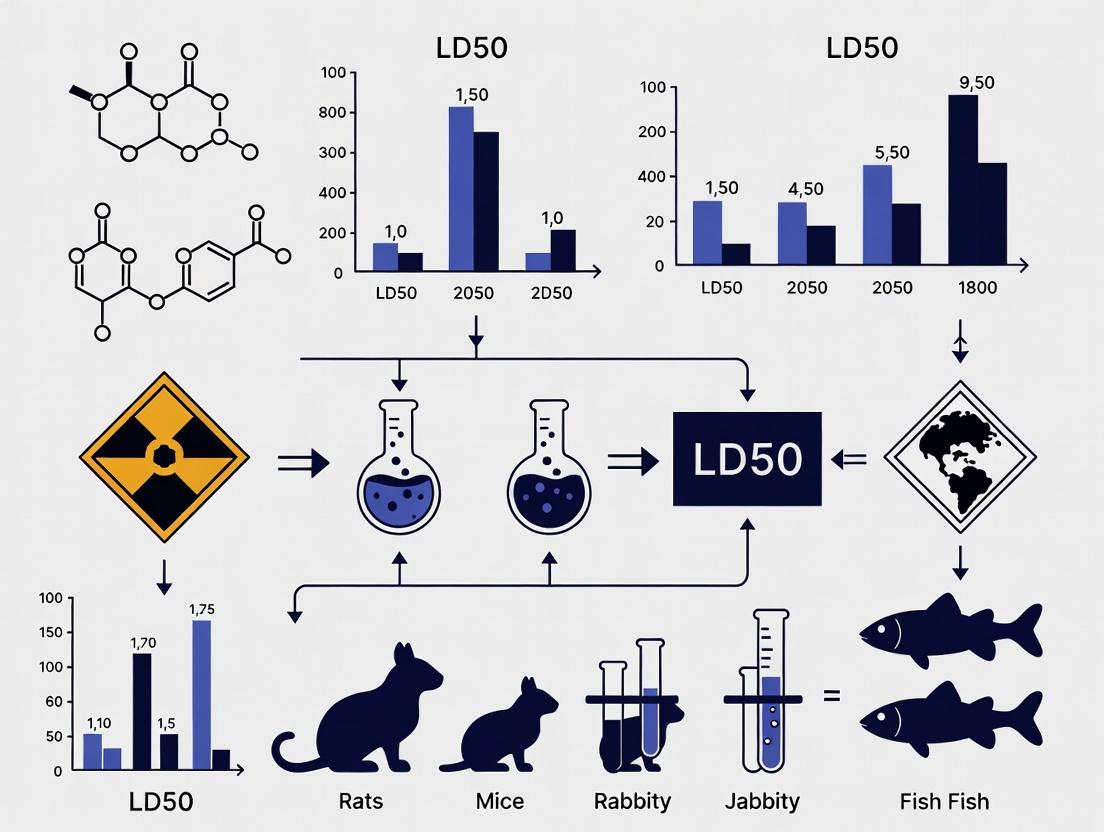

Figure 1: Standard workflow for determining median lethal dose (LD50).

Detailed Methodology

- Animal Model Selection: Tests are most commonly performed on rats and mice. The species, strain, age, sex, and body weight must be standardized and documented, as these factors significantly influence results [3] [4].

- Route of Administration: The substance is administered via a single, controlled route. Common routes include:

- Oral (Gavage): Mimics ingestion.

- Dermal: Application to shaved skin to assess absorption.

- Inhalation: Exposure to a controlled concentration of aerosol or gas in a chamber, yielding an LC50 (Lethal Concentration 50) value [3].

- Injection: Intravenous (IV), intraperitoneal (IP), or intramuscular (IM) for precise dosing [3].

- Experimental Design: Animals are randomly allocated into several groups (typically 4-6). Each group receives a different log-increasing dose of the test substance, while a control group receives the vehicle alone. Group sizes commonly range from 5 to 10 animals [4].

- Observation Period: After administration, animals are closely monitored for 14 days for signs of toxicity (lethargy, convulsions, etc.) and mortality [3]. The time of death is recorded.

- Data Analysis and Calculation: Mortality data at each dose level are used to plot a dose-response curve. The LD50 value and its confidence intervals are calculated using statistical methods such as the probit analysis, logit analysis, or the Spearman-Kärber method [7] [6]. The resulting value must be reported alongside the test species, route of administration, and observation period (e.g., LD50 (oral, rat) = 250 mg/kg) [3].

Cross-Species Comparison of LD50 Values

A central thesis in comparative toxicology is that LD50 values for a given substance can vary dramatically between species. This variability is driven by differences in physiology, metabolism, absorption, and genetic makeup [1]. Understanding this is critical for extrapolating animal data to human risk assessment.

Comparative Data: Rodenticides in Target vs. Non-Target Species

The following table illustrates stark interspecies differences using data for common rodenticides. It shows the grams of commercial bait required to deliver an LD50 dose, highlighting how a product toxic to a pest rodent can pose vastly different risks to birds, pets, or livestock [8].

Table 1: Cross-Species Comparison of Bait Required for LD50 Dose of Rodenticides [8]

| Species (1kg body weight) | Difenacoum (50ppm) | Bromadiolone (50ppm) | Brodifacoum (50ppm) | Warfarin (200ppm) |

|---|---|---|---|---|

| Rat (Target) | 40 g | 20 g | 5.8 g | 3,200 g |

| Mouse (Target) | Not Provided | Not Provided | Not Provided | Not Provided |

| Dog (Non-Target) | 200 g | 200 g | 5 g | 80 g |

| Cat (Non-Target) | 2,000 g | 500 g | 500 g | 40 g |

| Chicken (Non-Target) | 1,000 g | 1,000 g | 200 g | 4,000 g |

| Pig (Non-Target) | 1,600 g | 60 g | 10 g | 4 g |

Key Insights from Data:

- Potency Variance: Brodifacoum is highly potent across all species listed, requiring only 5.8g for a rat and 5g for a dog to reach LD50. In contrast, first-generation anticoagulants like warfarin require vastly larger amounts (kg-scale for rats) [8].

- Differential Sensitivity: A substance dangerous to one species may be less so to another. For example, a pig is more sensitive to warfarin (4g LD50) than a rat (3200g LD50), while a cat is more sensitive to bromadiolone than a chicken [8].

- Risk to Non-Target Species: The data directly informs ecological risk assessments. The low LD50 of brodifacoum for dogs indicates a high risk of secondary poisoning, a critical factor in pest management strategies [8].

The Challenge of Human Extrapolation

The interspecies variability shown above underscores the primary challenge in toxicology: animal LD50 data cannot be directly applied to humans [5] [9]. For instance, the dioxin TCDD is extremely toxic to guinea pigs but has not been unambiguously linked to acute human death from short-term exposure [9]. Therefore, human risk assessment uses animal-derived LD50 values as a starting point, applying safety factors (often 10-fold for interspecies differences and another 10-fold for human variability) to estimate a "virtually safe" dose for humans [5].

Advanced Concepts and Comparative Metrics

Therapeutic Index (TI)

For pharmaceuticals, the LD50 alone is insufficient. It must be compared to the drug's efficacy. The Therapeutic Index (TI) = LD50 / ED50, where ED50 is the median effective dose [1]. A high TI indicates a wide safety margin between the therapeutic and toxic doses. This is a more meaningful comparative metric for drug development than LD50 alone.

Alternative and Related Metrics

Other dose-response parameters provide a more complete toxicological profile:

- LD₀₁ / LD₉₉: The dose lethal to 1% or 99% of the population, describing the extremes of the dose-response curve [1].

- NOAEL/NOEL: The No-Observed-Adverse-Effect Level and No-Observed-Effect Level, critical for establishing safe chronic exposure limits [5].

- LCt₅₀: Used for inhalation hazards (especially chemical warfare agents), integrating Concentration (C) and exposure Time (t) based on Haber's Law, though it does not apply to substances rapidly detoxified by the body [1].

Algorithmic and Modeling Advances

Traditional testing is being supplemented by computational methods. New algorithms, such as one developed for snake venom antiserum, aim to derive accurate LD50 and ED50 values using fewer animals by leveraging mathematical relationships between dose, body weight, and response [7]. Furthermore, quantitative structure-activity relationship (QSAR) models and machine learning are being used to predict LD50 values based on chemical properties, reducing reliance on animal testing [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Conducting robust LD50 studies requires specialized materials and tools. The following table details key components of the research toolkit.

Table 2: Essential Research Toolkit for LD50 Studies

| Tool/Reagent Category | Specific Examples | Primary Function in LD50 Protocol |

|---|---|---|

| Test Substance Preparation | Pure chemical compound, Vehicle (e.g., corn oil, saline, carboxymethylcellulose), Solvents (if required) | To prepare accurate, stable, and administrable dose formulations for the chosen route (oral, injectable, etc.). |

| Animal Models | Specific pathogen-free (SPF) rodents (e.g., Sprague-Dawley rats, Swiss-Webster mice), with defined age, sex, and weight. | To provide a standardized, reproducible biological system for dose-response testing. |

| Dosing Apparatus | Oral gavage needles, Precision syringes, Inhalation exposure chambers, Dermal applicators. | To ensure precise, consistent, and controlled administration of the test substance via the specified route. |

| Clinical Observation Tools | Standardized clinical scoring sheets, Video recording equipment, Body weight scales, Feed/water consumption monitors. | To systematically record signs of toxicity, morbidity, and changes in physiological parameters during the observation period. |

| Statistical Analysis Software | Software capable of probit or logit analysis (e.g., SAS, R, Prism, specialized LC50/LD50 calculators). | To fit dose-response data to a statistical model and accurately calculate the median lethal dose (LD50) with confidence intervals. |

Visualization: From Animal Dose-Response to Human Risk Assessment

The final diagram synthesizes the core challenge of interspecies research: translating animal experimental data into meaningful human safety guidance. It shows the parallel processes in animal testing and human risk assessment, connected by the crucial, yet uncertain, step of interspecies extrapolation.

Figure 2: Translating animal LD50 data to human safety assessment involves key uncertainties.

The determination of chemical toxicity via the median lethal dose (LD50) or concentration (LC50) is a cornerstone of safety science, used to make critical go/no-go decisions in drug development and to assess ecological risk [10]. The LD50 represents the amount of a substance that causes death in 50% of a test population, providing a standardized measure of acute toxicity [3]. Historically, these assessments have relied heavily on rodent models, primarily rats and mice, due to their physiological and genetic similarity to humans, small size, and ease of genetic manipulation [11] [12]. Over 95% of the mouse genome is shared with humans, making them a primary model for studying disease mechanisms and toxicity [13] [12].

However, the critical challenge lies in extrapolating data from these standardized rodent tests to predict outcomes in humans and diverse ecological species. This cross-species extrapolation is fraught with complexity due to interspecies differences in anatomy, physiology, metabolism, and genetics [14] [15]. For instance, a chemical's toxicity can vary significantly based on the route of exposure (oral, dermal, inhalation) and the test species [3]. Furthermore, regulatory requirements are increasingly demanding a reduction in animal testing, pushing the field toward New Approach Methodologies (NAMs), including in silico models and computational toxicology [10].

This guide frames the comparison of LD50 values within the broader thesis that robust cross-species comparison is not merely an academic exercise but an imperative. It is essential for accurate human health risk assessment, effective ecological protection, and the development of reliable alternative methods that can reduce reliance on animal testing while improving predictive accuracy.

Comparative Analysis: Rodents, Humans, and Ecological Receptors

Rodents as Proxies for Human Health: Rodent models are invaluable for biomedical research, contributing to approximately 90% of Nobel Prize-winning studies in Physiology or Medicine [15]. Their utility stems from their ability to model complex disease processes, test therapeutic interventions, and provide toxicity data required by regulatory authorities before human trials [11]. Specific models, such as genetically engineered mice (GEMs) and patient-derived tumor xenografts (PDTXs), allow for sophisticated studies of cancer biology and drug efficacy [11]. For metabolic diseases like obesity and type 2 diabetes, rodent models have been instrumental in unraveling pathophysiological mechanisms, though researchers must critically assess species-specific differences in physiology [16].

Limitations and Interspecies Variability: Despite their advantages, rodents are imperfect human surrogates. Key physiological differences can lead to translational failures. For example, significant differences exist in drug metabolism, immune system function, and disease progression [13]. A chemical with a high LD50 in rats (indicating low toxicity) may still pose a significant risk to humans due to unique metabolic pathways, and vice-versa [3]. This variability underscores why testing in a single species is insufficient for comprehensive risk assessment and why understanding the mechanisms of toxicity is crucial for reliable extrapolation [14].

Extension to Ecological Risk Assessment (ERA): The paradigm of cross-species extrapolation extends beyond human health to protect ecosystems. Ecological Risk Assessment (ERA) requires toxicity data for a wide array of non-target species, including birds, mammals, fish, aquatic invertebrates, and plants [17]. It is logistically and ethically impossible to test every chemical on every potential species. Therefore, regulators rely on extrapolation from standard test species (e.g., laboratory rats, fathead minnows, daphnia) to predict effects on thousands of others [14]. The U.S. Environmental Protection Agency (EPA) uses a deterministic "quotient method," comparing an estimated environmental exposure concentration to a toxicity endpoint (like an LC50) to calculate a Risk Quotient (RQ) [17]. The reliability of this entire framework depends on the accuracy of the cross-species extrapolation models employed.

Data Presentation: Quantitative Comparisons Across Species and Models

The following tables summarize key quantitative data, illustrating the variability in toxicity metrics across species and the performance of modern predictive models designed to bridge these gaps.

Table 1: Acute Toxicity (LD50/LC50) Classifications and Comparative Values

| Toxicity Rating | Oral LD50 (Rat) mg/kg | Inhalation LC50 (Rat) ppm/4hr | Dermal LD50 (Rabbit) mg/kg | Probable Lethal Dose for 70kg Human | Example Chemical (Dichlorvos) |

|---|---|---|---|---|---|

| 1: Extremely Toxic | ≤ 1 | ≤ 10 | ≤ 5 | A taste, a drop (<7 drops) | Inhalation LC50: 1.7 ppm [3] |

| 2: Highly Toxic | 1-50 | 10-100 | 5-43 | 4 ml (1 tsp) | Intraperitoneal LD50 (rat): 15 mg/kg [3] |

| 3: Moderately Toxic | 50-500 | 100-1000 | 44-340 | 30 ml (1 fl. oz.) | Dermal LD50 (rat): 75 mg/kg [3] |

| 4: Slightly Toxic | 500-5000 | 1000-10,000 | 350-2810 | 600 ml (1 pint) | Oral LD50 (rat): 56 mg/kg [3] |

| 5: Practically Non-toxic | 5000-15000 | 10,000-100,000 | 2820-22590 | 1 Litre | Oral LD50 (dog): 100 mg/kg [3] |

Table 2: Performance of Machine Learning Models for Acute Toxicity Prediction (Selected Examples)

| Species | Endpoint | Model Type | Dataset Size (Compounds) | Key Performance Metric | Notes |

|---|---|---|---|---|---|

| Rat | Oral LD50 | Collaborative Acute Toxicity Modeling Suite (CATMoS) | >11,000 (classification) | High accuracy & robustness vs. in vivo [10] | Consensus model from multiple algorithms; tested on pharmaceutical compounds [10]. |

| Rat | Oral LD50 | Bayesian Classification (Assay Central) | 11,297 | Balanced Accuracy: 0.61–0.84 [10] | Eight models based on different toxicity categories [10]. |

| Mouse | Oral LD50 | Regression & Classification | 803 (oral) | 5-fold cross-validation statistics [10] | Data curated from ChEMBL for multiple administration routes [10]. |

| Fish | LC50 | Classification | Up to 2,983 | Based on threshold (≤1 / ≥100 mg/L) [10] | Combined data from ECOTOX and other databases [10]. |

| Daphnia | LC50 | Classification | Up to 1,377 | Based on threshold (≤1 / ≥100 mg/L) [10] | Data from ECOTOX and other sources [10]. |

Experimental Protocols: From In Vivo Tests to In Silico Models

1. Standard In Vivo LD50 Test Protocol (OECD Guidelines): This traditional method involves exposing groups of healthy, young adult animals (typically rats or mice) to a range of single doses of the test substance [3].

- Procedure: Animals are assigned to dose groups. The substance is administered via the relevant route (oral gavage, dermal application, inhalation). For inhalation tests (LC50), animals are exposed in chambers to a known concentration of a gas or vapor for a set period (often 4 hours) [3].

- Observation: Animals are clinically observed individually for signs of toxicity and mortality for a minimum of 14 days post-administration [3].

- Analysis: The number of deaths in each group is recorded. The LD50/LC50 value and its confidence interval are calculated using statistical methods (e.g., probit analysis) from the dose-response data [4].

- Endpoint: The dose/concentration estimated to kill 50% of the test population is derived [10] [3].

2. Computational LD50 Model Building Protocol (as per recent ML studies): This protocol outlines the creation of machine learning models to predict toxicity, reducing animal use [10].

- Data Curation: Large datasets are compiled from public sources (e.g., ChEMBL, ECOTOX) [10]. Entries are sanitized: duplicates and salts are removed, charges are neutralized, and molecular structures are standardized. For regression, LD50 values are converted to –log(mg/kg). For aquatic toxicity classification, the most sensitive species value is retained, and compounds are binarized as high or low toxicity based on thresholds (e.g., ≤1 mg/L and ≥100 mg/L) [10].

- Descriptor Generation & Model Training: Numerical descriptors (e.g., Extended Connectivity Fingerprints - ECFP6) representing molecular structures are generated. Multiple machine learning algorithms (e.g., Naïve Bayesian, Random Forest, Deep Learning) are trained on the curated data to classify or regress the toxicity endpoint [10].

- Validation: Model performance is rigorously evaluated using 5-fold cross-validation. For the rat CATMoS models, an external curated test set was used to validate predictions against known in vivo results [10].

- Applicability Domain: The chemical space for which the model makes reliable predictions is defined, which is a key requirement for OECD-compliant QSAR models [10].

3. Ecological Risk Quotient (RQ) Calculation Protocol (EPA Method): This is a screening-level method used in pesticide risk assessment [17].

- Exposure Estimation (EEC): The Estimated Environmental Concentration is calculated using models that consider pesticide use patterns, fate, transport, and organism behavior. For birds, this could be the concentration in diet (mg/kg diet) or the dose per unit area (mg a.i./ft²) [17].

- Toxicity Endpoint Selection: The most sensitive relevant toxicity endpoint from laboratory studies is selected. For an acute avian risk assessment, this is the lowest available LD50 (single oral dose) or LC50 (dietary) [17].

- Risk Quotient Calculation: A point estimate RQ is calculated: RQ = EEC / Toxicity Endpoint. For a dietary-based acute avian assessment: RQ = (Concentration in Diet, mg/kg) / (Avian LD50, mg/kg) [17].

- Risk Characterization: The calculated RQ is compared to pre-defined Levels of Concern (LOCs). An RQ exceeding the LOC indicates potential risk and may trigger more refined assessments or risk mitigation measures [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for Cross-Species Toxicity Research

| Tool/Resource | Type | Primary Function in Research |

|---|---|---|

| Assay Central Software | Software Platform | Enables building, validating, and deploying machine learning models for toxicity prediction using Bayesian algorithms and other methods [10]. |

| RDKit / Indigo Toolkit | Open-Source Cheminformatics | Provides fundamental functions for molecular sanitization, descriptor calculation, and structure standardization during data curation for computational modeling [10]. |

| CATMoS (Collaborative Acute Toxicity Modeling Suite) | Consensus Model Platform | Provides a curated dataset and consensus predictions from multiple modeling groups for rat oral LD50, offering high robustness for prioritization [10]. |

| EPA T-REX Model | Ecological Exposure Model | Calculates dietary- and dose-based Risk Quotients (RQs) for birds and mammals exposed to pesticides via spray, granular, or seed treatment applications [17]. |

| Genetically Engineered Mouse (GEM) Models | Biological Model | Allows for the study of disease mechanisms and compound efficacy in vivo by activating oncogenes or inactivating tumor-suppressor genes in a tissue-specific manner [11]. |

| Patient-Derived Tumor Xenograft (PDTX) Models | Biological Model | Conserves original human tumor characteristics (heterogeneity, genetics) when engrafted into immunodeficient mice, providing a more clinically relevant model for oncology drug testing [11]. |

| Rat Resource & Research Center (RRRC) / Mutant Mouse Regional Resource Centers (MMRRC) | Biorepository | Preserves and distributes genetically characterized rat and mouse strains, ensuring accessibility and reproducibility of research using these animal models [12]. |

The imperative for cross-species comparison is undeniable. Moving from rodent LD50 data to informed decisions about human health and ecological safety requires a multifaceted strategy that acknowledges both the power and the limitations of animal models. The future of the field lies in the integrated use of comparative biology, mechanistic toxicology, and advanced computational tools.

The development and regulatory adoption of New Approach Methodologies (NAMs), including the machine learning models detailed here, are critical for reducing animal use while potentially improving the accuracy and scope of toxicity predictions [10] [14]. Success will depend on improving data quality and accessibility, developing frameworks that combine relatedness-, traits-, and genomics-based extrapolation methods, and maintaining rigorous validation against high-quality experimental data [14]. By critically evaluating rodent data within this broader comparative framework, researchers and risk assessors can make more reliable, protective, and ethical decisions that safeguard both human and environmental health.

Visualizing Frameworks and Workflows

Diagram Title: A framework for cross-species toxicity extrapolation [14].

Diagram Title: EPA ecological risk quotient workflow [17].

Determining the median lethal dose (LD50) is a fundamental component of acute toxicity testing, providing a standardized point of comparison for chemical hazards [1] [3]. However, translating these animal-derived values into accurate human health risk assessments presents a significant scientific challenge due to profound interspecies variability. Historically, this uncertainty has been addressed through the application of default safety factors [18]. For decades, a 100-fold factor has been commonly used, divided into two 10-fold components: one for interspecies differences and another for intraspecies (human) variability [18]. While practical, these defaults are recognized as largely arbitrary and may not be protective or scientifically appropriate for all substances [18] [19].

The core thesis of this guide is that moving beyond these generic defaults requires a mechanistic understanding of the key biological drivers of variability. Empirical data increasingly show that predictable physiological and metabolic principles, rather than random differences, govern much of the observed variation in LD50 values across species [19]. This guide will objectively compare the performance of traditional default approaches with more sophisticated, data-driven methods for interspecies extrapolation, focusing on three primary factors: metabolism, physiology (allometry), and route of exposure. We will provide supporting experimental data and protocols to equip researchers with the tools for more accurate and scientifically defensible cross-species comparisons in toxicology and drug development.

Comparative Analysis of Extrapolation Methodologies

A critical step in risk assessment is extrapolating an effect level (like an LD50) from an animal model to a human equivalent. The table below compares the prevailing methodologies, their scientific basis, performance, and ideal applications.

Table 1: Comparison of Methodologies for Interspecies LD50 Extrapolation

| Methodology | Core Principle | Typical Performance & Uncertainty | Key Advantages | Major Limitations | Best Use Context |

|---|---|---|---|---|---|

| Default Safety Factor | Application of a generic 10-fold interspecies uncertainty factor [18]. | Variable; can be under- or over-protective. Factor of 10 may be inadequate for certain kinetics [18]. | Simple, requires no chemical-specific data, provides consistent regulatory benchmark. | Arbitrary, ignores chemical-specific toxicokinetics/dynamics, can lead to inaccurate risk estimates [18]. | Screening-level assessment; data-poor situations. |

| Body Weight Scaling (Allometric Exponent 1.0) | Assumes toxicity is equivalent when dose is normalized per kg of body weight [19]. | Poor agreement with empirical LD50 data; generally not supported [19]. | Intuitively simple. | Biologically implausible; fails to account for fundamental metabolic rate differences between species [19]. | Not recommended as a generic approach. |

| Caloric Demand/Metabolic Body Size Scaling (Allometric Exponent 0.75) | Scales dose based on metabolic rate, which correlates with body weight^0.75 [19]. | Strong empirical support for pharmacokinetic parameters (AUC, Clearance); reliable for interspecies extrapolation [19]. | Biologically grounded, data-driven, widely applicable across diverse chemicals. | Requires identification of appropriate point of departure; less accurate for locally acting agents. | Default data-informed approach for systemic toxicants when PBPK models are unavailable. |

| Physiologically Based Pharmacokinetic (PBPK) Modeling | Mathematical modeling of absorption, distribution, metabolism, and excretion based on species-specific physiology [19]. | High accuracy; reduces uncertainty to chemical-specific parameters. | Most scientifically rigorous, accounts for complex kinetics and metabolism, species- and route-specific. | Resource-intensive, requires substantial chemical-specific and physiological data. | Priority chemicals with rich data; drug development; refining risk assessments. |

Factor 1: Metabolic Pathways and Biotransformation

Role in Variability: Interspecies differences in drug metabolism are a primary source of variability in toxic response [20]. Variations in the expression, activity, and polymorphism of enzymes (e.g., CYPs, UGTs) can drastically alter the internal dose of a parent compound or toxic metabolite [18] [20]. These differences can render a species more susceptible or resistant compared to humans.

Supporting Experimental Data: Evidence underscores the limitation of default factors. A meta-analysis of enzyme activities suggests that chemical-specific adjustment factors can vary widely, often exceeding the standard 10-fold factor [18]. For instance, studies on human variability in susceptibility to cancer have suggested a factor of 25 may be more appropriate than 10 [18]. This metabolic variability is a key reason why a one-size-fits-all safety factor is problematic.

Detailed Experimental Protocol: In Vitro Metabolic Stability Assay for Cross-Species Comparison

Objective: To quantify interspecies differences in the metabolic clearance of a test compound using hepatic subcellular fractions.

Materials:

- Test Compound: Solution of known concentration.

- Liver Microsomes/S9 Fractions: From human, rat, mouse, and dog (commercially available).

- Co-factor Solutions: NADPH regenerating system (for Phase I), UDPGA (for Phase II).

- Buffers: Potassium phosphate buffer (pH 7.4).

- Stop Solution: Acetonitrile with internal standard.

- Analytical Instrumentation: LC-MS/MS system.

Procedure:

- Incubation: Combine microsomes (0.5 mg/mL protein), test compound (1 µM), and co-factors in buffer. Run duplicates.

- Time Course: Aliquot reactions are stopped with ice-cold acetonitrile at pre-set times (e.g., 0, 5, 15, 30, 60 min).

- Sample Processing: Centrifuge stopped reactions; analyze supernatant via LC-MS/MS to determine parent compound concentration.

- Data Analysis: Plot ln(compound concentration) vs. time. The slope represents the elimination rate constant (k). Calculate in vitro intrinsic clearance: CLint, in vitro = k / (microsomal protein concentration).

- Comparison: Normalize clearance values across species (e.g., per mg microsomal protein). A 10-fold or greater difference in CLint indicates significant metabolic variability not captured by default factors.

Factor 2: Physiological Scaling and Allometry

Role in Variability: Fundamental physiological processes—such as cardiac output, glomerular filtration, and metabolic rate—scale predictably with body size across mammalian species. Ignoring these relationships is a major source of error in dose extrapolation [19].

Supporting Experimental Data: Empirical analysis of pharmacokinetic data from 71 substances across species shows strong agreement with caloric demand scaling (exponent 0.75), but poor agreement with simple body weight scaling (exponent 1.0) [19]. For example, clearance (CL) scales with BW^0.75, meaning a smaller animal clears a proportionally larger dose per kg body weight per unit time. Consequently, administering equal mg/kg doses to a mouse and a human does not result in equivalent systemic exposure; the mouse experiences a lower exposure, making it appear less sensitive if raw LD50 values are compared directly.

Allometric Scaling Calculation: The general allometric equation is: P = a × BW^b Where P is the physiological parameter (e.g., equivalent dose), a is the allometric coefficient, BW is body weight, and b is the allometric exponent (0.75 for caloric demand scaling).

Table 2: Allometric Scaling Factors for Interspecies Extrapolation (Based on a 70 kg Human)

| Species | Typical Body Weight (kg) | Body Weight Scaling Factor (BW^1.0) | Caloric Demand Scaling Factor (BW^0.75) |

|---|---|---|---|

| Mouse | 0.025 | 2800 | 12.9 |

| Rat | 0.25 | 280 | 7.2 |

| Dog | 10 | 7 | 2.3 |

| Human | 70 | 1 | 1.0 |

Interpretation: To estimate a human equivalent dose (HED) from a rat LD50 of 100 mg/kg using caloric demand scaling: HED = 100 mg/kg ÷ 7.2 ≈ 13.9 mg/kg. Using body weight scaling would incorrectly suggest a HED of 100 mg/kg ÷ 280 ≈ 0.36 mg/kg, vastly overestimating human sensitivity.

Factor 3: Route of Exposure and Toxicokinetics

Role in Variability: The route of exposure (oral, intravenous, dermal, inhalation) dramatically affects the internal dose by influencing absorption rate, first-pass metabolism, and bioavailability [21]. This introduces significant variability when comparing LD50 values derived from different routes.

Supporting Experimental Data: Analysis of 527 compounds shows lethal toxicity (log 1/LD50) is well-correlated between intravenous (IV), intraperitoneal (IP), and intramuscular (IM) routes (R > 0.91), but poorly correlated between oral and IV routes (R = 0.82) [21]. Systemic bias exists: IV administration is typically the most sensitive route, while oral is the least sensitive, primarily due to differences in absorption rates and first-pass hepatic metabolism [21]. For example, the insecticide dichlorvos shows an oral LD50 in rats of 56 mg/kg but an intraperitoneal LD50 of just 15 mg/kg [3].

Table 3: Impact of Exposure Route on Acute Toxicity in Rats (Illustrative Data)

| Chemical | Oral LD50 (mg/kg) | Intraperitoneal LD50 (mg/kg) | Dermal LD50 (mg/kg) | Inhalation LC50 (ppm/4h) |

|---|---|---|---|---|

| Dichlorvos [3] | 56 | 15 | 75 | 1.7 |

| Ethanol [1] | 7060 | Not Provided | Not Provided | Not Provided |

| Sodium Chloride [1] | 3000 | Not Provided | Not Provided | Not Provided |

Detailed Experimental Protocol: Toxicokinetic Study Across Routes

Objective: To characterize the effect of exposure route on systemic exposure (AUC) and acute toxicity for a test compound.

Materials:

- Test Animals: Groups of male/female rats (e.g., Sprague-Dawley).

- Test Compound: Formulated for oral gavage, IV injection, and dermal application.

- Dosing Supplies: Gavage needles, syringes, IV catheters, occlusive dressings for dermal route.

- Blood Collection: Catheters or microsampling techniques.

- Bioanalytical Equipment: LC-MS/MS.

Procedure:

- Dosing: Administer a single sub-lethal dose of the compound to separate animal groups via oral, IV, and dermal routes. Dose levels should be equimolar.

- Serial Blood Sampling: Collect blood samples at multiple time points post-administration (e.g., 5, 15, 30 min, 1, 2, 4, 8, 24h).

- Bioanalysis: Quantify parent compound (and major metabolites) concentration in plasma.

- Toxicokinetic Analysis: Calculate AUC, Cmax, Tmax, and bioavailability (F) for each route. Bioavailability (F) = (AUCoral / Doseoral) / (AUCIV / DoseIV).

- Correlation with LD50: Compare the AUC at the LD50 dose for each route (if data available). The route with the lowest AUC at lethality is the most systemically potent.

Visualization of Key Concepts

Diagram 1: Framework for Interspecies LD50 Extrapolation (760x460 px)

Table 4: Key Research Reagent Solutions for Interspecies Toxicity Research

| Tool / Resource | Function in Interspecies Research | Example/Source |

|---|---|---|

| Population-Based In Vivo Models | Model genetic diversity within a species to quantify variability in toxic response and identify sensitive subpopulations. | Diversity Outbred (DO) mice, Collaborative Cross (CC) mice [18]. |

| In Vitro Metabolic Systems | Compare species-specific metabolic rates and pathways without in vivo studies. | Liver microsomes, hepatocytes, or S9 fractions from human, rat, dog, mouse [20]. |

| Genetically Diverse Cell Lines | Assess inter-individual human variability in toxicodynamic response in a controlled system. | Population-based human cell line models (e.g., from 1000 Genomes donors) [18]. |

| Consensus QSAR Platforms | Generate health-protective LD50 predictions when experimental data are absent, using consensus of multiple models. | CATMoS, VEGA, TEST models combined into a Conservative Consensus Model (CCM) [22]. |

| Curated Reference LD50 Databases | Provide high-quality, replicated in vivo data to understand baseline variability and validate alternative methods. | AcutoxBase, EPA CompTox Chemicals Dashboard, ECHA database [23]. |

| PBPK Modeling Software | Integrate physiological, physicochemical, and metabolic data to mechanistically simulate internal dose across species and routes. | Commercial (GastroPlus, Simcyp) and open-source (PK-Sim) platforms. |

| Chemical Descriptor Software | Calculate physicochemical properties (logP, pKa, molecular weight) critical for QSAR and kinetic modeling. | OPERA, RDKit, ChemAxon. |

The comparative analysis of median lethal dose (LD50) values across different species represents a foundational pillar in ecotoxicology and drug development. To synthesize these discrete comparisons into a robust, predictive framework for ecological risk assessment, researchers employ Species Sensitivity Distributions (SSDs). An SSD is a statistical model that characterizes the variation in sensitivity to a toxicant across a community of species, typically by fitting a probability distribution (often log-normal) to a set of toxicity data points, such as LD50 or EC50 values [24]. The core parameters of this distribution—the mean and the standard deviation (SD)—quantify the central tendency and the variability in species sensitivity, respectively [24].

Accurately determining these parameters is critical. They are used to calculate the Hazardous Concentration for 5% of species (HC5), a key metric for deriving predicted no-effect concentrations (PNECs) for environmental pollutants [25] [24]. However, constructing a reliable SSD traditionally requires toxicity data for a minimum of 8-10 species, a significant barrier for new or data-poor chemicals [24]. This challenge has driven innovation in two key areas: 1) the development of computational models to predict SSD parameters with limited data, and 2) the refinement of statistical frameworks to quantify uncertainty in SSD estimates. This guide objectively compares these emerging methodologies against traditional laboratory-based testing, providing researchers with a clear overview of the tools available for quantifying variability in species sensitivity.

Product Comparison Guide: QSAR Platforms for LD50 and Toxicity Prediction

A primary alternative to extensive biological testing is the use of Quantitative Structure-Activity Relationship (QSAR) models. These computational tools predict toxicological endpoints, like rat oral LD50, based on the chemical structure of a compound [22]. The following table compares the performance of leading QSAR platforms and a consensus approach in predicting Globally Harmonized System (GHS) acute toxicity categories.

Table 1: Performance Comparison of QSAR Models for Acute Oral Toxicity Prediction (Rat LD50) [22]

| Model / Approach | Key Description | Over-prediction Rate (Less Safe) | Under-prediction Rate (More Safe) | Primary Utility |

|---|---|---|---|---|

| TEST | Standalone QSAR model for toxicity estimation. | 24% | 20% | Initial screening, data gap filling. |

| CATMoS | Comprehensive Automated Toxicological Model using Simulations. | 25% | 10% | High-throughput toxicity prediction. |

| VEGA | Platform hosting multiple validated QSAR models. | 8% | 5% | Regulatory assessments, robust predictions. |

| Conservative Consensus Model (CCM) | Adopts the lowest predicted LD50 from TEST, CATMoS, and VEGA. | 37% | 2% | Health-protective screening under high uncertainty. |

Comparative Analysis: While individual models like VEGA offer a balanced performance with low under-prediction (5%), the Conservative Consensus Model (CCM) is explicitly designed for a precautionary principle [22]. By selecting the lowest predicted LD50 value from three independent models, the CCM minimizes the risk of underestimating toxicity (2% under-prediction) at the cost of a higher over-prediction rate (37%) [22]. This makes the CCM particularly valuable for prioritizing chemicals of concern or for making initial safety decisions in the absence of experimental data.

Experimental Protocol: Consensus QSAR Modeling

The methodology for developing and applying the CCM, as detailed in the research, involves a defined workflow [22].

- Input Compilation: The chemical structures of 6,229 organic compounds are standardized.

- Parallel Prediction: Each compound is submitted to three independent QSAR models: TEST, CATMoS, and VEGA to generate three distinct rat oral LD50 predictions.

- Consensus Application: For each compound, the lowest predicted LD50 value among the three outputs is identified and assigned as the CCM prediction.

- Validation & Categorization: Predicted LD50 values are converted into GHS toxicity categories. Performance is evaluated by comparing these predicted categories to the categories derived from experimental LD50 values, calculating rates of over- and under-prediction.

Diagram 1: Workflow of a Conservative Consensus QSAR Model for LD50 Prediction (77 characters)

Core Methodology: Quantifying SSD Parameters and Their Uncertainty

When experimental data for multiple species are available, the standard approach is to fit a log-normal distribution to the log10-transformed toxicity values (e.g., LD50s). The mean (μ) and standard deviation (σ) of this fitted distribution are the foundational SSD parameters [24].

Advanced Protocol: Estimating SSD Parameters from Minimal Data

A breakthrough protocol addresses the data scarcity problem by using toxicity data from just three species to predict the μ and σ for a full SSD [24].

- Data Curation: Collect quality-assured acute toxicity data (LC50/EC50) for at least 8 species per chemical, spanning algae, crustaceans, and fish. Data is log10-transformed [24].

- Full SSD Parameterization: For each chemical, calculate the sample mean and sample SD of the log-transformed data from all available species (the "true" reference values) [24].

- Three-Species Subsampling: For each chemical, randomly select one species from each of the three core taxonomic groups (algae, crustacean, fish). Calculate the mean and SD from only these three data points [24].

- Model Development: Build multiple linear regression models where the full SSD's μ and σ are the dependent variables. Predictors include physicochemical descriptors (e.g., log KOW) and, crucially, the mean and SD calculated from the three-species subset [24].

- Validation: Models are validated using a hold-out set of chemicals not used in the training phase [24].

Table 2: Performance of Models Predicting Full SSD Parameters from Limited Data [24]

| Prediction Target | Predictor Variables Used | Coefficient of Determination (R²) | Key Interpretation |

|---|---|---|---|

| SSD Mean (μ) | Physicochemical descriptors only (e.g., log KOW) | 0.62 | Descriptors alone explain a moderate amount of variance. |

| SSD Mean (μ) | Mean & SD from 3 species + descriptors | 0.96 | Three-species data drastically improves prediction accuracy. |

| SSD SD (σ) | Physicochemical descriptors only | 0.49 | Poor predictive power for variability using structure alone. |

| SSD SD (σ) | Mean & SD from 3 species + descriptors | 0.75 | Substantial improvement, enabling feasible σ estimation. |

Quantifying Uncertainty: The Assessment Factor Framework

The uncertainty in an estimated HC5 is formally quantified using an Assessment Factor (AF). The required AF size depends on the sample size (number of species tested) and the true, unknown variability (σ) in species sensitivity [25]. A derived statistical relationship shows that to protect 95% of species with 95% confidence, the AF can be calculated as [25]: AF = exp( t * σ * √(1 + 1/n) ) Where t is the critical value from the non-central t-distribution, σ is the estimated SD of the log-normal SSD, and n is the sample size [25]. This equation explicitly guides researchers on how much to adjust an HC5 estimate based on the quality and variability of their data.

Diagram 2: From Toxicity Data to Protected Concentration with Assessment Factors (95 characters)

Case Study: Beyond LD50 - The BeeGUTS TKTD Modeling Approach

A case study on bee pesticide risk assessment illustrates a paradigm shift from comparing static LD50 values to modeling dynamic toxicological processes. Traditional bee risk assessment relies on comparing 48-hour LD50 values between honey bees and other species [26]. However, the LD50 is time-dependent and influenced by physiological differences (e.g., body size, honey stomach volume), confounding true sensitivity comparisons [26].

The Toxicokinetic-Toxicodynamic (TKTD) modeling approach (BeeGUTS) separates these factors [26].

- Toxicokinetics (TK): Models the uptake, distribution, and elimination of the chemical within the bee over time.

- Toxicodynamics (TD): Models the damage accumulation and resulting mortality, characterized by an internal effect threshold, a time-independent parameter.

Table 3: Comparison of Traditional LD50 vs. TKTD Modeling for Bee Sensitivity Assessment

| Aspect | Traditional 48-hr LD50 Comparison | BeeGUTS TKTD Modeling Approach |

|---|---|---|

| Core Metric | Median Lethal Dose at 48 hours. | Internal threshold concentration (TD parameter). |

| Time Dependency | Highly time-dependent; value valid only for the test duration. | Time-independent; separates kinetics from dynamics. |

| Physiology Influence | Confounded by species size, feeding rates, and detoxification speed. | Explicitly accounted for in the toxicokinetic module. |

| Comparative Outcome | Can overestimate sensitivity differences between species [26]. | Reveals that honey bees are among the more sensitive species, with smaller inter-species differences than LD50s suggest [26]. |

| Data Requirement | Standard acute test results. | Time-series survival data from acute or chronic tests. |

This approach provides a more robust basis for cross-species extrapolation than simple LD50 comparisons, aligning with the SSD goal of understanding intrinsic sensitivity distributions [26].

Table 4: Key Research Reagent Solutions for SSD and LD50 Comparison Studies

| Item / Solution | Function in Research | Example / Note |

|---|---|---|

| Standard Test Organisms | Provide consistent, comparable toxicity endpoints (LC50, EC50) for SSD construction. | Algae (Pseudokirchneriella subcapitata), Crustacean (Daphnia magna), Fish (Oncorhynchus mykiss) [24]. |

| QSAR Software Platforms | Predict toxicity endpoints and classify hazards for data-poor chemicals. | VEGA, TEST, CATMoS models for rat oral LD50 prediction [22]. |

| Physicochemical Descriptors | Serve as predictors in models linking chemical structure to SSD parameters. | Log KOW (octanol-water partition coefficient), molecular weight [24]. |

| Curated Toxicity Databases | Source of quality-assured experimental data for model training and validation. | Data from regulatory assessments (e.g., Japan's IERAs) [24]. |

| Statistical Software & Packages | Perform distribution fitting, calculate HC5/PNEC, and implement uncertainty analysis. | R or Python with packages for SSD modeling and non-central t-distribution calculations [25]. |

| TKTD Modeling Software | Analyze time-series toxicity data to derive intrinsic sensitivity parameters. | BeeGUTS model framework for bee toxicity data [26]. |

Diagram 3: Logical Workflow Integrating Key Toolkit Components for SSD Analysis (85 characters)

Core Mechanistic Comparison of Toxicant Classes

This guide organizes toxicants by their primary Mode of Action (MoA), defined as a general functional or anatomical change leading to adverse outcomes, distinct from the precise biochemical mechanism [27]. This framework is essential for hazard assessment, predicting additive effects in mixtures, and interpreting cross-species differences in toxicity metrics like LD₅₀.

Table 1: Foundational Comparison of Narcotic, Neurotoxic, and Specific Toxicant Modes of Action

| Characteristic | Narcotics (Baseline Toxicity) | Neurotoxicants | Specific Receptor/Enzyme Toxicants |

|---|---|---|---|

| Primary MoA | Nonspecific membrane disruption (narcosis) [27]. | Disruption of nervous system function, structure, or development [28]. | Specific interaction with a defined molecular target (e.g., receptor, enzyme). |

| Biochemical Target | Lipid bilayer of cellular membranes [27]. | Ion channels, neurotransmitter systems, neuronal or glial cell integrity [29] [28]. | Specific proteins (e.g., Ah receptor, acetylcholinesterase) [27]. |

| Key Molecular Event | Accumulation in membranes, altering integrity and function [27]. | Oxidative stress, excitotoxicity, mitochondrial dysfunction, neuroinflammation [29] [30]. | High-affinity binding or catalytic inhibition/activation. |

| Potency Correlation | Correlates with lipophilicity (Kow); compounds are approximately equipotent based on tissue concentration [27]. | Varies widely; depends on affinity for neural targets and pharmacokinetics [29]. | Correlates with affinity for the specific target; potency varies dramatically between congeners [27]. |

| Additivity in Mixtures | Concentrations are directly additive (similar action) [27]. | May be additive if they share the same neurotoxic pathway (e.g., oxidative stress) [29]. | Additive only for compounds acting on the same specific target [27]. |

| Tissue Concentration at Effect | Acute lethality at ~2–8 μmol/g (lipid-normalized) [27]. | Sub-lethal effects can occur at very low concentrations; lethal dose varies [29]. | Can trigger effects at very low tissue concentrations (e.g., mutagenicity) [27]. |

| Representative Agents | Simple PAHs, many inert organic solvents [27]. | Methamphetamine, cocaine, lead, manganese, organophosphates (chronic) [29] [28]. | TCDD (AhR agonist), organophosphate pesticides (AChE inhibitors), MPP+ [27] [31]. |

Table 2: Comparison of Neurotoxicant Subcategories and Their Specific Mechanisms

| Neurotoxicant Class | Primary Molecular Target/Event | Downstream Consequences | Functional/Clinical Manifestation |

|---|---|---|---|

| Psychostimulants (e.g., Methamphetamine, Cocaine) | Monoamine transporter disruption → excessive dopamine/serotonin release [29]. | Oxidative stress, mitochondrial dysfunction, excitotoxicity [29]. | Cognitive impairment, psychosis, motor deficits [29]. |

| Dissociative Anesthetics (e.g., Ketamine, N₂O) | NMDA receptor antagonism (Ketamine) [29]; NMDAR antagonism & cobalamin oxidation (N₂O) [32]. | Glutamatergic disruption, excitotoxicity, impaired methylation & myelination (N₂O) [29] [32]. | Cognitive deficits, demyelinating neuropathy, paralysis [29] [32]. |

| Opioids (e.g., Heroin) | μ-opioid receptor agonism [29]. | Oxidative stress, neuroinflammation, mitochondrial dysfunction [29]. | Respiratory depression, cognitive deficits, leukoencephalopathy [29]. |

| Developmental Neurotoxicants (e.g., lead, PCBs) | Disruption of neural cell proliferation, migration, differentiation, synaptogenesis [31]. | Altered neural circuitry and connectivity [31]. | Lifelong cognitive, motor, or social deficits [31]. |

| Local Anesthetic Toxicity (e.g., high-dose lidocaine) | Disruption of mitochondrial function, uncoupling of oxidative phosphorylation [28]. | ATP depletion, oxidative stress, neuronal damage [28]. | Persistent neurological injury (e.g., cauda equina syndrome) [28]. |

Key Experimental Protocols for MoA Identification and Toxicity Assessment

In Vivo Acute Oral LD₅₀ Determination (OECD TG 423, 223)

This protocol establishes a foundational toxicity metric for cross-species and cross-compound comparison [4].

- Objective: Determine the single dose of a substance that causes lethality in 50% of a test population within a specified period [4].

- Test System: Typically rodents (rats/mice) or birds (e.g., Bobwhite quail) [4] [33]. Species selection is critical for extrapolation.

- Procedure:

- Healthy young adult animals are randomly assigned to dose groups (typically 4-6).

- Test compound is administered once via oral gavage in a graduated dose series.

- Animals are observed meticulously for 14 days for signs of morbidity, mortality, and toxic response (clinical observations can provide initial MoA clues).

- Time of death and gross pathological findings are recorded.

- Data Analysis: Mortality data are plotted (dose vs. % mortality) to generate a dose-response curve. The LD₅₀ value and its confidence interval are calculated using probit or logit analysis [4].

- Interpretation for MoA: Steep dose-response curves may indicate a specific receptor-mediated MoA, while shallower curves can suggest nonspecific toxicity. Notable differences in LD₅₀ across species can hint at variations in toxicokinetics or the presence/absence of a specific target [33].

High-Throughput In Vitro Neurotoxicity Screening (NeuriTox Assay)

This protocol identifies specific neurotoxicants by assessing neurite outgrowth impairment, distinguishing them from general cytotoxins [31].

- Objective: Screen chemical libraries for specific developmental neurotoxicity potential using human-derived neurons.

- Test System: LUHMES cells (Lund human mesencephalic cells), differentiated into dopaminergic neurons over 6 days [31].

- Procedure [31]:

- Cell Culture & Differentiation: LUHMES progenitors are seeded and differentiated using tetracycline, cAMP, and GDNF to mature neurons.

- Compound Exposure: On day 6 of differentiation, cells are exposed to test compounds (e.g., a range up to 20 µM) for 48 hours in a 96-well plate format.

- High-Content Imaging: Cells are fixed, stained for neuronal markers (e.g., β-III-tubulin) and a nuclear dye.

- Image Analysis: Automated analysis quantifies total neurite length per neuron and cell viability (nuclear count/health).

- Data Analysis: Concentration-response curves are generated for both neurite length and viability. A compound is flagged as a specific neurotoxicant if it significantly inhibits neurite outgrowth at concentrations that do not reduce cell viability [31].

- Interpretation for MoA: This assay can pinpoint compounds with specific MoAs affecting neuronal connectivity (e.g., microtubule disruptors like colchicine) and differentiate them from baseline narcotics, which typically show concurrent cytotoxicity [31].

Visualizing Mechanistic Pathways and Screening Workflows

Diagram 1: Comparative Pathways from Exposure to Adverse Outcome by MoA

Comparative Toxicant Pathways: Narcotics, Neurotoxicants, Specific Toxicants

Diagram 2: High-Throughput Screening Workflow for Neurotoxicant Identification

In Vitro Screening Workflow for Specific Neurotoxicity [31]

The Scientist's Toolkit: Essential Reagents and Models

Table 3: Key Research Reagent Solutions for MoA-Driven Toxicology

| Reagent / Model System | Function in MoA Research | Application Example |

|---|---|---|

| LUHMES Cells | Human-derived dopaminergic neuronal precursor cell line. Differentiate into mature, homogeneous neurons for high-throughput neurotoxicity screening [31]. | Identifying specific developmental neurotoxicants by measuring neurite outgrowth inhibition (NeuriTox assay) [31]. |

| Neuronal Differentiation Media | Typically contains tetracycline, cAMP, and glial cell-derived neurotrophic factor (GDNF). Drives and supports terminal differentiation of progenitor cells into functional neurons [31]. | Essential for preparing LUHMES or similar cells for neurotoxicity testing that requires mature neuronal phenotypes [31]. |

| Poly-L-Ornithine (PLO) / Fibronectin Coating | Provides a substrate that enhances neuronal attachment, survival, and neurite outgrowth in vitro. | Standard coating protocol for culturing primary neurons or neuronal cell lines like LUHMES [31]. |

| High-Content Imaging Assay Kits | Kits containing fluorescent dyes for β-III-tubulin (neurons), MAP2 (neurites), and nuclear/viability markers (e.g., DAPI, propidium iodide). Enable multiplexed, automated quantification of neuronal health. | Critical for endpoint analysis in high-throughput neurotoxicity screens to quantify neurite length and cell viability simultaneously [31]. |

| NTP80 Compound Library | A curated library of 75+ chemicals with known or suspected neuro/developmental toxicity, PAHs, flame retardants, etc. Serves as a benchmark for assay validation [31]. | Used as a test set to evaluate the predictive performance of new in vitro toxicity assays (e.g., NeuriTox, PeriTox) [31]. |

| Bobwhite Quail (Colinus virginianus) | Standard avian model for in vivo acute oral toxicity testing (OECD TG 223). Used for determining species-specific LD₅₀ values [33]. | Developing cross-species toxicity extrapolation models and QSARs for ecological risk assessment [33]. |

From Animal Data to Human Predictions: Methodologies for Extrapolating and Applying Cross-Species LD50

Lethal Dose 50 (LD50) and Lethal Concentration 50 (LC50) are standard measures of acute toxicity, representing the dose or concentration of a substance required to cause death in 50% of a treated test population within a defined period [3]. These values provide a foundational, quantitative method for comparing the relative poisoning potency of different chemicals, overcoming the challenge of comparing diverse toxic effects by using mortality as a common, unambiguous endpoint [3]. The concept was pioneered by J.W. Trevan in 1927 to standardize the evaluation of drugs and medicines [3] [5].

Acute toxicity refers to adverse effects occurring shortly after a single administration or a short-term exposure (typically up to 24 hours or 14 days of observation) [3]. LD50 applies to administered doses (oral, dermal, injection), while LC50 specifically refers to concentrations in an environmental medium, most commonly air for inhalation studies [3]. The values are expressed as the weight of chemical per unit body weight of the test animal (e.g., mg/kg for LD50) or as a concentration in air (e.g., ppm or mg/m³ for LC50) [3].

These tests are crucial for hazard identification, safety labeling (e.g., signal words like "Danger" or "Warning"), and establishing initial safety guidelines for human exposure, particularly in occupational and environmental health [34] [35]. They help define the toxic potency of a substance, where a lower LD50/LC50 value indicates higher toxicity [3] [4].

Comparative Analysis of Oral, Dermal, and Inhalation Study Designs

The route of exposure fundamentally influences a chemical's toxicity profile and is a critical variable in hazard assessment. Oral, dermal, and inhalation studies are designed to reflect different real-world exposure scenarios.

Oral LD50 Studies are the most frequently performed lethality tests [3]. This route is relevant for assessing risks from ingestion, such as drug overdose, food contamination, or accidental poisoning [3]. Experimentally, it is simpler and less expensive than other methods [3].

Dermal LD50 Studies evaluate toxicity when a chemical is applied to the skin, which is vital for occupational safety where skin contact is common [3]. The test measures the dose required to cause systemic toxicity after percutaneous absorption.

Inhalation LC50 Studies assess the toxicity of airborne substances, including gases, vapors, and particulates [3]. This is critical for setting workplace air quality standards and evaluating the risk from airborne pollutants. Exposure in these studies is typically for a fixed duration, often 4 hours [3].

The following table summarizes the core design elements and regulatory applications of these three primary test paradigms.

Table 1: Core Design Elements of Traditional Acute Toxicity Tests by Exposure Route [3] [34] [35]

| Design Parameter | Oral LD50 Study | Dermal LD50 Study | Inhalation LC50 Study |

|---|---|---|---|

| Primary Objective | Determine lethal dose by ingestion. | Determine lethal dose via skin absorption. | Determine lethal concentration by respiration. |

| Typical Test Species | Rat, mouse [3]. | Rat, rabbit [3]. | Rat, mouse [3]. |

| Exposure Duration | Single administration (gavage or feeding). | Single application to clipped skin (often 24-hour occluded contact). | Fixed period (commonly 4 hours) [3]. |

| Observation Period | Usually 14 days post-administration [3]. | Usually 14 days post-application [3]. | Usually 14 days post-exposure [3]. |

| Key Expression of Result | LD50 (mg of substance/kg body weight). | LD50 (mg of substance/kg body weight). | LC50 (ppm or mg/m³) with exposure time (e.g., 4-hr LC50). |

| Primary Regulatory Use | Drug safety, food poisoning, consumer product labeling [3]. | Occupational safety for chemicals handled by workers [3] [35]. | Workplace air quality standards, volatile chemical labeling [3]. |

| GHS Hazard Category (Example) | Cat. 1: ≤5 mg/kg; Cat. 2: >5-50 mg/kg [35]. | Cat. 1: ≤50 mg/kg; Cat. 2: >50-200 mg/kg [35]. | (Based on 4-hr exposure) Cat. 1: ≤0.1 mg/L; Cat. 2: >0.1-0.5 mg/L [34]. |

Detailed Experimental Protocols and Methodologies

Oral Acute Toxicity (OECD Guidelines)

Standardized guidelines, such as those from the Organisation for Economic Co-operation and Development (OECD), ensure consistency and reliability. The traditional oral LD50 test (OECD TG 401) has been largely superseded by more humane Fixed Dose Procedure (OECD TG 420), Acute Toxic Class Method (OECD TG 423), and Up-and-Down Procedure (OECD TG 425) [34]. These refined methods use fewer animals and focus on observing signs of severe toxicity rather than necessarily lethality.

A typical Fixed Dose Procedure involves [34]:

- Animal Selection: Healthy young adult rodents (usually rats), fasted prior to dosing.

- Dose Administration: A single dose is administered by oral gavage. Testing starts at a dose expected to produce clear signs of toxicity but not severe lethal effects (e.g., 50, 150, 500 mg/kg).

- Clinical Observations: Animals are observed intensively for toxic signs (e.g., changes in motor activity, tremors, convulsions, lethargy) for at least 14 days.

- Pathological Examination: Survivors are necropsied, and gross pathological changes are noted.

- Endpoint Determination: The study identifies the dose causing clear, observable toxicity. An LD50 estimate can be derived, but the primary goal is to classify the substance into a potency band (e.g., highly toxic, moderately toxic).

Dermal Acute Toxicity (OECD Guidelines)

The Dermal LD50 Test (OECD TG 402) evaluates systemic toxicity after a single topical application [34].

Key Protocol Steps:

- Skin Preparation: Fur is closely clipped from the dorsal/lumbar region of the test animal (e.g., rat, rabbit) 24 hours before application.

- Substance Application: The test substance, often moistened with water or a vehicle, is uniformly applied over approximately 10% of the body surface area. The site is covered with a porous gauze dressing and occlusive wrapping to prevent ingestion and evaporation for a 24-hour period.

- Post-Exposure: After 24 hours, the wrapping is removed, and residual test substance is washed off.

- Observations: Animals are observed daily for 14 days for mortality, clinical signs of toxicity, and local skin reactions (irritation).

- Data Analysis: Mortality data is analyzed using probit or logistic regression to calculate the dermal LD50 value in mg/kg.

Inhalation Acute Toxicity (OECD Guidelines)

The Acute Inhalation Toxicity Test (OECD TG 403) determines the LC50 of a substance in air [34].

Key Protocol Steps:

- Generation of Exposure Atmosphere: The test substance (gas, vapor, aerosol, or dust) is generated in a calibrated inhalation chamber at a constant, measurable concentration.

- Exposure: Groups of animals (usually rats) are placed in the chamber and exposed nose-only or whole-body to the target concentration for a fixed period, typically 4 hours [3].

- Post-Exposure Monitoring: Animals are removed, monitored for clinical signs, and observed for a standard 14-day period.

- Analytical Verification: The actual concentration of the test substance in the chamber air is measured analytically throughout the exposure period.

- Endpoint Calculation: The LC50 (expressed in mg/L or ppm) is calculated based on mortality at the end of the observation period.

Experimental Workflow for Acute Toxicity Testing

Comparative Toxicity Data and Interspecies Variation

A chemical's measured toxicity can vary dramatically depending on the route of exposure and the species tested. These differences are critical for accurate risk extrapolation.

Route-to-Route Comparisons

Data for specific chemicals illustrate how toxicity can change with the exposure pathway. For example, the insecticide dichlorvos shows significantly higher toxicity via inhalation compared to oral or dermal routes [3]:

- Oral LD50 (rat): 56 mg/kg

- Dermal LD50 (rat): 75 mg/kg

- Inhalation LC50 (rat): 1.7 ppm (4-hour exposure)

This pattern highlights the efficiency of the respiratory system in absorbing toxins and delivering them to systemic circulation. Consequently, a substance classified as "moderately toxic" orally may be "extremely toxic" by inhalation according to standard toxicity scales [3].

Interspecies Sensitivity

Significant differences in sensitivity exist even among avian species, as shown in a dataset comparing oral and dermal LD50 values for various pesticides [36]. For instance, for the insecticide fensulfothion:

- Oral LD50 for House Sparrow: 0.32 mg/kg

- Oral LD50 for Mallard Duck: 0.749 mg/kg

- Dermal LD50 for House Sparrow: 1.00 mg/kg

- Dermal LD50 for Mallard Duck: 2.86 mg/kg

These data show the house sparrow is more sensitive than the mallard to this compound by both routes. A broader analysis of bird species data found a statistically significant but moderate correlation (r=0.55) between oral and dermal LD50 values, leading to the predictive model: log Dermal LD50 = 0.84 + 0.62 * log Oral LD50 [36]. This model suggests dermal toxicity is generally lower (higher LD50) but can be estimated from oral data with caution, as the relationship is not perfectly predictive.

Table 2: Interspecies Variation in Acute Toxicity for Selected Chemicals [3] [36]

| Chemical | Species | Oral LD50 (mg/kg) | Dermal LD50 (mg/kg) | Inhalation LC50 | Notes |

|---|---|---|---|---|---|

| Dichlorvos | Rat | 56 | 75 | 1.7 ppm (4h) | Demonstrates high inhalation hazard [3]. |

| Dichlorvos | Rabbit | 10 | - | - | Rabbit is ~5.6x more sensitive than rat orally [3]. |

| Dichlorvos | Pig | 157 | - | - | Pig is ~2.8x less sensitive than rat orally [3]. |

| Carbofuran (Carbamate) | House Sparrow | 1.3 | 100.0 | - | Large (>75x) difference between oral and dermal routes [36]. |

| Parathion (Organophosphate) | Mallard Duck | 2.34 | 28.3 | - | Dermal LD50 is ~12x higher than oral [36]. |

| Disulfoton (Organophosphate) | Mallard Duck | 6.54 | 192.0 | - | Dermal LD50 is ~29x higher than oral [36]. |

| Disulfoton (Organophosphate) | Red-winged Blackbird | 3.2 | 1.00 | - | Exception: Dermal toxicity higher than oral [36]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Conducting standardized LD50/LC50 studies requires specific materials and reagents to ensure consistent, reproducible results.

Table 3: Key Research Reagent Solutions and Materials for LD50/LC50 Studies

| Item | Function in Study | Typical Specification / Example |

|---|---|---|

| Test Substance (Pure Chemical) | The agent whose toxicity is being evaluated. Must be characterized for identity and purity. | >98% purity. Mixtures are rarely studied in classic LD50 tests [3]. |

| Vehicle/Control Article | A solvent or medium used to dissolve or suspend the test substance for administration. Ensures effects are due to the test substance alone. | Water, saline, corn oil, methylcellulose, or acetone (for dermal contact tests) [26]. |

| Laboratory Animal Diets | Provides standardized nutrition before, during (if applicable), and after exposure to maintain animal health and reduce confounding variables. | Certified rodent or rabbit feed, ad libitum except during fasting before oral gavage. |

| Anesthetic/Analgesic Agents | Used ethically for humane endpoints or necessary procedures (e.g., blood collection) during the observation period. | Isoflurane (inhalant), ketamine/xylazine (injectable). Their use is protocol-dependent. |

| Clinical Pathology Reagents | Used to analyze blood and urine samples collected during or post-study to assess organ function and metabolic disturbances. | Kits for serum chemistry (ALT, AST, BUN, creatinine), hematology (CBC), and urinalysis. |

| Fixative Solution | Preserves tissue morphology for subsequent histological examination from necropsied animals. | 10% Neutral Buffered Formalin. |

| Inhalation Chamber & Analytics | For LC50 studies: generates, houses, and analytically verifies the constant concentration of test atmosphere. | Whole-body or nose-only exposure chamber with real-time aerosol monitors or gas analyzers [34]. |

| Dosing Apparatus | Ensures accurate and precise delivery of the test substance. | Oral gavage needle (ball-tipped for safety), calibrated syringe, topical application syringe, occlusive dressing for dermal studies. |

Modern Context: From Traditional LD50 to Cross-Species Prediction

Traditional LD50/LC50 tests provide crucial hazard identification data but have limitations, including high animal use, single-endpoint focus (death), and challenges in translating results directly to human health [5] [4]. Modern toxicology is evolving to address the core thesis of cross-species comparison through more sophisticated frameworks.

1. Mechanistic and Modeling Approaches: Studies now emphasize understanding toxicokinetics (what the body does to the chemical) and toxicodynamics (what the chemical does to the body) [26]. For example, research on bees shows that a 48-hour LD50 is a poor comparator between species because differences in body size, metabolism, and exposure kinetics confound the result [26]. Toxicokinetic-Toxicodynamic (TKTD) models, like the Bee General Uniform Threshold Model for Survival (BeeGUTS), separate physiological kinetics from inherent species sensitivity, providing a more robust basis for cross-species extrapolation [26].

2. Computational and In Vitro Methods: To reduce animal testing and improve human relevance, New Approach Methodologies (NAMs) are being prioritized [35]. These include computational models that use chemical properties and biological data to predict toxicity. Advanced machine learning frameworks now integrate genotype-phenotype differences between preclinical models (e.g., mice) and humans to better predict human-specific toxicities, such as neurotoxicity, which are poorly extrapolated from traditional animal LD50 data alone [37].

Evolution from Traditional LD50 to Predictive Frameworks

A core challenge in ecotoxicology and comparative risk assessment is translating toxicity data across different exposure routes and species. Externally measured endpoints, such as the Lethal Concentration 50 (LC50) for aquatic organisms, are route-specific and difficult to compare directly with Lethal Dose 50 (LD50) values from oral or inhalation studies in mammals [38]. This creates significant uncertainty when extrapolating hazards in a broader ecological or translational context.

This guide objectively compares the standard external (LC50) approach with the alternative method of converting LC50 to an internal dose using Bioconcentration Factors (BCF). The central thesis is that internal dose, estimated as a Critical Body Residue (CBR) or Lethal Residue 50 (LR50), provides a more unified and mechanistic basis for comparing toxicity across species and exposure routes than external concentrations alone [39] [38]. By bridging aquatic and terrestrial exposure routes, this conversion facilitates a more accurate comparison of LD50 values, advancing the goal of understanding intrinsic species sensitivity.

Performance Comparison: External LC50 vs. BCF-Derived Internal Dose

The primary advantage of converting to an internal dose is the reduction of variability introduced by differences in chemical uptake and pharmacokinetics across species. The following tables summarize key comparative findings.

Table 1: Comparison of Core Concepts and Metrics

| Aspect | Standard LC50 Approach | BCF-Based Internal Dose Approach |

|---|---|---|

| Primary Metric | Lethal Concentration 50 (LC50) in water (e.g., mg/L) [40]. | Critical Body Residue (CBR) or Lethal Residue 50 (LR50) in tissue (e.g., mmol/kg) [39] [38]. |

| Basis | External exposure in the medium. | Internal concentration at the presumed site of toxic action. |

| Key Converting Parameter | Not applicable. | Bioconcentration Factor (BCF) or Bioaccumulation Factor (BAF) [40]. |

| Relationship | LC50 = CBR / BCF (at steady-state) [39]. | LR50 = LC50 × BCF [38]. |

| Primary Application | Setting water quality criteria (e.g., CMC, CCC) [40]. | Cross-species and cross-route toxicity extrapolation; mode of action analysis [39] [38]. |

Table 2: Quantitative Comparison of Variability Across Test Types [38]

| Toxicity Metric | Exposure Route | Typical Test Organisms | Average Standard Deviation (log10 units) | Interpretation of Variability |

|---|---|---|---|---|

| LC50 | Aquatic (water) | Fish, invertebrates, algae | ~0.5 | Higher variability. Reflects differences in species' uptake efficiency, gill physiology, and water chemistry. |

| LD50 | Oral (dietary) | Birds, mammals | ~0.3 | Lower variability. Less influenced by external medium, but affected by gut absorption and metabolism. |

| LR50 (LC50 × BCF) | Internal (tissue) | Derived for aquatic species | Reduced vs. LC50 | Lowest variability. Internal concentration is a better predictor of toxic effect, normalizing for uptake differences. |

The data indicate that internal lethal residues (LR50) derived from LC50 and BCF exhibit reduced variability compared to raw LC50 values [38]. This supports the thesis that internal dose serves as a more consistent metric for comparing intrinsic toxicity across diverse species, a foundation for robust LD50 comparisons.

Experimental Protocol for Conversion

The conversion of LC50 to internal dose is grounded in toxicokinetic modeling. The following protocol details the application of a one-compartment model, as used in the Chemical Exposure Toxicity Space (CETS) model [39].

Core Principle and Equation

The model assumes the organism is a single compartment where chemical uptake from water and elimination are first-order processes. The internal concentration in the organism (CF) over time is given by [39]:

C_F = C_W × (k₁/(k₂ + k_M)) × (1 − exp[−(k₂ + k_M)t])

Where:

C_W= Dissolved concentration in water (mg/L)k₁= First-order uptake rate constant (L/kg/day)k₂= First-order elimination rate constant (1/day)k_M= First-order metabolic transformation constant (1/day)t= Exposure time (days)

At a lethal endpoint (e.g., 96-hour LC50), the internal concentration C_F is assumed to equal the Critical Body Residue for 50% lethality (CBR50). The ratio k₁/(k₂ + k_M) is the Bioconcentration Factor (BCF). For a non-metabolized chemical (k_M = 0), the steady-state BCF simplifies to k₁/k₂ [39].

Step-by-Step Conversion Methodology

- Determine the LC50: Obtain a validated LC50 value (preferably time-specific, e.g., 96-hr LC50) for the chemical and species of interest from standard toxicity tests (e.g., OECD Test Guideline 203) [39].

- Obtain or Estimate the BCF:

- Preferred: Use a measured BCF from a standardized test (e.g., OECD TG 305) [39].

- Estimated: For non-polar organic chemicals, a BCF can be estimated from the octanol-water partition coefficient (

K_OW) and the organism's lipid fraction (L):BCF ≈ L × K_OW[39]. - For metabolized chemicals, use a metabolism-corrected BCF (

BCF_M = k₁/(k₂ + k_M)).

- Calculate the Internal Dose (CBR50): Apply the fundamental conversion equation:

CBR_50 = LC_50 × BCFThis yields an estimated Lethal Residue 50 (LR50) in units of mass or moles per kg of organism tissue [38]. - Apply to Cross-Species Comparison: The derived CBR50 (LR50) can be compared directly with:

- LR50 values from other aquatic species for the same chemical.

- Internally-derived doses from mammalian LD50 studies after accounting for mammalian pharmacokinetics (reverse dosimetry) [41].

- Critical target-site concentrations proposed for specific modes of toxic action (e.g., narcosis baseline) [39].

Conceptual and Analytical Workflows

Diagram 1: Workflow for Converting Aquatic LC50 to Internal Dose

Diagram 2: Methodology for Cross-Species Toxicity Comparison [38]

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Tools for BCF-Based Internal Dose Research

| Tool/Reagent | Function in Conversion Research | Typical Source/Example |

|---|---|---|

| Standardized Toxicity Test Kits (e.g., OECD 203) | To generate high-quality, reproducible LC50 data for the chemical of interest. | Commercial lab suppliers; regulatory test guidelines. |

| Bioconcentration Test Systems (e.g., OECD 305) | To generate empirical BCF values via controlled uptake/elimination studies. | Flow-through or semi-static exposure chamber systems. |

| Chemical Analysis Standards (Pure analyte & internal standards) | For accurate quantification of chemical concentration in water and tissue matrices. | Certified reference material (CRM) providers. |

| Passive Sampling Devices (e.g., SPMD, POCIS) | To measure freely dissolved chemical concentration (C_W), improving BCF/LC50 accuracy. |

Environmental sampling suppliers. |

| Toxicokinetic Modeling Software | To implement one-compartment or PBPK models for BCF prediction and dose conversion. | E.g., AQUAWEB model, EPA ECOSAR [39]. |

| High-Throughput Log KOW Analyzers | To measure octanol-water partition coefficient, a key parameter for estimating baseline BCF. | Shake-flask or HPLC-based analytical methods. |