Beyond LD50: The Scientific and Regulatory Revolution in Human-Relevant In Vitro Toxicology Testing

This article provides a comprehensive analysis for researchers and drug development professionals on the paradigm shift away from the classical LD50 animal test.

Beyond LD50: The Scientific and Regulatory Revolution in Human-Relevant In Vitro Toxicology Testing

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the paradigm shift away from the classical LD50 animal test. It explores the scientific limitations and ethical concerns driving the search for alternatives [citation:1][citation:6], examines the suite of modern in vitro methodologies from high-throughput assays to organ-on-chip systems [citation:3][citation:4], addresses key technical and validation challenges in implementation [citation:2][citation:7], and evaluates the comparative performance and regulatory acceptance of these New Approach Methodologies (NAMs) [citation:5][citation:8]. The synthesis concludes that integrated in vitro and in silico strategies are poised to become the new standard for predictive safety assessment.

From LD50 to NAMs: Understanding the Imperative for In Vitro Alternatives

Acute systemic toxicity evaluates the adverse effects occurring within 24 hours after a single or multiple exposures to a substance via oral, dermal, or inhalation routes [1]. For nearly a century, the median lethal dose (LD50)—the dose estimated to kill 50% of a test animal population—has been a cornerstone metric for quantifying this toxicity and comparing the hazardous potential of different chemicals [1] [2]. First introduced by J.W. Trevan in 1927, the LD50 test was designed to standardize the measurement of a substance's poisoning potency, using death as a universal, quantal endpoint [1] [2] [3].

Regulatory bodies have historically required LD50 data for the classification, labeling, and risk assessment of chemicals, pharmaceuticals, and consumer products [1] [4]. The resulting value, expressed as mass of substance per kilogram of animal body weight (e.g., mg/kg), places a chemical on a toxicity scale [2]. As shown in Table 1, a lower LD50 value indicates higher toxicity [1] [5].

Table 1: Acute Oral Toxicity Classification Based on LD50 Values (Rat)

| LD50 Range (mg/kg) | Toxicity Class | Probable Lethal Dose for a 70 kg Human |

|---|---|---|

| ≤ 5 | Extremely Toxic | A taste (< 7 drops) |

| 5 – 50 | Highly Toxic | 1 tsp (4 ml) |

| 50 – 500 | Moderately Toxic | 1 oz (30 ml) |

| 500 – 5000 | Slightly Toxic | 1 pint (600 ml) |

| > 5000 | Practically Non-toxic | > 1 quart (1 L) |

Despite its historical role, the scientific validity and ethical justifiability of the classical LD50 test are now fundamentally questioned. This critique has driven a paradigm shift toward the 3Rs principle (Replacement, Reduction, and Refinement of animal use) and accelerated the development of human-relevant in vitro and in silico methodologies [1] [6].

Scientific Critique and Historical Evolution of Methods

The classical LD50 test, developed in the 1920s, required large numbers of animals (up to 100) distributed across several dose groups to precisely calculate the lethal dose [1]. This method was fraught with significant scientific limitations: high biological variability, substantial cost, and the provision of limited mechanistic data beyond a mortality percentage [1] [7]. Furthermore, the requirement to observe severe suffering and death as primary endpoints raised profound ethical issues [7] [8].

These limitations spurred the development of alternative in vivo methods designed to refine procedures and reduce animal numbers. Regulatory bodies like the Organisation for Economic Co-operation and Development (OECD) have endorsed several of these approaches [1].

Table 2: Evolution of Key Methods for Acute Toxicity Assessment

| Method (OECD Guideline) | Year Introduced | Key Principle | Typical Animal Use | Regulatory Status |

|---|---|---|---|---|

| Classical LD50 | 1920s | Mortality curve across multiple doses | 40-100 animals | Largely abandoned |

| Fixed Dose Procedure (FDP, 420) | 1992 | Identifies toxicity signs at fixed doses, avoids mortality | 5-20 animals | Approved |

| Acute Toxic Class (ATC, 423) | 1996 | Uses stepwise dosing with 3 animals per step | 6-18 animals | Approved |

| Up-and-Down Procedure (UDP, 425) | 1998/2008 | Sequential dosing of single animals | 6-15 animals | Approved |

While these refined in vivo methods represent progress, they do not constitute a full replacement for animal use. A more transformative shift is underway with New Approach Methodologies (NAMs), which include advanced in vitro models and in silico tools. This transition is being actively supported by regulatory agencies; for example, the U.S. FDA announced a plan in 2025 to phase out animal testing requirements for certain drugs, promoting the use of NAMs instead [6].

Ethical and Translational Concerns

The ethical objections to the LD50 test are severe and center on the intense and prolonged suffering inflicted on test animals. Symptoms preceding death can include tremors, convulsions, diarrhea, internal bleeding, and difficulty breathing over a period that may extend to days or weeks [7] [8]. As mortality is the primary endpoint, dying animals are typically not euthanized to relieve suffering, which contravenes modern ethical standards for animal welfare [7].

Beyond ethics, a core scientific failing is the poor human translatability of animal-derived LD50 data. Interspecies differences in anatomy, physiology, and metabolism mean that toxicity results in rodents or rabbits often do not accurately predict human responses [1] [4]. This lack of predictive validity creates tangible human health risks, as dangerous products might be deemed safe or vice versa [4]. The scientific critique is clear: the LD50 test is increasingly viewed as a crude and unreliable tool for modern safety assessment, which demands mechanistic understanding and human-relevant data [7] [5].

Application Notes: In Vitro and Alternative Methodologies

The limitations of the LD50 paradigm have catalyzed the development and validation of non-animal methods that align with the ultimate goal of full replacement. These methodologies offer greater human relevance, mechanistic insight, and throughput.

1. Advanced In Vitro Cell-Based Assays: Engineered human cell lines represent a direct replacement for specific, high-impact animal tests. A landmark example is the development of engineered human neuroblastoma cells for testing botulinum and tetanus toxins, which are otherwise tested in mouse LD50 assays. Researchers modified the cells to express the necessary surface proteins (SV2 and NTNH) that allow toxin uptake. This cell-based assay not only replaces animal use but demonstrated ten times greater sensitivity to botulinum B toxin than the traditional mouse bioassay [9].

2. Microphysiological Systems (MPS) and Organoids: Organ-on-a-chip devices and 3D organoids model complex tissue-level and organ-level functions. These systems use human cells to create miniature models of organs like the liver, lung, or kidney, allowing researchers to study systemic toxic effects and absorption in a more physiologically relevant context than static cell cultures [6].

3. In Silico and Computational Toxicology: Computer models and artificial intelligence (AI) are used to predict acute toxicity based on a compound's chemical structure and existing data from similar compounds. These quantitative structure-activity relationship (QSAR) models are fast, cost-effective, and can prioritize chemicals for further testing, significantly reducing animal use [1] [6].

4. Antibody-Based Assays: Immunoassays like the enzyme-linked immunosorbent assay (ELISA) use antibodies to detect and quantify specific toxins with high sensitivity and specificity. Such assays are now viable alternatives for potency testing of biologics like vaccines and antitoxins, replacing animal-based methods [10].

5. Integrated Testing Strategies: A single alternative method may not capture all aspects of in vivo toxicity. Therefore, the most robust approach is an Integrated Testing Strategy (ITS), which combines information from multiple sources (e.g., in silico predictions, in vitro cytotoxicity data, and in chemico reactivity assays) within a defined framework to make a reliable hazard classification without animal testing [6].

Protocols for Key Methodologies

Protocol 1: RefinedIn VivoAcute Oral Toxicity Test – Fixed Dose Procedure (OECD 420)

This protocol is used for hazard identification and classification while avoiding lethality as an endpoint.

- Test System: Young adult rats (typically females). Healthy, acclimatized animals are used.

- Dose Selection: A sighting study may be performed to choose an appropriate starting dose from predetermined levels (5, 50, 300, 2000 mg/kg).

- Dosing: A single dose is administered orally via gavage to a group of 5 animals.

- Observation: Animals are observed individually for signs of toxicity (e.g., changes in skin, fur, eyes, respiration) at least twice daily for 14 days. Detailed clinical records are kept.

- Decision Tree:

- If animals show clear signs of toxicity but survive, the test is concluded at that dose level.

- If mortality or severe suffering occurs, the test is repeated at the next lower dose.

- If no toxicity is observed, the test is repeated at the next higher dose.

- Classification: The substance is classified based on the dose that produced clear signs of toxicity, without the need to determine a precise LD50 [1].

Protocol 2:In VitroNeutral Red Uptake (NRU) Cytotoxicity Assay (OECD 129)

This baseline cytotoxicity assay identifies substances that are not classified for acute systemic toxicity.

- Cell Culture: Maintain 3T3 mouse fibroblast or normal human keratinocyte (NHK) cells in standard culture conditions.

- Seeding: Seed cells into 96-well plates at a density ensuring logarithmic growth and allow to attach for 24 hours.

- Test Substance Exposure: Prepare a series of test substance dilutions in culture medium. Replace the medium in the wells with the dilutions, including a vehicle control. Incubate for 48 hours.

- Neutral Red Uptake: After exposure, carefully wash cells and add a medium containing Neutral Red dye. Incubate for 3 hours to allow viable cells to incorporate the dye into lysosomes.

- Wash and Extract: Quickly wash cells to remove unincorporated dye. Add a desorb solution (e.g., ethanol/acetate buffer) to extract the dye from the cells.

- Quantification: Measure the absorbance of the extracted dye at 540 nm using a plate reader.

- Data Analysis: Calculate cell viability as a percentage of the control. Determine the IC50 (concentration that inhibits 50% of uptake). According to the guideline, a substance with an IC50 > 1000 µM (or 2000 mg/L for low solubility substances) in both cell lines may be considered not classified for acute oral toxicity [1].

Protocol 3: Cell-Based Assay for Botulinum Toxin Potency (Replacement for Mouse LD50)

This specific protocol outlines the core steps for replacing the mouse bioassay for botulinum neurotoxin type B (BoNT/B).

- Engineered Cell Line: Use engineered human neuroblastoma cells (e.g., SiMa cells) stably expressing the human receptor Synaptotagmin II (Syt II) and the universal booster protein NTNH to enable high-sensitivity toxin uptake [9].

- Cell Preparation: Seed the engineered cells into 96-well assay plates and culture until they reach 80-90% confluency.

- Toxin Exposure and Internalization: Serially dilute the BoNT/B test sample and reference standard in assay buffer. Apply dilutions to the cells. Incubate to allow receptor binding, internalization, and intracellular proteolytic activity.

- Detection of Cleavage Product: Lyse the cells. Detect the cleaved target substrate (e.g., VAMP-2) using a specific antibody pair in a sandwich immunoassay format (e.g., ELISA or electrochemiluminescence).

- Dose-Response Analysis: Plot the signal from the cleavage product against the log of the toxin concentration. Generate a 4-parameter logistic curve for both the test sample and the reference standard.

- Potency Calculation: Calculate the relative potency of the test sample by comparing its half-maximal effective concentration (EC50) to that of the reference standard. This assay has shown a 10x higher sensitivity than the mouse LD50 bioassay [9].

The Scientist's Toolkit: Essential Reagents for In Vitro Alternatives

Table 3: Key Research Reagent Solutions for In Vitro Acute Toxicity Assessment

| Reagent/Material | Function in Experiment | Example Application |

|---|---|---|

| Engineered Neuroblastoma Cells (e.g., expressing Syt II & NTNH) | Engineered to express human toxin receptors, enabling sensitive measurement of toxin internalization and enzymatic activity. | Potency testing of botulinum and tetanus toxins, replacing mouse LD50 bioassay [9]. |

| Toxin-Specific Monoclonal Antibodies | High-specificity binders used in ELISA to detect and quantify toxins or their cleaved substrates. | Quantifying toxin potency in cell lysates; detecting contaminants [10]. |

| Neutral Red Dye Solution | A vital dye taken up and retained by the lysosomes of viable cells; serves as a cytotoxicity endpoint. | 3T3 NRU or NHK NRU assays for baseline cytotoxicity (OECD 129) [1]. |

| Organ-on-a-Chip/Microphysiological System (MPS) | Microfluidic device containing human cells that mimics tissue/organ structure and function for mechanistic toxicity studies. | Modeling absorption and systemic toxicity in human-relevant liver, lung, or gut models [6]. |

| Matrices for 3D Cell Culture (e.g., Basement Membrane Extracts) | Provides a scaffold for cells to form 3D organoid structures with better physiological cell-cell interactions. | Growing hepatic or neuronal organoids for repeated-dose or mechanistic toxicity studies [6]. |

| Cytokine/Apoptosis Detection Kits (e.g., Caspase-3/7 assays) | Measures specific biomarkers of cellular stress, immune response, or programmed cell death pathways. | Identifying mechanistic toxicity pathways activated by test substances in human cell lines. |

Regulatory Landscape and Future Perspectives

The regulatory acceptance of non-animal methods is accelerating. The OECD has approved several in vitro test guidelines for endpoints like skin sensitization and phototoxicity [1] [10]. A pivotal moment occurred with the U.S. FDA Modernization Act 2.0 (2022), which explicitly allowed the use of alternatives to animal testing for drug safety. This was followed in April 2025 by an FDA announcement of a concrete plan to phase out animal testing requirements, starting with monoclonal antibodies [6].

The future of acute toxicity assessment lies in Integrated Approaches to Testing and Assessment (IATA) that combine in silico predictions, high-throughput in vitro data, and targeted in vitro assays on advanced MPS models. The goal is a human-centric, mechanism-based framework that provides superior protection of human health while fully replacing the scientifically and ethically obsolete LD50 test [4] [6].

The LD50 test (median lethal dose), introduced in 1927 for the biological standardization of dangerous drugs, became a widespread benchmark for acute toxicity testing [11]. However, its reliance on administering high doses of substances to large numbers of animals until 50% perish has long been criticized on ethical, scientific, and economic grounds [12] [11]. The pain, distress, and death experienced by animals, coupled with the test's high resource demands and sometimes questionable human relevance, necessitated a paradigm shift [12].

This shift is guided by the 3Rs principles—Replacement, Reduction, and Refinement—first articulated by William Russell and Rex Burch in 1959 [12] [13]. Originally conceived as a framework for humane experimental technique, the 3Rs have evolved into a dynamic engine for scientific innovation, increasingly aligned with regulatory modernisation [14] [13]. Within the context of developing in vitro alternatives to LD50 testing, the 3Rs provide a structured approach: Replacement seeks non-animal methods like advanced cell models and computer simulations; Reduction employs rigorous statistical design and preliminary in vitro screening to minimize animal numbers; and Refinement improves husbandry and procedures to alleviate suffering for animals still required [12].

Today, regulatory acceptance of 3Rs-aligned approaches is accelerating. The 2023 FDA Modernization Act 2.0 in the United States, for example, removed the mandatory requirement for animal testing before human clinical trials, opening the door for alternative methods [14]. This regulatory evolution, alongside advancements in biology and computation, positions the 3Rs not merely as an ethical guideline but as a core framework driving the development of more predictive, human-relevant safety assessments.

The Evolving 3Rs Framework: From Ethics to Regulatory Integration

Foundational Definitions and Modern Reinterpretations

The original 3Rs definitions were established in the context of 1950s science. Their contemporary reinterpretation ensures relevance for modern biomedical research [13].

- Replacement: Originally defined as "the substitution for conscious living higher animals of insentient material" [13]. A modern, proactive interpretation is to "conduct research that completely avoids the use of animals in scientific investigation, regulatory testing, and education" [13]. This encompasses New Approach Methodologies (NAMs), such as organ-on-chip systems or sophisticated in silico models, which may provide novel, sometimes superior, ways to answer research questions rather than simply substituting an existing animal test [13].

- Reduction: Defined as "reduction in the numbers of animals used to obtain information of a given amount and precision" [13]. This is achieved through improved experimental design (e.g., powerful statistical methods), sharing of data and resources, and the use of in vitro screening to prioritize compounds for any necessary in vivo testing [12].

- Refinement: Defined as "any decrease in the incidence or severity of inhumane procedures applied to those animals which still have to be used" [13]. This extends beyond minimizing acute pain to include enhancing housing, enrichment, and care to improve overall animal welfare, which in turn can increase the reliability and translatability of scientific data [12] [13].

Regulatory Adoption and the Rise of NAMs

Global regulatory bodies are increasingly integrating the 3Rs into their guidelines, creating a pivotal driver for change.

- European Union: Directive 2010/63/EU mandates that non-animal methods must be used wherever scientifically possible [13]. The European Medicines Agency (EMA) has published guidelines on the regulatory acceptance of 3Rs testing approaches [14].

- United States: The 2023 FDA Modernization Act 2.0 represents a watershed moment, explicitly allowing the use of alternative methods (including cell-based assays, organ chips, and computer models) to replace traditional animal studies for drug efficacy and safety testing [14].

- International Harmonisation: The Organisation for Economic Co-operation and Development (OECD) and the International Council for Harmonisation (ICH) develop and validate internationally agreed test guidelines for alternatives, such as validated in vitro skin corrosion and phototoxicity assays, facilitating global acceptance [14].

This regulatory shift is underpinned by the development of New Approach Methodologies (NAMs). NAMs are defined as non-animal, human-relevant approaches for hazard and safety assessment, encompassing advanced in vitro models (3D tissues, organoids), in silico tools (QSAR, machine learning), and 'omics technologies [14] [15]. Their integration into Integrated Approaches to Testing and Assessment (IATA) provides a holistic framework for decision-making, combining multiple information sources to replace, reduce, and refine animal use [14] [16].

Table 1: Key Regulatory Milestones Advancing the 3Rs in Toxicology

| Year | Region/Agency | Policy/Milestone | Impact on 3Rs |

|---|---|---|---|

| 1959 | Global (Scientific Community) | Publication of The Principles of Humane Experimental Technique by Russell & Burch [12] [13]. | Established the foundational 3Rs framework. |

| 2010 | European Union | Directive 2010/63/EU on animal protection in science [13]. | Legally mandated Replacement where possible and established ethics committees. |

| 2016 | European Medicines Agency (EMA) | Guideline on regulatory acceptance of 3Rs approaches [14]. | Provided pathway for non-animal methods in drug development. |

| 2023 | United States (FDA) | FDA Modernization Act 2.0 [14]. | Ended mandatory animal testing for new drugs, opening door for NAMs. |

| Ongoing | OECD/ICH | Development and validation of IATA and NAM-based test guidelines [14] [16]. | Facilitates international harmonization and acceptance of alternatives. |

Application Notes: Implementing the 3Rs in Modern Toxicity Testing

Replacement: CoreIn VitroMethodologies for Acute Toxicity Assessment

Replacement strategies for LD50 testing are multi-faceted, moving from simple cell death assays to complex, mechanistic systems.

1. Modern Cytotoxicity Testing: Classical assays like MTT (metabolic activity), LDH release (membrane integrity), and Neutral Red Uptake (lysosomal function) remain regulatory benchmarks but are now used as part of targeted batteries rather than standalone predictors [16]. Best practice involves using at least two orthogonal assays to distinguish between specific cytotoxic mechanisms and general cell stress [16].

- Example Protocol (Multiparametric Cytotoxicity Screening): Seed human hepatocellular carcinoma cells (HepG2) or primary hepatocytes in 96-well plates. After compound exposure, assay the same wells sequentially with: i) Resazurin (alamarBlue) for metabolic activity (fluorescence, Ex/Em 560/590 nm), ii) a fluorometric LDH assay (Ex/Em 540/590 nm), and iii) a nuclear stain (e.g., Hoechst 33342) for normalized cell count. This provides concurrent data on metabolism, membrane damage, and cell number from a single experimental replicate.

2. Stem Cell-Derived and 3D Models: These models offer superior physiological relevance.

- Organoids: Self-organizing 3D structures derived from pluripotent or adult stem cells that mimic organ microarchitecture and function. Liver organoids, for instance, can model repeated-dose hepatotoxicity and metabolic idiosyncrasies better than 2D hepatocytes [16] [17].

- Organ-on-a-Chip (OOC): Microfluidic devices housing living cells in a dynamic, tissue-relevant microenvironment. A liver-on-a-chip with endothelial and Kupffer cells can model not just hepatocyte death but also inflammation-driven toxicity [16] [17]. Multi-organ chips (e.g., liver-heart-kidney) can assess systemic effects and metabolite-mediated toxicity, moving closer to an in vitro "human-on-a-chip" [18] [17].

3. In Silico and Computational Toxicology: Computer models can predict acute toxicity by leveraging existing data, preventing unnecessary animal and lab work.

- Quantitative Structure-Activity Relationship (QSAR): Uses mathematical models to relate a compound's molecular descriptor to a toxicological outcome [14] [19].

- Machine Learning (ML) Models: Advanced algorithms, such as those in the Collaborative Acute Toxicity Modeling Suite (CATMoS), can classify compounds into toxicity categories (e.g., EPA GHS categories) based on rat LD50 data with high accuracy [19]. These models are built following OECD QSAR validation principles, ensuring defined endpoints, applicability domains, and rigorous performance metrics [19].

- Physiologically Based Kinetic (PBK) Modeling: Integrates in vitro concentration-response data with human physiological parameters to extrapolate an in vitro effective concentration to a human equivalent dose, a process known as Quantitative In Vitro to In Vivo Extrapolation (QIVIVE) [16].

Table 2: Comparison of Key Replacement Technologies for Acute Toxicity Assessment

| Technology | Description | Key Advantages | Current Limitations | Primary 3Rs Contribution |

|---|---|---|---|---|

| High-Throughput Cytotoxicity Assays (e.g., multiplexed imaging) | Automated screening of cell health parameters (viability, oxidative stress, apoptosis) in 2D or 3D cultures [16]. | Rapid, cost-effective; enables screening of large compound libraries; high-content mechanistic data. | Limited physiological complexity; may miss organ-specific or systemic effects. | Reduction, Replacement (for prioritization). |

| Induced Pluripotent Stem Cell (iPSC)-Derived Cells | Patient-specific or tissue-specific cells (cardiomyocytes, neurons, hepatocytes) differentiated from iPSCs [16] [17]. | Human genetic background; can model population variability and genetic diseases; ethically preferable to embryonic stem cells. | Variability in differentiation efficiency; functional immaturity compared to adult cells. | Replacement, Refinement (of disease modeling). |

| Organ-on-a-Chip | Microfluidic culture of human cells under dynamic flow and mechanical cues [16] [17]. | Recapitulates tissue-tissue interfaces, shear stress, and mechanical forces; allows for real-time analysis. | Technically complex; costly to operate; standardization challenges. | Replacement (for complex organ-level functions). |

| Machine Learning / QSAR Models | Computational models predicting toxicity from chemical structure and existing data [14] [19]. | Extremely fast and cheap for virtual screening; can predict for data-poor chemicals; no biological resources needed. | Dependent on quality/quantity of training data; defined applicability domain; requires experimental validation. | Replacement, Reduction (of experimental testing). |

4. Integrated Testing Strategies (ITS) and Adverse Outcome Pathways (AOPs): A full replacement of a complex endpoint like lethality often requires a weight-of-evidence approach, not a single test. The Adverse Outcome Pathway (AOP) framework is critical here. An AOP is a conceptual model linking a molecular initiating event (e.g., protein binding) through key biological events to an adverse outcome (e.g., organ failure) [14]. By designing in vitro tests to measure specific key events within a relevant AOP (e.g., mitochondrial dysfunction, cytotoxicity in a specific organ model), data can be integrated within an IATA to make a robust prediction of the in vivo outcome without animals [14] [16].

Reduction and Refinement: Optimizing NecessaryIn VivoStudies

When in vivo data is still scientifically or regulatorily required, Reduction and Refinement are rigorously applied.

Reduction in Acute Oral Toxicity Testing: Modern in vivo protocols have drastically reduced animal use. The Up-and-Down Procedure (UDP), an OECD guideline, uses sequential dosing of single animals, significantly reducing the number required (typically 6-10) compared to the classic LD50 protocol which could use 40-60 animals or more [11] [19]. Furthermore, testing in one sex is often justified unless there is evidence of significant sex-specific toxicity [11].

Refinement in Practice: Refinement encompasses all aspects of animal well-being. This includes:

- Husbandry: Providing species-specific housing with environmental enrichment (nesting material, shelters, social housing) to reduce stress and improve welfare [12].

- Procedural Refinements: Using refined methods of substance administration (e.g., precise gavage techniques), applying analgesia for potentially painful procedures, and establishing humane endpoints (e.g., predefined clinical scores triggering early euthanasia) to prevent severe suffering [12] [13].

- Scientific Benefit: Reduced stress translates to more stable physiology and less data variability, enhancing scientific quality and potentially reducing the number of animals needed to achieve statistical power—a direct link between Refinement and Reduction [12].

Detailed Experimental Protocols

Protocol: Machine Learning-Guided Prediction of Acute Oral Toxicity Category

This protocol outlines the use of a publicly available computational model to prioritize or screen compounds.

Objective: To classify a new chemical entity into a global harmonized system (GHS) acute oral toxicity category using a validated in silico model. Principle: A machine learning model (e.g., from the CATMoS project) trained on thousands of existing chemical structures and their corresponding rat LD50 values learns to associate structural features with toxicity [19]. The model predicts a category for novel compounds within its applicability domain.

Materials:

- Chemical structure of the test compound (in SMILES, SDF, or MOL2 format).

- Access to an in silico prediction platform (e.g., EPA's CompTox Chemistry Dashboard, commercial QSAR software with acute toxicity models, or a publicly available CATMoS implementation [19]).

- Computer with standard specifications.

Procedure:

- Structure Preparation: Draw or import the 2D chemical structure of the test compound into the software. Generate standardized descriptors (e.g., Extended Connectivity Fingerprints (ECFP6) or physicochemical descriptors) [19].

- Model Selection & Prediction: Select a validated acute oral toxicity (rat) classification model. Submit the prepared structure for prediction. The software will output:

- Predicted Toxicity Category (e.g., GHS Category 1-5 or EPA Category I-IV).

- Prediction Confidence/Probability (e.g., a value between 0 and 1).

- Applicability Domain Assessment: An indicator of whether the test compound's structure falls within the chemical space of the model's training set. Predictions for compounds outside the applicability domain should be treated with extreme caution [19].

- Interpretation & Decision:

- High Confidence, Low Toxicity Prediction: The compound may be a candidate for a refined in vivo test (e.g., UDP starting at a high dose) or for direct progression to other in vitro assays.

- High Confidence, High Toxicity Prediction: Supports the need for stringent handling controls. May justify in vitro mechanistic follow-up instead of, or prior to, any in vivo testing.

- Low Confidence or Outside Applicability Domain: Indicates a need for experimental data. Consider initiating a tiered testing strategy starting with in vitro cytotoxicity assays.

Validation: For regulatory purposes, positive and negative control compounds with known LD50 values should be run periodically to verify model performance. Experimental validation of predictions for novel chemical series is strongly recommended [19].

Protocol: TieredIn VitroAssessment of Acute Cytotoxic Potential Using a Liver Spheroid Model

This protocol describes a mechanistic, human-relevant in vitro strategy to assess acute toxicity potential, focusing on hepatic response.

Objective: To evaluate the cytotoxic potential of a test substance on 3D human liver spheroids using multiplexed, high-content endpoints. Principle: Primary human hepatocyte spheroids maintain liver-specific functions (metabolism, albumin secretion) longer than 2D cultures. Multiple fluorescent dyes are used simultaneously to measure different cell health parameters, providing a mechanistic profile of toxicity [16] [17].

Materials:

- Cells: Primary human hepatocytes or iPSC-derived hepatocyte-like cells.

- Cultureware: Ultra-low attachment 96-well U-bottom plates for spheroid formation.

- Assay Reagents: Commercial multicomponent live-cell staining kit (e.g., containing dyes for viability/cytotoxicity, caspase-3/7 activity for apoptosis, and glutathione depletion for oxidative stress). Examples include CellEvent Caspase-3/7 Green, H2DCFDA for ROS, and TMRM for mitochondrial membrane potential.

- Instrumentation: Automated fluorescence microscope or high-content imaging system.

Procedure: Week 1: Spheroid Formation & Maturation

- Seed hepatocytes at 1,000-2,000 cells/well in U-bottom plates in spheroid formation medium.

- Centrifuge plates gently (200 x g, 5 min) to aggregate cells at the well bottom.

- Culture for 5-7 days, allowing spheroid compaction and functional maturation, with medium changes every 2-3 days.

Day of Experiment: Compound Treatment & Staining

- Prepare a dilution series of the test compound (typically 8 concentrations, e.g., 100 µM to 0.1 µM) and positive control (e.g., 50 µM acetaminophen for hepatotoxicity).

- Replace medium in spheroid plates with treatment medium containing the compounds. Include vehicle control wells.

- Incubate for 24-48 hours at 37°C, 5% CO₂.

- At the end of treatment, add the multicomponent live-cell staining cocktail directly to the wells as per kit instructions. Incubate for 30-60 min.

- Image spheroids using a high-content imager with appropriate filter sets for each fluorescent channel.

Data Analysis:

- Use image analysis software to segment individual spheroids and quantify the fluorescence intensity for each channel per spheroid.

- Normalize all data to the vehicle control (set as 100% viability, 0% activation).

- Generate concentration-response curves for each endpoint (viability, apoptosis, oxidative stress).

- Calculate benchmark concentrations (e.g., IC50 for viability, EC10 for caspase activation).

Interpretation: A substance causing cytotoxicity (loss of viability) at low concentrations with concurrent activation of apoptosis and oxidative stress indicates a high acute toxic potential. This mechanistic profile can be mapped to relevant Key Events in an Adverse Outcome Pathway, informing a higher-level risk assessment and potentially replacing a preliminary in vivo acute toxicity study.

Table 3: Research Reagent Solutions for Advanced In Vitro Toxicology

| Reagent / Material | Function / Description | Key Considerations for 3Rs Alignment |

|---|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | Patient/disease-specific source for deriving human cardiomyocytes, neurons, hepatocytes, etc. [16] [17]. | Enables human-relevant disease modeling and toxicity screening, directly supporting Replacement. Avoids ethical issues of embryonic stem cells. |

| Defined, Xeno-Free Cell Culture Medium | Chemically defined medium free of animal-derived components like fetal bovine serum (FBS) [15]. | Eliminates batch variability and ethical concerns of FBS harvesting. Moves toward a fully animal-free test system, a progressive refinement of Replacement [15]. |

| Basement Membrane Extract (BME) / Synthetic Hydrogels | Extracellular matrix for supporting 3D cell culture, organoid growth, and cell differentiation. | Animal-derived BME raises ethical concerns [15]. Synthetic or recombinant human protein-based hydrogels are preferred for human-relevant models and full Replacement. |

| High-Content Imaging Dye Sets | Multiplexed fluorescent probes for viability, apoptosis, mitochondrial health, oxidative stress, etc. [16]. | Allows deep mechanistic profiling from a single experiment, maximizing data from each in vitro assay. This supports Reduction (of follow-up tests) and Refinement (of mechanistic understanding). |

| Microfluidic Organ-on-a-Chip Kits | Pre-fabricated chips (e.g., liver, kidney, multi-organ) with integrated microchannels and membranes [16] [17]. | Provides a platform for human-relevant, dynamic tissue models that can replace certain animal studies for absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiling (Replacement). |

Implementing the Transition: Pathways and Considerations

A Strategic Roadmap for Laboratories

Transitioning to a 3Rs-centric paradigm requires a deliberate strategy:

- Audit and Prioritize: Review current testing workflows. Identify which animal tests are most costly, use the most animals, or have the poorest human predictivity. These are prime candidates for alternative method development.

- Invest in Training: Equip scientists with skills in in vitro model development, high-content data analysis, and computational toxicology. This may involve workshops, collaborations with 3Rs centers, or hiring specialists [17].

- Start with Tiered Screening: Implement computational (in silico) and simple in vitro cytotoxicity screens as mandatory first steps in compound evaluation. Use these to prioritize compounds for any further, more complex testing (animal or advanced in vitro).

- Pilot and Validate: For a specific endpoint (e.g., skin irritation), select a validated non-animal method (e.g., reconstructed human epidermis model) and run a pilot project alongside the traditional assay to build internal confidence and procedural competence.

- Engage with Regulators Early: When developing a new alternative method for a regulatory purpose, initiate dialogue with relevant agencies (FDA, EMA) early to understand validation and acceptance criteria [14].

Navigating Challenges: Scientific and Ethical Nuances

The path to full Replacement is not without obstacles:

- Scientific Validation: A major hurdle is demonstrating that a new alternative method is as reliable and predictive as the animal test it seeks to replace, especially for complex systemic endpoints. This requires large, collaborative validation studies [14].

- The Animal-Derived Materials Paradox: Many "non-animal" methods rely on animal-derived components like fetal bovine serum (FBS), antibodies, or enzymes [15]. The ethical sourcing and ultimate replacement of these materials with defined, synthetic, or human recombinant alternatives is the next frontier for the 3Rs, sometimes termed moving toward "xeno-free" or fully "animal-free" science [15].

- Cultural and Regulatory Inertia: Established protocols and regulatory requirements can be slow to change. Continued advocacy, publication of robust case studies, and clear demonstration of business benefits (cost, speed, quality) are essential to drive adoption.

The 3Rs framework has matured from an ethical plea into a powerful, science-driven paradigm that is fundamentally reshaping toxicology. In the specific mission to replace the classic LD50 test, the 3Rs guide a multi-pronged attack: Replacement via human organoids, organs-on-chips, and predictive machine learning models; Reduction through sophisticated experimental design and in vitro prioritization; and Refinement by ensuring the utmost welfare for any animal still in use.

The recent regulatory shifts, epitomized by the FDA Modernization Act 2.0, have transformed the 3Rs from a voluntary guideline into a strategic imperative for drug development [14]. The future of acute toxicity assessment lies not in a single alternative but in Integrated Approaches to Testing and Assessment (IATA) that intelligently combine in silico predictions, mechanistic in vitro data from human cells, and targeted in vivo studies only when essential. By fully embracing the 3Rs, the scientific community can deliver more human-relevant safety data, accelerate innovation, and fulfill an ethical responsibility, proving that superior science and animal welfare are mutually achievable goals.

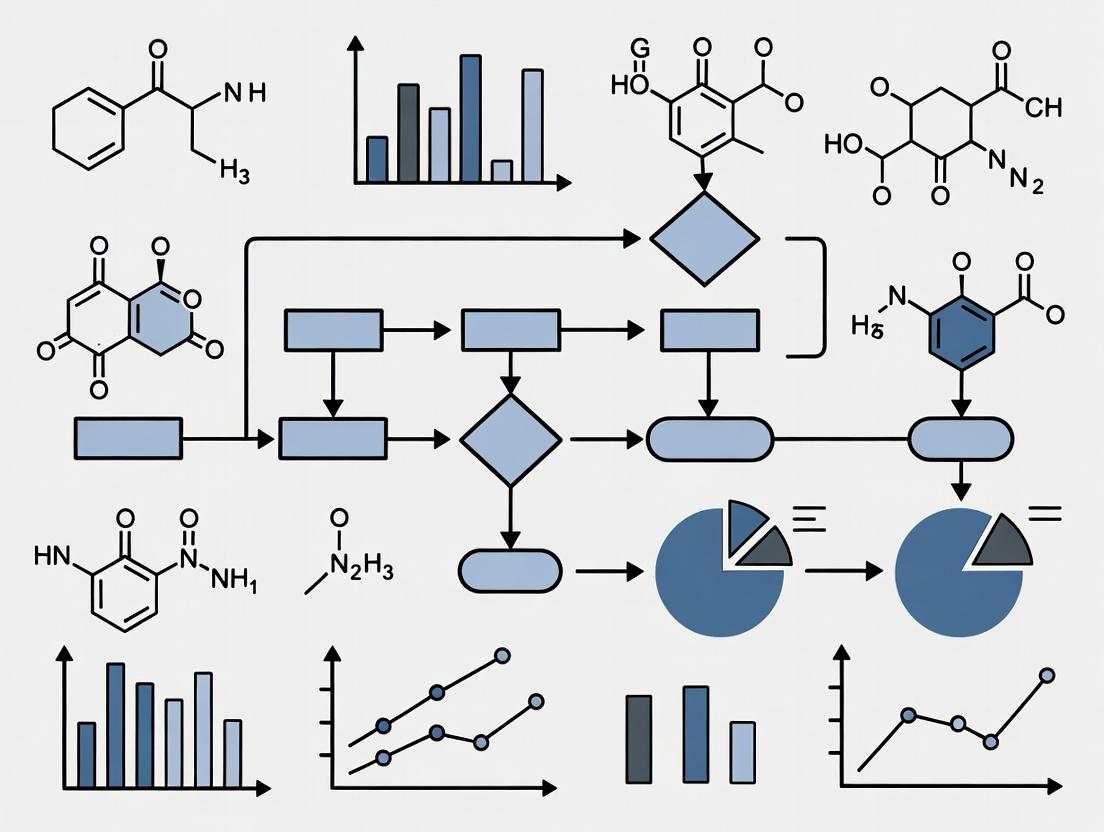

Toxicity Testing Strategy Integrating 3Rs Principles

QIVIVE: Quantitative In Vitro to In Vivo Extrapolation

The global pharmaceutical market is projected to reach approximately $1.6 trillion in 2025, driven by innovation in areas like oncology, immunology, and metabolic diseases [20]. However, this innovation is underpinned by a research and development (R&D) model of exceptionally high risk and cost. The average cost to bring a new drug from discovery to launch is estimated at $2.3 billion, with a clinical trial failure rate as high as 90% [21] [22]. A significant portion of this staggering cost is attributed to extensive preclinical safety testing, which has historically relied on animal models like the LD50 (median lethal dose) test.

Concurrently, a major regulatory shift is underway. The U.S. Food and Drug Administration (FDA) has announced a plan to phase out animal testing requirements for monoclonal antibodies and other drugs, encouraging the use of New Approach Methodologies (NAMs) [23]. This initiative, fueled by the 2022 FDA Modernization Act 2.0, aims to make animal testing "the exception rather than the norm" within 3-5 years [24]. This paradigm shift is driven by the dual imperatives of economic efficiency and scientific relevance. Animal models are not only costly and time-consuming but can also be poor predictors of human safety, particularly for complex biologics [23] [24]. Replacing, reducing, and refining (the 3Rs) animal use with human-relevant in vitro and in silico models presents a critical opportunity to de-risk drug development, lower R&D costs, and accelerate the delivery of safer therapies to patients [25].

Quantitative Landscape: Costs, Failures, and Market Drivers

The financial anatomy of drug development reveals an enterprise of immense scale and risk. The following tables summarize key quantitative data on global markets, development costs, and the evolving therapeutic focus, which collectively underscore the economic drivers for adopting more efficient and predictive non-animal methodologies.

Table 1: Global Pharmaceutical Market and R&D Investment (2025 Projections)

| Metric | Value | Details & Implications |

|---|---|---|

| Global Market Size | ~$1.6 Trillion | Excludes COVID-19 vaccines; reflects steady growth [20]. |

| Annual R&D Investment | >$200 Billion | All-time high, fueling pipeline innovation [20]. |

| Top Therapeutic Areas by Spend | 1. Oncology (~$273B)2. Immunology (~$175B)3. Metabolic Diseases (Mid-$100B range) | Oncology and immunology show 9-12% annual growth. GLP-1 drugs for obesity/diabetes are a transformational market [20]. |

| Share of Specialty Medicines | ~50% of global spending | Advanced therapies (biologics, targeted therapies) dominate expenditure, demanding complex safety assessment [20]. |

Table 2: Drug Development Costs and Failure Risks

| Metric | Value | Impact & Context |

|---|---|---|

| Average Cost to Launch | $2.3 Billion | From discovery to market approval [22]. |

| Clinical Trial Failure Rate | Up to 90% | A primary contributor to financial risk and sunk costs [21]. |

| Return on R&D Investment | 4.1% (2023) | Improved from pandemic lows but remains a thin margin on high risk [22]. |

| Cost of Pivotal Trials | Median $48 million per approved drug | For trials supporting FDA approval (2015-2017) [22]. |

| Estimated Annual Cost of Failed Oncology Trials | ~$60 Billion | Highlights sector-specific financial waste [22]. |

Table 3: Regulatory Shift and Adoption of Non-Animal Methods

| Aspect | Current Status / Metric | Significance for Drug Development |

|---|---|---|

| FDA Timeline for Animal Testing | Phase out to be "exception rather than norm" in 3-5 years [24]. | Creates urgent need for validated human-relevant alternatives. |

| Initial Focus of FDA Policy | Monoclonal Antibodies [23]. | Animal models are particularly poor predictors for this drug class. |

| Key Legislative Driver | FDA Modernization Act 2.0 (2022) [24]. | Removed mandatory animal testing for biosimilars, enabling regulatory use of NAMs. |

| Electronic Adherence Monitoring in Trials | Used in ~2.7% of trials [22]. | Example of a superior, non-animal method that improves data quality and reduces trial failure risk. |

Scientific and Regulatory Framework for Animal Testing Alternatives

The transition from animal-based to human-biology-based testing is guided by a robust framework centered on New Approach Methodologies (NAMs). NAMs are defined as modern, human-relevant testing methods that can replace, reduce, or refine (the 3Rs) the use of animals [25]. They are categorized based on their scientific approach [25]:

- In chemico: Experiments performed on biological molecules (e.g., proteins, DNA) outside of cells to study interactions.

- In silico: Experiments using computational platforms, including mathematical modeling, simulation, and artificial intelligence/machine learning (AI/ML) to predict biological effects.

- In vitro: Experiments using cells cultured outside the body. This includes advanced models like:

- Microphysiological Systems (MPS or Organs-on-Chips): Complex, cell-based devices that mimic key physiological aspects of human tissues or organs by incorporating microenvironments with flow, shear stress, and other physiologically relevant cues [25].

- Organoids: 3D tissue-like structures derived from stem cells that replicate the complexity and function of human mini-organs [25].

U.S. and global agencies are actively promoting this shift. The FDA's recent roadmap encourages drug sponsors to embrace these NAMs [23] [24]. Furthermore, the NIH Common Fund's Complement-ARIE program aims to accelerate the development, standardization, and validation of human-based NAMs [25]. A pivotal case study is the development of a cell-based assay for clostridium toxin (e.g., Botox, tetanus vaccine) potency testing, which has traditionally required the mouse LD50 test. Researchers engineered human neuroblastoma cell lines to be sensitive to these toxins, creating an assay that is ten times more sensitive for botulinum B toxin than the traditional animal test [9]. This assay, developed with funding from the UK's NC3Rs and now undergoing multi-manufacturer validation for Good Manufacturing Practice (GMP), demonstrates the potential for a complete, superior replacement of a long-standing animal test [9].

Detailed Experimental Protocols for Key In Vitro Alternatives

Protocol 1: Engineered Human Neuroblastoma Cell Assay for Botulinum Toxin Potency Testing

This protocol details the replacement of the murine LD50 assay for botulinum neurotoxin (BoNT) potency testing [9].

Cell Line Preparation:

- Cell Line: Use an engineered human neuroblastoma cell line (e.g., SH-SY5Y) stably transfected to overexpress the relevant toxin receptors (e.g., SV2 for BoNT/A) and a reporter gene (e.g., luciferase) under the control of a promoter responsive to toxin-mediated cleavage of intracellular targets like SNAP-25 [9].

- Culture: Maintain cells in a 1:1 mixture of Dulbecco's Modified Eagle Medium (DMEM) and Ham's F12 nutrient mix, supplemented with 10% fetal bovine serum (FBS), 1% non-essential amino acids, and appropriate selection antibiotics (e.g., 200 µg/mL hygromycin B) at 37°C with 5% CO₂.

Assay Execution:

- Seed the engineered neuroblastoma cells into 96-well white-walled, clear-bottom assay plates at a density of 20,000 cells per well. Incubate for 24 hours to allow adherence.

- Prepare serial dilutions of the botulinum toxin standard and test samples in assay buffer (culture medium with 0.1% bovine serum albumin).

- Remove the medium from the cells and add 100 µL of each toxin dilution to the wells. Include a vehicle-only control (0 toxin) and a maximum activity control (e.g., lysed cells). Incubate the plate for 48-72 hours at 37°C, 5% CO₂.

Detection and Quantification:

- Following incubation, equilibrate the plate to room temperature for 15 minutes.

- Add 100 µL of a luciferase assay reagent (e.g., One-Glo) to each well. Shake the plate gently for 2 minutes and incubate for 10 minutes to stabilize the luminescent signal.

- Measure luminescence using a plate reader. The toxin activity inhibits neurotransmitter release, which is linked to the reporter signal; thus, toxin potency is inversely proportional to the measured luminescence.

Data Analysis:

- Normalize the luminescence data: (Sample RLU – Average Max Toxin RLU) / (Average Vehicle Control RLU – Average Max Toxin RLU) * 100 = % Response.

- Plot % Response against the log10 of toxin concentration. Fit a 4-parameter logistic (4PL) curve to the standard dilutions.

- Calculate the half-maximal effective concentration (EC₅₀) for the standard and the test samples. The relative potency of the test sample is determined by comparing its EC₅₀ to that of the reference standard.

Protocol 2: Quantitative Systems Pharmacology (QSP) Model for Preclinical Safety Integration

This protocol outlines the development of a mechanistic QSP model to integrate in vitro toxicity data and predict in vivo human safety margins, reducing reliance on animal pharmacokinetic/pharmacodynamic (PK/PD) studies [26].

Define Scope and Gather Data:

- Objective: Predict human cardiac safety margin for a new small molecule drug based on in vitro hERG channel inhibition data.

- Data Collection: Collate all available in vitro data: IC₅₀ for hERG inhibition, cellular cytotoxicity (CC₅₀) in human cardiomyocytes, physicochemical properties (logP, pKa). Gather preclinical animal PK data (if any) and known human physiological parameters (heart rate, ion channel densities, serum protein binding).

Model Structure Development:

- Construct a minimal physiological model of human cardiac electrophysiology. Key model "states" (variables) may include plasma drug concentration, myocardial tissue concentration, and the percentage of inhibited hERG channels.

- Define the flow between states using ordinary differential equations (ODEs). For example:

- Rate of change in plasma concentration = (Absorption - Distribution - Metabolism - Excretion).

- Myocardial concentration = Plasma concentration * Tissue-to-plasma partition coefficient.

- % hERG inhibition = f(Myocardial concentration, IC₅₀) (using a Hill equation).

- Link hERG inhibition to a biomarker of toxicity, such as simulated QT interval prolongation, using a published mathematical relationship.

Model Calibration and Simulation:

- Parameter Estimation: Use the in vitro IC₅₀ data to calibrate the drug-specific inhibition constant in the model. Use human physiological literature values for system parameters.

- "What-if" Simulations: Run virtual trials by simulating a range of human doses. For each dose, the model outputs a time course of plasma concentration and predicted QT prolongation.

- Safety Margin Calculation: Determine the simulated dose that produces a QT prolongation equal to a regulatory threshold (e.g., 10 ms). Compare this to the simulated dose required for therapeutic efficacy (from a separate PD model). The ratio defines the predicted human cardiac safety margin.

Iterative Refinement:

- As new data becomes available (e.g., from Phase I clinical trials on drug concentration), compare model predictions with observed outcomes.

- Refine the model structure or parameters to improve its predictive accuracy (the "learn and confirm" paradigm) [26].

Protocol 3: Multi-organ Microphysiological System (MPS) for Off-Target Toxicity Screening

This protocol describes using interconnected organ-on-chip modules (e.g., liver, heart, kidney) to assess compound toxicity and metabolism in a dynamic, human-relevant system [23] [25].

System Setup and Priming:

- Acquire a commercial or custom-built multi-organ MPS platform with separate but fluidically linked chambers for liver, cardiac, and renal tissues.

- Seed each chamber with relevant human primary cells or induced pluripotent stem cell (iPSC)-derived cells under optimized flow conditions. For example, seed liver spheroids in the liver chamber, cardiac microtissues in the heart chamber, and proximal tubule epithelial cells in the kidney chamber.

- Circulate a serum-free, cell-compatible medium through the system at a physiologically relevant flow rate using a microfluidic pump. Allow the system to stabilize and the tissues to mature for 5-7 days, monitoring barrier function (e.g., transepithelial electrical resistance) and tissue-specific biomarkers (e.g., albumin for liver).

Compound Dosing and Circulation:

- Prepare the test compound at the desired therapeutic concentration in the circulating medium. Include a vehicle control.

- Introduce the compound-containing medium into the MPS reservoir to initiate the closed-loop circulation. The system recapitulates systemic exposure, where the liver module metabolizes the compound, and metabolites are circulated to the heart and kidney modules.

Real-time Monitoring and Endpoint Analysis:

- Continuously monitor functional parameters using integrated biosensors: beat rate and force of cardiac microtissues; albumin and urea production from liver spheroids; and oxygen consumption across all tissues.

- After 24-72 hours of exposure, collect effluent medium and tissue samples from each module.

- Analyze medium for biomarkers of injury: Troponin I (cardiac injury), ALT/AST (liver injury), KIM-1/NGAL (kidney injury).

- Perform targeted metabolomics on the medium to profile parent compound depletion and metabolite formation.

- Fix tissues for histopathological analysis (e.g., apoptosis staining, cytoskeletal integrity).

Data Integration and Hazard Identification:

- Correlate the timing and magnitude of functional deficits (e.g., arrhythmia in heart chip) with the appearance of specific metabolites and tissue injury biomarkers.

- Identify the primary organ of toxicity and hypothesize mechanisms (parent compound vs. metabolite-driven). Compare the results to historical animal data to assess the MPS model's predictive value for human outcomes.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for In Vitro Alternative Methods

| Item | Function | Example Application/Notes |

|---|---|---|

| Engineered Human Neuroblastoma Cell Lines | Engineered to overexpress specific toxin receptors and reporter genes for sensitive, quantitative measurement of neurotoxin activity [9]. | Replacement of mouse LD50 for botulinum and tetanus toxin potency testing. |

| Induced Pluripotent Stem Cell (iPSC)-Derived Cells | Provide a source of human cardiomyocytes, hepatocytes, neurons, etc., for constructing organ-specific models with patient- or disease-specific genetic backgrounds. | Used in organoid and organ-on-chip systems for disease modeling and toxicity screening. |

| Specialized 3D Culture Matrices | Mimic the extracellular matrix to support the formation and function of 3D tissue structures like spheroids and organoids. | Essential for liver spheroid formation in MPS and for growing organoids with proper polarity and cell-cell interactions. |

| Microfluidic Organ-on-Chip Devices | Provide a controlled microenvironment with fluid flow, mechanical forces, and multi-tissue integration to mimic human physiology [25]. | Platforms for multi-organ toxicity and efficacy studies, such as linked liver-heart-kidney systems. |

| Luciferase Reporter Assay Kits | Enable highly sensitive, quantitative measurement of cellular responses based on luminescence output. | Used as a readout in engineered cell assays where biological activity (e.g., toxin-mediated cleavage) regulates reporter gene expression [9]. |

| QSP/Modeling Software | Platforms for building, simulating, and calibrating mechanistic mathematical models of biological systems and drug effects [26]. | Used to integrate in vitro data and predict in vivo human outcomes, supporting dose selection and risk assessment. |

| Multiplex Biomarker Assay Kits | Allow simultaneous measurement of multiple proteins (e.g., cytokines, injury biomarkers) from small-volume samples. | Critical for assessing specific tissue injuries in MPS effluent media (e.g., troponin, ALT, KIM-1). |

| Electronic Medication Adherence Monitors | Digitally track and record the timing of medication intake with high accuracy, superior to patient self-report [22]. | Used in clinical trials to ensure data integrity, correct dose optimization, and reduce failure risk due to poor adherence. |

Definition and Core Principles of NAMs

New Approach Methodologies (NAMs) represent a transformative paradigm in toxicology and safety science. They are defined as any in vitro (cell-based), in chemico (chemical reactivity), or in silico (computational) method that, when used alone or in combination, enables improved chemical safety assessment through more protective and/or human-relevant models, thereby reducing reliance on animal testing [27]. The fundamental premise of NAMs is not to create a direct, one-to-one replacement for an animal test but to provide more relevant information on a chemical to enable an exposure-based, hypothesis-driven safety assessment [27]. This shift aligns with the vision of Next Generation Risk Assessment (NGRA), where NAMs are the tools used to achieve an exposure-led, risk-based evaluation [27].

A core principle of NAMs is their foundation in human biology, aiming to elucidate pertinent biological pathways and mechanisms of action (MOA) relevant to human health, rather than replicating overt toxicity in a different species [27]. This approach acknowledges that traditional animal models, particularly rodents, have a documented true positive human toxicity predictivity rate of only 40–65% [27]. Therefore, the goal is to improve the overall protection of human health, not necessarily to replicate the specific outcomes of an animal test.

The Historical Context: Transitioning from the LD50

The classical LD50 (median lethal dose) test, introduced in 1927, has been a cornerstone of acute toxicity testing for decades [1]. It involves administering increasing doses of a substance to groups of animals to determine the dose that kills 50% of the test population. Its primary use has been for hazard classification and labeling [1].

Table 1: Historical Progression of Acute Toxicity Testing Methods

| Method (Year Introduced) | Key Principle | Animal Use | Regulatory & Scientific Limitations |

|---|---|---|---|

| Classical LD50 (1927) | Direct determination of dose causing 50% mortality. | Very high (e.g., 40-100 animals) [1]. | High animal suffering, high cost, limited mechanistic insight, high inter-species uncertainty. |

| Refined Animal Tests (1990s) e.g., Fixed Dose Procedure (OECD 420). | Identify dose causing evident toxicity, not mortality. | Reduced (e.g., 5-15 animals) [1]. | Significant reduction in suffering but still uses animals and inherits species translation issues. |

| Full Replacement NAMs | Mechanism-based assessment using human biology. | None. Relies on in vitro, in chemico, in silico tools. | Requires validation and regulatory acceptance; addresses human relevance directly. |

The ethical and scientific limitations of the LD50, including significant animal suffering and poor human translatability, catalyzed the search for alternatives guided by the 3Rs principle (Replacement, Reduction, Refinement) [1]. Initial successes came with refined animal tests that used fewer animals and minimized suffering [1]. However, NAMs aim for the ultimate goal of full replacement, moving beyond refining animal use to eliminating it entirely for specific endpoints.

A persistent example is the Mouse Lethality Bioassay (MLB), the mandated test for batch potency testing of Botulinum Neurotoxin (BoNT) products [28]. Despite the severe suffering involved and the existence of validated cell-based alternatives, regulatory requirements and validation hurdles have slowed its replacement, illustrating the systemic barriers NAMs face [28].

Components of the Modern NAM Toolkit

The modern NAM toolkit is a diverse and integrated suite of technologies. Their combined use in Defined Approaches (DAs)—specific combinations of NAMs with a fixed data interpretation procedure—is key to regulatory acceptance [27].

Table 2: Core Components of the Integrated NAM Toolkit

| Technology Category | Description | Example Methods/Tools | Primary Application in Hazard Assessment |

|---|---|---|---|

| Computational & Modeling | In silico prediction of properties, toxicity, and exposure. | QSAR, Read-Across, PBPK modeling, Machine Learning classifiers. | Priority setting, screening, hazard identification, risk quantification. |

| In Chemico & Biochemical Assays | Measures a chemical's intrinsic reactivity or interaction with biomolecules. | Direct Peptide Reactivity Assay (DPRA) for skin sensitization. | Identifying molecular initiating events (e.g., protein binding). |

| Cell-Based In Vitro Assays | Uses cell lines, primary cells, or stem cells to measure biological responses. | 3T3 NRU cytotoxicity, gene reporter assays, high-content imaging. | Measuring cellular toxicity, pathway activation, and key events. |

| Tissue & Complex Co-Culture Models | More physiologically relevant models incorporating multiple cell types. | Reconstructed human epidermis, organoids, microphysiological systems (organ-on-a-chip). | Assessing tissue-level effects and functional responses. |

| Omics Technologies | High-throughput analysis of biological molecules. | Transcriptomics, proteomics, metabolomics. | Uncovering mechanisms of action and biomarker discovery. |

A key conceptual framework linking these tools is the Adverse Outcome Pathway (AOP). An AOP describes a sequential chain of measurable events, from a Molecular Initiating Event (MIE) through cellular Key Events (KEs), leading to an adverse outcome in an organism. NAMs are designed to measure specific points along this pathway, providing a mechanistically grounded assessment [29].

Figure 1: Integrating NAMs with the Adverse Outcome Pathway (AOP) Framework.

Application Note: A Defined Approach for Skin Sensitization

Objective: To classify the skin sensitization hazard potential of a chemical without animal testing. Background: The AOP for skin sensitization is well-established, involving covalent binding to skin proteins (MIE), keratinocyte activation (KE1), and dendritic cell activation (KE2) [29]. Defined Approach (OECD TG 497): This DA integrates results from three NAMs:

- Direct Peptide Reactivity Assay (DPRA) (in chemico): Measures the chemical's reactivity with model peptides, addressing the MIE.

- KeratinoSens (in vitro): Uses a reporter gene in keratinocytes to detect activation of the Nrf2 pathway, a key cellular stress response (KE1).

- h-CLAT (in vitro): Measures changes in surface markers on human dendritic cell-like cells, addressing KE2. Protocol Execution: The test chemical is run through each assay according to OECD protocols. The results (percent depletion, fold induction, fluorescence index) are entered into a fixed Bayesian Network or Integrated Testing Strategy (ITS) prediction model. The model outputs a probability-based classification (Sensitizer/Non-Sensitizer) [27]. Interpretation: This DA does not replicate the murine Local Lymph Node Assay (LLNA) but provides a human biology-based assessment. Validation studies show it can outperform the LLNA in specificity for human relevance [27].

Protocol: Replacing the Mouse Lethality Assay for Botulinum Neurotoxin

Title: Cell-Based Assay (CBA) Protocol for Botulinum Neurotoxin Type A (BoNT/A) Potency Testing. Purpose: To quantitatively measure the functional neurotoxic activity of BoNT/A batches, replacing the Mouse Lethality Bioassay (MLB) [28]. Principle: The assay measures the cleavage of the BoNT/A target protein, SNAP-25, in a sensitive neuroblastoma cell line. The extent of cleavage, quantified via immunoassay, is proportional to the toxin's enzymatic activity and potency.

Materials & Reagents:

- Cell Line: SiMa human neuroblastoma cells (or other SNAP-25 expressing neuronal cell line).

- Test Articles: BoNT/A reference standard and unknown batch samples.

- Essential Reagents: Cell culture media and supplements, assay buffers, lysis buffer, anti-SNAP-25 primary antibody (cleavage-specific and total), fluorescent or chemiluminescent secondary antibodies, microplate reader.

- Specialized Equipment: CO2 incubator, biosafety cabinet, multi-well cell culture plates, precision pipettes, plate washer, automated imaging or luminescence/fluorescence reader.

Procedure:

- Cell Seeding: Seed SiMa cells in a 96-well plate at a density ensuring ~80% confluence after 24 hours. Incubate at 37°C, 5% CO2.

- Sample Preparation & Serial Dilution: Prepare a serial dilution series of the BoNT/A reference standard (e.g., 6 concentrations) and the unknown sample(s) in assay buffer.

- Intoxication: Remove culture media from cells. Apply 100 µL of each dilution of the standard and samples to designated wells. Include a vehicle control (buffer only). Incubate for a defined period (e.g., 24-48 hours).

- Cell Lysis: Remove toxin-containing media. Lyse cells in situ with an appropriate lysis buffer.

- SNAP-25 Cleavage Quantification: a. Transfer lysates to an ELISA plate or use the cell culture plate if compatible. b. Perform a dual-antibody sandwich immunoassay: Capture total SNAP-25 with one antibody. Detect using a cleavage-specific antibody that binds only to the BoNT/A-cleaved fragment of SNAP-25. c. Alternatively, use Western blot or mass spectrometry-based methods for higher specificity.

- Signal Detection & Analysis: Develop the assay using a fluorescent or chemiluminescent substrate. Measure signal intensity.

- Data Analysis: Plot the dose-response curve for the reference standard (signal vs. log concentration). Use a 4-parameter logistic fit to generate a standard curve. Interpolate the potency of the unknown sample relative to the standard.

Validation Note: For regulatory submission, this CBA must undergo rigorous validation against the MLB for multiple product-specific BoNT/A formulations to demonstrate equivalent or superior accuracy, precision, and reliability [28] [29]. A formal Context of Use statement must be defined (e.g., "For batch release potency testing of BoNT/A product X") [29].

The Scientist's Toolkit: Essential Reagents & Solutions

Table 3: Key Research Reagent Solutions for NAM Development

| Reagent/Model System | Function | Application Example |

|---|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | Provides a source of genetically diverse, human-derived differentiated cells (neurons, cardiomyocytes, hepatocytes). | Modeling organ-specific toxicity and inter-individual variability in drug response [29]. |

| Reconstructed Human Tissues (EpiDerm, EpiAirway) | 3D, differentiated tissue models with realistic morphology and barrier function. | Assessing skin corrosion/irritation and respiratory toxicity [27]. |

| Microphysiological Systems (Organ-on-a-Chip) | Microfluidic devices that emulate tissue-tissue interfaces, mechanical forces, and perfusion. | Studying complex organ interactions and systemic ADME/Tox in a human-relevant context [27]. |

| Panels of Genetically Diverse Cell Lines | Cell line arrays capturing human population genetic diversity. | Identifying genetic biomarkers of susceptibility and assessing toxicity risks across subpopulations [29]. |

| High-Content Screening (HCS) Assay Kits | Multiplexed fluorescent kits for measuring multiple cellular endpoints (cell health, ROS, apoptosis). | High-throughput mechanistic profiling of chemical libraries. |

Pathways to Regulatory Acceptance & Validation

Regulatory acceptance is the critical translational step for NAMs. The process moves from scientific development to formal acceptance via validation and qualification [29].

Figure 2: The Pathway for NAM Validation and Regulatory Acceptance.

- Context of Use (COU): The cornerstone of validation. The COU is a detailed statement defining the specific regulatory purpose of the NAM (e.g., "to classify eye irritation hazard for liquids") [29]. It dictates the validation criteria.

- Building Scientific Confidence: This involves demonstrating:

- Reliability: The method is reproducible within and between laboratories.

- Relevance: The method is biologically meaningful for its COU, ideally anchored to an AOP or mechanistic understanding [29].

- Performance: For hazard identification, predictive capacity is assessed against high-quality reference data, which may include human data or animal data (with recognition of its limitations) [27] [29].

- Regulatory Implementation: Agencies like the U.S. EPA and FDA have strategic plans to develop, qualify, and implement NAMs [30] [31]. Successful case studies, such as the Defined Approaches for skin sensitization and eye irritation (OECD TGs 497 and 467), provide a blueprint for future endpoints [27].

NAMs constitute a modern, evidence-based toolkit that redefines safety assessment away from observing toxicity in animals towards understanding perturbation of human biology. The trajectory points toward increasingly integrated testing strategies that combine computational predictions, high-throughput in vitro screening, and sophisticated tissue models to generate safety data more predictive of human outcomes. The full realization of this paradigm depends on continued scientific innovation, collaborative validation efforts, and proactive engagement with regulatory agencies to transition validated NAMs from the research bench into standardized decision-making frameworks. The ultimate goal is a more humane, efficient, and human-relevant system for protecting public health.

The In Vitro Toolbox: Core Assays, Advanced Models, and Integrated Testing Strategies

Cytotoxicity testing is a cornerstone of modern toxicology, providing critical data for hazard identification, risk evaluation, and drug safety assessment [16]. The ethical and scientific drive to implement the 3Rs principle (Replacement, Reduction, and Refinement of animal testing) has accelerated the development and adoption of in vitro methodologies [16]. Foundational assays like MTT (3-(4,5-dimethylthiazol-2-yl)-2,5-diphenyltetrazolium bromide) and LDH (Lactate Dehydrogenase) release have established the methodological basis for this field. They serve as essential, reproducible tools for initial cytotoxicity screening and are recognized benchmarks in regulatory contexts [16] [32].

This article details the application and protocol for these foundational assays within the critical framework of developing in vitro alternatives to the classical in vivo LD50 test. Regulatory bodies like the OECD provide guidance on using such cytotoxicity data to estimate starting doses for acute oral systemic toxicity tests, which can significantly reduce animal use [33]. However, the limitations of single-endpoint assays in capturing complex biology have prompted an evolution towards more predictive, human-relevant strategies. This includes the integration of high-content screening (HCS) and other multiparametric approaches into New Approach Methodologies (NAMs) and Integrated Approaches to Testing and Assessment (IATA) [16]. The following sections provide a comparative analysis, detailed standardized protocols, and a discussion on the integration of these tools into a modern toxicology workflow aimed at replacing animal testing.

Comparative Analysis of Foundational Cytotoxicity Assays

Selecting an appropriate cytotoxicity assay requires understanding each method's principle, advantages, and limitations. The following table provides a structured comparison of MTT, LDH, and High-Content Screening, based on endpoint, key strengths, and common interferences [16] [34] [32].

Table 1: Comparative Characteristics of Cytotoxicity Assays

| Assay | Primary Endpoint / Principle | Key Advantages | Key Limitations & Common Interferences |

|---|---|---|---|

| MTT Assay | Metabolic activity (mitochondrial reduction of tetrazolium salt to formazan) [32]. | Simple, cost-effective, widely used and accepted. Provides quantitative data suitable for high-throughput formats [32]. | End-point assay only. False signals from compounds that affect mitochondrial function or non-specifically reduce MTT. Insoluble formazan requires solubilization step [16] [32]. |

| LDH Release Assay | Membrane integrity (measurement of cytosolic LDH enzyme released upon cell damage) [32]. | Simple, rapid, and can be performed on culture supernatant without cell lysis. Direct marker of cell death [32]. | Background LDH in serum-containing media. Can underestimate toxicity if cell debris absorbs LDH. Less specific for apoptotic vs. necrotic death [16]. |

| High-Content Screening (HCS) | Multiparametric (nuclear morphology, membrane integrity, mitochondrial potential, etc.) via automated imaging [16]. | Provides rich, mechanistic data on single-cell level. Distinguishes between death modes (apoptosis, necrosis). Suitable for complex models (3D) [16]. | Higher cost and expertise requirement. Complex data analysis. Throughput is lower than simple colorimetric assays [16]. |

A critical consideration is that no single assay is universally reliable. For instance, a comparative study on hepatoma cells exposed to cadmium chloride found the neutral red (lysosomal function) and MTT assays were more sensitive in detecting early cytotoxic events than the LDH leakage assay [34]. This underscores the importance of a multiparametric strategy—using at least two independent endpoints with different biological principles—to improve accuracy and avoid artefacts [16].

Detailed Experimental Protocols

Standardized protocols are essential for generating reproducible and reliable data, especially for regulatory applications. The following are detailed methodologies for MTT and LDH assays, incorporating best practices from recent interlaboratory standardization efforts [16] [35].

MTT Assay Protocol for Cell Viability

This protocol measures the metabolic reduction of MTT to purple formazan crystals by viable cells [32].

Materials:

- Cells in culture (e.g., BALB/c 3T3, normal human keratinocytes, HepG2)

- 96-well tissue culture plate

- Test compounds and appropriate vehicle controls

- MTT reagent (e.g., 5 mg/mL stock in PBS)

- Solubilization solution (e.g., DMSO, acidified isopropanol)

- Multi-channel pipette, plate reader (absorbance at 570 nm, reference ~630 nm)

Procedure:

- Cell Seeding: Seed cells in a 96-well plate at an optimized density (e.g., 5–20 x 10³ cells/well) in complete growth medium. Include cell-free wells for background controls. Allow cells to adhere overnight [16].

- Compound Treatment: Prepare serial dilutions of the test compound in culture medium. Replace the seeding medium with treatment medium. Include a vehicle control (0% cytotoxicity) and a positive control (100% cytotoxicity, e.g., 1% Triton X-100). Incubate for the desired exposure period (e.g., 24-72 hours) [16] [33].

- MTT Incubation: Add MTT solution (typically 10% of well volume) directly to each well. Incubate for 2-4 hours at 37°C [16].

- Formazan Solubilization: Carefully remove the medium containing MTT. Add the solubilization solution (e.g., 100 µL DMSO per well) to dissolve the formed purple formazan crystals. Shake the plate gently for 10-15 minutes.

- Absorbance Measurement: Measure the absorbance of each well at 570 nm, using a reference wavelength of 630-650 nm to subtract background [32].

- Data Analysis: Calculate cell viability:

% Viability = [(Abs_sample - Abs_blank) / (Abs_vehicle_control - Abs_blank)] * 100. Generate dose-response curves to determine IC50/EC50 values.

Critical Notes: Test compounds with intrinsic color or redox activity can interfere. Always include "no-cell" blanks with compound to check for interference [16]. Optimize cell density and MTT incubation time to ensure signal linearity.

LDH Release Assay Protocol for Cytotoxicity

This protocol measures the activity of LDH released from cells with damaged membranes into the culture supernatant [32].

Materials:

- Cells, culture plate, and test compounds (as in MTT protocol)

- LDH assay kit (typically containing reaction mixture and lysis buffer)

- Sterile, low-protein-binding tubes for supernatant transfer

- Plate reader (absorbance at 490 nm, reference ~680 nm)

Procedure:

- Cell Treatment: Seed and treat cells in a 96-well plate as described in Steps 1 & 2 of the MTT protocol.

- Supernatant Collection: At the end of the exposure period, gently centrifuge the plate (e.g., 250 x g for 5 minutes) to pellet any detached cells. Carefully transfer a portion of the supernatant (typically 50 µL) to a new clear-bottom plate.

- LDH Reaction: Prepare the LDH reaction mix according to the kit instructions. Add an equal volume of the reaction mix to each supernatant sample. Incubate at room temperature, protected from light, for 30 minutes.

- Signal Measurement: Add the stop solution (if provided) or measure absorbance directly. Read absorbance at 490 nm (primary) and 680 nm (reference).

- Controls and Normalization:

- Spontaneous LDH Release (Low Control): Measure LDH activity from vehicle-treated cells.

- Maximum LDH Release (High Control): Measure LDH activity from wells treated with lysis buffer (provided in kit) for 45 minutes before supernatant collection.

- Background Control: Measure LDH activity from culture medium without cells.

- Data Analysis: Calculate cytotoxicity:

% Cytotoxicity = [(Abs_sample - Abs_low_control) / (Abs_high_control - Abs_low_control)] * 100.

Critical Notes: Serum contains LDH. Use serum-free media during the assay or heat-inactivate serum beforehand to reduce background [16]. The assay should be performed promptly after supernatant collection.

Integration and Regulatory Context within LD50 Alternative Strategies

The foundational assays described are not standalone replacements for the LD50 test but are vital components of a tiered, integrated strategy. The Interagency Coordinating Committee on the Validation of Alternative Methods (ICCVAM) recommends that in vitro basal cytotoxicity data from assays like neutral red uptake (a close relative of MTT in principle) should be used in a weight-of-evidence approach to determine starting doses for in vivo acute oral systemic toxicity tests. This application has been formalized in the OECD Guidance Document 129, which aids in reducing animal numbers by preventing dosing at severely toxic levels [33].