Beyond Animal Testing: How Machine Learning Models Achieve High-Fidelity LD50 Prediction for Health-Protective Risk Assessment

This article provides a comprehensive review for researchers and drug development professionals on the application of machine learning (ML) for predicting rat acute oral LD50, a critical parameter for chemical...

Beyond Animal Testing: How Machine Learning Models Achieve High-Fidelity LD50 Prediction for Health-Protective Risk Assessment

Abstract

This article provides a comprehensive review for researchers and drug development professionals on the application of machine learning (ML) for predicting rat acute oral LD50, a critical parameter for chemical safety classification. We first explore the foundational role of LD50 in regulatory frameworks and the limitations of traditional testing, establishing the necessity for computational alternatives like ML-based Quantitative Structure-Activity Relationship (QSAR) models. The methodological section details the implementation of diverse approaches, from consensus QSAR models and generalized read-across to advanced deep learning algorithms, highlighting key toxicity databases for model training. We then address critical troubleshooting and optimization challenges, including data quality, feature selection, and techniques to combat overfitting, which are paramount for developing robust models. The validation segment critically compares model performance, examining evaluation metrics, the strengths of ensemble strategies, and the application of models to emerging contaminants. We conclude by synthesizing the path toward reliable, health-protective in silico toxicity assessments and their implications for accelerating safer drug and chemical design.

From Lethal Dose to Algorithm: The Foundational Shift to In Silico LD50 Prediction

The Critical Role of LD50 in GHS and Regulatory Hazard Classification

Welcome to the Technical Support Center for Predictive Toxicology & GHS Classification

This center provides technical guidance for researchers and regulatory scientists integrating in silico LD50 predictions into Globally Harmonized System (GHS) hazard classification workflows. Our resources are framed within ongoing research to enhance the accuracy and reliability of machine learning (ML) models for acute oral toxicity prediction, a critical step in modern, animal-sparing chemical safety assessment.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: What are the exact LD50 cut-off values for GHS acute toxicity classification, and how should I apply a predicted LD50 value? The GHS defines five categories for acute oral toxicity based on experimentally derived LD50 values in milligrams per kilogram body weight [1]. Classifying a chemical using a predicted LD50 involves placing the value into the appropriate hazard category band.

- Troubleshooting Scenario: Your QSAR model predicts an LD50 of 120 mg/kg for a novel compound. How is it classified?

- Solution: Refer to the definitive GHS criteria [2] [3]. A value of 120 mg/kg falls between 50 mg/kg and 300 mg/kg, placing the compound in Category 3 for acute oral toxicity.

FAQ 2: My ML model's predicted LD50 value differs from a limited experimental result. Which value should be used for initial GHS classification? Under regulatory frameworks like OSHA's Hazard Communication Standard, classification is based on available, scientifically valid data, which can include in silico predictions [4]. A weight-of-evidence approach is required.

- Troubleshooting Scenario: A consensus model predicts an LD50 of 450 mg/kg (Category 4), but a single preliminary test suggests 250 mg/kg (Category 3).

- Solution & Protocol:

- Assess Data Quality: Evaluate the experimental test's adherence to OECD guidelines (e.g., fixed-dose procedure) [1].

- Apply Weight-of-Evidence: Consider the robustness of the ML prediction. Was it derived from a validated, high-performance model applied to a structurally similar compound? [5].

- Apply the Conservative Principle: For health-protective screening, the most conservative (lowest) LD50 estimate should be prioritized to ensure safety [6]. In this case, you would provisionally classify as Category 3 while flagging the need for further verification.

- Recommendation: Generate additional in silico predictions using other validated models (e.g., VEGA, TEST) to build a stronger evidence base [6].

FAQ 3: How do I handle GHS classification for a mixture when I only have predicted LD50 data for its components? For untested mixtures, GHS provides specific rules based on the toxicity of classified ingredients [4]. You can use predicted LD50 values to classify individual components first, then apply the additivity formula for acute toxicity.

- Troubleshooting Scenario: You need to classify a two-ingredient mixture where Component A (10% concentration) has a predicted LD50 of 200 mg/kg (Cat 4), and Component B (90% concentration) has a predicted LD50 of 2000 mg/kg (Cat 5).

- Solution & Protocol:

- Classify Each Ingredient: Use predicted values to assign GHS categories.

- Apply the Additivity Formula: The formula for acute toxicity is used when specific bridging principles are not applicable [4]. It calculates the theoretical toxicity of the mixture based on the potency and concentration of its toxic components.

- Calculation:

- The formula is:

[100 / Σ (Ci / ATi)], whereCiis the concentration of ingredient i, andATiis the acute toxicity estimate (LD50) of ingredient i. - For this mixture:

ATmix = 100 / [(10/200) + (90/2000)] = 100 / [0.05 + 0.045] ≈ 1052 mg/kg.

- The formula is:

- Classify the Mixture: A predicted LD50 of 1052 mg/kg for the mixture corresponds to GHS Category 4.

FAQ 4: What key performance metrics should I evaluate when selecting or validating an ML model for LD50-based GHS categorization? Beyond simple regression metrics for predicting the exact LD50 value, the critical performance measure is the model's accuracy in correctly assigning the GHS category [6] [7]. Misclassification into a less severe category (under-prediction) is a critical error.

- Troubleshooting Scenario: You are comparing two models. Model A has a higher overall accuracy but misclassifies some potent toxins as less harmful. Model B is slightly less accurate but never makes this dangerous error.

- Solution & Analysis Protocol:

- Generate a Confusion Matrix: Tabulate model predictions against true GHS categories.

- Calculate Critical Metrics:

- Under-prediction Rate: The proportion of chemicals predicted in a less severe category than their true experimental category. This is the most important safety metric. A low rate is essential [6].

- Over-prediction Rate: The proportion predicted in a more severe category. While conservative and health-protective, a very high rate can lead to excessive false alarms [6].

- Balanced Accuracy: The average of sensitivity and specificity, providing a better measure than simple accuracy for imbalanced datasets [5].

- Recommendation: From a safety and regulatory perspective, Model B is superior. A health-protective model that minimizes under-prediction is crucial for initial screening, even with a higher over-prediction rate [6].

Table 1: GHS Acute Toxicity Hazard Categories (Oral Route) [2] [3] [1]

| GHS Category | LD50 Cut-off Value (mg/kg, oral, rat) | Hazard Statement Code | Hazard Statement | Signal Word | Pictogram |

|---|---|---|---|---|---|

| 1 | ≤ 5 | H300 | Fatal if swallowed | Danger | Skull and crossbones |

| 2 | >5 ≤ 50 | H300 | Fatal if swallowed | Danger | Skull and crossbones |

| 3 | >50 ≤ 300 | H301 | Toxic if swallowed | Danger | Skull and crossbones |

| 4 | >300 ≤ 2000 | H302 | Harmful if swallowed | Warning | Exclamation mark |

| 5 | >2000 ≤ 5000 | (Not mandatory) | May be harmful if swallowed | Warning | - |

Table 2: Performance Comparison of ML/QSAR Models for Acute Oral Toxicity GHS Classification

| Model / Approach | Key Description | Reported Performance Metric | Critical Strength for Regulatory Use | Reference |

|---|---|---|---|---|

| Conservative Consensus Model (CCM) | Combines predictions from TEST, CATMoS, VEGA; selects the lowest (most toxic) LD50 value. | Under-prediction rate: 2% (Lowest among models). Over-prediction rate: 37%. | Maximizes health protection by minimizing dangerous misclassifications. Ideal for priority screening. | [6] |

| Optimized Ensembled Model (OEKRF) | Ensemble of Random Forest and Kstar algorithms, with feature selection and 10-fold cross-validation. | Accuracy in GHS categorization: 93% (under optimized scenario). | Demonstrates high accuracy achievable through advanced model engineering and robust validation. | [8] |

| Multi-domain ML Model | Uses molecular descriptors and fingerprints for emerging contaminants. | Accuracy: >0.86; Recall: >0.84. | Identifies key toxicity-related descriptors (e.g., BCUTp1h, SLogPVSA4) providing mechanistic insight. | [7] |

| Random Forest (RF) Models | Commonly used algorithm in comparative reviews. | Balanced accuracy varies (e.g., ~0.73-0.83 for various endpoints). | A reliable and frequently top-performing baseline algorithm for toxicity prediction tasks. | [5] |

Detailed Experimental Protocols

Protocol 1: Implementing a Health-Protective Consensus Prediction Workflow This protocol is based on the Conservative Consensus Model (CCM) study [6].

- Input Preparation: Prepare a standardized molecular representation (e.g., SMILES string) for the query compound.

- Multi-Model Query: Submit the structure to at least three independent, validated QSAR models for rat acute oral LD50 prediction (e.g., TEST, CATMoS, VEGA platforms).

- Data Collection: Record the quantitative LD50 prediction (in mg/kg) from each model.

- Consensus Application: Apply the "Conservative Consensus" rule: Select the lowest predicted LD50 value from the set of model outputs.

- GHS Classification: Map the consensus LD50 value to the corresponding GHS hazard category using the fixed cut-off values in Table 1.

- Documentation: Report all individual model predictions, the consensus value, the final GHS category, and a note on the health-protective strategy employed.

Protocol 2: Developing a Robust ML Model with Feature Importance Analysis This protocol synthesizes methodologies from recent studies [7] [8].

- Data Curation: Assemble a high-quality dataset of >6,000 compounds with experimental rat oral LD50 values. Convert LD50 to GHS categories (1-5) as the primary endpoint [7].

- Descriptor Calculation & Feature Selection: Generate a comprehensive set of molecular descriptors and fingerprints. Use Principal Component Analysis (PCA) or similar methods to reduce dimensionality and select the most informative features [8].

- Model Training with Cross-Validation: Split data into training and test sets. Train multiple ML algorithms (e.g., Random Forest, SVM, XGBoost). Use 10-fold cross-validation on the training set to optimize hyperparameters and prevent overfitting [8].

- Model Interpretation: Apply SHapley Additive exPlanations (SHAP) analysis to the best-performing model to identify which molecular features (e.g., polarizability, electronegativity) drive predictions toward higher toxicity [7].

- External Validation & Performance Reporting: Evaluate the final model on the held-out test set. Report balanced accuracy, under-prediction rate, and confusion matrix for GHS category assignment, not just correlation coefficients for LD50 value prediction [6] [5].

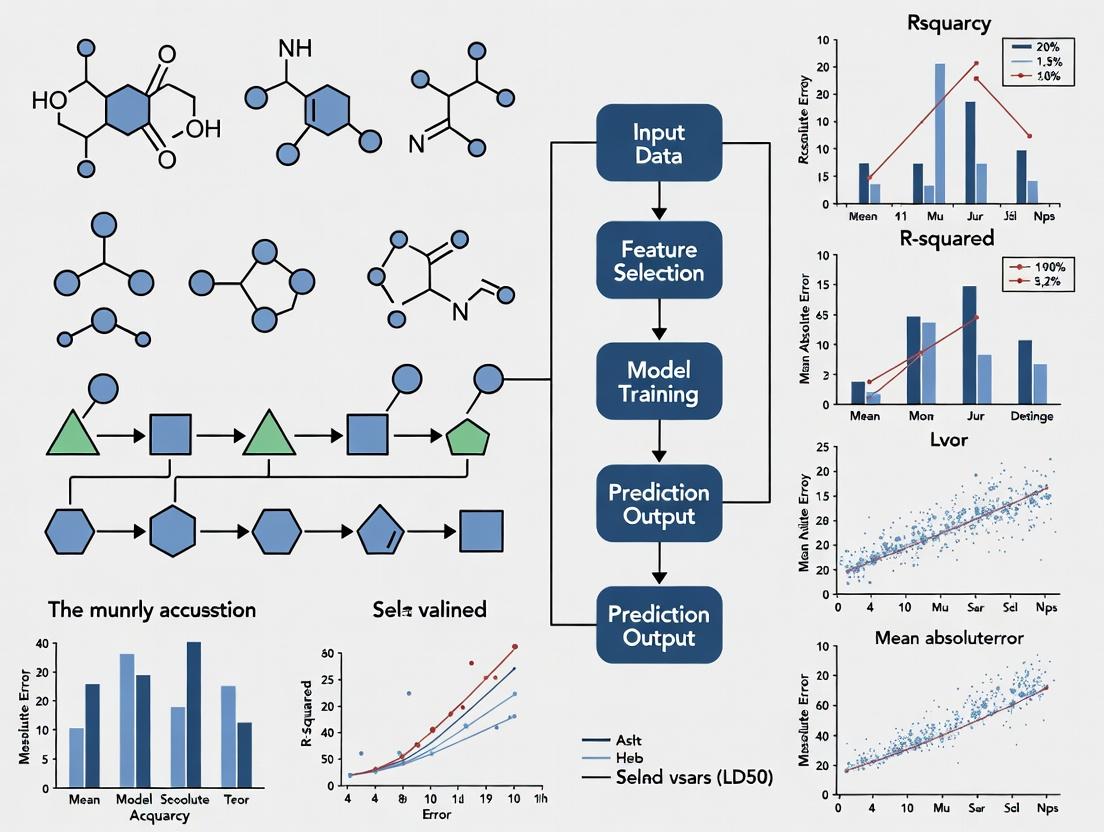

Visual Workflow Diagrams

Diagram 1: GHS classification workflow using ML-predicted LD50

Diagram 2: Development pipeline for a GHS category prediction ML model

| Tool / Resource Category | Specific Example or Name | Primary Function in Workflow | Key Consideration for Researchers |

|---|---|---|---|

| Public Prediction Platforms | VEGA, TEST, CATMoS | Provide immediate, validated QSAR predictions for rat oral LD50, useful for consensus modeling [6]. | Always check the model's applicability domain to ensure your compound is within the structural space it was trained on. |

| Curated Toxicity Data | PubChem GHS Classification Data [3] | Source of experimental LD50 values and official GHS classifications for known compounds, essential for model training and benchmarking. | Be aware of variability and sometimes conflicting classifications for the same compound from different sources [1]. |

| Molecular Descriptor Software | PaDEL-Descriptor, RDKit | Generate quantitative numerical representations (descriptors) and fingerprints from chemical structures for ML model input [7] [5]. | The choice of descriptor set (2D, 3D, fingerprints) significantly impacts model performance and interpretability. |

| Machine Learning Algorithms | Random Forest, XGBoost, Support Vector Machine (SVM) | Core algorithms for building classification models that predict GHS category from molecular descriptors [5] [8]. | Ensemble methods (like Random Forest) often outperform single models. Prioritize algorithms that provide feature importance metrics. |

| Model Interpretation Libraries | SHAP (SHapley Additive exPlanations) | Interprets ML model outputs to identify which structural features contribute most to a prediction of high or low toxicity [7]. | Critical for moving from a "black box" prediction to a mechanistically insightful, trusted tool. |

| Regulatory Guidance Documents | OSHA Appendix A (1910.1200AppA) [4], UN GHS Rev.11 (2025) [3] | Authoritative sources for classification rules, weight-of-evidence guidelines, and mixture rules. | The foundational reference for all regulatory compliance work; essential for justifying in silico classification decisions. |

Technical Support Center: Troubleshooting Guide

This guide addresses common challenges researchers face when transitioning from traditional in vivo LD50 testing to machine learning (ML)-based prediction models. The following table outlines specific issues, their root causes, and recommended solutions [9] [10] [11].

| Problem Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| Poor model accuracy on new compounds | Training data is not representative of your chemical space; model overfitting [5]. | Use applicability domain assessment; employ consensus modeling; integrate more diverse data sources (e.g., ChEMBL, PubChem) [9] [12]. |

| High false negative rate for toxicity | Imbalanced datasets with few toxic examples; model lacks mechanistic insight [5] [10]. | Apply algorithmic techniques (e.g., SMOTE) to balance data; use explainable AI (XAI) to identify missed toxicophores [10] [13]. |

| Inability to predict specific organ toxicity | Model trained only on general acute toxicity (LD50) endpoints [9]. | Adopt a multi-task learning framework that simultaneously trains on LD50 and specific organ toxicity assays [10]. |

| Results not accepted for regulatory submission | Model is a "black box" with no explanation for predictions [10] [13]. | Implement contrastive explanation methods (CEM) to identify pertinent positive/negative structural features [10]. |

| Species translation failure | Model trained on rodent data does not generalize to human predictions [14]. | Incorporate human-relevant in vitro (e.g., organ-on-a-chip) and clinical (FAERS) data into training via transfer learning [10] [11]. |

| Long training times for deep learning models | Complex architecture (e.g., deep neural nets) on large, unfiltered datasets [5]. | Use efficient molecular representations (like Morgan fingerprints); apply feature selection prior to training [5] [10]. |

Frequently Asked Questions (FAQs)

Q1: Our traditional in vivo LD50 testing is too slow and expensive for early-stage compound screening. What is the most efficient computational alternative to start with? A: Begin with Quantitative Structure-Activity Relationship (QSAR) models using software like the EPA's Toxicity Estimation Software Tool (TEST) [12]. TEST provides validated methodologies (e.g., hierarchical, consensus) to estimate oral rat LD50 directly from chemical structure, offering a rapid and cost-effective first-pass screening [12]. This can prioritize compounds for further testing, aligning with the "Reduction" principle of the 3Rs [15].

Q2: How reliable are ML-predicted LD50 values compared to actual animal test results? A: Performance varies by model and dataset. A 2023 review of ML models for acute toxicity prediction reported balanced accuracy values ranging from approximately 0.65 to 0.83 for external validation sets [5]. Notably, modern multi-task deep learning models that integrate in vitro, in vivo, and clinical data can improve predictive accuracy for human-relevant outcomes [10]. However, all models have an applicability domain and should be used within their validated chemical space [5].

Q3: We want to build a custom LD50 prediction model. What are the key data sources we need? A: You will need high-quality, curated toxicity data. Essential sources include:

- In vivo LD50 data: EPA's DSSTox/ToxVal database, TOXRIC, and the Registry of Toxic Effects of Chemical Substances (RTECS) [9] [10].

- Chemical descriptor data: PubChem for molecular structures and properties [9].

- Supplementary bioactivity data: ChEMBL and DrugBank for related pharmacological and ADMET data to enhance model robustness [9]. Always document data sources, curation steps, and any uncertainty associated with the experimental data [5].

Q4: Can ML models completely replace animal testing for acute toxicity in regulatory submissions? A: As of now, they are used for prioritization and screening but not as a sole replacement for final regulatory approval. However, regulatory science is evolving. The U.S. FDA encourages the adoption of advanced technologies, including AI/ML, through initiatives like FDA 2.0 [11]. Models that are transparent, explainable, and built on high-quality data are more likely to gain regulatory acceptance over time [13] [11]. The current goal is to significantly reduce and refine animal use through these models [15] [16].

Q5: Our in vitro cytotoxicity data doesn't correlate well with in vivo LD50 outcomes. How can ML help? A: This is a common limitation due to differing biological complexity [17]. ML can bridge this gap through advanced modeling techniques:

- Multi-task Learning (MTL): Train a single model on multiple endpoints (e.g., in vitro cytotoxicity, in vivo LD50, hepatotoxicity) simultaneously. This allows the model to learn shared features and improve generalization for the in vivo endpoint [10].

- Transfer Learning: Pre-train a model on a large amount of in vitro data, then fine-tune it on a smaller set of high-quality in vivo LD50 data. This approach can improve performance when in vivo data is limited [10].

Experimental Protocols & Methodologies

Protocol: Building a Multi-Task Deep Neural Network (MTDNN) for LD50 and Organ Toxicity Prediction

This protocol is adapted from state-of-the-art research for predicting clinical toxicity [10].

Objective: To develop a single model that accurately predicts both binary acute oral toxicity (LD50-based) and specific organ toxicity endpoints.

Materials & Software:

- Datasets: RTECS (for LD50 categorization) [10], Tox21 (for in vitro nuclear receptor/stress response assays) [10], and organ-specific data (e.g., hepatotoxicity from reviewed literature) [5].

- Molecular Representations: Morgan fingerprints (radius 2, 2048 bits) and/or pre-trained SMILES embeddings [10].

- Software: Python deep learning libraries (e.g., PyTorch, TensorFlow), RDKit for cheminformatics.

Procedure:

- Data Curation & Integration:

- Standardize compounds (remove salts, neutralize charges, canonicalize SMILES).

- Define a binary label for acute toxicity: Toxic (LD50 ≤ 5000 mg/kg) vs. Non-toxic (LD50 > 5000 mg/kg) per GHS/EPA guidelines [10].

- Merge datasets on canonical SMILES, creating a multi-label dataset where each compound has a label for the LD50 task and for each additional organ toxicity task.

Model Architecture & Training:

- Input Layer: Accepts the molecular representation vector (e.g., 2048-bit fingerprint).

- Shared Hidden Layers: 2-3 fully connected dense layers with ReLU activation. These layers learn general features relevant to all toxicity tasks.

- Task-Specific Output Branches: Separate output layers for each toxicity endpoint (e.g., one binary output for LD50, another for hepatotoxicity). Use a sigmoid activation function for each.

- Loss Function: Use a weighted sum of binary cross-entropy losses for each task to account for dataset imbalance.

- Training: Train the entire network end-to-end using an optimizer like Adam. Employ early stopping and dropout to prevent overfitting.

Validation & Explanation:

- Validate using stratified k-fold cross-validation. Report balanced accuracy, sensitivity, and specificity for each task [5].

- Apply a post-hoc explainability method like the Contrastive Explanations Method (CEM) to identify pertinent positive (toxicophore) and pertinent negative (detoxifying) structural features for individual predictions [10].

Protocol: Implementing a QSAR Consensus Model for LD50 Estimation Using TEST

This protocol outlines the use of the EPA's TEST software for reliable single-compound estimation [12].

Objective: To obtain a robust LD50 point estimate for a new chemical entity using multiple QSAR methodologies.

Procedure:

- Input Preparation: Launch TEST (v5.1.2). Draw the 2D chemical structure of the query compound in the built-in sketcher or import a SMILES/MOL file [12].

- Endpoint Selection: In the calculation options, select "Oral rat LD50" as the endpoint.

- Methodology Selection: Choose the "Consensus" method. This method averages predictions from four underlying methodologies: Hierarchical, Single-model, Group contribution, and Nearest neighbor [12].

- Execution & Analysis: Run the calculation. The results will show:

- The consensus predicted LD50 value (in mg/kg).

- Predictions from each individual method.

- A list of the three most structurally similar compounds in the training set with their experimental LD50 values, which aids in assessing prediction reliability [12].

- Reporting: Document the consensus prediction, the range of predictions from individual methods, and the experimental values of the nearest neighbors to communicate uncertainty.

Table 1: Limitations of Traditional In Vivo Testing: Cost, Time, and Predictive Value

| Aspect | Quantitative Measure | Source / Context |

|---|---|---|

| Financial Cost | Rodent carcinogenicity testing adds $2-4 million and 4-5 years to drug development [14]. | Cost for cancer therapeutics development. |

| Predictive Accuracy | Only ~50% of animal experiments are replicated in human studies [14]. | Analysis of 221 animal experiments. |

| Attrition Due to Toxicity | ~30% of drug development failures are due to safety/toxicity [9]. Approximately 56% of halted projects fail due to safety concerns [11]. | Statistical analysis of drug R&D failure reasons. |

| Late-Stage Failure | ~89% of novel drugs fail in human clinical trials, with half due to unanticipated human toxicity [14]. | Overall failure rate in drug development. |

| ML Model Performance | Balanced accuracy for acute toxicity prediction models ranges from ~0.65 to 0.83 in external validation [5]. Multi-task DNNs using SMILES embeddings can achieve high accuracy (AUC > 0.8) for clinical toxicity prediction [10]. | Review of 82 ML model studies [5]; State-of-the-art multi-task model [10]. |

Workflow and Conceptual Diagrams

Title: Workflow for Developing an ML Model to Overcome In Vivo Testing Limits

Title: Architecture of a Multi-Task DNN for Multi-Endpoint Toxicity Prediction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for ML-Driven Predictive Toxicology Research

| Item Name | Type | Primary Function in Research | Key Source / Reference |

|---|---|---|---|

| Toxicity Estimation Software Tool (TEST) | Software | Provides ready-to-use, validated QSAR models for estimating rat oral LD50 and other endpoints from chemical structure. Useful for rapid screening and benchmarking [12]. | U.S. Environmental Protection Agency (EPA) [12]. |

| PubChem Database | Database | Massive public repository of chemical structures, properties, and bioactivity data. Essential for sourcing molecular structures and linking to associated toxicity assay results [9]. | National Institutes of Health (NIH) [9]. |

| ChEMBL Database | Database | Manually curated database of bioactive molecules with drug-like properties. Provides high-quality bioactivity data (IC50, Ki, etc.) crucial for training robust ML models [9]. | European Molecular Biology Laboratory (EMBL-EBI) [9]. |

| TOXRIC / DSSTox | Database | Comprehensive toxicity databases focusing on curated in vivo and in vitro toxicity results. A critical source for experimental LD50 and other toxicological endpoint data [9]. | Multiple academic and regulatory sources [9]. |

| RDKit | Software Library | Open-source cheminformatics toolkit. Used for generating molecular descriptors and fingerprints (e.g., Morgan fingerprints), standardizing structures, and handling chemical data in Python [10]. | Open-source community. |

| Contrastive Explanation Method (CEM) Framework | Algorithmic Framework | A post-hoc explainable AI (XAI) method adapted for chemistry. Identifies minimal substructures that cause a prediction (pertinent positives) and whose absence would flip it (pertinent negatives), adding crucial interpretability [10]. | Adapted from ML explainability research [10]. |

| Organ-on-a-Chip / 3D Spheroid Assays | In vitro Model | Advanced physiological models that provide human-relevant toxicological response data. This data can be integrated into ML models to improve human translatability and reduce reliance on animal data [11]. | Commercial and academic providers [11]. |

Machine Learning as a Paradigm Shift in Predictive Toxicology

Foundations of ML-Driven Predictive Toxicology

What defines the paradigm shift from traditional methods to ML in predictive toxicology? The shift moves from costly, low-throughput, and ethically challenging animal testing to in silico models that analyze massive datasets to predict toxicity [9]. Traditional methods, which account for approximately 30% of drug development failures due to safety issues, are hampered by long cycles and limited accuracy in cross-species extrapolation [9]. ML models address this by learning complex patterns from chemical structures, biological data, and historical toxicity outcomes, enabling early, accurate, and human-relevant risk assessment while aligning with the 3Rs principle (Replacement, Reduction, and Refinement) of animal testing [13].

Which machine learning algorithms are most effective for predicting different toxicity endpoints? Algorithm performance varies by endpoint and dataset. The table below summarizes the balanced accuracy of common algorithms for key toxicity types, based on a review of recent models [5].

Table: Performance of ML Algorithms by Toxicity Endpoint (Balanced Accuracy)

| Toxicity Endpoint | Dataset Size | Algorithm | Validation Type | Reported Balanced Accuracy |

|---|---|---|---|---|

| Carcinogenicity | 829 compounds | Random Forest (RF) | Holdout | 0.724 [5] |

| Carcinogenicity | 829 compounds | Support Vector Machine (SVM) | Cross-validation | 0.802 [5] |

| Cardiotoxicity (hERG) | 620 compounds | Bayesian | Cross-validation | 0.828 [5] |

| Acute Toxicity | 8,000+ compounds | Deep Neural Network (DNN) | External | ~0.85 [9] |

| Hepatotoxicity | 475 compounds | Ensemble Learning | Holdout | 0.703 [5] |

What are the primary data sources for building and validating these models? High-quality, curated data is foundational. Key sources include public toxicity databases, biological experimental data, and clinical reports [9].

Table: Essential Data Sources for ML in Predictive Toxicology

| Data Source Type | Key Examples | Primary Use & Function |

|---|---|---|

| Curated Toxicity Databases | TOXRIC, DSSTox/Toxval, ICE [9] | Provide structured in vivo and in vitro toxicity data (e.g., LD50) for model training. |

| Bioactivity/Chemical Databases | ChEMBL, PubChem, DrugBank [9] | Supply chemical structures, properties, and bioactivity data for featurization. |

| In Vitro Assay Data | High-throughput screening (HTS), cytotoxicity (MTT, CCK-8) [9] | Offer cellular-level toxicity profiles for mechanistic modeling and validation. |

| In Vivo Animal Data | Regulatory study data (OECD guidelines) | Used as benchmark labels, though with caveats for human translatability. |

| Clinical & Post-Market Data | FDA Adverse Event Reporting System (FAERS) [9] | Enable models to learn from human adverse drug reactions (ADRs). |

Technical Troubleshooting: Data, Models, and Interpretation

We have compiled a dataset, but model performance is poor. What are the first issues to check? This is often a data quality problem. Follow this systematic checklist:

- Data Consistency: Verify toxicity labels. A major challenge is inconsistent toxicity assignments for the same chemical across different sources [5]. Standardize endpoints (e.g., use a single, reliable LD50 value source).

- Class Imbalance: For classification (toxic/non-toxic), an imbalanced dataset will bias the model toward the majority class. Apply techniques like SMOTE (Synthetic Minority Over-sampling Technique) or use balanced accuracy as your primary metric instead of simple accuracy [5].

- Descriptor/Feature Relevance: Ensure the molecular descriptors (e.g., molecular weight, logP, fingerprint bits) are relevant to the toxicity endpoint. Use feature selection methods (like PCA or random forest feature importance) to reduce noise and overfitting [5].

- Data Leakage: Ensure no information from the test set (or external validation set) was used during training or feature selection, which artificially inflates performance.

How do I choose between a traditional ML model (like SVM/RF) and a deep learning model? The choice depends on your data size, complexity, and need for interpretability.

- Use Traditional ML (SVM, RF, Gradient Boosting) when: Your dataset is moderate in size (hundreds to thousands of compounds), you require high model interpretability (e.g., RF feature importance), or you are working with pre-computed molecular descriptors [18] [5]. They are generally less computationally intensive and easier to tune.

- Use Deep Learning (DNN, Graph Neural Networks) when: You have very large datasets (>10,000 compounds), you want the model to learn optimal feature representations directly from raw inputs like SMILES strings or molecular graphs, or you are integrating complex multimodal data (e.g., chemical structure + gene expression) [9] [18]. DL models can capture more complex, non-linear relationships but are "black boxes" and require more data and computational power.

Our model performs well on cross-validation but fails on new external compounds. What causes this overfitting? Overfitting indicates the model learned noise or dataset-specific artifacts rather than generalizable rules.

- Solution 1: Simplify the Model. Reduce model complexity (e.g., decrease tree depth in RF, reduce the number of features). Apply stronger regularization techniques (L1/L2 regularization) [18].

- Solution 2: Improve Data Curation. Broaden the chemical space of your training set. Ensure it is representative of the diverse structures you intend to predict. External failure often stems from predicting compounds outside the model's "applicability domain" [13].

- Solution 3: Adopt Rigorous Validation. Never rely solely on internal cross-validation. Always hold out a completely independent temporal or proprietary external set for final testing. Implement "chronological validation," where the model is trained on data up to a certain date and tested on compounds discovered afterward, as demonstrated in a recent study predicting drug withdrawal with 95% accuracy [19].

How can we interpret a "black box" ML model's predictions to understand the drivers of toxicity? Interpretability is critical for scientific acceptance and hypothesis generation.

- Post-hoc Interpretation Tools: Use SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to explain individual predictions by quantifying each feature's contribution [13].

- Mechanistic Anchoring: Link model features or identified chemical substructures to known Adverse Outcome Pathways (AOPs) or structural alerts for toxicity. This connects the model's output to established biological understanding [18].

- Genotype-Phenotype Difference (GPD) Analysis: As shown in pioneering research, integrate biological context by analyzing how drug-target genes differ in essentiality, tissue expression, and network connectivity between preclinical models and humans. This can explain why a compound might fail in humans despite safe animal data [19].

Short title: ML workflow for predictive toxicology

Protocols for Model Development and Validation

Protocol 1: Building a Binary Classification Model for Acute Oral Toxicity (LD50) This protocol outlines steps to create a model classifying compounds as "highly toxic" (LD50 ≤ 50 mg/kg) or "low toxicity."

- Data Acquisition: Download acute oral toxicity data from the ICE or DSSTox databases [9]. Curate a set of compounds with reliable rat LD50 values.

- Data Curation & Labeling: Remove duplicates and inorganic salts. Assign a binary label (1: LD50 ≤ 50 mg/kg, 0: LD50 > 50 mg/kg). Apply a 75/25 split for training and holdout testing, ensuring stratification by label.

- Molecular Featurization: Compute 2D molecular descriptors (e.g., using RDKit) and extended connectivity fingerprints (ECFP4). Apply range scaling to descriptors.

- Model Training & Tuning: Train a Random Forest classifier. Use 5-fold cross-validation on the training set to tune hyperparameters (number of trees, max depth) via grid search, optimizing for balanced accuracy.

- Evaluation: Predict labels for the held-out test set. Report balanced accuracy, sensitivity, specificity, and AUC-ROC. Analyze misclassifications to check for structural patterns.

Protocol 2: Implementing a Cross-Species Genotype-Phenotype Difference (GPD) Analysis This advanced protocol quantifies biological differences to improve human toxicity prediction [19].

- Input Data Compilation: For a set of drug targets (genes), compile three layers of cross-species data:

- Essentiality: Gene knockout viability scores from human (e.g., DepMap) and mouse cell lines.

- Expression: Tissue-specific mRNA expression profiles from human (GTEx) and mouse (ENCODE) consortia.

- Network Connectivity: Protein-protein interaction degree centrality in human and mouse reference networks.

- GPD Metric Calculation: For each gene and data layer, calculate a difference score (e.g., human value - mouse value). Normalize scores across all genes.

- Model Integration: Use these GPD scores as additional biological features alongside chemical descriptors in a classifier (e.g., XGBoost) trained to distinguish hazardous from safe drugs [19].

- Validation: Perform chronological validation. Train the model on drug data up to a historical year (e.g., 1991) and test its ability to predict drugs withdrawn post-1991 due to toxicity.

Protocol 3: Rigorous External Validation and Applicability Domain Assessment A critical protocol to ensure model robustness for real-world use.

- External Set Creation: Secure toxicity data for novel compounds not used in any model development phase. This should come from a different source or time period.

- Predict & Evaluate: Run the external compounds through your finalized model. Report key performance metrics. A significant drop versus internal CV signals overfitting or an inappropriate applicability domain.

- Define Applicability Domain: Use methods like leverage (for linear models) or distance-based measures (e.g., k-NN distance to training set in descriptor space) to define the model's reliable prediction space. Flag any external compound falling outside this domain, and report its prediction with low confidence [13].

Short title: Model validation workflow

Table: Key Research Reagent Solutions for ML-Driven Toxicology

| Tool Category | Specific Resource | Function & Application |

|---|---|---|

| Toxicity Databases | DSSTox/Toxval [9] | Provides curated, standardized toxicity values (e.g., LD50) for model training and benchmarking. |

| Bioactivity Databases | ChEMBL [9] | Offers manually curated bioactivity data, including toxicity endpoints, for millions of compounds. |

| Chemical Databases | PubChem [9] | A primary source for chemical structures, properties, and bioassay data for featurization. |

| In Silico Featurization | RDKit, PaDEL-Descriptor [5] | Open-source software to compute molecular descriptors and fingerprints from chemical structures. |

| ML Modeling Platforms | scikit-learn, XGBoost, DeepChem | Libraries providing implementations of classic ML algorithms and deep learning models for chemistry. |

| Model Interpretation | SHAP (SHapley Additive exPlanations) [13] | Explains individual predictions of any ML model, identifying key contributing features. |

| Biological Data Integration | CTD, STRING, GTEx | Resources for gene-disease associations, protein interactions, and tissue expression to enable GPD analysis [19]. |

What are the most common regulatory concerns regarding ML models for toxicity prediction, and how can we address them? Regulatory agencies emphasize reliability, reproducibility, and relevance.

- Concern: Model Transparency and Interpretability. "Black box" models are mistrusted.

- Concern: Applicability Domain. Predictions for chemicals structurally different from the training set are unreliable.

- Mitigation: Explicitly define and report the model's applicability domain. Software should flag out-of-domain predictions [13].

- Concern: Data Quality. "Garbage in, garbage out."

Technical Support Center

This support center provides guidance for researchers developing and applying QSAR models for LD50 prediction, a core methodology in modern toxicology and machine learning-based chemical safety assessment [6] [7]. The resources below address common technical challenges.

Troubleshooting Guides

Issue 1: Model Produces Overly Conservative or Hazardous Predictions

- Problem: Predictions are consistently too toxic (over-prediction) or not toxic enough (under-prediction), leading to poor risk prioritization.

- Diagnosis: This often stems from model selection bias or unrepresentative training data. High under-prediction is particularly critical as it fails to identify true hazards [6].

- Resolution:

- Implement a Conservative Consensus Model (CCM). Use multiple validated models (e.g., TEST, CATMoS, VEGA) and select the lowest predicted LD50 (most toxic) as the final output. This health-protective approach minimizes under-prediction risk [6].

- Perform structural domain analysis. Check if your compound contains functional groups (e.g., organophosphates with P-O/P-S alerts [7]) outside your training set's applicability domain.

- Calibrate predictions using known reference compounds within the same chemical class to identify and correct systematic bias.

Issue 2: Poor Model Performance on New Chemical Classes

- Problem: A model validated on one dataset performs poorly when predicting for emerging contaminants or novel scaffolds.

- Diagnosis: The model is likely overfitted to specific molecular descriptors or lacks relevant toxicodynamic features for the new class [20].

- Resolution:

- Incorporate mechanism-informed descriptors. Move beyond general physicochemical properties. Use descriptors reflecting polarizability (e.g., BCUT), electronegativity (e.g., ATSC1pe), and surface area (e.g., SLogP_VSA4), which are linked to toxicity mechanisms [7].

- Apply SHAP (Shapley Additive Explanations) analysis. Use this method to interpret your model and identify which structural features drive predictions for the new class, validating them against known toxicology [7].

- Retrain with expanded data. Integrate high-quality experimental data for the new chemical class, ensuring proper train/test splits to avoid data leakage [21].

Issue 3: Inconsistent QSAR Predictions Across Different Software Platforms

- Problem: The same chemical structure yields different LD50 predictions and hazard classifications when processed through different QSAR tools.

- Diagnosis: Discrepancies arise from differences in underlying algorithms, descriptor sets, training data, and classification thresholds [6].

- Resolution:

- Benchmark with a standardized set. Create an internal set of 50-100 compounds with reliable experimental LD50 values. Run this set through all platforms to quantify systematic biases.

- Understand tool-specific principles. Determine if a tool uses a single model, a consensus approach, or a specific applicability domain. For instance, a CCM will inherently produce more conservative estimates than any single constituent model [6].

- Standardize pre-processing. Ensure chemical structures (e.g., SMILES) are identically prepared (tautomer, protonation, stereochemistry) before input into each platform.

Frequently Asked Questions (FAQs)

Q1: What is the most reliable strategy for predicting LD50 when no experimental data exists? A: A consensus approach combining multiple QSAR models is considered best practice. Research shows that a Conservative Consensus Model (CCM), which selects the lowest predicted LD50 value from reputable models like CATMoS, VEGA, and TEST, provides the most health-protective classification. While it increases the over-prediction rate (to ~37%), it crucially minimizes the under-prediction rate (to ~2%), ensuring hazardous chemicals are not missed [6].

Q2: Which molecular features are most critical for accurate acute oral toxicity prediction? A: Modern machine learning QSAR models identify several key features. Electron-related and topological descriptors such as BCUT, ATSC1pe, and SLogP_VSA4 are highly influential [7]. Furthermore, specific alerting substructures are critical. For example, the presence of P-O or P-S bonds, indicative of organophosphates, is a strong predictor of high toxicity via the information gain method [7]. Understanding these features links structure directly to potential toxic mechanisms [20].

Q3: How do I ensure my QSAR model is valid and acceptable for regulatory purposes? A: Adherence to OECD Principles for the Validation of QSARs is mandatory. This includes using a defined endpoint (like LD50), an unambiguous algorithm, a defined applicability domain, appropriate measures of goodness-of-fit and robustness, and a mechanistic interpretation where possible [22]. Models should be built with rigorous train/test/validation splits to prove predictive power, not just internal performance [21].

Q4: What are the essential steps to build a new QSAR model for LD50? A: A robust workflow is essential [21]: 1. Data Curation: Compile a high-quality dataset of chemical structures (SMILES) and experimental LD50 values. Clean and standardize the data. 2. Descriptor Calculation: Generate numerical molecular descriptors (e.g., using RDKit) or fingerprints from the structures. 3. Data Splitting: Split data into training (~80%) and test sets (~20%) using methods like stratified splitting to maintain class balance. 4. Model Training: Train a model (e.g., Random Forest, Gradient Boosting) on the training set. Optimize hyperparameters via cross-validation. 5. Validation & Interpretation: Test the model on the held-out set. Use SHAP analysis to interpret predictions and define the model's applicability domain [7].

Q5: Can QSAR completely replace animal testing for acute toxicity? A: While QSAR is a powerful New Approach Methodology (NAM) that can significantly reduce and replace animal testing, complete replacement for all chemicals and endpoints is not yet feasible. QSAR models are best used for prioritization and screening, identifying high-hazard compounds early in development. They provide crucial data for regulatory submissions under frameworks like REACH, especially when experimental data is lacking [22]. The field is moving towards integrated testing strategies that combine QSAR, in vitro tests, and other NAMs.

Performance Data for Common LD50 Prediction Strategies

The table below summarizes the performance of single models versus a consensus approach for predicting rat acute oral toxicity GHS categories [6]. A lower under-prediction rate is critical for safety.

| Prediction Model | Over-prediction Rate | Under-prediction Rate | Key Characteristic |

|---|---|---|---|

| TEST (Single Model) | 24% | 20% | Moderate conservatism. |

| CATMoS (Single Model) | 25% | 10% | Balanced performance. |

| VEGA (Single Model) | 8% | 5% | Least conservative. |

| Conservative Consensus Model (CCM) | 37% | 2% | Maximizes health protection. |

Experimental & Computational Protocols

Protocol 1: Implementing a Conservative Consensus Prediction

- Objective: To obtain a health-protective LD50 estimate for an untested compound.

- Materials: Chemical structure (SMILES string); Access to TEST, CATMoS, and VEGA platforms (or their standalone tools/APIs).

- Procedure [6]:

- Input: Prepare the canonical SMILES for the query compound.

- Individual Prediction: Submit the SMILES to each of the three models (TEST, CATMoS, VEGA) to obtain predicted LD50 values (usually in mg/kg).

- Consensus Application: Compare the three predicted LD50 values. Select the lowest value (indicating highest toxicity).

- Classification: Convert this consensus LD50 value into a GHS toxicity category (e.g., Category 1: LD50 ≤ 5 mg/kg; Category 5: LD50 > 2000 mg/kg).

- Reporting: Report the consensus LD50, its source model, the GHS category, and note the use of a CCM methodology.

Protocol 2: Building a Robust Machine Learning QSAR Model

- Objective: To develop a predictive LD50 classification model using curated data.

- Materials: Python environment with scikit-learn, RDKit, pandas; Curated dataset of SMILES and LD50 values [21].

- Procedure [7] [21]:

- Descriptor Generation: Use RDKit to calculate a set of ~200 molecular descriptors (e.g., constitutional, topological, electronic) for each compound in your dataset.

- Data Preparation: Convert continuous LD50 values into categorical GHS labels. Handle missing values and scale descriptors.

- Train-Test Split: Split the data into training (80%) and test (20%) sets, ensuring chemical diversity is represented in both.

- Model Training: Train a Random Forest or XGBoost classifier on the training set using 5-fold cross-validation to tune key hyperparameters.

- Validation: Predict on the test set. Evaluate performance using accuracy, recall (sensitivity), and area under the ROC curve. Aim for recall >0.84 for hazard classes [7].

- Interpretation: Apply SHAP analysis to the trained model to identify the most important molecular descriptors driving predictions.

Visualization of Workflows

QSAR Model Development and Consensus Prediction Workflow

From Chemical Structure to Predicted Mode of Toxic Action

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item / Resource | Category | Function & Application in QSAR for Toxicity |

|---|---|---|

| SMILES String | Data Input | A standardized text representation of a molecule's structure, serving as the universal starting point for all computational modeling [21]. |

| RDKit | Software Library | An open-source cheminformatics toolkit used to generate molecular descriptors and fingerprints from SMILES strings for model training [21]. |

| OECD QSAR Toolbox | Software Suite | A regulatory tool to fill data gaps by profiling chemicals, identifying analogues, and applying QSAR models, aiding in compliance with regulations like REACH [22]. |

| CATMoS, VEGA, TEST Models | Predictive Models | Established, validated QSAR models for acute oral toxicity. Used individually or in consensus to generate LD50 predictions and hazard classifications [6]. |

| scikit-learn | Software Library | A core Python library for machine learning. Used to build, train, validate, and evaluate QSAR models using algorithms like Random Forest [21]. |

| SHAP (Shapley Additive Explanations) | Interpretation Tool | A game-theoretic method to explain the output of any ML model. Critical for identifying which structural features contribute most to a predicted toxicity, adding mechanistic insight [7]. |

| PredSuite / NAMs.network | Platform / Database | Online platforms hosting ready-to-use QSAR models (PredSuite) or serving as a hub for New Approach Methodologies (NAMs), providing resources for modern risk assessment [22]. |

| High-Quality Experimental LD50 Data | Reference Data | Reliable, well-curated in vivo toxicity data is the essential foundation for training, testing, and validating any predictive QSAR model [6] [21]. |

Building the Predictive Engine: Key Machine Learning Methodologies and Their Applications

Technical Support & Troubleshooting Center

This support center is designed for researchers developing machine learning (ML) models for LD50 and toxicity prediction. It addresses common technical challenges related to data sourcing, curation, and integration from key public databases, framed within the context of improving model accuracy and reliability for drug development.

Frequently Asked Questions (FAQs)

Q1: I am building a model for acute oral toxicity (LD50) prediction. Which databases provide the most reliable and machine-learning-ready data for this specific endpoint? A: For LD50 prediction, your primary sources should be TOXRIC [23] and the Distributed Structure-Searchable Toxicity (DSSTox) database [9]. TOXRIC is particularly valuable as it offers pre-curated, ML-ready datasets for acute toxicity. It contains quantitative LD50 values standardized to consistent units (mg/kg) [23], which is critical for training regression models. DSSTox provides a large volume of searchable toxicity data, including standardized toxicity values through its ToxVal component [9]. For a more specialized, multimodal dataset that includes pesticide LD50 data paired with molecular images and docking data, you can refer to the open dataset on Zenodo [24].

Q2: My model performance is poor, and I suspect issues with my training data. What are the key data quality checks I should perform? A: Poor data quality is a major bottleneck. Implement the following checks based on standardized curation protocols:

- Standardize Units: Ensure all quantitative toxicity values (e.g., LD50) are converted to a single unit (e.g., mg/kg). TOXRIC's methodology details this conversion to prevent model errors from unit mixing [23].

- Remove Non-Standard Compounds: Filter out salts, solvents, inorganic compounds, and mixtures from your compound list. Follow protocols that use Canonical SMILES and PubChem CID for deduplication and filtering [23].

- Resolve Data Conflicts: For data aggregated from multiple sources (e.g., hepatotoxicity from seven databases), establish a rule to handle conflicts. A common method is to assign a label only if a high percentage (e.g., >80%) of sources agree; otherwise, remove the ambiguous sample [23].

- Address Class Imbalance: For classification tasks, inspect the ratio of toxic to non-toxic labels. Use resampling techniques (oversampling or undersampling) during preprocessing, as demonstrated in recent ML model optimizations [8].

Q3: I need to integrate diverse data types (e.g., molecular structures, bioactivity, in vitro assay results) to create a multimodal model. Which databases support this, and how do I link them? A: Successful multimodal integration relies on using databases with consistent compound identifiers and leveraging tools that bridge different data spaces.

- Core Linked Databases: Use PubChem CID as a universal key. PubChem itself integrates structure, bioactivity, and toxicity data [9]. ChEMBL provides drug-like compound data, bioactivity, and ADMET information [9], and is often linkable via PubChem.

- Workflow for Integration: Start with a toxicity endpoint from TOXRIC or DSSTox. Use the associated SMILES or PubChem CID to pull 2D/3D molecular descriptors from PubChem or calculate them using toolkits like RDKit. For biochemical context, link to bioactivity data in ChEMBL or target information in DrugBank [9]. An example multimodal dataset for pesticides integrates 2D images, 3D docking tensors, and physicochemical descriptors using this linking principle [24].

- Tool Recommendation: The Python library

PubChemPycan programmatically access compound-specific data from PubChem using the CID, facilitating automated data pipeline construction [23].

Q4: How can I validate my model against experimental data that is more translatable to human biology? A: Beyond traditional animal-derived LD50 data, incorporate modern in vitro toxicity data to enhance biological relevance.

- Source High-Content Screening Data: Utilize data from high-content, phenotypic screening assays. For example, protocols using iPSC-derived human hepatocyte spheroids generate rich, multiparametric toxicity data (cell viability, apoptosis, mitochondrial damage) that correlate with human liver toxicity [25].

- Utilize In Vitro Database Resources: The ToxCast/Tox21 data, incorporated into resources like TOXRIC, provides high-throughput screening data on thousands of compounds across hundreds of biological pathways [23]. This can be used as additional input features or for cross-validation.

- Clinical Data for Validation: For late-stage validation checks, the FDA Adverse Event Reporting System (FAERS) offers real-world clinical toxicity signals [9]. While not used for training, it can help assess the translational warning signs your model might capture.

The table below compares the primary databases used for sourcing and curating toxicity data [9] [23].

| Database | Primary Focus & Key Content | Data Scale & Relevance to LD50 | Key Feature for ML |

|---|---|---|---|

| TOXRIC | Comprehensive toxicology resource for intelligent computation; covers 13 toxicity categories [23]. | 113,372 compounds; 1,474 endpoints. Includes acute toxicity (LD50) datasets [23]. | Provides ML-ready, pre-curated, and standardized datasets for direct use. |

| DSSTox | Searchable toxicity database with standardized chemical-structure-toxicity data [9]. | Large volume of structure-toxicity pairs; includes ToxVal for standardized values [9]. | High-quality, curated data ideal for building reliable QSAR/ML models. |

| PubChem | Massive repository of chemical information: structure, properties, bioactivities, toxicity [9]. | Hundreds of millions of compound entries; aggregates data from many sources [9]. | Essential for obtaining molecular descriptors and linking compounds across databases. |

| ChEMBL | Manually curated bioactivity database for drug-like molecules [9]. | Millions of bioactivity data points (e.g., IC50, Ki) [9]. | Provides complementary bioactivity and ADMET data for multimodal modeling. |

| DrugBank | Detailed drug and drug target information, including mechanisms and ADMET profiles [9]. | Contains data on FDA-approved and investigational drugs [9]. | Useful for understanding drug-specific toxicity mechanisms and pathways. |

Detailed Experimental Protocols

Protocol 1: Building an Optimized Ensemble Model for Toxicity Prediction This protocol is adapted from a study that achieved high accuracy (93%) by combining feature selection, resampling, and ensemble learning [8].

- Data Acquisition & Preprocessing: Obtain a toxicity dataset (e.g., acute toxicity from TOXRIC). Standardize all values and remove duplicates as per FAQ A2.

- Feature Engineering & Selection: Calculate molecular descriptors (e.g., using RDKit). Perform Principal Component Analysis (PCA) to reduce dimensionality and select the most informative features [8].

- Handle Class Imbalance: Apply a resampling technique (e.g., SMOTE for oversampling or random undersampling) to create a balanced dataset [8].

- Model Training with Cross-Validation: Split data into training and test sets. Employ 10-fold cross-validation on the training set to tune hyperparameters and prevent overfitting [8].

- Ensemble Model Construction: Train multiple base models (e.g., Random Forest, KStar). Use an ensemble strategy (e.g., voting or stacking) to combine them. The cited study created an "Optimized Ensembled Model (OEKRF)" from Random Forest and KStar [8].

- Comprehensive Evaluation: Move beyond simple accuracy. Evaluate using AUC, sensitivity, specificity, F1-score, and composite scores like the proposed W-saw and L-saw scores to assess robustness [8].

Protocol 2: Creating a Multimodal Dataset for Deep Learning (Image + Structural Data) This protocol outlines steps to create a dataset suitable for advanced architectures like CNNs, as demonstrated for pesticide LD50 prediction [24].

- Define Compound List: Start with a list of compounds of interest and their known LD50 values (from TOXRIC or DSSTox).

- Acquire 2D Molecular Images: For each compound, use its PubChem CID to programmatically download the 2D structural depiction image from PubChem [24].

- Generate 3D Biochemical Descriptors: Perform molecular docking simulations for each compound against a relevant protein target (e.g., human acetylcholinesterase for neurotoxins). Convert the docking results into 3D voxelized grids or tensor representations [24].

- Calculate Physicochemical Descriptors: Use the SMILES string of each compound with a toolkit like RDKit to generate a set of standard molecular descriptors (e.g., molecular weight, logP, topological polar surface area) [24].

- Dataset Assembly & Storage: Create a central table (e.g., a CSV file) where each row is a compound, columns include LD50 value, paths to its 2D image file, paths to its 3D tensor file, and its vector of physicochemical descriptors [24]. This structured dataset is ready for multimodal deep learning.

Visualization of Workflows and Data Relationships

Title: Workflow for Building LD50 Prediction Models from Key Databases

Title: Multimodal Data Fusion for Advanced LD50 Modeling

The Scientist's Toolkit: Research Reagent & Resource Solutions

| Item / Resource | Function & Application in Toxicity Prediction Research | Example / Source |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit used to calculate molecular descriptors from SMILES strings, generate molecular fingerprints, and handle chemical data. | Used in creating multimodal datasets [24]. |

| PubChemPy | Python library to access PubChem data (CID, properties, structures) programmatically, essential for building automated data pipelines. | Used for unit conversion in TOXRIC curation [23]. |

| iPSC-derived Hepatocyte Spheroids | Advanced in vitro 3D cell model for hepatotoxicity screening. Provides human-relevant, multiparametric data (viability, apoptosis) for model validation. | iCell Hepatocytes 2.0 [25]. |

| High-Content Imaging System | Confocal imaging system for acquiring 3D images of spheroids. Enables quantification of phenotypic endpoints for toxicity. | ImageXpress Micro Confocal [25]. |

| Molecular Docking Software | Software suite to simulate the binding of a compound to a protein target. Generates 3D interaction data (binding affinity, poses) for use as model features. | Used to create 3D voxelized tensors [24]. |

| MetaXpress Software | High-content image analysis software with custom modules for quantifying 3D objects (spheroids, nuclei), cell viability, and fluorescence intensity. | Used to analyze hepatocyte spheroid assays [25]. |

This Technical Support Center provides guidance for implementing the Conservative Consensus Model (CCM) for the prediction of acute oral toxicity (LD50). Within the context of thesis research on machine learning models for LD50 prediction accuracy, the CCM approach is designed to generate health-protective predictions by integrating multiple individual Quantitative Structure-Activity Relationship (QSAR) models into a more robust, reliable, and conservative framework [26].

The core principle of consensus modeling is that the combined prediction from several validated models often outperforms any single constituent model, offering improved accuracy and broader applicability for new chemicals [26]. The "conservative" aspect prioritizes safety, erring on the side of caution to protect human and environmental health, which is critical in regulatory and drug development settings [5] [27].

This guide addresses practical challenges, outlines step-by-step experimental protocols, and provides solutions to common problems encountered during development and validation.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the primary advantage of using a consensus model over a single QSAR model for LD50 prediction? A: A consensus model averages or combines predictions from multiple individual QSAR models. This approach increases predictive accuracy and robustness on external validation sets compared to single models, as it mitigates the specific weaknesses and biases of any one model [26]. It also can provide a measure of prediction certainty based on the agreement between individual models.

Q2: My consensus model is highly accurate on the training data but performs poorly on new compounds. What could be the cause? A: This is a classic sign of overfitting. Potential causes include:

- Inadequate Applicability Domain (AD): The new compounds may fall outside the chemical space defined by your training set. Ensure your individual models and final consensus incorporate a defined AD to flag extrapolations [26].

- Data Quality Issues: Inconsistencies or errors in the experimental LD50 data for your training set will propagate through the models. Carefully curate your dataset and use the most conservative (lowest) LD50 value for compounds with multiple entries [26].

- Lack of Model Diversity: The individual models in your consensus may be too similar (e.g., same algorithm, same descriptors). Use a combinatorial QSAR approach, pairing different descriptor sets (e.g., Dragon, PaDEL) with different machine learning algorithms (e.g., Random Forest, Support Vector Machine) to create diverse models for consensus [26].

Q3: How can I make my consensus model "conservative" or health-protective? A: The conservatism can be engineered at two stages:

- During Data Curation: Use the most conservative (lowest) experimental LD50 value when multiple values exist for a compound, biasing the model toward higher sensitivity [26].

- During Prediction Interpretation: Implement a decision rule that prioritizes safety. For example, if the consensus predicts a compound to be "toxic" or of "high concern" from any reasonable model interpretation, the final call should be conservative. This aligns with frameworks that restrict actions to a "viable set" satisfying safety constraints [27].

Q4: What are the common data sources for building LD50 prediction models, and how should I manage them? A: Common sources include legacy datasets from regulatory bodies, commercial databases, and publicly available resources like the EPA's ECOTOX [28]. Key management steps are:

- Standardization: Convert all structures to a standard format (e.g., canonical SMILES) and verify them [26] [28].

- Deduplication: Identify and merge duplicate compounds, retaining the most reliable or conservative toxicity value [26] [28].

- Splitting: Perform a stratified random split to create a modeling set and a strict external validation set that is never used during model training or parameter optimization [26] [10].

Q5: How much data is needed to build a reliable consensus model? A: While more high-quality data is always better, studies have successfully built consensus models with several thousand compounds. For example, a key study used a modeling set of 3,472 compounds and an external validation set of 3,913 compounds [26]. The focus should be on data diversity and quality rather than just quantity. A smaller, well-curated dataset representing a broad chemical space is more valuable than a large, noisy, or narrow one [5].

Troubleshooting Common Experimental Issues

| Problem Area | Symptom | Potential Cause | Recommended Solution |

|---|---|---|---|

| Data Preparation | Inconsistent molecular structures, failed descriptor calculation. | Non-standardized SMILES, presence of salts/inorganics, incorrect valence. | Use chemical standardization toolkits (e.g., RDKit). Filter out organometallics, salts, and mixtures as done in foundational studies [26]. |

| Model Development | All individual models show poor performance. | Uninformative molecular descriptors, incorrect endpoint encoding (regression vs. classification). | Use established descriptor packages (e.g., Dragon, PaDEL). For classification, verify toxicity class thresholds (e.g., EPA, GHS classification) [10] [28]. |

| Model Validation | High accuracy in cross-validation but low accuracy in hold-out/external testing. | Data leakage, overfitting, or insufficiently diverse training set. | Implement a strict hold-out external validation set that is never used in training. Apply Applicability Domain filters to identify reliable predictions [26]. |

| Consensus Building | Consensus performance is no better than the best single model. | Individual models are highly correlated or make similar errors. | Increase model diversity by combining fundamentally different algorithms (e.g., RF, SVM, kNN) and descriptor types [5] [26]. |

| Interpretability | The model is a "black box"; difficult to explain predictions. | Using complex algorithms like deep neural networks without explanation methods. | Apply post-hoc explanation methods (e.g., contrastive explanations, feature importance) to identify toxicophores. Use more interpretable base models (e.g., SARpy rules) where possible [10] [28]. |

Detailed Experimental Protocols

Protocol 1: Dataset Curation for Rat Oral LD50 Modeling

This protocol is based on the methodology used to create one of the largest public QSAR datasets for acute oral toxicity [26].

Objective: To compile a robust, high-quality dataset for developing predictive LD50 models. Materials: Source databases (e.g., EPA, historical toxicology reports), chemical standardization software (e.g., RDKit, OpenBabel), spreadsheet or database management system. Procedure:

- Data Aggregation: Collect experimental rat oral LD50 values (preferred species and route) from multiple credible sources.

- Structure Verification: For each compound, obtain or generate a canonical SMILES string. Use automated and manual checks to verify structural correctness.

- Data Cleaning:

- Remove inorganic compounds, organometallics, salts, and mixtures.

- For compounds with multiple LD50 entries, retain the most conservative value (the lowest LD50, indicating highest toxicity).

- Convert LD50 values (typically in mg/kg) to a uniform scale: log(1/(mol/kg)) for regression modeling [26].

- Deduplication: Merge entries for the same chemical structure, keeping the conservative value.

- Dataset Splitting: Partition the final dataset into a Modeling Set (~50-70%) and an External Validation Set (30-50%). Ensure no structurally similar compounds are split across sets using clustering techniques. Important: The validation set must never be used for model training or feature selection.

Protocol 2: Combinatorial QSAR Model Development

Objective: To build a diverse set of individual QSAR models for later consensus [26]. Materials: Descriptor calculation software (e.g., Dragon, PaDEL), machine learning library (e.g., scikit-learn, R caret), computational hardware. Procedure:

- Descriptor Calculation: For the Modeling Set compounds, calculate 2-3 distinct sets of molecular descriptors (e.g., 2D topological, 3D geometric, electronic).

- Feature Preprocessing: Handle missing values, and apply feature scaling (normalization/standardization). Optionally, use feature selection (e.g., variance threshold, correlation filtering) to reduce dimensionality.

- Model Training: Apply 3-5 different machine learning algorithms to each descriptor set. Common algorithms include:

- Internal Validation: For each model, perform 5-fold or 10-fold cross-validation on the Modeling Set. Use metrics like Balanced Accuracy (classification) or R² (regression) for assessment [5].

- Model Selection: Retain all models that meet a pre-defined performance threshold (e.g., cross-validated Balanced Accuracy > 0.65 or R² > 0.5) for the consensus pool.

Protocol 3: Conservative Consensus Model Assembly & Validation

Objective: To integrate individual models into a final consensus predictor and rigorously evaluate its performance [26]. Materials: Trained individual models, external validation set, scripting environment (e.g., Python). Procedure:

- Prediction Generation: Apply each validated individual model to the External Validation Set.

- Applicability Domain (AD) Filtering: For each prediction, determine if the compound falls within that specific model's AD (e.g., based on leverage or distance). Flag predictions outside the AD as unreliable.

- Consensus Aggregation: Calculate the final prediction. For regression (continuous LD50), use the mean or median of the reliable individual predictions. For classification (toxic/non-toxic), use majority voting.

- Conservative Override: Implement a safety rule. Example: If any reliable model predicts "High Toxicity," the final consensus classification should be "High Toxicity Concern," regardless of the average vote.

- Performance Evaluation: Calculate final evaluation metrics (Balanced Accuracy, Sensitivity, Specificity, R²) on the External Validation Set. Critical: Compare consensus model performance against the best individual model and a naive baseline.

- Reporting: Document the coverage (percentage of compounds for which a reliable consensus prediction was made) and accuracy.

Workflow & Model Architecture Visualization

CCM Workflow for LD50 Prediction

Logic of Conservative Consensus Modeling

The Scientist's Toolkit: Key Research Reagent Solutions

The following tools and resources are essential for implementing the CCM approach for LD50 prediction.

| Tool / Resource Name | Type | Primary Function in CCM Development | Key Notes / Reference |

|---|---|---|---|

| PaDEL-Descriptor | Software | Calculates a comprehensive set of 2D and 3D molecular descriptors and fingerprints directly from structures. | Widely used for featurization in QSAR studies; open-source and batch capable [5] [26]. |

| Dragon Software | Software | Commercial platform for calculating a vast array (>5000) of molecular descriptors. | Often used as a complementary descriptor set to PaDEL to increase model diversity [26]. |

| RDKit | Open-Source Cheminformatics Library | Used for chemical standardization, SMILES parsing, descriptor calculation, and molecular operations. | Essential for data curation and preprocessing steps [10]. |

| TOPKAT | Commercial Software | A benchmark toxicity prediction suite. Its training set composition can be used to define external validation sets for fair comparison [26]. | Used in foundational studies to create a modeling set (compounds in TOPKAT) and a pure external set (compounds not in TOPKAT) [26]. |

| SARpy | Software | Automatically extracts Structural Alerts (SAs) or toxicophores from a dataset of active molecules. | Useful for creating interpretable rule-based models and for explaining consensus model predictions [28]. |

| ClinTox Dataset | Data | Contains data on drug candidates that failed clinical trials due to toxicity. | Serves as a valuable benchmark dataset for clinical toxicity prediction within a multi-task learning framework [10]. |

| ECOTOX Database | Data | EPA database providing single-chemical toxicity data for aquatic and terrestrial species. | A key source for experimental avian or wildlife LD50 data for cross-species modeling [28]. |

| scikit-learn / caret | Code Library | Provides unified implementations of machine learning algorithms (RF, SVM, kNN) for model building and validation. | Enables the efficient execution of the combinatorial QSAR modeling protocol. |

Generalized Read-Across (GenRA) represents a pivotal algorithmic advancement in predictive toxicology, transitioning the well-established but subjective practice of chemical read-across into an objective, reproducible computational framework [29] [30]. Within the context of a broader thesis on enhancing machine learning (ML) models for LD50 (median lethal dose) prediction accuracy, GenRA offers a compelling methodology. It operates on a foundational principle: using existing data from chemically "similar" source compounds to fill data gaps for target substances lacking experimental results [29]. Traditional read-across is an expert-driven process, which poses challenges for reproducibility and scalability in large-scale drug development and chemical safety screening [30].

GenRA systematizes this approach by using quantified structural and bioactivity similarity measures to identify candidate source analogues and generate a similarity-weighted prediction of toxicity outcomes [29] [30]. This aligns directly with core ML research objectives aimed at improving predictive accuracy, reducing reliance on animal testing, and accelerating the identification of safe drug candidates by providing a robust, data-driven method for early hazard assessment [13] [31]. By framing GenRA as an ML-informed read-across tool, researchers can critically evaluate its performance in quantitative LD50 prediction, analyze its uncertainty quantification, and explore its integration with other in silico models to build more reliable and generalizable toxicity forecasting systems [5] [13].

Technical Support Center: Troubleshooting Guides and FAQs

This section addresses common technical and methodological challenges researchers may encounter when implementing GenRA for LD50 prediction within an ML-driven research project.

Frequently Asked Questions (FAQs)

Q1: What are the most critical data quality issues that can undermine GenRA prediction accuracy for LD50? The principle of "garbage in, garbage out" is paramount. Critical issues include:

- Inconsistent or Low-Quality Experimental LD50 Source Data: The accuracy of GenRA predictions is contingent on the reliability of the toxicity data for the source analogues. Data from unverified sources, studies with poor experimental design, or highly variable results introduce significant noise [32] [5].

- Inappropriate Structural/Bioactivity Descriptors: Using fingerprints or descriptors that do not capture features relevant to acute toxicity mechanisms can lead to identifying "similar" compounds that are not biologically analogous for the LD50 endpoint [30].

- Misapplication of Similarity Metrics: The default Jaccard (Tanimoto) index may not be optimal for all chemical spaces or endpoints. The choice of similarity metric must be justified and its impact on neighbor selection evaluated [30].

Q2: How can I prevent data leakage and overfitting when developing and evaluating a GenRA model? This is a fundamental ML pitfall [32].

- Strict Data Separation: Your hold-out test set (compounds for final performance evaluation) must never be used during model development or analogue selection. All decisions about similarity metrics, weighting schemes, and the number of neighbours (k) must be made using only the training and validation sets [32].

- Use of Validation Sets: Employ a separate validation set to tune hyperparameters (like the similarity threshold or the value of k in k-nearest neighbours) to avoid overfitting to your training data [32].

- External Validation: The most robust evaluation involves testing your finalized GenRA approach on a completely external dataset from a different source or study [5] [13].

Q3: My GenRA model performs well on the training set but poorly on new compounds. Is this overfitting, and how can I fix it? Yes, this is a classic sign of overfitting, where the model has learned noise or specific idiosyncrasies of the training data rather than a generalizable relationship [32] [33].

- Increase the Similarity Threshold: Raise the minimum structural similarity required for a source compound to be considered an analogue. This makes the model more conservative.

- Adjust the Number of Neighbours (k): Using a very small k (e.g., 1 or 2) makes the prediction highly sensitive to individual data points. Increasing k creates a more stable, averaged prediction [30].

- Incorporate Bioactivity Data: Use a hybrid similarity measure that combines structural fingerprints with bioactivity profiles (e.g., from ToxCast assays). This can help ensure similarity is mechanistically relevant, not just structural [29] [30].

Q4: How does GenRA compare to other ML models like Random Forest or Deep Neural Networks for LD50 prediction? GenRA and other ML models are complementary tools with different strengths.

- GenRA (Read-Across): Provides intuitive, "white-box" predictions based on identifiable analogues. Its performance is highly interpretable ("compound X is predicted toxic because its 5 most similar neighbours are toxic") [29] [30]. It excels when high-quality analogue data exists but can struggle with truly novel scaffolds lacking similar neighbours.