Automating Trust: How AI and Computational Pipelines Are Revolutionizing Data Quality in Ecotoxicology

The exponential growth of ecotoxicological data, driven by high-throughput methods and environmental sensor networks, has rendered traditional manual quality assurance (QA) and quality control (QC) processes unsustainable.

Automating Trust: How AI and Computational Pipelines Are Revolutionizing Data Quality in Ecotoxicology

Abstract

The exponential growth of ecotoxicological data, driven by high-throughput methods and environmental sensor networks, has rendered traditional manual quality assurance (QA) and quality control (QC) processes unsustainable. This article explores the critical transition to automated data quality screening tools, addressing a spectrum of needs from foundational concepts to practical implementation for researchers and drug development professionals. We first establish the urgent need for automation, highlighting challenges like evaluator bias and the impracticality of manually screening millions of data points [citation:1][citation:5]. We then detail methodological applications, from EPA-developed frameworks like TADA for water quality data to advanced computational pipelines like RASRTox that automatically acquire and rank toxicological data [citation:3][citation:9]. The discussion extends to troubleshooting data integration, managing streaming data, and optimizing tool performance. Finally, we examine validation through benchmark datasets like ADORE and the comparative performance of AI-assisted assessments against human evaluation, demonstrating how these tools enhance consistency, speed, and reliability in hazard and risk assessments [citation:1][citation:6].

The Data Deluge: Why Manual QA/QC is Obsolete in Modern Ecotoxicology

Modern ecotoxicology and environmental risk assessment (ERA) are confronting a fundamental shift in scale. The volume of relevant data has expanded from curated collections of hundreds of studies to vast, unstructured repositories containing thousands to millions of individual data points [1] [2]. This expansion is driven by the proliferation of research on emerging contaminants like microplastics and pharmaceuticals, increased monitoring, and the availability of legacy data from diverse sources [3] [4]. Concurrently, regulatory and scientific demands for robust, transparent decision-making require that every data point used in an ERA undergoes a rigorous evaluation of its reliability and relevance [5] [4].

Manual quality assessment, the traditional mainstay, is no longer feasible. Evaluating a single study against comprehensive criteria can take an expert assessor hours. With the exponential growth in published literature, manual screening has become a critical bottleneck, prone to inconsistencies, evaluator bias, and semantic ambiguities [1] [2]. This document details the application notes and protocols for automated data quality screening tools, which represent the essential transition from manual, small-scale review to systematic, high-throughput evaluation. These tools are not intended to replace expert judgment but to augment and standardize the initial screening phases, ensuring that human expertise is focused on the most complex validation tasks [5].

Quantifying the Challenge: Data Volume and Variability

The challenge is twofold: sheer volume and profound variability in data quality. The following tables quantify these aspects, drawing from current research and established frameworks.

Table 1: Scale and Performance of Manual vs. AI-Assisted Data Screening

| Metric | Traditional Manual Review | AI-Assisted Screening (Current) | Target for Automation |

|---|---|---|---|

| Studies Processed Per Assessor Day | 2-5 full evaluations | Dozens of initial screenings & rankings [1] [2] | Hundreds of autonomous evaluations |

| Typical Evaluation Time Per Study | 1-4 hours | Minutes for initial classification [1] | Near real-time |

| Primary Bottleneck | Expert assessor availability & consistency | Refinement of AI prompts & validation of outputs | Integration with diverse data formats |

| Consistency | Variable; subject to evaluator bias and fatigue | High; applies same criteria uniformly [1] [2] | Objectively reproducible |

| Example Throughput | Manual review of 73 microplastics studies would take weeks [2] | AI tools screened 73 studies for QA/QC criteria efficiently [1] [2] | Screening entire digital libraries (10,000+ studies) |

Table 2: Key Sources of Variability in Ecotoxicological Data Quality [6] [4]

| Source of Variability | Impact on Data Quality | Order of Magnitude Influence |

|---|---|---|

| Toxicokinetic/Toxicodynamic Factors (e.g., metabolic rate, lipid content) | Alters the relationship between external exposure and internal effective dose. | Can change reported LC50 by 10-1000x [6] |

| Test Design & Reporting (e.g., exposure duration, control performance) | Affects reproducibility and reliability of the endpoint. | Major factor in Klimisch/CRED scoring; can render data unusable [3] [4] |

| Modifying Factors (e.g., water chemistry, temperature) | Influences chemical bioavailability and organism sensitivity. | Can significantly alter toxicity metrics [6] |

| Data Completeness & Transparency | Determines ability to independently verify or re-analyze results. | Critical for reliability scoring (CRED Criteria 17-20) [4] |

Foundational Frameworks for Data Quality Assessment

Automated tools must be built upon standardized, transparent frameworks to ensure their outputs are meaningful and defensible. Two core frameworks underpin this field.

Data Quality Objectives (DQOs) and the PARCCS Criteria: The foundation of any quality assessment is a clear statement of DQOs, which define the project's data needs [5]. These are operationalized through the PARCCS indicators: Precision, Accuracy, Representativeness, Comparability, Completeness, and Sensitivity [5]. Automated screening tools are configured to check for conformance with these predefined indicators during the verification and validation process.

The CRED (Criteria for Reporting and Evaluating Ecotoxicity Data) Methodology: For evaluating individual studies, the CRED method provides a structured, categorical approach that improves upon earlier Klimisch scores [3] [4]. It assesses 20 criteria across four domains: test substance, test organism, test design/conditions, and data analysis/reporting. Each criterion is judged as "fully," "partially," or "not" fulfilled, leading to a final reliability score (R1-R4) [4]. This structured checklist is ideal for translation into automated screening logic.

Table 3: Core Data Quality Dimensions for Assessment [7] [5]

| Dimension | Definition | Key Questions for Automated Screening |

|---|---|---|

| Integrity | Data is protected from unauthorized alteration. | Is the dataset complete and has its chain of custody been documented? |

| Unambiguity | Data elements are clearly defined and understood. | Are all parameters, units, and codes explicitly defined in metadata? |

| Consistency | Data is uniform across the dataset. | Are naming conventions, formats, and units used consistently? |

| Completeness | Expected data is present. | Are all required fields populated? Is supporting data (e.g., controls, QC) included? |

| Correctness | Data accurately represents the real-world values it is intended to model. | Does the data fall within expected plausible ranges? Do calculated values match reported results? |

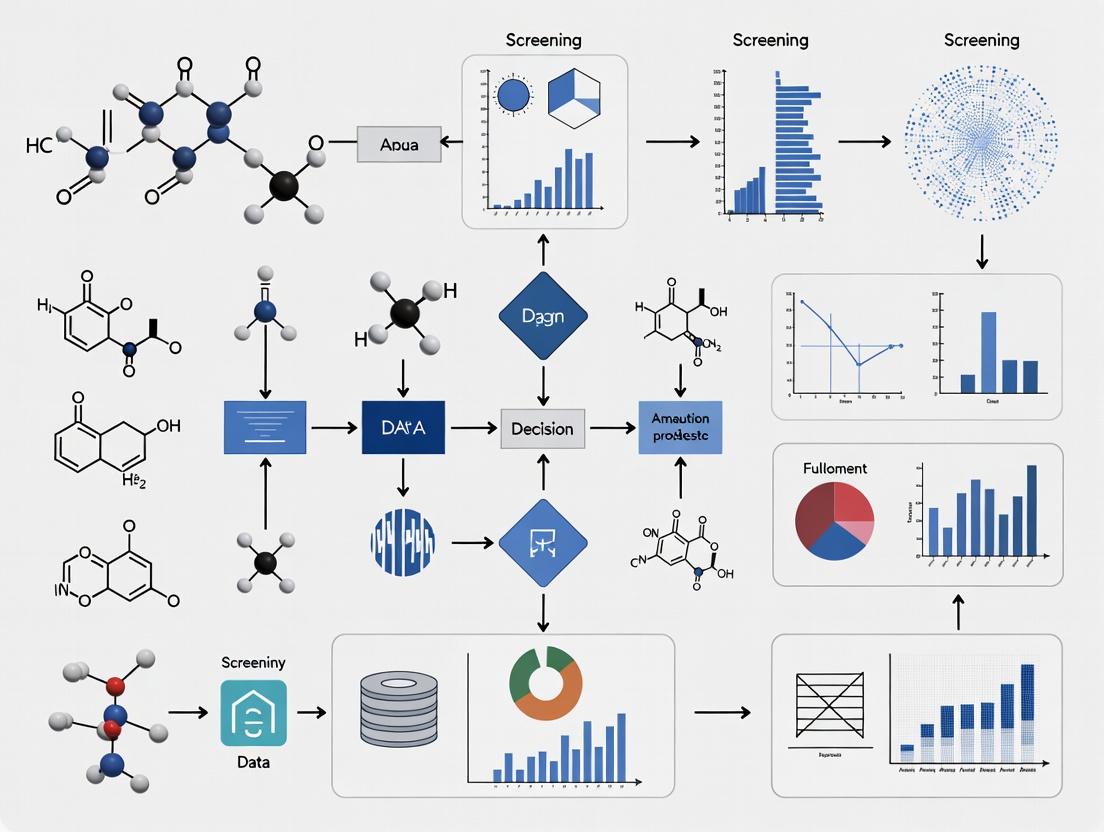

Diagram 1: Evolution from Manual to Automated Data Quality Screening.

Application Notes: Protocols for Automated Screening

Protocol: AI-Assisted QA/QC Screening for Literature Data

This protocol is adapted from a pioneering application using Large Language Models (LLMs) to screen microplastics studies, demonstrating a scalable approach to literature triage [1] [2].

Objective: To rapidly and consistently screen a large corpus of scientific literature against predefined QA/QC criteria, ranking studies for suitability in exposure and risk assessment.

Materials:

- Input Data: Digital corpus of scientific publications (PDFs or plain text).

- AI Tool: Access to a capable LLM (e.g., OpenAI's ChatGPT, Google's Gemini) via API or interface.

- QA/QC Criteria: A structured list of criteria derived from relevant guidelines (e.g., for microplastics in drinking water) [1].

- Prompt Template: A pre-engineered text prompt to instruct the LLM.

Procedure:

- Criteria Formalization: Translate the project's DQOs and PARCCS requirements into a concrete checklist of 10-15 yes/no or categorical questions. Example: "Does the study explicitly state the particle size range of microplastics analyzed?" [1] [2].

- Prompt Engineering: Develop a system prompt that:

- Defines the AI's role as a data quality screener.

- Lists the exact evaluation criteria.

- Instructs the AI to output a structured response (e.g., JSON) containing: Study ID, a score for each criterion, a brief justification, and an overall suitability ranking (e.g., High, Medium, Low).

- Batch Processing: Feed the text of each study into the LLM using the engineered prompt. Automated scripting (via Python, etc.) is used to manage large batches.

- Human-in-the-Loop Validation: Randomly select a subset (e.g., 20%) of the AI-processed studies for parallel, blind evaluation by a human expert. Compare results to calibrate the AI prompt and identify edge cases.

- Output and Triage: Use the AI-generated rankings to prioritize which studies undergo full, manual CRED evaluation. Studies ranked "Low" may be archived or flagged for rapid review.

Key Application Note: The AI acts as a force multiplier, not a replacement. Its strength is in handling the initial, repetitive screening task with high consistency, freeing experts to perform the nuanced, final validation on a pre-filtered, high-priority subset [5].

Protocol: Systematic Data Evaluation Using CRED Workflow

This protocol details the stepwise evaluation of individual study reliability, a process that can be partially automated through rule-based systems or AI trained on CRED outputs [4].

Objective: To assign a transparent and defensible reliability score (R1-R4) to an ecotoxicology study for use in regulatory decision-making.

Materials:

- Study Report: The full text, tables, and supplementary materials of the study.

- CRED Evaluation Sheet: A digital or physical form listing the 20 CRED criteria [4].

- Relevant Guidelines: OECD test guidelines, ASTM standards, or other methodology references pertinent to the study.

Procedure:

- Initial Administrative Screen: Confirm the study's basic relevance (organism, endpoint, chemical). Check for fundamental flaws (e.g., lack of control, obvious contamination).

- Criterion-by-Criterion Assessment: For each of the 20 CRED criteria, examine the study report and assign a fulfillment level:

- Fully (F): The study report contains all information required by the criterion.

- Partially (P): The study report contains some, but not all, required information.

- Not (N): The required information is absent or clearly flawed.

- Not Applicable (NA): The criterion does not apply to this study design.

- Expert Judgment Integration: Based on the pattern of F, P, and N scores, assign a final reliability category:

- R1 (Reliable without restrictions): All key criteria fully met.

- R2 (Reliable with restrictions): Most key criteria fully met, minor shortcomings.

- R3 (Not reliable): One or more key criteria not met or partially met, introducing significant uncertainty.

- R4 (Not assignable): Insufficient information to evaluate.

- Documentation: Record the justification for each criterion rating and the final score in a standardized format. This audit trail is critical for transparency.

Automation Potential: Steps 1 and 2 are prime candidates for automation. Natural Language Processing (NLP) models can be trained to extract information related to specific criteria (e.g., "identify the text stating the exposure concentration") and flag its presence or absence.

Diagram 2: AI-Assisted QA/QC Screening Workflow for Literature.

Diagram 3: CRED Reliability Evaluation Workflow and Scoring.

Table 4: Research Reagent Solutions for Automated Data Quality Screening

| Tool Category | Specific Tool/Resource | Function in Automated Screening |

|---|---|---|

| AI & NLP Models | General-purpose LLMs (ChatGPT, Gemini, Claude) | Perform initial literature triage, extract specific information from text, and summarize findings against criteria [1] [2]. |

| Evaluation Frameworks | CRED Evaluation Criteria, EPA QA/G-9 Guidance | Provide the standardized, structured checklist against which studies are programmatically or AI-evaluated [7] [4]. |

| Data Management Systems | Environmental Data Management Systems (EDMS) | Serve as the platform to store raw data, metadata, and the associated quality flags (validation qualifiers) generated by automated or manual review [5]. |

| Rule-Based Screening Scripts | Custom Python/R scripts using regex, statistical checks | Automate verification tasks: checking data ranges, consistency of units, completeness of fields, and conformance to PARCCS thresholds [5]. |

| Reference Databases | OECD Guidelines, ECOTOX Knowledgebase | Provide the authoritative reference points for test acceptability and historical control ranges, which can be used to build validation rules. |

Future Directions and Integration

The future lies in deep integration. Next-generation tools will seamlessly combine NLP information extraction, rule-based PARCCS verification, and predictive modeling to flag potential quality issues before an experiment is finalized. The ultimate goal is a continuous data quality assessment loop embedded within the scientific workflow. As data generation accelerates towards millions of data points—from high-throughput in vitro assays, omics technologies, and real-time environmental sensors—these automated screening protocols will transition from a useful aid to an indispensable component of credible, scalable ecotoxicological science and regulatory decision-making [1] [5]. The scale of the challenge necessitates a fundamental shift in methodology, where intelligent tools handle the scale, and human experts focus on the depth and nuance of environmental protection.

Ecotoxicology research is foundational to environmental safety, regulatory compliance, and sustainable product development. The field generates vast quantities of data from tests on aquatic and terrestrial organisms to assess the hazards of chemicals, pharmaceuticals, and environmental pollutants [8]. However, the traditional manual frameworks for ensuring the quality and reliability of this data are increasingly recognized as a critical bottleneck. These methods are fundamentally challenged by three interconnected limitations: inconsistency in evaluation, the introduction of human bias, and prohibitive time consumption [9] [1].

The demand for high-quality data is escalating, driven by stringent global regulations like the European Union's REACH and growing public awareness of environmental sustainability [8]. Concurrently, the volume and complexity of data are exploding, with over 350,000 chemicals registered worldwide and databases like the US EPA's ECOTOX containing over 1.1 million entries [10]. Manually screening this data for quality assurance and quality control (QA/QC) is no longer practically feasible. This creates an urgent need for automated, scalable solutions. Recent breakthroughs demonstrate that artificial intelligence (AI), particularly large language models (LLMs), can standardize and accelerate data reliability assessments, offering a transformative path forward for harmonized risk assessment in data-intensive regulatory domains [1]. This document details these limitations and provides application notes and protocols for implementing automated screening tools.

Quantifying the Limitations: A Data-Driven Perspective

The constraints of traditional manual methods can be quantified across key dimensions, impacting the efficiency, reliability, and scalability of ecotoxicological research.

Table 1: Comparative Analysis of Traditional vs. AI-Assisted Data Quality Screening

| Dimension | Traditional Manual Methods | AI-Assisted Automated Screening | Quantitative Impact / Evidence |

|---|---|---|---|

| Processing Speed | Labor-intensive, sequential review. | Parallel, high-speed processing of vast datasets. | AI can reduce daily monitoring time from 4 hours to 20 minutes [9]. LLMs evaluate studies at machine speed [1]. |

| Consistency & Standardization | Prone to semantic ambiguity and evaluator drift. | Applies predefined QA/QC criteria uniformly to all data. | AI replicates human assessments with high consistency, overcoming semantic ambiguities [1]. |

| Scalability | Difficulties scaling with data volume or new formats. | Highly scalable and adaptable to new data sources. | Can screen 73+ studies systematically [1]; handles "endless information" [9]. |

| Bias Mitigation | Susceptible to cognitive biases (confirmation, selection). | Can be designed to minimize subjective bias in screening. | Addresses publication bias and small-study effects that distort meta-analysis [11]. |

| Resource Requirement | High demand for expert analyst time. | Reduces demand for repetitive manual screening. | Enables a team of 2 analysts to serve 180k stakeholders [9]. |

| Error Rate | Manual data entry and judgment errors. | Automated extraction reduces transcription errors. | Not explicitly quantified, but automation inherently reduces manual error rates. |

Table 2: Statistical Measures of Inconsistency in Meta-Analysis (Traditional Context)

| Statistic | Primary Function | Limitation in Traditional Context | Proposed Advanced Alternative |

|---|---|---|---|

| Q Test / I² | Tests for presence of heterogeneity; quantifies its magnitude. | Low power with few studies; assumes normal between-study distribution [11]. | Hybrid Test: Combines multiple T_(p) statistics for robust power across skewed or heavy-tailed distributions [11]. |

| Subgroup Analysis | Explores sources of inconsistency. | Often post-hoc and susceptible to "fishing." | Pre-specified, AI-clustered subgrouping based on study features. |

| Outlier Detection | Identifies extreme studies. | Often relies on arbitrary cut-offs (e.g., visual inspection). | T_(p) Statistics: Use different mathematical powers (e.g., p=1 for robust sum, p→∞ for maximum) to systematically detect outliers [11]. |

Detailed Limitation 1: Inconsistency

Inconsistency refers to unwanted variability in research findings or data quality judgments that arises from differences in methodology, execution, or evaluation, rather than from true biological or chemical effects.

- Root Causes in Ecotoxicology: Inconsistency stems from methodological heterogeneity (e.g., different test species, exposure durations, endpoints like LC50 or EC50), population differences (e.g., species strains, life stages), and, critically, subjective QA/QC judgments [11] [10]. When manually assessing study reliability, evaluators may interpret vague QA/QC criteria differently due to semantic ambiguity [1].

- Impact on Risk Assessment: Inconsistent data integration leads to unreliable meta-analyses and unpredictable risk assessments. Traditional statistical measures like the Q test can fail to detect inconsistency when the number of studies is small or when the distribution of effects is non-normal (e.g., skewed by publication bias) [11]. This undermines regulatory decision-making and chemical safety assurance.

Application Note: Protocol for AI-Assisted Consistency Screening

This protocol uses Large Language Models (LLMs) to perform standardized QA/QC screening for microplastics in drinking water studies, as validated by recent research [1].

Objective: To automate the consistent application of predefined QA/QC criteria for evaluating the reliability of scientific studies, replicating human expert judgment with high fidelity.

Materials:

- LLM Access: API or interface to a model such as OpenAI's ChatGPT or Google's Gemini [1].

- QA/QC Framework: A published, structured list of criteria for evaluating study reliability (e.g., for microplastics in water) [1].

- Corpus of Studies: Digital texts (PDFs or plain text) of scientific studies to be evaluated.

Procedure:

- Prompt Engineering: Translate the human QA/QC framework into a structured LLM system prompt. The prompt must define the task, list all criteria, and specify the exact output format (e.g., JSON containing scores, confidence, and justifications).

- Study Processing: Convert study PDFs to plain text. For large studies, use a "chunking" strategy: first, instruct the LLM to identify and extract the Methods, Results, and Quality Indicators sections.

- Batch Evaluation: Submit the extracted relevant text chunks for each study to the LLM with the engineered prompt. Use parallel API calls to process multiple studies simultaneously.

- Aggregation & Scoring: Programmatically aggregate the LLM's outputs for each study. Calculate a composite reliability score or rank based on the criteria.

- Validation: For a subset of studies, compare AI-generated scores with scores from independent human experts. Calculate inter-rater agreement metrics (e.g., Cohen's kappa) to validate performance.

AI-Assisted QA/QC Screening Workflow [1]

Detailed Limitation 2: Bias

Bias is a systematic deviation from true representation or judgment. In traditional ecotoxicology, it infiltrates both the evidence base (through study conduct and publication) and the data screening process itself.

- Types of Bias:

- Publication Bias: Studies with statistically significant or "positive" results are more likely to be published than null studies. This skews the available data for meta-analysis [11].

- Selection Bias: In manual literature screening, reviewers may unconsciously select studies that align with their hypotheses.

- Confirmation Bias: During data quality evaluation, reviewers may judge studies supporting their prior beliefs more favorably.

- Compounding Effect: These biases interact. Publication bias creates a skewed pool of data, which human reviewers then filter with their own cognitive biases, potentially doubling the distortion in the final synthesized evidence [11].

Application Note: Protocol for a Hybrid Inconsistency Test

This protocol implements a robust statistical test to detect inconsistency in meta-analysis, which is less susceptible to being masked by biased data distributions [11].

Objective: To powerfully detect between-study inconsistency (heterogeneity) even when the distribution of effect sizes is non-normal (e.g., skewed, heavy-tailed, or contaminated by outliers).

Materials:

- Meta-Analysis Dataset: Effect sizes (Y_i) and their standard errors (s_i) from k independent studies.

- Statistical Software: R or Python with capabilities for resampling methods.

Procedure:

- Calculate Standardized Deviances: For each study i, compute the standardized deviate: Z_i = (Y_i - θ_hat) / s_i, where θ_hat is the common-effect estimate.

- Compute Alternative Tp Statistics: For a set of integer powers *p* (e.g., 1, 2, 3, 4, ∞), compute the statistic *Tp = Σ |Zi|^p*. *T2* is the traditional Q statistic. T_1 is more robust to outliers. T_∞ is the maximum absolute deviate, sensitive to a single outlier.

- Calculate P-value for Each Tp: Derive the null distribution for each *Tp* using a parametric resampling method: a. Assume homogeneity (τ² = 0). Simulate new effect sizes Y_i^ ~ N(θ_hat, s_i²). b. For each simulation, recalculate θ_hat* and the T_p* statistics. c. Repeat many times (e.g., 10,000) to build the empirical null distribution for each T_p. d. The P-value for each test is the proportion of simulated T_p* values exceeding the observed T_p.

- Compute Hybrid Test P-value: Let P_min be the minimum P-value from the set of individual T_p tests. Derive the null distribution for P_min using the same resampling procedure (step 3), recording the minimum P-value from each simulation. The final hybrid test P-value is the proportion of simulated P_min values smaller than the observed P_min.

- Interpretation: A significant hybrid test indicates inconsistency is present. The power of the test remains high across various inconsistency patterns.

Detailed Limitation 3: Time Consumption

Time is the most palpable constraint. Manual QA/QC, literature screening, and data extraction are profoundly slow, creating a mismatch between the pace of research and the need for timely decisions.

- The Volume Challenge: The ECOTOX database alone contains over 1.1 million entries [10]. Manually evaluating even a fraction for a systematic review can take months. A case study showed that an AI system reduced the time for daily information monitoring from 4 hours to 20 minutes—a 92% reduction [9].

- Opportunity Cost: The time scientists spend on repetitive screening tasks is diverted from higher-value activities like experimental design, complex data interpretation, and innovation. This slows the entire research lifecycle and delays regulatory submissions and product development [8].

Application Note: Protocol for Creating a Machine Learning Benchmark Dataset

High-quality, standardized datasets are prerequisites for developing and fairly comparing automated screening and prediction tools. This protocol outlines the creation of the ADORE benchmark dataset for aquatic toxicity prediction [10].

Objective: To curate a well-described, feature-rich dataset from the ECOTOX database to serve as a community standard for developing and benchmarking machine learning models in ecotoxicology.

Materials:

- Primary Source: The US EPA ECOTOX database (pipe-delimited ASCII files) [10].

- Secondary Sources: PubChem for chemical identifiers (SMILES, InChIKey), phylogenetic databases for species traits.

- Processing Tools: Scripting language (Python/R) for data wrangling.

Procedure:

- Taxonomic Filtering: Filter the ECOTOX species file to retain only three key taxonomic groups: Fish, Crustaceans, and Algae.

- Endpoint Harmonization:

- For Fish: Include only mortality (MOR) endpoints (e.g., LC50). Standardize exposure period to ≤96 hours.

- For Crustaceans: Include mortality (MOR) and immobilization/intoxication (ITX) endpoints. Standardize exposure to ≤48 hours.

- for Algae: Include effects on population growth: mortality (MOR), growth (GRO), population (POP), physiology (PHY). Standardize exposure to ≤72 hours [10].

- Data Cleaning & Fusion: a. Link test results to species taxonomy and chemical identifiers (CAS, DTXSID, InChIKey). b. Remove entries with missing critical data (e.g., species group, chemical ID, effect concentration). c. Exclude in vitro tests and tests on early life stages (e.g., embryos) to focus on standard acute in vivo toxicity [10]. d. Merge with external chemical data (molecular descriptors from PubChem) and species data (phylogenetic features).

- Challenge Definition & Splitting: a. Define specific ML challenges (e.g., "Can a model trained on fish and algae data predict toxicity for crustaceans?"). b. Split the curated dataset into training and test sets using scaffold splitting (based on chemical structure) to test a model's ability to generalize to novel chemistries, not just interpolate [10].

- Documentation & Release: Publish the dataset with a complete data descriptor, including a feature glossary, sourcing details, and the rationale for all filtering decisions to ensure reproducibility.

Benchmark Dataset Creation for ML in Ecotoxicology [10]

Implementing automated data quality screening requires a combination of data, software, and standardized frameworks.

Table 3: Research Reagent Solutions for Automated Ecotoxicology Screening

| Tool / Resource | Type | Primary Function | Source / Example |

|---|---|---|---|

| ECOTOX Database | Reference Data | Provides core ecotoxicology test results (LC50, EC50) for model training and validation. | United States Environmental Protection Agency (US EPA) [10]. |

| ADORE Dataset | Benchmark Data | A curated, feature-rich dataset for fair comparison of ML models predicting acute aquatic toxicity. | Published in Scientific Data [10]. |

| Large Language Models (LLMs) | Analysis Engine | Automates text-based tasks: QA/QC scoring, data extraction from literature, summarizing findings. | OpenAI's ChatGPT, Google's Gemini [1]. |

| QA/QC Criteria Frameworks | Protocol | Provides the standardized rules and questions an AI system is prompted to apply during screening. | Published criteria for specific domains (e.g., microplastics in water) [1]. |

| Hybrid Test Software | Statistical Tool | Implements advanced, robust tests for detecting inconsistency in meta-analyses with non-normal data. | Custom R/Python code based on methodology from [11]. |

| Chemical Identifiers (InChIKey, SMILES) | Standardization | Unique, standardized representations of chemical structure for unambiguous data linking and featurization. | PubChem, CompTox Chemicals Dashboard [10]. |

| OECD Test Guidelines | Regulatory Standard | Defines internationally accepted test methods, providing the basis for judging methodological quality. | Organisation for Economic Co-operation and Development (OECD) [10]. |

In ecotoxicology, data quality determines the reliability of chemical hazard assessments, ecological risk evaluations, and the validity of predictive models. High-quality data is characterized by its accuracy, completeness, consistency, and fitness for purpose, enabling confident decision-making in research and regulation [12]. The increasing volume of data from high-throughput screening (HTS) assays and automated literature curation necessitates robust, automated quality screening tools [13] [14]. Poor data quality, which can cost organizations significant resources, leads to misinterpretation of toxicity, flawed risk assessments, and ultimately, inadequate environmental protection [12]. A model-based analysis has demonstrated that undocumented variability—from factors like exposure duration and species physiology—can cause differences in toxicity metrics (e.g., LC50) by one to three orders of magnitude, highlighting the critical need for stringent quality control [6]. This document outlines the core principles, evaluation protocols, and practical tools for defining and ensuring data quality, framed within the development of automated screening systems.

Core Principles and Dimensions of Data Quality

A structured framework is essential for managing data quality across its lifecycle [12]. In ecotoxicology, quality is multi-dimensional, encompassing both the intrinsic properties of the data and its contextual relevance for specific applications.

Table 1: Core Dimensions of Ecotoxicological Data Quality

| Quality Dimension | Definition | Ecotoxicological Application Example | Common Risk & Source of Error |

|---|---|---|---|

| Accuracy & Integrity | Data correctly represents the true value or phenomenon it describes, free from error or bias. | Correct reporting of a chemical concentration (e.g., mg/L) and the corresponding mortality endpoint (LC50). | Transcription errors; analytical instrument calibration drift; undocumented model assumptions influencing dose metrics [6]. |

| Completeness | All necessary data fields and expected records are present without omission. | A study record includes chemical CAS RN, species name, exposure duration, endpoint value, and control group results. | Missing metadata (e.g., pH, water temperature); partial reporting of sub-lethal endpoints. |

| Consistency & Standardization | Data is uniform in format, definition, and measurement units across different datasets and sources. | Use of controlled vocabularies (e.g., ToxRefDB) [13]; standardized units for toxicity values across the ECOTOX Knowledgebase. | Inconsistent taxonomic nomenclature; mixing of nominal and measured concentrations without annotation. |

| Relevance & Fitness for Purpose | Data is applicable and useful for the specific context of the analysis or decision at hand. | Using a freshwater fish toxicity study to assess risk in a freshwater ecosystem. | Applying data from a non-standard test organism to a regulatory assessment for a standard species. |

| Validity & Plausibility | Data conforms to defined syntax (format, type, range) and biological/chemical plausibility rules. | A reported LC50 value falls within a plausible range based on the chemical's mode of action and similar substances. | Physicochemically impossible solubility values; outlier values resulting from experimental artifact. |

| Traceability & Lineage | The origin of the data and all transformations it has undergone are fully documented. | Ability to trace a summarized toxicity value in ToxValDB back to the original primary literature source [13]. | Lack of provenance documentation for data extracted from secondary literature or reviews. |

These dimensions are interdependent. For instance, data cannot be accurate if it is incomplete (e.g., missing a crucial test condition). Automated screening tools operationalize these principles by checking data against predefined rules and metrics [12].

Automated Data Quality Screening: Tools and Applications

The shift from purely manual curation to automated and semi-automated processes addresses the challenge of managing large-scale ecotoxicology data [14]. These tools integrate into systematic review workflows to enhance efficiency without sacrificing accuracy.

Table 2: Key EPA Databases for Ecotoxicology and Associated Quality Features

| Database/Resource | Primary Content | Key Data Quality Features | Role in Automated Screening |

|---|---|---|---|

| ECOTOX Knowledgebase [13] [14] | Curated ecotoxicity data for aquatic and terrestrial species. | Standardized curation protocols; use of automated tools for literature screening and data evaluation. | Source of high-quality curated data for model training; framework for automated quality checks (e.g., completeness of required fields). |

| Toxicity Reference Database (ToxRefDB v3.0) [13] | In vivo animal toxicity data from guideline studies. | Controlled vocabulary; structured data from over 6,000 studies. | Provides a "gold standard" dataset of high-quality guideline study data for benchmarking. |

| Toxicity Value Database (ToxValDB v9.6) [13] | Aggregated in vivo toxicology data and derived values from over 40 sources. | Standardized summary format; facilitates comparison across sources. | Enables automated consistency checks by providing multiple values for the same chemical-endpoint combination. |

| ToxCast Data [13] | High-throughput screening (HTS) assay data for thousands of chemicals. | Extensive assay metadata and experimental data. | Requires robust QA/QC pipelines to manage and validate large-scale in vitro screening data. |

| CompTox Chemicals Dashboard [13] | Integrates chemical properties, exposure, hazard, and risk data. | Links chemicals across data sources via unique identifiers (DTXSID). | Serves as a platform for automated cross-database consistency verification (e.g., structure-identifier mapping). |

Adopting automated tools within the ECOTOX curation pipeline, such as for title/abstract screening, has demonstrated an 83% reduction in the level of effort required to identify relevant journal articles, while maintaining consistency with manual reviews [14]. This efficiency gain is critical for expanding the coverage and timeliness of this vital resource.

Diagram 1: Automated Literature Curation for ECOTOX Knowledgebase [14].

Experimental Protocols for Data Quality Evaluation

Protocol: Screening and Reviewing Open Literature Toxicity Data

This protocol is adapted from the EPA OPP guidelines for evaluating data from the ECOTOX Knowledgebase and other open literature sources [15].

Objective: To systematically identify, evaluate, and categorize ecotoxicity studies for use in ecological risk assessments.

Materials:

- Access to the ECOTOX Knowledgebase and/or scientific literature databases.

- EPA OPP Evaluation Guidelines [15].

- Data extraction and review summary template (e.g., Open Literature Review Summary - OLRS).

Procedure:

- Literature Acquisition:

- Execute a predefined search strategy for the chemical(s) of concern, typically via the ECOTOX Knowledgebase [15].

- Retrieve the complete text of all potentially relevant studies.

Initial Screening (Acceptance Criteria):

- Evaluate each study against 14 minimum acceptance criteria [15]. Core criteria include:

- Effects from exposure to a single chemical.

- Biological effect on live, whole organisms.

- Reported concurrent concentration/dose and exposure duration.

- Comparison to an acceptable control group.

- The study is a full article in the primary literature published in English.

- Evaluate each study against 14 minimum acceptance criteria [15]. Core criteria include:

Data Extraction & Quality Review:

- For studies passing initial screening, extract detailed data into a structured template.

- Assess study reliability based on:

- Methodological Soundness: Adherence to standard test guidelines (e.g., OECD, EPA), clarity of methods, appropriate statistical analysis.

- Reporting Completeness: Documentation of test conditions (e.g., temperature, pH, solvent), chemical verification, raw data availability.

- Result Plausibility: Consistency of results within the study and with existing data for similar chemicals/species.

Categorization and Documentation:

- Categorize the study based on its quality and usefulness (e.g., "Core" for risk assessment, "Supportive", "Not Usable").

- Complete an Open Literature Review Summary (OLRS) for each study, documenting the rationale for acceptance/rejection and categorization.

- Submit OLRS to designated data management staff for archiving and tracking [15].

Protocol: Implementing Automated Quality Checks in a Data Pipeline

Objective: To embed automated data quality validation rules within an ecotoxicological data processing pipeline.

Materials:

- Raw ecotoxicity dataset (e.g., from automated extraction tools).

- Programming environment (e.g., Python, R) or data quality tool.

- Defined quality rules and thresholds.

Procedure:

- Rule Definition:

- Translate quality dimensions into explicit, machine-executable rules. Examples:

- Completeness: Required fields (Chemical ID, Endpoint Value, Species) !=

NULL. - Validity: Endpoint Value > 0; Exposure Duration in allowed list (

"24h","48h","96h"). - Plausibility: LC50 (mg/L) ≤ Water Solubility (mg/L) for the same chemical.

- Consistency: Species name matches an entry in a controlled taxonomy table.

- Completeness: Required fields (Chemical ID, Endpoint Value, Species) !=

- Translate quality dimensions into explicit, machine-executable rules. Examples:

Implementation & Profiling:

- Code the rules into validation scripts or configure them within a data quality tool.

- Execute an initial data profile to measure baseline metrics (e.g., percentage completeness, duplicate count).

Execution and Flagging:

- Run validation scripts on new or updated data batches.

- Flag records that violate rules. Severity levels can be assigned (e.g., "Error" for missing chemical ID, "Warning" for value slightly outside expected range).

Reporting and Remediation:

- Generate a data quality scorecard with metrics for each dimension [12].

- Route flagged records to a review queue for manual investigation by a data steward.

- Document all corrections to maintain data lineage.

Diagram 2: Tiered Data Evaluation & Categorization Process [15].

Table 3: Key Research Reagent Solutions & Resources

| Tool/Resource | Function in Quality Assurance | Relevance to Automated Screening |

|---|---|---|

| ECOTOX Knowledgebase [13] [15] | Provides a vast, curated source of ecotoxicity data for benchmarking and validation. | Serves as a reference dataset for training and testing automated data extraction and quality classification algorithms. |

| EPA CompTox Chemicals Dashboard [13] | Central hub for chemical identifiers, properties, and linked hazard data. | Enables automated cross-referencing and validation of chemical identities and associated data across tools. |

| ToxValDB [13] | Aggregates toxicity values from multiple sources in a standardized format. | Allows for automated outlier detection by comparing a new data point against a distribution of existing values. |

| Controlled Vocabularies & Ontologies (e.g., in ToxRefDB) [13] | Standardize terms for species, endpoints, and effects. | Critical for ensuring consistency in data labeling, which enables reliable automated grouping and analysis. |

| Data Quality Rule Engines (e.g., open-source libraries, commercial DQ tools) [12] | Execute predefined validation rules on datasets. | The core implementer of automated checks for completeness, validity, and business logic (e.g., "exposure duration must be positive"). |

| Literature Mining Tools (e.g., Abstract Sifter) [13] | Assist in the semi-automated screening and prioritization of scientific literature. | Reduces manual effort in the initial phases of systematic review, directly supporting the curation pipeline [14]. |

Defining and ensuring data quality in ecotoxicology is a foundational activity that transitions from abstract principles to concrete, actionable protocols. The core dimensions of accuracy, completeness, consistency, and relevance provide the framework for evaluation [12]. The integration of automated and semi-automated screening tools—exemplified by advancements in curating the ECOTOX Knowledgebase—is transformative, offering dramatic gains in efficiency while maintaining high standards [14]. For researchers and regulators, the consistent application of detailed evaluation protocols, such as those formalized by the EPA [15], is essential for generating reliable, defensible data. As the field evolves towards greater reliance on high-throughput and in silico methods, the development and refinement of automated data quality screening tools will remain a critical thesis, ensuring that the expanding universe of ecotoxicological data remains robust and fit for its purpose of protecting environmental health.

The field of toxicology is undergoing a fundamental transformation, driven by converging regulatory pressures and rapid technological innovation. Growing societal and ethical concerns regarding animal testing, embodied in the 3Rs (Replacement, Reduction, and Refinement) principles, are being codified into policy [16]. Simultaneously, regulatory agencies worldwide are acknowledging the scientific limitations of traditional animal models, which have a human toxicity predictivity rate of only 40–65%, and are actively encouraging more human-relevant methods [16] [17]. This regulatory push is a key driver for the development and adoption of New Approach Methodologies (NAMs).

NAMs are defined as any non-animal, human-biology-based approach—encompassing in vitro, in chemico, and in silico (computational) methods—used for chemical safety assessment [16] [17]. They represent a shift from observational animal toxicology to a mechanistic, hypothesis-driven paradigm focused on understanding perturbations of biological pathways relevant to human health. The ultimate goal is Next Generation Risk Assessment (NGRA), an exposure-led framework that integrates diverse NAMs data to ensure protective safety decisions [16].

A critical consequence of this shift is an exponential increase in the volume, velocity, and variety of data generated. Complex in vitro systems like organ-on-a-chip models, high-content screening assays, and multi-omics analyses produce rich, multifaceted datasets [17]. This data-rich environment creates a pressing need for robust, automated tools to ensure data quality, standardization, and reproducibility—cornerstones for regulatory acceptance and scientific confidence. This document details application notes and experimental protocols for implementing automated data quality screening within NAMs-based ecotoxicology research, framed as an essential component of a modern, credible testing strategy.

Application Notes: Implementing Automated Quality Screening for NAMs

The integration of automated data quality screening is not merely a technical convenience but a prerequisite for the reliable use of NAMs in regulatory contexts. Effective implementation addresses several core challenges inherent to complex, next-generation data.

Ensuring Data Integrity and Standardization

NAMs generate complex data from diverse platforms (e.g., gene expression, cellular imaging, kinetic parameters). Manual quality assurance/quality control (QA/QC) is too slow, inconsistent, and prone to evaluator bias to handle this scale [1]. Automated screening applies standardized, pre-defined criteria uniformly across all datasets.

- Objective Metrics: Algorithms can check for adherence to pre-set Minimum Criteria (e.g., positive/negative control performance, coefficient of variation thresholds, signal-to-noise ratios) and Optimal Performance Standards (e.g., dynamic range, Z'-factor for high-throughput screens).

- Consistency: Automated tools eliminate human inconsistency in applying semantic QA/QC criteria, ensuring that a "reliable" study is classified the same way every time [1].

- Traceability: Every data point can be linked to its quality metrics, creating an auditable trail from raw data to processed result, which is vital for regulatory submissions.

Facilitating Reproducibility and Cross-Study Comparison

A major barrier to NAMs acceptance is the perceived difficulty in reproducing results across laboratories. Automated screening mitigates this by enforcing protocol adherence and flagging outliers.

- Protocol Compliance Checks: Software can cross-reference metadata (e.g., cell passage number, serum batch, dosing solvent) against standard operating procedure (SOP) requirements.

- Inter-Laboratory Benchmarking: When multiple labs use the same automated screening pipeline, their data quality can be objectively compared, identifying technical rather than biological variations.

- Data Curation for Reuse: High-quality, screened datasets are primed for entry into shared repositories, fueling the development of more predictive computational models and read-across approaches [18].

Accelerating Regulatory Acceptance and Defining Approaches

Regulators require confidence that NAMs data is robust and fit-for-purpose. Automated quality control provides transparent, objective evidence of data reliability.

- Building Confidence: Consistent, high-quality data from automated screening supports the validation of new NAMs and helps build the "weight-of-evidence" needed for regulatory decisions [16].

- Enabling Defined Approaches (DAs): DAs are fixed data interpretation procedures applied to data generated from specific NAMs combinations (e.g., for skin sensitization) [16]. Automated screening is essential to ensure the input data for a DA meets the required quality specifications, as outlined in OECD test guidelines like TG 497.

- Efficiency in Review: Regulatory reviewers can more efficiently audit studies where quality metrics are programmatically generated and summarized, accelerating the review cycle.

The following table contrasts the characteristics of traditional versus NAM-based data screening paradigms.

Table 1: Comparison of Traditional vs. NAM-Based Data Screening Paradigms

| Feature | Traditional Animal Study Screening | NAMs Data Screening with Automation |

|---|---|---|

| Primary Focus | Adherence to procedural guideline (OECD TG); historical control ranges. | Adherence to mechanistic performance standards; control performance; technical reliability. |

| Data Volume | Low to moderate (clinical observations, histopathology, clinical pathology). | Very high (high-content imaging, omics, real-time kinetic data). |

| Screening Method | Manual audit by study director and QA unit. | Automated algorithmic checks against predefined criteria, with human oversight of flags. |

| Key Metrics | Mortality, clinical signs, organ weights, histopathology findings. | Cell viability, assay interference, control CVs, Z'-factor, pathway perturbation strength. |

| Speed | Slow (weeks to months for full study audit). | Rapid (real-time to hours for initial quality pass/fail). |

| Consistency | Prone to inter-evaluator variability. | High, due to standardized, programmatic application of rules. |

| Outcome | Study deemed valid or invalid. | Data streams tagged with quality scores; unfit data excluded from downstream analysis. |

Experimental Protocols

The successful deployment of NAMs hinges on rigorous, standardized protocols. Below are detailed methodologies for two critical processes: validating a NAMs-based Defined Approach and implementing an AI-assisted quality screening system.

Protocol: Validation of a Defined Approach (DA) for Skin Sensitization Assessment

This protocol outlines the steps to validate a specific DA, such as the one described in OECD TG 497, which integrates results from the Direct Peptide Reactivity Assay (DPRA), KeratinoSens, and h-CLAT to replace the murine Local Lymph Node Assay (LLNA) [16].

- Objective: To demonstrate that the DA provides equivalent or superior predictivity of human skin sensitization hazard compared to the traditional LLNA, using a set of reference chemicals.

- Materials:

- Test chemicals: A curated list of ≥30 substances covering a range of sensitization potencies and chemical classes.

- In chemico assay: DPRA kit.

- In vitro assays: KeratinoSens (ARE-Nrf2 luciferase reporter gene assay) and h-CLAT (Human Cell Line Activation Test) kits.

- Reference data: Reliable human and/or LLNA data for all test chemicals.

- DA prediction model: The fixed data interpretation procedure (DIP) as specified in OECD TG 497.

- Procedure:

- Assay Execution: Perform the DPRA, KeratinoSens, and h-CLAT assays for all test chemicals in triplicate, strictly following respective OECD Test Guidelines (TGs 442C, 442D, 442E).

- Data Generation: Record raw data and calculate the relevant metrics (e.g., % peptide depletion for DPRA, EC1.5 for KeratinoSens, CV75 for h-CLAT).

- Automated Quality Check: Subject raw data from each assay run to automated screening:

- Verify positive and negative control values fall within historical acceptance ranges.

- Confirm replicate variability (CV) is below a pre-defined threshold (e.g., <20%).

- Flag any assay run where quality checks fail for re-analysis.

- Apply DA DIP: Input the quality-checked results into the standardized DA prediction model (e.g., 2-out-of-3 concordance rule or an integrated scoring model).

- Performance Assessment:

- Compare DA predictions (Sensitizer/Non-sensitizer) against the reference human/LLNA dataset.

- Calculate accuracy, sensitivity, specificity, and concordance.

- Demonstrate that performance meets or exceeds predefined validation criteria (e.g., ≥80% accuracy).

- Expected Outcome: A validated, reproducible testing strategy that does not require new animal data, supported by a transparent record of data quality at each step.

Protocol: AI-Assisted QA/QC Screening for Ecotoxicology Literature Data

Adapted from a study on microplastics research, this protocol uses a Large Language Model (LLM) to standardize the evaluation of study reliability for data extraction in systematic reviews or hazard assessment [1].

- Objective: To rapidly and consistently screen a large corpus of scientific literature against predefined QA/QC criteria, identifying studies suitable for inclusion in exposure or risk assessment.

- Materials:

- Literature Corpus: A digital collection of relevant scientific papers (PDF format).

- QA/QC Criteria: A structured list of quality items (e.g., "Was a negative control used?", "Was particle size characterization reported?", "Was the analytical method validated?").

- AI Tool: Access to a commercial or open-source LLM API (e.g., OpenAI GPT, Google Gemini).

- Prompt Engineering Interface: A platform for developing and testing instructional prompts for the LLM.

- Procedure:

- Criteria Formalization: Translate QA/QC criteria into a clear, unambiguous checklist. Assign a weight or points to each item if a scoring system is desired.

- Prompt Development: Create a system prompt that instructs the LLM to act as a data quality evaluator. The prompt must include the criteria and instruct the model to extract evidence and provide a score or classification.

- Example Prompt: "You are an expert in ecotoxicology study quality assessment. For the provided study text, evaluate it based on the following criteria: 1. Reporting of control groups... [list criteria]. For each criterion, state 'Yes,' 'No,' or 'Unclear,' and provide the supporting sentence from the text. Finally, calculate a total score and classify the study as 'High,' 'Medium,' or 'Low' reliability."

- Pilot and Calibration:

- Run the prompt on a small subset of papers (5-10) manually scored by human experts.

- Compare AI and human outputs. Refine the prompt to resolve discrepancies and improve accuracy.

- Batch Processing: Automate the extraction of text from PDFs and submit it in batches to the LLM using the finalized prompt.

- Result Aggregation & Review:

- Compile LLM outputs (scores, classifications, evidence snippets) into a structured database or spreadsheet.

- Implement a human review step for studies on the border between classification tiers or where the LLM indicated "Unclear" for critical criteria.

- Expected Outcome: A consistently screened library of studies with associated quality scores, dramatically reducing the time required for systematic review while improving transparency and reproducibility of the screening process [1].

Table 2: Research Reagent Solutions for NAMs Implementation

| Research Reagent/Platform | Primary Function in NAMs |

|---|---|

| Organ-on-a-Chip (Microphysiological System) | Microengineered devices that mimic organ-level structure and function (e.g., lung, liver, kidney). Used to model systemic toxicity, absorption, and complex tissue-tissue interactions in a human-relevant context [17]. |

| IPS-Derived Human Cells | Induced pluripotent stem cells differentiated into target cell types (e.g., cardiomyocytes, neurons). Provide a limitless, human-genetic-background source of cells for in vitro assays, overcoming donor variability [17]. |

| High-Content Screening (HCS) Assay Kits | Multiplexed fluorescent assay kits that measure multiple cellular endpoints (e.g., cytotoxicity, oxidative stress, mitochondrial health) via automated imaging. Enable high-throughput mechanistic profiling of chemicals [17]. |

| Multi-Omics Analysis Suites | Integrated platforms for transcriptomics, proteomics, or metabolomics. Used to identify mechanistic signatures of toxicity, map effects onto Adverse Outcome Pathways (AOPs), and discover biomarkers [16] [17]. |

| Physiologically Based Kinetic (PBK) Modeling Software | In silico tools that simulate the absorption, distribution, metabolism, and excretion (ADME) of chemicals. Critical for translating in vitro effective concentrations to human-relevant external doses in NGRA [16] [17]. |

| Defined Approach (DA) Prediction Model | A fixed data interpretation procedure, often software-based, that integrates results from specific in chemico and in vitro NAMs to predict a toxicological endpoint, as per OECD guidelines [16]. |

Integration and Workflow Visualization

The successful application of NAMs within a modern ecotoxicology framework requires the seamless integration of diverse methodologies, all underpinned by automated data quality control. The following diagram illustrates this conceptual workflow and the critical role of quality screening.

NAM Integration and Validation Workflow

The AI-assisted screening protocol itself can be visualized as a sequential, iterative process, as shown in the following diagram.

AI-Assisted QA/QC Screening Protocol

The rise of NAMs is inextricably linked to regulatory pressures demanding more human-relevant, ethical, and predictive toxicology. However, the data-rich, mechanistic nature of NAMs introduces new challenges in quality assurance and standardization that traditional approaches cannot meet. As detailed in these application notes and protocols, automated data quality screening tools—from algorithmic checks of assay performance to AI-driven literature evaluation—are not merely supportive technologies but foundational components of a credible NAMs ecosystem. They provide the consistency, transparency, and efficiency required to build scientific and regulatory confidence. The future of ecotoxicology and risk assessment lies in the seamless integration of advanced biological models with robust digital quality infrastructure, enabling a truly protective and animal-free paradigm for chemical safety [18] [16] [17].

Ecotoxicology faces a dual challenge: escalating volumes of chemical and environmental data, and the imperative for robust, reproducible hazard assessments. Traditional manual quality assurance (QA) and data curation are time-consuming, subjective, and unsustainable[reference:0]. This document frames the adoption of automated screening intelligence within the broader thesis that computational and artificial intelligence (AI) tools are essential for ensuring data quality, accelerating risk assessment, and enabling high-throughput ecotoxicology. The following application notes and detailed protocols illustrate this paradigm shift.

Application Notes: Key Studies in Automated Screening

1. AI-Assisted Data Quality Evaluation for Microplastics Risk Assessment

- Objective: To standardize and accelerate the QA/QC screening of microplastics research data using Large Language Models (LLMs)[reference:1].

- Method: Developed specific prompts based on established QA/QC criteria for microplastics in drinking water. These prompts were used to instruct LLMs (ChatGPT, Gemini) to evaluate 73 published studies (2011-2024)[reference:2].

- Outcome: The AI tools effectively extracted relevant information, interpreted study reliability, and replicated human assessments, demonstrating promise for improving speed, consistency, and applicability in QA/QC tasks[reference:3].

2. Automated Computational Pipeline for Ecological Hazard Assessment (RASRTox)

- Objective: To rapidly acquire, score, and rank toxicological data for streamlined ecological hazard assessments, particularly for Endangered Species Act consultations[reference:4].

- Method: Developed the RASRTox pipeline to automatically extract and categorize ecological toxicity benchmark values from curated data sources (ECOTOX, ToxCast) and QSAR models (TEST, ECOSAR)[reference:5].

- Outcome: As a proof of concept, Points-of-Departure (PODs) generated for 13 chemicals were generally within an order of magnitude of traditional Toxicity Reference Values (TRVs), demonstrating utility for rapidly identifying critical studies for screening value derivation[reference:6].

Table 1: Performance Metrics of Featured Automated Screening Tools

| Tool / Method | Primary Function | Data Source / Scale | Key Performance Outcome | Reference |

|---|---|---|---|---|

| LLM (ChatGPT/Gemini)-Assisted QA/QC | Standardized data quality screening & study ranking | 73 microplastics studies (2011-2024) | High consistency in replicating human reliability assessments; dramatic reduction in screening time. | [reference:7] |

| RASRTox Computational Pipeline | Automated data acquisition, scoring, and ranking for hazard assessment | ECOTOX, ToxCast, TEST, ECOSAR; 13-chemical proof of concept | Generated PODs within an order of magnitude of traditional TRVs for most chemicals. | [reference:8] |

| High-Throughput Ecotoxicology (HiTEC) - Cell Painting | High-content phenotypic profiling for chemical screening | Adapted for non-human (e.g., fish, insect) cell lines | Enables screening at a higher throughput than many current organism-level test methods. | [reference:9] |

Experimental Protocols

Protocol 1: Implementing an LLM-Assisted Data Quality Screen

- Step 1 – Criteria Formalization: Translate existing QA/QC guidelines (e.g., for microplastics in water) into a structured checklist of yes/no or scored items.

- Step 2 – Prompt Engineering: Develop a system prompt that defines the LLM’s role as a data quality auditor. Provide the checklist and instructions for evaluating text from scientific abstracts or full methods sections.

- Step 3 – Batch Processing: Use the LLM’s API (e.g., OpenAI, Gemini) to submit the text of multiple studies (e.g., 73 studies) with the standardized prompt.

- Step 4 – Output Parsing & Validation: Programmatically extract the LLM’s scored evaluations. Validate a subset (e.g., 20%) against independent human expert ratings to calculate concordance metrics (e.g., Cohen’s kappa)[reference:10].

Protocol 2: Executing the RASRTox Computational Pipeline

- Step 1 – Data Acquisition: Script automated queries to public toxicity databases (ECOTOX, ToxCast) and QSAR model servers (TEST, ECOSAR) for a target chemical list[reference:11].

- Step 2 – Data Extraction & Categorization: Parse retrieved data to extract key endpoints (e.g., LC50, NOEC), associated species, and test conditions. Categorize data by taxon and toxicity measure.

- Step 3 – Scoring & Ranking: Apply predefined scoring rules based on test guideline adherence, species relevance, and data quality. Rank studies and calculated benchmark values (PODs) by score.

- Step 4 – Benchmark Comparison & Reporting: Compare pipeline-generated PODs against accepted benchmarks (e.g., TRVs, WQC). Generate a report highlighting top-ranked studies and consensus PODs for review by a toxicologist[reference:12].

Visualization: Workflow Diagrams

Diagram 1: RASRTox Automated Pipeline for Ecological Hazard Assessment (76 chars)

Diagram 2: AI-Assisted Data Quality Screening Workflow (53 chars)

Table 2: Key Research Reagent Solutions & Computational Tools

| Item | Category | Function in Automated Screening |

|---|---|---|

| ECOTOX Knowledgebase | Database | Curated repository of ecotoxicological effects data for chemicals, serving as a primary source for automated pipelines like RASRTox[reference:13]. |

| EPA ToxCast/Tox21 Data | Database | High-throughput screening data for thousands of chemicals, used for in vitro bioactivity profiling and predictive modeling. |

| TEST & ECOSAR | QSAR Software | Automated tools for predicting toxicity endpoints based on chemical structure, filling data gaps in hazard assessment[reference:14]. |

| Cell Painting Assay Kits | Wet-Lab Reagent | Enable high-content, phenotypic profiling in non-human cell lines, forming the basis for high-throughput in vitro screening in ecotoxicology[reference:15]. |

| Python/R with Bio‑/Eco‑ Libraries | Programming Environment | Essential for scripting data acquisition, analysis, pipeline automation, and integrating with AI/ML frameworks (e.g., scikit-learn, TensorFlow). |

| LLM APIs (OpenAI, Gemini) | AI Service | Provide the core engine for natural language processing tasks in automated study evaluation, data extraction, and summarization[reference:16]. |

| Automated Liquid Handlers & Imagers | Laboratory Hardware | Enable the physical execution of high-throughput assays (e.g., cell painting) by performing repetitive pipetting and image capture without manual intervention. |

From Theory to Toolbox: Implementing Automated Screening Pipelines and Frameworks

The advancement of ecotoxicology research and chemical risk assessment is increasingly constrained by the volume and heterogeneity of environmental data. Manual data quality screening has become a critical bottleneck, being too time-consuming, inconsistent, and practically unfeasible for the vast number of studies being published [1]. This document frames the architecture of automated workflows within the context of a broader thesis on automated data quality screening tools. The thesis posits that robust, modular pipeline architectures are fundamental to deploying artificial intelligence (AI) and computational toxicology methodologies effectively. These architectures standardize and accelerate the evaluation of data reliability, thereby harmonizing risk assessments and enabling faster, evidence-based environmental protection decisions [1] [19].

Foundational Components of Automated Pipelines

Automated pipeline architectures are structured workflows that connect data processing, model training, evaluation, and deployment into seamless, repeatable systems [20]. In the context of ecotoxicology, they transform raw, disparate data into validated, actionable insights for hazard assessment. Effective architectures balance automation with the flexibility required for scientific experimentation [20].

Core Architectural Modules

A robust pipeline for data quality screening can be decomposed into modular components, each with a distinct function. This modularity enhances reuse, simplifies testing, and improves system reliability [20].

Table 1: Core Components of an Automated Data Screening Pipeline

| Pipeline Component | Primary Function | Key Output |

|---|---|---|

| Data Ingestion & Acquisition | Programmatically collects data from diverse curated sources (e.g., ECOTOX, ToxCast, literature databases). | Raw, structured, and unstructured datasets. |

| Preprocessing & Feature Engineering | Cleanses data (handles null values, normalizes units), extracts relevant entities (chemical names, endpoints), and structures information for analysis [21]. | Standardized, analysis-ready data frames. |

| Quality Assessment & Scoring | Applies rule-based and AI-driven checks to evaluate data against predefined QA/QC criteria (e.g., completeness, methodology reliability) [1] [22]. | Quality scores, reliability flags, and identified data gaps. |

| Modeling & Prediction | Utilizes New Approach Methodologies (NAMs) like quantitative structure-activity relationships (QSAR) or AI models to fill data gaps or predict toxicity [19]. | Predicted toxicity values (e.g., points-of-departure). |

| Ranking & Prioritization | Synthesizes experimental and predicted data to rank chemicals or studies based on hazard potential or data confidence. | Prioritized lists for further expert review. |

| Reporting & Visualization | Generates standardized assessment reports, visual summaries, and data lineage documentation. | Audit trails, interactive dashboards, and regulatory submission documents. |

Architectural Patterns: Orchestration and Flow

These modular components are coordinated by an orchestration tool that manages their execution order, dependencies, and failure handling. The workflow is typically represented as a directed acyclic graph (DAG), ensuring a logical, non-circular flow of data [20]. The choice of orchestration tool (e.g., Apache Airflow, Kubeflow, Prefect) depends on the team's infrastructure and the need for ML-specific capabilities [20].

Diagram 1: Modular Architecture for Automated Data Screening (Max width: 760px)

Data Quality Testing: Techniques and Integration

The quality assessment module is the core of the screening pipeline. It systematically applies a suite of tests to ensure data is fit for purpose. Moving from manual, subjective checks to an automated framework is critical for scalability and consistency [1] [21].

Essential Data Testing Methods for Ecotoxicology

Different testing methods address specific quality dimensions. An effective pipeline integrates multiple techniques.

Table 2: Key Data Quality Testing Methods and Their Application [21] [22]

| Testing Method | Description | Application in Ecotoxicology Screening | When to Use |

|---|---|---|---|

| Completeness Testing | Verifies all required data fields and records are present. | Checks for missing critical fields (e.g., chemical CAS RN, dose concentration, species, effect endpoint). | Initial data ingestion and during study evaluation [22]. |

| Consistency Testing | Ensures data follows uniform rules across sources. | Validates consistent use of units (μg/L vs. ppb), taxonomic nomenclature, and endpoint terminology. | When integrating data from multiple studies or databases [21]. |

| Accuracy/Plausibility Testing | Assesses if data correctly represents real-world values. | Applies boundary checks (e.g., negative concentration values) or compares reported results against known chemical properties. | During scoring of individual study reliability [22]. |

| Uniqueness Testing | Identifies unintended duplicate records. | Flags potentially duplicate entries for the same chemical-species-endpoint combination from the same study. | During database compilation and deduplication. |

| Referential Integrity Testing | Validates relationships between linked data points. | Ensures that a cited test guideline or a species ID links to a valid entry in a master reference table. | In structured relational databases linking studies to references. |

The Role of AI in Quality Assessment

Large Language Models (LLMs) like GPT and Gemini offer transformative potential for automating the screening of textual data, such as journal articles and regulatory reports. They can be prompted to extract specific information and evaluate study reliability based on predefined QA/QC criteria with high consistency, replicating human assessments at scale [1]. This AI-assisted screening acts as a force multiplier for expert toxicologists.

Diagram 2: AI-Assisted Data Quality Assessment Workflow (Max width: 760px)

Implementation Protocols and Case Studies

Protocol: Implementing an Automated Screening Pipeline (RASRTox Model)

The following protocol is adapted from the development of RASRTox (Rapidly Acquire, Score, and Rank Toxicological data), an automated computational pipeline for ecological hazard assessment [19].

Objective: To create a reproducible pipeline that extracts, scores, and ranks ecological toxicity data from multiple sources to support efficient Biological Evaluations under the Endangered Species Act. Materials: See "The Scientist's Toolkit" (Section 6). Procedure:

- Data Acquisition:

- Configure connectors to extract data from curated sources (ECOTOX, ToxCast).

- Implement web scraping or API-based ingestion for systematic literature review, focusing on predefined chemical lists and species.

- Data Processing & Standardization:

- Apply text parsing to identify and extract key entities: chemical identifiers, test species, effect endpoints, and toxicity values (e.g., LC50, NOEC).

- Standardize all units of measurement and normalize taxonomic names to a common ontology (e.g., ITIS).

- Structure the extracted data into a unified schema.

- Quality Scoring:

- Apply rule-based checks derived from established QA/QC frameworks (e.g., EPA Guidance) [7]. For example, flag studies that lack control group data or clear dose-response information.

- (Optional AI Integration) For textual methodology sections, use a prompted LLM to score reliability based on criteria like test guideline adherence and statistical reporting [1].

- Assign a categorical score (e.g., High, Medium, Low Reliability) to each data point.

- Predictive Modeling for Data Gaps:

- For chemicals or species with insufficient experimental data, trigger QSAR models (e.g., ECOSAR, TEST) to predict toxicity values [19].

- Clearly label all predicted values and associate uncertainty metrics.

- Data Ranking & Synthesis:

- Integrate experimental and predicted data.

- Rank chemicals based on the potency (toxicity value) and confidence (quality score) of the supporting data.

- Generate a summarized report highlighting the most critical studies and primary data gaps. Validation: As performed in the RASRTox study, validate pipeline output by comparing machine-derived points-of-departure (PODs) for a set of chemicals against traditional benchmarks like Toxicity Reference Values (TRVs). Successful pipelines show PODs generally within an order of magnitude of TRVs [19].

Protocol: AI-Assisted QA/QC Screening for Literature

This protocol details the method for using LLMs to evaluate individual studies, as demonstrated in microplastics research [1].

Objective: To standardize and accelerate the evaluation of study reliability for inclusion in systematic reviews or risk assessments. Materials: Access to an LLM API (e.g., OpenAI GPT, Google Gemini); a set of scientific studies in digital text format; a defined QA/QC checklist. Procedure:

- Prompt Engineering:

- Develop a structured prompt that includes: (a) the role ("You are an expert toxicologist"), (b) the task ("Evaluate the reliability of this study"), (c) the explicit criteria from the QA/QC checklist (e.g., "Was the sampling method clearly described?", "Were appropriate controls used?"), and (d) the required output format (e.g., a JSON object with scores and text rationale).

- Batch Processing:

- Chunk study texts to fit within the LLM's context window, prioritizing Methods and Results sections.

- Automate the sending of prompts and collection of responses using a scripting language (Python).

- Response Parsing and Aggregation:

- Parse the LLM's structured output to extract numerical scores and categorical flags.

- Aggregate scores across a corpus of studies to enable comparative ranking. Validation: Manually score a random subset of studies and calculate inter-rater consistency (e.g., Cohen's Kappa) between the human reviewer and the AI tool to ensure acceptable agreement [1].

Quantitative Analysis and Visualization of Pipeline Output

The output of an automated screening pipeline is quantitative and requires appropriate statistical analysis and visualization to support decision-making.

Key Analytical Steps:

- Descriptive Statistics: Summarize the compiled dataset. Calculate central tendency (mean, median) and dispersion (range, standard deviation) of toxicity values for key chemical-species groups [23].

- Sensitivity Analysis: Perform statistical tests (e.g., t-tests, ANOVA) to determine if derived toxicity values or quality scores differ significantly between chemical classes, taxonomic groups, or test methodologies [23].

- Uncertainty Characterization: Quantify and visualize uncertainty, which may arise from model predictions (QSAR), interspecies extrapolation, or data quality variance.

Table 3: Recommended Visualizations for Pipeline Output Analysis [24] [23]

| Analytical Goal | Recommended Visualization | Purpose in Ecotoxicology |

|---|---|---|

| Compare toxicity across chemicals | Bar Chart (with error bars) | Visually compare mean LC50 values for multiple chemicals, showing variance. |

| Show distribution of data quality scores | Histogram or Pie Chart | Illustrate the proportion of studies rated as High, Medium, or Low reliability. |

| Track data completeness over time | Line Chart | Show the trend in the average number of reported QA criteria in published studies per year. |

| Correlate predicted vs. experimental values | Scatter Plot | Validate QSAR model performance by plotting predicted toxicity against experimental data. |

| Rank chemical hazard | Dot Plot or Ordered Bar Chart | Rank chemicals by hazard potency, often incorporating confidence intervals or quality score shading. |

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key software, tools, and resources required to implement the automated workflows described.

Table 4: Essential Toolkit for Building Automated Screening Pipelines

| Tool/Resource Category | Specific Examples | Function in the Pipeline |

|---|---|---|

| Orchestration & Workflow Management | Apache Airflow, Kubeflow, Prefect, Nextflow [20] | Coordinates the execution of pipeline components as Directed Acyclic Graphs (DAGs). |

| Data Version Control & Reproducibility | DVC (Data Version Control), MLflow, Git LFS [20] | Versions datasets, models, and experiments to ensure full reproducibility of any analysis run. |

| Computational Toxicology & QSAR | EPA TEST, ECOSAR [19] | Provides predictive models to estimate toxicity for chemicals lacking experimental data. |

| Data Quality Testing Frameworks | Great Expectations, Deequ, custom scripts using pandas | Implements and executes automated data quality tests (completeness, uniqueness, etc.) [21]. |

| AI/LLM for Text Analysis | OpenAI API, Google Gemini API, spaCy [1] | Automates the extraction and reliability scoring of information from textual study reports. |

| Curated Data Sources | EPA ECOTOXicology Knowledgebase (ECOTOX), EPA ToxCast [19] | Provides high-quality, structured experimental toxicity data for ingestion and validation. |

| Visualization & Reporting | Python (Matplotlib, Seaborn, Plotly), R (ggplot2), ChartExpo [23] | Generates publication-quality charts, interactive dashboards, and final assessment reports. |