Animal LD50 Data in Human Risk Assessment: Reliability, Limitations, and the Rise of New Approach Methodologies

This article provides a critical evaluation of the reliability of animal LD50 data for predicting human health risks, tailored for researchers, scientists, and drug development professionals.

Animal LD50 Data in Human Risk Assessment: Reliability, Limitations, and the Rise of New Approach Methodologies

Abstract

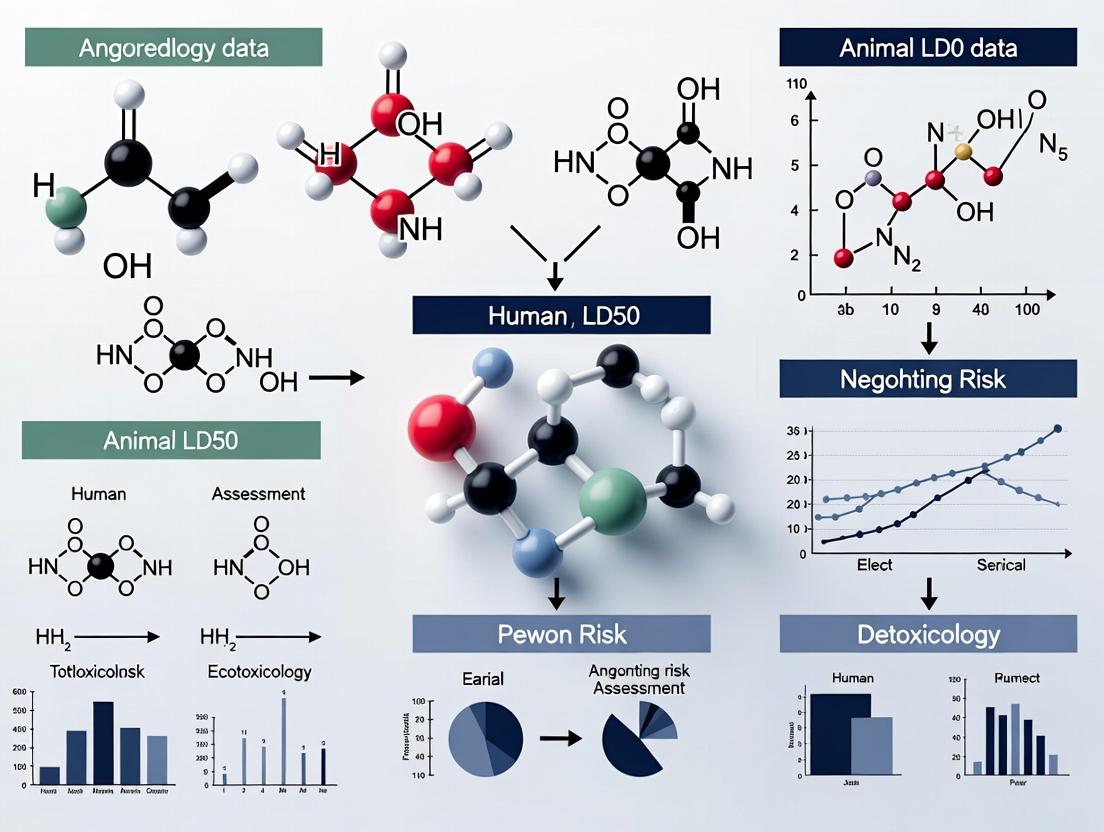

This article provides a critical evaluation of the reliability of animal LD50 data for predicting human health risks, tailored for researchers, scientists, and drug development professionals. The scope progresses from establishing the foundational definition and role of LD50 in regulatory history to detailing its methodological application in formal risk assessment frameworks like the EPA's four-step process. It then tackles core scientific limitations—including interspecies variation, ethical concerns, and high resource costs—and explores optimization strategies via computational models and conservative consensus approaches. Finally, the article validates the ongoing paradigm shift towards human-relevant New Approach Methodologies (NAMs), examining in vitro and in silico alternatives, integrated assessment frameworks, and the barriers to their regulatory acceptance. The synthesis offers a forward-looking perspective on transitioning to a more predictive, efficient, and ethical safety science.

The Bedrock of Toxicity Testing: Defining LD50 and Its Historical Role in Hazard Identification

What is LD50? Quantifying the Lethal Dose for 50% of a Test Population

The median lethal dose (LD50) is a foundational toxicological unit defined as the dose of a substance required to kill 50% of a test population under controlled conditions within a specified time [1] [2]. Originally developed by J.W. Trevan in 1927 to standardize the potency of drugs and biological agents, this metric provides a quantifiable benchmark for comparing the acute toxicity of diverse chemicals [1] [2]. For researchers and drug development professionals, the LD50 value serves as a critical initial data point for hazard classification, informing safety protocols and regulatory decisions [3] [4].

However, within the context of human risk assessment, the reliability of animal-derived LD50 data is a subject of ongoing scientific scrutiny. While it offers a standardized comparison, significant limitations arise from interspecies physiological differences, genetic variability within test populations, and the inherent ethical and practical constraints of traditional testing methods [1] [5]. This guide compares classical in vivo LD50 determination methods with contemporary alternative protocols, examining their experimental workflows, data output, and relevance for extrapolating risk to humans. The evolution toward computational and refined animal methods reflects the field's pursuit of more predictive, humane, and human-relevant toxicological data [3] [4].

Defining the Metric: Core Concepts and Calculation

The LD50 value is expressed as the mass of a substance administered per unit mass of the test subject, most commonly as milligrams per kilogram (mg/kg) of body weight [1]. This normalization allows for the comparison of toxicity across different substances and animal sizes, though toxicity does not always scale linearly with body mass [1]. The related term LC50 (Lethal Concentration 50) refers to the lethal concentration of a chemical in air or water, with the exposure duration (e.g., 4 hours) being critical [1] [2].

The choice of a 50% mortality endpoint is a statistical compromise that avoids the high variability associated with measuring effects at the extremes (e.g., LD01 or LD99) and reduces the number of animals required to define the dose-response curve [1] [6]. The underlying assumption is a monotonic dose-response relationship, where mortality increases with the administered dose [6]. The resulting sigmoidal curve plots the percentage of mortality against the dose (often logarithmically transformed), with the LD50 located at its midpoint [7] [6].

Table 1: Key Dose-Response Terms in Toxicology

| Term | Definition | Primary Use |

|---|---|---|

| LD50 | Lethal dose for 50% of the population [1]. | Gold standard for comparing acute toxicity. |

| LD01 / LD99 | Lethal dose for 1% or 99% of the population [1]. | Assessing threshold or extreme lethality. |

| LC50 | Lethal concentration in air/water for 50% of population [2]. | Inhalation or aquatic toxicity studies. |

| ED50 | Effective dose for 50% of the population. | Pharmacology (therapeutic effect). |

| Therapeutic Index | Ratio of LD50 to ED50 [1]. | Quantifying drug safety margin. |

Statistical estimation is required in practice because the exact dose-response curve is unknown. Based on mortality counts from groups of animals administered different doses, researchers use models like logistic regression or probit analysis to interpolate the dose corresponding to 50% mortality [6]. The precision of the LD50 estimate depends on the number of animals, the number of dose groups, and the spacing of the doses [6].

Experimental Methodologies: From Classical to Contemporary Protocols

Classical LD50 Test

The original "classical" protocol, developed in the 1920s, involved administering the test substance to large groups of animals (often 50-100 rodents) across 4-6 predefined dose levels [4]. Animals were observed for 14 days for signs of toxicity and mortality [2]. The LD50 and its confidence interval were calculated using statistical methods like the probit analysis developed by Litchfield and Wilcoxon or the arithmetic method of Reed and Muench [4]. While this method aimed for statistical precision, it required significant numbers of animals and caused severe distress, leading to ethical and scientific criticism [4] [8].

Refined Animal-Based Methods (The 3Rs Principle)

To address the drawbacks of the classical test, regulatory bodies like the OECD have approved refined methods that adhere to the "3Rs" principle (Reduction, Refinement, Replacement) [4].

- Fixed Dose Procedure (FDP, OECD TG 420): This method does not aim to find a lethal dose. Instead, it identifies a dose that causes clear signs of toxicity (but not death) in a small group of animals, using that information to classify the substance into a hazard category [4].

- Acute Toxic Class (ATC) Method (OECD TG 423): This sequential testing procedure uses even fewer animals (typically 3 per step) and employs a stepwise dosing strategy to assign the substance to a defined toxicity class [4].

- Up-and-Down Procedure (UDP, OECD TG 425): This is the most significant reduction alternative. It doses animals sequentially, one at a time, with the dose for the next animal adjusted up or down based on the outcome for the previous one. Sophisticated computer programs (e.g., EPA's AOT425StatPgm) calculate the LD50 and confidence interval, typically using 6-9 animals instead of 40-50 [4] [9].

Table 2: Comparison of Key Acute Oral Toxicity Testing Methods

| Method (OECD Guideline) | Approx. Animal Use (Rodents) | Primary Objective | Key Advantage | Regulatory Status |

|---|---|---|---|---|

| Classical LD50 (Historical) | 40-100 [4] | Determine precise LD50 value with confidence intervals. | Historical standard, extensive data. | Largely suspended; not compliant with modern 3Rs. |

| Fixed Dose Procedure (420) | 10-20 [4] | Identify hazard class based on evident toxicity. | Avoids lethal endpoints, reduces suffering. | Approved (1992). |

| Acute Toxic Class (423) | 6-18 [4] | Assign substance to a pre-defined toxicity class. | Efficient stepwise approach, uses few animals. | Approved (1996). |

| Up-and-Down (425) | 6-9 [4] [9] | Estimate LD50 and confidence interval. | Drastic animal reduction, precise statistical output. | Approved (1998). |

| In Silico (Q)SAR (Non-Test) | 0 | Predict toxicity from chemical structure. | High-throughput, no animals, useful for screening. | Used for prioritization; gaining regulatory acceptance [3]. |

In Silico and In Vitro Alternatives

Computational toxicology methods are emerging as replacements. (Quantitative) Structure-Activity Relationship [(Q)SAR] models predict LD50 values by analyzing the relationship between a chemical's structural properties and its known toxicological activity [3]. A major collaborative project by NICEATM and the U.S. EPA compiled a database of ~12,000 rat oral LD50 values to develop and validate such models for regulatory endpoints [3]. These models can predict whether a chemical is "very toxic" (LD50 < 50 mg/kg) or "non-toxic" (LD50 > 2000 mg/kg) with balanced accuracies over 0.80 [3]. While not yet a full replacement for all regulatory needs, they are invaluable for prioritization and screening.

Comparative Data Analysis: LD50 in Context

LD50 values span an immense range, illustrating the vast differences in acute toxicity between common substances. It is critical to note that these values are specific to the test animal, route, and conditions and cannot be directly translated to a "human LD50." [1] [5]

Table 3: Comparative LD50 Values for Selected Substances (Rodent, Oral Route) [1] [5]

| Substance | Approximate LD50 (mg/kg) | Toxicity Classification | Context for Human Risk |

|---|---|---|---|

| Botulinum Toxin | 0.000001 (1 ng/kg) [5] | Extremely Toxic | Human lethal dose is minute; extreme hazard. |

| Sodium Cyanide | 6.4 [5] | Highly Toxic | Well-known rapid poison; high acute risk. |

| Nicotine | 50 [5] | Highly Toxic | Toxic if ingested; hazard different from smoking. |

| Paracetamol (Acetaminophen) | 2000 [1] | Slightly Toxic | Human hepatotoxicity at high doses; species difference is key. |

| Aspirin | 200 [5] | Moderately Toxic | Human therapeutic drug; overdose possible. |

| Sodium Chloride (Table Salt) | 3000 [1] | Slightly Toxic | Essential nutrient; toxicity from extreme intake. |

| Ethanol | 7060 [1] | Practically Non-Toxic | Recreational drug; chronic toxicity differs. |

| Water | >90,000 [1] | Relatively Harmless | Practically non-toxic; illustrates scale. |

The therapeutic index (TI = LD50 / ED50) is a more relevant metric than LD50 alone for drug development, as it quantifies the margin between efficacy and lethality [1]. A substance with a low LD50 can be safely used if its effective dose is much lower (high TI), whereas a substance with a high LD50 can be dangerous if its effective dose is close to its toxic dose (low TI).

Reliability for Human Risk Assessment: Critical Limitations

The extrapolation of animal LD50 data to predict human acute toxicity is fraught with uncertainty, forming the core of the thesis on its limited reliability.

- Interspecies Variability: Metabolic, physiological, and anatomical differences can lead to dramatically different responses. For example, paracetamol is significantly more toxic to humans than to rats [1] [5]. Chocolate is harmless to humans but toxic to dogs [1].

- Intraspecies Variability: LD50 is a population median. It does not account for genetic diversity, age, sex, health status, or microbiome differences within the human population that can create susceptible subpopulations [1] [7].

- Route of Exposure: The LD50 varies drastically with the route of administration (oral, dermal, intravenous, inhalation) [1] [2]. Human exposure scenarios may not match the test route.

- Mechanistic vs. Descriptive Data: A single LD50 number provides no information on the mechanism of toxicity, time course of death, or specific organ pathology, which are crucial for understanding human risk and developing treatments [8].

- Acute vs. Chronic Effects: LD50 measures only acute lethality. It offers no insight into long-term effects of low-dose exposure, such as carcinogenicity, endocrine disruption, or neurotoxicity [2].

Therefore, while animal LD50 data are essential for initial hazard identification and classification, they are merely the first step in a comprehensive risk assessment. Reliable human risk assessment requires data from multiple sources: mechanistic studies, in vitro assays using human cells or tissues, pharmacokinetic modeling, epidemiological data, and a thorough understanding of expected human exposure scenarios.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagents and Materials for LD50-Related Studies

| Item | Function in LD50 Research |

|---|---|

| Laboratory Rodents (e.g., Sprague-Dawley Rats) | The standard in vivo model organism for determining mammalian acute oral toxicity [2] [3]. |

| Test Substance (High Purity) | The chemical agent being evaluated. Testing is nearly always performed using a pure form to ensure accurate dosing and interpretation [2]. |

| Vehicle (e.g., Carboxymethylcellulose, Corn Oil) | A neutral substance used to dissolve or suspend the test compound for accurate oral gavage or other administration. |

| Gavage Needle/Syringe | For precise oral administration of the test substance to rodents. |

| Clinical Chemistry & Hematology Assay Kits | To quantify biomarkers of organ damage (e.g., liver enzymes, creatinine) in sub-acute studies or satellite animal groups. |

| Histopathology Supplies (Fixatives, Stains) | For microscopic examination of tissues (liver, kidney, heart, etc.) to identify target organs and pathological lesions. |

| Computer with Statistical Software (e.g., AOT425StatPgm) | Essential for designing UDP studies, calculating sequential doses, and determining the final LD50 estimate with confidence intervals [9]. |

| (Q)SAR Software & Chemical Databases | For in silico prediction of toxicity. Relies on large curated databases like the EPA's ~12,000 compound rat LD50 inventory [3]. |

Historical Development and Core Principles

The median lethal dose (LD50) test, formally introduced by J.W. Trevan in 1927, was designed to quantify the acute toxicity of substances by determining the dose expected to kill 50% of a tested animal population within a specified timeframe [4]. This metric provided a standardized, reproducible point for comparing the toxicity of chemicals, which was rapidly adopted for drug and chemical safety assessment. The original "Classical LD50" protocol, developed in the 1920s, required large numbers of animals—often up to 100 individuals across five dose groups—to generate a precise dose-response curve [4].

The conceptual foundation of the LD50 test is its role in acute systemic toxicity evaluation, which assesses adverse effects occurring within 24 hours of a single or multiple exposures via oral, dermal, or inhalation routes [4]. Its results became crucial for the hazard classification and labeling of substances, providing a foundational metric for regulatory decisions worldwide [4] [10]. The test's endpoint, while focused on mortality, also involves observing signs of toxicity, which can offer insights into a substance's mechanism of action and target organs [4].

Evolution of Protocols: From Classical LD50 to Modern Alternatives

The drive to reduce animal use and refine testing procedures led to significant methodological evolution, guided by the "3Rs" framework (Replacement, Reduction, and Refinement) established by Russell and Burch in 1959 [4].

Table 1: Evolution of Key LD50 Estimation Methods

| Method (Year Introduced) | Key Principle | Typical Animal Number | Regulatory Status (OECD Guideline) | Primary Advantage |

|---|---|---|---|---|

| Classical LD50 (1920s) | Precise mortality curve across multiple doses. | 40-100+ | Superseded | Historical benchmark for toxicity ranking. |

| Fixed Dose Procedure (1992) | Identifies a dose causing evident toxicity but not death. | 10-20 | OECD 420 | Significantly reduces mortality and distress. |

| Acute Toxic Class Method (1996) | Uses stepwise dosing to assign a toxicity class. | 6-18 | OECD 423 | Requires fewer animals than classical test. |

| Up-and-Down Procedure (2001) | Adjusts dose for each animal based on previous outcome. | 6-10 | OECD 425 | Most efficient reduction in animal numbers. |

The transition from the Classical LD50 began in earnest in the 1980s and 1990s with the development and OECD adoption of alternative in vivo methods that prioritize the 3Rs [4]. The Fixed Dose Procedure (FDP), adopted in 1992 (OECD 420), abandons the goal of precisely finding the 50% lethality point. Instead, it focuses on identifying a dose that produces clear signs of toxicity without necessarily causing death, thereby minimizing animal suffering [4]. The Acute Toxic Class (ATC) method and the Up-and-Down Procedure (UDP) further reduce animal numbers by using sequential, step-wise dosing strategies [4]. These modern protocols accept a less precise estimate of the lethal dose in exchange for ethical gains and efficiency, while still providing robust data for hazard classification under systems like the Globally Harmonized System (GHS) [4].

Modern Computational Models for Acute Toxicity Prediction

The most recent evolution involves the partial or complete replacement of animal testing through New Approach Methodologies (NAMs), particularly advanced computational models [11] [4]. These in silico tools leverage large historical datasets to predict toxicity, addressing the need to assess millions of commercially available chemicals that lack experimental data [11].

Quantitative Structure-Activity Relationship (QSAR) models are a cornerstone of this approach. They operate on the principle that a chemical's structure determines its biological activity and toxicity. For regulatory use, QSAR models must fulfill specific validation principles, including having a defined endpoint, an unambiguous algorithm, and a defined domain of applicability [11]. Major software tools implementing these models include the OECD QSAR Toolbox, OASIS, and Derek [11].

A significant advancement is the development of consensus models that combine predictions from multiple individual algorithms to improve reliability. The Collaborative Acute Toxicity Modeling Suite (CATMoS) is a prominent example, generating consensus predictions from various machine-learning models built on a large, curated dataset of rat acute oral toxicity [11]. Studies show that a Conservative Consensus Model (CCM), which selects the lowest predicted LD50 value from multiple models (like CATMoS, VEGA, and TEST), offers a health-protective approach. It exhibits a very low under-prediction rate (2%), meaning it rarely underestimates toxicity, though it has a higher over-prediction rate (37%) [12].

Table 2: Performance of Selected Computational Models for Rat Acute Oral Toxicity Prediction

| Model | Model Type | Key Performance Metrics | Strengths | Application Context |

|---|---|---|---|---|

| TEST | Statistical QSAR Consensus (Hierarchical Clustering, FDA, Nearest Neighbor) | External Test Set: R²: 0.626, MAE: 0.431 [10]. Broad chemical coverage [10]. | Makes predictions for a wide array of chemicals; freely available. | Screening and priority-setting where experimental data is absent [10]. |

| TIMES | Hybrid Expert System (Mechanistic SARs + QSARs) | Training Set R²: 0.85 [10]. Performance similar to TEST but for fewer chemicals [10]. | Incorporates mechanistic reasoning and AOP-like constructs. | Useful for chemicals within its well-defined mechanistic categories [10]. |

| CATMoS | Consensus Machine Learning Model | High accuracy and robustness vs. in vivo results [11]. | Leverages collective strengths of multiple algorithms; high predictive confidence. | Prioritizing in vivo testing; used in pharmaceutical industry for compound triage [11]. |

| Conservative Consensus Model (CCM) | Consensus of multiple models (e.g., TEST, CATMoS, VEGA) | Under-prediction rate: 2%; Over-prediction rate: 37% [12]. | Maximizes health protection by selecting the most conservative (lowest) LD50 prediction. | Hazard identification under conditions of high uncertainty or for priority risk assessment [12]. |

Experimental Protocols and Methodologies

Modern In Vivo Protocol: OECD Guideline 425 (Up-and-Down Procedure)

The UDP represents the state-of-the-art in refined animal testing for acute oral toxicity [4]. The procedure begins with a limit test at 2000 mg/kg. If no mortality is observed, the test is concluded, classifying the substance in a low toxicity category. If necessary, the main test proceeds using a sequential dosing strategy. A single animal is dosed at a best-estimate starting dose. If it survives, the next animal receives a higher dose; if it dies, the next receives a lower dose. The test continues with a minimum of six animals, with dosing intervals adjusted based on outcomes. The LD50 and its confidence intervals are calculated using a maximum likelihood method. This protocol typically uses only 6-10 animals, a drastic reduction from classical methods, and limits severe suffering [4].

In Silico Prediction Protocol Using QSAR Models

A standard workflow for computational prediction involves several key steps [11] [10]:

- Chemical Standardization: The input chemical structure (e.g., via SMILES notation or CAS number) is converted into a QSAR-ready format. This involves removing salts, neutralizing charges, and standardizing tautomers using toolkits like RDKit [11] [10].

- Descriptor Calculation: Numerical representations (molecular descriptors) or structural fingerprints (e.g., Extended Connectivity Fingerprints - ECFP6) are generated to characterize the molecule computationally [11].

- Model Application & Prediction: The descriptors are submitted to the model(s). In tools like TEST, multiple underlying methods (hierarchical, FDA) generate predictions, and a consensus value is derived [10]. For the CCM, predictions from several platforms (TEST, CATMoS, VEGA) are collected, and the lowest predicted LD50 value is selected as the final, health-protective estimate [12].

- Applicability Domain Assessment: A critical step determines whether the query chemical falls within the structural and property space of the chemicals used to train the model. Predictions for chemicals outside this domain are considered unreliable [11].

The Reliability of Animal LD50 Data for Human Risk Assessment

The core thesis regarding the reliability of animal LD50 data for human risk assessment must account for inherent variability and uncertainty. A critical analysis of a large reference dataset (~16,713 studies) reveals substantial inherent variability in experimental animal LD50 values [10]. For chemicals with multiple studies, the range of reported values can span an order of magnitude or more. This variability stems from factors like animal strain, sex, laboratory protocol, and housing conditions.

This inherent noise in the biological benchmark itself challenges the validation of alternative methods. When evaluating computational models like TEST and TIMES, their prediction errors must be weighed against the background variability of the experimental data [10]. A model prediction falling within the 95% confidence interval of the experimental data may be considered sufficiently accurate for classification purposes, even if it does not match a single idealized value.

Furthermore, the translation from rat to human introduces another layer of uncertainty due to interspecies differences in toxicokinetics (absorption, distribution, metabolism, excretion) and toxicodynamics [4]. Regulatory frameworks address these uncertainties by applying assessment factors (e.g., 10-fold for interspecies differences) to animal-derived LD50 values when estimating potential human lethal doses. The trend toward more health-protective models, like the Conservative Consensus Model, which intentionally errs on the side of over-predicting toxicity, is a direct response to these uncertainties, aiming to ensure safety even in the face of data limitations and biological variability [12].

Integrated Hazard Assessment and Future Directions

Modern chemical hazard assessment, as exemplified by frameworks like the Enhesa GHS+, no longer relies on a single LD50 value [13]. Acute oral toxicity is integrated as one supplemental endpoint within a comprehensive evaluation of human health and environmental hazards. The overall process is systematic [13]:

- Chemical Identity Confirmation using CAS RN or SMILES.

- List Screening against hundreds of regulatory and authoritative lists.

- Endpoint-Level Assessment, where data from experiments, read-across analogs, and in silico models are weighed using weight-of-evidence and often a precautionary principle.

- Overall Hazard Categorization using a rules-based system that synthesizes all endpoint findings into a final hazard category (e.g., Green, Yellow, Red) [13].

In this integrated system, a predicted or experimental LD50 value feeds into the acute toxicity endpoint assessment. If high-quality experimental data is lacking, a conservative QSAR prediction or data from a read-across analog is used to fill the gap, ensuring a complete assessment [13]. The future of acute toxicity testing lies in further developing and validating these integrated testing strategies (ITS). These strategies intelligently combine in silico tools, high-throughput in vitro assays (like 3T3 NRU cytotoxicity), and targeted in vivo tests only when absolutely necessary. The goal is to maximize the use of non-animal data for screening and prioritization, enhance human relevance, and provide a more mechanistic understanding of toxicity, all while firmly adhering to the principles of the 3Rs [11] [4].

Timeline of LD50 Test Evolution

Integrated Hazard Assessment Workflow

- OECD Testing Guidelines (420, 423, 425): The definitive international standards for conducting refined in vivo acute oral toxicity tests. They provide step-by-step protocols to ensure scientific validity, reproducibility, and ethical compliance [4].

- QSAR-Ready Chemical Structures: Standardized molecular representations (typically as SMILES strings) with salts removed and charges neutralized. This is a mandatory pre-processing step for reliable computational toxicity prediction, ensuring models interpret the correct molecular entity [11] [10].

- Reference LD50 Datasets (e.g., NICEATM/EPA): Large, curated collections of historical animal toxicity data. These are essential for training, validating, and benchmarking new predictive models. The ICCVAM reference set of ~16,713 studies is a prime example [10].

- Consensus Modeling Software (e.g., CATMoS Platform): Software that aggregates predictions from multiple underlying machine learning or QSAR models. Using a consensus approach improves prediction reliability and robustness, making it a key tool for modern hazard assessment [12] [11].

- Chemical Hazard Assessment Framework (e.g., GHS+): A structured rule-based system that integrates data from multiple endpoints (acute toxicity, mutagenicity, ecotoxicity, etc.) to assign an overall hazard category. This moves decision-making beyond a single LD50 value to a holistic profile [13].

- Applicability Domain Assessment Tool: A method or module (often integrated into QSAR software) to determine whether a chemical of interest falls within the structural space of a model's training set. It is critical for qualifying predictions and avoiding unreliable extrapolations [11].

1. Introduction: The LD50 in Human Risk Assessment The median lethal dose (LD50) test, introduced by Trevan in 1927, is a standardized measurement for quantifying the acute toxicity of substances by determining the dose that causes death in 50% of a test animal population [14] [2]. Despite its historical role in toxicological hazard ranking and regulatory classification, its reliability for direct human risk assessment is fundamentally limited. This limitation stems from interspecies variability, ethical and statistical inefficiencies of the classical test design, and the critical distinction between external exposure and internal biologically effective dose [15] [16] [17]. This guide compares traditional in vivo LD50 protocols with modern alternative approaches, framing the analysis within the imperative to enhance predictive reliability for human health by standardizing exposure route assessment and integrating human-relevant values.

2. Standardizing Exposure: Routes, Dosimetry, and Internal Dose Accurate risk assessment requires moving beyond administered dose to understand how exposure route affects internal dose. The U.S. EPA defines multiple dose metrics: potential dose (inhaled/ingested amount), applied dose (at absorption barrier), internal dose (absorbed into bloodstream), and biologically effective dose (interacting with target tissues) [18]. For inhalation, a primary occupational route, the internal dose can be significantly lower than the potential dose due to respiratory physiology and clearance mechanisms [18].

Table 1: Key Metrics for Inhalation vs. Oral Exposure Assessment

| Exposure Metric | Inhalation Route (Air) | Oral Route | Critical Parameter |

|---|---|---|---|

| Primary Metric | Concentration (C~air~: mg/m³ or ppm) [18] | Dose (mg/kg body weight) [2] | Media-specific measurement |

| Temporal Adjustment | Cair-adj = C~air~ × (ET/24) × EF × (ED/AT) [18] | Not typically applied to single-dose LD50 | Exposure time (ET), frequency (EF), duration (ED), averaging time (AT) |

| Intake/Dose Calculation | ADD = (C~air~ × InhR × ET × EF × ED) / (BW × AT) [18] | LD50 = Administered mass / Body Weight [2] | Inhalation rate (InhR), Body weight (BW) |

| Species Conversion Challenge | Lung anatomy, ventilation rate, deposition efficiency [18] | Gastrointestinal physiology, metabolism, absorption [17] | Requires dosimetric adjustment factors |

3. Comparative Analysis of Experimental Protocols This section details and compares the classical LD50 test with a refined alternative and a non-animal method.

3.1. Classical Oral LD50 Test (OECD Guideline 401 - Historical)

- Objective: Determine the single oral dose causing 50% mortality in a defined animal population.

- Methodology: Groups of 8-10 animals (typically rodents) per dose level are administered the substance via gavage. Four or more dose levels are used to span the expected mortality range from 0% to 100% [2]. Animals are observed for 14 days for mortality and clinical signs of toxicity.

- Endpoint & Analysis: The LD50 and its confidence interval are calculated using probit or logistic regression analysis of mortality vs. log(dose).

- Limitations: High animal use (40-100+); significant suffering; results are highly sensitive to protocol variables (species, strain, sex, housing) [14] [16]. The endpoint (death) provides limited mechanistic insight for human risk assessment.

3.2. Fixed Dose Procedure (FDP - OECD Guideline 420)

- Objective: Identify a discernible non-lethal toxic effect (signs of severe toxicity) to classify a substance's hazard, avoiding mortality as an endpoint.

- Methodology: A sighting study determines a starting dose (e.g., 5, 50, 300, 2000 mg/kg). A single group of 5 animals (one sex) is then dosed at this level. If clear signs of severe toxicity (not death) manifest, the test stops, and the substance is classified. If mortality occurs, a lower dose is tested [14].

- Endpoint & Analysis: The procedure identifies the dose causing clear signs of "evident toxicity." Classification is based on predefined dose bands.

- Advantages: Significant reduction (up to 70%) in animal use; emphasizes morbidity over mortality, providing more relevant toxicity data; aligns with the 3Rs (Replacement, Reduction, Refinement) [14].

3.3. In Vitro Cytotoxicity Assays (Baseline Toxicity Screening)

- Objective: Predict starting points for acute oral systemic toxicity using mammalian cell lines.

- Methodology: Established cell lines (e.g., NHK, 3T3) are exposed to a range of substance concentrations for 24-72 hours. Cell viability is measured using endpoints like neutral red uptake, MTT reduction, or ATP content [14] [16].

- Endpoint & Analysis: The concentration inhibiting 50% of cell viability (IC50) is calculated. Regression models can correlate IC50 ranges with in vivo LD50 classification bands.

- Advantages: No live animals used; high throughput; provides mechanistic data; uses human cells for greater relevance [16]. Limitations: Cannot model complex pharmacokinetics or organ-system interactions; best used in integrated testing strategies.

Table 2: Protocol Comparison for Acute Toxicity Testing

| Feature | Classical LD50 | Fixed Dose Procedure | In Vitro Cytotoxicity |

|---|---|---|---|

| Primary Endpoint | Mortality (LD50) [2] | Signs of severe toxicity [14] | Cell viability (IC50) |

| Animals/Cells per Test | 40-100+ animals [16] | 5-15 animals [14] | 0 animals; multi-well plates |

| Duration | 14 days observation [2] | 14 days observation | 24-72 hours |

| Statistical Output | Precise LD50 with confidence intervals | Hazard classification band | IC50 with confidence intervals |

| Predictive Value for Human Acute Toxicity | Limited by interspecies differences [15] | Improved via morbid symptomology | Promising for ranking; requires validation |

| Regulatory Acceptance | Historically required; now largely retired | Accepted for classification (OECD, EPA, EU) | Accepted as part of weight-of-evidence; growing acceptance |

4. Data Analysis: Interspecies Variability and Visualization A core challenge in applying animal data is interspecies variability. A meta-analysis of LD50, LC50 (lethal concentration), and LR50 (lethal tissue residue) data found that while internal doses (biologically effective dose) are more comparable across species and routes, external lethal doses can vary over several orders of magnitude [17]. For example, the insecticide dichlorvos shows variable toxicity: Oral LD50 (rat) = 56 mg/kg, but Inhalation LC50 (rat) = 1.7 ppm [2].

Table 3: Illustrative Interspecies Variability in Acute Toxicity (Sample Values)

| Substance | Species | Route | LD50/LC50 | Toxicity Class (Hodge & Sterner) |

|---|---|---|---|---|

| Dichlorvos [2] | Rat | Oral | 56 mg/kg | Moderately Toxic |

| Rat | Inhalation | 1.7 ppm (4h) | Extremely Toxic | |

| Rabbit | Oral | 10 mg/kg | Highly Toxic | |

| Dog | Oral | 100 mg/kg | Slightly Toxic | |

| Nicotine [15] | Rat | Oral | 50 mg/kg | Highly Toxic |

| Ethanol [15] | Rat | Oral | 7000 mg/kg | Practically Non-toxic |

Effective visualization of such comparative data is essential. Bar charts are optimal for comparing discrete values (e.g., LD50 across species) [19] [20]. Dose-response curves, typically line charts, visualize the continuous relationship between dose and effect, highlighting the slope and critical values like LD50, LD10, and NOAEL [15]. A box plot (or whisker plot) is the most robust method to display the distribution, median, and variability of LD50 data across multiple studies or species [21].

Table 4: Data Visualization Methods for Toxicity Data

| Chart Type | Best For | Example in Toxicity Assessment | Pros/Cons |

|---|---|---|---|

| Bar Chart [19] [20] | Comparing exact values across categories. | Directly comparing LD50 values of 5 chemicals for the same species/route. | Pro: Simple, universally understood. Con: Can oversimplify variability. |

| Dose-Response Curve (Line Chart) [15] | Showing the relationship between dose and effect (mortality, response %). | Plotting % mortality vs. log(dose) to derive LD50 and curve slope. | Pro: Shows complete relationship, slope indicates toxicity range. Con: Requires multiple data points per agent. |

| Box Plot (Whisker Plot) [21] | Displaying data distribution (median, range, interquartile range). | Showing variability in reported LD50s for one chemical across different labs or species. | Pro: Excellent for showing variability and outliers. Con: Less familiar to some general audiences. |

Diagram 1: Workflow for Analyzing Interspecies Toxicity Data

5. The Scientist's Toolkit: Reagents & Essential Materials

- Test Substances: High-purity chemical or well-characterized mixture [2].

- Vehicle/Control Article: Solvent (e.g., corn oil, saline, methyl cellulose) for dose formulation and control groups.

- Cell Culture System (In Vitro): Validated cell line (e.g., NHK, 3T3), culture medium, sera, trypsin/EDTA [16].

- Viability Assay Kits: MTT, Neutral Red, or ATP-based assay kits for quantifying cytotoxicity [16].

- Animal Models (When Required): Defined strain, sex, and age of rodents (e.g., Sprague-Dawley rat, CD-1 mouse) from accredited breeders [2].

- Dosing Apparatus: Oral gavage needles, calibrated inhalation chambers, precision micropipettes.

- Data Analysis Software: Software for probit/logit analysis (e.g., EPA BMDS), statistical packages (R, SAS, GraphPad Prism), and visualization tools.

6. Alternative Approaches and the Expression of Modern Values The field is transitioning from a singular focus on lethality to an expression of values prioritizing human relevance, efficiency, and ethics. This includes:

- Integrated Testing Strategies (ITS): Combining computational (in silico) models like QSAR, high-throughput in vitro assays, and targeted in vivo tests only for confirmation [14] [16].

- Adoption of Subacute Endpoints: Using more informative endpoints like organ toxicity biomarkers, clinical pathology, and histopathology.

- Dosimetric Adjustment: Applying physiologically based pharmacokinetic (PBPK) modeling to extrapolate animal doses to human equivalent doses based on internal dosimetry, addressing the core limitation of route-to-route and species extrapolation [18] [17].

Diagram 2: Integrated Testing Strategy Workflow

7. Conclusion Reliable human risk assessment cannot rely on the classical LD50 as a standalone, direct metric. Standardization must focus on the route of exposure to understand internal dosimetry and on the expression of values that favor human-relevant, mechanistic, and ethical testing strategies [18] [16] [17]. While animal-derived acute toxicity data provide a historical benchmark, its future utility lies within integrated approaches that use modern in silico and in vitro tools to prioritize and refine testing, ultimately enhancing the predictive accuracy and human relevance of safety assessments.

The assessment of chemical toxicity has evolved from systems based on observational animal data to globally harmonized frameworks designed for human protection. The Hodge and Sterner scale, developed in the mid-20th century, established a systematic but simplistic method for categorizing substance toxicity based primarily on oral rat LD₅₀ values [22]. This scale categorized chemicals from "practically nontoxic" to "super toxic" and was foundational for early hazard communication. However, its reliance on a single, animal-derived metric presented significant limitations for accurate human risk prediction.

This historical approach contrasts with the modern Globally Harmonized System of Classification and Labelling of Chemicals (GHS), developed by the United Nations. The GHS is an integrated, evidence-based system that classifies chemicals according to their physical, health, and environmental hazards and communicates this information through standardized labels and safety data sheets (SDS) [23]. Its core objectives are to enhance the protection of human health and the environment, facilitate international trade, and reduce the burden of compliance for companies operating in multiple jurisdictions [23]. The transition from Hodge and Sterner to GHS represents a paradigm shift from animal-centric lethality data to a multifaceted, human-focused assessment that incorporates a broader spectrum of toxicological endpoints.

Comparative Analysis of Classification Systems

The fundamental difference between the two systems lies in their data inputs, classification criteria, and intended application. The table below provides a direct comparison of their toxicity categories and the underlying experimental data.

Table 1: Comparison of Hodge & Sterner Oral Toxicity Scale and GHS Acute Oral Toxicity Categories

| Hodge & Sterner Category | Approx. Rat Oral LD₅₀ (mg/kg) | GHS Acute Oral Toxicity Category | GHS Hazard Statement & Signal Word | Typical LD₅₀ Range (mg/kg) |

|---|---|---|---|---|

| Practically Non-Toxic | > 15,000 | Not Classified | — | ≥ 5000 |

| Slightly Toxic | 5,001 – 15,000 | Category 5 | H303: May be harmful if swallowed (Warning) | 2000 – 5000 |

| Moderately Toxic | 501 – 5,000 | Category 4 | H302: Harmful if swallowed (Warning) | 300 – 2000 |

| Very Toxic | 1 – 500 | Category 3 | H301: Toxic if swallowed (Danger) | 50 – 300 |

| Extremely Toxic | < 1 | Category 2 | H300: Fatal if swallowed (Danger) | 5 – 50 |

| Super Toxic | < 0.01 | Category 1 | H300: Fatal if swallowed (Danger) | ≤ 5 |

The GHS system introduces critical refinements. First, it uses standardized hazard statements (H-codes) and signal words ("Danger" or "Warning") to convey precise risk [23]. Second, GHS classification is not based solely on LD₅₀ but considers the weight of evidence from various sources, including in vitro studies and human experience [24]. Third, GHS mandates a suite of communication elements, including pictograms and precautionary statements, creating a more robust and actionable hazard communication tool compared to the single-number output of the Hodge and Sterner scale [25].

The Scientific Challenge: Reliability of Animal LD₅₀ Data for Human Risk

The central thesis questioning the reliability of animal LD₅₀ data for human risk assessment is supported by substantial scientific evidence. Traditional acute toxicity testing protocols, such as the OECD Up-and-Down Procedure, are designed to determine the dose lethal to 50% of a test population (typically rodents) with statistical confidence [22]. While standardized, these protocols face inherent limitations:

- Interspecies Metabolic and Physiological Differences: Fundamental genetic and phenotypic variations mean that a compound's metabolic pathway, rate of clearance, or target organ sensitivity can differ drastically between rodents and humans [26].

- High Variability and Ethical Cost: LD₅₀ tests require significant numbers of animals and can cause severe suffering. Efforts to reduce animal use, like the limit test (using a maximum of 5 animals at a high dose), highlight the ethical and resource-intensive nature of these assays [22].

- Narrow Scope of Endpoints: LD₅₀ measures only one endpoint—death—over a short period. It provides no data on organ-specific toxicity, long-term chronic effects, carcinogenicity, or reproductive harm, which are critical for comprehensive human safety assessment [24].

This translational gap has direct consequences. A significant proportion of drug candidates that appear safe in preclinical animal studies fail in clinical trials or are withdrawn post-marketing due to unforeseen human adverse events, such as cardiovascular or neurotoxicity [26] [27]. For example, the appetite suppressant sibutramine showed no severe cytotoxicity in preclinical studies but was later withdrawn due to life-threatening cardiovascular risks in humans [26]. This underscores that lethality in animals is a poor, standalone predictor of complex human toxicities.

Modern Alternatives and Performance Data

The field is rapidly moving toward New Approach Methodologies (NAMs) that aim to reduce, refine, and replace animal testing [24]. These include integrated testing strategies that combine in vitro assays (like 3D organoids), in silico models (like QSAR and PBPK modeling), and omics technologies (transcriptomics, proteomics) [24]. A prominent advancement is the use of artificial intelligence and machine learning to integrate diverse data streams for human-specific toxicity prediction.

A 2025 study demonstrates the power of a genotype-phenotype differences (GPD) machine learning model [26]. This model moves beyond chemical structure to incorporate biological disparities between preclinical models (e.g., mice, cell lines) and humans in areas like gene essentiality and tissue expression profiles.

Table 2: Performance Comparison of Toxicity Prediction Models

| Model / Approach | Core Data Input | Reported Performance (AUROC) | Key Strength | Primary Limitation |

|---|---|---|---|---|

| Traditional Animal LD₅₀ | Rodent lethality dose | Not applicable (single endpoint) | Standardized, historical data baseline | Poor human translatability, ethical cost, narrow scope [22]. |

| Chemical Structure-Based AI | Molecular descriptors & fingerprints | ~0.50 (Baseline in GPD study) | High-throughput, early screening | Misses biologically-driven human-specific toxicity [26]. |

| GPD-Integrated AI Model [26] | Chemical features + interspecies genotype-phenotype differences | 0.75 | Captures human-specific biological risk; identifies neuro/cardio toxicity signals. | Requires high-quality genetic and phenotypic data across species. |

| NAM/IATA Framework [24] | In vitro, in silico, read-across, omics data | Varies by endpoint (qualitative "weight of evidence") | Mechanistic insight, addresses multiple toxicity endpoints, reduces animal use. | Lack of standardized validation frameworks for regulatory acceptance. |

The experimental protocol for the GPD model involved compiling a dataset of 434 "risky" drugs (associated with clinical trial failure or post-market withdrawal) and 790 approved drugs [26]. For each drug target, differences in gene essentiality, tissue expression profiles, and biological network connectivity between humans and preclinical models were quantified. A Random Forest model integrating these GPD features with chemical descriptors significantly outperformed models based on chemical structure alone, particularly for predicting hard-to-detect toxicities like neurotoxicity [26].

The workflow for modern, human-focused hazard assessment integrates these new methodologies, as shown in the following diagram.

Diagram: Workflow Shift from Animal-Centric to Integrated Evidence-Based Hazard Assessment. The traditional path (top, yellow) relies on linear extrapolation from animal data, while the modern GHS/NAMs path (bottom, green) integrates diverse human-relevant evidence streams for a more robust classification.

The Scientist's Toolkit: Research Reagent Solutions

Transitioning to human-focused assessment requires new tools. The following table details essential reagents and materials for contemporary toxicology research.

Table 3: Essential Research Reagent Solutions for Modern Toxicity Assessment

| Reagent/Material | Function in Research | Application Context |

|---|---|---|

| Human Primary Cells & iPSC-Derived Cells | Provide human-specific biological response data, overcoming interspecies differences. Used in 2D/3D culture systems. | In vitro toxicity screening, organ-on-a-chip models, mechanistic studies [24]. |

| Toxicogenomics Panels (Transcriptomics/Proteomics) | Measure genome-wide gene expression or protein changes in response to toxicants. Identifies biomarkers and modes of action. | Developing Adverse Outcome Pathways (AOPs), calculating benchmark doses (BMD), understanding mechanistic toxicity [24]. |

| QSAR Software & Chemical Databases | Predict toxicity based on chemical structure similarity and properties. Enables high-throughput virtual screening. | Early compound prioritization, read-across justification for data-poor chemicals, filling data gaps [24]. |

| PBPK (Physiologically Based Pharmacokinetic) Modeling Software | Simulates the absorption, distribution, metabolism, and excretion (ADME) of chemicals in humans and animals. | Interspecies and inter-route extrapolation, predicting internal target organ doses [24]. |

| Machine Learning Platforms (e.g., for GPD Analysis) | Integrate diverse data types (chemical, genomic, phenotypic) to identify complex patterns predicting human toxicity. | Building advanced models like GPD-based classifiers for human-specific risk prediction [26] [27]. |

| Standardized GHS Label Elements (Pictograms, H/P Codes) | Tools for the accurate communication of classified hazards in laboratory and workplace settings. | Ensuring compliant labeling of research chemicals and secondary containers, supporting hazard communication programs [23] [25]. |

Regulatory Implementation and Future Directions

The adoption of GHS and NAMs is actively reshaping the regulatory landscape. In the United States, OSHA's updated Hazard Communication Standard (HCS) aligns with GHS Rev. 7, with key compliance deadlines approaching: substance reclassification by January 19, 2026, and mixture reclassification by July 19, 2027 [28]. This update emphasizes clearer labels, especially for small containers, and more detailed Safety Data Sheets [23] [28].

Regulatory agencies are increasingly building frameworks for NAM acceptance. The European Chemicals Agency (ECHA) and the U.S. EPA are developing guidance for using read-across, QSAR, and PBPK models within Integrated Approaches to Testing and Assessment (IATA) [24]. The future direction involves greater automation, data harmonization (FAIR principles), and the use of AI to manage the vast data generated by high-throughput NAMs [24]. The continued integration of human-relevant biology into hazard identification and classification promises to close the reliability gap left by traditional animal data, leading to more robust protection of human health.

The Fundamental Role of LD50 in Initial Hazard Identification for Chemicals and Pharmaceuticals

For decades, the median lethal dose (LD50) has served as a cornerstone metric in toxicology, providing a standardized measure of a substance's acute toxicity [7]. Defined as the dose required to kill 50% of a test population within a specified time, its numerical value (expressed in mg/kg of body weight) offers a seemingly straightforward basis for classifying chemical hazards, prioritizing risks, and establishing initial safety guidelines [7]. In pharmaceutical development, it traditionally defined the starting point for safety margins, informing the calculation of the therapeutic index.

However, the reliability of animal-derived LD50 data for predicting human risk forms a critical point of scholarly and practical debate. This comparison guide examines the fundamental role of the LD50 test within the contemporary landscape of hazard identification. It objectively evaluates its performance against a suite of New Approach Methodologies (NAMs), framing the discussion within a broader thesis on the translational reliability of animal data for human safety. The evolution toward integrated testing strategies and computational toxicology reflects a concerted effort to address the scientific, ethical, and extrapolation challenges inherent in the classical paradigm [24] [29].

The Traditional LD50 Protocol: Methodology and Inherent Limitations

The classical determination of LD50 follows a standardized in vivo experimental protocol. Researchers administer logarithmically spaced doses of a test compound to groups of laboratory animals, typically rats or mice, via a relevant route (e.g., oral, dermal, intravenous) [7]. Mortality is recorded over a fixed observation period, usually 14 days. The resulting data is plotted on a dose-response curve, with the dose corresponding to 50% mortality interpolated as the LD50 value [7].

Table 1: Key Limitations of the Traditional Animal LD50 Test

| Limitation Category | Specific Issues | Impact on Human Risk Assessment |

|---|---|---|

| Ethical & Resource | High animal use; subject to the 3Rs (Replacement, Reduction, Refinement) principle; costly and time-consuming [24]. | Impedes high-throughput screening; increasing regulatory and societal pressure to find alternatives [29]. |

| Interspecies Extrapolation | Metabolic, physiological, and genetic differences between rodents and humans [30]. | Introduces uncertainty in predicting the effective toxic dose in humans. |

| Protocol Variability | Results can vary with species, strain, sex, age, and laboratory conditions [7]. | Affects the reproducibility and consistency of data used for global hazard classification. |

| Endpoint Specificity | Measures only mortality, providing no mechanistic insight into the pathway of toxicity or target organ effects [31]. | Limits utility for understanding mode of action and for assessing chemicals with similar LD50s but different underlying toxicities. |

| Statistical & Predictive Value | A single point estimate (LD50) may not accurately reflect the shape of the entire dose-response curve for human-relevant outcomes [32]. | Poor correlation with human lethal doses for some chemical classes; historically, prediction can be poor [32]. |

Diagram 1: Traditional animal LD50 test workflow and key limitations.

Performance Comparison: LD50 versus New Approach Methodologies (NAMs)

The limitations of the traditional test have catalyzed the development and validation of NAMs. These include in vitro assays, in silico models, and omics technologies, which are often integrated within a weight-of-evidence framework [24]. The table below provides a comparative performance analysis of these alternatives against the classical LD50.

Table 2: Comparative Performance of Hazard Identification Methods for Acute Toxicity

| Methodology | Key Description | Experimental Performance & Data | Relative Advantages | Relative Disadvantages |

|---|---|---|---|---|

| Traditional LD50 (Rat) | In vivo mortality endpoint in rodents [7]. | Long historical dataset; regulatory acceptance. Direct measure of systemic toxicity. | High animal use, cost, time. Poor human extrapolation for some classes. No mechanism [32]. | |

| Consensus QSAR Models | Computational models predicting toxicity from chemical structure [12]. | CCM model: Under-prediction rate 2% (health protective), over-prediction 37% on 6,229 compounds [12]. | Very fast, low cost, no animals. Good for screening/prioritization [12] [30]. | Reliant on quality training data. Can be a "black box." Limited for novel scaffolds [31]. |

| Mechanistic QSAR/Hybrid Models | QSAR integrated with molecular docking & DFT calculations for mechanism [31]. | For nerve agents: Model identified AChE binding affinity as key predictor; enabled LD50 prediction for novel agents (e.g., Novichok) [31]. | Provides mechanistic insight (e.g., AChE inhibition). Better for novel, highly toxic substances [31]. | Computationally intensive. Requires expert knowledge. |

| Integrated Testing Strategies (IATA) | Defined approaches combining in chemico, in vitro, & in silico data within a framework [24]. | Used for skin sensitization & other endpoints. Aims to replicate Adverse Outcome Pathways (AOPs). | Animal-free, mechanistically informative. Can improve accuracy via weight-of-evidence [24]. | Complex to develop and validate. Regulatory acceptance can be slow [29]. |

| Human Cell-Based In Vitro Assays | High-throughput screening using human cell lines (2D/3D) or organoids [24] [30]. | Can generate IC50 values correlating to organ-specific toxicity. Data feeds into in vitro to in vivo extrapolation (IVIVE) models. | Human-relevant biology. Medium-high throughput. Can elucidate cellular mechanisms. | May not capture complex systemic physiology (ADME). |

| Rodent-to-Human Extrapolation Analysis | Retrospective correlation of historical rodent LD50 with human lethal dose [32]. | Study of 36 chemicals: Best correlation was mouse intraperitoneal LD50 to human dose (r²=0.838) [32]. | Maximizes utility of existing historical data. Can inform quantitative extrapolation factors. | Based on limited, high-quality human data. Route-specific correlations vary [32]. |

The data reveals a critical trade-off. While traditional LD50 provides a whole-organism systemic response, its predictive reliability for humans is variable [32]. Conversely, QSAR models offer exceptional throughput and are increasingly robust for conservative hazard classification, as shown by the very low under-prediction rate of the Conservative Consensus Model (CCM) [12]. The most significant advancement may be mechanistically grounded hybrid models, which for specific toxicants like nerve agents can surpass the predictive utility of a stand-alone rodent LD50 by identifying the molecular initiating event (e.g., AChE binding energy) [31].

Experimental Protocols for Key Alternative Methods

Consensus Quantitative Structure-Activity Relationship (QSAR) Modeling

This protocol leverages multiple computational models to generate a health-protective prediction [12].

- Input Chemical Structure: A standardized chemical structure (e.g., SMILES string) is prepared.

- Multi-Model Prediction: The structure is run through several established QSAR platforms (e.g., TEST, CATMoS, VEGA) to obtain individual predicted LD50 values [12].

- Apply Consensus Rule: A conservative consensus model (CCM) selects the lowest predicted LD50 value from the individual model outputs [12].

- Hazard Classification: The consensus LD50 value is converted into a Globally Harmonized System (GHS) toxicity category for classification.

- Performance Validation: Model performance is benchmarked against a large, curated dataset of experimental rodent LD50 values, with accuracy measured by rates of under-prediction (non-conservative error) and over-prediction (conservative error) [12].

Hybrid Mechanistic QSAR for Organophosphorus Agents

This detailed protocol integrates computational chemistry to capture toxicodynamic mechanisms [31].

- Descriptor Calculation:

- Conventional Descriptors: Calculate physicochemical properties (e.g., log P, molecular weight).

- Quantum Chemical Descriptors: Use Density Functional Theory (DFT) to compute the serine phosphorylation interaction energy, a key step in acetylcholinesterase (AChE) inhibition [31].

- Molecular Docking: Perform docking simulations of the agent into the AChE active site to estimate binding affinity (docking score) [31].

- Model Training & Validation: Combine all descriptors into a dataset. Use machine learning algorithms (e.g., Random Forest) to build a model correlating descriptors with experimental LD50 values. Validate using cross-validation techniques [31].

- Prediction & Interpretation: Apply the trained model to predict LD50 for novel compounds. Use feature importance analysis to identify which mechanistic descriptor (e.g., binding energy) drives the prediction [31].

Diagram 2: Integrating New Approach Methodologies (NAMs) for hazard identification.

Transitioning from traditional methods to integrated strategies requires a new toolkit. The table below details key resources for implementing modern hazard identification approaches.

Table 3: Research Reagent Solutions for Modern Hazard Identification

| Item/Resource | Type | Primary Function in Hazard ID | Example/Notes |

|---|---|---|---|

| OECD QSAR Toolbox | Software | Filling data gaps via read-across from structurally similar chemicals with existing data [24]. | Uses chemical categorization and workflow to predict toxicity. |

| Defined Approaches (DAs) | Protocol | Standardized, animal-free testing strategies for specific endpoints (e.g., skin sensitization) [29]. | Combines specific in chemico and in vitro assay results in a fixed formula. |

| Physiologically Based Kinetic (PBK) Models | Software | In vitro to in vivo extrapolation (IVIVE); translates in vitro concentration to in vivo dose [24]. | Tools like httk R package model human pharmacokinetics. |

| Toxicity Databases | Database | Provide curated experimental data for model training and validation [30]. | PubChem, ChEMBL, DSSTox provide LD50, assay data [30]. |

| Human Cell Lines & Organoids | Biological | Provide human-relevant mechanistic data on cellular stress and death pathways [24] [30]. | Primary hepatocytes, 3D liver spheroids, iPSC-derived cells. |

| High-Throughput Screening (HTS) Assays | Assay | Rapidly profile chemical bioactivity across many targets or cellular pathways [24]. | Used in programs like the U.S. EPA's ToxCast. |

| Transcriptomics Platforms | Platform | Generate omics data to identify gene expression signatures of toxicity and inform AOPs [24]. | Used in benchmark dose (BMD) modeling for point-of-departure derivation. |

| Molecular Docking Software | Software | Predicts binding affinity of chemicals to biological targets (e.g., enzymes, receptors) [31]. | Key for mechanistic QSAR of agents like acetylcholinesterase inhibitors [31]. |

The fundamental role of LD50 is undeniably evolving. Its strength as a standardized, systemic endpoint ensures its data will remain a valuable component in hazard classification and for validating new approaches. However, within the thesis focusing on reliability for human risk assessment, its stand-alone utility is limited by interspecies differences and a lack of mechanistic insight.

The comparative analysis demonstrates that no single method fully replaces the traditional LD50. Instead, the future lies in tiered, integrated strategies. Initial hazard can be rapidly and conservatively screened using consensus QSAR models [12]. For priority compounds, mechanistically driven NAMs—which may include targeted in vitro assays, omics, and PBK modeling—can provide human-relevant data on the pathway of toxicity, offering more reliable insight into potential human risk than an animal LD50 alone [24] [31]. The ultimate goal is a Next-Generation Risk Assessment (NGRA) paradigm where the "fundamental role" of LD50 is fulfilled by a suite of fit-for-purpose, reliable, and human-biology-focused methods [24] [29].

From Animal Dose to Human Risk: The Methodological Framework for Applying LD50 Data

Integrating Animal Data into the Human Health Risk Assessment Paradigm

This guide compares the traditional paradigm of using animal toxicity data, primarily the lethal dose 50 (LD50), for human health risk assessment against modern, alternative approaches. The analysis is framed within the critical thesis of the reliability of animal data for predicting human outcomes, providing researchers with a data-driven comparison of methodologies.

Performance Comparison: Traditional vs. Modern Risk Assessment Approaches

The following table summarizes the core characteristics, advantages, and limitations of the established animal-based paradigm versus emerging alternative methodologies [11] [33] [34].

Table: Comparison of Animal-Based and Alternative Risk Assessment Paradigms

| Aspect | Traditional Animal Testing (LD50 Focus) | Modern Alternative Approaches (NAMs) |

|---|---|---|

| Core Data | In vivo LD50/LC50 from rodents (rat, mouse) [11]. | In silico predictions, high-throughput in vitro assays, human cell-based models, and curated databases [11] [35]. |

| Regulatory Foundation | Long-established OECD guidelines; basis for hazard classification [11]. | Governed by OECD principles for QSAR validation; gaining regulatory acceptance [11]. |

| Primary Advantage | Provides a whole-organism, systemic response under controlled conditions [34]. | Dramatically reduces animal use; faster, cheaper; can handle thousands of data-poor chemicals [11]. |

| Key Reliability Limitation | Interspecies extrapolation uncertainty; requires 10-fold safety factor; variable human concordance [33] [34]. | Models are limited by their training data quality and applicability domain [11]. |

| Major Throughput Limitation | Low-throughput, time-consuming, and resource-intensive [11]. | Very high-throughput for screening; final validation for novel chemicals may still be needed [11]. |

| Predictive Performance | For pharmaceuticals, rodent studies predicted 43% of human toxicities; non-rodents predicted 63% [34]. | Consensus models like CATMoS show high accuracy (e.g., >80% for rat oral acute toxicity) in external validations [11]. |

Experimental Data on Model Performance and Interspecies Concordance

Performance of Computational LD50 Models

Recent collaborative efforts have developed machine learning models to predict acute oral toxicity. Key performance metrics from the Collaborative Acute Toxicity Modeling Suite (CATMoS) and related studies are summarized below [11].

Table: Performance Metrics of Computational LD50 Prediction Models

| Model / Study | Species/Endpoint | Algorithm/Type | Key Performance Metric | Result |

|---|---|---|---|---|

| CATMoS (Consensus) [11] | Rat, Acute Oral LD50 | Multiple algorithm consensus | External validation accuracy | Demonstrated high accuracy and robustness vs. in vivo data |

| Assay Central Models [11] | Rat, Acute Oral Toxicity | Bayesian classification (ECFP6) | Balanced accuracy (external) | Range: 0.61 – 0.84 across eight models |

| Multi-Species Models [11] | Mouse, Fish, Daphnia | Classification & Regression | 5-fold cross-validation | Performance varies by dataset; enables prioritization for testing |

| Industry Validation [11] | Pharmaceutical Compounds | CATMoS application | Separation of low/high toxicity | Effectively identified compounds with LD50 >2000 mg/kg |

Reliability and Concordance of Animal LD50 Data for Human Prediction

The reliability of animal data is fundamentally challenged by interspecies differences. The following table compiles key concordance rates from large-scale analyses [33] [34] [36].

Table: Interspecies Concordance of Toxicity Data

| Analysis Focus | Data Source | Concordance Rate Finding | Implication for Human Risk Assessment |

|---|---|---|---|

| Pharmaceutical Toxicity [34] | 150 compounds, 12 companies | 61% overall concordance (same organ, severe effects). Rodents alone: 43%. Non-rodents alone: 63%. | Animal studies miss a substantial fraction of human toxicities; non-rodents may be more predictive. |

| Acute Oral LD50 Variability [36] | ACuteTox project (97 substances) | Rat vs. mouse LD50 showed high correlation (R²=0.8-0.9). Substance-specific differences were significant for some (e.g., warfarin). | High rodent-rodent correlation supports internal reliability, but does not validate human predictability. |

| EPA Toxicity Values [34] | 22 chemicals (IRIS database) | Human-based RfDs were lower (less protective) than animal-based RfDs for ~32% of chemicals. | Default safety factors applied to animal data may, in some cases, under-protect human health. |

| Stroke Treatment Translation [33] | Meta-analysis of interventions | Only 3 of 494 interventions effective in animal stroke models showed convincing effect in patients. | Highlights a major "translational gap" for complex disease endpoints beyond acute toxicity. |

Detailed Experimental and Computational Protocols

Protocol for Traditional In Vivo Acute Oral Toxicity Testing (OECD Guideline 423/425)

The standard protocol for deriving an LD50 or classifying acute toxicity involves [34]:

- Test System: Use healthy young adult rodents (typically rats), of a single sex (usually females), acclimatized to laboratory conditions.

- Dosing: Administer a single dose of the test chemical via oral gavage. A stepwise procedure (up-and-down method) or fixed doses are used to minimize animal use.

- Observation Period: Monitor animals meticulously for 14 days post-administration for signs of morbidity, mortality, and clinical symptoms.

- Pathology: Conduct gross necropsy on all animals found dead or sacrificed at termination.

- Data Analysis: Calculate the LD50 (dose causing 50% mortality) using statistical methods (e.g., probit analysis) or assign a toxicity class (e.g., GHS category) based on mortality at fixed doses.

Protocol for Building a Machine Learning LD50 Prediction Model

A modern workflow for creating an in silico prediction model, as employed in recent studies [11], includes:

- Data Curation: Acquire and sanitize high-quality in vivo LD50 data from public sources (e.g., EPA's ToxRefDB, ChEMBL). Remove duplicates, neutralize salts, and standardize structures using cheminformatics tools (e.g., RDKit).

- Data Preparation: For classification models, binarize LD50 values using a threshold (e.g., ≤ 50 mg/kg for "highly toxic"). For regression models, convert LD50 to –log(mg/kg). Split data into training and external test sets.

- Descriptor Generation: Calculate numerical representations (descriptors) for each chemical structure, such as extended connectivity fingerprints (ECFP6) or physicochemical properties.

- Model Training & Validation: Train multiple algorithms (e.g., Naïve Bayesian, Random Forest, Deep Learning) on the training set. Evaluate performance using 5-fold cross-validation (measuring accuracy, sensitivity, specificity). Avoid data leakage.

- External Validation & Consensus: Test the final model(s) on a held-out external dataset. In consortia like CATMoS, predictions from multiple models are aggregated into a single consensus prediction to improve robustness [11].

- Applicability Domain Assessment: Define the chemical space for which the model's predictions are reliable, based on the training data.

Visualizing the Integrated Risk Assessment Workflow

The following diagram illustrates how traditional and modern data streams converge within a contemporary, integrated human health risk assessment paradigm.

Diagram: Integrated Data Workflow for Modern Risk Assessment

Researchers integrating animal data into risk assessment can leverage these key public resources and tools [11] [35].

Table: Key Research Reagent Solutions and Data Resources

| Resource Name | Provider | Primary Function | Relevance to LD50 & Risk Assessment |

|---|---|---|---|

| Toxicity Reference Database (ToxRefDB v3.0) | U.S. EPA [35] | Curated database of in vivo animal toxicity studies. | Provides structured, high-quality animal data from thousands of studies for model training and retrospective analysis. |

| Toxicity Value Database (ToxValDB v9.6) | U.S. EPA [35] | Compilation of summarized toxicity values and experimental data. | Offers a standardized format for comparing toxicity data across chemicals and sources, aiding weight-of-evidence reviews. |

| CompTox Chemicals Dashboard | U.S. EPA [35] | Interactive portal for chemical property, exposure, and hazard data. | Integrates multiple data streams (ToxCast, ToxRefDB, predictions) for a unified chemical safety assessment. |

| ECOTOX Knowledgebase | U.S. EPA [11] [35] | Database of ecotoxicological effects of chemicals on aquatic and terrestrial species. | Enables cross-species comparisons and modeling for ecological and human health endpoints. |

| Assay Central / CATMoS Models | Academic/Industry Consortium [11] | Software and consensus models for predicting acute and other toxicities. | Provides validated machine learning models to prioritize chemicals for testing and fill data gaps for LD50. |

| High-Throughput Toxicokinetics (HTTK) Package | U.S. EPA [35] | In vitro toxicokinetic data and models for chemical clearance. | Supports interspecies extrapolation by linking external dose to internal concentration, refining PBPK models. |

This guide provides a comparative analysis of traditional animal-derived toxicity data, primarily the median lethal dose (LD₅₀), within the U.S. Environmental Protection Agency's (EPA) standardized risk assessment framework. It objectively evaluates the performance, uncertainties, and evolving alternatives to animal data at each stage of the process, contextualized within the broader thesis on the reliability of such data for human risk assessment [37] [38].

Comparative Analysis: LD₅₀ Performance in Risk Assessment

The table below summarizes the role and key challenges of using animal LD₅₀ data within the four-step EPA risk assessment paradigm [39] [37] [38].

| Assessment Step | Primary Role of Animal LD₅₀ Data | Key Performance Limitations & Uncertainties | Emerging Alternative Approaches |

|---|---|---|---|

| Hazard Identification | Identify potential for acute lethal toxicity and classify hazard (e.g., "highly toxic") [4]. | - Species-specific physiology may not predict human response [33].- Binary endpoint (death) ignores subtler, clinically relevant toxicity [4]. | - In vitro cytotoxicity assays (e.g., 3T3 NRU) [4].- Computational (in silico) structure-activity models [4]. |

| Dose-Response Assessment | Provide a quantitative potency metric (dose causing 50% mortality) for extrapolation [39]. | - High-dose to low-dose extrapolation introduces uncertainty [39].- Inter-species kinetic/dynamic differences are poorly quantified [33]. | - Fixed Dose Procedure (FDP), Acute Toxic Class (ATC) methods (animal reduction) [4].- Pathway-based assays using human cells [4]. |

| Exposure Assessment | Not directly applicable; defines a severe toxicological endpoint for safety margin calculations. | - LD₅₀ from controlled lab exposure does not reflect real-world human exposure scenarios [39]. | -- |

| Risk Characterization | Informs hazard quotients or safety margins for acute exposure scenarios. | - Compounds all preceding uncertainties (species, dose, exposure) [40].- "Standardization fallacy" may reduce real-world predictive value [33]. | - Integrated testing strategies combining alternative methods [4]. |

The Scientist's Toolkit: Research Reagent Solutions for Toxicity Testing

The following table details essential materials and their functions in traditional and modern toxicity testing protocols.

| Item/Category | Function in Toxicity Testing | Example & Notes |

|---|---|---|

| In Vivo Test Models | Provide a whole-organism system to assess complex systemic toxicity, absorption, and distribution. | Rodents (rats, mice), rabbits, dogs. Use is guided by the 3Rs principle (Reduction, Refinement, Replacement) [4]. |

| In Vitro Cell Cultures | Replace or reduce animal use by screening for basal cytotoxicity or specific mechanistic endpoints. | 3T3 fibroblast cell line (for Neutral Red Uptake assay) [4]; Normal Human Keratinocytes (for skin irritation) [4]. |

| Toxicant Standards & Reagents | Ensure consistency and reproducibility in experimental dosing and endpoint measurement. | Chemical reference standards, cell culture media, vital dyes (e.g., Neutral Red for cell viability) [4]. |

| In Silico Platforms | Use computational models to predict toxicity based on chemical structure and known data, prioritizing chemicals for testing. | QSAR (Quantitative Structure-Activity Relationship) software and databases [4]. |

| OECD Test Guidelines | Provide internationally standardized, validated experimental protocols for regulatory acceptance. | e.g., OECD TG 420 (Fixed Dose Procedure), TG 423 (Acute Toxic Class Method), TG 425 (Up-and-Down Procedure) [4]. |

Detailed Methodologies & Workflows

EPA's Four-Step Risk Assessment Process

The EPA's process is the foundation for evaluating human health risks from environmental chemicals [37] [38]. Animal toxicity data, including LD₅₀, are primarily integrated in the first two steps.

EPA Risk Assessment Process & Animal Data Integration

- Planning and Scoping: The process begins by defining the risk assessment's purpose, scope, and technical approach, identifying the populations and health effects of concern [39] [37].

- Step 1: Hazard Identification: Determines if a chemical can cause adverse health effects and characterizes the strength of evidence. Animal studies are a primary data source when human epidemiological data are unavailable [39]. An LD₅₀ value is used here to classify a chemical's acute toxicity potential (e.g., from "extremely toxic" to "relatively harmless") [4].

- Step 2: Dose-Response Assessment: Quantifies the relationship between exposure dose and the probability or severity of an effect [39]. The LD₅₀ is a key data point from animal studies for acute effects. A major challenge is extrapolating from the high doses used in animal studies to the lower doses typical of human environmental exposure, and from animal species to humans, which introduces significant uncertainty [39] [33].

- Step 3: Exposure Assessment: Estimates the intensity, frequency, and duration of human exposure to the chemical through various pathways (e.g., air, water, food) [37]. Animal data is not directly used in this step, which relies on environmental monitoring and human activity data.

- Step 4: Risk Characterization: Synthesizes information from the previous steps to produce an overall risk estimate, including a description of associated uncertainties [37] [40]. For acute risks, the estimated human exposure level might be compared to the animal-derived LD₅₀ (with uncertainty factors applied) to calculate a margin of safety.

Experimental Protocol: The Classical LD₅₀ Test

The classical LD₅₀ test, introduced in the 1920s, was designed to precisely determine the dose that kills 50% of a test population [4].

Objective: To quantitatively determine the median lethal dose (LD₅₀) of a chemical substance in a defined animal population. Procedure:

- Animals: A large number (historically 40-100) of healthy, young adult animals (typically rodents) of a single species and strain are used [4] [33].

- Dosing: Animals are randomly divided into several groups (e.g., 5 groups). Each group receives a different single dose of the test substance, usually via oral gavage. Dose levels are spaced logarithmically (e.g., factor of 2 apart) to bracket the expected lethal range.

- Observation: Following administration, animals are observed intensively for signs of toxicity (e.g., lethargy, convulsions) and mortality over a fixed period, typically 14 days [4].

- Analysis: The number of deaths in each dose group is recorded. The LD₅₀ value, along with its confidence interval, is calculated using statistical probit analysis, plotting mortality probability against the logarithm of the dose [4].

Limitations & Refinements: Due to its use of many animals and causing severe distress, the classical method has been largely replaced by refined methods like the OECD Fixed Dose Procedure (OECD TG 420), which uses fewer animals and focuses on identifying evident toxicity rather than causing mortality [4].