Advancing Ecological Risk Assessment: A Comprehensive Guide to Evidence Synthesis Methods for Biomedical and Environmental Research

This article provides a comprehensive guide to evidence synthesis methodologies essential for modern ecological risk assessment (ERA), tailored for researchers, scientists, and drug development professionals.

Advancing Ecological Risk Assessment: A Comprehensive Guide to Evidence Synthesis Methods for Biomedical and Environmental Research

Abstract

This article provides a comprehensive guide to evidence synthesis methodologies essential for modern ecological risk assessment (ERA), tailored for researchers, scientists, and drug development professionals. It begins by establishing the foundational principles and regulatory frameworks that underpin ERA. The guide then explores the practical application of advanced methods, including systematic review, meta-analysis, and novel prospective modeling techniques. It addresses common challenges in data integration and heterogeneity, offering troubleshooting strategies and optimization approaches. Finally, the article examines methods for validating and comparing different assessment models, emphasizing robustness and reliability. The synthesis highlights how these methods translate environmental safety data into critical insights for biomedical research, supporting the development of safer pharmaceuticals and a deeper understanding of chemical-environment interactions.

The Cornerstones of Ecological Risk Assessment: Frameworks, Principles, and Problem Formulation

Ecological Risk Assessment (ERA) is formally defined as the application of a formal framework to estimate the effects of human actions on natural resources and to interpret the significance of those effects in light of the inherent uncertainties identified throughout the assessment process [1]. It provides a systematic method for organizing and analyzing data, information, assumptions, and uncertainties to evaluate the likelihood of adverse ecological effects resulting from exposure to one or more environmental stressors [2]. These stressors can be chemical (e.g., pesticides, heavy metals), physical (e.g., land-use change, habitat alteration), or biological (e.g., invasive species, pathogens) [1] [2].

The process is foundational to evidence-based environmental decision-making, serving to protect ecological resources by identifying and quantifying potential risks to ecosystems, habitats, and species [2]. Its applications are wide-ranging, supporting regulatory actions for hazardous waste sites and pesticides, informing watershed management, and aiding in the protection of ecosystems from diverse stressors [1]. Framed within the context of evidence synthesis for research, ERA transcends simple data collection; it is a structured scientific process that necessitates the rigorous integration, evaluation, and interpretation of disparate lines of evidence—from laboratory toxicology and field monitoring to epidemiological observations—to produce a coherent and defensible characterization of risk [3] [4] [5].

Core Objectives and Phases of ERA

The overarching objective of ERA is to support environmental decision-making by providing a transparent, scientifically defensible estimate of risk that clearly communicates the likelihood, magnitude, and uncertainty of potential ecological effects [6] [2]. This is operationalized through a phased framework that ensures thorough problem definition, analysis, and synthesis.

Table 1: Core Objectives of Ecological Risk Assessment

| Primary Objective | Description | Key Output |

|---|---|---|

| Informed Decision-Making | To provide risk managers with a scientific basis for evaluating different risk management options, such as setting environmental limits, approving pesticides, or prioritizing remediation actions [6]. | A risk characterization that integrates exposure and effects, summarizing findings and uncertainties [1]. |

| Predictive & Retrospective Analysis | To predict the likelihood of future effects from proposed actions (prospective) or to evaluate the cause of observed ecological impacts (retrospective) [1]. | An assessment that supports forecasting or diagnostic conclusions. |

| Evidence Synthesis | To systematically gather, appraise, and integrate multiple lines of evidence (e.g., toxicity data, field studies, biomonitoring) into a coherent risk estimate [3] [4]. | A weight-of-evidence conclusion, potentially quantified using advanced statistical methods [4]. |

| Uncertainty Characterization | To explicitly identify, analyze, and communicate the uncertainties and data gaps inherent in the assessment, defining the confidence in the final risk estimates [2]. | A detailed uncertainty analysis that qualifies the risk description. |

The foundational process for achieving these objectives, as established by the U.S. EPA and widely adopted, consists of three primary phases, preceded by a critical planning stage [1] [6].

Table 2: The Primary Phases of Ecological Risk Assessment

| Phase | Core Activities | Key Outputs |

|---|---|---|

| Planning | Dialogue between risk managers and assessors to define goals, scope, complexity, and team roles. Identifies the natural resources of concern [1] [6]. | A documented plan outlining management goals, assessment scope, and team agreements. |

| Problem Formulation | Identification of assessment endpoints (valued ecological entities and their attributes), development of a conceptual model linking stressors to endpoints, and creation of an analysis plan [1] [6]. | Assessment endpoints, a conceptual model, and a definitive analysis plan for the study. |

| Analysis | Exposure Assessment: Characterizes the sources, pathways, and magnitude of contact between stressors and ecological receptors.Effects Assessment: Evaluates the relationship between stressor magnitude and the type and severity of ecological effects [1] [6]. | An exposure profile and a stressor-response profile. |

| Risk Characterization | Integration of exposure and effects analyses to estimate and describe risk. Includes risk estimation, uncertainty analysis, and a summary of the evidence and its significance [1] [6] [2]. | A final risk characterization report detailing estimated risks, confidence levels, and major uncertainties. |

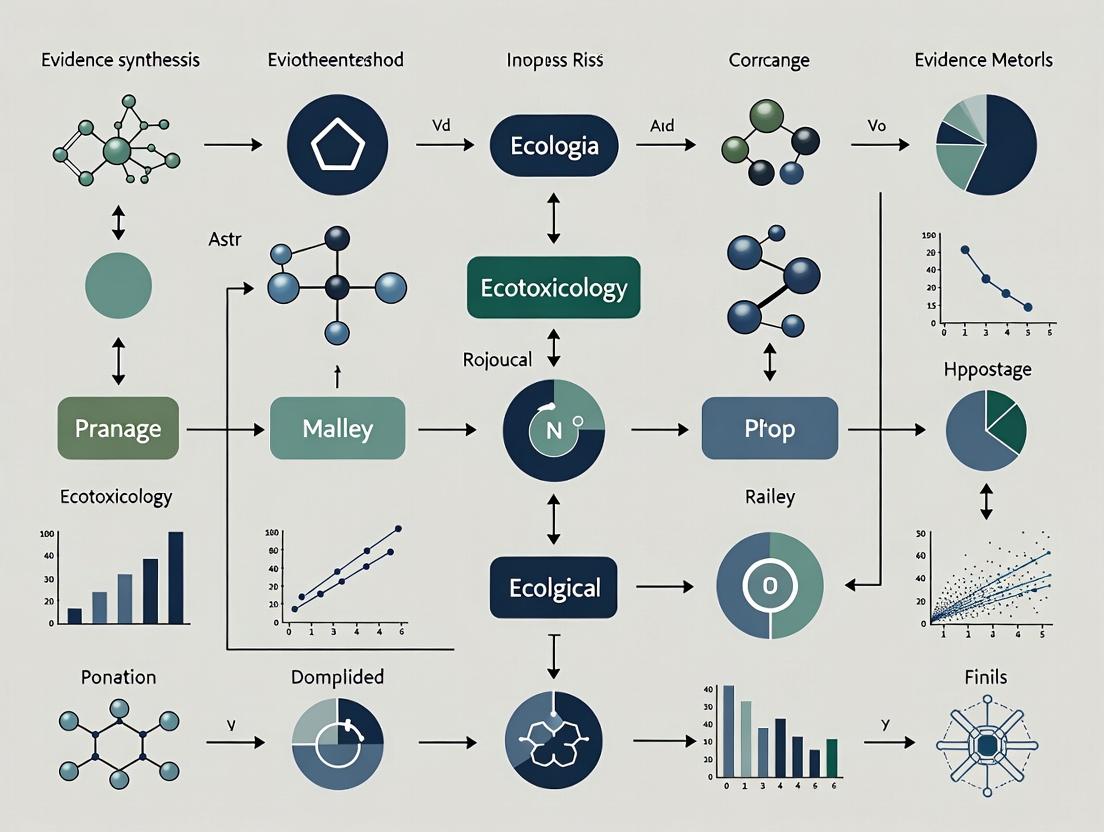

Flow of Ecological Risk Assessment Process

Evidence Synthesis Methods in ERA

Within the ERA framework, evidence synthesis is the critical practice of systematically locating, appraising, and combining results from multiple studies to inform the analysis and risk characterization phases [3] [7]. This is central to a modern, rigorous thesis on ERA methodologies.

Systematic Reviews (SR) and Systematic Maps (SM) are two foundational synthesis methods. A Systematic Review aims to answer a specific, closed-framed research question (e.g., "Does exposure to chemical X at concentration Y reduce reproduction in species Z?") through mandatory critical appraisal of studies and quantitative or qualitative synthesis of results [7]. In contrast, a Systematic Map seeks to provide a broad overview of the evidence base on a topic, cataloguing and describing the available research to identify knowledge gaps and clusters. Critical appraisal is optional in mapping, and the output is typically a searchable database and visualizations of the evidence landscape [3] [7].

Systematic Evidence Mapping (SEM), as applied by the EPA, is a powerful tool for assessment upkeep. It uses a structured process (e.g., based on PECO criteria—Population, Exposure, Comparator, Outcome) to screen new literature against existing assessment endpoints. This helps determine if new data are sufficient to trigger a full reassessment of a chemical or stressor [3].

For quantitative integration, Bayesian Markov Chain Monte Carlo (MCMC) methods represent an advanced synthesis technique. This approach allows for the formal statistical combination of seemingly disparate lines of evidence—such as risk assessment quotients, biomonitoring data, and epidemiological observations—into a single, updated probability distribution of risk [4]. The power of Bayesian inference lies in its ability to quantitatively incorporate prior knowledge and explicitly account for uncertainty, generating outputs such as the probability that a risk quotient exceeds a regulatory level of concern [4].

Evidence Synthesis Methodologies for ERA

Case Studies and Quantitative Data

Case 1: Quantitative Integration for Insecticide Risk A study demonstrated the use of Bayesian MCMC to integrate multiple lines of evidence for insecticides malathion and permethrin, used in mosquito control [4]. The methodology synthesized data from human-health risk assessments, biomonitoring studies, and epidemiology studies to generate a unified, probabilistic risk estimate.

Table 3: Bayesian Synthesis of Risk for Insecticides [4]

| Insecticide | Mean Risk Quotient (RQ) | Variance | Probability that RQ > 1 (Level of Concern) |

|---|---|---|---|

| Malathion | 0.4386 | 0.0163 | < 0.0001 |

| Permethrin | 0.3281 | 0.0083 | < 0.0001 |

Protocol 1: Bayesian MCMC Integration for Risk Synthesis

- Define Parameter of Interest: Establish the Risk Quotient (RQ) as the key parameter, calculated as Potential Exposure (PE) divided by a toxicological endpoint value [4].

- Literature Review: Conduct a comprehensive search across academic and government databases to identify all relevant risk assessment, biomonitoring, and epidemiology studies for the stressor[sentence:198].

- Extract Data: For each study, extract or calculate the RQ estimate and its associated measure of variance or uncertainty.

- Specify Prior Distribution: Define a prior probability distribution for the RQ based on existing knowledge or use a non-informative prior if no prior information exists [4].

- Model Specification: Construct a Bayesian statistical model that links the observed RQ data from each study to the underlying "true" population RQ.

- MCMC Simulation: Use Markov Chain Monte Carlo software (e.g., JAGS, Stan) to draw thousands of samples from the joint posterior distribution of the parameters, which represents updated knowledge after incorporating all new evidence [4].

- Output Analysis: Calculate summary statistics (mean, variance, credible intervals) from the posterior distribution. Determine the probability that the true RQ exceeds the regulatory Level of Concern (typically 1.0) [4].

Case 2: Prospective ERA for Mining Areas The ERA based on Exposure and Ecological Scenarios (ERA-EES) method was developed to prospectively assess soil heavy metal risks around metal mining areas (MMAs) before costly field sampling [8]. It uses Multi-Criteria Decision Analysis (MCDA) tools—the Analytic Hierarchy Process (AHP) and Fuzzy Comprehensive Evaluation (FCE)—to weigh and combine scenario indicators.

Table 4: Indicator Weights for the Prospective ERA-EES Method [8]

| Scenario Layer | Indicator | Weight | Description |

|---|---|---|---|

| Exposure Scenario (70%) | Mine Type | 36% | e.g., Nonferrous vs. Ferrous metal mining |

| Mining Method | 19% | Open-pit vs. Underground mining | |

| Mining Scale | 15% | Small, Medium, or Large operation | |

| Ecological Scenario (30%) | Ecosystem Type | 49% (of ecological layer) | e.g., farmland, forest, residential area |

| Climatic Zone | 32% (of ecological layer) | Influences fate/transport and receptor sensitivity | |

| Soil Type | 19% (of ecological layer) | Affects metal bioavailability |

Protocol 2: Developing a Prospective ERA-EES Model

- Indicator Selection: Select key exposure scenario indicators (related to stressor release and transport) and ecological scenario indicators (related to receptor vulnerability and ecosystem service value) based on literature and expert knowledge [8].

- Expert Elicitation: Convene a panel of domain experts (e.g., ≥50) to perform pairwise comparisons of indicators using standardized AHP questionnaires to determine their relative importance [8].

- Calculate Weights: Synthesize expert judgments to construct a consensus comparison matrix. Calculate the normalized principal eigenvector of the matrix to derive the final weights for each indicator (as in Table 4) [8].

- Establish Grading System: Define criteria and risk levels (e.g., Low, Medium, High) for each qualitative (e.g., mining method) and quantitative indicator [8].

- Fuzzy Comprehensive Evaluation: For a specific site, assign membership degrees for each indicator to the different risk levels based on its attributes. Combine these membership degrees with the AHP-derived weights using fuzzy mathematics to compute an overall risk vector [8].

- Risk Classification: Apply the principle of maximum membership to the final risk vector, or use a composite score, to assign the site to a final prospective risk level [8].

- Validation: Validate the model's performance by applying it to a set of well-characterized sites (e.g., 67 MMAs in China) and comparing its predictions with traditional, measurement-based risk indices [8].

Quantitative Methodologies for Risk Synthesis

Table 5: Key Research Reagent Solutions and Tools for ERA

| Tool/Reagent Category | Specific Item/Example | Function in ERA |

|---|---|---|

| Evidence Synthesis Software | Systematic Review platforms (e.g., Rayyan, CADIMA), Bayesian MCMC software (e.g., JAGS, Stan, WinBUGS) | Aids in screening literature for systematic reviews/maps; performs statistical integration of diverse data streams into probabilistic risk estimates [4] [7]. |

| Toxicity & Ecotoxicity Databases | ECOTOX (EPA), CompTox Chemicals Dashboard, PubMed, Web of Science | Sources for stressor-response data, toxicological endpoints, and literature for developing effects assessments and conducting evidence maps [3] [6]. |

| Exposure & Fate Models | Fugacity models, GIS-based transport models, Bioaccumulation models | Predicts the distribution, transformation, and concentration of stressors in environmental media to characterize exposure pathways and magnitudes [6] [2]. |

| Multicriteria Decision Analysis (MCDA) Tools | Analytic Hierarchy Process (AHP) software, Fuzzy Logic toolboxes | Supports the weighting and integration of qualitative and quantitative indicators in prospective or complex risk assessments, such as the ERA-EES method [8]. |

| Guidance & Framework Documents | EPA's Guidelines for Ecological Risk Assessment, EcoBox Toolbox, Workshop reports on evidence-based frameworks [6] [5] | Provide standardized protocols, checklists, and conceptual frameworks for planning, conducting, and interpreting ERAs, ensuring consistency and regulatory compliance. |

| Standard Test Organisms & Assays | Algae (e.g., Pseudokirchneriella subcapitata), Crustaceans (e.g., Daphnia magna), Fish (e.g., Pimephales promelas), Earthworms (e.g., Eisenia fetida) | Provide standardized, reproducible toxicity data for effects assessment. These model receptors are used in laboratory tests to generate dose-response relationships [2]. |

Ecological Risk Assessment is a dynamic and evolving scientific discipline whose core objective is to synthesize complex environmental evidence into actionable knowledge for decision-makers. The integration of robust evidence synthesis methods—from systematic mapping to Bayesian statistics—is transforming ERA from a qualitative, weight-of-evidence exercise into a more quantitative, transparent, and reproducible science [3] [4] [5]. This evolution directly supports the thesis that advanced evidence synthesis methodologies are critical for the next generation of ecological risk research.

Future directions will likely involve greater adoption of systematic evidence mapping as a maintenance tool for existing chemical assessments [3], the development of standardized frameworks for integrating "new approach methodologies" (NAMs) like high-throughput in vitro assays and computational toxicology data into the ERA evidence stream [5], and the refinement of probabilistic and spatial modeling techniques to better characterize and visualize uncertainty. The continued development of accessible tools and reagents, as outlined in the toolkit, will be essential to empower researchers and assessors to implement these advanced methods, ultimately leading to more efficient, predictive, and protective ecological risk management worldwide.

The discipline of Ecological Risk Assessment (ERA) represents a specialized, applied domain within the broader universe of evidence synthesis methods. While systematic reviews and meta-analyses synthesize evidence from primary research studies, ERA synthesizes disparate lines of environmental evidence—from toxicity tests and field monitoring to chemical fate modeling and population studies—to evaluate the likelihood of adverse ecological effects [9]. The evolution of ERA guidelines, from the foundational 1992 Framework to today's dynamic processes, mirrors a paradigm shift in evidence synthesis at large: a move from linear, sequential procedures toward iterative, adaptive, and stakeholder-engaged approaches. This evolution is driven by the need to address complex ecological systems, manage uncertainty explicitly, and provide timely evidence for environmental decision-making, balancing scientific rigor with practical applicability [10] [9].

The Foundational 1992 Framework: Principles and Linear Process

The U.S. Environmental Protection Agency's (EPA) 1992 Framework for Ecological Risk Assessment established the core paradigm that has guided the field for decades [10]. It formalized a three-phase, linear process designed to separate scientific assessment from policy-driven risk management, thereby ensuring objectivity and transparency [9].

Core Conceptual Pillars:

- Risk Triad: The framework is built on the interdependent relationship between stressors (e.g., chemicals, habitat alteration), exposure (co-occurrence of stressor and receptor), and ecological effects [10] [9].

- Separation of Analysis and Management: It strictly delineates the scientific risk assessment process from the socio-political risk management process, a principle aimed at preserving the integrity of the scientific analysis [9].

- Baseline and Retrospective Focus: Initially, the framework was predominantly applied to retrospective assessments (evaluating existing contamination) and relied on establishing a historical "natural condition" as a baseline for comparison [9].

The Linear Assessment Process: The original framework prescribed a sequential workflow, where the completion of one phase triggered the initiation of the next.

Diagram: Linear ERA Process per the 1992 Framework

Diagram: The traditional, sequential workflow of the 1992 ERA Framework, showing clear separation between assessment and management phases.

The Drivers of Evolution: From Framework to Iterative Guidelines

The static nature of the initial framework soon confronted the dynamic realities of ecological systems and regulatory needs. Key drivers for its evolution included:

- Complexity of Regional Assessments: Site-specific assessments expanded to watershed or landscape scales, requiring integration of multiple stressors and cumulative effects, which the simple linear model could not easily accommodate [11].

- Demand for Prospective Forecasting: Growing need for predictive ERA for new chemicals, genetically modified organisms, and land-use changes demanded more flexible, scenario-based approaches [9].

- The "Timeliness" Imperative: Environmental crises and rapid policy cycles created demand for rapid evidence synthesis methodologies, challenging the timeframe of traditional, comprehensive ERA [12] [13].

- Stakeholder Integration: The recognized value of early and ongoing engagement with risk managers, regulated entities, and the public to ensure the relevance and utility of the assessment [10].

In response, the EPA published the Guidelines for Ecological Risk Assessment in 1998, which explicitly replaced the 1992 Framework. These Guidelines retained the core phases but introduced critical flexibility, emphasizing planning and iterative interaction between risk assessors and managers [10].

Modern Iterative ERA: Core Principles and Adaptive Workflow

Modern ERA is characterized by its cyclical and adaptive nature. The process is no longer a straight line but a spiral of increasing refinement, where feedback loops allow for re-scoping and adjustment as new information emerges [10] [11].

Key Principles of Modern Iterative ERA:

- Planning and Problem Formulation as a Keystone: This initial stage is vastly expanded. It involves collaborative dialogue among assessors, managers, and stakeholders to define clear assessment endpoints (e.g., survival of a fish population), conceptual models, and an analysis plan [10].

- Iteration and Feedback: The process is explicitly iterative. Findings from the risk characterization phase often feed back to refine the problem formulation or request additional analysis [11].

- Transparency in Uncertainty: Modern guidelines mandate explicit documentation and communication of data gaps, assumptions, and quantitative uncertainties throughout the assessment [10] [14].

- Integration of Multiple Evidence Streams: It synthesizes data from chemical monitoring, biological effect monitoring (using biomarkers), ecosystem monitoring, and modeling to form a weight-of-evidence conclusion [9].

Diagram: Modern Iterative ERA Process

Diagram: The modern iterative ERA process, featuring feedback loops and continuous stakeholder dialogue, adapted from contemporary EPA guidance [10] [11].

Table 1: Evolution of Key ERA Components from 1992 to Modern Iterative Approaches

| Component | 1992 Framework (Linear) | Modern Iterative Guideline (Adaptive) |

|---|---|---|

| Core Process | Sequential, linear phases. | Cyclical with formal feedback loops; planning is continuous [10] [11]. |

| Problem Formulation | Initial scoping step. | Keystone, collaborative activity; involves conceptual models and explicit assessment endpoints [10]. |

| Role of Risk Manager | Primarily at the end, to make decisions based on assessment. | Engaged throughout, especially in planning and problem formulation [10]. |

| Uncertainty Handling | Often implicit or summarized at the end. | Explicitly identified, quantified where possible, and communicated in each phase [10] [14]. |

| Primary Application | Retrospective, site-specific contamination. | Both retrospective and prospective; applied from site-specific to regional scales [11] [9]. |

| Evidence Synthesis | Primarily toxicity and exposure data. | Weight-of-evidence approach integrating chemical, biological, and ecological monitoring data [9]. |

| Temporal Focus | Single point-in-time assessment. | May include long-term monitoring feedback for validation and adaptive management [11]. |

Parallels with Rapid Evidence Synthesis: The ERA Initiative as a Case Study

The push for timely, decision-relevant evidence is not unique to ecology. The health policy sector has pioneered Rapid Evidence Synthesis (RES) and Rapid Reviews, methodologies that directly parallel and inform the evolution toward iterative ERA [12] [13] [15].

The WHO's Embedding Rapid Reviews in Health Systems Decision-Making (ERA) Initiative provides a powerful analogue. It established rapid-response platforms in low- and middle-income countries to produce timely syntheses for health policy makers [12]. The initiative's core lessons are highly transferable to ecological risk assessment:

- Integration with Decision-Makers: Platforms were embedded within policy-making institutions, ensuring relevance and uptake—mirroring the modern ERA emphasis on assessor-manager dialogue [12].

- Structured Flexibility: It employed a structured yet flexible protocol, balancing speed with methodological rigor, akin to tailoring an ERA's depth to the management question [12] [13].

- Capacity Building: A Technical Assistance Centre provided tailored training, a model for building capacity in agencies conducting iterative ERA [12].

Table 2: Protocol for a Rapid Evidence Synthesis (RES) for Health Innovations [13] This protocol exemplifies the structured, rapid methodologies influencing modern iterative assessment.

| Stage | Key Activities | Timeline (Within 2-week target) | Personnel |

|---|---|---|---|

| Request & Scoping | Iterative discussion between reviewers and decision-makers to define key questions and scope. | Days 1-2 | Review lead, decision-maker liaison |

| Search & Screening | Targeted, pragmatic database searches; accelerated dual screening based on title/abstract. | Days 3-5 | Information specialist, two reviewers |

| Data Extraction & Appraisal | Streamlined extraction into pre-defined tables; rapid critical appraisal using checklists (e.g., GRADE for evidence certainty). | Days 6-8 | Two reviewers |

| Synthesis & Reporting | Narrative synthesis structured around decision criteria; clear reporting of certainty and relevance of evidence. | Days 9-10 | Review lead |

| Integration | Presentation of findings to decision-making body; discussion of implications for the specific context. | Days 11-14 | Review team, stakeholders |

The Scientist's Toolkit: Essential Reagents and Methods for Modern ERA

Conducting a modern, iterative ERA requires a sophisticated toolkit that extends beyond traditional ecotoxicology.

Table 3: Research Reagent Solutions for Modern Ecological Risk Assessment

| Tool / Reagent Category | Specific Example / Method | Function in Modern Iterative ERA |

|---|---|---|

| Monitoring & Biomarkers | Fish Bioaccumulation Markers (e.g., PCB levels in liver tissue) [9] | Provides direct evidence of exposure and internal dose for hydrophobic contaminants; supports effects-driven assessments. |

| Monitoring & Biomarkers | Biological Effect Monitoring (BEM) (e.g., acetylcholinesterase inhibition, DNA adducts) [9] | Measures early sub-lethal biological responses (biomarkers) to stressors, linking exposure to potential adverse outcomes. |

| Evidence Synthesis Frameworks | GRADE-CERQual (Confidence in Evidence from Reviews of Qualitative research) [15] | Framework for assessing confidence in synthesized qualitative findings (e.g., from stakeholder input); ensures transparency. |

| Evidence Synthesis Frameworks | Weight-of-Evidence (WoE) Frameworks (e.g., EPA's WoE for carcinogen assessment) | Systematic method for integrating lines of evidence (strength, consistency, relevance) to support a risk conclusion. |

| Computational & Modeling | Exposure Assessment Models (e.g., fugacity-based models, GIS-based watershed models) | Predicts environmental fate and exposure concentrations under various scenarios, crucial for prospective ERA. |

| Computational & Modeling | Population Viability Analysis (PVA) Software | Models long-term ecological effects at the population level, addressing a key assessment endpoint. |

| Stakeholder Engagement | Conceptual Model Diagramming Tools (e.g., causal networks) | Facilitates collaborative problem formulation by visually mapping stressors, exposures, effects, and ecological receptors. |

The evolution from the 1992 ERA Framework to today's iterative guidelines represents a maturation of environmental science into a more responsive, inclusive, and pragmatic discipline. It has converged with parallel advancements in evidence synthesis from the health sciences, particularly the principles of rapid review and integrated knowledge translation [12] [13]. The future of ERA lies in further embracing these methodologies—developing standardized yet flexible protocols for rapid ecological assessments, deepening the use of systematic review methods to evaluate ecotoxicological evidence, and formalizing stakeholder engagement as a core component of the scientific process. This evolution ensures that ecological risk assessment remains a robust, credible, and indispensable tool for guiding sustainable decisions in a complex and rapidly changing world.

Problem formulation represents the critical, upfront process of defining the purpose, scope, and methodological pathway for any scientific assessment intended to inform decision-making. Within the context of evidence synthesis for ecological risk assessment (ERA)—a cornerstone of sustainable drug development and environmental protection—this stage determines the entire assessment's relevance, efficiency, and ultimate utility [16]. A well-executed problem formulation aligns the scientific investigation with the specific needs of risk managers, ensuring that the resulting evidence synthesis directly addresses the decisions at hand, whether they concern the approval of a new veterinary pharmaceutical, the setting of an occupational exposure limit, or the management of an environmental contaminant [17].

The consequences of inadequate problem formulation are severe. Assessments can become unmanageably broad, miss critical endpoints, consume excessive resources, or produce conclusions that are misaligned with management options [16]. The National Academies of Sciences, Engineering, and Medicine has emphasized that "increased emphasis on planning and scoping and on problem formulation has been shown to lead to risk assessments that are more useful and better accepted by decision-makers" [16]. This guide synthesizes principles from project scope management [18] [19], formal problem-solving frameworks [20], and established ecological risk assessment guidelines [21] [17] to provide researchers and drug development professionals with a rigorous, practical framework for this essential phase.

Conceptual Foundation: Core Elements of Problem Formulation

Effective problem formulation in evidence synthesis for ERA is built upon three interdependent pillars: a clear management goal, a precisely defined scientific question, and a structured assessment plan.

The process begins with planning, a collaborative dialogue between risk assessors and risk managers. The goal is to determine if a risk assessment is the appropriate tool to support a decision and to agree upon the assessment's goals, scope, timing, and available resources [17]. This step ensures the scientific work remains grounded in a real-world decision-making context.

Following planning, the core of problem formulation involves integrating available information to define the problem. Key factors considered include [17]:

- Stressors: Their type (e.g., chemical, biological), characteristics, mode of action, and patterns of release.

- Sources: The origin, status, and spatial scale of the stressor.

- Exposure: The environmental media involved, timing, and pathways through which receptors encounter the stressor.

- Receptors: The ecological entities (species, communities, ecosystems) potentially at risk, including their life history, susceptibility, and legal protection status.

From this integration, two vital products are developed:

- Assessment Endpoints: Explicit expressions of the environmental values to be protected, defined by a specific ecological entity and its attribute (e.g., the reproduction of fathead minnows in freshwater systems, the survival of gyps vulture populations) [17].

- Conceptual Model: A written description and visual representation of the predicted relationships between stressors, exposures, and assessment endpoints. It consists of risk hypotheses that diagrammatically illustrate how a stressor is expected to move from source to receptor and cause an effect [17].

The final pillar is the creation of an Analysis Plan. This document outlines how the risk hypotheses will be evaluated, specifying data needs, analytical methods, and measures for characterizing risk. It explicitly identifies uncertainties and ensures the planned analysis will fulfill the risk manager's needs [17].

Methodological Framework: A Six-Step Process for Researchers

Adapting proven project scope management processes [18] [19] to the scientific domain, the following six-step framework provides a replicable methodology for problem formulation.

Step 1: Plan Scope Management Before defining the scientific question, create a Scope Management Plan. This document serves as a playbook, detailing how the assessment's boundaries will be defined, validated, and controlled [18]. It should outline roles, responsibilities, and protocols for managing changes to the scope. Engaging all key stakeholders (e.g., toxicologists, ecologists, risk managers, regulatory experts) in this initial planning is critical for establishing shared understanding and buy-in [17].

Step 2: Collect Requirements Systematically gather and document all requirements the assessment must satisfy. This involves translating broad management goals into specific, technical needs. Requirements fall into categories such as:

- Business/Management: Regulatory deadlines, budget constraints, and the required format for decision-making.

- Stakeholder: Concerns from community groups, public health agencies, or industry representatives.

- Technical/Scientific: Specific endpoints of concern (e.g., endocrine disruption, acute mortality), required sensitivity of tests, and applicable regulatory guidelines (e.g., VICH GL6 for veterinary products) [21] [19]. A Requirements Traceability Matrix is invaluable for linking each requirement to later tasks and deliverables.

Step 3: Define the Scope Statement Synthesize the requirements into a definitive Project Scope Statement. This document acts as the contract for the scientific work, explicitly listing what is included and, just as importantly, what is excluded [18] [19]. For an ERA, it should clearly state the stressor(s) under investigation, the geographic and temporal boundaries, the receptor systems considered, and the specific health or ecological outcomes assessed. A signed scope statement prevents "scope creep"—the uncontrolled expansion of the assessment that leads to budget overruns, missed deadlines, and unclear conclusions [18].

Step 4: Create the Work Breakdown Structure (WBS) Decompose the total scope of the assessment into smaller, manageable work packages. For an evidence synthesis, the WBS might break down into phases such as: 1) Systematic Literature Search, 2) Study Screening & Eligibility, 3) Data Extraction, 4) Risk of Bias Assessment, 5) Data Synthesis, and 6) Report Drafting. Each package is assigned an owner, a budget (in time or resources), and a deliverable. This structure enables effective scheduling, budgeting, and progress tracking [18].

Step 5: Validate Scope Establish a formal process for obtaining stakeholder sign-off on key deliverables at predetermined milestones, not just at the project's end [18]. In an ERA, this could involve reviewing and approving the finalized protocol, the completed evidence gap map, or the draft conceptual model before proceeding to full-scale analysis. Validation ensures the work remains aligned with management needs and provides opportunities for course correction.

Step 6: Control Scope Implement a monitoring system to track progress against the baseline scope and manage any necessary changes through a formal change control process [19]. Any request to add a new stressor, receptor, or endpoint must be evaluated for its impact on timeline and resources and formally approved before implementation. This step is essential for maintaining the assessment's rigor and feasibility.

Integrating Problem Formulation with Evidence Synthesis Methods

The choice of evidence synthesis type is a direct outcome of problem formulation. The specific management question dictates the most appropriate methodological approach [22]. The table below aligns common synthesis types with assessment objectives born from problem formulation.

Table 1: Aligning Evidence Synthesis Types with Assessment Objectives from Problem Formulation

| Evidence Synthesis Type | Primary Objective | Typical Output in ERA Context | Key References |

|---|---|---|---|

| Systematic Review (SR) | Answer a focused question on specific health/ecological effects; highest level of rigor. | A quantitative or qualitative summary of the relationship between a pharmaceutical concentration and a specific adverse outcome. | [23] [22] |

| Scoping Review / Evidence Map | Identify the volume, nature, and gaps in available literature on a broad topic. | A map of existing ecotoxicity data for a drug class (e.g., benzimidazoles) across species and endpoints, highlighting data-poor areas. | [16] [22] |

| Rapid Review | Provide timely evidence using streamlined SR methods for urgent decision-making. | A accelerated assessment of acute risks of a drug spill to inform immediate mitigation measures. | [23] [22] |

| Living Review | Maintain an ongoing, continuously updated synthesis as new evidence emerges. | A dynamic assessment of the environmental risks of a widely used antiparasitic, updated with new post-market monitoring studies. | [22] |

A pivotal tool for transitioning from problem formulation to systematic review is the PECO(S) framework (Population, Exposure, Comparator, Outcome, Study Design). It operationalizes the review question into structured eligibility criteria [16]. For example, in assessing the risk of a veterinary antibiotic:

- Population (P): Aquatic macroinvertebrates (e.g., Daphnia magna)

- Exposure (E): Environmental concentrations of drug X and its major metabolites

- Comparator (C): Untreated controls or ambient background levels

- Outcome (O): Acute immobilization (EC50) and chronic reproductive impairment

- Study Design (S): Standardized laboratory toxicity tests (e.g., OECD guidelines)

The iterative nature of problem formulation must be emphasized. As a scoping review or preliminary search reveals the available evidence, the PECO statement or conceptual model may need refinement [17]. Furthermore, emerging technologies like Artificial Intelligence (AI) are transforming evidence synthesis. AI tools can accelerate literature screening and data extraction, but their use requires careful justification and transparent reporting to maintain methodological integrity, as outlined in the RAISE recommendations [23]. The decision to use AI must be weighed as a trade-off, considering the specific synthesis context, risk tolerance for errors, and availability of validation for the AI tool [23].

Experimental Protocols & Case Application

This section details a protocol for a Tiered Environmental Risk Assessment (ERA) of a veterinary medicinal product (VMP), demonstrating the application of the problem formulation framework.

Protocol: Tiered ERA for a Novel Antiparasitic Veterinary Drug

1. Problem Formulation & Scoping

- Objective: To determine if the environmental concentrations of novel antiparasitic drug "Compound Alpha," used in livestock, pose an unacceptable risk to soil and aquatic organisms.

- Management Goal: Inform the European Medicines Agency (EMA) marketing authorization decision under Regulation (EU) 2019/6 [21].

- Scoping Activity: Conduct a preliminary evidence map of ecotoxicity data for structurally similar compounds (e.g., other benzimidazoles). This reveals that benzimidazoles bind to evolutionarily conserved β-tubulin, indicating potential risk to non-target eukaryotes [21].

- Conceptual Model Development:

- Source: Treated cattle excretion onto pasture.

- Stressors: Compound Alpha and its primary metabolite.

- Pathways: Runoff to surface water, leaching to groundwater, retention in soil.

- Receptors: Soil-dwelling organisms (earthworms, microbes), aquatic organisms (algae, daphnids, fish).

- Assessment Endpoints: Survival and reproduction of the earthworm Eisenia fetida; growth of the algae Pseudokirchneriella subcapitata; survival and reproduction of the water flea Daphnia magna.

2. Analysis Plan: The Tiered Approach The assessment follows the VICH GL6/38 tiered strategy [21].

- Phase I (Exposure Estimation): Calculate the Predicted Environmental Concentration in soil (PECsoil) using standardized equations based on dosage, animal excretion rate, and manure application practices. Decision Point: If PECsoil < 100 µg/kg, proceed to Phase II [21].

- Phase II Tier A (Initial Hazard Assessment):

- Experimental Tests:

- Earthworm Acute Toxicity (OECD 207): Adult E. fetida are exposed to Compound Alpha in artificial soil for 14 days. Endpoint: LC50 (lethal concentration for 50%).

- Algal Growth Inhibition (OECD 201): P. subcapitata is exposed to Compound Alpha in culture medium for 72 hours. Endpoint: ErC50 (concentration causing 50% reduction in growth rate).

- Daphnia Acute Immobilization (OECD 202): D. magna neonates (<24h old) are exposed to Compound Alpha in water for 48 hours. Endpoint: EC50.

- Data Analysis: Calculate the Predicted No-Effect Concentration (PNEC) by applying an assessment factor (e.g., 1000) to the lowest reliable LC/EC50 value. Compute the Risk Quotient: PEC/PNEC. Decision Point: If PEC/PNEC > 1, proceed to Tier B [21].

- Experimental Tests:

- Phase II Tier B (Refined Assessment): Conduct chronic toxicity tests (e.g., earthworm reproduction OECD 222, daphnia reproduction OECD 211) to derive a more robust PNEC. Perform fate studies (degradation, sorption) to refine the PEC. Recalculate the Risk Quotient.

- Phase II Tier C (Risk Mitigation): If risk persists, design field studies or evaluate risk mitigation measures (e.g., mandatory manure storage period) [21].

Table 2: Critical Data Gaps in Ecotoxicology for Legacy Pharmaceuticals [21]

| Data Gap | Quantitative Scope | Implication for Problem Formulation |

|---|---|---|

| Missing Chronic Ecotoxicity Data | Only 12% of all drugs have a comprehensive set of ecotoxicity data; 281 of 404 APIs on the German market lack ERA data. | Assessments for older drugs must begin with extensive scoping and may rely heavily on predictive (Q)SAR models or read-across approaches. |

| Lack of Data for Transformation Products | Most ERAs focus on the parent compound, though metabolites can be equally or more toxic. | The conceptual model must explicitly include major transformation products as potential stressors. |

| Limited Real-World Exposure Scenarios | Standard tests use constant exposure, whereas environmental exposure is often pulsed (e.g., after manure application). | The analysis plan may need to incorporate more complex, time-variable exposure studies in higher tiers. |

Diagram 1: Problem Formulation Workflow for Evidence Synthesis in ERA. This flowchart visualizes the three-phase, iterative process from stakeholder engagement to final protocol development.

Diagram 2: The PECO(S) Framework Operationalizing a Review Question. This diagram shows how each PECO(S) element contributes to defining a precise and answerable systematic review question.

Table 3: Research Reagent Solutions & Key Resources for Problem Formulation

| Tool / Resource | Function in Problem Formulation | Key Features / Examples |

|---|---|---|

| Evidence Synthesis Taxonomy (e.g., ESTI) [22] | Guides the selection of the most appropriate type of review (systematic, scoping, rapid) based on the management question and available evidence. | Clarifies distinctions between review types (e.g., systematic review vs. scoping review) to ensure methodological alignment with objectives. |

| RAISE Recommendations for AI Use [23] | Provides a framework for deciding if and how to use AI tools (e.g., for screening, data extraction) while maintaining rigor and transparency. | Offers tailored guidance for evidence synthesists, mandating justification, transparency in reporting, and adherence to ethical standards. |

| Cochrane Handbook & Methodological Updates [24] | Provides the gold-standard methodology for designing and executing systematic reviews, including problem formulation elements like PICO development. | Continuously updated; includes chapters on integrating non-randomized studies, equity considerations, and specific guidance for network meta-analysis and qualitative synthesis. |

| EPA Guidelines for Ecological Risk Assessment [17] | The definitive regulatory framework for structuring ERA problem formulation, including developing assessment endpoints and conceptual models. | Details the iterative planning and problem formulation phase, emphasizing integration of risk managers and stakeholders. |

| Project Scope Management Software (e.g., Monograph) [18] | Facilitates the operational aspects of scope management: creating WBS, tracking deliverables, controlling scope creep, and validating scope with stakeholders. | Enables real-time budget and schedule tracking against the project scope baseline, providing visibility into potential overruns. |

| Systematic Review Software (e.g., RevMan, Covidence) | Supports the execution of the analysis plan derived from problem formulation, managing screening, data extraction, and synthesis. | Cochrane's RevMan now includes advanced random-effects methods and prediction intervals [24]. Covidence streamlines the screening and extraction process. |

| Citizen Science Platforms [25] | Can be integrated into the conceptual model as a source of exposure or monitoring data, particularly for identifying real-world exposure scenarios or affected receptors. | Useful for gathering large-scale environmental data; requires careful design to ensure data quality and representativeness. |

This whitepaper provides a technical guide for implementing integrative approaches at the critical interface between risk assessors, managers, and stakeholders. Framed within a broader thesis on evidence synthesis methods for ecological risk assessment research, it details systematic methodologies for harmonizing disparate data streams and fostering collaborative decision-making. The content is structured for researchers, scientists, and drug development professionals, focusing on actionable protocols, visualized workflows, and standardized toolkits to translate complex risk evidence into robust, transparent, and actionable management strategies [26] [27].

Foundational Concepts: Integration in Risk Analysis

Integrated Risk Management (IRM) is an organization-wide approach that centralizes risk activities to drive efficient management across all business segments [28]. In scientific and ecological contexts, this philosophy translates to a structured, collaborative process where evidence generation (assessment), decision-making (management), and value-based input (stakeholders) are interconnected.

The core objective is to move from siloed operations to a holistic, risk-aware culture [28]. For researchers, this means designing evidence synthesis projects—such as Systematic Evidence Maps (SEMs) or integrative data analyses—with explicit inputs for and from managers and stakeholders from the outset [26] [27]. Successful integration yields a comprehensive view of an organization's or ecosystem's risk profile, enabling better performance, stronger resilience, and cost-effective compliance [28] [29].

Table 1: Core Components of an Integrative Risk Framework [28] [29]

| Component | Primary Actor | Key Activities | Output for Integration |

|---|---|---|---|

| Strategy & Planning | Senior Management / Lead Researchers | Establish risk appetite; align activities with business/ecological objectives; select evidence synthesis framework. | Documented protocol defining scope, objectives, and stakeholder engagement plan. |

| Evidence Assessment | Risk Assessors / Scientists | Identify, evaluate, and prioritize risks via systematic reviews, SEMs, or experimental data generation [26]. | Harmonized data register; prioritized risk list; gap analysis. |

| Response Planning | Risk Managers | Develop treatment/mitigation plans based on assessed risks and organizational goals. | Action plans with assigned responsibilities, resources, and timelines. |

| Communication & Reporting | All Parties | Establish communication plans; report progress; translate technical findings for diverse audiences. | Dashboards; interactive evidence maps [26]; tailored reports for technical and non-technical audiences. |

| Monitoring & Review | Managers & Assessors | Track mitigation progress, control effectiveness, and emerging risks. | Key Risk Indicator (KRI) metrics; updated risk assessments. |

| Technology & Support | All Parties | Utilize software for data aggregation, visualization, and collaborative workflow management [28] [29]. | Integrated platform providing a single source of truth for all risk-related data. |

Methodological Protocols for Evidence Synthesis and Integration

This section details experimental and analytical protocols essential for generating the robust, synthesized evidence required at the assessor-manager-stakeholder interface.

Protocol for Conducting a Systematic Evidence Map (SEM)

Systematic Evidence Maps (SEMs) are a form of evidence synthesis that provides a structured overview of a research landscape, identifying trends and gaps without necessarily performing a full meta-analysis [26]. They are particularly valuable for scoping complex ecological risks and prioritizing future research or assessment efforts.

Detailed Methodology [26]:

- Define Research Scope and Question: Collaboratively define the scope with managers and stakeholders. Formulate a primary question using PECO/PICO elements (Population, Exposure, Comparator, Outcome for ecological settings).

- Develop and Execute a Systematic Search Strategy:

- Identify bibliographic databases (e.g., PubMed, Web of Science, Scopus, specialist ecological databases).

- Design a comprehensive search string using controlled vocabulary (e.g., MeSH terms) and free-text keywords.

- Document the full search strategy for reproducibility.

- Supplement database searches with grey literature searching (regulatory reports, theses, preprints).

- Screen Studies Systematically:

- Use dual-independent screening for titles/abstracts and full texts against pre-defined eligibility criteria.

- Resolve conflicts by consensus or via a third reviewer.

- Record reasons for exclusion at the full-text stage.

- Code Data and Extract Variables: Develop a standardized data extraction form. Code studies for key characteristics:

- Descriptive: Author, year, location, study design (e.g., cohort, case-control, experimental).

- Methodological: Exposure/risk factor measurement, outcome assessment, sample size.

- Content: Specific exposure/intervention, measured outcome, direction of effect (if reported).

- Critical Appraisal (Optional but Recommended): Assess the risk of bias or quality of individual studies using tools appropriate to the study design (e.g., Cochrane RoB tool, SYRCLE's tool for animal studies). This step is crucial when evidence is intended to inform subsequent syntheses or decision-making [26].

- Synthesis and Visualization:

- Conduct a narrative synthesis of the evidence.

- Create interactive heatmaps to visualize the volume and distribution of evidence across exposure-outcome pairs.

- Generate network diagrams to illustrate linkages between studied variables.

- Host outputs on an interactive website or platform to facilitate exploration by all parties [26].

Protocol for Integrative Data Analysis Across Multiple Studies

Integrative data analysis combines individual-level data from multiple independent studies (e.g., different birth cohorts, panel studies, or experimental datasets) to increase power, explore consistency, and examine context-dependent effects [27]. This is key for assessing ecological risks across diverse populations or conditions.

Detailed Methodology [27]:

- Establish Collaborative Consortium: Form a multi-study research team with agreement on goals, governance, data sharing, and authorship.

- Data Harmonization: This is the most critical technical step.

- Define a Common Model: Create a theoretical model specifying constructs of interest (e.g., "socioeconomic stress," "ecosystem resilience").

- Develop a Cross-Walk Algorithm: For each construct, map how variables from each source study (with different instruments, units, or scales) are transformed into a common, comparable metric.

- Apply Harmonization: Transform individual study data using the algorithms. This may involve recoding, scaling, or creating latent variables.

- Advanced Statistical Analysis:

- Pooled Analysis: Merge harmonized datasets into a single file for analysis, using statistical adjustments (e.g., fixed effects for study identity) to account for clustering.

- Meta-Analytic Techniques: Analyze each study separately and then pool the effect estimates using random- or fixed-effects meta-analysis models.

- Moderator Analysis: Use the integrated data to investigate whether associations between risk and outcome vary by factors like study location, population characteristics, or methodological features.

- Interpretation and Reporting: Interpret findings in the context of both the common model and the unique aspects of contributing studies. Clearly report the harmonization process to allow for critique and replication.

Diagram 1: Systematic Evidence Map (SEM) Workflow (82 chars)

Integration Mechanisms at the Interface

Effective translation of synthesized evidence into management action requires deliberate structural and procedural mechanisms.

The Iterative IRM Cycle in a Research Context

The six key activities of IRM form a cyclical, iterative process rather than a linear one [28]. In a research-driven context, this cycle is fueled by continuous evidence synthesis and stakeholder feedback.

Diagram 2: Iterative IRM Cycle for Evidence-Based Decisions (74 chars)

Quantitative Framework for Prioritizing Risks and Actions

Following evidence assessment, a standardized framework is needed to prioritize risks for management action. This involves evaluating both the magnitude of the risk and organizational context.

Table 2: Risk Prioritization Matrix: Integrating Evidence with Management Context [28] [29]

| Risk ID | Description (From Evidence Assessment) | Likelihood (1-5) | Impact Severity (Ecological/Business) (1-5) | Inherent Risk Score (LxI) | Current Control Effectiveness (1-5) | Residual Risk Score | Stakeholder Concern (High/Med/Low) | Priority for Action |

|---|---|---|---|---|---|---|---|---|

| RQ-01 | Population decline of Species A linked to Pollutant X | 4 | 5 | 20 | 2 (Partial regulation) | 10 | High | Critical |

| RQ-02 | Habitat fragmentation effect on ecosystem service Y | 5 | 4 | 20 | 3 (Existing protections) | 12 | High | High |

| RQ-03 | Emerging pathogen Z in isolated sub-population | 2 | 5 | 10 | 1 (No monitoring) | 10 | Medium | Medium |

| RQ-04 | Non-significant effect of Stressor B in SEM | 3 | 2 | 6 | 4 (Naturally resilient) | 3 | Low | Low |

The Scientist's Toolkit: Essential Reagent Solutions for Integrative Risk Research

This table details key "reagent solutions"—both conceptual and technological—required to execute the methodologies described and facilitate integration.

Table 3: Research Reagent Solutions for Integrative Risk Assessment

| Item / Solution | Function / Purpose | Application in Integrative Process |

|---|---|---|

| Systematic Review Management Software (e.g., Covidence, Rayyan) | Supports collaborative study screening, data extraction, and conflict resolution during evidence synthesis. | Enables transparent and efficient execution of the SEM protocol, allowing assessors to manage large evidence bases and share progress with managers [26]. |

| Data Harmonization Tools & Frameworks | Provides methodologies and sometimes software (e.g., synthetic data generation, common model scripting in R/Python) for aligning disparate datasets [27]. | Critical for the integrative data analysis protocol, transforming multi-study data into a format suitable for pooled or comparative analysis. |

| Interactive Data Visualization Platforms (e.g., Tableau, R Shiny) | Creates dynamic dashboards, heatmaps, and network diagrams from synthesized data [26]. | Serves as the core of the "Communication & Reporting" phase, allowing managers and stakeholders to interact with evidence findings intuitively [28] [30]. |

| Integrated Risk Management (IRM) Platform | Centralized software to document risks, controls, actions, and KRIs; facilitates workflow management and reporting [28] [29]. | Acts as the "Technology & Support" backbone, housing the risk register, tracking mitigation progress, and providing a single source of truth for all parties. |

| Structured Stakeholder Engagement Protocol | A planned approach (e.g., interviews, workshops, Delphi methods) to gather input, values, and perspectives systematically. | Informs "Strategy" and ensures "Communication" is bi-directional, integrating stakeholder values into the risk assessment and management framework from start to finish. |

Understanding Exposure and Ecological Scenarios as Foundational Concepts

Ecological risk assessment is a structured scientific process used to estimate the likelihood and magnitude of adverse ecological effects resulting from exposure to stressors, such as chemical contaminants. Its primary purpose is to provide decision-makers with a scientifically defensible basis for actions to protect ecosystems and human health [31]. Within this framework, the accurate characterization of exposure and the construction of realistic ecological scenarios are fundamental. These concepts define the bridge between a stressor's presence in the environment and its potential to cause harm to ecological receptors [31].

This guide frames these core concepts within the emerging paradigm of systematic evidence synthesis. Traditional risk assessments can be challenged by vast, heterogeneous, and sometimes conflicting scientific literature. Evidence synthesis methods, such as systematic review and systematic evidence mapping, offer a transparent, rigorous, and reproducible approach to navigating this complexity [3] [7]. These methods ensure that risk assessments are built upon a comprehensive and unbiased summary of the available science, thereby strengthening the credibility and reliability of exposure estimates and scenario development for informed environmental decision-making [3].

Foundational Concepts: Exposure and Dose

Exposure is defined as the contact or co-occurrence of a stressor (e.g., a chemical, physical agent, or biological entity) with an ecological receptor (e.g., an organism, population, or community). The quantification of this contact is the cornerstone of risk estimation. A critical related concept is dose, which refers to the amount of a stressor that is absorbed, deposited within, or otherwise interacts with the receptor [31].

Key Metrics and Terminology

Exposure and dose are characterized through several key metrics:

- Intake/Uptake: The process by which a stressor crosses an outer boundary of an organism (e.g., through ingestion, inhalation, or dermal absorption).

- Applied Dose: The amount of a stressor presented at an absorption barrier.

- Internal Dose: The amount that has been absorbed and is available for interaction with internal tissues.

- Biologically Effective Dose: The fraction of the internal dose that reaches and interacts with a specific target site (e.g., a cell or molecule), initiating a toxicological effect [31].

Doses can be expressed as instantaneous, average daily, or average lifetime measures, depending on the assessment's temporal scope. The choice of metric has significant implications for the relevance of hazard data and the ultimate risk characterization [31].

The Exposure Assessment Workflow

A systematic exposure assessment follows a defined workflow, progressing from problem formulation to data analysis and uncertainty characterization. The following diagram outlines this critical pathway.

Exposure Assessment Conceptual Workflow

Constructing and Applying Ecological Scenarios

An exposure scenario is a set of facts, assumptions, and inferences that describe how exposure occurs. It translates a conceptual understanding of the system into a quantitative framework for estimation [31]. Scenarios are essential for structuring assessments, identifying data needs, and ensuring calculations are relevant to the specific environmental context and management question.

Core Components of an Exposure Scenario

A robust ecological exposure scenario integrates several key elements:

- Source Characterization: Identification of the stressor's origin (e.g., industrial effluent, agricultural runoff, atmospheric deposition) and its release properties.

- Fate and Transport Analysis: Description of the processes that move and transform the stressor from the source through environmental media (air, water, soil, sediment) to the location of the receptor. This involves concepts like partitioning (e.g., using octanol-water partition coefficients) and degradation [31].

- Exposure Pathway Identification: The course a stressor takes from the source to the receptor (e.g., factory stack → atmosphere → deposition → soil → earthworm → robin). A single source can create multiple pathways.

- Receptor Characterization: Definition of the ecological entities at risk, including their life stages, behaviors (e.g., foraging patterns, habitat use), and relevant exposure factors (e.g., dietary intake rates, soil ingestion rates for wildlife) [31] [32].

- Exposure Route Specification: The specific mechanism by which the stressor enters the receptor (dermal, inhalation, ingestion).

Tiered Approach to Scenario Development

Risk assessments often employ a tiered approach, where simple, conservative scenarios are used initially (screening tiers). If potential risks are indicated, more complex and realistic scenarios are developed in higher tiers. Higher tiers may involve probabilistic modeling, spatially explicit data, and detailed ecosystem modeling [33].

Table 1: Tiered Approach to Exposure Assessment and Uncertainty Analysis [33]

| Tier | Description | Typical Analysis | Output |

|---|---|---|---|

| Tier 1 | Screening-Level | Uses conservative, health-protective single-point estimates (e.g., Reasonable Maximum Exposure). | Single, high-end exposure estimate to identify substances requiring further investigation. |

| Tier 2 | Deterministic Range-Finding | Uses more realistic, yet still deterministic, high and low values for key inputs. | A plausible range (Low to High) of exposures. |

| Tier 3 | Probabilistic (1-Dimensional) | Uses probability distributions for input variables to characterize variability in the exposed population/system. | A full distribution of exposure (e.g., CDF), but does not separate variability from uncertainty. |

| Tier 4 | Probabilistic (2-Dimensional) | Uses nested probability distributions to separately characterize variability (inner loop) and uncertainty (outer loop). | Separate distributions showing the confidence bounds around the variability distribution. |

Critical Distinction: Variability vs. Uncertainty

A foundational principle in quantitative risk assessment is the clear distinction between variability and uncertainty. Confusing these concepts can lead to poor decision-making [32] [33].

- Variability represents inherent heterogeneity in a system. It is a property of nature that cannot be reduced by more study (only better characterized). Examples include differences in body weight among individuals in a population, spatial variation in soil contaminant concentrations, or temporal variation in river flow rates [32] [33].

- Uncertainty represents a lack of perfect knowledge about the true value of a quantity or the correctness of a model. It can often be reduced through further research or better data. Examples include measurement error, extrapolation from animal models to humans, or uncertainty in a model's structure [32] [33].

Table 2: Comparison of Variability and Uncertainty [32] [33]

| Aspect | Variability | Uncertainty |

|---|---|---|

| Nature | Inherent heterogeneity or diversity in the real world. | Lack of knowledge about the true state or value. |

| Reducibility | Cannot be reduced; can be better characterized with more data. | Can be reduced with more or better information. |

| Sources in Exposure Assessment | Inter-individual differences (age, behavior), spatial/temporal differences in environmental concentrations, genetic diversity in susceptibility. | Scenario uncertainty (missing pathways), model uncertainty (simplified processes), parameter uncertainty (measurement error, sampling error). |

| Quantitative Expression | Characterized using statistical ranges, percentiles, and probability distributions (e.g., standard deviation). | Characterized using confidence intervals, credible intervals, or qualitative statements about knowledge gaps. |

The following diagram illustrates the primary sources and relationships of uncertainty within the modeling process for socio-ecological systems, a core component of advanced ecological scenarios [34].

Sources of Uncertainty in Socio-Ecological Scenario Modeling

Evidence Synthesis Methods for Robust Risk Assessment

Systematic methodology is crucial for transparently and comprehensively gathering and evaluating the scientific evidence that underpins exposure scenarios and dose-response assessments [3] [7].

Systematic Review vs. Systematic Evidence Mapping

Two primary synthesis methods support risk assessment:

- Systematic Review: A rigorous method to answer a specific, focused question (e.g., "What is the effect of uranium exposure on zebrafish embryo development?"). It involves critical appraisal of individual study validity and often uses meta-analysis to quantitatively combine results [7].

- Systematic Evidence Map (SEM): Used to survey a broad evidence base on a topic. It catalogs and describes available studies through visualizations (e.g., interactive databases, heat maps) to identify knowledge clusters and gaps. It is particularly valuable when the research field is broad or heterogeneous, making a full systematic review premature [3] [7].

Table 3: Core Differences Between Systematic Review and Evidence Mapping [7]

| Feature | Systematic Review | Systematic Evidence Map |

|---|---|---|

| Primary Objective | Answer a specific question with a synthesized finding. | Provide an overview of the evidence landscape; identify gaps and clusters. |

| Research Question | Narrow, focused (PECO/PICO-driven). | Broad, exploratory. |

| Critical Appraisal | Mandatory; influences synthesis and conclusions. | Optional; if done, does not typically filter studies from the map. |

| Synthesis Method | Quantitative (meta-analysis) and/or qualitative synthesis. | Visual, graphical, and descriptive synthesis (databases, charts, matrices). |

| Key Output | An answer to the question, often with an effect size estimate. | A searchable database and visualizations of evidence distribution. |

Application in Risk Assessment: The Uranium Case Study

A demonstrated application is the use of SEM to assess the impact of new literature on updating health reference values for uranium [3]. The process involved:

- Defining the PECO Framework: (Populations, Exposure, Comparators, Outcomes) to guide literature search and screening.

- Systematic Search & Screening: Retrieving literature from 2011-2022 and filtering against PECO criteria.

- Mapping and Comparison: Cataloging new studies against the principal health outcomes identified in a prior 2013 assessment.

- Informing Hazard Evaluation: Using the map to determine if new evidence was sufficient to change existing toxicity values or to prioritize endpoints for new dose-response analysis [3].

This case shows how SEM provides a structured, auditable process for determining when new science necessitates a resource-intensive full re-assessment.

Quantitative Modeling in Exposure and Scenario Analysis

Quantitative models are indispensable tools for estimating exposure where direct measurement is impractical, exploring complex system dynamics, and forecasting the outcomes of different management scenarios [35].

A Taxonomy of Ecological Models

Models can be classified along axes of detail and numerical/data usage [35].

Table 4: Taxonomy and Examples of Quantitative Ecological Models [35]

| Model Type | Description | Typical Use in Exposure/Risk | Example |

|---|---|---|---|

| Correlative (Statistical) | Models empirical relationships between variables without specifying underlying mechanisms. | Predicting species distribution in contaminated habitats; linking land use to water quality. | Generalized Linear Model (GLM) of fish abundance vs. pollutant concentration. |

| Strategic (Mechanistic) | Captures key processes with simplified representation to provide general insights. | Exploring population-level consequences of reduced fecundity due to exposure. | Logistic growth model with a contaminant-induced reduction in carrying capacity. |

| Tactical (Detailed Mechanistic) | Highly detailed, process-based models intended for specific, realistic predictions. | Spatially explicit individual-based models (IBMs) of foraging animals in a contaminated landscape; Physiologically Based Pharmacokinetic (PBPK) models. | IBM simulating small mammal exposure to soil pesticides across a farm plot. |

Best Practices for Model Development and Evaluation

To ensure models are "fit-for-purpose" and credible, researchers should adhere to established good practices [35]:

- Design Phase: Clearly address a management question and consult end-users.

- Specification Phase: Balance model complexity with available data and explicitly state all assumptions.

- Evaluation Phase: Rigorously evaluate the model against independent data (validation) and assess its sensitivity to parameter changes.

- Inference Phase: Include measures of uncertainty, communicate them clearly, avoid over-reliance on arbitrary thresholds, focus on relevance, and publish model code for transparency [35].

The Scientist's Toolkit: Essential Reagents & Methodological Components

This section outlines key methodological "reagents"—the standardized protocols, data sources, and analytical tools—required to conduct robust exposure and scenario-based risk assessments within an evidence synthesis framework.

Table 5: Research Reagent Solutions for Evidence Synthesis in Risk Assessment

| Tool/Reagent | Function/Purpose | Key Source/Example |

|---|---|---|

| PECO/PICO Framework | Provides a structured protocol for formulating the research question, guiding literature search strategy, and establishing study inclusion/exclusion criteria. | Population, Exposure, Comparator, Outcome framework for systematic evidence mapping [3]. |

| Systematic Review Software | Platforms that manage and document the workflow of a systematic review/map, including reference management, deduplication, screening, and data extraction. | Rayyan, Covidence, EPPI-Reviewer. |

| Exposure Factors Data | Compilations of quantitative data on human and ecological receptor characteristics and behaviors that influence exposure (e.g., ingestion rates, inhalation rates, body weights, activity patterns). | EPA's Exposure Factors Handbook; Child-Specific Exposure Factors Handbook [31]. |

| Fate & Transport Parameters | Physicochemical constants used to model the movement and partitioning of stressors in the environment. | Henry's Law Constant, Octanol-Water Partition Coefficient (Kow), organic carbon partition coefficient (Koc), degradation half-lives [31]. |

| Probabilistic Analysis Tools | Software for performing Monte Carlo simulation and other probabilistic techniques to characterize variability and uncertainty. | @Risk, Crystal Ball, or programming environments like R with mc2d package. |

| Biomonitoring Data | Data from programs that measure concentrations of chemicals or their metabolites in tissues or fluids (e.g., blood, urine) of organisms, providing integrated measures of exposure from all routes. | National Health and Nutrition Examination Survey (NHANES) data for human biomonitoring [31]. |

| Pharmacokinetic (PK) Models | Mathematical models (e.g., 1-compartment, PBPK) used to interpret biomonitoring data by relating internal tissue concentrations to external exposure doses, either in "forward" or "backward" calculation modes [31]. | Simple 1-compartment first-order model for bioaccumulative contaminants [31]. |

Data Visualization for Communicating Risk and Uncertainty

Effective communication of complex exposure and risk information to diverse audiences—from scientists to risk managers to the public—is critical. Data visualization transforms numerical results into accessible insights [36].

Visualization of Risk Assessment Outputs

- Risk Matrices (Heat Maps): A standard tool for plotting risks based on their likelihood and impact, using color coding (e.g., red for high risk) for immediate visual prioritization [37] [38].

- Probability Density Functions (PDFs) & Cumulative Distribution Functions (CDFs): Essential for communicating the results of probabilistic (Tier 3/4) assessments, showing the full distribution of exposure or risk across a population [33].

- Risk Trajectory Charts: Show how individual risks move within a risk matrix over time, indicating whether they are escalating, stable, or being mitigated [37].

- Bow-Tie Diagrams: Visually map the causes of a risk on one side and its potential consequences on the other, with control measures displayed in the center, providing a holistic view of risk management [38].

Visualizing Evidence Synthesis Products

For systematic maps, visualization is the primary synthesis output [7]. Interactive evidence atlases, bubble plots, and heat maps can display the volume and distribution of studies across different dimensions, such as:

- Type of stressor and receptor studied.

- Geographic location of studies.

- Outcomes measured (e.g., mortality, growth, reproduction).

- Study quality ratings.

These visual tools instantly reveal where robust evidence exists and where critical knowledge gaps persist, directly guiding future research and assessment priorities [3] [7].

Within the domain of ecological risk assessment (ERA) and next-generation risk assessment (NGRA), the synthesis of diverse, complex, and often uncertain evidence poses a significant scientific challenge. Tiered and refined assessment strategies have emerged as a critical methodological framework to address this challenge, providing a structured, iterative, and resource-efficient pathway from initial screening to comprehensive, ecologically realistic evaluation. These strategies are fundamentally grounded in the principle of progressing from conservative, screening-level models to increasingly realistic and complex analyses only as necessitated by the initial findings [39]. This phased approach allows risk assessors to efficiently triage low-risk scenarios while focusing sophisticated resources on cases where potential risk is indicated [40].

Framed within a broader thesis on evidence synthesis, tiered methodologies offer a systematic protocol for integrating heterogeneous data streams—from high-throughput in vitro bioactivity assays and toxicokinetic modeling to field-scale ecological surveys and population models. They formalize the process of hypothesis testing and iterative refinement, where each tier seeks to reduce uncertainty by relaxing conservative assumptions, incorporating more site-specific data, or employing more mechanistically detailed models [41] [39]. This paper provides an in-depth technical guide to the core principles, operational frameworks, and experimental protocols that define modern tiered assessment strategies, underscoring their indispensable role in achieving robust, defensible, and actionable syntheses of evidence for ecological and human health protection.

Core Principles of Tiered Assessment

The efficacy of a tiered strategy hinges on several foundational principles that govern its design and execution. Understanding these principles is essential for deploying the framework correctly and interpreting its outcomes.

The Efficiency Principle: The primary objective is to identify "no-risk" or "low-risk" determinations at the earliest possible tier using the simplest adequate model [39]. Lower tiers employ conservative assumptions (e.g., upper-bound exposure estimates, sensitive toxicity endpoints) designed to overestimate risk. If a substance passes this protective screen, no further resource-intensive assessment is needed. Escalation occurs only when a potential risk is flagged, ensuring efficient allocation of scientific and regulatory resources.

Progressive Refinement and Realism: As the assessment escalates, each successive tier incorporates greater ecological, biological, or exposure realism to replace the conservative defaults of the lower tier. This may involve replacing generic models with spatially explicit ones, laboratory toxicity data with field or mesocosm studies, or simple quotient methods with dynamic population models [39] [40]. The goal is to converge on an accurate, unbiased estimate of risk.

Iterative Hypothesis Testing: A tiered assessment is not a linear checklist but an iterative, hypothesis-driven process. The outcomes from one tier inform the specific questions and design of the next. For example, a Tier 1 screen might identify a potential hazard to a specific organ system, prompting a Tier 2 investigation focused on the toxicokinetics and bioactivity pathways for that system [41].