Advancing Conceptual Models for Ecological Risk Assessment: Integrating Frameworks, Methodologies, and Applications for Researchers

This article provides a comprehensive guide to conceptual model development for ecological risk assessment, tailored for researchers, scientists, and drug development professionals.

Advancing Conceptual Models for Ecological Risk Assessment: Integrating Frameworks, Methodologies, and Applications for Researchers

Abstract

This article provides a comprehensive guide to conceptual model development for ecological risk assessment, tailored for researchers, scientists, and drug development professionals. It explores the foundational role of problem formulation and stakeholder engagement, details methodological advances from exposure pathways to complex system models, addresses common challenges and optimization strategies, and examines validation through comparative case studies. The synthesis aims to enhance the accuracy, relevance, and predictive power of ecological risk assessments in supporting environmental safety and biomedical research decisions.

Laying the Groundwork: Core Principles and Problem Formulation in Ecological Risk Assessment

The Central Role of Problem Formulation as the Assessment Blueprint

Within the structured paradigm of ecological risk assessment (ERA), problem formulation is not merely a preliminary step but the foundational blueprint that dictates the entire scientific and regulatory endeavor [1] [2]. It represents a critical planning and scoping phase where risk assessors and managers collaboratively define the assessment's purpose, scope, and methodological pathway [1]. This phase transforms broad protection goals—often derived from legal statutes like the Clean Water Act—into a tractable, hypothesis-driven scientific investigation [1] [2]. For research concerning conceptual model development, problem formulation is the process through which abstract concerns about ecosystem health are translated into explicit models that diagram predicted relationships among stressors, exposures, and ecological receptors [1] [3]. A rigorously constructed problem formulation ensures the assessment is relevant, efficient, and ultimately capable of supporting informed environmental decision-making, while a deficient one can lead to misallocated resources, ambiguous results, and compromised decisions [2] [4].

Core Components of Problem Formulation

The problem formulation phase is an integrative process that synthesizes regulatory context, scientific knowledge, and management needs into a clear assessment plan. Its core components, developed iteratively between risk managers and assessors, include the following key agreements and products [1].

The Planning Dialogue: Establishing the Framework

Before technical assessment begins, a planning dialogue sets the strategic framework. Key agreements include [1]:

- Management Goals: The desired ecological conditions to be protected (e.g., "maintaining a sustainable aquatic community") [1].

- Regulatory Context: The specific action triggering the assessment (e.g., registration of a new pesticide) [1].

- Assessment Scope & Complexity: Determined by data availability, resources, and the tolerable level of uncertainty, often structured as a tiered evaluation progressing from simple screening to complex analyses [1].

Key Outputs of Problem Formulation

The technical work of problem formulation yields three critical outputs that guide the subsequent risk analysis and characterization phases [1] [2].

- Assessment Endpoints: These operationalize management goals by specifying the ecological entity (e.g., a fish species) and its valued attribute (e.g., reproductive success) to be protected [1].

- Conceptual Models: Diagrams and risk hypotheses that illustrate the predicted relationships between a stressor (e.g., a chemical), potential exposure pathways, and the assessment endpoints [1] [3]. They identify what is known and where critical knowledge gaps exist.

- Analysis Plan: A detailed protocol outlining how data will be evaluated, which risk hypotheses will be tested, and what measures (e.g., LC50, estimated environmental concentration) will be used to characterize risk [1].

Table 1: Quantitative Criteria for Refining Conceptual Model Exposure Pathways in Ecological Risk Assessment [3]

| Exposure Pathway | Trigger for Inclusion in Conceptual Model | Key Quantitative Thresholds |

|---|---|---|

| Sediment Exposure (Acute) | Pesticide half-life in sediment ≤ 10 days AND one of: | - Soil-water distribution coefficient (Kd) ≥ 50 L/kg- Log Kow ≥ 3- Koc ≥ 1,000 L/kg OC |

| Sediment Exposure (Acute & Chronic) | Estimated Environmental Concentration (EEC) in sediment > 0.1 of acute LC50/EC50 AND half-life ≥ 10 days AND one of the above partitioning thresholds. | (Same thresholds as above) |

| Ground Water Exposure | Meets any one of four criteria, including: | - Detections in prospective ground water studies- Kd < 5 (mobility) AND hydrolysis half-life > 30 days (persistence) |

| Bioaccumulation (for piscivorous birds/mammals) | Consider for hydrophobic organic pesticides when all characteristics are met: | - Non-ionic, organic compound- Log Kow between 4 and 8- Potential to reach aquatic habitats |

Developing the Conceptual Model: A Practical Framework

The conceptual model is the visual and narrative heart of problem formulation. It provides a shared understanding of the system and the risk hypotheses to be investigated [5]. For ecological risk research, its development follows a systematic process.

Construction Process

Development begins by integrating all available information on stressor characteristics, ecosystem attributes, and potential effects [1]. Using this information, assessors draft a diagram—typically a flow chart of boxes and arrows—that maps plausible routes from source to receptor [3]. This model is not static; it is refined to be site- or stressor-specific by adding, removing, or weighting pathways based on evidence (see Table 1) [3]. For instance, a model for a volatile pesticide would emphasize atmospheric transport pathways with solid lines, while a non-volatile compound would depict them as minor or dotted lines [3].

Experimental Protocols for Pathway Validation

The conceptual model generates specific, testable risk hypotheses. The following protocols are central to testing exposure pathway hypotheses in ERAs.

Protocol for Evaluating Sediment Exposure Pathway: This test determines if sediment dwelling organisms are at risk [3].

- Objective: Assess whether the sediment compartment is a significant exposure pathway for benthic organisms.

- Procedure: Determine the pesticide's half-life in sediment via aerobic soil or aquatic metabolism studies. Concurrently, analyze its partitioning behavior by measuring the soil-water distribution coefficient (Kd), the octanol-water coefficient (Kow), or the organic carbon normalized coefficient (Koc).

- Data Analysis: Apply the criteria in Table 1. If the chemical meets both the persistence and partitioning thresholds, the sediment pathway is included as a solid line in the conceptual model and a sediment toxicity evaluation is added to the analysis plan.

Protocol for Screening Inhalation Exposure for Terrestrial Organisms: This evaluates the risk from airborne pesticides [3].

- Objective: Determine if inhalation of volatile compounds or spray droplets is a viable exposure route for mammals and birds.

- Procedure: Use the Screening Tool for Inhalation Risk (STIR) for an initial screening-level assessment. Input chemical-specific properties (e.g., vapor pressure, Henry's Law constant) and application data.

- Data Analysis: Model outputs estimate inhalation exposure concentrations. If exposures approach or exceed levels of concern, the inhalation pathway is formally incorporated into the terrestrial conceptual model (as in Figure 3 of guidance documents) and targeted monitoring or higher-tier modeling may be prescribed [3].

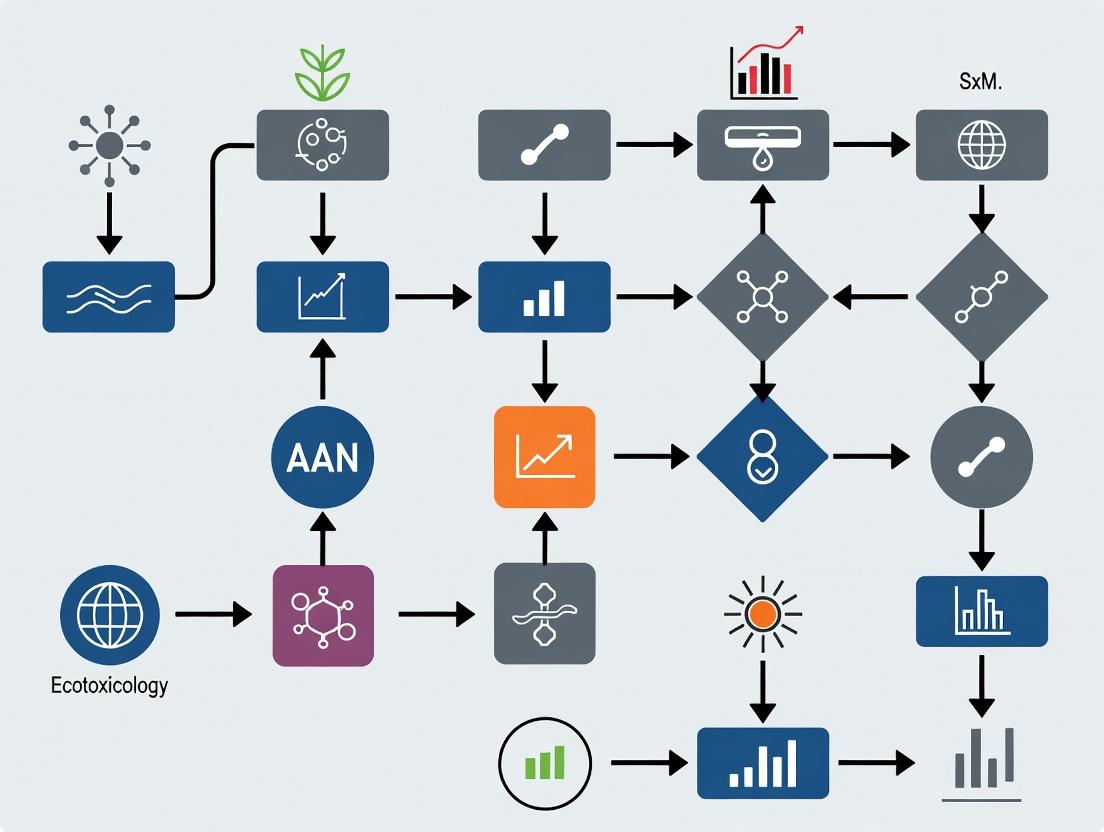

Diagram 1: The Problem Formulation Workflow within the ERA Process (96 characters)

Visualization of Risk Pathways and Relationships

Effective visualization is paramount for communicating the complex relationships captured in conceptual models. Diagrams translate hypotheses into a universal format, clarifying exposure scenarios and fostering consensus among stakeholders [6] [7].

Standardizing Visual Communication

Authoritative guidance provides standardized templates for common scenarios. For example, the U.S. EPA supplies generic models for aquatic and terrestrial organisms, which risk assessors modify for specific stressors [3]. These diagrams use consistent notation: boxes represent entities (e.g., stressor sources, ecological receptors), and arrows depict the pathways and directions of interaction [1] [3]. Visual refinements, such as using solid versus dotted lines to indicate the relative importance of a pathway, convey qualitative judgments based on data [3].

Diagram 2: Generic Aquatic and Terrestrial Exposure Pathway Relationships (84 characters)

Advanced Visual Tools for Analysis

Beyond static diagrams, interactive data visualization tools play an increasing role in analyzing complex risk data. These tools allow researchers to [6] [8]:

- Perform Comparative Analysis: Use clustered bar charts to compare risks across multiple species or sites [7].

- Conduct Trend Analysis: Implement multi-axis line charts or control charts to track risk indicators over time and identify spikes [7].

- Prioritize Risks: Employ heatmaps or matrix charts to plot risks based on likelihood and impact, focusing resources on high-priority concerns [9] [7].

The Scientist's Toolkit: Essential Reagents & Materials

The execution of an ERA guided by a robust problem formulation requires specific research tools and materials. The following table details key solutions and their functions in generating data for the assessment.

Table 2: Key Research Reagent Solutions for Ecological Risk Assessment Experiments [1] [3] [2]

| Tool/Reagent Category | Specific Example/Name | Primary Function in ERA |

|---|---|---|

| Surrogate Test Organisms | Laboratory rat (Rattus norvegicus), fathead minnow (Pimephales promelas), earthworm (Eisenia fetida). | Serve as standardized test surrogates for broad taxonomic groups (mammals, fish, soil invertebrates) in toxicity studies to estimate effects endpoints [1]. |

| Toxicity Endpoint Standards | LC50 (Median Lethal Concentration), EC50 (Median Effect Concentration), NOAEC/NOAEL (No Observed Adverse Effect Concentration/Level). | Quantitative measures derived from toxicity tests used as benchmarks to compare with exposure estimates in the risk characterization phase [1]. |

| Environmental Fate Tracers | Radiolabeled (e.g., ¹⁴C) pesticide compounds, stable isotope labels. | Used in metabolism and degradation studies (e.g., aerobic soil metabolism) to trace the breakdown pathways of a stressor and accurately measure its half-life in different compartments [3]. |

| Partitioning Coefficient Standards | Reference solvents for Octanol-Water Partition Coefficient (Kow) tests, standardized soils for Soil-Water Distribution Coefficient (Kd) tests. | Used in laboratory assays to determine a chemical's affinity for different environmental media (water, soil, organic carbon), which predicts its mobility and potential exposure pathways [3]. |

| Exposure Estimation Models | KABAM (Kow-based Aquatic BioAccumulation Model), STIR (Screening Tool for Inhalation Risk), various runoff and drift models. | Simulation tools used to estimate exposure concentrations (EECs) for receptors when direct monitoring data are unavailable, based on chemical properties and use patterns [3]. |

Data Analysis and Interpretation Guided by the Blueprint

The analysis plan from problem formulation explicitly dictates how data will be processed and interpreted. This moves the assessment from qualitative diagrams to quantitative risk estimates.

From Measurement to Assessment Endpoints

Research data analysis in ERA typically follows a diagnostic and inferential approach [10]. Measurement endpoints (e.g., a fish LC50 from a lab test) are statistically analyzed and then logically linked to the assessment endpoints (e.g., population sustainability of a native fish species) defined in the problem formulation [1] [2]. This linkage is a critical inference that must be justified by the conceptual model.

Tiered Data Analysis Strategy

The scope and complexity agreed upon during planning manifest as a tiered analysis strategy [1].

- Tier 1: Uses simple, conservative models and screening-level toxicity data to identify substances posing negligible risk. A "fail" here triggers a higher-tier analysis.

- Tier 2 & Higher: Employs more sophisticated, realistic models (e.g., probabilistic models) and data (e.g., field studies) to refine risk estimates for chemicals of concern [1]. The problem formulation blueprint pre-defines the triggers and methods for moving between tiers.

Diagram 3: Linking Management Goals to Testable Risk Hypotheses (78 characters)

A meticulously crafted problem formulation is the indispensable blueprint for credible and actionable ecological risk research. It ensures scientific rigor by forcing the explicit statement of risk hypotheses, enhances efficiency by targeting resources at the most plausible pathways of concern, and provides regulatory clarity by creating an auditable trail from management goals to analysis plans [2] [4]. For drug development professionals, particularly those assessing environmental impacts of pharmaceuticals or agrochemicals, adopting this structured approach mitigates the risk of late-stage regulatory failures. It shifts the focus from merely generating data to answering specific, regulatory-relevant questions framed at the project's inception. Ultimately, embedding robust problem formulation and conceptual model development into the research lifecycle is a best practice that yields more defensible science, more predictable regulatory outcomes, and more effective protection of ecological systems.

Defining Management Goals and Ecological Assessment Endpoints

In the structured process of ecological risk assessment (ERA), the deliberate definition of management goals and ecological assessment endpoints constitutes the critical first phase. This phase is foundational to the development of a robust conceptual model, which is a schematic hypothesis describing the predicted relationships between a stressor (e.g., a pharmaceutical effluent, an agricultural chemical) and the ecological components of a system [11]. A well-articulated conceptual model ensures scientific rigor and decision-relevance by explicitly linking measurable scientific endpoints to the societal values they represent.

This guide provides a technical framework for researchers and drug development professionals to establish these foundational elements. By integrating principles from regulatory science and contemporary methodological approaches—specifically mixed methods research—this process moves beyond conventional ecotoxicological endpoints to incorporate a broader consideration of ecosystem services and stakeholder values [11] [12]. The outcome is a defensible, transparent, and actionable roadmap for ecological risk research.

Core Definitions and Regulatory Context

- Management Goals: Broad, value-based statements of desired environmental outcomes. They answer the question, "What do we want to protect or sustain?" Examples include "protect aquatic life in the receiving watershed," "maintain soil biodiversity and productivity," or "conserve avian populations."

- Ecological Assessment Endpoints: Explicit, operationally defined expressions of the ecological entity (e.g., a species, community, functional group, or habitat) and its key attribute (e.g., survival, reproduction, growth, community structure) that are tied to a management goal and can be quantitatively or qualitatively measured [11]. A clear assessment endpoint is essential for a focused conceptual model.

- Conceptual Model Role: The conceptual model visually and narratively formalizes the pathway from a stressor (source, release, exposure) to its effect on the chosen assessment endpoint(s). It identifies intervening variables, mitigating processes, and alternative causal pathways, ensuring the research design adequately tests the risk hypothesis.

Recent guidelines, such as the EPA's Generic Ecological Assessment Endpoints, emphasize expanding endpoint selection to include ecosystem services—the benefits humans derive from ecosystems [11]. This shift makes risk assessments more relevant to decision-makers by connecting ecological impacts to societal outcomes like water purification, carbon sequestration, or nutrient cycling. This connection is a key integrative step in modern conceptual model development.

Methodological Framework: A Mixed Methods Approach

Defining goals and endpoints requires synthesizing diverse data types: quantitative (e.g., toxicity thresholds, population census data) and qualitative (e.g., stakeholder interviews, regulatory policy analysis, landscape value assessments). A mixed methods research framework provides a systematic methodology for this integration, strengthening the validity and comprehensiveness of the resulting conceptual model [12] [13].

The table below summarizes the primary mixed methods designs applicable to this phase of ecological risk research.

Table 1: Mixed Methods Research Designs for Endpoint Definition and Conceptual Model Development [12] [13]

| Design Name | Sequence & Priority | Primary Purpose in ERA Context | Integration Point |

|---|---|---|---|

| Exploratory Sequential | QUAL → quan | To use qualitative data (e.g., stakeholder workshops) to identify, define, or prioritize key concerns and values, which then inform the selection of quantitative metrics for monitoring or testing. | Findings from initial qualitative phase determine the variables and endpoints for the subsequent quantitative phase. |

| Explanatory Sequential | QUAN → qual | To use quantitative data (e.g., screening-level risk calculations) to identify unexpected or priority areas of risk requiring deeper investigation via qualitative methods (e.g., site-specific exposure scenario development). | Quantitative results guide the sampling strategy and questioning for the follow-up qualitative phase. |

| Convergent (Concurrent) | QUAN + QUAL | To collect both data types independently but simultaneously on related aspects of the same problem, then merge results to develop a complete, validated picture. | Datasets are compared, contrasted, or transformed during analysis to generate meta-inferences. |

Detailed Experimental and Procedural Protocols

Protocol 1: Exploratory Sequential Design for Stakeholder-Driven Endpoint Selection This protocol is ideal for new or complex risk scenarios where societal values are not fully codified.

- Qualitative Phase (Exploration):

- Data Collection: Conduct structured focus groups or semi-structured interviews with key stakeholders (regulators, community representatives, industry experts) [12]. Use a moderator guide focused on perceived risks, valued ecological resources, and management priorities.

- Analysis: Perform thematic analysis (e.g., using NVivo or similar software) on interview transcripts to identify recurring themes, concerns, and valued ecosystem components [13].

- Integration & Design: Transform qualitative themes into a structured survey or a list of candidate assessment endpoints and associated metrics. This is the building approach to integration [12].

- Quantitative Phase (Expansion & Prioritization):

- Data Collection: Administer the developed survey to a larger, representative sample of stakeholders or subject matter experts.

- Analysis: Use statistical methods (e.g., frequency analysis, multi-criteria decision analysis) to rank and prioritize the candidate endpoints based on the survey data.

Protocol 2: Convergent Design for Comprehensive Site Assessment This protocol is suited for complex site-specific assessments where existing data is available but fragmented.

- Parallel Data Collection:

- Quantitative Strand: Compile existing monitoring data (e.g., chemical concentrations, standardized toxicity test results, species abundance surveys).

- Qualitative Strand: Collect data through ethnographic field observations, historical land-use analysis, and interviews with local experts or communities about observed ecological changes.

- Separate Analysis: Analyze each dataset using appropriate methods (statistical analysis for QUAN; thematic or content analysis for QUAL).

- Integration via Data Transformation and Joint Display:

- Procedure: Quantitize the qualitative data by coding interview themes and counting their frequency or intensity across respondents [13]. Alternatively, qualify quantitative data by creating narrative profiles for different statistical clusters (e.g., "high-impact" vs. "low-impact" zones).

- Merging: Create a joint display table. One column lists quantitative findings (e.g., "Amphipod density < 100 individuals/m² in Zone A"), an adjacent column lists related qualitative findings (e.g., "Local fishers report absence of bottom-feeding fish in Zone A for 3 years"), and a third column provides the meta-inference ("Consistent evidence of benthic community impairment in Zone A") [13] [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Mixed Methods Ecological Research

| Tool / Reagent Category | Specific Examples | Primary Function in Endpoint Definition |

|---|---|---|

| Stakeholder Engagement Platforms | Structured interview guides, focus group protocols, Delphi method questionnaires. | To systematically elicit and document qualitative data on values, perceptions, and management priorities from diverse groups [12]. |

| Qualitative Data Analysis Software | NVivo, ATLAS.ti, Dedoose, MAXQDA. | To code, organize, and perform thematic analysis on unstructured text data from interviews, open-ended surveys, or policy documents [13]. |

| Data Integration & Visualization Software | Dedicated mixed methods tools (e.g., features in ATLAS.ti), or general-purpose tools (Microsoft Excel, R with ggplot2, Python with Matplotlib). | To create joint displays (e.g., side-by-side comparison tables, integrated charts) that visually merge quantitative and qualitative findings for interpretation [13] [14]. |

| Ecosystem Services Classification Frameworks | The Common International Classification of Ecosystem Services (CICES), EPA's GEAE guidelines [11]. | To provide a standardized lexicon and structure for translating ecological functions (e.g., nutrient cycling) into societally relevant assessment endpoints. |

| Standardized Ecotoxicological Assays | OECD Test Guidelines (e.g., for algal growth, Daphnia reproduction, fish acute toxicity), ASTM standards. | To generate quantitative, reproducible dose-response data for specific biological attributes, forming the core of many conventional assessment endpoints. |

Integration, Reporting, and Advanced Applications

Effective integration is the defining feature of a mixed methods approach. The "fit" of the integrated data—the degree to which the qualitative and quantitative findings cohere—must be explicitly evaluated [12]. Conflicting results are not necessarily a failure; they can indicate a flawed assumption in the conceptual model, an unidentified variable, or a need for further research.

Joint displays are the premier tool for representing integration. Moving beyond simple tables, advanced displays can incorporate visuals such as graphs, charts, maps, or qualitative conceptual diagrams adjacent to statistical outputs [14]. For example, a map showing chemical concentration gradients (QUAN) can be juxtaposed with a thematic map derived from interview data about observed wildlife health (QUAL), with the overlapping areas highlighting priority zones for a refined assessment endpoint.

A key advanced application is the explicit linkage of assessment endpoints to ecosystem services [11]. This involves:

- Identifying the relevant ecosystem service(s) (e.g., water filtration).

- Specifying the ecological structures and functions that provide that service (e.g., healthy benthic invertebrate community processing sediments).

- Selecting measurable attributes of those structures/functions as the assessment endpoint (e.g., diversity and abundance of filter-feeding bivalves). This chain forms a transparent, defensible logic within the conceptual model, directly connecting scientific measurement to societal value.

Defining management goals and ecological assessment endpoints is a sophisticated, integrative process central to conceptual model development. By adopting a deliberate mixed methods research framework, scientists can ensure this process is systematic, transparent, and inclusive of both measurable ecological attributes and human values. The use of structured protocols, joint displays for integration, and ecosystem services frameworks produces a robust foundation for ecological risk research that is scientifically credible and decision-relevant, ultimately supporting more effective environmental management and sustainable drug development.

Integrating Stakeholder Engagement and Communicative Planning Models

Ecological Risk Assessment (ERA) is a critical, formal process for evaluating the likelihood that adverse ecological effects may occur due to exposure to one or more stressors, including chemicals, land-use change, or biological agents [15]. Traditionally, ERA has often relied on deterministic tools like risk quotients (RQs), which compare a single exposure estimate to a single effects threshold, and has focused on narrow assessment endpoints such as the survival of standard test species [16]. This approach contains extensive, unquantified uncertainty and creates a significant gap between the measured endpoints in controlled studies and the ultimate protection goals for ecosystems and the services they provide to society [15].

To develop more relevant and robust conceptual models for ecological risk research, a transformative integration of two paradigms is essential. First, stakeholder engagement and communicative planning models must be embedded throughout the research lifecycle. Second, the assessment framework itself must evolve to quantitatively evaluate risks and benefits to Ecosystem Services (ES)—the benefits people obtain from ecosystems [17]. This integration addresses a core challenge in transdisciplinary research: the "usability gap" between what scientists produce and what decision-makers need for actionable, evidence-based policy [18].

This whitepaper provides a technical guide for researchers and drug development professionals—who must increasingly consider environmental fate and ecotoxicology—on implementing this integrated approach. We detail a multi-model, tiered engagement framework and a quantitative Ecosystem Services Risk-Benefit Assessment (ERA-ES) methodology, providing the protocols and tools necessary to bridge scientific analysis and societal relevance [19] [17].

Conceptual Foundations: From Deterministic Endpoints to Co-Created Knowledge

The Limitations of Conventional Ecological Risk Assessment

Current ERA practices, particularly for chemicals, are frequently governed by tiered guidelines. Initial screening-level assessments often use deterministic RQs, which are dimensionless numbers calculated by dividing an estimated environmental concentration (EEC) by a toxicity benchmark (e.g., LC50) [15] [16]. This method simplifies complex, probabilistic realities into a binary outcome against an arbitrarily set Level of Concern (LOC).

Table 1: Limitations of Deterministic Risk Quotient (RQ) Approach in ERA

| Limitation Category | Specific Issue | Consequence for Risk Assessment |

|---|---|---|

| Exposure Oversimplification | Uses a single point estimate (e.g., 90th percentile EEC) instead of a full distribution [16]. | Fails to capture the frequency, magnitude, or timing of peak exposures, which may be critical for life-cycle impacts. |

| Effects Oversimplification | Relies on acute toxicity for limited surrogate species (e.g., Daphnia magna) [15]. | Poorly extrapolates to chronic, population-level, or ecosystem-service-level effects for diverse species. |

| Neglect of Ecological Context | Ignores species life history, recovery potential, and ecological interactions [16]. | Over- or under-protects species and functions, leading to inefficient resource allocation for risk management. |

| Opaque Uncertainty | Uses safety factors that are arbitrary and not quantitatively linked to uncertainty [16]. | Provides a false sense of precision; hampers transparent communication of risk confidence. |

The Imperative for Stakeholder Engagement

Stakeholders are "any person or group who has an interest in the research topic and/or who stands to gain or lose from a possible policy change" influenced by the findings [20]. Engaging them transforms ERA from a purely technical exercise into a legitimate and salient process for decision-making [18]. Engagement is not merely the dissemination of final results but an iterative process of actively soliciting knowledge, experience, judgment, and values to create shared understanding and inform decisions [20]. This co-creation of knowledge ensures that models address the right problems, incorporate local and indigenous knowledge, and that results are actionable [19] [20].

Core Framework: A Multi-Model Approach for Integration and Engagement

A single model is rarely sufficient to meet all project needs, from rapid stakeholder interaction to answering complex, system-level questions [19]. A suite of models of varying complexity, deployed adaptively throughout a project, is more effective.

Table 2: Multi-Model Toolkit for Stakeholder-Integrated Ecological Risk Research [19]

| Model Type | Primary Purpose | Complexity & Development Time | Key Role in Engagement & Research |

|---|---|---|---|

| Conceptual Models | Map main system drivers, components, and relationships. | Low; qualitative or simple diagrams. | Creates a shared mental model; foundational for problem formulation with stakeholders. |

| Toy / Simple Quantitative Models | Simplify system to a handful of key components for exploration. | Low to Medium; rapid deployment. | Trains stakeholders in system dynamics; tests initial hypotheses interactively in workshops. |

| Industry / Sector-Specific Models | Detailed analysis of a single sector or stressor (e.g., a specific fishery or chemical fate). | Medium; requires targeted data. | Addresses immediate, focused stakeholder questions; builds credibility and provides early results. |

| Shuttle Models | Incorporate the minimum core processes needed for a basic understanding of the overall problem. | Medium; focused on key linkages. | Facilitates fast, iterative feedback on core system logic before full model development. |

| Whole-of-System Models | Fully integrated representation of environmental, social, and economic processes. | High; long development time, resource-intensive. | Addresses complex, interconnected management questions; validates insights from simpler models. |

This adaptive approach de-couples the long development cycle of complex models from the need for continuous stakeholder interaction, maintaining engagement and allowing for mid-course corrections in research focus [19].

Quantitative Methodology: Integrating Ecosystem Services into Risk Assessment

The Ecosystem Services-based Ecological Risk Assessment (ERA-ES) method provides a quantitative framework to assess both risks and benefits to ES supply [17].

ERA-ES Protocol

Objective: To quantify the probability and magnitude of changes in ecosystem service supply exceeding defined risk or benefit thresholds following a human intervention.

Case Study Context: Applied to assess the regulating service of waste remediation (via sediment denitrification) in marine offshore developments [17].

Phase 1: Problem Formulation & ES Selection

- Define the Intervention: Clearly specify the human activity (e.g., installation of an offshore wind farm, OWF).

- Select Relevant Ecosystem Services: Identify and prioritize ES relevant to the ecosystem and stakeholders (e.g., waste remediation, food provision, carbon sequestration) [17].

- Identify Underlying Ecosystem Processes: Determine the key biophysical processes that deliver the selected ES (e.g., for waste remediation: sediment denitrification rate driven by total organic matter and fine sediment fraction) [17].

Phase 2: Quantitative Modeling & Threshold Definition

- Model ES Supply: Develop or apply a quantitative model linking environmental parameters to ES supply. Example: A multiple linear regression model predicting sediment denitrification rate based on sediment characteristics [17].

- Establish Risk & Benefit Thresholds (RBT, BBT):

- Risk Threshold (RBT): The level of ES supply below which ecosystem function is considered impaired and service delivery is compromised. This can be set based on historical baselines, reference conditions, or regulatory standards.

- Benefit Threshold (BBT): The level of ES supply above which a clear net benefit is realized. This can be set as an improvement over baseline or a management target [17].

- Simulate Intervention Impacts: Use the ES supply model to simulate conditions with and without the intervention, generating probability distributions of ES supply outcomes.

Phase 3: Risk-Benefit Calculation & Visualization

- Calculate Metrics: Using cumulative distribution functions (CDFs) of post-intervention ES supply:

- Risk Metric: Probability that ES supply < RBT, multiplied by the average magnitude of the deficit when it occurs.

- Benefit Metric: Probability that ES supply > BBT, multiplied by the average magnitude of the surplus when it occurs [17].

- Compare Scenarios: Calculate and compare these metrics across different management or development scenarios to evaluate trade-offs.

Diagram 1: ERA-ES Workflow: A 3-Phase Methodology

Stakeholder Engagement Protocol for Co-Creation

Objective: To iteratively engage stakeholders throughout the ERA-ES process to ensure relevance, incorporate diverse knowledge, and foster shared understanding [20].

Phase 1: Setting-Up

- Stakeholder Identification: Identify groups based on interest, influence, data access, and decision-making authority related to the problem [20].

- Initial Scoping Workshop: Convene stakeholders to jointly define management concerns, frame core questions, and outline initial conceptual models [19].

Phase 2: Development & Design

- Iterative Model Review: Use simple "toy" or conceptual models in workshops to train stakeholders, elicit feedback on model structure, and validate key relationships [19].

- Data Co-Design: Collaborate with stakeholders to identify and access relevant data sources, and define acceptable methods for data collection and gap-filling [20].

Phase 3: Implementation & Communication

- Feedback on Interim Outputs: Present preliminary risk maps or model outputs for stakeholder critique and interpretation [20].

- Joint Interpretation Sessions: Facilitate meetings where researchers present findings (objectives, methods, assumptions, results, limitations) and stakeholders discuss opportunities for use, challenges, and necessary refinements [20].

Phase 4: Output & Dissemination

- Co-Development of Products: Jointly design final outputs (e.g., interactive risk maps, dashboards, policy briefs) to ensure usability [20].

- Transparent Documentation: Share meeting minutes and summary reports with all participants to ensure clarity and build trust [20].

Diagram 2: Iterative Stakeholder Engagement Cycle

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Tools for Integrated ERA-ES Research

| Category | Item / Solution | Primary Function in Research |

|---|---|---|

| Modeling & Analysis Software | R, Python (with NumPy, SciPy, Pandas) | Statistical analysis, implementation of ES supply models, and calculation of risk/benefit metrics from probability distributions. |

| Geospatial Analysis Tools | ArcGIS, QGIS, R (sf package) | Spatial data management, analysis, and visualization; critical for creating risk maps and analyzing spatially-explicit ES. |

| Participatory Modeling Platforms | Stella/iThink, NetLogo, Miro | Developing interactive "toy" and system dynamics models for use in stakeholder workshops to facilitate shared learning [19]. |

| Ecological & Ecotoxicological Data | Standardized bioassay kits (e.g., Daphnia, algal toxicity tests); Sediment core samplers. | Generating effects data for chemical stressors and collecting field samples to parameterize and validate ES process models (e.g., sediment characteristics for denitrification) [17] [15]. |

| Stakeholder Engagement Facilitation | Professional facilitator services; Secure data sharing portals; Interactive voting/polling tools. | Ensuring productive, inclusive meetings and secure, transparent exchange of pre-reads, data, and model outputs between researchers and stakeholders [20]. |

Moving beyond deterministic risk quotients requires a dual advancement in ecological risk research: the adoption of quantitative Ecosystem Services assessment and the deep integration of stakeholders through communicative planning models. The multi-model toolkit allows for adaptive, iterative engagement, maintaining stakeholder interest and ensuring research relevance [19]. The ERA-ES methodology provides a rigorous, quantitative framework to assess trade-offs, evaluating not just risks but also potential benefits of interventions [17]. For researchers, especially in fields like drug development where environmental implications are scrutinized, mastering this integrated approach is key to producing conceptual models and final assessments that are scientifically robust, socially legitimate, and actionable for sustainable decision-making.

The PRISM (Partial Risk Map) model represents a paradigmatic evolution in ecological risk assessment, transitioning from traditional, top-down knowledge-deficit approaches to integrative, participatory frameworks. Developed initially for high-reliability sectors like nuclear power, its application to ecological systems addresses the critical need for multi-stakeholder, transparent, and quantifiable risk prioritization in complex environmental management [21]. This technical guide details the AHP-PRISM integration, which synergizes the Analytic Hierarchy Process's structured decision-making with PRISM's granular, multi-dimensional risk mapping. By converting qualitative expert judgments into consistent quantitative rankings, the model facilitates the identification and management of partial risks within interconnected ecological processes, such as land-use change impacts on habitat provision and water purification services [22] [21]. The framework's core strength lies in its ability to decompose systemic risks into analyzable components, enabling targeted interventions and fostering a collaborative safety culture essential for sustainable ecosystem management.

Conceptual models in ecological risk research serve as abstract representations of the causal pathways linking stressors to ecosystem effects. Traditional models often operate on a knowledge-deficit premise, where scientists unidirectionally communicate risks to decision-makers and the public. This approach fails to capture the pluralistic values, localized knowledge, and perceptual diversity inherent in environmental management. The development of the PRISM model is contextualized within a broader thesis advocating for participatory conceptual modeling. This paradigm shift recognizes stakeholders not merely as recipients of information but as co-producers of knowledge, integrating scientific data with community insights, expert heuristics, and managerial priorities [21]. In ecological contexts, such as assessing the impacts of urbanization on watershed services, this is vital [22]. Participatory frameworks like PRISM provide a structured lexicon and a visual common ground—the Partial Risk Map—that enables diverse groups to collaboratively define risk scenarios, weight assessment criteria, and interpret multi-dimensional outcomes, thereby bridging the gap between ecological complexity and actionable risk governance.

The PRISM Model: Core Architecture and Theoretical Foundations

The PRISM model is a novel risk assessment methodology designed to address limitations in traditional techniques like Failure Mode and Effects Analysis (FMEA) and Risk Matrices (RM). Its theoretical foundation is built on the principle of partial risk disaggregation [21].

Fundamental Components

Unlike conventional methods that aggregate risk into a single metric (e.g., Risk Priority Number), PRISM evaluates and visualizes risk across three independent, cardinal dimensions:

- Occurrence (O): The probability or frequency of an initiating event or stressor.

- Severity (S): The magnitude of the adverse ecological consequence.

- Detection (D): The ease with which the onset of the effect or the failure of a control measure can be identified before consequential damage occurs.

Each dimension is assessed on a deterministic scale (typically 1-10). The core innovation is that these scores are not multiplied but are treated as coordinates, plotting each risk event as a distinct point or vector within a three-dimensional "Partial Risk Space." This prevents the information loss and ranking ambiguities common in multiplicative models and allows for the nuanced comparison of risks that may have similar aggregate scores but fundamentally different profiles (e.g., a high-severity, low-probability event vs. a low-severity, high-probability one) [21].

The AHP-PRISM Synthesis

The foundational PRISM method relies on direct expert scoring, which can introduce subjectivity and inconsistency. The AHP-PRISM synthesis integrates the Analytic Hierarchy Process to robustly weight criteria and calibrate judgments [21].

- AHP Function: AHP provides a systematic pairwise comparison framework for weighting the relative importance of different risk events, assessment criteria, or even the O, S, and D dimensions themselves within a specific ecological context. It generates a priority vector of weights and a Consistency Ratio (CR) to validate the coherence of expert judgments [21].

- Integration Mechanism: AHP-derived weights are used to adjust or synthesize the scores plotted in the PRISM space. This creates a weighted partial risk map, where the position of risks reflects not just raw scores but their prioritized importance as determined by structured stakeholder consensus. This synthesis is crucial for participatory frameworks, as it makes the valuation process transparent, debatable, and mathematically consistent.

Diagram 1: AHP-PRISM Integration Workflow. This flowchart details the synthesis of the Analytic Hierarchy Process (AHP) with the PRISM model, showing the feedback loop for ensuring judgment consistency before generating the final risk map [21].

Quantitative Framework and Data Presentation

The AHP-PRISM model's quantitative rigor stems from the mathematical formalisms of AHP and the spatial logic of PRISM. The following tables summarize the core quantitative scales and a hypothetical data output from an ecological risk assessment.

Table 1: Fundamental Scale for AHP Pairwise Comparisons [21]

| Intensity of Importance | Definition | Explanation |

|---|---|---|

| 1 | Equal Importance | Two activities contribute equally to the objective. |

| 3 | Moderate Importance | Experience and judgment slightly favor one activity over another. |

| 5 | Strong Importance | Experience and judgment strongly favor one activity over another. |

| 7 | Very Strong Importance | An activity is favored very strongly over another; its dominance demonstrated in practice. |

| 9 | Extreme Importance | The evidence favoring one activity over another is of the highest possible order of affirmation. |

| 2, 4, 6, 8 | Intermediate Values | Used to compromise between the above judgments. |

Table 2: Exemplary PRISM Risk Assessment Output for Watershed Ecological Risks [22] [21]

| Risk Event ID | Ecological Stressor | Occurrence (O) | Severity (S) | Detection (D) | AHP-Derived Weight | Risk Vector (O, S, D) |

|---|---|---|---|---|---|---|

| RE-01 | Expansion of Construction Land | 8 | 9 | 3 | 0.32 | (8, 9, 3) |

| RE-02 | Non-Point Source Pollution Diffusion | 7 | 8 | 5 | 0.28 | (7, 8, 5) |

| RE-03 | Fragmentation of Forest Habitat | 6 | 7 | 4 | 0.18 | (6, 7, 4) |

| RE-04 | Decline in Water Purification Service | 5 | 9 | 6 | 0.15 | (5, 9, 6) |

| RE-05 | Soil Erosion from Cultivated Land | 4 | 6 | 7 | 0.07 | (4, 6, 7) |

Note: O, S, D scores are on a 1-10 scale. The AHP weight (summing to 1.0) represents the relative overall importance of each risk event based on stakeholder-derived criteria. The Risk Vector is the core input for the 3D Partial Risk Map.

Diagram 2: PRISM 3D Partial Risk Map Conceptual Axes. This diagram illustrates the three independent dimensions defining the PRISM assessment space, each representing a core component of risk profile [21].

Experimental Protocols for Ecological Application

Implementing the AHP-PRISM model for ecological risk research requires a structured, replicable protocol. The following methodology is adapted from its application in safety-critical industries and tailored for ecological systems, such as assessing watershed risks [22] [21].

Phase 1: Problem Structuring & Expert Panel Assembly

- Objective: Define the system boundaries and constitute the participatory panel.

- Protocol:

- System Definition: Clearly bound the ecological system (e.g., Houxi Basin watershed [22]). Draft a conceptual model linking anthropogenic drivers (e.g., urbanization) to pressures (e.g., land-use change), states (e.g., habitat quality), and impacts (e.g., loss of provisioning services).

- Risk Event Identification: Using workshops or Delphi techniques with stakeholders (scientists, land-use planners, community representatives), generate a comprehensive list

Nof potential risk events (e.g., "Conversion of forest to construction land leading to habitat fragmentation"). - Panel Formation: Assemble a multidisciplinary panel of

Kexperts (typically 5-10). Ensure representation from ecological modeling, toxicology, local ecology, resource management, and community stakeholders.

Phase 2: AHP Weighting of Risk Events or Criteria

- Objective: Derive consistent, consensus-based weights for risk events or assessment criteria.

- Protocol:

- Hierarchy Construction: Structure a hierarchy. The top goal is "Prioritize Ecological Risks." Level 2 can be assessment criteria (e.g., "Impact on Biodiversity," "Impact on Water Security," "Economic Cost of Mitigation"), or the risk events themselves can be at Level 2 for direct pairwise comparison.

- Pairwise Comparison Surveys: Each expert

kindependently completes pairwise comparison matrices using the scale in Table 1. Fornelements,(n*(n-1))/2comparisons are made. - Consistency Validation:

- For each expert's matrix, compute the Consistency Index (CI) [21]:

CI = (λ_max - n) / (n - 1)whereλ_maxis the principal eigenvalue of the comparison matrix. - Compute the Consistency Ratio (CR) [21]:

CR = CI / RIwhereRIis the Random Index (a known value based onn). - A CR ≤ 0.10 is considered acceptable. Matrices with CR > 0.10 must be revised by the expert [21].

- For each expert's matrix, compute the Consistency Index (CI) [21]:

- Aggregation of Judgments: Use the geometric mean method to aggregate the valid individual judgment matrices from all

Kexperts into a single group comparison matrix. - Priority Vector Calculation: Compute the normalized principal eigenvector of the aggregated matrix. This yields the priority weight vector

W = [w_1, w_2, ..., w_n], whereΣw_i = 1.

Phase 3: PRISM Dimensional Scoring

- Objective: Score each risk event on the O, S, and D dimensions.

- Protocol:

- Calibration Workshop: Conduct a facilitated workshop with the expert panel. Provide background data (e.g., GIS land-use maps, pollution export coefficients [22], species vulnerability indices).

- Dimensional Scoring: For each risk event

i, the panel discusses and assigns consensus scoresO_i,S_i,D_ion a defined scale (e.g., 1-10). Definitions must be anchored (e.g., Severity: 1=Negligible impact on service, 10=Collarpsse of service/local extinction). - Vector Formation: Each risk event

iis now defined by its risk vectorV_i = (O_i, S_i, D_i)and its aggregated AHP weightw_i.

Phase 4: Mapping, Analysis & Scenario Testing

- Objective: Visualize and analyze risks to inform management.

- Protocol:

- 3D Mapping: Plot all risk vectors

V_iin a 3D scatter plot (PRISM Map). Point size can be scaled byw_i. - Cluster Analysis: Identify clusters of risks with similar profiles (e.g., high-severity, hard-to-detect cluster).

- Scenario Testing (What-If Analysis): Model the effect of proposed management interventions. For example, if a policy reduces the

Occurrencescore of "Non-Point Source Pollution" from 7 to 4, replot its new position on the map to visualize the risk reduction. - Sensitivity Analysis: Test the robustness of the prioritization by varying the AHP weights or dimensional scores within plausible bounds.

- 3D Mapping: Plot all risk vectors

The Scientist's Toolkit: Research Reagent Solutions

Implementing the AHP-PRISM framework requires both conceptual and analytical tools. The following toolkit is essential for researchers.

Table 3: Essential Research Toolkit for AHP-PRISM Implementation

| Tool Category | Specific Item/Technique | Function & Rationale |

|---|---|---|

| Stakeholder Engagement | Facilitated Workshop Protocol | Structured process to elicit knowledge, define system boundaries, and build consensus among diverse participants. |

| Judgment Elicitation & Analysis | AHP Pairwise Comparison Software (e.g., ExpertChoice, SuperDecisions, R ahp package) |

Supports the creation of comparison matrices, calculates eigenvectors, and, crucially, validates the Consistency Ratio (CR) to ensure logical coherence of judgments [21]. |

| Spatial & Ecological Data | GIS Software (e.g., ArcGIS, QGIS), Ecosystem Service Models (e.g., InVEST, ARIES) | Provides quantitative input for scoring the O, S, D dimensions. For example, InVEST habitat quality or nutrient retention models can inform severity scores for land-use change [22]. |

| Statistical & Visualization | 3D Graphing Software (e.g., Python Matplotlib, R rgl), Statistical Packages (e.g., R, SPSS) | Generates the Partial Risk Map and performs cluster analysis or sensitivity testing on the results. |

| Documentation & Transparency | Decision Audit Trail Document | Records all assumptions, participant inputs, weight justifications, and scoring rationales. Critical for reproducibility and building trust in the participatory process. |

Case Integration: Watershed Ecological Risk Assessment

The AHP-PRISM model is directly applicable to complex ecological challenges, such as the risk assessment of the Houxi Basin under urbanization pressure [22].

- Problem: Urbanization drives changes in land use/land cover (LUCC), which simultaneously degrade terrestrial habitat provision and aquatic water purification services through non-point source (NPS) pollution [22].

- AHP-PRISM Application:

- Risk Events: Defined from LUCC transitions (e.g., "Forest -> Construction Land," "Cultivated Land -> Orchard").

- AHP Weighting: Criteria could include "Impact on Habitat Connectivity," "Contribution to NPS Pollution Load," and "Irreversibility." Local and scientific experts weight these criteria.

- PRISM Scoring:

- Occurrence: Modeled from projected land-use change scenarios.

- Severity: Quantified using ecosystem service models (e.g., InVEST for habitat quality, export coefficient models for NPS pollution [22]).

- Detection: Based on the monitorability of the service degradation (e.g., water quality is more readily monitored than genetic diversity loss).

- Outcome: The resulting Partial Risk Map visually identifies which land-use transitions pose the most severe, likely, and insidious risks. The study by [22] concluded that optimizing spatial layout is more effective than merely increasing green area, a policy insight readily communicable from a PRISM map showing clustered risks related to spatial configuration. The AHP-PRISM synthesis would add a layer of democratic validation to such findings, ensuring management priorities reflect shared values.

Limitations and Future Directions

While powerful, the AHP-PRISM model has inherent limitations. The quality of output remains dependent on expert competency and the honesty of the participatory process. The framework can be computationally intensive for very large sets of risk events. Future development trajectories include:

- Integration with Probabilistic Modeling: Combining the deterministic PRISM scores with Bayesian networks or Monte Carlo simulations to explicitly account for uncertainty in O, S, and D estimates.

- Dynamic PRISM: Developing temporal versions of the risk map to visualize how risk vectors migrate over time under different climate or policy scenarios.

- Automated Elicitation Platforms: Creating online, real-time collaboration tools for distributed expert panels to conduct AHP comparisons and PRISM scoring synchronously or asynchronously.

- Cross-Scale Integration: Linking PRISM assessments across spatial scales (e.g., from a local watershed to a regional biome) to understand risk cascades.

The PRISM model, particularly when synthesized with AHP, embodies the necessary shift from knowledge-deficit to participatory frameworks in ecological risk research. It moves beyond simply calculating risk to structuring democratic deliberation about risk. By making the dimensions of risk explicit, separable, and debatable, it provides a common visual and analytical language for scientists, policymakers, and communities. The resulting Partial Risk Map is more than an analytical output; it is a boundary object that facilitates co-learning and transparent decision-making. In confronting the interconnected, evolving risks facing ecosystems, such frameworks are not merely advantageous—they are essential for developing resilient and legitimate management strategies. The PRISM model offers a robust, flexible, and transparent pathway to this goal, turning the assessment of ecological risk into a participatory process of building shared understanding and commitment to action.

This whitepaper establishes the theoretical and methodological foundations of the Hierarchical Patch Dynamics (HPD) paradigm integrated with systems thinking, framing it as a critical framework for developing conceptual models in ecological risk research [23] [5]. It details how the HPD paradigm addresses complexity through a spatially explicit, multi-scale perspective, which is essential for structuring causal hypotheses and predictive scenarios in complex ecological and biomedical systems [23]. The guide provides actionable methodologies for model construction, data integration, and analysis, accompanied by standardized visualization and data presentation protocols tailored for researchers and scientists engaged in conceptual model development.

Ecological and biomedical systems for risk research are characterized by inherent complexity: a large number of diverse components, nonlinear interactions, spatial heterogeneity, and processes operating across multiple scales [23]. Traditional reductionist models often fail to capture the emergent properties and unexpected dynamics that arise from these interactions. The development of conceptual models for ecological risk assessment and management, therefore, requires a theoretical framework that can render this complexity comprehensible and analyzable [5].

The Hierarchical Patch Dynamics (HPD) paradigm, emerging from the integration of hierarchy theory and patch dynamics, provides this framework [23]. When coupled with systems thinking, it offers a powerful approach for constructing conceptual models that link societal or clinical actions to environmental or physiological stressors and their ultimate effects on valued endpoints [5]. This integrated perspective is not merely an ecological tool; it is a generalizable methodology for understanding any complex system where modularity, scale, and feedback are critical, including applications in toxicology and drug development.

Theoretical Core: Principles of Hierarchical Patch Dynamics

The HPD paradigm is built upon several core principles that directly inform conceptual model structure [23].

- Ecological Systems as Hierarchical Patchworks: Systems are composed of nested, interacting patches. A "patch" is a spatially explicit, heterogeneous landscape unit differing from its surroundings in nature or appearance. These patches form hierarchical levels (e.g., leaf, tree, forest stand, watershed), where each level operates at a distinct scale in space and time.

- Dynamics as the Cross-Scale Interaction of Pattern and Process: Pattern refers to the spatial arrangement of patches, while process denotes the flows of energy, material, and information. The two are inseparable: patterns influence processes, and processes create and modify patterns. This interaction is mediated across hierarchical scales.

- The Scaling Ladder Strategy: This is a methodological principle for modeling. Instead of seeking a single "correct" scale, investigators use a sequence of scales (a ladder) to traverse from fine to broad scales. Models are developed at each rung, with explicit rules for translating information (e.g., aggregation, disaggregation) between adjacent levels. This acknowledges that different mechanisms may dominate at different scales.

This hierarchical organization is not merely an observer's construct but is often intrinsic to the system, evolving for greater stability and efficiency [23]. It stands in contrast to theories like self-organized criticality (SOC), which may describe some systems but de-emphasize the critical role of top-down constraints and multi-scale organization prevalent in biological systems [23].

Methodological Integration: From Theory to Conceptual Model Workflow

The transition from HPD theory to a formal conceptual model for risk research involves a structured workflow. This process transforms theoretical understanding into a testable framework for hypothesis generation and scenario analysis [5].

Table 1: Core Phases in HPD-Informed Conceptual Model Development

| Phase | Objective | Key Actions | Output for Risk Assessment |

|---|---|---|---|

| 1. System Delineation & Hierarchical Decomposition | Define system boundaries and identify nested hierarchical levels. | Identify focal level for assessment. Define finer (mechanistic) and broader (contextual) levels. | A multi-scale system description linking cellular/organ to population/ecosystem effects. |

| 2. Patch Classification & Pattern Analysis | Characterize the spatial-temporal structure of the system at each relevant level. | Classify patch types based on structure/function. Quantify patch metrics (size, shape, arrangement). | Identification of heterogeneous exposure units and critical habitats or tissue types. |

| 3. Process Mapping & Interaction Modeling | Link ecological/biological processes to the patch structure across scales. | Diagram drivers, stressors, and effects. Specify process rates and feedback loops within and between patches. | A causal diagram hypothesizing pathways from management actions or drug exposure to ecological/health endpoints [5]. |

| 4. Scaling and Integration | Formally integrate understanding across hierarchical levels. | Apply scaling rules (e.g., aggregation, parameterization) to translate information. Use the "scaling ladder" to connect models. | A predictive, multi-scale model capable of projecting risk under different future scenarios [5]. |

Diagram 1: HPD Conceptual Model Development Workflow (89 characters)

Practical Application: Protocols for Model Construction and Analysis

Implementing the HPD approach requires specific protocols for constructing and analyzing models. The following methodologies are adapted from established spatially explicit hierarchical modeling efforts [23].

Protocol for Constructing a Hierarchical Patch Dynamics Model (HPDM)

This protocol outlines the steps for building a model akin to the HPDM-PHX (Phoenix urban landscape model) [23].

- Problem Framing & Scale Selection: Define the primary ecological risk question. Select the focal hierarchical level (e.g., a specific organ, a population). Establish the immediate finer (component) and broader (context) levels.

- Spatial Data Structuring: Organize input data into a tabular format where each row represents a unique spatial unit (a patch) at a given hierarchical level, and columns represent attributes [24]. Essential columns include a Unique Identifier (UID), patch type, spatial coordinates, and relevant measures (e.g., nutrient load, receptor density, stressor concentration) [24].

- Patch Dynamics Algorithm Development: For each patch type at the focal level, formalize the rules of change. These are often conditional statements based on the state of the patch itself, states of neighboring patches (within-level interaction), and constraints from the broader level (top-down control). This can be implemented via cellular automata, agent-based models, or differential equations.

- Cross-Level Coupling: Develop explicit functions or rules for information transfer between hierarchical levels. This often involves:

- Aggregation (Bottom-Up): Summarizing fine-scale patch states (e.g., mean stressor level) to provide input to the broader level.

- Disaggregation (Top-Down): Using broad-scale constraints (e.g., total allowable load) to allocate resources or limits to finer-scale patches.

- Model Calibration & Scenario Analysis: Calibrate the model using retrospective data. Then, employ the integrated conceptual model to project the outcomes of different management or exposure scenarios, analyzing the spatial and temporal patterns of risk [5].

Protocol for Quantitative Data Comparison Across Scales or Groups

A critical step in HPD analysis is comparing system metrics (e.g., recovery rate, biomarker expression) across different patch types, hierarchical levels, or experimental groups. Data must be structured for clear comparison [25].

Table 2: Structure for Comparing a Quantitative Variable Between Groups

| Group (Patch Type / Level) | Sample Size (n) | Mean | Standard Deviation | Median | Interquartile Range (IQR) |

|---|---|---|---|---|---|

| Group A (e.g., Forest Patches) | 14 | 2.22 | 1.270 | 1.70 | 1.50 |

| Group B (e.g., Urban Patches) | 11 | 0.91 | 1.131 | 0.80 | 1.05 |

| Difference (A - B) | — | 1.31 | — | 0.90 | — |

Note: This table format, adapted from quantitative comparison guidelines [25], provides a complete numerical summary for each group. The difference between group means/medians is a key comparative statistic [25].

Visualization Protocol: To complement the table, use side-by-side boxplots to visually compare the distributions. Boxplots effectively show the median, quartiles, range, and potential outliers for each group, facilitating direct visual comparison of central tendency and variability [25]. For small datasets, a 2-D dot chart with jittered points may be preferable to show individual observations [25].

Diagram 2: Hierarchical Levels and Scale Interactions (61 characters)

The Scientist's Toolkit: Essential Reagents for HPD Research

Implementing the HPD approach requires a suite of conceptual and technical "reagents."

Table 3: Research Reagent Solutions for HPD Modeling

| Item | Function in HPD Research | Example / Note |

|---|---|---|

| Hierarchical Patch Dynamics Modeling Platform (HPD-MP) [23] | Software environment designed to facilitate the construction, linkage, and execution of multi-scale spatial models. | Provides libraries for common scaling functions and patch interaction algorithms [23]. |

| Geographic Information System (GIS) | The primary tool for defining, classifying, and analyzing spatial patches and their patterns. Used for structuring spatial data into rows (patches) and columns (attributes) [24]. | Essential for Protocols 4.1 (steps 2 & 3). |

| Spatially Explicit Data | The fundamental input for characterizing pattern. Includes remote sensing imagery, land cover maps, or spatially registered biomarker/sensor data. | Data must be structured with clear granularity (what one row represents) [24]. |

| Scaling Functions | Mathematical or statistical rules for aggregating and disaggregating information between hierarchical levels. | Includes averaging, summation, or more complex nonlinear functions [23]. |

| Conceptual Diagramming Tool | Software for creating clear diagrams of causal pathways and system structure, as required in conceptual model development [5]. | Outputs must adhere to visual accessibility standards, including sufficient color contrast [26] [27]. |

Future Directions and Integration with Risk Assessment

The HPD framework's future lies in tighter integration with formal ecological risk assessment (ERA) and analogous frameworks in biomedical research. Conceptual models developed using HPD are not just descriptive; they are the foundational structure for effects-directed assessment and predictive scenario analysis [5]. They explicitly link management or therapeutic actions to stressors and ecological or health endpoints, allowing for the testing of causal hypotheses and the projection of recovery under different intervention scenarios [5].

Key advancements will involve automating the scaling processes within modeling platforms, improving the integration of qualitative and quantitative data, and developing standardized HPD-based conceptual model templates for common risk assessment problems. By providing a systematic way to decompose, analyze, and reconstitute complex systems, the integration of Hierarchical Patch Dynamics and systems thinking offers a robust and necessary foundation for the next generation of conceptual models in ecological and biomedical risk research.

From Theory to Practice: Methodologies for Building and Applying Conceptual Models

Step-by-Step Guidance for Developing Generic and Chemical-Specific Conceptual Models

Within the systematic framework of ecological risk assessment (ERA), the development of a conceptual model is the critical bridge between planning and scientific analysis [28]. This guide provides step-by-step instructions for constructing both generic and chemical-specific conceptual models, which are foundational to the Problem Formulation phase as defined by the U.S. Environmental Protection Agency (EPA) [28]. A well-constructed model visually and descriptively hypothesizes the relationships between stressors, ecological receptors, and exposure pathways, thereby guiding the entire scope of the risk investigation and ensuring it remains focused on management goals [28].

This process is not performed in isolation. It is an integrative component of a larger, iterative thesis on ecological risk, where the model is both an output of initial planning and a blueprint for the subsequent analysis and risk characterization phases [28].

Foundational Framework: The Ecological Risk Assessment Process

The development of a conceptual model is embedded within the formal, three-phase structure of an ecological risk assessment: Problem Formulation, Analysis, and Risk Characterization [28]. The following workflow diagram illustrates this overarching process and the central role of conceptual model development.

Diagram 1: Iterative Ecological Risk Assessment Workflow

Phase I: Problem Formulation and Conceptual Model Development

Problem Formulation transforms the broad goals from the Planning phase into a precise, actionable scientific investigation [28]. Its primary objectives are to refine assessment objectives, identify ecological entities at risk, and define the characteristics to protect (assessment endpoints) [28].

Core Components of a Conceptual Model

A conceptual model is a schematic representation consisting of the following key elements [28]:

- Sources & Stressors: The origin (e.g., manufacturing effluent, pesticide application) and the physical, chemical, or biological agent causing change.

- Exposure Pathways: The physical routes a stressor takes from the source to the receptor (e.g., runoff, leaching, atmospheric deposition, dietary uptake).

- Receptors & Assessment Endpoints: The ecological entities (e.g., fathead minnow, honey bee colony, wetland ecosystem) and their specific attributes chosen for protection (e.g., survival, reproductive success, community diversity).

- Ecological Effects / Risk Hypotheses: Predicted biological responses of the assessment endpoints to the stressor (e.g., reduced growth, mortality, population decline).

Step-by-Step Development Protocol

Step 1: Define Assessment Endpoints Select endpoints using three principal criteria: ecological relevance, susceptibility to known stressors, and relevance to management and societal goals [28]. This involves professional judgment to prioritize entities such as endangered species, commercially important species, or critical ecosystem functions [28].

Step 2: Identify Stressors and Sources Based on the management question, characterize the stressor's properties. For a chemical-specific model, this includes its chemical identity, formulation, release patterns, and environmental fate properties.

Step 3: Diagram Exposure Pathways Map the plausible environmental routes connecting the source to each receptor. Consider transport media (water, air, soil), transformation processes (degradation, metabolism), and exposure matrices (water column, sediment, prey items) [28].

Step 4: Articulate Risk Hypotheses For each "source → pathway → receptor → effect" chain, state a clear, testable hypothesis about the expected adverse effect. This formalizes the model's predictive power.

Step 5: Create the Visual Schematic and Narrative Translate the compiled information into a diagram (see Diagram 2 below) accompanied by a detailed written description that justifies each component and linkage.

Diagram 2: Generic Chemical-Specific Conceptual Model Example

From Generic to Chemical-Specific Models

A generic model outlines general pathways (e.g., for a broad class like "insecticides"). The chemical-specific model refines this by incorporating compound-specific data, which is critical for accurate exposure and effects analysis [28].

Table 1: Key Distinctions Between Generic and Chemical-Specific Model Components

| Component | Generic Conceptual Model | Chemical-Specific Conceptual Model |

|---|---|---|

| Stressor Identity | Broad class (e.g., organophosphate insecticide) | Specific compound (e.g., chlorpyrifos; CAS No. 2921-88-2) |

| Exposure Pathways | All plausible pathways for the chemical class | Pathways prioritized based on chemical properties (e.g., high Koc favors soil adsorption; low volatility minimizes drift) |

| Fate Processes | General processes (hydrolysis, photolysis, biodegradation) | Quantified rates and dominant degradation pathways (e.g., aqueous hydrolysis t1/2 = 35 d at pH 7) |

| Bioaccumulation Potential | Qualitative statement (e.g., "may bioaccumulate") | Quantitative assessment using Log Kow or BCF data (e.g., Log Kow = 4.7, indicating high potential) |

| Ecological Effects | General modes of action (e.g., acetylcholinesterase inhibition) | Species- and endpoint-specific toxicity data (e.g., 96-h LC50 for Daphnia magna = 0.1 µg/L) |

Phase II: Analysis Plan Informed by the Conceptual Model

The conceptual model directly informs the Analysis Plan, which specifies the data and methods needed to evaluate the risk hypotheses [28]. The plan has two core components: the Exposure Assessment and the Ecological Effects Assessment.

Exposure Assessment Protocol

The exposure profile describes the "course a stressor takes from the source to the receptor" [28]. For chemicals, bioavailability—whether the chemical is in a form an organism can absorb—is a critical determinant [28].

Key Experimental & Assessment Methodologies:

- Environmental Fate Studies: Determine chemical distribution using standardized OECD or EPA guidelines for hydrolysis, photodegradation, soil adsorption/desorption (Kd), and leaching potential.

- Monitoring Studies: Measure chemical concentrations in relevant environmental compartments (water, sediment, soil, biota) at the site of concern. Use validated analytical methods (e.g., LC-MS/MS, GC-ECD).

- Bioaccumulation/Biomagnification Studies: Conduct laboratory dietary or waterborne exposure tests to derive a Bioconcentration Factor (BCF). Assess biomagnification potential by analyzing chemical concentrations across a field-sampled food web.

- Modeling: Use fugacity-based or process-based models (e.g., EQC, EXAMS) to predict environmental distribution and concentration (Predicted Environmental Concentration - PEC).

Ecological Effects Assessment Protocol

The stressor-response profile evaluates "evidence that exposure...causes effects of concern" [28]. Data from both guideline studies and the open literature are integrated [29].

Guideline for Evaluating Open Literature Toxicity Data [29]: The EPA Office of Pesticide Programs uses a rigorous two-phase screen to determine the utility of open literature studies for quantitative risk assessment.

Table 2: EPA Acceptance Criteria for Open Literature Ecological Toxicity Studies [29]

| Phase | Criterion | Description |

|---|---|---|

| Phase I: Acceptability Screen | 1. Single Chemical | Effects must be attributable to a single chemical exposure. |

| 2. Whole Organism | Effects must be on live, whole aquatic or terrestrial plants/animals. | |

| 3. Reported Concentration | A concurrent environmental concentration, dose, or application rate is reported. | |

| 4. Explicit Duration | The exposure duration is explicitly stated. | |

| Phase II: Usability Evaluation | 5. Calculated Endpoint | A quantifiable endpoint (e.g., LC50, NOEC) is reported or can be derived. |

| 6. Acceptable Control | Treatments are compared to an appropriate control group. | |

| 7. Verified Species | The test species is reported and can be verified taxonomically. | |

| 8. Study Type & Source | The paper is a full, primary-source article published in English. |

Key Experimental Methodologies for Effects Data:

- Acute Toxicity Tests: Short-term tests (24-96 hour) to determine mortality endpoints (e.g., LC50, EC50) for fish, invertebrates, and algae (OECD 203, 202, 201).

- Chronic Toxicity Tests: Longer-term tests (e.g., 21-day Daphnia reproduction, early life-stage fish tests) to determine endpoints like growth, reproduction, and survival (OECD 211, 210).

- Mesocosm/Field Studies: Semi-field studies (ponds, streams) to assess community- and ecosystem-level effects under more realistic conditions.

- Dose-Response Modeling: Fit toxicity data to statistical models (e.g., probit, logistic) to derive point estimates and confidence intervals.

Phase III: Risk Characterization and Model Iteration

In Risk Characterization, results from the exposure and effects analyses are integrated to estimate risk [28]. The conceptual model is revisited to evaluate which risk hypotheses were supported and to identify dominant exposure pathways or particularly sensitive receptors.

Key Outputs:

- Risk Quotients (RQs): Calculated as PEC / PNEC (Predicted No-Effect Concentration).

- Probabilistic Risk Estimates: Using joint probability distributions of exposure and effects.

- Uncertainty Analysis: Qualitative or quantitative description of uncertainties inherent in the data and model structure.

- Risk Description: A narrative synthesis interpreting the adversity, spatial/temporal scale, and potential for recovery of ecological effects [28].

This characterization provides the scientific basis for risk management decisions, potentially triggering a refined, iterative cycle of assessment beginning with an updated conceptual model [28].

The Scientist's Toolkit: Essential Research Reagent Solutions

Developing and testing conceptual models requires specialized materials and databases. The following toolkit is essential for professionals in this field.

Table 3: Key Research Reagent Solutions for Conceptual Model Development and Testing

| Tool / Material | Function in Conceptual Model Context | Key Features / Examples |

|---|---|---|

| EPA ECOTOX Database [29] | The primary search engine for obtaining curated ecotoxicological effects data from the open literature for use in stressor-response profiles. | Contains single-chemical toxicity data for aquatic and terrestrial species. Used to fulfill data requirements for Registration Review and endangered species assessments [29]. |

| Analytical Reference Standards | Essential for quantifying chemical stressors in environmental and tissue samples during exposure monitoring and bioaccumulation studies. | High-purity certified reference materials (CRMs) for target analytes. Used to calibrate instruments and ensure data quality. |