Adapting the GRADE Framework for Environmental Health Systematic Reviews: A Practical Guide for Researchers and Practitioners

This article provides a comprehensive guide to adapting the Grading of Recommendations Assessment, Development, and Evaluation (GRADE) framework for environmental and occupational health (EOH) systematic reviews.

Adapting the GRADE Framework for Environmental Health Systematic Reviews: A Practical Guide for Researchers and Practitioners

Abstract

This article provides a comprehensive guide to adapting the Grading of Recommendations Assessment, Development, and Evaluation (GRADE) framework for environmental and occupational health (EOH) systematic reviews. Aimed at researchers, scientists, and drug development professionals, it addresses the growing demand for a structured, transparent process to evaluate and integrate diverse evidence streams—including human, animal, in vitro, and in silico studies[citation:1]. The article explores the foundational principles of GRADE, details the application of the newly developed GRADE Evidence-to-Decision (EtD) framework for EOH[citation:2][citation:3], troubleshoots common methodological challenges, and validates the approach through comparative case studies and conceptual advances. It synthesizes current guidance to empower professionals in producing robust, actionable evidence for environmental health risk assessment and decision-making.

Understanding GRADE: The Foundational Framework for Transparent Environmental Health Assessments

The Growing Demand for Structured Evidence Assessment in Environmental Health

The field of environmental health faces a critical challenge: synthesizing complex evidence from diverse sources—including human epidemiology, animal toxicology, and in vitro studies—to inform robust public health decisions and policies. The demand for structured evidence assessment has never been greater, driven by the proliferation of scientific data and the need for transparency in risk assessment and guideline development [1]. Traditional narrative reviews are insufficient for this task, as they are prone to bias and lack explicit methodology.

Within this context, the adaptation of the Grading of Recommendations Assessment, Development and Evaluation (GRADE) framework presents a transformative opportunity. Originally developed for clinical medicine, GRADE provides a systematic and transparent process for rating the certainty of evidence and the strength of recommendations [2]. Its application to environmental health questions—such as assessing whether an exposure constitutes a hazard or evaluating intervention effectiveness—requires careful methodological consideration and adaptation [1]. This article details the essential application notes and protocols for implementing a GRADE-based framework in environmental health systematic reviews, providing researchers and risk assessors with the tools to meet this growing demand for rigor and clarity.

Core Application Notes for GRADE Adaptation

Adapting GRADE for environmental health involves addressing the unique nature of the evidence. Key application notes are summarized in the table below.

Table 1: Key Adaptations of the GRADE Framework for Environmental Health Systematic Reviews

| GRADE Component | Standard Clinical Application | Adaptation for Environmental Health | Rationale & Key Tools |

|---|---|---|---|

| Formulating the Question | Focused on interventions (PICO: Population, Intervention, Comparator, Outcome). | Expands to include exposure assessment & hazard identification. Formats include PECO (Population, Exposure, Comparator, Outcome) or PEST (Population, Exposure, Study design, Time) [1]. | Questions often address "Is exposure X a risk factor for health outcome Y?" rather than "Is intervention A effective?" [1]. |

| Evidence Integration | Primarily integrates evidence from human randomized controlled trials (RCTs). | Requires integration of streams of evidence from human observational studies, animal models, in vitro assays, and in silico models [1]. | No single study type is considered a priori as high certainty. Mechanistic evidence from non-human studies is crucial for establishing biological plausibility. |

| Risk of Bias Assessment | Uses tools like Cochrane RoB 2 for RCTs. | Employs domain-specific tools. For exposure prevalence studies, the Risk of Bias in Studies estimating Prevalence of Exposure to Occupational factors (RoB-SPEO) tool is recommended [3]. | Study designs are heterogeneous. Tools must be fit-for-purpose, assessing biases specific to exposure measurement (e.g., recall bias, exposure misclassification). |

| Assessing Certainty | Starts RCTs as high certainty, downgrades for limitations. | Often starts human observational studies as low certainty, with potential for upgrading based on strong evidence of association (e.g., large effect size, dose-response) [1] [2]. | Recognizes the inherent limitations of observational designs while allowing for confidence in compelling evidence. |

| Expected Heterogeneity | Unexplained heterogeneity lowers certainty rating. | "Expected heterogeneity" in exposure prevalence across space/time is acknowledged and planned for in analysis, not automatically a limitation [3]. | Exposure levels vary geographically and temporally due to real-world factors; this variability is an important finding, not merely statistical noise. |

A central challenge is the integration of evidence streams. The GRADE framework for environmental health must explicitly outline how data from human, animal, and mechanistic studies are combined to form a single body of evidence and an overall certainty rating for a health outcome [1]. This process often involves using systematic evidence maps (SEMs) as a preliminary step to identify and categorize the available evidence before undertaking a full quantitative synthesis [4].

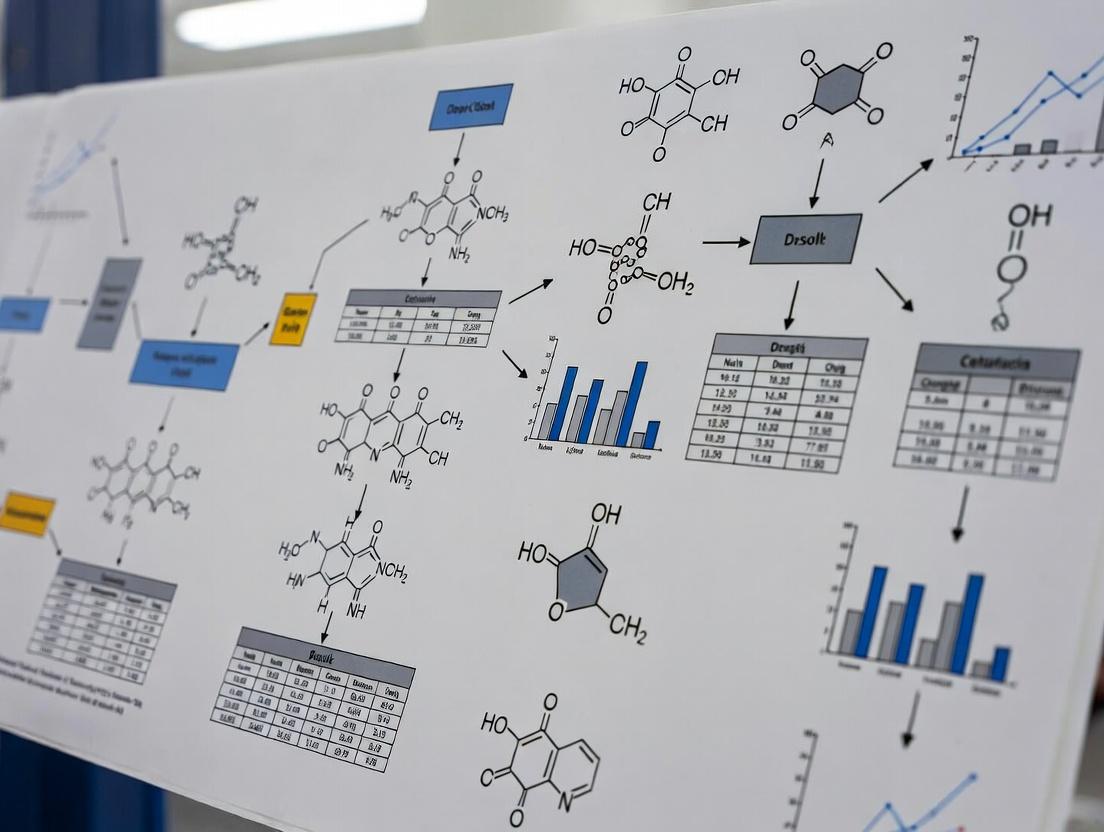

Diagram Title: Workflow for GRADE Adaptation in Environmental Health Reviews

Detailed Experimental Protocols

Protocol for a Systematic Review of Exposure Prevalence

This protocol follows guidance for systematic reviews of the prevalence of exposure to environmental and occupational risk factors [3] and aligns with the updated SPIRIT 2025 principles for comprehensive protocol reporting [5].

1. Protocol Registration and Team Assembly:

- Registration: Prior to beginning, register the review title and objectives on a platform like PROSPERO. The protocol must include a structured summary and detailed methods [5].

- Team: Assemble a multidisciplinary team including a subject matter expert, a systematic review methodologist, a statistician, and an information specialist. Document all roles [5].

2. Defining the Scope and Question:

- Formulate the question using the PEST format (Population, Exposure, Study design, Time) [3].

- Example: "What is the global prevalence of exposure to silica dust (E) among construction workers (P) as measured in cross-sectional surveys (S) between 2010 and 2023 (T)?"

- Pre-define how expected heterogeneity (e.g., by region, sub-sector) will be analyzed and presented [3].

3. Systematic Search and Screening:

- The information specialist will design and execute a comprehensive search across multiple databases (e.g., PubMed, Embase, specialized environmental indices).

- Searches will combine terms for the population, exposure, and study design filter for prevalence/observational studies.

- At least two reviewers will independently screen titles/abstracts and full texts against pre-defined eligibility criteria, using software such as Rayyan or Covidence. Disagreements will be resolved by consensus or a third reviewer.

4. Data Extraction and Risk of Bias Assessment:

- Develop and pilot a standardized data extraction form. Key data items include: study location/time, sample size, sampling method, exposure assessment method (e.g., air monitoring, self-report), and prevalence metric.

- Assess the risk of bias for each included study using the RoB-SPEO tool [3]. This tool evaluates domains such as the representativeness of the target population, the validity of the exposure assessment, and the handling of missing data.

- Conduct this assessment in duplicate, measuring inter-rater agreement (e.g., Cohen's kappa).

5. Data Synthesis and Certainty Assessment:

- If meta-analysis is appropriate, use random-effects models to pool prevalence estimates, acknowledging expected heterogeneity. Present results via forest plots and perform subgroup analyses (e.g., by continent, measurement type).

- If quantitative synthesis is not feasible, conduct a structured narrative synthesis organized by key themes or subgroups.

- Assess the overall certainty of the evidence for the prevalence estimate using a GRADE adaptation. Start the rating as low (due to the cross-sectional design) and consider downgrading for risk of bias, inconsistency, indirectness, imprecision, or publication bias. Upgrading is generally not applicable for prevalence estimates.

Protocol for Integrating Evidence Streams for Hazard Identification

This protocol outlines the methodology for integrating human, animal, and mechanistic evidence to assess the hazard of an environmental chemical, a core task in environmental health [1].

1. Problem Formulation and Systematic Evidence Map (SEM):

- Formulate a clear health-outcome-specific question (e.g., "Does chronic exposure to chemical Z cause hepatotoxicity?").

- Conduct a Systematic Evidence Map (SEM) to identify, categorize, and visualize the available evidence across all streams [4]. This involves systematic searches for each evidence stream, high-level coding of study characteristics (e.g., species, model system, exposure duration, outcome), and creation of interactive databases or heatmaps to identify evidence clusters and gaps.

2. Stream-Specific Systematic Review and Quality Appraisal:

- Human Evidence: Conduct a systematic review of epidemiological studies. Assess risk of bias using a tool like the Navigation Guide risk of bias tool or OHAT's tool for observational studies. Extract data on effect estimates (e.g., risk ratios).

- Animal Evidence: Conduct a systematic review of in vivo toxicology studies. Assess risk of bias using a tool like SYRCLE's RoB tool. Extract data on dose-response and effect size.

- Mechanistic Evidence: Conduct a systematic review of in vitro and in silico studies. Apply a suitable risk of bias tool (e.g., for high-throughput screening). Extract data on key events in a hypothesized adverse outcome pathway (AOP).

3. Evidence Integration and Synthesis:

- Use a pre-defined framework (e.g., the OHAT or Navigation Guide approach) to integrate findings.

- Step 1: Rate confidence in each stream individually based on study quality, consistency, and relevance.

- Step 2: Determine if bodies of evidence support each other. Do animal models show the same health effect? Do mechanistic studies explain the biological plausibility?

- Step 3: Develop a combined confidence rating for the hazard conclusion. Strong, consistent evidence across multiple streams with minimal unexplained inconsistencies leads to a higher confidence rating.

- Document the integration process transparently in an evidence profile table.

4. Final Certainty Rating using GRADE:

- The integrated confidence rating is translated into a GRADE certainty rating (High, Moderate, Low, Very Low) for the health outcome.

- Create a Summary of Findings (SoF) table presenting the health outcome, the integrated evidence, and the final GRADE certainty rating for use in the Evidence-to-Decision (EtD) framework [2].

Diagram Title: Protocol for Multi-Stream Evidence Integration in Hazard Assessment

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Essential Toolkit for Conducting GRADE-Based Environmental Health Systematic Reviews

| Tool / Resource Name | Type | Primary Function in Evidence Assessment | Key Reference / Source |

|---|---|---|---|

| GRADE Handbook | Methodology Guide | The definitive reference for applying GRADE principles, including defining certainty and using EtD frameworks. | [2] |

| RoB-SPEO Tool | Risk of Bias Tool | Assesses risk of bias in individual studies estimating prevalence of exposure to occupational or environmental factors. | [3] |

| Navigation Guide / OHAT Risk of Bias Tool | Risk of Bias Tool | Assesses risk of bias in human observational studies (e.g., cohort, case-control) for environmental health questions. | [1] |

| SYRCLE's RoB Tool | Risk of Bias Tool | Assesses risk of bias in animal intervention studies. Critical for evaluating the internal validity of toxicology evidence. | [1] |

| Systematic Evidence Map (SEM) Guidance | Methodological Framework | Provides a structured approach to scoping and categorizing a broad evidence base before a full review, identifying gaps and clusters. | [4] |

| PECO/PEST Framework | Question Formulation Template | Guides the structuring of a focused, answerable environmental health research question for a systematic review. | [3] [1] |

| SPIRIT 2025 Checklist | Protocol Reporting Standard | A 34-item checklist ensuring comprehensive and transparent reporting of systematic review protocols, analogous to its use for clinical trials. | [5] |

| Covidence / Rayyan | Software Platform | Facilitates collaborative management of the review process, including reference screening, data extraction, and conflict resolution. | N/A (Industry Standard) |

| GRADEpro GDT | Software Platform | A web-based tool for creating Summary of Findings tables, Evidence Profiles, and interactive EtD frameworks. | [2] |

The Grading of Recommendations Assessment, Development and Evaluation (GRADE) framework is a systematic and transparent methodology for evaluating the certainty of a body of scientific evidence and for developing and grading health-related recommendations [2]. Originally developed for clinical medicine, its application has expanded into environmental and occupational health (EOH), a field characterized by complex evidence from human observational studies, animal toxicology, in vitro assays, and exposure models [6]. The core objectives of GRADE are to separate the certainty of evidence from the strength of recommendations, ensuring that decision-making is based on both the confidence in effect estimates and contextual factors like equity, feasibility, and values [7] [2].

The adaptation of GRADE for EOH addresses a critical need for a structured process to evaluate and integrate diverse evidence streams, particularly for questions concerning environmental exposures, hazards, and risk-mitigating interventions [8] [6]. The GRADE Evidence-to-Decision (EtD) framework for EOH, formalized in recent guidance, provides a tailored structure with twelve assessment criteria, incorporating considerations such as socio-political context, timing of effects, and a broadened scope of equity [9] [10]. This structured approach moves beyond traditional narrative reviews, offering policymakers and scientists a rigorous tool to translate evidence into clear, actionable guidance for managing environmental health risks [9] [11].

Assessing the Certainty of Evidence

The assessment of the certainty of evidence (also termed quality of evidence or confidence in effect estimates) is a foundational GRADE principle. It represents the degree of confidence that the true effect of an exposure or intervention lies close to its estimated effect [12].

Initial Rating and Domains for Rating Down

GRADE classifies study designs into two groups for an initial certainty rating. Randomized controlled trials (RCTs) start as high certainty. Observational studies (or non-randomized studies, NRS) typically start as low certainty due to inherent risks of confounding, but may start as high if evaluated with a rigorous tool like ROBINS-I that adequately assesses confounding [12]. This initial rating is then modified by assessing five domains that may decrease the certainty level.

Table 1: GRADE Domains for Rating Down Certainty of Evidence [12]

| Domain | Definition | Examples in Environmental Health |

|---|---|---|

| Risk of Bias | Limitations in study design or execution. | Lack of blinding in outcome assessment, inadequate control for confounding in cohort studies [12]. |

| Inconsistency | Unexplained variability in results across studies. | Widely varying effect estimates (heterogeneity) for the association between an air pollutant and a health outcome across different study populations [12]. |

| Indirectness | Differences between the studied and relevant PECO (Population, Exposure, Comparator, Outcome) questions. | Evidence from animal studies applied to human health, or studies using a surrogate exposure biomarker instead of direct personal exposure measurement [6]. |

| Imprecision | Results are based on sparse data or wide confidence intervals. | A small total sample size or a confidence interval for a risk ratio that includes both appreciable benefit and no effect [12]. |

| Publication Bias | Systematic under- or over-publication of studies based on results. | A missing small-study effect in a funnel plot, suggesting smaller studies with null findings were not published [12]. |

Domains for Rating Up (Observational Studies)

For bodies of evidence from observational studies, three factors can increase the certainty rating [12]. These are generally not applied to RCTs.

Table 2: GRADE Domains for Rating Up Certainty of Evidence from Observational Studies [12]

| Domain | Definition | Application Criteria |

|---|---|---|

| Large Magnitude of Effect | A significantly large effect estimate. | A relative risk >2 or <0.5 based on consistent evidence from observational studies with no obvious bias [12]. |

| Dose-Response Gradient | Evidence of a changing effect with changing exposure level. | A monotonic relationship where increased exposure correlates with increased risk of the health outcome [12]. |

| Effect of Plausible Residual Confounding | All plausible confounding would reduce the demonstrated effect. | Evidence suggests that any unmeasured or residual confounding is likely to bias the results toward the null, meaning the true effect may be larger [12]. |

The final certainty is expressed using one of four levels: High, Moderate, Low, or Very Low [12]. This judgment is made for each critical or important outcome and is presented transparently in an evidence profile or summary of findings table [7].

Formulating and Grading the Strength of Recommendations

Moving from evidence to recommendations involves balancing the certainty in evidence with other critical factors. Recommendations are characterized by their direction (for or against an intervention/exposure control) and their strength (strong or conditional, also called weak) [2].

The Evidence-to-Decision (EtD) Framework

The GRADE EtD framework structures this deliberative process. The EOH-specific EtD framework includes twelve criteria grouped into several categories [9] [10].

Table 3: Core Criteria in the GRADE EtD Framework for Environmental & Occupational Health [9] [10]

| Criterion Category | Specific Criteria | Key Considerations for EOH |

|---|---|---|

| Problem & Alternatives | Priority of the problem; Feasibility of alternatives. | Includes socio-political context and timing of implementing alternatives [9]. |

| Benefits, Harms & Evidence | Desirable effects; Undesirable effects; Certainty of evidence. | Timing of benefits/harms is explicitly considered [9]. |

| Values, Equity & Acceptability | Values and preferences; Equity; Acceptability. | Equity broadened beyond health to include environmental justice; explicit handling of variable stakeholder views [9] [10]. |

| Resources & Feasibility | Resource use; Cost-effectiveness; Feasibility. | -- |

Determining Recommendation Strength

The strength of a recommendation is determined by how confident the guideline panel is that the desirable consequences of adhering to it outweigh the undesirable consequences across a population [7]. Four key factors inform this judgment.

Table 4: Determinants of the Strength of a Recommendation

| Determinant | Strong Recommendation For | Conditional/Weak Recommendation For |

|---|---|---|

| Balance of Effects | Desirable effects clearly outweigh undesirable effects (or vice versa). | Desirable and undesirable effects are closely balanced, or uncertainty exists about the balance. |

| Certainty of Evidence | Based on high- or moderate-certainty evidence. | Based on low- or very low-certainty evidence. |

| Values and Preferences | Homogeneous values and preferences; little variability in what people value. | Heterogeneous or uncertain values and preferences. |

| Resource Use | Net benefits clearly justify the costs (or clearly do not). | Net benefits may not be worth the costs, or uncertainty exists about resource implications. |

A strong recommendation implies that most individuals should follow the recommended course of action, and it can be adopted as policy in most situations. A conditional recommendation requires deliberation and context-specific adaptation, as different choices may be appropriate for different individuals or groups [7] [2].

Adaptation for Environmental Health Systematic Reviews

Applying GRADE to environmental health requires specific adaptations to address the field's unique challenges, such as integrating multiple evidence streams and the predominance of observational data on hazards [8] [6].

Integrating Multiple Evidence Streams

A primary challenge is evaluating and integrating evidence from human, animal, in vitro, and in silico (computational) studies [8] [6]. GRADE provides a framework for assessing each stream separately and then integrating judgments.

- Human Evidence: Typically from observational studies, assessed starting at low certainty, using ROBINS-I for risk of bias, with attention to critical confounding [12] [6].

- Animal Evidence: Experimental animal studies start at high certainty but are almost always rated down for indirectness (difference between animal population and humans) [6].

- Modeled Evidence: For exposure or health outcome models, the certainty of model outputs depends on the certainty of model inputs (evidence feeding the model) and the credibility of the model itself [13].

Framing the Research Question: PECO

In EOH, the clinical PICO (Population, Intervention, Comparator, Outcome) is adapted to PECO (Population, Exposure, Comparator, Outcome) [8]. This reframes the question around an environmental exposure and a comparator exposure level (e.g., low vs. high, or exposed vs. unexposed).

Experimental Protocols and Application Notes

Protocol for Assessing Certainty of Evidence for an Environmental Exposure

This protocol outlines the steps for conducting a GRADE certainty assessment for a systematic review investigating a suspected environmental hazard.

Objective: To assess the certainty of evidence for the association between a specified environmental exposure (e.g., perfluorooctanoic acid [PFOA]) and a critical health outcome (e.g., reduced antibody response).

Materials:

- Systematic review team with expertise in toxicology, epidemiology, and GRADE methodology.

- Completed systematic review with extracted data for the PECO question.

- GRADE handbook or guidance documents [7] [12].

- Software: GRADEpro Guideline Development Tool (GDT) or equivalent.

Procedure:

- Define the PECO: Finalize the Population, Exposure, Comparator, and Outcomes with stakeholder input. Pre-rate outcomes as "critical" or "important" for decision-making [8].

- Map Evidence Streams: Categorize included studies into streams: human observational, animal experimental, in vitro.

- Assess Each Stream per Outcome:

- Human Studies: Use ROBINS-I tool to evaluate risk of bias. Initial certainty: Low. Apply GRADE domains (Table 1 & 2). Document reasons for downgrading/upgrading.

- Animal Studies: Use SYRCLE's RoB tool or similar. Initial certainty: High. Rate down for indirectness (P: animal species, E: high-dose vs. human low-dose). Assess other domains.

- Integrate Certainty Across Streams: Develop a single certainty rating for the outcome. Higher certainty in one stream (e.g., a large, consistent human effect) may dominate. Alternatively, if streams are concordant, certainty may be increased beyond that of any single stream [6].

- Create Summary of Findings Table: Use GRADEpro GDT to generate a table presenting for each critical outcome: number of participants/studies, effect estimates, and the final certainty rating (High/Moderate/Low/Very Low) with footnotes explaining key judgments [7].

- Report: The certainty assessment is reported as the final output of the systematic review. Guideline panels will use this table in the subsequent EtD process.

Protocol for Conducting an EtD Framework Meeting

This protocol guides a panel in using the EOH EtD framework to move from evidence to a recommendation.

Objective: To formulate a graded recommendation on whether to implement a specific intervention to reduce occupational exposure to silica dust.

Materials:

- Completed Summary of Findings table (from Protocol 5.1).

- Pre-populated EtD framework table with available evidence on all 12 criteria [9].

- Stakeholder representatives (e.g., workers, industry, regulators).

- Facilitator trained in GRADE EtD methods.

Procedure:

- Preparation: The technical team pre-populates the EtD framework with summaries of evidence for each criterion (e.g., estimated costs, stakeholder survey results on acceptability).

- Panel Discussion: The multi-disciplinary panel reviews each criterion sequentially:

- Problem & Alternatives: Discuss the burden of silicosis and feasibility of engineering controls vs. personal protective equipment.

- Balance of Effects: Review the certainty and magnitude of health benefits (reduced disease) versus harms (cost, inconvenience).

- Values, Equity & Acceptability: Elicit input from stakeholder representatives. Discuss impacts on vulnerable sub-populations.

- Resources & Feasibility: Review cost-effectiveness analyses and implementation barriers.

- Judgments and Conclusions: For each criterion, the panel agrees on a descriptive judgment (e.g., "Probably no important uncertainty"). The panel then synthesizes these judgments to decide the direction (for the intervention) and strength (strong or conditional) of the recommendation, following the determinants in Table 4.

- Documentation: The final EtD table, including all judgments, research evidence, and the final recommendation with justification, is published transparently [9] [10].

Table 5: Key Research Reagent Solutions for GRADE in Environmental Health

| Tool/Resource | Function | Application Note |

|---|---|---|

| GRADE Handbook & Official Guidance [7] | Core reference manuals detailing concepts, procedures, and examples for applying GRADE. | Essential for training and ensuring fidelity to the GRADE approach. The 2019 Environment International series provides EOH-specific guidance [8]. |

| GRADEpro GDT (Guideline Development Tool) [7] | Web-based software to create systematic review summaries (SoF tables) and structure EtD frameworks. | Streamlines the technical process, ensures format consistency, and facilitates collaboration among review team members. |

| ROBINS-I (Risk Of Bias In Non-randomized Studies - of Interventions) [12] | Structured tool for assessing risk of bias in comparative observational studies. | Critical for evaluating human evidence in EOH. Allows observational studies to start at a high certainty rating if confounding is adequately addressed. |

| PECO Framework [8] | Adaptation of PICO for exposure questions: Population, Exposure, Comparator, Outcome. | Foundational for correctly framing the environmental health systematic review question at the outset. |

| GRADE EtD Framework for EOH [9] [10] | The 12-criteria framework tailored for environmental and occupational health decisions. | The structured template that guides panels from evidence synthesis to a final recommendation, incorporating socio-political context and broad equity. |

| CHANGE Tool [14] | A standardized tool for assessing study quality in weight-of-evidence reviews on climate change and health. | An example of a domain-specific adaptation for a critical area within environmental health, assessing transparency, bias, and covariate selection. |

| Models Certainty Assessment Framework [13] | Conceptual approach for grading the certainty of evidence derived from mathematical models (e.g., exposure, climate, economic). | Vital for integrating modeled evidence, distinguishing uncertainty in model inputs from the credibility of the model itself. |

The field of environmental and occupational health (EOH) is defined by complex questions concerning hazardous exposures, population risk, and the effectiveness of mitigation interventions [15]. Making trustworthy, evidence-informed decisions in this domain requires the systematic and transparent synthesis of diverse evidence streams, including human observational studies, animal toxicology, in vitro assays, and in silico models [6]. Historically, the Grading of Recommendations Assessment, Development and Evaluation (GRADE) framework has been a cornerstone for clinical and public health guideline development. Its rigorous, structured approach to rating the certainty of evidence and moving from evidence to recommendations represents a significant, yet underutilized, opportunity for the EOH field [15].

The adaptation of GRADE for EOH is not merely an academic exercise but a practical necessity to address critical gaps. Traditional EOH risk assessments can lack transparency in how different types of evidence are weighted and integrated. The GRADE Evidence-to-Decision (EtD) framework provides a mechanism to make this process explicit, incorporating not only the certainty of the evidence but also other crucial factors like equity, feasibility, and stakeholder values into final recommendations [9]. This article details the application notes and protocols for implementing the GRADE framework in EOH systematic reviews, providing researchers and assessors with the practical tools to harness its potential.

The application of GRADE in EOH necessitates an understanding of the unique evidentiary landscape. Key adaptations include the use of the PECO (Population, Exposure, Comparator, Outcome) framework for formulating questions and the integration of non-human evidence [8].

Table 1: Characteristics of Evidence Streams in Environmental Health Systematic Reviews

| Evidence Stream | Typical Study Designs | Initial GRADE Certainty Rating | Common Reasons for Downgrading | Key Role in Evidence Integration |

|---|---|---|---|---|

| Human Observational | Cohort, Case-Control, Cross-Sectional | Low [6] | Risk of bias (confounding), Imprecision, Inconsistency, Indirectness [6] | Provides direct evidence on exposure-outcome relationships in relevant populations. |

| Animal Toxicology | Randomized controlled experiments (in vivo) | High [6] | Indirectness (to human population), Risk of bias, Imprecision [6] | Informs biological plausibility, mechanisms, and dose-response in controlled settings. |

| In Vitro / Mechanistic | Cell culture, isolated tissue assays | Not formally rated by default; used as supportive evidence. | High indirectness to whole organism. | Elucidates molecular and cellular mechanisms of action. |

| In Silico / Modeling | Computational, PBPK, QSAR models | Not formally rated by default; used as supportive evidence. | Model validation and uncertainty. | Supports extrapolation and hypothesis generation. |

A core challenge is integrating these streams into a single assessment. The GRADE approach for EOH involves evaluating each stream separately for a given outcome and then synthesizing the findings to form an overall judgment on the certainty that an exposure causes a health effect [15] [6].

Table 2: Key Adaptation Criteria in the GRADE EtD Framework for Environmental & Occupational Health [9] [16]

| EtD Criterion | Standard GRADE Application | EOH-Specific Adaptation & Considerations |

|---|---|---|

| Priority of the Problem | Focus on disease burden and healthcare priority. | Explicitly includes consideration of the socio-political context and population vulnerability (e.g., environmental justice) [9]. |

| Benefits & Harms | Assesses desired and undesired health effects. | Timing of effects is critically considered (e.g., acute vs. chronic, latent periods) [9]. Includes ecological benefits. |

| Certainty of Evidence | Judgment on confidence in effect estimates. | Integrates certainty ratings across multiple evidence streams (human, animal, etc.) [15] [6]. |

| Values & Acceptability | Importance of outcomes to those affected. | Acknowledges and accommodates variable or conflicting stakeholder views (e.g., industry, community, regulator perspectives) [9]. |

| Feasibility | Practicality of implementation. | Assesses technical, logistical, and political feasibility. Timing is a key factor (e.g., urgent vs. long-term interventions) [9]. |

| Equity | Impact on health equity. | Broadened beyond health equity to include social, economic, and environmental justice dimensions [9]. |

Detailed Experimental Protocols for GRADE Application in EOH

Protocol 1: Formulating the Research Question and Protocol Registration

Objective: To define a clear, actionable, and systematic review question using the PECO framework and to establish a publicly available review protocol to minimize bias. Procedure:

- Define PECO Elements:

- Population (P): Specify the exposed population(s) of interest (e.g., "pregnant women," "occupational workers in semiconductor manufacturing").

- Exposure (E): Define the environmental or occupational agent, specifying metrics (e.g., "chronic exposure to airborne PM2.5," "dermal exposure to solvent X").

- Comparator (C): Define the alternative exposure scenario (e.g., "low or non-exposed population," "exposure below a specific threshold").

- Outcome (O): List critical and important health outcomes, specifying measurement methods and timing (e.g., "incidence of childhood asthma diagnosis," "mean change in lung FEV1").

- Develop and Register Protocol: Document the full methodology, including PECO, search strategy, eligibility criteria, risk of bias assessment tool, and synthesis plan. Register the protocol on a platform like PROSPERO, the Open Science Framework (OSF), or INPLASY [17].

Protocol 2: Systematic Search, Screening, and Evidence Stream Stratification

Objective: To conduct a comprehensive, reproducible literature search and categorize studies by evidence stream for parallel assessment. Procedure:

- Search Strategy: Develop Boolean search strings using keywords and controlled vocabulary (e.g., MeSH, EMTREE) for all PECO elements across multiple databases (PubMed, Scopus, Web of Science, Embase, specialized environmental indices) [17].

- Dual Screening: Use structured software (e.g., Rayyan, Covidence) for title/abstract and full-text screening by two independent reviewers, resolving conflicts by consensus.

- Evidence Stream Stratification: Upon full-text inclusion, tag each study by its primary evidence stream (Human Observational, Animal, In Vitro). Model-based studies (In Silico) are typically identified separately for supportive use.

Protocol 3: Assessing Certainty of Evidence for a Body of Human Observational Studies

Objective: To apply GRADE domains to rate the certainty (high, moderate, low, very low) for a specific exposure-outcome pair from human studies. Procedure:

- Initial Rating: Start at Low certainty for observational evidence [6].

- Assess & Downgrade:

- Risk of Bias: Use a tool like ROBINS-I (Risk Of Bias In Non-randomized Studies - of Interventions), adapted for exposures [8]. Downgrade for serious or very serious limitations across studies.

- Inconsistency: Downgrade for unexplained heterogeneity in effect direction or magnitude (e.g., high I² statistic, non-overlapping confidence intervals).

- Indirectness: Downgrade if the PECO of the available studies differs meaningfully from the review question (e.g., different exposure biomarker, surrogate outcome).

- Imprecision: Downgrade if the confidence interval around the summary effect estimate is wide and includes both appreciable benefit and harm (or null effect).

- Publication Bias: Downgrade if funnel plot asymmetry is suspected or likely (e.g., small-study effects).

- Assess & Upgrade (Rare in EOH): Consider upgrading for a large magnitude of effect (e.g., relative risk >2.0), a dose-response gradient, or if all plausible confounding would reduce the observed effect [6].

Protocol 4: Applying the Evidence-to-Decision (EtD) Framework

Objective: To structure a transparent decision-making process for formulating a recommendation or policy based on synthesized evidence [9] [18]. Procedure:

- Scoping & Context: Define the decision, alternatives (e.g., "set exposure limit at X," "implement engineering control Y"), and relevant stakeholders.

- Populate EtD Table: For each criterion (Problem, Benefits/Harms, Evidence Certainty, Values, Resources, Equity, Acceptability, Feasibility), summarize the best available evidence or judgments [16].

- Judgment & Conclusion: For each criterion, the panel makes a judgment (e.g., "Probably no important trade-offs," "Probably favors the intervention"). These feed into an overall strength of recommendation (e.g., "Strong recommendation for," "Conditional recommendation against").

- Document Rationale: The EtD framework requires explicit documentation of the reasons for each judgment and the final recommendation, ensuring full transparency [9].

Diagram 1: GRADE for EOH Systematic Review & Decision Workflow. This workflow illustrates the sequential and parallel steps from question formulation to final recommendation, highlighting the integration of multiple evidence streams into the EtD framework [9] [6].

Diagram 2: Multi-Stream Evidence Synthesis for GRADE Certainty. This diagram details how evidence from different streams is assessed against GRADE domains to reach an integrated judgment on the certainty of evidence for a specific exposure-outcome relationship [15] [6].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools and Frameworks for Implementing GRADE in EOH Reviews

| Tool / Framework | Primary Function | Application in EOH GRADE Protocol |

|---|---|---|

| PECO Framework | Question formulation for exposure studies. | Defines the key elements of the systematic review question (Population, Exposure, Comparator, Outcome) [8]. |

| GRADEpro GDT (Guideline Development Tool) | Software for creating Summary of Findings and EtD tables. | Platform to manage evidence assessments, document certainty ratings, and populate the structured EtD framework [8]. |

| ROBINS-I (adapted) | Risk of bias assessment for non-randomized studies of interventions/exposures. | Critical tool for assessing the "Risk of Bias" GRADE domain for human observational studies. An adapted version for exposures is recommended [8]. |

| Navigation Guide Methodology | A systematic review methodology for EOH based on GRADE. | Provides a detailed, stepwise case study for applying GRADE, including evidence integration from human and animal studies [6]. |

| GRADE EtD Framework for EOH | Structured template for moving from evidence to a decision. | The finalized framework incorporating EOH-specific criteria (e.g., socio-political context, timing, broad equity) to guide panel judgment and recommendation formulation [9] [18]. |

| PRISMA 2020 Checklist | Reporting guideline for systematic reviews. | Ensures transparent and complete reporting of the review process, from search to synthesis [17]. |

The translation of environmental health science into protective policy and regulation has historically been hampered by inconsistent and non-transparent methods for synthesizing evidence [19]. Traditional expert-led narrative reviews, dominant in the field for decades, were vulnerable to bias and often failed to incorporate new scientific findings in a timely manner, leading to delays in addressing public health threats [19]. The urgent need for rigorous, transparent methodologies became clear, mirroring the evolution that occurred in clinical medicine over 20 years prior, where systematic review approaches like Cochrane and GRADE revolutionized evidence-based practice [19].

This document details the application notes and protocols for adapted systematic review frameworks within environmental health. Framed within a broader thesis on the adaptation of the GRADE framework, it focuses on the key methodologies developed and adopted by leading agencies: the Navigation Guide, the NTP/OHAT Handbook, and the recently published GRADE Evidence-to-Decision (EtD) framework for Environmental and Occupational Health (EOH) [9] [19] [20]. These frameworks represent a concerted effort to bring the rigor of evidence-based medicine to the complex challenges of environmental exposures, characterized by observational human data, extensive animal toxicology studies, and the need to protect populations from harm [21].

The Methodological Evolution: From Clinical Medicine to Environmental Health

The adaptation of systematic review methodology for environmental health required significant modifications to address the field's unique evidentiary challenges. The following diagram illustrates this conceptual evolution and the relationships between the key frameworks.

Figure 1: Evolution and Integration of Key Methodological Frameworks in Environmental Health.

The foundational shift began with the recognition that environmental health decisions, like clinical ones, require a structured, transparent process to separate scientific assessment from policy values [19]. The Navigation Guide methodology, developed around 2009, was pioneering in explicitly coupling the rigor of systematic review from clinical sciences with the hazard identification approach of the International Agency for Research on Cancer (IARC) [19]. A critical adaptation was the treatment of human observational studies. Unlike clinical GRADE, which typically rates such evidence as low quality initially, the Navigation Guide assigned a default "moderate" quality rating, acknowledging their central role in environmental epidemiology [19].

Subsequent development by the National Toxicology Program's Office of Health Assessment and Translation (NTP/OHAT) further standardized procedures for integrating human and animal evidence [20]. The most recent and formalized adaptation is the GRADE-EOH EtD framework, published in 2025, which extends the generic GRADE EtD structure with specific modifications for environmental and occupational health contexts, such as considering socio-political context, timing of effects, and broad equity considerations [9] [10]. This progression represents a harmonization of pioneering field-specific methods with the internationally recognized GRADE standard.

Detailed Protocols and Application Notes

Protocol: The Navigation Guide Systematic Review Methodology

The Navigation Guide provides a four-step, protocol-driven process for translating environmental health science into evidence-based conclusions [19]. The following workflow details the operational steps for conducting a review.

Figure 2: Navigation Guide Systematic Review Workflow.

Application Notes:

- Step 1 - Specifying the Question: The PICO format is adapted to environmental health, where the "Intervention" (I) is an environmental exposure (e.g., chemical, air pollutant), and the "Comparator" (C) is often a lower or non-exposed group [19].

- Step 2 - Selecting Evidence: A comprehensive, unbiased search is critical. The protocol must pre-specify sources (multiple bibliographic databases, grey literature) and eligibility criteria. Documenting the study flow (e.g., PRISMA diagram) is mandatory for transparency [22].

- Step 3 - Rating Evidence (Core Protocol):

- Risk of Bias Assessment: Use tailored tools for human observational studies (e.g., modified Newcastle-Ottawa Scale) and for animal studies (e.g., SYRCLE's risk of bias tool). This assessment judges the internal validity of each primary study [22].

- Body of Evidence Rating: For human evidence, the initial rating is "moderate" (not "low") due to inherent observational design, then rated down for risk of bias, imprecision, inconsistency, indirectness, or publication bias, or up for large magnitude of effect or dose-response [19]. For animal evidence, a parallel process is conducted using domains relevant to toxicology studies.

- Evidence Integration: The separate ratings for human and animal streams are integrated using a pre-specified algorithm (e.g., a matrix or set of rules) to reach one of five hazard-related conclusions about the strength of the overall evidence [19].

- Step 4 - Grading Recommendations: This step moves from hazard identification to a health-protective recommendation. It explicitly integrates the strength of evidence with information on exposure prevalence, availability of safer alternatives, and stakeholder values and preferences [19].

Protocol: The GRADE Evidence-to-Decision (EtD) Framework for EOH

The 2025 GRADE-EOH EtD framework provides a structured template for panels to deliberate and document judgments across key criteria to move from evidence to a decision or recommendation [9] [10]. The framework's structure is displayed below.

Figure 3: Structure of the GRADE-EOH Evidence-to-Decision Framework.

Application Notes:

- Scoping and Contextualization: This preliminary step defines the scope of the decision, identifies relevant stakeholders (e.g., communities, industry, regulators), and clarifies the decision context (e.g., regulatory standard setting, public health guideline development) [9].

- Criterion-by-Criterion Judgment:

- Problem Priority: Judges the importance of the problem, explicitly considering the socio-political context (e.g., public concern, legal mandates) [9].

- Benefits, Harms, and Balance: Requires estimation of desirable and undesirable consequences. A key EOH adaptation is the explicit consideration of the timing of effects (e.g., immediate vs. intergenerational) [9].

- Certainty of Evidence: Uses the standard GRADE approach to rate confidence in effect estimates for each critical outcome, often drawing on a systematic review conducted via Navigation Guide or OHAT methods [9].

- Values and Acceptability: Assesses the relative importance of outcomes to those affected. The EOH framework more explicitly accommodates variable or conflicting stakeholder views [9].

- Equity: Broadened beyond health equity to consider distributional effects across subgroups, communities, and generations [9].

- Feasibility: Assesses practical, resource, and political feasibility, again considering timing and context [9].

- Reaching a Conclusion: The panel synthesizes judgments across all criteria to formulate a decision or recommendation, specifying its strength (e.g., "The panel recommends...") and any implementation considerations [9].

Protocol: NTP/OHAT Handbook for Systematic Review

The NTP/OHAT Handbook provides standard operating procedures for evidence integration, particularly strong in integrating human and animal data and reaching hazard conclusions [20].

Core Phases:

- Problem Formulation & Protocol Development: Define the scope, develop analytic framework (linking exposure, key events, outcomes), and publish a detailed protocol.

- Evidence Search & Selection: Conduct comprehensive literature searches, screen studies using pre-defined forms, and manage data with systematic review software.

- Risk of Bias & Quality Assessment: Apply OHAT-designed tools to evaluate internal validity of individual human and animal studies.

- Evidence Synthesis & Integration: This is the hallmark phase.

- Hazard Identification: For each outcome, synthesize data within each evidence stream (human, animal). Rate confidence in each body of evidence (High, Moderate, Low, or Very Low) based on risk of bias, consistency, directness, and precision.

- Integrate Streams: Use a pre-defined matrix to combine confidence ratings from human and animal evidence, leading to a hazard conclusion level (e.g., "Known to be a hazard," "Suspected to be a hazard") [20].

- Dose-Response Analysis: Where data permit, model the relationship between exposure and effect.

- Reporting: Document all steps and conclusions in a final report following standardized formats.

Application Notes: The OHAT approach is highly mechanistic and key event-oriented, making it particularly suited for complex toxicological assessments. It provides highly granular tools for evaluating animal toxicity studies. Recent updates clarify processes for reaching conclusions based on human data alone and for handling multiple outcomes or exposures [20].

Case Studies in Application

Case Study: Application of the Navigation Guide to Triclosan

A seminal application of the Navigation Guide methodology evaluated the developmental and reproductive toxicity of the antimicrobial agent triclosan [22].

- Question: "Does exposure to triclosan have adverse effects on human development or reproduction?" [22]

- Process: The reviewers followed Steps 1-3 of the Navigation Guide, focusing on thyroid hormone (thyroxine, T4) levels as a critical upstream outcome [22].

- Evidence Synthesis: They performed a meta-analysis of data from 8 rat studies, finding a statistically significant dose-dependent decrease in T4 with postnatal exposure [22].

Table 1: Quantitative Findings from Navigation Guide Case Study on Triclosan [22]

| Evidence Stream | Number of Studies | Risk of Bias Assessment | Quality of Body of Evidence | Key Quantitative Finding (Meta-Analysis) | Conclusion for Stream |

|---|---|---|---|---|---|

| Human Evidence | 3 studies on T4 | Low to Moderate risk of bias | Moderate/Low (rated down for imprecision, inconsistency) | Not performed (insufficient exposure data) | "Inadequate" evidence of association |

| Animal Evidence (Rats) | 8 studies on T4 | Moderate to High risk of bias | Moderate | Postnatal exposure: -0.31% T4 change per mg/kg-bw (95% CI: -0.38, -0.23) | "Sufficient" evidence of association |

| Overall Integrated Hazard Conclusion | "Possibly Toxic" to reproductive/developmental health |

Comparative Analysis of Framework Outputs

The choice of methodology can influence the format and emphasis of the final output, though conclusions are generally aligned when applied rigorously.

Table 2: Comparative Outputs of Key Methodological Frameworks

| Framework | Primary Output Format | Strength of Evidence Conclusion | Decision/Recommendation Output | Key Differentiating Features |

|---|---|---|---|---|

| Navigation Guide | Hazard identification statement; Quality ratings for human/animal streams. | "Known," "Probably," "Possibly," "Not Classifiable," or "Probably Not" Toxic [19]. | Optional Step 4. Explicitly integrates exposure, alternatives, values for a health-protective recommendation [19]. | Integrates EBM rigor with IARC-style hazard language. Default "moderate" rating for human studies. |

| NTP/OHAT Handbook | Hazard conclusion level; Confidence ratings (High, Mod, Low) for each evidence stream. | "Known to be a hazard," "Suspected to be a hazard," etc. [20]. | Primarily focused on hazard identification for NTP report. Can inform risk assessment. | Highly structured SOPs. Strong focus on integrating mechanistic animal data and key events. |

| GRADE-EOH EtD | Structured judgment table across 12 criteria; Final recommendation/decision. | Certainty of evidence for each outcome (High, Moderate, Low, Very Low) [9]. | Explicit, graded recommendation (e.g., strong/weak) with implementation notes [9]. | Full EtD process. Incorporates socio-political context, broad equity, timing, and stakeholder acceptability explicitly [9]. |

Table 3: Research Reagent Solutions for Environmental Health Systematic Reviews

| Item/Tool | Primary Function | Application Notes & Source |

|---|---|---|

| GRADEpro GDT (Guideline Development Tool) | Software to create Summary of Findings tables, manage evidence, and develop EtD frameworks. | Essential for implementing the GRADE-EOH framework. Facilitates structured data entry and transparent reporting [7]. |

| Systematic Review Management Software (e.g., Covidence, Rayyan, DistillerSR) | Manages the review process: deduplication, blinded screening, data extraction. | Critical for ensuring efficiency and reducing error in large-scale reviews, especially during study selection [22]. |

| Risk of Bias (RoB) Tools | Assesses internal validity of primary studies. | Human observational studies: ROBINS-I, modified Newcastle-Ottawa Scale [21]. Animal studies: SYRCLE's RoB tool. Systematic reviews themselves: ROBIS tool [21]. |

| Generic Protocol for Environmental Health SRs | A template protocol following COSTER recommendations. | Provides a starting point for planning and registering a review, ensuring key methodological steps are pre-specified [23]. |

| PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) Guidelines & Flow Diagram | Reporting standard to ensure transparency and completeness. | The PRISMA 2020 checklist and flow diagram for study selection are mandatory for publication [21]. |

| PECO Search Filter Hedge | A pre-tested combination of search terms to identify environmental health observational studies. | Increases sensitivity and specificity of database searches. Customized versions exist for PubMed, Embase, etc. [21]. |

Analysis of Key Agency Adoption and Impact

The adoption of these rigorous methodologies by leading agencies marks a paradigm shift in environmental health science.

- The Navigation Guide served as a proof-of-concept and catalyst, demonstrating that systematic, transparent review was achievable in environmental health. It directly influenced methodological developments at the U.S. EPA and NTP [19].

- NTP/OHAT has institutionalized systematic review within a major U.S. federal research program. Its handbook provides a living, evolving standard for toxicological evidence integration, actively promoting harmonization with other agencies [20].

- The GRADE-EOH Framework (2025) represents formal international methodological endorsement. Published as part of the official GRADE guidance series, it provides a common standard for global bodies like the World Health Organization (WHO) and national health/environmental agencies to structure complex decisions about exposures and interventions [9] [10].

This trajectory—from innovative pilot to federal standardization to international guidance—demonstrates full maturation. These frameworks equip researchers, risk assessors, and policymakers to navigate complex evidence with transparency, reducing bias and providing a clear audit trail from science to action, ultimately fulfilling the core mission of preventing harm and protecting public health [19].

Step-by-Step Application: Implementing the GRADE EtD Framework in Environmental Health Reviews

The formulation of a precisely structured research question is the critical first step in any rigorous evidence synthesis. In environmental and occupational health (EOH), the PECO framework (Population, Exposure, Comparator, Outcome) is the established standard for defining questions about the association between exposures and health outcomes [24] [25]. This framework is foundational to conducting systematic reviews that inform guideline development and risk assessment.

The integration of PECO within the broader Grading of Recommendations Assessment, Development and Evaluation (GRADE) methodology represents a significant evolution in EOH research [8]. While GRADE provides a structured process for assessing the certainty of evidence and moving from evidence to decisions, a well-constructed PECO question ensures the review addresses a problem relevant to decision-makers and defines the scope of evidence to be gathered [10] [8]. This article details the application of the PECO framework, providing protocols for its operationalization within the context of adapting GRADE for systematic reviews of environmental health interventions and exposures.

Core Components of the PECO Framework

The PECO framework deconstructs a research question into four essential, interrelated components. Precise definition of each is crucial for developing study inclusion criteria and guiding the subsequent review [24] [25].

- Population (P): The group of individuals (or other species, in animal studies) of interest, defined by characteristics such as age, health status, occupation, or geographical location. A clearly defined population ensures the review's relevance to specific at-risk groups [24].

- Exposure (E): The environmental agent, occupational hazard, or other factor whose effect is being studied. In EOH, defining exposure involves specifying the agent, its magnitude, duration, timing, and route of exposure, which often presents a significant methodological challenge [24].

- Comparator (C): The alternative against which the exposure is compared. This is a key differentiator from clinical PICO (Population, Intervention, Comparator, Outcome) questions. In PECO, the comparator is typically a different level of exposure (e.g., lower dose, absent background exposure), an alternative exposure, or a pre-intervention state [24].

- Outcome (O): The health or disease measures that may be influenced by the exposure. Outcomes should be prioritized as critical or important for decision-making and defined with specificity (e.g., "incidence of ischemic stroke" rather than "cardiovascular disease") [24].

Operational Framework: Five PECO Scenarios for Systematic Reviews

The application of PECO is not uniform; it depends on the decision-making context and the existing knowledge about the exposure-outcome relationship. The following framework outlines five paradigmatic scenarios for formulating PECO questions [24].

Table 1: PECO Formulation Scenarios for Environmental Health Systematic Reviews [24]

| Scenario & Context | Objective | PECO Formulation Approach | Example Question |

|---|---|---|---|

| 1. Exploring an Association | To determine if a relationship exists and characterize its shape (e.g., linear, threshold). | Compare the entire range of observed exposures. | Among urban adults, what is the effect of each 10 µg/m³ increase in long-term PM2.5 exposure on the incidence of asthma? |

| 2. Evaluating Quantile-Based Effects | To compare health effects across high vs. low exposure groups within the available data. | Use cut-offs (e.g., tertiles, quartiles) defined by the distribution in identified studies. | Among industrial workers, what is the effect of exposure to noise in the highest quartile compared to the lowest quartile on hearing impairment? |

| 3. Applying External Reference Values | To assess risk relative to a known standard or population benchmark. | Use cut-offs derived from external sources (e.g., other populations, regulatory standards). | Among children, what is the effect of blood lead levels ≥5 µg/dL compared to <5 µg/dL on cognitive development score? |

| 4. Identifying Harm-Mitigating Thresholds | To evaluate if staying below a specific exposure level ameliorates harm. | Use a predefined, health-based exposure limit as the comparator. | Among factory workers, what is the effect of exposure to organic solvents below the occupational exposure limit (OEL) compared to above the OEL on liver enzyme function? |

| 5. Evaluating an Intervention's Impact | To assess the health effect of an intervention that reduces exposure. | The comparator is the pre-intervention or no-intervention state. | Among a community using groundwater, what is the effect of installing a filtration system (reducing arsenic exposure by ≥50%) compared to no filtration on the prevalence of skin lesions? |

Experimental Protocols for PECO Application

Protocol 4.1: Iterative PECO Development for a Systematic Review This protocol guides the collaborative process of defining the review scope.

- Convene the Panel: Assemble a team including subject matter experts, systematic review methodologists, and end-users (e.g., risk managers, policymakers).

- Define the Problem: Collaboratively draft a broad problem statement (e.g., "the potential cardiovascular effects of traffic-related air pollution in Europe").

- Populate PECO Components Iteratively:

- Population: Refine from broad categories to specific, actionable definitions (e.g., from "Europeans" to "adults >30 years residing in urban EU areas").

- Exposure: Specify the agent(s) (e.g., NO₂), metrics (e.g., annual mean concentration), and measurement context (e.g., ambient monitoring at residence).

- Comparator: Select the appropriate scenario from Table 1 based on review purpose and data availability.

- Outcome: Prioritize a final list of critical and important outcomes (e.g., critical: acute myocardial infarction hospitalization; important: hypertension incidence).

- Finalize and Validate: Draft the full PECO question. Check that each component is sufficiently precise to guide the search strategy and study eligibility screening.

Protocol 4.2: Exposure Quantification and Comparator Definition This methodology is essential for implementing Scenarios 2-5 from Table 1.

- Preliminary Evidence Scan: Conduct a scoping search to understand the range and distribution of exposure measures reported in the literature.

- Quantification Strategy:

- For Scenario 2, plan to extract reported exposure metrics (means, medians, ranges) and group studies based on their reported quantiles or create common quantiles post-hoc for meta-analysis.

- For Scenarios 3 & 4, identify the source of the external cut-off (e.g., WHO Air Quality Guideline, OSHA Permissible Exposure Limit) and document its rationale.

- For Scenario 5, define the intervention's exposure-reduction efficacy target based on pilot data or engineering estimates.

- Sensitivity Analysis Plan: Pre-define how alternative exposure classifications or cut-off values will be tested to assess the robustness of the primary findings.

Integration with the GRADE Framework

A well-formulated PECO question directly feeds into the subsequent GRADE evidence assessment and Evidence-to-Decision (EtD) process [10] [8]. The PECO defines the evidence base, which GRADE then evaluates for certainty.

Table 2: Linking PECO Development to GRADE Certainty Assessment Domains

| GRADE Certainty Domain | Influence of PECO Formulation | Considerations for Environmental Health |

|---|---|---|

| Risk of Bias | A clear 'Comparator' (C) defines the target experiment for assessing bias using tools like ROBINS-I [8]. | Assessing how well observational studies approximate the ideal comparison defined in the PECO. |

| Indirectness (P) | A narrowly defined 'Population' (P) may limit directness to other groups, lowering certainty for broader recommendations. | May be traded off against precision. Requires explicit judgment in the EtD framework [10]. |

| Indirectness (E/C/O) | Imprecise definition of 'Exposure' (E) or 'Outcome' (O) leads to indirect comparisons across studies. | Using biomarker-based exposure (E) may be more direct than environmental proxy measures. |

| Imprecision | The choice of PECO 'Scenario' affects required sample size. Comparing extreme quantiles (Scenario 2) may yield more precise estimates than analyzing incremental changes (Scenario 1). | Confidence intervals around effect estimates inform judgments on imprecision. |

| Publication Bias | A comprehensive PECO-based search strategy is the primary defense against missing studies. | Specialized environmental health databases and grey literature sources are critical [26]. |

The PECO question structures the Evidence-to-Decision (EtD) framework by defining the "Problem," the "Options" (exposure scenarios or interventions), and the "Important Outcomes" [10] [27]. Subsequent EtD judgments about the balance of effects, equity, acceptability, and feasibility are all grounded in the evidence synthesized to answer the PECO question [10] [27].

PECO to GRADE Evidence Workflow

The Scientist's Toolkit: Essential Reagents for PECO-Driven Reviews

Table 3: Key Methodological Tools for PECO Formulation and Application

| Tool / Resource | Primary Function | Application Notes |

|---|---|---|

| ROBINS-I Tool | Assesses risk of bias in non-randomized studies of interventions or exposures [8]. | The PECO 'Comparator' defines the "target experiment" against which bias is judged. |

| GRADEpro GDT (Guideline Development Tool) | Software to create 'Summary of Findings' tables and manage the EtD framework [8]. | The prioritized outcomes from the PECO question are directly imported to structure evidence profiles. |

| PECO Scenario Framework (Table 1) | Provides a typology for defining the Exposure and Comparator based on review purpose [24]. | Prevents misalignment between the research question and the analytical approach. |

| Exposure Assessment Databases | Sources of data on environmental concentrations, biomonitoring, or modeling estimates. | Critical for defining external cut-offs (Scenarios 3/4) or interpreting exposure quantiles (Scenario 2). |

| PRISMA-P & PRISMA 2020 Checklists | Reporting standards for systematic review protocols and completed reviews [26]. | Ensure the PECO question is explicitly reported and the methods for its application are transparent. |

Systematic Review Experimental Protocol

The Grading of Recommendations Assessment, Development and Evaluation (GRADE) Evidence-to-Decision (EtD) framework for environmental and occupational health (EOH) represents a tailored methodological advancement designed to support transparent and structured decision-making in a field characterized by complex evidence and diverse stakeholders [9] [10]. Developed by the GRADE Working Group, this framework addresses a critical gap, as many EOH decision-makers had not adopted existing EtD frameworks due to their limited applicability to non-clinical contexts such as exposure regulation and hazard control [11] [28].

The framework was developed through a rigorous multi-phase process. This began with a systematic review and narrative synthesis of published and public EOH decision frameworks, followed by a modified Delphi process involving content experts from risk assessment, management, and socio-economic analysis [29]. A draft framework was then pilot-tested through virtual workshops, with results presented for iterative feedback and final approval by the GRADE Working Group in May 2023 [9] [10]. The foundational work for applying GRADE to EOH questions was initiated by the Environmental and Occupational Health Project Group in 2014, which prioritized adapting GRADE to evaluate exposure risk and interventions, and to integrate evidence across diverse streams (e.g., human observational, animal, in vitro) [8].

This EOH EtD framework retains the core structure of existing GRADE EtDs—comprising a scoping process and twelve assessment criteria—but incorporates key modifications to address the unique socio-political, evidentiary, and stakeholder landscape of environmental and occupational health [28].

Core Structure and Key Modifications from Standard GRADE

The GRADE EtD framework for EOH maintains consistency with the overarching GRADE philosophy but introduces critical adaptations to its criteria and their application. The table below summarizes the core criteria and highlights the principal modifications specific to the EOH context.

Table 1: Core Assessment Criteria of the GRADE EtD Framework for EOH and Key Modifications

| Assessment Criterion | Standard GRADE Consideration | Key Modifications for EOH Context |

|---|---|---|

| Priority of the Problem | Is the health problem a priority? | Explicit inclusion of the socio-political context in judging priority [9]. |

| Benefits & Harms | How substantial are the desirable and undesirable anticipated effects? | Addition of timing (e.g., latency of effects, immediacy of benefits) as a key factor in judgments [10] [28]. |

| Certainty of Evidence | What is the overall certainty of the evidence of effects? | Adapted for diverse evidence streams (human, animal, mechanistic) common in EOH [8]. |

| Values | Is there important uncertainty/variability in how people value outcomes? | More explicit accommodation of variable or conflicting stakeholder views (e.g., industry, community, regulator) [9]. |

| Balance of Effects | Do desirable effects outweigh undesirable effects? | Consideration of timing of effects influences the balance judgment [10]. |

| Resource Use | How large are the resource requirements (costs)? | Applied to interventions like exposure mitigation or remediation technologies. |

| Equity | What would be the impact on health equity? | Broadened beyond health equity to include social, economic, and environmental justice considerations [28]. |

| Acceptability | Is the option acceptable to key stakeholders? | Explicitly addresses potentially profound conflicts in acceptability among different stakeholder groups [9]. |

| Feasibility | Is the option feasible to implement? | Assesses feasibility in light of socio-political context and timing constraints [10]. |

The development process confirmed that while no entirely new decision criteria were needed for EOH, the nomenclature and granularity of considerations required significant tailoring [29]. For instance, EOH decisions must grapple with concepts like the "precautionary principle" and "toxicity," which are integrated into the standard criteria (e.g., benefits/harms, certainty of evidence) but require domain-specific guidance for consistent interpretation [29].

Methodological Development and Validation Protocol

The creation of the EOH EtD framework followed a validated protocol involving evidence synthesis and expert consensus. The following workflow details the sequence of methods used.

Diagram 1: Development Workflow for the EOH EtD Framework (Max. 100 characters)

Detailed Experimental Protocols

Protocol 1: Systematic Review and Narrative Synthesis of EOH Frameworks [29]

- Objective: To identify and synthesize existing decision frameworks used in EOH to inform the adaptation of the GRADE EtD.

- Search Strategy: Systematic searches in MEDLINE, EMBASE, and Cochrane Library (2011-2021), supplemented by manual grey literature searches.

- Screening & Selection: Two reviewers independently screened titles/abstracts and full texts against pre-defined inclusion criteria (frameworks informing decision-making about EOH exposures).

- Data Extraction & Synthesis: Decision considerations were abstracted from each included framework. A narrative synthesis was performed, mapping considerations to the structure of the existing GRADE EtD to identify gaps and needs for adaptation.

- Outcome: Identification of 38 source frameworks and 560 individual decision considerations, 104 of which informed the adaptation [29].

Protocol 2: Modified Delphi Consensus Process [29]

- Objective: To refine and reach consensus on EOH-specific decision considerations and their formulation.

- Panel Composition: Stakeholders from EOH sub-fields (risk assessment/management, nutrition/food safety, cancer, socio-economic analysis).

- Procedure:

- Round 1: Panelists rated the relevance and clarity of extracted considerations on a 7-point Likert scale and provided free-text comments. Considerations not meeting a pre-defined threshold were excluded or aggregated.

- Round 2: Panelists re-rated revised/aggregated considerations and commented further.

- Consensus Definition: Pre-defined statistical thresholds for agreement on relevance and wording.

- Outcome: A finalized set of 47 core decision considerations for the EOH EtD framework [29].

Protocol 3: Pilot Testing via Virtual Workshops [9] [10]

- Objective: To test the usability and applicability of the draft EOH EtD framework with potential end-users.

- Design: A series of interactive virtual workshops where participants applied the draft framework to realistic EOH case studies (e.g., setting exposure thresholds, evaluating interventions).

- Data Collection: Facilitated discussion and structured feedback were collected on clarity, comprehensiveness, and practicality of the framework and its guidance.

- Analysis: Qualitative analysis of feedback to identify points of confusion, missing elements, and practical barriers.

- Outcome: Iterative refinement of the framework structure and the development of a supporting user guide [9].

Application Notes for Systematic Reviews and Decision-Making

Framing the Question: The PECO Framework

In EOH systematic reviews that feed into the EtD process, the research question must be precisely structured. The recommended format is the PECO (Population, Exposure, Comparator, Outcome) statement [8]. This replaces the clinical PICO (Patient, Intervention, Comparator, Outcome) framework.

- Population: The human population affected (e.g., workers in manufacturing, communities near a point source).

- Exposure: The environmental or occupational agent or condition (e.g., fine particulate matter, shift work).

- Comparator: The alternative exposure scenario (e.g., lower exposure level, absence of the agent, an alternative intervention).

- Outcome: The critical health or non-health outcomes (e.g., incidence of lung cancer, quality of life, healthcare costs).

Assessing Certainty of Evidence from Diverse Streams

A central challenge in EOH is synthesizing evidence of different types. The EtD framework requires explicit judgment on the certainty of evidence, which for EOH involves integrating:

- Human Evidence (typically observational): Assessed using adapted tools like ROBINS-I (Risk Of Bias In Non-randomized Studies - of Interventions) for exposure studies [8].

- Animal & Mechanistic Evidence: Rated using GRADE domains (risk of bias, inconsistency, indirectness, imprecision, publication bias), acknowledging the inherent indirectness for human decisions [8]. The overall certainty rating reflects a structured integration of these streams, often starting with human evidence and adjusting based on supportive or conflicting evidence from other streams.

Populating the EtD Framework: A Practical Workflow

The logical flow for applying the framework in a decision-making panel is structured as follows.

Diagram 2: EtD Application Workflow for Decision Panels (Max. 100 characters)

Key Steps for Researchers and Methodologists:

- Technical Team Prepares Evidence Synthesis: Following a systematic review, the team populates the "Research Evidence" column for each EtD criterion. For benefits/harms, this includes structured summaries of effect estimates and certainty ratings. For criteria like equity and acceptability, it may include synthesized qualitative evidence or stakeholder survey data.

- Panel Makes Judgments: The decision-making panel reviews the evidence summaries and formulates a judgment for each criterion (e.g., "Probably no important equity impacts," "Probably acceptable to most stakeholders"). The modified EOH criteria guide them to explicitly consider timing, socio-political context, and broader equity.

- Reaching a Conclusion: The panel weighs the judgments across all criteria to make a final decision or recommendation (e.g., "Set the occupational exposure limit at X level," "Implement the engineering control in settings Y and Z"). The rationale, tied directly to the evidence and judgments, is documented transparently in the framework.

Table 2: Key Research Reagent Solutions for Implementing the GRADE EOH EtD Framework

| Tool/Resource Name | Function/Purpose | Key Features for EOH |

|---|---|---|

| GRADEpro GDT (Guideline Development Tool) | Software to create and manage SoF tables, EtD frameworks, and guidelines. | Supports structuring of PECO questions and integration of non-randomized study evidence [7]. |

| ROBINS-I (Risk Of Bias In Non-randomized Studies - of Interventions) | Tool to assess risk of bias in non-randomized studies of interventions. | Adapted and piloted for use in studies of exposures, forming a basis for rating down certainty for risk of bias [8]. |

| RoB-SPEO (Risk of Bias in Studies of Prevalence of Exposure) | Tool to assess risk of bias in studies estimating prevalence of an exposure. | Tailored for EOH exposure prevalence studies, a common evidence source for problem prioritization [30]. |

| Navigation Guide Methodology | A systematic review framework for environmental health. | Provides a parallel, compatible roadmap for evidence synthesis that feeds directly into the GRADE EtD framework [10] [8]. |

| PECO Framework Template | Protocol template for framing EOH research questions. | Ensures systematic reviews address the correct Population, Exposure, Comparator, and Outcome for decision-making [8]. |

| WHO/ILO Systematic Review Protocol for Exposure Prevalence | Standardized protocol for reviewing prevalence data. | Enables rigorous synthesis of data critical for assessing the "Priority of the Problem" criterion [30]. |